Posture Estimation Method, Posture Estimation Device, And Vehicle

NAKAOKA; Yasushi ; et al.

U.S. patent application number 16/526297 was filed with the patent office on 2020-02-06 for posture estimation method, posture estimation device, and vehicle. This patent application is currently assigned to SEIKO EPSON CORPORATION. The applicant listed for this patent is SEIKO EPSON CORPORATION. Invention is credited to Yasushi NAKAOKA, Fumikazu OTANI, Kentaro YODA.

| Application Number | 20200039522 16/526297 |

| Document ID | / |

| Family ID | 69228213 |

| Filed Date | 2020-02-06 |

View All Diagrams

| United States Patent Application | 20200039522 |

| Kind Code | A1 |

| NAKAOKA; Yasushi ; et al. | February 6, 2020 |

POSTURE ESTIMATION METHOD, POSTURE ESTIMATION DEVICE, AND VEHICLE

Abstract

A posture estimation method includes calculating a posture change amount of an object based on an output of an angular velocity sensor, predicting posture information of the object by using the posture change amount, adjusting error information in a manner of determining whether or not the output of the angular velocity sensor is within an effective range and, when it is determined that the output of the angular velocity sensor is not within the effective range, increasing a posture error component in error information and reducing a correlation component between the posture error component and an error component other than the posture error component in the error information, and correcting the predicted posture information of the object based on the error information.

| Inventors: | NAKAOKA; Yasushi; (Shiojiri-shi, JP) ; OTANI; Fumikazu; (Matsumoto-shi, JP) ; YODA; Kentaro; (Chino-shi, JP) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Assignee: | SEIKO EPSON CORPORATION Tokyo JP |

||||||||||

| Family ID: | 69228213 | ||||||||||

| Appl. No.: | 16/526297 | ||||||||||

| Filed: | July 30, 2019 |

| Current U.S. Class: | 1/1 |

| Current CPC Class: | G01P 3/44 20130101; G01C 9/02 20130101; B60W 30/02 20130101; B60Y 2200/50 20130101; B60W 40/10 20130101; G05D 1/027 20130101; G01C 21/16 20130101 |

| International Class: | B60W 40/10 20060101 B60W040/10; G01C 9/02 20060101 G01C009/02; G01P 3/44 20060101 G01P003/44; G05D 1/02 20060101 G05D001/02; B60W 30/02 20060101 B60W030/02 |

Foreign Application Data

| Date | Code | Application Number |

|---|---|---|

| Jul 31, 2018 | JP | 2018-143691 |

Claims

1. A posture estimation method comprising: calculating a posture change amount of an object based on an output of an angular velocity sensor; predicting posture information of the object by using the posture change amount; adjusting error information in a manner of determining whether or not the output of the angular velocity sensor is within an effective range, and when it is determined that the output of the angular velocity sensor is not within the effective range, increasing a posture error component in error information and reducing a correlation component between the posture error component and an error component other than the posture error component in the error information; and correcting the predicted posture information of the object based on the error information.

2. The posture estimation method according to claim 1, wherein the adjusting of the error information includes determining whether or not a current time is in a first period after it is determined that the output of the angular velocity sensor is not within the effective range, increasing the posture error component in the first period, and reducing the correlation component between the posture error component and the error component other than the posture error component, in the first period.

3. The posture estimation method according to claim 1, wherein the error component other than the posture error component includes a bias error component of an angular velocity.

4. The posture estimation method according to claim 2, wherein the error component other than the posture error component includes a bias error component of an angular velocity.

5. The posture estimation method according to claim 1, wherein the correlation component between the posture error component and the error component other than the posture error component is zero.

6. The posture estimation method according to claim 1, further comprising: calculating a velocity change amount of the object based on an output of an acceleration sensor and the output of the angular velocity sensor, wherein in the adjusting of the error information, whether or not the output of the acceleration sensor is within an effective range is determined, and, when it is determined that the output of the angular velocity sensor or the output of the acceleration sensor is not within the corresponding effective range, a motion velocity error component in the error information is increased, and a correlation component between the motion velocity error component and an error component other than the motion velocity error component in the error information is reduced, and in the predicting of the posture information, velocity information of the object is predicted by using the velocity change amount.

7. The posture estimation method according to claim 6, wherein the adjusting of the error information includes determining whether or not a current time is in a second period after it is determined that the output of the acceleration sensor is not within the effective range, increasing the motion velocity error component in the second period, and reducing the correlation component between the motion velocity error component and the error component other than the motion velocity error component, in the second period.

8. The posture estimation method according to claim 6, wherein the error component other than the motion velocity error component includes a bias error component of an acceleration.

9. The posture estimation method according to claim 7, wherein the error component other than the motion velocity error component includes a bias error component of an acceleration.

10. The posture estimation method according to claim 6, wherein the correlation component between the motion velocity error component and the error component other than the motion velocity error component is zero.

11. A posture estimation device comprising: a posture-change-amount calculation unit that calculates a posture change amount of an object based on an output of an angular velocity sensor; a posture information prediction unit that predicts posture information of the object by using the posture change amount; an error information adjustment unit that determines whether or not the output of the angular velocity sensor is within an effective range, and when it is determined that the output of the angular velocity sensor is not within the effective range, increases a posture error component in error information, and reduces a correlation component between the posture error component and an error component other than the posture error component in the error information; and a posture information correction unit that corrects the predicted posture information of the object based on the error information.

12. A vehicle comprising: the posture estimation device according to claim 11; and a control device that controls a posture of the vehicle based on posture information of the vehicle, which is estimated by the posture estimation device.

Description

[0001] The present application is based on, and claims priority from JP Application Serial Number 2018-143691, filed Jul. 31, 2018, the disclosure of which is hereby incorporated by reference herein in its entirety.

BACKGROUND

1. Technical Field

[0002] The present disclosure relates to a posture estimation method, a posture estimation device, and a vehicle.

2. Related Art

[0003] A device or a system of mounting an inertial measurement unit (IMU) on an object and calculating the position or posture of the object with an output signal of the inertial measurement unit (IMU) is known. A bias error is provided in the output signal of the inertial measurement unit (IMU), and an error also occurs in posture calculation. Thus, a technique of correcting such an error with a Kalman filter and estimating the accurate posture of the object is proposed. For example, JP-A-2015-179002 discloses a posture estimation method of calculating a posture change amount of an object by using an output of an angular velocity sensor and estimating the posture of the object by using the posture change amount.

[0004] JP-A-2015-179002 is an example of the related art.

[0005] An effective measurement range is defined in an angular velocity sensor. When an angular velocity which is out of the range is temporarily input, the output of the angular velocity sensor may include a not-assumed large error. Regarding this, in the posture estimation method disclosed in JP-A-2015-179002, there is a concern of not assuming a case where the output of the angular velocity sensor is even temporarily out of the effective range and decreasing accuracy in estimating the posture of an object.

SUMMARY

[0006] An aspect of a posture estimation method according to the present disclosure includes calculating a posture change amount of an object based on an output of an angular velocity sensor, predicting posture information of the object by using the posture change amount, adjusting error information in a manner of determining whether or not the output of the angular velocity sensor is within an effective range, and when it is determined that the output of the angular velocity sensor is not within the effective range, increasing a posture error component in error information and reducing a correlation component between the posture error component and an error component other than the posture error component in the error information, and correcting the predicted posture information of the object based on the error information.

[0007] In the aspect of the posture estimation method, the adjusting of the error information may include determining whether or not the current time is in a first period after it is determined that the output of the angular velocity sensor is not within the effective range, increasing the posture error component in the first period, and reducing the correlation component between the posture error component and the error component other than the posture error component, in the first period.

[0008] In the aspect of the posture estimation method, the error component other than the posture error component may include a bias error component of an angular velocity.

[0009] In the aspect of the posture estimation method, the correlation component between the posture error component and the error component other than the posture error component may be zero.

[0010] The aspect of the posture estimation method may further include calculating a velocity change amount of the object based on an output of an acceleration sensor and the output of the angular velocity sensor. In the adjusting of the error information, whether or not the output of the acceleration sensor is within an effective range may be determined, and, when it is determined that the output of the angular velocity sensor or the output of the acceleration sensor is not within the corresponding effective range, a motion velocity error component in the error information may be increased, and a correlation component between the motion velocity error component and an error component other than the motion velocity error component in the error information may be reduced. In the predicting of the posture information, velocity information of the object may be predicted by using the velocity change amount.

[0011] In the aspect of the posture estimation method, the adjusting of the error information may include determining whether or not the current time is in a second period after it is determined that the output of the acceleration sensor is not within the effective range, increasing the motion velocity error component in the second period, and reducing the correlation component between the motion velocity error component and the error component other than the motion velocity error component, in the second period.

[0012] In the aspect of the posture estimation method, the error component other than the motion velocity error component may include a bias error component of an acceleration.

[0013] In the aspect of the posture estimation method, the correlation component between the motion velocity error component and the error component other than the motion velocity error component may be zero.

[0014] An aspect of a posture estimation device according to the present disclosure includes a posture-change-amount calculation unit that calculates a posture change amount of an object based on an output of an angular velocity sensor, a posture information prediction unit that predicts posture information of the object by using the posture change amount, an error information adjustment unit that determines whether or not the output of the angular velocity sensor is within an effective range, and when it is determined that the output of the angular velocity sensor is not within the effective range, increases a posture error component in error information, and reduces a correlation component between the posture error component and an error component other than the posture error component in the error information, and a posture information correction unit that corrects the predicted posture information of the object based on the error information.

[0015] An aspect of a vehicle according to the present disclosure includes the aspect of the posture estimation device and a control device that controls a posture of the vehicle based on posture information of the vehicle, which is estimated by the posture estimation device.

BRIEF DESCRIPTION OF THE DRAWINGS

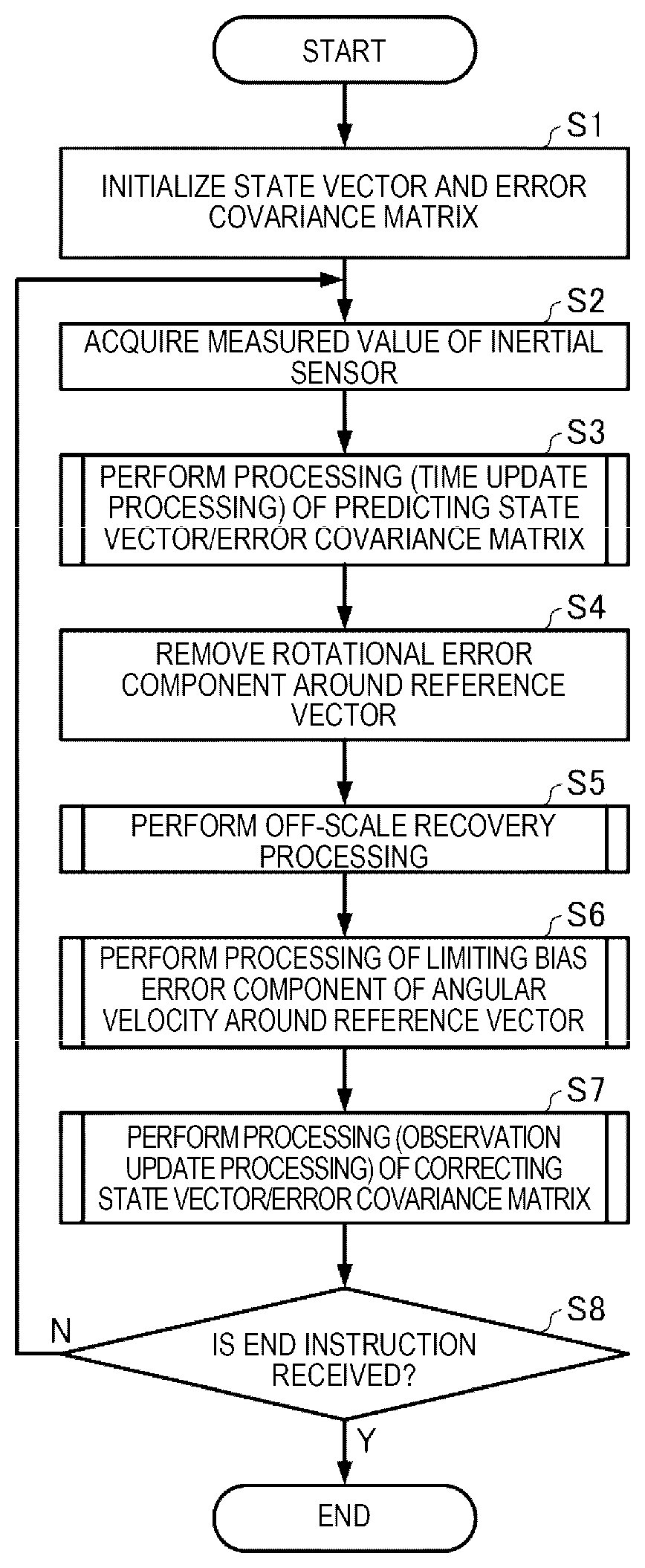

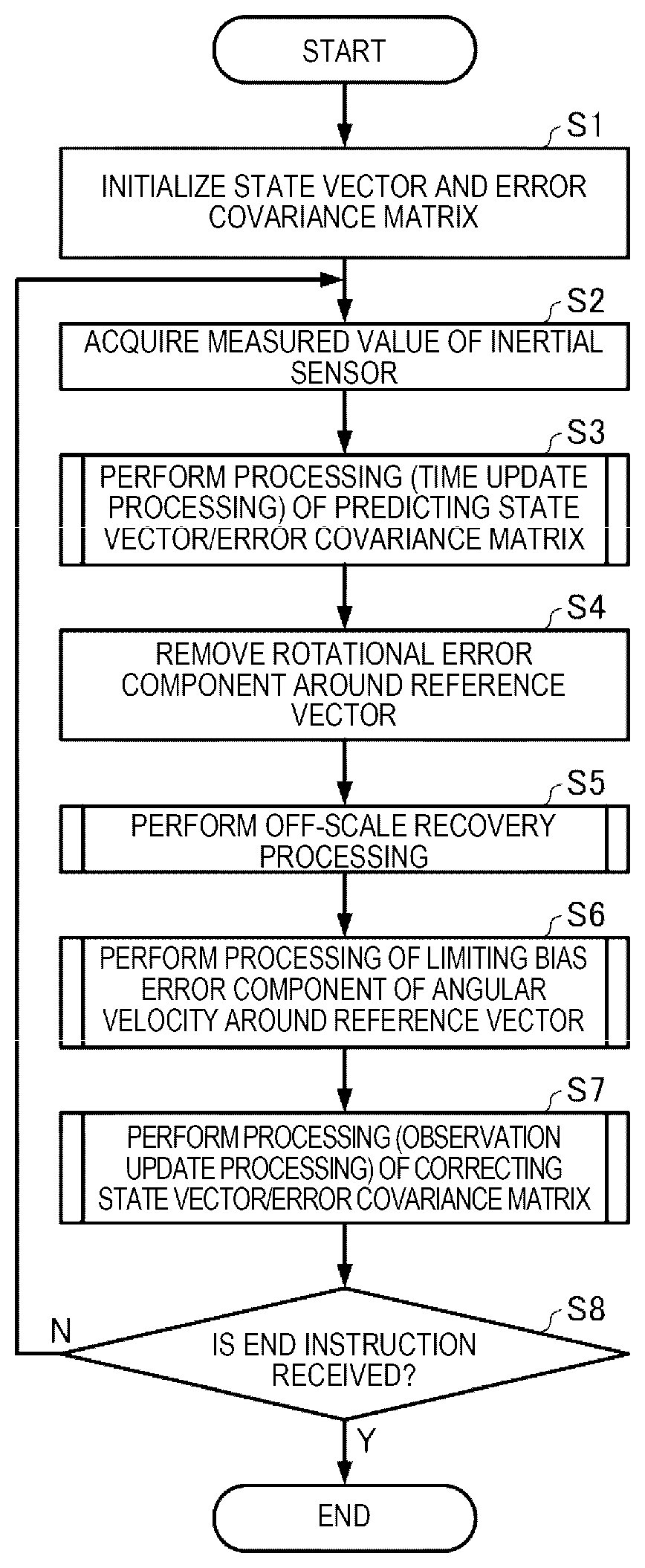

[0016] FIG. 1 is a flowchart illustrating an example of procedures of a posture estimation method.

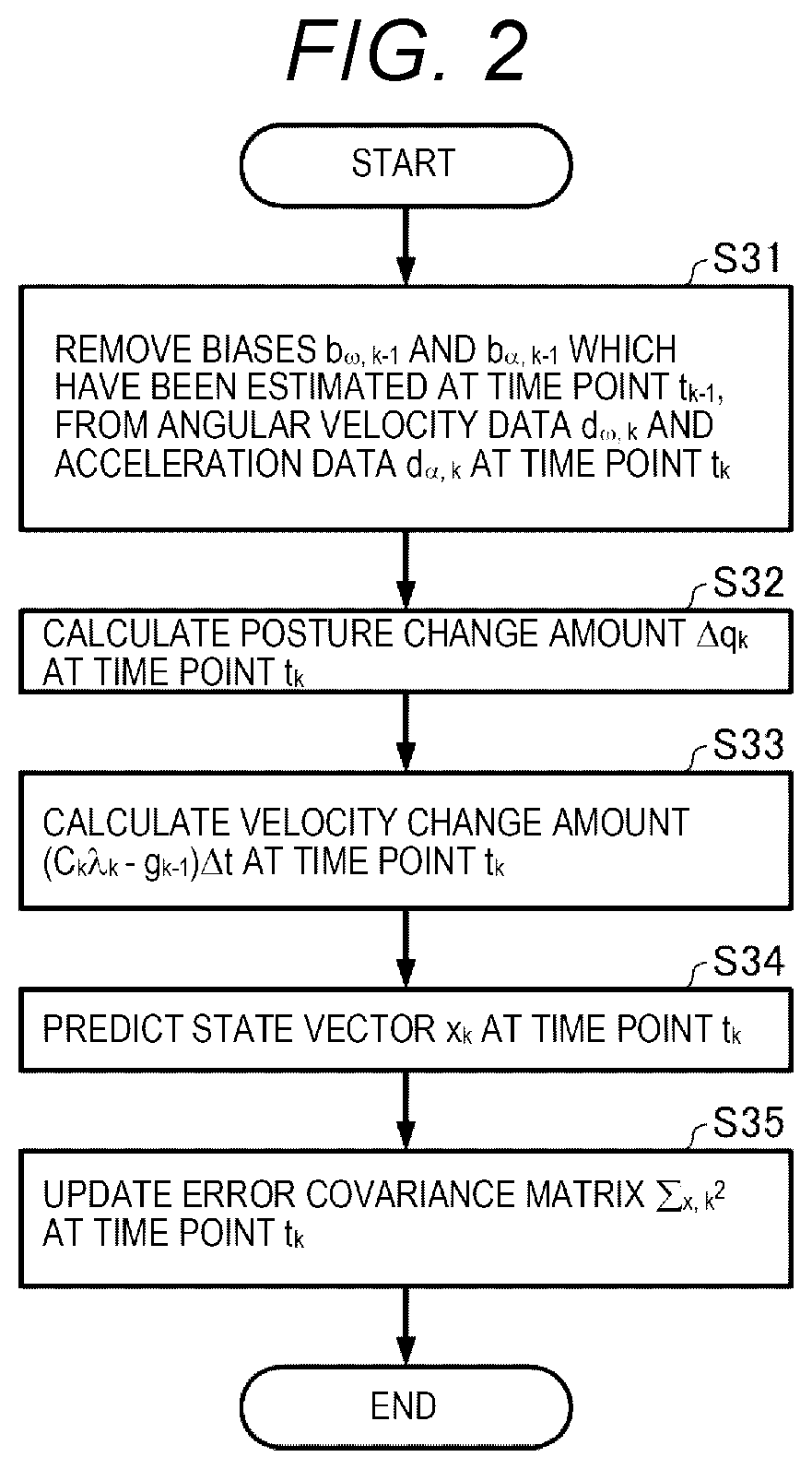

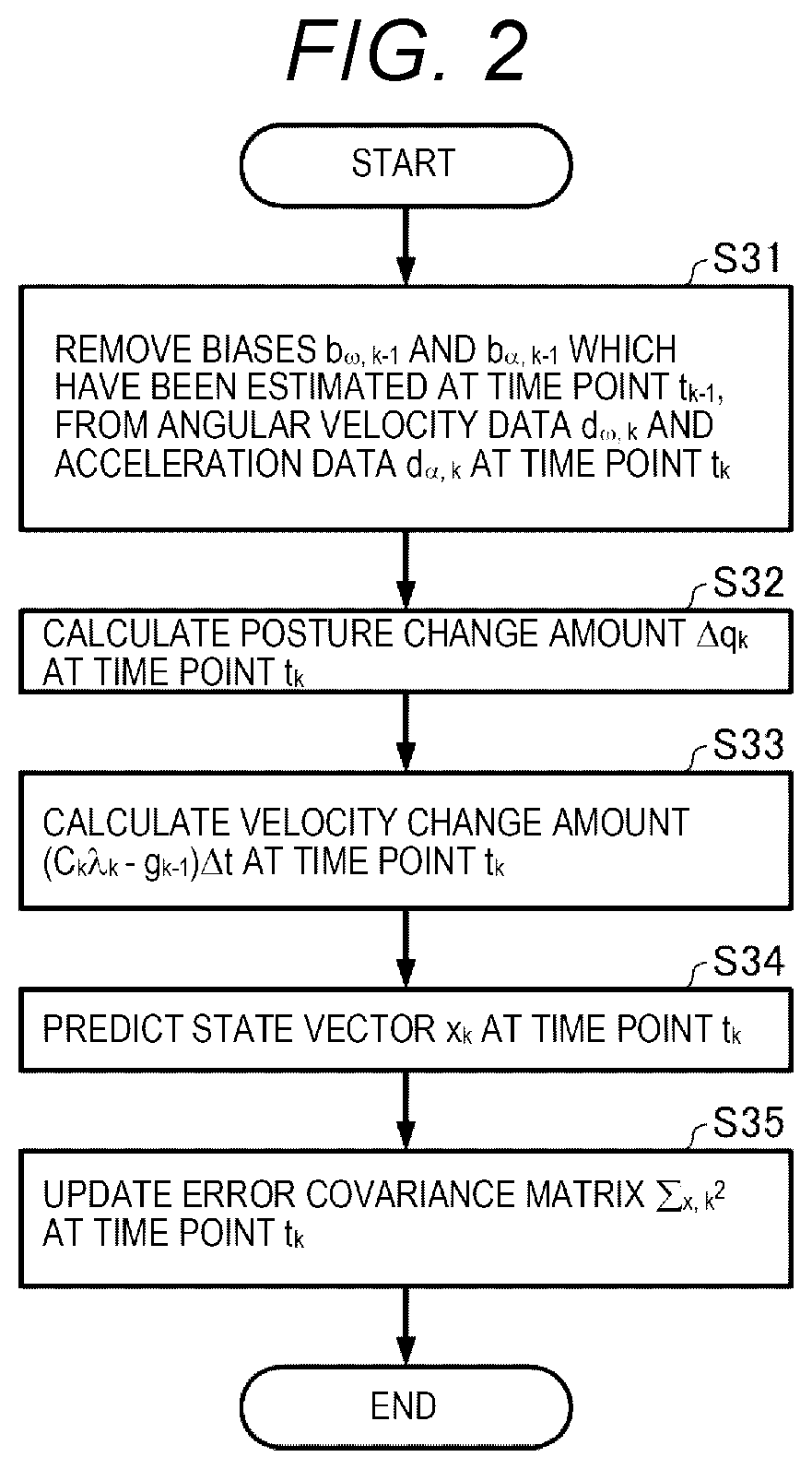

[0017] FIG. 2 is a flowchart illustrating an example of procedures of a process S3 in FIG. 1.

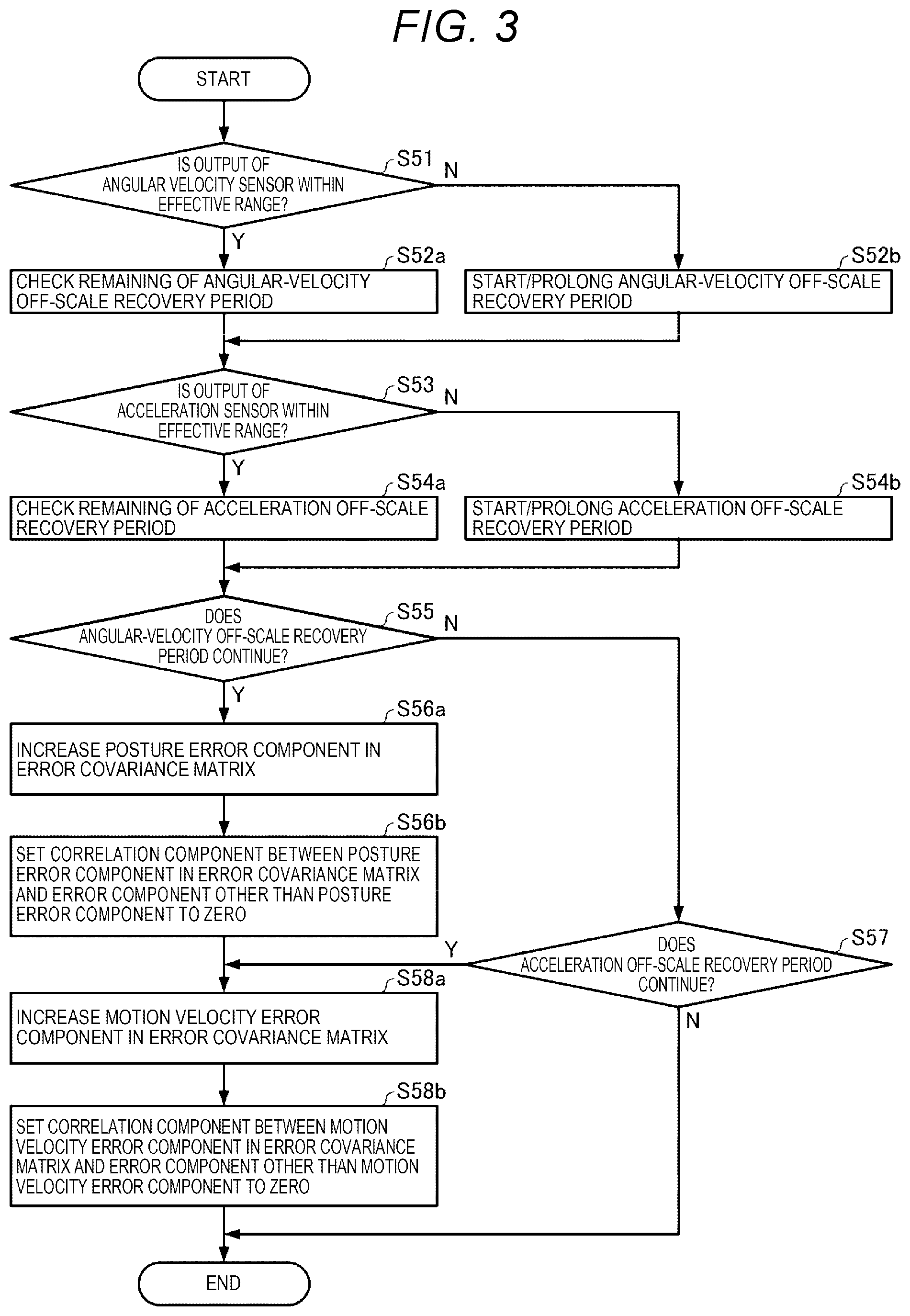

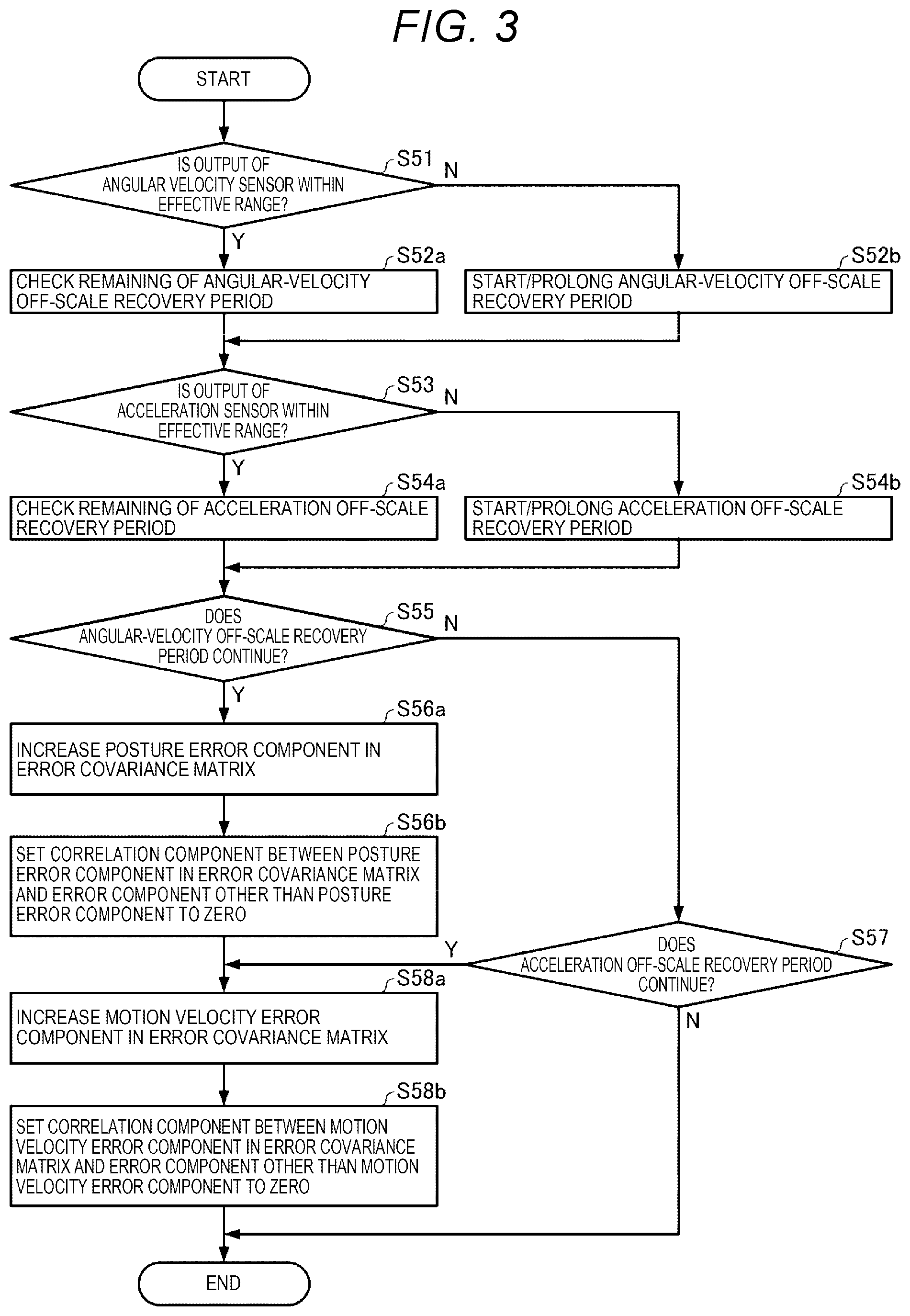

[0018] FIG. 3 is a flowchart illustrating an example of procedures of a process S5 in FIG. 1.

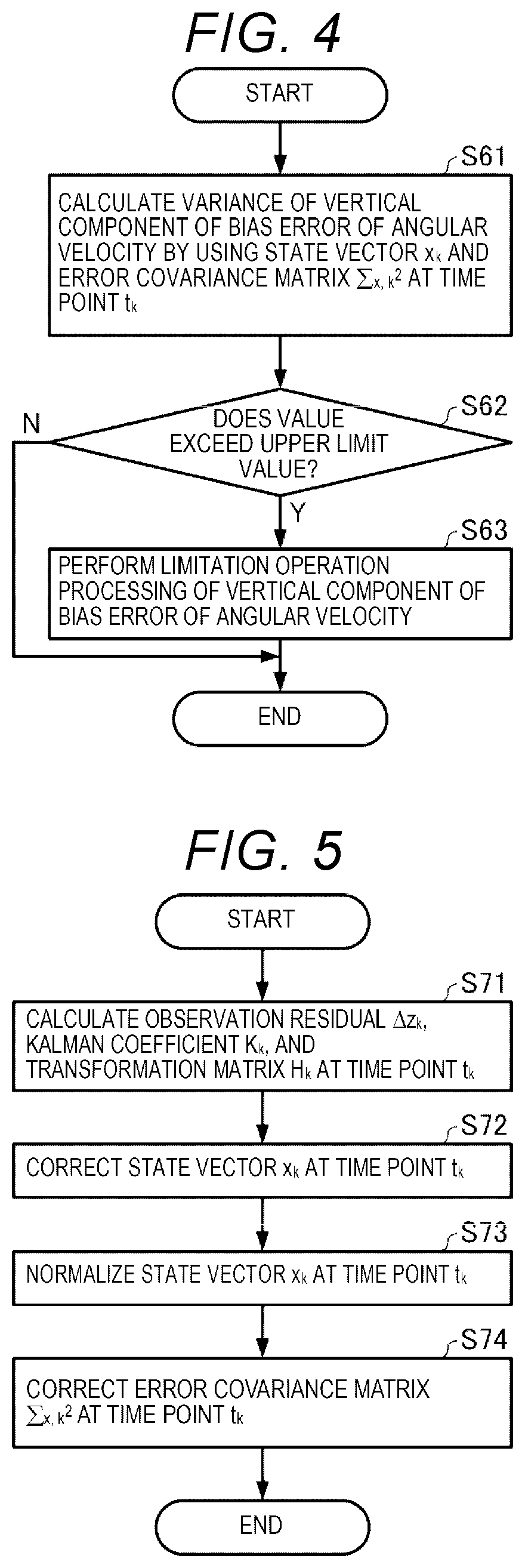

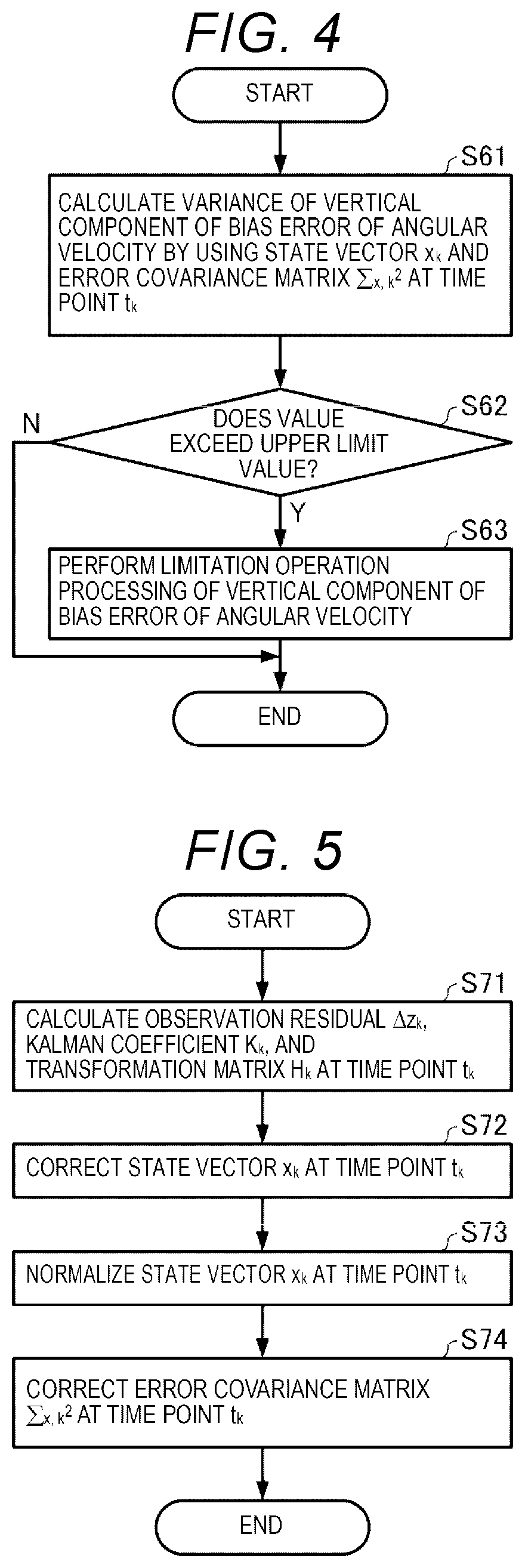

[0019] FIG. 4 is a flowchart illustrating an example of procedures of a process S6 in FIG. 1.

[0020] FIG. 5 is a flowchart illustrating an example of procedures of a process S7 in FIG. 1.

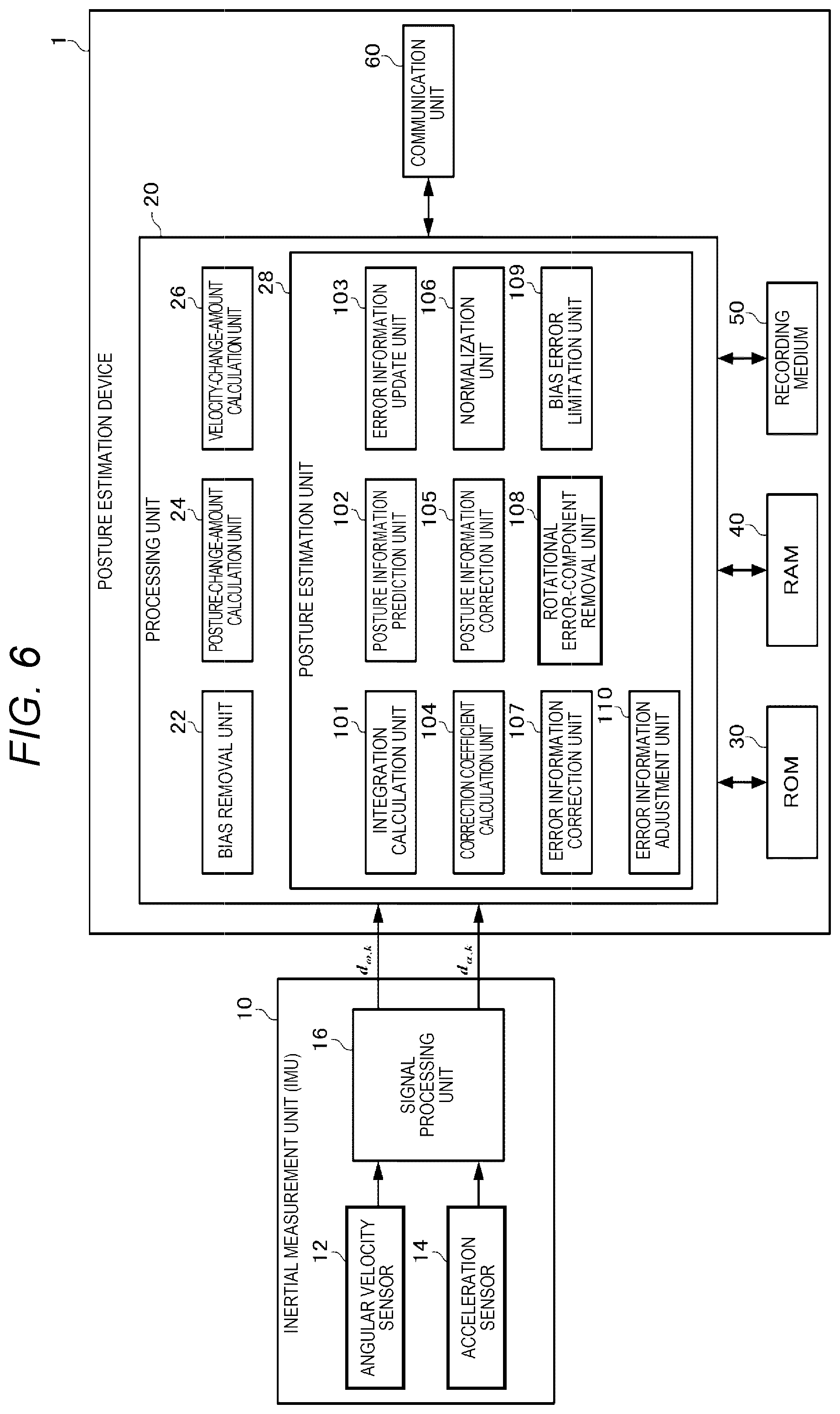

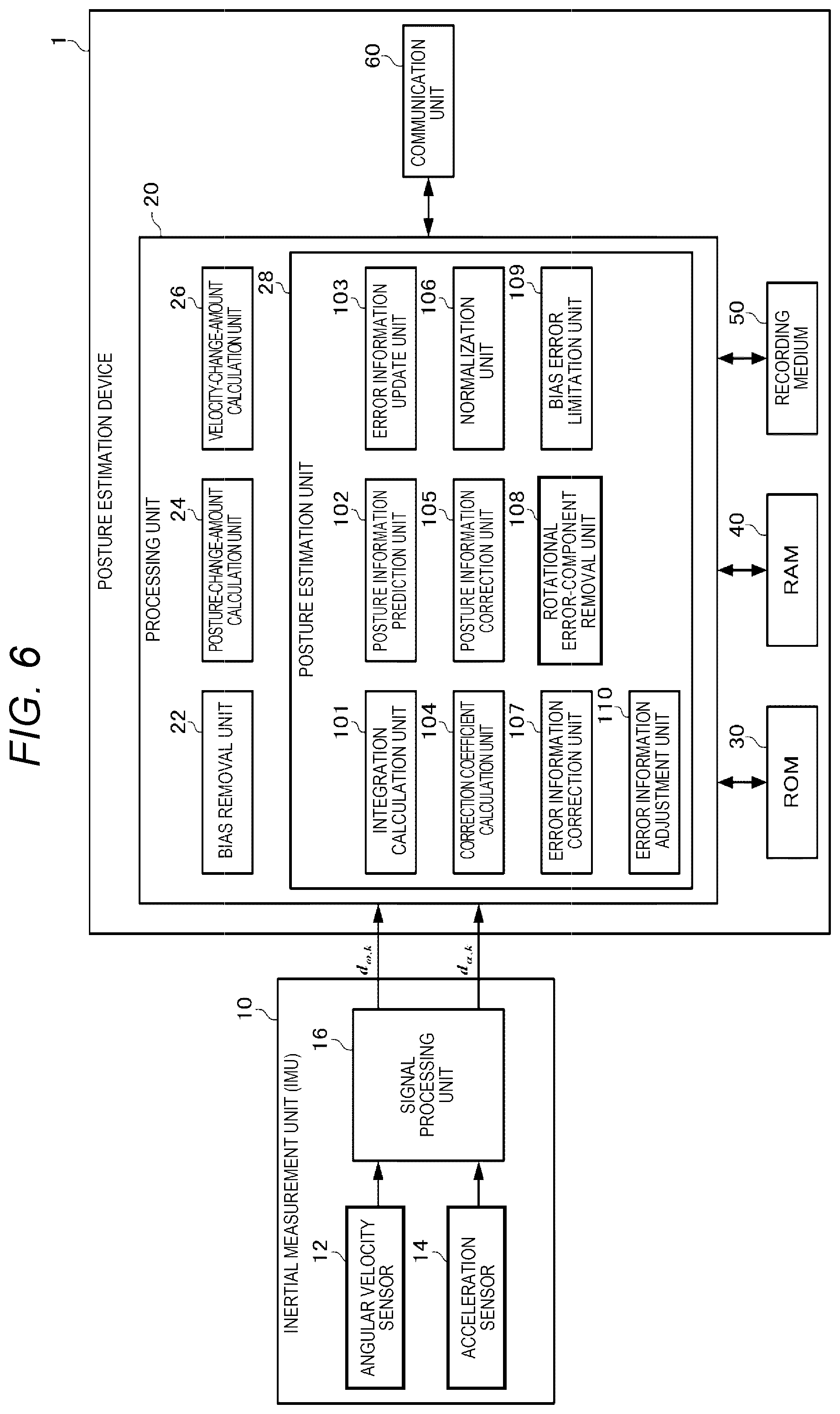

[0021] FIG. 6 is a diagram illustrating an example of a configuration of a posture estimation device according to an embodiment.

[0022] FIG. 7 is a diagram illustrating a sensor coordinate system and a local coordinate system.

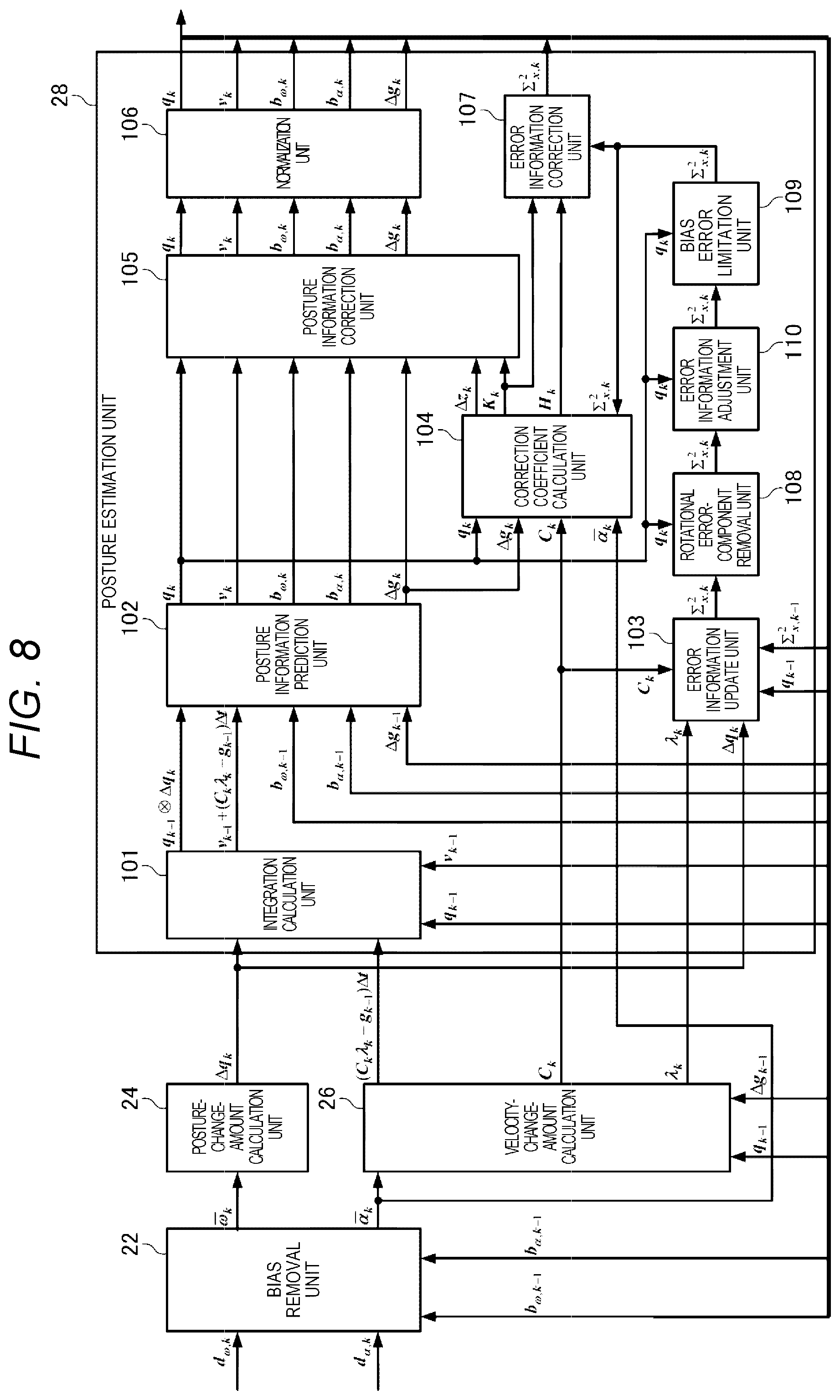

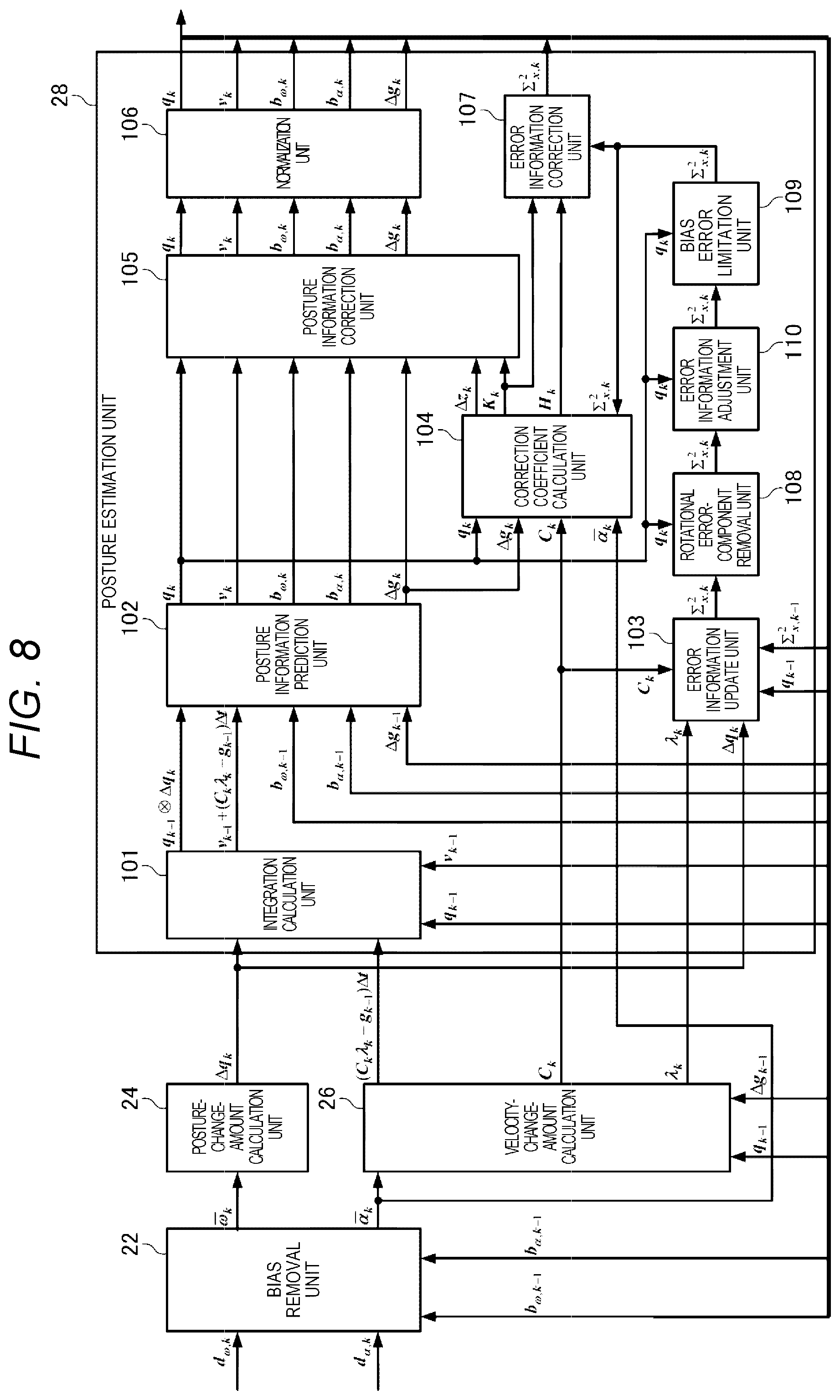

[0023] FIG. 8 is a diagram illustrating an example of a configuration of a processing unit in the embodiment.

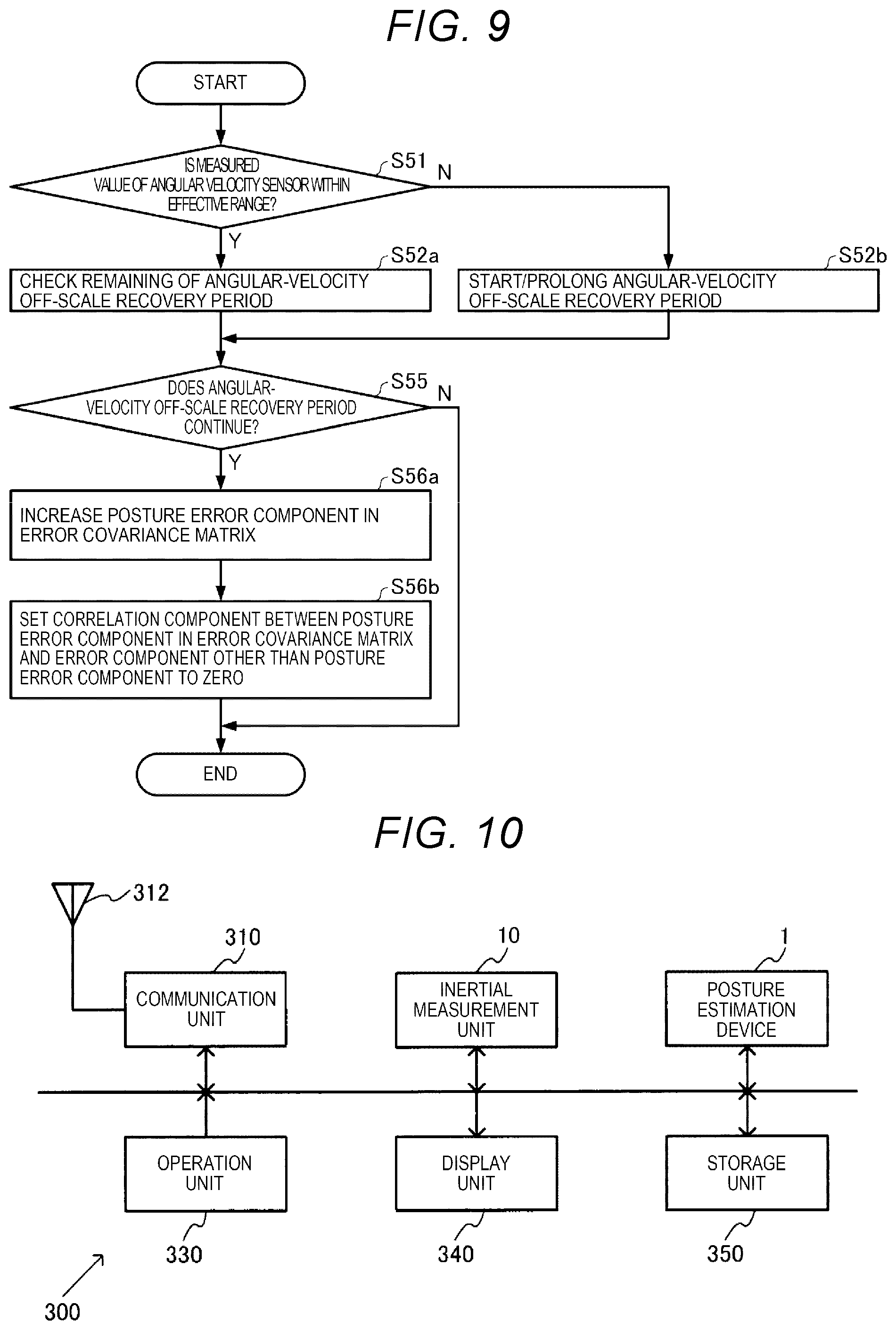

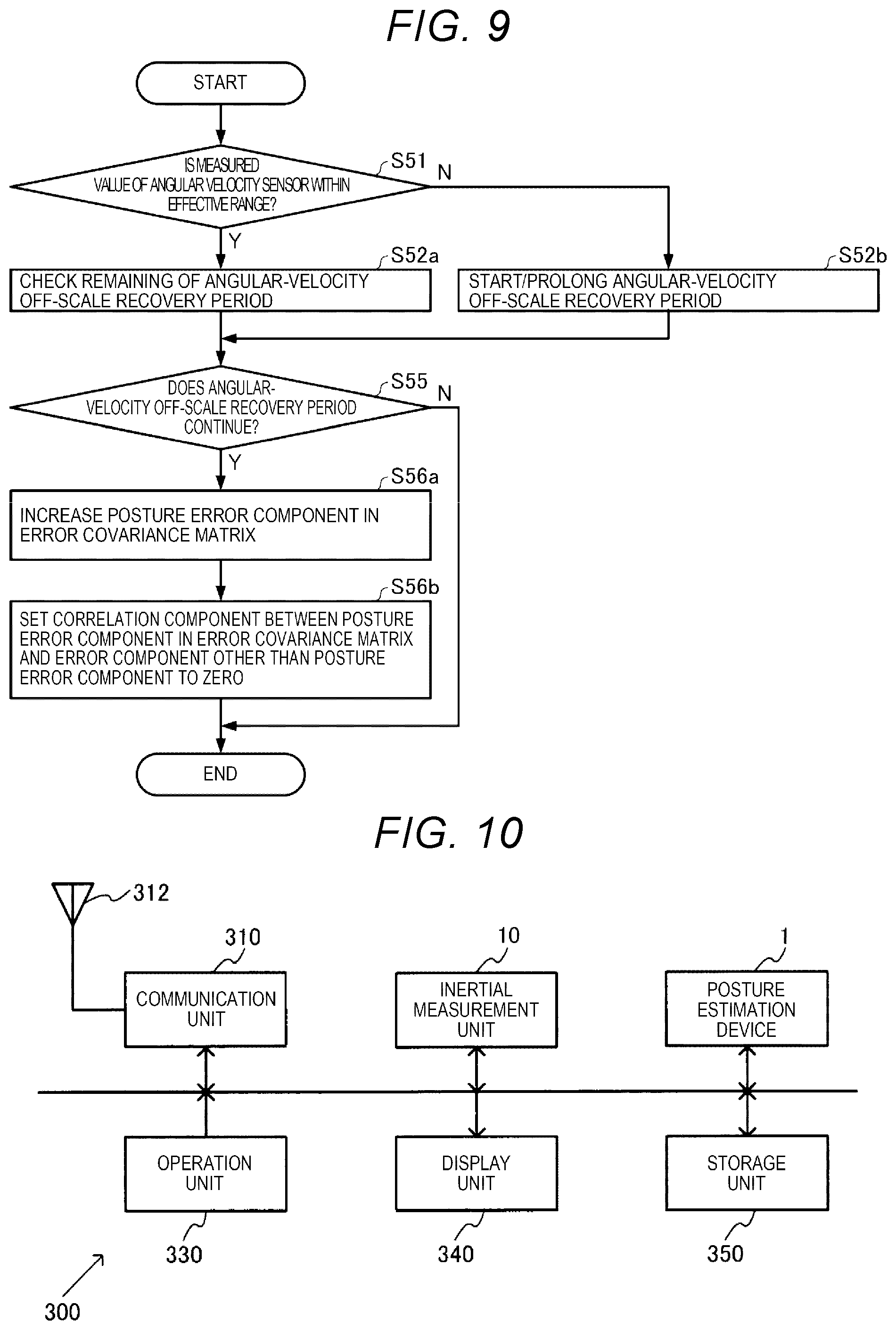

[0024] FIG. 9 is a flowchart illustrating another example of the procedures of the process S5 in FIG. 1.

[0025] FIG. 10 is a block diagram illustrating an example of a configuration of an electronic device in the embodiment.

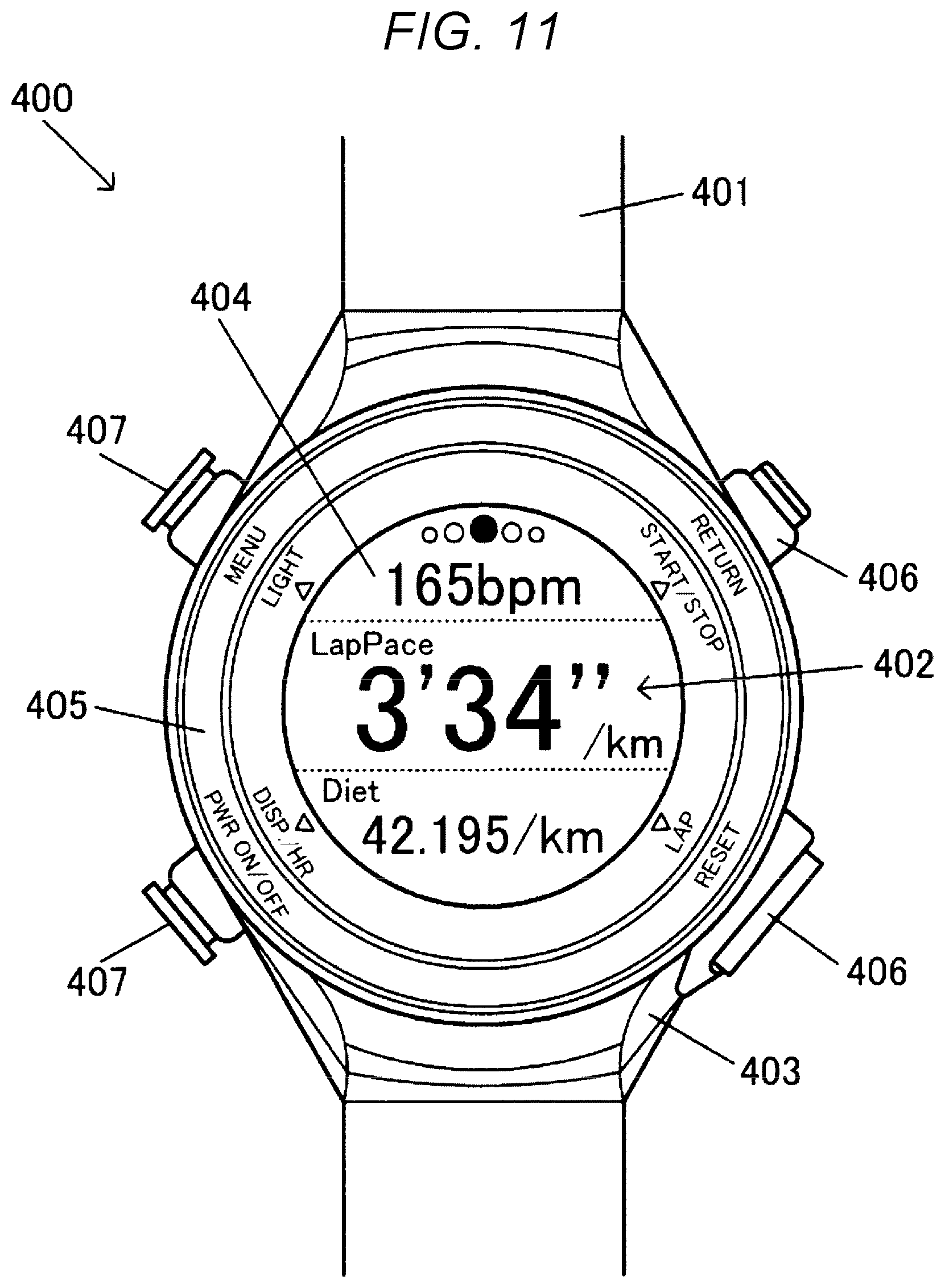

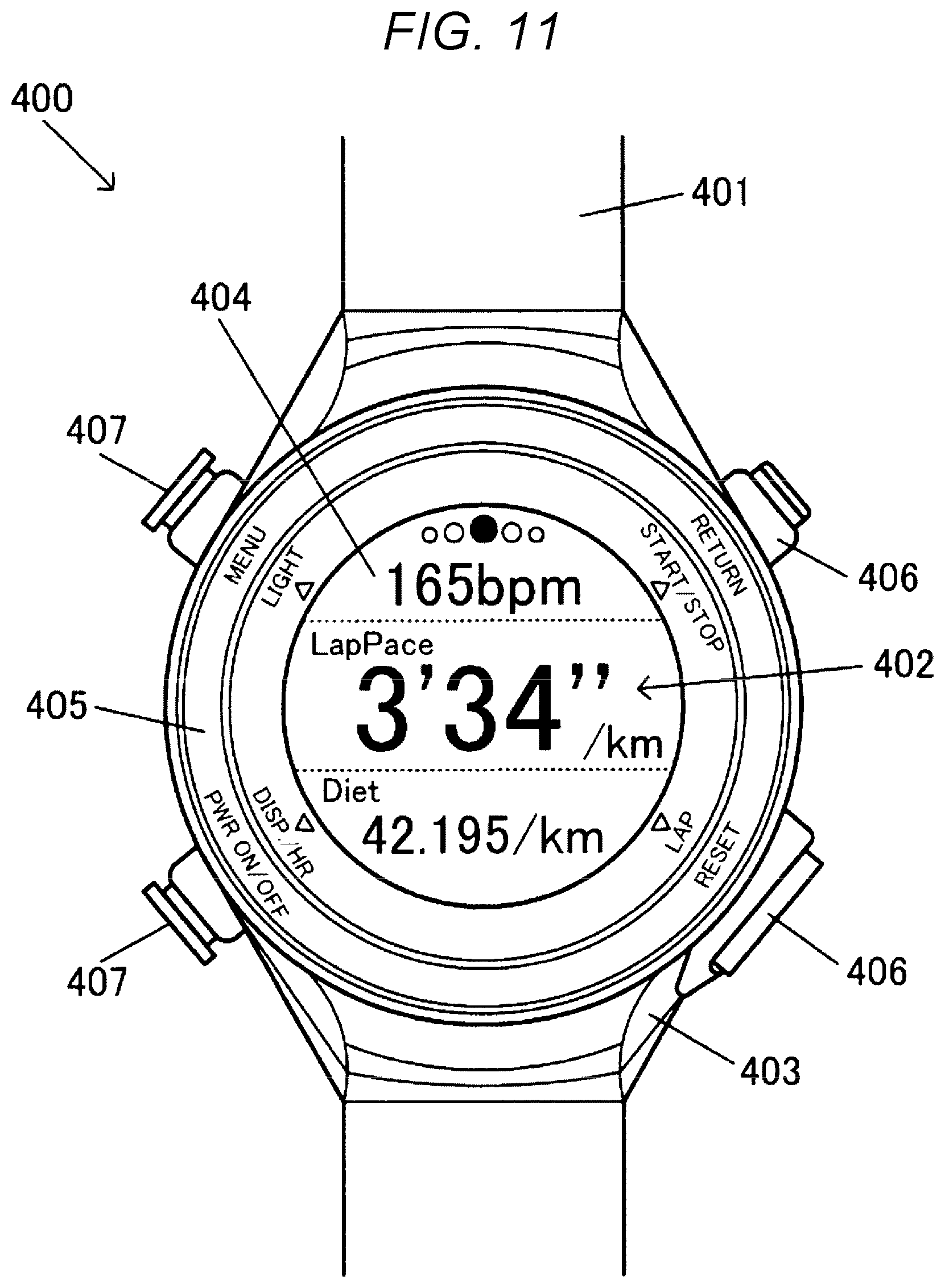

[0026] FIG. 11 is a plan view illustrating a wrist watch-type activity meter as a portable type electronic device.

[0027] FIG. 12 is a block diagram illustrating an example of a configuration of the wrist watch-type activity meter as the portable type electronic device.

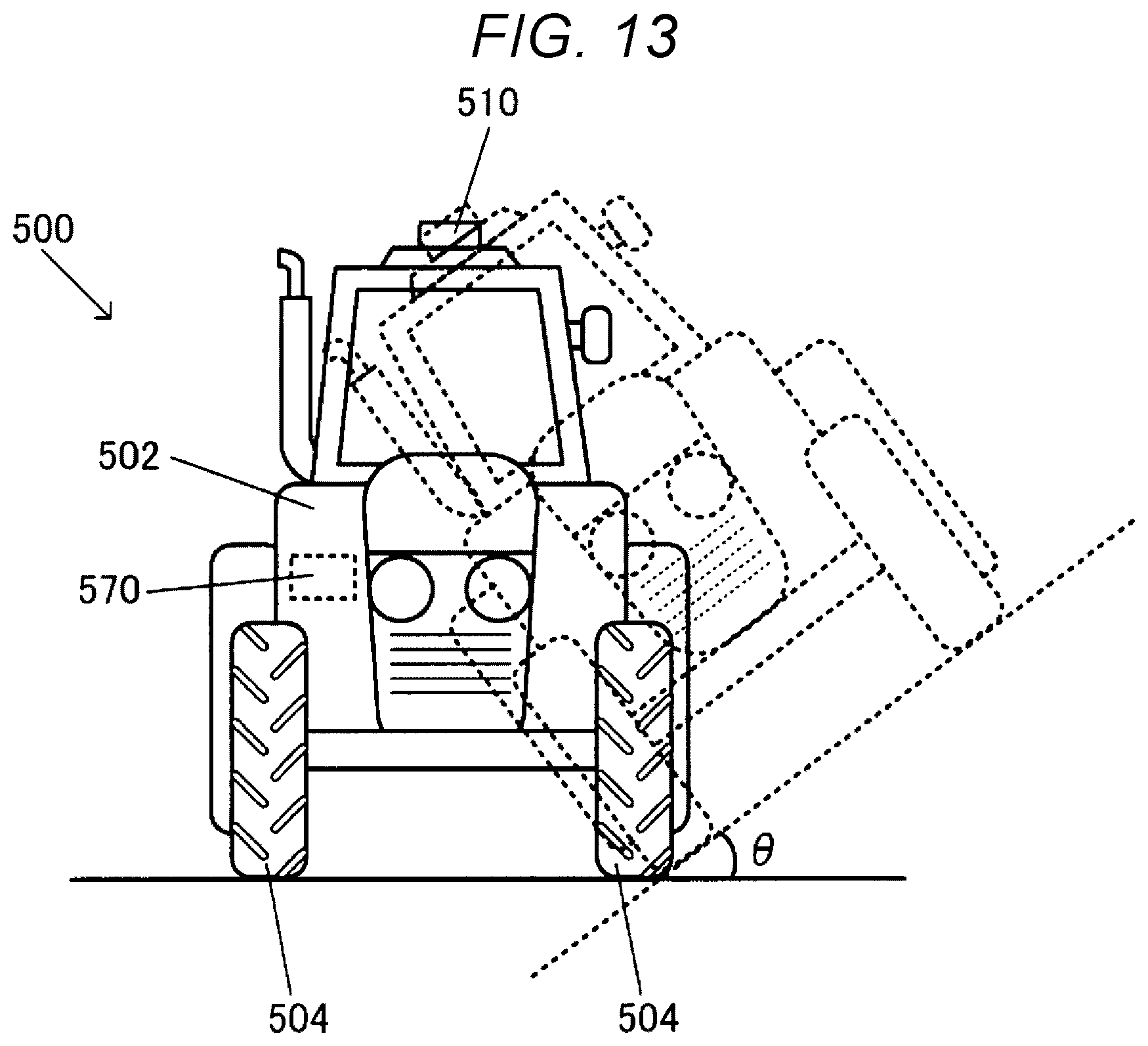

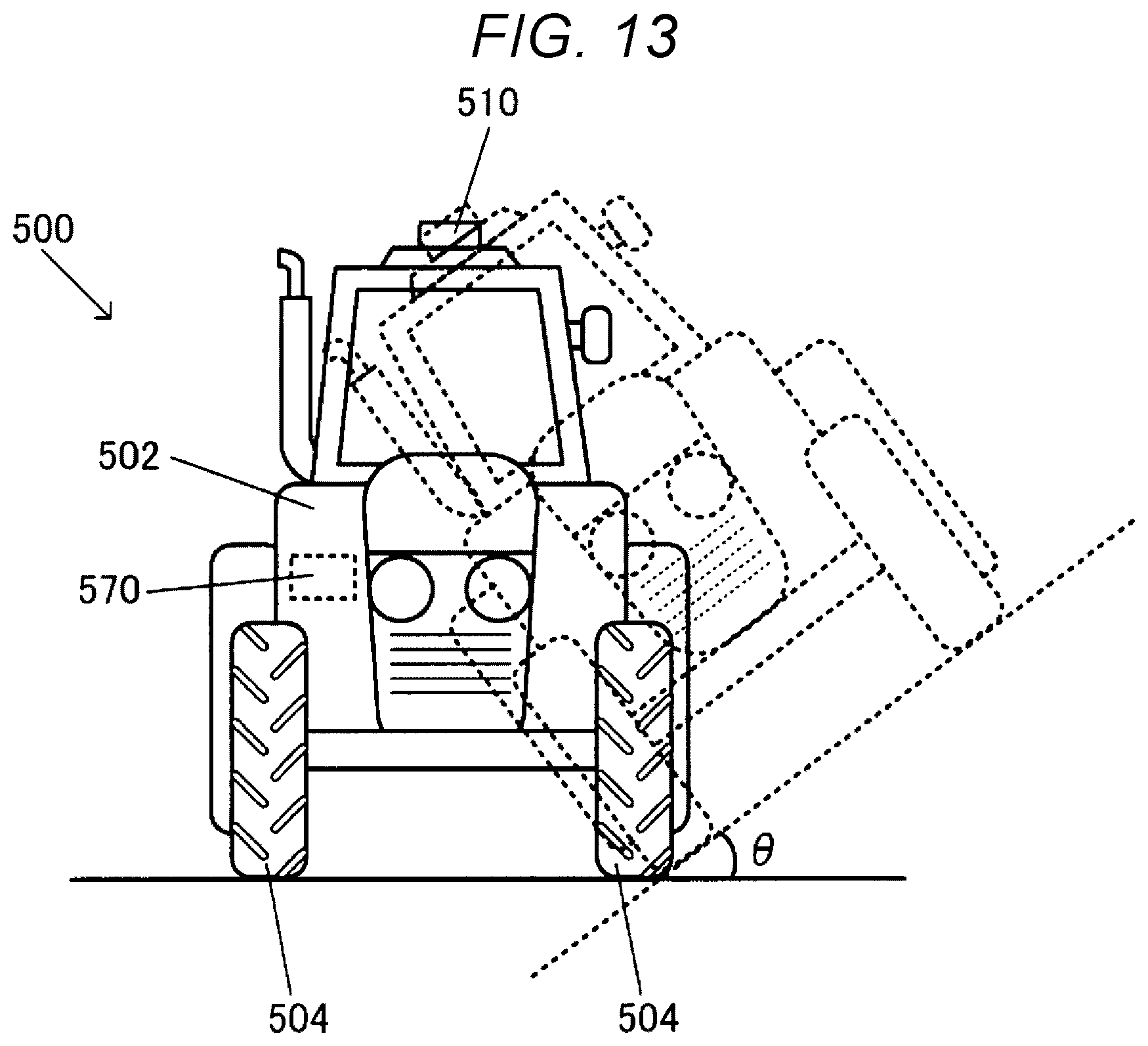

[0028] FIG. 13 illustrates an example of a vehicle in the embodiment.

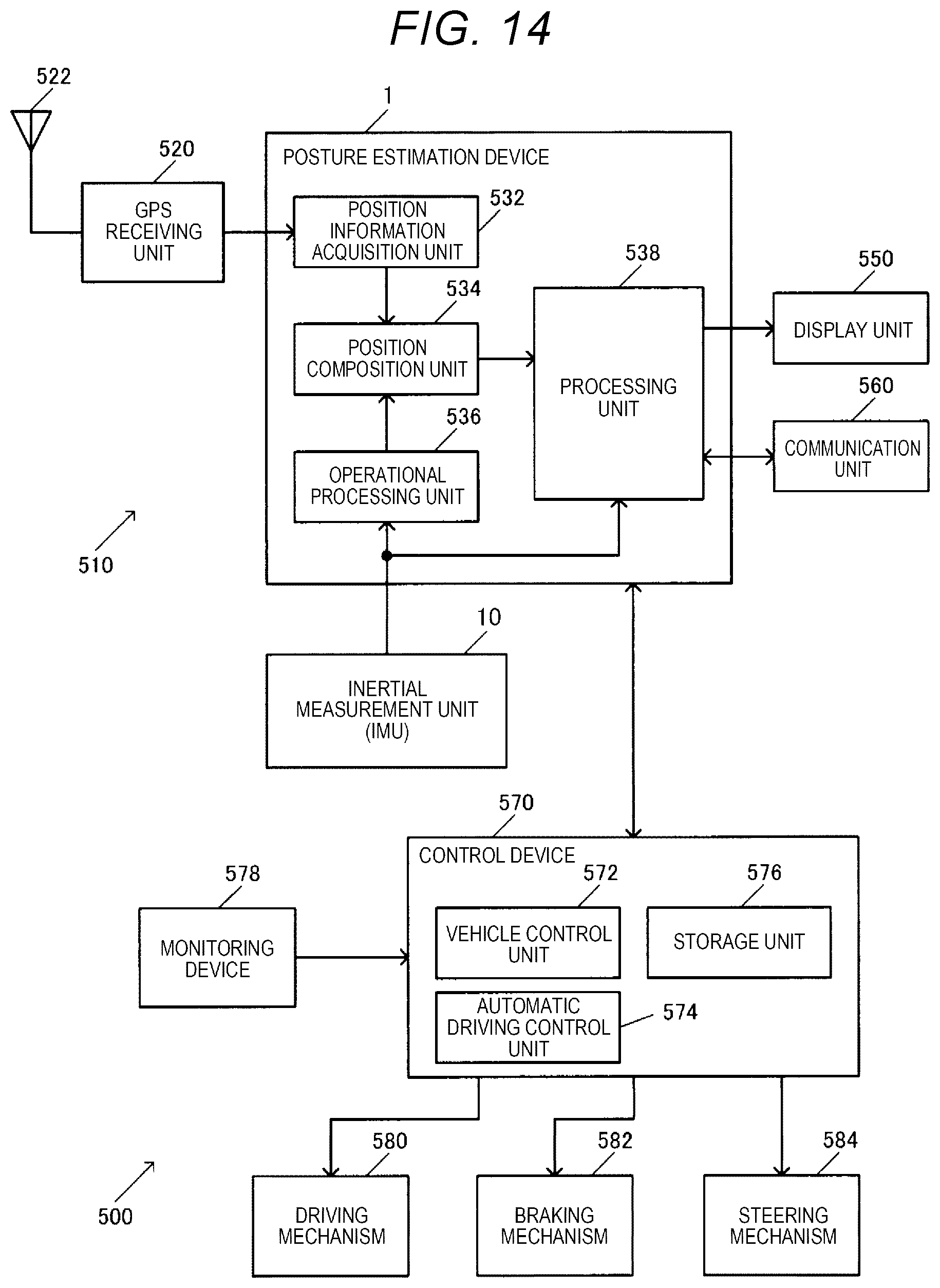

[0029] FIG. 14 is a block diagram illustrating an example of a configuration of the vehicle.

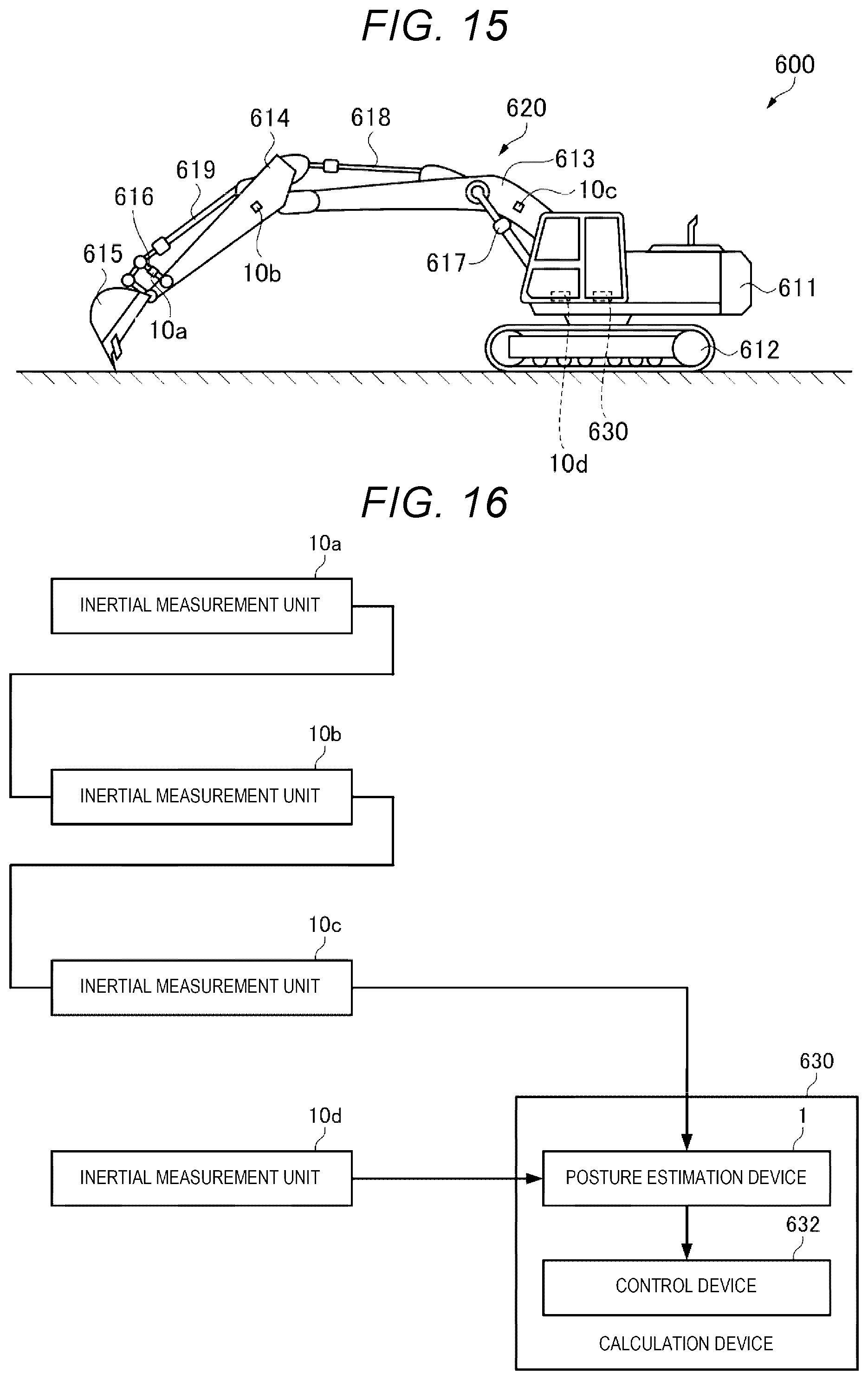

[0030] FIG. 15 illustrates another example of the vehicle in the embodiment.

[0031] FIG. 16 is a block diagram illustrating an example of a configuration of the vehicle.

DESCRIPTION OF EXEMPLARY EMBODIMENTS

[0032] Hereinafter, a preferred embodiment according to the present disclosure will be described in detail with reference to the drawings. Embodiments described below do not unduly limit the contents of the present disclosure described in the appended claims. All components described below are not essential components in the present disclosure.

1. Posture Estimation Method

1-1. Posture Estimation Theory

1-1-1. IMU Output Model

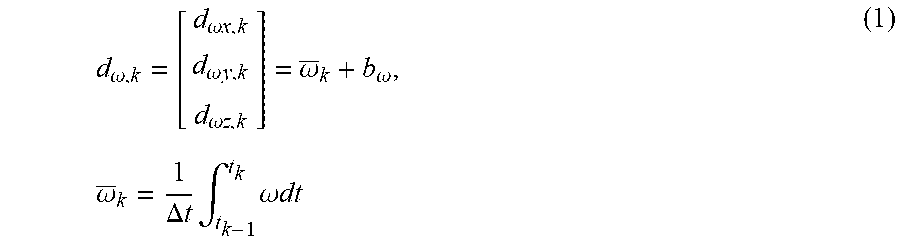

[0033] The output of the inertial measurement unit (IMU) includes angular velocity data d.sub..omega., k as an output of a three-axis angular velocity sensor and acceleration data d.sub..alpha., k as an output of a three-axis acceleration sensor at each sampling time point (t.sub.k). Here, as shown in Expression (1), the angular velocity data d.sub..omega., k is represented by the sum of an average value of an angular velocity vector .omega. in a sampling interval (.DELTA.t=t.sub.k-t.sub.k-1) and the residual bias b.sub..omega..

d .omega. , k = [ d .omega. x , k d .omega. y , k d .omega. z , k ] = .omega. _ k + b .omega. , .omega. _ k = 1 .DELTA. t .intg. t k - 1 t k .omega. dt ( 1 ) ##EQU00001##

[0034] Similarly, as shown in Expression (2), the acceleration data d.sub..alpha., k is also represented by the sum of an average value of an acceleration vector .alpha. and the residual bias b.sub..alpha..

d .alpha. , k = [ d .alpha. x , k d .alpha. y , k d .alpha. z , k ] = .alpha. _ k + b .alpha. , .alpha. _ k = 1 .DELTA. t .intg. t k - 1 t k .alpha. dt ( 2 ) ##EQU00002##

1-1-2. Calculation of Three-Dimensional Posture by Angular Velocity Integration

[0035] When a three-dimensional posture is represented by quaternions, a relation between the posture quaternion q and the angular velocity vector .omega. [rad/s] is represented by a differential equation in Expression (3).

d dt q = 1 2 q .omega. ( 3 ) ##EQU00003##

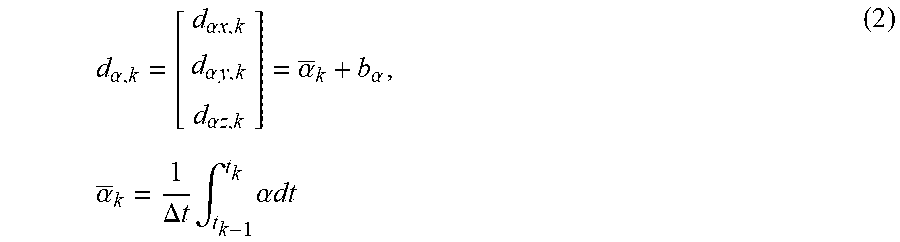

[0036] Here, a symbol obtained by superimposing .largecircle. and .times. on each other indicates quaternion multiplication. For example, elements of quaternion multiplication of q and p are calculated as shown in Expression (4).

q p = [ + q 0 - q 1 - q 2 - q 3 + q 1 + q 0 - q 3 + q 2 + q 2 + q 3 + q 0 - q 1 + q 3 - q 2 + q 1 + q 0 ] [ p 0 p 1 p 2 p 3 ] = [ q 0 p 0 - q 1 p 1 - q 2 p 2 - q 3 p 3 q 1 p 0 + q 0 p 1 - q 3 p 2 + q 2 p 3 q 2 p 0 + q 3 p 1 + q 0 p 2 - q 1 p 3 q 3 p 0 - q 2 p 1 + q 1 p 2 + q 0 p 3 ] ( 4 ) ##EQU00004##

[0037] As shown in Expression (5), the angular velocity vector .omega. is considered as being equivalent to a quaternion in which the real (scalar) component is zero, and the imaginary (vector) component coincides with the component of w.

.omega. = [ .omega. x .omega. y .omega. z ] .ident. [ 0 .omega. x .omega. y .omega. z ] = [ 0 .omega. ] ( 5 ) ##EQU00005##

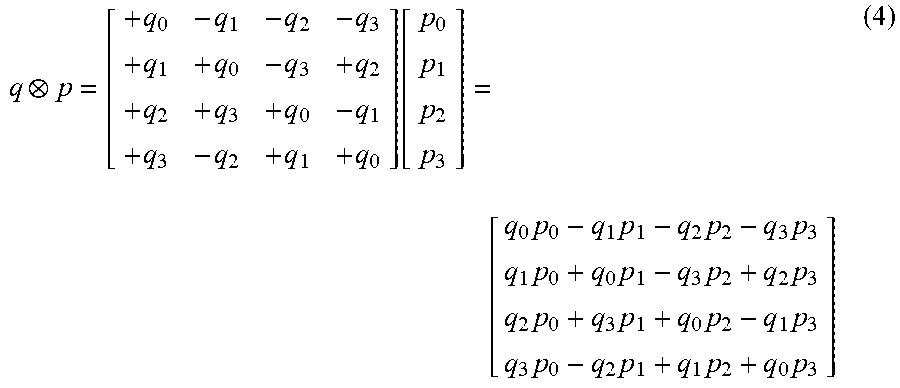

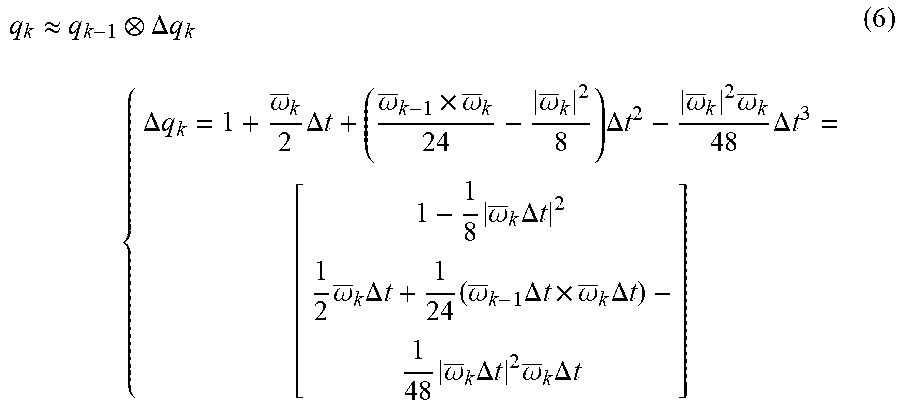

[0038] If the differential equation (3) is solved, it is possible to calculate the three-dimensional posture. However, unfortunately, the general solution thereof has not been found. Further, the value of the angular velocity vector .omega. is also obtained in only a form of a discrete average value. Thus, it is necessary that approximation calculation is performed with Expression (6) for each short (sampling) time.

q k .apprxeq. q k - 1 .DELTA. q k { .DELTA. q k = 1 + .omega. _ k 2 .DELTA. t + ( .omega. _ k - 1 .times. .omega. _ k 24 - .omega. _ k 2 8 ) .DELTA. t 2 - .omega. _ k 2 .omega. _ k 48 .DELTA. t 3 = [ 1 - 1 8 .omega. _ k .DELTA. t 2 1 2 .omega. _ k .DELTA. t + 1 24 ( .omega. _ k - 1 .DELTA. t .times. .omega. _ k .DELTA. t ) - 1 48 .omega. _ k .DELTA. t 2 .omega. _ k .DELTA. t ] ( 6 ) ##EQU00006##

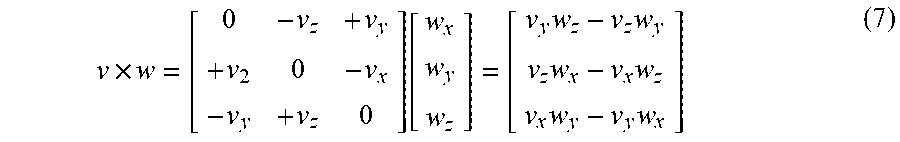

[0039] Expression (6) is an expression calculated based on Taylor expansion at each of t=t.sub.k-1 to the third-order term of .DELTA.t, considering the posture quaternion q and an integration relation of the angular velocity vector .omega. for each axis. The term including .omega..sub.k-1 in the expression corresponds to the Corning correction term. The symbol .times. indicates a cross product (vector product) of a three-dimensional vector. For example, the elements of v.times.w are calculated as in Expression (7).

v .times. w = [ 0 - v z + v y + v 2 0 - v x - v y + v z 0 ] [ w x w y w z ] = [ v y w z - v z w y v z w x - v x w z v x w y - v y w x ] ( 7 ) ##EQU00007##

1-1-3. Tilt Error Observation by Gravitational Acceleration

[0040] The acceleration sensor detects an acceleration generated by the movement thereof. However, on the earth, the acceleration is normally detected in a state of adding a gravitational acceleration of about 1 [G] (=9.80665 [m/s.sup.2]). The gravitational acceleration is normally a vector in a vertical direction. Thus, it is possible to know an error of a tilt (roll and pitch) component of the posture by comparison to the output of the three-axis acceleration sensor. Therefore, firstly, it is necessary that an acceleration vector .alpha. in the sensor coordinate system (xyz coordinate system), which is observed by the three-axis acceleration sensor is transformed to an acceleration vector .alpha.' in a coordinate system (XYZ coordinate system) of a local space obtained by horizontal orthogonal axes and a vertical axis. The coordinate (rotation) transformation can be calculated with the posture quaternion q and conjugate quaternion q*, as shown in Expression (8).

.alpha.'=q.alpha.q* (8)

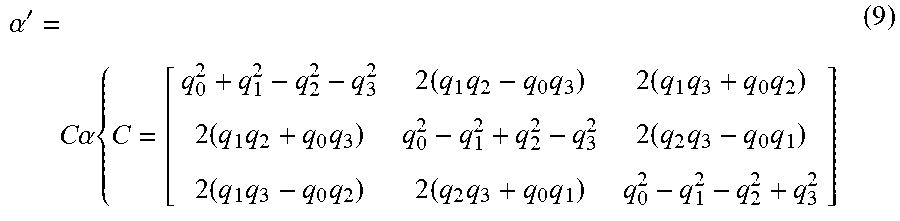

[0041] Expression (8) can be expressed with a three-dimensional coordinate transformation matrix C, as in Expression (9).

.alpha. ' = C .alpha. { C = [ q 0 2 + q 1 2 - q 2 2 - q 3 2 2 ( q 1 q 2 - q 0 q 3 ) 2 ( q 1 q 3 + q 0 q 2 ) 2 ( q 1 q 2 + q 0 q 3 ) q 0 2 - q 1 2 + q 2 2 - q 3 2 2 ( q 2 q 3 - q 0 q 1 ) 2 ( q 1 q 3 - q 0 q 2 ) 2 ( q 2 q 3 + q 0 q 1 ) q 0 2 - q 1 2 - q 2 2 + q 3 2 ] ( 9 ) ##EQU00008##

[0042] A tilt error is obtained by comparing the acceleration vector .alpha.' to the gravitational acceleration vector g in the local-space coordinate system (XYZ coordinate system). The gravitational acceleration vector g is represented by Expression (10). In Expression (10), .DELTA.g indicates a gravitational-acceleration correction value indicating a difference [G] from the standard value of the gravitational acceleration vector g.

g = [ 0 0 - ( 1 + .DELTA. g ) ] ( 10 ) ##EQU00009##

1-1-4. Observation of Zero Motion Velocity

[0043] In particular, the motion velocity of the IMU is considered to be substantially equal to zero in a long term, in user interface applications. A relation between the motion velocity vector v in the local-space coordinate system, and the acceleration vector .alpha. and the angular velocity vector .omega. in the sensor coordinate system is expressed with the coordinate transformation matrix C by a differential equation in Expression (11).

d dt v = C .alpha. - g d dt C = C [ 0 - .omega. z + .omega. y + .omega. z 0 - .omega. x - .omega. y + .omega. x 0 ] ( 11 ) ##EQU00010##

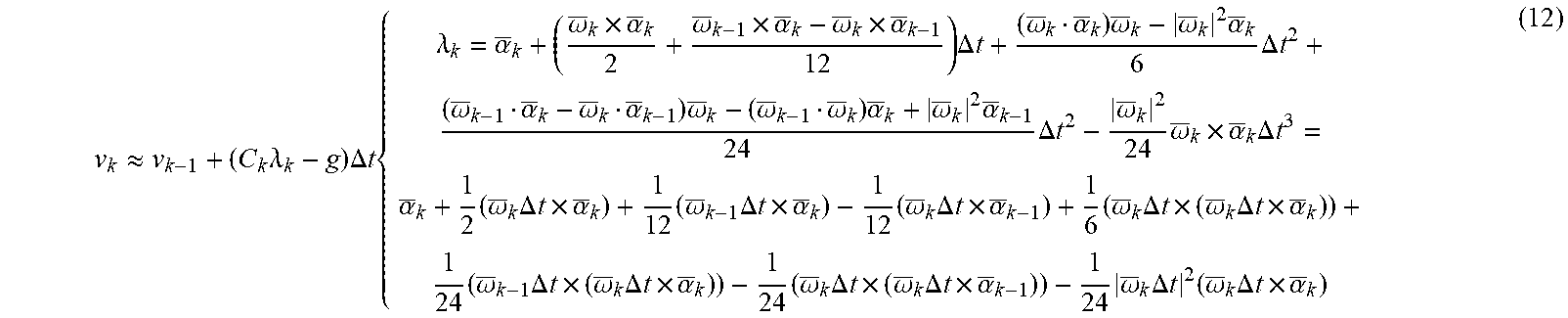

[0044] Here, the values of the acceleration vector .alpha. and the angular velocity vector .omega. are obtained only in a form of a discrete average value. Thus, the motion velocity vector is calculated by performing approximation calculation with Expression (12) for each short (sampling) time.

v k .apprxeq. v k - 1 + ( C k .lamda. k - g ) .DELTA. t { .lamda. k = .alpha. _ k + ( .omega. _ k .times. .alpha. _ k 2 + .omega. _ k - 1 .times. .alpha. _ k - .omega. _ k .times. .alpha. _ k - 1 12 ) .DELTA. t + ( .omega. _ k .alpha. _ k ) .omega. _ k - .omega. _ k 2 .alpha. _ k 6 .DELTA. t 2 + ( .omega. _ k - 1 .alpha. _ k - .omega. _ k .alpha. _ k - 1 ) .omega. _ k - ( .omega. _ k - 1 .omega. _ k ) .alpha. _ k + .omega. _ k 2 .alpha. _ k - 1 24 .DELTA. t 2 - .omega. _ k 2 24 .omega. _ k .times. .alpha. _ k .DELTA. t 3 = .alpha. _ k + 1 2 ( .omega. _ k .DELTA. t .times. .alpha. _ k ) + 1 12 ( .omega. _ k - 1 .DELTA. t .times. .alpha. _ k ) - 1 12 ( .omega. _ k .DELTA. t .times. .alpha. _ k - 1 ) + 1 6 ( .omega. _ k .DELTA. t .times. ( .omega. _ k .DELTA. t .times. .alpha. _ k ) ) + 1 24 ( .omega. _ k - 1 .DELTA. t .times. ( .omega. _ k .DELTA. t .times. .alpha. _ k ) ) - 1 24 ( .omega. _ k .DELTA. t .times. ( .omega. _ k .DELTA. t .times. .alpha. _ k - 1 ) ) - 1 24 .omega. _ k .DELTA. t 2 ( .omega. _ k .DELTA. t .times. .alpha. _ k ) ( 12 ) ##EQU00011##

[0045] Expression (12) is an expression calculated based on Taylor expansion at each of t=t.sub.k-1 to the third-order term of .DELTA.t, considering the motion velocity vector v and integration relations of the acceleration vector .alpha. and the angular velocity vector .omega. for each axis. The third-order term is ignored in Expression (12) because of being sufficiently small although the residual error .epsilon..sub..lamda. is provided in the third-order term. The symbol indicates a dot product (scalar product) of a three-dimensional vector. For example, vw is calculated as in Expression (13).

vw=r.sub.xw.sub.x+r.sub.yw.sub.y+v.sub.zw.sub.z (13)

1-1-5. Posture Quaternion and Error Thereof

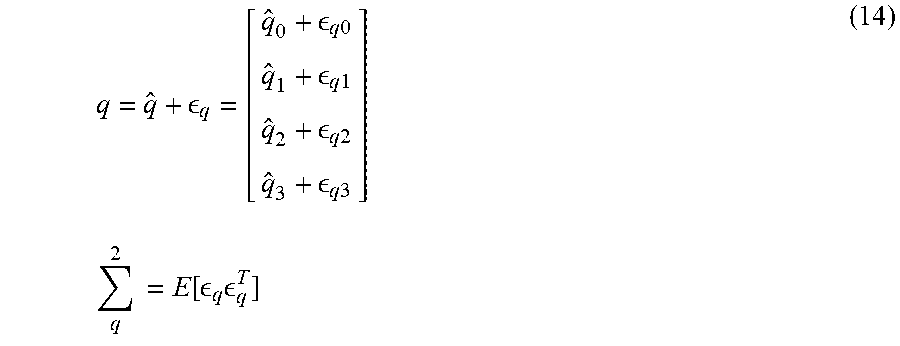

[0046] It is considered that the true value ({circumflex over ( )}) of the calculated posture quaternion q has an error .epsilon..sub.q, as in Expression (14).

q = q ^ + q = [ q ^ 0 + q 0 q ^ 1 + q 1 q ^ 2 + q 2 q ^ 3 + q 3 ] q 2 = E [ q q T ] ( 14 ) ##EQU00012##

[0047] Here, .SIGMA..sub.q.sup.2 represents an error covariance matrix indicating the magnitude of the error .epsilon..sub.q. E[ ] indicates an expected value. T on the right shoulder indicates transposition of the vector-matrix.

[0048] The quaternion and the error have four values, but there are just three degrees of freedom in a three-dimensional posture (rotational transformation). The fourth degree of freedom of the posture quaternion corresponds to enlargement/reduction conversion. However, it is necessary that the enlargement/reduction ratio is normally fixed to 1 in posture detection processing. In practice, a enlargement/reduction ratio component changes by various calculation errors. Thus, processing of suppressing the change of the enlargement/reduction ratio is required.

[0049] In a case of the posture quaternion q, the square of the absolute value thereof corresponds to the enlargement/reduction ratio. Thus, the change is suppressed by normalization processing in which the absolute value is set to 1, as in Expression (15).

q .rarw. q q = 1 q 0 2 + q 1 2 + q 2 2 + q 3 2 [ q 0 q 1 q 2 q 3 ] ( 15 ) ##EQU00013##

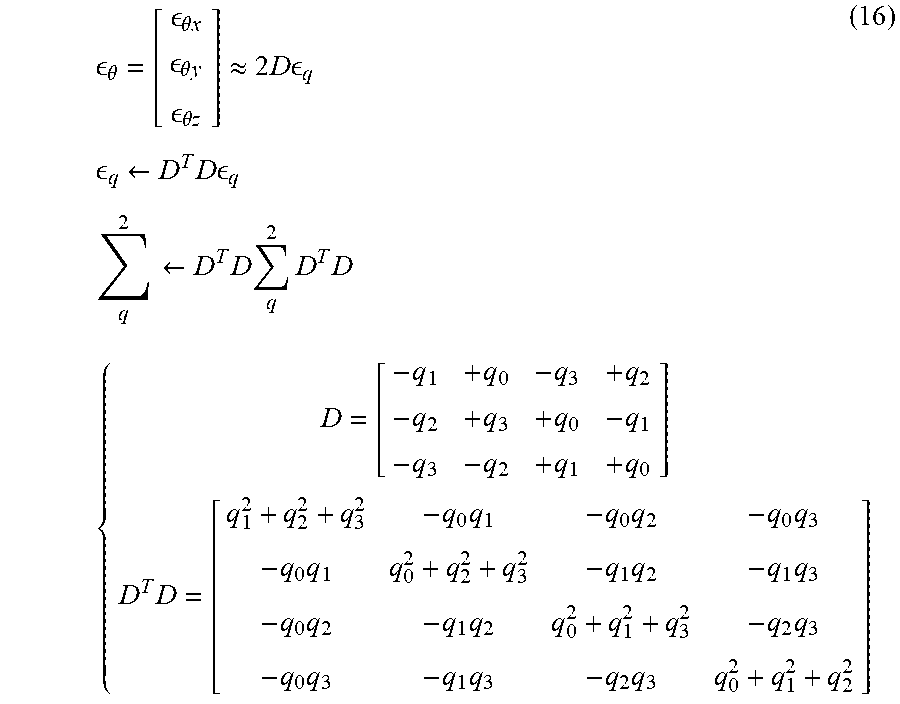

[0050] In a case of the posture error .epsilon..sub.q, it is necessary to hold the rank (order number) of the error covariance matrix .SIGMA..sub.q.sup.2 to be three. Thus, the rank is limited as in Expression (16), considering a (three-dimensional) error rotation vector .epsilon..sub..theta. in the local-space coordinate system (assuming that a posture error angle is sufficiently small).

.theta. = [ .theta. x .theta. y .theta. z ] .apprxeq. 2 D q q .rarw. D T D q q 2 .rarw. D T D q 2 D T D ( 16 ) { D = [ - q 1 + q 0 - q 3 + q 2 - q 2 + q 3 + q 0 - q 1 - q 3 - q 2 + q 1 + q 0 ] D T D = [ q 1 2 + q 2 2 + q 3 2 - q 0 q 1 - q 0 q 2 - q 0 q 3 - q 0 q 1 q 0 2 + q 2 2 + q 3 2 - q 1 q 2 - q 1 q 3 - q 0 q 2 - q 1 q 2 q 0 2 + q 1 2 + q 3 2 - q 2 q 3 - q 0 q 3 - q 1 q 3 - q 2 q 3 q 0 2 + q 1 2 + q 2 2 ] ##EQU00014##

1-1-6. Removal (Ignoring) of Azimuth Error

[0051] When an azimuth observation section such as a magnetic sensor is not provided in posture detection, an azimuthal component of the posture error just monotonously increases and does not serve for any purpose. Further, the increased error estimate causes a feedback gain to unnecessarily increase, and this causes the azimuth to unexpectedly change or vary. Thus, an azimuth error component .epsilon..sub..theta.z is removed from Expression (16).

.theta. ' = [ .theta. x .theta. y ] .apprxeq. 2 D ' q q .rarw. D ' T D ' q q 2 .rarw. D ' T D ' q 2 D ' T D ' { D ' = [ - q 1 + q 0 - q 3 + q 2 - q 2 + q 3 + q 0 - q 1 ] D ' T D ' = [ q 1 2 + q 2 2 - q 0 q 1 - q 2 q 3 - q 1 q 3 - q 0 q 2 0 - q 0 q 1 - q 2 q 3 q 0 2 + q 3 2 0 q 0 q 2 - q 1 q 3 q 1 q 3 - q 0 q 2 0 q 0 2 + q 3 2 - q 0 q 1 - q 2 q 3 0 q 0 q 2 - q 1 q 3 - q 0 q 1 - q 2 q 3 q 1 2 + q 2 2 ] ( 17 ) ##EQU00015##

1-1-7. Extended Kalman Filter

[0052] An extended Kalman filter that calculates a three-dimensional posture based on the above model expressions can be designed.

State Vector and Error Covariance Matrix

[0053] As in Expression (18), the posture quaternion q, the motion velocity vector v, the residual bias b.sub..omega. (offset to angular velocity vector .omega.) of the angular velocity sensor, the residual bias b.sub..alpha. (offset to acceleration vector .alpha.) of the acceleration sensor, and the gravitational-acceleration correction value .DELTA.g, as unknown state values to be obtained, constitute a state vector x (14-dimensional vector) of the extended Kalman filter. In addition, the error covariance matrix .SIGMA..sub.x.sup.2 is defined.

x = [ q v b .omega. b .alpha. .DELTA. g ] , x 2 = E [ x x T ] , x = [ q v b .omega. b .alpha. .DELTA. g ] x = x ^ + x ( 18 ) ##EQU00016##

Process Model

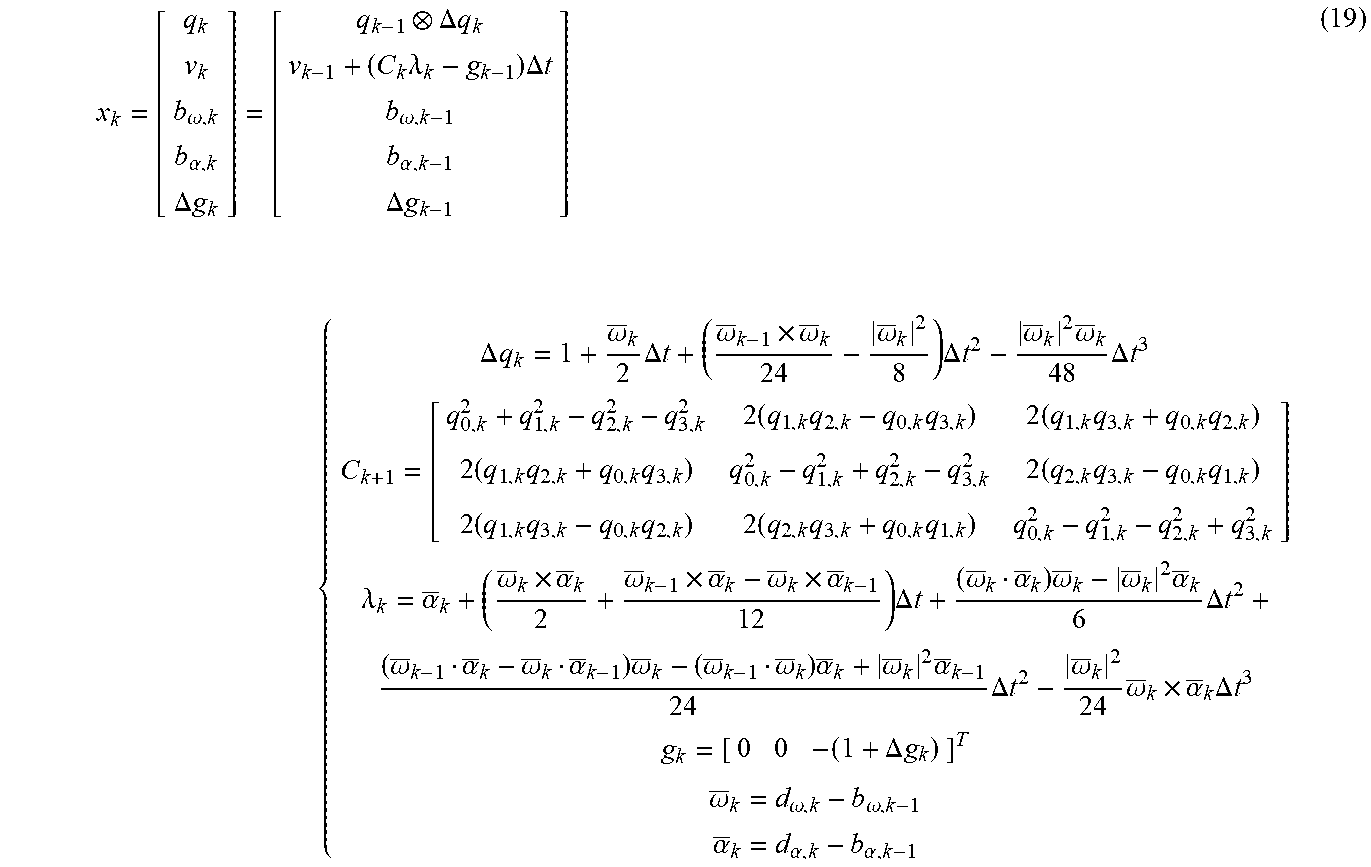

[0054] In a process model, the value of the latest state vector is predicted based on the sampling interval .DELTA.t and values of the angular velocity data d.sub..omega. and the acceleration data d.sub..alpha., as in Expression (19).

x k = [ q k v k b .omega. , k b .alpha. , k .DELTA. g k ] = [ q k - 1 .DELTA. q k v k - 1 + ( C k .lamda. k - g k - 1 ) .DELTA. t b .omega. , k - 1 b .alpha. , k - 1 .DELTA. g k - 1 ] { .DELTA. q k = 1 + .omega. _ k 2 .DELTA. t + ( .omega. _ k - 1 .times. .omega. _ k 24 - .omega. _ k 2 8 ) .DELTA. t 2 - .omega. _ k 2 .omega. _ k 48 .DELTA. t 3 C k + 1 = [ q 0 , k 2 + q 1 , k 2 - q 2 , k 2 - q 3 , k 2 2 ( q 1 , k q 2 , k - q 0 , k q 3 , k ) 2 ( q 1 , k q 3 , k + q 0 , k q 2 , k ) 2 ( q 1 , k q 2 , k + q 0 , k q 3 , k ) q 0 , k 2 - q 1 , k 2 + q 2 , k 2 - q 3 , k 2 2 ( q 2 , k q 3 , k - q 0 , k q 1 , k ) 2 ( q 1 , k q 3 , k - q 0 , k q 2 , k ) 2 ( q 2 , k q 3 , k + q 0 , k q 1 , k ) q 0 , k 2 - q 1 , k 2 - q 2 , k 2 + q 3 , k 2 ] .lamda. k = .alpha. _ k + ( .omega. _ k .times. .alpha. _ k 2 + .omega. _ k - 1 .times. .alpha. _ k - .omega. _ k .times. .alpha. _ k - 1 12 ) .DELTA. t + ( .omega. _ k .alpha. _ k ) .omega. _ k - .omega. _ k 2 .alpha. _ k 6 .DELTA. t 2 + ( .omega. _ k - 1 .alpha. _ k - .omega. _ k .alpha. _ k - 1 ) .omega. _ k - ( .omega. _ k - 1 .omega. _ k ) .alpha. _ k + .omega. _ k 2 .alpha. _ k - 1 24 .DELTA. t 2 - .omega. _ k 2 24 .omega. _ k .times. .alpha. _ k .DELTA. t 3 g k = [ 0 0 - ( 1 + .DELTA. g k ) ] T .omega. _ k = d .omega. , k - b .omega. , k - 1 .alpha. _ k = d .alpha. , k - b .alpha. , k - 1 ( 19 ) ##EQU00017##

[0055] The covariance matrix of a state error is updated as in Expression (20) by receiving influences of noise components .eta..sub..omega. and .eta..sub..alpha. of the angular velocity data d.sub..omega., and the acceleration data d.sub..alpha., and process noise .rho..sub..omega., .rho..sub..alpha., and .rho..sub.g indicating instability of the residual bias b.sub..omega. of the angular velocity sensor, the residual bias b.sub..alpha. of the acceleration sensor, and the value (gravitational acceleration value) of the gravitational acceleration vector g.

x , k 2 = A k x , k - 1 2 A k T + W k .rho. , k 2 W k T { A k .apprxeq. [ J q / q , k 0 4 .times. 3 - J q / .omega. , k 0 4 .times. 3 0 4 .times. 1 J v / q , k I 3 .times. 3 0 3 .times. 3 - C k 0 3 .times. 1 0 3 .times. 4 0 3 .times. 3 I 3 .times. 3 0 3 .times. 3 0 3 .times. 1 0 3 .times. 4 0 3 .times. 3 0 3 .times. 3 I 3 .times. 3 0 3 .times. 1 0 1 .times. 1 0 3 .times. 3 0 1 .times. 3 0 1 .times. 3 I 1 .times. 1 ] W k .apprxeq. [ J q / .omega. , k 0 4 .times. 3 0 4 .times. 3 0 4 .times. 3 0 4 .times. 1 0 3 .times. 3 C k 0 3 .times. 3 0 3 .times. 3 0 3 .times. 1 0 3 .times. 3 0 3 .times. 3 .DELTA. t I 3 .times. 3 0 3 .times. 3 0 3 .times. 1 0 3 .times. 3 0 3 .times. 3 0 3 .times. 3 .DELTA. t I 3 .times. 3 0 3 .times. 1 0 1 .times. 3 0 1 .times. 3 0 1 .times. 3 0 1 .times. 3 .DELTA. t I 1 .times. 1 ] .rho. , k 2 = [ .eta. .omega. , k 2 0 3 .times. 3 0 3 .times. 3 0 3 .times. 3 0 3 .times. 1 0 3 .times. 3 .eta..alpha. , k 2 0 3 .times. 3 0 3 .times. 3 0 3 .times. 1 0 3 .times. 3 0 3 .times. 3 .rho..omega. 2 0 3 .times. 3 0 3 .times. 1 0 3 .times. 3 0 3 .times. 3 0 3 .times. 3 .rho. .alpha. 2 0 3 .times. 1 0 1 .times. 3 0 1 .times. 3 0 1 .times. 3 0 1 .times. 3 .sigma. .rho. g 2 I 1 .times. 1 ] ( 20 ) ##EQU00018##

[0056] Here, 0.sub.n.times.m indicates a zero matrix having n rows and m columns. I.sub.n.times.m indicates an identity matrix having n rows and m columns. J . . . indicates a matrix of propagation coefficients of each error obtained by partial differentiation of the process model, as with Expression (21). .SIGMA. . . . indicates a covariance matrix of each type of noise as with Expression (22).

J q / q , k = [ + .DELTA. q 0 , k - .DELTA. q 1 , k - .DELTA. q 2 , k - .DELTA. q 3 , k + .DELTA. q 1 , k + .DELTA. q 0 , k + .DELTA. q 3 , k - .DELTA. q 2 , k + .DELTA. q 2 , k - .DELTA. q 3 , k + .DELTA. q 0 , k + .DELTA. q 1 , k + .DELTA. q 3 , k + .DELTA. q 2 , k - .DELTA. q 1 , k + .DELTA. q 0 , k ] J q / .omega. , k = 1 2 [ - q 1 , k - 1 - q 2 , k - 1 - q 3 , k - 1 + q 0 , k - 1 - q 3 , k - 1 + q 2 , k - 1 + q 3 , k - 1 + q 0 , k - 1 - q 1 , k - 1 - q 2 , k - 1 + q 1 , k - 1 + q 0 , k - 1 ] .DELTA. t J v / q , k = 2 [ + r 1 , k + r 0 , k + r 3 , k - r 2 , k + r 2 , k - r 3 , k + r 0 , k + r 1 , k 0 0 0 0 ] .DELTA. t , [ r 0 , k r 1 , k r 2 , k r 3 , k ] = [ + q 1 , k - 1 + q 2 , k - 1 + q 3 , k - 1 + q 0 , k - 1 - q 3 , k - 1 + q 2 , k - 1 + q 3 , k - 1 + q 0 , k - 1 - q 1 , k - 1 - q 2 , k - 1 + q 1 , k - 1 + q 0 , k - 1 ] .lamda. k ( 21 ) .eta..omega. , k 2 = E [ .eta. .omega. .eta. .omega. T ] = [ .sigma. .eta. .omega. x , k 2 0 0 0 .sigma. .eta. .omega. y , k 2 0 0 0 .sigma. .eta. .omega. z , k 2 ] .eta. .alpha. , k 2 = E [ .eta. .alpha. .eta. .alpha. T ] = [ .sigma. .eta. .alpha. x , k 2 0 0 0 .sigma. .eta. .alpha. y , k 2 0 0 0 .sigma. .eta. .alpha. z , k 2 ] .rho. .omega. 2 = E [ .rho. .omega. .rho. .omega. T ] = [ .sigma. .rho..omega. x , k 2 0 0 0 .sigma. .rho..omega. y , k 2 0 0 0 .sigma. .rho..omega. z , k 2 ] .rho..alpha. 2 = E [ .rho. .alpha. .rho. .alpha. T ] = [ .sigma. .rho..alpha. x , k 2 0 0 0 .sigma. .rho..alpha. y , k 2 0 0 0 .sigma. .rho..alpha. z , k 2 ] ( 22 ) ##EQU00019##

Observation Model

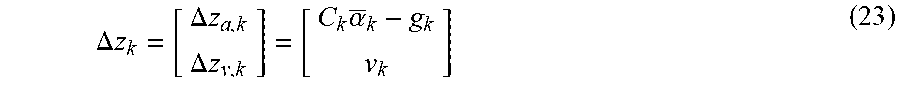

[0057] In an observation model, an observation residual .DELTA.z in which an observation residual .DELTA.z.sub.a of the gravitational acceleration based on acceleration data d.sub..alpha. and an observation residual .DELTA.z.sub.v of the zero motion velocity are used as elements is calculated as in Expression (23).

.DELTA. z k = [ .DELTA. z a , k .DELTA. z v , k ] = [ C k .alpha. _ k - g k v k ] ( 23 ) ##EQU00020##

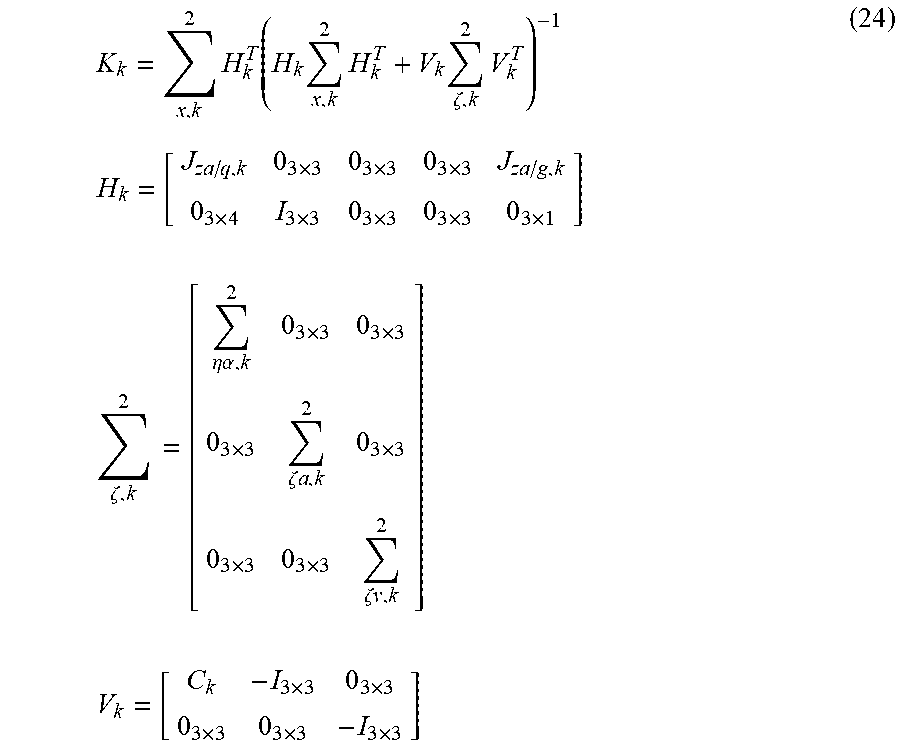

[0058] Here, a Kalman coefficient K is calculated, as in Expression (24), by adding the noise component .eta..sub..alpha. of the acceleration data d.sub..alpha. and the motion acceleration component .zeta..sub.a and the motion velocity component .zeta..sub.v as observation errors.

K k = x , k 2 H k T ( H k x , k 2 H k T + V k .zeta. , k 2 V k T ) - 1 H k = [ J za / q , k 0 3 .times. 3 0 3 .times. 3 0 3 .times. 3 J za / g , k 0 3 .times. 4 I 3 .times. 3 0 3 .times. 3 0 3 .times. 3 0 3 .times. 1 ] .zeta. , k 2 = [ .eta..alpha. , k 2 0 3 .times. 3 0 3 .times. 3 0 3 .times. 3 .zeta. a , k 2 0 3 .times. 3 0 3 .times. 3 0 3 .times. 3 .zeta. v , k 2 ] V k = [ C k - I 3 .times. 3 0 3 .times. 3 0 3 .times. 3 0 3 .times. 3 - I 3 .times. 3 ] ( 24 ) ##EQU00021##

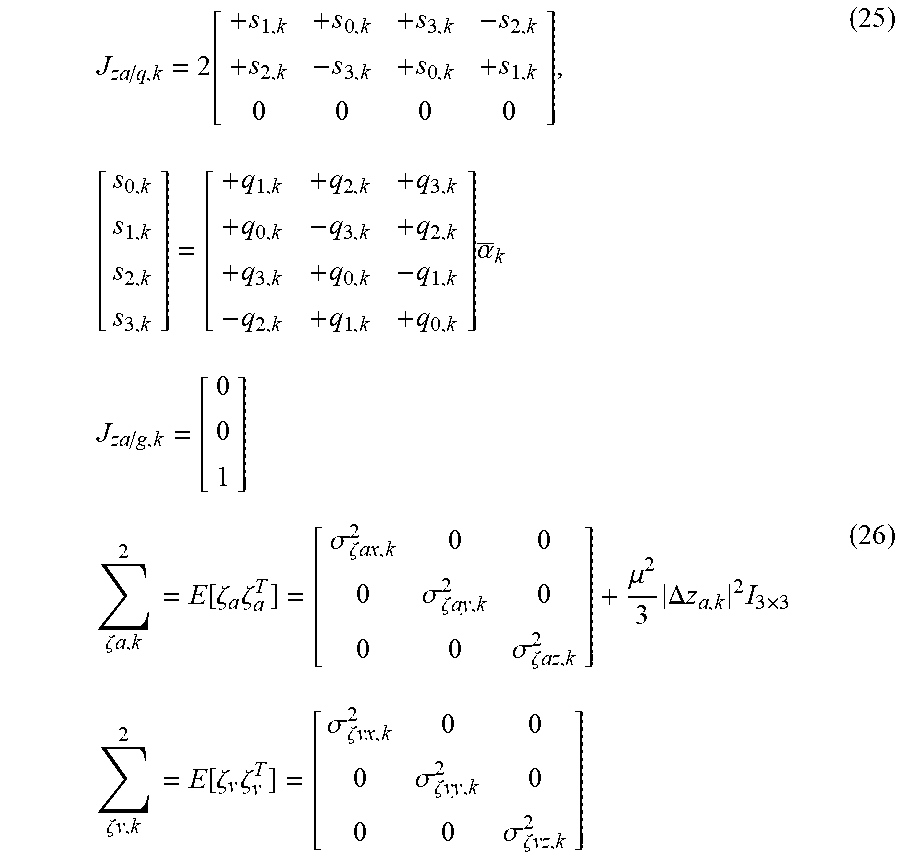

[0059] Here, J . . . is the matrix of propagation coefficients of each error obtained by partial differentiation of the observation model, as with Expression (25). .SIGMA. . . . indicates a covariance matrix of each type of noise as with Expression (26)

J za / q , k = 2 [ + s 1 , k + s 0 , k + s 3 , k - s 2 , k + s 2 , k - s 3 , k + s 0 , k + s 1 , k 0 0 0 0 ] , [ s 0 , k s 1 , k s 2 , k s 3 , k ] = [ + q 1 , k + q 2 , k + q 3 , k + q 0 , k - q 3 , k + q 2 , k + q 3 , k + q 0 , k - q 1 , k - q 2 , k + q 1 , k + q 0 , k ] .alpha. _ k J za / g , k = [ 0 0 1 ] ( 25 ) .zeta. a , k 2 = E [ .zeta. a .zeta. a T ] = [ .sigma. .zeta. ax , k 2 0 0 0 .sigma. .zeta. ay , k 2 0 0 0 .sigma. .zeta. az , k 2 ] + .mu. 2 3 .DELTA. z a , k 2 I 3 .times. 3 .zeta. v , k 2 = E [ .zeta. v .zeta. v T ] = [ .sigma. .zeta. vx , k 2 0 0 0 .sigma. .zeta. vy , k 2 0 0 0 .sigma. .zeta. vz , k 2 ] ( 26 ) ##EQU00022##

[0060] Here, .mu. is a coefficient for calculating the RMS of the error of each axis from the predicted motion acceleration. The state vector x is corrected, as in Expression (27), and the error covariance matrix .SIGMA..sub.x.sup.2 thereof is updated, by using the Kalman coefficient K.

x.sub.k.rarw.x.sub.k-K.sub.kz.sub.k

.SIGMA..sub.z,k.sup.2.rarw..SIGMA..sub.z,k.sup.2-K.sub.kH.sub.k.SIGMA..s- ub.z,k.sup.2 (27)

Posture Normalization Model

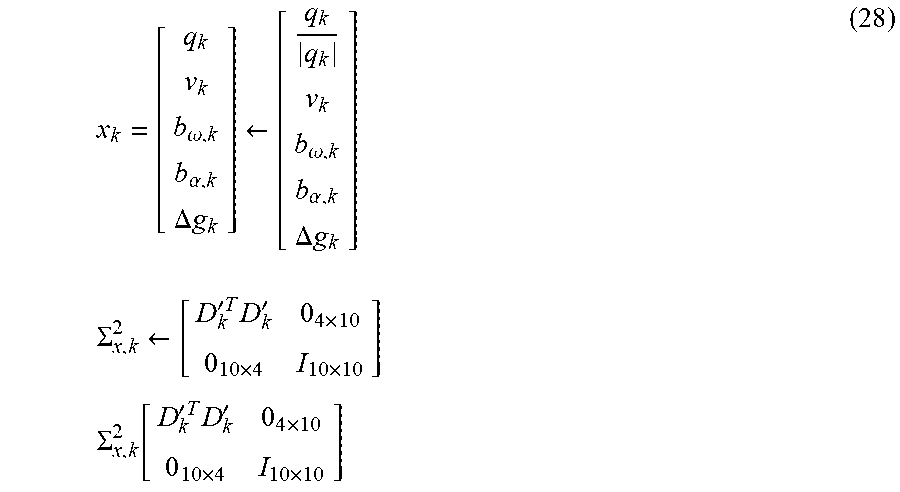

[0061] In the posture normalization model, Expression (28) is updated in order to maintain the posture quaternion and the error covariance thereof to proper values.

x k = [ q k v k b .omega. , k b .alpha. , k .DELTA. g k ] .rarw. [ q k q k v k b .omega. , k b .alpha. , k .DELTA. g k ] .SIGMA. x , k 2 .rarw. [ D k ' T D k ' 0 4 .times. 10 0 10 .times. 4 I 10 .times. 10 ] .SIGMA. x , k 2 [ D k ' T D k ' 0 4 .times. 10 0 10 .times. 4 I 10 .times. 10 ] ( 28 ) ##EQU00023##

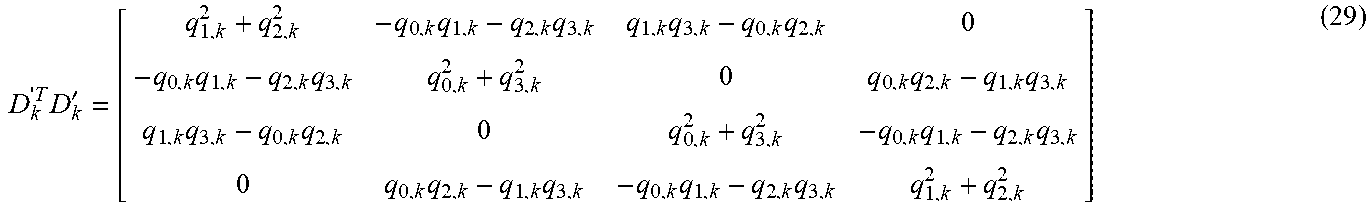

[0062] Here, D'.sup.TD' indicates a matrix of Expression (29) for limiting the rank of the posture error and removing the azimuthal component.

D k ' T D k ' = [ q 1 , k 2 + q 2 , k 2 - q 0 , k q 1 , k - q 2 , k q 3 , k q 1 , k q 3 , k - q 0 , k q 2 , k 0 - q 0 , k q 1 , k - q 2 , k q 3 , k q 0 , k 2 + q 3 , k 2 0 q 0 , k q 2 , k - q 1 , k q 3 , k q 1 , k q 3 , k - q 0 , k q 2 , k 0 q 0 , k 2 + q 3 , k 2 - q 0 , k q 1 , k - q 2 , k q 3 , k 0 q 0 , k q 2 , k - q 1 , k q 3 , k - q 0 , k q 1 , k - q 2 , k q 3 , k q 1 , k 2 + q 2 , k 2 ] ( 29 ) ##EQU00024##

1-1-8. Initial Value

State Vector and Error Covariance Matrix

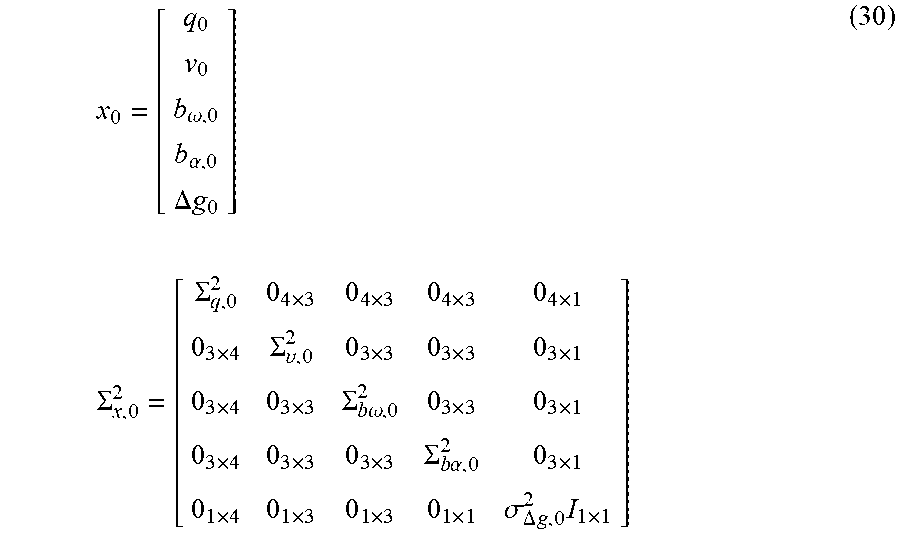

[0063] Initial values of the state vector x and the error covariance matrix .SIGMA..sub.x.sup.2 are given as in Expression (30).

x 0 = [ q 0 v 0 b .omega. , 0 b .alpha. , 0 .DELTA. g 0 ] .SIGMA. x , 0 2 = [ .SIGMA. q , 0 2 0 4 .times. 3 0 4 .times. 3 0 4 .times. 3 0 4 .times. 1 0 3 .times. 4 .SIGMA. .upsilon. , 0 2 0 3 .times. 3 0 3 .times. 3 0 3 .times. 1 0 3 .times. 4 0 3 .times. 3 .SIGMA. b .omega. , 0 2 0 3 .times. 3 0 3 .times. 1 0 3 .times. 4 0 3 .times. 3 0 3 .times. 3 .SIGMA. b .alpha. , 0 2 0 3 .times. 1 0 1 .times. 4 0 1 .times. 3 0 1 .times. 3 0 1 .times. 1 .sigma. .DELTA. g , 0 2 I 1 .times. 1 ] ( 30 ) ##EQU00025##

Posture Quaternion

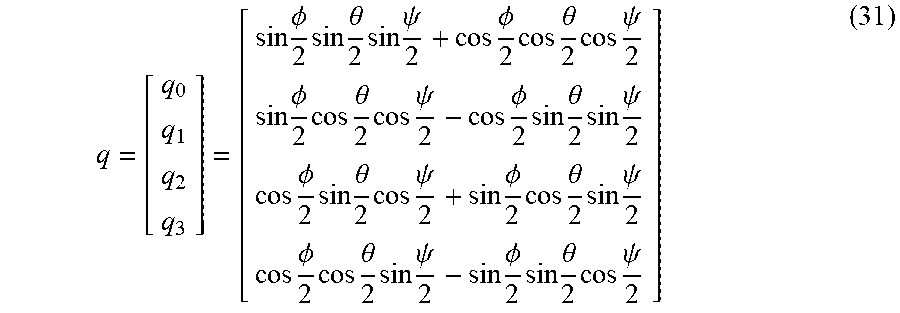

[0064] It is necessary that the posture of the IMU is given in quaternion expression. The posture quaternion q can be calculated from a roll (bank) angle .phi. [rad], a pitch (elevation) angle .theta. [rad], and a yaw angle (azimuth) .psi. [rad] used for the posture and the like of an aircraft, as in Expression (31).

q = [ q 0 q 1 q 2 q 3 ] = [ sin .phi. 2 sin .theta. 2 sin .psi. 2 + cos .phi. 2 cos .theta. 2 cos .psi. 2 sin .phi. 2 cos .theta. 2 cos .psi. 2 - cos .phi. 2 sin .theta. 2 sin .psi. 2 cos .phi. 2 sin .theta. 2 cos .psi. 2 + sin .phi. 2 cos .theta. 2 sin .psi. 2 cos .phi. 2 cos .theta. 2 sin .psi. 2 - sin .phi. 2 sin .theta. 2 cos .psi. 2 ] ( 31 ) ##EQU00026##

[0065] The error covariance matrix .SIGMA..sub.q.sup.2 is calculated from RMS.sigma..sub..PHI. [rad RMS] of a roll angle error and RMS.sigma..sub..theta. [rad RMS] of a pitch angle error, as in Expression (32) (yaw angle error is ignored).

.SIGMA. q 2 = 1 4 D ' T [ .sigma. .phi. 2 0 0 .sigma. .theta. 2 ] D ' D ' = [ - q 1 + q 0 - q 3 + q 2 - q 2 + q 3 + q 0 - q 1 ] ( 32 ) ##EQU00027##

Motion Velocity Vector

[0066] If the initial state is stationary, the motion velocity vector v may be to be required to 0. The error covariance matrix .SIGMA..sub.v.sup.2 is given based on RMS.sigma..sub.vx, RMS.sigma..sub.vy, and RMS.sigma..sub.vz [Gs RMS] of the errors of the motion velocity vector v in the axes, regardless of being stationary, as in Expression (33)

.SIGMA. v 2 = [ .sigma. vx 2 0 0 0 .sigma. vy 2 0 0 0 .sigma. vz 2 ] ( 33 ) ##EQU00028##

Residual Biases of Angular Velocity/Acceleration Sensor

[0067] If the residual bias b.sub..omega. of the angular velocity sensor and the residual bias b.sub..alpha. of the acceleration sensor are known, the residual biases are required to be appropriately set. When the residual biases are unknown, zero as an expected value is given to the residual biases. Error covariance matrixes .SIGMA..sub.b.omega..sup.2 and .SIGMA..sub.b.alpha..sup.2 are given based on the errors RMS.sigma..sub.b.omega.x, RMS.sigma..sub.b.omega.y, and RMS.sigma..sub.b.omega.z [rad/s RMS] of the residual biases of the angular velocity sensor in the axes and the errors RMS.sigma..sub.b.alpha.x, RMS.sigma..sub.b.alpha.y, and RMS.sigma..sub.b.alpha.z [G RMS] of the residual biases of the acceleration sensor in the axes, as in Expression (34).

.SIGMA. b .omega. 2 = [ .sigma. b .omega. x 2 0 0 0 .sigma. b .omega. y 2 0 0 0 .sigma. b .omega. z 2 ] .SIGMA. b .alpha. 2 = [ .sigma. b .alpha. x 2 0 0 0 .sigma. b .alpha. y 2 0 0 0 .sigma. b .alpha. z 2 ] ( 34 ) ##EQU00029##

Gravitational-Acceleration Correction Value

[0068] If the gravitational acceleration value is known, a difference from the standard value 1 [G] (=9.80665 [m/s.sup.2]) is required to be appropriately set. When the gravitational acceleration value is unknown, zero as an expected value is given to the gravitational acceleration value. RMS.sigma..sub..DELTA.g, [G RMS] of the error of the gravitational acceleration value is applied to the error covariance matrix.

1-1-9. Setting Value

Sampling Interval

[0069] Since the posture detection processing from the output of the IMS corresponds to a time integration operation in principle, the sampling interval .DELTA.t [s] is an important value, and thus is required to be appropriately set.

Output Noise of Angular Velocity/Acceleration Sensor

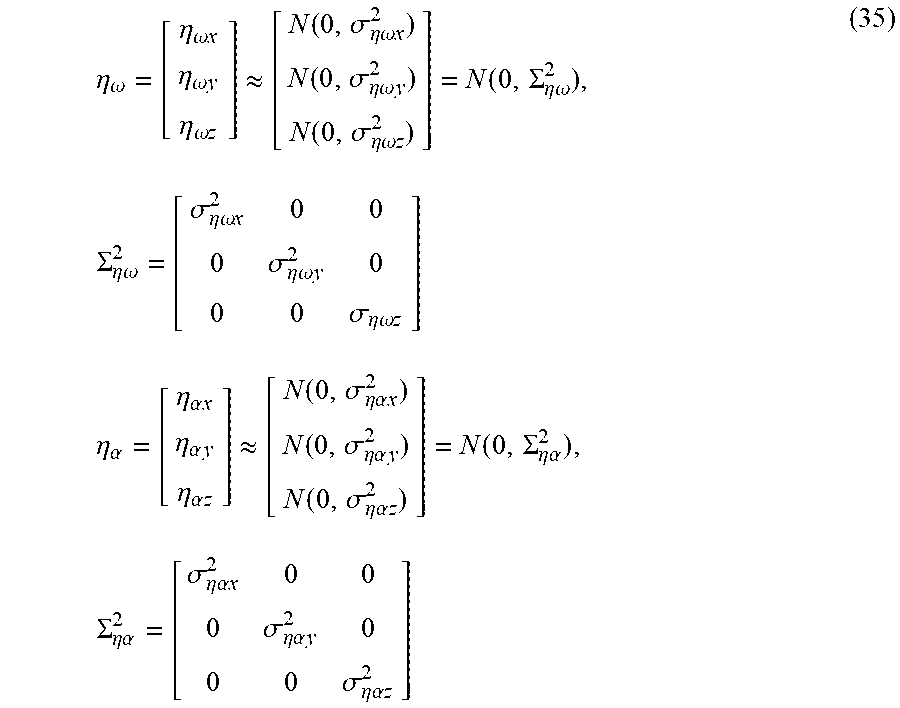

[0070] The noise components .eta. included in outputs of the angular velocity sensor and the acceleration sensor are considered as the white Gaussian noise of the variance .sigma..sub..eta..sup.2 which is average zero independent for each axis. The magnitude of the noise components is designated by the corresponding RMS values (.sigma..sub..eta..omega.x, .sigma..sub..eta..omega.y, .sigma..sub..eta..omega.z) [rad/s RMS] and (.sigma..sub.n.alpha.x, .sigma..sub..eta..alpha.y, .sigma..sub..eta..alpha.z) [G RMS], as in Expression (35).

.eta. .omega. = [ .eta. .omega. x .eta. .omega. y .eta. .omega. z ] .apprxeq. [ N ( 0 , .sigma. .eta. .omega. x 2 ) N ( 0 , .sigma. .eta. .omega. y 2 ) N ( 0 , .sigma. .eta..omega. z 2 ) ] = N ( 0 , .SIGMA. .eta. .omega. 2 ) , .SIGMA. .eta. .omega. 2 = [ .sigma. .eta. .omega. x 2 0 0 0 .sigma. .eta. .omega. y 2 0 0 0 .sigma. .eta. .omega. z ] .eta. .alpha. = [ .eta. .alpha. x .eta. .alpha. y .eta. .alpha. z ] .apprxeq. [ N ( 0 , .sigma. .eta. .alpha. x 2 ) N ( 0 , .sigma. .eta. .alpha. y 2 ) N ( 0 , .sigma. .eta. .alpha. z 2 ) ] = N ( 0 , .SIGMA. .eta. .alpha. 2 ) , .SIGMA. .eta..alpha. 2 = [ .sigma. .eta. .alpha. x 2 0 0 0 .sigma. .eta. .alpha. y 2 0 0 0 .sigma. .eta. .alpha. z 2 ] ( 35 ) ##EQU00030##

Biases of Angular Velocity/Acceleration Sensor and Instability of Gravitational Acceleration Value

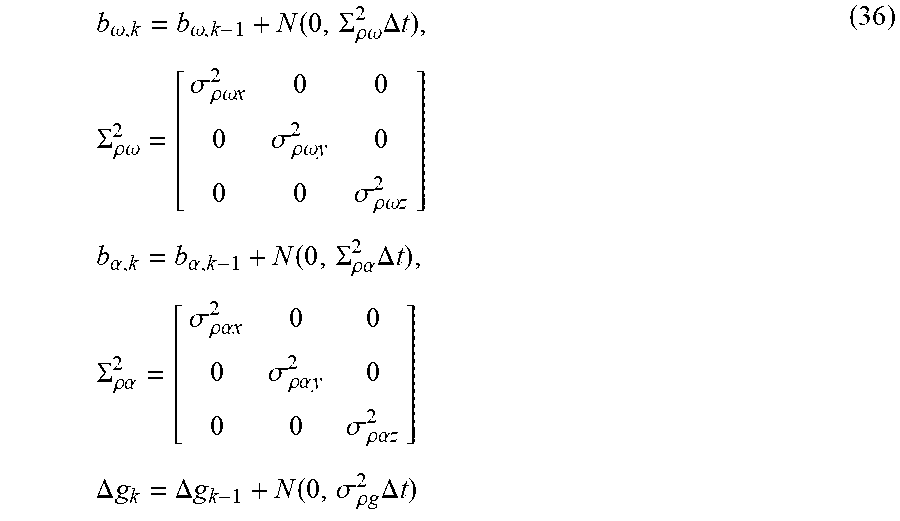

[0071] It is considered that the biases of the angular velocity sensor and the acceleration sensor are not constant and change with time. It is considered that the gravitational acceleration value also slightly changes depending on the surrounding environment. Considering the change as an individual random walk, the instability is designated by (.sigma..sub..rho..omega.x, .sigma..sub..rho..omega.y, .sigma..sub..rho..omega.z) [rad/s/ s], (.sigma..sub..rho..alpha.x, .sigma..sub..rho..alpha.y, .sigma..sub..rho..alpha.z) [G/ s], and .sigma..sub..rho.g [G/ s], as in Expression (36).

b .omega. , k = b .omega. , k - 1 + N ( 0 , .SIGMA. .rho..omega. 2 .DELTA. t ) , .SIGMA. .rho..omega. 2 = [ .sigma. .rho..omega. x 2 0 0 0 .sigma. .rho..omega. y 2 0 0 0 .sigma. .rho..omega. z 2 ] b .alpha. , k = b .alpha. , k - 1 + N ( 0 , .SIGMA. .rho..alpha. 2 .DELTA. t ) , .SIGMA. .rho. .alpha. 2 = [ .sigma. .rho. .alpha. x 2 0 0 0 .sigma. .rho. .alpha. y 2 0 0 0 .sigma. .rho. .alpha. z 2 ] .DELTA. g k = .DELTA. g k - 1 + N ( 0 , .sigma. .rho. g 2 .DELTA. t ) ( 36 ) ##EQU00031##

Motion Acceleration

[0072] When the gravitational acceleration is observed in order to correct the posture, the motion acceleration component .zeta..sub.a acts as the observation error. When this observation error is considered as simple white Gaussian noise, a result that the posture sensitively responds to a large motion acceleration due to a rapid motion is obtained. Thus, a noise model as with Expression (37) of changing the level depending on the magnitude of the estimated motion acceleration (difference between the observed acceleration and the estimated gravitational acceleration) is used, and a linear coefficient .mu. [N/A] and a constant term (.sigma..sub..zeta..alpha.x, .sigma..sub..zeta..alpha.y, .sigma..sub..zeta..alpha.z) [G RMS] are used as setting items.

a , k .apprxeq. N ( 0 , .SIGMA. a , k 2 ) , .SIGMA. a , k 2 = [ .sigma. ax 2 0 0 0 .sigma. ay 0 0 0 .sigma. az ] + .mu. 2 3 .DELTA. z a , k 2 I 3 .times. 3 ( 37 ) ##EQU00032##

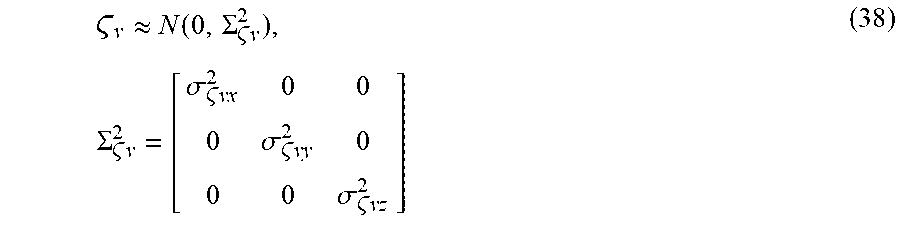

Motion Velocity

[0073] In observing the zero motion velocity, it is observed that the motion velocity of the IMU is substantially zero in a long term. The motion velocity component .zeta..sub.v appearing in a short term acts as the observation error. This observation error is considered as the white Gaussian noise independent for each axis, and the magnitude of this observation error is designated by the RMS values (.sigma..sub..zeta.vx, .sigma..sub..zeta.vy, .sigma..sub..zeta.vz) [Gs RMS], as in Expression (38).

v .apprxeq. N ( 0 , .SIGMA. v 2 ) , .SIGMA. v 2 = [ .sigma. vx 2 0 0 0 .sigma. vy 2 0 0 0 .sigma. vz 2 ] ( 38 ) ##EQU00033##

1-1-10. Limitation of Yaw-Axis Component of Bias Error in Angular Velocity Sensor

[0074] When an azimuth observation section such as a magnetic sensor is not provided, only a yaw-axis component (vertical component) of the bias error of the angular velocity sensor also monotonously increases. Normally, a yaw-axis direction changes with the posture and the sensor coordinates. Thus, for example, even though a z-axis of the angular velocity sensor at a certain time point coincides with a yaw axis, and thus error estimate increases, correction is performed by observing the gravitational acceleration when the posture changes, and thus the z-axis becomes an inclination (for example, a horizontal-axis direction) other than the yaw axis. Thus, the error estimate is reduced. However, for example, when the posture changes small, and the substantially same posture continues for a long time, the error estimate of the yaw-axis component increases, and the feedback gain increases. In addition, the increase of the feedback gain causes the bias estimation value of the angular velocity sensor to change without intention, and thus causes the azimuth of the posture to drift. In addition, it is unlikely that the bias estimation error of even the yaw-axis component of the practical angular velocity sensor increases beyond, for example, the initial bias error without limit. Accordingly, an upper limit value is provided only in the yaw-axis component of the bias estimation error of the angular velocity sensor in the state error covariance matrix, and thus, the yaw-axis component is limited not to exceed the upper limit value.

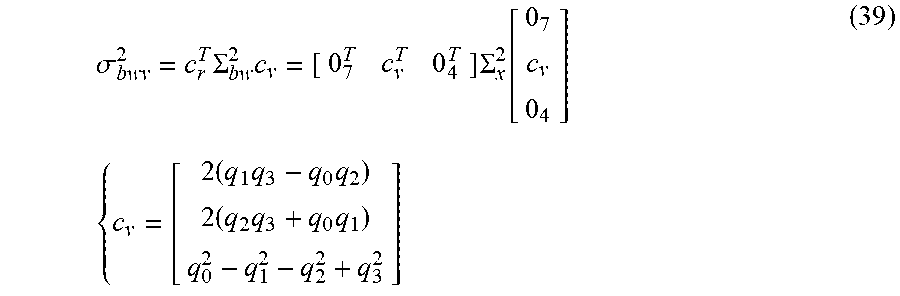

[0075] Firstly, as in Expression (39), the variance .sigma..sub.b.omega.v.sup.2 of the yaw-axis component is calculated from the error covariance matrix .SIGMA..sub.b.omega..sup.2 of the residual bias in the angular velocity sensor, based on the current posture quaternion.

.sigma. bwv 2 = c r T .SIGMA. bw 2 c v = [ 0 7 T c v T 0 4 T ] .SIGMA. x 2 [ 0 7 c v 0 4 ] { c v = [ 2 ( q 1 q 3 - q 0 q 2 ) 2 ( q 2 q 3 + q 0 q 1 ) q 0 2 - q 1 2 - q 2 2 + q 3 2 ] ( 39 ) ##EQU00034##

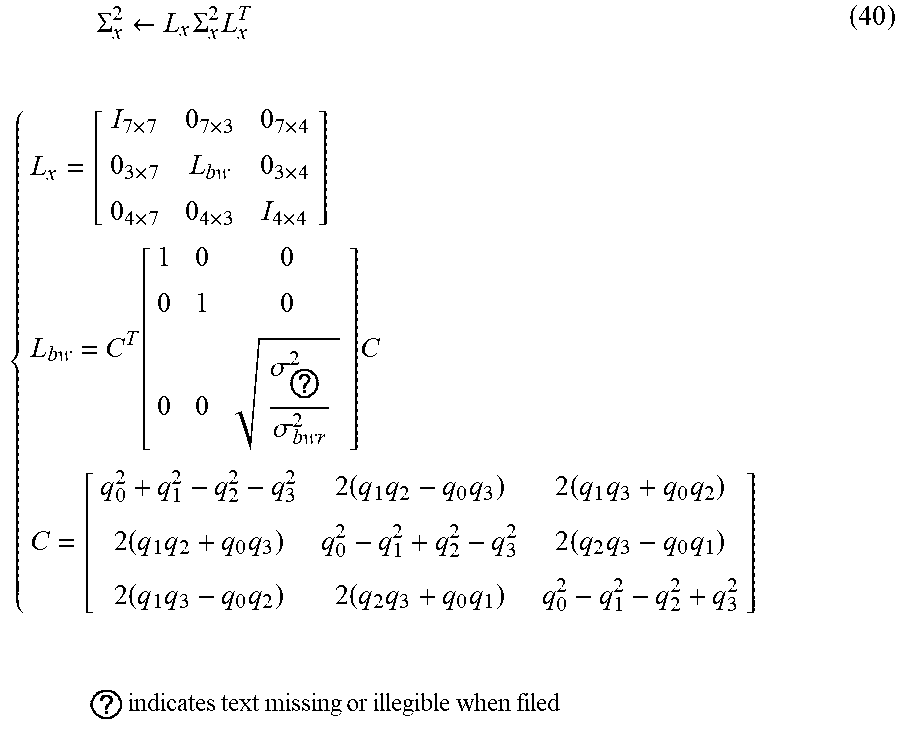

[0076] When the variance .sigma..sub.b.omega.v.sup.2 of the yaw-axis component exceeds the upper limit value .sigma..sub.b.omega.max.sup.2, the yaw-axis component is limited as in Expression (40).

.SIGMA. x 2 .rarw. L x .SIGMA. x 2 L x T { L x = [ I 7 .times. 7 0 7 .times. 3 0 7 .times. 4 0 3 .times. 7 L bw 0 3 .times. 4 0 4 .times. 7 0 4 .times. 3 I 4 .times. 4 ] L bw = C T [ 1 0 0 0 1 0 0 0 .sigma. ? 2 .sigma. bwr 2 ] C C = [ q 0 2 + q 1 2 - q 2 2 - q 3 2 2 ( q 1 q 2 - q 0 q 3 ) 2 ( q 1 q 3 + q 0 q 2 ) 2 ( q 1 q 2 + q 0 q 3 ) q 0 2 - q 1 2 + q 2 2 - q 3 2 2 ( q 2 q 3 - q 0 q 1 ) 2 ( q 1 q 3 - q 0 q 2 ) 2 ( q 2 q 3 + q 0 q 1 ) q 0 2 - q 1 2 - q 2 2 + q 3 2 ] ? indicates text missing or illegible when filed ( 40 ) ##EQU00035##

1-1-11. Countermeasure for Off-Scale of Sensor

[0077] An effective measurement range is defined in an actual inertial sensor (angular velocity sensor and acceleration sensor). When a physical quantity (angular velocity or acceleration) exceeding the range is input, it is not possible to obtain an accurate output by saturation of a signal in the inertial sensor. Exceeding the effective measurement range is referred to as "off-scale" below. Normally, a sensor to be used is selected to correspond to a range to be measured such that off-scale does not occur. However, a state (off-scale state) exceeding the range by an impact and the like in a very short time is assumed depending on the application.

Influence of Sensor Off-Scale

[0078] If the angular velocity sensor is in the off-scale state, a large error is included in the angular velocity, and an error in a posture angle obtained by integrating the angular velocity increases. An error in the motion velocity increases by integration of the acceleration based on the posture angle having an increased error. If the acceleration sensor is in the off-scale state, a large error is included in the acceleration, and the error in the motion velocity obtained by integrating the acceleration increases. Since the error by the off-scale is not the stochastic process, obtaining the average or the variance is not possible. Modeling of a Kalman filter is difficult because the amount of error is huge and unpredictable. Thus, in the current Kalman filter, the above error is not recognized as an error to be corrected. Thus, when the output value of the sensor is out of the effective range of the sensor, this situation is recognized as off-scale, and a proper period after the time point at which the output value of the sensor is out of the effective range is defined as a recovery period from the off-scale. Then, processing different from that in the normal time is performed.

Off-Scale Recovery Processing

[0079] Following processing is applied to the state error covariance matrix to handle the state error increasing by an influence of off-scale. That is, the error variance component of the covariance matrix is appropriately inflated in response to an increase of the error. The covariance component is set to zero in order to separate the increased error from other state variable errors. It is possible to strongly correct the increased state variable of the error and to suppress the negative influence on other state variables such as the bias estimation value to the minimum, by performing the above processing before correction processing.

Angular Velocity Off-Scale Recovery Processing

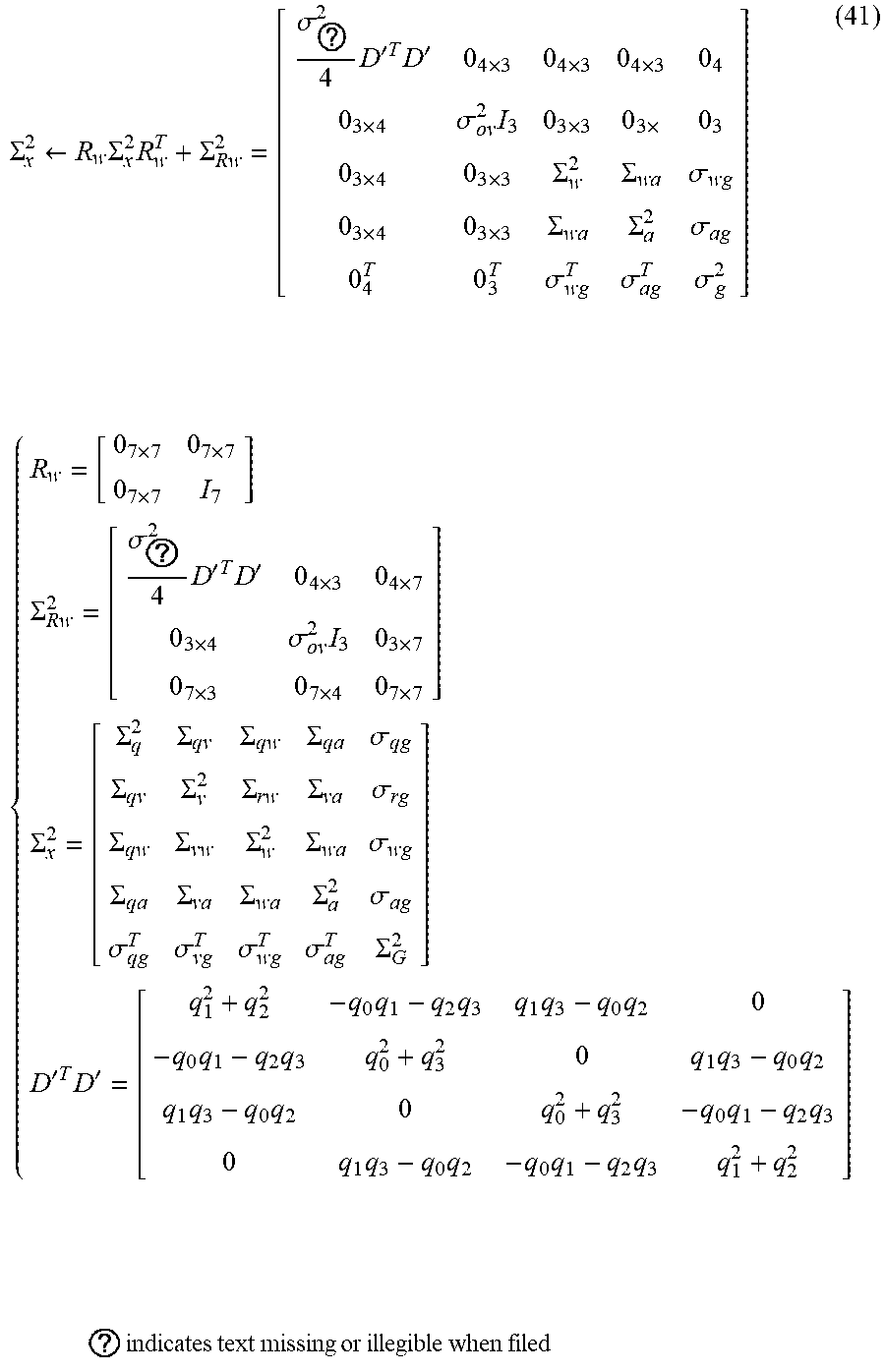

[0080] Processing of Expression (41) is applied in order to handle the posture angle and the error in motion velocity, which increase by the off-scale state of the angular velocity sensor. That is, the error component (posture error component) of the posture quaternion q in the error covariance matrix .SIGMA..sub.x.sup.2 is appropriately increased in response to an increase of the posture angle error. A correlation component (covariance component) between the posture error component and an error component other than the posture error component is set to zero in order to separate the posture error component from other error components, in the error covariance matrix .SIGMA..sub.x.sup.2. The error component other than the posture error component includes a motion velocity error component, an error component of the residual bias b.sub..omega. of the angular velocity sensor (bias error component of angular velocity), an error component of the residual bias b.sub..alpha. of the acceleration sensor (bias error component of acceleration), and an error component of the gravitational-acceleration correction value .DELTA.g. Setting the correlation component (covariance component) to zero can include a value approximate to zero and a value allowing a predetermined error component to be separated from other error components.

[0081] The error component of the motion velocity vector v (motion velocity error component) in the error covariance matrix .SIGMA..sub.x.sup.2 is appropriately increased in response to an increase of the motion velocity error. A correlation component (covariance component) between the motion velocity error component and an error component other than the motion velocity error component is set to zero in order to separate the motion velocity error component from other error components, in the error covariance matrix .SIGMA..sub.x.sup.2. The error component other than the motion velocity error component includes the posture error component, the bias error component of the angular velocity, the bias error component of the acceleration, and the error component of the gravitational-acceleration correction value. In Expression (41), .sigma..sub.oq.sup.2 indicates the variance of the posture error at time of off-scale recovery, and .sigma..sub.ov.sup.2 indicates the variance of the motion velocity error at the time of off-scale recovery.

.SIGMA. x 2 .rarw. R w .SIGMA. x 2 R w T + .SIGMA. Rw 2 = [ .sigma. ? 2 4 D ' T D ' 0 4 .times. 3 0 4 .times. 3 0 4 .times. 3 0 4 0 3 .times. 4 .sigma. ov 2 I 3 0 3 .times. 3 0 3 .times. 0 3 0 3 .times. 4 0 3 .times. 3 .SIGMA. w 2 .SIGMA. w a .sigma. wg 0 3 .times. 4 0 3 .times. 3 .SIGMA. wa .SIGMA. a 2 .sigma. ag 0 4 T 0 3 T .sigma. wg T .sigma. ag T .sigma. g 2 ] { R w = [ 0 7 .times. 7 0 7 .times. 7 0 7 .times. 7 I 7 ] .SIGMA. Rw 2 = [ .sigma. ? 2 4 D ' T D ' 0 4 .times. 3 0 4 .times. 7 0 3 .times. 4 .sigma. ov 2 I 3 0 3 .times. 7 0 7 .times. 3 0 7 .times. 4 0 7 .times. 7 ] .SIGMA. x 2 = [ .SIGMA. q 2 .SIGMA. qv .SIGMA. qw .SIGMA. qa .sigma. qg .SIGMA. qv .SIGMA. v 2 .SIGMA. rw .SIGMA. va .sigma. rg .SIGMA. qw .SIGMA. vw .SIGMA. w 2 .SIGMA. wa .sigma. wg .SIGMA. qa .SIGMA. va .SIGMA. wa .SIGMA. a 2 .sigma. ag .sigma. qg T .sigma. vg T .sigma. wg T .sigma. ag T .SIGMA. G 2 ] D ' T D ' = [ q 1 2 + q 2 2 - q 0 q 1 - q 2 q 3 q 1 q 3 - q 0 q 2 0 - q 0 q 1 - q 2 q 3 q 0 2 + q 3 2 0 q 1 q 3 - q 0 q 2 q 1 q 3 - q 0 q 2 0 q 0 2 + q 3 2 - q 0 q 1 - q 2 q 3 0 q 1 q 3 - q 0 q 2 - q 0 q 1 - q 2 q 3 q 1 2 + q 2 2 ] ? indicates text missing or illegible when filed ( 41 ) ##EQU00036##

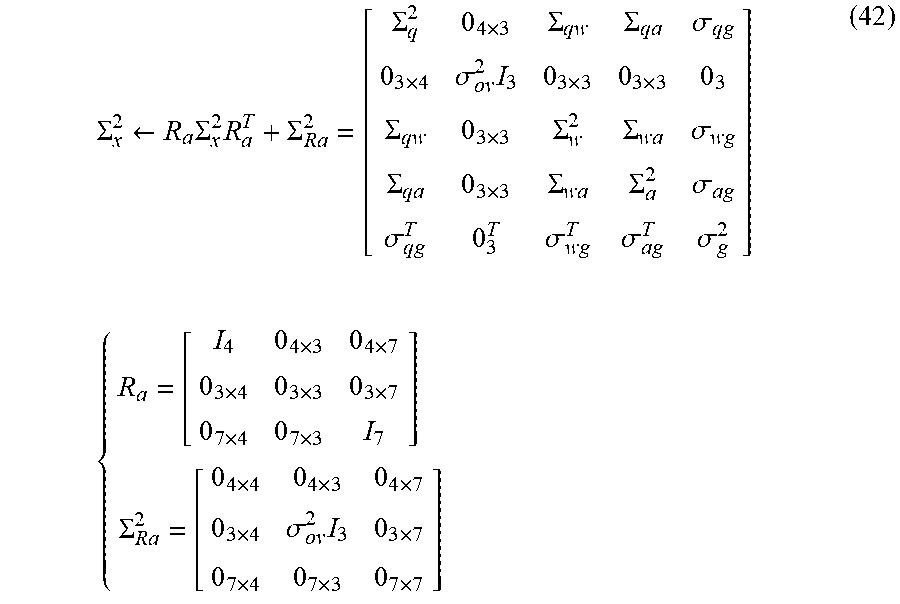

Acceleration Off-Scale Recovery Processing

[0082] Processing of Expression (42) is applied in order to handle the error in motion velocity, which increases by the off-scale state of the acceleration sensor. That is, the motion velocity error component in the error covariance matrix .SIGMA..sub.x.sup.2 is appropriately increased in response to an increase of the motion velocity error. A correlation component (covariance component) between the motion velocity error component and an error component other than the motion velocity error component is set to zero in order to separate the motion velocity error component from other state variable errors, in the error covariance matrix .SIGMA..sub.x.sup.2.

.SIGMA. x 2 .rarw. R a .SIGMA. x 2 R a T + .SIGMA. Ra 2 = [ .SIGMA. q 2 0 4 .times. 3 .SIGMA. qw .SIGMA. qa .sigma. qg 0 3 .times. 4 .sigma. ov 2 I 3 0 3 .times. 3 0 3 .times. 3 0 3 .SIGMA. qw 0 3 .times. 3 .SIGMA. w 2 .SIGMA. wa .sigma. wg .SIGMA. qa 0 3 .times. 3 .SIGMA. wa .SIGMA. a 2 .sigma. ag .sigma. qg T 0 3 T .sigma. wg T .sigma. ag T .sigma. g 2 ] { R a = [ I 4 0 4 .times. 3 0 4 .times. 7 0 3 .times. 4 0 3 .times. 3 0 3 .times. 7 0 7 .times. 4 0 7 .times. 3 I 7 ] .SIGMA. Ra 2 = [ 0 4 .times. 4 0 4 .times. 3 0 4 .times. 7 0 3 .times. 4 .sigma. ov 2 I 3 0 3 .times. 7 0 7 .times. 4 0 7 .times. 3 0 7 .times. 7 ] ( 42 ) ##EQU00037##

1-2. Flowchart of Posture Estimation Method

[0083] FIG. 1 is a flowchart illustrating an example of procedures of a posture estimation method according to the embodiment. The procedures in FIG. 1 are performed by a posture estimation device that estimates the posture of an object to which an IMU is attached, for example. The object is not particularly limited, and for example, a vehicle, an electronic device, exercise equipment, a person, and an animal may be provided as the object. The IMU may be detachable from the object. The IMU may be provided in a state where the IMU is fixed to the object, and thus detaching the IMU is not possible, for example, the IMU is mounted in the object. For example, the posture estimation device may be a personal computer (PC) or may be various portable devices such as a smart phone.

[0084] As illustrated in FIG. 1, in the posture estimation method in the embodiment, firstly, the posture estimation device initializes the state vector x.sub.k and the error covariance matrix .SIGMA..sub.x, k.sup.2 as error information (initialization step S1). That is, the posture estimation device sets k=0 and sets (initializes) the state vector x.sub.0 and the error covariance matrix .SIGMA..sub.x, 0.sup.2 at a time point to. Specifically, the posture estimation device sets the elements q.sub.0, v.sub.0, b.sub..omega., 0, b.sub..alpha., 0, and .DELTA.g.sub.0 of the state vector x.sub.0 and .SIGMA..sub.q, 0.sup.2, .SIGMA..sub.v, 0.sup.2, .SIGMA..sub.b.omega., 0.sup.2, .SIGMA..sub.b.alpha., 0.sup.2, and .sigma..sub..DELTA.g, 0.sup.2 included in the error covariance matrix .SIGMA..sub.x, 0.sup.2, which are expressed as in Expression (30).

[0085] For example, the posture estimation device may set the initial posture of the inertial measurement unit (IMU) to have a roll angle, a pitch angle, and a yaw angle which have been determined in advance, and may set q.sub.0 by substituting the roll angle, the pitch angle, and the yaw angle into Expression (31). Alternatively, the posture estimation device may acquire acceleration data from the acceleration sensor in a state where the inertial measurement unit (IMU) is stopped. The posture estimation device may specify a direction of the gravitational acceleration from the acceleration data, calculate a roll angle and a pitch angle, and set the yaw angle to a predetermined value (for example, 0). Then, the posture estimation device may set q.sub.0 by substituting the roll angle, the pitch angle, and the yaw angle into Expression (31). The inertial measurement unit (IMU) sets .SIGMA..sub.q, 0.sup.2 by substituting RMS.sigma..sub..PHI. of a roll angle error and RMS.sigma..sub..theta. of a pitch angle error into Expression (32).

[0086] For example, the posture estimation device sets a state where the inertial measurement unit (IMU) is stopped to be an initial state and sets v.sub.0 to 0. Then, the posture estimation device sets .SIGMA..sub.v, 0.sup.2 by substituting RMS.sigma..sub.vx, RMS.sigma..sub.vy, and RMS.sigma..sub.vz of the error of the motion velocity vector v in the axes into Expression (33).

[0087] If the residual bias b.sub..omega. of the angular velocity sensor and the residual bias b.sub..alpha. of the acceleration sensor are known, the posture estimation device sets the values of the residual biases to b.sub..omega., 0 and b.sub..alpha., 0. If b.sub..omega. and b.sub..alpha. are not known, the posture estimation device sets b.sub..omega., 0 and b.sub..alpha., 0 to zero. The posture estimation device sets .SIGMA..sub.b.omega., 0.sup.2 and .SIGMA..sub.b.alpha., 0.sup.2 by substituting the errors RMS.sigma..sub.b.omega.x, RMS.sigma..sub.b.omega.y, and RMS.sigma..sub.b.omega.z of the residual bias of the angular velocity sensor in the axes and the errors RMS.sigma..sub.b.alpha.x, RMS.sigma..sub.b.alpha.y, and RMS.sigma..sub.b.alpha.z of the residual bias of the acceleration sensor in the axes into Expression (34).

[0088] If the gravitational acceleration value is known, the posture estimation device sets the difference from 1 G to .DELTA.g.sub.0. If the gravitational acceleration value is not known, the posture estimation device sets .DELTA.g.sub.0 to zero. The posture estimation device sets RMS.sigma..sub..DELTA.g of the error of the gravitational acceleration value in .sigma..sub..DELTA.g, 0.sup.2.

[0089] Then, the posture estimation device acquires a measured value of the inertial measurement unit (IMU) (measured-value acquisition step S2). Specifically, the posture estimation device waits until the sampling interval .DELTA.t elapses. If the sampling interval .DELTA.t elapses, the posture estimation device sets k=k+1 and t.sub.k=t.sub.k-1+.DELTA.t and acquires angular velocity data d.sub..omega., k and acceleration data d.sub..alpha., k from the inertial measurement unit (IMU).

[0090] Then, the posture estimation device performs prediction processing (also referred to as time update processing) of the state vector x.sub.k (including the posture quaternion q.sub.k being the posture information at a time point t.sub.k, as an element) and the error covariance matrix .SIGMA..sub.x, k.sup.2 being error information at the time point t.sub.k (prediction step S3).

[0091] FIG. 2 is a flowchart illustrating an example of procedures of the step S3 in FIG. 1. As illustrated in FIG. 2, firstly, the posture estimation device performs processing of removing the residual biases b.sub..omega., k-1 and b.sub..alpha., k-1 which have been estimated at a time point t.sub.k-1, from angular velocity data d.sub..omega., k and acceleration data d.sub..alpha., k at the time point t.sub.k. The posture estimation device performs the above processing with Expression (19) (bias removal step S31).

[0092] Then, the posture estimation device performs processing (posture-change-amount calculation processing) of calculating the posture change amount .DELTA.q.sub.k at the time point t.sub.k based on the output of the angular velocity sensor (posture-change-amount calculation step S32). Specifically, the posture estimation device calculates the posture change amount .DELTA.q.sub.k with Expression (6), based on the angular velocity data d.sub..omega., k.

[0093] The posture estimation device performs processing (velocity-change-amount calculation processing) of calculating the velocity change amount (C.sub.k.lamda..sub.k-g.sub.k-1).DELTA.t of the object based on the output of the acceleration sensor and the output of the angular velocity sensor (velocity-change-amount calculation step S33). Specifically, the posture estimation device calculates the velocity change amount (C.sub.k.lamda..sub.k-g.sub.k-1).DELTA.t with Expressions (9), (10), and (12), based on the acceleration data d.sub..alpha., k and the angular velocity data d.sub..omega., k.

[0094] The posture estimation device performs processing (posture information prediction processing) of predicting the posture quaternion q.sub.k being posture information of the object at the time point t.sub.k, by using the posture change amount .DELTA.q.sub.k (posture information prediction step S34). In the posture information prediction step S34, the posture estimation device further performs processing (velocity information prediction processing) of predicting the motion velocity vector v.sub.k being velocity information of the object at the time point t.sub.k by using the velocity change amount (C.sub.k.lamda..sub.k-g.sub.k-1).DELTA.t. Specifically, in the posture information prediction step S34, the posture estimation device performs processing of predicting the posture quaternion q.sub.k and the state vector x.sub.k including the motion velocity vector v.sub.k as the element, by Expressions (6), (12), and (19).

[0095] Lastly, the posture estimation device performs processing of updating the error covariance matrix .SIGMA..sub.x, k.sup.2 at the time point t.sub.k by Expressions (20) and (21) (error information update step S35).

[0096] Returning to FIG. 1, the posture estimation device performs processing (rotational error component removal processing) of removing a rotational error component around a reference vector in the error covariance matrix .SIGMA..sub.x, k.sup.2 being the error information (rotational error-component removal step S4). The reference vector is a vector observed by the observation section. In the embodiment, the reference vector is a gravitational acceleration vector observed by the acceleration sensor being the observation section. In the embodiment, a rotation error around the reference vector is an azimuth error. Thus, in the step S4, the posture estimation device performs processing of removing the azimuth error in the error covariance matrix .SIGMA..sub.x, k.sup.2. Specifically, the posture estimation device generates the error covariance matrix .SIGMA..sub.x, k.sup.2 in which the rank limitation and removal of the azimuth error component .epsilon..sub..theta.z in the error covariance matrix .SIGMA..sub.q, k.sup.2 of the posture quaternion q.sub.k are performed, by Expressions (28) and (29).

[0097] Then, the posture estimation device performs off-scale recovery processing (error information adjustment step S5). Specifically, in the error information adjustment step S5, the posture estimation device determines whether or not the output of the angular velocity sensor is within the effective range. When it is determined that the output of the angular velocity sensor is not within the effective range, the posture estimation device performs processing of increasing the posture error component in the error covariance matrix .SIGMA..sub.x, k.sup.2 being the error information and reducing the correlation component between the posture error component and the error component other than the posture error component, in the error covariance matrix .SIGMA..sub.x, k.sup.2 (error information adjustment step S5). In the error information adjustment step S5, the posture estimation device determines whether or not the output of the acceleration sensor is within the effective range. When it is determined that the output of the angular velocity sensor or the output of the acceleration sensor is not within the corresponding effective range, the posture estimation device performs processing of increasing the motion velocity error component in the error covariance matrix .SIGMA..sub.x, k.sup.2 being the error information and reducing the correlation component between the motion velocity error component and the error component other than the motion velocity error component in the error covariance matrix .SIGMA..sub.x, k.sup.2.

[0098] FIG. 3 is a flowchart illustrating an example of procedures of the step S5 in FIG. 1. As illustrated in FIG. 3, firstly, the posture estimation device determines whether or not the output (angular velocity data d.sub..omega.) of the angular velocity sensor is within the effective range (Step S51). When it is determined that the output of the angular velocity sensor is within the effective range (Y in Step S51), the posture estimation device checks the remaining of an angular-velocity off-scale recovery period (Step S52a). When it is determined that the output of the angular velocity sensor is not within the effective range (N in Step S51), the posture estimation device starts or prolongs the angular-velocity off-scale recovery period (Step S52b).

[0099] Then, the posture estimation device determines whether or not the output (acceleration data d.sub..alpha.) of the acceleration sensor is within the effective range (Step S53). When the posture estimation device determines that the output of the acceleration sensor is within the effective range (Y in Step S53), the posture estimation device checks the remaining of an acceleration off-scale recovery period (Step S54a). When the posture estimation device determines that the output of the acceleration sensor is not within the effective range (N in Step S53), the posture estimation device starts or prolongs the acceleration off-scale recovery period (Step S54b).

[0100] Then, the posture estimation device determines whether or not the current time is in the angular-velocity off-scale recovery period as a first period after it is determined that the output of the angular velocity sensor is not within the effective range (Step S55). When the posture estimation device determines that the current time is in the angular-velocity off-scale recovery period (Y in Step S55), the posture estimation device increases the posture error component in the error covariance matrix .SIGMA..sub.x, k.sup.2 being the error information (Step S56a). The posture estimation device reduces the correlation component between the posture error component and the error component other than the posture error component in the error covariance matrix .SIGMA..sub.x, k.sup.2, for example, sets the correlation component to zero (Step S56b). The posture estimation device increases the motion velocity error component in the error covariance matrix .SIGMA..sub.x, k.sup.2 (Step S58a). The posture estimation device reduces the correlation component between the motion velocity error component and the error component other than the motion velocity error component in the error covariance matrix .SIGMA..sub.x, k.sup.2, for example, sets the correlation component to zero (Step S58b). In practice, the posture estimation device performs the processes of Step S56a, Step S56b, Step S58a, and Step S58b by updating the error covariance matrix .SIGMA..sub.x, k.sup.2 with Expression (41).

[0101] When the posture estimation device determines that the current time is not in the angular-velocity off-scale recovery period (N in Step S55), the posture estimation device determines whether or not the current time is in the acceleration off-scale recovery period as a second period after it is determined that the output of the acceleration sensor is not within the effective range (Step S57). When the posture estimation device determines that the current time is in the acceleration off-scale recovery period (Y in Step S57), the posture estimation device increases the motion velocity error component in the error covariance matrix .SIGMA..sub.x, k.sup.2 (Step S58a). The posture estimation device reduces the correlation component between the motion velocity error component and the error component other than the motion velocity error component in the error covariance matrix .SIGMA..sub.x, k.sup.2, for example, sets the correlation component to zero (Step S58b). In practice, the posture estimation device performs the processes of Step S58a and Step S58b by updating the error covariance matrix .SIGMA..sub.x, k.sup.2 with Expression (42).

[0102] When the posture estimation device determines that the current time is in neither the angular-velocity off-scale recovery period nor the acceleration off-scale recovery period (N in Step S55 and N in Step S57), the posture estimation device does not perform the processes of Step S56a, Step S56b, Step S58a, and Step S58b.

[0103] Returning to FIG. 1, the posture estimation device performs processing (bias error limitation processing) of limiting the bias error component of the angular velocity around the reference vector, in the error covariance matrix .SIGMA..sub.x, k.sup.2 being the error information (bias error limitation step S6). As described above, in the embodiment, the reference vector is the gravitational acceleration vector. Thus, in Step S6, the posture estimation device limits the bias error component of the angular velocity around the gravitational acceleration vector, that is, limits the vertical component (yaw-axis component) of the bias error of the angular velocity.

[0104] FIG. 4 is a flowchart illustrating an example of procedures of Step S6 in FIG. 1. As illustrated in FIG. 4, firstly, the posture estimation device performs processing of calculating the variance .sigma..sub.b.omega.v.sup.2 of the vertical component of the bias error in the angular velocity with Expression (39) and the state vector x.sub.k and the error covariance matrix .SIGMA..sub.x, k.sup.2 at the time point t.sub.k (bias-error vertical component calculation step S61).

[0105] Then, the posture estimation device performs processing of determining whether or not the variance .sigma..sub.b.omega.v.sup.2 exceeds the upper limit value (bias error determination step S62).

[0106] When the variance .sigma..sub.b.omega.v.sup.2 exceeds the upper limit value (Y in Step S62), the posture estimation device performs limitation operation processing of limiting the vertical component of the bias error in the angular velocity (limitation operation step S63). Specifically, when the variance .sigma..sub.b.omega.v.sup.2 exceeds the upper limit value .sigma..sub.b.omega.max.sup.2, the posture estimation device updates the error covariance matrix .SIGMA..sub.x, k.sup.2 by Expression (40). When the variance .sigma..sub.b.omega.v.sup.2 is equal to or less than the upper limit value (N in Step S62), the posture estimation device does not perform the limitation operation processing in Step S63.

[0107] Returning to FIG. 1, the posture estimation device performs correction processing (also referred to as observation update processing) of the state vector x.sub.k and the error covariance matrix .SIGMA..sub.x, k.sup.2 at the time point t.sub.k (correction step S7). In the embodiment, the posture estimation device performs processing (posture information correction step) of correcting the posture quaternion q.sub.k being the posture information of the object, which has been predicted in Step S3, based on the error covariance matrix .SIGMA..sub.x, k.sup.2 being the error information. Specifically, in the posture information correction step, the posture estimation device corrects the state vector x.sub.k including the posture quaternion q.sub.k being the posture information as the element, based on the observation residual .DELTA.z.sub.a, k being a difference between the acceleration vector (obtained based on the output of the acceleration sensor) and the gravitational acceleration vector being the reference vector. FIG. 5 is a flowchart illustrating an example of procedures of Step S7 in FIG. 1.

[0108] As illustrated in FIG. 5, firstly, the posture estimation device performs processing of calculating the observation residual .DELTA.z.sub.k, a Kalman coefficient K.sub.k, and a transformation matrix H.sub.k at the time point t.sub.k by Expressions (23) and (24) (correction coefficient calculation step S71).

[0109] Then, the posture estimation device performs processing (posture information correction processing) of correcting the posture quaternion q.sub.k being the predicted posture information of the object at the time point t.sub.k (posture information correction step S72). Specifically, the posture estimation device performs processing of correcting the state vector x.sub.k at the time point t.sub.k with Expression (27), the observation residual .DELTA.z.sub.k, and the Kalman coefficient K.sub.k.

[0110] The posture estimation device performs processing of normalizing the state vector x.sub.k at the time point t.sub.k by Expression (28) (normalization step S73).

[0111] Then, the posture estimation device performs processing of correcting the error covariance matrix .SIGMA..sub.x, k.sup.2 at the time point t.sub.k with Expression (27), the Kalman coefficient K.sub.k, and the transformation matrix H.sub.k (error information correction step S74).

[0112] Returning to FIG. 1, the posture estimation device repeats the processes of Step S2 to Step S7 until an end instruction is received (N in Step S8). If the end instruction is received (Y in Step S8), the posture estimation device ends the processing.

[0113] The order of the steps in FIG. 1 can be appropriately changed. For example, the order of Step S4, Step S5, and Step S6 may be changed, and at least one of Step S4, Step S5, and Step S6 may be performed after Step S7.

[0114] As described above, according to the posture estimation method in the embodiment, the posture change amount and the velocity change amount of an object are calculated with Expressions (6) and (12) derived from Expressions (1) and (2) of the output model of the IMU. Then, the posture of the object is estimated with the posture change amount and the velocity change amount. In Expressions (6) and (12), a calculation error in the posture change amount or the velocity change amount is reduced in comparison to that in the related art, by calculating the posture change amount and the velocity change amount with not only the first-order term of .DELTA.t but also the second-order term and the third-order term thereof.

[0115] If the object rotates, the coordinate transformation matrix C.sub.k changes. However, the coordinate transformation matrix C.sub.k is calculated from the elements of the posture quaternion q.sub.k estimated by the Kalman filter. Thus, when the object rotates rapidly, the coordinate transformation matrix C.sub.k may not immediately follow the rotation of the object. Regarding this, according to the posture estimation method in the embodiment, since the acceleration .lamda..sub.k is calculated with not only the acceleration but also the angular velocity in Expression (12), the rotation of the object is immediately reflected to the acceleration .lamda..sub.k. Thus, even when the object rotates rapidly, it is possible to reduce deterioration of calculation accuracy of the velocity change amount.

[0116] Further, according to the posture estimation method in the embodiment, with Expression (23), the observation residual of the gravitational acceleration by the output of the acceleration sensor and the motion velocity of the object become zero in a long term, and the Kalman coefficient K.sub.k in Expression (24) is calculated with the observation residual of the zero motion velocity. Thus, even though observation information of the azimuth is not provided, it is possible to estimate the posture of the object with high accuracy.