Device, method, and graphical user interface for managing folders

Chaudhri , et al. September 29, 2

U.S. patent number 10,788,953 [Application Number 16/020,804] was granted by the patent office on 2020-09-29 for device, method, and graphical user interface for managing folders. This patent grant is currently assigned to Apple Inc.. The grantee listed for this patent is Apple Inc.. Invention is credited to Imran Chaudhri, Marcel Van Os.

View All Diagrams

| United States Patent | 10,788,953 |

| Chaudhri , et al. | September 29, 2020 |

Device, method, and graphical user interface for managing folders

Abstract

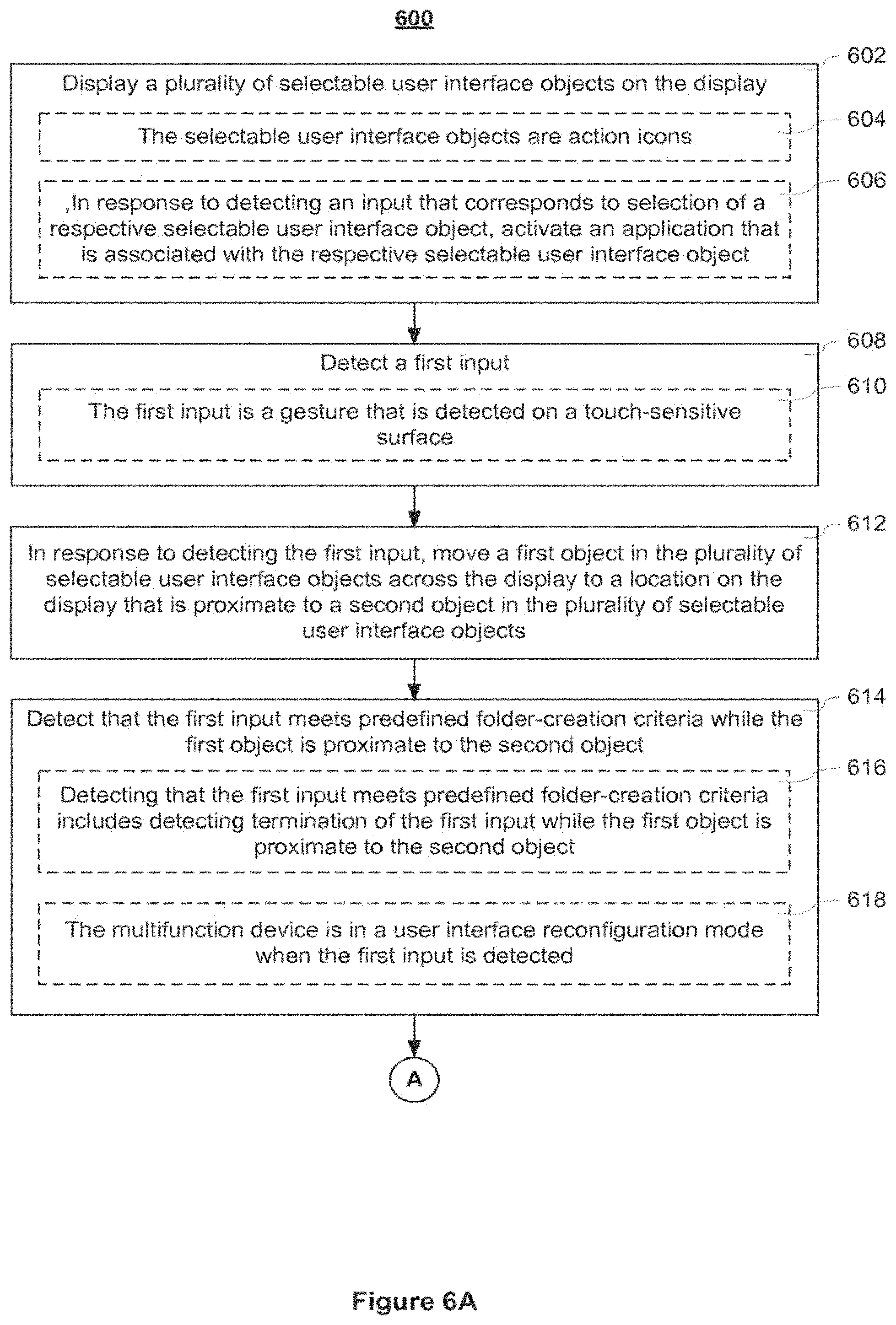

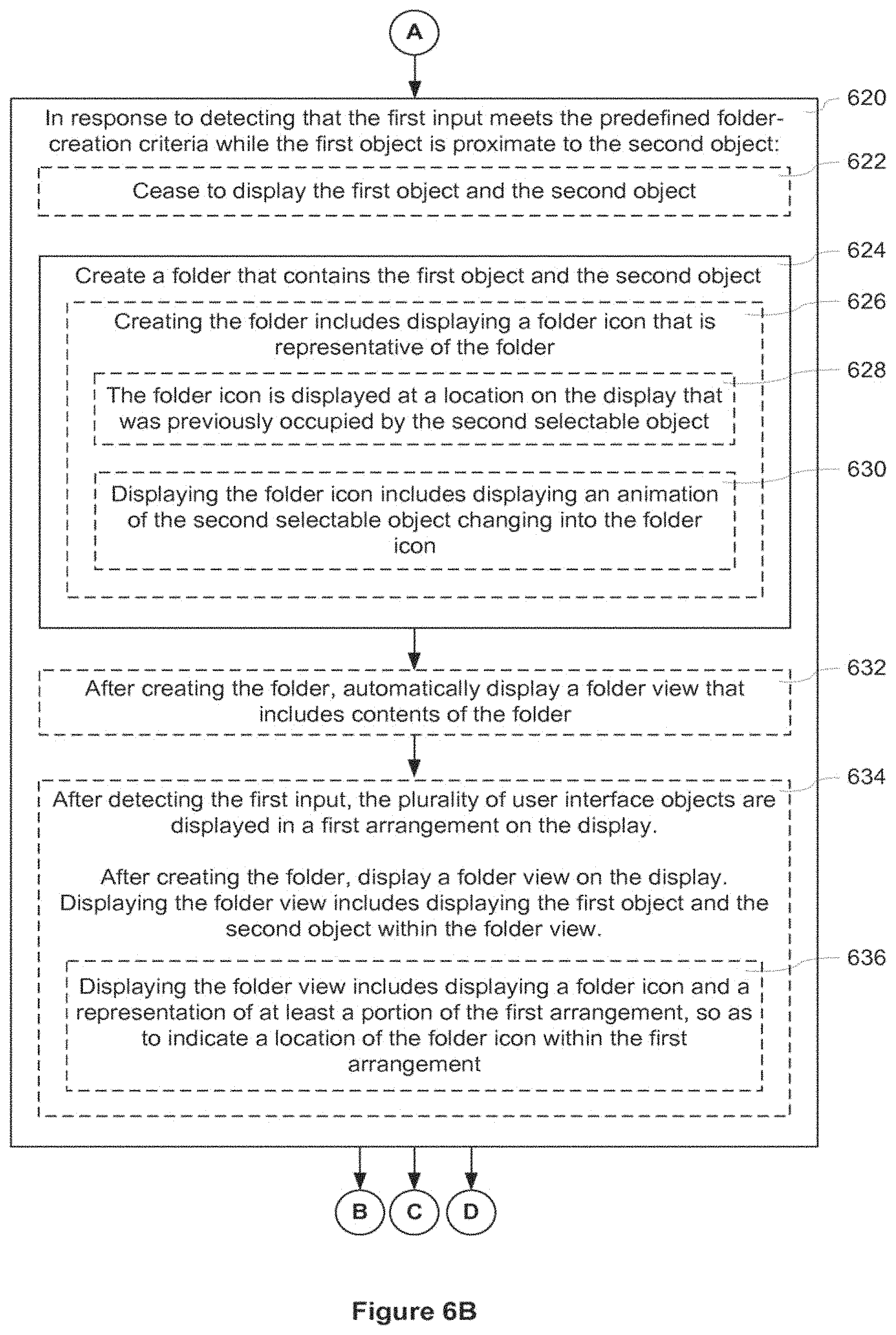

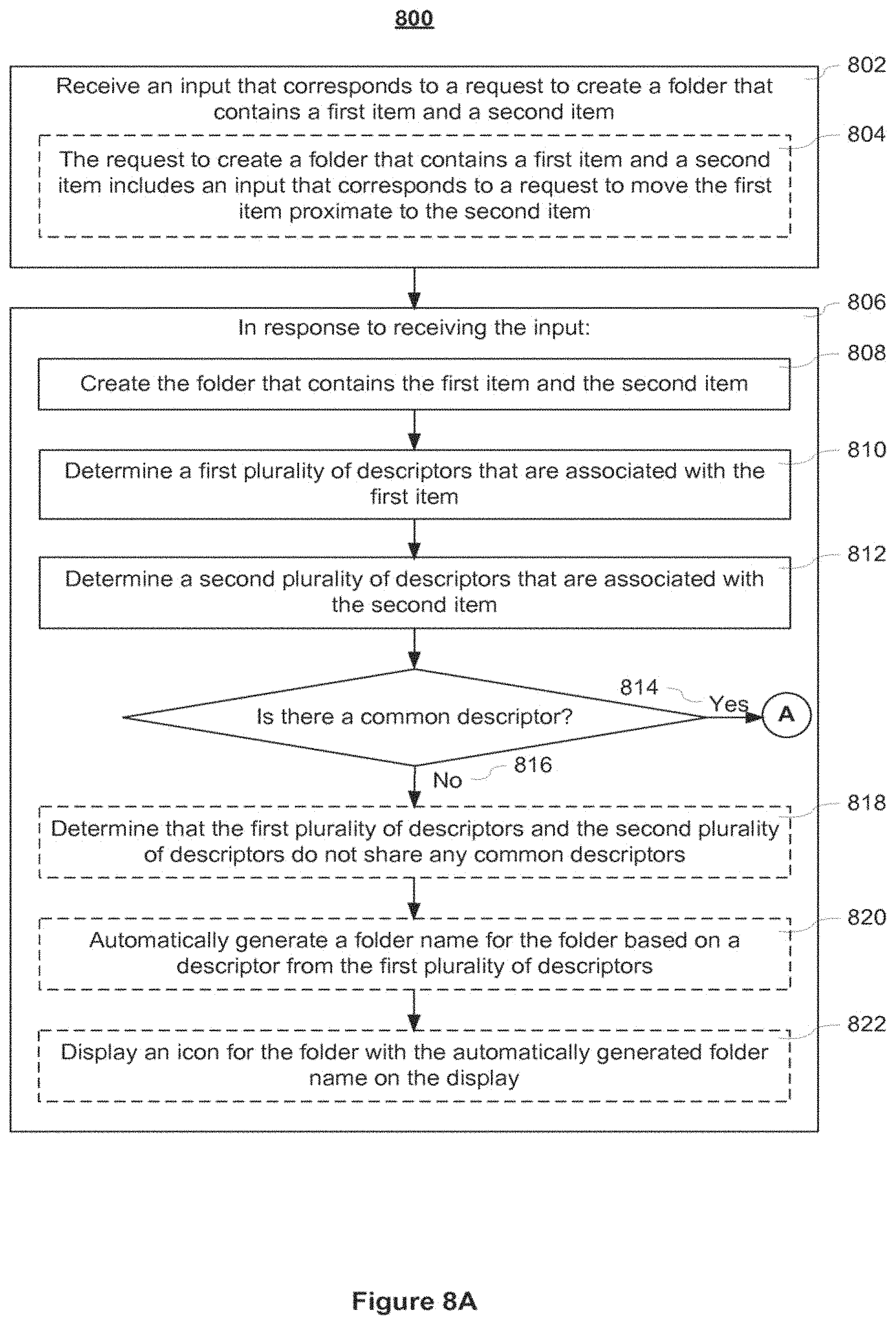

A multifunction device displays a plurality of selectable user interface objects on the display. In response to detecting the first input, the device moves a first object in the plurality of selectable user interface objects across the display to a location on the display that is proximate to a second object in the plurality of selectable user interface objects. In response to detecting that the first input meets predefined folder-creation criteria while the first object is proximate to the second object, the device creates a folder that contains the first object and the second object.

| Inventors: | Chaudhri; Imran (San Francisco, CA), Van Os; Marcel (San Francisco, CA) | ||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Applicant: |

|

||||||||||

| Assignee: | Apple Inc. (Cupertino,

CA) |

||||||||||

| Family ID: | 1000005083002 | ||||||||||

| Appl. No.: | 16/020,804 | ||||||||||

| Filed: | June 27, 2018 |

Prior Publication Data

| Document Identifier | Publication Date | |

|---|---|---|

| US 20180307388 A1 | Oct 25, 2018 | |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | Issue Date | ||

|---|---|---|---|---|---|

| 12888362 | Sep 22, 2010 | 10025458 | |||

| 61321872 | Apr 7, 2010 | ||||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | G06F 9/451 (20180201); G06F 3/04886 (20130101); G06F 3/0482 (20130101); G06F 3/04812 (20130101); H04M 1/72583 (20130101); H04N 7/147 (20130101); H04N 7/15 (20130101); G06F 3/0486 (20130101); G06F 3/0488 (20130101); G06F 3/04817 (20130101) |

| Current International Class: | G06F 3/0482 (20130101); G06F 3/0481 (20130101); H04M 1/725 (20060101); H04N 7/15 (20060101); G06F 3/0486 (20130101); G06F 3/0488 (20130101); H04N 7/14 (20060101); G06F 9/451 (20180101) |

References Cited [Referenced By]

U.S. Patent Documents

| 4899136 | February 1990 | Beard et al. |

| 5124959 | June 1992 | Yamazaki et al. |

| 5146556 | September 1992 | Hullot et al. |

| 5196838 | March 1993 | Meier et al. |

| 5237679 | August 1993 | Wang et al. |

| 5312478 | May 1994 | Reed et al. |

| 5452414 | September 1995 | Rosendahl et al. |

| 5491778 | February 1996 | Gordon et al. |

| 5497454 | March 1996 | Bates et al. |

| 5515486 | May 1996 | Amro et al. |

| 5544295 | August 1996 | Capps |

| 5546529 | August 1996 | Bowers et al. |

| 5572238 | November 1996 | Krivacic |

| 5598524 | January 1997 | Johnston et al. |

| 5621878 | April 1997 | Owens et al. |

| 5625818 | April 1997 | Zarmer |

| 5644739 | July 1997 | Moursund |

| 5657049 | August 1997 | Ludolph et al. |

| 5671381 | September 1997 | Strasnick et al. |

| 5678015 | October 1997 | Goh |

| 5726687 | March 1998 | Belfiore et al. |

| 5736974 | April 1998 | Selker |

| 5745096 | April 1998 | Ludolph et al. |

| 5745116 | April 1998 | Pisutha-Arnond |

| 5745718 | April 1998 | Cline et al. |

| 5745910 | April 1998 | Piersol et al. |

| 5754179 | May 1998 | Hocker et al. |

| 5754809 | May 1998 | Gandre |

| 5757371 | May 1998 | Oran et al. |

| 5760773 | June 1998 | Berman et al. |

| 5760774 | June 1998 | Grossman et al. |

| 5774119 | June 1998 | Alimpich et al. |

| 5796401 | August 1998 | Winer |

| 5798752 | August 1998 | Buxton et al. |

| 5801699 | September 1998 | Hooker et al. |

| 5801704 | September 1998 | Oohara et al. |

| 5812862 | September 1998 | Smith et al. |

| 5825352 | October 1998 | Bisset et al. |

| 5825357 | October 1998 | Malamud et al. |

| 5835079 | November 1998 | Shieh |

| 5835094 | November 1998 | Ermel et al. |

| 5838326 | November 1998 | Card et al. |

| 5861885 | January 1999 | Strasnick et al. |

| 5870683 | February 1999 | Wells et al. |

| 5870734 | February 1999 | Kao |

| 5877765 | March 1999 | Dickman et al. |

| 5877775 | March 1999 | Theisen et al. |

| 5880733 | March 1999 | Horvitz et al. |

| 5900876 | May 1999 | Yagita et al. |

| 5914716 | June 1999 | Rubin et al. |

| 5914717 | June 1999 | Kleewein et al. |

| 5923327 | July 1999 | Smith et al. |

| 5923908 | July 1999 | Schrock et al. |

| 5934707 | August 1999 | Johnson |

| 5943679 | August 1999 | Niles et al. |

| 5956025 | September 1999 | Goulden et al. |

| 5963204 | October 1999 | Ikeda et al. |

| 5995106 | November 1999 | Naughton et al. |

| 6005579 | December 1999 | Sugiyama et al. |

| 6012072 | January 2000 | Lucas et al. |

| 6043818 | March 2000 | Nakano et al. |

| 6049336 | April 2000 | Liu et al. |

| 6054989 | April 2000 | Robertson et al. |

| 6072486 | June 2000 | Sheldon et al. |

| 6088032 | July 2000 | Mackinlay |

| 6111573 | August 2000 | Mccomb et al. |

| 6121969 | September 2000 | Jain et al. |

| 6133914 | October 2000 | Rogers et al. |

| 6160551 | December 2000 | Naughton et al. |

| 6166738 | December 2000 | Robertson et al. |

| 6188407 | February 2001 | Smith et al. |

| 6195094 | February 2001 | Celebiler |

| 6211858 | April 2001 | Moon et al. |

| 6222465 | April 2001 | Kumar et al. |

| 6229542 | May 2001 | Miller |

| 6253218 | June 2001 | Aoki et al. |

| 6275935 | August 2001 | Barlow et al. |

| 6278454 | August 2001 | Krishnan |

| 6313853 | November 2001 | Lamontagne et al. |

| 6317140 | November 2001 | Livingston |

| 6353451 | March 2002 | Teibel et al. |

| 6396520 | May 2002 | Ording |

| 6477117 | November 2002 | Narayanaswanni et al. |

| 6486895 | November 2002 | Robertson et al. |

| 6496206 | December 2002 | Memyk et al. |

| 6496209 | December 2002 | Horii |

| 6525997 | February 2003 | Narayanaswami et al. |

| 6545669 | April 2003 | Kinawi et al. |

| 6549218 | April 2003 | Gershony et al. |

| 6571245 | May 2003 | Huang et al. |

| 6590568 | July 2003 | Astala et al. |

| 6597345 | July 2003 | Hirshberg |

| 6597378 | July 2003 | Shiraishi et al. |

| 6621509 | September 2003 | Eiref et al. |

| 6628309 | September 2003 | Dodson et al. |

| 6628310 | September 2003 | Hiura et al. |

| 6647534 | November 2003 | Graham |

| 6683628 | January 2004 | Nakagawa et al. |

| 6690623 | February 2004 | Maano |

| 6700612 | March 2004 | Anderson et al. |

| 6710788 | March 2004 | Freach et al. |

| 6714222 | March 2004 | Bjorn et al. |

| 6753888 | June 2004 | Kamiwada et al. |

| 6763388 | July 2004 | Tsimelzon |

| 6774914 | August 2004 | Benayoun |

| 6781575 | August 2004 | Hawkins et al. |

| 6798429 | September 2004 | Bradski |

| 6809724 | October 2004 | Shiraishi et al. |

| 6816175 | November 2004 | Hamp et al. |

| 6820111 | November 2004 | Rubin et al. |

| 6822638 | November 2004 | Dobies et al. |

| 6847387 | January 2005 | Roth |

| 6874128 | March 2005 | Moore et al. |

| 6880132 | April 2005 | Uemura |

| 6915490 | July 2005 | Ewing |

| 6931601 | August 2005 | Vronay et al. |

| 6934911 | August 2005 | Salmimaa et al. |

| 6940494 | September 2005 | Hoshino et al. |

| 6963349 | November 2005 | Nagasaki |

| 6970749 | November 2005 | Chinn et al. |

| 6976210 | December 2005 | Silva et al. |

| 6976228 | December 2005 | Bernhardson |

| 6978127 | December 2005 | Bulthuis et al. |

| 7003495 | February 2006 | Burger et al. |

| 7007239 | February 2006 | Hawkins et al. |

| 7010755 | March 2006 | Anderson et al. |

| 7017118 | March 2006 | Carroll |

| 7043701 | May 2006 | Gordon |

| 7071943 | July 2006 | Adler |

| 7075512 | July 2006 | Fabre et al. |

| 7080326 | July 2006 | Molander et al. |

| 7088340 | August 2006 | Kato |

| 7093201 | August 2006 | Duarte |

| 7107549 | September 2006 | Deaton et al. |

| 7117453 | October 2006 | Drucker et al. |

| 7119819 | October 2006 | Robertson et al. |

| 7126579 | October 2006 | Ritter |

| 7133859 | November 2006 | Wong |

| 7134092 | November 2006 | Fung et al. |

| 7134095 | November 2006 | Smith et al. |

| 7142210 | November 2006 | Schwuttke et al. |

| 7146576 | December 2006 | Chang et al. |

| 7155667 | December 2006 | Kotler et al. |

| 7173603 | February 2007 | Kawasome |

| 7173604 | February 2007 | Marvit et al. |

| 7178111 | February 2007 | Glein et al. |

| 7194527 | March 2007 | Drucker et al. |

| 7194698 | March 2007 | Gottfurcht et al. |

| 7215323 | May 2007 | Gombert et al. |

| 7216305 | May 2007 | Jaeger |

| 7231229 | June 2007 | Hawkins et al. |

| 7237240 | June 2007 | Chen et al. |

| 7242406 | July 2007 | Robotham et al. |

| 7249327 | July 2007 | Nelson et al. |

| 7278115 | October 2007 | Conway et al. |

| 7283845 | October 2007 | De Bast |

| 7287232 | October 2007 | Tsuchimura et al. |

| 7292243 | November 2007 | Burke |

| 7310636 | December 2007 | Bodin et al. |

| 7340678 | March 2008 | Chiu et al. |

| 7355593 | April 2008 | Hill et al. |

| 7362331 | April 2008 | Ording |

| 7383497 | June 2008 | Glenner et al. |

| 7392488 | June 2008 | Card et al. |

| 7403211 | July 2008 | Sheasby et al. |

| 7403910 | July 2008 | Hastings et al. |

| 7404151 | July 2008 | Borchardt et al. |

| 7406666 | July 2008 | Davis et al. |

| 7412650 | August 2008 | Gallo |

| 7415677 | August 2008 | Arend et al. |

| 7417680 | August 2008 | Aoki et al. |

| 7432928 | October 2008 | Shaw et al. |

| 7433179 | October 2008 | Hisano et al. |

| 7434177 | October 2008 | Ording et al. |

| 7437005 | October 2008 | Drucker et al. |

| 7444390 | October 2008 | Tadayon et al. |

| 7456823 | November 2008 | Poupyrev et al. |

| 7468742 | December 2008 | Ahn et al. |

| 7478437 | January 2009 | Hatanaka et al. |

| 7479948 | January 2009 | Kim et al. |

| 7480872 | January 2009 | Ubillos |

| 7480873 | January 2009 | Kawahara |

| 7487467 | February 2009 | Kawahara et al. |

| 7490295 | February 2009 | Chaudhri et al. |

| 7493573 | February 2009 | Wagner |

| 7496595 | February 2009 | Accapadi et al. |

| 7506268 | March 2009 | Jennings et al. |

| 7509321 | March 2009 | Wong et al. |

| 7509588 | March 2009 | Van Os et al. |

| 7511710 | March 2009 | Barrett |

| 7512898 | March 2009 | Jennings et al. |

| 7523414 | April 2009 | Schmidt et al. |

| 7526738 | April 2009 | Ording et al. |

| 7546548 | June 2009 | Chew et al. |

| 7546554 | June 2009 | Chiu et al. |

| 7552402 | June 2009 | Bilow |

| 7557804 | July 2009 | McDaniel |

| 7561874 | July 2009 | Wang et al. |

| 7584278 | September 2009 | Rajarajan et al. |

| 7587683 | September 2009 | Ito et al. |

| 7594185 | September 2009 | Anderson et al. |

| 7596766 | September 2009 | Sharma et al. |

| 7606819 | October 2009 | Audet et al. |

| 7607150 | October 2009 | Kobayashi |

| 7620894 | November 2009 | Kahn |

| 7624357 | November 2009 | De Bast |

| 7636898 | December 2009 | Takahashi |

| 7642934 | January 2010 | Scott |

| 7650575 | January 2010 | Cummins et al. |

| 7657842 | February 2010 | Matthews et al. |

| 7657845 | February 2010 | Drucker et al. |

| 7663620 | February 2010 | Robertson et al. |

| 7665033 | February 2010 | Byrne et al. |

| 7667703 | February 2010 | Hong et al. |

| 7680817 | March 2010 | Audet et al. |

| 7683883 | March 2010 | Touma et al. |

| 7698658 | April 2010 | Ohwa et al. |

| 7710423 | May 2010 | Drucker et al. |

| 7716604 | May 2010 | Kataoka et al. |

| 7719523 | May 2010 | Hillis |

| 7719542 | May 2010 | Gough et al. |

| 7724242 | May 2010 | Hillis et al. |

| 7725839 | May 2010 | Michaels |

| 7728821 | June 2010 | Hillis et al. |

| 7730401 | June 2010 | Gillespie et al. |

| 7730423 | June 2010 | Graham |

| 7735021 | June 2010 | Padawer et al. |

| 7739604 | June 2010 | Lyons et al. |

| 7747289 | June 2010 | Wang et al. |

| 7761813 | July 2010 | Kim et al. |

| 7765266 | July 2010 | Kropivny |

| 7770125 | August 2010 | Young et al. |

| 7783990 | August 2010 | Amadio et al. |

| 7791755 | September 2010 | Mori |

| 7797637 | September 2010 | Marcjan |

| 7805684 | September 2010 | Arvilommi |

| 7810038 | October 2010 | Matsa et al. |

| 7840901 | November 2010 | Lacey et al. |

| 7840907 | November 2010 | Kikuchi et al. |

| 7840912 | November 2010 | Elias et al. |

| 7843454 | November 2010 | Biswas |

| 7853972 | December 2010 | Brodersen et al. |

| 7856602 | December 2010 | Armstrong |

| 7873916 | January 2011 | Chaudhri |

| 7880726 | February 2011 | Nakadaira et al. |

| 7904832 | March 2011 | Ubillos |

| 7907124 | March 2011 | Hillis et al. |

| 7907476 | March 2011 | Lee |

| 7908569 | March 2011 | Ala-Rantala |

| 7917869 | March 2011 | Anderson |

| 7924444 | April 2011 | Takahashi |

| 7940250 | May 2011 | Forstall |

| 7956869 | June 2011 | Gilra |

| 7958457 | June 2011 | Brandenberg et al. |

| 7979879 | July 2011 | Kazama et al. |

| 7986324 | July 2011 | Funaki et al. |

| 7995078 | August 2011 | Baar |

| 7996789 | August 2011 | Louch et al. |

| 8020110 | September 2011 | Hurst |

| 8024671 | September 2011 | Lee et al. |

| 8046714 | October 2011 | Yahiro et al. |

| 8059101 | November 2011 | Westerman et al. |

| 8064704 | November 2011 | Kim et al. |

| 8065618 | November 2011 | Kumar et al. |

| 8069404 | November 2011 | Audet |

| 8072439 | December 2011 | Hillis et al. |

| 8078966 | December 2011 | Audet |

| 8099441 | January 2012 | Surasinghe |

| 8103963 | January 2012 | Ikeda et al. |

| 8111255 | February 2012 | Park |

| 8125481 | February 2012 | Gossweiler, III et al. |

| 8130211 | March 2012 | Abernathy |

| 8139043 | March 2012 | Hillis |

| 8151185 | April 2012 | Audet |

| 8152640 | April 2012 | Shirakawa et al. |

| 8156175 | April 2012 | Hopkins |

| 8161419 | April 2012 | Palahnuk et al. |

| 8185842 | May 2012 | Chang et al. |

| 8188985 | May 2012 | Hillis et al. |

| 8205172 | June 2012 | Wong et al. |

| 8209628 | June 2012 | Davidson |

| 8214793 | July 2012 | Muthuswamy |

| 8230358 | July 2012 | Chaudhri |

| 8232990 | July 2012 | King et al. |

| 8255808 | August 2012 | Lindgren et al. |

| 8259163 | September 2012 | Bell |

| 8266550 | September 2012 | Cleron |

| 8269729 | September 2012 | Han et al. |

| 8269739 | September 2012 | Hillis et al. |

| 8279241 | October 2012 | Fong |

| 8306515 | November 2012 | Ryu et al. |

| 8335784 | December 2012 | Gutt et al. |

| 8365084 | January 2013 | Lin et al. |

| 8423911 | April 2013 | Chaudhri |

| 8434027 | April 2013 | Jones |

| 8446371 | May 2013 | Fyke et al. |

| 8458615 | June 2013 | Chaudhri |

| 8519964 | August 2013 | Platzer et al. |

| 8519972 | August 2013 | Forstall et al. |

| 8525839 | September 2013 | Chaudhri |

| 8558808 | October 2013 | Forstall et al. |

| 8564544 | October 2013 | Jobs et al. |

| 8601370 | December 2013 | Chiang et al. |

| 8619038 | December 2013 | Chaudhri et al. |

| 8626762 | January 2014 | Seung et al. |

| 8672885 | March 2014 | Kriesel et al. |

| 8683349 | March 2014 | Roberts et al. |

| 8713011 | April 2014 | Asai et al. |

| 8730188 | May 2014 | Pasquero et al. |

| 8799777 | August 2014 | Lee et al. |

| 8799821 | August 2014 | De Rose et al. |

| 8826170 | September 2014 | Weber et al. |

| 8839128 | September 2014 | Krishnaraj et al. |

| 8881060 | November 2014 | Chaudhri et al. |

| 8881061 | November 2014 | Chaudhri et al. |

| 8957866 | February 2015 | Barnett et al. |

| 8972898 | March 2015 | Carter |

| 9026508 | May 2015 | Nagai |

| 9032438 | May 2015 | Ito et al. |

| 9053462 | June 2015 | Cadiz |

| 9082314 | July 2015 | Tsai |

| 9152312 | October 2015 | Terleski et al. |

| 9170708 | October 2015 | Chaudhri et al. |

| 9239673 | January 2016 | Shaffer et al. |

| 9256627 | February 2016 | Surasinghe |

| 9367232 | June 2016 | Platzer et al. |

| 9386432 | July 2016 | Chu et al. |

| 9417787 | August 2016 | Fong |

| 9448691 | September 2016 | Suda |

| 9619143 | April 2017 | Herz et al. |

| 9794397 | October 2017 | Min et al. |

| 10250735 | April 2019 | Butcher et al. |

| 10359907 | July 2019 | Van Os et al. |

| 2001/0024195 | September 2001 | Hayakawa |

| 2001/0024212 | September 2001 | Ohnishi |

| 2001/0038394 | November 2001 | Tsuchimura et al. |

| 2002/0008691 | January 2002 | Hanajima et al. |

| 2002/0015042 | February 2002 | Robotham et al. |

| 2002/0018051 | February 2002 | Singh |

| 2002/0024540 | February 2002 | McCarthy |

| 2002/0038299 | March 2002 | Zernik et al. |

| 2002/0054090 | May 2002 | Silva et al. |

| 2002/0057287 | May 2002 | Crow et al. |

| 2002/0067376 | June 2002 | Martin et al. |

| 2002/0078037 | June 2002 | Hatanaka et al. |

| 2002/0085037 | July 2002 | Leavitt et al. |

| 2002/0091697 | July 2002 | Huang et al. |

| 2002/0097261 | July 2002 | Gottfurcht et al. |

| 2002/0104096 | August 2002 | Cramer et al. |

| 2002/0109721 | August 2002 | Konaka et al. |

| 2002/0140698 | October 2002 | Robertson et al. |

| 2002/0140736 | October 2002 | Chen |

| 2002/0143949 | October 2002 | Rajarajan et al. |

| 2002/0149561 | October 2002 | Fukumoto et al. |

| 2002/0152222 | October 2002 | Holbrook |

| 2002/0167683 | November 2002 | Hanamoto et al. |

| 2002/0191029 | December 2002 | Gillespie et al. |

| 2002/0196238 | December 2002 | Tsukada et al. |

| 2003/0001898 | January 2003 | Bernhardson |

| 2003/0007012 | January 2003 | Bate |

| 2003/0016241 | January 2003 | Burke |

| 2003/0030664 | February 2003 | Parry |

| 2003/0048295 | March 2003 | Lilleness et al. |

| 2003/0063072 | April 2003 | Brandenberg et al. |

| 2003/0080991 | May 2003 | Crow et al. |

| 2003/0085931 | May 2003 | Card et al. |

| 2003/0090572 | May 2003 | Belz et al. |

| 2003/0098894 | May 2003 | Sheldon et al. |

| 2003/0122787 | July 2003 | Zimmerman et al. |

| 2003/0128242 | July 2003 | Gordon |

| 2003/0142136 | July 2003 | Carter et al. |

| 2003/0156119 | August 2003 | Bonadio |

| 2003/0156140 | August 2003 | Watanabe |

| 2003/0156756 | August 2003 | Gokturk et al. |

| 2003/0160825 | August 2003 | Weber |

| 2003/0164827 | September 2003 | Gottesman et al. |

| 2003/0169288 | September 2003 | Misawa |

| 2003/0169298 | September 2003 | Ording |

| 2003/0169302 | September 2003 | Davidsson et al. |

| 2003/0174170 | September 2003 | Jung et al. |

| 2003/0174172 | September 2003 | Conrad et al. |

| 2003/0184552 | October 2003 | Chadha |

| 2003/0184587 | October 2003 | Ording et al. |

| 2003/0189597 | October 2003 | Anderson et al. |

| 2003/0195950 | October 2003 | Huang et al. |

| 2003/0200289 | October 2003 | Kemp et al. |

| 2003/0206195 | November 2003 | Matsa et al. |

| 2003/0206197 | November 2003 | Mcinerney |

| 2003/0210278 | November 2003 | Kyoya et al. |

| 2004/0008224 | January 2004 | Molander et al. |

| 2004/0021643 | February 2004 | Hoshino et al. |

| 2004/0027330 | February 2004 | Bradski |

| 2004/0056839 | March 2004 | Yoshihara |

| 2004/0070608 | April 2004 | Saka |

| 2004/0103156 | May 2004 | Quillen et al. |

| 2004/0109013 | June 2004 | Goertz |

| 2004/0119757 | June 2004 | Corley et al. |

| 2004/0121823 | June 2004 | Noesgaard et al. |

| 2004/0125088 | July 2004 | Zimmerman et al. |

| 2004/0138569 | July 2004 | Grunwald et al. |

| 2004/0141011 | July 2004 | Smethers et al. |

| 2004/0143598 | July 2004 | Wagner |

| 2004/0155909 | August 2004 | Sheasby et al. |

| 2004/0160462 | August 2004 | Sheasby et al. |

| 2004/0196267 | October 2004 | Kawai et al. |

| 2004/0215719 | October 2004 | Altshuler |

| 2004/0222975 | November 2004 | Nakano et al. |

| 2004/0236769 | November 2004 | Smith et al. |

| 2004/0257375 | December 2004 | Cowperthwaite |

| 2005/0005246 | January 2005 | Card et al. |

| 2005/0005248 | January 2005 | Rockey et al. |

| 2005/0010955 | January 2005 | Elia et al. |

| 2005/0012862 | January 2005 | Lee |

| 2005/0024341 | February 2005 | Gillespie et al. |

| 2005/0026644 | February 2005 | Lien |

| 2005/0039134 | February 2005 | Wiggeshoff et al. |

| 2005/0043987 | February 2005 | Kumar et al. |

| 2005/0052471 | March 2005 | Nagasaki |

| 2005/0057524 | March 2005 | Hill et al. |

| 2005/0057530 | March 2005 | Hinckley et al. |

| 2005/0057548 | March 2005 | Kim |

| 2005/0060653 | March 2005 | Fukase et al. |

| 2005/0060664 | March 2005 | Rogers |

| 2005/0060665 | March 2005 | Rekimoto |

| 2005/0088423 | April 2005 | Keely et al. |

| 2005/0091596 | April 2005 | Anthony et al. |

| 2005/0091609 | April 2005 | Matthews et al. |

| 2005/0097089 | May 2005 | Nielsen et al. |

| 2005/0116026 | June 2005 | Burger et al. |

| 2005/0120142 | June 2005 | Hall |

| 2005/0131924 | June 2005 | Jones |

| 2005/0134578 | June 2005 | Chambers et al. |

| 2005/0138570 | June 2005 | Good et al. |

| 2005/0151742 | July 2005 | Hong et al. |

| 2005/0177796 | August 2005 | Takahashi |

| 2005/0210410 | September 2005 | Ohwa et al. |

| 2005/0216913 | September 2005 | Gemmell et al. |

| 2005/0229102 | October 2005 | Watson et al. |

| 2005/0246331 | November 2005 | De Vorchik et al. |

| 2005/0251755 | November 2005 | Mullins et al. |

| 2005/0259087 | November 2005 | Hoshino et al. |

| 2005/0262448 | November 2005 | Vronay et al. |

| 2005/0275636 | December 2005 | Dehlin et al. |

| 2005/0278757 | December 2005 | Grossman et al. |

| 2005/0283734 | December 2005 | Santoro et al. |

| 2005/0289476 | December 2005 | Tokkonen |

| 2005/0289482 | December 2005 | Anthony et al. |

| 2006/0004685 | January 2006 | Pyhalammi et al. |

| 2006/0005207 | January 2006 | Louch et al. |

| 2006/0007182 | January 2006 | Sato et al. |

| 2006/0020903 | January 2006 | Wang et al. |

| 2006/0022955 | February 2006 | Kennedy |

| 2006/0025110 | February 2006 | Liu |

| 2006/0026521 | February 2006 | Hotelling et al. |

| 2006/0026535 | February 2006 | Hotelling et al. |

| 2006/0026536 | February 2006 | Hotelling et al. |

| 2006/0031874 | February 2006 | Ok et al. |

| 2006/0033751 | February 2006 | Keely et al. |

| 2006/0035628 | February 2006 | Miller et al. |

| 2006/0036568 | February 2006 | Moore et al. |

| 2006/0048069 | March 2006 | Igeta |

| 2006/0051073 | March 2006 | Jung et al. |

| 2006/0053392 | March 2006 | Salmimaa et al. |

| 2006/0055700 | March 2006 | Niles et al. |

| 2006/0070007 | March 2006 | Cummins et al. |

| 2006/0075355 | April 2006 | Shiono et al. |

| 2006/0075396 | April 2006 | Surasinghe |

| 2006/0080386 | April 2006 | Roykkee et al. |

| 2006/0080616 | April 2006 | Vogel et al. |

| 2006/0080617 | April 2006 | Anderson et al. |

| 2006/0090022 | April 2006 | Flynn et al. |

| 2006/0092133 | May 2006 | Touma et al. |

| 2006/0092770 | May 2006 | Demas |

| 2006/0097991 | May 2006 | Hotelling et al. |

| 2006/0107231 | May 2006 | Matthews et al. |

| 2006/0112335 | May 2006 | Hofmeister et al. |

| 2006/0112347 | May 2006 | Baudisch |

| 2006/0116578 | June 2006 | Grunwald et al. |

| 2006/0117372 | June 2006 | Hopkins |

| 2006/0119619 | June 2006 | Fagans |

| 2006/0123359 | June 2006 | Schatzberger |

| 2006/0123360 | June 2006 | Anwar et al. |

| 2006/0125799 | June 2006 | Hillis et al. |

| 2006/0129586 | June 2006 | Arrouye et al. |

| 2006/0143574 | June 2006 | Ito et al. |

| 2006/0153531 | July 2006 | Kanegae et al. |

| 2006/0161863 | July 2006 | Gallo |

| 2006/0161871 | July 2006 | Hotelling et al. |

| 2006/0164418 | July 2006 | Hao et al. |

| 2006/0174211 | August 2006 | Hoellerer et al. |

| 2006/0187212 | August 2006 | Park et al. |

| 2006/0190833 | August 2006 | Sangiovanni et al. |

| 2006/0197752 | September 2006 | Hurst et al. |

| 2006/0197753 | September 2006 | Hotelling |

| 2006/0209035 | September 2006 | Jenkins et al. |

| 2006/0210958 | September 2006 | Rimas-ribikauskas et al. |

| 2006/0212828 | September 2006 | Yahiro et al. |

| 2006/0212833 | September 2006 | Gallagher et al. |

| 2006/0236266 | October 2006 | Majava |

| 2006/0242596 | October 2006 | Armstrong |

| 2006/0242604 | October 2006 | Wong et al. |

| 2006/0242607 | October 2006 | Hudson |

| 2006/0253771 | November 2006 | Baschy |

| 2006/0262116 | November 2006 | Moshiri et al. |

| 2006/0267966 | November 2006 | Grossman et al. |

| 2006/0268100 | November 2006 | Karukka et al. |

| 2006/0271864 | November 2006 | Satterfield et al. |

| 2006/0271867 | November 2006 | Wang et al. |

| 2006/0271874 | November 2006 | Raiz et al. |

| 2006/0277460 | December 2006 | Forstall et al. |

| 2006/0277481 | December 2006 | Forstall et al. |

| 2006/0277486 | December 2006 | Skinner |

| 2006/0278692 | December 2006 | Matsumoto et al. |

| 2006/0282790 | December 2006 | Matthews et al. |

| 2006/0284852 | December 2006 | Hofmeister et al. |

| 2006/0290661 | December 2006 | Innanen et al. |

| 2007/0013665 | January 2007 | Vetelainen et al. |

| 2007/0016958 | January 2007 | Bodepudi |

| 2007/0024468 | February 2007 | Quandel et al. |

| 2007/0028269 | February 2007 | Nezu et al. |

| 2007/0030362 | February 2007 | Ota et al. |

| 2007/0032267 | February 2007 | Haitani et al. |

| 2007/0044029 | February 2007 | Fisher et al. |

| 2007/0050432 | March 2007 | Yoshizawa |

| 2007/0050726 | March 2007 | Wakai et al. |

| 2007/0055940 | March 2007 | Moore et al. |

| 2007/0055947 | March 2007 | Ostojic et al. |

| 2007/0061745 | March 2007 | Anthony et al. |

| 2007/0065044 | March 2007 | Park et al. |

| 2007/0067272 | March 2007 | Flynt et al. |

| 2007/0070066 | March 2007 | Bakhash |

| 2007/0083827 | April 2007 | Scott et al. |

| 2007/0083911 | April 2007 | Madden et al. |

| 2007/0091068 | April 2007 | Liberty |

| 2007/0101292 | May 2007 | Kupka |

| 2007/0101297 | May 2007 | Forstall et al. |

| 2007/0106950 | May 2007 | Hutchinson et al. |

| 2007/0113207 | May 2007 | Gritton |

| 2007/0120832 | May 2007 | Saarinen et al. |

| 2007/0121869 | May 2007 | Gorti et al. |

| 2007/0123205 | May 2007 | Lee et al. |

| 2007/0124677 | May 2007 | de los Reyes et al. |

| 2007/0126696 | June 2007 | Boillot |

| 2007/0126732 | June 2007 | Robertson et al. |

| 2007/0132789 | June 2007 | Ording et al. |

| 2007/0136351 | June 2007 | Dames et al. |

| 2007/0146325 | June 2007 | Poston et al. |

| 2007/0150810 | June 2007 | Katz et al. |

| 2007/0150834 | June 2007 | Muller et al. |

| 2007/0150835 | June 2007 | Muller |

| 2007/0152958 | July 2007 | Ahn et al. |

| 2007/0152980 | July 2007 | Kocienda et al. |

| 2007/0156697 | July 2007 | Tsarkova |

| 2007/0157089 | July 2007 | Van Os et al. |

| 2007/0157097 | July 2007 | Peters |

| 2007/0174785 | July 2007 | Perttula |

| 2007/0177803 | August 2007 | Elias et al. |

| 2007/0177804 | August 2007 | Elias et al. |

| 2007/0179938 | August 2007 | Ikeda et al. |

| 2007/0180395 | August 2007 | Yamashita et al. |

| 2007/0188518 | August 2007 | Vale et al. |

| 2007/0189737 | August 2007 | Chaudhri et al. |

| 2007/0192741 | August 2007 | Yoritate et al. |

| 2007/0209004 | September 2007 | Layard |

| 2007/0226652 | September 2007 | Kikuchi et al. |

| 2007/0240079 | October 2007 | Flynt et al. |

| 2007/0243862 | October 2007 | Coskun et al. |

| 2007/0245250 | October 2007 | Schechter et al. |

| 2007/0247425 | October 2007 | Liberty et al. |

| 2007/0250793 | October 2007 | Miura et al. |

| 2007/0250794 | October 2007 | Miura et al. |

| 2007/0266011 | November 2007 | Rohrs et al. |

| 2007/0271532 | November 2007 | Nguyen et al. |

| 2007/0288860 | December 2007 | Ording et al. |

| 2007/0288862 | December 2007 | Ording |

| 2007/0288868 | December 2007 | Rhee et al. |

| 2007/0294231 | December 2007 | Kaihotsu |

| 2008/0001924 | January 2008 | De los Reyes et al. |

| 2008/0005702 | January 2008 | Skourup et al. |

| 2008/0005703 | January 2008 | Radivojevic et al. |

| 2008/0006762 | January 2008 | Fadell et al. |

| 2008/0014917 | January 2008 | Rhoads et al. |

| 2008/0016468 | January 2008 | Chambers et al. |

| 2008/0016471 | January 2008 | Park |

| 2008/0024454 | January 2008 | Everest |

| 2008/0034013 | February 2008 | Cisler et al. |

| 2008/0034309 | February 2008 | Louch et al. |

| 2008/0034317 | February 2008 | Fard et al. |

| 2008/0040668 | February 2008 | Ala-Rantala |

| 2008/0059915 | March 2008 | Boillot |

| 2008/0062126 | March 2008 | Algreatly |

| 2008/0062141 | March 2008 | Chandhri |

| 2008/0062257 | March 2008 | Corson |

| 2008/0067626 | March 2008 | Hirler et al. |

| 2008/0082930 | April 2008 | Omemick et al. |

| 2008/0089587 | April 2008 | Kim et al. |

| 2008/0091763 | April 2008 | Devonshire et al. |

| 2008/0094369 | April 2008 | Ganatra et al. |

| 2008/0104515 | May 2008 | Dumitru et al. |

| 2008/0109408 | May 2008 | Choi et al. |

| 2008/0117461 | May 2008 | Mitsutake et al. |

| 2008/0120568 | May 2008 | Jian et al. |

| 2008/0122796 | May 2008 | Jobs et al. |

| 2008/0125180 | May 2008 | Hoffman et al. |

| 2008/0126971 | May 2008 | Kojima |

| 2008/0130421 | June 2008 | Akaiwa et al. |

| 2008/0136785 | June 2008 | Baudisch et al. |

| 2008/0148182 | June 2008 | Chiang et al. |

| 2008/0155453 | June 2008 | Othmer |

| 2008/0155617 | June 2008 | Angiolillo et al. |

| 2008/0158145 | July 2008 | Westerman |

| 2008/0158172 | July 2008 | Hotelling et al. |

| 2008/0161045 | July 2008 | Vuorenmaa |

| 2008/0164468 | July 2008 | Chen et al. |

| 2008/0165140 | July 2008 | Christie et al. |

| 2008/0168365 | July 2008 | Chaudhri |

| 2008/0168367 | July 2008 | Chaudhri et al. |

| 2008/0168368 | July 2008 | Louch et al. |

| 2008/0168382 | July 2008 | Louch et al. |

| 2008/0168401 | July 2008 | Boule et al. |

| 2008/0168478 | July 2008 | Platzer et al. |

| 2008/0180406 | July 2008 | Han et al. |

| 2008/0182628 | July 2008 | Lee et al. |

| 2008/0184112 | July 2008 | Chiang et al. |

| 2008/0204424 | August 2008 | Jin et al. |

| 2008/0215980 | September 2008 | Lee et al. |

| 2008/0216017 | September 2008 | Kurtenbach et al. |

| 2008/0222545 | September 2008 | Lemay et al. |

| 2008/0225007 | September 2008 | Nakadaira et al. |

| 2008/0229254 | September 2008 | Warner |

| 2008/0231610 | September 2008 | Hotelling et al. |

| 2008/0244119 | October 2008 | Tokuhara et al. |

| 2008/0259045 | October 2008 | Kim et al. |

| 2008/0259057 | October 2008 | Brons |

| 2008/0266407 | October 2008 | Battles et al. |

| 2008/0276201 | November 2008 | Risch et al. |

| 2008/0282202 | November 2008 | Sunday |

| 2008/0300055 | December 2008 | Lutnick et al. |

| 2008/0300572 | December 2008 | Rankers et al. |

| 2008/0307350 | December 2008 | Sabatelli et al. |

| 2008/0307361 | December 2008 | Louch et al. |

| 2008/0307362 | December 2008 | Chaudhri et al. |

| 2008/0309632 | December 2008 | Westerman et al. |

| 2008/0313110 | December 2008 | Kreamer et al. |

| 2008/0313596 | December 2008 | Kreamer et al. |

| 2009/0002335 | January 2009 | Chaudhri |

| 2009/0007017 | January 2009 | Anzures et al. |

| 2009/0009815 | January 2009 | Karasik et al. |

| 2009/0019385 | January 2009 | Khatib et al. |

| 2009/0021488 | January 2009 | Kali et al. |

| 2009/0023433 | January 2009 | Walley et al. |

| 2009/0024946 | January 2009 | Gotz |

| 2009/0034805 | February 2009 | Perlmutter et al. |

| 2009/0055748 | February 2009 | Dieberger et al. |

| 2009/0058821 | March 2009 | White et al. |

| 2009/0063971 | March 2009 | Chaudhri et al. |

| 2009/0064055 | March 2009 | Staszak |

| 2009/0070708 | March 2009 | Finkelstein |

| 2009/0077501 | March 2009 | Heubel et al. |

| 2009/0103780 | April 2009 | Nishihara et al. |

| 2009/0113350 | April 2009 | Hibino et al. |

| 2009/0122018 | May 2009 | Vymenets et al. |

| 2009/0125842 | May 2009 | Nakayama |

| 2009/0132965 | May 2009 | Shimizu |

| 2009/0138194 | May 2009 | Geelen |

| 2009/0138827 | May 2009 | Van Os et al. |

| 2009/0144653 | June 2009 | Ubillos |

| 2009/0150775 | June 2009 | Miyazaki et al. |

| 2009/0158200 | June 2009 | Palahnuk et al. |

| 2009/0163193 | June 2009 | Fyke et al. |

| 2009/0164936 | June 2009 | Kawaguchi |

| 2009/0172606 | July 2009 | Dunn et al. |

| 2009/0178008 | July 2009 | Herz et al. |

| 2009/0183125 | July 2009 | Magal et al. |

| 2009/0184936 | July 2009 | Algreatly |

| 2009/0189911 | July 2009 | Ono |

| 2009/0199128 | August 2009 | Matthews et al. |

| 2009/0204920 | August 2009 | Beverley et al. |

| 2009/0204928 | August 2009 | Kallio et al. |

| 2009/0217187 | August 2009 | Kendall et al. |

| 2009/0217206 | August 2009 | Liu et al. |

| 2009/0217209 | August 2009 | Chen et al. |

| 2009/0222420 | September 2009 | Hirata |

| 2009/0222765 | September 2009 | Ekstrand |

| 2009/0222766 | September 2009 | Chae et al. |

| 2009/0228807 | September 2009 | Lemay |

| 2009/0228825 | September 2009 | Van Os et al. |

| 2009/0237371 | September 2009 | Kim et al. |

| 2009/0237372 | September 2009 | Kim et al. |

| 2009/0254869 | October 2009 | Ludwig et al. |

| 2009/0265669 | October 2009 | Kida et al. |

| 2009/0278806 | November 2009 | Duarte et al. |

| 2009/0278812 | November 2009 | Yasutake |

| 2009/0282369 | November 2009 | Jones |

| 2009/0303231 | December 2009 | Robinet et al. |

| 2009/0313584 | December 2009 | Kerr et al. |

| 2009/0313585 | December 2009 | Hellinger et al. |

| 2009/0315848 | December 2009 | Ku et al. |

| 2009/0319928 | December 2009 | Alphin et al. |

| 2009/0319935 | December 2009 | Figura |

| 2009/0322676 | December 2009 | Kerr et al. |

| 2009/0327969 | December 2009 | Estrada |

| 2010/0011304 | January 2010 | Van Os |

| 2010/0013780 | January 2010 | Ikeda et al. |

| 2010/0031203 | February 2010 | Morris et al. |

| 2010/0050133 | February 2010 | Nishihara et al. |

| 2010/0053151 | March 2010 | Marti et al. |

| 2010/0058182 | March 2010 | Jung |

| 2010/0063813 | March 2010 | Richter et al. |

| 2010/0077333 | March 2010 | Yang et al. |

| 2010/0082661 | April 2010 | Beaudreau |

| 2010/0083165 | April 2010 | Andrews et al. |

| 2010/0095206 | April 2010 | Kim |

| 2010/0095238 | April 2010 | Baudet |

| 2010/0095248 | April 2010 | Karstens |

| 2010/0105454 | April 2010 | Weber et al. |

| 2010/0107101 | April 2010 | Shaw et al. |

| 2010/0110025 | May 2010 | Lim |

| 2010/0115428 | May 2010 | Shuping et al. |

| 2010/0122195 | May 2010 | Hwang |

| 2010/0124152 | May 2010 | Lee |

| 2010/0146451 | June 2010 | Jun-Dong et al. |

| 2010/0153844 | June 2010 | Hwang et al. |

| 2010/0153878 | June 2010 | Lindgren et al. |

| 2010/0157742 | June 2010 | Relyea et al. |

| 2010/0159909 | June 2010 | Stifelman |

| 2010/0162108 | June 2010 | Stallings et al. |

| 2010/0169357 | July 2010 | Ingrassia et al. |

| 2010/0191701 | July 2010 | Beyda et al. |

| 2010/0211872 | August 2010 | Rolston et al. |

| 2010/0211919 | August 2010 | Brown et al. |

| 2010/0214216 | August 2010 | Nasiri et al. |

| 2010/0223563 | September 2010 | Green |

| 2010/0223574 | September 2010 | Wang et al. |

| 2010/0229129 | September 2010 | Price et al. |

| 2010/0229130 | September 2010 | Edge et al. |

| 2010/0241955 | September 2010 | Price et al. |

| 2010/0241967 | September 2010 | Lee |

| 2010/0241999 | September 2010 | Russ et al. |

| 2010/0248788 | September 2010 | Yook et al. |

| 2010/0251085 | September 2010 | Zearing et al. |

| 2010/0257468 | October 2010 | Bernardo et al. |

| 2010/0262591 | October 2010 | Lee et al. |

| 2010/0262634 | October 2010 | Wang |

| 2010/0287505 | November 2010 | Williams |

| 2010/0315413 | December 2010 | Izadi et al. |

| 2010/0318709 | December 2010 | Bell et al. |

| 2010/0325529 | December 2010 | Sun |

| 2010/0332497 | December 2010 | Valliani et al. |

| 2010/0333017 | December 2010 | Ortiz |

| 2011/0004835 | January 2011 | Yanchar et al. |

| 2011/0007000 | January 2011 | Lim |

| 2011/0010672 | January 2011 | Hope |

| 2011/0012921 | January 2011 | Cholewin et al. |

| 2011/0029934 | February 2011 | Locker et al. |

| 2011/0041098 | February 2011 | Kajiya et al. |

| 2011/0055722 | March 2011 | Ludwig |

| 2011/0061010 | March 2011 | Wasko |

| 2011/0078597 | March 2011 | Rapp et al. |

| 2011/0083104 | April 2011 | Minton |

| 2011/0087981 | April 2011 | Jeong et al. |

| 2011/0093821 | April 2011 | Wigdor et al. |

| 2011/0099299 | April 2011 | Vasudevan et al. |

| 2011/0107261 | May 2011 | Lin et al. |

| 2011/0119610 | May 2011 | Hackborn et al. |

| 2011/0119629 | May 2011 | Huotari et al. |

| 2011/0131534 | June 2011 | Subramanian et al. |

| 2011/0145758 | June 2011 | Rosales et al. |

| 2011/0148786 | June 2011 | Day et al. |

| 2011/0148798 | June 2011 | Dahl |

| 2011/0167058 | July 2011 | Van Os |

| 2011/0173556 | July 2011 | Czerwinski et al. |

| 2011/0179097 | July 2011 | Ala-Rantala |

| 2011/0179368 | July 2011 | King et al. |

| 2011/0225549 | September 2011 | Kim |

| 2011/0239155 | September 2011 | Christie |

| 2011/0246918 | October 2011 | Henderson |

| 2011/0246929 | October 2011 | Jones et al. |

| 2011/0252346 | October 2011 | Chaudhri |

| 2011/0252349 | October 2011 | Chaudhri |

| 2011/0252372 | October 2011 | Chaudhri |

| 2011/0252373 | October 2011 | Chaudhri |

| 2011/0283334 | November 2011 | Choi et al. |

| 2011/0285659 | November 2011 | Kuwabara et al. |

| 2011/0289423 | November 2011 | Kim et al. |

| 2011/0289448 | November 2011 | Tanaka |

| 2011/0298723 | December 2011 | Fleizach et al. |

| 2011/0302513 | December 2011 | Ademar et al. |

| 2011/0310005 | December 2011 | Chen et al. |

| 2011/0310058 | December 2011 | Yamada et al. |

| 2011/0314422 | December 2011 | Cameron et al. |

| 2012/0023471 | January 2012 | Fisher et al. |

| 2012/0030623 | February 2012 | Hoellwarth |

| 2012/0036460 | February 2012 | Cieplinski et al. |

| 2012/0042272 | February 2012 | Hong et al. |

| 2012/0084692 | April 2012 | Bae |

| 2012/0084694 | April 2012 | Sirpal et al. |

| 2012/0110031 | May 2012 | Lahcanski et al. |

| 2012/0117506 | May 2012 | Koch et al. |

| 2012/0124677 | May 2012 | Hoogerwerf et al. |

| 2012/0151331 | June 2012 | Pallakoff et al. |

| 2012/0169617 | July 2012 | Maenpaa |

| 2012/0216146 | August 2012 | Korkonen |

| 2012/0324390 | December 2012 | Tao |

| 2013/0067411 | March 2013 | Kataoka et al. |

| 2013/0111400 | May 2013 | Miwa |

| 2013/0194066 | August 2013 | Rahman et al. |

| 2013/0205244 | August 2013 | Decker et al. |

| 2013/0234924 | September 2013 | Janefalkar et al. |

| 2013/0321340 | December 2013 | Seo et al. |

| 2013/0332886 | December 2013 | Cranfill et al. |

| 2014/0015786 | January 2014 | Honda |

| 2014/0068483 | March 2014 | Platzer et al. |

| 2014/0108978 | April 2014 | Yu et al. |

| 2014/0109024 | April 2014 | Miyazaki |

| 2014/0143784 | May 2014 | Mistry et al. |

| 2014/0165006 | June 2014 | Chaudhri et al. |

| 2014/0195972 | July 2014 | Lee et al. |

| 2014/0215457 | July 2014 | Gaya et al. |

| 2014/0237360 | August 2014 | Chaudhri et al. |

| 2014/0276244 | September 2014 | Kamyar |

| 2014/0293755 | October 2014 | Geiser et al. |

| 2014/0317555 | October 2014 | Choi et al. |

| 2014/0328151 | November 2014 | Serber |

| 2014/0365126 | December 2014 | Vulcano et al. |

| 2015/0012853 | January 2015 | Chaudhri et al. |

| 2015/0089407 | March 2015 | Suzuki |

| 2015/0105125 | April 2015 | Min et al. |

| 2015/0112752 | April 2015 | Wagner et al. |

| 2015/0117162 | April 2015 | Tsai |

| 2015/0160812 | June 2015 | Yuan et al. |

| 2015/0172438 | June 2015 | Yang |

| 2015/0242092 | August 2015 | Van Os et al. |

| 2015/0242989 | August 2015 | Mun et al. |

| 2015/0281945 | October 2015 | Seo et al. |

| 2015/0301506 | October 2015 | Koumaiha |

| 2015/0379476 | December 2015 | Chaudhri et al. |

| 2016/0034148 | February 2016 | Wilson et al. |

| 2016/0034167 | February 2016 | Wilson et al. |

| 2016/0048296 | February 2016 | Gan et al. |

| 2016/0054710 | February 2016 | Jo et al. |

| 2016/0062572 | March 2016 | Yang et al. |

| 2016/0077495 | March 2016 | Brown et al. |

| 2016/0117141 | April 2016 | Ro et al. |

| 2016/0124626 | May 2016 | Lee et al. |

| 2016/0179310 | June 2016 | Chaudhri et al. |

| 2016/0182805 | June 2016 | Emmett et al. |

| 2016/0253065 | September 2016 | Platzer et al. |

| 2016/0269540 | September 2016 | Butcher et al. |

| 2017/0147198 | May 2017 | Herz et al. |

| 2017/0255169 | September 2017 | Lee et al. |

| 2017/0357426 | December 2017 | Wilson et al. |

| 2017/0357427 | December 2017 | Wilson et al. |

| 2017/0357433 | December 2017 | Boule et al. |

| 2017/0374205 | December 2017 | Panda |

| 2019/0171349 | June 2019 | Van Os et al. |

| 2019/0173996 | June 2019 | Butcher et al. |

| 2019/0179514 | June 2019 | Van Os et al. |

| 2019/0235724 | August 2019 | Platzer et al. |

| 2019/0320057 | October 2019 | Omernick et al. |

| 2012202140 | May 2012 | AU | |||

| 2015100115 | Mar 2015 | AU | |||

| 2349649 | Jan 2002 | CA | |||

| 700242 | Jul 2010 | CH | |||

| 1392977 | Jan 2003 | CN | |||

| 1464719 | Dec 2003 | CN | |||

| 1695105 | Nov 2005 | CN | |||

| 1773875 | May 2006 | CN | |||

| 1940833 | Apr 2007 | CN | |||

| 101072410 | Nov 2007 | CN | |||

| 101308443 | Nov 2008 | CN | |||

| 102244676 | Nov 2011 | CN | |||

| 102446059 | May 2012 | CN | |||

| 103210366 | Jul 2013 | CN | |||

| 163032 | Dec 1985 | EP | |||

| 404373 | Dec 1990 | EP | |||

| 626635 | Nov 1994 | EP | |||

| 689134 | Dec 1995 | EP | |||

| 844553 | May 1998 | EP | |||

| 1003098 | May 2000 | EP | |||

| 1143334 | Oct 2001 | EP | |||

| 1186997 | Mar 2002 | EP | |||

| 1271295 | Jan 2003 | EP | |||

| 1517228 | Mar 2005 | EP | |||

| 1674976 | Jun 2006 | EP | |||

| 1724996 | Nov 2006 | EP | |||

| 1956472 | Aug 2008 | EP | |||

| 2150031 | Feb 2010 | EP | |||

| 2911377 | Aug 2015 | EP | |||

| 2819675 | Jul 2002 | FR | |||

| 2329813 | Mar 1999 | GB | |||

| 2407900 | May 2005 | GB | |||

| 6-208446 | Jul 1994 | JP | |||

| 8-221203 | Aug 1996 | JP | |||

| 9-73381 | Mar 1997 | JP | |||

| 9-101874 | Apr 1997 | JP | |||

| 9-258971 | Oct 1997 | JP | |||

| 9-292262 | Nov 1997 | JP | |||

| 9-297750 | Nov 1997 | JP | |||

| 10-40067 | Feb 1998 | JP | |||

| 10-214350 | Aug 1998 | JP | |||

| 11-508116 | Jul 1999 | JP | |||

| 2001-92430 | Apr 2001 | JP | |||

| 2001-092586 | Apr 2001 | JP | |||

| 2001-318751 | Nov 2001 | JP | |||

| 2002-41197 | Feb 2002 | JP | |||

| 2002-41206 | Feb 2002 | JP | |||

| 2002-132412 | May 2002 | JP | |||

| 2002-149312 | May 2002 | JP | |||

| 2002-189567 | Jul 2002 | JP | |||

| 2002-525705 | Aug 2002 | JP | |||

| 2002-297514 | Oct 2002 | JP | |||

| 2002-312105 | Oct 2002 | JP | |||

| 2003-66941 | Mar 2003 | JP | |||

| 2003-139546 | May 2003 | JP | |||

| 2003-198705 | Jul 2003 | JP | |||

| 2003-248538 | Sep 2003 | JP | |||

| 2003-256142 | Sep 2003 | JP | |||

| 2003-271310 | Sep 2003 | JP | |||

| 2003-295994 | Oct 2003 | JP | |||

| 2003-536125 | Dec 2003 | JP | |||

| 2004-38260 | Feb 2004 | JP | |||

| 2004-70492 | Mar 2004 | JP | |||

| 2004-132741 | Apr 2004 | JP | |||

| 2004-152075 | May 2004 | JP | |||

| 2004-341892 | Dec 2004 | JP | |||

| 2005-4396 | Jan 2005 | JP | |||

| 2005-4419 | Jan 2005 | JP | |||

| 2005-515530 | May 2005 | JP | |||

| 2005-198064 | Jul 2005 | JP | |||

| 2005-202703 | Jul 2005 | JP | |||

| 2005-227951 | Aug 2005 | JP | |||

| 2005-228088 | Aug 2005 | JP | |||

| 2005-228091 | Aug 2005 | JP | |||

| 2005-309933 | Nov 2005 | JP | |||

| 2005-321915 | Nov 2005 | JP | |||

| 2005-327064 | Nov 2005 | JP | |||

| 2006-99733 | Apr 2006 | JP | |||

| 2006-155232 | Jun 2006 | JP | |||

| 2006-259376 | Sep 2006 | JP | |||

| 2007-25998 | Feb 2007 | JP | |||

| 2007-124667 | May 2007 | JP | |||

| 2007-132676 | May 2007 | JP | |||

| 2007-512635 | May 2007 | JP | |||

| 2007-334984 | Dec 2007 | JP | |||

| 2008-15698 | Jan 2008 | JP | |||

| 2008-102860 | May 2008 | JP | |||

| 2008-304959 | Dec 2008 | JP | |||

| 2008-306667 | Dec 2008 | JP | |||

| 2009-9350 | Jan 2009 | JP | |||

| 2009-508217 | Feb 2009 | JP | |||

| 2009-136456 | Jun 2009 | JP | |||

| 2009-277192 | Nov 2009 | JP | |||

| 2010-61402 | Mar 2010 | JP | |||

| 2010-97552 | Apr 2010 | JP | |||

| 2010-187096 | Aug 2010 | JP | |||

| 2010-538394 | Dec 2010 | JP | |||

| 2012-208645 | Oct 2012 | JP | |||

| 2013-25357 | Feb 2013 | JP | |||

| 2013-25409 | Feb 2013 | JP | |||

| 2013-120468 | Jun 2013 | JP | |||

| 2013-191234 | Sep 2013 | JP | |||

| 2013-206274 | Oct 2013 | JP | |||

| 2013-211055 | Oct 2013 | JP | |||

| 2014-503891 | Feb 2014 | JP | |||

| 10-2002-0010863 | Feb 2002 | KR | |||

| 10-2009-0035499 | Apr 2009 | KR | |||

| 10-2009-0100320 | Sep 2009 | KR | |||

| 10-2010-0019887 | Feb 2010 | KR | |||

| 10-2011-0078008 | Jul 2011 | KR | |||

| 10-2011-0093729 | Aug 2011 | KR | |||

| 10-2012-0057800 | Jun 2012 | KR | |||

| 10-2013-0016329 | Feb 2013 | KR | |||

| 10-2015-0022599 | Mar 2015 | KR | |||

| 1996/06401 | Feb 1996 | WO | |||

| 1998/44431 | Oct 1998 | WO | |||

| 1999/38149 | Jul 1999 | WO | |||

| 2000/16186 | Mar 2000 | WO | |||

| 2002/13176 | Feb 2002 | WO | |||

| 2003/060622 | Jul 2003 | WO | |||

| 2005/041020 | May 2005 | WO | |||

| 2005/055034 | Jun 2005 | WO | |||

| 2006/012343 | Feb 2006 | WO | |||

| 2006/020304 | Feb 2006 | WO | |||

| 2006/020305 | Feb 2006 | WO | |||

| 2006/117438 | Nov 2006 | WO | |||

| 2006/119269 | Nov 2006 | WO | |||

| 2007/031816 | Mar 2007 | WO | |||

| 2007032908 | Mar 2007 | WO | |||

| 2006/020304 | May 2007 | WO | |||

| 2007/069835 | Jun 2007 | WO | |||

| 2007/094894 | Aug 2007 | WO | |||

| 2007/142256 | Dec 2007 | WO | |||

| 2008/017936 | Feb 2008 | WO | |||

| 2008/114491 | Sep 2008 | WO | |||

| 2009/032638 | Mar 2009 | WO | |||

| 2009/089222 | Jul 2009 | WO | |||

| 2011/126501 | Oct 2011 | WO | |||

| 2012/078079 | Jun 2012 | WO | |||

| 2013/017736 | Feb 2013 | WO | |||

| 2013/157330 | Oct 2013 | WO | |||

Other References

|