Systems And Methods For Proactively Identifying And Surfacing Relevant Content On An Electronic Device With A Touch-sensitive Display

GROSS; Daniel C. ; et al.

U.S. patent application number 17/030300 was filed with the patent office on 2021-01-07 for systems and methods for proactively identifying and surfacing relevant content on an electronic device with a touch-sensitive display. The applicant listed for this patent is Apple Inc.. Invention is credited to Jesper A. ANDERSEN, Hafid ARRAS, Jerome R. BELLEGARDA, Alexandre CARLHIAN, Kevin D. CLARK, Patrick L. COFFMAN, Richard R. DELLINGER, Thomas DENIAU, Jannes G.A. DOLFING, Christopher P. FOSS, Jason J. GAUCI, Daniel C. GROSS, Aria D. HAGHIGHI, Jun HATORI, Cyrus D. IRANI, Bronwyn A. JONES, Gaurav KAPOOR, Karl Christian KOHLSCHUETTER, Stephen O. LEMAY, Mathieu J. MARTEL, Alexandre R. MOHA, Colin C. MORRIS, Giulia P. PAGALLO, Brent D. RAMERTH, Michael R. SIRACUSA, Sofiane TOUDJI, Xin WANG, Lawrence Y. YANG.

| Application Number | 20210006943 17/030300 |

| Document ID | / |

| Family ID | |

| Filed Date | 2021-01-07 |

View All Diagrams

| United States Patent Application | 20210006943 |

| Kind Code | A1 |

| GROSS; Daniel C. ; et al. | January 7, 2021 |

SYSTEMS AND METHODS FOR PROACTIVELY IDENTIFYING AND SURFACING RELEVANT CONTENT ON AN ELECTRONIC DEVICE WITH A TOUCH-SENSITIVE DISPLAY

Abstract

Systems and methods for proactively identifying and surfacing relevant content are disclosed herein. An example method includes: detecting, via the touch-sensitive display, a search activation gesture from a user of the electronic device. The method also includes: in response to detecting only the search activation gesture, displaying a search interface on substantially all of the touch-sensitive display, the search interface including: (i) a search entry portion; and (ii) a predictions portion with one or more user interface objects each associated with a respective locally-installed application. Each respective locally-installed application is selected from among a plurality of locally-installed applications for inclusion in the predictions portion based on an application usage history associated with the user of the electronic device.

| Inventors: | GROSS; Daniel C.; (San Francisco, CA) ; COFFMAN; Patrick L.; (San Francisco, CA) ; DELLINGER; Richard R.; (San Jose, CA) ; FOSS; Christopher P.; (San Francisco, CA) ; GAUCI; Jason J.; (Cupertino, CA) ; HAGHIGHI; Aria D.; (Seattle, WA) ; IRANI; Cyrus D.; (Los Altos, CA) ; JONES; Bronwyn A.; (London, GB) ; KAPOOR; Gaurav; (Santa Clara, CA) ; LEMAY; Stephen O.; (San Francisco, CA) ; MORRIS; Colin C.; (Sunnyvale, CA) ; SIRACUSA; Michael R.; (Mountain View, CA) ; YANG; Lawrence Y.; (Bellevue, WA) ; RAMERTH; Brent D.; (San Francisco, CA) ; BELLEGARDA; Jerome R.; (Saratoga, CA) ; DOLFING; Jannes G.A.; (Sunnyvale, CA) ; PAGALLO; Giulia P.; (Cupertino, CA) ; WANG; Xin; (San Jose, CA) ; HATORI; Jun; (San Francisco, CA) ; MOHA; Alexandre R.; (Los Altos, CA) ; CLARK; Kevin D.; (San Francisco, CA) ; KOHLSCHUETTER; Karl Christian; (Monte Sereno, CA) ; ANDERSEN; Jesper A.; (Portland, OR) ; ARRAS; Hafid; (Paris, FR) ; CARLHIAN; Alexandre; (Paris, FR) ; DENIAU; Thomas; (Paris, FR) ; MARTEL; Mathieu J.; (Paris, FR) ; TOUDJI; Sofiane; (San Francisco, CA) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Appl. No.: | 17/030300 | ||||||||||

| Filed: | September 23, 2020 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 16893098 | Jun 4, 2020 | |||

| 17030300 | ||||

| 16147557 | Sep 28, 2018 | 10735905 | ||

| 16893098 | ||||

| 15166226 | May 26, 2016 | 10200824 | ||

| 16147557 | ||||

| 62172019 | Jun 5, 2015 | |||

| 62167265 | May 27, 2015 | |||

| Current U.S. Class: | 1/1 |

| International Class: | H04W 4/029 20060101 H04W004/029; G06F 9/451 20060101 G06F009/451; H04W 4/50 20060101 H04W004/50; G06F 3/0488 20060101 G06F003/0488; G06F 3/01 20060101 G06F003/01; H04W 4/40 20060101 H04W004/40 |

Claims

1. A non-transitory computer-readable storage medium storing executable instructions that, when executed by an electronic device with a display and a touch-sensitive surface, cause the electronic device to: present, on the display, a text-input field associated with a first application and textual content associated with the first application; determine, based on at least a portion of the textual content, whether a next input from a user of the electronic device to the text-input field likely relates to a first type of information; in accordance with a determination that a next input likely relates to the first type of information: obtain first information; and prepare the obtained first information for display as a predicted content item; display, within the first application, an affordance that includes the predicted content item; detect, via the touch-sensitive surface, a selection of the affordance; and in response to detecting the selection, display a representation of the predicted content item.

2. The non-transitory computer-readable storage medium of claim 1, wherein the first type of information is a location, and wherein obtaining the first information includes obtaining a suggested physical location.

3. The non-transitory computer-readable storage medium of claim 2, wherein obtaining the suggested physical location includes obtaining current location information from a location sensor on the electronic device.

4. The non-transitory computer-readable storage medium of claim 2, wherein obtaining the a suggested physical location includes analyzing the textual content and determining, based at least in part on the portion of the analyzed textual content, the suggested physical location.

5. The non-transitory computer-readable storage medium of claim 4, wherein determining the suggested physical location is further based on location information recently viewed in a second application.

6. The non-transitory computer-readable storage medium of claim 2, wherein the representation of the predicted content item is an address for the suggested physical location.

7. The non-transitory computer-readable storage medium of claim 2, wherein the representation of the predicted content item is a maps object that includes an identifier for the suggested physical location.

8. The non-transitory computer-readable storage medium of claim 1, wherein the first type of information is a contact, and wherein obtaining the first information includes conducting a search on the electronic device for contact information related to the portion of the textual content.

9. The non-transitory computer-readable storage medium of claim 1, wherein the first type of information is an event, and wherein obtaining the first information includes conducting a new search on the electronic device for event information related to the portion of the textual content.

10. The non-transitory computer-readable storage medium of claim 1, wherein determining whether the next input to the text-input field likely relates to the first type of information includes: performing natural-language processing on the portion of the textual content; and determining that the portion of the textual content includes a question about the current location of the user of the electronic device.

11. The non-transitory computer-readable storage medium of claim 1, wherein determining whether the next input to the text-input field likely relates to the first type of information includes: parsing the textual content as it is received by the application to detect stored patterns known to relate to the first type of information.

12. The non-transitory computer-readable storage medium of claim 1, wherein the executable instructions, when executed by the electronic device, further cause the electronic device to: in accordance with detecting an additional input not selecting the affordance: cease to display the affordance.

13. The non-transitory computer-readable storage medium of claim 1, wherein the affordance is displayed adjacent to the text-input field.

14. A method, comprising: at an electronic device with a display and a touch-sensitive surface: presenting, on the display, a text-input field associated with a first application and textual content associated with the first application; determining, based on at least a portion of the textual content, whether a next input from a user of the electronic device to the text-input field likely relates to a first type of information; in accordance with a determination that a next input likely relates to the first type of information: obtaining first information; and preparing the obtained first information for display as a predicted content item; displaying, within the first application, an affordance that includes the predicted content item; detecting, via the touch-sensitive surface, a selection of the affordance; and in response to detecting the selection, displaying a representation of the predicted content item.

15. An electronic device, comprising: a touch-sensitive display; one or more processors; a memory; and one or more programs, wherein the one or more programs are stored in the memory and configured to be executed by the one or more processors, the one or more programs including instructions for: presenting, on the display, a text-input field associated with a first application and textual content associated with the first application; determining, based on at least a portion of the textual content, whether a next input from a user of the electronic device to the text-input field likely relates to a first type of information; in accordance with a determination that a next input likely relates to the first type of information: obtaining first information; and preparing the obtained first information for display as a predicted content item; displaying, within the first application, an affordance that includes the predicted content item; detecting, via the touch-sensitive surface, a selection of the affordance; and in response to detecting the selection, displaying a representation of the predicted content item.

Description

RELATED APPLICATION

[0001] This application is a continuation of U.S. patent application Ser. No. 16/893,098, filed Jun. 4, 2020, which is a continuation of U.S. patent application Ser. No. 16/147,557, filed Sep. 28, 2018, which is a continuation of U.S. application Ser. No. 15/166,226, filed May 26, 2016, which claims priority to U.S. Provisional Application Ser. No. 62/172,019, filed Jun. 5, 2015, and U.S. Provisional Application Ser. No. 62/167,265, filed May 27, 2015. Each of these applications is incorporated by reference herein in its respective entirety.

TECHNICAL FIELD

[0002] The embodiments disclosed herein generally relate to electronic devices with touch-sensitive displays and, more specifically, to systems and methods for proactively identifying and surfacing relevant content on an electronic device with a touch-sensitive display.

BACKGROUND

[0003] Handheld electronic devices with touch-sensitive displays are ubiquitous. Users of these ubiquitous handheld electronic devices now install numerous applications on their devices and use these applications to help them perform their daily activities more efficiently. In order to access these applications, however, users typically must unlock their devices, locate a desired application (e.g., by navigating through a home screen to locate an icon associated with the desired application or by searching for the desired application within a search interface), and then also locate a desired function within the desired application. Therefore, users often spend a significant amount of time locating desired applications and desired functions within those applications, instead of simply being able to immediately execute (e.g., with a single touch input) the desired application and/or perform the desired function.

[0004] Moreover, the numerous installed applications inundate users with a continuous stream of information that cannot be thoroughly reviewed immediately. As such, users often wish to return at a later point in time to review a particular piece of information that they noticed earlier or to use a particular piece of information at a later point in time. Oftentimes, however, users are unable to locate or fail to remember how to locate the particular piece of information.

[0005] As such, it is desirable to provide an intuitive and easy-to-use system and method for proactively identifying and surfacing relevant content (e.g., the particular piece of information) on an electronic device that is in communication with a display and a touch-sensitive surface.

SUMMARY

[0006] Accordingly, there is a need for electronic devices with faster, more efficient methods and interfaces for quickly accessing applications and desired functions within those applications. Moreover, there is a need for electronic devices that assist users with managing the continuous stream of information they receive daily by proactively identifying and providing relevant information (e.g., contacts, nearby places, applications, news articles, addresses, and other information available on the device) before the information is explicitly requested by a user. Such methods and interfaces optionally complement or replace conventional methods for accessing applications. Such methods and interfaces produce a more efficient human-machine interface by requiring fewer inputs in order for users to locate desired information. For battery-operated devices, such methods and interfaces conserve power and increase the time between battery charges (e.g., by requiring a fewer number of touch inputs in order to perform various functions). Moreover, such methods and interfaces help to extend the life of the touch-sensitive display by requiring a fewer number of touch inputs (e.g., instead of having to continuously and aimlessly tap on a touch-sensitive display to locate a desired piece of information, the methods and interfaces disclosed herein proactively provide that piece of information without requiring user input).

[0007] The above deficiencies and other problems associated with user interfaces for electronic devices with touch-sensitive surfaces are addressed by the disclosed devices. In some embodiments, the device is a desktop computer. In some embodiments, the device is portable (e.g., a notebook computer, tablet computer, or handheld device). In some embodiments, the device has a touchpad. In some embodiments, the device has a touch-sensitive display (also known as a "touch screen" or "touch-screen display"). In some embodiments, the device has a graphical user interface (GUI), one or more processors, memory and one or more modules, programs or sets of instructions stored in the memory for performing multiple functions. In some embodiments, the user interacts with the GUI primarily through stylus and/or finger contacts and gestures on the touch-sensitive surface. In some embodiments, the functions optionally include image editing, drawing, presenting, word processing, website creating, disk authoring, spreadsheet making, game playing, telephoning, video conferencing, e-mailing, instant messaging, fitness support, digital photography, digital video recording, web browsing, digital music playing, and/or digital video playing. Executable instructions for performing these functions are, optionally, included in a non-transitory computer-readable storage medium or other computer program product configured for execution by one or more processors.

[0008] (A1) In accordance with some embodiments, a method is performed at an electronic device (e.g., portable multifunction device 100, FIG. 1A, configured in accordance with any one of Computing Device A-D, FIG. 1E) with a touch-sensitive display (touch screen 112, FIG. 1C). The method includes: executing, on the electronic device, an application in response to an instruction from a user of the electronic device. While executing the application, the method further includes: collecting usage data. The usage data at least includes one or more actions (or types of actions) performed by the user within the application. The method also includes: (i) automatically, without human intervention, obtaining at least one trigger condition based on the collected usage data and (ii) associating the at least one trigger condition with a particular action of the one or more actions performed by the user within the application. Upon determining that the at least one trigger condition has been satisfied, the method includes: providing an indication to the user that the particular action associated with the trigger condition is available.

[0009] (A2) In some embodiments of the method of A1, obtaining the at least one trigger condition includes sending, to one or more servers that are remotely located from the electronic device, the usage data and receiving, from the one or more servers, the at least one trigger condition.

[0010] (A3) In some embodiments of the method of any one of A1-A2, providing the indication includes displaying, on a lock screen on the touch-sensitive display, a user interface object corresponding to the particular action associated with the trigger condition.

[0011] (A4) In some embodiments of the method of A3, the user interface object includes a description of the particular action associated with the trigger condition.

[0012] (A5) In some embodiments of the method of A4, the user interface object further includes an icon associated with the application.

[0013] (A6) In some embodiments of the method of any one of A3-A5, the method further includes: detecting a first gesture at the user interface object. In response to detecting the first gesture: (i) displaying, on the touch-sensitive display, the application and (ii) while displaying the application, the method includes: performing the particular action associated with the trigger condition.

[0014] (A7) In some embodiments of the method of A6, the first gesture is a swipe gesture over the user interface object.

[0015] (A8) In some embodiments of the method of any one of A3-A5, the method further includes: detecting a second gesture at the user interface object. In response to detecting the second gesture and while continuing to display the lock screen on the touch-sensitive display, performing the particular action associated with the trigger condition.

[0016] (A9) In some embodiments of the method of A8, the second gesture is a single tap at a predefined area of the user interface object.

[0017] (A10) In some embodiments of the method of any one of A3-A9, the user interface object is displayed in a predefined central portion of the lock screen.

[0018] (A11) In some embodiments of the method of A1, providing the indication to the user that the particular action associated with the trigger condition is available includes performing the particular action.

[0019] (A12) In some embodiments of the method of A3, the user interface object is an icon associated with the application and the user interface object is displayed substantially in a corner of the lock screen on the touch-sensitive display.

[0020] (A13) In some embodiments of the method of any one of A1-A12, the method further includes: receiving an instruction from the user to unlock the electronic device. In response to receiving the instruction, the method includes: displaying, on the touch-sensitive display, a home screen of the electronic device. The method also includes: providing, on the home screen, the indication to the user that the particular action associated with the trigger condition is available.

[0021] (A14) In some embodiments of the method of A13, the home screen includes (i) a first portion including one or more user interface pages for launching a first set of applications available on the electronic device and (ii) a second portion, that is displayed adjacent to the first portion, for launching a second set of applications available on the electronic device. The second portion is displayed on all user interface pages included in the first portion and providing the indication on the home screen includes displaying the indication over the second portion.

[0022] (A15) In some embodiments of the method of A14, the second set of applications is distinct from and smaller than the first set of applications.

[0023] (A16) In some embodiments of the method of any one of A1-A15, determining that the at least one trigger condition has been satisfied includes determining that the electronic device has been coupled with a second device, distinct from the electronic device.

[0024] (A17) In some embodiments of the method of any one of A1-A16, determining that the at least one trigger condition has been satisfied includes determining that the electronic device has arrived at an address corresponding to a home or a work location associated with the user.

[0025] (A18) In some embodiments of the method of A17, determining that the electronic device has arrived at an address corresponding to the home or the work location associated with the user includes monitoring motion data from an accelerometer of the electronic device and determining, based on the monitored motion data, that the electronic device has not moved for more than a threshold amount of time.

[0026] (A19) In some embodiments of the method of any one of A1-A18, the usage data further includes verbal instructions, from the user, provided to a virtual assistant application while continuing to execute the application. The at least one trigger condition is further based on the verbal instructions provided to the virtual assistant application.

[0027] (A20) In some embodiments of the method of A19, the verbal instructions comprise a request to create a reminder that corresponds to a current state of the application, the current state corresponding to a state of the application when the verbal instructions were provided.

[0028] (A21) In some embodiments of the method of A20, the state of the application when the verbal instructions were provided is selected from the group consisting of: a page displayed within the application when the verbal instructions were provided, content playing within the application when the verbal instructions were provided, a notification displayed within the application when the verbal instructions were provided, and an active portion of the page displayed within the application when the verbal instructions were provided.

[0029] (A22) In some embodiments of the method of A20, the verbal instructions include the term "this" in reference to the current state of the application.

[0030] (A23) In another aspect, a method is performed at one or more electronic devices (e.g., portable multifunction device 100, FIG. 5, and one or more servers 502, FIG. 5). The method includes: executing, on a first electronic device of the one or more electronic devices, an application in response to an instruction from a user of the first electronic device. While executing the application, the method includes: automatically, without human intervention, collecting usage data, the usage data at least including one or more actions (or types of actions) performed by the user within the application. The method further includes: automatically, without human intervention, establishing at least one trigger condition based on the collected usage data. The method additionally includes: associating the at least one trigger condition with particular action of the one or more actions performed by the user within the application. Upon determining that the at least one trigger condition has been satisfied, the method includes: providing an indication to the user that the particular action associated with the trigger condition is available.

[0031] (A24) In another aspect, an electronic device is provided. In some embodiments, the electronic device includes: a touch-sensitive display, one or more processors, and memory storing one or more programs which, when executed by the one or more processors, cause the electronic device to perform the method described in any one of A1-A22.

[0032] (A25) In yet another aspect, an electronic device is provided and the electronic device includes: a touch-sensitive display and means for performing the method described in any one of A1-A22.

[0033] (A26) In still another aspect, a non-transitory computer-readable storage medium is provided. The non-transitory computer-readable storage medium stores executable instructions that, when executed by an electronic device with a touch-sensitive display, cause the electronic device to perform the method described in any one of A1-A22.

[0034] (A27) In still one more aspect, a graphical user interface on an electronic device with a touch-sensitive display is provided. In some embodiments, the graphical user interface includes user interfaces displayed in accordance with the method described in any one of A1-A22.

[0035] (A28) In one additional aspect, an electronic device is provided that includes a display unit (e.g., display unit 4201, FIG. 42), a touch-sensitive surface unit (e.g., touch-sensitive surface unit 4203, FIG. 42), and a processing unit (e.g., processing unit 4205, FIG. 42). In some embodiments, the electronic device is configured in accordance with any one of the computing devices shown in FIG. 1E (i.e., Computing Devices A-D). For ease of illustration, FIG. 42 shows display unit 4201 and touch-sensitive surface unit 4203 as integrated with electronic device 4200, however, in some embodiments one or both of these units are in communication with the electronic device, although the units remain physically separate from the electronic device. The processing unit is coupled with the touch-sensitive surface unit and the display unit. In some embodiments, the touch-sensitive surface unit and the display unit are integrated in a single touch-sensitive display unit (also referred to herein as a touch-sensitive display). The processing unit includes an executing unit (e.g., executing unit 4207, FIG. 42), a collecting unit (e.g., collecting unit 4209, FIG. 42), an obtaining unit (e.g., obtaining unit 4211, FIG. 42), an associating unit (e.g., associating unit 4213, FIG. 42), a providing unit (e.g., providing unit 4215, FIG. 42), a sending unit (e.g., sending unit 4217, FIG. 42), a receiving unit (e.g., receiving unit 4219, FIG. 42), a displaying unit (e.g., displaying unit 4221, FIG. 42), a detecting unit (e.g., detecting unit 4223, FIG. 42), a performing unit (e.g., performing unit 4225, FIG. 42), a determining unit (e.g., determining unit 4227, FIG. 42), and a monitoring unit (e.g., monitoring unit 4229, FIG. 42). The processing unit (or one or more components thereof, such as the units 4207-4229) is configured to: execute (e.g., with the executing unit 4207), on the electronic device, an application in response to an instruction from a user of the electronic device; while executing the application, collect usage data (e.g., with the collecting unit 4209), the usage data at least including one or more actions performed by the user within the application; automatically, without human intervention, obtain (e.g., with the obtaining unit 4211) at least one trigger condition based on the collected usage data; associate (e.g., with the associating unit 4213) the at least one trigger condition with a particular action of the one or more actions performed by the user within the application; and upon determining that the at least one trigger condition has been satisfied, provide (e.g., with the providing unit 4215) an indication to the user that the particular action associated with the trigger condition is available.

[0036] (A29) In some embodiments of the electronic device of A28, obtaining the at least one trigger condition includes sending (e.g., with the sending unit 4217), to one or more servers that are remotely located from the electronic device, the usage data and receiving (e.g., with the receiving unit 4219), from the one or more servers, the at least one trigger condition.

[0037] (A30) In some embodiments of the electronic device of any one of A28-A29, providing the indication includes displaying (e.g., with the displaying unit 4217 and/or the display unit 4201), on a lock screen on the touch-sensitive display unit, a user interface object corresponding to the particular action associated with the trigger condition.

[0038] (A31) In some embodiments of the electronic device of A30, the user interface object includes a description of the particular action associated with the trigger condition.

[0039] (A32) In some embodiments of the electronic device of A31, the user interface object further includes an icon associated with the application.

[0040] (A33) In some embodiments of the electronic device of any one of A30-A32, the processing unit is further configured to: detect (e.g., with the detecting unit 4223 and/or the touch-sensitive surface unit 4203) a first gesture at the user interface object. In response to detecting the first gesture: (i) display (e.g., with the displaying unit 4217 and/or the display unit 4201), on the touch-sensitive display unit, the application and (ii) while displaying the application, perform (e.g., with the performing unit 4225) the particular action associated with the trigger condition.

[0041] (A34) In some embodiments of the electronic device of A33, the first gesture is a swipe gesture over the user interface object.

[0042] (A35) In some embodiments of the electronic device of any one of A30-A33, the processing unit is further configured to: detect (e.g., with the detecting unit 4223 and/or the touch-sensitive surface unit 4203) a second gesture at the user interface object. In response to detecting the second gesture and while continuing to display the lock screen on the touch-sensitive display unit, the processing unit is configured to: perform (e.g., with the performing unit 4225) the particular action associated with the trigger condition.

[0043] (A36) In some embodiments of the electronic device of A35, the second gesture is a single tap at a predefined area of the user interface object.

[0044] (A37) In some embodiments of the electronic device of any one of A30-A36, the user interface object is displayed in a predefined central portion of the lock screen.

[0045] (A38) In some embodiments of the electronic device of A28, providing the indication to the user that the particular action associated with the trigger condition is available includes performing (e.g., with the performing unit 4225) the particular action.

[0046] (A39) In some embodiments of the electronic device of A30, the user interface object is an icon associated with the application and the user interface object is displayed substantially in a corner of the lock screen on the touch-sensitive display unit.

[0047] (A40) In some embodiments of the electronic device of any one of A28-A39, the processing unit is further configured to: receive (e.g., with the receiving unit 4219) an instruction from the user to unlock the electronic device. In response to receiving the instruction, the processing unit is configured to: display (e.g., with the displaying unit 4217 and/or the display unit 4201), on the touch-sensitive display unit, a home screen of the electronic device. The processing unit is also configured to: provide (e.g., with the providing unit 4215), on the home screen, the indication to the user that the particular action associated with the trigger condition is available.

[0048] (A41) In some embodiments of the electronic device of A40, the home screen includes (i) a first portion including one or more user interface pages for launching a first set of applications available on the electronic device and (ii) a second portion, that is displayed adjacent to the first portion, for launching a second set of applications available on the electronic device. The second portion is displayed on all user interface pages included in the first portion and providing the indication on the home screen includes displaying (e.g., with the displaying unit 4217 and/or the display unit 4201) the indication over the second portion.

[0049] (A42) In some embodiments of the electronic device of A41, the second set of applications is distinct from and smaller than the first set of applications.

[0050] (A43) In some embodiments of the electronic device of any one of A28-A42, determining that the at least one trigger condition has been satisfied includes determining (e.g., with the determining unit 4227) that the electronic device has been coupled with a second device, distinct from the electronic device.

[0051] (A44) In some embodiments of the electronic device of any one of A28-A43, determining that the at least one trigger condition has been satisfied includes determining (e.g., with the determining unit 4227) that the electronic device has arrived at an address corresponding to a home or a work location associated with the user.

[0052] (A45) In some embodiments of the electronic device of A44, determining that the electronic device has arrived at an address corresponding to the home or the work location associated with the user includes monitoring (e.g., with the monitoring unit 4229) motion data from an accelerometer of the electronic device and determining (e.g., with the determining unit 4227), based on the monitored motion data, that the electronic device has not moved for more than a threshold amount of time.

[0053] (A46) In some embodiments of the electronic device of any one of A28-A45, the usage data further includes verbal instructions, from the user, provided to a virtual assistant application while continuing to execute the application. The at least one trigger condition is further based on the verbal instructions provided to the virtual assistant application.

[0054] (A47) In some embodiments of the electronic device of A46, the verbal instructions comprise a request to create a reminder that corresponds to a current state of the application, the current state corresponding to a state of the application when the verbal instructions were provided.

[0055] (A48) In some embodiments of the electronic device of A47, the state of the application when the verbal instructions were provided is selected from the group consisting of: a page displayed within the application when the verbal instructions were provided, content playing within the application when the verbal instructions were provided, a notification displayed within the application when the verbal instructions were provided, and an active portion of the page displayed within the application when the verbal instructions were provided.

[0056] (A49) In some embodiments of the electronic device of A46, the verbal instructions include the term "this" in reference to the current state of the application.

[0057] (B1) In accordance with some embodiments, a method is performed at an electronic device (e.g., portable multifunction device 100, FIG. 1A, configured in accordance with any one of Computing Device A-D, FIG. 1E) with a touch-sensitive display (touch screen 112, FIG. 1C). The method includes: obtaining at least one trigger condition that is based on usage data associated with a user of the electronic device, the usage data including one or more actions (or types of actions) performed by the user within an application while the application was executing on the electronic device. The method also includes: associating the at least one trigger condition with a particular action of the one or more actions performed by the user within the application. Upon determining that the at least one trigger condition has been satisfied, the method includes: providing an indication to the user that the particular action associated with the trigger condition is available.

[0058] (B2) In some embodiments of the method of B1, the method further includes the method described in any one of A2-A22.

[0059] (B3) In another aspect, an electronic device is provided. In some embodiments, the electronic device includes: a touch-sensitive display, one or more processors, and memory storing one or more programs which, when executed by the one or more processors, cause the electronic device to perform the method described in any one of B1-B2.

[0060] (B4) In yet another aspect, an electronic device is provided and the electronic device includes: a touch-sensitive display and means for performing the method described in any one of B1-B2.

[0061] (B5) In still another aspect, a non-transitory computer-readable storage medium is provided. The non-transitory computer-readable storage medium stores executable instructions that, when executed by an electronic device with a touch-sensitive display, cause the electronic device to perform the method described in any one of B1-B2.

[0062] (B6) In still one more aspect, a graphical user interface on an electronic device with a touch-sensitive display is provided. In some embodiments, the graphical user interface includes user interfaces displayed in accordance with the method described in any one of B1-B2.

[0063] (B7) In one additional aspect, an electronic device is provided that includes a display unit (e.g., display unit 4201, FIG. 42), a touch-sensitive surface unit (e.g., touch-sensitive surface unit 4203, FIG. 42), and a processing unit (e.g., processing unit 4205, FIG. 42). In some embodiments, the electronic device is configured in accordance with any one of the computing devices shown in FIG. 1E (i.e., Computing Devices A-D). For ease of illustration, FIG. 42 shows display unit 4201 and touch-sensitive surface unit 4203 as integrated with electronic device 4200, however, in some embodiments one or both of these units are in communication with the electronic device, although the units remain physically separate from the electronic device. The processing unit includes an executing unit (e.g., executing unit 4207, FIG. 42), a collecting unit (e.g., collecting unit 4209, FIG. 42), an obtaining unit (e.g., obtaining unit 4211, FIG. 42), an associating unit (e.g., associating unit 4213, FIG. 42), a providing unit (e.g., providing unit 4215, FIG. 42), a sending unit (e.g., sending unit 4217, FIG. 42), a receiving unit (e.g., receiving unit 4219, FIG. 42), a displaying unit (e.g., displaying unit 4221, FIG. 42), a detecting unit (e.g., detecting unit 4223, FIG. 42), a performing unit (e.g., performing unit 4225, FIG. 42), a determining unit (e.g., determining unit 4227, FIG. 42), and a monitoring unit (e.g., monitoring unit 4229, FIG. 42). The processing unit (or one or more components thereof, such as the units 4207-4229) is configured to: obtain (e.g., with the obtaining unit 4211) at least one trigger condition that is based on usage data associated with a user of the electronic device, the usage data including one or more actions performed by the user within an application while the application was executing on the electronic device; associate (e.g., with the associating unit 4213) the at least one trigger condition with a particular action of the one or more actions performed by the user within the application; and upon determining that the at least one trigger condition has been satisfied, provide (e.g., with the providing unit 4215) an indication to the user that the particular action associated with the trigger condition is available.

[0064] (B8) In some embodiments of the electronic device of B7, obtaining the at least one trigger condition includes sending (e.g., with the sending unit 4217), to one or more servers that are remotely located from the electronic device, the usage data and receiving (e.g., with the receiving unit 4219), from the one or more servers, the at least one trigger condition.

[0065] (B9) In some embodiments of the electronic device of any one of B7-B8, providing the indication includes displaying (e.g., with the displaying unit 4217 and/or the display unit 4201), on a lock screen on the touch-sensitive display, a user interface object corresponding to the particular action associated with the trigger condition.

[0066] (B10) In some embodiments of the electronic device of B9, the user interface object includes a description of the particular action associated with the trigger condition.

[0067] (B11) In some embodiments of the electronic device of B10, the user interface object further includes an icon associated with the application.

[0068] (B12) In some embodiments of the electronic device of any one of B9-B11, the processing unit is further configured to: detect (e.g., with the detecting unit 4223 and/or the touch-sensitive surface unit 4203) a first gesture at the user interface object. In response to detecting the first gesture: (i) display (e.g., with the displaying unit 4217 and/or the display unit 4201), on the touch-sensitive display, the application and (ii) while displaying the application, perform (e.g., with the performing unit 4225) the particular action associated with the trigger condition.

[0069] (B13) In some embodiments of the electronic device of B12, the first gesture is a swipe gesture over the user interface object.

[0070] (B14) In some embodiments of the electronic device of any one of B9-B12, the processing unit is further configured to: detect (e.g., with the detecting unit 4223 and/or the touch-sensitive surface unit 4203) a second gesture at the user interface object. In response to detecting the second gesture and while continuing to display the lock screen on the touch-sensitive display, the processing unit is configured to: perform (e.g., with the performing unit 4225) the particular action associated with the trigger condition.

[0071] (B15) In some embodiments of the electronic device of B14, the second gesture is a single tap at a predefined area of the user interface object.

[0072] (B16) In some embodiments of the electronic device of any one of B9-B15, the user interface object is displayed in a predefined central portion of the lock screen.

[0073] (B17) In some embodiments of the electronic device of B7, providing the indication to the user that the particular action associated with the trigger condition is available includes performing (e.g., with the performing unit 4225) the particular action.

[0074] (B18) In some embodiments of the electronic device of B9, the user interface object is an icon associated with the application and the user interface object is displayed substantially in a corner of the lock screen on the touch-sensitive display.

[0075] (B19) In some embodiments of the electronic device of any one of B7-B18, the processing unit is further configured to: receive (e.g., with the receiving unit 4219) an instruction from the user to unlock the electronic device. In response to receiving the instruction, the processing unit is configured to: display (e.g., with the displaying unit 4217 and/or the display unit 4201), on the touch-sensitive display, a home screen of the electronic device. The processing unit is also configured to: provide (e.g., with the providing unit 4215), on the home screen, the indication to the user that the particular action associated with the trigger condition is available.

[0076] (B20) In some embodiments of the electronic device of B19, the home screen includes (i) a first portion including one or more user interface pages for launching a first set of applications available on the electronic device and (ii) a second portion, that is displayed adjacent to the first portion, for launching a second set of applications available on the electronic device. The second portion is displayed on all user interface pages included in the first portion and providing the indication on the home screen includes displaying (e.g., with the displaying unit 4217 and/or the display unit 4201) the indication over the second portion.

[0077] (B21) In some embodiments of the electronic device of B20, the second set of applications is distinct from and smaller than the first set of applications.

[0078] (B22) In some embodiments of the electronic device of any one of B7-B21, determining that the at least one trigger condition has been satisfied includes determining (e.g., with the determining unit 4227) that the electronic device has been coupled with a second device, distinct from the electronic device.

[0079] (B23) In some embodiments of the electronic device of any one of B7-B22, determining that the at least one trigger condition has been satisfied includes determining (e.g., with the determining unit 4227) that the electronic device has arrived at an address corresponding to a home or a work location associated with the user.

[0080] (B24) In some embodiments of the electronic device of B23, determining that the electronic device has arrived at an address corresponding to the home or the work location associated with the user includes monitoring (e.g., with the monitoring unit 4229) motion data from an accelerometer of the electronic device and determining (e.g., with the determining unit 4227), based on the monitored motion data, that the electronic device has not moved for more than a threshold amount of time.

[0081] (B25) In some embodiments of the electronic device of any one of B7-B24, the usage data further includes verbal instructions, from the user, provided to a virtual assistant application while continuing to execute the application. The at least one trigger condition is further based on the verbal instructions provided to the virtual assistant application.

[0082] (B26) In some embodiments of the electronic device of B25, the verbal instructions comprise a request to create a reminder that corresponds to a current state of the application, the current state corresponding to a state of the application when the verbal instructions were provided.

[0083] (B27) In some embodiments of the electronic device of B26, the state of the application when the verbal instructions were provided is selected from the group consisting of: a page displayed within the application when the verbal instructions were provided, content playing within the application when the verbal instructions were provided, a notification displayed within the application when the verbal instructions were provided, and an active portion of the page displayed within the application when the verbal instructions were provided.

[0084] (B28) In some embodiments of the electronic device of B26, the verbal instructions include the term "this" in reference to the current state of the application.

[0085] (C1) In accordance with some embodiments, a method is performed at an electronic device (e.g., portable multifunction device 100, FIG. 1A, configured in accordance with any one of Computing Device A-D, FIG. 1E) with a touch-sensitive display (touch screen 112, FIG. 1C). The method includes: detecting a search activation gesture on the touch-sensitive display from a user of the electronic device. In response to detecting the search activation gesture, the method includes: displaying a search interface on the touch-sensitive display that includes: (i) a search entry portion and (ii) a predictions portion that is displayed before receiving any user input at the search entry portion. The predictions portion is populated with one or more of: (a) at least one affordance for contacting a person of a plurality of previously-contacted people, the person being automatically selected from the plurality of previously-contacted people based at least in part on a current time and (b) at least one affordance for executing a predicted action within an application of a plurality of applications available on the electronic device, the predicted action being automatically selected based at least in part on an application usage history associated with the user of the electronic device.

[0086] (C2) In some embodiments of the method of C1, the person is further selected based at least in part on location data corresponding to the electronic device.

[0087] (C3) In some embodiments of the method of any one of C1-C2, the application usage history and contact information for the person are retrieved from a memory of the electronic device.

[0088] (C4) In some embodiments of the method of any one of C1-C2, the application usage history and contact information for the person are retrieved from a server that is remotely located from the electronic device.

[0089] (C5) In some embodiments of the method of any one of C1-C4, the predictions portion is further populated with at least one affordance for executing a predicted application, the predicted application being automatically selected based at least in part on the application usage history.

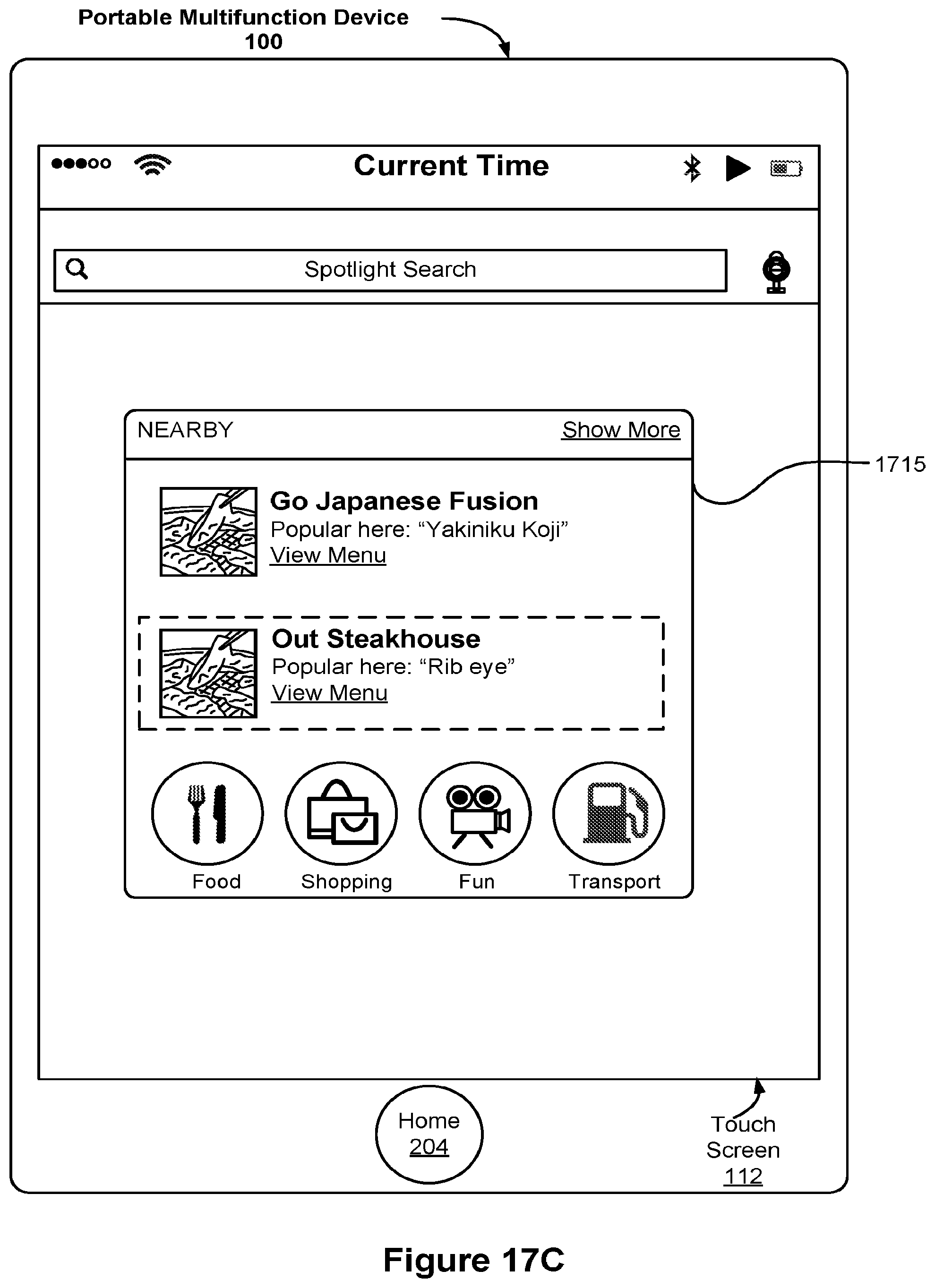

[0090] (C6) In some embodiments of the method of any one of C1-05, the predictions portion is further populated with at least one affordance for a predicted category of places (or nearby places), and the predicted category of places is automatically selected based at least in part on one or more of: the current time and location data corresponding to the electronic device.

[0091] (C7) In some embodiments of the method of any one of C1-C6, the method further includes: detecting user input to scroll the predictions portion. In response to detecting the user input to scroll the predictions portion, the method includes: scrolling the predictions portion in accordance with the user input. In response to the scrolling, the method includes: revealing at least one affordance for a predicted news article in the predictions portion (e.g., the predicted news article is one that is predicted to be of interest to the user).

[0092] (C8) In some embodiments of the method of C7, the predicted news article is automatically selected based at least in part on location data corresponding to the electronic device.

[0093] (C9) In some embodiments of the method of any one of C1-C8, the method further includes: detecting a selection of the at least one affordance for executing the predicted action within the application. In response to detecting the selection, the method includes: displaying, on the touch-sensitive display, the application and executing the predicted action within the application.

[0094] (C10) In some embodiments of the method of any one of C3-C4, the method further includes: detecting a selection of the at least one affordance for contacting the person. In response to detecting the selection, the method includes: contacting the person using the contact information for the person.

[0095] (C11) In some embodiments of the method of C5, the method further includes: detecting a selection of the at least one affordance for executing the predicted application. In response to detecting the selection, the method includes: displaying, on the touch-sensitive display, the predicted application.

[0096] (C12) In some embodiments of the method of C6, the method further includes: detecting a selection of the at least one affordance for the predicted category of places. In response to detecting the selection, the method further includes: (i) receiving data corresponding to at least one nearby place and (ii) displaying, on the touch-sensitive display, the received data corresponding to the at least one nearby place.

[0097] (C13) In some embodiments of the method of C7, the method further includes: detecting a selection of the at least one affordance for the predicted news article. In response to detecting the selection, the method includes: displaying, on the touch-sensitive display, the predicted news article.

[0098] (C14) In some embodiments of the method of any one of C1-C13, the search activation gesture is available from at least two distinct user interfaces, and a first user interface of the at least two distinct user interfaces corresponds to displaying a respective home screen page of a sequence of home screen pages on the touch-sensitive display.

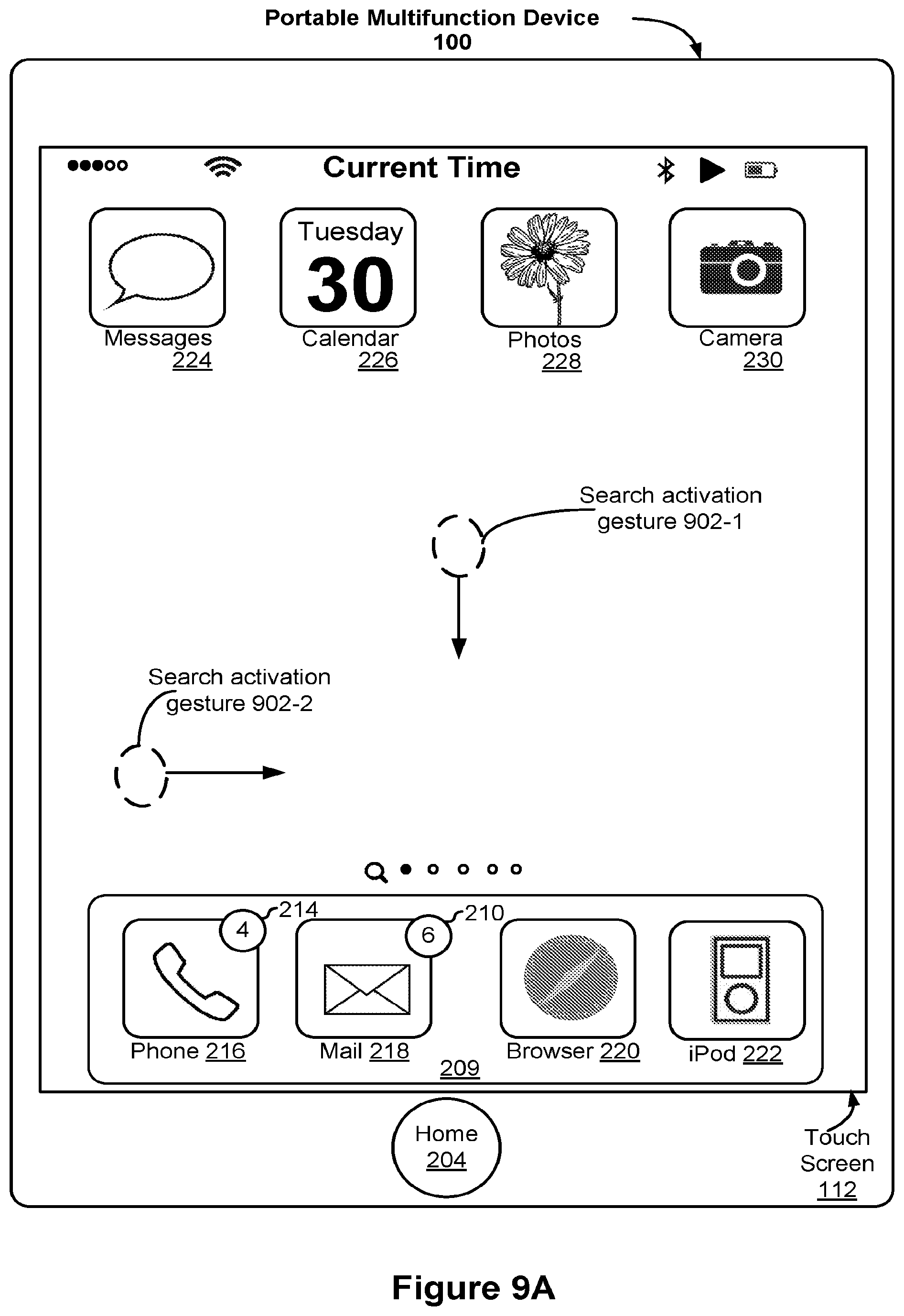

[0099] (C15) In some embodiments of the method of C14, when the respective home screen page is a first home screen page in the sequence of home screen pages, the search activation gesture comprises one of the following: (i) a gesture moving in a substantially downward direction relative to the user of the electronic device or (ii) a continuous gesture moving in a substantially left-to-right direction relative to the user and substantially perpendicular to the downward direction.

[0100] (C16) In some embodiments of the method of C15, when the respective home screen page is a second home screen page in the sequence of home screen pages, the search activation gesture comprises the continuous gesture moving in the substantially downward direction relative to the user of the electronic device.

[0101] (C17) In some embodiments of the method of C14, a second user interface of the at least two distinct user interfaces corresponds to displaying an application switching interface on the touch-sensitive display.

[0102] (C18) In some embodiments of the method of C17, the search activation gesture comprises a contact, on the touch-sensitive display, at a predefined search activation portion of the application switching interface.

[0103] (C19) In another aspect, an electronic device is provided. In some embodiments, the electronic device includes: a touch-sensitive display, one or more processors, and memory storing one or more programs which, when executed by the one or more processors, cause the electronic device to perform the method described in any one of C1-C18.

[0104] (C20) In yet another aspect, an electronic device is provided and the electronic device includes: a touch-sensitive display and means for performing the method described in any one of C1-C18.

[0105] (C21) In still another aspect, a non-transitory computer-readable storage medium is provided. The non-transitory computer-readable storage medium stores executable instructions that, when executed by an electronic device with a touch-sensitive display, cause the electronic device to perform the method described in any one of C1-C18.

[0106] (C22) In still one more aspect, a graphical user interface on an electronic device with a touch-sensitive display is provided. In some embodiments, the graphical user interface includes user interfaces displayed in accordance with the method described in any one of C1-C18.

[0107] (C23) In one additional aspect, an electronic device is provided that includes a display unit (e.g., display unit 4301, FIG. 43), a touch-sensitive surface unit (e.g., touch-sensitive surface unit 4303, FIG. 43), and a processing unit (e.g., processing unit 4305, FIG. 43). In some embodiments, the electronic device is configured in accordance with any one of the computing devices shown in FIG. 1E (i.e., Computing Devices A-D). For ease of illustration, FIG. 43 shows display unit 4301 and touch-sensitive surface unit 4303 as integrated with electronic device 4300, however, in some embodiments one or both of these units are in communication with the electronic device, although the units remain physically separate from the electronic device. The processing unit includes a displaying unit (e.g., displaying unit 4309, FIG. 43), a detecting unit (e.g., detecting unit 4307, FIG. 43), a retrieving unit (e.g., retrieving unit 4311, FIG. 43), a populating unit (e.g., populating unit 4313, FIG. 43), a scrolling unit (e.g., scrolling unit 4315, FIG. 43), a revealing unit (e.g., revealing unit 4317, FIG. 43), a selecting unit (e.g., selecting unit 4319, FIG. 43), a contacting unit (e.g., contacting unit 4321, FIG. 43), a receiving unit (e.g., receiving unit 4323, FIG. 43), and an executing unit (e.g., executing unit 4325, FIG. 43). The processing unit (or one or more components thereof, such as the units 4307-4225) is configured to: detect (e.g., with the detecting unit 4307 and/or the touch-sensitive surface unit 4303) a search activation gesture on the touch-sensitive display from a user of the electronic device; in response to detecting the search activation gesture, display (e.g., with the displaying unit 4309 and/or the display unit 4301) a search interface on the touch-sensitive display that includes: (i) a search entry portion; and (ii) a predictions portion that is displayed before receiving any user input at the search entry portion, the predictions portion populated with one or more of: (a) at least one affordance for contacting a person of a plurality of previously-contacted people, the person being automatically selected (e.g., by the selecting unit 4319) from the plurality of previously-contacted people based at least in part on a current time; and (b) at least one affordance for executing a predicted action within an application of a plurality of applications available on the electronic device, the predicted action being automatically selected (e.g., by the selecting unit 4319) based at least in part on an application usage history associated with the user of the electronic device.

[0108] (C24) In some embodiments of the electronic device of C23, the person is further selected (e.g., by the selecting unit 4319) based at least in part on location data corresponding to the electronic device.

[0109] (C25) In some embodiments of the electronic device of any one of C23-C24, the application usage history and contact information for the person are retrieved (e.g., by the retrieving unit 4311) from a memory of the electronic device.

[0110] (C26) In some embodiments of the electronic device of any one of C23-C24, the application usage history and contact information for the person are retrieved (e.g., by the retrieving unit 4311) from a server that is remotely located from the electronic device.

[0111] (C27) In some embodiments of the electronic device of any one of C23-C26, the predictions portion is further populated (e.g., by the populating unit 4313) with at least one affordance for executing a predicted application, the predicted application being automatically selected (e.g., by the selecting unit 4319) based at least in part on the application usage history.

[0112] (C28) In some embodiments of the electronic device of any one of C23-C27, the predictions portion is further populated (e.g., by the populating unit 4313) with at least one affordance for a predicted category of places, and the predicted category of places is automatically selected (e.g., by the selecting unit 4319) based at least in part on one or more of: the current time and location data corresponding to the electronic device.

[0113] (C29) In some embodiments of the electronic device of any one of C23-C28, the processing unit is further configured to: detect (e.g., with the detecting unit 4307 and/or the touch-sensitive surface unit 4303) user input to scroll the predictions portion. In response to detecting the user input to scroll the predictions portion, the processing unit is configured to: scroll (e.g., with the scrolling unit 4319) the predictions portion in accordance with the user input. In response to the scrolling, the processing unit is configured to: reveal (e.g., with the revealing unit 4317) at least one affordance for a predicted news article in the predictions portion (e.g., the predicted news article is one that is predicted to be of interest to the user).

[0114] (C30) In some embodiments of the electronic device of C7, the predicted news article is automatically selected (e.g., with the selecting unit 4319) based at least in part on location data corresponding to the electronic device.

[0115] (C31) In some embodiments of the electronic device of any one of C23-C30, the processing unit is further configured to: detect (e.g., with the detecting unit 4307 and/or the touch-sensitive surface unit 4303) a selection of the at least one affordance for executing the predicted action within the application. In response to detecting the selection, the processing unit is configured to: display (e.g., with the displaying unit 4309), on the touch-sensitive display (e.g., display unit 4301), the application and execute (e.g., with the executing unit 4325) the predicted action within the application.

[0116] (C32) In some embodiments of the electronic device of any one of C25-C26, the processing unit is further configured to: detect (e.g., with the detecting unit 4307 and/or the touch-sensitive surface unit 4303) a selection of the at least one affordance for contacting the person. In response to detecting the selection, the processing unit is configured to: contact (e.g., with the contacting unit 4321) the person using the contact information for the person.

[0117] (C33) In some embodiments of the electronic device of C27, the processing unit is further configured to: detect (e.g., with the detecting unit 4307 and/or the touch-sensitive surface unit 4303) a selection of the at least one affordance for executing the predicted application. In response to detecting the selection, the processing unit is configured to: display (e.g., with the displaying unit 4307), on the touch-sensitive display (e.g., with the display unit 4301), the predicted application.

[0118] (C34) In some embodiments of the electronic device of C28, the processing unit is further configured to: detect (e.g., with the detecting unit 4307 and/or the touch-sensitive surface unit 4303) a selection of the at least one affordance for the predicted category of places. In response to detecting the selection, the processing unit is configured to: (i) receive (e.g., with the receiving unit 4323) data corresponding to at least one nearby place and (ii) display (e.g., with the displaying unit 4307), on the touch-sensitive display (e.g., display unit 4301), the received data corresponding to the at least one nearby place.

[0119] (C35) In some embodiments of the electronic device of C29, the processing unit is further configured to: detect (e.g., with the detecting unit 4307 and/or the touch-sensitive surface unit 4303) a selection of the at least one affordance for the predicted news article. In response to detecting the selection, the processing unit is configured to: display (e.g., with the displaying unit 4307), on the touch-sensitive display (e.g., display unit 4301), the predicted news article.

[0120] (C36) In some embodiments of the electronic device of any one of C23-C35, the search activation gesture is available from at least two distinct user interfaces, and a first user interface of the at least two distinct user interfaces corresponds to displaying a respective home screen page of a sequence of home screen pages on the touch-sensitive display.

[0121] (C37) In some embodiments of the electronic device of C36, when the respective home screen page is a first home screen page in the sequence of home screen pages, the search activation gesture comprises one of the following: (i) a gesture moving in a substantially downward direction relative to the user of the electronic device or (ii) a continuous gesture moving in a substantially left-to-right direction relative to the user and substantially perpendicular to the downward direction.

[0122] (C38) In some embodiments of the electronic device of C37, when the respective home screen page is a second home screen page in the sequence of home screen pages, the search activation gesture comprises the continuous gesture moving in the substantially downward direction relative to the user of the electronic device.

[0123] (C39) In some embodiments of the electronic device of C36, a second user interface of the at least two distinct user interfaces corresponds to displaying an application switching interface on the touch-sensitive display.

[0124] (C40) In some embodiments of the electronic device of C39, the search activation gesture comprises a contact, on the touch-sensitive display, at a predefined search activation portion of the application switching interface.

[0125] Thus, electronic devices with displays, touch-sensitive surfaces, and optionally one or more sensors to detect intensity of contacts with the touch-sensitive surface are provided with faster, more efficient methods and interfaces for proactively accessing applications and proactively performing functions within applications, thereby increasing the effectiveness, efficiency, and user satisfaction with such devices. Such methods and interfaces may complement or replace conventional methods for accessing applications and functions associated therewith.

[0126] (D1) In accordance with some embodiments, a method is performed at an electronic device (e.g., portable multifunction device 100, FIG. 1A, configured in accordance with any one of Computing Device A-D, FIG. 1E) with a touch-sensitive surface (e.g., touch-sensitive surface 195, FIG. 1D) and a display (e.g., display 194, FIG. 1D). The method includes: displaying, on the display, content associated with an application that is executing on the electronic device. The method further includes: detecting, via the touch-sensitive surface, a swipe gesture that, when detected, causes the electronic device to enter a search mode that is distinct from the application. The method also includes: in response to detecting the swipe gesture, entering the search mode, the search mode including a search interface that is displayed on the display. In conjunction with entering the search mode, the method includes: determining at least one suggested search query based at least in part on information associated with the content. Before receiving any user input at the search interface, the method includes: populating the displayed search interface with the at least one suggested search query. In this way, instead of having to remember and re-enter information into a search interface, the device provides users with relevant suggestions that are based on app content that they were viewing and the user need only select one of the suggestions without having to type in anything.

[0127] (D2) In some embodiments of the method of D1, detecting the swipe gesture includes detecting the swipe gesture over at least a portion of the content that is currently displayed.

[0128] (D3) In some embodiments of the method of any one of D1-D2, the method further includes: before detecting the swipe gesture, detecting an input that corresponds to a request to view a home screen of the electronic device; and in response to detecting the input, ceasing to display the content associated with the application and displaying a respective page of the home screen of the electronic device. In some embodiments, the respective page is an initial page in a sequence of home screen pages and the swipe gesture is detected while the initial page of the home screen is displayed on the display.

[0129] (D4) In some embodiments of the method of any one of D1-D3, the search interface is displayed as translucently overlaying the application.

[0130] (D5) In some embodiments of the method of any one of D1-D4, the method further includes: in accordance with a determination that the content includes textual content, determining the at least one suggested search query based at least in part on the textual content.

[0131] (D6) In some embodiments of the method of D5, determining the at least one suggested search query based at least in part on the textual content includes analyzing the textual content to detect one or more predefined keywords that are used to determine the at least one suggested search query.

[0132] (D7) In some embodiments of the method of any one of D1-D6, determining the at least one suggested search query includes determining a plurality of suggested search queries, and populating the search interface includes populating the search interface with the plurality of suggested search queries.

[0133] (D8) In some embodiments of the method of any one of D1-D7, the method further includes: detecting, via the touch-sensitive surface, a new swipe gesture over new content that is currently displayed; and in response to detecting the new swipe gesture, entering the search mode, entering the search mode including displaying the search interface on the display; and in conjunction with entering the search mode and in accordance with a determination that the new content does not include textual content, populating the search interface with suggested search queries that are based on a selected set of historical search queries from a user of the electronic device.

[0134] (D9) In some embodiments of the method of D8, the search interface is displayed with a point of interest based on location information provided by a second application that is distinct from the application.

[0135] (D10) In some embodiments of the method of any one of D8-D9, the search interface further includes one or more suggested applications.

[0136] (D11) In some embodiments of the method of any one of D8-D10, the set of historical search queries is selected based at least in part on frequency of recent search queries.

[0137] (D12) In some embodiments of the method of any one of D1-D11, the method further includes: in conjunction with entering the search mode, obtaining the information that is associated with the content by using one or more accessibility features that are available on the electronic device.

[0138] (D13) In some embodiments of the method of D12, using the one or more accessibility features includes using the one or more accessibility features to generate the information that is associated with the content by: (i) applying a natural language processing algorithm to textual content that is currently displayed within the application and (ii) using data obtained from the natural language processing algorithm to determine one or more keywords that describe the content, and the at least one suggested search query is determined based on the one or more keywords.

[0139] (D14) In some embodiments of the method of D13, determining the one or more keywords that describe the content also includes (i) retrieving metadata that corresponds to non-textual content that is currently displayed in the application and (ii) using the retrieved metadata, in addition to the data obtained from the natural language processing algorithm, to determine the one or more keywords.

[0140] (D15) In some embodiments of the method of any one of D1-D14, the search interface further includes one or more trending queries.

[0141] (D16) In some embodiments of the method of D15, the search interface further includes one or more applications that are predicted to be of interest to a user of the electronic device.

[0142] (D17) In another aspect, an electronic device is provided. In some embodiments, the electronic device includes: a touch-sensitive surface and a display, one or more processors, and memory storing one or more programs that, when executed by the one or more processors, cause the electronic device to perform the method described in any one of D1-D16.

[0143] (D18) In yet another aspect, an electronic device is provided and the electronic device includes: a touch-sensitive surface and a display and means for performing the method described in any one of D1-D16.

[0144] (D19) In still another aspect, a non-transitory computer-readable storage medium is provided. The non-transitory computer-readable storage medium stores executable instructions that, when executed by an electronic device with a touch-sensitive surface and a display, cause the electronic device to perform the method described in any one of D1-D16.

[0145] (D20) In still one more aspect, a graphical user interface on an electronic device with a touch-sensitive surface and a display is provided. In some embodiments, the graphical user interface includes user interfaces displayed in accordance with the method described in any one of D1-D16. In one more aspect, an information processing apparatus for use in an electronic device that includes a touch-sensitive surface and a display is provided. The information processing apparatus includes: means for performing the method described in any one of D1-D16.

[0146] (D21) In one additional aspect, an electronic device is provided that includes a display unit (e.g., display unit 4401, FIG. 44), a touch-sensitive surface unit (e.g., touch-sensitive surface unit 4403, FIG. 44), and a processing unit (e.g., processing unit 4405, FIG. 44). The processing unit is coupled with the touch-sensitive surface unit and the display unit. In some embodiments, the electronic device is configured in accordance with any one of the computing devices shown in FIG. 1E (i.e., Computing Devices A-D). For ease of illustration, FIG. 44 shows display unit 4401 and touch-sensitive surface unit 4403 as integrated with electronic device 4400, however, in some embodiments one or both of these units are in communication with the electronic device, although the units remain physically separate from the electronic device. In some embodiments, the touch-sensitive surface unit and the display unit are integrated in a single touch-sensitive display unit (also referred to herein as a touch-sensitive display). The processing unit includes a detecting unit (e.g., detecting unit 4407, FIG. 44), a displaying unit (e.g., displaying unit 4409, FIG. 44), a retrieving unit (e.g., retrieving unit 4411, FIG. 44), a search mode entering unit (e.g., the search mode entering unit 4412, FIG. 44), a populating unit (e.g., populating unit 4413, FIG. 44), a obtaining unit (e.g., obtaining unit 4415, FIG. 44), a determining unit (e.g., determining unit 4417, FIG. 44), and a selecting unit (e.g., selecting unit 4419, FIG. 44). The processing unit (or one or more components thereof, such as the units 1007-1029) is configured to: display (e.g., with the displaying unit 4407), on the display unit (e.g., the display unit 4407), content associated with an application that is executing on the electronic device; detect (e.g., with the detecting unit 4407), via the touch-sensitive surface unit (e.g., the touch-sensitive surface unit 4403), a swipe gesture that, when detected, causes the electronic device to enter a search mode that is distinct from the application; in response to detecting the swipe gesture, enter the search mode (e.g., with the search mode entering unit 4412), the search mode including a search interface that is displayed on the display unit (e.g., the display unit 4407); in conjunction with entering the search mode, determine (e.g., with the determining unit 4417) at least one suggested search query based at least in part on information associated with the content; and before receiving any user input at the search interface, populate (e.g., with the populating unit 4413) the displayed search interface with the at least one suggested search query.

[0147] (D22) In some embodiments of the electronic device of D21, detecting the swipe gesture includes detecting (e.g., with the detecting unit 4407) the swipe gesture over at least a portion of the content that is currently displayed.

[0148] (D23) In some embodiments of the electronic device of any one of D21-D22, wherein the processing unit is further configured to: before detecting the swipe gesture, detect (e.g., with the detecting unit 4407) an input that corresponds to a request to view a home screen of the electronic device; and in response to detecting (e.g., with the detecting unit 4407) the input, cease to display the content associated with the application and display a respective page of the home screen of the electronic device (e.g., with the displaying unit 4409), wherein: the respective page is an initial page in a sequence of home screen pages; and the swipe gesture is detected (e.g., with the detecting unit 4407) while the initial page of the home screen is displayed on the display unit.

[0149] (D24) In some embodiments of the electronic device of any one of D21-D23, the search interface is displayed (e.g., the displaying unit 4409 and/or the display unit 4401) as translucently overlaying the application.

[0150] (D25) In some embodiments of the electronic device of any one of D21-D24, the processing unit is further configured to: in accordance with a determination that the content includes textual content, determine (e.g., with the determining unit 4417) the at least one suggested search query based at least in part on the textual content.

[0151] (D26) In some embodiments of the electronic device of D25, determining the at least one suggested search query based at least in part on the textual content includes analyzing the textual content to detect (e.g., with the detecting unit 4407) one or more predefined keywords that are used to determine (e.g., with the determining unit 4417) the at least one suggested search query.

[0152] (D27) In some embodiments of the electronic device of any one of D21-D26, determining the at least one suggested search query includes determining (e.g., with the determining unit 4417) a plurality of suggested search queries, and populating the search interface includes populating (e.g., with the populating unit 4413) the search interface with the plurality of suggested search queries.

[0153] (D28) In some embodiments of the electronic device of any one of D21-D27, the processing unit is further configured to: detect (e.g., with the detecting unit 4407), via the touch-sensitive surface unit (e.g., with the touch-sensitive surface unit 4403), a new swipe gesture over new content that is currently displayed; and in response to detecting the new swipe gesture, enter the search mode (e.g., with the search mode entering unit 4412), and entering the search mode includes displaying the search interface on the display unit (e.g., with the display unit 4409); and in conjunction with entering the search mode and in accordance with a determination that the new content does not include textual content, populate (e.g., with the populating unit 4413) the search interface with suggested search queries that are based on a selected set of historical search queries from a user of the electronic device.

[0154] (D29) In some embodiments of the electronic device of D28, the search interface is displayed (e.g., the displaying unit 4409) with a point of interest based on location information provided by a second application that is distinct from the application.

[0155] (D30) In some embodiments of the electronic device of any one of D28-D29, the search interface further includes one or more suggested applications.

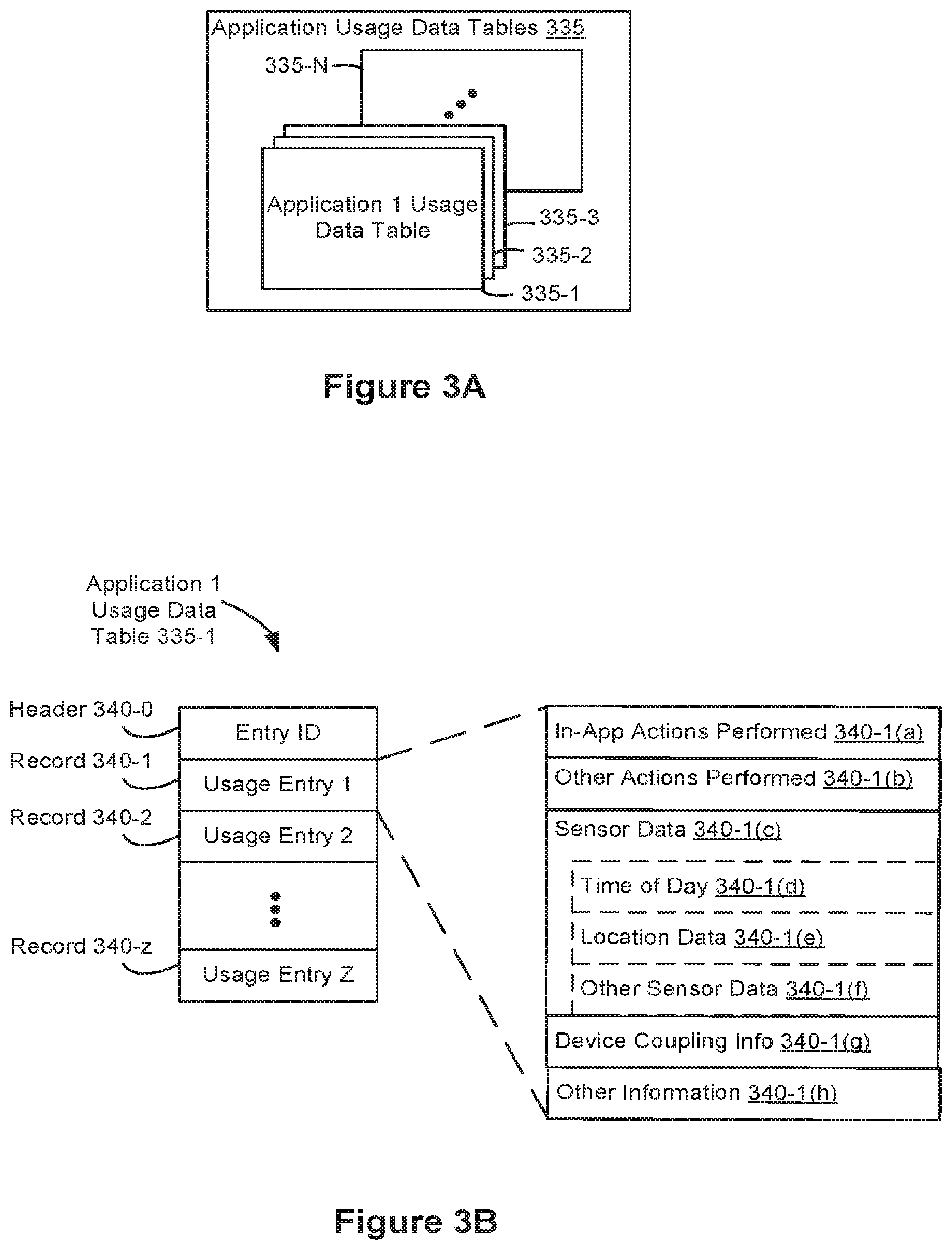

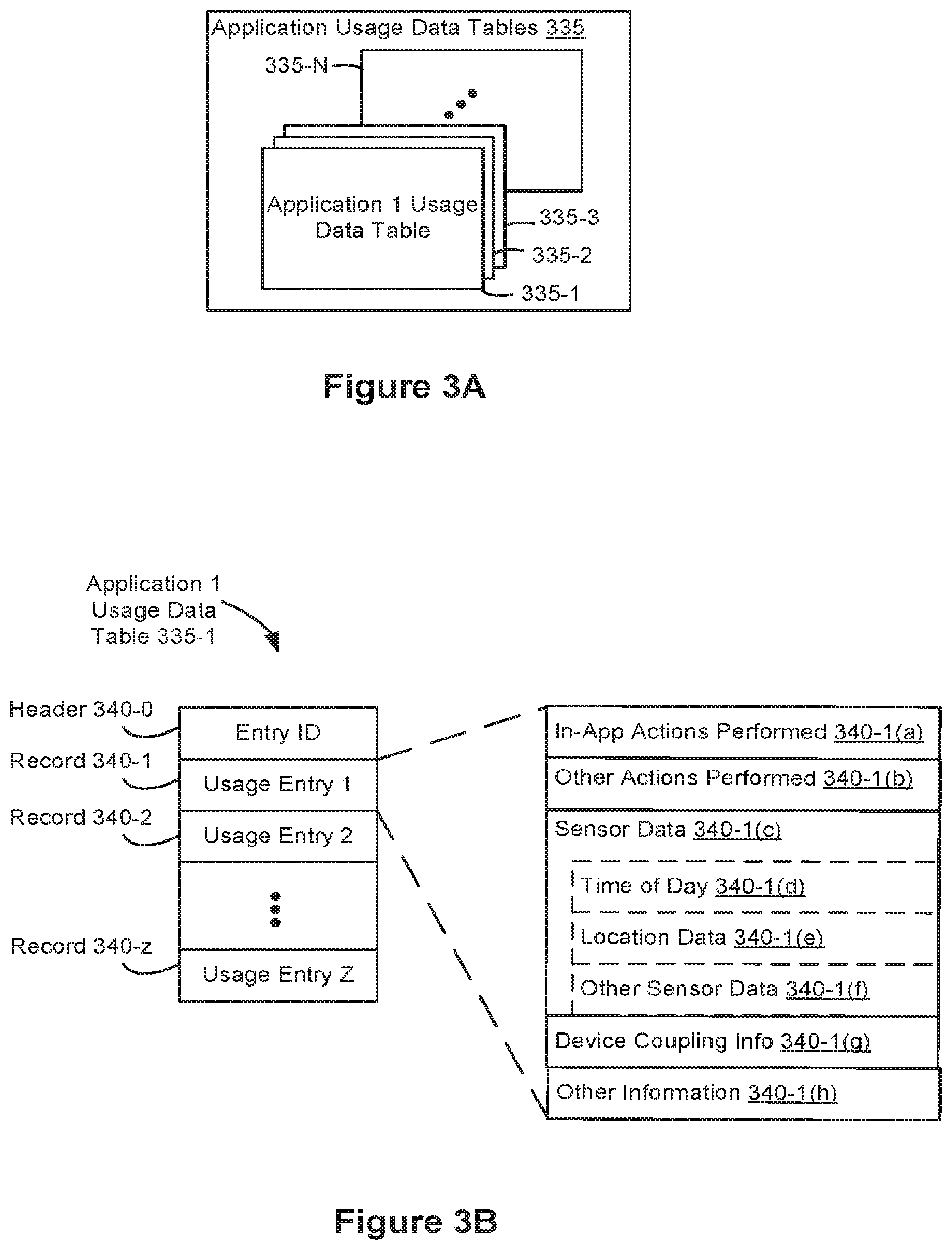

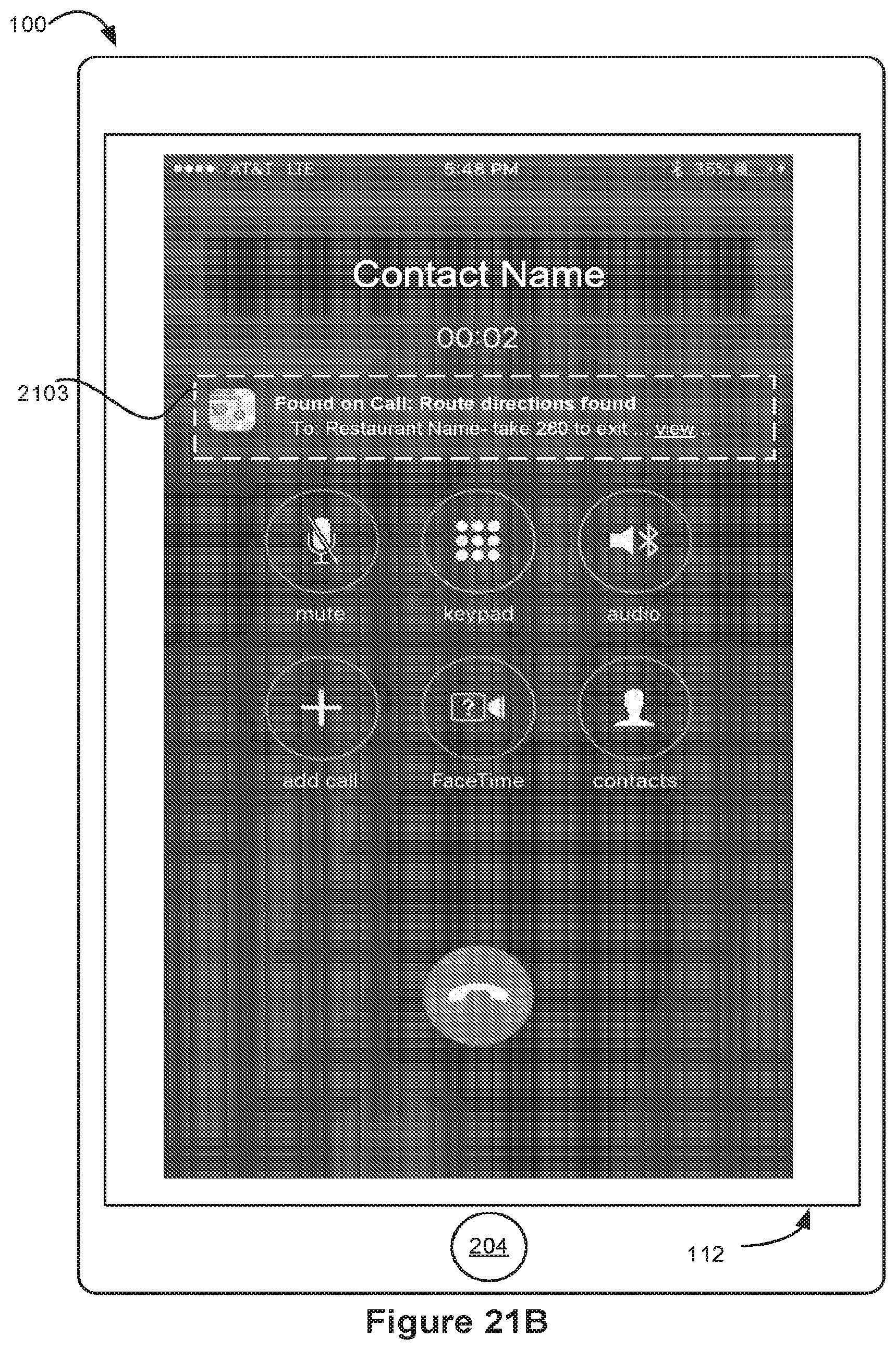

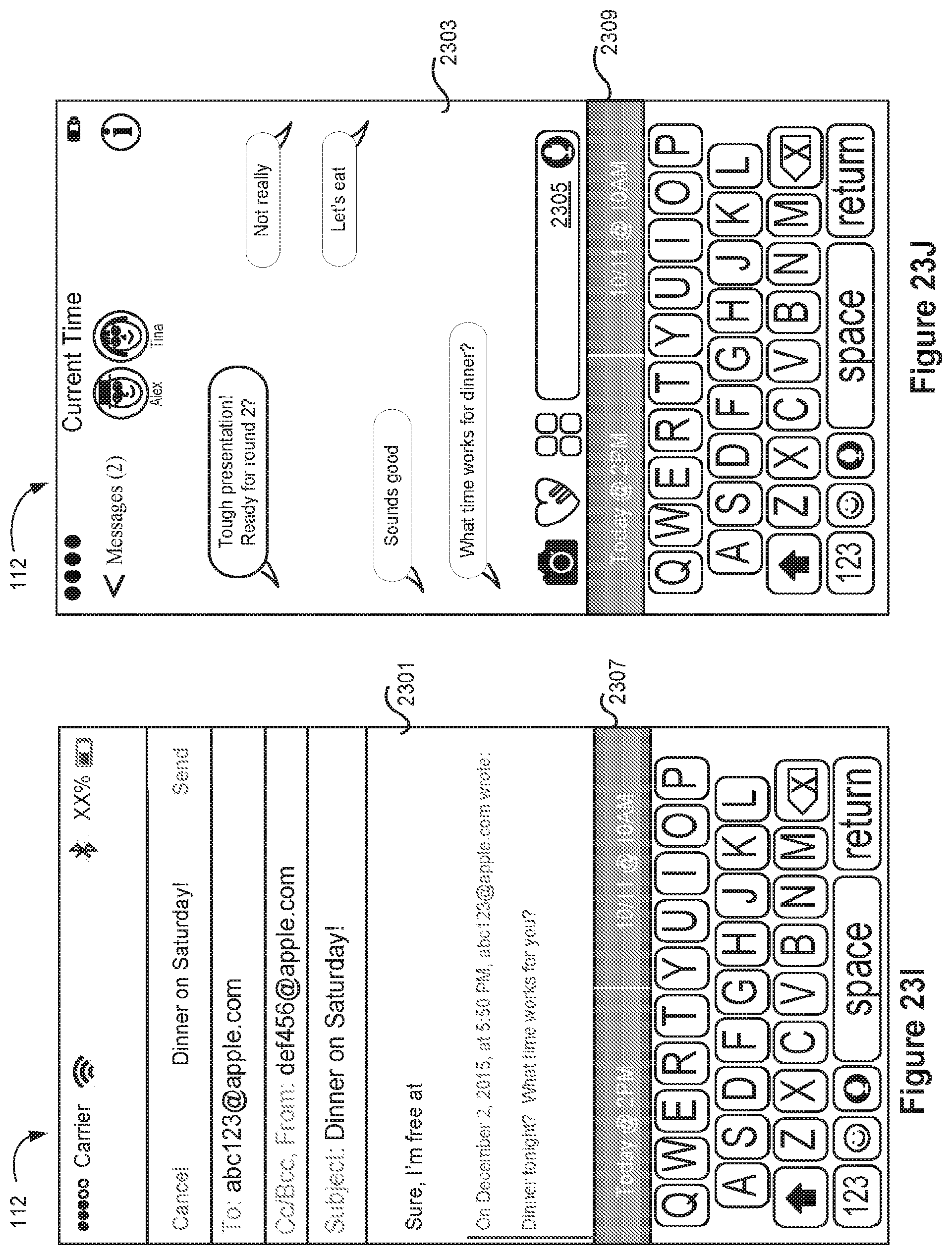

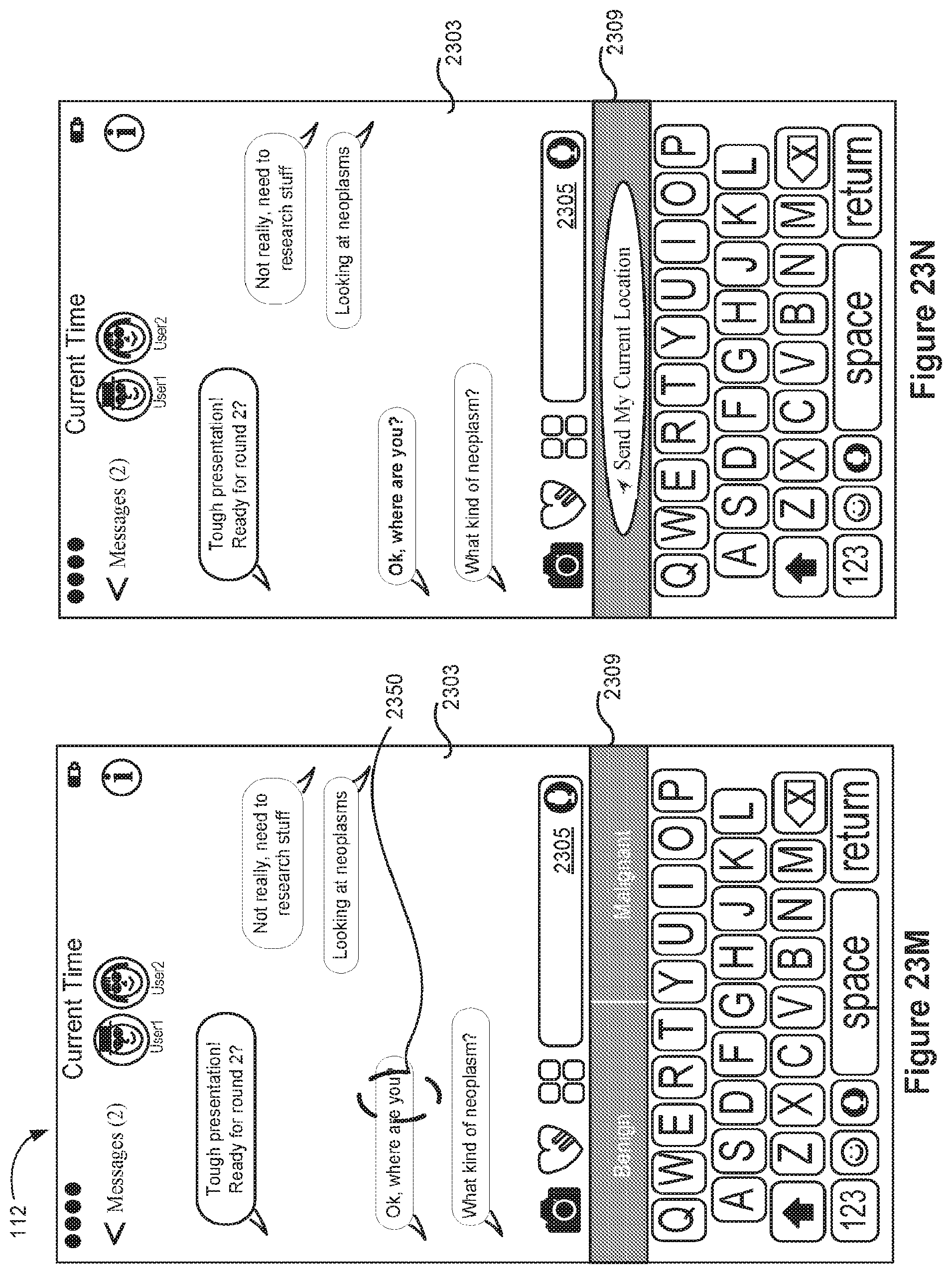

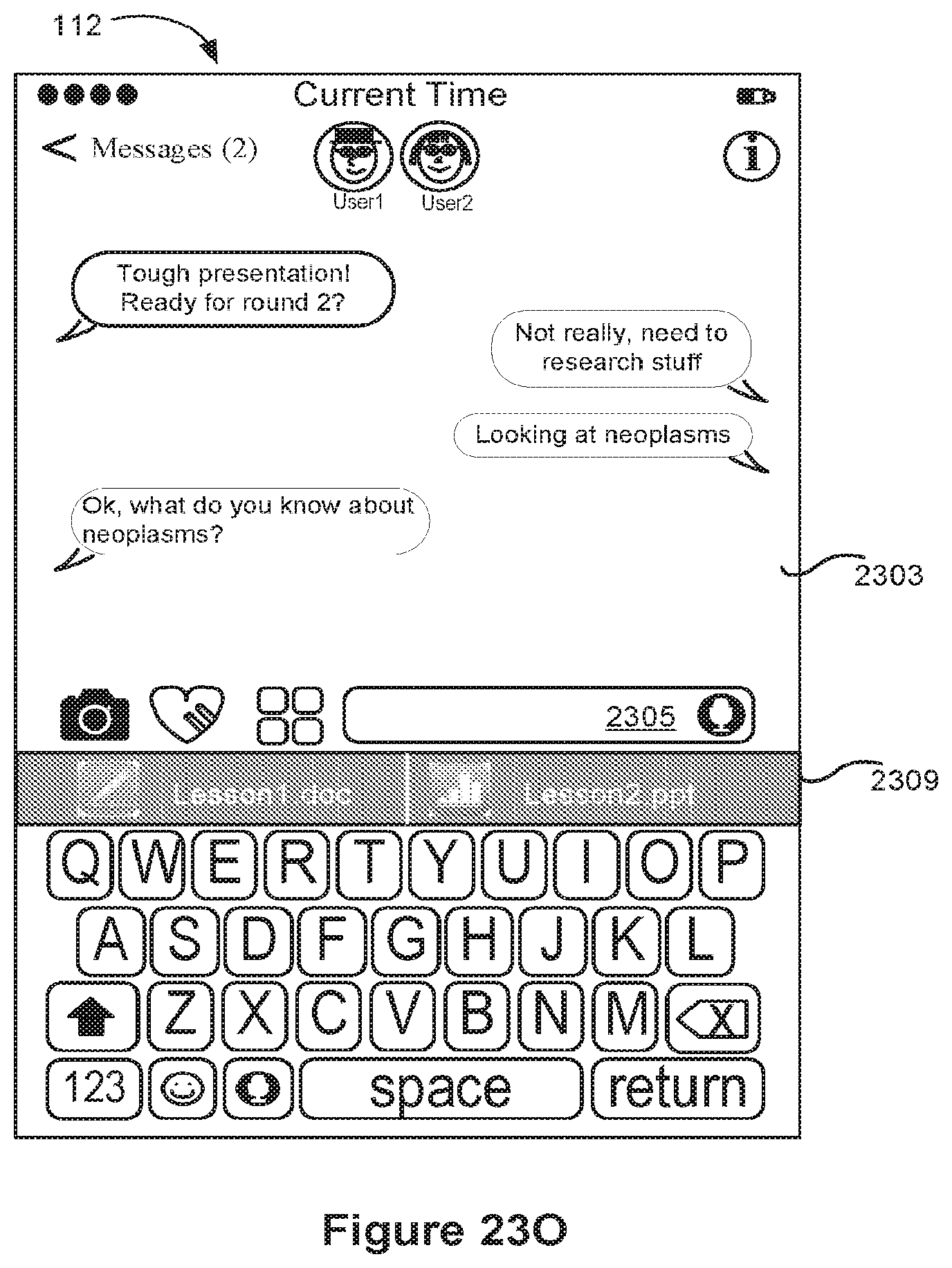

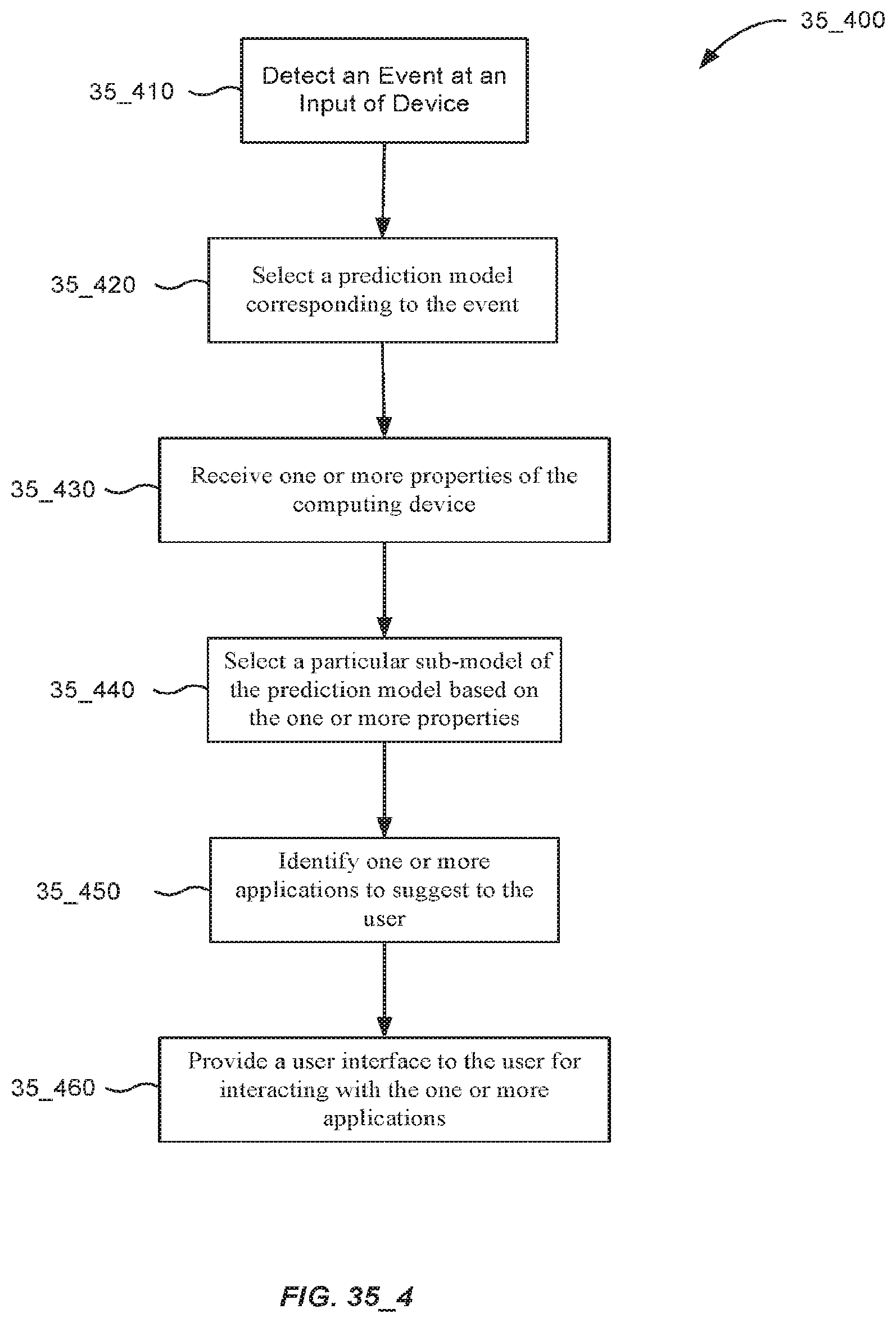

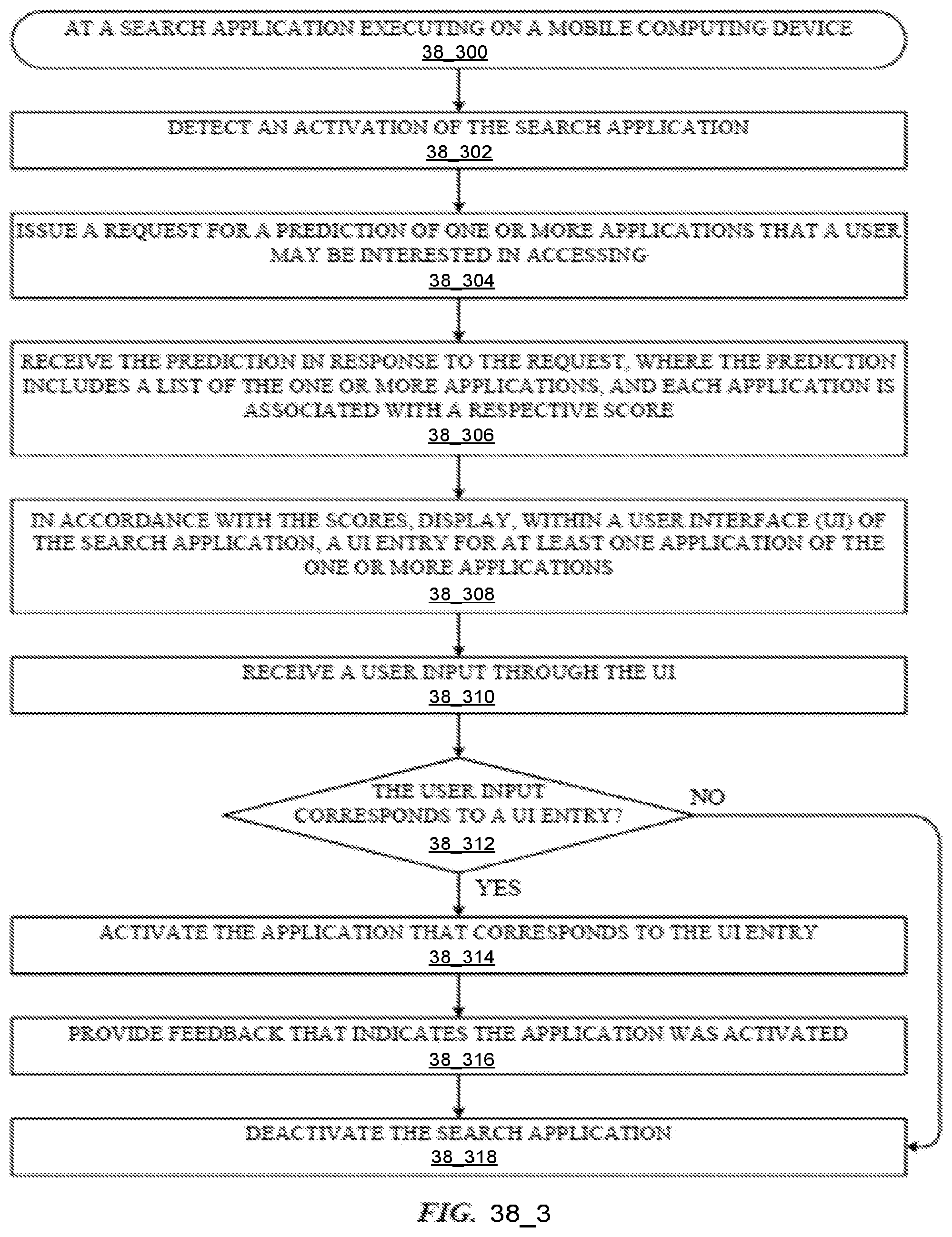

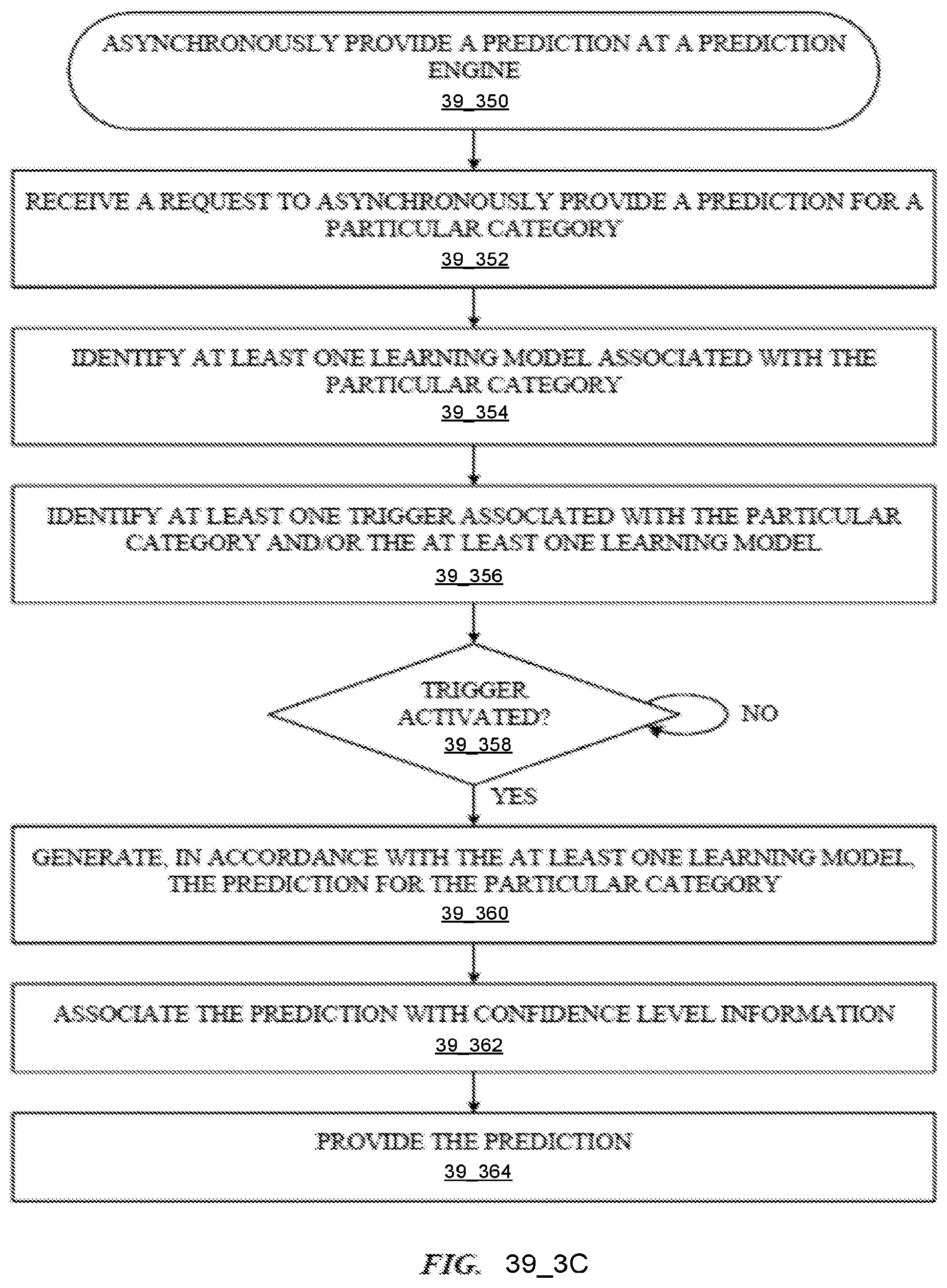

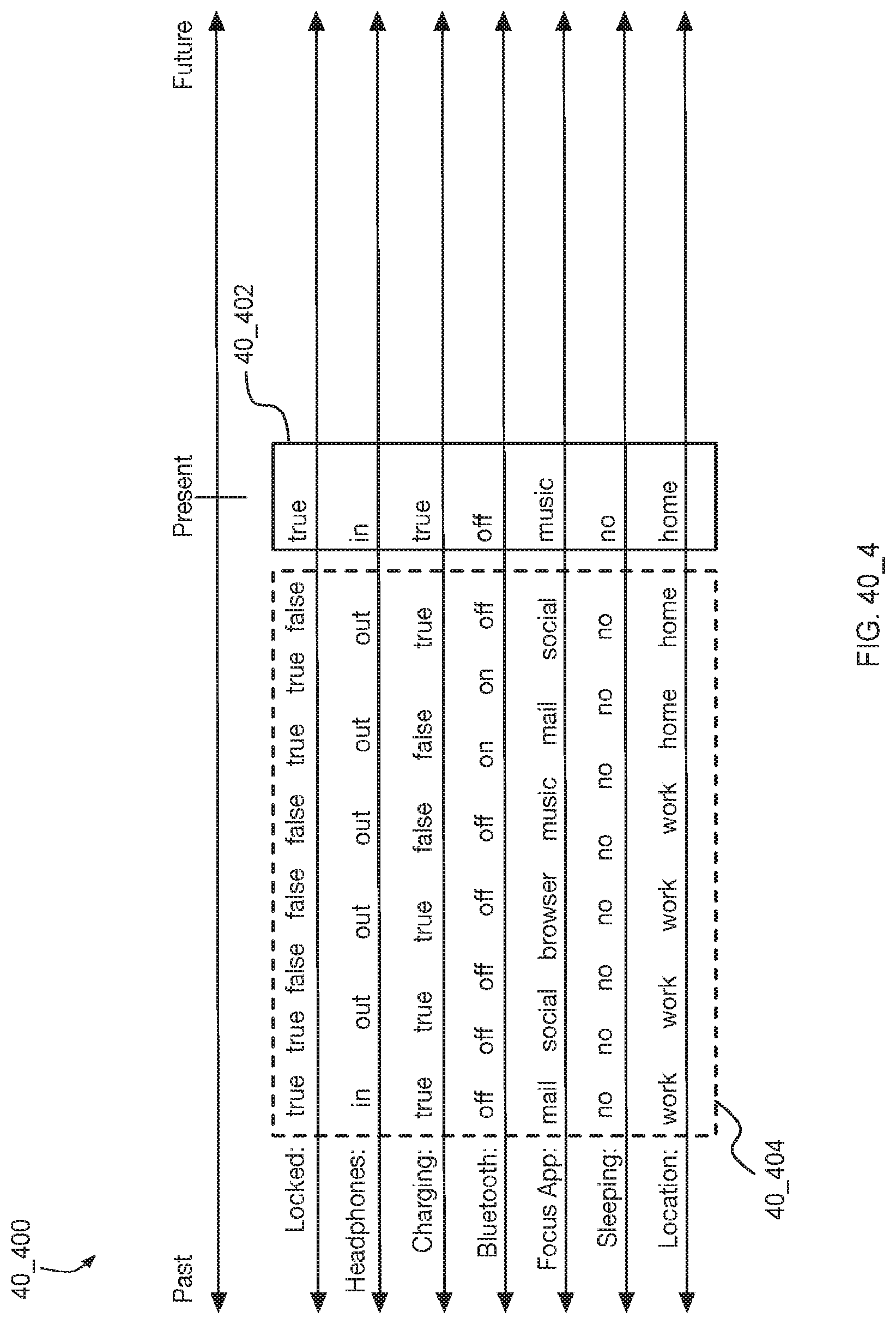

[0156] (D31) In some embodiments of the electronic device of any one of D28-D30, the set of historical search queries is selected (e.g., with the selecting unit 4419) based at least in part on frequency of recent search queries.