High-Precision Privacy-Preserving Real-Valued Function Evaluation

Gama; Nicolas ; et al.

U.S. patent application number 16/643833 was filed with the patent office on 2020-09-24 for high-precision privacy-preserving real-valued function evaluation. The applicant listed for this patent is Inpher, Inc.. Invention is credited to Jordan Brandt, Nicolas Gama, Dimitar Jetchev, Stanislav Peceny, Alexander Petric.

| Application Number | 20200304293 16/643833 |

| Document ID | / |

| Family ID | 1000004901233 |

| Filed Date | 2020-09-24 |

View All Diagrams

| United States Patent Application | 20200304293 |

| Kind Code | A1 |

| Gama; Nicolas ; et al. | September 24, 2020 |

High-Precision Privacy-Preserving Real-Valued Function Evaluation

Abstract

A method for performing privacy-preserving or secure multi-party computations enables multiple parties to collaborate to produce a shared result while preserving the privacy of input data contributed by individual parties. The method can produce a result with a specified high degree of precision or accuracy in relation to an exactly accurate plaintext (non-privacy-preserving) computation of the result, without unduly burdensome amounts of inter-party communication. The multi-party computations can include a Fourier series approximation of a continuous function or an approximation of a continuous function using trigonometric polynomials, for example, in training a machine learning classifier using secret shared input data. The multi-party computations can include a secret share reduction that transforms an instance of computed secret shared data stored in floating-point representation into an equivalent, equivalently precise, and equivalently secure instance of computed secret shared data having a reduced memory storage requirement.

| Inventors: | Gama; Nicolas; (Lausanne, CH) ; Brandt; Jordan; (Holton, KS) ; Jetchev; Dimitar; (St-Saphorin-Lavaux, CH) ; Peceny; Stanislav; (New York, NY) ; Petric; Alexander; (New York, NY) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Family ID: | 1000004901233 | ||||||||||

| Appl. No.: | 16/643833 | ||||||||||

| Filed: | August 30, 2018 | ||||||||||

| PCT Filed: | August 30, 2018 | ||||||||||

| PCT NO: | PCT/US2018/048963 | ||||||||||

| 371 Date: | March 10, 2020 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 62647635 | Mar 24, 2018 | |||

| 62641256 | Mar 9, 2018 | |||

| 62560175 | Sep 18, 2017 | |||

| 62552161 | Aug 30, 2017 | |||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | G06F 17/16 20130101; G06N 3/08 20130101; H04L 2209/04 20130101; G06N 3/0481 20130101; H04L 2209/46 20130101; H04L 9/085 20130101; G06F 17/147 20130101 |

| International Class: | H04L 9/08 20060101 H04L009/08; G06F 17/14 20060101 G06F017/14; G06N 3/08 20060101 G06N003/08; G06N 3/04 20060101 G06N003/04; G06F 17/16 20060101 G06F017/16 |

Claims

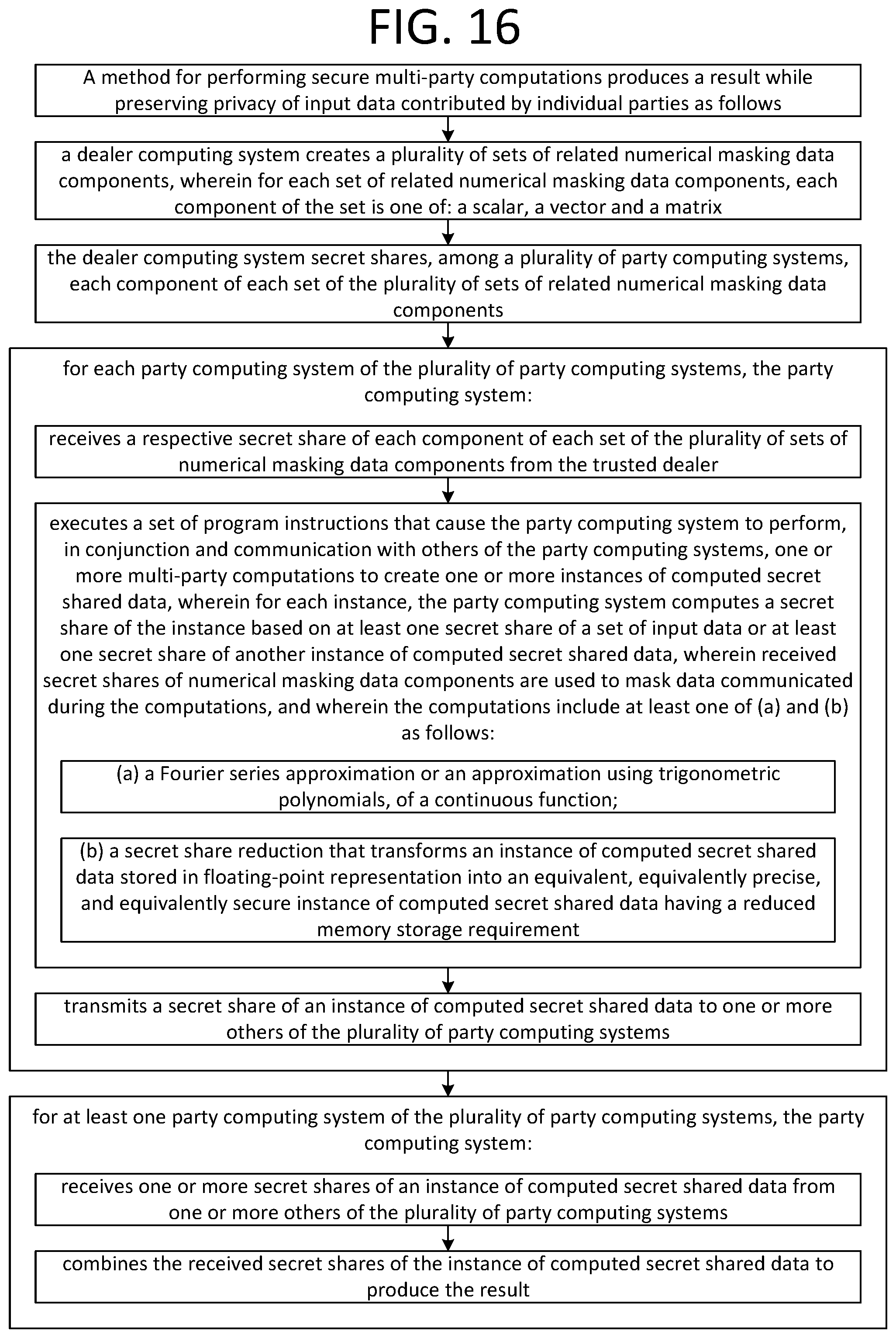

1. A method for performing secure multi-party computations to produce a result while preserving privacy of input data contributed by individual parties, the method comprising: a dealer computing system creating a plurality of sets of related numerical masking data components, wherein for each set of related numerical masking data components, each component of the set is one of: a scalar, a vector and a matrix; the dealer computing system secret sharing, among a plurality of party computing systems, each component of each set of the plurality of sets of related numerical masking data components; for each party computing system of the plurality of party computing systems, the party computing system: receiving a respective secret share of each component of each set of the plurality of sets of numerical masking data components from the dealer computing system, and for at least one set of input data, receiving a secret share of the set of input data; executing a set of program instructions that cause the party computing systems to perform one or more multi-party computations to create one or more instances of computed secret shared data, wherein for each instance, each party computing system computes a secret share of the instance based on at least one secret share of a set of input data or at least one secret share of another instance of computed secret shared data, wherein received secret shares of numerical masking data components are used to mask data communicated during the computations, and wherein the computations comprise at least one of (a), (b) and (c) as follows: (a) approximating a value of a continuous function using a Fourier series selected, based on the set of input data or the another instance of computed secret shared data, from a plurality of determined Fourier series, wherein each of the plurality of determined Fourier series is configured to approximate the continuous function on an associated subinterval of a domain of the continuous function, (b) a secret share reduction that transforms an instance of computed secret shared data stored in floating-point representation into an equivalent, equivalently precise, and equivalently secure instance of computed secret shared data, wherein each secret share of the instance has a reduced memory storage requirement, and wherein the transformation is performed by at least: each party computing system of the plurality of computing systems: selecting a set of highest order digits of a secret share beyond a predetermined cutoff position; and retaining a set of lowest order digits of the secret share up to the cutoff position; determining a sum of values represented by the selected set of highest order digits across the plurality of computing systems; and distributing the determined sum across the retained sets of lowest order digits of the secret shares of the plurality of party computing systems, and (c) determining secret shares of a Fourier series evaluation on the set of input data or the another instance of computed secret shared data by at least: masking secret shares of the set of input data or the another instance of computed secret shared data with the secret shares of numerical masking data components; determining and revealing a value represented by the masked secret shares; calculating values of Fourier series basis functions based on the determined value represented by the masked secret shares; and calculating the secret shares of the Fourier series evaluation based on the calculated values of the Fourier series basis functions and the secret shares of numerical masking data components; for each party computing system of the plurality of party computing systems, the party computing system, transmitting a secret share of an instance of computed secret shared data to one or more others of the plurality of party computing systems; and for at least one party computing system of the plurality of party computing systems, the party computing system: receiving one or more secret shares of an instance of computed secret shared data from one or more others of the plurality of party computing systems; and combining the received secret shares of the instance of computed secret shared data to produce the result.

2. The method of claim 1, wherein the computations comprise (a) and (b).

3. The method of claim 1, wherein the computations comprise (a).

4. The method of claim 3, further comprising: partitioning a portion of the domain of the continuous function into a plurality of subintervals; and for each subinterval of the plurality of subintervals: determining a Fourier series approximation of the function on the subinterval.

5. The method of claim 3, wherein the multi-party computations further comprise selecting the associated subinterval using at least one of garbled circuits and oblivious selection.

6. The method of claim 3, wherein the approximation is a uniform approximation of the continuous function.

7. The method of claim 3, wherein the continuous function is a machine learning activation function.

8. The method of claim 7, wherein the machine learning activation function is the sigmoid function.

9. The method of claim 7, wherein the machine learning activation function is the hyperbolic tangent function.

10. The method of claim 7, wherein the machine learning activation function is a rectifier activation function for a neural network.

11. The method of claim 3, wherein the continuous function is the sigmoid function.

12. The method of claim 1, wherein the computations comprise (b).

13. The method of claim 12, wherein determining a sum of values represented by the selected set of highest order digits across the plurality of computing systems comprises: determining a set of numerical masking data components that sum to zero; distributing to each of the party computing systems one member of the determined set; each party computing system receiving a respective member of the determined set; each party computing system adding the received member to its selected set of highest order digits of its secret share to obtain a masked set of highest order digits; and summing the masked sets of highest order digits.

14. The method of claim 1, wherein the result is a set of coefficients of a logistic regression classification model.

15. The method of claim 1, wherein the method implements a logistic regression classifier, and wherein the result is a prediction of the logistic regression classifier based on the input data.

16. The method of claim 1, wherein the dealer computing system is a trusted dealer computing system, and wherein communications between the party computing systems are inaccessible to the trusted dealer computing system.

17. The method of claim 1, wherein the dealer computing system is an honest-but-curious dealer computing system, and wherein privacy of secret shared input data contributed by one or more of the party computing systems is preserved regardless of whether communications between the party computing systems can be accessed by the honest-but-curious dealer computing system.

18. The method of claim 1, further comprising: for at least one set of input data, performing a statistical analysis on the set of input data to determine a set of input data statistics; performing a pre-execution of a set of source code instructions using the set of input data statistics to generate statistical type parameters for each of one or more variable types; and compiling the set of source code instructions based on the set of statistical type parameters to generate the set of program instructions.

19. The method of claim 18, wherein the pre-execution is performed subsequent to: unrolling loops in the set of source code instructions having a determinable number of iterations; and unrolling function calls in the set of source code instructions.

20. The method of claim 1, wherein at least one set of related numerical masking data components consists of three components having a relationship where one of the components is equal to a multiplicative product of a remaining two of the components.

21. The method of claim 1, wherein at least one set of related numerical masking data components comprises a number and a set of one or more associated values of Fourier basis functions evaluated on the number.

22. The method of claim 1, wherein the computations comprise (c).

23. The method of claim 22, wherein the calculating the secret shares of the Fourier series evaluation is performed on the basis of the formula: e.sup.imx.sub.+=e.sup.im(x.sym..lamda.).sup.e.sup.im(-.lamda.).sub.+ where x represents the set of input data or the another instance of computed secret shared data, .lamda. represents the masking data, m represents an integer, the notation n.sub.+ denotes additive secret shares of a number n, and the notation .sym. denotes addition modulo 2.pi..

24. The method of claim 1, wherein the computations comprise (a), (b), and (c).

25. The method of claim 1, wherein the computations comprise (a) and (c).

26. The method of any one of claims 1 through 25, wherein the result has a predetermined degree of precision in relation to a plaintext computation of the result.

27. The method of any one of claims 1 through 25, further comprising at least one of the plurality of party computing systems secret sharing, among the plurality of party computing systems, a respective set of input data.

28. A system comprising a plurality of computer systems, wherein the plurality of computer systems are configured to perform the method of any one of claims 1 through 25.

29. A non-transitory computer-readable medium encoded with the set of program instructions of any one of claims 1 through 25.

30. A non-transitory computer-readable medium encoded with computer code that, when executed by plurality of computer systems, cause the plurality of computer systems to perform the method of any one of claims 1 through 25.

Description

RELATED APPLICATIONS

[0001] The subject matter of this application is related to U.S. Provisional Application No. 62/552,161, filed on 2017 Aug. 30, U.S. Provisional Application No. 62/560,175, filed on 2017 Sep. 18, U.S. Provisional Application No. 62/641,256, filed on 2018 Mar. 9, and U.S. Provisional Application No. 62/647,635, filed on 2018 Mar. 24, all of which applications are incorporated herein by reference in their entireties.

BACKGROUND OF THE INVENTION

[0002] There exist problems in privacy-preserving or secure multi-party computing that do not have effective solutions in the prior art. For example, suppose a number of organizations desire to collaborate in training a machine learning classifier in order to detect fraudulent activity, such as financial scams or phishing attacks. Each organization has a set of training data with examples of legitimate and fraudulent activity, but the individual organizations want to retain the privacy and secrecy of their data while still being able to collaboratively contribute their data to the training of the classifier. Such a training, in theory, can be accomplished using privacy-preserving or secure multi-party computing techniques. In order to be effective, however, the classifier must also support a very high level of precision to detect what may be relatively rare occurrences of fraudulent activity as compared to much more frequent legitimate activity. Existing secure multi-party computing techniques do not provide requisite levels of precision for such training without requiring unduly burdensome amounts of inter-party communication.

SUMMARY OF THE INVENTION

[0003] A method for performing privacy-preserving or secure multi-party computations enables multiple parties to collaborate to produce a shared result while preserving the privacy of input data contributed by individual parties. The method can produce a result with a specified high degree of precision or accuracy in relation to an exactly accurate plaintext (non-privacy-preserving) computation of the result, without unduly burdensome amounts of inter-party communication. The multi-party computations can include a Fourier series approximation of a continuous function or an approximation of a continuous function using trigonometric polynomials, for example, in training a machine learning classifier using secret shared input data. The multi-party computations can include a secret share reduction that transforms an instance of computed secret shared data stored in floating-point representation into an equivalent, equivalently precise, and equivalently secure instance of computed secret shared data having a reduced memory storage requirement.

[0004] As will be appreciated by one skilled in the art, multiple aspects described in the remainder of this summary can be variously combined in different operable embodiments. All such operable combinations, though they may not be explicitly set forth in the interest of efficiency, are specifically contemplated by this disclosure.

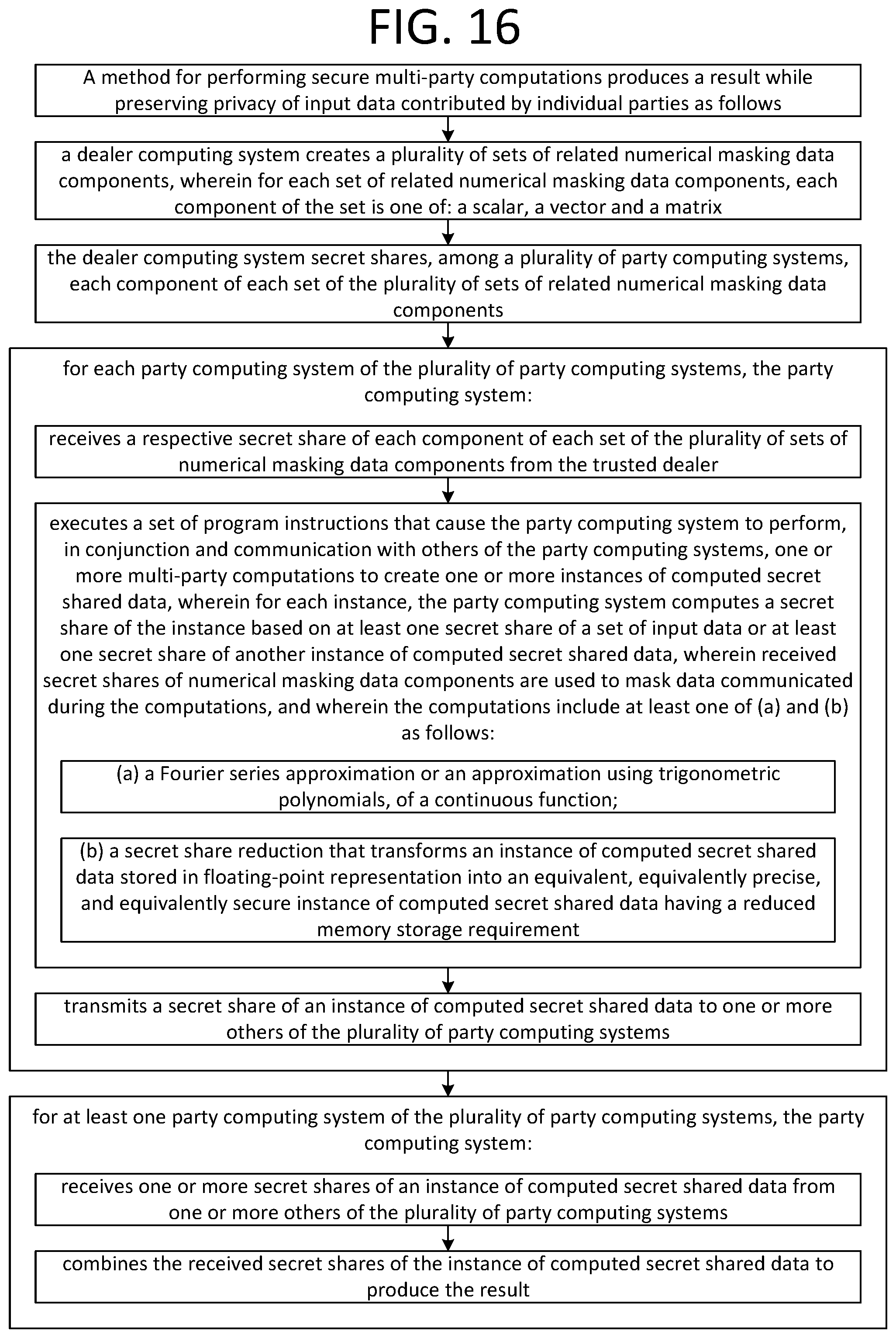

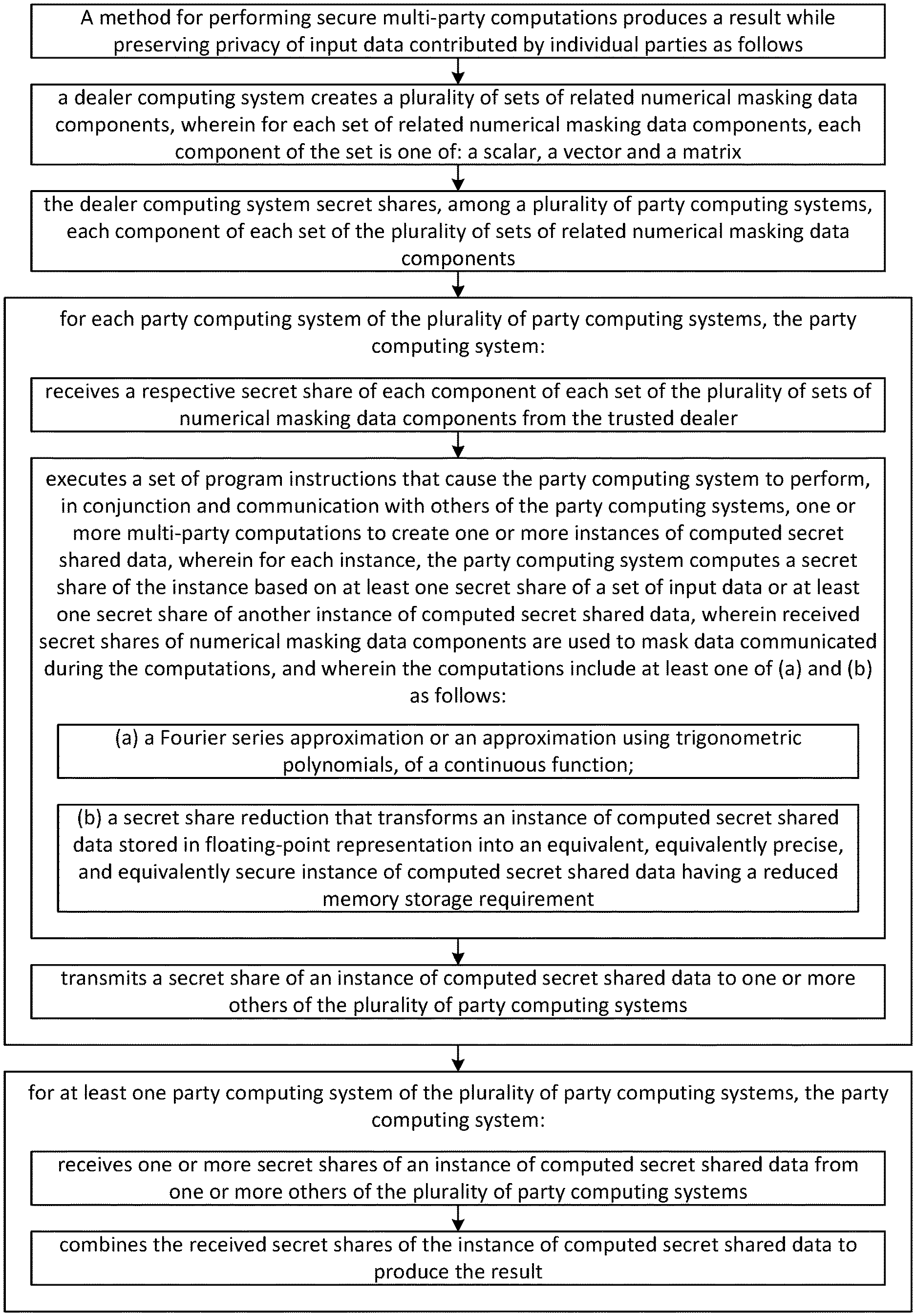

[0005] A method for performing secure multi-party computations can produce a result while preserving the privacy of input data contributed by individual parties.

[0006] In the method, a dealer computing system can create a plurality of sets of related numerical masking data components, wherein for each set of related numerical masking data components, each component of the set is one of: a scalar, a vector and a matrix. The dealer computing system can secret share, among a plurality of party computing systems, each component of each set of the plurality of sets of related numerical masking data components.

[0007] In the method, for each party computing system of the plurality of party computing systems, the party computing system can receive a respective secret share of each component of each set of the plurality of sets of numerical masking data components from the trusted dealer. The party computing system can, for at least one set of input data, receive a secret share of the set of input data. The party computing system can execute a set of program instructions that cause the party computing system to perform, in conjunction and communication with others of the party computing systems, one or more multi-party computations to create one or more instances of computed secret shared data. For each instance, the party computing system can compute a secret share of the instance based on at least one secret share of a set of input data or at least one secret share of another instance of computed secret shared data. Received secret shares of numerical masking data components can be used to mask data communicated during the computations.

[0008] The computations can include, for example, a Fourier series approximation of a continuous function or an approximation of a continuous function using trigonometric polynomials. The computations can also or alternatively include, for example, a secret share reduction that transforms an instance of computed secret shared data stored in floating-point representation into an equivalent, equivalently precise, and equivalently secure instance of computed secret shared data having a reduced memory storage requirement.

[0009] In the method, the party computing system can transmit a secret share of an instance of computed secret shared data to one or more others of the plurality of party computing systems. For at least one party computing system, the party computing system can receive one or more secret shares of an instance of computed secret shared data from one or more others of the plurality of party computing systems. The party computing system can combine the received secret shares of the instance of computed secret shared data to produce the result.

[0010] The method can be performed such that the computations further include partitioning a domain of a function into a plurality of subintervals; and for each subinterval of the plurality of subintervals: determining an approximation of the function on the subinterval, and computing an instance of computed secret shared data using at least one of garbled circuits and oblivious selection.

[0011] The approximation of the continuous function can be on an interval. The approximation can be a uniform approximation of the continuous function. The continuous function can be a machine learning activation function. The machine learning activation function can be the sigmoid function. The machine learning activation function can be the hyperbolic tangent function. The machine learning activation function can be a rectifier activation function for a neural network. The continuous function can be the sigmoid function.

[0012] The secret share reduction can include masking one or more most significant bits of each secret share of an instance of computed secret shared data. The result can be a set of coefficients of a logistic regression classification model. The method can implement a logistic regression classifier, and the result can be a prediction of the logistic regression classifier based on the input data.

[0013] The dealer computing system can be a trusted dealer computing system, and communications between the party computing systems can be made inaccessible to the trusted dealer computing system.

[0014] The dealer computing system can be an honest-but-curious dealer computing system, and privacy of secret shared input data contributed by one or more of the party computing systems can be preserved regardless of whether communications between the party computing systems can be accessed by the honest-but-curious dealer computing system.

[0015] The method can further include: for at least one set of input data, performing a statistical analysis on the set of input data to determine a set of input data statistics; performing a pre-execution of a set of source code instructions using the set of input data statistics to generate statistical type parameters for each of one or more variable types; and compiling the set of source code instructions based on the set of statistical type parameters to generate the set of program instructions. The pre-execution can be performed subsequent to: unrolling loops in the set of source code instructions having a determinable number of iterations; and unrolling function calls in the set of source code instructions.

[0016] The method can be performed such that at least one set of related numerical masking data components consists of three components having a relationship where one of the components is equal to a multiplicative product of a remaining two of the components.

[0017] The method can be performed such that at least one set of related numerical masking data components comprises a number and a set of one or more associated values of Fourier basis functions evaluated on the number.

[0018] The method can be performed such that the result has a predetermined degree of precision in relation to a plaintext computation of the result.

[0019] The method can be performed such that at least one of the plurality of party computing systems secret shares, among the plurality of party computing systems, a respective set of input data.

[0020] A system can include a plurality of computer systems, wherein the plurality of computer systems are configured to perform the method.

[0021] A non-transitory computer-readable medium can be encoded with the set of program instructions.

[0022] A non-transitory computer-readable medium can be encoded with computer code that, when executed by plurality of computer systems, cause the plurality of computer systems to perform the method.

BRIEF DESCRIPTION OF THE DRAWINGS

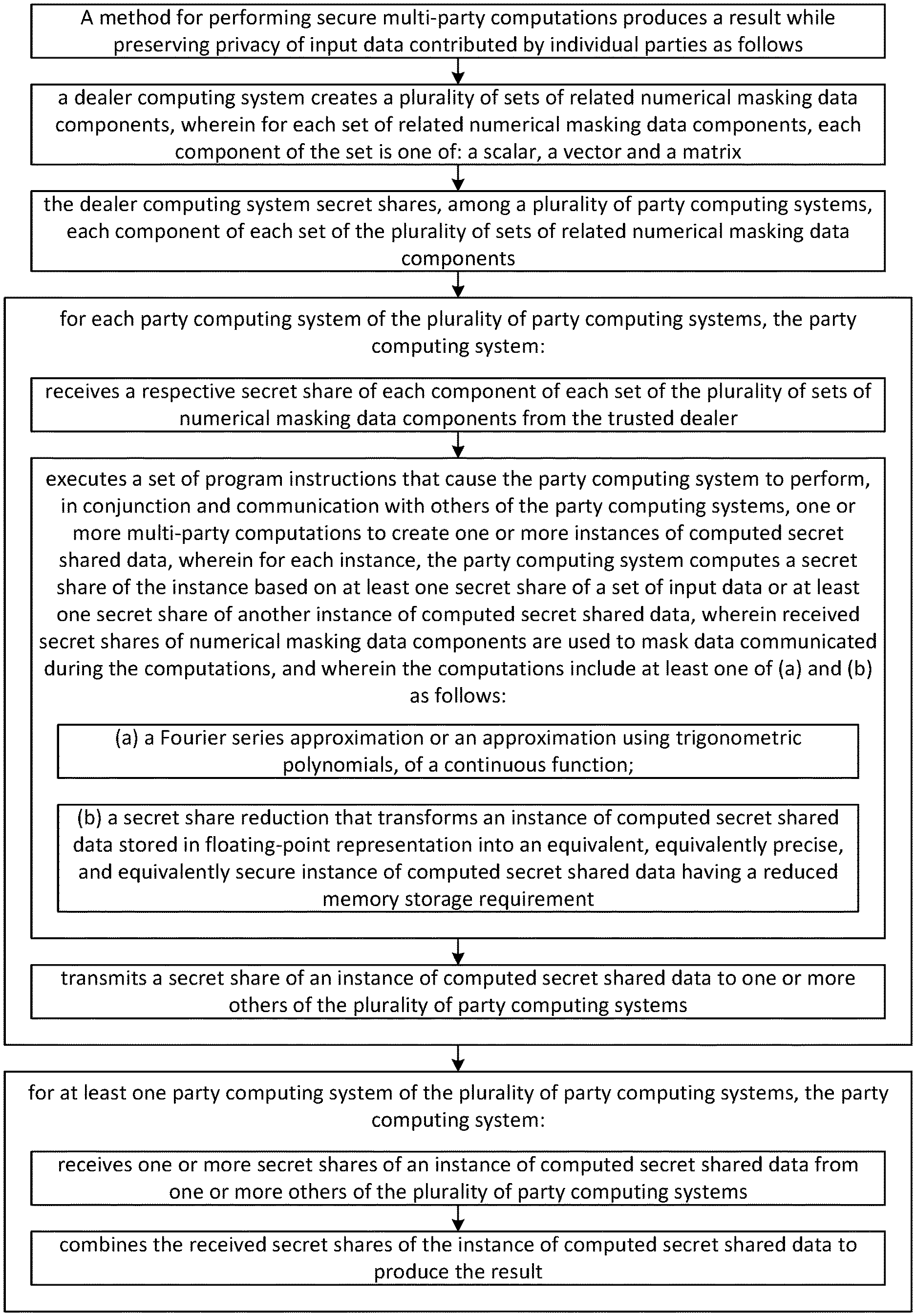

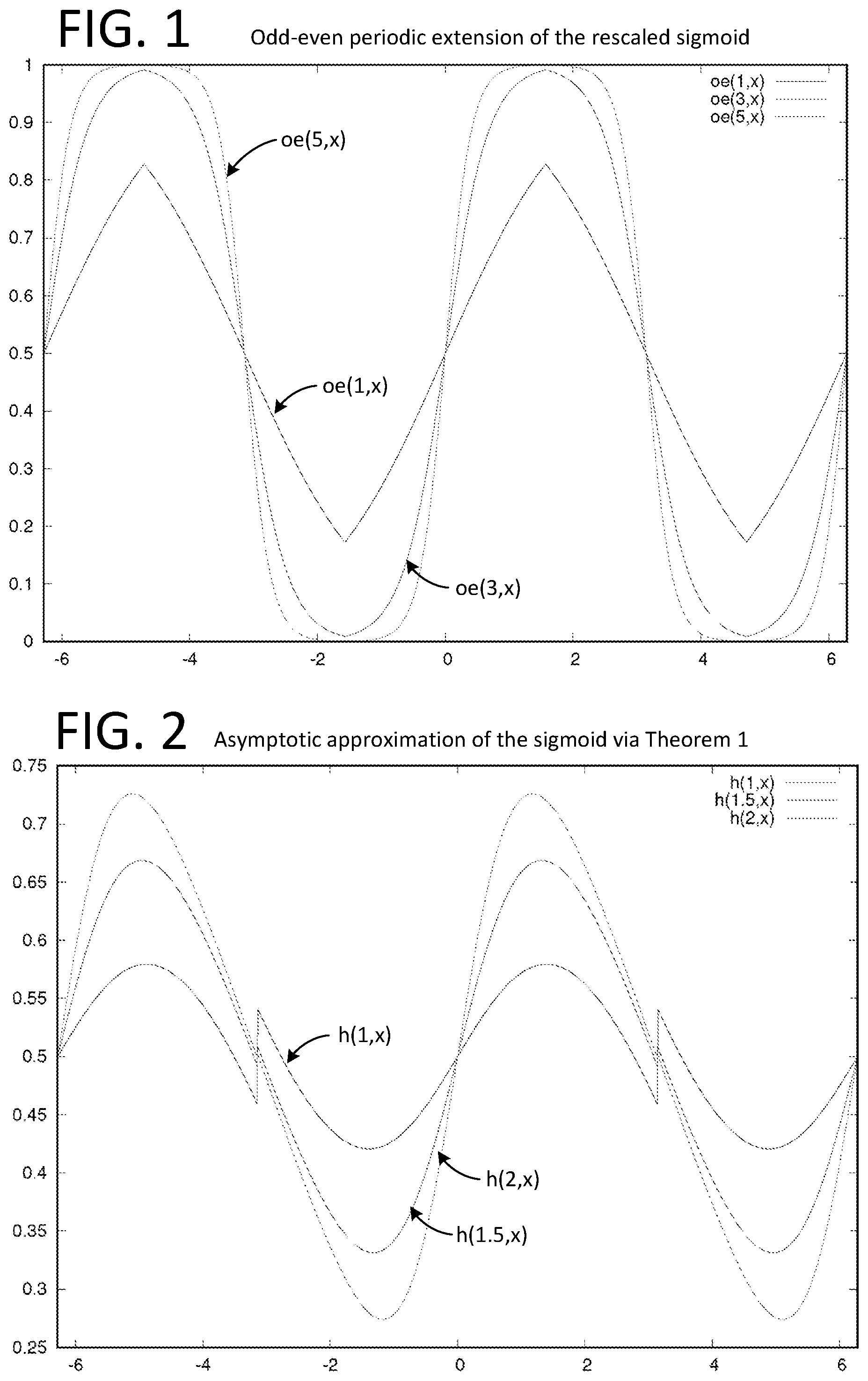

[0023] FIG. 1 illustrates a graph of the odd-even periodic extension of the rescaled sigmoid.

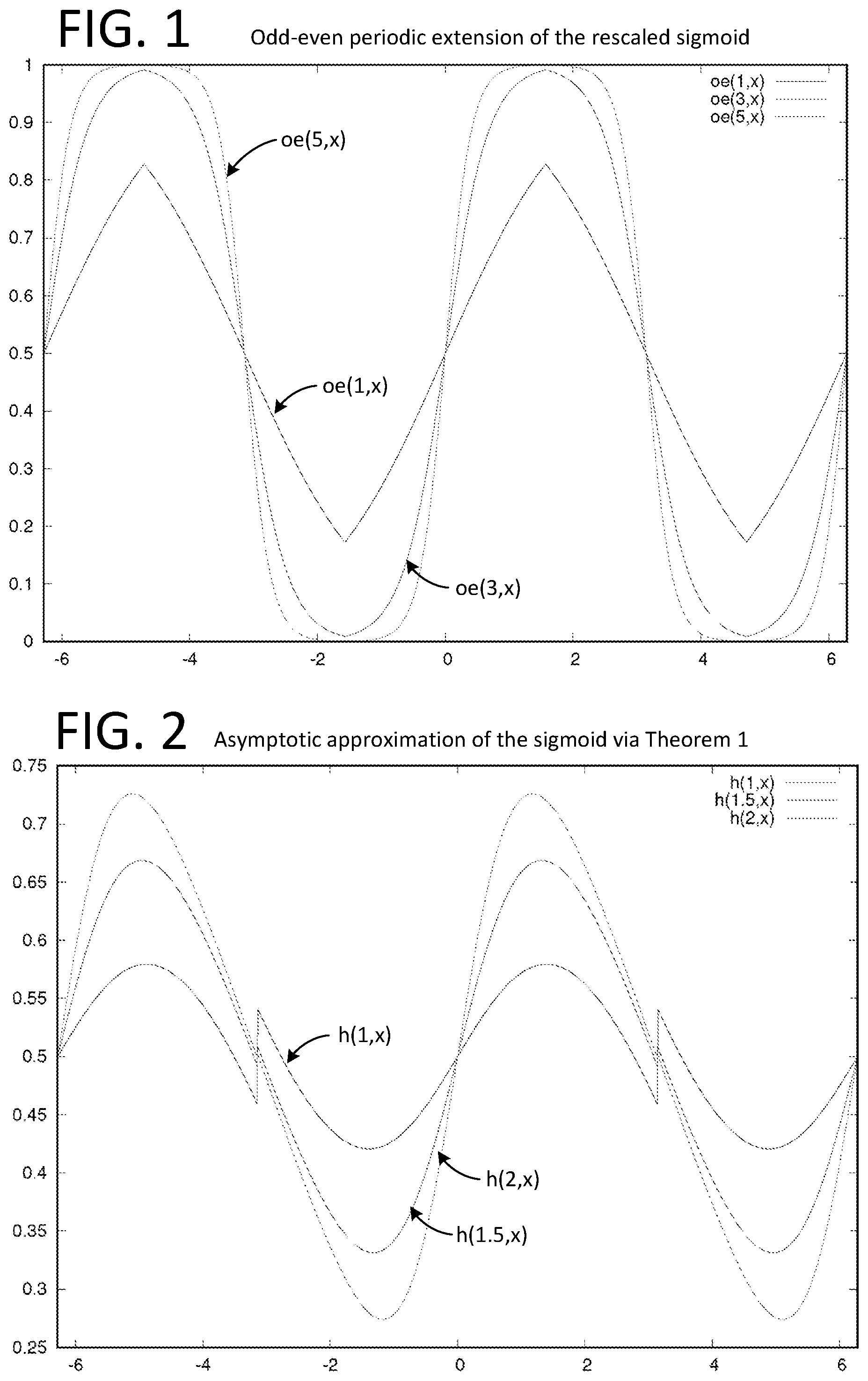

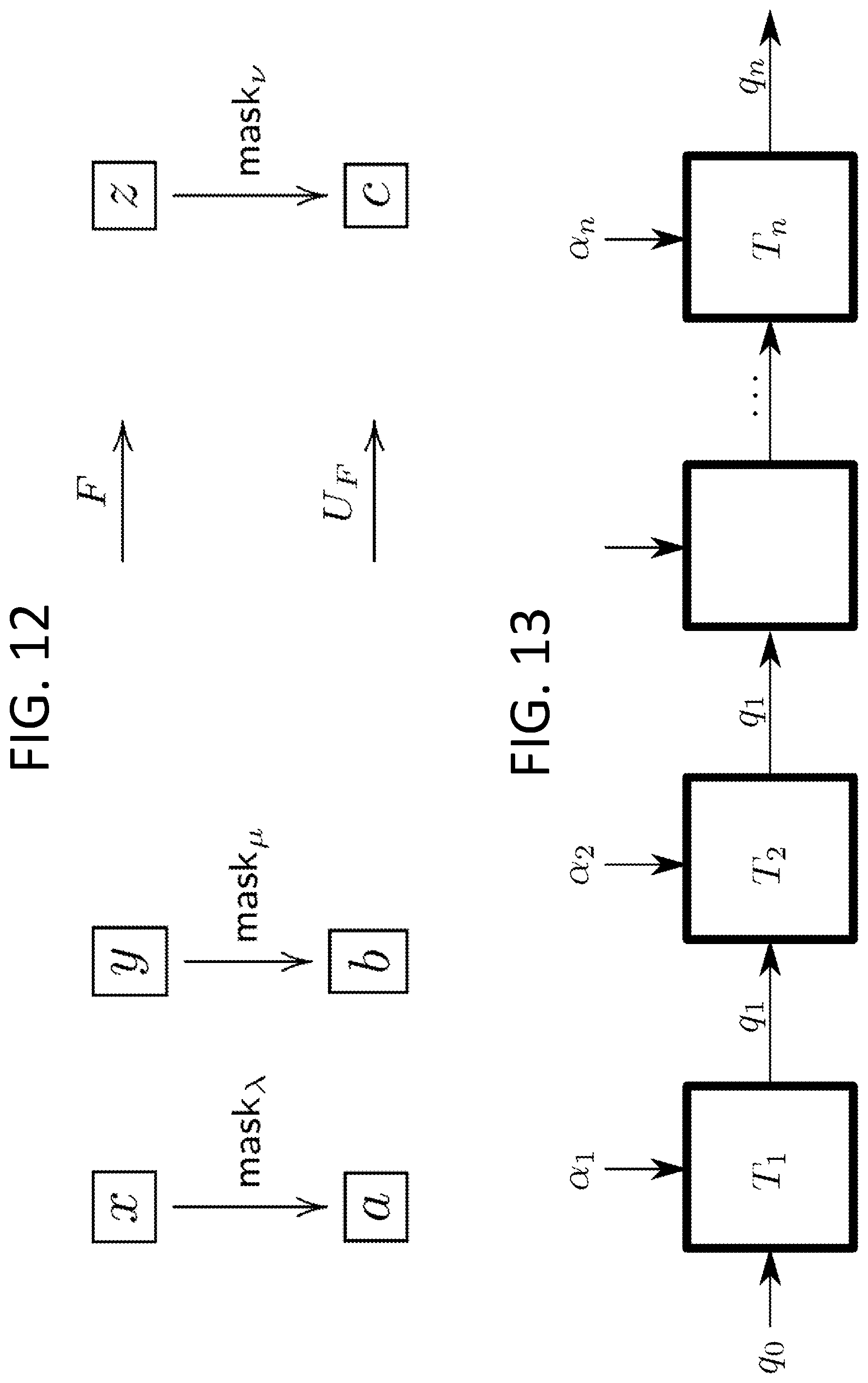

[0024] FIG. 2 illustrates an asymptotic approximation of the sigmoid via Theorem 1.

[0025] FIG. 3 illustrates a schematic of the connections during the offline phase of the MPC protocols in accordance with one embodiment.

[0026] FIG. 4 illustrates a schematic of the communication channels between players during the online phase in accordance with one embodiment.

[0027] FIG. 5 illustrates a table of results of our implementation summarizing the different measures we obtained during our experiments for n=3 players.

[0028] FIG. 6 shows the evolution of the cost function during the logistic regression as a function of the number of iterations.

[0029] FIG. 7 shows the evolution of the F-score during the same logistic regression as a function of the number of iterations.

[0030] FIG. 8 illustrates an example truth table and a corresponding encrypted truth table (encryption table).

[0031] FIG. 9 illustrates a table in which we give the garbling time, garbling size and the evaluation time for different garbling optimizations.

[0032] FIG. 10 illustrates an example comparison circuit.

[0033] FIG. 11 illustrates and example secret addition circuit.

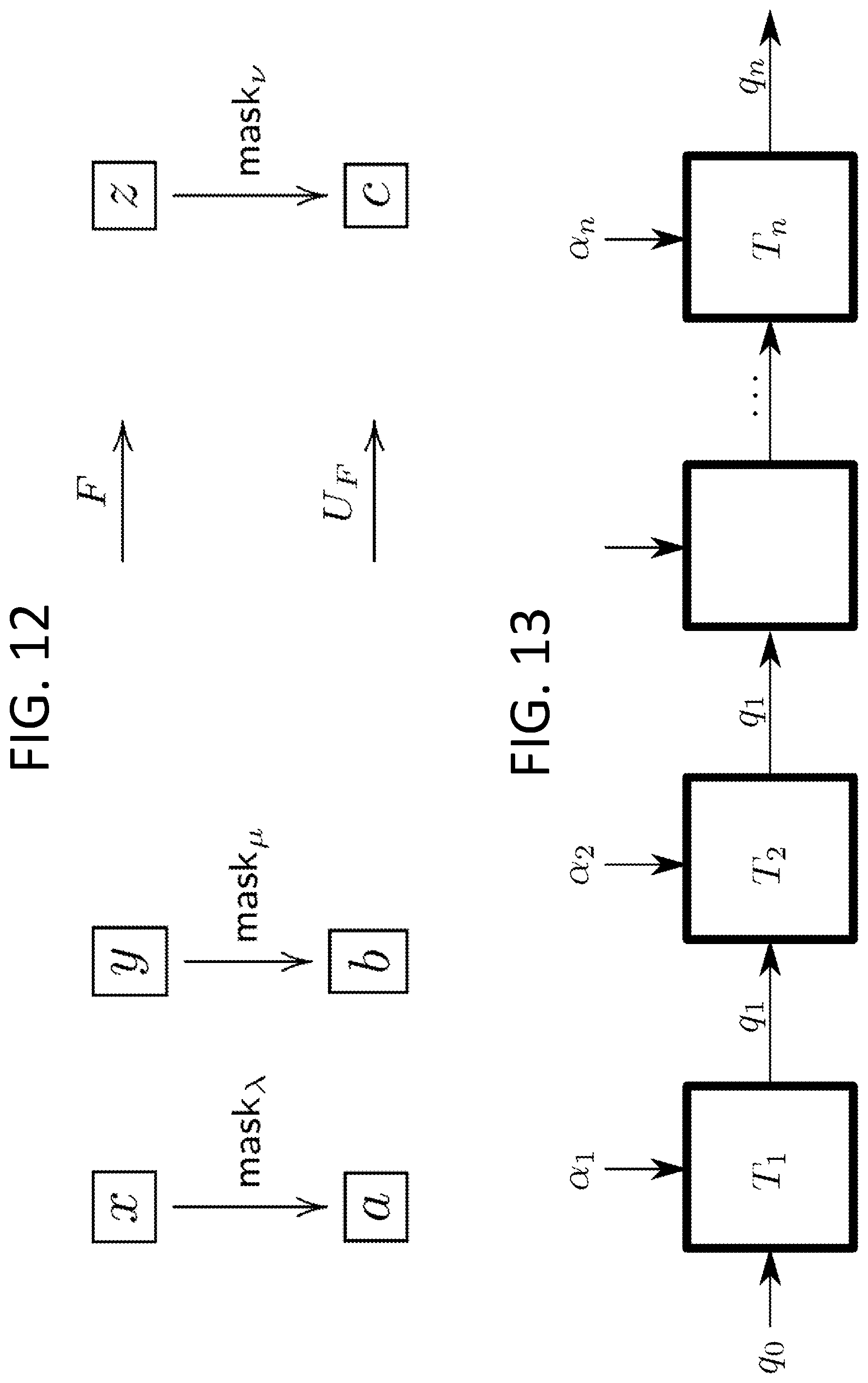

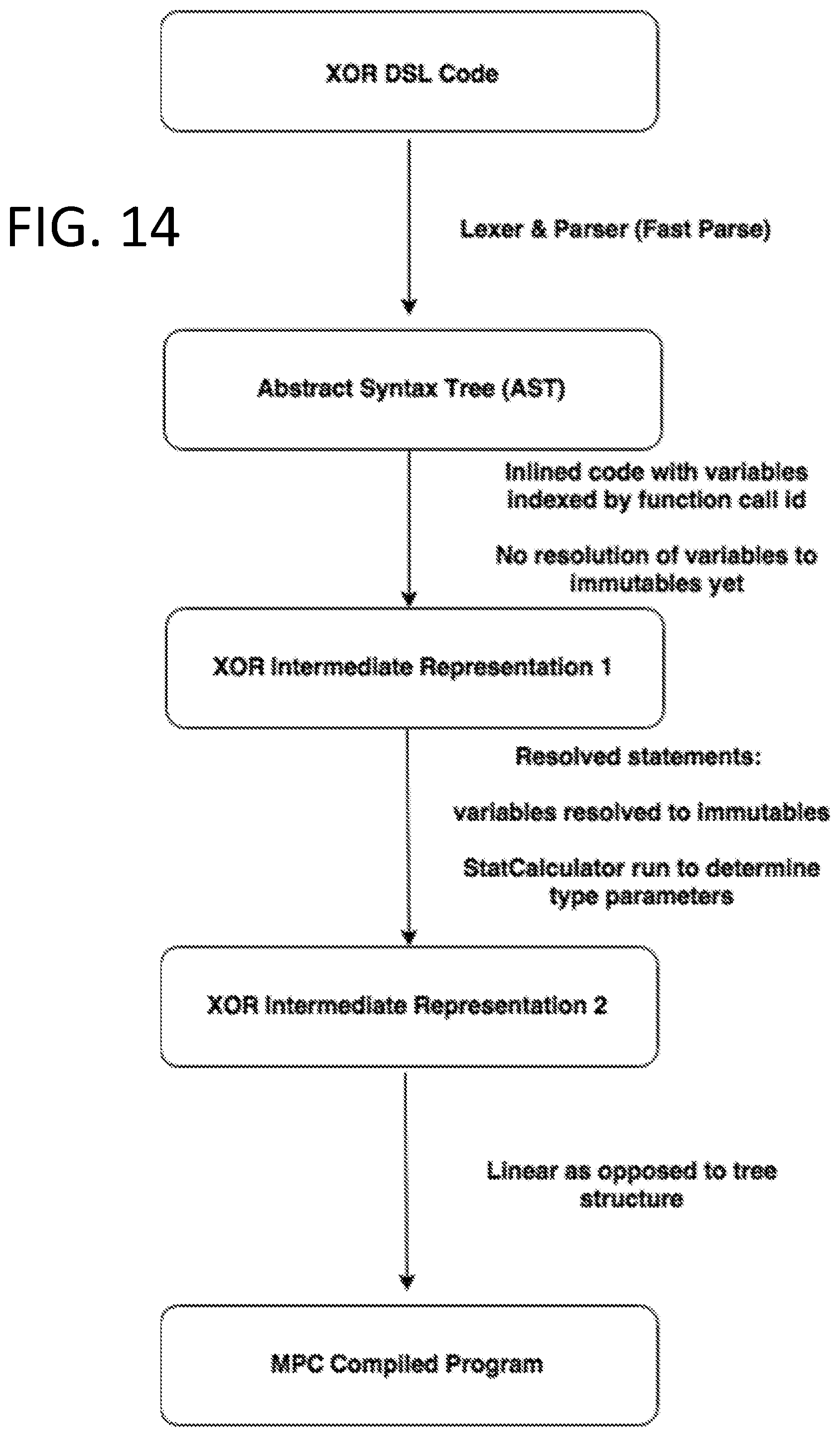

[0034] FIG. 12 illustrates a diagram of two example functions.

[0035] FIG. 13 illustrates a schematic of a state machine that processes n letters.

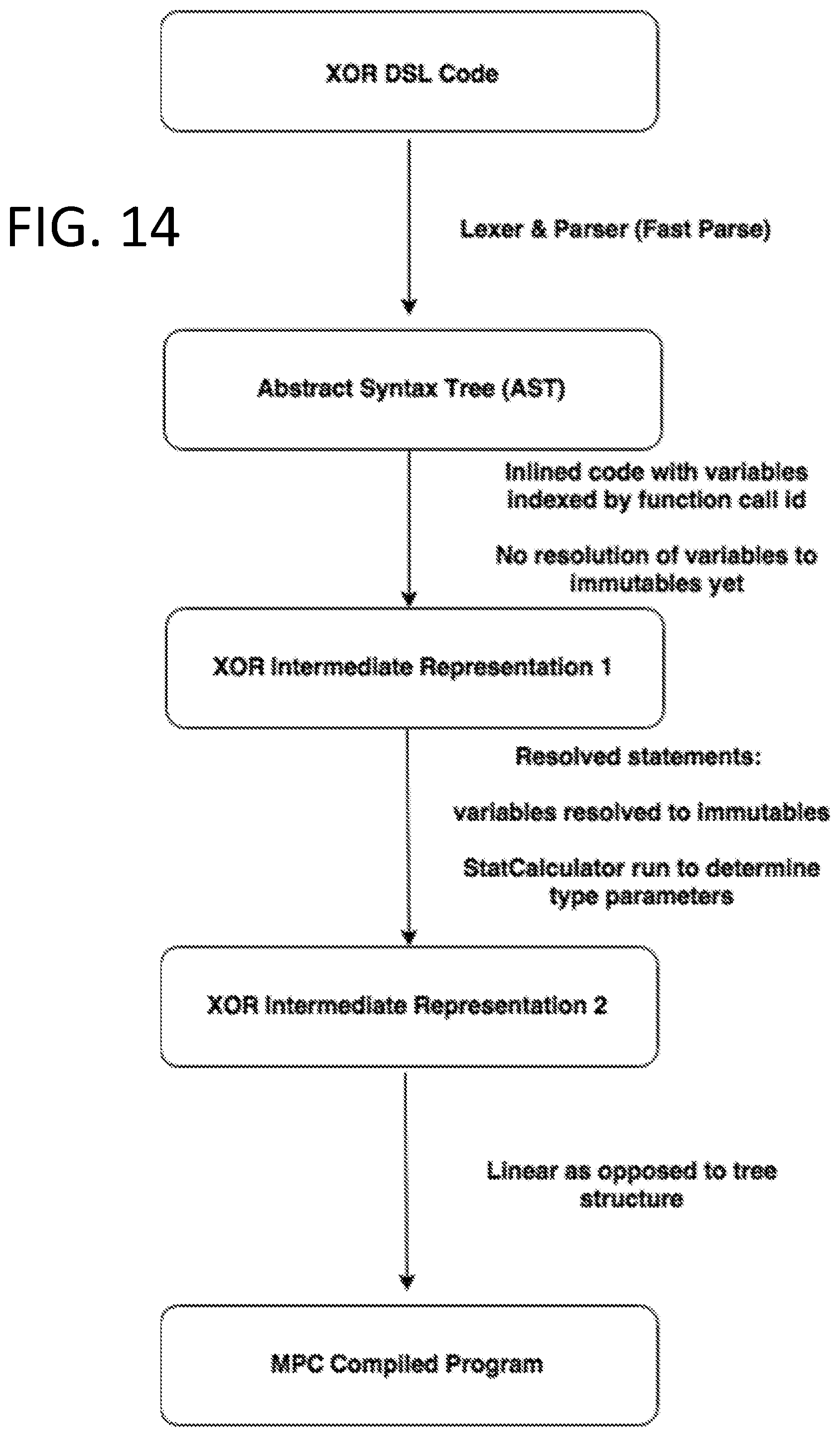

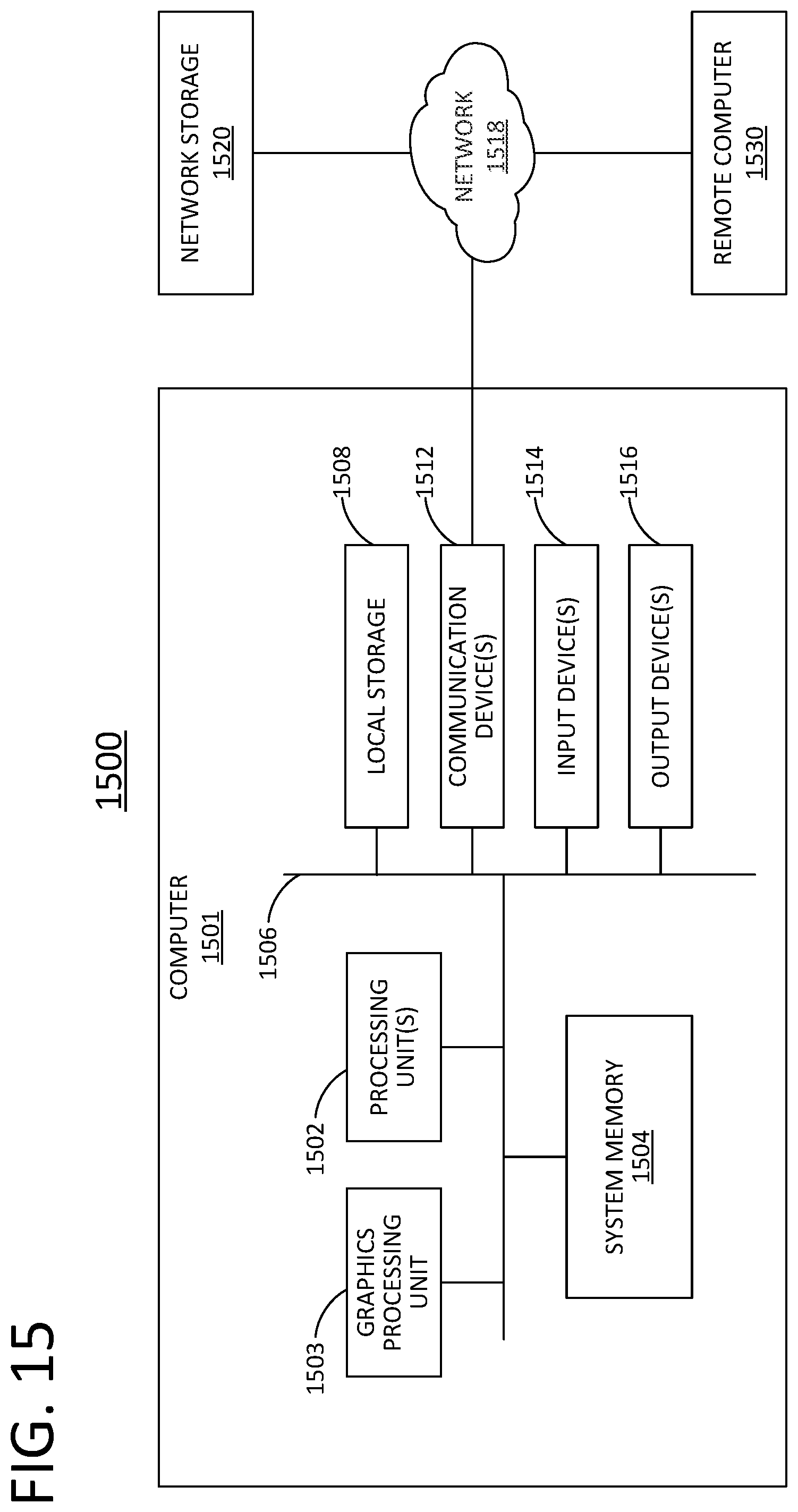

[0036] FIG. 14 illustrates a method for performing a compilation in accordance with one embodiment.

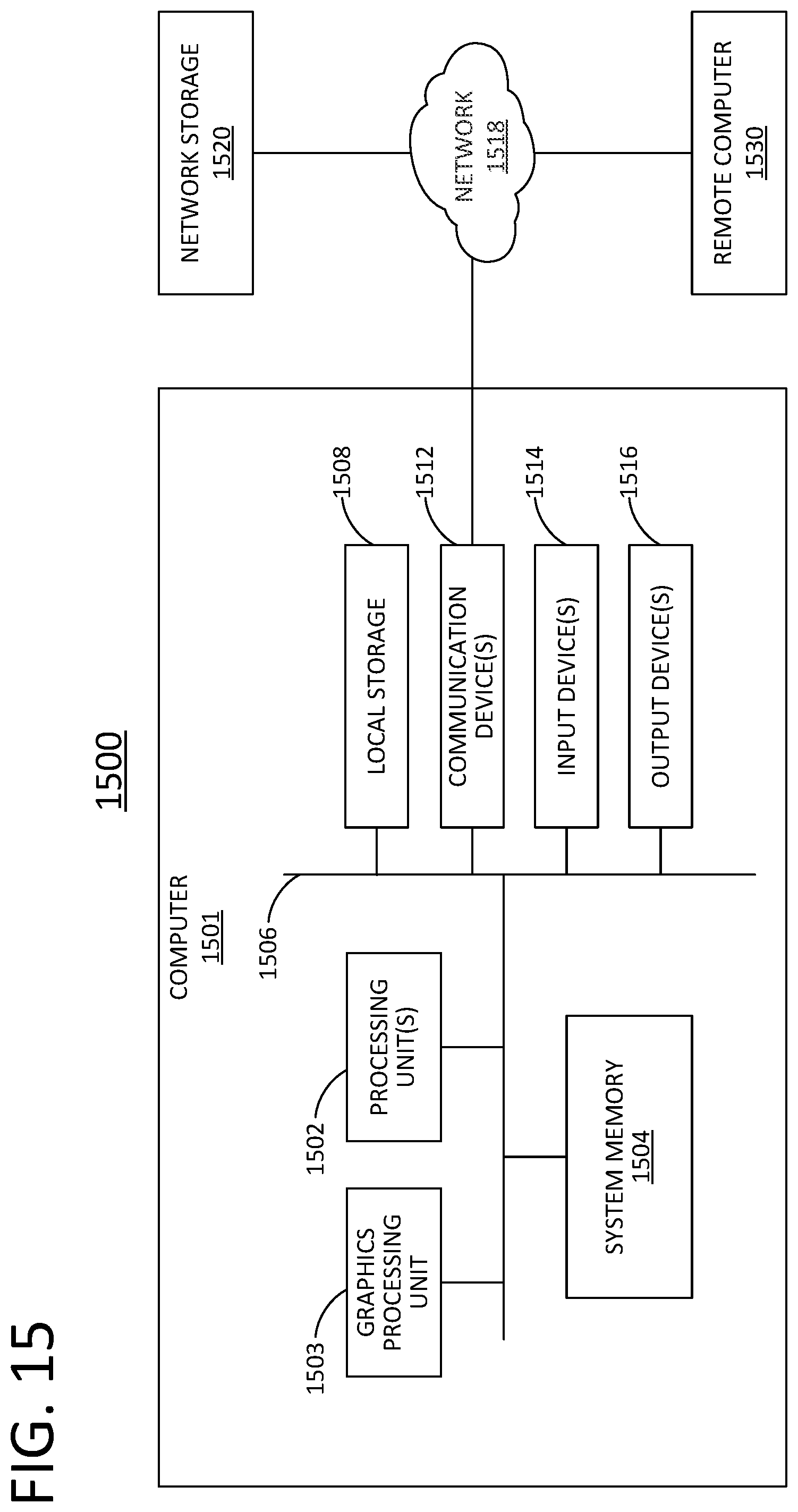

[0037] FIG. 15 illustrates a general computer architecture that can be appropriately configured to implement components disclosed in accordance with various embodiments.

[0038] FIG. 16 illustrates a method for performing secure multi-party computations in accordance with various embodiments.

DETAILED DESCRIPTION

[0039] In the following description, references are made to various embodiments in accordance with which the disclosed subject matter can be practiced. Some embodiments may be described using the expressions one/an/another embodiment or the like, multiple instances of which do not necessarily refer to the same embodiment. Particular features, structures or characteristics associated with such instances can be combined in any suitable manner in various embodiments unless otherwise noted.

[0040] I. High-Precision Privacy-Preserving Real-Valued Function Evaluation

0 Overview

[0041] We propose a novel multi-party computation protocol for evaluating continuous real-valued functions with high numerical precision. Our method is based on approximations with Fourier series and uses at most two rounds of communication during the online phase. For the offline phase, we propose a trusted-dealer and honest-but-curious aided solution, respectively. We apply our method to train a logistic regression classifier via a variant of Newton's method (known as IRLS) to compute unbalanced classification problems that detect rare events and cannot be solved using previously proposed privacy-preserving optimization methods (e.g., based on piecewise-linear approximations of the sigmoid function). Our protocol is efficient as it can be implemented using standard quadruple-precision floating point arithmetic. We report multiple experiments and provide a demo application that implements our method for training a logistic regression model.

1 Introduction

[0042] Privacy-preserving computing allows multiple parties to evaluate a function while keeping the inputs private and revealing only the output of the function and nothing else. Recent advances in multi-party computation (MPC), homomorphic encryption, and differential privacy made these models practical. An example of such computations, with applications in medicine and finance, among others, is the training of supervised models where the input data comes from distinct secret data sources [17], [23], [25], [26] and the evaluation of predictions using these models.

[0043] In machine learning classification problems, one trains a model on a given dataset to predict new inputs, by mapping them into discrete categories. The classical logistic regression model predicts a class by providing a probability associated with the prediction. The quality of the model can be measured in several ways, the most common one being the accuracy that indicates the percentage of correctly predicted answers.

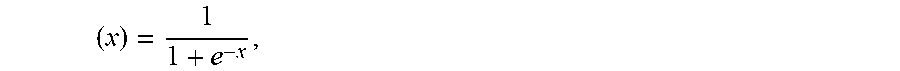

[0044] It appears that for a majority of the datasets (e.g., the MNIST database of handwritten digits [15] or the ARCENE dataset [14]), the classification achieves very good accuracy after only a few iterations of the gradient descent using a piecewise-linear approximation of the sigmoid function sigmo: .fwdarw.[0, 1] defined as

sigmo ( x ) = 1 1 + e - x , ##EQU00001##

although the current cost function is still far from the minimum value [25]. Other approximation methods of the sigmoid function have also been proposed in the past. In [29], an approximation with low degree polynomials resulted in a more efficient but less accurate method. Conversely, a higher-degree polynomial approximation applied to deep learning methods in [24] yielded more accurate, but less efficient methods (and thus, less suitable for privacy-preserving computing). In parallel, approximation solutions for privacy-preserving methods based on homomorphic encryption [2], [27], [18], [22] and differential privacy [1], [10] have been proposed in the context of both classification methods and deep learning.

[0045] Nevertheless, accuracy itself is not always a sufficient measure for the quality of the model, especially if, as mentioned in [19, p. 423], our goal is to detect a rare event such as a rare disease or a fraudulent financial transaction. If, for example, one out of every one thousand transactions is fraudulent, a nave model that classifies all transactions as honest achieves 99.9% accuracy; yet this model has no predictive capability. In such cases, measures such as precision, recall and F1-score allow for better estimating the quality of the model. They bound the rates of false positives or negatives relative to only the positive events rather than the whole dataset.

[0046] The techniques cited above achieve excellent accuracy for most balanced datasets, but since they rely on a rough approximation of the sigmoid function, they do not converge to the same model and thus, they provide poor scores on datasets with a very low acceptance rate. In this paper, we show how to regain this numerical precision in MPC, and to reach the same score as the plaintext regression. Our MPC approach is mostly based on additive secret shares with precomputed multiplication numerical masking data [4]. This means that the computation is divided in two phases: an offline phase that can be executed before the data is shared between the players (also referred to as parties or party computing systems), and an online phase that computes the actual result. For the offline phase, we propose a first solution based on a trusted dealer, and then discuss a protocol where the dealer is honest-but-curious. The dealer or trusted dealer can also be referred to as a dealer computing system.

[0047] 1.1 Our Contributions

[0048] A first contribution is a Fourier approximation of the sigmoid function. Evaluation of real-valued functions has been widely used in privacy-preserving computations. For instance, in order to train linear and logistic regression models, one is required to compute real-valued functions such as the square root, the exponential, the logarithm, the sigmoid or the softmax function and use them to solve non-linear optimization problems. In order to train a logistic regression model, one needs to minimize a cost function which is expressed in terms of logarithms of the continuous sigmoid function. This minimum is typically computed via iterative methods such as the gradient descent. For datasets with low acceptance rate, it is important to get much closer to the exact minimum in order to obtain a sufficiently precise model. We thus need to significantly increase the number of iterations (nave or stochastic gradient descent) or use faster-converging methods (e.g., IRLS [5, .sctn. 4.3]). The latter require a numerical approximation of the sigmoid that is much better than what was previously achieved in an MPC context, especially when the input data is not normalized or feature-scaled. Different approaches have been considered previously such as approximation by Taylor series around a point (yielding only good approximation locally at that point), or polynomial approximation (by e.g., estimating least squares). Although better than the first one, this method is numerically unstable due to the variation of the size of the coefficients. An alternative method based on approximation by piecewise-linear functions has been considered as well. In MPC, this method performs well when used with garbled circuits instead of secret sharing and masking, but does not provide enough accuracy.

[0049] In our case, we approximate the sigmoid using Fourier series, an approach applied for the first time in this context. This method works well as it provides a better uniform approximation assuming that the function is sufficiently smooth (as is the case with the sigmoid). In particular, we virtually re-scale and extend the sigmoid to a periodic function that we approximate with a trigonometric polynomial which we then evaluate in a stable privacy-preserving manner. To approximate a generic function with trigonometric polynomials that can be evaluated in MPC, one either uses the Fourier series of a smooth periodic extension or finds directly the closest trigonometric polynomial by the method of least squares for the distance on the half-period. The first approach yields a superalgebraic convergence at best, whereas the second converges exponentially fast. On the other hand, the first one is numerically stable whereas the second one is not (under the standard Fourier basis). In the case of the sigmoid, we show that one can achieve both properties at the same time.

[0050] A second contribution is a Floating-point representation and masking. A typical approach to multi-party computation protocols with masking is to embed fixed-point values into finite groups and use uniform masking and secret sharing. Arithmetic circuits can then be evaluated using, e.g., precomputed multiplication numerical masking data and following Beaver's method [4]. This idea has been successfully used in [13] and [12]. Whereas the method works well on low multiplicative depth circuits like correlations or linear regression [17], in general, the required group size increases exponentially with the multiplicative depth. In [25], this exponential growth is mitigated by a two-party rounding solution, but the technique does not extend to three or more players where an overflow in the most significant bits can occur. In this work, we introduce an alternative sharing scheme, where fixed-point values are shared directly using (possibly multibit) floating points, and present a technique to reduce the share sizes after each multiplication. This technique easily extends to an arbitrary number of players.

[0051] A third contribution is a significant reduction in communication time. In this paper, we follow the same approach as in [25] and define dedicated numerical masking data for high-level instructions, such as large matrix multiplications, a system resolution, or an oblivious evaluation of the sigmoid. This approach is less generic than masking low-level instructions as in SPDZ, but it allows to reduce the communication and memory requirements by large factors. Masks and operations are aware of the type of vector or matrix dimensions and benefit from the vectorial nature of the high-level operations. For example, multiplying two matrices requires a single round of communication instead of up to O(n.sup.3) for coefficient-wise approaches, depending on the batching quality of the compiler. Furthermore, masking is defined per immutable variable rather than per elementary operation, so a constant matrix is masked only once during the whole method. Combined with non-trivial local operations, these numerical masking data can be used to achieve much more than just ring additions or multiplications. In a nutshell, the amount of communications is reduced as a consequence of reusing the same masks, and the number of communication rounds is reduced as a consequence of masking directly matrices and other large structures. Therefore, the total communication time becomes negligible compared to the computing cost.

[0052] A fourth contribution is a new protocol for the honest but curious offline phase extendable to n players. We introduce a new protocol for executing the offline phase in the honest-but-curious model that is easily extendable to a generic number n of players while remaining efficient. To achieve this, we use a broadcast channel instead of peer-to-peer communication which avoids a quadratic explosion in the number of communications. This is an important contribution, as none of the previous protocols for n>3 players in this model are efficient. In [17], for instance, the authors propose a very efficient method in the trusted dealer model; yet the execution time of the oblivious transfer protocol is quite slow.

2 Notation and Preliminaries

[0053] Assume that P.sub.1, . . . , P.sub.n are distinct computing parties (players). We recall some basic concepts from multi-party computation that will be needed for this paper.

[0054] 2.1 Secret Sharing and Masking

[0055] Let (G, ) be a group and let x G be a group element. A secret share of x, denoted by x. (by a slight abuse of notation), is a tuple (x.sub.1, . . . , x.sub.n) G.sup.n such that x=x.sub.1 . . . x.sub.n. If (G, +) is abelian, we call the secret shares x.sub.1, . . . , x.sub.n additive secret shares. A secret sharing scheme is computationally secure if for any two elements x, y G, strict sub-tuples of shares x.sub. or y.sub. are indistinguishable. If G admits a uniform distribution, an information-theoretic secure secret sharing scheme consists of drawing x.sub.1, . . . , x.sub.n-1 uniformly at random and choosing x.sub.n=x.sub.n-1.sup.-1 . . . x.sub.1.sup.-1 x. When G is not compact, the condition can be relaxed to statistical or computational indistinguishability.

[0056] A closely related notion is the one of group masking. Given a subset X of G, the goal of masking X is to find a distribution D over G such that the distributions of x D for x X are all indistinguishable. Indeed, such distribution can be used to create a secret share: one can sample .lamda..rarw.D, and give .lamda..sup.-1 to a player and x .lamda. to the other. Masking can also be used to evaluate non-linear operations in clear over masked data, as soon as the result can be privately unmasked via homomorphisms (as in, e.g., the Beaver's triplet multiplication technique [4]).

[0057] 2.2 Arithmetic with Secret Shares Via Masking

[0058] Computing secret shares for a sum x+y (or a linear combination if (G, +) has a module structure) can be done non-interactively by each player by adding the corresponding shares of x and y. Computing secret shares for a product is more challenging. One way to do that is to use an idea of Beaver based on precomputed and secret shared multiplicative numerical masking data. From a general point of view, let (G.sub.1, +), (G.sub.2, +) and (G.sub.3, +) be three abelian groups and let .pi.:G.sub.1.times.G.sub.2.fwdarw.G.sub.3 be a bilinear map.

[0059] Given additive secret shares x.sub.+ and y.sub.+ for two elements x G.sub.1 and y G.sub.2, we would like to compute secret shares for the element .pi.(x, y) G.sub.3. With Beaver's method, the players must employ precomputed single-use random numerical masking data (.lamda..sub.+, .mu..sub.+, .pi.(.lamda., .mu.).sub.+) for .lamda. G.sub.1 and .mu. G.sub.2, and then use them to mask and reveal a=x+.lamda. and b=.gamma.+.mu.. The players then compute secret shares for .pi.(x, y) as follows: [0060] Player 1 computes z.sub.1=.pi.(a, b)-.pi.(a, .mu..sub.1)-.pi.(.lamda..sub.1, b)+(.pi.(.lamda., .mu.)).sub.1; [0061] Player i (for i=2, . . . , n) computes z.sub.i=-.pi.(a, .mu..sub.i)-.pi.(.lamda..sub.i, b)+(.pi.(.lamda., .mu.)).sub.i.

[0062] The computed z.sub.1, . . . , z.sub.n are the additive shares of .pi.(x, y). A given .lamda. can be used to mask only one variable, so one triplet (more generally, set of numerical masking data) must be precomputed for each multiplication during the offline phase (i.e. before the data is made available to the players). Instantiated with the appropriate groups, this abstract scheme allows to evaluate a product in a ring, but also a vectors dot product, a matrix-vector product, or a matrix-matrix product.

[0063] 2.3 MPC Evaluation of Real-Valued Continuous Functions

[0064] For various applications (e.g., logistic regression in Section 6, below), we need to compute continuous real-valued functions over secret shared data. For non-linear functions (e.g. exponential, log, power, cos, sin, sigmoid, etc.), different methods are proposed in the literature.

[0065] A straightforward approach consists of implementing a full floating point arithmetic framework [6, 12], and to compile a data-oblivious method that evaluates the function over floats. This is for instance what Sharemind and SPDZ use. However, these two generic methods lead to prohibitive running times if the floating point function has to be evaluated millions of times.

[0066] The second approach is to replace the function with an approximation that is easier to compute: for instance, [25] uses garbled circuits to evaluate fixed point comparisons and absolute values; it then replaces the sigmoid function in the logistic regression with a piecewise-linear function. Otherwise, [24] approximates the sigmoid with a polynomial of fixed degree and evaluates that polynomial with the Horner method, thus requiring a number of rounds of communications proportional to the degree.

[0067] Another method that is close to how SPDZ [13] computes inverses in a finite field is based on polynomial evaluation via multiplicative masking: using precomputed numerical masking data of the form (.lamda.+, .lamda..sup.-1+, . . . , .lamda..sup.-p.sub.+), players can evaluate P(x)=.SIGMA..sub.i=0.sup.pa.sub.px.sup.p by revealing u=x.lamda. and outputting the linear combination .SIGMA..sub.i=0.sup.pa.sub.iu.sup.i.lamda..sup.-i.sub.+.

[0068] Multiplicative masking, however, involves some leakage: in finite fields, it reveals whether x is null. The situation gets even worse in finite rings where the multiplicative orbit of x is disclosed (for instance, the rank would be revealed in a ring of matrices), and over : the order of magnitude of x would be revealed.

[0069] For real-valued polynomials, the leakage could be mitigated by translating and rescaling the variable x so that it falls in the range [1, 2). Yet, in general, the coefficients of the polynomials that approximate the translated function explode, thus causing serious numerical issues.

[0070] 2.4 Full Threshold Honest-but-Curious Protocol

[0071] Since our goal is to emphasize new functionalities, such as efficient evaluation of real-valued continuous functions and good quality logistic regression, we often consider a scenario where all players follow the protocol without introducing any errors. The players may, however, record the whole transaction history and try to learn illegitimate information about the data. During the online phase, the security model imposes that any collusion of at most n-1 players out of n cannot distinguish any semantic property of the data beyond the aggregated result that is legitimately and explicitly revealed. To achieve this, Beaver triplets (also referred to as numerical masking data, used to mask player's secret shares) can be generated and distributed by a single entity called the trusted dealer. In this case, no coalition of at most n-1 players should get any computational advantage on the plaintext numerical masking data information. However, the dealer himself knows the plaintext numerical masking data, and hence the whole data, which only makes sense on some computation outsourcing use-cases. In Section 5, below, we give an alternative honest-but-curious (or semi-honest) protocol to generate the same numerical masking data, involving this time bi-directional communications with the dealer. In this case, the dealer and the players collaborate during the offline phase in order to generate the precomputed material, but none of them have access to the whole plaintext numerical masking data. This makes sense as long as the dealer does not collude with any player, and at least one player does not collude with the other players. We leave the design of actively secure protocols for future work.

3 Statistical Masking and Secret Share Reduction

[0072] In this section, we present our masking technique for fixed-point arithmetic and provide an method for the MPC evaluation of real-valued continuous functions. In particular, we show that to achieve p bits of numerical precision in MPC, it suffices to have p+2.tau.-bit floating points where .tau. is a fixed security parameter.

[0073] The secret shares we consider are real numbers. We would like to mask these shares using floating point numbers. Yet, as there is no uniform distribution on , no additive masking distribution over reals can perfectly hide the arbitrary inputs. In the case when the secret shares belong to some known range of numerical precision, it is possible to carefully choose a masking distribution, depending on the precision range, so that the masked value computationally leaks no information about the input. A distribution with sufficiently large standard deviation could do the job: for the rest of the paper, we refer to this type of masking as "statistical masking". In practice, we choose a normal distribution with standard deviation .sigma.=2.sup.40.

[0074] On the other hand, by using such masking, we observe that the sizes of the secret shares increase every time we evaluate the multiplication via Beaver's technique (Section 2.2). In Section 3.3, we address this problem by introducing a technique that allows to reduce the secret share sizes by discarding the most significant bits of each secret share (using the fact that the sum of the secret shares is still much smaller than their size).

[0075] 3.1 Floating Point, Fixed Point and Interval Precision

[0076] Suppose that B is an integer and that p is a non-negative integer (the number of bits). The class of fixed-point numbers of exponent B and numerical precision p is:

C(B,p)={x 2.sup.B-p,|x|.ltoreq.2.sup.B}.

[0077] Each class C(B, p) is finite, and contains 2.sup.p+1+1 numbers. They could be rescaled and stored as (p+2)-bit integers. Alternatively, the number x C(B, p) can also be represented by the floating point value x, provided that the floating point representation has at least p bits of mantissa. In this case, addition and multiplication of numbers across classes of the same numerical precision are natively mapped to floating-point arithmetic. The main arithmetic operations on these classes are: [0078] Lossless Addition: C(B.sub.1, p.sub.1).times.C(B.sub.2, p.sub.2).fwdarw.C(B, p) where B=max(B.sub.1, B.sub.2)+1 and p=B min(B.sub.1-p.sub.1, B.sub.2-p.sub.2); [0079] Lossless Multiplication: C(B.sub.1, p.sub.1).times.C(B.sub.2, p.sub.2).fwdarw.C(B, p) where B=B.sub.1+B.sub.2 and p=p.sub.1+p.sub.2; [0080] Rounding: C(B.sub.1, p.sub.1).fwdarw.C(B, p), that maps x to its nearest element in 2.sup.B-p.

[0081] Lossless operations require p to increase exponentially in the multiplication depth, whereas fixed precision operations maintain p constant by applying a final rounding. Finally, note that the exponent B should be incremented to store the result of an addition, yet, B is a user-defined parameter in fixed point arithmetic. If the user forcibly chooses to keep B unchanged, any result |x|>2.sup.B will not be representable in the output domain (we refer to this type of overflow as plaintext overflow).

[0082] 3.2 Floating Point Representation

[0083] Given a security parameter .tau., we say that a set S is a .tau.-secure masking set for a class C(B, p) if the following distinguishability game cannot be won with advantage .gtoreq.2-.tau.: the adversary chooses two plaintexts m.sub.0, m.sub.1 in C(B, p), a challenger picks b {0, 1} and .alpha. S uniformly at random, and sends c=m.sub.b+.alpha. to the adversary. The adversary has to guess b. Note that increasing such distinguishing advantage from 2.sup.-.tau. to .apprxeq.1/2 would require to give at least 2.sup..tau. samples to the attacker, so .tau.=40 is sufficient in practice.

[0084] Proposition 1. The class C(B, p, .tau.)={.alpha. 2.sup.B-p , |.alpha.|.ltoreq.2.sup.B+.tau.} is a .tau.-secure masking set for C(B, p)

[0085] Proof. If a, b C(B, p) and U is the uniform distribution on C(B, p, .tau.), the statistical distance between a+U and b+U is (b-a)2.sup.p-B/# C(B, p, .tau.).ltoreq.2.sup.-.tau.. This distance upper-bounds any computational advantage.

[0086] Again, the class C(B, p, .tau.)=C(B+.tau., p+.tau.) fits in floating point numbers of p+.tau.-bits of mantissa, so they can be used to securely mask fixed point numbers with numerical precision p. By extension, all additive shares for C(B, p) will be taken in C(B, p, .tau.).

[0087] We now analyze what happens if we use Beaver's protocol to multiply two plaintexts x C(B.sub.1, p) and y C(B.sub.2, p). The masked values x+.lamda. and y+.mu. are bounded by 2.sup.B.sup.1.sup.+.tau. and 2.sup.B.sup.2.sup.+.tau. respectively. Since the mask .lamda. is also bounded by 2.sup.B.sup.1.sup.+.tau. and .mu. by 2.sup.B.sup.2.sup.+.tau., the computed secret shares of x y will be bounded by 2.sup.B.sup.1.sup.+B.sup.2.sup.+2.tau.. So the lossless multiplication sends C(B.sub.1, p, .tau.).times.C(B.sub.2, p, .tau.).fwdarw.C(B, 2p, 2.tau.) where B=B.sub.1+B.sub.2 instead of C(B, p, .tau.). Reducing p is just a matter of rounding, and it is done automatically by the floating point representation. However, we still need a method to reduce r, so that the output secret shares are bounded by 2.sup.B+.tau..

[0088] 3.3 Secret Share Reduction Method

[0089] The method we propose depends on two auxiliary parameters: the cutoff, defined as .eta.=B+.tau. so that 2.sup..eta. is the desired bound in absolute value, and an auxiliary parameter M=2.sup.K larger than the number of players.

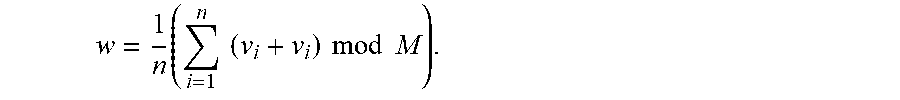

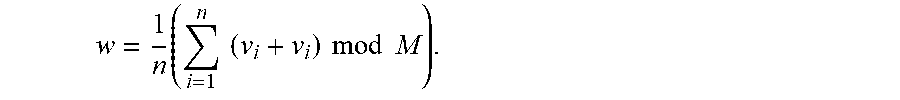

[0090] The main idea is that the initial share contains large components z.sub.1, . . . , z.sub.n that sum up to the small secret shared value z. Additionally, the most significant bits of the share beyond the cutoff position (say MSB(z.sub.i)=.left brkt-bot.z.sub.i/2.sup..eta..right brkt-bot.) do not contain any information on the data, and are all safe to reveal. We also know that the MSB of the sum of the shares (i.e. MSB of the data) is null, so the sum of the MSB of the shares is very small. The share reduction method simply computes this sum, and redistributes it evenly among the players. Since the sum is guaranteed to be small, the computation is done modulo M rather than on large integers. More precisely, using the cutoff parameter .eta., for i=1, . . . , n, player i writes his secret share z.sub.i of z as z.sub.i=u.sub.i+2.sup..eta.v.sub.i, with v.sub.i and u.sub.i [-2.sup..eta.-1, 2.sup..eta.-1). Then, he broadcasts v.sub.i mod M, so that each player computes the sum. The individual shares can optionally be re-randomized using a precomputed share v.sub.+, with v=0 mod M. Since w=.SIGMA.v.sub.i's is guaranteed to be between -M/2 and M/2, it can be recovered from its representation mod M. Thus, each player locally updates its share as u.sub.i+2.sup..eta.w/n, which have by construction the same sum as the original shares, but are bounded by 2.sup..eta..

[0091] 3.4 Mask Reduction Method

[0092] The following method details one embodiment for reducing the size of the secret shares as described above in Section 3.3. This procedure can be used inside the classical MPC multiplication involving floating points.

Input: z.sub.+ and one set of numerical masking data v.sub.+, with v=0 mod M. Output: Secret shares for the same value z with smaller absolute values of the shares. 1: Each player P.sub.i computes u.sub.i [-2.sup..eta.-1, 2.sup..eta.-1) and v.sub.i , such that z.sub.i=u.sub.i+2.sup..eta.v.sub.i. 2: Each player P.sub.i broadcasts v.sub.i+v.sub.i mod M to other players. 3: The players compute

w = 1 n ( i = 1 n ( v i + v i ) mod M ) . ##EQU00002##

4: Each player P.sub.i computes the new share of z as z.sub.i'=u.sub.i+2.sup.nw.

[0093] 4 Fourier Approximation

[0094] Fourier theory allows us to approximate certain periodic functions with trigonometric polynomials. The goal of this section is two-fold: to show how to evaluate trigonometric polynomials in MPC and, at the same time, to review and show extensions of some approximation results to non-periodic functions.

[0095] 4.1 Evaluation of Trigonometric Polynomials or Fourier Series in MPC

[0096] Recall that a complex trigonometric polynomial is a finite sum of the form t(x)=.SIGMA..sub.m=-P.sup.Pc.sub.me.sup.imx, where c.sub.m is equal to a.sub.m+ib.sub.m, with a.sub.m, b.sub.m . Each trigonometric polynomial is a periodic function with period 2.pi.. If c.sub.-m=c.sub.m for all m , then t is real-valued, and corresponds to the more familiar cosine decomposition t(x)=a.sub.0+.SIGMA..sub.m=1.sup.Na.sub.m cos(mx)+b.sub.m sin(mx). Here, we describe how to evaluate trigonometric polynomials in an MPC context, and explain why it is better than regular polynomials.

[0097] We suppose that, for all m, the coefficients a.sub.m and b.sub.m of t are publicly accessible and they are 0.ltoreq.a.sub.m, b.sub.m.ltoreq.1. As t is 2.pi. periodic, we can evaluate it on inputs modulo 2.pi.. Remark that as mod 2.pi. admits a uniform distribution, we can use a uniform masking: this method completely fixes the leakage issues that were related to the evaluation of classical polynomials via multiplicative masking. On the other hand, the output of the evaluation is still in : in this case we continue using the statistical masking described in previous sections. The inputs are secretly shared and additively masked: for sake of clarity, to distinguish the classical addition over reals from the addition modulo 2.pi., we temporarily denote this latter by .sym.. In the same way, we denote the additive secret shares with respect to the addition modulo 2.pi. by .sub..sym.. Then, the transition from to .sub..sym. can be achieved by trivially reducing the shares modulo 2.pi..

[0098] Then, a way to evaluate t on a secret shared input x.sub.+=(x.sub.1, . . . , x.sub.n) is to convert x.sub.+ to x.sub..sym. and additively mask it with a shared masking .lamda..sub..sym., then reveal x.sym..lamda. and rewrite our target e.sup.imx.sub.+ as e.sup.im(x.sym..lamda.) e.sup.im(-.lamda.).sub.+. Indeed, since x.sym..lamda. is revealed, the coefficient e.sup.im(x.sym..lamda.) can be computed in clear. Overall, the whole trigonometric polynomial t can be evaluated in a single round of communication, given precomputed trigonometric polynomial or Fourier series masking data such as (.lamda..sub..sym., e.sup.-i.lamda..sub.+, . . . , e.sup.-i.lamda.P.sub.+) and thanks to the fact that x.sym..lamda. has been revealed.

[0099] Also, we notice that to work with complex numbers of absolute value 1 makes the method numerically stable, compared to power functions in regular polynomials. It is for this reason that the evaluation of trigonometric polynomials is a better solution in our context.

[0100] 4.2 Approximating Non-Periodic Functions

[0101] If one is interested in uniformly approximating (with trigonometric polynomials on a given interval, e.g. [-.pi./2, .pi./2]) a non-periodic function f, one cannot simply use the Fourier coefficients. Indeed, even if the function is analytic, its Fourier series need not converge uniformly near the end-points due to Gibbs phenomenon.

[0102] 4.2.1 Approximations Via C.sup..infin.-Extensions.

[0103] One way to remedy this problem is to look for a periodic extension of the function to a larger interval and look at the convergence properties of the Fourier series for that extension. To obtain exponential convergence, the extension needs to be analytic too, a condition that can rarely be guaranteed. In other words, the classical Whitney extension theorem [28] will rarely yield an analytic extension that is periodic at the same time. A constructive approach for extending differentiable functions is given by Hestenes [20] and Fefferman [16] in a greater generality. The best one can hope for is to extend the function to a C.sup..infin.-function (which is not analytic). As explained in [8], [9], such an extension yields a super-algebraic approximation at best that is not exponential.

[0104] 4.2.2 Least-Square Approximations.

[0105] An alternative approach for approximating a non-periodic function with trigonometric functions is to search for these functions on a larger interval (say [-.pi., .pi.]), such that the restriction (to the original interval) of the L.sup.2-distance between the original function and the approximation is minimized. This method was first proposed by [7], but it was observed that the coefficients with respect to the standard Fourier basis were numerically unstable in the sense that they diverge (for the optimal solution) as one increases the number of basis functions. The method of [21] allows to remedy this problem by using a different orthonormal basis of certain half-range Chebyshev polynomials of first and second kind for which the coefficients of the optimal solution become numerically stable. In addition, one is able to calculate numerically these coefficients using a Gaussian quadrature rule.

[0106] 4.2.2.1 Approximation of Functions by Trigonometric Polynomial Over the Half Period

[0107] Let f be a square-integrable function on the interval [-.pi./2, .pi./2] that is not necessarily smooth or periodic.

[0108] 4.2.2.1.1 the Approximation Problem

[0109] Consider the set

G n = { g ( x ) = a 0 2 + k = 1 n a k sin ( kx ) + k = 1 n b k cos ( kx ) } ##EQU00003##

of 2.pi.-periodic functions and the problem

g.sub.n(x)=argmin.sub.g G.sub.n.parallel.f-g.parallel..sub.L.sub.[-.pi./2,.pi./2].sup.2.

[0110] As it was observed in [7], if one uses the nave basis to write the solutions, the Fourier coefficients of the functions g.sub.n are unbounded, thus resulting in numerical instability. It was explained in [21] how to describe the solution in terms of two families of orthogonal polynomials closely related to the Chebyshev polynomials of the first and second kind. More importantly, it is proved that the solution converges to f exponentially rather than super-algebraically and it is shown how to numerically estimate the solution g.sub.n(x) in terms of these bases.

[0111] We will now summarize the method of [21]. Let

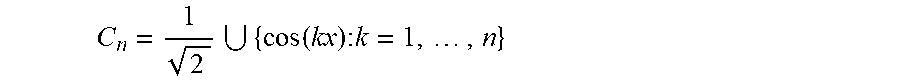

C n = 1 2 { cos ( kx ) : k = 1 , , n } ##EQU00004##

and let .sub.n be the -vector space spanned by these functions (the subspace of even functions). Similarly, let

S.sub.n={sin(kx):k=1, . . . ,n},

and let .sub.n be the -span of S.sub.n (the space of odd functions). Note that C.sub.n.orgate.S.sub.n is a basis for G.sub.n.

[0112] 4.2.2.1.2 Chebyshev's Polynomials of First and Second Kind

[0113] Let T.sub.k (y) for y [-1, 1] be the kth Chebyshev polynomial of first kind, namely, the polynomial satisfying T.sub.k (cos .theta.)=cos k.theta. for all .theta. and normalized so that T.sub.k (1)=1 (T.sub.k has degree k). As k varies, these polynomials are orthogonal with respect to the weight function w.sub.1(.gamma.)=1/ {square root over (1-y.sup.2)}. Similarly, let U.sub.k (y) for y [-1, 1] be the kth Chebyshev polynomial of second kind, i.e., the polynomial satisfying U.sub.k (cos .theta.)=sin((k+1).theta.)/sin .theta. and normalized so that U.sub.k (1)=k+1. The polynomials {U.sub.k(.gamma.)} are orthogonal with respect to the weight function w.sub.2(.gamma.)= {square root over (1-y.sup.2)}.

[0114] It is explained in [21, Thm.3.3] how to define a sequence {T.sub.k.sup.h} of half-range Chebyshev polynomials that form an orthonormal bases for the space of even functions. Similarly, [21, Thm.3.4] yields an orthonormal basis {U.sub.k.sup.h} for the odd functions (the half-range Chebyshev polynomials of second kind). According to [21, Thm.3.7], the solution g.sub.n to the above problem is given by

g n ( x ) = k = 0 n a k T k h ( cos x ) + k = 0 n - 1 b k U k h ( cos x ) sin x , where ##EQU00005## a k = 2 .pi. .intg. - .pi. 2 .pi. 2 f ( x ) T k h ( cos x ) dx , and ##EQU00005.2## b k = 2 .pi. .intg. - .pi. 2 .pi. 2 f ( x ) U k h ( cos x ) sin x dx . ##EQU00005.3##

[0115] While it is numerically unstable to express the solution g.sub.n in the standard Fourier basis, it is stable to express them in terms of the orthonormal basis {T.sub.k.sup.h}.orgate.{U.sub.k.sup.h}. In addition, it is shown in [21, Thm.3.14] that the convergence is exponential. To compute the coefficients a.sub.k and b.sub.k numerically, one uses Gaussian quadrature rules as explained in [21, .sctn. 5].

[0116] 4.2.3 Approximating the Sigmoid Function.

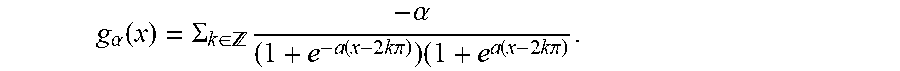

[0117] We now restrict to the case of the sigmoid function over the interval [-B/2, B/2] for some B>0. We can rescale the variable to approximate g(x)=sigmo(Bx/.pi.) over [-.pi./2, .pi./2]. If we extend g by anti-periodicity (odd-even) to the interval [.lamda./2, 3.lamda./2] with the mirror condition g(x)=g(.pi.-x), we obtain a continuous Dr-periodic piecewise C.sup.1 function. By Dirichlet's global theorem, the Fourier series of g converges uniformly over , so for all .epsilon.>0, there exists a degree N and a trigonometric polynomial g.sub.N such that .parallel.g.sub.N-g.parallel..sub..infin..ltoreq..epsilon.. To compute sigmo(t) over secret shared t, we first apply the affine change of variable (which is easy to evaluate in MPC), to get the corresponding x [-.pi./2, .pi./2], and then we evaluate the trigonometric polynomial g.sub.N(x) using Fourier numerical masking data. This method is sufficient to get 24 bits of precision with a polynomial of only 10 terms, however asymptotically, the convergence rate is only in .THETA.(n.sup.-2) due to discontinuities in the derivative of g. In other words, approximating g with .lamda. bits of precision requires to evaluate a trigonometric polynomial of degree 2.sup..lamda./2. Luckily, in the special case of the sigmoid function, we can compute this degree polynomial by explicitly constructing a 2.pi.-periodic analytic function that is exponentially close to the rescaled sigmoid on the whole interval [-.pi., .pi.] (not the half interval). Besides, the geometric decay of the coefficients of the trigonometric polynomial ensures perfect numerical stability. The following theorem summarizes this construction.

[0118] Theorem 1. Let h.sub..alpha.(x)=1/(1+.sup.e-.alpha.x)-x/2.pi. for x (-.pi., .pi.). For every .left brkt-top.>0, there exists .alpha.=O(log(1/.epsilon.)) such that h.sub..alpha. is at uniform distance .epsilon./2 from a 2.pi.-periodic analytic function g. There exists N=O(log.sup.2 (1/.epsilon.)) such that the Nth term of the Fourier series of g is at distance .epsilon./2 of g, and thus, at distance.ltoreq..epsilon. from h.sub..alpha..

[0119] We now prove Theorem 1, with the following methodology. We first bound the successive derivatives of the sigmoid function using a differential equation. Then, since the first derivative of the sigmoid decays exponentially fast, we can sum all its values for any x modulo 2.pi., and construct a C.sup..infin. periodic function, which approximates tightly the original function over [-.pi., .pi.]. Finally, the bounds on the successive derivatives directly prove the geometric decrease of the Fourier coefficients.

[0120] Proof. First, consider the .sigma.(x)=1/(1+e-x) the sigmoid function over . .sigma. satisfies the differential equation .sigma.'=.sigma.-.sigma..sup.2. By derivating n times, we have

.sigma. ( n + 1 ) = .sigma. ( n ) - k = 0 n ( n k ) .sigma. ( k ) .sigma. ( n - k ) = .sigma. ( n ) ( 1 - .sigma. ) - k = 1 n ( n k ) .sigma. ( k ) .sigma. ( n - k ) . ##EQU00006##

Dividing by (n+1)!, this yields

.sigma. ( n + 1 ) ( n + 1 ) ! .ltoreq. 1 n + 1 ( .sigma. ( n ) n ! + k = 1 n .sigma. ( k ) k ! .sigma. ( n - k ) ( n - k ) ! ) ##EQU00007##

From there, we deduce by induction that for all n.gtoreq.0 and for all x ,

| .sigma. ( n ) ( x ) n ! | .ltoreq. 1 ##EQU00008##

and it decreases with n, so for all n.gtoreq.1,

|.sigma..sup.(n)(x)|.ltoreq.n!.sigma.'(x).ltoreq.n!e.sup.-51 x|.

[0121] FIG. 1 illustrates a graph of the odd-even periodic extension of the rescaled sigmoid. The rescaled sigmoid function g(.alpha.x) is extended by anti-periodicity from

[ - .pi. 2 ; .pi. 2 ] ##EQU00009##

to

[ .pi. 2 ; 3 .pi. 2 ] . ##EQU00010##

This graph shows the extended function for .alpha.=1, 3, 5. By symmetry, the Fourier series of the output function has only odd sinus terms: 0.5+.alpha..sub.2n+1 sin((2n+1)x). For .alpha.=20/.pi., the first Fourier form a rapidly decreasing sequence: [6.12e-1, 1.51e-1, 5.37e-2, 1.99e-2, 7.41e-3, 2.75e-3, 1.03e-3, 3.82e-4, 1.44e-4, 5.14e-5, 1.87e-5, . . . ], which rapidly achieves 24 bits of precision. However, the sequence asymptotically decreases in O(n.sup.-2) due to the discontinuity in the derivative in

- .pi. 2 , ##EQU00011##

so this method is not suitable to get an exponentially good approximation.

[0122] FIG. 2 illustrates an asymptotic approximation of the sigmoid via Theorem 1. As .alpha. grows, the discontinuity in the rescaled sigmoid function

g ( .alpha. x ) - x 2 .pi. ##EQU00012##

vanishes, and it gets exponentially close to an analytic periodic function, whose Fourier coefficients decrease geometrically fast. This method is numerically stable, and can evaluate the sigmoid with arbitrary precision in polynomial time.

[0123] We now construct a periodic function that should be very close to the derivative of h.sub..alpha.: consider

g .alpha. ( x ) = .SIGMA. k .di-elect cons. - .alpha. ( 1 + e - a ( x - 2 k .pi. ) ) ( 1 + e a ( x - 2 k .pi. ) . ##EQU00013##

By summation of geometric series, g.sub..alpha. is a well-defined infinitely derivable 2.pi.-periodic function over . We can easily verify that for all x (-.pi., .pi.), the difference

h .alpha. ' ( x ) - 1 2 .pi. - g .alpha. ( x ) ##EQU00014##

is bounded by 2.alpha..

.SIGMA. k = 1 .infin. e .alpha. ( x - 2 k .pi. ) .ltoreq. 2 .alpha. e - a .pi. 1 - e - 2 .pi. a , ##EQU00015##

so by choosing

.alpha. = .theta. ( log ( 1 ) ) , ##EQU00016##

this difference can be made smaller than

2 . ##EQU00017##

[0124] We suppose now that a is fixed and we prove that g.sub..alpha. is analytic, i.e. its Fourier coefficients decrease exponentially fast. By definition, g.sub..alpha.(x)=.sigma.(.alpha.(x-2k.pi.)), so for all p N, g.sub..alpha..sup.(p)(x)=.alpha..sup.p+1.sigma..sup.(p+1)(.alpha.x-2.alph- a.k.pi.), so .parallel.g.sub..alpha..sup.(p).parallel..sub..infin..ltoreq.2.alpha..sup- .p+1(p+1)!. This proves that the n-th Fourier coefficient

c n ( g .alpha. ) .ltoreq. min p .di-elect cons. 2 .alpha. p + 1 ( p + 1 ) n p . ##EQU00018##

This minimum is reached for

p + 1 .apprxeq. n .alpha. , ##EQU00019##

and yields |c.sub.n(g.sub..alpha.)|=O(e.sup.-n/.alpha.).

[0125] Finally, this proves that by choosing N.apprxeq..alpha..sup.2=.THETA.(log(1/.epsilon.).sup.2), the N-th term of the Fourier series of g.sub..alpha. is at distance .ltoreq..epsilon. of g.sub..alpha., and thus from

h .alpha. ' - 1 2 .pi. . ##EQU00020##

This bound is preserved by integrating the trigonometric polynomial (the g from the theorem is the primitive of g.sub..alpha.), which yields the desired approximation of the sigmoid over the whole interval (-.pi., .pi.)..box-solid.

5 Honest but Curious Model

[0126] In the previous sections, we defined the shares of multiplication, power and Fourier numerical masking data, but did not explain how to generate them. Of course, a single trusted dealer approved by all players (TD model) could generate and distribute all the necessary shares to the players. Since the trusted dealer knows all the masks, and thus all the data, the TD model is only legitimate for few computation outsourcing scenarios.

[0127] We now explain how to generate the same numerical masking data efficiently in the more traditional honest-but-curious (HBC) model. To do so, we keep an external entity, called again the dealer, who participates in an interactive protocol to generate the numerical masking data, but sees only masked information. Since the numerical masking data in both the HBC and TD models are similar, the online phase is unchanged. Notice that in this HBC model, even if the dealer does not have access to the secret shares, he still has more power than the players. In fact, if one of the players wants to gain information on the secret data, he has to collude with all other players, whereas the dealer would need to collaborate with just one of them.

[0128] 5.1 Honest but Curious Communication Channels

[0129] In what follows, we suppose that, during the offline phase, a private channel exists between each player and the dealer. In the case of an HBC dealer, we also assume that an additional private broadcast channel (a channel to which the dealer has no access) exists between all the players. Afterwards, the online phase only requires a public broadcast channel between the players. In practice, because of the underlying encryption, private channels (e.g., SSL connections) have a lower throughput (generally .apprxeq.20 MB/s) than public channels (plain TCP connections, generally from 100 to 1000 MB/s between cloud instances).

[0130] The figures presented in this section represent the communication channels between the players and the dealer in both the trusted dealer and the honest but curious models. Two types of communication channels are used: the private channels, that correspond in practice to SSL channels (generally <20 MB/s), and the public channels, corresponding in practice to TCP connections (generally from 100 MB to 1 GB/s). In the figures, private channels are represented with dashed lines, while public channels are represented with plain lines.

[0131] FIG. 3 illustrates a schematic of the connections during the offline phase of the MPC protocols in accordance with one embodiment. The figure shows the communication channels in both the trusted dealer model (left) and in the honest but curious model (right) used during the offline phase. In the first model, the dealer sends the numerical masking data to each player via a private channel. In the second model, the players have access to a private broadcast channel, shared between all of them and each player shares an additional private channel with the dealer. The private channels are denoted with dashed lines. The figure represents 3 players, but each model can be extended to an arbitrary number n of players. In the TD model, the dealer is the only one generating all the precomputed data. He uses private channels to send to each player his share of the numerical masking data (one-way arrows). In the HBC model, the players collaborate for the generation of the numerical masking data. To do that, they need an additional private broadcast channel between them, that is not accessible to the dealer.

[0132] FIG. 4 illustrates a schematic of the communication channels between players during the online phase in accordance with one embodiment. The figure shows the communication channels used during the online phase. The players send and receive masked values via a public broadcast channel (public channels are denoted with plain lines). Their number, limited to 3 in the example, can easily be extended to a generic number n of players. The online phase is the same in both the TD and the HBC models and the dealer is not present.

[0133] 5.2 Honest but Curious Methods

[0134] The majority of HBC protocols proposed in the literature present a scenario with only 2 players. In [11] and [3], the authors describe efficient HBC protocols that can be used to perform a fast MPC multiplication in a model with three players. The two schemes assume that the parties follow correctly the protocol and that two players do not collude. The scheme proposed in [11] is very complex to scale for more than three parties, while the protocol in [3] can be extended to a generic number of players, but requires a quadratic number of private channels (one for every pair of players). We propose a different protocol for generating the multiplicative numerical masking data in the HBC scenario, that is efficient for any arbitrary number n of players. In our scheme, the dealer evaluates the non-linear parts in the numerical masking data generation, over the masked data produced by the players, then he distributes the masked shares. The mask is common to all players, and it is produced thanks to the private broadcast channel that they share. Finally, each player produces his numerical masking data by unmasking the precomputed data received from the dealer.

[0135] We now present in detail two methods in the honest-but-curious scenario: a first for the generation of multiplicative Beaver's numerical masking data, and a second for the generation of the numerical masking data used in the computation of a power function. In both methods, the dealer and the players collaborate for the generation of numerical masking data and none of them is supposed to have access to the whole information. The general idea is that the players generate their secret shares (of .lamda. and .mu., in the first case, and of .lamda. only, in the second case), that each one keeps secret. They also generate secret shares of a common mask, that they share between each other via the broadcast channel, but which remains secret to the dealer. The players then mask their secret shares with the common mask and send them to the dealer, who evaluates the non-linear parts (product in the first method and power in the second method). The dealer generates new additive shares for the result and sends these values back to each player via the private channel. This way, the players don't know each other's shares. Finally, the players, who know the common mask, can independently unmask their secret shares, and obtain their final share of the numerical masking data, which is therefore unknown to the dealer.

[0136] Honest but curious numerical masking data generation method

Output: Shares (.lamda., .mu., z) with z=.lamda..mu.. 1: Each player P.sub.i generates a.sub.i, b.sub.i, .lamda..sub.i, .mu..sub.i (from the according distribution). 2: Each player P.sub.i shares with all other players a.sub.i, b.sub.i. 3: Each player computes a=+a.sub.1+ . . . +a.sub.n and b=b.sub.1+ . . . +b.sub.n. 4: Each player P.sub.i sends to the dealer a.sub.i+.lamda..sub.i and b.sub.i+.mu..sub.i. 5: The dealer computes a+.lamda., b+.mu. and w=(a+.lamda.)(b+.mu.). 6. The dealer creates w.sub.+ and sends w.sub.i to player P.sub.i, for i=1, . . . , n. 7: Player P.sub.1 computes z.sub.1=w.sub.1-ab-a.mu..sub.1-b.lamda..sub.1. 8: Player i for i=2, . . . n computes z.sub.i=w.sub.io.mu..sub.i-b.lamda..sub.i.

[0137] Honest but curious numerical masking data generation for the power function method

Output: Shares .lamda. and .lamda..sup.-.alpha.. 1: Each player P.sub.i generates .lamda..sub.i, a.sub.i (from the according distribution). 2: Each player P.sub.i shares with all other players a.sub.i. 3: Each player computes a=a.sub.1+ . . . +a.sub.n. 4: Each player P.sub.i generates z.sub.i in a way that .SIGMA..sub.i=1.sup.nz.sub.i=0. 5: Each player P.sub.i sends to the dealer z.sub.i+a.lamda..sub.i. 6: The dealer computes .mu..lamda. and w=(.mu..lamda.).sup.-.alpha.. 7. The dealer creates w.sub.+ and sends w.sub.i to player P.sub.i, for i=1, . . . , n. 8: Each player P.sub.i right-multiplies w.sub.i with .mu..sup..alpha. to obtain (.lamda..sup.-.alpha.).sub.i.

[0138] We now present and a third method for the generation of numerical masking data used for the evaluation of a trigonometric polynomial in the HBC scenario.

Output: Shares (.lamda., e.sup.im.sup.1.sup..lamda..sub.+, . . . , .sup.im.sup.N.sup..lamda..sub.+). 1: Each player P.sub.i generates .lamda..sub.i, a.sub.i (uniformly modulo 2.pi.) 2: Each player P.sub.i broadcasts a.sub.i to all other players. 3: Each player computes a=a.sub.1+ . . . +a.sub.n mod 2.pi.. 4: Each player P.sub.i sends to the dealer .lamda..sub.i+a.sub.i mod 2.pi.. 5: The dealer computes .lamda.+a mod 2.pi. and w.sup.(1)=e.sup.im.sup.1.sup.(.lamda.+a), . . . , w.sup.(N)=e.sup.im.sup.N.sup.(.lamda.+a) 6: The dealer creates w.sup.(1).sub.+, . . . , w.sup.(N).sub.+ and sends w.sup.(1), . . . , w.sup.(N) to player P.sub.i. 7: Each player P.sub.i multiplies each w.sub.i.sup.(j) by e.sup.-im.sup.j.sup.a to get (e.sup.im.sup.j.sup..lamda.).sub.i, for all j [1, N].

6 Application to Logistic Regression

[0139] In a classification problem one is given a data set, also called a training set, that we will represent here by a matrix X M.sub.N,k (), and a training vector y {0, 1}.sup.N. The data set consists of N input vectors of k features each, and the coordinate y.sub.i of the vector y corresponds to the class (0 or 1) to which the i-th element of the data set belongs to. Formally, the goal is to determine a function h.sub..theta.:.sup.k.fwdarw.{0, 1} that takes as input a vector x, containing k features, and which outputs h.sub..theta.(x) predicting reasonably well y, the corresponding output value.

[0140] In logistic regression, typically one uses hypothesis functions h.sub..theta.:.sup.k+1.fwdarw.[0, 1] of the form h.sub..theta.(x)=sigmo(.theta..sup.Tx), where .theta..sup.Tx=.SIGMA..sub.i=0.sup.k.theta..sub.ix.sub.i and x.sub.0=1. The vector .theta., also called model, is the parameter that needs to be determined. For this, a convex cost function C.sub.x,y (.theta.) measuring the quality of the model at a data point (x, y) is defined as

C.sub.x,y(.theta.)=-y log h.sub..theta.(x)-(1-y)log(1-h.sub..theta.(x)).

[0141] The cost for the whole dataset is thus computed as .SIGMA..sub.i=1.sup.NC.sub.x.sub.i.sub.,y.sub.i(.theta.). The overall goal is to determine a model .theta. whose cost function is as close to 0 as possible. A common method to achieve this is the so called gradient descent which consists of constantly updating the model .theta. as

.theta.:=.theta.-.alpha..gradient.C.sub.x,y(.theta.),

where C.sub.x,y (.theta.) is the gradient of the cost function and .alpha.>0 is a constant called the learning rate. Choosing the optimal .alpha. depends largely on the quality of the dataset: if .alpha. is too large, the method may diverge, and if .alpha. is too small, a very large number of iterations are needed to reach the minimum. Unfortunately, tuning this parameter requires either to reveal information on the data, or to have access to a public fake training set, which is not always feasible in private MPC computations. This step is often silently ignored in the literature. Similarly, preprocessing techniques such as feature scaling, or orthogonalization techniques can improve the dataset, and allow to increase the learning rate significantly. But again, these techniques cannot easily be implemented when the input data is shared, and when correlation information should remain private.

[0142] In this work, we choose to implement the IRLS method [5, .sctn. 4.3], which does not require feature scaling, works with learning rate 1, and converges in much less iterations, provided that we have enough floating point precision. In this case, the model is updated as:

.theta.:=.theta.-H(.theta.).sup.-1C.sub.x,y(.theta.),

where H(.theta.) is the Hessian matrix.