Systems, Methods And Apparatuses For Stereo Vision And Tracking

TADI; Tej ; et al.

U.S. patent application number 16/532604 was filed with the patent office on 2020-06-04 for systems, methods and apparatuses for stereo vision and tracking. The applicant listed for this patent is MINDMAZE HOLDING SA. Invention is credited to Corentin BARBIER, Leandre BOLOMEY, Nicolas BOURDAUD, Sylvain CARDIN, Frederic CONDOLO, Nicolas FREMAUX, Ieltxu GOMEZ LORENZO, Flavio LEVI CAPITAO CANTANTE, Jonas OSTLUND, Renaud OTT, Flavio ROTH, Jose RUBIO, Tej TADI.

| Application Number | 20200177870 16/532604 |

| Document ID | / |

| Family ID | 62152585 |

| Filed Date | 2020-06-04 |

View All Diagrams

| United States Patent Application | 20200177870 |

| Kind Code | A1 |

| TADI; Tej ; et al. | June 4, 2020 |

SYSTEMS, METHODS AND APPARATUSES FOR STEREO VISION AND TRACKING

Abstract

A system, method and apparatus for stereo vision and tracking with a plurality of coupled cameras and optional sensors.

| Inventors: | TADI; Tej; (Lausanne, CH) ; BOLOMEY; Leandre; (Lausanne, CH) ; FREMAUX; Nicolas; (Lausanne, CH) ; RUBIO; Jose; (Lausanne, CH) ; OSTLUND; Jonas; (Lausanne, CH) ; CARDIN; Sylvain; (Lausanne, CH) ; ROTH; Flavio; (Lausanne, CH) ; OTT; Renaud; (Lausanne, CH) ; CONDOLO; Frederic; (Lausanne, CH) ; BOURDAUD; Nicolas; (Lausanne, CH) ; LEVI CAPITAO CANTANTE; Flavio; (Lausanne, CH) ; BARBIER; Corentin; (Lausanne, CH) ; GOMEZ LORENZO; Ieltxu; (Lausanne, CH) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Family ID: | 62152585 | ||||||||||

| Appl. No.: | 16/532604 | ||||||||||

| Filed: | August 6, 2019 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| PCT/IB2018/000386 | Feb 7, 2018 | |||

| 16532604 | ||||

| 62598487 | Dec 14, 2017 | |||

| 62553953 | Sep 4, 2017 | |||

| 62456050 | Feb 7, 2017 | |||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | G02B 27/0172 20130101; G06F 2203/011 20130101; G02B 2027/0138 20130101; G06F 3/011 20130101; H04N 13/383 20180501; G06F 3/016 20130101; G06F 3/014 20130101; H04N 13/296 20180501; H04N 13/239 20180501; H04N 13/271 20180501 |

| International Class: | H04N 13/383 20180101 H04N013/383; G02B 27/01 20060101 G02B027/01 |

Claims

1. A stereo vision procurement apparatus for obtaining stereo visual data, comprising: a stereo RGB camera; a depth sensor; and an RGB-D fusion module, a processor; and a plurality of tracking devices to track movement of a subject, wherein: the processor is configured to process data from the tracking devices to form a plurality of sub-features, said sub-features are combined by said FPGA to form a feature to track movements of the subject, each of said stereo RGB camera and said depth sensor are configured to provide pixel data corresponding to a plurality of pixels, said RGB-D fusion module is configured to combine RGB pixel data from said stereo RGB camera and depth information pixel data from said depth sensor to form stereo visual pixel data (SVPD), and said RGB-D fusion module is implemented in an FPGA field-programmable gate array).

2. The apparatus of claim 1, further comprising a de-mosaicing module configured to perform a method comprising: averaging the RGB pixel data associated with a plurality of green pixels surrounding red and blue sites for R(B) at B-G(R-G) sites or R(B) at R-G(B-G) sites, and reducing a number of green pixel values from the RGB pixel data to fit a predetermined pixel array (e.g., a 5.times.5 window) for R(B) at B(R) sites.

3. The apparatus of claim 2, wherein: said stereo RGB camera comprises a first camera and a second camera, each of said first and second cameras being associated with a clock on said FPGA, and said FPGA including a double clock sampler for synchronizing said clocks of said first and right cameras.

4. The apparatus of claim 3, further comprising: a histogram module comprising a luminance calculator for determining a luminance level of at least said RGB pixel data; and a classifier for classifying said RGB pixel data according to said luminance level, wherein said luminance level is transmitted to said stereo RGB camera as feedback.

5. The apparatus of claim 4, further comprising a white balance module configured to apply a smoothed GW (gray world) algorithm to said RGB pixel data.

6. The apparatus of claim 1, further comprising: a biological sensor configured to provide biological data, wherein: said biological sensor is selected from the group consisting of: an EEG sensor, a heartrate sensor, an oxygen saturation sensor, an EKG sensor, or EMG sensor, and a combination thereof, the processor is configured to process the biological data to form a plurality of sub-features, and said sub-features are combined by the FPGA to form a feature.

7. The apparatus of claim 1, wherein said FPGA is implemented as a field-programmable gate array (FPGA) comprising a system on a chip (SoC), including an operating system as a SOM (system on module).

8. The apparatus of claim 7, further comprising a CPU SOM for performing overflow operations from said FPGA.

9. The apparatus of claim 1, wherein said tracking devices comprise a plurality of wearable sensors.

10. The apparatus of claim 9, further comprising: a multi-modal interaction device in communication with a subject, said multi-modal interaction device comprising said plurality of tracking devices and at least one haptic feedback device, wherein: the processor is configured to process data from the tracking devices to form a plurality of tracking sub-features, and said sub-features are combined by said FPGA to form a feature to track movements of the subject and to provide feedback through said at least one haptic feedback device.

11. The apparatus of claim 1 further comprising: a memory; and wherein said processor is configured to perform a defined set of operations in response to receiving a corresponding instruction selected from an instruction set of codes, and said instruction set of codes include: a first set of codes for operating said RGB-D fusion module to synchronize RGB pixel data and depth pixel data, and for creating a disparity map; and a second set of codes for creating a point cloud from said disparity map and said depth pixel data.

12. The apparatus of claim 11, wherein said point cloud comprises a colorized point cloud.

13. The apparatus of claim 1 further comprising: a memory; and wherein said processor is configured to perform a defined set of operations for performing any of the functionality recited in claim 1 in response to receiving a corresponding instruction selected from an instruction set of codes, wherein said codes are stored in said memory.

14. The apparatus of claim 13, wherein said processor is configured to operate according to a set of codes selected from the instruction set for a de-noising process for a CFA (color filter array) image according to a W-means process.

15. The apparatus of claim 14, wherein said computational device comprises a second set of codes selected from the instruction set for operating a bad pixel removal process.

16. A system comprising the apparatus of claim 1, further comprising a display for displaying stereo visual data.

17. A method for processing image information comprising: receiving SVPD from the stereo camera of the apparatus of claim 1; performing RGB preprocessing on the input pixel data to produce preprocessed RGB image pixel data; using the RGB preprocessed image pixel data in the operation of the stereo camera with respect to at least one of an autogain and an autoexposure algorithm; rectifying the SVPD so as to control artifacts caused by the lens of the camera; and calibrating the SVPD so as to prevent distortion of the stereo pixel input data by the lens of the stereo camera.

18. The method of claim 17, further comprising colorizing the preprocessed RGB image pixel data, and creating a disparity map based on the colorized, preprocessed RGB image pixel data.

19. The method of claim 18, wherein calibration comprises matching the RGB pixel image data with depth pixel data.

20. The method of claim 19, wherein the disparity map is created by: obtaining depth pixel data from at least one of the stereo pixel input data, the preprocessed RGB image pixel data, and depth pixel data from a depth sensor, and checking differences between stereo images.

21. The method of claim 20, wherein said disparity map, plus depth pixel data from the depth sensor in the form of a calibrated depth map, is combined for the point cloud computation.

Description

FIELD OF THE DISCLOSURE

[0001] The present disclosure is directed to systems, methods and apparatuses for stereo vision and tracking, and in particular, to systems, methods and apparatuses for stereo vision which include a plurality of image sensors (e.g., cameras), as well as (in some embodiments) additional sensors that also includes tracking of at least part of a user.

BACKGROUND OF THE DISCLOSURE

[0002] Stereoscopic cameras provide a stereo view and are well known. For example, International Patent Publication no. WO2014154839 is understood to describe a camera system for capturing stereo data using two RGB cameras combined with a depth sensor for tracking the motion of an object (e.g., a person). The computations of the system are performed by a separate computer, which can lead to lag. Other examples include: [0003] The Persee product of Orbbec 3D (also known as Shenzhen Orbbec Co., Ltd.; https://orbbec3d.com/) combines camera functions with an ARM processor in a single apparatus. The apparatus includes a single RGB camera, a depth sensor, an infrared receiving port and a laser projector to provide stereo camera information; [0004] International Patent Publication no. WO2016192437, describes a system in which infrared sensor data is combined with RGB data to create a 3D image; and [0005] The Zed product of Stereolabs Inc (https://www.stereolabs.com/zed/specs/) provides a 3D camera with tracking capabilities.

BRIEF SUMMARY OF THE DISCLOSURE

[0006] Embodiments of the present disclosure are directed to systems, methods and apparatuses for stereo vision which include tracking, and in particular, to systems, methods and apparatuses for stereo vision which include a plurality of image sensors (e.g., cameras), as well as (in some embodiments) additional sensors.

[0007] According to at least some embodiments there is provided a stereo vision procurement apparatus for obtaining stereo visual data, comprising: a stereo RGB camera; a depth sensor; and an RGB-D fusion module, wherein: each of said stereo RGB camera and said depth sensor are configured to provide pixel data corresponding to a plurality of pixels, said RGB-D fusion module is configured to combine RGB pixel data from said stereo RGB camera and depth information pixel data from said depth sensor to form stereo visual pixel data (SVPD), and said RGB-D fusion module is implemented in an FPGA field-programmable gate array).

[0008] Optionally the apparatus further comprises a de-mosaicing module configured to perform a method comprising: averaging the RGB pixel data associated with a plurality of green pixels surrounding red and blue sites for R(B) at B-G(R-G) sites or R(B) at R-G(B-G) sites, and reducing a number of green pixel values from the RGB pixel data to fit a predetermined pixel array (e.g., a 5.times.5 window) for R(B) at B(R) sites.

[0009] Optionally said stereo RGB camera comprises a first camera and a second camera, each of said first and second cameras being associated with a clock on said FPGA, and said FPGA including a double clock sampler for synchronizing said clocks of said first and right cameras.

[0010] Optionally the apparatus further comprises a histogram module comprising a luminance calculator for determining a luminance level of at least said RGB pixel data; and a classifier for classifying said RGB pixel data according to said luminance level, wherein said luminance level is transmitted to said stereo RGB camera as feedback.

[0011] Optionally the apparatus further comprises a white balance module configured to apply a smoothed GW (gray world) algorithm to said RGB pixel data.

[0012] Optionally the apparatus further comprises a processor; and a biological sensor configured to provide biological data, wherein: said biological sensor is selected from the group consisting of: an EEG sensor, a heartrate sensor, an oxygen saturation sensor, an EKG sensor, or EMG sensor, and a combination thereof, the processor is configured to process the biological data to form a plurality of sub-features, said sub-features are combined by the FPGA to form a feature.

[0013] Optionally said FPGA is implemented as a field-programmable gate array (FPGA) comprising a system on a chip (SoC), including an operating system as a SOM (system on module).

[0014] Optionally the apparatus further comprises a CPU SOM for performing overflow operations from said FPGA.

[0015] Optionally the apparatus further comprises a processor; and a plurality of tracking devices to track movement of a subject, wherein: the processor is configured to process data from the tracking devices to form a plurality of sub-features, and said sub-features are combined by said FPGA to form a feature to track movements of the subject.

[0016] Optionally the tracking devices comprise a plurality of wearable sensors.

[0017] Optionally the apparatus further comprises a processor; and a multi-modal interaction device in communication with a subject, said multi-modal interaction device comprising said plurality of tracking devices and at least one haptic feedback device, wherein: the processor is configured to process data from the tracking devices to form a plurality of tracking sub-features, and said sub-features are combined by said FPGA to form a feature to track movements of the subject and to provide feedback through said at least one haptic feedback device.

[0018] Optionally the apparatus further comprises a processor configured to perform a defined set of operations in response to receiving a corresponding instruction selected from an instruction set of codes; and a memory; wherein: said defined set of operations including: a first set of codes for operating said RGB-D fusion module to synchronize RGB pixel data and depth pixel data, and for creating a disparity map; and a second set of codes for creating a point cloud from said disparity map and said depth pixel data.

[0019] Optionally said point cloud comprises a colorized point cloud.

[0020] Optionally the apparatus further comprises a memory; and a processor configured to perform a defined set of operations for performing any of the functionality as described herein in response to receiving a corresponding instruction selected from an instruction set of codes.

[0021] Optionally said processor is configured to operate according to a set of codes selected from the instruction set for a de-noising process for a CFA (color filter array) image according to a W-means process.

[0022] Optionally said computational device comprises a second set of codes selected from the instruction set for operating a bad pixel removal process.

[0023] According to at least some embodiments there is provided a system comprising the apparatus as described herein, further comprising a display for displaying stereo visual data.

[0024] Optionally the system further comprises an object attached to a body of a user; and an inertial sensor, wherein said object comprises an active marker, input from said object is processed to form a plurality of sub-features, and said sub-features are combined by the FPGA to form a feature.

[0025] Optionally the system further comprises a processor for operating a user application, wherein said RGB-D fusion module is further configured to output a colorized point cloud to said user application.

[0026] Optionally said processor is configured to transfer SVPD to said display without being passed to said user application, and said user application is additionally configured to provide additional information for said display that is combined by said FPGA with said SVPD for output to said display.

[0027] Optionally said biological sensor is configured to output data via radio-frequency (RF), and wherein: the system further comprises an RF receiver for receiving the data from said biological sensor, and said feature from said FPGA is transmitted to said user application.

[0028] Optionally the system further comprises at least one of a haptic or tactile feedback device, the device configured to provide at least one of haptic or tactile feedback, respectively, according to information provided by said user application.

[0029] According to at least some embodiments there is provided a stereo vision procurement system comprising: a first multi-modal interaction platform configurable to be in communication with one or more additional second multi-modal interaction platforms; a depth camera; a stereo RGB camera; and an RGB-D fusion chip; wherein: each of said stereo RGB camera and said depth camera are configured to provide pixel data corresponding to a plurality of pixels, the RGB-D fusion chip comprises a processor operative to execute a plurality of instructions to cause the chip to fuse said RGB pixel data and depth pixel data to form stereo visual pixel data.

[0030] Optionally the depth camera is configured to provide depth pixel data according to TOF (time of flight).

[0031] Optionally the stereo camera is configured to provide SVPD from at least one first and at least one second sensor.

[0032] Optionally the RGB-D fusion chip is configured to preprocess at least one of SVPD and depth pixel data so as to form a 3D point cloud with RGB pixel data associated therewith.

[0033] Optionally the fusion chip is further configured to form the 3D point cloud for tracking at least a portion of a body by at least the first multi-model interaction platform.

[0034] Optionally the system further comprises at least one of a display and a wearable haptic device, wherein at least the first multi-modal interaction platform is configured to output data to at least one of the display and the haptic device.

[0035] Optionally the system further comprises one or more interactive objects or tools configured to perform at least one of giving feedback, receiving feedback, and receiving instructions from at least one of the multi-modal interaction platforms.

[0036] Optionally the system further comprises one or more sensors configured to communicate with at least one of the multi-modal interaction platforms.

[0037] Optionally the one or more sensors include at least one of: a stereo vision AR (augmented reality) component configured to display an AR environment according to at least one of tracking data of a user and data received from the first multi-modal interaction platform, and a second additional multi-modal interaction platform; an object tracking sensor; a facial detection sensor configured to detect a human face, or emotions thereof; and a markerless tracking sensor in which an object is tracked without additional specific markers placed on it.

[0038] According to at least some embodiments there is provided a multi-model interaction platform system comprising: a multi-modal interaction platform; a plurality of wearable sensors each comprising an active marker configured to provide an active signal for being detected; an inertial sensor configured to provide an inertial signal comprising position and orientation information; at least one of a heart rate and oxygen saturation sensor, or a combination thereof; an EEG sensor; and at least one wearable haptic devices, including one or more of a tactile feedback device and a force feedback device.

[0039] According to at least some embodiments there is provided a method for processing image information comprising: receiving SVPD from a stereo camera; performing RGB preprocessing on the input pixel data to produce preprocessed RGB image pixel data; using the RGB preprocessed image pixel data in the operation of the stereo camera with respect to at least one of an autogain and an autoexposure algorithm; rectifying the SVPD so as to control artifacts caused by the lens of the camera; and calibrating the SVPD so as to prevent distortion of the stereo pixel input data by the lens of the stereo camera.

[0040] Optionally the method further comprises colorizing the preprocessed RGB image pixel data, and creating a disparity map based on the colorized, preprocessed RGB image pixel data.

[0041] Optionally calibration comprises matching the RGB pixel image data with depth pixel data.

[0042] Optionally the disparity map is created by: obtaining depth pixel data from at least one of the stereo pixel input data, the preprocessed RGB image pixel data, and depth pixel data from a depth sensor, and checking differences between stereo images.

[0043] Optionally said disparity map, plus depth pixel data from the depth sensor in the form of a calibrated depth map, is combined for the point cloud computation.

[0044] According to at least some embodiments there is provided an image depth processing method for depth processing of one or more images comprising: receiving TOF (time-of-flight) image data of an image from a TOF camera; creating at least one of a depth map or a level of illumination for each pixel from the TOF data; feeding the level of illumination into a low confidence pixel removal process comprising: comparing a distance that each pixel is reporting; correlating said distance of said each pixel to the illumination provided by said each pixel, removing any pixel upon the illumination provided by the pixel being outside a predetermined acceptable range such that the distance cannot be accurately determined; processing depth information to remove motion blur of the image, wherein motion blur is removed by removing artifacts at edges of moving objects in depth of the image; and applying at least one of temporal or spatial filters to the image data.

[0045] According to at least some embodiments there is provided a stereo image processing method comprising: receiving first data flow of at least one image from a first RGB camera and second data flow of at least one image from a second RGB camera; sending the first and second data flows to a frame synchronizer; and synchronizing, using the frame synchronizer, a first image frame from the first data flow and a second image frame from the second data flow such that time shift between the first image and frame and the second image frame is substantially eliminated.

[0046] Optionally sampling, before sending the first and second data flows to the frame synchronizer, the first and second data flows such that each of the first and second data flows are synchronized with a single clock; and detecting which data flow is advanced of the other, and directing the advanced data flow to a First Input First Output (FIFO), such that the data from the advanced flow is retained by the frame synchronizer until the other data flow reaches the frame synchronizer.

[0047] Optionally the method further comprises serializing frame data of the first and second data flows as a sequence of bytes.

[0048] Optionally the method further comprises detecting non-usable pixels.

[0049] Optionally the method further comprises constructing a set of color data from each of the first and second data flows.

[0050] Optionally the method further comprises color correcting each of the first and second data flows.

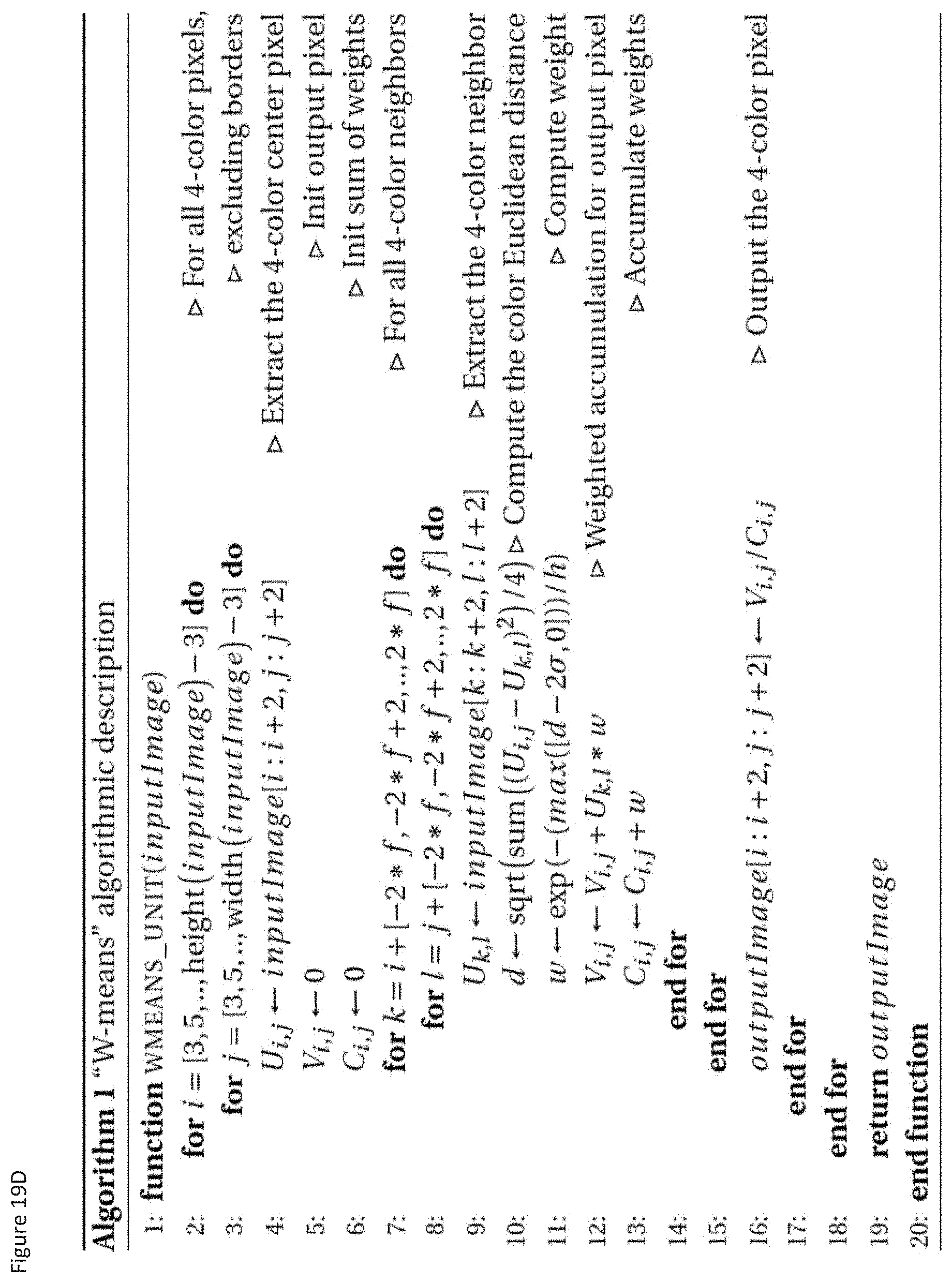

[0051] Optionally the method further comprises corresponding the first and second data flows into a CFA (color filter array) color image data; applying a denoising process for the CFA image data, the process comprising: grouping four (4) CFA colors to make a 4-color pixel for each pixel of the image data; comparing each 4-color pixel to neighboring 4-color pixels; attributing a weight to each neighbor pixel depending on its difference with the center 4-color pixel; and for each color, computing a weighted mean to generate the output 4-color pixel.

[0052] Optionally said denoising process further comprises performing a distance computation according to a Manhattan distance, computed between each color group neighbor and the center color group.

[0053] Optionally the method further comprises applying a bad pixel removal algorithm before said denoising process.

[0054] Optionally the apparatus as described herein is able to obtain SVPD and to track a user, wherein the apparatus further comprises: a body model; and one or more processors having computer instructions operating thereon configured to cause the processor to: fit data points from the depth sensor to the body model according to a probabilistic fitting algorithm, said probabilistic fitting algorithm being constrained according to at least one constraint defined according to human anatomy, identifying a plurality of data points as super points and assigning each of said super points an additional weight; wherein: a plurality of said data points are identified with joints of the anatomy, said super points are defined according to one or more objects attached to a body, each of said stereo RGB camera and said depth sensor are configured to provide data as a plurality of pixels, said RGB-D fusion module is configured to combine RGB data from said stereo RGB camera and depth information from said depth sensor to SVPD, and the depth sensor provides data to determine a three-dimensional location of a body in space according to a distance of the body from the depth sensor.

[0055] Optionally said one or more objects attached to the body comprise one or more of at least one active marker configured to provide a detectable signal and a passive object.

[0056] Optionally said data points identified with joints of the human body are identified according to a previously determined position as an estimate.

[0057] Optionally the body model comprises a template, said template including a standard model of a skeleton according to a hierarchy of joints as vertices and skinning, and a first determination of a position of at least one of the joints of the hierarchy of joints according to said template.

[0058] According to at least some embodiments there is provided a system comprising an apparatus as described herein, optionally comprising a characteristic of a system as described herein, further comprising a display for displaying SVPD.

[0059] Optionally the system further comprises one or more objects attached to the user; and an inertial sensor, wherein said one or more objects comprises an active marker, the computer instructions are configured to cause the processor to form a plurality of sub-features from input from said one or more objects and combining said sub-features into a feature.

[0060] Optionally the system further comprises at least one of a haptic feedback device and a tactile feedback device configured to provide at least one of haptic and tactile feedback according to information provided by said user application.

[0061] Optionally computer instructions include instructions which cause the processor to perform as a tracking engine.

[0062] Optionally the tracking engine is configured to track at least one of the position of the user's body and the position of one or more body parts of the user, including but not limited, to one or more of an arm, a leg, a hand, a foot, and a head.

[0063] Optionally the tracking engine is configured to decompose signals representing physical actions made by the user into data representing a series of gestures.

[0064] Optionally the tracking engine is configured to decompose signals representing physical actions into data representing a series of gestures via classifier functionality.

[0065] Optionally the system further comprises a plurality of templates, wherein the computer instructions are further configured to cause the processor to initialize a template of the plurality of templates Optionally the template features a model of a human body configured only as a plurality of parameters, only as a plurality of features, or both.

[0066] Optionally the plurality of parameters and/or features include a skeleton, and one or more joints.

[0067] Optionally the computer instructions are additionally configured to cause the processor to utilize the plurality of parameters and/or features to assist in tracking of the user's movements.

[0068] Optionally the computer instructions are configured to map the sensor data onto a GMM (Gaussian mixture model).

[0069] Optionally the body model includes a sparse-skin representation.

[0070] Optionally the computer instructions are additionally configured to cause the processor to suppress corresponding Gaussians.

[0071] Optionally data is mapped to a GMM.

[0072] Optionally said data is mapped to said GMM by a classifier.

[0073] Optionally the tracking engine includes a template engine configured to read a template from a template database, and the computer instructions are additionally configured to: cause the processor to operate as a GMM mapper, and send the template into the GMM mapper.

[0074] Optionally the computer instructions are additionally configured to cause the processor to operate as a point cloud decomposer, and the GMM mapper is configured to receive point cloud information from the point cloud decomposer.

[0075] Unless otherwise defined, all technical and scientific terms used herein have the same meaning as commonly understood by one of ordinary skill in the art to which this disclosure belongs. The materials, systems, apparatuses, methods, and examples provided herein are illustrative only and not intended to be limiting.

[0076] Implementation of the embodiments of the present disclosure include performing or completing tasks, steps, and functions, manually, automatically, or a combination thereof. Specifically, steps can be implemented by hardware or by software on an operating system, of a firmware, and/or a combination thereof. For example, as hardware, steps of at least some embodiments of the disclosure can be implemented as a chip or circuit (e.g., ASIC). As software, steps of at least some embodiments of the disclosure can be implemented as a number of software instructions being executed by a computer (e.g., a processor) using an operating system. Thus, in any case, selected steps of methods of at least some embodiments of the disclosure can be performed by a processor for executing a plurality of instructions.

[0077] Software (e.g., an application, computer instructions, code) which is configured to perform (or cause to be performed) certain functionality of some of the disclosed embodiments may also be referred to as a "module" for performing that functionality, and also may be referred to a "processor" for performing such functionality. Thus, processor, according to some embodiments, may be a hardware component, or, according to some embodiments, a software component.

[0078] Further to this end, in some embodiments, a processor may also be referred to as a module, and, in some embodiments, a processor may comprise one more modules. In some embodiments, a module may comprise computer instructions--which can be a set of instructions, an application, software, which are operable on a computational device (e.g., a processor) to cause the computational device to conduct and/or achieve one or more specific functionality. Furthermore, the phrase "abstraction layer" or "abstraction interface", as used with some embodiments, can refer to computer instructions (which can be a set of instructions, an application, software) which are operable on a computational device (as noted, e.g., a processor) to cause the computational device to conduct and/or achieve one or more specific functionality. The abstraction layer may also be a circuit (e.g., an ASIC see above) to conduct and/or achieve one or more specific functionality. Thus, for some embodiments, and claims which correspond to such embodiments, the noted feature/functionality can be described/claimed in a number of ways (e.g., abstraction layer, computational device, processor, module, software, application, computer instructions, and the like).

[0079] Some embodiments are described with regard to a "computer", a "computer network," and/or a "computer operational on a computer network," it is noted that any device featuring a processor (which may be referred to as "data processor"; "pre-processor" may also be referred to as "processor") and the ability to execute one or more instructions may be described as a computer, a computational device, and a processor (e.g., see above), including but not limited to a personal computer (PC), a server, a cellular telephone, an IP telephone, a smart phone, a PDA (personal digital assistant), a thin client, a mobile communication device, a smart watch, head mounted display or other wearable that is able to communicate externally, a virtual or cloud based processor, a pager, and/or a similar device. Two or more of such devices in communication with each other may be a "computer network."

BRIEF DESCRIPTION OF THE DRAWINGS

[0080] Embodiments of the present disclosure are herein described, by way of example only, with reference to the accompanying drawings. With specific reference now to the drawings in detail, it is stressed that the particulars shown are by way of example and for purposes of illustrative discussion of the preferred embodiments of inventions disclosed herein, and are presented in order to provide what is believed to be the most useful and readily understood description of the principles and conceptual aspects of various embodiments of the inventions disclosed herein.

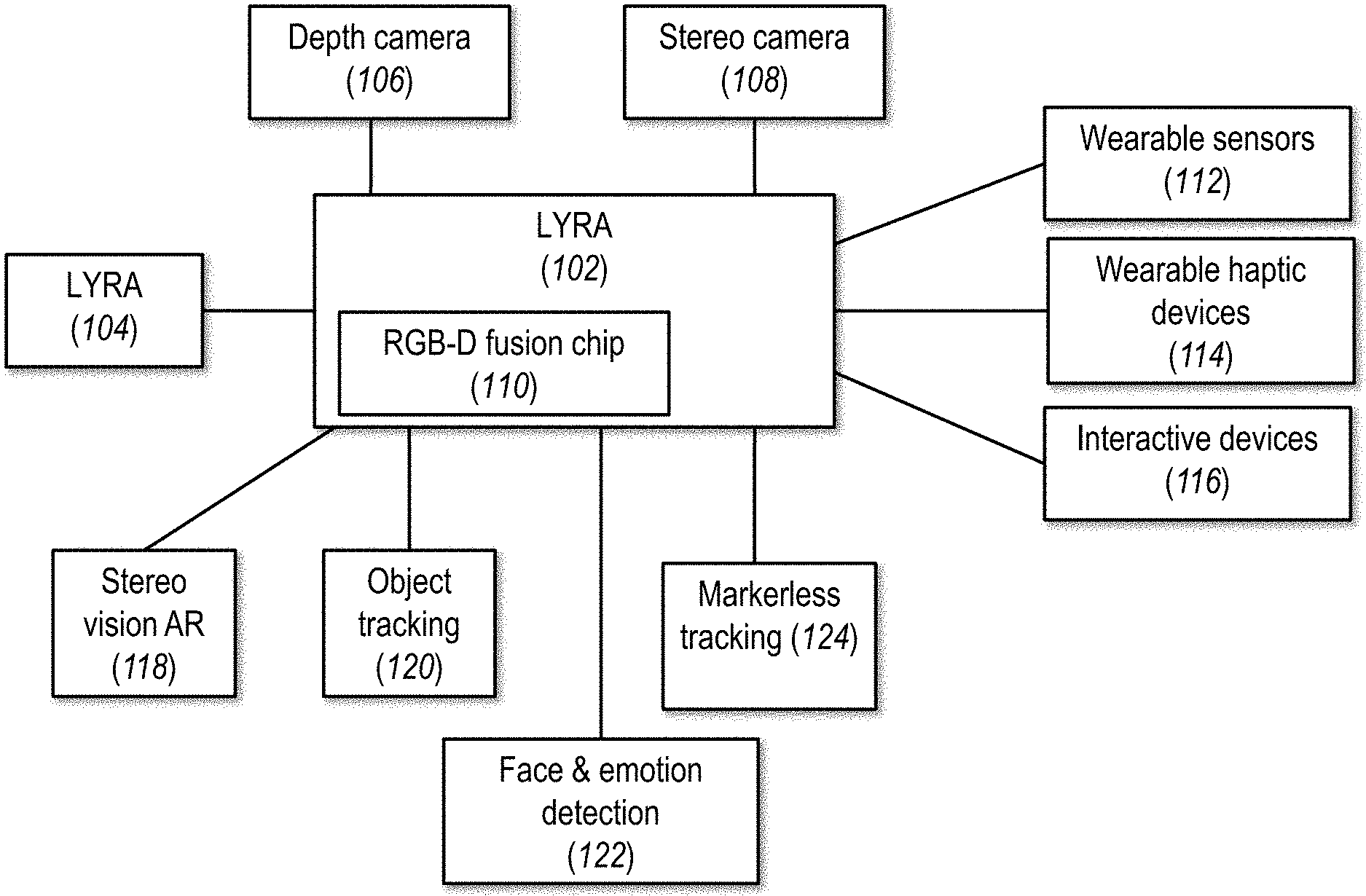

[0081] FIG. 1 shows a non-limiting example of a system according to at least some embodiments of the present disclosure;

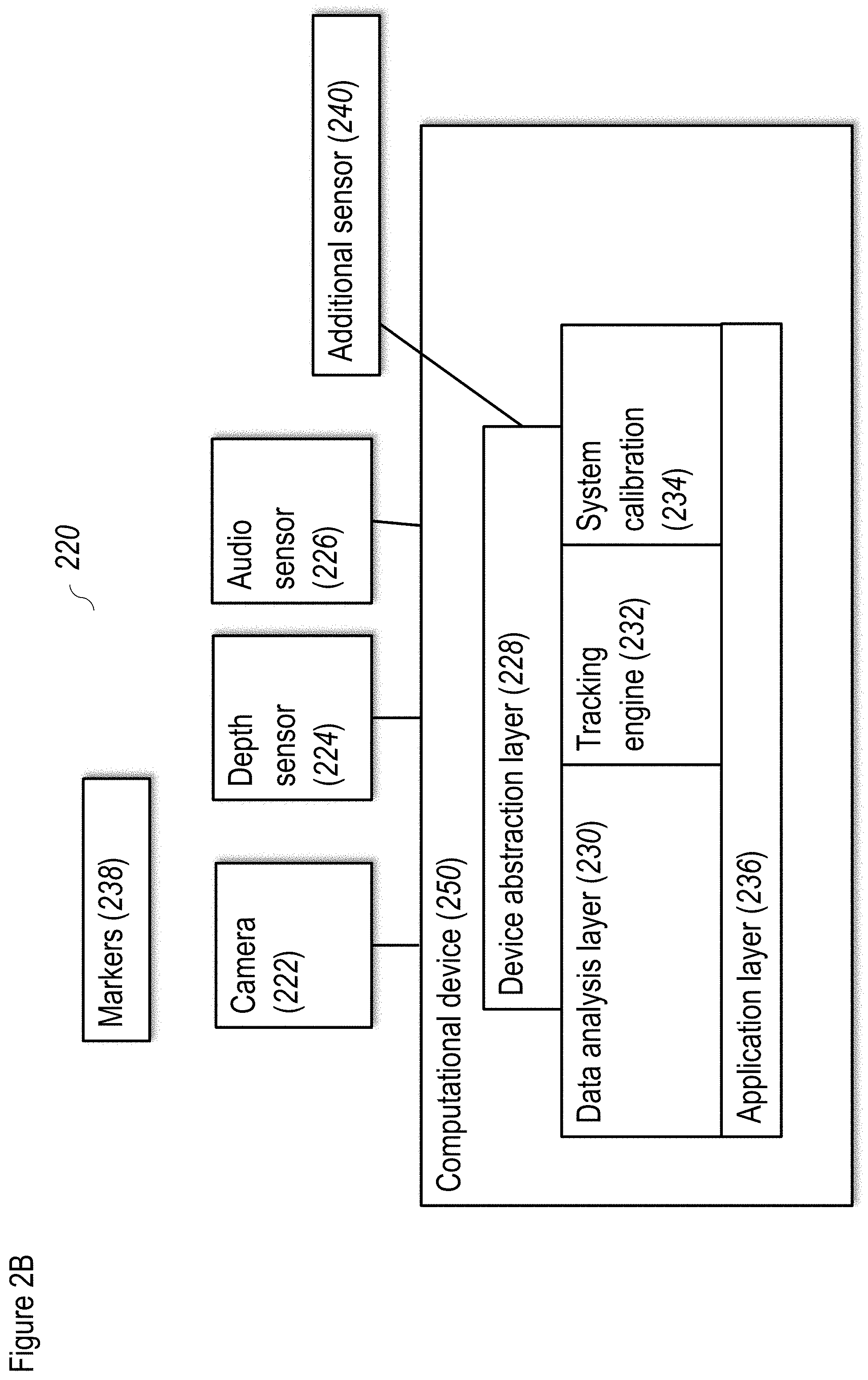

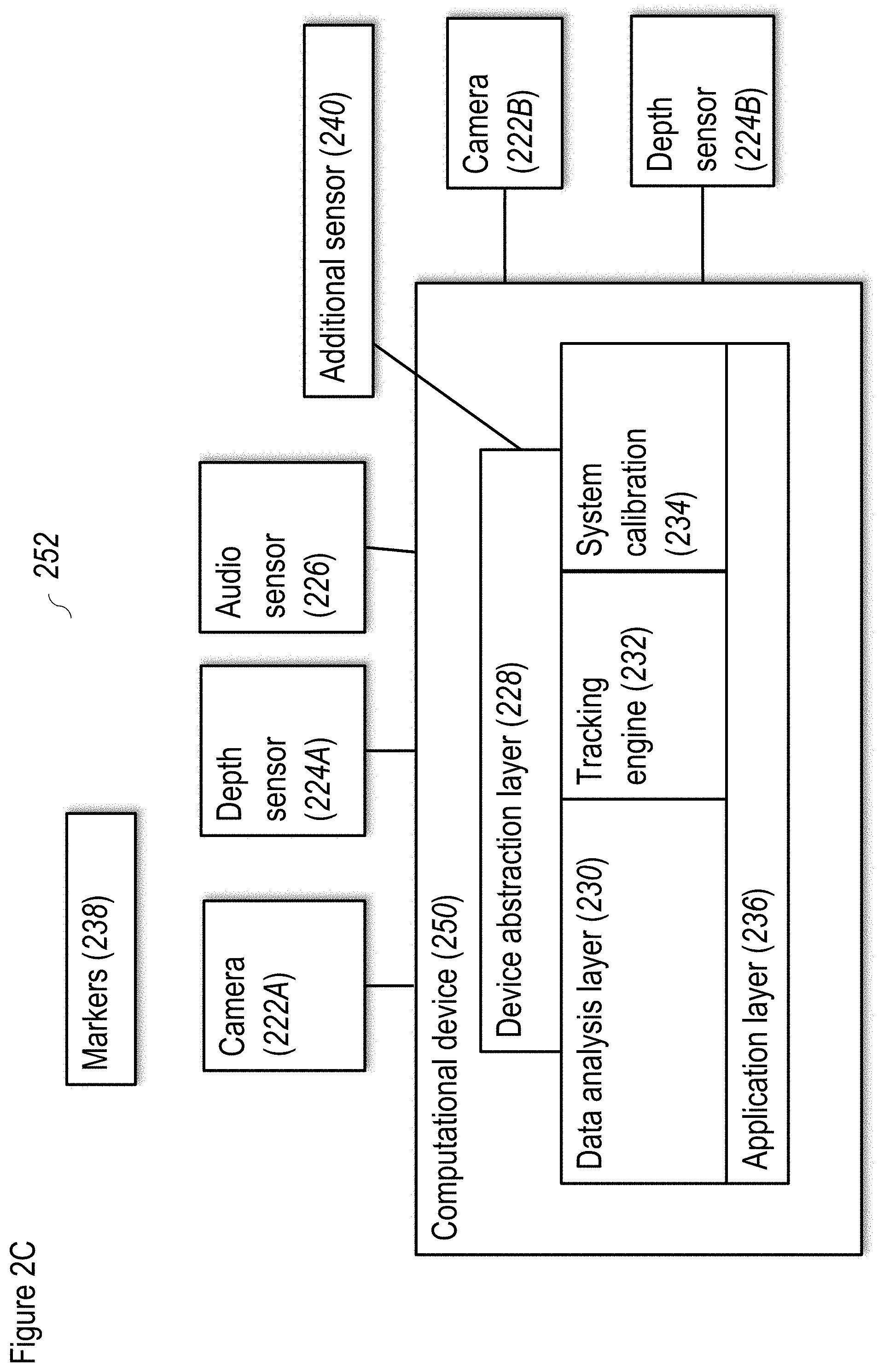

[0082] FIGS. 2A, 2B and 2C show additional details and embodiments of the system of FIG. 1;

[0083] FIG. 3 shows a non-limiting example of a method for preprocessing according to at least some embodiments of the present disclosure;

[0084] FIGS. 4A and 4B shows a non-limiting example of a method for depth preprocessing according to at least some embodiments of the present disclosure;

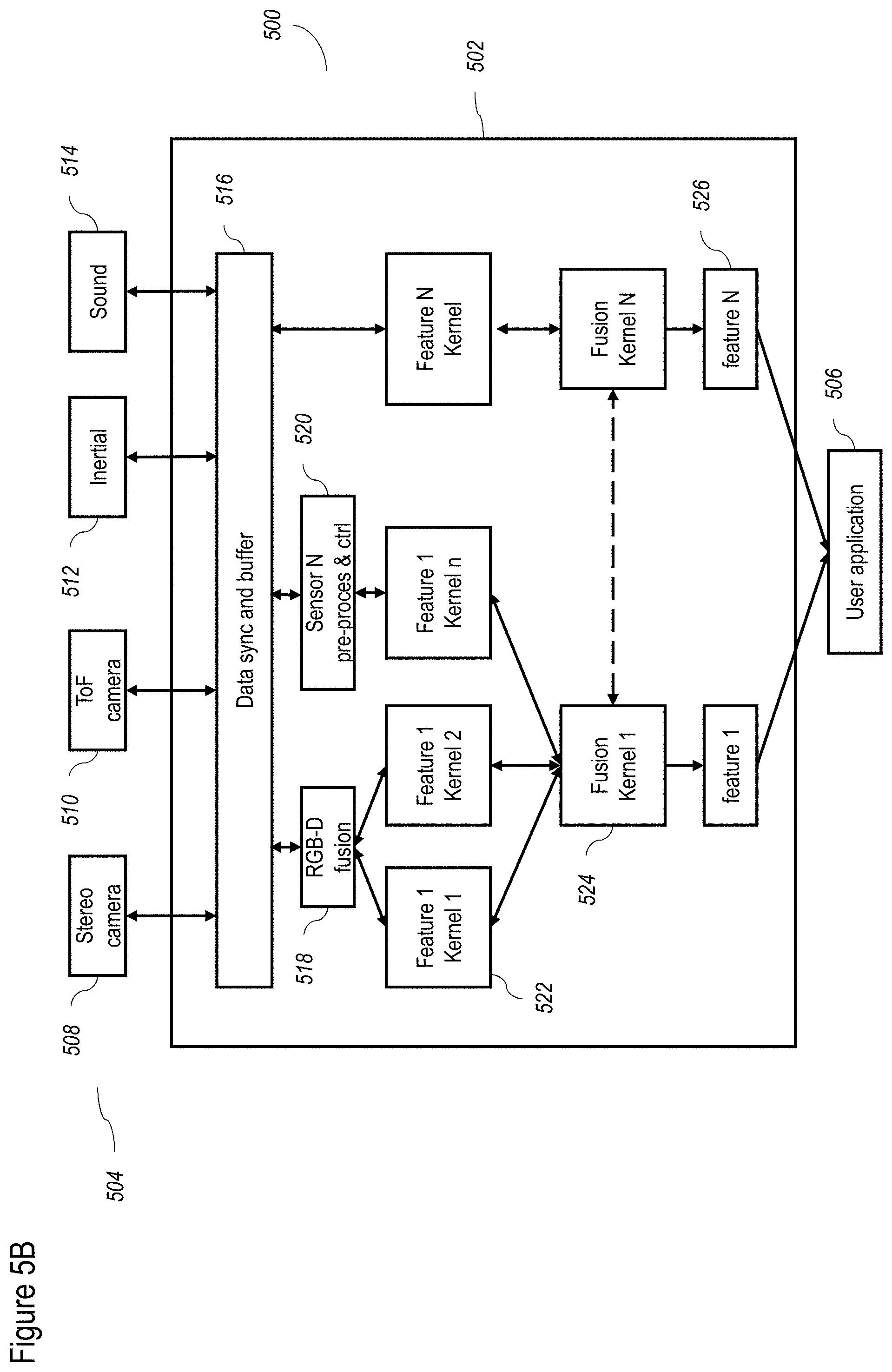

[0085] FIGS. 5A-5D show a non-limiting example of a data processing flow for the FPGA (field-programmable gate array) according to at least some embodiments of the present disclosure;

[0086] FIGS. 6A-6E shows a non-limiting example of a hardware system for the camera according to at least some embodiments of the present disclosure;

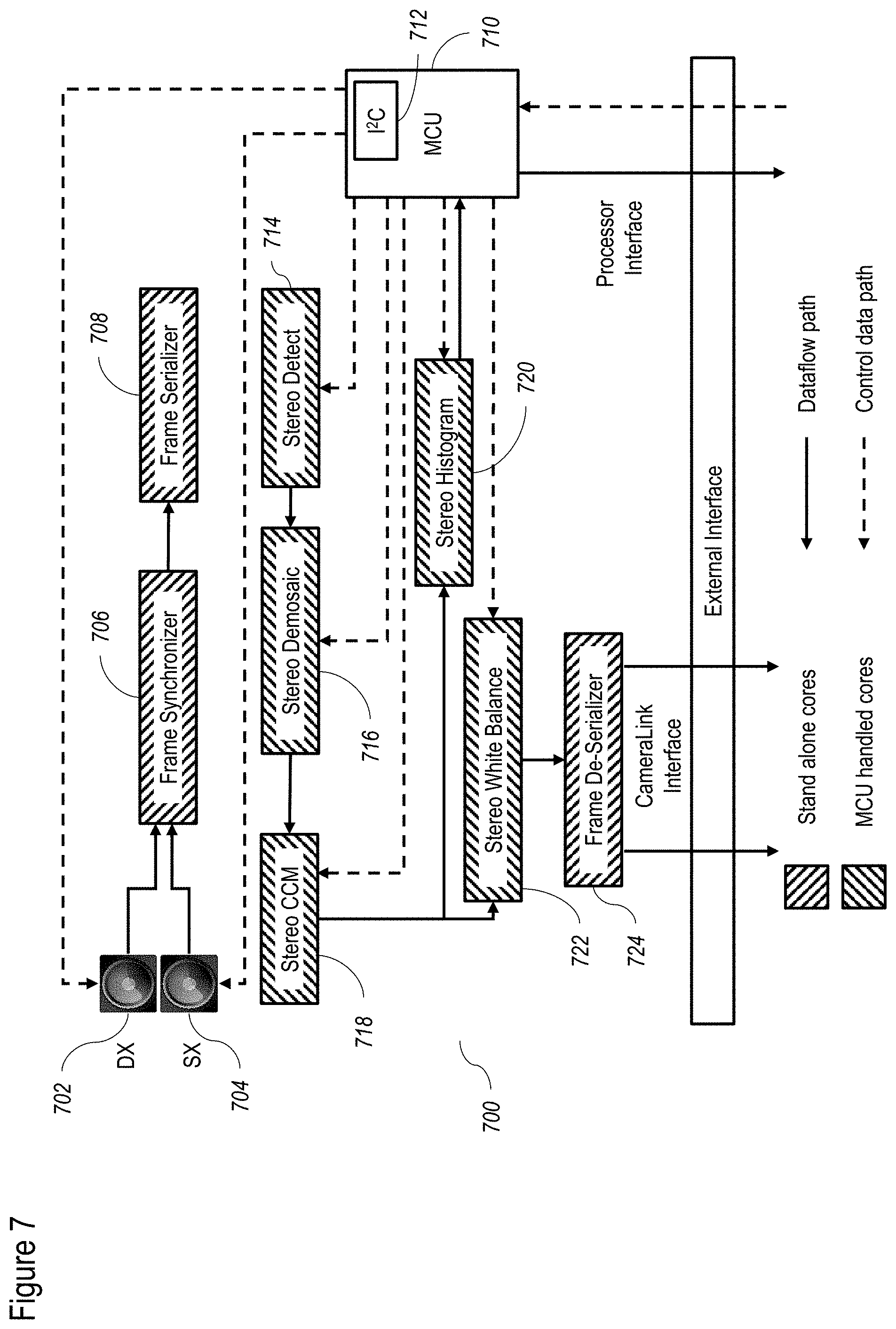

[0087] FIG. 7 shows a non-limiting example of a method for stereo processing according to at least some embodiments of the present disclosure;

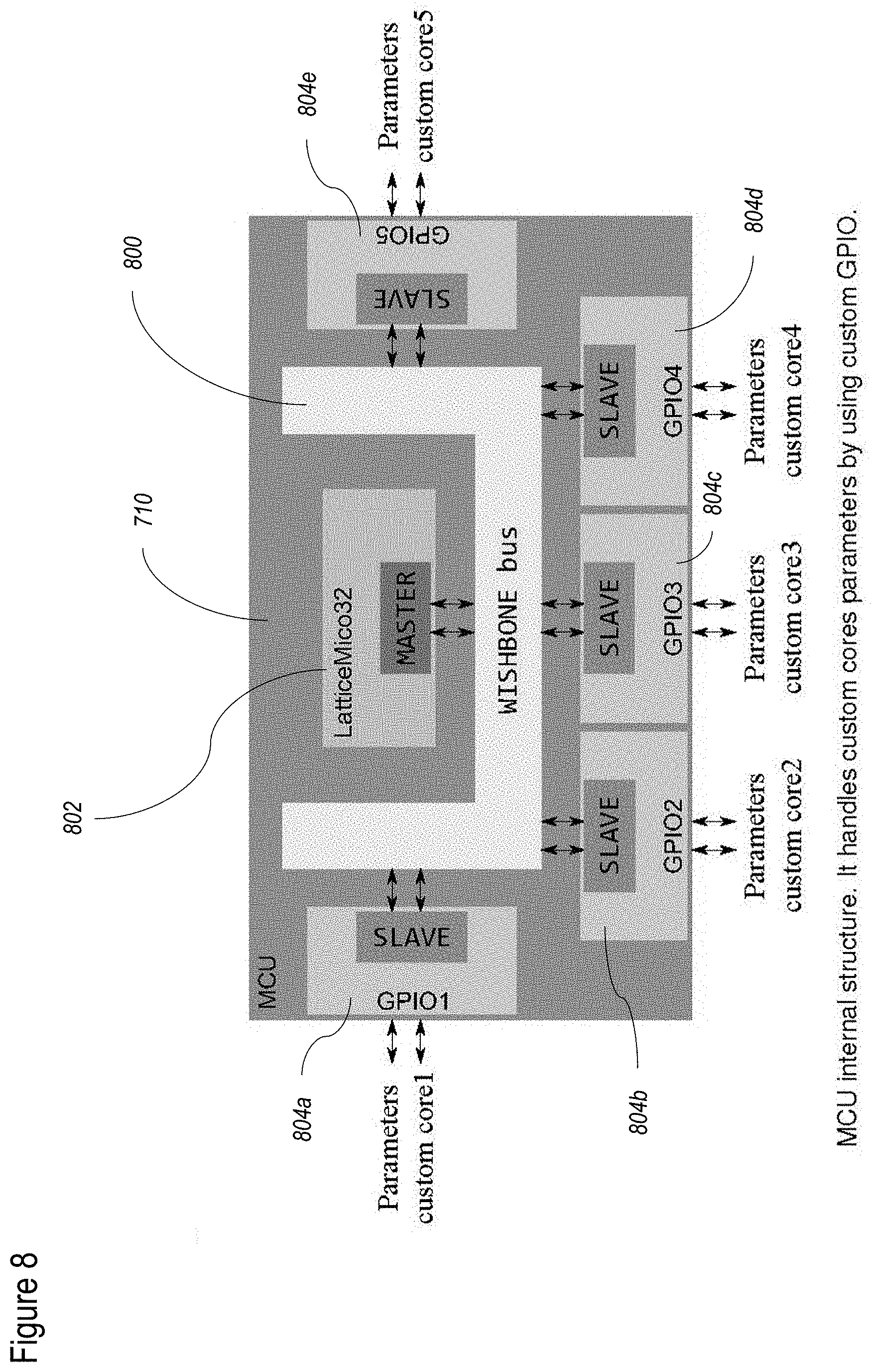

[0088] FIG. 8 shows a non-limiting example of a MCU configuration according to at least some embodiments of the present disclosure;

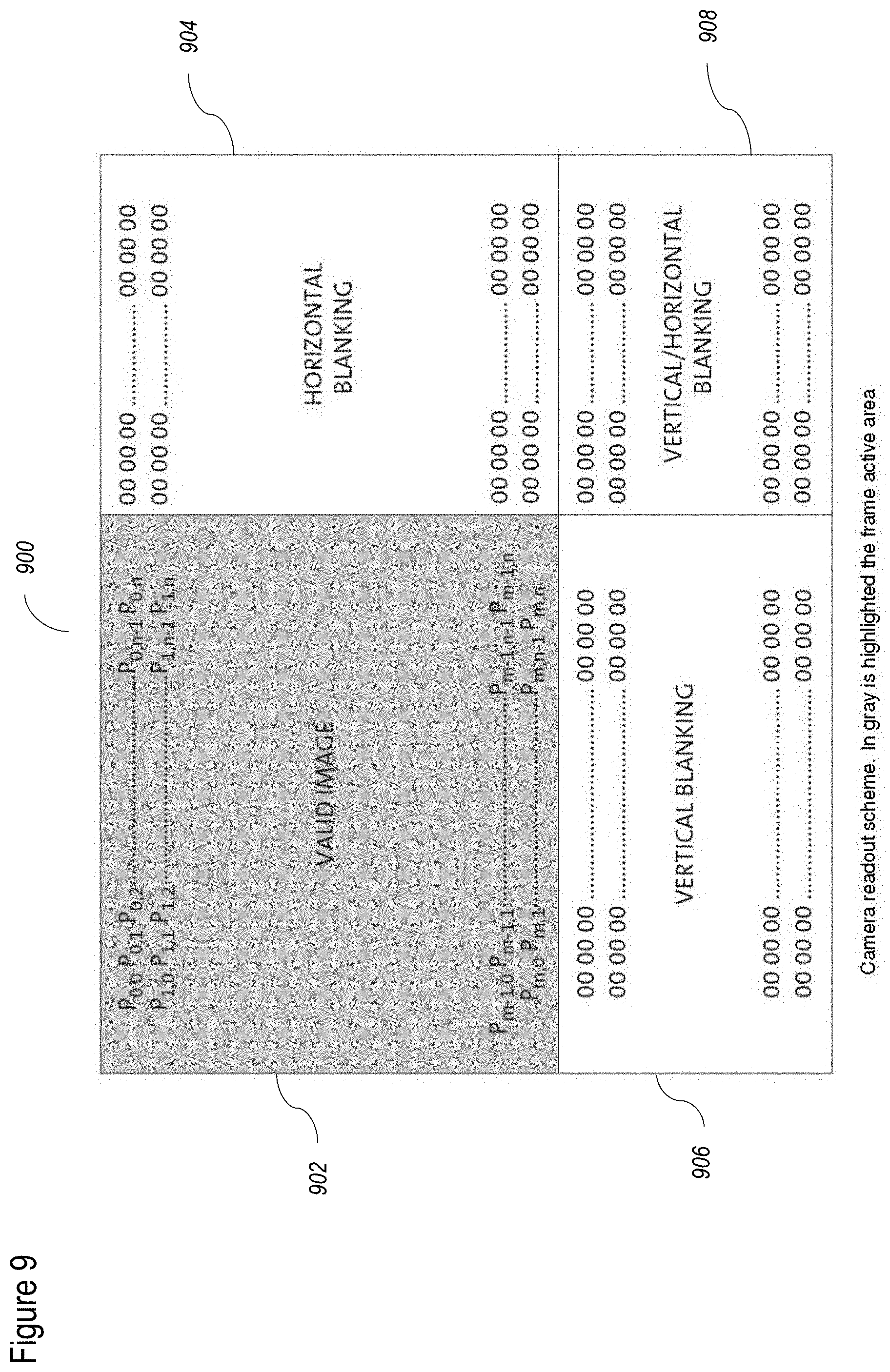

[0089] FIG. 9 shows a non-limiting example of a camera according to at least some embodiments of the present disclosure;

[0090] FIG. 10 shows a non-limiting example of a configuration for double clock sampler functions according to at least some embodiments of the present disclosure;

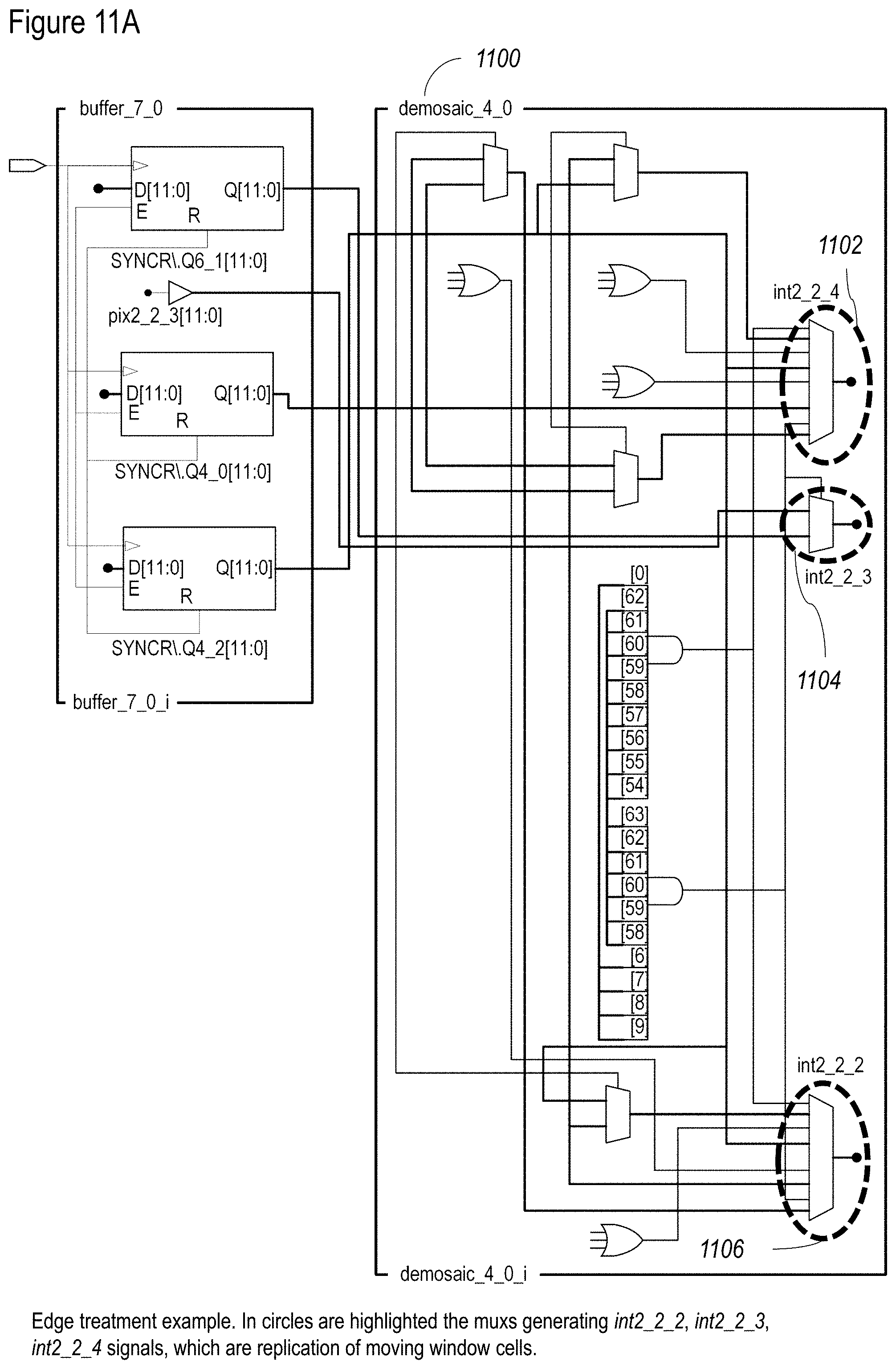

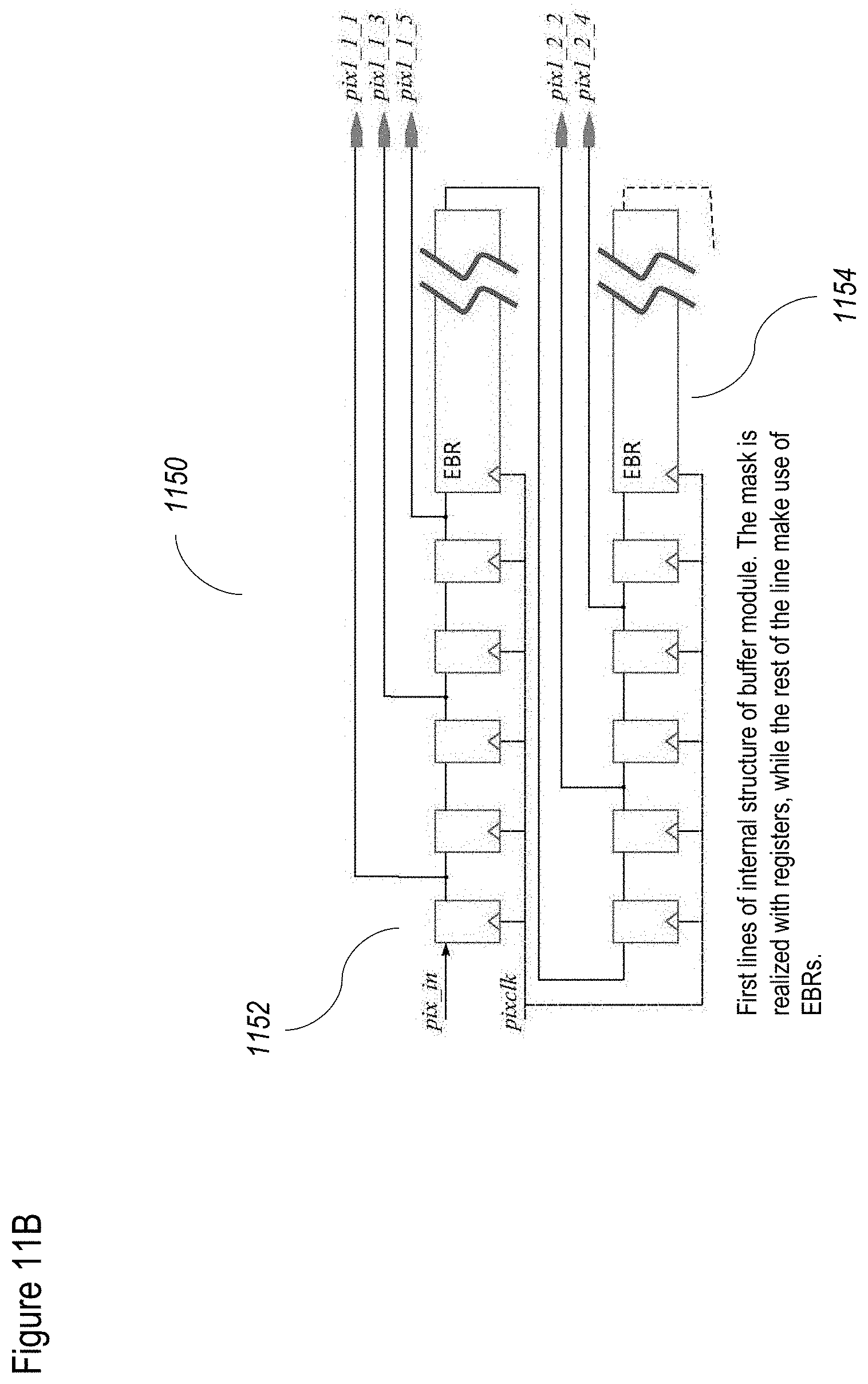

[0091] FIGS. 11A and 11B show a non-limiting example of a buffer configuration according to at least some embodiments of the present disclosure;

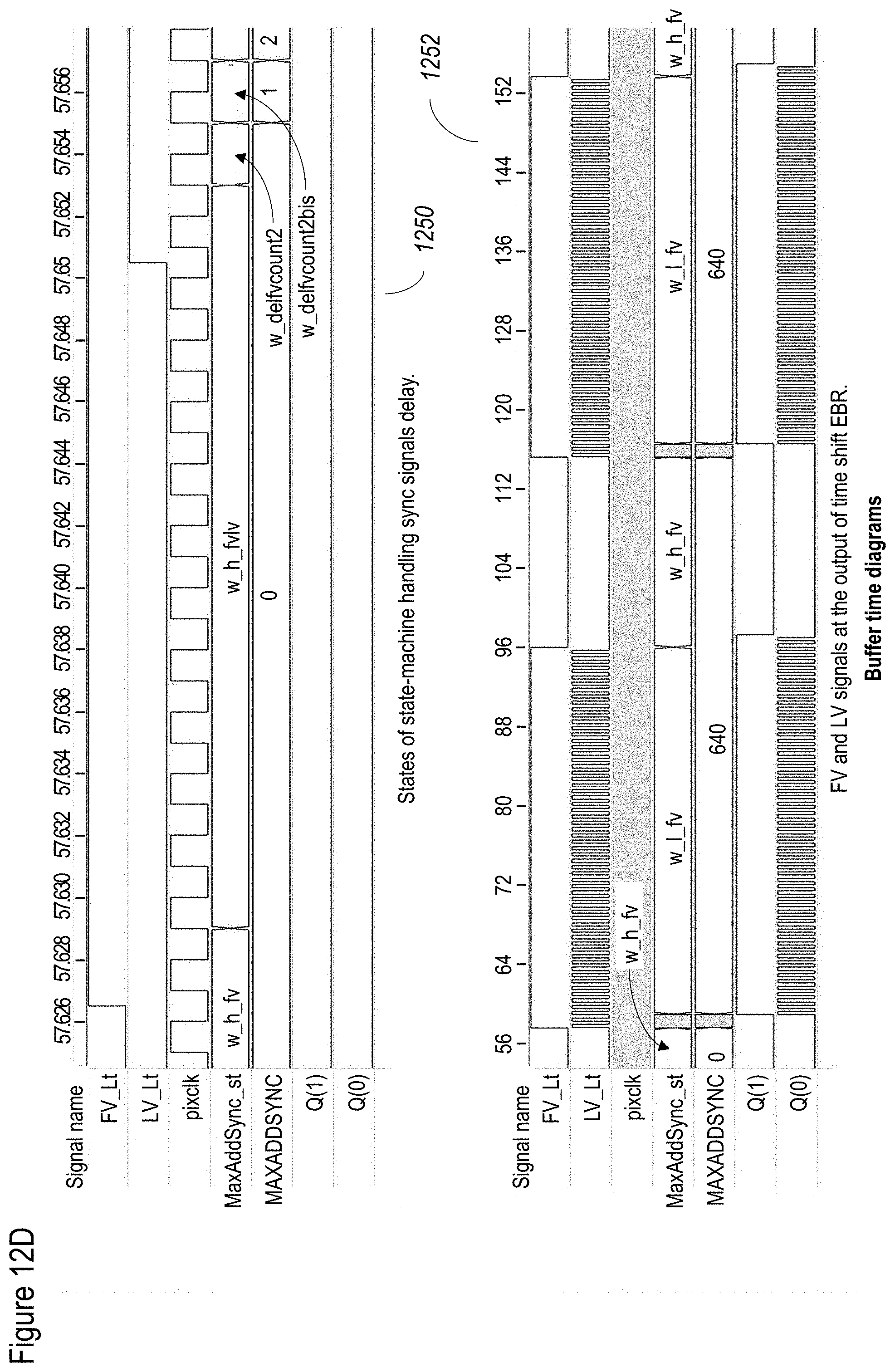

[0092] FIGS. 12A-12D show a non-limiting example of an internal buffer cells arrangement: FIG. 12A shows a global structure, FIG. 12B shows a mask for defective pixel detection and FIG. 12C shows a mask for de-mosaic task. FIG. 12D, which shows exemplary state machines;

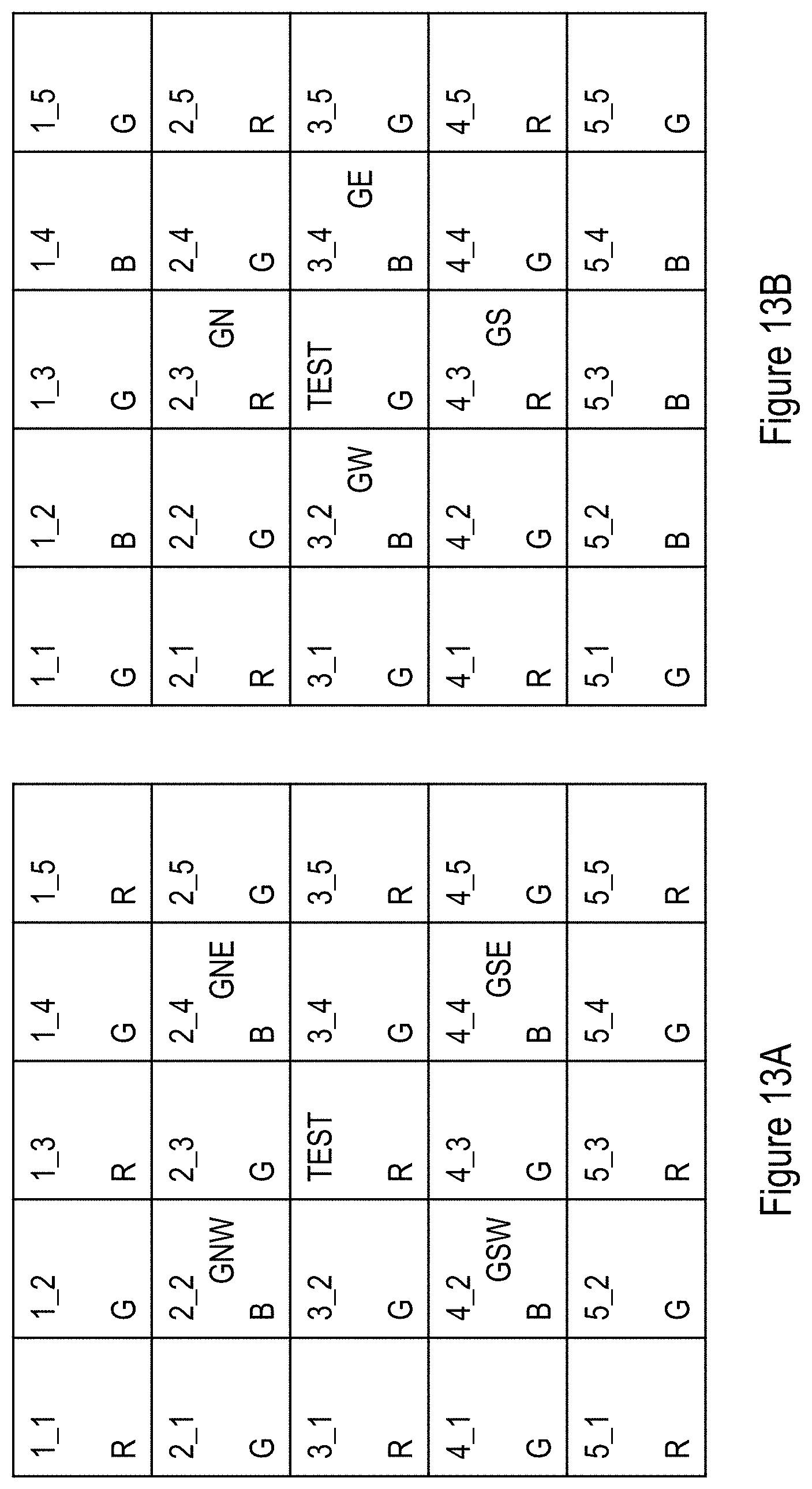

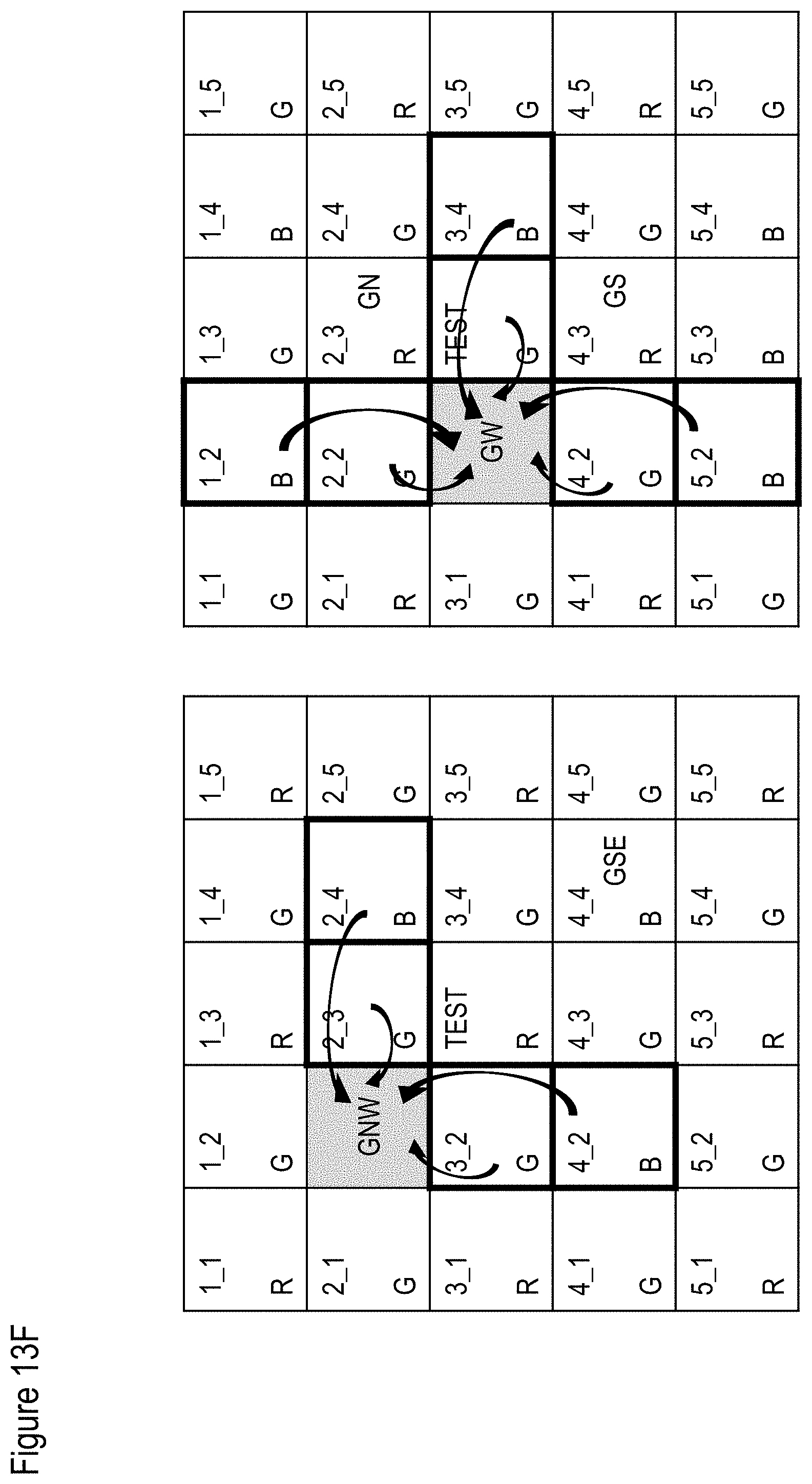

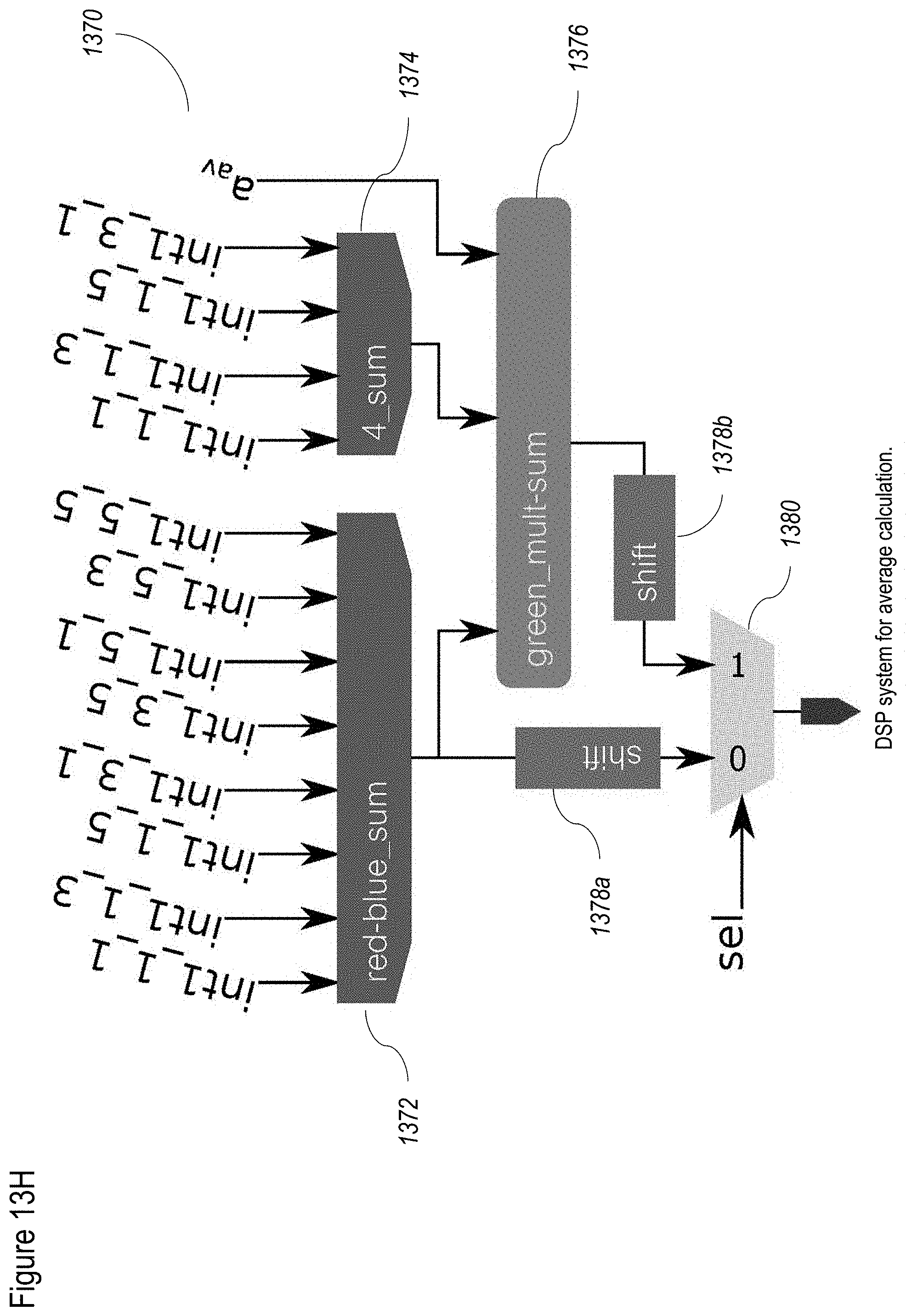

[0093] FIGS. 13A-13H show a non-limiting example of a method for de-mosaic according to at least some embodiments of the present disclosure;

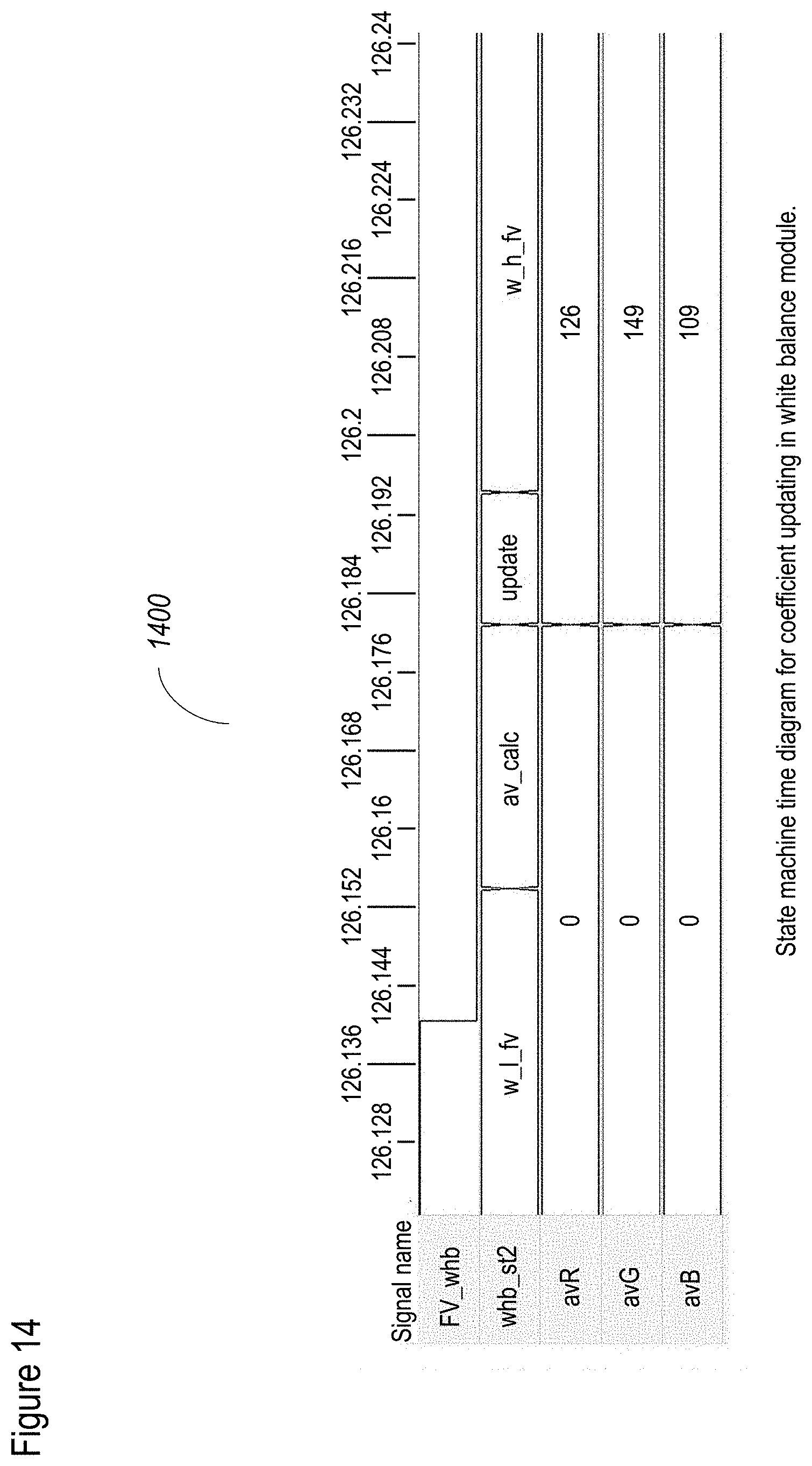

[0094] FIG. 14 shows a non-limiting example of a method for white balance correction according to at least some embodiments of the present disclosure;

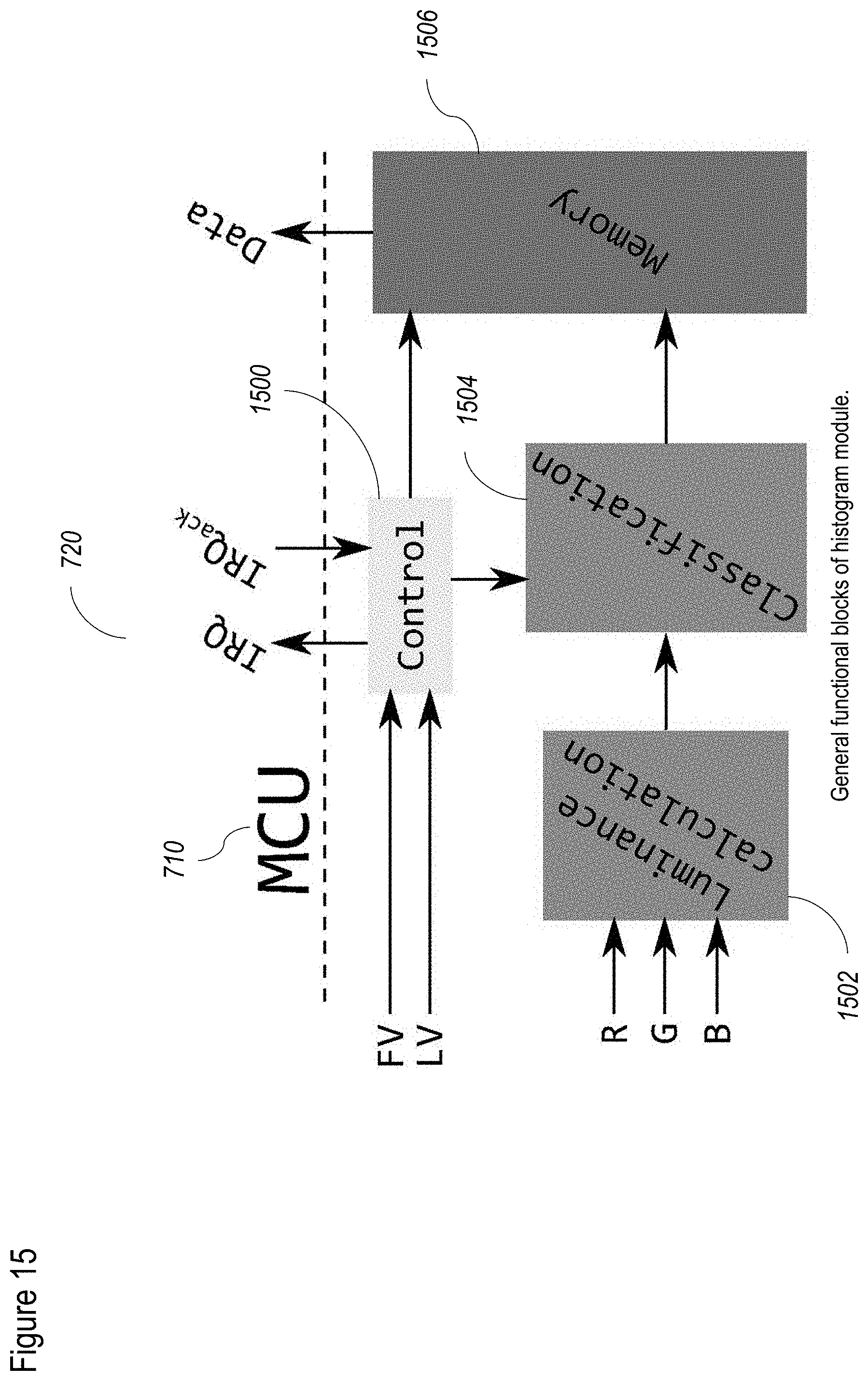

[0095] FIG. 15 shows a non-limiting example of a method for performing the histogram adjustment according to at least some embodiments of the present disclosure;

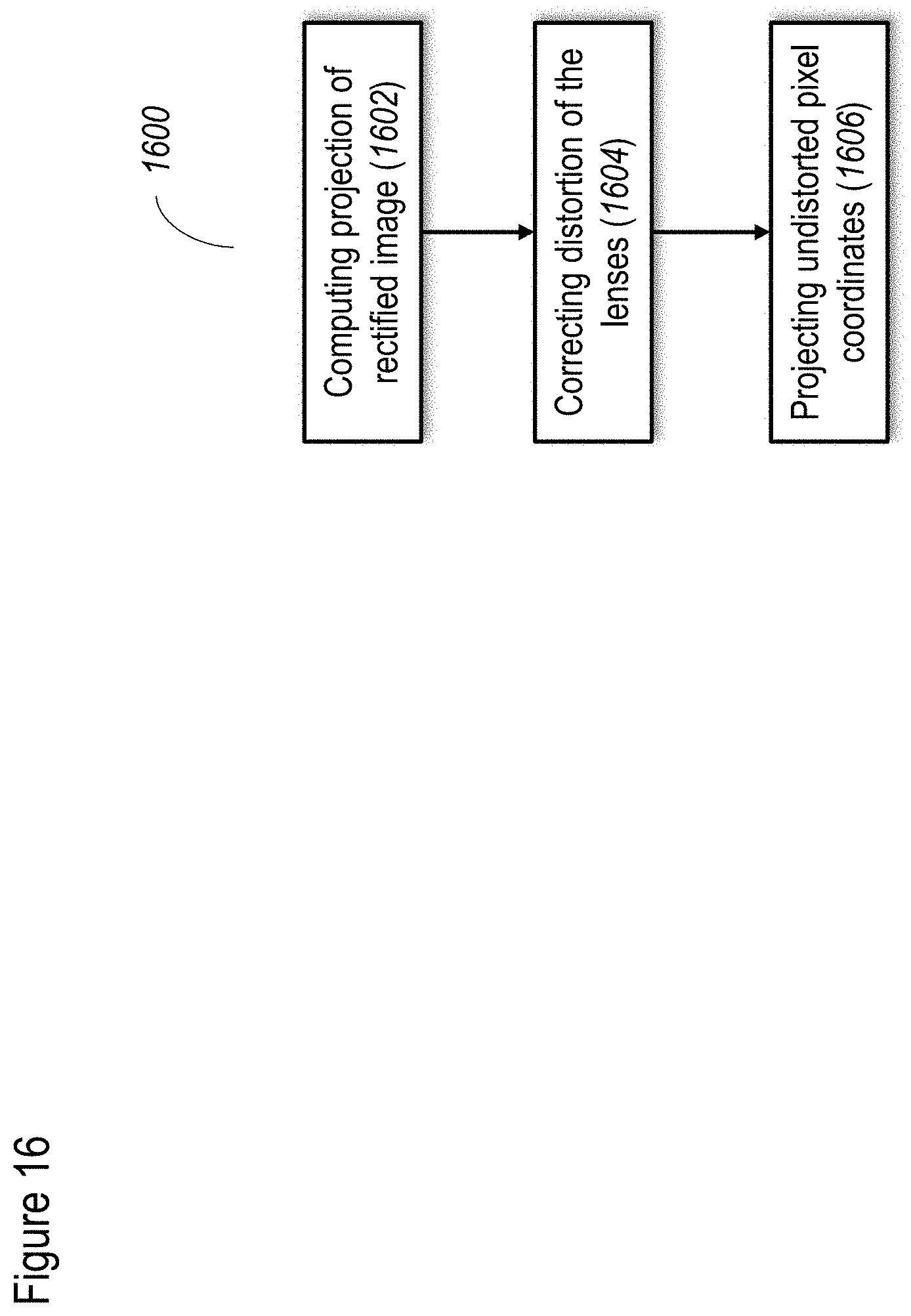

[0096] FIG. 16 shows an illustrative, exemplary, non-limiting process for stereo rectification according to at least some embodiments of the present disclosure;

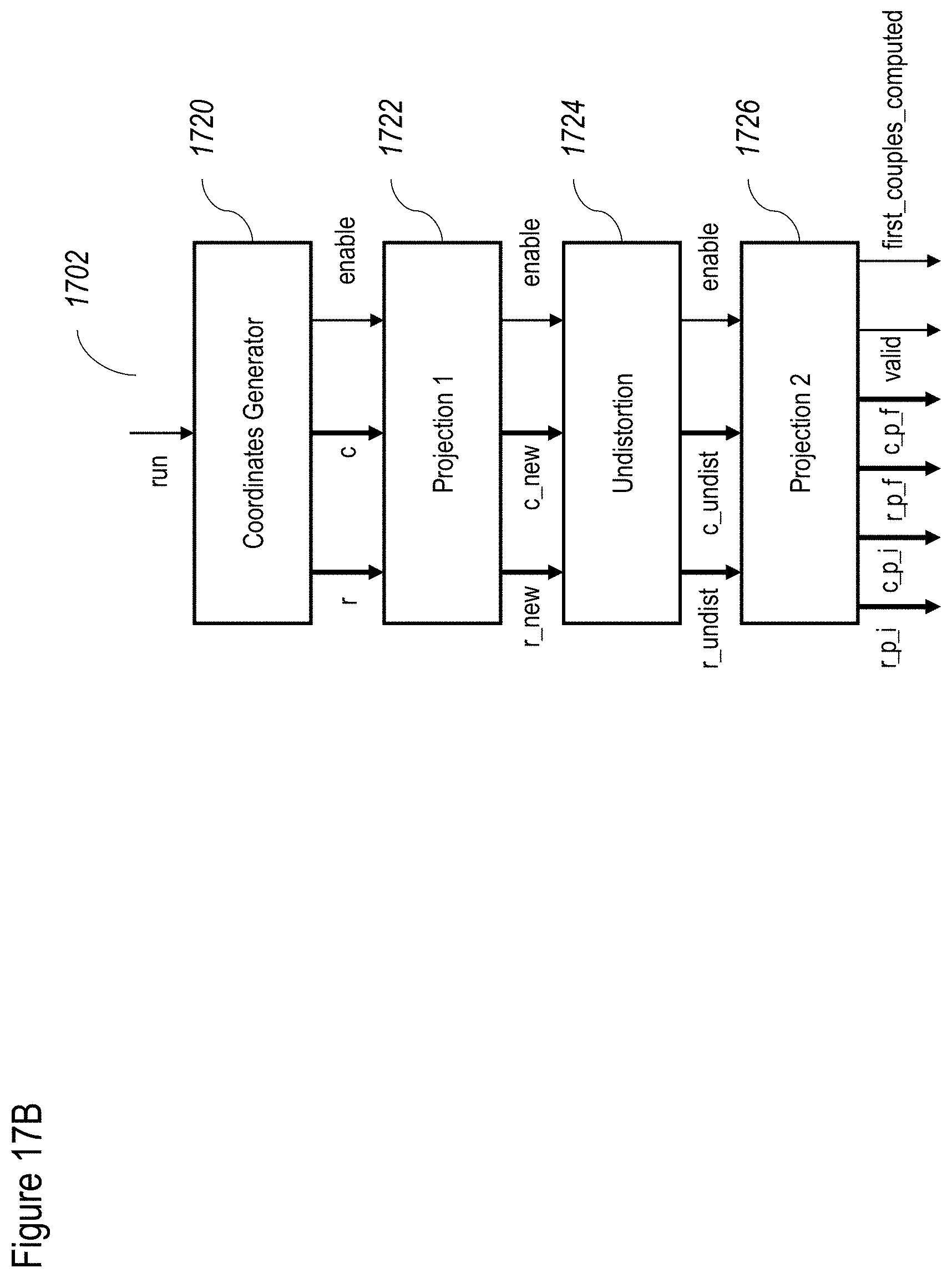

[0097] FIG. 17A shows an illustrative, exemplary, non-limiting system for stereo rectification according to at least some embodiments of the present disclosure;

[0098] FIG. 17B shows an illustrative, exemplary, non-limiting mapper module for use with the system of FIG. 17A according to at least some embodiments of the present disclosure;

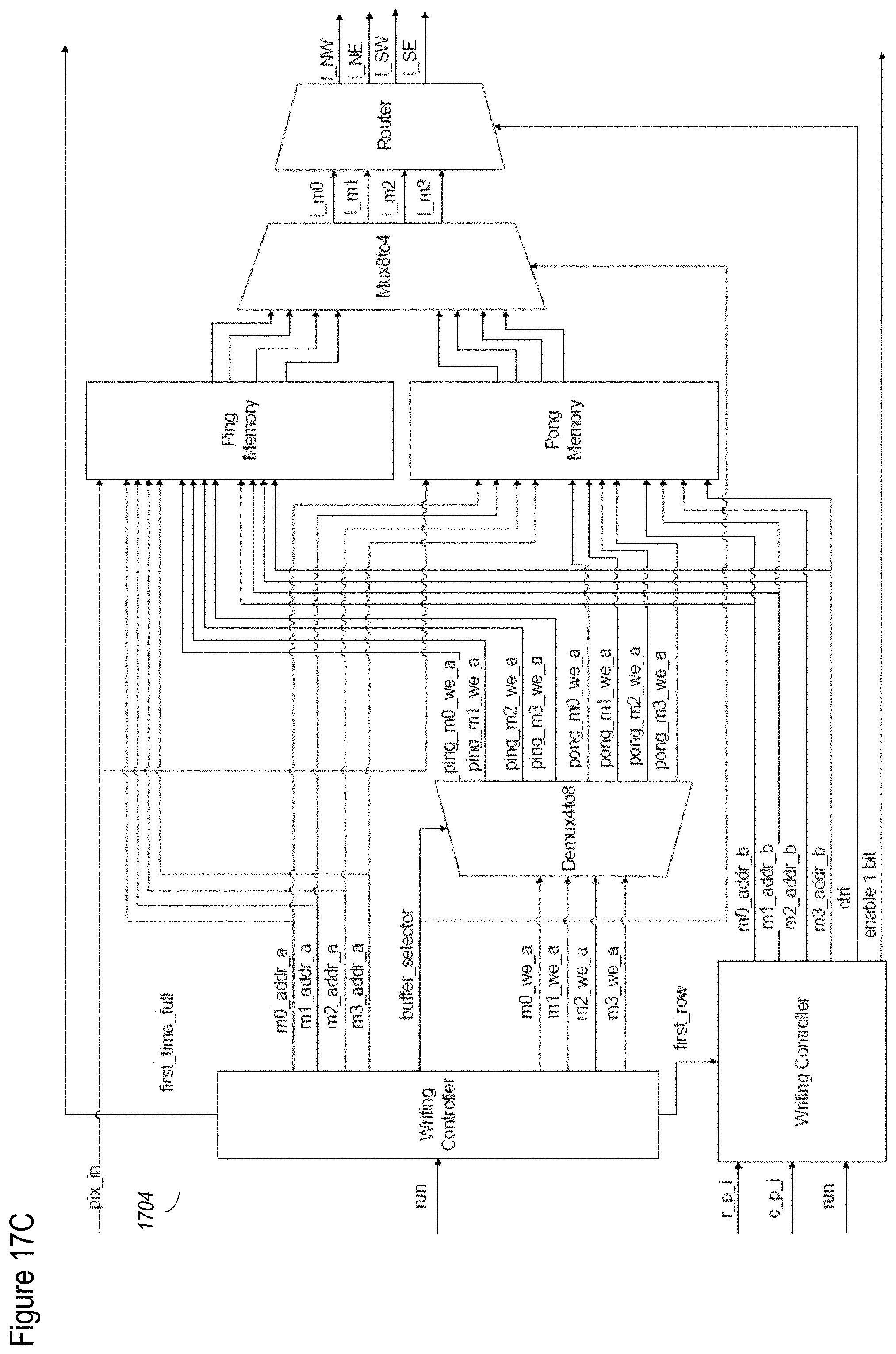

[0099] FIG. 17C shows an illustrative, exemplary, non-limiting memory management for use with the system of FIG. 17A according to at least some embodiments of the present disclosure;

[0100] FIG. 17D shows a non-limiting example of an image;

[0101] FIG. 17E shows the memory filling scheme for this image;

[0102] FIG. 17F shows a non-limiting, exemplary finite state machine for use with the system of FIG. 17A according to at least some embodiments of the present disclosure;

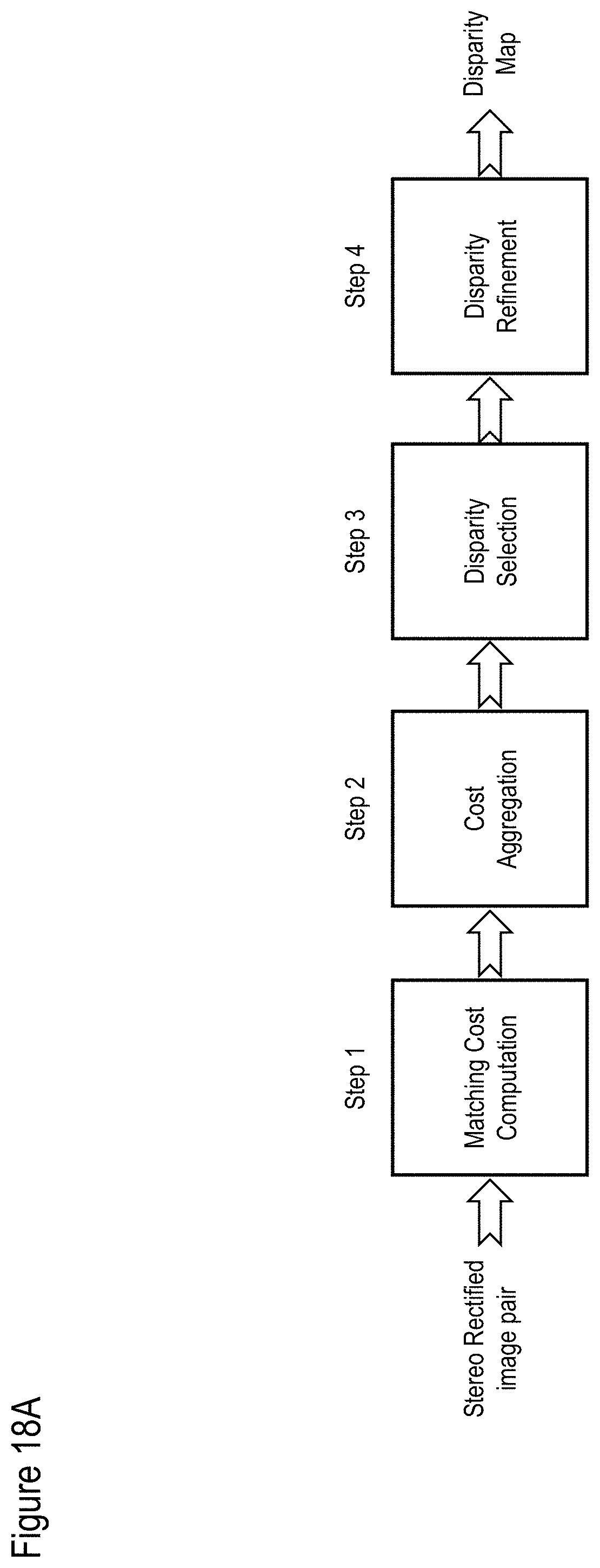

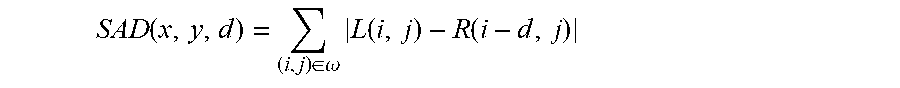

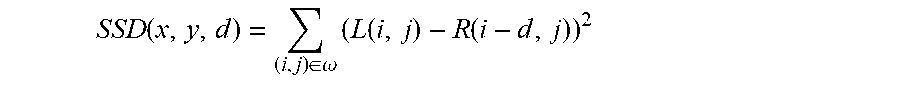

[0103] FIG. 18A shows an illustrative, exemplary, non-limiting disparity map method according to at least some embodiments of the present disclosure;

[0104] FIG. 18B shows an illustrative, exemplary, non-limiting method for calculating a cost for the disparity map method according to at least some embodiments of the present disclosure;

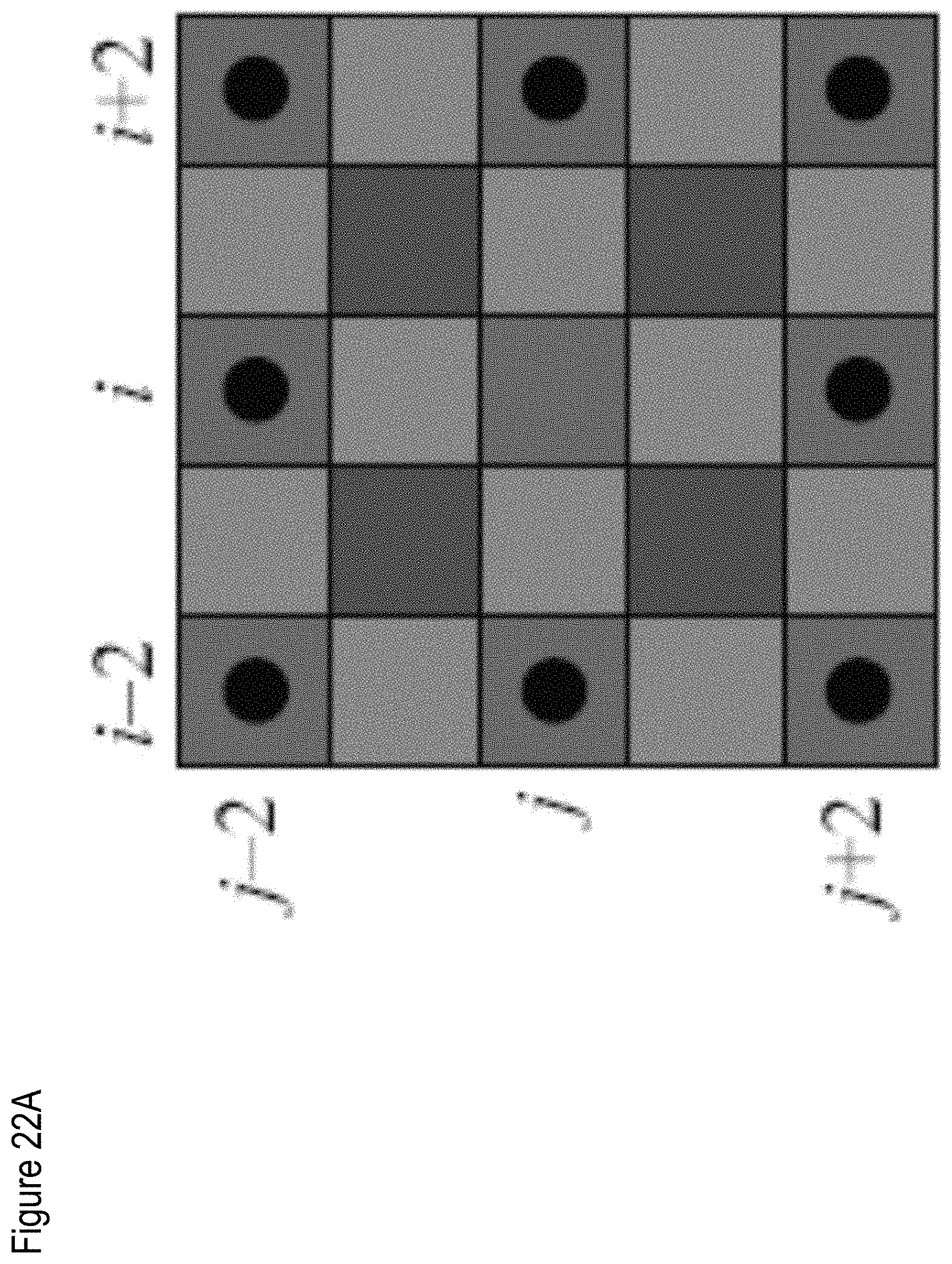

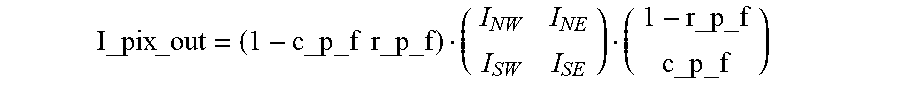

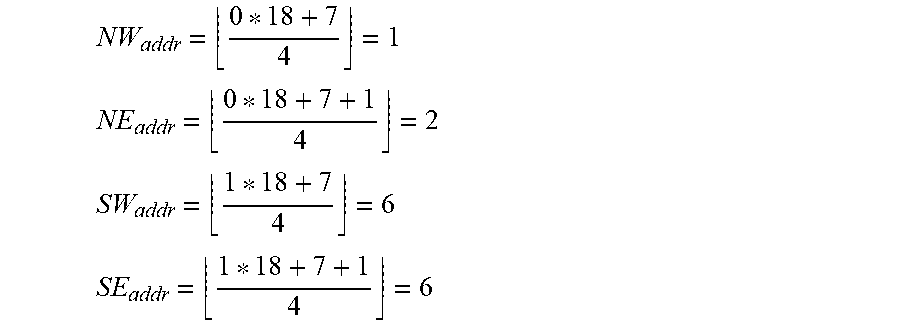

[0105] FIG. 19A shows an example of image representation for "W-means" algorithm;

[0106] FIG. 19B shows the effects of parameters on "W-means" weight;

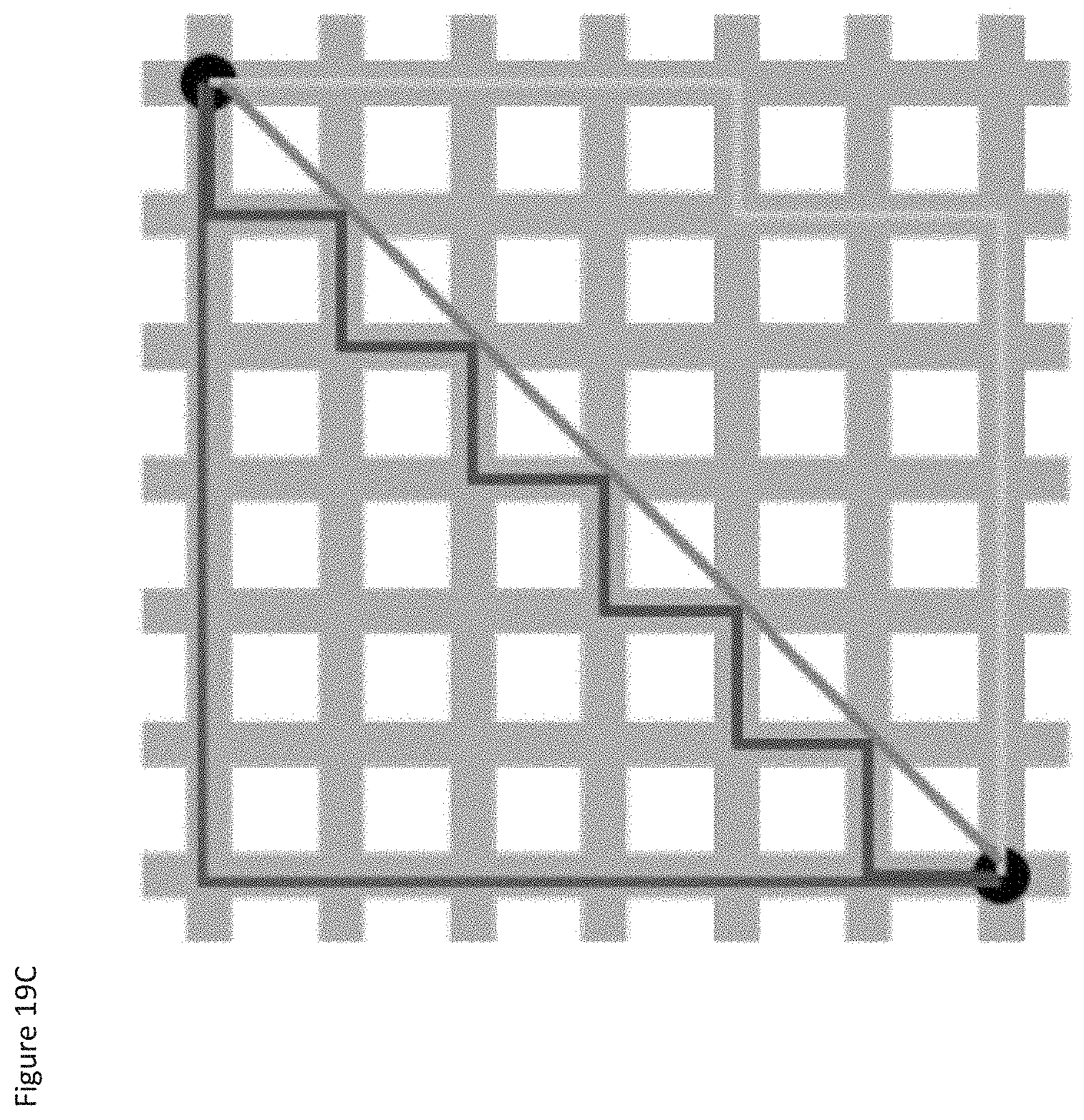

[0107] FIG. 19C shows taxicab geometry versus Euclidean distance: In taxicab geometry, the red, yellow, and blue paths vall have the shortest length of |6|+|6|=12. In Euclidean geometry, the green line has length, and is the unique shortest path;

[0108] FIG. 19D shows the W-means algorithm, in a non-limiting example;

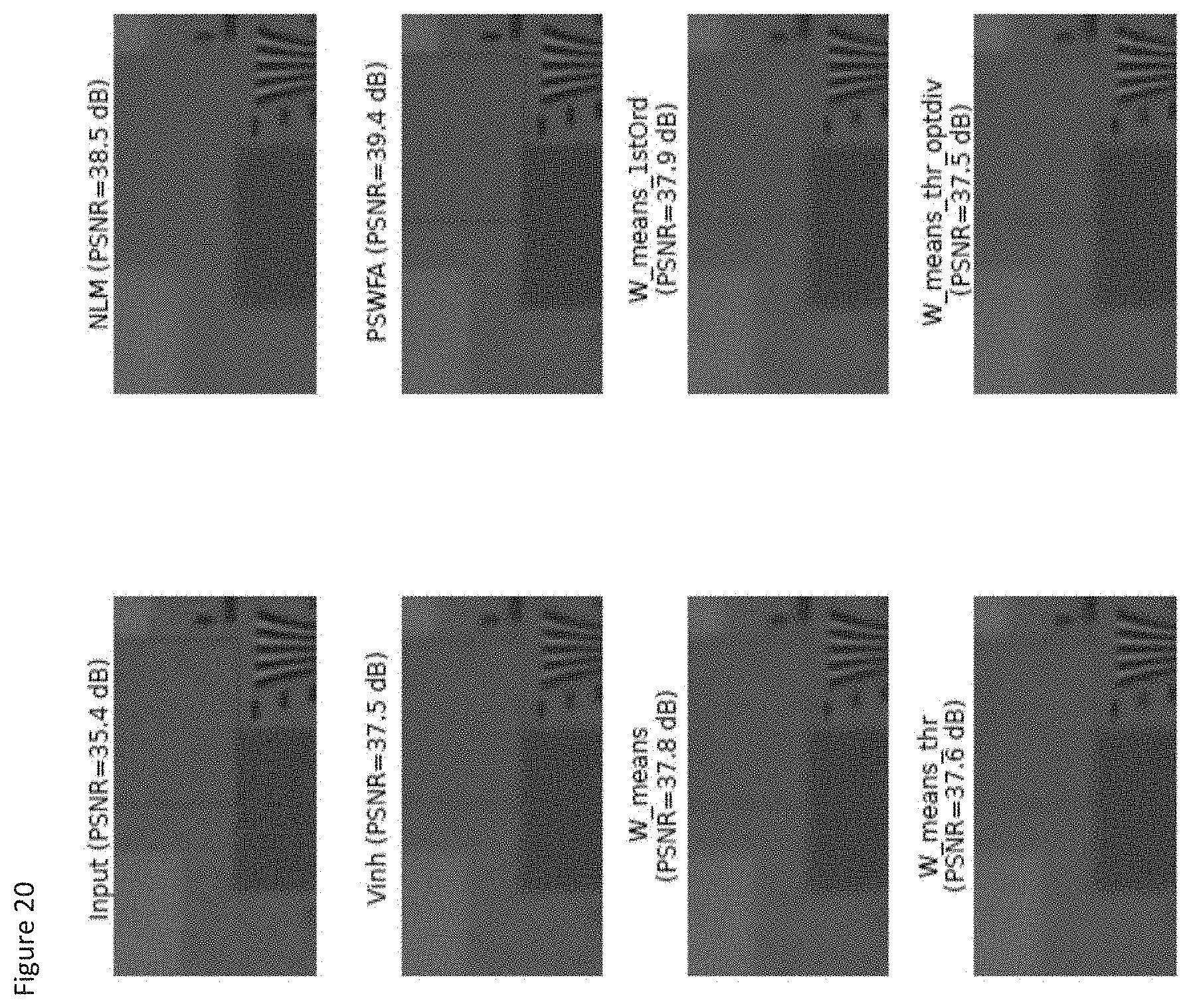

[0109] FIG. 20 shows the results of state of the art and "W-means" algorithms, after application of the debayer. Image size (150.times.80) (zoom). Algorithm parameters are: NLM(h=6, f=3, r=10), Vinh(p=8), PSWFA(n=5), W_means(h=16, .sigma.=4), W_means_1stOrd(h=32, .sigma.=2), W_means_thr(.sigma.=12), W_means_thr_optdiv(.sigma.=12);

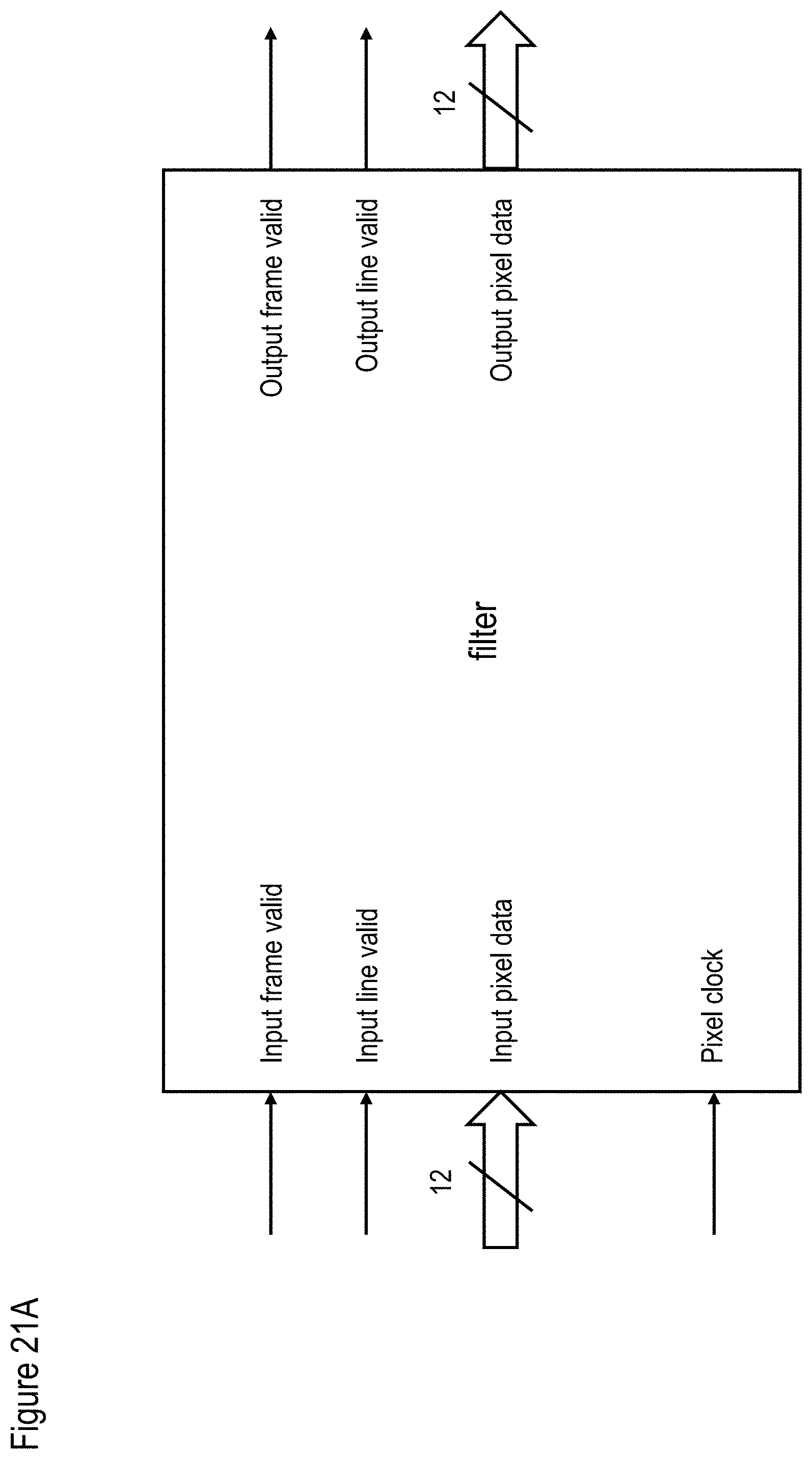

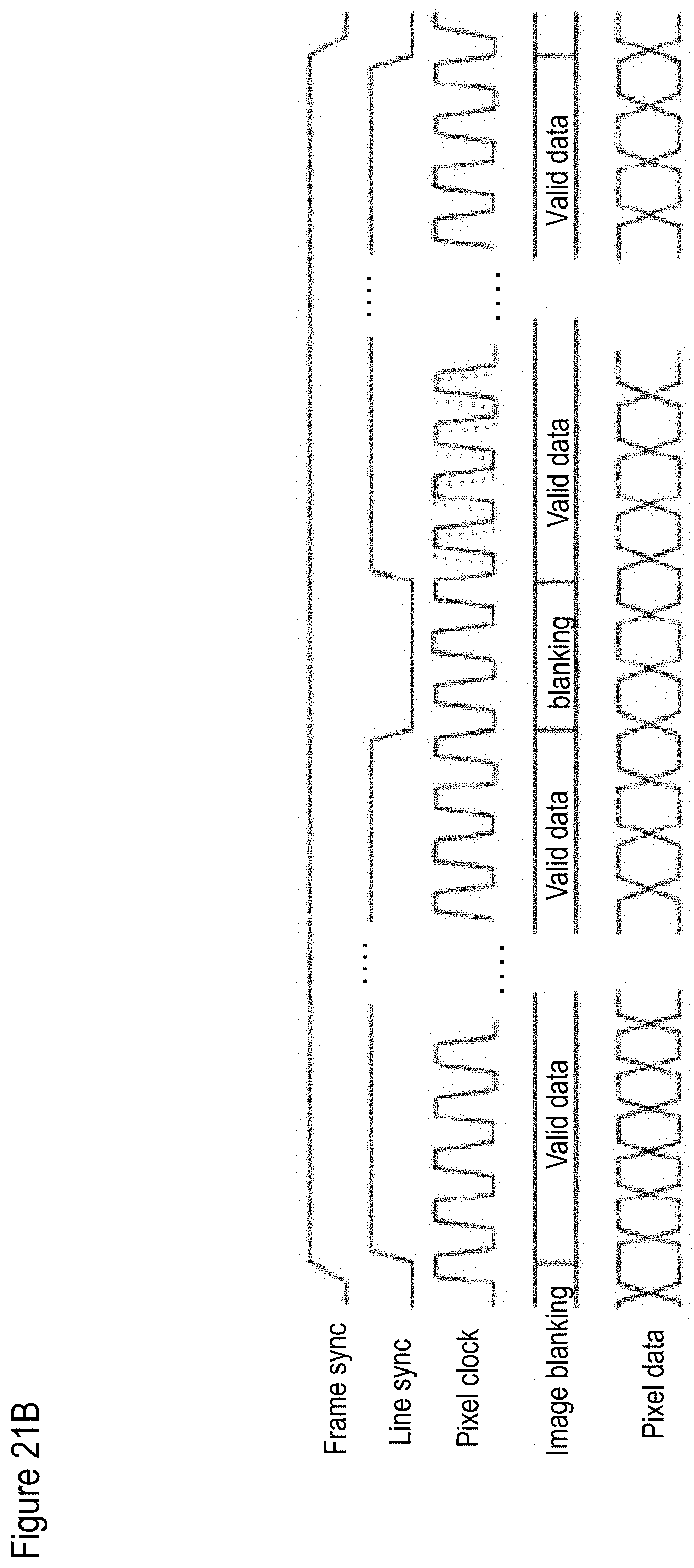

[0110] FIG. 21A shows required ports of the filter to be added in the image pipeline, while FIG. 21B shows a pixel stream interface chronogram;

[0111] FIG. 22A shows a schematic of the Bailey and Jimmy method, while FIG. 22B shows an exemplary implementation thereof;

[0112] FIG. 23 shows an exemplary bad pixel removal method FPGA implementation diagram, in which each yellow unit is a VHDL component;

[0113] FIG. 24 shows an exemplary, illustrative non-limiting data flow for bad pixel removal;

[0114] FIG. 25 shows an exemplary, illustrative non-limiting diagram for "W-means" unit FPGA implementation;

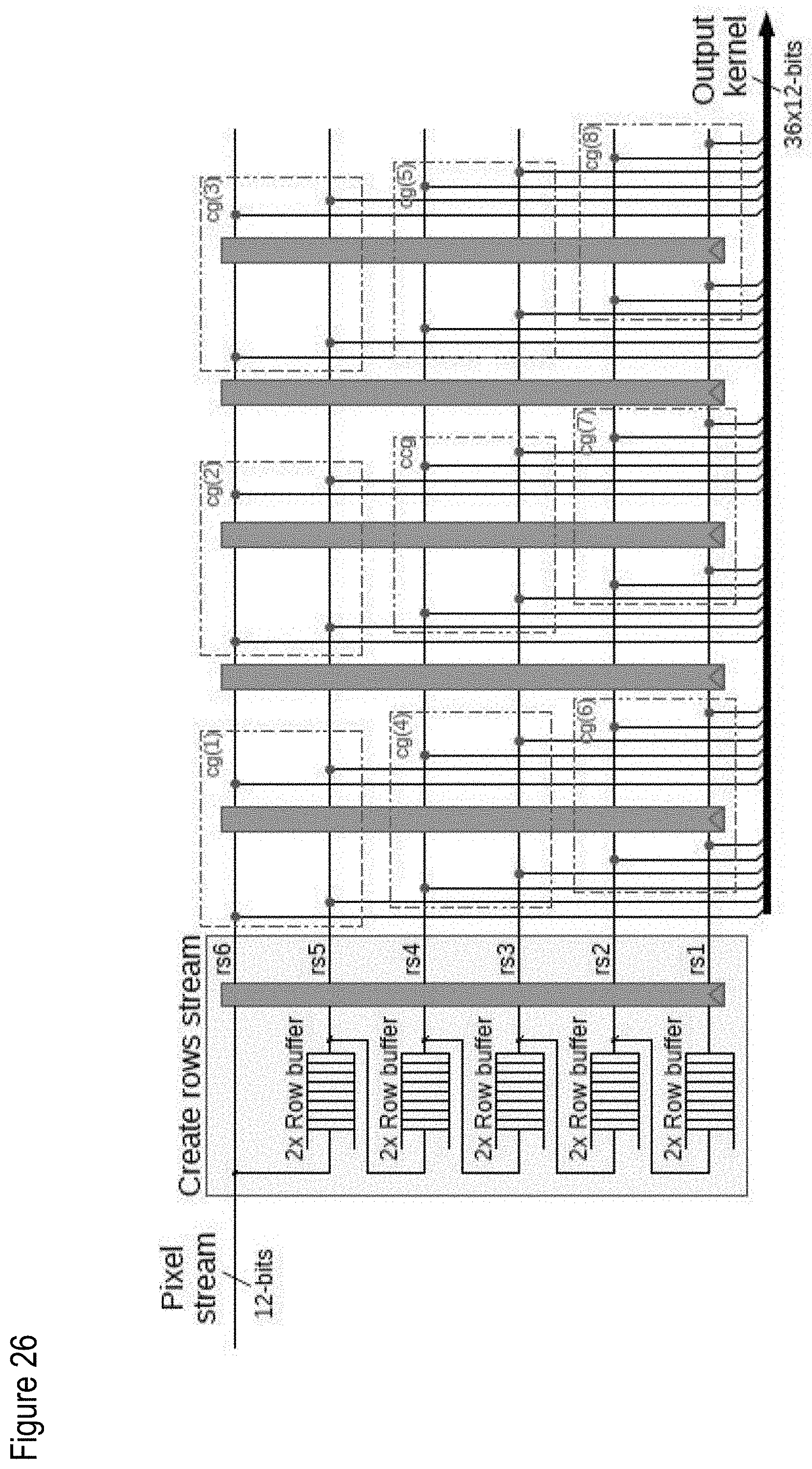

[0115] FIG. 26 shows an exemplary, illustrative non-limiting generate kernel component diagram for "W-means" algorithm, where the red annotations are color groups;

[0116] FIG. 27 shows an exemplary, illustrative non-limiting distance computation component diagram for "W-means" algorithm, in which "ccg(i)" is the center color group with color number i, "cg(x)(i)" is the neighbor number.times.with color number i and "d(x)" is the result distance for the neighbor number x. i.di-elect cons.[1, 4], x.di-elect cons.[1, 8];

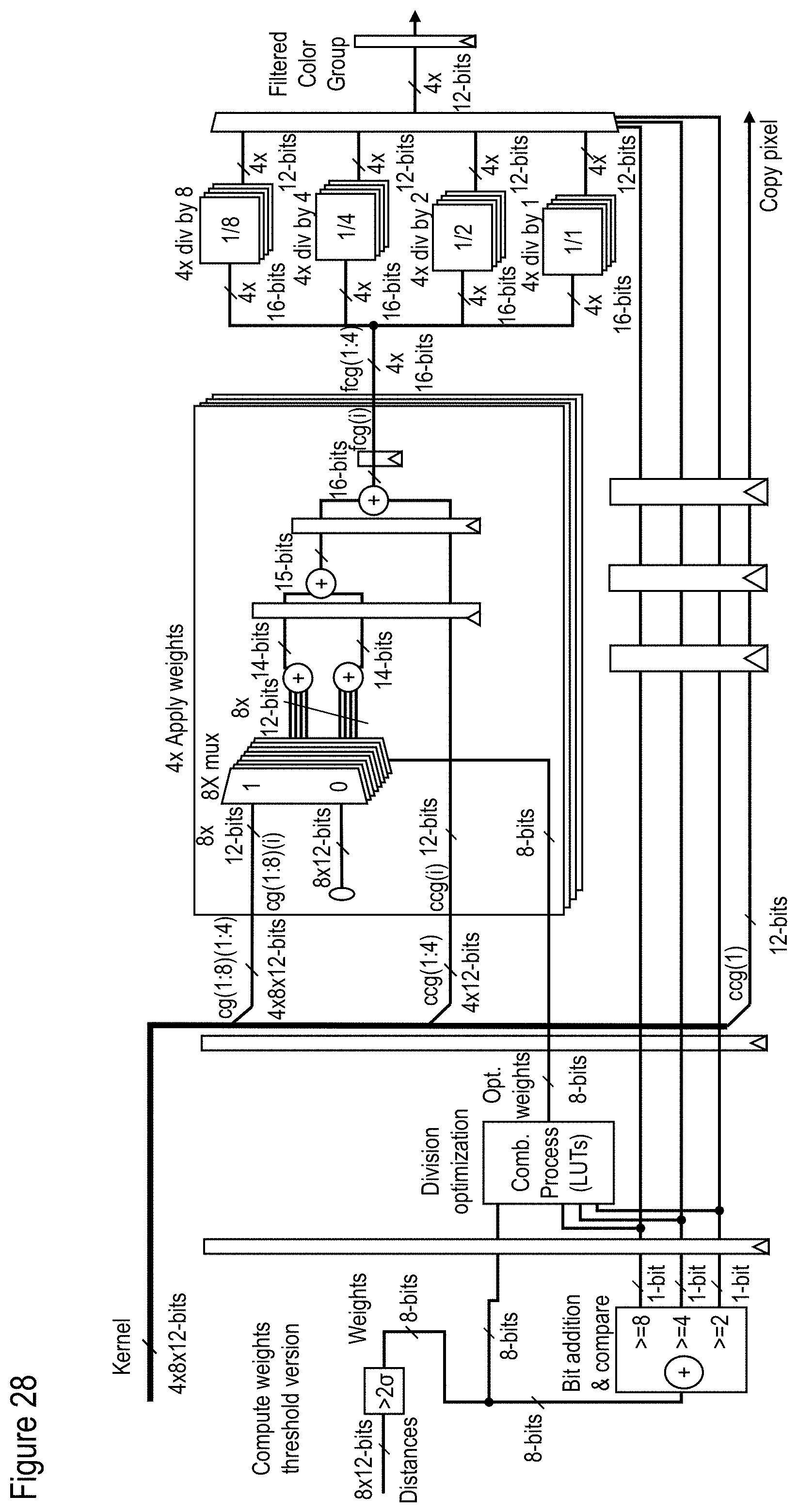

[0117] FIG. 28 shows an exemplary, illustrative non-limiting filter core "thr_optdiv` component diagram for "W-means" algorithm, in which "ccg(i)" is the center color group with color number i, "cg(x)(i)" is the neighbor number.times.with color number i, and "fcg(i)" is the center color group with color number i. i.di-elect cons.[1, 4], x.di-elect cons.[1, 8];

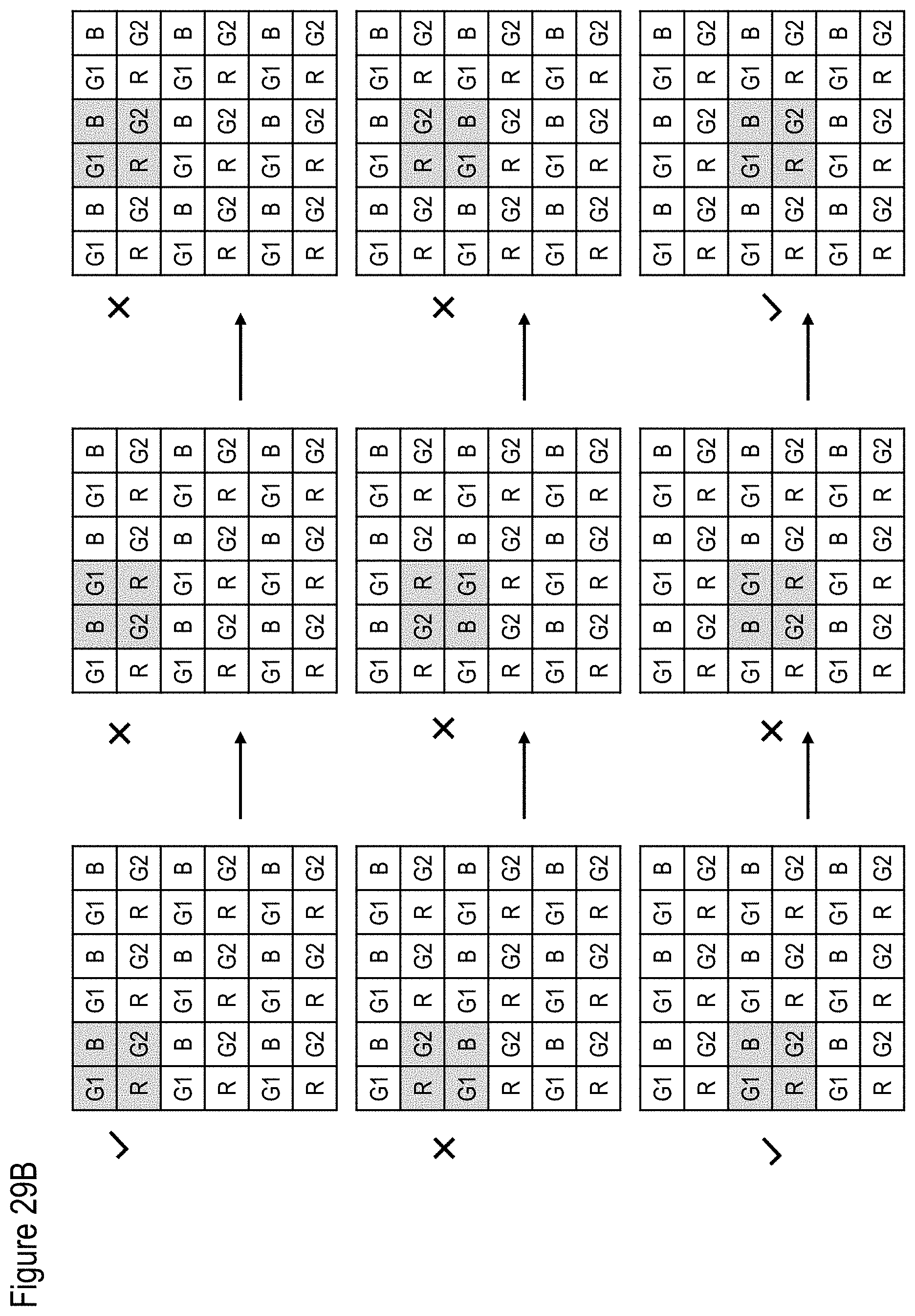

[0118] FIG. 29A shows an exemplary, illustrative non-limiting format output component diagram for "W-means" algorithm, while FIG. 29B shows an exemplary, illustrative valid output color group for "W-means" algorithm in a CFA (color filter array) image. In this example the CFA colors are "GBRG" (first image row start with green then blue and the second row starts with red then green);

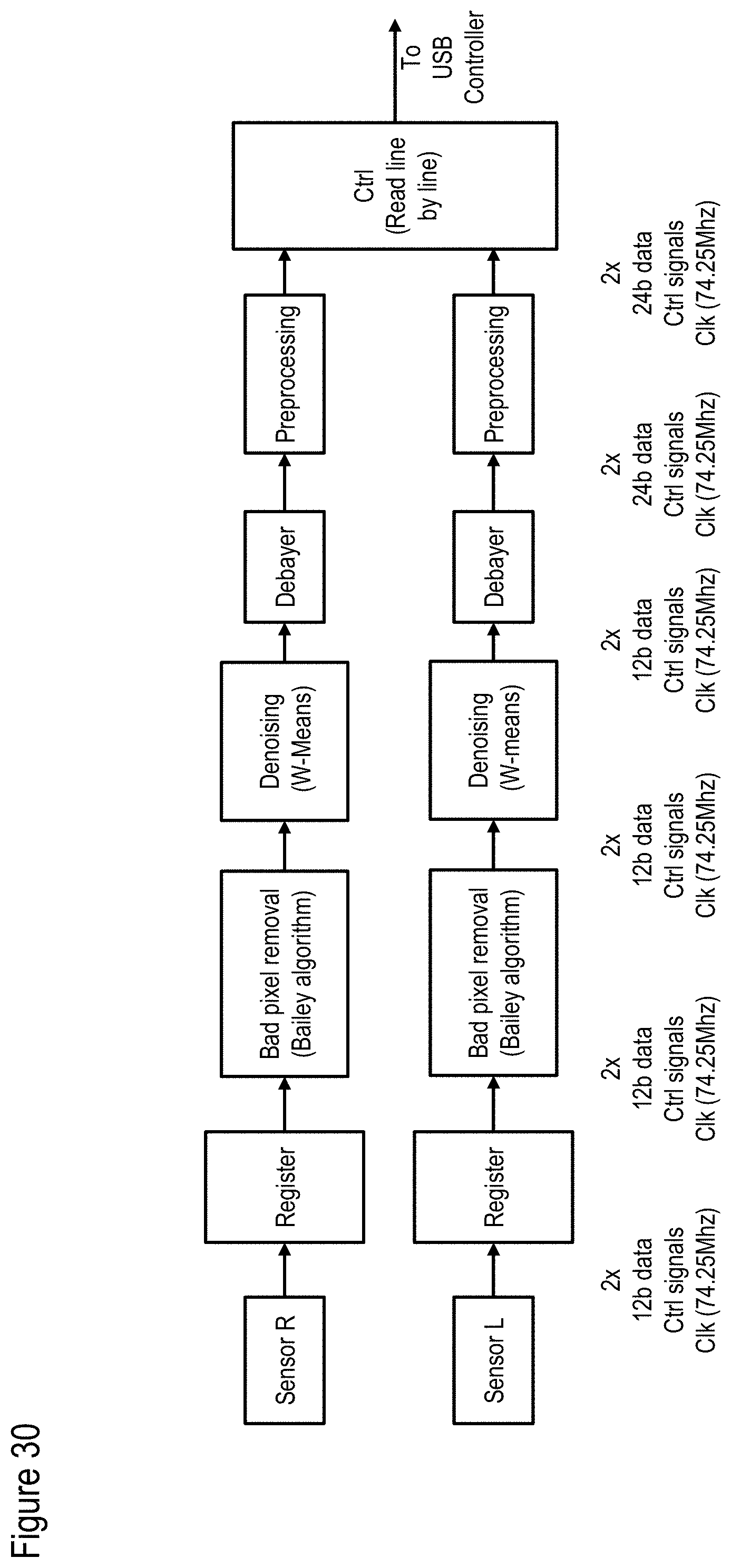

[0119] FIG. 30 shows an exemplary, illustrative non-limiting data flow for bad pixel removal and denoising;

[0120] FIGS. 31A and 31B show final test results on the camera module for both the bad pixel and "W-means" algorithms. Image size (150.times.150) (zoom);

[0121] FIG. 32 shows a non-limiting exemplary method for color correction according to at least some embodiments;

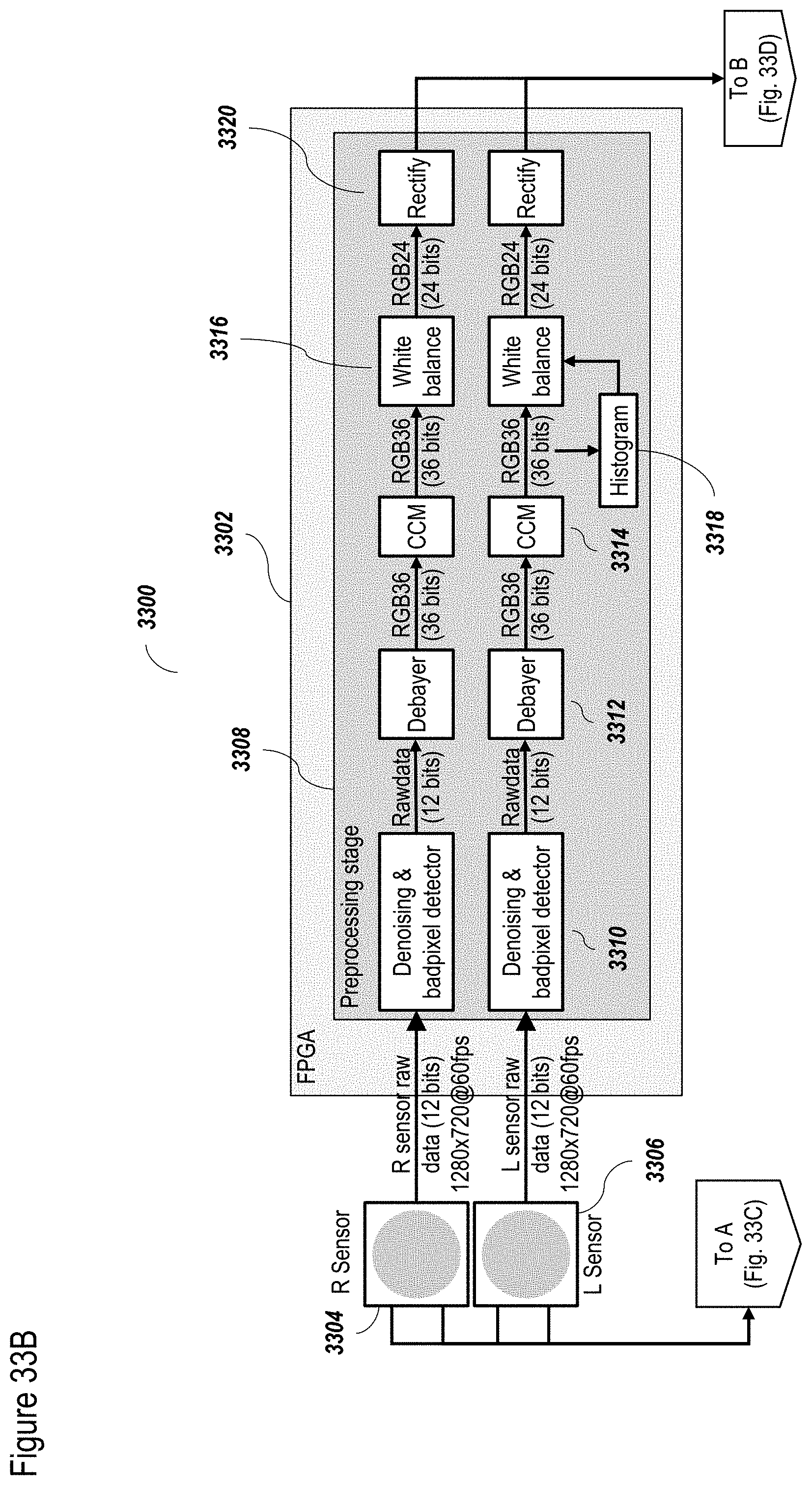

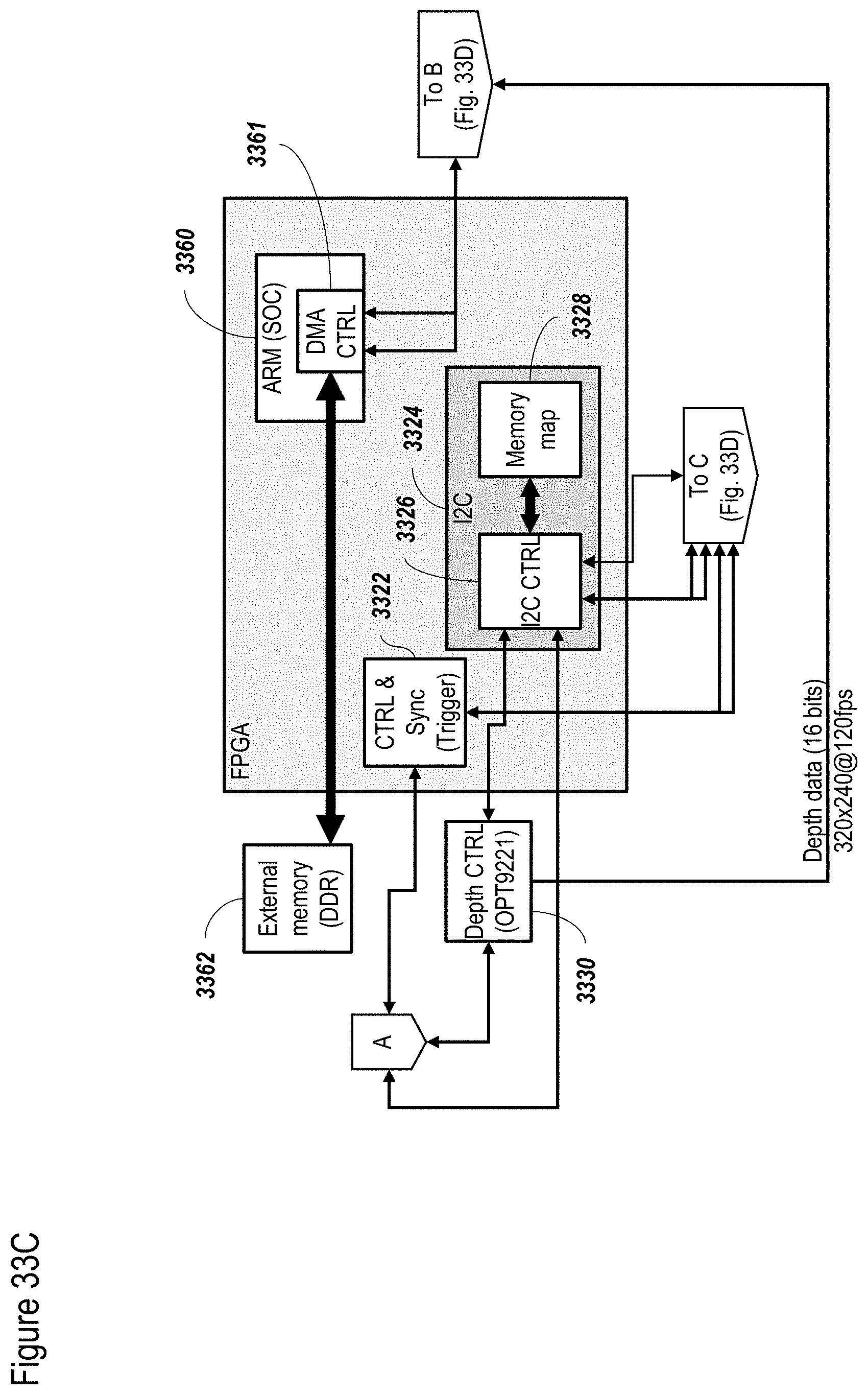

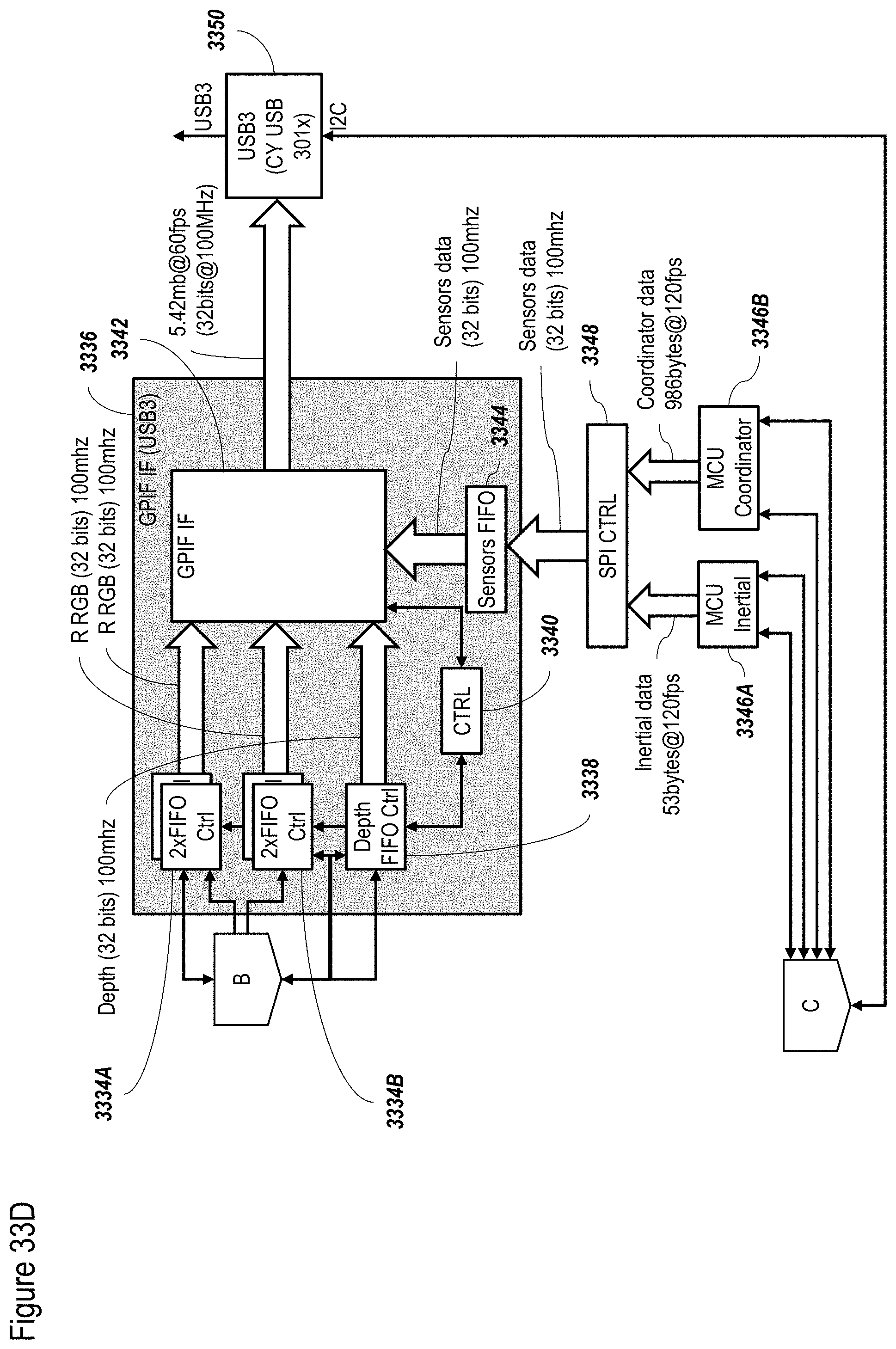

[0122] FIGS. 33A-33D show a non-limiting exemplary FPGA configuration according to at least some embodiments;

[0123] FIG. 34 shows a non-limiting example of a method for tracking the user, optionally performed with the system of FIG. 1 or 2, according to at least some embodiments of the present disclosure;

[0124] FIG. 35 shows a non-limiting example of a tracking engine, optionally for use with the system of FIG. 1 or 2, or the method of FIG. 34, according to at least some embodiments of the present disclosure;

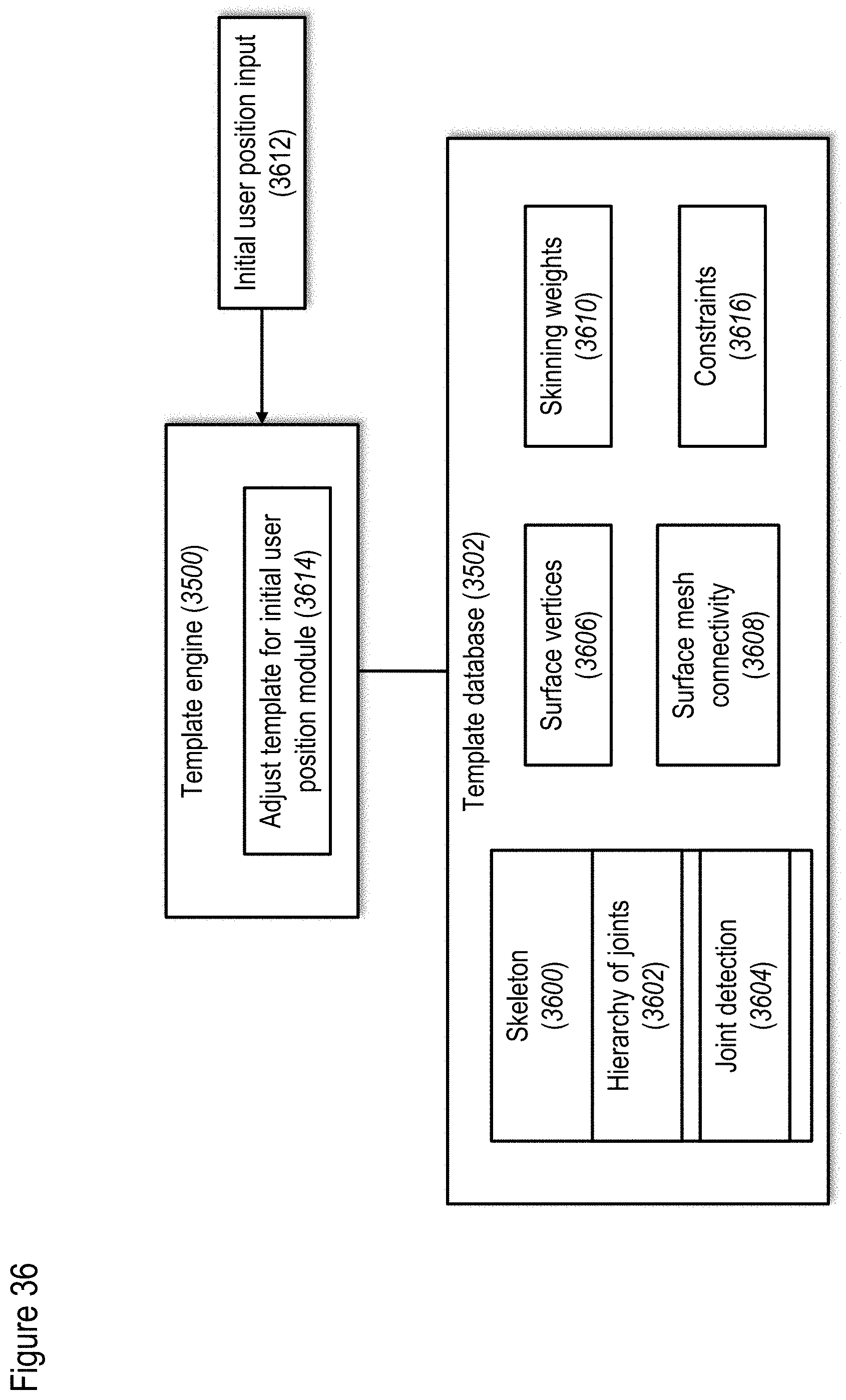

[0125] FIG. 36 shows templates and a template engine, according to at least some embodiments of the present disclosure;

[0126] FIG. 37 shows a non-limiting example of a method for creating and using templates, according to at least some embodiments of the present disclosure;

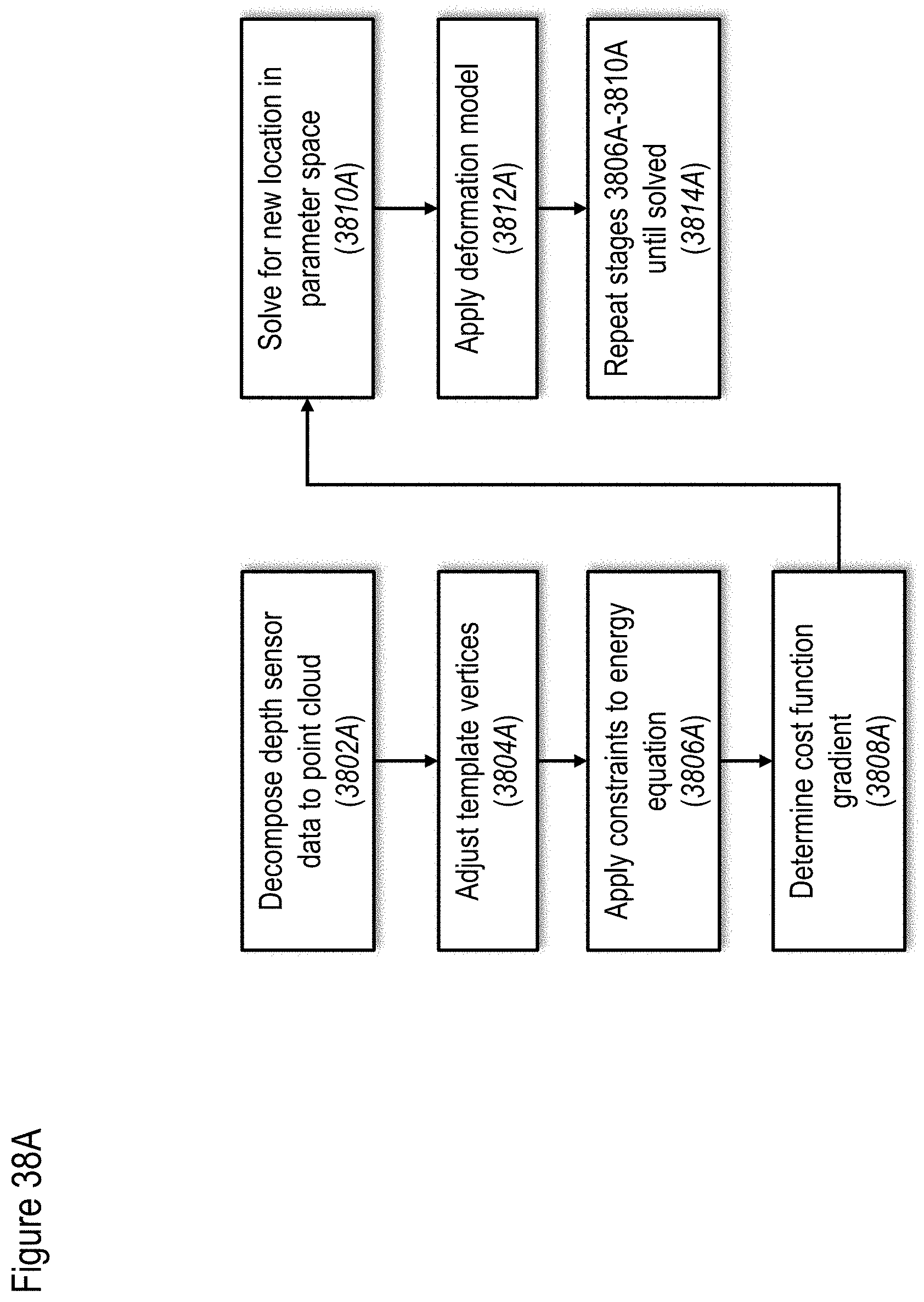

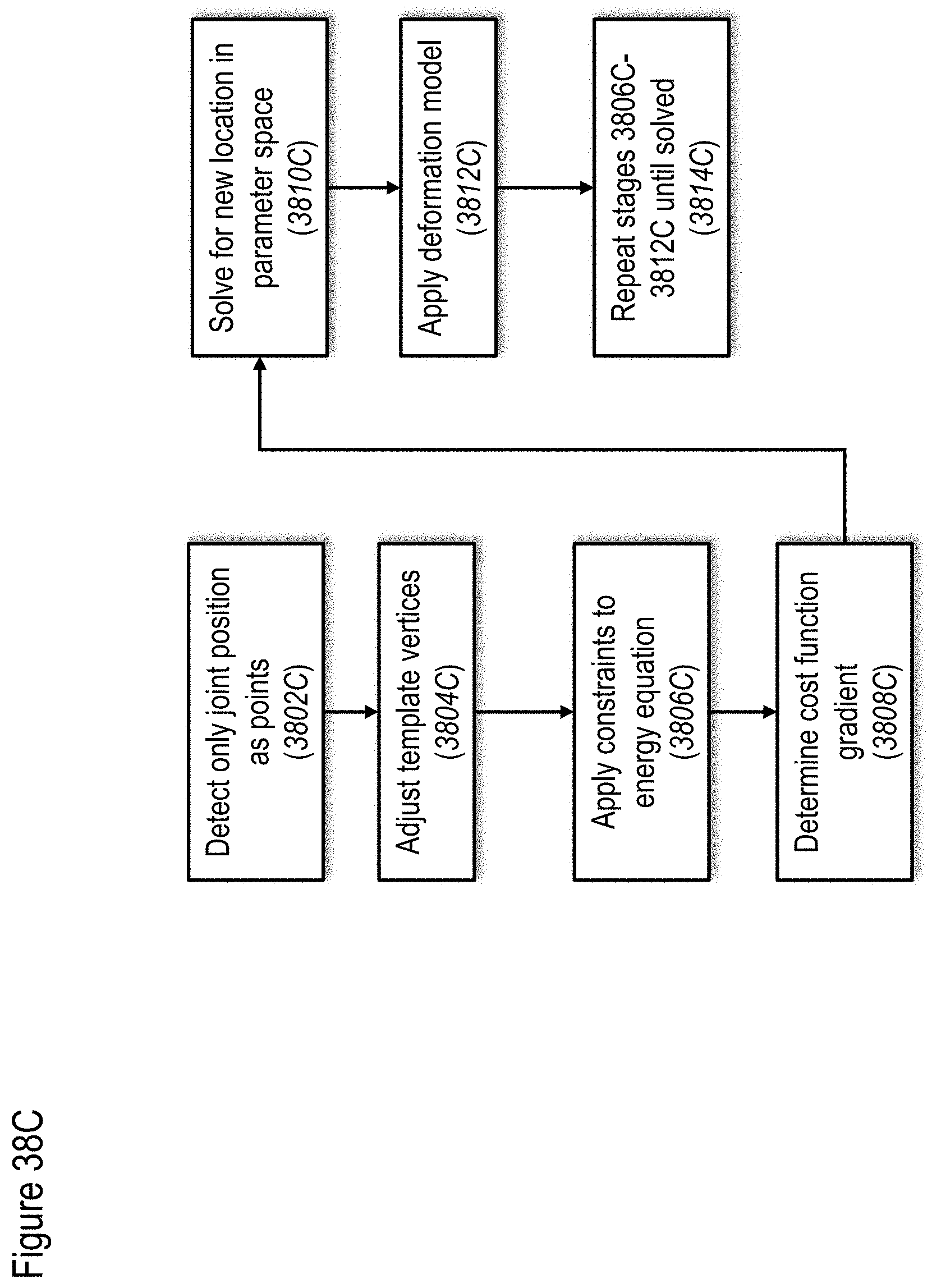

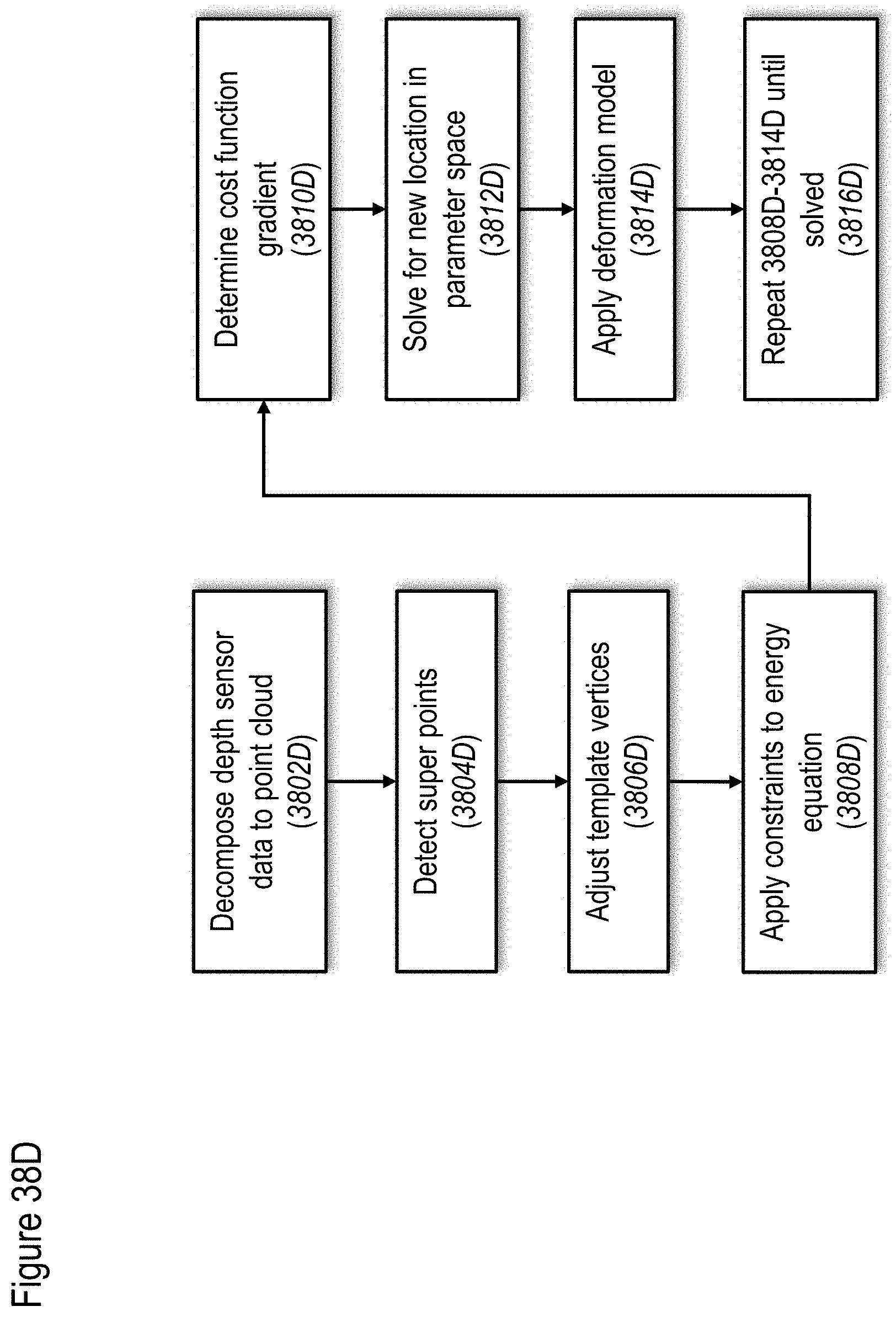

[0127] FIGS. 38A to 38E show non-limiting examples of methods for mapping data to track a user, according to at least some embodiments of the present disclosure;

[0128] FIG. 39 shows a non-limiting example of a method for applying a deformation model, according to at least some embodiments of the present disclosure;

[0129] FIG. 40 shows a non-limiting example of a method for pose recovery, according to at least some embodiments of the present disclosure;

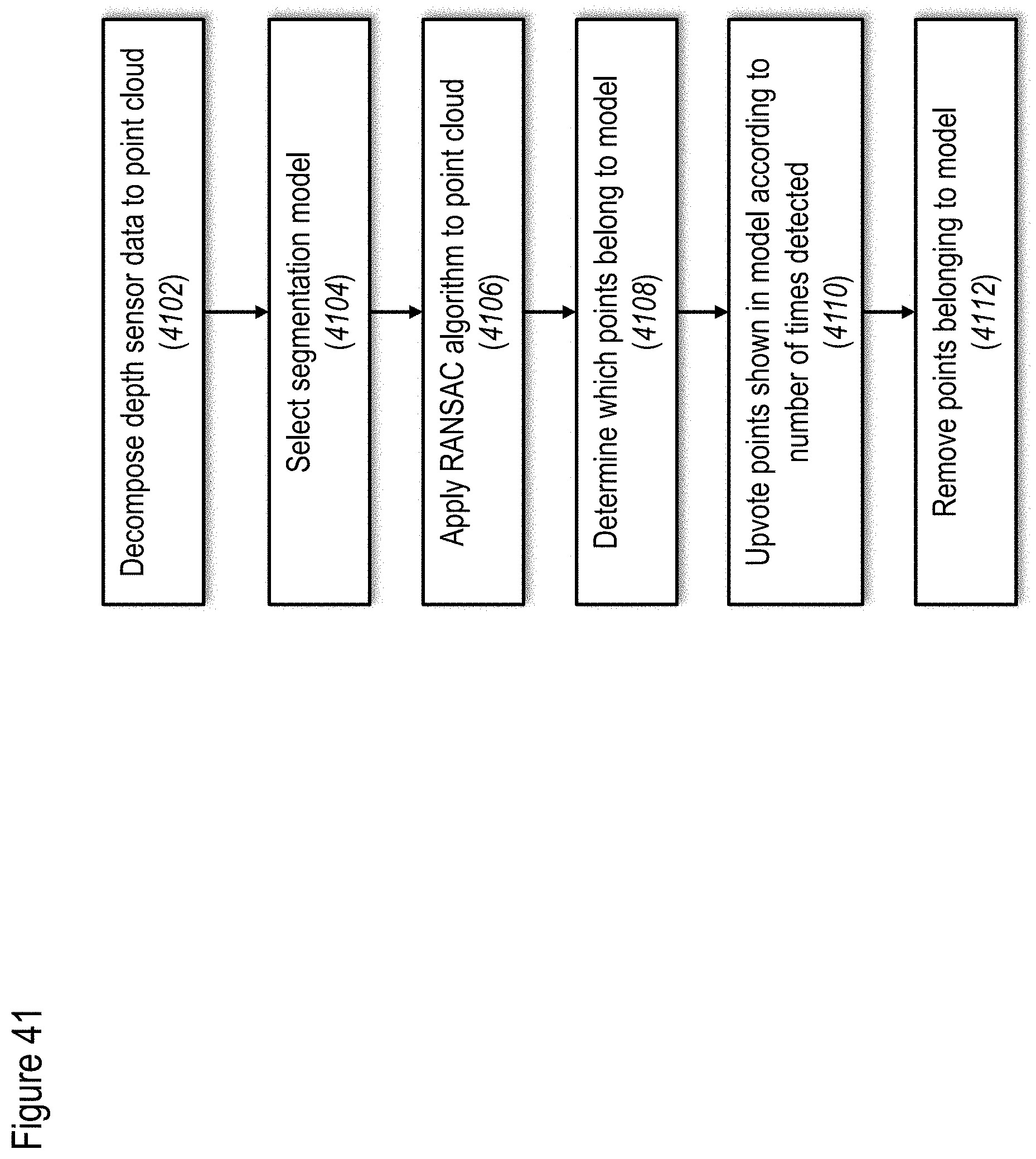

[0130] FIG. 41 shows a non-limiting example of a method for segmentation of a background object, according to at least some embodiments of the present disclosure;

[0131] FIG. 42 shows a non-limiting example of a method for joint detection, according to at least some embodiments of the present disclosure;

[0132] FIGS. 43 and 44 show two non-limiting example methods for applying VR to medical therapeutics according to at least some embodiments of the present disclosure;

[0133] FIG. 45 shows a non-limiting example method for applying VR to increase a user's ability to perform ADL (activities of daily living) according to at least some embodiments;

[0134] FIG. 46 shows a non-limiting example method for applying AR to increase a user's ability to perform ADL (activities of daily living) according to at least some embodiments;

[0135] FIG. 47 relates to another non-limiting example of a denoising method, using a bilateral filter with Gaussian blur filtering;

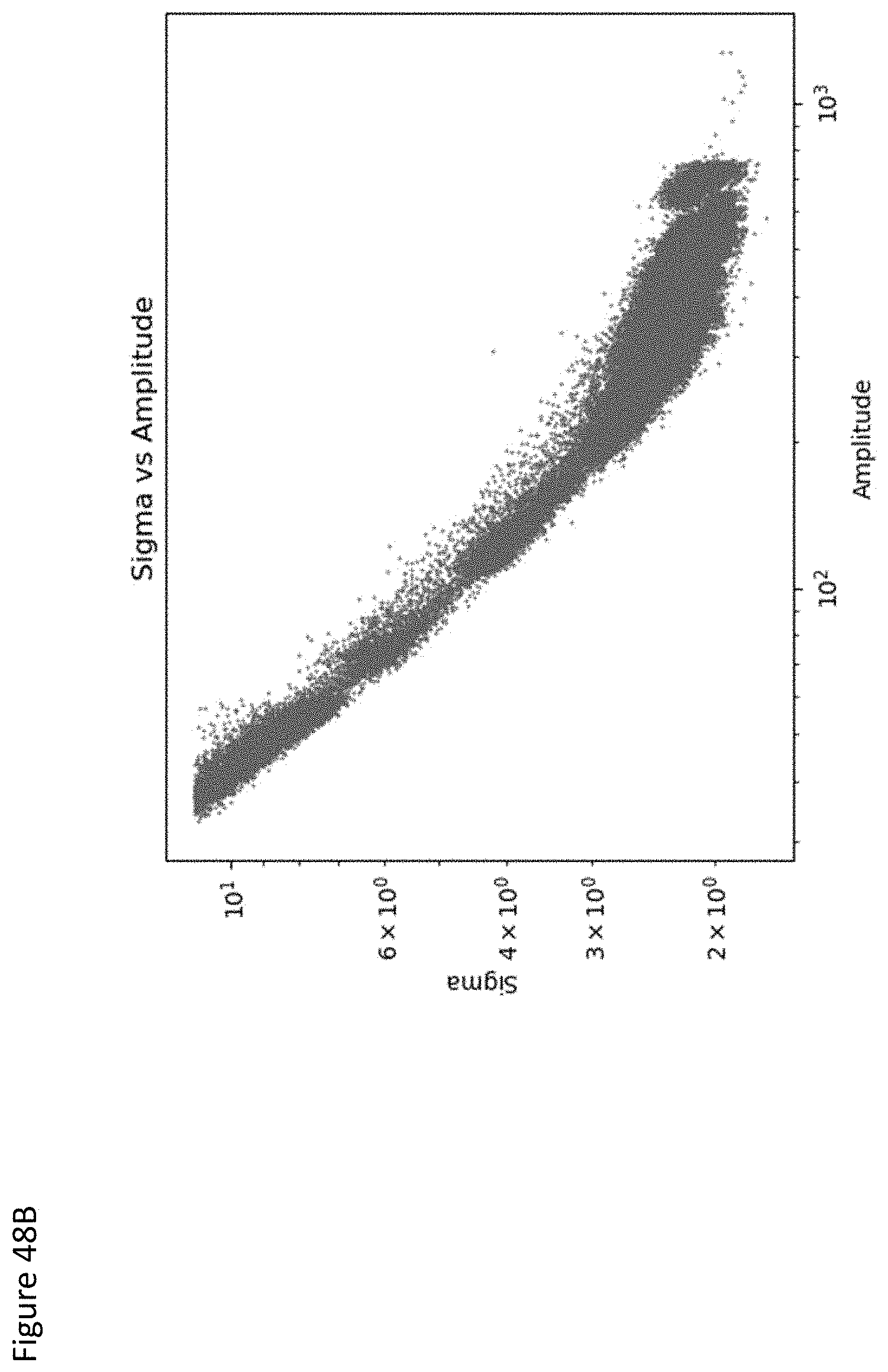

[0136] FIGS. 48A-48C relate to non-limiting exemplary data for fitting the sigma;

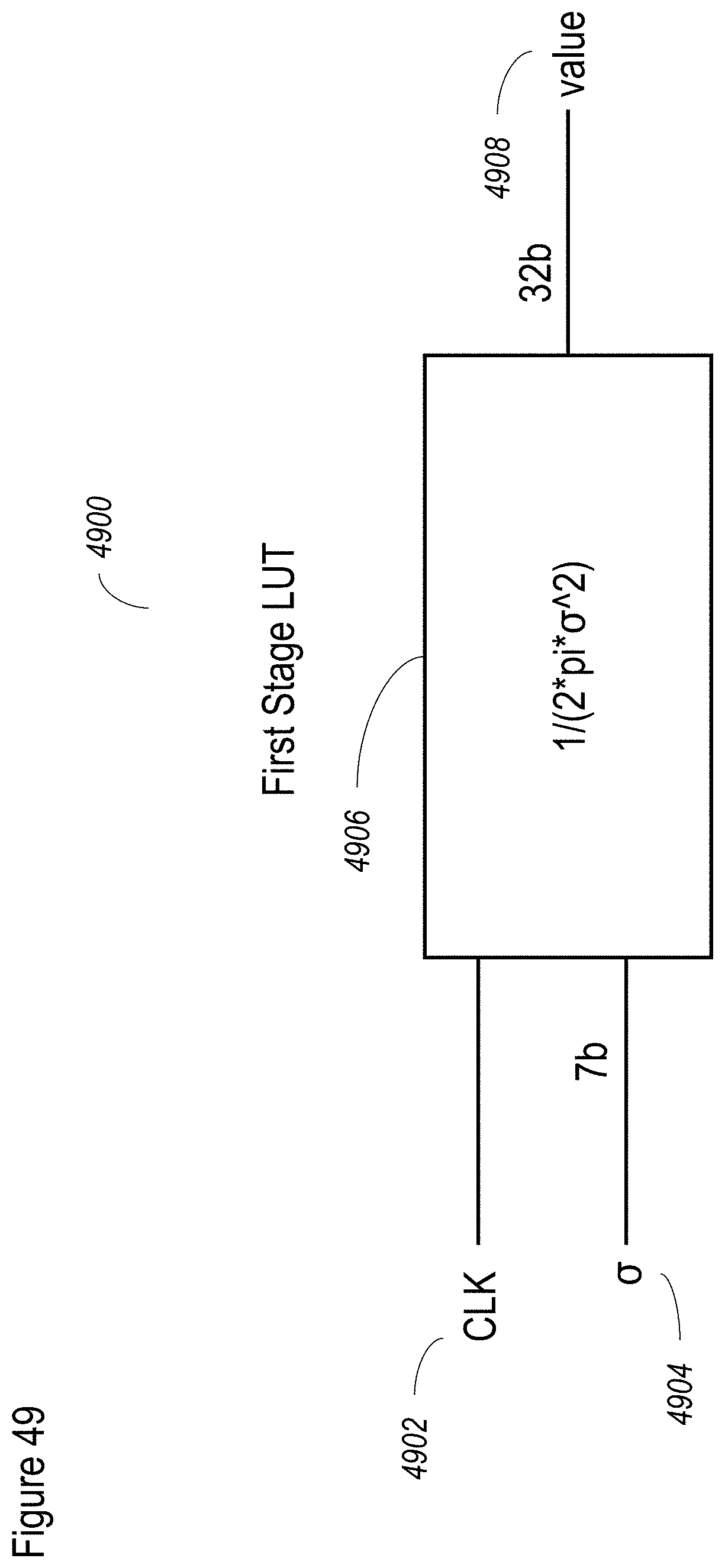

[0137] FIG. 49 shows a non-limiting, exemplary implementation of the LUT in hardware or firmware, which is preferably used for the first stage;

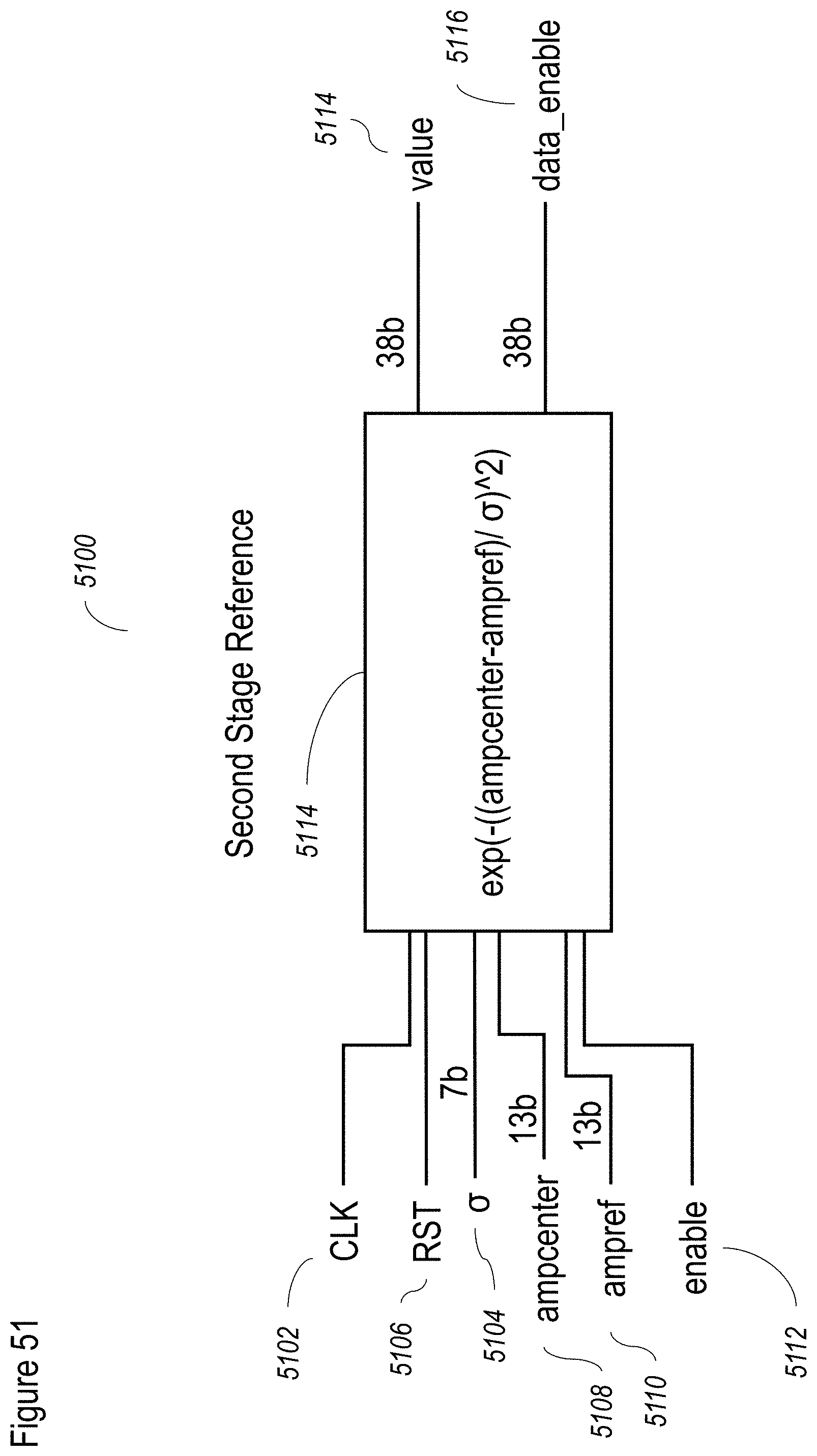

[0138] FIGS. 50-53 show non-limiting schematic implementations of pixel processing for hardware or firmware;

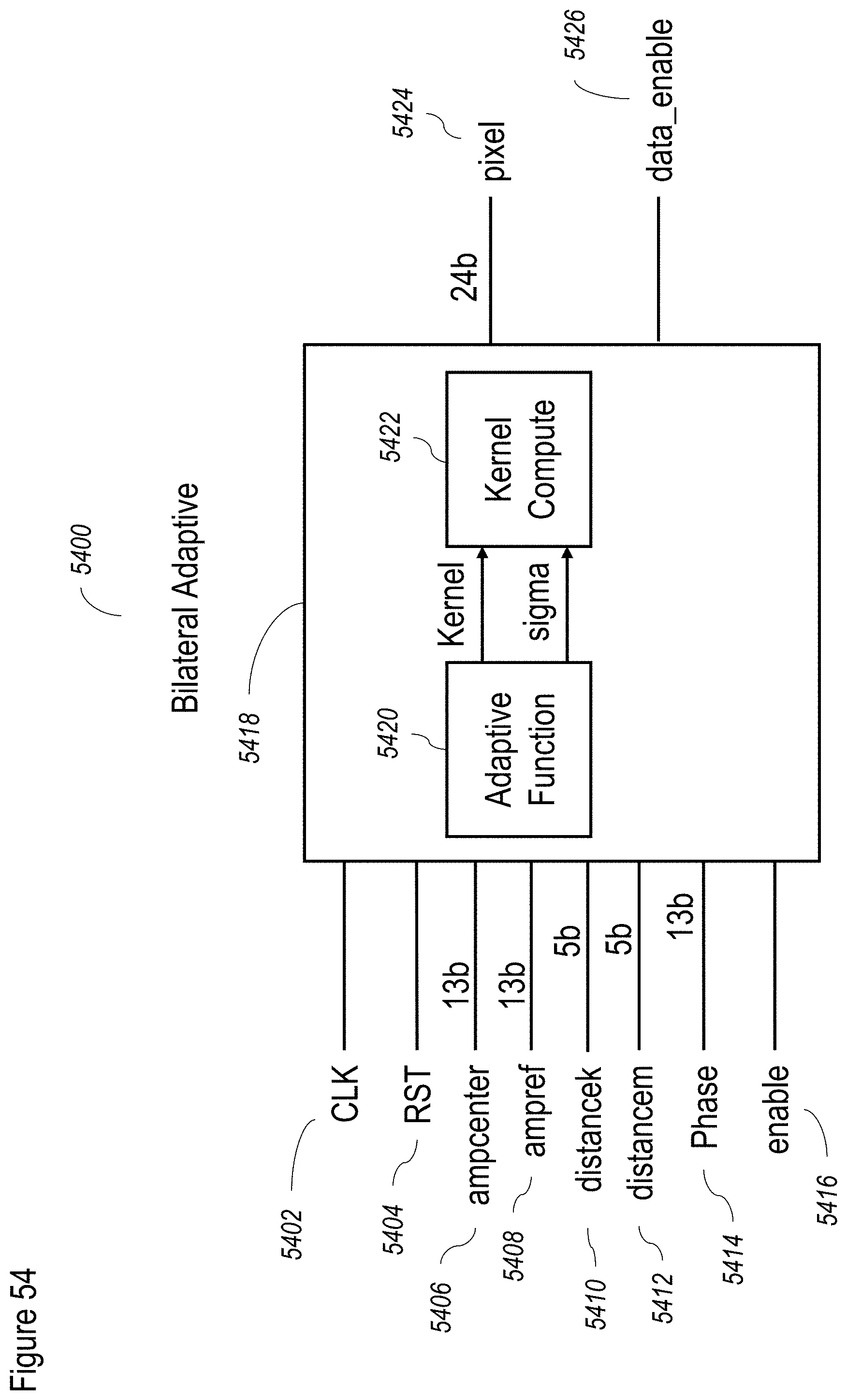

[0139] FIG. 54 shows an exemplary, schematic combined bilateral filter implementation;

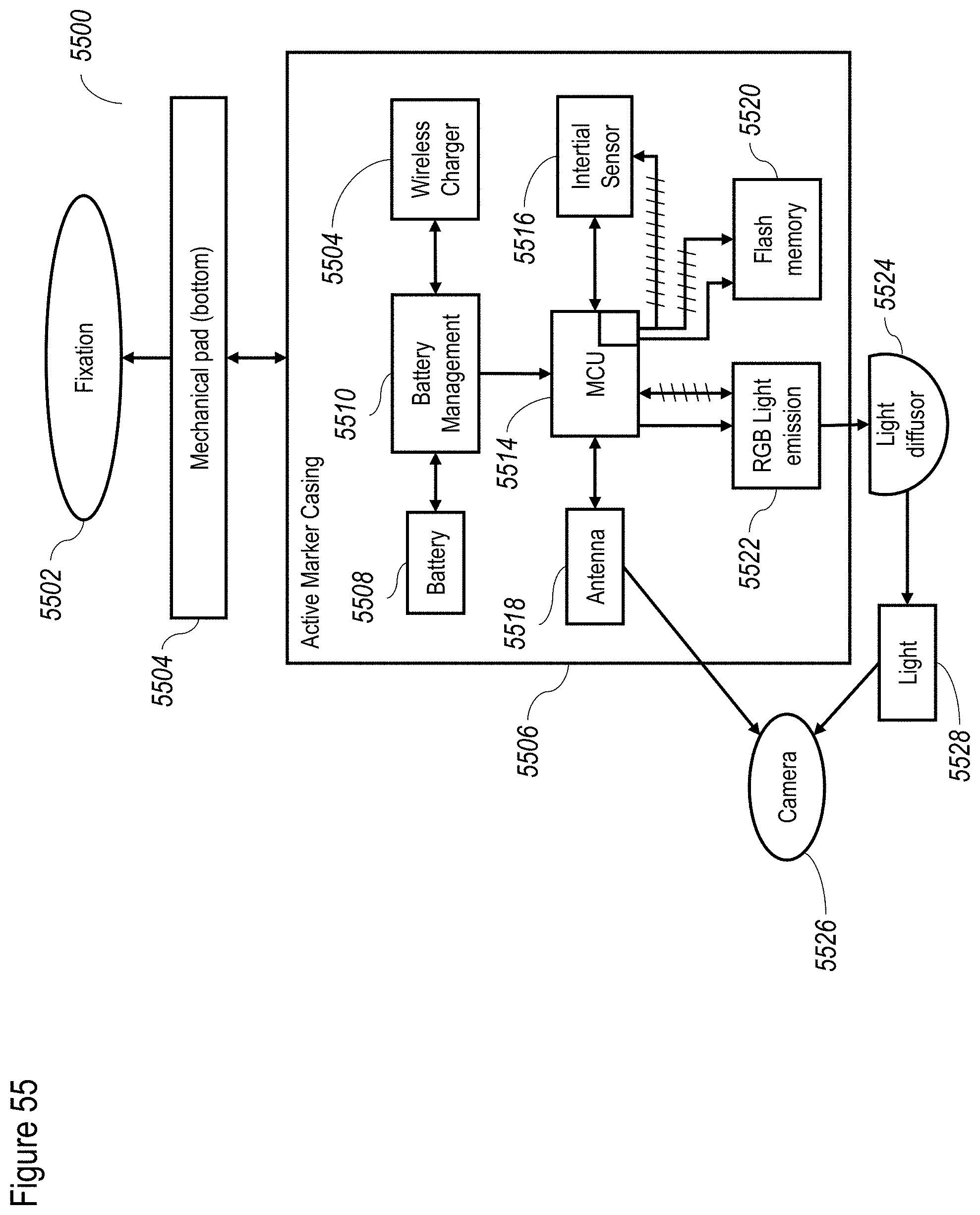

[0140] FIG. 55 shows a non-limiting exemplary system for layout for active markers;

[0141] FIG. 56A shows a non-limiting exemplary wireless marker operational method;

[0142] FIG. 56B shows a non-limiting exemplary wireless marker communication method;

[0143] FIG. 56C1 relates to an exemplary wireless marker packet structure;

[0144] FIG. 56C2 shows an exemplary wireless marker protocol for acquisition;

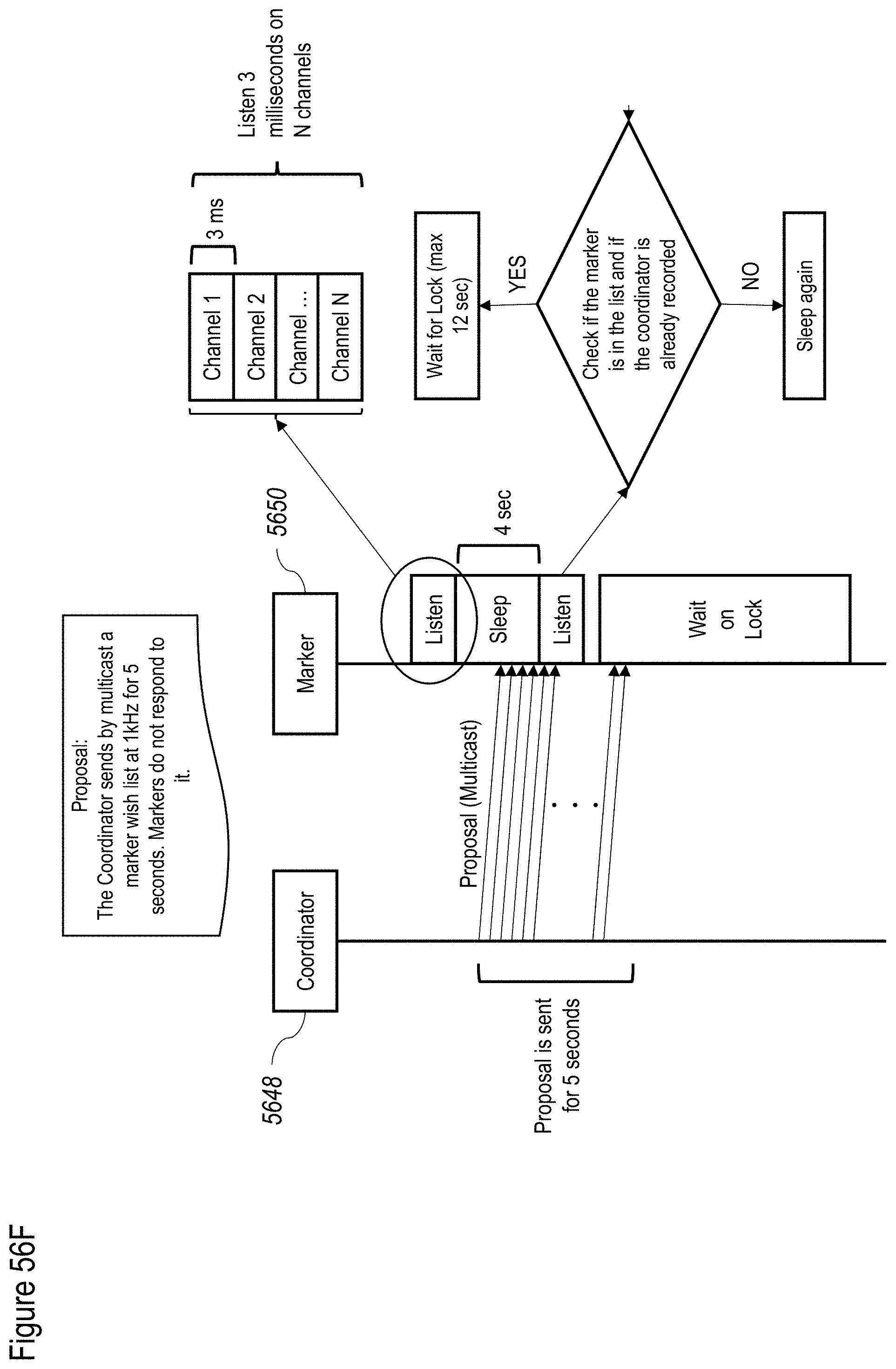

[0145] FIG. 56D shows a non-limiting exemplary process between a host 5644 and the coordinator 5646;

[0146] FIG. 56E shows again coordinator 5648 and marker 5650 to show the correspondence between the two of them as the coordinator locates the different markers;

[0147] FIG. 56F shows the next phase of communication for the markers; and

[0148] FIG. 57 shows a non-limiting exemplary timeline for the protocol.

DETAILED DESCRIPTION OF AT LEAST SOME EMBODIMENTS

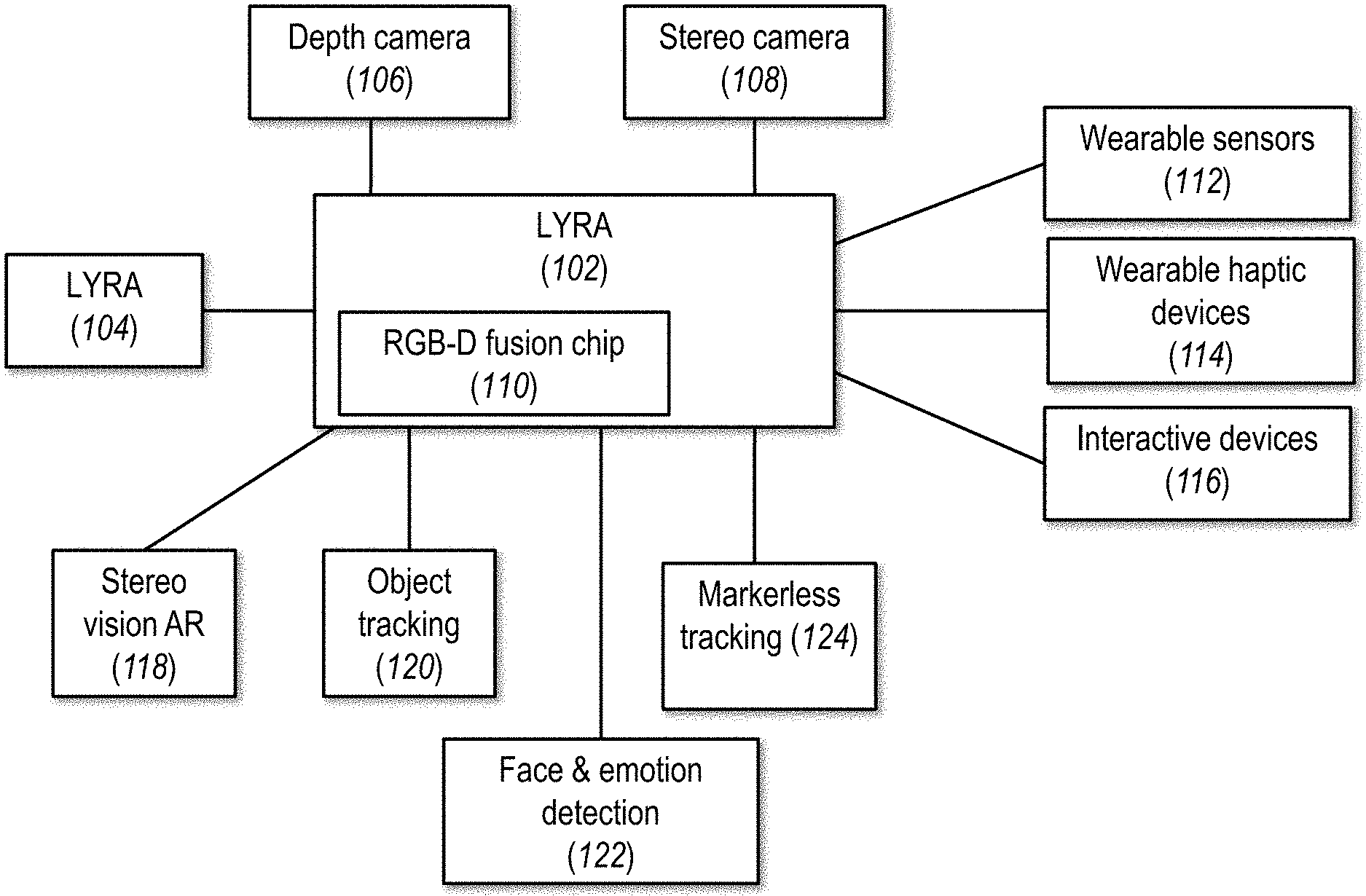

[0149] FIG. 1 shows a non-limiting example of a system according to at least some embodiments of the present disclosure. As shown, a system 100 features a multi-modal interaction platform 102, which can be chained to one or more additional multi-modal interaction platforms 104 as shown. Multi-modal interaction platform 102 can in turn be in communication with a depth sensor (e.g., camera) 106, a stereo sensor (e.g., camera) 108, and an RGB-D fusion chip 110. Depth camera 106 is configured to provide depth sensor data, which may be pixel data, for example, according to TOF (time of flight) relative to each pixel. Stereo camera 108 is configured to provide stereo camera data (pixel data) from left (first) and right (second) camera sensors (not shown). The functions of RGB-D fusion chip 110 are described in greater detail with regard to FIG. 3, but preferably include preprocessing of stereo camera data and depth data, to form a 3D point cloud with RGB data associated with it. The formation of the point cloud enables its use for tracking a body or a portion thereof, for example (or for other types of processing), by multi-modal interaction platform 102. Multi-modal interaction platform 102 can then output data to a visual display (not shown) or a wearable haptic device 114, for example to provide haptic feedback. One or more interactive objects or tools 116 may be provided to give or receive feedback or instructions from multi-modal interaction platform 102, or both.

[0150] A plurality of additional functions may be provided through the components described herein, alone or in combination, with one or more additional sensors, provided through outputs from multi-modal interaction platform 102. For example, a stereo vision AR (augmented reality) component 118 can be provided to display an AR environment according to tracking data of the subject and other information received from multi-modal interaction platform 102. Such object tracking can be enabled by an object tracking output 120. Detection of a human face, optionally with detection of emotion, may be provided through such an output 122. Markerless tracking 124, in which an object is tracked without additional specific markers placed on it, may also be provided. Other applications are also possible.

[0151] FIG. 2A shows a detail of the system of FIG. 1, shown as a system 200. In this figure, multi-modal interaction platform 102 is shown as connected to a plurality of different wearable sensors 112, including, but not limited, to an active marker 202, which can, for example, provide an active signal for being detected, such as an optical signal (for example) which would be detected by the stereo camera; an inertial sensor 204, for providing an inertial signal that includes position and orientation information; a heart rate/oxygen saturation sensor 206; EEG electrodes 208; and/or one or more additional sensors 210. Operation of some wearable sensors 112 in conjunction with multi-modal interaction platform 102 is described in greater detail below.

[0152] Multi-modal interaction platform 102 is also shown as connected to a plurality of different wearable haptic devices 114, including one or more of a tactile feedback device 212 and a force feedback device 214. For example and without limitation, such wearable haptic devices 114 could include a glove with small motors on the tips of the fingers to provide tactile feedback or such a motor connected to an active marker. Without wishing to be limited to a single benefit or to a closed list, connecting such sensors/feedback devices on a hardware platform enables better data synchronization, for example with timing provided by the same hardware clock signal, which can be useful for analysis.

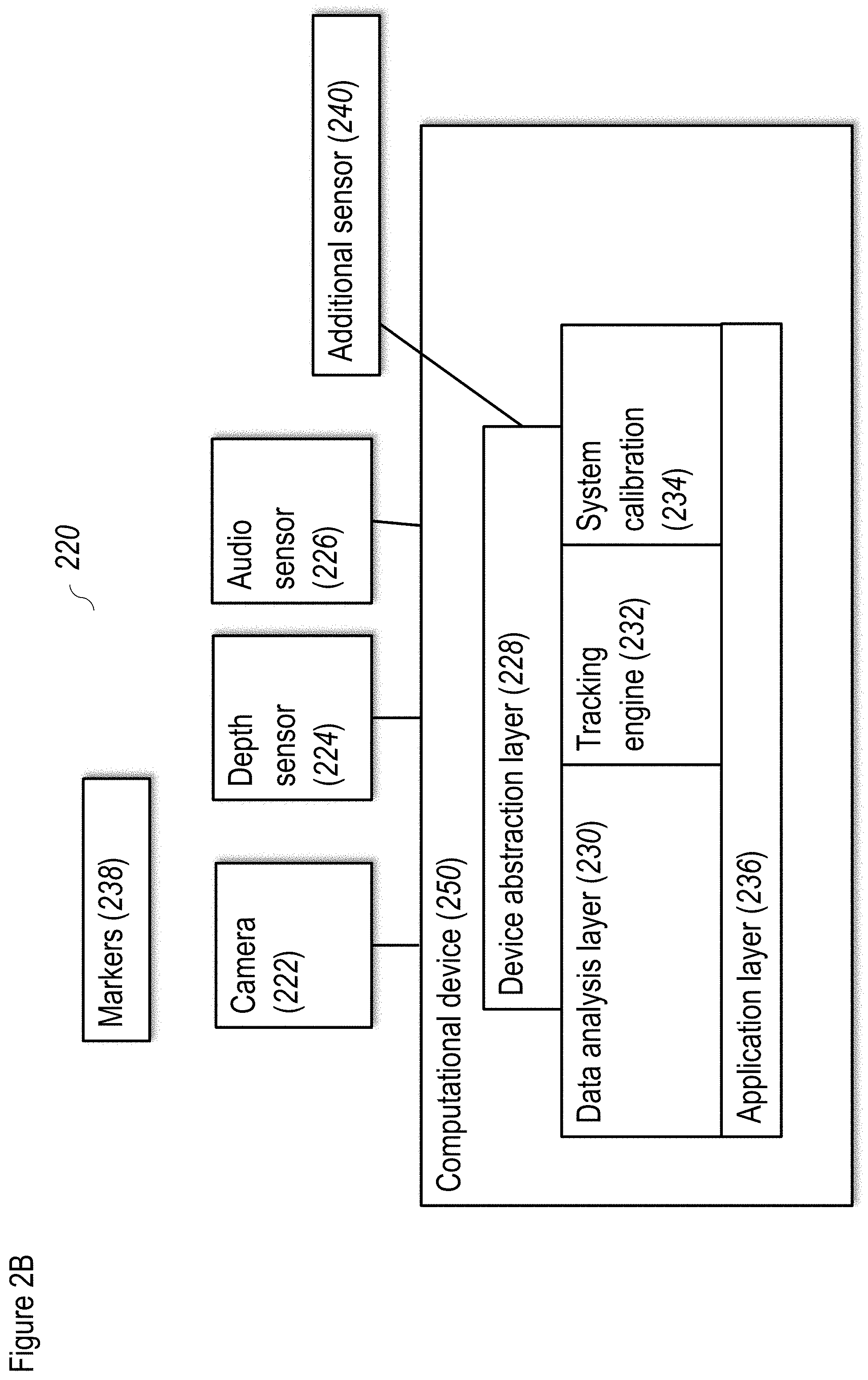

[0153] FIG. 2B shows a non-limiting example of a system according to at least some embodiments of the present disclosure. As shown, a system 220 features a camera 222, a depth sensor 224 and optionally an audio sensor 226. Optionally an additional sensor 240 is also included. Optionally camera 222 and depth sensor 224 are combined in a single product (e.g., Kinect.RTM. product of Microsoft.RTM., and/or as described in U.S. Pat. No. 8,379,101). FIG. 1B shows an exemplary implementation for camera 222 and depth sensor 224. Optionally, camera 222 and depth sensor 224 can be implemented with the LYRA camera of Mindmaze SA. The integrated product (i.e., camera 222 and depth sensor 224) enables, according to some embodiments, the orientation of camera 222 to be determined with respect to a canonical reference frame. Optionally, three or all four sensors (e.g., a plurality of sensors) are combined in a single product.

[0154] The sensor data, in some embodiments, relates to physical actions of a user (not shown), which are accessible to the sensors. For example, camera 222 can collect video data of one or more movements of the user, while depth sensor 224 may provide data to determine the three dimensional location of the user in space according to the distance of the user from depth sensor 224 (or more specifically, the plurality of distances that represent the three dimensional volume of the user in space). Depth sensor 224 can provide TOF (time of flight) data regarding the position of the user, which, when combined with video data from camera 222, allows a three dimensional map of the user in the environment to be determined. As described in greater detail below, such a map enables the physical actions of the user to be accurately determined, for example, with regard to gestures made by the user. Audio sensor 226 preferably collects audio data regarding any sounds made by the user, optionally including, but not limited to, speech. Additional sensor 240 can collect biological signals about the user and/or may collect additional information to assist the depth sensor 224.

[0155] Sensor data is collected by a device abstraction layer 228, which preferably converts the sensor signals into data which is sensor-agnostic. Device abstraction layer 228 preferably handles the necessary preprocessing such that, if different sensors are substituted, only changes to device abstraction layer 228 would be required; the remainder of system 220 can continue functioning without changes (or, in some embodiments, at least without substantive changes). Device abstraction layer 228 preferably also cleans signals, for example, to remove or at least reduce noise as necessary, and can also be used to normalize the signals. Device abstraction layer 228 may be operated by a computational device 250, and any method steps may be performed by a computational device (note--modules and interfaces disclosed herein are assumed to incorporate, or to be operated by, a computational device, even if not shown).

[0156] The preprocessed signal data from the sensors can then be passed to a data analysis layer 230, which preferably performs data analysis on the sensor data for consumption by an application layer 236 (according to some embodiments, "application," means any type of interaction with a user). Preferably, such analysis includes tracking analysis, performed by a tracking engine 232, which can track the position of the user's body and also can track the position of one or more body parts of the user, including but not limited, to one or more of arms, legs, hands, feet, head and so forth. Tracking engine 232 can decompose physical actions made by the user into a series of gestures. A "gesture" in this case may include an action taken by a plurality of body parts of the user, such as taking a step while swinging an arm, lifting an arm while bending forward, moving both arms, and so forth. Such decomposition and gesture recognition can also be done separately, for example, by a classifier trained on information provided by tracking engine 232 with regard to tracking the various body parts. Tracking engine 232 may be adjusted according to a presence or absence of each limb of the user. For example, if the user is an amputee who is missing a leg, tracking engine 232 can be calibrated to take such a loss into account. Such calibration may take place automatically or may occur as part of a user directed calibration process at the start of a session with a particular user.

[0157] It is noted that while the term "classifier" is used throughout, this term is also intended to encompass "regressor". For machine learning, the difference between the two terms is that for classifiers, the output or target variable takes class labels (that is, is categorical). For regressors, the output variable assumes continuous variables (see for example http://scottge.net/2015/06/14/ml101-regression-vs-classification-vs-clust- ering-problems/).

[0158] The tracking of the user's body and/or body parts, optionally decomposed to a series of gestures, can then be provided to application layer 236, which translates the actions of the user into a type of reaction and/or analyzes these actions to determine one or more action parameters. For example, and without limitation, a physical action taken by the user to lift an arm is a gesture which could translate to application layer 236 as lifting a virtual object. Alternatively or additionally, such a physical action could be analyzed by application layer 236 to determine the user's range of motion or ability to perform the action.

[0159] To assist in the tracking process, optionally, one or more markers 238 can be placed on the body of the user. Markers 238 optionally feature a characteristic that can be detected by one or more of the sensors, such as by camera 222, depth sensor 224, audio sensor 226 or additional sensor 240. Markers 238 can be detectable by camera 222, for example, as optical markers. While such optical markers may be passive or active, preferably, markers 238 are active optical markers, for example featuring an LED light. More preferably, each of markers 238, or alternatively each pair of markers 238, can comprise an LED light of a specific color which is then placed on a specific location of the body of the user. The different colors of the LED lights, placed at a specific location, convey a significant amount of information to the system through camera 222; as described in greater detail below, such information can be used to make the tracking process efficient and accurate. Additionally, or alternatively, one or more inertial sensors can be added to the hands of the user as a type of marker 238, which can be enabled as Bluetooth or other wireless communication, such that the information would be sent to device abstraction layer 228. The inertial sensors can also be integrated with an optical component in at least markers 238 related to the hands, or even for more such markers 238. The information can then optionally be integrated to the tracking process, for example, to provide an estimate of orientation and location for a particular body part, for example as a prior restraint.

[0160] Data analysis layer 230, in some embodiments, includes a system calibration module 234. As described in greater detail below, system calibration module 234 is configured to calibrate the system with respect to the position of the user, in order for the system to track the user effectively. System calibration module 234 can perform calibration of the sensors with respect to the requirements of the operation of application layer 236 (although, in some embodiments--which can include this embodiment--device abstraction layer 228 is configured to perform sensor specific calibration). Optionally, the sensors may be packaged in a device (e.g., Microsoft.RTM. Kinect), which performs its own sensor specific calibration.

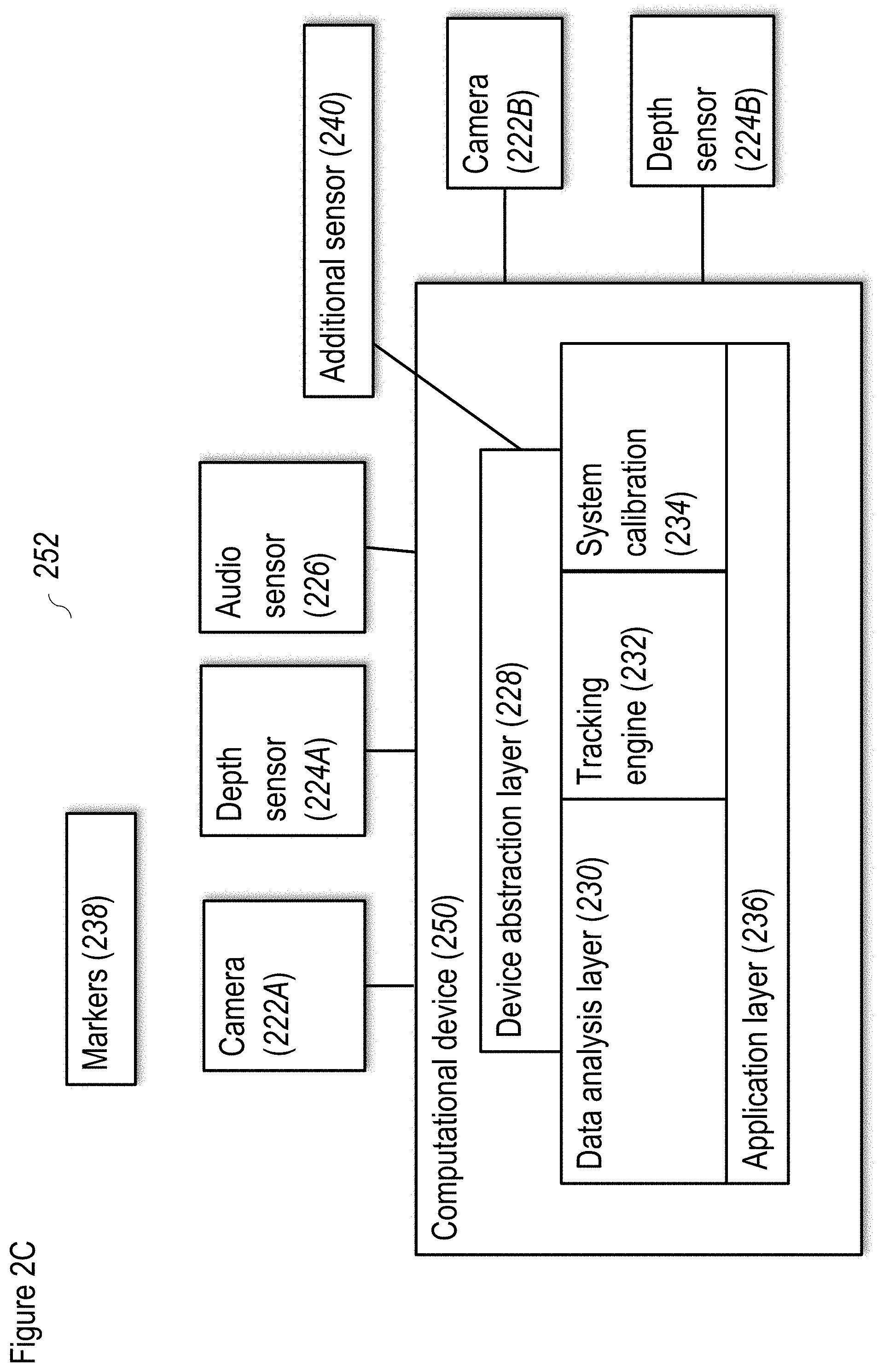

[0161] FIG. 2C shows a non-limiting example of a system according to at least some embodiments of the present disclosure. As shown, a system 252 includes the components of the system of FIG. 2B, and additionally features a second camera 222B and a second depth sensor 224B. As a non-limiting example of a use for system 252, it could be used to provide additional information about the movements of a user. For example, camera 222B and depth sensor 224B could be attached to the user, for example and without limitation to headgear worn by the user. Camera 222A and depth sensor 224A would be placed external to the user, for example at a short distance from the user. Such a configuration would enable the hands of the user to be tracked separately from the body of the user.

[0162] For this implementation, one of camera 222A and camera 222B, and one of depth sensor 224A and depth sensor 224B, is preferably selected as the master while the other is the slave device. For example, preferably camera 222B and depth sensor 224B would be the master devices, such that control would be provided according to the movements of the user. Optionally only one of camera 222B and depth sensor 224B is provided; if so, then preferably at least depth sensor 224B is provided.

[0163] Another non-limiting implementation would use system 252 to extend the range of operation. Each of camera 222A,B and depth sensor 224A,B has a trade off between field of view and resolution: the greater the field of view, the lower the angular resolution is, and vice versa. In order for the range of operation to be extended to 10 meters, for example, it would be necessary to provide a plurality of cameras 222 and a plurality of depth sensors 224, stationed at various points along this range. The data would therefore have the necessary resolution and field of view.

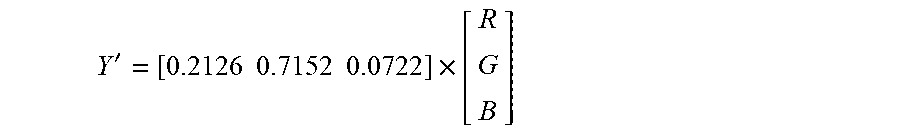

[0164] FIG. 3 shows a non-limiting example of a method for preprocessing according to at least some embodiments of the present disclosure. As shown, preprocessing starts at 302 with input from the stereo camera, provided as stereo data 304. Stereo data 304 undergoes RGB preprocessing 306, which in turn feeds back to the operation of stereo camera 302, for example, with regard to the autogain and autoexposure algorithm, described in greater below. In 308, image rectification is performed, to control artifacts caused by the lens of the camera. In some embodiments, a calibration process can be performed to prevent distortion of the image data by the lens, whether at the time of manufacture or at the time of use.

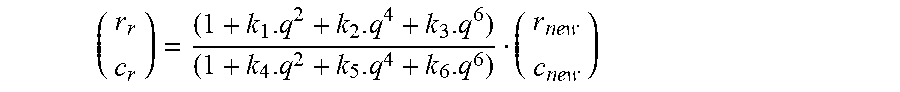

[0165] Optionally, the camera calibration process is performed as follows. To perform all these steps, intrinsic and extrinsic parameters of the cameras are needed to know how they are positioned one to each other, to know their distortion, their focal length and so on. These parameters are often obtained from a calibration step. This calibration step optionally comprises taking several pictures of a chessboard pattern with the cameras and then computing the parameters by finding the pattern (of known size) inside the images.

[0166] From the intrinsic calibration process, the intrinsic parameters of each camera are extracted and may comprise the following: [0167] Focal length: in pixels, (fx, fy); [0168] Principal point: in pixels, (cx, cy); [0169] Skew coefficient: defines the angle between the horizontal and vertical pixels axes, ac; [0170] Distortion coefficients: radial (k.sub.1, k.sub.2, k.sub.3, k.sub.4, k.sub.5, k.sub.6) and tangential (p.sub.1, p.sub.2) distortion coefficients.

[0171] Then, from the extrinsic calibration process, the position of one camera to the other can be extracted by having a 3.times.3 rotation matrix r and a 3.times.1 translation vector t.

[0172] In 310, stereo RGB images that have been preprocessed may then be processed for colorization and for creating a disparity map, such may then be fed to a colorized point cloud formation process 312. The process in 312 may be performed, for example, as described in the paper "Fusion of Terrestrial LiDAR Point Clouds with Color Imagery", by Colin Axel, 2013, available from https://www.cis.rit.edu/DocumentLibrary/admin/uploads/CIS000202.PDF. However, optionally, determination of the sensor position and orientation may be dropped, since the stereo camera and depth sensor can both be calibrated, with their position and orientation known before processing begins. In addition, pixels from the RGB camera can be matched with pixels from the depth sensor, providing an additional layer of calibration. The colorized point cloud can then be output as the 3D point cloud with RGB data in 314.

[0173] Turning back to 310, the disparity map is created in 312 by obtaining the depth information from the stereo RGB images and then checking the differences between stereo images. The disparity map, plus depth information from the depth sensor in the form of a calibrated depth map (as described in greater detail below), is combined for the point cloud computation in 318, for a more robust data set.

[0174] Depth information from the depth sensor can be obtained as follows. Depth and illumination data is obtained in 320, from TOF (time of flight) camera 326. The depth and illumination data may then be processed along two paths, a first path for TOF control 322, which in turn feeds back to TOF camera 326 to control illumination and exposure time according to the illumination data. A second path for TOF calibration 324 can then be used to correct the TOF image, by applying the factory calibration, which in turn feeds corrected TOF depth data into the depth map 328. Calibration of the TOF function may be required to be certain that the depth sensor data is correct, relative to the function of the depth sensor itself. Such calibration increases the accuracy of depth map 328. Depth map 328 can then be fed into 318, as described above, to increase the accuracy of creating the colorized point cloud.

[0175] FIGS. 4A and 4B show a non-limiting example of a method for depth preprocessing according to at least some embodiments of the present disclosure, which shows the depth processing method of FIG. 3 in more detail. Accordingly, as shown in FIG. 4A, a depth preprocessing process 400 starts with image (e.g., pixel) data being obtained from a TOF camera in 402, which may be used to create a depth map in 406, but may also may be used to determine a level of illumination in 414 for each pixel. The level of illumination can then be fed into a low confidence pixel removal process 408. This process compares the distance that a pixel in the image is reporting and correlates this reported distance to the illumination provided by that pixel. The settings for process 408 can be decided in advance, according to the acceptable noise level, which may for example be influenced by the application using or consuming the data. The lower the acceptable noise level, the lower the amount of data which is available. If the illumination is outside of a predetermined acceptable range, the distance cannot be accurately determined. Preferably, if this situation occurs, the pixel is removed.

[0176] A histogram process 416, which enables autoexposure and autogain adjustments, is described in greater detail below.

[0177] After removal of low confidence pixels in 408, the depth processing can continue with motion blur removal in 410, which can remove artifacts at edges of moving objects in depth (i.e., removing the pixels involved). The application of temporal and spatial filters may be performed in 412, which are used to remove noise from the depth (spatial) and average data over time to remove noise (temporal). Spatial filters attenuate noise by reducing the variance among the neighborhood of a pixel, resulting in a smoother surface, but potentially at the cost of reduced contrast. Such a spatial filter may be implemented as a Gaussian filter for example, which uses a Gaussian weighting function, G(p-p') to average the pixels, p', within a square neighborhood, w, centered about the pixel, p. FIG. 47 relates to another non-limiting example of a denoising method, using a bilateral filter with Gaussian blur filtering.

[0178] Turning back to histogram process 416, the information obtained therefrom may also be passed to an exposure and illumination control process 418 as previously described, which is used to adjust the function of TOF camera 402. FIG. 4B shows an exemplary illustrative non-limiting method for detecting defective pixels according to at least some embodiments of the present disclosure, which can be used for example with the method of FIG. 4A, for example to remove low confidence pixels as previously described. The process 450 can be divided into three steps: interpolation, defect screening, candidate screening (for example).

[0179] While each incoming pixel (452) reaches the center of the moving window obtained in the buffer of the FPGA (field-programmable gate array), it is checked to determine if it was previously stored (in memory) as being defective (454). If not previously stored, the module proceeds to perform the candidate screening process (456) where the value of the pixel under test is compared toward surrounding neighbors average. If a certain threshold, TH_NEIGH, is exceeded, the inspected pixel is suspected to be defective, hence its data (value, position, neighbor average) are stored for further analysis.

[0180] A stored pixel is checked to determine whether it was previously labeled as defective (458), which leads to interpolation (460). If not previously labeled as defective, the pixel undergoes defect screening (462) by comparing its actual and previous values. A higher difference between these values as compared to the threshold TH_DIFF (to cancel effects of noise) corresponds to the pixel changing regularly, such that the pixel is no longer suspected as being defective. A time constant is incremented for each period of time that the pixel remains under suspicion of being defective. Another threshold, TH FRAME, is defined and used to compare the value of the time constant. Once a pixel value (excluding noise) remains unchanged for a certain number of frames, such that the value of the time constant is equal to the second threshold of TH FRAME, the pixel is determined to be defective. Now the interpolation step becomes active, so that defective pixel is corrected before it slides toward first mask_2 memory cell. Interpolation may be performed by substituting investigated pixel value by average of its surrounding pixel. The average can be calculated among those pixels having the same filter color as the one in the center of the mask. An example of such a process is demonstrated in following pseudo-code form:

TABLE-US-00001 for pixel=1 to endFrame do if pixel already stored then if pixel already defective then Interpolate pixel else if|pixel-previousPixelValue|.ltoreq.TH_DIFF then if timeConst=TH_FRAME then Add pixel to defects list else Increment timeConst end else Remove pixel from candidate list end else if memory not full then if |pixel-neighborsAverage|.gtoreq. TH_NEIGH then Add pixel to candidate list end end end indicates data missing or illegible when filed

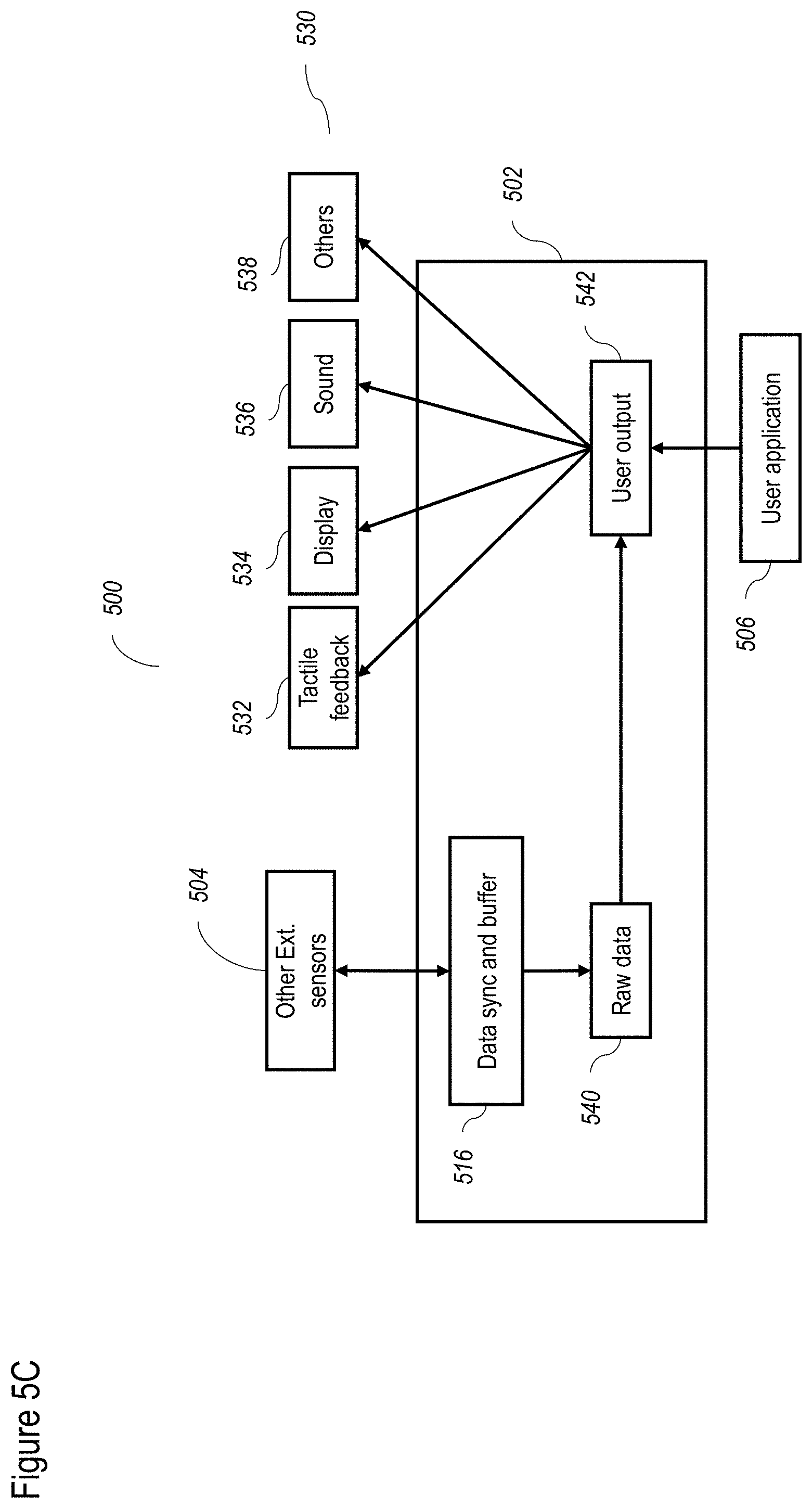

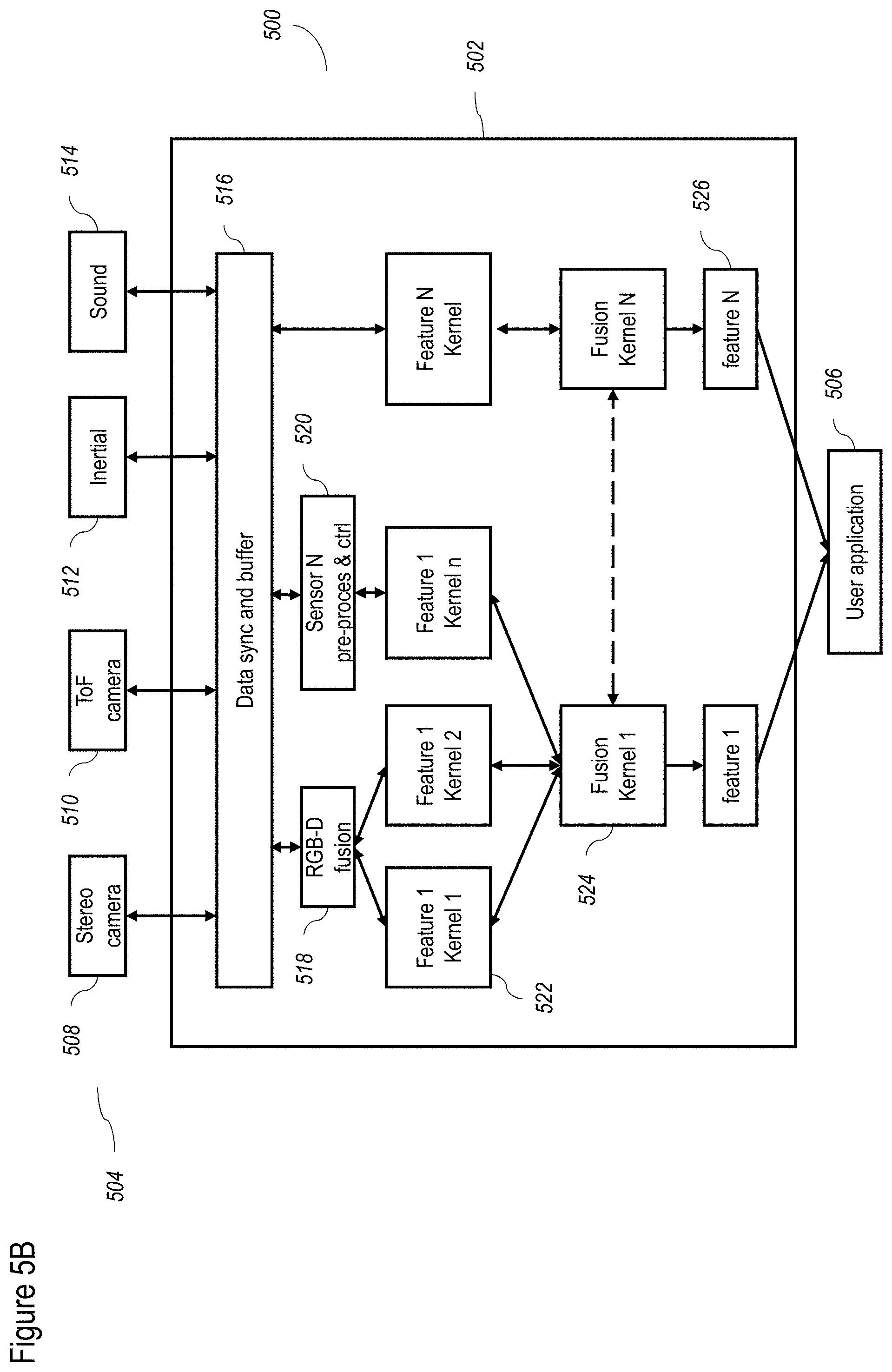

[0181] FIGS. 5A-5D show a non-limiting example of a data processing flow for the FPGA according to at least some embodiments of the present disclosure. FIG. 5A shows the overall flow 500, which includes input from one or more sensors 504, and output to one or more output devices 530. Input from sensors 504 can be processed through FPGA process 502 and then sent to a user application 506. User application 506 may then return output to output devices 530.

[0182] FIG. 5B describes the detailed flow for some exemplary input sensors 504. Thus, and for example, as shown, exemplary input sensors 504 include one or more of a stereo camera 508, a ToF camera 510, an inertial sensor 512 and a sound input device 514. A non-limiting example of sound input device 514 could include a microphone for example. Input from input sensors 504 may be received by a data sync and buffer 516, which operates as described in greater detail below, to synchronize various data streams (including without limitation between inputs of stereo camera 508, and between stereo camera 508 and ToF camera 510) according to a plurality of clocks. Data sync and buffer 516 can also buffer data as described in greater detail below. In terms of buffering functions, the buffer part of data sync and buffer 516 is configured to provide a moving window. This allows data processing to be performed on a portion of a frame when data are serially sent.

[0183] Optionally one or more input sensors 504 are asynchronous sensors. As a non-limiting example, an asynchronous sensor implementation for a camera does not send data at a fixed frame rate. Instead, such a sensor would only send data when a change had been detected, thereby only sending the change data.

[0184] Data may then pass to an RGB-D fusion chip process 518, the operation of which was described with regard to FIG. 3, and which preprocesses the data for depth and RGB processing. Data can also pass to a sensor specific preprocess and control 520 for sensors other than stereo camera 508 and ToF camera 510, to prepare the sensor data for further use (for example, in regard to calibration of the data).

[0185] Next, data may pass to a layer of feature specific kernels 520, which receive data from RGB-D fusion chip process 518, and sensor specific preprocess and control 520. Feature specific kernels 520 may be operated according to the OPENCL standard, which supports communication between the FPGA and the CPU of the computational device operating user application 506 (not shown). Feature specific kernels 520 may also receive data directly from data sync and buffer 516, for example, to control the sensor acquisition and to provide feedback to data sync and buffer 516, to feed back to sensors 504.

[0186] Feature specific kernels 520, according to some embodiments, take data related to particular features of interest to be calculated, such as the previously described point cloud of 3D and RGB data, and calculate sub-features related to the feature. Non-limiting examples of such features may also include portions of processes as described herein, such as the de-mosaic process, color correction, white balance and the like. Each feature specific kernel 520 may have an associated buffer (not shown), which is preferably designed in order to provide a moving window. This allows data processing to be performed on a portion of a frame when data is serially sent.

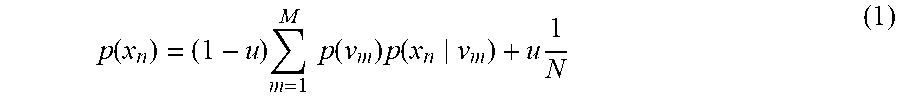

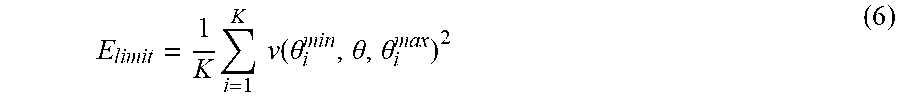

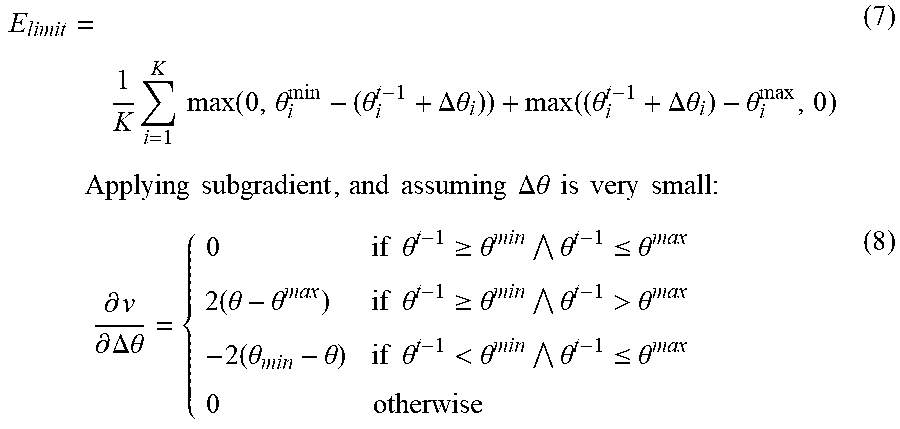

[0187] Next, the sub-features can be passed to a plurality of fusion kernels 522, to fuse the sub-features into the actual features, such as the previously described point cloud of 3D and RGB data. Specific feature specific kernels 520 and fusion kernels 522 processes are described in greater detail below. Fusion kernel 522 can also report that a particular feature specific kernel 520 is missing information to the feature specific kernel that reports any missing information to sensors 504 through data sync and buffer 516. These features 526 may then be passed to user application 506 which may request specific features 526, for example, by enable specific fusion kernels 522, as needed for operation.

[0188] Among the advantages of calculation by feature specific kernels 520 and fusion kernels 522 according to some embodiments, is that both are implemented in the FPGA (field programmable array), and hence may be calculated very quickly. Both feature specific kernels 522 and fusion kernels 524 may be calculated by dedicated elements in the FPGA which can be specifically created or adjusted to operate very efficiently for these specific calculations. Even though features 526 may require intensive calculations, shifting such calculations, away from a computational device that operates user application 506 (not shown) and to the FPGA process 502, significantly increases the speed and efficiency of performing such calculations.

[0189] Optionally the layer of feature specific kernels 520 and/or the layer of fusion kernels 522 may be augmented or replaced by one or more neural networks. Such neural network(s) could be trained on sensor data and/or on the feature data from the layer of feature specific kernels 520.

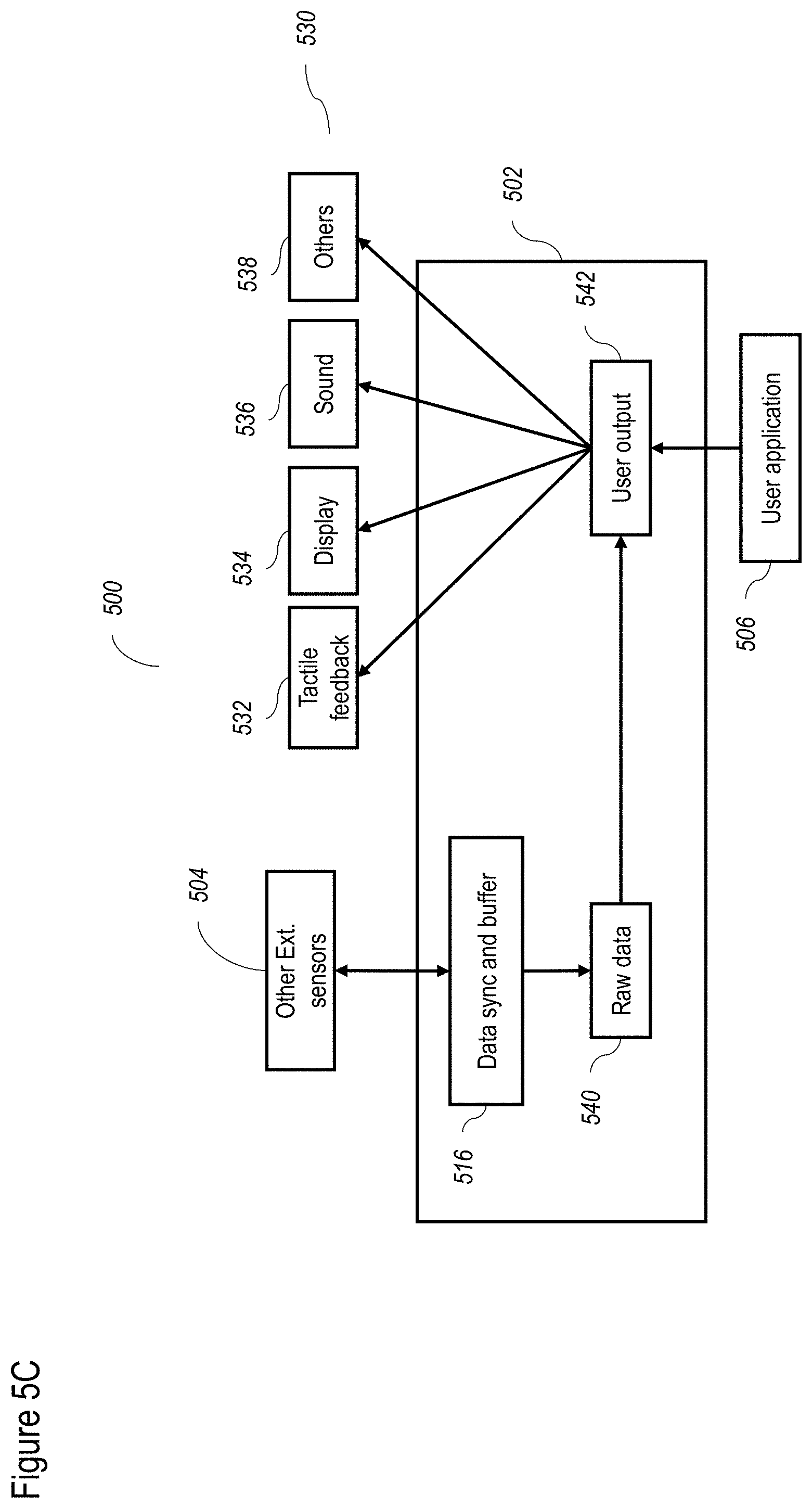

[0190] FIG. 5C shows the operation of the process 500 as it relates to additional external sensors 504 and output devices 530. Input from additional external sensors 504 may be transmitted to data sync and buffer 516, and then to a raw data processor 540, for example, for the display or other output device 530, that requires a raw pipe of data, optionally with minor modifications, to avoid sending all of the data to user application 506, which is operated by a slower computational device (thereby avoiding delay). Raw processor 540 could also optionally receive data from stereo camera 508 (not shown) as a raw feed. From raw data processor 540, the sensor input data can be sent to a user output controller 542 for being output to the user.

[0191] Output from user application 506 can also be sent to user output controller 542, and then to output devices 530. Non-limiting examples of output devices 530 include a tactile feedback device 532, a display 534, a sound output device 536 and optionally other output devices 538. Display 534 can display visual information to the user, for example, as part of a head mounted device, for example for VR (virtual reality) and AR (augmented reality) applications. Similarly, other output devices 530 could provide feedback to the user, such as tactile feedback by tactile feedback device 532, as part of VR or AR applications.

[0192] FIG. 5D shows the operation of a process 550 which features an additional stereo camera 508B and an additional ToF camera 510B. Stereo camera 508B and ToF camera 510B may be mounted on the head of the user as previously described. For this implementation, stereo camera 508B and ToF camera 510B would be the master devices, while stereo camera 508A and ToF camera 510A would be the slave devices. All of the devices would send their data to data sync and buffer 516; the process would then proceed as previously described. Again optionally only one of stereo camera 508B and ToF camera 510B is present, in which case preferably ToF camera 510B is present.

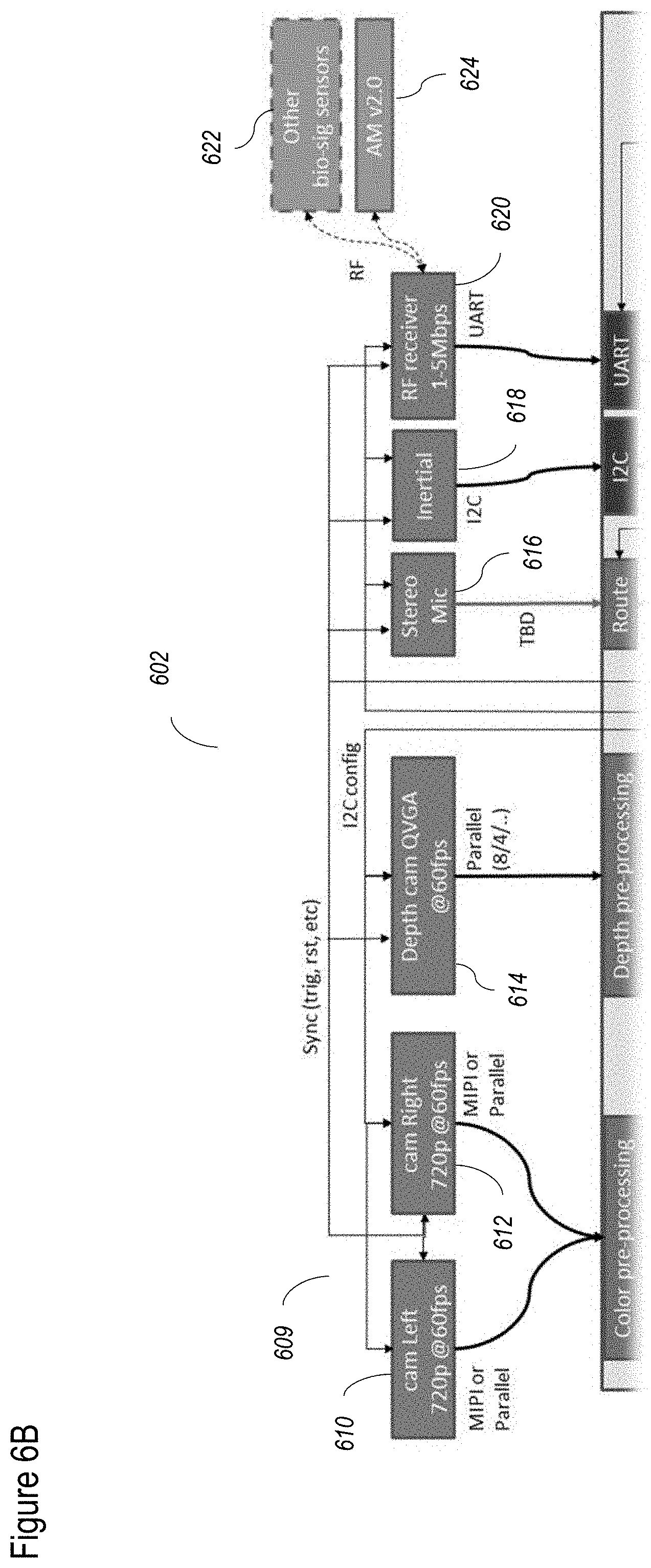

[0193] FIGS. 6A-6E show an exemplary, illustrative, non-limiting hardware system for the camera according to at least some embodiments of the present disclosure. FIG. 6A shows the overall hardware system 600, featuring a plurality of layers 602, 604, 606 and 608. Layer 602 features a plurality of inputs. Layer 604 features FPGA hardware, which may optionally function as described with regard to FIG. 5. Layer 606 relates to CPU hardware and associated accessories. Layer 608 relates to a host computer. FIG. 6B shows layer 602 in more detail, including various inputs such as a stereo camera 609, featuring a left camera 610 and a right camera 612, which in this non-limiting example, feature 720 pixels and 60 fps (frames per second). Each of left camera 610 and right camera 612 may communicate with the FPGA (shown in the next layer) according to a standard such as MIPI (Mobile Industry Processor Interface) or parallel communication.

[0194] A depth sensor 614 is shown as a ToF camera, in this non-limiting example implemented as a QVGA (Quarter Video Graphics Array) camera operating at 60 fps, which communicates with the FPGA according to parallel communication. Audio input may be obtained from a stereo microphone 616 as shown. An inertial sensor 618 may be used to obtain position and orientation data. A radio-freqency (RF) receiver 620 may be used to collect data from other external sensors, which may be worn by the user for example, such as a bio sensor 622 and an AM (active marker) sensor 624, as previously described.

[0195] FIG. 6C shows layer 604, which includes a FPGA 626, which may operate as described with regard to FIG. 5. FPGA 626 may be implemented as an FPGA SoC SOM, which is a field-programmable gate array (FPGA) which features an entire system on a chip (SoC), including an operating system (so it is a "computer on a chip" or SOM--system on module). FPGA 626 includes a color preprocessing unit 628 which receives data from stereo camera 609, and which preprocesses the data as previously described, for example with regard to FIG. 3. A depth preprocessing unit 630 receives depth data from depth sensor 614, and preprocesses the data as previously described, for example with regard to FIGS. 3 and 4.

[0196] A sensor config 646 optionally receives configuration information from stereo camera 609 and depth sensor 614, for example, to perform the previously described synchronization and calibration of FIG. 3. Similarly, sensor config 646 optionally receives configuration information from the remaining sensors of layer 602, again to perform synchronization and calibration of the data, and also the state and settings of the sensors. Synchronization is controlled by a data sync module 648, which instructs all sensors as to when to capture and transmit data, and which also provides a timestamp for the data that is acquired. A route module 632 can receive input from stereo microphone 616, to convert data for output to USB 640 or data transceiver 644.

[0197] Inertial sensor 618 may communicate with FPGA 626 according to the I2C (Inter Integrated Circuit) protocol, so FPGA 626 includes an I2C port 634. Similarly, RF receiver 620 may communicate with FPGA 626 according to the UART (universal asynchronous receiver/transmitter) protocol, so FPGA 626 features a UART port 636. For outputs, FPGA 626 can include one and/or another of a MIPI port 638, a USB port 640, an Ethernet port 642 and a data transceiver 644.

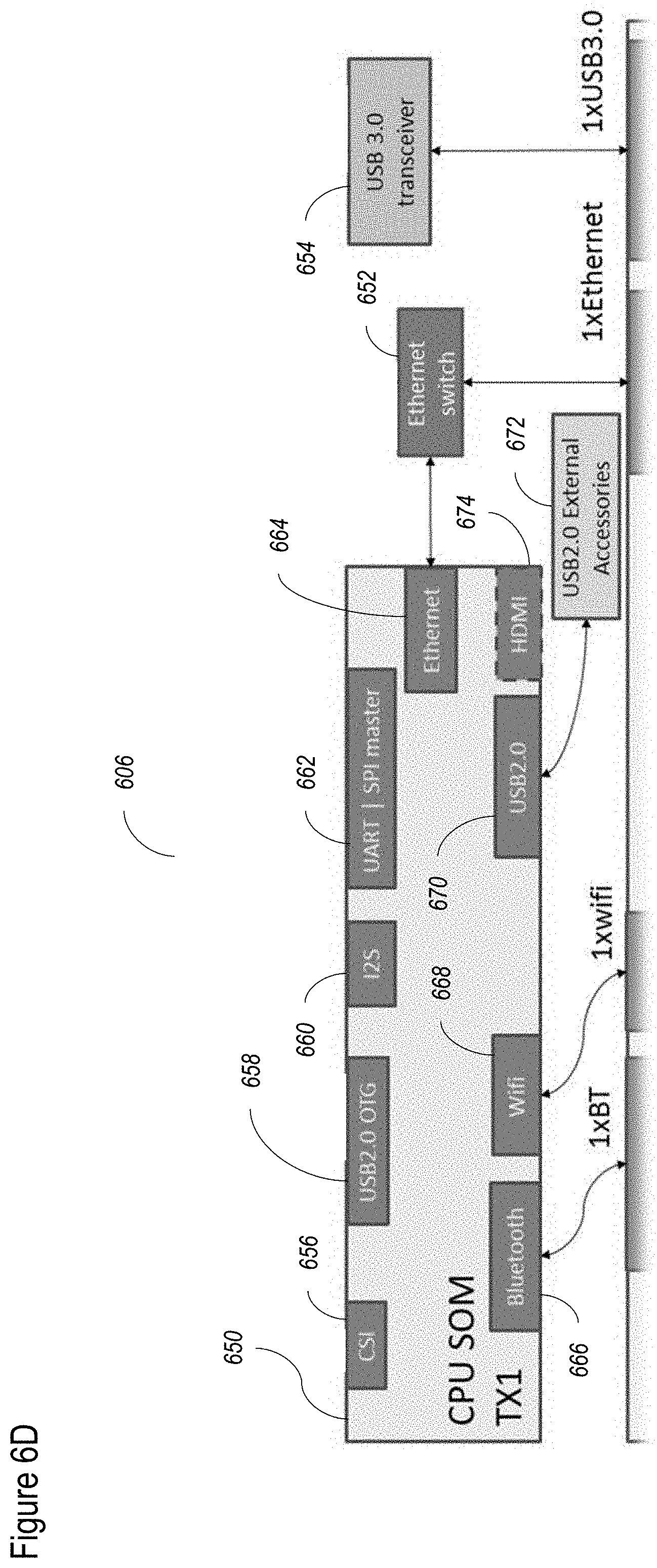

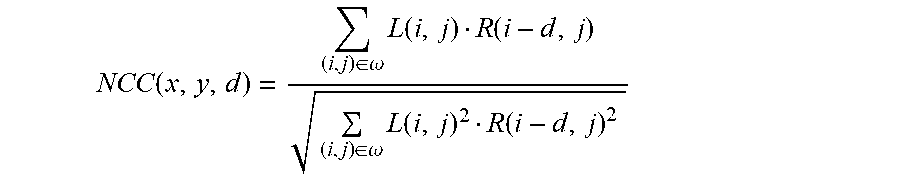

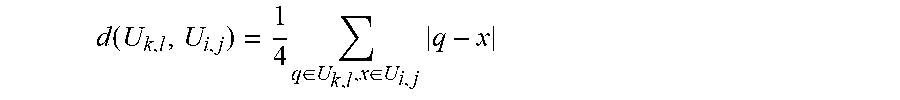

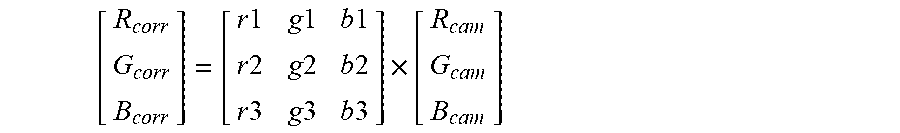

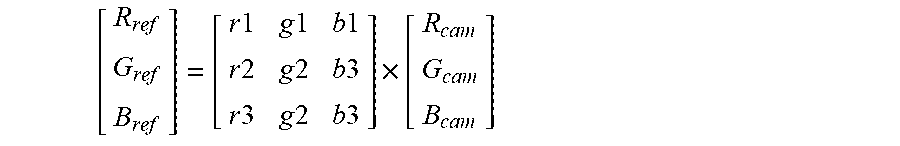

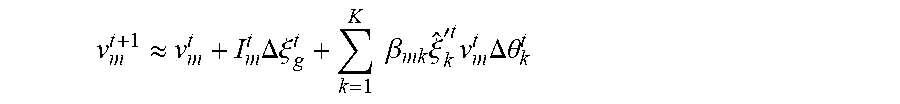

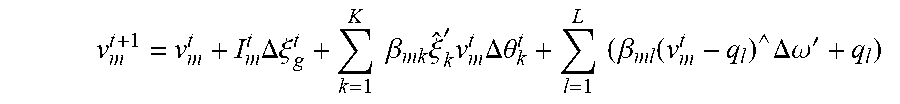

[0198] Turning now to FIG. 6D, the elements of layer 606 are shown, which can include one and/or another of a CPU 650, an Ethernet switch 652, and a USB transceiver 654. CPU 650 may handle calculations otherwise handled by FPGA 626 if the latter is temporarily unable to process further calculations, or to perform other functions, such as functions to assist the more efficient operation of a user application (which would be run by the host computer of layer 608). CPU 650 may be implemented as a SOM. Inputs to CPU 650 optionally include a CSI port 656 (for communicating with MIPI port 638 of FPGA 626); a USB port 658 (for communicating with USB port 640 of FPGA 626); an I2S 660 for transferring sound from the microphone; and UART/SPI master 662 for providing the RF receiver data to the CPU processors.