System, Methods, Device And Apparatuses For Preforming Simultaneous Localization And Mapping

TADI; Tej ; et al.

U.S. patent application number 16/513952 was filed with the patent office on 2020-05-21 for system, methods, device and apparatuses for preforming simultaneous localization and mapping. The applicant listed for this patent is MINDMAZE HOLDING SA. Invention is credited to Leandre BOLOMEY, Nicolas BOURDAUD, Sylvain CARDIN, Blazej CZUPRYNSKI, Farzin DADASHI, Piotr Krzysztof GRODEK, Dat NGO, Julien PILET, Tej TADI, Tomasz TRZCINSKI, Krzysztof WROBEL.

| Application Number | 20200158517 16/513952 |

| Document ID | / |

| Family ID | 70726292 |

| Filed Date | 2020-05-21 |

View All Diagrams

| United States Patent Application | 20200158517 |

| Kind Code | A1 |

| TADI; Tej ; et al. | May 21, 2020 |

SYSTEM, METHODS, DEVICE AND APPARATUSES FOR PREFORMING SIMULTANEOUS LOCALIZATION AND MAPPING

Abstract

Embodiments of the present disclosure are directed to various systems, methods and apparatuses for performing simultaneous localization and mapping (SLAM) for a wearable device, including without limitation, a head-mounted wearable device that optionally includes a display screen. Such embodiments enable accurate and quick localization of a wearable device within a dynamically constructed map, optionally through computations performed with a computational device (including those having limited resources). A non-limiting example of such a computational device is a smart cellular phone or other mobile computational device.

| Inventors: | TADI; Tej; (Lausanne, CH) ; PILET; Julien; (Lausanne, CH) ; NGO; Dat; (Lausanne, CH) ; DADASHI; Farzin; (Lausanne, CH) ; WROBEL; Krzysztof; (Lausanne, CH) ; CZUPRYNSKI; Blazej; (Lausanne, CH) ; TRZCINSKI; Tomasz; (Lausanne, CH) ; GRODEK; Piotr Krzysztof; (Lausanne, CH) ; BOURDAUD; Nicolas; (Lausanne, CH) ; BOLOMEY; Leandre; (Lausanne, CH) ; CARDIN; Sylvain; (Lausanne, CH) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Family ID: | 70726292 | ||||||||||

| Appl. No.: | 16/513952 | ||||||||||

| Filed: | July 17, 2019 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| PCT/IB18/00281 | Jan 19, 2018 | |||

| 16513952 | ||||

| 62577751 | Oct 27, 2017 | |||

| 62448370 | Jan 19, 2017 | |||

| 62699836 | Jul 18, 2018 | |||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | G06T 2207/30244 20130101; G01C 21/206 20130101; G06T 7/80 20170101; G01C 21/32 20130101; G06T 7/74 20170101; H04W 4/029 20180201; G06T 7/248 20170101; G06T 7/579 20170101 |

| International Class: | G01C 21/32 20060101 G01C021/32; H04W 4/029 20060101 H04W004/029; G06T 7/73 20060101 G06T007/73; G06T 7/246 20060101 G06T007/246; G06T 7/80 20060101 G06T007/80 |

Claims

1. An apparatus, comprising: a wearable device; an optical sensor coupled to the wearable device; a computational device; a simultaneous localization and mapping (SLAM) analyzer configured to operate on the computational device and to receive optical sensor data from said optical sensor and having a localization processor and a fast mapping processor, the fast mapping processor configured to rapidly create a map from said optical sensor data; and a map refinement processor to refine said map according to said optical sensor data; wherein said localization processor is configured to localize the optical sensor according to said optical sensor data within said map according to a SLAM process; and wherein at least two of said localization processor, said fast mapping processor, and said map refinement processor is configured to operate at a separate process speed of said computational device.

2. The apparatus of claim 1, wherein said computational device comprises a mobile computational device.

3. The apparatus of claim 2, wherein said computational device comprises a cellular phone.

4. The apparatus of claim 3, wherein said wearable device comprises headgear for mounting said wearable device to a user, said cellular phone comprises said optical sensor, and said cellular phone is mounted on said headgear or is otherwise connected to it.

5. The apparatus of claim 1, wherein said SLAM analyzer is configured to operate said map refinement processor at a first process speed and to operate said fast mapping processor at a second process speed, said first process speed being substantially slower than said second process speed.

6. The apparatus of claim 5, wherein said first process speed is at least 50% slower than said second process speed.

7. The apparatus of claim 5, wherein: said localization processor comprises a tracking processor and at least one of said SLAM analyzer and localization processor is configured to operate said tracking processor at a third process speed, said third process speed being different from said first process speed and different from said second process speed and being substantially faster than said second process speed; and said tracking processor is configured to localize said optical sensor on said map according to said optical sensor data and according to a last known position of said optical sensor on said map.

8. The apparatus of claim 7, wherein said third process speed is at least five times faster than said second process speed.

9. The apparatus of claim 7, wherein said tracking processor is configured to reduce jitter by spreading error across localizations.

10. The apparatus of claim 7, wherein said map refinement processor is configured to calibrate said optical sensor according to an estimate of difference between said map before and after said map refinement processor refines said map.

11. The apparatus of claim 7, wherein: said SLAM analyzer further comprises a map changes processor, and said map changes processor is configured to detect a change in the environment of said optical sensor represented by said map.

12. The apparatus of claim 11, further comprising an outside application configured to be operated by said computational device for manipulating, locating or representing an object, wherein said map changes processor is configured to send a message signal to said outside application that: a particular object has been moved, a particular object has disappeared from its last known location, or a new specific object has appeared.

13. The apparatus of claim 12, wherein said outside application comprises a VR (virtual reality) application or an AR (augmented reality) application.

14. The apparatus of claim 13, wherein: said outside application is an AR application; said SLAM analyzer further comprising a real object locator; and said real object locator determines a location and geometry of a physical object in an environment external to the apparatus, and provides said location and geometry to said AR application.

15. A method for performing SLAM for an apparatus comprising a wearable device, a sensor attached to the wearable device, a computational device, and a simultaneous localization and mapping (SLAM) analyzer operated by the computational device, the method comprising: receiving sensor data from said sensor by said SLAM analyzer; performing a SLAM process by said SLAM analyzer, said SLAM process comprising: simultaneously dynamically constructing a map and locating the wearable device according to said sensor data within said dynamically constructed map, wherein said SLAM process is adapted to be performed by said limited resources of said computational device; performing a fast mapping process to rapidly create said dynamically constructed map from said sensor data; performing a localization process to localize said wearable device in said dynamically constructed map according to said sensor data; and performing a map refinement process to refine said dynamically constructed map according to said sensor data, wherein: said map refinement processor is operated at a first process speed of said computational device and said fast mapping processor is operated at a second process speed of said computational device, said first process speed being substantially slower than said second process speed so as to adapt said SLAM process to be performed by said computational device.

16. The method of claim 15 wherein said first process speed is at least 50% slower than said second process speed.

17. The apparatus of claim 15, wherein: said performing a localization process comprises a tracking process operated at a third process speed of the computational device, said third process speed being different from said first process speed and different from said second process speed and being substantially faster than said second process speed.

18. The method of claim 17, wherein said third process speed is at least five times faster than said second process speed.

Description

FIELD OF THE DISCLOSURE

[0001] The present disclosure, in at least some embodiments, is directed to systems, methods, and apparatuses for performing simultaneous localization and mapping (SLAM), and in particular, for such systems, methods, and apparatuses, for performing SLAM with/for a wearable device.

BACKGROUND

[0002] The term SLAM refers to "Simultaneous Localization And Mapping," and was initially applied to problems of independent movement of a mobile robot (device). In some such systems, the location of the mobile device (e.g., robot) is necessary--that is, its location on a map of an environment--as is a map the environment, so that the mobile device can determine its relative location within that environment. In some known systems, however, these tasks cannot be performed simultaneously, which results in substantial delays when processing mobile device location information.

[0003] SLAM can be performed with sensor data from a number of different sensor types. Visual SLAM refers to the use of visual data from a visual sensor, such as for example a camera, to perform the SLAM process. In some cases, only such visual data is used for the SLAM process (see for example Visual Simultaneous Localization and Mapping: A Survey, Artificial Intelligence Review 43(1) November 2015).

[0004] Various types of sensors and the use of their data in the SLAM process are described in "Past, Present, and Future of Simultaneous Localization And Mapping: Towards the Robust-Perception Age", Cadena et al, https://arxiv.org/pdf/1606.05830.pdf. This article also describes the importance of the "pose", or position and orientation, for the SLAM process. The pose relates to the position and orientation of the robot or other entity to which the sensor is attached, while the map describes the environment for that robot.

[0005] Additionally, some known systems cannot dynamically determine the nature of the mobile device's environment, and therefore, cannot dynamically determine navigation instructions, and/or other information. For example, in some known systems, a navigator for the mobile device can input pre-determined environment data into the known system so as to provide a description of the environment. Such known systems, however, cannot modify the description of the environment substantially in real-time, based on new environmental information, and/or the like.

[0006] U.S. Pat. No. 9,367,811 describes a method for context aware localization, mapping, and tracking (CALMT). However this method does not feature simultaneous localization and mapping, such that it is less useful than SLAM. Furthermore the method is focused on computer vision, which is a more limited activity.

[0007] US Patent Application No. 2014/0125700 describes one method for performing SLAM with sensor data, but is restricted to use in situations that have geometric constraints that are known a priori, which must be provided to the SLAM system before it can begin operation.

[0008] U.S. Pat. No. 9,674,507 describes a monocular SLAM system that creates a 3D map with panoramic and 6DOF camera movements. The system is limited to generating maps only by creating keyframes first, analyzing features, and then potentially saving the keyframe as part of the map. The system requires that all tasks operate in a single process or provides the possibility that all mapping tasks can be separated into another process with all mapping tasks in that single process. The system does not provide further flexibility given that mapping is done by keyframes only.

[0009] U.S. Pat. No. 9,390,344 describes a SLAM system using various motion sensors and that maps and tracks simultaneously using threaded processes. The system described similarly uses keyframes only to create maps.

[0010] Thus, a need exists for methods, apparatuses, and systems that can dynamically determine the location of a mobile device, dynamically determine the nature of the mobile device's environment, and can efficiently determine actions for the mobile device to take based on the dynamically-determined information. Methods and systems for mapping operations without any a priori constraints are also needed.

SUMMARY OF SOME OF THE EMBODIMENTS

[0011] Embodiments of the present disclosure include, systems, methods and apparatuses for performing simultaneous localization and mapping (SLAM) which addressed the above-noted shortcomings.

[0012] In some embodiments, a SLAM system is provided for a wearable device, including without limitation, a head-mounted wearable device that optionally includes a display screen. Such systems, methods and apparatuses can be configured to accurately (and in some embodiments, quickly) localize a wearable device within a dynamically constructed map, e.g., through computations performed with a computational device. A non-limiting example of such a computational device is a smart cellular phone or other mobile computational device.

[0013] According to at least some embodiments, SLAM systems, methods and apparatuses can support a VR (virtual reality) application, an AR (augmented reality) application, and/or the like.

[0014] According to at least some embodiments, there is provided a wearable apparatus, comprising: a monocular optical sensor, a computational device, and a simultaneous localization and mapping (SLAM) analyzer operational on the computational device and configured for receiving optical sensor data from said sensor, the SLAM analyzer comprising: a localization module, and a fast mapping module configured to rapidly create said dynamically constructed a map from said sensor data; and a map refinement module to refine said dynamically constructed map according to said sensor data; wherein said SLAM analyzer is configured to localize the sensor according to said optical sensor data within a dynamically constructed map according to a SLAM process; each of said localization module, said fast mapping module and said map refinement module is configured to operate at a separate process speed of said computational device; and said localization module localizes said sensor in said dynamically constructed map according to said sensor data.

[0015] Optionally said computational device comprises a mobile computational device.

[0016] Optionally said computational device comprises a cellular phone.

[0017] Optionally the apparatus further comprises headgear for mounting said apparatus to a user, wherein said cellular phone comprises said sensor, and said cellular phone is mounted on said headgear.

[0018] Optionally said computational device comprises a hardware processor configured to perform a predefined set of basic operations in response to receiving a corresponding basic instruction selected from a predefined native instruction set of codes, and memory; said SLAM analyzer comprises: a first set of machine codes selected from the native instruction set for receiving said optical sensor data, a second set of machine codes selected from the native instruction set for operating said localization module, a third set of machine codes selected from the native instruction set for operating said fast mapping module, and a fourth set of machine codes selected from the native instruction set for operating said map refinement module; and each of the first, second, third and fourth sets of machine code is stored in the memory.

[0019] Optionally said hardware processor operates said map refinement module at a process speed that is at least 50% slower than said fast mapping module.

[0020] Optionally said localization module comprises a tracking processor, said tracking processor operates at a separate process speed from each of a fast mapping processor and a map refinement processor; said process speed of said tracking processor is at least five times faster than said process speed of said fast mapping processor; and said tracking processor locates said sensor according to said sensor data and according to a last known position of said sensor on said map.

[0021] Optionally said tracking processor reduces jitter by spreading error across localizations.

[0022] Optionally said map refinement processor is configured to calibrate said sensor according to a difference estimate between said map before and after said map refinement processor refines said map.

[0023] Optionally said map refinement processor is configured to correct for drift caused by said fast mapping processor.

[0024] Optionally said map refinement processor is configured to perform map refinement by bundle adjustment.

[0025] Optionally the apparatus further comprises a sensor preprocessor operated by said computational device, said sensor comprises a camera, said data comprises video data, and said sensor preprocessor further comprises a calibration module for calibrating said video data of said camera according to a calibration process.

[0026] Optionally said calibration process includes at least one of determining lens distortion and focal length.

[0027] Optionally said calibration module is configured to calibrate said camera according to a model of said camera and/or of said cellular phone.

[0028] Optionally said sensor preprocessor comprises a sensor abstraction interface for abstracting data from said sensor.

[0029] Optionally said sensor comprises a camera, said data comprises video data, said localization module is configured to reduce jitter while determining a location a plurality of times according to at least one of maintaining a constant error, mixing frame-to-frame with keyframe-to-frame tracking, applying a Kalman filter, and a combination thereof.

[0030] Optionally said sensor comprises a camera, said data comprises video data, the apparatus further comprising a sensor preprocessor operated by said computational device, and said sensor preprocessor further comprises a sensor data preprocessor configured for converting said video data to grayscale if necessary and then applying a Gaussian pyramid to said grayscale video data.

[0031] Optionally said SLAM analyzer is configured to localize the sensor only according to said optical sensor data.

[0032] Optionally said optical sensor data comprises video data and wherein said SLAM analyzer is configured to perform an initialization process comprising: a keypoints reference frame detection process configured to select an image as a reference frame; a keypoints detection process configured for detecting a plurality of keypoints on the reference frame; and an initial map creation process configured for creating an initial map from said keypoints.

[0033] Optionally said keypoints detection process comprises a LK (Lucas-Kanade) process.

[0034] Optionally said initialization process further comprises a verification process configured to verify validity of the tracked points.

[0035] Optionally said verification process comprises a NCC (Normalized Cross Correlation) process.

[0036] Optionally said initialization process further comprises a pose calculation process configured to calculate a pose of said optical sensor before said initial map creation process creates said initial map.

[0037] Optionally said pose calculation process comprises applying homography and/or an essential matrix to said keypoints to determine the pose.

[0038] Optionally said pose calculation process comprises applying said homography and said essential matrix, and determining which of said applying provides a more accurate result.

[0039] Optionally said pose calculation process comprises applying said homography first to determine if a sufficiently accurate result is obtained; if said sufficiently accurate result is not obtained, applying said essential matrix.

[0040] Optionally said pose calculation process comprises a RANSAC process.

[0041] Optionally said pose calculation process further comprises estimating said essential matrix according to a process selected from the group consisting of GOODSAC and RANSAC.

[0042] Optionally said map is generated without a priori constraint.

[0043] Optionally said map is generated de novo.

[0044] Optionally the apparatus further comprises at least one of an accelerometer, a gyroscope, a magnetometer, a barometric pressure sensor, a GPS (global positioning system) sensor, a microphone or other audio sensor, a proximity sensor, a temperature sensor, a UV (ultraviolet light) sensor, a depth sensor, and an IMU (inertial measurement unit).

[0045] Optionally said IMU comprises an accelerometer and a gyroscope.

[0046] Optionally said IMU further comprises a magnetometer.

[0047] Optionally during an initialization of said SLAM analyzer, said optical sensor data and said IMU data are interpolated according to a time based interpolation method, followed by initial bundle adjustment of interpolated data.

[0048] Optionally said SLAM analyzer is additionally configured to determine displacement of at least said optical sensor according to a combination of translation of said optical sensor and rotation of said IMU.

[0049] Optionally said SLAM analyzer is additionally configured to integrate rotation of said IMU of a first pose to determine a second pose of said optical sensor.

[0050] Optionally said SLAM analyzer is further configured to operate a loop closure process, and update said map according to said second pose, followed by performing said loop closure process.

[0051] Optionally said optical sensor comprises a camera selected from the group consisting of RGB camera, color camera, grayscale camera, infrared camera, a charged coupled device (CCD), and a CMOS sensor.

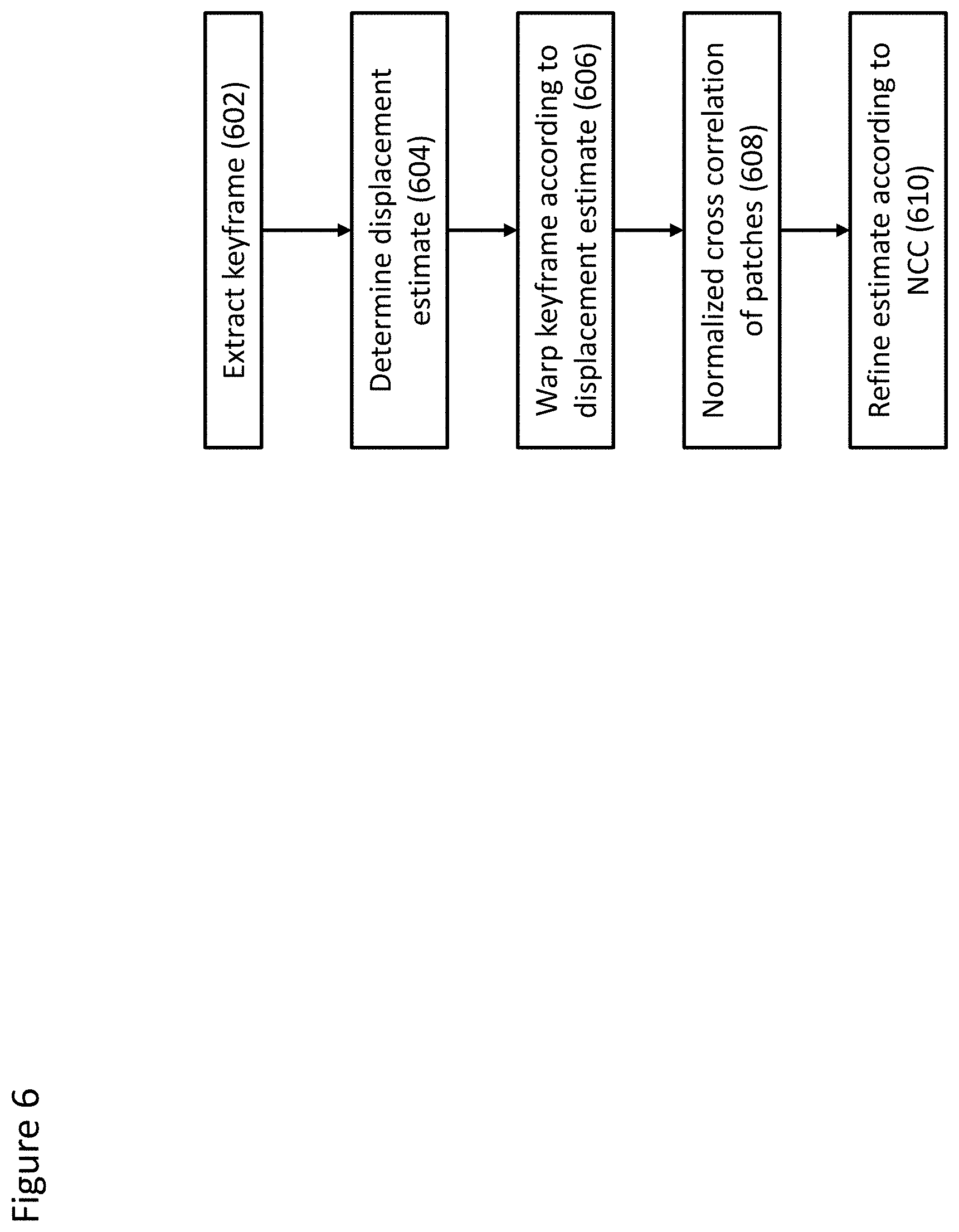

[0052] Optionally said SLAM analyzer is additionally configured to perform a SLAM process comprising: selecting a plurality of keyframes of said video data; determining a plurality of features of each of said keyframes; warping a plurality of patches of said keyframes around said plurality of features; performing a NCC (normalized cross-correlation) process on said warped keyframe patches; and determining a location of said optical sensor according to said NCC process.

[0053] Optionally said SLAM process further comprises determining a displacement estimate from a previous known location of said optical sensor, and said determining said location of said optical sensor according to said NCC process comprises applying a result of said NCC process to said displacement estimate.

[0054] Optionally said selecting said plurality of keyframes of said video data further comprises selecting a plurality of keyframes from said dynamically constructed map according to a plurality of feature points on said dynamically constructed map.

[0055] Optionally said SLAM process further comprises reducing jitter by maintaining a consistent error across analysis of a plurality of frames.

[0056] Optionally said SLAM process further comprises: determining if relocalization of said optical sensor is required according to a determination of reliability of said location of said optical sensor, and if so, performing relocalization by comparing a plurality of features of said keyframes to determine said previous known location of said optical sensor, performing said selecting a plurality of keyframes of said video data; determining a plurality of features of each of said keyframes; warping a plurality of patches of said keyframes around said plurality of features; performing a NCC (normalized cross-correlation) process on said warped keyframe patches; and determining a location of said optical sensor according to said NCC process.

[0057] Optionally said comparing said plurality of features of said keyframes comprises: determining a descriptor for each feature; sorting said descriptors for similarity; sorting said keyframes according to similar descriptors; and comparing said sorted descriptors to a plurality of known landmarks on said dynamically constructed map appearing on said sorted keyframes.

[0058] Optionally said sorting said descriptors for similarity is performed with a vocabulary tree.

[0059] Optionally said comparing said sorted descriptors to a plurality of known landmarks on said dynamically constructed map appearing on said sorted keyframes further comprises removing outliers and determining said previous known location.

[0060] Optionally said removing outliers and determining said previous known location is performed according to RANSAC.

[0061] Optionally said determining said location comprises: searching for a known landmark on a plurality of selected keyframes; if said known landmark is not found on said plurality of selected keyframes, determining said known landmark to be invalid; and if said known landmark is found on at least one of said plurality of selected keyframes, determining said known landmark to be validated.

[0062] Optionally said SLAM analyzer further comprises a map collaboration processor configured for communicating map information to and receiving map information from at least one additional SLAM analyzer external to the apparatus.

[0063] Optionally said SLAM analyzer further comprises a map changes processor, and said map changes processor is configured to detect a change in the environment represented by said map.

[0064] Optionally the apparatus further comprises an object application, which may also be termed herein an outside application, operated by said computational device and configured for manipulating, locating or representing an object, wherein said map changes processor is configured to inform said object application that: a particular object has been moved, a particular object has disappeared from its last known location, or a new specific object has appeared. The object as described herein is a physical object in the physical world, but mapped onto the map as described herein.

[0065] Optionally said object application comprises a VR (virtual reality) application or an AR (augmented reality) application.

[0066] Optionally said object application is an AR application, said SLAM analyzer further comprising a real object locator, said real object locator is configured to determine a location and geometry of a physical object in an environment external to the apparatus, and provides said location and geometry to said AR application.

[0067] Optionally the apparatus further comprises a housing for housing said optical sensor.

[0068] Optionally said housing further houses said computational device.

[0069] Optionally said computational device is located separately from said housing.

[0070] Optionally said computational device is located remotely from said housing.

[0071] According to at least some embodiments, there is provided a wearable apparatus, comprising: a sensor; a computational device; and a simultaneous localization and mapping (SLAM) analyzer configured for receiving data from said sensor and for being operated by said computational device, wherein: said SLAM analyzer is configured to localize the apparatus according to said sensor data within a dynamically constructed map according to a SLAM process; said sensor comprises a camera, said data comprises video data from said camera, said SLAM process comprises: selecting a plurality of keyframes of said video data; determining a plurality of features of each of said keyframes; warping a plurality of patches of said keyframes around said plurality of features; performing a NCC (normalized cross-correlation) process on said warped keyframe patches; and determining a location of said wearable device according to said NCC process.

[0072] Optionally said SLAM process further comprises determining a displacement estimate from a previous known location of said wearable device, and said determining said location of said wearable device according to said NCC process comprises applying a result of said NCC process to said displacement estimate.

[0073] Optionally said selecting said plurality of keyframes of said video data further comprises selecting a plurality of keyframes from said dynamically constructed map according to a plurality of feature points on said dynamically constructed map.

[0074] Optionally said SLAM process further comprises reducing jitter by maintaining a consistent error across analysis of a plurality of frames.

[0075] Optionally said SLAM process further comprises: determining if relocalization of said wearable device is required according to a determination of reliability of said location of said wearable device, and if so, performing relocalization by comparing a plurality of features of said keyframes to determine said previous known location of said wearable device, performing said selecting a plurality of keyframes of said video data; determining a plurality of features of each of said keyframes; warping a plurality of patches of said keyframes around said plurality of features; performing a NCC (normalized cross-correlation) process on said warped keyframe patches; and determining a location of said wearable device according to said NCC process.

[0076] Optionally said comparing said plurality of features of said keyframes comprises: determining a descriptor for each feature; sorting said descriptors for similarity; sorting said keyframes according to similar descriptors; and comparing said sorted descriptors to a plurality of known landmarks on said dynamically constructed map appearing on said sorted keyframes.

[0077] Optionally said sorting said descriptors for similarity is performed with a vocabulary tree.

[0078] Optionally said comparing said sorted descriptors to a plurality of known landmarks on said dynamically constructed map appearing on said sorted keyframes further comprises removing outliers and determining said previous known location.

[0079] Optionally said removing outliers and determining said previous known location is performed according to RANSAC.

[0080] Optionally said determining said location comprises: searching for a known landmark on a plurality of selected keyframes; if said known landmark is not found on said plurality of selected keyframes, determining said known landmark to be invalid; and if said known landmark is found on at least one of said plurality of selected keyframes, determining said known landmark to be validated.

[0081] Optionally the apparatus further comprises an AR (augmented reality) application, wherein: said SLAM analyzer further comprises an obstacle avoidance processor; said obstacle avoidance processor is configured to determine a location and geometry of each validated landmark that is a potential obstacle and communicates said location and geometry to said AR application.

[0082] Optionally the apparatus further comprises an VR (virtual reality) application, wherein said SLAM analyzer further comprises an obstacle avoidance processor configured to determine a location and geometry of each validated landmark that is a potential obstacle and communicates said location and geometry to said VR application.

[0083] Optionally said sensor comprises a plurality of cameras and wherein said video data is analyzed at least as stereo image data.

[0084] Optionally the apparatus further comprises an IMU, wherein said SLAM analyzer is further configured to analyze said IMU data for said SLAM process.

[0085] Optionally said SLAM analyzer is further configured to interpolate said optical data and said IMU data by said SLAM process.

[0086] Optionally said SLAM process is configured to interpolate said optical sensor data and said IMU data, and calculate a quaternion interpolation of said optical sensor data and said IMU data.

[0087] Optionally said SLAM process further comprises determining an initialization error for said IMU, and for weighting said quaternion interpolation according to said initialization error.

[0088] Optionally said quaternion interpolation comprises a weighted SLERP interpolation.

[0089] Optionally said IMU comprises a magnetometer, said apparatus further comprises a magnetometer separate from said IMU or a combination thereof, and wherein said SLAM process further comprises determining translation of said magnetometer according to magnetometer data, and applying said translation to said interpolated optical sensor data and IMU data.

[0090] According to some embodiments there is provided a SLAM apparatus configured for performing simultaneous localization and mapping (SLAM) process, comprising: a computational device, a SLAM analyzer operated by or operational on said computational device, an optical sensor in communication with said computational device, an IMU in communication with said computational device, wherein said IMU comprises an accelerometer and a gyroscope; and a structure for causing said optical sensor and said IMU to move in tandem; wherein: said computational device is configured to receive sensor data from said optical sensor and from said IMU for being analyzed by said SLAM analyzer; and said SLAM analyzer is configured to perform a SLAM process to create a map and to localize one or both of said optical sensor and said IMU in said map according to said optical sensor data and said IMU data, according to a time based localization method.

[0091] Optionally said structure comprises a housing for housing said optical sensor and said IMU.

[0092] Optionally said housing further houses said computational device.

[0093] Optionally said SLAM process further comprises performing initial bundle adjustment according to a spline, wherein said spline is determined according to said optical sensor data and said IMU data, and wherein a second derivative of said spline is determined according to accelerometer data.

[0094] Optionally said IMU comprises a magnetometer, said apparatus further comprises a magnetometer separate from said IMU or a combination thereof, and wherein said SLAM process further comprises determining translation of said magnetometer according to magnetometer data; wherein said SLAM process further comprises applying said translation to said interpolated optical sensor data and IMU data.

[0095] According to at least some embodiments, there is provided a SLAM method configured for performing SLAM for a wearable apparatus comprising a sensor, a computational device, and a simultaneous localization and mapping (SLAM) analyzer operated by the computational device, the method comprising: receiving sensor data from said sensor by said SLAM analyzer; performing a SLAM process by said SLAM analyzer, said SLAM process comprising: simultaneously dynamically constructing a map and locating the apparatus according to said sensor data within said dynamically constructed map, wherein said SLAM process is adapted to be performed by said limited resources of said computational device; wherein: said performing said SLAM process comprises: performing a fast mapping process to rapidly create said dynamically constructed map from said sensor data; performing a localization process to localize said wearable device in said dynamically constructed map according to said sensor data; and performing a map refinement process to refine said dynamically constructed map according to said sensor data, each of said fast mapping process and said map refinement process is operated at a separate process speed of said computational device, and said map refinement process operates at a process speed that is at least 50% slower than a process speed of said fast mapping process so as to adapt said SLAM process to be performed by said computational device.

[0096] According to at least some embodiments, there is provided a SLAM method for performing SLAM for a wearable apparatus comprising a sensor and a computational device, wherein said sensor comprises a camera providing video data; the method comprising: receiving video data from said camera by said computational device; simultaneously dynamically constructing a map and locating the apparatus according to said video data within said dynamically constructed map, by selecting a plurality of keyframes of said video data; determining a plurality of features of each of said keyframes; warping a plurality of patches of said keyframes around said plurality of features; performing NCC (normalized cross-correlation) process on said warped keyframe patches; and determining a location of said wearable device on said dynamically constructed map according to said NCC process.

[0097] Optionally the method further comprises adding IMU data for a more efficient and/or accurate SLAM process.

[0098] Unless otherwise defined, all technical and scientific terms used herein have the same meaning as commonly understood by one of ordinary skill in the art to which this disclosure belongs. The materials, methods, and examples provided herein are illustrative only and not intended to be limiting.

[0099] Various embodiments of the methods, systems and apparatuses of the present disclosure can be implemented by hardware and/or by software or a combination thereof. For example, as hardware, selected steps of methodology according to some embodiments can be implemented as a chip and/or a circuit. As software, selected steps of the methodology (e.g., according to some embodiments of the disclosure) can be implemented as a plurality of software instructions being executed by a computer (e.g., using any suitable operating system). Accordingly, in some embodiments, selected steps of methods, systems and/or apparatuses of the present disclosure can be performed by a processor (e.g., executing an application and/or a plurality of instructions).

[0100] Although embodiments of the present disclosure are described with regard to a "computer", and/or with respect to a "computer network," it should be noted that optionally any device featuring a processor and the ability to execute one or more instructions is within the scope of the disclosure, such as may be referred to herein as simply a computer or a computational device and which includes (but not limited to) any type of personal computer (PC), a server, a cellular telephone, an IP telephone, a smartphone, a PDA (personal digital assistant), a thin client, a mobile communication device, a smartwatch, head mounted display or other wearable that is able to communicate wired or wirelessly with a local or remote device. To this end, any two or more of such devices in communication with each other may comprise a "computer network."

BRIEF DESCRIPTION OF THE DRAWINGS

[0101] Embodiments of the disclosure is herein described, by way of example only, with reference to the accompanying drawings. With specific reference now to the drawings in detail, it is stressed that particulars shown are by way of example and for purposes of illustrative discussion of the various embodiments of the present disclosure only, and are presented in order to provide what is believed to be a useful and readily understood description of the principles and conceptual aspects of the various embodiments of inventions disclosed therein.

[0102] FIG. 1A shows schematic of a non-limiting example of a SLAM system, according to at least some embodiments;

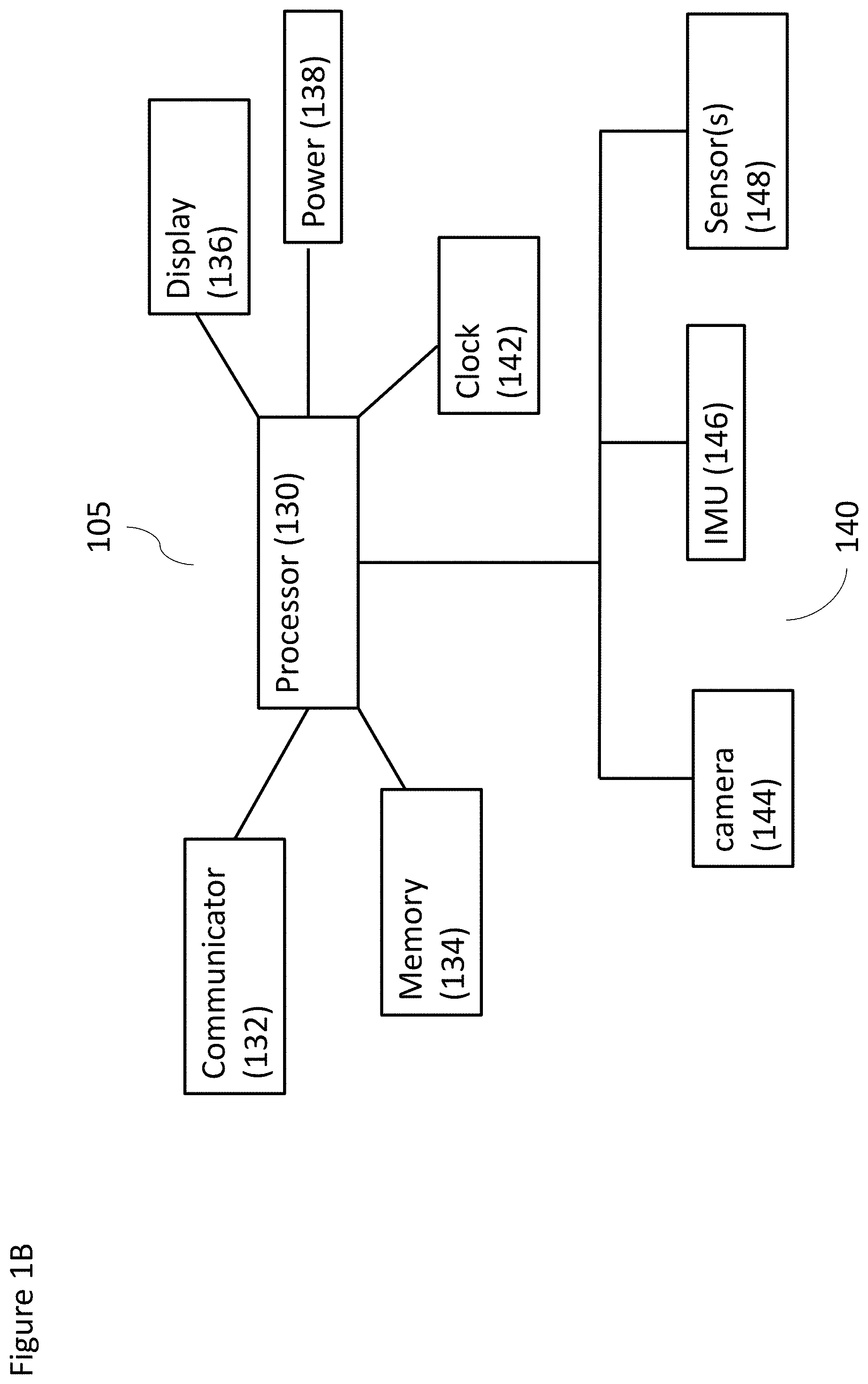

[0103] FIG. 1B shows a schematic of a non-limiting example of a wearable device, according to at least some embodiments;

[0104] FIG. 1C shows a schematic of a non-limiting example of a combination of a wearable device and a computational device, according to at least some embodiments;

[0105] FIG. 1D shows another schematic of a non-limiting example of a combination of a wearable device, a local data processing system, and a remote data processing system, according to at least some embodiments;

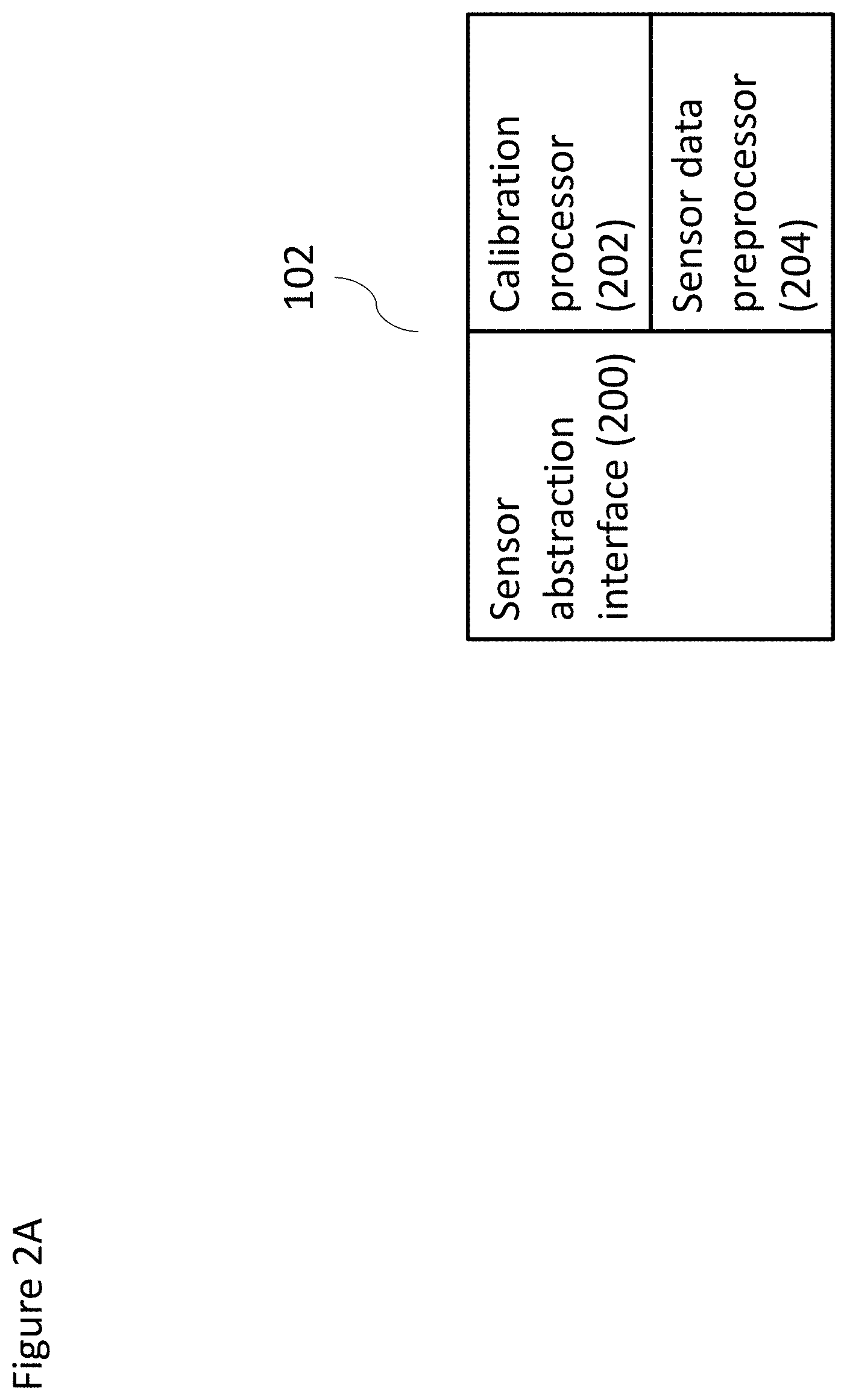

[0106] FIG. 2A shows a schematic of a non-limiting example of sensor preprocessor according to at least some embodiments;

[0107] FIG. 2B shows a schematic of a non-limiting example of a SLAM analyzer according to at least some embodiments;

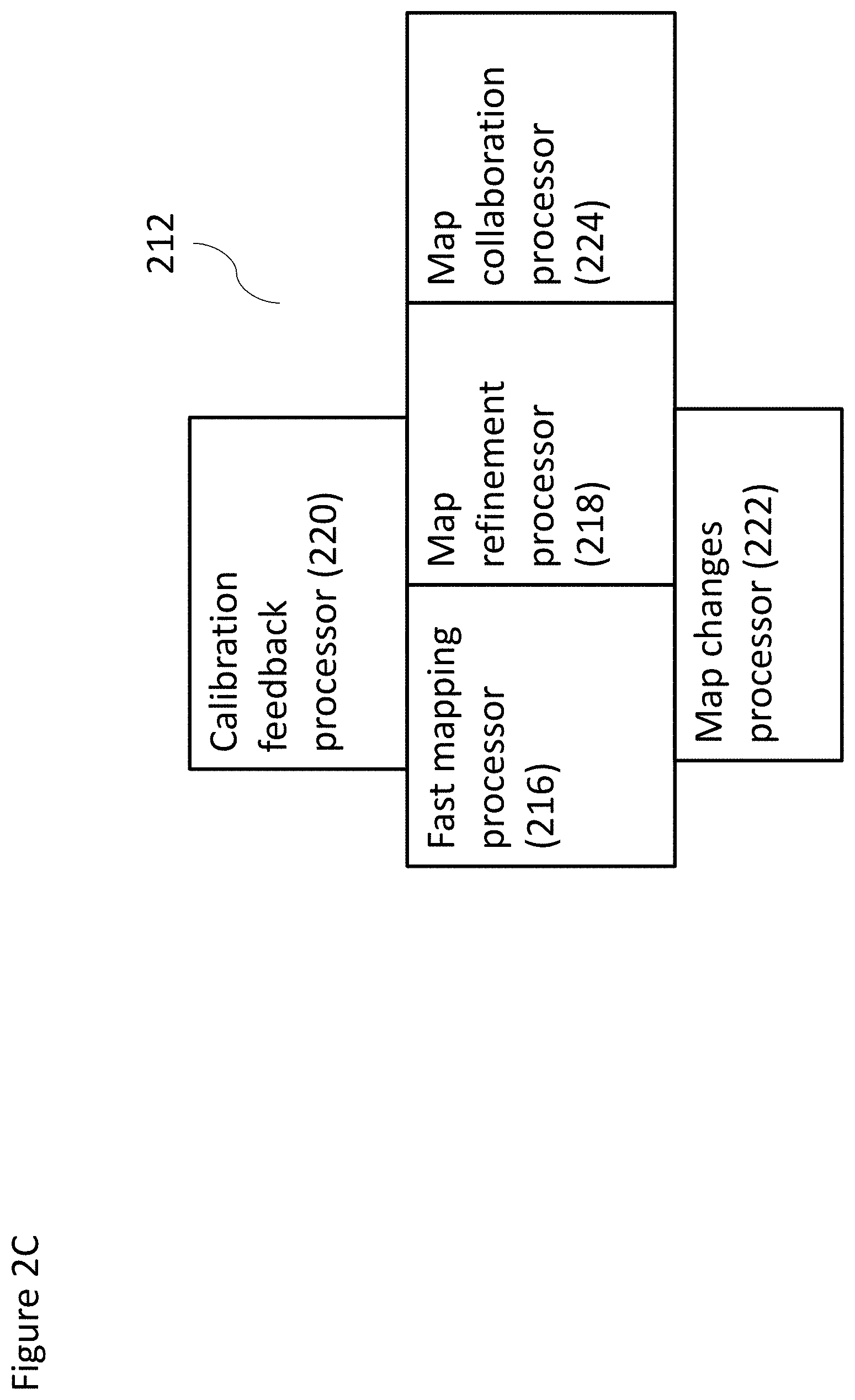

[0108] FIG. 2C shows a schematic of a non-limiting example of a mapping module according to at least some embodiments;

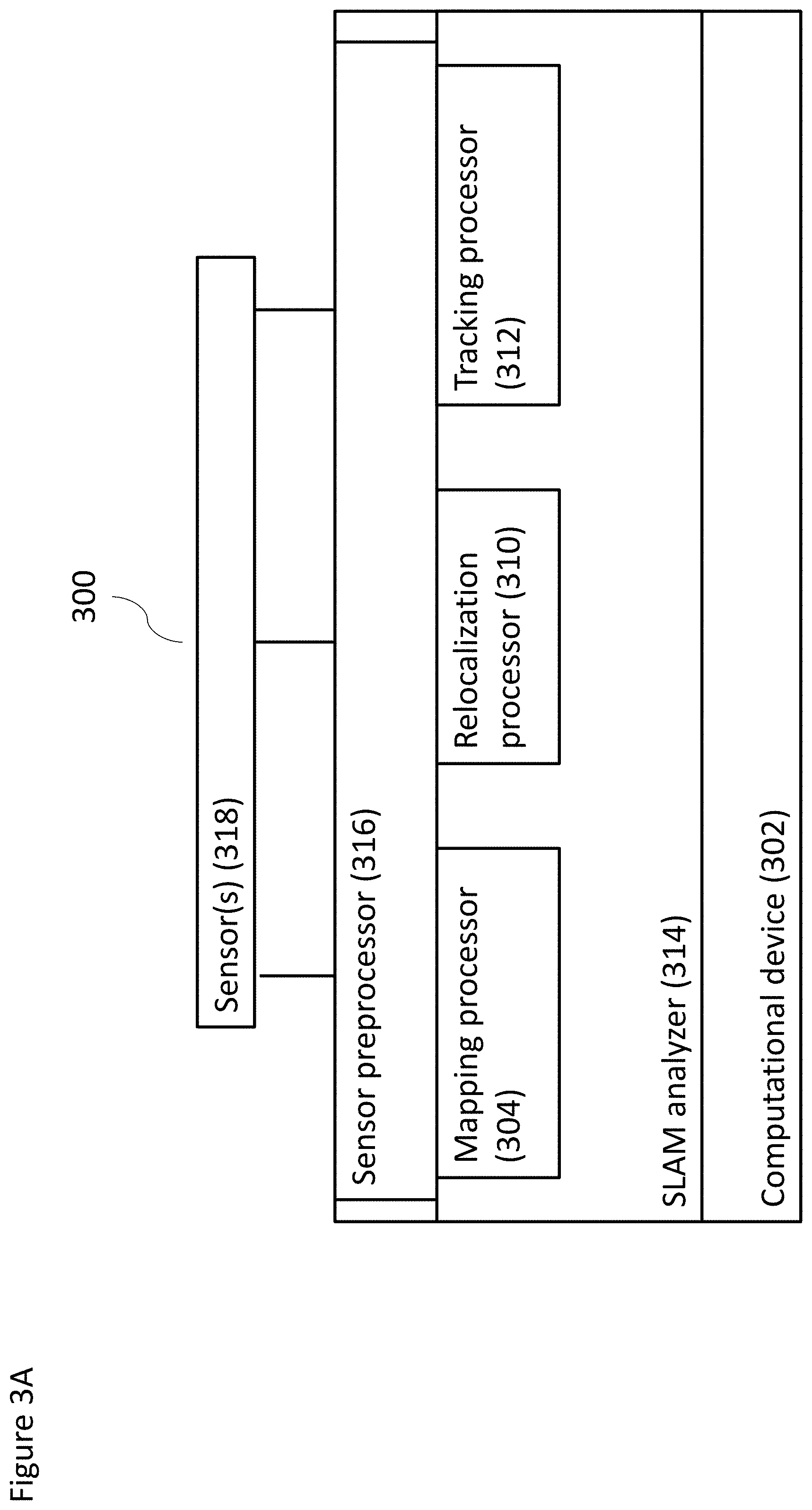

[0109] FIG. 3A shows a schematic of another non-limiting example of a system according to at least some embodiments;

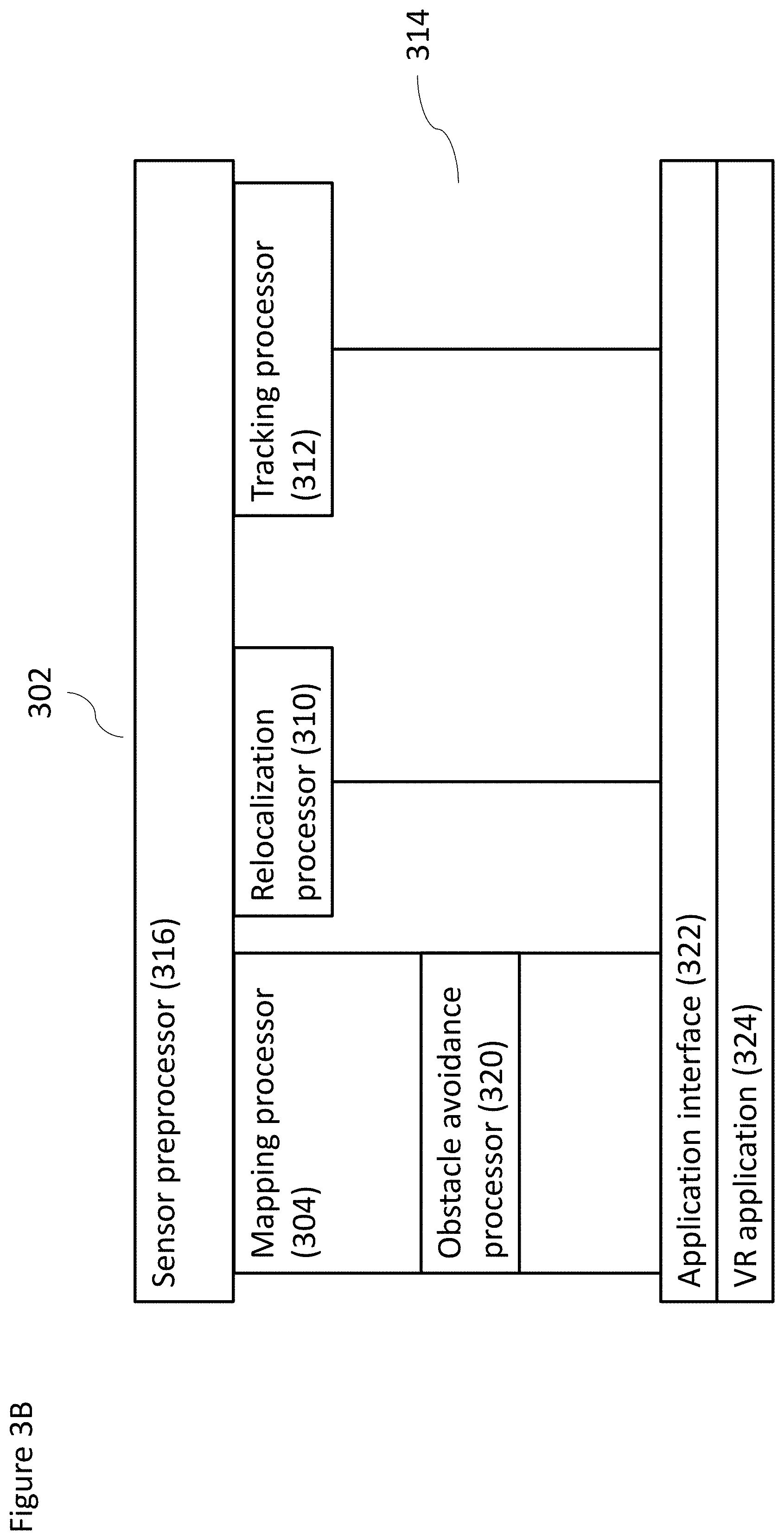

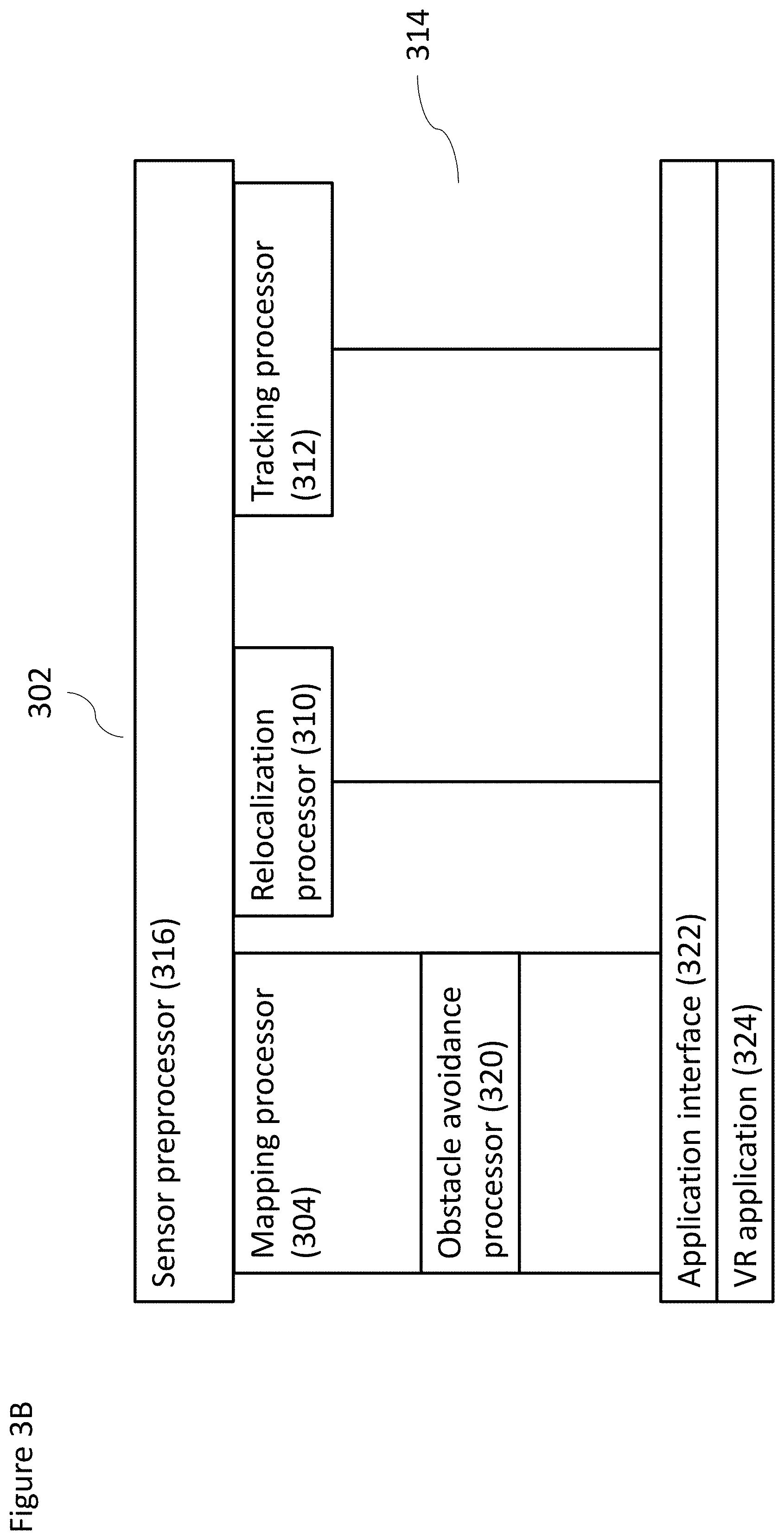

[0110] FIG. 3B shows a schematic of a non-limiting example implementation of a computational device operating at least some components of the system according to at least some embodiments;

[0111] FIG. 3C shows a schematic of another non-limiting example implementation of a computational device operating at least some components of the system according to at least some embodiments;

[0112] FIG. 4 shows a non-limiting exemplary method for performing SLAM according to at least some embodiments;

[0113] FIG. 5 shows a non-limiting exemplary method for performing localization according to at least some embodiments;

[0114] FIG. 6 shows another non-limiting example of a method for performing localization according to at least some embodiments;

[0115] FIG. 7 shows a non-limiting example of a method for updating system maps according to map refinement, according to at least some embodiments of the present invention; and

[0116] FIG. 8 shows a non-limiting example of a method for validating landmarks according to at least some embodiments of the present invention.

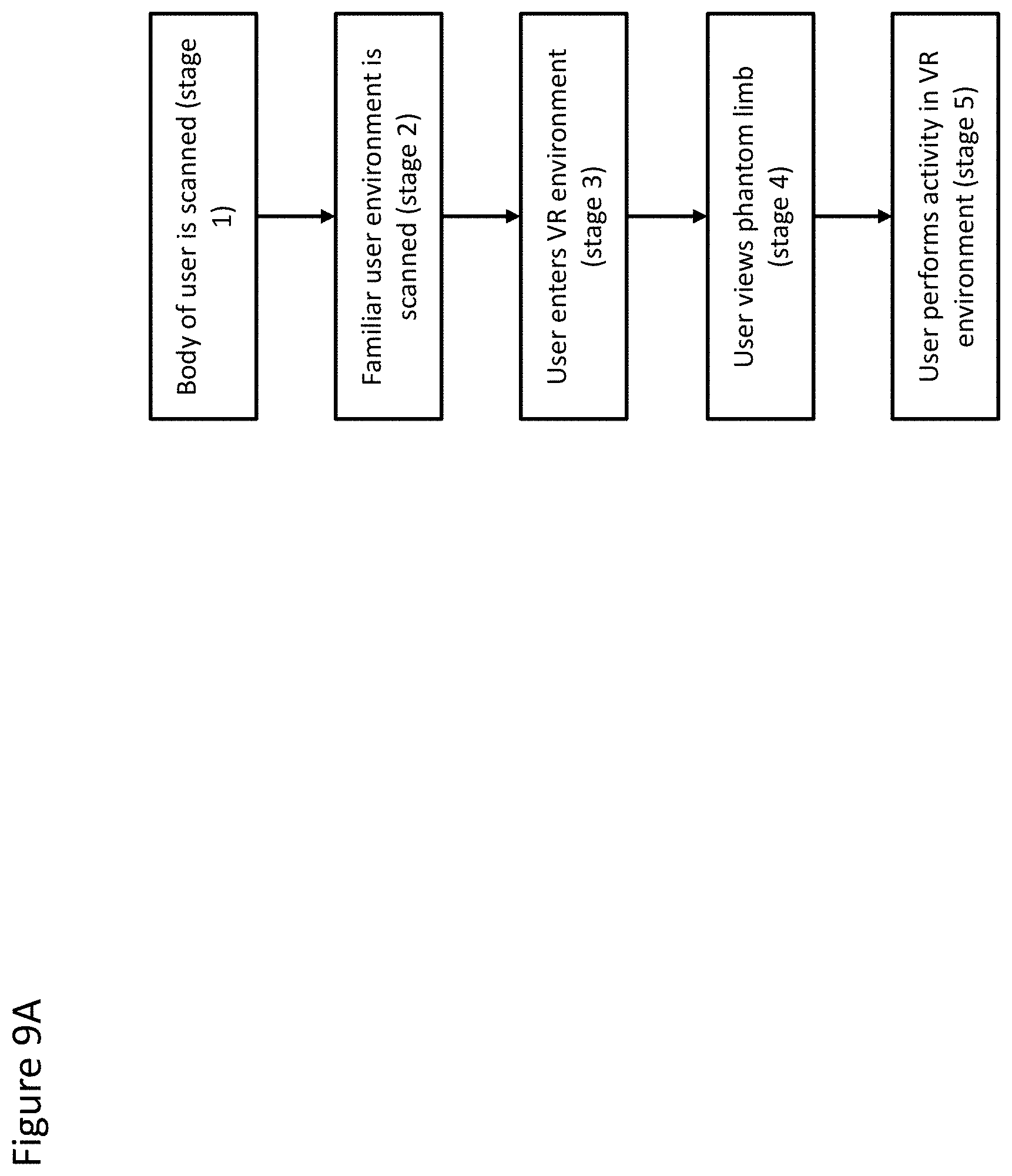

[0117] FIGS. 9A and 9B are example logic flow diagrams illustrating the performance of actions in a VR environment, according to at least some embodiments;

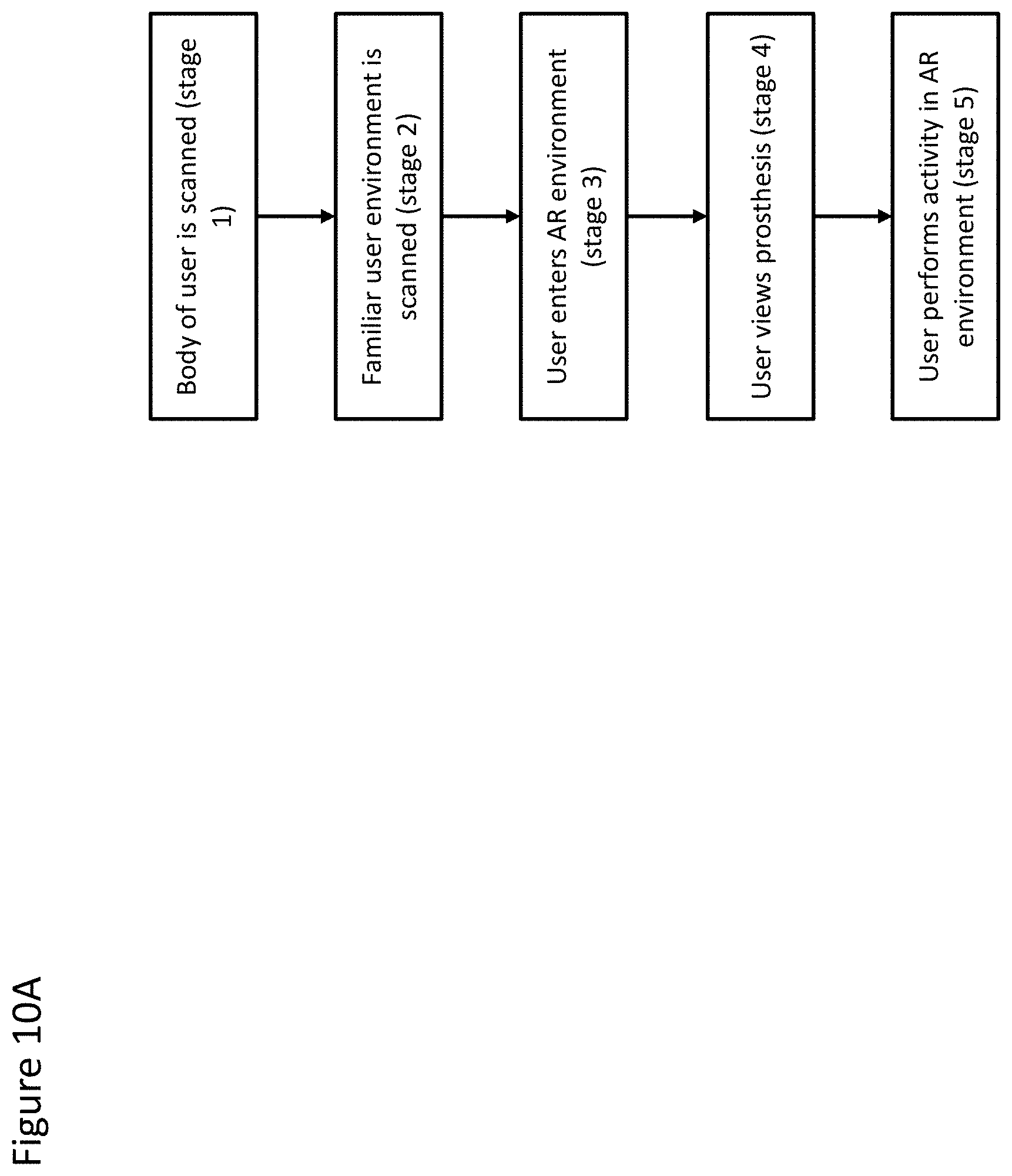

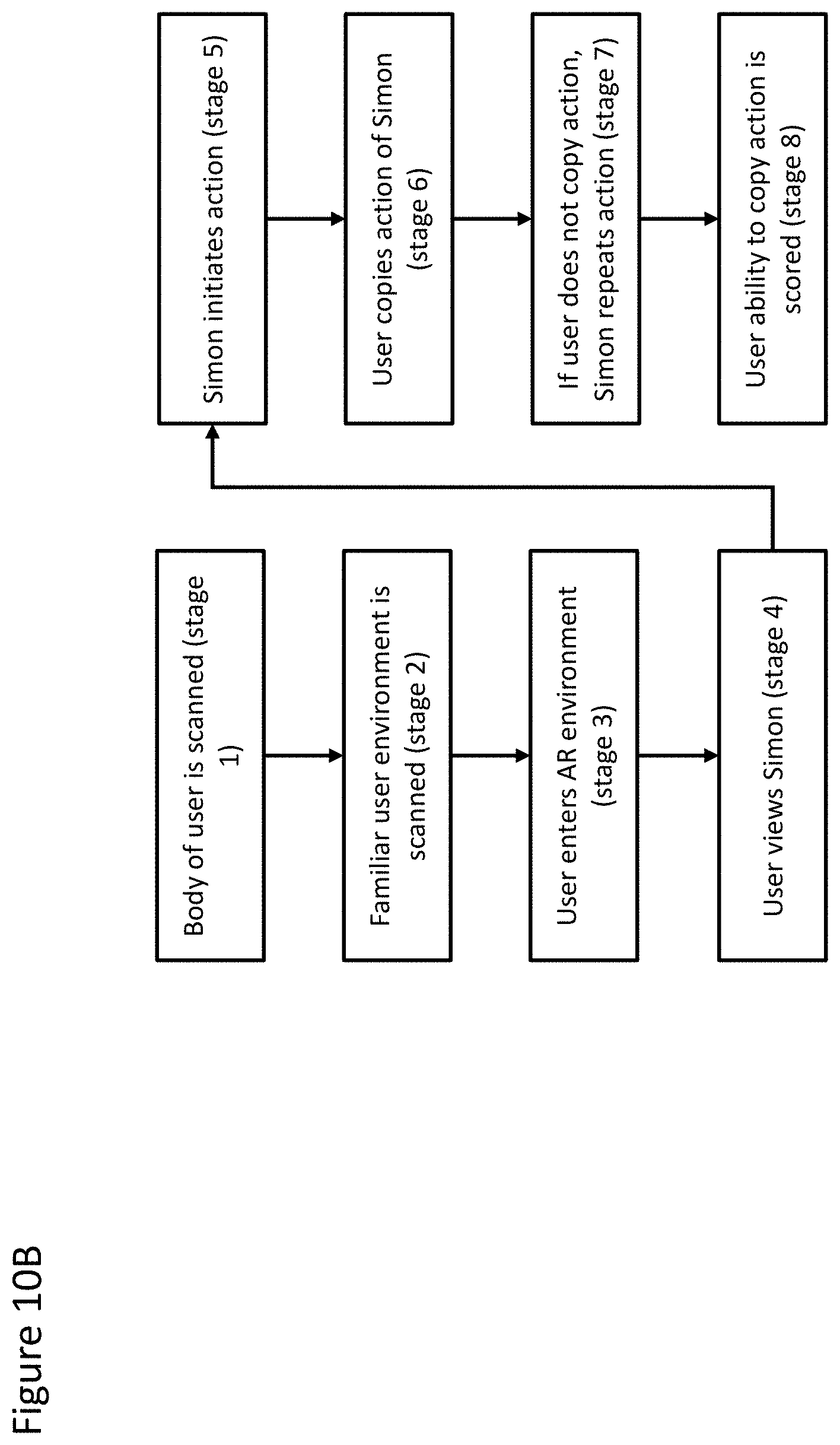

[0118] FIGS. 10A and 10B are example logic flow diagram illustrating the performance of actions in an AR environment, according to at least some embodiments;

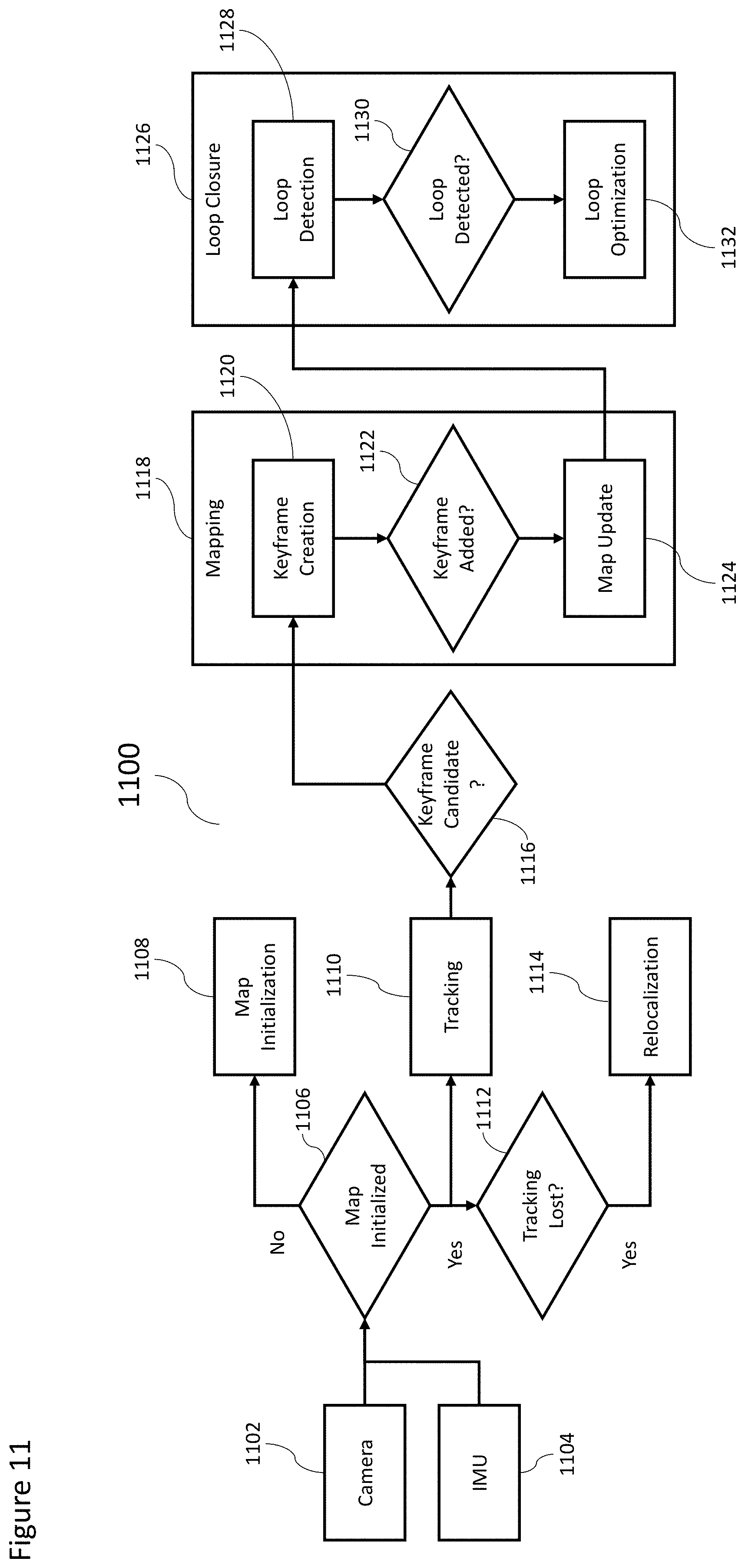

[0119] FIG. 11 shows an exemplary, non-limiting flow diagram for performing SLAM according to at least some embodiments;

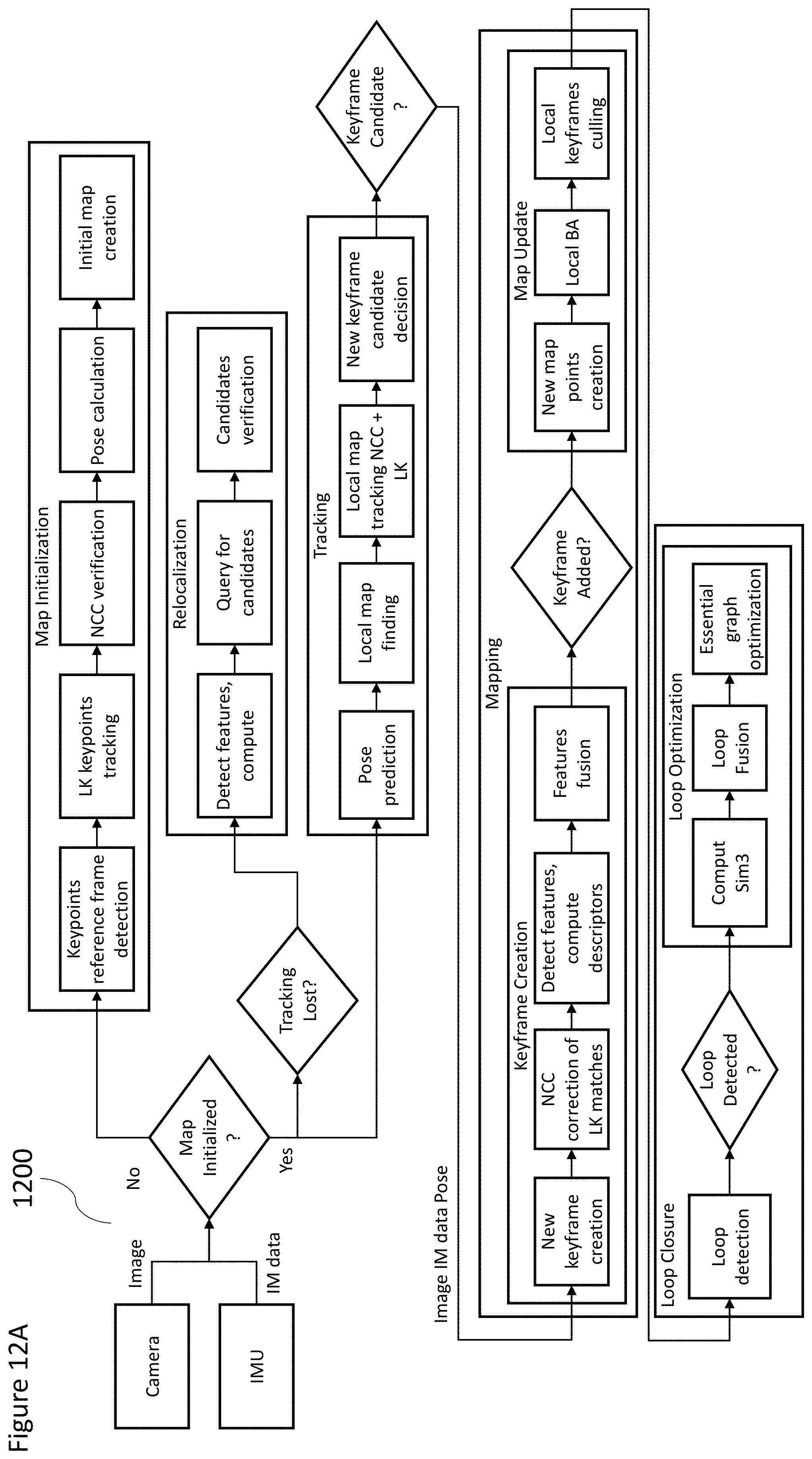

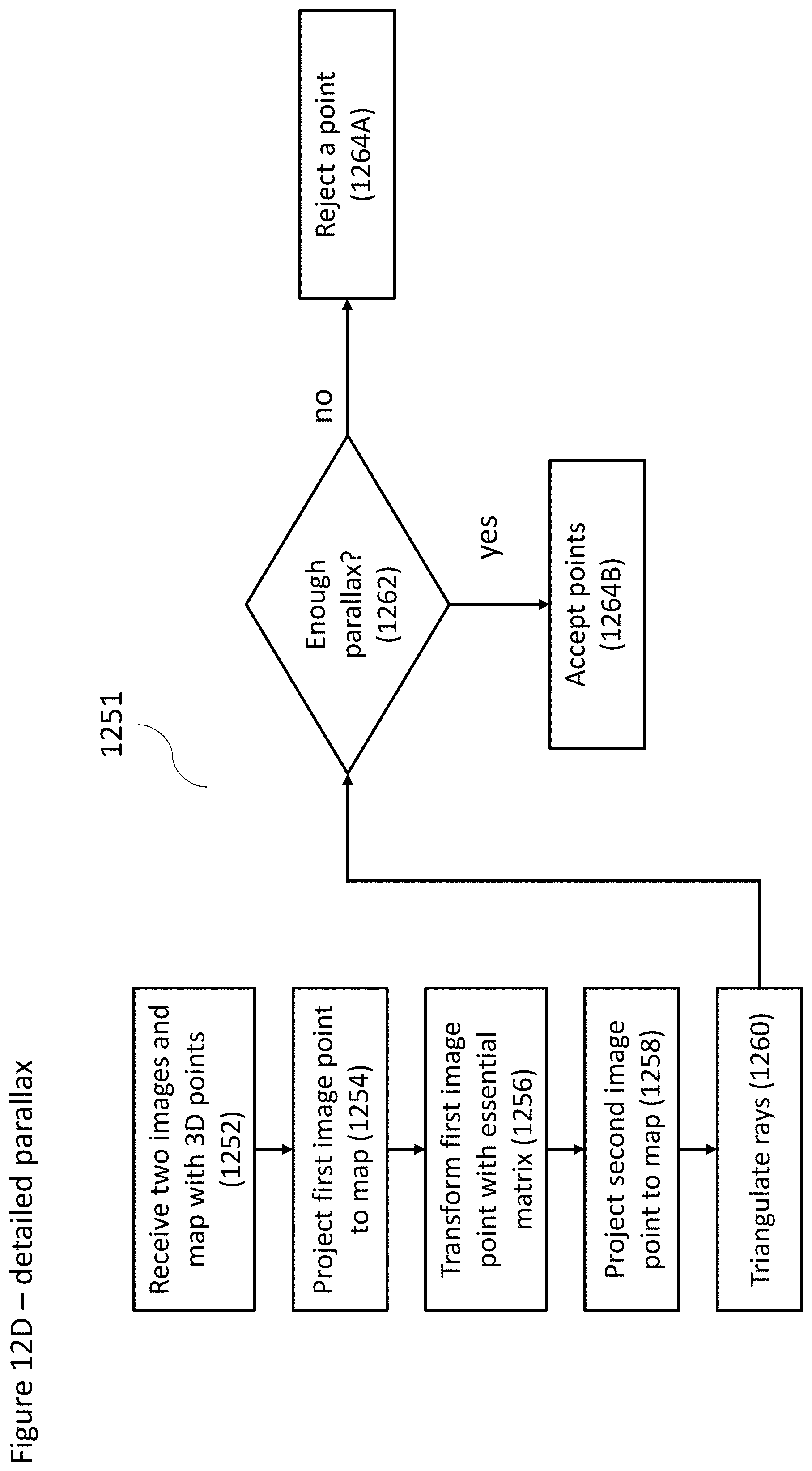

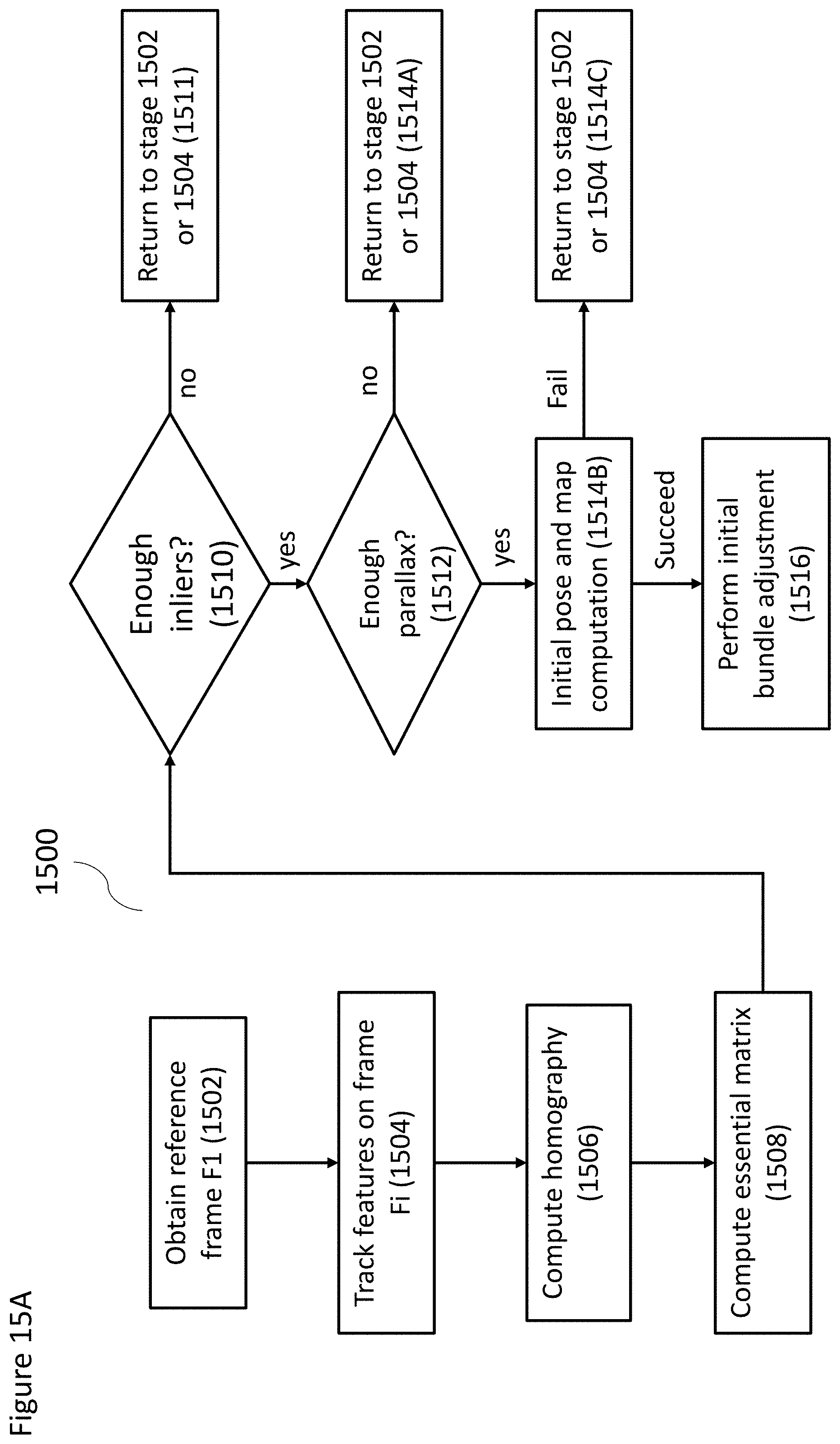

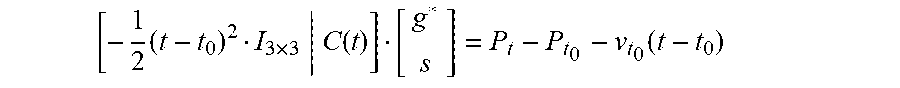

[0120] FIGS. 12A-12D show a detailed, exemplary, non-limiting flow diagram for performing SLAM according to at least some embodiments;

[0121] FIG. 13A shows a schematic graph of accelerometer data;

[0122] FIG. 13B shows an exemplary, non-limiting flow diagram for determining the coordinates scale and gravity vector from IMU (Inertial Measurement Unit) data according to at least some embodiments;

[0123] FIG. 13C shows an exemplary, non-limiting flow diagram for pose prediction according to at least some embodiments;

[0124] FIG. 14 shows an exemplary, non-limiting system for visual-inertial SLAM with IMU (inertial measurement unit) data according to at least some embodiments;

[0125] FIG. 15A shows an exemplary, non-limiting flow diagram for SLAM initialization according to at least some embodiments;

[0126] FIG. 15B shows an exemplary, non-limiting flow diagram for initial bundle adjustment with IMU data according to at least some embodiments;

[0127] FIG. 16 shows an exemplary, non-limiting flow diagram for SLAM initialization with interpolation of IMU data according to at least some embodiments;

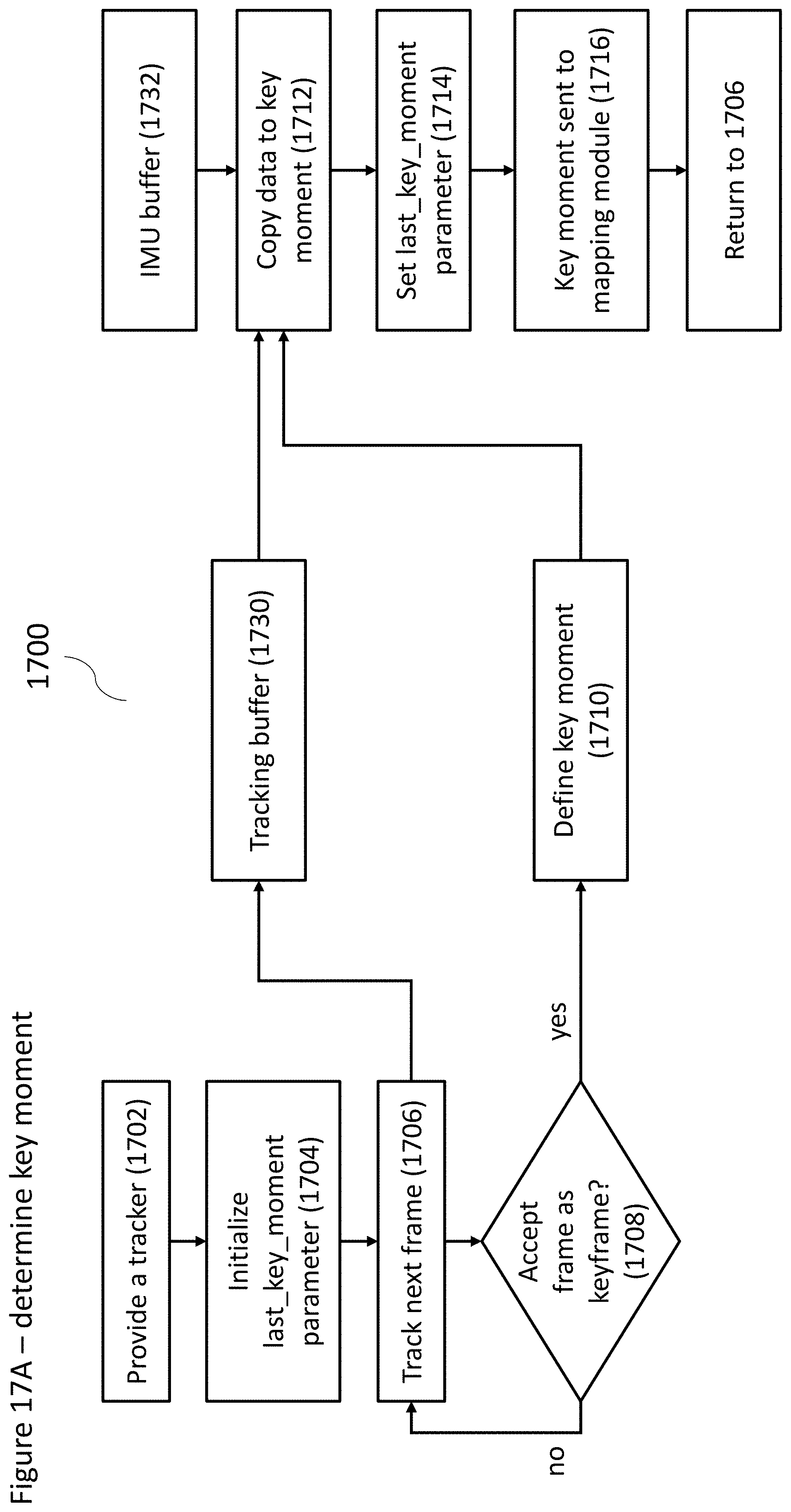

[0128] FIG. 17A shows an exemplary, non-limiting flow diagram for determining a key moment according to at least some embodiments; and

[0129] FIG. 17B shows an exemplary, non-limiting schematic diagram of a spline with a plurality of key moments and key frames.

DETAILED DESCRIPTION OF SOME OF THE EMBODIMENTS

[0130] FIG. 1A shows a schematic of a non-limiting example of a simultaneous localization and mapping (SLAM) system, according to at least some embodiments of the present disclosure. In some implementations, SLAM system 100 can include at least one computational device/computer 107 (as indicated earlier, the terms/phrases of computer, processor and computation device can be used interchangeably in the present disclosure), a wearable device 105, and one or more sensors 103. The computational device 107 can include a sensor preprocessor 102 and a SLAM analyzer 104, and can be operatively coupled to the wearable device 105 (e.g., wired or wirelessly), and can be included in the wearable device 105, and/or some combination thereof. Sensor preprocessor 102 and SLAM analyzer 104 can be separate processors in and of themselves in the computational device, or, may be software modules (e.g., an application program and/or a set of computer instructions for performing SLAM functionality operational on one or more processors). In some implementations, the computational device 107 can be configured to receive signal data (e.g., from the wearable device 105), to preprocess the signal data, so as to determine movement of the wearable device, and to instruct the wearable device to perform one or more actions based on the movement of the wearable device. Specifically, in some implementations, sensor preprocessor 102 can receive the sensor data from the wearable device 105, and can perform preprocessing on the sensor data. For example, sensor preprocessor 102 can generate abstracted sensor data based on the sensor data.

[0131] SLAM analyzer 104 is configured to operate a SLAM process so as to determine a location of wearable device 105 within a computational device-generated map, as well as being configured to determine a map of the environment surrounding wearable device 105. For example, the SLAM process can be used to translate movement of the user's head and/or body when wearing the wearable device (e.g., on the user's head or body). A wearable that is worn on the user's head would for example provide movement information with regard to turning the head from side to side, or up and down, and/or moving the body in a variety of different ways. Such movement information is needed for SLAM to be performed.

[0132] In some implementations, because the preprocessed sensor data is abstracted from the specific sensors, the SLAM analyzer 104, therefore, can be sensor-agnostic, and can perform various actions without knowledge of the particular sensors from which the sensor data was derived.

[0133] As a non-limiting example, if sensor 103 is a camera (e.g., digital camera including a resolution, for example, of 640.times.480 and greater, at any frame rate including, for example 60 fps), then movement information may be determined by SLAM analyzer 104 according to a plurality of images from the camera. For such an example, sensor preprocessor 102 preprocesses the images before SLAM analyzer 104 performed the analysis (which may include, for example, converting images to grayscale). Next a Gaussian pyramid may be computed for one or more images, which is also known as a MIPMAP (multum in parvo map), in which the pyramid starts with a full resolution image, and the image is operated on multiple times, such that each time, the image is half the size and half the resolution of the previous operation. SLAM analyzer 104 may perform a wide variety of different variations on the SLAM process, including one or more of, but not limited to, PTAM (Parallel Tracking and Mapping), as described for example in "Parallel Tracking and Mapping on a Camera Phone" by Klein and Murray, 2009 (available from ieeexplore.ieee.org/document/5336495/); DSO (Direct Sparse Odometry), as described for example in "Direct Sparse Odometry" by Engel et al, 2016 (available from https://arxiv.org/abs/1607.02565); or any other suitable SLAM method, including those as described herein.

[0134] In some implementations, the wearable device 105 can be operatively coupled to the one or more sensor(s) 103 and the computational device 107 (e.g., wired, wirelessly). The wearable device 105 can be a device (such as an augmented reality (AR) and/or virtual reality (VR) headset, and/or the like) configured to receive sensor data, so as to track a user's movement when the user is wearing the wearable device 105. The wearable device 105 can be configured to send sensor data from the one or more sensors 103 to the computational device 107, such that the computational device 107 can process the sensor data to identify and/or contextualize the detected user movement.

[0135] In some implementations, the one or more sensors 103 can be included in wearable device 105 and/or separate from wearable device 105. A sensor 105 can be one of a camera (as indicated above), an accelerometer, a gyroscope, a magnetometer, a barometric pressure sensor, a GPS (global positioning system) sensor, a microphone or other audio sensor, a proximity sensor, a temperature sensor, a UV (ultraviolet light) sensor, an IMU (inertial measurement unit), and/or other sensors. If implemented as a camera, sensor 103 can be one of an RGB, color, grayscale or infrared camera, a charged coupled device (CCD), a CMOS sensor, a depth sensor, and/or the like. If implemented as an IMU, sensor 103 can be an accelerometer, a gyroscope, a magnetometer, a combination of two or more of same, and/or the like. When multiple sensors 103 are operatively coupled to and/or included in the wearable device 105, the sensors 103 can include one or more of the aforementioned types of sensors.

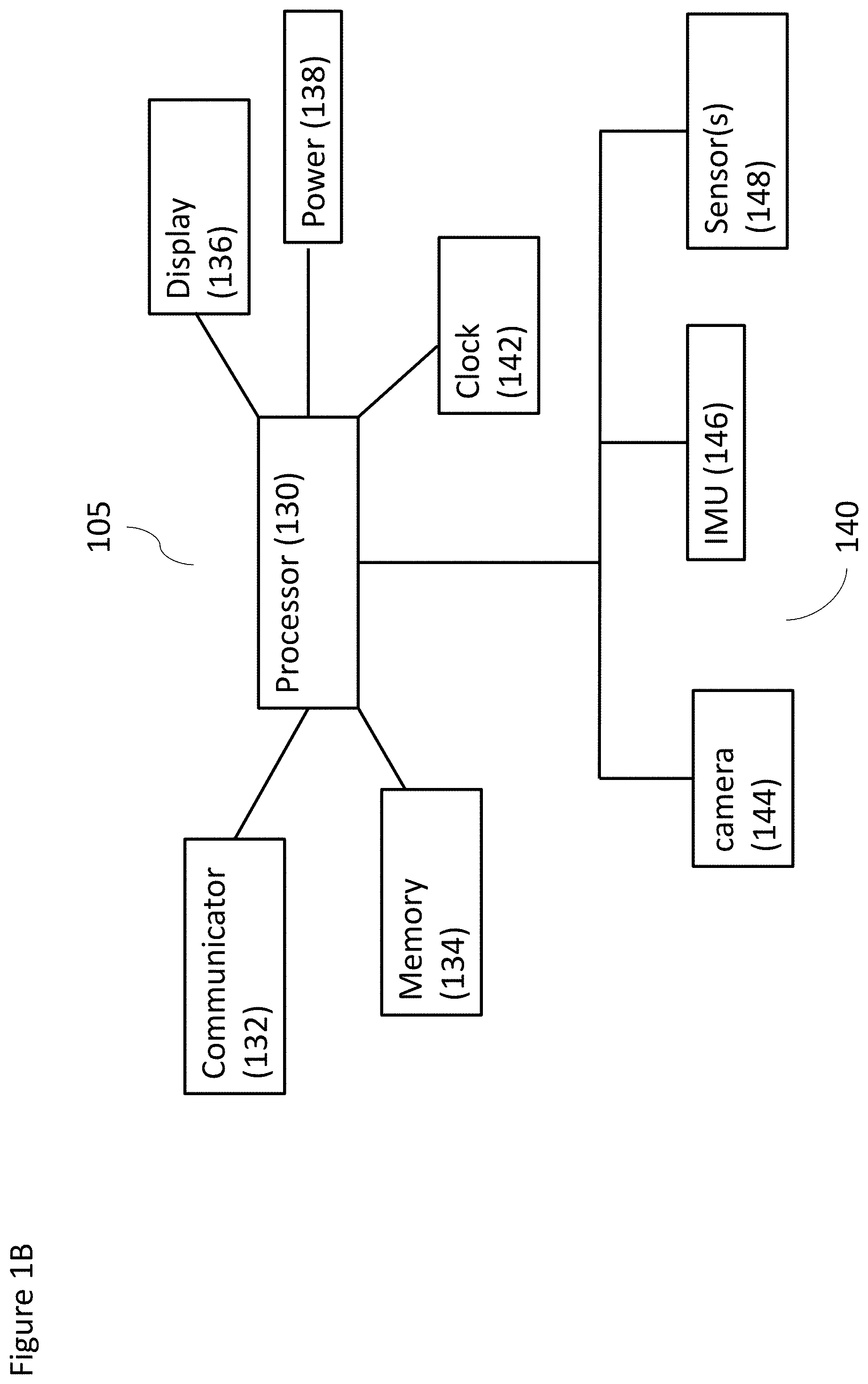

[0136] FIG. 1B shows a schematic of a non-limiting example of a wearable device 105 according to at least some embodiments. For example, in some implementations, a wearable device 105 can include a processor 130, a communicator 132, a memory 134, a display 136, a clock 142, and a power supply 138, and/or a number of sensors 103. In some implementations, each of the communicator 132, the memory 134, the display 136, the clock 142, and the power supply 138 can be operatively coupled to the processor 130. In implementations where the sensors 103 are operatively coupled to the wearable device 105, the sensors can be operatively coupled to the processor 130 (e.g., via the communicator 132); in implementations where the sensors are included in the wearable device 105, the sensors can be directly and operatively coupled to the processor 130.

[0137] Throughout the present disclosure, a "module" may refer to a designated circuit, a software application, set of computer instructions/software operational on a processor, or a processor itself (e.g., ASIC), for carrying out noted functionality.

[0138] In some implementations, the processor 130 can be a general purpose processor, a Field Programmable Gate Array (FPGA), an Application Specific Integrated Circuit (ASIC), a Digital Signal Processor (DSP), and/or the like. The memory 134 can be a hardware module and/or component configured to store data accessible by the processor 130, and/or to store code representing executable instructions for the processor 130. The memory 134 can be, for example, a random access memory (RAM), a memory buffer, a hard drive, a database, an erasable programmable read-only memory (EPROM), an electrically erasable read-only memory (EEPROM), a read-only memory (ROM) and/or so forth. In some embodiments, the memory 134 stores instructions to cause the processor 130 to execute modules, processes and/or functions associated with the wearable device 105. The processor 130 can be configured to implement instructions stored in the memory 134. The memory 134 can be configured to store processor-readable instructions that are accessible and executable by the processor 130.

[0139] In some implementations, the communicator 132 can be an external communication channel device, including but not limited to a device for communicating on WiFi and/or cellular networks, through Bluetooth, through infrared, and/or through a similar communication modality. The communicator 132 can be operatively coupled to other electronic devices, e.g., such as the computational device 107, the sensors 103, and/or the like, and can be configured to send and/or receive data to and/or from the other electronic devices. In some implementations, the display 136 can be one of an audio, video, haptic feedback, and/or vibration display. In some implementations, display 136 can be configured to display image, video, and/or other data. In some implementations, power supply 138 can be configured to supply power to wearable device 105, for example through a battery and/or through an external power source. Processor 130 can also control a clock 142. In some implementations, the processor 130 can control a number of different sensors 103, e.g., including but not limited to a camera 144, a IMU 146 and and/or one or more other sensors 148.

[0140] In some implementations, wearable device 105 can be an electronic device that is wearable and/or portable for a user, e.g., including a headset device, a helmet device, a mobile device (e.g., such as a cellular telephone, a laptop, a tablet, and/or a similar device), and/or other such electronic devices. As one non-limiting example, a wearable device 105 can be a smartphone device operatively coupled to a head mount. The smartphone can include a number of sensors (e.g., such as a camera, an accelerometer, a gyroscope, an IR sensor, and/or other sensors). The wearable device 105 can be configured to receive sensor data from the sensors and send the sensor data to the computational device 107. In some implementations, the computational device can be included in the wearable device 105.

[0141] Optionally sensor 103 and wearable device 105 are contained in a single housing (not shown). Optionally computational device 107 is also contained within the housing. Alternatively, computational device 107 is external to the housing. Also alternatively, computational device 107 is remote from the housing, such that computational device 107 is located at a distance of at least 5 cm, a distance of at least 10 cm, a distance of at least 20 cm, any distance in between or a greater distance.

[0142] FIG. 1C shows a non-limiting, example, illustrative schematic combination of a wearable device and a computational device according to at least some embodiments, shown as a system 170. For example, in some implementations, system 170 can include computational device 107, wearable device 105, sensor preprocessor 102, SLAM analyzer 104 and application logic 171. In some implementations, the system 170 can also include one or more sensor(s) 103; in other implementations, the one or more sensors may be external to the system 170, and can be operatively coupled to system 170 so as to prove sensor data to the system 170. The application logic 171 can be implemented via hardware or software, and can be configured to support the operation, for example, of a VR and/or AR application. In some implementations, system 170 can also include a display 174 (e.g., similar to display 136 as described in FIG. 1B) configured to display the output of application logic 171, such as information related to operation of a VR or AR application. Display 174 can be one or more of an audio, video, haptic feedback or vibration display.

[0143] FIG. 1D shows another non-limiting, exemplary, illustrative schematic combination of a wearable device 105 and a computational device 107 according to at least some embodiments, shown as a system 176. As shown, a system 176 can include a wearable device 105 such as a pair of smart glasses 178. Glasses 178 can include a display 180 similar to display 136 described in FIG. 1B. In some implementations, the glasses 178 can be operatively coupled to, for example, a local data processing system 182 (corresponding to the sensor preprocessor 102 of computational device 107), and optionally a remote processing system (according to some embodiments). Local data processing system 182 can, in turn, be operatively coupled to a remote data processing system 192 (e.g., corresponding to SLAM analyzer 104 and/or a similar analytics device), for example through a network 190. Network 190 can be a wired or wireless network, and can be one of a local area network (LAN), a cellular network, a wireless network (e.g., such as WiFi), a Bluetooth and/or similar network, and/or the like.

[0144] Local data processing system 182 can include, in some implementations, a local data processing module 184 (which may be referred to as a processor or module and may be hardware or software), a local data storage 186 and a local interface 188. The sensor(s) 103 can be configured to transmit sensor data to glasses 178, which are configured to transmit the sensor data to local data processor 184. Local data processor 184 can be configured to preprocess the sensor data. Local data processor 184 can also be configured to store the data in local data storage 186, and/or to transmit the data through local interface 188 and network 190 to the remote data processing system 192.

[0145] When the local data processing system 182 sends preprocessed sensor data to the remote data processing system 192, the remote data interface 194 of remote data processing system 192 can receive the preprocessed sensor data, and can store the preprocessed sensor data in remote data storage 198. The remote data processor 196 can be configured to analyze the data. For example, the remote data processor 196 can be configured to determine where the glasses 178 are oriented and/or where the glasses 178 have moved, using the preprocessed sensor data. In some implementations, the remote data processor 196 can be configured to determine other information relating to the glasses 178 based on the preprocessed sensor data. The remote data processor can then be configured to send the results of the analysis of the preprocessed sensor data to local data processing system 182, e.g., via the network 190. The local sensor processing system 182 can be configured to use the results to alter information displayed by display 180 in the glasses 178 (e.g., to alter an area of vision within a virtual environment, and/or the like).

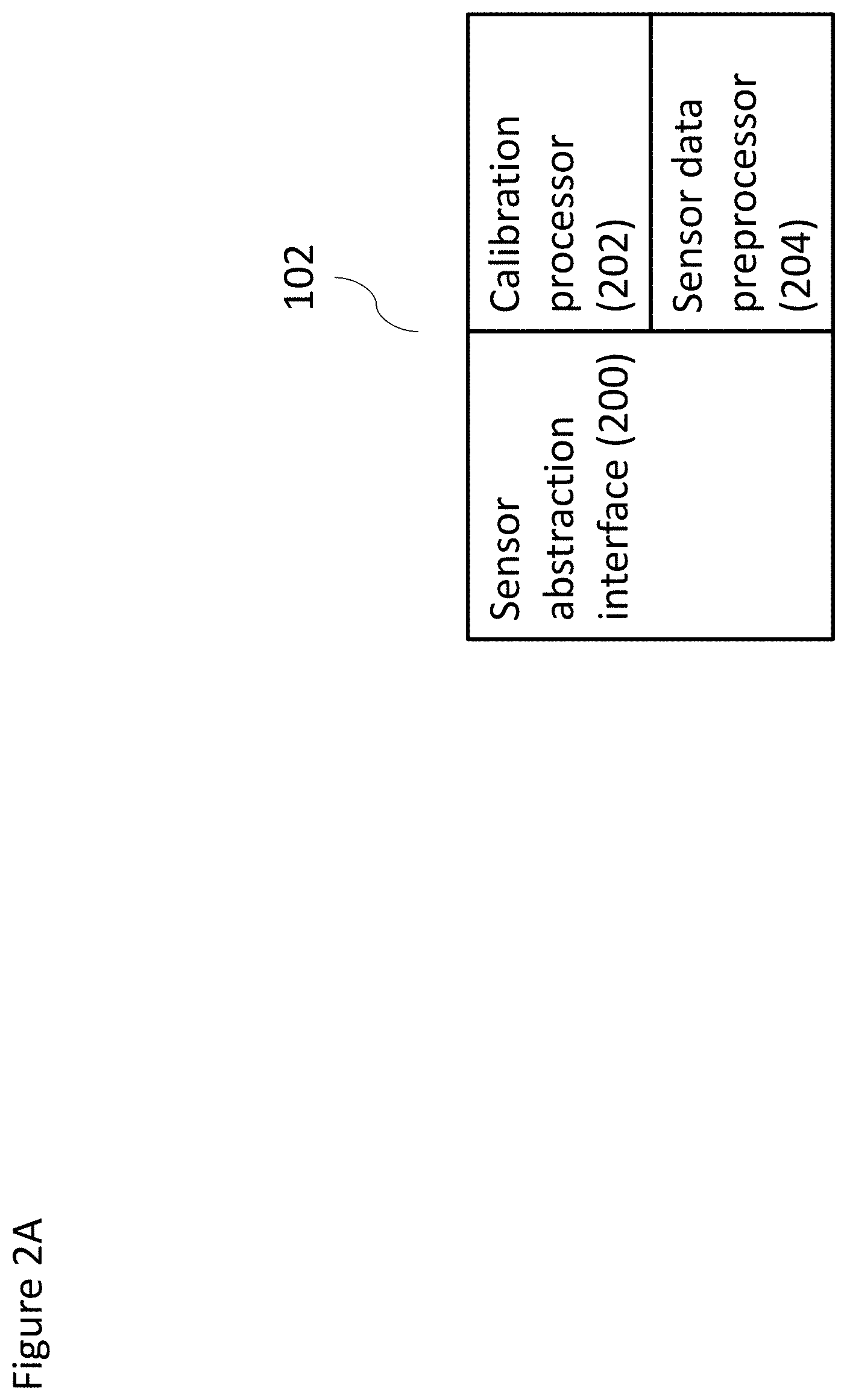

[0146] FIG. 2A shows a non-limiting, exemplary, illustrative schematic sensor preprocessor 102 according to at least some embodiments. As shown, sensor preprocessor 102 can include a sensor abstraction interface 200, a calibration processor 202 and a sensor data preprocessor 204. Sensor abstraction interface 200 can abstract the incoming sensor data (for example, abstract incoming sensor data from a plurality of different sensor types), such that sensor preprocessor 102 preprocesses sensor-agnostic sensor data.

[0147] In some implementations, calibration processor 202 can be configured to calibrate the sensor input, such that the input from individual sensors and/or from different types of sensors can be calibrated. As an example of the latter, if a sensor's sensor type is known and has been analyzed in advance, calibration processor 202 can be configure to provide the sensor abstraction interface 200 with information about device type calibration (for example), so that the sensor abstraction interface 200 can abstract the data correctly and in a calibrated manner. For example, the calibration processor 202 can be configured to include information for calibrating known makes and models of cameras, and/or the like. Calibration processor 202 can also be configured to to perform a calibration process to calibrate each individual sensor separately, e.g., at the start of a session (upon a new use, turning on the system, and the like) using that sensor. The user (not shown), for example, can take one or more actions as part of the calibration process, including but not limited to displaying printed material on which a pattern is present. The calibration processor 202 can receive the input from the sensor(s) as part of an individual sensor calibration, such that calibration processor 202 can use this input data to calibrate the sensor input for each individual sensor. The calibration processor 202 can then send the calibrated data from sensor abstraction interface 200 to sensor data preprocessor 204, which can be configured to perform data preprocessing on the calibrated data, including but not limited to reducing and/or eliminating noise in the calibrated data, normalizing incoming signals, and/or the like. The sensor preprocessor 102 can then send the preprocessed sensor data to a SLAM analyzer (not shown).

[0148] FIG. 2B shows a non-limiting, example, illustrative schematic SLAM analyzer 104, according to at least some embodiments. In some implementations, the SLAM analyzer 104 can include a localization processor 206 and a mapping processor 212. The localization processor 206 of the SLAM analyzer 104 can be operatively coupled to the mapping processor 212 and/or vice-versa. In some implementations, the mapping processor 212 can be configured to create and update a map of an environment surrounding the wearable device (not shown). Mapping processor 212, for example, can be configured to determine the geometry and/or appearance of the environment, e.g., based on analyzing the preprocessed sensor data received from the sensor preprocessor 102. Mapping processor 212 can also be configured to generate a map of the environment based on the analysis of the preprocessed data. In some implementations, the mapping processor 212 can be configured to send the map to the localization processor 206 to determine a location of the wearable device within the generated map.

[0149] In some implementations, the localization processor 206 can include a relocalization processor 208 and a tracking processor 210. Relocalization processor 208, in some implementations, can be invoked when the current location of the wearable device 105--and more specifically, of the one or more sensors 103 associated with the wearable device 105--cannot be determined according to one or more criteria. For example, in some implementations, relocalization processor 208 can be invoked when the current location cannot be determined by processing the last known location with one or more adjustments. Such a situation may arise, for example, if SLAM analyzer 104 is inactive for a period of time and the wearable device 105 moves during this period of time. Such a situation may also arise if tracking processor 210 cannot track the location of wearable device on the map generated by mapping processor 212.

[0150] In some implementations, tracking processor 210 can determine the current location of the wearable device 105 according to the last known location of the device on the map and input information from one or more sensor(s), so as to track the movement of the wearable device 105. Tracking processor 210 can use algorithms such as a Kalman filter, or an extended Kalman filter, to account for the probabilistic uncertainty in the sensor data.

[0151] In some implementations, the tracking processor 210 can track the wearable device 105 so as to reduce jitter, e.g., by keeping a constant and consistent error through the mapping process, rather than estimating the error at each step of the process. For example, the tracking processor 210 can, in some implementations, use the same or a substantially similar error value when tracking a wearable device 105.

[0152] In some implementations, the tracking processor 210 can track the wearable device 105 so as to reduce jitter, e.g., by mixing frame-to-frame with keyframe-to-frame tracking, as described in "Stable Real-Time 3D Tracking using Online and Offline Information", by Vacchetti et al. However, the method described in this paper relies upon manually acquiring keyframes, while for the optional method described herein, the keyframes are created dynamically as needed, as described in greater detail below (for example as described in the discussion of FIGS. 6-8). In some implementations, the tracking processor 210 can also use Kalman filtering to address jitter, can implement Kalman filtering in addition to, or in replacement of, the methods described herein.

[0153] In some implementations, the output of localization processor 206 can be sent to mapping processor 212, and the output of mapping processor 212 can be sent to the localization processor 206, so that the determination by each of the location of the wearable device 105 and the map of the surrounding environment can inform the determination of the other.

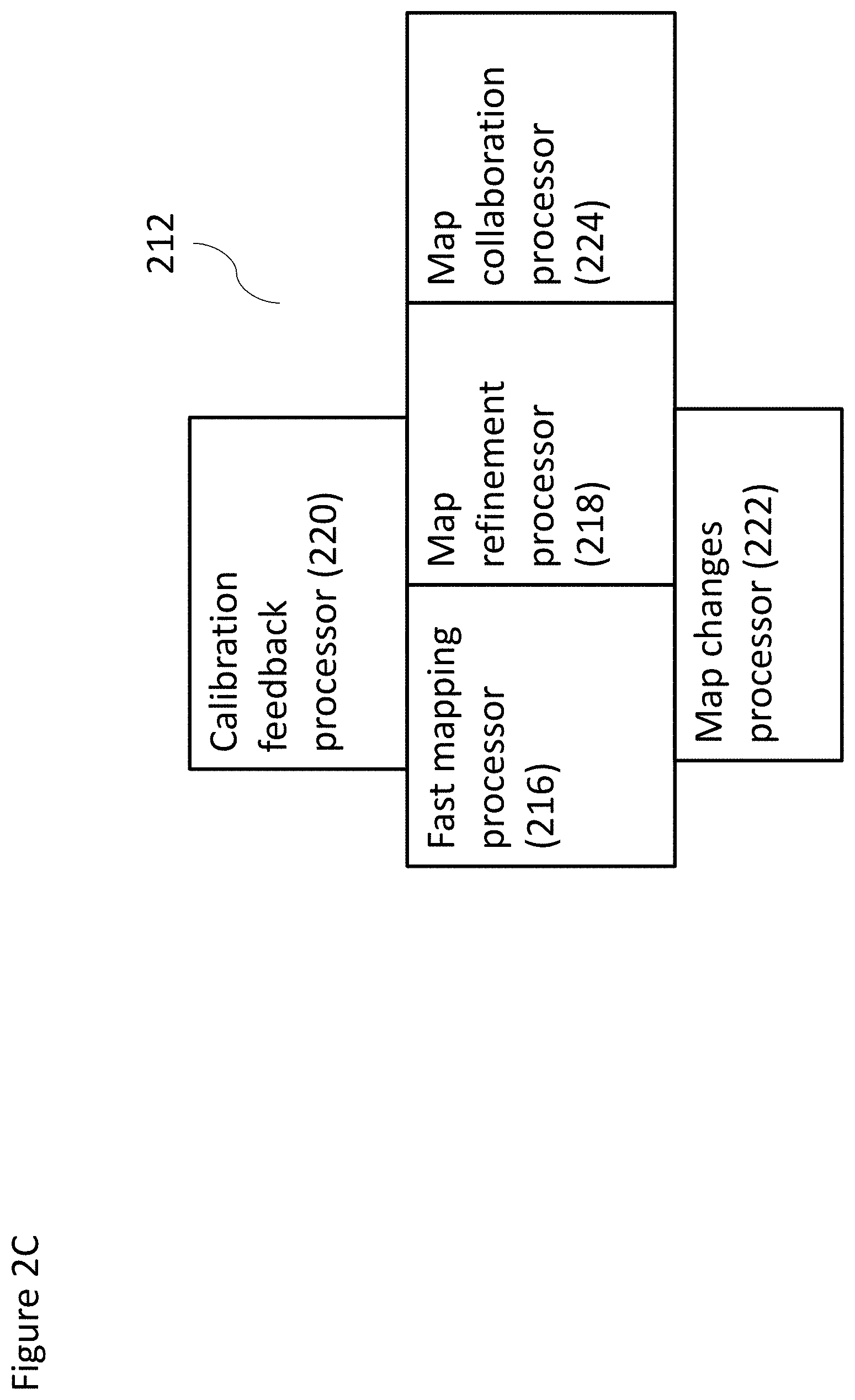

[0154] FIG. 2C shows a non-limiting, exemplary, illustrative schematic mapping module or processor according to at least some embodiments. For example, in some implementations, mapping module or processor 212 can include a fast mapping processor 216, a map refinement processor 218, a calibration feedback processor 220, a map changes processor 222 and a map collaboration processor 224. Each of fast mapping processor 216 and map refinement processor 218 can be in direct communication with each of calibration feedback processor 220 and map changes processor 222 separately. In some implementations, map collaboration processor 224 may be in direct communication with map refinement processor 218.

[0155] In some implementations, fast mapping processor 216 can be configured to define a map rapidly and in a coarse-grained or rough manner, using the preprocessed sensor data. Map refinement processor 218 can be configured to refine this rough map to create a more defined map. Map refinement processor 218 can be configured to correct for drift. Drift can occur as the calculated map gradually begins to differ from the true map, due to measurement and sensor errors for example. For example, such drift can cause a circle to not appear to be closed, even if movement of the sensor should have led to its closure. Map refinement processor 218 can be configured to correct for drift, by making certain that the map is accurate; and/or can be configured to spread the error evenly throughout the map, so that drift does not become apparent. In some implementations, each of fast mapping processor 216 and map refinement processor 218 is operated as a separate thread on a computational device (not shown). For such an implementation, localization processor 206 can be configured to operate as yet another thread on such a device.

[0156] Map refinement processor 218 performs mathematical minimization of the points on the map, including with regard to the position of all cameras and all three dimensional points. For example, and without limitation, if the sensor data comprises image data, then map refinement processor 218 may re-extract important features of the image data around locations that are defined as being important, for example because they are information-rich. Such information-rich locations may be defined according to landmarks on the map, as described in greater detail below. Other information-rich locations may be defined according to their use in the previous coarse-grained mapping by fast mapping processor 216.

[0157] The combination of the implementations of FIGS. 2B and 2C may optionally be implemented on three separate threads as follows. The tracking thread would optionally and preferably operate with the fastest processing speed, followed by the fast mapping thread; while the map refinement thread can operate at a relatively slower processing speed. For example, tracking can be operated at a process speed that is at least five times faster than the process speed of fast mapping, while the map refinement thread can be operated at a process speed that is at least 50% slower than the speed of fast mapping. The following processing speeds could be implemented as a non-limiting example: tracking being operated in a tracking thread at 60 Hz, fast mapping thread at 10 Hz, and the map refinement thread being operated once every 3 seconds.

[0158] Calibration feedback processor 220 can be operated in conjunction with input from one or both of fast mapping processor 216 and map refinement processor 218. For example, the output from map refinement processor 218 can be used to determine one or more calibration parameters for one or more sensors, and/or to adjust such one or more calibration parameters. For the former case, if the sensor was a camera, then output from map refinement processor 218 can be used to determine one or more camera calibration parameters, even if no previous calibration was known or performed. Such output can be used to solve for lens distortion and focal length, because the output from map refinement processor 218 can be configured to indicate where calibration issues related to the camera were occurring, as part of solving the problem of minimization by determining a difference between the map before refinement and the map after refinement. Alternatively or additionally, such calibration can feed into the mapping process, whether by fast mapping processor 216 and/or map refinement processor 218.

[0159] Map changes processor 222 can also be operated in conjunction with input from one or both of fast mapping processor 216 and map refinement processor 218, to determine what change(s) have occurred in the map as a result of a change in position of the wearable device. Map changes processor 222 can also receive output from fast mapping processor 216, to determine any coarse-grained changes in position. Map changes processor 222 can also (additionally or alternatively) receive output from map refinement processor 218, to determine more precise changes in the map. Such changes can include removal of a previous validated landmark, or the addition of a new validated landmark; as well as changes in the relative location of previously validated landmarks. By "validated landmark" it is meant a landmark whose location has been correctly determined and confirmed, for example by being found at the same location for more than one mapping cycle.

[0160] Such changes can be explicitly used to increase the speed and/or accuracy of further localization and/or mapping activities, and/or can be fed to an outside application that relies upon SLAM in order to increase the speed and/or efficacy of operation of the outside application. By "outside application" it is meant any application that is not operative for performing SLAM.

[0161] As a non-limiting example of feeding this information to the outside application, such information can be used by the application, for example to warn the user that one of the following has occurred: a particular object has been moved; a particular object has disappeared from its last known location; or a new specific object has appeared. Such warning can be determined according to the available information from the last time the scene was mapped.

[0162] Map changes processor 222 can have a higher level understanding for determining that a set of coordinated or connected landmarks moved or disappeared, for example to determine a larger overall change in the environment being mapped. Again, such information may be explicitly used to increase the speed and/or accuracy of further localization and/or mapping activities, and/or may be fed to an outside application that relies upon SLAM in order to increase the speed and/or efficacy of operation of the outside application.

[0163] Map collaboration processor 224 can receive input from map refinement processor 218 in order for a plurality of SLAM analyzers in conjunction with a plurality of wearable devices to create a combined, collaborative map. For example, a plurality of users, wearing a plurality of wearable devices implementing such a map collaboration processor 224, can receive the benefit of pooled mapping information over a larger area. As a non-limiting example only, such a larger area can include an urban area, including at least outdoor areas, and also including public indoor spaces. Such a collaborative process can increase the speed and efficiency with which such a map is built, and can also increase the accuracy of the map, by receiving input from a plurality of different sensors from different wearable devices. While map collaboration processor 224 can also receive and implement map information from fast mapping processor 216, for greater accuracy, data from map refinement processor 218 is used.

[0164] Optionally, computational device 107 from FIG. 1A comprises a hardware processor configured to perform a predefined set of basic operations in response to receiving a corresponding basic instruction selected from a predefined native instruction set of codes, and memory. SLAM analyzer 104 optionally comprises a first set of machine codes selected from the native instruction set for receiving sensor data, which may be optical sensor data. SLAM analyzer 104 optionally comprises a second set of machine codes selected from the native instruction set for operating a localization module (such as the instructions for localization processor 206), a third set of machine codes selected from the native instruction set for operating a fast mapping module (such as the instructions for fast mapping processor 216); and a fourth set of machine codes selected from the native instruction set for operating a map refinement module (such as the instructions for map refinement processor 218). Each of the first, second, third and fourth sets of machine code is stored in the memory of computational device 107.

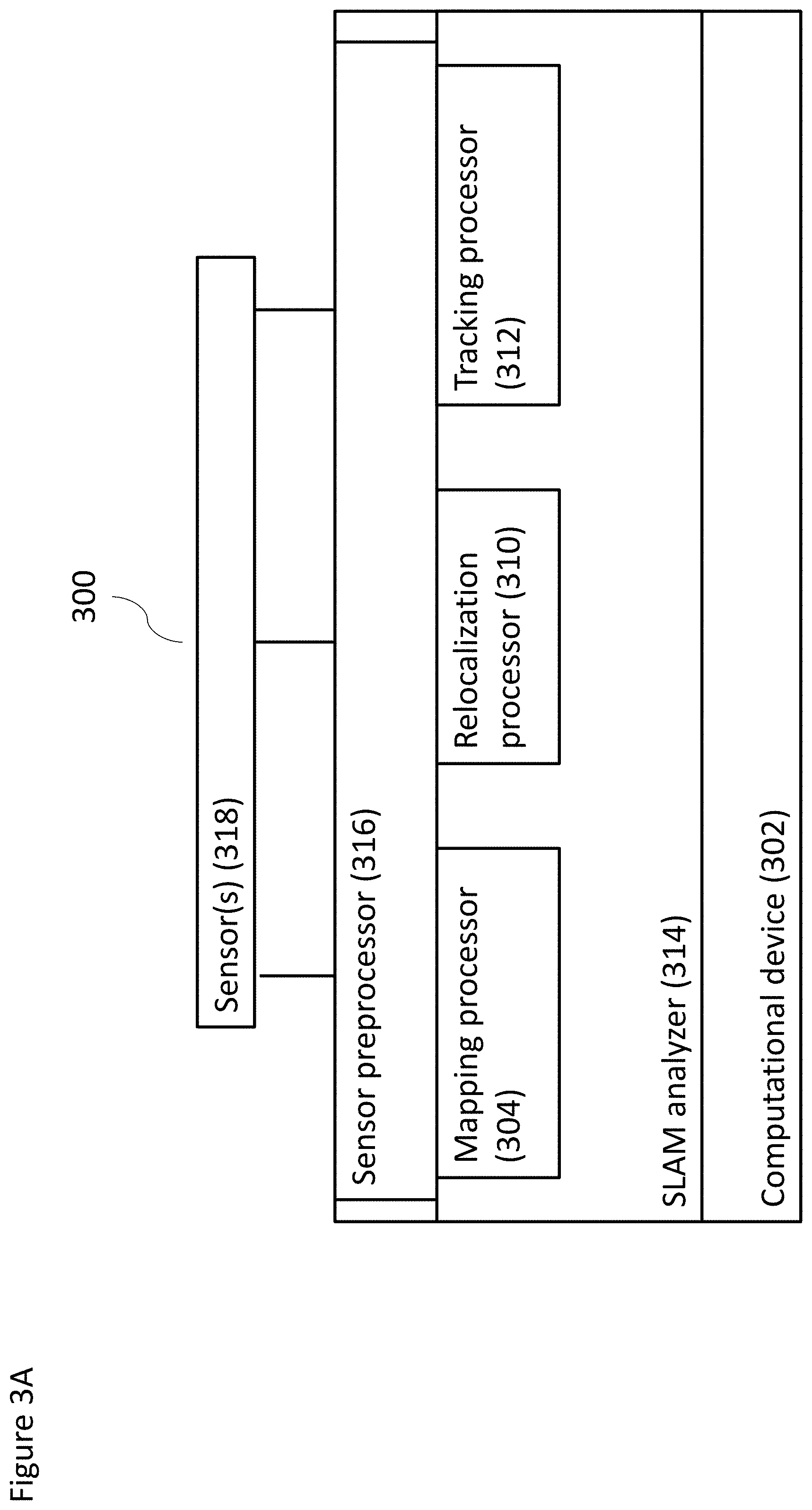

[0165] FIG. 3A shows a schematic of another non-limiting example system according to at least some embodiments of the present invention, relating to one or more sensors communicating with a computational device, shown as a system 300. As shown, system 300 includes a computational device 302 in communication with one or more sensors 318. Sensor(s) 318 may comprise any type of sensor as described in the present disclosure, or a plurality of different types of sensors.

[0166] Computational device 302 preferably operates a sensor preprocessor 316, which may optionally operate as previously described for other sensor preprocessors. Preferably, sensor preprocessor 316 receives input data from one or more sensors 318 and processes the input data to a form which is suitable for use by SLAM analyzer 314. SLAM analyzer 314 may operate as previously described for other SLAM analyzers.

[0167] SLAM analyzer 314 preferably comprises a mapping module or processor 304, which may operate as previously described for other mapping modules, and thus, perform mapping functions as previously described. SLAM analyzer 314 also preferably includes a relocalization module or processor 310 and a tracking module or processor 312. While in some embodiments relocalization module 310 and tracking module 312 can be separate modules, relocalization module 310 and tracking module 312 may be combined in a single module.

[0168] Relocalization module 310 may operate as previously described relocalization modules in the disclosure, so as to determine the location of system 300 (or rather of sensor(s) 318) in case such a location cannot be determined from a previously known location of same and data from sensor(s) 318. Furthermore, tracking module 312 may optionally operate as previously described for other tracking modules, to determine the location of system 300 (or rather of sensor(s) 318) from a previously known location of same and data from sensor(s) 318.

[0169] FIG. 3B shows a schematic of a non-limiting example of a computational device operating at least some components of the system according to at least some embodiments of the present disclosure. System 302 includes some of the same components as FIG. 3A (which are shown with the same numbering). SLAM analyzer 314 of system 302 preferably features an obstacle avoidance module or processor 320, which is optionally and preferably operated/controlled by mapping module or processor 304. Obstacle avoidance module 320 is configured to detect and map potential obstacles in a real, physical world, so as to assist the user of the wearable device 105 in avoiding potential obstacles. By tracking validated (i.e., actual) landmarks and corresponding geometry thereof in the real, physical world, mapping processor 212 can provide such information to obstacle avoidance processor, enabling the obstacle avoidance processor to identify such landmarks as potential obstacles. The obstacle avoidance processor can thus be used to determine the distance of the landmarks to the user and/or a distance from the user to sensor(s) 103 that are providing the input data used for mapping.

[0170] In some implementations, the output of SLAM analyzer 104 (which may include information about the potential obstacles) is passed through an application interface to a VR (virtual reality) application. Optionally, both the application interface 322 and VR application 324 are operated by computational device 107 (e.g., for either or both of the schematics shown in FIGS. 3A and 3B). The VR application can use the mapping and localization information to feed into the map of the virtual world, as well as the location of the representation of the user, or "avatar", on such map. In addition, the VR application 324 can use information regarding potential obstacles as input to the map of the virtual world. For example, the VR application 324 can display a wall in the virtual world that corresponds to the location and geometry of a wall in the physical world, according to the information received. VR application 324 could also optionally receive other types of information, for example, regarding the location and movement of an object held in the user's hand (not shown), which would be extraneous to SLAM analyzer 314.