Method And System For Unsynchronized Structured Lighting

TAUBIN; Gabriel ; et al.

U.S. patent application number 16/434846 was filed with the patent office on 2019-11-28 for method and system for unsynchronized structured lighting. The applicant listed for this patent is Brown University. Invention is credited to Fatih CALAKLI, Daniel MORENO, Gabriel TAUBIN.

| Application Number | 20190364264 16/434846 |

| Document ID | / |

| Family ID | 54055938 |

| Filed Date | 2019-11-28 |

View All Diagrams

| United States Patent Application | 20190364264 |

| Kind Code | A1 |

| TAUBIN; Gabriel ; et al. | November 28, 2019 |

METHOD AND SYSTEM FOR UNSYNCHRONIZED STRUCTURED LIGHTING

Abstract

A system and method to capture the surface geometry a three-dimensional object in a scene using unsynchronized structured lighting is disclosed. The method and system includes a pattern projector configured and arranged to project a sequence of image patterns onto the scene at a pattern frame rate, a camera configured and arranged to capture a sequence of unsynchronized image patterns of the scene at an image capture rate, and a processor configured and arranged to synthesize a sequence of synchronized image frames from the unsynchronized image patterns of the scene. Each of the synchronized image frames corresponds to one image pattern of the sequence of image patterns.

| Inventors: | TAUBIN; Gabriel; (Providence, RI) ; MORENO; Daniel; (Norwich, CT) ; CALAKLI; Fatih; (Isparta, TR) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Family ID: | 54055938 | ||||||||||

| Appl. No.: | 16/434846 | ||||||||||

| Filed: | June 7, 2019 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 15124176 | Sep 7, 2016 | |||

| PCT/US15/19357 | Mar 9, 2015 | |||

| 16434846 | ||||

| 61949529 | Mar 7, 2014 | |||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | H04N 13/167 20180501; G06T 2207/10028 20130101; G03B 35/02 20130101; G06T 7/521 20170101; H04N 13/254 20180501 |

| International Class: | H04N 13/254 20060101 H04N013/254; G03B 35/02 20060101 G03B035/02; H04N 13/167 20060101 H04N013/167; G06T 7/521 20060101 G06T007/521 |

Goverment Interests

STATEMENT REGARDING GOVERNMENT INTEREST

[0002] This Invention was made with government support under grant number DE-FG02-08ER15937 awarded by the Department of Energy. The government has certain rights in the invention.

Claims

1. A method to synthesize a sequence of synchronized image frames synchronized from a sequence of unsynchronized image frames; each of the synchronized image frames corresponding to one of a sequence of image patterns; the synchronized image frames and unsynchronized image frames being image frames of the same width and height; the image frames comprising a plurality of common pixels; the method comprising the steps of: a) partitioning the plurality of common pixels into a pixel partition; the pixel partition comprising a plurality of pixel sets; the pixel sets being disjoint; each of the plurality of common pixels being a member of one of the pixel sets; b) selecting a pixel set; c) building a pixel set measurement matrix; d) estimating a pixel set projection matrix; the pixel set projection matrix projecting a measurement space vector onto the column space of the pixel set measurement matrix; e) estimating a pixel set system matrix; the system matrix being parameterized by a set of system matrix parameters; f) estimating a pixel set synchronized matrix as a function of the pixel set measurement matrix and the pixel set system matrix; g) repeating steps b) to f) until all the pixels sets have been selected; and h) constructing a sequence of synchronized image frames from the pixel set synchronized matrices.

2. A method as in claim 1, where the pixel partition comprises a single pixel set, and the single pixel set contains all the pixels of the image frames.

3. A method as in claim 1, where the number of pixel sets in the pixel partition is equal to the height of the image frames, and each row of the image frames is a pixel set.

4. A method as in claim 1, where the pixel set measurement matrix is modeled as the product of the system matrix times the pixel set synchronized matrix, and the step of estimating the pixel set synchronized matrices reduces to the solution of a linear least-squares problem.

5. A method as in claim 1, where the system matrix is parameterized by an image frame period parameter, an integration time parameter, and a first pattern delay parameter.

Description

CROSS REFERENCE TO RELATED APPLICATIONS

[0001] This application is a divisional application of U.S. patent application Ser. No. 15/124,176, which is a national phase filing under 35 U.S.C. .sctn. 371 of International Application No. PCT/US2015/019357 filed Mar. 9, 2015, which claims priority to earlier filed U.S. Provisional Application Ser. No. 61/949,529, filed Mar. 7, 2014, the contents of which are incorporated herein by reference.

BACKGROUND OF THE INVENTION

1. Field of the Invention

[0003] This patent document relates generally to the field of three-dimensional shape capture of the surface geometry of an object, and more particularly to structured lighting three-dimensional shape capture.

2. Background of the Related Art

[0004] Three-dimensional scanning and digitization of the surface geometry of objects is commonly used in many industries and services, and their applications are numerous. A few examples of such applications are inspection and measurement of shape conformity in industrial production systems, digitization of clay models for industrial design and styling applications, reverse engineering of existing parts with complex geometry for three-dimensional printing, interactive visualization of objects in multimedia applications, three-dimensional documentation of artwork, historic and archaeological artifacts, human body scanning for better orthotics adaptation, biometry or custom-fit clothing, and three-dimensional forensic reconstruction of crime scenes.

[0005] One technology for three-dimensional shape capture is based on structured lighting. Three dimensional shape capture systems based on structure lighting are more accurate than those based on time-of-flight (TOF) image sensors. In a standard structured lighting 3D shape capture system a pattern projector is used to illuminate the scene of interest with a sequence of known two-dimensional patterns, and a camera is used to capture a sequence of images, synchronized with the projected patterns. The camera captures one image for each projected pattern. Each sequence of images captured by the camera is decoded by a computer processor into a dense set of projector-camera pixel correspondences, and subsequently into a three-dimensional range image, using the principles of optical triangulation.

[0006] The main limitation of three-dimensional shape capture systems is the required synchronization between projector and camera. To capture a three-dimensional snapshot of a moving scene, the sequence of patterns must be projected at a fast rate, the camera must capture image frames exactly at the same frame rate, and the camera has to start capturing the first frame of the sequence exactly when the projector starts to project the first pattern.

[0007] Therefore, there is a need for three-dimensional shape measurement methods and systems based on structure lighting where the camera and the pattern projector are not synchronized.

[0008] Further complicating matters, image sensors generally use one of two different technologies to capture an image, referred to as "rolling shutter" and "global shutter". "Rolling shutter" is a method of image capture in which a still picture or each frame of a video is captured not by taking a snapshot of the entire scene at single instant in time but rather by scanning across the scene rapidly, either vertically or horizontally. In other words, not all parts of the image of the scene are recorded at exactly the same instant. This is in contrast with "global shutter" in which the entire frame is captured at the same instant. Even though most image sensors in consumer devices are rolling shutter sensors, many image sensors used in industrial applications are global shutter sensors.

[0009] Therefore, there is a need for three-dimensional shape measurement methods and systems based on structure lighting where the camera and the pattern projector are not synchronized, supporting both global shutter and rolling shutter image sensors.

SUMMARY OF THE INVENTION

[0010] A system and method to capture the surface geometry a three-dimensional object in a scene using unsynchronized structured lighting solves the problems of the prior art. The method and system includes a pattern projector configured and arranged to project a sequence of image patterns onto the scene at a pattern frame rate, a camera configured and arranged to capture a sequence of unsynchronized image patterns of the scene at an image capture rate; and a processor configured and arranged to synthesize a sequence of synchronized image frames from the unsynchronized image patterns of the scene, each of the synchronized image frames corresponding to one image pattern of the sequence of image patterns. Because the method enables use of an unsynchronized pattern projector and camera significant cost savings can be achieved. The method enables use of inexpensive cameras, such as smartphone cameras, webcams, point-and-shoot digital cameras, camcorders as well as industrial cameras. Furthermore, the method and system enable processing the images with a variety of computing hardware, such as computers, digital signal processors, smartphone processors and the like. Consequently, three-dimensional image capture using structured lighting may be used with relatively little capital investment.

BRIEF DESCRIPTION OF THE DRAWINGS

[0011] These and other features, aspects, and advantages of the method and system will become better understood with reference to the following description, appended claims, and accompanying drawings where:

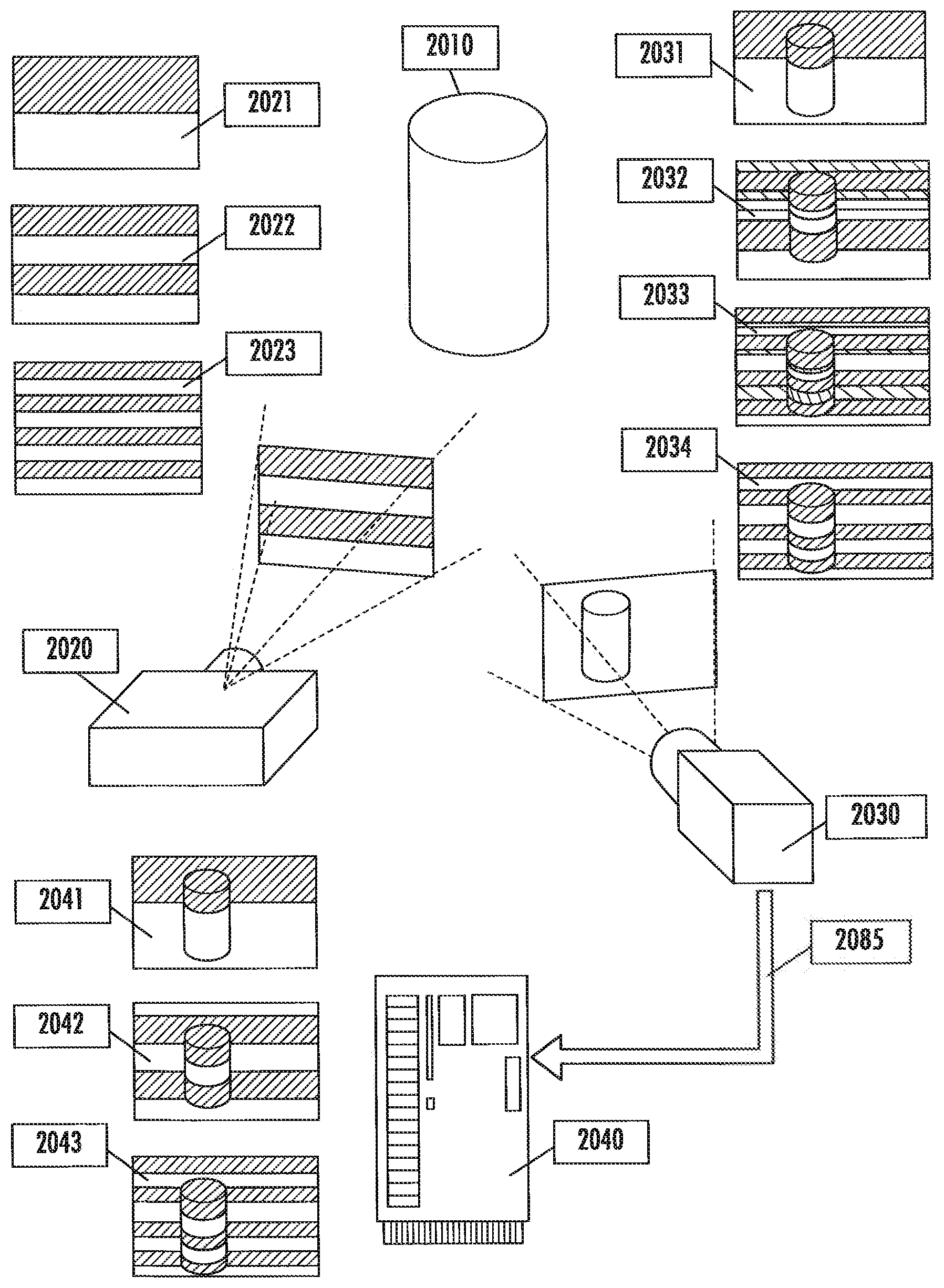

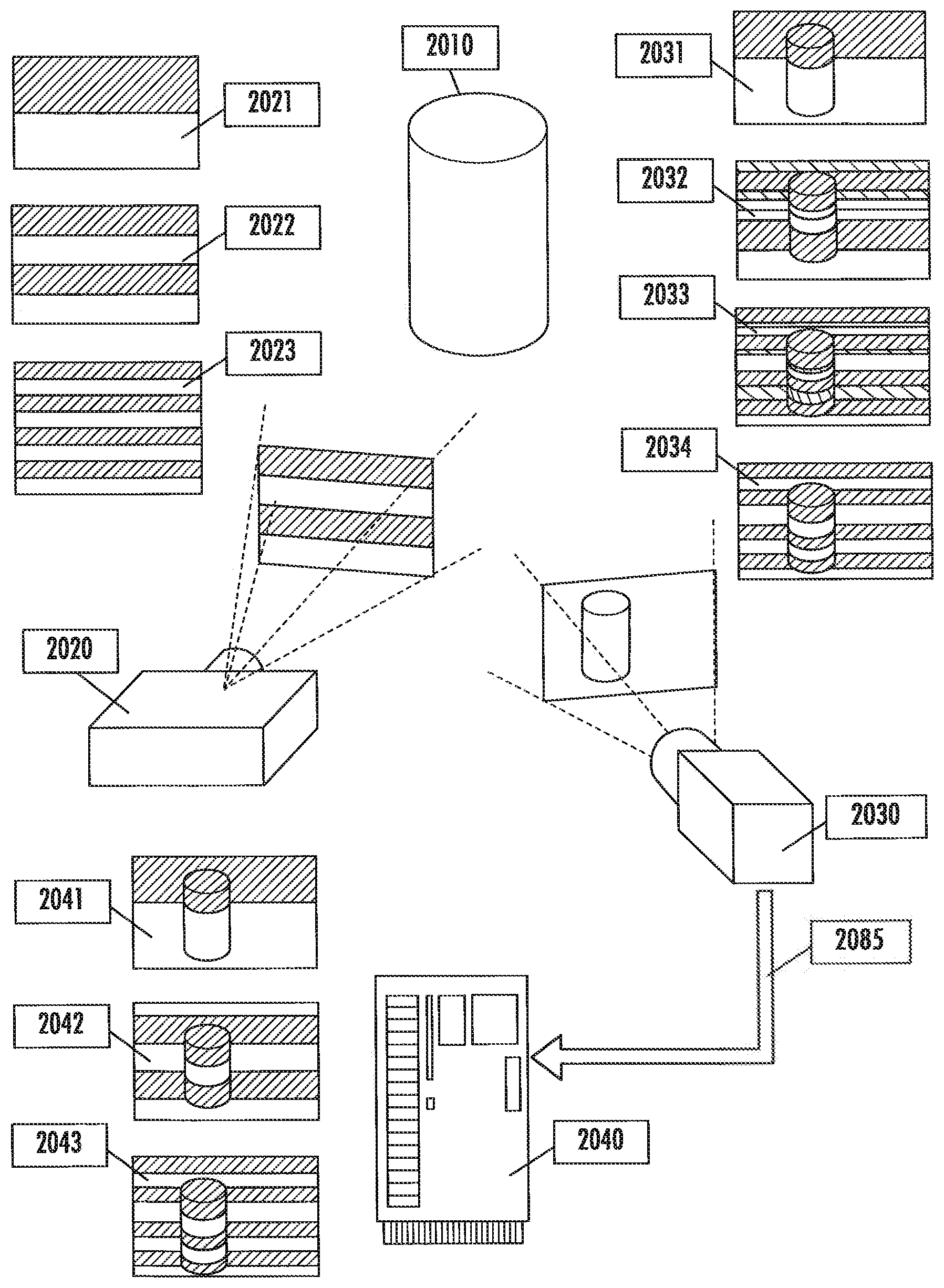

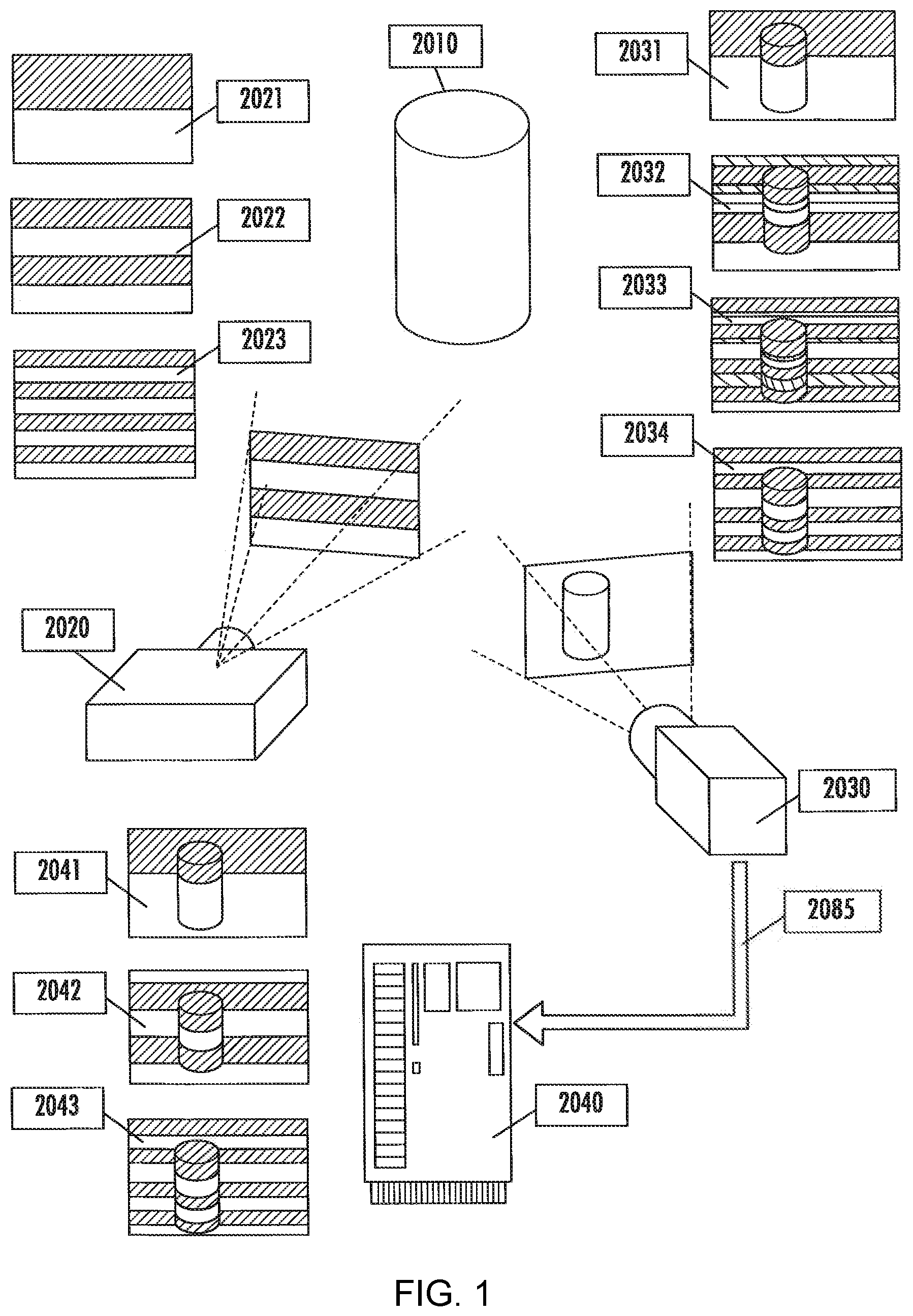

[0012] FIG. 1 is an illustration of an exemplary embodiment of the method and system for unsynchronized structured lighting;

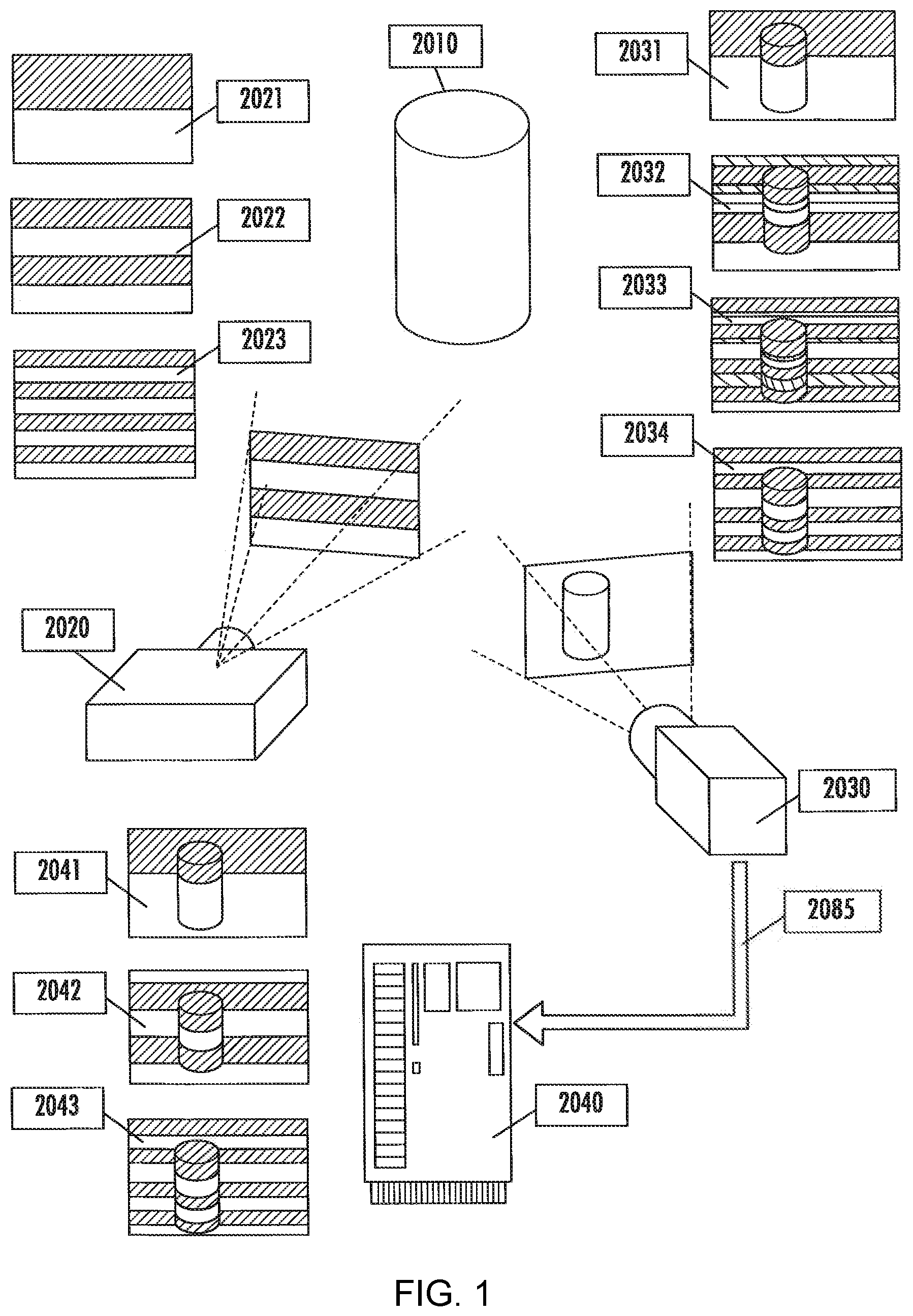

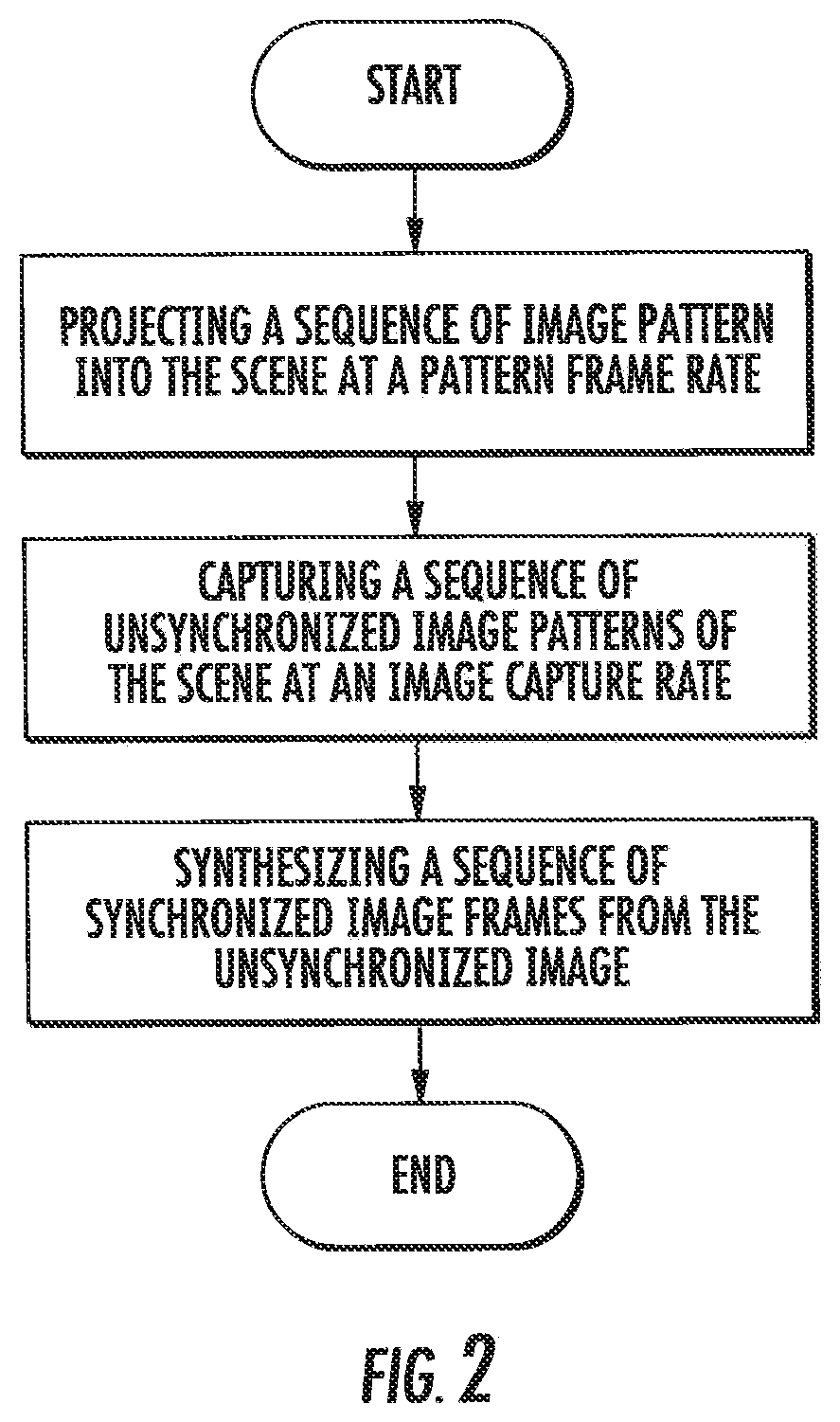

[0013] FIG. 2 shows a flowchart of an exemplary embodiment of the method and system for unsynchronized structured lighting;

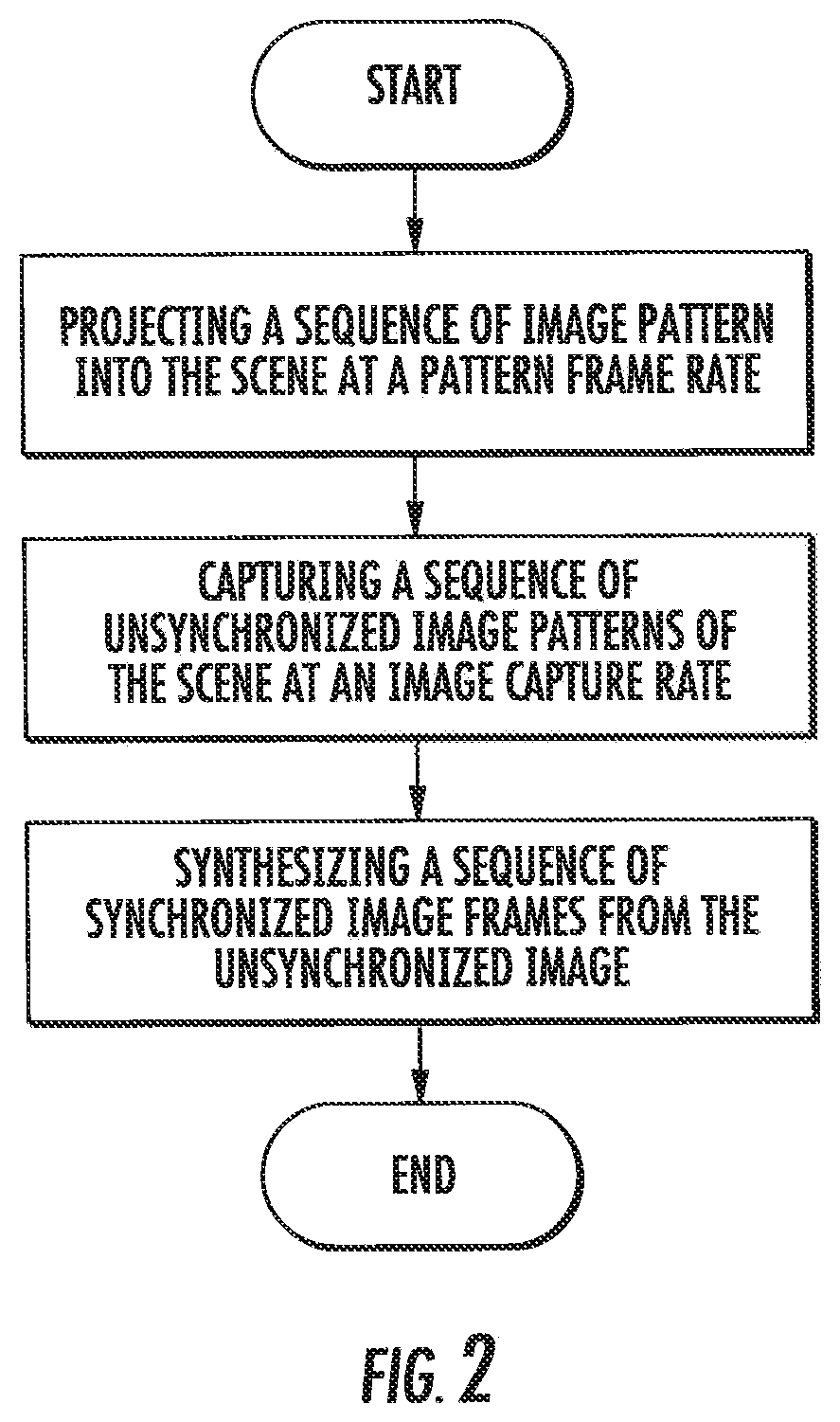

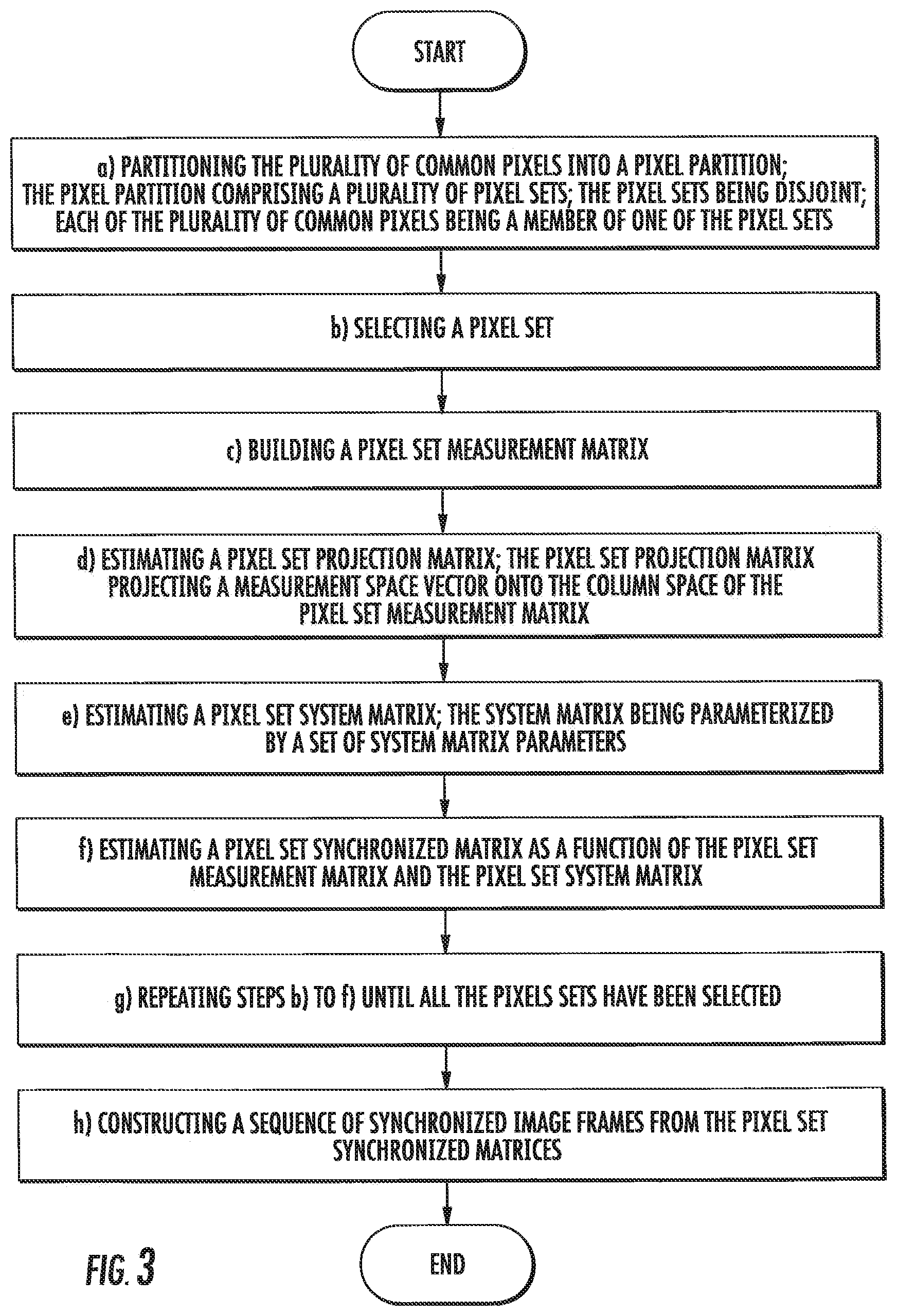

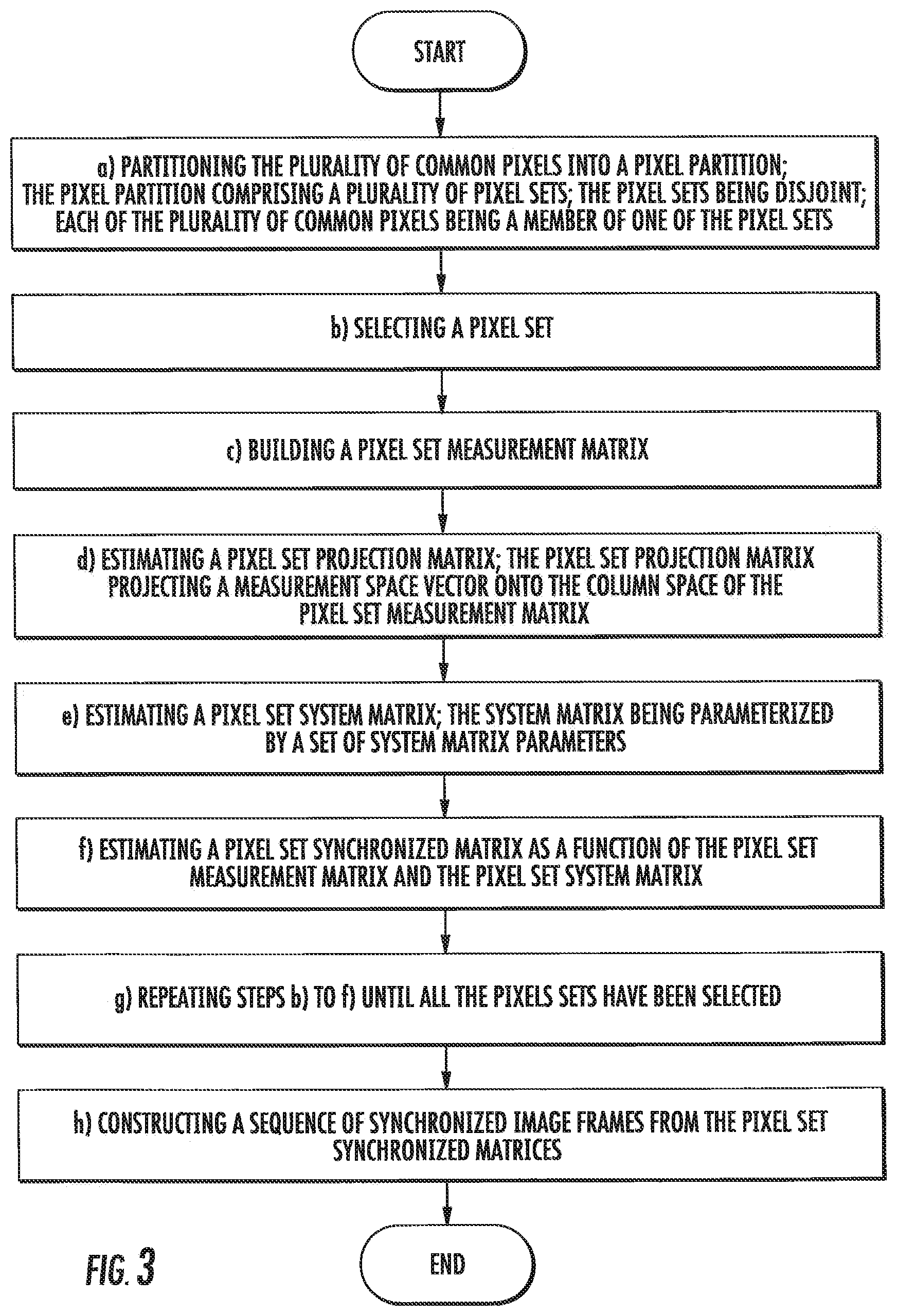

[0014] FIG. 3 shows a flowchart of an exemplary embodiment of the method and system for unsynchronized structured lighting of synthesizing the synchronized sequence of image frames;

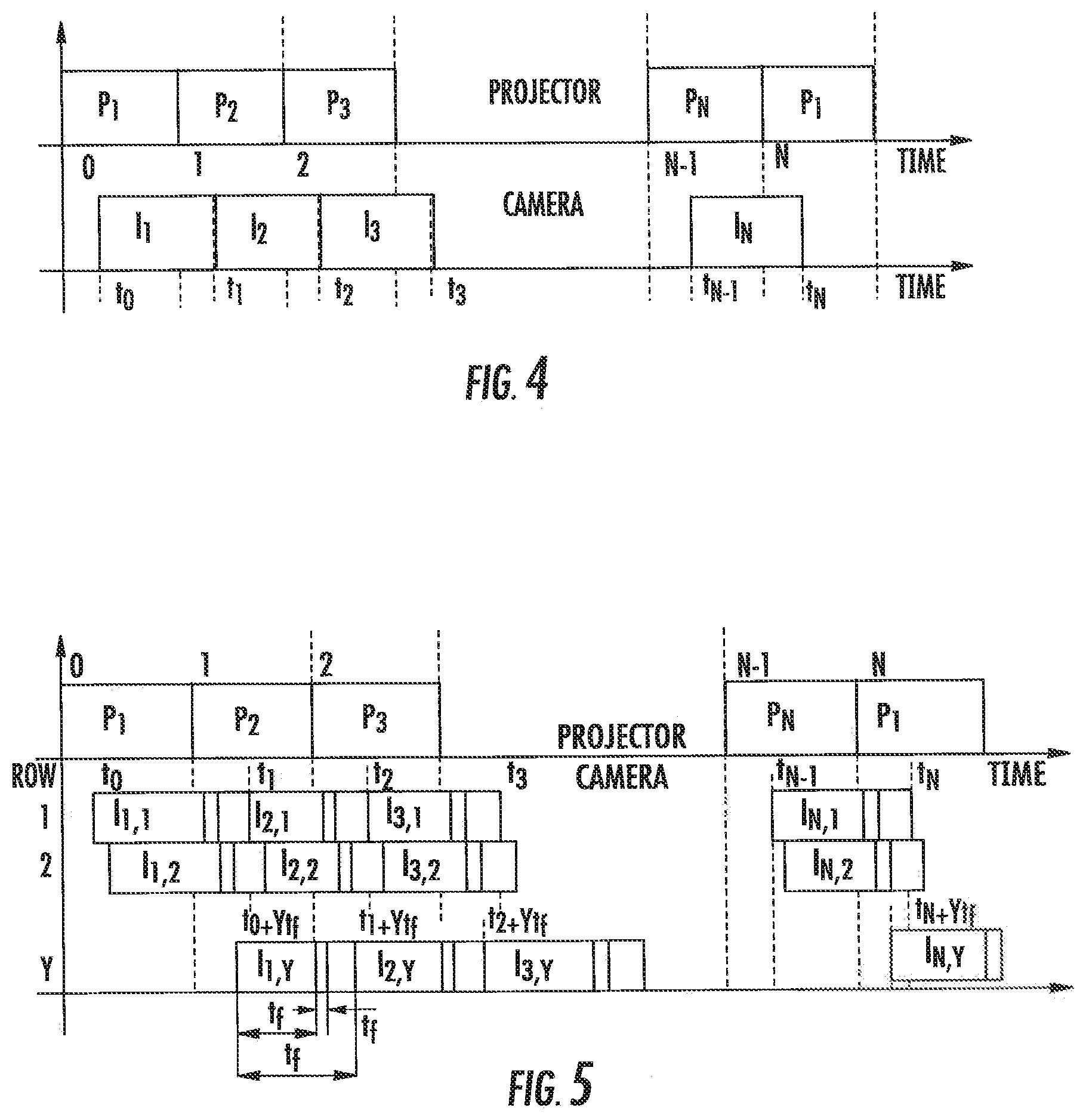

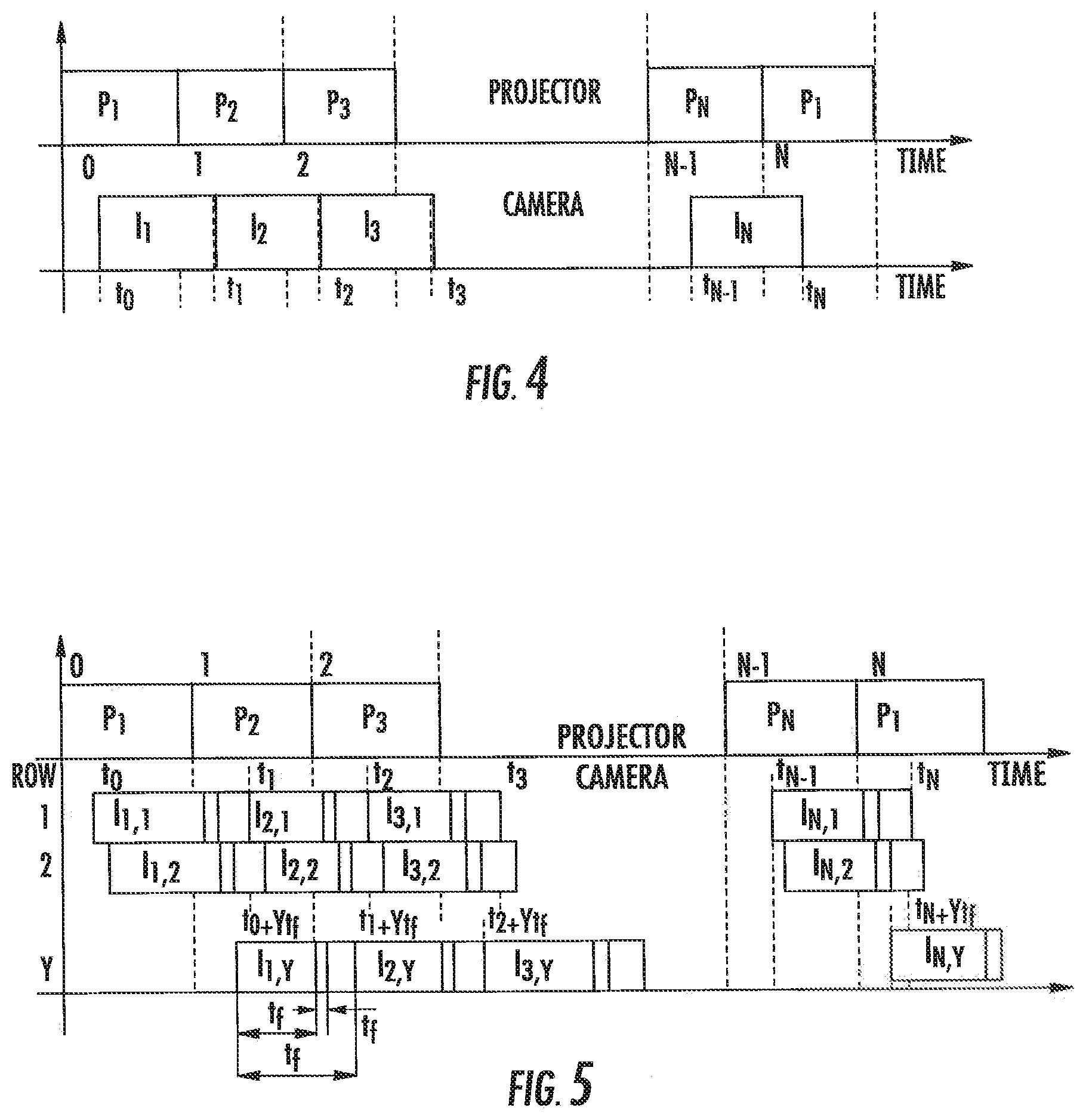

[0015] FIG. 4 is a chart illustrating the timing for a method to synthesize the synchronized sequence of image frames from an unsynchronized sequence of image frames, where a global shutter image sensor is used and where the image frame rate is identical to the pattern frame rate;

[0016] FIG. 5 is a chart illustrating the timing for a method to synthesize the synchronized sequence of image frames from an unsynchronized sequence of image frames, where a rolling shutter image sensor is used and where the image frame rate is identical to the pattern frame rate;

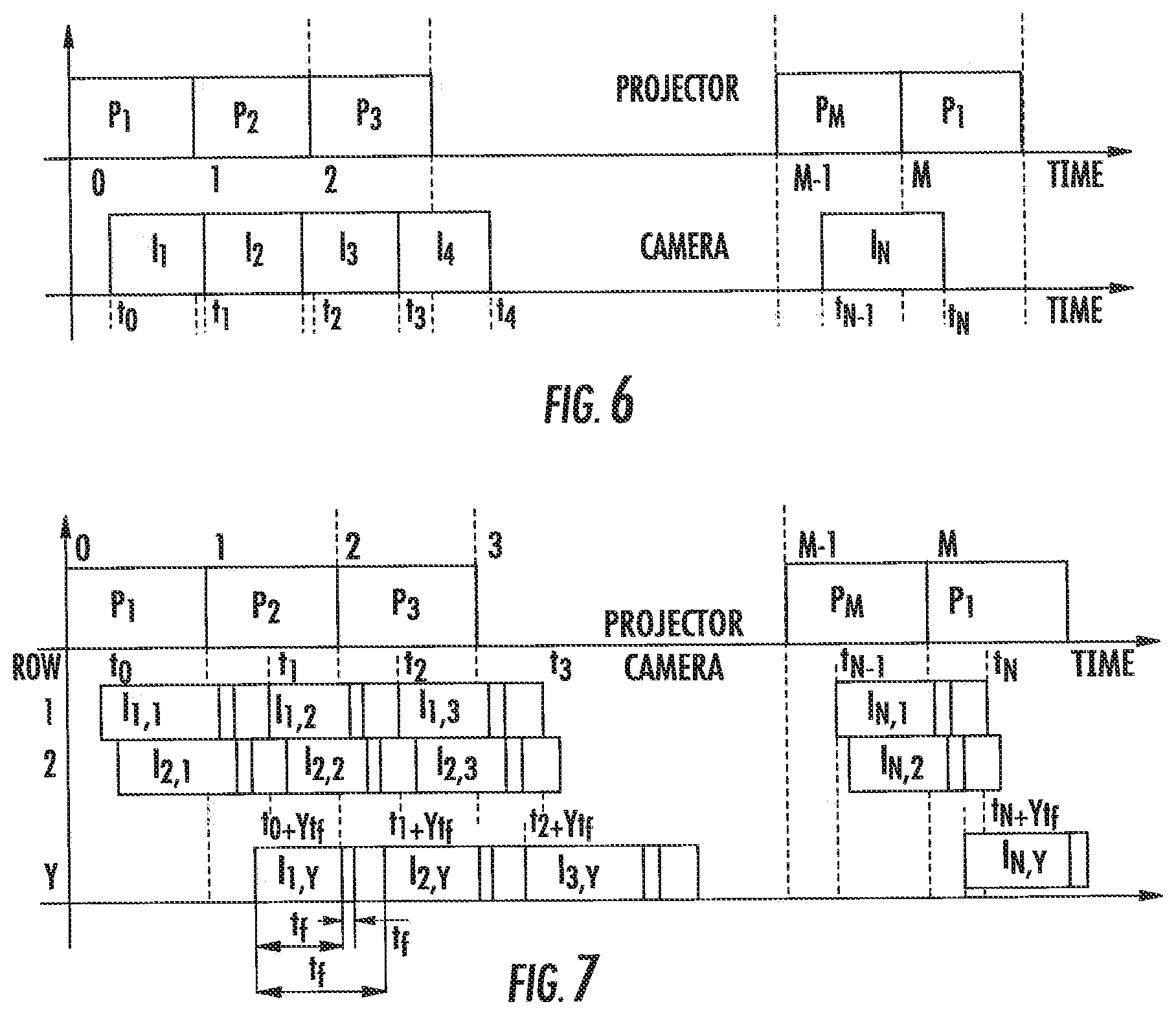

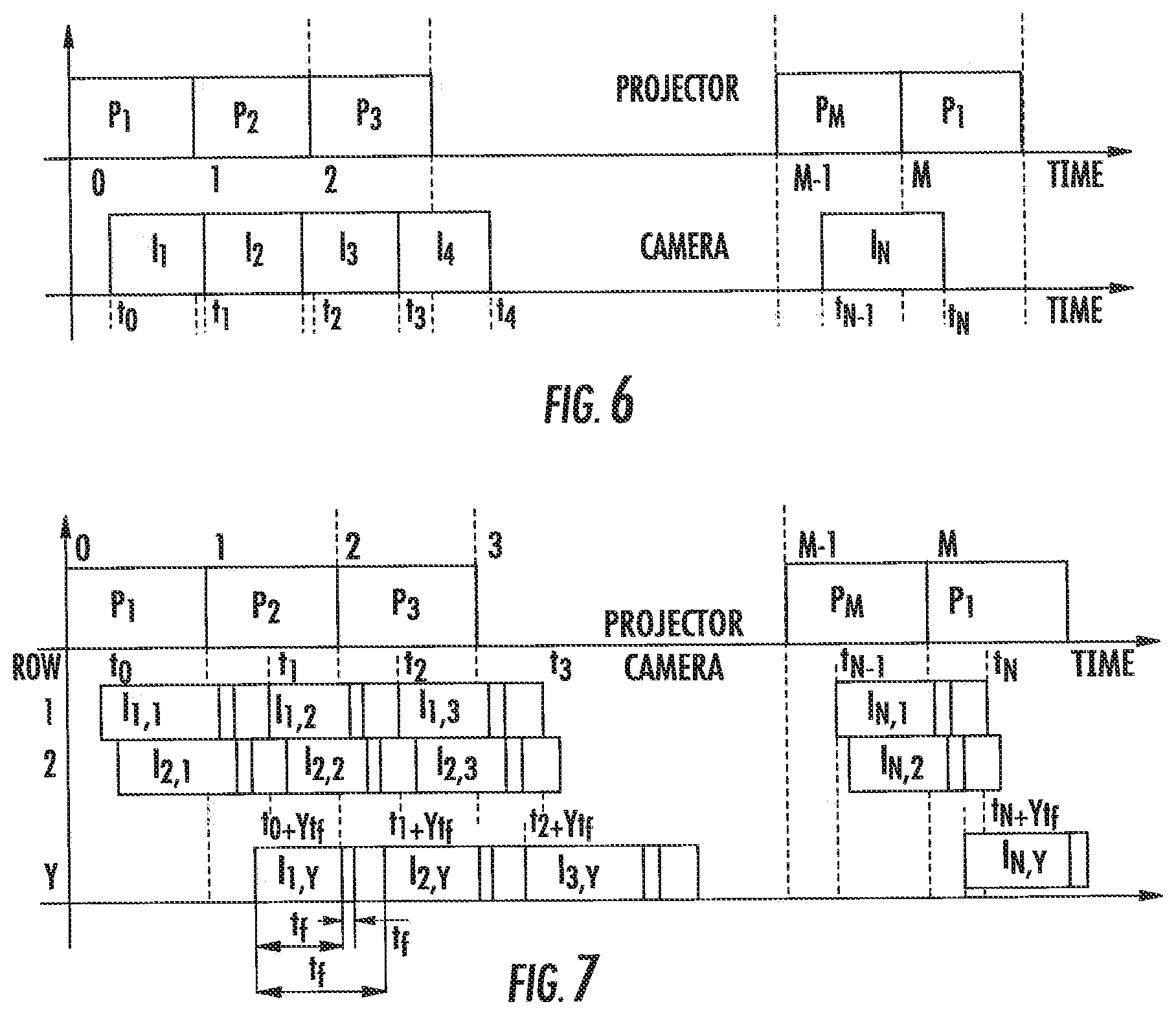

[0017] FIG. 6 is a chart illustrating the timing for a method to synthesize the synchronized sequence of image frames from an unsynchronized sequence of image frames, where a global shutter image sensor is used and where the image frame rate is higher or equal than the pattern frame rate

[0018] FIG. 7 is a chart illustrating the timing for method to synthesize the synchronized sequence of image frames from an unsynchronized sequence of image frames, where a rolling shutter image sensor is used and where the image frame rate is higher or equal than the pattern frame rate;

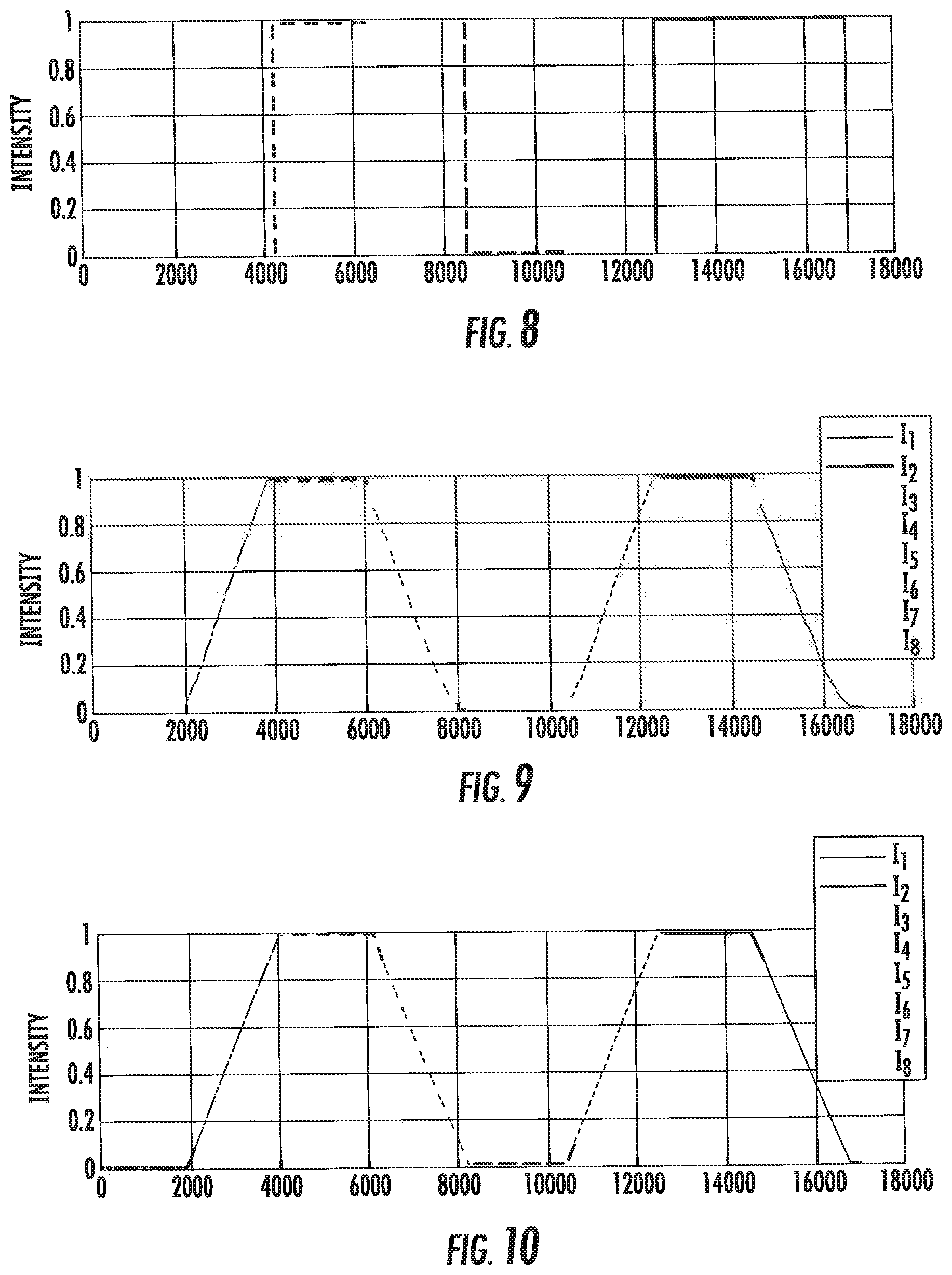

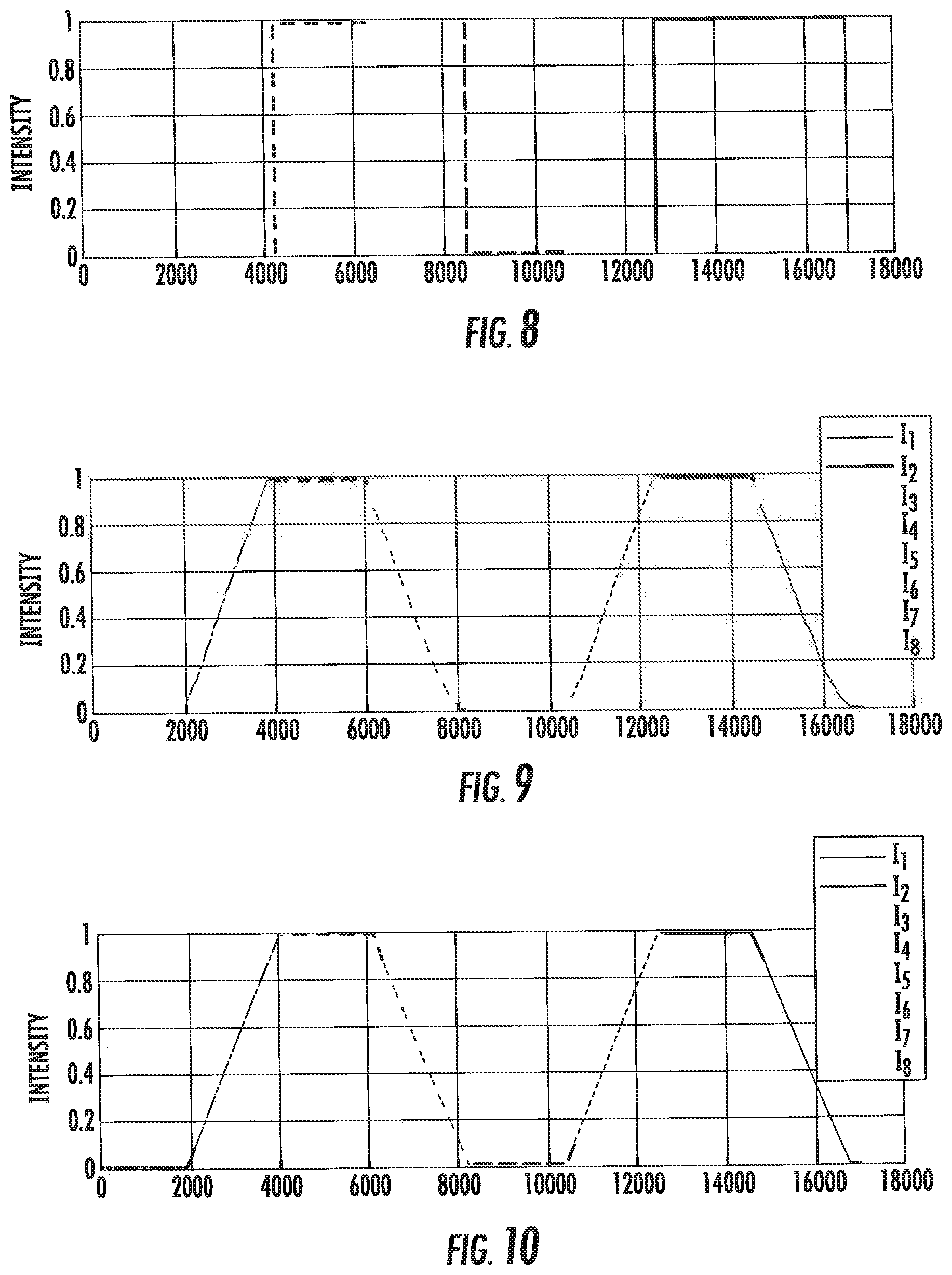

[0019] FIG. 8 shows a chart illustrating a calibration pattern used to correct the time;

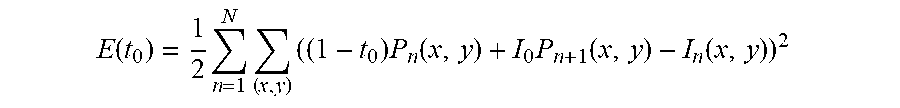

[0020] FIG. 9 shows a chart illustrating image data normalized according to the method described herein; and

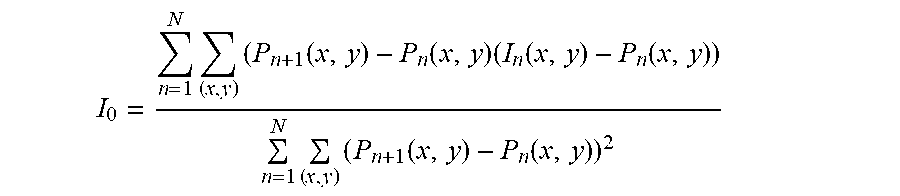

[0021] FIG. 10 shows a chart illustrating a model estimating the pattern value for each pixel in a captured image.

DETAILED DESCRIPTION

[0022] A system and method to capture the surface geometry a three-dimensional object in a scene using unsynchronized structured lighting is shown generally in FIGS. 1 and 2. The method and system includes a pattern projector configured and arranged to project a sequence of image patterns onto the scene at a pattern frame rate, a camera configured and arranged to capture a sequence of unsynchronized image patterns of the scene at an image capture rate; and a processor configured and arranged to synthesize a sequence of synchronized image frames from the unsynchronized image patterns of the scene, each of the synchronized image frames corresponding to one image pattern of the sequence of image patterns.

[0023] One object of the present invention is a system to synthesize a synchronized sequence of image frames from an unsynchronized sequence of image frames, illustrated in FIG. 1; the unsynchronized image frames captured while a three-dimensional scene was illuminated by a sequence of patterns. The system comprises a pattern projector 2020 and a camera 2030. An object 2010 is partially illuminated by the pattern projector 2020, and partially visible by the camera 2030. The pattern projector projects a sequence of patterns 2021, 2022, 2023 at a certain pattern rate. The pattern rate is measured in patterns per second. The camera 2030 captures a sequence of unsynchronized image frames 2031, 2032, 2033, 2034, at a certain frame rate. The frame rate is larger or equal than the pattern rate. The number of unsynchronized image frames captured by the camera is larger or equal than the number of patterns in the sequence of patterns. The camera starts capturing the first unsynchronized image frame not earlier than the time when the projector starts projecting the first pattern of the sequence of image patterns. The camera ends capturing the last unsynchronized image frame not earlier than the time when the projector ends projecting the last pattern of the sequence of image patterns. To capture a frame the camera opens the camera aperture, which it closes after an exposure time. The camera determines the pixel values by integrating the incoming light while the aperture is open. Since camera and projector are not synchronized, the projector may switch patterns while the camera has the aperture open. As a result, some or all of the pixels of the captured unsynchronized image frame 2032 will be partially exposed to two consecutive patterns 2021 and 2022. The resulting sequence of unsynchronized image frames, are transmitted to a computer processor 2040, which executes a method to synthesize a synchronized sequence of image frames from the unsynchronized sequence of image frames. The number of frames in the synchronized sequence of image frames 2041, 2042, 2043 will be the same as the number of patterns 2021, 2022, 2023 and represent estimates of what the camera would have captured if it were synchronized with the projector. In a preferred embodiment the camera 2030 and the computer processor 2040 are components of a single device such a digital camera, smartphone, or computer tablet.

[0024] Another object of the invention is an unsynchronized three-dimensional shape capture system, comprising the system to synthesize a synchronized sequence of image frames from an unsynchronized sequence of image frames described above, and further comprising prior art methods for decoding, three-dimensional triangulation, and optionally geometric processing, executed by the computer processor.

[0025] Another object of the invention is a three-dimensional snapshot camera comprising the unsynchronized three-dimensional shape capture system, where the projector has the means to select the pattern rate from a plurality of supported pattern rates, the camera has the means to select the frame rate from a plurality of supported frame rates, and the camera is capable of capturing the unsynchronized image frames in burst mode at a fast frame rate. In a preferred embodiment the projector has a knob to select the pattern rate. In another preferred embodiment the pattern rate is set by a pattern rate code sent to the projector through a communications link. Furthermore, the system has means to set the pattern rate and the frame rate so that the frame rate is not slower than the pattern rate. In a more preferred embodiment the user sets the pattern rate and the frame rate.

[0026] In a more preferred embodiment of the snapshot camera, the camera has the means to receive a camera trigger signal, and the means to set the number of burst mode frames. In an even more preferred embodiment, the camera trigger signal is generated by a camera trigger push-button. When the camera receives the trigger signal it starts capturing the unsynchronized image frames at the set frame rate, and it stops capturing unsynchronized image frames after capturing the set number of burst mode frames.

[0027] In a first preferred embodiment of the snapshot camera with camera trigger signal, the projector continuously projects the sequence of patterns in a cyclic fashion. In a more preferred embodiment the system has the means of detecting when the first pattern is about to be projected, and the camera trigger signal is delayed until that moment.

[0028] In a second preferred embodiment of the snapshot camera with camera trigger signal, the projector has the means to receive a projector trigger signal. In a more preferred embodiment the camera generates the projector trigger signal after receiving the camera trigger signal, and the camera has the means to send the projector trigger signal to the projector. In an even more preferred embodiment the camera has a flash trigger output, and it sends the projector trigger signal to the projector through the flash trigger output. When the projector receives the trigger signal it starts projecting the sequence of patterns at the set pattern rate, and it stops projecting patterns after it projects the last pattern.

[0029] Another object of this invention is a method to synthesize a synchronized sequence of image frames from an unsynchronized sequence of image frames, generating a number of frames in the synchronized sequence of image frames equal to the number of projected patterns, and representing estimates of what the camera would have captured if it were synchronized with the projector.

[0030] As will be described in greater detail below in the associated proofs, the method to synthesize the synchronized sequence of image frames from the unsynchronized sequence of image frames is shown generally in FIG. 3. Each of the synchronized image frames corresponds to one of a sequence of image patterns. Further, the synchronized image frames and unsynchronized image frames have the same width and height. Each image frame includes a plurality of common pixels. In a first step a) the plurality of common pixels is partitioned into a pixel partition. The pixel partition includes a plurality of pixel sets, each of which is disjoint. Further each of the plurality of common pixels is a member of one of the pixel sets. In a step b) a pixel set is selected. In a step c) a pixel set measurement matrix is built. In a step d) a pixel set projection matrix is estimated. The pixel set projection matrix projects a measurement space vector onto the column space of the pixel set measurement matrix. In a step e) a pixel set system matrix is estimated. The system matrix is parameterized by a set of system matrix parameters. In a step f) a pixel set synchronized matrix as a function of the pixel set measurement matrix and the pixel set system matrix is estimated. In a step g) steps b) to f) are repeated until all the pixels sets have been selected. In a step h) a sequence of synchronized image frames from the pixel set synchronized matrices is constructed.

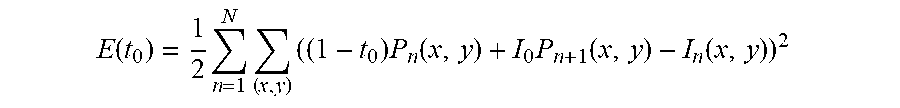

[0031] In a preferred embodiment, the method to synthesize the synchronized sequence of image frames from an unsynchronized sequence of image frames, applies to a global shutter image sensor where the image frame rate is identical to the pattern frame rate. FIG. 4 illustrates the timing for this embodiment. In this embodiment, the projector projects N patterns at a fixed frame rate, a global shutter image sensor capture N images at identical frame rate. Capturing each image takes exactly one unit of time, normalized by the projector frame rate. The start time for the first image capture t.sub.0 is unknown, but the start time for the n-th image capture is related to the start time for the first image capture t.sub.n=t.sub.0+n-1. The actual value measured by the image sensor at the (x, y) pixel of the n-th image, can be modeled as

I.sub.n(x,y)=(1-t.sub.0)P.sub.n(x,y)+t.sub.0P.sub.n+1(x,y)

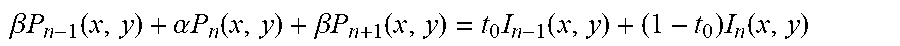

where P.sub.n(x, y) and P.sub.n-1(x, y) represent the pattern values to be estimated that contribute to the image pixel (x, y) and P.sub.n+1=P.sub.1. Projected patterns are known in advance, but since it is not known which projector pixel illuminates each image pixel, they have to be treated as unknown. To estimate the value of t.sub.0, the following expression is minimized

E ( t 0 ) = 1 2 n = 1 N ( x , y ) ( ( 1 - t 0 ) P n ( x , y ) + I 0 P n + 1 ( x , y ) - I n ( x , y ) ) 2 ##EQU00001## [0032] with respect to t.sub.0, where the sum is over a subset of pixels (x, y) for which the corresponding pattern pixel values P.sub.n(x, y) and P.sub.n-1(x, y) are known. Differentiating E(t.sub.0) with respect to t.sub.0 and equating the result to zero, an expression to estimate t.sub.0 is obtained

[0032] I 0 = n = 1 N ( x , y ) ( P n + 1 ( x , y ) - P n ( x , y ) ( I n ( x , y ) - P n ( x , y ) ) n = 1 N ( x , y ) ( P n + 1 ( x , y ) - P n ( x , y ) ) 2 ##EQU00002##

[0033] Once the value of t.sub.0 has been estimated, the N pattern pixel values P.sub.1(x, y), . . . , P.sub.n(x, y) can be estimated for each pixel (x, y) by minimizing the following expression

E ( P 1 ( x , y ) , , P N ( x , y ) ) = 1 2 n = 1 N ( ( 1 - t 0 ) P n ( x , y ) + t 0 P n + 1 ( x , y ) - I n ( x , y ) ) 2 ##EQU00003## [0034] which reduces to solving the following system of N linear equations

[0034] .beta. P n - 1 ( x , y ) + .alpha. P n ( x , y ) + .beta. P n + 1 ( x , y ) = t 0 I n - 1 ( x , y ) + ( 1 - t 0 ) I n ( x , y ) ##EQU00004## [0035] for n=1, . . . , N, where .alpha.=t.sup.2.sub.0+(1-t.sub.0).sup.2 and .beta.=t.sub.0(1-t.sub.0).

[0036] In another preferred embodiment, the method to synthesize the synchronized sequence of image frames from an unsynchronized sequence of image frames, applies to a rolling shutter image sensor where the image frame rate is identical to the pattern frame rate. FIG. 5 illustrates the timing for this embodiment. We project N patterns at fixed framerate, a rolling shutter camera captures N images. Capture begins while pattern P.sub.1 is being projected. Projector framerate is 1, pattern P.sub.n is projected between time n-1 and n. A camera frame is read every t.sub.f time, camera framerate is assumed equal to projector framerate but in practice may vary a little. A camera row requires t, time to be readout from the sensor, thus, a sensor with Y rows needs a time Yt.sub.r to read a complete frame, Yt.sub.f less than or equal to t.sub.r. Each camera frame is exposed t.sub.e time, its readout begins immediately after exposure ends, t.sub.e+t.sub.f less than or equal to t.sub.f.

[0037] Camera row y in image n begins being exposed at time t.sub.n,y

t.sub.n,y=t.sub.0+(n-1)t.sub.f+yt.sub.r,y:0 . . . Y-1, [0038] and exposition ends at time t.sub.n,y+t.sub.e

[0039] In this model image n is exposed while pattern P.sub.n and P.sub.n+1 are being projected. Intensity level measured at a pixel in row y is given by

I.sub.n,y=(n-t.sub.n,y)k.sub.n,yP.sub.n+(t.sub.n,y+t.sub.e-n)k.sub.n,yP.- sub.n+1+C.sub.n,y,

[0040] The constants k.sub.n,y and C.sub.n,y are scene dependent.

[0041] Let be min {I.sub.n,y} a pixel being being exposed while P(t)=0, and max {I.sub.n,y} a pixel being exposed while P(t)=1, max {I.sub.n,y}=t.sub.e k.sub.n,y+C.sub.n,y. Now, we define a normalized image I.sub.n,y as,

I ~ n , y = ( n - t n , y ) P n + ( t n , y + t e - n ) P n + 1 t e ##EQU00005##

[0042] A normalized image is completely defined by the time variables and pattern values. In this section we want to estimate the time variables. Let's rewrite Equation 58 as

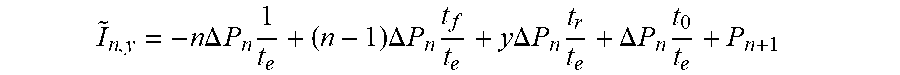

I ~ n , y = - n .DELTA. P n 1 t e + ( n - 1 ) .DELTA. P n t f t e + y .DELTA. P n t r t e + .DELTA. P n t 0 t e + P n + 1 ##EQU00006## [0043] being t.sub.0 and d unknown. Image pixel values are given by

[0043] I.sub.n(x,y)=(1-t.sub.0-yd)P.sub.n(x,y)+(t.sub.0+yd)P.sub.n+1(x,y- ),

[0044] Same as before, P.sub.n(x, y) and P.sub.n+1(x, y) represent the pattern values contributing to camera pixel (x, y), we define P.sub.n+1=P.sub.1, P.sub.0=P.sub.n, I.sub.n+1=I.sub.1, and I.sub.0=I.sub.N, and I will omit pixel (x, y) to simplify the notation. We now minimize the following energy to find the time variables t.sub.0 and d

E ( t 0 , d ) = 1 2 n = 1 N x , y ( ( 1 - t 0 - y d ) P n + ( t 0 + y d ) P n + 1 - I n ) 2 ##EQU00007##

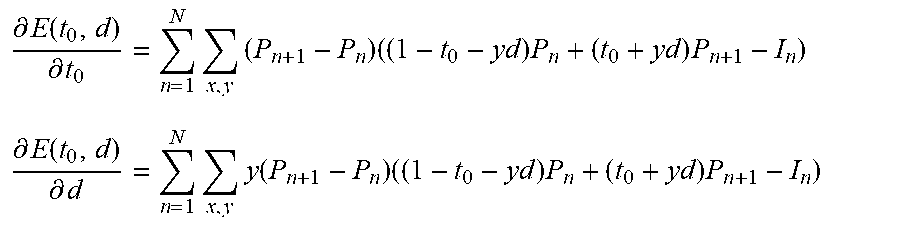

[0045] The partial derivatives are given by

.differential. E ( t 0 , d ) .differential. t 0 = n = 1 N x , y ( P n + 1 - P n ) ( ( 1 - t 0 - y d ) P n + ( t 0 + y d ) P n + 1 - I n ) ##EQU00008## .differential. E ( t 0 , d ) .differential. d = n = 1 N x , y y ( P n + 1 - P n ) ( ( 1 - t 0 - y d ) P n + ( t 0 + y d ) P n + 1 - I n ) ##EQU00008.2##

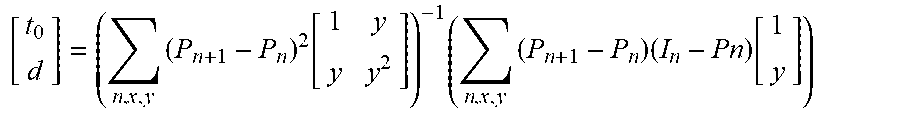

[0046] We set the gradient equal to the null vector and reorder as

[ t 0 d ] = ( n , x , y ( P n + 1 - P n ) 2 [ 1 y y y 2 ] ) - 1 ( n , x , y ( P n + 1 - P n ) ( I n - Pn ) [ 1 y ] ) ##EQU00009##

[0047] We use Equation 29 to compute to and d when we have some known (or estimated) pattern values.

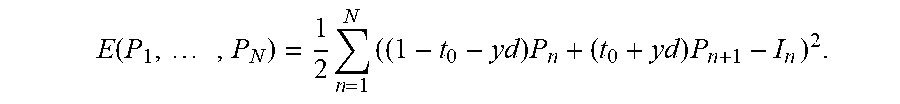

[0048] With known t.sub.0 and d we estimate pattern values minimizing

E ( P 1 , , P N ) = 1 2 n = 1 N ( ( 1 - t 0 - y d ) P n + ( t 0 + y d ) P n + 1 - I n ) 2 . ##EQU00010##

[0049] Analogous as in Case 1 we obtain that Ap=b with A as in Equation 12 and .alpha., .beta., and b defined as

a=(1t.sub.0-yd).sup.2+(t.sub.0+yd).sup.2, .beta.=(1=t.sub.0-yd)(t.sub.0+yd)

b=(1-t.sub.0-yd)(I.sub.1I.sub.2 . . . , I.sub.N).sup.r+(t.sub.0+yd)(I.sub.N,I.sub.1, . . . I.sub.N-1).sup.r

[0050] Pattern values for each pixel are given by p=A.sup.-1 b.

[0051] In another preferred embodiment, the method to synthesize the synchronized sequence of image frames from an unsynchronized sequence of image frames, applies to a global shutter image sensor where the image frame rate is higher or equal than the pattern frame rate. FIG. 6 illustrates the timing for this embodiment. We now project M patterns at fixed framerate and we capture N images with a global shutter camera, also at a fixed framerate. We require that N.gtoreq.M. Capture begins while pattern P.sub.1 is being projected. We introduce a new variable d which is the camera capture delay from one row to the next. Same as in Case 1, up to two patterns may contribute to each image but here we do not know which ones are because the camera frame rate is unknown. The new image equation is

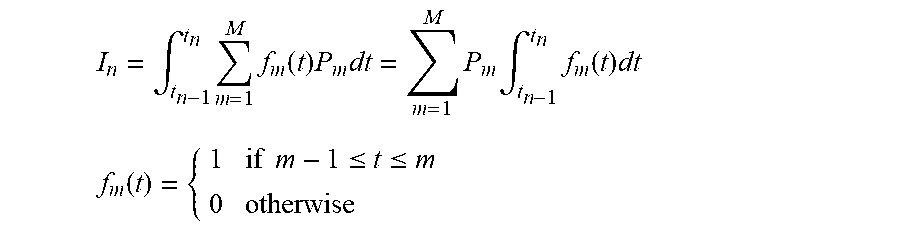

I n = .intg. t n - 1 t n m = 1 M f m ( t ) P m dt = m = 1 M P m .intg. t n - 1 t n f m ( t ) dt ##EQU00011## f m ( t ) = { 1 if m - 1 .ltoreq. t .ltoreq. m 0 otherwise ##EQU00011.2##

[0052] Let .DELTA.t=t.sub.n-1-t.sub.n the time between image frames, let p=(P.sub.1 . . . , P.sub.M).sup.T and .PHI..sub.n(t.sub.0, .DELTA.t)=(.PHI..sub.n, 1, t.sub.0, .DELTA.t), . . . , .PHI.(n, M, t.sub.0, .DELTA.t)).sup.T and rewrite Equation 33 as

I n = 1 .DELTA. t .phi. n ( t 0 , .DELTA. t ) T p ##EQU00012##

[0053] Each function .PHI.(n, m, t.sub.0, .DELTA.t)=.intg..sub.n-1.sup.tnf.sub.m(t)dt can be written as

.PHI.(n,m,t0,.DELTA.f)=max(0,min(m,t.sub.n)-max(m-1,t.sub.n-1))

[0054] Same as before, P.sub.n(x, y) represents a pattern value contributing to camera pixel (x, y), we define P.sub.n+1=P.sub.1, P.sub.0=P.sub.N, I.sub.n+1=I.sub.1, and I.sub.0=I.sub.n, and I will omit pixel (x, y) to simplify the notation.

[0055] We now minimize the following energy to find the time variables t.sub.0 and .DELTA.t

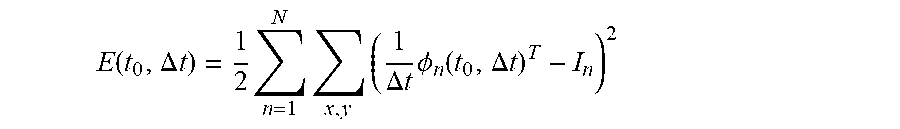

E ( t 0 , .DELTA. t ) = 1 2 n = 1 N x , y ( 1 .DELTA. t .phi. n ( t 0 , .DELTA. t ) T - I n ) 2 ##EQU00013##

[0056] We solve for t.sub.0 and .DELTA.t by making

.gradient. E ( t 0 , .DELTA. t ) = 0 ##EQU00014## .gradient. E ( t 0 , .DELTA. t ) = n = 1 N x , y 1 .DELTA. t J .phi. n ( t 0 , .DELTA. t ) T p ( 1 .DELTA. t .phi. n ( t 0 , .DELTA. t ) T p - I n ) ##EQU00014.2##

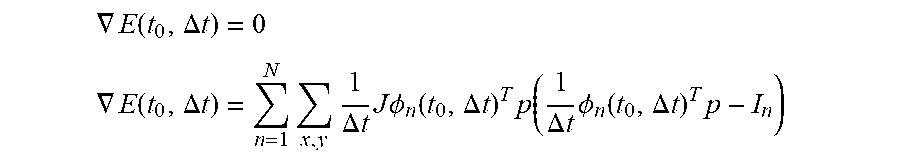

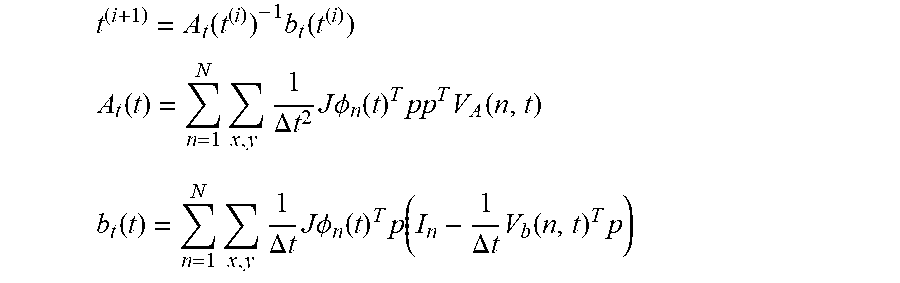

[0057] Because J.PHI..sub.n(t.sub.0, .DELTA.t) depends on the unknown value t=(t.sub.0, .DELTA.t).sup.T we solve for them iteratively

t ( i + 1 ) = A t ( t ( i ) ) - 1 b t ( t ( i ) ) ##EQU00015## A t ( t ) = n = 1 N x , y 1 .DELTA. t 2 J .phi. n ( t ) T pp T V A ( n , t ) ##EQU00015.2## b t ( t ) = n = 1 N x , y 1 .DELTA. t J .phi. n ( t ) T p ( I n - 1 .DELTA. t V b ( n , t ) T p ) ##EQU00015.3##

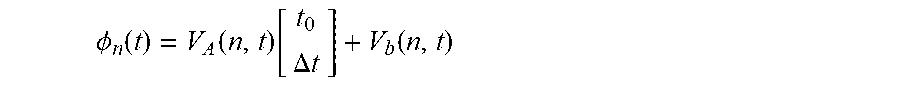

[0058] Matrix V.sub.A(n,t) and vector V.sub.b(n,t) are defined such as

.phi. n ( t ) = V A ( n , t ) [ t 0 .DELTA. t ] + V b ( n , t ) ##EQU00016##

[0059] For completeness we include the following definitions:

V A ( , n , t ) = [ v A ( n , 1 , t ) , , v A ( n , M , t ) ] T ##EQU00017## V b ( n , t ) = [ v b ( n , 1 , t ) , , v b ( n , M , t ) ] T ##EQU00017.2## t diff .ident. min ( m , t n ) - max ( m - 1 , t n - 1 ) ##EQU00017.3## v A ( n , m , t ) = { [ 0 , 1 ] T if m - 1 .ltoreq. t n - 1 .ltoreq. t n .ltoreq. m and t diff > 0 [ - 1 , 1 - n ] T if m - 1 .ltoreq. t n - 1 .ltoreq. m .ltoreq. t n and t diff > 0 [ 1 , n ] T if t n - 1 .ltoreq. m - 1 .ltoreq. t n .ltoreq. m and t diff > 0 [ 0 , 0 ] T if t n - 1 .ltoreq. m - 1 .ltoreq. m .ltoreq. t n and t diff > 0 [ 0 , 0 ] T otherwise v b ( n , m , t ) = { 0 if m - 1 .ltoreq. t n - 1 .ltoreq. t n .ltoreq. m and t diff > 0 m if m - 1 .ltoreq. t n - 1 .ltoreq. m .ltoreq. t n and t diff > 0 1 - m if t n - 1 .ltoreq. m - 1 .ltoreq. t n .ltoreq. m and t diff > 0 1 if t n - 1 .ltoreq. m - 1 .ltoreq. m .ltoreq. t n and t diff > 0 0 otherwise J .phi. n ( t ) = V A ( n , t ) ##EQU00017.4##

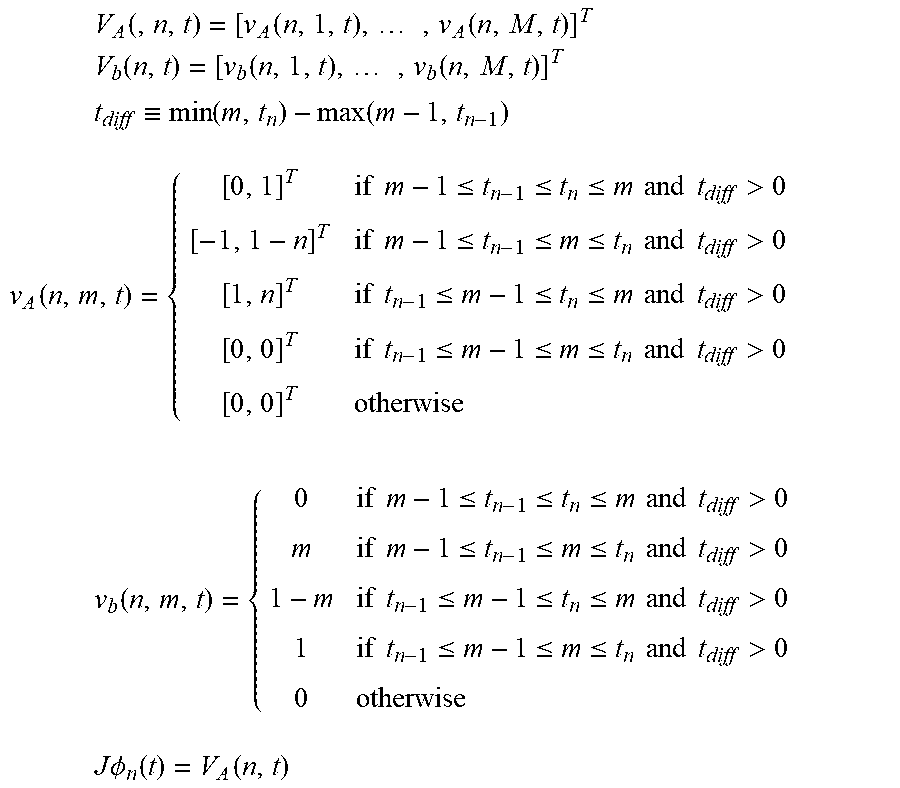

[0060] With known t.sub.0 and 66 t we estimate pattern values minimizing

E ( p ) = 1 2 n = 1 N ( .phi. n ( t ) T p - I n ) 2 ##EQU00018##

[0061] Analogous as in Case 1 we obtain that Ap=b with

A = .PHI. T .PHI. , b = .PHI. T I ##EQU00019## .PHI. = [ .phi. 1 ( t ) T .phi. N ( t ) T ] ##EQU00019.2##

[0062] Pattern values for each pixel are given by p=A.sup.-1b.

[0063] In another preferred embodiment, the method to synthesize the synchronized sequence of image frames from an unsynchronized sequence of image frames, applies to a rolling shutter image sensor where the image frame rate is higher or equal than the pattern frame rate. FIG. 7 illustrates the timing for this embodiment. Projector frame rate is 1, pattern P.sub.m is projected between time m-1 and m. A camera frame is read every t.sub.f. A camera row requires t.sub.r time to be readout from the sensor, thus, a sensor with Y rows needs a time Yt.sub.r to read a complete frame, t.sub.f.gtoreq.Yt.sub.r. Each camera frame is exposed t.sub.e time, its readout begins immediately after exposure ends, t.sub.e+t.sub.r>t.sub.f.

[0064] Camera row y in image n begins being exposed at time t.sub.n, y

t.sub.n,y=t.sub.0+(n-1)t.sub.f+yt.sub.r,y:0 . . . Y-1 [0065] and exposition ends at time t.sub.n,y+t.sub.e.

[0066] In this model a pixel intensity in image n at row y is given by

I n , y = .intg. t n , y t n , y + t e k n , y P ( t ) dt + C n , y ##EQU00020## I n , y = m = 1 M max ( 0 , .intg. max ( t n , y , m - 1 ) min ( t n , y + t e , m ) k n , y P m dt ) + C n , y ##EQU00020.2##

[0067] The constants k.sub.n, y and C.sub.n, y are scene dependent, P.sub.m is either 0 or 1.

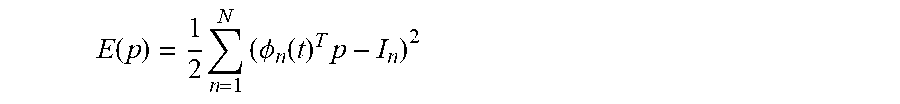

[0068] Let be min{sub.n, y} a pixel being exposed while P(t)=0, and max{I.sub.n, y} a pixel being exposed while P(t)=1,

min{I.sub.n,y}=C.sub.n,y

max{I.sub.n,y}=t.sub.ek.sub.n,y+C.sub.n,y

[0069] Now, we define a normalized image .sub.n, y as,

I ^ n , y = I n , y - min { I n , y } max [ I n , y ] min { I n , y } ##EQU00021## I ~ n , y = 1 t e m = 1 M max ( 0 , .intg. max ( t n , y , m - 1 ) min ( t n , y + t e , m ) P m dt ) ##EQU00021.2##

[0070] A normalized image is completely defined by the time variables and pattern values. In this section we want to estimate the time variables. Let's rewrite the previous equation as,

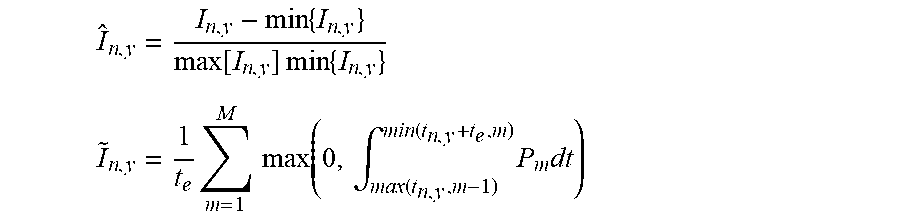

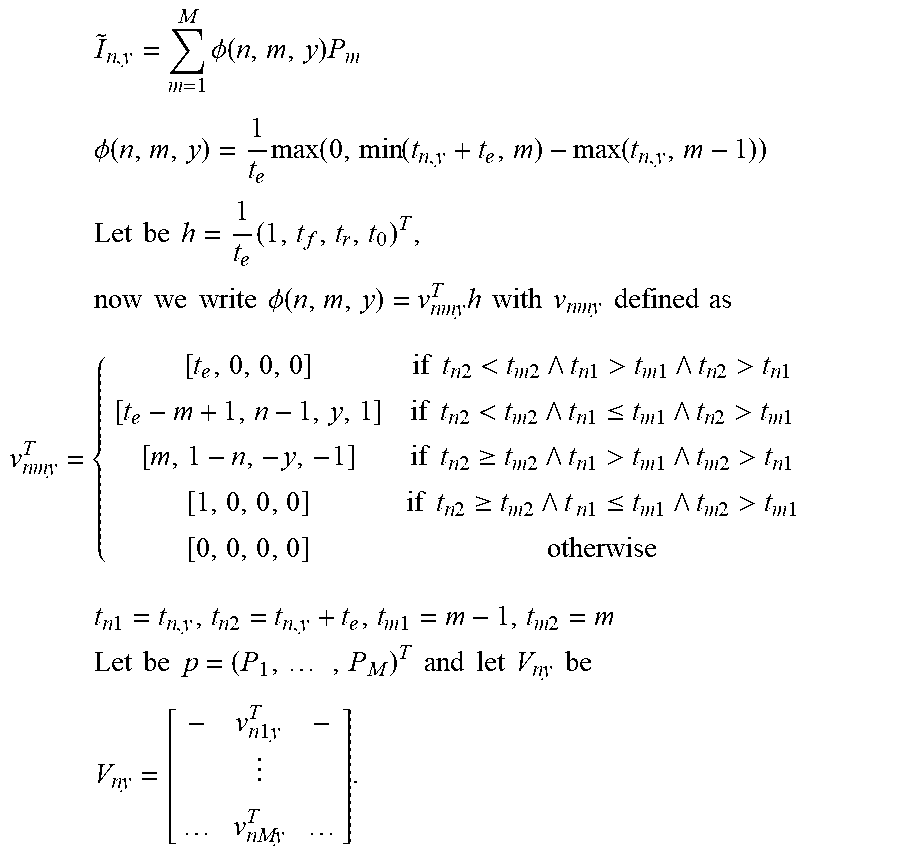

I ~ n , y = m = 1 M .phi. ( n , m , y ) P m ##EQU00022## .phi. ( n , m , y ) = 1 t e max ( 0 , min ( t n , y + t e , m ) - max ( t n , y , m - 1 ) ) ##EQU00022.2## Let be h = 1 t e ( 1 , t f , t r , t 0 ) T , now we write .phi. ( n , m , y ) = v nmy T h with v nmy defined as ##EQU00022.3## v nmy T = { [ t e , 0 , 0 , 0 ] if t n 2 < t m 2 t n 1 > t m 1 t n 2 > t n 1 [ t e - m + 1 , n - 1 , y , 1 ] if t n 2 < t m 2 t n 1 .ltoreq. t m 1 t n 2 > t m 1 [ m , 1 - n , - y , - 1 ] if t n 2 .gtoreq. t m 2 t n 1 > t m 1 t m 2 > t n 1 [ 1 , 0 , 0 , 0 ] if t n 2 .gtoreq. t m 2 t n 1 .ltoreq. t m 1 t m 2 > t m 1 [ 0 , 0 , 0 , 0 ] otherwise t n 1 = t n , y , t n 2 = t n , y + t e , t m 1 = m - 1 , t m 2 = m Let be p = ( P 1 , , P M ) T and let V ny be V ny = [ - v n 1 y T - v nMy T ] . ##EQU00022.4##

[0071] We now minimize the following energy to find the unknown h

E ( h ) = 1 2 n = 1 N x , y ( p ( x , y ) T V ny h - I ~ n ( x , y ) ) 2 ##EQU00023## [0072] with the following constraints

[0072] h > [ 0 1 0 0 ] and [ 0 - 1 Y 0 0 - 1 1 - ] h .ltoreq. [ 0 - 1 ] ##EQU00024## [0073] or equivalently

[0073] t r e - t f t e .ltoreq. - 1 t r + t e .ltoreq. t f ##EQU00025##

[0074] Equation E(h) cannot be minimized in closed form because the values matrix V.sub.n,y depends on the unknown values. Using an iterative approach the current value h.sup.(i) is used to compute V.sub.n,y.sup.(i) and the next value h.sup.(i+1)pl.

[0075] Up to this point we have assumed that the only unknown is h, meaning that pattern values are known for all image pixels. The difficulty lies is knowing which pattern pixel is being observed by each camera pixel. We simplify this issue by making calibration patterns all `black or all `white`, best seen in FIG. 8. For example, a sequence of four patterns `{black, black, white, white}` will produce images with completely black and completely white pixels, as well as pixels in transition from black to white and vice versa. The all black or white pixels are required to produce normalized images, as shown in FIG. 9, and the pixels in transition constrain the solution of the parameter h in Equation E(h).

[0076] Decoding is done in two steps: 1) the time offset t.sub.0 need to be estimated for this particular sequence; 2) the pattern values are estimated for each camera pixel, as shown in FIG. 10. Value t.sub.0 is estimated using Equation E(h) where the known components of h are fixed, but some pattern values are required to be known, specially we need to know for some pixels whether they are transitioning from `black` to `white` or the opposite. Non-transitioning pixels provided no information in this step. Until now, we have projected a couple of black's and white's at the beginning of the sequence to ensure we can normalized all pixels correctly and to simplify t.sub.0 estimation. We will revisit this point in the future for other pattern sequences.

[0077] Similarly as for the time variables, pattern values are estimated by minimizing the following energy

E ( p ) = 1 2 n = 1 N ( h T V ny T p ( x , y ) - I ~ n ( x , y ) ) 2 , s . t . p m .ltoreq. 1 ##EQU00026##

[0078] The matrix h.sup.T V.sub.n,y.sup.T is bi-diagonal for N=M and it is fixed if h is known.

[0079] Therefore, it can be seen that the exemplary embodiments of the method and system provides a unique solution to the problem of using structure lighting for three-dimensional image capture where the camera and projector are unsychronized.

[0080] It would be appreciated by those skilled in the art that various changes and modifications can be made to the illustrated embodiments without departing from the spirit of the present invention. All such modifications and changes are intended to be within the scope of the present invention except as limited by the scope of the appended claims.

* * * * *

D00000

D00001

D00002

D00003

D00004

D00005

D00006

XML

uspto.report is an independent third-party trademark research tool that is not affiliated, endorsed, or sponsored by the United States Patent and Trademark Office (USPTO) or any other governmental organization. The information provided by uspto.report is based on publicly available data at the time of writing and is intended for informational purposes only.

While we strive to provide accurate and up-to-date information, we do not guarantee the accuracy, completeness, reliability, or suitability of the information displayed on this site. The use of this site is at your own risk. Any reliance you place on such information is therefore strictly at your own risk.

All official trademark data, including owner information, should be verified by visiting the official USPTO website at www.uspto.gov. This site is not intended to replace professional legal advice and should not be used as a substitute for consulting with a legal professional who is knowledgeable about trademark law.