Human-computer interface for computationally efficient placement and sizing of virtual objects in a three-dimensional representation of a real-world environment

Faulkner , et al. June 1, 2

U.S. patent number 11,023,093 [Application Number 15/993,519] was granted by the patent office on 2021-06-01 for human-computer interface for computationally efficient placement and sizing of virtual objects in a three-dimensional representation of a real-world environment. This patent grant is currently assigned to Microsoft Technology Licensing, LLC. The grantee listed for this patent is MICROSOFT TECHNOLOGY LICENSING, LLC. Invention is credited to Bogdan Berg, Jason Thomas Faulkner, Timothy David Kviz, Kathleen Patricia Mulcahy, Sandhya Rajendra Rao.

View All Diagrams

| United States Patent | 11,023,093 |

| Faulkner , et al. | June 1, 2021 |

Human-computer interface for computationally efficient placement and sizing of virtual objects in a three-dimensional representation of a real-world environment

Abstract

An improved human-computer interface for placing and sizing virtual objects in a three-dimensional ("3D") representation of a real-world environment is disclosed herein. The disclosed HCI can determine a location, size, and orientation of virtual objects in a 3D representation of a real-world environment based on simplified user gestures. In some embodiments, based on the timing and direction of an input gesture, a computing device can utilize (1) an automated mode that determines a position and orientation of a virtual object or utilize (2) a manual mode that determines position, orientation, and size of the virtual object. A computing device capable of 3D spatial mapping generates mesh data defining a 3D representation of a real-world environment and images of the real-world environment. The size of the virtual object can be based on a computed depth of real-world objects related to the virtual object.

| Inventors: | Faulkner; Jason Thomas (Seattle, WA), Rao; Sandhya Rajendra (Bellevue, WA), Mulcahy; Kathleen Patricia (Seattle, WA), Kviz; Timothy David (Seattle, WA), Berg; Bogdan (Redmond, WA) | ||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Applicant: |

|

||||||||||

| Assignee: | Microsoft Technology Licensing,

LLC (Redmond, WA) |

||||||||||

| Family ID: | 1000005589960 | ||||||||||

| Appl. No.: | 15/993,519 | ||||||||||

| Filed: | May 30, 2018 |

Prior Publication Data

| Document Identifier | Publication Date | |

|---|---|---|

| US 20190369836 A1 | Dec 5, 2019 | |

| Current U.S. Class: | 1/1 |

| Current CPC Class: | G06F 3/013 (20130101); G06T 7/50 (20170101); G06T 7/70 (20170101); G06F 3/04845 (20130101); G06F 3/167 (20130101); G06F 3/04883 (20130101); G06T 17/20 (20130101); G06F 3/04815 (20130101); G06T 19/006 (20130101); G06F 3/03543 (20130101) |

| Current International Class: | G06F 3/0481 (20130101); G06F 3/0346 (20130101); G06T 17/20 (20060101); G06F 3/16 (20060101); G06F 3/0488 (20130101); G06F 3/0484 (20130101); G06T 7/70 (20170101); G06F 3/0338 (20130101); G06F 3/01 (20060101); G06T 7/50 (20170101); G06T 19/00 (20110101); G06F 3/0354 (20130101) |

References Cited [Referenced By]

U.S. Patent Documents

| 6335731 | January 2002 | Yamamoto |

| 2006/0136552 | June 2006 | Krane et al. |

| 2009/0089322 | April 2009 | Naaman |

| 2009/0195656 | August 2009 | Zhou |

| 2011/0285811 | November 2011 | Langlotz |

| 2012/0072420 | March 2012 | Moganti et al. |

| 2012/0143361 | June 2012 | Kurabayashi et al. |

| 2014/0002444 | January 2014 | Bennett et al. |

| 2014/0006967 | January 2014 | Arumugam et al. |

| 2014/0306993 | October 2014 | Poulos et al. |

| 2015/0067615 | March 2015 | Sim |

| 2015/0254905 | September 2015 | Ramsby |

| 2016/0246948 | August 2016 | Xiao |

| 2016/0379409 | December 2016 | Gavriliuc et al. |

| 2017/0039770 | February 2017 | Lanier et al. |

| 2017/0061691 | March 2017 | Scott |

| 2018/0121214 | May 2018 | Faulkner et al. |

| 2019/0236842 | August 2019 | Bennett et al. |

| 2019/0318510 | October 2019 | Eronen et al. |

| 2020/0202634 | June 2020 | Faulkner et al. |

| 2977961 | Jan 2016 | EP | |||

| 2017027183 | Feb 2017 | WO | |||

Other References

|

"International Search Report and Written Opinion Issued in PCT Application No. PCT/US2019/031926", dated Jul. 24, 2019, 12 Pages. cited by applicant . "Non-Final Office Action Issued in U.S. Appl. No. 16/228,638", dated Mar. 6, 2020, 17 Pages. cited by applicant . "International Search Report and Written Opinion Issued in PCT Application No. PCT/US19/065572", dated Mar. 4, 2020, 12 Pages. cited by applicant . "Final Office Action Issued in U.S. Appl. No. 16/228,638", dated Aug. 10, 2020, 40 Pages. cited by applicant. |

Primary Examiner: To; Jennifer N

Assistant Examiner: Chen; Kc

Attorney, Agent or Firm: Newport IP, LLC Shigeta; Scott Y.

Claims

What is claimed is:

1. A system comprising: one or more data processing units; and a computer-readable medium having encoded thereon computer-executable instructions to cause the one or more data processing units to receive sensor data captured by one or more sensors of a computing device, the sensor data comprising an image of the real-world environment; render the image in a user interface (UI); receive input data indicating an input action and a position for a virtual object to be placed within the three-dimensional representation of the real-world environment; in response to determining that the input action meets one or more criteria, render the virtual object in the UI at the position within the three-dimensional representation of the real-world environment, wherein an orientation of the virtual object is based on the direction of movement indicated by an input device captured prior to the input action, wherein the input action meets the one or more criteria when a duration associated with the input action is less than a threshold amount of time, wherein the input action does not meet the one or more criteria when the duration exceeds the threshold amount of time; and in response to determining that the input action does not meet the one or more criteria, render the virtual object in the UI, wherein a first point of the virtual object is at the position, and a second point of the virtual object is located at a second position determined in response to a subsequent input action.

2. The system of claim 1, wherein the instructions further cause the one or more data processing units to: in response to determining that the input action does not meet the one or more criteria, monitor the input data indicating a movement indicated by the input device, wherein the movement indicated by the input device controls a movement of the second point of the virtual object; and modify a size of the virtual object based on a distance between the first point and the second point of the virtual object.

3. The system of claim 1, wherein the sensor data further comprises mesh data defining a three-dimensional representation of a real-world environment, and wherein the instructions further cause the one or more data processing units to: in response to determining that the input action meets the one or more criteria, determine a depth at the position within the three-dimensional representation of the real-world environment based on the mesh data; and determine a size parameter of the virtual object based upon the depth.

4. The system of claim 3, wherein the size parameter includes a length of the virtual object.

5. The system of claim 1, wherein the input action comprises a contact point between a user and a touch surface of the system, wherein the input action meets the one or more criteria when the contact point moves over a boundary that is a threshold distance from an initial contact point within the threshold amount of time.

6. The system of claim 5, wherein the input action further comprises detecting that the user stops making contact with the touch surface, wherein the position is based on a location where the user stopped making contact with the surface.

7. The system of claim 1, wherein the input action comprises a contact point between a user and a touch surface of the system, wherein the input action meets the one or more criteria when the contact point remains within a boundary that is a threshold distance from an initial contact point within the threshold amount of time.

8. The system of claim 7, wherein the input action comprises detecting that the user makes contact with the touch surface of the system, wherein the subsequent input action comprises detecting that the user has stopped making contact with the touch surface of the system.

9. The system of claim 1, wherein the duration is based on a first time that a button of the input device is activated to a second time that the button is deactivated, wherein the input action comprises activating the button and the subsequent input action comprises deactivating the button.

10. A system comprising: one or more data processing units; and a computer-readable medium having encoded thereon computer-executable instructions to cause the one or more data processing units to receive sensor data captured by one or more sensors of a computing device, the sensor data comprising mesh data defining a three-dimensional representation of a real-world environment and an image of the real-world environment; render the image in a user interface (UI); receive input data indicating a position for a virtual object to be placed within the three-dimensional representation of the real-world environment, wherein the position is selected in response to an input action; determine a direction of movement indicated by the input data, wherein the direction of movement is captured prior to the input action; in response to determining that the input action meets one or more criteria, render the virtual object in the UI at the position within the three-dimensional representation of the real-world environment, wherein an orientation of the virtual object is based on the direction of movement indicated by the input data, wherein the input action meets the one or more criteria when a duration associated with the input action is less than a threshold amount of time, wherein the input action does not meet the one or more criteria when the duration exceeds the threshold amount of time; and in response to determining that the input action does not meet the one or more criteria, render the virtual object in the UI, wherein a first point of the virtual object is at the position, and a second point of the virtual object is located at a second position determined in response to a subsequent input action.

11. The system of claim 10, wherein the instructions further cause the one or more data processing units to: determine a depth at the position within the three-dimensional representation of the real-world environment based on a distance between a real-world object and the one or more sensors of the computing device; and determine a size parameter of the virtual object based upon the depth.

12. The system of claim 10, wherein the input action involves receiving an input signal from a sensor tracking eye movement of a user to determine the position for the virtual object.

13. The system of claim 12, wherein the sensor generates the input data indicating the input action by a gesture performed by the user.

14. The system of claim 10, wherein the input action is a voice command captured by a microphone in communication with the system, wherein the voice command indicates the duration.

15. The system of claim 14, wherein the instructions further cause the one or more data processing units to: determine content for an annotation based on the voice command; and render the annotation in association with the virtual object.

16. A system comprising: means for receiving sensor data captured by one or more sensors of a computing device, the sensor data comprising mesh data defining a three-dimensional representation of a real-world environment and an image of the real-world environment; means for rendering the image in a user interface (UI); means for receiving input data indicating a position for a virtual object to be placed within the three-dimensional representation of the real-world environment, wherein the position is selected in response to an input action; means for determining a direction of movement indicated by the input data, wherein the direction of movement is captured prior to the input action; means for rendering the virtual object in the UI at the position within the three-dimensional representation of the real-world environment, wherein an orientation of the virtual object is based on the direction of movement indicated by the input data, wherein the virtual object is rendered in response to determining that the input action meets one or more criteria, wherein the input action meets the one or more criteria when a duration associated with the input action is less than a threshold amount of time, wherein the input action does not meet the one or more criteria when the duration exceeds the threshold amount of time; and means for rendering the virtual object in the UI in response to determining that the input action does not meet the one or more criteria, wherein a first point of the virtual object is at the position, and a second point of the virtual object is located at a second position determined in response to a subsequent input action.

17. The system of claim 16, wherein the instructions further cause the one or more data processing units to: determine a depth at the position within the three-dimensional representation of the real-world environment based, at least in part, on the mesh data; and determine a size of the virtual object based upon the depth.

18. The system of claim 16, wherein the input action is a voice command captured by a microphone in communication with the system, wherein the voice command indicates the duration.

19. The system of claim 16, wherein the duration is based on a first time that a button of an input device is activated to a second time that the button is deactivated, wherein the input action comprises activating the button and a subsequent action comprises deactivating the button.

20. The system of claim 3, wherein the depth is based on a distance between a real-world object and a sensor in communication with the system measuring the distance.

Description

BACKGROUND

A networked meeting represents one popular form of electronic collaboration that facilitates communication between two or more participants present at separate physical locations. Participants of a communication session in a networked meeting are able to exchange live video, audio, and other types of content to view, hear, and otherwise share information. Participants can also view a common space, e.g., a whiteboard or a shared application, through which ideas can be exchanged. Viewing of the common space can be complemented with a video and audio conference, an instant messaging session, or any combination thereof, such that the networked meeting can act as a near substitute for an in-person meeting.

As networked meetings have become ubiquitous, the types of devices that can be used to participate in networked meetings has increased. While once limited to desktop and laptop computers, users can now participate in networked meetings using many other types of hardware devices including, but not limited to, smartphones, tablet computing devices, set-top boxes, smart televisions, video game systems, and even augmented reality ("AR"), virtual reality ("VR"), and mixed reality ("MR") devices.

When a participant utilizes an AR device to participate in a networked meeting, it is currently possible for the AR device to capture that participant's view of their surrounding environment and transmit images or video of the view to the other meeting participants. The other meeting participants can then be presented with the images or video of the real-world environment surrounding the user wearing the AR device. The other meeting participants might also be able to view any virtual objects that the AR device has overlaid upon the real-world environment.

Despite the benefits of the features described above, some systems do have some drawbacks. For example, some traditional input devices cannot be used for placement and modification of virtual objects displayed within a mixed reality environment or a virtual reality environment. It can be appreciated that there is an ongoing need to improve techniques for allowing users to efficiently interact with objects in such environments. Inefficient interactions between a user and a computing device can lead to an undesirable user experience and, among other inefficiencies with respect to computing resources, production loss.

It is with respect to these considerations and others that the disclosure made herein is presented.

SUMMARY

An improved human-computer interface ("HCI") is disclosed herein for placing and sizing virtual objects in a three-dimensional ("3D") representation of a real-world environment. The disclosed HCI can determine a location, size, and orientation of virtual objects in a 3D representation of a real-world environment based on simplified user gestures. In some embodiments, based on the timing and direction of an input gesture, a computing device can utilize (1) an automated mode that determines a position and orientation of a virtual object or utilize (2) a manual mode that determines position, orientation, and size of the virtual object. The automated mode is invoked in response to a first type of input action, such as a short tap of a mouse button. In response to detecting the first type of input action, a virtual object, such as a tip of an arrow, can be placed at the position of a cursor at the time of the input action. The orientation of the virtual object is determined by the direction of the movement of an input device immediately prior to the input action. The size of the virtual object can correspond to a distance between a real-world object and one or more sensors.

The manual mode is invoked in response to a second type of input action, such as a press and hold action. In response to detecting the second type of input action, a first point of the virtual object, such as an arrow, is placed at the position of the input action. During the press and hold action, the first point of the virtual object is locked at the position, the size and orientation of the virtual object are manipulated by the movement of cursor. In response to detecting a subsequent input action, such as a release action, a second point of the virtual object, such as the tail of the arrow, is placed at the position of the cursor at the time of the subsequent input action.

The HCI disclosed herein can enable users to efficiently place, size, rotate, and otherwise modify virtual objects in a 3D representation of a real-world environment. This can also result in more efficient use of computing resources such as processor cycles, memory, network bandwidth, and power, as compared to previous solutions. Other technical benefits not specifically mentioned herein can also be realized through implementations of the disclosed subject matter.

In order to realize the technical benefits mentioned briefly above, and potentially others, a computing device configured with sensors and program code capable of 3D spatial mapping, such as an AR device or appropriately-configured smartphone, generates mesh data that defines a 3D representation of a real-world environment. The computing device also generates still or moving images (i.e. a video) of the real-world environment. Such a computing device can also be utilized to augment a user's view of the real-world environment with virtual objects. The virtual objects appear as if they are actually present in the real-world environment when the real-world environment is viewed with the computing device. The computing device transmits the mesh data and images to a remote computing device over a suitable data communications network.

The remote computing device executes an application program, or another type of program, that is configured to enable networked meetings, such as those described briefly above. The application program receives the mesh data and images generated by the computing device (e.g. a AR device) and renders the images in a user interface ("UI"). In this manner, a user of the remote computing device can see the view of the real-world environment as seen by the user of the computing device along with any virtual objects augmenting that view.

The configurations disclosed herein improve a user's interaction with the computing device. In particular, this invention enables users to indicate a position and a direction of an object in a 2D or 3D computing environment using simplified gestures. In some configurations, the application program can also receive user input (e.g. mouse or touch input) via the UI that defines a location on the images at which a virtual object is to be placed within the 3D representation of the real-world environment defined by the mesh data. For example, a user might utilize the UI to place an arrow-shaped virtual object at a location pointing to a real-world object or a second virtual object in order to bring attention to the real-world or virtual object. Other types of virtual objects can be placed in a similar manner.

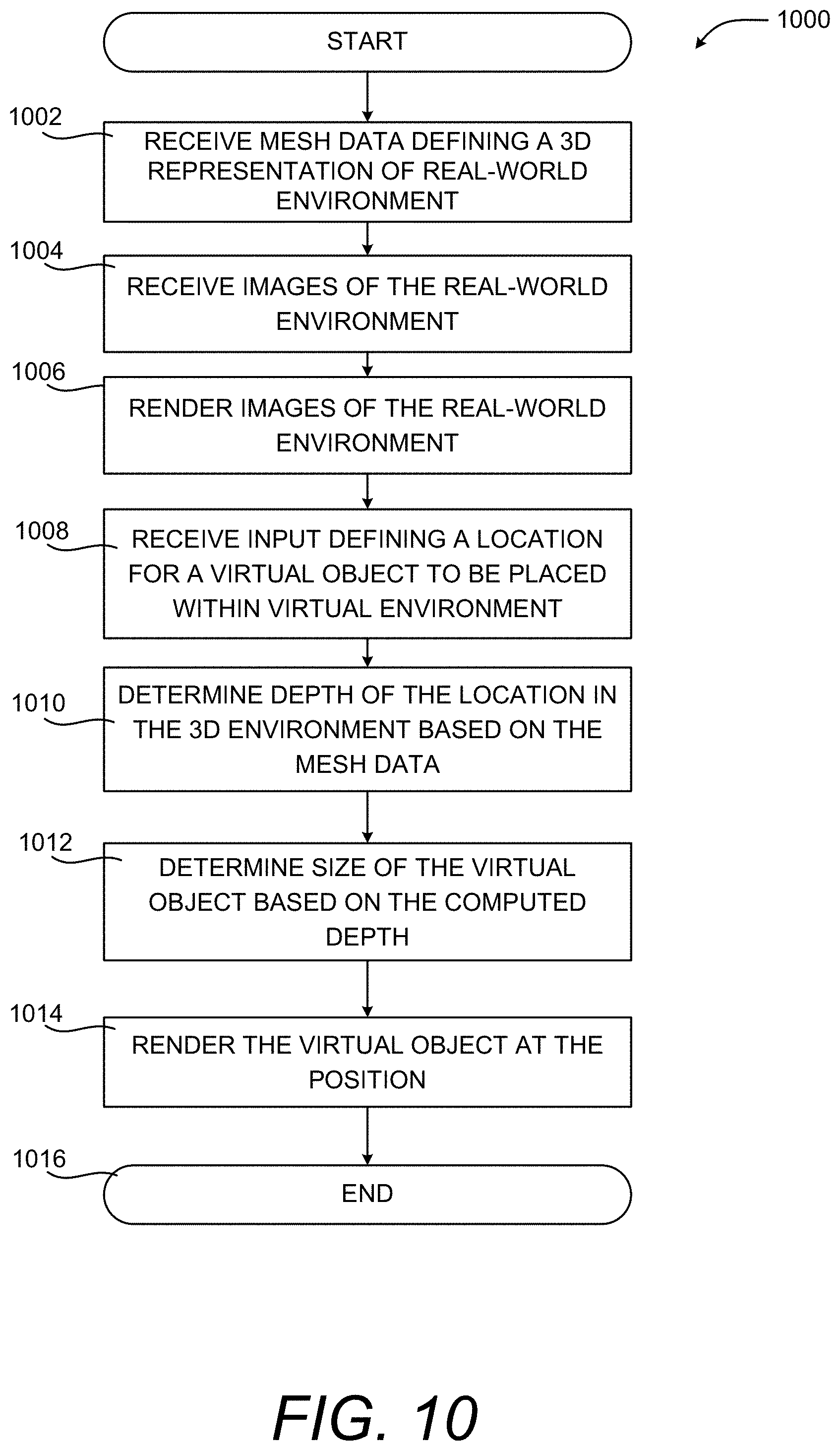

In some configurations, responsive to receiving an input for placing a virtual object, the application program can utilize the mesh data to determine the depth of the specified location within the 3D representation of the real-world environment. The application program can then determine the size at which the virtual object is to be rendered in the UI based upon the computed depth. For instance, virtual objects nearer to a user's viewpoint into the 3D representation of the real-world environment can be rendered larger than those virtual objects that are further away.

In some embodiments, the depth of the location within the 3D representation of the real-world environment corresponds to the depth of a real-world object at the specified location in the real-world environment. In this manner, virtual objects can be located at the same depth in the 3D representation of the real-world environment as real-world objects. The depth of the location within the 3D representation of the real-world environment might alternately correspond to the depth of another virtual object already present in the 3D representation of the real-world environment. In this manner, a user can define virtual objects located at the same depth in the 3D representation of the real-world environment as other virtual objects.

Once the size of the virtual object has been computed, the remote computing device can render the virtual object in the UI at the location within the 3D representation of the real-world environment. In this manner, the virtual object appears as if it were actually present in the real-world environment. The mesh data can be updated and provided to the computing device (e.g. an AR device) so that the user of the device can view the new virtual object as if were actually present in the real-world environment. The updated mesh data can also be provided to other participants in the networked meeting for display on their computing devices.

In some scenarios, the real-world object at the location in the real-world environment is planar such as, for instance, a wall or a whiteboard. In these scenarios, the virtual object can be rendered in the same plane as the real-world object. In this manner, both the size of the virtual object and its orientation can be defined such that the virtual object appears in perspective when rendered by the remote computing device.

In some embodiments, the virtual object is fixed at the location specified within the 3D representation of the real-world environment. In this manner, the virtual objects remain in their specified location even when a user of the computing device moves their head or otherwise changes position. Additionally, in some embodiments the UI further includes one or more UI controls for manipulating the virtual object. For example, and without limitation, the UI controls can provide functionality for rotating, coloring, transforming, deleting, replacing, or otherwise modifying the visual appearance of the virtual object.

It should be appreciated that various aspects of the subject matter described briefly above and in further detail below can be implemented as a hardware device, a computer-implemented method, a computer-controlled apparatus or device, a computing system, or an article of manufacture, such as a computer storage medium. While the subject matter described herein is presented in the general context of program modules that execute on one or more computing devices, those skilled in the art will recognize that other implementations can be performed in combination with other types of program modules. Generally, program modules include routines, programs, components, data structures, and other types of structures that perform particular tasks or implement particular abstract data types.

Those skilled in the art will also appreciate that aspects of the subject matter described herein can be practiced on or in conjunction with other computer system configurations beyond those specifically described herein, including multiprocessor systems, microprocessor-based or programmable consumer electronics, AR, VR, and MR devices, video game devices, handheld computers, smartphones, smart televisions, self-driving vehicles, smart watches, e-readers, tablet computing devices, special-purpose hardware devices, network appliances, and the others.

Features and technical benefits other than those explicitly described above will be apparent from a reading of the following Detailed Description and a review of the associated drawings. This Summary is provided to introduce a selection of concepts in a simplified form that are further described below in the Detailed Description. This Summary is not intended to identify key or essential features of the claimed subject matter, nor is it intended to be used as an aid in determining the scope of the claimed subject matter.

BRIEF DESCRIPTION OF THE DRAWINGS

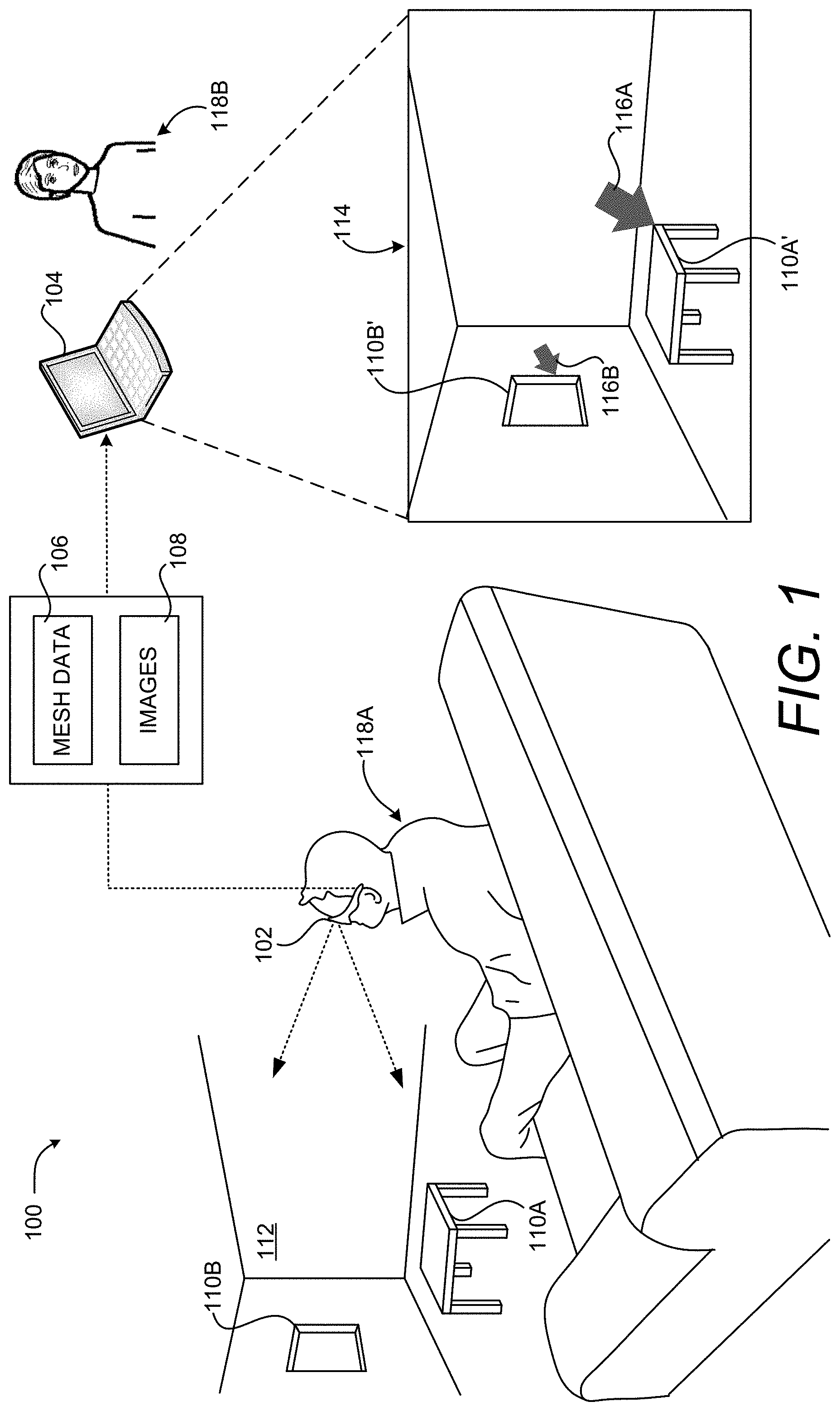

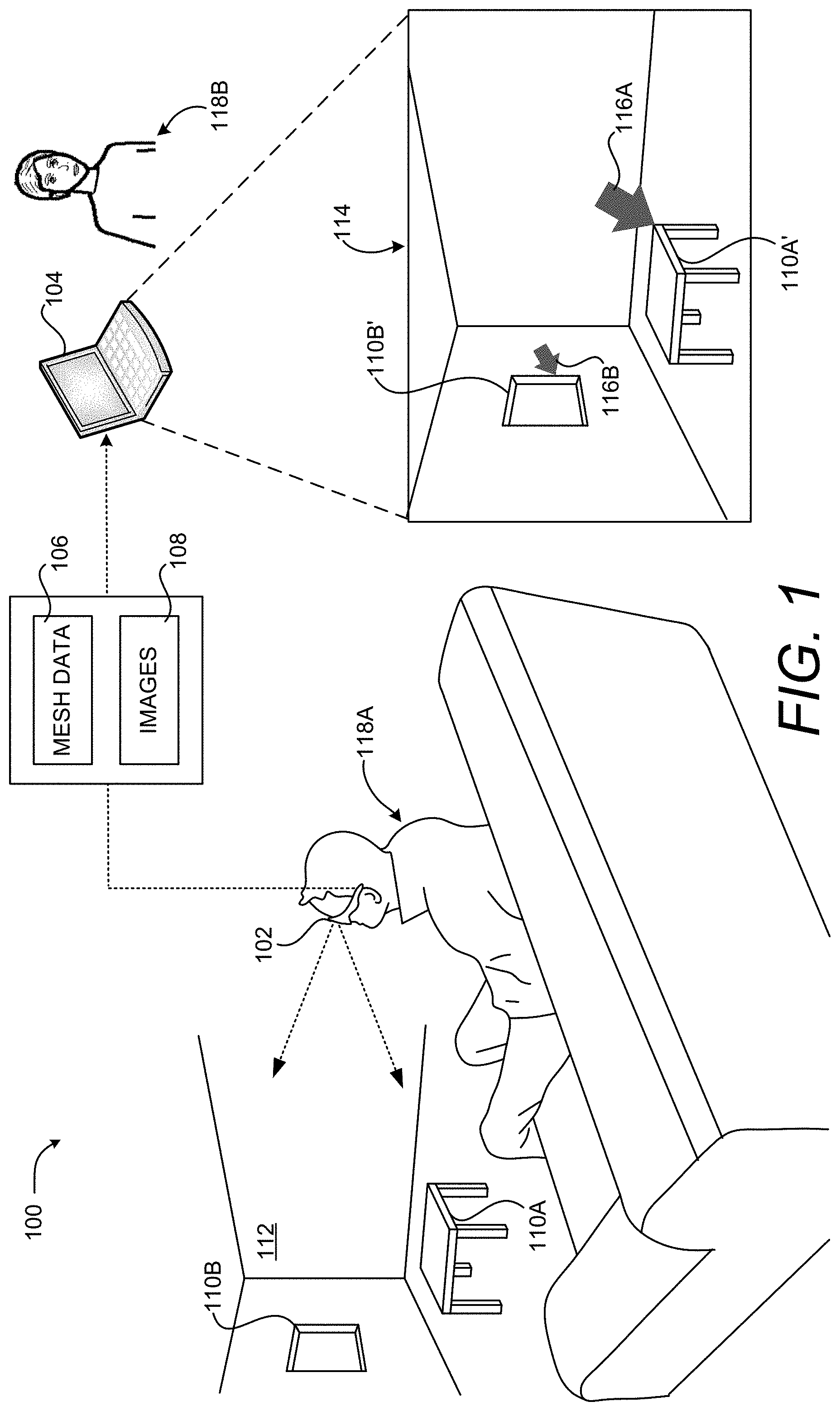

FIG. 1 is a computing system diagram illustrating aspects of an operating environment for the embodiments disclosed herein along with aspects of an illustrative HCI that enables computationally efficient placement and sizing of virtual objects in a 3D representation of a real-world environment, according to one embodiment disclosed herein.

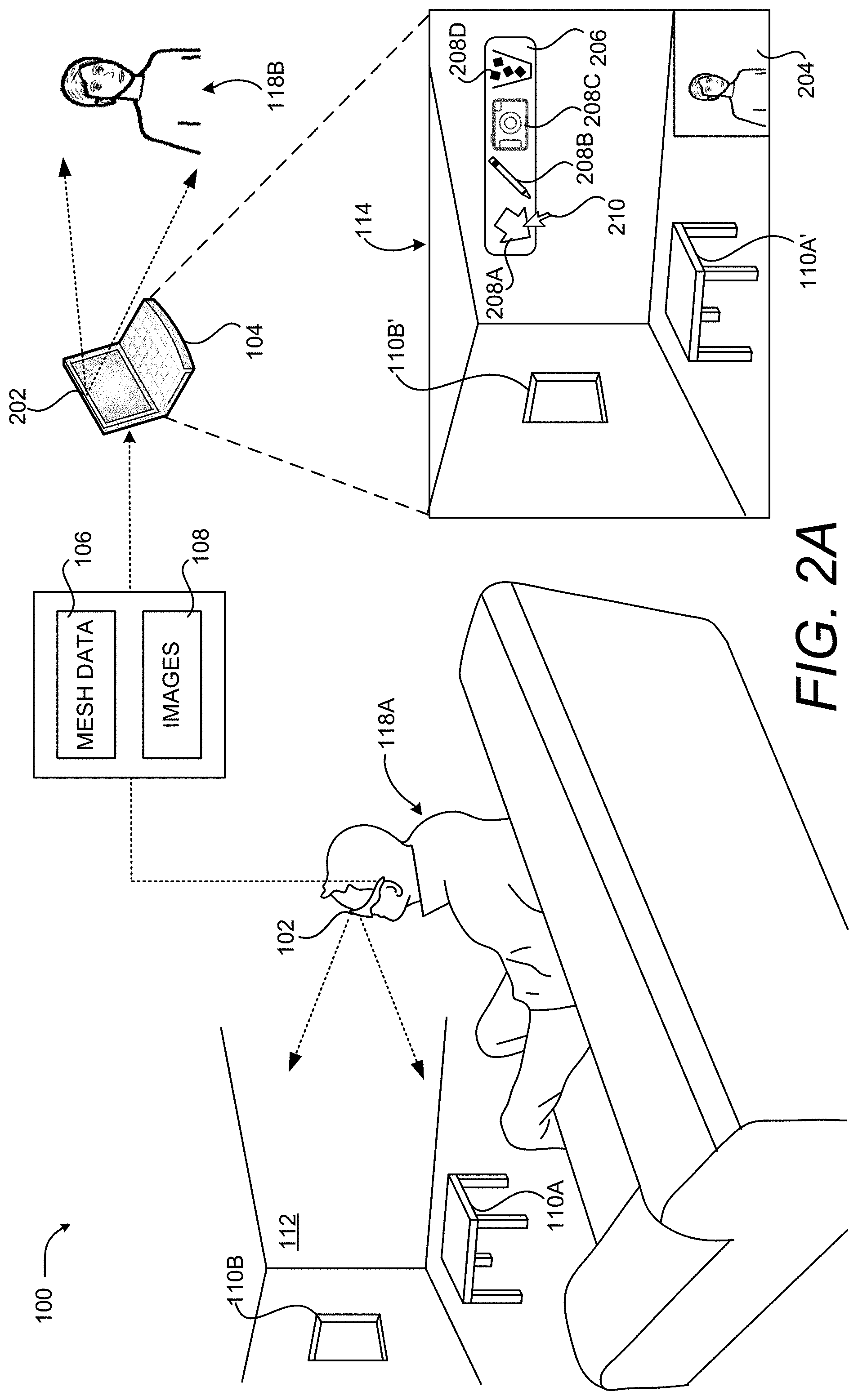

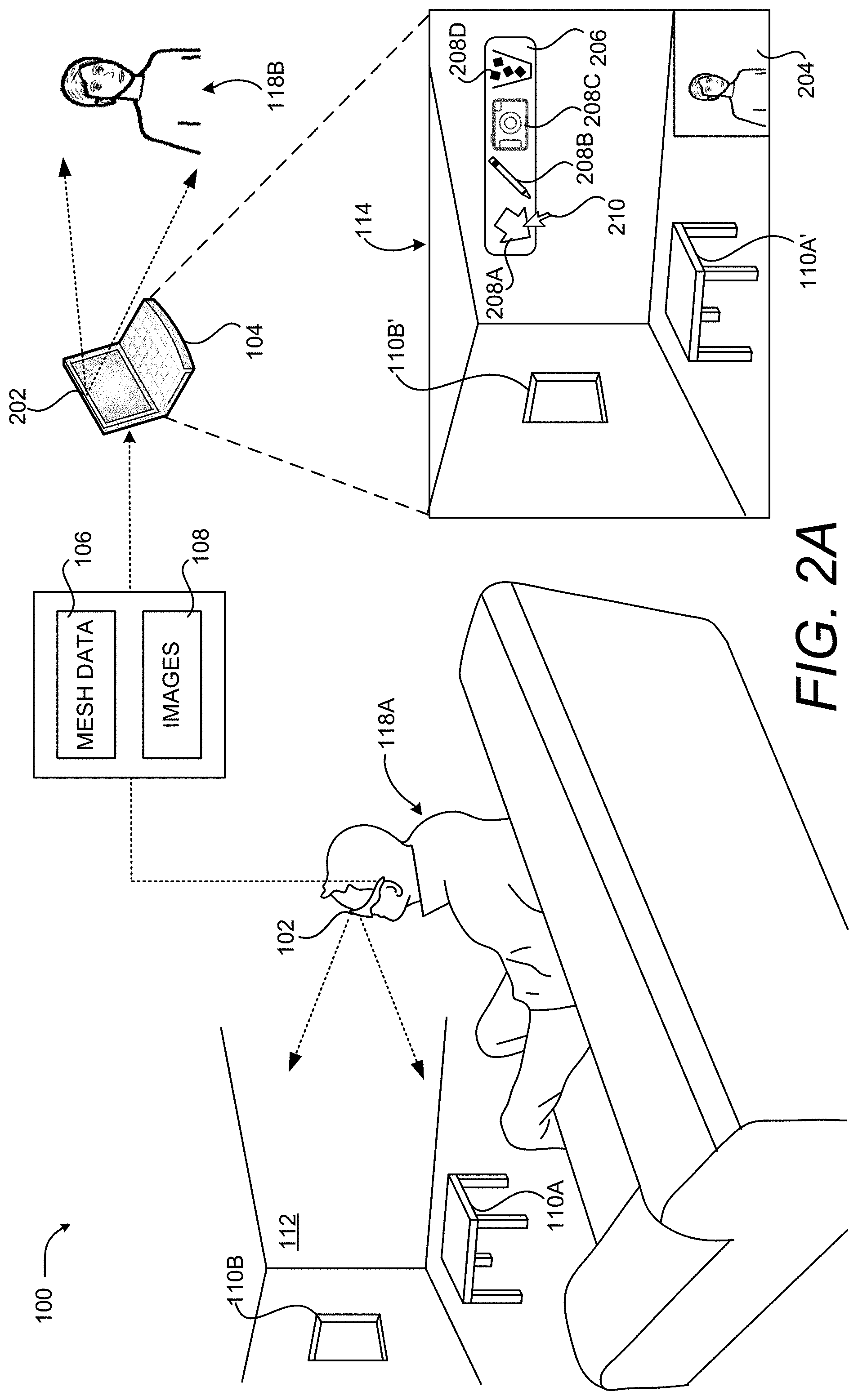

FIG. 2A is a computing system diagram illustrating additional aspects of the illustrative HCI shown in FIG. 1, according to one embodiment disclosed herein.

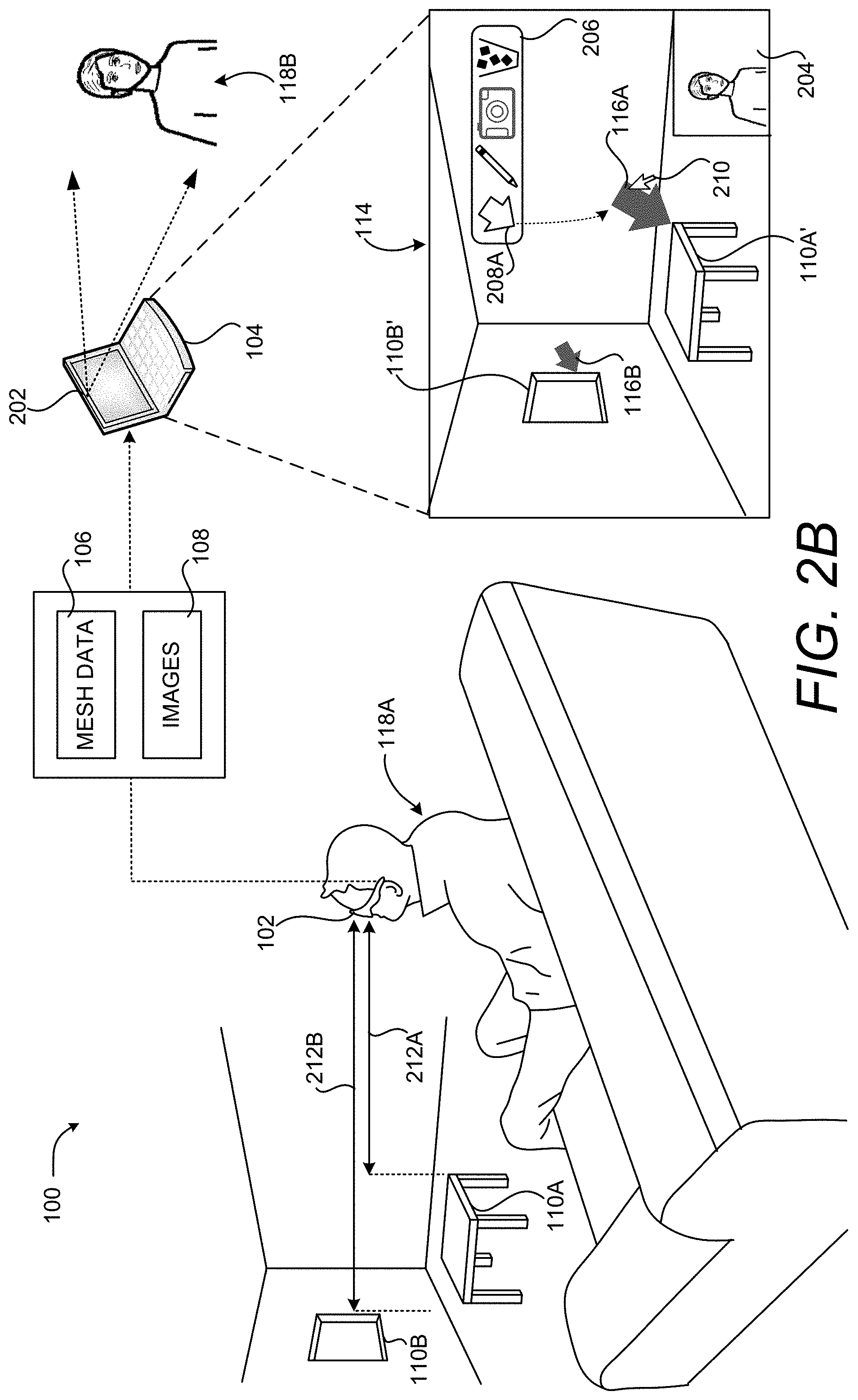

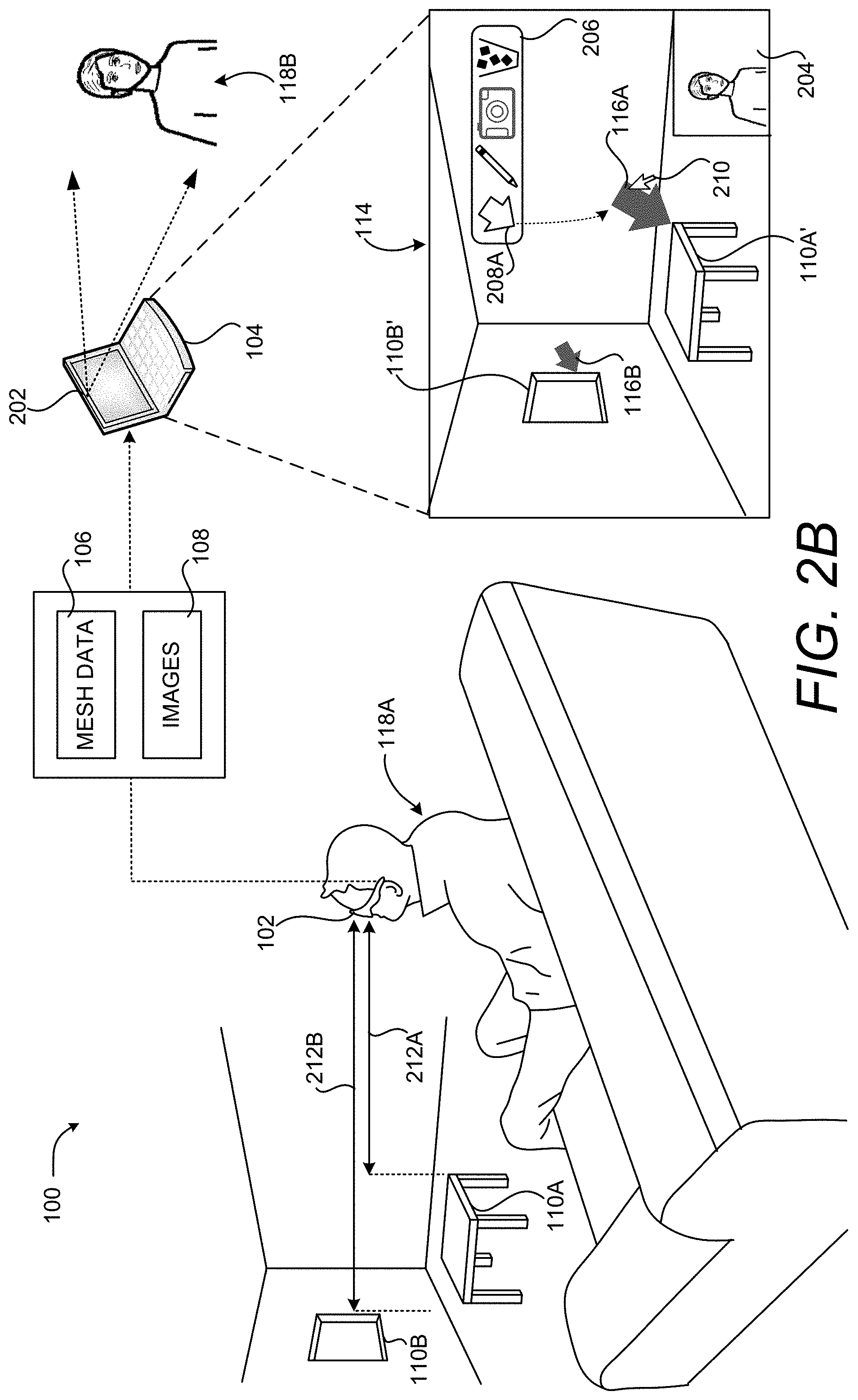

FIG. 2B is a computing system diagram showing additional aspects of the illustrative HCI shown in FIGS. 1 and 2A.

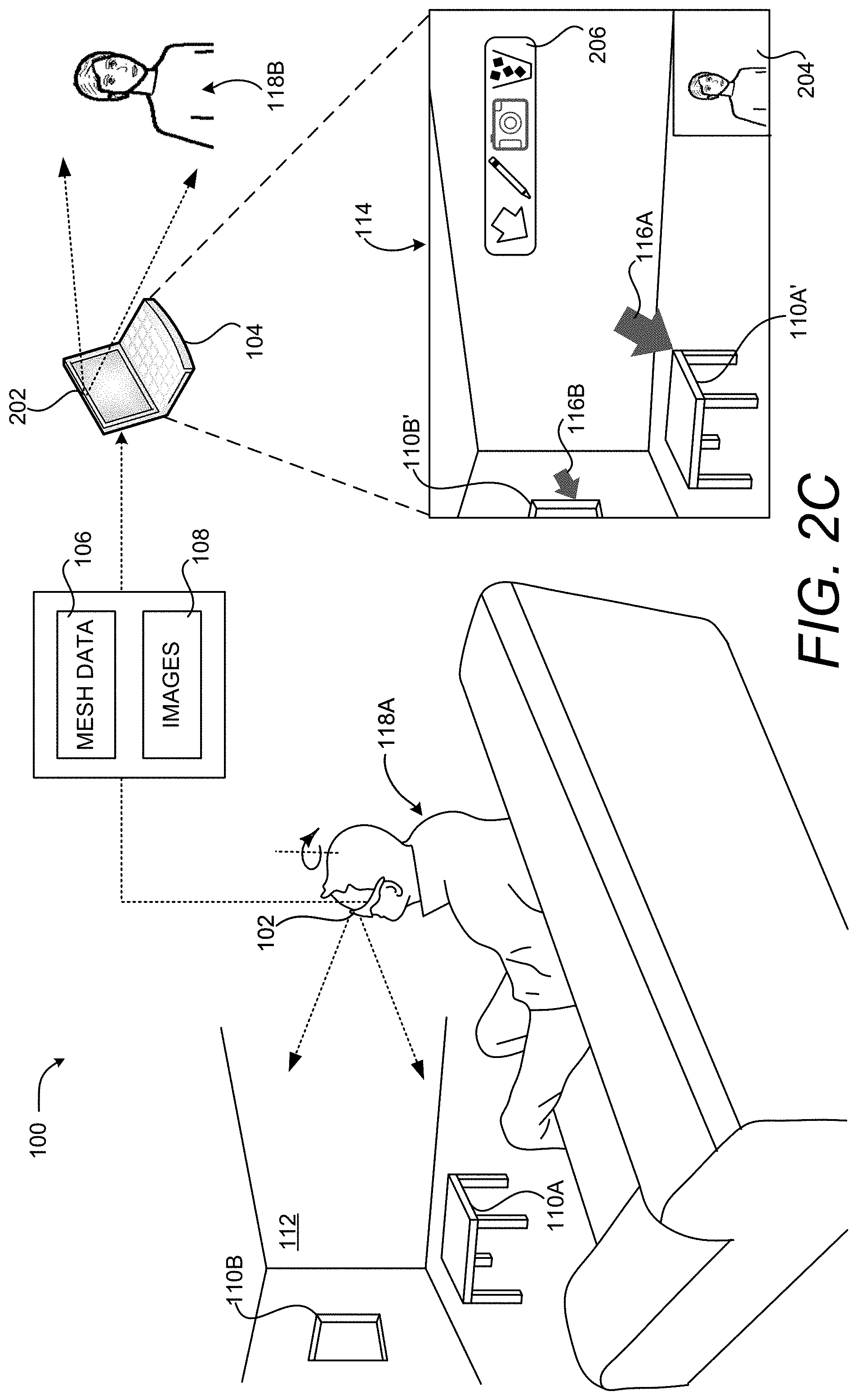

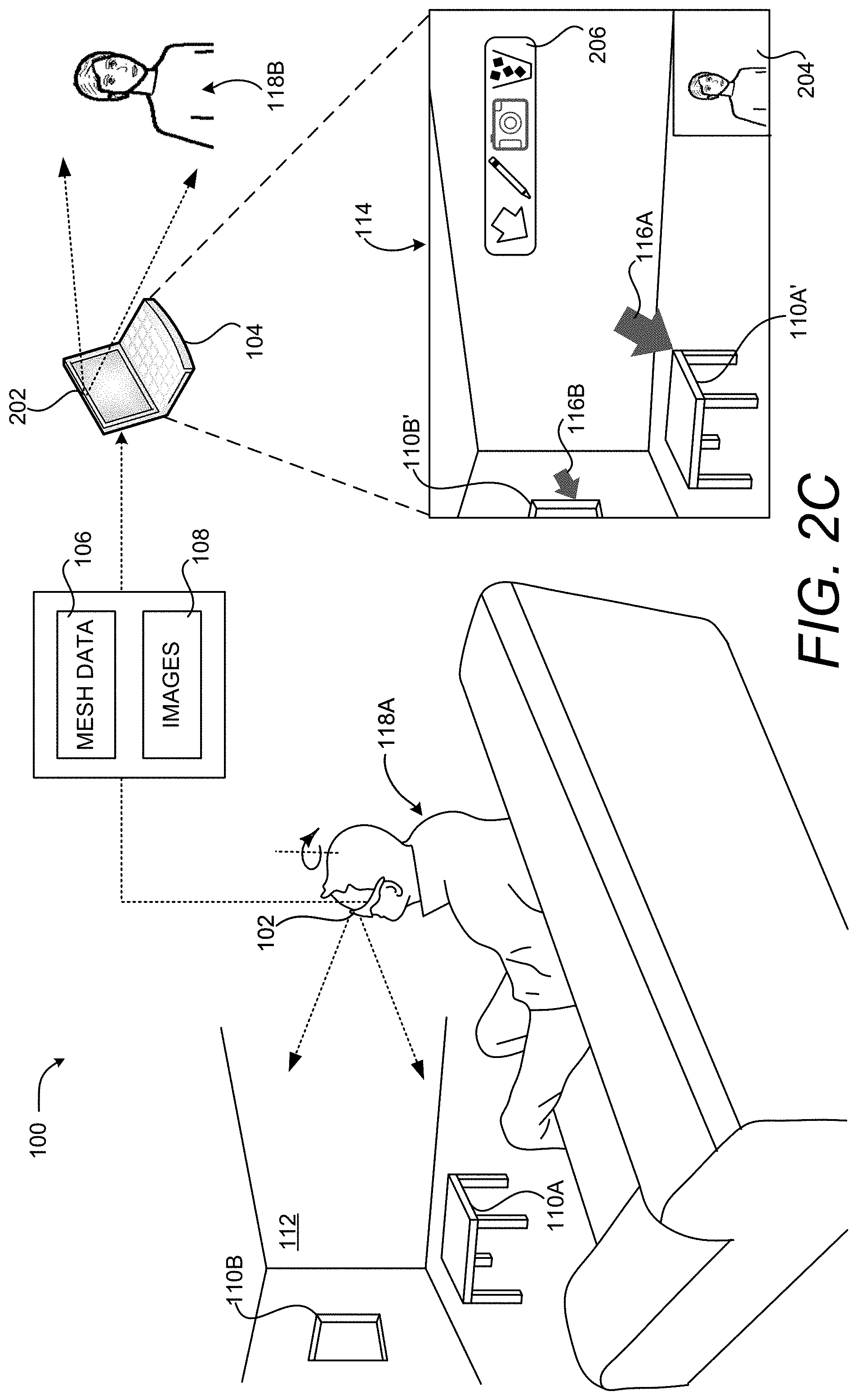

FIG. 2C is a computing system diagram illustrating additional aspects of the illustrative HCI shown in FIGS. 1, 2A, and 2B, according to one embodiment disclosed herein.

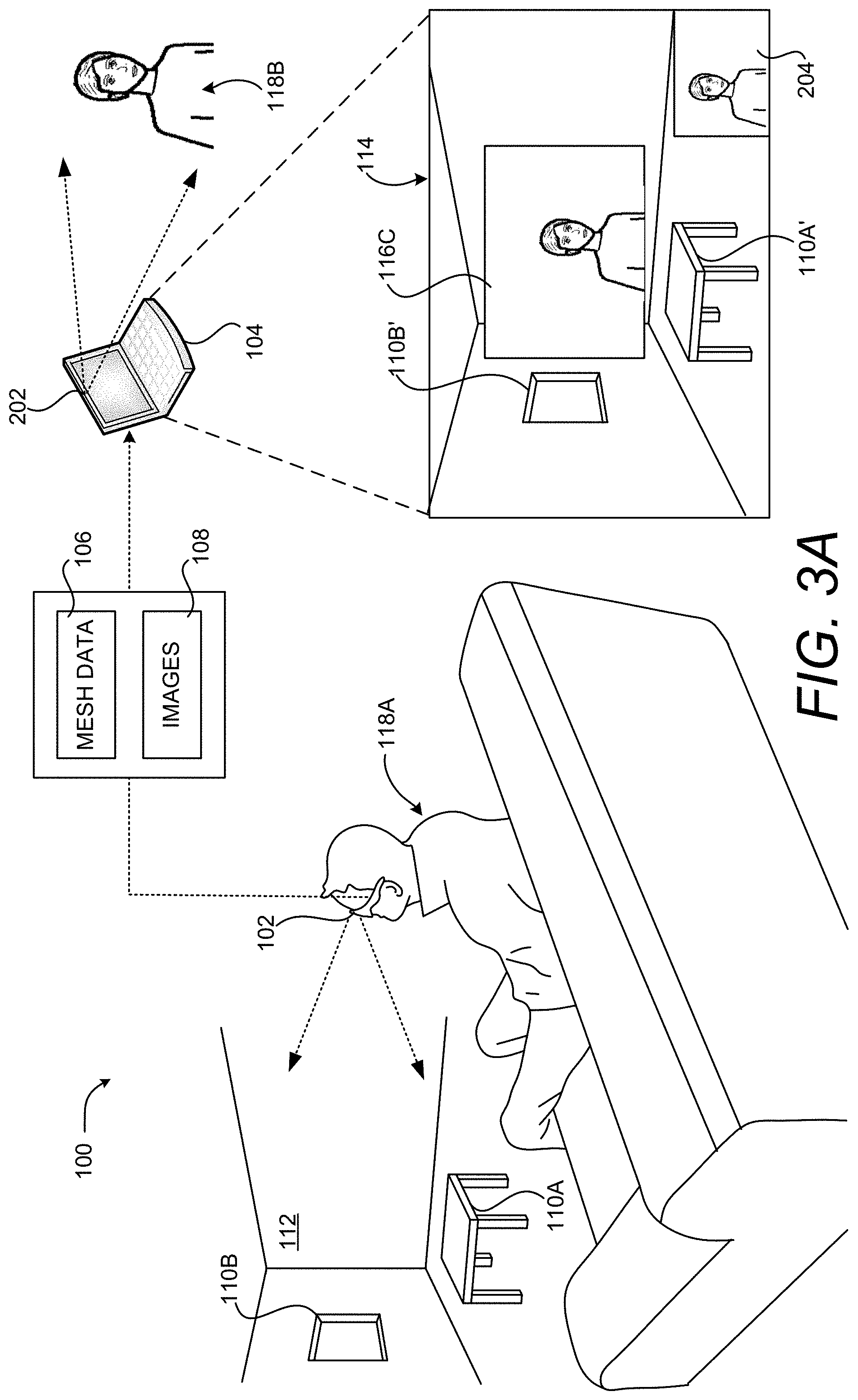

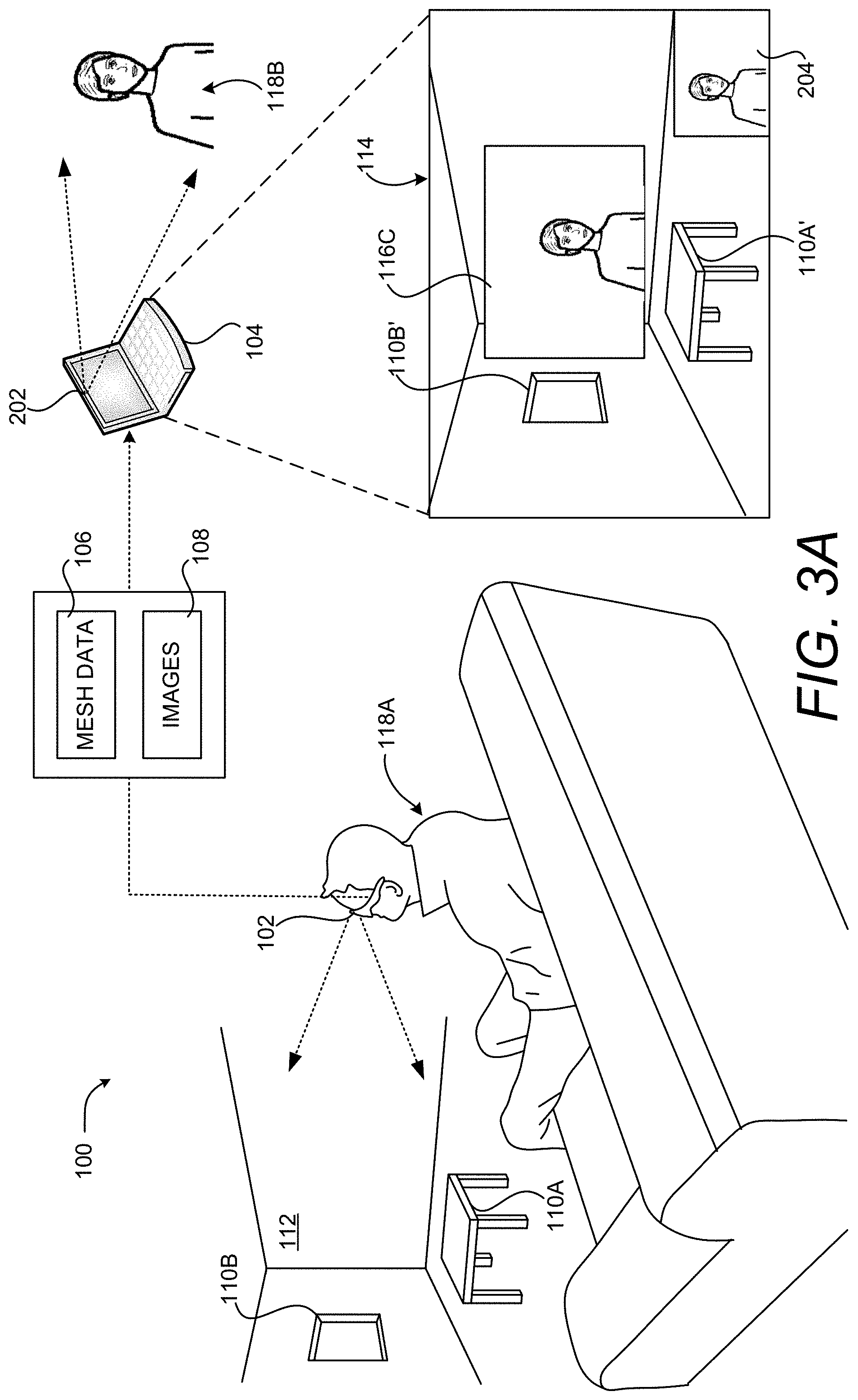

FIG. 3A is a computing system diagram illustrating aspects of another embodiment of the illustrative HCI shown in FIG. 1.

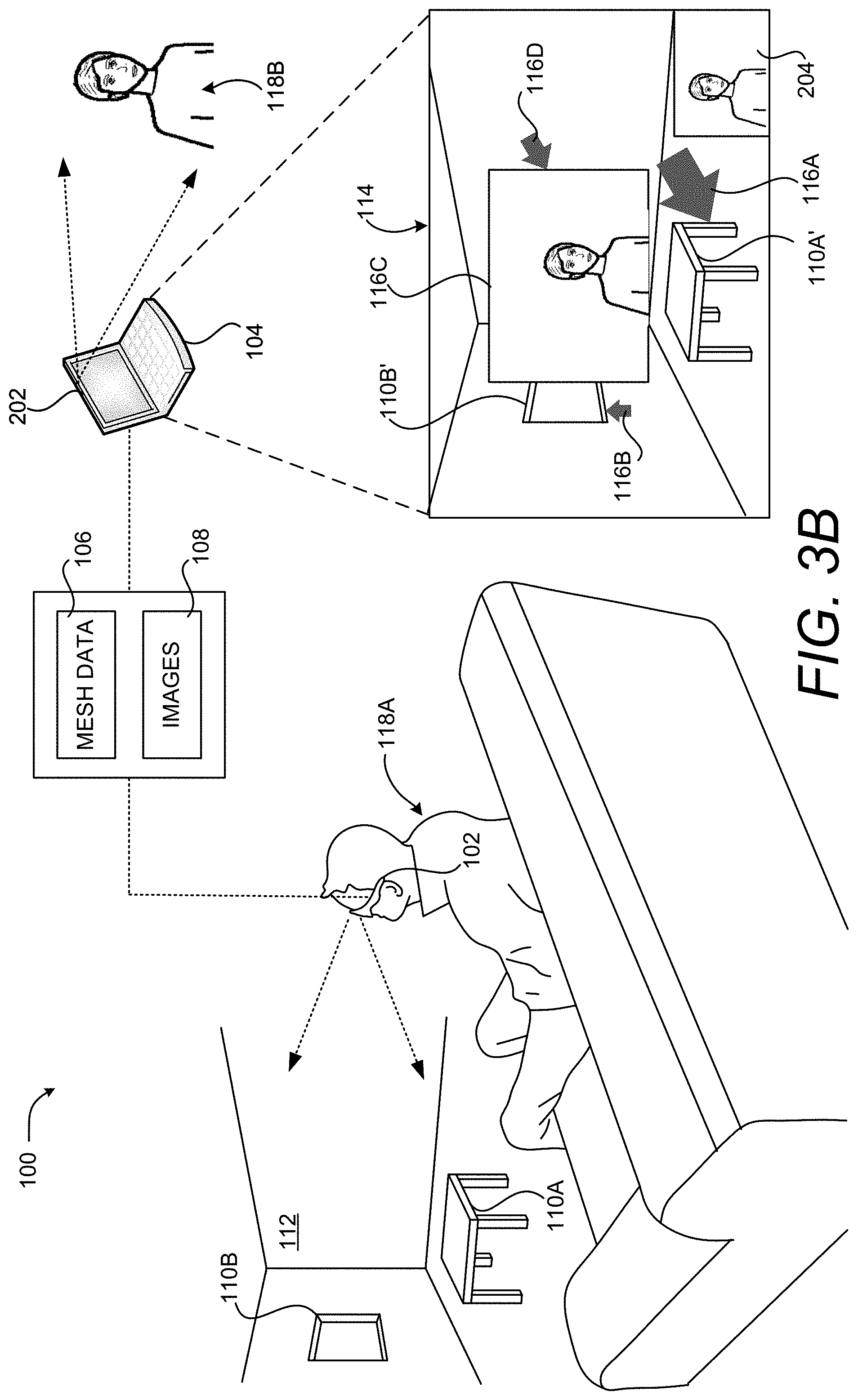

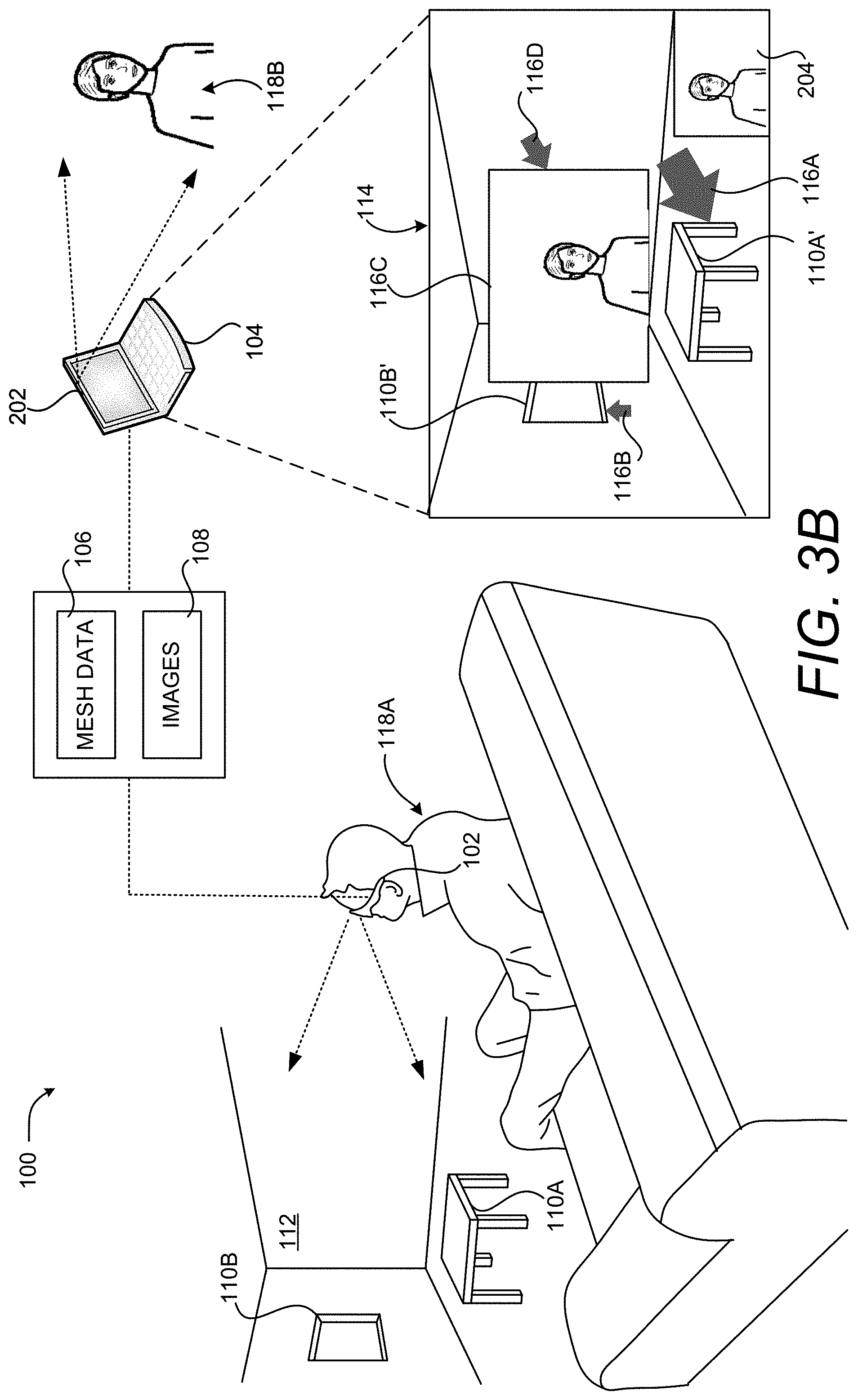

FIG. 3B is a computing system diagram showing additional aspects of the embodiment of the illustrative HCI shown in FIG. 3A;

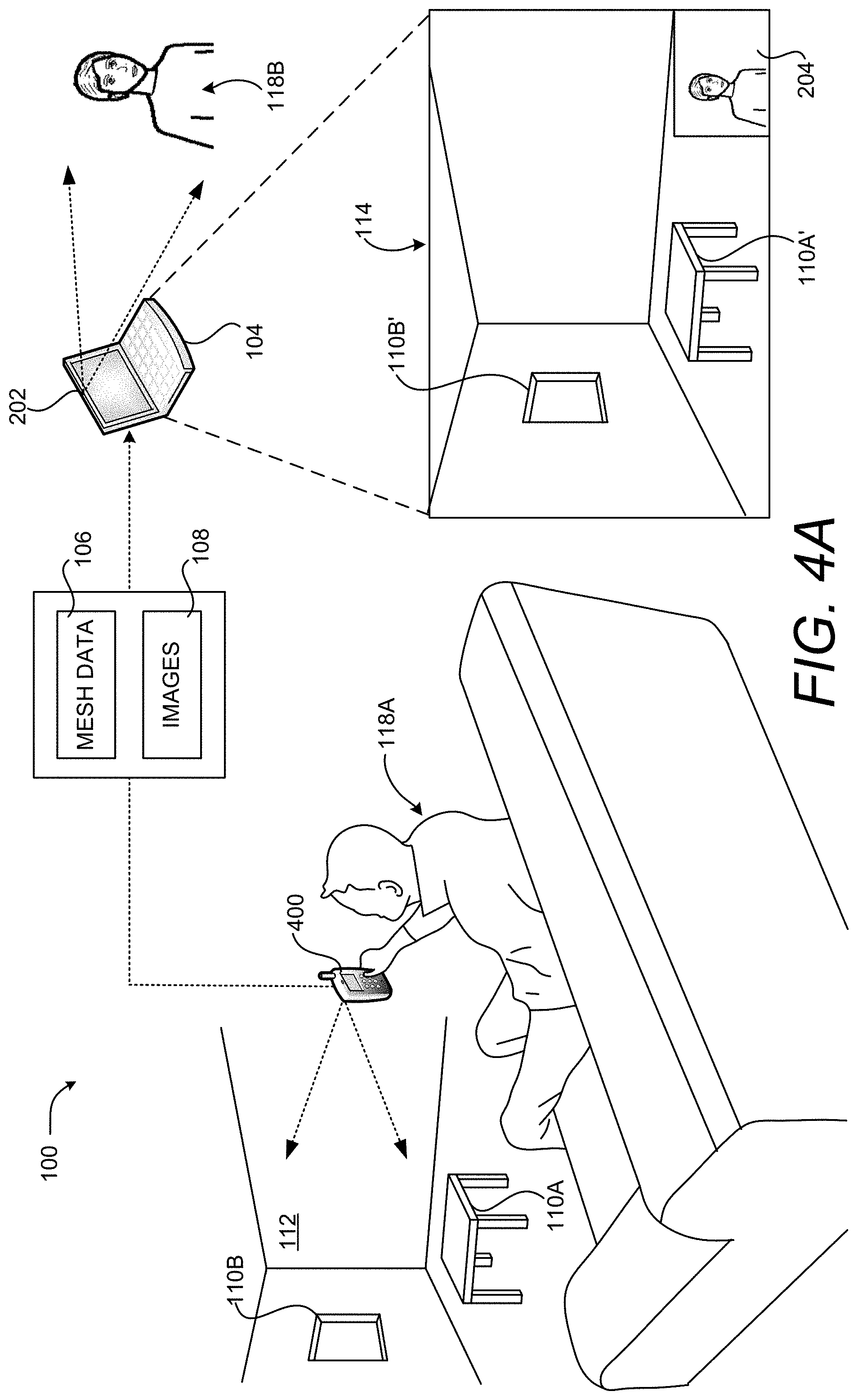

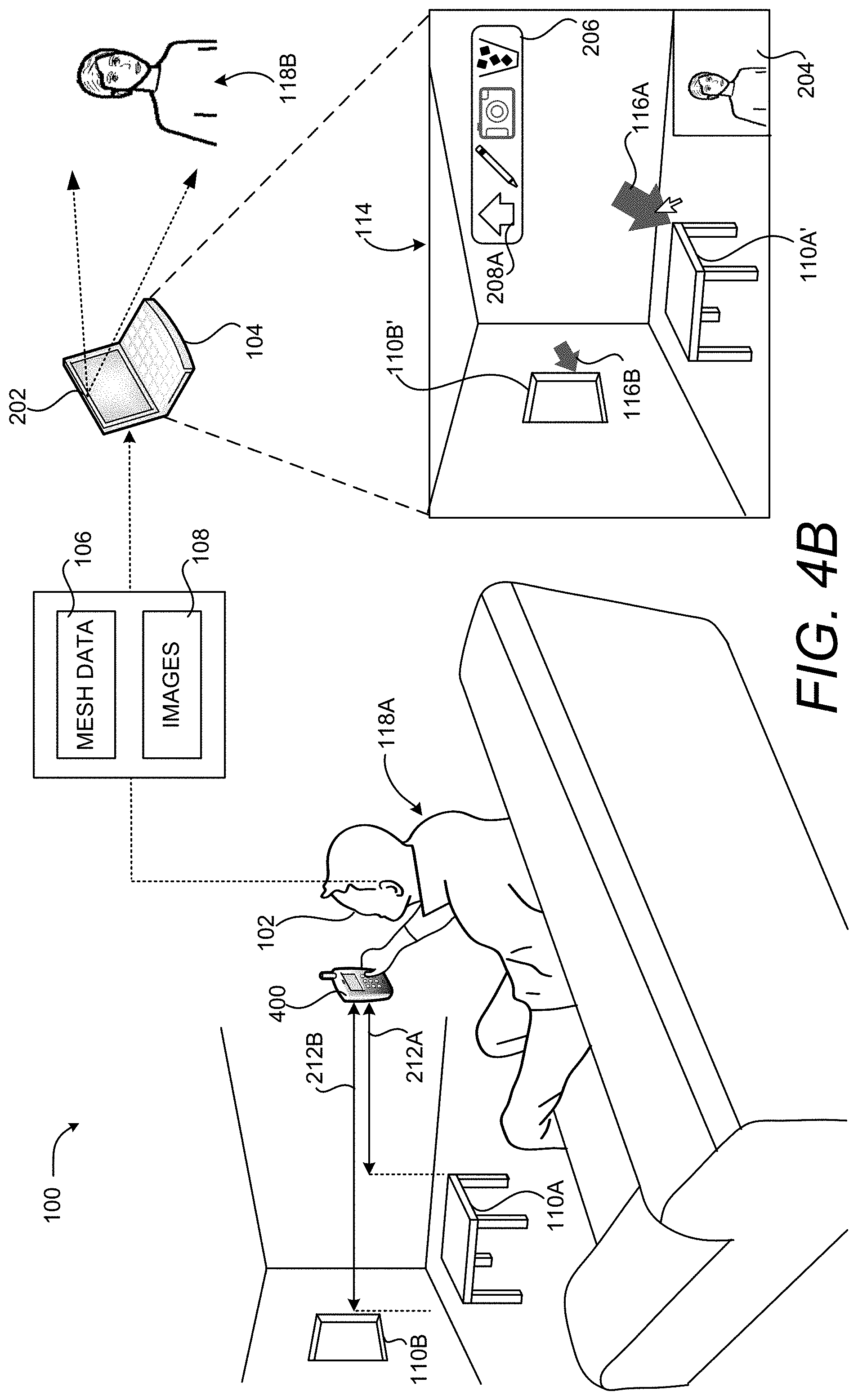

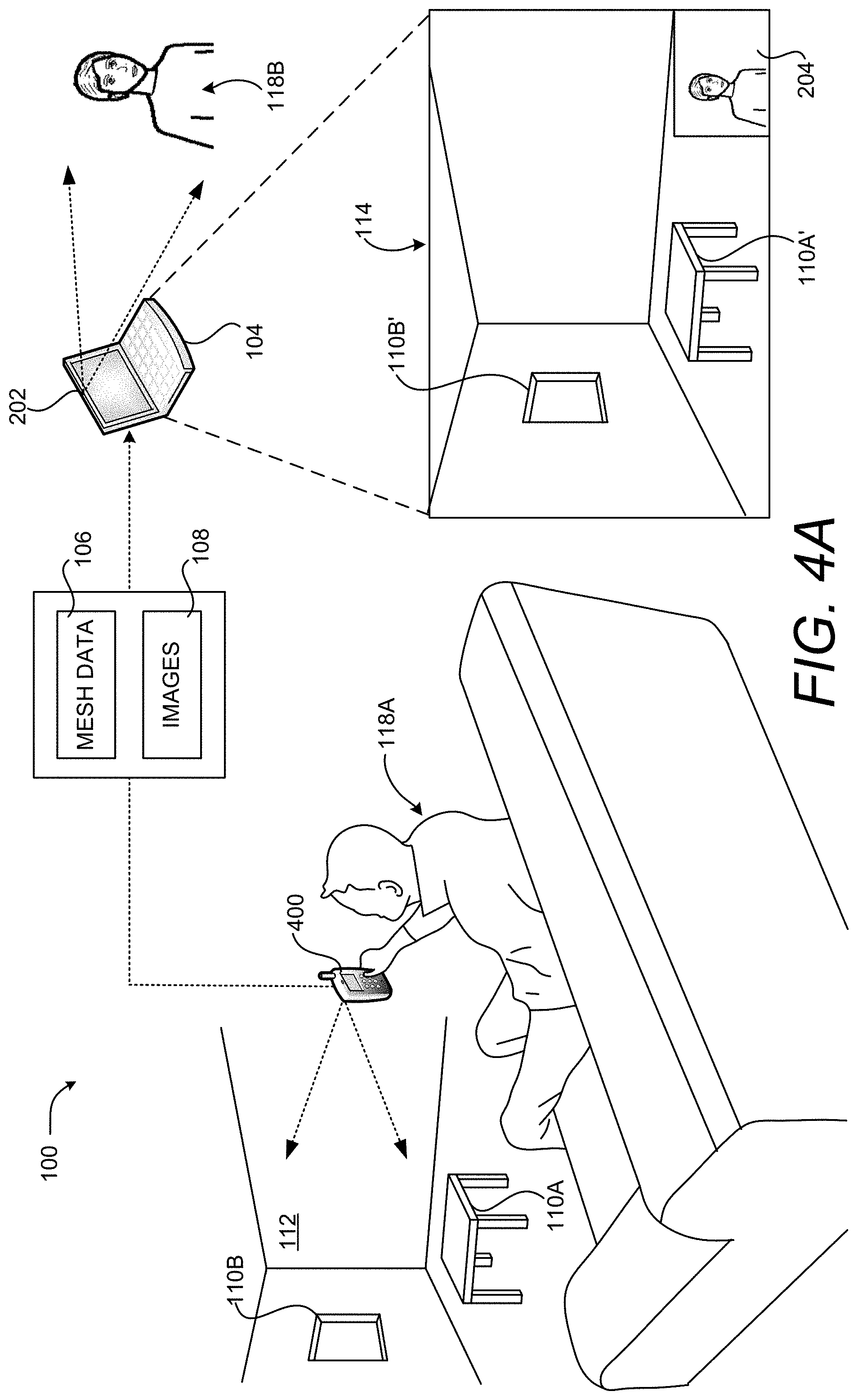

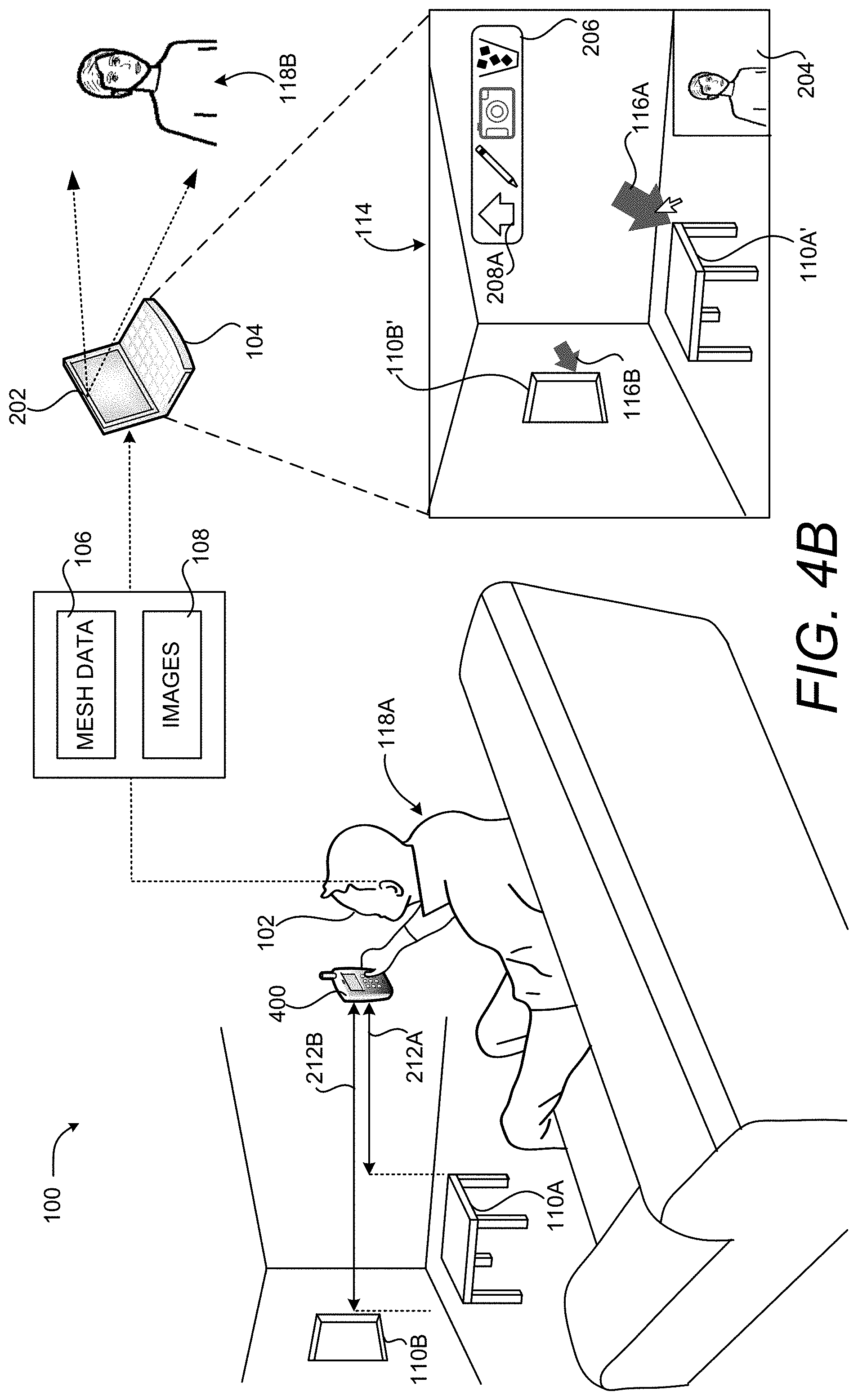

FIG. 4A is a computing system diagram showing aspects of another embodiment of the illustrative HCI shown in FIG. 1.

FIG. 4B is a computing system diagram illustrating additional aspects of the embodiment of the illustrative HCI shown in FIG. 4A.

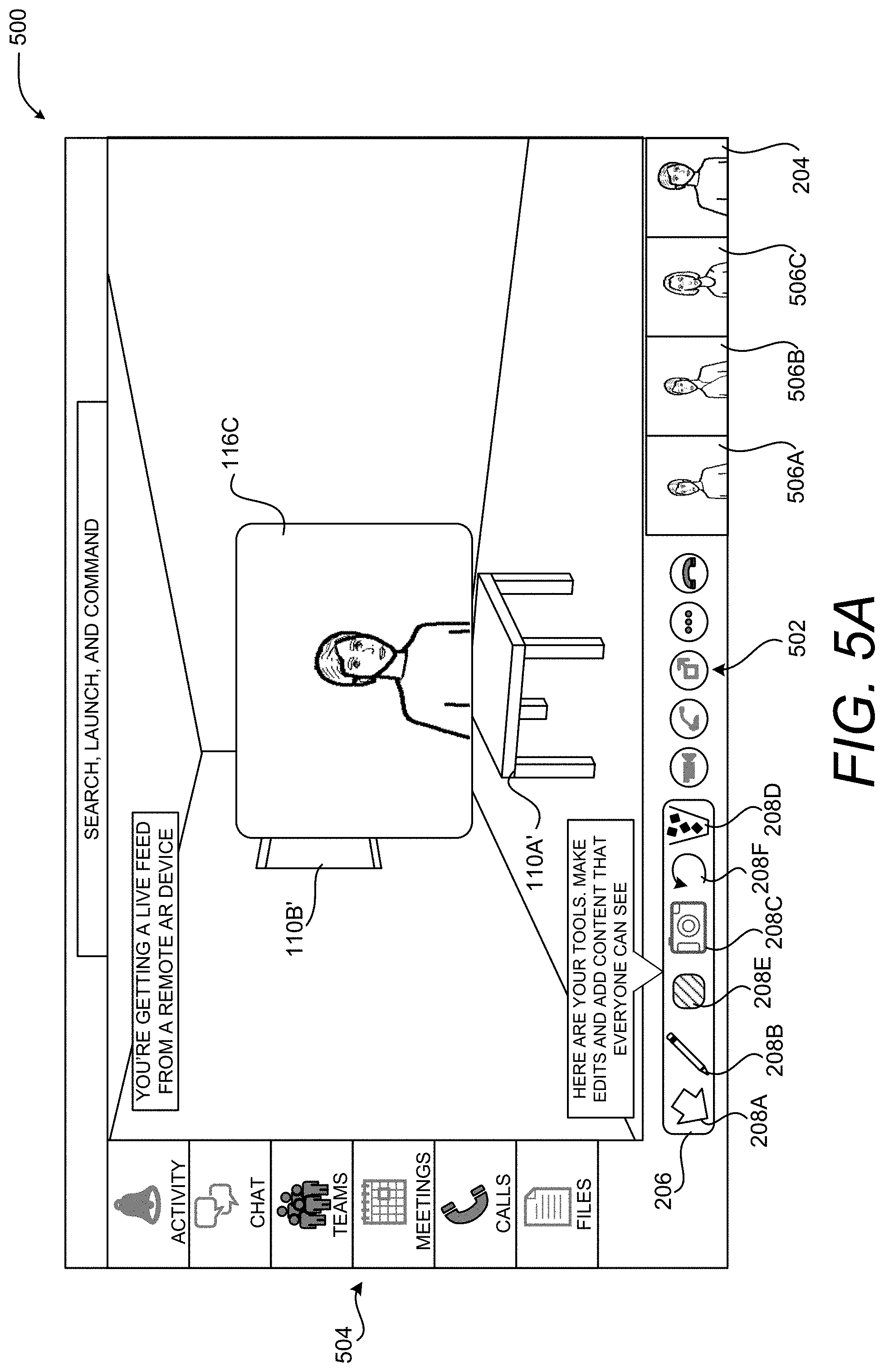

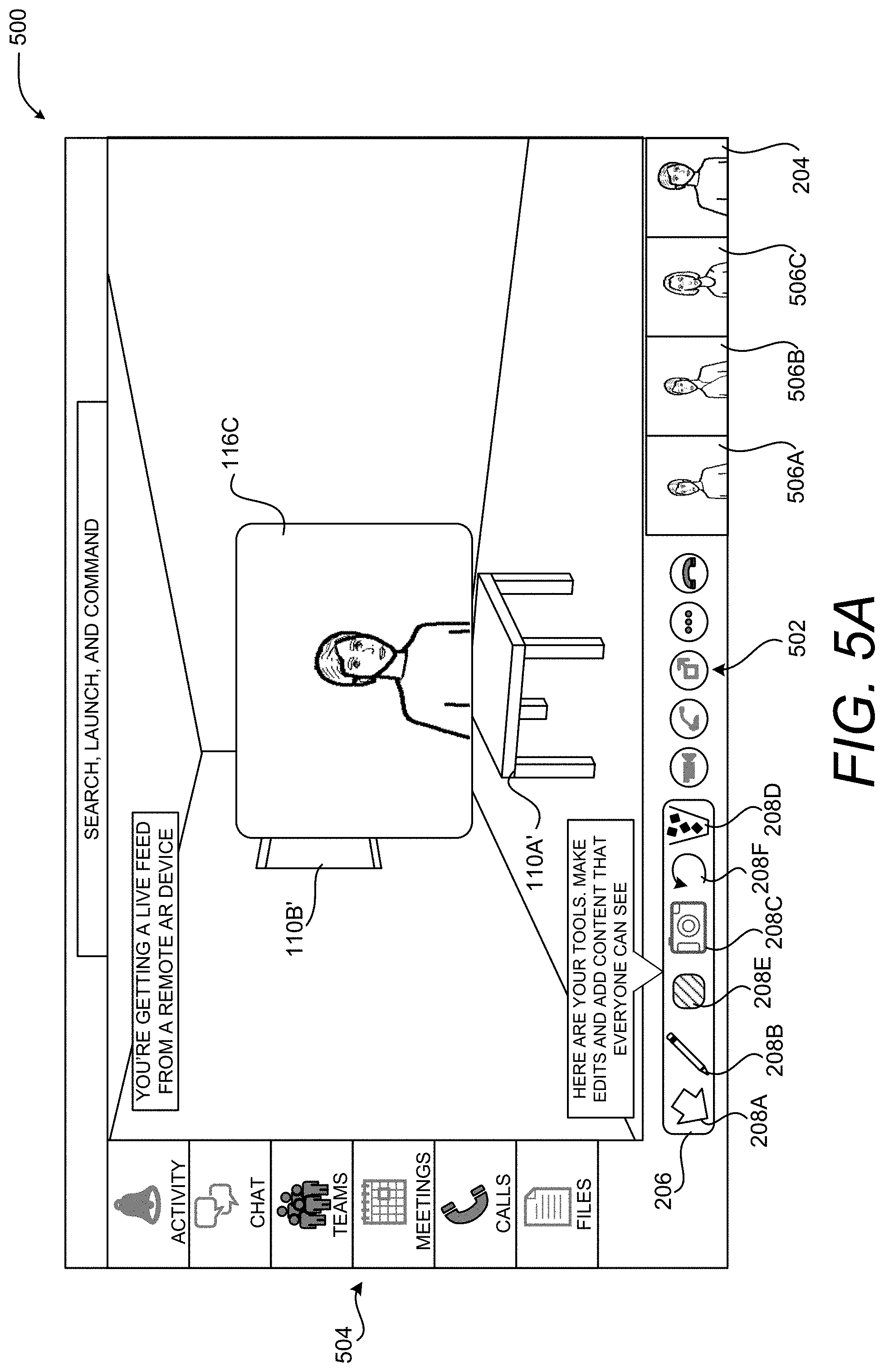

FIG. 5A is a UI diagram showing aspects of an illustrative UI that enables computationally efficient placement and sizing of virtual objects in a 3D representation of a real-world environment, according to one embodiment disclosed herein.

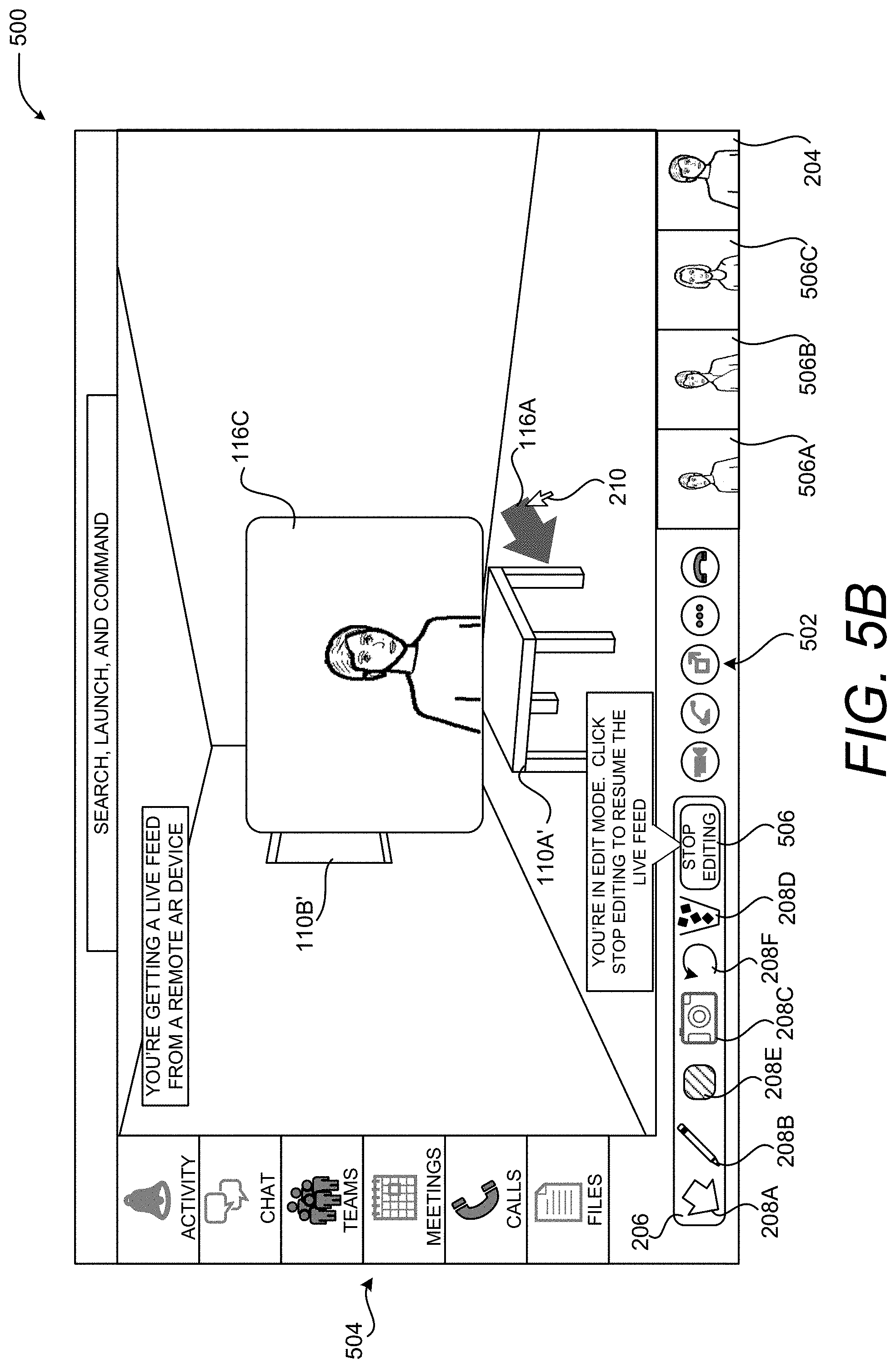

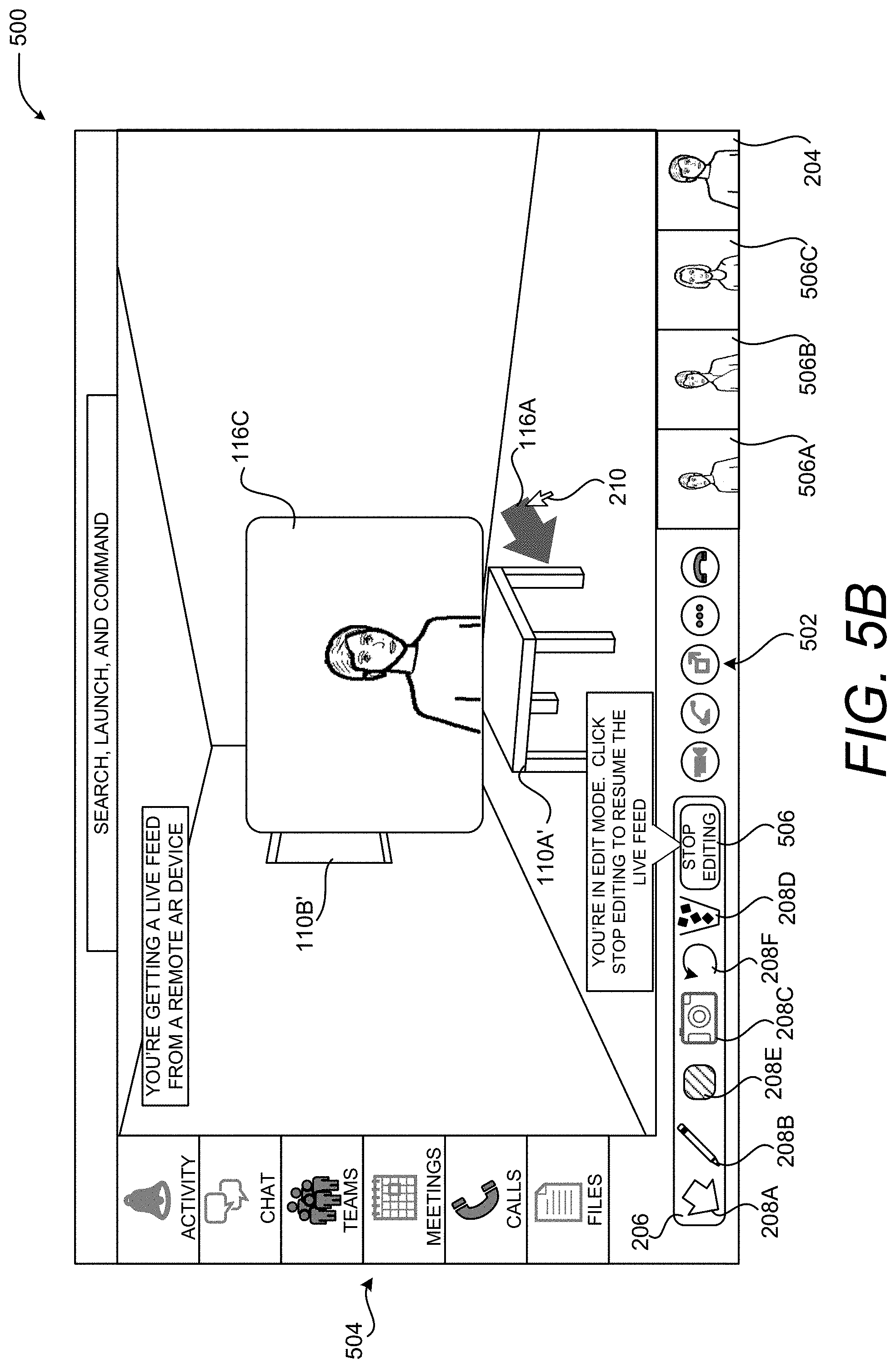

FIG. 5B is a UI diagram showing additional aspects of the illustrative UI shown in FIG. 5A, according to one embodiment disclosed herein.

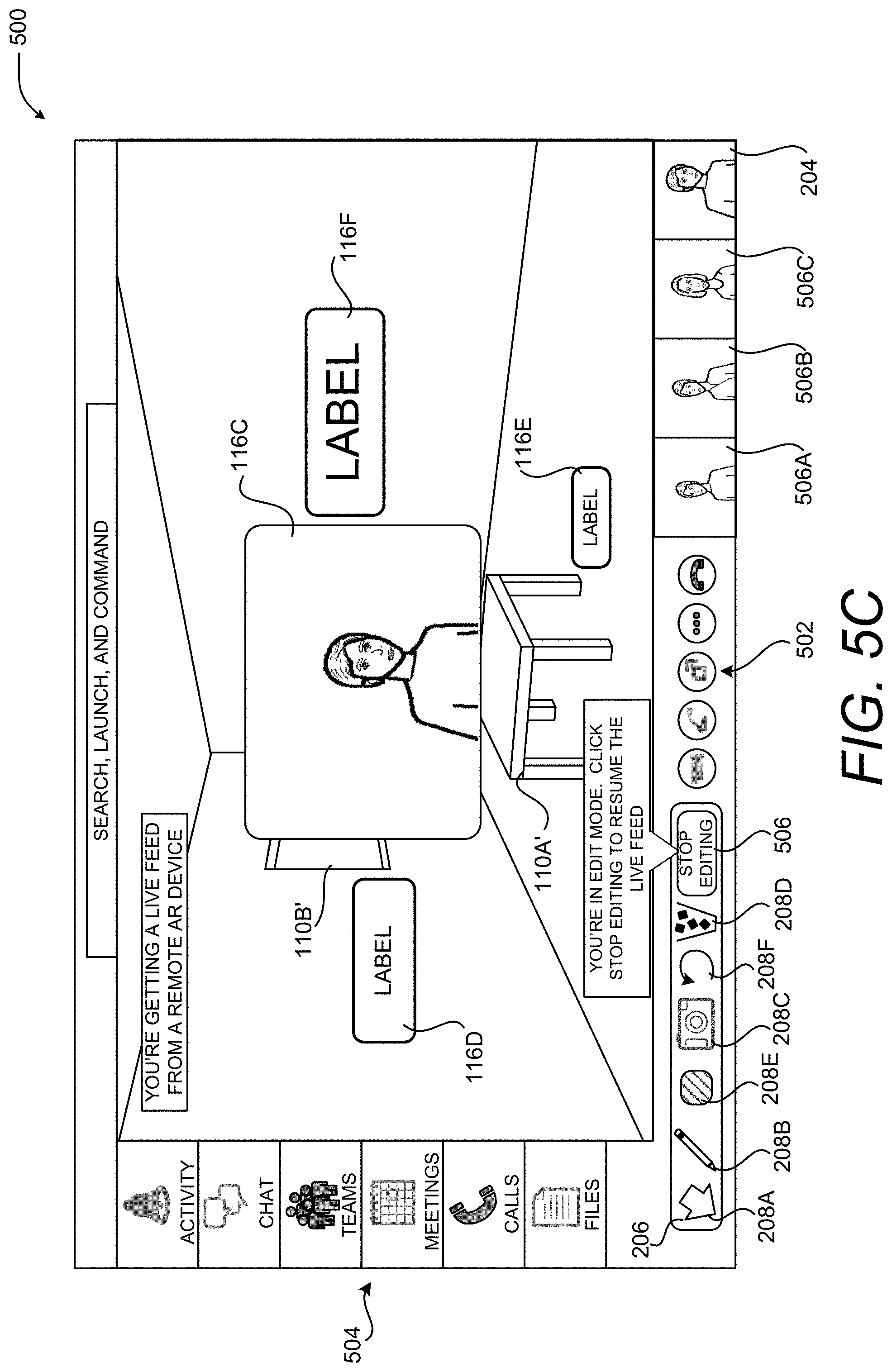

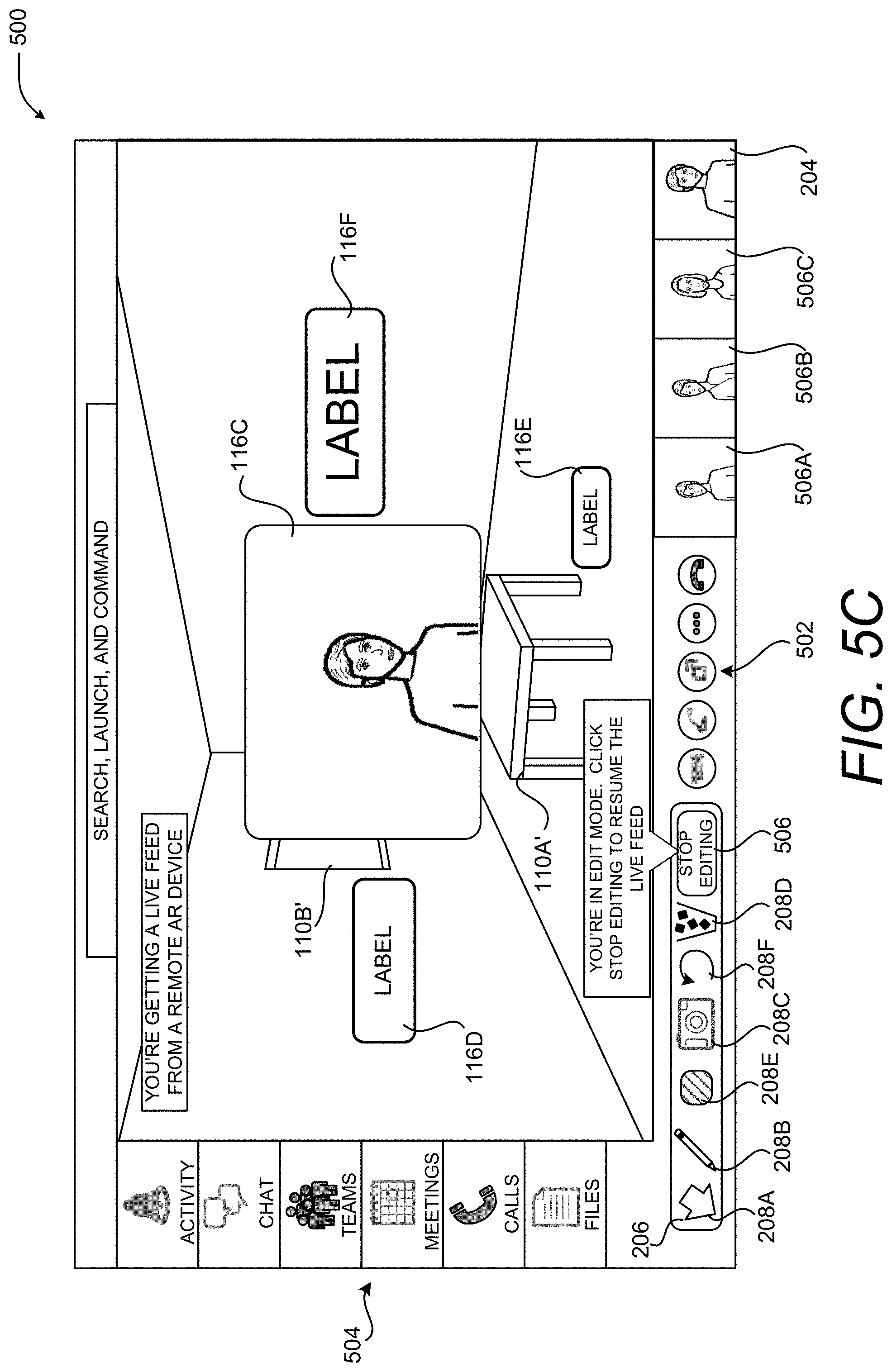

FIG. 5C is a UI diagram showing additional aspects of the illustrative UI shown in FIGS. 5A and 5B, according to one embodiment disclosed herein.

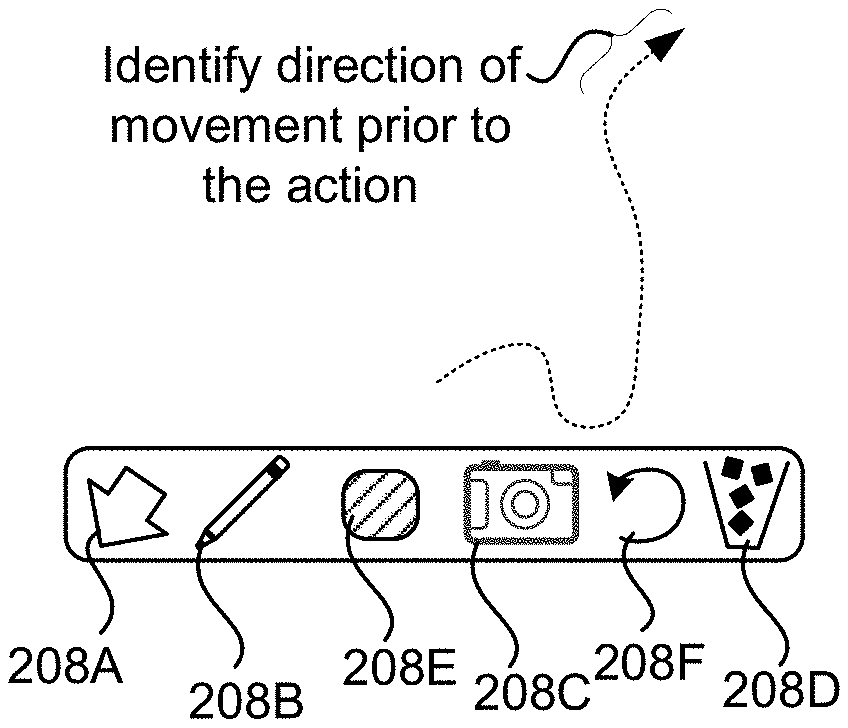

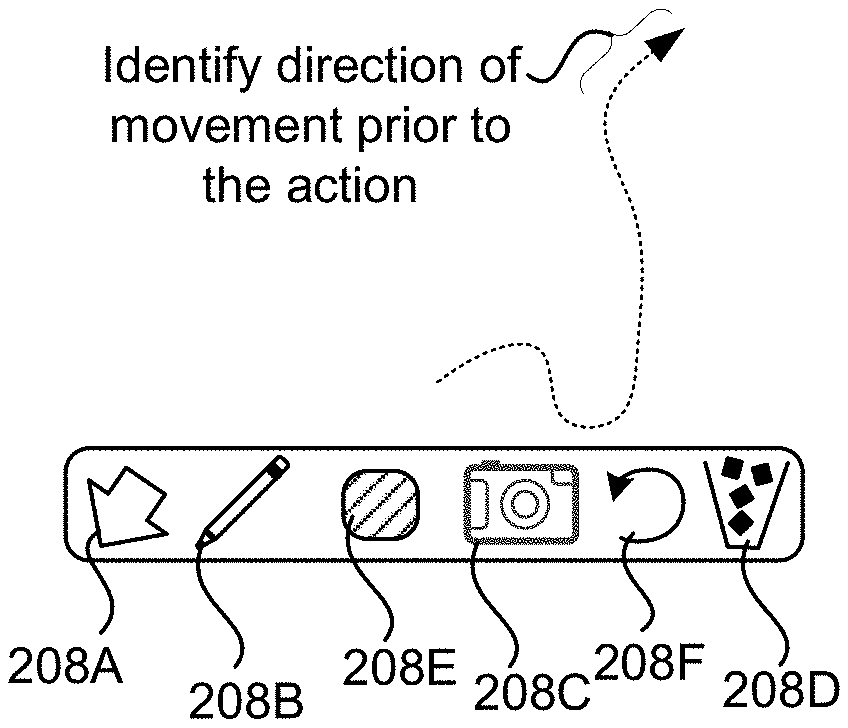

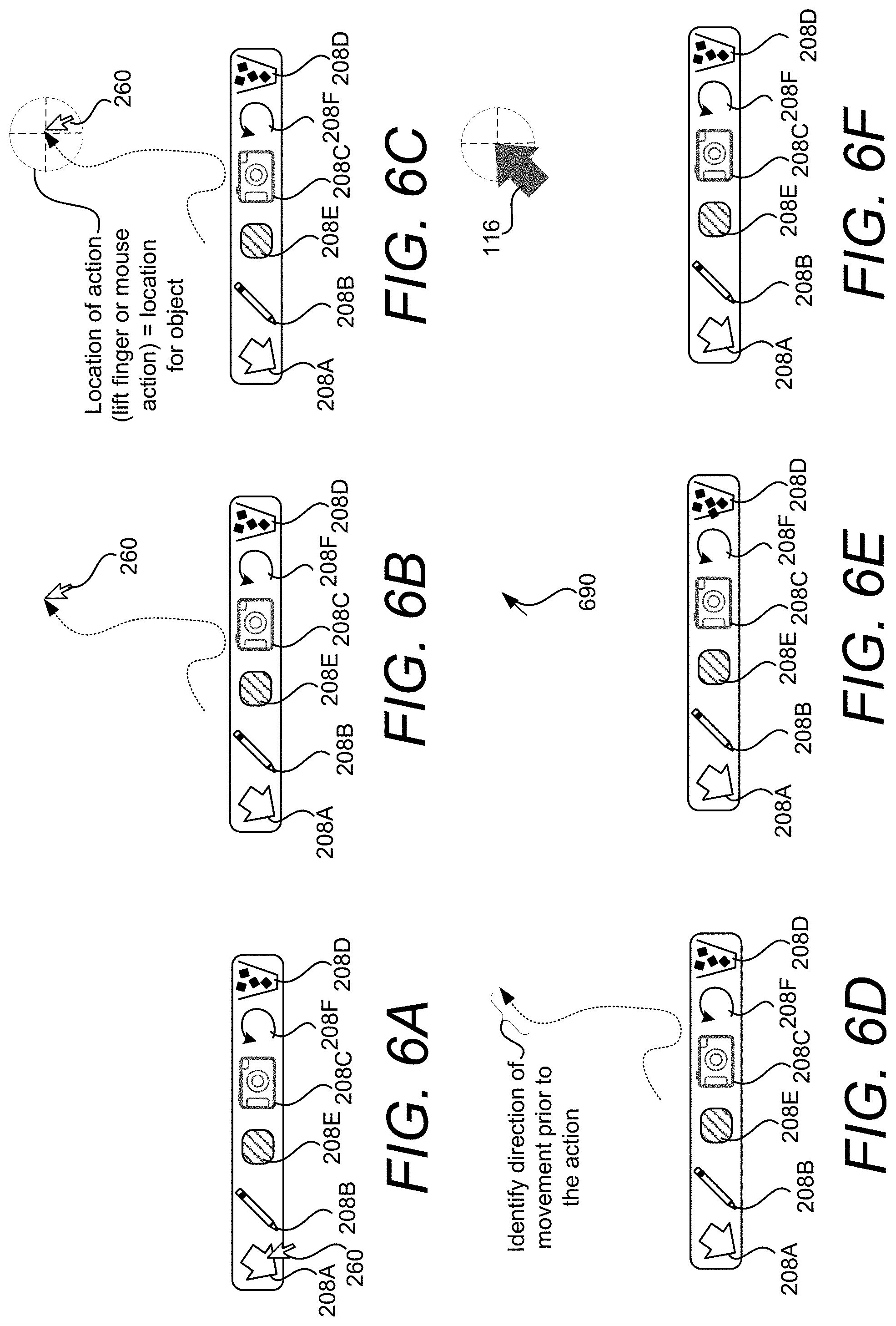

FIG. 6A is a UI diagram showing a selection process for invoking a computationally efficient placement and orientation of a virtual object in a 3D representation of a real-world environment.

FIG. 6B is a UI diagram showing the movement of an input gesture used for computationally efficient placement and orientation of a virtual object in a 3D representation of a real-world environment.

FIG. 6C is a UI diagram showing the location of the virtual object placed in response to an input gesture.

FIG. 6D is a UI diagram showing how the movement pattern of an input gesture can be used to identify an orientation for a virtual object.

FIG. 6E is a UI diagram showing a vector that is determined from the movement pattern of the pointing device.

FIG. 6F is a UI diagram showing the generation and rendering of a virtual object base on the movement pattern of the pointing device.

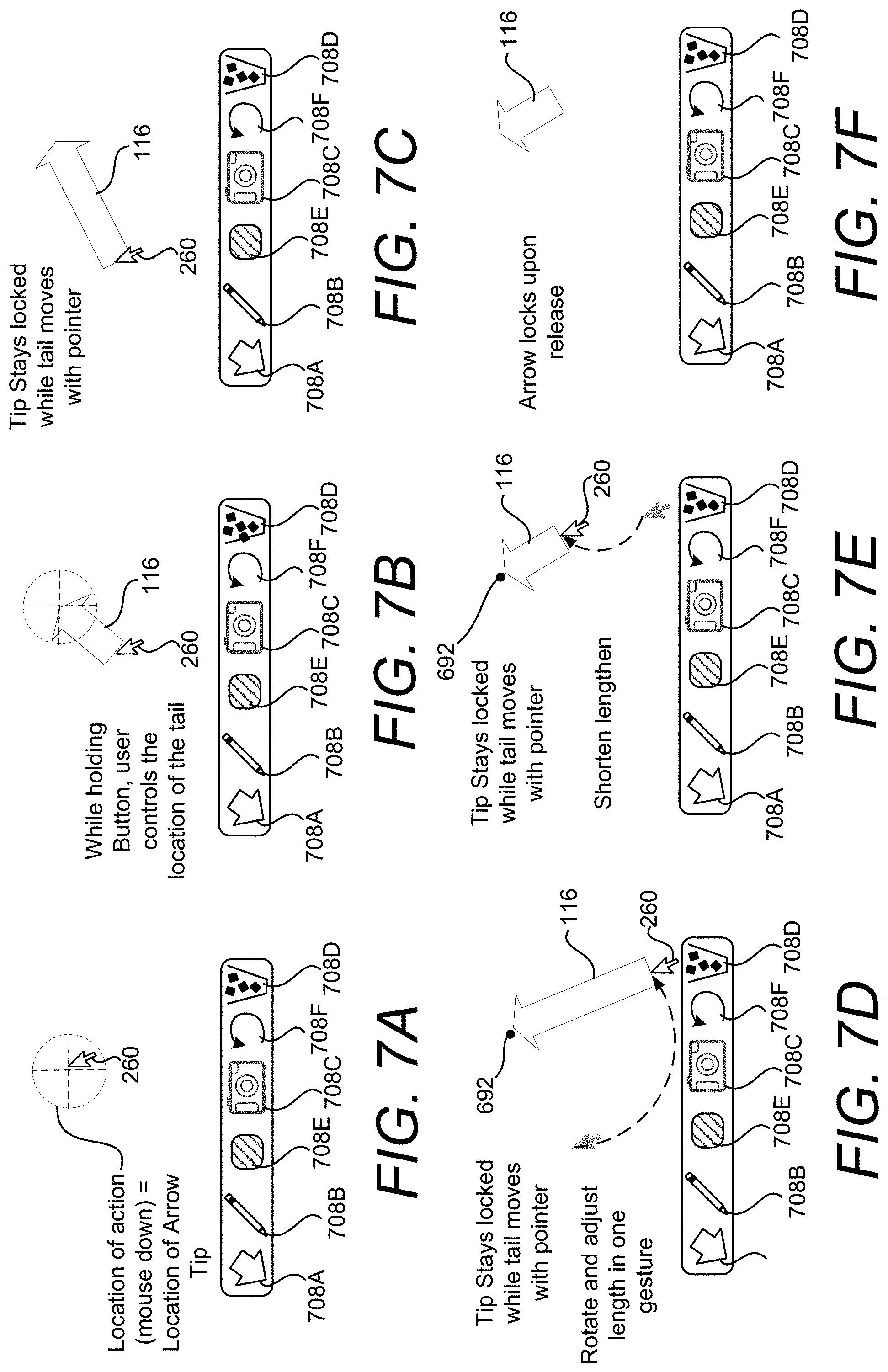

FIG. 7A is a UI diagram showing a location that is determined for placement of a virtual object based on an input action.

FIG. 7B is a UI that illustrates how the virtual object shown in FIG. 7A can be generated based on the input gesture.

FIG. 7C is a UI that illustrates how the virtual object shown in FIG. 7A can be resized and rotated in a clockwise direction based on the input gesture.

FIG. 7D is a UI that illustrates how the virtual object shown in FIG. 7A can be resized and rotated in a counterclockwise direction based on the input gesture.

FIG. 7E is a UI that illustrates how the virtual object shown in FIG. 7A can be resized to a smaller size based on the input gesture.

FIG. 7F is a UI diagram showing a full rendering of the virtual object that is positioned and oriented based on the input gesture.

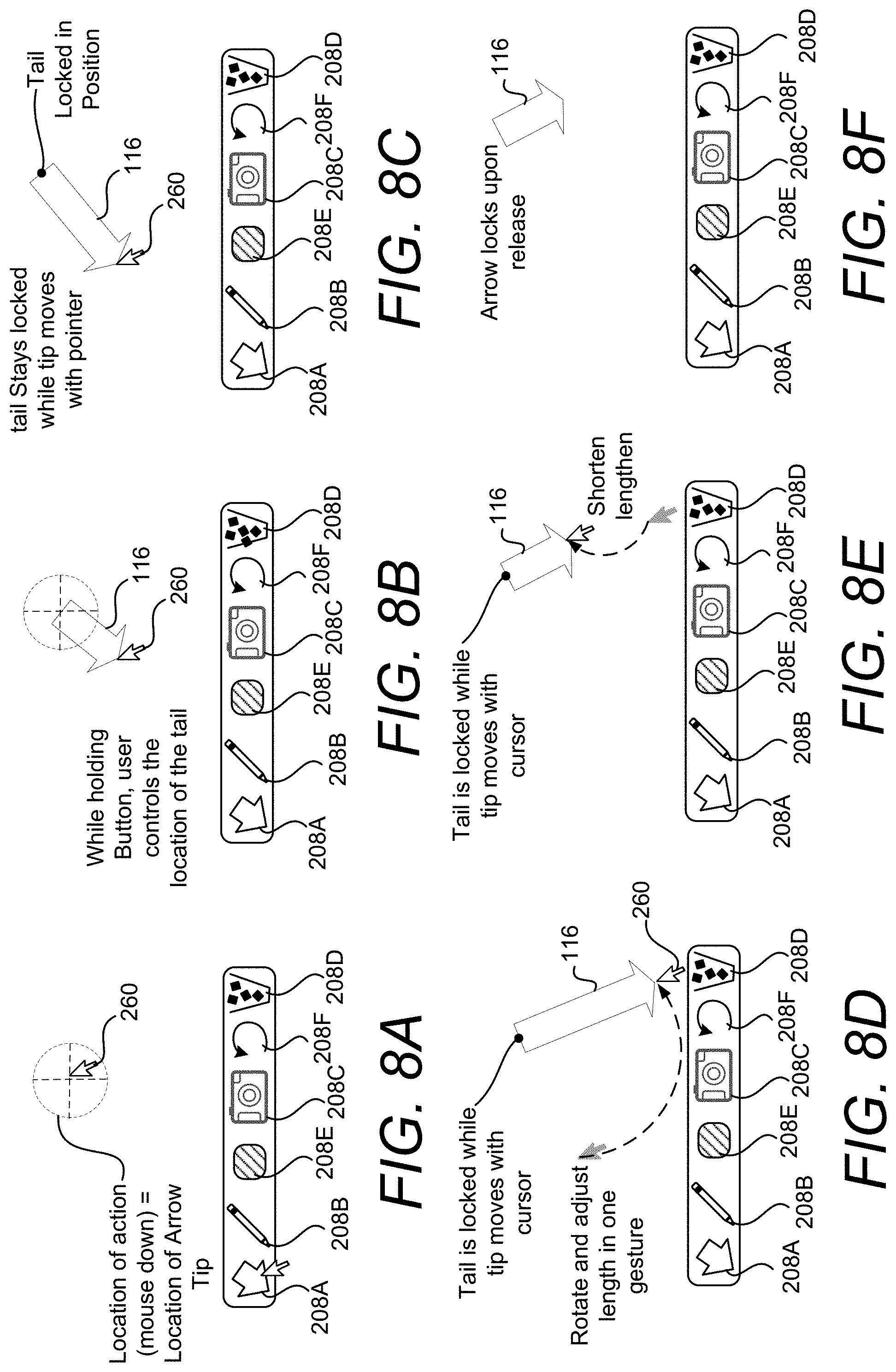

FIG. 8A is a UI diagram showing a location that is determined for placement of a virtual object based on an input action.

FIG. 8B is a UI that illustrates how the virtual object shown in FIG. 8A can be generated based on the input gesture.

FIG. 8C is a UI that illustrates how the virtual object shown in FIG. 8A can be resized and rotated in a clockwise direction based on the input gesture.

FIG. 8D is a UI that illustrates how the virtual object shown in FIG. 8A can be resized and rotated in a counterclockwise direction based on the input gesture.

FIG. 8E is a UI that illustrates how the virtual object shown in FIG. 8A can be resized to a smaller size based on the input gesture.

FIG. 8F is a UI diagram showing a full rendering of the virtual object that is positioned and oriented based on the input gesture.

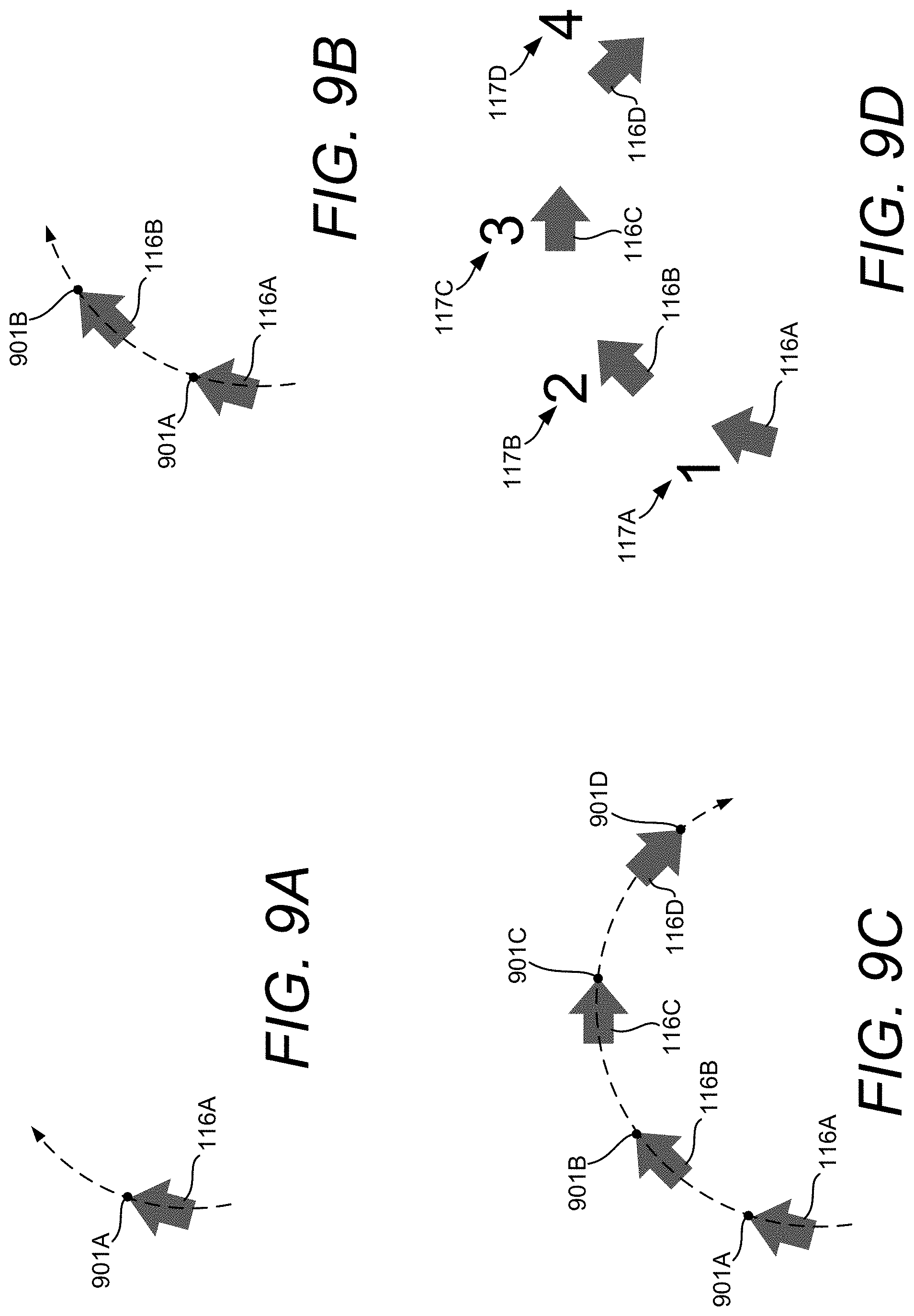

FIG. 9A is a UI diagram showing the start of an input gesture for placing a plurality of virtual objects.

FIG. 9B is a UI diagram showing the continuation of the input gesture shown in FIG. 9A for placing a plurality of virtual objects.

FIG. 9C is a UI diagram showing the continuation of the input gesture shown in FIG. 9B for placing a plurality of virtual objects.

FIG. 9D is a UI diagram showing the plurality of virtual objects placed by a user gesture shown in FIG. 9A through FIG. 9C.

FIG. 10 is a computing system diagram illustrating aspects of a routine for computationally efficient placement and sizing of virtual objects in a 3D representation of a real-world environment, according to one embodiment disclosed herein.

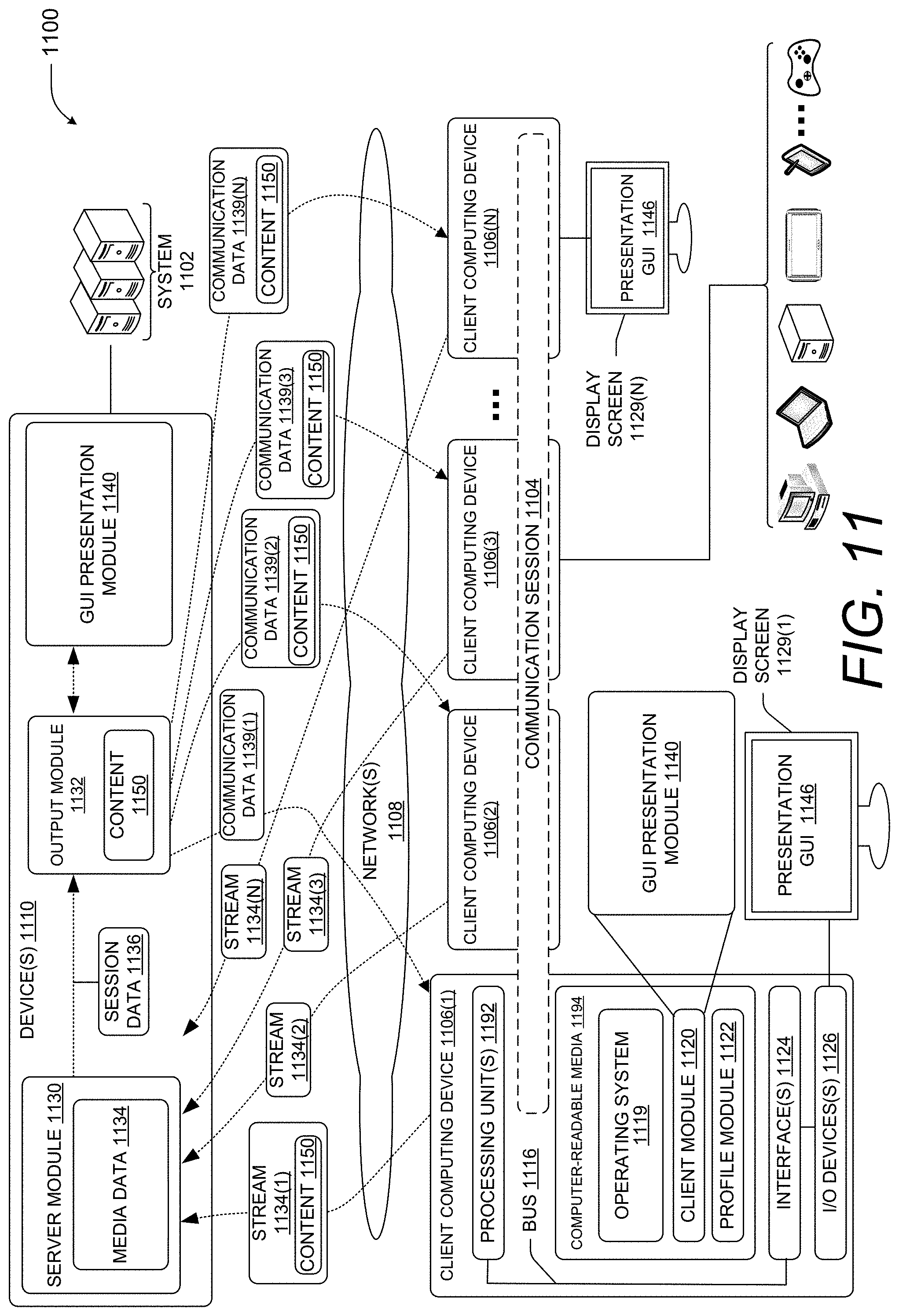

FIG. 11 is a computing system diagram showing aspects of an illustrative operating environment for the technologies disclosed herein.

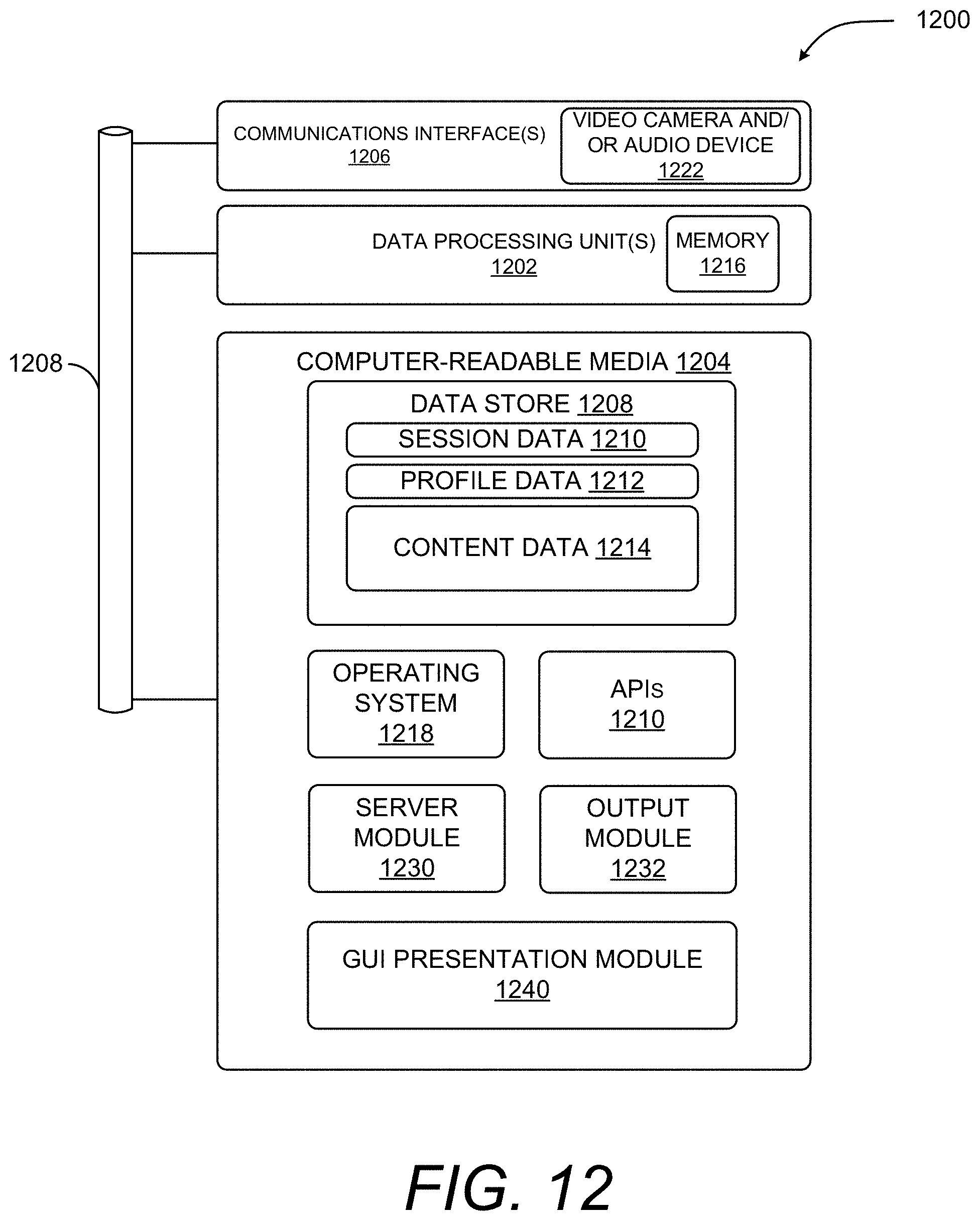

FIG. 12 is a computing architecture diagram showing aspects of the configuration and operation of a computing device that can implement aspects of the technologies disclosed herein.

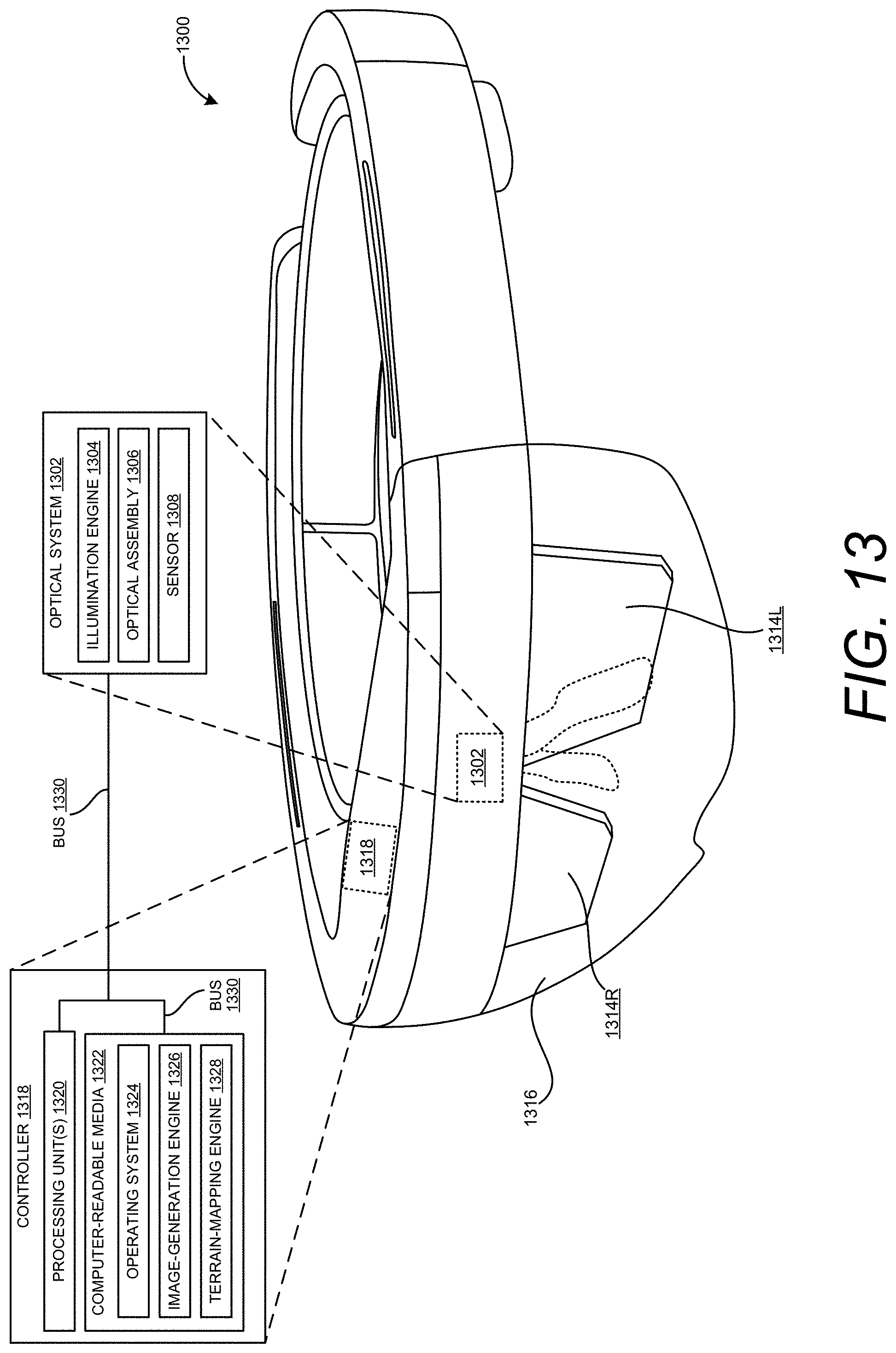

FIG. 13 is a computing device diagram showing aspects of the configuration and operation of an AR device that can implement aspects of the disclosed technologies, according to one embodiment disclosed herein.

DETAILED DESCRIPTION

The following Detailed Description describes an improved HCI for placing and sizing virtual objects in a 3D representation of a real-world environment. As mentioned above, the disclosed HCI can automatically size virtual objects in a 3D representation of a real-world environment such that the size of the objects corresponds to their depth in the 3D representation. This enables users to quickly place virtual objects at a desired location in the 3D representation of the real-world environment and have those virtual objects automatically sized such that they appear in perspective to the user. This can result in more efficient use of computing resources such as processor cycles, memory, network bandwidth, and power, as compared to previous solutions relying upon inefficient manual placement of virtual objects at a proper depth in a 3D environment. Technical benefits other than those specifically described herein might also be realized through implementations of the disclosed technologies.

As discussed briefly above, a networked meeting represents one popular form of electronic collaboration that utilizes an application program (e.g., CISCO WEBEX provided by CISCO SYSTEMS, Inc. of San Jose, Calif., GOTOMEETING provided by CITRIX SYSTEMS, INC. of Santa Clara, Calif., ZOOM provided by ZOOM VIDEO COMMUNICATIONS of San Jose, Calif., GOOGLE HANGOUTS by ALPHABET INC. of Mountain View, Calif., and SKYPE FOR BUSINESS and TEAMS provided by MICROSOFT CORPORATION, of Redmond, Wash.) to facilitate communication between two or more participants present at separate physical locations. As also discussed briefly above, participants of a communication session in a networked meeting are able to exchange live video, audio, and other types of content to view, hear, and otherwise share information. Participants can also view a common space, e.g., a whiteboard or a shared application, through which ideas can be exchanged. Viewing of the common space can be complemented with a video and audio conference, an instant messaging session, or any combination thereof, such that the networked meeting can act as a near substitute for an in-person meeting.

Various types of computing devices can be utilized to participate in networked meetings including, but not limited to, smartphones, tablet computing devices, set-top boxes, smart televisions, video game systems, and even AR, VR, and MR devices. When a participant utilizes an AR device to participate in a networked meeting, the AR device might capture that participant's view of their surrounding environment and transmit images or video of the view to the other meeting participants. The other meeting participants might then be presented with the images or video of the real-world environment surrounding the user wearing the AR device. The other meeting participants might also be able to view any virtual objects that the AR device has overlaid upon the real-world environment.

While meeting participants can view the real-world environment surrounding the user of an AR device and any virtual objects augmenting the user's view of the real-world environment, HCIs for allowing users to interact with, place, size, rotate, and otherwise modify such virtual objects have heretofore been very primitive. As a result, users often struggle to manually place and modify such virtual objects, which can result in inefficient use of computing resources such as, but not limited to, processor cycles, memory, network bandwidth, and power. Moreover, even when a user has successfully placed a virtual object in such an environment, the virtual object can be rendered in a manner that is confusing to the user. This can result in the user spending more time attempting to manually correct the size or placement of a new virtual object or to reorient an existing virtual object, which can also result in inefficient and unnecessary use of computing resources such as, but not limited to, processor cycles, memory, network bandwidth, and power. The disclosed HCI addresses the technical considerations set forth above, and potentially others, and thereby provides technical benefits to computing systems implementing the disclosed technologies.

Turning now to the figures (which might be referred to herein as a "FIG." or "FIGS."), additional details will be provided regarding an improved HCI disclosed herein with reference to the accompanying drawings that form a part hereof. The FIGS. show, by way of illustration, specific configurations or examples. Like numerals represent like or similar elements throughout the FIGS. In the FIGS., the left-most digit(s) of a reference number generally identifies the figure in which the reference number first appears. References made to individual items of a plurality of items can use a reference number with another number included within a parenthetical (and/or a letter without a parenthetical) to refer to each individual item. Generic references to the items might use the specific reference number without the sequence of letters. The drawings are not drawn to scale.

FIG. 1 is a computing system diagram illustrating aspects of an operating environment for the embodiments disclosed herein along with aspects of a HCI capable of enabling computationally efficient placement and sizing of virtual objects in a 3D representation of a real-world environment, according to one embodiment disclosed herein. As shown in FIG. 1, a system 100 disclosed herein utilizes a computing device 102 in some embodiments. The computing device 102, alone or in combination with one or more other devices (e.g. a local computer or one or more remote computing devices 104), might form a system 100 that performs or otherwise implements the various processes and techniques described herein.

In the configuration shown in FIGS., the computing device 102 takes the form of a wearable, head-mounted display device that is worn by a user. It will be understood, however, that the computing device 102 might take a variety of different forms other than the specific configurations depicted in the FIGS. Although the configurations disclosed herein are discussed primarily in the context of AR devices, it is to be appreciated that the technologies disclosed herein can also be utilized with other types of devices that include functionality for spatial mapping such as, but not limited to, appropriately configured VR devices, MR devices smartphones, and tablet computing devices.

The computing device 102 is configured with sensors, other hardware, and program code capable of 3D spatial mapping, such as an AR device or appropriately-configured smartphone, that generates mesh data 106. The mesh data 106 defines a 3D representation of a real-world environment 112, including any physical objects 110 in the real-world environment 112. Examples of mesh data 106 include, but are not limited to, a 3D depth map. The computing device 102 can also capture audio present in the real-world environment 112, such as speech of the user 118A. The computing device 102 also generates still or moving images 108 (i.e. a video) of the real-world environment 112. The terms "image" or "images," as used herein, encompass both still images and moving images, such as digital video.

The computing device 102 includes one or more display panels (not shown in FIG. 1) that display computer generated ("CG") graphics. For example, the computing device 102 might include a right-eye display panel for right-eye viewing and a left-eye display panel for left-eye viewing. A right-eye display panel is typically located near a right eye of the user to fully or partially cover a field of view of the right eye, and a left-eye display panel is located near a left eye of the user to fully or partially cover a field of view of the left eye.

In another example, a unitary display panel might extend over both the right and left eyes of a user and provide both right-eye and left-eye viewing via right-eye and left-eye viewing regions of the unitary display panel. In each of these implementations, the ability of the computing device 102 to separately display different right-eye and left-eye graphical content via right-eye and left-eye displays might be used to provide a user 118A of the computing device 102 with a stereoscopic viewing experience.

The computing device 102 might include a variety of on-board sensors. For example, and without limitation, a sensor subsystem (not shown in FIG. 1) might include one or more outward facing optical cameras (e.g., cameras located on an external surface of the computing device 102 and forward facing in a viewing direction of the user 118A), such as an optical camera. The computing device 102 can also include one or more inward facing optical cameras (also not shown in FIG. 1) (e.g., rearward facing toward the user 118A and/or toward one or both eyes of the user 118A).

The computing device 102 can also include a variety of other sensors (not shown in FIG. 1) including, but not limited to, accelerometers, gyroscopes, magnetometers, environment understanding cameras, depth cameras, inward or outward facing video cameras, microphones, ambient light sensors, and potentially other types of sensors. Data obtained by the sensors can be utilized to detect the location, orientation (which might be referred to as a "pose"), and movement of the computing device 102.

The one or more outward facing optical cameras of the computing device 102 can be configured to observe the real-world environment 112 and output images 108 illustrating the real-world environment 112 observed by a user 118A of the computing device 102. The optical cameras are red-green-blue ("RGB") cameras and infrared cameras in one embodiment. It is to be appreciated, however, that other types of cameras can be utilized in other configurations such as, but not limited to, black and white ("B&W") cameras. Additionally, and as mentioned above, the same or different cameras can be utilized for tracking motion of the head of the user 118A (i.e. "head tracking).

The computing device 102 might also include a processing subsystem (not shown in FIG. 1) that includes one or more processor devices that perform at least some of the processes and operations described herein, as defined by instructions executed by the processing subsystem. Such processes or operations might include generating and providing image signals to the display panels, receiving sensory signals from sensors such as cameras, enacting control strategies and procedures responsive to those sensory signals, generating the mesh data 106, and transmitting the mesh data 106 to one or more remote computing devices 104. Other computing systems, such as local or remote computing devices 104 might also perform some of the computational tasks disclosed herein.

The computing device 102 might also include an on-board data storage subsystem (not shown in FIG. 1) that includes one or more memory devices storing computer-executable instructions (e.g., software and/or firmware) executable by the processing subsystem and might additionally hold other suitable types of data. The computing device 102 might also include a communications subsystem supporting wired and/or wireless communications with remote devices (i.e., off-board devices) over a communications network (not shown in FIG. 1). As an example, the communication subsystem of the computing device 102 might be configured to wirelessly send or receive mesh data 106, images 108, digital audio, and/or other information to and from the remote computing device 104.

The computing device 102 can also be utilized to augment a user's view of the real-world environment 112 with virtual objects 116. The virtual objects 116 appear as if they are actually present in the real-world environment 112 when the real-world environment 112 is viewed with the computing device 102. Additional details regarding the configuration and operation of an illustrative computing device 102 will be provided below with regard to FIG. 11.

As described briefly above, the computing device 102 interacts with a remote computing device 104 in some embodiments. The remote computing device 104 may be a personal computer, a wearable computer, including a HMD, or any other type of computing device having components for causing a display of one or more images on a display, such as the illustrative graphical user interface ("UI") 114.

The remote computing device 104 executes an application program, or another type of program, that is configured to enable networked meetings, such as those described above. As also described briefly above, networked meetings can provide various types of communications sessions that enable participants, such as the user 118A and a user 118B of the remote computing device 104 to share information. Such communications sessions can include, but are not limited to, a broadcast session (i.e. one participant to many), a conference session (i.e. many participants to many participants), or a peer-to-peer session (i.e. one participant to one other participant). Additional details regarding one illustrative UI for participating in a networked meeting will be provided below with regard to FIGS. 5A-5C.

The remote computing device 104 also receives the mesh data 106 and images 108 generated by the computing device 102 and renders the images 108 in the UI 114. In this manner, a user 118B of the remote computing device can see the view of the real-world environment 112 as seen by the user 118A of the computing device 102 along with any virtual objects 116 augmenting that view in the UI 114. It should be appreciated that, although not illustrated in FIG. 1, various network devices and connections can be utilized to enable data communications between the computing device 102 and the remote computing device 104.

The application program executing on the remote computing device 104 can also receive user input (e.g. mouse or touch input) from the user 118B via the UI 114 that defines a location on the displayed images 108 at which a virtual object 116 is to be placed within the 3D representation of the real-world environment 112 defined by the mesh data 106. In the example shown in FIG. 1, for instance, the user 118B has utilized the UI 114 to place an arrow-shaped virtual object 116A that points to a rendering of a real-world object 110A' (i.e. the real-world object 110A (a table)). The user 118B has also utilized the UI 114 to place an arrow-shaped virtual object 116B that points to a rendering of a real-world object 110A' (i.e. the real-world object 110A (a window)).

Although not illustrated in FIG. 1, the user 118B can also place virtual objects 116 in association with other virtual objects 116. In this manner, the user 118B can bring attention to a real-world object 110 or a virtual object 116. Other types and shapes of two-dimensional ("2D") and 3D virtual objects 116 can be placed in a similar manner. One illustrative UI 114 for placing virtual objects 116 is described below with reference to FIGS. 5A-5C.

When a user 118B places a virtual object 116 on the rendering of the images 108, the application program executing on the computing device 104 utilizes the mesh data 106 to determine the depth of the location specified by the user 118B within the 3D representation of the real-world environment 112. For example, and without limitation, the computing device 104 can determine the depth of the specified location on the z-axis of the depth map defined by the mesh data 106.

The application program executing on the remote computing device 104 can then determine the size at which the virtual object 116 is to be rendered in the UI 114 based upon the computed depth. For instance, virtual objects 116 nearer to a user's viewpoint into the 3D representation of the real-world environment 112 can be rendered larger than those virtual objects 116 that appear further away from the user.

In some embodiments, the depth of the location within the 3D representation of the real-world environment 112 corresponds to the depth of a real-world object 110 at the specified location in the real-world environment 112. In this manner, virtual objects 116 can be located at the same depth in the 3D representation of the real-world environment 112 as real-world objects 110. In the example shown in FIG. 1, for instance, the virtual object 116A has been placed at the same depth as the real-world object 110A. Similarly, the virtual object 116B has been placed at the same depth as the real-world object 110B. As a result, the virtual object 116A appears larger than the virtual object 116B because the real-world object 110A is closer to the user 118A than the real-world object 110B.

The depth of the location within the 3D representation of the real-world environment 112 might alternately correspond to the depth of another virtual object 116 in the 3D representation of the real-world environment 112. In this manner, a user 118B can define virtual objects 116 located at the same depth in the 3D representation of the real-world environment 112 as other virtual objects 116. Additional details regarding this aspect will be provided below with regard to FIGS. 3A and 3B.

Once the size of the virtual object 116 to be placed has been computed, the remote computing device 104 can render the virtual object 116 in the UI 114 at the computing size and at the specified location within the 3D representation of the real-world environment 112. In this manner, the virtual object 116 appears as if it were actually present in the real-world environment 112. Moreover, using the mechanism described above, users can more quickly place virtual objects 116 at desired locations in a 3D representation of a real-world environment 112 and have those virtual objects 116 automatically sized such that they appear in perspective to the user 118B. This can result in more efficient use of computing resources such as processor cycles, memory, network bandwidth, and power, as compared to previous solutions relying upon inefficient manual placement of virtual objects 116 at a proper depth in a 3D environment.

The computing device 104 can also update the mesh data 106 to include the newly-placed virtual object 116 and provide the updated mesh data 106 to the computing device 102 so that the user 118A of the computing device 102 can view the newly-placed virtual object 116 as if were actually present in the real-world environment 112. In the example shown in FIG. 1, for instance, the virtual objects 116A and 116B appear to the user 118A as if they were actually present in the real-world environment 112. The updated mesh data 106 can also be provided to the computing devices of other participants in the networked meeting for display. Similarly, the updated mesh data 106 can be provided to a server computer (not shown in FIG. 1) for provision to other computing devices 102 and 104.

In some scenarios, the real-world object 110 at the specified location in the real-world environment 112 is planar such as, for instance, a wall, a whiteboard, or the window shown in FIG. 1. In these scenarios, a virtual object 116 can be transformed and rendered in the same plane as the real-world object 110. In this manner, both the size of the virtual object 116 and its orientation in 3D space can be defined such that the virtual object 116 appears in perspective when rendered by the remote computing device 102 and viewed by the user 118A.

The computing device 104 can also modify visual attributes of virtual objects 116 based upon their location within the 3D representation of the real-world environment 112. For example, and without limitation, the brightness or color of virtual objects 116 can be modified based on their depth in the 3D representation of the real-world environment 112. This can make virtual objects 116 that are deeper in the 3D representation to appear darker than those that are closer to a user's 118A viewpoint.

Visual attributes of virtual objects 116 can also be modified as the ambient lighting in the real-world environment 112 changes. For example, the intensity of virtual objects 116 might be reduced as the real-world environment 112 darkens. The virtual objects 116 might also be rendered with shadows depending upon the location of a light source within the real-world environment.

In some embodiments, the virtual object 116 is fixed at the location specified within the 3D representation of the real-world environment 112. In this manner, the virtual objects 116 remain in their specified location even when a user 118A of the computing device 102 moves their head or otherwise changes position. Additional details regarding this aspect will be provided below with regard to FIG. 2C.

In some embodiments the UI 114 further includes one or more UI controls (not shown in FIG. 1) for manipulating virtual objects 116. For example, and without limitation, the UI controls can provide functionality for rotating, coloring, transforming, deleting, replacing, or otherwise modifying the visual appearance of a virtual object 116. Additional details regarding this aspect will be provided below with regard to FIGS. 5A-5C.

FIGS. 2A-2C are computing system diagrams showing additional aspects of the illustrative HCI described above with reference to FIG. 1, according to one embodiment disclosed herein. As shown in FIG. 2A, the remote computing device 104 can be equipped with an imaging sensor 202, like a video camera, in some embodiments. The imaging sensor 202 faces the user 118B and captures images 108 of the user 118B. A rendering 204 of the images 108 of the user 118B on the remote computing device 104 can be displayed in the UI 114. In this manner, a user 118B can see themselves during a networked conference.

As shown in FIG. 2A, the UI 114 also includes a UI 206 containing UI controls 208 in some embodiments which, when selected, enable the placement, modification, or deletion of content on the images 108. The UI control 208A enables a user 118B to place a virtual object 116, in this example an arrow, on the rendering of the images 108. The UI control 208B enables a user 118B to draw on the rendering of the images 108. The UI control 208C enables a user to place a digital picture over the rendering of the images 108. The UI control 208D enables a user to delete a previously-placed virtual object 116, a drawing, or picture. Other UI controls 208 can be provided in other embodiments, some of which are described below with regard to FIGS. 5A-5C.

As discussed briefly above, a user 118B can interact with the UI 206 utilizing mouse input, touch input, or other types of input. In the example shown in FIG. 2A, for instance, the user 118B has moved a mouse cursor 210 over the UI control 208A in order to initiate the placement of a virtual object 116. As shown in FIG. 2B, the user 118B has clicked on the UI control 208A and dragged toward the rendering of the real-world object 110A'. When the user 118B releases the mouse button, the virtual object 116A is placed at the specified location. Details regarding one specific HCI for placing and positioning virtual objects 116 is described below with regard to FIGS. 6A-6C.

In one embodiment, the rendering of the real-world environment 112 shown in the UI 114 is paused while the user 118B is placing a virtual object 116. By pausing the rendering of the real-world environment 112, the user 118B can more easily place a virtual object 116 on the images 108. Once the virtual object 116 has been placed, the rendering of the real-world environment 112 can resume. In other embodiments, the rendering of the real-world environment 112 shown in the UI 114 is not paused while the user 118B is placing a virtual object 116.

As discussed briefly above, when a user 118B places a virtual object 116 on the rendering of the images 108, the application program executing on the computing device 104 utilizes the mesh data 106 to determine the depth of the location specified by the user 118B within the 3D representation of the real-world environment 112. In one embodiment, the depth of anew virtual object 116 is the same as the depth of a real-world object 110 or a virtual object 116 that exists at the specified location for the new virtual object 116.

In the example shown in FIG. 2B, for instance, the remote computing device 104 can determine the location for the virtual object 116A based upon the location of the real-world object 110A in 3D space. The computing device can also calculate the distance 212A from the computing device 102 to the real-world object 110A (i.e. the table) using the mesh data 106. This distance can be utilized to size the virtual object 116A. Similarly, the remote computing device 104 can determine the depth of the virtual object 116B based upon the depth of the real-world object 110B (i.e. the window). The remote computing device 104 can then calculate the distance 212B from the computing device 102 to the real-world object 110B using the mesh data 106. This distance is utilized to size the virtual object 116B.

Because the depth 212B is greater than the depth 212A, the size of the virtual object 116A is greater than the size of the virtual object 116B when rendered in the UI 114. In this manner, virtual objects 116 nearer to a user's viewpoint into the 3D representation of the real-world environment 112 can be rendered larger than those virtual objects 116 that appear further away from the user 118A.

Once the size of the virtual object 116 to be placed has been computed, the remote computing device 104 can render the virtual object 116 in the UI 114 at the computed size and at the specified location within the 3D representation of the real-world environment 112. In this manner, the virtual object 116 appears as if it were actually present in the real-world environment 112.

The computing device 104 can also update the mesh data 106 to include the newly-placed virtual object 116 and provide the updated mesh data 106 to the computing device 102 so that the user 118A of the computing device 102 can view the newly-placed virtual object 116 as if were actually present in the real-world environment 112. In the example shown in FIG. 1, for instance, the virtual objects 116A and 116B appear to the user 118A as if they were actually present in the real-world environment 112.

The updated mesh data 106 can also be provided to the remote computing devices 104 of other participants in the networked meeting for display. Similarly, the updated mesh data 106 can be provided to a server computer (not shown in FIG. 1) for storage and distribution to other computing devices 102 and 104.

As discussed above, the virtual object 116 is fixed, or "pinned," at the location specified within the 3D representation of the real-world environment 112 in some embodiments. In this manner, virtual objects 116 remain in their specified location with respect to real-world and virtual objects even when a user 118A of the computing device 102 moves their head or otherwise changes position.

In the example shown in FIG. 2C, for instance, the user 118A has rotated their head clockwise and, as a result, their view of the real-world environment 112 has changed. Consequently, the rendering of the real-world environment 112 shown in the UI 114 has changed accordingly. However, the locations of the virtual objects 116A and 116B have not changed relative to the locations of the rendered real-world objects 110A' and 110B' to which they are pinned.

FIGS. 3A and 3B are computing system diagrams illustrating aspects of another embodiment of the illustrative HCI described above with reference to FIG. 1. In the embodiment shown in FIGS. 3A and 3B, another virtual object 116C exists in the 3D representation of the real-world environment 112. In this example, the virtual object 116C is an image 108 of the user 118B of the remote computing device 104. The virtual object 116C is located in the 3D representation of the real-world environment 112 such that it appears at a comfortable viewing distance (e.g. six feet) from the user 118A when viewed using the computing device 102.

As shown in FIG. 3B, the user 118B can also place virtual objects 116 in association with other virtual objects 116, such as the virtual object 116C. In this example, the user 118B has placed a virtual object 116D at a location adjacent to the virtual object 116C. As a result, the computing device 104 has computed the depth for the virtual object 116D based upon the depth of the virtual object 116C in the 3D representation of the real-world environment 112 defined by the mesh data 106. Using this mechanism, the user 118B can bring attention to a real-world object 110 or a virtual object 116. Other types and shapes of 2D and 3D virtual objects 116 can be placed in a similar manner.

FIGS. 4A and 4B are computing system diagrams illustrating aspects of another embodiment of the illustrative HCI described above with reference to FIG. 1. As illustrated in FIG. 4A, other types of computing devices can be utilized to generate the mesh data 106 and the images 108 in some embodiments. In the illustrated embodiment, for example, a suitably equipped smartphone 400 can be utilized to generate the mesh data 106 and the images 108. In order to accomplish this, the smartphone 400 can be equipped with two cameras. The cameras generate images 108 of the same real-world environment. The smartphone 400 can then compute the depth map for the real-world environment using the stereo images.

As shown in FIG. 4B, the smartphone 400 can compute the distance 212A from the smartphone 400 to the real-world object 110A (i.e. the table) using the mesh data 106. Similarly, the smartphone 400 can determine the depth of the virtual object 116B based upon the depth of the real-world object 110B (i.e. the window). The smartphone 400 can then provide the mesh data 106 to the remote computing device 104 for use in the manner described above. The smartphone 400 can receive the updated mesh data 106 and augment its display of video captured by its camera with the virtual objects 116.

As discussed above, the mesh data 106, including the location of any virtual objects 116 contained therein, can be stored at a server computer. In this embodiment, a computing device such as a smartphone 400 can retrieve the mesh data 106 and utilize the mesh data 106 to augment its display of the real-world environment 112 even if the device is incapable of generating the mesh data 106.

FIGS. 5A-5C are UI diagrams showing aspects of an illustrative UI 500 that enables computationally efficient placement and sizing of virtual objects 116 in a 3D representation of a real-world environment 112, according to one embodiment disclosed herein. As discussed briefly above, the technologies disclosed herein can be utilized in conjunction with an application program that provides functionality for holding networked meetings. A UI 500 presented by such an application is shown in FIGS. 5A-5C and described below.

As with the UI 114, the UI 500 includes a rendering of the images 108 of the real-world environment 112 generated by the computing device 102. In this manner, a user 118B of the remote computing device 104 can see the view of the real-world environment 112 as seen by the user 118A of the computing device 102 along with any virtual objects 116 augmenting that view. As in the examples shown in FIGS. 3A and 3B, a virtual object 116C can also be presented that shows the live video of the user 118B. The UI 500 can also include thumbnails 506A-506C, static or moving, of the other participants in a networked meeting.

As illustrated in FIG. 5A, the UI 500 can also include UI controls 502 for controlling aspects of the networked meeting such as, but not limited to, initiating or ending a networked meeting, sharing content with other participants in the networked meeting, muting and unmuting an audio capture device, and starting or stopping the sharing of live video. Other UI controls 502 can be provided in other embodiments.

As also illustrated in FIG. 5A, the UI 500 can also include UI controls 504 for performing other tasks relating to a networked meeting. For example, and without limitation, the UI controls 504 can provide functionality for showing notifications, showing a list of users and associated chat sessions, showing available groups or teams of users, showing the meetings during a day or other time period, and showing any recently shared or used files. Other UI controls 504 for performing other types of functionality can be provided in other embodiments.

The UI 500 can also include the UI 206 shown in FIGS. 2A-2C and 4B and described above for enabling the placement, modification, and deletion of content on the images 108. As discussed above, the UI control 208A enables a user 118B to place a virtual object 116, the UI control 208B enables a user 118B to draw on the rendering of the images 108, the UI control 208C enables a user to place a digital picture over the rendering of the images 108, and the UI control 208D enables a user to delete a previously-placed virtual object 116, a drawing, or picture. The embodiment shown in FIGS. 5A-5C also includes UI control 208F for undoing a previous operation and a UI control 208E that provides functionality for selecting the color of a virtual object 116. Other UI controls 208 can be provided in other embodiments.

As in the examples described above, a user 118B can interact with the UI 500 utilizing mouse input, touch input, or other types of input. In the example shown in FIG. 5B, for instance, the user 118B has moved a mouse cursor 210 over the UI control 208A in order to initiate the placement of a virtual object 116A, clicked, and dragged the mouse cursor 210 toward the rendering of the real-world object 110A'. When the user 118B releases the mouse button, the virtual object 116A is placed at the specified location in the manner described above.

In one embodiment, the rendering of the real-world environment 112 shown in the UI 114 is paused while the user 118B is placing a virtual object 116. By pausing the rendering of the real-world environment 112, the user 118B can more easily place a virtual object 116 on the images 108. Once the virtual object 116 has been placed, the rendering of the real-world environment 112 can resume. In other embodiments, the rendering of the real-world environment 112 shown in the UI 114 is not paused while the user 118B is placing a virtual object 116.

As discussed briefly above, when a user 118B places a virtual object 116 on the rendering of the images 108, the application program executing on the computing device 104 utilizes the mesh data 106 to determine the depth of the location specified by the user 118B within the 3D representation of the real-world environment 112. In one embodiment, the depth of a new virtual object 116 is the same as the depth of a real-world object 110 or a virtual object 116 that exists at the specified location for the new virtual object 116.

As in the example described above, the rendering of the real-world environment 112 shown in the UI 500 can be paused while the user 118B is placing a virtual object 116. By pausing the rendering of the real-world environment 112, the user 118B can more easily place a virtual object 116 on the images 108. Once the virtual object 116 has been placed, the rendering of the real-world environment 112 can resume. Alternately, a UI control 506 shown in FIG. 5B can be provided which, when selected, will resume the rendering of the images 108 of the real-world environment 112.

As mentioned above, other types and shapes of 2D or 3D virtual objects 116 can be placed and sized in the manner described herein. In the example shown in FIG. 5B, for instance, virtual objects 116D-116F have been placed and sized in the 3D rendering of the real-world environment 112 in the manner described above. The virtual objects 116D-116F are labels that have text that can be customized to provide information to a viewer. Other types of virtual objects 116 can be placed and sized in a similar manner.

As summarized above, the techniques disclosed herein can enable a user to control a location, size, and orientation of virtual objects in a 3D representation of a real-world environment based on simplified user gestures. In some embodiments, based on the timing and direction of an input gesture, a computing device can utilize an automated mode that determines a position and orientation of a virtual object or utilize a manual mode that determines position, orientation, and size of the virtual object. The modes can be selected based on a type of user gesture. For example, a first type of user gesture may include a short tap of a button, e.g., if a mouse button is held for less than a threshold period of time. As will be described in more detail below, the manual mode may be invoked when a second type of user gesture is received. A second type of user gesture may include a press-and-hold action, e.g., if a mouse button is held for more than the threshold period of time. To illustrate these aspects, FIGS. 6A-6F illustrate an example of a user input gesture that invokes the automated mode. FIGS. 7A-7F and FIGS. 8A-8F illustrate examples of user input gesture that invokes the manual mode.

With reference to FIGS. 6A-6F, an example involving the manual mode is shown and described. In this example, the automated mode is invoked in response to a first type of input action, such as a short tap of a mouse button. In response to detecting the first type of input action, a virtual object, such as an arrow, can be placed at the position of the input action. The orientation of the virtual object is determined by the direction of the movement of an input device immediately prior to the input action. The size of the virtual object can be based on one or more factors. For instance, the size of the virtual object can correspond to a distance between a real-world object and one or more sensors.

As shown in FIG. 6A, to invoke the automatic mode, a user 118B first selects UI control 208A using a mouse, touch input, or another type of user input device. In the example shown in FIG. 6A, the user 118B has selected the UI control 208A using an input device and an associated mouse cursor 210. Once the user 118B has selected the UI control 208A, as shown in FIG. 6B, the user can move the cursor 260 to any desired location. In this example, the movement is illustrated by a dotted line.

As shown in FIG. 6C, a position (indicted by the dashed crosshairs 689) is selected in response to receiving an input action, such as an actuation of a mouse button, etc. The position that is selected for the virtual object is based on the location of the cursor 260. As summarized above, the timing of the input action controls whether the computing device utilizes automated mode or manual mode. In one illustrative example, the automated mode is invoked in response to a first type of input action, such as a short tap of a mouse button. A short tap can comprise both a button down and a button up action within a predetermined period of time.

The orientation of the virtual object that is to be placed is determined by the direction of the movement of the cursor 260 immediately prior to the input action. To achieve this feature, as shown in FIG. 6D, the computing device monitors the direction and path of the cursor 260 prior to the input action. In some implementations, as shown in FIG. 6E, the computing device may generate data defining a vector 690 and the vector 690 can be used to determine the orientation of the virtual object. Based on the position and the determined orientation, as shown in FIG. 6F, the virtual object 116 can be rendered on the user interface.

In some configurations, when using the automated mode, the size of the virtual object 116 can correspond to a distance between a real-world object and one or more sensors. When the virtual object 116 is placed in association with a real-world object, the length and/or width of the object can be based on the distance between the real-world object and one or more sensors. For instance, as described above, when an arrow is placed in association with the table, the arrow may be one size. When the arrow is placed in association with the window, which may be further from the computing device than the table, the arrow may be another size, small or larger. In some configurations, the size of the virtual object 116 can be based on a velocity of movement prior to the input action. Thus, in the example described above, an arrow may be larger or smaller based on the velocity of the cursor 260 prior to the input action.

Although the example described above involves an input device having a button, such as a mouse, it can be appreciated that the techniques disclosed herein can utilize any other suitable input device. For example, the techniques disclosed herein can utilize a computing device having a touchscreen. In such an implementation, once the user 118B first selects UI control 208A for placement of the virtual object, the user can trace a finger or pen on a touchscreen allowing the computing device to monitor the direction of movement. When the user performs an input action, e.g., the user lifts his or her finger or pen from the touch surface or provides a voice command, the computing device can determine a position based on the location of the contact point between the touchscreen and the finger or pen. The position of the virtual object can be at the point at which the user lifted his or her finger or pen, and the orientation of the object can be based on the direction movement prior to the input action.

The system can also utilize eye tracking features. For instance, the input action can involve eye movements that are captured by a sensor in communication with the system. The sensor can generate input data indicating the position of the object to be placed. The sensor can also generate input data indicating the input action by a gesture performed by the user. For instance, the user can blink to place virtual objects or provide a voice command to place virtual objects.

With reference to FIGS. 7A-7F, an example involving the manual mode is shown and described. The manual mode is invoked in response to a second type of input action, such as a press and hold action of an input device. In response to detecting the second type of input action, a first point of the virtual object, such as a tip of an arrow, is placed at the position of a cursor at the time of the input action. During the press and hold action, the first point of the virtual object is locked at the position and the size and orientation of the virtual object are manipulated by the movement of the cursor. In response to detecting a subsequent input action, such as a release action, a second point of the virtual object, such as the tail of the arrow, is placed at the position of the cursor at the time of the subsequent input action.

To invoke the manual mode, a user 118B can select the UI control 208A using a mouse, touch input, or another type of user input device. In the example shown in FIG. 7A, the user 118B has selected the UI control 208A using an input device and an associated mouse cursor 210. Once the user 118B has selected the UI control 208A, the user can move the cursor 260 to any desired location.

As shown in FIG. 7A, a first position for a first point of the virtual object is selected in response to receiving an input action, such as an actuation of a mouse button, etc. The first position (indicted by the dashed crosshairs) that is selected for the first point of the virtual object is based on the location of the cursor 260 at the time of the input action. In this example, the first point of the virtual object is the tip of an arrow. As summarized herein, the manual mode is invoked in response to a second type of input action, such as a press and hold action, where an input device is held for a threshold period of time.

In response to determining that an input device has been held for at least a threshold time, as shown in FIG. 7B, the virtual object 116 is rendered and a second point of the virtual object, such as the tail of a narrow, is configured to move with the cursor 260. Thus, as shown in FIG. 7B and FIG. 7C, given that the first point of the virtual object is locked at the first position, the length of the virtual object 116 can change based on the distance between the position of the cursor 260 and the first position. As shown in FIG. 7D, the orientation of the virtual object 116 can also be changed based on the movement of the cursor 260. Given that the second point of the virtual object moves with the cursor 260, orientation and size of the virtual object can be changed simultaneously. As shown in FIG. 7E, the virtual object can be shortened by moving the cursor 260 toward the first point. As shown in FIG. 7F, in response to receiving a subsequent input action, such as a release of the input device, the second point of the virtual object, such as the tail of the arrow, is placed at the position of the cursor at the time of the subsequent input action. As shown, the size (at least one dimension) of the virtual object can be based on a distance between the first point and the second point.

The example provided above is for illustrative purposes and is not to be construed as limiting. It can be appreciated that any virtual object of any shape, size, or orientation, can be configured according to the techniques disclosed herein. In one illustrative example, shown in FIGS. 8A-8F, the virtual object can be in the form of an arrow, where the first point of the virtual object is the tail of the arrow and the second point of the virtual object is the tip of the arrow.

As shown in FIG. 8A, a first position for a first point of the virtual object is selected in response to receiving an input action, such as an actuation of a mouse button, etc. The first position (indicted by the dashed crosshairs) that is selected for the first point of the virtual object is based on the location of the cursor 260 at the time of the input action. In this example, the first point of the virtual object is the tail of an arrow. As summarized herein, the manual mode is invoked in response to a second type of input action, such as a press and hold action, where an input device is held for a threshold period of time. While the input device is held, the first point of the virtual object remains locked at the first position.