Detection of business email compromise

Jakobsson

U.S. patent number 10,721,195 [Application Number 15/414,489] was granted by the patent office on 2020-07-21 for detection of business email compromise. This patent grant is currently assigned to ZapFraud, Inc.. The grantee listed for this patent is ZapFraud, Inc.. Invention is credited to Bjorn Markus Jakobsson.

View All Diagrams

| United States Patent | 10,721,195 |

| Jakobsson | July 21, 2020 |

Detection of business email compromise

Abstract

Detecting scam is disclosed. A sender, having a first email address, is associated with a set of secondary contact data items. The set of secondary contact data items comprises at least one of a phone number, a second email address, and an instant messaging identifier. It is determined that an email message purporting to originate from the sender's first email address has been sent to a recipient. Prior to allowing access by the recipient to the email message, it is requested, using at least one secondary contact item, that the sender confirm that the email message was indeed originated by the sender. In response to receiving a confirmation from the sender that the sender did originate the email message, the email message is delivered to the recipient.

| Inventors: | Jakobsson; Bjorn Markus (Portola Valley, CA) | ||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Applicant: |

|

||||||||||

| Assignee: | ZapFraud, Inc. (Portola Valley,

CA) |

||||||||||

| Family ID: | 59398670 | ||||||||||

| Appl. No.: | 15/414,489 | ||||||||||

| Filed: | January 24, 2017 |

Prior Publication Data

| Document Identifier | Publication Date | |

|---|---|---|

| US 20170230323 A1 | Aug 10, 2017 | |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | Issue Date | ||

|---|---|---|---|---|---|

| 62287378 | Jan 26, 2016 | ||||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | H04L 63/083 (20130101); H04L 51/28 (20130101); H04L 63/1433 (20130101); H04L 51/12 (20130101); H04L 63/1483 (20130101); H04L 63/145 (20130101); H04L 63/0227 (20130101); H04L 51/38 (20130101); H04L 63/08 (20130101); H04L 2463/082 (20130101) |

| Current International Class: | H04L 29/06 (20060101); H04L 12/58 (20060101) |

References Cited [Referenced By]

U.S. Patent Documents

| 6161130 | December 2000 | Horvitz |

| 6574658 | June 2003 | Gabber |

| 6721784 | April 2004 | Leonard |

| 7293063 | November 2007 | Sobel |

| 7299261 | November 2007 | Oliver |

| 7644274 | January 2010 | Jakobsson |

| 7809795 | October 2010 | Cooley |

| 7814545 | October 2010 | Oliver |

| 7899213 | March 2011 | Otsuka |

| 7899866 | March 2011 | Buckingham |

| 7917655 | March 2011 | Coomer |

| 8010614 | August 2011 | Musat |

| 8131655 | March 2012 | Cosoi |

| 8255572 | August 2012 | Coomer |

| 8484741 | July 2013 | Chapman |

| 8489689 | July 2013 | Sharma |

| 8566938 | October 2013 | Prakash |

| 8667069 | March 2014 | Connelly |

| 8719940 | May 2014 | Higbee |

| 8752172 | June 2014 | Dotan |

| 8832202 | September 2014 | Yoshioka |

| 8984640 | March 2015 | Emigh |

| 9060057 | June 2015 | Danis |

| 9154514 | October 2015 | Prakash |

| 9245115 | January 2016 | Jakobsson |

| 9277049 | March 2016 | Danis |

| 9338287 | May 2016 | Russo |

| 9471714 | October 2016 | Iwasaki |

| 9473437 | October 2016 | Jakobsson |

| 2002/0138271 | September 2002 | Shaw |

| 2003/0023736 | January 2003 | Abkemeier |

| 2003/0229672 | December 2003 | Kohn |

| 2003/0236845 | December 2003 | Pitsos |

| 2004/0176072 | September 2004 | Gellens |

| 2004/0177120 | September 2004 | Kirsch |

| 2004/0203589 | October 2004 | Wang |

| 2005/0033810 | February 2005 | Malcolm |

| 2005/0060643 | March 2005 | Glass |

| 2005/0076084 | April 2005 | Loughmiller |

| 2005/0080857 | April 2005 | Kirsch |

| 2005/0182735 | August 2005 | Zager |

| 2005/0188023 | August 2005 | Doan |

| 2005/0210106 | September 2005 | Cunningham |

| 2005/0216587 | September 2005 | John |

| 2005/0223076 | October 2005 | Barrus |

| 2005/0235065 | October 2005 | Le |

| 2005/0257261 | November 2005 | Shraim |

| 2006/0004772 | January 2006 | Hagan |

| 2006/0015563 | January 2006 | Judge |

| 2006/0026242 | February 2006 | Kuhlmann |

| 2006/0031306 | February 2006 | Haverkos |

| 2006/0053490 | March 2006 | Herz |

| 2006/0149821 | July 2006 | Rajan |

| 2006/0168329 | July 2006 | Tan |

| 2006/0195542 | August 2006 | Nandhra |

| 2006/0206713 | September 2006 | Hickman |

| 2006/0224677 | October 2006 | Ishikawa |

| 2006/0253597 | November 2006 | Mujica |

| 2006/0259558 | November 2006 | Yen |

| 2006/0265498 | November 2006 | Turgeman |

| 2007/0019235 | January 2007 | Lee |

| 2007/0027992 | February 2007 | Judge |

| 2007/0101423 | May 2007 | Oliver |

| 2007/0107053 | May 2007 | Shraim |

| 2007/0130618 | June 2007 | Chen |

| 2007/0143432 | June 2007 | Klos |

| 2007/0192169 | August 2007 | Herbrich |

| 2007/0198642 | August 2007 | Malik |

| 2007/0239639 | October 2007 | Loughmiller |

| 2007/0271343 | November 2007 | George |

| 2007/0299916 | December 2007 | Bates |

| 2008/0004049 | January 2008 | Yigang |

| 2008/0046970 | February 2008 | Oliver |

| 2008/0050014 | February 2008 | Bradski |

| 2008/0104235 | May 2008 | Oliver |

| 2008/0141374 | June 2008 | Sidiroglou |

| 2008/0175266 | July 2008 | Alperovitch |

| 2008/0178288 | July 2008 | Alperovitch |

| 2008/0235794 | September 2008 | Bogner |

| 2008/0276315 | November 2008 | Shuster |

| 2008/0290154 | November 2008 | Barnhardt |

| 2008/0313704 | December 2008 | Sivaprasad |

| 2009/0064330 | March 2009 | Shraim |

| 2009/0089859 | April 2009 | Cook |

| 2009/0210708 | August 2009 | Chou |

| 2009/0217370 | August 2009 | Hulten |

| 2009/0228583 | September 2009 | Pocklington |

| 2009/0252159 | October 2009 | Lawson |

| 2009/0292781 | November 2009 | Teng |

| 2009/0319629 | December 2009 | De Guerre |

| 2010/0030798 | February 2010 | Kumar |

| 2010/0043071 | February 2010 | Wang |

| 2010/0070761 | March 2010 | Gustave |

| 2010/0115040 | May 2010 | Sargent |

| 2010/0145900 | June 2010 | Zheng |

| 2010/0250929 | September 2010 | Schultz |

| 2010/0287246 | November 2010 | Klos |

| 2010/0299399 | November 2010 | Wanser |

| 2010/0313253 | December 2010 | Reiss |

| 2011/0060802 | March 2011 | Katsikas |

| 2011/0087485 | April 2011 | Maude |

| 2011/0191847 | August 2011 | Davis |

| 2011/0271349 | November 2011 | Kaplan |

| 2012/0030293 | February 2012 | Bobotek |

| 2012/0167233 | June 2012 | Gillum |

| 2012/0227104 | September 2012 | Sinha |

| 2012/0246725 | September 2012 | Osipkov |

| 2012/0278694 | November 2012 | Washio |

| 2012/0278887 | November 2012 | Vitaldevara |

| 2013/0067012 | March 2013 | Matzkel |

| 2013/0081142 | March 2013 | McDougal |

| 2013/0083129 | April 2013 | Thompson |

| 2013/0128883 | May 2013 | Lawson |

| 2013/0346528 | December 2013 | Shinde |

| 2014/0230061 | August 2014 | Higbee |

| 2014/0250506 | September 2014 | Hallam-Baker |

| 2015/0030156 | January 2015 | Perez |

| 2015/0067833 | March 2015 | Verma |

| 2015/0067839 | March 2015 | Wardman |

| 2015/0081722 | March 2015 | Terada |

| 2016/0104132 | April 2016 | Abbatiello |

| 2017/0091274 | March 2017 | Guo |

| 2017/0200015 | July 2017 | Gonzalez |

| 2017/0206545 | July 2017 | Gupta |

| 2017/132170 | Aug 2017 | WO | |||

Other References

|

Author Unknown, "An Effective Solution for Spam", downloaded from "https://web.archive.org/web/20050203011232/http:/home.nyc.rr.com/spamsol- ution/An%20Effective%20Solution%20for%20Spam.htm", Feb. 3, 2005. cited by applicant . Author Unknown, "BABASTIK: AntiSpam Personal", downloaded from "https://web.archive.org/web/20101031061734/babastik.com/AntiSpam-Persona- l/", Oct. 31, 2010. cited by applicant . Author Unknown, "bluebottle--trusted delivery", downloaded from "https://web.archive.org/web/20140715223712/https://bluebottle.com/truste- d-delivery.php", Jul. 15, 2014. cited by applicant . Author Unknown, "First of all, Your Software Is Excellent", downloaded from "https://web.archive.org/web/20120182074130/http://www.spamresearchc- enter.com/", Aug. 12, 2012. cited by applicant . Author Unknown, "Frequently asked questions regarding Spamboomerang: Test Drive how SPAM Boomerang treats unknown senders", downloaded from "https://web.archive.org/web/20080719034305/http:/www.triveni.com.au/Spam- boomerang/Spam_Faq.html", Jul. 19, 2008. cited by applicant . Author Unknown, "Junk Mail Buffering Agent", downloaded from http://www.ivarch.com/programs/jmba.shtml, Jun. 2005. cited by applicant . Author Unknown, "No Software to Install", downloaded from "https://web.archive.org/web/201002095356/http://www.cleanmymailbox.com:8- 0/howitworks.html", Oct. 2, 2010. cited by applicant . Author Unknown, "Rejecting spam with a procmail accept list", downloaded from "https://web.archive.org/web/20160320083258/http:/angel.net/.about.n- ic/spam-x/", Mar. 20, 2016. cited by applicant . Author Unknown, "SpamFry: Welcome to our Beta testers", downloaded from https://web.archive.org/web/20050404214637/http:www.spamfry.net:80/, Apr. 4, 2005. cited by applicant . Author Unknown, "Sporkie" From Syncelus Wiki, retrieved from "http://wiki.syncleus.com/index.php?title=Sporkie&oldid=1034 (https://web.archive.org/web/20150905224202/http://wiki.syncleus.com/inde- x.php?title=Sporkie&oldid=1034)", Sep. 2015. cited by applicant . Author Unknown, "Stop Spam Mail, Block Offensive Materials, Save Time and Money", iPermitMail Email Firewall Version 3.0, 2003. cited by applicant . Author Unknown, (Steven)--Artificial Intelligence for your email, downloaded from "https://web.archive.org/web/20140607193205/http://www.softwaredevelopmen- t.net.au:80/pge_steven.htm", Jun. 7, 2014. cited by applicant . Author Unknown, 0Spam.com, Frequently Asked Questions, downloaded from "https://web.archive.org/web/20150428181716/http://www.0spam.com:80/suppo- rt.shtml#whatisit", Apr. 28, 2015. cited by applicant . Author Unknown, Affini: A Network of Trust, downloaded from https://web.archive.org/web/20100212113200/http://www.affini.com:80/main/- info.html, Feb. 12, 2010. cited by applicant . Author Unknown, Alan Clifford's Software Page, downloaded from "https://web.archive.org/web/20150813112933/http:/clifford.ac/software.ht- ml", Aug. 13, 2015. cited by applicant . Author Unknown, ASB AntiSpam official home page, downloaded from "https://web.archive.org/web/20080605074520/http://asbsoft.netwu.com:80/i- ndex.html", Jun. 5, 2008. cited by applicant . Author Unknown, Boxbe, Wikipedia, Nov. 17, 2016, https://en.wikipedia.org/wiki/Boxbe?wprov=sfsi1. cited by applicant . Author Unknown, BoxSentry, An advanced email validation facility to prevent Spam, downloaded from "https://web.archive.org/web/20040803060108/http://www.boxsentry.com:80/w- orkings.html", Aug. 3, 2004. cited by applicant . Author Unknown, CAPTCHA: Telling Humans and Computers Apart Automatically, downloaded from "https://web.archive.org/web/20160124075223/http:/www.captcha.net/", Jan. 24, 2016. cited by applicant . Author Unknown, CashRamSpam.com, "Learn More about CRS: Welcome to CashRamSpam", downloaded from "https://web.archive.org/web/20151014175603/http:/cashramspam.com/learnmo- re/index.phtml", Oct. 14, 2015. cited by applicant . Author Unknown, drcc nsj, New Features: Query/Response system and Bayesian auto-leaning, downloaded from "https://web.archive.org/web/20150520052601/http:/domino-240.drcc.com:80/- publicaccess/news.nsf/preview/DCRR-69PKU5", May 20, 2015. cited by applicant . Author Unknown, FairUCE: A spam filter that stops spam by verifying sender identity instead of filtering content., downloaded from "https://web.archive.org/web/20061017101305/https:/secure.alphaworks.ibm.- com/tech/fairuce", posted Nov. 30, 2004, captured on Oct. 17, 2006. cited by applicant . Author Unknown, Home Page For "Countering Spam with Ham-Authenticated Email and the Guarded Email Protocol", downloaded from https://web.archive.org/web/20150913075130/http:/www.dwheeler.com/guarded- -email/, Sep. 13, 2015. cited by applicant . Author Unknown, Home: About.com, downloaded from "https://web.archive.org/web/20110201205543/quarantinemail.com/" Feb. 1, 2011. cited by applicant . Author Unknown, How ChoiceMail Works, downloaded from "https://web.archive.org/web/20160111013759/http://www.digiportal.com:80/- products/how-choicemail-works.html", Jan. 11, 2016. cited by applicant . Author Unknown, How Mail Unknown works., downloaded from "https://web.archive.org/web/20100123200126/http://www.mailunknown.com:80- /HowMailUnknownWorks.asp#VerifyValidate", Jan. 23, 2010. cited by applicant . Author Unknown, Joe Maimon--Sendmail Page, downloaded from "https://web.archive.org/web/20150820074626/http:/www.jmaimon.com/sendmai- l/" Aug. 20, 2015. cited by applicant . Author Unknown, Kens Spam Filter 1.40, downloaded from "https://web.archive.org/web/20080317184558/http://www.kensmail.net:80/sp- am.html", Mar. 17, 2008. cited by applicant . Author Unknown, mailcircuit.com, Secure: Spam Protection, downloaded from "https://web.archive.org/web/20131109042243/http:/www.mailcircuit.com/sec- ure/", Nov. 9, 2013. cited by applicant . Author Unknown, mailDuster, Tour 1: Show me how mailDuster blocks spam, downloaded from "https://web.archive.org/web/20070609210003/http://www.mailduster.com:80/- tour1.phtml", Jun. 9, 2007. cited by applicant . Author Unknown, mailDuster, Tour 2: But how do my friends and colleagues send me email?, downloaded from "https://web.archive.org/web/20070609210039/http://www.mailduster.com:80/- tour2.phtml", Jun. 9, 2007. cited by applicant . Author Unknown, mailDuster, Tour 3: How do I manage this "Allow and Deny List"?, downloaded from "https://web.archive.org/web/20070610012141/http://www.mailduster.com:80/- tour3.phtml", Jun. 10, 2007. cited by applicant . Author Unknown, mailDuster, User Guide, downloaded from "https://web.archive.org/web/20070612091602/http://www.mailduster.com:80/- userguide.phtml", Jun. 12, 2007. cited by applicant . Author Unknown, myprivacy.ca, "Welcome to myprivacy.ca: The simple yet effective whois-harvester-buster", downloaded from "https://web.archive.org/web/20160204100135/https:/www.myprivacy.ca/", Feb. 4, 2016. cited by applicant . Author Unknown, PermitMail, Products: The most advanced email firewall available for your business, downloaded from "https://web.archive.org/web/20160219151855/http://ipermitmail.com/produc- ts/", Feb. 19, 2016. cited by applicant . Author Unknown, Petmail Design, downloaded from "https://web.archive.org/web/20150905235136if_/http:/petmail.lothar.com/d- esign.html", Jul. 2005. cited by applicant . Author Unknown, PostShield.net, Challenge and Response, downloaded from "https://web.archive.org/web/20080117111334/http://www.postshield.net:80/- ChallengeAndResponse.aspx", Jan. 17, 2008. cited by applicant . Author Unknown, privatemail.com, how it works: Experts say the best way to control spam is to use temporary "disposable" email addresses like from Yahoo or Hotmail that can be discarded after they start getting spam., downloaded from "https://web.archive.org/web/20100212231457/http:/privatemail.com:80/HowI- tWorksPage.aspx", Feb. 12, 2010. cited by applicant . Author Unknown, Product Information, "Sender Validation is the solution to your company's spam problem.", downloaded from "https://web.archive.org/web/20140413143328/http:/www.spamlion.com:80/Pro- ducts.asp", Apr. 13, 2014. cited by applicant . Author Unknown, qconfirm--How it works, downloaded from https://web.archive.org/web/20150915060329/http:/smarden.org/qconfirm/tec- hnical.html, Sep. 15, 2015. cited by applicant . Author Unknown, RSF Mail Agent, Nov. 17, 2016, http://theory.csail.mit.edu/.about.rivest/rsf. cited by applicant . Author Unknown, Say Goodbye to Email Overload, downloaded from "https://web.archive.org/web/20160119092844/http://www.boxbe.com:80/how-i- t-works", Jan. 19, 2016. cited by applicant . Author Unknown, sendio, "Inbox Security. Threats eliminated with a layered technology approach.", downloaded from "https://web.archive.org/web/20140213192151/http:/www.sendio.com/solution- s/security/", Feb. 13, 2014. cited by applicant . Author Unknown, Spam Pepper, Combatting Net Spam, downloaded from "https://web.archive.org/web/20141002210345/http://www.spampepper.com:80/- spampepper-com/", Oct. 2, 2014. cited by applicant . Author Unknown, Spam Snag, Stop Unsolicited Emails forever!, downloaded from "https://web.archive.org/web/20081220202500/http://www.spamsnag.com:- 80/how.php", Dec. 20, 2008. cited by applicant . Author Unknown, Spam: Overview, downloaded from "https://web.archive.org/web/20090107024207/http:/www.spamwall.net/produc- ts.htm", Jan. 7, 2009. cited by applicant . Author Unknown, SpamBlocks is a Web based Mail filtering service which integrates with your existing mailbox., downloaded from "https://web.archive.org/web/20090107050428/http:/www.spamblocks.net/howi- tworks/detailed_system_overview.php", Jan. 7, 2009. cited by applicant . Author Unknown, SpamCerbere.com, downloaded from "https://web.archive.org/web/20070629011221/http:/www.spamcerbere.com:80/- en/howitworks.php", Jun. 29, 2007. cited by applicant . Author Unknown, SPAMjadoo: Ultimate Spam Protection, downloaded from "https://web.archive.org/web/20140512000636/http:/www.spamjadoo.com:80/es- p-explained.htm" May 12, 2014. cited by applicant . Author Unknown, SpamKilling, "What is AntiSpam?", downloaded from "https://web.archive.org/web/20100411141933/http:/www.spamkilling.com:80/- home_html.htm", Apr. 11, 2010. cited by applicant . Author Unknown, SpamRestraint.com: How does it work?, downloaded from "https://web.archive.org/web/20050206071926/http://www.spamrestraint.com:- 80/moreinfo.html", Feb. 6, 2005. cited by applicant . Author Unknown, Tagged Message Delivery Agent (TMDA), downloaded from "http://web.archive.org/web/20160122072207/http://www.tmda.net/", Jan. 22, 2016. cited by applicant . Author Unknown, UseBestMail provides a mechanism for validating mail from non-UseBestMail correspondents., downloaded from "https://web.archive.org/web/20090106142235/http://www.usebestmail.com/Us- eBestMail/Challenge_Response.html", Jan. 6, 2009. cited by applicant . Author Unknown, V@nquish Labs, "vqNow: How It Works", downloaded from "https://web.archive.org/web/20130215074205/http:/www.vanquish.com:80/pro- ducts/products_how_it_works.php?product=vqnow", Feb. 15, 2013. cited by applicant . Author Unknown, V@nquishLabs, How it Works: Features, downloaded from "https://web.archive.org/web/20081015072416/http://vanquish.com/features/- features_how_it_works.shtml", Oct. 15, 2008. cited by applicant . Author Unknown, What is Auto Spam Killer, downloaded from "https://web.archive.org./web/20090215025157/http://knockmail.com:80/supp- ort/descriptionask.html", Feb. 15, 2009. cited by applicant . Author Unknown, White List Email (WLE), downloaded from "https://web.archive.org/web/20150912154811/http:/www.rfc1149.net/devel/w- le.html", Sep. 12, 2015. cited by applicant . Brad Templeton, "Proper principles for Challenge/Response anti-spam systems", downloaded from "http://web.archive.org/web/2015090608593/http://www.templetons.com/brad/- spam/challengeresponse.html", Sep. 6, 2015. cited by applicant . Danny Sleator, "Blowback: A Spam Blocking System", downloaded from "https://web.archive.org/web/20150910031444/http://www.cs.cmu.edu/.about.- sleator/blowback", Sep. 10, 2015. cited by applicant . David A. Wheeler, Countering Spam by Using Ham Passwords (Email Passwords), article last revised May 11, 2011; downloaded from https://web.archive.org/web/20150908003106/http:/www.dwheeler.com/essays/- spam-email-password.html, captured on Sep. 8, 2015. cited by applicant . David A. Wheeler, "Countering Spam with Ham-Authenticated Email and the Guarded Email Protocol", article last revised Sep. 11, 2003; downloaded from "https://web.archive.org/web/20150915073232/http:/www.dwheeler.com/g- uarded-email/guarded-email.html", captured Sep. 15, 2015. cited by applicant . Fleizach et al., "Slicing Spam with Occam's Razor", published Jun. 10, 2007, downloaded from "https://web.archive.org/web/20140214225525/http://csetechrep.ucsd.edu/Di- enst/UI/2.0/Describe/ncstrl.ucsd_cse/C2007-0893", captured Feb. 14, 2014. cited by applicant . James Thornton, "Challenge/Response at the SMTP Level", downloaded from "https://web.archive.org/web/20140215111642/http://original.jamesthornton- .com/writing/challenge-response-at-smtp-level.html", Feb. 15, 2014. cited by applicant . Marco Paganini, Active Spam Killer, "How It Works", downloaded from "https://web.archive.org/web/20150616133020/http:/a-s-k.sourceforge.net:8- 0/howitworks.html", Jun. 16, 2015. cited by applicant . Peter Simons, "mapSoN 3.x User's Manual", downloaded from "https://web.archive.org/web/20140626054320/http:/mapson.sourceforge.net/- ", Jun. 26, 2014. cited by applicant . Ronald L. Rivest, "RSF Quickstart Guide", Sep. 1, 2004. cited by applicant . Patent Cooperation Treaty: International Search Report for PCT/US2017/014776 dated May 23, 2017; 4 pages. cited by applicant . Patent Cooperation Treaty: Written Opinion for PCT/US2017/014776 dated May 23, 2017; 6 pages. cited by applicant . A. Whitten and J. D. Tygar. Why Johnny Can't Encrypt: A Usability Evaluation of PGP 5.0. In Proceedings of the 8th Conference on USENIX Security Symposium--vol. 8, SSYM'99, Berkeley, CA, USA, 1999. USENIX Association. cited by applicant . Ahonen-Myka et al., "Finding Co-Occuring Text Phrases by Combining Sequence and Frequent Set Discovery", Proceedings of the 16th International Joint Conference on Artificial Intelligence IJCAI-99 Workshop on Text Mining: Foundations, Techniques, and Applications, (Jul. 31, 1999) 1-9. cited by applicant . Author Unknown, "Federal Court Denies Attempt by Mailblocks, Inc. To Shut Down Spamarrest LLC", downloaded from "http://www.spamarrest.com/pr/releases/20030611.jsp", Seattle, WA, Jun. 11, 2003. cited by applicant . Bjorn Markus Jakobsson, U.S. Appl. No. 14/487,989 entitled "Detecting Phishing Attempts" filed Sep. 16, 2014. cited by applicant . Bjorn Markus Jakobsson, U.S. Appl. No. 14/535,064 entitled "Validating Automatic Number Identification Data" filed Nov. 6, 2014. cited by applicant . Bjorn Markus Jakobsson, U.S. Appl. No. 15/235,058 entitled "Tertiary Classification of Communications", filed Aug. 11, 2016. cited by applicant . E. Zwicky, F. Martin, E. Lear, T. Draegen, and K. Andersen. Interoper-ability Issues Between DMARC and Indirect Email Flows. Internet-Draft draft-ietf-dmarc-interoperability-18, Internet Engineering Task Force, Sep. 2016. Work in Progress. cited by applicant . Karsten M. Self, "Challenge-Response Anti-Spam Systems Considered Harmful", downloaded from "ftp://linuxmafia.com/faq/Mail/challenge-response.html", last updated Dec. 29, 2003. cited by applicant . M. Jakobsson and H. Siadati. SpoofKiller: You Can Teach People How to Pay, but Not How to Pay Attention. In Proceedings of the 2012 Workshop on Socio-Technical Aspects in Security and Trust (STAST), STAST '12, pp. 3-10, Washington, DC, USA, 2012. IEEE Computer Society. cited by applicant . NIST. Usability of Security. http://csrc.nist.gov/security-usability/HTML/research.html. cited by applicant . R. Dhamija and J. D. Tygar. The Battle Against Phishing: Dynamic Security Skins. In Proceedings of the 2005 Symposium on Usable Privacy and Security, SOUPS '05, New York, NY, USA, 2005. ACM. cited by applicant . S. L. Garfinkel and R. C. Miller. Johnny 2: A User Test of Key Continuity Management with S/MIME and Outlook Express. In Proceedings of the 2005 Symposium on Usable Privacy and Security, SOUPS '05, New York, NY, USA, 2005. ACM. cited by applicant. |

Primary Examiner: Chen; Shin-Hon (Eric)

Attorney, Agent or Firm: Schwabe Williamson & Wyatt, PC

Parent Case Text

CROSS REFERENCE TO OTHER APPLICATIONS

This application claims priority to U.S. Provisional Patent Application No. 62/287,378 entitled DETECTION OF BUSINESS EMAIL COMPROMISE filed Jan. 26, 2016 which is incorporated herein by reference for all purposes.

Claims

What is claimed is:

1. A system for detection of business email compromise, comprising: a processor configured to: automatically determine that a first party is trusted by a second party, based on at least one of determining that the first party and second party belong to the same organization and that at least a threshold number of messages have been transmitted between the second party and the first party during a period of time that exceeds a threshold time; receive a message addressed to the second party from a third party, the third party distinct from the first party; perform a risk determination of the received message to determine if the received message poses a risk by determining that a display name of the first party and a display name of third party are the same or that a domain name of the first party and a domain name of the third party are similar, wherein similarity is determined based on having a string distance below a first threshold, or being conceptually similar based on a list of conceptually similar character strings; responsive to the first party being trusted by the second party, and the received message is determined to pose a risk, automatically perform a security action and a report generation action without having received any user input from a user associated with the second party in response to the message, wherein the security action comprises marking the message up with a warning or quarantining the message, wherein the report generating action comprises including information about the received message in a report accessible to an admin of the system; and a memory coupled to the processor and configured to provide the processor with instructions.

2. The system of claim 1 wherein the risk determination is further based at least in part on at least one of an indication of spoofing, an indication of account takeover, a presence of a reply-to address, a determination of an abnormal delivery path, and a geographic inconsistency.

3. The system of claim 1 wherein the risk determination is further based on at least one of: detection of a new signature file, detection of a new display name, detection of high-risk email content, detection of an abnormal delivery path, and based on analysis of attachments.

4. The system of claim 1 wherein an address associated with the first party is a secondary communication channel associated with at least one of the first party and an admin associated with the first party.

5. The system of claim 1 wherein the security action further comprises transmitting a confirmation request to an address associated with the first party, the confirmation request comprising at least a portion of the message, wherein the message is delivered to the second party based on verification of information received in response to the confirmation request.

6. The system of claim 1 wherein the security action further comprises modifying the message by at least one of: i) changing the display name based on a schedule or when an event occurs, ii) adding Unicode characters in the display name, iii) adding a title of the recipient to the display name, and iv) recording when the display name was modified to determine how old a connection is to the first party.

7. The system of claim 1 wherein the security action comprises at least one of: initiating a multi-factor authentication verification, modifying the display name of the message, transmitting a notification or a warning to an address associated with the second party, and transmitting a confirmation request to an address associated with the first party, the confirmation request comprising at least a portion of the message.

8. The system of claim 7 wherein a confirmation in response to the confirmation request comprises at least one of entering a code and clicking on a link included in the confirmation request.

9. The system of claim 8 wherein information associated with the clicking on the link is collected, wherein the information comprises at least one of an IP address, a cookie, and browser version information.

10. A non-monotonic system for determining whether an electronic message is deceptive, comprising: a processor configured to: automatically determine whether a first party is trusted by a second party, based on at least one of determining that the first party and second party belong to the same organization and that at least a threshold number of messages have been transmitted between the second party and the first party during a period of time that exceeds a threshold time; receive a message addressed to the second party from a third party, the third party distinct from the first party; perform a risk determination of the received message to determine if the received message poses a risk by determining that a display name of the first party and a display name of third party are the same or that a domain name of the first party and a domain name of the third party are similar, wherein similarity is determined based on having a string distance below a first threshold, or being conceptually similar based on a list of conceptually similar character strings; responsive to the first party being trusted by the second party, and the received message is determined to pose a risk, determine that the message is deceptive; responsive to a determination that the first party is not trusted by the second party, determine that the message is not deceptive; responsive to the message being found deceptive, automatically perform a security action and a report generation action without having received any user input from a user associated with the second party in response to the message, wherein the security action comprises marking the message up with a warning or quarantining the message, wherein the report generating action comprises including information about the received message in a report accessible to an admin of the system; and responsive to the message being found not deceptive, deliver the message to the second party; and a memory coupled to the processor and configured to provide the processor with instructions.

11. A method for detection of business email compromise, comprising: automatically determining that a first party is trusted by a second party, based on at least one of determining that the first party and second party belong to the same organization and that at least a threshold number of messages have been transmitted between the second party and the first party during a period of time that exceeds a threshold time, and by evaluating a transitive closure algorithm; receiving a message addressed to the second party from a third party, the third party distinct from the first party; performing a risk determination of the received message to determine if the received message poses a risk by determining that a display name of the first party and a display name of third party are the same or that a domain name of the first party and a domain name of the third party are similar, wherein similarity is determined based on having a string distance below a first threshold, or being conceptually similar based on a list of conceptually similar character strings; responsive to the first party being trusted by the second party, and the received message is determined to pose a risk, automatically performing a security action and a report generation action without having received any user input from a user associated with the second party in response to the message, wherein the security action comprises marking the message up with a warning or quarantining the message, wherein the report generating action comprises including information about the received message in a report accessible to an admin of the system.

12. The method of claim 11 further comprising basing the risk determination at least in part on at least one of an indication of spoofing, an indication of account takeover, a presence of a reply-to address, a determination of an abnormal delivery path, and a geographic inconsistency.

13. The method of claim 11 further comprising generating the risk determination based on at least one of: detection of a new signature file, detection of a new display name, detection of high-risk email content, detection of an abnormal delivery path, and an analysis of attachments.

14. The method of claim 11 further comprising determining an address associated with the first party is a secondary communication channel associated with at least one of the first party and an admin associated with the first party.

15. The method of claim 11 wherein the security action further comprises transmitting a confirmation request to an address associated with the first party, the confirmation request comprising at least a portion of the message, the method further comprising enabling a confirmation in response to the confirmation request to comprise at least one of entering a code and clicking on a link included in the confirmation request.

16. The method of claim 15 further comprising collecting information associated with the clicking on the link, wherein the information comprises at least one of an IP address, a cookie, and browser version information.

17. The method of claim 11 further comprising delivering the message to the second party based on verification of information received in response to the confirmation request.

18. A non-monotonic method for determining whether an electronic message is deceptive, comprising: automatically determining whether a first party is trusted by a second party, based on at least one of determining that the first party and second party belong to the same organization and that at least a threshold number of messages have been transmitted between the second party and the first party during a period of time that exceeds a threshold time, and by evaluating a transitive closure algorithm; receiving a message addressed from a third party distinct from the first party and addressed to the second party; performing a risk determination of the received message to determine if the received message poses a risk by determining that a display name of the first party and a display name of third party are the same or that a domain name of the first party and a domain name of the third party are similar, wherein similarity is determined based on having a string distance below a first threshold, or being conceptually similar based on a list of conceptually similar character strings; responsive to the first party being trusted by the second party and the received message is determined to pose a risk, determining that the message is deceptive; responsive to a determination that the first party is not trusted by the second party, determining that the message is not deceptive; responsive to the message being found deceptive, automatically performing a security action and a report generation action without having received any user input from a user associated with the second party in response to the message, wherein the security action comprises marking the message up with a warning or quarantining the message, wherein the report generating action comprises including information about the received message in a report accessible to an admin of the system; and responsive to the message being found not deceptive, delivering the message to the second party.

Description

BACKGROUND OF THE INVENTION

Business Email Compromise (BEC) is a type of scam that has increased dramatically in commonality in the recent past. In January 2015, the FBI released stats showing that between Oct. 1, 2013 and Dec. 1, 2014, some 1,198 companies reported having lost a total of $179 million in BEC scams, also known as "CEO fraud." It is likely that many companies do not report being victimized, and that the actual numbers are much higher. There therefore exists an ongoing need to protect users against such scams.

BRIEF DESCRIPTION OF THE DRAWINGS

Various embodiments of the invention are disclosed in the following detailed description and the accompanying drawings.

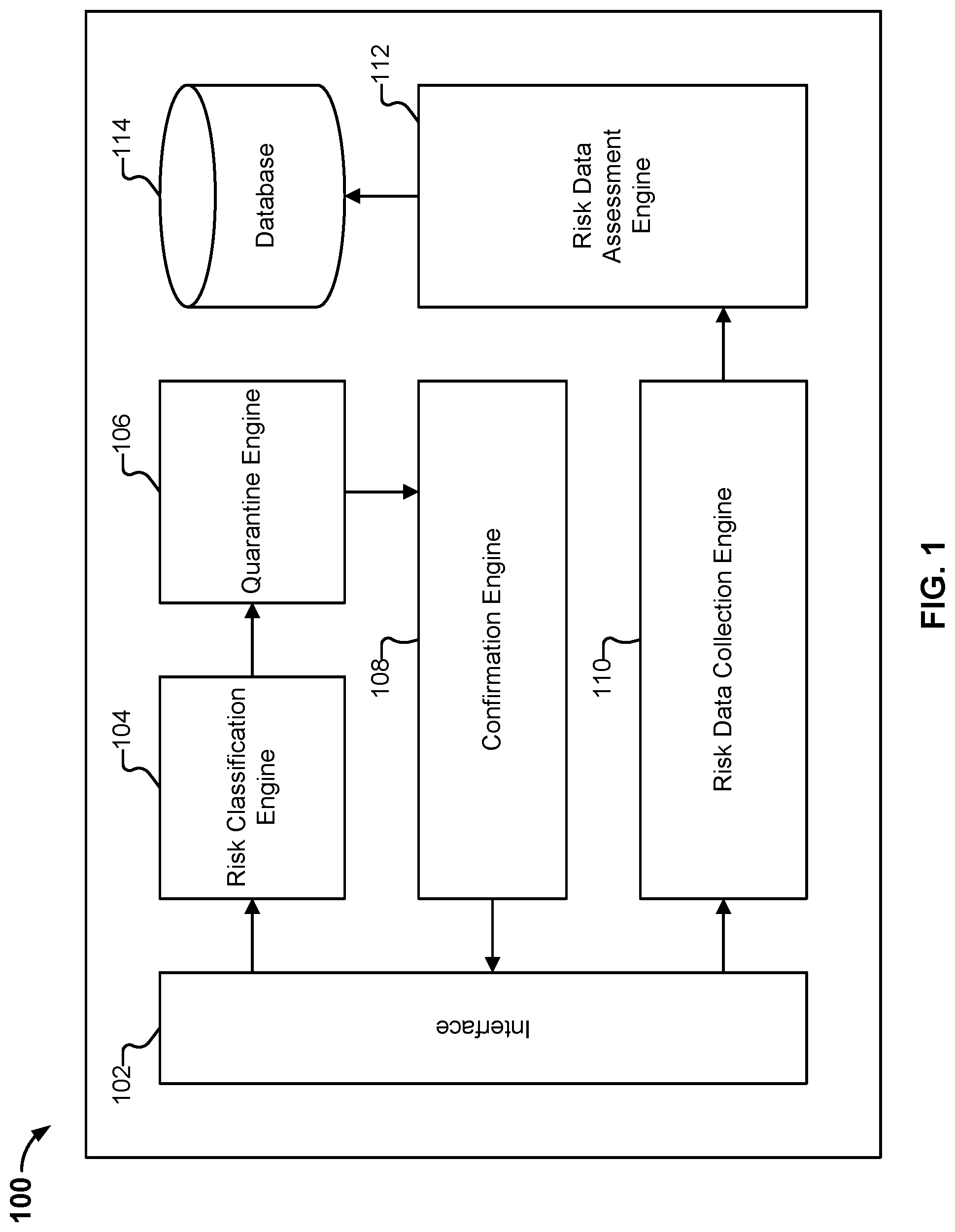

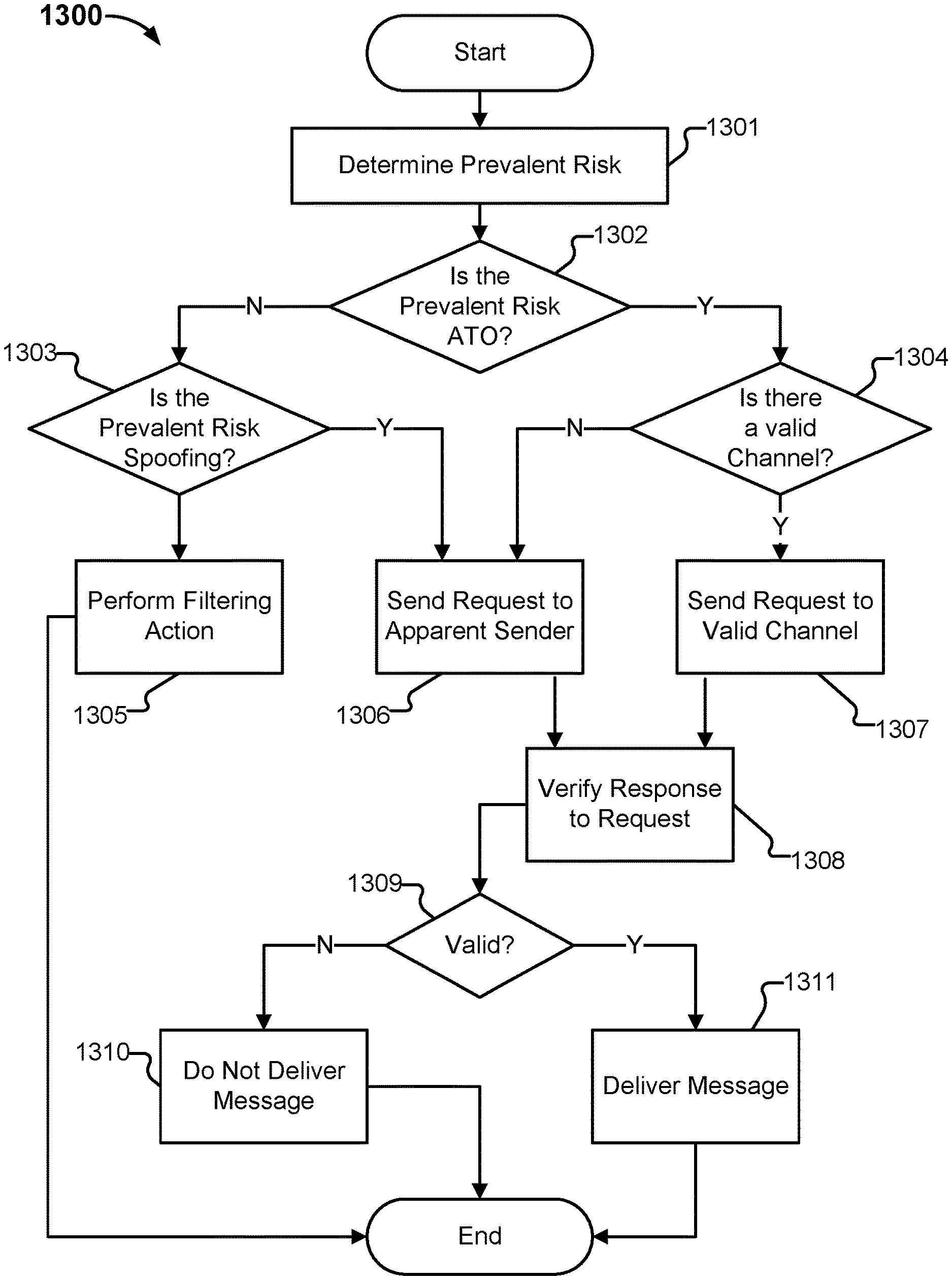

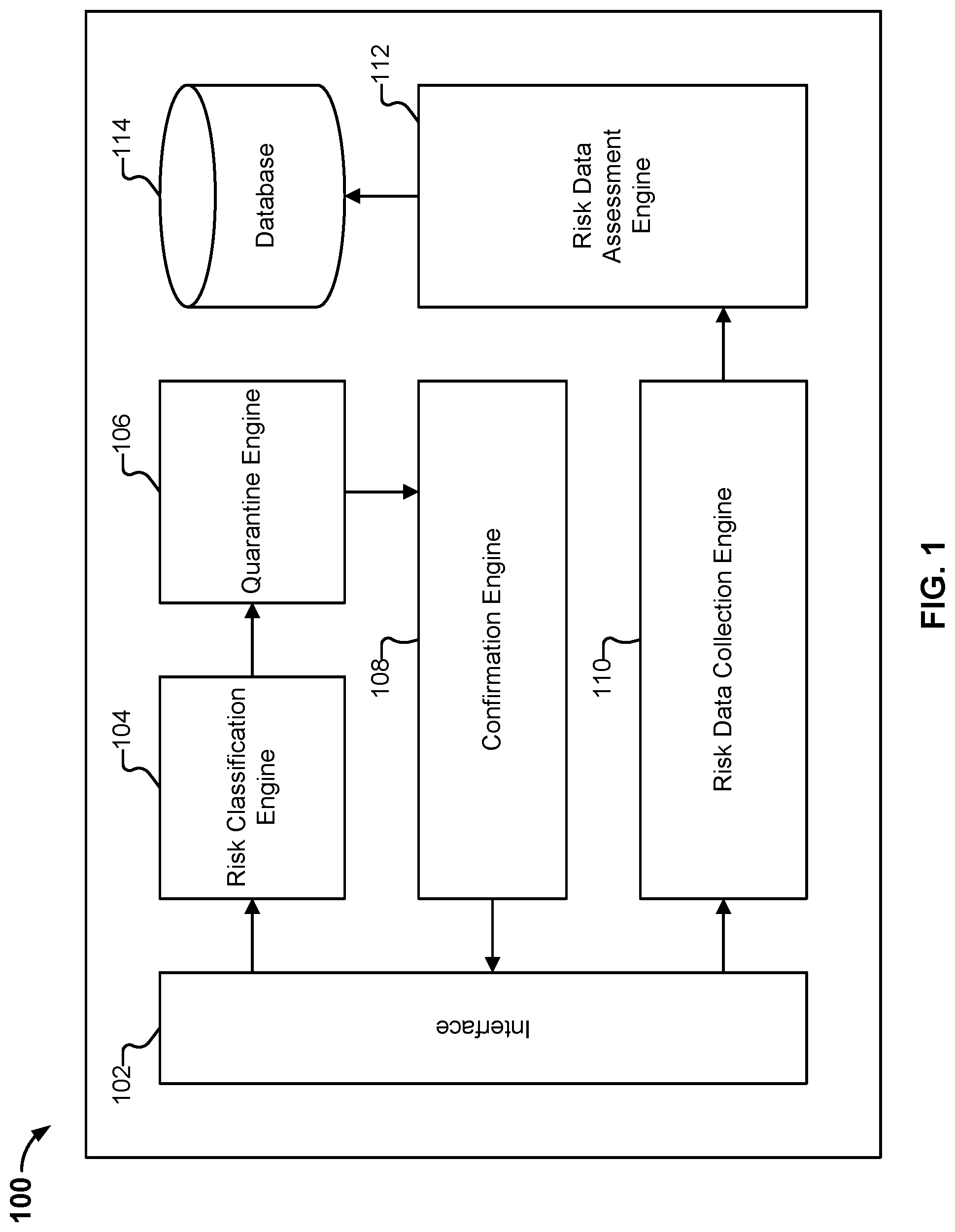

FIG. 1 is a block diagram illustrating an embodiment of a system for detecting scam.

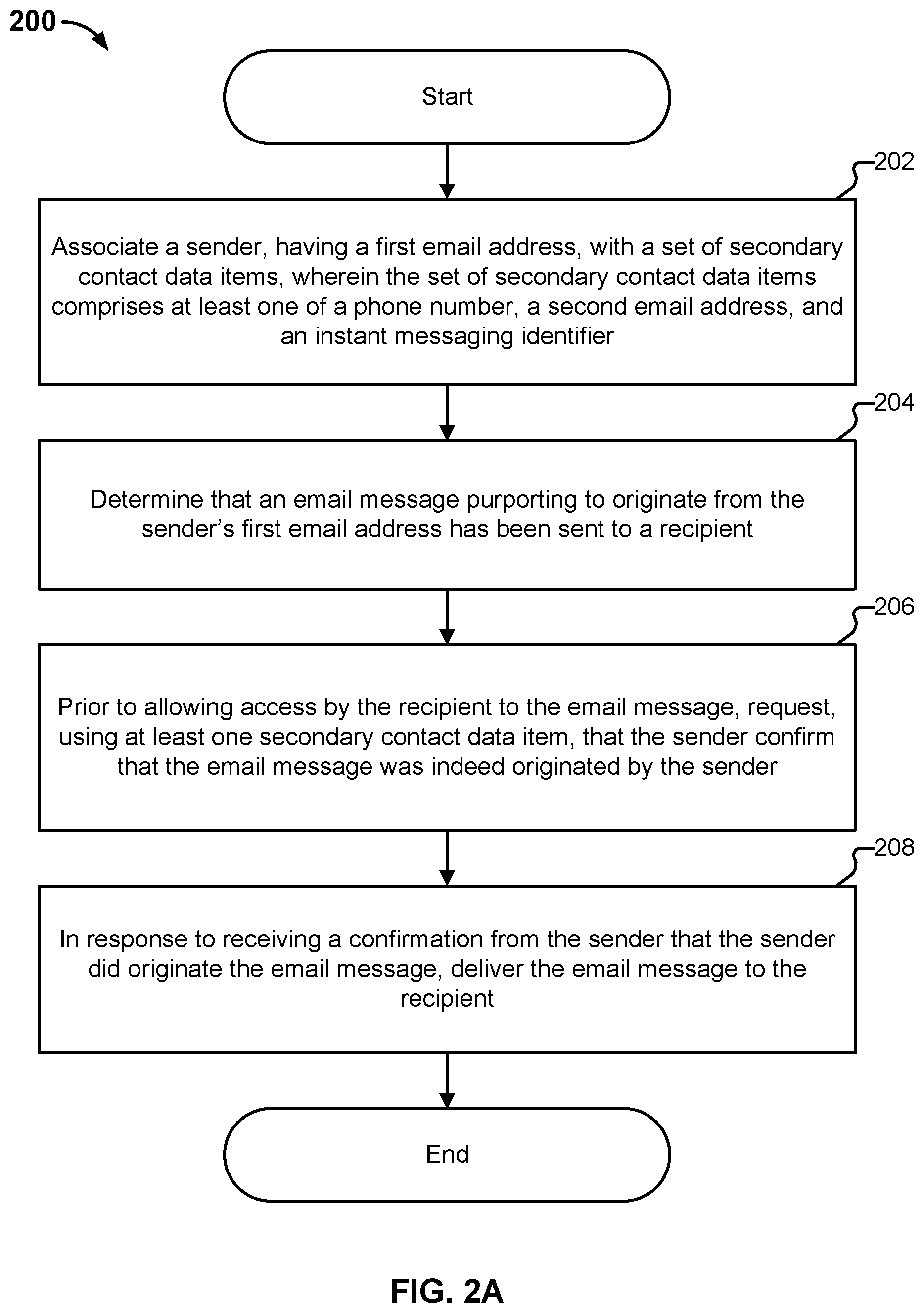

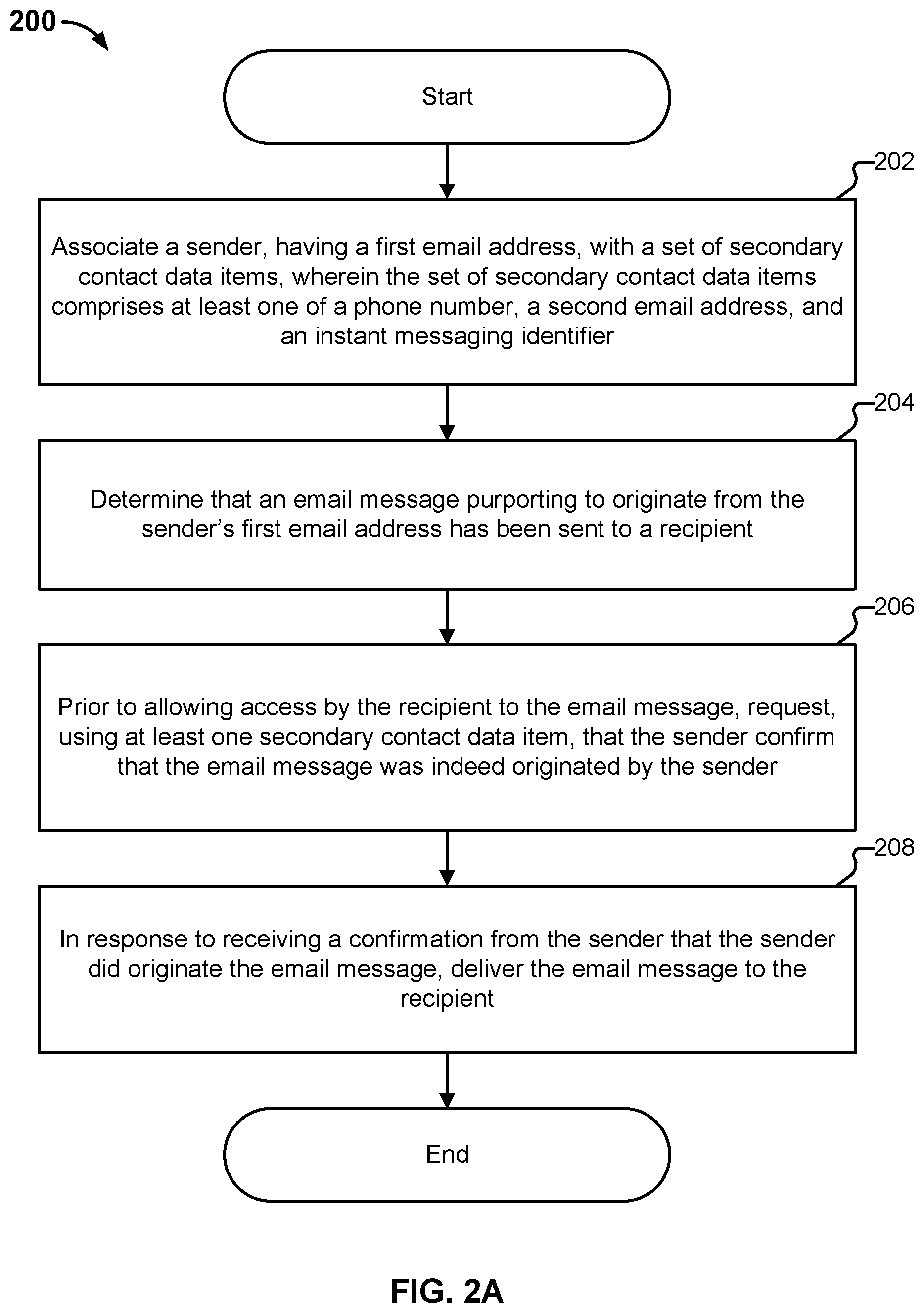

FIG. 2A is a flow diagram illustrating an embodiment of a process for detecting scam.

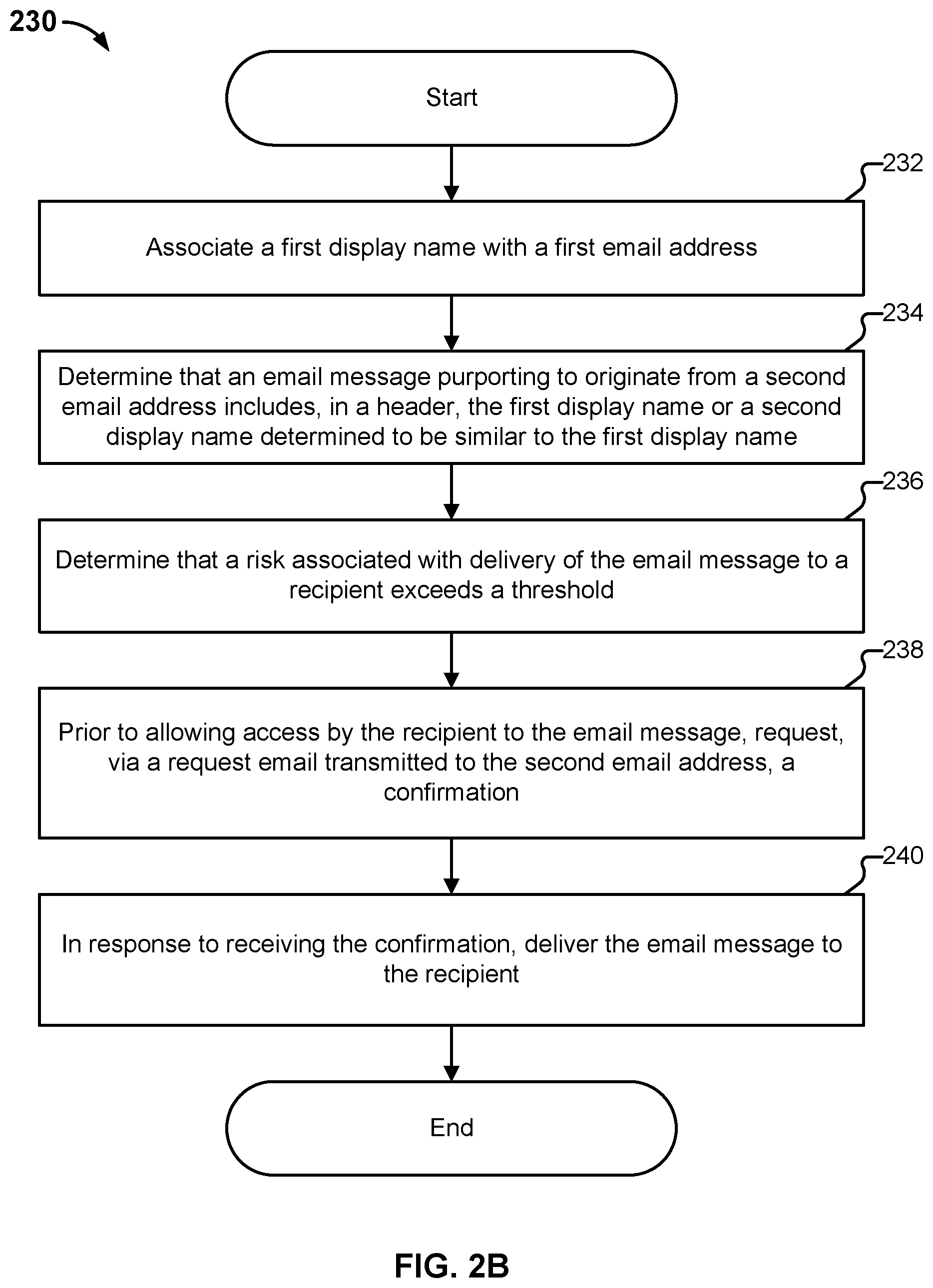

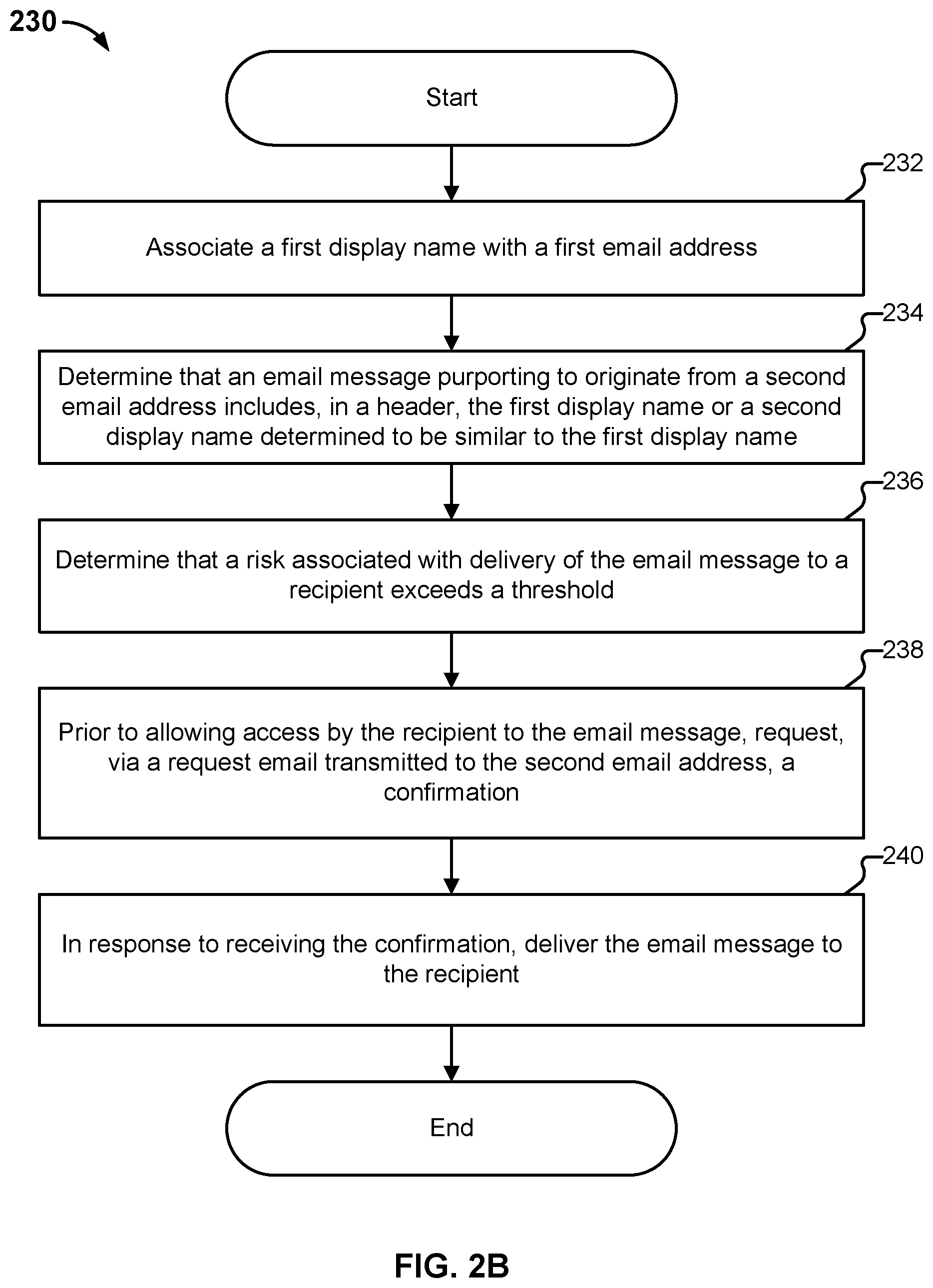

FIG. 2B is a flow diagram illustrating an embodiment of a process for detecting scam.

FIG. 2C is a flow diagram illustrating an embodiment of a process for detecting scam.

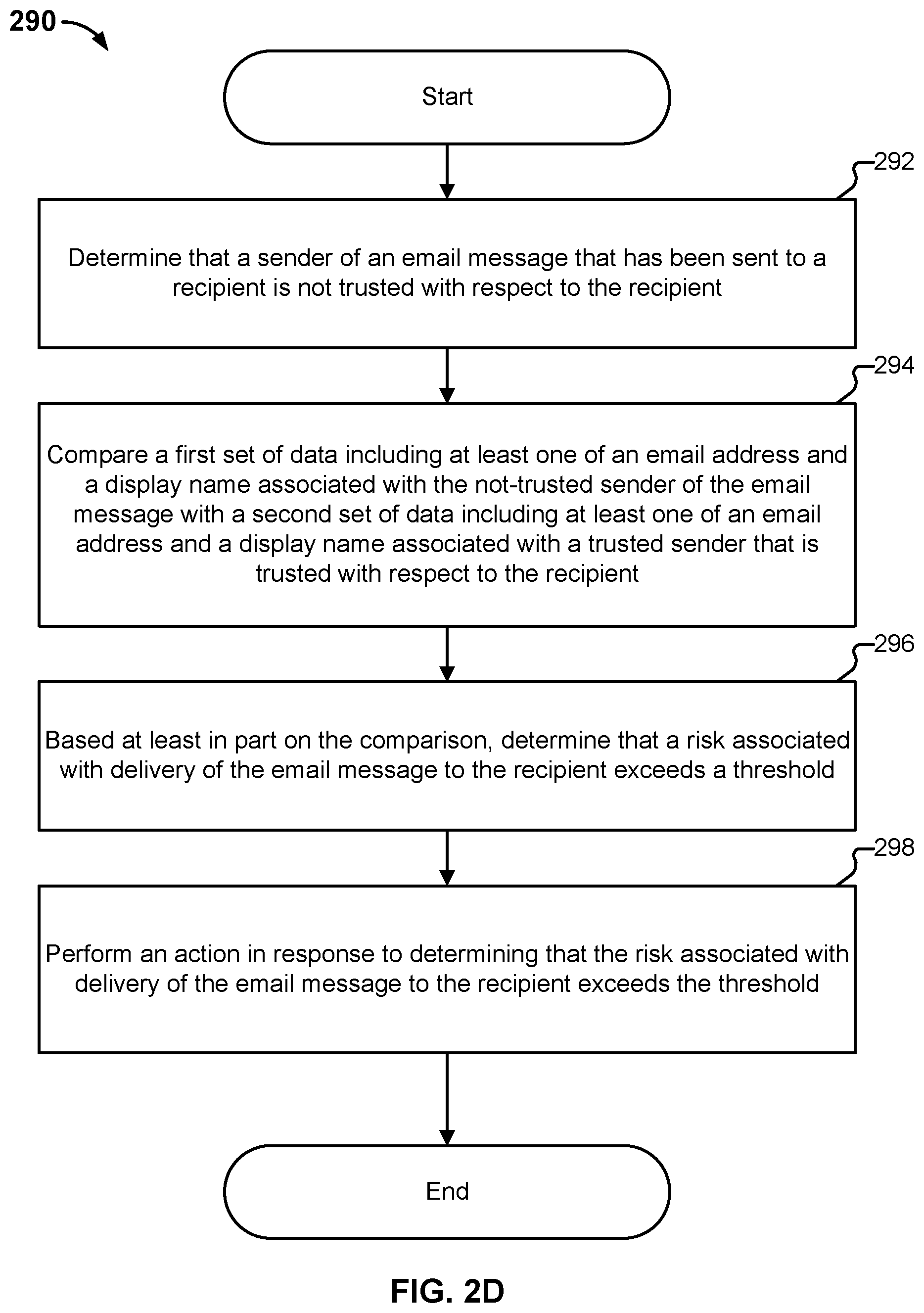

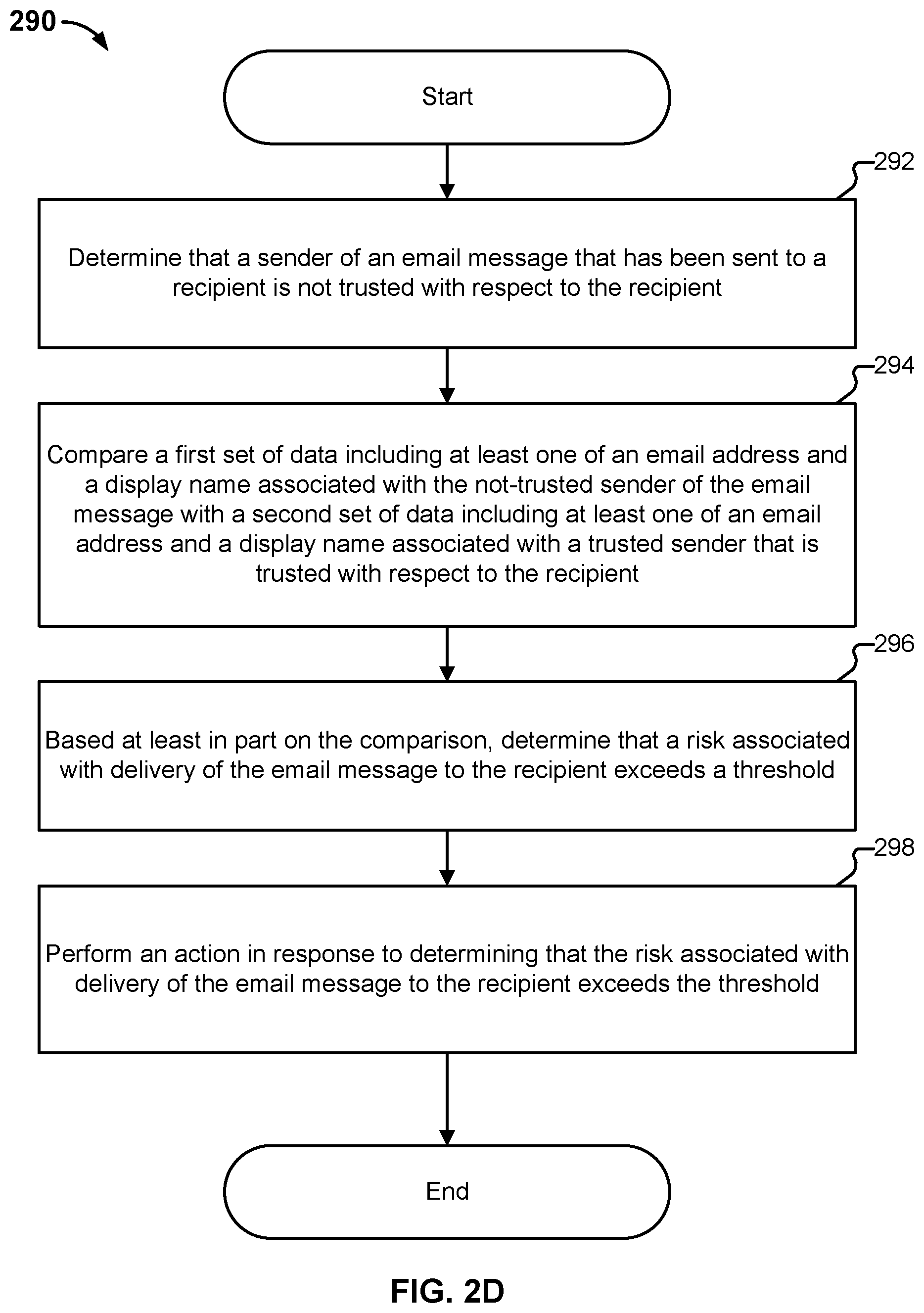

FIG. 2D is a flow diagram illustrating an embodiment of a process for detecting scam.

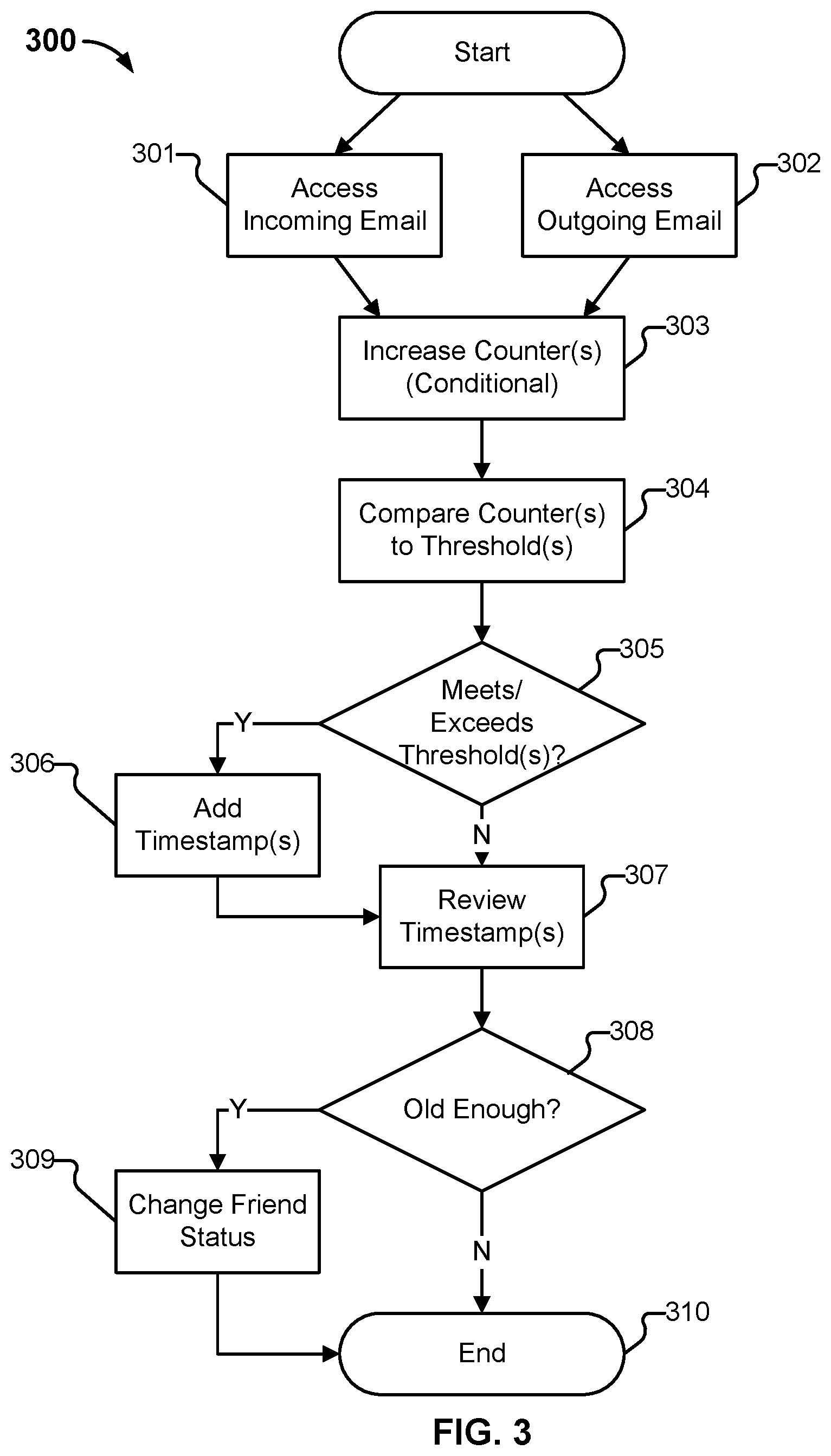

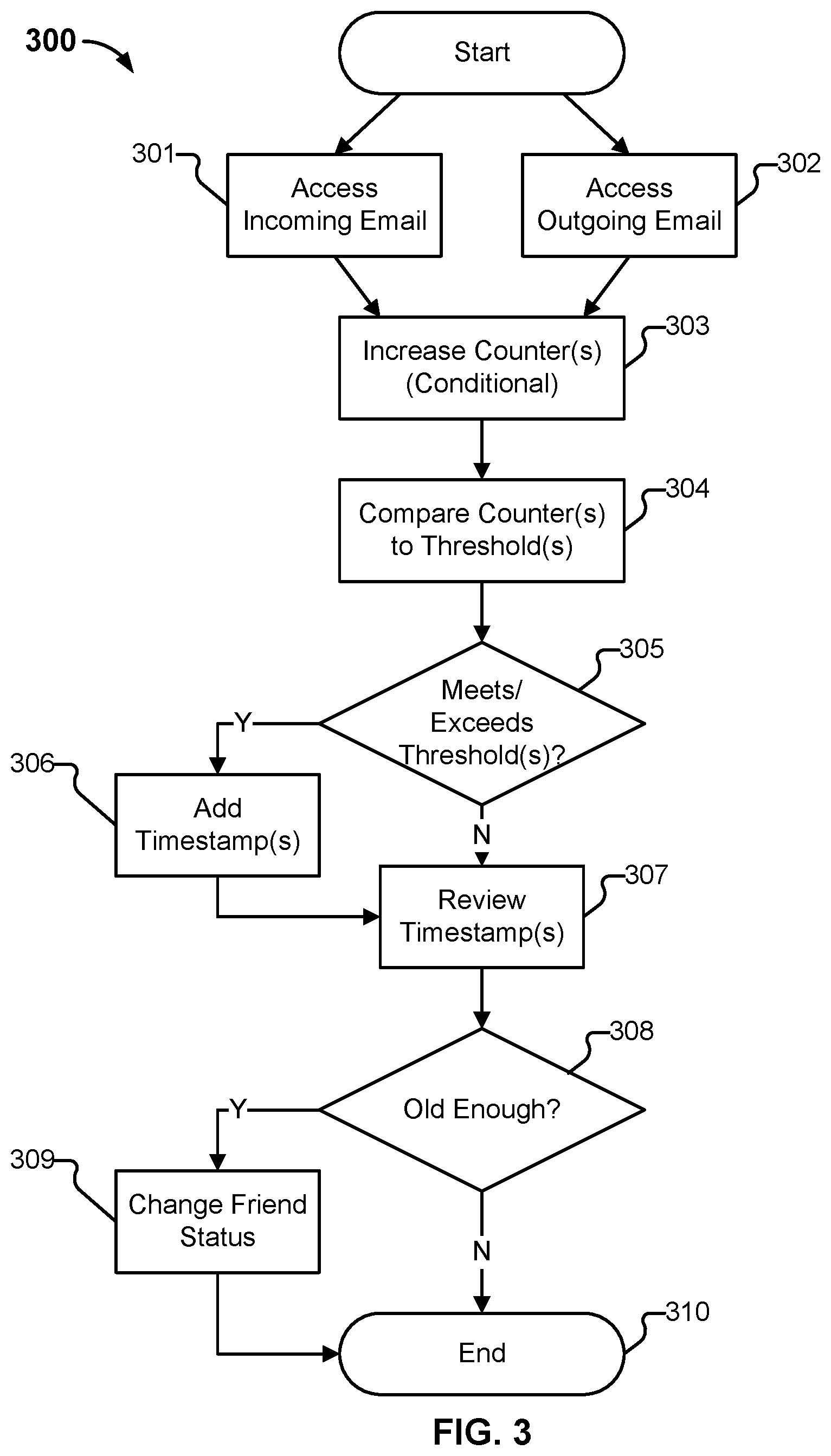

FIG. 3 illustrates an example process to determine that an account is a friend.

FIG. 4 illustrates an example process to determine that an email sender is trusted.

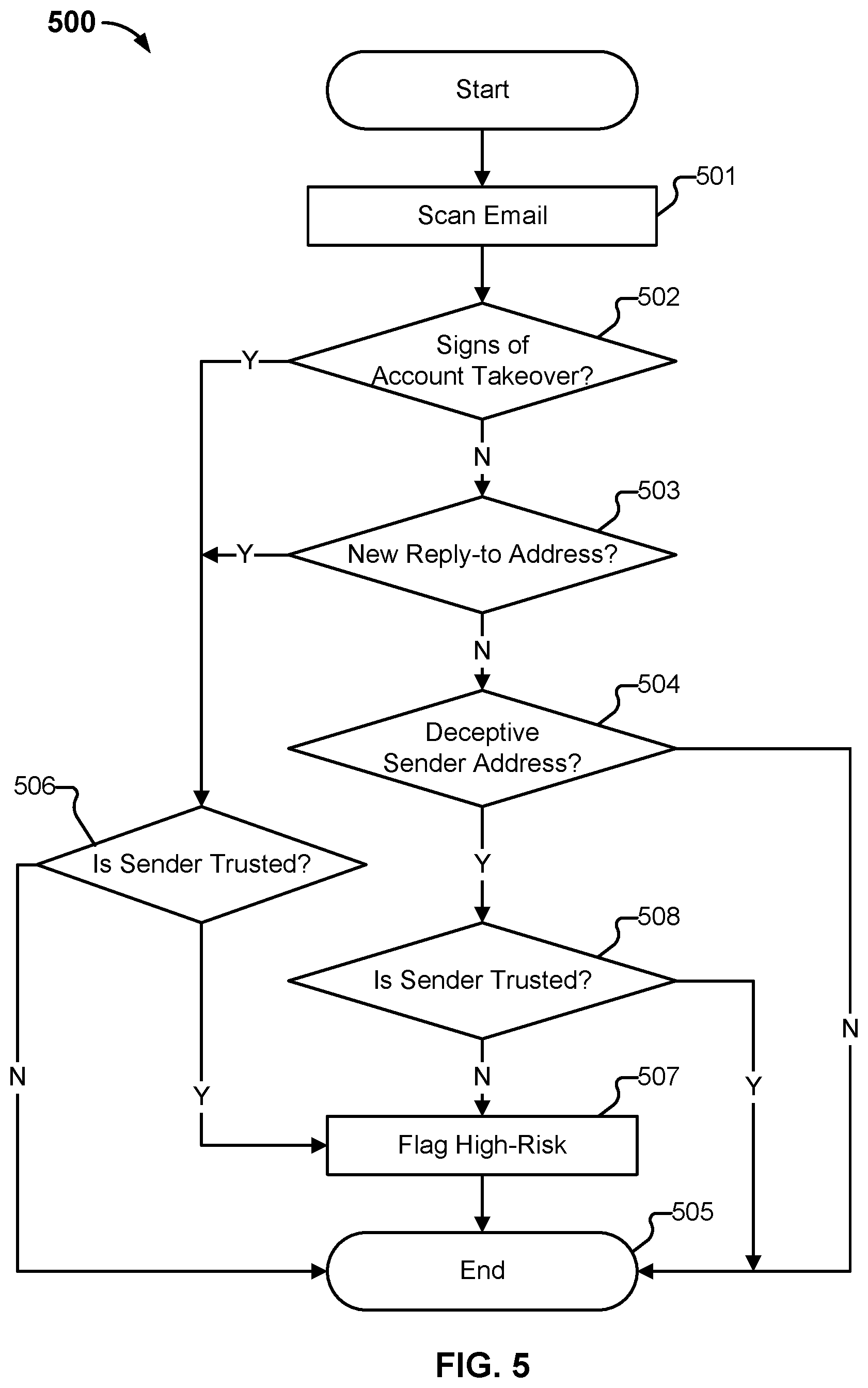

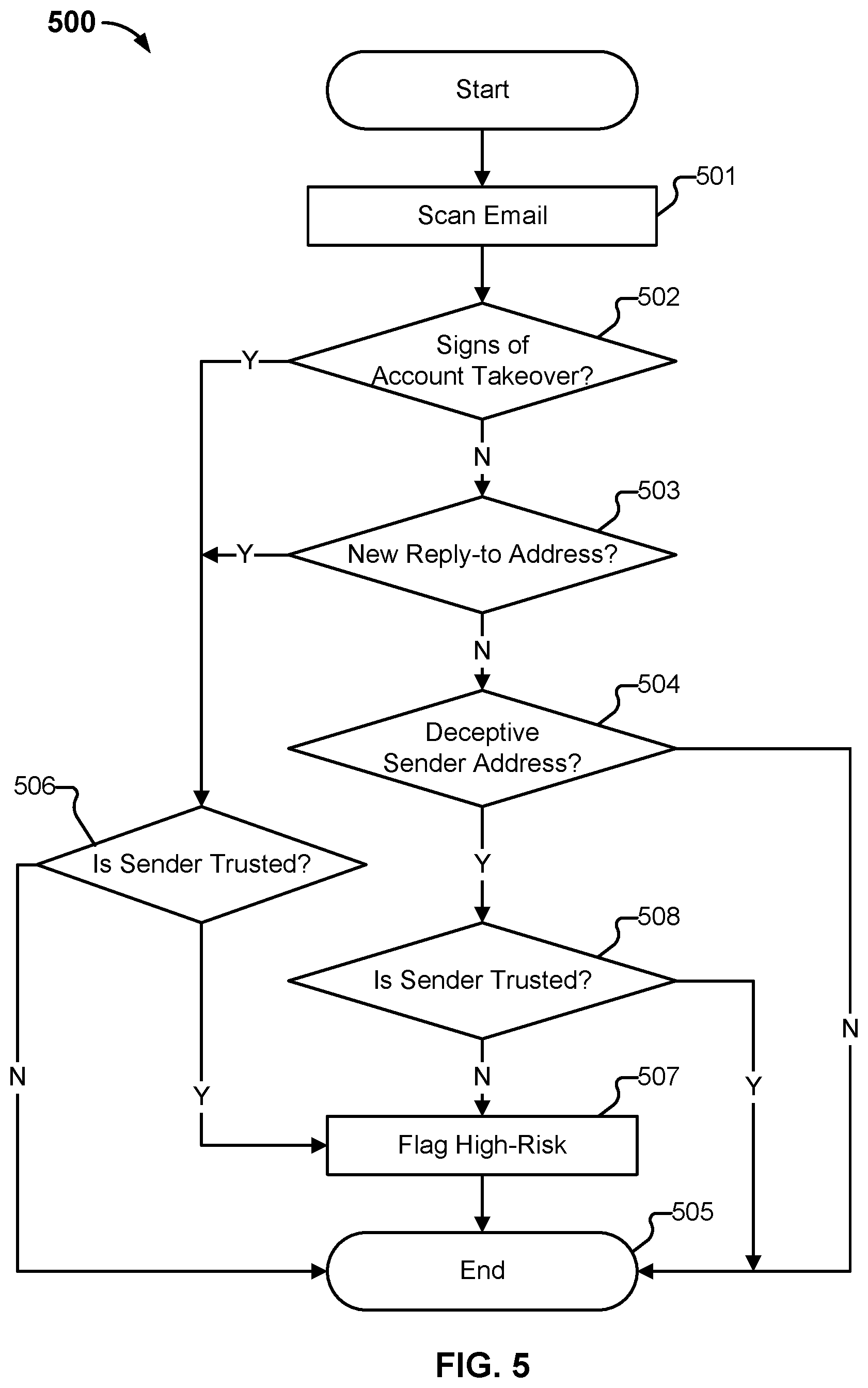

FIG. 5 illustrates an embodiment of a simplified non-monotonically increasing filter.

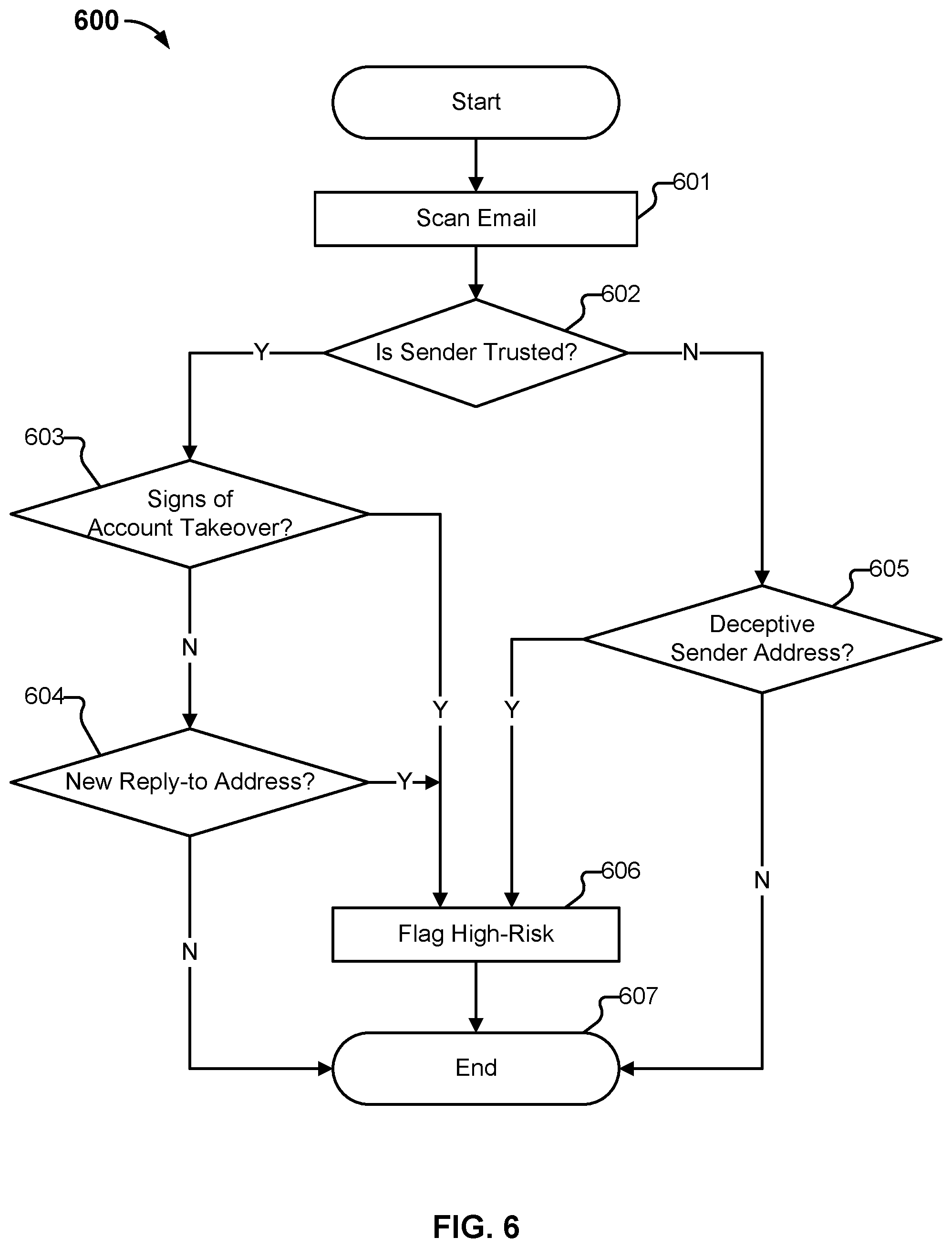

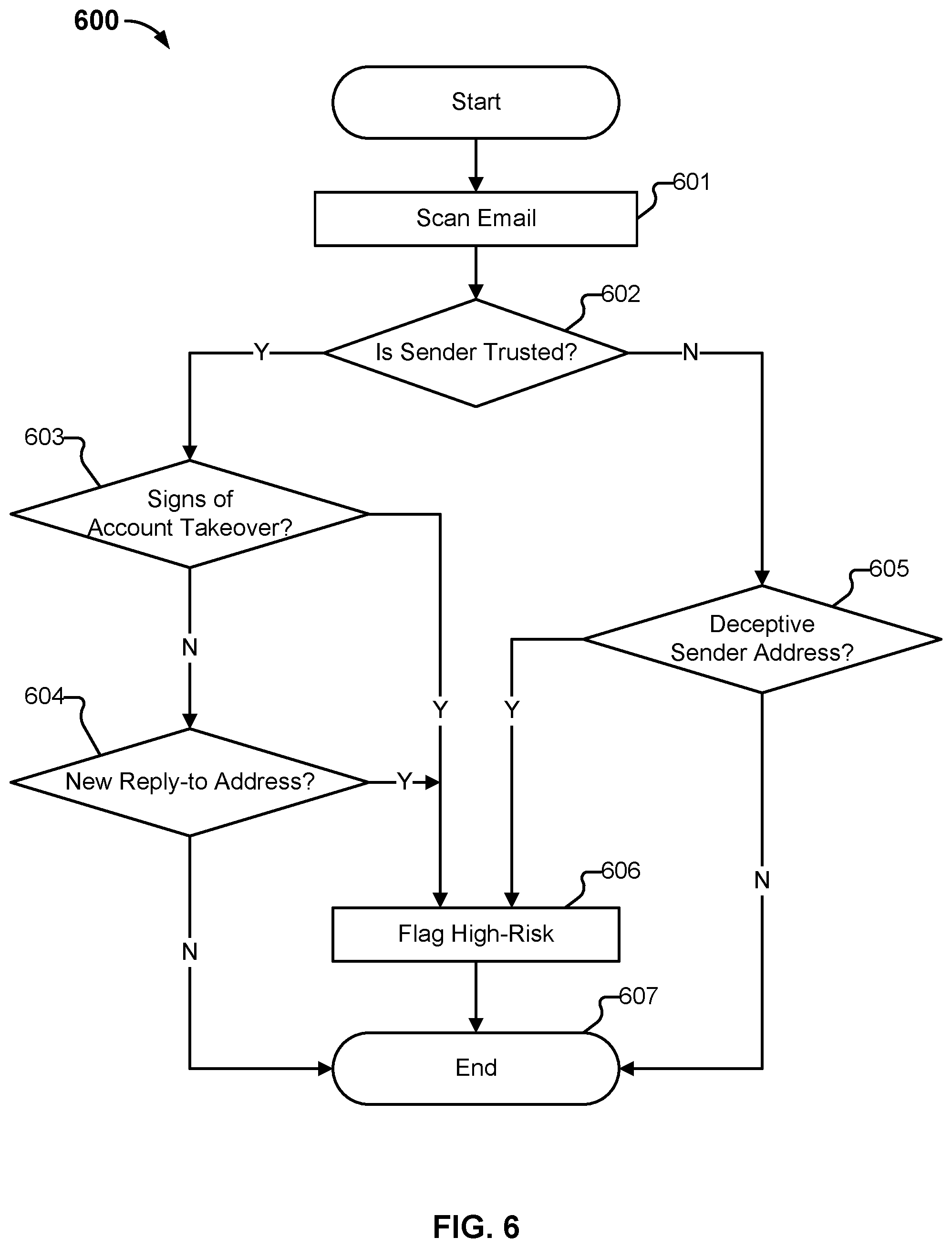

FIG. 6 illustrates an alternative embodiment of a non-monotonic combining logic.

FIG. 7 illustrates a second alternative embodiment of a non-monotonic combining logic.

FIG. 8 illustrates an example process for classification of primary risks associated with an email, using a non-monotonically increasing combining component.

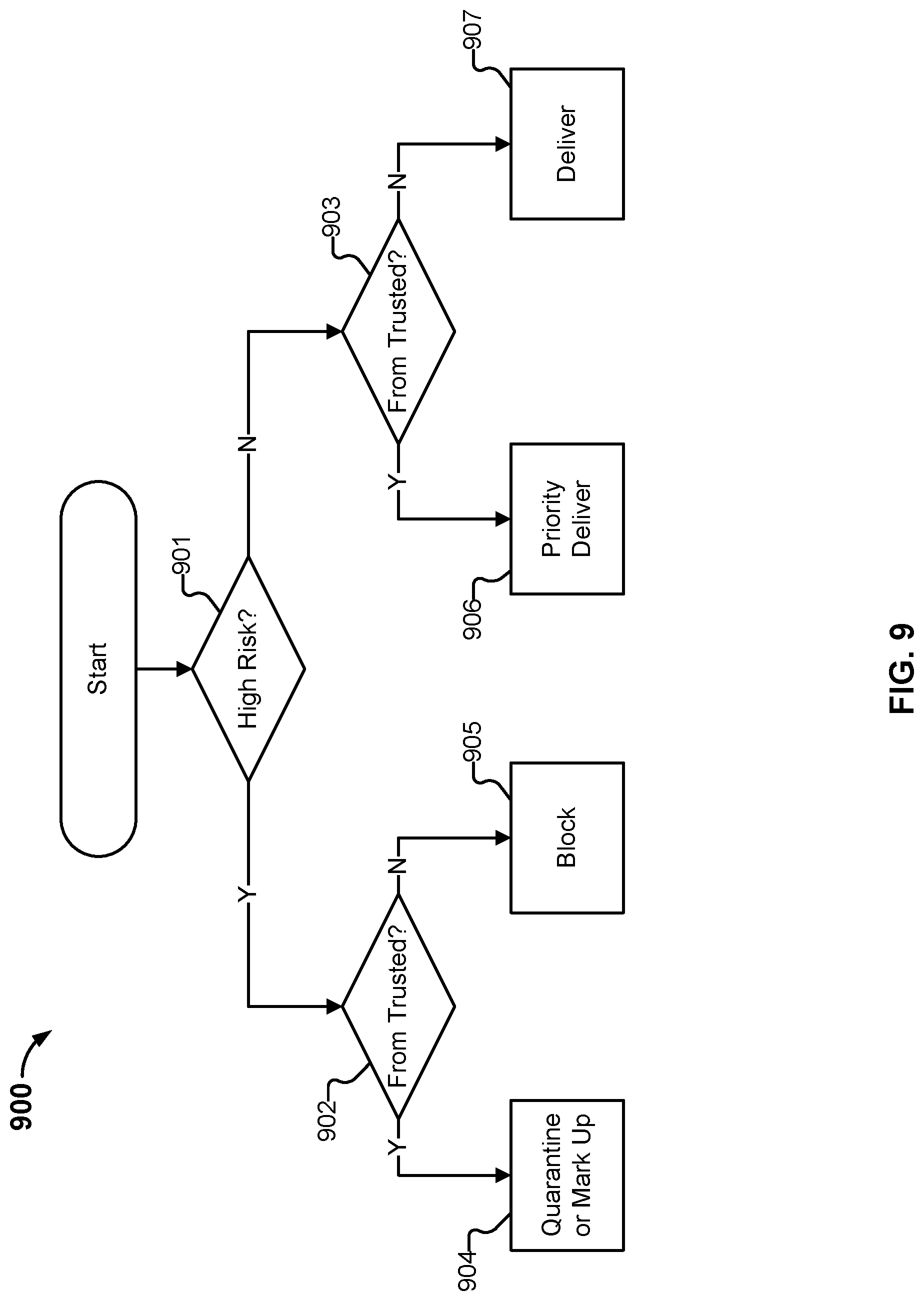

FIG. 9 illustrates an example embodiment of a process to identify what messages should be quarantined based on both high risk and a reasonable likelihood of being legitimate.

FIG. 10 illustrates an embodiment of a quarantine process using a secondary channel for release of quarantined messages.

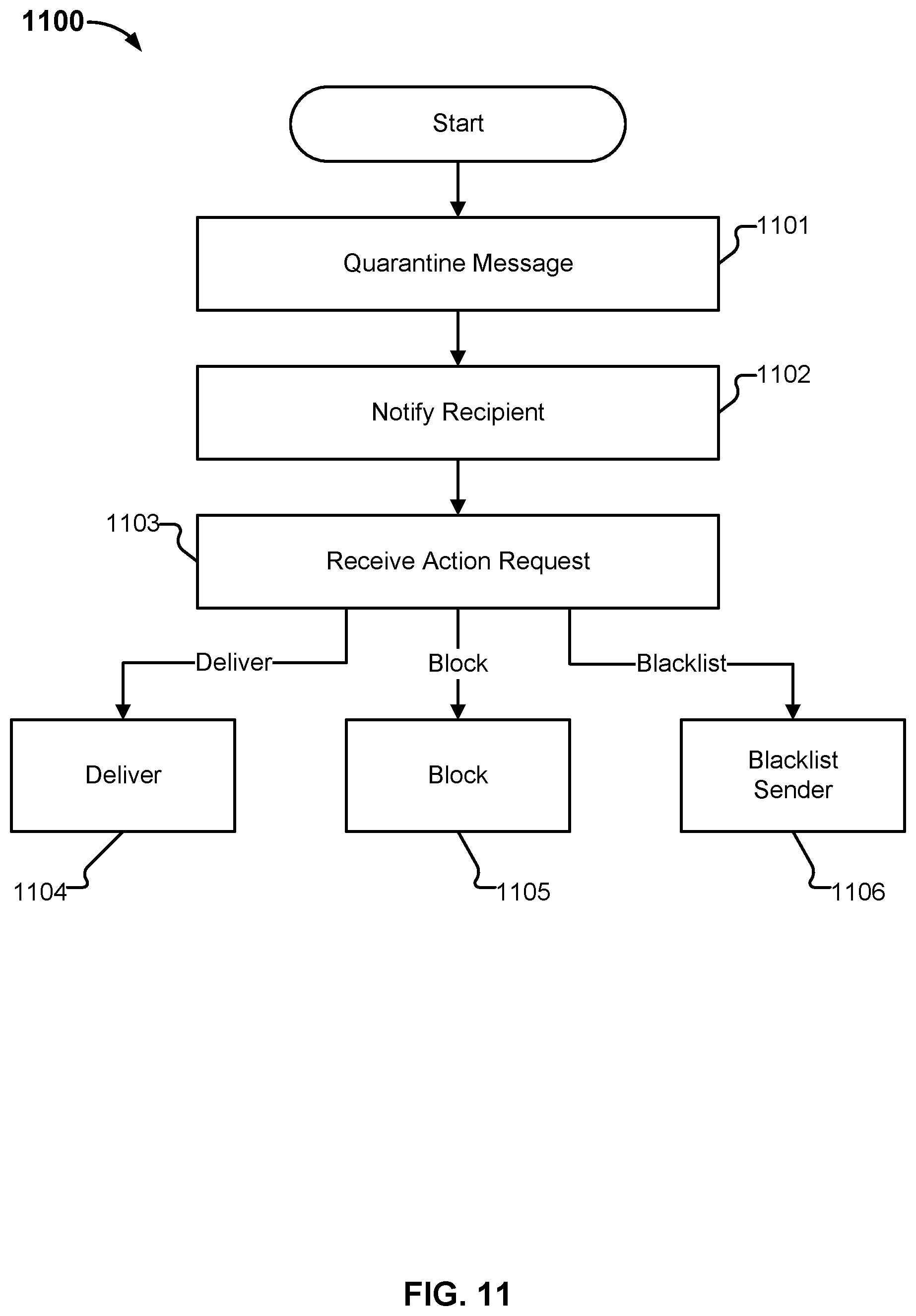

FIG. 11 illustrates an example embodiment of a process for processing of a quarantined email message.

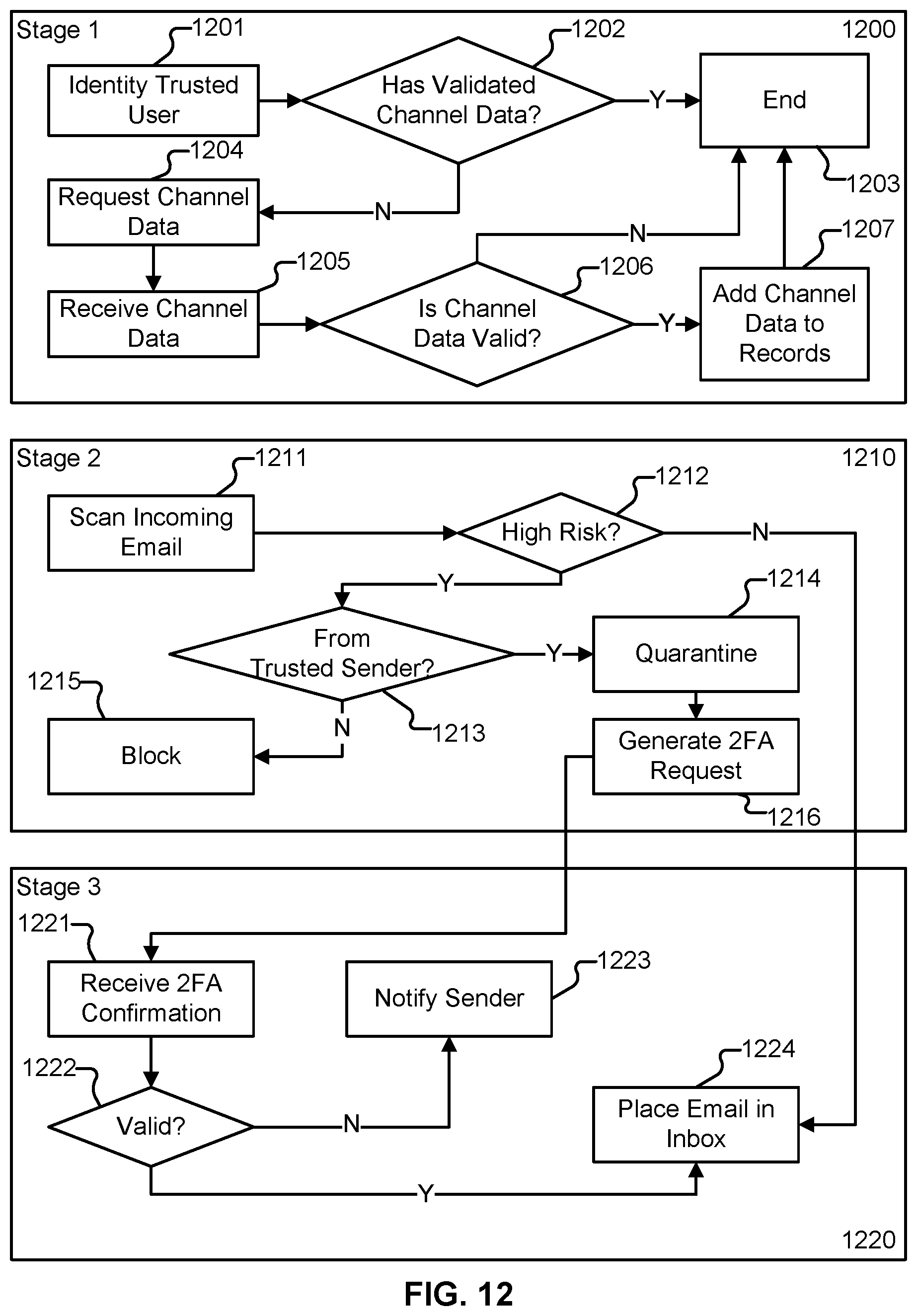

FIG. 12 illustrates an example of the three stages in one embodiment of a 2FA confirmation process.

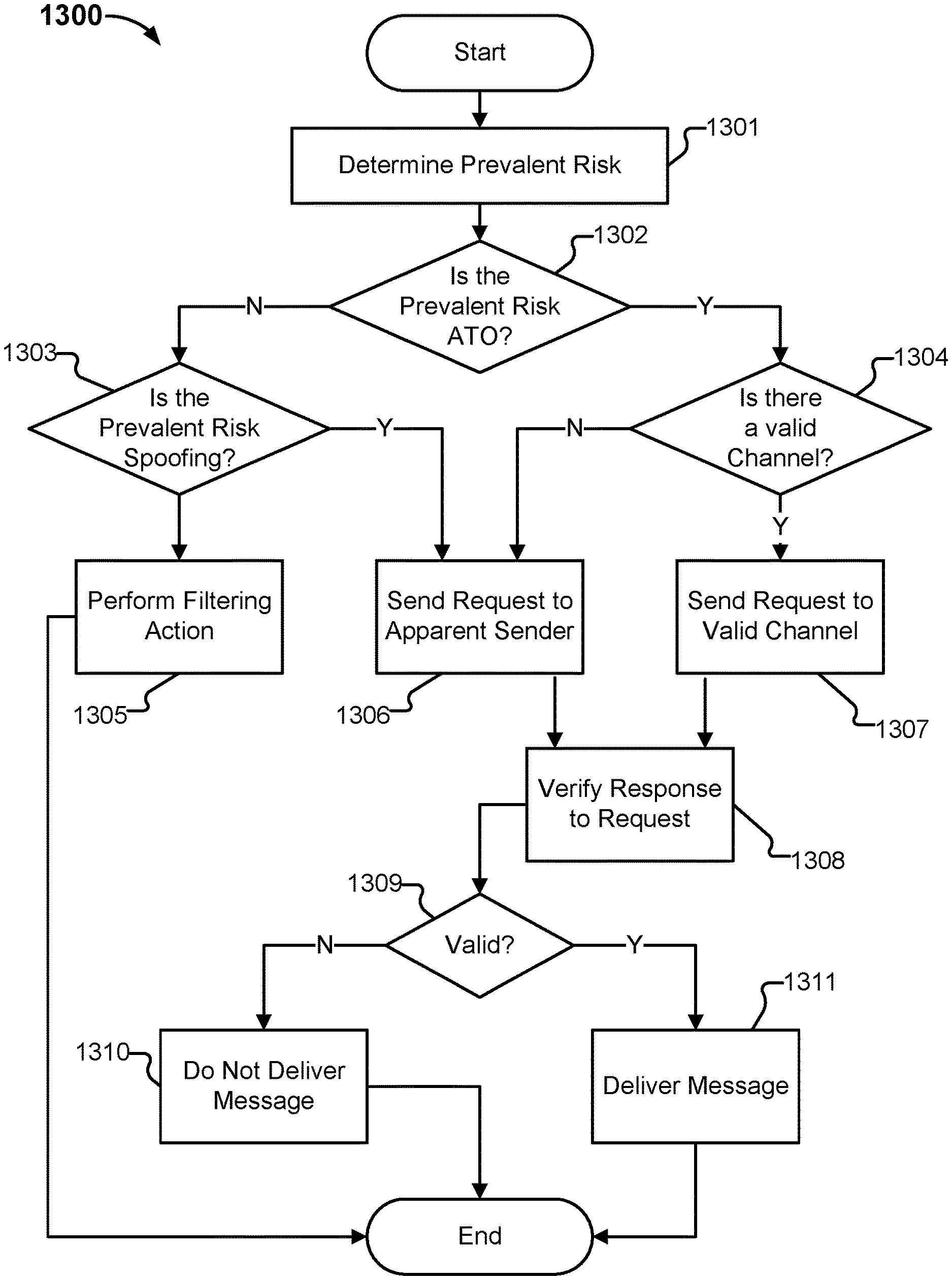

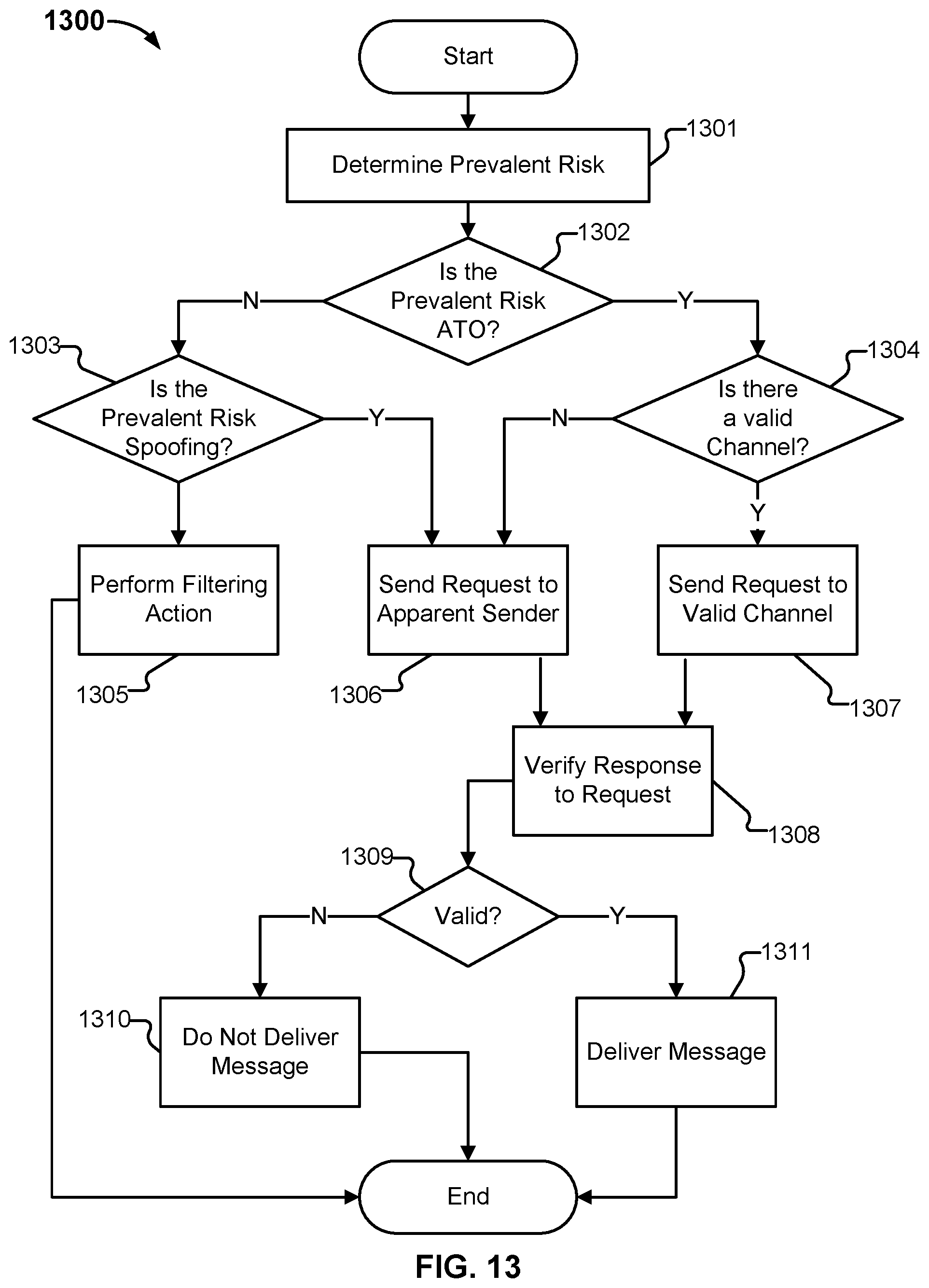

FIG. 13 illustrates an example embodiment of processing associated with sending a request to an account associated with the apparent sender of an email.

FIG. 14 illustrates an example embodiment of a request.

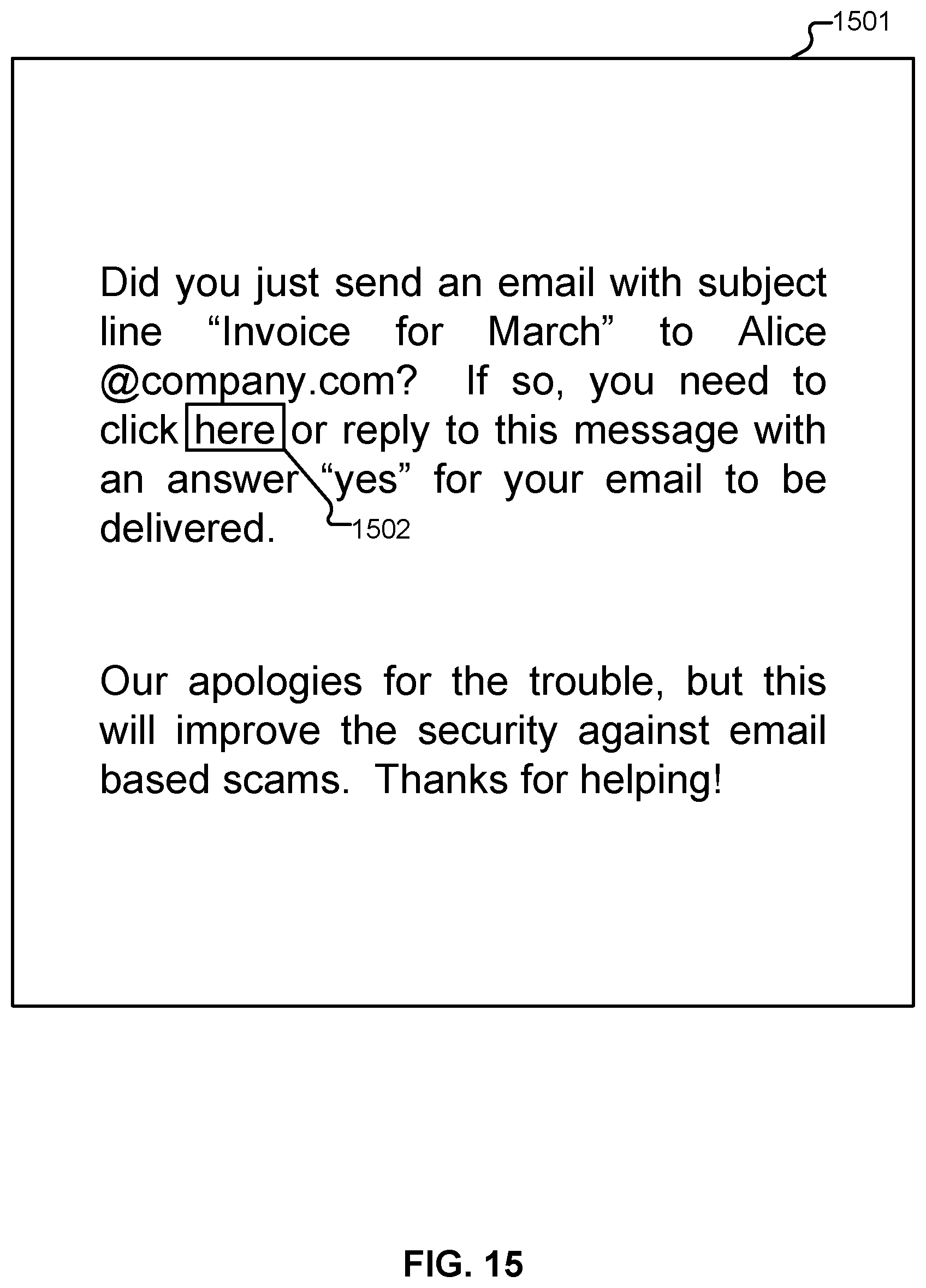

FIG. 15 illustrates an example embodiment of a request.

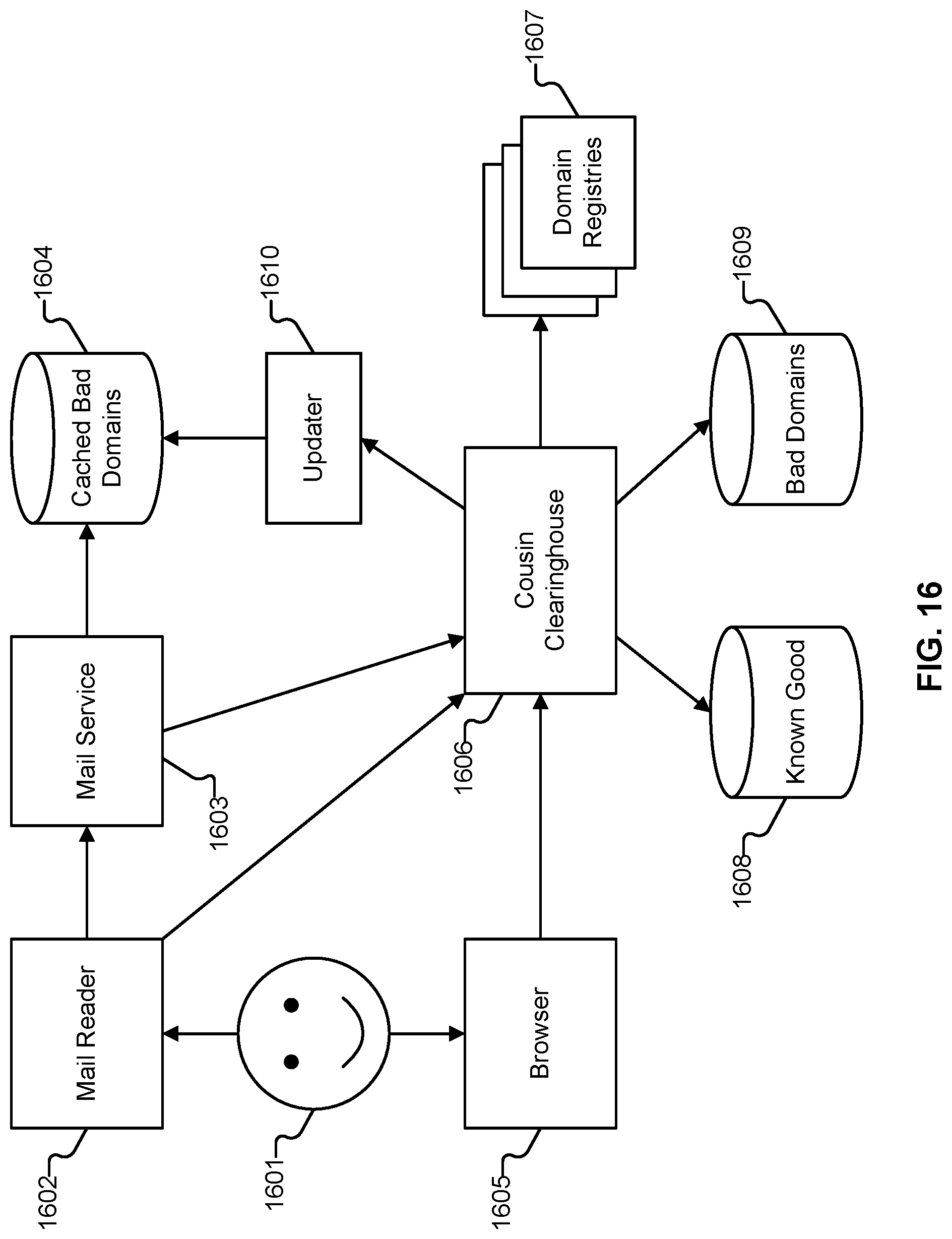

FIG. 16 illustrates an example embodiment of a cousin clearinghouse.

DETAILED DESCRIPTION

The invention can be implemented in numerous ways, including as a process; an apparatus; a system; a composition of matter; a computer program product embodied on a computer readable storage medium; and/or a processor, such as a processor configured to execute instructions stored on and/or provided by a memory coupled to the processor. In this specification, these implementations, or any other form that the invention may take, may be referred to as techniques. In general, the order of the steps of disclosed processes may be altered within the scope of the invention. Unless stated otherwise, a component such as a processor or a memory described as being configured to perform a task may be implemented as a general component that is temporarily configured to perform the task at a given time or a specific component that is manufactured to perform the task. As used herein, the term `processor` refers to one or more devices, circuits, and/or processing cores configured to process data, such as computer program instructions.

A detailed description of one or more embodiments of the invention is provided below along with accompanying figures that illustrate the principles of the invention. The invention is described in connection with such embodiments, but the invention is not limited to any embodiment. The scope of the invention is limited only by the claims and the invention encompasses numerous alternatives, modifications and equivalents. Numerous specific details are set forth in the following description in order to provide a thorough understanding of the invention. These details are provided for the purpose of example and the invention may be practiced according to the claims without some or all of these specific details. For the purpose of clarity, technical material that is known in the technical fields related to the invention has not been described in detail so that the invention is not unnecessarily obscured.

A BEC scam usually begins with the thieves either phishing an executive and gaining access to that individual's inbox, or emailing employees from a lookalike domain name that is, for example, one or two letters off from the target company's true domain name. For example, if the target company's domain was "example.com" the thieves might register "example.com" (substituting the letter "L" with the numeral 1) or "example.co," and send messages from that domain. Other times, the thieves will spoof an email, e.g., using a mail server setup to act as an open relay, which permits them to send bogus emails with a real domain name that is not theirs. Yet other times, the thieves may create a personal email account with a user name suggesting that the email account belongs to the CEO, and then email the CEO's secretary with a request. Commonly, the thieves request that the recipient transfer money for some business transaction. In many cases, the thieves have studied the targeted organization well enough to know what kind of request will seem reasonable, making them likely to be more successful. For example, a thief can gain access to an internal email account, like the CEO's, and find a previous legitimate invoice that is then modified to become a scam.

Other, technically similar scams also face consumers. One example of this is the so-called "stranded traveler scam", which typically involves a friend of the victim who was robbed in a foreign country and needs a quick loan to get home. Other related scams include scams where young adults supposedly are jailed in a foreign country, and need help from grandparents. Many times, scams like these use accounts that have been compromised, e.g., in phishing attacks. Sometimes, spoofing is used, or other methods of deceit, including registration of email accounts with names related to the person in supposed need. What is common for all of these scams is that they use deception, and commonly take advantage of pre-existing trust relationships between the intended victim and the party in supposed need.

When BEC scams are referred to in this document, they refer to the collection of scams that have the general format of the BEC scam, which includes but is not limited to stranded traveler scams, imprisoned in Mexico scams, phishing emails, and other emails that suggest familiarity, authority, friendship or other relationship. Many targeted scams fall in this category, and scams of these types can be addressed by using the techniques described herein.

Unlike traditional phishing scams, spoofed emails used in CEO fraud schemes and related scams, such as those described above, are unlikely to set off traditional spam filters, because these are targeted phishing scams that are not mass emailed, and common spam filters rely heavily on the quantity of email of a certain type being sent. Also, the crooks behind them take the time to understand the target organization's relationships, activities, interests and travel and/or purchasing plans. This makes the scam emails look rather realistic--both to their recipients and to traditional spam filters.

Traditional spam filtering is designed to detect typical spam. This is typically sent in high volume, has low open rates, and even lower response rates. It is commonly placed in the spam folder by the recipient (if not already done so by the spam filter). It commonly contains a small set of keywords, corresponding to the products that are most profitable for spammers to sell. These keywords are typically not used in non-spam email traffic. To avoid detection by spam filters, spammers commonly obfuscate messages, e.g., write V-!-@-G.R-A instead of "Viagra". This commonly helps the spammers circumvent spam filters, but the message is typically still clear to the recipient.

In contrast, a typical BEC scam message is sent to only a small number of targeted recipients, such as one or two recipients within an organization. If similar messages are sent to recipients in other organizations, those are typically not verbatim copies, as there is a fair amount of customization, much of which is guided by contextual information obtained from data breaches, compromised accounts, and publicly available information, including social networks. There are typically no keywords specific to BEC emails--instead, BEC scammers attempt to mimic the typical emails of the people they interact with. As a result, there is typically no need for obfuscation. BEC scammers may purchase or register new domain names, like example.com above, solely for the purpose of deceiving users within one specific organization targeted by the scammer, and may spend a significant amount of effort customizing their emails to make them credible, based on contextual information related to the intended victims. These factors contribute to make traditional/existing spam filters fail to detect BEC scam emails.

In some embodiments, the techniques described herein address the problems of email scams, such as BEC scams, using a set of detection components. While example embodiments involving email are described below, the techniques described herein can variously be adapted to accommodate any type of communication channel, such as chat, (e.g., instant messaging (IM)), text (e.g., short message service (SMS)), etc., as applicable.

In various embodiments, the detection components include, but are not limited to, components to detect deceptive email content; to detect deceptive domains; to detect deceptive email addresses; to detect email header structures associated with deceptive practices; to detect deceptive attachments; and to detect hyperlinked material that is associated with deceptive emails.

Furthermore, in some embodiments, the outputs of at least two deception detection components are combined in a way that limits error rates, for example, using a non-monotonic combining logic that triggers on combinations of the above described deception detection components. Further details regarding this logic will be described below. In some embodiments, the logic reduces error rates by mirroring scammer strategies and associated uses of approaches that cause the deception detection components to trigger. In some embodiments, this reduces false negatives. At the same time, in some embodiments, the logic reduces false positives by not blocking benevolent emails, even if these cause the triggering of deception detection components, for example, as long as these are not triggered according to patterns indicative of common scammer strategies.

As will be illustrated in further detail below, the techniques described herein mitigate the threat associated with Business Email Compromise and associated scams. In some embodiments, this is done by detecting structural persuasion attempts. In some embodiments, this is in contrast to verbal persuasion attempts, which include text-based appeals in the content portion of a message. In some embodiments, structural persuasion relates to use of deceptive header information intended to cause the recipient of an email to be inclined to accept a message as legitimate and safe.

In some embodiments, the use of second factor authentication (2FA) for confirmation is beneficial to avoid risk. For example, if Alice sends an email to her broker, Bob, asking Bob to sell some of her stock, then it can be beneficial for Bob to confirm with Alice before performing the sale. This avoids performing transactions as a result of attacks, such as a spoofing attack in which Eve is sending a spoofed message to Bob, appearing to come from Alice. It also mitigates the threat associated with malware and stolen computers. For example, consider a setting where Eve places malware on Alice's computer, causing an email to be sent from Alice to Bob, in which Bob is asked to sell some of Alice's stock. In these examples, using a 2FA for confirmation reduces the threat, as if Eve does not have the ability to receive the 2FA request and respond to it on Alice's behalf, then the email request will be ignored by Bob. In some embodiments, the 2FA confirmation requests include SMS messages or manually placed phone calls. Existing systems for sending 2FA confirmation requests are not automated. Instead, Bob reads his email from Alice, and determines in a case-by-case basis whether to initiate a 2FA confirmation request. Occasionally, Bob may make a mistake or be hurried by a high-priority request, thereby deciding to ignore the 2FA confirmation. Scammers may trick Bob to omit the request. In some embodiments, the techniques described herein automate the determination of when to send a 2FA confirmation request, and integrate the confirmation with the delivery of the email. This way, Bob will not receive the email from Alice until Alice has confirmed it, unless it is an email that does not require a confirmation, in which case it will be delivered immediately.

Traditional spam filters typically have a logic that is monotonically increasing. What this means is that they may have combining logic functions that generate a filtering decision from two or more detection components, such as one velocity detector and one reputation detector, and where a "higher" detection on either of these result in a higher probability of blocking the email. For example, the output of the velocity detector may be three levels, corresponding to low, medium, and high velocities. Similarly, the output of the reputation detector may be three levels, corresponding to low, medium, and high reputation risk. The combining logic function may determine that a message is undesirable if it results in a high velocity level, a high reputation risk level, or a medium level if both the velocity detector and the reputation detection components output medium levels. This traditional combining logic is monotonically increasing, and works in a way that can be described as "additive": if any filter outputs a "higher" detection score, that means that it is more likely that the email will be blocked, as individual scores from different detection components are combined in a way in which each score contributes toward reaching a threshold in a manner that does not depend on the other scores. If the threshold is reached, a filter action is performed.

In contrast, in one embodiment, the disclosed scam detector (also referred to herein as "the system") corresponds to a logic combination function that is not monotonically increasing. This type of function is referred to herein as "non-monotonically increasing." For example, suppose that a first and a second detector each have three possible outputs, which for illustrative purposes, are referred to as low, medium, and high. In some embodiments, the combining logic function determines that an email is not desirable if the first detector outputs high and the second detector outputs low; the first detector outputs low and the second detector outputs high; or both generate a medium output; but otherwise determines that the email is desirable. In this example, it is clear that neither detector generates an output from which a classification decision can be made without also taking the output of the other detector into consideration. It is also clear in this example that at least one of the detectors produces an output for which one value is not always indicative of a safe email, but sometimes that value is indicative of an unsafe email. Seen another way, in some embodiments, the results of the individual detectors are combined using a combining function whose operations depend on at least one of the scores and types of the individual detectors. In some embodiments, such a detector identifies what other detectors are relevant for the classification, and how to combine the scores and types from those.

While the above examples describe monotonically increasing and non-monotonically increasing functions in the context of email classification, the techniques described herein can be applied to more detectors than two, and to different types of detector outputs, such as binary detector outputs and detector outputs with more than three possible options. In some embodiments, the detector outputs are of different types for different detectors, such as a first detector with a binary output and a second detector with an output that can take ten different values. In some embodiments, the detector outputs can be represented as numeric values, Boolean values, class memberships, or any other appropriate types of values. Detectors can be implemented in software, hardware or a combination of these, and in some embodiments, may utilize some manual curation in cases where, for example, an automated classification is not supported by the system rules for a particular input email message.

The non-monotonic logic is described in further detail in the combining logic section below, where example pseudocode is provided, illustrating an example embodiment of the techniques described herein. One example element of relevance to the non-monotonic evaluation is the classification of the sender being, or not being, a trusted party. In one embodiment, a trusted sender is what is defined as a "friend" or an "internal" party in the example embodiment below. In another embodiment, a trusted sender is a party who the recipient has an entry for in his or her address book; is connected to on a network (e.g., social network such as Facebook or LinkedIn); has chatted or placed phone/video calls using a communications application/program such as Skype or similar software; or a combination of such properties. In one example embodiment, two associated parties share a list of trusted parties; if one email sender is qualified as a trusted party for one of the associated parties, then the same email sender is also automatically or conditionally qualified as a trusted party for the second associated party. Possible example conditions include the two associated parties being members of the same organization; having configured their respective systems to allow for the exchange of information related to who is a trusted party; conditions relating to the certainty of the classification and a minimum required certainty configuration of the second associated party; and any combination of such conditions. Further details regarding determining what users are trusted are described below.

In some embodiments, the non-monotonic logic causes a different evaluation of messages sent from trusted senders and non-trusted senders. For example, in the example embodiment below, the presence of an untrusted reply-to address is associated with risk when it is part of a message from a trusted sender, but not from a non-trusted sender (e.g., from=bob@example.com is not the same as reply-to=bob@exampe.com). Similarly, in some embodiments, spoof indicators are associated with risk in a message from a trusted sender, but not from a non-trusted sender. Conversely, in some embodiments, deceptive links, deceptive attachments, deceptive domain names, deceptive email addresses, and the like are associated with risk primarily in messages from non-trusted parties. In other words, in some embodiments, the risk evaluation logic described herein is not "additive" in that the presence of an indicator implies greater risk in one context, while lesser risk in another context. In some embodiments, the non-monotonic logic associated with the risk evaluation maps to the business strategy of the scammers, where this business strategy corresponds to how they typically carry out their acts of trying to scam recipients.

Described herein are also techniques for determining when an email address is potentially deceptive. In some embodiments, a first component of this determination determines the similarity of two or more email addresses, using, for example, string comparison techniques specifically designed to compare email addresses and their associated display names with each other. In some embodiments, this comparison is made with respect to display name, user name, domain, TLD, and/or any combinations of these, where two addresses can be compared with respect to at least one such combination, which can include two or more. In some embodiments, this first component also includes techniques to match conceptually similar strings to each other, where the two strings may not be similar in traditional aspects. For example, the words "Bill" and "William" are not closely related in a traditional string-comparison sense; however, they are conceptually related since people named "William" are often called "Bill". Therefore, an email address with a display name "Bill" has a similar meaning to an email address with a display name "William", even though the two are not similar in a traditional string comparison sense. Furthermore, the words "mom" and "morn" are not very similar in a traditional string comparison sense, since one is a three-letter word and the other a five-letter word, and these two words only have one letter in common. However, they are visually related since "m" looks similar to "rn". This similarity may be greater for some fonts than for other, which is another aspect that is considered in one embodiment. In some embodiments, a string comparison technique that adds conceptual similarity detection to traditional string comparison improves the ability to detect deceptive email addresses. This can also include the use of unicode character sets to create homographs, which are characters that look like other characters, and which can be confused with those.

In some embodiments, a second component of the determination of whether an email address is potentially deceptive relies on the context in which this is used. This is another example of a non-monotonic filter function. In some embodiments, if an email address of the sender of an email corresponding to a non-trusted party is similar to that of a trusted party associated with the recipient of the email, then that is deceptive, as the sender may attempt to mimic a trusted party. On the other hand, if the sender of an email is trusted, then having a reply-to address that is similar to the sender email address is deceptive. For example, a scammer can gain access to an account and send emails to friends of the account owner but modifies the reply-to email to a similarly looking address so that the real account holder does not see responses. Therefore, based on the trust relationship, the notion of "deceptive" changes meaning.

Another example of a non-monotonic aspect of the techniques disclosed herein is the presence of a reply-to address. In some embodiments, it matters less whether a non-trusted sender has a reply-to address, and this should not affect the filtering decision; on the other hand, it does matter whether a trusted sender has a reply-to address. If this reply-to address is deceptive with respect to the sender address, that is treated as a reason for taking a filtering action. In one embodiment, the fact that an email has a reply-to address--independently of whether it is deceptive--where the reply-to address is not previously associated with the sender, is sufficient to flag the email if the sender is a trusted party. In various embodiments, flagged emails can be blocked, quarantined, marked up, or otherwise processed to reduce the risk associated with them. The same is not true for a sender who is not a trusted party.

In one embodiment, the available filtering decisions are conditional for at least some of the detection components. For example, if it is determined that an email is sent from a non-trusted party, then it is acceptable to block it if it contains some elements associated with high risk. If the apparent sender of the email is a trusted party and the email headers contain a deceptive reply-to address, then it is also acceptable to block the message. If the apparent sender of the email is a trusted party and there is a new reply-to address that is not deceptive, then it is not acceptable to block the email, but more appropriate to quarantine, mark up, or otherwise flag the email. Similarly, if the apparent sender of the email is a trusted party and there is no reply-to address but content associated with risk, then based on the level of risk, the message may either be marked up or tagged, or simply let through, if the risk is not very high. Instead of blocking emails that are evaluated to be high-risk from a scam perspective as well as possibly having been sent by a trusted party, the emails can be marked up with a warning, sent along with a notification or warning, quarantined until a step-up action has been performed, or any combination of these or related actions. One example step-up action involves the filtering system or an associated system automatically sending a notification to the apparent sender, asking for a confirmation that the message was indeed sent by this party. In some embodiments, if a secondary communication channel has been established between the filtering system and the apparent sender, then this is used. For example, if the filtering system has access to a cell phone number associated with the sender, then an SMS or an automated phone call may be generated, informing the sender that if he or she just sent an email to the recipient, then he/she needs to confirm by responding to the SMS or phone call, or performing another confirming action, such as visiting a website with a URL included in the SMS. In some embodiments, the received email is identified to the recipient of the SMS/phone call, e.g., by inclusion of at least a portion of the subject line or greeting. If no secondary communication channel has been established, then in some embodiments, the system sends a notification to the sender requesting this to be set up, e.g., by registering a phone number at which SMSes can be received, and have this validated by receiving a message with a confirmation code to be entered as part of the setup. In some embodiments, to avoid spoofing of the system, the request is made in the context of an email recently sent by the party requested to register. For example, the registration request may quote the recently sent email, e.g., by referring to the subject line and the recipient, and then ask the sender to click on a link to register. Optionally, this setup can be initiated not only for high-risk messages, but also as a user is qualified as trusted (e.g., having been detected to be a friend), which allows the system to have access to a secondary communication channel later on. Phone numbers can also be obtained by the filtering system accessing address books of users who are protected by the system, extracting phone numbers from emails that are being processed, and associating these with senders, or other techniques. Other secondary channels are also possible to use, such as alternative email addresses, Skype messaging channels, Google Chat messages, etc. In an alternative embodiment, it is possible to transmit an email message to the sender of the high-risk message in response to the processing of the high-risk message, requiring the sender of the high-risk message to confirm that this was sent by him or her by performing an action such as responding to an identification challenge, whether interacting with an automated system or an operator. This can be done on the same channel as used by the sender of the message, or to another email address, if known by the system. Any identification challenge system can be used, as appropriate. This can be combined with the setup of a secondary channel, as the latter provides a more convenient method to confirm the transmission of messages.

In some embodiments, the technique for quarantining high-risk messages sent by trusted parties until a secondary channel confirmation has been received seamlessly integrates second factor authentication methods with delivery of sensitive emails, such as emails containing invoices or financial transfer requests. This can be beneficial in systems that do not focus on blocking of high-risk messages as well as in systems such as that described in the exemplary embodiment below.

In some embodiments, configured to protect consumers, content analysis would not focus on mention of the word "invoice" and similar terms of high risk to enterprises, but instead use terms of relevance to consumer fraud. For example, detection of likely matches to stranded traveler scams and similar can be done using a collection of terms or using traditional machine learning methods, such as Support Vector Networks (SVNs). In some embodiments, if a likely match is detected, this would invoke a second-factor authentication of the message.

The use of second factor authentication (2FA) for confirmation is beneficial to avoid risk. For example, if Alice sends an email to her broker, Bob, asking Bob to sell some of her stock, then it is beneficial for Bob to confirm with Alice before performing the sale. This avoids performing transactions as a result of attacks, such as a spoofing attack in which Eve is sending a spoofed message to Bob, appearing to come from Alice. It also mitigates the threat associated with malware and stolen computers. For example, consider a setting where Eve places malware on Alice's computer, causing an email to be sent from Alice to Bob, in which Bob is asked to sell some of Alice's stock. In these examples, using a 2FA for confirmation reduces the threat, as if Eve does not have the ability to receive the 2FA request and respond to it on Alice's behalf, then the email request will be ignored by Bob. The 2FA confirmation requests can include SMS messages or (manually or automatically placed) phone calls. Existing systems for sending 2FA confirmation requests are not automated. Instead, for example, Bob reads his email from Alice, and determines in a case-by-case basis whether to initiate a 2FA confirmation request. Sometimes, Bob may make a mistake or be hurried by a high-priority request, thereby deciding to ignore the 2FA confirmation. Scammers may trick Bob to omit the request. In some embodiments, the techniques described herein include automating the determination of when to send a 2FA confirmation request, and integrates the confirmation with the delivery of the email. This way, Bob will not receive the email from Alice until Alice has confirmed it, unless it is an email that does not require a confirmation, in which case it will be delivered immediately.

In some embodiments, the techniques described herein are usable to automate the use of 2FA for confirmation of emails associated with heightened risk. In some embodiments, this is a three-stage process, an example of which is provided below.

In the first stage, channel information is obtained. In some embodiments, this channel information is a phone number of a party, where this phone number can be used for a 2FA confirmation. For example, if the phone number is associated with a cell phone, then an SMS can later be sent for 2FA, as the need arises to verify that an email was sent by the user, as opposed to spoofed or sent by an attacker from the user's account. Whether it is a cell phone number or landline number, the number can be used for placing of an automated phone call. The channel can also be associated with other messaging methods, such as IM or an alternative email address. In one embodiment, the first stage is performed by access of records in a contact list, whether uploaded by a user of a protected system, by an admin associated with the protected system, or automatically obtained by the security system by finding the contact list on a computer storage associated with the protected system. Thus, in this embodiment, the setup associated with the first stage is performed by what will later correspond to the recipient of an email, where the recipient is a user in the protected organization. In another embodiment, the first stage is performed by the sender of emails, i.e., the party who will receive the 2FA confirmation request as a result of sending a high-risk email to a user of the protected system. In one embodiment, sender-central setup of the 2FA channel is performed after the sender has been identified as a trusted party relative to one or more recipients associated with the protected system, and in some embodiments, is verified before being associated with the sender. This verification can be performed using standard methods, in which a code is sent, for example, by SMS or using an automated phone call, to a phone number that has been added for a sender account, and after the associated user has received the code and entered it correctly for the system to verify it, then the number is associated with the sender. If a sender already has a channel associated with his or her email address, for example, by the first stage of the process having been performed in the past, relative to another recipient, then in some embodiments, it is not required to perform the setup again. If later on, a 2FA confirmation request fails to be delivered, then, in some embodiments, the channel information is removed and new channel information requested. Channel information can be validated by sending a link to an email account associated with a sender, containing a link, and sending a message with a code to the new channel, where the code needs to be entered in a webpage associated with the link in the email. In one embodiment, this is performed at a time that there is no suspicion of the email account being taken over. Alternatively, the validation can be performed by the recipient entering or uploading channel data associated with a sender. While the validation of the channel may not be completely full-proof, and there is a relatively small potential risk that an attacker would manage to register and validate a channel used for 2FA, the typical case would work simply by virtue of most people not suffering account take-overs most of the time, and therefore, this provides security for the common case.

An alternative approach to register a channel is to notify the user needing to register that he or she should call a number associated with the registration, which, in some embodiments, includes a toll-free number, and then enter a code that is contained in the notification. For example, the message could be "Your email to Alice@company.com with subject line `March invoice` was quarantined. To release your email from quarantine and have it delivered, please call <number here> and enter the code 779823 when prompted." In some embodiments, at any time, one code is given out to one user. When a code is entered, the phone number of the caller is obtained and stored. An alternative approach is to request an SMS. For example, the message could be "Your email to Alice@company.com with subject line `March invoice` was quarantined. To release your email from quarantine and have it delivered, please SMS the code 779823 to short code <SMS number here>."

In some embodiments, if the phone number has previously been used to register more than a threshold number of channels, such as more than 10 channels, then a first exception is raised. If the phone number is associated with fraud, then a second exception is raised. If the phone number is associated with a VoIP service, then a third exception is raised. If the phone number is associated with a geographic region inconsistent with the likely area of the user, then a fourth exception is raised. Based on the exceptions raised, a first risk score is computed. In addition, in some embodiments, a second risk score is computed based on the service provider, the area code of the phone number, the time zone associated with the area code, the time of the call, and additional aspects of the phone number and the call. In some embodiments, the first and the second risk scores are combined, and the resulting value compared to a threshold, such as 75. In some embodiments, if the resulting value exceeds the threshold, the risk is considered too high, otherwise it is considered acceptable. If the risk is determined to be acceptable, then in some embodiments, the phone number is recorded as a valid channel. If later it is determined that a valid channel resulted in the delivery of undesirable email messages, then in some embodiments, the associated channel data is removed or invalidated, and is placed on a list of channel data that is associated with fraud.

In the second stage, a high-risk email is sent to a user of a protected organization, from a sender that the system determines is trusted to the recipient. In one embodiment, the email is placed in quarantine and a 2FA confirmation request to the email sender is automatically initiated by the security system, where the sender is the party indicated, for example, in the `from` field of the email. In some embodiments, this 2FA confirmation is sent to the channel registered in the first stage. In one embodiment, if this transmission fails, then a registration request is sent to the email address of the sender of the email, requesting that the sender registers (as described in the first stage, above.

In a third stage, a valid confirmation to the 2FA confirmation request is received by the system and the quarantined message is removed from quarantine and delivered to the intended recipient(s). In the case where a registration request was sent in the second stage, in some embodiments, a different action is taken, to take into account that the new registration information may be entered by a criminal. An example action is to remove the quarantined message from quarantine, mark it up with a warning, the entered channel information, and a suggestion that the recipient manually verifies this channel information before acting on the email. The marked-up email can also contain a link for the recipient to confirm that the entered channel information is acceptable, or to indicate that it is not. If the system receives a confirmation from the recipient that the entered channel information is acceptable then this information is added to a record associated with the sender. The email is then transmitted to the intended recipient(s).

An alternative authentication option is to request the sender authenticate through a web page. A request with a URL link can be sent on a variety of channels including the original sending email address, an alternate email address, or an SMS containing a URL. The appropriate channel can be selected based on the likelihood of risk. A long random custom URL can be generated each time to minimize the likelihood of guessing by an attacker. The user can click on the link and be transparently verified by the device information including browser cookies, flash cookies, browser version information or IP address. This information can be analyzed together to confirm that it is likely a previously known device. For example, if there is no prior cookie and the IP address is from another country, then this is unlikely to be the correct user. A second factor, in addition to device information, can be the entry of a previously established passcode for the user. The second factor can be a stronger factor including a biometric, or token that generates unique time based values. FIDO (Fast Identity Online) authentication tokens can be used to provide strong factor with a good user experience.

One authentication option is to reply with an email and ask the receiver to call a number to authenticate. This is an easy way to capture new phone numbers for accounts. Because the incoming phone number can be easily spoofed, a follow up call or SMS back to the same number can complete the authentication. In one scenario, the user can be asked what follow up they would like. For example, "Press 1 to receive an SMS, Press 2 to receive a phone call."

Authentication using a previously unknown phone number can also be performed. For example, authentication can be strengthened by performing various phone number checks including a Name-Address-Phone (NAP) check with a vendor or a check against numbers previously used for scams or a check against a list of free VOIP numbers.

Yet another example technique for 2FA involves hardware tokens displaying a temporary pass code. In some embodiments, the system detects a high-risk situation as described above and sends the apparent sender an email with a link, requesting that the apparent sender clicks on the link to visit a webpage and enter the code from the 2FA token there. After this code has been verified, in some embodiments, the high-risk email is removed from quarantine and delivered to the recipient. In this context, a second channel is not needed, as the use of the token makes abuse by a phisher or other scammer not possible.

Other conditional verification techniques can be conditionally used for high-risk situations involving emails coming from trusted accounts. One of the benefits of the techniques described herein is to selectively identify such contexts and automatically initiate a verification, while avoiding to initiate a verification for other contexts.

In one embodiment, the conditional verification is replaced by a manual review by an expert trained in detecting scams. In some embodiments, the email under consideration is processed to hide potential personally identifiable information (PII) before it is sent for the expert to review. In some embodiments, at the same time, the email is placed in quarantine, from which it is removed after the expert review concludes. If the expert review indicates that the email is safe then, in some embodiments, it is delivered to its intended recipients, whereas if the expert review indicates that it is not desirable, then it is discarded.

When the terms "blocked" and "discarded" are used herein, they are interchangeably used to mean "not delivered", and in some embodiments, not bounced to the sender. In some instances, a notification may be sent to the sender, explaining that the email was not delivered. The choice of when to do this is, in some embodiments, guided by a policy operating on the identified type of threat and the risk score of the email.