Video decoding device and video decoding method

Hattori , et al.

U.S. patent number 10,708,586 [Application Number 16/688,475] was granted by the patent office on 2020-07-07 for video decoding device and video decoding method. This patent grant is currently assigned to MITSUBISHI ELECTRONIC CORPORATION. The grantee listed for this patent is Mitsubishi Electric Corporation. Invention is credited to Ryoji Hattori, Norimichi Hiwasa, Yusuke Itani, Akira Minezawa, Yoshimi Moriya, Shunichi Sekiguchi, Kazuo Sugimoto.

View All Diagrams

| United States Patent | 10,708,586 |

| Hattori , et al. | July 7, 2020 |

Video decoding device and video decoding method

Abstract

A parallel processing starting unit 3 that partitions an inputted image into tiles each having a predetermined size, and distributes tiles obtained through the partitioning, and N tile encoding units 5-1 to 5-N each of that carries out a prediction difference encoding process on a tile distributed thereto by the parallel processing starting unit 3 to generate a local decoded image are disposed, and each of N tile loop filter units 7-1 to 7-N determines a filter per tile suitable for a filtering process on the local decoded image generated by the corresponding one of the tile encoding units 5-1 to 5-N, and carries out the filtering process on the local decoded image by using the filter.

| Inventors: | Hattori; Ryoji (Tokyo, JP), Minezawa; Akira (Tokyo, JP), Itani; Yusuke (Tokyo, JP), Sugimoto; Kazuo (Tokyo, JP), Sekiguchi; Shunichi (Tokyo, JP), Moriya; Yoshimi (Tokyo, JP), Hiwasa; Norimichi (Tokyo, JP) | ||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Applicant: |

|

||||||||||

| Assignee: | MITSUBISHI ELECTRONIC

CORPORATION (Tokyo, JP) |

||||||||||

| Family ID: | 48192020 | ||||||||||

| Appl. No.: | 16/688,475 | ||||||||||

| Filed: | November 19, 2019 |

Prior Publication Data

| Document Identifier | Publication Date | |

|---|---|---|

| US 20200092549 A1 | Mar 19, 2020 | |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | Issue Date | ||

|---|---|---|---|---|---|

| 15880260 | Jan 25, 2018 | 10523935 | |||

| 14350732 | May 29, 2018 | 9986235 | |||

| PCT/JP2012/078012 | Oct 30, 2012 | ||||

Foreign Application Priority Data

| Oct 31, 2011 [JP] | 2011-239105 | |||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | H04N 19/174 (20141101); H04N 19/82 (20141101); H04N 19/176 (20141101); H04N 19/117 (20141101); H04N 19/46 (20141101); H04N 19/86 (20141101); H04N 19/436 (20141101); H04N 19/11 (20141101); H04N 19/172 (20141101); H04N 19/159 (20141101) |

| Current International Class: | H04N 19/11 (20140101); H04N 19/436 (20140101); H04N 19/82 (20140101); H04N 19/176 (20140101); H04N 19/86 (20140101); H04N 19/117 (20140101); H04N 19/174 (20140101); H04N 19/46 (20140101); H04N 19/172 (20140101); H04N 19/159 (20140101) |

References Cited [Referenced By]

U.S. Patent Documents

| 2004/0013310 | January 2004 | Suino |

| 2008/0159639 | July 2008 | Dvir et al. |

| 2009/0274216 | November 2009 | Kato et al. |

| 2010/0118945 | May 2010 | Wada et al. |

| 2010/0225655 | September 2010 | Tung |

| 2012/0121188 | May 2012 | Kenji |

| 2013/0016771 | January 2013 | Misra et al. |

| 2013/0094568 | April 2013 | Hsu et al. |

| 2013/0101035 | April 2013 | Wang |

| 2013/0107973 | May 2013 | Wang |

| 2013/0301942 | November 2013 | Kondo |

| 2014/0270559 | September 2014 | Kondo |

| 2011-35620 | Feb 2011 | JP | |||

| 2014-525151 | Sep 2014 | JP | |||

| WO 2013/008942 | Jan 2013 | WO | |||

| WO 2013/063455 | May 2013 | WO | |||

Other References

|

Bross, et al., "WD4: Working Draft 4 of High-Efficiency Video Coding", JCT-VC of ITU-T SG16 WP3 and ISO/IEC JTC1/SC29/WG11, 6th Meeting: Torino, IT, Oct. 28, 2011, JCTVC-F803_d5, pp. 25, 28-29. cited by applicant . Fuldseth, et al., "Tiles", Joint Collaborative Team on Video Coding (JCT-VC) of ITU-T SG16 WP3 and ISO/IEC JTC1/SC29/WG11, JCTVC-E408_r1, 5th Meeting: Geneva, CH, Mar. 16-23, 2011, pp. 1-14. cited by applicant . Fuldseth, et al., "Tiles", Joint Collaborative Team on Video Coding (JCT-VC) of ITU-T SG16 WP3 and ISO/IEC JTC1/SC29/WG11, JCTVC-F335, 6th Meeting: Torino, IT, Jul. 14-22, 2011, pp. 1-15. cited by applicant . Joint Collaborative Team on Video Coding (JCT-VC) of ITU-T SG16 WP3 and ISO/IEC JTC1/SC29/WG11 6th Meeting: Torino, IT, Jul. 14-22, 2011--JCTVC-F_Notes_d7--Title: Meeting report of the sixth meeting of the Joint Collaborative Team on Video Coding. cited by applicant . Joint Collaborative Team on Video Coding (JCT-VC) of ITU-T SG16 WP3 and ISO/IEC JTC1/SC29/WG11 7th Meeting: Geneva, CH, Nov. 21-30, 2011--JCTVC-G194--Title: AHG4: Non-cross-tiles loop filtering for independent tiles. cited by applicant . Joint Collaborative Team on Video Coding (JCT-VC); Title: CE4 Subset 3: Slice Common Information Sharing; Document: JCTVC-E045. cited by applicant . Joint Collaborative Team on Video Coding (JCT-VC); Title: Generalized slices; Document: JCTVC-Dxxx. cited by applicant . Joint Collaborative Team on Video Coding (JCT-VC); Title: Paralell Processing of ALF and SAO for Tiles; Document: JCTVC-G454. cited by applicant . Joint Collaborative Team on Video Coding (JCT-VC); Title: Slice Boundary Processing and Picture Layer Raw Byte Sequence Payload; Document: JCTVC-D128. cited by applicant . Joint Collaborative Team on Video Coding (JCT-VC); Title: Test Model Under Consideration; Document: JCTVC-B205. cited by applicant . Yamakage, et al. "CE12: Deblocking Filter Parameter Adjustment in Slice Level", Joint Collaborative Team on Video Coding (JCT-VC) of ITU-T SG16 WP3 and ISO/IEC JTC1/SC29/WG11 6th Meeting: Torino, JCTVC-F143_rl, Jul. 14-22, 2011, pp. 1-4. cited by applicant . Kazui et al., "Support of very low delay coding in the Tile," Joint Collaborative Team on Video Coding (JCT-VC) of ITU-T SG16 WP3 and ISO/IEC JTC1/SC29/WG11, Document: JCTVC-F140, 6th Meeting, Torino, IT, Jul. 14-22, 2011 pp. 1-10. cited by applicant . Office Action issued in related Indian Patent Application No. 2948/CHENP/2014 dated Sep. 26, 2018. cited by applicant . Osamoto, "HM3.2 Fine Granularity Slice Implementation Issues to be Clarified," Joint Collaborative Team on Video Coding (JCT-VC) of ITU-T SG16 WP3 and ISO/IEC JTC1/SC29/WG11, JCTVC-F694m21177, 6th Meeting: Torino, IT, Jul. 14-22, 2011, pp. 1-5. cited by applicant . Office Action issued in corresponding Brazilian Applicaiton No. 11 2014 009569 8 dated Apr. 14, 2020. cited by applicant. |

Primary Examiner: Vaughn, Jr.; William C

Assistant Examiner: Jean Baptiste; Jerry T

Attorney, Agent or Firm: Birch, Stewart, Kolasch & Birch, LLP

Parent Case Text

CROSS-REFERENCE TO RELATED APPLICATIONS

This application is a Divisional of copending U.S. application Ser. No. 15/880,260, filed on Jan. 25, 2018, which is a Divisional of U.S. application Ser. No. 14/350,732, filed on Apr. 9, 2014 (now U.S. Pat. No. 9,986,235 issued May 29, 2018), which was filed as PCT International Application No. PCT/JP2012/078012 on Oct. 30, 2012, which claims the benefit under 35 U.S.C. .sctn. 119(a) to Patent Application No. 2011-239105, filed in Japan on Oct. 31, 2011, all of which are hereby expressly incorporated by reference into the present application.

Claims

The invention claimed is:

1. A video decoding device comprising: an encoded bit data distributor that separates encoded bit data separated from a bitstream into encoded bit data of tile; one or more tile decoders each of which carries out a prediction difference decoding process on encoded bit data of tile separated by the encoded bit data distributor to generate a decoded image of tile; and one or more tile filters each of which carries out a filtering process on the decoded image generated by one of the one or more tile decoders by using a filter per tile shown by a filter parameter separated from the bitstream, wherein the video decoding device includes: a demultiplexer that demultiplexes the bitstream into the encoded bit data, filter parameters each showing a filter per a tile which is a rectangular region having a predetermined size, and partitioning control information indicating a partitioning state of an inputted image, which are multiplexed into the bitstream; an image memory that stores a decoded image on which a filtering process is carried out; and a decoded image storage that combines the decoded image on which the filtering process is carried out by the one or more tile filters to generate a decoded image of frame according to the partitioning control information separated by the demultiplexer, and that stores the decoded image in the image memory, and wherein when a filter share flag showing whether or not a filter is made to be shared among the tiles is multiplexed into the bitstream, the demultiplexer demultiplexes the bitstream into the filter share flag, and also demultiplexes the bitstream into the filter parameters each showing a filter per tile or a filter parameter showing a filter per frame, and, when the filter share flag separated by the demultiplexer shows that a filter is made not to be shared among the tiles, each of the one or more tile filters carries out a filtering process on the decoded image of tile generated by one of the one or more tile decoders by using a filter per tile shown by a filter parameter separated by the demultiplexer, otherwise, carries out a filtering process on the decoded image of tile generated by one of the one or more tile decoders by using the filter per frame shown by the filter parameter separated by the demultiplexer.

Description

FIELD OF THE INVENTION

The present invention relates to a video decoding device for and a video decoding method of decoding encoded data transmitted thereto from a video encoding device into an image.

BACKGROUND OF THE INVENTION

Conventionally, according to international standard video encoding methods, such as MPEG (Moving Picture Experts Group) and "ITU-T H.26x," an inputted video frame is partitioned into square blocks which are called macroblocks (MB), coding units (CU), or the like, and an intra-frame prediction, an inter-frame prediction, an orthogonal transformation of a prediction error signal, quantization, an entropy encoding process, and so on are carried out on each of the blocks. Further, after the processes on all the macroblocks are completed and one screenful of local decoded image is generated, a process of determining parameters for a loop filter, a process of filtering a local decoded image by using the loop filter, and an entropy encoding process are carried out.

The encoding process of encoding each coding unit is based on the premise that coding units are processed in a raster scan order, and in the encoding process on a certain coding unit, the encoded result of a previously-processed coding unit is needed in the raster scan order. Concretely, when carrying out an inter-frame prediction, a local decoded image of an adjacent coding unit is used as a reference to pixel. Further, in the entropy encoding process, a probability switching model is shared with the previously-processed coding unit in the raster scan order when the occurrence probability of a symbol is estimated, and it is necessary to refer to the mode information of an adjacent macroblock for switching between probability models. Therefore, in order to advance the encoding process on a certain coding unit, a part or all of the process on the previously-processed coding unit has to be completed in the raster scan order. This interdependence between coding units is an obstacle to the parallelization of the encoding process and a decoding process.

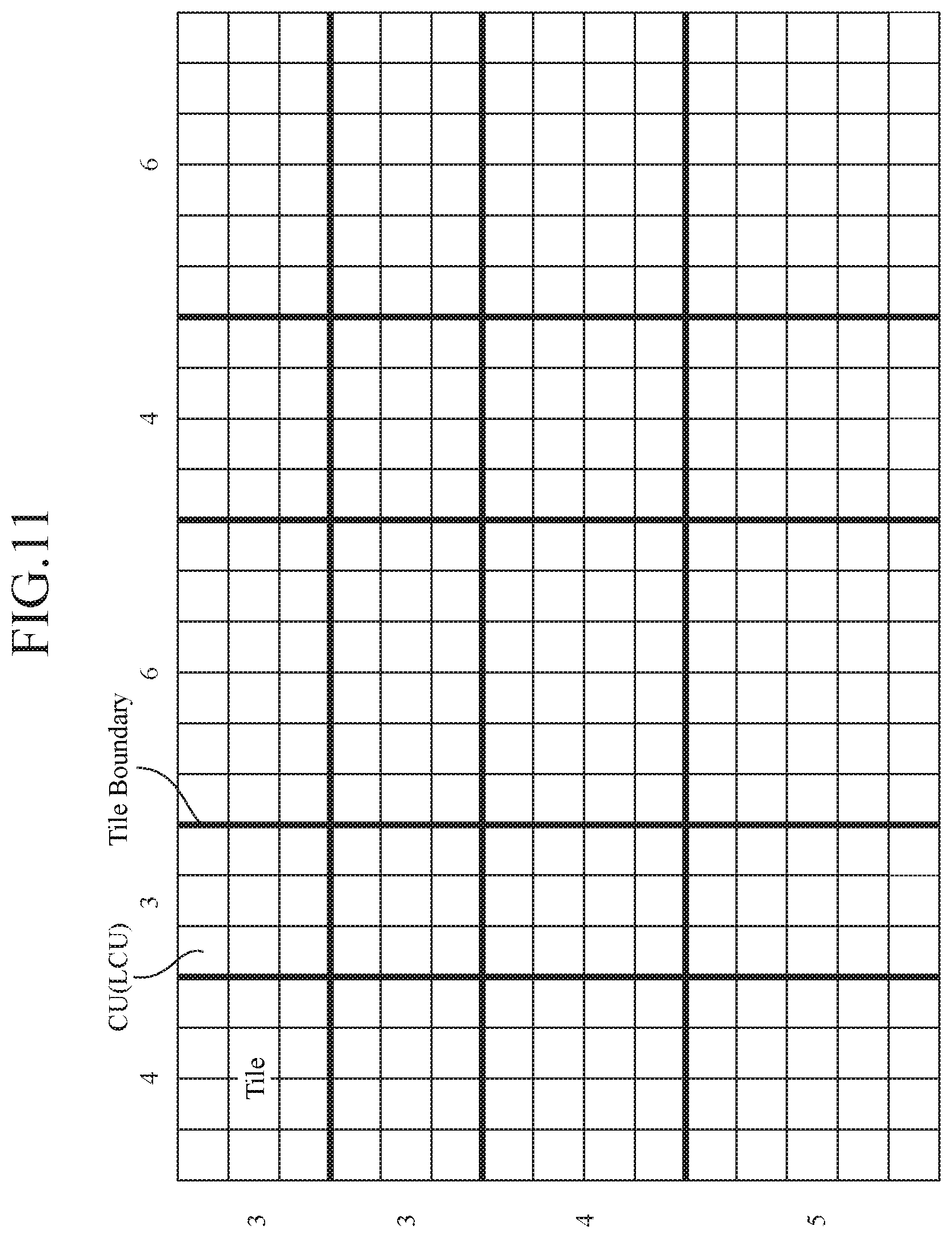

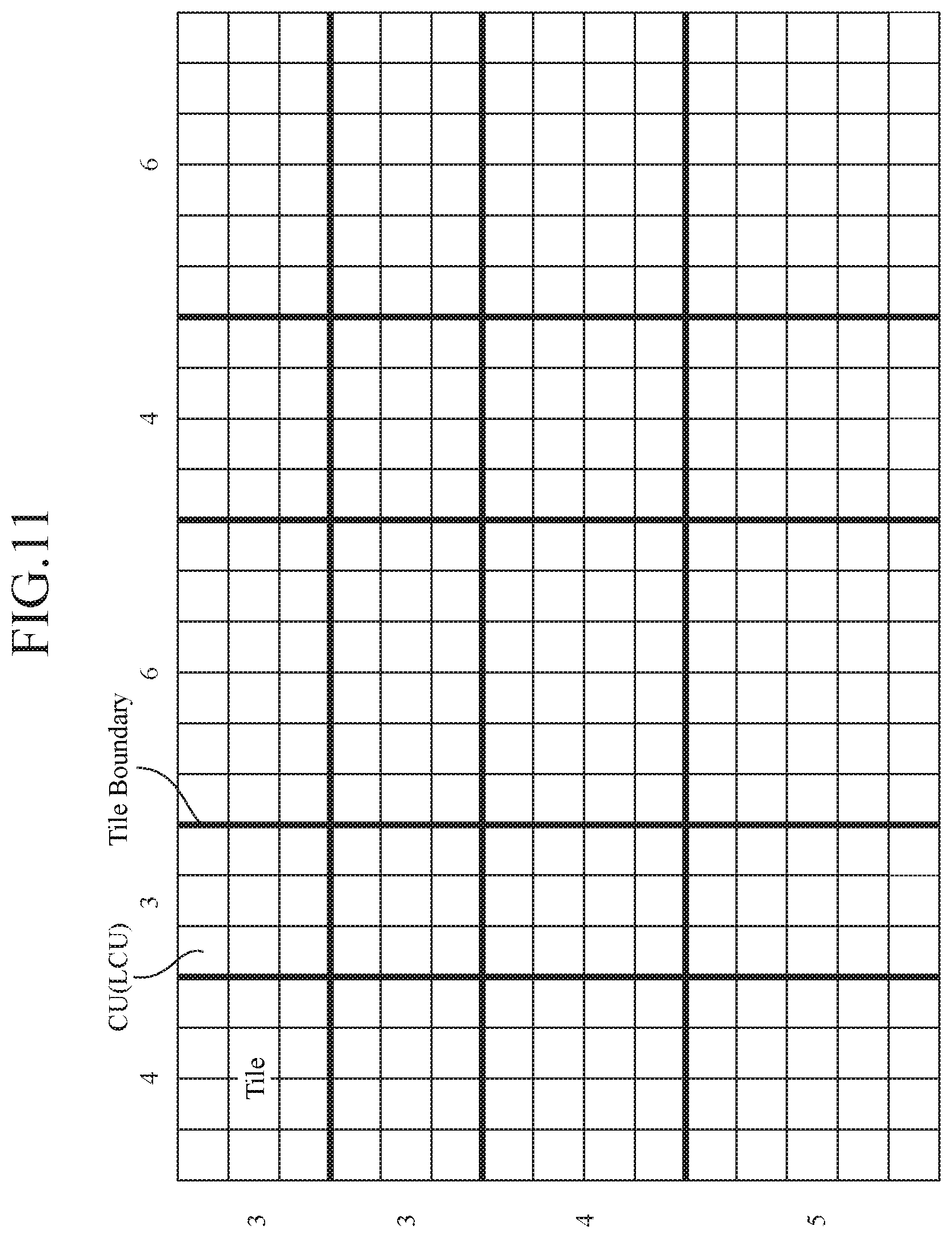

In the following nonpatent reference 1, a structural unit called a tile is used in order to solve the above-mentioned problem. In this specification, a tile is referred to as a rectangular region which consists of a plurality of coding units, as shown in FIG. 11. By eliminating the dependence on any coding unit belonging to a different tile, parallel processing on a tile level can be implemented. Concretely, a tile has characteristics as shown below.

(1) Partitioning of an image into tiles is carried out on a per coding unit basis.

(2) Partitioning of an image into tiles results in generation of a grid with the width of each row and that of each column being non-uniform. More specifically, tiles adjacent in a vertical direction have the same coordinates at both the right and left ends thereof, and tiles adjacent in a horizontal direction have the same coordinates at both the upper and lower ends thereof.

(3) Each coding unit is processed in a raster scan order which is closed within a tile. Further, slice partitioning can be carried out within a tile.

(4) In a coding unit which is processed first within a tile, entropy encoding is started from an initial state.

(5) In an intra-frame prediction process, an inter-frame prediction process, an entropy encoding process, and an entropy decoding process, at the time of making a reference to the local decoded image and the coding mode of an adjacent coding unit, a screen edge is processed without referring to the adjacent coding unit when the adjacent coding unit belongs to a different tile.

(6) A loop filtering process is carried out on the entire screen after the processes on all the tiles are completed and a decoded image of the entire screen is generated.

As mentioned above, in the encoding process of encoding a coding unit belonging to a certain tile, only the result of a coding unit belonging to the same tile is needed for orthogonal transformation, quantization, and entropy coding processes on a prediction signal and a prediction error signal. Therefore, the intra-frame/inter-frame prediction processes, and the orthogonal transformation, quantization, and entropy coding processes on the prediction error signal, which are included in the encoding process, can be carried out in parallel on a tile level. Further, in the decoding process on a bitstream generated using tiles, an entropy decoding process, an inverse quantization and inverse transformation process on a prediction error signal, and intra-frame/inter-frame prediction processes can be carried out in parallel on a tile level.

RELATED ART DOCUMENT

Nonpatent Reference

Nonpatent reference 1: Arild Fuldseth, Michael Horowitz, Shilin Xu, Andrew Segall, Minhua Zhou, "JCTVC-F335: Tiles", Joint Collaborative Team on Video Coding (JCT-VC) of ITU-T SG16WP3 and ISO/IEC JTC1/SC29/WG11 6th Meeting: Torino, IT, 14-22 Jul., 2011

SUMMARY OF THE INVENTION

Problems to be Solved by the Invention

Because conventional video encoding devices are constructed as above, while the intra-frame/inter-frame prediction processes, and the orthogonal transformation, quantization, and entropy encoding processes on the prediction error signal can be carried out in parallel, the loop filtering process is carried out on the entire screen. In this case, because it is necessary to refer to the local decoded images of both tiles adjacent to a tile boundary when performing a filtering process on the tile boundary, a problem is that the processes on both the tiles must be completed and parallel processing cannot be carried out on a tile level.

The present invention is made in order to solve the above-mentioned problem, and it is therefore an object of the present invention to provide, a video decoding device and a video decoding method capable of carrying out a loop filtering process in parallel on a tile level.

Means for Solving the Problem

In accordance with the present invention, there is provided a video decoding device including: an encoded bit data distributor that separates encoded bit data separated from a bitstream into encoded bit data per tile; one or more tile decoders each of that carries out a prediction difference decoding process on encoded bit data per tile separated by the encoded bit data distributor to generate a decoded image per tile; and one or more tile filters each of that carries out a filtering process on the decoded image generated by one of the one or more tile decoders by using a filter per tile shown by a filter parameter separated from the bitstream.

Advantages of the Invention

Because the video decoding device in accordance with the present invention is constructed in such a way as to include the encoded bit data distributor that separates encoded bit data separated from a bitstream into encoded bit data per tile; the one or more tile decoders each of that carries out a prediction difference decoding process on encoded bit data per tile separated by the encoded bit data distributor to generate a decoded image per tile; and the one or more tile filters each of that carries out a filtering process on the decoded image generated by one of the one or more tile decoders by using a filter per tile shown by a filter parameter separated from the bitstream, there is provided an advantage of enabling the loop filters to carry out the filtering processes in parallel on a tile level.

BRIEF DESCRIPTION OF THE FIGURES

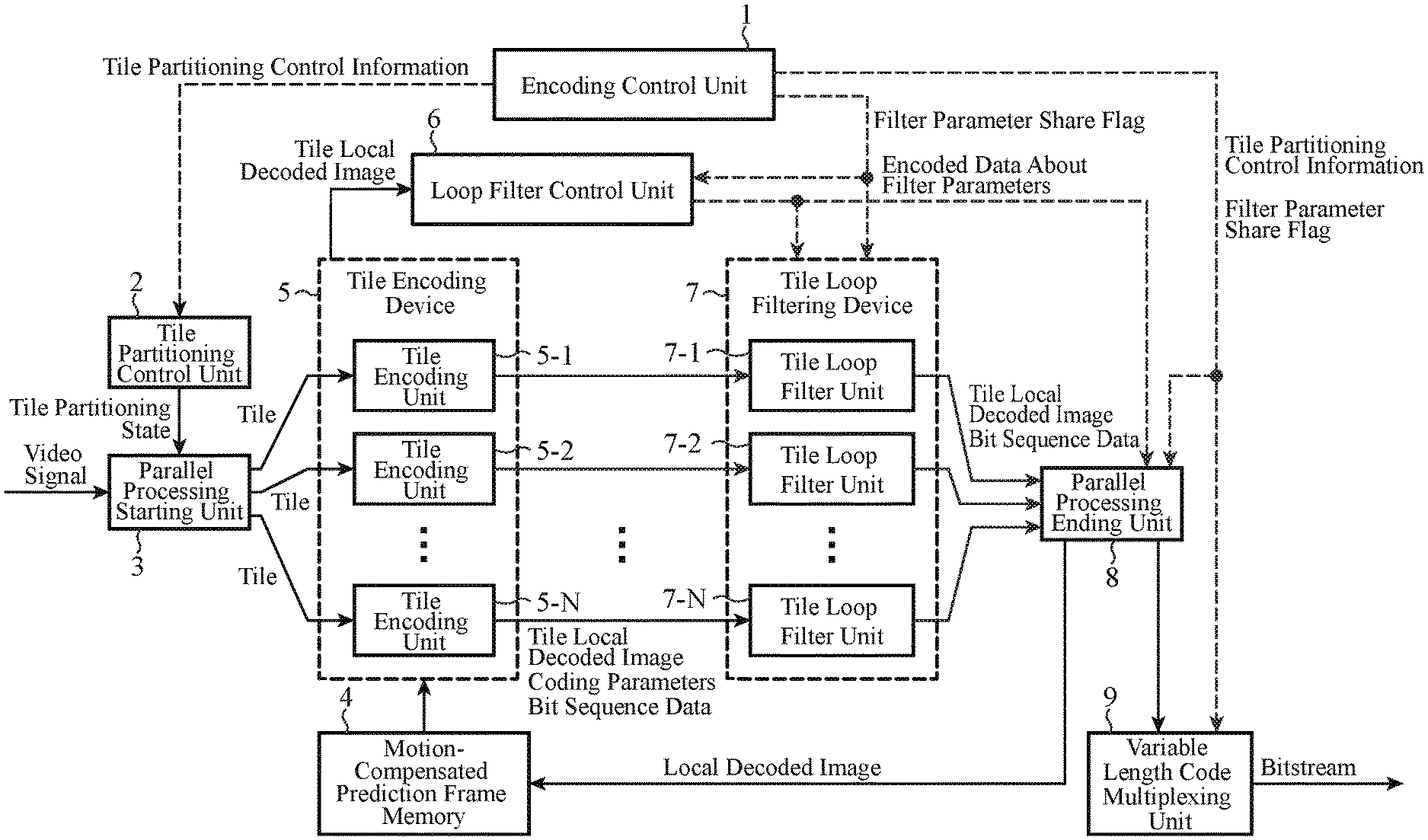

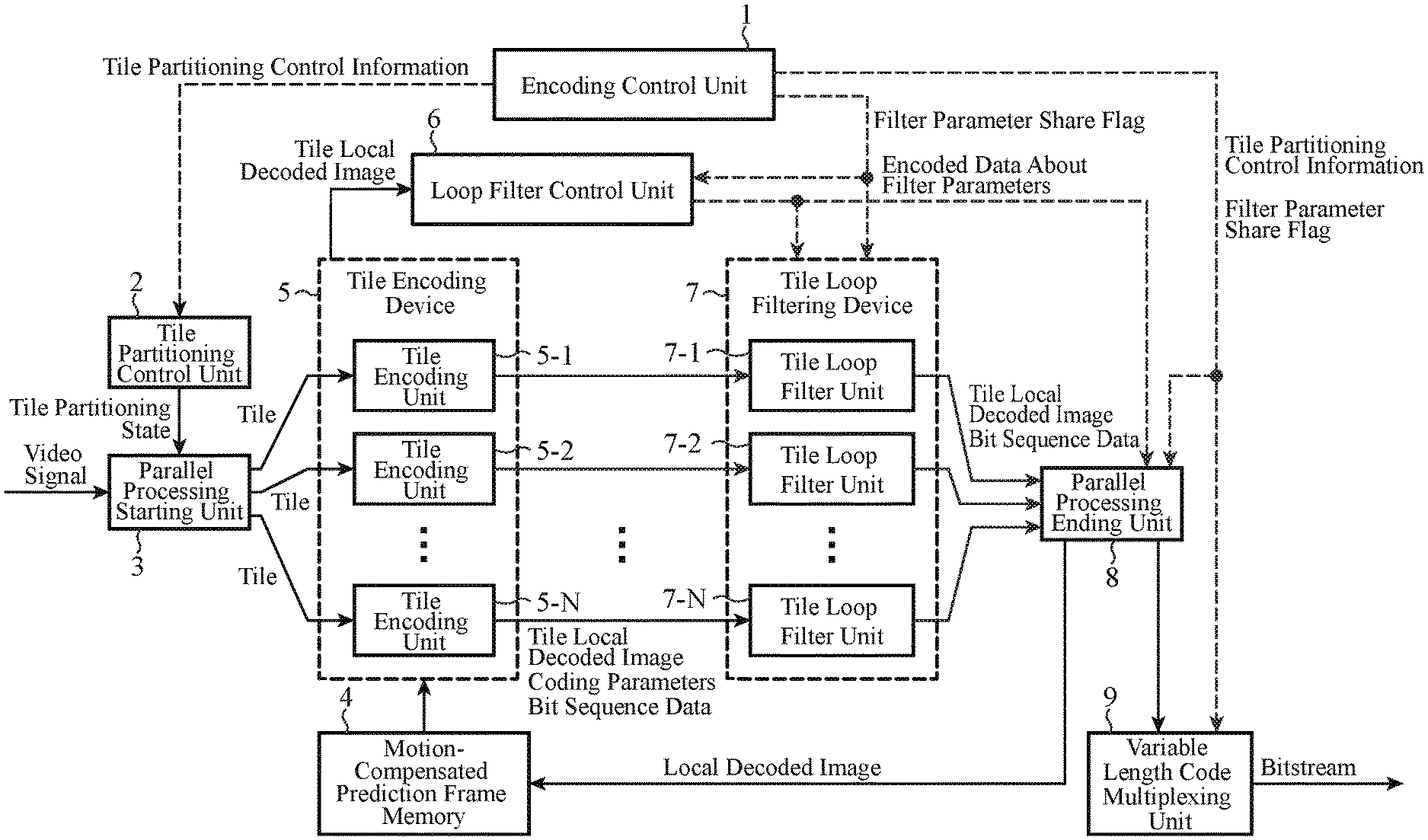

FIG. 1 is a block diagram showing a video encoding device in accordance with Embodiment 1 of the present invention;

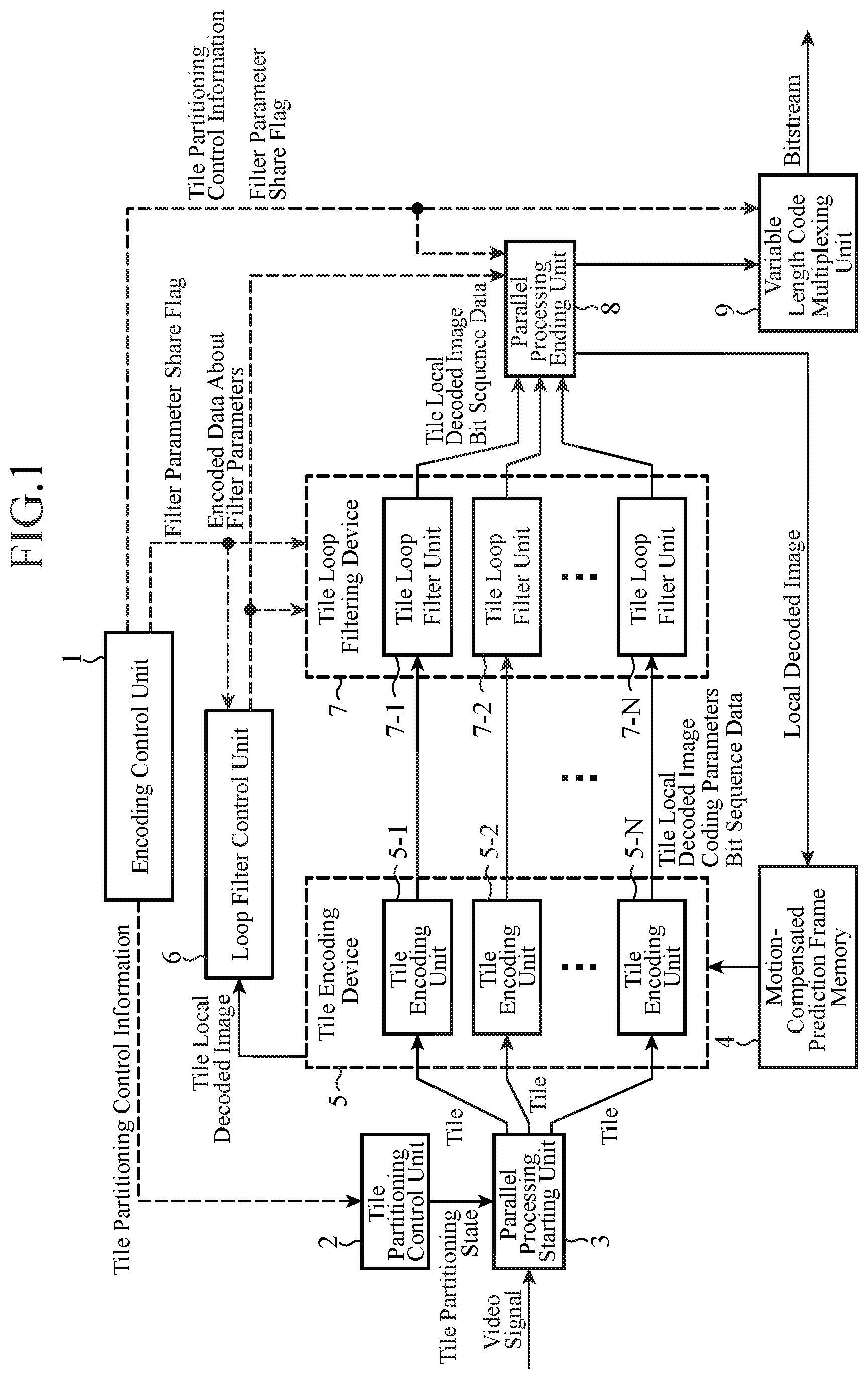

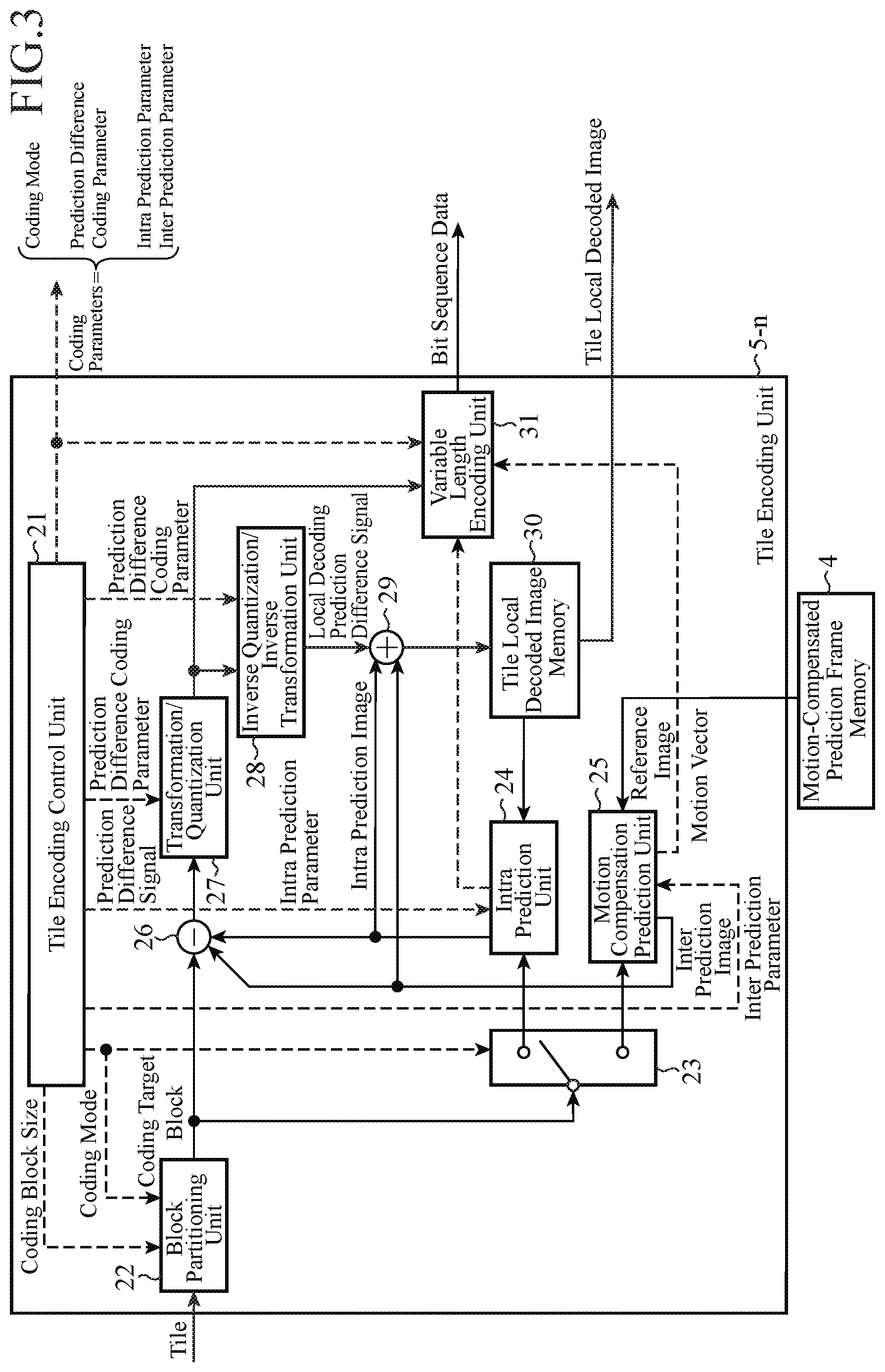

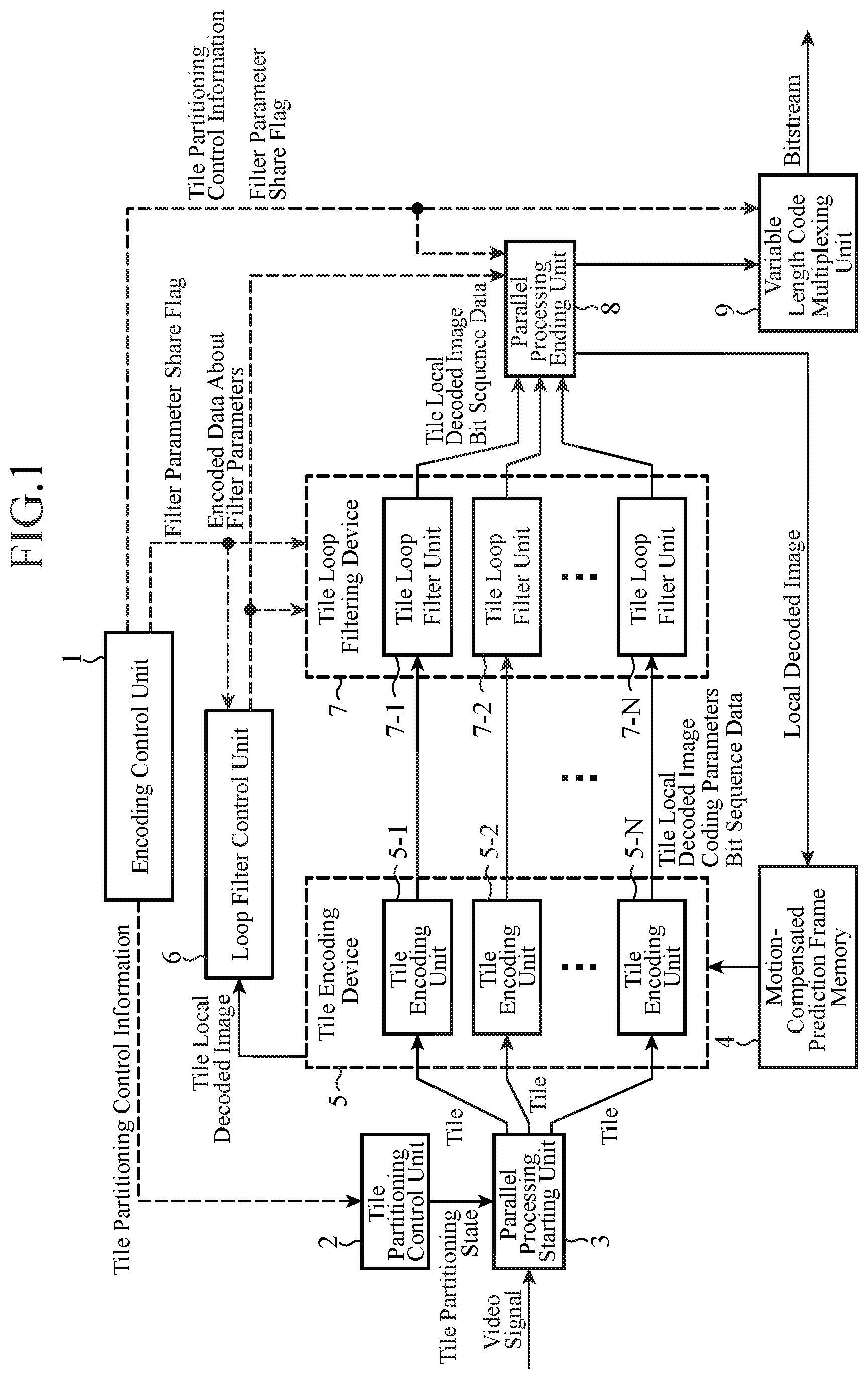

FIG. 2 is a flow chart showing processing (video encoding method) carried out by the video encoding device in accordance with Embodiment 1 of the present invention;

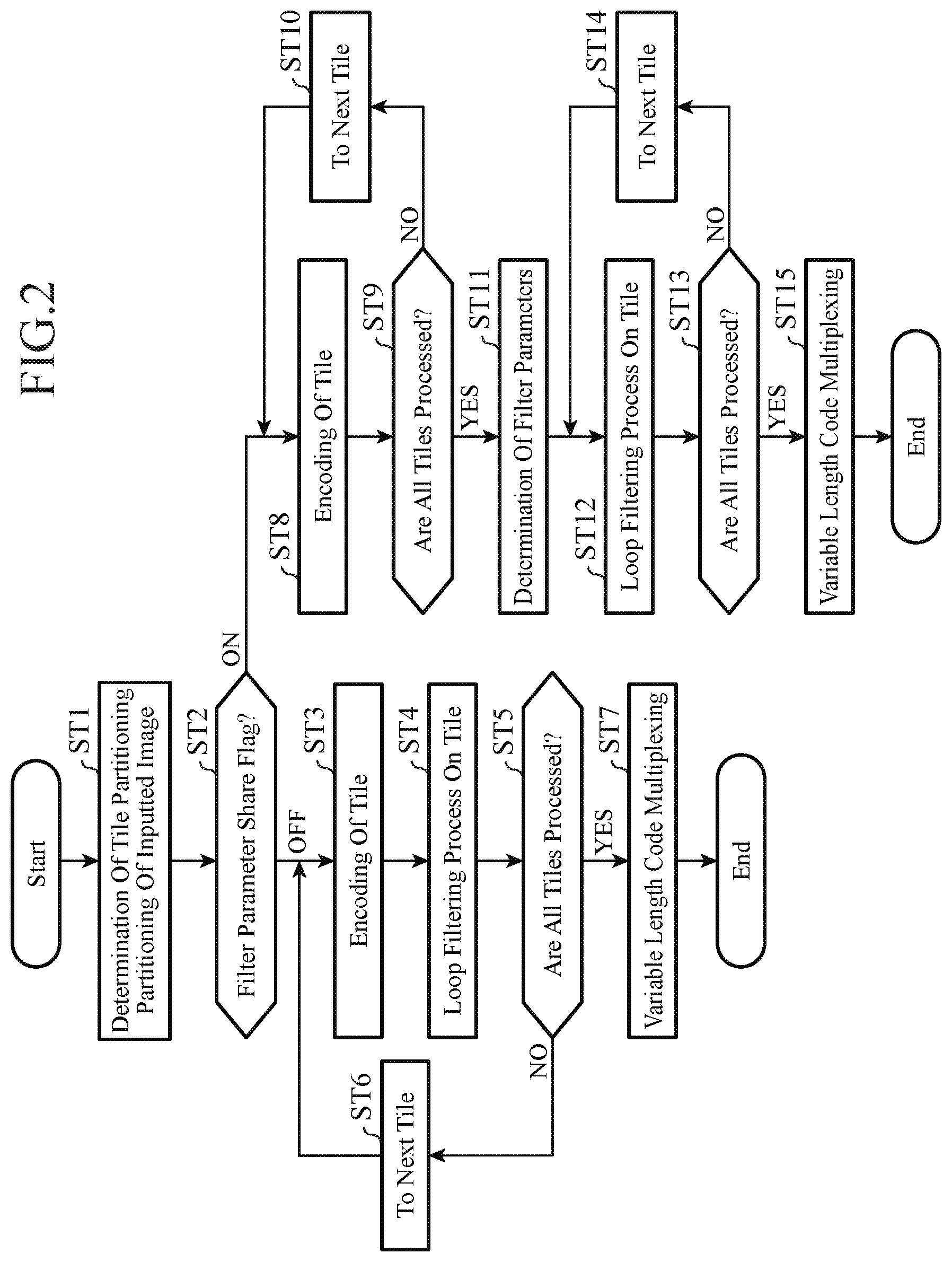

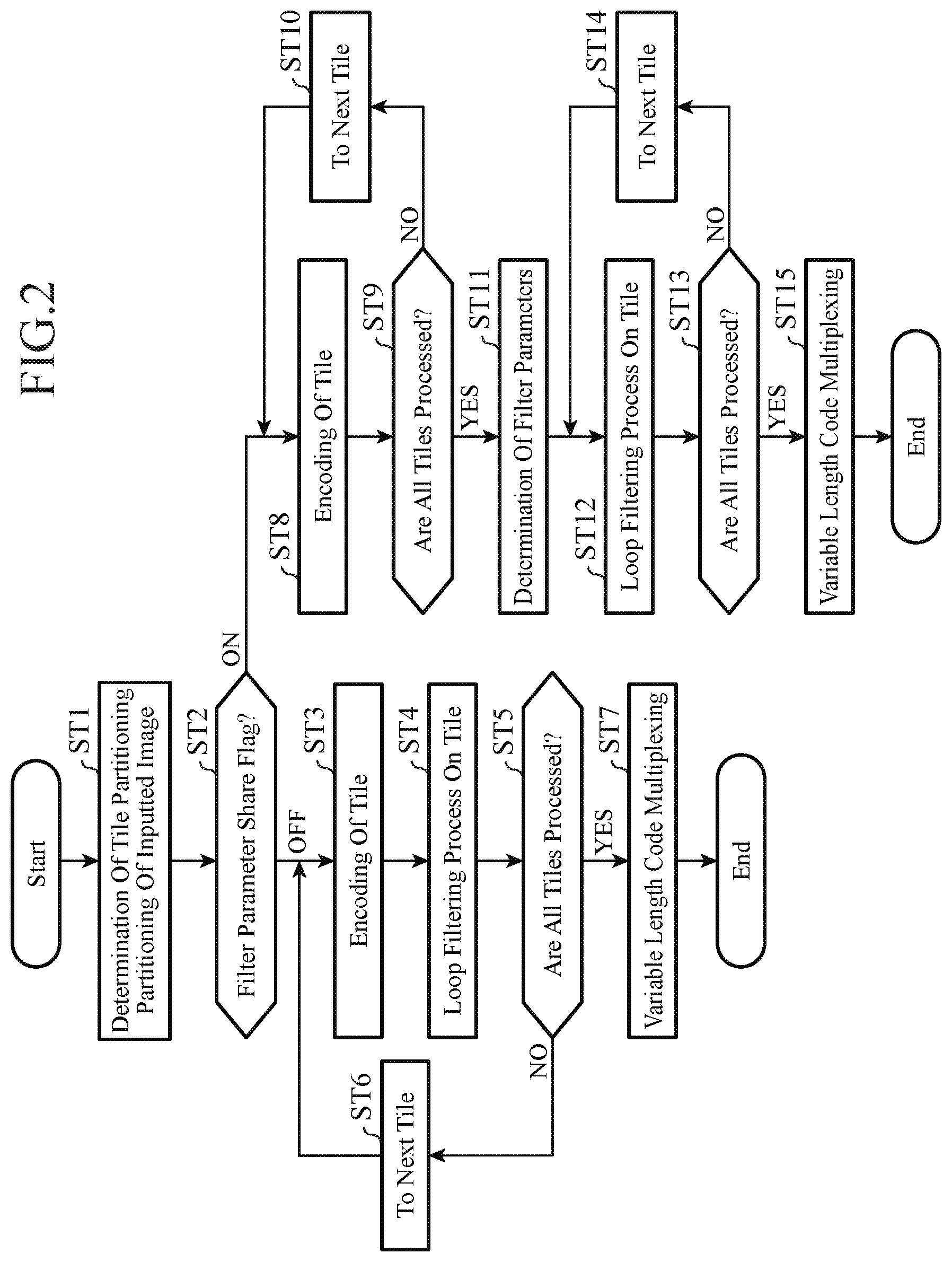

FIG. 3 is a block diagram showing each tile encoding unit 5-n (n=1, 2, . . . , N) of the video encoding device in accordance with Embodiment 1 of the present invention;

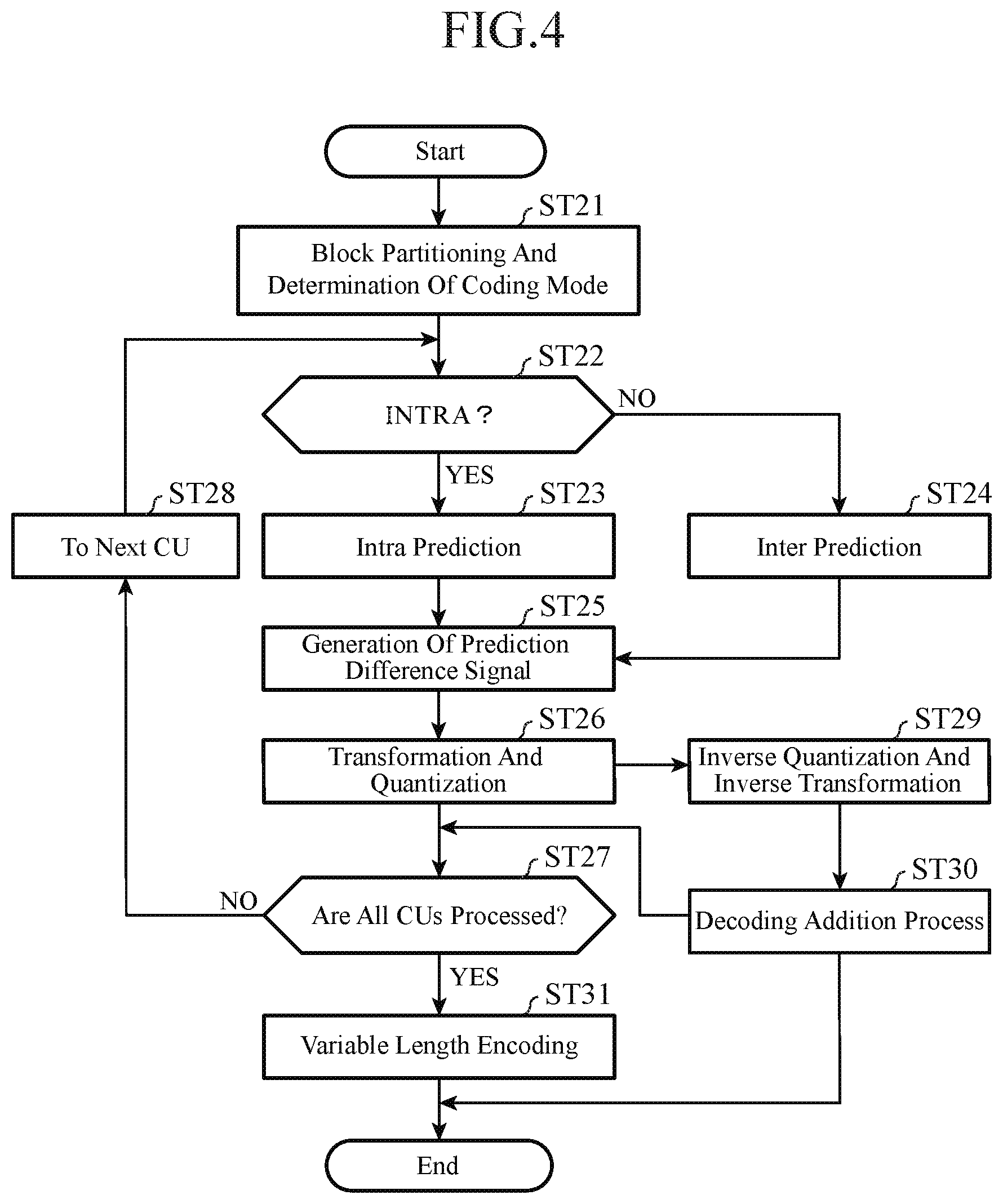

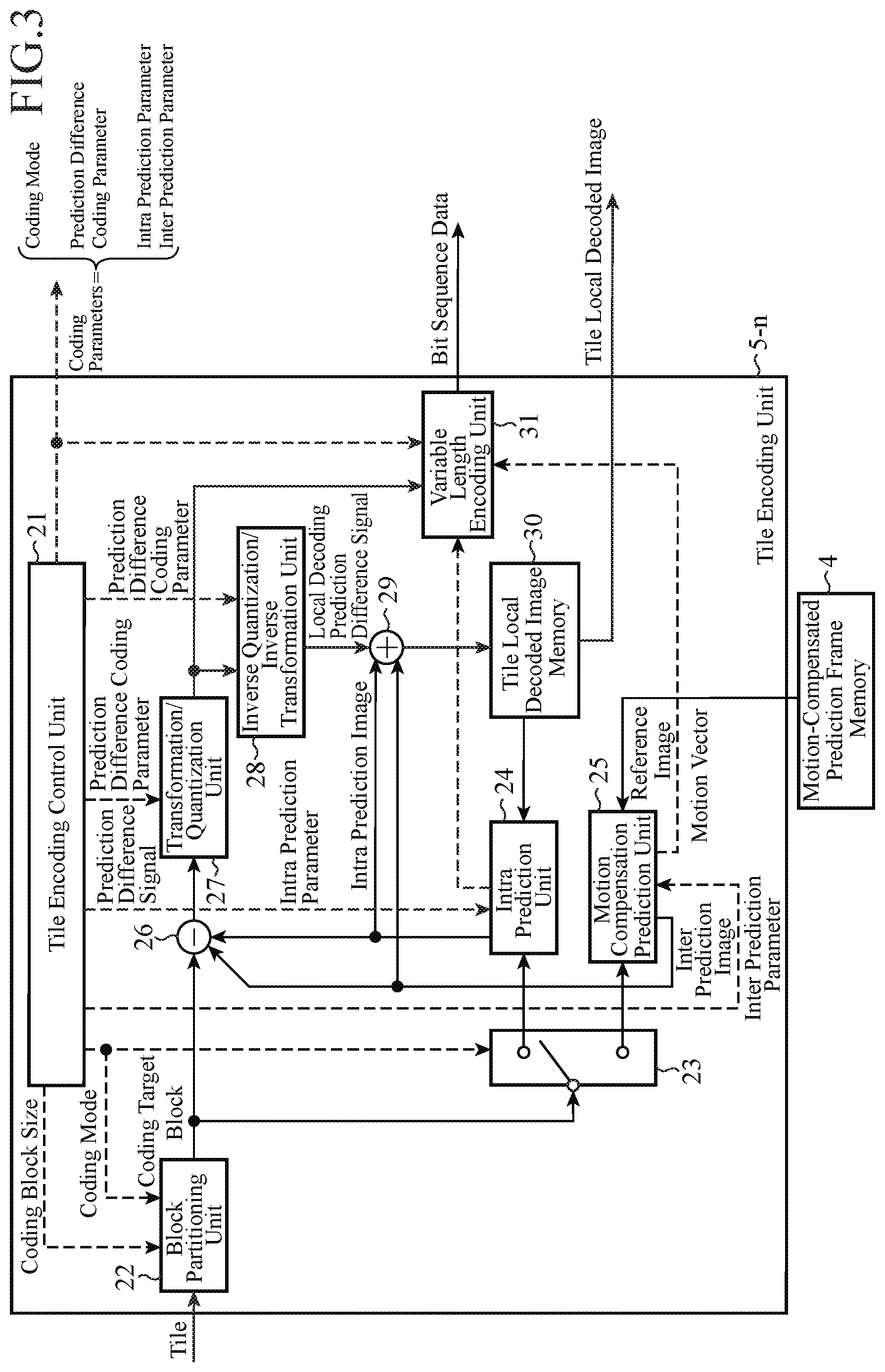

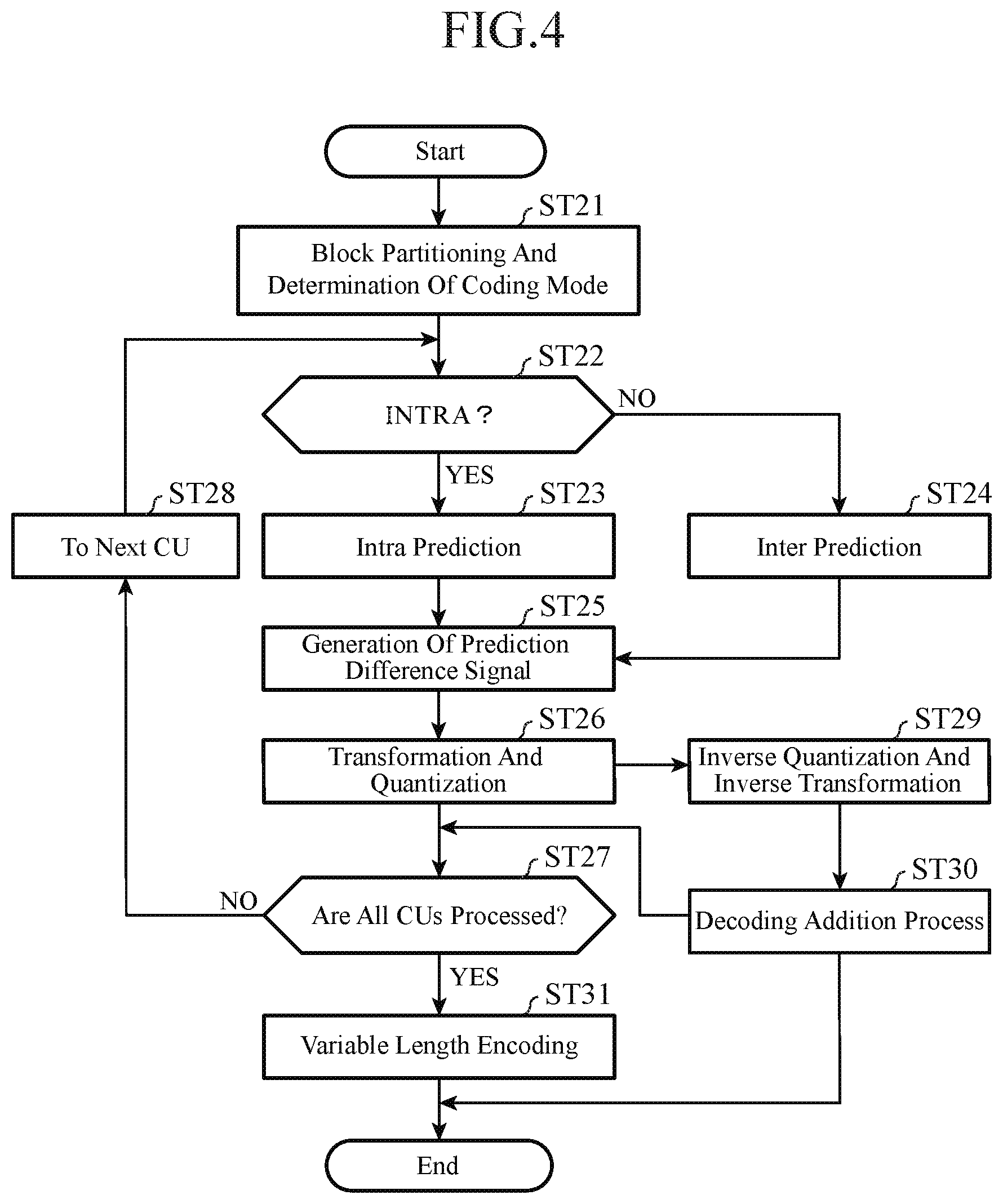

FIG. 4 is a flow chart showing a process carried out by each tile encoding unit 5-n (n=1, 2, . . . , N) of the video encoding device in accordance with Embodiment 1 of the present invention;

FIG. 5 is a block diagram showing each tile loop filter unit 7-n (n=1, 2, . . . , N) of the video encoding device in accordance with Embodiment 1 of the present invention;

FIG. 6 is a block diagram showing a video decoding device in accordance with Embodiment 1 of the present invention;

FIG. 7 is a flow chart showing processing (video decoding method) carried out by the video decoding device in accordance with Embodiment 1 of the present invention;

FIG. 8 is a block diagram showing each tile decoding unit 54-n (n=1, 2, . . . , N) of the video decoding device in accordance with Embodiment 1 of the present invention;

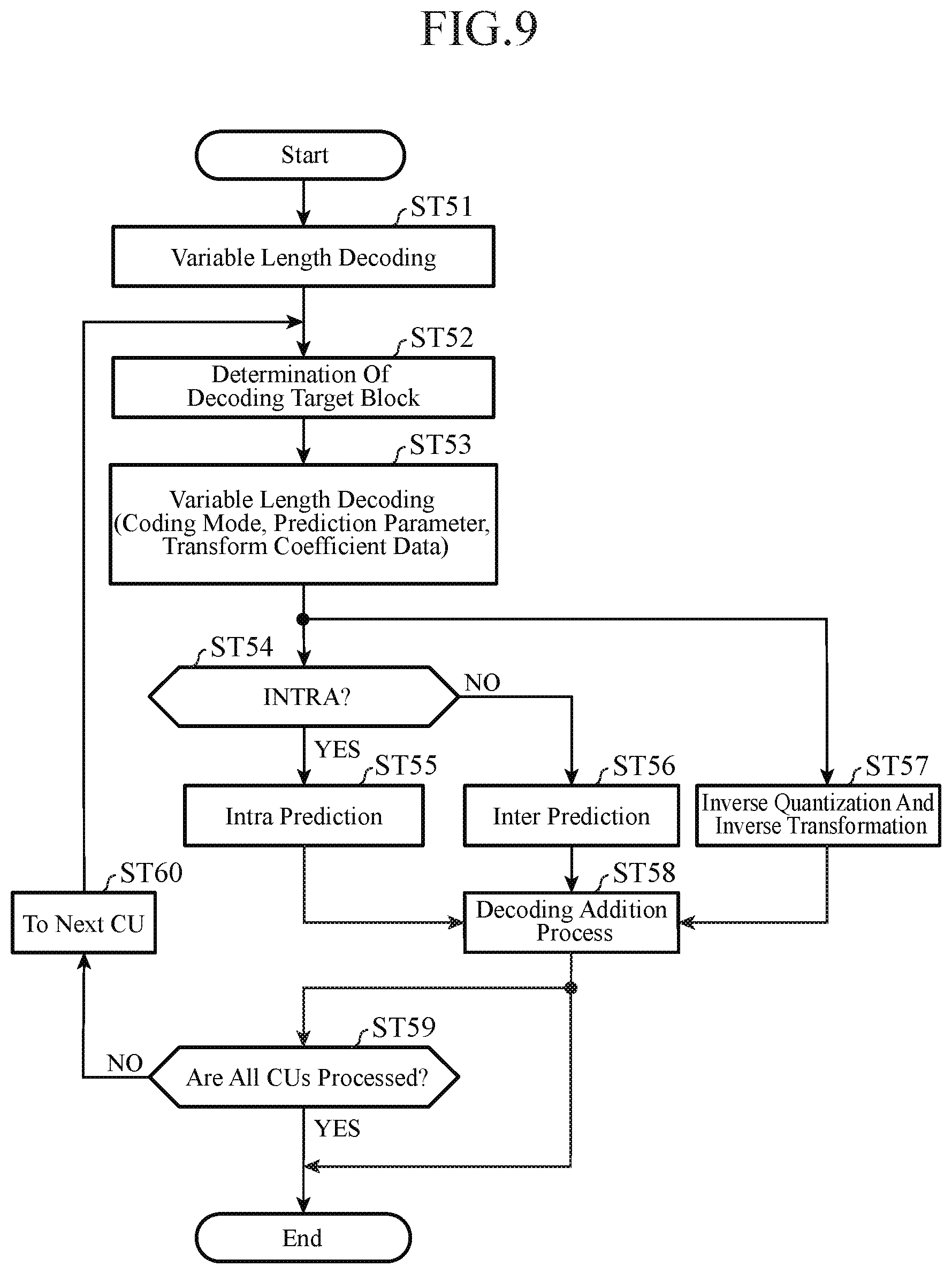

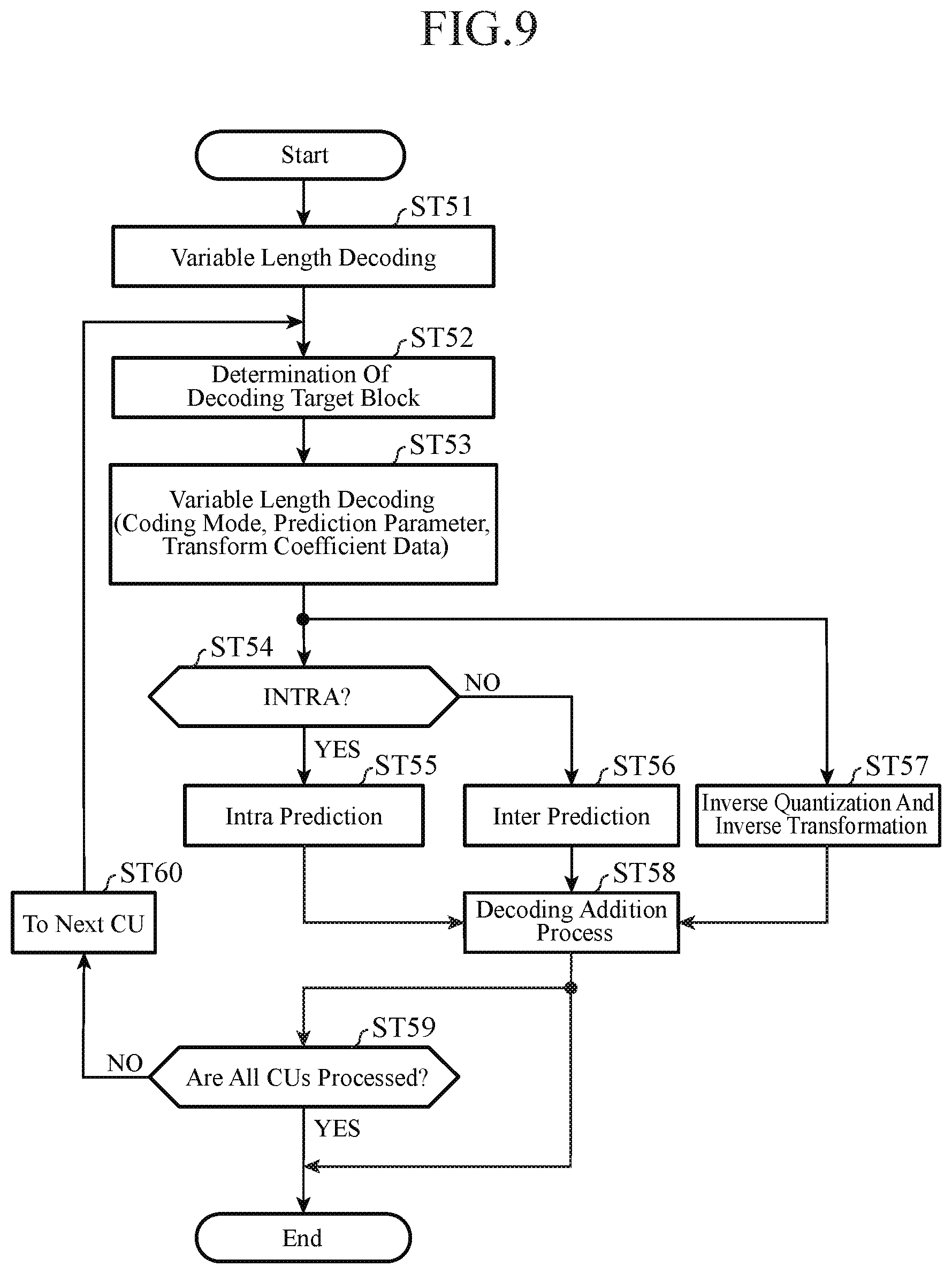

FIG. 9 is a flow chart showing a process carried out by each tile decoding unit 54-n (n=1, 2, . . . , N) of the video decoding device is shown in accordance with Embodiment 1 of the present invention;

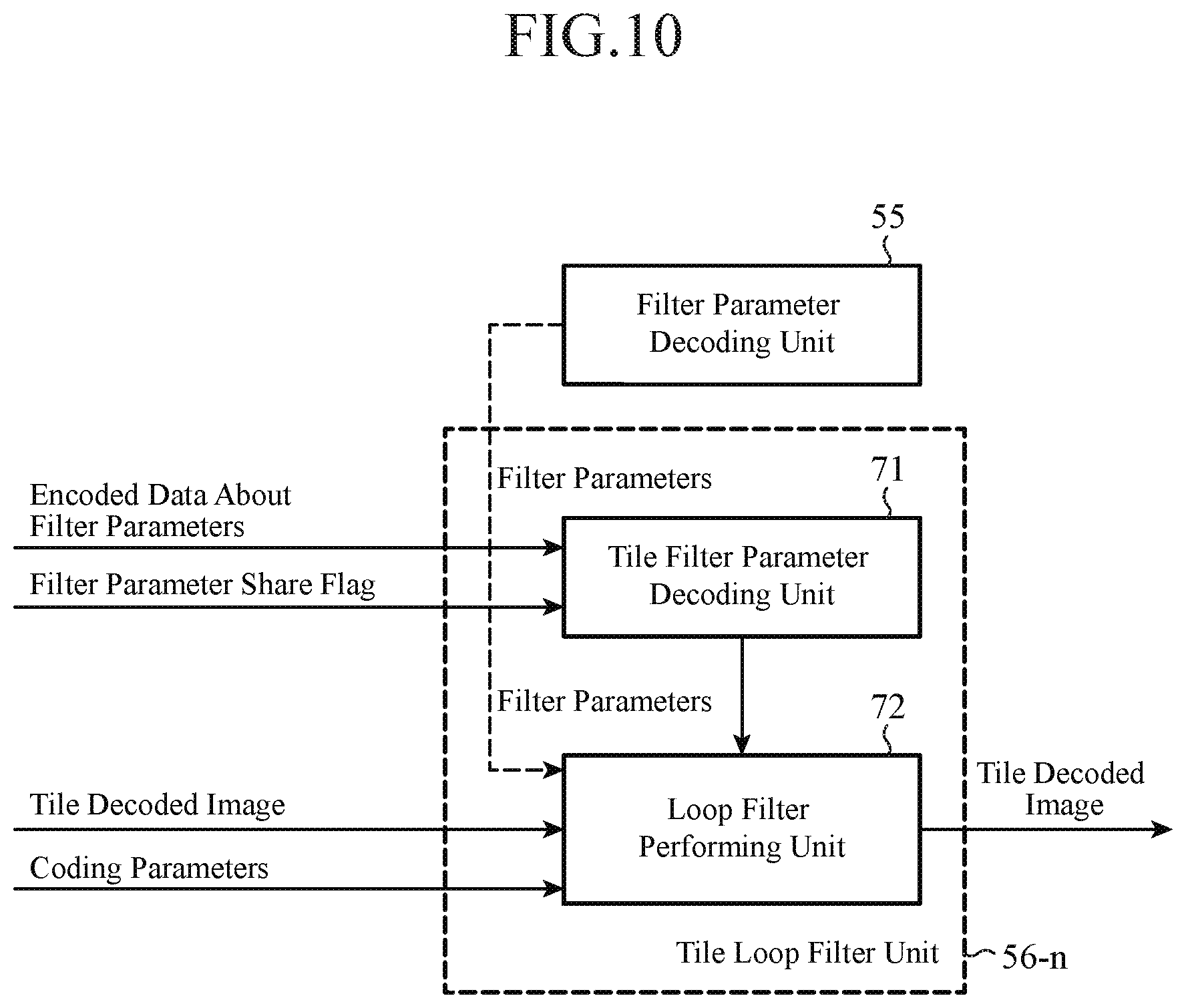

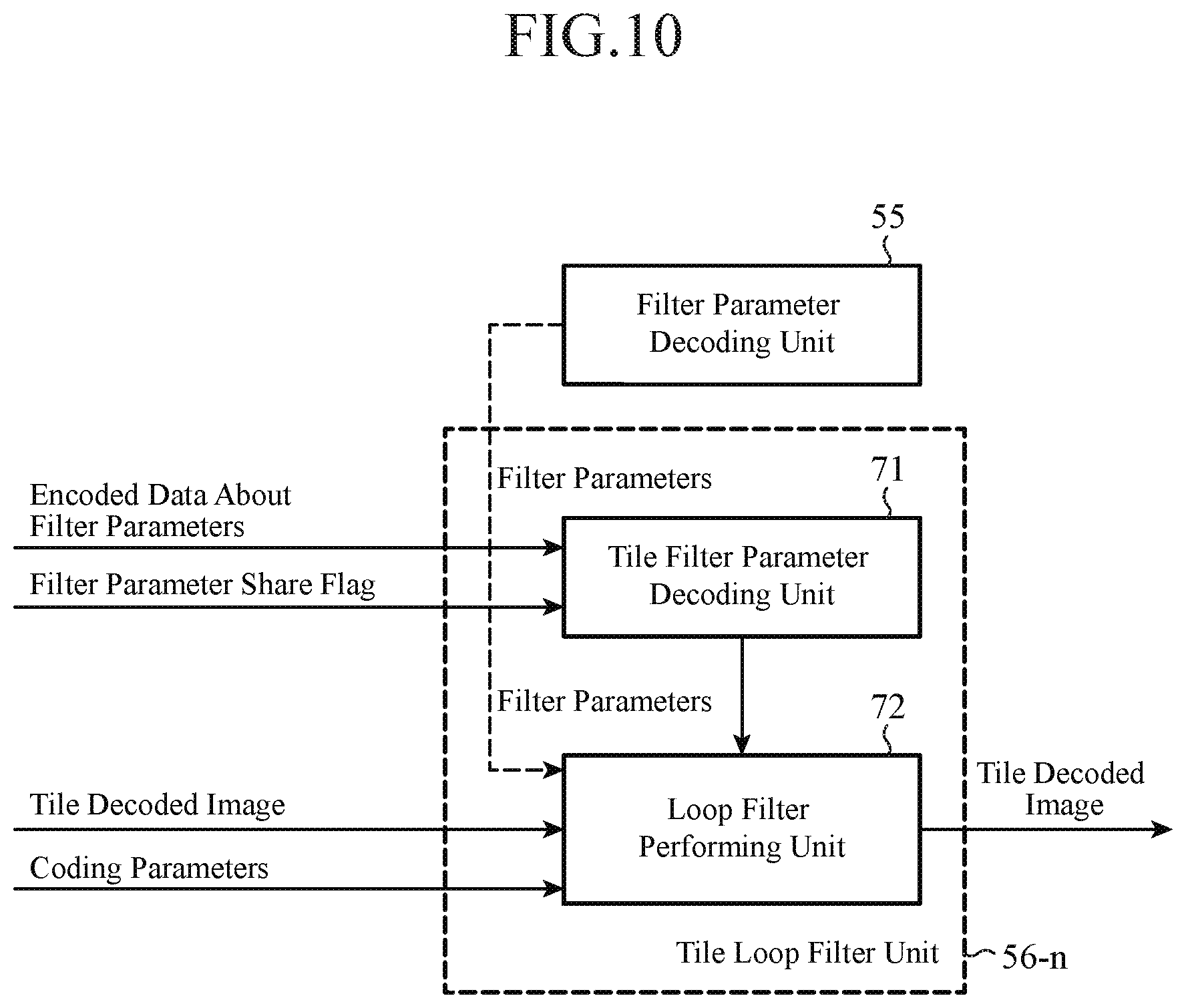

FIG. 10 is a block diagram showing each tile loop filter unit 56-n (n=1, 2, . . . , N) of the video decoding device in accordance with Embodiment 1 of the present invention;

FIG. 11 is an explanatory drawing showing an example of partitioning of an image into LCUs and partitioning of an image into tiles;

FIG. 12 is an explanatory drawing showing an example in which each largest coding block is divided hierarchically into a plurality of coding target blocks;

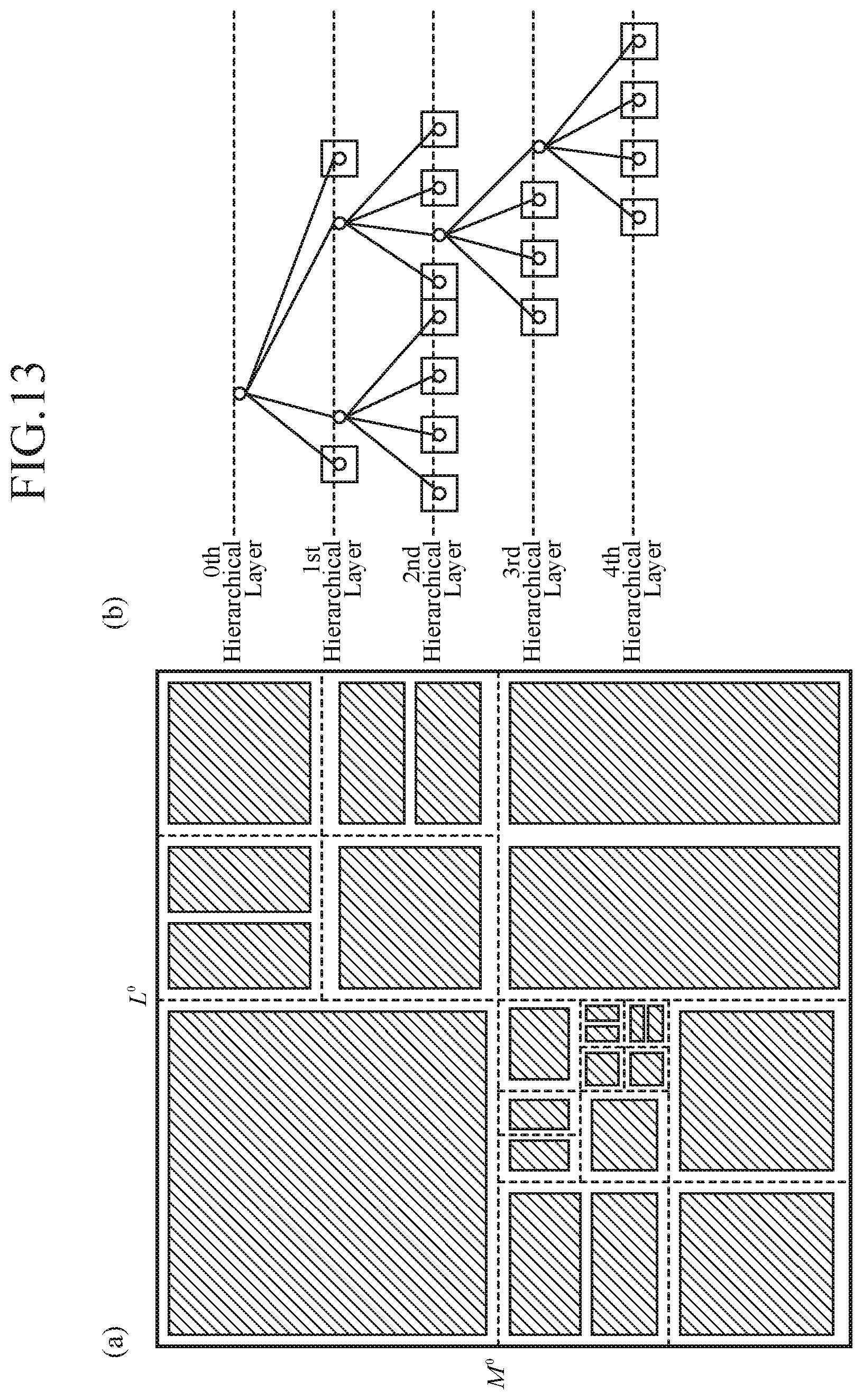

FIG. 13 is an explanatory drawing showing, with a quadtree graph, a distribution of partitions obtained through the partitioning, and a situation in which a coding mode m(B.sup.n) is assigned to each partition obtained through the hierarchical layer partitioning;

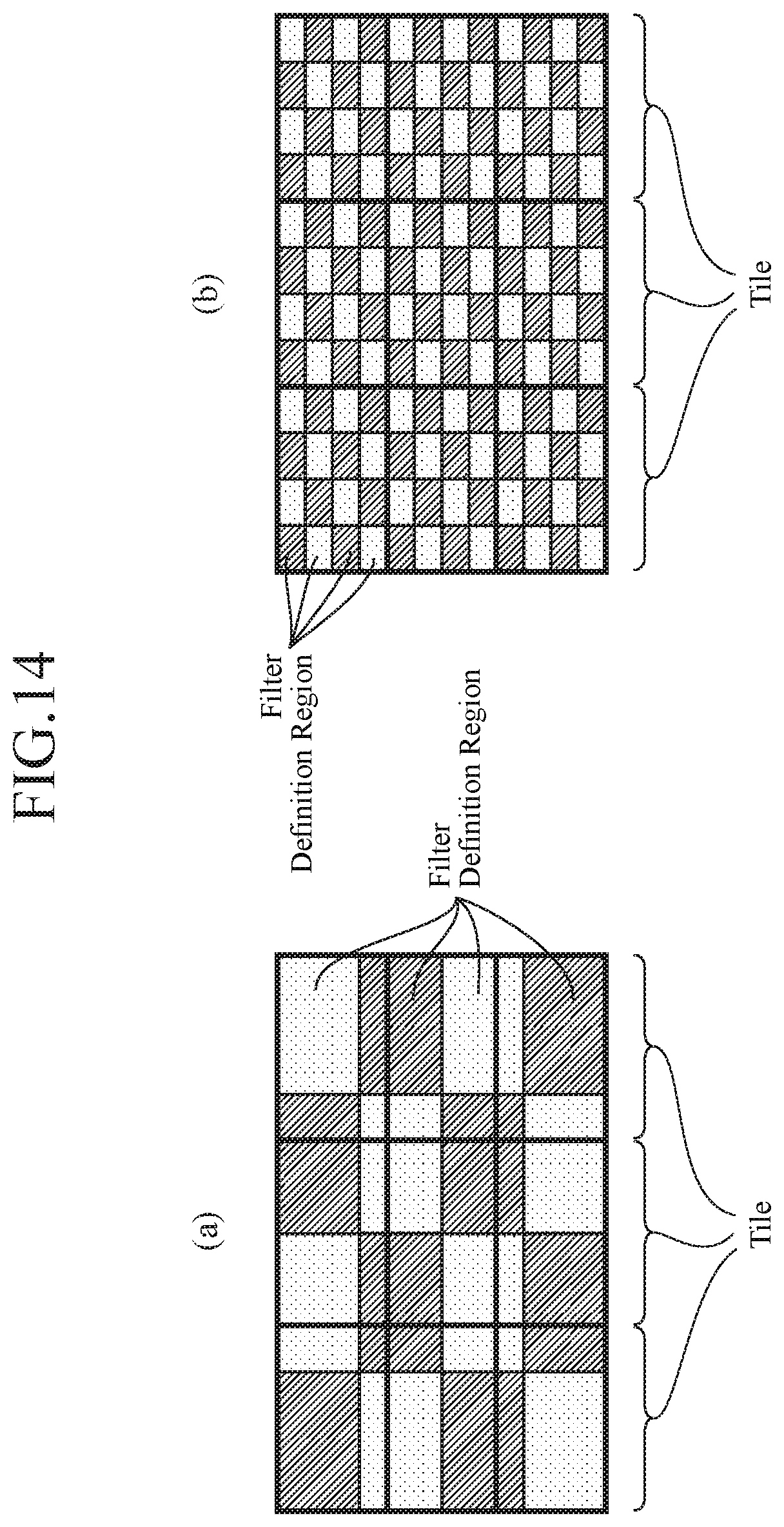

FIG. 14 is an explanatory drawing showing tiles and filter definition regions;

FIG. 15 is an explanatory drawing showing a bitstream into which filter parameters are multiplexed;

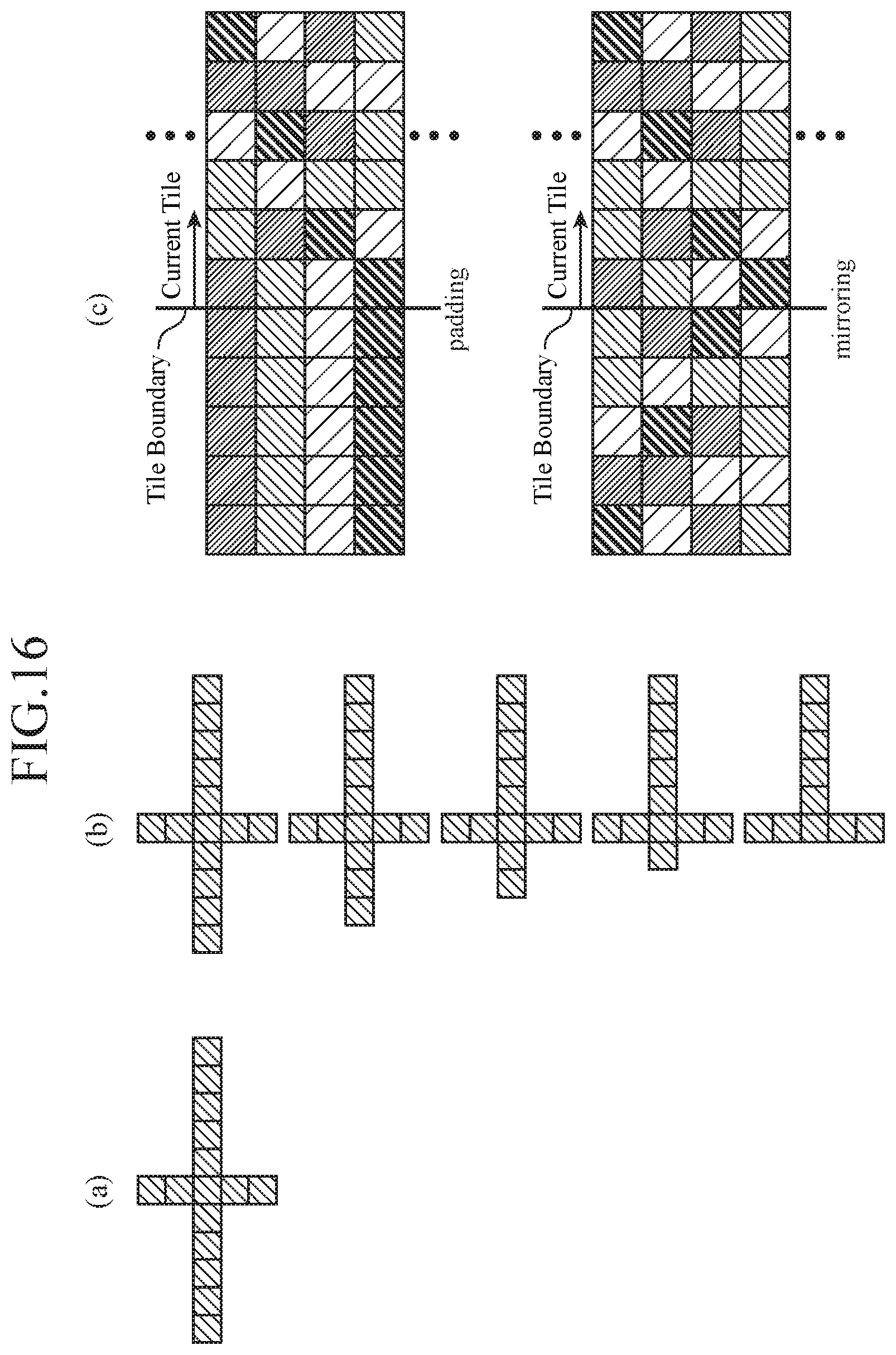

FIG. 16 is an explanatory drawing showing a filtering process on a filter boundary;

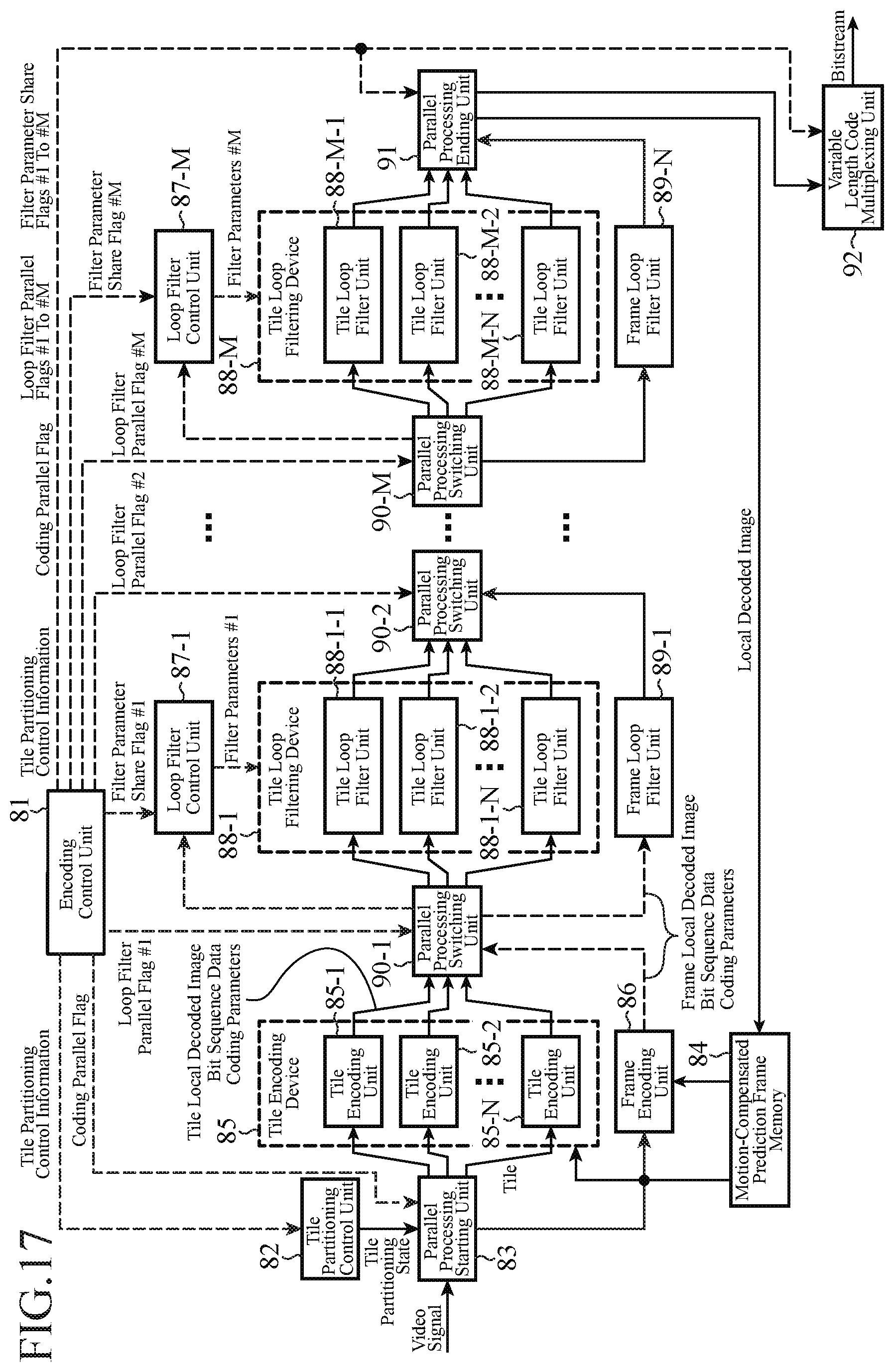

FIG. 17 is a block diagram showing a video encoding device in accordance with Embodiment 2 of the present invention;

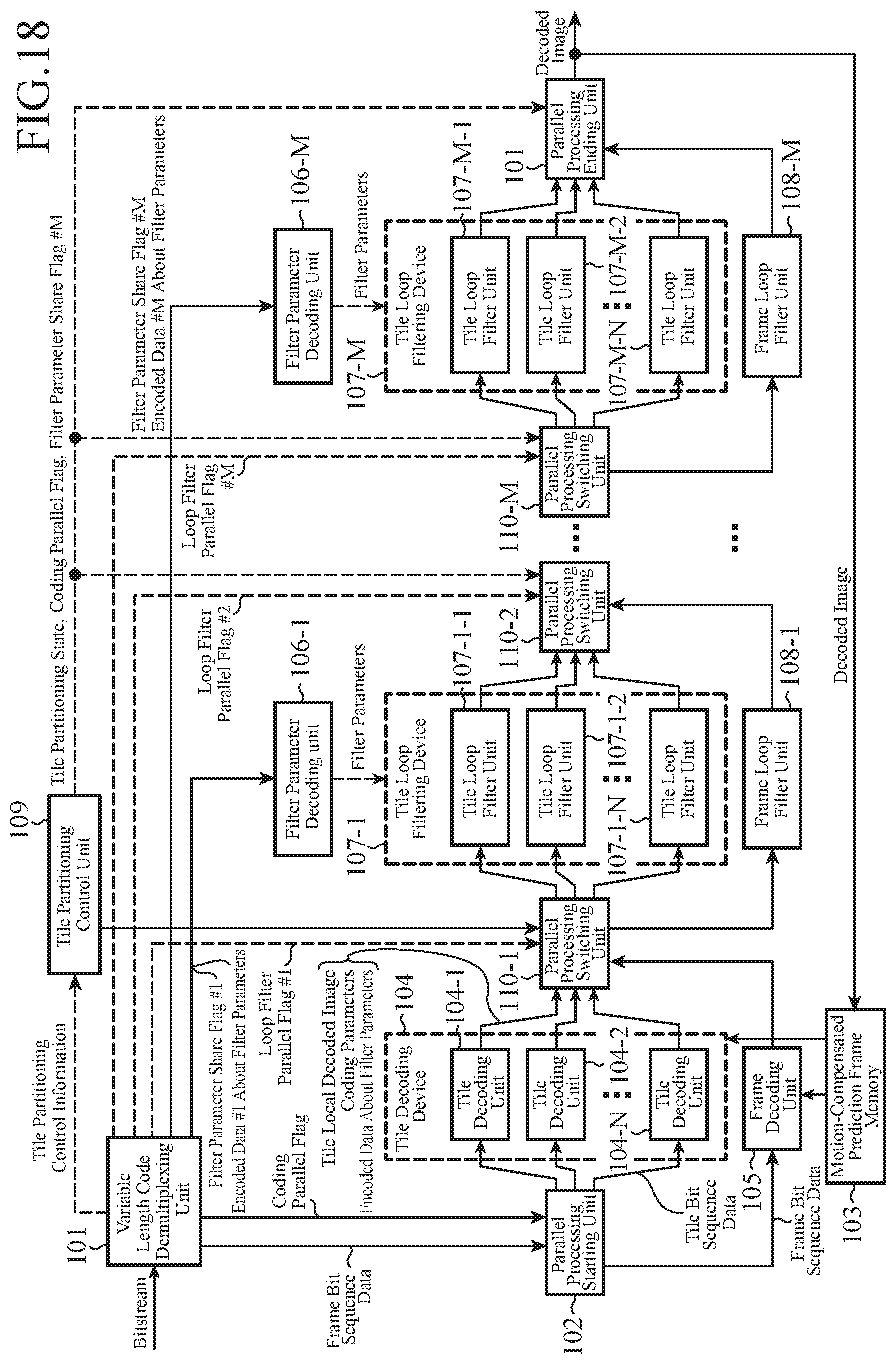

FIG. 18 is a block diagram showing a video decoding device in accordance with Embodiment 2 of the present invention; and

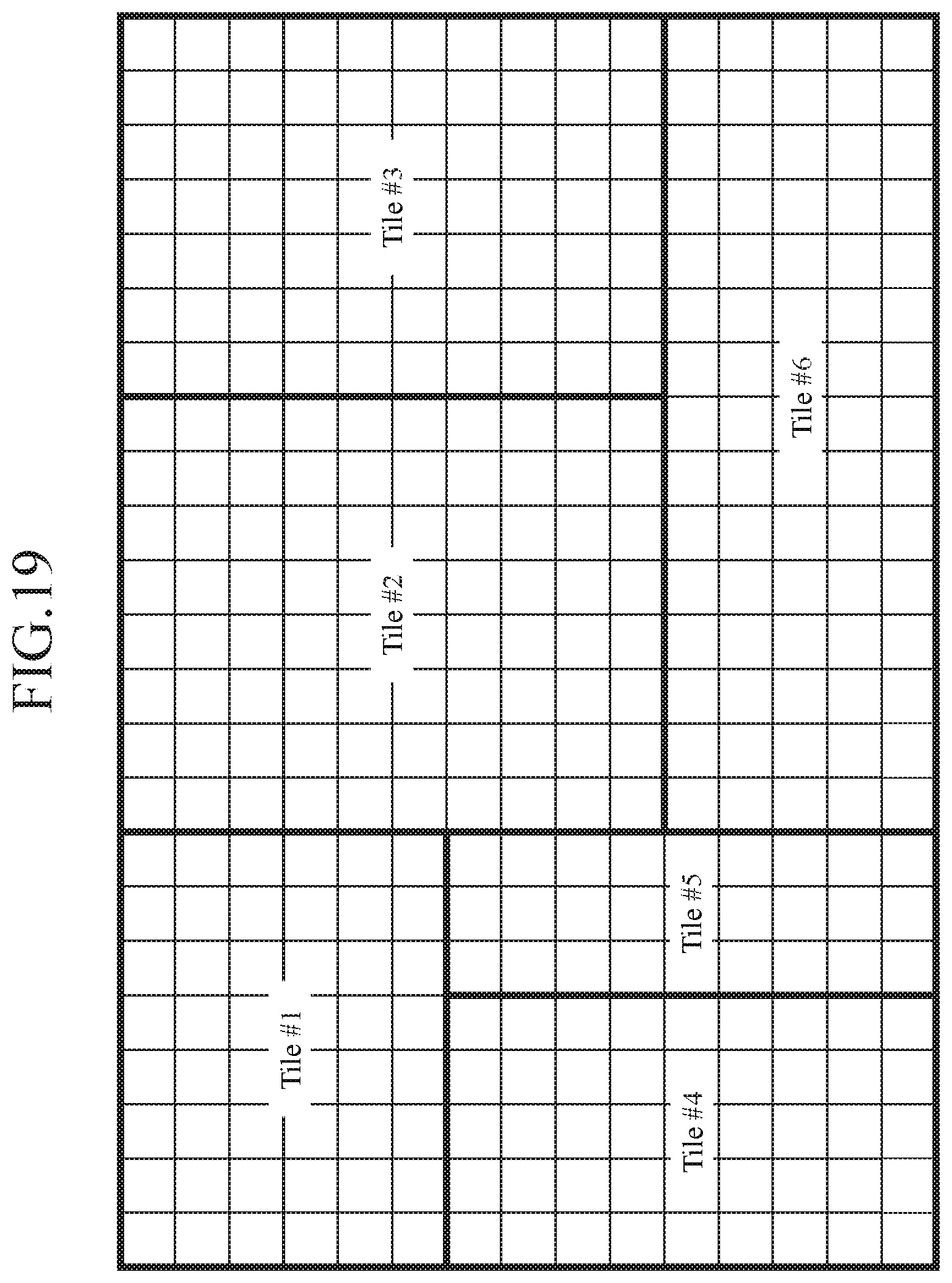

FIG. 19 is an explanatory drawing showing an example of freely partitioning into tiles not arranged in a grid.

EMBODIMENTS OF THE INVENTION

Hereafter, in order to explain this invention in greater detail, the preferred embodiments of the present invention will be described with reference to the accompanying drawings.

Embodiment 1

FIG. 1 is a block diagram showing a video encoding device in accordance with Embodiment 1 of the present invention. Referring to FIG. 1, an encoding control unit 1 carries out a process of outputting tile partitioning control information (partitioning control information) indicating a dividing state at the time of partitioning an inputted image shown by a video signal into tiles each of which is a rectangular region having a predetermined size. The encoding control unit 1 also carries out a process of outputting a filter parameter share flag (filter share flag) showing whether or not a filter for use in a filtering process to be performed on a local decoded image per tile, which is generated by each of tile encoding units 5-1 to 5-N, is made to be shared among the tiles. The encoding control unit 1 constructs a partitioning controller.

A tile partitioning control unit 2 carries out a process of controlling the partitioning of the inputted image by a parallel processing starting unit 3 in such a way that the partitioning matches the partitioning state indicated by the tile partitioning control information outputted from the encoding control unit 1. The parallel processing starting unit 3 carries out a process of partitioning the inputted image shown by the video signal into tiles each having a predetermined size according to a command from the tile partitioning control unit 2, and distributing the tiles obtained through the partitioning among the tile encoding units 5-1 to 5-N. A tile distributor is comprised of the tile partitioning control unit 2 and the parallel processing starting unit 3.

A motion-compensated prediction frame memory 4 is a recording medium for storing a local decoded image on which a filtering process is performed. The motion-compensated prediction frame memory 4 constructs an image memory. A tile encoding device 5 is equipped with the N tile encoding units 5-1 to 5-N, and each of the N tile encoding units 5-1 to 5-N carries out a prediction difference encoding process independently. N is an integer equal to or larger than 1. Each of the tile encoding units 5-1 to 5-N carries out a process of carrying out a prediction difference encoding process on each tile distributed thereto by the parallel processing starting unit 3 by referring to a local decoded image which is stored in the motion-compensated prediction frame memory 4 and on which a filtering process is performed, thereby outputting bit sequence data (encoded bit data) which is the result of the encoding process, and coding parameters (these coding parameters are used when carrying out the prediction difference encoding process, and include a coding mode, a prediction difference coding parameter, an intra prediction parameter, and an inter prediction parameter), and also generating a tile local decoded image (local decoded image). The tile encoding units 5-1 to 5-N construct tile encoders.

A loop filter control unit 6 carries out a process of, when the filter parameter share flag outputted from the encoding control unit 1 shows that a filter is made to be shared among the tiles, obtaining a local decoded image per frame from the tile local decoded images generated by the tile encoding units 5-1 to 5-N, determining a filter per frame suitable for the local decoded image per frame, and outputting filter parameters showing the filter to a tile loop filtering device 7. The loop filter control unit 6 also carries out a process of variable-length-encoding the filter parameters showing the filter per frame, and outputting encoded data about the filter parameters to a parallel processing ending unit 8. The loop filter control unit 6 constructs a per-frame filter determinator.

The tile loop filtering device 7 is equipped with N tile loop filter units 7-1 to 7-N, and each of the N tile loop filter units 7-1 to 7-N carries out a filtering process independently. N is an integer equal to or larger than 1. Each of the tile loop filter units 7-1 to 7-N carries out a process of, when the filter parameter share flag outputted from the encoding control unit 1 shows that a filter is made not to be shared among the tiles, determining a filter per tile suitable for a filtering process to be performed on the tile local decoded image generated by the corresponding one of the tile encoding units 5-1 to 5-N and performing the filtering process on the tile local decoded image by using the filter, and also variable-length-encoding the filter parameters showing the filter per tile and multiplexing encoded data about the filter parameters into the bit sequence data outputted from the corresponding one of the tile encoding units 5-1 to 5-N. In contrast, when the filter parameter share flag shows that a filter is made to be shared among the tiles, each of the tile loop filter units carries out a process of performing a filtering process on the tile local decoded image generated by the corresponding one of the tile encoding units 5-1 to 5-N by using the filter per frame shown by the filter parameters outputted from the loop filter control unit 6, and also outputting the bit sequence data outputted from the corresponding one of the tile encoding units 5-1 to 5-N, just as it is. The tile loop filter units 7-1 to 7-N construct tile filters.

The parallel processing ending unit 8 carries out a process of combining the tile local decoded images on which the filtering processes are respectively performed by the tile loop filter units 7-1 to 7-N to generate a local decoded image per frame according to the tile partitioning control information outputted from the encoding control unit 1, and storing the local decoded image in the motion-compensated prediction frame memory 4, and also outputting the bit sequence data outputted from each of the tile loop filter units 7-1 to 7-N to a variable length code multiplexing unit 9. The parallel processing ending unit 8 also carries out a process of, when the filter parameter share flag outputted from the encoding control unit 1 that a filter is made to be shared among the tiles, outputting the encoded data about the filter parameters showing the filter per frame outputted from the loop filter control unit 6 to the variable length code multiplexing unit 9. The parallel processing ending unit 8 constructs a local decoded image storage.

The variable length code multiplexing unit 9 carries out a process of, when the filter parameter share flag outputted from the encoding control unit 1 shows that a filter is made not to be shared among the tiles, multiplexing the bit sequence data outputted from the parallel processing ending unit 8, and the tile partitioning control information and the filter parameter share flag which are outputted from the encoding control unit 1 to generate a bitstream. In contrast, when the filter parameter share flag shows that a filter is made to be shared among the tiles, the variable length code multiplexing unit carries out a process of multiplexing the bit sequence data and the encoded data about the filter parameters showing the filter per frame which are outputted from the parallel processing ending unit 8, and the tile partitioning control information and the filter parameter share flag which are outputted from the encoding control unit 1 to generate a bitstream. The variable length code multiplexing unit 9 constructs a multiplexer.

In the example shown in FIG. 1, it is assumed that each of the encoding control unit 1, the tile partitioning control unit 2, the parallel processing starting unit 3, the motion-compensated prediction frame memory 4, the tile encoding device 5, the loop filter control unit 6, the tile loop filtering device 7, the parallel processing ending unit 8, and the variable length code multiplexing unit 9, which are the components of the video encoding device, consists of dedicated hardware (e.g., a semiconductor integrated circuit equipped with a CPU, a one chip microcomputer, or the like). In a case in which the video encoding device consists of a computer, a program in which the processes carried out by the encoding control unit 1, the tile partitioning control unit 2, the parallel processing starting unit 3, the tile encoding device 5, the loop filter control unit 6, the tile loop filtering device 7, the parallel processing ending unit 8, and the variable length code multiplexing unit 9 are described can be stored in a memory of the computer, and a CPU of the computer can be made to execute the program stored in the memory. FIG. 2 is a flow chart showing processing (a video encoding method) carried out by the video encoding device in accordance with Embodiment 1 of the present invention.

FIG. 3 is a block diagram showing each tile encoding unit 5-n (n=1, 2, . . . , N) of the video encoding device in accordance with Embodiment 1 of the present invention. Referring to FIG. 3, a tile encoding control unit 21 carries out a process of determining a coding block size which is the size of a coding target block, and also determining a coding mode with the highest coding efficiency for a coding target block outputted from a block partitioning unit 22 from among one or more selectable intra coding modes and one or more selectable inter coding modes. The tile encoding control unit 21 also carries out a process of, when the coding mode with the highest coding efficiency is an intra coding mode, determining an intra prediction parameter which is used when an intra prediction process is carried out on the coding target block in the intra coding mode and, when the coding mode with the highest coding efficiency is an inter coding mode, determining an inter prediction parameter which is used when an inter prediction process is carried out on the coding target block in the inter coding mode. The tile encoding control unit 21 further carries out a process of determining a prediction difference coding parameter to be provided for a transformation/quantization unit 27, an inverse quantization/inverse transformation unit 28, and a variable length encoding unit 31. Hereafter, the coding mode, the prediction difference coding parameter, and the intra prediction parameter or the inter prediction parameter, which are determined by the tile encoding control unit 21, are collectively referred to as the coding parameters. The tile encoding control unit 21 carries out a process of outputting the coding parameters to the tile loop filter unit 7-n (n=1, 2, . . . , N).

The block partitioning unit 22 carries out a process of partitioning each tile distributed thereto by the parallel processing starting unit 3 into blocks (blocks each of which is a unit for prediction process) each having the coding block size determined by the tile encoding control unit 21, and outputting a coding target block which is a unit for prediction process. A select switch 23 carries out a process of, when the coding mode determined by the tile encoding control unit 21 is an intra coding mode, outputting the coding target block outputted thereto from the block partitioning unit 22 to an intra prediction unit 24, and, when the coding mode determined by the tile encoding control unit 21 is an inter coding mode, outputting the coding target block outputted thereto from the block partitioning unit 22 to a motion-compensated prediction unit 25.

The intra prediction unit 24 carries out a process of, while referring to a local decoded image stored in the tile local decoded image memory 30, performing an intra prediction process on the coding target block outputted from the select switch 23 by using the intra prediction parameter determined by the tile encoding control unit 21 to generate an intra prediction image. Because only the local decoded images of blocks belonging to the same tile are stored in the tile local decoded image memory 30, no reference is made to the local decoded image of any block belonging to a different tile in the intra prediction process by the intra prediction unit 24. When the block at the position referred to belongs to a different tile, an intra prediction process which does not have to refer to any pixel is carries out.

The motion-compensated prediction unit 25 carries out a process of comparing the coding target block outputted from the select switch 23 with the local decoded image which is stored in the motion-compensated prediction frame memory 4 and on which a filtering process is performed to search for a motion vector, and performing an inter prediction process (motion-compensated prediction process) on the coding target block by using both the motion vector and the inter prediction parameter determined by the tile encoding control unit 21 to generate an inter prediction image. A subtracting unit 26 carries out a process of subtracting the intra prediction image generated by the intra prediction unit 24 or the inter prediction image generated by the motion-compensated prediction unit 25 from the coding target block outputted from the block partitioning unit 22 to output a prediction difference signal (difference image) which is the result of the subtraction to the transformation/quantization unit 27.

The transformation/quantization unit 27 carries out a process of carrying out an orthogonal transformation process (e.g., a DCT (discrete cosine transform) or an orthogonal transformation process, such as a KL transform, in which bases are designed for a specific learning sequence in advance) on the prediction difference signal outputted from the subtracting unit 26 by referring to the prediction difference coding parameter determined by the tile encoding control unit 21 to calculate transform coefficients, and also quantizing the transform coefficients by referring to the prediction difference coding parameter and then outputting compressed data which are the transform coefficients quantized thereby (quantization coefficients of the difference image) to the inverse quantization/inverse transformation unit 28 and the variable length encoding unit 31.

The inverse quantization/inverse transformation unit 28 carries out a process of inverse-quantizing the compressed data outputted from the transformation/quantization unit 27 by referring to the prediction difference coding parameter determined by the tile encoding control unit 21, and also carrying out an inverse orthogonal transformation process on the transform coefficients which are the compressed data inverse-quantized thereby by referring to the prediction difference coding parameter to calculate a local decoded prediction difference signal corresponding to the prediction difference signal outputted from the subtracting unit 26. An adding unit 29 carries out a process of adding the local decoded prediction difference signal calculated by the inverse quantization/inverse transformation unit 28 and the intra prediction image generated by the intra prediction unit 24 or the inter prediction image generated by the motion-compensated prediction unit 25 to calculate a local decoded image corresponding to the coding target block outputted from the block partitioning unit 22.

The tile local decoded image memory 30 is a recording medium for storing the local decoded image calculated by the adding unit 29. When the encoding process on all the coding units (CUs) belonging to the tile is completed, the local decoded image about the single tile stored in the memory is outputted to the tile loop filter unit 7-n. The variable length encoding unit 31 carries out a process of variable-length-encoding the compressed data outputted from the transformation/quantization unit 27, the coding parameters outputted from the tile encoding control unit 21, and the motion vector outputted from the motion-compensated prediction unit 25 (when the coding mode is an inter coding mode), and outputting bit sequence data which are the results of the encoding to the tile loop filter unit 7-n. FIG. 4 is a flow chart showing a process carried out by each tile encoding unit 5-n (n=1, 2, . . . , N) of the video encoding device in accordance with Embodiment 1 of the present invention.

FIG. 5 is a block diagram showing each tile loop filter unit 7-n (n=1, 2, . . . , N) of the video encoding device in accordance with Embodiment 1 of the present invention. Referring to FIG. 5, a tile loop filter control unit 41 carries out a process of, when the filter parameter share flag outputted from the encoding control unit 1 shows that a filter is made not to be shared among the tiles, determining a filter per tile suitable for a filtering process to be performed on the tile local decoded image generated by the tile encoding unit 5-n, and outputting filter parameters showing the filter to a loop filter performing unit 42 and a variable length encoding unit 43.

The loop filter performing unit 42 carries out a filtering process on the tile local decoded image outputted from the tile encoding unit 5-n by using both the filter per tile shown by the filter parameters outputted from the tile loop filter control unit 41, and the coding parameters outputted from the tile encoding unit 5-n when the filter parameter share flag outputted from the encoding control unit 1 shows that a filter is made not to be shared among the tiles. In contrast, when the filter parameter share flag shows that a filter is made to be shared among the tiles, the loop filter performing unit carries out a filtering process on the tile local decoded image outputted from the tile encoding unit 5-n by using both the filter per tile shown by the filter parameters outputted from the loop filter control unit 6, and the coding parameters outputted from the tile encoding unit 5-n.

The variable length encoding unit 43 carries out a process of variable-length-encoding the filter parameters showing the filter per tile, which is outputted from the tile loop filter control unit 41, multiplexing encoded data about the filter parameters into the bit sequence data outputted from the tile encoding unit 5-n, and outputting the bit sequence data to the parallel processing ending unit 8 when the filter parameter share flag outputted from the encoding control unit 1 shows that a filter is made not to be shared among the tiles. In contrast, when the filter parameter share flag shows that a filter is made to be shared among the tiles, the variable length encoding unit carries out a process of outputting the bit sequence data outputted from the tile encoding unit 5-n to the parallel processing ending unit 8, just as it is.

FIG. 6 is a block diagram showing a video decoding device in accordance with Embodiment 1 of the present invention. Referring to FIG. 6, a variable length code demultiplexing unit 51 carries out a process of, when receiving the bitstream generated by the video encoding device shown in FIG. 1, demultiplexing the bitstream into the bit sequence data per frame (data in which the bit sequence data per tile are united), the tile partitioning control information indicating the partitioning state of the inputted image, the filter share flag showing whether or not a filter is made to be shared among the tiles, and the encoded data about the filter parameters showing a filter per frame (when the filter parameter share flag shows that a filter is made to be shared among the tiles), which are multiplexed into the bitstream. The variable length code demultiplexing unit 51 constructs a demultiplexer.

A parallel processing starting unit 52 carries out a process of dividing the bit sequence data per frame outputted from the variable length code demultiplexing unit 51 into a plurality of bit sequence data per tile, and distributing the bit sequence data per tile among tile decoding units 54-1 to 54-N. The parallel processing starting unit 52 constructs an encoded bit data distributor. A motion-compensated prediction frame memory 53 is a recording medium for storing a decoded image on which a filtering process is performed. The motion-compensated prediction frame memory 53 constructs an image memory.

A tile decoding device 54 is equipped with the N tile decoding units 54-1 to 54-N, and each of the N tile decoding units 54-1 to 54-N carries out a prediction difference decoding process independently. N is an integer equal to or larger than 1. Each of the tile decoding units 54-1 to 54-N carries out a process of carrying out a prediction difference decoding process on bit sequence data per tile distributed thereto by the parallel processing starting unit 52 by referring to a decoded image which is stored in the motion-compensated prediction frame memory 53 and on which a filtering process is performed, thereby generating a tile decoded image (decoded image per tile), and also outputting coding parameters which the tile decoding unit uses when carrying out the prediction difference decoding process (coding parameters multiplexed into the bit sequence data) to the corresponding one of tile loop filter units 56-1 to 56-N. Each of the tile decoding units also carries out a process of, when the encoded data about the filter parameters showing a filter per tile is multiplexed into the bit sequence data, outputting the encoded data about the filter parameters to the corresponding one of the tile loop filter units 56-1 to 56-N. The tile decoding units 54-1 to 54-N construct tile decoders.

A filter parameter decoding unit 55 carries out a process of, when the filter parameter share flag separated by the variable length code demultiplexing unit 51 shows that a filter is made to be shared among the tiles, decoding the encoded data outputted thereto from the variable length code demultiplexing unit 51 into the filter parameters showing the filter per frame, and outputs the filter parameters to the tile loop filter units 56-1 to 56-N.

A tile loop filtering device 56 is equipped with the N tile loop filter units 56-1 to 56-N, and each of the N tile loop filter units 56-1 to 56-N carries out a filtering process independently. N is an integer equal to or larger than 1. Each of the tile loop filter units 56-1 to 56-N carries out a process of, when the filter parameter share flag separated by the variable length code demultiplexing unit 51 shows that a filter is made not to be shared among the tiles, decodes the encoded data outputted from the corresponding one of the tile decoding units 54-1 to 54-N into the filter parameters showing a filter per tile, and performing a filtering process on the tile decoded image generated by the corresponding one of the tile decoding units 54-1 to 54-N by using the filter per tile shown by the filter parameter. In contrast, when the filter parameter share flag shows that a filter is made to be shared among the tiles, each of the tile loop filter units carries out a process of performing a filtering process on the tile decoded image generated by the corresponding one of the tile decoding units 54-1 to 54-N by using the filter per frame shown by the filter parameters outputted from the filter parameter decoding unit 55. The tile loop filter units 56-1 to 56-N construct tile filters.

A tile partitioning control unit 57 carries out a process of grasping the partitioning state of the tiles from the tile partitioning control information separated by the variable length code demultiplexing unit 51 and controlling the arrangement of each tile decoded image in a parallel processing ending unit 58 in such a way that a decoded image corresponding to the original inputted image is obtained. The parallel processing ending unit 58 carries out a process of combining the tile decoded images on which the filtering process are respectively performed by the tile loop filter units 56-1 to 56-N to generate a decoded image per frame under the control of the tile partitioning control unit 57, and storing the decoded image in the motion-compensated prediction frame memory 53. A decoded image storage is comprised of the tile partitioning control unit 57 and the parallel processing ending unit 58.

In the example shown in FIG. 6, it is assumed that each of the variable length code demultiplexing unit 51, the parallel processing starting unit 52, the motion-compensated prediction frame memory 53, the tile decoding device 54, the filter parameter decoding unit 55, the tile loop filtering device 56, the tile partitioning control unit 57, and the parallel processing ending unit 58, which are the components of the video decoding device, consists of dedicated hardware (e.g., a semiconductor integrated circuit equipped with a CPU, a one chip microcomputer, or the like). In a case in which the video decoding device consists of a computer, a program in which the processes carried out by the variable length code demultiplexing unit 51, the parallel processing starting unit 52, the tile decoding device 54, the filter parameter decoding unit 55, the tile loop filtering device 56, the tile partitioning control unit 57, and the parallel processing ending unit 58 are described can be stored in a memory of the computer, and a CPU of the computer can be made to execute the program stored in the memory. FIG. 7 is a flow chart showing processing (video decoding method) carried out by the video decoding device in accordance with Embodiment 1 of the present invention.

FIG. 8 is a block diagram showing each tile decoding unit 54-n (n=1, 2, . . . , N) of the video encoding method in accordance with Embodiment 1 of the present invention. Referring to FIG. 8, a variable length decoder 61 carries out a process of variable-length-decoding the compressed data associated with a decoding target block (a block corresponding to a coding target block) which is a unit for prediction process, the coding parameters (the coding mode, the intra prediction parameter (when the coding mode is an intra coding mode), the inter prediction parameter (when the coding mode is an inter coding mode), and the prediction difference coding parameter), and the motion vector (when the coding mode is an inter coding mode) from bit sequence data per tile distributed thereto by the parallel processing starting unit 52, and outputting the coding parameters variable-length-decoded and the encoded data about the filter parameters showing the filter per tile which is multiplexed into the bit sequence data per tile to the tile loop filter unit 56-n.

A select switch 62 carries out a process of, when the coding mode variable-length-decoded by the variable length decoder 61 is an intra coding mode, outputting the intra prediction parameter variable-length-decoded by the variable length decoder 61 to an intra prediction unit 63, and, when the coding mode variable-length-decoded by the variable length decoder 61 is an inter coding mode, outputting the inter prediction parameter and the motion vector which are variable-length-decoded by the variable length decoder 61 to a motion compensator 64.

An intra prediction unit 63 carries out a process of performing an intra prediction process on the decoding target block by using the intra prediction parameter outputted from the select switch 62 while referring to a decoded image stored in a tile decoded image memory 67 to generate an intra prediction image. The motion compensator 64 carries out a process of performing an inter prediction process on the decoding target block by using the motion vector and the inter prediction parameter which are outputted from the select switch 62 while referring to a decoded image which is stored in the motion-compensated prediction frame memory 53 and on which a filtering process is performed to generate an inter prediction image.

An inverse quantization/inverse transformation unit 65 carries out a process of referring to the prediction difference coding parameter variable-length-decoded by the variable length decoder 61 and inverse-quantizing the compressed data variable-length-decoded by the variable length decoder 61, and also referring to the prediction difference coding parameter and performing an inverse orthogonal transformation process on the transform coefficients which are the compressed data inverse-quantized to calculate a decoded prediction difference signal. An adding unit 66 carries out a process of adding the decoded prediction difference signal calculated by the inverse quantization/inverse transformation unit 65 and the intra prediction image generated by the intra prediction unit 63 or the inter prediction image generated by the motion compensator 64 to calculate a decoded image. The tile decoded image memory 67 is a recording medium for storing the decoded image calculated by the adding unit 66. When the decoding processing on all the coding units (CUs) belonging to the tile is completed, the decoded image about the single tile stored in the memory is outputted to the outside of each tile decoding unit 54-n. FIG. 9 is a flow chart showing the process carried out by each tile decoding unit 54-n (n=1, 2, . . . , N) of the video encoding method in accordance with Embodiment 1 of the present invention.

FIG. 10 is a block diagram showing each tile loop filter unit 56-n (n=1, 2, . . . , N) of the video encoding method in accordance with Embodiment 1 of the present invention. In FIG. 10, a tile filter parameter decoding unit 71 carries out a process of, when the filter parameter share flag separated by the variable length code demultiplexing unit 51 shows that a filter is made not to be shared among the tiles, decoding the encoded data about the filter parameters showing a filter per tile, the encoded data being separated by the variable length code demultiplexing unit 51, and outputting the filter parameters which are the result of the decoding to a loop filter performing unit 72.

The loop filter performing unit 72 carries out a filtering process on the tile decoded image outputted from the tile decoding unit 54-n by using both the filter per tile shown by the filter parameters decoded by the tile filter parameter decoding unit 71 and the coding parameters variable-length-decoded by the variable length decoder 61 of the tile decoding unit 54-n when the filter parameter share flag separated by the variable length code demultiplexing unit 51 shows that a filter is made not to be shared among the tiles. In contrast, when the filter parameter share flag shows that a filter is made to be shared among the tiles, the loop filter performing unit carries out a filtering process on the tile decoded image outputted from the tile decoding unit 54-n by using both the filter per frame shown by the filter parameters outputted from the filter parameter decoding unit 55 and the coding parameters variable-length-decoded by the variable length decoder 61 of the tile decoding unit 54-n.

Next, operations will be explained. In this Embodiment 1, a case in which the video encoding device receives each frame image of a video as an inputted image, carries out a motion-compensated prediction between adjacent frames, and performs a compression process with an orthogonal transformation and quantization on an acquired prediction difference signal, and, after that, carries out variable length encoding to generate a bitstream, and the video decoding device decodes the bitstream outputted from the video encoding device will be explained.

First, the operation of the video encoding device shown in FIG. 1 will be explained. The video encoding device shown in FIG. 1 is characterized that the video encoding device partitions the inputted image into rectangular regions called tiles and eliminates the interdependence between encoding and decoding processes on tiles, thereby making it possible to carry out parallel processing on a tile level in the encoding processing. Simultaneously, the decoding processing is characterized in that an encoded result which makes it possible to perform parallel processing on a tile level is outputted.

In the encoding process on a video signal, the amount of information to be processed generally increases in proportion to the space resolution, the time resolution, and the luminance level resolution of the inputted image. There is a tendency for the time resolution, the space resolution, and the luminance level resolution of a video signal to increase with evolution of image capturing apparatus, storage apparatus, transmission apparatus, etc., and there is a possibility that it becomes impossible to carry out an encoding process and a decoding process at a predetermined speed in existing video encoding devices and existing video decoding devices. As one solution to this problematic situation, there can be considered a method of spatially partitioning the inputted image into parts and providing the parts for a plurality of encoding units respectively, and causing the plurality of encoding units to operate in parallel, thereby reducing the processing time. In this case, in also a decoding side, by providing a plurality of encoded data obtained through partitioning for different decoders respectively, and causing the plurality of decoders to carry out decoding processes in parallel, the processing time can be reduced.

In this case, in order to perform the parallel processing at a higher speed, it is desirable to reduce the frequency with which a reference to information is made among encoding units operating in parallel and the frequency with which a reference to information is made among decoders operating in parallel as much as possible. This reference includes a reference to a pixel for an intra-frame prediction, a reference to motion vector information for a motion vector prediction, and a reference to coding parameter information of an adjacent region for entropy encoding, and also includes a state of a symbol occurrence probability estimation model in entropy encoding. When a certain region is encoded, if adjacent regions are processed in parallel by other encoding units, synchronization among the processes is needed in order to refer to these pieces of information. Therefore, referring to the pieces of information is undesirable for improving the parallel performance. Further, in addition to the above-mentioned pieces of information, a pixel in an adjacent region needs to be referred to when carrying out a filtering process on a screen.

Therefore, in order to improve the performance of the parallel processing including a filtering process, a pixel in an adjacent region currently being processed in parallel by another encoding unit or decoder should not be referred to also in the filtering process.

In this Embodiment 1, in order to implement a function required of such parallel processing for video encoding, the video encoding device is constructed in such a way as to partition the inputted image into rectangular regions called tiles, thereby enabling parallelization of a prediction difference encoding process on a tile level and also enabling parallelization of the determination of a filter coefficient and a filtering process, and to switch whether or not to parallelize the determination of a filter coefficient.

A video signal having a format which is to be processed by the video encoding device shown in FIG. 1 can be a YUV signal which consists of a luminance signal and two color difference signals or a color video signal in arbitrary color space, such as an RGB signal, outputted from a digital image sensor, or an arbitrary video signal, such as a monochrome image signal or an infrared image signal, in which each video frame consists of a series of digital samples (pixels) in two dimensions, horizontal and vertical. The gradation of each pixel can be a 8-bit, 10-bit, or 12-bit one. In the following explanation, for convenience' sake, the video signal of the inputted image is a YUV signal unless otherwise specified. Further, a case in which signals having a 4:2:0 format which are subsampled are handled as the two color difference components U and V with respect to the luminance component Y will be described. Further, a data unit to be processed which corresponds to each frame of the video signal is referred to as a "picture." In this Embodiment 1, although an explanation will be made in which a "picture" is a video frame signal on which progressive scanning is carried out, a "picture" can be alternatively a field image signal which is a unit which constructs a video frame when the video signal is an interlaced signal.

First, the encoding control unit 1 outputs tile partitioning control information indicating a partitioning state at a time of partitioning an inputted image shown by a video signal into tiles each having a predetermined size to the tile partitioning control unit 2, the parallel processing ending unit 8, and the variable length code multiplexing unit 9. The encoding control unit 1 also outputs a filter parameter share flag showing whether a filter for use in a filtering process on a local decoded image per tile generated by each of the tile encoding units 5-1 to 5-N is made to be shared among the tiles (when a filter is made to be shared among the tiles, the flag is set to ON, otherwise, the flag is set to OFF) to the tile partitioning control unit 2, the loop filter control unit 6, the tile loop filter units 7-1 to 7-N, and the variable length code multiplexing unit 9.

When receiving the tile partitioning control information from the encoding control unit 1, the tile partitioning control unit 2 controls the partitioning of the inputted image by the parallel processing starting unit 3 in such a way that the partitioning matches the partitioning state indicated by the tile partitioning control information. When the video signal showing the inputted image is inputted thereto, the parallel processing starting unit 3 partitions the inputted image into tiles each having the predetermined size according to a command from the tile partitioning control unit 2, and distributes the tiles obtained through the partitioning among the tile encoding units 5-1 to 5-N (step ST1 of FIG. 2). The information for controlling the tile partitioning can be expressed in various forms. For example, the tile partitioning can be specified by defining a CU (Largest CU: LCU) having a maximum size as a minimum unit of the tiles, and providing information showing how many LCUs are included.

FIG. 11 is an explanatory drawing showing an example of the LCU partitioning and the tile partitioning of an image. The inputted image is partitioned into LCUs arranged in a grid with an upper left corner being set as a start point, as shown in FIG. 11. When the height and the width of the image and the length of one side of each LCU are specified, this partitioning is determined uniquely. Because the tile partitioning is carried out with each LCU being defined as a minimum unit, the width and the height of each tile can be expressed by the number of LCUs included in the horizontal side and the number of LCUs included in the vertical side respectively. Therefore, ColumnWidthArray [ ] and RowHeightArray[ ] which are two integer series are provided as the tile partitioning control information. ColumnWidthArray[ ] is an integer series in which the widths of tiles arranged in a horizontal direction, each tile width being expressed by the number of LCUs, are listed in order starting with the width of the leftmost tile. RowHeightArray[ ] is an integer series in which the heights of tiles arranged in a vertical direction, each tile height being expressed by the number of LCUs, are listed in order starting with the height of the uppermost tile.

For example, when the tile partitioning is specified as ColumnWidthArray[ ]={4, 3, 6, 4, 6} and RowHeightArray[ ]={3, 3, 4, 5}, the tile partitioning is carried out as shown in FIG. 11. Further, because the LCU partitioning of the inputted image is determined uniquely from the image size and the length of one side of an LCU, even if the width of the rightmost column and the height of the lowermost row are not specified, the LCU partitioning can be determined uniquely from the information about the other rows and the other columns. Therefore, these pieces of information can be omitted. In that case, the tile partitioning can be simply specified as ColumnWidthArray[ ]={4, 3, 6, 4} and RowHeightArray[ ]={3, 3, 4}. Although the example of partitioning a frame into tiles arranged in a grid as shown in FIG. 11 is explained above, more flexible tile partitioning which provides an arrangement of tiles arranged in a pattern different from a grid one, as shown in FIG. 19, can be alternatively carried out. In such a case, the tile partitioning control information must also be information which can express such a partitioning state. For example, the tile partitioning control information can be expressed by the coordinates of the pixels or the LCUs at the upper left ends of the tiles, and the pieces of information about the widths and the heights of the tiles. Further, the tile partitioning state can be maintained at the same state throughout the entire sequence, or can be alternatively changed on a per frame basis or on a per picture, such as intra picture or inter picture, basis. When the same tile partitioning state is used throughout the entire sequence, the tile partitioning information should just be multiplexed into the header of the sequence. When the tile partitioning state is changed in the course of the sequence, the tile partitioning information should just be multiplexed into the picture header of a picture which is to be processed immediately after the tile partitioning state is changed.

The video encoding device shown in FIG. 1 can also perform an operation of not carrying out any parallel processing on all tile levels. In this case, the tile partitioning control information can be specified in such a way as to show that the number of tiles is one and the size of the tile matches the size of the inputted image, or all the parallel processing can be turned off according to a tile encoding parallel flag or a tile loop filter unit parallel flag. As an alternative, another flag can be multiplexed into the tile partitioning control information to change whether or not to perform the parallel processing according to the flag. Although a case of carrying out the parallel processing on a tile level will be explained hereafter, an operation in a case of not carrying out the parallel processing is equivalent to an operation in a case in which the entire frame is a single tile.

The parallel processing starting unit 3 partitions the inputted image into tiles each having the predetermined size and distributes the tiles obtained through the partitioning among the tile encoding units 5-1 to 5-N, as mentioned above. In this case, because the number of tile encoding units 5-1 to 5-N is an upper limit to the number of tiles which can be simultaneously processed in parallel, two or more tiles can be outputted to each tile encoding unit 5-n when the number of tiles is larger than the number of tile encoding units 5-1 to 5-N. For example, when the number of tiles is 7 and the number of tile encoding units is 3, a process on each of tiles 1 to 3 is assigned to the tile encoding unit 5-1, a process on each of tiles 4 and 5 is assigned to the tile encoding unit 5-2, and a process on each of tiles 6 and 7 is assigned to the tile encoding unit 5-3. Further, even when the number of tiles obtained through the partitioning is less than the number of tile encoding units, control can be carried out in such a way that two or more tiles are assigned to each tile encoding unit.

When the filter parameter share flag outputted from the encoding control unit 1 is OFF (step ST2), the process of each tile encoding unit 5-n and the process of each tile loop filter unit 7-n are carried out continuously (steps ST3 and ST4). At this time, the process by each tile encoding unit 5-n and the process by each tile loop filter unit 7-n are repeatedly carried out a number of times corresponding to the number of tiles (steps ST5 and ST6), and the process of each loop is carried out independently. Therefore, the processes of loops whose number is equal to the number of tile encoding units 5-n can be carried out in parallel. Because it is possible to parallelize the process of encoding each tile and the process of loop filtering each tile together when the filter parameter share flag is OFF, it becomes able to further improve the parallelism of the encoding process. Further, because the filter parameters are localized, this leads to an improvement in the image quality when the picture has a property greatly varying for each image region. The details of the process carried out by each tile encoding unit 5-n and the process carried out by the corresponding tile loop filter unit 7-n will be mentioned below.

In contrast, when the filter parameter share flag outputted from the encoding control unit 1 is ON (step ST2), each tile encoding unit 5-n repeatedly carries out the encoding process of encoding a tile until the encoding process on all tiles is completed (steps ST8 to ST10), and, when the encoding process on all the tiles is completed, the loop filter control unit 6 acquires a local decoded image per frame which is the one of the entire screen from a tile local decoded image generated by each tile encoding unit 5-n, determines a filter per frame suitable for the local decoded image per frame and outputs filter parameters showing the filter to the tile loop filter unit 7-n and the parallel processing ending unit 8 (step ST11). The details of the process carried out by the loop filter control unit 6 will be mentioned below. When receiving the filter parameters from the loop filter control unit 6, the tile loop filter unit 7-n repeatedly carries out a filtering process on the tile local decoded image until filtering processes on all tile local decoded images are completed (steps ST12 to ST14).

Because the filter parameters are shared among all the tiles when the filter parameter share flag is ON, the code amount of the filter parameters can be reduced as compared with the case in which the filter parameter share flag is OFF. Further, designing the filter parameters by using the local decoded image of the entire frame is more effective for an improvement in the image quality, and so on. However, when the filter parameter share flag is ON, the parallel performance of the encoding process drops because there is a necessity to temporarily establish synchronization in the parallel processing between each tile encoding unit 5-n and the corresponding tile loop filter unit 7-n. In the video decoding device which will be mentioned below, even when decoding a bitstream generated at a time when the filter parameter share flag is ON, the parallel performance does not drop.

When the filtering processes by the tile loop filter units 7-1 to 7-N are completed, the parallel processing ending unit 8 combines the tile local decoded images on which the filtering processes are respectively performed by the tile loop filter units 7-1 to 7-N to generate a local decoded image per frame according to the tile partitioning control information outputted from the encoding control unit 1, and stores the local decoded image in the motion-compensated prediction frame memory 4, and also outputs the bit sequence data outputted from each of the tile loop filter units 7-1 to 7-N to the variable length code multiplexing unit 9. Further, when the filter parameter share flag outputted from the encoding control unit 1 is ON, the parallel processing ending unit 8 outputs encoded data about the filter parameters which is outputted from the loop filter control unit 6 and which shows the filter per frame to the variable length code multiplexing unit 9.

When the filter parameter share flag outputted from the encoding control unit 1 is OFF, the variable length code multiplexing unit 9 multiplexes the bit sequence data outputted from the parallel processing ending unit 8 (bit sequence data generated by each of the tile encoding units 5-1 to 5-N), and the tile partitioning control information and the filter parameter share flag which are outputted from the encoding control unit 1 by using a predetermined method to generate a bitstream (step ST7). In contrast, when the filter parameter share flag is ON, the variable length code multiplexing unit multiplexes the bit sequence data outputted from the parallel processing ending unit 8 (bit sequence data generated by each of the tile encoding units 5-1 to 5-N), the tile partitioning control information and the filter parameter share flag which are outputted from the encoding control unit 1, and the encoded data about the filter parameters which is outputted from the loop filter control unit 6 and which shows the filter per frame by using a predetermined method to generate a bitstream (step ST15).

Next, the process carried out by each of the tile encoding units 5-1 to 5-N in the tile encoding device 5 will be explained in detail. The tile encoding device 5 is equipped with the N tile encoding units 5-1 to 5-N, and each of the N tile encoding units 5-1 to 5-N carries out a prediction difference encoding process (prediction difference encoding process without making any reference to information about other tiles) independently.

The tile encoding control unit 21 of each tile encoding unit 5-n determines the size of a largest coding block (LCU) and an upper limit on the number of hierarchical layers for partitioning, and processes of steps ST21 to ST31 of FIG. 4 are carried out on the image region of each LCU in a specific order. First, the tile encoding control unit 21 outputs a command to hierarchically partition a tile into coding target blocks each having a coding block size until the number of layers reaches the upper limit determined as above of the number of hierarchical layers for partitioning to the block partitioning unit 22, and also determines a coding mode for each of the coding target blocks (step ST21 of FIG. 4). The block partitioning unit 22 partitions each tile distributed thereto by the parallel processing starting unit 3 into blocks each having the coding block size (blocks each of which is a unit for prediction process) according to the command from the tile encoding control unit 21, and outputs a coding target block which is a unit for prediction process. FIG. 12 is an explanatory drawing showing an example in which each largest coding block is hierarchically partitioned into a plurality of coding target blocks. Referring to FIG. 12, each largest coding block is a coding target block whose luminance component, which is shown by "0th hierarchical layer", has a size of (L.sup.0, M.sup.0).

By carrying out the hierarchical partitioning with each block having the LCU size being set as a start point until the depth of the hierarchy reaches a predetermined depth which is set separately according to a quadtree structure, the coding target blocks can be acquired. At the depth of n, each coding target block is an image region having a size of (L.sup.n, M.sup.n). In this example, although L.sup.n and M.sup.n can be the same as or differ from each other, the case of L.sup.n=M.sup.n is shown in FIG. 12. Hereafter, the coding block size determined by the tile encoding control unit 21 is defined as the size of (L.sup.n, M.sup.n) in the luminance component of each coding target block. Because quadtree partitioning is carried out, (L.sup.n+1,M.sup.n+1)=(L.sup.n/2, M.sup.n/2) is always established.

In the case of a color video image signal (4:4:4 format), such as an RGB signal, in which all the color components have the same sample number, all the color components have a size of (L.sup.n, M.sup.n), while in the case of handling a 4:2:0 format, a corresponding color difference component has a coding block size of (L.sup.n/2, M.sup.n/2). Hereafter, each coding target block in the nth hierarchical layer is expressed as B.sup.n, and a coding mode selectable for each coding target block B.sup.n is expressed as m(B.sup.n). In the case of a color video signal which consists of a plurality of color components, the coding mode m(B.sup.n) can be formed in such a way that an individual mode is used for each color component, or can be formed in such a way that a common mode is used for all the color components. Hereafter, an explanation will be made by assuming that the coding mode indicates the one for the luminance component of a coding block having a 4:2:0 format in a YUV signal unless otherwise specified.

The coding mode m(B.sup.n) can be one of one or more intra coding modes (generically referred to as "INTRA") or one or more inter coding modes (generically referred to as "INTER"), and the tile encoding control unit 21 selects a coding mode with the highest coding efficiency for each coding target block B.sup.n from among all the coding modes available in the picture currently being processed or a subset of these coding modes. Each coding target block B.sup.n is further partitioned into one or more units for prediction process (partitions) by the block partitioning unit 22, as shown in FIG. 13. Hereafter, each partition belonging to a coding target block B.sup.n is expressed as P.sub.i.sup.n (i shows a partition number in the nth hierarchical layer). How the partitioning of each coding target block B.sup.n into partitions is carried out is included as information in the coding mode m(B.sup.n). Although a prediction process is carried out on every partition P.sub.i.sup.n according to the coding mode m(B.sup.n), and a prediction parameter is selected for each coding target block B.sup.n or each partition P.sub.i.sup.n.

The tile encoding control unit 21 generates such a block partitioning state as shown in, for example, FIG. 13 for each largest coding block, and then determines coding target blocks. Hatched portions shown in FIG. 13(a) show a distribution of partitions after the partitioning, and FIG. 13(b) shows a situation in which coding modes m(B.sup.n) are respectively assigned to the partitions according to the hierarchical layer partitioning by using a quadtree graph. Each node enclosed by .quadrature. shown in FIG. 13(b) is a node (coding target block) to which a coding mode m(B.sup.n) is assigned.

When the coding mode m(B.sup.n) determined by the tile encoding control unit 21 is an intra coding mode (in the case of m(B.sup.n).di-elect cons.INTRA), the select switch 23 outputs the coding target block B.sup.n outputted from the block partitioning unit 22 to the intra prediction unit 24. In contrast, when the coding mode m(B.sup.n) determined by the tile encoding control unit 21 is an inter coding mode (in the case of m(B.sup.n).di-elect cons.INTER), the select switch outputs the coding target block B.sup.n outputted from the block partitioning unit 22 to the motion-compensated prediction unit 25.

When the coding mode m(B.sup.n) determined by the tile encoding control unit 21 is an intra coding mode (in the case of m(B.sup.n).di-elect cons.INTRA), and the intra prediction unit 24 receives the coding target block B.sup.n from the select switch 23 (step ST22), the intra prediction unit 24 carries out an intra prediction process on each partition P.sub.i.sup.n in the coding target block B.sup.n by using the intra prediction parameter determined by the tile encoding control unit 21 while referring to the local decoded image stored in the tile local decoded image memory 30, to generate an intra prediction image P.sub.INTRAi.sup.n (step ST23).

Because the local decoded images stored in the tile local decoded image memory 30 are only the local decoded images of blocks belonging to the current tile, an intra prediction process which is the same as an intra prediction on a screen edge which does not need any reference to an adjacent pixel is carried out on a tile end. Further, because the video decoding device shown in FIG. 6 needs to generate an intra prediction image which is completely the same as the intra prediction image P.sub.INTRAi.sup.n, the intra prediction parameter used for the generation of the intra prediction image P.sub.INTRAi.sup.n is outputted from the tile encoding control unit 21 to the variable length encoding unit 31 and is multiplexed into the bitstream.

When the coding mode m(B.sup.n) determined by the tile encoding control unit 21 is an inter coding mode (in the case of m(B.sup.n).di-elect cons.INTER) and the motion-compensated prediction unit 25 receives the coding target block B.sup.n from the select switch 23 (step ST22), the motion-compensated prediction unit 25 compares each partition P.sub.i.sup.n in the coding target block B.sup.n with the local decoded image which is stored in the motion-compensated prediction frame memory 4 and on which a filtering process is performed to search for a motion vector, and carries out the inter prediction process on each partition P.sub.i.sup.n in the coding target block B.sup.n by using both the motion vector and the inter prediction parameter determined by the tile encoding control unit 21 to generate an inter prediction image P.sub.INTERi.sup.n (step ST24). Because the video decoding device shown in FIG. 6 needs to generate an inter prediction image which is completely the same as the inter prediction image P.sub.INTERi.sup.n, the inter prediction parameter used for the generation of the inter prediction image P.sub.INTERi.sup.n is outputted from the tile encoding control unit 21 to the variable length encoding unit 31 and is multiplexed into the bitstream, and the motion vector is outputted from the motion-compensated prediction unit 25 to the variable length encoding unit 31 and is multiplexed into the bitstream.