Information processing apparatus, information processing method, and information processing system

Ozaki Nov

U.S. patent number 10,489,929 [Application Number 15/539,718] was granted by the patent office on 2019-11-26 for information processing apparatus, information processing method, and information processing system. This patent grant is currently assigned to SONY CORPORATION. The grantee listed for this patent is SONY CORPORATION. Invention is credited to Koji Ozaki.

View All Diagrams

| United States Patent | 10,489,929 |

| Ozaki | November 26, 2019 |

Information processing apparatus, information processing method, and information processing system

Abstract

Provided is an information processing apparatus, including a position estimating unit configured to estimate a position of a second imaging apparatus on the basis of a first captured image captured by a first imaging apparatus whose position is specified and a second captured image captured at a time corresponding to the first captured image by the second imaging apparatus serving as a position estimation target.

| Inventors: | Ozaki; Koji (Kanagawa, JP) | ||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Applicant: |

|

||||||||||

| Assignee: | SONY CORPORATION (Tokyo,

JP) |

||||||||||

| Family ID: | 56355772 | ||||||||||

| Appl. No.: | 15/539,718 | ||||||||||

| Filed: | October 9, 2015 | ||||||||||

| PCT Filed: | October 09, 2015 | ||||||||||

| PCT No.: | PCT/JP2015/078843 | ||||||||||

| 371(c)(1),(2),(4) Date: | June 26, 2017 | ||||||||||

| PCT Pub. No.: | WO2016/111066 | ||||||||||

| PCT Pub. Date: | July 14, 2016 |

Prior Publication Data

| Document Identifier | Publication Date | |

|---|---|---|

| US 20180012373 A1 | Jan 11, 2018 | |

Foreign Application Priority Data

| Jan 7, 2015 [JP] | 2015-001409 | |||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | G06K 9/00805 (20130101); G08G 1/005 (20130101); G06K 9/00771 (20130101); G01B 11/00 (20130101); G06T 7/73 (20170101); G08G 1/166 (20130101); G06T 7/70 (20170101); H04N 5/247 (20130101); G06T 2207/30244 (20130101); G06T 2207/30232 (20130101) |

| Current International Class: | G06T 7/70 (20170101); G06T 7/73 (20170101); G08G 1/16 (20060101); H04N 5/247 (20060101); G06K 9/00 (20060101); G01B 11/00 (20060101); G08G 1/005 (20060101) |

References Cited [Referenced By]

U.S. Patent Documents

| 2003/0156189 | August 2003 | Utsumi et al. |

| 2006/0115117 | June 2006 | Nagaoka et al. |

| 2017/0256042 | September 2017 | Torma |

| 103975221 | Aug 2014 | CN | |||

| 1197294 | Jan 2015 | HK | |||

| 2003-279315 | Oct 2003 | JP | |||

| 2005-098927 | Apr 2005 | JP | |||

| 2005-241323 | Sep 2005 | JP | |||

| 2005241323 | Sep 2005 | JP | |||

| 2006-153768 | Jun 2006 | JP | |||

| 2014-137321 | Jul 2014 | JP | |||

| 2014236426 | Dec 2014 | JP | |||

| 2013/088626 | Jun 2013 | WO | |||

| WO-2013088626 | Jun 2013 | WO | |||

Other References

|

International Search Report and Written Opinion of PCT Application No. PCT/JP2015/078843, dated Jan. 12, 2016, 02 pages of English Translation and 08 pages of ISRWO. cited by applicant. |

Primary Examiner: Bernardi; Brenda C

Attorney, Agent or Firm: Chip Law Group

Claims

The invention claimed is:

1. An information processing apparatus, comprising: at least one processor configured to: determine a position of a subject in at least one of a first image or a second image; and determine a position of a first imaging apparatus based on the determined position of the subject and a specified position of a second imaging apparatus, wherein the second image is captured by the second imaging apparatus, and the first image is captured by the first imaging apparatus at a time that is same as a time of capture of the second image.

2. The information processing apparatus according to claim 1, wherein the at least one processor is further configured to determine the position of the first imaging apparatus as the specified position of the second imaging apparatus, based on the subject included in each of the first image and the second image.

3. The information processing apparatus according to claim 2, wherein the at least one processor is further configured to determine the position of the first imaging apparatus as the specified position of the second imaging apparatus, based on the second image that includes the first imaging apparatus.

4. The information processing apparatus according to claim 2, wherein the at least one processor is further configured to determine the position of the first imaging apparatus as the specified position of the second imaging apparatus, based on the first image that includes the second imaging apparatus.

5. The information processing apparatus according to claim 2, wherein the at least one processor is further configured to determine the position of the first imaging apparatus as one of the position of the subject or the specified position of the second imaging apparatus.

6. The information processing apparatus according to claim 1, wherein the at least one processor is further configured to: determine that the subject is included in the first image and the second image; and determine the position of the first imaging apparatus based on the determination that the subject is included in the first image and the second image, first relative position information indicating a relative position of the subject with respect to the first imaging apparatus, and second relative position information indicating a relative position of the subject with respect to the second imaging apparatus.

7. The information processing apparatus according to claim 6, wherein the at least one processor is further configured to determine the position of the first imaging apparatus as a relative position of the first imaging apparatus with respect to the specified position of the second imaging apparatus.

8. The information processing apparatus according to claim 6, wherein the at least one processor is further configured to determine the position of the first imaging apparatus as an absolute position of the first imaging apparatus, based on the specified position of the second imaging apparatus and based on a relative position of the first imaging apparatus with respect to the specified position of the second imaging apparatus.

9. The information processing apparatus according to claim 1, wherein the at least one processor is further configured to: determine that the subject is included in the first image and the second image; determine that the second image includes the first imaging apparatus; and determine the position of the first imaging apparatus based on: the determination that the subject is included in the first image and the second image, the determination that the second image includes the first imaging apparatus, and third relative position information indicating a relative position of the first imaging apparatus with respect to the second imaging apparatus.

10. The information processing apparatus according to claim 1, wherein the at least one processor is further configured to: determine that the subject is included in the first image and the second image; determine that the first image includes the second imaging apparatus; and determine the position of the first imaging apparatus based on: the determination that the subject is included in the first image and the second image, the determination that the first image includes the second imaging apparatus, and fourth relative position information indicating a relative position of the second imaging apparatus with respect to the first imaging apparatus.

11. The information processing apparatus according to claim 1, wherein the at least one processor is further configured to: determine a narrowed-down position corresponding to the first imaging apparatus based on information associated with the first imaging apparatus; and determine the position of the first imaging apparatus based on the second image captured by the second imaging apparatus, wherein the second imaging apparatus is at a position corresponding to the narrowed-down position.

12. The information processing apparatus according to claim 1, wherein the processor is further configured to transmit information to an external device based on the determined position of the first imaging apparatus.

13. The information processing apparatus according to claim 1, further comprising an imaging device configured to generate a captured image, wherein the at least one processor is further configured to utilize the captured image as one of the first image or the second image.

14. The information processing apparatus according to claim 1, wherein the first imaging apparatus is in a vehicle.

15. The information processing apparatus according to claim 6, wherein the at least one processor is further configured to determine the position of the first imaging apparatus based on the first relative position information stored in a recording medium.

16. The information processing apparatus according to claim 1, wherein the at least one processor is further configured to detect the subject from the first image.

17. The information processing apparatus according to claim 6, wherein the at least one processor is further configured to determine: a distance between the first imaging apparatus and the subject based on a magnitude of an imaging range of the first imaging apparatus, and further based on information of a lens of the first imaging apparatus, a direction associated with the subject with respect to the first imaging apparatus, based on a shape of the imaging range, and the relative position of the subject with respect to the first imaging apparatus based on the distance between the first imaging apparatus and the subject, and the direction associated with the subject.

18. An information processing method, comprising: in an information processing apparatus: determining a position of a subject in at least one of a first image or a second image; and determining a position of a first imaging apparatus based on the determined position of the subject and a specified position of a second imaging apparatus, wherein the second image is captured by the second imaging apparatus, and the first image is captured by the first imaging apparatus at a time that is same as a time of capture of the second image.

19. An information processing system, comprising: a first imaging apparatus having a specified position, wherein the first imaging apparatus is configured to capture a first image; a second imaging apparatus that is a position estimation target, wherein the second imaging apparatus is configured to capture a second image; and an information processing apparatus that includes at least one processor configured to: determine a position of a subject in at least one of the first image or the second image; and determine a position of the second imaging apparatus based on the determined position of the subject and the specified position of the first imaging apparatus, wherein the first image is captured by the first imaging apparatus at a time that is same as a time of capture of the second image.

20. An information processing apparatus, comprising: at least one processor configured to: determine a position of a first imaging apparatus based on a first image and a second image, wherein the second image is captured by a second imaging apparatus having a specified position, the first image is captured by the first imaging apparatus at a time that is same as a time of capture of the second image, and the position of the first imaging apparatus is determined as the specified position of the second imaging apparatus, based on one of: the second image that includes the first imaging apparatus, or the first image that includes the second imaging apparatus.

Description

CROSS REFERENCE TO RELATED APPLICATIONS

This application is a U.S. National Phase of International Patent Application No. PCT/JP2015/078843 filed on Oct. 9, 2015, which claims priority benefit of Japanese Patent Application No. JP 2015-001409 filed in the Japan Patent Office on Jan. 7, 2015. Each of the above-referenced applications is hereby incorporated herein by reference in its entirety.

TECHNICAL FIELD

The present disclosure relates to an information processing apparatus, an information processing method, and an information processing system.

BACKGROUND ART

Techniques of comparing an image captured by an imaging apparatus (hereinafter referred to as a "captured image") with other images and estimating a position at which the captured image is captured have been developed. As a technique of comparing a captured image with other images and estimating the position at which the captured image is captured, for example, a technique described in the following Patent Literature 1 is known.

CITATION LIST

Patent Literature

Patent Literature 1: JP 2011-113245A

DISCLOSURE OF INVENTION

Technical Problem

For example, when the technique described in Patent Literature 1 is used, a captured image is compared with images stored in a database. For example, in the technique described in Patent Literature 1, the presence or absence of comparison is determined using either or both of a date and time and weather conditions as conditions, and a priority with which the comparison is performed is changed. Therefore, for example, when the technique described in Patent Literature 1 is used, it is possible to set an image that is close to the position at which the captured image is captured among the images stored in the database as a comparison target image, and thus there is a possibility that the position at which the captured image was taken will be able to be estimated.

However, for example, when the technique described in Patent Literature 1 is used, the images compared with the captured image are not images captured at the same time as the captured image or at a time that can be regarded as the same time. For this reason, for example, when the technique described in Patent Literature 1 is used, although the comparison is performed while changing the priority, a time and weather conditions in which the images compared with the captured image are captured may be different. Therefore, for example, when the technique described in Patent Literature 1 is used, even if an image captured at the same location as the captured image is included in the database, for example, it is difficult to compare it with the captured image because a shadowing method differs, a hiding relation with other objects differs even when imaging is performed in the same direction, or there is an object which is included in one image but not included in the other image even in both angles of view. Therefore, for example, it is not necessarily possible to improve the accuracy of estimating the position at which the captured image is captured even using the technique described in Patent Literature 1.

The present disclosure proposes an information processing apparatus, an information processing method, and an information processing system, which are novel and improved and capable of estimating a position on the basis of a captured image.

Solution to Problem

According to the present disclosure, there is provided an information processing apparatus including a position estimating unit configured to estimate a position of a second imaging apparatus on the basis of a first captured image captured by a first imaging apparatus whose position is specified and a second captured image captured at a time corresponding to the first captured image by the second imaging apparatus serving as a position estimation target.

In addition, according to the present disclosure, there is provided an information processing method that is performed by an information processing apparatus, the information processing method including a step of estimating a position of a second imaging apparatus on the basis of a first captured image captured by a first imaging apparatus whose position is specified and a second captured image captured at a time corresponding to the first captured image by the second imaging apparatus serving as a position estimation target.

In addition, according to the present disclosure, there is provided an information processing system including: a first imaging apparatus whose position is specified; a second imaging apparatus that serves as a position estimation target; and an information processing apparatus that includes a position estimating unit configured to estimate a position of the second imaging apparatus on the basis of a first captured image captured by the first imaging apparatus and a second captured image captured at a time corresponding to the first captured image by the second imaging apparatus.

Advantageous Effects of Invention

According to the present disclosure, it is possible to estimate a position on the basis of a captured image.

Note that the effects described above are not necessarily limitative. With or in the place of the above effects, there may be achieved any one of the effects described in this specification or other effects that may be grasped from this specification.

BRIEF DESCRIPTION OF DRAWINGS

FIG. 1 is an explanatory diagram for describing an information processing method according to the present embodiment.

FIGS. 2A and 2B is are explanatory diagrams for describing an information processing method according to the present embodiment.

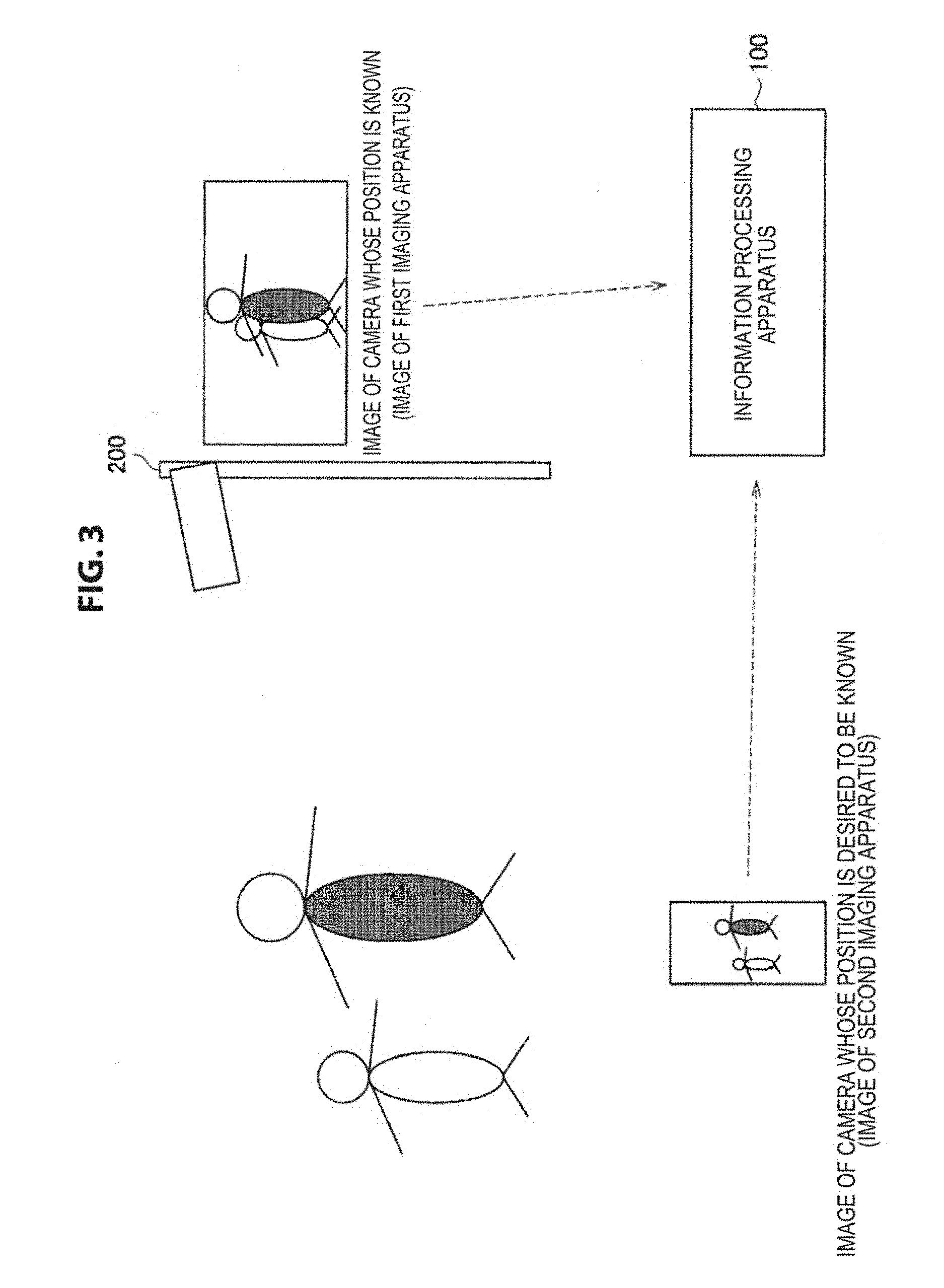

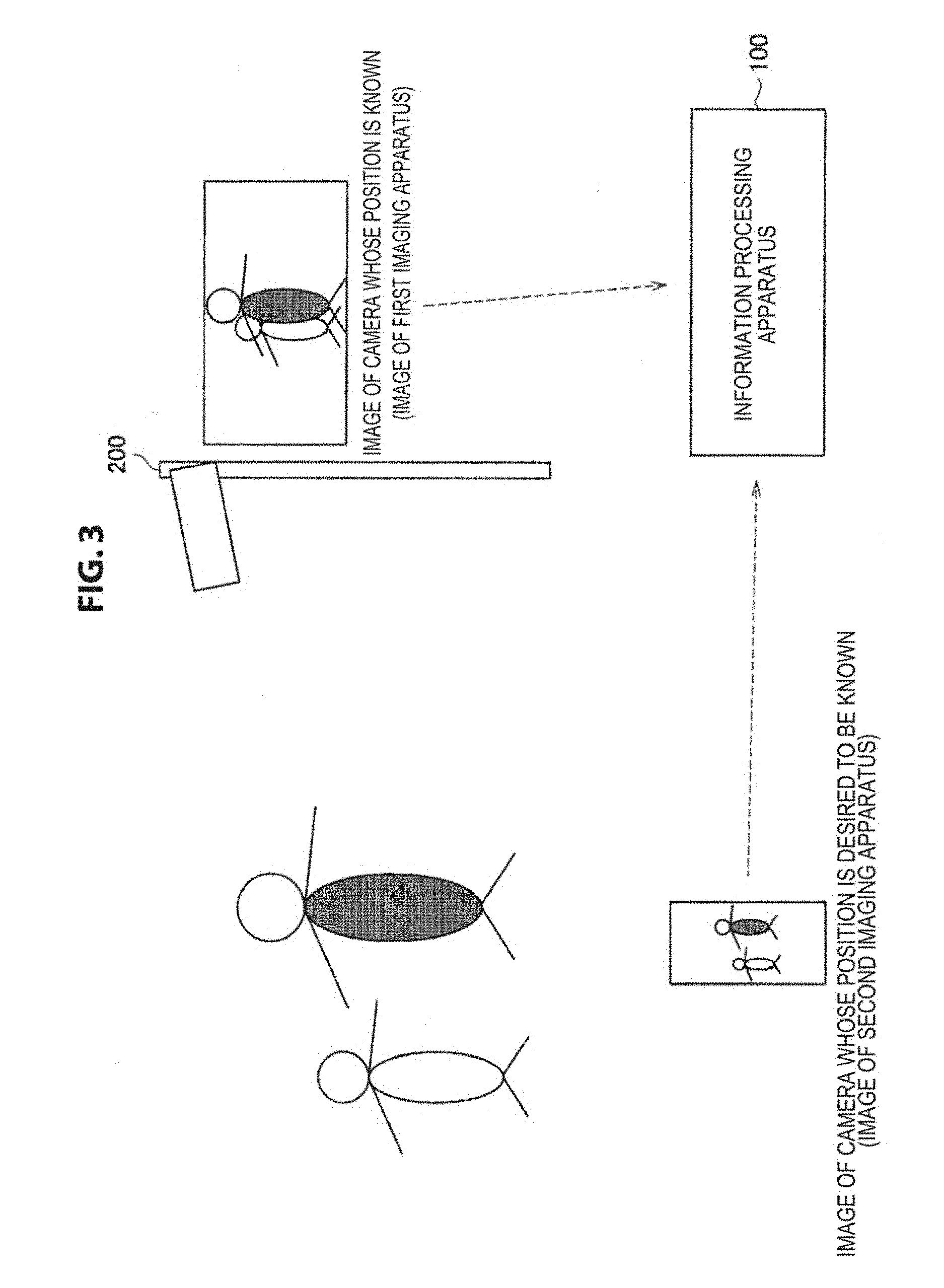

FIG. 3 is an explanatory diagram for describing an example of a process related to an information processing method according to the present embodiment.

FIGS. 4A, 4B, and 4C are explanatory diagrams for describing an example of a process related to an information processing method according to the present embodiment.

FIGS. 5A, 5B, 5C, 5D, and 5E are explanatory diagrams illustrating an example of a method of estimating a relative position with an object in a first imaging apparatus according to the present embodiment.

FIGS. 6A and 6B are explanatory diagrams illustrating an example of a method of estimating a relative position with an object in a second imaging apparatus according to the present embodiment.

FIGS. 7A, 7B, and 7C are explanatory diagrams for describing an example of a process related to an information processing method according to the present embodiment.

FIG. 8 is an explanatory diagram for describing another application example of an information processing method according to the present embodiment.

FIGS. 9A, 9B, and 9C are explanatory diagrams illustrating an example of a process in an information processing system to which the information processing method according to the present embodiment is applied.

FIGS. 10A(A), 10A(B), 10A(C), and 10A(D) are explanatory diagrams for describing an example of a process related to an information processing method according to the present embodiment.

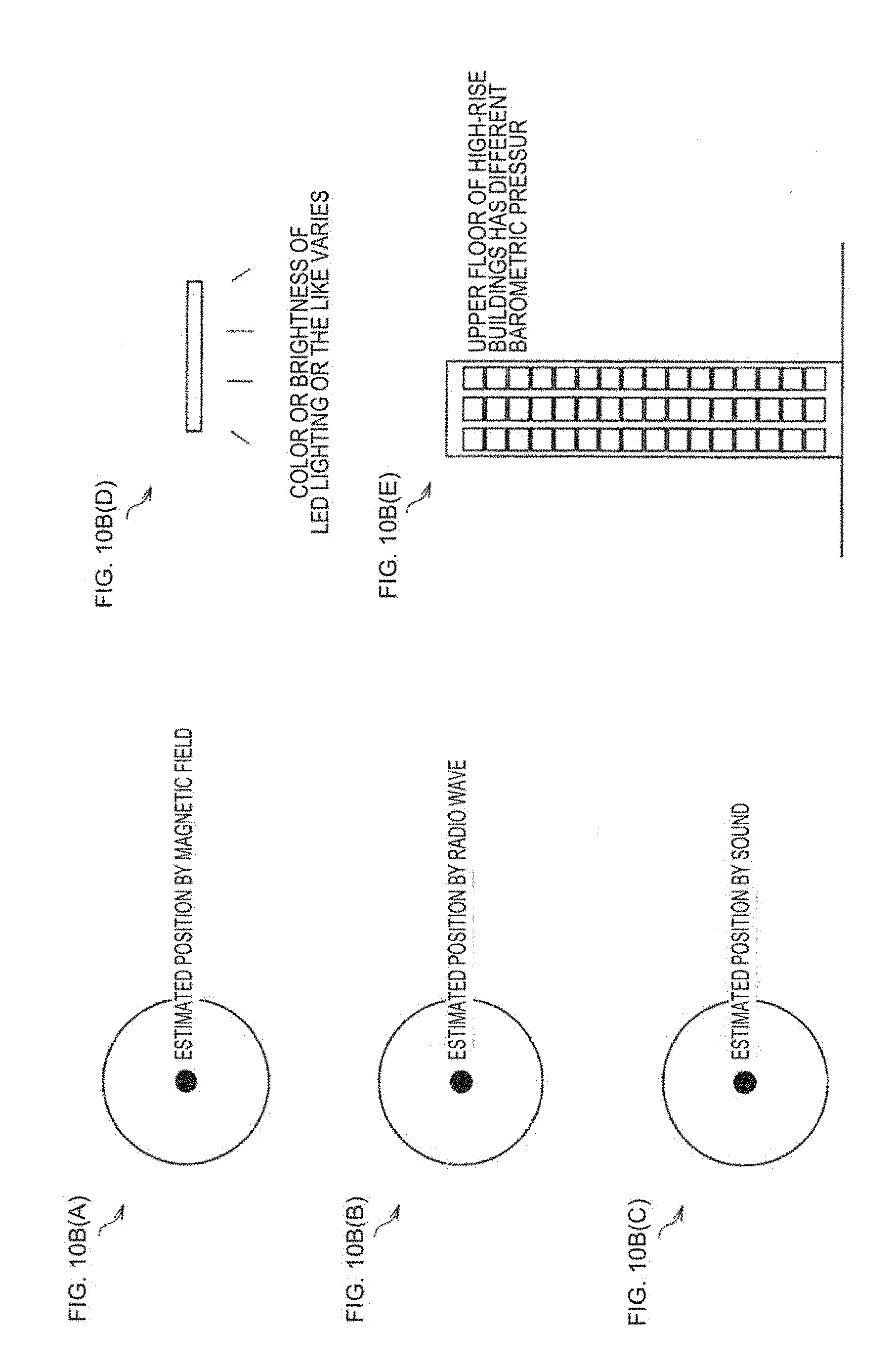

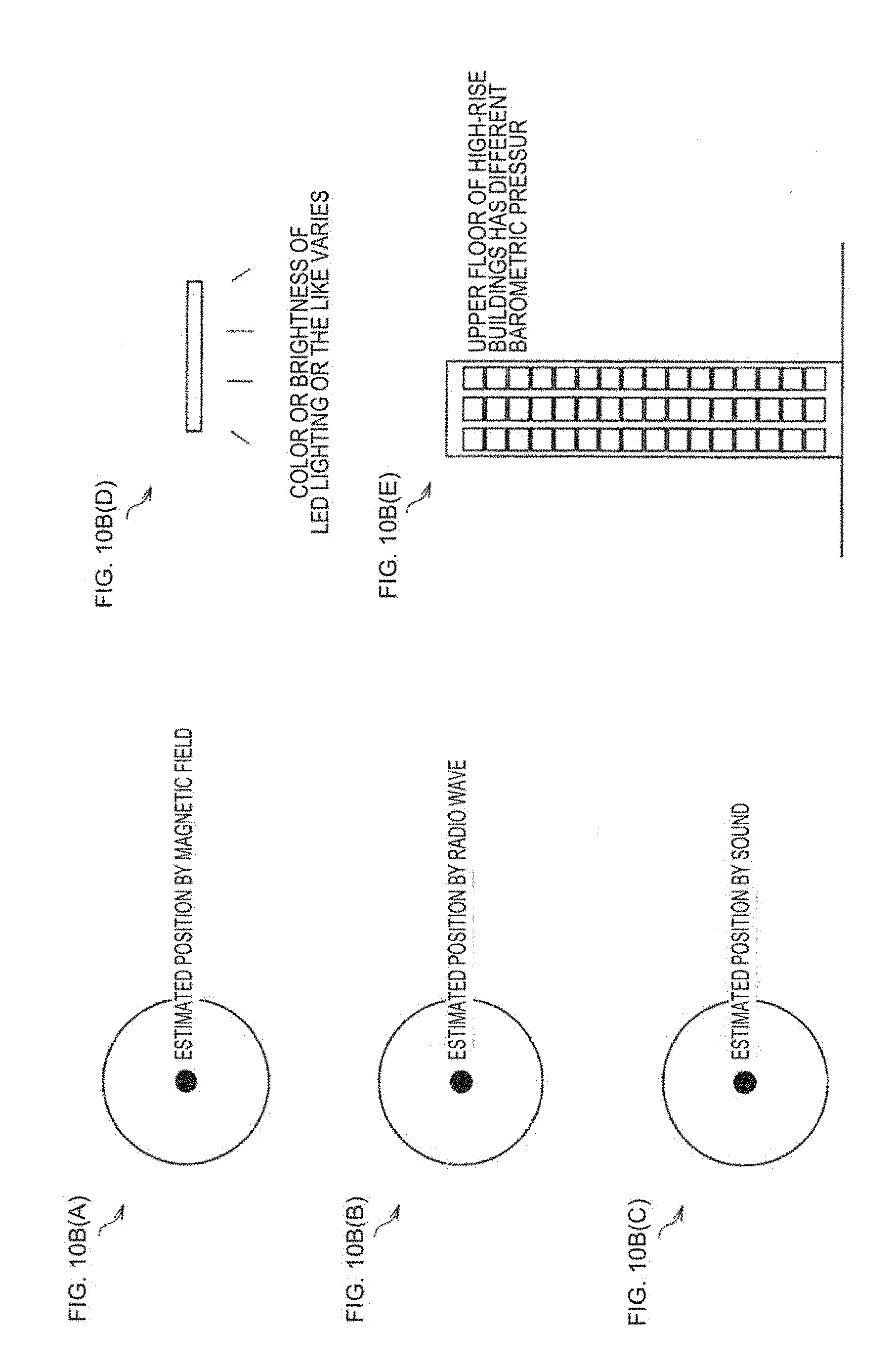

FIGS. 10B(A), 10B(B), 10B(C), 10B(D), and 10B(E) are explanatory diagrams for describing an example of a process related to an information processing method according to the present embodiment.

FIGS. 11A, 11B, and 11C are explanatory diagrams for describing an example of a process related to an information processing method according to the present embodiment.

FIG. 12 is an explanatory diagram for describing an example of a process related to an information processing method according to the present embodiment.

FIG. 13 is an explanatory diagram for describing an example of a process related to an information processing method according to the present embodiment.

FIG. 14 is an explanatory diagram for describing an example of a process related to an information processing method according to the present embodiment.

FIGS. 15A and 15B are explanatory diagrams for describing a first example of a use case implemented by a process using an estimated position of a second imaging apparatus related to an information processing method according to the present embodiment.

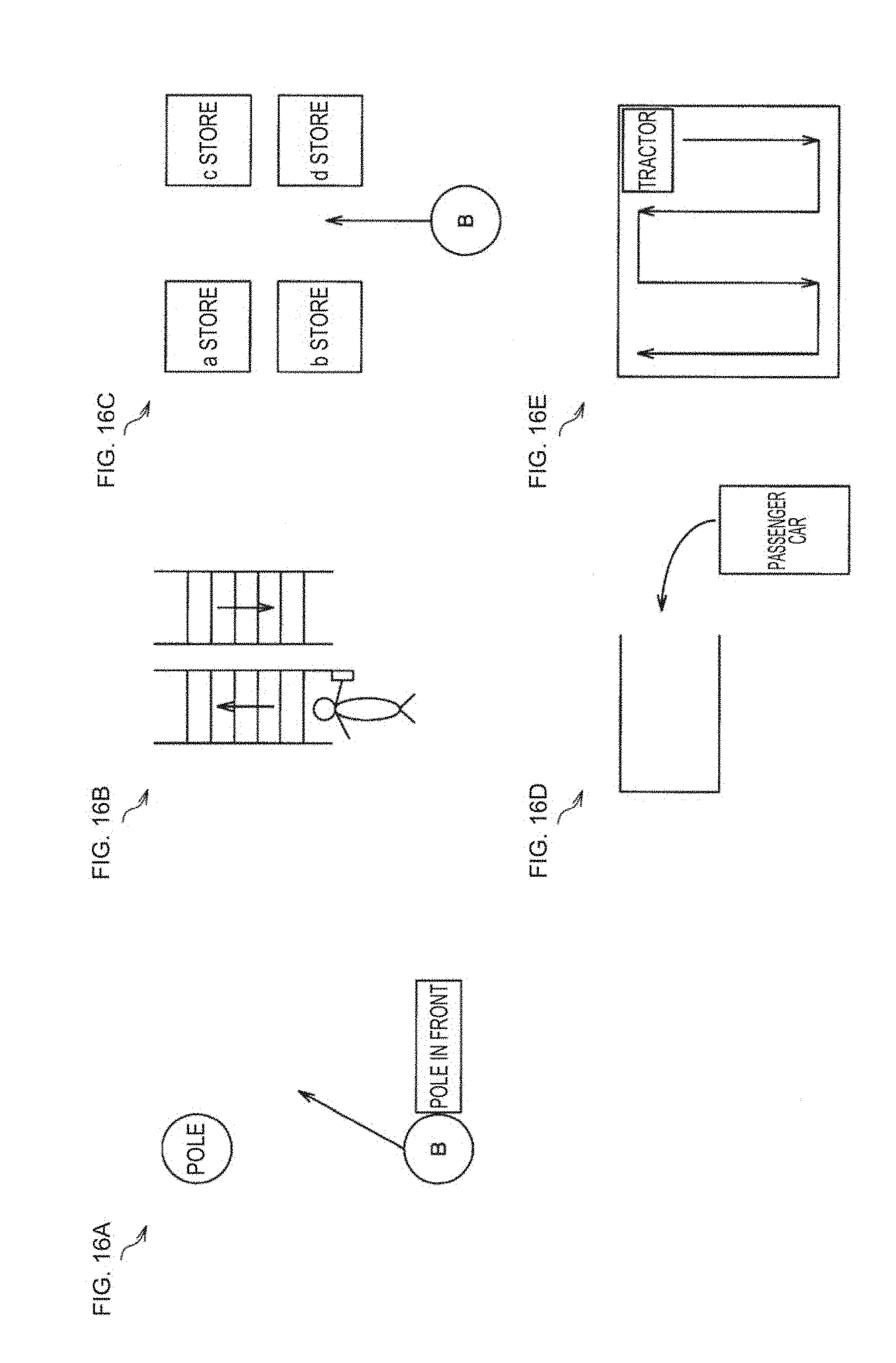

FIGS. 16(A), 16(B), 16(C), 16(D), and 16(E) are explanatory diagrams for describing a second example of a use case implemented by a process using an estimated position of a second imaging apparatus related to an information processing method according to the present embodiment.

FIGS. 17A, 17B, and 17C are explanatory diagrams for describing a second example of a use case implemented by a process using an estimated position of a second imaging apparatus related to an information processing method according to the present embodiment.

FIG. 18 is an explanatory diagram for describing a second example of a use case implemented by a process using an estimated position of a second imaging apparatus related to an information processing method according to the present embodiment.

FIG. 19 is an explanatory diagram for describing a second example of a use case implemented by a process using an estimated position of a second imaging apparatus related to an information processing method according to the present embodiment.

FIGS. 20A and 20B are explanatory diagrams for describing a third example of a use case implemented by a process using an estimated position of a second imaging apparatus related to an information processing method according to the present embodiment.

FIGS. 21A and 21B is are explanatory diagrams for describing a fourth example of a use case implemented by a process using an estimated position of a second imaging apparatus related to an information processing method according to the present embodiment.

FIG. 22 is an explanatory diagram for describing a fourth example of a use case implemented by a process using an estimated position of a second imaging apparatus related to an information processing method according to the present embodiment.

FIG. 23 is an explanatory diagram for describing a fourth example of a use case implemented by a process using an estimated position of a second imaging apparatus related to an information processing method according to the present embodiment.

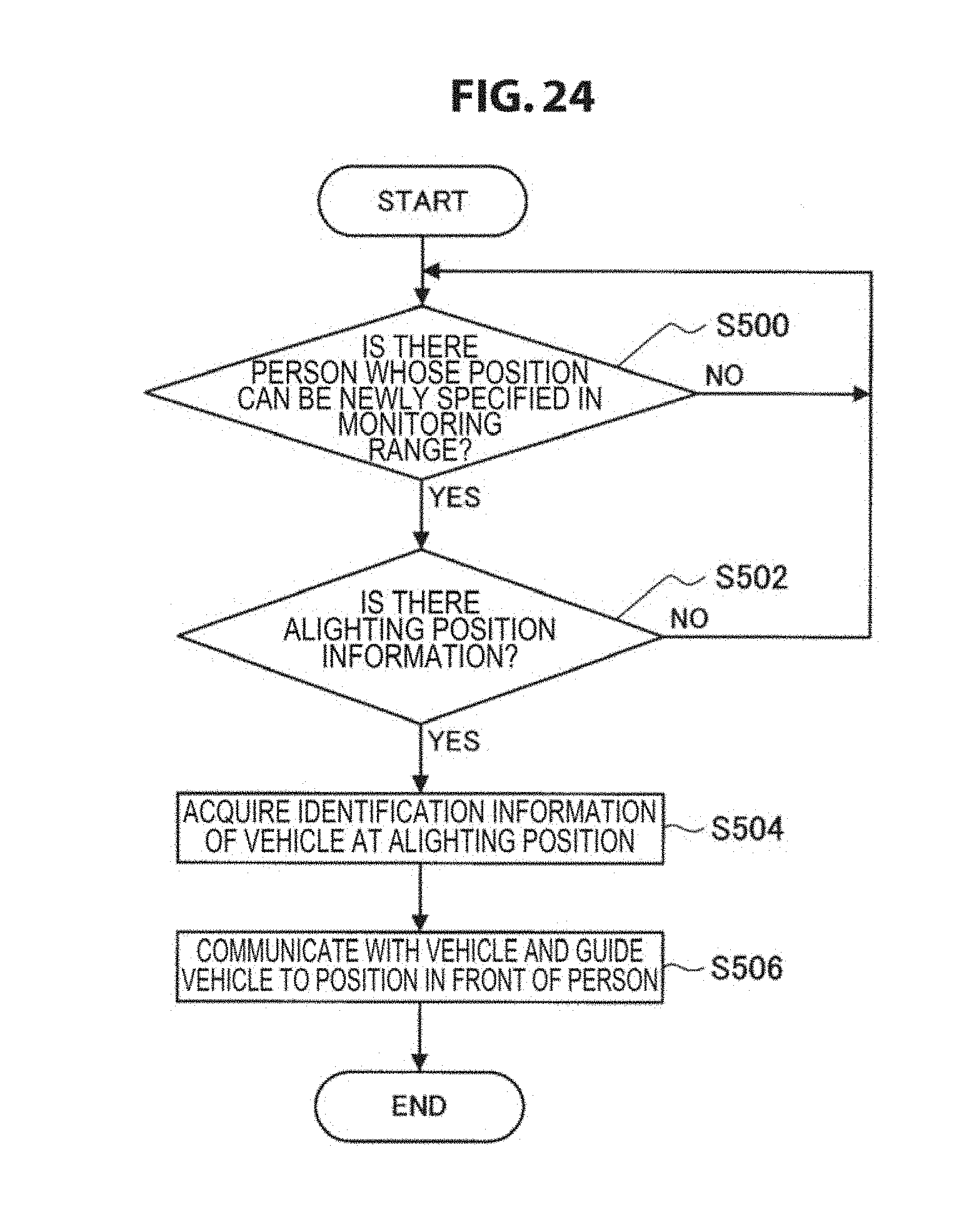

FIG. 24 is an explanatory diagram for describing a fourth example of a use case implemented by a process using an estimated position of a second imaging apparatus related to an information processing method according to the present embodiment.

FIGS. 25A and 25B are explanatory diagrams for describing a fifth example of a use case implemented by a process using an estimated position of a second imaging apparatus related to an information processing method according to the present embodiment.

FIG. 26 is an explanatory diagram for describing a fifth example of a use case implemented by a process using an estimated position of a second imaging apparatus related to an information processing method according to the present embodiment.

FIG. 27 is an explanatory diagram for describing a sixth example of a use case implemented by a process using an estimated position of a second imaging apparatus related to an information processing method according to the present embodiment.

FIG. 28 is an explanatory diagram for describing a sixth example of a use case implemented by a process using an estimated position of a second imaging apparatus related to an information processing method according to the present embodiment.

FIG. 29 is an explanatory diagram for describing a sixth example of a use case implemented by a process using an estimated position of a second imaging apparatus related to an information processing method according to the present embodiment.

FIG. 30 is an explanatory diagram for describing a sixth example of a use case implemented by a process using an estimated position of a second imaging apparatus related to an information processing method according to the present embodiment.

FIG. 31 is an explanatory diagram for describing a seventh example of a use case implemented by a process using an estimated position of a second imaging apparatus related to an information processing method according to the present embodiment.

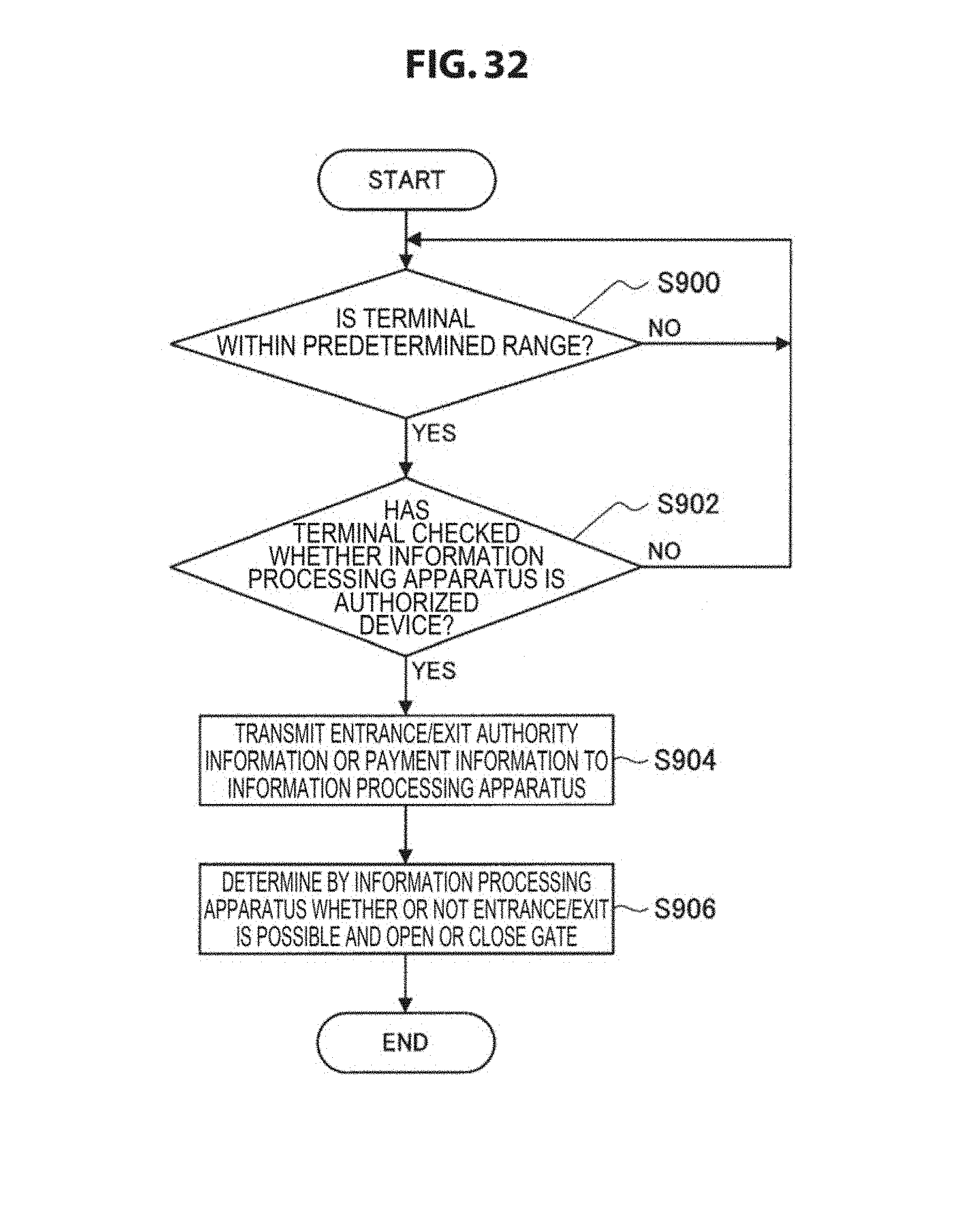

FIG. 32 is an explanatory diagram for describing a seventh example of a use case implemented by a process using an estimated position of a second imaging apparatus related to an information processing method according to the present embodiment.

FIGS. 33A(A), 33A(B), 33A(C), 33A(D), 33A(E), 33A(F), 33A(G), 33A(H), 33A(I), 33A(J), 33A(K), 33A(L), 33A(M), 33A(N), 33A(O), 33A(P), 33A(Q), 33A(R), 33A(S), 33A(T), and 33A(U) is are explanatory diagrams illustrating an example of a method of displaying information transmitted through an information transmission control process related to an information processing method according to the present embodiment.

FIGS. 33B(A), 33B(B), 33B(C), 33B(D), 33B(E), and 33B(F) are explanatory diagrams illustrating an example of a method of displaying information transmitted through an information transmission control process related to an information processing method according to the present embodiment.

FIGS. 33C(A), 33C(B), 33C(C), and 33C(D) are explanatory diagrams illustrating an example of a method of displaying information transmitted through an information transmission control process related to an information processing method according to the present embodiment.

FIGS. 33D(A) and 33D(B) are explanatory diagrams illustrating an example of a method of displaying information transmitted through an information transmission control process related to an information processing method according to the present embodiment.

FIGS. 33E(A) and 33E(B) are explanatory diagrams illustrating an example of a method of displaying information transmitted through an information transmission control process related to an information processing method according to the present embodiment.

FIG. 34 is a block diagram illustrating an example of a configuration of an information processing apparatus according to the present embodiment.

FIG. 35 is an explanatory diagram illustrating an example of a hardware configuration of an information processing apparatus according to the present embodiment.

FIG. 36 is an explanatory diagram illustrating an example of a hardware configuration of the first imaging apparatus according to the present embodiment.

MODE(S) FOR CARRYING OUT THE INVENTION

Hereinafter, (a) preferred embodiment(s) of the present disclosure will be described in detail with reference to the appended drawings. In this specification and the appended drawings, structural elements that have substantially the same function and structure are denoted with the same reference numerals, and repeated explanation of these structural elements is omitted.

Further, description will proceed in the following order.

1. Information processing method according to present embodiment

2. Information processing system according to present embodiment

3. Program according to present embodiment

Information Processing Method According to Present Embodiment

Before a configuration of apparatuses constituting an information processing system according to the present embodiment is described, first, an information processing method according to the present embodiment will be described. Hereinafter, an information processing method according to the present embodiment will be described in connection with an example in which the information processing apparatus according to the present embodiment performs a process according to the information processing method of the present embodiment.

[1] Overview of Information Processing Method According to Present Embodiment

As described above, even if the priority of the image compared with the captured image is changed using either or both of a date and time and weather conditions as conditions, it is not necessarily possible to improve the estimation accuracy of the position at which the captured image is captured.

In this regard, the information processing apparatus according to the present embodiment estimates a position of an imaging apparatus whose position is to be estimated on the basis of a captured image captured by an imaging apparatus whose position is specified (hereinafter referred to as a "first captured image") and a captured image captured at a time corresponding to a time at which the first captured image is captured by an imaging apparatus whose position is estimated (hereinafter referred to as a "second captured image"). In the following description, the imaging apparatus whose position is specified is referred to as a "first imaging apparatus," and the imaging apparatus whose position is to be estimated is referred to as a "second imaging apparatus." In the following description, an imaging apparatus is also referred to as a "camera."

Here, examples of the captured image according to the present embodiment include a still image or a frame image constituting a moving image.

Further, examples of the time corresponding to the time at which the first captured image according to the present embodiment is captured include the same time as the time at which the first captured image is captured or a time included in a predetermined period including the time at which the first captured image is captured. The predetermined period according to the present embodiment is, for example, a period that can be regarded as the same time as the time at which the first captured image is imaged. The predetermined period of time for the present embodiment may be, for example, a fixed period which is set in advance such as a period within 1 second before and after the time at which the first captured image is imaged. Further, the predetermined period according to the present embodiment may be a variable period that can be appropriately set on the basis of, for example, a user operation of the user of an information processing apparatus 100.

FIG. 1 is an explanatory diagram for describing an information processing method according to the present embodiment and illustrates an example of an information processing system 1000 including the information processing apparatus 100 according to the present embodiment that performs a process according to the information processing method of the present embodiment.

The information processing system 1000 includes the information processing apparatus 100, a first imaging apparatus 200, and a second imaging apparatus 300.

FIG. 1 illustrates the information processing system including one first imaging apparatus and one second imaging apparatus, but the information processing system according to the present embodiment is not limited to the example illustrated in FIG. 1. For example, the information processing system according to the present embodiment may include a plurality of first imaging apparatuses or a plurality of second imaging apparatuses. Further, the information processing system according to the present embodiment may include a plurality of information processing apparatuses according to the present embodiment.

Further, FIG. 1 illustrates an example in which the information processing apparatus 100 is a separate device from the first imaging apparatus and the second imaging apparatus, but the information processing system according to the present embodiment is not limited to the example illustrated in FIG. 1. For example, in the information processing system according to the present embodiment, the information processing apparatus 100 and the first imaging apparatus may be implemented as a single apparatus, or the information processing apparatus 100 and the second imaging apparatus may be implemented as a single apparatus.

The first imaging apparatus 200 may be an imaging apparatus with a fixed imaging position such as a security camera. The first imaging apparatus 200 generates the first captured image, for example, by performing imaging periodically or aperiodically. Here, the periodic imaging in the first imaging apparatus 200 may be, for example, imaging of moving images or intermittent imaging of still images.

In the first imaging apparatus 200, the first captured image is generated in an imaging device of the first imaging apparatus 200 or an imaging device connected to the first imaging apparatus 200. For example, the first imaging apparatus 200 may be equipped with a plurality of imaging devices or connected with a plurality of imaging device. Further, imaging directions of the plurality of imaging devices may be the same or different.

Further, the first imaging apparatus 200 is not limited to an imaging apparatus with a fixed imaging position. For example, the first imaging apparatus 200 may have a variable imaging position as long as it is possible to specify a position using an arbitrary technique capable of specifying a position such as positioning using the Global Positioning System (GPS).

Further, the first captured image according to the present embodiment is not limited to the above example. For example, the first captured image according to the present embodiment may be an image which is captured on the basis of the user operation of the user of the first imaging apparatus 200 or an imaging command transmitted from an external device of the first imaging apparatus 200.

Examples of the second imaging apparatus 300 include an imaging apparatus with a variable imaging position, such as an imaging apparatus mounted on an arbitrary vehicle such as a car (an imaging apparatus installed in a vehicle), or a portable device such as a mobile phone, a smartphone, or a tablet type device. The second imaging apparatus 300 is not limited to the above example. For example, the second imaging apparatus 300 may be an arbitrary imaging apparatus with a variable imaging position such as a wearable device that can be worn by the user and used such as a watch type device or a glasses type device or an unmanned aircraft such as a radio control helicopter. The second imaging apparatus 300 generates the second captured image by performing imaging, for example, periodically or aperiodically or by performing imaging on the basis of the user operation of the user of the second imaging apparatus 300. Here, the periodic imaging in the second imaging apparatus 300 may be, for example, imaging of moving images or intermittent imaging of still images.

In the second imaging apparatus 300, the second captured image is generated in an imaging device included in the second imaging apparatus 300 or an imaging device connected to the second imaging apparatus 300. For example, the second imaging apparatus 300 may be equipped with a plurality of imaging devices or may be connected with a plurality of imaging devices. Further, imaging directions of the plurality of imaging devices may be the same or different.

Further, the second imaging apparatus 300 is not limited to an imaging apparatus with a variable imaging position. For example, the second imaging apparatus 300 may be an imaging apparatus with a fixed imaging position.

Further, the second captured image according to the present embodiment is not limited to the above example. For example, the second captured image according to the present embodiment may be an image which is captured on the basis of an imaging command transmitted from an external device of the second imaging apparatus 300.

The position of the second imaging apparatus 300 is estimated by performing a process according to the information processing method of the present embodiment which will be described later on the basis of the first captured image captured by the first imaging apparatus 200 and the second captured image captured at the time corresponding to the first captured image by the second imaging apparatus 300. The information processing apparatus 100 acquires the first captured image and the second captured image via communication with an external device by a communication unit (which will be described later) or an external communication unit connected thereto, and estimates the position of the second imaging apparatus 300 on the basis of the acquired first captured image and the second captured image. For example, the information processing apparatus 100 may acquire the first captured image and the second captured image by direct communication with the first imaging apparatus 200 and the second imaging apparatus 300 or may acquire the first captured image and the second captured image by indirect communication via another device.

In the information processing system 1000, the information processing apparatus 100 estimates the position of the second imaging apparatus 300 which has captured the second captured image on the basis of the first captured image and the second captured image captured at the time corresponding to the first captured image.

FIGS. 2A and 2B are explanatory diagrams for describing the information processing method according to the present embodiment. FIG. 2A illustrates an example of the first captured image, and FIG. 2B illustrates an example of the second captured image captured at the time corresponding to the first captured image illustrated in FIG. 2A.

For example, as illustrated in FIGS. 2A and 2B, when the first captured image and the second captured image captured at the time corresponding to the first captured image are used, it is possible to regard the imaged time and the weather conditions as the same. Here, when the first captured image and the second captured image are images captured at the same place, for example, a shadowing method for an object is similar, a hiding relation with another object is similar when imaging is performed in the same direction, and there is no object which is included in one image but not included in the other image in both angles of view if there is no hiding relation. Therefore, the information processing apparatus 100 can more easily estimate the position of the second imaging apparatus 300 by performing the process according to the information processing method of the present embodiment and can improve the estimation accuracy of the position of the second imaging apparatus 300.

Further, the information processing apparatus 100 estimates the position of the second imaging apparatus 300 using the first captured image and the second captured image captured at the time corresponding to the first captured image. Therefore, the information processing apparatus 100 can estimate the position of the second imaging apparatus 300 even when the second imaging apparatus 300 is positioned indoors or on high-rise building streets in which it is difficult to obtain high position estimation accuracy through positioning using an existing position specifying method such as a position specifying method using GPS.

[2] Process Related to Information Processing Method According to Present Embodiment

Next, the process according to the information processing method of the present embodiment will be described in further detail. Hereinafter, an example in which the process according to the information processing method of the present embodiment is performed by the information processing apparatus 100 constituting the information processing system 1000 illustrated in FIG. 1 will be described.

The information processing apparatus 100 performs, for example, a process (1) to be described below (a position estimation process) as the process according to the information processing method of the present embodiment.

(1) Position Estimation Process

The information processing apparatus 100 estimates the position of the second imaging apparatus 300 on the basis of the first captured image captured by the first imaging apparatus 200 whose position is specified and the second captured image captured at the time corresponding to the first captured image by the second imaging apparatus 300 whose position is to be estimated.

(1-1) First Example of Position Estimation Process

For example, when an object contained in the first captured image is included in the second captured image, the information processing apparatus 100 estimates the position of the first imaging apparatus 200 as the position of the second imaging apparatus 300. Hereinafter, an object included in a captured image is also referred to as a "subject."

For example, the information processing apparatus 100 specifies the position of the first imaging apparatus 200 using the "identification information of the first imaging apparatus 200" acquired together with the first captured image and a table (or a database) in which the identification information is associated with a position.

Here, the identification information related to the present embodiment may be, for example, data capable of specifying each of the first imaging apparatus and the second imaging apparatus such as an ID, an Internet protocol (IP) address, or a cookie.

The method of specifying the position of the first imaging apparatus according to the present embodiment is not limited to the above example. For example, the information processing apparatus 100 may specify a position which is set in advance as the position of the first imaging apparatus 200 or may specify a position indicated by position information (data) acquired together with the first captured image from the first imaging apparatus 200 as the position of the first imaging apparatus 200.

Further, when the position of the object is specified, the information processing apparatus 100 may estimate the position of the first imaging apparatus 200 or the position of the object as the position of the second imaging apparatus 300.

For example, the information processing apparatus 100 specifies the position of the object corresponding to the first imaging apparatus 200 with reference to the table (or the database) in which the position of the first imaging apparatus 200 is associated with the position of the object. The method of specifying the position of the object is not limited to the above example. For example, the information processing apparatus 100 may analyze a feature of an object extracted from the captured image and specify the position of the object using an arbitrary method of specifying the position of the object, for example, by specifying the position of the object with reference to a table (or a database) in which the features are associated with the positions.

FIG. 3 is an explanatory diagram for describing an example of the process according to the information processing method of the present embodiment. In FIG. 3, a security camera is illustrated as an example of the first imaging apparatus 200.

As illustrated in FIG. 3, when the same object (person, in the example illustrated in FIG. 3) is extracted from the first captured image ("image of camera whose position is known" in FIG. 3) and the second captured image ("image of camera whose position is desired to be known" in FIG. 3), the information processing apparatus 100 estimates the position of the first imaging apparatus 200 or the position of the object as the position of the second imaging apparatus 300.

FIGS. 4A, 4B, and 4C are explanatory diagrams for describing an example of the process according to the information processing method of the present embodiment, and illustrates an example of a position estimation process according to the present embodiment. Each of FIGS. 4A, 4B, and 4C illustrates an example of the position estimation process according to the first example.

In the example illustrated in FIGS. 4A, 4B, and 4C, a "security camera" corresponds to the first imaging apparatus 200, and a "moving camera" illustrated in FIGS. 4A, 4B, and 4C corresponds to the second imaging apparatus 300. Further, ".fwdarw." illustrated in FIGS. 4A, 4B, and 4C indicates the direction in which the position of the second imaging apparatus 300 is estimated, and as illustrated in FIGS. 4A, 4B, and 4C, the position of the first imaging apparatus 200 or the position of the object is estimated as the position of the second imaging apparatus 300.

(1-1-1) Process Illustrated FIG. 4A

The information processing apparatus 100 looks for the same object in both the first captured image and the second captured image.

If the same object is found, the information processing apparatus 100 estimates the position of the first imaging apparatus 200 or the position of the object whose position is specified as the position of the second imaging apparatus 300. Further, when the same object is not found, the information processing apparatus 100 does not estimate the position of the second imaging apparatus 300.

(1-1-2) Processing Illustrated in FIG. 4B

For example, when the first imaging apparatus 200 and the second imaging apparatus 300 have a positional relation illustrated in FIG. 4B, the second imaging apparatus 300 is further included in the first captured image in addition to the object. When the second imaging apparatus 300 is further included in the first captured image as described above, the information processing apparatus 100 may estimate the position of the first imaging apparatus 200 or the position of the object whose position is specified as the position of the second imaging apparatus 300.

For example, the information processing apparatus 100 determines whether or not the second imaging apparatus 300 is included in the first captured image by analyzing the feature of the object extracted from the first captured image and matches the feature with data indicating the feature of the second imaging apparatus 300. Here, examples of the data illustrating the feature of the second imaging apparatus 300 include image data indicating an external appearance of the second imaging apparatus 300 and data indicating a feature of the second imaging apparatus 300 obtained by analyzing the image data.

(1-1-3) Process Illustrated in FIG. 4C

For example, when the first imaging apparatus 200 and the second imaging apparatus 300 have a positional relation illustrated in FIG. 4C, the first imaging apparatus is further included in the second captured image in addition to the object. When the first imaging apparatus 200 is further included in the second captured image as described above, the information processing apparatus 100 may estimate the position of the first imaging apparatus 200 or the position of the object whose position is specified as the position of the second imaging apparatus 300.

For example, the information processing apparatus 100 determines whether or not the first imaging apparatus 200 is included in the second captured image by analyzing the feature of the object extracted from the second captured image and matches the feature with data indicating the feature of the first imaging apparatus 200. Here, examples of the data indicating the feature of the first imaging apparatus 200 include image data indicating an external appearance of the first imaging apparatus 200 and data indicating a feature of the first imaging apparatus 200 obtained by analyzing the image data.

The information processing apparatus 100 estimates the position of the first imaging apparatus 200 or the position of the object whose position is specified as the position of the second imaging apparatus 300 using the first captured image and the second captured image captured at the time corresponding to the first captured image, for example, as illustrated in FIGS. 4A, 4B, and 4C.

(1-2) Second Example of Position Estimation Process

In the position estimation process according to the first example, the position of the first imaging apparatus 200 or the position of the object whose position is specified is estimated as the position of the second imaging apparatus 300, but the position estimation process according to the present embodiment is not limited to the above example. For example, the information processing apparatus 100 is also able to estimate the position of the second imaging apparatus 300 on the basis of an estimation result of the relative position of the object with respect to the first imaging apparatus 200 estimated from the first captured image and an estimation result of the relative position of the object with respect to the second imaging apparatus 300 estimated from the second captured image.

For example, the information processing apparatus 100 estimates a relative position indicated by first relative position information (data) indicating the relative position of the object with respect to the first imaging apparatus 200 as the relative position of the object with respect to the first imaging apparatus 200.

Further, the information processing apparatus 100 estimates a relative position indicated by second relative position information (data) indicating the relative position of the object with respect to the second imaging apparatus 300 as the relative position of the object with respect to the second imaging apparatus 300.

Here, when the same object is included in the first captured image and the second captured image captured at the time corresponding to the first captured image, the position of the object is consistent. Therefore, the relative position of the second imaging apparatus 300 with respect to the first imaging apparatus 200 is decided by estimating the relative position of the object with respect to the first imaging apparatus 200 and the relative position of the object with respect to the second imaging apparatus 300.

Therefore, the information processing apparatus 100 can estimate the position of the second imaging apparatus 300 on the basis of, for example, the first relative position information and the second relative position information.

For example, the information processing apparatus 100 estimates the relative position of the second imaging apparatus 300 with respect to the position of the first imaging apparatus 200 as the position of the second imaging apparatus 300.

Since the position of the first imaging apparatus 200 is specified, it is also possible to estimate an absolute position of the second imaging apparatus 300 by estimating the relative position of the second imaging apparatus 300 with respect to the position of the first imaging apparatus 200. Therefore, the information processing apparatus 100 may estimate, for example, the absolute position of the second imaging apparatus 300 as the position of the second imaging apparatus 300.

For example, the relative position of the object with respect to the first imaging apparatus 200 and the relative position of the object with respect to the second imaging apparatus 300 are estimated as follows. It will be appreciated that the estimation method of the relative position of the object with respect to the first imaging apparatus 200 and the relative position of the object with respect to the second imaging apparatus 300 is not limited to an example to be described below.

The relative position of the object with respect to the first imaging apparatus 200 is estimated, for example, by estimating a direction in which the object is located and a distance from the object in the first imaging apparatus 200 as will be described below.

FIGS. 5A, 5B, 5C, 5D, and 5E are explanatory diagrams illustrating an example of an estimation method of the relative position with respect to the object in the first imaging apparatus 200 according to the present embodiment.

Since an angle of view and a direction are known in the first imaging apparatus 200, the first imaging apparatus 200 can estimate the direction of the object included in the first captured image (FIG. 5A).

Further, the first imaging apparatus 200 estimates the distance from the object, for example, by using the following method. A focused position is estimated as the distance of the object using contrast auto focus (AF) or the like (FIG. 5B). The distance of the object is estimated from a degree of blur when an optical zoom magnification is changed using the fact that a depth of field changes when the optical zoom magnification changes (FIG. 5B). The distance of the object is estimated from an amount of deviation of the object in a plurality of captured images captured at substantially the same time through a plurality of imaging devices whose imaging position deviation is known (or an imaging device including a plurality of optical systems) (FIG. 5D). The distance of the object is estimated on the basis of a distance measurement value of a distance sensor (a phase difference sensor) using a phase difference AF or the like, or the distance of the object is estimated using a captured image captured by a light field camera (FIG. 5E).

For example, as described above, the first imaging apparatus 200 estimates the relative position with respect to the object by estimating the direction in which the object is located and the distance from the object. Then, the first imaging apparatus 200 causes a communication device with which the first imaging apparatus 200 is equipped or a connected external communication device to transmit the first relative position information indicating the estimation result to the external device such as the information processing apparatus 100. When the relative position with respect to the object is fixed, the first imaging apparatus 200 may transmit the first relative position information stored in a recording medium to the external device.

Further, the process related to the estimation of the relative position between the first imaging apparatus 200 and the object is not limited to an example in which the process is performed in the first imaging apparatus 200. For example, the process related to the estimation of the relative position between the first imaging apparatus 200 and the object may be performed by the first imaging apparatus 200 and the information processing apparatus 100 in cooperation.

The relative position of the object with respect to the second imaging apparatus 300 is estimated, for example, by estimating the direction in which the object is located and the distance from the object in the second imaging apparatus 300 as will described below.

FIGS. 6A and 6B are explanatory diagrams illustrating an example of the estimation method of the relative position with respect to the object in the second imaging apparatus 300 according to the present embodiment. FIGS. 6A and 6B illustrate an example of an imaging range when a plane such as a wall (an example of an object) is imaged. FIG. 6A illustrates an example of the imaging range when a plane such as a wall (an example of an object) is imaged from the front, and FIG. 6B illustrates an example of the imaging range when a flat surface such as a wall (an example of an object) is obliquely imaged.

Here, when the same object is included in the first captured image and the second captured image, since the position of the first imaging apparatus 200 is specified, the information processing apparatus 100 is able to estimate the position of the object on the basis of the first relative position information. In other words, FIGS. 6A and 6B illustrate an example of an estimation method in which the second imaging apparatus 300 estimates the relative position with respect to the object whose position is specified. Examples of the first relative position information used when the information processing apparatus 100 estimates the position of the object include first relative position information acquired through communication by the communication unit (which will be described later) or a connected external imaging device and first relative position information stored in a storage medium such as a storage unit (which will be described later) or a connected external recording medium. For example, the first relative position information stored in the recording medium may be information which is stored in the recording medium in advance or the first relative position information which is acquired through communication and stored in the recording medium.

The second imaging apparatus 300 estimates the distance from the angle of view using, for example, known lens information. The second imaging apparatus 300 estimates the distance from the object, for example, in accordance with the magnitude of the imaging range using the fact that the angle of view is known.

Further, the second imaging apparatus 300 estimates the direction in which the object is located by estimating the angle of view with the object from a shape of the imaging range.

For example, as described above, the second imaging apparatus 300 estimates the relative position with respect to the object by estimating the direction in which the object is located and the distance from the object. The second imaging apparatus 300 can estimate the direction in which the object is located and the distance from the object using a similar method to that of the first imaging apparatus 200 described above.

Then, the second imaging apparatus 300 causes the communication device with which the second imaging apparatus 300 is equipped or a connected external communication device to transmit the second relative position information indicating the estimation result to an external device such as the information processing apparatus 100.

Further, the process related to the estimation of the relative position between the second imaging apparatus 300 and the object is not limited to an example in which it is performed in the second imaging apparatus 300. For example, the process related to the estimation of the relative position between the second imaging apparatus 300 and the object may be performed by the second imaging apparatus 300 and the information processing apparatus 100 in cooperation.

Hereinafter, an example of the position estimation process according to the second example will be described. FIGS. 7A, 7B, and 7C is are explanatory diagrams for describing an example of a process according to the information processing method of the present embodiment and illustrates an example of the position estimation process according to the present embodiment. Each of FIGS. 7A, 7B, and 7C illustrates an example of the position estimation process according to the second example.

In the example illustrated in FIGS. 7A, 7B, and 7C, a "security camera" corresponds to the first imaging apparatus 200, and a "moving camera" illustrated in FIGS. 7A, 7B, and 7C corresponds to the second imaging apparatus 300. Further, ".fwdarw." illustrated in FIGS. 7A, 7B, and 7C indicates the direction in which the position of the second imaging apparatus 300 is estimated.

(1-2-1) Process Illustrated in FIG. 7A

The information processing apparatus 100 looks for the same object in both the first captured image and the second captured image captured at the time corresponding to the first captured image.

When the same object is found, the information processing apparatus 100 estimates the position of the second imaging apparatus 300 on the basis of the first relative position information corresponding to the first captured image and the second relative position information corresponding to the second captured image. For example, the information processing apparatus 100 estimates the relative position of the second imaging apparatus 300 or the absolute position of the second imaging apparatus 300 with respect to the position of the first imaging apparatus 200 as the position of the second imaging apparatus 300. Further, when the same object is not found, the information processing apparatus 100 does not estimate the position of the second imaging apparatus 300.

(1-2-2) Process Illustrated in FIG. 7B

For example, when the first imaging apparatus 200 and the second imaging apparatus 300 have a positional relation illustrated in FIG. 7B, the second imaging apparatus 300 is further included in the first captured image in addition to the object. When the second imaging apparatus 300 is further included in the first captured image as described above, the information processing apparatus 100 may estimate the position of the second imaging apparatus 300 on the basis of third relative position information (data) indicating the relative position of the second imaging apparatus with respect to the first imaging apparatus 200.

The information processing apparatus 100 determines whether or not the second imaging apparatus 300 is included in the first captured image, for example, similarly to the process of (1-1-2).

Further, the information processing apparatus 100 estimates the relative position indicated by the third relative position information indicating the relative position of the second imaging apparatus 300 with respect to the first imaging apparatus 200 as the relative position of the second imaging apparatus 300 with respect to the first imaging apparatus 200. Further, since the position of the first imaging apparatus 200 is specified, the information processing apparatus 100 can estimate the absolute position of the second imaging apparatus 300 using the estimated position.

Here, the relative position of the second imaging apparatus 300 with respect to the first imaging apparatus 200 is estimated in the first imaging apparatus 200, for example, by a method similar to the method for estimating the relative position of the object with respect to the first imaging apparatus 200. Then, the information processing apparatus 100 estimates the position of the second imaging apparatus 300 using, for example, the third relative position information indicating the estimation result acquired from the first imaging apparatus 200. Further, similarly to the method of estimating the relative position of the object with respect to the first imaging apparatus 200, the process related to the estimation of the relative position of the second imaging apparatus 300 with respect to the first imaging apparatus 200 may be performed by the first imaging apparatus 200 and the information processing apparatus 100 in cooperation.

Further, the processing illustrated in FIG. 7B is not limited to the process described above.

For example, the information processing apparatus 100 may estimate the absolute position of the second imaging apparatus 300 through a similar process to the process (1-2-1).

Further, the information processing apparatus 100 may estimate the absolute position of the second imaging apparatus 300, for example, using both the process based on the third relative position information and the process of (1-2-1). For example, when both of the processes are used, the information processing apparatus 100 complementarily uses the result of the process based on the third relative position information.

(1-2-3) Process Illustrated in FIG. 7C

For example, when the first imaging apparatus 200 and the second imaging apparatus 300 have a positional relation illustrated in FIG. 7C, the first imaging apparatus 200 is further included in the second captured image in addition to the object. When the first imaging apparatus 200 is further included in the second captured image as described above, the information processing apparatus 100 may estimate the position of the second imaging apparatus 300 on the basis of fourth relative position information (data) indicating the relative position of the first imaging apparatus 200 with respect to the second imaging apparatus 300.

The information processing apparatus 100 determines whether or not the first imaging apparatus 200 is included in the second captured image, similarly to the process of (1-1-3).

Further, the information processing apparatus 100 estimates the relative position of the second imaging apparatus 300 with respect to the first imaging apparatus 200, for example, using the relative position indicated by the fourth relative position information indicating the relative position of the first imaging apparatus 200 with respect to the second imaging apparatus 300. Further, since the position of the first imaging apparatus 200 is specified, the information processing apparatus 100 can estimate the absolute position of the second imaging apparatus 300 using the estimated position.

Here, the relative position of the second imaging apparatus 300 with respect to the first imaging apparatus 200 is estimated in the second imaging apparatus 300, for example, by a method similar to the method of estimating the relative position of the object with respect to the second imaging apparatus 300. Then, the information processing apparatus 100 estimates the position of the second imaging apparatus 300, for example, using the fourth relative position information indicating the estimation result acquired from the second imaging apparatus 300. Similarly, in the method of estimating the relative position of the object with respect to the second imaging apparatus 300, the process related to the estimation of the relative position of the first imaging apparatus 200 with respect to the second imaging apparatus 300 may be performed by the second imaging apparatus 300 and the information processing apparatus 100 in cooperation.

Further, the process illustrated in FIG. 7C is not limited to the process described above.

For example, the information processing apparatus 100 may estimate the absolute position of the second imaging apparatus 300 through a similar process to the process (1-2-1).

Further, the information processing apparatus 100 may estimate the absolute position of the second imaging apparatus 300, for example, using both the process based on the fourth relative position information and the process of (1-2-1). For example, when both of the processes are used, the information processing apparatus 100 complementarily uses a result of the process based on the fourth relative position information.

For example, the information processing apparatus 100 performs the position estimation process according to the first example described in (1-1) and the position estimation process according to the second example described in (1-2) as the position estimation process.

Further, the position estimation process according to the present embodiment is not limited to the position estimation process according to the first example described in (1-1) and the position estimation process according to the second example described in (1-2).

For example, when the information processing system according to the present embodiment includes a plurality of first imaging apparatuses, there may be a plurality of first captured images captured at the same time. Here, the information processing apparatus 100 can estimate the position of the second imaging apparatus by performing the position estimation process using both the second captured image captured by a certain second imaging apparatus and all of a plurality of first captured images. However, when the position estimation process is performed using all of the plurality of first captured images, a processing load may be increased.

In this regard, in the position estimation process according to the present embodiment, the information processing apparatus 100 may narrow down the first captured image used for the process and estimate the position of the second imaging apparatus on the basis of the narrowed first captured image and the second captured image captured at the time corresponding to the first captured image.

For example, the information processing apparatus 100 narrows down the position at which the second imaging apparatus is likely be located on the basis of information related to the second imaging apparatus. Then, the information processing apparatus 100 estimates the position of the second imaging apparatus on the basis of the first captured image captured by the first imaging apparatus located at the position corresponding to the narrowed position.

The information related to the second imaging apparatus according to the present embodiment is data used for narrowing down the position at which the second imaging apparatus is likely be located. The information related to the second imaging apparatus according to the present embodiment includes, for example, the following information. An example of the position estimation process using the information related to the second imaging apparatus according to the present embodiment will be described later. Position information indicating the position of the second imaging apparatus Information indicating motion of the second imaging apparatus (for example, data indicating change in acceleration or angular velocity) Tracking information indicating a history obtained by tracking the second imaging apparatus (tracking according to the present embodiment will be described later.) Information related to a magnetic field around the second imaging apparatus (for example, data indicating a strength and a direction of the magnetic field) Information related to radio waves near the second imaging apparatus (for example, data indicating a strength and a delay time of radio waves) Information related to a sound near the second imaging apparatus (for example, data indicating strength and a delay time of a sound) Information related to light near the second imaging apparatus (for example, data indicating luminance and color) Information indicating barometric pressure around the second imaging apparatus

When the position estimation process is performed, the position of the second imaging apparatus 300 is estimated on the basis of the first captured image and the second captured image captured at the time corresponding to the first captured image. Therefore, the information processing apparatus 100 can estimate the position on the basis of the captured image by performing the position estimation process.

Further, when the position estimation process is performed, the information processing apparatus 100 can obtain the effect that is obtained by performing the process according to the information processing method of the present embodiment.

The above descriptions is in connection with the example in which the roles of the imaging apparatuses (the first imaging apparatus and the second imaging apparatus) constituting the information processing system are fixed, but an application example of the information processing method according to the present embodiment is not limited to the above example.

For example, in the information processing system to which the information processing method according to the present embodiment is applied, the roles of the imaging apparatuses constituting the information processing system may change.

FIG. 8 is an explanatory diagram for describing another application example of the information processing method according to the present embodiment. The information processing system illustrated in FIG. 8 includes, for example, a security camera, a camera installed in a helicopter (a "helicopter camera" illustrated in FIG. 8), and a camera mounted on a smartphone (a "smartphone camera" illustrated in FIG. 8). Here, the security camera illustrated in FIG. 8 corresponds to the first imaging apparatus whose position is specified.

In the information processing system illustrated in FIG. 8, the information processing apparatus according to the present embodiment estimates the position of the camera mounted on the helicopter using a captured image captured by the security camera as the first captured image and a captured image captured by the camera mounted on the helicopter as the second captured image. In other words, the camera mounted on the helicopter plays the role of the second imaging apparatus when the security camera is used as a reference.

When the position of the camera mounted on the helicopter is estimated, the position of the camera mounted on the helicopter is specified. Therefore, the information processing apparatus according to the present embodiment can perform the position estimation process using the captured image captured by the camera mounted on the helicopter as the first captured image.

Therefore, in the information processing system illustrated in FIG. 8, the information processing apparatus according to the present embodiment estimates the position of the camera mounted on the smartphone using the captured image captured by the camera mounted on the helicopter as the first captured image and a captured image captured by the camera mounted on the smartphone as the second captured image. In other words, the camera mounted on the helicopter plays the role of the first imaging apparatus when the camera mounted on the smartphone is used as a reference.

For example, the role of the imaging apparatus constituting the information processing system is changed like the camera mounted on the helicopter illustrated in FIG. 8, and thus even when the position of the camera mounted on the smartphone is unable to be estimated directly using the captured image captured by the security camera which is originally the first imaging apparatus and the captured image captured by the camera mounted on the smartphone, for example, due to a wall or the like illustrated in FIG. 8, the information processing apparatus according to the present embodiment can estimate the position of the camera mounted on the smartphone.

The process according to the information processing method of the present embodiment is not limited to the process (position estimation process) of (1).

For example, the information processing apparatus according to the present embodiment can further perform the process using the estimated position of the second imaging apparatus. As the process using the estimated position of the second imaging apparatus, for example, the following process (an information transmission control process) of (2) may be used.

(2) Information Transmission Control Process

The information processing apparatus according to the present embodiment transmits information based on the estimated position of the second imaging apparatus to the external device. The information processing apparatus according to the present embodiment causes the communication unit (which will be described later) with which the information processing apparatus is equipped or a connected external communication device to transmit the information on the basis of the position of the second imaging apparatus to the external device.

An example of the information based on the position of the second imaging apparatus according to the present embodiment and an example of a use case implemented by transmitting the information based on the position of the second imaging apparatus will be described later.

The process using the estimated position of the second imaging apparatus is not limited to the process (information transmission control process) of (2). For example, the information processing apparatus according to the present embodiment can perform an arbitrary process which can be performed using the estimated position of the second imaging apparatus such as a "process of recording the estimated position of the second imaging apparatus in a recording medium as a log" or a "process of estimating the second imaging apparatus, a user who carries the second imaging apparatus, or a vehicle on which the second imaging apparatus is mounted from the estimated position of the second imaging apparatus." An example of the use case implemented by the process using the estimated position of the second imaging apparatus according to the present embodiment will be described later.

For example, the information processing apparatus according to the present embodiment performs the `"process (position estimation process) of (1)" or "the process (position estimation process) of (1) and the process using the estimated position of the second imaging apparatus" as the process related to information processing method according to the present embodiment.

The information processing apparatus according to the present embodiment can achieve the following effects, for example, by performing the process according to the information processing method of the present embodiment. The position is estimated using the first captured image and the second captured image which are captured at a corresponding time, and thus a time and weather conditions are the same (substantially the same), and the position is easily estimated. Since the position of the second imaging apparatus is estimated using the first captured image and the second captured image, it is possible to estimate the position of the second imaging apparatus with a high degree of accuracy even indoors or on high-rise building streets in which it is difficult to obtain the high accuracy through positioning using existing positioning such as positioning using GPS. A subject corresponding to the second imaging apparatus is specified on the basis of the first captured image, and the subject is tracked, and thus the position can be continuously estimated without repeating the process (position estimation process) of (1). The process (information transmission control process) of (2) is performed as the process using the estimated position of the second imaging apparatus, and thus it is possible to deliver various kinds of information to the second imaging apparatus, the user of the second imaging apparatus, and the like.

"The process (position estimation process) of (1)" or "the process (position estimation process) of (1) and the process using the estimated position of the second imaging apparatus" are processes obtained by dividing the process according to the information processing method of the present embodiment for the sake of convenience. Therefore, in the process according to the information processing method of the present embodiment, for example, "the process (position estimation process) of (1)" may be regarded as two or more processes (by an arbitrary division method). Further, in the process according to the information processing method of the present embodiment, "the process (position estimation process) of (1) and the process using the estimated position of the second imaging apparatus" may be regarded as one process, or "the process (position estimation process) of (1) and the process using the estimated position of the second imaging apparatus" may be regarded as two or more processes (by an arbitrary division method).

[3] Specific Example of Process Related to Information Processing Method According to Present Embodiment

A specific example of the process according to the information processing method of the present embodiment will be described below.