Digital therapeutic corrective spectacles

Abou Shousha A

U.S. patent number 10,386,645 [Application Number 16/144,995] was granted by the patent office on 2019-08-20 for digital therapeutic corrective spectacles. This patent grant is currently assigned to UNIVERSITY OF MIAMI. The grantee listed for this patent is UNIVERSITY OF MIAMI. Invention is credited to Mohamed Abou Shousha.

View All Diagrams

| United States Patent | 10,386,645 |

| Abou Shousha | August 20, 2019 |

Digital therapeutic corrective spectacles

Abstract

Devices for testing, identifying, and compensating for ocular pathologies affecting the vision of a patient are provided in the form of digital therapeutic corrective spectacles that provided personalized, customized visual field corrected/enhancement. The devices include wearable spectacles with one or more digital monitors that are used to recreate an entire visual field as a digitized corrected image or that include custom-reality glasses that can be used to overlay a visual scene with generated image to correct or enhance the visual field of the subject.

| Inventors: | Abou Shousha; Mohamed (Pembroke Pines, FL) | ||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Applicant: |

|

||||||||||

| Assignee: | UNIVERSITY OF MIAMI (Miami,

FL) |

||||||||||

| Family ID: | 65807407 | ||||||||||

| Appl. No.: | 16/144,995 | ||||||||||

| Filed: | September 27, 2018 |

Prior Publication Data

| Document Identifier | Publication Date | |

|---|---|---|

| US 20190094552 A1 | Mar 28, 2019 | |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | Issue Date | ||

|---|---|---|---|---|---|

| 62563770 | Sep 27, 2017 | ||||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | G02B 27/017 (20130101); A61B 3/0091 (20130101); G02B 27/0179 (20130101); A61B 3/024 (20130101); A61B 3/113 (20130101); G02B 27/0172 (20130101); G02B 2027/0141 (20130101); G02B 2027/0178 (20130101); G02B 2027/0138 (20130101); G02B 2027/0187 (20130101); G02B 2027/014 (20130101); G02B 2027/0123 (20130101) |

| Current International Class: | G02B 27/01 (20060101); A61B 3/113 (20060101); A61B 3/00 (20060101) |

References Cited [Referenced By]

U.S. Patent Documents

| 5359675 | October 1994 | Siwoff |

| 5589897 | December 1996 | Sinclair et al. |

| 5831667 | November 1998 | Siminou |

| 5841511 | November 1998 | D'Souza et al. |

| 6152565 | November 2000 | Liu et al. |

| 7195353 | September 2007 | Blum et al. |

| 7686450 | March 2010 | Heiberger |

| 8135227 | March 2012 | Lewis et al. |

| 8494298 | July 2013 | Lewis et al. |

| 9516283 | December 2016 | Hilkes et al. |

| 9618748 | April 2017 | Munger et al. |

| 9952434 | April 2018 | Jiao et al. |

| 9955862 | May 2018 | Freeman et al. |

| 10058454 | August 2018 | Chayet et al. |

| 10111583 | October 2018 | Freeman et al. |

| 10127706 | November 2018 | Jones et al. |

| 10129520 | November 2018 | Munger et al. |

| 2003/0174284 | September 2003 | Stewart |

| 2009/0153796 | June 2009 | Rabner |

| 2010/0149073 | June 2010 | Chaum et al. |

| 2012/0200595 | August 2012 | Lewis et al. |

| 2013/0169929 | July 2013 | Fateh |

| 2013/0215147 | August 2013 | Hilkes et al. |

| 2013/0329190 | December 2013 | Lewis et al. |

| 2014/0003762 | January 2014 | Macnamara |

| 2014/0132629 | May 2014 | Pandey et al. |

| 2014/0198017 | July 2014 | Lamb et al. |

| 2014/0210970 | July 2014 | Dalal et al. |

| 2015/0193984 | July 2015 | Bar-Zeev et al. |

| 2015/0277121 | October 2015 | Fridental |

| 2015/0355481 | December 2015 | Hilkes et al. |

| 2016/0104453 | April 2016 | Borenstein et al. |

| 2016/0262608 | September 2016 | Krueger |

| 2016/0270656 | September 2016 | Samec et al. |

| 2017/0000345 | January 2017 | Samec et al. |

| 2017/0001032 | January 2017 | Samec et al. |

| 2017/0007111 | January 2017 | Samec et al. |

| 2017/0007115 | January 2017 | Samec et al. |

| 2017/0007116 | January 2017 | Samec et al. |

| 2017/0010470 | January 2017 | Samec et al. |

| 2017/0017083 | January 2017 | Samec et al. |

| 2017/0092007 | March 2017 | Goldberg et al. |

| 2017/0273552 | September 2017 | Leung et al. |

| 2018/0012414 | January 2018 | Lewis et al. |

| 2018/0088323 | March 2018 | Bao et al. |

| 2018/0125716 | May 2018 | Cho et al. |

Other References

|

International Search Report and Written Opinion from International Application No. PCT/US18/52313 dated Nov. 20, 2018. cited by applicant . Notification of the International Search Report and the Written Opinion of the International Searching Authority, dated Nov. 20, 2018, in corresponding International Application No. PCT/2018/053213. cited by applicant . "Augmented-View for Restricted Visual Field: Multiple Device Implementations," by Vargas-Martin, et al., Optometry and Vision Science, Nov. 2002, vol. 79, No. 11, pp. 715-723. I I. cited by applicant . "Clinical Performance of Electronic, Head-mounted, Low-vision Devices," by Culharn, et al., Ophthalmic and Physiological Optics 2004, vol. 24, pp. 281-290. "Conformal and Other Image Warpings for Reading with Field Defect," by Juday, et al., SPiE vol. 2239 Visual Information Processing Iii (1994), pp. 92-102. I . . .1 --. cited by applicant . "Evaluation of a Prototype Minified Augmented-View Device for Patients with Impaired Night Vision," by Bowers, et al., Ophthalmic and Physiological Optics 2004, vol. 24, pp. 296-312. cited by applicant . "The Programmable Rernapper: Clinical Applications for Patients with Field Defects," by Loshin, et al., Optometry and Vision Science 1989, vol. 66., No. 6, pp. 389-395. 1 1. cited by applicant . Non-Final Office Action dated May 10, 2019 in related U.S. Appl. No. 16/367,687, 12 pages. cited by applicant . Non-Final Office Action dated May 16, 2019 in related U.S. Appl. No. 16/367,751, 13 pages. cited by applicant. |

Primary Examiner: McDowell, Jr.; Maurice L.

Attorney, Agent or Firm: Pillsbury Winthrop Shaw Pittman LLP

Parent Case Text

CROSS-REFERENCE TO RELATED APPLICATIONS

The present application claims the benefit of U.S. Provisional Application No. 62/563,770, entitled "Digital Therapeutic Corrective Spectacles", filed on Sep. 27, 2017, which is hereby incorporated by reference herein in its entirety.

Claims

What is claimed:

1. A system for facilitating increased field of view of a scene via a wearable device, the system comprising: a computer system that comprises one or more processors executing computer program instructions that, when executed, cause the computer system to: obtain, via a wearable device, a plurality of images of a scene, the wearable device comprising one or more monitors to display one or more images to an eye of a user; determine a central region common to the plurality of images; for each image of the plurality of images, determine a peripheral region of the image divergent from a corresponding peripheral region of at least another image of the plurality of images; generate a combined image based on the common central region and the divergent peripheral regions such that the combined image comprises (i) a first region comprising a representation of the common central region and (ii) a second region comprising representations of the divergent peripheral regions, the second region being around the first region; and cause the combined image to be displayed on the one or more monitors of the wearable device.

2. The system of claim 1, wherein the computer system is caused to: perform shifting of each image of the plurality of images such that a size of the common central region is decreased and a size of at least one of the divergent peripheral regions is increased, wherein generating the combined image comprises generating the combined image based on the common central region and the divergent peripheral regions subsequent to the performance of the shifting.

3. The system of claim 1, wherein the computer system is caused to: perform resizing of one or more regions of the plurality of images such that an extent of any resizing of the common central region is different than an extent of any resizing of at least one of the divergent peripheral regions, wherein generating the combined image comprises generating the combined image based on the common central region and the divergent peripheral regions subsequent to the performance of the resizing.

4. The system of claim 3, wherein performing the resizing comprises performing the resizing of one or more regions of the plurality of images such that a percentage change in size of the common central region represented in the first region of the combined image is greater than or less than a percentage change in size of at least one of the divergent peripheral regions represented in the second region of the combined image.

5. The system of claim 4, wherein the percentage change in size of at least one of the divergent peripheral regions is zero, and wherein the percentage change in size of the common central region is greater than zero.

6. The system of claim 4, wherein the percentage change in size of at least one of the divergent peripheral regions is greater than zero, and wherein the percentage change in size of the common central region is zero.

7. The system of claim 1, wherein generating the combined image comprises generating the combined image based on the common central region, the divergent peripheral regions, and a peripheral region common to the plurality of images such that (i) the first region of the combined image comprises the representation of the common central region and a representation of the common peripheral region and (ii) the second region of the combined image comprises representations of the divergent peripheral regions.

8. The system of claim 1, wherein the wearable device comprises first and second cameras, and wherein obtaining the plurality of images comprises obtaining at least one of the plurality of images via the first camera of the wearable device and obtaining at least another one of the plurality of images via the second camera of the wearable device.

9. The system of claim 1, wherein the one or more monitors of the wearable device comprises first and second monitors, and wherein causing the combined image to be displayed comprises causing the combined image to be displayed via the first and second monitors.

10. The system of claim 1, wherein the computer system is a wearable computer system comprising the one or more processors executing the computer program instructions that, when executed, cause the wearable computer system to perform all the foregoing operations.

11. The system of claim 1, wherein the wearable device comprises a wearable spectacles device.

12. The system of claim 11, wherein the wearable spectacles device comprises the one or more processors executing the computer program instructions that, when executed, cause the wearable spectacles device to perform all the foregoing operations.

13. A method being implemented by one or more processors executing computer program instructions that, when executed, perform the method, the method comprising: obtaining, via a wearable device, a plurality of images of a scene; determining a central region common to the plurality of images; for each image of the plurality of images, determining a peripheral region of the image divergent from a corresponding peripheral region of at least another image of the plurality of images; generating an enhanced image based on the common central region and the divergent peripheral regions such that the enhanced image comprises (i) a first region comprising a representation of the common central region and (ii) a second region comprising representations of the divergent peripheral regions, the second region being around the first region; and causing the enhanced image to be displayed via the wearable device.

14. The method of claim 13, further comprising: performing shifting of each image of the plurality of images such that a size of the common central region is decreased and a size of at least one of the divergent peripheral regions is increased, wherein generating the enhanced image comprises generating the enhanced image based on the common central region and the divergent peripheral regions subsequent to the performance of the shifting.

15. The method of claim 13, further comprising: performing resizing of one or more regions of the plurality of images such that an extent of any resizing of the common central region is different than an extent of any resizing of at least one of the divergent peripheral regions, wherein generating the enhanced image comprises generating the enhanced image based on the common central region and the divergent peripheral regions subsequent to the performance of the resizing.

16. The method of claim 15, wherein performing the resizing comprises performing the resizing of one or more regions of the plurality of images such that a percentage change in size of the common central region represented in the first region of the enhanced image is greater than or less than a percentage change in size of at least one of the divergent peripheral regions represented in the second region of the enhanced image.

17. The method of claim 16, wherein the percentage change in size of at least one of the divergent peripheral regions is zero, and wherein the percentage change in size of the common central region is greater than zero.

18. The method of claim 16, wherein the percentage change in size of at least one of the divergent peripheral regions is greater than zero, and wherein the percentage change in size of the common central region is zero.

19. The method of claim 13, wherein generating the enhanced image comprises generating the enhanced image based on the common central region, the divergent peripheral regions, and a peripheral region common to the plurality of images such that (i) the first region of the enhanced image comprises the representation of the common central region and a representation of the common peripheral region and (ii) the second region of the enhanced image comprises representations of the divergent peripheral regions.

20. The method of claim 13, wherein the wearable device comprises first and second cameras, and wherein obtaining the plurality of images comprises obtaining at least one of the plurality of images via the first camera of the wearable device and obtaining at least another one of the plurality of images via the second camera of the wearable device.

21. The method of claim 13, wherein causing the enhanced image to be displayed comprises causing the enhanced image to be displayed via first and second monitors of the wearable device.

22. The method of claim 13, wherein a wearable computer system comprising the one or more processors executing the computer program instructions that, when executed, perform the method.

23. The method of claim 13, wherein the wearable device comprises a wearable spectacles device.

24. One or more non-transitory computer-readable media comprising instructions that, when executed by one or more processors, cause operations comprising: obtaining, via a wearable device, a plurality of images of a scene; determining a central region common to the plurality of images; for each image of the plurality of images, determining a peripheral region of the image divergent from a corresponding peripheral region of at least another image of the plurality of images; generating an enhanced image based on the common central region and the divergent peripheral regions such that the enhanced image comprises (i) a first region comprising a representation of the common central region and (ii) a second region comprising representations of the divergent peripheral regions, the second region being around the first region; and causing the enhanced image to be displayed via the wearable device.

25. The one or more non-transitory computer-readable media of claim 24, wherein the operations further comprise: performing shifting of each image of the plurality of images such that a size of the common central region is decreased and a size of at least one of the divergent peripheral regions is increased, wherein generating the enhanced image comprises generating the enhanced image based on the common central region and the divergent peripheral regions subsequent to the performance of the shifting.

26. The one or more non-transitory computer-readable media of claim 24, wherein the operations further comprise: performing resizing of one or more regions of the plurality of images such that an extent of any resizing of the common central region is different than an extent of any resizing of at least one of the divergent peripheral regions, wherein generating the enhanced image comprises generating the enhanced image based on the common central region and the divergent peripheral regions subsequent to the performance of the resizing.

27. The one or more non-transitory computer-readable media of claim 26, wherein performing the resizing comprises performing the resizing of one or more regions of the plurality of images such that a percentage change in size of the common central region represented in the first region of the enhanced image is greater than or less than a percentage change in size of at least one of the divergent peripheral regions represented in the second region of the enhanced image.

28. The one or more non-transitory computer-readable media of claim 27, wherein the percentage change in size of at least one of the divergent peripheral regions is zero, and wherein the percentage change in size of the common central region is greater than zero.

29. The one or more non-transitory computer-readable media of claim 27, wherein the percentage change in size of at least one of the divergent peripheral regions is greater than zero, and wherein the percentage change in size of the common central region is zero.

30. The one or more non-transitory computer-readable media of claim 24, wherein generating the enhanced image comprises generating the enhanced image based on the common central region, the divergent peripheral regions, and a peripheral region common to the plurality of images such that (i) the first region of the enhanced image comprises the representation of the common central region and a representation of the common peripheral region and (ii) the second region of the enhanced image comprises representations of the divergent peripheral regions.

Description

FIELD OF THE INVENTION

The present disclosure relates to techniques for compensating for visual impairments in the visual field, visual aberrations, and visual alignment errors of a user, and, more particularly, to wearable devices that correct for the aforementioned visual impairments and supplying corrections to the users.

BACKGROUND

The background description provided herein is for the purpose of generally presenting the context of the disclosure. Work of the presently named inventors, to the extent it is described in this background section, as well as aspects of the description that may not otherwise qualify as prior art at the time of filing, are neither expressly nor impliedly admitted as prior art against the present disclosure.

Patients with ocular pathologies such as optic nerve pathologies and/or retinal pathologies (e.g., patients with glaucoma) have variable localized reduction in visual sensitivity of their visual field. That means that in some areas of their visual field the image is dimmer than other areas. This dimming within the visual field results because more intense illumination is required to stimulate the eye in the affected areas compared to unaffected areas, and is the result of the eye pathology. Patients will describe this dimming as having a cloud or blur over a part of their visual field. When the pathology progresses, the affected areas of the visual field can lose more and more of their ability to see and may eventually become totally blind.

Visual field diagnostic devices have been used to test the visual field sensitivity of a patient by projecting a light that is initially faint and then if the patient does not indicate that he/she is seeing it, the intensity increases more and more until the patient indicates that he/she sees the light. The sensitivity of the projected area is then recorded. If the patient does not see the light even with the maximum illumination intensity, then this area of the visual field is identified as blind.

Refractive errors negatively affect vision. Those refractive errors are caused by irregularities in the refractive elements of the eye. They result in blurry vision that is partly correctable by glass spectacles and contact lenses. That is the reason why some subjects see more than others and some have better quality of vision than others. Spectacles made out of glass as well as contact lenses only come in certain increments and would only correct regular errors of refraction e.g. regular astigmatism. Those regular errors of refraction are called lower order aberrations. Higher order aberrations are errors of refraction that are not correctable by spectacles or by contact lenses. Additionally, higher order aberrations are dynamic and not fixed. They change according to the pupil size, the accommodation state of the eye and direction of gaze.

Current techniques for treating presbyopia include single vision, bifocal and multifocal reading spectacles, and multifocal contact lenses. With the multifocal or bifocal spectacles, the patient will look through specific areas of the glass to get the required correction. With multifocal contact lenses, the light is diffracted into multiple focal points, improving the depth of focus but at the expense of decreasing the quality of vision. All those techniques are not very convenient and limit the near vision.

Double vision results from misalignment of the line of vision of patient. Double vision is dynamic and not static, meaning that it increases and decreases towards one or multiple gazes. So, if the subject has limitation in bringing the right eye outwards then the double vision will increase when the patient is looking to the right and might decrease when the subject is looking to the left.

Anisometropia (unequal refractive power of both eyes of a subject) is not uncommon, especially after eye surgery or trauma. It is one of the indications of cataract surgery per Medicare. Corrective glass spectacles are unable to correct for anisometropia. That is because the corrective glass spectacles produce two images, one to each eye, with unequal sizes (aniseikonia) and the brain could not fuse those two images into a binocular single vision. That problem is simply because the lenses of glass spectacles are either convex, magnify the image or concave, minify the image. The amount of magnification or minification depends on the amount of correction.

Lenses of glass spectacles are either convex, magnify the image or concave, minify the image. That affects the visual field of subjects. Glasses spectacles correct the refractive error of the patient but also produce distortion in the image being viewed.

Subjects with anisocoria have unequal pupil size and that can be congenital, acquired from an eye disease or following surgery or trauma. Those subjects have light sensitivity from a single eye and that eye cannot tolerate the light brightness tolerated by the healthy eye.

There is a need for an optical device that can compensate for the aforementioned visual impairments.

SUMMARY

In exemplary embodiments, the present techniques provide devices for testing, identifying, and/or compensating for one or more ocular pathologies affecting the vision of a patient. These ocular pathologies include, for example, pathologies of the optic nerve such as glaucoma, optic neuritis, and optic neuropathies, pathologies of the retina such as macular degeneration, retinitis pigmentosa, pathologies of the visual pathway as microvascular strokes and tumors and other conditions such as presbyopia, strabismus, high and low optical aberrations, monocular vision, anisometropia and aniseikonia, light sensitivity, anisocorian refractive errors, and astigmatism. In some exemplary embodiments, the present techniques provide devices for enhancing a field of view to a subject, such as modification of: a horizontal, vertical, and/or diagonal angle of view; light provided to one or more regions; size of objects in one or more regions; and/or location of objects in one or more regions.

In exemplary embodiments, the systems and devices described herein may include a wearable spectacles device configured to test, identify, compensate for visual impairments, and/or enhance aspects of a subjects vision or field of view. Some such embodiments may be configured to provide personalized customized visual correction to the subject using them. In one example, the spectacles device comprises digital therapeutic corrective spectacles (also termed herein "DTS"). Spectacles may also include, by way of example, glasses, sunglasses, and eyewear.

In an aspect a vision system may include a wearable spectacle device. The system may further include an image processing device having a processor and a memory. The image processing device may store instructions on the memory, wherein the instructions, when executed, cause the processor to execute a testing mode and/or a vision mode.

In one example, the system may further include a pupil tracking sensor configured to track a pupil physical condition and/or line of sight of a subject. In a further example, the pupil tracking sensor comprises one or more inward directed image sensors. In the above or another example, the system may include vision field sensor configured to capture a vision field in the vision mode.

In any of the above or another example, the instructions when executed by the processor may cause the processor to, in a testing mode, (i) instruct a display by the wearable spectacles device of a plurality of testing stimuli to the subject over one or more testing locations in a testing visual field, (ii) instruct the inward directed image sensor to capture position indications of the pupil physical condition and/or line of sight during the displaying of the plurality of testing stimuli over the one or more testing locations, and (iii) determine one or more affected regions in the testing visual field and determine one or more vision pathologies of the subject, wherein the plurality of stimuli differ in contrast levels with respect to each other and with respect to a baseline contrast level.

In any of the above or another example, the instructions when executed by the processor may cause the processor to, in the visioning mode, correct the image of the vision field to enhance a field of view and/or compensate for the one or more affected regions and instruct a display by the wearable spectacles device of the corrected image to the subject using the wearable spectacle device.

In any of the above or another example, the image processing device stores instructions that, when executed, cause the processor to: in the visioning mode, instruct the vision field camera to capture the image of the visual field, process the image in response to the determined one or more affected regions in the testing visual field, correct the image to compensate for the one or more affected regions, and instruct a display by the wearable spectacles device of the corrected image to the subject as a digital image.

In any of the above or another example, the digital spectacles may further comprise a first digital monitor and a second digital monitor each configured to display one of the plurality of stimuli to a respective eye of the subject in the testing mode. In any of the above or another example, the vision field camera comprises a first vision field camera and second vision field camera, the first vision field camera corresponding to the first digital monitor and the second vision field camera corresponding to the second digital monitor. In any of the above or another example, the pupil physical condition is selected from one or more of (i) pupil movement of one or more pupils, (ii) a limbus, (iii) a line of sight, and/or (iv) a visual axis of the subject. In any of the above or another example, the vision field camera comprises at least one vision field camera that extends inwardly from an outer surface of the wearable spectacle. In any of the above or another example, the vision field camera comprises at least one vision field camera that extends outwardly from an outer surface of the wearable spectacle. In any of the above or another example, in the visioning mode, the vision field camera captures continuous images of the visual field.

In any of the above or another example, the plurality of testing stimuli comprise at least one testing image of text or of an object. In any of the above or another example, the one or more affected regions comprises regions of reduced vision sensitivity or higher or lower optical aberrations. In any of the above or another example, the one or more affected regions comprises regions of reduced brightness. In any of the above or another example, the plurality of stimuli differ in contrast levels with respect to each other and with respect to a baseline contrast level by at least 20 dB. In any of the above or another example, the plurality of stimuli differ in contrast levels with respect to each other and with respect to a baseline contrast level by at least 30 dB. In any of the above or another example, the image processing device stores instructions that, when executed, cause the processor to: in the testing mode, instruct a display by the wearable spectacles device of the plurality of testing stimuli to the subject in a descending or ascending contrast.

In another aspect, a vision system includes a wearable spectacle device, at least one digital monitor, at least one vision field camera, and an image processing device.

In some examples, the at least one digital monitor is configured to display an image to an eye of the subject. In one example, the at least one vision field camera may be configured to capture a plurality of monocular images of a scene, each monocular image being shifted from each other monocular image. In one example, the image processing device may include a processor and a memory, and be coupled to the at least one digital monitor. The image processing device may store instructions on the memory that when executed, cause the processor to combine the plurality of monocular images into a combined image having a field of view greater than a field of view of any one of the plurality of monocular images. In any of the above or another embodiment, the instructions may cause the processor to display the combined image to the at least one digital monitor for presenting the subject with widened field view of the scene.

In any of the above or another example, the image processing device stores instructions on the memory that, when executed, cause the processor to: combine the plurality of monocular images into the combined image by performing selective field shifting on at least one of the plurality of monocular images relative to the other plurality of monocular images to generate a widen peripheral region for the combined image. In any of the above or another example, the image processing device stores instructions on the memory that, when executed, cause the processor to: combine the plurality of monocular images into the combined image by performing peripheral selective field manipulation on at least one of the plurality of monocular images relative to the other plurality of monocular images.

In any of the above or another example, the peripheral selective field manipulation comprises performing a shrinking or an enlarging on a peripheral region or a central macular region of the plurality of monocular images. In any of the above or another example, the image processing device stores instructions on the memory that, when executed, cause the processor to: combine the plurality of monocular images into the combined image by identifying a defect field region in at least one of the plurality of monocular images, capturing the defect field region, and transferring the captured defect field region to a non-defect field region and forming the combined image to include the transferred captured defect field region for display to the subject.

In any of the above or another example, the image processing device stores instructions on the memory that, when executed, cause the processor to: combine the plurality of monocular images into the combined image by identifying a common central region of each of the plurality of monocular images and identifying divergent peripheral regions of the plurality of monocular images; and form the combined image to have a first region corresponding to the common central region and a second region formed by combining the divergent peripheral regions into a widen peripheral region that surrounds the first region. In any of the above or another example, the image processing device stores instructions on the memory that, when executed, cause the processor to: form the combined image such that the second region corrects for visual field defect and aberrations of an eye of the subject. In any of the above or another example, the at least one digital monitor comprises a first digital monitor and a second digital monitor each configured for displaying the combined image to a respective eye of the subject.

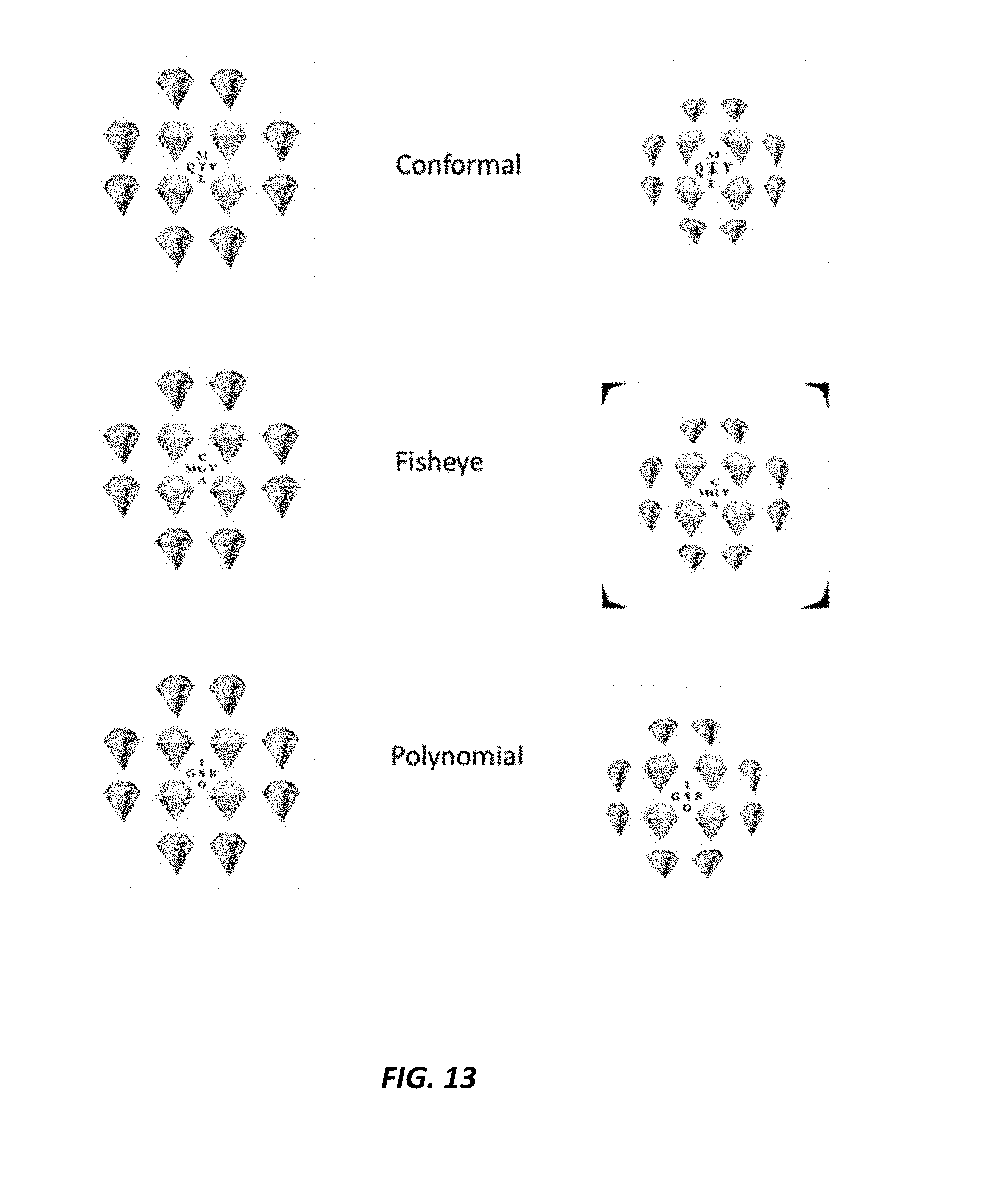

In any of the above or another example, the image processing device stores instructions on the memory that, when executed, cause the processor to perform a fisheye transformation on a first region of the plurality of monocular images to modify a radial component of the plurality of monocular images, according to: r.sub.new=r+ar.sup.3 where is a constant.

In any of the above or another example, the image processing device stores instructions on the memory that, when executed, cause the processor to perform a conformal mapping transformation on the plurality of monocular images to modify the radial component according to: r.sub.new=r.sup..beta. where .beta. is a constant power of the radial component and .beta.>1

In any of the above or another embodiment, the image processing device may store instructions on the memory that, when executed, cause the processor to perform a polynomial transformation to map points from a wider annulus around a center of the plurality of monocular images to a thinner annulus, for forming the combined image.

In still another aspect, an apparatus may include a wearable spectacle having a housing. The wearable spectacle may have a controllable projector configured to project a patterned image onto the retina of the subject. The apparatus may further include an image processing device having a processor, memory, and an input device. The image processing device may be coupled to the controllable projector.

In some examples, the image processing device is configured to: (A) receive to the input device a visual scoring signal indicative of the patterned image experienced at the retina of the subject; (B) analyze the visual scoring signal, determine if a distortion experienced at the retina is present based on the visual scoring signal, and when a distortion is present, determine a pattern adjustment for the patterned image based on the visual scoring signal; and (C) adjust the patterned image based on the pattern adjustment to form a revised patterned image and project the revised patterned image onto the retina and repeat (A).

In any of the above or another example, the corrective imaging element is an adjusted intensity of the peripheral element relative to a central image region of the visible scene or an adjusted intensity of the central element relative to a peripheral image region of the visible scene. In any of the above or another example, the image processing device is configured to: adjust the position and/or composition of the corrective imaging element in response to detected movement of the eye of the subject. In any of the above or another example, the image processing device is configured to: identify one or more affected regions of one or both eyes of the subject; and determine the corrective imaging element that compensates for the one or more affected regions.

In yet another aspect, an apparatus may include a wearable spectacle device, the wearable spectacle device may include at least one optical element for passing an image of a visible scene to the subject. The wearable spectacle device may further include at least one digital monitor corresponding to the at least one optical element, the at least one digital monitor being configured to overlay a corrective imaging element over an image of the visible scene of the at least one optical element. The apparatus may also include an image processing device having a processor and a memory. The image processing device may be coupled to the at least one digital monitor.

In one example, the image processing device configured to generate the corrective imaging element as a peripheral element of the image of the visible scene to correct for a peripheral visual field defect or generate the corrective imaging element as a central element of the image of the visible scene to correct for a central visual field detect. In any of the above or another example, the image processing device may be configured to display the corrective image element over visible scene to the subject.

In any of the above or another example, the corrective imaging element is an adjusted intensity of the peripheral element relative to a central image region of the visible scene or an adjusted intensity of the central element relative to a peripheral image region of the visible scene. In any of the above or another example, the image processing device is configured to: adjust the position and/or composition of the corrective imaging element in response to detected movement of the eye of the subject. In any of the above or another example, the image processing device is configured to: identify one or more affected regions of one or both eyes of the subject; and determine the corrective imaging element that compensates for the one or more affected regions.

In any of the above or another example, the image processing device is configured to: in a testing mode, (i) instruct the at least one digital monitor to display a plurality of testing stimuli to the subject over one or more testing locations in a testing visual field, (ii) instruct an image sensor of the apparatus to capture position indications of the pupil physical condition and/or line of sight during the displaying of the plurality of testing stimuli over the one or more testing locations, and (iii) determine the one or more affected regions in the testing visual field and determine one or more vision pathologies of the subject. In any of the above or another example, the plurality of stimuli differ in contrast levels with respect to each other and with respect to a baseline contrast level.

In any of the above or another example, the at least one digital monitor is contained with a layer of the at least one optical element. In any of the above or another example, the layer is an inner layer or an outer layer of the at least one optical element.

BRIEF DESCRIPTION OF THE DRAWINGS

The figures described below depict various aspects of the system and methods disclosed herein. It should be understood that each figure depicts an example of aspects of the present systems and methods.

FIGS. 1A-1C illustrate views of an example spectacles device according to various embodiments described herein;

FIG. 2 schematically illustrates an example vision system according to various embodiments described herein;

FIG. 3 schematically illustrates a device with a vision correction framework implemented on an image processing device and a wearable spectacles device according to various embodiments described herein;

FIG. 4 illustrates an example process including a testing mode and a visioning mode according to various embodiments described herein;

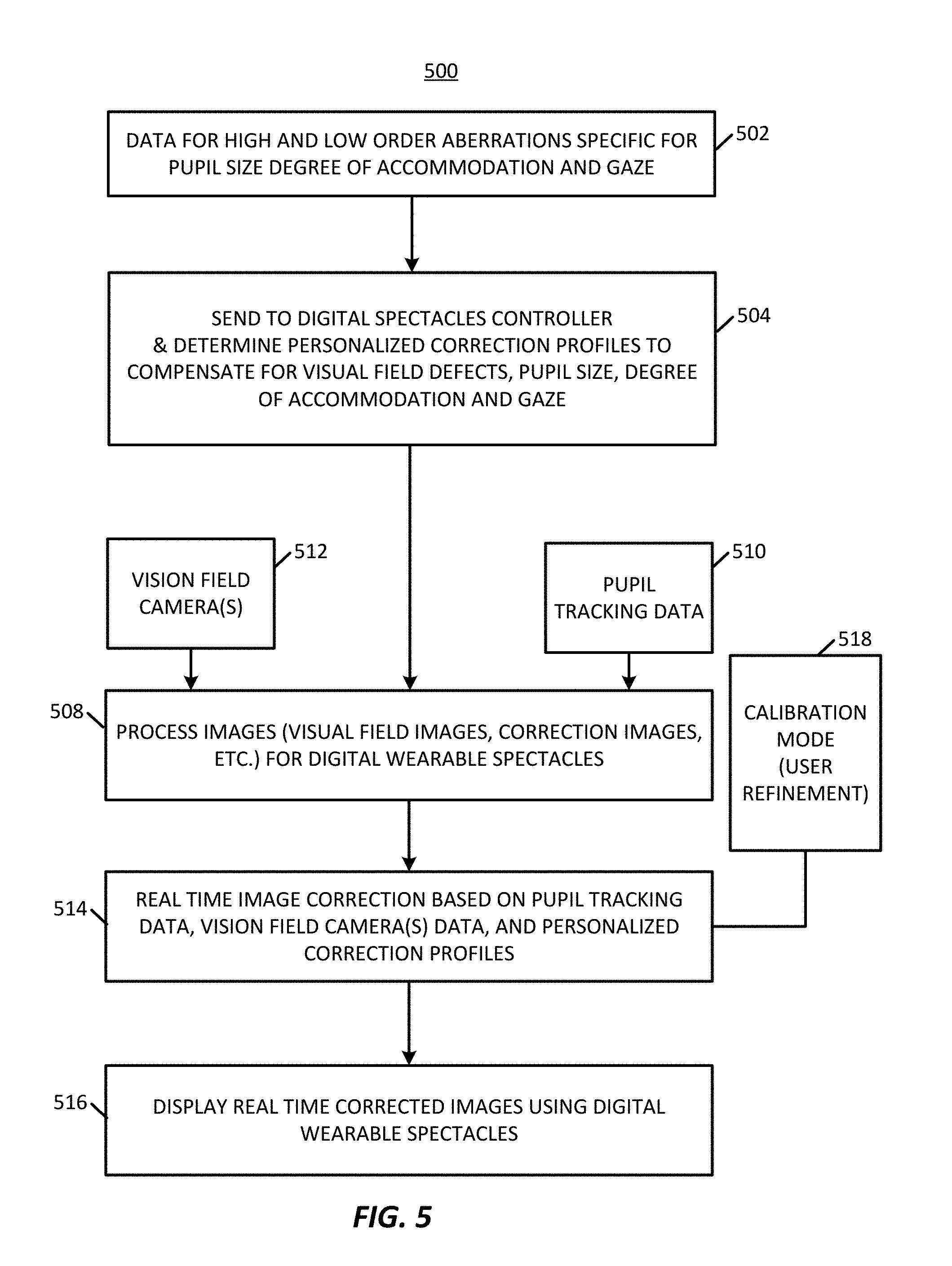

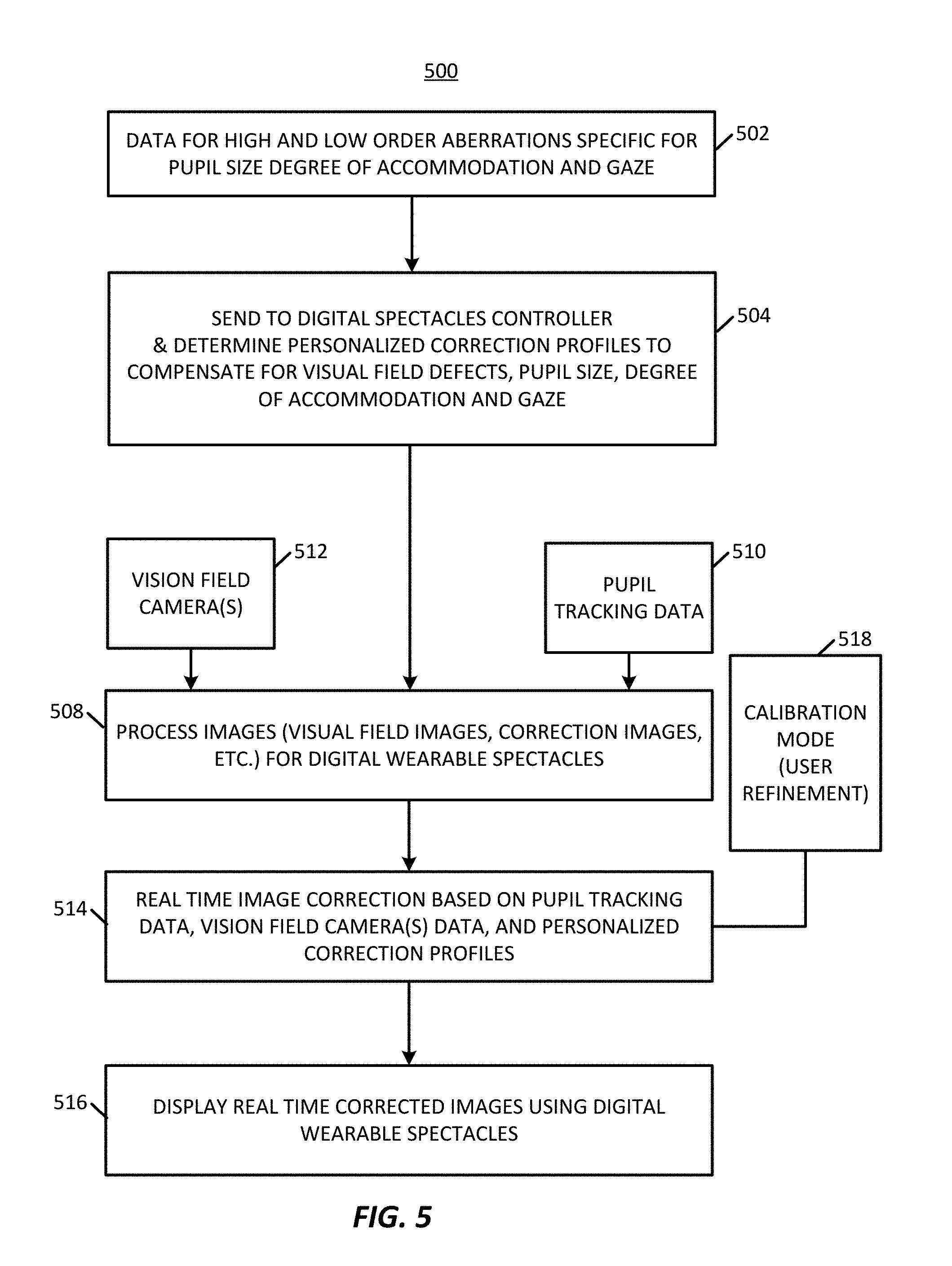

FIG. 5 illustrates an example process including a testing mode and a visioning mode according to various embodiments described herein;

FIGS. 6A-6C illustrate an example assessment protocol for a testing mode process including pupil tracking according to various embodiments described herein;

FIGS. 7A-7C illustrate an example assessment protocol for a testing mode process including pupil tracking according to various embodiments described herein;

FIG. 8 schematically illustrates a workflow including a testing module that generates and presents a plurality of visual stimuli to a user through a wearable spectacles device according to various embodiments described herein;

FIG. 9 illustrates a testing mode process according to various embodiments described herein;

FIG. 10 illustrates a process for an artificial intelligence corrective algorithm mode that may be implemented as part of the testing mode according to various embodiments described herein;

FIG. 11 shows a test image according to various embodiments described herein;

FIG. 12 illustrates development of a simulated vision image including overlaying an impaired visual field on a test image for presentation to a subject according to various embodiments described herein;

FIG. 13 illustrates examples of different correction transformations that may be applied to an image and presented to a subject according to various embodiments described herein;

FIG. 14 illustrates example translation methods according to various embodiments described herein;

FIG. 15 schematically illustrates an example of a machine learning framework according to various embodiments described herein;

FIG. 16 illustrates a process of an AI system of a machine learning framework according to various embodiments described herein;

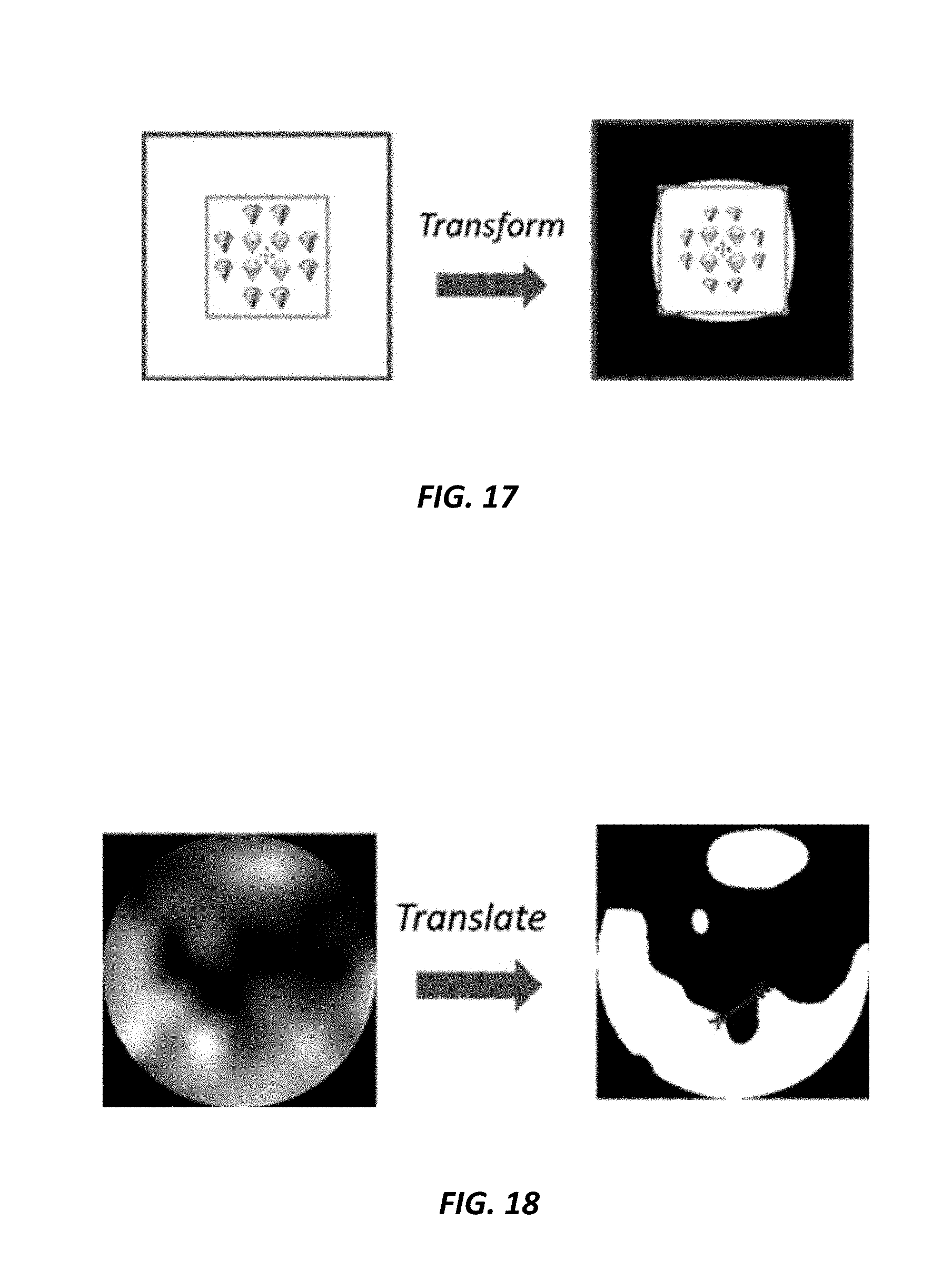

FIG. 17 illustrates an example transformation of a test image according to various embodiments described herein;

FIG. 18 illustrates an example translation of a test image according to various embodiments described herein;

FIG. 19 is a graphical user interface illustrating various aspects of an implementation of an AI system according to various embodiments described herein;

FIG. 20 schematically illustrates a framework for an AI system including a feed-forward neural network according to various embodiments described herein;

FIGS. 21 & 22 illustrate example testing mode processes of an AI system including an AI neural network and an AI algorithm optimization process, respectively, according to various embodiments described herein;

FIG. 23 illustrates an example process implementing testing and visioning modes according to various embodiments described herein;

FIG. 24 illustrates a wearable spectacles device comprising custom reality wearable spectacles that allow an image from the environment to pass through a portion thereof wherein a peripheral field of a viewer is allowed to pass through and a central region is blocked according to various embodiments described herein;

FIG. 25 illustrates a wearable spectacles device comprising custom reality wearable spectacles that allow an image from the environment to pass through a portion thereof wherein a central region of a viewer is allowed to pass through and a peripheral field region is blocked according to various embodiments described herein;

FIG. 26 illustrates a normal binocular vision for a subject where a monocular image from the left eye and from the right eye are combined into a single perceived image having a macular central area and a peripheral visual field area surrounding the central area;

FIG. 27 illustrates a tunnel vision condition wherein a peripheral area is not visible to a subject;

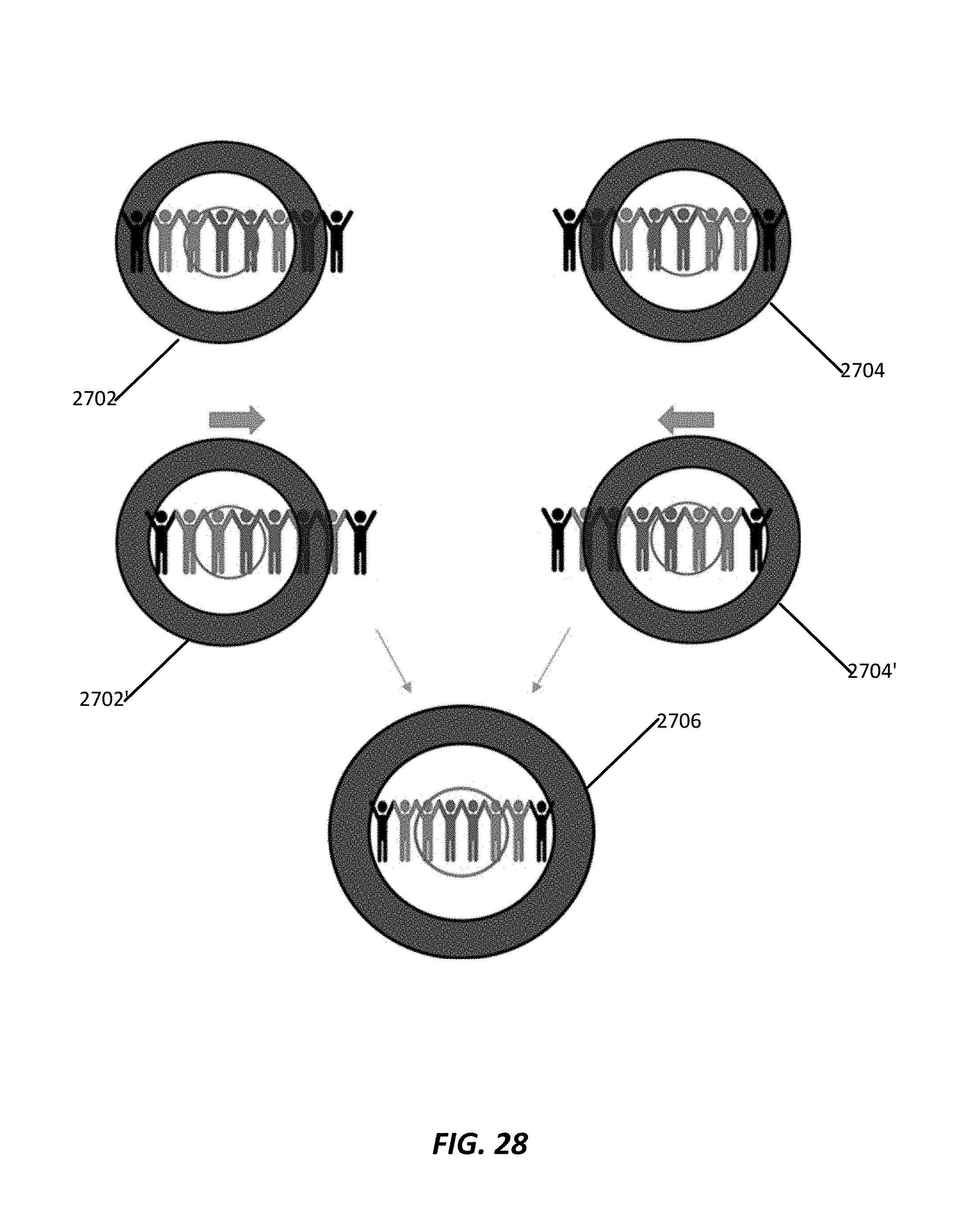

FIG. 28 illustrates an image shifting technique to enhance vision or to correct a tunnel vision condition according to various embodiments described herein;

FIG. 29 illustrates an image resizing transformation technique to enhance vision or preserve central visual acuity while expanding the visual field according to various embodiments described herein;

FIG. 30 illustrates a binocular view field expansion technique according to various embodiments described herein;

FIG. 31A illustrates a technique for assessing dry eye and corneal irregularities including projecting a pattern onto the corneal surface and imaging the corneal surface reflecting the pattern according to various embodiments described herein;

FIG. 31B schematically illustrates presentation of a reference image comprising a grid displayed to a subject or projected onto a cornea or retina of the subject via wearable spectacles according to various embodiments described herein;

FIG. 31C illustrates an example grid for manipulation by a subject according to various embodiments described herein;

FIG. 31D illustrates an example manipulation of the grid illustrated in FIG. 31C according to various embodiments described herein;

FIG. 31E illustrates a scene as it should be perceived by the subject according to various embodiments described herein;

FIG. 31F illustrates an example corrected visual field that when provided to a subject with a visual distortion determined by the grid technique results in that subject perceiving the visual field as shown FIG. 31E according to various embodiments described herein;

FIG. 31G illustrates a display including a manipulable grid onto which a subject may communicate distortions within a visual field according to various embodiments described herein;

FIG. 32 is an image of a corneal surface reflecting a pattern projected onto the corneal surface according to various embodiments described herein;

FIG. 33 illustrates an example of a normal pattern reflection according to various embodiments described herein;

FIG. 34 illustrates an example of an abnormal pattern reflection according to various embodiments described herein;

FIG. 35A illustrates a fast thresholding strategy for a testing mode including four contrast staircase stimuli covering a central 40 degree radius using 52 stimuli sequences at predetermined locations according to various embodiments described herein;

FIG. 35B shows a timing diagram showing five step (a-e) of a testing sequence at one stimulus location according to various embodiments described herein;

FIG. 36 illustrates calculation of widths and heights of pixels bounding the largest bright field according to various embodiments described herein;

FIG. 37 illustrate a width map and height map according to various embodiments described herein;

FIG. 38 illustrate test images used to test four main quadrants of a visual field according to various embodiments described herein;

FIG. 39A illustrates an example visual field view prior to remapping according various embodiments described herein;

FIG. 39B illustrates an example visual field view following remapping according to various embodiments described herein; and

FIGS. 40A-40C illustrates an example custom reality spectacles device according to various embodiments described herein.

DETAILED DESCRIPTION

The present application provides techniques and devices for testing, identifying, and compensating for ocular pathologies affecting the visual field for a patient. These ocular pathologies include, for example, pathologies of the optic nerve such as glaucoma, optic neuritis, and optic neuropathies, pathologies of the retina such as macular degeneration, retinitis pigmentosa, pathologies of the visual pathway as microvascular strokes and tumors and other conditions such as presbyopia, strabismus, high and low optical aberrations, monocular vision, anisometropia and aniseikonia, light sensitivity, anisocorian refractive errors, and astigmatism.

The techniques herein provide vision systems, spectacle devices, and associated systems and devices thereof, for testing, enhancing, and/or correcting vision or a perception of a visual field.

One or more devices of the vision system may be configured for use within one or more of the systems described herein or may be configured for separate use. For example, in various embodiments, a vision system comprises a spectacle device. It will be appreciated that devices described herein may include one or more systems comprising one or more devices. Thus devices may include one or more associated systems or devices.

The vision system may include an image processing device (which may also be referred to as an image processor, computing device, or the like) configured to perform the herein described image processing operations of the vision system. As described herein, the image processing device may be fully or partially integrated with the spectacles device or may be fully or partially external, e.g., remote, to the spectacles device. Such external image processing devices may be configured for wired or wireless communication with the spectacles device.

Exemplary embodiments of the spectacle device includes a wearable spectacles device. Some embodiments of the spectacle device may employ digital aspects with respect to one or more of imaging, imaging processing, communication, display, or other functionalities described here. Various embodiments of the spectacles device, either alone or together with other systems or devices, may be configured to provide a personalized, customized visually corrected vision field to a subject. In some examples, a spectacles device may comprise digital therapeutic corrective spectacles (also termed herein "DTS"). One exemplary spectacles device may comprise wearable digital spectacles for use by individuals for purposes other than therapeutic correction. For example, the spectacles device may be configured to enhance normal vision, field of view, or perception thereof, of a subject, e.g., by increasing or decreasing field of view, modification of a horizontal, vertical, and/or diagonal angle of view, modification of light provided to one or more regions, modification of a size an object or regions within one or more regions of a field of view, and/or relocation of an object or region to another region of the field of view. The spectacle devices herein may be activated by voice activation, remote control (e.g., cellular phone) or body movement (e.g., winks or hard double blinks), in some examples.

Embodiments of vision systems or spectacle devices may include one or more digital monitors. Visions systems or spectacle devices may also include one or more image sensors. In some embodiments, image sensors may include one or more outward directed image sensors for imaging a viewing environment of the subject (which may also be referred to as a user, wearer, or patient), which may typically correspond to a field of view originating from the eyes of a subject, but which may be taken from other origination points in some configurations. Outward directed image sensors may comprise, for example, one or more cameras positioned to capture all or a portion of one or more fields of view, which may include more or less of a field of view relative to a human. In these or other embodiments, one or more image sensors may include one or more inward directed image sensors for imaging aspects of a subject such as a physical state of a pupil of the subject. For example, a spectacles device may include inward directed image sensors such as cameras (visible, infrared, etc.) that capture and track line of sight, limbus, pupil data for a subject, corneal data for a subject, retinal image, image of a pattern reflected on the cornea or the retina. Line of sight, also known as the visual axis, may be achieved by tracking the pupil, the limbus (which is the edge between the cornea and the sclera), or even track blood vessel on the surface of the eye or inside the eye. Thus, image sensors may be used to image limbus, blood vessels, as well as the pupil.

Some vision systems or spectacle devices may include one or more displays, which may be referred to as digital monitors. Digital monitors may include a monitor for generating a display on a screen, which may include projection onto a screen which may include heads-up display, or a monitor for projection of the display onto one or both eyes of a subject. For example, a spectacles device may include one or more digital monitors for display of images to the subject. These or other vision systems or spectacle devices may include projectors configured to display images to a subject by projecting images on a monitor, e.g., a screen such as a glass, or onto an eye of the subject, e.g., retinal projection. In some examples, the devices include a headset with two miniature external viewfinder cameras. Headsets may include, for example, a wearable spectacles device as described herein. In some examples, spectacle devices may include a spectacles device configured to recreate an entire visual field as a digitized corrected image to provide an optimized rendition of the visual field. In some examples, the vision systems or spectacle devices may include a spectacle device comprising an alternative reality (AR) or virtual reality (VR) headset. In these or other examples, the systems and devices may include spectacle devices wherein the visual field may be viewed by a user, but the visual field has been corrected by the introduction of a corrected image.

In some examples, a vision system or spectacles device may be configured to process and/or display images to correct lower and/or higher order aberrations and/or refractive errors and thus provide improved customized personalized vision to the subject. In some examples, systems or devices including a spectacles device may be configured to treat a myriad of ocular anomalies. Ocular anomalies includes, for example, various classes of diagnosable conditions, related to one or more of visual field defects, decreased vision effects, field of vision distortions, secondary effects, and double vision. The ocular anomalies that can be corrected through the operation of the systems or devices described herein may include, but are not limited to, one or more of presbyopia, double vision caused by strabismus, glaucoma, age related macular degeneration, monocular vision, anisometropia and aniseikonia, light sensitivity, and anisocoria, pathologies of the optic nerve such as glaucoma, optic neuritis, and optic neuropathies, pathologies of the retina such as macular degeneration, retinitis pigmentosa, pathologies of the visual pathway as microvascular strokes and tumors and other conditions such as presbyopia, strabismus, high and low optical aberrations, refractive errors, and astigmatism.

In exemplary embodiments, a vision system or spectacles device may be configured to provide an enhanced and/or corrected image displayed to a subject, either through digital recreation or through augmenting the visual field. In exemplary embodiments, the spectacles device may include one or more projectors configured to project a digital recreated or augmented image into the eye of the subject, projecting onto the retina, via retinal projection.

In exemplary embodiments, a vision system or spectacles devices may be configured to correct or enhance the field of view of the subject, e.g., correcting or increasing the angle of vision of the subject. In some examples, the central and peripheral view regions are affected differently (e.g., through zooming in or zooming out the images displayed or projected to the subject eye) to enhance the view angle of the subject or to increase the detail perceived by the subject.

In exemplary embodiments, a vision system or spectacles device may be configured to compensate for changes in the localized brightness of the visual field for a patient, e.g., as determined from visual field test results, which may be performed together with the spectacles devices or separate. The spectacles devices may be configured to compensate by providing increased brightness to areas of the visual field with lower sensitivity as compared to areas with normal sensitivity. In some examples, spectacle devices or associated systems are configured to register and track these lower sensitivity areas using the pupil and visual axes. The spectacle devices or associated systems herein employ compensation techniques for these lower sensitivity regions to provide a homogenous image from the perception of the subject. This compensation techniques remove the localized cloud of the subject with respect to the low sensitivity areas to improve visual performance and increase the functional visual field of the subject.

In exemplary embodiments, a vision system or spectacles device may include a testing mode, e.g., to identify and test aspects a subject's vision or functional visual field. In this or other embodiments, spectacle devices may include a visioning mode, e.g., to provide enhanced or corrected vision or visual field, which may be in real time and/or personalized to the subject. In some embodiments, spectacle devices or associated systems include both a testing mode and visioning mode, which may be configured to utilize follow-up or maintenance testing procedures for streamlined reprograming of visioning mode processing as the subject's vision changes. In some embodiments of the spectacles device may include a programing interface configured to receive updates with respect to testing mode operations and/or visioning mode operations. For example, the programing interface may include a wired or wireless communication port including a receiver or transceiver. In some embodiments, the spectacles device may be configured to receive updates comprising testing results performed by a testing mode of the system or another system or device for integration with the visioning mode operations. In some embodiments, updates may include data or instructions provided by the subject, such as via a user interface in signal communication with the programing interface via the communication port. The data or instructions may be conveyed by the user via interactions with a user interface comprising a tablet, smart phone, computer, or a peripheral device in a testing mode, which may include a feedback mode, as described herein or during operation of a visioning mode, which may similarly include a feedback mode. Some embodiments may include a user interface mounted on a spectacle device such as a switch, touch sensor, capacitance sensor, or other interface through which a user may convey or adjust parameters with respect to the vision or corrective profile by which the visioning mode processes and presents images to the subject.

In exemplary embodiments, a vision system or spectacles device may include one or more outward directed image sensors, e.g., cameras, positioned to image a field of vision of the subject and display images on a monitor, e.g., display screen, glass of the spectacles, or project the images into an eye of the subject person wearing the spectacles device after processing the image. The processing of the image may comprise customizing the image to treat and/or correct for the aforementioned conditions or to enhance vision or functional visual field. As introduced above, spectacles devices may include or associate with one or more inward directed image sensors, e.g., cameras, that observe the subject eye, line of sight, pupil size, and/or position of the limbus to register and/or adjust for the aforementioned corrections or enhancements.

In exemplary embodiments, a vision system or spectacles device may be configured to correct for the lower and/or high order visual aberration in a dynamic manner. The techniques may detect the size of the pupil, accommodative status and change in line of sight and thus changes the visual aberration corrective profile accordingly. The higher and/or lower order aberrations may be captured in relation to the pupil size, state of accommodation and direction of gaze using aberrometer to allow the spectacles device to create such a dynamic corrective profile. The image projected to the subject by the techniques herein may be inversely distorted according to actual aberrations of the subject so that his/her own aberrations are re-inversed to provide the best vision. Some embodiments may implement techniques to detect the state of accommodation by detecting the signs of the near reflex, namely miosis (decrease the size of the pupil) and convergence (inward crossing of the pupil). For example, spectacles devices may include a pupil to detect pupil size and/or a line of sight tracker to detect direction of gaze. Those inputs allow the techniques to detect the correction profile to be displayed.

In exemplary embodiments, the present techniques may be implemented to provide vision correction that automatically autofocuses images displayed via the one or more monitors to provide near vision. To further augment and enhance near vision, the inward directed image sensors, e.g., cameras, may detect if the subject is trying to look at a near target by detecting signs of near reflex, miosis (decrease in pupil size) and convergence (inward movement of the eye), and automatically autofocus to provide better near vision. Near correction for reading a newspaper is different than that for reading from a computer monitor, for instance. Example spectacle devices and/or associated systems described herein may be configured to determine how far away an object is by quantifying the amount of the near reflex exerted by the subject and thus provide a corresponding focusing correction.

In exemplary embodiments, a vision system or spectacle device may be configured to correct for double vision secondary to strabismus in a dynamic manner. For example, pupil and line of sight tracking may operatively cooperate with inward directed image sensors to track pupil, limbus or eye structure such as blood vessels of the subject and line of sight. This tracking may be utilized to inform the displacement of images displayed to the subject, e.g., projected or displayed on one or more monitors or projected onto the eyes of the subject, in a dynamic way to compensate for the strabismus and to prevent double vision in all gazes.

In exemplary embodiments, a vision system or spectacle device may be configured to improve vision and safety of patients with visual field defects, such as glaucoma patients. Such subjects may have missing parts of visual fields. For instance, if a car or person is in a blind part of this subject vision, then that car or person is invisible for that subject. The vision systems and spectacles devices described herein may be implemented correct for these blind spots. For example, the visual field defect may be detected using a visual field testing mode of the vision system or spectacle device. In some examples, software executed by example systems and devices herein may be configured to redistribute images captured by an outward directed image sensor, e.g., camera, to the subject's actual functional visual field. The actual visual field may be dynamically projected in reference to the pupil or line of sight, e.g., utilizing data obtained by pupil and line of sight tracking. In other words, the present techniques may bring the picture of the car or person that is within the subject's blind spot to a position outside of the subject's blind spot, thereby, improving safety and functionality of those subjects.

In patients with age related macular degeneration or other conditions that affect the macula of the eye, who has central blind spot, the vision system or spectacle device may be configured to distribute an image or portion thereof to the peripheral or paracentral part of their functional visual field. The present techniques may project parts of the image of interest to healthy parts of the retina, for example, and avoid the unhealthy parts of the retina. In some examples, a vision system or spectacle device may include a testing mode to delineate seeing and blind parts of the visual field that is used during modification of the image to direct its distribution.

In monocular patients or patient having poor vision in one eye, the vision system or spectacles device may capture a normal binocular visual field and distribute the normal binocular visual field to the actual functional visual field of both eyes to provide the patient with the widest possible field of view. Indeed, these spectacles devices may be implemented to augment the visual field of a normal subject, for military engagement and other applications, to provide a subject with an enhanced visual field. For example, the spectacles device may be implemented to enhance a visual field of a subject in athletic applications, physician applications, driving applications, etc.

Anisometropia results from unequal refractive power of both eyes of a subject. In various embodiments, the vision system or spectacle device may be configured to correct for anisometriopia by modification of the image size to create images of equal sizes and displaying or projecting them to both eyes to avoid visual disturbances.

Unlike Lenses of glass spectacles that cause distortion to the visual field such as minification or magnification of the image of interest, the present techniques may be utilized to be independent of corrective lenses to not affect visual field of subjects.

In some examples, the vision system or spectacle device, may be configured to display or project light independent from the brightness of the surrounding environment. In one example, displayed or projected light may be adjusted automatically according to a size of a pupil as detected by the systems and/or devices or manually, e.g., via a user interface coupled to, e.g., in signal communication with, the spectacles device, as a patient requires. The pupil tends to constrict more in bright environment and dilate in less bright environment. As introduced above, the systems and devices herein may be configured to detect degree of constriction/dilation and adjust for brightness accordingly, which may be in a personalized and customized manner. Subjects with anisocoria, for example, may use the present techniques to allow for adjustment of brightness for each eye separately. In some examples, this is done automatically by the system or device, as it detects the pupil size.

FIG. 1A illustrates an example spectacles device 100 forming a wearable device for a subject. In some embodiments, the spectacles device 100 may be a part of a visioning system as described herein. The spectacles device 100 includes a left eyepiece 102 and a right eyepiece 104. Each eyepiece 102 and 104 may contain and/or associate with a digital monitor configured to display (or project) recreated images to a respective eye of the subject. In various embodiments, digital monitors may include a display screen, projectors, and/or hardware to generate the image display on the display screen. It will be appreciated that digital monitors comprising projectors may be positioned at other locations to project images onto an eye of the subject or onto an eyepiece comprising a screen, glass, or other surface onto which images may be projected. In one embodiment, the left eye piece 102 and right eyepiece 104 may be positioned with respect to the housing 106 to fit an orbital area on the subject such that each eyepiece 102, 104 is able to collect data and display/project image data, which in a further example includes displaying/projecting image data to a different eye.

Each eyepiece 102,104 may further includes one or more inward directed sensors 108, 110, which may be inward directed image sensors. In an example, inward directed sensors 108, 110 may include infrared cameras, photodetectors, or other infrared sensors, configured to track pupil movement and to determine and track visual axes of the subject. The inward directed sensors 108, 110, e.g., comprising infrared cameras, may be located in lower portions relative to the eye pieces 102, 104, so as to not block the visual field of the subject, neither their real visual field nor a visual field displayed or projected to the subject. The inward directed sensors 108, 110 may be directionally aligned to point toward a presumed pupil region for better pupil and/or line of sight tracking. In some examples, the inward directed sensors 108, 110 may be embedded within the eye pieces 102, 104 to provide a continuous interior surface.

FIG. 1B illustrates a front view of the spectacles device 100, showing the front view of the eye pieces 102, 104, where respective outward directed image sensors 112, 114 comprising field of vision cameras are positioned. In other embodiments, fewer or additional outward directed image sensors 112, 114 may be provided. The outward directed image sensors 112. 114 may be configured to capture continuous images. The spectacles device 100 or associated vision system may be further configured to then correct and/or enhance the images, which may be in a customized manner based on the optical pathologies of the subject. The spectacles device 100 may further be configured to display the corrected and/or enhanced image to the subject via the monitors in a visioning mode. For example, the spectacles device may generate the corrected and/or enhanced image on a display screen associated with the eyepiece or adjacent region, project the image onto a display screen associated with the eyepiece or adjacent region, or project the image onto one or more eyes of the subject.

FIG. 1C is an image of an example constructed spectacles device 100 comprising eyepieces 102, 104 including two digital monitors, with focusing lens 116, 118 In this example, only one inward directed optical sensor 110 is included for pupil and line of sight tracking, however, in other examples, multiple inward directed optical sensors 110 may be provided.

In exemplary embodiments, the spectacles device 100 may include a testing mode. In an example testing mode, the inward directed sensors 108, 110 track pupil movement and perform visual axis tracking (e.g., line of sight) in response to a testing protocol. In this or another example, the inward directed sensors 108, 110 may be configured to capture a reflection of a pattern reflected on the cornea and/or retina to detect distortions and irregularities of the cornea or the ocular optical system.

Testing mode may be used to perform a visual assessments to identify ocular pathologies, such as, high and/or low order aberrations, pathologies of the optic nerve such as glaucoma, optic neuritis, and optic neuropathies, pathologies of the retina such as macular degeneration, retinitis pigmentosa, pathologies of the visual pathway as microvascular strokes and tumors and other conditions such as presbyopia, strabismus, high and low optical aberrations, monocular vision, anisometropia and aniseikonia, light sensitivity, anisocorian refractive errors, and astigmatism. In the testing mode, data may be collected for the particular subject and used to correct captured images before those images are displayed, which may include projected as described herein, to the subject by the monitors.

In some examples, external sensors may be used to provide further data for assessing visual field of the subject. For example, data used to correct the captured image may be obtained from external testing devices such as visual field testing devices, aberromaters, electro-oculograms, or visual evoked potential devices. Data obtained from those devices may be combined with pupil or line of sight tracking for visual axis determinations to create the corrective profile of used to correct the images being projected of displayed to the viewer.

The spectacles device 100 may include a visioning mode, which may be in addition to or instead of a testing mode. In visioning mode, one or more outward directed image sensors 112, 114 capture images that are transmitted to an imaging processor for real-time image processing. The image processor may be embedded within, e.g., integrated or attached to, the spectacles device 100 or may be external thereto, such as associated with an external image processing device. The imaging processor may be a component of a visioning module and/or include a scene processing module as described elsewhere herein.

The spectacles device 100 may be communicatively coupled with one or more imaging processor through wired or wireless communications, such as through a wireless transceiver embedded within the spectacles device 100. An external imaging processor may include a computer such as a laptop computer, tablet, mobile phone, network server, or other computer processing devices, centralized or distributed, and may be characterized by one or more processors and one or more memories. In the discussed example, the captured images are processed in this external image processing device; however, in other examples, the captured images may be processed by an imaging processor embedded within the digital spectacles. The processed images, e.g., enhanced to improve functional visual field or other vision aspects and/or enhanced to correct for the visual field pathologies of the subject, are then transmitted to the spectacles device 100 and displayed by the monitors for viewing by the subject.

In an example operation of a vision system including the spectacles device, real-time image processing of captured images may be executed by an imaging processor, e.g., using a custom-built MATLAB (MathWorks, Natick, Mass.) code, that runs on a miniature computer embedded in the spectacles device. In other examples, the code may be run on an external image processing device or other computer wirelessly networked to communicate with the spectacles device. In one embodiment, the vision system, including the spectacles device, image processor, and associated instructions for executing visioning and/or testing modes, which may be embodied on the spectacles device alone or in combination with one or more external devices, e.g., laptop computer, may be operated in two modes, a visioning mode and a separate testing mode.

FIG. 2 illustrates an example vision system 200 including a spectacles device 202 communicatively coupled to a network 204 for communicating with a server 206, mobile cellular phone 208, or personal computer 210, any of which may contain a visional correction framework 212 for implementing the processing techniques herein, such as image processing techniques, which may include those with respect to the testing mode and/or visioning mode. In the illustrated example, the visional correction framework 212 includes a processor and a memory storing an operating system and applications for implementing the techniques herein, along with a transceiver for communicating with the spectacles device 202 over the network 204. The framework 212 contains a testing module 214, which includes a machine learning framework in the present example. The machine learning framework may be used along with a testing protocol executed by the testing module, to adaptively adjust the testing mode to more accurately assess ocular pathologies, in either a supervised or unsupervised manner. The result of the testing module operation may include development of a customized vision correction model 216 for a subject 218. A visioning module 220, which in some embodiments may also include a machine learning framework having accessed customized vision correction models, to generate corrected visual images for display by the spectacles device 202. The vision correction framework 212 may also include a scene processing module which may process images for use during testing mode and/or visioning mode operations and may include operations described above and elsewhere herein with respect to a processing module. As described above and elsewhere herein, in some embodiments, the spectacle device 202 may include all or a portion of the vision correction framework 212.

In the testing mode, the spectacles device 100 or 202, and in particular the one or more inward directed image sensors comprising tracking cameras, which may be positioned along an interior of the spectacles device 100 or 202, may be used to capture pupil and visual axis tracking data that is used to accurately register the processed images on the subject's pupil and visual axis.

FIG. 3 illustrates a vision system 300 comprising a vision correction framework 302. The vision correction framework 302 may be implemented on a image processing device 304 and a spectacles device 306 for placing on a subject. The image processing device 304 may be contained entirely in an external image processing device or other computer, while in other examples all or part of the image processing device 304 may be implemented within the spectacles device 306.

The image processing device 304 may include a memory 308 storing instructions 310 for executing the testing and/or visioning modes described herein, which may include instructions for collecting high-resolution images of a subject from the spectacles device 306. In the visioning mode, the spectacles device 306 may capture real-time vision field image data as raw data, processed data, or pre-processed data. In the testing mode, the spectacles device may project testing images (such as the letters "text" or images of a vehicle or other object) for testing aspects of a vision field of a subject.

The spectacles device 306 may be communicatively connected to the image processing device 304 through a wired or wireless link. The link may be through a Universal Serial Bus (USB), IEEE 1394 (Firewire), Ethernet, or other wired communication protocol device. The wireless connection can be through any suitable wireless communication protocol, such as, WiFi, NFC, iBeacon, Bluetooth, Bluetooth low energy, etc.