Dynamic adjustment of frame rate conversion settings

Choudhury , et al. July 16, 2

U.S. patent number 10,354,394 [Application Number 15/703,764] was granted by the patent office on 2019-07-16 for dynamic adjustment of frame rate conversion settings. This patent grant is currently assigned to Dolby Laboratories Licensing Corporation. The grantee listed for this patent is Dolby Laboratories Licensing Corporation. Invention is credited to Robin Atkins, Tao Chen, Anustup Kumar Atanu Choudhury, Samir N. Hulyalkar.

| United States Patent | 10,354,394 |

| Choudhury , et al. | July 16, 2019 |

Dynamic adjustment of frame rate conversion settings

Abstract

Motion characteristics related to the images are determined. A motion characteristics metadata portion is generated based on the motion characteristics, and is to be used for determining an optimal FRC operational mode with a downstream device for the images. The images are encoded into a video stream. The motion characteristics metadata portion is encoded into the video stream as a part of image metadata. The video stream is transmitted to the downstream device. The downstream receives the video stream and operates the optimal FRC operational mode to generate, based on the images, additional images. The images and the additional images are rendered on a display device at an image refresh rate different from an input image refresh rate represented by images encoded in the video stream.

| Inventors: | Choudhury; Anustup Kumar Atanu (Palo Alto, CA), Chen; Tao (Palo Alto, CA), Atkins; Robin (San Jose, CA), Hulyalkar; Samir N. (Los Gatos, CA) | ||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Applicant: |

|

||||||||||

| Assignee: | Dolby Laboratories Licensing

Corporation (San Francisco, CA) |

||||||||||

| Family ID: | 61620448 | ||||||||||

| Appl. No.: | 15/703,764 | ||||||||||

| Filed: | September 13, 2017 |

Prior Publication Data

| Document Identifier | Publication Date | |

|---|---|---|

| US 20180082429 A1 | Mar 22, 2018 | |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | Issue Date | ||

|---|---|---|---|---|---|

| 62395617 | Sep 16, 2016 | ||||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | H04N 21/2343 (20130101); H04N 21/234381 (20130101); H04N 21/2401 (20130101); G06T 7/207 (20170101); G06T 7/248 (20170101); H04N 7/01 (20130101); H04N 21/2353 (20130101); G06T 2207/10016 (20130101); G06T 2207/10024 (20130101); H04N 7/0127 (20130101) |

| Current International Class: | H04N 19/102 (20140101); H04N 19/137 (20140101); H04N 19/139 (20140101); H04N 19/157 (20140101); G06T 7/207 (20170101); H04N 19/114 (20140101); H04N 19/11 (20140101); H04N 21/235 (20110101); H04N 21/24 (20110101); H04N 7/01 (20060101); G06T 7/246 (20170101); H04N 21/2343 (20110101) |

| Field of Search: | ;345/240.02 |

References Cited [Referenced By]

U.S. Patent Documents

| 5557684 | September 1996 | Wang |

| 6449312 | September 2002 | Zhang |

| 6628715 | September 2003 | Iu |

| 7103231 | September 2006 | Cornog |

| 7991196 | August 2011 | Tener |

| 8013909 | September 2011 | Nikkanen |

| 8150194 | April 2012 | Fujibayashi |

| 8160149 | April 2012 | Demos |

| 8208563 | June 2012 | Dane |

| 8358696 | January 2013 | Bukin |

| 8462266 | June 2013 | Ueno |

| 8559517 | October 2013 | Ogino |

| 8634463 | January 2014 | Shi |

| 8718143 | May 2014 | Chen |

| 8768103 | July 2014 | Ogino |

| 9031131 | May 2015 | Patankar |

| 9129399 | September 2015 | Jin |

| 9191682 | November 2015 | Margerm |

| 2005/0249288 | November 2005 | Ha |

| 2007/0071100 | March 2007 | Shi |

| 2007/0211800 | September 2007 | Shi |

| 2009/0148058 | June 2009 | Dane |

| 2009/0161011 | June 2009 | Hurwitz |

| 2009/0183200 | July 2009 | Gritton |

| 2010/0079669 | April 2010 | Hattori |

| 2010/0177239 | July 2010 | Servais |

| 2010/0260255 | October 2010 | Sannidhi |

| 2011/0206127 | August 2011 | Nguyen |

| 2013/0002947 | January 2013 | Mikhalenkov |

| 2013/0279590 | October 2013 | Chen |

| 2014/0307166 | October 2014 | Glen |

| 2015/0116513 | April 2015 | Chen |

| 2015/0254823 | September 2015 | Atkins |

| 2015/0341658 | November 2015 | Xi |

| 2011/094164 | Aug 2011 | WO | |||

| 2014/193631 | Dec 2014 | WO | |||

| 2015/130616 | Sep 2015 | WO | |||

Other References

|

Lee, Sung-Hee et al "Adaptive Motion Compensated Interpolation for Frame Rate-up Conversion" IEEE Transactions on Consumer Electronics, vol. 48, No. 3, Aug. 1, 2002, pp. 444-450. cited by applicant . Cetin, M. et al "An Adaptive True Motion Estimation Algorithm for Frame Rate Conversion of High Definition Video" Pattern Recognition (ICPR), 20th International Conference, pp. 4109-4112, Aug. 2010. cited by applicant . Huang, A.M. et al "Correlation-Based Motion Vector Processing with Adaptive Interpolation Scheme for Motion-Compensated Frame Interpolation" IEEE Transactions on Image Processing, vol. 18, No. 4, Apr. 2009, pp. 740-752. cited by applicant . Pesquet-Popescu, B. et al "Motion Estimation Techniques" Telecom ParisTech, pp. 1-76, 2014. cited by applicant . Youn, J. et al "Motion Vector Refinement for High-Performance Transcoding" IEEE Transactions on Multimedia, vol. 1,No. 1, Mar. 1999, pp. 30-40. cited by applicant . Tasdizen, O. et al "Recursive Dynamically Variable Step Search Motion Estimation Algorithm for High Definition Video" Pattern Recognition (ICPR) 20th International Conference, pp. 2354-2357, Aug. 2010. cited by applicant . Shimano, M. et al "Video Temporal Super-Resolution Based on Self-Similarity" Advanced Topics in Computer Vision, pp. 411-430, Dec. 2013. cited by applicant . Lu, Q. et al "Motion-Compensated Frame Interpolation with Multiframe-Based Occlusion Handling" Jul. 2015, Display Technology, vol. 12, No. 1, pp. 45-54. cited by applicant. |

Primary Examiner: Saltarelli; Dominic D

Claims

The invention claimed is:

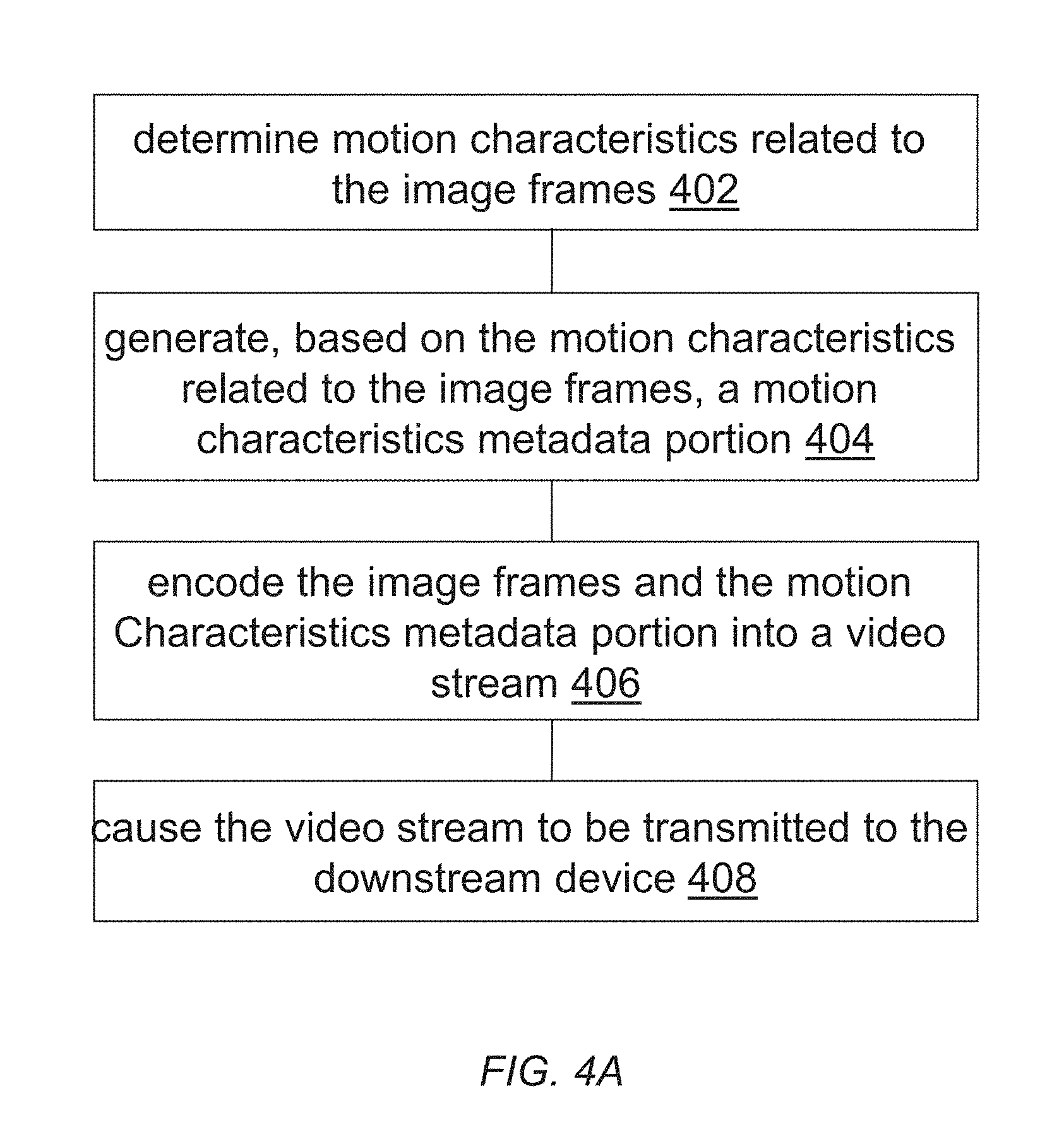

1. A method, comprising: determining one or more motion characteristics related to the one or more images; generating, based at least in part on the one or more motion characteristics related to the one or more images, a motion characteristics metadata portion, wherein the motion characteristics metadata portion is to be used for determining an optimal frame rate conversion (FRC) operational mode with a downstream device for the one or more images; encoding the one or more images into a video stream, wherein the motion characteristics metadata portion is encoded into the video stream as a part of image metadata wherein the motion characteristics metadata portion encoded in the video stream indicates whether the one or more motion characteristics are of a random motion type; causing the video stream to be transmitted to the downstream device.

2. The method of claim 1, wherein the one or more images comprises a current image and one or more reference images other than the current image.

3. The method of claim 1, further comprising: counting a total number of objects depicted in the one or more images; determining whether the total number of objects exceeds a component number threshold; in response to determining that the total number of objects does not exceed a component number threshold, generating the motion characteristics metadata portion for the one or more images to indicate avoiding generating additional images using image interpolation of the one or more images in the optimal FRC operational mode.

4. The method of claim 1, wherein the one or more motion characteristics comprise one or more of: histograms of components of motion vectors, standard deviations of components of motion vectors, kurtoses of components of motion vectors, or skews of components of motion vectors.

5. The method of claim 1, further comprising: comparing at least one motion characteristic in the one or more motion characteristics of the one or more images with a variance threshold; based on results of comparing the at least one motion characteristic with the variance threshold, determining whether image content visually depicted in the one or more images comprises relatively random motions.

6. The method of claim 1, further comprising: determining one or more relationships between one or more peaks of a histogram of components of motion vectors represented in the one or more motion characteristics of the one or more images and one or more other portions of the histogram; based on the one or more relationships, determining whether image content visually depicted in the one or more images comprises relatively random motions.

7. The method of claim 1, wherein the one or more images comprise a plurality of spatial regions; wherein the plurality of spatial regions respectively corresponds to a plurality of sets of motion characteristics; wherein each spatial region in the plurality of spatial regions corresponds to a respective set of motion characteristics; wherein the optimal FRC operational mode represents a FRC operational mode optimally selected from a plurality of FRC operational modes for a specific spatial region in the plurality of spatial regions of the one or more images; and wherein the motion characteristics metadata portion is to be used to determine a second optimal FRC operational mode with the downstream device that represents a second different FRC operational mode optimally selected from the plurality of FRC operational modes for a second specific spatial region in the plurality of spatial regions of the one or more images.

8. The method of claim 1, wherein at least one of the one or more motion characteristics related to the one or more images is determined based on image content visually depicted in one or more images.

9. The method of claim 1, wherein at least one of the one or more motion characteristics related to the one or more images is determined based on motion vectors in one or more images, and wherein the motion vectors are already pre-computed by an upstream device.

10. One or more non-transitory computer-readable media storing a sequence of instructions, which when executed by one or more computing processors, cause the one or more computing processors to perform the method as recited in claim 1.

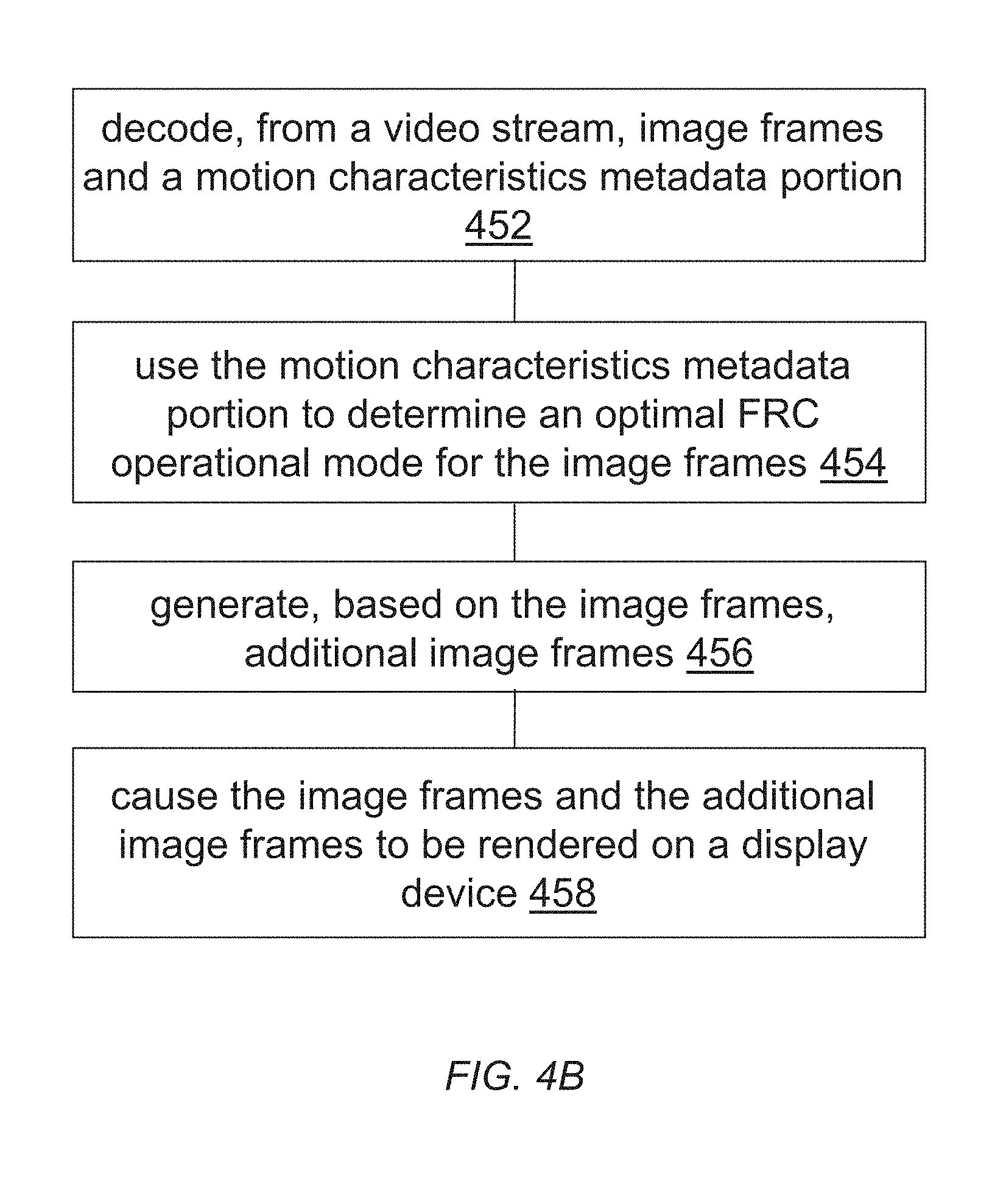

11. A method, comprising: decoding, from a video stream, one or more images and a motion characteristics metadata portion, wherein the motion characteristics metadata portion is generated by an upstream device for the one or more images based at least in part on one or more motion characteristics related to the one or more images, wherein the one or more motion characteristics are determined based on image content visually depicted in one or more images, wherein the motion characteristics metadata portion encoded in the video stream indicates whether the one or more motion characteristics are of a random motion type; using the motion characteristics metadata portion to determine an optimal frame rate conversion (FRC) operational mode for the one or more images; operating the optimal FRC operational mode to generate, based on the one or more images, one or more additional images in addition to the one or more images; causing the one or more images and the one or more additional images to be rendered on a display device.

12. The method of claim 11, wherein the optimal FRC operational mode represents a specific FRC operational mode selected from a plurality of FRC operational modes for the one or more images.

13. One or more non-transitory computer-readable media storing a sequence of instructions, which when executed by one or more computing processors, cause the one or more computing processors to perform the method as recited in claim 11.

14. An apparatus comprising one or more computing processors and one or more non-transitory computer-readable media storing a sequence of instructions, which when executed by the one or more computing processors, cause the one or more computing processors to perform the method as recited in claim 1.

15. An apparatus comprising one or more computing processors and one or more non-transitory computer-readable media storing a sequence of instructions, which when executed by the one or more computing processors, cause the one or more computing processors to perform the method as recited in claim 11.

Description

TECHNOLOGY

The present invention relates generally to images. More particularly, an embodiment of the present invention relates to dynamic adjustment of frame rate conversion settings.

BACKGROUND

Image interpolation, which computes a set of plausible interpolated images using two or more adjacent images, has varied applications including but not limited to frame rate conversion (FRC) between different broadcast standards, synthesis of virtual views, animating still images and so on.

Some TV manufacturing companies incorporate built-in motion interpolation technology in their products to perform FRC. A mechanism for FRC can be as simple as merely replicating received images to achieve the desired frame rate. For example, a TV running at an image refresh rate of 120 Hz and receiving a 30 Hz image sequence may simply display each image four consecutive times. The advantage of this solution is that the complexity of the system is very low, at the expense of possibly resulting in motion judder.

Complicated systems can be designed for motion interpolation. However, computational costs of such techniques can be quite high and can even result in noticeable lags in viewing image sequences involving motions.

The approaches described in this section are approaches that could be pursued, but not necessarily approaches that have been previously conceived or pursued. Therefore, unless otherwise indicated, it should not be assumed that any of the approaches described in this section qualify as prior art merely by virtue of their inclusion in this section. Similarly, issues identified with respect to one or more approaches should not assume to have been recognized in any prior art on the basis of this section, unless otherwise indicated.

BRIEF DESCRIPTION OF DRAWINGS

The present invention is illustrated by way of example, and not by way of limitation, in the figures of the accompanying drawings and in which like reference numerals refer to similar elements and in which:

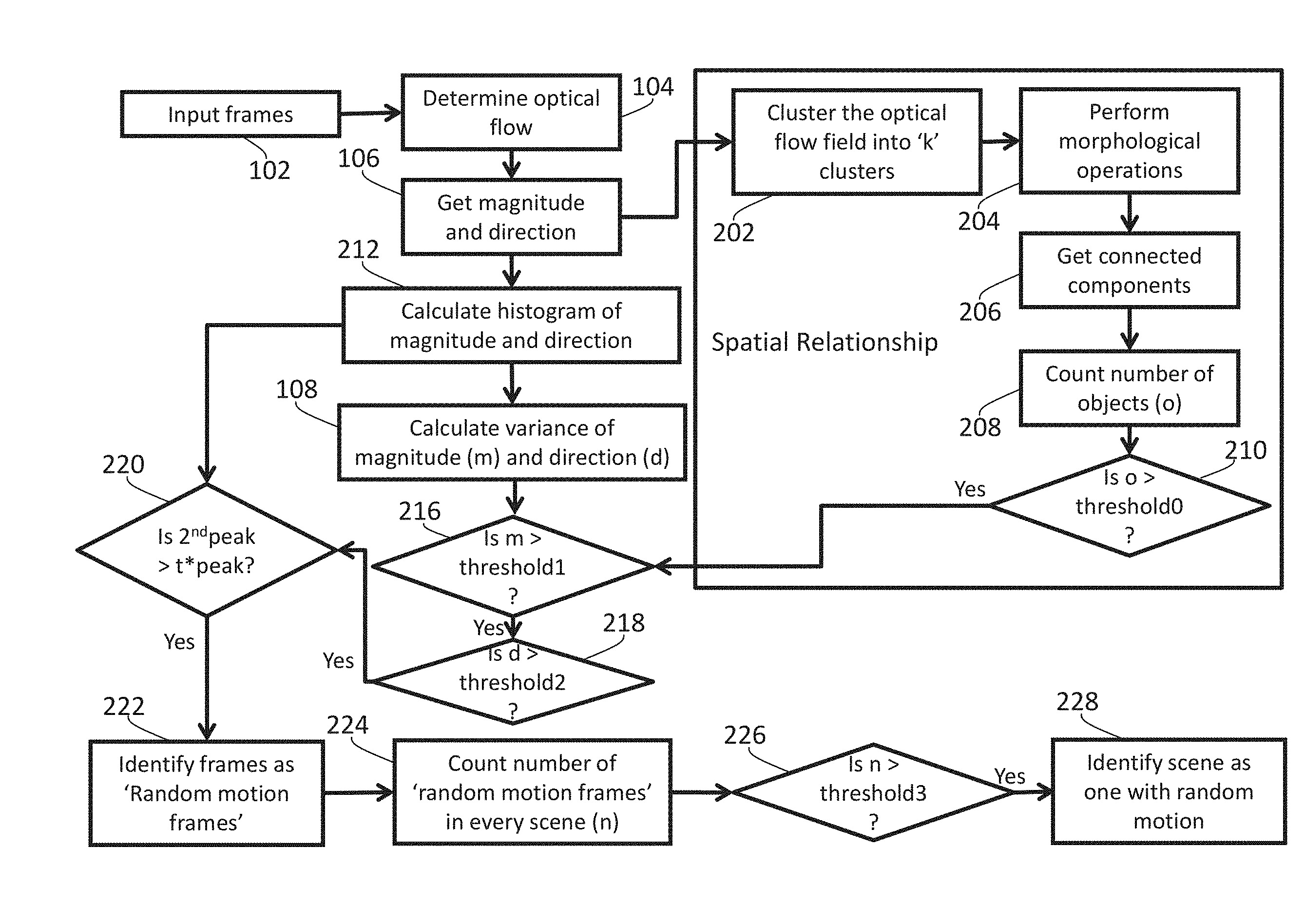

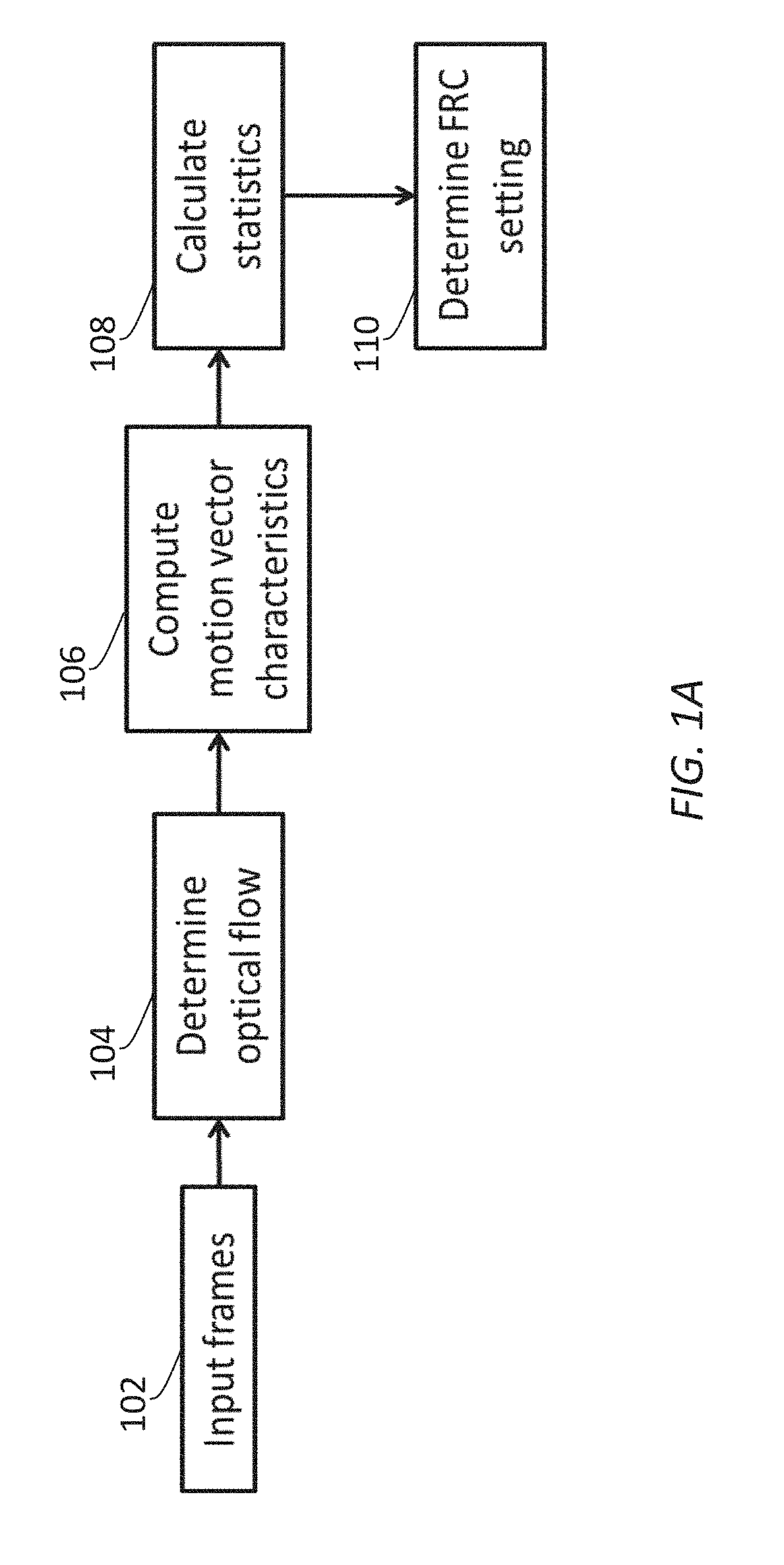

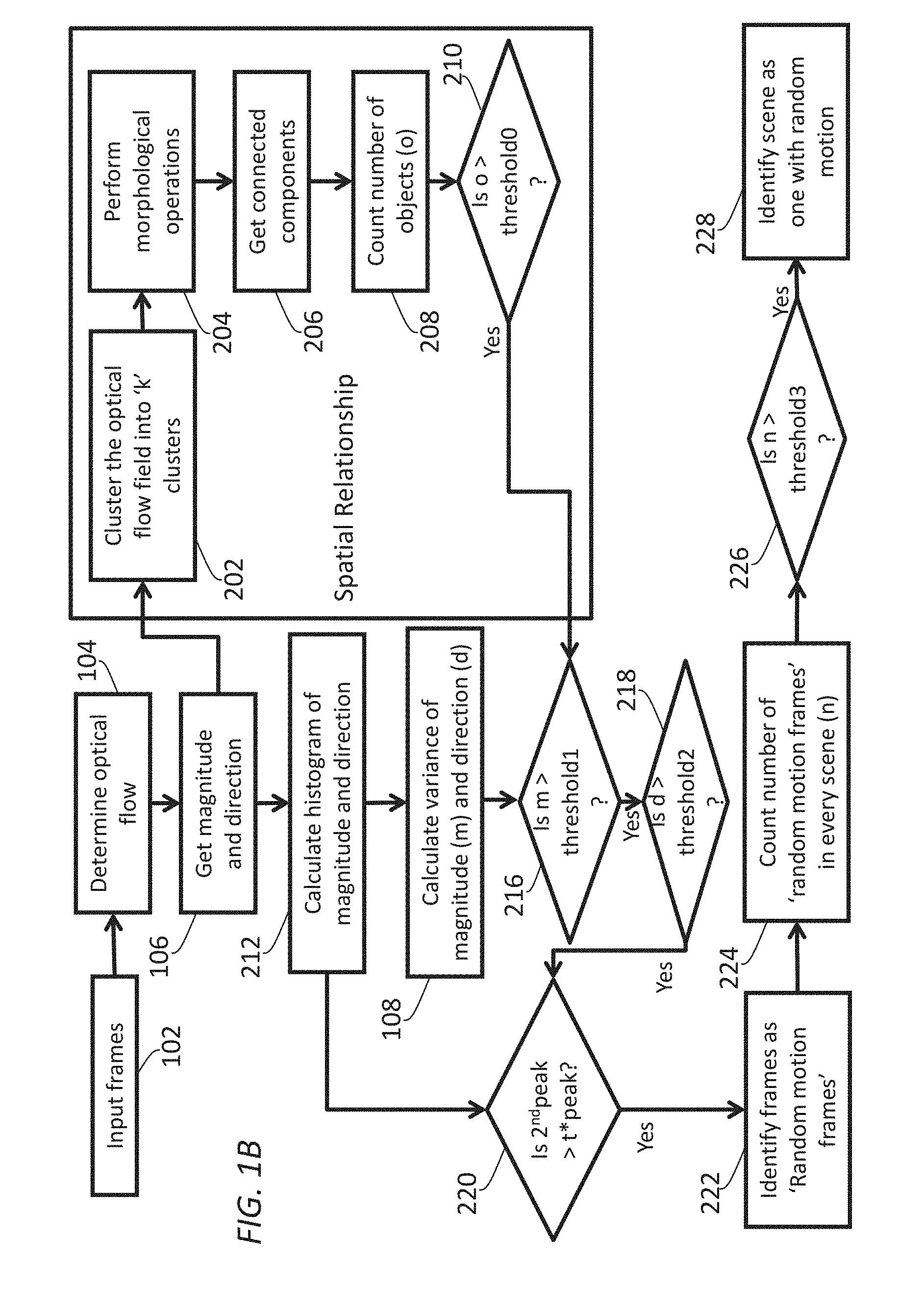

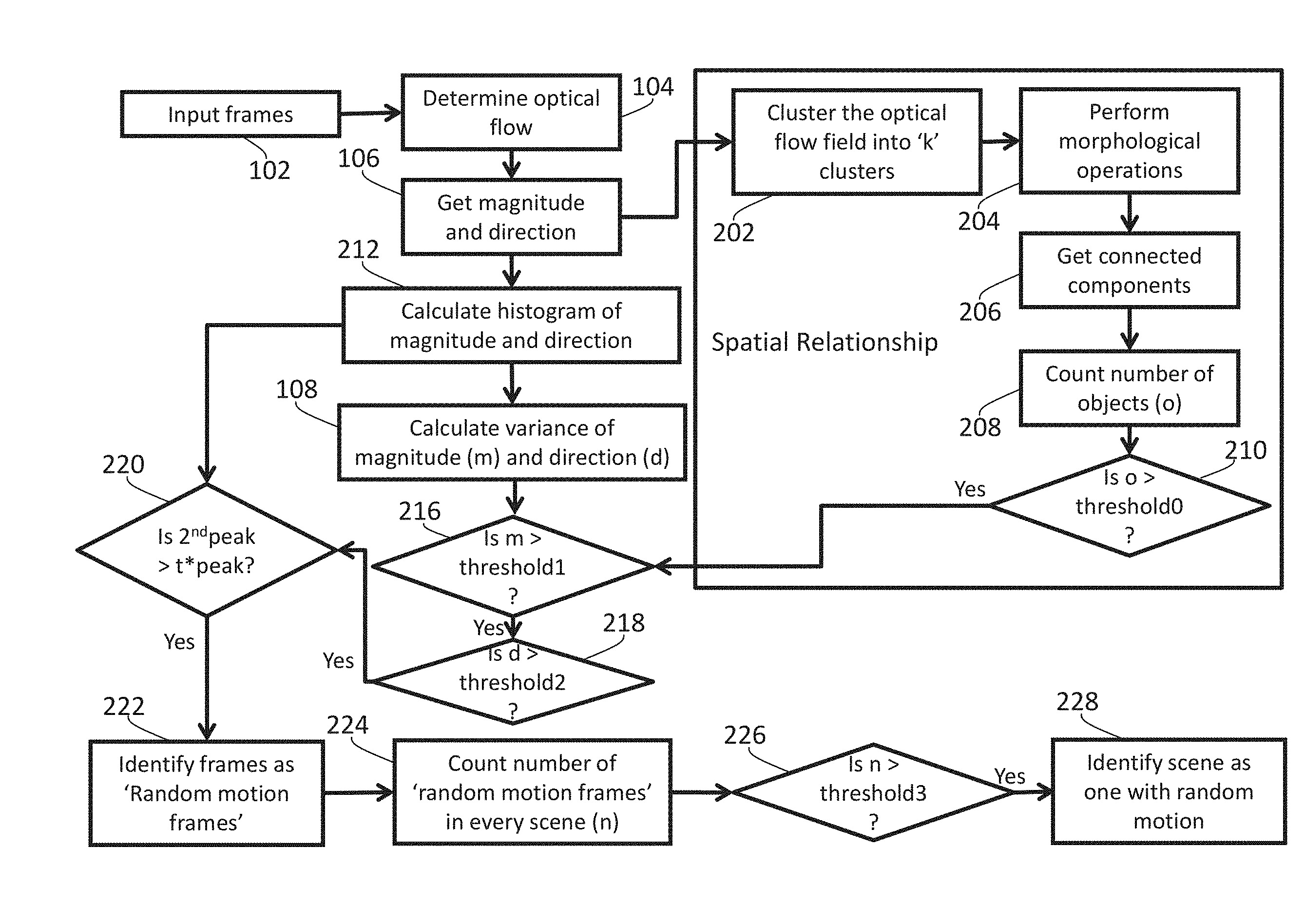

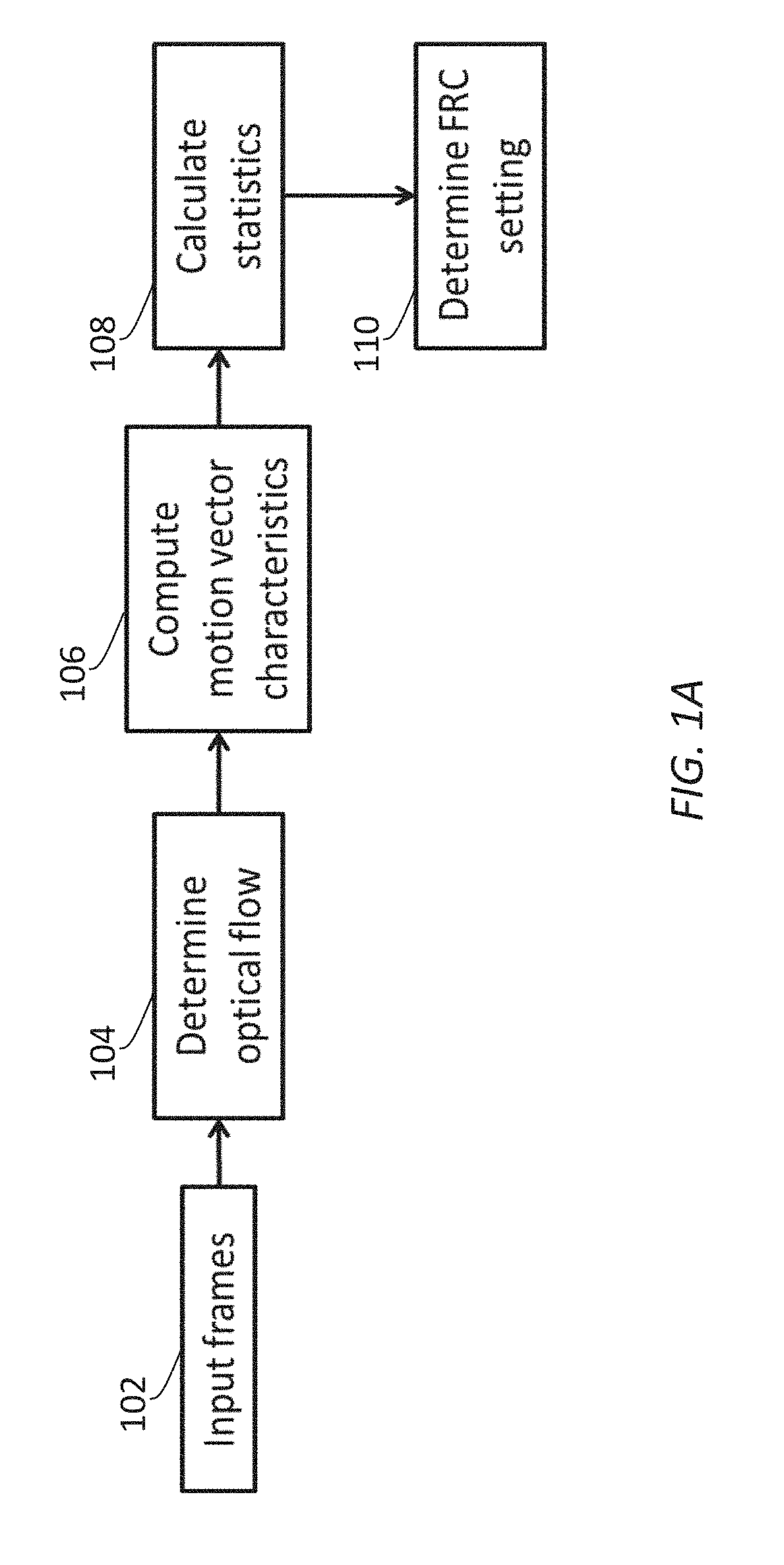

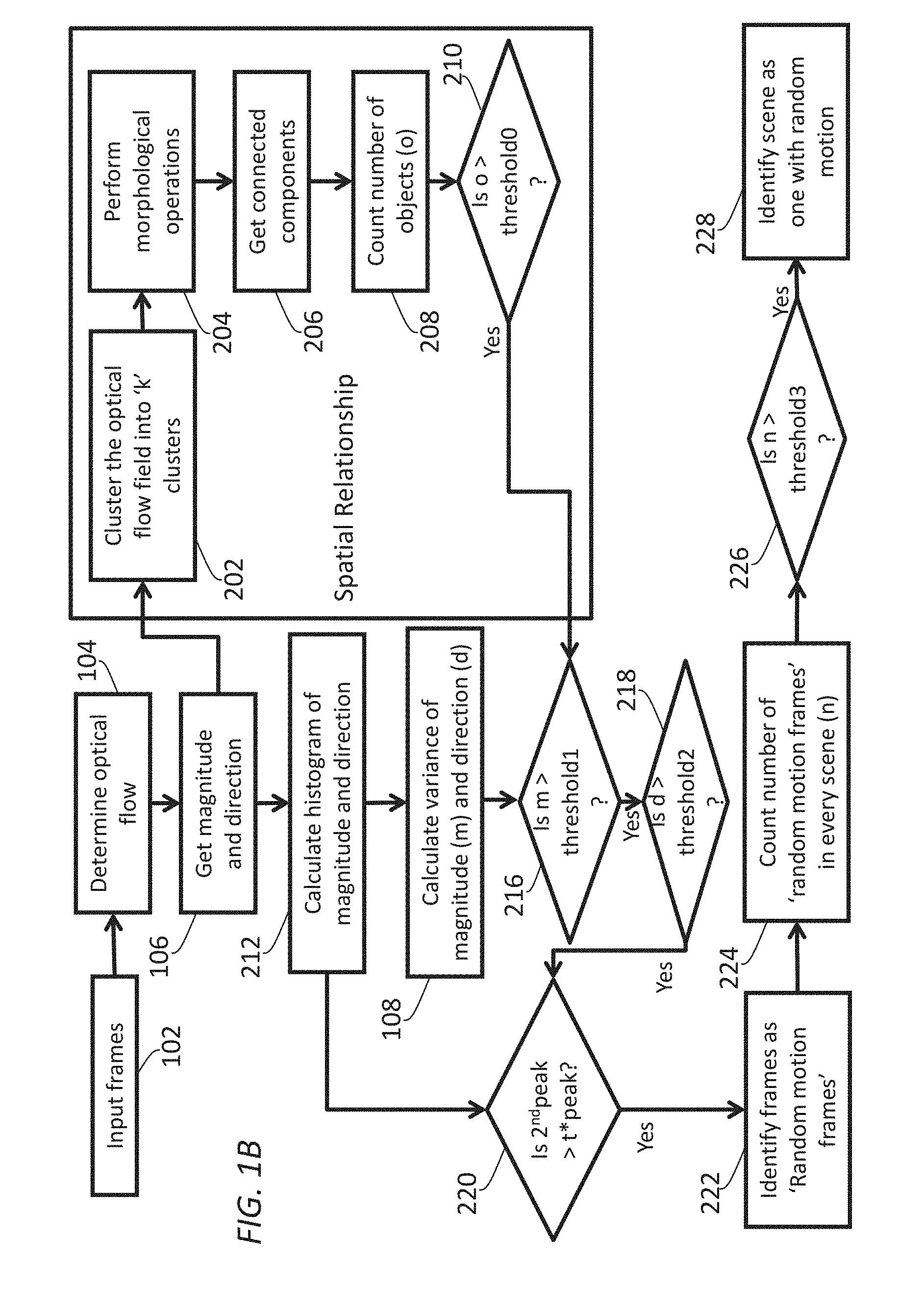

FIG. 1A and FIG. 1B illustrate example process flows for determining motion characteristics of content (e.g., used in determining optimal FRC operational modes, used in other operations, etc.);

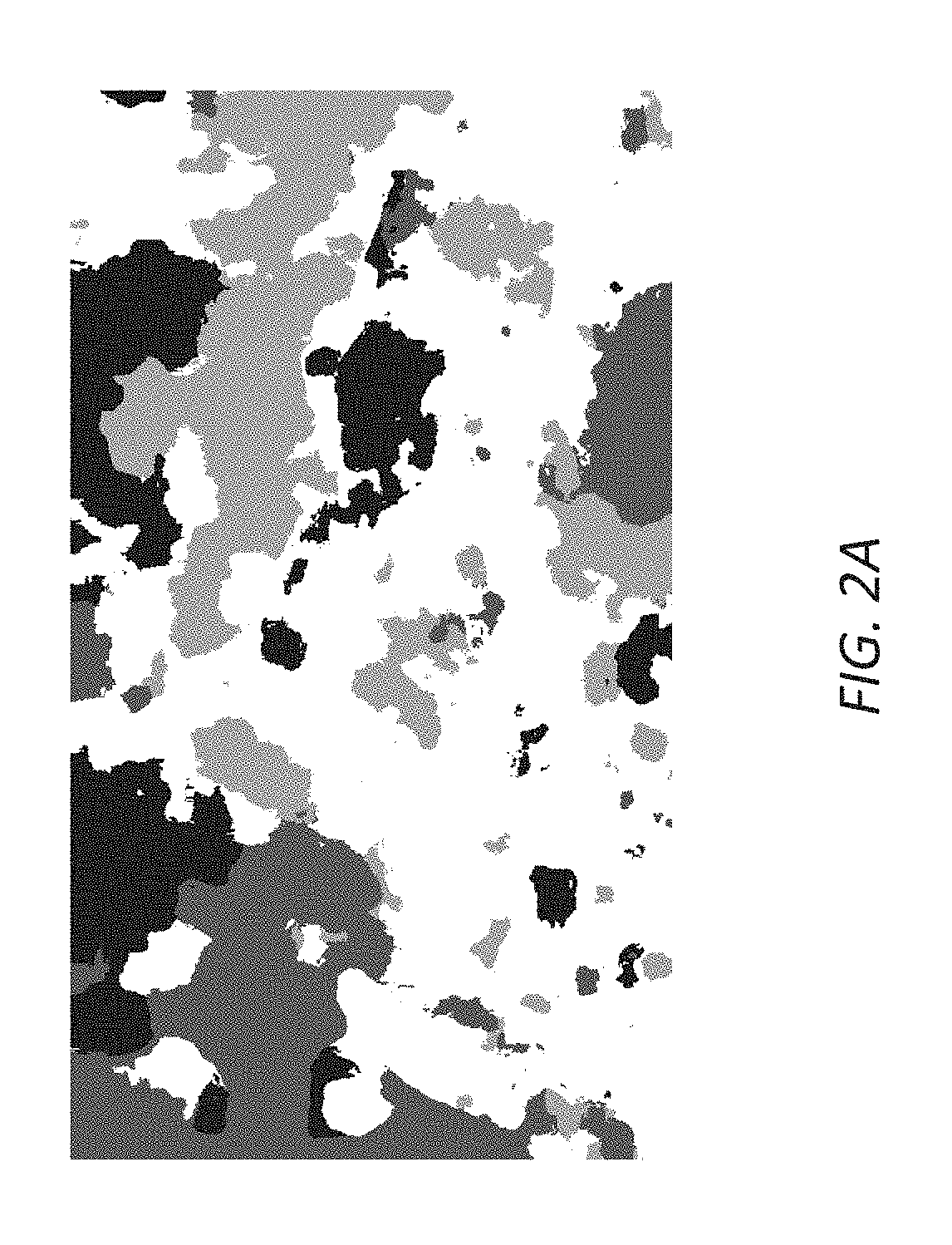

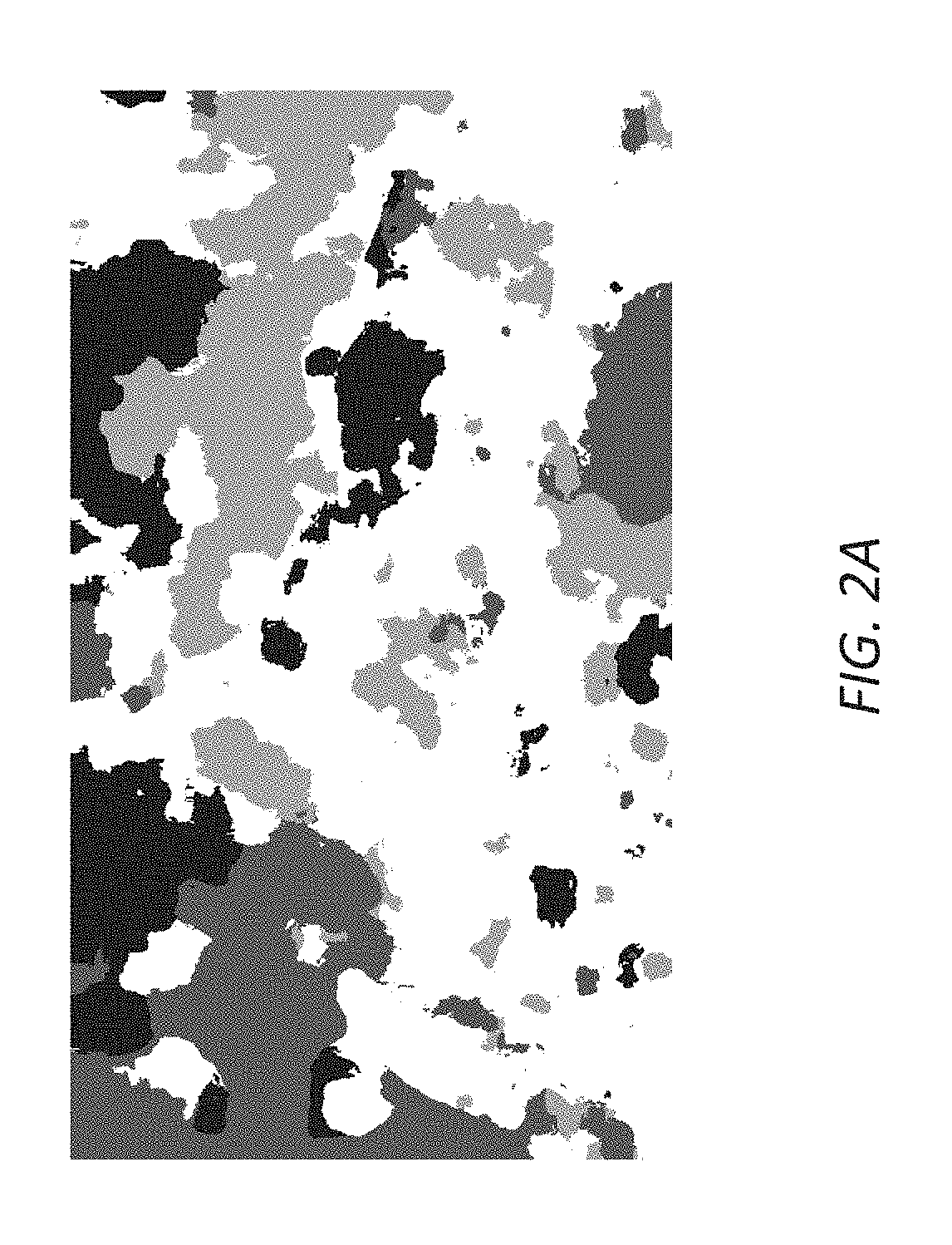

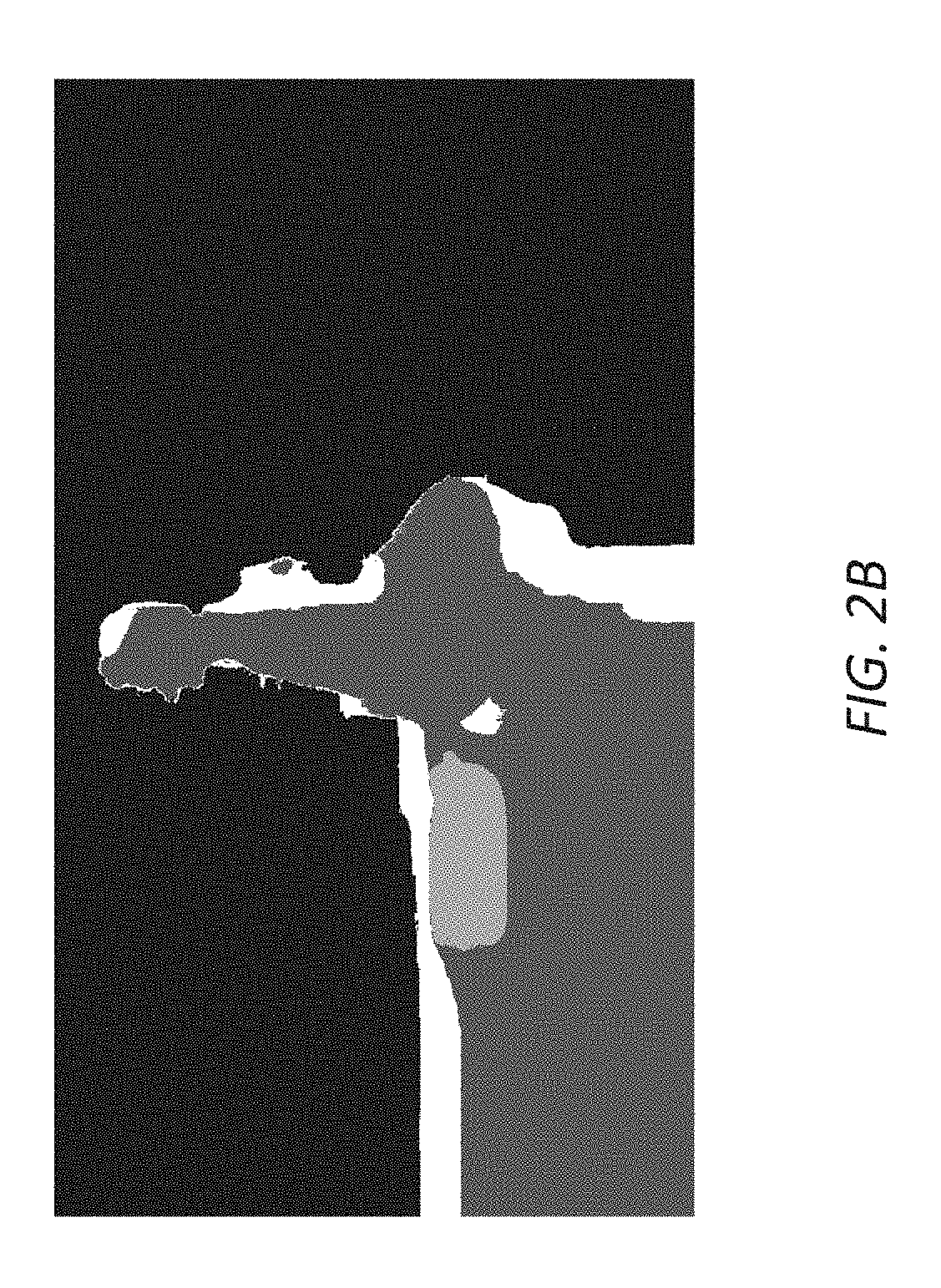

FIG. 2A and FIG. 2B illustrate example cluster maps in which different numbers of components/objects are detected;

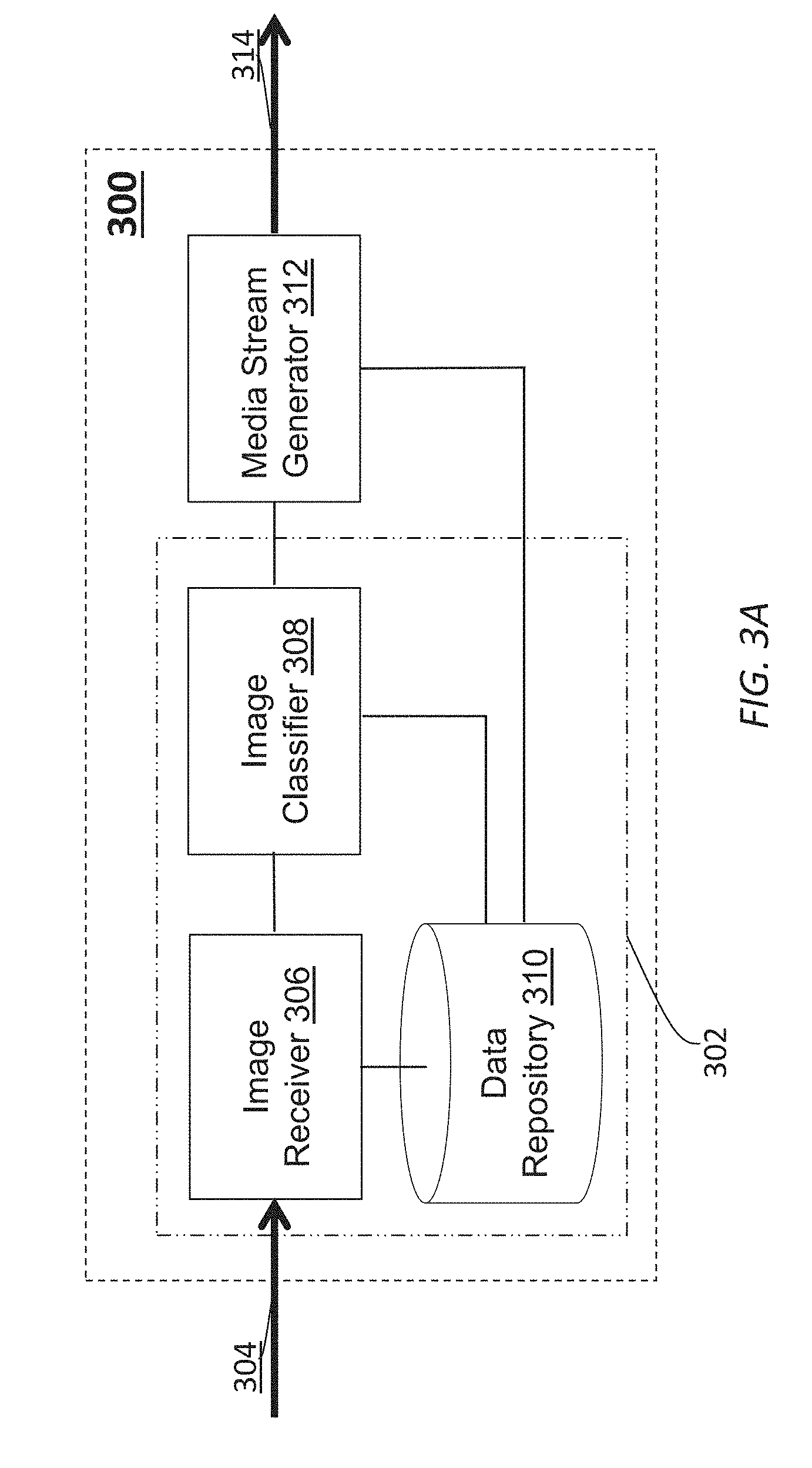

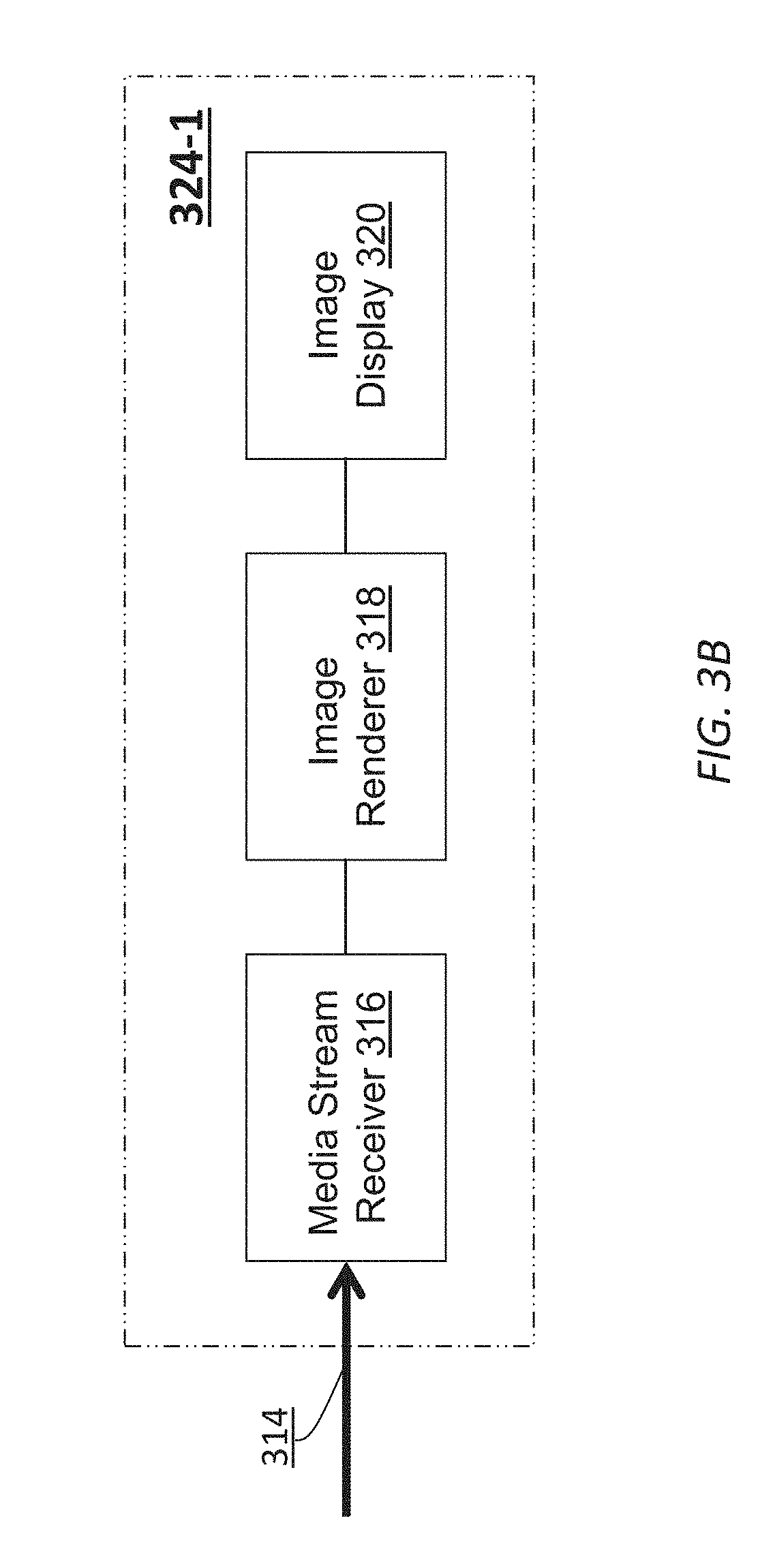

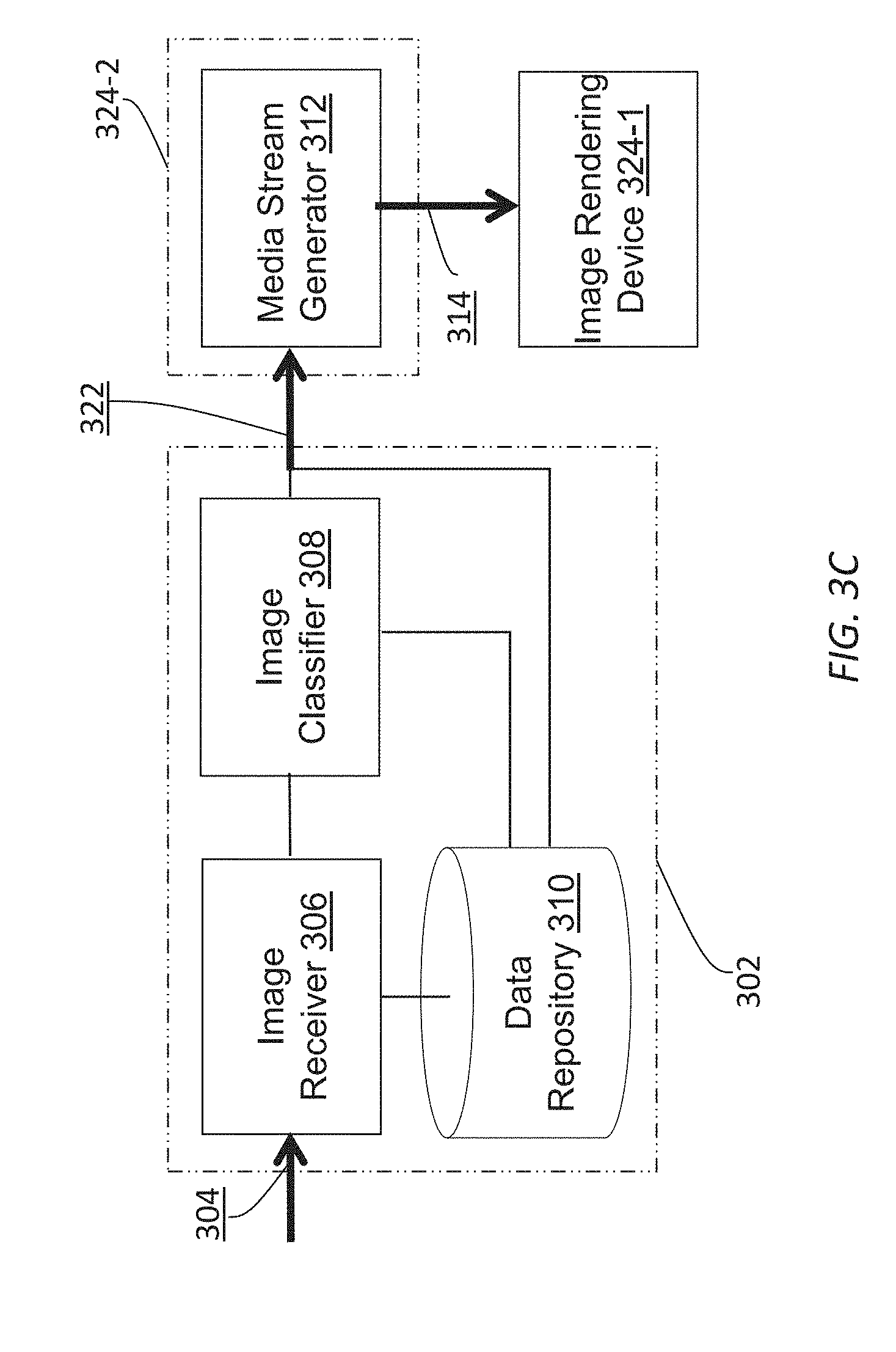

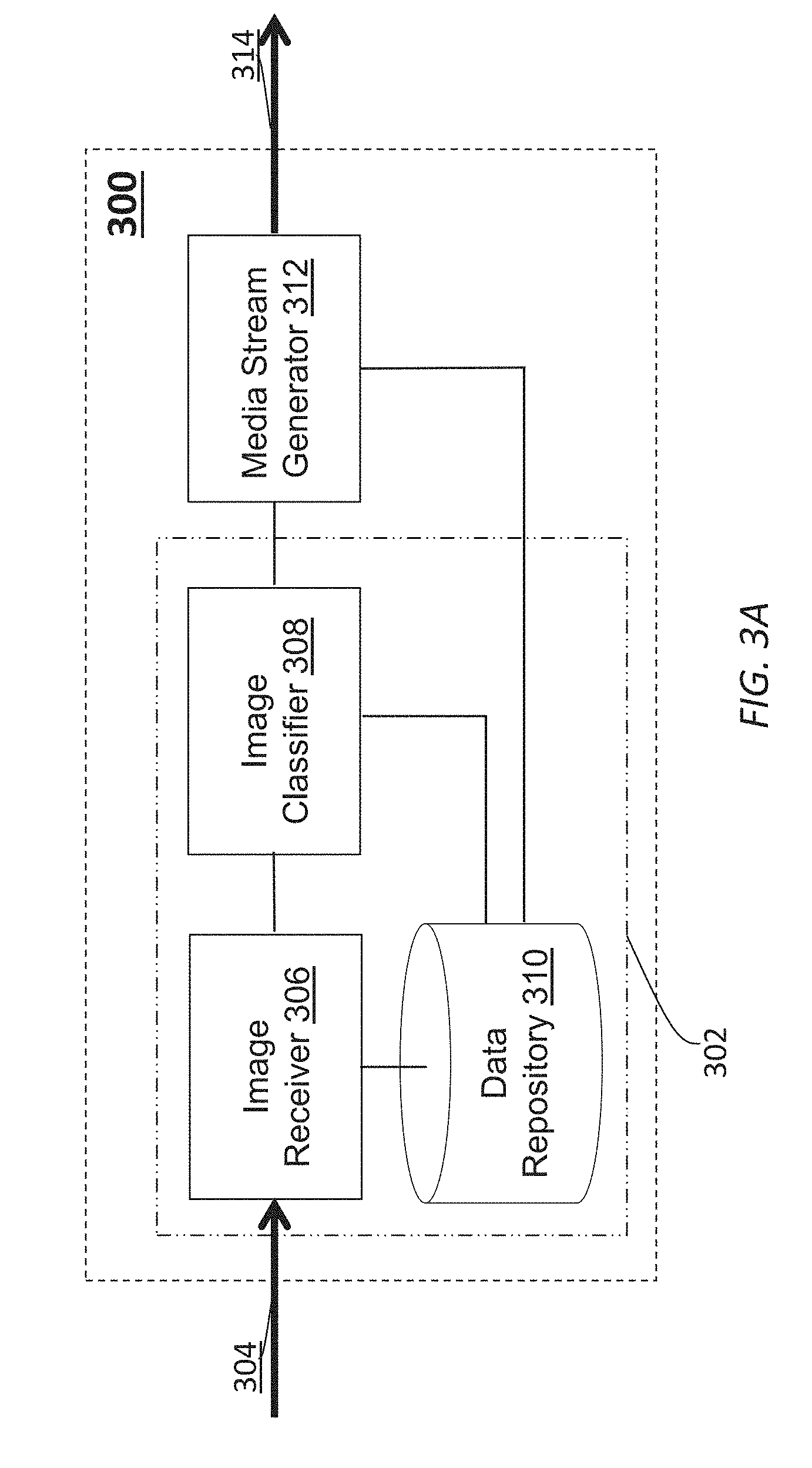

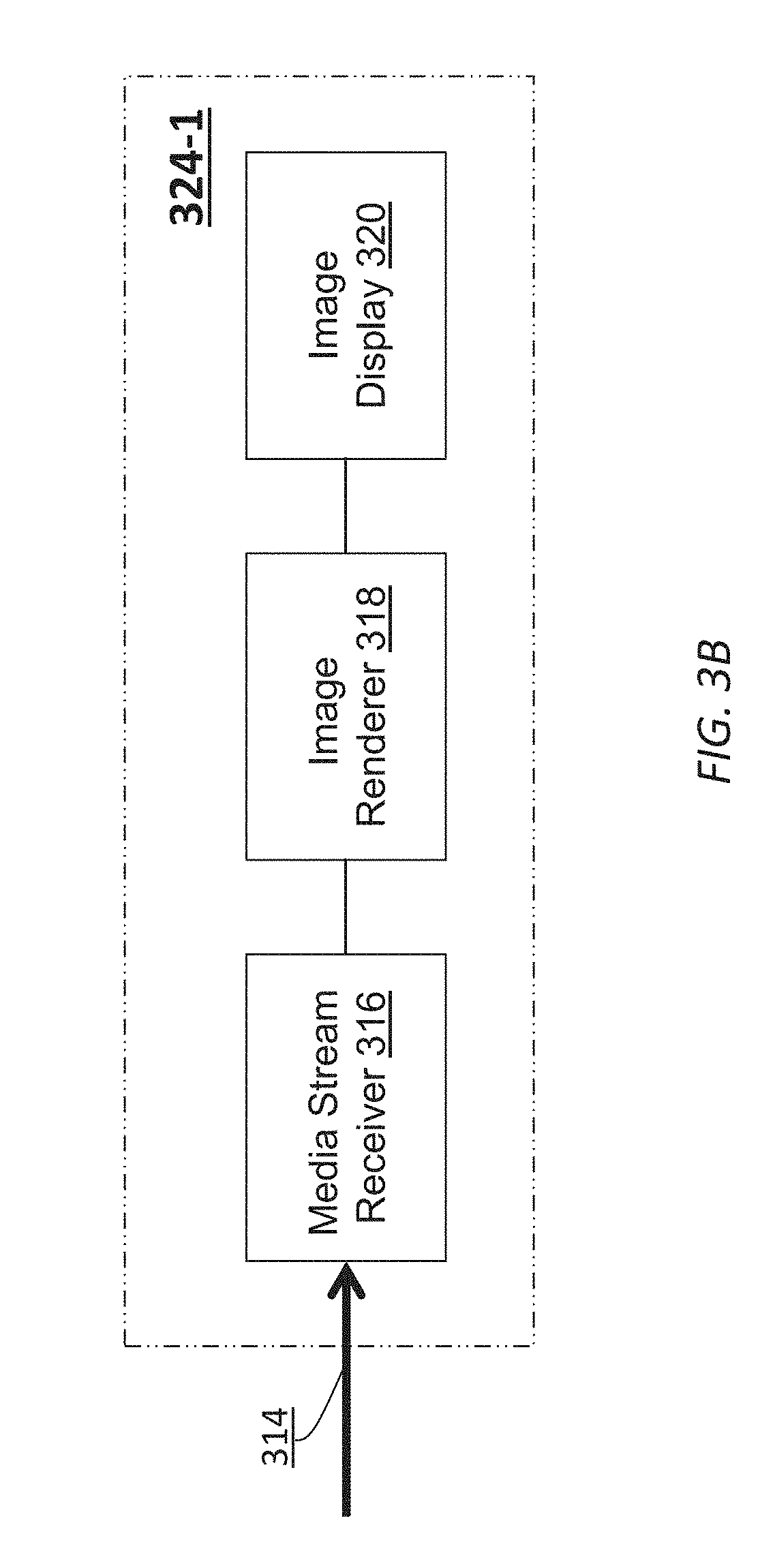

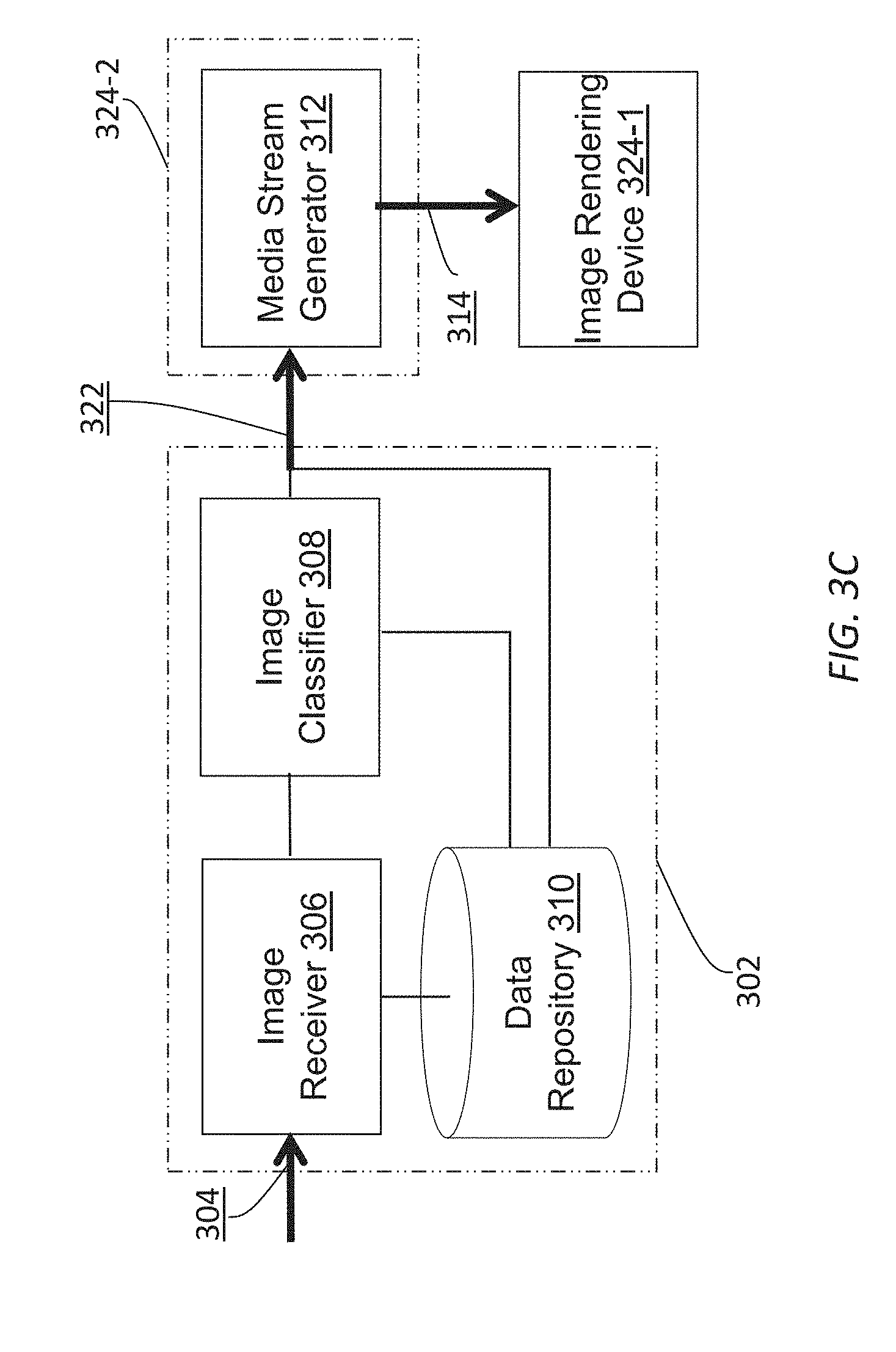

FIG. 3A-FIG. 3C illustrate example spherical video encoders and clients;

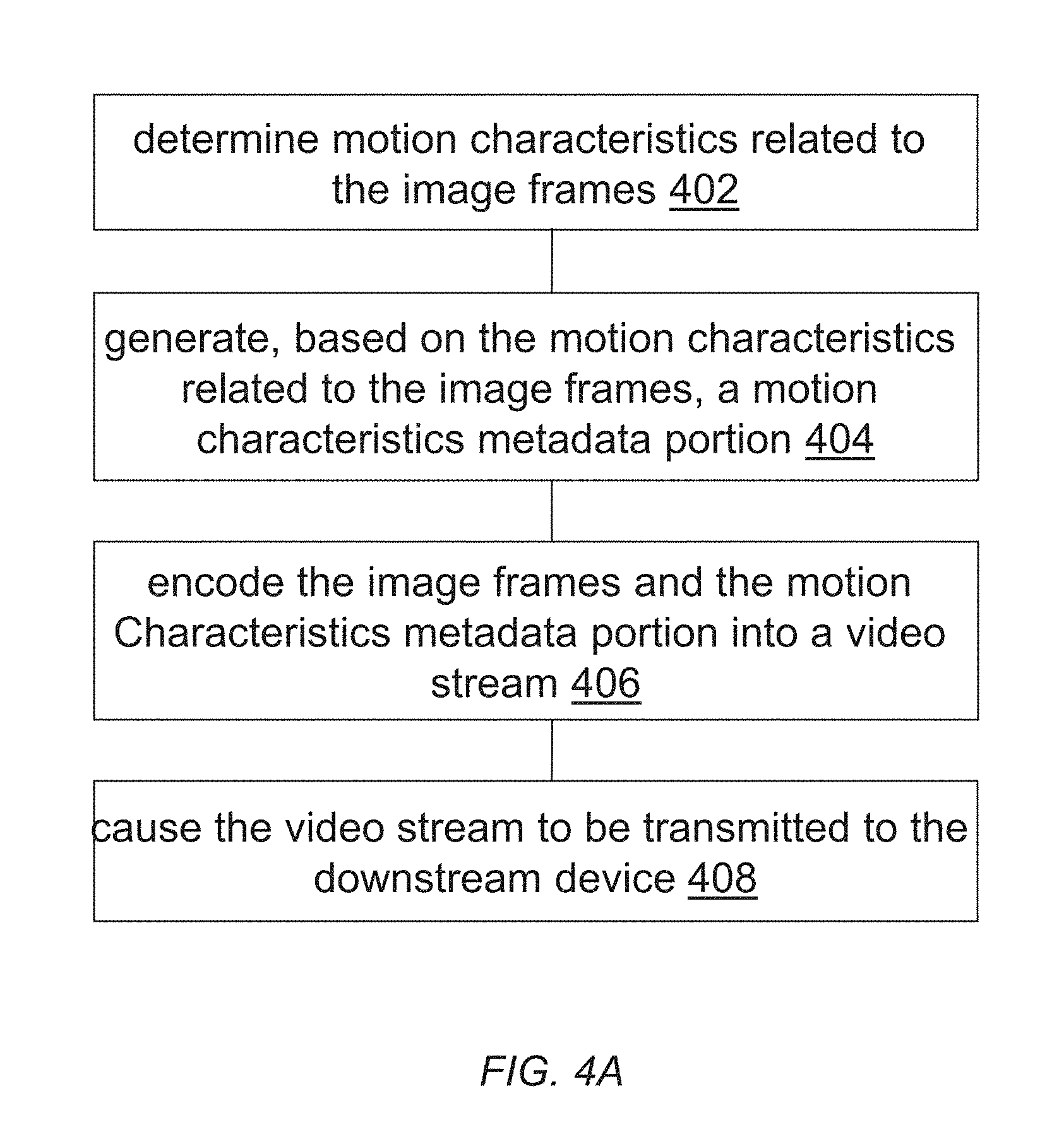

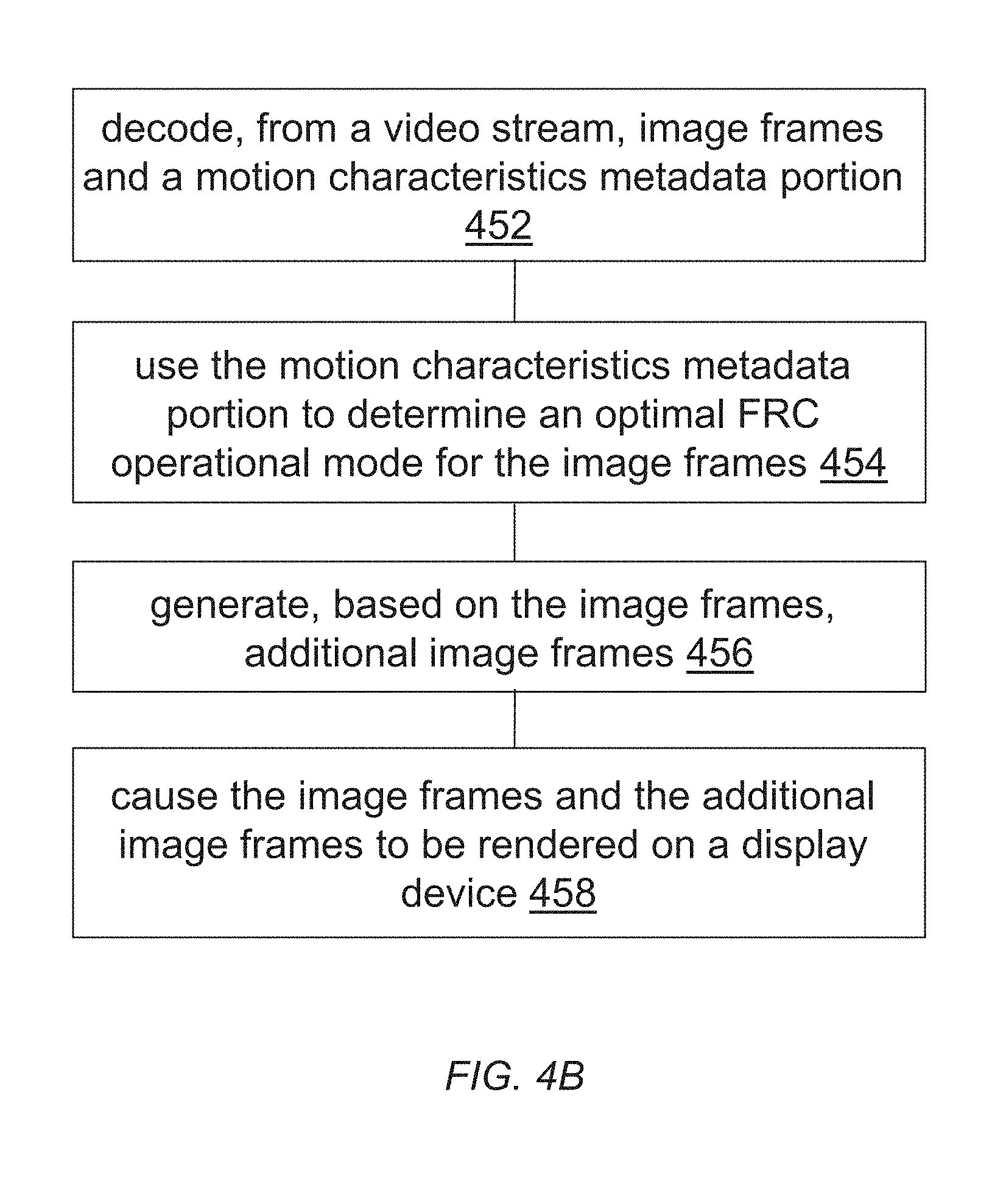

FIG. 4A and FIG. 4B illustrate example process flows; and

FIG. 5 illustrates an example hardware platform on which a computer or a computing device as described herein may be implemented.

DESCRIPTION OF EXAMPLE EMBODIMENTS

Example embodiments, which relate to dynamic adjustment of frame rate conversion settings, are described herein. In the following description, for the purposes of explanation, numerous specific details are set forth in order to provide a thorough understanding of the present invention. It will be apparent, however, that the present invention may be practiced without these specific details. In other instances, well-known structures and devices are not described in exhaustive detail, in order to avoid unnecessarily occluding, obscuring, or obfuscating the present invention.

Example embodiments are described herein according to the following outline: 1. GENERAL OVERVIEW 2. FRAME RATE CONVERSION 3. DETERMINING OPTIMAL FRC MODES BASED IMAGE CONTENT ANALYSIS 4. EXAMPLE VIDEO ENCODERS AND DECODERS 5. EXAMPLE PROCESS FLOWS 6. IMPLEMENTATION MECHANISMS--HARDWARE OVERVIEW 7. EQUIVALENTS, EXTENSIONS, ALTERNATIVES AND MISCELLANEOUS 1. General Overview

This overview presents a basic description of some aspects of an example embodiment of the present invention. It should be noted that this overview is not an extensive or exhaustive summary of aspects of the example embodiment. Moreover, it should be noted that this overview is not intended to be understood as identifying any particularly significant aspects or elements of the example embodiment, nor as delineating any scope of the example embodiment in particular, nor the invention in general. This overview merely presents some concepts that relate to the example embodiment in a condensed and simplified format, and should be understood as merely a conceptual prelude to a more detailed description of example embodiments that follows below. Note that, although separate embodiments are discussed herein, any combination of embodiments and/or partial embodiments discussed herein may be combined to form further embodiments.

Example embodiments described herein relate to encoding video data with motion characteristics of the video data. The motion characteristics of the video data can be used in a variety of image processing/rendering operations, including but not necessarily limited to only, any of: FRC operations, operations that guide two-dimensional (2D) to three-dimensional (3D) depth extraction, virtual reality (VR) anti-nausea algorithms, power saving algorithms, etc. By way of example but not limitation, based on image content visually depicted in one or more images, one or more motion characteristics related to the one or more images are determined. Based at least in part on the one or more motion characteristics related to the one or more images, a motion characteristics metadata portion is determined. The content motion characteristics portion is to be used for determining an optimal FRC operational mode with a downstream device for the one or more images. The one or more images are encoded into a video stream. The motion characteristics metadata portion is encoded into the video stream as a part of image metadata. The video stream is caused to be transmitted to the downstream device.

Example embodiments described herein relate to performing FRC operations using motion characteristics metadata. One or more images and a motion characteristics metadata portion is decoded from a video stream. The motion characteristics metadata portion is generated by an upstream device for the one or more images based at least in part on one or more motion characteristics related to the one or more images. The one or more motion characteristics are determined based on image content visually depicted in one or more images. The motion characteristics metadata portion is used to determine an optimal FRC operational mode for the one or more images. The optimal FRC operational mode is operated by the downstream device to generate, based on the one or more images, one or more additional images in addition to the one or more images. The one or more images and the one or more additional images are caused to be rendered on a display device.

In some example embodiments, mechanisms as described herein form a part of a media processing system, including but not limited to any of: cloud-based server, mobile device, encoding device, transcoding device, decoding device, media device, CAVE-type system or wall-sized display, video game device, display device, media player, media server, media production system, camera systems, home-based systems, communication devices, video processing system, video codec system, studio system, streaming server, content service system, handheld device, game machine, television, cinema display, laptop computer, netbook computer, tablet computer, cellular radiotelephone, electronic book reader, point of sale terminal, desktop computer, computer workstation, computer server, computer kiosk, or various other kinds of terminals and media processing units.

Various modifications to the preferred embodiments and the generic principles and features described herein will be readily apparent to those skilled in the art. Thus, the disclosure is not intended to be limited to the embodiments shown, but is to be accorded the widest scope consistent with the principles and features described herein.

2. Frame Rate Conversion

Image interpolation techniques that are based on optical flow can have varying levels of complexity depending on how an optical flow field is generated such as whether the optical flow field is dense, pixel-based, block-based, how many adjacent images in the past are used, how many adjacent images in the future are used, and so on. As used herein, adjacent images used for image interpolation and/or image replication may refer to (e.g., non-interpolated, non-replicated, etc.) images that are not generated from other images by image interpolation and/or image replication.

In some operational scenarios, correspondences can be computed between adjacent images in a video stream based on optical flow computations. Warping can be performed on the adjacent images to get estimates for the interpolated images. Due to potential ambiguities in computing correspondences in optical flow, however, some of these techniques may need to rely on computationally expensive optimization that involves tuning numerous parameters and thus takes long computational times to find optimal FRC settings. While using a large amount of information for computing optical flow may lead to better image interpolation results (e.g., less motion judder, less motion blur, etc.), the economic cost and the computational cost in such an approach can be high. Furthermore, in practice the high cost computation may result in perceptible time lags in viewing image sequences involving relatively high motion image content.

In some embodiments, a display system may implement different frame rate conversion (FRC) operational modes, any of which may be selected by the display system automatically without user input, by a user manually, according to the user's personal preferences, etc. In a first FRC operational mode (e.g., OFF), the display system may operate frame rate conversion without performing image interpolation and merely replicate received images. In one or more second FRC operational modes (e.g., LOW, MEDIUM, HIGH, etc.), the display system may operate frame rate conversion with various degrees of complexities. For example, in a FRC LOW mode, the display system may operate frame rate conversion by replicating most received images (3 out of 4 frames while converting a 30 Hz input frame rate to a 120 Hz display-device-specific frame rate) and applying image interpolation only to a small subset of received images (1 out of 4 frames while converting a 30 Hz input frame rate to a 120 Hz display-device-specific frame rate). On the other hand, in a FRC HIGH mode, the display system may operate frame rate conversion by applying image interpolation to most received images, replicating none of received images or replicating only a small subset of the received images.

In some embodiments, FRC operational modes as described herein may further comprise FRC LOW, intermediate FRC operational modes, etc., in which image interpolations and image replications are applied to (e.g., static, fixed, dynamically settable, etc.) various numbers of received images.

In many operational scenarios, when the display system operates in a non FRC OFF mode such as the FRC HIGH mode, worse interpolation results can be produced than when the display system would operate in the FRC OFF mode. Although this sounds counter-intuitive, the worse interpolation results can occur with relatively high probabilities. This is so because in the FRC HIGH mode, the display system performs complex operations (e.g., computing optical flow using multiple adjacent images, etc.) to determine correspondences. However, if input image content has random motions, then it may become very difficult to estimate correspondences between pixels, pixel blocks, etc., of different received images. Example random motions may include, but are not limited to only, any of: rain drops, snow falling, dust storm, sand storm, wind blowing leaves away, fast fight sequences, etc.

In addition, optimization techniques used for computing optical flow (e.g., calculating the correspondences, etc.) may not converge properly, and thus may produce irregular and incorrect estimated motions of pixels/blocks of interpolated images. The irregular and incorrect estimated motions may cause unwanted visual artifacts to be produced in rendering the interpolated images.

Thus, for input image content containing random motions, it is desirable to watch a time sequence of received images mixed with replicated images generated in the FRC OFF mode, as the replicated images would be relatively free of objectionable visual artifacts that could otherwise be produced in interpolated images due to the irregular and incorrect estimated motions.

Techniques as described herein can be dynamically applied to avoid/reduce performing image interpolation operations for input image content that is susceptible to generating objectionable visual artifacts. Even in operational scenarios in which image interpolation operations are suitable (or input image content that is not susceptible to generating objectionable visual artifacts), these techniques can be dynamically applied to select the best FRC operational mode possible (e.g., with the best strength/level for the image interpolation operations, etc.) for performing the image interpolation operations.

In some embodiments, a display system under techniques as described herein can implement a mechanism that dynamically adjusts FRC operational modes depending on actual motion types found in the input image content. Motion statistics including but not limited to magnitudes and directions pertaining to motion vectors between adjacent images may be computed and/or collected, and may be used to predict and/or select the best FRC operational mode.

For example, in operational scenarios in which relatively smooth motions (including but not limited to relatively static or stationary scenes/images) are detected from two or more adjacent images, based on motion statistics indicating the relatively smooth motions, the FRC HIGH mode may be predicted and/or selected. In some embodiments, the relatively smooth motions may be measured or determined so when motion vectors computed from adjacent images in the input image content have similar, relatively uniform, and/or converging magnitudes and directions with variances that do not exceed corresponding random motion thresholds. As a result, the display system can operate with relatively strong image interpolation that generates interpolated images around or in between the adjacent images, thereby providing a better viewing experience while watching the input image content associated with accurate and fast motion estimation for the relatively smooth motions.

On the other hand, in operational scenarios in which relatively random motions are detected from two or more adjacent images, based on motion statistics indicating the relatively random motions, the FRC OFF mode may be predicted and/or selected. In some embodiments, the relatively random motions may be measured or determined so when the motion vectors have different magnitudes and directions with variances that exceed the corresponding random motion thresholds. As a result, the display system can operate without image interpolation and avoid generate interpolated images around or in between the adjacent images, thereby avoiding generating unwanted visual artifacts associated with incorrect motion estimation, which are likely to be generated under other approaches that blindly relies on (e.g., relatively strong, etc.) image interpolation for frame rate conversion.

3. Determining Optimal FRC Modes Based Image Content Analysis

FIG. 1A illustrates an example process flow that can be used to determine motion characteristics of content. The motion characteristics of content may be used in determining optimal FRC operational modes, used in other operations, etc., in a display system with respect to a given image sequence. In some embodiments, one or more computing devices or components (e.g., an encoding device/module, a transcoding device/module, a decoding device/module, a media device/module, etc.) may perform this process flow. Example display systems may include, but are not necessarily limited to only, any combination of: video encoders, video decoders, video streaming servers, video streaming clients, set-top devices, video decoders, display devices, storage devices, etc.

In block 102, the display system receives the image sequence comprising one or more sets of images ("input frames") that support an input frame rate. Each set of images may represent two or more adjacent images (or two or more adjacent input frames) along a common time reference (e.g., a normal playing time, etc.) represented in the image sequence. As used herein, adjacent images refer to non-interpolated, non-replicated images that are timewise next (e.g., consecutive, sequential, immediately following, immediately preceding, etc.) to each other (or one another) in the image sequence. In some embodiments, a set of images as described herein may form a subdivision of a scene, a single scene, multiple scenes, a single group of pictures (GOP), multiple GOPs, etc.

In block 104, the display system calculates an optical flow between two or more adjacent images in a (e.g., each, etc.) set of images and generates an optical flow field to represent the optical flow of pixels, pixel blocks, etc. Correspondences among (or between) the adjacent images may be determined based on one or more optical flow equations. The optical flow field may be pixel based, pixel-block based, etc. The optical flow field may be used to estimate patterns of apparent motions of different objects, different clusters of objects, different spatial regions, etc., depicted in the adjacent images.

Any combination of one or more optical flow techniques may be used to determine the optical flow and/or to generate the corresponding optical flow field. Example optical flow techniques may include, but are not necessarily limited to only, any of: dense optical flow techniques, pixel-based techniques, block-based techniques, techniques that estimate the apparent motions by using only past images (relative to a currently decoded, processed and/or designated image), techniques that estimate the apparent motions by using only future images (relative to a currently decoded, processed and/or designated image), techniques that estimate the apparent motions by any combination or number of past and future images (relative to a currently decoded, processed and/or designated image), etc. Additionally, optionally, or alternatively, motion estimation as described herein can be performed from only individual (decoded) images, directly from a video bitstream (as received by the display system) that encodes the image sequence, or a combination of the foregoing. For example, motion estimation under techniques as described herein may be solely derived from individual images (e.g., uncompressed images, etc.). Additionally, optionally, or alternatively, at least a part of motion estimation under techniques as described herein may be based on motion information such as (e.g., block-based, etc.) GOP motion information decoded directly from a video stream (or one or more media data bitstreams) encoded with the images.

In block 106, the display system computes, based on motion vectors estimated from the patterns of the apparent motions of the different objects in the adjacent images, characteristics of motion vectors in the adjacent images.

In some embodiments, a motion vector can be computed using a directional offset (or displacement) of an object from the object's reference coordinates. The directional offset or displacement may be derived from coordinates of pixels representing the object in a first image (e.g., a currently decoded, processed and/or designated image, etc.) of the adjacent images relative to reference coordinates of correspondence pixels representing the same object in one or more second first images (e.g., one or more reference images, etc.) of the adjacent images.

For example, the directional offset or displacement may be derived as a difference between (a center of mass in) the coordinates of the pixels representing the object in the first image (or the current image) and (a center of mass in) the reference coordinates of the correspondence pixels representing the same object in the one or more second first images (or the reference images).

In some embodiments, characteristics of a motion vector as described herein may comprise a magnitude component and a direction component.

In block 108, the display system, based on the characteristics of the motion vectors in the adjacent images, determines/calculates statistics of the motion vectors in the adjacent images. Example motion vector statistics may include, but are not necessarily limited to only, any of: distributions, mean values, standard deviations, group values, kurtoses, skews, etc., in or among magnitude components and/or direction components of the motion vectors. In some embodiments, for adjacent images that have relatively high standard deviation values in motions (as represented by the motion vectors), the motions in the adjacent images have a relatively high likelihood of being random, as the motions are relatively non-consistent among themselves. Conversely, for adjacent images that have relatively low standard deviation values in motions, the motions in the adjacent images have a relatively low likelihood of being random, as the motions are relatively consistent among themselves. Apart from standard deviations, other characteristics can be used in motion vector characteristics analysis and/or motion vector statistics analysis. These other characteristics may include, but are not necessarily limited to only, any of: histograms or distributions, peaks in histograms or distributions, higher order moments of statistical distributions such as kurtoses, skews, etc.

For example, in some embodiments, the display system optionally or alternatively computes, for the adjacent images, other statistics such as a histogram of the magnitude components of the motion vectors and/or a histogram of the direction components of the motion vectors. The display system can further analyze the histograms to determine the patterns of the apparent motions of the objects depicted in the adjacent images. For instance, if the histogram of the direction components of the motion vectors is relatively uniform in counts across bins of the histogram, then it may be inferred by the display system that the motions depicted in the adjacent images are relatively random because the motion vectors in the adjacent images exhibit a relatively uniform distribution of different directions. Conversely, if the histogram of the direction components of the motion vectors has a significant peak in counts across bins of the histogram, then it may be inferred by the display system that the motions are not random but relatively smooth (or regular) because the motion vectors exhibit a certain relatively narrow directionality such as caused by camera panning.

In block 110, the display system dynamically adjusts/determines the best FRC operational mode (or the best FRC setting) for the set of images based at least in part on statistics of motion vectors and/or histograms of magnitude components and/or direction components of motion vectors in all adjacent images of the set of images.

In operational scenarios in which the set of images comprises a plenty (e.g., exceeding a random motion frame number threshold, etc.) of relatively random motions (e.g., in a scene, in a GOP, etc.) and in which motion estimation in FRC operational modes that perform image interpolation is not reliable, the display system (e.g., dynamically, etc.) adjusts/determines that the FRC OFF mode is the best FRC operational mode to be used to generate additional images in addition to the set of images for the purpose of converting from the input frame rate in the set of images to a device-specific frame rate as appropriate in the display system. Although the FRC OFF mode may create motion blur, such effects may not be noticeable, especially in high motion sequences (e.g., random motion sequences, etc.). In fact, it is desirable to render such high motion sequences in the FRC OFF mode, rather than in the FRC HIGH mode, in order to avoid or reduce unwanted visual artifacts associated with incorrect motion estimation.

In other operational scenarios in which the set of images does not comprises a plenty (e.g., not exceeding the random motion frame number threshold, etc.) of relatively random motions (e.g., in a scene, in a GOP, etc.) and in which motion estimation in FRC operational modes that perform image interpolation is reliable, the display system (e.g., dynamically, etc.) adjusts/determines that a FRC operational mode such as the FRC HIGH mode, the FRC MED mode, the FRC LOW mode, etc., is the best FRC operational mode to be used to generate additional images in addition to the set of images for the purpose of converting from the input frame rate in the set of images to a device-specific frame rate as appropriate in the display system.

FIG. 1B illustrates an example process flow that can be used to determine whether a set of images should be classified as containing random motions. In some embodiments, one or more computing devices or components (e.g., an encoding device/module, a transcoding device/module, a decoding device/module, a media device/module, etc.) may perform this process flow.

In block 102 (which may be the same as block 102 in FIG. 1A), a display system receives an image sequence comprising one or more sets of images ("input frames") that support an input frame rate. Each set of images may comprise two or more adjacent images along a common time reference represented in the image sequence.

In block 104 (which may be the same as block 104 in FIG. 1A), the display system calculates an optical flow in two or more adjacent images in a set of images in the one or more sets of images and generates an optical flow field to represent the optical flow in the adjacent images.

In block 106 (which may be the same as block 106 in FIG. 1A), the display system computes, based on motion vectors estimated from patterns of apparent motions of different objects in the optical flow field, characteristics of motion vectors in the adjacent images. In some embodiments, characteristics of a motion vector as described herein may comprise a magnitude component and a direction component.

In some embodiments, the process flow of FIG. 1B may use a sub-process to analyze spatial relationships of pixels, blocks, etc., in the optical flow field of the adjacent images. This sub-process may be implemented as either a part (e.g., in block 106) of the process flow of FIG. 1B or a separate process flow operating in addition to or in conjunction with the process flow (e.g., in block 106) of FIG. 1B.

As illustrated in FIG. 1B, in block 202, the display system uses the optical flow field to generate a cluster map that comprises a plurality of clusters (denoted as `K` clusters). The optical flow field may comprise a (e.g., two-dimensional, spherical, etc.) field of motion vectors computed from pixel values of adjacent images based on one or more optical flow equations. By way of example but not limitation, the field of motion vectors in the optical flow field may comprise a respective motion vector at each pixel position of a set of pixel positions spanning an image. The display system can apply optical flow analysis tools to the field of motion vectors as represented in the optical flow field, and to recognize the plurality of clusters from the field of motion vectors thereby generating the cluster map that comprises the plurality of clusters. In some embodiments, each cluster in the plurality of clusters (or in the cluster map), as detected from the optical flow field, may comprise one or more (e.g., connected, spatially distributed, etc.) components or objects that are originally depicted in the images.

In block 204, the display system performs morphological operations on the plurality of clusters in the cluster map. The cluster map may comprise a (e.g., two-dimensional, spherical, etc.) field of mapped values derived from the field of motion vectors in the optical flow field. By way of example but not limitation, the field of mapped values in the cluster map may comprise a respective map value at each pixel position of a set of pixel positions spanning an image. In some embodiments, these morphological operations may be (e.g., non-grayscale, grayscale, etc.) operations acting on the field of mapped values in the cluster map at an individual pixel level, at an individual pixel sub-block level, at an individual pixel block level, etc. Example morphological operations may include, but are not necessarily limited to only, any of: erosion operations, dilation operations, opening operations, closing operations, etc.

In block 206, the display system uses the cluster map as having been operated with the morphological operations to identify individual (e.g., connected, spatially distributed, etc.) components or objects ("connected components") in each cluster in the plurality of clusters in the cluster map.

In block 208, the display system counts (e.g., connected, spatially distributed, etc.) components/objects, as detected from the optical flow field using the foregoing operations, to generate a total number of components/objects in the adjacent images. FIG. 2A illustrates a first example cluster map in which a relatively high number (e.g., 187, etc.) of components/objects are detected, whereas FIG. 2B illustrates a second example cluster map in which a relatively low number (e.g., 23, etc.) of components/objects are detected.

In block 210, the display system determines whether the total number of components/objects, as detected from the optical flow field computed from the adjacent images, exceeds a specific (e.g., connected, spatially distributed, etc.) component number threshold (e.g., denoted as "threshold 0," 30, 40, 50, 100, etc.).

In some embodiments, in response to determining that the total number of components/objects in the optical flow field of the adjacent images exceeds the specific component number threshold, the display system proceeds to perform further statistical analyses on motion vectors represented in the optical flow field to determine whether the adjacent images are to be classified as containing random motions or not.

In some embodiments, in response to determining that the total number of components/objects in the optical flow field of the adjacent images does not exceed the specific component number threshold, the display system avoids performing statistical analyses on the motion vectors represented in the optical flow field and directly determines that the adjacent images are to be classified as not containing random motions but rather are to be classified as containing smooth (or non-random) motions. As a result of this determination, in some embodiments, operations in blocks 212, 108, 216, 218, 220, 222, etc., may be omitted for the adjacent images in response to determining that the total number of components/objects in the optical flow field of the adjacent images does not exceed the specific component number threshold. However, in some other embodiments, some or all operations in blocks 212, 108, 216, 218, 220, 222, etc., may still be performed for the adjacent images in response to determining that the total number of components/objects in the optical flow field of the adjacent images does not exceed the specific component number threshold.

In block 212, the display system computes, based on the characteristics of the motion vectors (e.g., as represented in the optical flow field, etc.) in the adjacent images, a histogram of the magnitude components of the motion vectors and/or a histogram of the direction components of the motion vectors.

In block 108, the display system, based on the characteristics of the motion vectors (e.g., as represented in the optical flow field, etc.) in the adjacent images, further determines/calculates statistics (e.g., variances, etc.) of the motion vectors in the adjacent images.

In block 216, the display system determines whether a variance of magnitude components of motion vectors in the optical flow field of the adjacent images exceeds a specific motion magnitude variance threshold (e.g., denoted as "threshold 1," etc.).

The variance may be represented by any combination of standard deviations, kurtoses, skews, etc. Different types of variance values may be compared with different types of thresholds. For example, the standard deviations of the magnitude components may be compared with the specific motion magnitude variance threshold (or a component value thereof) in the form of a magnitude standard deviation threshold. Additionally, optionally, or alternatively, the kurtoses of the magnitude components may be compared with the specific motion magnitude variance threshold (or a component value thereof) in the form of a magnitude kurtosis threshold. Additionally, optionally, or alternatively, the skews of the magnitude components may be compared with the specific motion magnitude variance threshold (or a component value thereof) in the form of a magnitude skew threshold. A threshold as described herein may be single valued or multi-valued in various embodiments.

In some embodiments, in response to determining that the variance of magnitude components of motion vectors in the optical flow field of the adjacent images exceeds the specific motion magnitude variance threshold, the display system proceeds to perform further statistical analyses on the motion vectors represented in the optical flow field to determine whether the adjacent images are to be classified as containing random motions or not.

In some embodiments, in response to determining that the variance of magnitude components of motion vectors in the optical flow field of the adjacent images does not exceed the specific motion magnitude variance threshold, the display system avoids performing further statistical analyses on the motion vectors represented in the optical flow field and directly determines that the adjacent images are to be classified as not containing random motions but rather are to be classified as containing smooth (or non-random) motions. As a result of this determination, in some embodiments, operations in blocks 202, 204, 206, 208, 210, 212, 218, 220, 222, etc., may be omitted for the adjacent images in response to determining that the variance of magnitude components of motion vectors in the optical flow field of the adjacent images does not exceed the specific motion magnitude variance threshold. However, in some other embodiments, some or all operations in blocks 202, 204, 206, 208, 210, 212, 218, 220, 222, etc., may still be performed for the adjacent images in response to determining that the variance of magnitude components of motion vectors in the optical flow field of the adjacent images does not exceed the specific motion magnitude variance threshold.

In block 218, the display system determines whether a variance of direction components of motion vectors in the optical flow field of the adjacent images exceeds a specific motion direction variance threshold (e.g., denoted as "threshold 2," etc.).

For example, the standard deviations of the direction components may be compared with the specific motion direction variance threshold (or a component value thereof) in the form of a direction standard deviation threshold. Additionally, optionally, or alternatively, the kurtoses of the direction components may be compared with the specific motion direction variance threshold (or a component value thereof) in the form of a direction kurtosis threshold. Additionally, optionally, or alternatively, the skews of the direction components may be compared with the specific motion direction variance threshold (or a component value thereof) in the form of a direction skew threshold.

In some embodiments, in response to determining that the variance of direction components of motion vectors in the optical flow field of the adjacent images exceeds the specific motion direction variance threshold, the display system proceeds to perform further statistical analyses on the motion vectors represented in the optical flow field to determine whether the adjacent images are to be classified as containing random motions or not.

In some embodiments, in response to determining that the variance of direction components of motion vectors in the optical flow field of the adjacent images does not exceed the specific motion direction variance threshold, the display system avoids performing further statistical analyses on the motion vectors represented in the optical flow field and directly determines that the adjacent images are to be classified as not containing random motions but rather are to be classified as containing smooth (or non-random) motions. As a result of this determination, in some embodiments, operations in blocks 202, 204, 206, 208, 210, 212, 216, 220, 222, etc., may be omitted for the adjacent images in response to determining that the variance of direction components of motion vectors in the optical flow field of the adjacent images does not exceed the specific motion direction variance threshold. However, in some other embodiments, some or all operations in blocks 202, 204, 206, 208, 210, 212, 216, 220, 222, etc., may still be performed for the adjacent images in response to determining that the variance of direction components of motion vectors in the optical flow field of the adjacent images does not exceed the specific motion direction variance threshold.

In block 220, the display system identifies two or more magnitude peaks in the histogram of the magnitude components of the motion vectors and/or two or more direction peaks in the histogram of the direction components of the motion vectors. The two or more magnitude peaks may comprise the highest magnitude peak and the second highest magnitude peak. The highest magnitude peak may correspond to the highest count over a single bin, over two bins, etc., of the magnitude component histogram, whereas the second highest magnitude peak may correspond to the second highest count over the same number of bin(s) of the magnitude component histogram over which the highest magnitude peak is measured. Additionally, optionally, or alternatively, the two or more direction peaks may comprise the highest direction peak and the second highest direction peak. The highest direction peak may correspond to the highest count over a single bin, over two bins, etc., of the direction component histogram, whereas the second highest direction peak may correspond to the second highest count over the same number of bin(s) of the direction component histogram over which the highest direction peak is measured.

In some embodiments, the display system determines whether the second highest magnitude peak exceeds a specific peak threshold factor times the highest magnitude peak. Additionally, optionally, or alternatively, the display system determines whether the second highest direction peak exceeds a specific peak threshold factor times the highest direction peak.

In some embodiments, in response to determining either that the second highest magnitude peak exceeds the specific peak threshold factor times the highest magnitude peak or that the second highest magnitude peak exceeds the specific peak threshold factor times the highest magnitude peak in block 220, the display system proceeds to block 222 and identifies/classifies the adjacent images as images that contain random motions (or random motion images/frames). Otherwise, in block 220, in response to determining both that the second highest magnitude peak does not exceed the specific peak threshold factor times the highest magnitude peak and that the second highest magnitude peak does not exceed the specific peak threshold factor times the highest magnitude peak, the display system identifies/classifies the adjacent images as images that contain smooth motions (or non-random motion images/frames).

In some embodiments, in response to determining both that the second highest magnitude peak exceeds the specific peak threshold factor times the highest magnitude peak and that the second highest magnitude peak exceeds the specific peak threshold factor times the highest magnitude peak in block 220, the display system proceeds to block 222 and identifies/classifies the adjacent images as images that contain random motions (or random motion images/frames). Otherwise, in block 220, in response to determining either that the second highest magnitude peak does not exceed the specific peak threshold factor times the highest magnitude peak or that the second highest magnitude peak does not exceed the specific peak threshold factor times the highest magnitude peak, the display system identifies/classifies the adjacent images as images that contain smooth motions (or non-random motion images/frames).

The display system may repeat the operations from blocks 104 and 222 for all other adjacent images in the set of images. In some embodiments, some or all of these operations may be performed with respect to a currently decoded, processed and/or designated image (or a current image) in relation to one or more images adjacent to the current image.

In block 224, the display system counts all random motion images/frames in the set of images to generate a total number of random motion images/frames (denoted as "n") in the set of images. In some embodiments, the total number of random motion images/frames may be computed as an absolute number. In some embodiments, the total number of random motion images/frames may be computed as a relative number, for example, as a ratio of the total number of random motion images/frames over the total number of images in the set of images.

In block 226, the display system determines whether the total number of random motion images/frames in the set of images exceeds a specific random motion frame number threshold (e.g., denoted as "threshold 3," etc.). In some embodiments, the specific random motion frame number threshold may be specified as an absolute number. In some embodiments, the specific random motion frame number threshold may be specified as a relative number, for example, as a ratio of a threshold number of random motion images/frames over the total number of images in the set of images.

In some embodiments, in response to determining that the total number of random motion images/frames in the set of images exceeds the specific random motion frame number threshold in block 226, the display system proceeds to block 228 and identifies/classifies the set of images as (e.g., a scene, a GOP, etc.) containing random motions (or random motion images/frames). Otherwise, in block 228, in response to determining that the total number of random motion images/frames in the set of images does not exceed the specific random motion frame number threshold in block 226, the display system identifies/classifies the set of images as (e.g., a scene, a GOP, etc.) containing smooth motions (or non-random motion images/frames).

In some embodiments, some or all of the foregoing operations may be performed with respect to a currently decoded, processed and/or designated set of images (a current set of images). The display system may repeat the foregoing operations for other received sets of images.

Under techniques as described herein, selecting/adjusting FRC operational modes (or FRC settings) based on motion statistics derived from (pixel values of) images can also be categorized as a classification problem in which a classifier may be used to determine the best FRC operational mode for any given set of images. The inputs to the classifier may be motion statistics (or statistics of motion vectors) computed from each of one or more sets of images, whereas the outputs of the classifier may be respective FRC operational modes (or respective FRC settings) for the one or more sets of images. These respective FRC operational modes may comprise two or more different FRC operational modes (e.g., FRC OFF, FRC LOW, FRC, MED, FRC HIGH, etc.) where some of the sets of images are classified as containing smooth motions and some others of the sets of images are classified as containing random motions.

For the purpose of illustration only, it has been described that FRC related operational modes supported by techniques as described herein include an FRC OFF mode, an FRC HIGH mode, an FRC MED mode, and an FRC LOW mode. It should be noted that in various embodiments, more or fewer FRC related operational modes may be determined and used per spatial region, per image, per scene, etc., based on FRC-related analyses on image content in an image or a spatial region thereof, in a scene, etc. In an example, in some embodiments, the FRC related modes supported by techniques as described herein may include only an FRC OFF mode and an FRC ON mode. In another example, in some embodiments, the FRC related modes supported by techniques as described herein may include only an FRC OFF mode, an FRC HIGH mode, and an FRC LOW mode. In yet another example, in some embodiments, the FRC related modes supported by techniques as described herein may include additional FRC related modes in addition to an FRC OFF mode, an FRC HIGH mode, an FRC MED mode, and an FRC LOW mode.

Any combination of one or more of a wide variety of classification techniques such as decision tree, support vector machine (SVM), random forest classifier, etc., may be used to classify a given set of images into a respective FRC operational mode optimally selected/determined for the set of images.

In some embodiments, an upstream device (e.g., a video encoder, a studio-based system, a broadcast system, a media streaming server, a cloud-based system, a set-top box, etc.) may classify (or pre-classify) an image sequence comprising sets of images in a media program before transmitting the images to downstream devices. For example, in a pre-computing stage (e.g., offline, during encoding, before encoding, etc.), the upstream device may determine complexity of motions in the image sequence and generate motion characteristics metadata for the image sequence based on the complexity of motions determined in content depicted in the image sequence. The complexity of motions in the image sequence can be estimated with optical flow techniques and/or other motion estimation techniques. In some embodiments, the upstream device determines an optimal FRC operational mode per image. Additionally, optionally, or alternatively, in some embodiments, the upstream device determines or maintains an optimal (constant) FRC operational mode for an entire scene comprising multiple (e.g., consecutive, sequential, etc.) images; changes in FRC operational modes may be allowed to occur only at scene cuts or scene boundaries between two different adjacent scenes (or at the beginning of a media program comprising the scenes).

The upstream device may compute only a single value (e.g., a single-valued FRC flag, etc.) representing the complexity of motion per image, per scene, etc. Additionally, optionally, or alternatively, the upstream device may compute a multi-valued complexity factor (e.g., a multi-valued FRC flag, etc.) per image, per scene, etc. By way of example but not limitation, the multi-valued complexity factor may be a set of vectors describing the complexity of the motion along multiple axes or dimensions, which may include, but are not necessarily limited to only, any of: translation, rotation, number of different motion clusters, etc.

Some or all of optical flow fields, cluster maps with and/or without morphological operations, number of objects directly or indirectly detected from the optical flow fields, histograms and/or distributions of motion vectors, histograms and/or distributions of characteristics (e.g., standard deviations, kurtoses, skews, etc.) of motion vectors, optimal FRC operational modes determined by one or more classifying processes or classifiers, etc., can be used to generate the motion characteristics metadata at the encoder side (e.g., by the upstream device).

In some embodiments, the motion characteristics metadata may include an FRC flag, an FRC data field, etc., that can directly or indirectly indicate whether a particular FRC operational mode such as an FRC OFF mode, an FRC HIGH mode, an FRC LOW mode, an FRC intermediate mode, etc., is to be used by a downstream device and/or a display device operating in conjunction with the downstream device for a given set of images.

In some embodiments, the motion characteristics metadata includes an FRC flag for each image to indicate the best FRC operational mode for the image. In various embodiments, a downstream device may implement an algorithm to set FRC operational modes per image, per scene, per GOP, per fixed number of images, etc. For example, the downstream device may set a particular FRC operational mode for a set of images in response to determining that a plurality of images (e.g., exceeding a certain threshold number, etc.) in the set of images has the particular FRC operational mode predetermined (e.g., through a per-image flag, etc.) at the encoder side as the best FRC operational mode.

In some embodiments, the motion characteristics metadata includes an FRC flag (e.g., the single-valued FRC flag, etc.) for each set of images (e.g., representing a scene, etc.) to indicate the best FRC operational mode for the set of images. The downstream device may set a particular FRC operational mode for the set of images in response to determining that the set of images has the particular FRC operational mode predetermined (e.g., through a per-image flag, etc.) at the encoder side as the best FRC operational mode.

In some embodiments, the motion characteristics metadata may comprise an overall value/factor or multiple component values/factors (e.g., the multi-valued FRC flag, etc.) per image, per scene, per GOP, etc. Some or all of the motion characteristics metadata may be carried in one or more tables. For example, the motion characteristics metadata may comprise a FRC data field or flag per image, per scene, per GOP, etc., to indicate one or more of: a random motion type, a smooth motion type, a panning motion type (which is considered as a smooth motion type), a random translational motion type, a smooth translational motion type, a random rotational motion type, a smooth rotational motion type, etc.

In some embodiments, instead of or in addition to generating the motion characteristics metadata that explicitly determines/sets the best FRC operational modes, the upstream device may generate one or more portions of the motion characteristics metadata that can be used to guide downstream devices to select the best FRC operational mode among various FRC operational modes respectively implemented by different downstream devices. For example, the motion characteristics metadata may be used to indicate that there are primarily translational motions in an image or a spatial region thereof, in a scene, etc. Additionally, optionally, or alternatively, the motion characteristics metadata may be used to indicate that there are two or more layer or spatial regions of different types of motions in an image or a spatial region thereof, in a scene, etc. For example, the two or more layers of different types of motions may include a first layer or a first spatial region of rains (or raining motions), a second layer or a second spatial region of (e.g., relatively static, relatively stationary, etc.) background, etc. The motion characteristics metadata may be used by a downstream device to guide its own selection of the best FRC operational mode among a plurality FRC operational modes implemented by the downstream device or a display device operating in conjunction with the downstream device in an image or a spatial region thereof, in a scene, etc.

The motion characteristics metadata can be generated in real time, in offline processing, time-delay processing, etc. The motion characteristics metadata may be included by (e.g., as a part of, as supplemental metadata to, etc.) overall image metadata embedded (e.g., as sub-bitstreams, as one or more data fields, as one or more flags, etc.) in one or more media data bitstreams that are used to transmit the images to the downstream devices.

In some embodiments, some or all of the motion characteristics metadata may be generated based at least in part on the knowledge of future images relative to one or more currently decoded, processed and/or designated images, where the future images are available, for example, as a part of a non-live media program, as a part of offline processing of a media program, in a peek-ahead buffer of a live or non-live media program, etc.

In some embodiments, a downstream device (e.g., a set-top box, a TV, a mobile device, a tablet computer, a laptop computer, a PC, etc.) may receive the image sequence comprising the sets of images in the media program with the motion characteristics metadata generated by the upstream device.

For example, in a decoding/playback stage (e.g., in a television, in a set-top box, etc.), the downstream device read or decode the complexity of motion (for the image sequence) from the motion characteristics metadata that is encoded and carried in one or more media data bitstreams from which the sets of images can be decoded. Based at least in part on the decoded complexity of motion, the downstream device can decide on the best FRC operational mode (or the best FRC settings). In some embodiments, the downstream device can decide on the best FRC operational mode (or the best FRC settings) further based on FRC capabilities of the downstream device and/or a display device operating in conjunction with the downstream device. For example, the downstream device and/or the display device may have relatively strong FRC capabilities in interpolating translational motions, but relatively weak FRC capabilities in interpolating rotational motions. In response to determining that the complexity of motion indicates relatively predominant translational motions in an image, in a scene, etc., the downstream device and/or the display device may set a FRC HIGH or FRC MED as the best FRC operational mode for the image, for the scene, etc. On the other hand, in response to determining that the complexity of motion indicates relatively predominant rotational motions in an image, in a scene, etc., the downstream device and/or the display device may set a FRC LOW or even FRC OFF as the best FRC operational mode for the image, for the scene, etc.

In some embodiments, the downstream device may be freed from performing some or all of the operations used to classify the sets of images in the media program after receiving the images directly or indirectly from the upstream device. Some or all of optical flow fields, cluster maps with and/or without morphological operations, number of objects directly or indirectly detected from the optical flow fields, histograms and/or distributions of motion vectors, histograms and/or distributions of characteristics (e.g., standard deviations, kurtoses, skews, etc.) of motion vectors, optimal FRC operational modes determined by one or more classifying processes or classifiers, etc., can be derived at the decoder side (e.g., by the downstream device) from the motion characteristics metadata. In some embodiments, some or all of the motion characteristics metadata may be used to determine or select a respective (optimal) FRC operational mode for each of the sets of images.

Using the motion characteristics metadata generated at the encoder side, the downstream device can avoid or reduce buffering the images and analyzing motion statistics of the images at the decoder side, thereby significantly avoiding or reducing delays in rendering the images. Motion characteristics metadata can be used in a flexible manner in various embodiments. For example, in some embodiments, the motion characteristics metadata including but not limited to the size/amount of metadata overheads may be specifically optimized for deploying to one or more of: particular FRC related frameworks, particular platforms, particular manufacturers/vendors, particular types of display devices and/or computing devices, etc. In some other embodiments, the motion characteristics metadata including but not limited to the size/amount of metadata overheads may be based on standards, proprietary specifications, enhancements to standards and/or proprietary specifications, etc., and may be deployed to a wide variety of FRC related frameworks, platforms, manufacturers/vendors, types of display devices and/or computing devices, etc.

Additionally, optionally, or alternatively, a downstream device may be configured to perform adaptive operations to adapt the motion characteristics metadata for optimally selecting/determining optimal FRC operational modes (or optimal FRC settings) for images to be rendered with the downstream device. For example, the motion characteristics metadata may include one or more metadata portions that specify, or that can be used to determine, a set of more or fewer FRC operational modes than device-specific FRC operational modes actually implemented with the downstream device or with a display device operating in conjunction with the downstream device. The downstream device and/or the display device may be configured to map the FRC operational modes in the set that can be specified/determined based on the motion characteristics metadata to the device-specific FRC operational modes.

In some embodiments, the motion characteristics metadata may include one or more metadata portions that specify, or that can be used to determine, multiple sets of FRC operational modes and/or multiple sets of FRC operational parameters. Each of the multiple sets of FRC operational modes and/or the multiple sets of FRC operational parameters may applicable to one or more of: respective FRC related frameworks, respective platforms, respective manufacturers/vendors, particular types of display devices and/or computing devices, etc. The downstream device or with a display device operating in conjunction with the downstream device may be configured to select a particular set of FRC operational modes from the multiple sets of FRC operational modes and/or a particular set of FRC operational parameters from the multiple sets of FRC operational parameters that is appropriate to the downstream device and/or the display device, for example, based on FRC capabilities of the downstream device and/or the display device.

In some embodiments, different FRC operational modes may be selected or determined for different spatial regions of images. A spatial region as described herein may refer to a set of contiguous pixels in (e.g., a frame of, etc.) an image. For example, a display system as described herein may be configured to partition/segment an image (or two or more adjacent images) into one or more spatial regions based on respective motion characteristics in the one or more spatial regions of the image (or the two or more adjacent images). In some embodiments, the one or more spatial regions collectively span an entire image. In some embodiments, none of the one or more spatial regions may be non-overlapping. In some embodiments, at least two of the one or more spatial regions may overlap with each other.

The different motion characteristics in the different spatial regions may be determined with an optical flow field or motion vectors therein as generated from two or more adjacent images including but not necessarily limited to the (current) image. In an example, relatively high motions may be detected in a first portion of the image, whereas relatively low motions may be detected in a second portion of the image. In another example, relatively random motions may be detected in a third portion of the image, whereas relatively smooth motions may be detected in a fourth portion of the image. In yet another example, relatively predominant rotational motions may be detected in a third portion of the image, whereas relatively predominantly translational motions may be detected in a fourth portion of the image.

Additionally, optionally, or alternatively, the different motion characteristics in the different spatial regions may be determined with a cluster map derived from the optical flow field. For example, mapped values in the cluster map with or without morphological operations may be used to partition/segment the image (or the two or more images) into the different spatial regions.

In some embodiments, techniques as described herein may be applied at a spatial region level. For example, one or both of the processes of FIG. 1A and FIG. 1B may be performed at the spatial region level. An individual optimal FRC operational mode (or FRC settings) may be specifically selected or determined for an individual spatial region of the different spatial regions. Thus, in some embodiments, instead of applying a single FRC operational mode (or FRC settings), multiple different FRC operational modes may be selected or determined for an image or different spatial regions therein.

For the purpose of illustration only, it has been described that some or all of the number of objects, the relationship between the variance of the magnitude components with respect to certain magnitude variance threshold, the relationship between the variance of the direction components with respect to certain direction variance threshold, the relationship between the second peak of a histogram (e.g., a magnitude component histogram, a direction component histogram, a standard deviation histogram, a higher moment histogram such as kurtosis or skew histogram, etc.) with respect to the first peak of the same histogram, etc., may be used to determine or classify one or more images as containing relatively random motions, as containing relatively smooth motions, etc. Additionally, optionally, or alternatively, types of motions such as random translations, random rotations, smooth translations, smooth rotations, panning motions, a combination of two or more different types of motions in images or spatial regions therein can be determined based on some or all of these parameters relating to motion characteristics in the images or the spatial regions therein.

It should be noted that in various embodiments, in addition to, or in place of, these parameters, other parameters relating to motion characteristics in the images or the spatial regions therein may be used. For example, parameters such as the number of cluster, the relationship between the first peak with respect to the entire histogram (e.g., the entire magnitude component histogram, the entire direction component histogram, the entire standard deviation histogram, the entire higher moment histogram such as the entire kurtosis or skew histogram, etc.), the relationship between the first two or more peaks with respect to the entire histogram, etc., may be used to determine or classify the one or more images as containing relatively random motions, as containing relatively smooth motions, etc. Additionally, optionally, or alternatively, types of motions such as random translations, random rotations, smooth translations, smooth rotations, panning motions, a combination of two or more different types of motions in images or spatial regions therein can be determined based at least in part on some or all of these additional parameters relating to motion characteristics in the images or the spatial regions therein.

In some embodiments, non-motion characteristics metadata can be generated by upstream devices (e.g., video encoding devices, etc.) to guide operations in downstream devices (e.g., video decoding devices, etc.). By way of example but not limitation, noise level metadata can be generated by upstream devices to indicate a level of noise in an image or a spatial region thereof, in a scene, etc., in order to guide application of sharpening filters, a noise reduction filters, etc., implemented in downstream devices or display devices operating in conjunction with the downstream devices. For images or spatial regions therein that are sensitive to visual artifacts (e.g., halos, noises, etc.) caused by applying a sharpening filter, a noise reduction filter, etc., noise level metadata can be generated by an upstream device and used by downstream devices to avoid the application or reduce the strength of the sharpening filter, the noise reduction filter, etc., for the purpose of avoiding or reducing these visual artifacts in images that are prone to generating these visual artifacts if the application or the strength (e.g., set by a user, set by default, set programmatically, set without the benefit of the noise level metadata, etc.) of the sharpening filter, the noise reduction filter, etc., were maintained or applied.

For example, in a pre-computing stage (e.g., offline, during encoding, before encoding, etc.), the upstream device may determine noise levels in the image sequence and generate noise level metadata for the image sequence based on the noise levels determined in the image sequence.

The noise level metadata can be generated in real time, in offline processing, time-delay processing, etc. The noise level metadata may be included by (e.g., as a part of, as supplemental metadata to, etc.) overall image metadata embedded (e.g., as sub-bitstreams, as one or more data fields, as one or more flags, etc.) in one or more media data bitstreams that are used to transmit the images to the downstream devices.

In some embodiments, a downstream device (e.g., a set-top box, a TV, a mobile device, a tablet computer, a laptop computer, a PC, etc.) may receive the image sequence comprising the sets of images in the media program with the noise level metadata generated by the upstream device.

In some embodiments, the downstream device may be freed from performing some or all of the operations used to determine the noise levels in the sets of images in the media program after receiving the images directly or indirectly from the upstream device.