Touch-based gesture recognition and application navigation

Amerige , et al.

U.S. patent number 10,324,619 [Application Number 15/250,686] was granted by the patent office on 2019-06-18 for touch-based gesture recognition and application navigation. This patent grant is currently assigned to FACEBOOK, INC.. The grantee listed for this patent is Facebook, Inc.. Invention is credited to Brian Daniel Amerige, Benjamin Grady Cunningham.

View All Diagrams

| United States Patent | 10,324,619 |

| Amerige , et al. | June 18, 2019 |

Touch-based gesture recognition and application navigation

Abstract

A method is performed at an electronic device that includes a display, a touch-sensitive surface, one or more processors, and memory storing one or more programs. The device displays a user interface of a software application, wherein the user interface includes a plurality of user-interface elements. A first gesture is detected on the touch-sensitive surface while displaying the first user interface, and an initial direction of movement is determined for the first gesture. The device recognizes that the initial direction corresponds to one of a first predefined direction on the touch-sensitive surface or a second predefined direction on the touch-sensitive surface, wherein the first predefined direction is distinct from the second predefined direction. Display of one or more user-interface elements of the plurality of user-interface elements is manipulated in accordance with the corresponding one of the first or second predefined direction.

| Inventors: | Amerige; Brian Daniel (Palo Alto, CA), Cunningham; Benjamin Grady (San Francisco, CA) | ||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Applicant: |

|

||||||||||

| Assignee: | FACEBOOK, INC. (Menlo Park,

CA) |

||||||||||

| Family ID: | 55074603 | ||||||||||

| Appl. No.: | 15/250,686 | ||||||||||

| Filed: | August 29, 2016 |

Prior Publication Data

| Document Identifier | Publication Date | |

|---|---|---|

| US 20160370987 A1 | Dec 22, 2016 | |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | Issue Date | ||

|---|---|---|---|---|---|

| 14334588 | Jul 17, 2014 | 9430142 | |||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | G06F 3/04817 (20130101); G06F 3/04883 (20130101); G06F 3/0485 (20130101); G06F 3/04845 (20130101) |

| Current International Class: | G06F 3/0488 (20130101); G06F 3/0481 (20130101); G06F 3/0485 (20130101); G06F 3/0484 (20130101) |

| Field of Search: | ;715/863 |

References Cited [Referenced By]

U.S. Patent Documents

| 8368723 | February 2013 | Grossweiler, III et al. |

| 8416217 | April 2013 | Eriksson et al. |

| 8527909 | September 2013 | Mullany |

| 8552999 | October 2013 | Dale |

| 8566044 | October 2013 | Shaffer |

| 8682602 | March 2014 | Moore |

| 8826178 | September 2014 | Zhang |

| 8847992 | September 2014 | Kornmann et al. |

| 9229632 | January 2016 | Welkin et al. |

| 9235321 | January 2016 | Matas |

| 9245312 | January 2016 | Matas |

| 9310992 | April 2016 | Kornmann |

| 9311112 | April 2016 | Shaffer |

| 9430142 | August 2016 | Amerige |

| 9575652 | February 2017 | Rossi |

| 9639260 | May 2017 | Blumenberg |

| 9678943 | June 2017 | Bi |

| 9684521 | June 2017 | Shaffer |

| 9710453 | July 2017 | Ouyang |

| 9733716 | August 2017 | Shaffer |

| 9798459 | October 2017 | Williamson |

| 9870141 | January 2018 | Ansell |

| 9965177 | May 2018 | Moore |

| 2006/0271867 | November 2006 | Wang et al. |

| 2010/0045666 | February 2010 | Kornmann et al. |

| 2010/0309147 | December 2010 | Fleizach et al. |

| 2011/0078560 | March 2011 | Weeldreyer |

| 2011/0126148 | May 2011 | Krishnaraj et al. |

| 2011/0164058 | July 2011 | Lemay |

| 2011/0181526 | July 2011 | Shaffer |

| 2012/0131514 | May 2012 | Ansell |

| 2012/0327009 | December 2012 | Fleizach |

| 2013/0016039 | January 2013 | Moore |

| 2013/0016103 | January 2013 | Grossweiler, III et al. |

| 2013/0016129 | January 2013 | Grossweiler, III et al. |

| 2013/0067390 | March 2013 | Kwiatkowski |

| 2013/0067398 | March 2013 | Pittappilly et al. |

| 2013/0073932 | March 2013 | Migos et al. |

| 2013/0198661 | August 2013 | Matas |

| 2013/0263029 | October 2013 | Rossi |

| 2014/0002494 | January 2014 | Cunningham |

| 2014/0082497 | March 2014 | Chalouhi et al. |

| 2014/0215386 | July 2014 | Song et al. |

| 2014/0237415 | August 2014 | Amerige |

| 2014/0327677 | November 2014 | Walker |

| 2014/0362056 | December 2014 | Zambetti |

| 2014/0365945 | December 2014 | Karunamuni et al. |

| 2015/0067581 | March 2015 | Wu et al. |

| 2015/0082250 | March 2015 | Wagner et al. |

| 2015/0113480 | April 2015 | Krikke et al. |

Other References

|

Amerige, Notice of Allowance, U.S. Appl. No. 14/334,588, dated Apr. 21, 2016, 8 pgs. cited by applicant . Matas, Office Action, U.S. Appl. No. 14/334,604, dated Jul. 28, 2016, 23 pgs. cited by applicant . Matas, Final Office Action, U.S. Appl. No. 14/334,604, dated Feb. 1, 2017, 22 pgs. cited by applicant . Matas, Office Action, U.S. Appl. No. 14/334,604, dated Jul. 13, 2017, 24 pgs. cited by applicant . Matas, Notice of Allowance, U.S. Appl. No. 14/334,604, dated Mar. 14, 2018, 9 pgs. cited by applicant . Event Handling Guide for iOS, Developer, Copyright .COPYRGT. 2013 Apple Inc., 74 pgs. cited by applicant . UIPanGestureRecognizer-Only vertical or Horizontal, Aug. 17, 2011, http://stackoverflow.com/questions/7100884/uipangesturerecognizer-only-ve- rtical-or-horizontal, 7 pgs. cited by applicant . UISwipeGestureRecognizer-Configuring the gesture, Mar. 23, 2010, https://developer.apple.com/library/ios/documentation/uikit/reference/UIS- wipeGesture Recognizer_Class/Reference/Reference.html, 2 pgs. cited by applicant . UIPanGestureRecognizer-Configuring the Gesture Recognizer, Mar. 23, 2010, https://developer.apple.com/library/ios/documentation/uikit/reference/UIP- anGestureRecognizer_Class/Reference/Reference.html, 2 pgs. cited by applicant . How can I capture which direction is being panned using UIPanGestureRecognizer?, Dec. 18, 2013, http://stackoverflow.com/questions/5187502/how-can-i-capture-which-direct- ion-is-being-panned-using-uipangesturerecognizer, 2 pgs. cited by applicant . UIGestureRecognizer-Tutorial-in-IOS 5: Pinches, Pans, and More!, Nov. 30, 2011, http://www.raywenderlich.com/6567/uigesturerecognizer-tutorial-in-i- os-5-pinches-pans-and-more, 14 pgs. cited by applicant . Event-Handling Guide for iOS: GestureRecognizers, Jan. 28, 2013, https://developer.apple.com/library/ios/documentation/EventHandling/Conce- ptual/EventHandlingiPhoneOS/GestureRecognizer_basics/GestureRecognizer_bas- ics.html, 16 pgs. cited by applicant . UISwipeGestureRecognizer Class Reference, May 1, 2014, https://developer.apple.com/library/ios/documentation/UIKit/Reference/UIS- wipeGestureRecognizer_Class/Reference/Reference.html#//apple_ref/occ/cl/UI- SwipeGestureRecognizer, 3 pgs. cited by applicant . SimpleGestureRecognizers/APLGestureRecognizerViewController.m, Apr. 29, 2014, https://developer.apple.com/library/ios/samplecode/SimpleGestureRec- ognizers/Listings/SimpleGestureRecognizers_AP LGestureRecognizerViewController_m.html, 6 pgs. cited by applicant. |

Primary Examiner: Titcomb; William D

Attorney, Agent or Firm: Morgan, Lewis & Bockius LLP

Parent Case Text

RELATED APPLICATION

This application is a continuation of U.S. application Ser. No. 14/334,588, filed Jul. 17, 2014, entitled "Touch-Based Gesture Recognition and Application Navigation," and is related to U.S. application Ser. No. 14/334,604, filed Jul. 17, 2014, entitled "Touch-Based Gesture Recognition and Application Navigation," all of which are incorporated by reference herein in their entirety.

Claims

What is claimed is:

1. A non-transitory computer-readable storage medium storing one or more programs, the one or more programs comprising instructions, which, when executed by an electronic device with a display, a touch-sensitive surface, and one or more processors, cause the device to: display a user interface of a social networking application, wherein the user interface includes a plurality of displayed user-interface elements; detect a first gesture on the touch-sensitive surface while displaying the first user interface; determine an initial direction of movement for the first gesture; recognize that the initial direction corresponds to one of a first predefined direction on the touch-sensitive surface or a second predefined direction on the touch-sensitive surface, wherein the first predefined direction is distinct from the second predefined direction; and manipulate display of one or more user-interface elements of the plurality of displayed user-interface elements in accordance with the corresponding one of the first or second predefined direction.

2. The computer-readable storage medium of claim 1, wherein the instructions include: instructions for a first gesture recognizer to recognize gestures in the first predefined direction; and instructions for a second gesture recognizer to recognize gestures in the second predefined direction.

3. The computer-readable storage medium of claim 2, wherein the social networking application includes the instructions for the first gesture recognizer and the instructions for the second gesture recognizer.

4. The computer-readable storage medium of claim 2, wherein an operating system of the electronic device includes the instructions for the first gesture recognizer and the instructions for the second gesture recognizer.

5. The computer-readable storage medium of claim 2, wherein the one or more programs further comprise instructions, which, when executed by the electronic device, cause the electronic device to: based on the corresponding one of the first or second predefined direction, send a message from a corresponding one of the first gesture recognizer or the second gesture recognizer to a view controller, wherein the message indicates that the corresponding one of the first or second predefined direction has been recognized for the first gesture.

6. The computer-readable storage medium of claim 5, wherein: the message includes information specifying a location of the detected first gesture with respect to the user interface; and the specified location corresponds to the one or more user-interface elements.

7. The computer-readable storage medium of claim 1, wherein manipulating display of the one or more user-interface elements comprises moving the one or more user-interface elements in the corresponding one of the first or second predefined direction on the display.

8. The computer-readable storage medium of claim 1, wherein the displayed user interface is a first user interface, and the one or more programs further comprise instructions, which, when executed by the electronic device, cause the electronic device to: in response to detecting the first gesture on a first user-interface element of the one or more user-interface elements and recognizing that the initial direction of the first gesture corresponds to the first direction, display a second user interface distinct from the first user interface; and in response to detecting the first gesture on the first user-interface element and recognizing that the initial direction of the first gesture corresponds to the second direction, display a third user interface distinct from the first user interface and the second user interface.

9. The computer-readable storage medium of claim 1, wherein the instructions that cause the electronic device to recognize that the initial direction corresponds to one of the first predefined direction or the second predefined directions comprise instructions that cause the electronic device to determine whether a component of the initial direction of the first gesture satisfies a threshold value.

10. An electronic device, comprising: a display; a touch-sensitive surface; one or more processors; and memory storing one or more programs that are configured to be executed by the one or more processors, the one or more programs including instructions for: displaying a user interface of a social networking application, wherein the user interface includes a plurality of displayed user-interface elements; detecting a first gesture on the touch-sensitive surface while displaying the first user interface; determining an initial direction of movement for the first gesture; recognizing that the initial direction corresponds to one of a first predefined direction on the touch-sensitive surface or a second predefined direction on the touch-sensitive surface, wherein the first predefined direction is distinct from the second predefined direction; and manipulating display of one or more user-interface elements of the plurality of displayed user-interface elements in accordance with the corresponding one of the first or second predefined direction.

11. The device of claim 10, wherein the instructions include: instructions for a first gesture recognizer to recognize gestures in the first predefined direction; and instructions for a second gesture recognizer to recognize gestures in the second predefined direction.

12. The device of claim 11, wherein the social networking application includes the instructions for the first gesture recognizer and the instructions for the second gesture recognizer.

13. The device of claim 11, wherein an operating system of the electronic device includes the instructions for the first gesture recognizer and the instructions for the second gesture recognizer.

14. The device of claim 11, wherein the one or more programs further comprise instructions for: based on the corresponding one of the first or second predefined direction, sending a message from a corresponding one of the first gesture recognizer or the second gesture recognizer to a view controller, wherein the message indicates that the corresponding one of the first or second predefined direction has been recognized for the first gesture.

15. The device of claim 14, wherein: the message includes information specifying a location of the detected first gesture with respect to the user interface, and the specified location corresponds to the one or more user-interface elements.

16. A method, comprising: at an electronic device having a display, a touch-sensitive surface, one or more processors, and memory storing one or more programs for execution by the one or more processors: displaying a user interface of a social networking application, wherein the user interface includes a plurality of displayed user-interface elements; detecting a first gesture on the touch-sensitive surface while displaying the first user interface; determining an initial direction of movement for the first gesture; recognizing that the initial direction corresponds to one of a first predefined direction on the touch-sensitive surface or a second predefined direction on the touch-sensitive surface, wherein the first predefined direction is distinct from the second predefined direction; and manipulating display of one or more user-interface elements of the plurality of displayed user-interface elements in accordance with the corresponding one of the first or second predefined direction.

17. The method of claim 16, wherein the one or more programs comprise instructions for a first gesture recognizer and instructions for a second gesture recognizer, the method further comprising: using the first gesture recognizer to recognize gestures in the first predefined direction; and using the second gesture recognizer to recognize gestures in the second predefined direction.

18. The method of claim 16, wherein manipulating display of the one or more user-interface elements comprises moving the one or more user-interface elements in the corresponding one of the first or second predefined direction on the display.

19. The method of claim 16, wherein the displayed user interface is a first user interface, the method further comprising: in response to detecting the first gesture on a first user-interface element of the one or more user-interface elements and recognizing that the initial direction of the first gesture corresponds to the first direction, displaying a second user interface distinct from the first user interface; and in response to detecting the first gesture on the first user-interface element and recognizing that the initial direction of the first gesture corresponds to the second direction, displaying a third user interface distinct from the first user interface and the second user interface.

20. The method of claim 16, wherein recognizing that the initial direction corresponds to one of the first predefined direction or the second predefined directions comprises determining whether a component of the initial direction of the first gesture satisfies a threshold value.

Description

TECHNICAL FIELD

This relates generally to touch-based gesture recognition and application navigation, including but not limited to recognizing pan gestures and navigating in a software application that has a hierarchy of user interfaces.

BACKGROUND

Touch-sensitive devices, such as tablets and smart phones with touch screens, have become popular in recent years. Many touch-sensitive devices are configured to detect touch-based gestures (e.g., tap, pan, swipe, pinch, depinch, and rotate gestures). Touch-sensitive devices often use the detected gestures to manipulate user interfaces and to navigate between user interfaces in software applications on the device.

However, there is an ongoing challenge to properly recognize and respond to touch-based gestures. Incorrect recognition of a touch gesture typically requires a user to undo any actions performed in response to the misinterpreted gesture and to repeat the touch gesture, which can be tedious, time-consuming, and inefficient. In addition, there is an ongoing challenge to make navigation in software applications via touch gestures more efficient and intuitive for users.

SUMMARY

Accordingly, there is a need for more efficient devices and methods for recognizing touch-based gestures. Such devices and methods optionally complement or replace conventional methods for recognizing touch-based gestures.

Moreover, there is a need for more efficient devices and methods for navigating in software applications via touch gestures. Such devices and methods optionally complement or replace conventional methods for navigating in software applications via touch gestures.

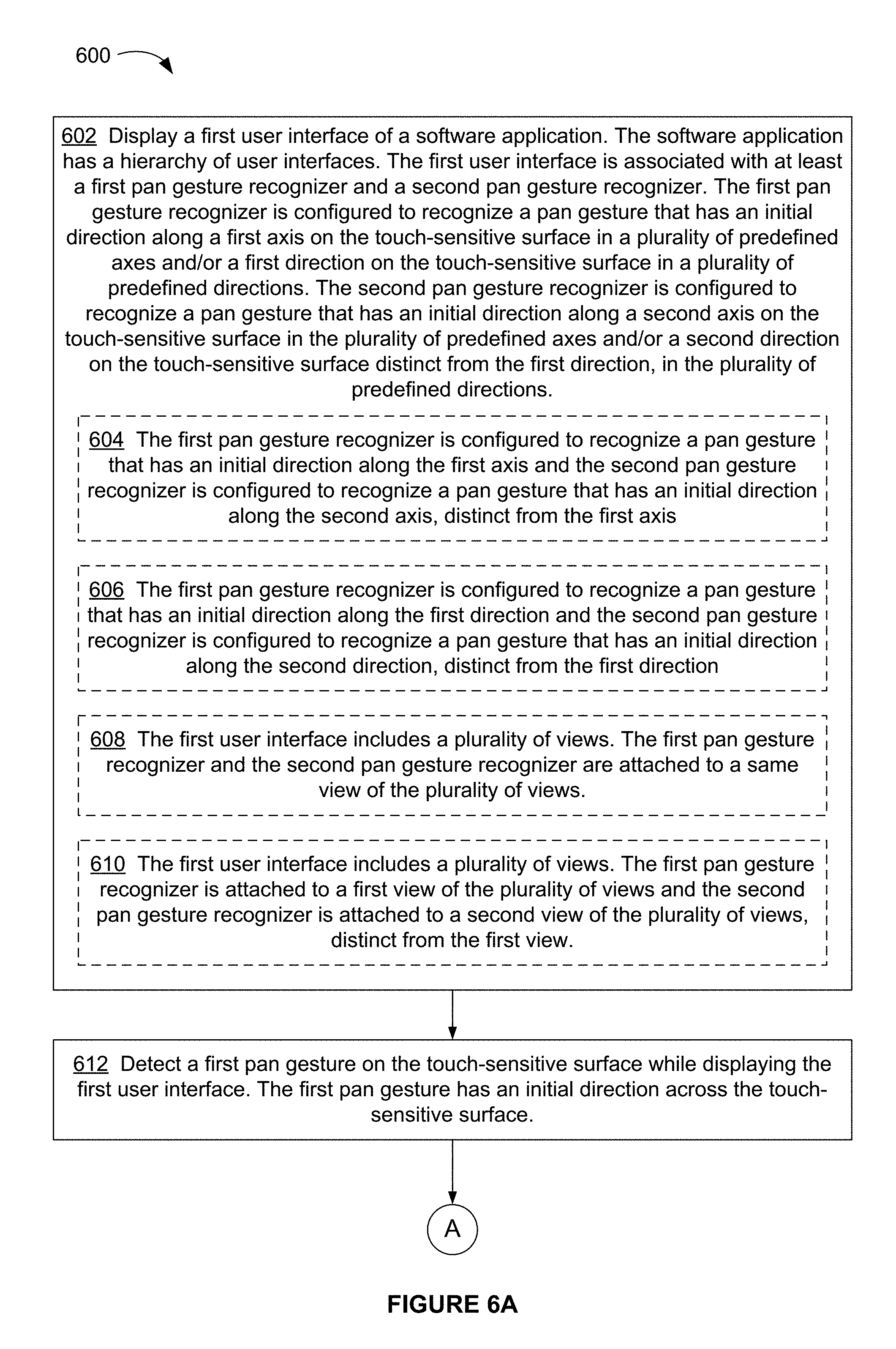

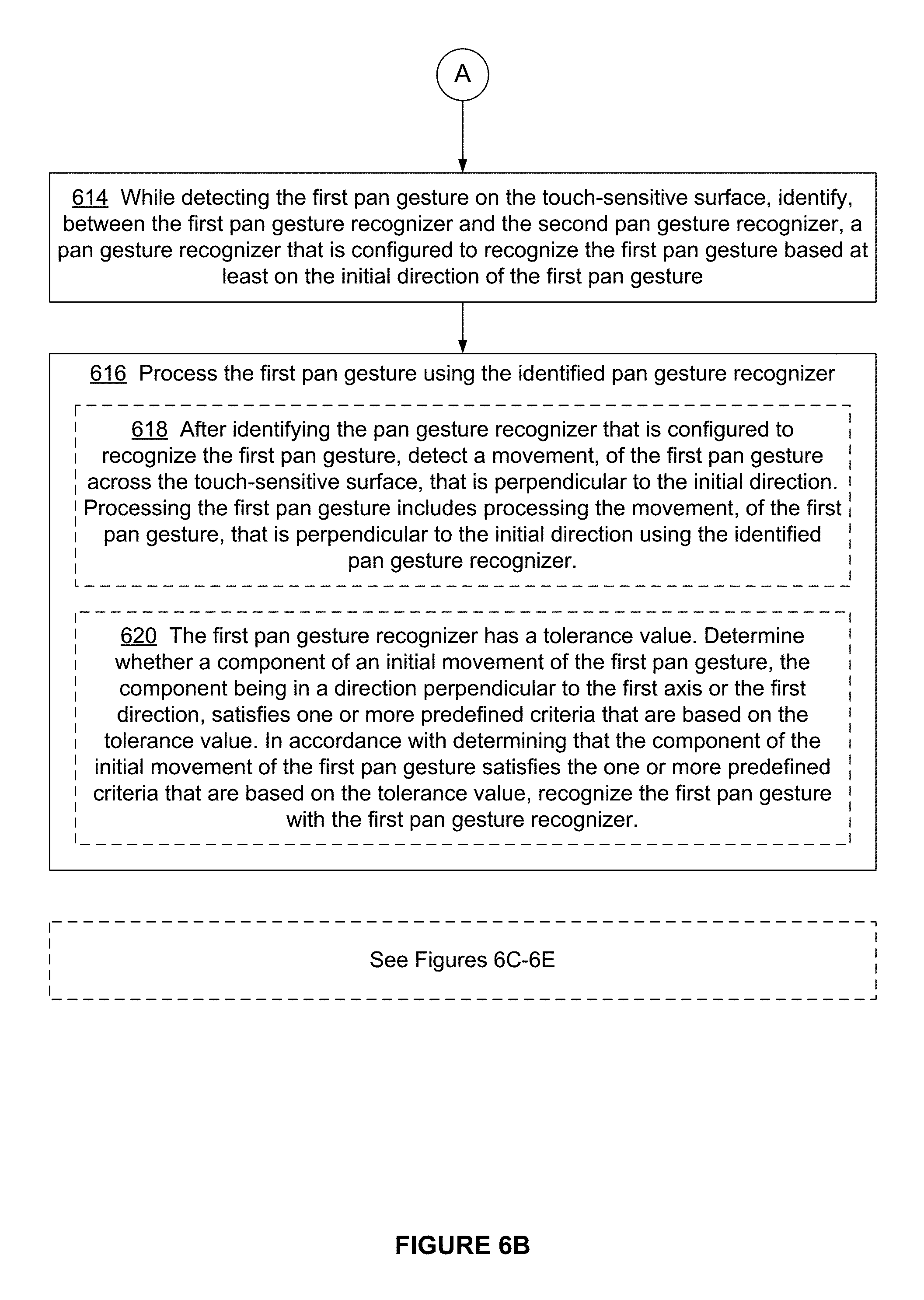

In accordance with some embodiments, a method is performed at an electronic device having a display, a touch-sensitive surface, one or more processors, and memory. The memory stores one or more programs for execution by the one or more processors. The method includes displaying a first user interface of a software application. The software application has a hierarchy of user interfaces. The first user interface is associated with at least a first pan gesture recognizer and a second pan gesture recognizer. The first pan gesture recognizer is configured to recognize a pan gesture that has an initial direction along a first axis on the touch-sensitive surface in a plurality of predefined axes and/or a first direction on the touch-sensitive surface in a plurality of predefined directions. The second pan gesture recognizer is configured to recognize a pan gesture that has an initial direction along a second axis on the touch-sensitive surface in the plurality of predefined axes and/or a second direction on the touch-sensitive surface distinct from the first direction, in the plurality of predefined directions. The method also includes detecting a first pan gesture on the touch-sensitive surface while displaying the first user interface. The first pan gesture has an initial direction across the touch-sensitive surface. The method further includes, while detecting the first pan gesture on the touch-sensitive surface, identifying, between the first pan gesture recognizer and the second pan gesture recognizer, a pan gesture recognizer that is configured to recognize the first pan gesture based at least on the initial direction of the first pan gesture; and processing the first pan gesture using the identified pan gesture recognizer.

In accordance with some embodiments, an electronic device includes a display, a touch-sensitive surface, one or more processors, memory, and one or more programs; the one or more programs are stored in the memory and configured to be executed by the one or more processors and the one or more programs include instructions for performing the operations of the method described above. In accordance with some embodiments, a graphical user interface on an electronic device with a display, a touch-sensitive surface, a memory, and one or more processors to execute one or more programs stored in the memory includes one or more of the elements displayed in the method described above, which are updated in response to processes, as described in the method described above. In accordance with some embodiments, a computer readable storage medium has stored therein instructions, which, when executed by an electronic device with a display, a touch-sensitive surface, and one or more processors, cause the device to perform the operations of the method described above. In accordance with some embodiments, an electronic device includes: a display, a touch-sensitive surface, and means for performing the operations of the method described above.

Thus, electronic devices with displays, touch-sensitive surfaces, and one or more processors are provided with more efficient methods for recognizing touch-based gestures, thereby increasing the effectiveness, efficiency, and user satisfaction of and with such devices. Such methods and interfaces may complement or replace conventional methods for recognizing touch-based gestures.

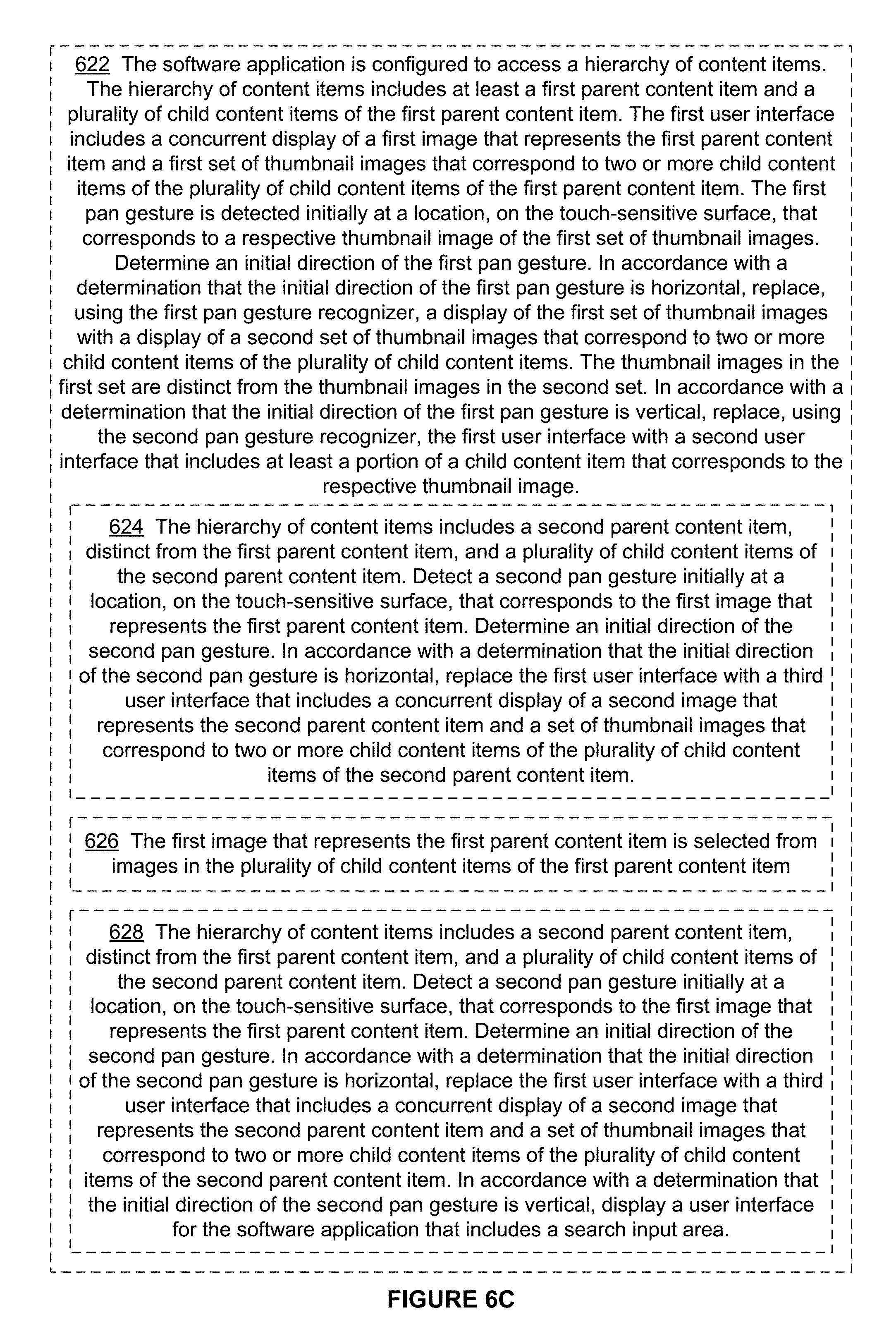

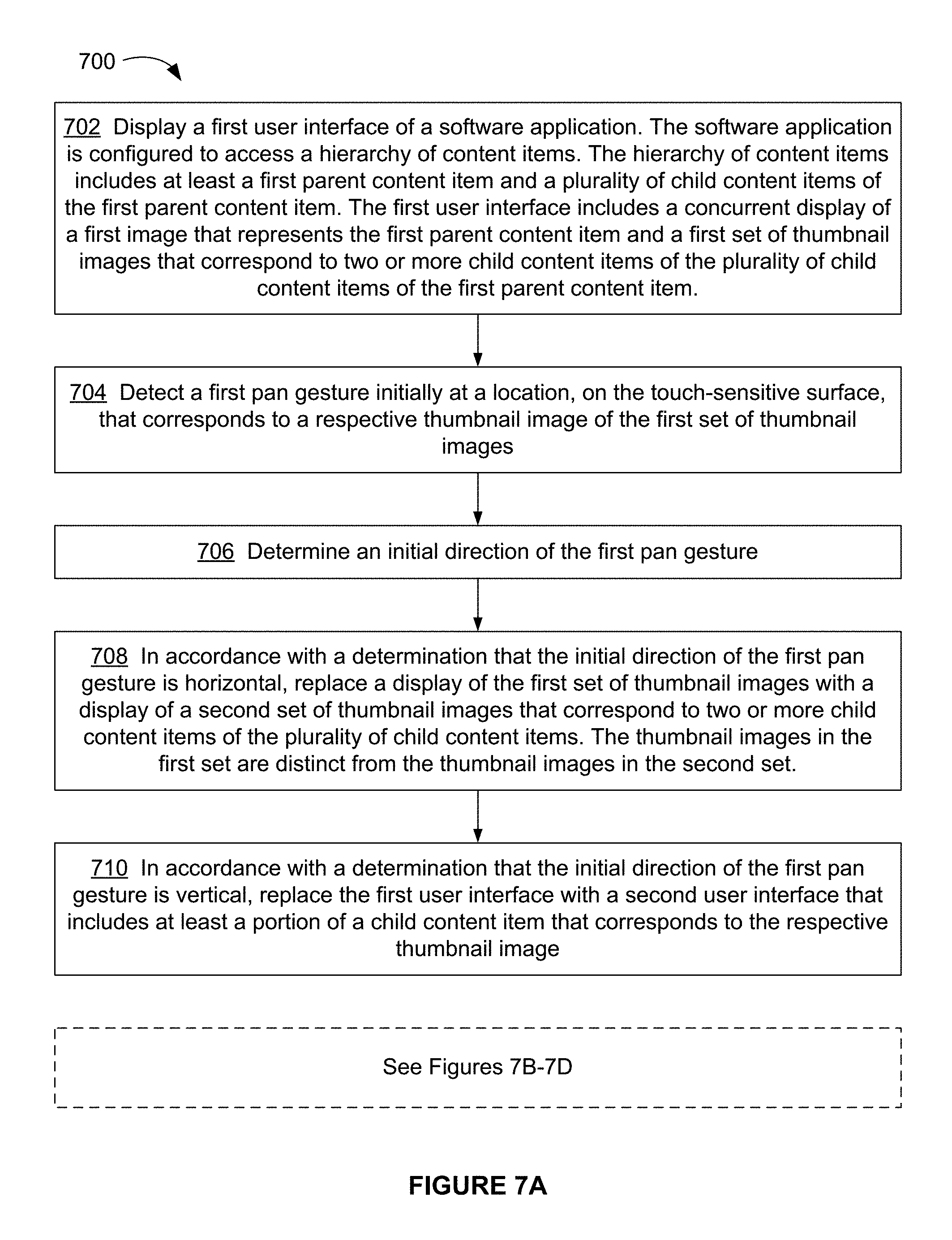

In accordance with some embodiments, a method is performed at an electronic device having a display, a touch-sensitive surface, one or more processors, and memory. The memory stores one or more programs for execution by the one or more processors. The method includes displaying a first user interface of a software application. The software application is configured to access a hierarchy of content items. The hierarchy of content items includes at least a first parent content item and a plurality of child content items of the first parent content item. The first user interface includes a concurrent display of a first image that represents the first parent content item and a first set of thumbnail images that correspond to two or more child content items of the plurality of child content items of the first parent content item. The method also includes detecting a first pan gesture initially at a location, on the touch-sensitive surface, that corresponds to a respective thumbnail image of the first set of thumbnail images; and determining an initial direction of the first pan gesture. The method further includes, in accordance with a determination that the initial direction of the first pan gesture is horizontal, replacing a display of the first set of thumbnail images with a display of a second set of thumbnail images that correspond to two or more child content items of the plurality of child content items, wherein the thumbnail images in the first set are distinct from the thumbnail images in the second set; and, in accordance with a determination that the initial direction of the first pan gesture is vertical, replacing the first user interface with a second user interface that includes at least a portion of a child content item that corresponds to the respective thumbnail image.

In accordance with some embodiments, an electronic device includes a display, a touch-sensitive surface, one or more processors, memory, and one or more programs; the one or more programs are stored in the memory and configured to be executed by the one or more processors and the one or more programs include instructions for performing the operations of the method described above. In accordance with some embodiments, a graphical user interface on an electronic device with a display, a touch-sensitive surface, a memory, and one or more processors to execute one or more programs stored in the memory includes one or more of the elements displayed in the method described above, which are updated in response to processes, as described in the method described above. In accordance with some embodiments, a computer readable storage medium has stored therein instructions, which, when executed by an electronic device with a display, a touch-sensitive surface, and one or more processors, cause the device to perform the operations of the method described above. In accordance with some embodiments, an electronic device includes: a display, a touch-sensitive surface, and means for performing the operations of the method described above.

Thus, electronic devices with displays, touch-sensitive surfaces, and one or more processors are provided with more efficient methods for navigating in a software application that has a hierarchy of user interfaces, thereby increasing the effectiveness, efficiency, and user satisfaction of and with such devices. Such methods and interfaces may complement or replace conventional methods for navigating in a software application that has a hierarchy of user interfaces.

BRIEF DESCRIPTION OF THE DRAWINGS

For a better understanding of the various described embodiments, reference should be made to the Description of Embodiments below, in conjunction with the following drawings in which like reference numerals refer to corresponding parts throughout the figures.

FIG. 1 is a block diagram illustrating an exemplary network architecture of a social network in accordance with some embodiments.

FIG. 2 is a block diagram illustrating an exemplary social network system in accordance with some embodiments.

FIG. 3 is a block diagram illustrating an exemplary client device in accordance with some embodiments.

FIGS. 4A-4CC illustrate exemplary user interfaces in a software application with a hierarchy of user interfaces, in accordance with some embodiments.

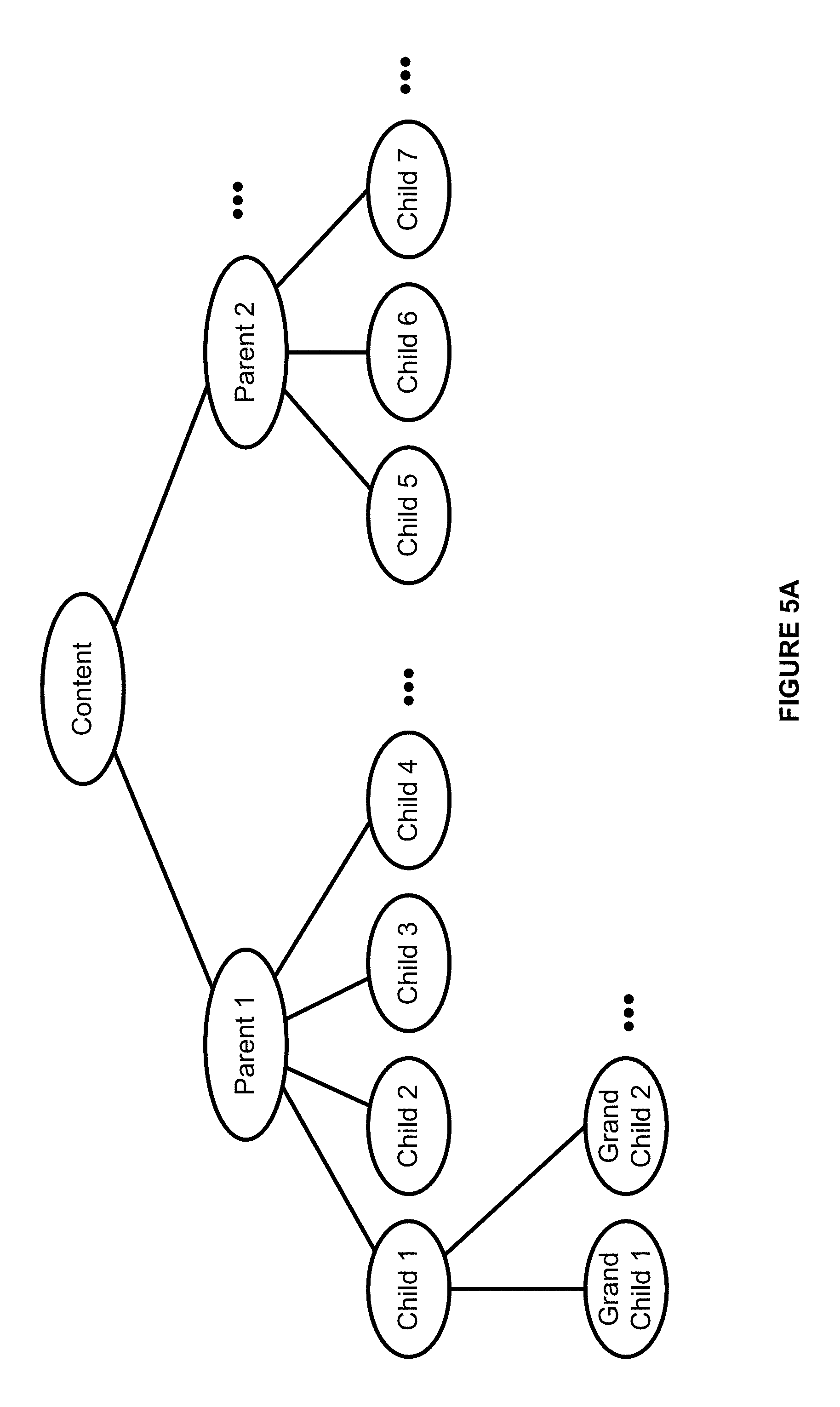

FIG. 5A is a diagram illustrating an exemplary hierarchy of content items in accordance with some embodiments.

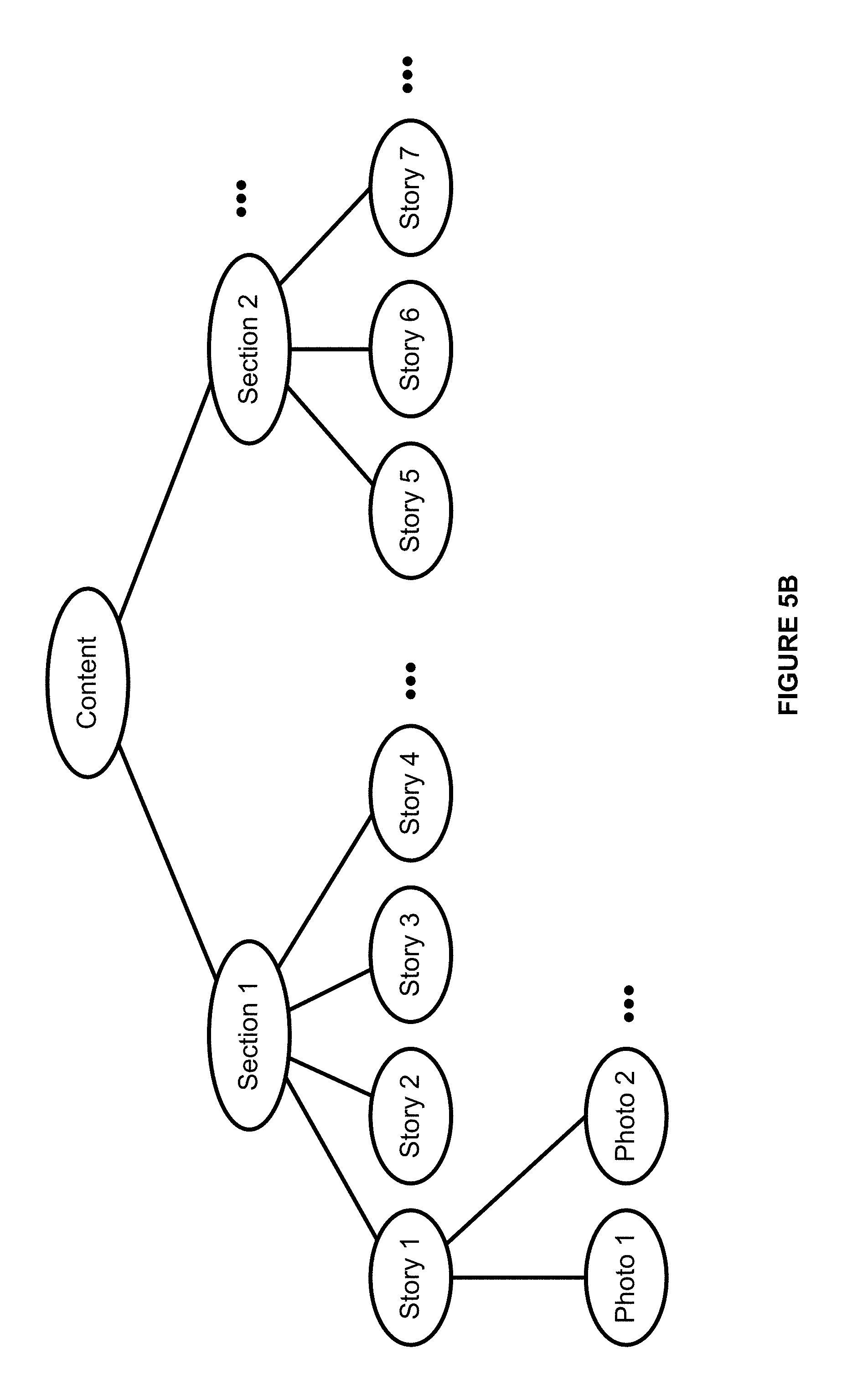

FIG. 5B is a diagram illustrating an exemplary hierarchy of content items in accordance with some embodiments.

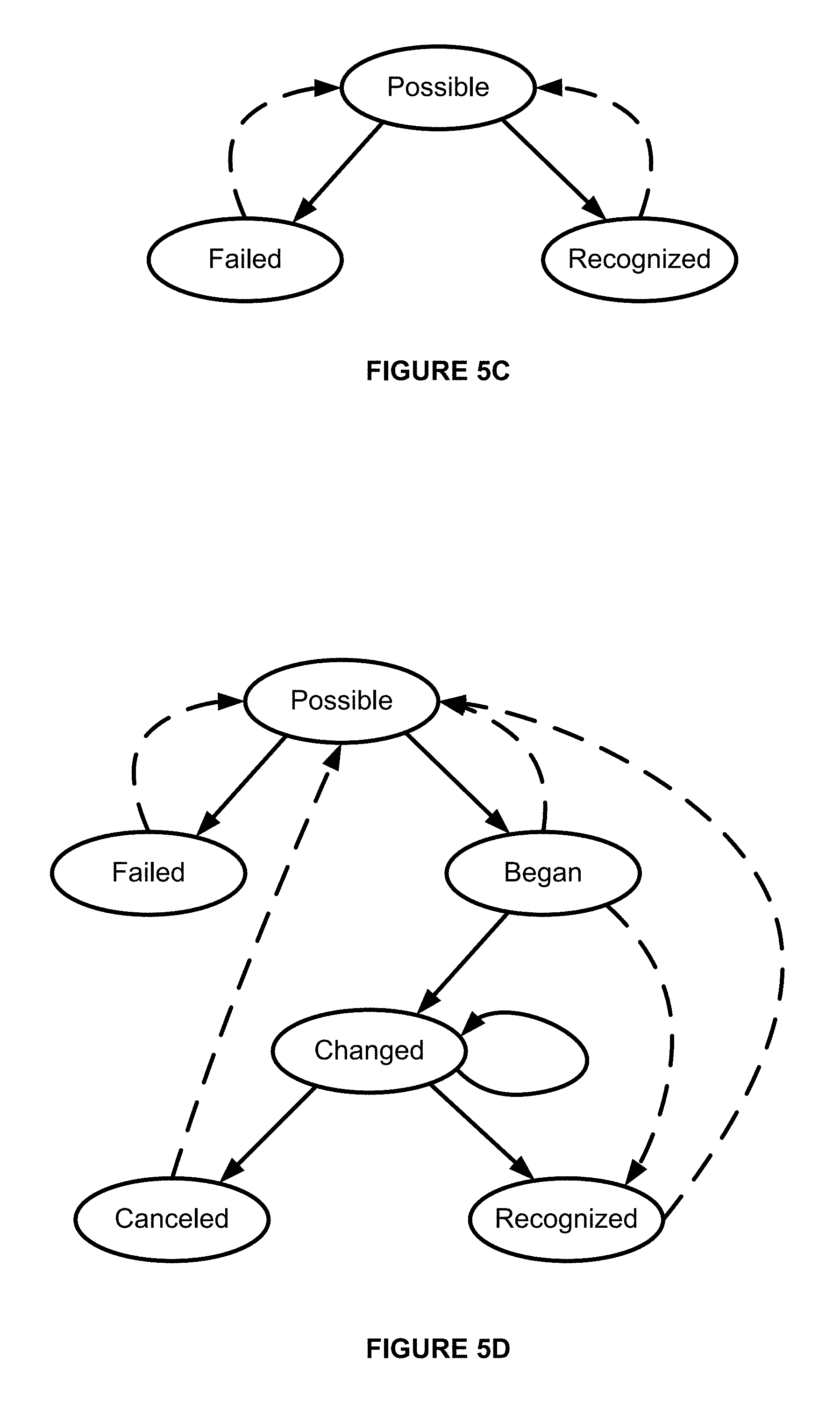

FIG. 5C illustrates an exemplary state machine for discrete gesture recognizers in accordance with some embodiments.

FIG. 5D illustrates an exemplary state machine for continuous gesture recognizers in accordance with some embodiments.

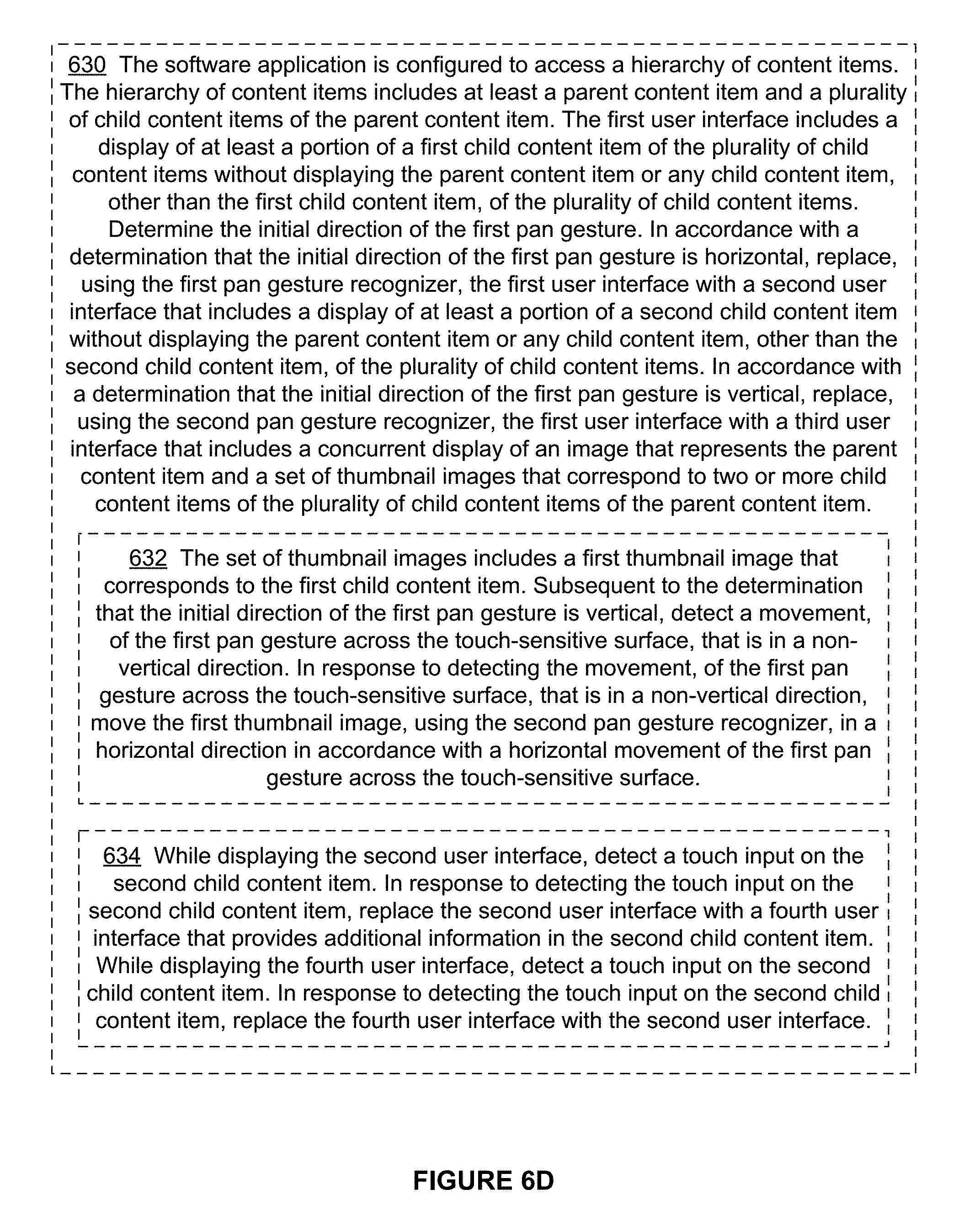

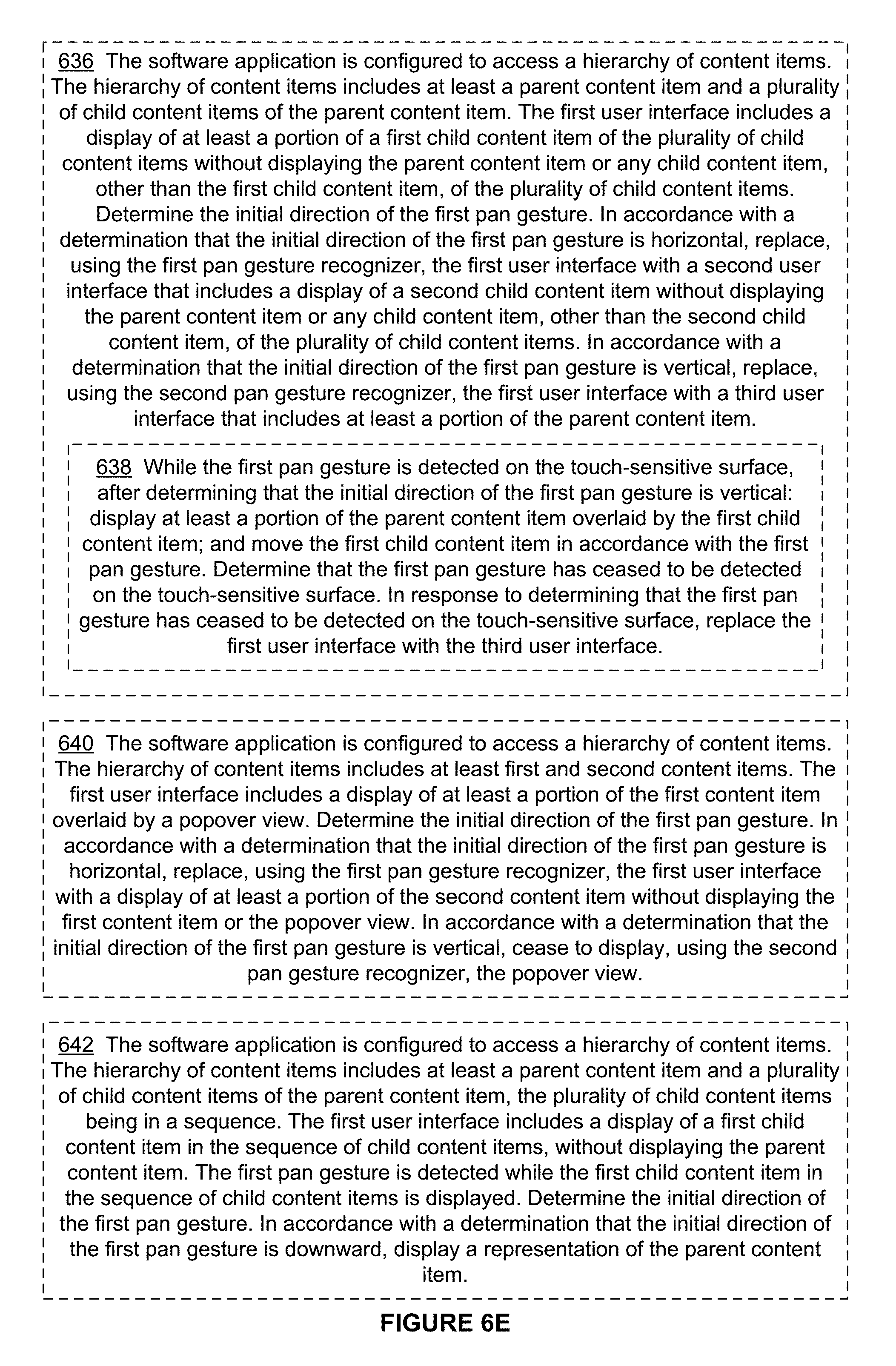

FIGS. 6A-6E are flow diagrams illustrating a method of processing touch-based gestures in accordance with some embodiments.

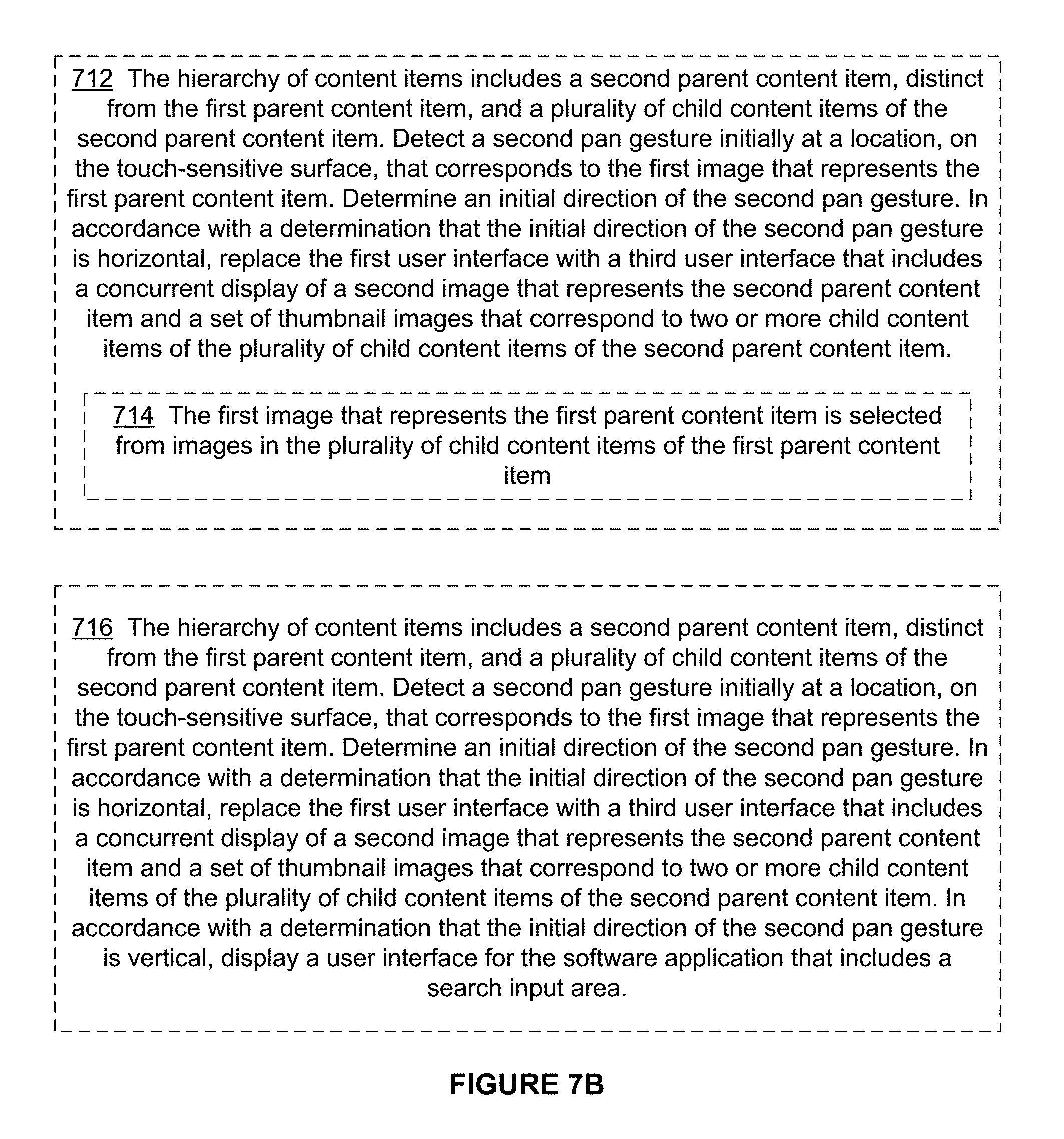

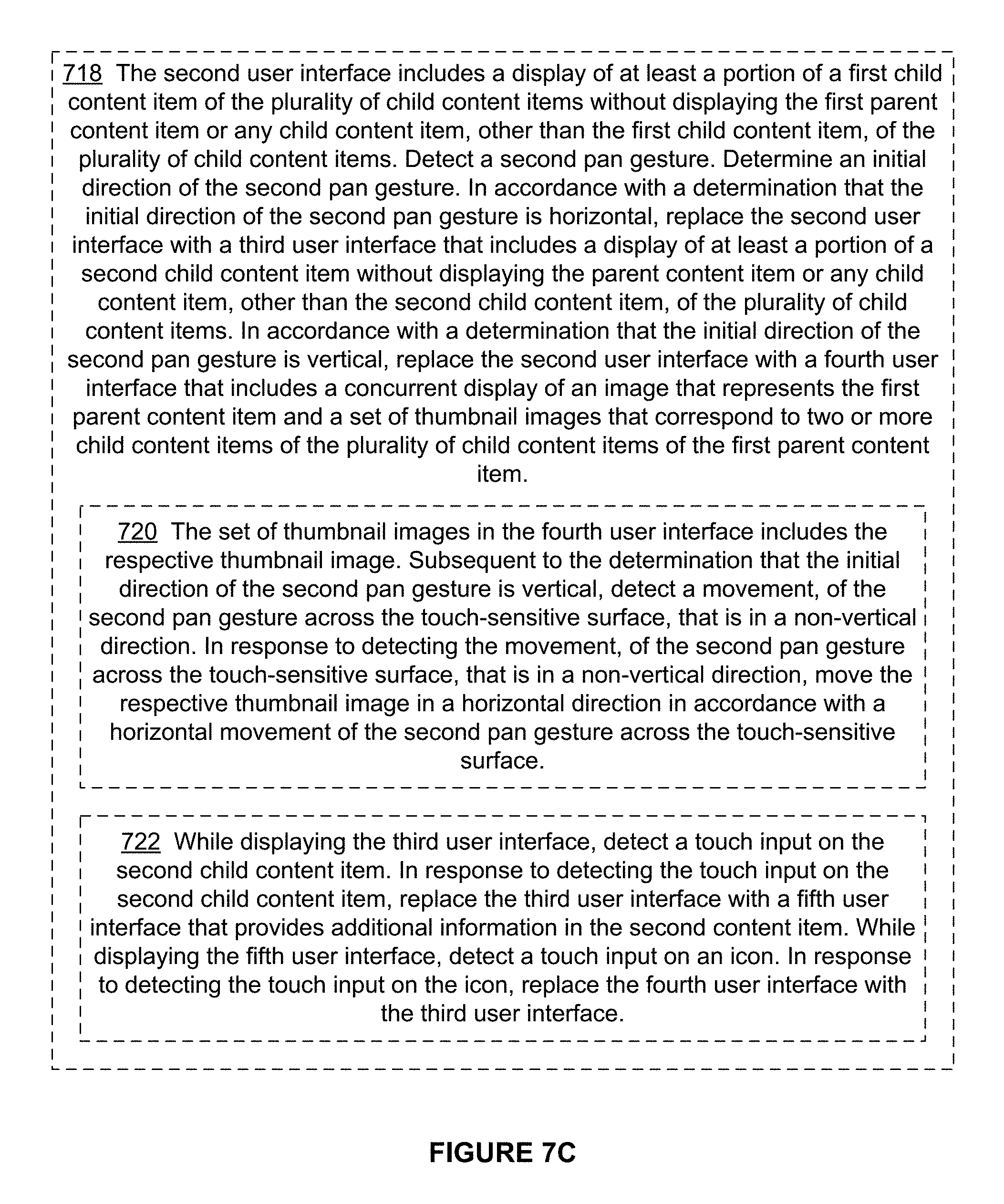

FIGS. 7A-7D are flow diagrams illustrating a method of navigating in a software application via touch gestures in accordance with some embodiments.

DESCRIPTION OF EMBODIMENTS

The devices and methods described herein improve recognition of pan gestures and use pan gestures to easily and efficiently navigate in a software application (e.g., a social networking application) that has a hierarchy of user interfaces.

A pan gesture may include movements of a finger touch input in both horizontal and vertical directions. Thus, it may be difficult to distinguish two different pan gestures using pan gesture recognizers. For example, two pan gesture recognizers, located in a same view, may conflict with each other in trying to recognize a pan gesture in the same view.

To address this problem, methods are described herein to identify the correct pan gesture recognizer to process a particular pan gesture based on the initial direction of the particular pan gesture. For example, when a user at an electronic device provides a pan gesture that is initially horizontal, the initially-horizontal pan gesture can be distinguished from a pan gesture that is initially vertical. Because the initial direction of the pan gesture is used to determine which gesture recognizer processes the pan gesture, after the initial direction of the pan gesture is identified, subsequent movements in the pan gesture may be provided in any direction (e.g., an initially-horizontal pan gesture may include a subsequent horizontal or diagonal movement). Thus, the combination of a constrained initial direction and subsequent free movement (e.g., movement in any direction on a touch screen) in a pan gesture provides the flexibility associated with pan gestures while allowing the use of multiple pan gesture recognizers, because a matching (e.g., a recognizing) gesture recognizer can be identified based on the initial direction.

Methods are also described herein that use pan gestures to navigate in a software application that has a hierarchy of user interfaces. For example, a user can use various pan gestures to easily move between content items (e.g., stories) in a single content section, between individual stories and multiple stories and a representative image of the content section, and between different content sections. These methods reduce the time spent navigating through a large number of content items to find the desired content, thereby providing a more efficient and intuitive human-machine interface and reducing power consumption by the electronic device.

Below, FIGS. 1-3 provide a description of devices used for providing social network content (e.g., client devices and social network servers). FIGS. 4A-4CC illustrate exemplary user interfaces in a software application with a hierarchy of user interfaces (e.g., a social networking application). FIG. 5A-5B are diagrams illustrating exemplary hierarchies of content items. FIG. 5C illustrates an exemplary state machine for discrete gesture recognizers in accordance with some embodiments. FIG. 5D illustrates an exemplary state machine for continuous gesture recognizers in accordance with some embodiments. FIGS. 6A-6E are flow diagrams illustrating a method of processing touch-based gestures on an electronic device. FIGS. 7A-7D are flow diagrams illustrating a method of navigating in a software application via touch gestures. The user interfaces in FIGS. 4A-4CC, the diagrams in FIGS. 5A-5B, and the state machines in FIGS. 5C-5D are used to illustrate the processes in FIGS. 6A-6E and 7A-7D.

Reference will now be made to embodiments, examples of which are illustrated in the accompanying drawings. In the following description, numerous specific details are set forth in order to provide an understanding of the various described embodiments. However, it will be apparent to one of ordinary skill in the art that the various described embodiments may be practiced without these specific details. In other instances, well-known methods, procedures, components, circuits, and networks have not been described in detail so as not to unnecessarily obscure aspects of the embodiments.

It will also be understood that, although the terms first, second, etc. are, in some instances, used herein to describe various elements, these elements should not be limited by these terms. These terms are used only to distinguish one element from another. For example, a first user interface could be termed a second user interface, and, similarly, a second user interface could be termed a first user interface, without departing from the scope of the various described embodiments. The first user interface and the second user interface are both user interfaces, but they are not the same user interface.

The terminology used in the description of the various described embodiments herein is for the purpose of describing particular embodiments only and is not intended to be limiting. As used in the description of the various described embodiments and the appended claims, the singular forms "a," "an," and "the" are intended to include the plural forms as well, unless the context clearly indicates otherwise. It will also be understood that the term "and/or" as used herein refers to and encompasses any and all possible combinations of one or more of the associated listed items. It will be further understood that the terms "includes," "including," "comprises," and/or "comprising," when used in this specification, specify the presence of stated features, integers, steps, operations, elements, and/or components, but do not preclude the presence or addition of one or more other features, integers, steps, operations, elements, components, and/or groups thereof.

As used herein, the term "if" is, optionally, construed to mean "when" or "upon" or "in response to determining" or "in response to detecting" or "in accordance with a determination that," depending on the context. Similarly, the phrase "if it is determined" or "if [a stated condition or event] is detected" is, optionally, construed to mean "upon determining" or "in response to determining" or "upon detecting [the stated condition or event]" or "in response to detecting [the stated condition or event]" or "in accordance with a determination that [a stated condition or event] is detected," depending on the context.

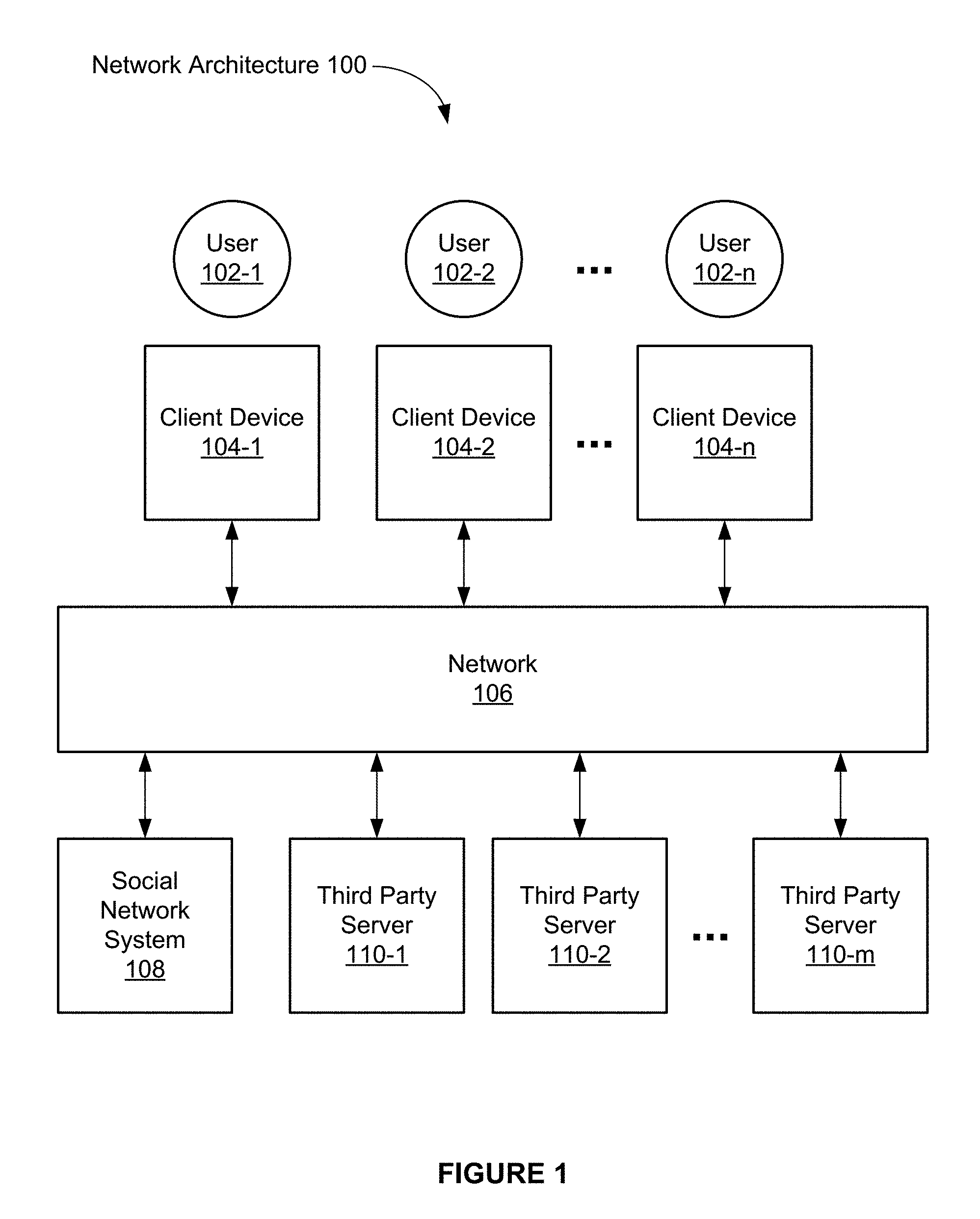

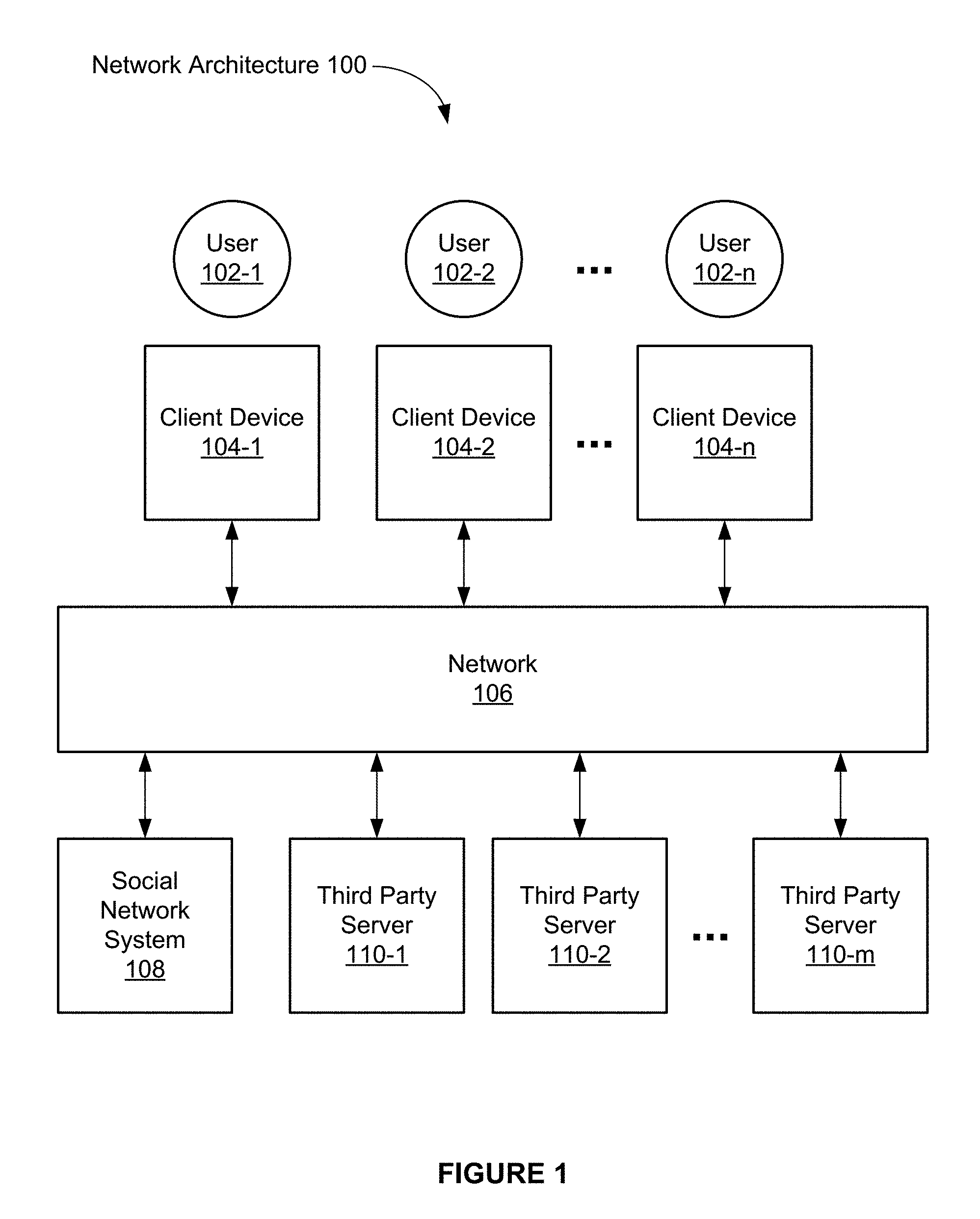

FIG. 1 is a block diagram illustrating an exemplary network architecture of a social network in accordance with some embodiments. The network architecture 100 includes a number of client devices (also called "client systems," "client computers," or "clients") 104-1, 104-2, . . . 104-n communicably connected to a social network system 108 by one or more networks 106.

In some embodiments, the client devices 104-1, 104-2, . . . 104-n are computing devices such as smart watches, personal digital assistants, portable media players, smart phones, tablet computers, 2D gaming devices, 3D (e.g., virtual reality) gaming devices, laptop computers, desktop computers, televisions with one or more processors embedded therein or coupled thereto, in-vehicle information systems (e.g., an in-car computer system that provides navigation, entertainment, and/or other information), or other appropriate computing devices that can be used to communicate with an electronic social network system. In some embodiments, the social network system 108 is a single computing device such as a computer server, while in other embodiments, the social network system 108 is implemented by multiple computing devices working together to perform the actions of a server system (e.g., cloud computing). In some embodiments, the network 106 is a public communication network (e.g., the Internet or a cellular data network) or a private communications network (e.g., private LAN or leased lines) or a combination of such communication networks.

Users 102-1, 102-2, . . . 102-n employ the client devices 104-1, 104-2, . . . 104-n to access the social network system 108 and to participate in a social networking service. For example, one or more of the client devices 104-1, 104-2, . . . 104-n execute web browser applications that can be used to access the social networking service. As another example, one or more of the client devices 104-1, 104-2, . . . 104-n execute software applications that are specific to the social network (e.g., social networking "apps" running on smart phones or tablets, such as a Facebook social networking application running on an iPhone, Android, or Windows smart phone or tablet).

Users interacting with the client devices 104-1, 104-2, . . . 104-n can participate in the social networking service provided by the social network system 108 by posting information, such as text comments (e.g., updates, announcements, replies), digital photos, videos, audio files, links, and/or other electronic content. Users of the social networking service can also annotate information posted by other users of the social networking service (e.g., endorsing or "liking" a posting of another user, or commenting on a posting by another user). In some embodiments, information can be posted on a user's behalf by systems and/or services external to the social network or the social network system 108. For example, the user may post a review of a movie to a movie review website, and with proper permissions that website may cross-post the review to the social network on the user's behalf. In another example, a software application executing on a mobile client device, with proper permissions, may use global positioning system (GPS) or other geo-location capabilities (e.g., Wi-Fi or hybrid positioning systems) to determine the user's location and update the social network with the user's location (e.g., "At Home," "At Work," or "In San Francisco, Calif."), and/or update the social network with information derived from and/or based on the user's location. Users interacting with the client devices 104-1, 104-2, . . . 104-n can also use the social network provided by the social network system 108 to define groups of users. Users interacting with the client devices 104-1, 104-2, . . . 104-n can also use the social network provided by the social network system 108 to communicate and collaborate with each other.

In some embodiments, the network architecture 100 also includes third-party servers 110-1, 110-2, . . . 110-m. In some embodiments, a given third-party server is used to host third-party websites that provide web pages to client devices 104, either directly or in conjunction with the social network system 108. In some embodiments, the social network system 108 uses iframes to nest independent websites within a user's social network session. In some embodiments, a given third-party server is used to host third-party applications that are used by client devices 104, either directly or in conjunction with the social network system 108. In some embodiments, social network system 108 uses iframes to enable third-party developers to create applications that are hosted separately by a third-party server 110, but operate within a social networking session of a user and are accessed through the user's profile in the social network system. Exemplary third-party applications include applications for books, business, communication, contests, education, entertainment, fashion, finance, food and drink, games, health and fitness, lifestyle, local information, movies, television, music and audio, news, photos, video, productivity, reference material, security, shopping, sports, travel, utilities, and the like. In some embodiments, a given third-party server is used to host enterprise systems, that are used by client devices 104, either directly or in conjunction with the social network system 108. In some embodiments, a given third-party server is used to provide third-party content (e.g., news articles, reviews, message feeds, etc.).

In some embodiments, a given third-party server 110 is a single computing device, while in other embodiments, a given third-party server 110 is implemented by multiple computing devices working together to perform the actions of a server system (e.g., cloud computing).

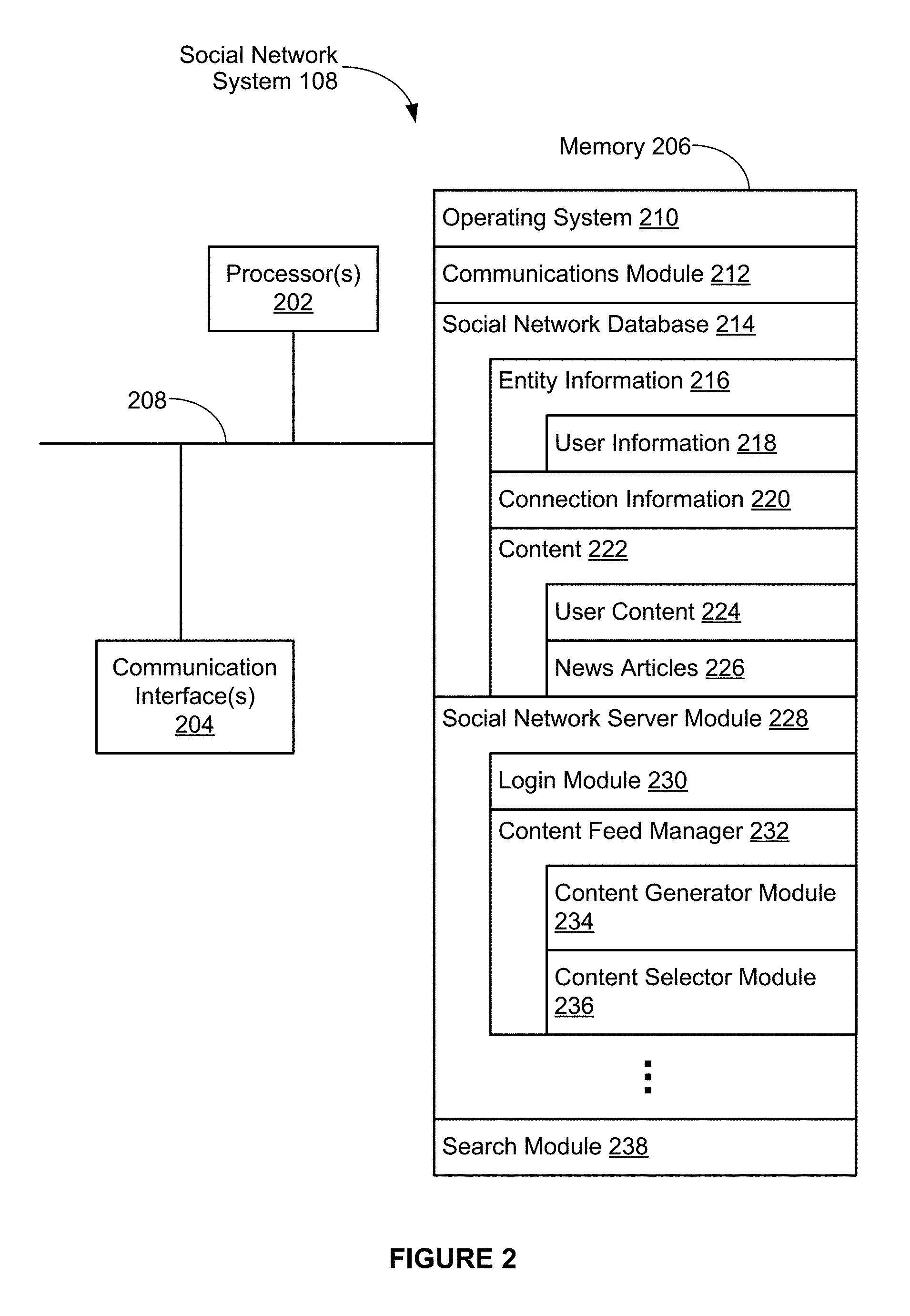

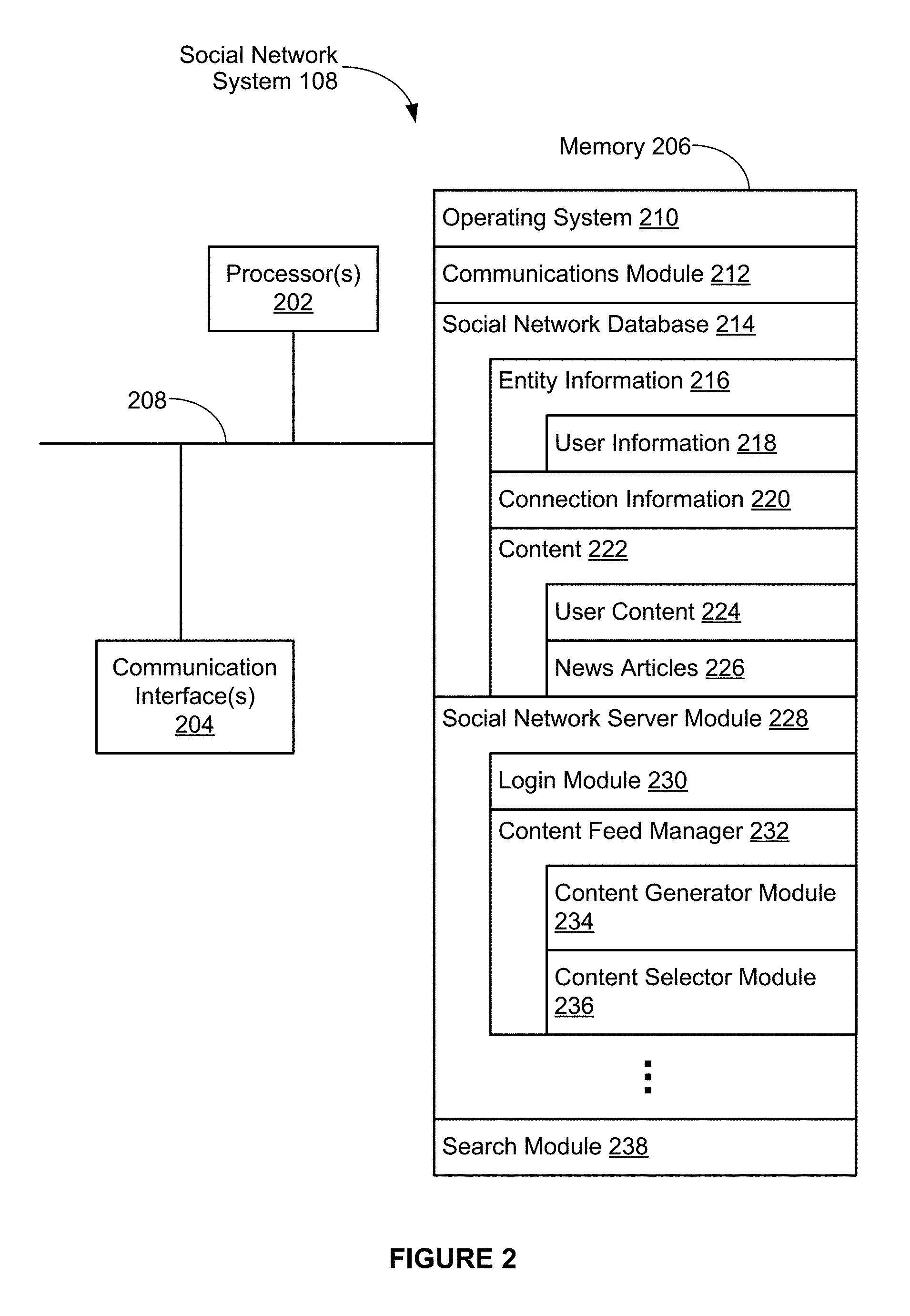

FIG. 2 is a block diagram illustrating an exemplary social network system 108 in accordance with some embodiments. The social network system 108 typically includes one or more processing units (processors or cores) 202, one or more network or other communications interfaces 204, memory 206, and one or more communication buses 208 for interconnecting these components. The communication buses 208 optionally include circuitry (sometimes called a chipset) that interconnects and controls communications between system components. The social network system 108 optionally includes a user interface (not shown). The user interface, if provided, may include a display device and optionally includes inputs such as a keyboard, mouse, trackpad, and/or input buttons. Alternatively or in addition, the display device includes a touch-sensitive surface, in which case the display is a touch-sensitive display.

Memory 206 includes high-speed random access memory, such as DRAM, SRAM, DDR RAM or other random access solid state memory devices; and may include non-volatile memory, such as one or more magnetic disk storage devices, optical disk storage devices, flash memory devices, or other non-volatile solid state storage devices. Memory 206 may optionally include one or more storage devices remotely located from the processor(s) 202. Memory 206, or alternately the non-volatile memory device(s) within memory 206, includes a non-transitory computer readable storage medium. In some embodiments, memory 206 or the computer readable storage medium of memory 206 stores the following programs, modules and data structures, or a subset or superset thereof: an operating system 210 that includes procedures for handling various basic system services and for performing hardware dependent tasks; a network communication module 212 that is used for connecting the social network system 108 to other computers via the one or more communication network interfaces 204 (wired or wireless) and one or more communication networks, such as the Internet, cellular telephone networks, mobile data networks, other wide area networks, local area networks, metropolitan area networks, and so on; a social network database 214 for storing data associated with the social network, such as: entity information 216, such as user information 218; connection information 220; and content 222, such as user content 224 and/or news articles 226; a social network server module 228 for providing social networking services and related features, in conjunction with browser module 338 or social network client module 340 on the client device 104, which includes: a login module 230 for logging a user 102 at a client 104 into the social network system 108; and a content feed manager 232 for providing content to be sent to clients 104 for display, which includes: a content generator module 234 for describing objects in the social network database 214, such as images, videos, audio files, comments, status messages, links, applications, and/or other entity information 216, connection information 220, or content 222; and a content selector module 236 for choosing the information/content to be sent to clients 104 for display; and a search module 238 for enabling users of the social network system to search for content and other users in the social network.

The social network database 214 stores data associated with the social network in one or more types of databases, such as graph, dimensional, flat, hierarchical, network, object-oriented, relational, and/or XML databases.

In some embodiments, the social network database 214 includes a graph database, with entity information 216 represented as nodes in the graph database and connection information 220 represented as edges in the graph database. The graph database includes a plurality of nodes, as well as a plurality of edges that define connections between corresponding nodes. In some embodiments, the nodes and/or edges themselves are data objects that include the identifiers, attributes, and information for their corresponding entities, some of which are rendered at clients 104 on corresponding profile pages or other pages in the social networking service. In some embodiments, the nodes also include pointers or references to other objects, data structures, or resources for use in rendering content in conjunction with the rendering of the pages corresponding to the respective nodes at clients 104.

Entity information 216 includes user information 218, such as user profiles, login information, privacy and other preferences, biographical data, and the like. In some embodiments, for a given user, the user information 218 includes the user's name, profile picture, contact information, birth date, sex, marital status, family status, employment, education background, preferences, interests, and/or other demographic information.

In some embodiments, entity information 216 includes information about a physical location (e.g., a restaurant, theater, landmark, city, state, or country), real or intellectual property (e.g., a sculpture, painting, movie, game, song, idea/concept, photograph, or written work), a business, a group of people, and/or a group of businesses. In some embodiments, entity information 216 includes information about a resource, such as an audio file, a video file, a digital photo, a text file, a structured document (e.g., web page), or an application. In some embodiments, the resource is located in the social network system 108 (e.g., in content 222) or on an external server, such as third-party server 110.

In some embodiments, connection information 220 includes information about the relationships between entities in the social network database 214. In some embodiments, connection information 220 includes information about edges that connect pairs of nodes in a graph database. In some embodiments, an edge connecting a pair of nodes represents a relationship between the pair of nodes.

In some embodiments, an edge includes or represents one or more data objects or attributes that correspond to the relationship between a pair of nodes. For example, when a first user indicates that a second user is a "friend" of the first user, the social network system 108 transmits a "friend request" to the second user. If the second user confirms the "friend request," the social network system 108 creates and stores an edge connecting the first user's user node and the second user's user node in a graph database as connection information 220 that indicates that the first user and the second user are friends. In some embodiments, connection information 220 represents a friendship, a family relationship, a business or employment relationship, a fan relationship, a follower relationship, a visitor relationship, a subscriber relationship, a superior/subordinate relationship, a reciprocal relationship, a non-reciprocal relationship, another suitable type of relationship, or two or more such relationships.

In some embodiments, an edge between a user node and another entity node represents connection information about a particular action or activity performed by a user of the user node towards the other entity node. For example, a user may "like," "attended," "played," "listened," "cooked," "worked at," or "watched" the entity at the other node. The page in the social networking service that corresponds to the entity at the other node may include, for example, a selectable "like," "check in," or "add to favorites" icon. After the user clicks one of these icons, the social network system 108 may create a "like" edge, a "check in" edge, or a "favorites" edge in response to the corresponding user action. As another example, the user may listen to a particular song using a particular application (e.g., an online music application). In this case, the social network system 108 may create a "listened" edge and a "used" edge between the user node that corresponds to the user and the entity nodes that correspond to the song and the application, respectively, to indicate that the user listened to the song and used the application. In addition, the social network system 108 may create a "played" edge between the entity nodes that correspond to the song and the application to indicate that the particular song was played by the particular application.

In some embodiments, content 222 includes text (e.g., ASCII, SGML, HTML), images (e.g., jpeg, tiff and gif), graphics (vector-based or bitmap), audio, video (e.g., mpeg), other multimedia, and/or combinations thereof. In some embodiments, content 222 includes executable code (e.g., games executable within a browser window or frame), podcasts, links, and the like.

In some embodiments, the social network server module 228 includes web or Hypertext Transfer Protocol (HTTP) servers, File Transfer Protocol (FTP) servers, as well as web pages and applications implemented using Common Gateway Interface (CGI) script, PHP Hyper-text Preprocessor (PHP), Active Server Pages (ASP), Hyper Text Markup Language (HTML), Extensible Markup Language (XML), Java, JavaScript, Asynchronous JavaScript and XML (AJAX), XHP, Javelin, Wireless Universal Resource File (WURFL), and the like.

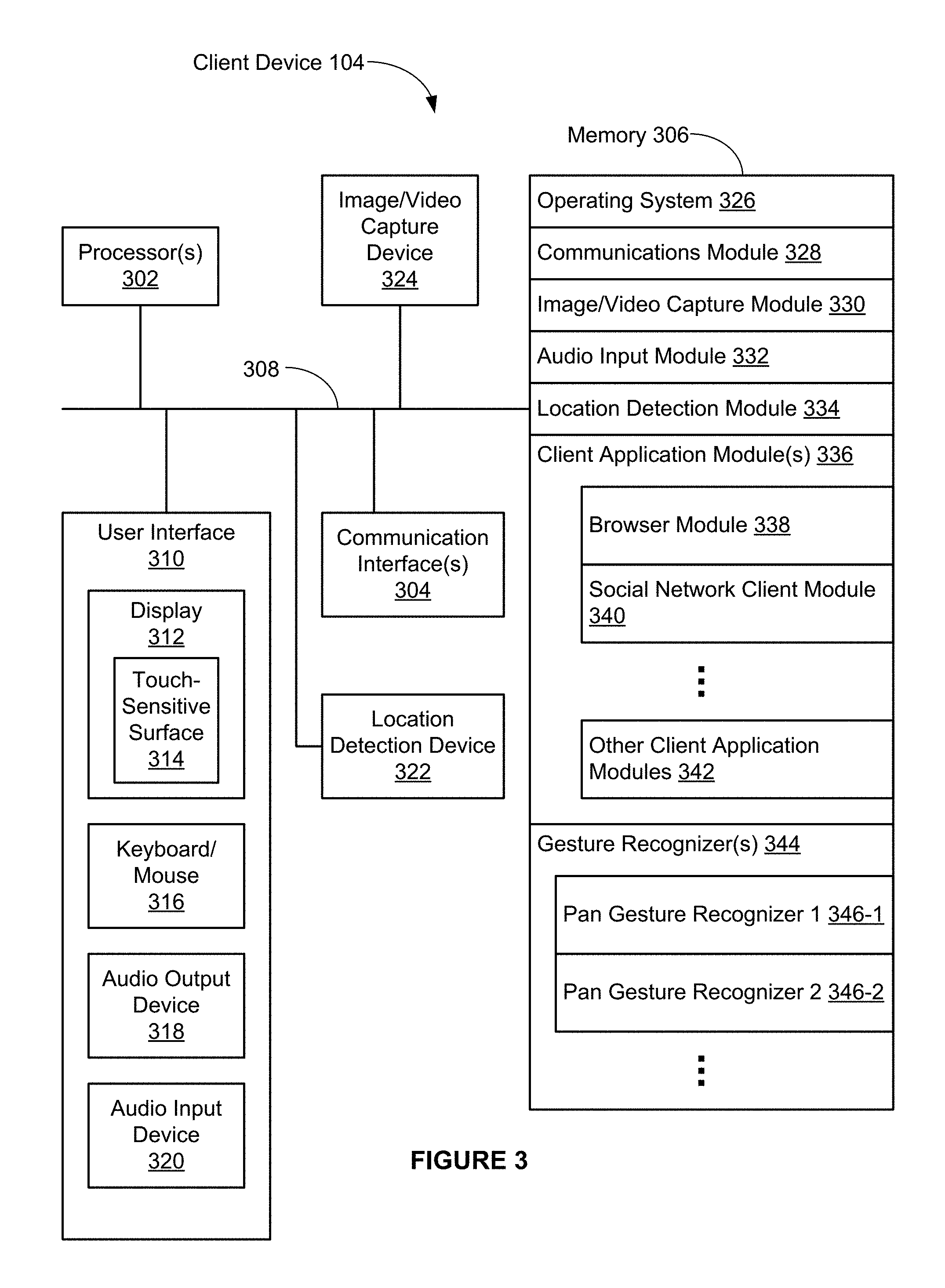

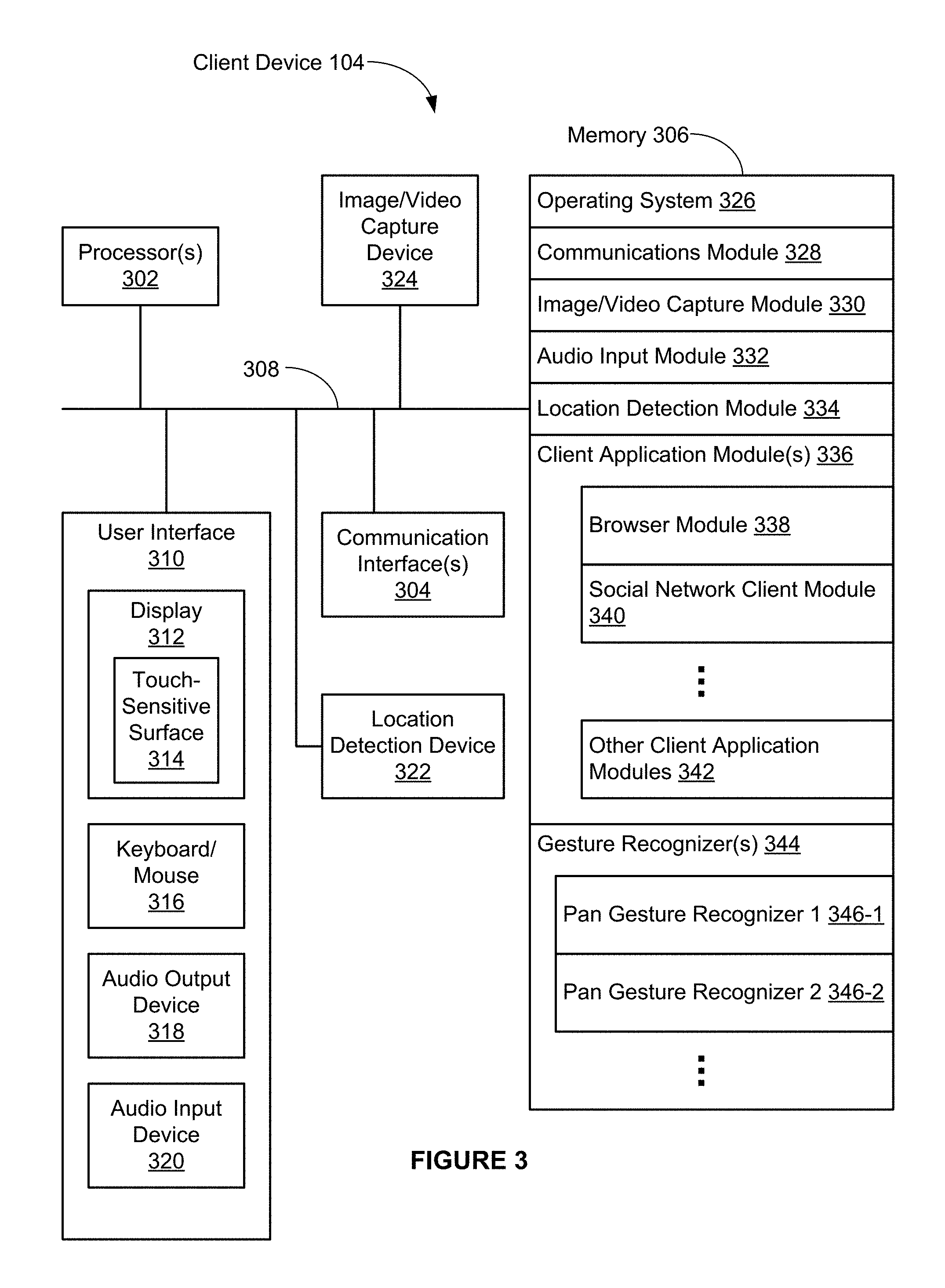

FIG. 3 is a block diagram illustrating an exemplary client device 104 in accordance with some embodiments. The client device 104 typically includes one or more processing units (processors or cores) 302, one or more network or other communications interfaces 304, memory 306, and one or more communication buses 308 for interconnecting these components. The communication buses 308 optionally include circuitry (sometimes called a chipset) that interconnects and controls communications between system components. The client device 104 includes a user interface 310. The user interface 310 typically includes a display device 312. In some embodiments, the client device includes inputs such as a keyboard, mouse, other input buttons 316, and/or a touch-sensitive surface 314 (e.g., a touch pad). In some embodiments, the display device 312 includes a touch-sensitive surface 314, in which case the display device 312 is a touch-sensitive display. In client devices that have a touch-sensitive display 312, a physical keyboard is optional (e.g., a soft keyboard may be displayed when keyboard entry is needed). The user interface 310 also includes an audio output device 318, such as speakers or an audio output connection connected to speakers, earphones, or headphones. Furthermore, some client devices 104 use a microphone and voice recognition to supplement or replace the keyboard. Optionally, the client device 104 includes an audio input device 320 (e.g., a microphone) to capture audio (e.g., speech from a user). Optionally, the client device 104 includes a location detection device 322, such as a GPS (global positioning satellite) or other geo-location receiver, for determining the location of the client device 104. The client device 104 also optionally includes an image/video capture device 324, such as a camera or web cam.

Memory 306 includes high-speed random access memory, such as DRAM, SRAM, DDR RAM or other random access solid state memory devices; and may include non-volatile memory, such as one or more magnetic disk storage devices, optical disk storage devices, flash memory devices, or other non-volatile solid state storage devices. Memory 306 may optionally include one or more storage devices remotely located from the processor(s) 302. Memory 306, or alternately the non-volatile memory device(s) within memory 306, includes a non-transitory computer readable storage medium. In some embodiments, memory 306 or the computer readable storage medium of memory 306 stores the following programs, modules and data structures, or a subset or superset thereof: an operating system 326 that includes procedures for handling various basic system services and for performing hardware dependent tasks; a network communication module 328 that is used for connecting the client device 104 to other computers via the one or more communication network interfaces 304 (wired or wireless) and one or more communication networks, such as the Internet, cellular telephone networks, mobile data networks, other wide area networks, local area networks, metropolitan area networks, and so on; an image/video capture module 330 (e.g., a camera module) for processing a respective image or video captured by the image/video capture device 324, where the respective image or video may be sent or streamed (e.g., by a client application module 336) to the social network system 108; an audio input module 332 (e.g., a microphone module) for processing audio captured by the audio input device 320, where the respective audio may be sent or streamed (e.g., by a client application module) to the social network system 108; a location detection module 334 (e.g., a GPS, Wi-Fi, or hybrid positioning module) for determining the location of the client device 104 (e.g., using the location detection device 322) and providing this location information for use in various applications (e.g., social network client module 340); one or more client application modules 336, including the following modules (or sets of instructions), or a subset or superset thereof: a web browser module 338 (e.g., Internet Explorer by Microsoft, Firefox by Mozilla, Safari by Apple, or Chrome by Google) for accessing, viewing, and interacting with web sites (e.g., a social networking web site such as www.facebook.com), a social network client module 340 (e.g., Paper by Facebook) for providing an interface to a social network (e.g., a social network provided by social network system 108) and related features; and/or other optional client application modules 342, such as applications for word processing, calendaring, mapping, weather, stocks, time keeping, virtual digital assistant, presenting, number crunching (spreadsheets), drawing, instant messaging, e-mail, telephony, video conferencing, photo management, video management, a digital music player, a digital video player, 2D gaming, 3D (e.g., virtual reality) gaming, electronic book reader, and/or workout support; and one or more gesture recognizers 344 for processing gestures (e.g., touch gestures, such as pan, swipe, pinch, depinch, rotate, etc.), including the following gesture recognizers, or a subset or superset thereof: a pan gesture recognizer 1 (346-1), which is also called herein a first pan gesture recognizer, that is configured to recognize a pan gesture; a pan gesture recognizer 2 (346-2), which is also called herein a second pan gesture recognizer, that is configured to recognize a pan gesture; and one or more other gesture recognizers (not shown), each of which is configured to recognize a respective gesture (e.g., tap, swipe, pan, pinch, depinch, rotate, etc.).

Each of the above identified modules and applications correspond to a set of executable instructions for performing one or more functions described above and the methods described in this application (e.g., the computer-implemented methods and other information processing methods described herein). These modules (i.e., sets of instructions) need not be implemented as separate software programs, procedures or modules, and thus various subsets of these modules are, optionally, combined or otherwise re-arranged in various embodiments. For example, in some embodiments, at least a subset of the gesture recognizers 344 (e.g., pan gesture recognizer 1 (346-1) and pan gesture recognizer 2 (346-2)) is included in the operating system 326. In some other embodiments, at least a subset of the gesture recognizers 344 (e.g., pan gesture recognizer 1 (346-1) and pan gesture recognizer 2 (346-2)) is included in the social network client module 340. In some embodiments, memory 206 and/or 306 store a subset of the modules and data structures identified above. Furthermore, memory 206 and/or 306 optionally store additional modules and data structures not described above.

Attention is now directed towards embodiments of user interfaces ("UI") and associated processes that may be implemented on a client device (e.g., client device 104 in FIG. 3).

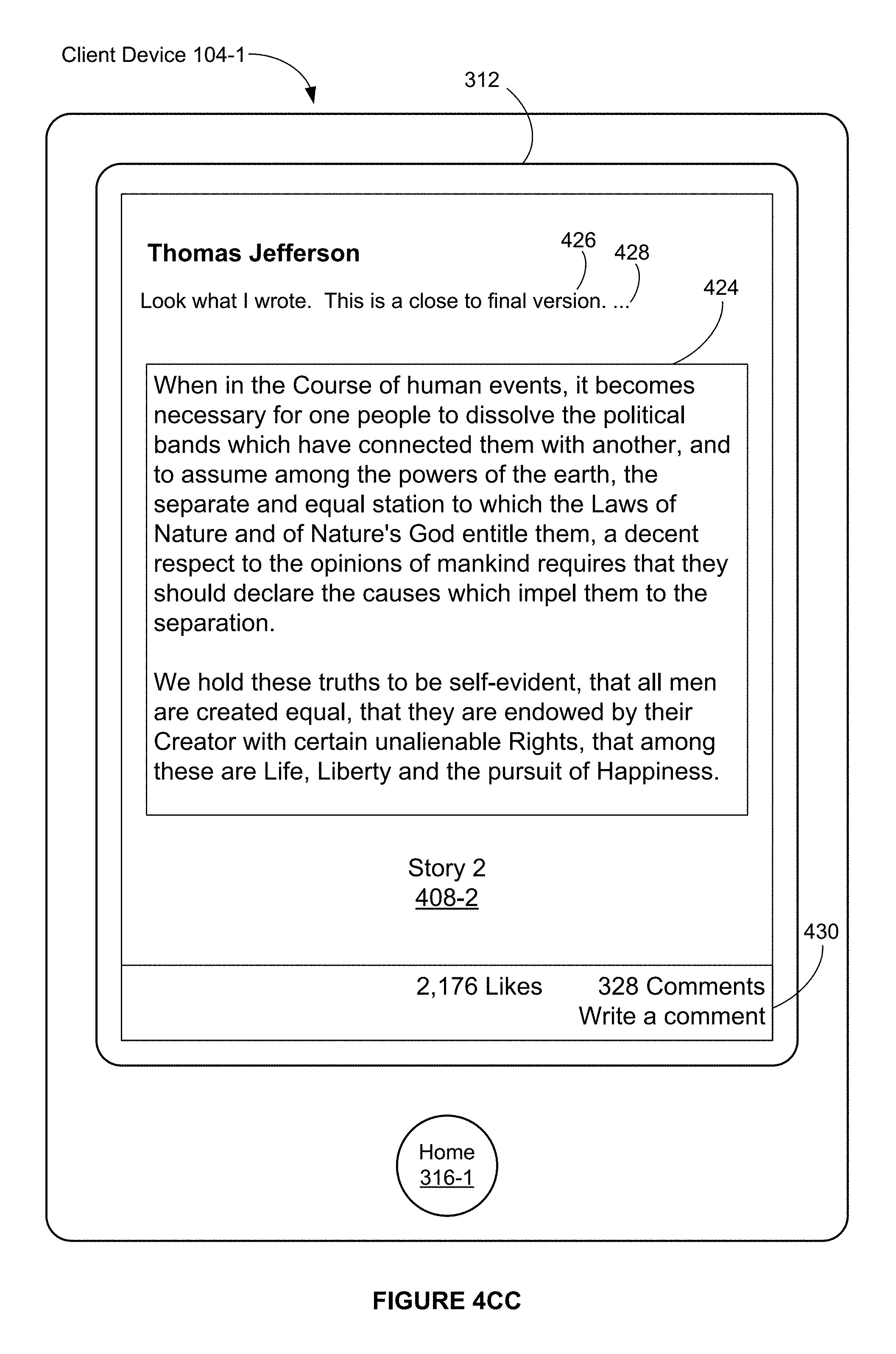

FIGS. 4A-4CC illustrate exemplary user interfaces in a software application with a hierarchy of user interfaces, in accordance with some embodiments. The user interfaces in these figures are used to illustrate the processes described below, including the processes in FIGS. 6A-6E and 7A-7D.

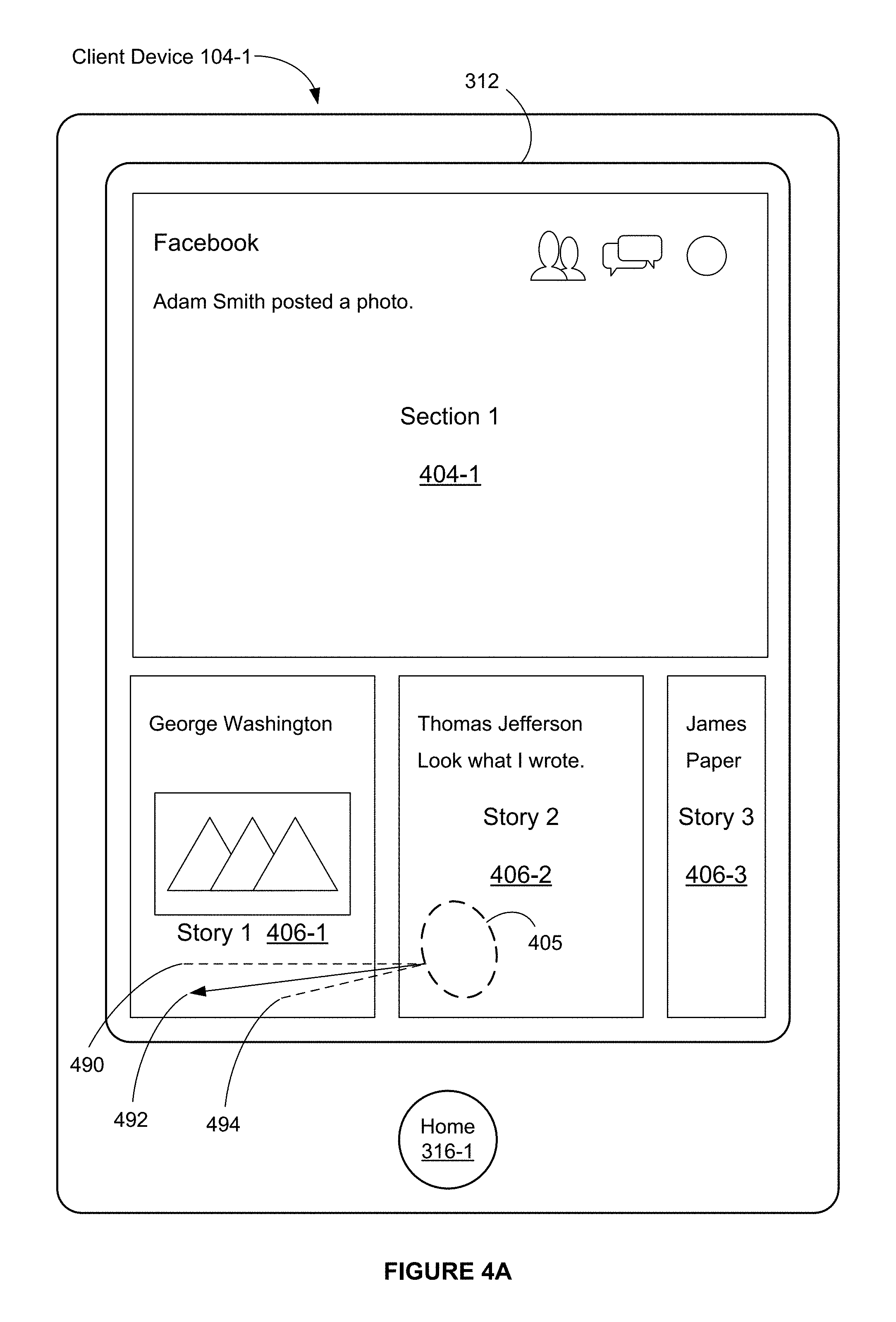

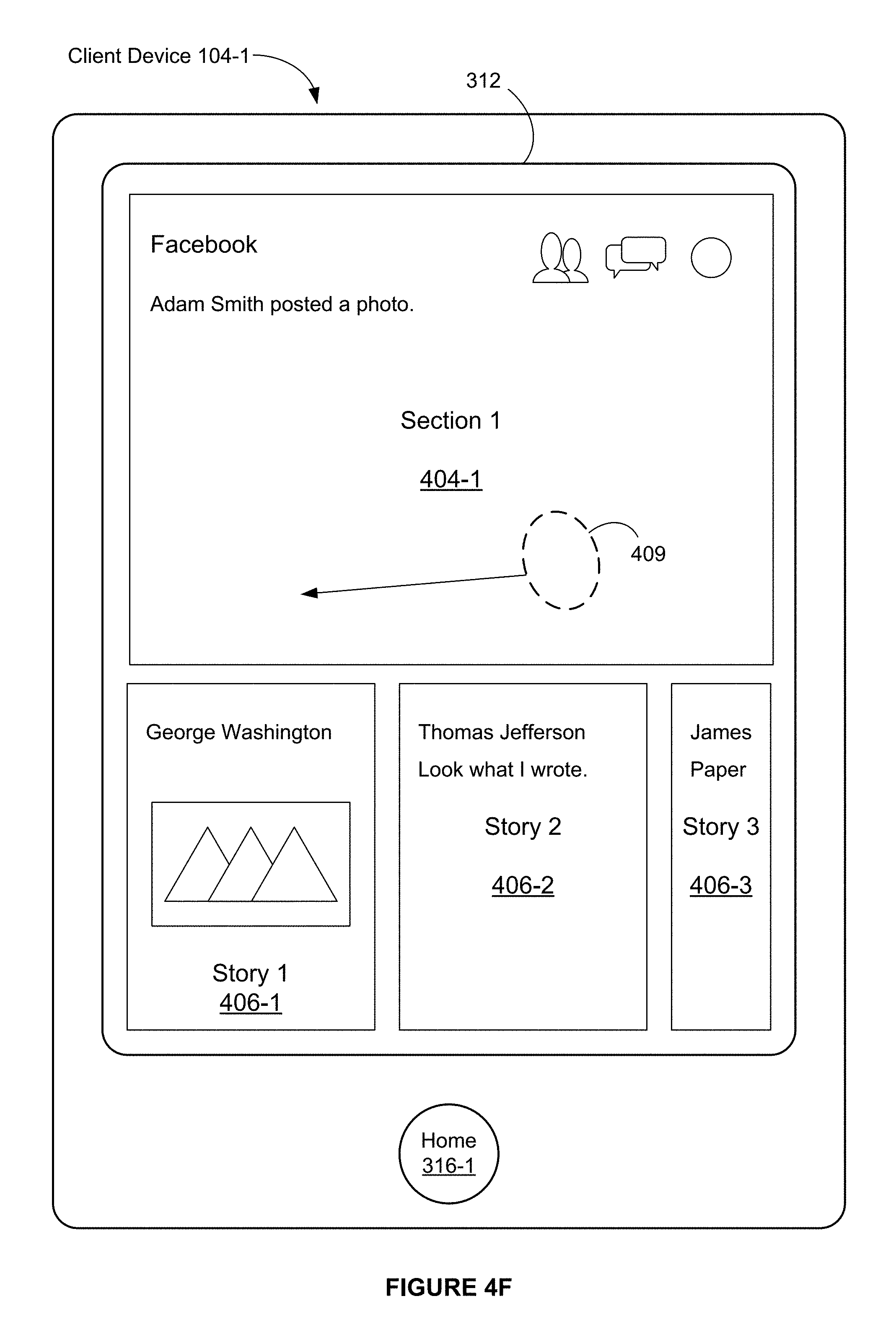

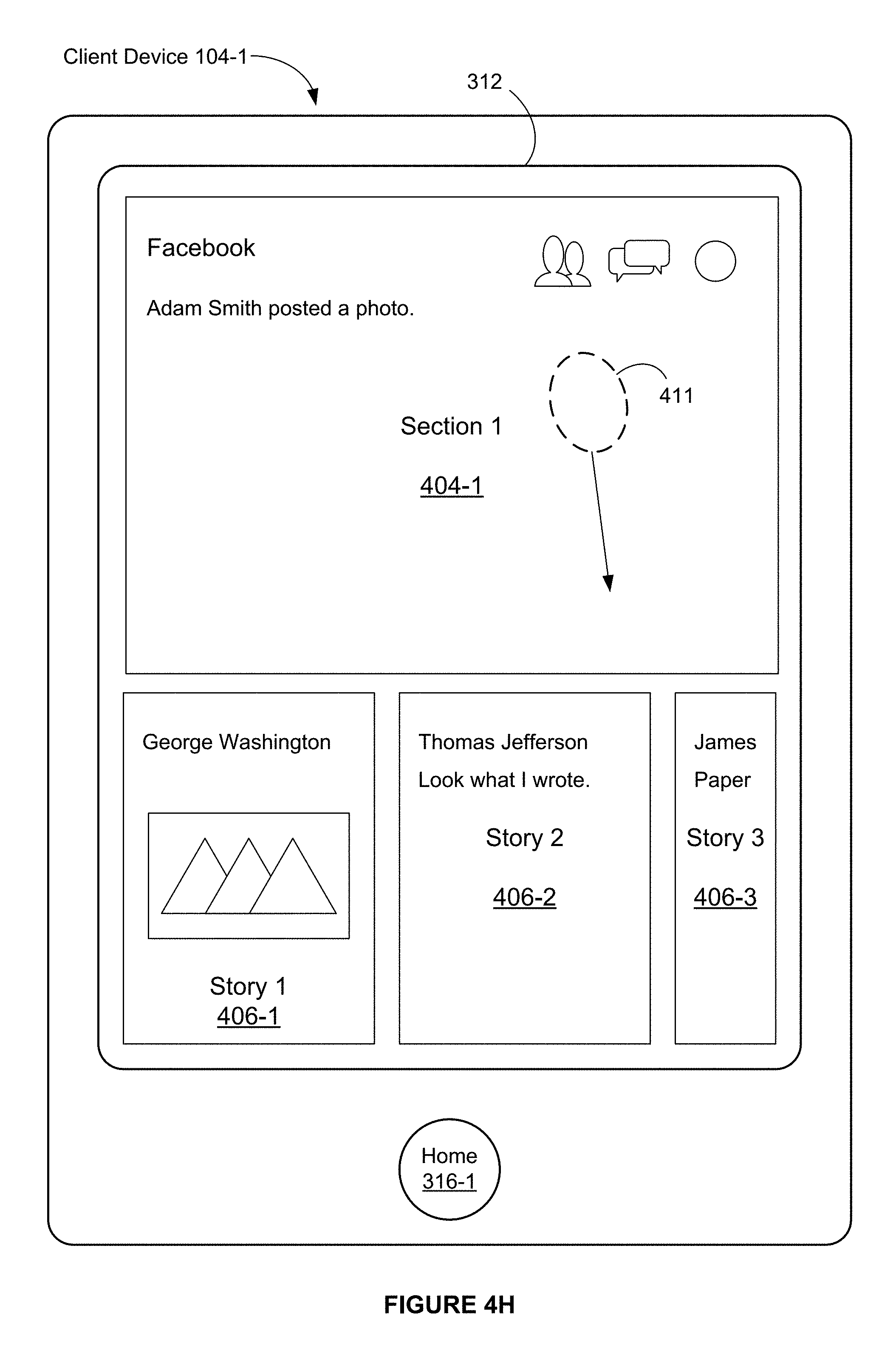

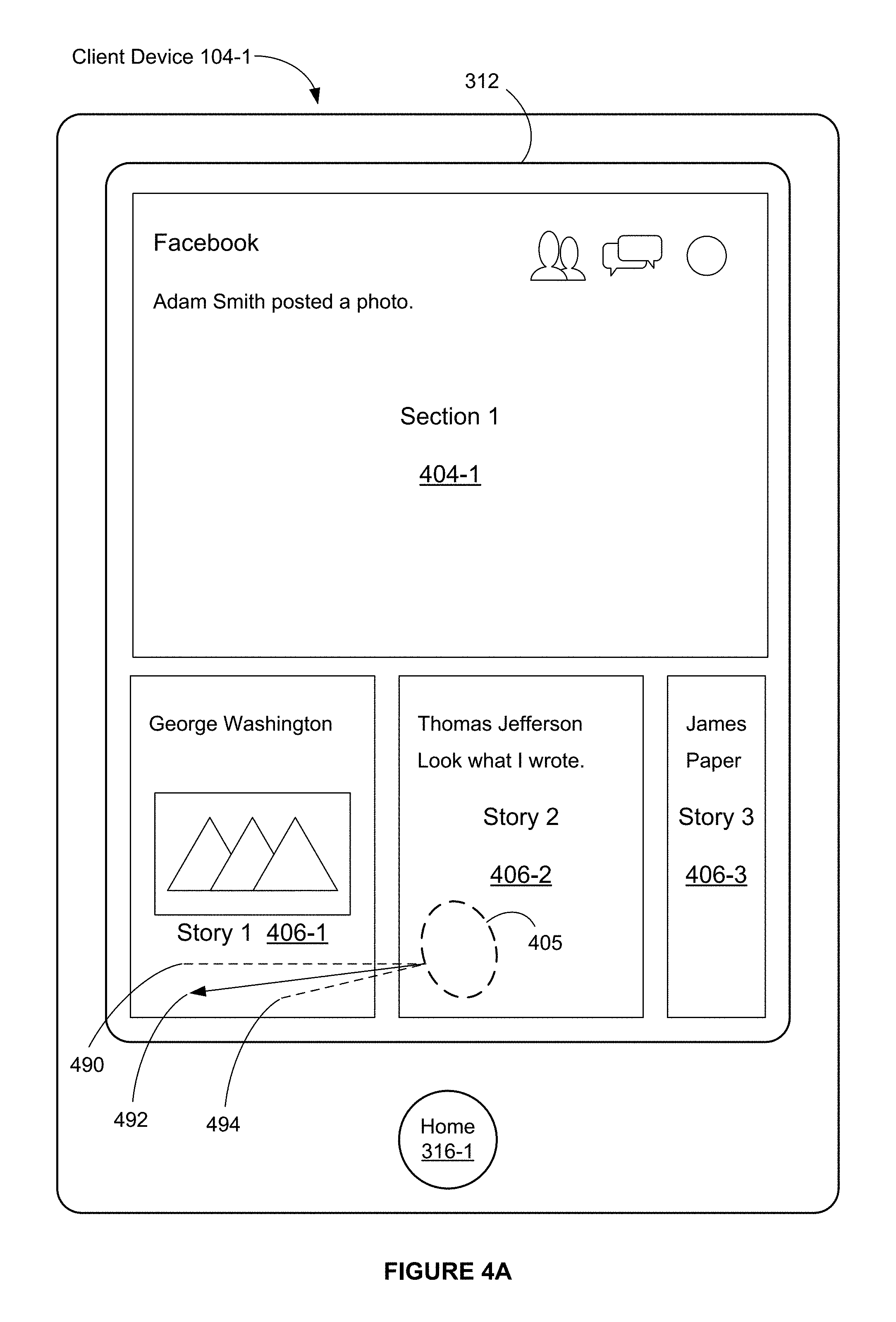

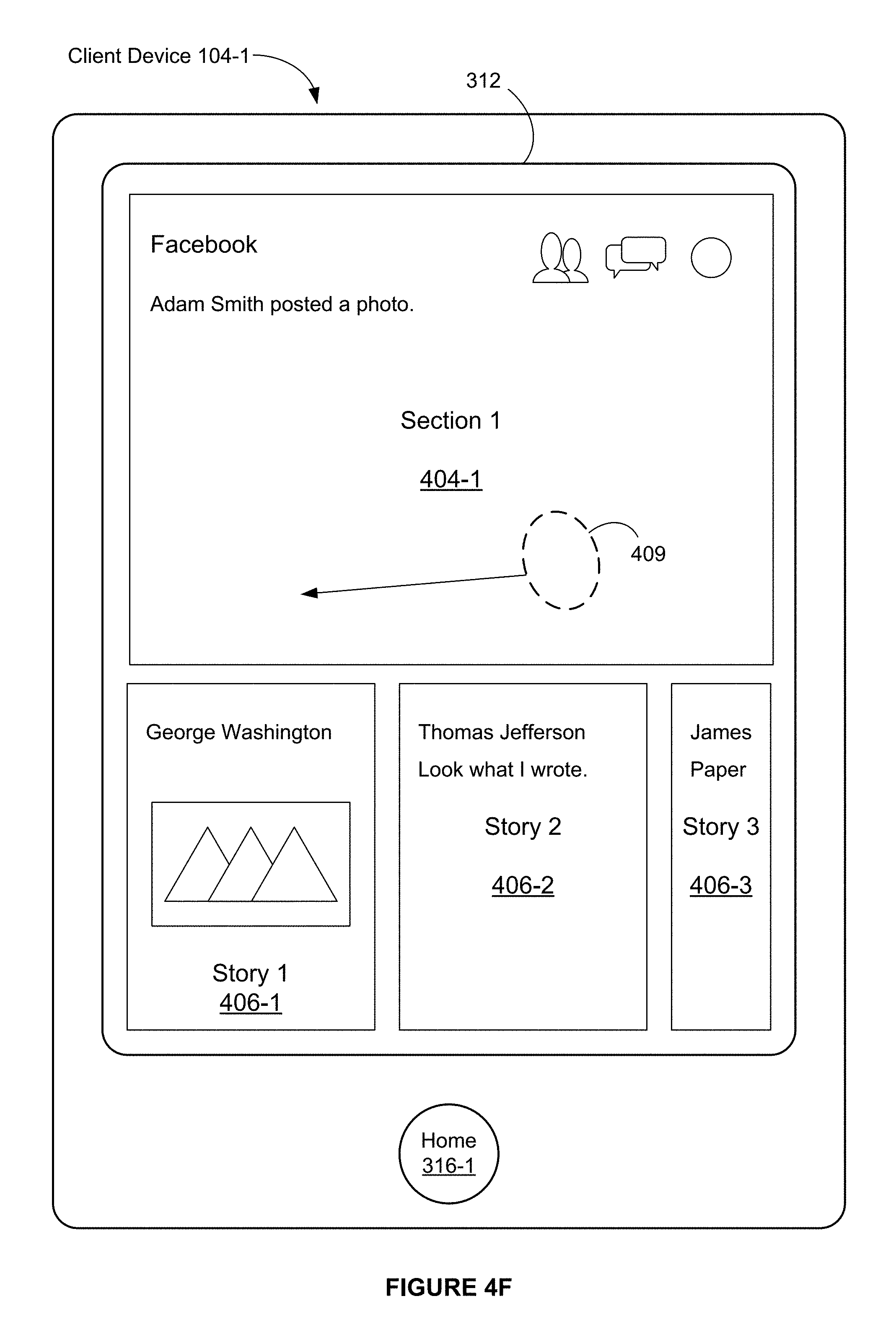

FIGS. 4A-4B and 4F-4G illustrate exemplary user interfaces associated with a section view mode in accordance with some embodiments.

FIGS. 4A-4B illustrate exemplary user interfaces associated with a horizontal pan gesture on a story thumbnail image in accordance with some embodiments.

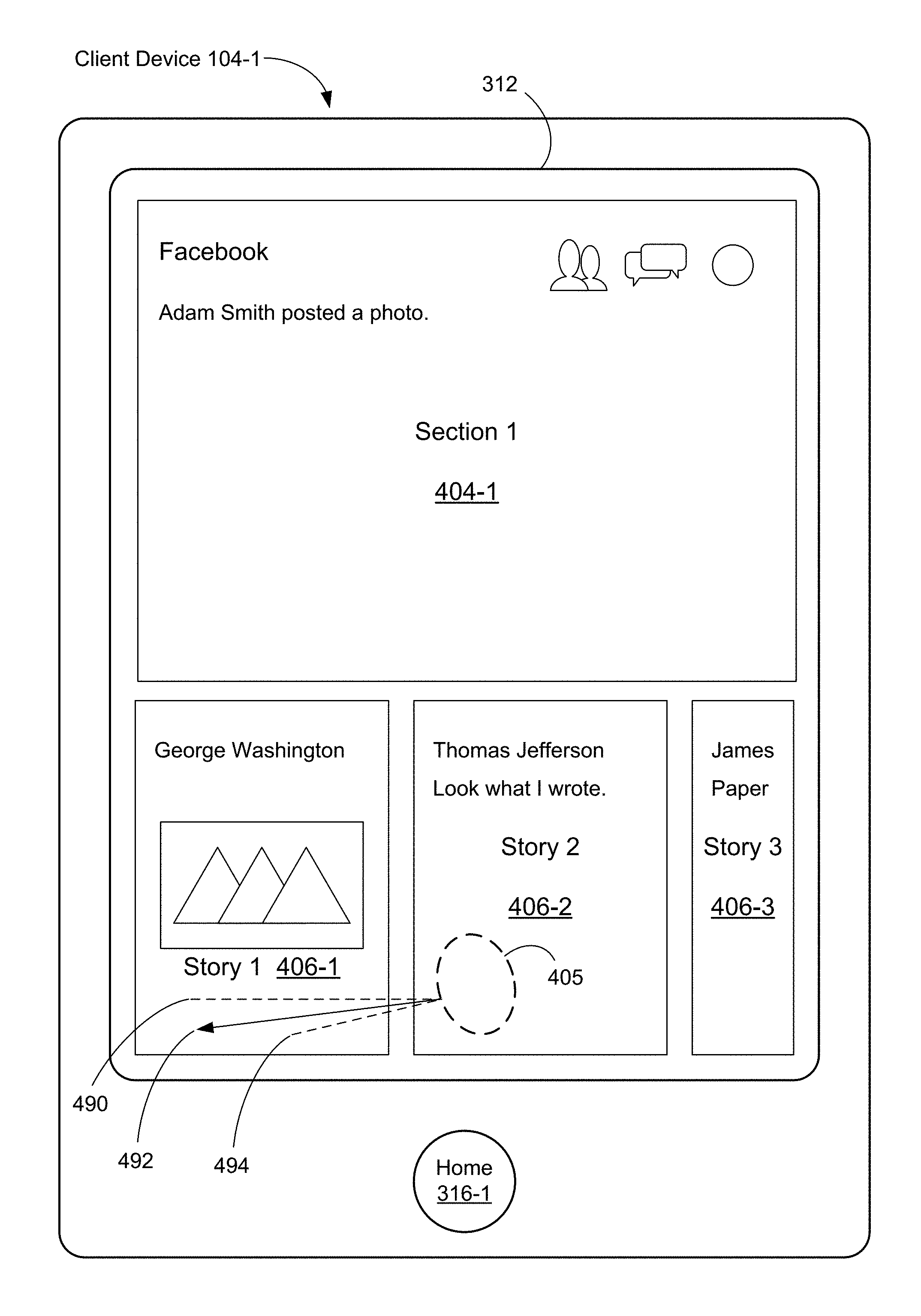

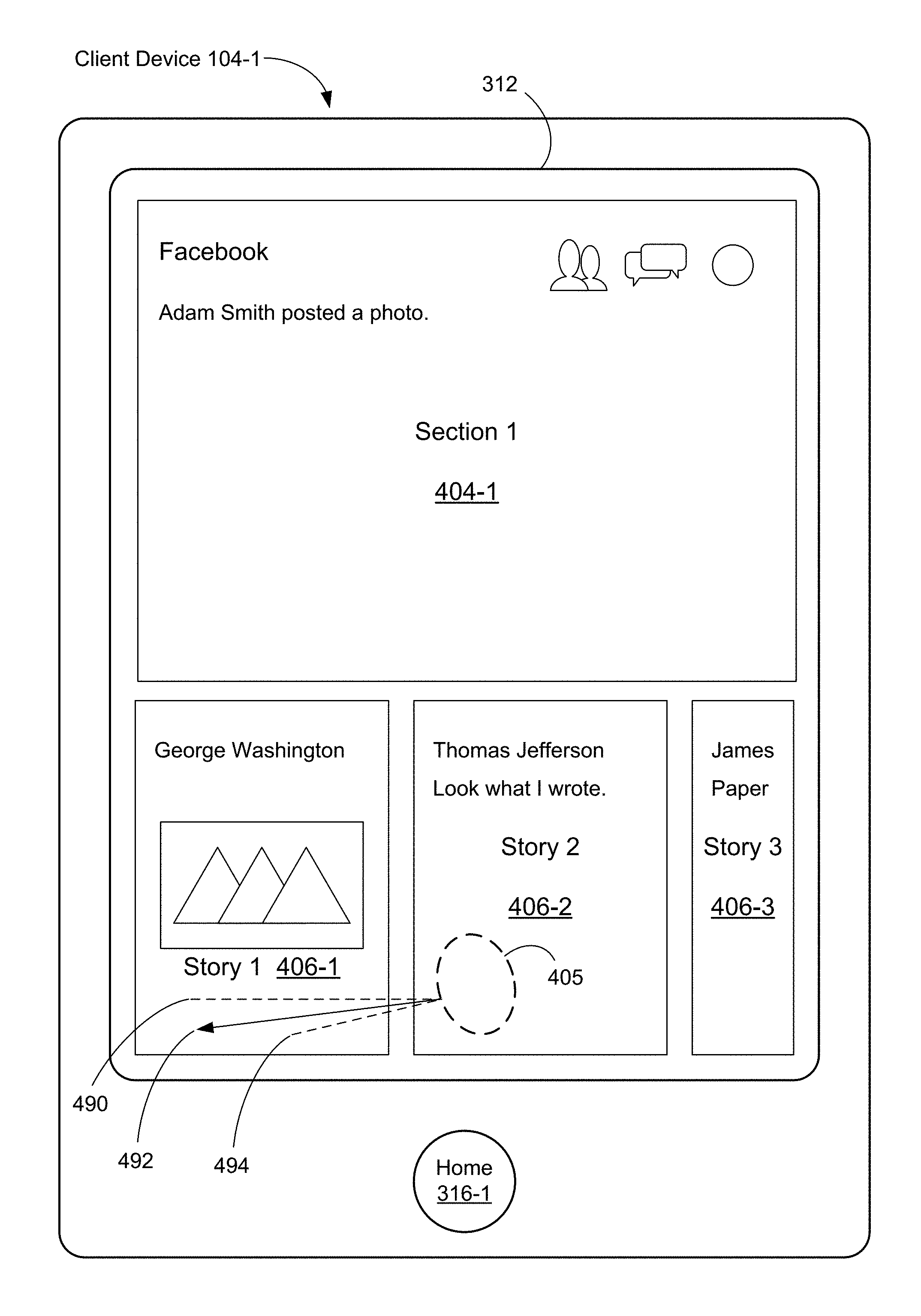

FIG. 4A illustrates that the client device 104-1 includes a display 312. The client device 104-1 includes a touch-sensitive surface. In some embodiments, the display 312 includes the touch-sensitive surface, and the display 312 is a touch-sensitive display (also called herein a touch screen). In some embodiments, the portable electronic device 104-1 also includes one or more physical buttons (e.g., a home button 316-1, etc.).

In FIG. 4A, the display 312 displays a user interface that includes a plurality of user interface elements, including a plurality of images. In some embodiments, the plurality of images includes a first section image 401-1 that represents a first section (e.g., a social networking content section in the social network client module 340, which also contains other content sections). The plurality of images also includes thumbnail images 406-1, 406-2, and 406-3 that correspond to stories in the first section (e.g., comments or postings by users who are connected to a user of the client device 104-1 in a social networking service (so-called "friends" of the user)).

FIG. 4A also illustrates that a horizontal pan gesture 405 (e.g., a leftward pan gesture) is detected initially at a location on the touch-sensitive surface that corresponds to the thumbnail image 406-2 (which corresponds to Story 2) on the display 312.

As shown in FIG. 4A, the horizontal pan gesture 405 includes a substantially horizontal movement of a touch. In other words, the horizontal pan gesture 405 need not be perfectly horizontal, and need not be perfectly linear. Regardless, in some cases, the horizontal pan gesture 405 is recognized as a horizontal gesture in accordance with a determination that the horizontal pan gesture 405 satisfies predefined criteria. In some embodiments, the predefined criteria include that an angle formed by an initial direction 492 of the horizontal pan gesture 405 and a reference axis 490 (e.g., a horizontal axis on the display 312) is within a predefined angle. In some embodiments, the predefined angle corresponds to an angle formed by a predefined threshold direction 494 and the reference axis 490. In some other embodiments, the predefined criteria include that a ratio of a vertical component of an initial movement of the horizontal pan gesture 405 (e.g., vertical distance traversed by the horizontal pan gesture 405) and a horizontal component of the initial movement of the horizontal pan gesture 405 (e.g., horizontal distance traversed by the horizontal pan gesture 405) is less than a predefined threshold value. In some embodiments, an initial velocity of the horizontal pan gesture 405 is used instead of, or in addition to, an initial distance traversed by the pan gesture.

Although FIG. 4A is used to describe a horizontal pan gesture, a vertical pan gesture can be recognized in a similar manner. For example, a vertical pan gesture need not be perfectly vertical and need not be perfectly linear. For brevity, such details are not repeated here.

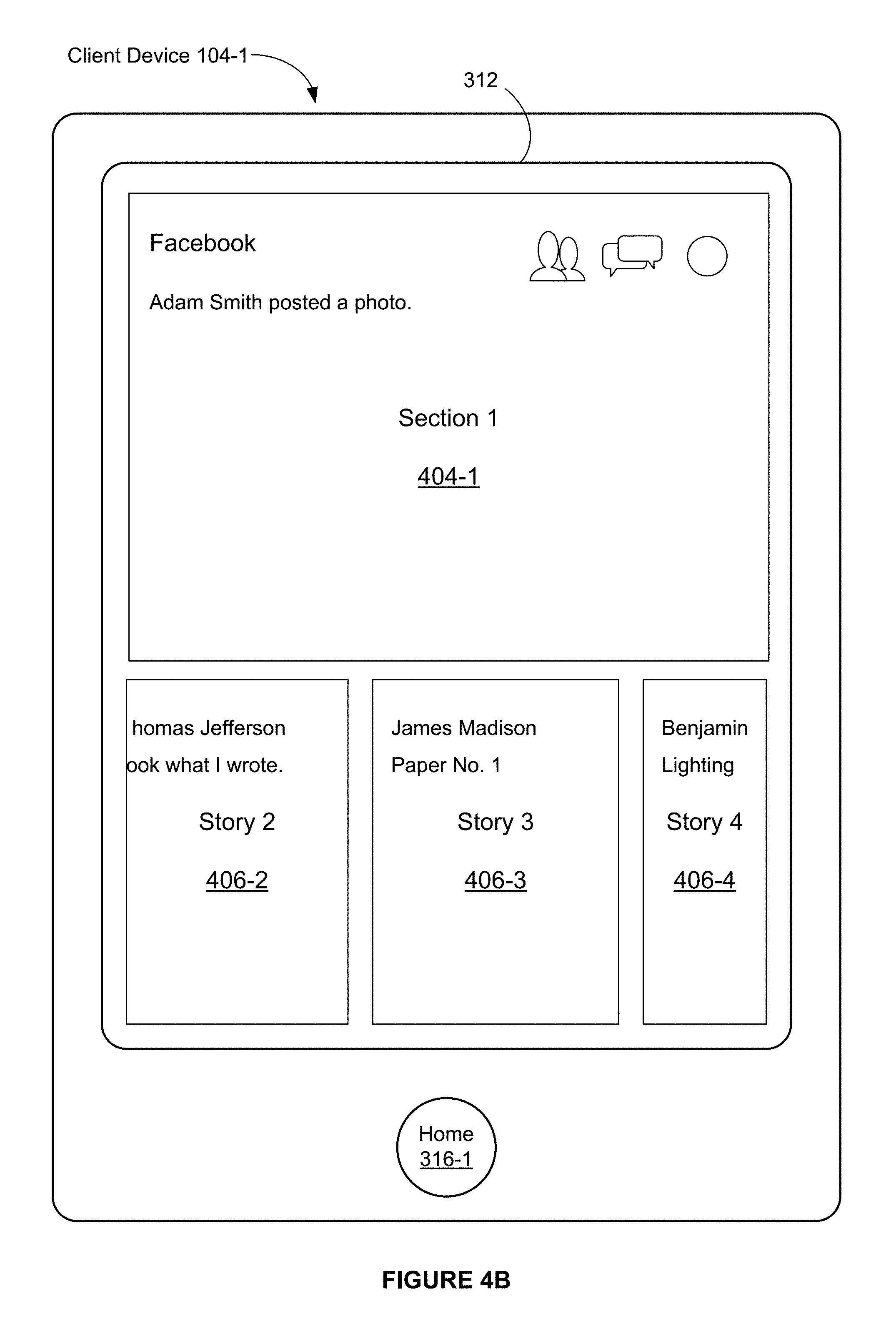

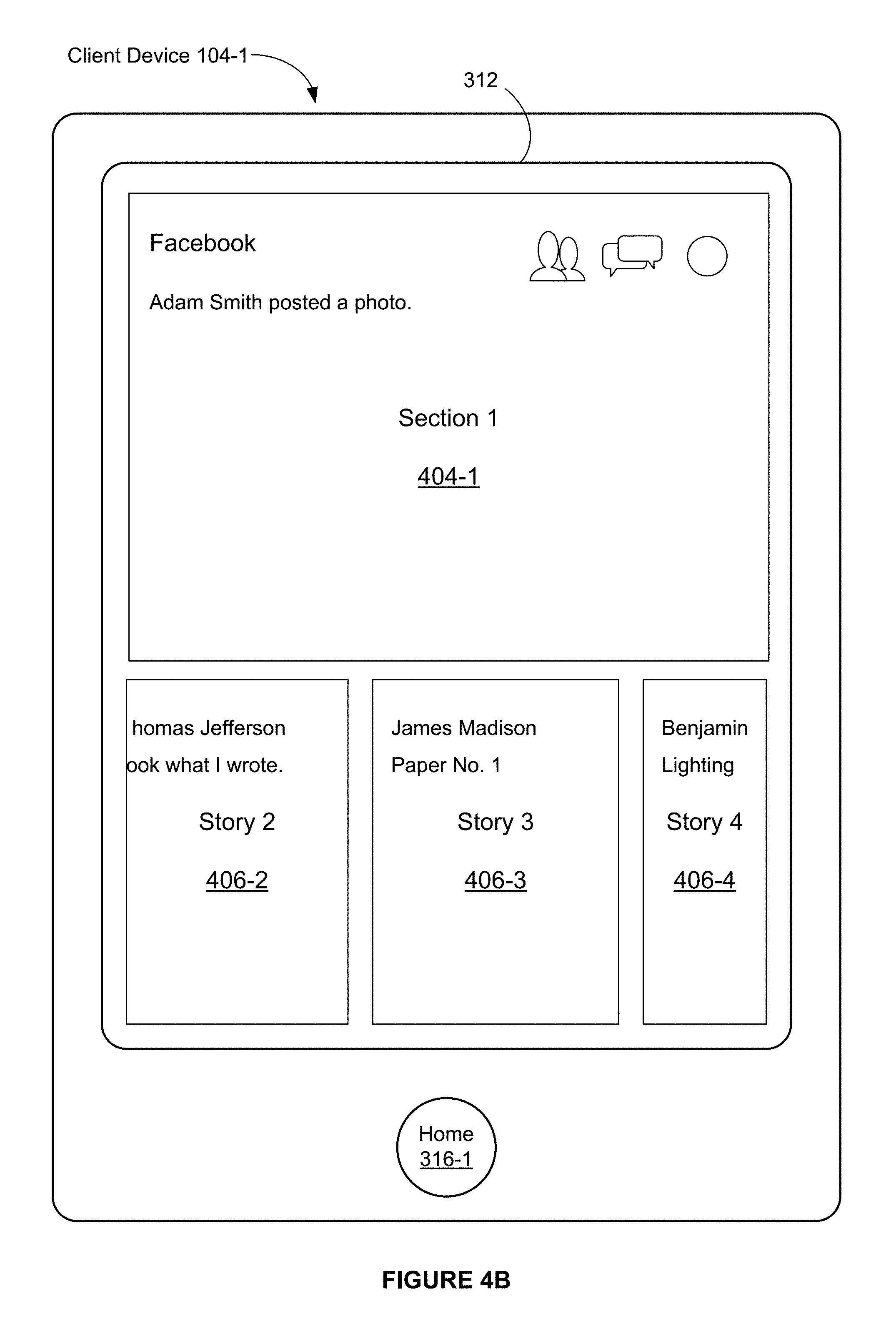

FIG. 4B illustrates that, in response to the horizontal pan gesture 405, the thumbnail images are scrolled. For example, the thumbnail images 406-1, 406-2, and 406-3 illustrated in FIG. 4A are scrolled horizontally so that the thumbnail image 406-1 ceases to be displayed. The thumbnail image 406-3, which is partially included in the user interface illustrated in FIG. 4A, is included in its entirety in the user interface illustrated in FIG. 4B. FIG. 4B also illustrates that the user interface includes at least a portion of a new thumbnail image 406-4.

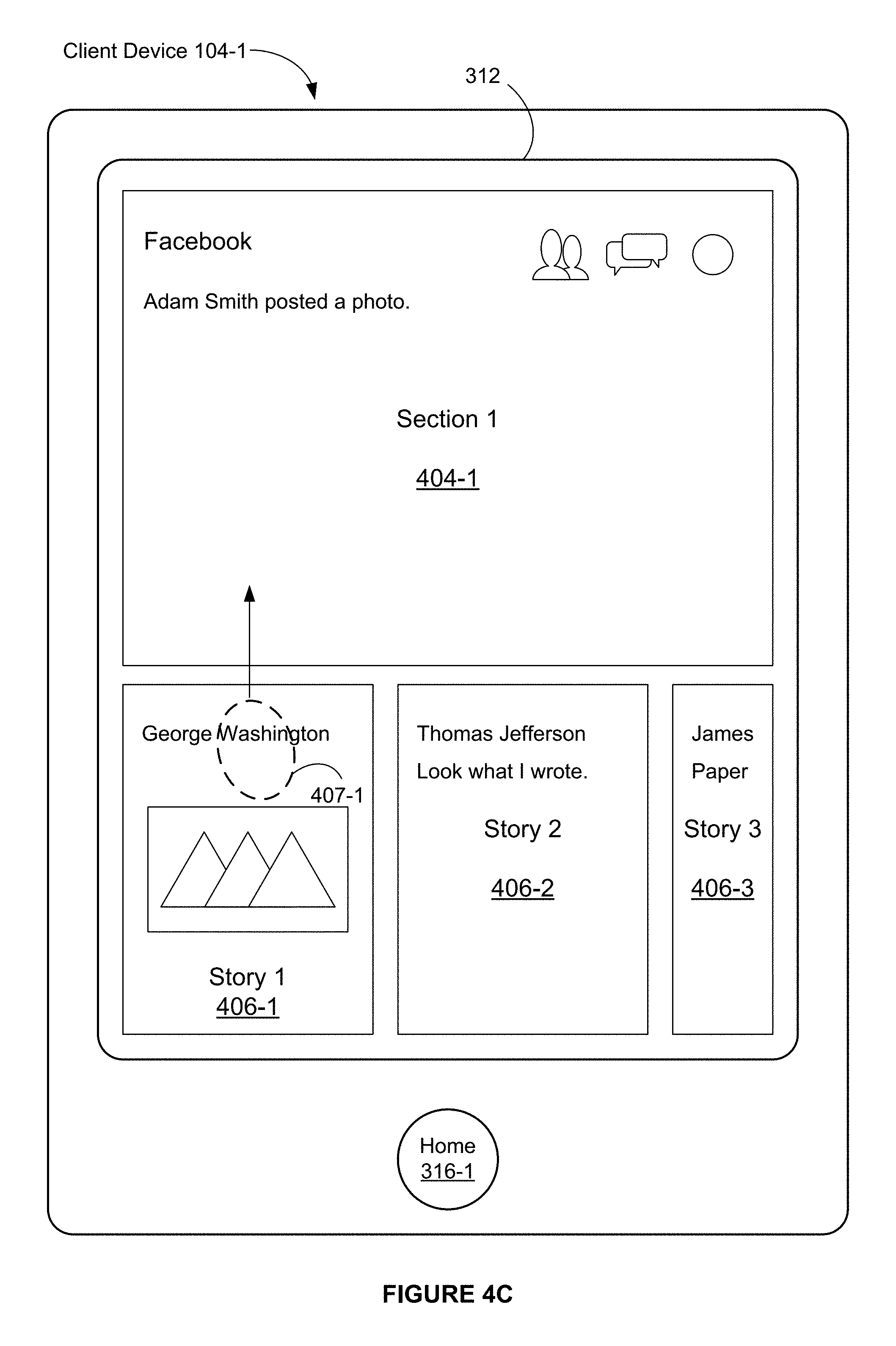

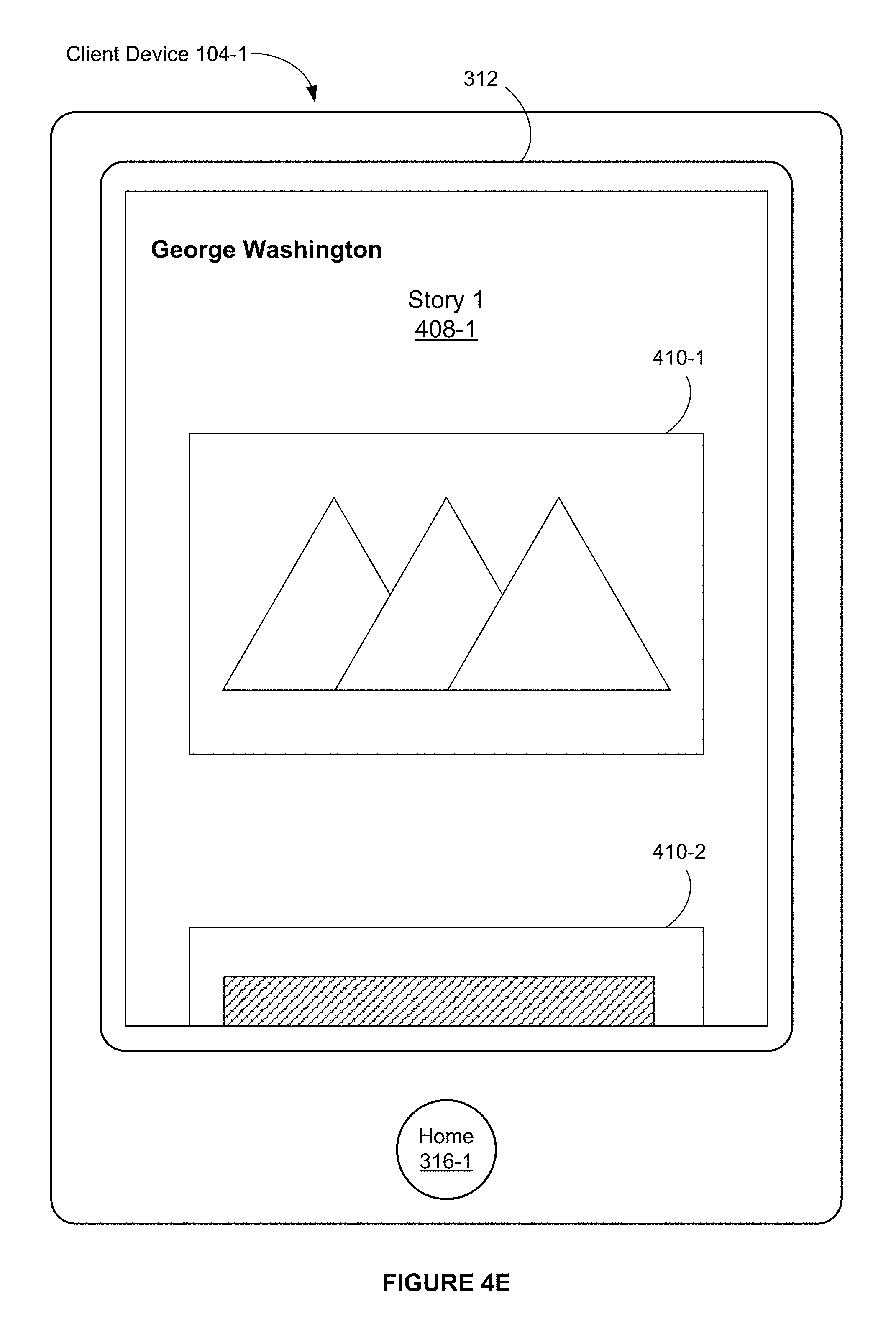

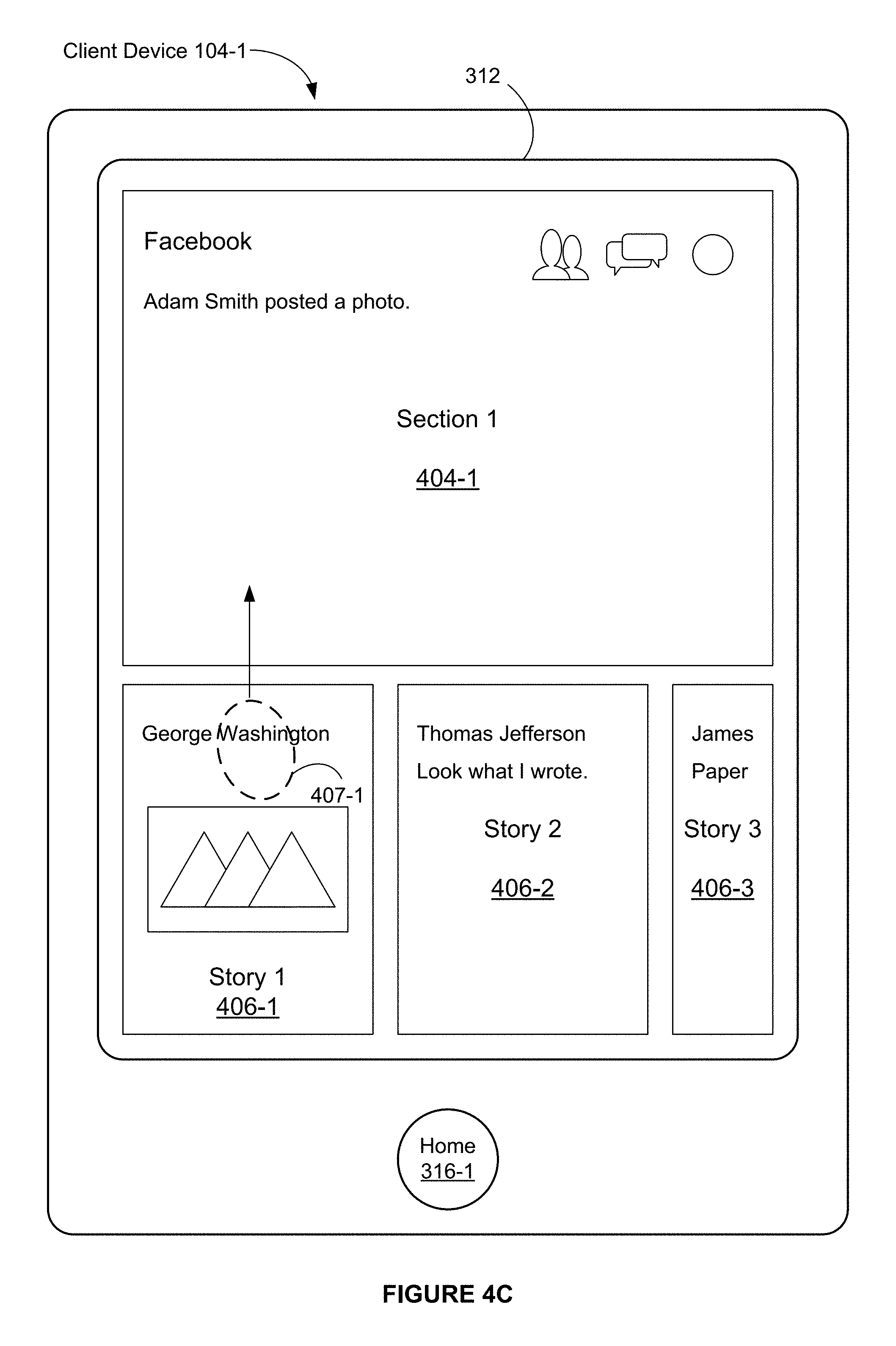

FIGS. 4C-4E illustrate exemplary user interfaces associated with a vertical pan gesture on a story thumbnail image in accordance with some embodiments.

FIG. 4C illustrates that a vertical pan gesture (e.g., an upward pan gesture) is detected initially at a location 407-1 on the touch-sensitive surface that corresponds to the thumbnail image 406-1 (which corresponds to Story 1) on the display 312.

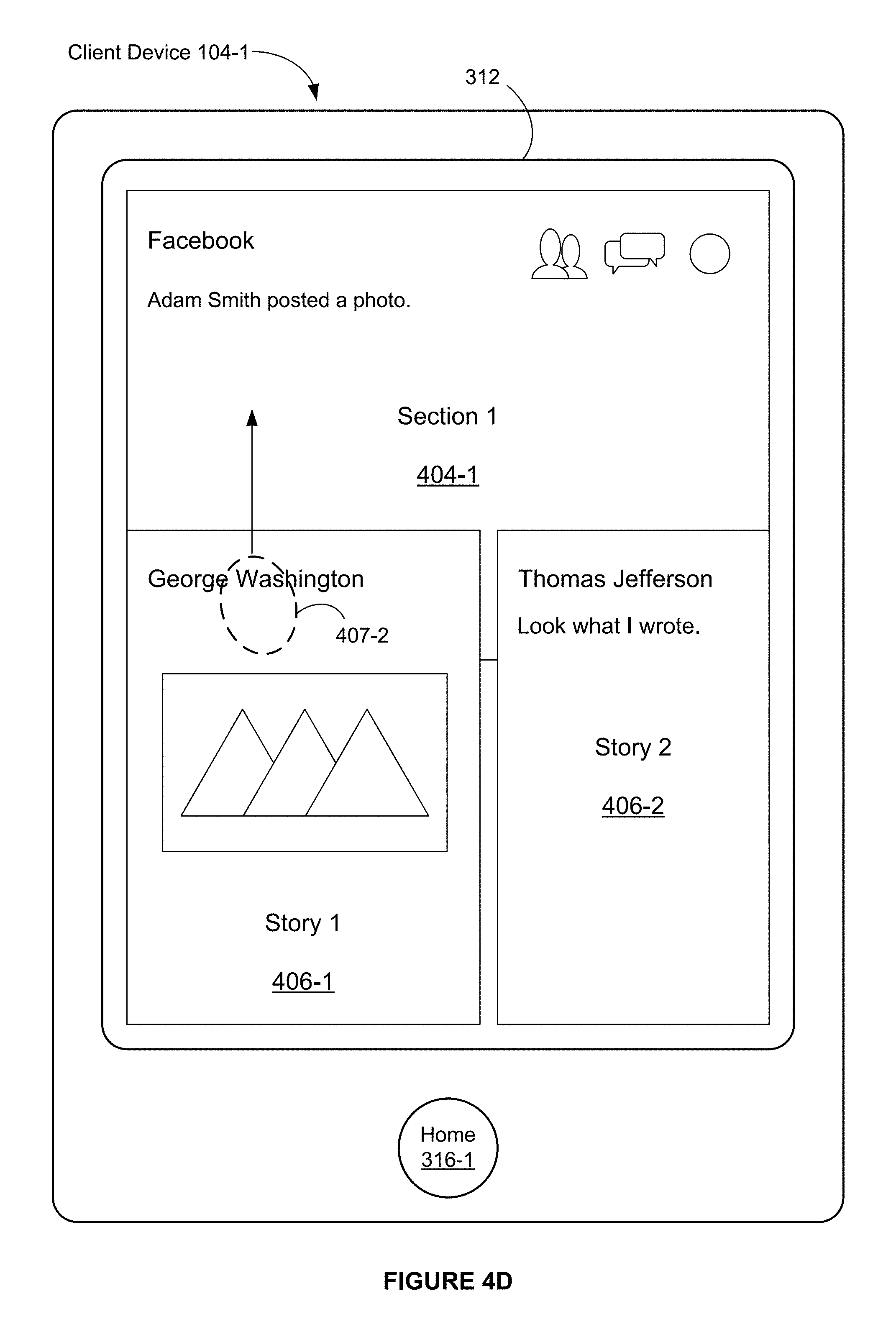

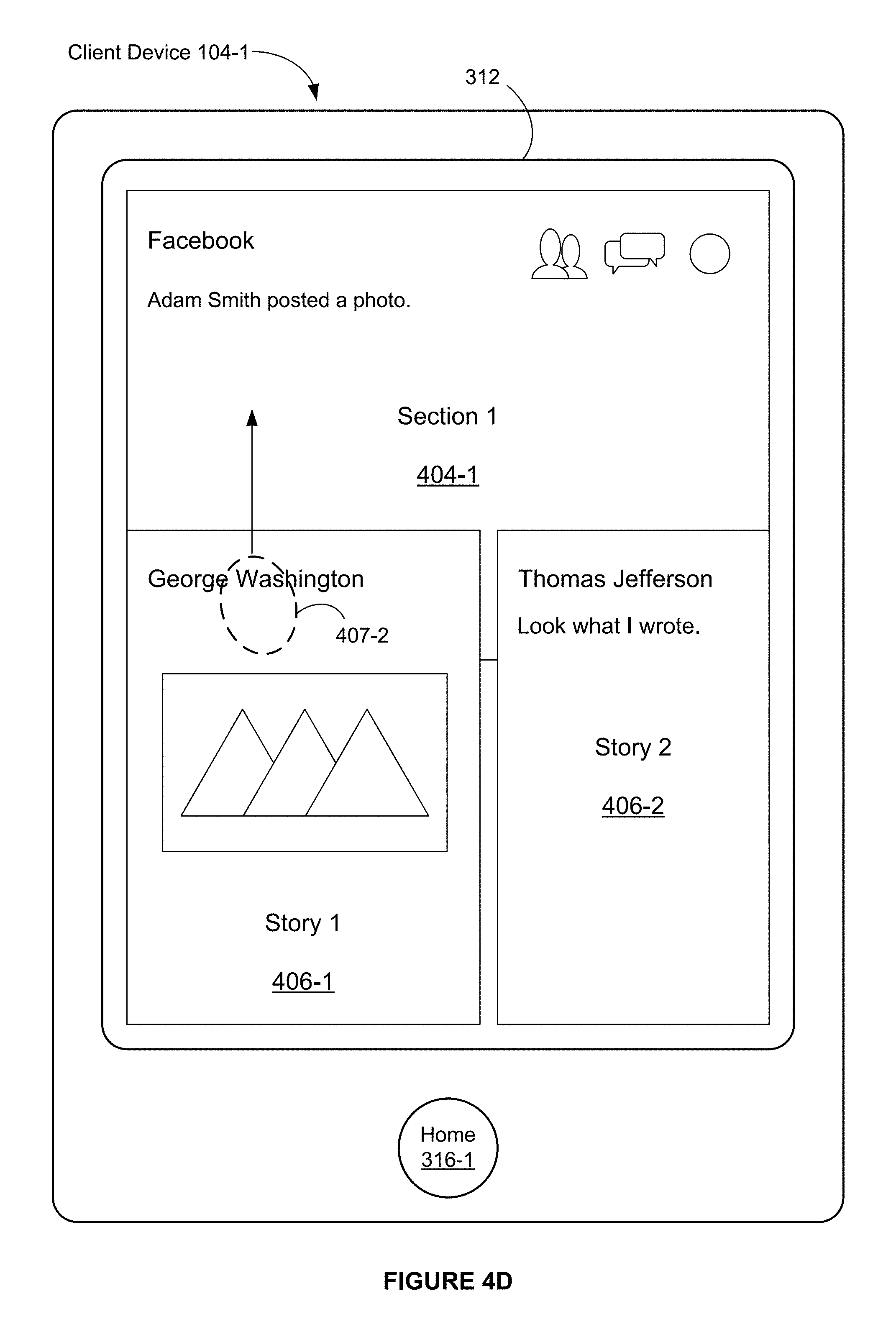

FIG. 4D illustrates that the vertical pan gesture remains in contact with the display 312 and has moved to a location 407-2. FIG. 4D also illustrates that, in response to the movement of a touch in the vertical pan gesture from the location 407-1 (FIG. 4C) to the location 407-2 (FIG. 4D), one or more thumbnail images are enlarged. For example, in FIG. 4D, the thumbnail images 406-1 and 406-2 have been enlarged in accordance with the movement of the touch from the location 407-1 to the location 407-2 (e.g., a vertical distance from the location 407-1 to the location 407-2). In FIG. 4D, the thumbnail image 406-3 (FIG. 4C) ceases to be displayed.

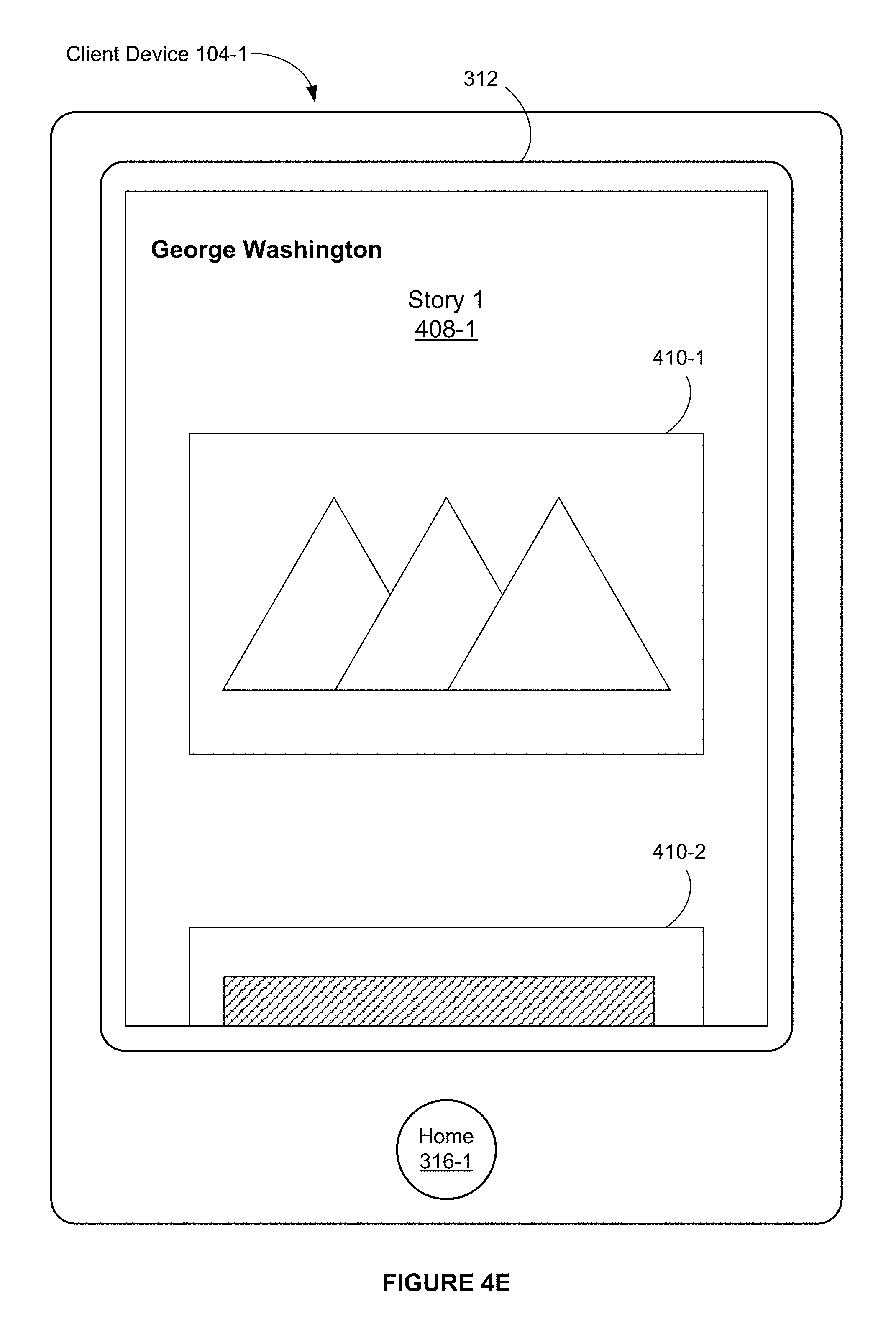

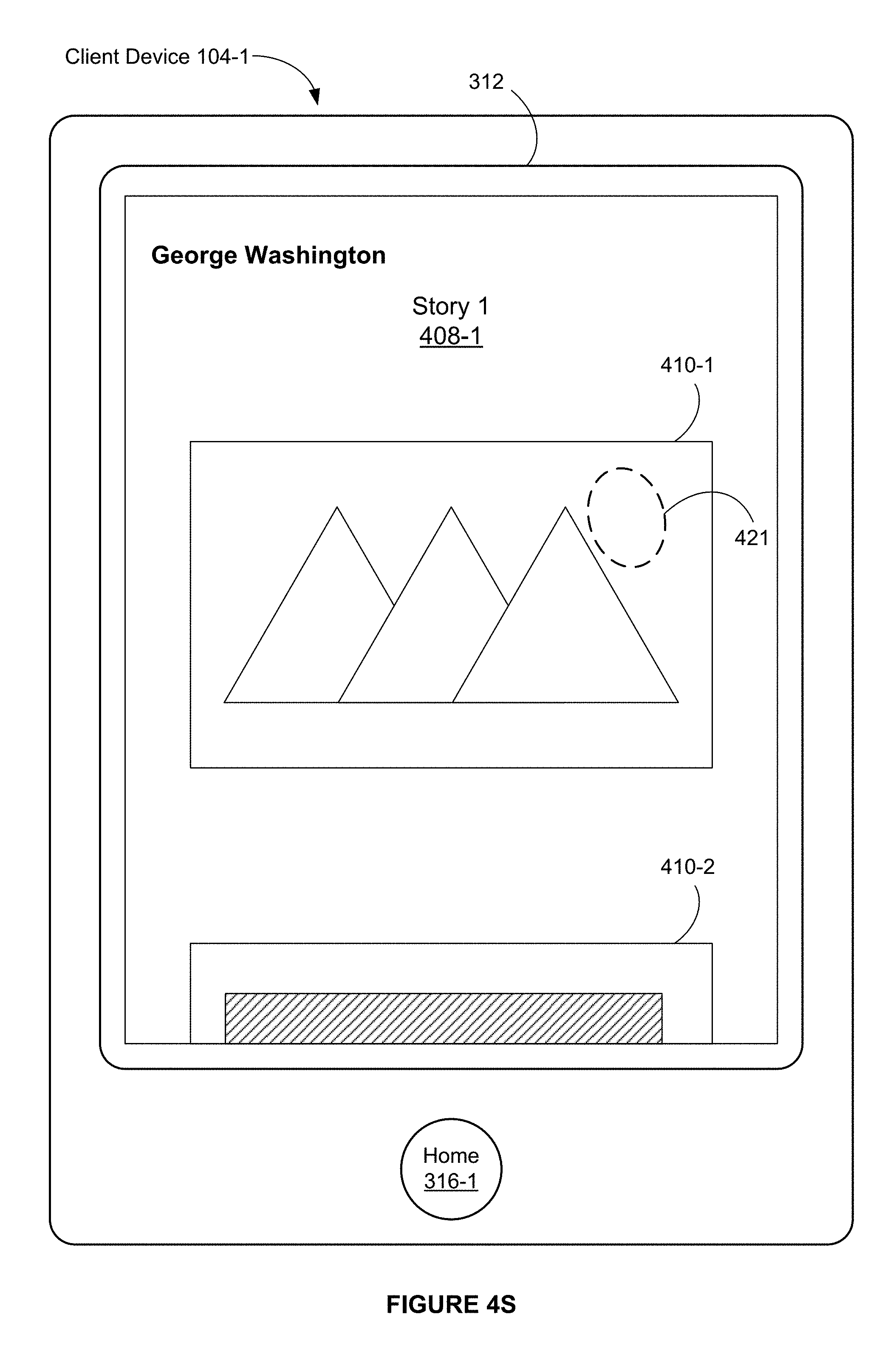

FIG. 4E illustrates that in response to a further upward movement of the vertical pan gesture or in response to a release of the vertical pan gesture from the display 312 (e.g., the vertical pan gesture ceases to be detected on the display 312), the thumbnail image 406-1 is further enlarged to become a full-screen-width image 408-1 that corresponds to Story 1. In FIG. 4E, a width of the image 408-1 corresponds substantially to a full width of the display 312 (e.g., the width of the image 408-1 corresponds 70, 75, 80, 90 or 95 percent or more of the full width of the display 312). As used herein, a display that includes a full-screen-width image that corresponds to a story is called a story view.

FIG. 4E also illustrates that the full scale image 408-1 includes one or more photos, such as photos 410-1 and 410-2.

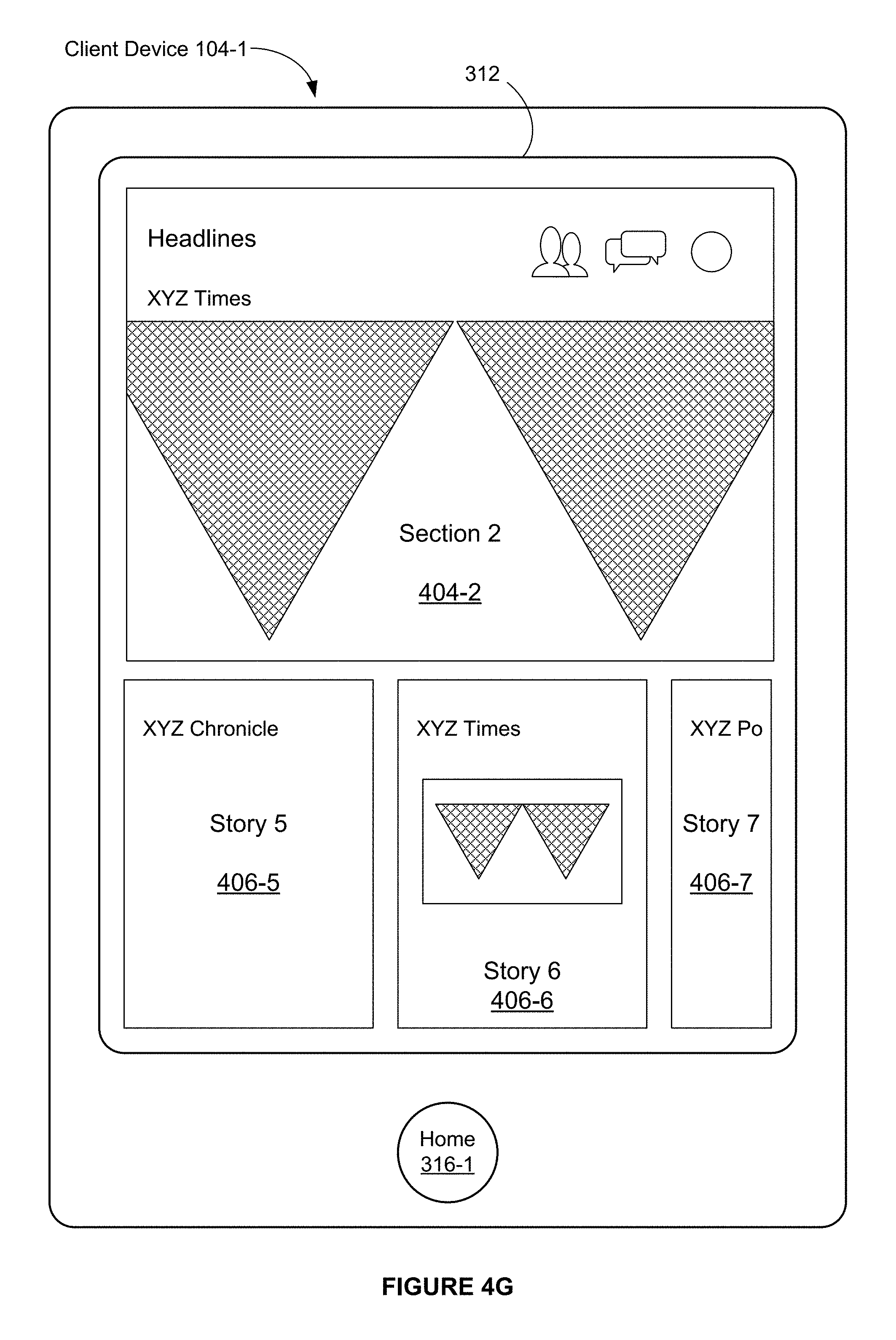

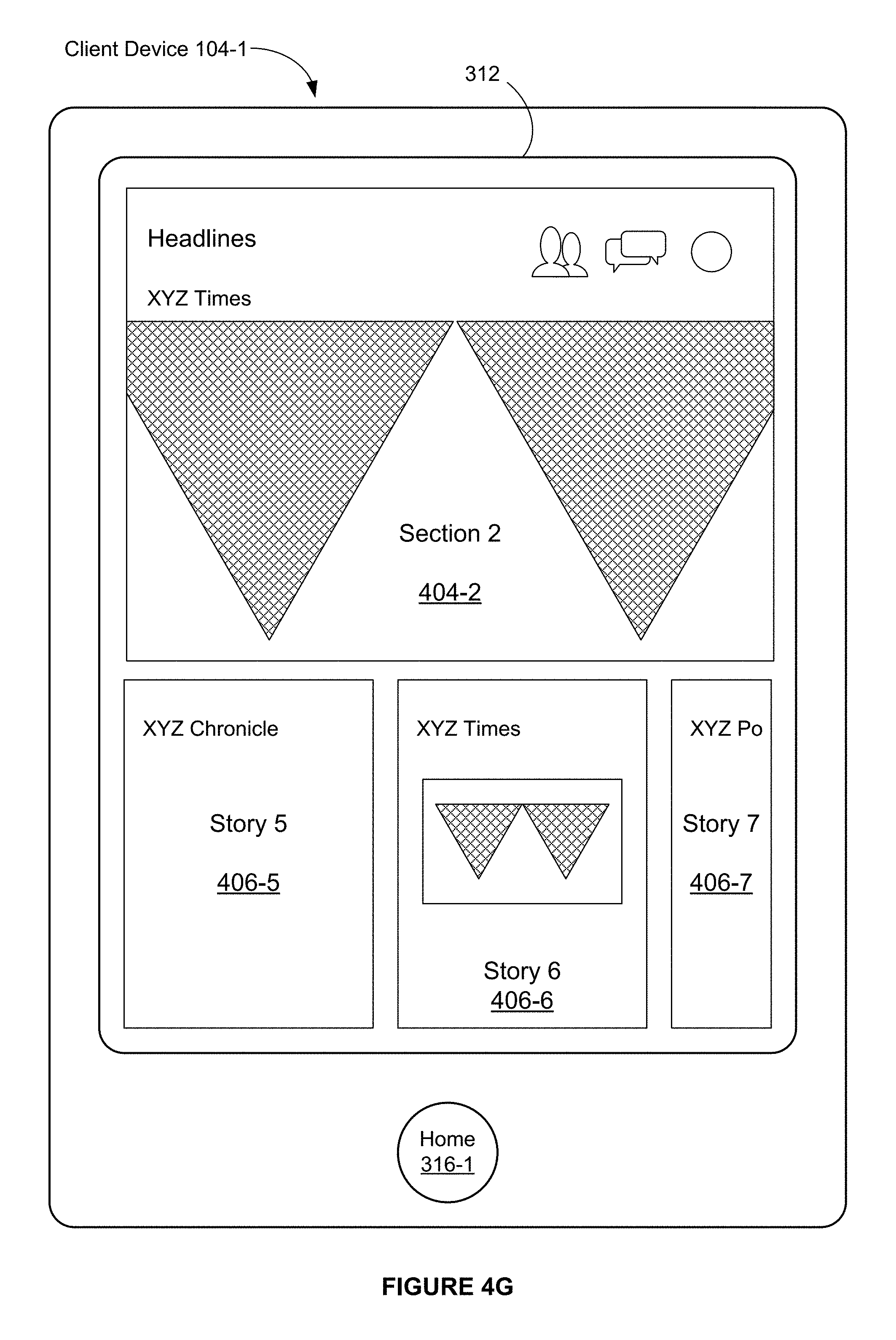

FIGS. 4F-4G illustrate exemplary user interfaces associated with a horizontal pan gesture on a section image in accordance with some embodiments.

In FIG. 4F, a horizontal pan gesture 409 (e.g., a left pan gesture) is detected initially at a location that corresponds to the first section image 404-1.

FIG. 4G illustrates that, in response to the horizontal pan gesture 409, the first section image 404-1 is scrolled and replaced with a second section image 404-2 that corresponds to a second section (e.g., a headline news section). For example, the first section image 404-1 is scrolled horizontally in a same direction as the horizontal pan gesture 409 and the second section image 404-2 is scrolled in horizontally in the same direction to replace the first section image 404-1.

FIG. 4G also illustrates that, in response to the horizontal pan gesture 409, the thumbnail images 406-1, 406-2, and 406-3 (FIG. 4F) that correspond to stories in the first section are replaced with thumbnail images that correspond to stories in the second section (e.g., news articles). For example, the thumbnail images 406-1, 406-2, and 406-3 are scrolled horizontally in a same direction as the horizontal pan gesture 409 and thumbnail images 406-5, 406-6, and 406-7 are scrolled in horizontally in the same direction to replace the thumbnail images 406-1, 406-2, and 406-3.

In some embodiments, a section image for a particular section is selected from images in stories in the particular section. For example, in FIG. 4G, the second section image 404-2 corresponds to an image in Story 2, which is shown in the thumbnail image 406-6.

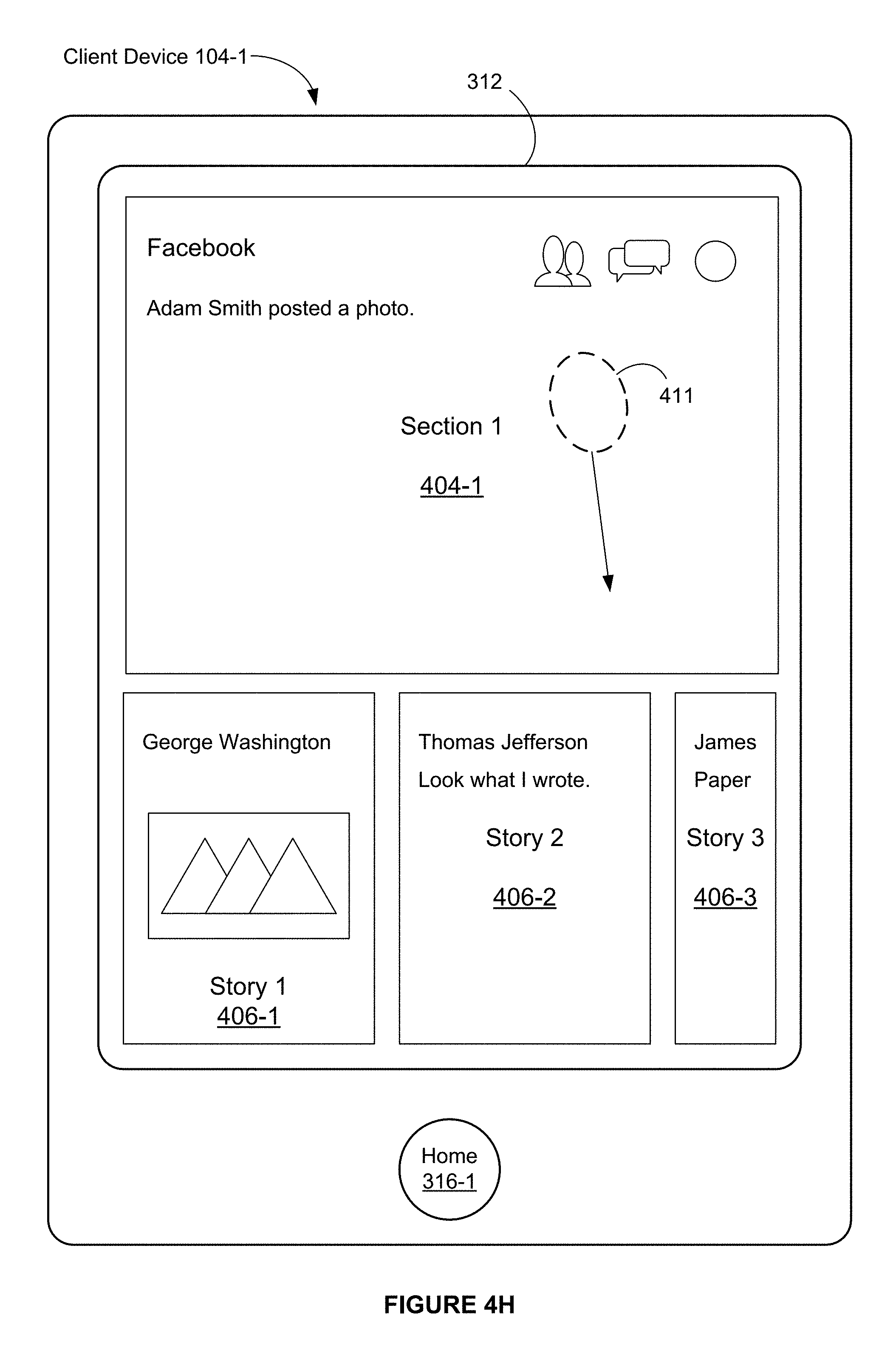

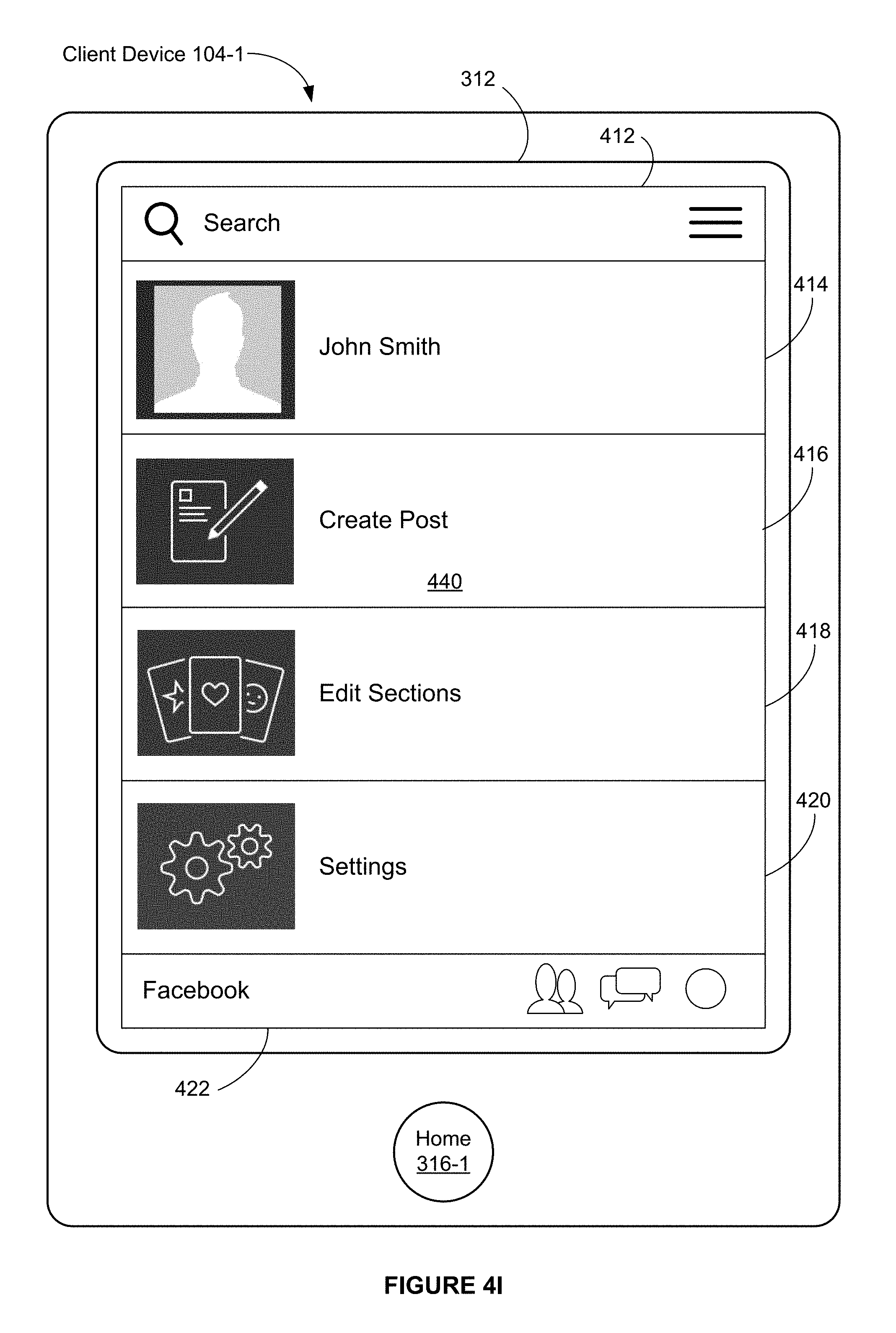

FIGS. 4H-4I illustrate exemplary user interfaces associated with a vertical pan gesture on a section image in accordance with some embodiments.

In FIG. 4H, a vertical pan gesture 411 (a downward pan gesture) is detected initially at a location that corresponds to the first section image 404-1.

FIG. 4I illustrates that, in response to the vertical pan gesture 411, the user interface illustrated in FIG. 4H is scrolled (e.g., downward) and a user interface 440 for the social networking application is displayed. The user interface 440 illustrated in FIG. 4I includes a search input area 412. In some embodiments, the user interface 440 for the social networking application includes a user interface icon 414, which, when selected (e.g., with a tap gesture), initiates display of a home page of a user associated with the client device 104-1. In some embodiments, the user interface 440 for the social networking application includes a user interface icon 416, which, when selected (e.g., with a tap gesture), initiates display of a user interface for composing a message for the social networking application. In some embodiments, the user interface 440 for the social networking application includes a user interface icon 418, which, when selected (e.g., with a tap gesture), initiates display of a user interface for selecting and/or deselecting sections for the social networking application. In some embodiments, the user interface 440 for the social networking application includes a user interface icon 420, which, when selected (e.g., with a tap gesture), initiates display of a user interface for adjusting settings in the social networking application.

FIG. 4I also illustrates that a portion 422 of the user interface illustrated in FIG. 4H remains on the display 312. In some embodiments, the portion 422, when selected (e.g., with a tap gesture on the portion 422), causes the client device 104-1 to cease to display the user interface 440 for the social networking application and redisplay the user interface illustrated in FIG. 4H in its entirety.

FIGS. 4J-4R illustrate exemplary user interface associated with a story view mode in accordance with some embodiments.

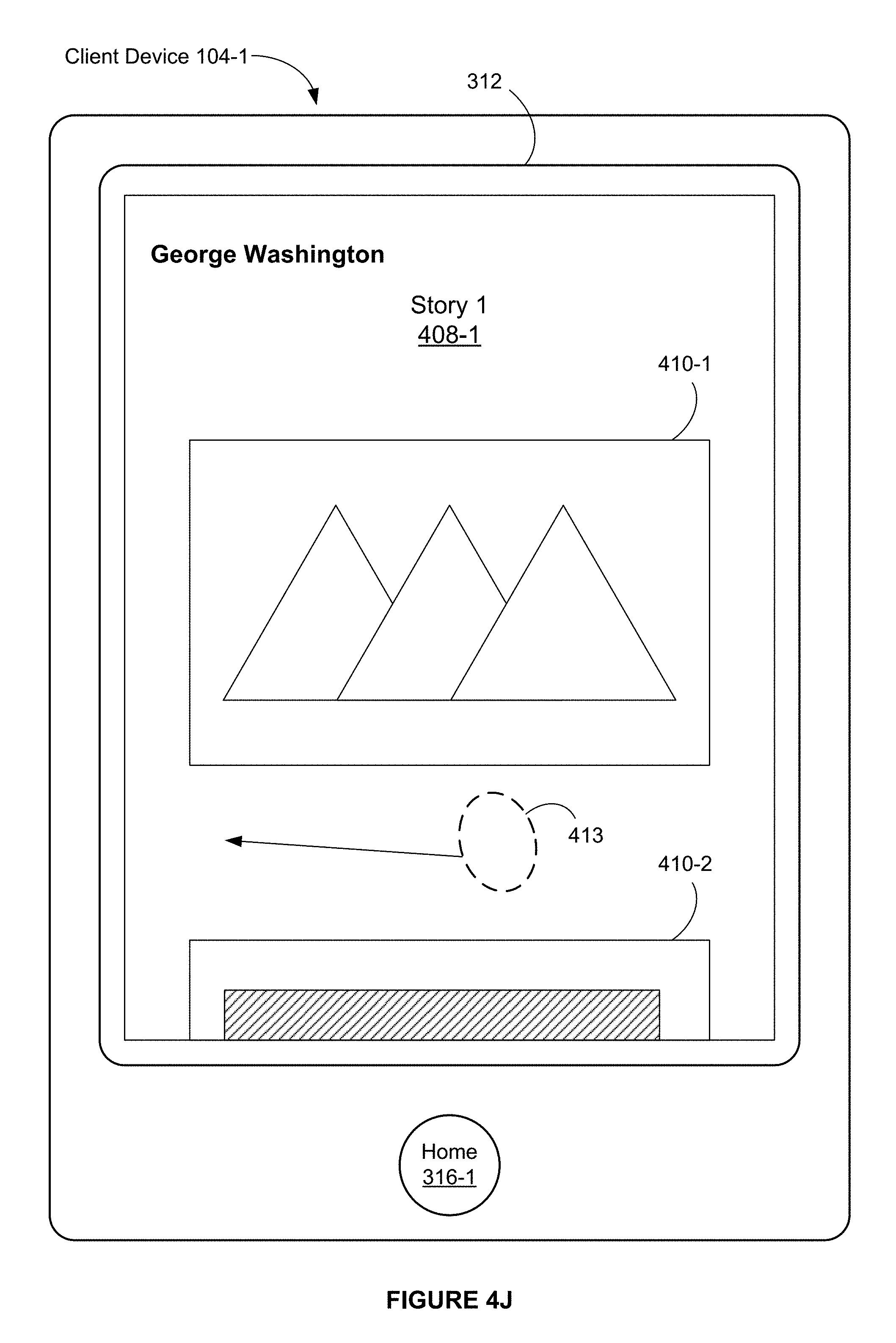

FIGS. 4J-4K illustrate exemplary user interfaces associated with a horizontal pan gesture on an image that corresponds to a story (e.g., a full-screen-width image) in accordance with some embodiments.

FIG. 4J illustrates that a horizontal pan gesture 413 (e.g., a leftward pan gesture) is detected at a location on the touch-sensitive surface that corresponds to the image 408-1 on the display 312.

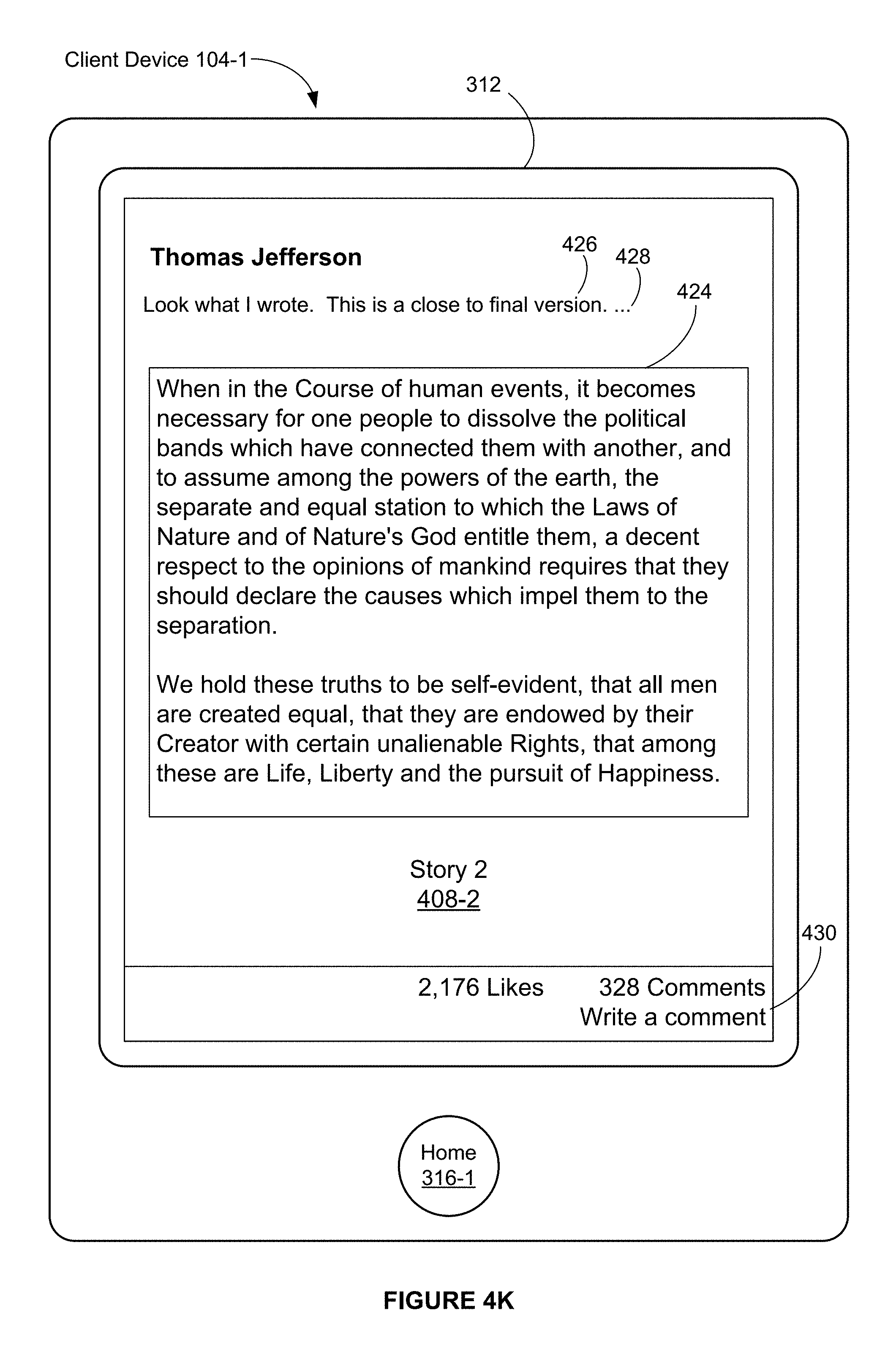

FIG. 4K illustrates that, in response to the horizontal pan gesture 413, a full-screen-width image 408-2 that corresponds to Story 2 is displayed. In FIG. 4K, a width of the image 408-2 corresponds substantially to a full width of the display 312 (e.g., the width of the image 408-2 corresponds 70, 75, 80, 90 or 95 percent or more of the full width of the display 312).

In some embodiments, Story 1 and Story 2 are adjacent to each other in a sequence of stories in the social networking content section. In some embodiments, Story 2 is subsequent to Story 1 in the sequence of stories.

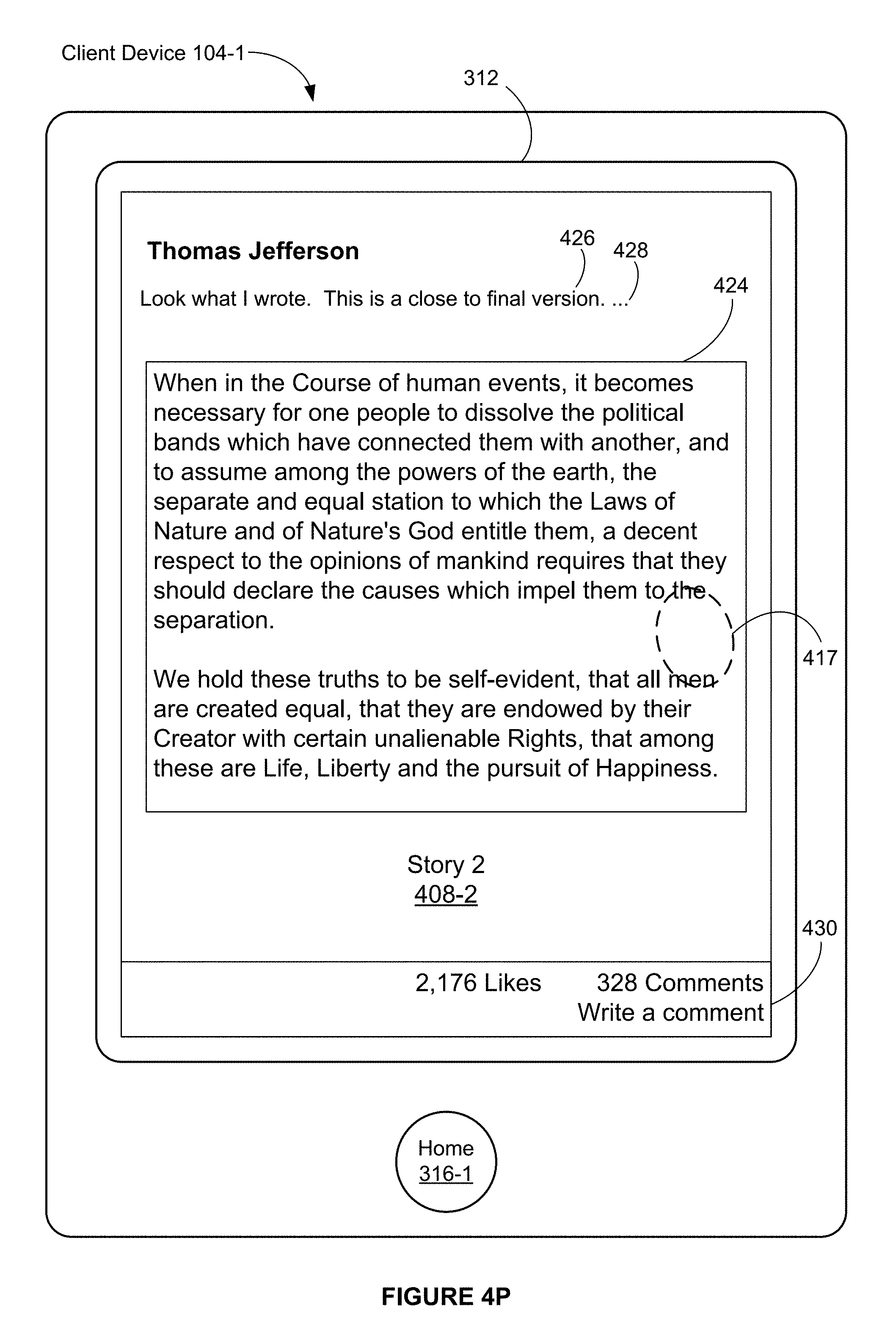

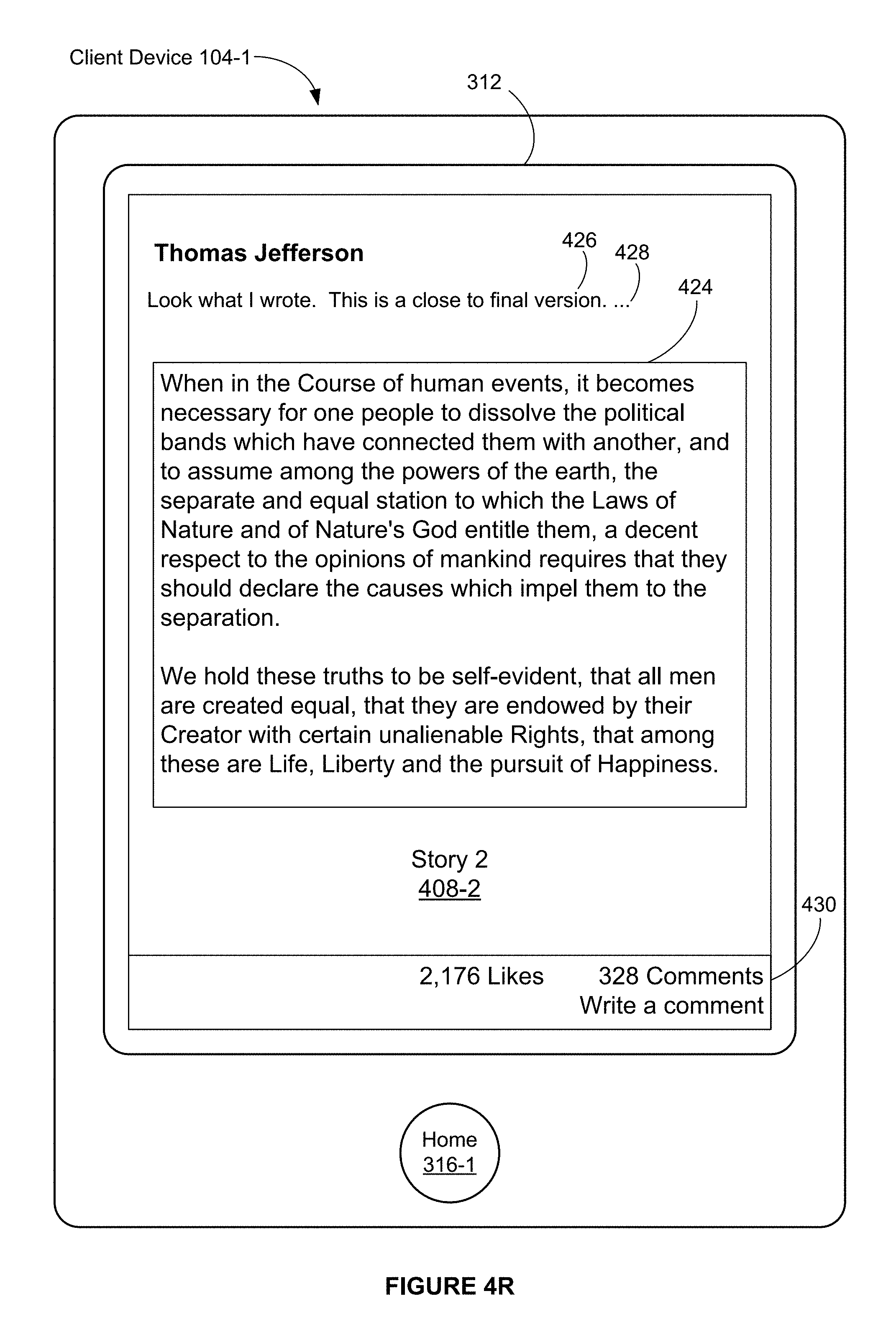

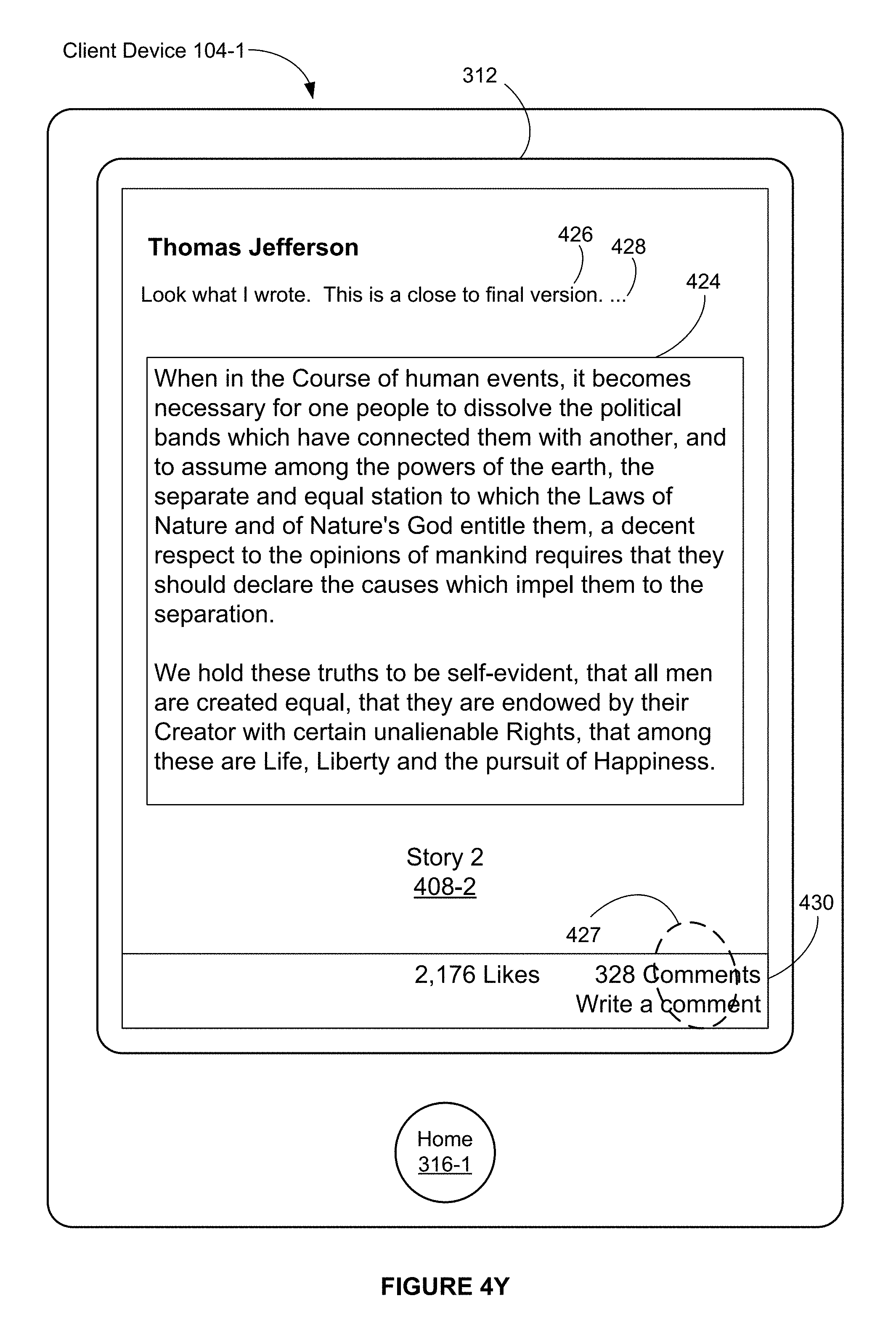

In FIG. 4K, the image 402-8 includes a user interface icon 424 for displaying an embedded content item.

FIG. 4K also illustrates that the image 408-2 includes a comment 426 from an author of Story 2 (e.g., Thomas Jefferson). In FIG. 4K, a portion of the comment 426 is truncated and displayed with a user interface icon 428 (e.g., ellipsis), which, when selected (e.g., with a tap gesture), initiates display of the truncated portion of the comment 426. In some embodiments, in response to selecting the user interface icon 428, the user interface icon 428 is replaced with the truncated portion of the comment 426.

In FIG. 4K, the image 408-2 is displayed with a user interface icon 430 that includes one or more feedback indicators. For example, the user interface icon 430 indicates how many users like Story 2 (e.g., 2,176 users) and how many comments have been made for Story 2 (e.g., 328 comments).

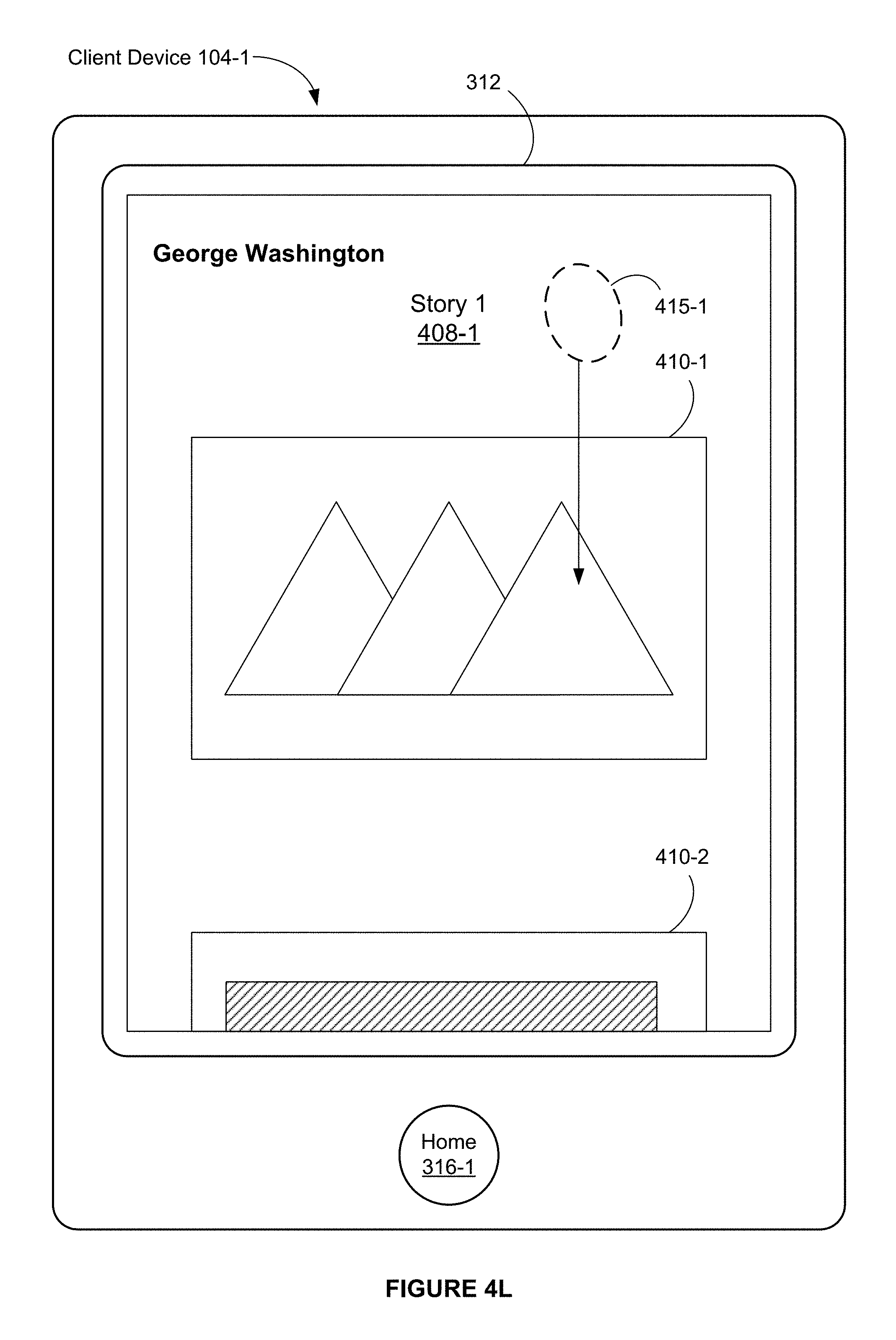

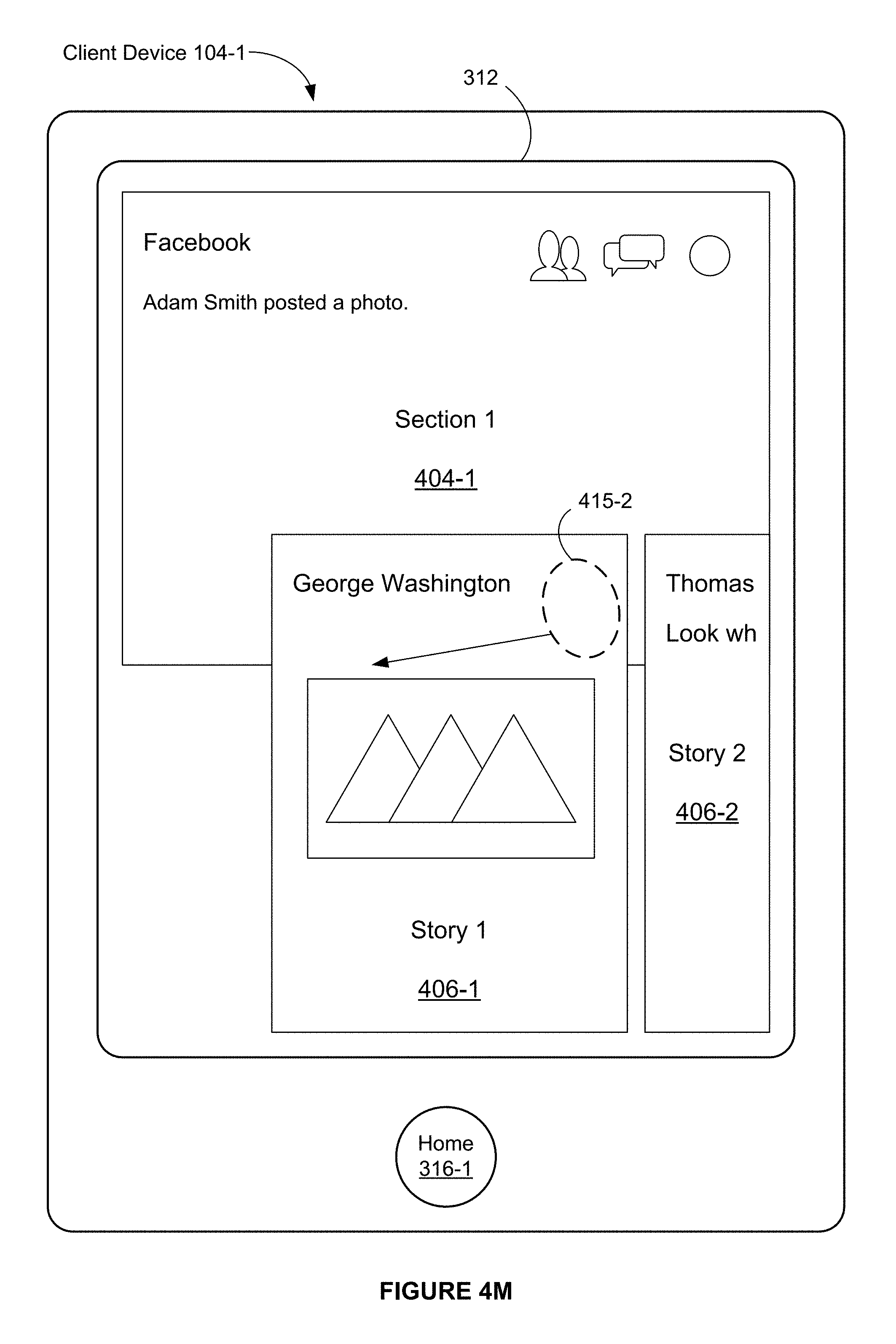

FIGS. 4L-4O illustrate exemplary user interfaces associated with a vertical pan gesture on an image that corresponds to a story (e.g., a full-screen-width image) in accordance with some embodiments. The vertical pan gesture in this instance results in a story view mode being changed to a section view mode.

FIG. 4L illustrates that a vertical pan gesture is detected at a location 415-1 on the touch-sensitive surface that corresponds to the full-screen-width image 408-1 of Story 1.

FIG. 4M illustrates that the vertical pan gesture remains in contact with the display 312 and has moved to a location 415-2. FIG. 4M also illustrates that, in response to the movement of a touch in the vertical pan gesture from the location 415-1 (FIG. 4L) to the location 415-2 (FIG. 4M), the full-screen-width image 408-1 is reduced to become the thumbnail image 406-1. In addition, at least a portion of the thumbnail image 406-2 is displayed. In FIG. 4M, the thumbnail images 406-1 and 406-2 at least partially overlay the image 404-1.

FIG. 4M also illustrates that the vertical pan gesture continues to move in a direction that is not perfectly vertical (e.g., diagonal).

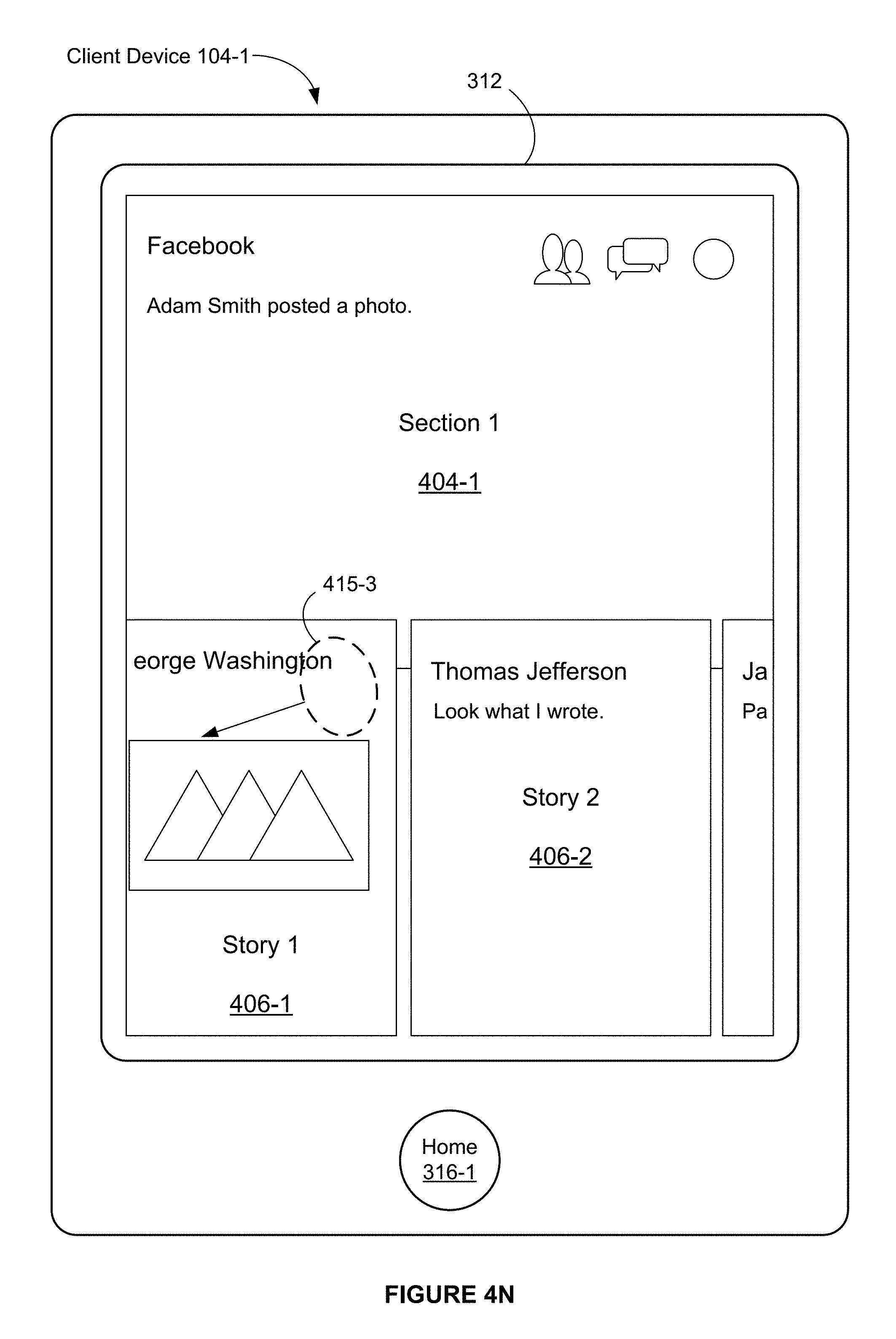

FIG. 4N illustrates that the vertical pan gesture remains in contact with the display 312 and has moved to a location 415-3. FIG. 4N also illustrates that, in response to the movement of the touch from the location 415-2 (FIG. 4M) to the location 415-3 (FIG. 4N), the thumbnail image 406-1 and the thumbnail image 406-2 are further reduced. In addition, in response to the movement of the touch from the location 415-2 (FIG. 4M) to the location 415-3 (FIG. 4N), which includes a horizontal movement of the touch, the thumbnail images 406-1 and the thumbnail image 406-2 are scrolled horizontally in accordance with the horizontal movement of the touch. In FIG. 4N, the thumbnail images 406-1 and 406-2 at least partially overlay the image 404-1.

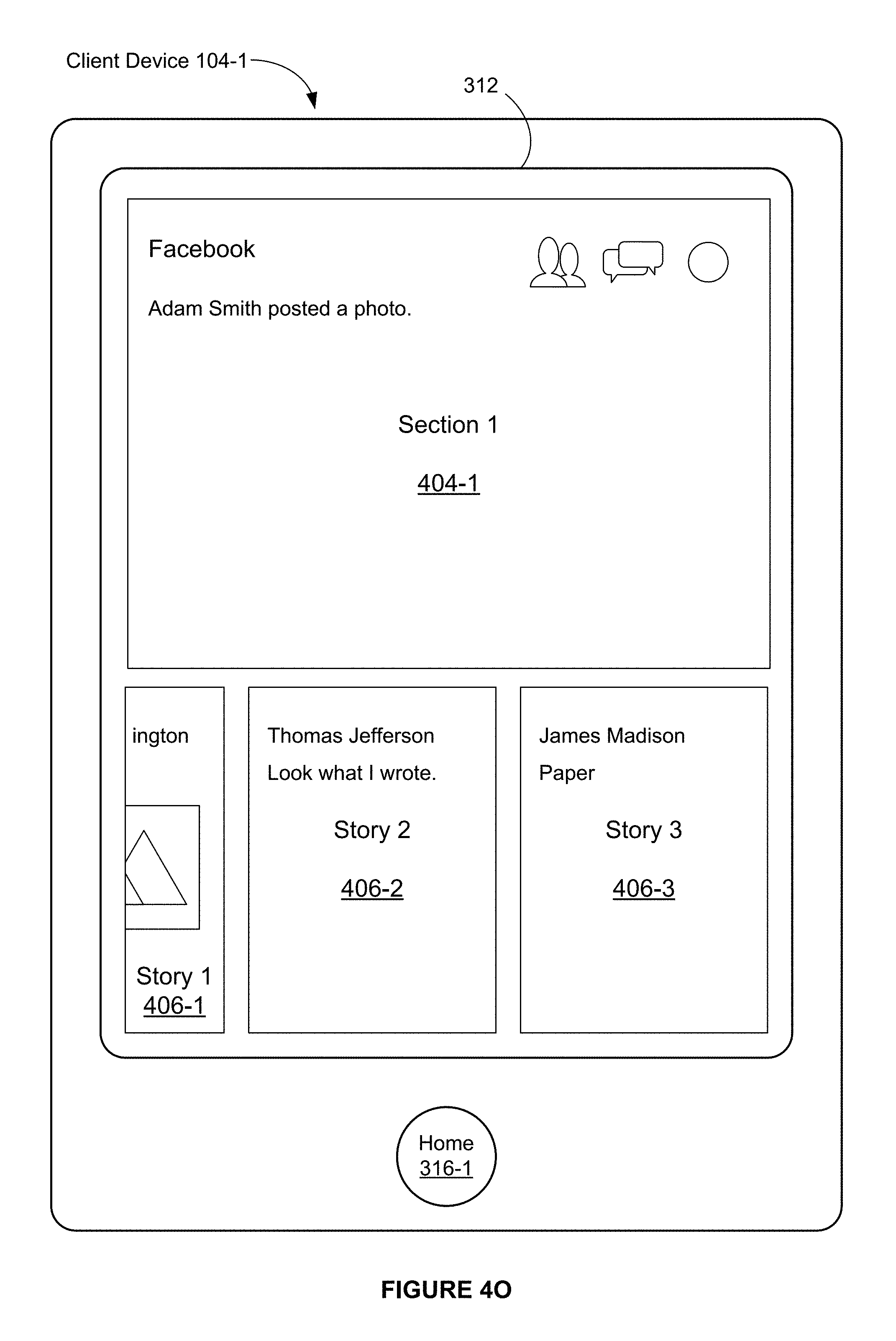

FIG. 4O illustrates that, in response to a further downward movement of the touch or in response to a release of the touch from the touch-sensitive surface (e.g., the touch ceases to be detected on the touch-sensitive surface), the thumbnail images 406-1, 406-2, and 406-3 are further reduced so that the thumbnail images 406-1, 406-2, and 406-3 cease to overlay the image 404-1.

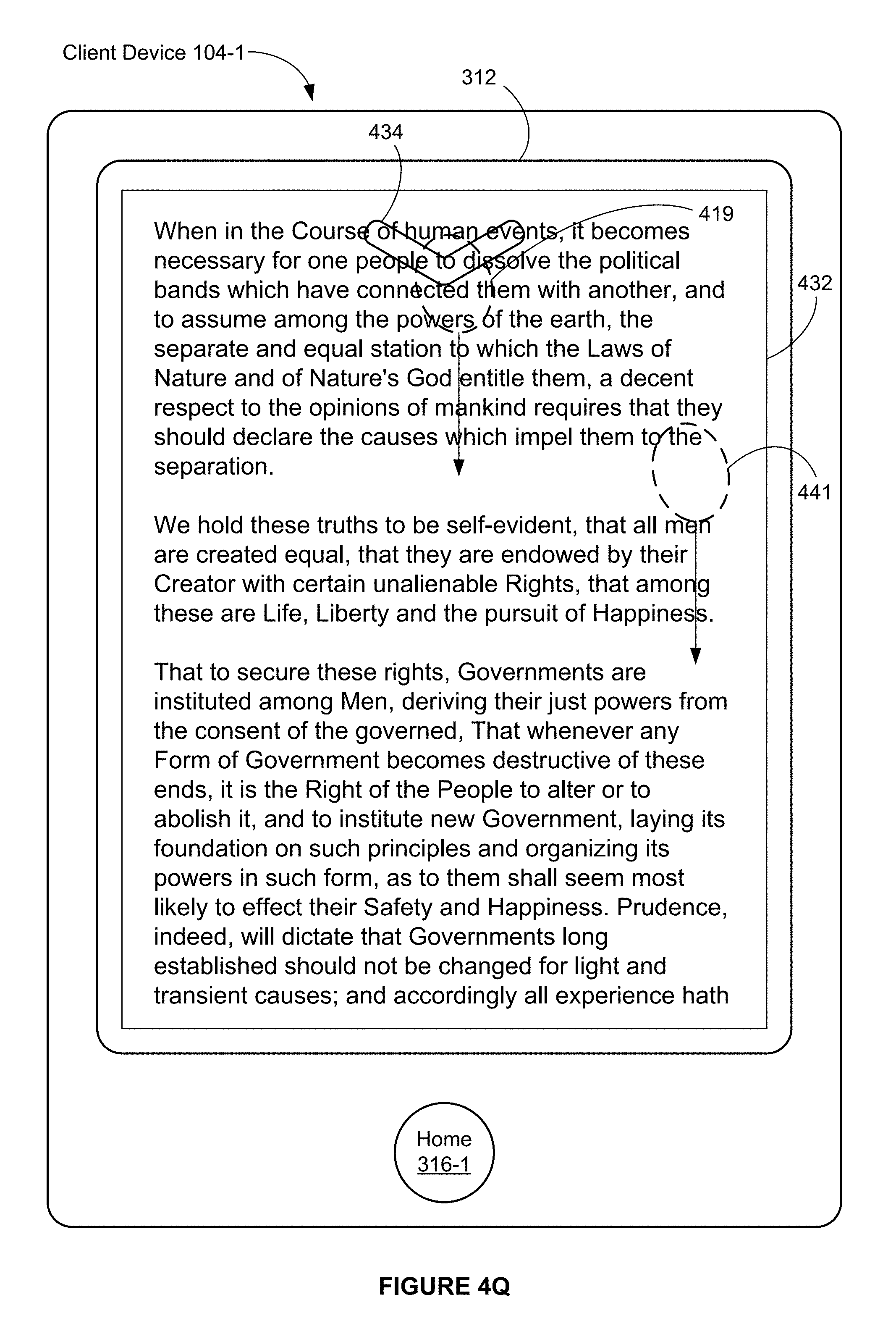

FIGS. 4P-4Q illustrate exemplary user interfaces associated with a user interface icon for displaying an embedded content item in accordance with some embodiments.

FIG. 4P illustrates that a touch input 417 (e.g., a tap gesture) is detected at a location on the touch-sensitive surface that corresponds to the user interface icon 424 for displaying an embedded content item.

FIG. 4Q illustrates that, in response to detecting the touch input 417, the user interface illustrated in FIG. 4P is replaced with a user interface that includes a full-screen-width display 432 of additional information embedded in Story 2. In some embodiments, an animation in which the user interface icon 424 expands, opens, and/or unfolds, to become the full-screen-width display 432 of the additional information is displayed in replacing the user interface illustrated in FIG. 4P with the user interface that includes the full-screen-width display 432 of additional information embedded in Story 2.

In FIG. 4Q, a user interface icon 434 (e.g., a downward arrow icon) is displayed, overlaying the full-screen-width display 432 of additional information. The user interface icon 434, when selected (e.g., with a tap gesture on the icon 434 or a downward swipe or pan gesture 419 that starts on icon 434), initiates replacing the full-screen-width display 432 of the additional information with the user interface that includes the user interface icon 424 (FIG. 4P). In some embodiments, the user interface icon 434 is transparent or translucent. In some embodiments, the user interface icon 434 is displayed in accordance with a determination that the full-screen-width display 432 of the additional information is scrolled (e.g., downward) at a speed that exceeds a predetermined speed. In some embodiments, the user interface icon 434 is displayed in accordance with a determination that the full-screen-width display 432 of the additional information includes a display of a last portion (e.g., a bottom portion) of the additional information. In some embodiments, an upward arrow, instead of a downward arrow, is displayed over the additional information. In some embodiments, the downward arrow is displayed adjacent to a top portion of the display 312. In some embodiments, the user interface icon 434 is displayed in accordance with a determination that the full-screen-width display 432 of the additional information includes a display of an initial portion (e.g., a top portion) of the additional information.

FIG. 4Q also illustrates that a downward gesture 419 (e.g., a downward swipe gesture or a downward pan gesture that starts on icon 434 or an activation region for icon 434) is detected while the additional information is displayed. Alternatively, FIG. 4Q also illustrates that a downward gesture 441 (e.g., a downward swipe gesture or a downward pan gesture that starts on the additional information, but away from icon 434 or an activation region for icon 434) is detected while the additional information is displayed.

FIG. 4R illustrates that, in response to detecting the downward gesture 419, the full-screen-width display 432 of the additional information (FIG. 4Q) is replaced with the user interface that includes the user interface icon 424. In some embodiments, an animation in which the full-screen-width display 432 of the additional information shrinks, closes, and/or folds, to become the user interface icon 424 is displayed in replacing the full-screen-width display 432 of the additional information with the user interface that includes the user interface icon 424.

If gesture 441 was detected in FIG. 4Q, instead of gesture 419, then the additional information would be scrolled (not shown) instead of ceasing to show the additional information. Thus, depending on where the swipe or pan gesture is applied to the additional information, the additional information either scrolls or ceases to be displayed. This provides an easy way to navigate with swipe or pan gestures within the application.

FIGS. 4S-4X illustrate exemplary user interfaces associated with a photo view mode in accordance with some embodiments.

FIG. 4S illustrates that a touch input 421 (e.g., a tap gesture) is detected at a location on the touch-sensitive surface that corresponds to a photo 410-1 in the full-screen-width image 408-1 on the display 312.

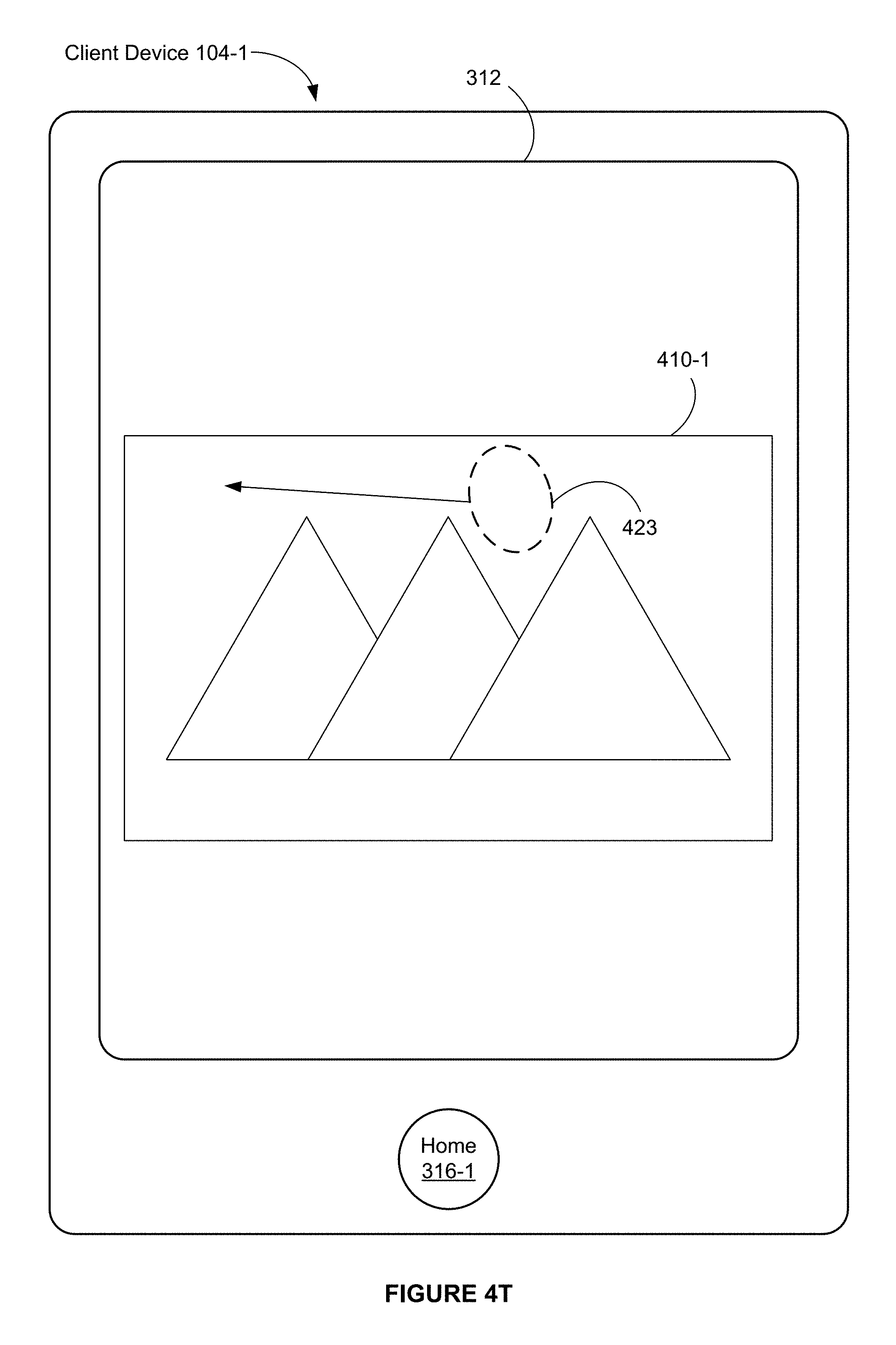

FIG. 4T illustrates that, in response to detecting the touch input 421, the photo 410-1 is displayed in a photo view mode. In FIG. 4T, the photo 410-1 is displayed without any other photo or text in the full-screen-width image 408-1. In FIG. 4T, a width of the photo 410-1 corresponds substantially to a full width of the display 312 (e.g., the width of the photo 410-1 corresponds 70, 75, 80, 90 or 95 percent or more of the full width of the display 312). As used herein, a user interface that includes a full-screen-width image of a single photo is called a photo view.

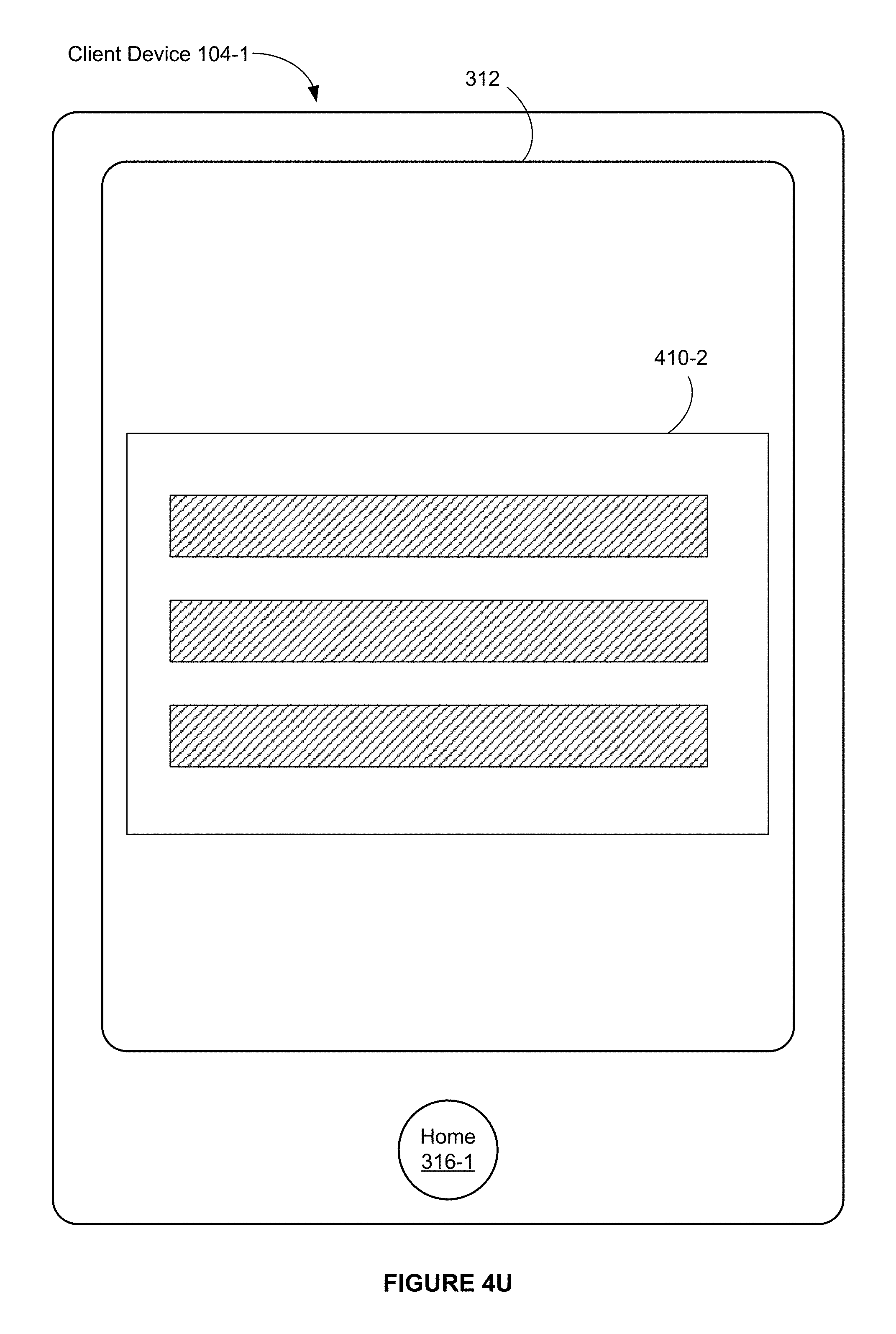

FIGS. 4T-4U illustrate exemplary user interfaces associated with a horizontal pan gesture on a photo in a photo view in accordance with some embodiments.

FIG. 4T illustrates that a horizontal pan gesture 423 (e.g., a leftward pan gesture) is detected at a location on the touch-sensitive surface that corresponds to the photo 410-1.

FIG. 4U illustrates that, in response to the horizontal pan gesture 423, the photo 410-1 is replaced with the photo 410-2. In some embodiments, in Story 2, the photo 410-1 and the photo 410-2 are adjacent to each other in a sequence of photos (e.g., the photo 410-1 and the photo 410-2 are displayed adjacent to each other in FIG. 4S). In some embodiments, the photo 410-2 is subsequent to photo 410-1 in the sequence of photos.

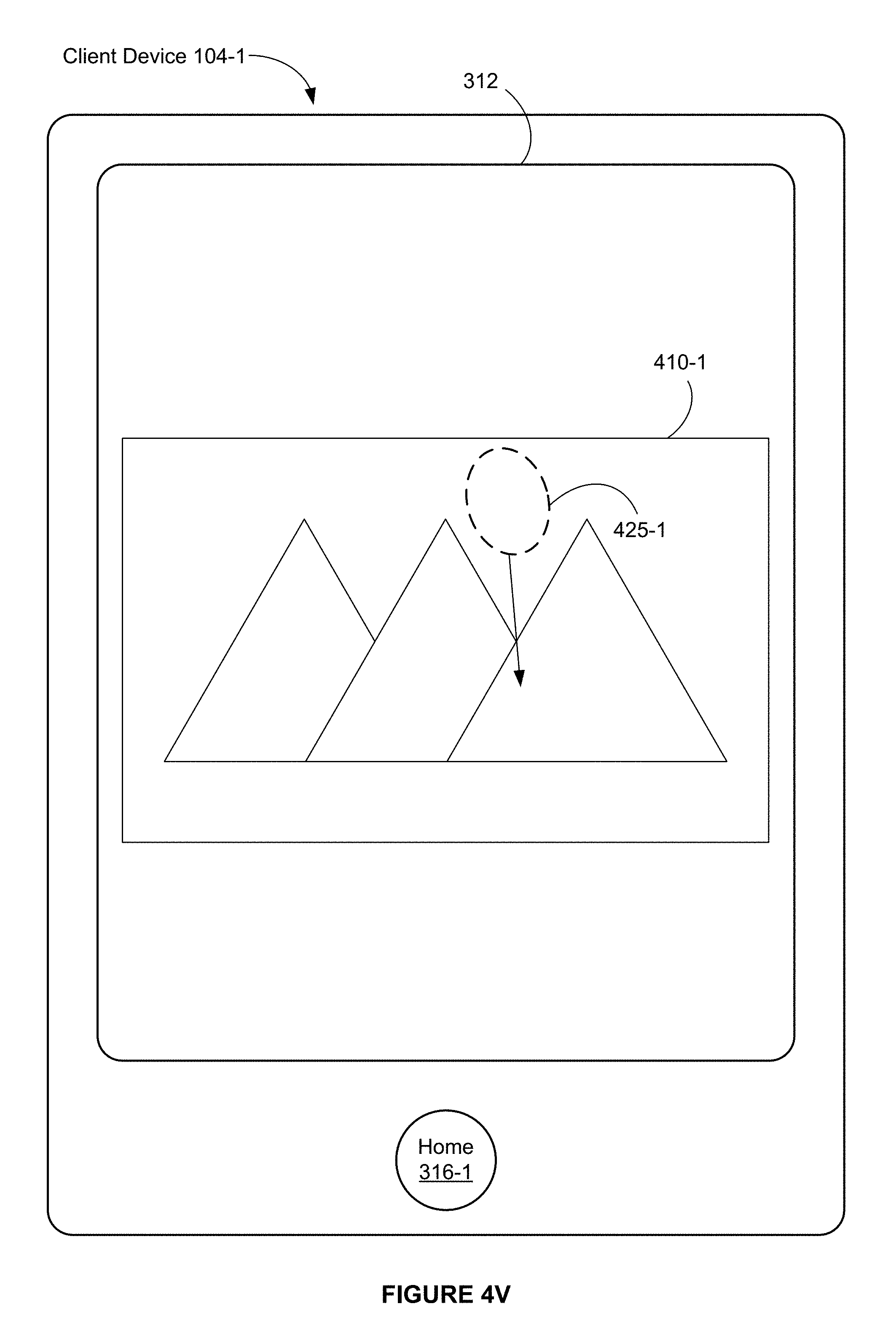

FIGS. 4V-4X illustrate exemplary user interfaces associated with an initially vertical pan gesture on a photo in a photo view that changes direction in accordance with some embodiments.

FIG. 4V illustrates that an initially vertical pan gesture 425 (e.g., a downward pan gesture that is vertical or substantially vertical) is detected at a location 425-1 on the touch-sensitive surface that corresponds to the photo 410-1.

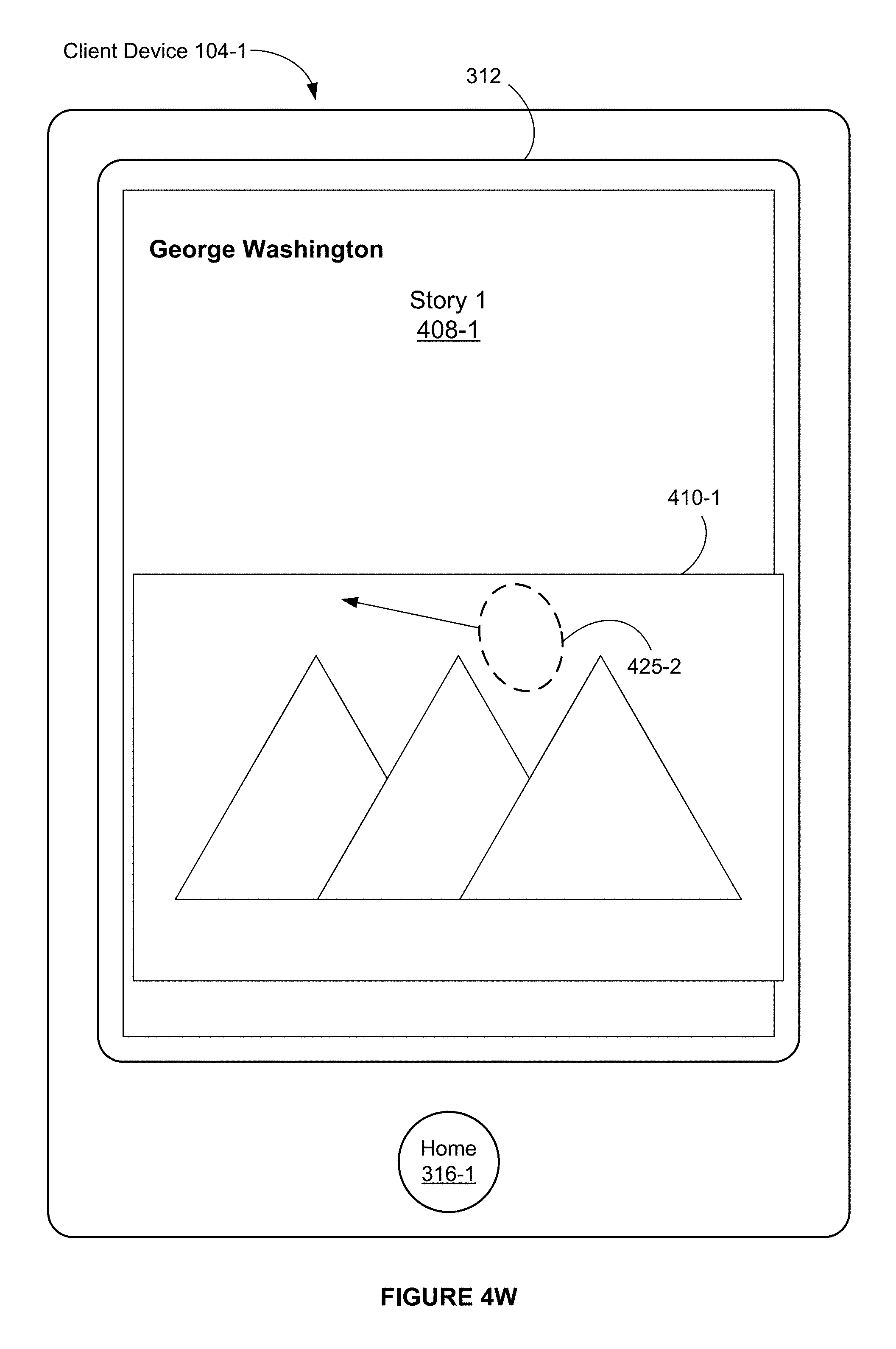

FIG. 4W illustrates that the pan gesture 425 remains in contact with the touch-sensitive surface and has moved to a location 425-2. FIG. 4W also illustrates that, in response to the movement of a touch in the pan gesture from the location 425-1 (FIG. 4V) to the location 425-2 (FIG. 4W), the photo 410-1 moves in accordance with the movement of the touch from the location 425-1 to the location 425-2, and the full-screen-width image 408-1 reappears and is overlaid with the photo 410-1.

In addition, FIG. 4W illustrates that the touch in the pan gesture 425 continues to move on display 312, but changes direction (e.g., from vertical to horizontal or diagonal). In some embodiments, the photo 410-1 moves in accordance with the touch in the pan gesture (e.g., when the touch moves horizontally, the photo 410-1 moves horizontally).

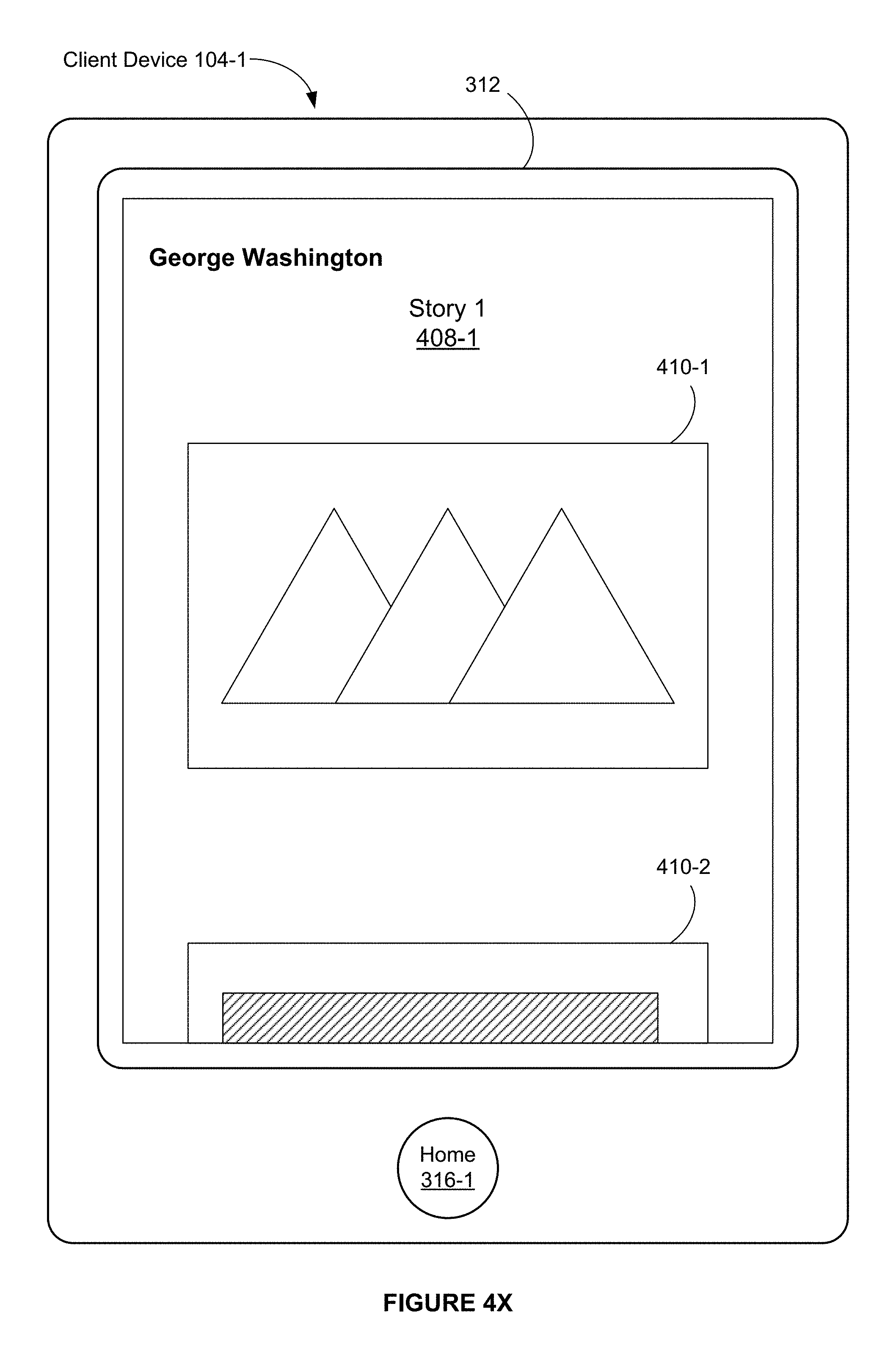

FIG. 4X illustrates that the pan gesture 425 ceases to be detected on the touch-sensitive surface. FIG. 4X also illustrates that, in response to the pan gesture 425 ceasing to be detected on the touch-sensitive surface, the full-screen-width image 408-1 is displayed without an overlay.

FIGS. 4Y-4CC illustrate exemplary user interfaces associated with a comments popover view in accordance with some embodiments.

FIG. 4Y illustrates that a touch input 427 (e.g., a tap gesture) is detected at a location that corresponds to the user interface icon 430 that includes one or more feedback indicators.

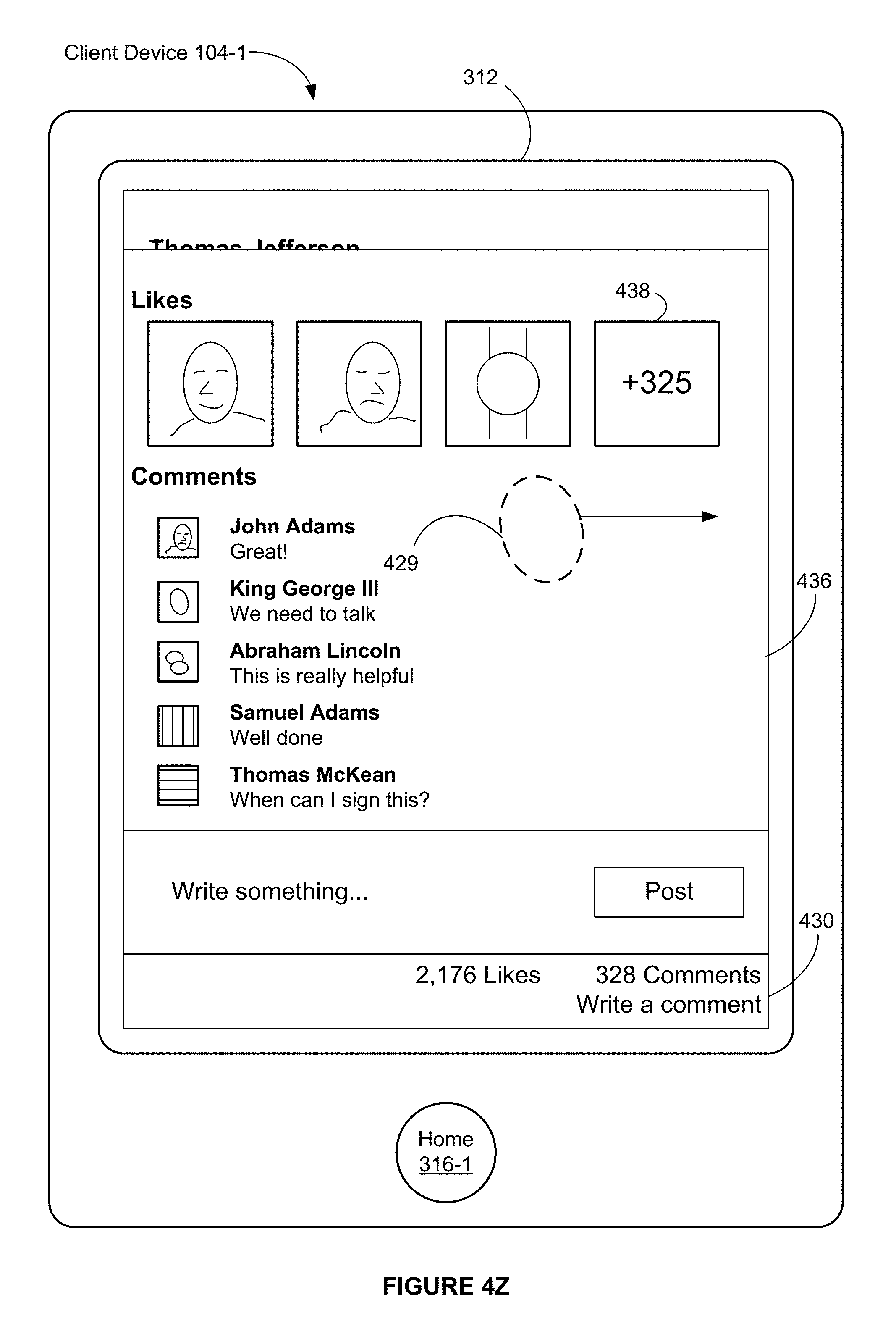

FIG. 4Z illustrates that, in response to detecting the touch input 427, a comments popover view 436 is displayed. In FIG. 4Z, the comments popover view 436 is overlaid over the full-screen-width image 408-2 that corresponds to Story 2 (FIG. 4Y). The comments popover view 436 includes user identifiers (e.g., user photos) that correspond to users who liked Story 2. The comments popover view 436 also includes user identifiers for users, who left comments on Story 2, and their comments on Story 2.

In FIG. 4Z, the comments popover view 436 includes a user interface icon 438 for displaying additional users (who liked Story 2). The user interface icon 438 indicates a number of additional users who liked Story 2. In some embodiments, the user interface icon 438, when selected (e.g., with a tap gesture), initiates rearranging the displayed user identifiers and displaying additional user identifiers that correspond to additional users who liked Story 2. For example, selecting the user interface icon 438 displays an animation in which the horizontally arranged user identifiers are rearranged to a vertically arranged scrollable list of user identifiers.

FIG. 4Z also illustrates that a horizontal gesture 429 (e.g., a rightward pan gesture or a rightward swipe gesture) is detected at a location on the touch-sensitive surface that corresponds to the comments popover view 436.

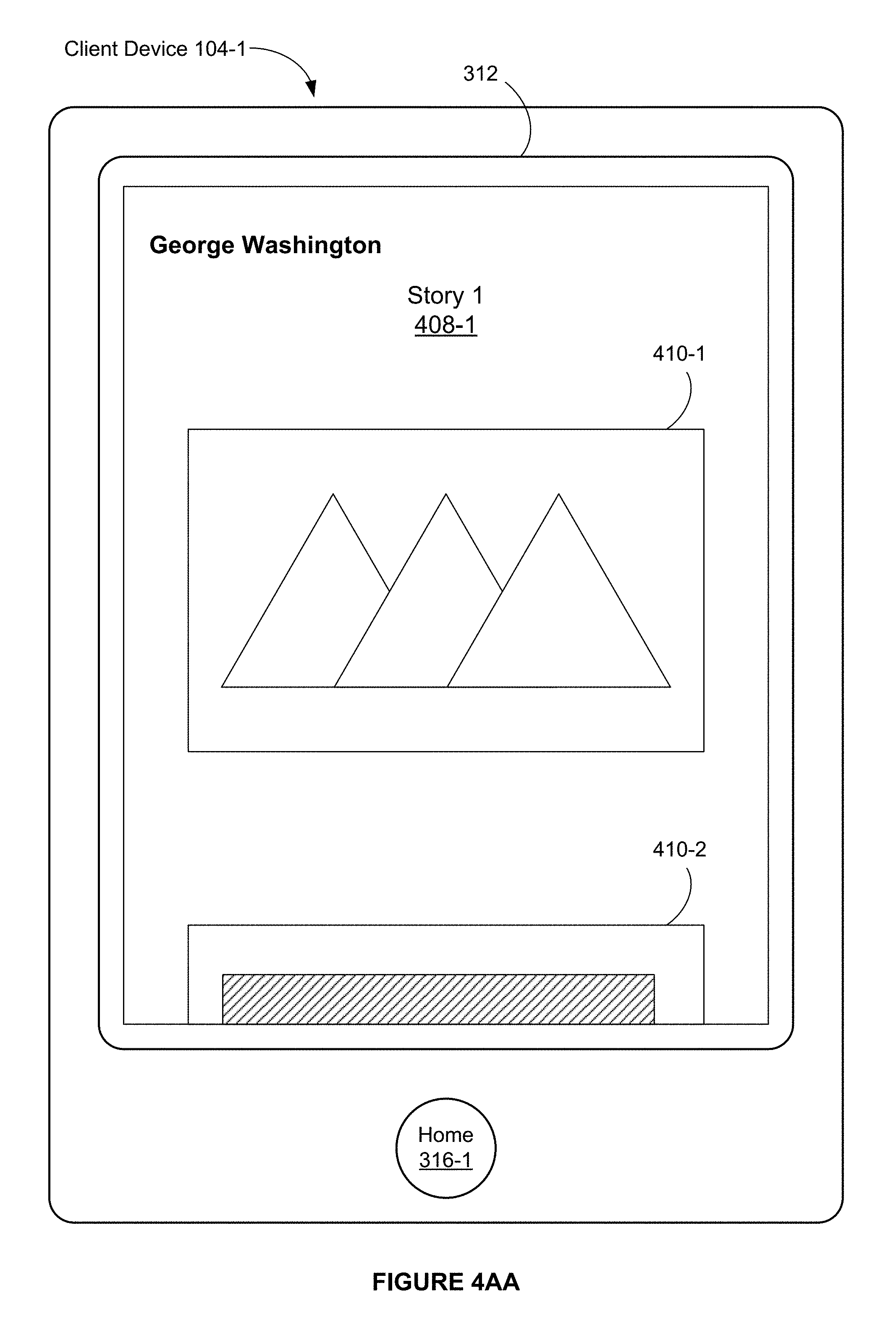

FIG. 4AA illustrates that, in response to the horizontal pan gesture 429, the display of the full-screen-width image 408-2 overlaid by the comments popover view 436 is replaced with the full-screen-width image 408-1.

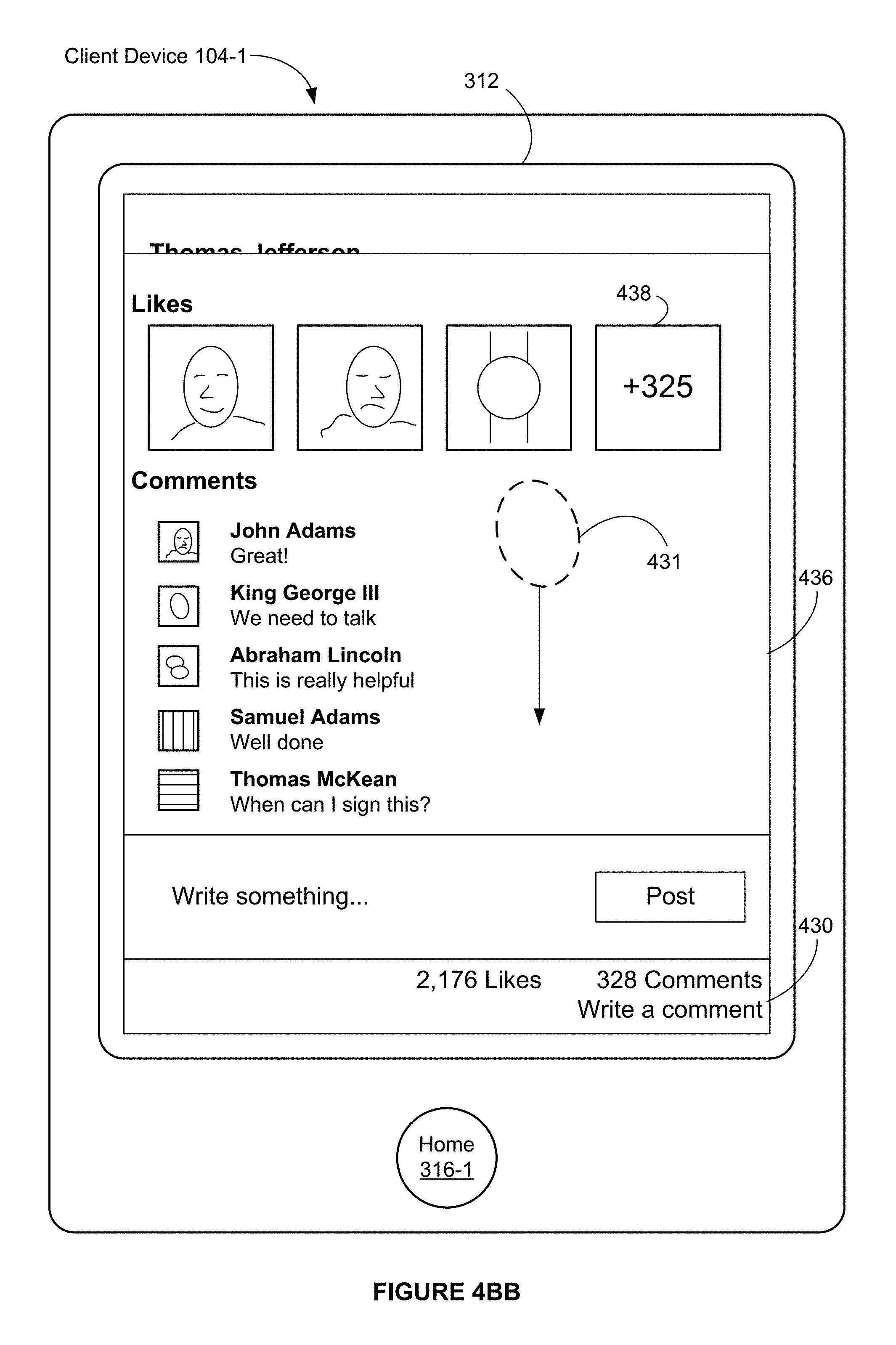

FIG. 4BB illustrates that a vertical gesture 431 (e.g., a downward pan gesture or a downward swipe gesture) is detected at a location on the touch-sensitive surface that corresponds to the comments popover view 436.