Analysis And Deep Learning Modeling Of Sensor-based Object Detection Data For Organic Motion Determination In Bounded Aquatic Environments Using Underwater Powered Systems

Barton; Chris ; et al.

U.S. patent application number 17/084616 was filed with the patent office on 2022-04-21 for analysis and deep learning modeling of sensor-based object detection data for organic motion determination in bounded aquatic environments using underwater powered systems. This patent application is currently assigned to Guard, Inc.. The applicant listed for this patent is Guard, Inc.. Invention is credited to Chris Barton, Garrett J. Burkitt, III, Edsel P. Hamilton, III, Nigel Morris, Srinivasa Narasimhan.

| Application Number | 20220122431 17/084616 |

| Document ID | / |

| Family ID | 1000005427982 |

| Filed Date | 2022-04-21 |

View All Diagrams

| United States Patent Application | 20220122431 |

| Kind Code | A1 |

| Barton; Chris ; et al. | April 21, 2022 |

ANALYSIS AND DEEP LEARNING MODELING OF SENSOR-BASED OBJECT DETECTION DATA FOR ORGANIC MOTION DETERMINATION IN BOUNDED AQUATIC ENVIRONMENTS USING UNDERWATER POWERED SYSTEMS

Abstract

Techniques for analysis and deep learning modeling of sensor-based object detection data for organic motion determination in bounded aquatic environments using underwater powered systems are described, including a light disposed substantially within a recess of a boundary wall, the light being disposed substantially underwater and configured to receive power using a conduit, and a spacer ring disposed circumferentially about an opening associated with the recess, the spacer ring being configured to secure the light within the recess and to provide a channel formed in the spacer ring, the channel being configured to receive the conduit.

| Inventors: | Barton; Chris; (San Francisco, CA) ; Narasimhan; Srinivasa; (McDonald, PA) ; Morris; Nigel; (Toronto, CA) ; Hamilton, III; Edsel P.; (Pflugerville, TX) ; Burkitt, III; Garrett J.; (Simi Valley, CA) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Assignee: | Guard, Inc. San Francisco CA |

||||||||||

| Family ID: | 1000005427982 | ||||||||||

| Appl. No.: | 17/084616 | ||||||||||

| Filed: | October 29, 2020 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 16443866 | Jun 17, 2019 | |||

| 17084616 | ||||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | G08B 21/08 20130101; G06T 7/215 20170101; G06N 3/08 20130101; G06T 2207/20084 20130101; G06T 2207/10052 20130101; G06T 2207/20081 20130101; G06T 7/80 20170101; G08B 1/08 20130101; G06T 2207/30196 20130101 |

| International Class: | G08B 1/08 20060101 G08B001/08; G08B 21/08 20060101 G08B021/08; G06N 3/08 20060101 G06N003/08; G06T 7/80 20060101 G06T007/80; G06T 7/215 20060101 G06T007/215 |

Claims

1. A system, comprising: a sensor disposed in a recess proximate to a waterline associated with a body of water; and a processor configured to capture an image from the sensor, the sensor being housed in a structure disposed in the recess and electrically coupled to a light, the recess being configured to receive a spacer ring configured to detachably couple to a hook adapter secured to a boundary wall associated with the body of water, the spacer ring also being configured to provide a channel and the processor also being configured to convert the image into data digitally encoded by the processor, to evaluate the data to separate background data from foreground data, to generate tracking data from the data after the background data is removed, the tracking data being evaluated to determine whether a head or a body are detected by comparing the tracking data to classifier data, to track the head or the body relative to the waterline if the head or the body are detected in the tracking data, and to determine a state associated with the head or the body, if the head or the body is detected, the state being associated with state data, the state data being used to determine a drowning state.

2. The system of claim 1, wherein the channel is configured to receive a plurality of conduits, at least one of the plurality of conduits having one or more wires configured to transmit an electrical signal.

3. The system of claim 1, wherein the channel is configured to provide access between an inner area of the structure and an outer area of the structure.

4. The system of claim 1, wherein the channel is configured to receive a plurality of conduits, at least one of the plurality of conduits having one or more cables configured to transmit a voltage.

5. The system of claim 1, wherein the channel is configured to receive a plurality of conduits, at least one of the plurality of conduits having one or more cables configured to transmit data.

6. The system of claim 1, wherein the spacer ring is configured to electrically bond the structure when the spacer ring is secured to the boundary wall using the hook adapter.

7. The system of claim 1, wherein the spacer ring is configured to electrically bond the structure when the spacer ring is secured to the hook adapter using a fastener substantially comprised of an electrically conductive alloy.

8. The system of claim 1, wherein the spacer ring is configured to be disposed between the recess and a bezel securing the structure within the recess.

9. The system of claim 1, wherein the spacer ring is disposed circumferentially around an opening to the recess using the hook adapter.

10. The system of claim 1, wherein the channel is configured to receive a plurality of conduits, at least one of the plurality of conduits having one or more wires configured to transmit an electrical signal.

11. The system of claim 1, wherein the conduit channel is formed in the spacer ring.

12. An apparatus, comprising: a light disposed substantially within a recess of a boundary wall, the light being disposed substantially underwater and configured to receive power using a conduit; and a spacer ring disposed circumferentially about an opening associated with the recess, the spacer ring being configured to secure the light within the recess and to provide a channel formed in the spacer ring, the channel being configured to receive the conduit.

13. The apparatus of claim 12, wherein the recess is formed in the boundary wall.

14. The apparatus of claim 12, wherein the channel is partially formed in the outer edge of the spacer ring.

15. The apparatus of claim 12, wherein the channel is formed in the spacer ring, the channel being configured to permit the conduit to provide an electrically contiguous path between an endpoint in the recess and another endpoint substantially outside of the recess and the spacer ring.

16. The apparatus of claim 12, wherein the light is an endpoint configured to be electrically coupled by the conduit to another endpoint.

17. The apparatus of claim 12, wherein the conduit is configured to provide an electrical coupling to the light and a data coupling to a sensor disposed within the recess.

18. The apparatus of claim 12, wherein the conduit is configured to provide an electrical coupling to the light and a data coupling to a sensor disposed substantially outside of the recess.

19. The apparatus of claim 12, wherein the spacer ring has a plurality of tabs formed in substantially in inner periphery of the spacer ring, one or more of the plurality of tabs being configured to secure the spacer ring to the boundary wall when the one or more of the plurality of tabs is structurally interlocked with a hook adapter.

20. A system, comprising: a structure configured to house within a recess an electrical element; a sensor configured to capture an image; a light electrically coupled to the structure; and a spacer ring configured to detachably couple to a hook adapter and to provide a channel configured to pass a conduit from the electrical elements to another electrical element outside the recess.

Description

CROSS-REFERENCE TO RELATED APPLICATIONS

[0001] This application is a continuation-in-part application of U.S. patent application Ser. No. 16/443,866, filed Jun. 17, 2019 and entitled, "Analysis And Deep Learning Modeling Of Sensor-Based Object Detection Data In Bounded Aquatic Environments," all of which is herein incorporated by reference in its entirety for all purposes.

FIELD

[0002] The present invention relates generally to computer science, data science, databases, sensor management, and electronic safety and security systems. More specifically, techniques for analysis and deep learning modeling of sensor-based object detection data for organic motion determination in bounded aquatic environments using underwater powered systems are described.

BACKGROUND

[0003] As the fields of data networking, computer science, and data science expand, various computer programs including operating systems, client and server applications, online Internet platforms, distributed and cloud computing systems, and various other types of applications are being developed and deployed in increasing complexity, design, and scale across a wide variety of industries and sectors. There is widespread utility for applications in various fields, including those promoting human health and safety. For example, thousands of people die each year in drowning-related accidents, whether in natural (e.g., oceans, seas, lakes, rivers, streams, ponds, or the like) or artificial bodies of water (e.g., reservoirs, swimming pools, diving tanks, wading pools and ponds, or the like). Yet, conventional techniques have not prevented the occurrence of such incidents, which often remain unnoticed or undetected until after someone has drowned.

[0004] Conventional techniques for detecting drowning have not been particularly successful due to a variety of factors. Problematically, conventional techniques are often technically limited, inaccurate, inferior due to limitations of implemented equipment, or impractically expensive to deploy. While some conventional techniques use sensor-based approaches, these systems are unable to distinguish various features such as pool walls, steps, bottom contours, or other fixed objects from those that are temporary or transient such as moving bodies in a pool. Moreover, the motion and tracking of individuals in conventional techniques is very difficult and sensor-based conventional solutions are easily defeated. Further, current sensor-based approaches are often technically limited as input received from conventional systems is often not processed accurately nor input to systems sophisticated enough to perform data-processing functions that can accurately identify in-water objects. Conventional techniques often are unable to detect and classify in-water objects accurately or consistently, which can lead to unfortunate exceptions and low drowning detection rates. Still further, conventional techniques can be physically difficult and expensive to deploy, often requiring additional construction for retrofitting pre-existing pools and artificially enclosed bodies of water. Further, conventional techniques suffer from numerous other defects such as equipment expense, limited ability to recognize using sensors features in a waterborne environment, power distribution, among other problems that characterize drowning detection as flawed and problematic.

[0005] Thus, what is needed is a solution for detecting objects in aquatic environments in order to identify and prevent drowning incidents without the limitations of conventional techniques.

BRIEF DESCRIPTION OF THE DRAWINGS

[0006] Various embodiments or examples ("examples") of the invention are disclosed in the following detailed description and the accompanying drawings:

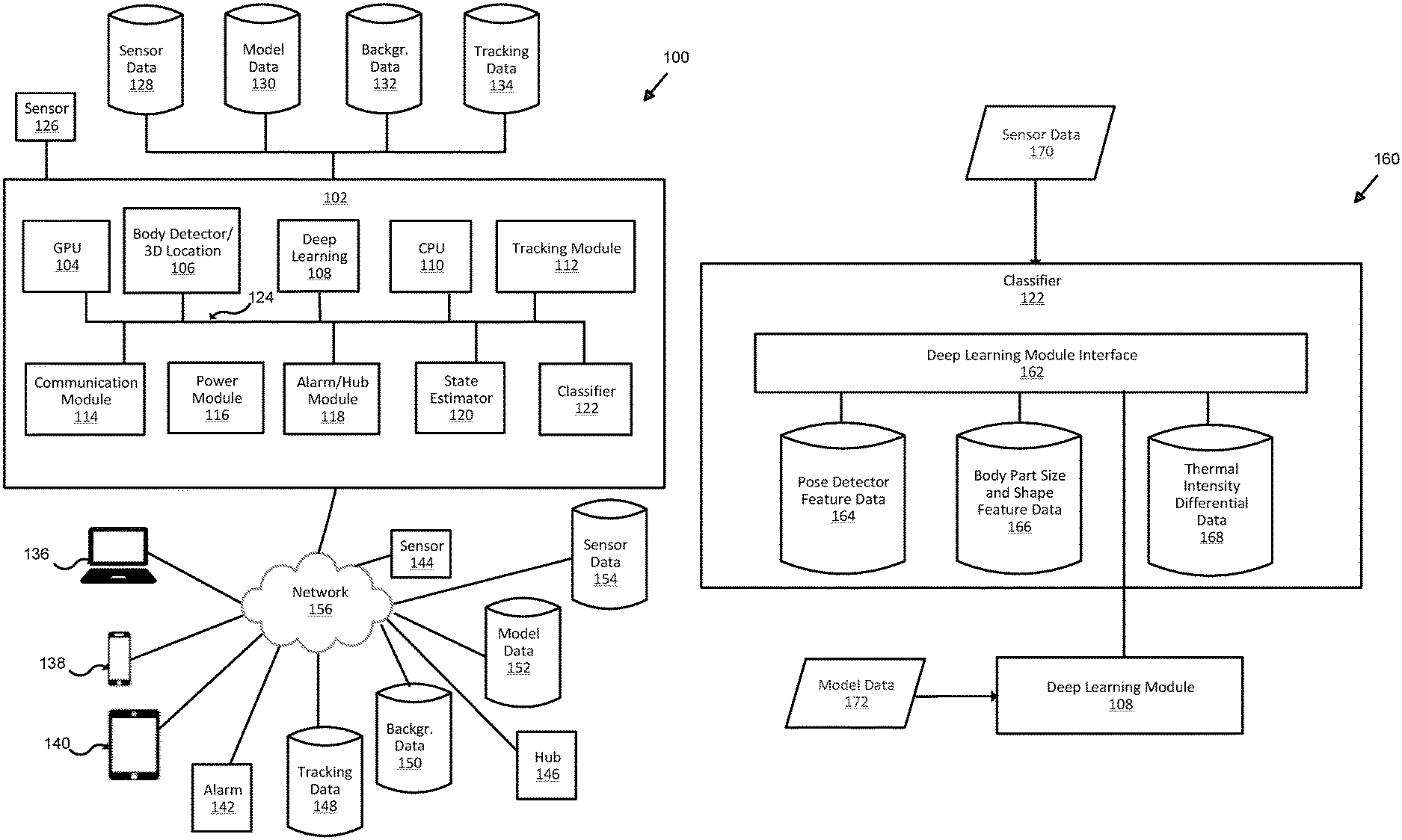

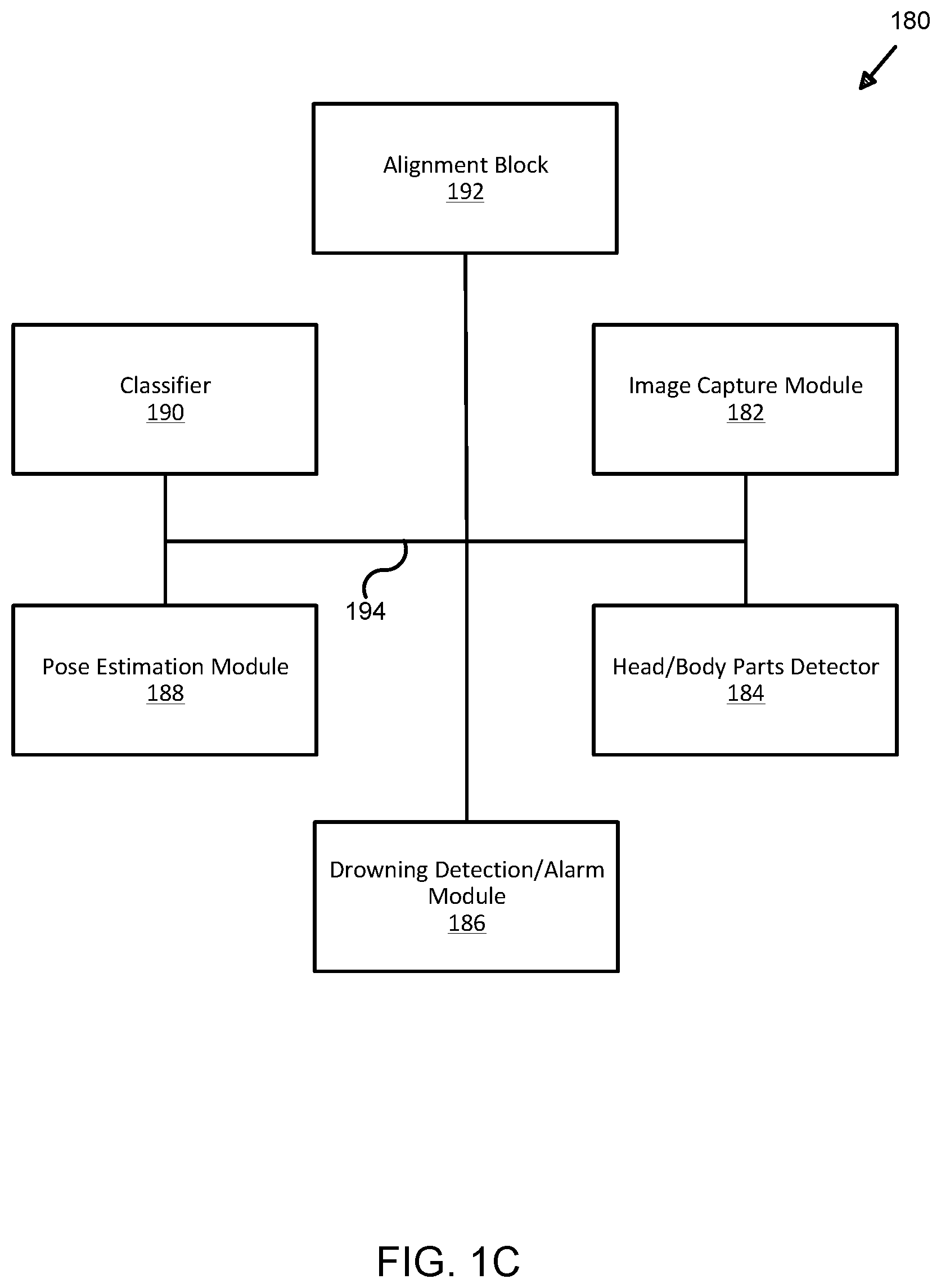

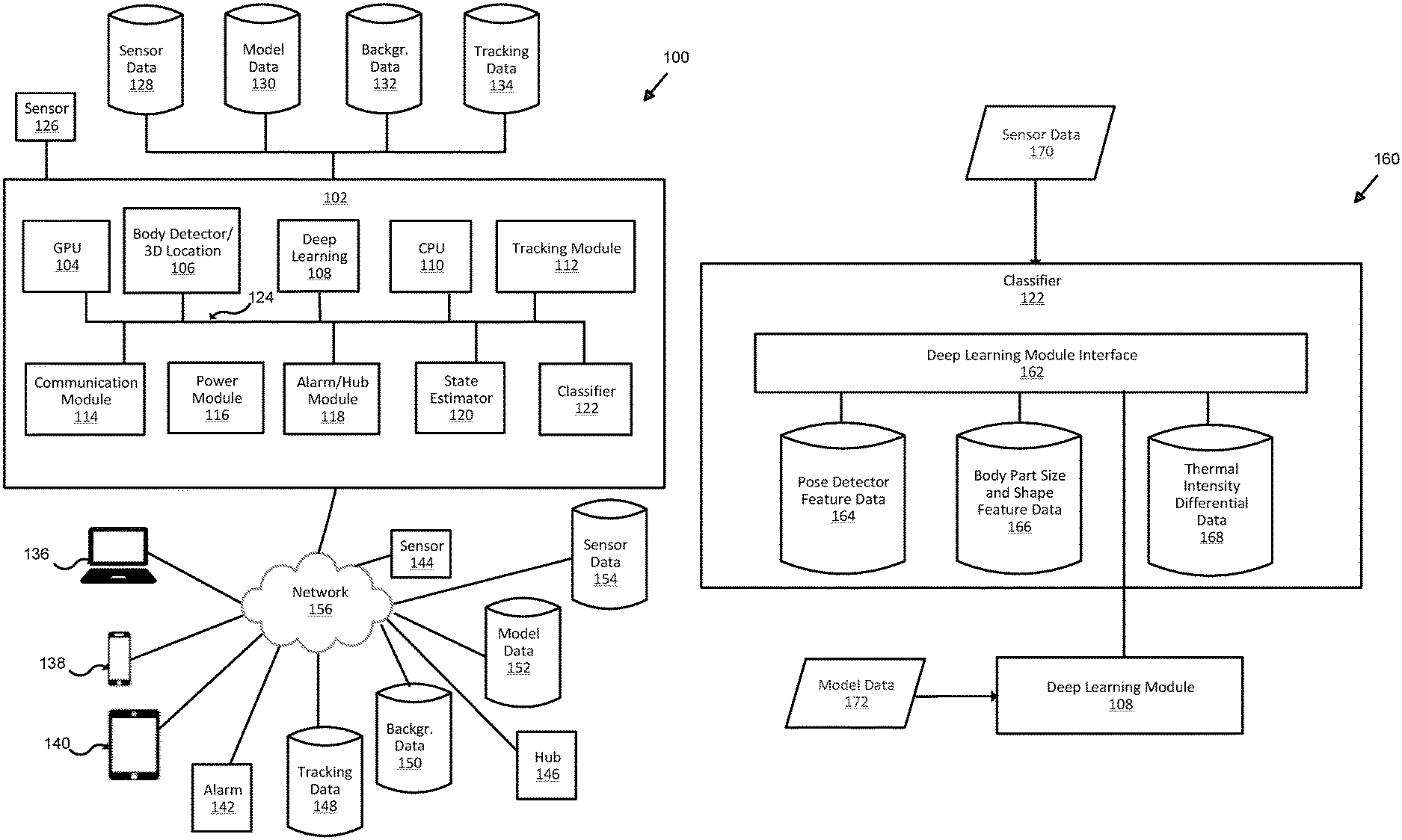

[0007] FIG. 1A illustrates an exemplary system for analysis and deep learning modeling of sensor-based detection data in bounded aquatic environments;

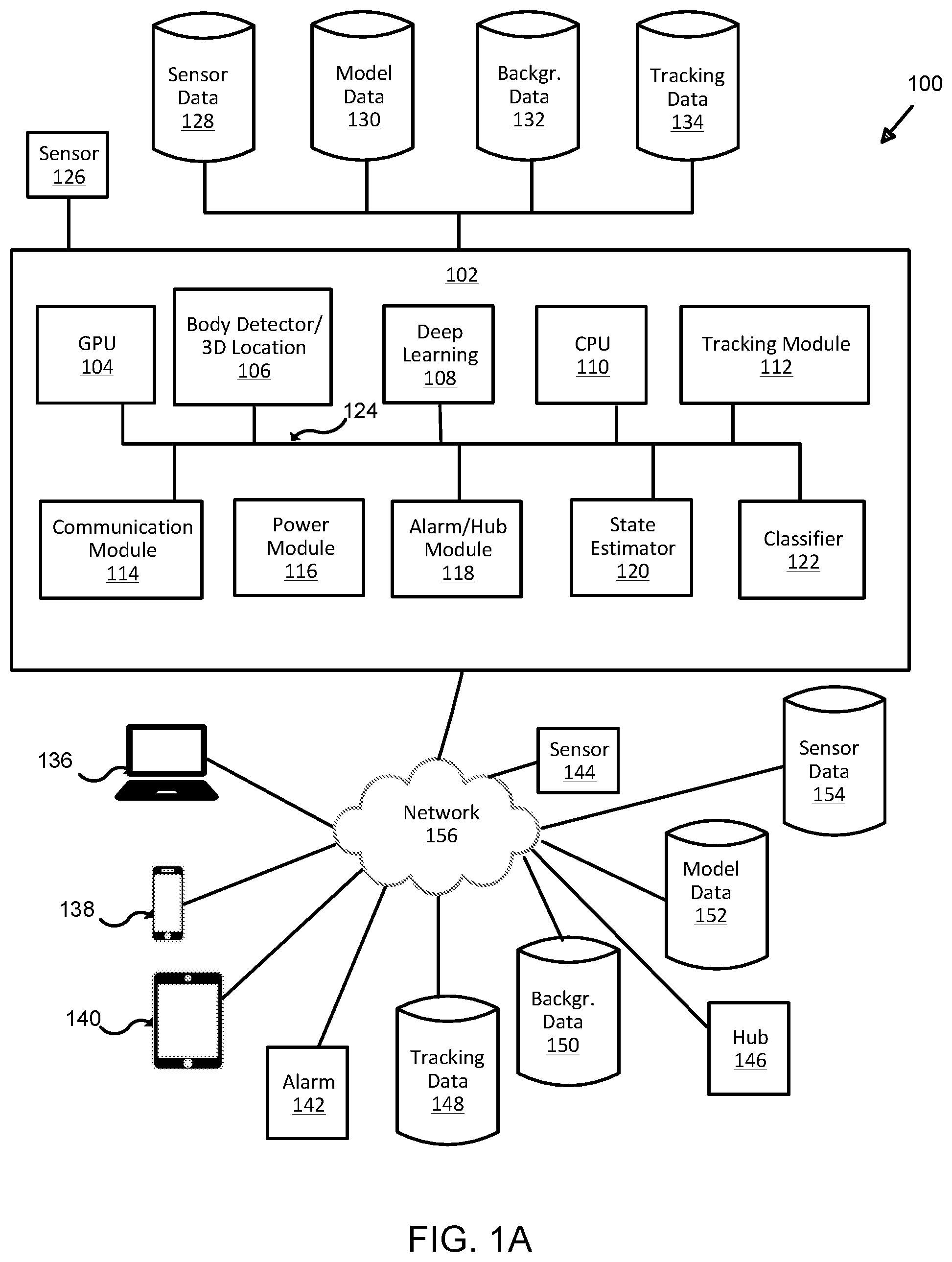

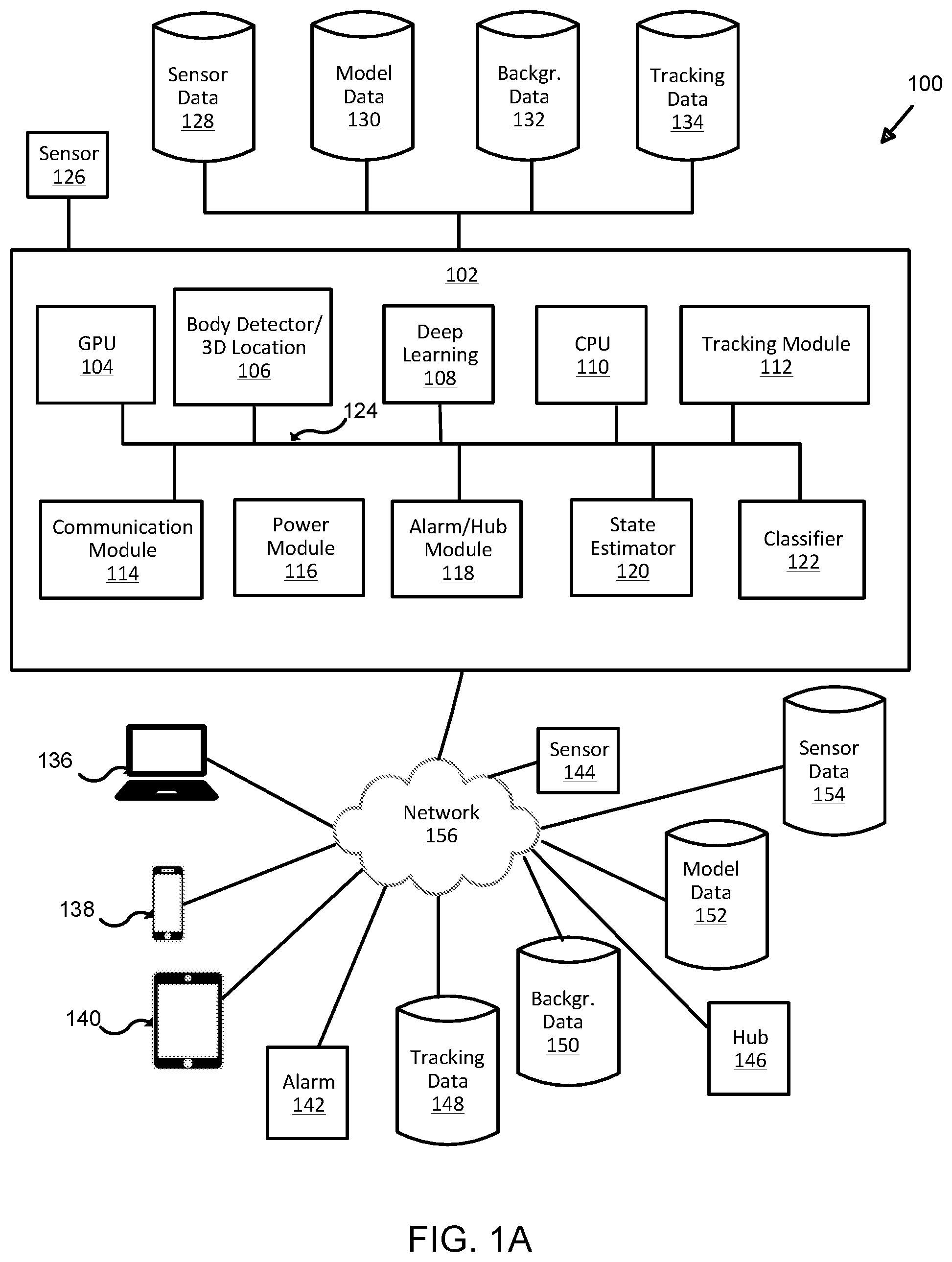

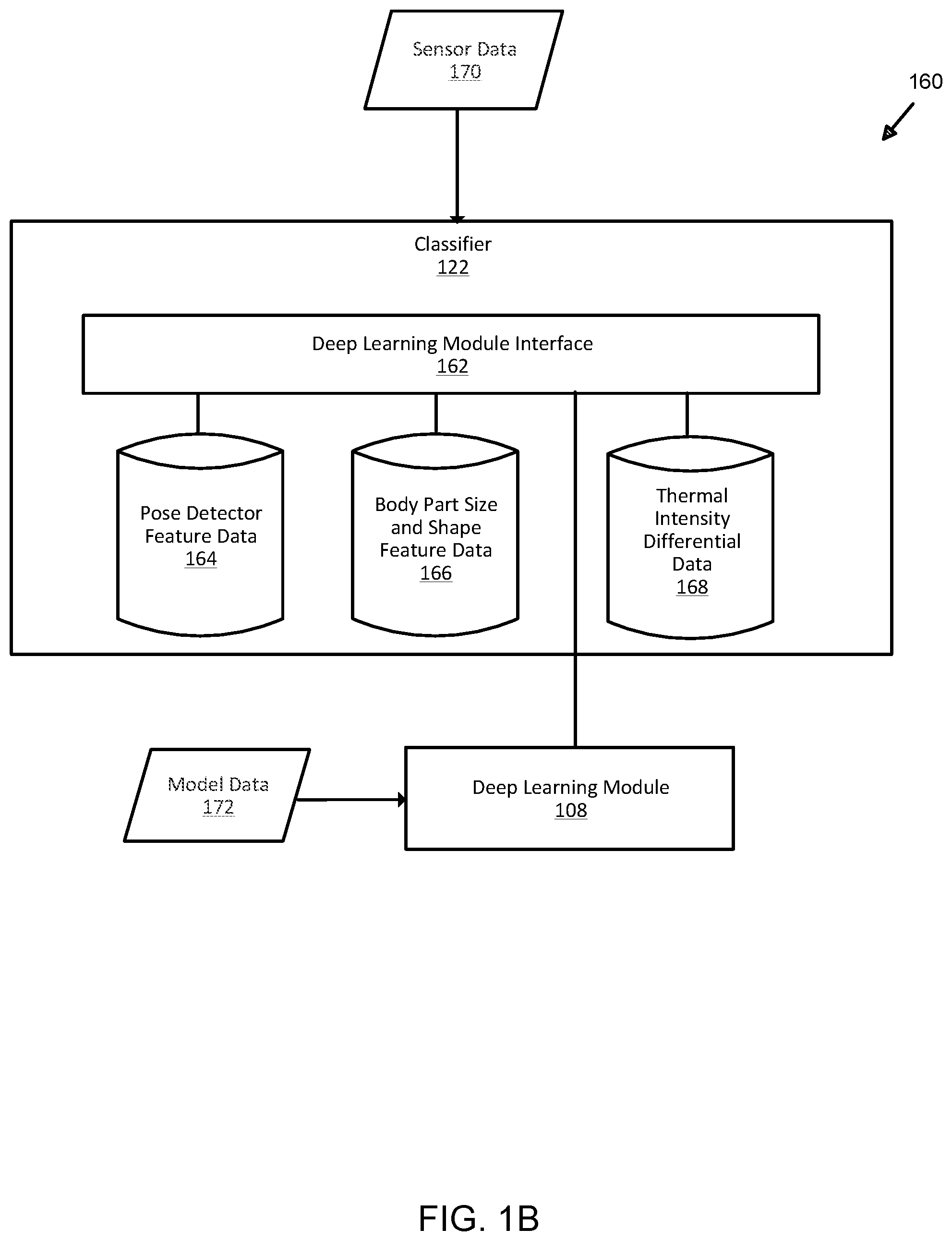

[0008] FIG. 1B illustrates an exemplary classifier module for analysis and deep learning modeling of sensor-based detection data in bounded aquatic environments;

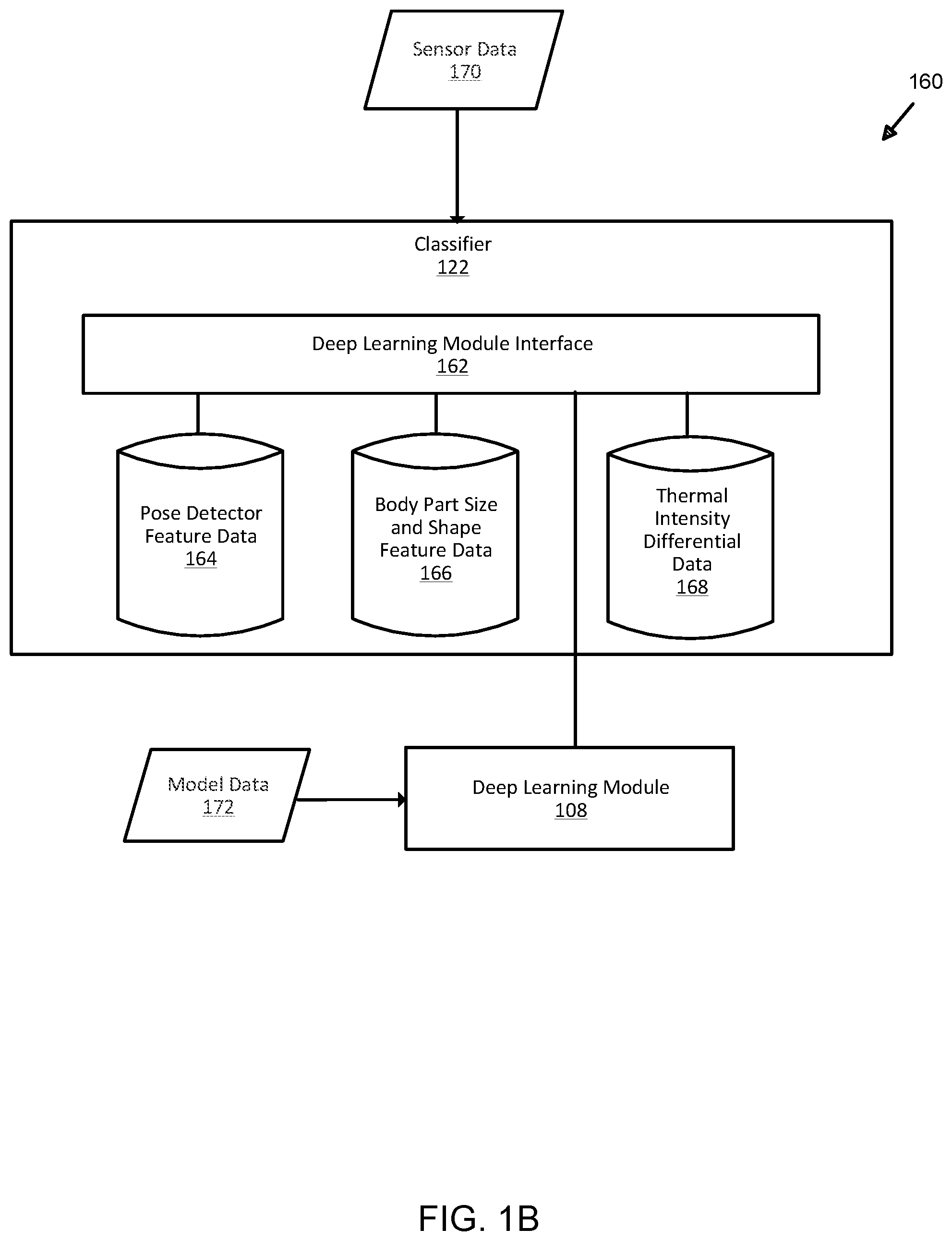

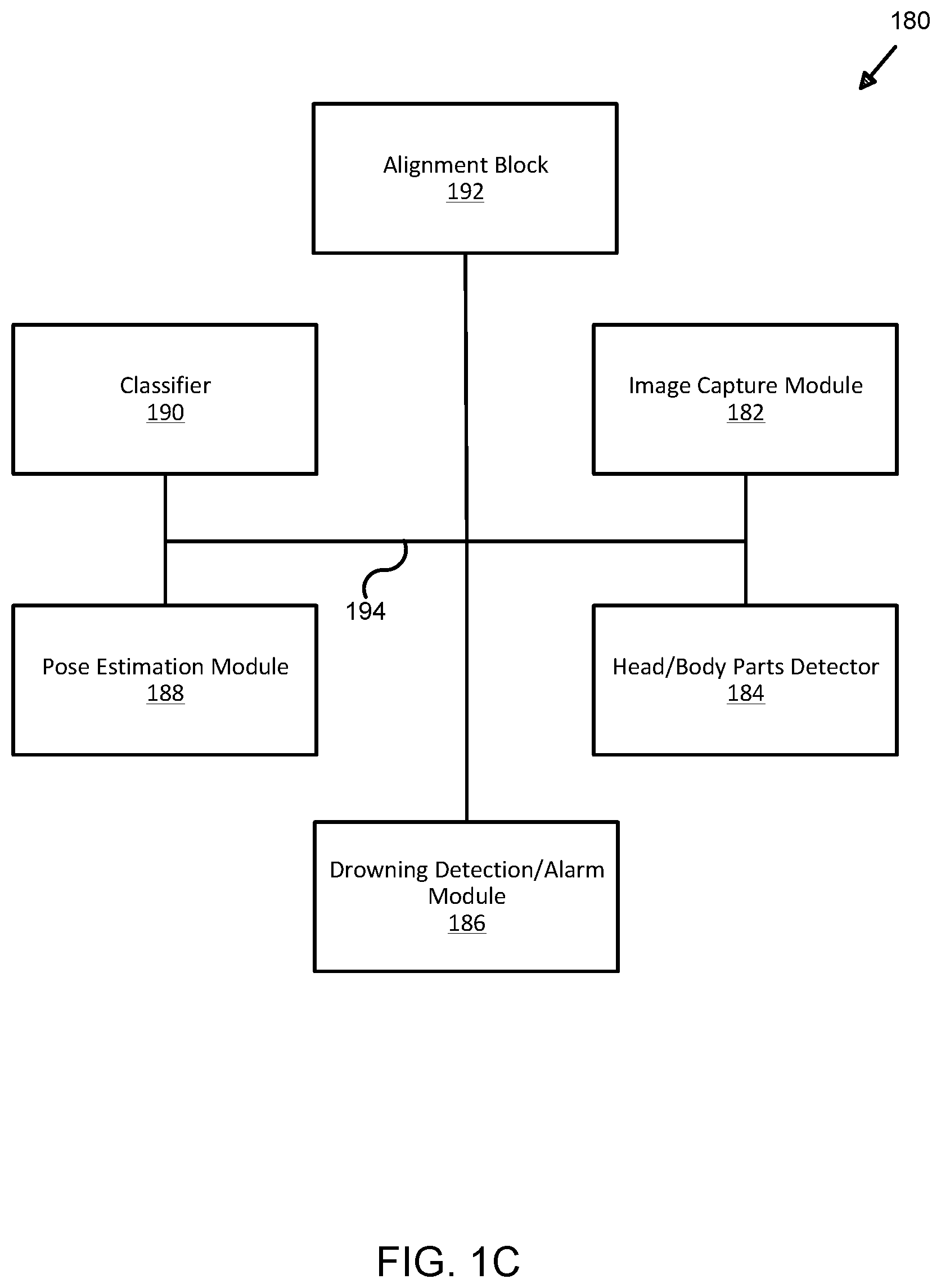

[0009] FIG. 1C illustrates an exemplary block modular architecture for analysis and deep learning modeling of sensor-based detection data for organic motion determination in bounded aquatic environments;

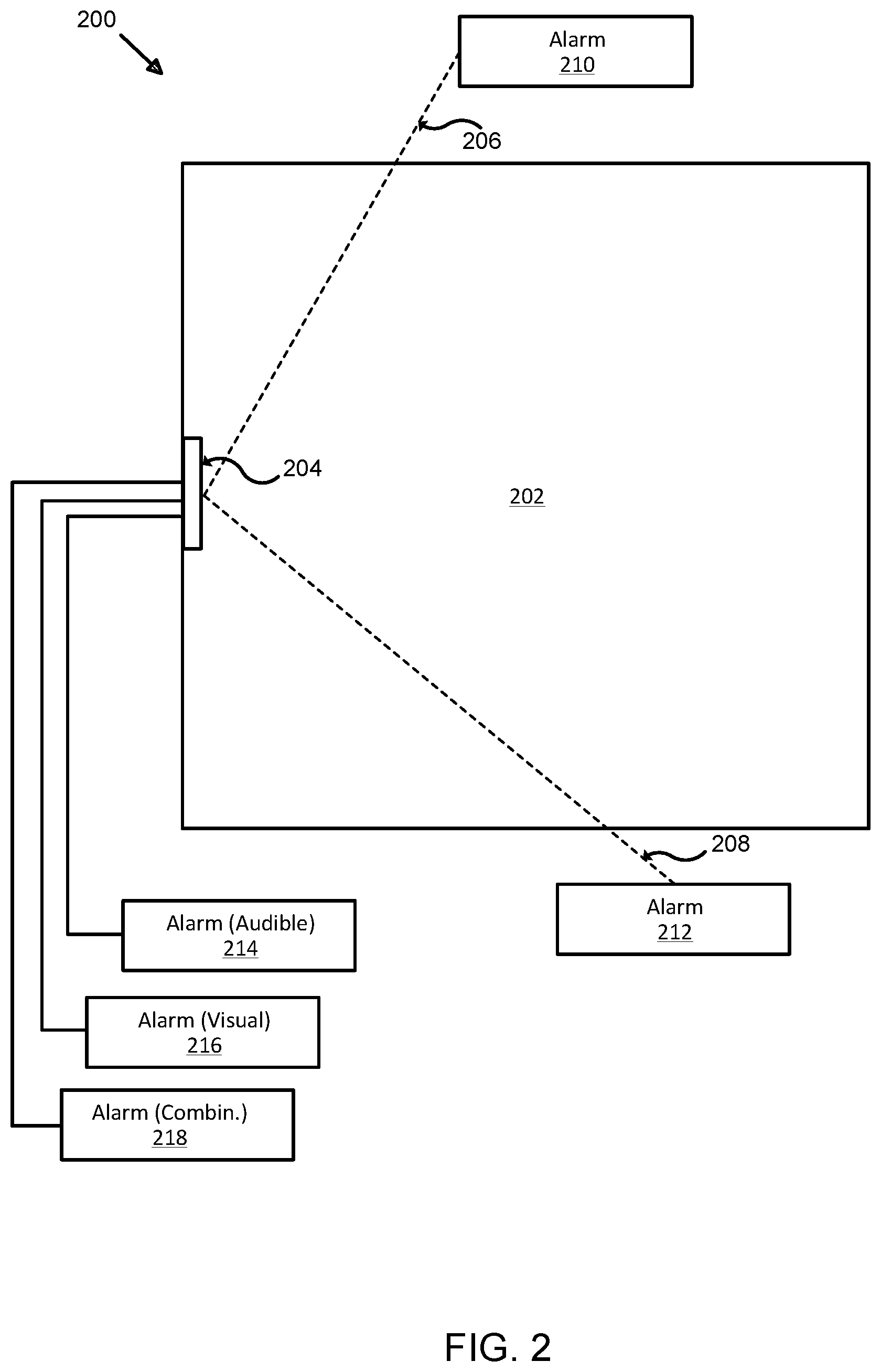

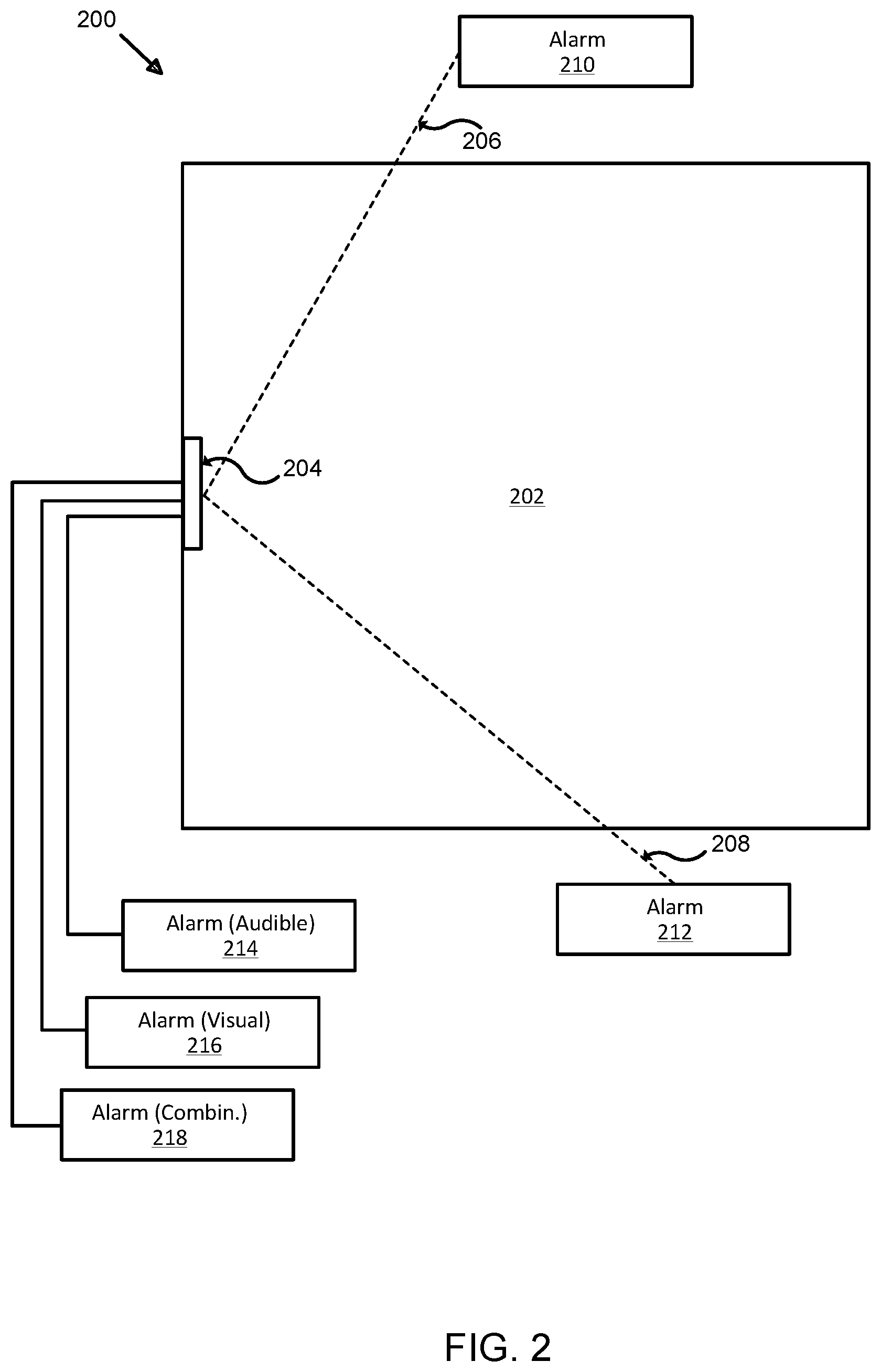

[0010] FIG. 2 illustrates another exemplary system for analysis and deep learning modeling of sensor-based detection data in bounded aquatic environments;

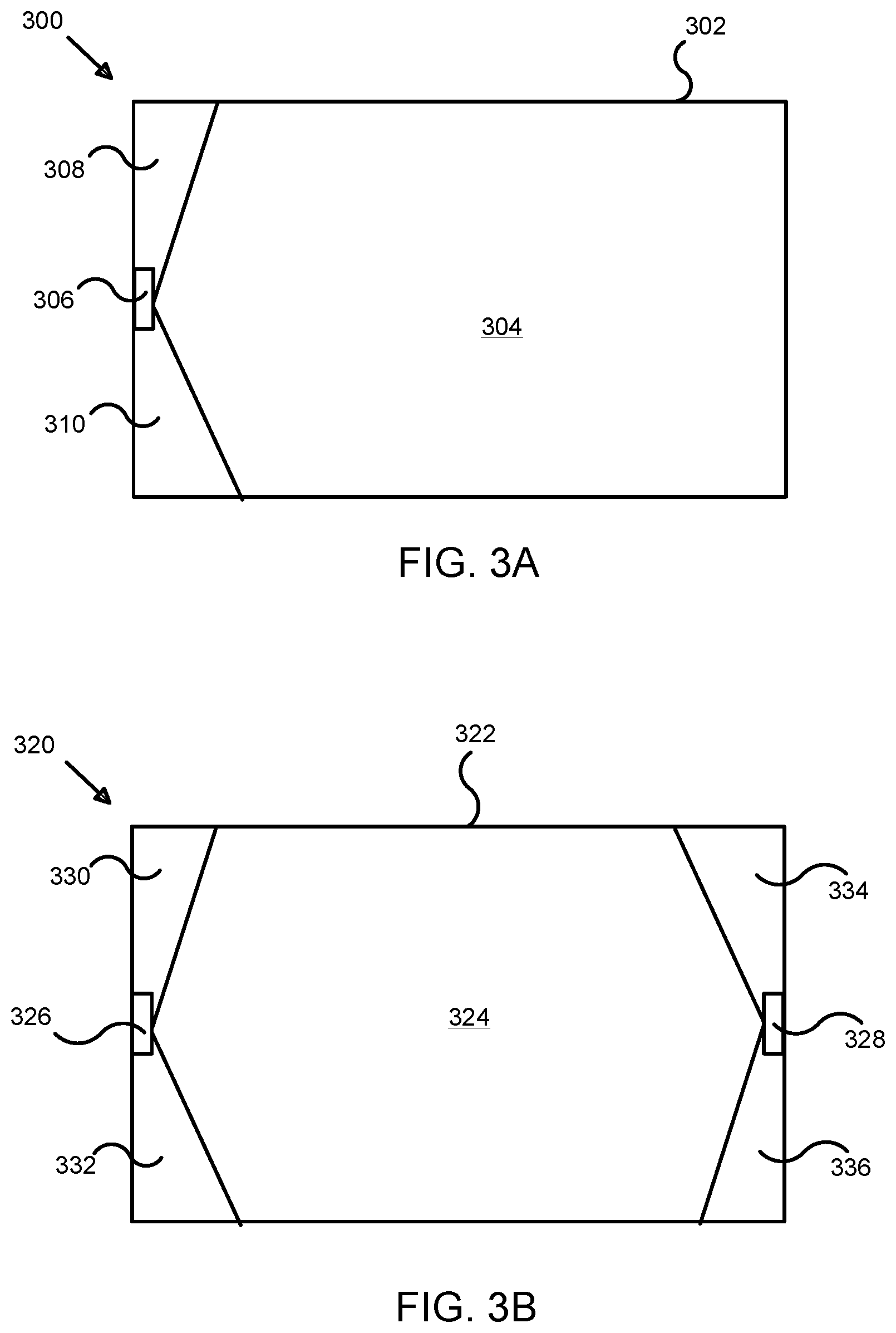

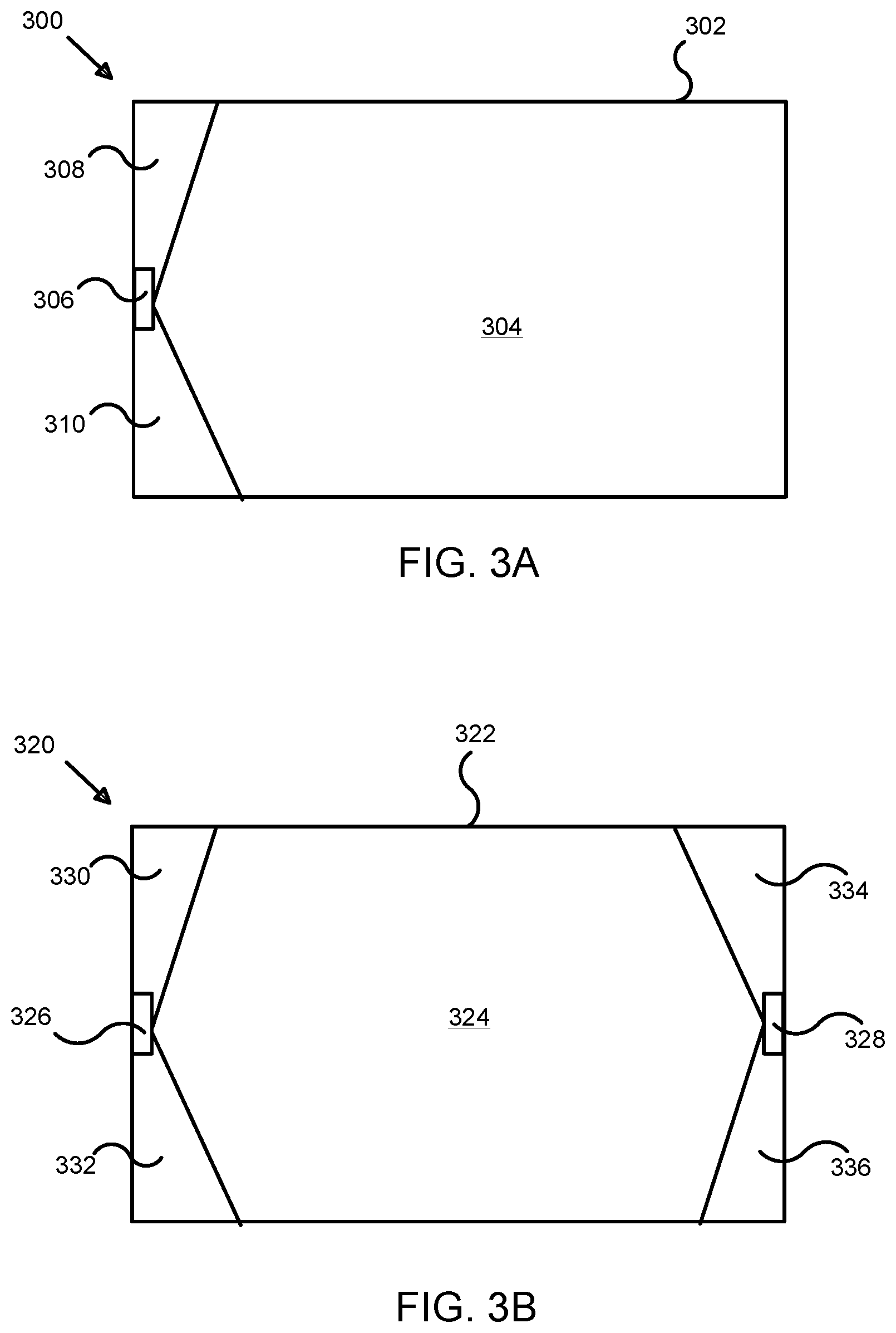

[0011] FIG. 3A illustrates an exemplary sensor arrangement in a system for analysis and deep learning modeling of sensor-based detection data in bounded aquatic environments;

[0012] FIG. 3B illustrates an alternative exemplary sensor arrangement in a system for analysis and deep learning modeling of sensor-based detection data in bounded aquatic environments;

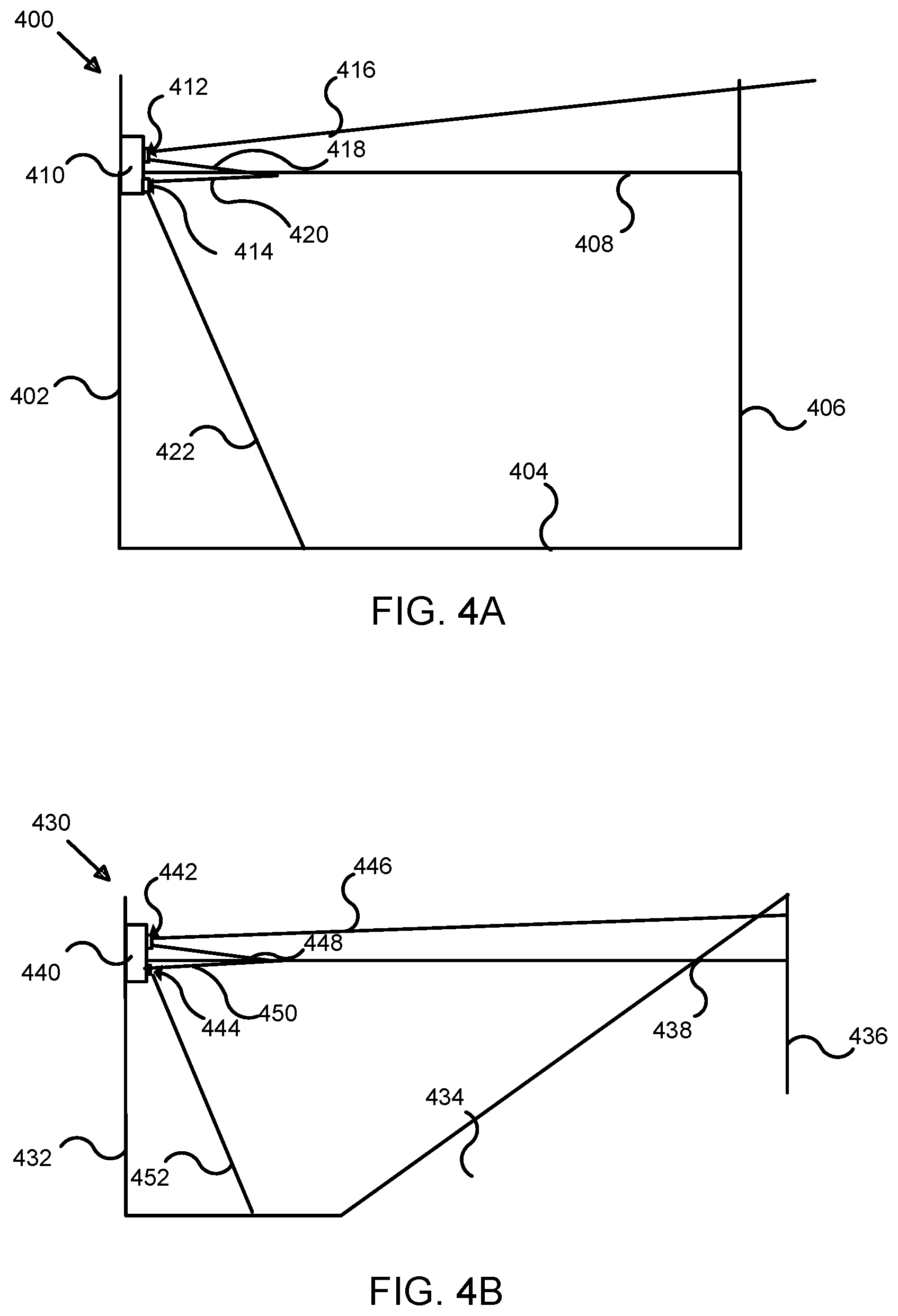

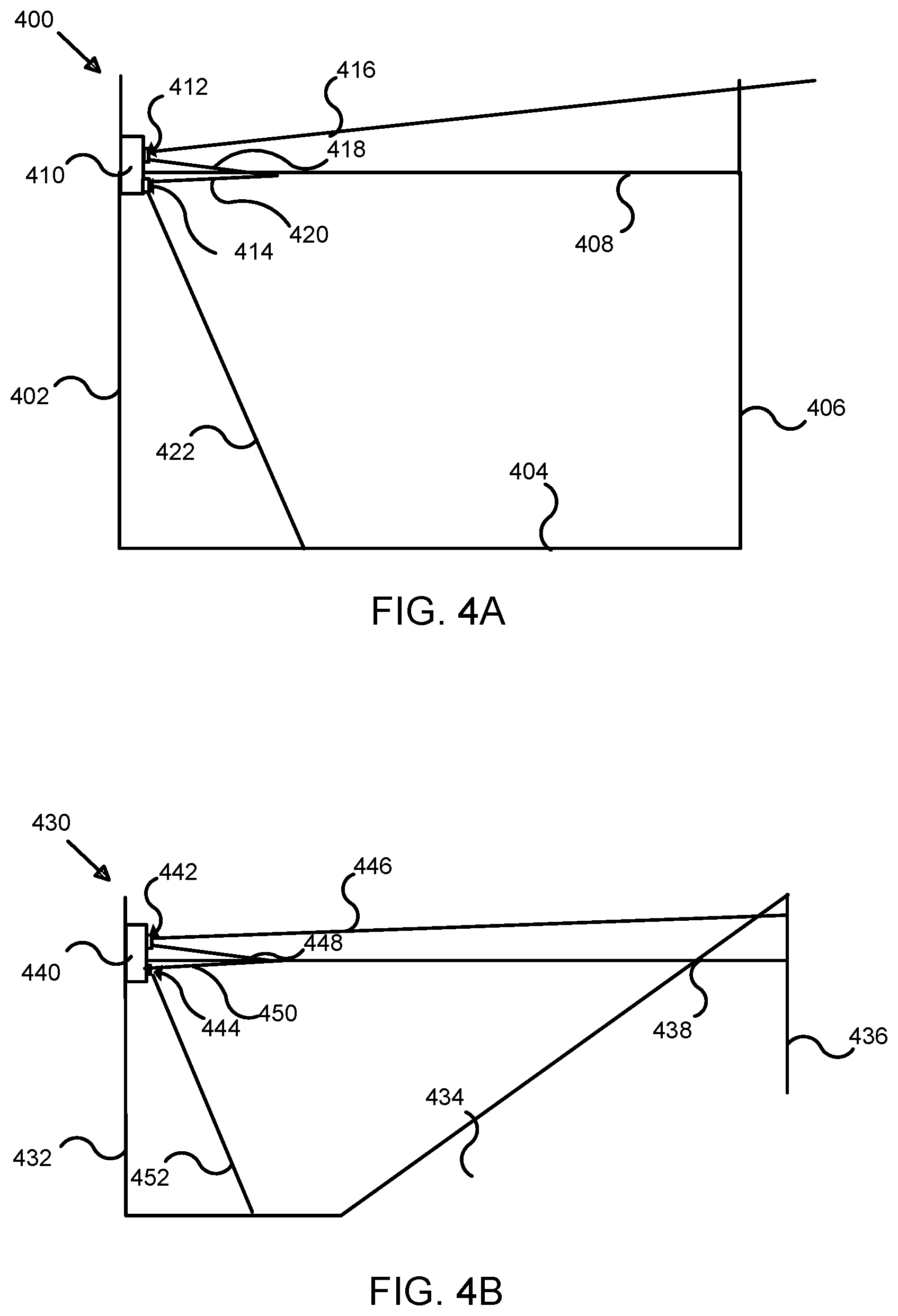

[0013] FIG. 4A illustrates a cross-sectional view of an exemplary system for analysis and deep learning modeling of sensor-based detection data in bounded aquatic environments;

[0014] FIG. 4B illustrates an alternative cross-sectional view of an exemplary system for analysis and deep learning modeling of sensor-based detection data in bounded aquatic environments;

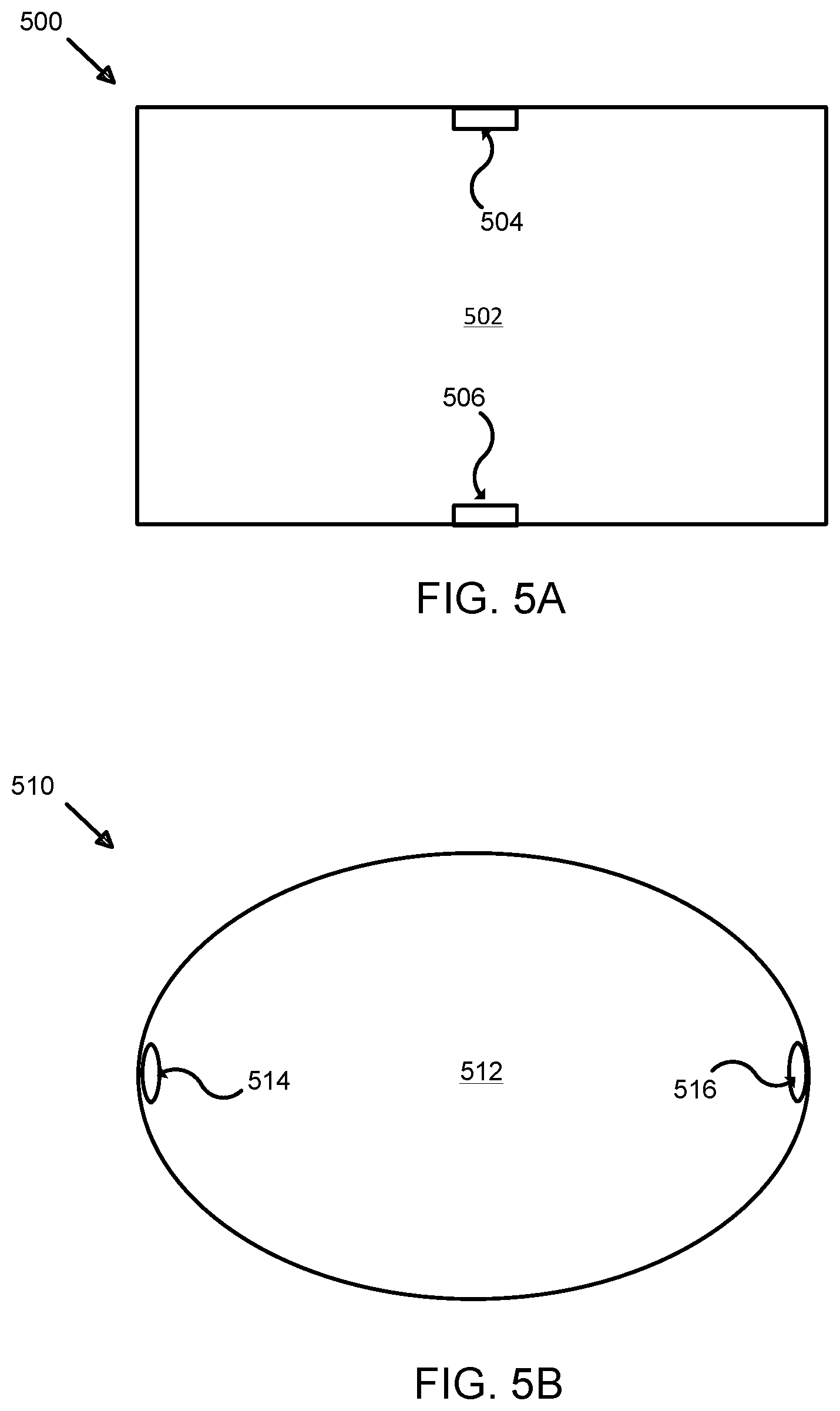

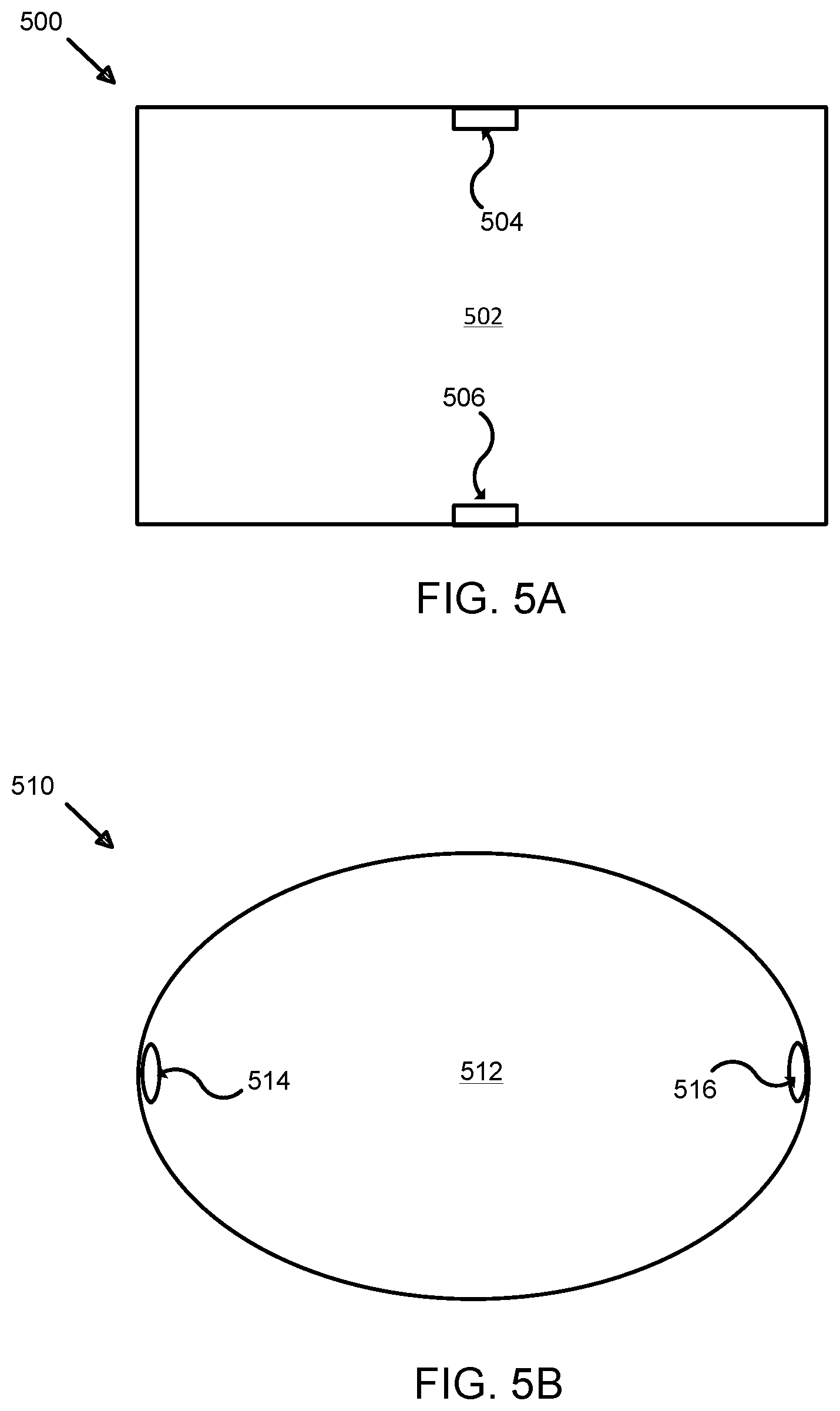

[0015] FIG. 5A illustrates a top view of an exemplary system for analysis and deep learning modeling of sensor-based detection data in bounded aquatic environments;

[0016] FIG. 5B illustrates an alternative top view of an exemplary system for analysis and deep learning modeling of sensor-based detection data in bounded aquatic environments;

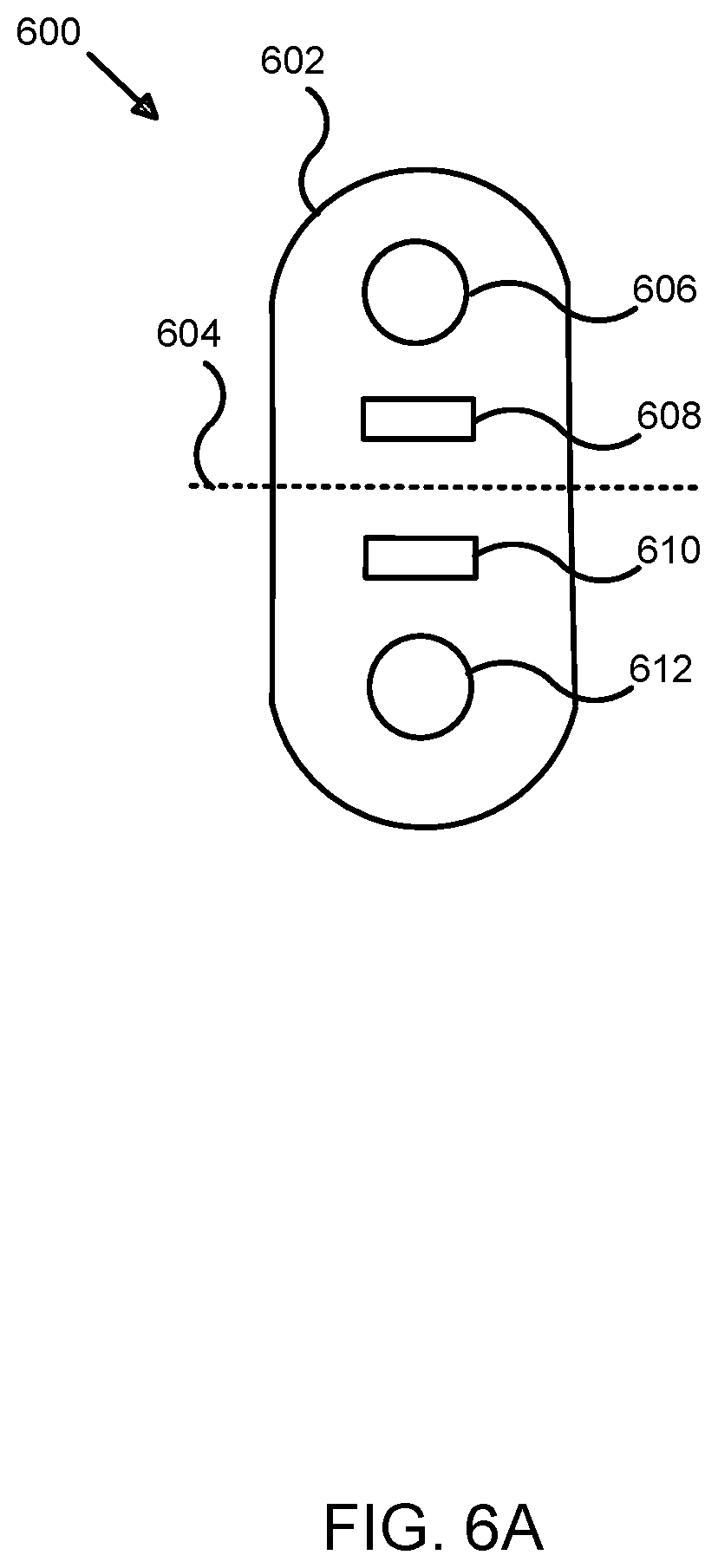

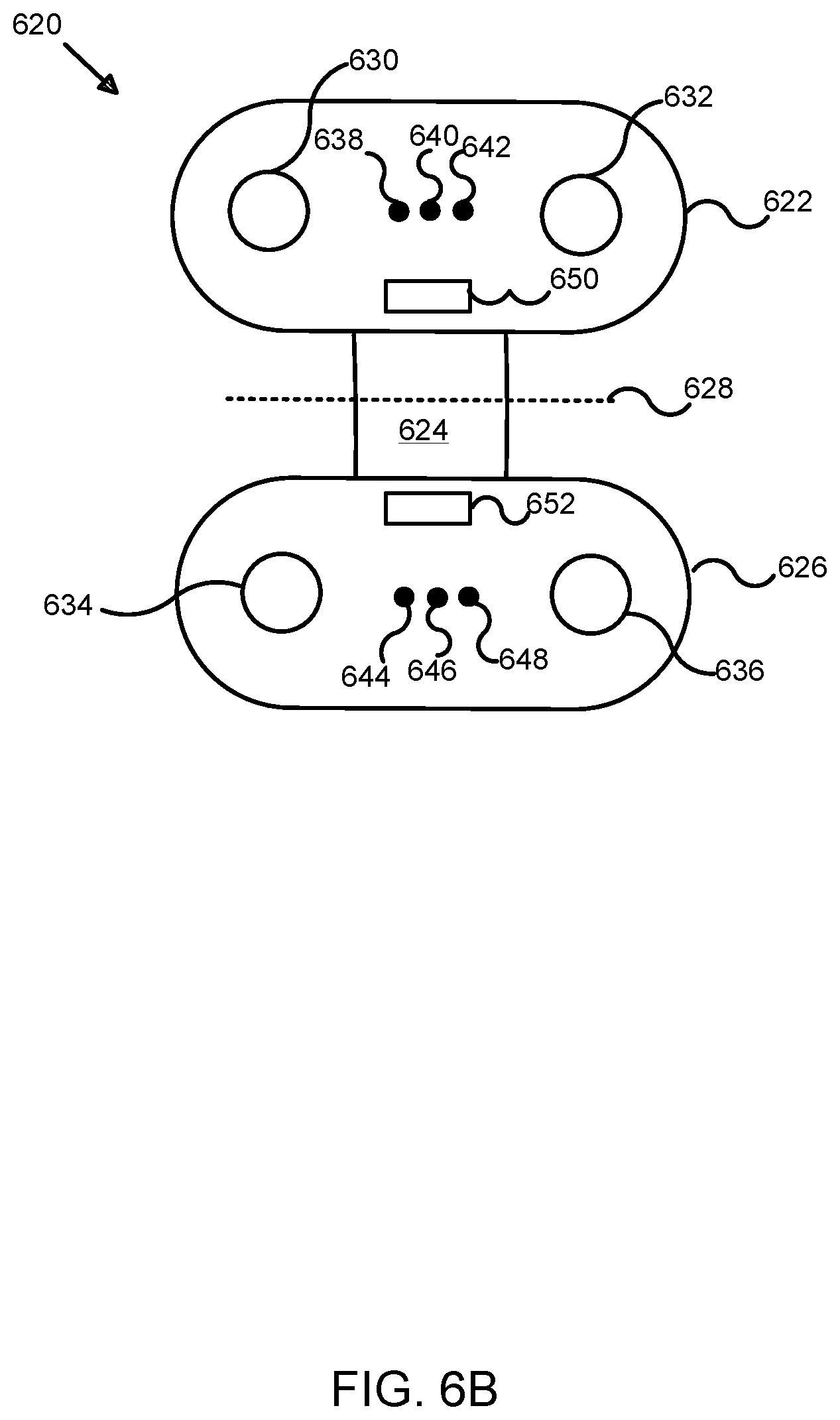

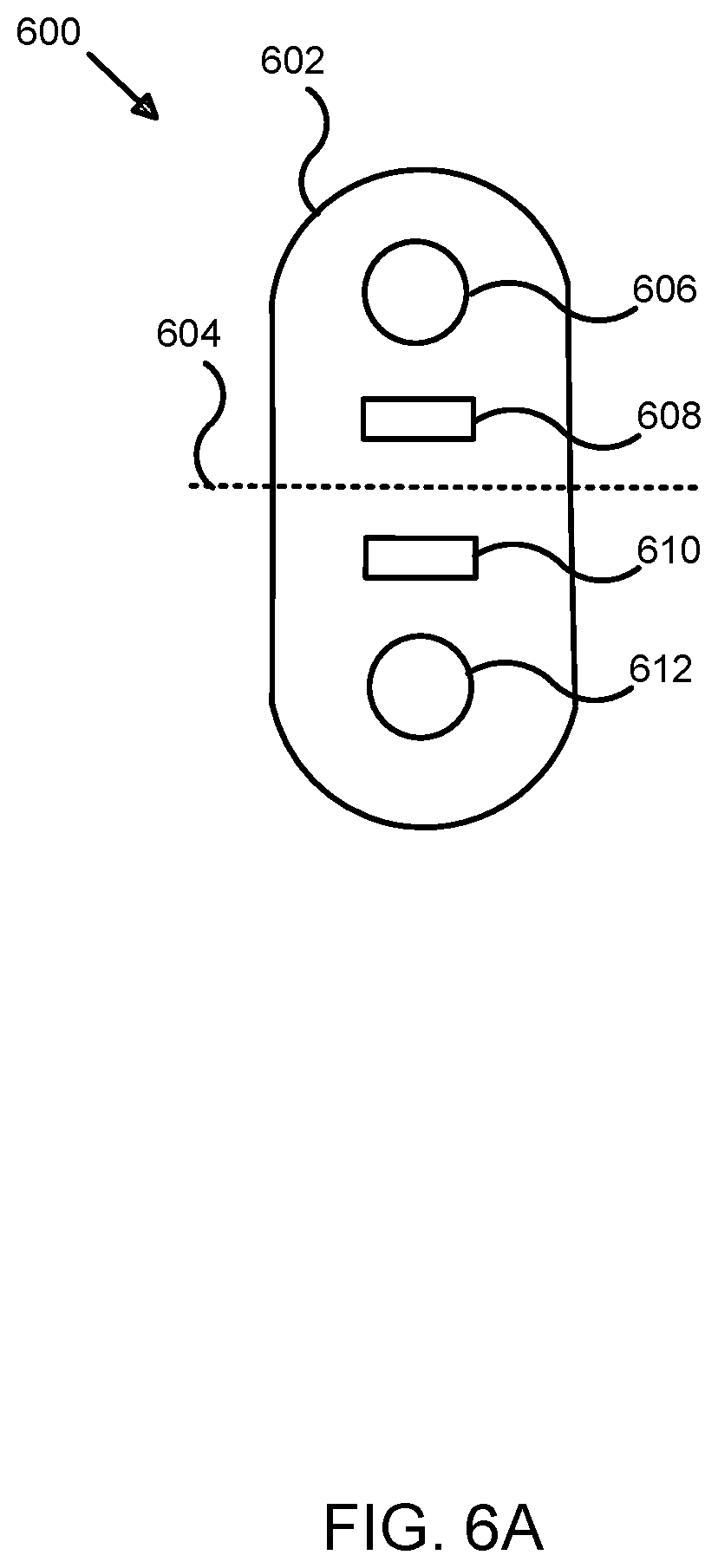

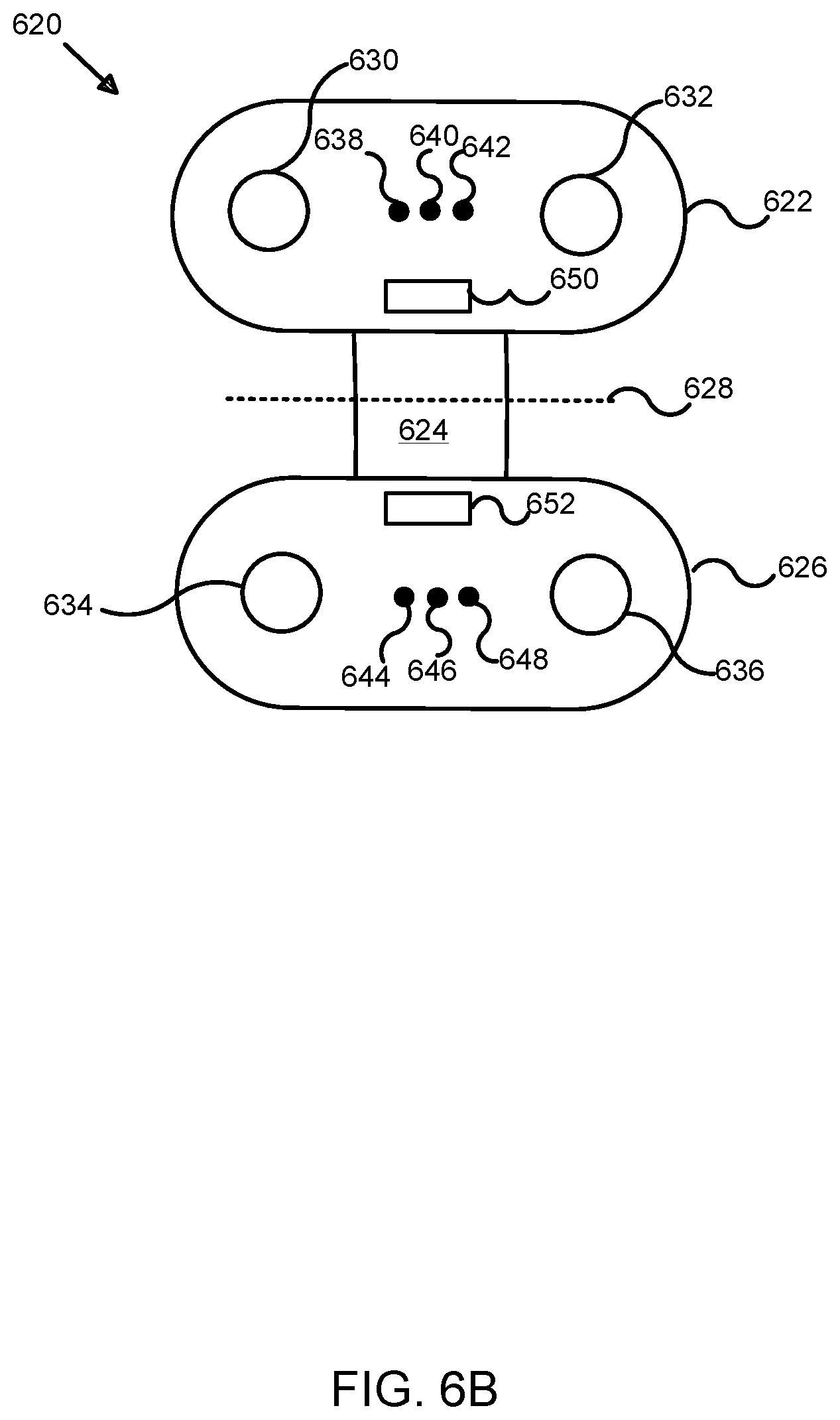

[0017] FIG. 6A illustrates an exemplary sensor array for analysis and deep learning modeling of sensor-based detection data in bounded aquatic environments;

[0018] FIG. 6B illustrates an alternative exemplary sensor array for analysis and deep learning modeling of sensor-based detection data in bounded aquatic environments;

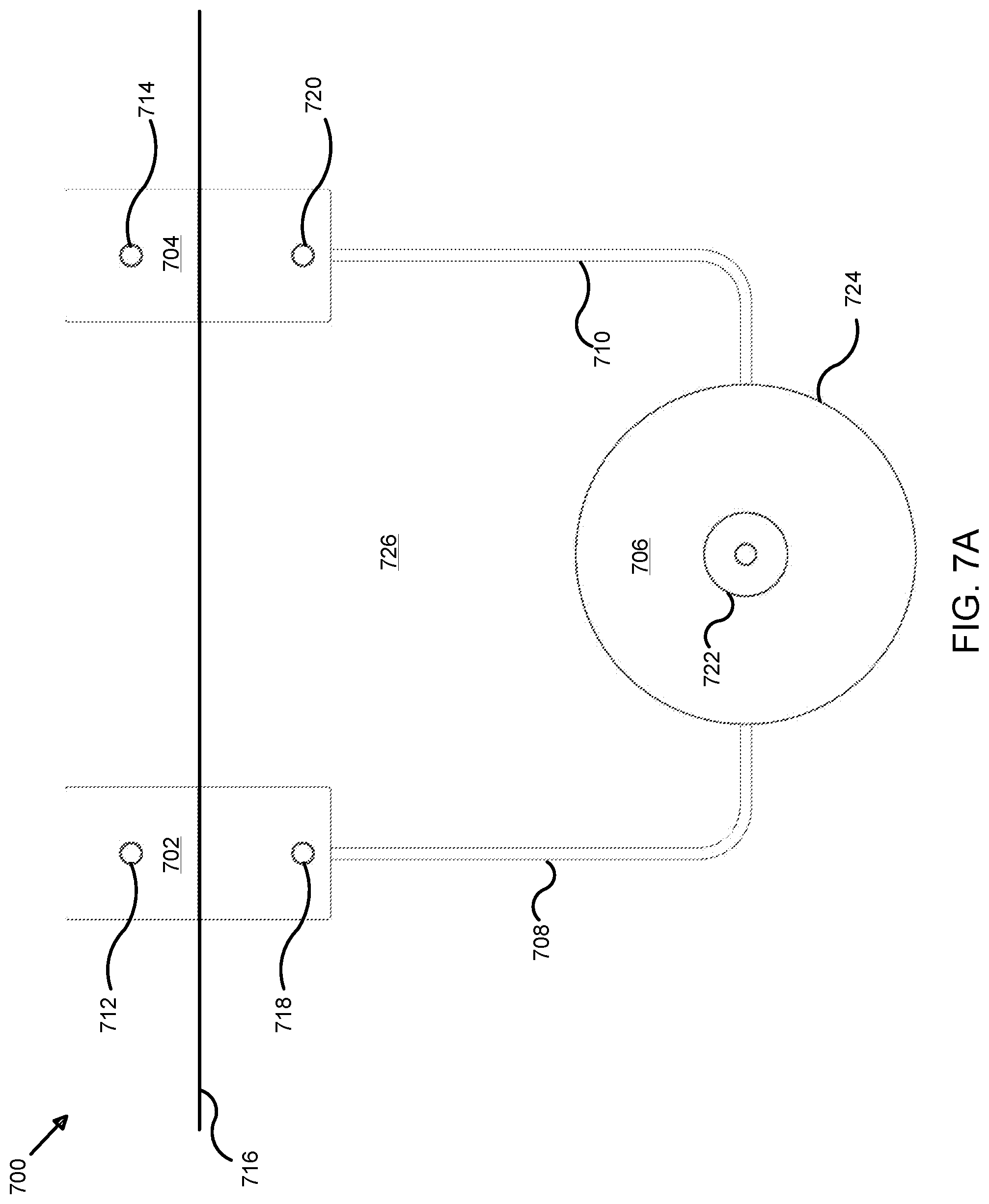

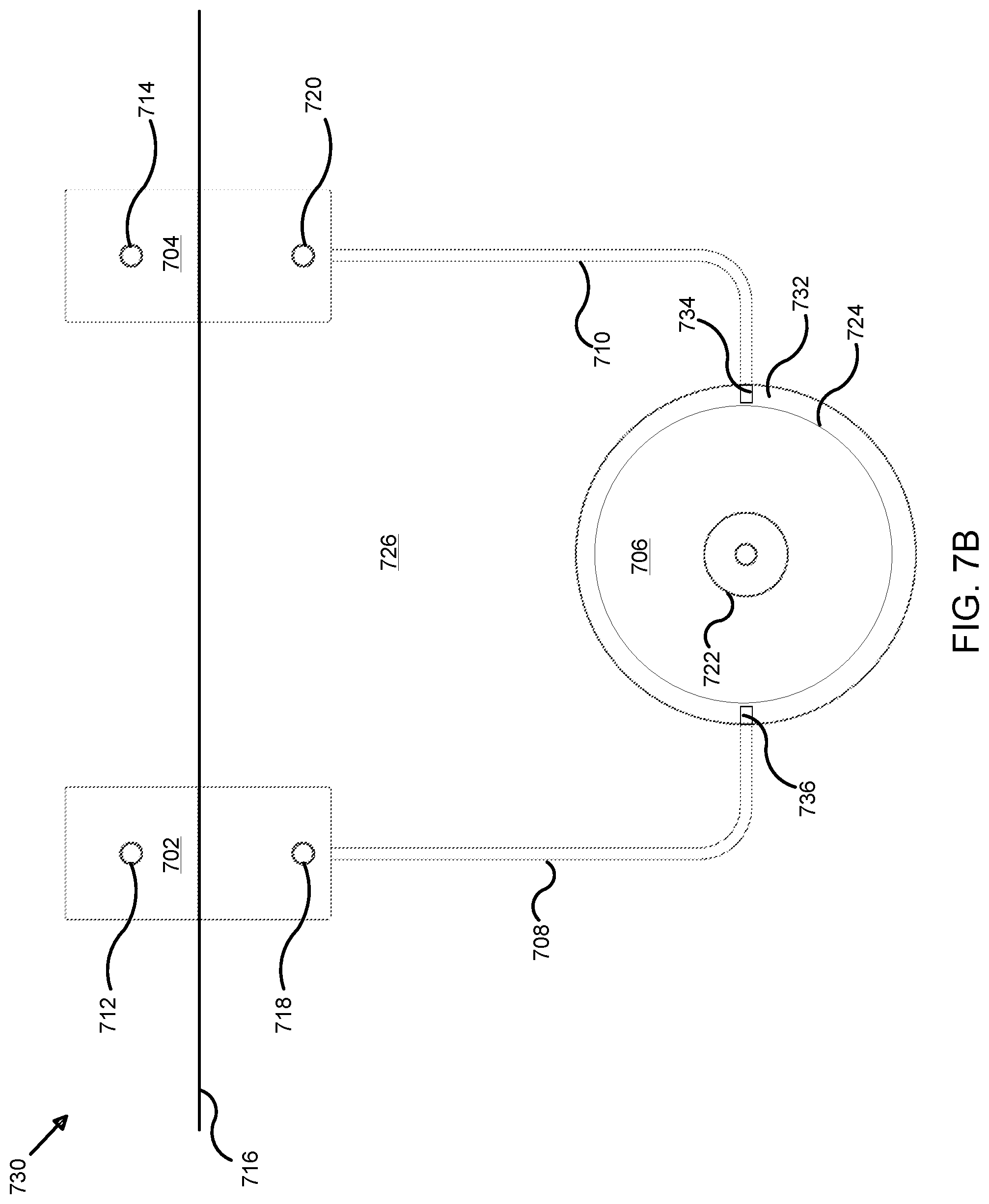

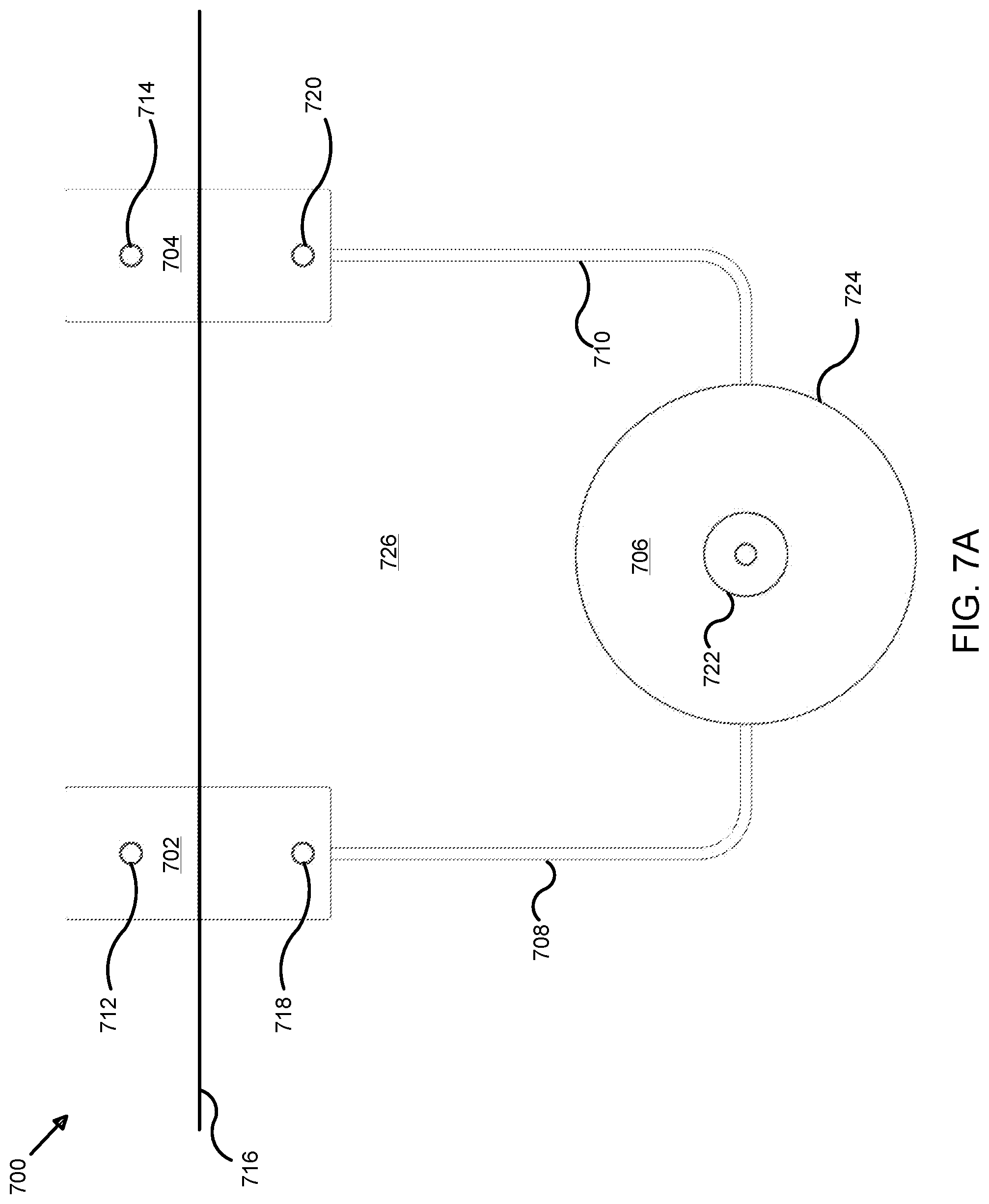

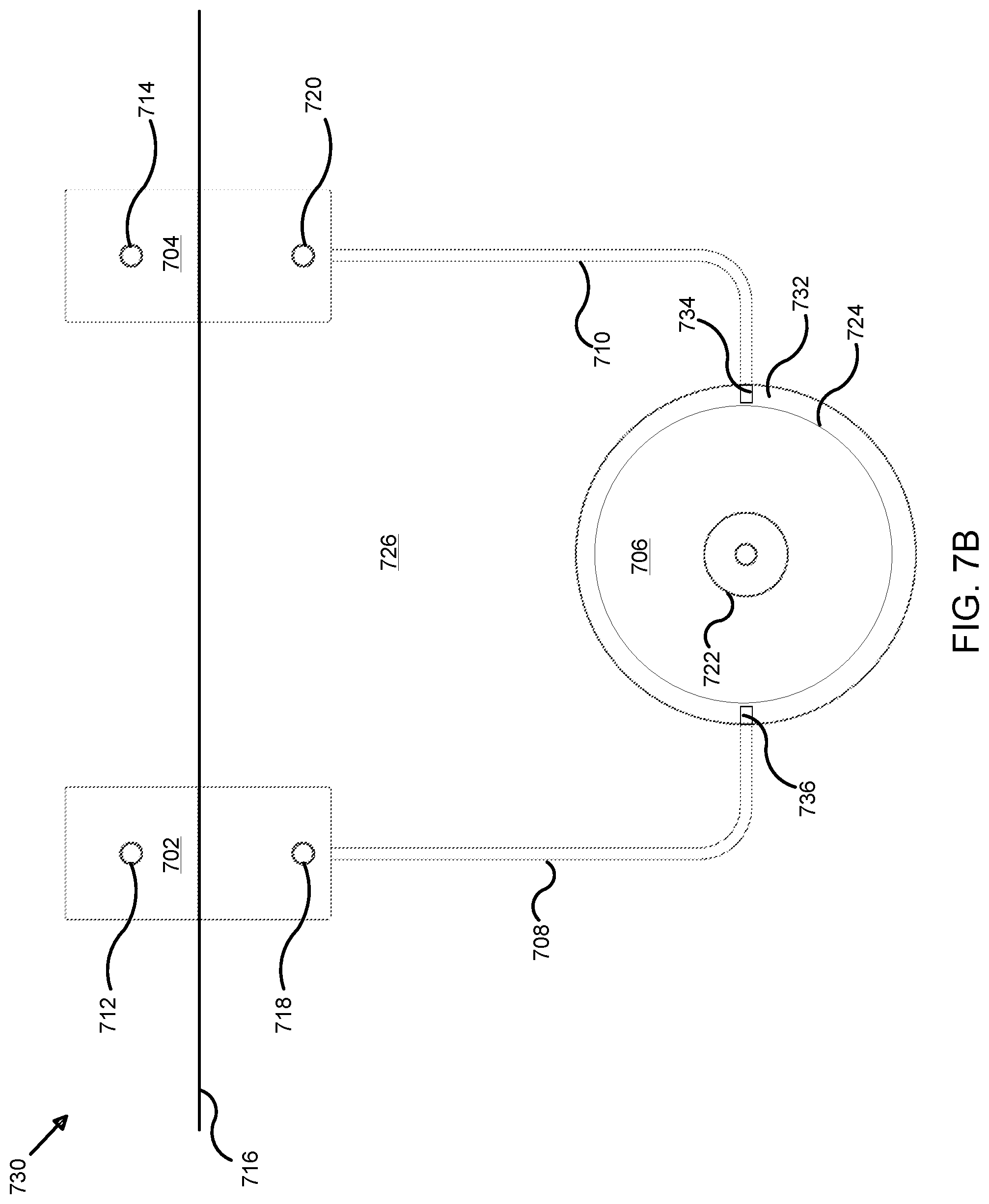

[0019] FIG. 7A illustrates an exemplary local system for analysis and deep learning modeling of sensor-based detection data in bounded aquatic environments;

[0020] FIG. 7B illustrates an alternative exemplary local system for analysis and deep learning modeling of sensor-based detection data in bounded aquatic environments;

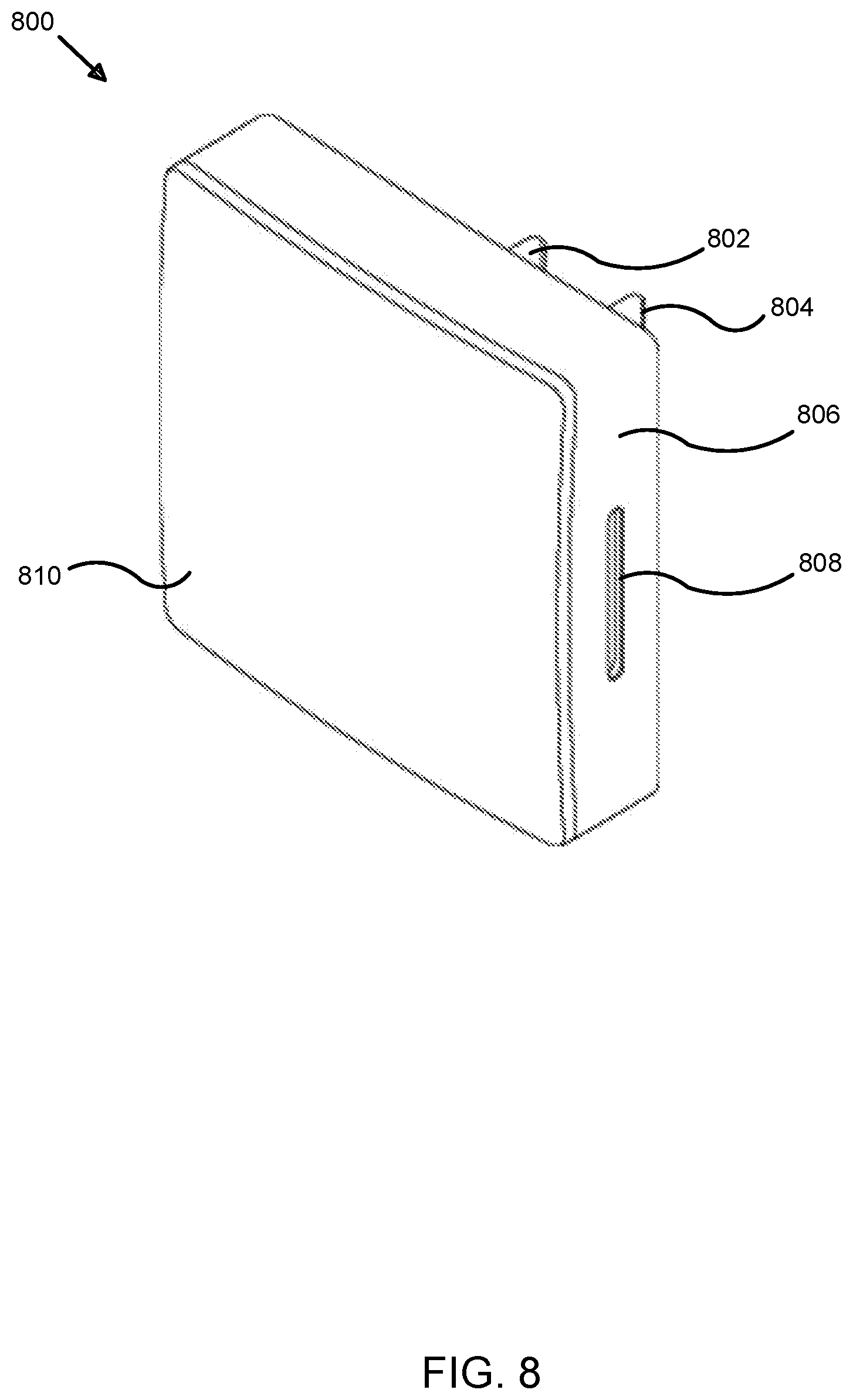

[0021] FIG. 8 illustrates a perspective view of an exemplary hub for analysis and deep learning modeling of sensor-based detection data in bounded aquatic environments;

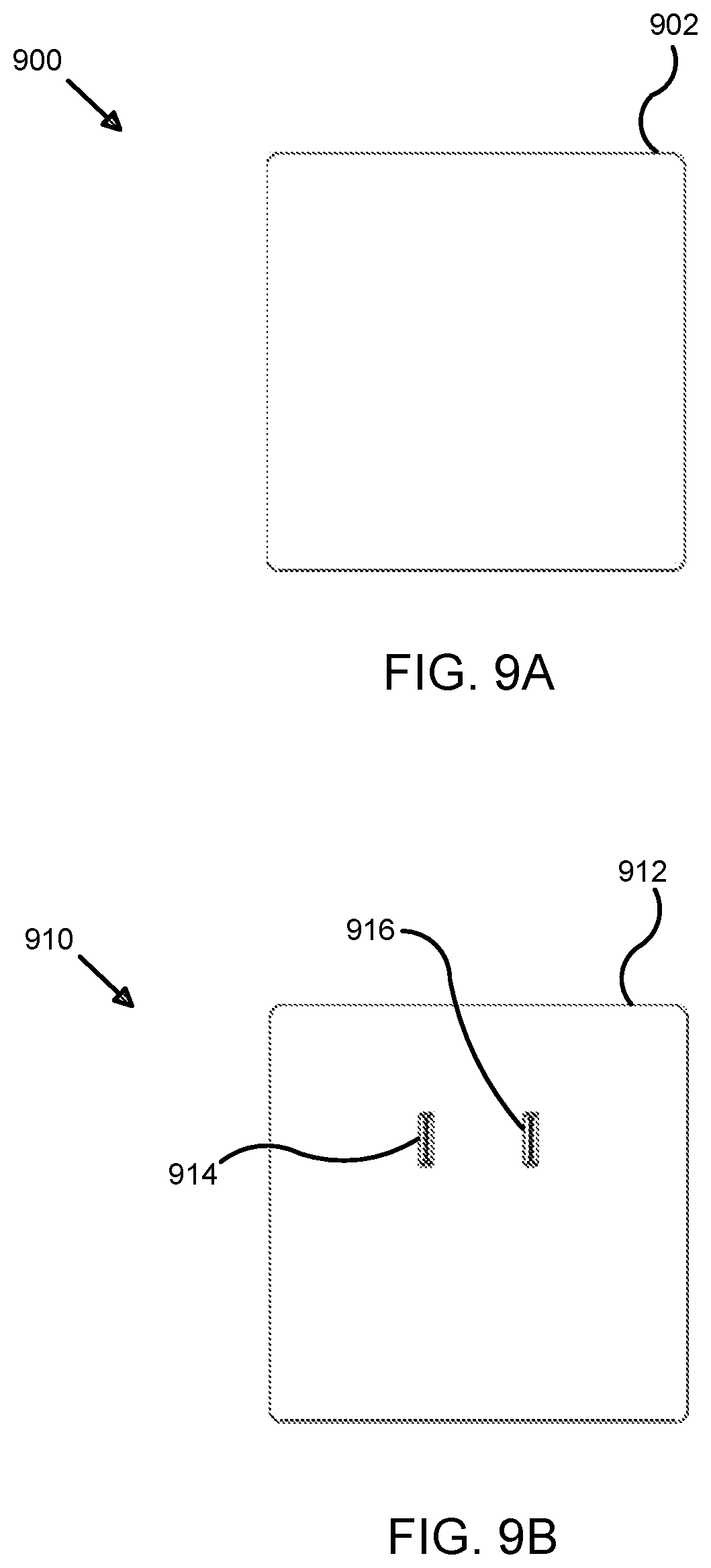

[0022] FIG. 9A illustrates a front view of an exemplary hub for analysis and deep learning modeling of sensor-based detection data in bounded aquatic environments;

[0023] FIG. 9B illustrates a rear view of an exemplary hub for analysis and deep learning modeling of sensor-based detection data in bounded aquatic environments;

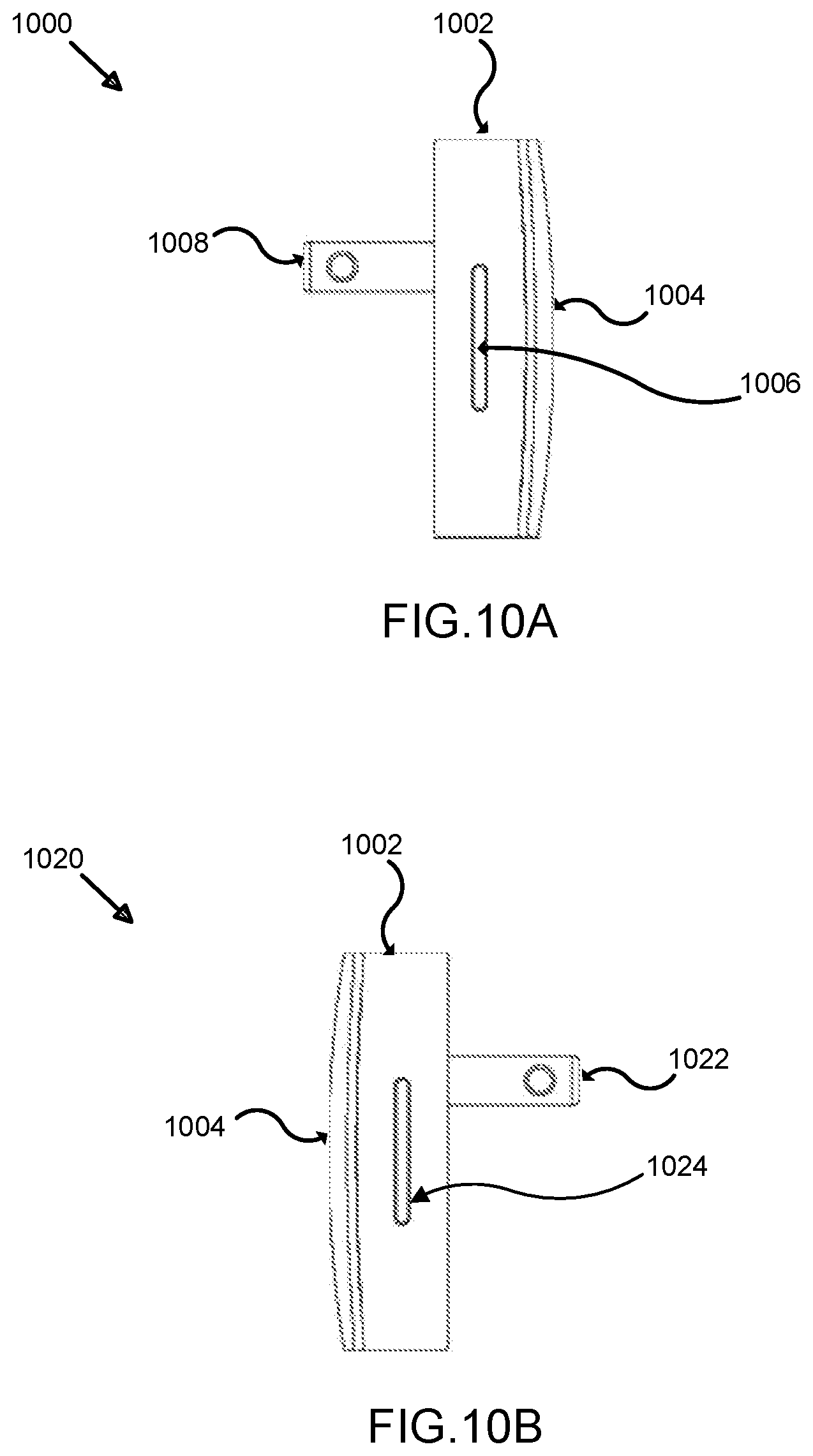

[0024] FIG. 10A illustrates a right side view of an exemplary hub for analysis and deep learning modeling of sensor-based detection data in bounded aquatic environments;

[0025] FIG. 10B illustrates a left side view of an exemplary hub for analysis and deep learning modeling of sensor-based detection data in bounded aquatic environments;

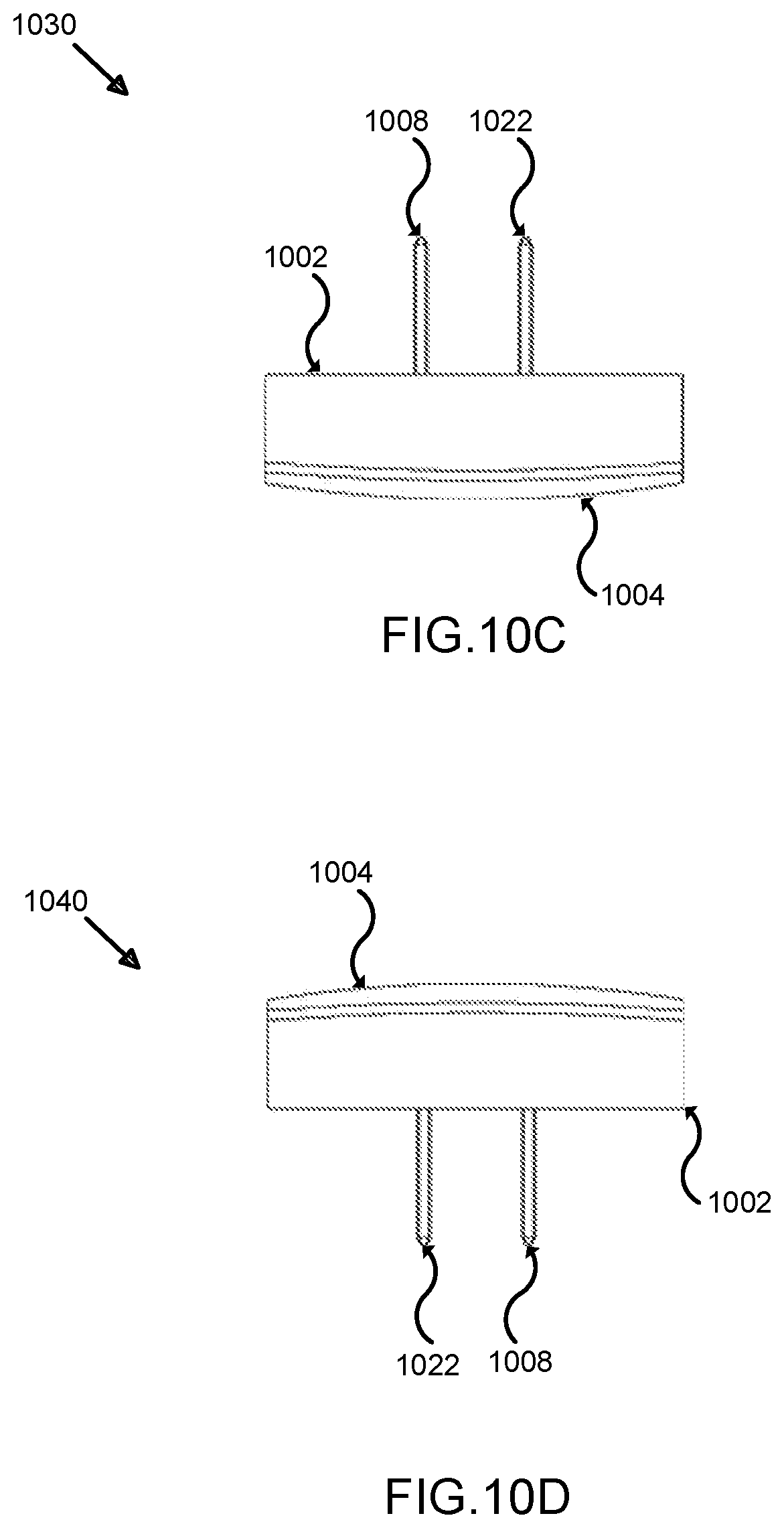

[0026] FIG. 10C illustrates a top view of an exemplary hub for analysis and deep learning modeling of sensor-based detection data in bounded aquatic environments;

[0027] FIG. 10D illustrates a bottom view of an exemplary hub for analysis and deep learning modeling of sensor-based detection data in bounded aquatic environments;

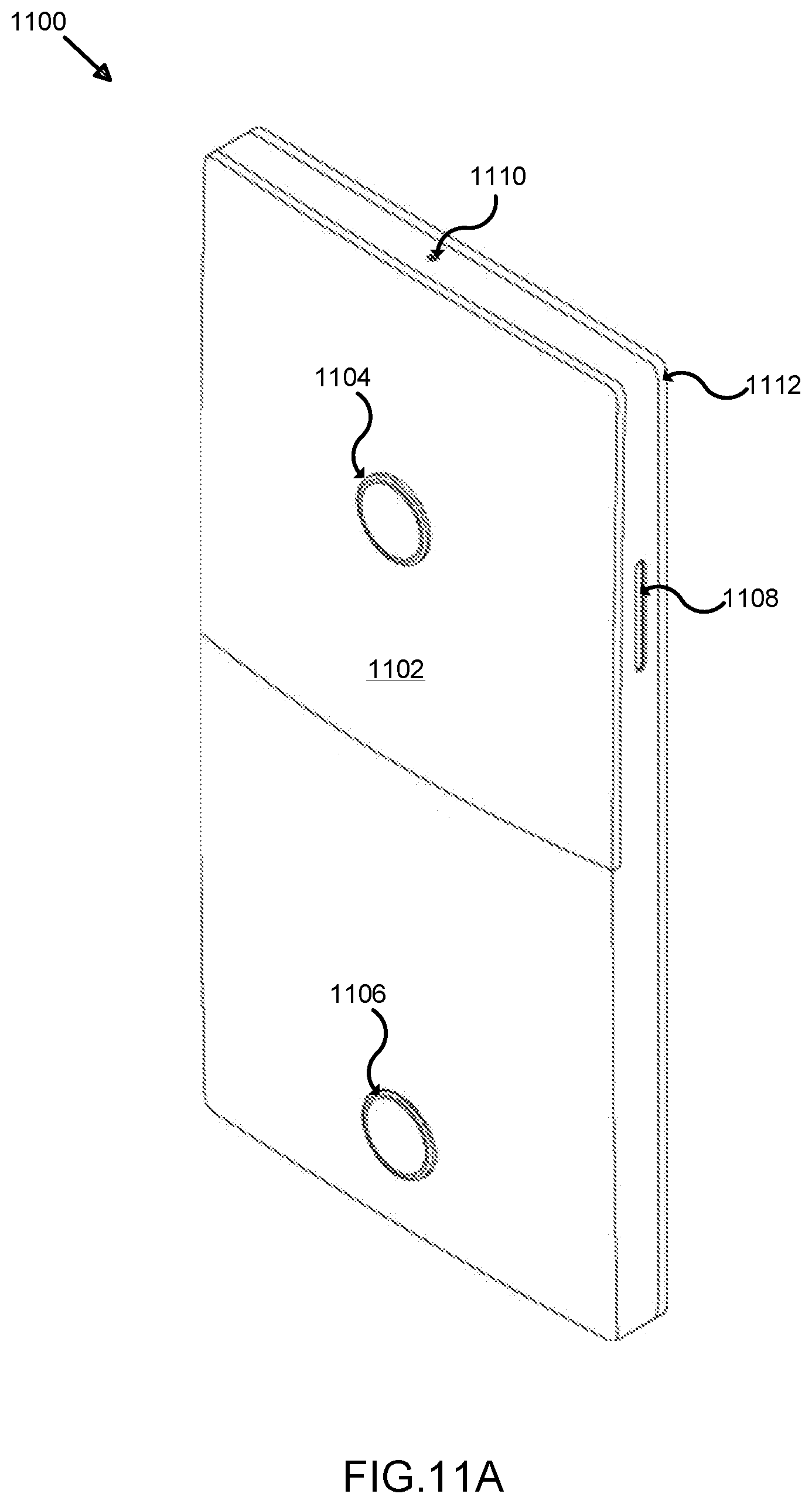

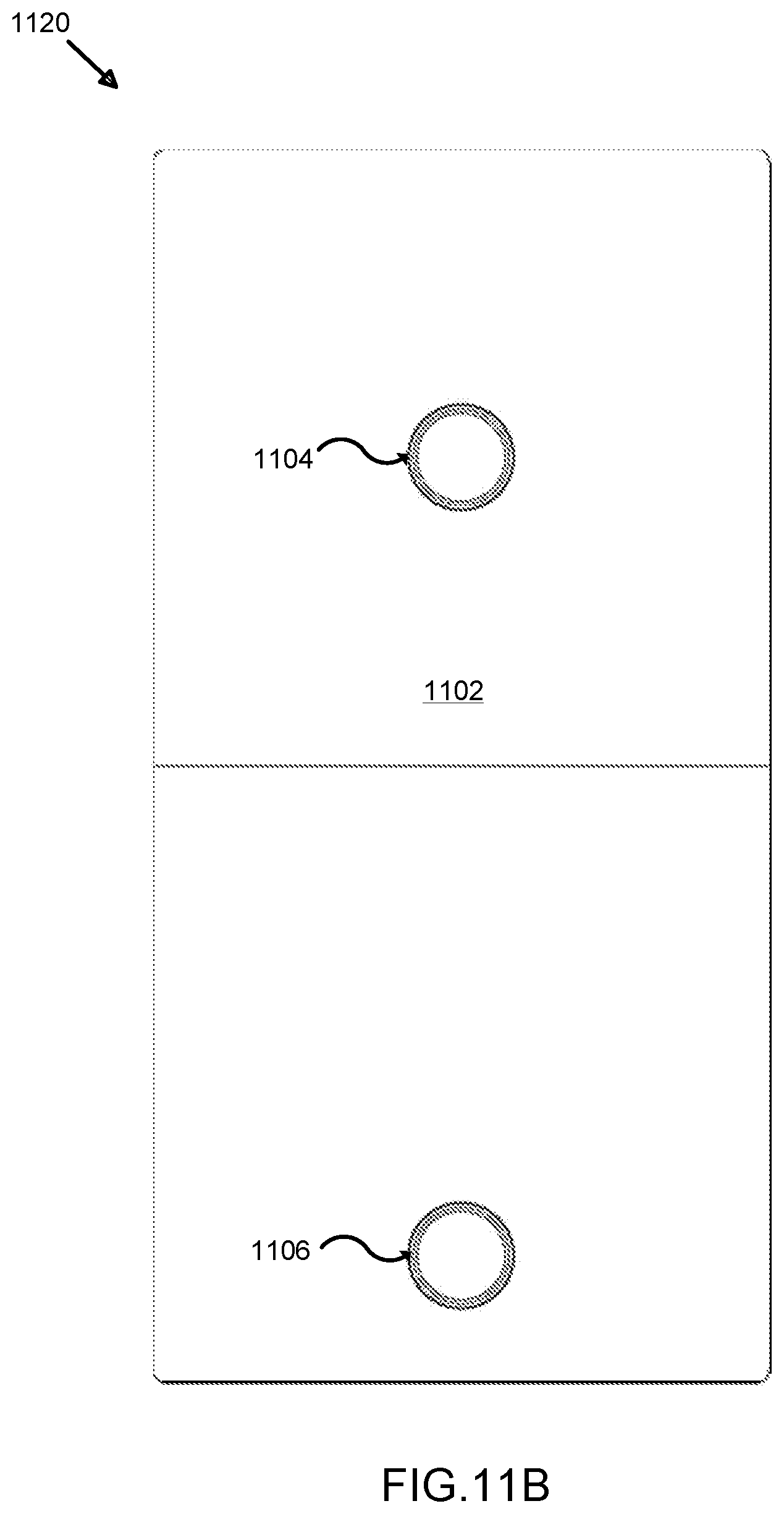

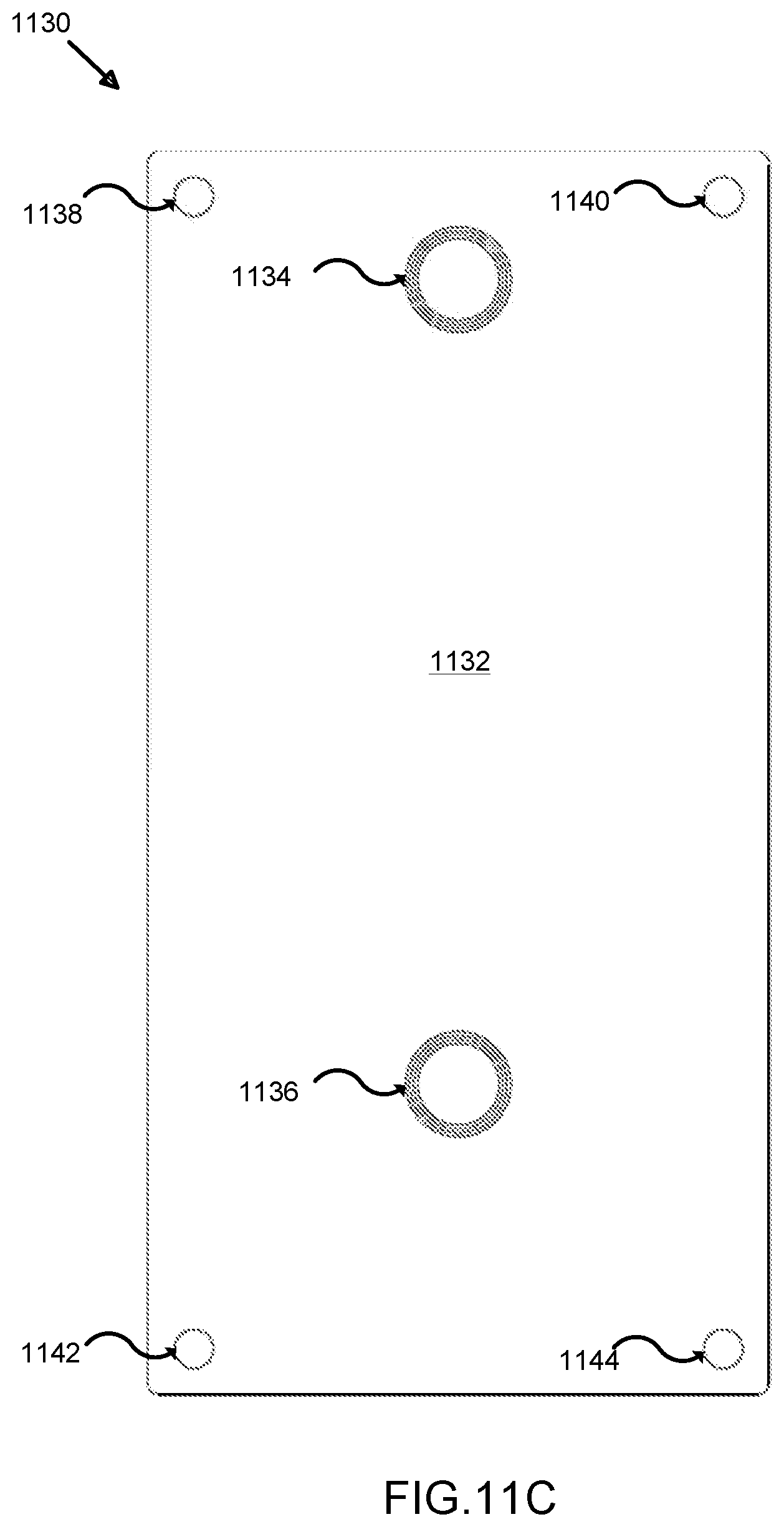

[0028] FIG. 11A illustrates a perspective view of an exemplary tile unit for analysis and deep learning modeling of sensor-based detection data in bounded aquatic environments;

[0029] FIG. 11B illustrates a front view of an exemplary tile unit for analysis and deep learning modeling of sensor-based detection data in bounded aquatic environments;

[0030] FIG. 11C illustrates a rear view of an exemplary tile unit for analysis and deep learning modeling of sensor-based detection data in bounded aquatic environments;

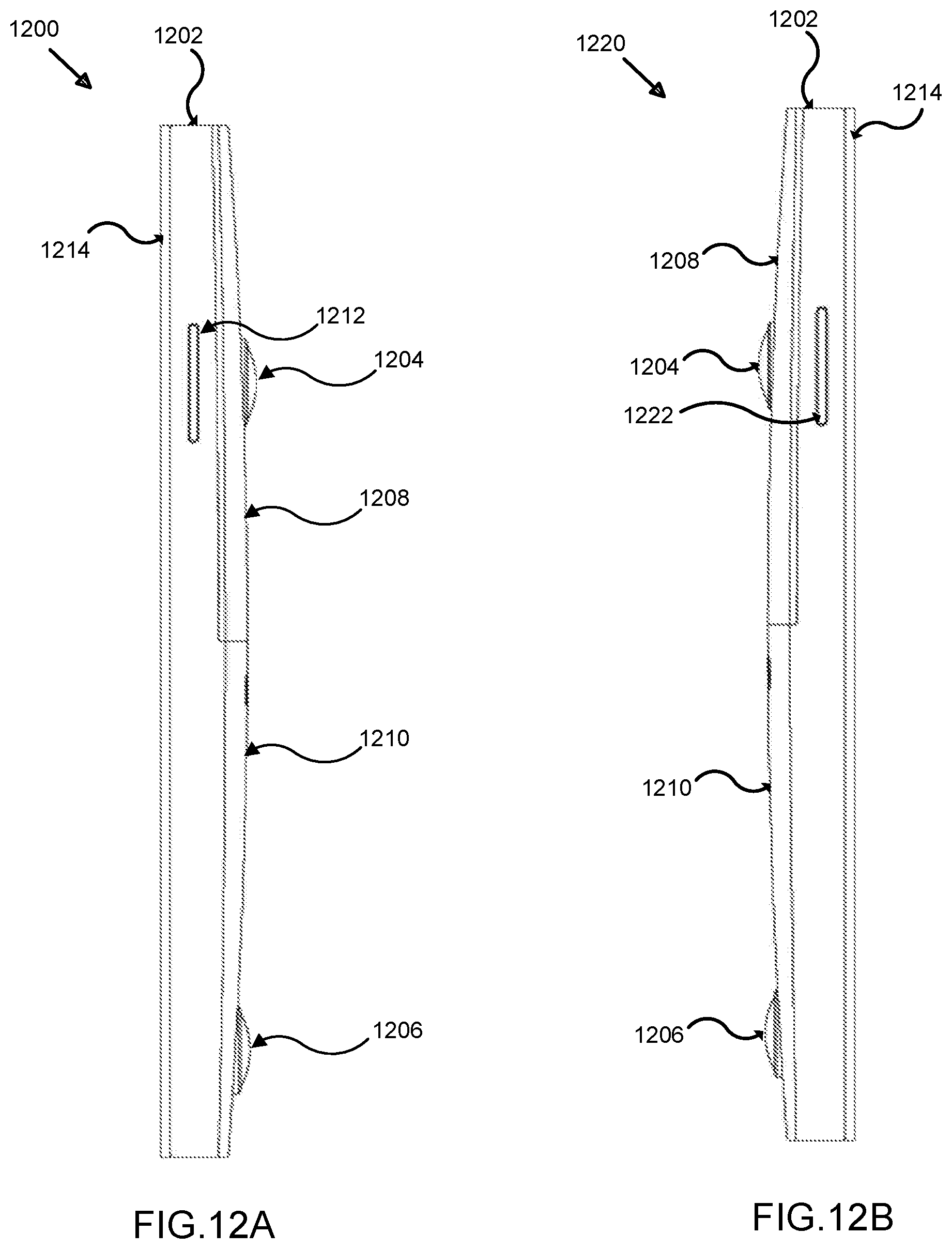

[0031] FIG. 12A illustrates a right side view of an exemplary tile unit for analysis and deep learning modeling of sensor-based detection data in bounded aquatic environments;

[0032] FIG. 12B illustrates a left side view of an exemplary tile unit for analysis and deep learning modeling of sensor-based detection data in bounded aquatic environments;

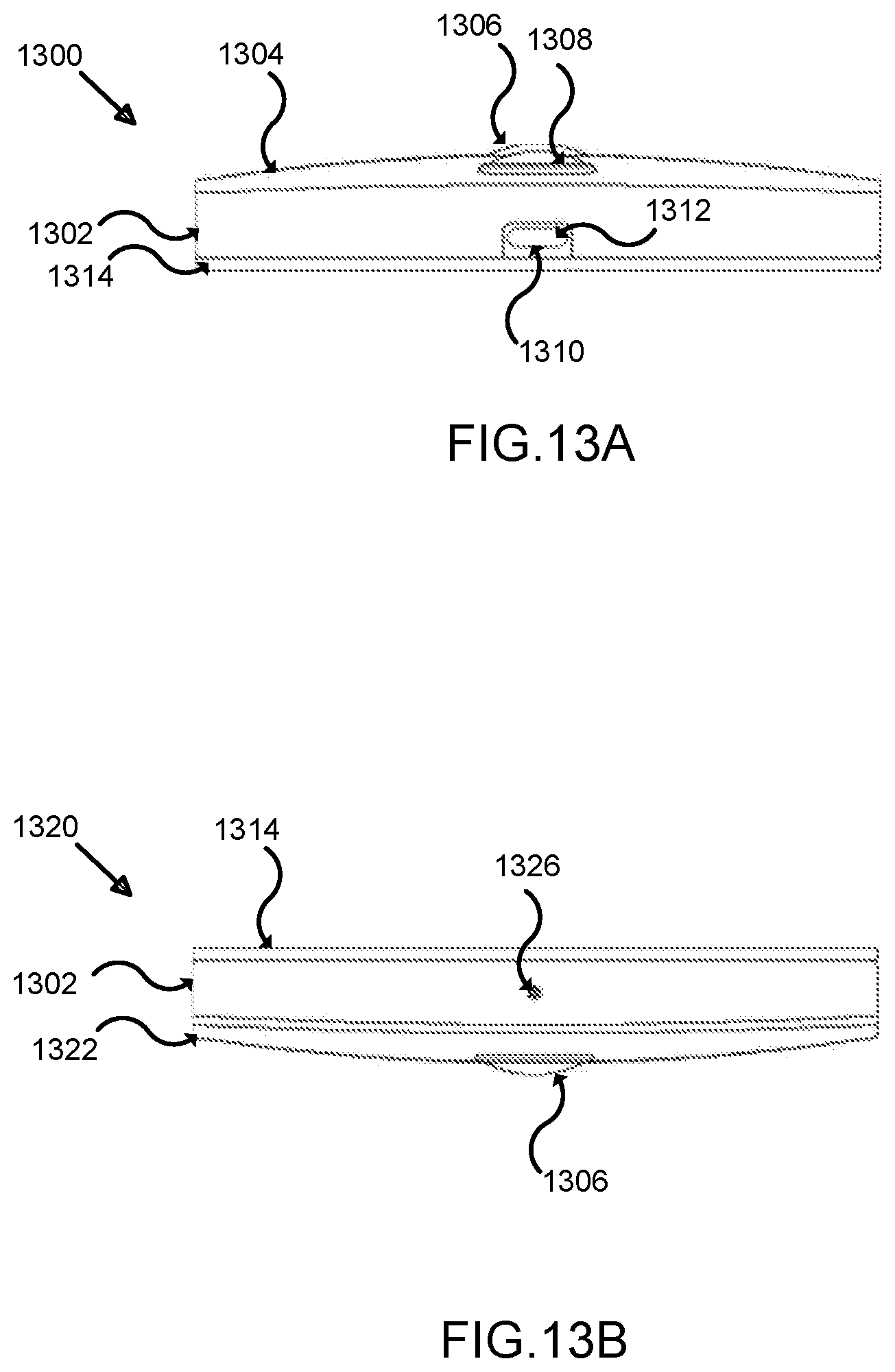

[0033] FIG. 13A illustrates a bottom view of an exemplary tile unit for analysis and deep learning modeling of sensor-based detection data in bounded aquatic environments;

[0034] FIG. 13B illustrates a top view of an exemplary tile unit for analysis and deep learning modeling of sensor-based detection data in bounded aquatic environments;

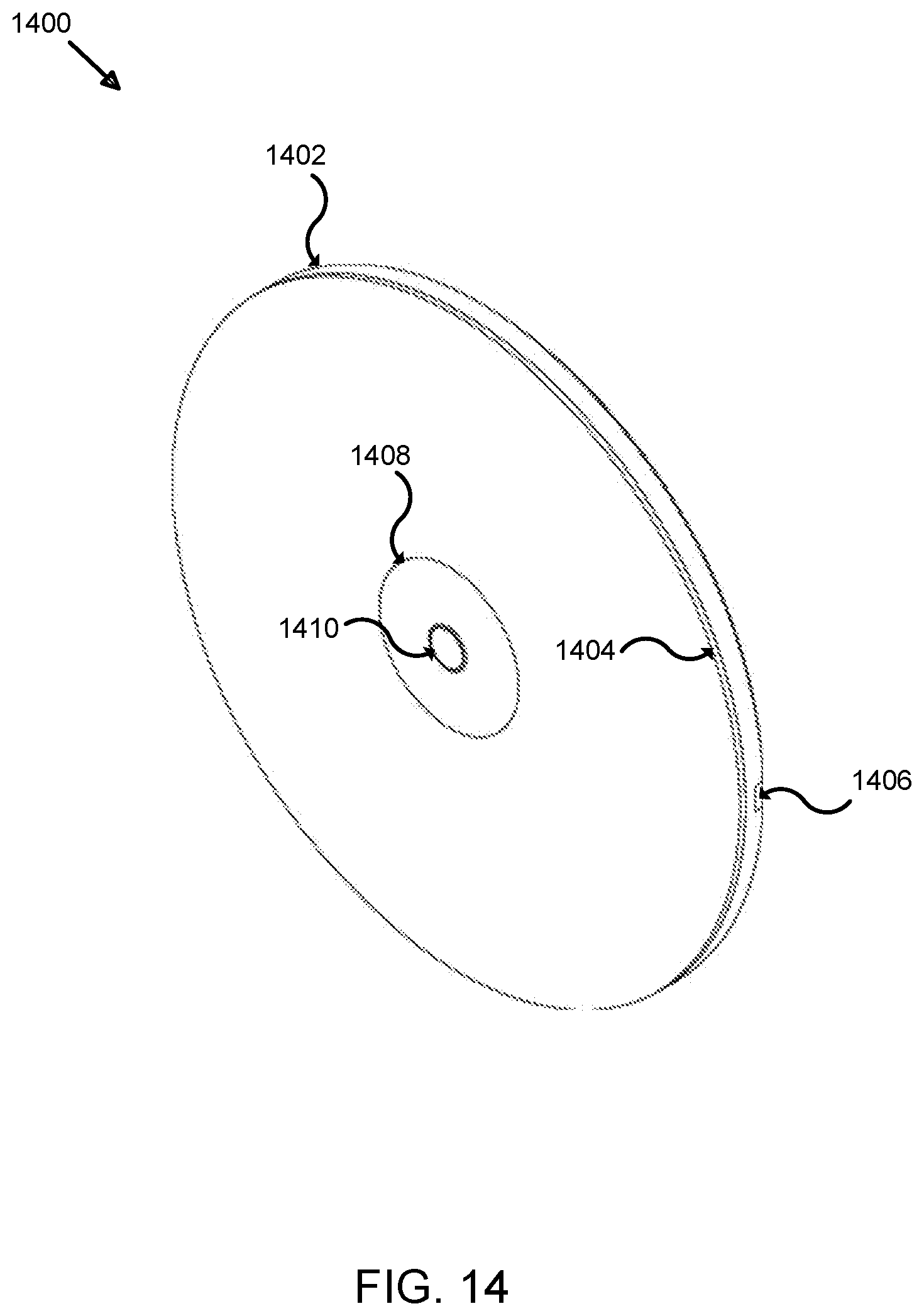

[0035] FIG. 14 illustrates a perspective view of a light unit for analysis and deep learning modeling of sensor-based detection data in bounded aquatic environments;

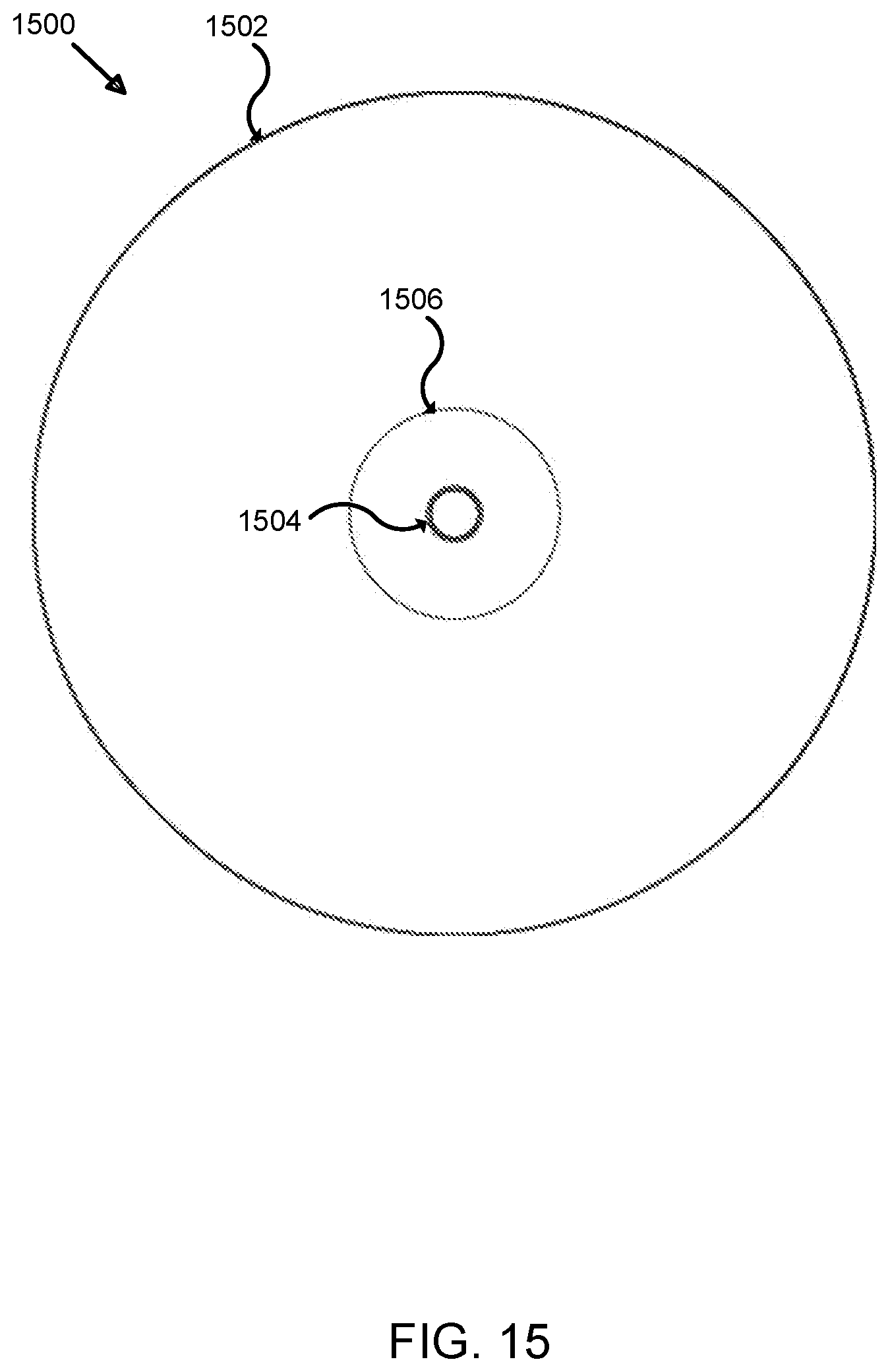

[0036] FIG. 15 illustrates a front view of a light unit for analysis and deep learning modeling of sensor-based detection data in bounded aquatic environments;

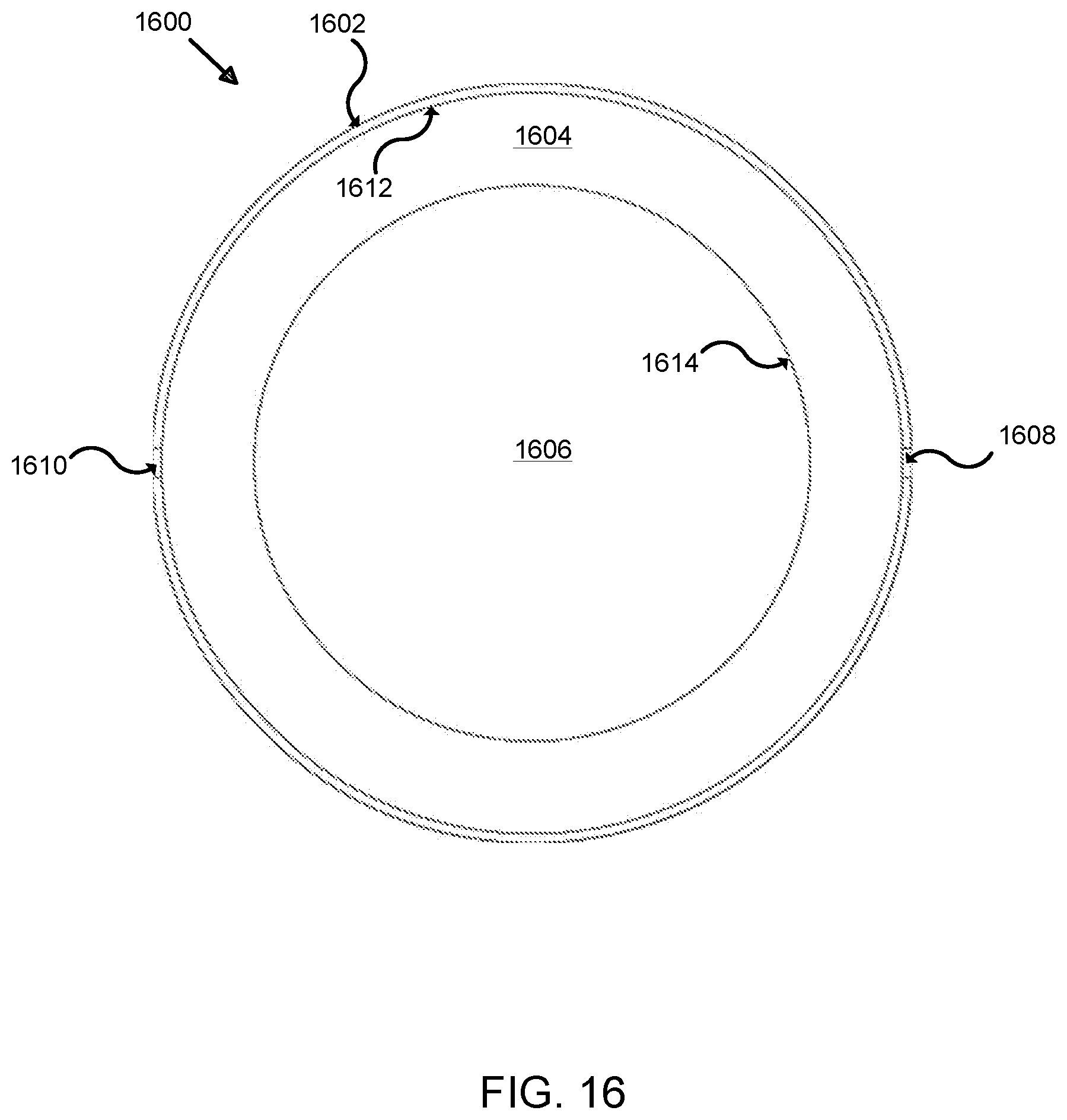

[0037] FIG. 16 illustrates a rear view of a light unit for analysis and deep learning modeling of sensor-based detection data in bounded aquatic environments;

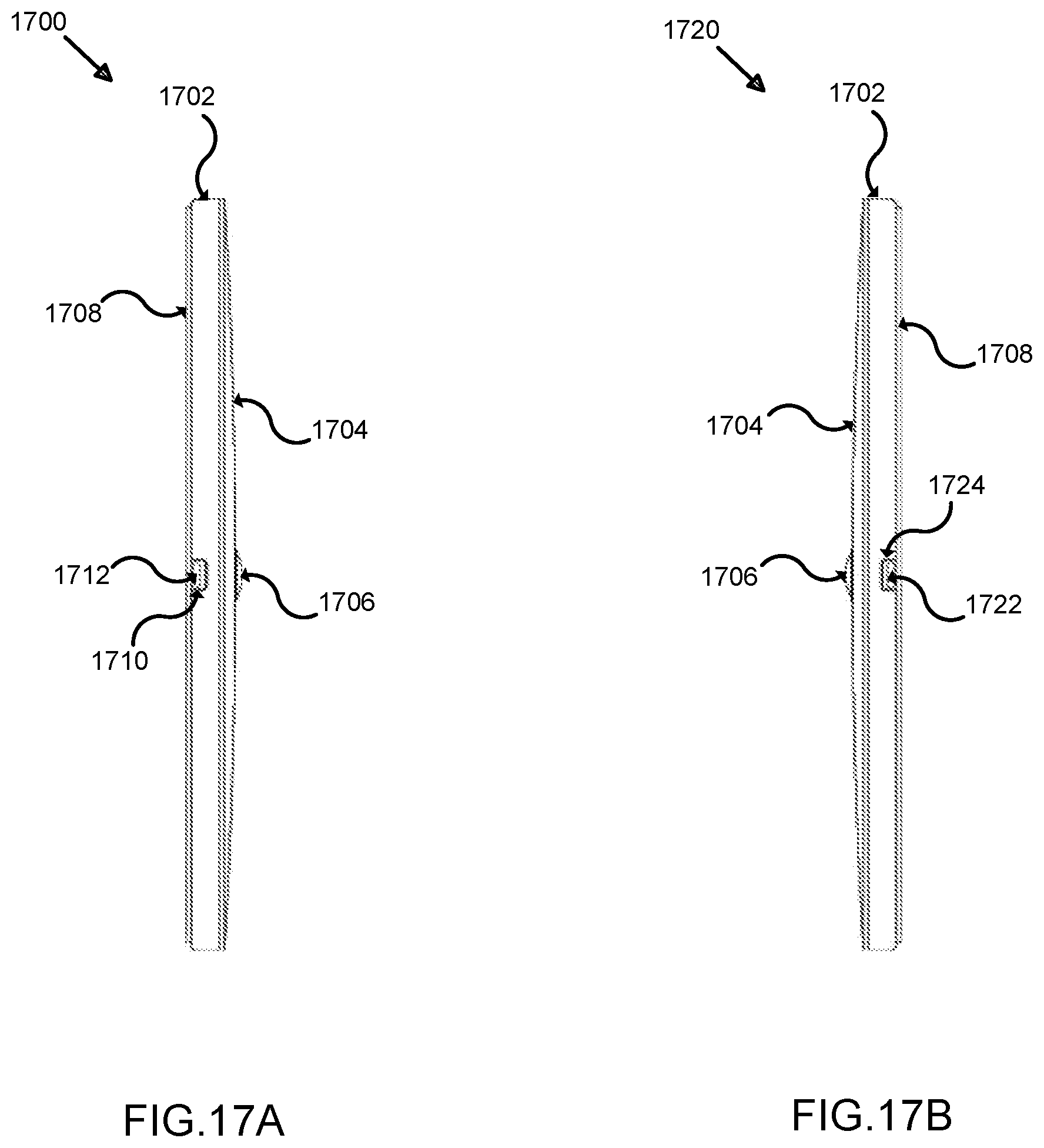

[0038] FIG. 17A illustrates a right side view of a light unit for analysis and deep learning modeling of sensor-based detection data in bounded aquatic environments;

[0039] FIG. 17B illustrates a left side view of a light unit for analysis and deep learning modeling of sensor-based detection data in bounded aquatic environments;

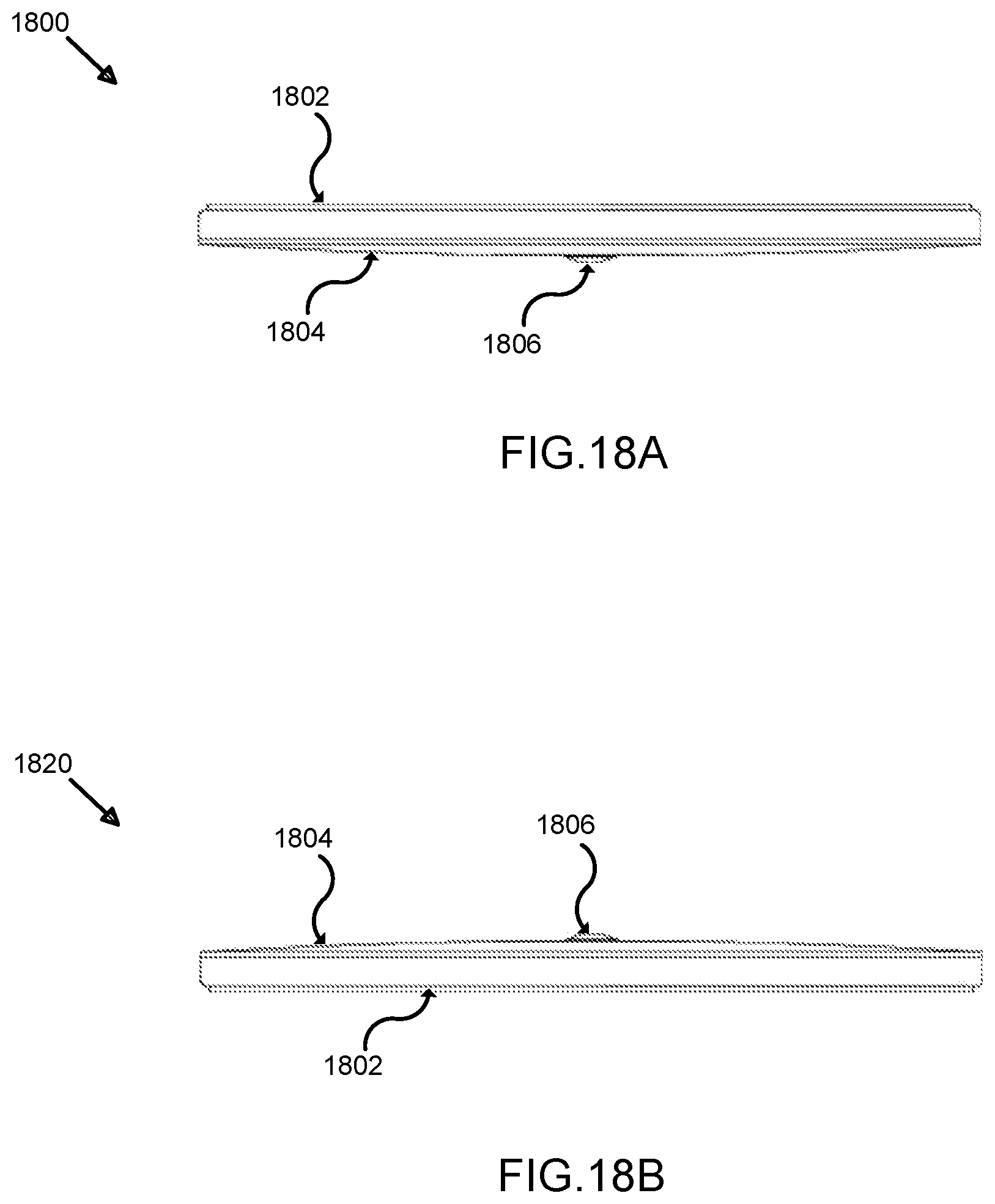

[0040] FIG. 18A illustrates a top view of a light unit for analysis and deep learning modeling of sensor-based detection data in bounded aquatic environments;

[0041] FIG. 18B illustrates a bottom view of a light unit for analysis and deep learning modeling of sensor-based detection data in bounded aquatic environments;

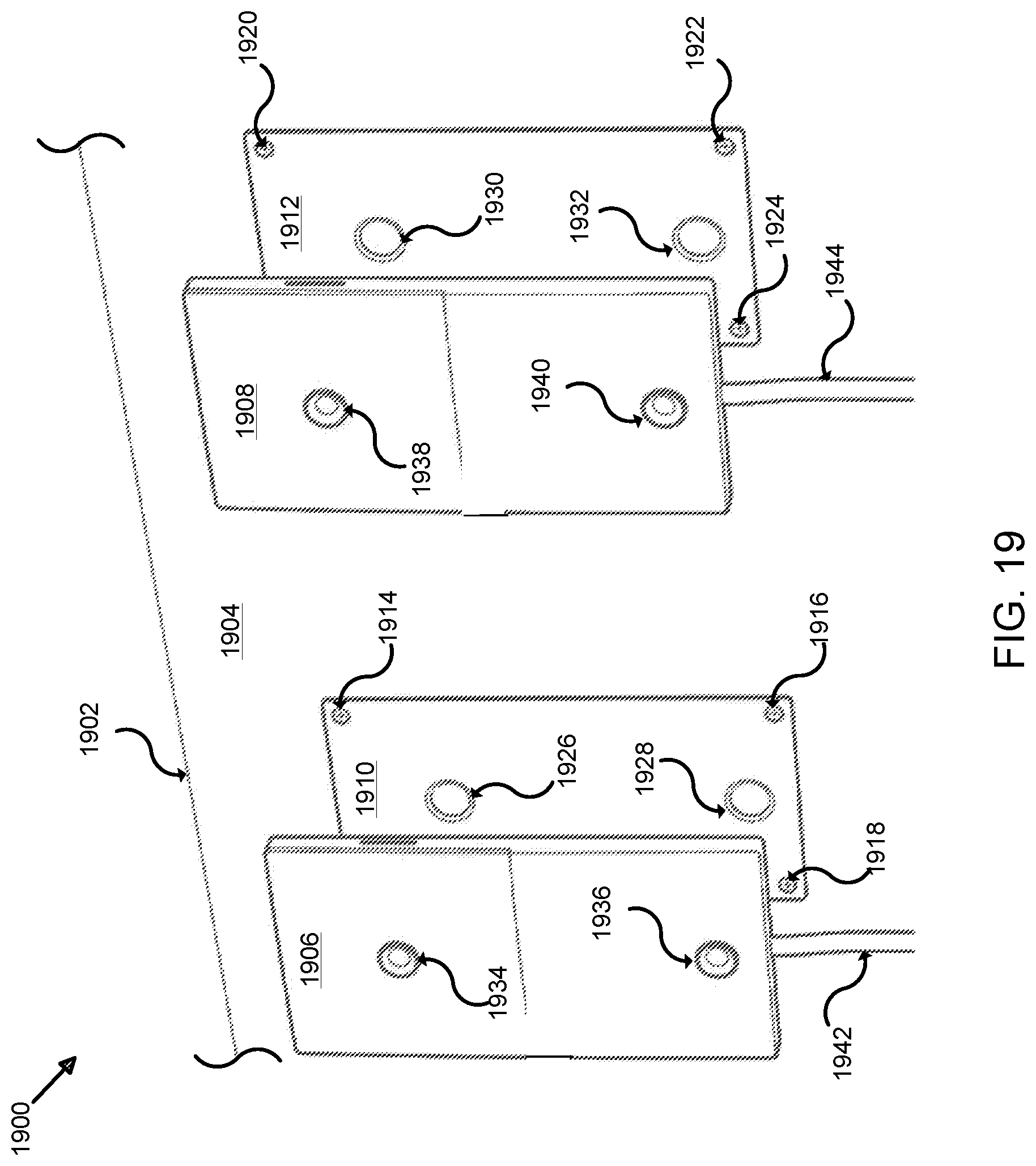

[0042] FIG. 19 illustrates a perspective view of a dual exemplary tile unit for analysis and deep learning modeling of sensor-based detection data in bounded aquatic environments;

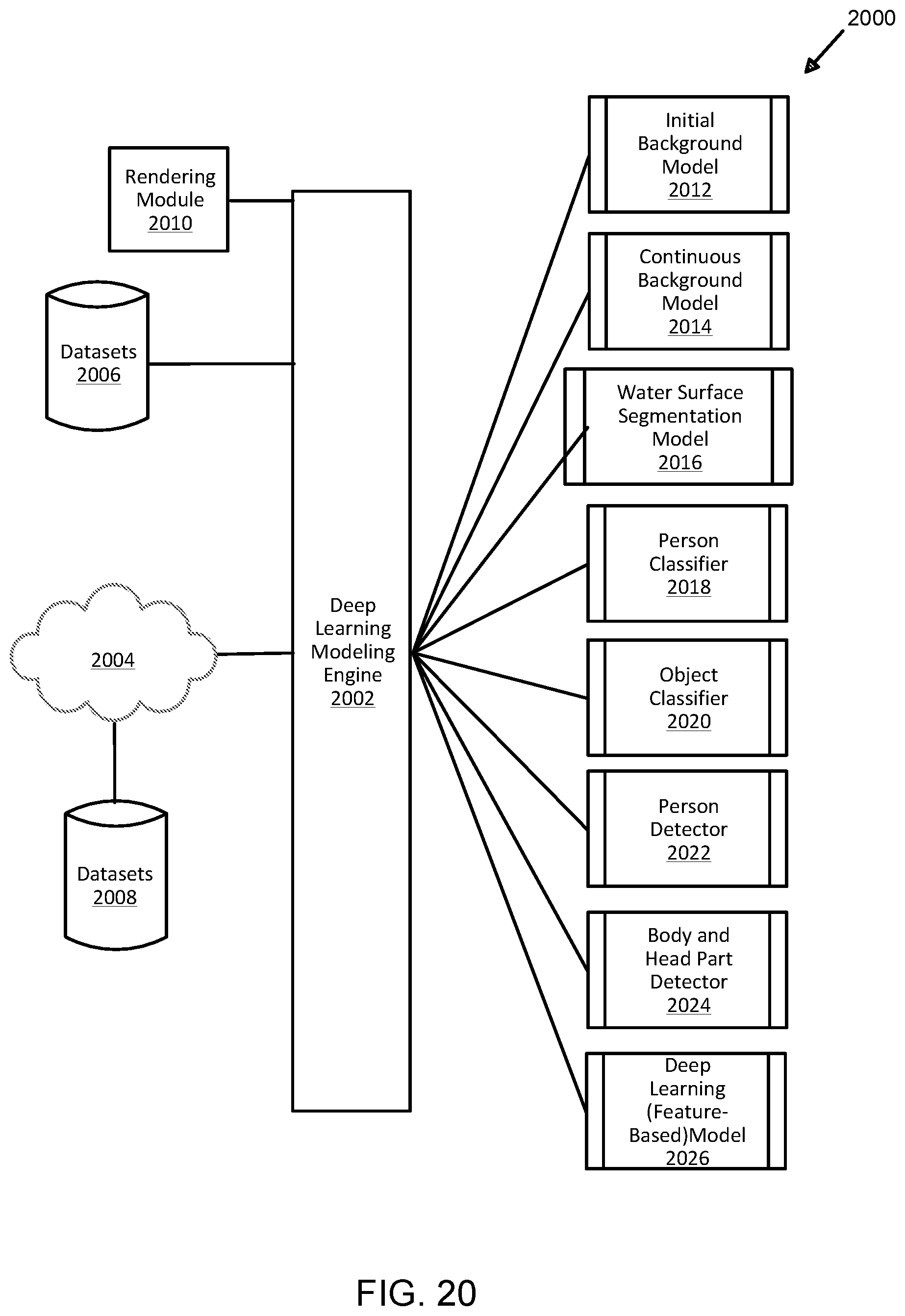

[0043] FIG. 20 illustrates an exemplary application architecture for analysis and deep learning modeling of sensor-based detection data in bounded aquatic environments;

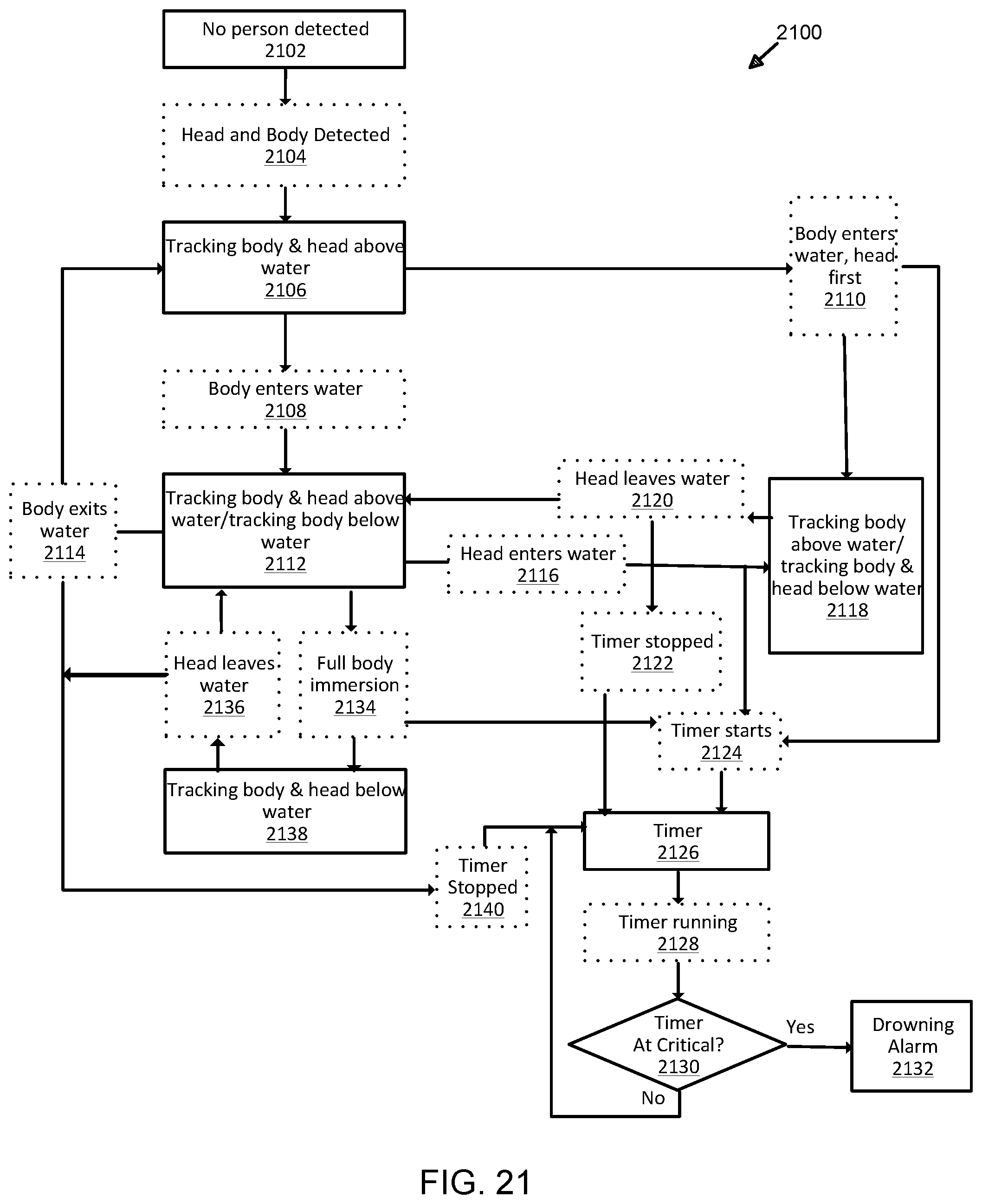

[0044] FIG. 21 illustrates an exemplary state diagram for analysis and deep learning modeling of sensor-based detection data in bounded aquatic environments;

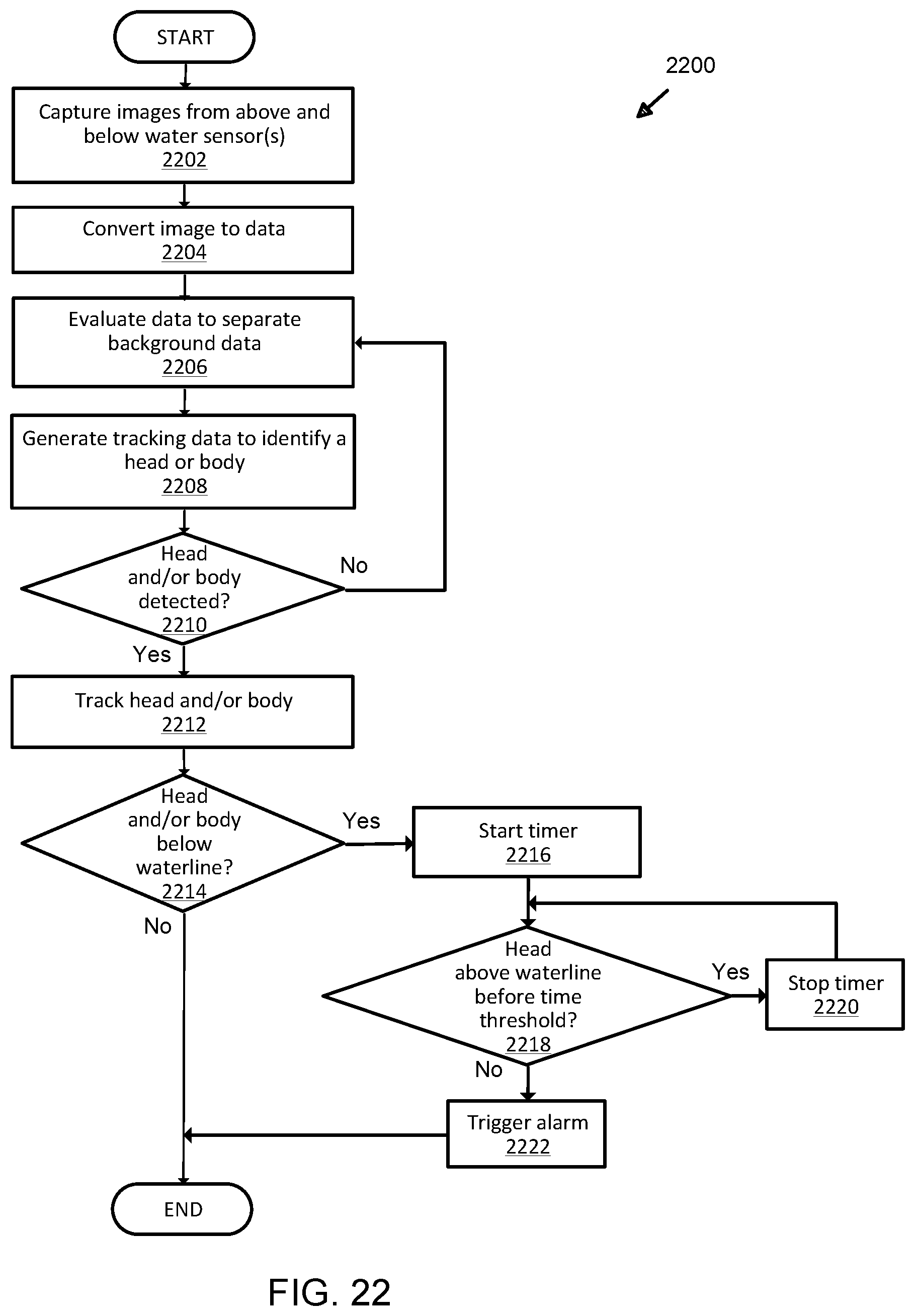

[0045] FIG. 22 illustrates an exemplary process for analysis and deep learning modeling of sensor-based detection data in bounded aquatic environments;

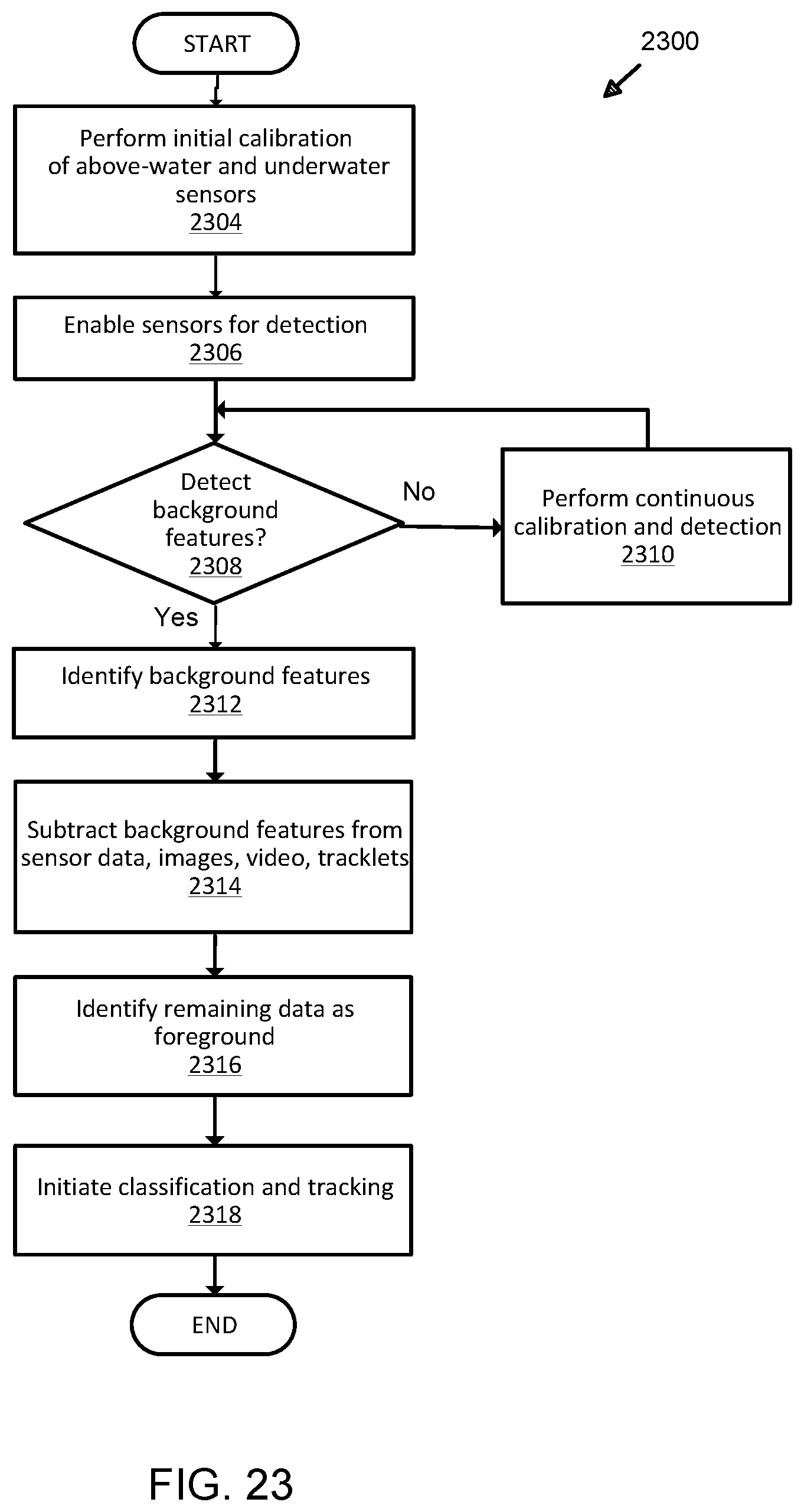

[0046] FIG. 23 illustrates an alternative exemplary process for analysis and deep learning modeling of sensor-based detection data in bounded aquatic environments;

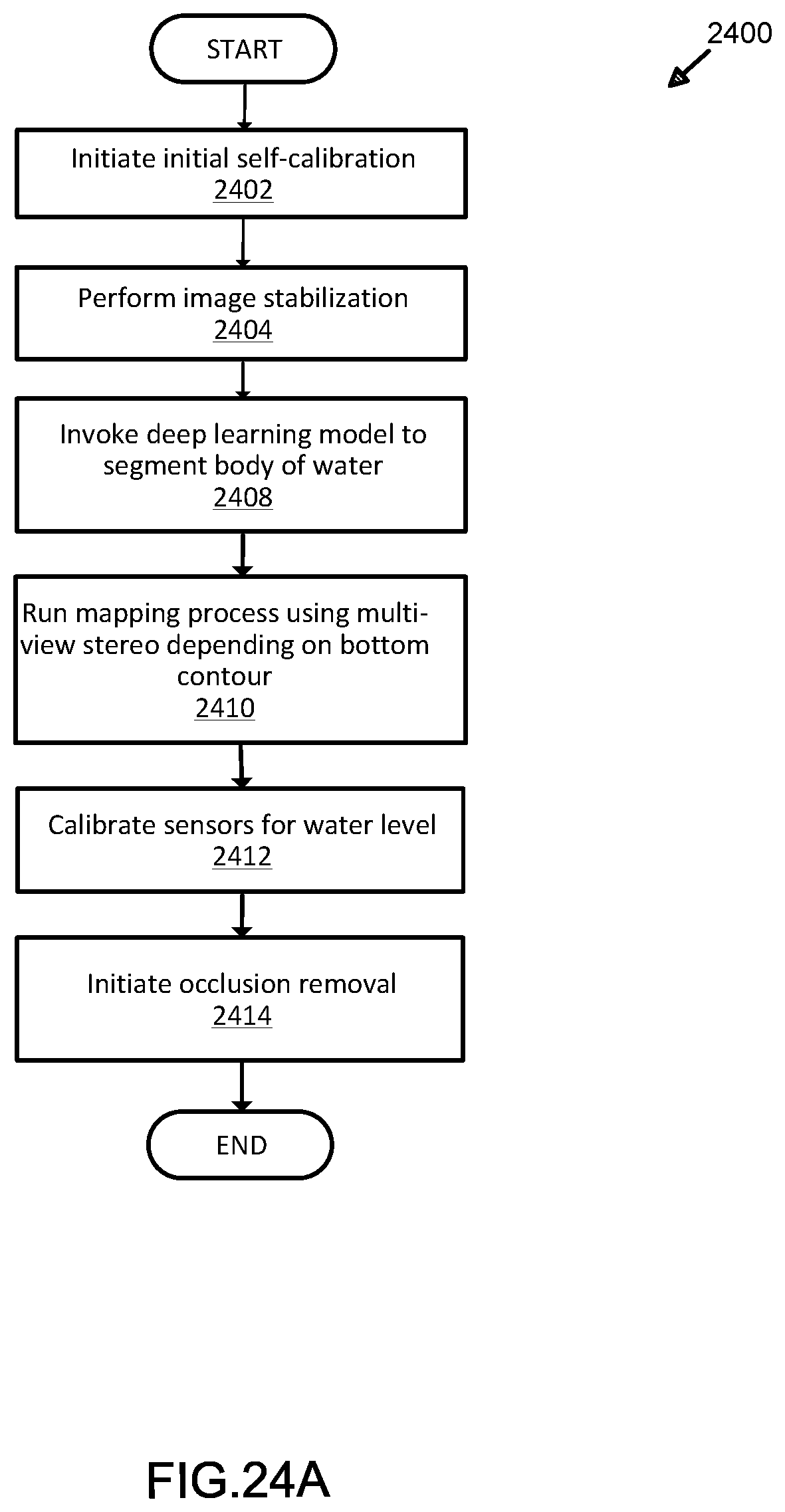

[0047] FIG. 24A illustrates an exemplary calibration process for analysis and deep learning modeling of sensor-based detection data in bounded aquatic environments;

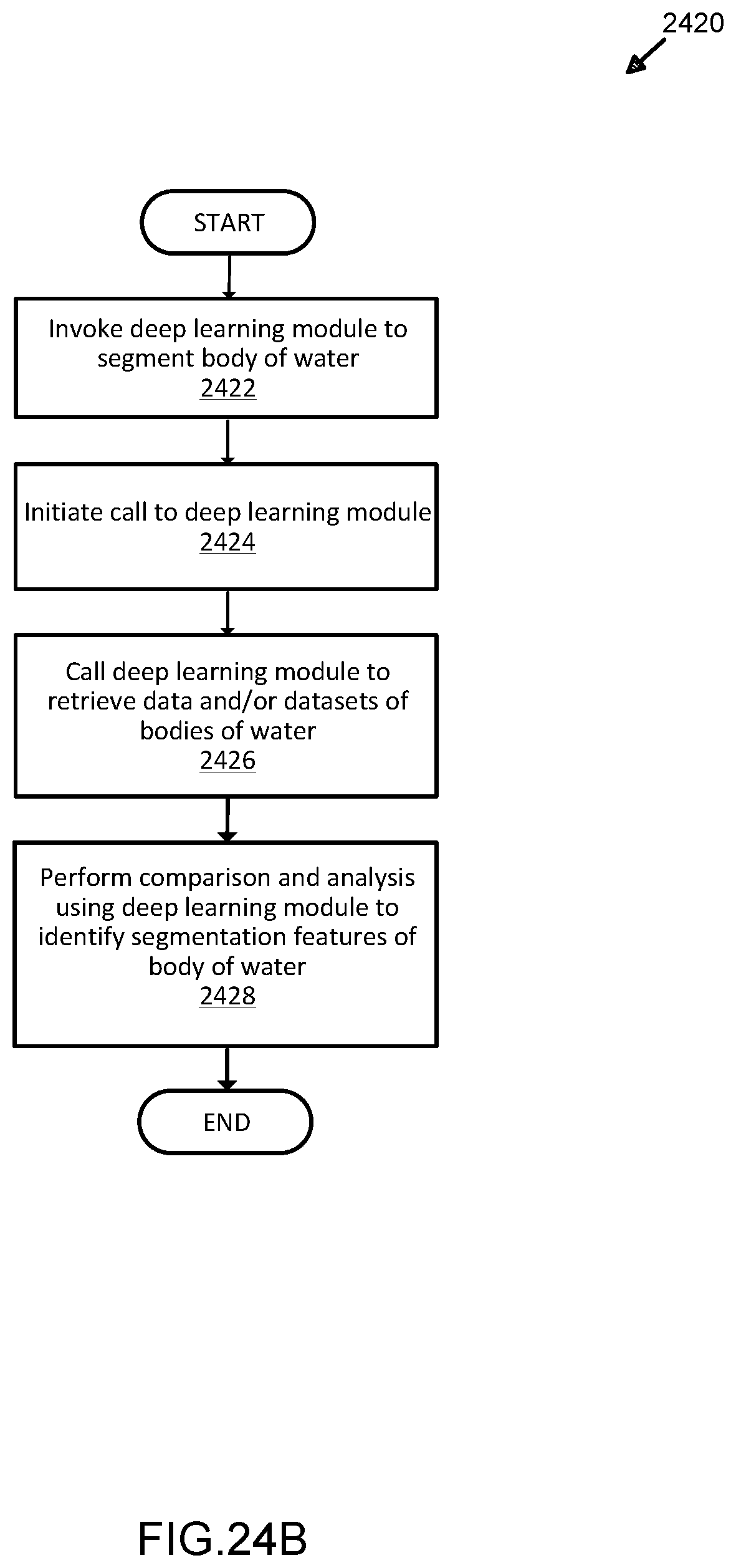

[0048] FIG. 24B illustrates an exemplary process for segmentation of bodies of water for analysis and deep learning modeling of sensor-based detection data in bounded aquatic environments;

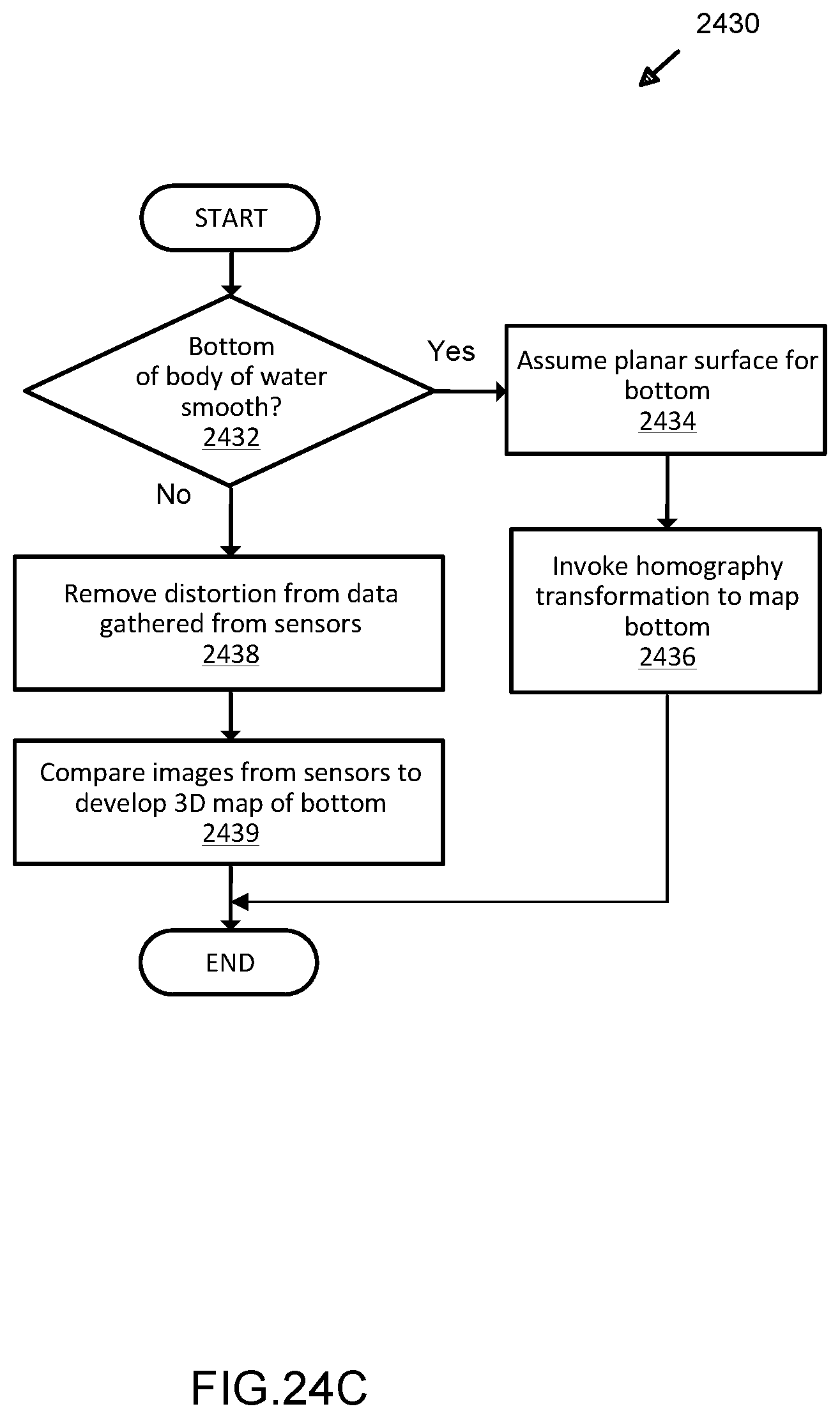

[0049] FIG. 24C illustrates an exemplary process for mapping aquatic environments for analysis and deep learning modeling of sensor-based detection data in bounded aquatic environments;

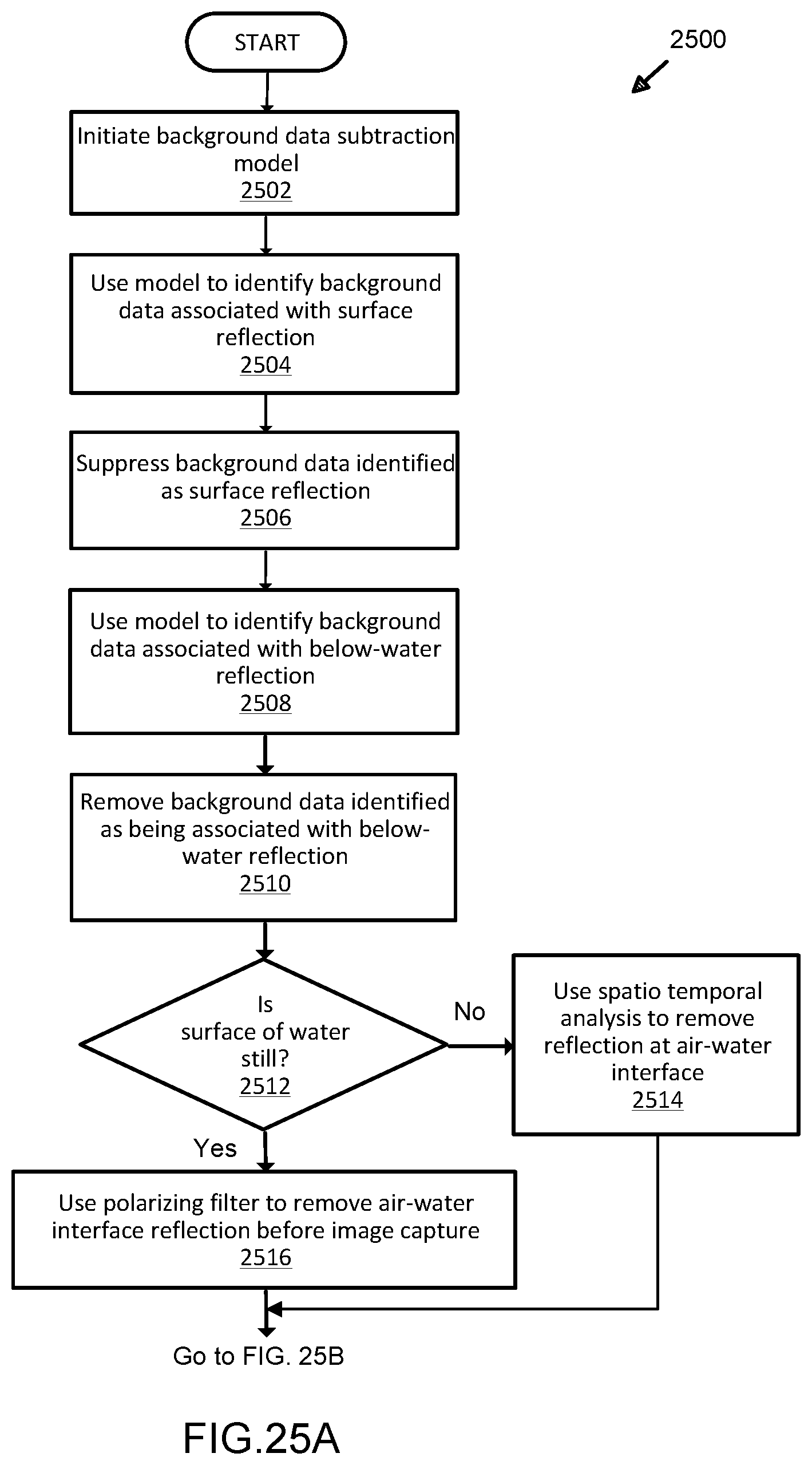

[0050] FIG. 25A illustrates an exemplary process for background subtraction in analysis and deep learning modeling of sensor-based detection data in bounded aquatic environments;

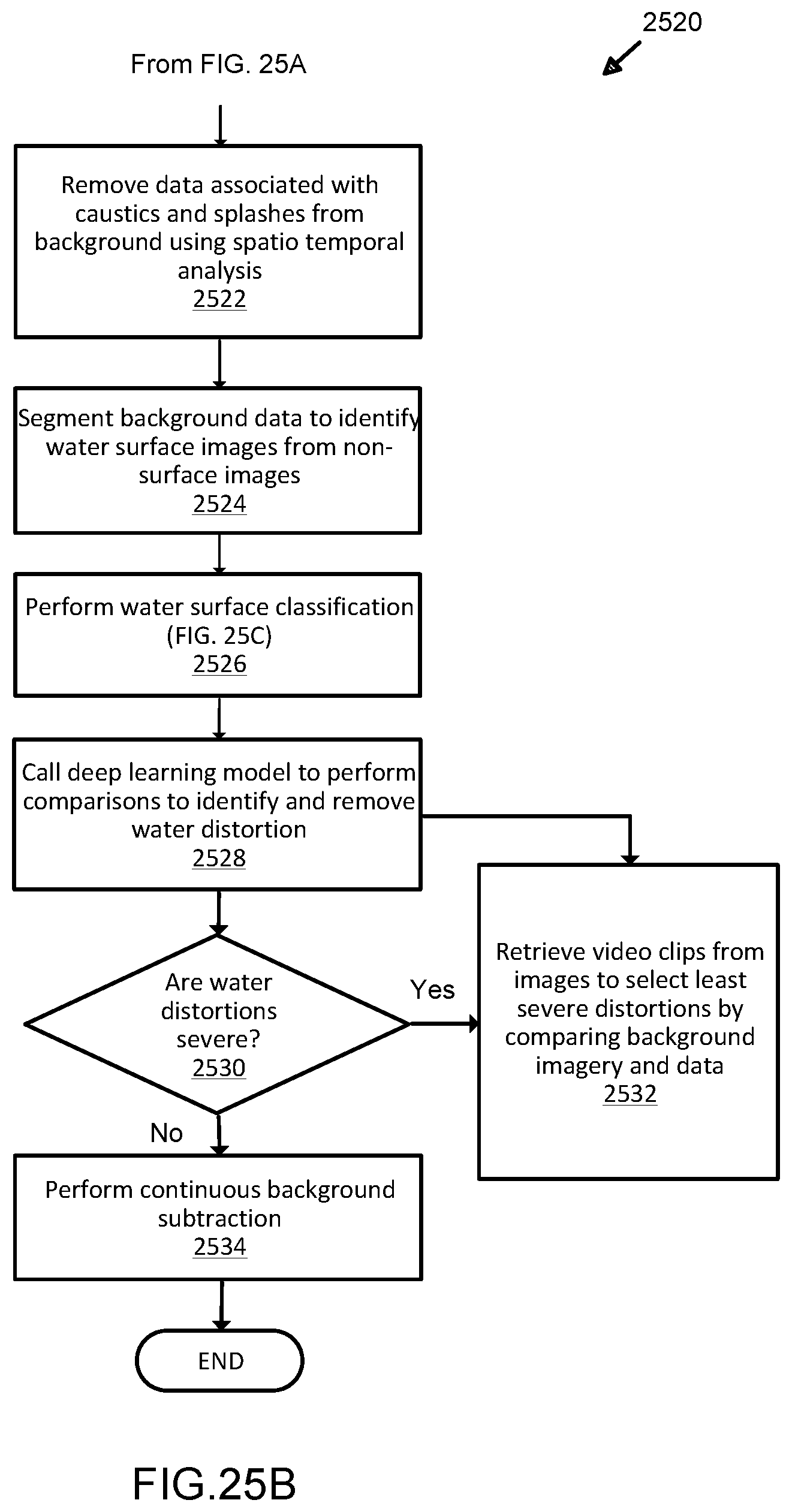

[0051] FIG. 25B illustrates a further exemplary process for background subtraction in analysis and deep learning modeling of sensor-based detection data in bounded aquatic environments;

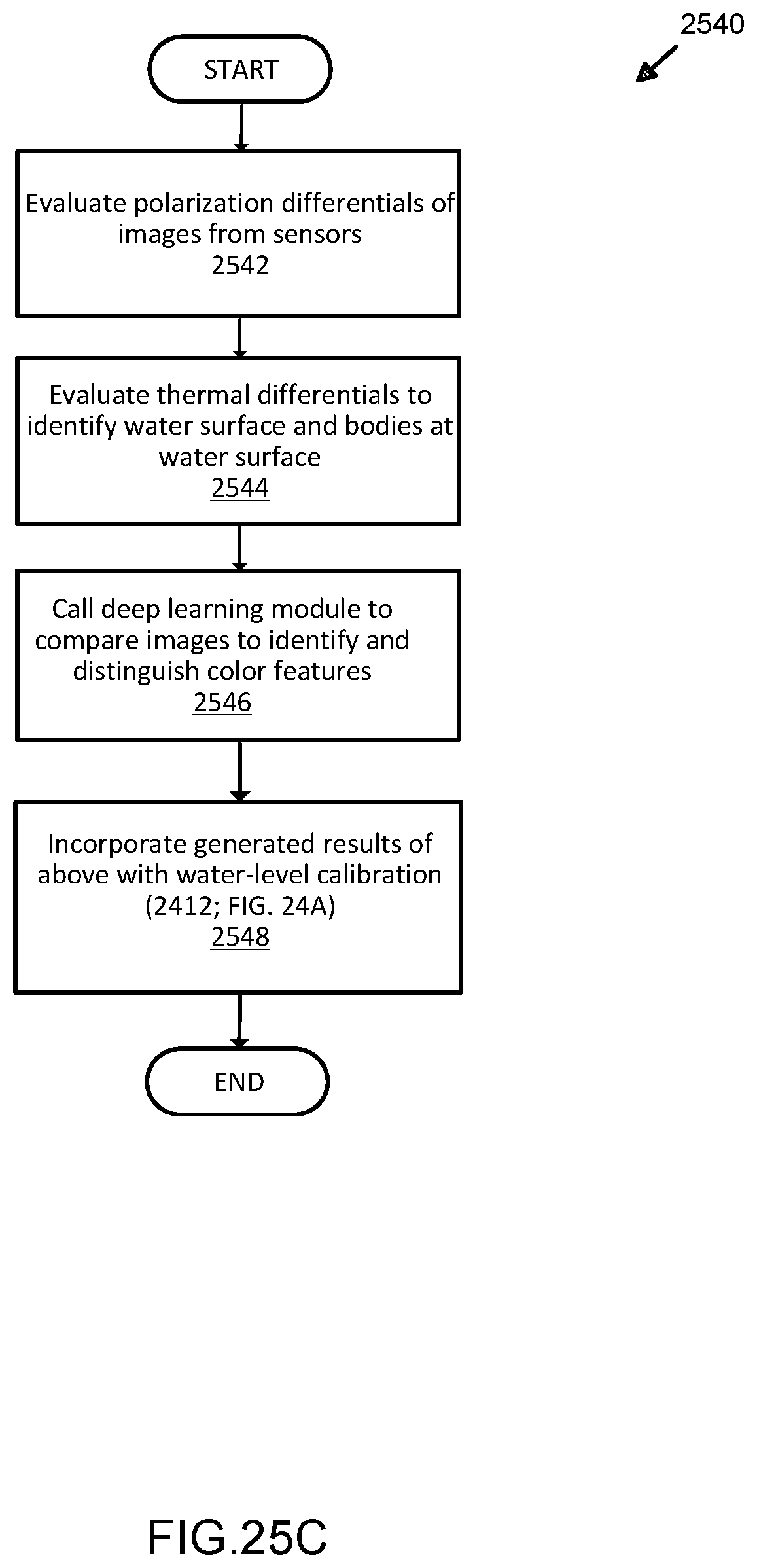

[0052] FIG. 25C illustrates an exemplary process for water surface classification in analysis and deep learning modeling of sensor-based detection data in bounded aquatic environments;

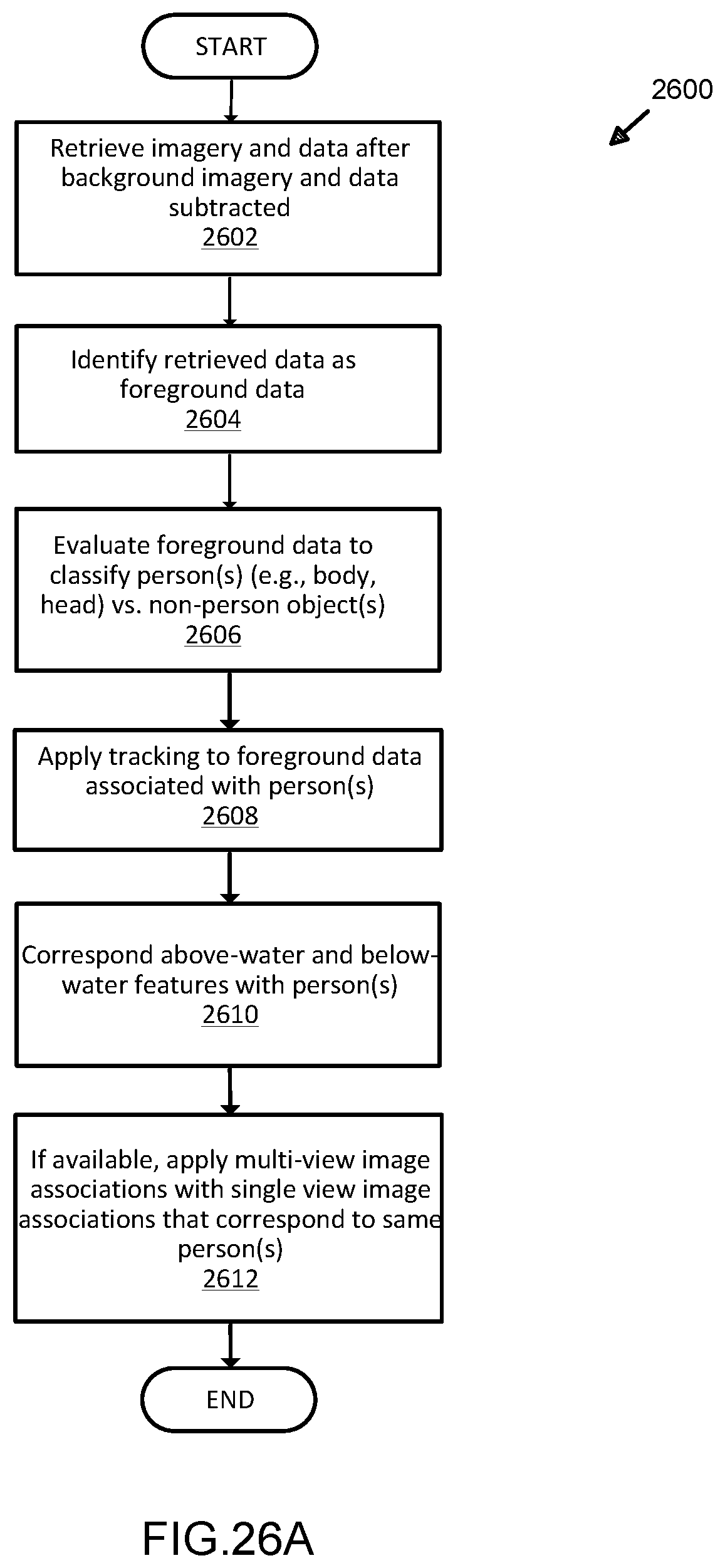

[0053] FIG. 26A illustrates an exemplary process for detection and classification of bodies in analysis and deep learning modeling of sensor-based detection data in bounded aquatic environments;

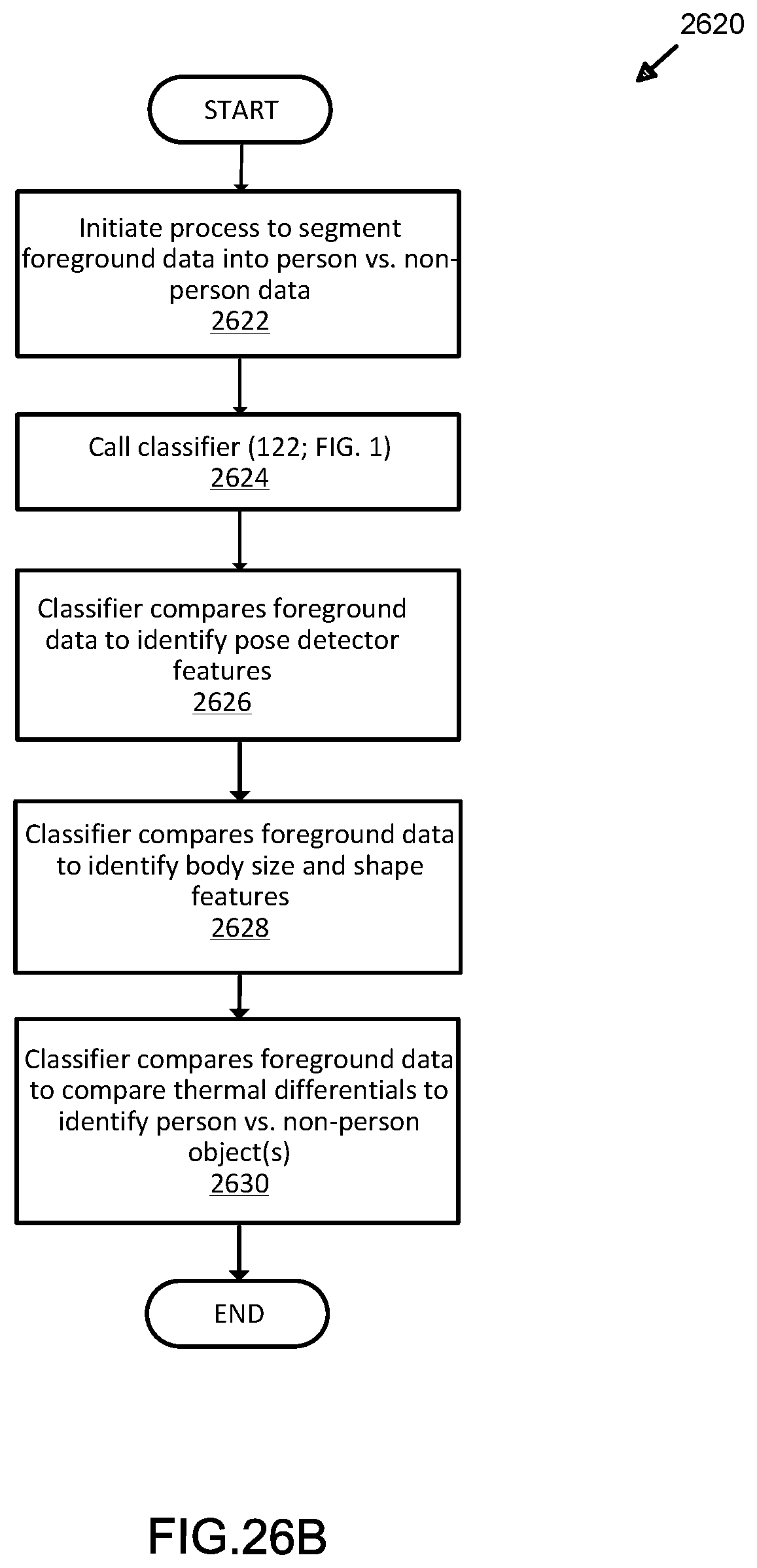

[0054] FIG. 26B illustrates a further exemplary process for classification in analysis and deep learning modeling of sensor-based detection data in bounded aquatic environments;

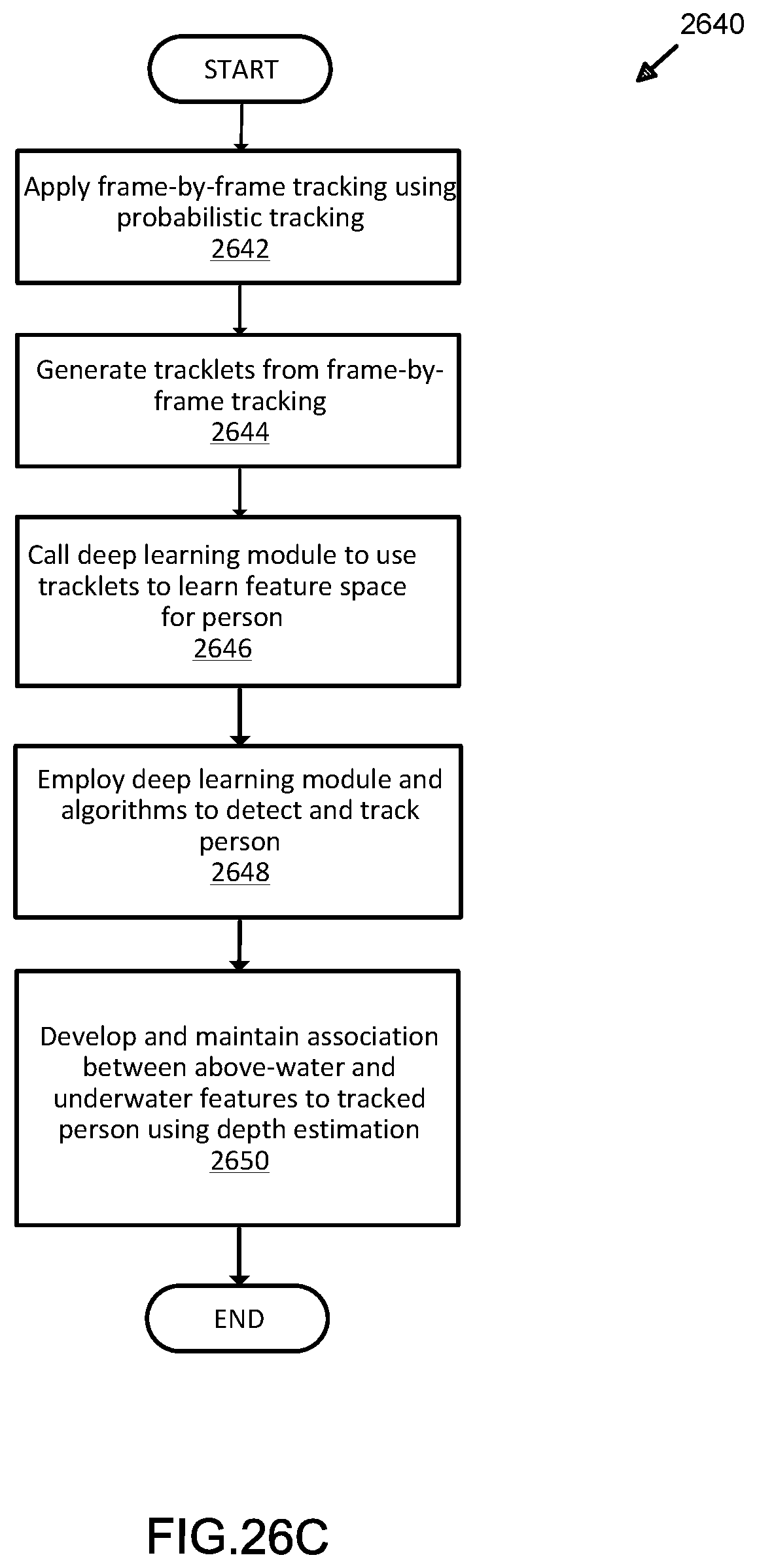

[0055] FIG. 26C illustrates yet another exemplary process for classification in analysis and deep learning modeling of sensor-based detection data in bounded aquatic environments;

[0056] FIG. 27A illustrates an exemplary graphical representation of motion features generated in analysis and deep learning modeling of sensor-based detection data for organic motion determination in bounded aquatic environments;

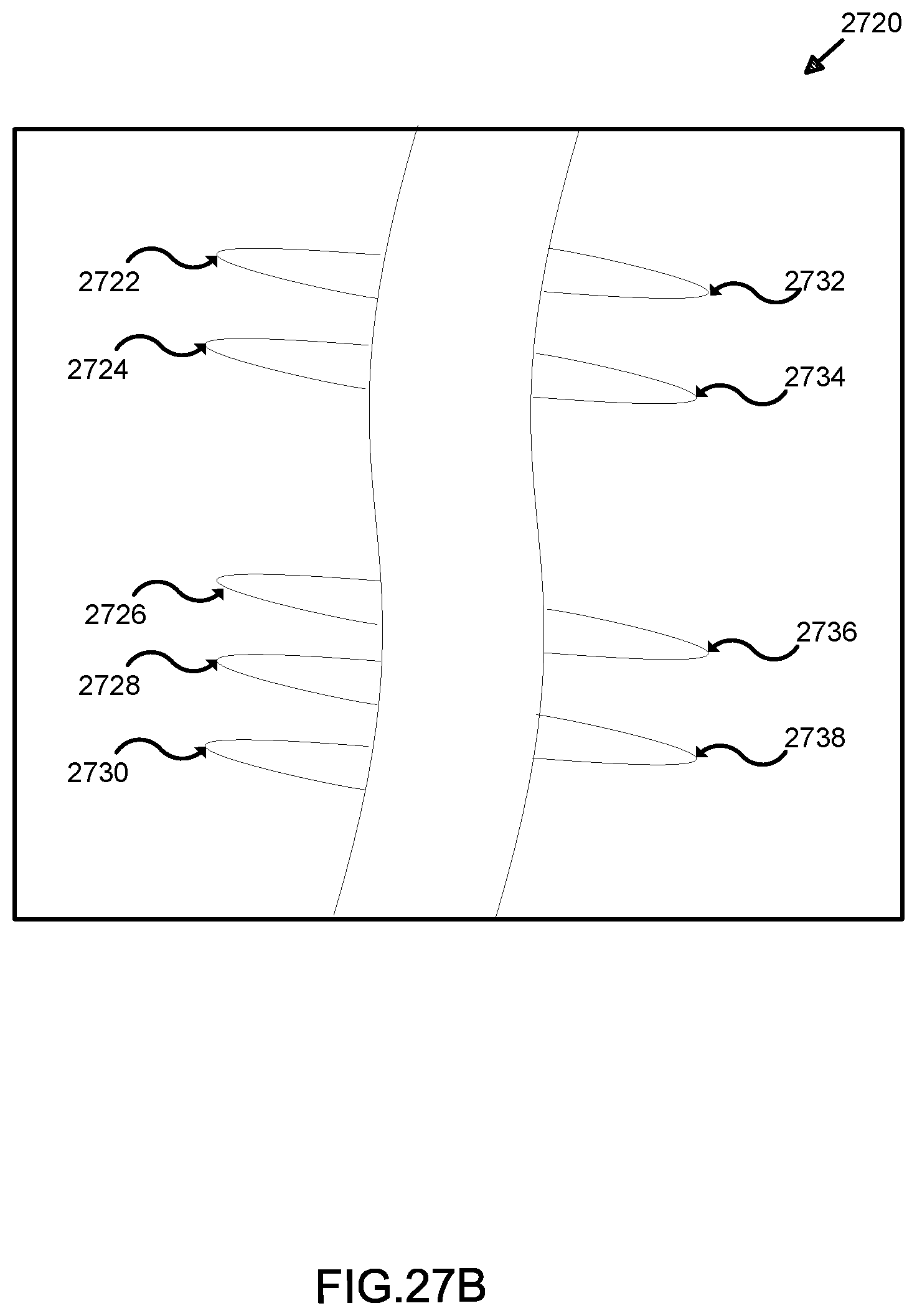

[0057] FIG. 27B illustrates another exemplary graphical representation of irregular motion features depicting organic motion as generated using techniques for analysis and deep learning modeling of sensor-based detection data for organic motion determination in bounded aquatic environments;

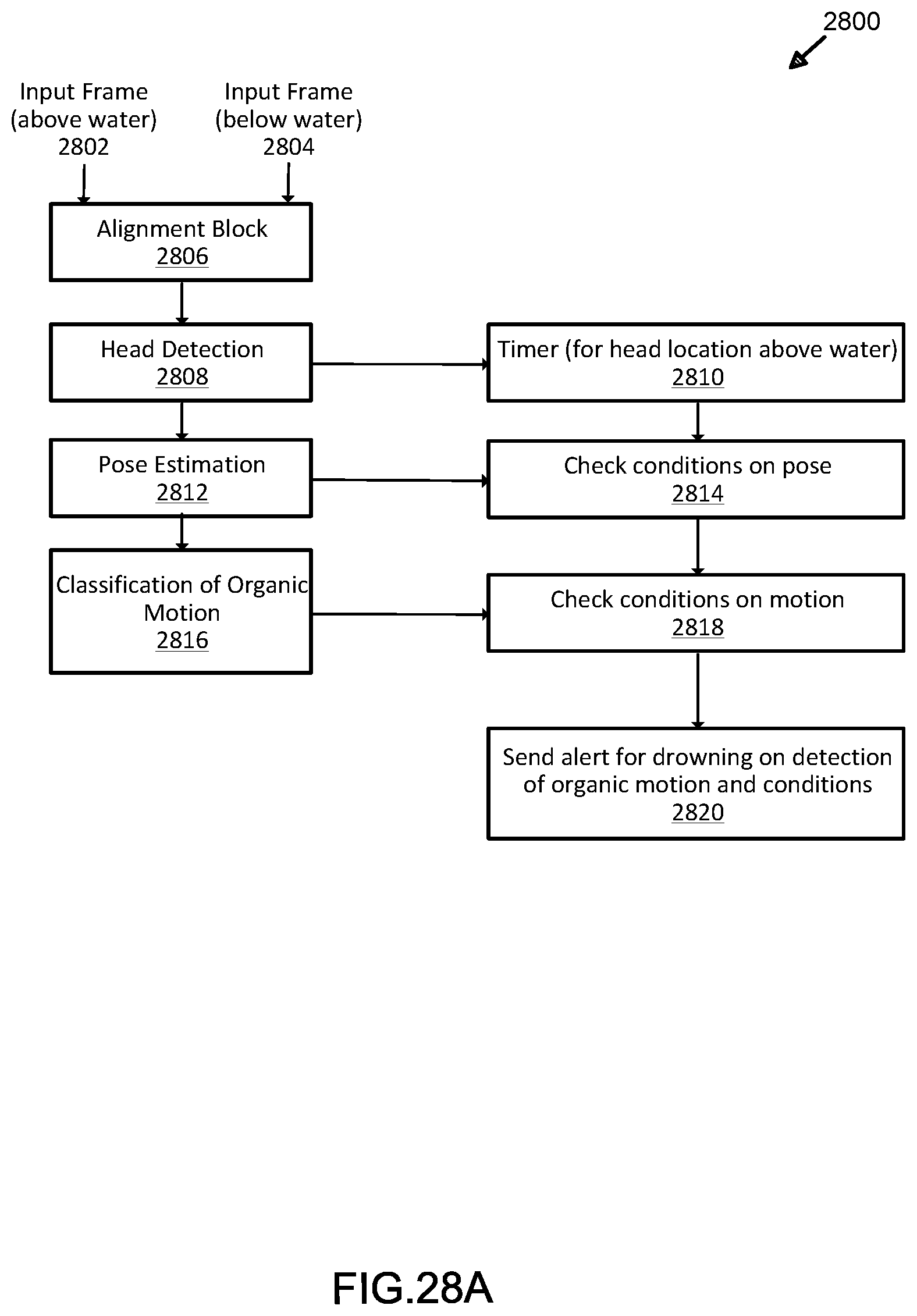

[0058] FIG. 28A illustrates an exemplary process for deep learning classification in analysis and deep learning modeling of sensor-based detection data in bounded aquatic environments;

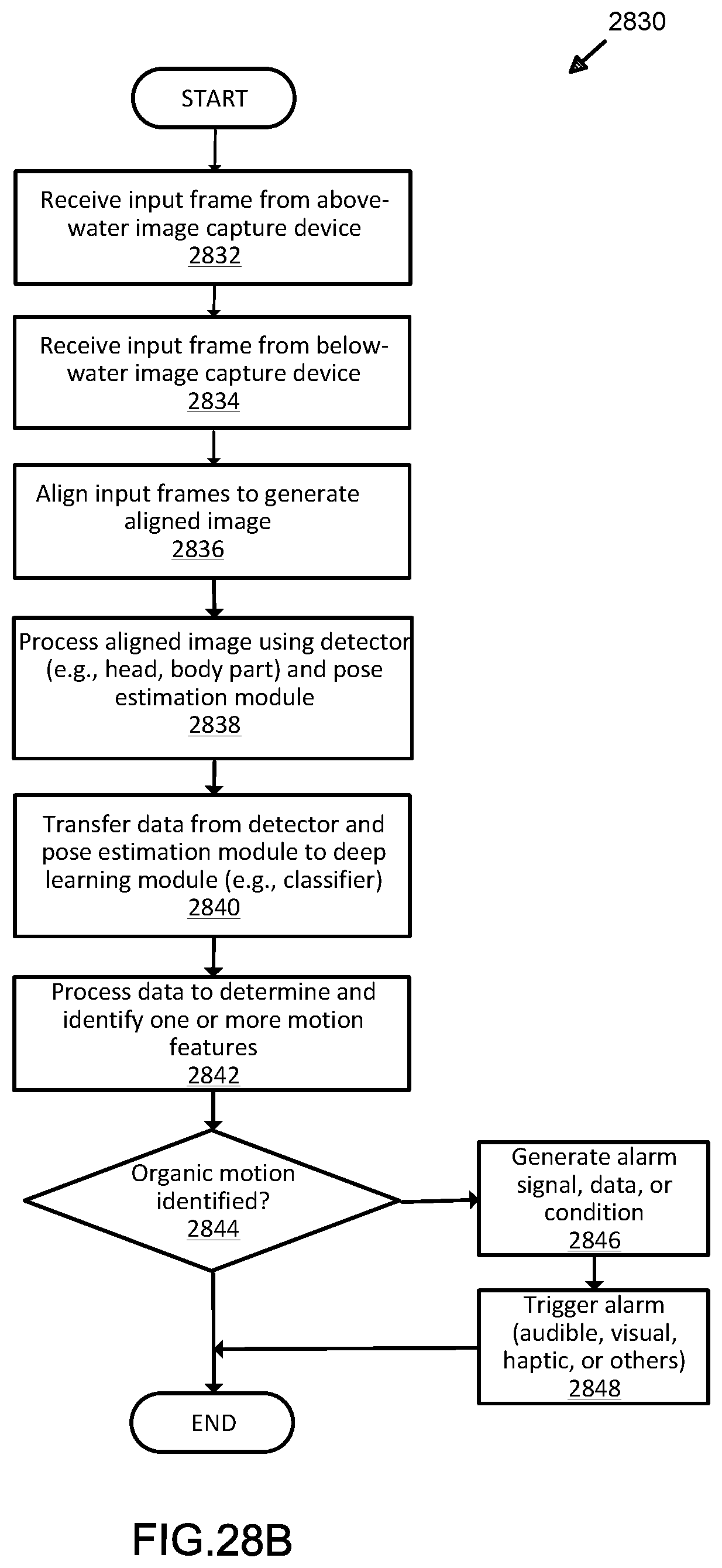

[0059] FIG. 28B illustrates another exemplary process for deep learning classification in analysis and deep learning modeling of sensor-based detection data for organic motion determination in bounded aquatic environments;

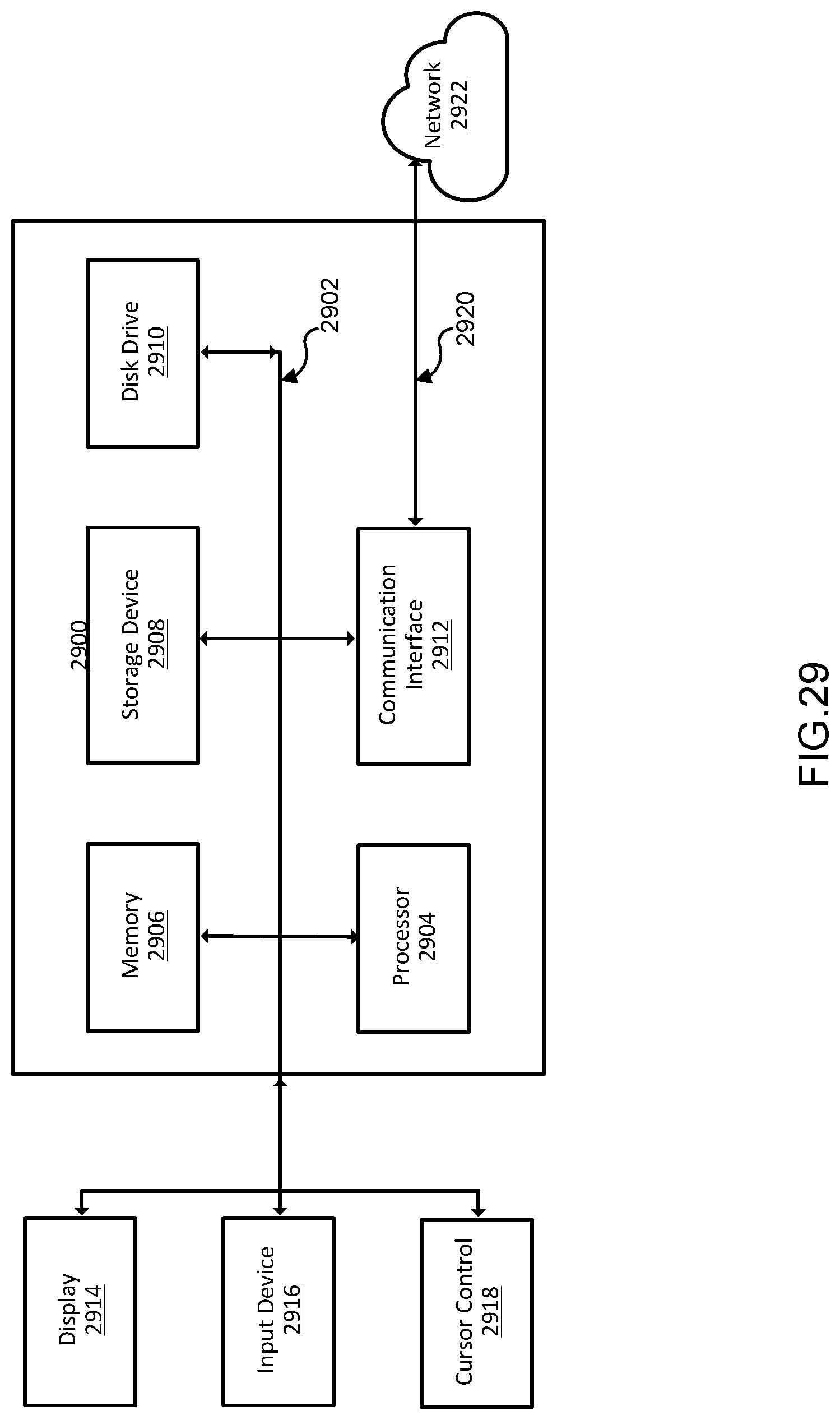

[0060] FIG. 29 illustrates an exemplary computing system suitable for analysis and deep learning modeling of sensor-based detection data in bounded aquatic environments;

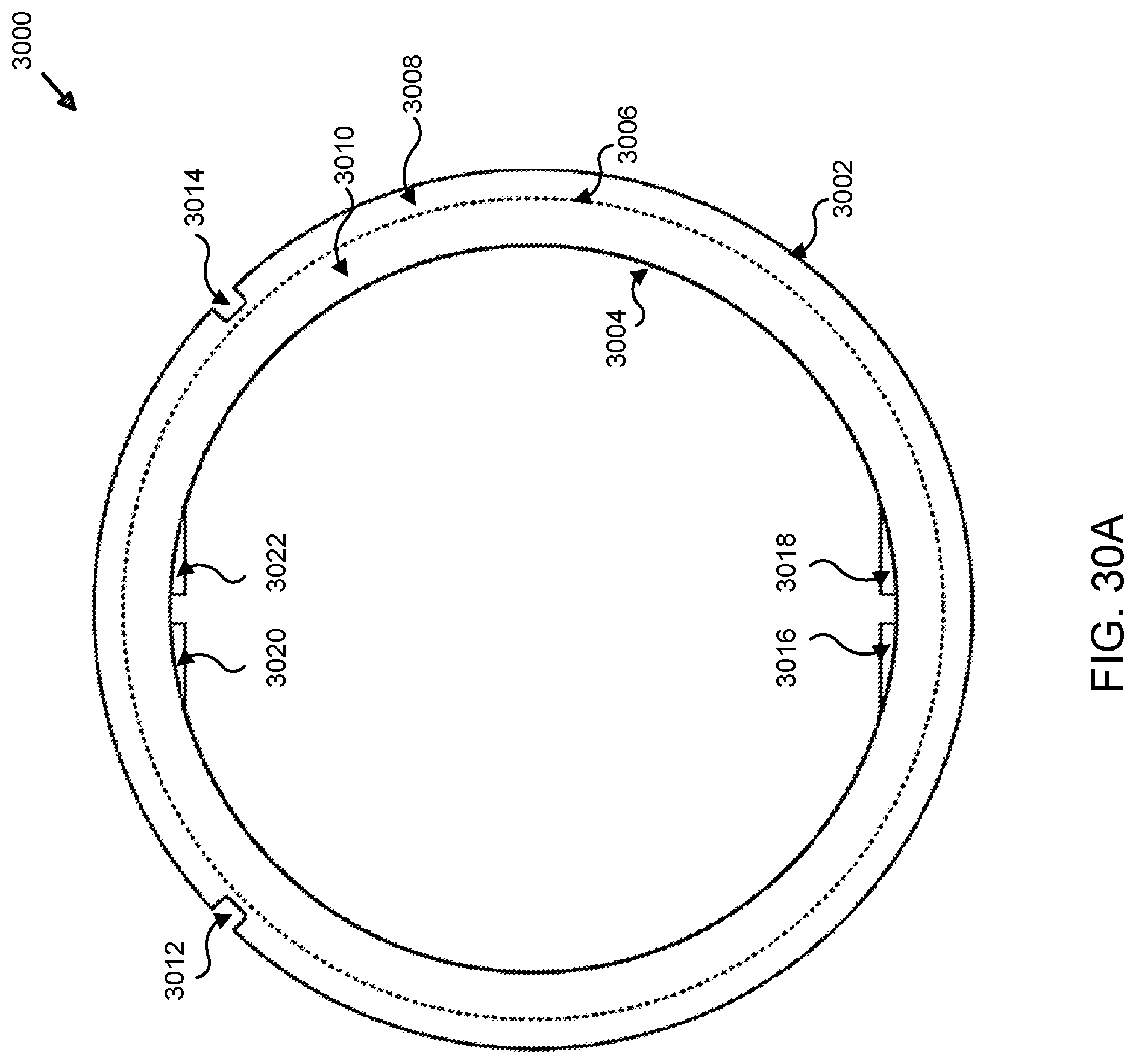

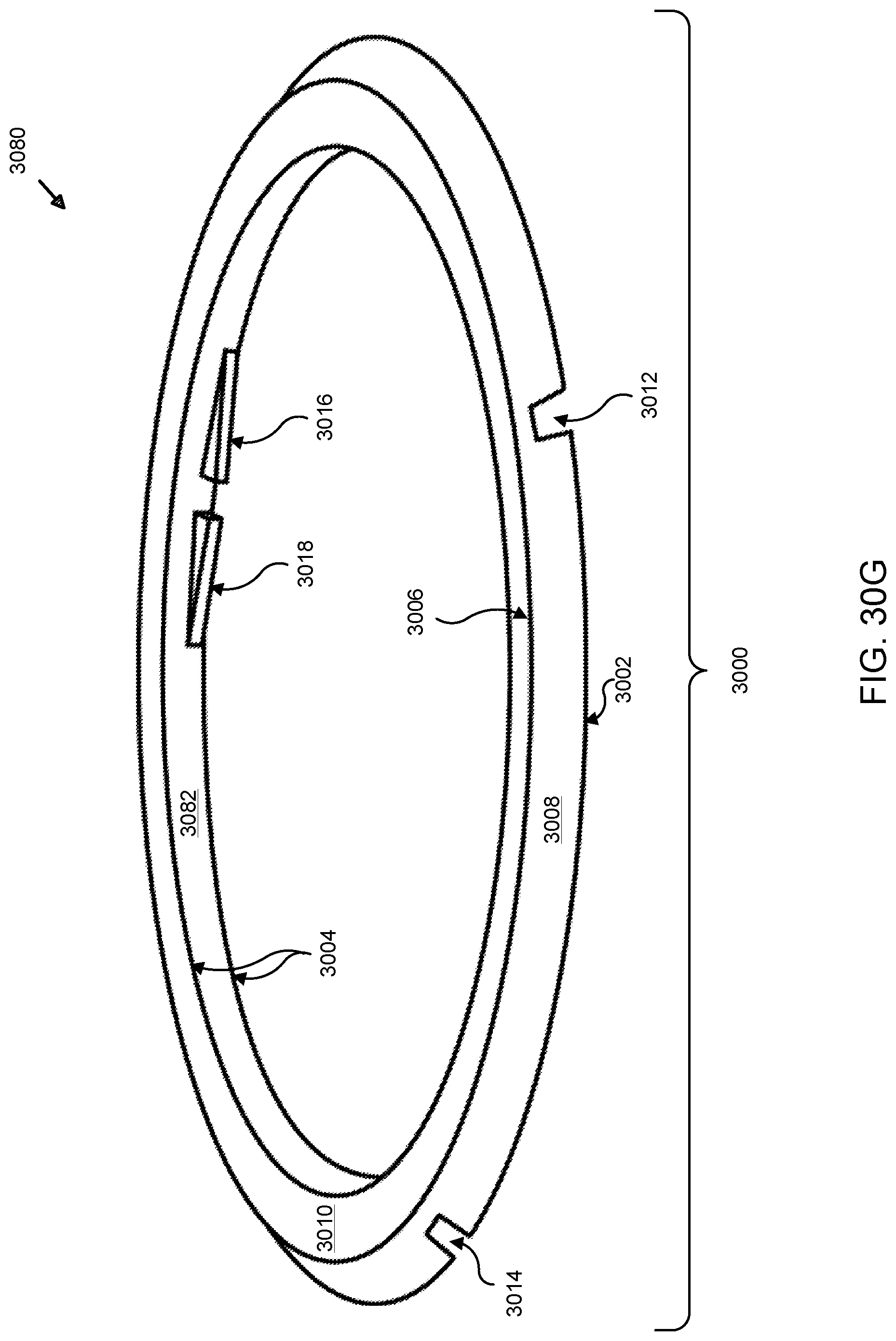

[0061] FIG. 30A illustrates a front view of an exemplary spacer ring used in analysis and deep learning modeling of sensor-based detection data in bounded aquatic environments using underwater powered systems;

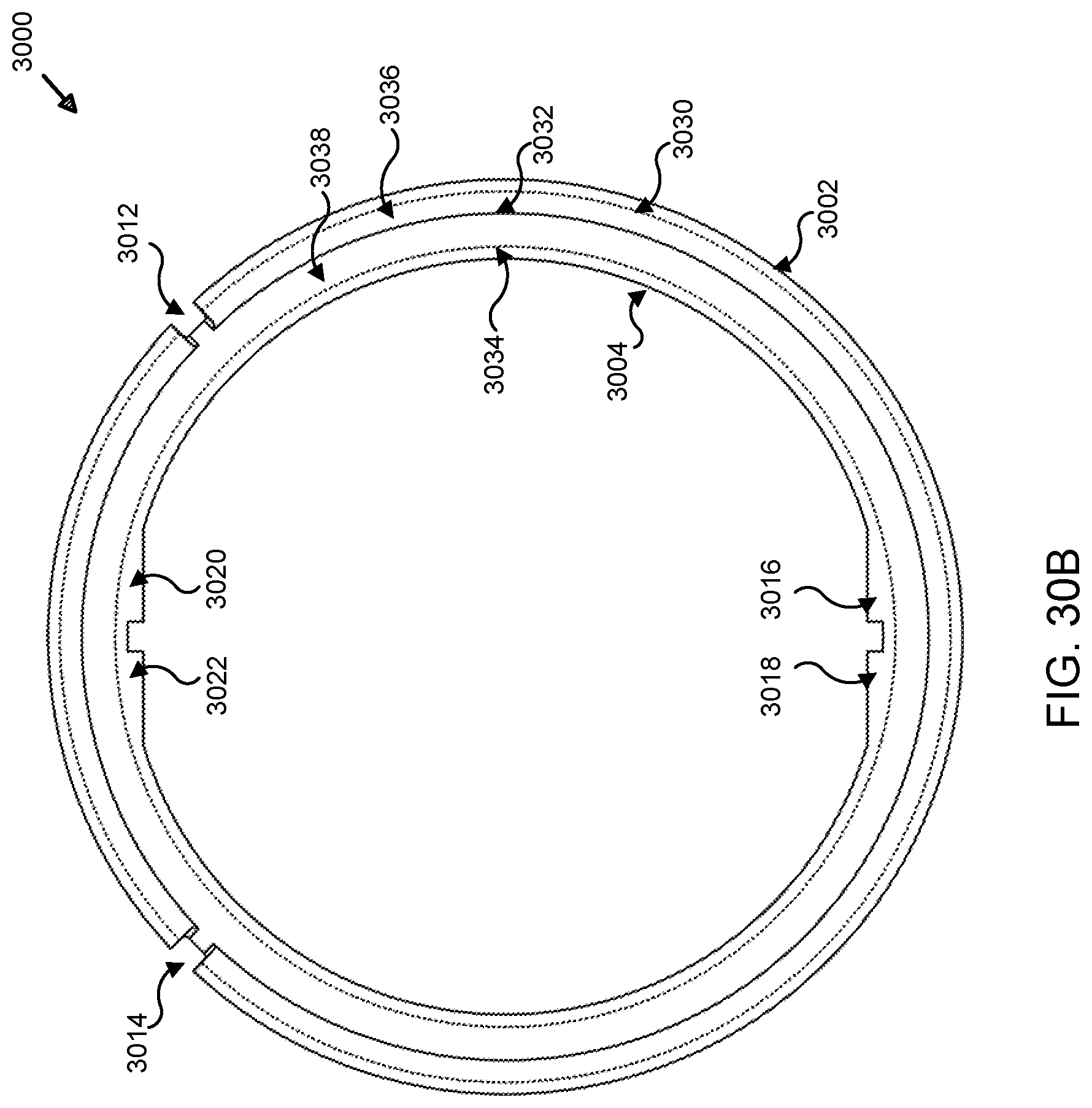

[0062] FIG. 30B illustrates a rear view of an exemplary spacer ring used in analysis and deep learning modeling of sensor-based detection data in bounded aquatic environments using underwater powered systems;

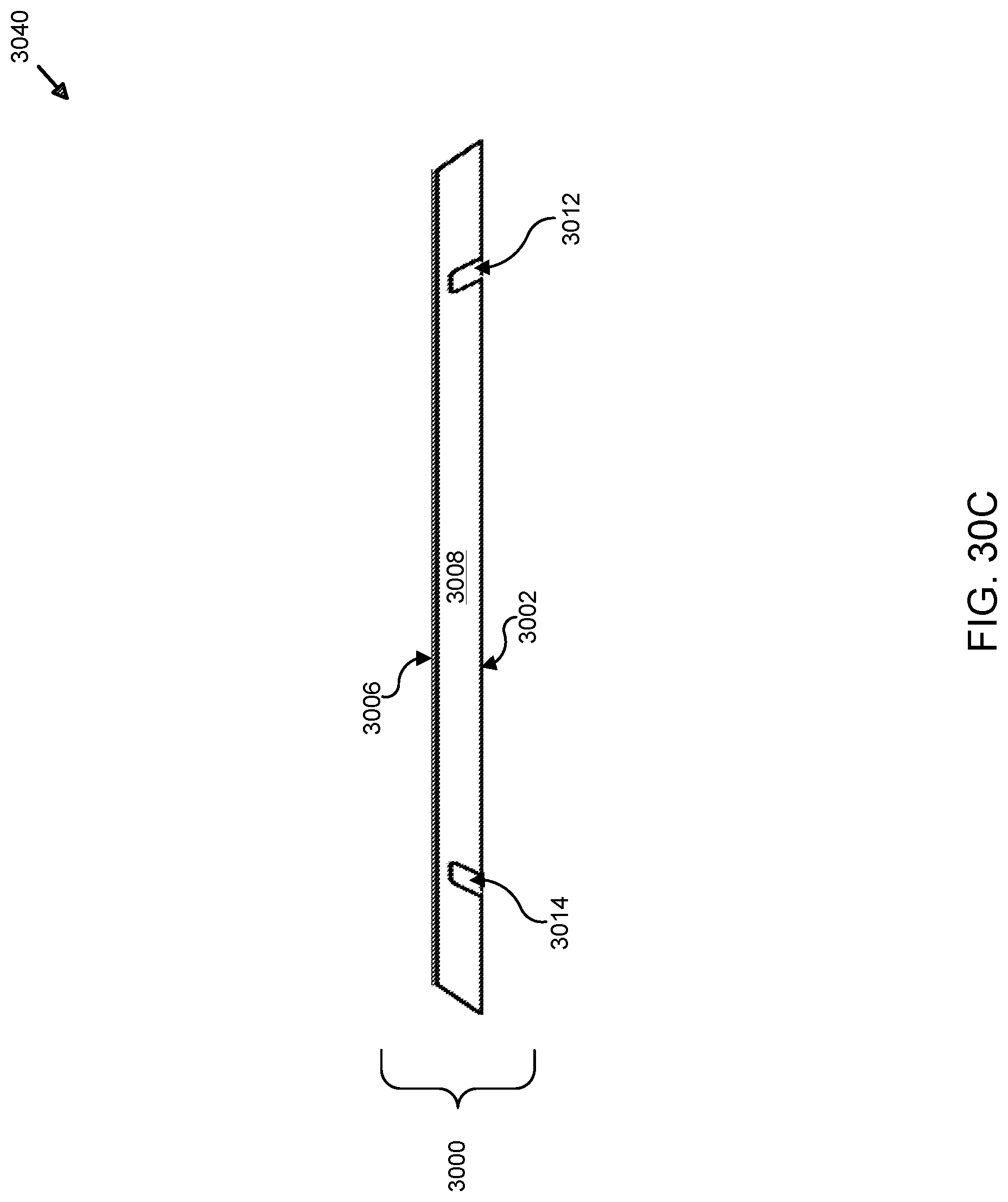

[0063] FIG. 30C illustrates a top view of an exemplary spacer ring used in analysis and deep learning modeling of sensor-based detection data in bounded aquatic environments using underwater powered systems;

[0064] FIG. 30D illustrates a bottom view of an exemplary spacer ring used in analysis and deep learning modeling of sensor-based detection data in bounded aquatic environments using underwater powered systems;

[0065] FIG. 30E illustrates a right view of an exemplary spacer ring used in analysis and deep learning modeling of sensor-based detection data in bounded aquatic environments using underwater powered systems;

[0066] FIG. 30F illustrates a left view of an exemplary spacer ring used in analysis and deep learning modeling of sensor-based detection data in bounded aquatic environments using underwater powered systems;

[0067] FIG. 30G illustrates a perspective view of an exemplary spacer ring used in analysis and deep learning modeling of sensor-based detection data in bounded aquatic environments using underwater powered systems;

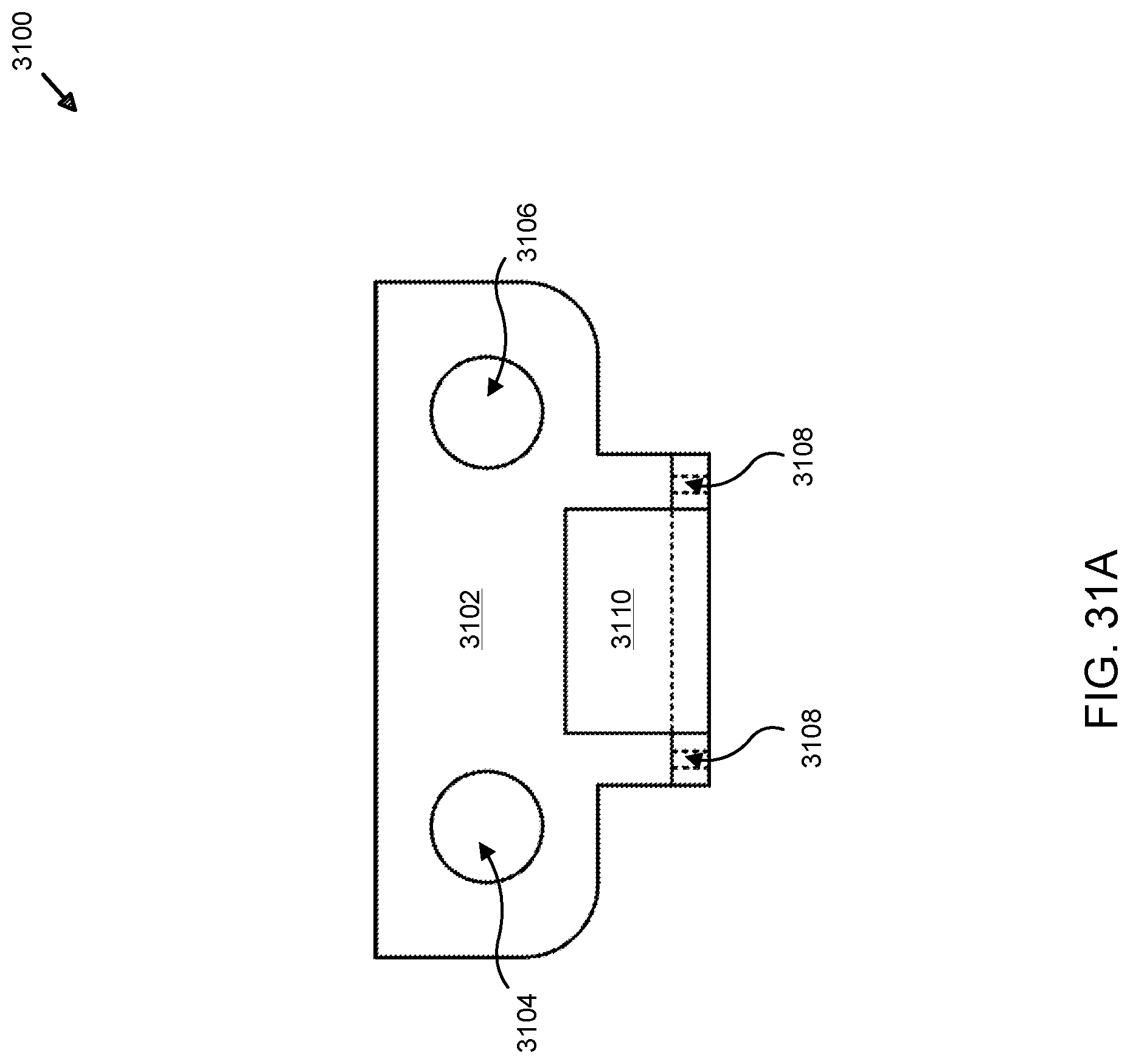

[0068] FIG. 31A illustrates a front view of an exemplary hook adapter used in analysis and deep learning modeling of sensor-based detection data in bounded aquatic environments using underwater powered systems;

[0069] FIG. 31B illustrates a rear view of an exemplary spacer ring mounting bracket used in analysis and deep learning modeling of sensor-based detection data in bounded aquatic environments using underwater powered systems;

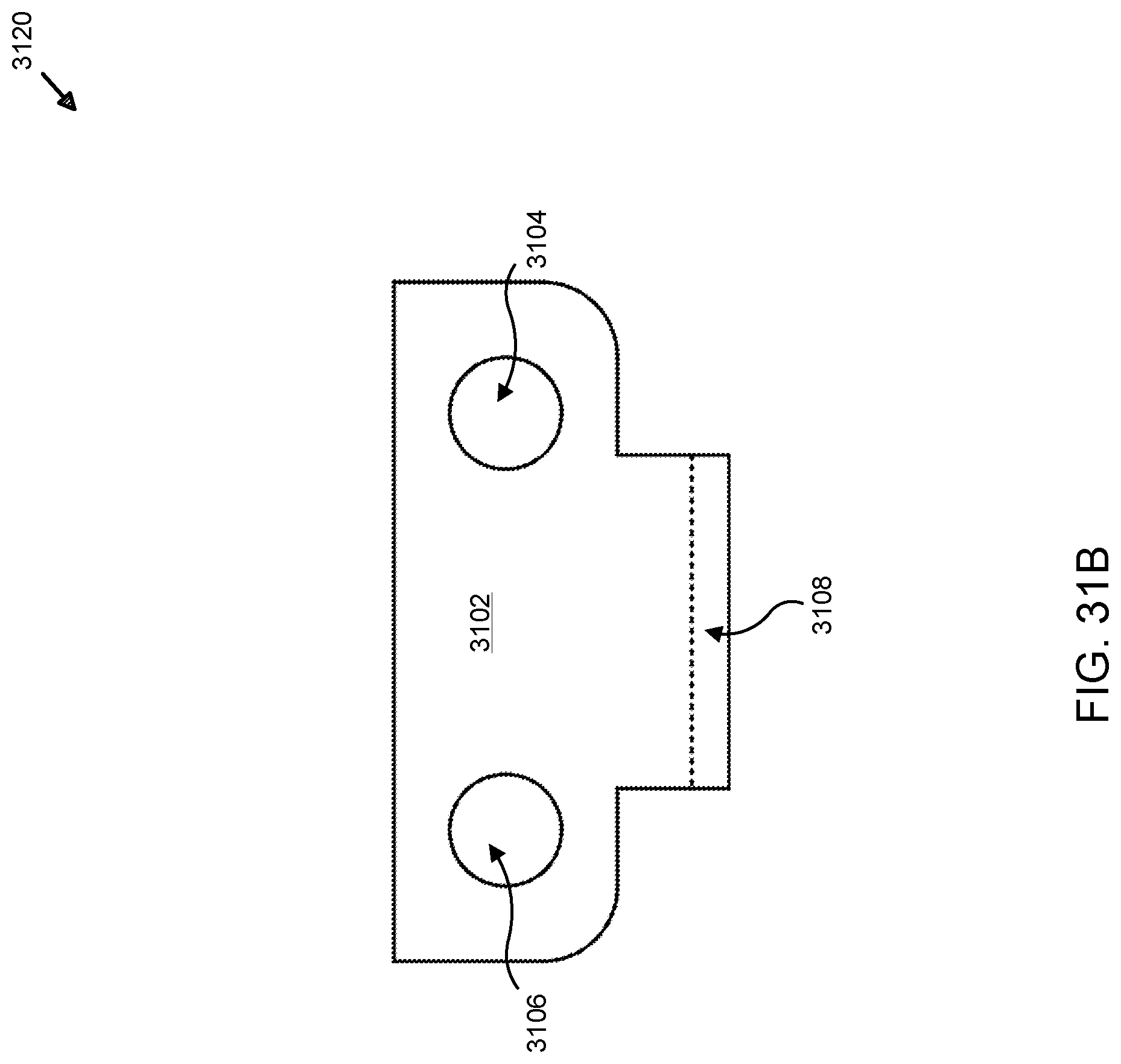

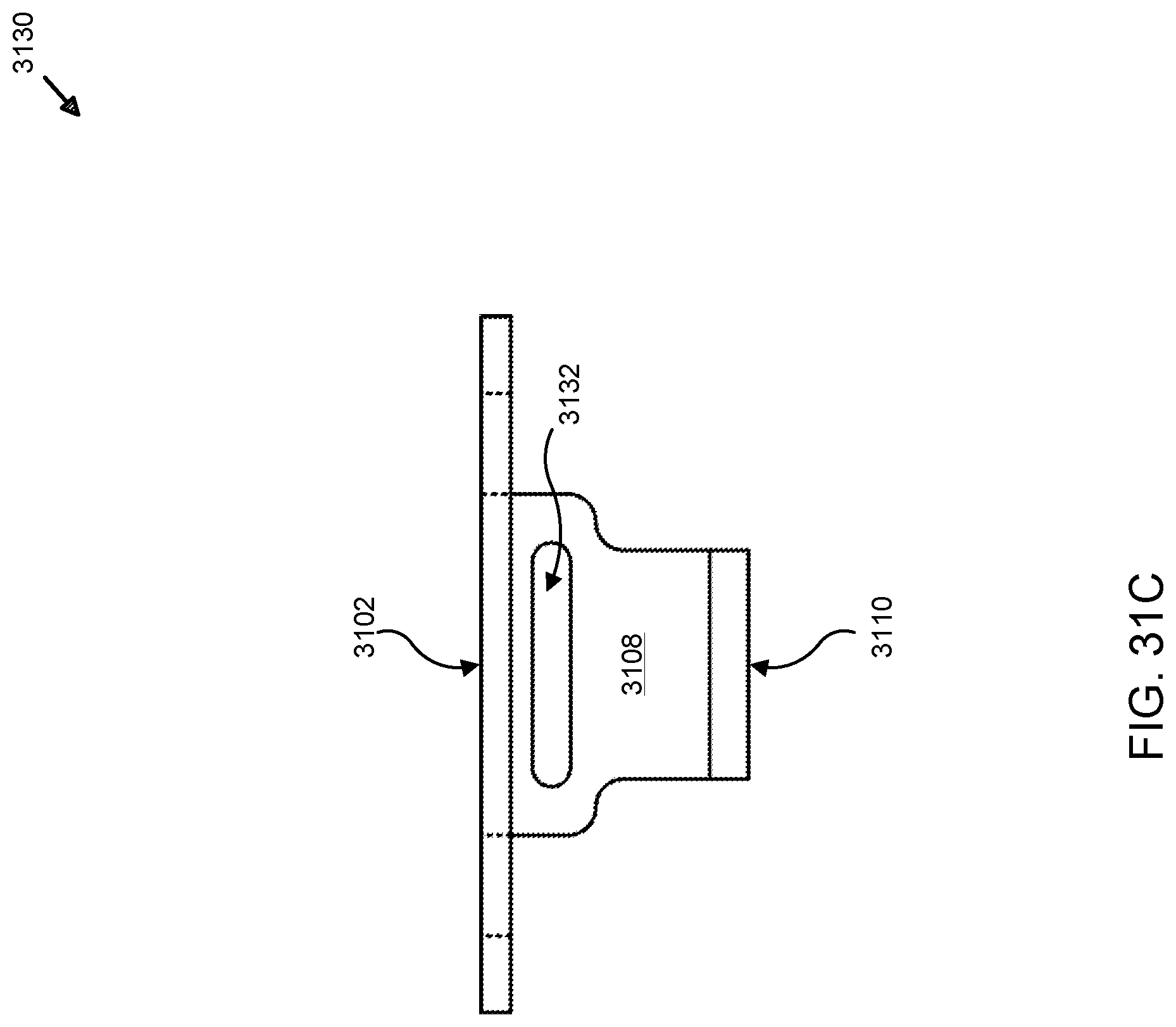

[0070] FIG. 31C illustrates a top view of an exemplary hook adapter used in analysis and deep learning modeling of sensor-based detection data in bounded aquatic environments using underwater powered systems;

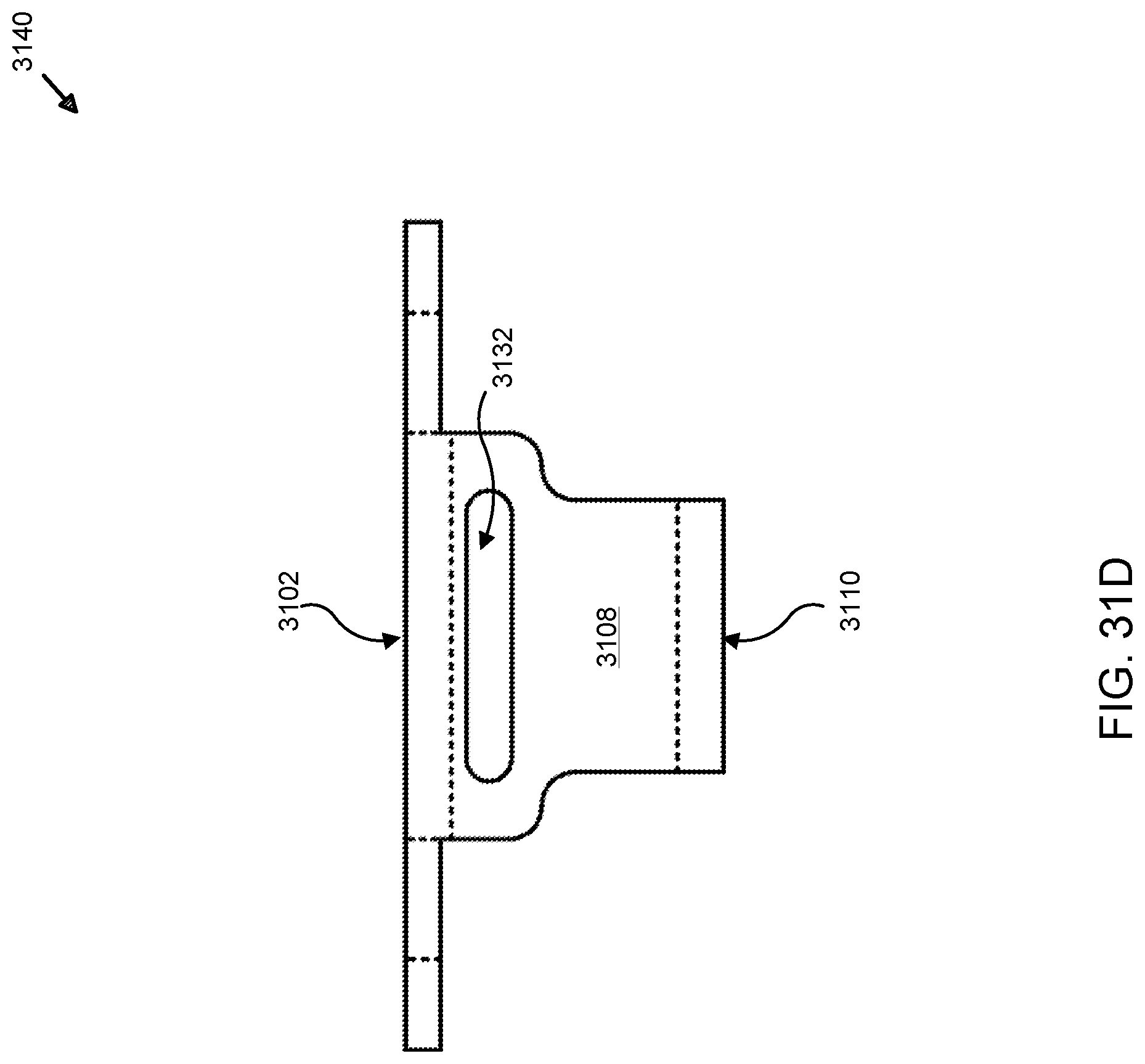

[0071] FIG. 31D illustrates a bottom view of an exemplary hook adapter used in analysis and deep learning modeling of sensor-based detection data in bounded aquatic environments using underwater powered systems;

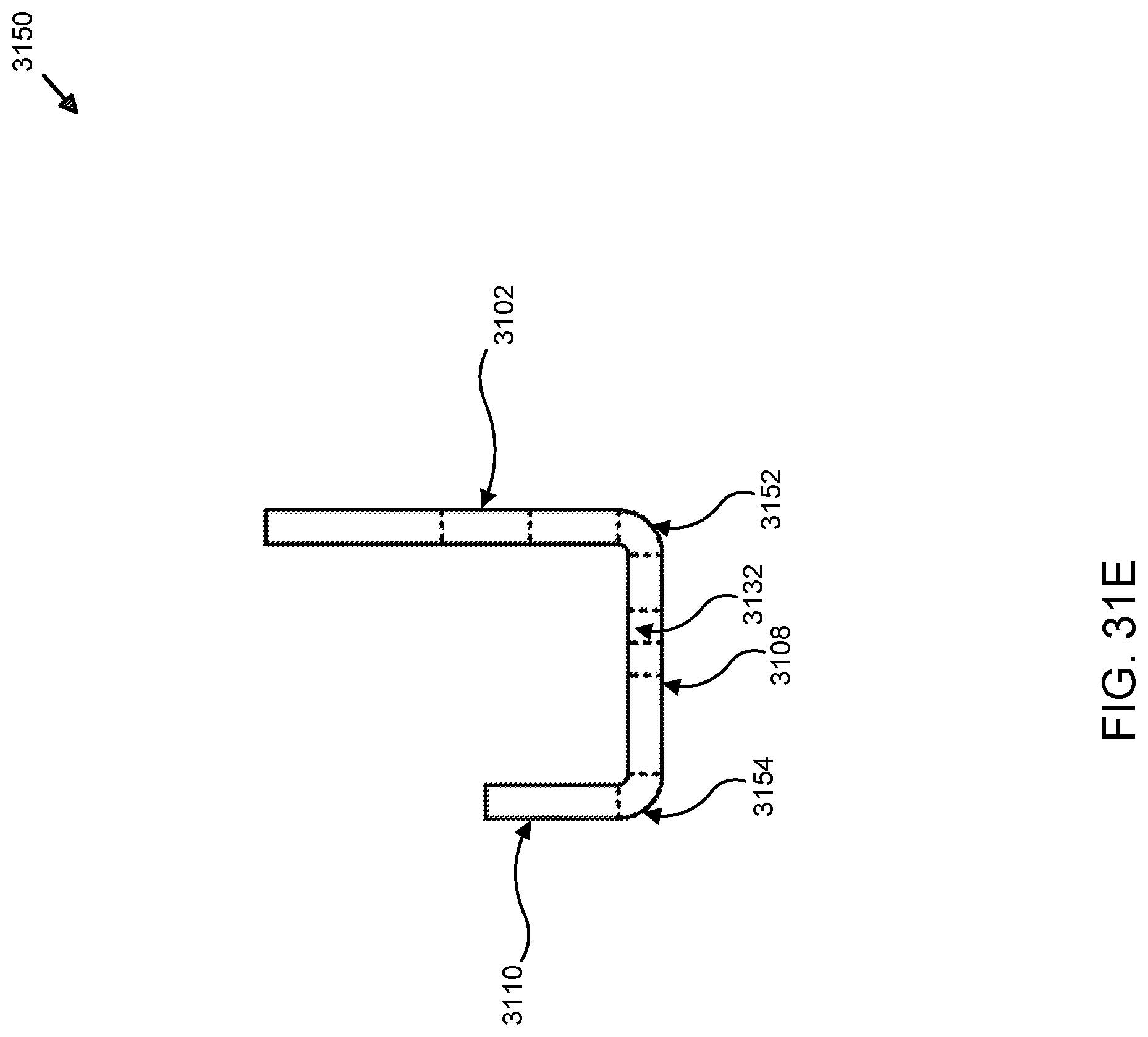

[0072] FIG. 31E illustrates a right view of an exemplary hook adapter used in analysis and deep learning modeling of sensor-based detection data in bounded aquatic environments using underwater powered systems;

[0073] FIG. 31F illustrates a left view of an exemplary hook adapter used in analysis and deep learning modeling of sensor-based detection data in bounded aquatic environments using underwater powered systems;

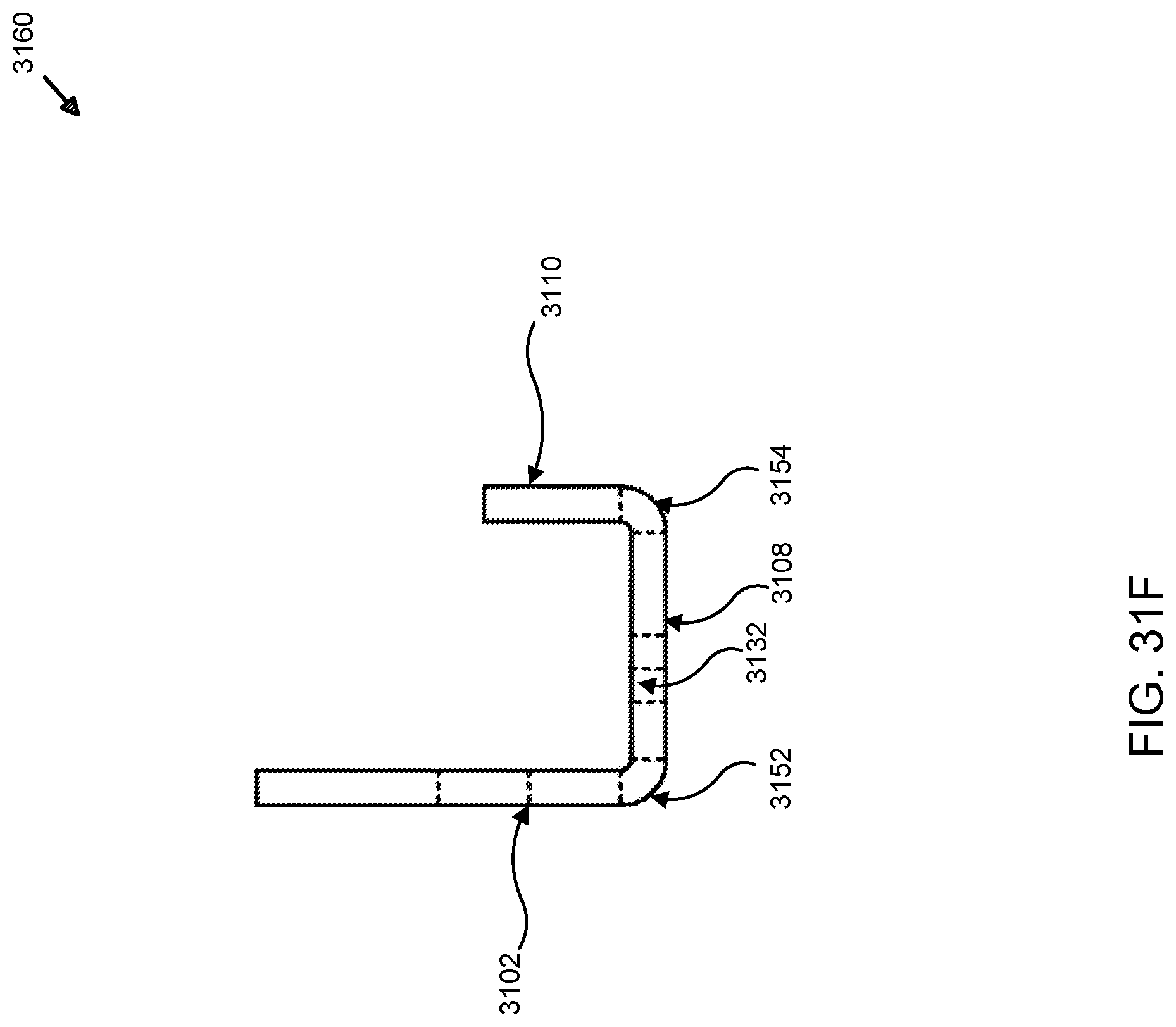

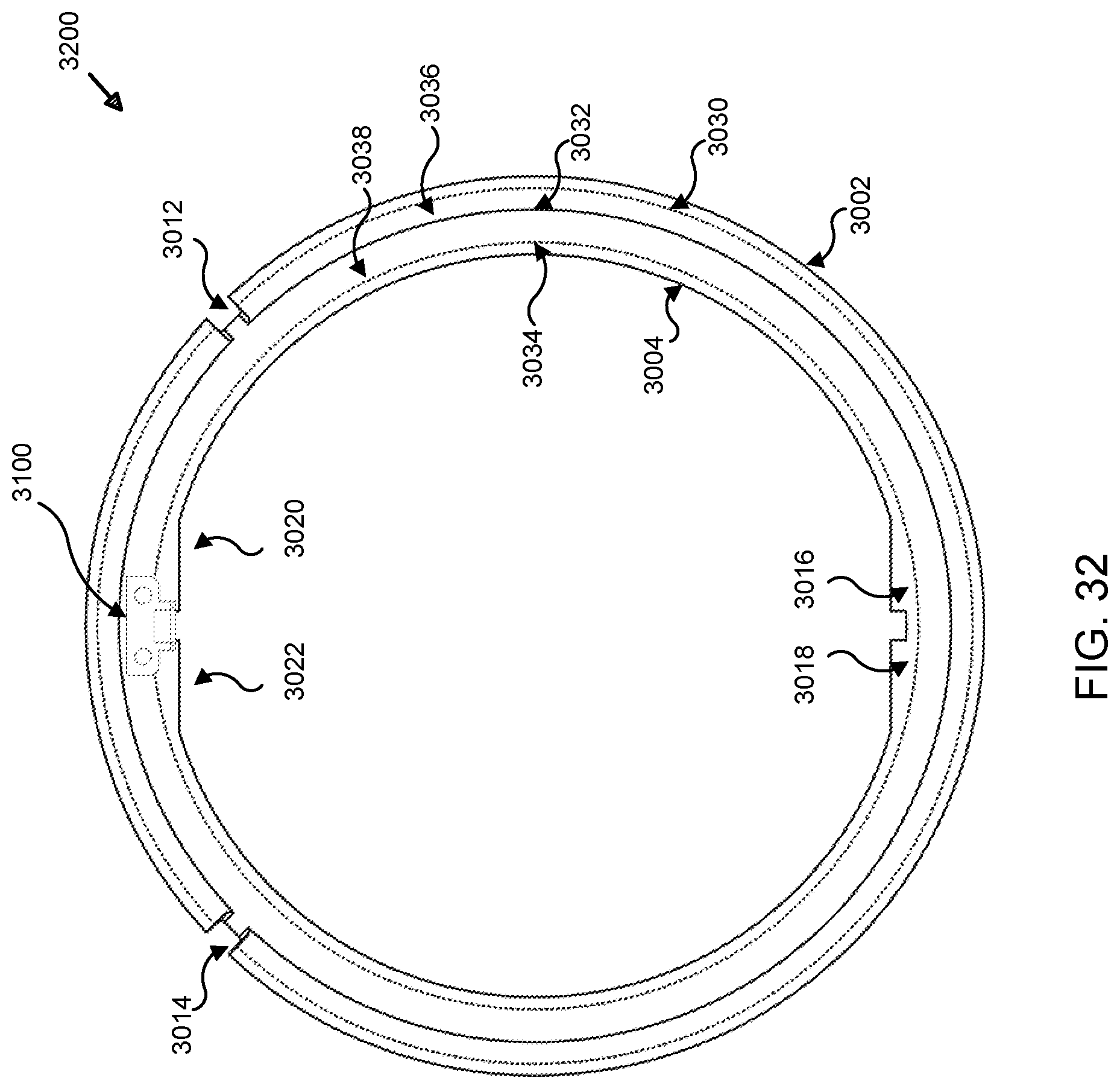

[0074] FIG. 31G illustrates a perspective view of an exemplary hook adapter used in analysis and deep learning modeling of sensor-based detection data in bounded aquatic environments using underwater powered systems; and

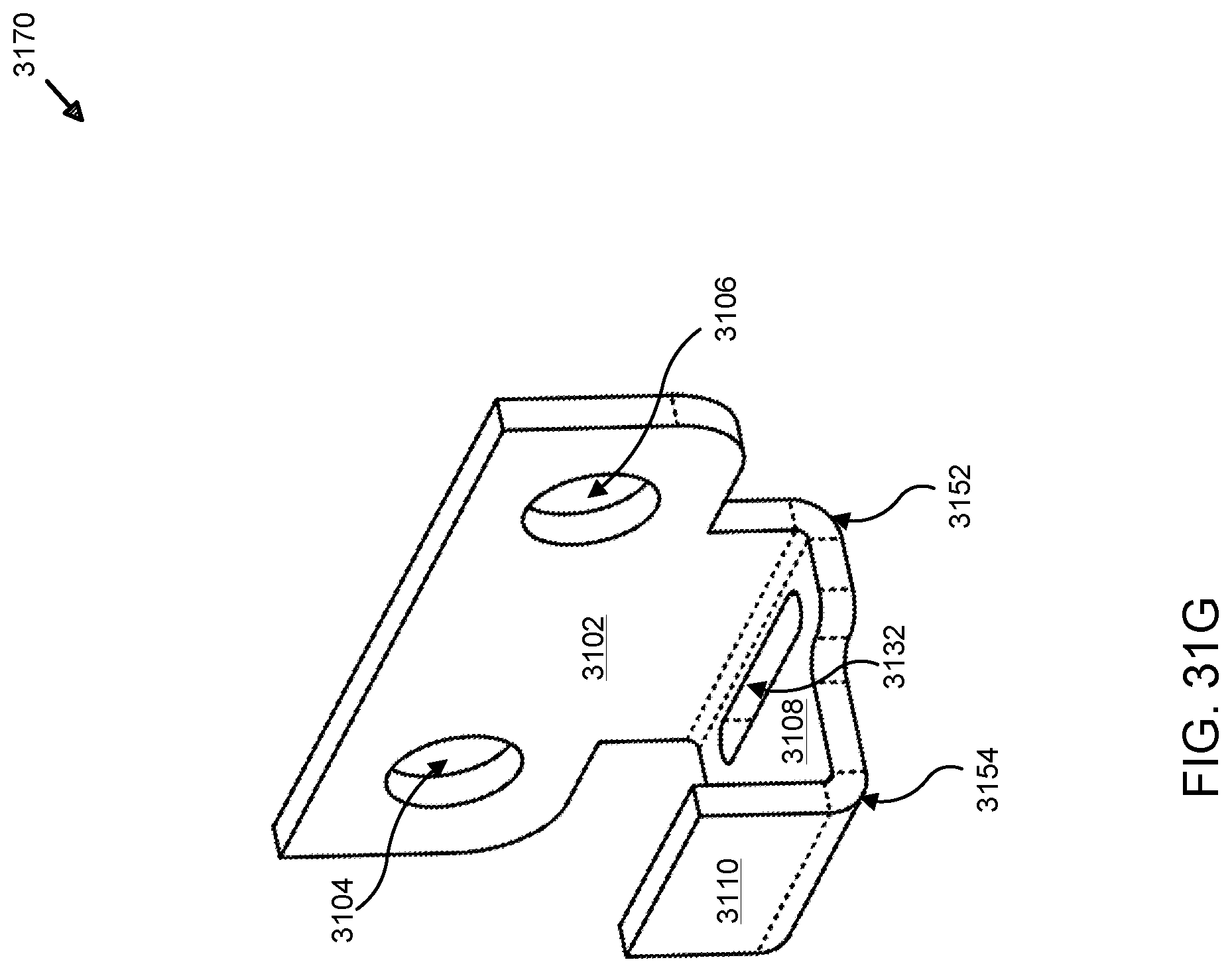

[0075] FIG. 32 illustrates a rear view of an exemplary spacer ring coupled to a hook adapter for use in analysis and deep learning modeling of sensor-based detection data in bounded aquatic environments using underwater powered systems.

DETAILED DESCRIPTION

[0076] Various embodiments or examples may be implemented in numerous ways, including as a system, a process, an apparatus, a user interface, or a series of program code or instructions on a computer readable medium such as a storage medium or a computer network including program instructions that are sent over optical, electronic, electrical, chemical, wired, or wireless communication links. In general, individual operations or sub-operations of disclosed processes may be performed in an arbitrary order, unless otherwise provided in the claims.

[0077] A detailed description of one or more examples is provided below along with accompanying figures. This detailed description is provided in connection with such examples, but is not limited to any particular example. The scope is limited only by the claims and numerous alternatives, modifications, and equivalents. Numerous specific details are set forth in the following description in order to provide a thorough understanding. These details are provided for the purpose of illustrating various examples and the described techniques may be practiced according to the claims without some or all of these specific details. For clarity, technical material that is known in the technical fields and related to the examples has not been described in detail to avoid unnecessarily obscuring the description or providing unnecessary details that may be already known to those of ordinary skill in the art.

[0078] As used herein, "system" may refer to or include the description of a computer, network, or distributed computing system, topology, or architecture using various computing resources that are configured to provide computing features, functions, processes, elements, components, or parts, without any particular limitation as to the type, make, manufacturer, developer, provider, configuration, programming or formatting language, service, class, resource, specification, protocol, or other computing or network attributes. As used herein, "software" or "application" may also be used interchangeably or synonymously with, or refer to a computer program, software, program, firmware, or any other term that may be used to describe, reference, or refer to a logical set of instructions that, when executed, performs a function or set of functions within a computing system or machine, regardless of whether physical, logical, or virtual and without restriction or limitation to any particular implementation, design, configuration, instance, or state. Further, "platform" may refer to any type of computer hardware (hereafter "hardware") and/or software using one or more local, remote, distributed, networked, or computing cloud (hereafter "cloud")-based computing resources (e.g., computers, clients, servers, tablets, notebooks, smart phones, cell phones, mobile computing platforms or tablets, and the like) to provide an application, operating system, or other computing environment, such as those described herein, without restriction or limitation to any particular implementation, design, configuration, instance, or state. Distributed resources such as cloud computing networks (also referred to interchangeably as "computing clouds," "storage clouds," "cloud networks," or, simply, "clouds," without restriction or limitation to any particular implementation, design, configuration, instance, or state) may be used for processing and/or storage of varying quantities, types, structures, and formats of data, without restriction or limitation to any particular implementation, design, or configuration.

[0079] As described herein, structured and unstructured data may be stored in various types of data structures including, but not limited to databases, data repositories, data warehouses, data stores, or other data structures and facilities configured to manage, store, retrieve, process calls for/to, copy, modify, or delete data or sets of data (i.e., "datasets") in various computer programming languages and formats in accordance with various types of structured and unstructured database schemas such as SQL, MySQL, NoSQL, DynamoDB.TM. or others, such as those developed by proprietary and open source providers like Amazon.RTM. Web Services, Inc. of Seattle, Wash., Microsoft.RTM., Oracle.RTM., Salesforce.com, Inc., and others, without limitation or restriction to any particular schema, instance, or implementation. Further, references to databases, data structures, or any type of data storage facility may include any embodiment as a local, remote, distributed, networked, cloud-based, or combined implementation thereof. In some examples, data may be formatted and transmitted (i.e., transferred over one or more data communication protocols) between computing resources using various types of wired and wireless data communication and transfer protocols such as Hypertext Transfer Protocol (HTTP), Transmission Control Protocol (TCP)/Internet Protocol (IP), Internet Relay Chat (IRC), SMS, text messaging, instant messaging (IM), WiFi, WiMax, or others, without limitation. As described herein, disclosed processes implemented as software may be programmed using Java.RTM., JavaScript.RTM., Scala, Perl, Python.TM., XML, HTML, and other data formats and programs, without limitation. As used in this Detailed Description, references to layers of an application architecture (e.g., application layer or data layer) may refer to a stacked layer application architecture such as the Open Systems Interconnect (OSI) model or others.

[0080] FIG. 1A illustrates an exemplary system for analysis and deep learning modeling of sensor-based detection data in bounded aquatic environments. Here, system 100 includes application 102, general processing unit (GPU) 104, body detector/3D (i.e., 3-dimensional) location module 106, deep learning module 108, central processing unit (CPU) 110, tracking module 112, communication module 114, power module 116, alarm/hub module 118, state estimator 120, classifier 122, data bus 124, sensor 126, sensor data 128, model data 130, background data 132, tracking data 134, clients 136-140, alarm 142, sensor 144, hub 146, tracking data 148, background data 150, model data 152, sensor data 154, and network 156. In some examples, application 102 may be implemented as a software-based program configured to implement one or more of the processes described herein. Application 102 may also be implemented as a standalone or distributed application, for example, with one or more of sub-modules 104-122 being hosted locally, remotely, or in a distributed topology (e.g., software-as-a-service (i.e., "SaaS"), using a computing cloud, networked servers, or any other type of topology using different computing components on which one or more of sub-modules 104-122 are hosted, served, or otherwise made available to application 102). Here, sub-modules 104-122 of application 102 are in data communication with each other using data bus 124, which may be direct, local, remote, wired, wireless, distributed, virtual, or any other type of connection that permits data communication between sub-modules 104-122.

[0081] Here, graphics processing unit ("GPU") 104 may be implemented to perform various processes (executed as software, firmware, circuitry, or a combination thereof) such as those described herein. For example, GPU 104 may be configured to perform processes for detection and association (i.e., correspondence between detected objects (e.g., head and body (i.e., for an adult, child, or animal) corresponding or associated with a given individual person, above and below water detected elements that are associated with the same object, or the like) of objects using the techniques described herein. In some examples, one or more deep learning (i.e., of various types based on neural networks, probabilistic, inference-based, and other types of algorithms, without limitation or restriction) and/or machine learning algorithms, software, or the like may be implemented to develop and reference (i.e., during processing of sensor data 128 and/or 154 received from sensors 126 and/or 144) models that may be used by GPU 104 to perform various processes such as detection, association, background subtraction, identification of foreground features or objects, performing analysis or processing to identify particular features, surfaces, objects, structures, motions, or the like in order to detect (i.e., sense) various above and below water detected objects. Application 102 may be configured to perform various types of data analysis using, for example, deep or machine learning algorithms or models (collectively referred to as "models" herein). Data analysis or data analytics may refer to any type of process performed on any data (e.g., sensor data 128 and/or 154) accessible or input to application 102, in some examples. In other examples, data analytics may be provided by using data retrieved from sources external to application 102 and are not limited to the examples shown and described. As used herein, "image" may refer to a still, moving, video, three dimensional ("3D"), or any other type of image, regardless of form, format, or media. Also as used herein, "objects" may refer to person and non-person items that are detected by sensors 126 and/or 144, for example, in aquatic environments such as those described herein. As used herein, any type of deep learning algorithm may be used and, in some examples, multiple or different deep learning algorithms may be used and trained to perform processes such as detection, association, identification, or others, by referencing data found in one or more of sensor data 128 or 154, model data 130 or 152, background data 132 or 150, and/or data retrieved from one or more of clients 136-140. As shown and described herein, various resources (e.g., clients 136-140 (e.g., desktop 136, mobile devices such as smart phone ("smart phone") 138 or computing tablet ("tablet") 140), alarm 142, sensor 144, and hub 146) may also be networked resources (i.e., resources that are coupled to or in data communication with one or more elements of system 100) using a data network such as network 156. Any type of data communication links, wired, wireless, chemical, optical, line-of-sight, radiating, or the like, may be used and are not limited to any specific implementation. In some examples, machine learning algorithms may be used along with or in addition to deep learning algorithms in order to develop a large dataset that may be used by detection, association, identification, or other processes such as those described herein. In other examples, data obtained from in-water, above-water, or below-water placed (i.e., "placed" may be used interchangeably with "disposed," "positioned," "emplaced," or "implemented," without limitation) sensors 126 and 144 may also sense or detect (these terms may be used interchangeably) images, video, data, or any other type of input, including, but not limited to electrical, thermal, acoustic, audio, optical, haptic, or other types, without limitation. Sensors 126 and/or 144 may be implemented using various types of technologies such as RGB (i.e., Red, Blue, Green color-channel sensing), NIR (i.e., Near Infrared), infrared, thermal, thermal infrared, or others, without limitation or restriction. Due to differences in above-water and underwater (i.e., gaseous and liquid) environments or media in which sensors 126 and/or 144 may be placed, different types of sensing technology may be implemented. For example, sensors 126 and/or 144 may be implemented, above and underwater, solely above water, or solely underwater, using a wide-angle lens, aperture, filter, polarizer, and, for sensing (i.e., detecting) at night or in other low-light environments, various types of light sources for night illumination such as incandescent, halogen, chemical, light emitting diode-based (hereafter "LED) lighting such as blue LEDs for night illumination for underwater (i.e., liquid) sensors and infrared LEDs for night illumination for above-water (i.e., gaseous or non-liquid) illumination. In some examples, these features may be referred to, individually or collectively, as an "optical feature" or "optical features," respectively. As used herein, "camera" may refer to any type of image capture device using, in some examples, one, some, or none of the immediately described features (e.g., wide-angle lens, aperture, filter, polarizer, and, for sensing (i.e., detecting) at night or in other low-light environments, various types of light sources for night illumination such as incandescent, halogen, chemical, light emitting diode-based (hereafter "LED) lighting such as blue LEDs for night illumination for underwater (i.e., liquid) sensors and infrared LEDs for night illumination for above-water (i.e., gaseous or non-liquid) illumination, or others, without limitation or restriction)). In some examples, the use of multiple cameras may be implemented to capture not only a single image, but also video and 3D data. In other examples, multiple systems 100 in data communication with each other (not shown) may be implemented to capture video and 3D data and configured to process and function collaboratively. As an example, a large body of water such as a large, irregularly shaped hotel or resort pool may require the use of multiple systems (e.g., application 102) to ensure drowning detection capabilities over the entire body. Other colors and color-channels may be used and are not limited to the examples provided herein. In some example, sensors 126 and/or 144 may be implemented to capture still images, continuous video feeds, short video clips (i.e., tracklets), motion-activated video or still images (i.e., the sensors may be using optical cameras, as an example, to capture still images or videos when motion is detected in an aquatic environment; whether above-water or underwater), and others. Further, mechanisms may be implemented with sensors 126 and/or 144 (either or both of which may be implemented above-water or underwater) to rotate filters, polarizers, imagers, or the like to control exposure, sensitivity, or other photographic attributes of captured signals, data, images, video, or the like. As used herein, video may include images, that consist of still images and/or video-based captured imagery, which could include continuous video, motion-activated video capture, or tracklets, as described above. Sensors 126 and/or 144, in some examples, may also be configured using one or more implementations and system 100 is not limited to solely one or two sensors. While sensors 126 and 144 are shown as having direct and indirect (e.g., networked via network 156), respectively, data communication links with application 102, the example shown is not intended to limit the number, type, or topology in which application 102 is coupled (i.e., directly or indirectly, wired or wireless, or the like) to sensors 126 and/or 144. Further, sensors 126 and/or 144 may also be implemented as sensor arrays (e.g., a sensor body may include multiple types of sensors or multiple sensors of a single type, or the like). For example, multiple sensors, sensor arrays, or mechanisms for rotating polarizers, filters, imagers, or the like, may be implemented to adjust the field of view of each sensor (e.g. sensors 126 and/or 144) in order to capture more fully a body of water such as a swimming pool, as an example. As another example, multiple optical sensors (e.g., cameras) may be used to implement sensors 126 and/or 144 as a single virtual camera with a large aperture configured to sense a large area of an aquatic environment, as discussed in greater detail below. In other examples, sensors 126 and/or 144 may be implemented as cameras having wide-angle lenses and apertures that permit wide fields of view (e.g., 160 to 180 degrees relative to an axis of a surface on which sensors 126 and/or 144 are placed). Placement, calibration, implementation, and other aspects and attributes of sensors 126 and/or 144 are discussed in greater detail below.

[0082] Here, data obtained from sensors 126 or 144 may be in various forms and formats such as analog, wave, optical, digital, or the like, without limitation or restriction to any particular type of implementation. In some examples, sensor 126 or 144 may be configured to obtain optical images using variable or wide angle aperture camera that capture still, video, moving, or other types of optical input that may be processed by GPU 104 and central processing unit ("CPU") 110 for various tasks such as those described above and others (e.g., object tracking, image reconstruction, background subtraction, foreground identification of objects (i.e., persons vs. non-persons), and others (which may be stored as tracking data 134 or 148). Input captured by sensors 126 and/or 144 may be in analog forms that are converted into data (e.g., analog or digital) locally or transmitted to other modules managed and/or arbitrated by communication module 114. In other examples, active illumination-based sensors may be implemented for sensors 126 and/or 144 using techniques such as light detection and ranging (hereafter "LIDAR"), those that generate light curtains (i.e., breaking a light curtain generated by one or more of sensors 126 and/or 144 can result in detecting various objects when one or more beams of light projected within a light curtain are intercepted or interrupted by an object(s)), and others, without limitation. Examples of techniques that may be used include programmable triangulating light curtains, light sheet fluorescence microscopy, rotating light sheets configure to move in synchrony with designated rows in one or more cameras implemented as sensors 126 and/or 144, among other techniques for propagating light in patterns, waves, beams, dwells, or the like where interruptions of these propagation techniques can be used by sensors 126 and/or 144 to detect objects.

[0083] Other modules that may receive sensor input, regardless of form or format, and convert to data include GPU 104, CPU 110, or other elements of system 100. For example, GPU 104 or CPU 110 may be implemented on one or more of clients 136-140 or another (e.g., a remotely hosted server or computing cloud (hereafter "cloud") comprised of one or more physical or virtual computing resources). Regardless, data converted from inputs detected or received by sensors 126 and/or 144 may be stored in sensor data 128 and/or 154 and used by GPU 104, CPU 110, or other modules such as body detector/3D location module 106, deep learning module 108, tracking module 112, state estimator 120, or classifier 122 to perform other processes such as those described herein and below in greater detail. Sensor data 128 may be further processed to identify background data (e.g., data associated with background features of an aquatic environment such as walls, bottom surfaces and contours, steps, underwater obstructions, or other features) that is stored in a database for background data 132. Identifying background features and subtracting these from captured images and/or video enable system 100 to further identify foreground features and objects such as floating inanimate objects, persons, animals, or the like by transferring data to other modules configured to perform various processing functions.

[0084] For example, body detector/3D location module 106 may be configured to use input (e.g., data from sensor data 128 and/or 144) to detect and identify body(s), body parts, and the positioning thereof in a three-dimensional ("3D") space such as within a body of water. As used herein, a body of water may refer to any type of aquatic environment. In some examples, aquatic environments may include oceans, seas, lakes, f.sub.jords, channels, canals, ponds, pools, or any other type of natural or artificial structure containing water, aqueous liquids, or the like. As used herein, aquatic environments can refer to swimming pools and the techniques described can be used for practical applications such as detection of persons within the environments and track them with the eventual goal of detecting a drowning state and activating (i.e., triggering, sounding, enabling, turning on, or otherwise causing) an alarm, which may be audible, optical, light-based, vibration-based, haptic, or of any other type, without limitation or restriction.

[0085] Referring back to the body detector/3D location module 106, input from sensors 126 and/or 144 may be input to one or more deep learning algorithms developed as software and/or firmware-related components of system 102 and managed by deep learning module 108. For example, body detector/3D location module 106 may include other algorithms, software, or firmware that, working cooperatively with deep learning module 108 when instructed or called by GPU 104, is used to detect whether a body is present in a body of water and, if so, what body parts have been detected and the location of said body and/or body parts within the 3D space of an aquatic environment. Different body parts may also be associated to a given person by body detector/3D location module 106. In other examples, body detector/3D location module may also be used to detect and associate above-water with underwater parts of non-person objects that may be within a bounded aquatic environment (e.g., pool floats or toys, chlorinating pool floats, debris skimmers such as those found in swimming pools, flotsam, jetsam, partially-submerged or partially-immersed objects, or the like). If bounded (i.e., "bounded" may refer to a fully or partially enclosed space in which an aquatic environment is provided or found, such as a swimming pool, canal, diving tank, wading pool, or the like), body detector/3D location may, for example, detect when bodies have entered a body of water (as used herein, "body of water" may be used and refer to "aquatic environment" interchangeably without limitation or restriction) and at what 3D position of said body of water the object has been detected relative to the positions and fields of view sensed by sensors 126 and/or 144. Deep learning module 108 may be called to compare images, for example, from model data 130 or 152 to analyze input from sensors 126 and/or 144 to provide further data input to body detector/3D location module 106. If a body has been detected and a position determined by body detector/3D location module 106, then CPU 110, tracking module 112, and classifier 122 (as described in greater detail below) may be called or otherwise used to identify and track bodies or non-person objects. As used herein, bodies may refer to any size, shape, or type of living, animate, ambulating organism such as a human or animal (e.g., cat, dog, bird, goat, cow, deer, wolf, and others, without limitation or restriction).

[0086] In some examples, tracking may be established and managed by CPU 110 and tracking module 112 of detected person and non-person objects for uses by one or more of sub-modules 104-122 of application 102. In other examples, data from tracking module 112 may be stored in one or more of sensor data 128 or 154, model data 130 or 152, background data 132 or 150, tracking data 134 or 148, or other databases. For example, other databases, local or remote (e.g., cloud-based), may be provided to exclusively store tracking data from CPU 110 and tracking module 112. In some examples, tracking module 112 may be configured to manage tracking data that is processed by or generated from GPU 104 or CPU 110. As an example, when persons enter or leave a pool, tracking module 112 may be configured to delete a tracking record associated with said person or create a new tracking record for a person entering a pool. Tracking module 112 may also be configured, in some examples, to manage historical data associated with tracking detected persons and objects and sharing copies of said data with model data 130 and/or 152, which can be used to improve the accuracy of deep learning algorithms used and implemented by deep learning module 108. Data associated with tracking multiple individuals can be combined to track multiple individuals in a given space (i.e., an appearance space) by fusing (i.e., combining) processed tracklets from sensors 126 and/or 144, from multiple fields of view and, using 3D feature detection algorithms, size changes due to refraction of tracked body parts of individuals underwater can be estimated. Various types and sources of data may be used by deep learning module 108 (which may also be implemented with or replaced by other types of machine learning algorithms) to "train" or "learn" to identify features such as those discussed herein by using, for example, different types of comparative analysis (e.g., probabilistic, statistical, and others, without limitation or restrictions). Data of detected and classified items may then be tracked and data associated with tracking may be stored in tracking data 134 or remote, cloud-based, distributed, or otherwise networked databases such as tracking data 148. Tracking data may also be used, in some examples, to invoke state estimator 120, which may be implemented as a software module used to determine various states associated with given bodies (i.e., persons, animals, or the like) in a body of water, as described in greater detail below in connection with FIG. 21. In some examples, a drowning state may be estimated when state estimator 120 receives data indicating sensors 126 and/or 144 have detected a motionless body or head, a body or head partially or fully submerged for a time period, motionless eyelids/nose/mouth (i.e., eyelids are not moving or fluttering, mouth is open, but underwater, or the like) that are motionless or submerged for a time period, a body or head lacking detected breathing (i.e., no rise or fall of a chest or torso region), or any other configuration of drowning that may be learned by application 102 using various types of models, rules, or sensor data, without limitation or restriction. As used herein, "motionless" may refer to the complete or partial lack of motion originating from a detected body, head, or portion thereof, regardless of whether motion is imparted to the detected body, head, or portion thereof by the surrounding environment. In other words, "motionless" may refer to the lack of ambulatory or voluntary motion originating from a detected head, body, or portion thereof. In still other examples, drowning state estimation may be achieved by state estimator 120 for other types of drowning configurations and are not limited to the examples described herein.

[0087] Referring back to FIG. 1A, classifier 122 may be invoked to aid in identifying objects detected by sensors 126 and/or 144 in a body of water. In some examples, classifier 122 may be configured to implement segmentation of captured images and videos from sensors 126 and/or 144. For example, classifier 122 may be configured to classify features of detected objects into person and non-person classes, which may include pose detector features, body part size and shape features, and thermal intensity differentials. By using deep or machine learning algorithms such as those implemented by deep learning module 108 (which can be trained against various types and groups of data (e.g., model data 130)), non-person objects can be identified apart from persons detected in an aquatic environment such as a swimming pool by segmenting captured images and video. As used herein, "deep learning" may include machine learning models, both of which may refer to data models and algorithms that are used to process various types of input data to perform other processes and functions such as those described herein or others. Collectively, "deep learning" and "machine learning" may be referred to as "models."

[0088] In some examples, segmented images and video may be referred to as tracklets and can be used to learn (i.e., using deep learning module 108) to associate certain detected features with a given person. In further examples, classifier 122 may rely upon modeling provided by deep learning module 108 to determine whether a detected object should be classified as a person or non-person object, is moving or still, is fully or partially submerged or immersed, if a person, whether a head of said person is below or above water, and the like.

[0089] State estimator 120, in some examples, in data communication with one or more of body detector/3D location module 106, tracking module 112, classifier module 122, and alarm/hub module 118 may be configured to assign, correspond, and track various states of a detected object. If state estimator 120 indicates that a detected object is a person and a head corresponding to a body of said person is below water and has been submerged for a given period of time (e.g., 20-30 seconds), it may be configured to send (using a wired, wireless, optical, or other type of data communication link (e.g., data bus 124)) a control signal or data to alarm/hub module 118 to initiate a timer that, upon reaching a given threshold) activate an alarm (e.g., audible, visual, haptic, or others) to alert nearby personnel to a potential drowning event occurring. Due to the extensive data available to deep learning module 108 using modeling data 130 and/or 152, patterns of behavior can be imaged, analyzed, and recognized to provide early warning of drowning activities the possibility of cessation or interruption of a drowning event and survivability of drowning persons could be substantially increased.

[0090] As discussed above, hub 146 may be implemented as a local or remote unit that may be configured to provide one or more of the features, functions, and processes of application 102 as described herein. For example, hub 146 may be used to implement an alarm (such as those types described herein) that can be triggered when a timer (not shown) initiated by state estimator 120 has met or exceeded a given threshold (i.e., a pre-specified time limit measured in hours, minutes, seconds, or fractions thereof) for a state during which a head of a body associated with a person has been fully or partially submerged. In some examples, power supplied to hub 146 may come from a variety of sources, including electrical power driven over various voltages and amperages of direct or alternating current (i.e., 110-115 VAC or 110-115 VAC), 220 VAC, 12 VDC, 24 VDC, or others, without limitation or restriction). Power may be generated for system 100, application 102, and the elements shown within FIG. 1A from various sources and is neither limited or nor restricted to any particular form or type. Regardless, power module 116 may be implemented to manage and control power distribution to one or more of the elements shown in system 100 such as GPU 104, CPU 110, sensors 126 and/or 144, hub 146, clients 136-140, alarm 142, or others, without limitation or restriction. Power module 116 may also be implemented as software, firmware, circuitry, or a combination thereof to distribute, manage, enable/disable, or otherwise control the distribution of electrical power to the above-referenced elements, among others. In other examples, the quantity, type, configuration, function, or structure of elements 102-156 of system 100 may be varied and are not limited to any specific implementation, without limitation or restriction.

[0091] FIG. 1B illustrates an exemplary classifier module for analysis and deep learning modeling of sensor-based detection data in bounded aquatic environments. Here, subsystem 160 includes classifier 122 (FIG. 1A), deep learning module interface 162, pose detector feature data 164, body part size and shape feature data 166, and thermal intensity differential data 168. In some examples, classifier 122 receives input in the form of sensor data 170 from one or more of sensors 126 and/or 144 (FIG. 1A (not shown)). As used herein, sensor data 170 may be data that is generated from processing received input to sensors 126 and/or 144. In some examples, sensors 126 and/or 144 may receive optical signals in the form of light waves received, detected and processed by optical sensors. In other examples, sensors 126 and/or 144 may be acoustic sensors configured to detect objects based on sound (i.e., acoustic, sonar, ultrasound, and others), regardless of frequency, wavelength, and propagation source and which may be ultimately converted to sound data. In still other examples, sensors 126 and/or 144 may be configured to receive electrical signals that are converted from analog to digital data using, for example, an analog-digital converter (i.e., ADC). In still other examples, different types of data may be input as sensor data 170, which is not limited to any specific data type, format, or schema and may include streaming data and datasets from various media. When sensor data 170 is received by classifier 122, deep learning module interface 162 invokes deep learning module 108 (FIG. 1A) in order to classify sensor data 170 into pose detector features, body part size and shape features, and thermal intensity differentials. As an example, video captured by sensors 126 and/or 144 (above water and/or underwater) are segmented by deep learning module 108 by processing sensor data 170 into pose detector feature data 164, body part size and shape feature data 166, and/or thermal intensity differential data 168. Deep learning module 108 may be trained against model data 172 in order to identify classifications for sensor data 170 that is received by classifier 122. As shown, deep learning module interface 162 may also be configured to interface with other modules such as GPU 104 (FIG. 1A) or CPU 110 (FIG. 1A) to perform other processes using sensor data 170, such as those described herein. In other examples, the quantity, type, configuration, function, or structure of system 160, classifier 122, and the elements shown may be varied and are not limited to the descriptions provided.

[0092] FIG. 1C illustrates an exemplary deep learning system architecture for analysis and deep learning modeling of sensor-based detection data for organic motion determination in bounded aquatic environments. In some examples, system 180 includes image capture module 182, head/body parts detector 184, drowning detection and alarm module 186, pose estimation module 188, classifier 190, alignment block 192, all of which are configured to transfer data, including signals, voltages, or any other means, type, or format of transmitting and receiving information between elements 182-192, over or using data bus 194. Here, system 180 may be designed, implemented, and configured to perform various functions associated with drowning detection by using deep learning algorithms to process data resulting from other processes such as, for example, head detection, body part detection, pose estimation, point estimation (of points determined by coordinate determination of detected body parts such as a head underwater), among others, without limitation or restriction. As shown, system 180 includes image capture module 182, which may include cameras, apertures, lenses, or any type of image capturing device (not shown).

[0093] In some examples, image capture module 182 may refer to a single or multiple camera system in which cameras may be placed at various points about a bounded aquatic environment (e.g., swimming pool, lake, pond, harbor, bay, or the like, without limitation), including above a waterline (i.e., above water), below a waterline (i.e., underwater), or at a waterline. A multi-camera implementation of image capture module 182 may include cameras placed not only at various points around a bounded aquatic environment, but also above and underwater in order to capture images of objects, persons, bodies, animals, or other inanimate or animate objects that may exhibit organic or inorganic motion that are floating or partially or fully submerged. As used herein, "organic motion" may refer to motion that originates or emanates from an animate object such as a human or other animal that, as discussed below, when partially or fully submerged, may be imaged by image capture module 182 and processed using one or more deep learning algorithms to determine whether irregular motion or movement is detected and whether other conditions are present so as to enable system 180 to determine a drowning condition exists and, if so, to trigger an alarm (such as those described herein). "Inorganic motion," in some examples, may refer to motion associated with an inanimate object (i.e., non-human, non-animal, non-organic, or the like) that, in some examples, does not exhibit irregular motion such as that originated or emanated from an animate object such as a person. In other examples, organic or inorganic motion may be described differently and is not limited to the descriptions provided.

[0094] Here, image capture module 182 (as well as one, some, all, or none of elements 182-192) may be implemented using software, firmware, hardware, circuitry, or a combination thereof and configured to capture images, video, audio, or multimedia imagery, signals (e.g., electric, electronic, light/optical, and others, without limitation or restriction), and data. Data captured may be in various image formats such as red-green-blue (i.e., RGB), infrared, ultraviolet, and others, without limitation or restriction. As shown, image capture module 182 may be implemented to generate data that may be transferred between any of elements 182-192 over data bus 194. Techniques such as computer vision may be used to not only capture, but process data (e.g., audio, video, multimedia, still imagery, and others) from cameras placed above water, underwater, about a waterline, or otherwise disposed around and about a bounded body of water. Further, image capture module 182 may be designed, implemented, configured, or function differently than as shown and is not limited to the examples provided.

[0095] Once captured, in some examples, images may be converted or translated into data that may be transmitted (i.e., sent) to one or more of elements 184-192 over data bus 194, the latter of which may be implemented using wired, wireless, optical, or other data transmission media, without limitation or restriction. For example, images captured by cameras or other imaging devices (not shown) may be processed by image capture module 182 and converted into data that is transmitted to classifier 190, which may be implemented to identify one or more motion features using, in some examples, a singular or multiple deep learning algorithms. As used herein, "motion features" may refer to any aspect, attribute, feature, function, or characteristic of data that, when analyzed, indicates regular or irregular motion associated with animate, inanimate, organic, or inorganic objects or persons above or underwater. Examples of motion features are described in additional detail below in connection with FIGS. 27A-27B.

[0096] Referring back to FIG. 1C, deep learning algorithms may be used to implement the described techniques and there are no limitations or restrictions as to any particular instances. For example, deep learning algorithms such as those that implement not only self-learning techniques (i.e., evaluating input data to the algorithm against databases, data lakes, or other data gathered or collected that can be used to identify, perform rapid algorithm calculations to support machine-made determinations about input data, or otherwise classify input data based on rules, conditions, or other logic provided to support computing operations performed by deep or machine learning algorithms), but also those that can be used for image processing using neural networks (e.g., artificial, convolutional, recurrent, and others) are among a few examples of algorithmic techniques that may be used to implement classifier 120. The types of deep learning algorithms used by classifier 190 are not intended to be limiting and may be configured to process data received from image capture module 182 and head/body parts detector 184 for various purposes ranging from head detection to pose estimation to others, without limitation or restriction.

[0097] In some examples, image data from head/body parts detector 184 may be generated by processing data received from image capture devices (e.g., cameras, charge coupling devices, etc.) that are in direct or indirect data communication with image capture module 182, which is transferred to head/body parts detector 184, which processes to evaluate using computation determinations to identify body parts in the image data received. When processing and evaluating image data (i.e., data from image capture module 182), heads/body parts detector 184, in some examples, identifies body parts and assigns coordinates (relative to a given frame of reference or bounded body (e.g., bounded aquatic environment such as a swimming pool, dive tank, pool, pond, lake, bay, harbor, or the like, although any aquatic environment could use the techniques described herein) to the identified parts. Once identified, the coordinates may be transmitted by head/body parts detector 184 as data (e.g., using any type of programming, formatting, or data processing language such as Java, JavaScript, MATLAB, Python, HTML, HTML5, and many others, without limitation or restriction to any particular language, format, or protocol) to any of elements 182-192.

[0098] For example, head/body parts detector 184 processes image data received from image capture module 182 and identifies one or more bodies that are partially above water and partially underwater. Head/body parts detector 184 then identifies parts of the body and assigns coordinates (e.g., using any type of coordinate system for reference during computing processes performed by elements 182-192 of system 180), which are then transmitted to classifier 190. In other examples, alignment block 192 may also be used to process data from image capture module 182 in order to align images captured of bodies above and below water.

[0099] In some examples, alignment block 192 may be used to account for various imagery effects on captured images in an aquatic environment, above and underwater. Effects such as distortion (e.g., lens, perspective, optical, and others, without limitation or restriction), scattering, absorption, and others, without limitation or restriction, may be taken into account when aligning images in order to present a complete object (e.g., a contiguous body or shape belonging to an object, person, animal, insect, animate or inanimate object) using the techniques described herein. An aligned image (not shown) may then be processed by head/body parts detector 184 and pose estimation module 188. In some examples, pose estimation module 188 may be another computing process implemented using software, firmware, hardware, circuitry, or a combination thereof.

[0100] As described herein, pose estimation may refer to various types of data processing techniques (e.g., deep learning, machine learning, or other algorithmic techniques, without limitation or restriction) in which data (e.g., image data from image capture module 182, aligned image data from alignment block 192, detected part data from head/body part detector 184, or others) may be received and processed by pose estimation module 188. For example, deep learning or machine learning algorithms such as TensorFlow.TM., Torch, OpenPose, and others, without limitation or restriction, may be used to determine poses associated with various detected body(s) and part(s), as determined by head/body parts detector 184. Trained against one or more datasets (e.g., MPII, SURREAL, or others, without limitation or restriction), pose estimation may be in two or three-dimensions (i.e., "2D" or "3D") and, by evaluating coordinates associated with joints and parts of a body, various types of determinations such as poses, movement, and features (i.e., of facial or other body parts) may be determined by pose estimation module 188. Data associated with these determinations may be input to classifier 190, which may be used during process to generate various computations associated with pose estimation, joint and body part coordinates, movement, location of body parts relative to a waterline (i.e., above, below, or at a waterline of a bounded aquatic environment), and others, without limitation or restriction.

[0101] In some examples, classifier 190 (as well as other elements 182-188, 192) may be implemented using various types of software, hardware, firmware, circuitry, or combination thereof. For example, deep learning, machine learning, and other types of algorithms, without limitation or restriction, may be implemented as computing software to transform a generic computer, computing device, or computing environment into classifier 190, which is configured to receive input from elements 182-188 and 192 in order to process and generate computational determinations as to body and part detection, image alignment, movement, location, pose estimation, among others, and to generate a further determination as to whether the computed data indicates a detected body (i.e., a contiguous object or shape detected within a bounded aquatic environment) exhibits organic movement (i.e., movement emanating from the detected body and not from the surrounding environment imparting physical forces acting upon an object or body). In other words, classifier 190 is not a generic computing device, module, or computer, but instead a specialized element of system 180 that, utilizing deep learning, machine learning, or other algorithmic techniques to receive data input (e.g., digital data, analog signals, or the like) regarding captured images (i.e., from image capture module 182 and alignment block 192), detected body parts (i.e., from head/body parts detector 184), and estimated poses (i.e., from pose estimation module 188), and analyze these inputs relative using machine or deep learning algorithms to generate a coordinate-based determination that organic motion is detected and, when compared to criteria (e.g., rules, thresholds, or the like), also determine whether a drowning condition exists.

[0102] In some examples, if classifier 190 runs deep learning and/or machine learning processing and, after application of criteria (e.g., rules, quantitative or qualitative thresholds, or the like), determines a drowning condition exists, further data or signals may be generated and sent by classifier 190 to drowning detection/alarm module 186. In some examples, classifier 190 may determine whether a drowning condition exists or, outputting data to drowning detection/alarm module 186, the latter may generate said determination, either in combination or as a standalone output. Regardless of whether classifier 190 or drowning detection/alarm module 186, either in combination or individually, generates a data-driven determination that a drowning condition exists, an output signal (not shown) may be generated from drowning detection/alarm module 186 that triggers an alarm (not shown) such as those described herein (e.g., visual, audible, haptic, or other sensory-perceptible alarm to alert nearby persons or personnel of a potential or actual drowning condition within a bounded aquatic environment such as a swimming pool, lake, pond, bay, harbor, or other body of water (or other fluid or liquid) having at least one non-liquid boundary or bounded side). In other examples, system 180 and elements 182-194 may be designed, configured, or implemented differently and are not limited to the examples shown and described.

[0103] FIG. 2 illustrates another exemplary system for analysis and deep learning modeling of sensor-based detection data in bounded aquatic environments. Here, top view 200 shows a bounded area (e.g., an aquatic environment such as a body of water) 202 in which system 204 is implemented (partially above water, partially underwater, as described in greater detail below) with at least one sensor (not shown). In some examples, at least one or more elements of application 102 (FIG. 1A) may be implemented with system 204. In other examples, system 204 may be an implementation of application 102 (FIG. 1A). As shown, system 204 may be coupled via data communication links 206-208 to alarms 210-212, respectively. Data communication links 206-208 may be implementing using wired, wireless, optical, radiating, or other data communication technologies in order for signals and/or data to be transferred between system 204 and alarms 210-212. System 204 may also, in some examples, include one or more sensors (e.g., sensors 126 and/or 144 (FIG. 1A)) that are configured to detect objects within bounded area 202. Sensors (not shown) may be implemented and detected objects may be classified as person or non-person objects. Classifier 122 may be configured to classify detected objects based on pose features, body part sizes or shapes, and/or thermal intensity (i.e., the thermal image of a person is configured to be read, detected, or sensed differently than inanimate, colder non-person objects). Once detected, classified, associated, and tracked, a determination may be made as to whether to trigger one, some, or all of alarms 210-218. In some examples, one or more of alarms 210-218 may be triggered when a timer activated by system 204 reaches or exceeds a given threshold. As described in greater detail below, a timer may be set when sensor(s) (not shown) of system 204 provide input that, when processed, classifies body parts such as a body (e.g., torso, trunk, legs, appendages, and the like) and head as being associated with a person and said head is detected and tracked underwater (i.e., below a waterline) for an amount of time at or after which a drowning state is assumed to exist. A drowning state may be one of several states that system 204 can transition into or through, which is described in greater detail below in connection with FIG. 21.

[0104] Referring back to FIG. 2, alarms 210-218 may of various types of alarms. In some examples, one or more of alarms 210-218 may be directly coupled to system 204 using wired electrical connections that, when a drowning state is detected (i.e., a state in which a tracked person(s), trigger an audible, visual, or other perceptible alarm to alert nearby individuals to a potential drowning occurring. Alarms may also be, in some examples, automatically generated messages such as text (e.g., short messaging system ("SMS"), Internet Relay Chat ("IRC"), iMessages.RTM., or others), electronic mail ("email"), voice calls, or the like. Further, alarms may also be triggered and generate automatic calls, messages, or other signals to be sent to emergency services for medical, paramedical, fire, police, or other similar services to respond to the location of a prospective victim detected as being in a drowning state. In other examples, one or more of alarms 210-218 may also be in data or electrical communication with system 204 using wireless links to transfer data and/or power (e.g., inductive, magnetic, or the like) and, likewise, are configured to provide an audible, visual, haptic, pyrotechnic, thermal, vibrational, or otherwise perceptible alarm when triggered (i.e., when a drowning state activates a timer that reaches a pre-determined threshold). In still other examples, a combination of different types of alarms may be implemented with system 204 in order to alert nearby personnel if a detected person in bounded area 202 whose classified body parts are tracked and a condition is detected (i.e., a drowning state) that results in sending a signal from system 204 to activate a timer as a condition precedent to triggering an alarm. In other examples, the quantity, type, configuration, function, or structure of system 204 and the elements shown may be varied and are not limited to the descriptions provided.

[0105] FIG. 3A illustrates an exemplary sensor arrangement in a system for analysis and deep learning modeling of sensor-based detection data in bounded aquatic environments. Here, view 300 is an "aerial" or top view illustrating bounded area 302 within which field of view 304 is configured to be sensed (passively (i.e., awaiting detection of one or more input stimuli, conditions, attributes, or the like) or actively (i.e., by generating from an originating sensor an output that may be used to measure or sense a return input (e.g., sonar, radar, lidar, or the like)) by tile unit 306. Regions 308-310 fall outside of field of view 304, but can be covered by overlapping fields of view provided by other sensors (not shown) implemented with tile unit 306, which may be placed on different sides or surfaces of bounded area 302. "Tile unit" (e.g., tile unit 306) may refer to a unit, chassis, package, substrate, or housing configured to include one or more sensors and one or more elements of application 102 (FIG. 1A) that are configured to perform processes as described herein, without limitation or restriction. In some examples, tile unit 306 may be integrated within the structure of bounded area 302 or may be placed after a bounded area (e.g., a swimming pool) has been constructed, as described in greater detail below. In other examples, tile unit 306 may be implemented and configured differently in function and/or structure.

[0106] As an example, bounded area 302 may be a swimming pool that is rectangular in shape. A wide angle sensor or sensor array (not shown) may be implemented and co-located with system 306, which is configured to detect objects within field of view 304. Regions 308 and 310 remain outside of field of view 304. However, placing another system, as described in greater detail below in connection with FIG. 3B enables regions 308 and 310 to also be sensed by one or more sensors placed elsewhere in order to provide overlapping sensor coverage. In other examples, the quantity, type, configuration, function, or structure of the elements shown may be varied and are not limited to the descriptions provided.