Graphical User Interface For Real-time Management Of An Earth Shaping Vehicle

Ready-Campbell; Noah Austen ; et al.

U.S. patent application number 17/563972 was filed with the patent office on 2022-04-21 for graphical user interface for real-time management of an earth shaping vehicle. The applicant listed for this patent is Built Robotics Inc.. Invention is credited to Lucas Allen Bruder, Edward Jiacheng Cai, Gaurav Jitendra Kikani, Joonhyun Kim, Andrew Xiao Liang, Elizabeth Maitland Murdoch, Thomas Joel Pech, Noah Austen Ready-Campbell.

| Application Number | 20220120060 17/563972 |

| Document ID | / |

| Family ID | |

| Filed Date | 2022-04-21 |

View All Diagrams

| United States Patent Application | 20220120060 |

| Kind Code | A1 |

| Ready-Campbell; Noah Austen ; et al. | April 21, 2022 |

GRAPHICAL USER INTERFACE FOR REAL-TIME MANAGEMENT OF AN EARTH SHAPING VEHICLE

Abstract

A computing device generates a graphical user interface displaying a three-dimensional representation of the site comprising a position of a vehicle capable of moving material within the site and a target location within the site for the vehicle to move material. The computing device transmits a set of instructions for the vehicle to move a volume of material from the target location. The computing device receives sensor data describing a depth of the target location, a current volume of material moved from the target location, and a position of the vehicle. The graphical user interface displayed on the computing device is modified to display the current depth of the target location, and the position of the vehicle relative to the target location. The computing device modifies the set of instructions based on the received sensor data and provides the modified set of instructions to the vehicle.

| Inventors: | Ready-Campbell; Noah Austen; (San Francisco, CA) ; Liang; Andrew Xiao; (San Francisco, CA) ; Cai; Edward Jiacheng; (San Francisco, CA) ; Murdoch; Elizabeth Maitland; (San Francisco, CA) ; Pech; Thomas Joel; (San Francisco, CA) ; Bruder; Lucas Allen; (San Francisco, CA) ; Kikani; Gaurav Jitendra; (San Francisco, CA) ; Kim; Joonhyun; (San Francisco, CA) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Appl. No.: | 17/563972 | ||||||||||

| Filed: | December 28, 2021 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 17002658 | Aug 25, 2020 | 11236492 | ||

| 17563972 | ||||

| International Class: | E02F 9/26 20060101 E02F009/26; G06F 3/04815 20060101 G06F003/04815; E02F 9/20 20060101 E02F009/20 |

Claims

1. A method for managing a material moving routine in a site, the method comprising: generating, by a computing device, a graphical user interface displaying a three-dimensional representation of the site comprising a position of a vehicle capable of moving material within the site, a target location within the site for the vehicle to move material, and a representation of a planned movement of a tool overlaying the representation of the site, the representation of the planned movement divided into a plurality of segments, wherein each segment of the plurality of segments represents a portion of the planned movement of the tool; transmitting, by the computing device, a set of instructions to cause the vehicle to move a volume of material from the target location; as the vehicle moves material from the target location based on the set of instructions: receiving, at the computing device, data recorded by one or more sensors mounted to the vehicle describing a current state of the site; modifying, based on the data recorded by the one or more sensors, the graphical user interface to display the current state of the site; and modifying, by the computing device, the set of instructions based on the received data recorded by the one or more sensors and providing the modified set of instructions to the vehicle.

2. The method of claim 1, wherein the computing device continuously receives the data recorded by the one or more sensors as the vehicle moves material from the target location and modifies the graphical user interface continuously as the vehicle moves material from the target location.

3. The method of claim 1, wherein the graphical user interface further displays a side-profile view of the three-dimensional representation of the site, the method further comprising: receiving data recorded by the one or more sensors mounted to the vehicle describing a current position of the vehicle and a material moving tool coupled to the vehicle in the site; and as the vehicle moves material from the target location, modifying the graphical user interface to display the current position of the vehicle and the material moving tool based on the data describing the current position of the vehicle and material moving tool coupled to the vehicle in the site.

4. The method of claim 1, wherein the computing device transmits a second set of instructions for the vehicle to move material from a second target location, the method further comprising: receiving second data recorded by the one or more sensors indicating that the vehicle has navigated to the second target location; modifying the graphical user interface to display a position of the vehicle at the second target location; and as the vehicle moves material from the second target location, modifying, based on the second data recorded by the one or more sensors, the graphical user interface to display the second target location, a current depth of the second target location, a position of the vehicle relative to the second target location, and an updated height of the of the pile of material, wherein the height of the pile of material is updated based on the volume of material moved from the second target location.

5. The method of claim 1, wherein the graphical user interface further displays a representation of a ground surface of the site and one or more of the following features: a representation of a range of motion of a material moving tool coupled to the vehicle and the vehicle overlaying the representation of the ground surface; a three-dimensional representation of a previous target location overlaying the representation of the ground surface; and a representation illustrating continuous movement of the material moving tool as the vehicle executes the set of instructions to move material from the target location.

6. The method of claim 1, further comprising: responsive to receiving data recorded by the one or more sensors mounted to the vehicle as the vehicle moves material, generating a notification comprising one or more of: a warning message; an error message; a fatal error alert; an informational message; and an interactive message, wherein the interactive message displays a prompt for an operator to provide additional information regarding the set of instructions.

7. The method of claim 1, further comprising: computing one or more material moving metrics based on the volume of material moved from the target location and an amount of time that the vehicle to has spent moving material from the target location; and generating graphic elements of the graphical user interface to display the computed material moving metrics using a first subset of graphical representations and a second set of numerical metrics.

8. The method of claim 1, further comprising: accessing a set of instructions to be executed by the vehicle, wherein the set of instructions define operations for moving material at the target location; and generating the representation of the planned movement of the tool by determining a visual representation of the accessed set of instructions.

9. A non-transitory computer readable medium storing instructions for managing a material moving routine in a site encoded thereon that, when executed by a processor, cause the processor to: generate a graphical user interface displaying a three-dimensional representation of the site comprising a position of a vehicle capable of moving material within the site, a target location within the site for the vehicle to move material, and a representation of a planned movement of a tool overlaying the representation of the site, the representation of the planned movement divided into a plurality of segments, wherein each segment of the plurality of segments represents a portion of the planned movement of the tool; transmit, to a controller of the vehicle, a set of instructions to cause the vehicle to move a volume of material from the target location; as the vehicle moves material from the target location based on the set of instructions: receive data recorded by one or more sensors mounted to the vehicle describing a current state of the site; modify, based on the data recorded by the one or more sensors, the graphical user interface to display the current state of the site; and modify the set of instructions based on the received data recorded by the one or more sensors and provide the modified set of instructions to the vehicle.

10. The non-transitory computer readable medium of claim 9, wherein the processor continuously receives the data recorded by the one or more sensors as the vehicle moves material from the target location and modifies the graphical user interface continuously as the vehicle moves material from the target location.

11. The non-transitory computer readable medium of claim 9, wherein the graphical user interface displays a side-profile view of the three-dimensional representation of the site, the instructions further causing the processor to: receive data recorded by the one or more sensors mounted to the vehicle describing a current position of the vehicle and a material moving tool coupled to the vehicle in the site; and as the vehicle moves material from the target location, the graphical user interface to display the current position of the vehicle and the material moving tool based on the data describing the current position of the vehicle and material moving tool coupled to the vehicle in the site.

12. The non-transitory computer readable medium of claim 9, wherein the processor transmits a second set of instructions for the vehicle to move material from a second target location, the instructions further causing the processor to: receive second data recorded by the one or more sensors indicating that the vehicle has navigated to the second target location; modify the graphical user interface to display a position of the vehicle at the second target location; and as the vehicle moves material from the second target location, modify, based on the second data recorded by the one or more sensors, the graphical user interface to display the second target location, a current depth of the second target location, a position of the vehicle relative to the second target location, and an updated height of the of the pile of material, wherein the height of the pile of material is updated based on the volume of material moved from the second target location.

13. The non-transitory computer readable medium of claim 9, further comprising instructions that cause the graphical user interface to display a representation of a ground surface of the site and one or more of the following features: a representation of a range of motion of a material moving tool coupled to the vehicle and the vehicle overlaying the representation of the ground surface; a three-dimensional representation of a previous target location overlaying the representation of the ground surface; and a representation illustrating continuous movement of the material moving tool as the vehicle executes the set of instructions to move material from the target location.

14. The non-transitory computer readable medium of claim 9, further comprising instructions that cause the processor to: responsive to receiving data recorded by the one or more sensors mounted to the vehicle as the vehicle moves material, generate a notification comprising one or more of: a warning message; an error message; a fatal error alert; an informational message; and an interactive message, wherein the interactive message displays a prompt for an operator to provide additional information regarding the set of instructions.

15. The non-transitory computer readable medium of claim 9, further comprising instructions that cause the processor to: compute one or more material moving metrics based on the volume of material moved from the target location and an amount of time that the vehicle to has spent moving material from the target location; and generate graphic elements of the graphical user interface to display the computed material moving metrics using a first subset of graphical representations and a second set of numerical metrics.

16. A system for managing a material moving routine in a site, the system comprising: a vehicle capable of moving material within the site positioned within the site and comprising one or more sensors mounted to the vehicle and a controller of the vehicle, wherein the one or more sensors record data describing the site; and a computing device communicatively coupled to the vehicle, the computing device comprising a processor and a non-transitory computer readable medium storing instructions encoded thereon that, when executed by the processor, cause the processor to: generate a graphical user interface displaying a three-dimensional representation of the site comprising a position of a vehicle capable of moving material within the site, a target location within the site for the vehicle to move material, and a representation of a planned movement of a tool overlaying the representation of the site, the representation of the planned movement divided into a plurality of segments, wherein each segment of the plurality of segments represents a portion of the planned movement of the tool; transmit, to the controller of the vehicle, a set of instructions to cause the vehicle to move a volume of material from the target location; as the vehicle moves material from the target location based on the set of instructions: receive data recorded by one or more sensors mounted to the vehicle describing a current state of the site; modify, based on the data recorded by the one or more sensors, the graphical user interface to display the current state of the site; and modify the set of instructions based on the received data recorded by the one or more sensors and provide the modified set of instructions to the vehicle.

17. The system of claim 16, wherein the processor continuously receives the data recorded by the one or more sensors as the vehicle moves material from the target location and modifies the graphical user interface continuously as the vehicle moves material from the target location.

18. The system of claim 16, wherein the graphical user interface displays a side-profile view of the three-dimensional representation of the site, the instructions further causing the processor to: receive data recorded by the one or more sensors mounted to the vehicle describing a current position of the vehicle and a material moving tool coupled to the vehicle in the site; and as the vehicle moves material from the target location, the graphical user interface to display the current position of the vehicle and the material moving tool based on the data describing the current position of the vehicle and material moving tool coupled to the vehicle in the site.

19. The system of claim 16, wherein the processor transmits a second target tool path with operations for the vehicle to perform to excavate a volume of earth from a second target location, the instructions further causing the processor: receive second data recorded by the one or more sensors indicating that the vehicle has navigated to the second target location; modify the graphical user interface to display a position of the vehicle at the second target location; and as the vehicle moves material from the second target location, modify, based on the second data recorded by the one or more sensors, the graphical user interface to display the second target location, a current depth of the second target location, a position of the vehicle relative to the second target location, and an updated height of the of the pile of material, wherein the height of the pile of material is updated based on the volume of material moved from the second target location.

20. The system of claim 16, further comprising instructions that cause the graphical user interface to display a representation of a ground surface of the site and one or more of the following features: a representation of a range of motion of a material moving tool coupled to the vehicle and the vehicle overlaying the representation of the ground surface; a three-dimensional representation of a previous target location overlaying the representation of the ground surface; and a representation illustrating continuous movement of the material moving tool as the vehicle executes the set of instructions to move material from the target location.

21. The system of claim 16, further comprising instructions that cause the processor to: responsive to receiving data recorded by the one or more sensors mounted to the vehicle as the vehicle moves material, generate a notification comprising one or more of: a warning message; an error message; a fatal error alert; an informational message; and an interactive message, wherein the interactive message displays a prompt for an operator to provide additional information regarding the set of instructions.

22. The system of claim 16, further comprising instructions that cause the processor to: compute one or more material moving metrics based on the volume of material moved from the target location and an amount of time that the vehicle to has spent moving material from the target location; and generate graphic elements of the graphical user interface to display the computed material moving metrics using a first subset of graphical representations and a second set of numerical metrics.

Description

CROSS REFERENCE TO RELATED APPLICATIONS

[0001] This application is a continuation of U.S. application Ser. No. 17/002,658, filed Aug. 25, 2020, which is incorporated by reference in its entirety.

BACKGROUND

Field of Art

[0002] The disclosure relates generally to methods and systems for managing the operation of an autonomous or semi-autonomous vehicle, and more specifically to a graphical user interface for managing an autonomous or semi-autonomous vehicle performing an earth shaping routine in a site.

Description of the Related Art

[0003] Vehicles such as backhoes, loaders, and excavators, generally categorized as earth shaping vehicles, are used to move earth from locations in a site. Currently, operation of these earth shaping vehicles is very expensive as each vehicle requires a manual operator be available and present during operation. Further complicating the industry, there is an insufficient labor force skilled enough to meet the demand for operating these vehicles. Because these vehicles must be operated manually, earth moving can only be performed during the day, extending the duration of earth moving tasks and further increasing overall costs. The dependence of current earth shaping vehicles on manual operators increases the risk of human error during earth moving processes and reduce the quality of work done at the site.

[0004] Additionally, for operators to effectively track and monitor the progress of a routine performed by an autonomous of a semi-autonomous vehicle, the operator should be positioned within the site overseeing the progress of the vehicle. However, such situations expose the operator to unnecessary risks, for example injuries caused by crossing paths with the vehicle, interrupting operation of the vehicle, or stepping through loose earth moved by the vehicle. Accordingly, there exists a need for systems and techniques that allow operators to manage an autonomous or semi-autonomous vehicle from a remote location outside of the site.

SUMMARY

[0005] Described is an autonomous or semi-autonomous earth shaping system that unifies an earth shaping vehicle with a sensor system for moving earth within a site. The earth shaping system controls and navigates an earth shaping vehicle through an earth shaping routine of a site. The earth shaping system uses a combination of sensors integrated into the earth shaping vehicle to record the positions and orientations of the various components of the earth shaping vehicle and/or the conditions of the surrounding earth. Data recorded by the sensors may be aggregated or processed in various ways, for example, to determine and control the actuation of the vehicle's controls, to generate representations of the current state of the site, to perform measurements and generate analyses based on those measurements, and perform other tasks described herein.

[0006] According to a first embodiment, a computing device used by an operator, generates a graphical user interface that displays a three-dimensional representation of the site. The three-dimensional representation of the site comprises a position of an earth shaping vehicle within the site and a target location within the site where the earth shaping vehicle is deployed to move or shape earth. The computing device transmits a target tool path to the earth shaping vehicle that includes operations for the vehicle to perform to shape or move a volume of earth from the target location. The target tool path may alternatively include operations for the earth shaping vehicle to perform an alternate earth-shaping task. As the earth shaping vehicle moves earth from the target location based on the target tool path, the computing device receives data recorded by one or more sensors mounted to the earth shaping vehicle. The received sensor data describes a current depth of the target location, a current volume of earth excavated from the target location, a position of the earth shaping vehicle relative to the target location, a location of a dump pile where the earth shaping vehicle deposits excavated earth, and a height of the dump pile. Based on the received sensor data, the graphical user interface displayed on the computing device is modified to display the current depth of the target location, the position of the vehicle relative to the target location, the location of the dump pile, and the height of the dump pile. Additionally, the target tool path is modified based on the received sensor data and the modified target tool path is transmitted to the computing device.

[0007] According to a second embodiment, a computing device used by an operator generates an initial geofence around a target location in response to a first user input to a graphical user interface displayed on the computing device. The computing device transmits operations for an earth shaping vehicle to perform while navigating within the initial geofence. The computing device receives an indication of an obstacle within the initial geofence detected by the earth shaping vehicle. The indication of the obstacle may be displayed on the graphical user interface. In response to a second user input via the graphical user interface, the computing device generates an updated geofence that includes the target location but excludes the obstacle. The computing device transmits the updated geofence to the earth shaping vehicle, and the earth shaping vehicle performs the operations while navigating within the updated geofence.

[0008] The described graphical user interfaces enable operators to remotely monitor and manage semi-autonomous and autonomous vehicles operating in a site, which improves the overall efficiency with which the vehicles can operate and reduces risks to both the vehicles and the operators managing the vehicles.

BRIEF DESCRIPTION OF DRAWINGS

[0009] FIG. 1 shows an earth shaping system for moving earth, according to an embodiment.

[0010] FIG. 2A illustrates an example placement of sensors on a track trencher configured to excavate earth from a target location, according to an embodiment.

[0011] FIG. 2B illustrates an example placement of sensors for a skid-steer loader configured to excavate earth from a target location, according to an embodiment.

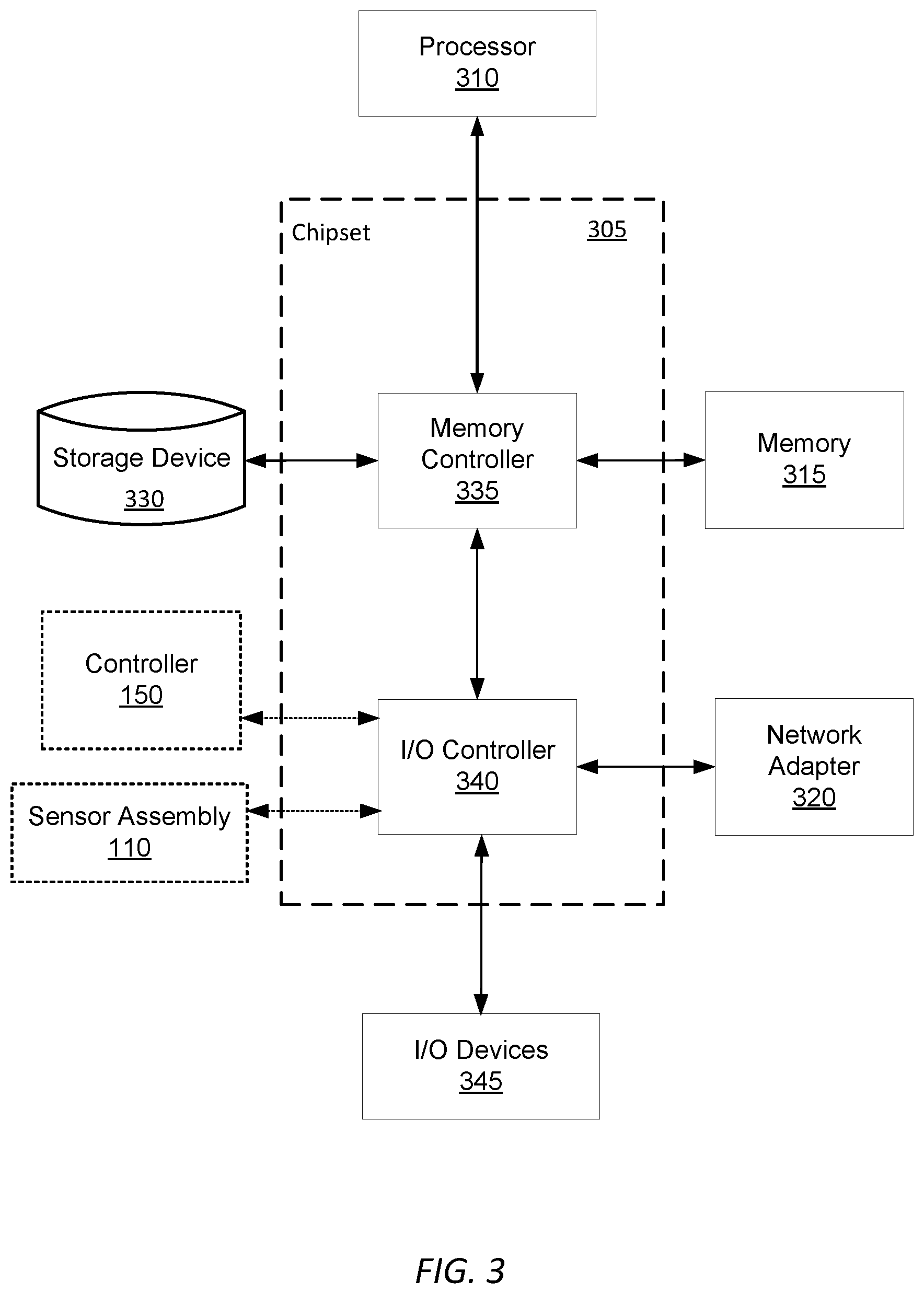

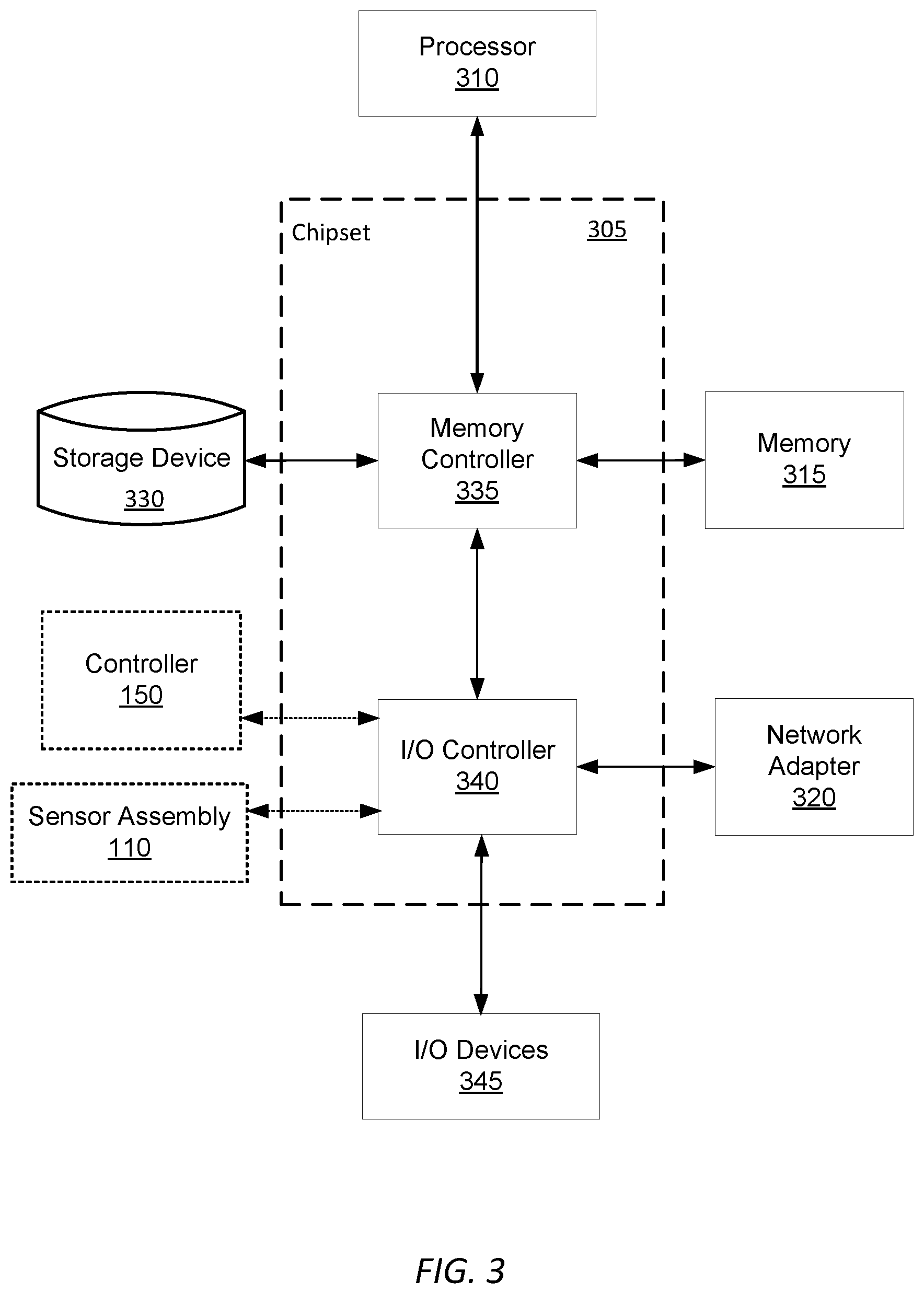

[0012] FIG. 3 is a high-level block diagram illustrating an example of a computing device used in an on-unit computer, off-unit computer, and/or database server, according to an embodiment.

[0013] FIG. 4 is a system architecture diagram for controlling an earth shaping vehicle, according to an embodiment.

[0014] FIG. 5A is a system architecture diagram for a preparation engine, according to an embodiment.

[0015] FIG. 5B is a flowchart describing a process for a controller to prepare a digital terrain model for a site, according to one embodiment.

[0016] FIG. 6A is a system architecture diagram for an earth removal engine, according to an embodiment.

[0017] FIG. 6B is a flowchart describing a process for an earth shaping vehicle to perform an excavation routine, according to one embodiment.

[0018] FIG. 6C is a flowchart describing a process for an earth shaping vehicle to perform a fill estimate routine, according to one embodiment.

[0019] FIG. 7A is a system architecture diagram for a volume check engine, according to an embodiment.

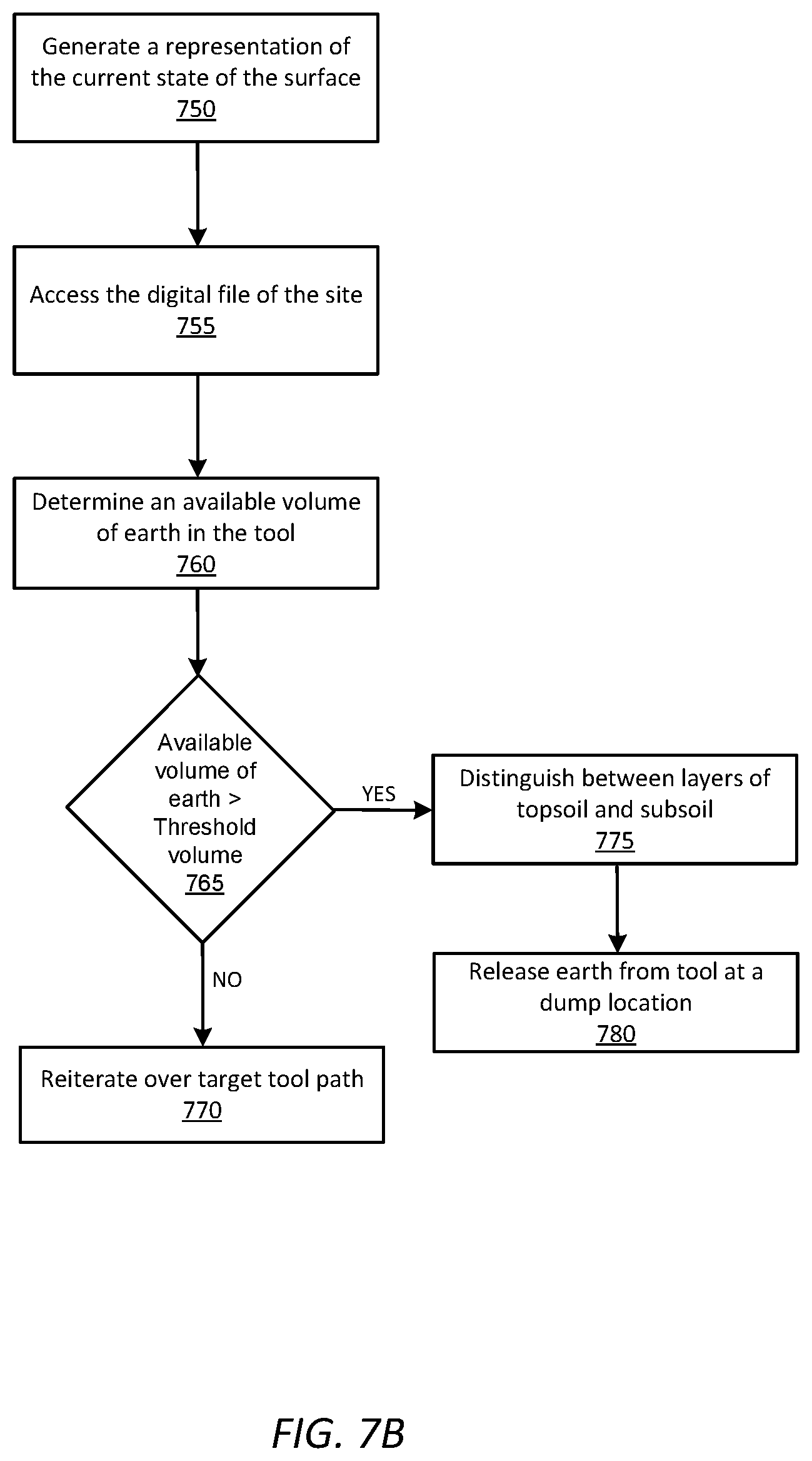

[0020] FIG. 7B is a flowchart describing a process for an earth shaping vehicle to perform a volume check routine, according to an embodiment.

[0021] FIG. 8 is a system architecture diagram for an operations interface engine, according to an embodiment.

[0022] FIGS. 9A-9F are illustrations of graphical user interfaces presented to an operator to initialize a target tool path, according to an embodiment.

[0023] FIG. 10A is an illustration of an example coordinate space in which an earth shaping vehicle updates a computing device while performing an earth shaping routine, according to an embodiment.

[0024] FIGS. 10B-10H are illustrations of graphical user interfaces presented to an operator to monitor an earth-shaping routine and an earth shaping vehicle, according to an embodiment.

[0025] FIG. 11 is an illustration of a graphical user interface presented to an operator to monitor actuation of an earth shaping tool on an earth shaping vehicle, according to an embodiment.

[0026] FIGS. 12A-12D are illustrations of a graphical user interface presented to an operator to analyze metrics of an earth-shaping routine and an earth shaping vehicle, according to an embodiment.

[0027] FIG. 13A is an illustration of an example coordinate space in which a geofence is updated based on an indication from an earth shaping vehicle navigating within the geofence, according to an embodiment

[0028] FIG. 13B is an illustration of a graphical user interface presented to an operator to update a geofence, according to an embodiment.

[0029] The figures depict various embodiments of the presented invention for purposes of illustration only. One skilled in the art will readily recognize from the following discussion that alternative embodiments of the structures and methods illustrated herein may be employed without departing from the principles described herein.

DETAILED DESCRIPTION

I. Excavation System

[0030] FIG. 1 shows an earth shaping system 100 for moving earth autonomously or semi-autonomously from a dig site using a suite of one or more sensors 170 mounted on an earth shaping vehicle 115 to record data describing the state of the earth shaping vehicle 115 and the site as earth is moved within the site. As examples, FIGS. 2A and 2B illustrate the example placement of sensors for a track trencher and a skid steer loader, respectively, according to example embodiments. FIGS. 1-2B are discussed together in the following section for clarity.

[0031] The earth shaping system 100 includes a set of components physically coupled to the earth shaping vehicle 115. These include a sensor assembly 110, the earth shaping 115 itself, a digital or analog electrical controller 150, and an on-unit computer 120a. The sensor assembly 110 includes one or more of any of the following types of sensors: measurement sensors 125, spatial sensors 130, imaging sensors 135, and position sensors 145.

[0032] Each of these components will be discussed further below in the remaining sub-sections of FIG. 1. Although FIG. 1 illustrates only a single instance of most of the components of the earth shaping system 100, in practice more than one of each component may be present, and additional or fewer components may be used different than those described herein.

[0033] I.A. Earth Shaping Vehicle

[0034] The earth shaping 115 may be an excavation vehicle. Excavation vehicles are items of heavy equipment designed to move earth from beneath the ground surface within a dig site. As described herein, a dig site may also be referred to, more generally, as a site. Excavation vehicles are outfitted with a tool 175 that is large and capable of excavating large volumes of earth at a single time, particularly relative to what an individual human can move by hand. Generally, excavation vehicles excavate earth by scraping or digging earth from beneath the ground surface. Examples of excavation vehicles within the scope of this description include, but are not limited to loaders such as backhoe loaders, track loaders, wheel loaders, skid steer loaders, scrapers, graders, bulldozers, compactors, excavators, mini-excavators, trenchers, skip loaders

[0035] In implementations involving excavation vehicles, the tool 175 is an excavation tool including not only an instrument collecting earth, such as a bucket or shovel, but also any articulated elements for positioning the instrument for the collection, measurement, and dumping of dirt. For example, in an excavator or loader the excavation tool refers not only to the bucket but also the multi-element arm that adjusts the position and orientation of the tool.

[0036] In addition to excavation vehicles, earth shaping vehicles may additionally refer to hauling vehicles, compacting vehicles, or any other vehicles deployed within a dig site to assist and optimize the performance of various earth shaping tasks. For example, an excavation vehicle may excavate earth from below the surface of a dig site and deposit the excavated earth into a hauling vehicle. The hauling vehicle transports to earth from a first location in the dig site to a second location, for example a fill location. At the fill location, the hauling vehicle empties contents of a hauling tool to fill earth into a hole and a compacting vehicle compacts the filled earth. Alternatively, in place of the hauling vehicle filling earth at a fill location, another excavation vehicle may transfer earth from the hauling vehicle 115b to the fill location. In implementations for which multiple vehicles perform tasks, instructions are communicated to each vehicle in the dig site via the network 105. As described herein, excavation vehicles, hauling vehicles, and compacting vehicles may be broadly referred to as "earth shaping vehicles."

[0037] Whereas excavation vehicles are configured with an excavation tool for moving earth from beneath the ground surface, hauling vehicles are large and capable of moving large volumes of earth above the surface from a first location to a second location some distance away. Typically, hauling vehicles are configured with hauling tools capable of transporting a larger volume of earth than an excavation tool configured to an excavation vehicle over larger distances. Examples of hauling vehicles include on-road or off-road trucks, for example dump trucks, articulated dump trucks or belly dumps, self-loading trucks, for example scrapers, scraper-tractors, high-speed dozers, or other wheeled or tracked equipment configured to tow a scraper attachment.

[0038] In implementations involving hauling vehicles 115, the tool 175 is a hauling tool that is an instrument for securely transporting earth over a distance. The hauling tool may additionally refer to actuation elements, which when actuated by a hydraulic system, adjust the orientation and position of the hauling tool to fill earth into a location on the dig site.

[0039] Compacting vehicles are designed with a compacting tool for compacting earth that has been filled into a fill location, earth that has been loosed by the navigation of other vehicles through the dig site, earth that was excavated and deposited at a previous time, or a combination thereof. Examples of compacting vehicles include, but are not limited to, smooth drum rollers, wheeled rollers, sheepsfoot rollers, pneumatic rollers, tandem vibratory compactors, rammers, vibratory plate compactors, wheeled dozers, and landfill compactors.

[0040] Additionally, in implementations involving compacting vehicles, the tool 175 is a compaction tool that is an instrument for improving the compactness of loose earth in the dig site. As described herein, compaction tool may refer not only to the tool in contact with loose earth, but also the element that adjusts the position and orientation of the tool.

[0041] Among other components, earth shaping vehicles 115 generally include a chassis (not shown), a drive system (not shown), an earth shaping tool 175, an engine (not shown), an on-board sensor assembly 110, and a controller 150. The chassis is the frame upon on which all other components are physically mounted. The drive system gives the earth shaping vehicle 115 mobility through the site.

[0042] The engine powers both the drive system and the earth shaping tool 175. The engine may be an internal combustion engine, or an alternative power source, such as an electric motor or battery. In many earth shaping vehicles 115, the engine powers the drive system and the earth shaping tool commonly through a single hydraulic system, however other means of actuation may also be used. A common property of hydraulic systems used within earth shaping vehicles 115 is that the hydraulic capacity of the vehicle 115 is shared between the drive system and the tool. In some embodiments, the instructions and control logic for the earth shaping vehicle 115 to operate autonomously and semi-autonomously includes instructions relating to determinations about how and under what circumstances to allocate the hydraulic capacity of the hydraulic system.

[0043] Although particular embodiments throughout the description are described with reference to an earth shaping vehicle, a person having ordinary skill in the art would recognize that the described techniques, systems, and embodiments may also be applied to earth shaping routines involving hauling vehicles, compacting vehicles, or other earth shaping vehicles deployed autonomously or semi-autonomously to perform an earth shaping routine.

[0044] I.B. Sensor Assembly

[0045] As introduced above, the sensor assembly 110 includes a combination of one or more of: measurement sensors 125, spatial sensors 130, imaging sensors 135, and position sensors 145. The sensor assembly 110 is configured to collect data related to the earth shaping vehicle 115 and environmental data surrounding the earth shaping vehicle 115. The controller 150 is configured to receive the data from the assembly 110 and carry out the instructions and operations to perform an earth shaping routine provided by the computers 120 based on the recorded data. This includes operations to control the drive system to move the position of the tool based on the environmental data, a location of the earth shaping vehicle 115, and the earth shaping routine.

[0046] Sensors 170 are either removably mounted to the earth shaping vehicle 115 without impeding the operation of the earth shaping vehicle 115, or the sensor is an integrated component that is a native part of the earth shaping vehicle 115 as made available by its manufacturer. Each sensor transmits the data in real-time or as soon as a network connection is achieved, automatically without input from the earth shaping vehicle 115 or a human operator. Data recorded by the sensors 170 is used by the controller 150 and/or on-unit computer 120a for analysis of, generation of and carrying out of earth shaping routines, among other tasks.

[0047] Position sensors 145 provide a position of the earth shaping vehicle 115. This may be a localized position within a dig site, or a global position with respect to latitude/longitude, or some other external reference system. In one embodiment, a position sensor is a global positioning system interfacing with a static local ground-based GPS node mounted to the earth shaping vehicle 115 to output a position of the earth shaping vehicle 115. In one embodiment, position sensors 145 comprise at least one transmitter/receiver pair, one of which is mounted to the earth shaping vehicle and the other is positioned away from the vehicle 115, for example a GPS satellite. In implementations in which a computer 120 determines a position of features or obstacles within a dig site relative to the position of the earth shaping vehicle 115, the positions sensors 145 comprise a single transmitter/receiver pair mounted to the earth shaping vehicle 115. Based on recorded data, the position sensors 115 produce a signal representative of the position and orientation of the earth shaping vehicle relative to the site. The produced signal is processed by the controller 150.

[0048] Spatial sensors 130 output a three-dimensional map in the form of a three-dimensional point cloud representing distances, for example between one meter and fifty meters between the spatial sensors 130 and the ground surface or any objects within the field of view of the spatial sensor 130, in some cases per rotation of the spatial sensor 130. In one embodiment, spatial sensors 130 include a set of light emitters (e.g., Infrared (IR)) configured to project structured light into a field near the earth shaping vehicle 115, a set of detectors (e.g., IR cameras), and a processor configured to transform data received by the infrared detectors into a point cloud representation of the three-dimensional volume captured by the detectors as measured by structured light reflected by the environment. In one embodiment, the spatial sensor 130 is a LIDAR sensor having a scan cycle that sweeps through an angular range capturing some or all of the volume of space surrounding the earth shaping vehicle 115. Other types of spatial sensors 130 may be used, including time-of-flight sensors, ultrasonic sensors, and radar sensors.

[0049] Imaging sensors 135 capture still or moving-video representations of the ground surface, objects, and environment surrounding the earth shaping vehicle 115 In one embodiment, each camera can output a video feed containing a sequence of digital photographic images at a rate of 20 Hz. In one embodiment, multiple imaging sensors 135 are mounted such that each imaging sensor captures some portion of the entire 360-degree angular range around the vehicle. For example, front, rear, left lateral, and right lateral imaging sensors may be mounted to capture the entire angular range around the earth shaping vehicle 115. In another embodiment, the imaging sensors 135 comprise a plurality of sensors configured to record a field of view in all directions that the machine is capable of moving. Examples imaging sensors 135 include, but are not limited to, stereo RGB cameras, structure from motion cameras, monocular RGB cameras, LIDAR sensors, radar sensors, cameras, an alternative imaging sensor, or a combination thereof The sensor assembly 110 may include a second set of imaging sensors 135 configured to record the interaction of the earth shaping vehicle 115 with features within the environment, for example excavating earth from a hole, depositing earth at a dump pile, or navigating over a target tool path to excavate earth from a hole. Based on the recorded data, the imaging sensors 135 produce at least one signal describing one or more features of the site based on the position of the earth shaping vehicle 115 within the site. The produced signal is processed by the controller 150.

[0050] Measurement sensors 125 generally measure properties of the ambient environment, or properties of the earth shaping vehicle 115 itself. These properties may include tool position/orientation, relative articulation of the various joints of the arm supporting the tool, vehicle 115 speed, ambient temperature, hydraulic pressure (either relative to capacity or absolute) including how much hydraulic capacity is being used by the drive system and the earth shaping tool separately. A variety of possible measurement sensors 125 may be used, including end-effect sensors, hydraulic pressure sensors, linear encoders, radial encoders, inertial measurement unit sensors, incline sensors, accelerometers, strain gauges, gyroscopes, and string encoders. For example, an end-effector sensor is coupled at each joint at which the earth shaping tool experiences a change in angle relative to the ground surface, a change in height relative to the ground surface, or both. Based on recorded data, the measurement sensors 125 produce a signal representative of a position and orientation of the corresponding joint relative to an site. The produced signal is processed by a controller, for example the controller 150, to determine the orientation and/or position of the earth shaping tool and the earth shaping vehicle 115. Data gathered by measurement sensors 125 may also be used to determine derivatives of position information.

[0051] Using the track trencher of FIG. 2A as an example, the representations with diagonal crosshatching represent the example placements of a set of measurement sensors 125, the representation with diamond crosshatching represent example placements of a set of spatial sensors 130, and the representations with grid crosshatching represent example placements of a set of position sensors 145. Using the skid-steer loader of FIG. 2B as another example, diagonal crosshatchings represent measurement sensors 125, diamond crosshatchings represent spatial sensors 130, and grid crosshatchings represent position sensors 145. Additionally, vertical crosshatchings near the drive system represent example placements for a linear encoder 210 and horizontal crosshatchings near the roof represent imaging sensors 135, for example RGB cameras.

[0052] Generally, individual sensors as well as the sensor assembly 110 itself range in complexity from simplistic measurement devices that output analog or electrical systems electrically coupled to a network bus or other communicative network, to more complicated devices which include their own onboard computer processors, memory, and the communications adapters (similar to on-unit computer 120a). Regardless of construction, the sensors and/or sensor assembly together function to record, store, and report information to the computers 120. Any given sensor may record or the sensor assembly may append to recorded data a time stamps for when data was recorded.

[0053] The sensor assembly 110 may include its own network adapter (not shown) that communicates with the computers 120 either through either a wired or wireless connection. For wireless connections, the network adapter may be a Bluetooth Low Energy (BTLE) wireless transmitter, infrared, or 802.11 based connection. For wired connection, a wide variety of communications standards and related architecture may be used, including Ethernet, a Controller Area Network (CAN) Bus, or similar.

[0054] In the case of a BTLE connection, After the sensor assembly 110 and on-unit computer 120a have been paired with each other using a BTLE passkey, the sensor assembly 110 automatically synchronizes and communicates information relating to the shaping or moving of earth in a site to the on-site computer 120a. If the sensor assembly 110 has not been paired with the on-unit computer 120 prior to the deployment of the vehicle, the information is stored locally until such a pairing occurs. Upon pairing, the sensor assembly 110 communicates any stored data to the on-site computer 120a.

[0055] The sensor assembly 110 may be configured to communicate received data to any one of the controller 150 of the earth shaping vehicle 115, the on-unit computer 120a, as well as the off-unit computer 120b. For example, if the network adapter of the sensor assembly 110 is configured to communicate via a wireless standard such as 802.11 or LTE, the adapter may exchange data with a wireless access point such as a wireless router, which may in turn communicate with the off-unit computer 120b and also on-unit computer 120a. This type of transmission may be redundant, but it can help ensure that recorded data arrives at the off-unit computer 120b for consumption and decision making by a manual operator, while also providing the data to the on-unit computer 120a for autonomous or semi-autonomous decision making in the carrying out of the earth shaping routine.

[0056] I.C. On-Unit Computer

[0057] Data collected by the sensors 170 is communicated to the on-unit computer 120a to assist in the design or carrying out of an earth shaping routine. Generally, earth shaping routines are sets of computer program instructions that, when executed, control the various controllable inputs of the earth shaping vehicle 115 to carry out an earth shaping routine. The controllable input of the earth shaping vehicle 115 may include the joystick controlling the drive system and earth shaping tool and any directly-controllable articulable elements, or some controller 150 associated input to those controllable elements, such as an analog or electrical circuit that responds to joystick inputs.

[0058] Generally, earth shaping routines are broadly defined to include any task that can be feasibly carried out by an earth shaping vehicle 115. Examples include, but are not limited to: dig site preparation routines, excavation routines, fill estimate routines, volume check routines, dump routines, wall cutback routines, backfill/compaction routines. Examples of these routines are described further below. In addition to instructions, earth shaping routines include data characterizing the site and the amount and locations of earth to be excavated. Examples of such data include, but are not limited to, a digital file, sensor data, a digital terrain model, and one or more target tool paths. Examples of such data are further described below.

[0059] The earth shaping vehicle 115 is designed to perform operations outlined in a set of instructions for an earth shaping routine either entirely autonomously or semi-autonomously. Here, semi-autonomous refers to an earth shaping vehicle 115 that not only responds to the instructions but also to a manual operator. Manual operators of the earth shaping vehicle 115 may be monitor the earth shaping routine from inside of the earth shaping vehicle 115 using the on-unit computer 120a or remotely using an off-unit computer 120b from outside of the earth shaping vehicle, on-site, or off-site. Manual operation may take the form of manual input to the joystick, for example. Sensor data is received by the on-unit computer 120a and assists in the carrying out of those instructions, for example by modifying exactly what inputs are provided to the controller 150 in order to achieve the instructions to be accomplished as part of the earth shaping routine.

[0060] The on-unit computer 120a may also exchange information with the off-unit computer 120b and/or other earth shaping vehicles (not shown) connected through network 105. For example, an earth shaping vehicle 115 may communicate data recorded by one earth shaping vehicle 115 to a fleet of additional earth shaping vehicle 115's that may be used at the same site. Similarly, through the network 105, the computers 120 may deliver data regarding a specific site to a central location, for example a computing device controlled by a remote operator. This may involve the earth shaping vehicle 115 exchanging data with the off-unit computer, which in turn can initiate a process to generate the set of instructions defining operations for an earth shaping vehicle 115 to perform to excavate the earth. The generated set of instructions may be delivered to one or more earth shaping vehicles 115. Similarly, the earth shaping vehicle 115 may also receive data sent by other sensor assemblies 110 of other earth shaping vehicles 115 as communicated between computers 120 over network 105.

[0061] The on-unit computer 120a may also process the data received from the sensor assembly 110. Processing generally takes sensor data that in a "raw" format may not be directly usable, and converts into a form that useful for another type of processing. For example, the on-unit computer 120a may fuse data from the various sensors into a real-time scan of the ground surface of the site around the earth shaping vehicle 115. This may comprise fusing the point clouds of various spatial sensors 130, the stitching of images from multiple imaging sensors 135, and the registration of images and point clouds relative to each other or relative to data regarding an external reference frame as provided by position sensors 145 or other data. Processing may also include up sampling, down sampling, interpolation, filtering, smoothing, or other related techniques.

[0062] In implementations involving cooperation between multiple earth shaping vehicles in a dig site, the on-unit computer 120a coupled to a primary vehicle tasked with performing an earth shaping routine may communicate instructions including a request to on-unit computers 120a for one or more secondary vehicles such that each secondary vehicle assists the primary vehicle with the performance of the earth shaping routine. For example, in the embodiment of FIG. 1, the earth shaping vehicle 115 may be the primary vehicle and the on-unit computer 120a generates instructions for the earth shaping vehicle 115 to perform an earth shaping routine that requires earth be hauled over a distance and compacted. Accordingly, the on-unit computer 120a communicates a request via the network 105 to an on-unit computer coupled to each of a hauling vehicle and a compacting vehicle. Upon receipt of the request, each on-unit computer generates instructions for the hauling vehicle and the compacting vehicle to assist the earth shaping vehicle with performing the earth shaping routine.

[0063] I.D. Off-Unit Computer

[0064] The off-unit computer 120b includes a software architecture for supporting access and use of the earth shaping system 100 by many different earth shaping vehicles 115 through network 105, and thus at a high level can be generally characterized as a cloud-based system. Any operations or processing performed by the on-unit computer 120a may also be performed similarly by the off-unit computer 120b.

[0065] In some instances, the operation of the earth shaping vehicle 115 is monitored by a human operator. Human operators, when necessary, may halt or override the automated earth shaping process and manually operate the earth shaping vehicle 115 in response to observations made regarding the features or the properties of the site. Monitoring by a human operator may include remote oversight of the whole earth shaping routine or a portion of it. Such monitoring by a remote human operator will be further discussed with reference to FIGS. 9A-13B. Human operation of the earth shaping vehicle 115 may also include manual or remote control of the joysticks of the earth shaping vehicle 115 for portions of the earth shaping routine (i.e., preparation routine, excavation routine, etc.). Additionally, when appropriate, human operators may override all or a part of the set of instructions and/or earth shaping routine carried out by the on-unit computer 120a.

[0066] In implementations involving cooperation between multiple earth shaping vehicles in a dig site, the off-unit computer 120b may operate as a central control system, generating instructions for a combination of earth shaping vehicles to cooperatively perform an earth shaping routine. During the generation of those instructions, the off-unit computer 120b may generate a separate set of instructions for each earth shaping vehicle involved in the performance of the routine and communicate a specific set of instructions to each vehicle via the network 105. For example, in the embodiment illustrated in FIG. 1, the off-unit computer 120b may generate a set of instructions for each of the earth shaping vehicle 115, a compacting vehicle, and a hauling vehicle. In another embodiment, the off-unit computer 120b may generate a single set of complete instructions and communicate vehicle-specific subsets of the instructions to each of the vehicles. As described herein, such an off-unit computer 120b may also be referred to as a "central computer 120."

[0067] I.E. General Computer Structure

[0068] The on-unit 120a and off-unit 120b computers may be generic or special purpose computers. A simplified example of the components of an example computer according to one embodiment is illustrated in FIG. 3.

[0069] FIG. 3 is a high-level block diagram illustrating physical components of an example off-unit computer 120b from FIG. 1, according to one embodiment. Illustrated is a chipset 305 coupled to at least one processor 310. Coupled to the chipset 305 is volatile memory 315, a network adapter 320, an input/output (I/O) device(s) 325, and a storage device 330 representing a non-volatile memory. In one implementation, the functionality of the chipset 305 is provided by a memory controller 335 and an I/O controller 340. In another embodiment, the memory 315 is coupled directly to the processor 310 instead of the chipset 305. In some embodiments, memory 315 includes high-speed random access memory (RAM), such as DRAM, SRAM, DDR RAM or other random access solid state memory devices.

[0070] The storage device 330 is any non-transitory computer-readable storage medium, such as a hard drive, compact disk read-only memory (CD-ROM), DVD, or a solid-state memory device. The memory 315 holds instructions and data used by the processor 310. The I/O controller 340 is coupled to receive input from the machine controller 150 and the sensor assembly 110, as described in FIG. 1, and displays data using the I/O devices 345. The I/O device 345 may be a touch input surface (capacitive or otherwise), a mouse, track ball, or other type of pointing device, a keyboard, or another form of input device. The network adapter 320 couples the off-unit computer 120b to the network 105.

[0071] As is known in the art, a computer 120 can have different and/or other components than those shown in FIG. 2. In addition, the computer 120 can lack certain illustrated components. In one embodiment, a computer 120 acting as server may lack a dedicated I/O device 345. Moreover, the storage device 330 can be local and/or remote from the computer 120 (such as embodied within a storage area network (SAN)), and, in one embodiment, the storage device 330 is not a CD-ROM device or a DVD device.

[0072] Generally, the exact physical components used in the on-unit 120a and off-unit 120b computers will vary. For example, the on-unit computer 120a will be communicatively coupled to the controller 150 and sensor assembly 110 differently than the off-unit computer 120b.

[0073] Typically, the off-unit computer 120b will be a server class system that uses powerful processors, large memory, and faster network components compared to the on-unit computer 120a, however this is not necessarily the case. Such a server computer typically has large secondary storage, for example, using a RAID (redundant array of independent disks) array and/or by establishing a relationship with an independent content delivery network (CDN) contracted to store, exchange and transmit data such as the asthma notifications contemplated above. Additionally, the computing system includes an operating system, for example, a UNIX operating system, LINUX operating system, or a WINDOWS operating system. The operating system manages the hardware and software resources of the off-unit computer 120b and also provides various services, for example, process management, input/output of data, management of peripheral devices, and so on. The operating system provides various functions for managing files stored on a device, for example, creating a new file, moving or copying files, transferring files to a remote system, and so on.

[0074] As is known in the art, the computer 120 is adapted to execute computer program engines for providing functionality described herein. A engine can be implemented in hardware, firmware, and/or software. In one embodiment, program engines are stored on the storage device 330, loaded into the memory 315, and executed by the processor 310.

[0075] I.F. Network

[0076] The network 105 represents the various wired and wireless communication pathways between the computers 120, the sensor assembly 110, and the earth shaping vehicle 115. Network 105 uses standard Internet communications technologies and/or protocols. Thus, the network 105 can include links using technologies such as Ethernet, IEEE 802.11, integrated services digital network (ISDN), asynchronous transfer mode (ATM), etc. Similarly, the networking protocols used on the network 150 can include the transmission control protocol/Internet protocol (TCP/IP), the hypertext transport protocol (HTTP), the simple mail transfer protocol (SMTP), the file transfer protocol (FTP), etc. The data exchanged over the network 105F can be represented using technologies and/or formats including the hypertext markup language (HTML), the extensible markup language (XML), etc. In addition, all or some links can be encrypted using conventional encryption technologies such as the secure sockets layer (SSL), Secure HTTP (HTTPS) and/or virtual private networks (VPNs). In another embodiment, the entities can use custom and/or dedicated data communications technologies instead of, or in addition to, the ones described above.

II. Earth Shaping Vehicle Operation Overview

[0077] FIG. 4 is a diagram of the system architecture for the control logic 400 of an earth shaping vehicle 115, according to an embodiment. The control logic 400, an embodiment of the controller 150, is implemented by s software within a central computer, for example an on-unit computer 120a or the off-unit computer 120b, and is executed by providing inputs to the controller 150 to control the control inputs of the vehicle 115 such as the joystick. The system architecture of the control logic 400 comprises a navigation engine 410, a preparation engine 420, an earth moving engine 430, a volume check engine 440. In other embodiments, the control logic 400 may include more or fewer components. Functionality indicated as being performed by a particular engine may be performed by other engines instead.

[0078] The navigation engine 410 provides mapping and orientation instructions to the drivetrain 210 of the earth shaping vehicle 115 to navigate the vehicle through the coordinate space of the site and along target tool paths to perform earth shaping routines. The preparation engine 420 creates and/or converts a digital file describing a target state of the site into a set of target tool paths. In combination, the set of target tool paths describes an earth shaping routine and an organizational layout of the site along with any other instructions needed to carry out the earth shaping routine (e.g., a location of earth to be moved, a location at which earth is to be filled, and a location of other vehicles relative to a primary vehicle). The preparation engine is further described with reference to FIGS. 5A and 5B.

[0079] The earth moving engine 430 executes instructions (e.g., instructions encoded as a set of target tool paths) to actuate a tool 175 and the drive train to perform an earth shaping routine, for example an excavation routine to excavate earth from a location in a dig site, a filling routine to fill earth at a location in the dig site, or a hauling routine to move earth from one location to another in the dig site. The earth moving engine 430 will be further discussed with reference to FIGS. 6A-6C. The volume check engine 440 measures the amount of earth in an earth shaping tool 175, for example an excavation tool coupled to an excavation vehicle or a hauling tool coupled to a hauling vehicle, and makes a determination regarding whether or not the earth shaping vehicle should release the contents of the tool or continue performing an earth shaping routine. The volume check engine 440 will be further discussed with reference to FIGS. 7A and 7B.

[0080] The operator interface engine 450 generates a graphical user interface for presentation to a remote operator on a computing device. The operator interface engine 450 generates various graphical user interfaces or graphic elements that provide the remote operator with insight into the progress of an earth shaping routine, the condition of the earth shaping vehicle 115 performing the routine, and the surrounding area and ground surface of the site. The operator interface engine 450 may additionally enable the remote operator to manually initialize a target tool path and communicate the initialized target tool path to an earth shaping vehicle. Based on feedback from vehicle 115 performing operations outlined by a target tool path, the operator interface engine 450 may generate graphical user interfaces for the operator to modify the tool path in real-time. In any of the implementations described above, the operator interface engine 450 receives interactive input from the remote operator, for example touch input directly on a screen of the computing device or keystrokes via a keyboard of the computing device. Inputs from the operator cause the operator interface engine 450 to perform processing functions including, but not limited to, generating a new graphical user interface, updating an existing graphical user interface, and displaying the graphical user interface on the computing device. In alternate embodiments, the operator interface engine 450 may be a component of the computing device controlled by the operator. In such embodiments, the operator interface engine 450 may be communicatively coupled with the 150 on-board the vehicle 115. The operator interface engine is further described with reference to FIGS. 9A-13B.

[0081] For the sake of simplicity, functionality of the control logic 400 is described within the context of an excavation vehicle, however such functionality may be applied to any earth shaping vehicle 115, for example a compacting vehicle or a hauling vehicle.

III. Preparing Instructions for an Earth-Shaping Routine

[0082] Prior to an earth shaping vehicle 115 performing operations to navigate through the site and excavate earth from a dig location, the controller 150 generates the operations to be performed by the vehicle 115, also referred to as a target tool path, based on a known target state of the site and contextual data describing the initial state of the site. FIG. 5A is a diagram of the system architecture for the preparation engine 420 of a central computer 120, according to an embodiment. The preparation engine 420 generates a digital terrain model including one or more target tool paths which can be followed by the earth shaping vehicle 115. The system architecture of the preparation engine 420 comprises a digital file store 510, a sensor data store 520, a digital mapping engine 530, and a target tool path generator 540. In other embodiments, the preparation engine 420 may include more or fewer components. Functionality indicated as being performed by a particular engine may be performed by other engines instead. Some of the engines of the preparation engine 410 may be stored in the control logic 400.

[0083] As described herein, a target tool path represents operations for an earth shaping vehicle 115 to perform to move or shape a volume of earth in the dig site. Examples of such operations include, but are not limited to, routines for excavating earth from a location in the dig site, hauling earth from one location to another in the dig site, filling or depositing earth excavated from one location at another, and compacting or grading earth in the dig site. The operations may also include instructions for actuating an earth shaping tool 175 to move earth in the dig site. For example, a target tool path for an excavation operation, includes instructions to actuate an excavation tool beneath a ground surface and to maintain the position of the tool beneath the ground surface until the tool is filled with earth. That same target tool path may additionally include instructions to raise the position of the tool above the ground surface to measure the volume of earth. Additionally, a target tool path may include instructions to adjust a position and an orientation of an earth shaping tool 175 before, after, or during the performance of an earth shaping operation. For example, a target tool path for an excavation operation, includes instructions to adjust an orientation of an excavation tool to effectively penetrate the ground surface, to maximize the volume of earth collected in the tool, to achieve a breakout angle, or a combination thereof. The target tool path may additionally include navigation instructions, for example a set of coordinates in a coordinate space representing the dig site, for the earth shaping vehicle 115 to navigate along a route between a start point and an end point in the dig site.

[0084] The digital file store 510 maintains one or more digital files, which may be accessed from a remote database. In some instances, the controller 150 may access these digital files from the central computer 120b and subsequently store them in the digital file store 510. Digital files may be image files describing the geographic layout of the site as a function of location within a coordinate space of the site, with different images representing a dig location, fill location, an entry ramp, etc. Geographic locations in the coordinate space may be represented as one or more two-dimensional points or three-dimensional points. The digital file may also include data describing how the earth shaping vehicle 115 ought to interact with each location discussed in the digital file. The digital files stored in the digital file store 510 may also include a digital file representing a target state of the site once all earth shaping routines have been completed. Digital files may be constructed using known computer programs and file types, such as a Computer Aided Design (CAD) file or a Building Information Modeling (BIM) file.

[0085] For example, a dig location may be characterized by a set of target volume dimensions which should be achieved upon the conclusion of an earth shaping routine. At a boundary of the dig location, the digital file may also include a ramp. Geometrically, the width of the ramp is generally greater than the maximum width of the combination of vehicle 115 and the tool 175 coupled to the vehicle. Additionally, the location of the fill location may be extracted from the digital file or received manually from a human operator. Alternatively, the location of the fill location within the site may be based on the estimated maximum size of the fill location and a specified relative distance between the fill location, the dig location, and other equipment in the site. The placement of the fill location may also be determined based on several considerations including, but not limited to: the risk of excavated earth caving in above the dig location or the fill location, the volume of excavated earth required to form the planned hole, the estimated compaction factor of the excavated earth, and the estimated swell factor of the excavated earth.

[0086] When appropriate, the digital file may also describe the location of fiducials representing technical pieces of equipment previously placed at the site such as stakes with active emitters and grade stakes. In alternate instances, the locations of the fiducials may be manually input to a central computer 120 based on the records of a human operator.

[0087] The preparation engine 420 generates a representation of the initial state of the site using sensor 170 data, stored within the sensor data store 520. As the navigation engine 410 maneuvers the earth shaping vehicle 115 through the site, sensors 170 gather contextual information on the site which is aggregated into a representation of the current state of the site. More specifically, spatial sensors 130 record spatial data in the form of point cloud representations, imaging sensors 135 gather imaging data, and depth sensors 145 gather data describing relative locations. More generally, the sensor data store 520 stores contextual information describing the current state of the site which refers to a physical landscape of the site and physical properties of soil, or earth, within the site. The navigation engine 410 navigates within the geospatial boundaries defined by the digital file to record contextual information describing the current state of the site.

[0088] When recording data via one or more spatial sensors, the spatial sensors 130 record one or more photographic images of various portions of the site. Based on the photographic images, the preparation engine 420 generates a representation of a current physical state of the site by stitching the recorded images into point clouds of data representing the portions of the site. Additionally, for each of the recorded images, the preparation engine 420 records and translates the position and orientation of features within the site into the point cloud representations with respect to the coordinate space of the digital file. In alternative implementations, the sensor assembly 110 uses an imaging sensor 135 to record the contextual information as photographic images of portions of the site and, for each of those images, stores the associated positions and orientations of the relevant features within the photographed portion of the site. Additionally, for each of the recorded images, the preparation engine 420 records and translates the position and orientation of features within the site into the point cloud representations with respect to the coordinate space of the digital file. In another alternate implementation, the sensor assembly 110 uses an imaging sensor 135 to record the contextual information as photographic images of portions of the site and, for each of those images, stores the associated positions and orientations of the relevant features within the portion of the site. Alternatively, the earth shaping vehicle 115 includes sensors and a software assembly that generates a digital terrain model of the site using simultaneous localization and mapping (SLAM).

[0089] Using the representation of a current physical state of the site generated based on the sensor data and the representation of the target state of the site, the digital mapping engine 530 generates a digital terrain model of the site. By aligning points in the target state of the site with the initial state of the site in the coordinate space, the digital mapping engine 530, or alternatively the central computer 120, identifies differences between the two representations. For example, the digital mapping engine 530 may determine a volume of earth to be excavated to form the planned hole from the digital file. In one embodiment, digital mapping engine 530 aligns (or registers) the two representations (the digital file and the contextual data) using the known locations of fiducials and other locations within the site common to both representations. Position data from a position sensor 145 such as a GPS or the boundaries of the sites provided by both representations may also be used by the digital mapping engine 530 to perform the alignment. The digital mapping engine 530 may additionally use algorithms, such as Iterative Closest Point (ICP) to align the two representations. In one embodiment, for every point pair in the actual/target representations, if the difference in elevation (e.g., Z-axis relative to the ground plane) is greater than a threshold, the digital mapping engine 530 multiplies the difference in elevation by the resolution of the representation to calculate a voxel volume, and is then summed together. The digital mapping engine may perform such a technique at multiple points to determine how the two representations should be adjusted relative to each other along an axis to align them.

[0090] In some implementations, the preparation module, or alternatively the central computers 120, use the digital terrain model to determine the difference in volume between the two representations which translates into the volume of earth to be excavated from the hole. Incorporating all the considerations made above, the physical layout of the site, the volume of earth to be excavated, and the creation of cutbacks and slope backs, the preparation engine 420 generates one or more target tool paths.

[0091] Using the digital terrain model, the target tool path generator 540 generates one or more target tool paths for the earth shaping vehicle 115 to move a tool 175, or a combination of earth shaping vehicles 115 to move multiple tools 175, to perform an earth shaping routine, for example excavating a volume of earth, filling a volume of earth, or navigating the earth shaping vehicle 115 within the site. Tool paths provide instructions for a semi-autonomous vehicle to perform an earth shaping routine in the form of geographical steps and corresponding coordinates for the earth shaping vehicle 115 and/or coupled tool to traverse within the site. In implementations where the site is represented in the digital terrain model as a coordinate space, for example the implementations described above, a target tool path includes a set of coordinates within the coordinate space. A target tool path may further represent a measure of volume relative to the volume of the planned hole. For example, if a hole is 4'' wide, 3'' long, and 2'' deep, a single target tool path includes coordinates within the 12'' area of the coordinate space and, at each coordinate, places the tool at a depth of 2'' in order to excavate the hole using a single target tool path. Target tool paths may describe a variety of shapes representing a variety of earth shaping techniques, for example substantially rectangular pathways in two dimensions, substantially triangular pathways in two dimensions, hyperrectangular pathways in three dimensions, hyperrectangular pathways in three dimensions, elliptic pathways in two dimensions, hyperelliptic pathways in three dimensions, or curved lines along the plane of the ground surface.

[0092] For holes of greater volumes or requiring a graded excavation, the target tool path generator 540 may generate multiple target tool paths at different offsets from the finish tool path. For example, if three target tool paths are required to excavate a 6'' deep hole, the first may be performed at a depth of 3'', the second at a depth 2'', and the third at a depth of 1''. As a result, a target tool path may represent instructions for excavating only a fraction of the volume of excavated earth. For example, the last tool path used at the conclusion of the excavation of the hole may be referred to as a finish tool path, which digs minimal to no volume, but is primarily intended to even the surface of the bottom of the dug hole. While moving through the finish tool path, the tool excavates less earth from the hole than in previous tool paths by adjusting the depth of the leading edge or the angle of the tool beneath the ground surface. To conclude the excavation of the hole, the earth shaping vehicle 115 adjusts a non-leading edge of the tool and reduces the speed of the drive. In some implementations, instructions included in each target tool path may be executed by a different earth shaping vehicle 115, resulting in a fleet of earth shaping vehicles 115 operating cooperatively to complete a task.

[0093] For holes of greater volumes that may require a graded excavation, the target tool path generator 540 may generate multiple tool paths at different offsets from the finish tool path. For example, if three tool paths are required to excavate a 6'' deep hole, the first may be performed at a depth of 3'', the second at a depth 2'', and the third at a depth of 1''. As a result, a tool path may represent only a fraction of the volume of excavated earth. In one embodiment, the target tool path generator 540 calculates the number of tool paths by dividing the target depth of the hole by the maximum depth that each tool path is capable of In some instances, the maximum depth that each tool path is capable of is also defined by the dimensions of the tool 175 attached to the earth shaping vehicle 115. In other embodiments, the tool paths may be manually generated using the off-unit computer 120b as the central controller 120.

[0094] In some implementations, tool paths may not describe the shape of the hole in three-dimensions, instead removing the depth measurement to only specify a two-dimensional pathway or two-dimensional plane in the three or two-dimensional coordinate system. In such instances, the depth instructions for how deep to dig with a tool path may be provided to the controller 150 in a separate set of instructions.

[0095] The target tool path generator 540 may define tool paths are defined based on several factors including, but not limited to, the composition of the soil, the properties of the tool being used to excavate the hole, the properties of the drive system moving the tool, and the properties of the earth shaping vehicle 115. Example properties of the earth shaping tool 175 and earth shaping vehicle 115 include the size of the tool, the weight of the earth shaping tool 175, and the force exerted on the earth shaping tool 175 in contact with the ground surface of the site.

[0096] When performed in reverse or in alternative sequences, the processes described above and below with respect to trenching and drilling as specific examples may also perform other earth shaping routines including, but not limited to, digging, grading, filling, trenching, compacting, aerating, ripping, stripping, spreading, and smoothing.