Methods And Systems For Providing Context Based Information

White; John M. ; et al.

U.S. patent application number 17/560463 was filed with the patent office on 2022-04-14 for methods and systems for providing context based information. The applicant listed for this patent is 1904038 ALBERTA LTD. o/a SMART ACCESS. Invention is credited to Kyle Jeske, Timothy Regnier, Serguei Roupassov, Jonathan Smelquist, John M. White.

| Application Number | 20220116737 17/560463 |

| Document ID | / |

| Family ID | 1000006103846 |

| Filed Date | 2022-04-14 |

View All Diagrams

| United States Patent Application | 20220116737 |

| Kind Code | A1 |

| White; John M. ; et al. | April 14, 2022 |

METHODS AND SYSTEMS FOR PROVIDING CONTEXT BASED INFORMATION

Abstract

Methods and systems are described for providing content based on context. An example method may comprise receiving, from a user device, a request comprising data indicative of a location of the user device. The method may comprise determining, based on receiving the request, user information associated with a user of the user device. The method may comprise generating, based on the user information and the data indicative of the location, an information profile relevant to a context of the user at the location. The method may comprise transmitting, to the user device, the information profile.

| Inventors: | White; John M.; (Edmonton, CA) ; Regnier; Timothy; (Edmonton, CA) ; Smelquist; Jonathan; (Edmonton, CA) ; Jeske; Kyle; (Edmonton, CA) ; Roupassov; Serguei; (Edmonton, CA) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Family ID: | 1000006103846 | ||||||||||

| Appl. No.: | 17/560463 | ||||||||||

| Filed: | December 23, 2021 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| PCT/IB2020/056979 | Jul 23, 2020 | |||

| 17560463 | ||||

| 62877607 | Jul 23, 2019 | |||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | H04W 4/021 20130101; H04W 8/20 20130101; G06F 16/9537 20190101 |

| International Class: | H04W 4/021 20060101 H04W004/021; H04W 8/20 20060101 H04W008/20; G06F 16/9537 20060101 G06F016/9537 |

Claims

1. A method comprising: receiving, from a mobile user device, a request comprising data indicative of a location of the user device, wherein the request is generated in response to the user device interacting with a trigger associated with one or more the location, a service, or an asset, and interacting with the trigger occurs via at least one of: scanning a trigger, capturing an image of the trigger or causing the mobile user device to be within a predetermined range of the trigger, and wherein the trigger is one of: a near-field communication (NFC) tag, a radio frequency identification (RFID) tag, a Quick-Response (QR) code, a barcode or a beacon; determining, based on receiving the request, user information associated with a user of the mobile user device; generating, based on the user information and the data indicative of the location, an information profile relevant to a context of the user at the location; determining, based on the data indicative of the location, an asset at the location; and transmitting, to the mobile user device, the information profile, wherein the information profile provides one or more actions or information related to the asset, service or location.

2. The method of claim 1, wherein the data indicative of the location comprises data generated based on a positioning system of the user device, wherein the positioning system comprises one or more of a global positioning system, a wireless signal positioning system, an ultrawide band positioning system, a sound based positioning system, a beacon based positioning system, a Bluetooth beacon positioning system, an inertial positioning system, an accelerometer, or a device or tag that indicates the location.

3. The method of claim 1, wherein the user information comprises one or more of a user name, a user identifier, user experience level, user skills, a user type, a user role, a user function, a user affiliation, a user employer, a user team, or a user organization.

4. The method of claim 1, wherein the data indicative of the location comprises one or more of an asset location at a premises, a premises location, a global positioning coordinate, a geospatial location within a premises, a location history, a shelf location, a premises zone, a container, an aisle, a department of a premises, a location range, or a tagged location.

5. The method of claim 1, further comprising determining a service information based one or more of the data indicative of the location, the request, or the user information.

6. The method of claim 1, further comprising storing a history of events associated with the user, wherein one or more of the events are associated with one or more of the location, a corresponding action performed at the location, or a corresponding asset at the location.

7. The method of claim 6, further comprising determining a pattern based on the history of events, wherein the pattern is determined based on one or more of the history of events associated with the user or a history of events associated with a plurality of users.

8. The method of claim 7, wherein the information profile comprises information selected for the information profile based on the pattern.

9. The method of claim 1, further comprising generating one or more models configured to predict relevance of an information module of one or more information modules to a context, wherein the one or more information modules are selected based on the one or more models.

10. The method of claim 9, wherein the context comprises one or more of a location characteristic, a user characteristic, or an asset characteristic of an asset at the location, wherein the location characteristic comprises a location identifier, a location category, a location within a premises, a shelf location, or a geographic boundary, wherein the user characteristic comprises one or more of a user role, a user experience level, a prior event associated with a user, a user permission level, or occurrence of a set of events being associated with a user, wherein the asset characteristic comprises a prior event associated with the asset, a scheduled event associated with the asset, an action associated with managing the asset, or an asset category.

11. The method of claim 10, further comprising tracking one or more interactions of the user with the information profile and training the one or more models based on the one or more interactions.

12. An apparatus comprising: a network interface configured to enable network communications; a memory; and one or more processors coupled to the network interface and the memory, wherein the one or more processors are configured to perform operations including: receiving, from a mobile user device, a request comprising data indicative of a location of the user device, wherein the request is generated in response to the user device interacting with a trigger associated with one or more the location, a service, or an asset, and interacting with the trigger occurs via at least one of: scanning a trigger, capturing an image of the trigger or causing the mobile user device to be within a predetermined range of the trigger, and wherein the trigger is one of: a near-field communication (NFC) tag, a radio frequency identification (RFID) tag, a Quick-Response (QR) code, a barcode or a beacon; determining, based on receiving the request, user information associated with a user of the mobile user device; generating, based on the user information and the data indicative of the location, an information profile relevant to a context of the user at the location; determining, based on the data indicative of the location, an asset at the location; and transmitting, to the mobile user device, the information profile, wherein the information profile provides one or more actions or information related to the asset, service or location.

13. The apparatus of claim 12, wherein the data indicative of the location comprises data generated based on a positioning system of the user device, wherein the positioning system comprises one or more of a global positioning system, a wireless signal positioning system, an ultrawide band positioning system, a sound based positioning system, a beacon based positioning system, a Bluetooth beacon positioning system, an inertial positioning system, an accelerometer, or a device or tag that indicates the location.

14. The apparatus of claim 12, wherein the one or more processors are further configured to store a history of events associated with the user, wherein one or more of the events are associated with one or more of the location, a corresponding action performed at the location, or a corresponding asset at the location.

15. The apparatus of claim 14, wherein the one or more processors are further configured to determine a pattern based on the history of events, wherein the pattern is determined based on one or more of the history of events associated with the user or a history of events associated with a plurality of users.

16. The apparatus of claim 12, wherein the one or more processors are further configured to generate one or more models configured to predict relevance of an information module of one or more information modules to a context, wherein the one or more information modules are selected based on the one or more models, wherein the context comprises one or more of a location characteristic, a user characteristic, or an asset characteristic of an asset at the location, wherein the location characteristic comprises a location identifier, a location category, a location within a premises, a shelf location, or a geographic boundary, wherein the user characteristic comprises one or more of a user role, a user experience level, a prior event associated with a user, a user permission level, or occurrence of a set of events being associated with a user, wherein the asset characteristic comprises a prior event associated with the asset, a scheduled event associated with the asset, an action associated with managing the asset, or an asset category.

17. One or more non-transitory computer-readable storage media encoded with instructions that, when executed by a processor, cause the processor to perform operations including: receiving, from a mobile user device, a request comprising data indicative of a location of the user device, wherein the request is generated in response to the user device interacting with a trigger associated with one or more the location, a service, or an asset, and interacting with the trigger occurs via at least one of: scanning a trigger, capturing an image of the trigger or causing the mobile user device to be within a predetermined range of the trigger, and wherein the trigger is one of: a near-field communication (NFC) tag, a radio frequency identification (RFID) tag, a Quick-Response (QR) code, a barcode or a beacon; determining, based on receiving the request, user information associated with a user of the mobile user device; generating, based on the user information and the data indicative of the location, an information profile relevant to a context of the user at the location; determining, based on the data indicative of the location, an asset at the location; and transmitting, to the mobile user device, the information profile, wherein the information profile provides one or more actions or information related to the asset, service or location.

18. The non-transitory computer-readable storage media of claim 17, wherein the data indicative of the location comprises data generated based on a positioning system of the user device, wherein the positioning system comprises one or more of a global positioning system, a wireless signal positioning system, an ultrawide band positioning system, a sound based positioning system, a beacon based positioning system, a Bluetooth beacon positioning system, an inertial positioning system, an accelerometer, or a device or tag that indicates the location.

19. The non-transitory computer-readable storage media of claim 17, further comprising instructions that, when executed by the processor, caused the processor to store a history of events associated with the user, wherein one or more of the events are associated with one or more of the location, a corresponding action performed at the location, or a corresponding asset at the location.

20. The non-transitory computer-readable storage media of claim 19, further comprising instructions that, when executed by the processor, caused the processor to determine a pattern based on the history of events, wherein the pattern is determined based on one or more of the history of events associated with the user or a history of events associated with a plurality of users.

Description

CROSS-REFERENCE TO RELATED APPLICATIONS

[0001] This application is a continuation of International Patent Application No. PCT/IB2020/056979, filed Jul. 23, 2020, which in turn claims priority to U.S. Provisional Application No. 62/877,607 filed Jul. 23, 2019, both of which are hereby incorporated by reference in their entirety for any and all purposes.

BACKGROUND

[0002] The body of electronically stored information continues to expand at a fast pace. It can be difficult to find information quickly. Typical search interfaces are not optimized for the providing information in a specific location. A user at a location or performing a specific task may have different needs than another user at the same location. Thus, there is a need for more sophisticated techniques for delivering information.

SUMMARY

[0003] Methods and systems are described for providing content based on context. An example method may comprise receiving, from a user device, a request comprising data indicative of a location of the user device. The method may comprise determining, based on receiving the request, user information associated with a user of the user device. The method may comprise generating, based on the user information and the data indicative of the location, an information profile relevant to a context of the user at the location. The method may comprise transmitting, to the user device, the information profile.

[0004] An example method may comprise determining a triggering event associated with an environment. The method may comprise determining, based on triggering event, user information and data indicative of a location of a user device associated the environment. The method may comprise generating, based on the user information and the data indicative of the location, an information profile relevant to a context of a user at the location. The method may comprise transmitting, to the user device, the information profile.

[0005] An example method may comprise determining, based on data associated with a user device, one or more triggering features. The one or more triggering features may be features associated with processing imaging data comprising one or more images of an environment in which the user device is located. The method may comprise determining, based on an association of the one or more triggering features and location information associated with the environment, an inferred location of the user device in the environment. The method may comprise determining, based on the inferred location and user information associated with the user device, an information profile. The method may comprise causing the information profile to be output via the user device.

[0006] An example method may comprise generating, by a user device, imaging data associated with an image sensor and comprising one or more images of an environment in which the user device is located. The method may comprise determining, based on processing the imaging data, one or more triggering features. The method may comprise sending, to a computing device, a request for information associated with the one or more triggering features. The method may comprise receiving, based on sending the request, an information profile. The information profile may be based on an inferred location of the user device in the environment and user information associated with the user device. The inferred location may be based on an association of the one or more triggering features and location information associated with the environment. The method may comprise causing, based on receiving the information profile, output of the information profile.

[0007] An example method may comprise storing location information associated with an environment and determining an association of one or more triggering features with corresponding portions of the location information. The one or more triggering features may comprise a feature associated with processing an image of the environment. The method may comprise determining an association of asset information with the one or more triggering features and the location information. The method may comprise causing, based on determining data indicative of the one or more triggering features and associated with imaging data of a user device, output, via the user device, of an information profile comprising the asset information. The information profile may be based on an inferred location associated with the user device and user information associated with the user device.

[0008] This Summary is provided to introduce a selection of concepts in a simplified form that are further described below in the Detailed Description. This Summary is not intended to identify key features or essential features of the claimed subject matter, nor is it intended to be used to limit the scope of the claimed subject matter. Furthermore, the claimed subject matter is not limited to limitations that solve any or all disadvantages noted in any part of this disclosure.

BRIEF DESCRIPTION OF THE DRAWINGS

[0009] The accompanying drawings, which are incorporated in and constitute a part of this specification, illustrate embodiments and together with the description, serve to explain the principles of the methods and systems.

[0010] FIG. 1 is a block diagram of an example system in accordance with the present disclosure.

[0011] FIG. 2 is a block diagram of an example content platform in accordance with the present disclosure.

[0012] FIG. 3A is a flowchart showing an example process for deploying a plurality of triggers.

[0013] FIG. 3B shows an example user interface image.

[0014] FIG. 3C shows an example user interface image.

[0015] FIG. 3D shows an example user interface image.

[0016] FIG. 3E shows an example user interface image.

[0017] FIG. 3F shows an example user interface image.

[0018] FIG. 3G shows an example user interface image.

[0019] FIG. 3H shows an example user interface image.

[0020] FIG. 3I shows an example user interface image.

[0021] FIG. 3J shows an example user interface image.

[0022] FIG. 3K shows an example user interface image.

[0023] FIG. 3L shows an example user interface image.

[0024] FIG. 3M shows an example user interface image.

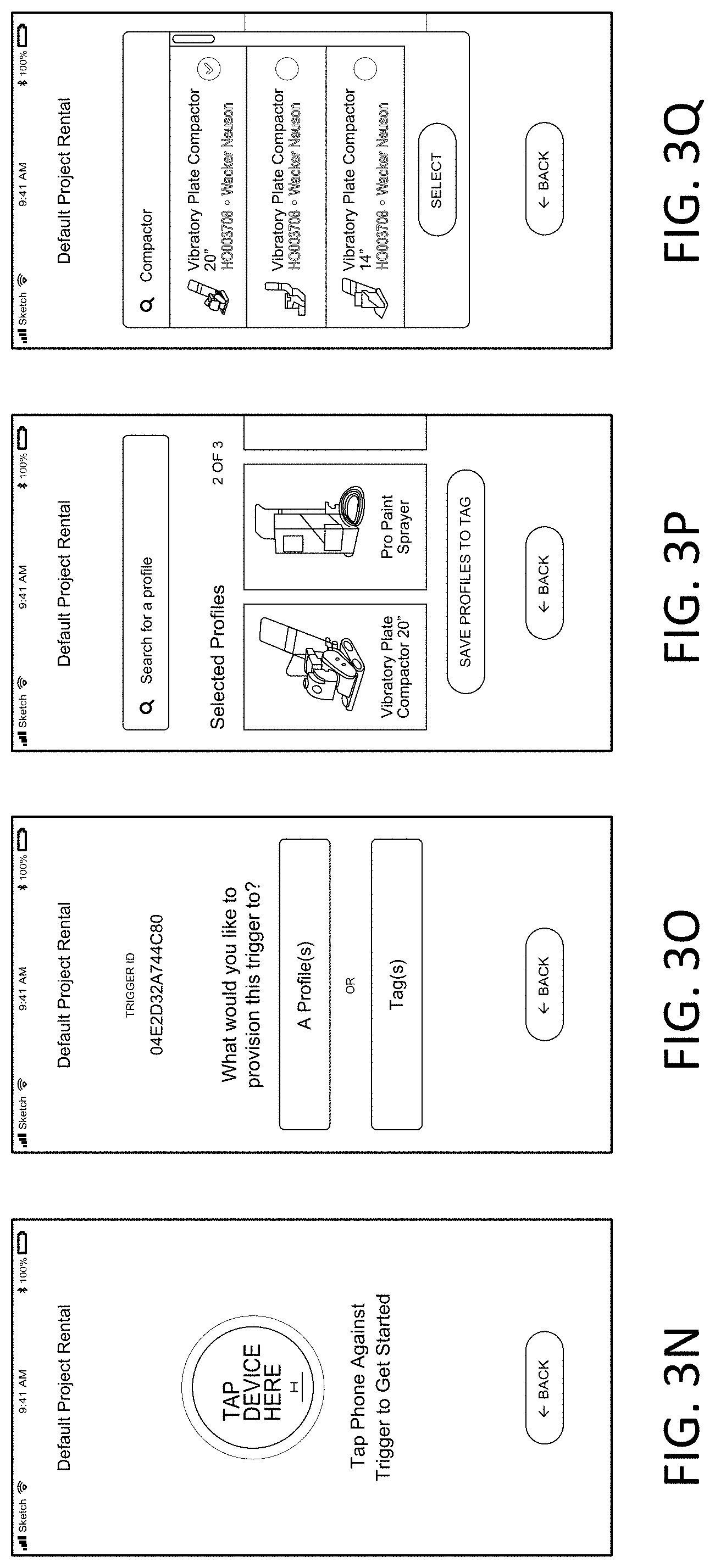

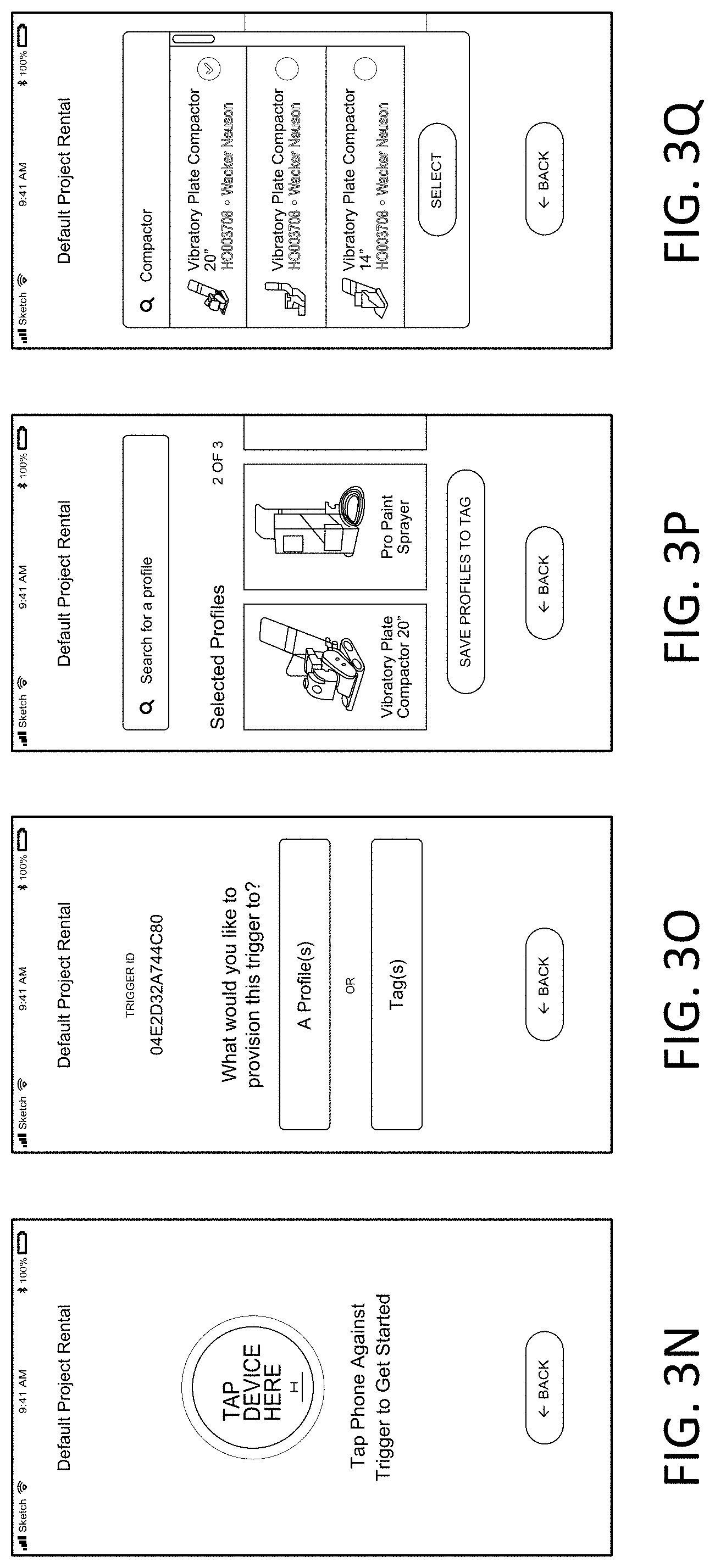

[0025] FIG. 3N shows an example user interface image.

[0026] FIG. 3O shows an example user interface image.

[0027] FIG. 3P shows an example user interface image.

[0028] FIG. 3Q shows an example user interface image.

[0029] FIG. 3R shows an example user interface image.

[0030] FIG. 3S shows an example user interface image.

[0031] FIG. 4 is an example user interface for an application for accessing information relevant to a context.

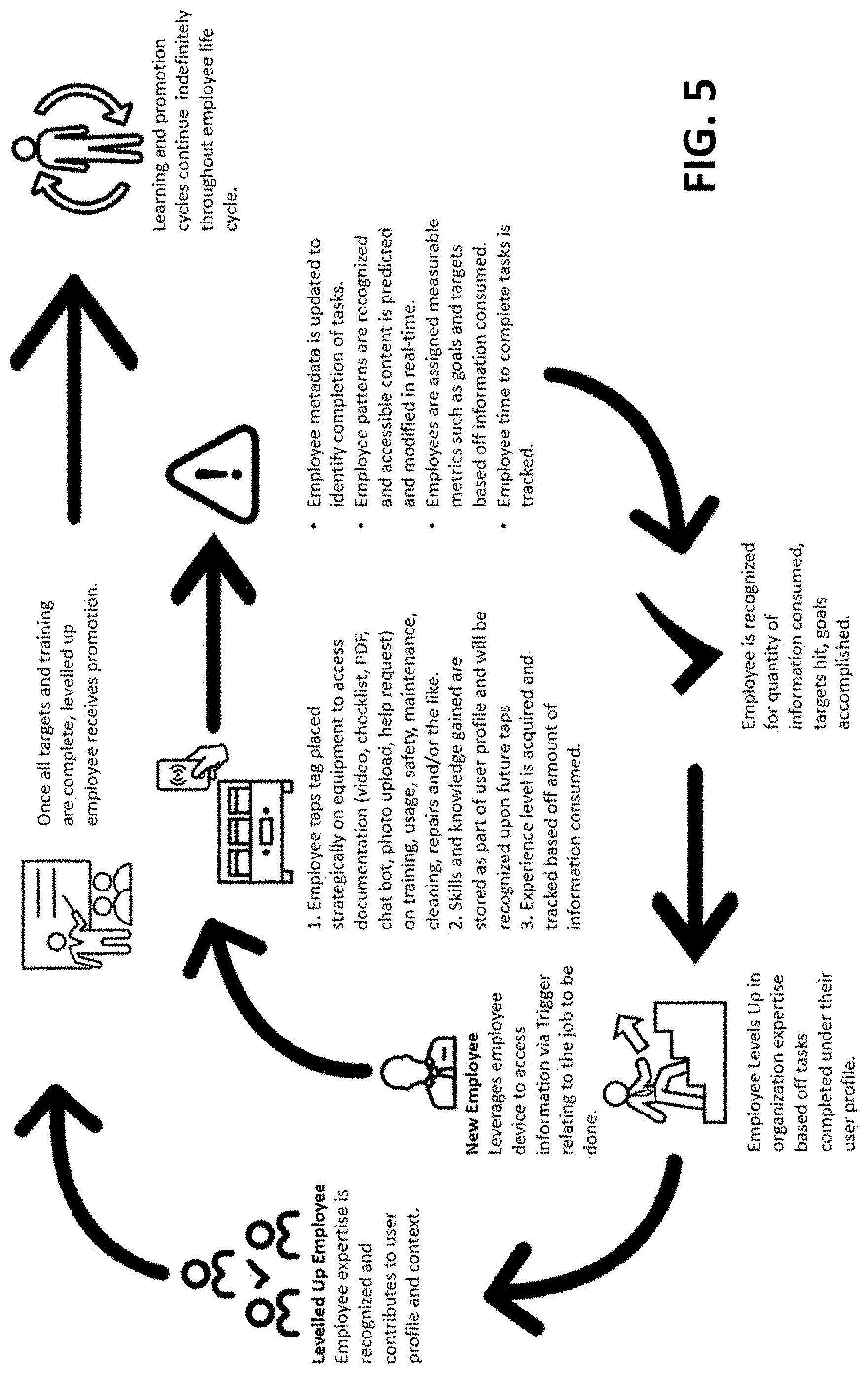

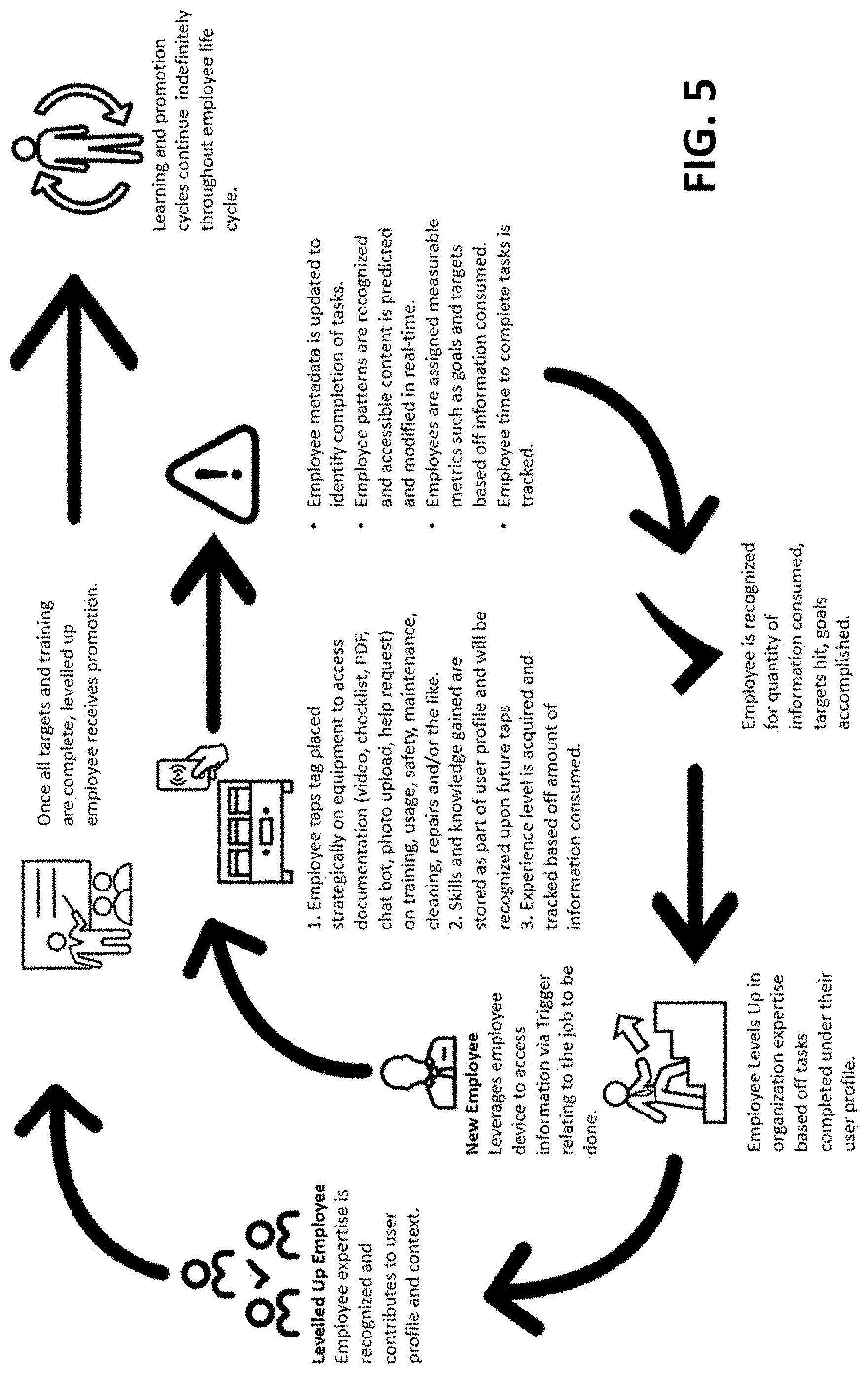

[0032] FIG. 5 is a diagram showing an example cycle of accessing information relevant to a context.

[0033] FIG. 6 is a block diagram illustrating an example computing device.

[0034] FIG. 7 show an example system for providing information.

[0035] FIG. 8 shows an example of location information represented as a layout of an environment.

[0036] FIG. 9 shows another example of location information represented as a planogram of a rack.

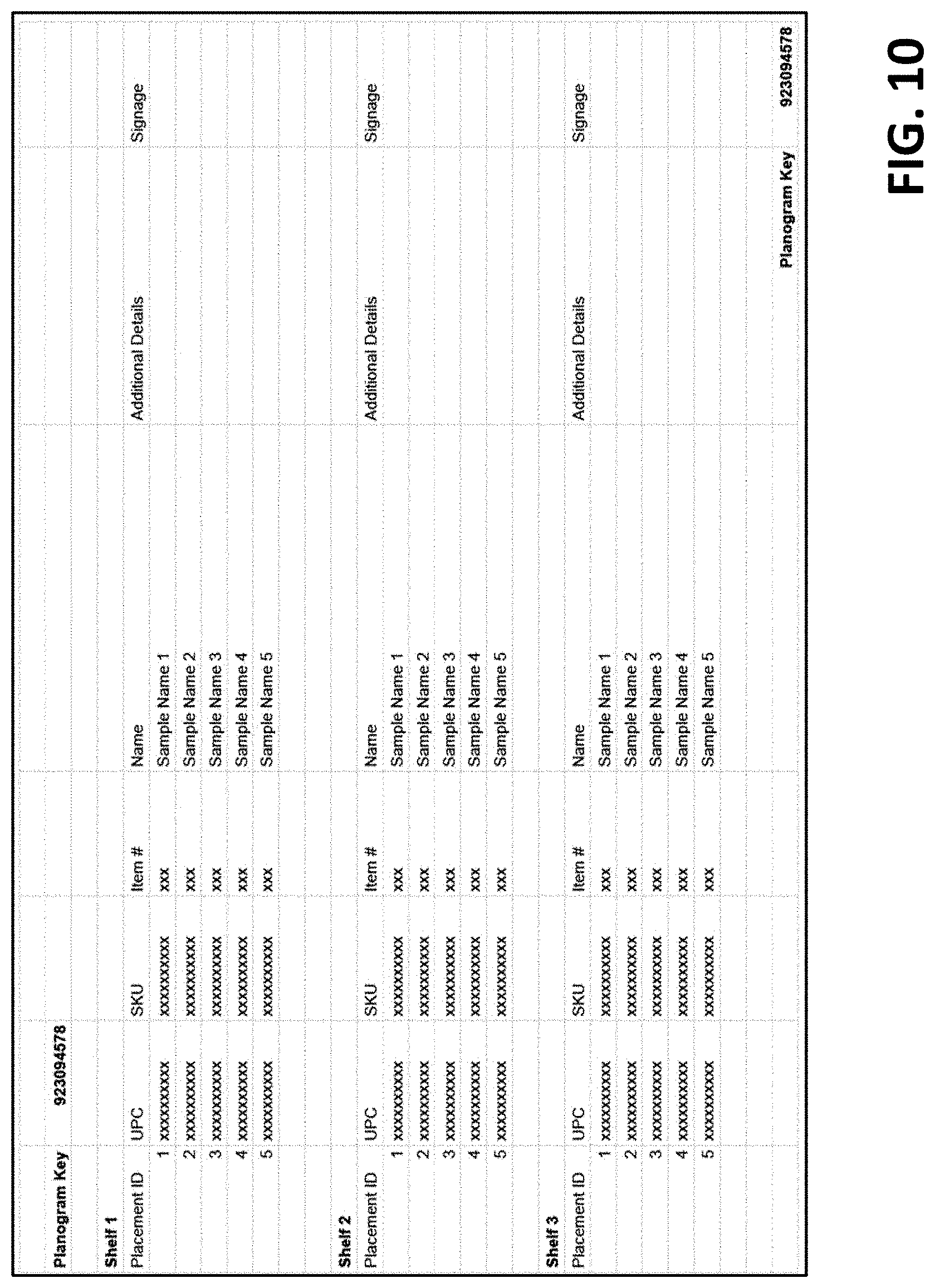

[0037] FIG. 10 shows another example of location information represented in a data structure.

[0038] FIG. 11 shows another example of location information represented as a layout of an environment.

DETAILED DESCRIPTION OF ILLUSTRATIVE EMBODIMENTS

[0039] Disclosed herein are methods and systems for more efficiently provide information associated with an environment. The disclosed methods and systems provide more efficient user interfaces that eliminate the need for individual users with different roles in the environment to use different applications and/or repeat the same searches for information. The disclosed methods and systems provide for obtaining information specific to various locations in the environment. Assets, structures, equipment and other features may be associated with different information. This information may also be different for different types of users. The disclosed methods and systems configure a user device to more efficiently deliver the right information for the right user, specific to a location, asset, structure, time of day, and/or the like. This increase in efficiency configures user devices (e.g., which are likely battery powered) to use less power and bandwidth because a user may spend less time browsing for information relevant to their role and/or relevant to a specific location.

[0040] The disclosed methods and systems allow for integration of learning, such as machine learning and other artificial intelligence, to identify gaps in information and/or other problems with procedures, training, operations, and/or the like. User profiles may also be modeled and used to customize the information in a specific information module. The disclosed methods and systems also allow for integration of business logic (e.g., from enterprise resource planning software, business process management data) into an information system to more efficiently trigger users to perform actions (e.g., while also providing at the same time the most up to date and relevant information for these actions). The disclosed methods and systems also configure user devices to more efficiently discover location specific information by configuring the user devices to scan, image, or otherwise determine location specific features that are associated with location, information, and/or the like. These efficiencies and others described herein provide for improved system efficiency, device efficiency, less battery usage, less usage of bandwidth (e.g., as less searching is required), as well as the increased leverage of information and connectivity between conventionally disparate systems.

[0041] FIG. 1 is a block diagram an example system 100 in accordance with the present disclosure. The system 100 may comprise a content platform 102. The content platform 102 may comprise one or more computing devices, such as servers. The content platform 102 may be configured to receive requests for content from one or more users devices 104. The content platform 102 may be configured to communicate with an organization platform 106.

[0042] The system 100 may comprise a network 108. The network 108 may be configured to communicatively couple one or more of the content platform 102, the organization platform 106, the one or more user devices 104, and/or the like. The network 108 may comprise a plurality of network devices, such as routers, switches, access points, switches, hubs, repeaters, modems, gateways, and/or the like. The network 108 may comprise wireless links, wired links, a combination thereof, and/or the like.

[0043] The one or more user devices 104 may comprise a computing device, such as a mobile device, a smart device (e.g., smart watch, smart glasses, smart phone, hand held device), a computing station, a laptop, a tablet device, and/or the like. The one or more user devices 108 may be configured to output one or more user interfaces, such as a user interface associated with the content platform 102, the organization platform 105, and/or the like. The one or more user interfaces may be caused to be output by an application.

[0044] The one or more user devices 104 may be configured to determine location information. The location information may comprise one or more of an asset location at a premises, a premises location, a global positioning coordinate, a geospatial location within a premises, a location history, a shelf location, a premises zone, a container, an aisle, a department of a premises, a common space (e.g., a park, landmark or parking lot), a vehicle, an automobile, a location range, a tagged location, a combination thereof, and/or the like. The location information may be determined based on a positioning system of the user device 104. The positioning system may comprise one or more of a global positioning system, a wireless signal positioning system, an ultrawide band positioning system, a sound based positioning system, a beacon based positioning system, a Bluetooth beacon positioning system, an antenna/receiver based system, an inertial positioning system, an accelerometer, a device (e.g., tag) that advertises its location (e.g., and other data) to the user, a combination thereof, and/or the like.

[0045] The location information may be determined based on triangulation using one or more signals, such as wireless signals, satellite signals, Bluetooth signals, and/or ultrawide band signals. The location information may be received by the user device 104 from a positioning system.

[0046] The location information may comprise data indicative of a location. The data indicative of a location may comprise an identifier associated with a location, such as a location identifier. The location identifier may be received from a trigger or tag, a near field communication (NFC) tag, a code, bar code, quick response (QR) code, a beacon, a signal, a combination thereof, and/or the like.

[0047] The user device 104 (e.g., or the application) may be configured to determine (e.g., detect) a triggering event. The triggering event may comprise receipt of location information, data indicative of a location, a location identifier, and/or the like. The triggering event may comprise detecting that the user device has entered a location, a premises, a premises location, a global positioning coordinate, a geospatial location within a premises, a location history, a shelf location, a premises zone, a container, an aisle, a common space, a department of a premises, a location range, and/or a tagged location.

[0048] The triggering event may comprise scanning an identifier physically located at the location. The identifier may be stored in a tag (e.g., NFC or RFID tag), a QR code, a bar code, or other physical identifier. As an example, a plurality of identifiers (e.g., tags or triggers) may be disposed (e.g., or positioned) at corresponding locations within a premises. An identifier may be disposed (e.g., or positioned) on a shelf. The identifier may be disposed (e.g., or positioned) proximate to (e.g., or onto) an asset (e.g., object, equipment, station, product). The identifier may be scanned by the user or may be scanned automatically if the user is in range.

[0049] The user device 104 (e.g., application) may be configured to send a request based on the triggering event. The request may comprise the location information (e.g., or data indicative of the location). The request may comprise timing information (e.g., a current time, time stamp), user information (e.g., user skill level, user account identifier), identifying information (e.g., user identifier, device identifier, location identifier, tag identifier, universal unique identifier, collision resistant unique identifier, globally unique identifier), event information (e.g., prior events or interactions of the user and/or associated with a location), predictive interactions (e.g., based on logic rule and/or automated machine created response), a combination thereof, and/or the like. The request may comprise or be associated with a request for information. The request may be sent to one or more of the content platform 102 or the organization platform 106. The organization platform 106 may be managed by an organization that manages the location. The organization may manage a premises, asset, service, product, and/or the like at the location. The organization platform 106 may query the content platform 102 to determine information to respond to the request.

[0050] The content platform 102 may be configured to determine information based on the request. The determined information may comprise business information, such as know how, operational information, service information, maintenance information, sales information, loyalty information, inventory, supply chain information, human resource information, chain of custody, payment information a combination thereof, and/or the like. The business information may be relevant to a business associate, such as an employee, supervisor, manager, owner, sales representative, cashier, vendor, operator, trainer, dispatcher, inspector, official, and/or the like. The determined information may be relevant to or comprise customer information, customer profile, customer data, such product information, price information, store information, distribution information and/or the like. The customer information may be relevant to a customer at the location.

[0051] The content platform 102 may comprise a request service 110 configured to receive request from the one or more user devices 104. The request service 110 may be configured to determine information related to the request, such as a context of the request. The request service 110 may analyze the location information to determine an asset. The term asset as used herein may comprise any interest (e.g., tangible or intangible business interest) or concern. The asset may comprise an object, product, service, fixture, equipment, and/or the like. Both physical assets and virtual assets (e.g., abstract quantity, data point) associated with the request. The term asset may comprise an action, such as a service, a sales pitch, information delivery, an operation, a repair, movement, data collection, consumer tracking, and/or the like.

[0052] The request service 110 may be configured to query the storage service 112 to determine the asset. The location information (e.g., an identifier) may be associated with the asset in a data store managed by the storage service 112. The storage service 112 may be any data store, such as a database, distributed data partition, data structure, and/or the like. The storage service 112 may retrieve asset information associated with the asset. The asset information may comprise an install date, a usage date, a part number, a service history, a location, a builder, a creator, list of questions (e.g., frequently asked questions and answers), a decommission date, a decommission circumstances (e.g., rules for decommissioning the asset), an interaction history, task information (e.g., completed tasks of attendance), installation instructions, maintenance information (e.g., maintenance protocols), operational information (e.g., instructions for operating), safety information, a combination thereof, and/or the like.

[0053] The request service 110 may be configured to query the storage service 112 to determine user information. The user information may comprise a user identifier, a user profile, a user characteristic, a user experience level, a user skill level, a user permission level, a user role, a user type, an event or behavior associated with a user, metadata associated with a user, and/or the like. The storage service 112 may store a user profile (e.g., for each user of a plurality of users) comprising one or more user characteristics. A user characteristic may comprise one or more of a user role, a user experience level, a prior event associated with a user, a user permission level, user attribute(s) or occurrence of a set of events or attributes, and/or the like.

[0054] A profile service 114 may be configured to collect user information and/or store the user information in the storage service 112. The user information may comprise user metadata. The user metadata may comprise any data point, such as an event or behavior, associated with a user. The profile service 114 may store the user information as a profile (e.g., a collection of data associated with a user identifier). The user information may comprise, a user identifier, a user type (e.g., customer, employee, vendor, administrator), a user role (e.g., associate, salesperson, manager, supervisor, owner), a user demographic (e.g., gender, age, race, language), user contact information, and/or the like.

[0055] The profile service 114 may be configured to track user events (e.g., or other metadata, data points). The profile service 114 may cause storage of the events in the storage service 112. The user events (e.g., or other user metadata) tracked for a user may be different for different types of users (e.g., or different user roles). For an employee, events may comprise achievement events, training events, sales events, maintenance events, break events, sick events, injury events, project start events, project completion events, and/or the like. For a customer, events may comprise purchase events, inquiry events (e.g., user requests information), service events (e.g., service performed for a user), action events (e.g., premises arrive/leave, zone arrive/leave, department arrive/leave, aisle arrive/leave, container arrive/leave, bin or container arrive/leave, asset arrive/leave, object arrive/leave), and/or the like.

[0056] The profile service 114 may be configured to track location events. A location event may comprise an event associated with a location, an asset at the location, an object at the location, a service at the location, an action at the location, and/or the like. The location events may be associated with a location identifier, such as tag or other trigger disposed at a location, an identifier associated with a precise physical location. A premises (e.g., or environment) may be associated with a plurality of physical locations at the premises. The premises (e.g., or environment) may be logically subdivided into a plurality of physical points, a plurality of zones, a plurality of regions, and/or the like. For example, each asset (e.g., product, fixture, door, wall, machine) may have an associated zone (e.g., or location area) assigned to the asset. An asset may be assigned a zone large enough (e.g., within 3 feet of the asset's location on a shelf) to determine if a user is interacting with (e.g., looking at it, picking it up, learning about) the asset. If a user enters the zone, the profile service 114 may track the event. The user device 104 may send an indication of the location (e.g., or of the user entering the zone) to the profile service 114. The profile service 114 may store the indication as an event. If tags are used, a user device 104 may interact with the tag. The user device 104 may send an indication of the interaction with the tag to the content platform 102. The content platform 102 may cause the profile service 114 track the indication as a location event.

[0057] The content platform 102 may comprise a learning service 116. The learning service 116 may be configured to determine user characteristics, trends, patterns, and/or the like. The learning service 116 may comprise one or more rules for determining user characteristics. The rules may map events, sequences of events, and/or the like with corresponding user characteristics (e.g., which may be stored as part of the user's profile). For example, if a customer purchases products at least once a month from a business, the customer may be given a characteristic of frequent shopper. A rule may associate achievement of a goal (e.g., or value of a metric) with a user characteristic, such as user skill, user achievement, user experience level, and/or the like. A goal may comprise a number of occurrences of an event, a count of a metric, and/or the like. The goal may be based on any measurable metric. An organization may define different goals for users to achieve to qualify to be assigned different user characteristic. The goal and/or metric may be associated with a location, location identifier, asset, service, and/or the like.

[0058] The learning service 116 may be configured to identify patterns based on metadata, events, metrics, goals, and/or the like. The learning service 116 may determine patterns for a single user, for multiple user, for a group of users, a combination thereof, and/or the like. The learning service 116 may determine patterns associated with a location (e.g., physical or virtual), a group of locations, an asset, a service, and/or the like. For example, the learning service 116 may determine a pattern in which a user visits a first location followed by a visit to a second location. For a first pattern, if the user is of a first type, the user may visit the first location and second location. For a second pattern, if the user is of a second type, the user may visit the first location and a third location.

[0059] The learning service 116 may analyze metadata associated with each location and/or the pattern. The metadata may comprise a textual description of the location, of an asset at the location, of a service at the location, and/or the like. Keywords in the textual description (e.g., whether indicated or automatically recognized) may be compared for different locations to determine similarity, differences, and/or associations between the different keywords. The patterns may be analyzed to determine behaviors. For example, if a user is an associate (e.g., sales associate, supervisor, manager), the user may walk from a department location to a break room. A pattern may emerge that the associate always stops at a certain location between the break room and department. For example, customers may stop the associate in an area that has poorly defined product explanations. The learning service may recognize the pattern and store the pattern in the storage service 112. A pattern may comprise an association between a location (e.g., or asset/service at a location) and relevant information.

[0060] The learning service 116 may generate one or more models associated with users, locations, assets, services, information profiles, content, and/or the like. The model may be generated based on machine learning, artificial intelligence, and/or the like. The model may be generated based on supervised learning, unsupervised learning, decision trees, feature learning, anomaly detection, association rules, neural networks, support vector machines, Bayesian networks, genetic algorithms, a combination thereof, and/or the like. The one or more models may be predictive models. The one or more models may predict associations between locations, users and locations, users and services, users and assets, users and information profiles, users and content, assets and services, and/or the like. The one or more models may be trained by a data set, such as prior data. The one or more models may be trained and/or updated as new data is obtained about a location, asset, service, user, and/or the like.

[0061] The learning service 116 may be configured to predict (e.g., or learn) information relevant to a context. The context may comprise any information associated with a location, asset, service, premises, user, content, and/or the like. The learning service 116 may predict the information relevant to the context based on the one or more rules, pattern recognition, the one or more models, and/or the like. User information, asset information, service information, premise information, profile information, content, and/or the like may be correlated using the one or more rules to corresponding information relevant to the context. The one or more rules may be updated and/or changed based on pattern recognition, machine learning, manual adjustment, and/or the like.

[0062] The content platform 102 may comprise an analytics service 118. The analytics service 118 may comprise an interface for manually entering the one or more rules. The analytics service 118 may be configured to output learned patterns, information from the one or more rules, suggestions to add rules, suggestions to update rules, and/or the like. The analytics service 118 may be configured to output a suggestion to update an information profile. A user can approve the suggestion. If approved, the content platform 102 may update and/or add the rule.

[0063] The content platform 102 may comprise an information service 120. The information service 120 may be configured to provide information, such as information relevant to a context (e.g., asset, location, user information). The information may be provided in an information profile. An information profile may comprise a data structure (e.g., or a representation of data) comprising the information. The information profile may comprise one or more information modules. An information module may comprise a set of information, such as an information page. An information module may comprise a widget, functional code block, applet, scripting module, coded module configured to deliver a specific type of information (e.g., according to a specifically programmed format or functionality), combination thereof, and/or the like

[0064] The request service 110 may call the information service 120 to provide information relevant to a request associated with the user. The request service may provide a context to the information service 120. The context may comprise user information, location information, asset information, service information, and/or the like. The information service 120 may be configured to use the one or more rules, patterns, one or more models, and/or the like to determine the information relevant to the context. The one or more rules, patterns, one or more models, and/or the like may be used to determine one or more information modules. The information service 120 may manage information profile generation rules associating specific context information with corresponding information modules. An information module may comprise a video module, image module, media module, comment module, a summary module, a checklist module, a training module, a certification module, an application module, a custom code module, a payment module and/or the like.

[0065] A location, an asset associated with a location, a service associated with the location, and/or the like may have a prior defined information profile. For example, a location may be associated with an asset, such as a product. The asset may have a first set of information modules associated with a first type of user (e.g., consumer or the public), a second set of information modules associated with a second type of user (e.g., employee), a third type of information associated with a third type of user (e.g., supervisor, vendor), a combination thereof, and/or the like. The first set of information modules may be different than the second set of information modules and the third set of information modules. The information service 120 may be configured to receive user information, such as a type of the user associated with a request. The information service 120 may use the type of user in the context to determine which set of modules to provide for a particular request.

[0066] The information service 120 may be configured to remove and/or add additional information modules based on the context. A default set of information modules may be associated with a location, an asset associated with a location, a service associated with the location, a user associated with a location, a combination thereof, and/or the like. For example, the information service 120 may be configured to add and/or remove one or more information modules to/from the default set of information modules when they are not required in the particular user context.

[0067] The information service 120 may be configured to customize information in an information module based on the context. An information module may comprise an executable code set, such as a client-side script, a server-side script, a plugin, an executable module of computer readable code, and/or the like. The information module may comprise media, such as text, image, video, and/or the like. The information module may be configured to receive one or more parameters to modify the information in the information module. The information service 120 may directly modify information in the information module. Modifying information may comprise adding information, removing information, emphasizing information, deemphasizing information, and/or the like. The information service 120 may be configured to customize information in the information module based on the one or more rules, the patterns, the one or more models, and/or the like. If the context indicates that the user has a first experience level (e.g., or skill level), the information service 120 may provide technical information. If the context indicates that the user has a second experience level (e.g., lower than the first experience level), the information service 120 may add information indicating another associate with the first experience level (e.g., along with a location, contact info of the other associate) and/or may provide additional information (e.g., training, safety, and/or the like) in order to up-skill the user to an appropriate experience level. The user's interaction with this other content can be then be used by the learning service 116 or analytics service 118 to adjust the context of that user, asset, location, and/or the like.

[0068] The content platform 102 may be configured to optimize the relevancy of information provided by the information service over time. The information relevant to the context may be output to the user via a user interface (e.g., on the user device 104). The user interface may communicate with a user interface service 122. The user interface may send data indicative of a user's response to the information relevant to the context. The data may indicate which information modules the user interacted with, a duration of time viewing an information module, interactions with interface elements (e.g., video control, checklist), and/or the like. The data indicative of the user's response may be used to generate a rule, change a rule, determine a pattern, update a model, and/or the like. If an information module comprises a video, the data from several users may indicate a pattern that the users typically fast forward to one part of the video. The information module may generate a link to cause a video module to start at the relevant part of the video. The data may be specific to a user type (e.g., or user role). A customer may typically watch the entire video, while a salesperson may only access a part of the video. The link may be provided for the salesperson, but the entire video may be provided to the customer.

[0069] The information may be accessed and/or based on the training level of the worker, the responsibility level of the worker, what information has been updated into the system, and/or the like. Example information that accessed and/or generated may comprise work procedure information, employee tasks, tasks to be completed, task to be repeated, issues to be report on, daily checklists, weekly checklists, asset status, alerts/notifications/call-button, safety procedures, safety checklist, lock out procedures, lock out reporting, cleaning instructions, hazardous material management, live training, feedback portal, community learning, best practices, "newsfeed", challenges, internal messaging, message boards, a combination thereof, and/or the like.

[0070] The information may be generated based on the training level of the worker, the responsibility level of the worker, what information has been updated into the system, a combination thereof, and/or the like. The information provided by the information module may comprise a task to be completed, a task to be repeated, issues to be reported on, daily checklists, weekly checklists, asset status, an alerts, a notification, a call-button, a safety procedure, a safety checklist, a lock out procedure, a lock out reporting, cleaning instructions, hazardous material management information, live training (e.g., or recorded video training), a feedback portal, community learning information (e.g., posts by users, best practices, a newsfeed, a challenge, internal messaging, message boards), a link to any of these items, a combination thereof, and/or the like.

[0071] The content platform 102 may comprise an integration service 124. The integration service 124 may be configured to communicate with external services, such as the organization platform 106. The integration service 124 may comprise an application programming interface (e.g., a set of accessible functions) for communicating with the content platform 102. The organization platform 106 may comprise an application service 126. The application service 126 may comprise any application managed by the organization. The application service 126 may comprise an application for managing assets, asset locations, and/or the like. The request service 110 may be configured to query the application service 126 to determine an asset and/or service associated with a location identifier. In some scenarios, the organization platform 106 may communicate with the user device. A request for information relevant to a context may be received by the organization platform 106 from a user device. The organization platform 106 may be configured to request information relevant to the context by querying the content platform 102.

[0072] FIG. 2 is a block diagram of an example content platform in accordance with the present disclosure. The cloud platform as-a-service may comprise an instance of the organization platform. The Administration & Access Control may comprise user management and authentication services. The Mobile Applications may comprise tools for managing provisioning of triggers. The Mobile Applications may comprise user-facing applications for interacting with the content platform (e.g., content platform 102). The Profile Builder and Content Management System may comprise functionality to create, curate, and manage content and profiles (e.g., created by the content platform 102 or organization platform). The Module-based Applications may comprise a video module (e.g., configured to show videos), image module (e.g., configured to show images), media module (e.g., configured to show media), comment module (e.g., configured to allow users to share comments), a summary module (e.g., configured to show a summary of the information profile or other information), a checklist module (e.g., configured to show one or more checklists), a training module (e.g., configured to provide user training), a certification module (e.g., configured to show and/or update certification information, e.g., a manager may update certifications, an employee may view tasks to complete certifications), an application module (e.g., configured to provide an application), a custom code module (e.g., configured to run custom code specific to an implementation of the platform), a payment module (e.g., configured to allow and/or receive payments from users), and/or the like.

[0073] The Trigger and Redirect Service may comprise functionality that allows for creating, managing, and deploying a plurality of triggers with dynamic routing to the appropriate profile, content, and/or information profile. The Learning & Analytics may comprise functionality to create and report on models associated with users, locations, assets, services, information profiles, content, and/or the like. The Developer Tools may comprise integration, SDK, API and user interface design functionality to allow for third-party developer use of the platform. The Integrations may comprise pre-defined or custom integrations with third-party enterprise resource planning (ERP), user management, location management, asset management, content management or other integration with any of the aforementioned modules, profiles, applications, developer tools, learning and analytics tools, trigger and redirect service tools, administrative tools and/or the like.

[0074] FIG. 3A is a flowchart showing an example process for deploying a plurality of triggers or tags. FIGS. 3B-3S show user interface images associated with the flow chart of FIG. 3A. FIGS. 3B-3F show example user interface representations of a splash screen, dashboard, and/or the like. FIGS. 3G-3L show example user interface representations of accessing an information profile, viewing an information profile, viewing product features, view checklists, and/or the like. FIGS. 3M-3S show example user interface representations of associating a trigger (e.g., a logical trigger) with one or more of a triggering feature (e.g., tag, imaging feature, shape, identifier, UPC, SKU, image, text, label), an asset, an information profile, a location, and/or the like. An organization may deploy a plurality of triggering features or tags at a premises (e.g., or environment). Each triggering feature may be disposed at a physical location (e.g., next to an asset, on an asset). Each triggering feature may be registered using an application. The triggering feature may be associated with a corresponding asset.

[0075] FIG. 4 is an example user interface for an application for accessing information relevant to a context. The example user interface may be a mobile device user interface. The mobile device may determine location information (e.g., based on a trigger, tag, signal). The user interface may be updated based on the location information. The user interface may comprise a plurality of information modules, such as information modules associated with job functions, information modules associated with management of assets (e.g., products, equipment, inventory), information modules associated with safety, information modules associated with performing actions in a business process, information associated with usage of assets after purchase, information modules associated with training, information modules with information for employees, or information modules with information not available to customers (e.g., information different than and/or supplemental to the product information displayed to customers).

[0076] The plurality of information modules may comprise a safety information module, a standard operating procedures module, a parts ordering and information module, a service and/or maintenance request module, an installation guide module, a product information module, a product information and history module, a training module (e.g., training videos and walk through), a checklist module (e.g., for safety and integrity checklists), a photo upload module (e.g., for condition and maintenance reports), a decommissioning module (e.g., for decommissioning assets and/or removing assets from inventory). The plurality of

[0077] FIG. 5 is a diagram showing an example cycle of accessing information relevant to a context. A new employee (e.g., front-line worker) may engage with a strategically placed trigger to access training documentation relating to usage of a point of sale system (e.g., or other asset). Training may include log in/out, basic cash handling procedures, gift card authorization, returns, split payment, printer, debit/credit transactions, and/or the like.

[0078] The employee may gain knowledge and skills related to POS system through video, checklist, PDF, chatbot, photo upload, help request and/or the like. Employee knowledge, completion, and ranking is stored in a user profile. The user profile may be processed (e.g., analyzed using rules, patterns, models, artificial intelligence, machine learning) to determine metadata associated with POS knowledge and generate an experience level based on amount and quality of information consumed.

[0079] As the employee complete training related to point of sale system, other training and certification (e.g., cash training, supervisor, key owner, cash manager certification, and/or the like) may be made available on the user profile. A user profile may have varying experience levels for different trainings and/or certifications. The employee may be a beginner level for point of sale training but at an advanced level for stocking. The training and/or experience levels may be used to trigger sending of information to user devices. Updates to certification and training may be flagged on employee profile, and all future information and training consumed may be based off said certification. Flags could be displayed via alert notification, content curation, and/or the like.

[0080] The employee may be assigned new privileges based off training completion (e.g., or role, experience level). Privileges may include the ability to make price changes, assigning discounts, completing cash in/out procedures at the beginning and end of day, and/or the like. New privileges may be displayed in new content format as access to content is acquired. The employee may be assigned daily challenges related to products sold and transactions complete. User profile recognizes may be completed targets in real-time.

[0081] Once all targets and training are complete, the employee may level up in the system (e.g., be associated with the next highest level) and gain access to more advanced training knowledgebases. This cycle may continue indefinitely throughout employee life cycle.

[0082] Concurrently, other users of the system may engage with the same POS trigger to get contextual information about that store area or their job role areas of focus (e.g., custodial or maintenance staff would receive content about POS maintenance, cleaning procedures, parts re-order information, manufacturer help, and/or the like).

[0083] Over time, a learning service (e.g., learning service 116) may be configured to identify patterns based on metadata, events, metrics, goals, and/or the like. The learning service may determine patterns for a single user, for multiple users, for a group of users, for a single asset, for multiple assets, a group of assets, for a single location, for multiple locations, a group of locations, a combination thereof, and/or the like. For example, the learning service may determine that users typically get stuck on a portion of a training or task in an information model. A delay in completing the task may be determined. It may be determined that the user navigates to a browser to find more information. The information may automatically be added to the information profile and/or provided as a suggestion to a manager to add the information. A flag may be associated with the task to notify a manager of the content platform 102 to re-evaluate an information module. The user device may also prompt the user to flag a task of information module to indicate a problem.

[0084] The disclosed methods and systems may be used to implement a tool for providing know how, operational procedures and other information for an employee or member of an organization. A digital trigger and content curation platform that provides in-store and post-sale engagement, brand connectivity, and access to extended services through the user's smartphone (e.g., or other device) engagement with a trigger (a near field communication tag, a code, bar code, quick response (QR) code, a beacon, a signal, visual feature, a combination thereof, and/or the like). The services, engagement, and connectivity will help customers get the most out of a purchase or other transaction and allow an organization (e.g., company, store, restaurant) the ability to get closer to the customer post-sale. Digital content could include product how-to videos, specs and safety information, product sale price, installation instructions, installation booking, repair information, repair booking, accessory products, product or sales resources, pricing, warranty and extended warranty pricing, extended use pricing, and/or the like. These pieces of data and resources encourage a more efficient associate in the workplace leading to a more informed customer (e.g., in- and out-of-store).

[0085] By engaging with the trigger affixed to a product, price label, store rack, fixture, and/or the like, associates may be taken to a user interface (e.g., an organization branded user interface) that will allow them to see specifics of that product, and pertinent operational information; for example: product knowledge content, consultation, help, and/or installation; required attachment or accessory products; product up-sells, cross-sells, bundles; safe use information; return care; other SKU information & pricing. The information may allow the associate to assist another user with a different role (e.g., customer, service person). The content platform may be used by customers, the general public, employees, managers, and/or the like.

[0086] By engaging with the trigger affixed to a product, price label, store rack, fixture, and/or the like, customers are taken to a user interface (e.g., an organization branded user interface) that will allow them to see specifics of that product, and pertinent operational information, such as product knowledge content, consultation, help, and/or installation, required attachments, accessory products, product up-sells, cross-sells, bundles, safe use information, return care, other SKU information, pricing, a combination thereof, and/or the like.

[0087] The methods and systems disclosed may be used for implementing a digital manual for installing or managing fixtures and/or other assets at stores. An organization (e.g., company, store) may deploy new fixtures (e.g., or other assets) and in-store merchandising programs regularly. These assets are often custom to the organization and may have unique installation or merchandising instructions. As new assets are rolled out, and feedback from the field comes in from these teams, iterations to the instruction may be needed. Getting up to date installation instructions into the hands of those that need it most can be challenging.

[0088] Other issues can arise during installation, such as paper instructions can be misplaced, installers may need to dig through multiple boxes to find a part(s), instructions may not be followed precisely resulting in rework, and parts that seem extra can be thrown away when they are to be used for other installations. All resulting in costly "one off" replacements.

[0089] Conventionally, the organization can create installation instructions and distribute the content through email or within the kits. A more convenient and efficient system can be implemented as disclosed herein.

[0090] An example disclosed system can comprise a Digital Instruction Management Tool. The system can be accessed through different triggers. These triggers may be used to load content in a responsive web application and/or native application. The content may comprise up to date assembly instructions for installation or set up of purchased products (e.g. a bookshelf, desk, patio furniture, dishwasher, car stereo, toilet, chair and/or the like), eliminating lost install manuals, knowing what is in each box, and/or parts lists so the user know what goes where. The Digital Installation Management Tool can be configured in a step by step format to guide the user through the installation process. A final audit photo can be uploaded for verification or compliance.

[0091] The different triggers for the system can be NFC tags, QR codes, SMS and URL labels per kit, and/or other item identifier.

[0092] A digital assembly guide may be developed with step-by-step instructions, including text and various media (e.g., images/video). Content may be accessed via a responsive webpage and/or native app. The content may be device agnostic (e.g., web or mobile browser). Content can be edited throughout the project lifecycle by any authorized user.

[0093] The content platform may be triggered. Provisioning and management service for NFC tags, QR codes, SMS and email of instructions and associated step URLs.

[0094] The methods and systems disclosed may be used for communicating safety and other health considerations at a location. An organization (e.g., company, store, factory) may provide communication of safe use and other health considerations of a particular location, asset or job function. Communicating this information in an effective way may be enhanced through contextual awareness of the user, the user's job function, the user's role, prior training data, the user's location, and/or the like.

[0095] Conventionally, the organization can create health and safety materials and distribute the context through existing communication channels and/or place them in various portals. A more convenient and efficient system can be implemented as disclosed herein whereby the user is directed to content through engagement with a trigger (e.g., location based trigger) placed in context of the health or safety area (e.g., placed inside a semi-truck, on a forklift, in a HAZMAT area, and/or the like)

[0096] The methods and systems disclosed may be used for on the job training at a particular location. An organization (e.g., company, store, factory) may require training on various services, technology, tools, or other organizational programs regularly. This training is often custom to the organization and may have requirements based on context like the user's job role and function, the location and/or the like.

[0097] Conventionally, the organization can create training materials and distribute the content through email, presentations or place them in various portals. A more convenient and efficient system can be implemented as disclosed herein whereby the user is directed to location and job-specific training content through engagement with a trigger placed in context of the training area

[0098] FIG. 6 depicts a computing device that may be used in various aspects, such as the servers, platforms, and/or devices depicted in FIG. 1, FIG. 2, and FIG. 7. With regard to the example architecture of FIG. 1, the content platform 102, the user device 104, and the organization platform 106 may each be implemented in as one or more instances of a computing device 600 of FIG. 6. With regard to the example architecture of FIG. 7, the user device 702, the interface service 706, the integration service 712, the management device 704, the association service 708 may each be implemented as one or more instances of computing device 600 of FIG. 6. The computer architecture shown in FIG. 6 shows a conventional server computer, workstation, desktop computer, laptop, tablet, network appliance, PDA, e-reader, digital cellular phone, or other computing node, and may be utilized to execute any aspects of the computers described herein, such as to implement the methods described herein.

[0099] The computing device 600 may include a baseboard, or "motherboard," which is a printed circuit board to which a multitude of components or devices may be connected by way of a system bus or other electrical communication paths. One or more central processing units (CPUs) 604 may operate in conjunction with a chipset 606. The CPU(s) 604 may be standard programmable processors that perform arithmetic and logical operations necessary for the operation of the computing device 600.

[0100] The CPU(s) 604 may perform the necessary operations by transitioning from one discrete physical state to the next through the manipulation of switching elements that differentiate between and change these states. Switching elements may generally include electronic circuits that maintain one of two binary states, such as flip-flops, and electronic circuits that provide an output state based on the logical combination of the states of one or more other switching elements, such as logic gates. These basic switching elements may be combined to create more complex logic circuits including registers, adders-subtractors, arithmetic logic units, floating-point units, and the like.

[0101] The CPU(s) 604 may be augmented with or replaced by other processing units, such as GPU(s) 605. The GPU(s) 605 may comprise processing units specialized for but not necessarily limited to highly parallel computations, such as graphics and other visualization-related processing.

[0102] A chipset 606 may provide an interface between the CPU(s) 604 and the remainder of the components and devices on the baseboard. The chipset 606 may provide an interface to a random access memory (RAM) 608 used as the main memory in the computing device 600. The chipset 606 may further provide an interface to a computer-readable storage medium, such as a read-only memory (ROM) 620 or non-volatile RAM (NVRAM) (not shown), for storing basic routines that may help to start up the computing device 600 and to transfer information between the various components and devices. ROM 620 or NVRAM may also store other software components necessary for the operation of the computing device 600 in accordance with the aspects described herein.

[0103] The computing device 600 may operate in a networked environment using logical connections to remote computing nodes and computer systems through local area network (LAN) 616. The chipset 606 may include functionality for providing network connectivity through a network interface controller (NIC) 622, such as a gigabit Ethernet adapter. A NIC 622 may be capable of connecting the computing device 600 to other computing nodes over a network 616. It should be appreciated that multiple NICs 622 may be present in the computing device 600, connecting the computing device to other types of networks and remote computer systems.

[0104] The computing device 600 may be connected to a mass storage device 628 that provides non-volatile storage for the computer. The mass storage device 628 may store system programs, application programs, other program modules, and data, which have been described in greater detail herein. The mass storage device 628 may be connected to the computing device 600 through a storage controller 624 connected to the chipset 606. The mass storage device 628 may consist of one or more physical storage units. A storage controller 624 may interface with the physical storage units through a serial attached SCSI (SAS) interface, a serial advanced technology attachment (SATA) interface, a fiber channel (FC) interface, or other type of interface for physically connecting and transferring data between computers and physical storage units.

[0105] The computing device 600 may store data on a mass storage device 628 by transforming the physical state of the physical storage units to reflect the information being stored. The specific transformation of a physical state may depend on various factors and on different implementations of this description. Examples of such factors may include, but are not limited to, the technology used to implement the physical storage units and whether the mass storage device 628 is characterized as primary or secondary storage and the like.

[0106] For example, the computing device 600 may store information to the mass storage device 628 by issuing instructions through a storage controller 624 to alter the magnetic characteristics of a particular location within a magnetic disk drive unit, the reflective or refractive characteristics of a particular location in an optical storage unit, or the electrical characteristics of a particular capacitor, transistor, or other discrete component in a solid-state storage unit. Other transformations of physical media are possible without departing from the scope and spirit of the present description, with the foregoing examples provided only to facilitate this description. The computing device 600 may further read information from the mass storage device 628 by detecting the physical states or characteristics of one or more particular locations within the physical storage units.

[0107] In addition to the mass storage device 628 described above, the computing device 500 may have access to other computer-readable storage media to store and retrieve information, such as program modules, data structures, or other data. It should be appreciated by those skilled in the art that computer-readable storage media may be any available media that provides for the storage of non-transitory data and that may be accessed by the computing device 600.

[0108] By way of example and not limitation, computer-readable storage media may include volatile and non-volatile, transitory computer-readable storage media and non-transitory computer-readable storage media, and removable and non-removable media implemented in any method or technology. Computer-readable storage media includes, but is not limited to, RAM, ROM, erasable programmable ROM ("EPROM"), electrically erasable programmable ROM ("EEPROM"), flash memory or other solid-state memory technology, compact disc ROM ("CD-ROM"), digital versatile disk ("DVD"), high definition DVD ("HD-DVD"), BLU-RAY, or other optical storage, magnetic cassettes, magnetic tape, magnetic disk storage, other magnetic storage devices, or any other medium that may be used to store the desired information in a non-transitory fashion.

[0109] A mass storage device, such as the mass storage device 628 depicted in FIG. 6, may store an operating system utilized to control the operation of the computing device 600. The operating system may comprise a version of the LINUX operating system. The operating system may comprise a version of the WINDOWS SERVER operating system from the MICROSOFT Corporation. According to further aspects, the operating system may comprise a version of the UNIX operating system. Various mobile phone operating systems, such as IOS and ANDROID, may also be utilized. It should be appreciated that other operating systems may also be utilized. The mass storage device 628 may store other system or application programs and data utilized by the computing device 600.

[0110] The mass storage device 628 or other computer-readable storage media may also be encoded with computer-executable instructions, which, when loaded into the computing device 600, transforms the computing device from a general-purpose computing system into a special-purpose computer capable of implementing the aspects described herein. These computer-executable instructions transform the computing device 600 by specifying how the CPU(s) 604 transition between states, as described above. The computing device 600 may have access to computer-readable storage media storing computer-executable instructions, which, when executed by the computing device 600, may perform the methods described herein.

[0111] A computing device, such as the computing device 600 depicted in FIG. 6, may also include an input/output controller 632 for receiving and processing input from a number of input devices, such as a keyboard, a mouse, a touchpad, a touch screen, an electronic stylus, or other type of input device. Similarly, an input/output controller 632 may provide output to a display, such as a computer monitor, a flat-panel display, a digital projector, a printer, a plotter, or other type of output device. It will be appreciated that the computing device 600 may not include all of the components shown in FIG. 6, may include other components that are not explicitly shown in FIG. 6, or may utilize an architecture completely different than that shown in FIG. 6.

[0112] As described herein, a computing device may be a physical computing device, such as the computing device 600 of FIG. 6. A computing node may also include a virtual machine host process and one or more virtual machine instances. Computer-executable instructions may be executed by the physical hardware of a computing device indirectly through interpretation and/or execution of instructions stored and executed in the context of a virtual machine.

[0113] Additional aspects of the disclosure are described below. Any of the following aspects may be implemented via the systems described above. The following aspects may be combined in any manner with each other and/or the aspects described elsewhere herein. The content platform 102, the organization platform 106, or a combination thereof may be configured to perform any of the features described below. Any of the features below may be used for determining user information, determining information profiles, determining location information, and/or the like.

[0114] Smart Triggering

[0115] The systems described herein may be configured to perform smart triggering, such as triggering a request for information or the automatic sending of information without requiring user interaction. Normally a user would manually interact with a triggering feature (e.g., by tapping the trigger, by capturing an image), thereby invoking content on demand. However, a trigger can also be automatic (e.g., passive), occurring without the user having to actively invoke it. Automatic triggering can be based on a user device entering/leaving a geofenced location, a user device being in proximity (e.g., within a threshold distance) of other users (e.g., perhaps of certain type or performing certain actions), a user device being in a location within a specific timeframe, a combination thereof, and/or the like. Automatic triggering can be performed by a user device. The user device can be configured based on a triggering process. The triggering process may be based on rules, pattern recognition, artificial intelligence, machine learning (e.g., if no external signal is required), and/or the like. The triggering process may be based on a context provided by a server. The context may comprise information about other users, business information (e.g., inventor), business process information and/or logic (e.g., from an ERP)

[0116] The triggering process may be based on geofencing, timing information, event information, other user information, business rules, a combination thereof, and/or the like. Geofencing based on triggering may comprise an associate passing near a product shelf. If an area around the product shelf is geofenced, the triggering process may trigger a product inventory check to physically verify if it is time to refill a product in product shelf. Example timing information may comprise a time of day. A trigger may be disabled during off-hours, when an associate is on a break, and/or the like.