Secure Digital Media Capture And Analysis

Speasl; Jerry ; et al.

U.S. patent application number 17/556071 was filed with the patent office on 2022-04-14 for secure digital media capture and analysis. The applicant listed for this patent is IMAGEKEEPER LLC. Invention is credited to Mike Patterson, Marc Roberts, Jerry Speasl.

| Application Number | 20220116511 17/556071 |

| Document ID | / |

| Family ID | 1000006039890 |

| Filed Date | 2022-04-14 |

View All Diagrams

| United States Patent Application | 20220116511 |

| Kind Code | A1 |

| Speasl; Jerry ; et al. | April 14, 2022 |

SECURE DIGITAL MEDIA CAPTURE AND ANALYSIS

Abstract

Systems and methods for generating certified images, annotations, and incident reports are disclosed. A media capture device can be used to capture a media asset and related metadata. The image and its metadata can then be certified upon capture so that it can be verified as authentic and unaltered after certification. The certified media asset can then be included in or as a part of an incident report, which may optionally align multiple media assets along a path based on location and time of capture. The report may itself be certified and synchronized with a cloud server system.

| Inventors: | Speasl; Jerry; (Las Vegas, NV) ; Roberts; Marc; (St. Louis, MO) ; Patterson; Mike; (Sherman, TX) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Family ID: | 1000006039890 | ||||||||||

| Appl. No.: | 17/556071 | ||||||||||

| Filed: | December 20, 2021 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 16505305 | Jul 8, 2019 | 11212416 | ||

| 17556071 | ||||

| 62694528 | Jul 6, 2018 | |||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | H04N 2201/3253 20130101; H04N 2201/0084 20130101; H04N 1/32128 20130101 |

| International Class: | H04N 1/32 20060101 H04N001/32 |

Claims

1. A method of media processing, the method comprising: receiving, from one or more sensors, a plurality of media assets captured by the one or more sensors during a time period; receiving a plurality of timestamps, each timestamp associated with capture of one of the plurality of media assets by the one or more sensors, wherein each of the plurality of timestamps falls within the time period; receiving a plurality of locations, each location associated with capture of one of the plurality of media assets by the one or more sensors; receiving a plurality of elevations, each elevation associated with capture of one of the plurality of media assets by the one or more sensors; generating a path of captures of the plurality of media assets, the path connecting the plurality of locations based on the plurality of timestamps, wherein the path includes a graphical representation of a respective elevation for each location of the plurality of locations; and rendering an interface for display using a display, the interface graphically aligning the plurality of media assets and the plurality of elevations along the path.

2. The method of claim 1, wherein each of the plurality of elevations is graphically represented in the path by a respective color from a plurality of colors along a color spectrum.

3. The method of claim 1, wherein each of the plurality of elevations is graphically represented in the path by a respective shape from a plurality of shapes.

4. The method of claim 1, wherein each of the plurality of elevations is graphically represented in the path by a respective position along an elevation axis.

5. The method of claim 1, wherein each of the plurality of elevations is graphically represented in the path by a respective number.

6. The method of claim 1, further comprising: displaying the interface on the display.

7. The method of claim 1, wherein the one or more sensors include an altimeter, wherein the plurality of elevations are received from the altimeter.

8. The method of claim 1, wherein the one or more sensors include an image sensor, wherein the plurality of media assets include one or more images captured by the image sensor.

9. The method of claim 1, further comprising: receiving a plurality of directions, each direction identifying where the one or more sensors was facing during capture of one of the plurality of media assets, wherein the path includes a graphical representation of a respective direction of the plurality of directions for each location of the plurality of locations.

10. The method of claim 1, further comprising: verifying that the plurality of media assets are unaltered since capture based on one or more certification datasets.

11. The method of claim 1, further comprising: identifying that a first subset of the path is in a first group and that a second subset of the path is in a second group, wherein the path includes a representation the first group and the second group.

12. The method of claim 11, wherein the first group indicates an indoor area and the second group indicates an outdoor area.

13. A system for media processing, the system comprising: one or more sensors that capture a plurality of media assets during a time period; a memory storing instructions; and a processor that executes the instructions, wherein execution of the instructions by the processor causes the processor to: receive, from the one or more sensors, a plurality of media assets captured by the one or more sensors during a time period; receive a plurality of timestamps, each timestamp associated with capture of one of the plurality of media assets by the one or more sensors, wherein each of the plurality of timestamps falls within the time period; receive a plurality of locations, each location associated with capture of one of the plurality of media assets by the one or more sensors; receive a plurality of elevations, each elevation associated with capture of one of the plurality of media assets by the one or more sensors; generate a path of captures of the plurality of media assets, the path connecting the plurality of locations based on the plurality of timestamps, wherein the path includes a graphical representation of a respective elevation for each location of the plurality of locations; and render an interface for display using a display, the interface graphically aligning the plurality of media assets and the plurality of elevations along the path.

14. The system of claim 13, wherein each of the plurality of elevations is graphically represented in the path by at least one of a respective color from a plurality of colors along a color spectrum, a respective shape from a plurality of shapes, a respective position along an elevation axis, or a respective number.

15. The system of claim 13, further comprising: a display that displays the interface.

16. The system of claim 13, wherein the one or more sensors include an altimeter, wherein the plurality of elevations are received from the altimeter.

17. The system of claim 13, wherein the one or more sensors include an image sensor, wherein the plurality of media assets include one or more images captured by the image sensor.

18. The system of claim 13, further comprising: receiving a plurality of directions, each direction identifying where the one or more sensors was facing during capture of one of the plurality of media assets, wherein the path includes a graphical representation of a respective direction of the plurality of directions for each location of the plurality of locations.

19. The system of claim 13, further comprising: verifying that the plurality of media assets are unaltered since capture based on one or more certification datasets.

20. The system of claim 13, further comprising: identifying that a first subset of the path is in a first group and that a second subset of the path is in a second group, wherein the path includes a representation the first group and the second group.

Description

CROSS-REFERENCE TO RELATED APPLICATION

[0001] This application is a continuation and claims the priority benefit of U.S. patent application Ser. No. 16/505,305 filed Jul. 8, 2019 and set to issue as U.S. Pat. No. 11,212,416, which claims the priority benefit of U.S. Provisional Patent Application No. 62/694,528 filed Jul. 6, 2018, the disclosures of which are incorporated herein by reference.

BACKGROUND

Field of the Invention

[0002] The present invention generally relates to digital media capture and synchronization. More specifically, the present invention relates to certification of digital media captured by electronic media capture devices and generation of reports using the resulting certified media.

Description of the Related Art

[0003] User devices such as smartphones or tablets can take photos using camera software applications designed to interact with camera hardware embedded in the user device. Some of these camera applications store photo metadata along with the photo. Examples of metadata include the identity the user device from which the photo was taken, latitude and longitude at which the photo was taken, and information concerning use of filters or other applications that may alter the digital image. The type, format, and details of such metadata are typically inconsistent between camera software applications, impossible to verify as unchanged, and incomplete in terms of what data is captured versus what data could potentially be captured and tied together. A user of one software application cannot rely on a specific set of metadata to be present along with the same photograph should it be taken in another application, and the user also traditionally cannot rely on data from such applications being secure or verifiably not tampered with.

[0004] Some types of positional, sensor, and other software or hardware data, while available for use, are often not stored as photo metadata or in a manner that allows for pairing of the data with a particular image. This data could be used in the context of other software applications or in the review of certain photographs. As a result, users may not be able to determine the exact positioning of a user device, an object being photographed, or the output of other sensors while or when the photo was being taken.

[0005] Photographs are also often used in creating an incident report. Creating an incident report usually involves transcribing details of an incident details such as a car accident or structural damage to a home from personal or third-party observation to handwritten form. Those handwritten notes are then entered into a computer or program operating thereon. Photographs related to the incident and showing the accident or damage are usually scanned or uploaded into the aforementioned computer or program by way of a physical or network connection. Traditionally, these images would have be--as a matter of course and necessity--accepted at face value with no reliable way to ascertain if the images were authentic or unaltered. The transcribed information such as the location and physics of an incident, too, were not always accurate due to human transcription or data entry error or a misreading of image data.

[0006] There is a need in the art to more completely collect available sources of metadata as they pertain to digital imagery--both still and moving. Further, there is a need in the art to better correlate such metadata to files or documents that may be associated with a digital image. Finally, there is a need in the art to be able to verify the accuracy of a digital image, the metadata therein, as well as any data that might be based on, related to, or otherwise derived from that image.

BRIEF DESCRIPTION OF THE DRAWINGS

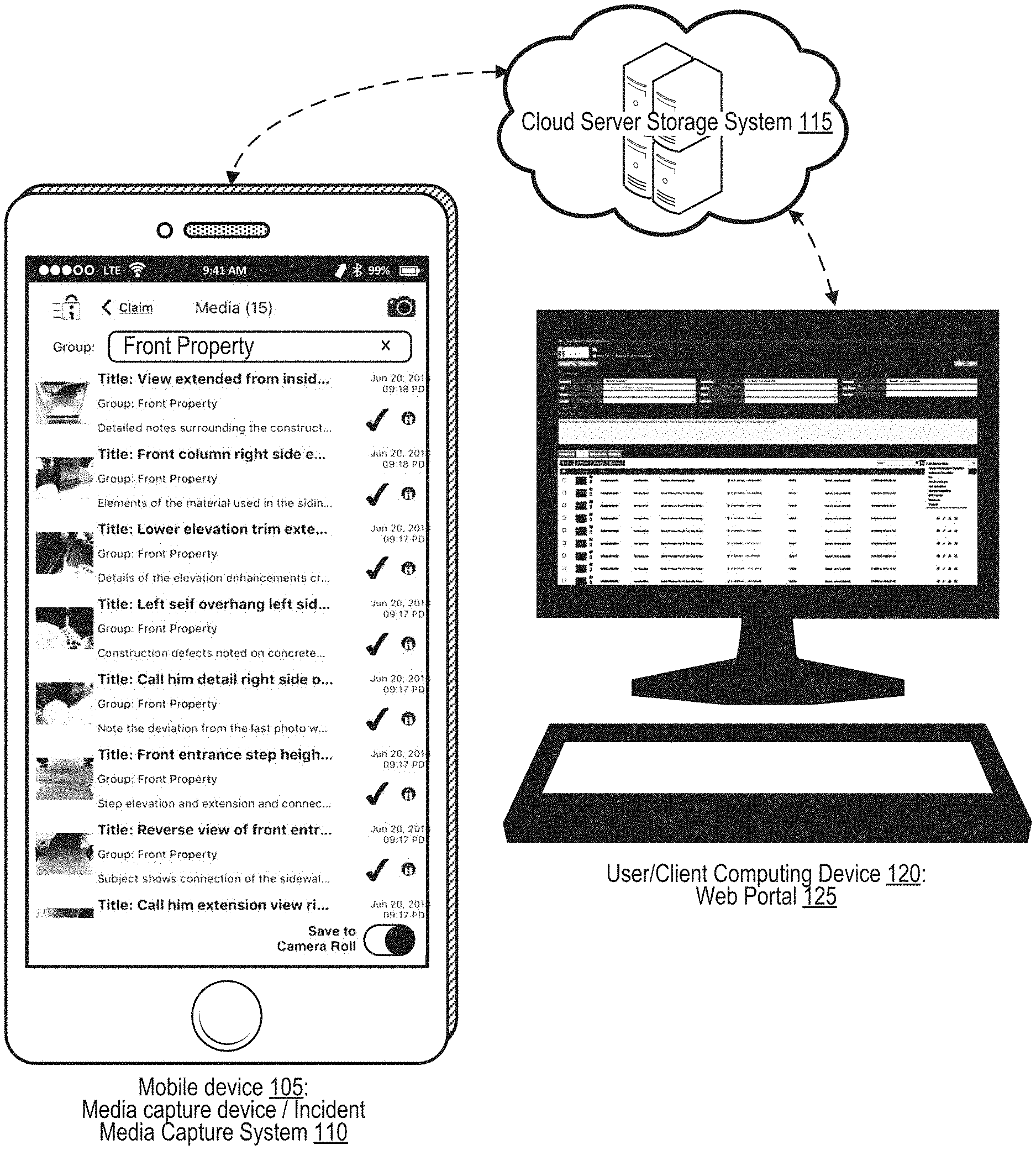

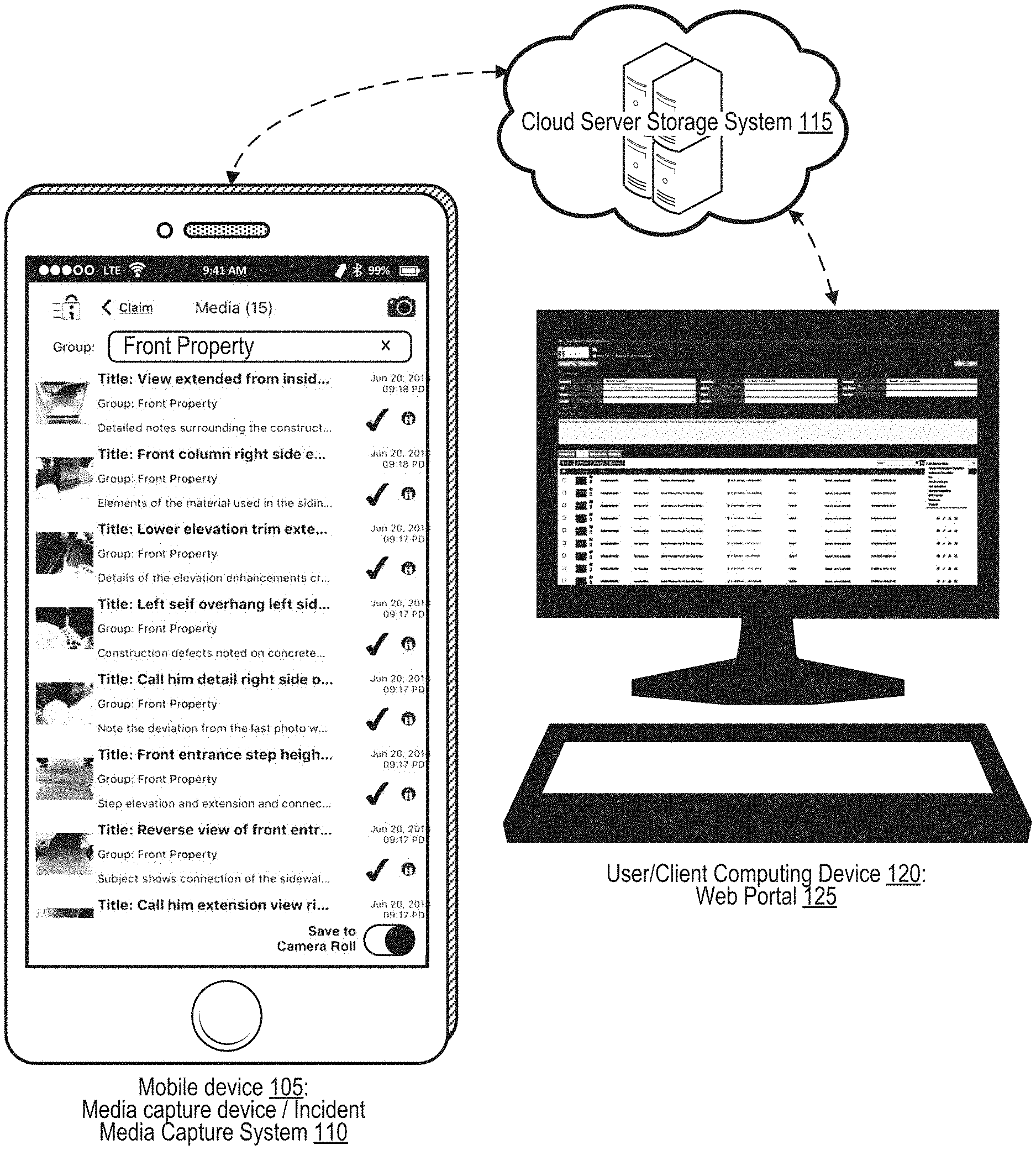

[0007] FIG. 1 illustrates an overview of a cloud server storage system that provides secure synchronization between a mobile device media capture system and a server-based web service accessible through a web portal.

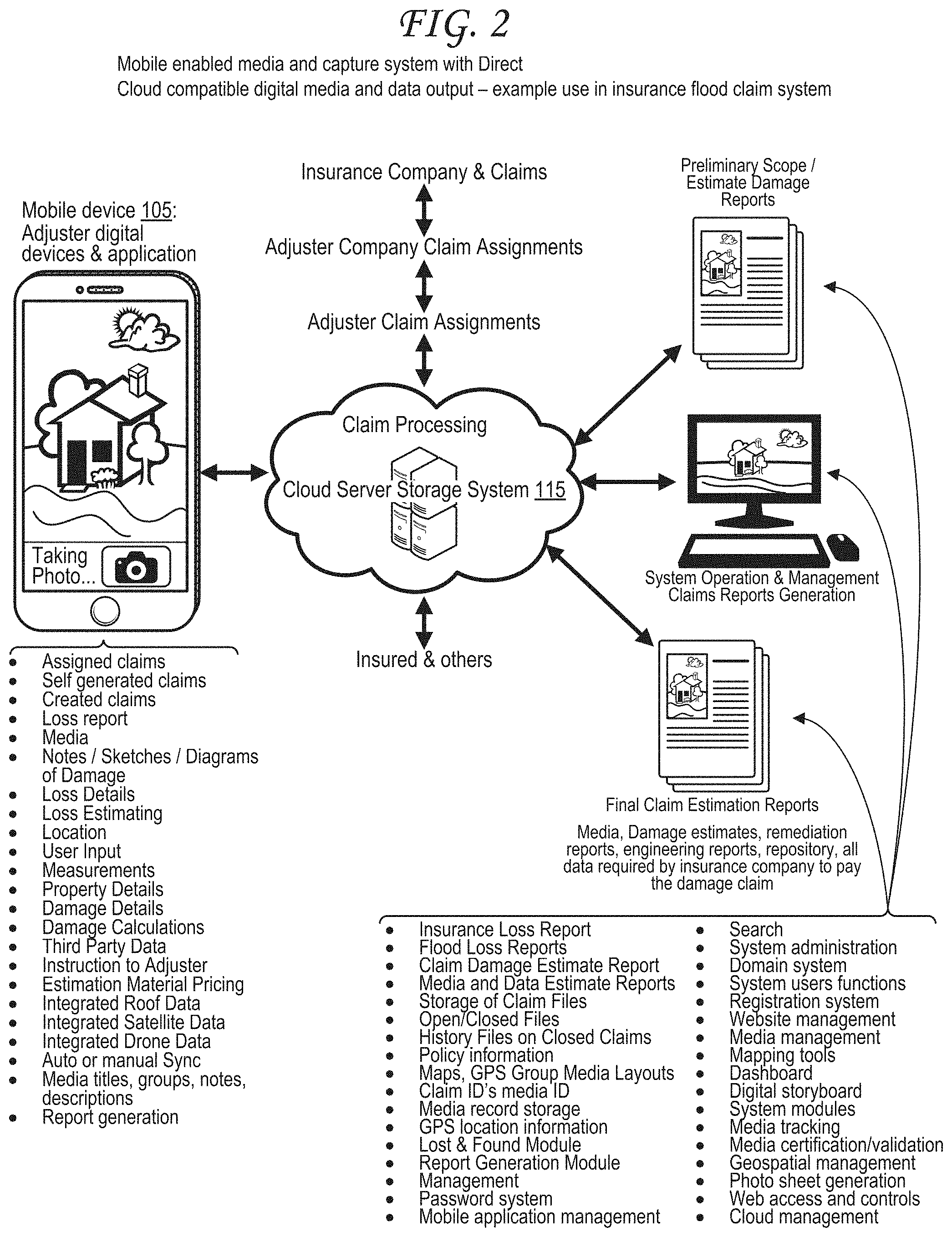

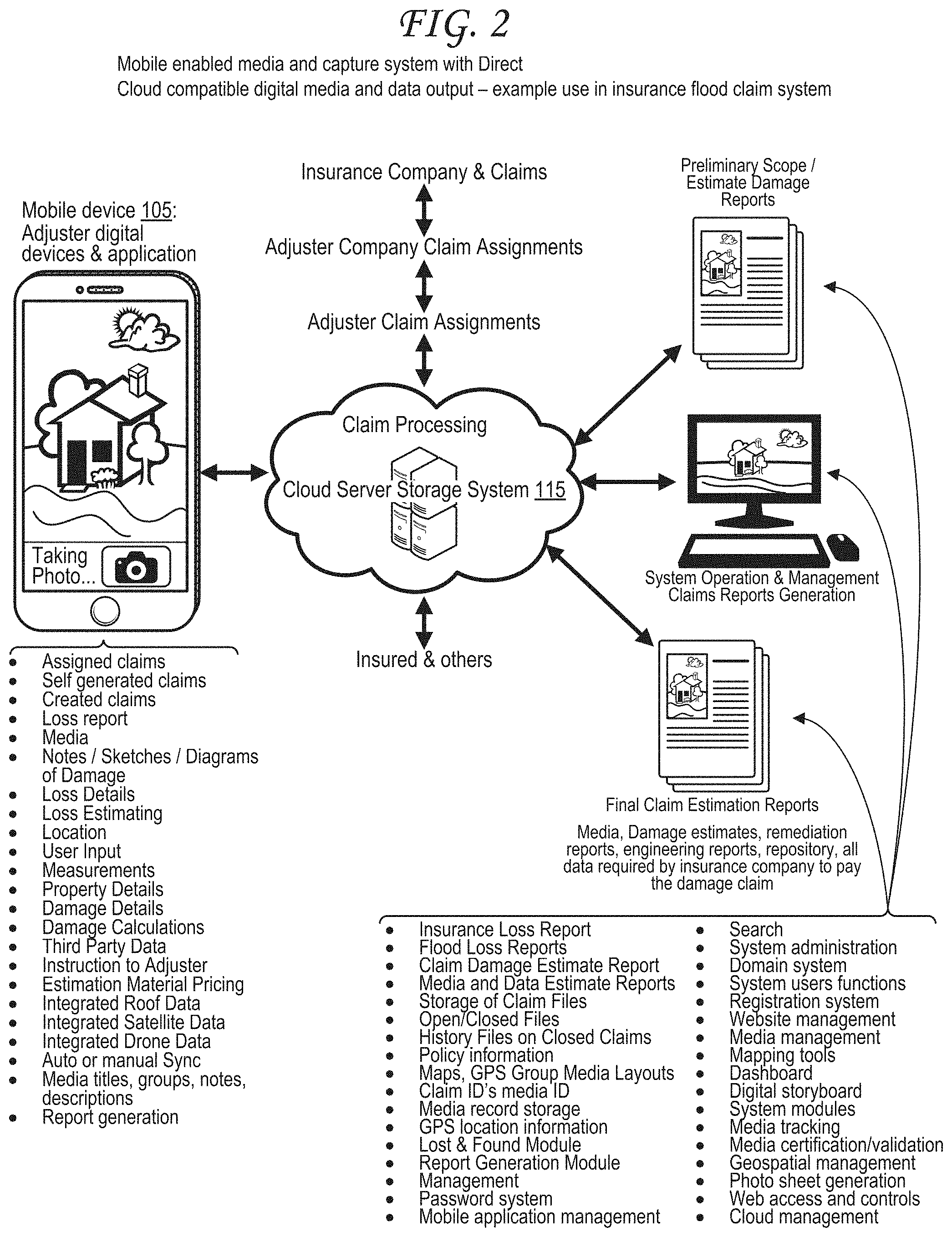

[0008] FIG. 2 illustrates secure synchronization using the cloud server storage system in the context of digital media concerning an insurance claim.

[0009] FIG. 3 illustrates stacked interfaces corresponding to an incident and incident media capture process.

[0010] FIG. 4 is a flow diagram illustrating procedures for creating a new incident record and for editing an existing incident record.

[0011] FIG. 5A illustrates a user interface for creating or editing a title, category, or comment for an entire incident.

[0012] FIG. 5B illustrates a user interface for viewing and searching through incident details for an entire incident or for any of a set of incident media items corresponding to the incident.

[0013] FIG. 5C illustrates a user interface for editing incident details for an entire incident or for any of a set of incident media items corresponding to the incident.

[0014] FIG. 6 illustrates a topographical map tracking locations, directions, altitudes, and sensor data corresponding to media captured at different points along a route.

[0015] FIG. 7 is a table illustrating metadata for three digital media assets captured at different times.

[0016] FIG. 8 illustrates application of directional and location tracking alongside media capture using sensors on or connected to the mobile device and algorithms run on the mobile device and/or on a cloud server.

[0017] FIG. 9 illustrates an interface with multiple types of media asset and metadata analyses, including mapping of media assets and metadata, focused analyses, and elevation analyses.

[0018] FIG. 10 illustrates an incident report generated using the analyses of FIG. 9.

[0019] FIG. 11 illustrates an exemplary media capture system and media certification system interfacing with different types of user devices and camera devices.

[0020] FIG. 12 illustrates a media capture device with an intelligent an image/video capture system that combines a camera image/video with a sensor data set from a sensor system.

[0021] FIG. 13 is a flow diagram illustrating an exemplary method for security certification and verification of digital media.

[0022] FIG. 14 is a flow diagram illustrating generation of a path of a capture device aligned to media captured by the capture device.

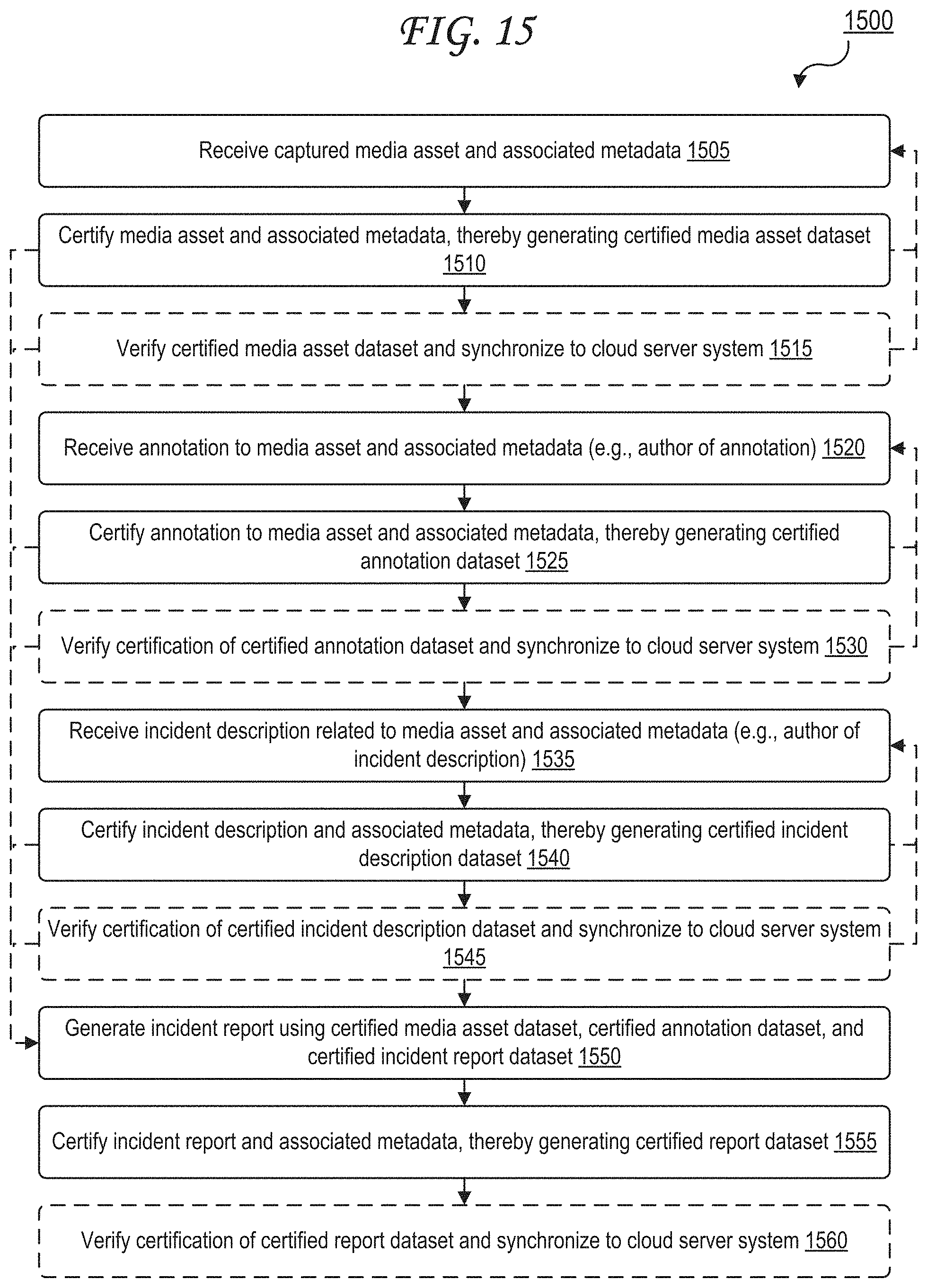

[0023] FIG. 15 is a flow diagram illustrating certification of media, annotations of media, and reports.

[0024] FIG. 16 is a block diagram of an exemplary computing device that may be used to implement some aspects of the subject technology.

DETAILED DESCRIPTION

[0025] Systems and methods for generating certified images, annotations, and incident reports are disclosed. A media capture device can be used to capture a media asset and related metadata. The image and its metadata can then be certified upon capture so that it can be verified as authentic and unaltered after certification. The certified media asset can then be included in or as a part of an incident report (e.g., for social media, news, or insurance), which may optionally align multiple media assets along a path based on location and time of capture. The report may itself be certified on a mobile device and synchronized with a cloud server system.

[0026] FIG. 1 illustrates an overview of a cloud server storage system that provides secure synchronization between a mobile device media capture system and a server-based web service accessible through a web portal.

[0027] In particular, the architecture illustrated in FIG. 1 illustrates a mobile device 105 that represents a media capture device, also referred to as an incident media capture system 110. A touchscreen display (or other display) of the mobile device 105 illustrates a graphical user interface (GUI) listing different certified media assets--that is, certified images and/or videos and/or audio--of a front of a property. Certified media assets may also include audio and/or various sensor readings as discussed further. Each listed item in the GUI includes a thumbnail of a certified media that can be expanded into the full certified media assets, a title describing the certified media assets, a group (these are all grouped as "front property"), a note, a timestamp and date of capture, an icon representing directional and location data, a momentary spinning and numbered icon denoting data is being transmitted to the cloud, a checkmark icon representing confirmation that data arrived and stored in cloud, and a note or description.

[0028] The data is captured by the media capture device (mobile device 105) and sensor hardware that connects to the mobile device 105 in a wired or wireless fashion as described further herein. The mobile device 105 and any sensor hardware it is connected to represent the incident media capture system 110. The sensor hardware may include manned vehicles or unmanned vehicles that are autonomous, self-driving, remote controlled, and/or semi-autonomous. Such manned or unmanned vehicles include aerial, aquatic, or land vehicles. Sensors on such vehicles may capture data as part of the incident media capture system 110 as per FIG. 11.

[0029] The web portal 125 may be a website hosted at the cloud server system 115 or hosted elsewhere on a host server or directly in a data center. The web portal 125 provides access to reports generated by the cloud server system 115 based on incidents identified via the mobile device 105 or the web portal 125. The web portal 125 provides access to media assets associated with those incidents and included in those reports.

[0030] The cloud server storage system 115 receives the certified media along with any other relevant data from the mobile device 105 through the Internet or through a local area network/Intranet. Data relevant to the certified media may include, for example, a title describing the certified media, type of media (e.g., "visual, 3D, IR, Time-Lapse, Slow Motion, Square, Panoramic, Lidar"), a group (e.g., "front property"), a note, a timestamp and date of capture, directional and location data, additional sensor data, and a note or description. This information is then made accessible by the cloud server storage system 115 to a user computing device 120 via a web portal 125 through the Internet or through a local area network/Intranet.

[0031] The user computing device 120 may be any computing device. The user computing device 120 may be the mobile device 105 or may be a different computing device.

[0032] FIG. 2 illustrates secure synchronization using the cloud server storage system in the context of digital media concerning an insurance claim.

[0033] Incident and media and data capture system and methods with bi-directional cloud compatible digital output, comprising of mobile digital devices with lens, shutter, application code and data capable cellular, transceiver or wireless cloud connectivity. The system is a fully cloud centralize system, or it can converge platforms to be used with a single computer or transceiver equipped digital camera. This multi-disciplinary collection and processing system allows for quick recognition action and processing and vital media and data recording preservation/storage of unalterable, irrefutable media, reports and documents. The system will process any type of incidents, insurance, flood claims, catastrophe events, accidents, law enforcement events much more rapidly than any other system. The system will produce claim loss reports with certified media with media titles, labels, captions, notes, groups all with precision GPS information all integrated into a PDF or other computer format for increased efficiency in performing an adjuster on-site claims loss report. Additional key elements of the system include a fully integrated documentation and dynamic visual GPS enabled title, categorized, descriptive media dialog/notes integrated multimedia report generation system incorporating a programmable estimating system with preservation features.

[0034] Additional features integrated to the system are dynamic sketching tools to allow users to draw/sketch a property detail with measurement as an adjuster generates an on-site damage reports and adds Incident titles, it's category and voice to text, dynamic forms for data block inputs or keyboard entered comments along with the incident's media and subsequent media details including media title, media group (s), media description is fully integrated into the web dynamic report assembling process and into the reports when the reports function is activated, and a report is generated, saved as an example in PDF format and sent/transmitted via text, SMS, email or added to an assemblage of a larger report.

[0035] This capability includes a media, data, audio transcription and report processing center (photo sheet development system) where media groups are sorted, rearranged and organized by user controls by groups, by media along or with each singular media, its geospatial data and media title/notes/description and groups.

[0036] Additionally, the system can utilize digital processing of the media to determine damages and create loss and replacement estimates which is data that can be extracted from the media. Measurement of details includes walls, basements, floors, roofs, eves, roof damage by hail, wind or tornado etc.

[0037] The system uses secure system log-in by users. As the user receives claims to adjust, the adjuster will log into the system using his system credentials. The user management i.e. system manager will manage all users though a password system with permissions of groups of users, or individual users. The media data, loss data, contents data, adjuster labor for the onsite loss investigation data is all integrated and processed with the continually updated cost estimating portion of the system to provide all parties involved in the loss; insured, adjuster, adjuster company, insurance company an insurance estimate along with the media, loss report, documents, witness statements, prior media taken during the event, policy information, purchase receipts, owner verification data, adjuster credentials, advance payment forms, engineering reports and any other document to prove the validity of the claim including any past damages associated with the claim. All this data including the final estimate are preserved into the cloud system and stored with access provided though the secure, password system, user management, dashboard, third party data access and all associated APIs (Advanced Programming Interfaces).

[0038] All claims/incident data is associated with 3D media and GPS precision location, using modern cellular device, cellular antenna systems, RTK, GPS L5 signals to provide precise geographic location, land features, rivers, streams, roads, bridges, buildings, topographic data etc. The system will utilize the gps claims data to integrate into computer generated maps such as Google Earth, Digital Globe or other sources of satellite data. This data is also integrated into the digital device system in real-time if required.

[0039] That is that the adjuster can capture media of the property while at the same time download remote satellite image data from a satellite or other 3.sup.rd party suppliers to incorporate roof damage, solar panels, i.e. hail, wind, tornado, wildfire, earthquake etc., associated with a particular event, incident or claim. The precision gps and media processing of both the satellite data and adjuster collected data can form an extremely precise map of for example hail damage location on a roof and show individual hail damage per shingle while indicating depth of the damage, percentage of damaged area, replacement costs to remove damage and replace to original condition. The system includes a claim configuration integrated software process which can configure the claim by type dynamically, i.e. fire, flood, accident, storms, earthquake, hurricane so the adjuster has access to multiple tools necessary to perform measurements, collect media, facts, in which to create loss and damage estimate reports for distinctly different types of events all using the same system.

[0040] The system also has a dynamic function to create both assigned claims versus created claims. Assigned claims are where the adjuster would have limited ability to modify the claim data, media or loss data. The Created claim would allow the owner/creator to manage everything about the claim and to manage all aspects of the claim with edit functions to edit the whole claim's data, media, estimate, loss forms, loss data, etc. The system can function as an integrated insurance, insurance claims system for providers, insurance adjuster firms or adjusters where insurance claims are digitally provided over the network to all parties for processing allowing the choice of or not of total transparency to the insurance company, claims company, adjuster, insured and if required the government if it is a government back NFIP flood claim. The system provides domain security, initiation and separation, so data is not compromised. The claims assigned can be assigned to a particular adjuster and can be assigned automatically in specific groups or in a mass intelligent assignment process. The digital device receives assignments through a preprogrammed or manual synchronization. Menus are provided to service the adjuster on his digital device for workflow and visual or audio status indicator of all claims such as new, in process, waiting for follow up, completed, waiting for approval etc. This allows the transaction thought the use of fill-in prompts, voice prompts, tone prompts for data and media collected on site of loss or capturing media of the insured individual home contents such as furniture or valuables with labels indicating value, age, and condition with a summing of the value.

[0041] The real-time GNSS (e.g., GPS) and media capture system plays a role in both being part of the media and by indicating the current location of the adjuster doing the work. The GPS allows anyone having access to the system to view the, time, location and travel path of collected media to determine the real-time adjuster location, time on job, time spent doing the damage assessment, and time to complete the overall damage inspection and estimate. The system features allow for scheduling adjusters with insured to complete their damage inspection and insured to communicate, make schedules, appointments electronically, and through other means, such as internet, mobile app interface, or via text, or email all with confirmations sent to confirm the time, in route and confirmation when on-site and ready to begin the property adjustment. Other technologies including certified real-time video, certified streaming video with gps and other attributes called out in this document will also be part of the video whether one way or two-way real-time video communication. The system additionally will also accept the standard Apple Computer's Facetime or Skype system and any commercial supplier of video conferencing, or internet meeting system such as Go to Meeting as a usable plug in to make the video or computer connection for real time customer communications. Certified digital photos, video, and audio can be captured/shared/transferred/stored between the parties during a real-time video teleconference connection meeting.

[0042] Further, the digital device with application code, reviews an insurance claim assigned to the claims adjuster on the digital device. There are certain steps required to adjust property for an insurance claim of any kind. The system will perform the actions required with the selected type of insurance claim. The digital device is capable of capturing 3D media with centimeter accurate precision gps location and gps media and data and documenting the property loss/damage and recording that data and integrating all into a report for insurance claim validation and proof of the loss which validates the carrier to payment.

[0043] Claims are maintained in a repository with all communications, claim records, documents, media, gps data, communications, initial notice of loss communication notes, engineering reports, policy data, advance payments records, property data, prior property loss records, contents, audit records, and required business processes. The system completes multiple claims assignments and produces the documents for different insurance carriers simultaneously using hierarchical domain computer software and password controls with a super management user with all data synchronized between the mobile device and the cloud system. The system allows for hundreds of mobile device users to be connected to various different domains simultaneously transmitting, syncing between the digital device and cloud system. The system will accept digital images, audio recording, video in multiple resolutions, digital policy information, word processor documents, spreadsheets, etc. The system has multiple drop-down menus, drop down lists and drop downs, that create a secondary drop-down list as cited in the tracking features shown in the displayed layer section. The system also allows the user to select various measurement features, such as conversion from fractions to decimal, form from feet to yards, etc. Measurement can be made using media embedded software solutions to use the media to measure in the media or process outside of the media. The measurement system can all utilize the digital device's onboard GPS system integrated with the media system to complete measurement of items in the digital media. The user can create 3D reference points in the media and draw lines with finger or user tools to fix a point in the image and draw lines, circles, squares, reference points, boxes to create measurements, as well as use integrated tools associated with measurement to calculate the areas, length, widths, volume, square feet etc., of the damage along with their replacement values as the data will be associated with the damage estimation of the loss whereby the loss damage report information is integrated and completed, stored, delivered.

[0044] There is a method for validating and authenticating the user of the portable device based on the registration and/or profile information of the user by the system management administration process and user.

[0045] The system's mobile application code (uploaded Incident Media Capture System) allows establishment and incident initiation process. The incident titles, incident categorizing, insertion of comments, storage, transmission and provides for system synchronization of the mobile device to cloud and the cloud web portal and to the mobile devices. All incidents and incident media and associated data i.e., titles, categories, comments etc., are synchronized end to end with the entirety of associated data and group incident's media captured, using its user screen selectable multiple media capture source.

[0046] FIG. 3 illustrates stacked interfaces corresponding to an incident and incident media capture process.

[0047] The user logs in to the mobile device 105 via interface 305, can create a new incident via "new+" button 310, and can save this incident via "save" button 315.

[0048] The user then inputs a title, category, and comments for the incident via interface 320. The user can add photographic media via a photo button 325. The Camera icon button expands to open and expose the camera screen and operator selectable controls and features. Some of these are select mode, photo, video, audio. Additionally, photo capture features and operational modes such as groups, general, standard, new, reports which are user selectable providing media location. Voice operational camera trigger is included as an additional mode for fingerless capture operation/process by using voice activation to capture photo, video, and audio.

[0049] Once the incident is created via the interface of step 320 and the user saves via save button 325, an incident view interface 330 is opened, showing an incident media list 340 with a number of incident media assets (here, four)--showing the media thumbnails, titles, groups, and notes corresponding to each media asset. These are certified media assets, meaning they got through the verification/certification process after being selected by the user and before being associated with the incident media list 340 of the incident viewed via incident view interface 330. The incident view interface 330 also includes a search bar 335, which can be used to search for a particular incident, group, word, and media asset in the incident media list 340. Alternately or additionally, the search bar 335 can be used to search for a different incident.

[0050] Users capture media in each incident along with recorded material about that incident with scripted aspects regarding each media. Each media contains internally code processed certified media, instantaneously at time of acquisition along with user inserted media titles, media group, media notes/description, and other media attributes, all electronically integrated.

[0051] The system maintains a continuous media chain of custody, from the instant the on-screen camera media trigger or activated selectable voice activated capture (system has a separate on-screen camera trigger control due to having a separate camera controller) is activated, media is captured and continues throughout the entire transmission process and for the total time the certified media and associated data is preserved in the cloud and web portal system.

[0052] FIG. 4 is a flow diagram illustrating procedures for creating a new incident record and for editing an existing incident record.

[0053] At step 405, the user logs in to the mobile device 105.

[0054] At step 410, the user selects "Create New Incident." New incident creation provides a number of possible processes at step 415, including adding incident title, adding emoji/icons to incident title, selecting/adding incident category, selecting/adding incident comment, searching incidents by title, synchronizing all incidents, adding MM/DD/YYYY date, adding timestamp and time zone (e.g., 11:22:45 PM Pacific Time), scrolling incident menu, and viewing application menu (home, synch, etc.).

[0055] At step 420, the user adds media to the incident. Adding media provides a number of possible processes, including selecting media type (e.g., photo, audio, video), selecting camera (e.g., photo, audio, video (including various selectable media resolutions and flash)), selecting subject then trigger, grouping, camera, trigger est. group, media transmission indicator, media audible alert transmission, selecting photo, selecting media selector, adding media title, caption, legend, annotate media (select tool), validating media, adding notes to media, adding media groups, archiving, searching, group selector, group list view, initiating group media, capturing initial group media, and group keyboard/voice to text.

[0056] At step 425, additional context can be added to the media, including incident, media, data management, forms; population of forms and reports; estimation, property data, assigned claims, appointments, location; contact lists, calendar, diary and secure electronic signatures with date and time along with printed name inserted into certain forms, title etc.

[0057] At step 430, the user selects "Edit Incident." Incident editing provides a number of possible processes at step 435, including editing the incident title, editing the incident emoji/icons, editing the incident category, editing the comment/note, searching the incident title and to validate a media, by user selecting the square with up arrow located in top left of screen when upon selection a validation screen appears to indicate certified or non-certified depending on the selecting and initiating the validation process, edit, add identifier, synching the edited incidents, and archiving the incident in the web portal.

[0058] At step 440, the user edits media for the incident. Editing media provides a number of possible processes, including editing incident media title, editing incident media group, search, selecting "enter a new group," selecting from group list view, editing text via keyboard/voice to text, editing media notes, selecting and moving media from one group to another, and archiving the media (e.g., in the web portal).

[0059] At step 445, additional functionality is listed, including mobile application, tracking system, synchronization, third party data interface, sketches, drawings, photos, video, audio, and other media.

[0060] FIG. 5A illustrates a user interface for creating or editing a title, category, or comment for an entire incident.

[0061] The user interface of FIG. 5A is an example of the interface 320 of FIG. 3 and of the process operations 415 and 435 of FIG. 4. In particular, the while the interface of FIG. 5A is an "edit incident" interface as in step 435 of FIG. 4, it should be understood that a "create new incident" interface as in step 415 of FIG. 4 may look the same or similar.

[0062] In particular, the interface of FIG. 5A includes a title input form 505, which is filled out with a boat icon/emoji as well as the text "May 16-26 Alaska Inside Passage." The interface of FIG. 5A also includes a category input form 510, which is filled out with the text "Recreation." The interface of FIG. 5A also includes a comment input form 515, which is filled out with the text "Grand Princess boarded Pier 27, San Francisco, Alaska Inside Passage; San Francisco, Ketchikan, Juneau, Skagway, Mendenhall Glacier, Tracy Arm Fjords, Vancouver, SFO."

[0063] FIG. 5B illustrates a user interface for viewing and searching through incident details for an entire incident or for any of a set of incident media items corresponding to the incident.

[0064] The user interface of FIG. 5B is an example of the interface 330 of FIG. 3 and of the process operations 415/425 and 435/440 of FIG. 4. The interface of FIG. 5B includes incident details 520, which include the incident title 505, incident category 510, and incident comments 515 from FIG. 5A. The incident details 520 also include the search bar 335 described in FIG. 3.

[0065] The interface of FIG. 5B also includes incident media details 525 for each listed media asset, including a media title 505, a media group 535 (all of the media assets visible in FIG. 5B are in the "SFO Arrival" group), and media descriptions/notes 540.

[0066] FIG. 5C illustrates a user interface for editing incident details for an entire incident or for any of a set of incident media items corresponding to the incident.

[0067] The user interface of FIG. 5C is the user interface of FIG. 5B, but with certain editing functions activated. That is, one of the media items identifying a media asset and its corresponding incident media details 525 (title 530, group 535, description/notes 540) are swiped to the left via the touchscreen of the mobile device 105 to reveal four buttons for editing the incident media 440. These four buttons read "edit group" for editing the group 535, "edit notes" for editing the description/notes 540, "edit title" for editing the title 530, and "archive" for archiving the media asset.

[0068] An "edit incident" button 435 is also present, which when pressed, takes the user back to the interface of FIG. 5A, allowing the user to edit the incident details 520.

[0069] FIG. 6 illustrates a topographical map tracking locations, directions, altitudes, sensor data, and directional data corresponding to media captured at different points along a route.

[0070] In particular, a path 605 is illustrated overlaid over the map 600 of FIG. 6. The path 605 includes a first location 610 associated with a timestamp reading 12:01:01 PM, a second location 615 associated with a timestamp reading 12:05:16 PM, a third location 620 associated with a timestamp reading 12:09:32 PM, a fourth location 625 associated with a timestamp reading 12:11:56 PM, a fifth location 630 associated with a timestamp reading 12:12:15 PM, and a sixth location 635 associated with a timestamp reading 12:14:27 PM.

[0071] The path 605 represents a path of travel of a media capture device through a mountainous region, the media capture device capturing various media assets at each location along the path. In particular, each location includes information overlaid over the map 600 representing media captured by the media capture device at that location, in particular a box with a photographic image captured by the media capture device at that location and a strip of video frames of a video captured by the media capture device at that location or a combination of each. A number of icons are also illustrated beside each location, the icons including a compass representing a direction that the media capture device is facing (and/or a compass reading of a compass of the media capture device), media capture device, an altitude indicator indicating altitude or elevation as captured by an altimeter of the media capture device, a location indicator indicating GNSS geolocation or other geolocation data captured via one or more positioning receivers off the media capture device, a metadata icon representing image and video metadata captured by the media capture device, a timer icon representing a time of capture and/or a duration of capture (e.g., for a video), a lens icon indicating media capture settings (e.g., aperture, ISO, exposure time, gain), a certification icon indicating certification of the media and other data collected by the media capture device as discussed with respect to FIG. 13, a cloud icon reflecting synchronization with servers 115 and/or other user computing devices 120 as discussed with respect to FIG. 1 and FIG. 2, a map icon reflecting map data that can optionally be pulled from internet-accessible map information databases or other sources and optionally including satellite or aerial or street-view photography from such sources, and an tri-arrow and angle icon representing pitch and roll and yaw captured by the media capture device.

[0072] The media capture system will maintain collected media and associated media data information if operated off grid in a non-cellular signal or WI FI service area. Once signal is detected, the media capture system will automatically (or by manual process) upload media and associated information to the cloud. If any data issues are indicated, the upload signal is shown. The user, at any time, can complete a system synchronization, and all data will be synchronized across both the cloud and on the digital device within or outside a domain structure. This includes the (a.) INCIDENT title, category, comments/notes. This also includes the (b.) MEDIA title, group, group title description, location, time, date, heading, altitude, GPS, certification, cloud confirmation, metadata, device type, device model, device user. The media capture system will track information regarding each media showing the start initiation media time, location, look angle, orientation, compass heading, stop time, movement time to next media capture, next media capture, acceleration, velocity/acceleration, time from last capture to the latest capture, and continuing data tracking until final capture is completed. Further, it will generate a map of the data collected and storing both the data and map in the system. All of this data is transmitted to the cloud and synchronized as well during the collection process. Using RTK signals from suppliers of those type of signals and others who generated corrections to standard GPS signals, the media capture system will also include the ability to utilize miniature GPS receivers and produce a precise centimeter accuracy incorporated into the media metadata of anywhere on the earth, underwater or in space. This media accuracy collection and transmitted within the system to the cloud for more precise media tracking and media precises elevation reporting. The map 600 of FIG. 6 is an example of a topography map with a path 605 having a starting point 610. A media capture device is shown at point 610 at a time, location, pointing angle of the lens, elevation, compass heading, 3-axis orientation of the lens, capturing a media. The system then moves to point 615. From point 610 to point 615, the time, velocity, elevation, acceleration, compass heading, lens's look angle, map location, metadata, media certification, are all processed to determine the outcome and transmitted to the cloud. The system then continues on to 620, 625, 630, and on to 635, which is the end of the path 605. The system which can be integrated directly as a stand-alone digital device or into a standard digital camera, 3D camera or, any type of autonomous vehicle camera system, auto/truck dashcam, police body camera, aircraft portable or fixed camera system, UAV camera, UAV dashcam, helicopter, boat, attached to delivery driver such as Amazon, FedEx, United Parcel Post, US Post office, or Uber, Lyft dash camera systems motorcycle or drone.

[0073] FIG. 7 is a table illustrating metadata for three digital media assets captured at different times.

[0074] In particular, FIG. 7 is an example metadata that can be captured by the media capture device alongside capture of media assets, for example along a path such as the path 605 of FIG. 6. The metadata may include timestamp and location data associated with each media asset (i.e., each image, video, and/or audio recording captured by the media capture device) as illustrated in FIG. 6. The metadata for each location, timestamp, and media asset may also include additional information, such as the information identified in the table 700 of FIG. 7, including Media identifier (ID) 705 of the media asset, media asset Title 710, Group 715 that the media asset is in (and optionally title of the group), Location 725 (optionally including elevation), orientation 730 (including roll, pitch, and/or yaw), direction (of movement or of capture) and optionally acceleration in the direction (or in any other direction) 735, distance and/or time and/or elevation and/or direction since last capture 740, user identifier (ID) 745, device model and type 750, angle that the media capture device is facing 755 (in degrees from a defined direction such as north or east or west or south) during capture of the media asset, media type 760 (e.g., image, 3D, video, audio, RADAR, LIDAR, SONAR/SODAR), a distance and/or bearing to a target object that is depicted in the media asset 765, order of location within the set of locations in the path 770, and additional mapping data 775 such as digital elevation model mapping. Additional metadata may also include Direction Traveled and Dwell time at a particular point. The metadata illustrated in the table 700 that relates to motion, direction, and orientation may be determined coupling one or more inertial measurement units (IMUs), one or more accelerometers, one or more gyroscopes, one or more positioning receivers (GNSS and/or otherwise), or some combination thereof. Metadata may then be incorporated into charts or graphs, reports and may be overlaid over maps such as the map 600 of FIG. 6.

[0075] FIG. 8 illustrates application of directional and location tracking alongside media capture using sensors on or connected to the mobile device and algorithms run on the mobile device and/or on a cloud server.

[0076] In particular, FIG. 8 is an example of path 800 of a media capture device from a start point 802 to an end point 828, with media captured at various times and locations along that path. The media capture device captures a media asset at location 802 at 65 degrees at 9:25:10 AM (the start point), then moves to location 804 and captures media at 60 degrees 9:25:14 AM, then captures a media asset at location 806 at 330 degrees at 9:25:16 AM, with these three captures (802, 804, 806) being in a first group 830. The media capture device then captures a media asset at location 808 at 280 degrees at 9:25:23 AM, then moves to location 810 and captures media at 330 degrees at 9:25:33 AM, then captures a media asset at location 812 at 240 degrees at 9:25:45 AM, with these three captures (808, 810, 812) being in a second group 832. The media capture device then captures a media asset at location 814 at 165 degrees at 9:26:10 AM, then moves to location 816 and captures media at 150 degrees at 9:26:20 AM, then captures a media asset at location 816 at 135 degrees at 9:26:30 AM, then captures a media asset at location 818 at 120 degrees at 9:26:40 AM, with these four captures (814, 816, 816, 818) being in a third group 834. The media capture device then captures a media asset at location 820 at 80 degrees at 9:27:12 AM, then moves to location 822 and captures media at 90 degrees at 9:27:30 AM, then captures a media asset at location 824 at 90 degrees at 9:28:09 AM, with these three captures (820, 822, 824) being in a fourth group 836. The media capture device then captures a media asset at location 826 at 225 degrees at 9:28:52 AM, then moves to location 828 and captures a final media asset of the path 800 at 185 degrees at 9:29:03 AM, with these two captures (826, 828) being in a fifth group 838. The path 800 is illustrated from a top-down view, with the locations plotted along latitude and longitude, with a compass 860 showing relative direction within the path 800. Direction of the media capture device during capture of each media asset (e.g., image, video, audio) is also captured and shown along the path 800 via the arrow and field of view indicator extending from the circle.

[0077] Images representing the media captured at each location and timestamp illustrated along the path 800 (and described above) are shown in callout boxes extending from each location along the path 800. In particular, the locations illustrated in the first group 830 and second group 832 represent locations outside of a building, and the corresponding images are illustrated showing the exterior of the building from different angles representing the view of the building from those locations. The locations illustrated in the third group 834, the fourth group 836, and the fifth group 838 represent locations inside of the building, and the corresponding images are illustrated showing various portions and angles within the interior of the building, with the images of the third group 834 showing a first room (an entrance room), the images of the fourth group 836 showing a second room (a bedroom), and the images of the fifth group 838 showing a third room (a bathroom).

[0078] In some cases, additional data may be collected by the media capture device, such as location, elevation, altitude, velocity, speed, roll, pitch, and yaw. Velocity or speed may alternately be calculated, for example based on the various locations and associated timestamps along the path 800. Distances between the different locations of the path 800 may be determined after or during movement of the media capture device along the path 800. In some uses, a predetermined location may be established. For example, collecting 3D certified images allows those to be directly inserted into CAD program to produce a 3D model of the house.

[0079] Ultimately, all of the media asset data, and related metadata concerning location of the media capture device during capture, timestamp corresponding to time of capture, direction that the media capture device is facing during capture, is then transmitted to the cloud as discussed with respect to FIG. 1 and FIG. 2, and analyses can be generated. The analysis may include a path such as the path 800 shown in FIG. 8, which may be generated after the media assets and metadata are all captured to align media assets and directional data to the path at the various locations, which are arranged into the path 800 based on the timestamps corresponding to the locations. In some cases, altitude or elevation data (or any other metadata as discussed with respect to the table 700) may be aligned to the path beside a particular location and media asset to which that particular data pertains. In some cases, metadata may be represented using colors along a color spectrum coloring a dot/circle/point/shape/icon representing a particular location. For example, if the metadata represented by color is altitude, red may represent sea level (or another lowest level of altitude in a range), orange may represent a higher altitude, yellow may represent a still higher altitude, green may represent a still higher altitude, turquoise may represent a still higher altitude, blue may represent a still higher altitude, indigo may represent a still higher altitude, and violet may represent a highest altitude in a range. The analysis may also include analyses such as the analyses 900 illustrated in FIG. 9 and included in the report of FIG. 10. Certified media captured from the media capture device, which may be a 3D camera, from known locations/positions can be inserted into CAD software to generate 3D view of damage to roofs, exteriors, interiors, etc., and converted into insurance reports to document damage along with mapping location of inspector traveling around a property.

[0080] FIG. 9 illustrates an interface with multiple types of media asset and metadata analyses, including mapping of media assets and metadata, focused analyses, and elevation analyses.

[0081] In particular, FIG. 9 provides an interface 900 with three analyses--a map interface 910 that plots multiple media assets and corresponding metadata on a satellite view map, a focused analysis 920 that focuses on a particular media asset and related metadata, and an elevation analysis 930 that plots elevation. The map 910 shows multiple certified media assets at captured different locations that are close to one another, with a particular media asset 915 highlighted with a callout box. The media asset 915 is a photo captured on 7/12/2016 at 11:02:16 AM at latitude 37.65127716 and longitude -121.85468246, with a media asset ID of MDI2016467029. An address in the corner of the map 910 identifies that the map 910 is centered around 341 Dove Drive, 94566 USA.

[0082] A focused analysis 920 focuses on a particular location or area with an address of 341 Dove Drive, 94566 USA that is a target of one or more media assets captured around a particular location and time. Metadata is presented in the focus analysis 920, including a job number (416812) associated with capture of the media assets, a GNSS point associated with a center of the property (GPS address property center point), an identifier of a closest fiduciary survey marker (Bingo 38), an identifier of a nearest cellular tower (87654 at bearing 330 degrees), a nearest cross street (Dove Drive and Grant Court), and a nearest Wi-Fi source (network Xfinity 663). A mini-map is also illustrated showing the relative positions of a cellular antenna 1.812 miles away at 286 degrees, a fiduciary survey point 1.765 miles away at 330 degrees, a nearby fault line that is 2.3 miles away at 120 degrees, and a nearby river water hazard that is 2.8 miles away at 245 degrees.

[0083] The elevation analysis 930 illustrates elevations at which various media assets were captured relative to a base elevation of 620 feet. The media assets are arranged from left to right in the order they were captured. The media assets include a first media asset 942 captured at elevation 619 feet and 0.5 inches with the media capture device facing an angle of 65 degrees, a second media asset 944 captured 360 degrees away from the first media asset 942 at elevation 616 feet with the media capture device facing an angle of 60 degrees, a third media asset 946 captured 10 degrees away from the second media asset 944 at elevation 619 feet with the media capture device facing an angle of 120 degrees, a fourth media asset 948 captured 50 degrees away from the third media asset 946 at elevation 618 feet with the media capture device facing an angle of 165 degrees, a fifth media asset 950 captured 88 degrees away from the fourth media asset 948 at elevation 618 feet and 30 inches with the media capture device facing an angle of 120 degrees, a sixth media asset 952 captured 91 degrees away from the fifth media asset 950 at elevation 618 feet and 10 inches with the media capture device facing an angle of 210 degrees, a seventh media asset 954 captured 115 degrees away from the sixth media asset 952 at elevation 619 feet with the media capture device facing an angle of 240 degrees, and a final eighth media asset 956 captured 180 degrees away from the seventh media asset 954 at elevation 618 feet and 10 inches with the media capture device facing an angle of 0 degrees. The GPS property center lies along the path between the fourth media asset 948 and the fifth media asset 950. The distance covered between each location corresponding to each media asset is denoted with a rightward arrow regardless of direction along a latitude-longitude plane (or globe), with direction along a latitude-longitude plane (or globe) denoted instead by the angle written above the arrow. The relative distances between the locations may be denoted by the relative lengths of these arrows, so that longer arrows denote further distances between locations while shorter arrows denote shorter distances between locations. Alternately, the relative times between capture of each media asset may be denoted by the relative lengths of these arrows, so that longer arrows denote more time elapsed between capture of two media assets while shorter arrows denote less time elapsed between capture of two media assets. In some cases, the distances and/or timestamps may be included in the elevation analysis 930.

[0084] FIG. 10 illustrates an incident report generated using the analyses of FIG. 9.

[0085] The incident report 1000 includes the map analysis 910, focus analysis 920, and elevation analysis 930 of FIG. 9 as well as a media mapping path 1010, a metadata table 700, an incident description 1020, a second area map 1030, and a street view image 1040. The incident description 1020 may include, for example, incident titles, categories, descriptions, and a date and times of the incident and/or of when the report was automatically generated by the cloud server system. The incident description 1020 also identifies multiple media asset relevant to the incident and corresponding incident media titles, groups, dates of capture, timestamps of capture, authors, locations, certification statuses, media identifiers, electronic signatures and notes.

[0086] Reports may be automatically generated to include media sheets--that is, grids, lists, or other arrangements of media assets and related data. Where the media is entirely photos, these may be photo sheets. Where the media is entirely videos, these may be video sheets, for example with multiple frames of the video displayed.

[0087] Provided in this new capability is the ability to provide that technology and with the associated software to create accurate ground truth waypoints with accuracy and details never before accomplished in real time. The application exploits dead reckoning algorithms to produce a position 1 location vs a position 2 in 6-axis real time measurement including dwell times.

[0088] Machine and user insertion into the metadata regarding the concurrent captured media details and facts about each media are also processed in the digital media capture device and autoloaded at the time of capture. The media information data is editable upon capture and later editable by numerous mean of media attributes via voice or keyboard; such as Incident Title, Incident Category, Comments about an Incident. Upon saving the Incident and using the media capture system for capturing images, video or audio into the system, and saving the Incident, the Incident detail page which already contains the previously inserted Incident title, category and comments appears and shows a Group search bar along with the media thumbnails of the Incident captured media. If the group system is utilized, each media is shown in its individual identified group, and can be searched by using the group search function. Each thumbnail contains the individual media Search, Title, Group Identifier, and Media Notes along with other icons showing cloud receipt, time date, time zone, and selectable map tool when selected bring up a map of the media asset capture location and other metadata information. Media can also be transferred from group to group using the "edit group" feature. Notes may be edited by using the "edit notes" feature. Titles can be edited by using "edit title" feature.

[0089] The identical information is synchronized between the mobile device and the cloud website and vice versa. It is available to the user after logging into the secure website. A similar set of tools are available in the web portal to identify each incident and each media associated with each incident. Here is a list of some of those attributes. Each Incident includes a unique Incident ID number (generated by the mobile application code), Incident number (generated by the mobile application code), Title, Domain, Category, Location, Description, Media, Certification, Authentication, Validity, icon with metadata indicating Certification, Authentication and Validity, Media icons for photo, video, audio, Assigned Group, assigned to, Priority, Status, Media Qty, Reports, Create On, Create By, Archived, Action; Edit incident, Open Quick view, Open Storyboard, GPS location, time, date, Plot Geo Tags, Mapping Tools, Report Development System, Search, Archive Incident, icons for mapping media, storyboard access, other actions, such as edit, dashboard, participants; personnel, subjects, contacts, management, configuration, members, audit, system reports, remote mobile application settings for download, revisions, controlling features, media resolution, accesses, default settings as example, setting selectable categories on mobile device and security parameters for the certification system.

[0090] Interface screens on the digital device provide user information integrated with work product and reports includes to name a few are mapping, GPS, Media along with, interactive control features (pop-up menus or slide up menus, or slide across, or grey areas) to highlight editable text for visual effects for both the camera system and information display menus. Additionally, an insurance claim documenting process can be performed using the initial claim information, including the claim number, the insurance policy number, the adjuster firm procedure process, assignment of claims, the processing of the claim by an adjuster utilizing a digital device and creating the loss information. This can be done by a menu structure, or fill in the blanks, or by audio prompting using a user selection, inserting information, tone or a voice. Every item will be collected or driven by the mobile computer application and immediately transmitted to the cloud and received on the other side of the cloud. This data can then be used to write formal reports, estimates, drawings, sketches and complete an Insurance claim or allow the ability to complete a virtual desk adjust the claim by using the certified media, the loss report generated by the mobile device with the media, adjuster credentials, and other forms required to satisfy the insurance carrier's requirements to pay funds to the insurance for their loss. Various screens also include metadata layers. Image layer with the ability to touch the screen and a map is visualized. This also will allow a voice note on each image when the image is touched. The voice will describe the title, notes, group and media description just by touching the image located either on the mobile device or on the cloud connected computer. Each image has the ability to play the image number, title, group, claim number, insurer, policy number, notes, description, location and has a volume control and send image to other feature.

[0091] Machine and user insertion into the metadata regarding the concurrent captured media details and facts about each media are also processed in the digital device and autoloaded at the time of capture. The media information data is editable upon capture and later editable by numerous means of media attributes via voice or keyboard; such as Incident Title, Incident Category, Comments about an Incident. Upon saving the Incident and using the media capture system for capturing images, video or audio into the system, and saving the Incident, the Incident detail page which already contains the previously inserted Incident title, category and comments appears and shows a Group search bar along with the media thumbnails of the Incident captured media. If the group system is utilized, each media is shown in its individual identified group, and can be searched by using the group search function. Each thumbnail contains the individual media Search, Title, Group Identifier, and Media Notes along with other icons showing cloud receipt, time date, time zone, and selectable map tool when selected brings up a map and maps its media location. Media can also be transferred from group to group using the "edit group" feature. Notes may be edited by using the "edit notes" feature. Titles can be edited by using "edit title" feature.

[0092] The identical information is synchronized between the mobile device and the cloud website. It is available to the user after logging into the secure website. A similar set of tools are available in the web portal to identify each incident and each media associated with each incident. Here is a list of some of those attributes. Each Incident includes a unique Incident identifier (ID) number (generated by the mobile application code), Incident number (generated by the mobile application code), Cloud website Authentication, Verification, Certification System verifies each media and marks accordingly, Title, Domain, Category, Location, Description, Assigned Group, assigned to, Priority, Status, Media Qty, Media Type, Reports, Create On, Create By, Archived, Action; Edit incident, Open Quick view, Open Storyboard, Plot Geo Tags, Archive Incident

[0093] Interface screens on the digital device provide user information integrated with work product and reports includes to name a few are mapping, GPS, Media along with, interactive control features (pop-up menus or slide up menus, or slide across, or grey areas) to highlight editable text for visual effects for both the camera system and information display menus. Additionally, an insurance claim documenting process can be performed using the initial claim information, including the claim number, the insurance policy number, the adjuster firm procedure process, assignment of claims, the processing of the claim by an adjuster utilizing a digital device and creating the loss information. This can be done by a menu structure, or fill in the blanks, or by audio prompting using a tone or a voice. Every item will be collected or driven by the mobile computer application and immediately transmitted to the cloud and received on the other side of the cloud. This data can then be used to write formal reports, estimates, drawings, sketches and complete an Insurance claim, a virtual claim or allow the ability to desk adjust the claim by using the certified media, the loss report generated by the mobile device with the media, adjuster credentials, and other forms required to satisfy the insurance carrier's requirements to pay funds to the insurance for their loss. Various screens also include metadata layers. Image layer with the ability to touch the screen and a map is visualized. This also will allow a voice note on each image when the image is touched. The voice will describe the title, notes, group and media description just by touching the image located either on the mobile device or on the cloud connected computer. Each image has the ability to play the title, group, notes, description and has a volume control and send image to other feature.

[0094] Additional details such as the situational awareness, all displayed on various types of base maps, satellite photos, or computer generated national, state, city, county, towns the quantity of incidents, quantity of media per event, quantity groups of media per group, quantity of media in groups all are appended with date, various icons representing time, GPS, media location, orientation, heading, elevation, magnetic north indication, media watermarks, a digital control unit in camera provides certification/validation, editable individual media titles, categories, notes, map, cloud receipt marker, in process status indicator, audible/visual transmission verification mark sharing same thru bi-direction-communication and synchronization with the cloud portion of the system with the role of system management, mobile application management, electronic digital media organization, control, preservation, processing, storage and dissemination.

[0095] The system is made up with two major elements tied together with software and bi-directional communication. The parts are: a secure mobile application uploaded on a digital device capable of media collection and transmission. The second element is a secure web and cloud ecosystem that bi-directionally communicates with the mobile digital system and preserves, processes, stores, reports, and provides secure access to users to The system is made of an unlimited number of mobile devices, users and cloud real-time communicative secure data interactive system that captures, collects, certifies media simultaneously using a secure mobile application on a digital device while organizing and using the information from the collection process and details contained within the media; such as who, where, why, how, and other metadata; location, orientation, time/date, elevation, camera heading, acceleration, metadata, velocities, encryption along with other integrated data forms such as; editable titled events and incidents with differing editable groups of group identified media either captured now or in the future additive to prior established groups with category, title, captions, descriptions, notes, media title, metadata integrated within the individual media provides data that is used in the process organizing and maintained and storing data in a media information system so that it can be instantly interacted with at a moment's notice either on a desktop or remoted digital device. The media information system provides the framework to keep track and organize of the collection, processing, storage, dissemination, viewing, reporting, transmission, interaction, interrogation auditing, tracking of the media data.

[0096] One example is the process a media goes though in its digital life. A human using a computer, software and computer process may add, change, modify, edit or remove a voice to text, voice to text title, note, description, caption, to or from a media. Additionally, the media may be altered or modified, for example using photo editing software and/or video editing software and/or audio editing software. The media Information system must keep track of each of the permutations and revisions that the media has undergone during the its digital life while residing in the media information system. The media information system creates a permanent record stored at capture or later as modified by the system in the cloud with all of the associated data. The system has an integral audit function that shows a detailed record to the user each time the media has been moved, changed, organized, edited, printed, or changed in any way. This audit feature is the artificial intelligent system process allowing learning, digital reading of words, notes, caption associated with the media which provides outputs of the system in a form upon human request.

[0097] Each media capture has a group, category, title, note, caption, description, metadata, GPS, compass heading, relationship to magnetic/true north, elevation, orientation, certification, validation, image identification number/code, time and date attributes which allow users to now understand information contained in the media itself. Some photos/videos have at a date printed on the image itself from the photo processor or film stock. Other information surrounding the photo details/information itself is lost or forgotten over time. The Media Information System overcome the past by incorporating all the details of the media over its life and continues to expand the details as it travels through its digital life. As the conversion from analog to digital media is complete, little has been done to organize other than albums, face view, search on Apple or Microsoft Web platforms. These systems are complex and hard to use and are not a system. By that, they upload media from many sources. This media system described here specifically loads images from only the mobile application. That maintains the process. The system will allow media to be uploaded however those media will not be certified and the originality of the data cannot be proven nor trusted. There is a need for a system that incorporates the collection, processing and storage of media which incorporates the totality of data associated with the media at capture so as to not lose the information contained in the image itself by not taking the steps to preserve it and nurture its digital life.

[0098] The system provides benefits and improvements to efficiency (through automated generation of paths and mapping interfaces) and speed to technologies used in many industries. It can be used for quality assurance, litigation media evidence and preservation, lifesaving such as being able to locate a user from last media captured on their digital device before going missing or following the media trail as this application teaches. A lot of recent lost or missing humans take a media in the area they go missing from and this invention provides a new tool for first responders and police to timely locate a missing or lost human, law enforcement certified media evidence collection, fire marshal arson investigations, and preservation, transportation i.e. documenting airline issues from globally remote locations such as parts failure where the system allows collaboration in real-time with media of the issues available on attendee's computer or mobile device with 100% availability. A new recreational photo system integrating drone media, Aerial trips can be documented, along with weather observation and aerial phenomena. Medical community in that hospitals can document procedures, accidents, gunshots, claimed conditions including x-rays and witness audio recordings at the time of the incident with certified media possibly lowering medical insurance policy fees. The hospitality industry can create historical documented recorded of the status, quality, maintenance issues, theft, fraud, guest damage, water damage, accident, incidents by capturing media which will remain in the system with title, notes, descriptions, location, orientation, heading, elevation, metadata providing detailed information of the digital device capturing the media, model number, version number, among some of the many attributes.

[0099] The system operation can begin on either the website or the mobile application which can be downloaded from the website or third party such as an Application Store or marketplace. The website has a built-in configuration control and understands the version history of every mobile application tethered to the system. After login, a number of interfaces will appear. Stepping through various interfaces of the software application that runs on the media capture device provides the actions, functions, transmissions, search, media review and other activities discussed herein.

[0100] Upon the application download, a user can insert username and password that was provided previously upon system registration to sign into a system. Another interface may appear listing a number of incidents contained in the system by or otherwise accessible to the logged in user. In one example, the user may have a total of 792 incident records in the cloud system 115. The user may select, for example, the 4th incident down on the incident list page. This is an incident that has been previously captured and stored in the system. Using the system information, the user can see the information about the incident such as an example below:

[0101] INCIDENT Title May 16-26 Alaska Inside Passage (269) Media Media Group: SFO Arrival (note time/date/zone/lat/long/Heading/Elevation Media Notes: "Under the Golden Gate Bridge" Added to the incident is an emoji of a boat for quick visual reference.

[0102] The user may request generation of a new incident, and can add a title, category/group, and notes/description to the new incident. A media asset may be captured then, or may be selected from previously captured media assets. The media asset is certified as discussed with respect to FIG. 13. Additional textual, graphical, or voice-based notes or annotations may be added to the media asset and/or to the incident report. The incident report is then created based on the media asset, optionally its annotations, the incident notes or descriptions input by the user. The incident report is then transmitted to the cloud server storage system 115.

[0103] FIG. 11 illustrates an exemplary media capture system and media certification system interfacing with different types of user devices and camera devices.

[0104] The image capture device collects an image as well as sensor data (as seen on the left side of FIG. 11). The data is then autonomously sent to the internet/cloud system where the digital data is filed, stored and accessed through the web in a systematic or serialized format constant with image identification formed with the image capture device (as seen on the right side of FIG. 11). This data can be transferred over a wired or a wireless connection. In some embodiments, the image capture device can first synchronize its image and/or sensor data with a second device. For example, a camera device (e.g., a digital point-and-shoot camera) may first be required to synchronize its data with a user device such as a smartphone or wearable device, which can then form a connection to the internet/cloud system. In the future these devices, i.e. handheld digital cameras, body cameras, binoculars can contain the certified media capture and transmission system and interact directly with the cloud as well as the second device.

[0105] The internet/cloud system can include one or more server systems, which may be connected to each other. In one embodiment, this internet/cloud system is a wireless multiplexed system for securely storing digital data to and from mobile digital devices. In another embodiment, the digital data (e.g., images, reports) are securely held in one central place, either by a hardware memory device, server, or a data center.