Auto-documentation For Application Program Interfaces Based On Network Requests And Responses

Palladino; Marco ; et al.

U.S. patent application number 16/933287 was filed with the patent office on 2022-03-31 for auto-documentation for application program interfaces based on network requests and responses. The applicant listed for this patent is Kong Inc.. Invention is credited to Augusto Marietti, Marco Palladino.

| Application Number | 20220103613 16/933287 |

| Document ID | / |

| Family ID | 1000006207995 |

| Filed Date | 2022-03-31 |

View All Diagrams

| United States Patent Application | 20220103613 |

| Kind Code | A9 |

| Palladino; Marco ; et al. | March 31, 2022 |

AUTO-DOCUMENTATION FOR APPLICATION PROGRAM INTERFACES BASED ON NETWORK REQUESTS AND RESPONSES

Abstract

Disclosed embodiments are directed at systems, methods, and architecture for providing auto-documentation to APIs. The auto documentation plugin is architecturally placed between an API and a client thereof and parses API requests and responses in order to generate auto-documentation. In some embodiments, the auto-documentation plugin is used to update preexisting documentation after updates. In some embodiments, the auto-documentation plugin accesses an on-line documentation repository. In some embodiments, the auto-documentation plugin makes use of a machine learning model to determine how and which portions of an existing documentation file to update.

| Inventors: | Palladino; Marco; (San Francisco, CA) ; Marietti; Augusto; (San Francisco, CA) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Prior Publication: |

|

||||||||||

| Family ID: | 1000006207995 | ||||||||||

| Appl. No.: | 16/933287 | ||||||||||

| Filed: | July 20, 2020 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 16254788 | Jan 23, 2019 | |||

| 16933287 | ||||

| 15974532 | May 8, 2018 | 10225330 | ||

| 16254788 | ||||

| 15899529 | Feb 20, 2018 | 10097624 | ||

| 15974532 | ||||

| 15662539 | Jul 28, 2017 | 9936005 | ||

| 15899529 | ||||

| 16714662 | Dec 13, 2019 | 11171842 | ||

| 15662539 | ||||

| 62896412 | Sep 5, 2019 | |||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | H04L 67/10 20130101; G06F 8/73 20130101; H04L 67/1004 20130101; H04L 67/42 20130101 |

| International Class: | H04L 29/08 20060101 H04L029/08; G06F 8/73 20060101 G06F008/73 |

Claims

1. A system for securing, managing, and extending functionalities of Application Programming Interfaces (APIs) in a microservices architecture, the system comprising: a plurality of APIs organized into a microservices application architecture; a processor operated control plane communicatively coupled to the plurality of APIs via data plane proxies, wherein the control plane is configured to: receive an incoming proxied request from a first API of the plurality of APIs directed to a second API of the plurality of APIs, the proxied request relayed to the control plane via a first data plane proxy associated with the first API; receive a proxied response from the second API, the proxied response relayed to the control plane via a second data plane proxy associated with the second API; parse the proxied request and the proxied response and extract current data; in response to a transaction that includes the request and the response, execute an auto-documentation plugin, wherein the auto-documentation plugin is configured to generate documentation based on the current data; and a data store configured to store the control plane and the documentation.

2. The system of claim 1, further comprising: a first gateway node and a second gateway node, the first gateway node and second gateway node configured as external end-points of the microservices application, wherein the one or more gateway nodes are further configured to: save, by the first gateway node, software code in the data store, wherein the software code is associated with a plugin included in a plurality of plugins; retrieving, by the second gateway node, the software code from the data store; and installing, by the second gateway node, the plugin at the second gateway node, using the retrieved software code associated with the plugin.

3. The system of claim 1, further comprising: a memory architecturally separate from the plurality of APIs, the memory including a program code library configured to execute functionalities common to execution of the plurality of APIs on the node in communication with the memory.

4. The system of claim 2, further comprising: an administration API for configuring and installing the plurality of plugins, wherein the administration API is used for provisioning the plurality of APIs.

5. The system of claim 1, wherein the request is parsed subsequent to the receiving the response from the API.

6. The system of claim 1, wherein the request is parsed independently from delivery to the second API.

7. The system of claim 1, wherein the auto-documentation is in the form of a Swagger file, a RAML file, or an API Blueprint file.

8. The system of claim 1, wherein the auto-documentation includes one or more endpoints, one or more parameters, one or more methods of the transaction.

9. The system of claim 1, wherein the request and the response is associated with an endpoint, wherein executing the auto-documentation plugin for the transaction includes: retrieving previously generated documentation for the endpoint; comparing the previously generated auto-documentation with the current data to determine a difference; and upon determining a difference, generating documentation for the transaction.

10. The system of claim 9, wherein the previously generated documentation associated with the endpoint and includes at least one of: a body, one or more headers, or one or more parameters.

11. The system of claim 9, wherein executing the auto-documentation plugin for the transaction includes: upon determining a difference does not exist, entering a state associated with not generating documentation for the transaction.

12. The system of claim 9, wherein executing the auto-documentation plugin for the transaction includes: upon determining the difference, electronically generating a notification for alerting the difference to a user.

13. A system comprising: a processor operated node architecturally positioned to receive proxied communications between a plurality of application program interfaces (APIs) that operate a microservices application, wherein the node parses API requests and responses in between the plurality of APIs and extracts current data and executes an auto-documentation program function, wherein the auto-documentation program function is configured to generate auto-documentation in response to a transaction that includes the request and the response, the auto-documentation based on the transaction; and a memory architecturally separate from the microservices application, the memory including a program code library configured to execute functionalities common to execution of the plurality of APIs on the node in communication with the memory.

14. The system of claim 13, wherein the node further comprises: a gateway that receives client requests, executes the program code library, and performs load balancing; and a data store that stores the program code library.

15. The system of claim 14, the node further comprising: a gateway cache that stores portions or all of the functionalities common to execution of the plurality of APIs and improves execution time over accessing the auto-documentation program function via the data store.

16. The system of claim 13, wherein the functionalities common to execution of the plurality of APIs comprise any of: authentication; logging; caching; transformations; rate-limiting; or any combination thereof.

17. The system of claim 13, wherein the functionalities common to execution of the plurality of APIs includes a first program function and a second program function.

18. The system of claim 17, the node further comprising: an administration API programmed to configure and install the first and second program functions, and further programmed to provision the API.

19. The system of claim 17, wherein the plurality of APIs cannot perform the first or second program functions without code from the program code library on a proxy server.

20. A method for securing, managing, and extending functionalities of a plurality of Application Programming Interfaces (APIs) in a microservices architecture, the method comprising: receiving, by a control plane, an incoming proxied request from a first API of the plurality of APIs directed to a second API of the plurality of APIs, the proxied request relayed to the control plane via a first data plane proxy associated with the first API; receiving, by the control plane, a proxied response from the second API, the proxied response relayed to the control plane via a second data plane proxy associated with the second API; parsing the proxied request and the proxied response and extracting current data; and in response to a transaction that includes the request and the response, executing an auto-documentation plugin, wherein the auto-documentation plugin is configured to generate documentation based on the current data between the first API and the second API.

21. The method of claim 20, further comprising: identifying, by the control plane, a set of APIs of the plurality of APIs that belong to a service group.

22. The method of claim 21, further comprising: parsing requests and responses between the set of APIs belonging to the service group; identify similar or matching objects across the requests and responses of multiple APIs; in response to said identification, executing the auto-documentation plugin, wherein the auto-documentation plugin is configured to generate service group level documentation based on requests and responses within the service group.

23. The method of claim 21, wherein the set of APIs that belong to the service group are along a same execution path.

Description

CROSS-REFERENCE TO RELATED APPLICATIONS

[0001] This application is a continuation-in-part of U.S. patent application Ser. No. 16/254,788, entitled "AUTO-DOCUMENTATION FOR APPLICATION PROGRAM INTERFACES BASED ON NETWORK REQUESTS AND RESPONSES," filed Jan. 23, 2019, which is a continuation of U.S. patent application Ser. No. 15/974,532, entitled "AUTO-DOCUMENTATION FOR APPLICATION PROGRAM INTERFACES BASED ON NETWORK REQUESTS AND RESPONSES," filed May 8, 2018, now U.S. Pat. No. 10,225,330, issued Mar. 5, 2019, which is a continuation-in-part of U.S. patent application Ser. No. 15/899,529, entitled "SYSTEMS AND METHODS FOR DISTRIBUTED INSTALLATION OF API AND PLUGINS," filed on Feb. 20, 2018, now U.S. Pat. No. 10,097,624, issued Oct. 9, 2018 that is, in turn, a continuation application of U.S. patent application Ser. No. 15/662,539 entitled "SYSTEMS AND METHODS FOR DISTRIBUTED API GATEWAYS," filed on Jul. 28, 2017, now U.S. Pat. No. 9,936,005, issued Apr. 3, 2018. The aforementioned applications are incorporated herein by reference in their entirety.

BACKGROUND

[0002] Application programming interfaces (APIs) are specifications primarily used as an interface platform by software components to enable communication with each other. For example, APIs can include specifications for clearly defined routines, data structures, object classes, and variables. Thus, an API defines what information is available and how to send or receive that information.

[0003] Setting up multiple APIs is a time-consuming challenge. This is because deploying an API requires tuning the configuration or settings of each API individually. The functionalities of each individual API are confined to that specific API and servers hosting multiple APIs are individually set up for hosting the APIs, this makes it very difficult to build new APIs or even scale and maintain existing APIs. This becomes even more challenging when there are tens of thousands of APIs and millions of clients requesting API-related services per day. These same tens of thousands of APIs are updated regularly. Consequently, updating the associated documentation with these APIs is a tedious and cumbersome activity. Consequently, this results in reduced system productivity.

BRIEF DESCRIPTION OF THE DRAWINGS

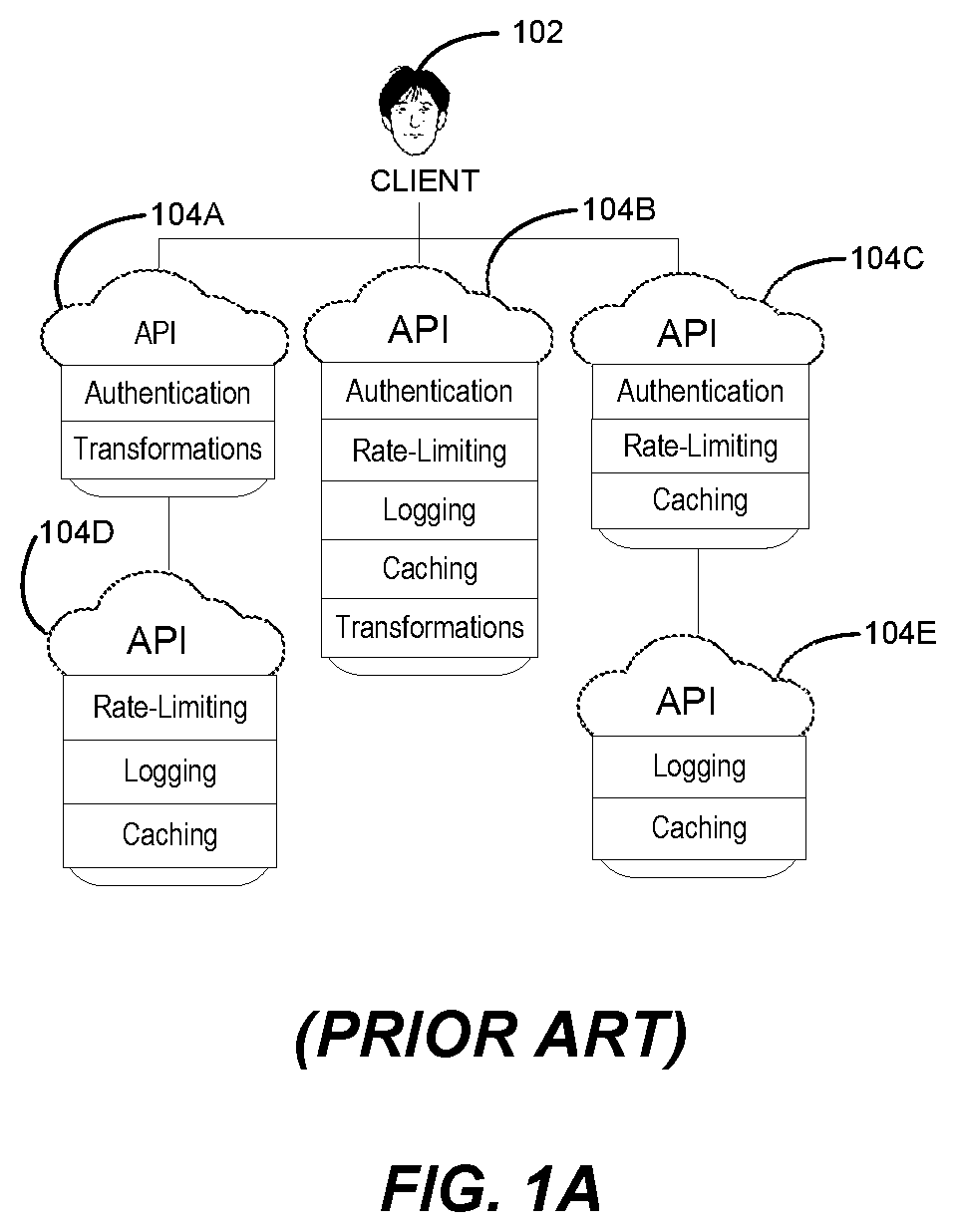

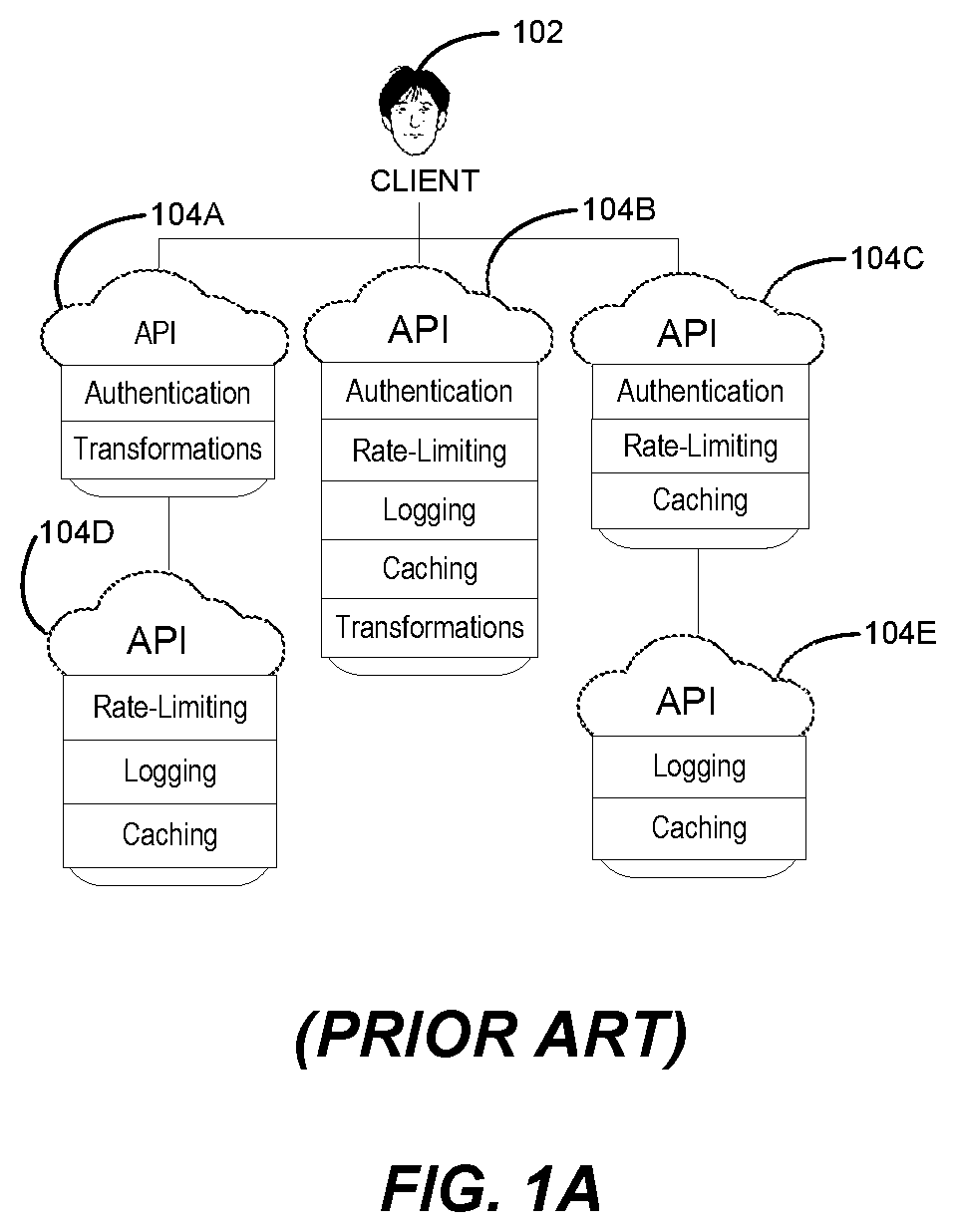

[0004] FIG. 1A illustrates a prior art approach with multiple APIs having functionalities common to one another;

[0005] FIG. 1B illustrates a distributed API gateway architecture, according to an embodiment of the disclosed technology;

[0006] FIG. 2 illustrates a block diagram of an example environment suitable for functionalities provided by a gateway node, according to an embodiment of the disclosed technology;

[0007] FIG. 3A illustrates a block diagram of an example environment with a cluster of gateway nodes in operation, according to an embodiment of the disclosed technology;

[0008] FIG. 3B illustrates a schematic of a data store shared by multiple gateway nodes, according to an embodiment of the disclosed technology;

[0009] FIG. 4A and FIG. 4B illustrate example ports and connections of a gateway node, according to an embodiment of the disclosed technology;

[0010] FIG. 5 illustrates a flow diagram showing steps involved in the installation of a plugin at a gateway node, according to an embodiment of the disclosed technology;

[0011] FIG. 6 illustrates a sequence diagram showing components and associated steps involved in loading configurations and code at runtime, according to an embodiment of the disclosed technology;

[0012] FIG. 7 illustrates a sequence diagram of a use-case showing components and associated steps involved in generating auto-documentation, according to an embodiment of the disclosed technology;

[0013] FIG. 8 illustrates a sequence diagram of another use-case showing components and associated steps involved in generating auto-documentation according to an embodiment of the disclosed technology;

[0014] FIG. 9 illustrates a flow diagram showing steps involved in generating auto-documentation, according to an embodiment of the disclosed technology;

[0015] FIG. 10 is a block diagram of a control plane system for a service mesh in a microservices architecture;

[0016] FIG. 11 is a block diagram illustrating communication between APIs resulting in updated documentation;

[0017] FIG. 12 is a block diagram illustrating service groups and features associated with identification thereof; and

[0018] FIG. 13 depicts a diagrammatic representation of a machine in the example form of a computer system within a set of instructions, causing the machine to perform any one or more of the methodologies discussed herein, to be executed.

DETAILED DESCRIPTION

[0019] The disclosed technology describes how to automatically generate or update documentation for an API by monitoring, parsing, and sniffing requests/responses to/from the API through network nodes such as proxy servers, gateways, and control planes. In network routing and microservices applications, the control plane is the part of the router architecture that is concerned with drawing the network topology, or the routing table that defines what to do with incoming packets. Control plane logic also can define certain packets to be discarded, as well as preferential treatment of certain packets for which a high quality of service is defined by such mechanisms as differentiated services.

[0020] In monolithic application architecture, a control plane operates outside the core application. In a microservices architecture, the control plane operates between each API that makes up the microservice architecture. Proxies operate linked to each API. The proxy attached to each API is referred to as a "data plane proxy." Examples of a data plane proxy include the sidecar proxies of Envoy proxies.

[0021] The generation or updates of documentation are implemented in a number of ways and based on a number of behavioral indicators described herein.

[0022] The following description and drawings are illustrative and are not to be construed as limiting. Numerous specific details are described to provide a thorough understanding of the disclosure. However, in certain instances, well-known or conventional details are not described in order to avoid obscuring the description. References to an embodiment in the present disclosure can be, but not necessarily are, references to the same embodiment; and, such references mean at least one of the embodiments.

[0023] Reference in this specification to "one embodiment" or "an embodiment" means that a particular feature, structure, or characteristic described in connection with the embodiment is included in at least one embodiment of the disclosure. The appearances of the phrase "in one embodiment" in various places in the specification are not necessarily all referring to the same embodiment, nor are separate or alternative embodiments mutually exclusive of other embodiments. Moreover, various features are described which may be exhibited by some embodiments and not by others. Similarly, various requirements are described which may be requirements for some embodiments but not other embodiments.

[0024] The terms used in this specification generally have their ordinary meanings in the art, within the context of the disclosure, and in the specific context where each term is used. Certain terms that are used to describe the disclosure are discussed below, or elsewhere in the specification, to provide additional guidance to the practitioner regarding the description of the disclosure. For convenience, certain terms may be highlighted, for example using italics and/or quotation marks. The use of highlighting has no influence on the scope and meaning of a term; the scope and meaning of a term is the same, in the same context, whether or not it is highlighted. It will be appreciated that same thing can be said in more than one way.

[0025] Consequently, alternative language and synonyms may be used for any one or more of the terms discussed herein, nor is any special significance to be placed upon whether or not a term is elaborated or discussed herein. Synonyms for certain terms are provided. A recital of one or more synonyms does not exclude the use of other synonyms. The use of examples anywhere in this specification including examples of any terms discussed herein is illustrative only, and is not intended to further limit the scope and meaning of the disclosure or of any exemplified term. Likewise, the disclosure is not limited to various embodiments given in this specification.

[0026] Without intent to further limit the scope of the disclosure, examples of instruments, apparatus, methods and their related results according to the embodiments of the present disclosure are given below. Note that titles or subtitles may be used in the examples for convenience of a reader, which in no way should limit the scope of the disclosure. Unless otherwise defined, all technical and scientific terms used herein have the same meaning as commonly understood by one of ordinary skill in the art to which this disclosure pertains. In the case of conflict, the present document, including definitions will control.

[0027] Embodiments of the present disclosure are directed at systems, methods, and architecture for providing microservices and a plurality of APIs to requesting clients. The architecture is a distributed cluster of gateway nodes that jointly provide microservices and the plurality of APIs. Providing the APIs includes providing a plurality of plugins that implement the APIs. As a result of a distributed architecture, the task of API management can be distributed across a cluster of gateway nodes. Every request being made to an API hits a gateway node first, and then the request is proxied to the target API. The gateway nodes effectively become the entry point for every API-related request. The disclosed embodiments are well-suited for use in mission critical deployments at small and large organizations. Aspects of the disclosed technology do not impose any limitation on the type of APIs. For example, these APIs can be proprietary APIs, publicly available APIs, or invite-only APIs.

[0028] FIG. 1A illustrates a prior art approach with multiple APIs having functionalities common to one another. As shown in FIG. 1A, a client 102 is associated with APIs 104A, 1048, 104C, 104D, and 104E. Each API has a standard set of features or functionalities associated with it. For example, the standard set of functionalities associated with API 104A are "authentication" and "transformations." The standard set of functionalities associated with API 1048 are "authentication," "rate-limiting," "logging," "caching," and "transformations." Thus, "authentication" and "transformations" are functionalities that are common to APIs 104A and 104B. Similarly, several other APIs in FIG. 1A share common functionalities. However, it is noted that having each API handle its own functionalities individually causes duplication of efforts and code associated with these functionalities, which is inefficient. This problem becomes significantly more challenging when there are tens of thousands of APIs and millions of clients requesting API-related services per day.

[0029] FIG. 1B illustrates a distributed API gateway architecture according to an embodiment of the disclosed technology. To address the challenge described in connection with FIG. 1A, the disclosed technology provides a distributed API gateway architecture as shown in FIG. 1B. Specifically, disclosed embodiments implement common API functionalities by bundling the common API functionalities into a gateway node 106 (also referred to herein as an API Gateway). Gateway node 106 implements common functionalities as a core set of functionalities that runs in front of APIs 108A, 108B, 108C, 108D, and 108E. The core set of functionalities include rate limiting, caching, authentication, logging, transformations, and security. It will be understood that the above-mentioned core set of functionalities are for examples and illustrations. There can be other functionalities included in the core set of functionalities besides those discussed in FIG. 1B. In some applications, gateway node 106 can help launch large-scale deployments in a very short time at reduced complexity and is therefore an inexpensive replacement for expensive proprietary API management systems. The disclosed technology includes a distributed architecture of gateway nodes with each gateway node bundled with a set of functionalities that can be extended depending on the use-case or applications.

[0030] FIG. 2 illustrates a block diagram of an example environment suitable for functionalities provided by a gateway node according to an embodiment of the disclosed technology. In some embodiments, a core set of functionalities are provided in the form of "plugins" or "add-ons" installed at a gateway node. (Generally, a plugin is a component that allows modification of what a system can do usually without forcing a redesign/compile of the system. When an application supports plug-ins, it enables customization. The common examples are the plug-ins used in web browsers to add new features such as search-engines, virus scanners, or the ability to utilize a new file type such as a new video format.)

[0031] As an example, a set of plugins 204 shown in FIG. 2 are provided by gateway node 206 positioned between a client 202 and one or more HTTP APIs. Electronic devices operated by client 202 can include, but are not limited to, a server desktop, a desktop computer, a computer cluster, a mobile computing device such as a notebook, a laptop computer, a handheld computer, a mobile phone, a smart phone, a PDA, a BlackBerry.TM. device, a Treo.TM., and/or an iPhone or Droid device, etc. Gateway node 206 and client 202 are configured to communicate with each other via network 207. Gateway node 206 and one or more APIs 208 are configured to communicate with each other via network 209. In some embodiments, the one or more APIs reside in one or more API servers, API data stores, or one or more API hubs. Various combinations of configurations are possible.

[0032] Networks 207 and 209 can be any collection of distinct networks operating wholly or partially in conjunction to provide connectivity to/from client 202 and one or more APIs 208. In one embodiment, network communications can be achieved by, an open network, such as the Internet, or a private network, such as an intranet and/or the extranet. Networks 207 and 209 can be a telephonic network, an open network, such as the Internet, or a private network, such as an intranet and/or the extranet. For example, the Internet can provide file transfer, remote login, email, news, RSS, and other services through any known or convenient protocol, such as, but not limited to the TCP/IP protocol, Open System Interconnections (OSI), FTP, UPnP, iSCSI, NSF, ISDN, PDH, RS-232, SDH, SONET, etc.

[0033] Client 202 and one or more APIs 208 can be coupled to the network 150 (e.g., Internet) via a dial-up connection, a digital subscriber loop (DSL, ADSL), cable modem, wireless connections, and/or other types of connection. Thus, the client devices 102A-N, 112A-N, and 122A-N can communicate with remote servers (e.g., API servers 130A-N, hub servers, mail servers, instant messaging servers, etc.) that provide access to user interfaces of the World Wide Web via a web browser, for example.

[0034] The set of plugins 204 include authentication, logging, rate-limiting, and custom plugins, of which authentication, logging, traffic control, rate-limiting can be considered as the core set of functionalities. An authentication functionality can allow an authentication plugin to check for valid login credentials such as usernames and passwords. A logging functionality of a logging plugin logs data associated with requests and responses. A traffic control functionality of a traffic control plugin manages, throttles, and restricts inbound and outbound API traffic. A rate limiting functionality can allow managing, throttling, and restricting inbound and outbound API traffic. For example, a rate limiting plugin can determine how many HTTP requests a developer can make in a given period of seconds, minutes, hours, days, months or years.

[0035] A plugin can be regarded as a piece of stand-alone code. After a plugin is installed at a gateway node, it is available to be used. For example, gateway node 206 can execute a plugin in between an API-related request and providing an associated response to the API-related request. One advantage of the disclosed system is that the system can be expanded by adding new plugins. In some embodiments, gateway node 206 can expand the core set of functionalities by providing custom plugins. Custom plugins can be provided by the entity that operates the cluster of gateway nodes. In some instances, custom plugins are developed (e.g., built from "scratch") by developers or any user of the disclosed system. It can be appreciated that plugins, used in accordance with the disclosed technology, facilitate in centralizing one or more common functionalities that would be otherwise distributed across the APIs, making it harder to build, scale and maintain the APIs.

[0036] Other examples of plugins can be a security plugin, a monitoring and analytics plugin, and a transformation plugin. A security functionality can be associated with the system restricting access to an API by whitelisting or blacklisting/whitelisting one or more consumers identified, for example, in one or more Access Control Lists (ACLs). In some embodiments, the security plugin requires an authentication plugin to be enabled on an API. In some use cases, a request sent by a client can be transformed or altered before being sent to an API. A transformation plugin can apply a transformations functionality to alter the request sent by a client. In many use cases, a client might wish to monitor request and response data. A monitoring and analytics plugin can allow monitoring, visualizing, and inspecting APIs and microservices traffic.

[0037] In some embodiments, a plugin is Lua code that is executed during the life-cycle of a proxied request and response. Through plugins, functionalities of a gateway node can be extended to fit any custom need or integration challenge. For example, if a consumer of the disclosed system needs to integrate their API's user authentication with a third-party enterprise security system, it can be implemented in the form of a dedicated (custom) plugin that is run on every request targeting that given API. One advantage, among others, of the disclosed system is that the distributed cluster of gateway nodes is scalable by simply adding more nodes, implying that the system can handle virtually any load while keeping latency low.

[0038] One advantage of the disclosed system is that it is platform agnostic, which implies that the system can run anywhere. In one implementation, the distributed cluster can be deployed in multiple data centers of an organization. In some implementations, the distributed cluster can be deployed as multiple nodes in a cloud environment. In some implementations, the distributed cluster can be deployed as a hybrid setup involving physical and cloud computers. In some other implementations, the distributed cluster can be deployed as containers.

[0039] FIG. 3A illustrates a block diagram of an example environment with a cluster of gateway nodes in operation. In some embodiments, a gateway node is built on top of NGINX. NGINX is a high-performance, highly-scalable, highly-available web server, reverse proxy server, and web accelerator (combining the features of an HTTP load balancer, content cache, and other features). In an example deployment, a client 302 communicates with one or more APIs 312 via load balancer 304, and a cluster of gateway nodes 306. The cluster of gateway nodes 306 can be a distributed cluster. The cluster of gateway nodes 306 includes gateway nodes 308A-308H and data store 310. The functions represented by the gateway nodes 308A-308H and/or the data store 310 can be implemented individually or in any combination thereof, partially or wholly, in hardware, software, or a combination of hardware and software.

[0040] Load balancer 304 provides functionalities for load balancing requests to multiple backend services. In some embodiments, load balancer 304 can be an external load balancer. In some embodiments, the load balancer 304 can be a DNS-based load balancer. In some embodiments, the load balancer 304 can be a Kubernetes.RTM. load balancer integrated within the cluster of gateway nodes 306.

[0041] Data store 310 stores all the data, routing information, plugin configurations, etc. Examples of a data store can be Apache Cassandra or PostgreSQL. In accordance with disclosed embodiments, multiple gateway nodes in the cluster share the same data store, e.g., as shown in FIG. 3A. Because multiple gateway nodes in the cluster share the same data store, there is no requirement to associate a specific gateway node with the data store--data from each gateway node 308A-308H is stored in data store 310 and retrieved by the other nodes (e.g., even in complex multiple data center setups). In some embodiments, the data store shares configurations and software codes associated with a plugin that is installed at a gateway node. In some embodiments, the plugin configuration and code can be loaded at runtime.

[0042] FIG. 3B illustrates a schematic of a data store shared by multiple gateway nodes, according to an embodiment of the disclosed technology. For example, FIG. 3B shows data store 310 shared by gateway nodes 308A-308H arranged as part of a cluster.

[0043] One advantage of the disclosed architecture is that the cluster of gateway nodes allow the system to be scaled horizontally by adding more gateway nodes to encompass a bigger load of incoming API-related requests. Each of the gateway nodes share the same data since they point to the same data store. The cluster of gateway nodes can be created in one datacenter, or in multiple datacenters distributed across different geographical locations, in both cloud or on-premise environments. In some embodiments, gateway nodes (e.g., arranged according to a flat network topology) between the datacenters communicate over a Virtual Private Network (VPN) connection. The system can automatically handle a new gateway node joining a cluster or leaving a cluster. Once a gateway node communicates with another gateway node, it will automatically discover all the other gateway nodes due to an underlying gossip protocol.

[0044] In some embodiments, each gateway includes an administration API (e.g., internal RESTful API) for administration purposes. Requests to the administration API can be sent to any node in the cluster. The administration API can be a generic HTTP API. Upon set up, each gateway node is associated with a consumer port and an admin port that manages the API-related requests coming into the consumer port. For example, port number 8001 is the default port on which the administration API listens and 8444 is the default port for HTTPS (e.g., admin_listen_ssl) traffic to the administration API.

[0045] In some instances, the administration API can be used to provision plugins. After a plugin is installed at a gateway node, it is available to be used, e.g., by the administration API or a declarative configuration.

[0046] In some embodiments, the administration API identifies a status of a cluster based on a health state of each gateway node. For example, a gateway node can be in one of the following states:

[0047] active: the node is active and part of the cluster.

[0048] failed: the node is not reachable by the cluster.

[0049] leaving: a node is in the process of leaving the cluster.

[0050] left: the node has left the cluster.

[0051] In some embodiments, the administration API is an HTTP API available on each gateway node that allows the user to create, restore, update, and delete (CRUD) operations on items (e.g., plugins) stored in the data store. For example, the Admin API can provision APIs on a gateway node, provision plugin configuration, create consumers, and provision their credentials. In some embodiments, the administration API can also read, update, or delete the data. Generally, the administration API can configure a gateway node and the data associated with the gateway node in the data store.

[0052] In some applications, it is possible that the data store only stores the configuration of a plugin and not the software code of the plugin. That is, for installing a plugin at a gateway node, the software code of the plugin is stored on that gateway node. This can result in efficiencies because the user needs to update his or her deployment scripts to include the new instructions that would install the plugin at every gateway node. The disclosed technology addresses this issue by storing both the plugin and the configuration of the plugin. By leveraging the administration API, each gateway node can not only configure the plugins, but also install them. Thus, one advantage of the disclosed system is that a user does not have to install plugins at every gateway node. But rather, the administration API associated with one of the gateway nodes automates the task of installing the plugins at gateway nodes by installing the plugin in the shared data store, such that every gateway node can retrieve the plugin code and execute the code for installing the plugins. Because the plugin code is also saved in the shared data store, the code is effectively shared across the gateway nodes by leveraging the data store, and does not have to be individually installed on every gateway node.

[0053] FIG. 4A and FIG. 4B illustrate example block diagrams 400 and 450 showing ports and connections of a gateway node, according to an embodiment of the disclosed technology. Specifically, FIG. 4A shows a gateway node 1 and gateway node 2. Gateway node 1 includes a proxy module 402A, a management and operations module 404A, and a cluster agent module 406A. Gateway node 2 includes a proxy module 402B, a management and operations module 404B, and a cluster agent module 406B. Gateway node 1 receive incoming traffic at ports denoted as 408A and 410A. Ports 408A and 410A are coupled to proxy module 402B. Gateway node 1 listens for HTTP traffic at port 408A. The default port number for port 408A is 8000. API-related requests are typically received at port 408A. Port 410A is used for proxying HTTPS traffic. The default port number for port 410A is 8443. Gateway node 1 exposes its administration API (alternatively, referred to as management API) at port 412A that is coupled to management and operations module 404A. The default port number for port 412A is 8001. The administration API allows configuration and management of a gateway node, and is typically kept private and secured. Gateway node 1 allows communication within itself (i.e., intra-node communication) via port 414A that is coupled to clustering agent module 406A. The default port number for port 414A is 7373. Because the traffic (e.g., TCP traffic) here is local to a gateway node, this traffic does not need to be exposed. Cluster agent module 406B of gateway node 1 enables communication between gateway node 1 and other gateway nodes in the cluster. For example, ports 416A and 416B coupled with cluster agent module 406A at gateway node 1 and cluster agent module 406B at gateway node 2 allow intra-cluster or inter-node communication. Intra-cluster communication can involve UDP and TCP traffic. Both ports 416A and 416B have the default port number set to 7946. In some embodiments, a gateway node automatically (e.g., without human intervention) detects its ports and addresses. In some embodiments, the ports and addresses are advertised (e.g., by setting the cluster_advertise property/setting to a port number) to other gateway nodes. It will be understood that the connections and ports (denoted with the numeral "B") of gateway node 2 are similar to those in gateway node 1, and hence is not discussed herein.

[0054] FIG. 4B shows cluster agent 1 coupled to port 456 and cluster agent 2 coupled to port 458. Cluster agent 1 and cluster agent 2 are associated with gateway node 1 and gateway node 2 respectively. Ports 456 and 458 are communicatively connected to one another via a NAT-layer 460. In accordance with disclosed embodiments, gateway nodes are communicatively connected to one another via a NAT-layer. In some embodiments, there is no separate cluster agent but the functionalities of the cluster agent are integrated into the gateway nodes. In some embodiments, gateway nodes communicate with each other using the explicit IP address of the nodes.

[0055] FIG. 5 illustrates a flow diagram showing steps of a process 500 involved in installation of a plugin at a gateway node, according to an embodiment of the disclosed technology. At step 502, the administration API of a gateway node receives a request to install a plugin. An example of a request is provided below:

TABLE-US-00001 For example: POST /plugins/install name=OPTIONAL_VALUE code=VALUE archive=VALUE

[0056] The administration API of the gateway node determines (at step 506) if the plugin exists in the data store. If the gateway node determines that the plugin exists in the data store, then the process returns (step 510) an error. If the gateway node determines that the plugin does not exist in the data store, then the process stores the plugin. (In some embodiments, the plugin can be stored in an external data store coupled to the gateway node, a local cache of the gateway node, or a third party storage. For example, if the plugin is stored at some other location besides the data store, then different policies can be implemented for accessing the plugin.) Because the plugin is now stored in the database, it is ready to be used by any gateway node in the cluster.

[0057] When a new API request goes through a gateway node (in the form of network packets), the gateway node determines (among other things) which plugins are to be loaded. Therefore, a gateway node sends a request to the data store to retrieve the plugin(s) that has/have been configured on the API and that need(s) to be executed. The gateway node communicates with the data store using the appropriate database driver (e.g., Cassandra or PostgresSQL) over a TCP communication. In some embodiments, the gateway node retrieves both the plugin code to execute and the plugin configuration to apply for the API, and then execute them at runtime on the gateway node (e.g., as explained in FIG. 6).

[0058] FIG. 6 illustrates a sequence diagram 600 showing components and associated steps involved in loading configurations and code at runtime, according to an embodiment of the disclosed technology. The components involved in the interaction are client 602, gateway node 604 (including an ingress port 606 and a gateway cache 608), data store 610, and an API 612. At step 1, a client makes a request to gateway node 604. At step 2, ingress port 606 of gateway node 604 checks with gateway cache 608 to determine if the plugin information and the information to process the request has already been cached previously in gateway cache 608. If the plugin information and the information to process the request is cached in gateway cache 608, then the gateway cache 608 provides such information to the ingress port 606. If, however, the gateway cache 608 informs the ingress port 606 that the plugin information and the information to process the request is not cached in gateway cache 608, then the ingress port 606 loads (at step 3) the plugin information and the information to process the request from data store 610. In some embodiments, ingress port 606 caches (for subsequent requests) the plugin information and the information to process the request (retrieved from data store 610) at gateway cache 608. At step 5, ingress port 606 of gateway node 604 executes the plugin and retrieves the plugin code from the cache, for each plugin configuration. However, if the plugin code is not cached at the gateway cache 608, the gateway node 604 retrieves (at step 6) the plugin code from data store 610 and caches (step 7) it at gateway cache 608. The gateway node 604 executes the plugins for the request and the response (e.g., by proxy the request to API 612 at step 7), and at step 8, the gateway node 604 returns a final response to the client.

Auto-Documentation Embodiment

[0059] When releasing an API, documentation is a requisite in order for developers to learn how to consume the API. Documentation for an API is an informative text document that describes what functionality the API provides, the parameters it takes as input, what is the output of the API, how does the API operate, and other such information. Usually documenting APIs can be a tedious and extensive task. In conventional systems, developers create an API and draft the documentation for the API. This approach to drafting a documentation for the API is human-driven. That is, the documentation is changed only when human developers make changes to the documentation.

[0060] Any time the API is updated, the documentation needs to be revised. In many instances, because of pressures in meeting deadlines, developers are not able to edit the documentation at the same pace as the changes to the API. This results in the documentation not being updated which leads to frustrations because of an API having unsupported/incorrect documentation. In some unwanted scenarios, the documentation does not match the implementation of the API. The issue of documentation is exacerbated in a microservices application that includes a large number of APIs that are independently updated and developed.

[0061] The generic concept of procedurally documentation generated from source code emerged recently, though has some inherent issues that are solved herein. Procedurally generated documentation often is limited to activation by the programmer who generates the source code or updates thereto. Techniques taught herein enable the auto-documentation of code that a user does not necessarily have access to. Further, the auto-documentation is performed passively by a network node and does not burden the machine that is executing the API code; thus, a processing advantage is achieved.

[0062] In some embodiments, the disclosed system includes a specialized plugin that automatically generates documentation for an API endpoint (e.g., input and output parameters of the API endpoint) without human intervention. By parsing the stream of requests and the responses passing through a gateway node, the plugin generates the documentation automatically. In some embodiments, the auto-documentation plugin is linked to an online repository of documentation, such as GitHub, and documentation files stored thereon are updated directly using provided login credentials where necessary. As an example, if a client sends a request to /hello, and the API associated with /hello responds back with code successfully, then the plugin determines that /hello is an endpoint based on the behavioral indicator of the manner of the response. Further behavioral indicators are discussed below. In some embodiments, an API and a client may have a certain order or series of requests and responses. The API or client will first request one set of parameters, and then based on the response, another request is sent based on the values of those parameters.

[0063] In some embodiments, the plugin can parse the parameters involved in a request/response and identify those parameters in the generated auto-documentation. In some embodiments, the plugin can generate a response to a client's request. In some embodiments, the API itself can provide additional response headers (e.g., specifying additional about the fields, parameters, and endpoints) to generate a more comprehensive auto-documentation. For example, a client makes a request to /hello with the parameters name, age, and id. The parameters are titled such that a semantic analysis of the collection of parameter titles are identifying a person.

[0064] In some embodiments the auto-documentation plugin can build a model using machine learning to predict what a field in the response means or that a sequence of request/responses has changed. By generating auto-documentation for one or more APIs, the auto-documentation plugin can learn to deal with fields and data that are not necessarily intuitive and compare to historical models. The plugin could therefore build a machine learning or neural net model that can be leveraged to be more accurate over time, and document more accurately. The machine learning model could be hosted locally within a gateway node, or can be sent to a remote (e.g., physical or cloud) server for further refinements.

[0065] According to the disclosed auto-documentation plugin, the API provides an endpoint for the plugin to consume so that the auto-documentation plugin can obtain specific information about fields that are not obvious. For example, a "name of an entity" field that is associated with the API may be obvious, but some other fields may not be obvious. Hypothetically, a response includes an "abcd_id" field whose meaning may not be automatically inferred by a gateway node or control plane/data plane proxy, or which might be of interest for documentation purposes. In some embodiments, the auto-documentation generated can be specifically associated with the "abcd_id" field. The "abcd_id" field-specific documentation can be created when the user configures the auto-documentation plugin the first time. In some embodiments, the generated auto-documentation can be retrieved by a third-party source (e.g., another API). In some embodiments, the generated auto-documentation can be retrieved by a custom response header that the API endpoint returns to a gateway node or control plane/data plane proxy.

[0066] The purpose of the "abcd_id" field can be inferred based on both a history of response values to the parameter, and a history of many APIs that use a similarly named parameter. For example, if responses consistently include values such as "Main St.", "74th Ln.", "Page Mill Rd.", and "Crossview Ct.", it can be inferred that "abcd_id" is being used to pass names of streets to and from the related API. This history may be observed across multiple APIs. For example, while "abcd_id" may not be intuitively determined, a given programmer or team of programmers may always use the parameters named as such for particular input types (such as street names). Thus, the auto-documentation plugin can update documentation for an API receiving a new (or updated) parameter based on what that parameter means to other APIs.

[0067] Where the response values to the request change to "John Smith", "Jane Doe", and "Barack Obama", then the model infers that the use of "abcd_id" has changed from names of streets to names of people. The auto-documentation plugin locates the portion of the documentation that refers to the parameter and updates the description of the use of the parameter.

[0068] Where an API and a client may have a certain order or series of requests and responses. A machine learning model is constructed based on the order of requests/responses using the values provided for the parameters to develop the model. For example, a historical model of a request/response schema shows 3 types of requests. First, a request with a string parameter "petType". In responses to the first request, if the value is responded as "dog", the subsequent request asks for the string parameter "favToy". If the response to the first request is "cat", the subsequent request asks for a Boolean parameter "isViolent" instead.

[0069] If a newly observed series of requests/responses instead subsequently requests for the string parameter "favToy" after the response to the first request is "cat", then the auto-documentation plugin determines that a method that evaluates the first request has changed and that the related documentation needs to be updated.

[0070] The auto-generated documentation is in a human-readable format so that developers can understand and consume the API. When the API undergoes changes or when the request/response (e.g., parameters included in the request/response) to the API undergoes changes, the system not only auto-generates documentation but also detects changes to the request/response. Detecting the changes enables the plugin to be able to alert/notify developers when API-related attributes change (e.g., in an event when the API is updated so that a field is removed from the API's response or a new field is added in the API's response) and send the updated auto-documentation. Thus, the documentation continually evolves over time.

[0071] In some embodiments, auto-documentation for an API is generated dynamically in real-time by monitoring/sniffing/parsing traffic related to requests (e.g., sent by one or more clients) and requests (e.g., received from the API). In some embodiments, the client can be a testing client. The client might have a test suite that the client intends to execute. If the client executes the test suite through a gateway node that runs the auto-documentation plugin, then the plugin can automatically generate the documentation for the test suite.

[0072] The auto-documentation output, for example, can be a Swagger file that includes each endpoint, each parameter, each method/class and other API-related attributes. (A Swagger file is typically in JSON.) Thus, the auto-documentation can be in other suitable formats, e.g., RAML and API Blueprint. In some embodiments, the auto-documentation functionality is implemented as a plugin (that runs as middleware) at a gateway node.

[0073] In a microservices architecture, each microservice typically exposes a set of what are typically fine-grained endpoints, as opposed to a monolithic application where there is just one set of (typically replicated, load-balanced) endpoints. An endpoint can be considered to be a URL pattern used to communicate with an API.

[0074] In some instances, the auto-documentation can be stored or appended to an existing documentation, in-memory, on disk, in a data store or into a third-party service. In some instances, the auto-documentation can be analyzed and compared with previous versions of the same documentation to generate DIFF (i.e., difference) reports, notifications and monitoring alerts if something has changed or something unexpected has been documented.

[0075] In some embodiments, the plugin for automatically generating the documentation can artificially provoke or induce traffic (e.g., in the form of requests and responses) directed at an API so that the plugin can learn how to generate the auto-documentation for that API.

[0076] FIG. 7 illustrates a sequence diagram 700 of a use-case showing components and associated steps involved in generating auto-documentation, according to an embodiment of the disclosed technology. Specifically, FIG. 7 corresponds to the use-case when the auto-documentation is generated based on pre-processing a request (e.g., sent by one or more clients) and post-processing a response (e.g., received from the API). The components involved in the interaction are a client 702, a control plane 704, and an API 706. At step 1, a client 702 makes a request to gateway node 704. At step 2, the gateway node 704 parses the request (e.g., the headers and body of the request) and generates auto-documentation associated with the request. (The request can be considered as one part of a complete request/response transaction.)

[0077] At step 3, the gateway node 704 proxies/load-balances the request to API 706, which returns a response. At step 4, the gateway node 704 parses the response (e.g., the headers and body of the response) returned by the API 706, and generates auto-documentation associated with the response. In some embodiments, the auto-documentation associated with the response is appended to the auto-documentation associated with the request. At step 5, the gateway node 704 proxies the response back to the client 702. At step 6, the resulting documentation is stored on-disk, in a data store coupled with the gateway node 704, submitted to a third-party service, or kept in-memory. In some embodiments, notifications and monitoring alerts can be submitted directly by gateway node 704, or leveraging a third-party service, to communicate changes in the generated auto-documentation or a status of the parsing process. In some embodiments, if parsing fails or the API transaction is not understood by the auto-documentation plugin, an error notification can also be sent.

[0078] FIG. 8 illustrates a sequence diagram of another use-case showing components and associated steps involved in generating auto-documentation, according to an embodiment of the disclosed technology. Specifically, FIG. 8 corresponds to the use-case when the auto-documentation is generated based on post-processing a request (e.g., sent by one or more clients) and post-processing a response (e.g., received from the API). The components involved in the interaction are a client 802, a gateway node 804, and an API 806. At step 1, a client 802 makes a request to gateway node 804. At step 2, the gateway node 804 executes all of its functionalities but does not parse the request at this point. At step 3, the gateway node 804 proxies/load-balances the request to API 806, which returns a response.

[0079] At step 4, the gateway node 804 parses the request and the response, and generates auto-documentation associated with the request and the response. At step 5, the gateway node 804 proxies the response back to the client 802. At step 6, the resulting documentation is stored on-disk, in a data store coupled with the gateway node 804, submitted to a third-party service, or kept in-memory. In some embodiments, notifications and monitoring alerts can be submitted directly by gateway node 804, or leveraging a third-party service, to communicate changes in the generated auto-documentation or a status of the parsing process. In some embodiments, pre-processing a request and post-processing a response is preferred over post-processing a request and post-processing a response. Such a scenario can arise when a user wishes to document a request, even if the resulting response returns an error or fails. Typically, pre-processing a request and post-processing a response is used to partially document an endpoint. In some embodiments, the reverse is preferred. Such a scenario doesn't allow for partial documentation and is used to document the entire transaction of the request and the end response.

[0080] FIG. 9 illustrates a flow diagram 900 showing steps involved in generating auto-documentation at a gateway node, according to an embodiment of the disclosed technology. The flow diagram in FIG. 9 corresponds to the use-case when the auto-documentation is generated based on post-processing a request and post-processing a response. At step 902, a gateway node receives a client request. At step 906, the gateway node proxies the request and receives a response from the API. The response is sent back to the client. At step 910, the gateway node parses both the request and the response. At step 914, the gateway node retrieves (from local storage and remote storage of file system) the documentation for the endpoint requested. Retrieving the documentation for the endpoint is possible when the plugin has already auto-documented the same endpoint before.

[0081] Upon retrieving the prior documentation, the gateway node can compare the prior documentation with the current request to identify differences. At step 918, the gateway node determines whether the endpoint exists. If the gateway node determines that the endpoint exists, then the getaway node compares (at step 922) prior documented auto-documentation (in the retrieved documentation) with the current request and response data (e.g., headers, parameters, body, and other aspects of the request and response data). If the gateway node determines that there is no difference in the prior documented auto-documentation (in the retrieved documentation) and the current request and response data, then the gateway node enters (at step 930) a "nothing to do" state in which the gateway node doesn't take any further action, and continues monitoring requests/responses to/from the API. If the gateway node determines (at step 926) that there is a difference in the prior documented auto-documentation (in the retrieved documentation) and the current request and response data, then the gateway node alerts/notifies (optionally, at step 934) a user that different auto-documentation is detected. The gateway node can notify the user via an internal alert module, sending an email to the user, or using a third-party notification service such as Pagerduty.

[0082] At step 938, the gateway node determines whether the auto-documentation is to be updated. If the gateway node determines that the auto-documentation does not need to be updated, then the gateway node enters (at step 942) a "nothing to do" state in which the gateway node doesn't take any further action, and continues monitoring requests/responses to/from the API. If the gateway node determines that the auto-documentation needs to be updated, then the gateway node generates (step 946) auto-documentation for the current API transaction and stores the request and response meta-information (e.g., headers, parameters, body, etc.) in a data store or local cache. In some embodiments, if the gateway node determines at step 918 that the endpoint does not exist, then the getaway node generates auto-documentation at step 946 which includes information about the endpoint (which is newly-created). If the documentation for a specific endpoint is missing, the reason could be because the endpoint is unique and has not been requested before.

[0083] An example of a request (e.g., sent by one or more clients) is provided below:

TABLE-US-00002 POST /do/something HTTP/1.1 Host: server Accept: application/json Content-Length: 25 Content-Type: application/x-www-form-urlencoded param1=value¶m2=value

[0084] An example of a response (e.g., received from the API) is provided below:

TABLE-US-00003 HTTP/1.1 200 OK Connection: keep-alive Date: Wed, 07 Jun 2017 18:14:12 GMT Content-Type: application/json Content-Length: 33 {"created":true, "param1":"value"}

[0085] In other embodiments, the auto-documentation functionality can be integrated with an application server or a web server, and not necessarily a gateway node. In such embodiments, the application server (or the web server) can host the API application and be an entry point for an endpoint provided by the API.

[0086] FIG. 10 is a block diagram of a control plane system 1000 for a service mesh in a microservices architecture. A service mesh data plane is controlled by a control plane. In a microservices architecture, each microservice typically exposes a set of what are typically fine-grained endpoints, as opposed to a monolithic application where there is just one set of (typically replicated, load-balanced) endpoints. An endpoint can be considered to be a URL pattern used to communicate with an API.

[0087] Service mesh data plane: Touches every packet/request in the system. Responsible for service discovery, health checking, routing, load balancing, authentication/authorization, and observability.

[0088] Service mesh control plane: Provides policy and configuration for all of the running data planes in the mesh. Does not touch any packets/requests in the system but collects the packets in the system. The control plane turns all the data planes into a distributed system.

[0089] A service mesh such as Linkerd, NGINX, HAProxy, Envoy co-locate service instances with a data plane proxy network proxy. Network traffic (HTTP, REST, gRPC, Redis, etc.) from an individual service instance flows via its local data plane proxy to the appropriate destination. Thus, the service instance is not aware of the network at large and only knows about its local proxy. In effect, the distributed system network has been abstracted away from the service programmer. In a service mesh, the data plane proxy performs a number of tasks. Example tasks disclosed herein include service discovery, update discovery, health checking, routing, load balancing, authentication and authorization, and observability.

[0090] Service discovery identifies each of the upstream/backend microservice instances within used by the relevant application. Health checking refers to detection of whether upstream service instances returned by service discovery are ready to accept network traffic. The detection may include both active (e.g., out-of-band pings to an endpoint) and passive (e.g., using 3 consecutive 5xx as an indication of an unhealthy state) health checking. The service mesh is further configured to route requests from local service instances to desired upstream service clusters.

[0091] Load balancing: Once an upstream service cluster has been selected during routing, a service mesh is configured load balance. Load balancing includes determining which upstream service instance should the request be sent; with what timeout; with what circuit breaking settings; and if the request fails should it be retried?

[0092] The service mesh further authenticates and authorizes incoming requests cryptographically using mTLS or some other mechanism. Data plane proxies enable observability features including detailed statistics, logging, and distributed tracing data should be generated so that operators can understand distributed traffic flow and debug problems as they occur.

[0093] In effect, the data plane proxy is the data plane. Said another way, the data plane is responsible for conditionally translating, forwarding, and observing every network packet that flows to and from a service instance.

[0094] The network abstraction that the data plane proxy provides does not inherently include instructions or built in methods to control the associated service instances in any of the ways described above. The control features are the enabled by a control plane. The control plane takes a set of isolated stateless data plane proxies and turns them into a distributed system.

[0095] A service mesh and control plane system 1000 includes a user 1002 whom interfaces with a control plane UI 1004. The UI 1004 might be a web portal, a CLI, or some other interface. Through the UI 1004, the user 1002 has access to the control plane core 1006. The control plane core 1006 serves as a central point that other control plane services operate through in connection with the data plane proxies 1008. Ultimately, the goal of a control plane is to set policy that will eventually be enacted by the data plane. More advanced control planes will abstract more of the system from the operator and require less handholding.

[0096] control plane services may include global system configuration settings such as deploy control 1010 (blue/green and/or traffic shifting), authentication and authorization settings 1012, route table specification 1014 (e.g., when service A requests a command, what happens), load balancer settings 1016 (e.g., timeouts, retries, circuit breakers, etc.), a workload scheduler 1018, and a service discovery system 1020. The scheduler 1018 is responsible for bootstrapping a service along with its data plane proxy 1018.

[0097] Services 1022 are run on an infrastructure via some type of scheduling system (e.g., Kubernetes or Nomad). Typical control planes operate in control of control plane services 1010-1020 that in turn control the data plane proxies 1008. Thus, in typical examples, the control plane services 1010-1020 are intermediaries to the services 1022 and associated data plane proxies 1008. An auto-documentation unit 1023 is responsible for parsing copied packets originating from the data plane proxies 1008 and associated with each service instance 1022. Data plane proxies 1008 catch requests and responses that are delivered in between services 1022 in addition to those that responses and requests that originate from outside of the microservices architecture (e.g., from external clients).

[0098] The auto-documentation unit 1023 updates documentation 1024 relevant to the associated service instances 1022 as identified by the auto-documentation unit 1023. Documentation 1024 may be present in source code for the services 1022 or in a separate document.

[0099] As depicted in FIG. 10, the control plane core 1006 is the intermediary between the control plane services 1010-1020 and the data plane proxies 1008. Acting as the intermediary, the control plane core 1006 removes dependencies that exist in other control plane systems and enables the control plane core 1006 to be platform agnostic. The control plane services 1010-1020 act as managed stores. With managed storages in a cloud deployment, scaling and maintaining the control plane core 1006 involves fewer updates. The control plane core 1006 can be split to multiple modules during implementation.

[0100] The control plane core 1006 passively monitors each service instance 1022 via the data plane proxies 1008 via live traffic. However, the control plane core 1006 may take active checks to determine the status or health of the overall application.

[0101] The control plane core 1006 supports multiple control plane services 1010-1020 at the same time by defining which one is more important through priorities. Employing a control plane core 1006 as disclosed aids control plane service 1010-1020 migration. Where a user wishes to change the control plane service provider (ex: changing service discovery between Zookeper based discovery to switch to Consul based discovery), a control plane core 1006 that receives the output of the control plane services 1010-1020 from various providers can configure each regardless of provider. Conversely, a control plane that merely directs control plane services 1010-1020 includes no such configuration store.

[0102] Another feature provided by the control plane core 1006 is Static service addition. For example, a user may run Consul, but you want to add another service/instance (ex: for debugging). The user may not want to add the additional service on the Consul cluster. Using a control plane core 1006, the user may plug the file-based source with custom definition multi-datacenter support. The user may expose the state hold in control plane core 1006 as HTTP endpoint, plug the control plane core 1006 from other datacenters as a source with lower priority. This will provide fallback for instances in the other datacenters when instances from local datacenter are unavailable.

[0103] FIG. 11 is a block diagram illustrating communication between APIs resulting in updated documentation. In a microservices application architecture a plurality of APIs 1122A, B communicate with one another to produce desired results of a larger application. A control plane 1106 is communicatively coupled to the plurality of APIs 1122A, B via data plane proxies 1108A, B.

[0104] The control plane 1106 is configured to receive an incoming proxied request of a first API 1122A, via a respective data plane proxy 1108A, directed to a second API 1122B. The control plane 1106 receives the proxied response from the second API 1122B, via a respective second data plane proxy 1108B. In practice, there are many more APIs 1122 and respective proxies 1108 than the two pictured in the figure. Because of the microservices architecture, where each API is a "service," the client/server relationship may exist in between many of the APIs that are intercommunicating within the microservices application.

[0105] Once received by the control plane 1106, auto-documentation unit 1113 of the control plane 1106 parses the proxied requests/responses and extracts current data similarly as described by other auto-documentation embodiments. The auto-documentation plugin is configured to generate auto-documentation in response to a transaction that includes the request and the response. The auto-documentation may be newly generated or updates to existing documentation. A data store including stores the newly created or updated documentation 1124.

[0106] In some embodiments, a set of APIs 1122 may operate together as a service group. A service group may have an additional documentation repository that refers to the functions/operations of methods within the service group at a higher level than a granular description of each API. Because the control plane 1106 has visibility on all requests and responses in the microservices architecture, the Auto-documentation module 1113 may identify similar or matching objects across the requests/responses of multiple APIs. Similar objects are those that are semantically similar, those that reference matching object or class titles (though other portions of the object name may differ, e.g., "user" and "validatedUser" are similar as they both refer to the user). In some embodiments, similar objects may also call for the same data type (e.g., string, int, float, Boolean, custom object classes, etc.) while in the same service group.

[0107] Thus, documentation 1124 that results may include a description of an execution path and multiple stages of input before reaching some designated output.

[0108] FIG. 12 is a block diagram illustrating service groups 1202 and features associated with identification thereof. A service group 1202 is a group of services 1204 that together perform an identifiable application purpose or business flow. For example, a set of microservices are responsible for an airline's ticketing portion of their website. Other examples may include "customer experience," "sign up," "login," "payment processing", etc. Using a control plane 1206 with an associated service discovery 1208 feature, packets are be monitored as they filter through the overall application (ex: whole website).

[0109] Given a starting point of a given service group 1202, the control plane 1206 may run a trace on packets having a known ID and follow where those packets (with the known ID) go in the microservice architecture as tracked by data plane proxies. In that way, the system can then automatically populate a service group 1202 using the trace. The trace is enabled via the shared execution path of the data plane proxies. Along each step 1210 between services 1204, the control plane 1204 measures latency and discover services. The trace may operate on live traffic corresponding to end users 1212, or alternatively using test traffic.

[0110] As output, the control plane generates a dependency graph of the given service group 1202 business flow and reports via a GUI. Using the dependency graph, a backend operator is provided insight into bottlenecks in the service group 1202. For example, in a given service group 1202, a set of services 1204 may run on multiple servers that are operated by different companies (e.g., AWS, Azure, Google Cloud, etc.). The latency between these servers may slow down the service group 1202 as a whole. Greater observability into the service group 1202 via a dependency graph enables backend operators to improve the capabilities and throughput of the service group 1202.

[0111] Exemplary Computer System

[0112] FIG. 12 shows a diagrammatic representation of a machine in the example form of a computer system 1200, within which a set of instructions for causing the machine to perform any one or more of the methodologies discussed herein may be executed.

[0113] In alternative embodiments, the machine operates as a standalone device or may be connected (networked) to other machines. In a networked deployment, the machine may operate in the capacity of a server or a client machine in a client-server network environment, or as a peer machine in a peer-to-peer (or distributed) network environment.

[0114] The machine may be a server computer, a client computer, a personal computer (PC), a tablet PC, a set-top box (STB), a personal digital assistant (PDA), a cellular telephone or smart phone, a tablet computer, a personal computer, a web appliance, a point-of-sale device, a network router, switch or bridge, or any machine capable of executing a set of instructions (sequential or otherwise) that specify actions to be taken by that machine.

[0115] While the machine-readable (storage) medium is shown in an exemplary embodiment to be a single medium, the term "machine-readable (storage) medium" should be taken to include a single medium or multiple media (a centralized or distributed database, and/or associated caches and servers) that store the one or more sets of instructions. The term "machine-readable medium" or "machine readable storage medium" shall also be taken to include any medium that is capable of storing, encoding or carrying a set of instructions for execution by the machine and that cause the machine to perform any one or more of the methodologies of the present invention.

[0116] In general, the routines executed to implement the embodiments of the disclosure, may be implemented as part of an operating system or a specific application, component, program, object, module or sequence of instructions referred to as "computer programs." The computer programs typically comprise one or more instructions set at various times in various memory and storage devices in a computer, and that, when read and executed by one or more processors in a computer, cause the computer to perform operations to execute elements involving the various aspects of the disclosure.

[0117] Moreover, while embodiments have been described in the context of fully functioning computers and computer systems, those skilled in the art will appreciate that the various embodiments are capable of being distributed as a program product in a variety of forms, and that the disclosure applies equally regardless of the particular type of machine or computer-readable media used to actually effect the distribution.

[0118] Further examples of machine or computer-readable media include, but are not limited to, recordable type media such as volatile and non-volatile memory devices, floppy and other removable disks, hard disk drives, optical disks (e.g., Compact Disk Read-Only Memory (CD ROMS), Digital Versatile Discs, (DVDs), etc.), among others, and transmission type media such as digital and analog communication links.

[0119] Unless the context clearly requires otherwise, throughout the description and the claims, the words "comprise," "comprising," and the like are to be construed in an inclusive sense, as opposed to an exclusive or exhaustive sense; that is to say, in the sense of "including, but not limited to." As used herein, the terms "connected," "coupled," or any variant thereof, means any connection or coupling, either direct or indirect, between two or more elements; the coupling of connection between the elements can be physical, logical, or a combination thereof. Additionally, the words "herein," "above," "below," and words of similar import, when used in this application, shall refer to this application as a whole and not to any particular portions of this application. Where the context permits, words in the above Detailed Description using the singular or plural number may also include the plural or singular number respectively. The word "or," in reference to a list of two or more items, covers all of the following interpretations of the word: any of the items in the list, all of the items in the list, and any combination of the items in the list.