Personalized Tailored Air Interface

MA; JIANGLEI ; et al.

U.S. patent application number 16/854329 was filed with the patent office on 2021-05-27 for personalized tailored air interface. This patent application is currently assigned to HUAWEI TECHNOLOGIES CO., LTD.. The applicant listed for this patent is MING JIA, JIANGLEI MA, WEN TONG, PEIYING ZHU. Invention is credited to MING JIA, JIANGLEI MA, WEN TONG, PEIYING ZHU.

| Application Number | 20210160149 16/854329 |

| Document ID | / |

| Family ID | 1000004793694 |

| Filed Date | 2021-05-27 |

View All Diagrams

| United States Patent Application | 20210160149 |

| Kind Code | A1 |

| MA; JIANGLEI ; et al. | May 27, 2021 |

PERSONALIZED TAILORED AIR INTERFACE

Abstract

Methods and devices utilizing artificial intelligence (AI) or machine learning (ML) for customization of a device specific air interface configuration in a wireless communication network are provided. An over the air information exchange to facilitate the training of one or more AI/ML modules involves the exchange of AI/ML capability information identifying whether a device supports AI/ML for optimization of the air interface.

| Inventors: | MA; JIANGLEI; (OTTAWA, CA) ; ZHU; PEIYING; (OTTAWA, CA) ; TONG; WEN; (OTTAWA, CA) ; JIA; MING; (OTTAWA, CA) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Assignee: | HUAWEI TECHNOLOGIES CO.,

LTD. SHENZHEN CN |

||||||||||

| Family ID: | 1000004793694 | ||||||||||

| Appl. No.: | 16/854329 | ||||||||||

| Filed: | April 21, 2020 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 62939284 | Nov 22, 2019 | |||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | H04L 41/0823 20130101; H04W 92/10 20130101; H04L 41/16 20130101 |

| International Class: | H04L 12/24 20060101 H04L012/24; H04W 92/10 20060101 H04W092/10 |

Claims

1. A method in a wireless communication network, the method comprising: transmitting, by a first device, information regarding an artificial intelligence or machine learning (AI/ML) capability of the first device to a second device over an air interface between the first device and the second device, the information regarding an AI/ML capability of the first device identifying whether the first device supports AI/ML for optimization of at least one air interface component over the air interface.

2. The method of claim 1, wherein the information regarding an AI/ML capability of the first device comprises information indicating at least one of the following: the first device is capable of supporting a type and/or level of complexity of AI/ML; whether the first device assists with an AI/ML training process for optimization of the at least one air interface component; at least one component of the at least one air interface component for which the first device supports AI/ML optimization.

3. The method of claim 2, wherein the at least one component of the at least one air interface component includes at least one of a coding component, a modulation component and a waveform component.

4. The method of claim 2, wherein the information indicating at least one component of the at least one air interface component for which the first device supports AI/ML optimization further comprises information indicating whether the first device supports joint optimization of two or more air interface components.

5. The method of claim 1, wherein transmitting the information regarding an AI/ML capability of the first device comprises at least one of: transmitting the information in response to receiving an enquiry; and transmitting the information as part of an initial network access procedure.

6. A method in a wireless communication network, the method comprising: receiving, by a second device, information regarding an artificial intelligence or machine learning (AI/ML) capability of a first device over an air interface between the first device and the second device, the information regarding an AI/ML capability of the first device identifying whether the first device supports AI/ML for optimization of at least one air interface component over the air interface; and transmitting an AI/ML training request to the first device based at least in part on the information regarding the AI/ML capability of the first device.

7. The method of claim 6, wherein the information regarding an AI/ML capability of the first device comprises information indicating at least one of the following: the first device is capable of supporting a type and/or level of complexity of AI/ML; whether the first device assists with an AI/ML training process for optimization of the at least one air interface component; at least one component of the at least one air interface component for which the first device supports AI/ML optimization.

8. The method of claim 7, wherein the at least one component of the at least one air interface component includes at least one of a coding component, a modulation component and a waveform component.

9. The method of claim 7, wherein the information indicating at least one component of the at least one air interface component for which the first device supports AI/ML optimization further comprises information indicating whether the first device supports joint optimization of two or more components of the at least one air interface component.

10. The method of claim 6, wherein receiving the information regarding an AI/ML capability of the first device comprises receiving the information as part of an initial network access procedure for the first device.

11. The method of claim 6, wherein transmitting the AI/ML training request comprises transmitting the AI/ML training request through downlink control information (DCI) on a downlink control channel or RRC signaling or the combination of the DCI and RRC signaling.

12. The method of claim 11, further comprising, receiving a training request response from the device confirming that the device has transitioned to an AI/ML training mode.

13. The method of claim 6, further comprising: transmitting a training termination signal to the first device to indicate that a training phase has finished.

14. An apparatus comprising: at least one processor; and a computer readable storage medium operatively coupled to the at least one processor, the computer readable storage medium storing programming for execution by the at least one processor, the programming comprising instructions to: transmit, from the apparatus, information regarding an artificial intelligence or machine learning (AI/ML) capability of the apparatus to a network device over an air interface between the appatus and the network device, the information regarding an AI/ML capability of the apparatus identifying whether the apparatus supports AI/ML for optimization of at least one air interface component over the air interface.

15. The apparatus of claim 14, wherein the information regarding an AI/ML capability of the apparatus comprises information indicating at least one of the following: the apparatus is capable of supporting a type and/or level of complexity of AI/ML; whether the apparatus assists with an AI/ML training process for optimization of the at least one air interface component; at least one component of the at least one air interface component for which the apparatus supports AI/ML optimization.

16. The apparatus of claim 15, wherein the at least one component of the at least one air interface component includes at least one of a coding component, a modulation component and a waveform component.

17. The apparatus of claim 15, wherein the information indicating at least one component of the at least one air interface component for which the apparatus supports AI/ML optimization further comprises information indicating whether the apparatus supports joint optimization of two or more components of the at least one air interface component.

18. A network apparatus comprising: at least one processor; and a computer readable storage medium operatively coupled to the at least processor, the computer readable storage medium storing programming for execution by the at least processor, the programming comprising instructions to: receive, by the network apparatus information regarding an artificial intelligence or machine learning (AI/ML) capability of a first device over an air interface between the first device and the network apparatus, the information regarding an AI/ML capability of the first device identifying whether the first device supports AI/ML for optimization of at least one air interface component over the air interface; and transmit an AI/ML training request to the first device based at least in part on the information regarding the AI/ML capability of the first device.

19. The network apparatus of claim 18, wherein the information regarding an AI/ML capability of the first device comprises information indicating at least one of the following: the first device is capable of supporting a type and/or level of complexity of AI/ML; whether the first device assists with an AI/ML training process for optimization of the at least one air interface component; at least one component of the at least one air interface component for which the first device supports AI/ML optimization.

20. The network apparatus of claim 19, wherein the at least one component of the at least one air interface component includes at least one of a coding component, a modulation component and a waveform component.

21. The network apparatus of claim 19, wherein the information indicating at least one component of the at least one air interface component for which the first device supports AI/ML optimization further comprises information indicating whether the first device supports joint optimization of two or more components of the at least one air interface component.

Description

CROSS-REFERENCE TO RELATED APPLICATION

[0001] This application claims the benefit of U.S. Provisional Patent Application No. 62/939,284 entitled "PERSONALIZED TAILORED AIR INTERFACE" filed Nov. 22, 2019, the entire contents of which is incorporated herein by reference.

FIELD

[0002] The present disclosure relates to wireless communication generally, and, in particular embodiments, to methods and apparatuses for air interface customization.

BACKGROUND

[0003] An air interface is the wireless communications link between two or more communicating devices, such as an evolved NodeB (also commonly referred to as a NodeB, a base station, NR base station, a transmit point, a remote radio head, a communications controller, a controller, and the like) and a user equipment (UE) (also commonly referred to as a mobile station, a subscriber, a user, a terminal, a phone, and the like). Typically, both communicating devices need to know the air interface in order to successfully transmit and receive a transmission.

[0004] In many wireless communication systems, the air interface definition is a one-size-fits-all concept. The components within the air interface cannot be changed or adapted once the air interface is defined. In some implementations, only limited parameters or modes of an air interface, such as a cyclic prefix (CP) length or multiple input multiple output (MIMO) mode, can be configured. In some modern wireless systems, a configurable air interface concept has been adopted to provide a framework for a more flexible air interface. It is intended to provide adaptation of different components within the air interface, and to address the potential requirements of future applications. Some modern wireless systems, such as fifth generation (5G) or new radio (NR) network systems, support network slicing, which is a network architecture that enables the multiplexing of virtualized and independent logical networks on the same physical network infrastructure. In such systems, each network slice is an isolated end-to-end network tailored to fulfill diverse requirements requested by a particular service or application. A configurable air interface has been proposed for NR networks that allows for service or slice based optimization of the air interface to allow the air interface to be configured based on a service or application that will be supported by the air interface or the network slice over which the service or application will be provided.

SUMMARY

[0005] Different pairs of communicating devices (i.e., a transmission sending device and a transmission receiving device) may have different transmission capabilities and/or transmission requirements. The different transmission capabilities and/or transmission requirements typically cannot be met optimally by a single air interface or air interface configuration.

[0006] The configurable air interface proposed for NR networks allows service or slice based optimization based on selecting from a predetermined subset of parameters or technologies for a predetermined subset of air interface components. If the service and/or network slice over which the service is provided changes, the configurations of the components of the transmit and receive chains of the communicating devices may be changed to match a new predetermined service or slice specific air interface corresponding to the new service or network slice.

[0007] However for each service, the transmission condition, capability and requirements can still be quite different for each device, which means, for example, that an air interface configuration that may be optimal for delivering a service to one device, for an example one UE, may not necessarily be optimal for delivering the same service to another UE.

[0008] The present disclosure provides methods and apparatuses that may be used to implement new air interfaces for wireless communication that are tailored or personalized on a device-specific basis, for example using artificial intelligence and/or machine learning to provide device-specific air interface optimization. For example, embodiments of the present disclosure include new air interfaces that go beyond a network slice/service specific air interface to a personalized tailored air interface that includes a personalized service type and a personalized air interface setting. Thus, using artificial intelligence and/or machine learning to optimize a device-specific air interface, can achieve a new air interface configuration to satisfy the requirement of each UE on an individual basis.

[0009] One broad aspect of the present disclosure provides a method in a wireless communication network in which a first device transmits information regarding an artificial intelligence or machine learning (AI/ML) capability of the first device to a second device over an air interface between the first device and the second device. For example, the information regarding an AI/ML capability of the first device may identify whether the first device supports AI/ML for optimization of at least one air interface component over the air interface. Thus, the exchange of AI/ML capability between two communicating devices is used to optimize one or more air interface components to accomplish device-specific air interface optimization.

[0010] Another broad aspect of the present disclosure provides a method in a wireless communication network in which a second device receives information regarding an artificial intelligence or machine learning (AI/ML) capability of a first device over an air interface between the first device and the second device. For example, the information regarding an AI/ML capability of the first device may identify whether the first device supports AI/ML for optimization of at least one air interface component over the air interface. In some embodiments, the second device may transmit an AI/ML training request to the first device based at least in part on the information regarding the AI/ML capability of the first device. Thus, the exchange of AI/ML capability between two communicating devices is used to optimize one or more air interface components to accomplish device-specific air interface optimization.

[0011] Yet another broad aspect of the present disclosure provides an apparatus that includes at least one processor and a computer readable storage medium operatively coupled to the at least one processor. The computer readable storage medium stores programming for execution by the at least one processor. The programming includes instructions to transmit, from the apparatus, information regarding an artificial intelligence or machine learning (AI/ML) capability of the apparatus to a network device over an air interface between the apparatus and the network device. For example, the information regarding an AI/ML capability of the apparatus may identify whether the apparatus supports AI/ML for optimization of at least one air interface component over the air interface.

[0012] Still another broad aspect of the present disclosure provides a network apparatus that includes at least one processor and a computer readable storage medium operatively coupled to the at least one processor. The computer readable storage medium stores programming for execution by the at least one processor. The programming includes instructions to receive, by the network apparatus, information regarding an artificial intelligence or machine learning (AI/ML) capability of a first device over an air interface between the first device and the network apparatus. For example, the information regarding an AI/ML capability of the first device may identify whether the first device supports AI/ML for optimization of at least one air interface component over the air interface. In some embodiment, the programming further comprises instructions to transmit an AI/ML training request to the first device based at least in part on the information regarding the AI/ML capability of the first device.

BRIEF DESCRIPTION OF THE DRAWINGS

[0013] Reference will now be made, by way of example, to the accompanying drawings which show example embodiments of the present application, and in which:

[0014] FIG. 1 is a schematic diagram of an example communication system suitable for implementing examples described herein;

[0015] FIGS. 2 and 3 are block diagrams illustrating example devices that may implement the methods and teachings according to this disclosure;

[0016] FIG. 4 is a block diagram of an example computing system that may implement the methods and teachings according to this disclosure;

[0017] FIG. 5 illustrates an example air interface and components thereof;

[0018] FIG. 6 is a block diagram of a transmit chain of a network device and a receive chain of a user equipment device that are configured to communicate over an air interface;

[0019] FIG. 7A is a block diagram of a first example of a transmit chain of a network device and a receive chain of a user equipment device that include machine learning components enabling device-specific tailoring/customization of an air interface, in accordance with a first embodiment of this disclosure;

[0020] FIG. 7B is a block diagram of a second example of a transmit chain of a network device and a receive chain of a user equipment device that include machine learning components enabling device-specific tailoring/customization of an air interface, in accordance with the first embodiment of this disclosure;

[0021] FIG. 8A is a block diagram of a first example of a transmit chain of a network device and a receive chain of a user equipment device that include machine learning components enabling device-specific tailoring/customization of an air interface, in accordance with a second embodiment of this disclosure;

[0022] FIG. 8B is a block diagram of a second example of a transmit chain of a network device and a receive chain of a user equipment device that include machine learning components enabling device-specific tailoring/customization of an air interface, in accordance with the second embodiment of this disclosure;

[0023] FIG. 9 is a block diagram of an example of a transmit chain of a network device and a receive chain of a user equipment device that include machine learning components enabling device-specific tailoring/customization of an air interface, in accordance with a third embodiment of this disclosure;

[0024] FIG. 10 is a block diagram of an example of a transmit chain of a network device and a receive chain of a user equipment device that include machine learning components enabling device-specific tailoring/customization of an air interface, in accordance with a fourth embodiment of this disclosure;

[0025] FIG. 11 is a block diagram of an example of a transmit chain of a network device and a receive chain of a user equipment device that include machine learning components enabling device-specific tailoring/customization of an air interface, in accordance with a fifth embodiment of this disclosure;

[0026] FIG. 12 illustrates an example of an over the air information exchange procedure for a training phase of machine learning components enabling device-specific tailoring/customization of an air interface, in accordance with an embodiment of this disclosure;

[0027] FIG. 13 illustrates another example of an over the air information exchange procedure for a training phase of machine learning components enabling device-specific tailoring/customization of an air interface, in accordance with an embodiment of this disclosure;

[0028] FIG. 14 illustrates an example of an over the air information exchange procedure for a normal operations phase of machine learning components enabling device-specific tailoring/customization of an air interface, in accordance with an embodiment of this disclosure; and

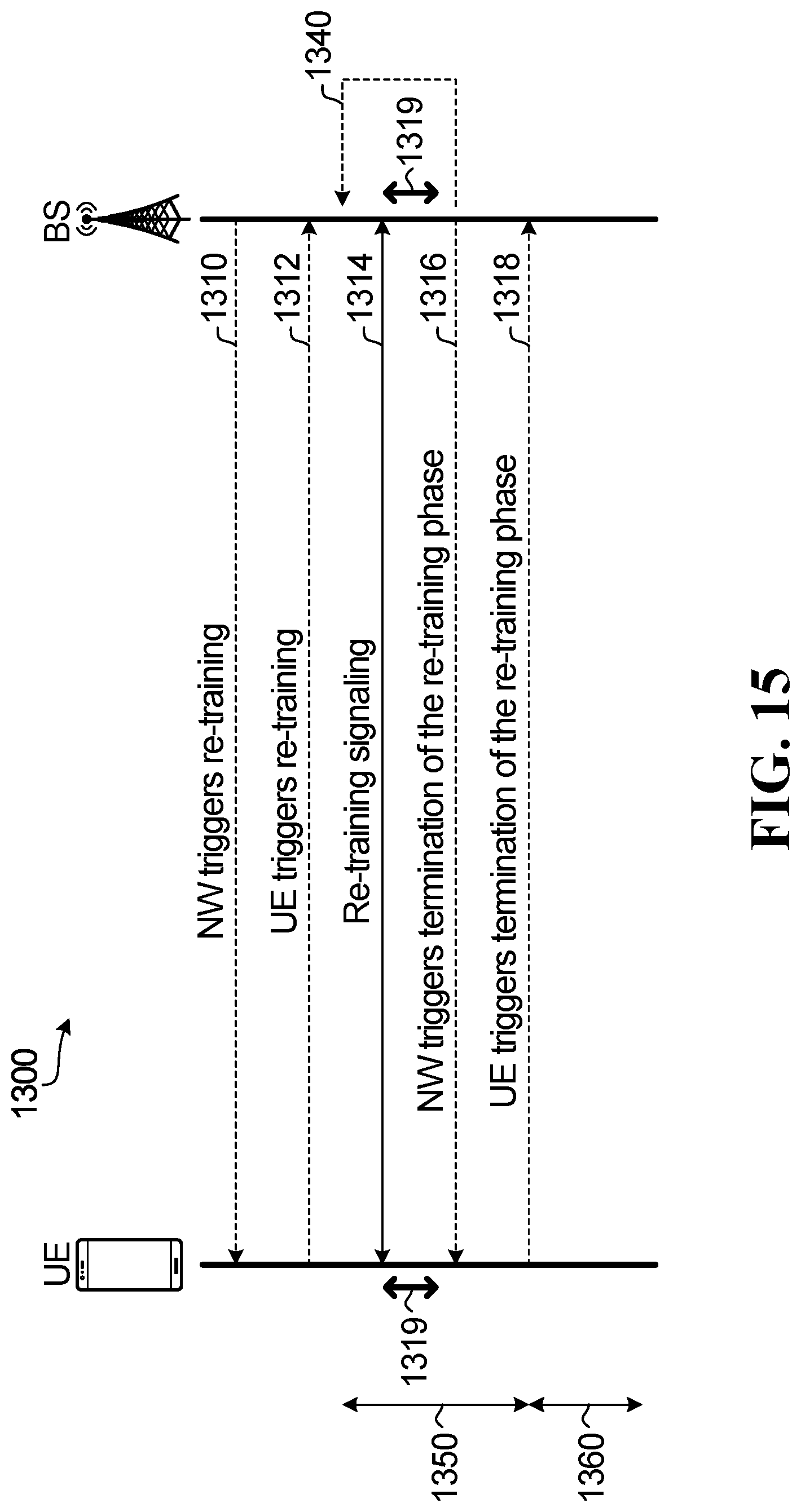

[0029] FIG. 15 illustrates an example of an over the air information exchange procedure for a re-training phase of machine learning components enabling device-specific tailoring/customization of an air interface, in accordance with an embodiment of this disclosure.

[0030] Similar reference numerals may have been used in different figures to denote similar components.

DESCRIPTION OF EXAMPLE EMBODIMENTS

[0031] To assist in understanding the present disclosure, an example wireless communication system is described below.

[0032] FIG. 1 illustrates an example wireless communication system 100 (also referred to as wireless system 100) in which embodiments of the present disclosure could be implemented. In general, the wireless system 100 enables multiple wireless or wired elements to communicate data and other content. The wireless system 100 may enable content (e.g., voice, data, video, text, etc.) to be communicated (e.g., via broadcast, narrowcast, user device to user device, etc.) among entities of the system 100. The wireless system 100 may operate by sharing resources such as bandwidth. The wireless system 100 may be suitable for wireless communications using 5G technology and/or later generation wireless technology (e.g., 6G or later). In some examples, the wireless system 100 may also accommodate some legacy wireless technology (e.g., 3G or 4G wireless technology).

[0033] In the example shown, the wireless system 100 includes electronic devices (ED) 110a-110c (generically referred to as ED 110), radio access networks (RANs) 120a-120b (generically referred to as RAN 120), a core network 130, a public switched telephone network (PSTN) 140, the internet 150, and other networks 160. In some examples, one or more of the networks may be omitted or replaced by a different type of network. Other networks may be included in the wireless system 100. Although certain numbers of these components or elements are shown in FIG. 1, any reasonable number of these components or elements may be included in the wireless system 100.

[0034] The EDs 110 are configured to operate, communicate, or both, in the wireless system 100. For example, the EDs 110 may be configured to transmit, receive, or both via wireless or wired communication channels. Each ED 110 represents any suitable end user device for wireless operation and may include such devices (or may be referred to) as a user equipment/device (UE), a wireless transmit/receive unit (WTRU), a mobile station, a fixed or mobile subscriber unit, a cellular telephone, a station (STA), a machine type communication (MTC) device, a personal digital assistant (PDA), a smartphone, a laptop, a computer, a tablet, a wireless sensor, or a consumer electronics device, among other possibilities. Future generation EDs 110 may be referred to using other terms.

[0035] In FIG. 1, the RANs 120 include base stations (BSs) 170a-170b (generically referred to as BS 170), respectively. Each BS 170 is configured to wirelessly interface with one or more of the EDs 110 to enable access to any other BS 170, the core network 130, the PSTN 140, the internet 150, and/or the other networks 160. For example, the BS 170s may include (or be) one or more of several well-known devices, such as a base transceiver station (BTS), a radio base station, a Node-B (NodeB), an evolved NodeB (eNodeB), a Home eNodeB, a gNodeB (sometimes called a next-generation Node B), a transmission point (TP), a transmit and receive point (TRP), a site controller, an access point (AP), or a wireless router, among other possibilities. Future generation BSs 170 may be referred to using other terms. Any ED 110 may be alternatively or additionally configured to interface, access, or communicate with any other BS 170, the internet 150, the core network 130, the PSTN 140, the other networks 160, or any combination of the preceding. The wireless system 100 may include RANs, such as RAN 120b, wherein the corresponding BS 170b accesses the core network 130 via the internet 150, as shown.

[0036] The EDs 110 and BSs 170 are examples of communication equipment that can be configured to implement some or all of the functionality and/or embodiments described herein. In the embodiment shown in FIG. 1, the BS 170a forms part of the RAN 120a, which may include other BSs, base station controller(s) (BSC), radio network controller(s) (RNC), relay nodes, elements, and/or devices. Any BS 170 may be a single element, as shown, or multiple elements, distributed in the corresponding RAN, or otherwise. Also, the BS 170b forms part of the RAN 120b, which may include other BSs, elements, and/or devices. Each BS 170 transmits and/or receives wireless signals within a particular geographic region or area, sometimes referred to as a "cell" or "coverage area". A cell may be further divided into cell sectors, and a BS 170 may, for example, employ multiple transceivers to provide service to multiple sectors. In some embodiments there may be established pico or femto cells where the radio access technology supports such. A macro cell may encompass one or more smaller cells. In some embodiments, multiple transceivers could be used for each cell, for example using multiple-input multiple-output (MIMO) technology. The number of RANs 120 shown is exemplary only. Any number of RANs may be contemplated when devising the wireless system 100.

[0037] The BSs 170 communicate with one or more of the EDs 110 over one or more air interfaces 190a using wireless communication links (e.g. radio frequency (RF), microwave, infrared (IR), etc.). The EDs 110 may also communicate directly with one another via one or more sidelink air interfaces 190b. The interfaces 190a and 190b may be generally referred to as air interfaces 190. BS-ED communications over interfaces 190a and ED-ED communications over interfaces 190b may use similar communication technology. The air interfaces 190 may utilize any suitable radio access technology. For example, the wireless system 100 may implement one or more channel access methods, such as code division multiple access (CDMA), time division multiple access (TDMA), frequency division multiple access (FDMA), orthogonal FDMA (OFDMA), or single-carrier FDMA (SC-FDMA) in the air interfaces 190. The air interfaces 190 may utilize other higher dimension signal spaces, which may involve a combine of orthogonal and/or non-orthogonal dimensions.

[0038] The RANs 120 are in communication with the core network 130 to provide the EDs 110 with various services such as voice, data, and other services. The RANs 120 and/or the core network 130 may be in direct or indirect communication with one or more other RANs (not shown), which may or may not be directly served by core network 130, and may or may not employ the same radio access technology as RAN 120a, RAN 120b or both. The core network 130 may also serve as a gateway access between (i) the RANs 120 or EDs 110 or both, and (ii) other networks (such as the PSTN 140, the internet 150, and the other networks 160). In addition, some or all of the EDs 110 may include functionality for communicating with different wireless networks over different wireless links using different wireless technologies and/or protocols. Instead of wireless communication (or in addition thereto), the EDs 110 may communicate via wired communication channels to a service provider or switch (not shown), and to the internet 150. PSTN 140 may include circuit switched telephone networks for providing plain old telephone service (POTS). Internet 150 may include a network of computers and subnets (intranets) or both, and incorporate protocols, such as Internet Protocol (IP), Transmission Control Protocol (TCP), User Datagram Protocol (UDP). EDs 110 may be multimode devices capable of operation according to multiple radio access technologies, and incorporate multiple transceivers necessary to support such.

[0039] FIGS. 2 and 3 illustrate example devices that may implement the methods and teachings according to this disclosure. In particular, FIG. 2 illustrates an example ED 110, and FIG. 3 illustrates an example base station 170. These components could be used in the communication system 100 or in any other suitable system.

[0040] As shown in FIG. 2, the ED 110 includes at least one processing unit 200. The processing unit 200 implements various processing operations of the ED 110. For example, the processing unit 200 could perform signal coding, data processing, power control, input/output processing, or any other functionality enabling the ED 110 to operate in the communication system 100. The processing unit 200 may also be configured to implement some or all of the functionality and/or embodiments described in more detail elsewhere herein. Each processing unit 200 includes any suitable processing or computing device configured to perform one or more operations. Each processing unit 200 could, for example, include a microprocessor, microcontroller, digital signal processor, field programmable gate array, or application specific integrated circuit.

[0041] The ED 110 also includes at least one transceiver 202. The transceiver 202 is configured to modulate data or other content for transmission by at least one antenna or Network Interface Controller (NIC) 204. The transceiver 202 is also configured to demodulate data or other content received by the at least one antenna 204. Each transceiver 202 includes any suitable structure for generating signals for wireless or wired transmission and/or processing signals received wirelessly or by wire. Each antenna 204 includes any suitable structure for transmitting and/or receiving wireless or wired signals. One or multiple transceivers 202 could be used in the ED 110. One or multiple antennas 204 could be used in the ED 110. Although shown as a single functional unit, a transceiver 202 could also be implemented using at least one transmitter and at least one separate receiver.

[0042] The ED 110 further includes one or more input/output devices 206 or interfaces (such as a wired interface to the internet 150 in FIG. 1). The input/output devices 206 permit interaction with a user or other devices in the network. Each input/output device 206 includes any suitable structure for providing information to or receiving information from a user, such as a speaker, microphone, keypad, keyboard, display, or touch screen, including network interface communications.

[0043] In addition, the ED 110 includes at least one memory 208. The memory 208 stores instructions and data used, generated, or collected by the ED 110. For example, the memory 208 could store software instructions or modules configured to implement some or all of the functionality and/or embodiments described herein and that are executed by the processing unit(s) 200. Each memory 208 includes any suitable volatile and/or non-volatile storage and retrieval device(s). Any suitable type of memory may be used, such as random access memory (RAM), read only memory (ROM), hard disk, optical disc, subscriber identity module (SIM) card, memory stick, secure digital (SD) memory card, and the like.

[0044] As shown in FIG. 3, the base station 170 includes at least one processing unit 1350, at least one transmitter 252, at least one receiver 254, one or more antennas 256, at least one memory 258, and one or more input/output devices or interfaces 266. A transceiver, not shown, may be used instead of the transmitter 252 and receiver 254. A scheduler 253 may be coupled to the processing unit 250. The scheduler 253 may be included within or operated separately from the base station 170. The processing unit 250 implements various processing operations of the base station 170, such as signal coding, data processing, power control, input/output processing, or any other functionality. The processing unit 250 can also be configured to implement some or all of the functionality and/or embodiments described in more detail herein. Each processing unit 250 includes any suitable processing or computing device configured to perform one or more operations. Each processing unit 250 could, for example, include a microprocessor, microcontroller, digital signal processor, field programmable gate array, or application specific integrated circuit.

[0045] Each transmitter 252 includes any suitable structure for generating signals for wireless or wired transmission to one or more EDs or other devices. Each receiver 254 includes any suitable structure for processing signals received wirelessly or by wire from one or more EDs or other devices. Although shown as separate components, at least one transmitter 252 and at least one receiver 254 could be combined into a transceiver. Each antenna 256 includes any suitable structure for transmitting and/or receiving wireless or wired signals. Although a common antenna 256 is shown here as being coupled to both the transmitter 252 and the receiver 254, one or more antennas 256 could be coupled to the transmitter(s) 252, and one or more separate antennas 256 could be coupled to the receiver(s) 254. Each memory 258 includes any suitable volatile and/or non-volatile storage and retrieval device(s) such as those described above in connection to the ED 110 in FIG. 2. The memory 258 stores instructions and data used, generated, or collected by the base station 170. For example, the memory 258 could store software instructions or modules configured to implement some or all of the functionality and/or embodiments described herein and that are executed by the processing unit(s) 250.

[0046] Each input/output device 266 permits interaction with a user or other devices in the network. Each input/output device 266 includes any suitable structure for providing information to or receiving/providing information from a user, including network interface communications.

[0047] It should be appreciated that one or more steps of the embodiment methods provided herein may be performed by corresponding units or modules, according to FIG. 4. For example, a signal may be transmitted by a transmitting unit or a transmitting module. A signal may be received by a receiving unit or a receiving module. A signal may be processed by a processing unit or a processing module. Other steps may be performed by an artificial intelligence (AI) or machine learning (ML) module. The respective units/modules may be implemented using hardware, one or more components or devices that execute software, or a combination thereof. For instance, one or more of the units/modules may be an integrated circuit, such as field programmable gate arrays (FPGAs) or application-specific integrated circuits (ASICs). It will be appreciated that where the modules are implemented using software for execution by a processor for example, they may be retrieved by a processor, in whole or part as needed, individually or together for processing, in single or multiple instances, and that the modules themselves may include instructions for further deployment and instantiation.

[0048] Additional details regarding the EDs such as 110 and base stations such as 170 are known to those of skill in the art. As such, these details are omitted here.

[0049] Referring back to FIG. 1, different pairs of communicating devices (i.e., a transmission sending device and a transmission receiving device), such as ED 110a communicating with BS 170a or ED 110b communicating with BS 170a, may have different transmission capabilities and/or transmission requirements. The different transmission capabilities and/or transmission requirements typically cannot be met optimally by a single air interface or air interface configuration.

[0050] As discussed above, a configurable air interface has been proposed to address this issue. FIG. 5 illustrates a diagram of an example of a configurable air interface 300. Air interface 300 comprises a number of building blocks that collectively specify how a transmission is to be made and/or received. The building blocks of air interface 300 may include waveform building block 305, frame structure building block 310, multiple access scheme building block 315, a protocols building block 320, a coding and modulation building block 325, and an antenna array processing building block 330.

[0051] Frame structure building block 310 may specify a configuration of a frame or group of frames. Non-limiting examples of frame structure options include a configurable multi-level transmission time interval (TTI), a fixed TTI, a configurable single-level TTI, a co-existence configuration, or configurable slot, mini slot, or configurable symbol duration block (SDB) and the like. The lengths of a TTI, slot, mini slot or SDB may also be specified. Frame structure building block 310 may also or instead specify timing parameters for DL and/or UL transmission, such as a transmission period for DL and/or UL, and/or a time switch gap between DL and UL transmissions. The frame structure can be for various duplexing schemes, such as time domain duplexing (TDD), frequency division duplexing (FDD) and full duplex operation.

[0052] Multiple access scheme building block 315 may specify how access to a channel is scheduled or configured for one or more users. Non-limiting examples of multiple access technique options include scheduled access, grant-free access, dedicated channel resource (no sharing between multiple users), contention based shared channel resource, non-contention based shared channel resource, cognitive radio based access, and the like.

[0053] Protocols building block 320 may specify how a transmission and/or a re-transmission are to be made. Non-limiting examples of transmission and/or re-transmission mechanism options include those that specify a scheduled data pipe size, a signaling mechanism for transmission and/or re-transmission, a re-transmission mechanism, and the like.

[0054] Coding and modulation building block 325 may specify how information being transmitted may be encoded (decoded) and modulated (demodulated) for transmission (reception) purposes. Non-limiting examples of coding and/or modulation technique options include low density parity check (LDPC) codes, polar codes, turbo trellis codes, turbo product codes, fountain codes, rateless codes, network codes, binary phase shift keying (BPSK), .pi./2-BPSK, quadrature phase shift keying (QPSK), quadrature amplitude modulation (QAM) such as 16QAM, 64QAM, 256QAM, hierarchical modulation, low PAPR modulation, non-linear modulation non-QAM based modulation, and the like.

[0055] Waveform building block 305 may specify a shape and form of a signal being transmitted. Non-limiting examples of waveform options include Orthogonal Frequency Division Multiplexing (OFDM) based waveform such as filtered OFDM (f-OFDM), Wavelet Packet Modulation (WPM), Faster Than Nyquist (FTN) Waveform, low Peak to Average Ratio Waveform (low PAPR WF such as DFT spread OFDM waveform), Filter Bank Multicarrier (FBMC) Waveform, Single Carrier Frequency Division Multiple Access (SC-FDMA), and the like. For OFDM-based waveforms, the waveform building block 305 may specify the associated waveform parameters such as sub-carrier spacings and cyclic prefix (CP) overhead.

[0056] Antenna array processing building block 330 may specify parameters for antenna array signal processing for channel acquisition and precoding/beamforming generation. In some embodiments, the functionality of the waveform building block 305 and the antenna array processing building block 330 may be combined as a multiple antenna waveform generator block.

[0057] Since the air interface 300 comprises a plurality of building blocks, and each building block may have a plurality of candidate technologies, it may be possible to configure a large number of different air interface profiles, where each air interface profile defines a respective air interface configuration option.

[0058] For example, the configurable air interface proposed for new radio (NR) networks allows service or slice based optimization, which can be advantageous because the potential application requirements for air interface technologies can be complex and diverse. Similar to the air interface 300 shown in FIG. 3, the configurable air interface proposed for 5G networks supports adaptive waveform, adaptive protocols, adaptive frame structure, adaptive coding and modulation family and adaptive multiple access schemes. With such mechanisms, the air interface can potentially accommodate a wide variety of user services, spectrum bands and traffic levels.

[0059] FIG. 6 illustrates an example of components in a transmit chain 400 of a base station 170 and components of a receive chain 450 of a UE 110 that may be configurable as part of a configurable air interface to allow the base station 170 and the UE 110 to communicate.

[0060] The components of the transmit chain 400 of the base station 170 include a source encoder 402, a channel encoder 404 and a modulator 406. Source encoder 402, channel encoder 404 and modulator 406 may each be implemented as a specific hardware block, or may be implemented in part as software modules executing in a processor, such as a microprocessor, a digital signal processor, a custom application specific integrated circuit, or a custom compiled logic array of a field programmable logic array.

[0061] The components of the receive chain 450 of the UE 110 include a demodulator 452 and a channel decoder 454. Demodulator 452 and channel decoder 454 may each be implemented as a specific hardware block, or may be implemented in part as software modules executing in a processor, such as a microprocessor, a digital signal processor, a custom application specific integrated circuit, or a custom compiled logic array of a field programmable logic array.

[0062] In operation, source encoder 402 encodes uncompressed raw data to generate compressed information bits, which are in turn encoded by channel encoder to generate channel coded information bits, which are then modulated by modulator 406 to generate modulated signals. In this example, the modulation performed by modulator 406 includes quadrature amplitude modulation (QAM) mapping and waveform generation. The modulated signals generated by modulator 406 are transmitted from base station 170 to UE 110 over one or more wireless channels. A base station can have multiple transmit antennas, in which case a waveform may be generated for each of the antennas. In such cases, the generated waveforms may contain different contents for each of the multiple transmit antennas, e.g., in a MIMO mode transmission. At UE 110, the received signals from base station 170 are demodulated by demodulator 452 to generate demodulated signals. A UE can have multiple receive antennas, in which case demodulator 452 may be configured to process waveforms received from multiple receive antennas as part of the waveform recovery process. The demodulated signals generated by demodulator 452 are decoded by channel decoder 454 to generate recovered compressed information bits. Source decoder 456 decodes the recovered compressed information bits to generate recovered uncompressed raw data.

[0063] Waveform here in the embodiment of FIG. 4 or the following embodiments, may specify a shape and form of a signal being transmitted. Non-limiting examples of waveform options include Orthogonal Frequency Division Multiplexing (OFDM) based waveform such as filtered OFDM (f-OFDM), Wavelet Packet Modulation (WPM), Faster Than Nyquist (FTN) Waveform, low Peak to Average Ratio Waveform (low PAPR WF such as DFT spread OFDM waveform), Filter Bank Multicarrier (FBMC) Waveform, Single Carrier Frequency Division Multiple Access (SC-FDMA), and the like. For OFDM-based waveforms, the waveform may specify the associated waveform parameters such as sub-carrier spacings and cyclic prefix (CP) overhead.

[0064] The coding and modulation performed by the components of the transmit chain 400 and the corresponding demodulation and decoding performed by the components of the receive chain 450 may be configured according to a modulation and coding scheme (MCS) corresponding to a service or slice specific air interface in order to support delivery of a service or application to UE 110 according to the selected code scheme and modulation scheme. If the service and/or network slice over which the service is provided changes, the configurations of the components of the transmit and receive chains of the base station 170 and UE 110 may be changed to match a new predetermined service or slice specific air interface corresponding to the new service or network slice. As noted above, a service or slice specific air interface such as this, which is based on selecting from a predetermined subset of parameters or technologies for a predetermined subset of air interface components, can potentially accommodate a wide variety of user services, spectrum bands and traffic levels.

[0065] However for each service, the transmission condition and requirements can still be quite different for each UE/device, which means, for example, that an air interface configuration that may be optimal for delivering a service to one UE/device may not necessarily be optimal for delivering the same service to another UE. Therefore, it would be desirable to provide further optimization of a UE/device specific air interface configuration.

[0066] Machine learning (ML) and artificial intelligence (AI) approaches have been used for solving many difficult and complex problems. To assist in understanding the present disclosure, some background discussion of ML and AI is now provided. AI is an emerging and fast-growing field thanks to the advances made in the field of computer architecture and in particular general purpose graphics processing units (GP-GPUs). A neural network, which is a form of ML, may be considered as a type of fitting function. Deep learning is one realization of a neural network, which contains more than one interconnected layer of artificial neurons. To train a deep neural network to fit a function (e.g., training using a great amount of input samples and output samples), the weight and threshold of each neuron are updated iteratively, so as to minimize an overall loss function or maximize an overall reward function. The iteration may be achieved by a gradient-descent or ascent back-propagation algorithm over training samples, which may require that the deep neural network architecture and the loss or reward function be mathematically differentiable.

[0067] Trainability typically requires: a function set (the neural network architecture) that defines an exploration space boundary within which a gradient-descent algorithm may traverse; and one or more loss (or reward) function(s) being differentiable with respect to each neuron's coefficient (for gradient-ascent or descent training) on that neural network architecture.

[0068] A deep neural network is often used for performing feature capture, and for performing prediction. Feature capture serves to extract useful information from a number of complex data, and this may be considered a form of dimension reduction. Prediction involves interpolation or extrapolation, to generate new data (generally referred to as predicted or estimated data) from sample data. Both these tasks may assume that the input data possess an intrinsic autoregression characteristic. For example, a pixel of an image usually has some relationship with its neighboring pixels. A convolutional neural network (CNN) may be developed to use this relationship to reduce the dimension of the data.

[0069] The present disclosure describes examples that may be used to implement new air interfaces for wireless communication that are tailored or personalized on a device-specific basis using AI/ML to provide device-specific air interface optimization. For example, embodiments of the present disclosure include new air interfaces that go beyond a network slice/service specific air interface to a personalized tailored air interface that includes a personalized service type and a personalized air interface setting. Examples of such personalized air interface settings may include one or more of the following: customized code scheme and modulation scheme; customized transmission scheme such as MIMO beamforming (BF), including channel acquisition/reconstruction and precoding; customized waveform type and associated parameters such as customized pulse shapes and parameters such as roll-off factors of an RRC pulse; customized frame structure; customized transmission/retransmission scheme and associated parameters such as product-code or inter-codebook or inter-TB 2D joint coding based retransmission and parameters such as incremental parity bit size and interleavers used; UE cooperation based retransmission and/or customized transmit-receive point (TRP) layer/type.

[0070] In some embodiments, the personalized tailored air interface parameters may be determined using AI/ML based on the physical speed/velocity at which the device is moving, a link budget of the device, the channel conditions of the device, one or more device capabilities and/or a service type that is to be supported. In some embodiments, the service type itself can be customized with UE-specific service parameters, such as quality of service (QoS) requirement(s), traffic pattern, etc.

[0071] In some embodiments, the personalized tailored air interface parameters may be optimized on the fly with minimal signaling overhead. For example, for 5G network implementations, the parameters may be configured from predefined candidate parameter sets. For next generation network implementations, e.g., for sixth generation (6G) networks, the parameters maybe adapted in a more flexible manner with real time or near real time optimization.

[0072] As will be discussed later, the level or type of air interface optimization available to a device may depend on the AI/ML capability of the device. If a user equipment has some AI/ML capability, the UE can work together with network device(s) to optimize its air interface (i.e., both sides of the air interface apply AI/ML to optimize the air interface). A UE that has no AI/ML capability may still help a network device to optimize an air interface during a training phase and/or during a normal operation phase by providing some types of measurement results to the network device for use in training AI/ML component(s) of the network device. For example, a high end AI/ML capable device may be able to benefit from full scale self-optimization of each component of an air interface (e.g., optimization of coding, modulation and waveform generation, MIMO operation optimization). A lower end AI/ML capable device may only be able to benefit from partial self-optimization of less than all components of an air interface. In some cases, a device may be dependent on centralized learning/training (e.g., all learning is done centrally in the network, such as at a base station). In other cases, learning/training may be based on federated learning, which is a machine learning technique that trains an algorithm across multiple decentralized edge devices or servers holding local data samples, without exchanging their data samples. In still other cases, learning/training may also or instead involve device cooperative learning.

[0073] As discussed above, an air interface generally includes a number of components and associated parameters that collectively specify how a transmission is to be made and/or received over a wireless communications link between two or more communicating devices. For example, an air interface may include one or more components defining the waveform(s), frame structure(s), multiple access scheme(s), protocol(s), coding scheme(s) and/or modulation scheme(s) for conveying data over a wireless communications link. The methods and devices disclosed herein provide a mechanism of AI/ML enabled/assisted air interface personalized optimization that supports different levels of per-UE/device based optimization. The disclosed examples also provide over the air signaling mechanisms to support per-UE/device based air interface function optimization.

[0074] FIG. 7A illustrates a first example of a transmit chain 500 of a base station 170 and a receive chain 550 of a UE 110 that each include an AI/ML module 502,552 that is trainable in order to provide a tailored personalized air interface between the base station 170 and UE 110, in accordance with an embodiment of the present disclosure. AI/ML components as referenced herein are intended to be modules or blocks based on an implementation of ML mechanisms. One example of an ML implementation is a neural network implemented in hardware, one or more components that execute software, or a combination thereof.

[0075] The AI/ML module 502 of the base station 170 includes a joint source and channel encoder component 504, a modulator component 506 and a waveform generator component 508.

[0076] The AI/ML module 552 of the UE 110 includes a joint waveform recovery, demodulator and source and channel decoder component 554.

[0077] The AI/ML module 502 provides AI/ML based autonomous optimization of all basic baseband signal processing functions including channel coding (or source coding plus channel coding) via encoding component 504, modulation via modulation component 506 and waveform generation via waveform generator 508. The base station 170 may have multiple transmit antennas, and in such embodiments the waveform generator 508 may be configured to generate a waveform for each of the transmit antennas. The AI/ML module 552 at the UE 110 provides the reciprocal based band processing functionality in order to recover information bits/raw data from signals received from the base station 170. The UE 110 may have multiple receive antennas, and in such embodiments the AI/ML module 552 may be configured to process waveforms received from multiple receive antennas as part of the waveform recovery process.

[0078] The coding, modulation and waveform generation may be optimized individually or two or more may be jointly optimized.

[0079] Several options are possible for individual optimization of the various components of the AI/ML modules 502, 552. Some non-limiting examples of these options are described below.

[0080] For example, for individual optimization of channel coding without a predefined coding scheme and parameters, self-learning/training and optimization may be used to determine an optimal coding scheme and parameters. For example, in some embodiments, a forward error correction (FEC) scheme is not predefined and AI/ML is used to determine a UE specific customized FEC scheme. In such embodiments, autoencoder based ML may be used as part of an iterative training process during a training phase in order to train an encoder component at a transmitting device and a decoder component at a receiving device. For example, during such a training process, an encoder at a base station and a decoder at a UE may be iteratively trained by exchanging a training sequence/updated training sequence. In general, the more trained cases/scenarios, the better performance. After training is done, the trained encoder component at the transmitting device and the trained decoder component at the receiving device can work together based on changing channel conditions to provide encoded data that may outperform results generated from a non-AI/ML based FEC scheme. In some embodiments, the AI/ML algorithms for self-learning/training and optimization may be downloaded by the UE from a network/server/other device.

[0081] For individual optimization of channel coding with predefined coding schemes, such as low density parity check (LDPC) code, Reed-Muller (RM) code, polar code or other coding scheme, the parameters for the coding scheme can be optimized.

[0082] The parameters for channel coding can be signaled to UE from time to time (periodically or event triggered), e.g., via radio resource control (RRC) signaling or dynamically through downlink control information (DCI) in a dynamic downlink control channel or the combination of the RRC signaling and DCI, or group DCI, or other new physical layer signaling. Training can be done all on the network side or assisted by UE side training or mutual training between the network side and the UE side.

[0083] In the example illustrated in FIG. 7A, the input to AI/ML module 502 is uncompressed raw data and source coding and channel coding are done jointly by AI/ML component 504. An alternative example is illustrated in FIG. 7B, in which source coding is done separately by a source encoder 501 to generate compressed information bits that are then received by AI/ML module 502 where they are channel coded by AI/ML component 504. Similarly, in the example illustrated in FIG. 7B, the output of the AI/ML module 552 at the UE 110 is recovered compressed information bits that are then decoded by a source decoder 555 to generate recovered raw data, whereas the AI/ML module 552 in FIG. 7A outputs recovered raw data.

[0084] For individual optimization of modulation without a predefined constellation, modulation may be done by an AI/ML module, the optimization targets and or algorithms of which (e.g., the AI/ML component 506) are understood by both the transmitter and the receiver (e.g., the base station 170 and UE 110, respectively, in the example scenario shown in FIG. 7A). For example, the AI/ML algorithm may be configured to maximize Euclidian or non-Euclidian distance between constellation points.

[0085] For individual optimization of modulation with a predefined non-linear modulator, the parameters for the modulation may be done by self-optimization.

[0086] For individual optimization of waveform generation without a predefined waveform type, without a predefined pulse shape and without predefined waveform parameters, self-learning/training and optimization may be used to determine optimal waveform type, pulse shape and waveform parameters. In some embodiments, the AI/ML algorithms for self-learning/training and optimization may be downloaded by the UE from a network/server/other device.

[0087] In some embodiments, there may be a finite set of predefined waveform types, and selection of a predefined waveform type from the finite set and determination of the pulse shape and other waveform parameters may be done through self-optimization.

[0088] Several options are also possible for joint optimization of two or more of the components of the AI/ML modules 502, 552. Some non-limiting examples of these options are described below.

[0089] For example, in some embodiments, the coding via component 504 (channel coding or joint source and channel coding) and the modulation implemented via component 506 may be jointly optimized with AI/ML, and the waveform generation via component 508 may be optimized separately. Multi-dimensional modulation, which is conceptually similar to trellis-coded modulation, is one example of a combined coding and modulation scheme that may be used in some embodiments of the present disclosure. For example, in some embodiments, AI/ML may be used to create a customized multi-dimensional modulation scheme for a pair of communicating devices, e.g., a base station and a UE.

[0090] In other embodiments, the modulation via component 504 and the waveform generation via component 508 may be jointly optimized with AI/ML, and the coding via component 504 may be optimized separately. In other embodiments, the coding, modulation and waveform generation may all be jointly optimized with AI/ML.

[0091] FIGS. 8A and 8B illustrate examples of a transmit chain 600 of a base station 170 and a receive chain 650 of a UE 110 that each include an AI/ML module 602,652 that is trainable in order to realize UE specific optimization and/or provide a tailored or personalized air interface between the base station 170 and UE 110, in accordance with a second embodiment of the present disclosure. In the example shown in FIG. 8A, the transmit chain 600 of base station 170 includes an AI/ML module 602 and a waveform generator 605. AI/ML module 602 of the base station 170 includes a joint source and channel encoder and modulation component 604. Similarly, in this example the receive chain 650 of UE 110 includes a waveform processor 651 and an AI/ML module 652, which includes a joint demodulator and source and channel decoder component 654.

[0092] Unlike the examples shown in FIGS. 7A and 7B, in which the AI/ML modules 502,552 provide AI/ML based autonomous optimization of all basic baseband signal processing functions including coding/decoding, modulation/demodulation and waveform generation/processing, in the example shown in FIG. 8A the AI/ML module 602 provides AI/ML based autonomous optimization of coding and modulation via component 604, and non-AI/ML based waveform generation is managed independently via waveform generator 605. The base station 170 may have multiple transmit antennas, and in such embodiments the waveform generator 605 may be configured to generate a waveform for each of the transmit antennas. The AI/ML module 652 at the UE 110 provides the reciprocal optimized baseband processing functionality on modulated signals recovered by waveform processor 651. The UE 110 may have multiple receive antennas, and in such embodiments the waveform processor 651 may be configured to process waveforms received from multiple receive antennas as part of the waveform recovery process.

[0093] In the example illustrated in FIG. 8A, the input to AI/ML module 602 is uncompressed raw data and joint source and channel coding and modulation are done by AI/ML component 604. The example illustrated in FIG. 8B differs from the example illustrated in FIG. 8A in that in FIG. 8A source coding is done separately by a source encoder 601 to generate information bits that are then received by AI/ML module 602 where they are jointly channel coded and modulated by AI/ML component 604. Similarly, in the example illustrated in FIG. 8B, the output of the AI/ML module 652 at the UE 110 is recovered compressed information bits that are then decoded by a source decoder 655 to generate recovered raw data, whereas the AI/ML module 652 in FIG. 8A outputs recovered raw data.

[0094] In the examples shown in FIGS. 8A and 8B, training of the AI/ML modules 602 and 652 may be done by self-learning/training optimization. Coding and modulation may be optimized by AI/ML separately or jointly, as discussed earlier.

[0095] As mentioned above, in the examples shown in FIGS. 8A and 8B, waveform generation via waveform generator 605 at base station 170 and waveform processing via waveform processor 651 at UE 110, may be managed without AI/ML. For example, waveform types and waveform parameters may be predefined and a waveform may be selected from a predefined set of candidate waveforms according to transmission requirements, such as peak to average power ratio (PAPR), frequency band, frequency localization, and the like. Alternatively, the waveform type and waveform parameters may be dynamically signaled to a UE via for example downlink control information (DCI) or radio resource control (RRC) signaling. In some embodiments, the predefined set of candidate waveforms may include single-carrier waveform and multi-carrier waveforms. Furthermore, the predefined set of candidate waveforms may include multiple candidate waveforms that differ in terms of one or more parameters. For example, there may be multiple candidate single-carrier waveforms predefined, such as single carrier offset QAM (OQAM) waveforms, with root-raised cosine pulse, and predefined roll-off factors.

[0096] FIG. 9 illustrates an example of a transmit chain 700 of a base station 170 and a receive chain 750 of a UE 110 that each include an AI/ML module 702,752 that is trainable in order to provide a tailored personalized air interface between the base station 170 and UE 110, in accordance with a third embodiment of the present disclosure.

[0097] In the example shown in FIG. 9, the transmit chain 700 of base station 170 includes a source encoder 701, a channel encoder 703 and an AI/ML module 702 that includes a modulation component 704 and a waveform generator component 706. In this example the receive chain 750 of UE 110 includes an AI/ML module 752, which includes a waveform processor component 756 and a demodulator component 754, a channel decoder 755 and a source decoder 757.

[0098] Unlike the previous examples shown in FIGS. 7A, 7B, 8A and 8B, the example shown in FIG. 9 utilizes non-AI/ML based source and channel coding/decoding and AI/ML based modulation/demodulation and waveform generation/processing. At UE 110, the waveform processor component 756 and the demodulator component 754 of the AI/ML module 652 provide the reciprocal optimized modulated signal recovery and demodulation functionality to recover modulated information bits. The recovered modulated information bits are decoded by channel decoder 755 to generate recovered compressed information bits, which are in turn decoded by source decoder 757 to generate recovered raw data.

[0099] In the example shown in FIG. 9, training of the AI/ML modules 602 and 652 may be done by self-learning/training optimization. Modulation and waveform generation may be optimized by AI/ML separately or jointly, as discussed earlier. As mentioned above, in the example shown in FIG. 9, source and channel coding via source encoder 701 and channel encoder 703 at base station 170 and channel and source decoding via channel decoder 755 and source decoder 757 at UE 110, may be managed without AI/ML. For example, coding schemes and associated parameters may be predefined and a coding scheme may be selected from a predefined set of coding schemes according to a transmission requirement. Alternatively, the coding scheme and associated parameters may be dynamically signaled to a UE via for example downlink control information (DCI) or radio resource control (RRC) signaling.

[0100] FIG. 10 illustrates an example of a transmit chain 800 of a base station 170 and a receive chain 850 of a UE 110 that each include an AI/ML module 802,852 that is trainable in order to provide a tailored personalized air interface between the base station 170 and UE 110, in accordance with a fourth embodiment of the present disclosure.

[0101] In the example shown in FIG. 10, the transmit chain 800 of base station 170 includes a source encoder 801, a channel encoder 803, an AI/ML module 802, which includes a modulation component 804, and a waveform generator 805. In this example the receive chain 850 of UE 110 includes a waveform processor 851, an AI/ML module 852, a channel decoder 855 and a source decoder 857. The AI/ML module 852 includes a demodulator component 854.

[0102] Unlike the previous examples, the example shown in FIG. 10 utilizes non-AI/ML based channel coding/decoding and waveform generation/processing and AI/ML based modulation/demodulation. At UE 110, the waveform processor 851, channel decoder 855 and source decoder 857 provide non AI/ML based signal recover, channel decoding and source decoding, respectively, and the demodulator component 854 of the AI/ML module 852 provides optimized demodulation functionality that is the reciprocal of the modulation functionality performed by the modulation component 804 at base station 170.

[0103] For optimization of modulation without a predefined constellation, an AI/ML algorithm implemented by modulation component 804 may be configured to maximize Euclidian or non-Euclidian distance between constellation points.

[0104] For optimization of modulation with a predefined non-linear modulator, the parameters for the modulation may be done by self-optimization, e.g., to optimize the distance of modulated symbols. In some scenarios, non-AI/ML based optimization of modulation may also or instead be utilized. As mentioned above, in the example shown in FIG. 10, source and channel coding via source encoder 801 and channel encoder 803 and waveform generation via waveform generator 805 at base station 170 and waveform processing via waveform processor 851 and channel and source decoding via channel decoder 855 and source decoder 857 at UE 110, may be managed without AI/ML. For example, waveform types and associated parameters as well as coding schemes and associated parameters may be predefined and a waveform type and a coding scheme may be selected from predefined sets according to a transmission requirement, as discussed previously. Alternatively, the coding scheme and associated parameters and/or the waveform type and waveform parameters may be dynamically signaled to a UE via for example downlink control information (DCI) or radio resource control (RRC) signaling.

[0105] FIG. 11 illustrates an example of a transmit chain 900 of a base station 170 and a receive chain 950 of a UE 110 that each include an AI/ML module 902,952 that is trainable in order to provide a tailored personalized air interface between the base station 170 and UE 110, in accordance with a fifth embodiment of the present disclosure.

[0106] In the example shown in FIG. 11, the transmit chain 900 of base station 170 includes a source encoder 901, a channel encoder 903, a QAM mapping component 905 and an AI/ML module 902 that includes a waveform generation component 904. In this example the receive chain 950 of UE 110 includes an AI/ML module 952, a QAM demapping component 953, a channel decoder 955 and a source decoder 957. The AI/ML module 952 includes a waveform processing component 954.

[0107] Unlike the previous examples, the example shown in FIG. 11 utilizes non-AI/ML based source and channel coding/decoding and modulation/demodulation and AI/ML based or assisted waveform generation. The AI/ML based or assisted waveform generation may enable per UE based optimization of one or more waveform parameters, such as pulse shape, pulse width, subcarrier spacing (SCS), cyclic prefix, pulse separation, sampling rate, PAPR and the like.

[0108] For optimization of waveform generation without a predefined waveform type, without a predefined pulse shape and without predefined waveform parameters, self-learning/training and optimization may be used to determine optimal waveform type, pulse shape and waveform parameters. In some embodiments, the AI/ML algorithms for self-learning/training and optimization may be downloaded by the UE from a network/server/other device. In some embodiments, there may be a finite set of predefined waveform types, and selection of a predefined waveform type from the finite set and determination of the pulse shape and other waveform parameters may be done through self-optimization. In some scenarios, non-AI/ML based optimization of waveform generation may also or instead be utilized.

[0109] As mentioned above, in the example shown in FIG. 11, source and channel coding via source encoder 901 and channel encoder 903 and modulation via QAM mapping component 905 at base station 170 and demodulation via QAM demapping component 953 and channel and source decoding via channel decoder 955 and source decoder 957 at UE 110, may be managed without AI/ML. For example, a modulation and coding scheme and associated parameters may be selected from a predefined set of modulation and coding schemes according to a transmission requirement, as discussed previously. Alternatively, the modulation and coding scheme and associated parameters may be dynamically signaled to a UE via for example downlink control information (DCI) or radio resource control (RRC) signaling.

[0110] Examples of over the air information exchange procedures that may facilitate training of ML components of communicating devices, such as various ML components of the base stations 170 and UEs 110 of the examples shown in FIGS. 7 to 11 will now be described with reference to FIGS. 12 to 14.

[0111] FIG. 12 is a signal flow diagram 1000 of an example of an over the air information exchange procedure for a training phase of machine learning components enabling device-specific tailoring/customization of an air interface, in accordance with an embodiment of this disclosure.

[0112] In the signal flow diagram 1000, a UE and a BS or other network device are involved in an information exchange for an AI/ML training phase 1150. Although only one UE and one BS are shown in FIG. 12 to avoid congestion in the drawing, data collection or information sharing during training, and similarly operation of a communication network, are expected to involve more than one UE and more than one BS. For example, in some embodiments training may be done with the joint efforts from multiple network devices and multiple UEs and air interface optimization may be done on a per UE basis.

[0113] The information exchange procedure begins with UE sending information indicating an AI/ML capability of the UE to the BS at 1010. The information indicating an AI/ML capability of the UE may indicate whether or not the UE supports AI/ML for optimization of an air interface. If the UE is capable of supporting AI/ML optimization, the information may also or instead indicate what type and/or level of complexity of AI/ML the UE is capable of supporting, e.g., which function/operation AI/ML can be supported, what kind of AI/ML algorithm can be supported (for example, autoencoder, reinforcement learning, neural network (NN), deep neural network (DNN), how many layers of NN can be supported, etc.). In some embodiments, the information indicating an AI/ML capability of the UE may also or instead include information indicating whether the UE can assist with training.

[0114] In some embodiments, the information sent at 1010 may include information indicating an AI/ML capability type of the UE. The AI/ML capability type may identify whether the UE supports AI/ML optimization of one or more components of the air interface of the device. For example, the AI/ML capability type may be one of a plurality of AI/ML capability types, where each AI/ML capability type corresponds to support for a different level of AI/ML capability. For example, the plurality of AI/ML capability types may include an AI/ML capability type that indicates the UE supports deep learning. As another example, the plurality of AI/ML capability types may include different types that indicate different combinations of air interface components that are optimizable by AI/ML. For example, the plurality of AI/ML capability types may include one or more of the following types: [0115] a type corresponding to support for AI/ML based optimization of all baseband signal processing components, such as coding (channel coding or joint source and channel coding), modulation and waveform generation (e.g., similar to the examples shown in FIGS. 7A and 7B); [0116] a type corresponding to support for AI/ML based optimization of coding and modulation, but not waveform generation (e.g., similar to the examples shown in FIGS. 8A and 8B); [0117] a type corresponding to support for AI/ML based optimization of modulation and waveform generation, but not coding (e.g., similar to the example shown in FIG. 9); [0118] a type corresponding to support for AI/ML based optimization of modulation, but not coding and waveform generation (e.g., similar to the example shown in FIG. 10); [0119] a type corresponding to support for AI/ML based optimization of waveform generation, but not coding and modulation (e.g., similar to the example shown in FIG. 11).

[0120] In some embodiments, the information sent by the UE to the BS at 1010 may be sent by the UE to the BS as part of an initial access procedure to access the network. In other embodiments, the information may also or instead be sent by the UE in response to a capability enquiry from the BS (not shown).

[0121] After receiving AI/ML capability information from the UE indicating that the UE supports AI/ML and can assist with training, the BS sends a training request to the UE at 1012 to trigger a training phase 1050. In some embodiments, the training request may be sent to the UE through DCI (dynamic signaling) on a downlink control channel or on a data channel. For example, in some embodiments the training request may be sent to the UE as UE specific or UE common DCI. For example, UE common DCI may be used to send a training request to all UEs or a group of UEs.