Methods and Systems for Detecting Spurious Data Patterns

Louizos; Louizos Alexandros ; et al.

U.S. patent application number 17/100243 was filed with the patent office on 2021-05-27 for methods and systems for detecting spurious data patterns. The applicant listed for this patent is Fraud.net, Inc.. Invention is credited to R. Whitney Anderson, Ayaan Chaudhry, Oliver Clark, Louizos Alexandros Louizos, Gary Plunkett, Cathy Ross.

| Application Number | 20210158161 17/100243 |

| Document ID | / |

| Family ID | 1000005277425 |

| Filed Date | 2021-05-27 |

View All Diagrams

| United States Patent Application | 20210158161 |

| Kind Code | A1 |

| Louizos; Louizos Alexandros ; et al. | May 27, 2021 |

Methods and Systems for Detecting Spurious Data Patterns

Abstract

Disclosed are implementations that include a method for detecting anomalous data, including converting a set of data values representative of a multi-dimensional item into a nodes-and-edges graph representation of the item, applying a graph convolution process to the graph representation to generate a transformed graph representation for the item comprising a resultant transformed configuration of the nodes and edges representing the item, and determining, based on the transformed configuration, a probability that the item is anomalous. Another example method includes receiving input data at a neural network circuit comprising a plurality of node layers, with each of the plurality of node layers comprising respective one or more nodes, with the neural network circuit further comprising adjustable weighted connections connecting at least some nodes in different layers of the plurality of node layers. The method further includes removing one or more of the weighted connections at one or more time instances.

| Inventors: | Louizos; Louizos Alexandros; (New York, NY) ; Chaudhry; Ayaan; (Toronto, CA) ; Plunkett; Gary; (Bellingham, WA) ; Clark; Oliver; (New York, NY) ; Ross; Cathy; (New York, NY) ; Anderson; R. Whitney; (New York, NY) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Family ID: | 1000005277425 | ||||||||||

| Appl. No.: | 17/100243 | ||||||||||

| Filed: | November 20, 2020 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 62939236 | Nov 22, 2019 | |||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | G06N 3/04 20130101; G06N 3/08 20130101; G06F 16/285 20190101 |

| International Class: | G06N 3/08 20060101 G06N003/08; G06F 16/28 20060101 G06F016/28; G06N 3/04 20060101 G06N003/04 |

Claims

1. A method for detection and classification of data, the method comprising: receiving input data at a neural network circuit comprising a plurality of node layers, with each of the plurality of node layers comprising respective one or more nodes, the neural network circuit further comprising adjustable weighted connections connecting at least some nodes in different layers of the plurality of node layers; and removing one or more of the weighted connections at one or more time instances.

2. The method of claim 1, wherein the neural network circuit is a feed-forward neural network circuit.

3. The method of claim 1, wherein removing the one or more of the weighted connections comprises: selecting the one or more of the weighted connections randomly; and removing the randomly selected one or more of the weighted connections.

4. The method of claim 1, wherein removing the one or more of the weighted connections comprises: selecting a set of multiple connections from the weighted connections based, at least in part, on output of the neural network circuit; and selecting randomly the one or more of the weighted connections from the selected set of multiple connections.

5. The method of claim 4, wherein selecting the set of multiple connections comprises: selecting one or more pairs of node layers of the neural network circuit according to the output of the neural network circuit; and removing at least one weighted connection between node layers of the selected one or more pairs of node layers.

6. The method of claim 4, wherein selecting the set of multiple connections comprises: selecting the set of multiple connections according to output values produced by elements of an output node layer of the neural network circuit and a plurality of output ranges defined for possible values produced by the output node layer.

7. The method of claim 1, further comprising: configuring at least some of the weighted connections according to a biasing factor in response to output of the neural network resulting from an input data record, of the received input data, processed by the neural network.

8. The method of claim 7, wherein the biasing factor is a multiplication factor applied to the at least some of the weighted connections through a back-propagation operation in response to a determination that the neural network correctly identified the input data record as being anomalous.

9. The method of claim 1, further comprising: performing preprocessing on a received raw data record to produce an input data record provided to the neural network circuit, including performing one or more of: Gaussian normalization applied to the raw data record, or removing one or more data elements of the raw data record based on at least one of: entropy associated with the one or more data elements, sparseness associated with the one or more data elements, a p-value associated with the one or more data elements, or a low-effect value associated with the one or more data elements.

10. The method of claim 9, wherein removing one or more data elements comprises: identifying a particular data element as a rare element in response to determining, based on training data to train a learning engine implementation for performing the preprocessing, that the particular data element is present in fewer than an adjustable threshold number of data records comprising the training data, wherein the adjustable threshold number is adjusted based on likelihood of occurrence of anomalous values for the particular data element; and removing from runtime data records the particular data element identified as the rare element.

11. A system comprising: an input stage to receive one or more input data records; and a controller, implementing one or more learning engines, in communication with a memory device to store programmable instructions, to: receive input data at a neural network circuit comprising a plurality of node layers, with each of the plurality of node layers comprising respective one or more nodes, the neural network circuit further comprising adjustable weighted connections connecting at least some nodes in different layers of the plurality of node layers; and remove one or more of the weighted connections at one or more time instances.

12. The system of claim 11, wherein the neural network circuit includes a feed-forward neural network circuit.

13. The system of claim 11, wherein the controller configured to remove the one or more of the weighted connections is configured to: select the one or more of the weighted connections randomly; and remove the randomly selected one or more of the weighted connections.

14. The system of claim 11, wherein the controller configured to remove the one or more of the weighted connections is configured to: select a set of multiple connections from the weighted connections based, at least in part, on output of the neural network circuit; and select randomly the one or more of the weighted connections from the selected set of multiple connections.

15. The system of claim 14, wherein the controller configured to select the set of multiple connections is configured to: select one or more pairs of node layers of the neural network circuit according to the output of the neural network circuit; and remove at least one weighted connection between node layers of the selected one or more pairs of node layers.

16. The system of claim 14, wherein the controller configured to select the set of multiple connections is configured to: select the set of multiple connections according to output values produced by elements of an output node layer of the neural network circuit and a plurality of output ranges defined for possible values produced by the output node layer.

17. The system of claim 11, wherein the controller is further configured to: configure at least some of the weighted connections according to a biasing factor in response to output of the neural network resulting from an input data record, of the received input data, processed by the neural network.

18. The system of claim 17, wherein the biasing factor is a multiplication factor applied to the at least some of the weighted connections through a back-propagation operation in response to a determination that the neural network correctly identified the input data record as being anomalous.

19. The system of claim 11, wherein the controller is further configured to: perform preprocessing on a received raw data record to produce an input data record provided to the neural network circuit, including performing one or more of: Gaussian normalization applied to the raw data record, or removing one or more data elements of the raw data record based on at least one of: entropy associated with the one or more data elements, sparseness associated with the one or more data elements, a p-value associated with the one or more data elements, or a low-effect value associated with the one or more data elements.

20. A non-transitory computer readable media storing a set of instructions, executable on at least one programmable device, to: receive input data at a neural network circuit comprising a plurality of node layers, with each of the plurality of node layers comprising respective one or more nodes, the neural network circuit further comprising adjustable weighted connections connecting at least some nodes in different layers of the plurality of node layers; and remove one or more of the weighted connections at one or more time instances.

Description

CROSS-REFERENCE TO RELATED APPLICATIONS

[0001] This application claims priority to, and the benefit of, U.S. Provisional Application No. 62/939,236 entitled "METHODS AND SYSTEMS FOR DETECTING SPURIOUS DATA PATTERNS," and filed Nov. 22, 2019, the content of which is incorporated herein by reference in its entirety.

BACKGROUND

[0002] The ever-growing volume of electronic business and economic activity has been accompanied by a similar sharp increase in fraudulent and harmful electronic activity. Being able to robustly detect rare data patterns is beneficial in cases where anomalous behavior needs to be detected (e.g., through detection of data outliers) to prevent damage to devices or fraud in financial transactions.

SUMMARY

[0003] There is a need for robust detectors of spurious data patterns among a stream of data whether data source is sensors, financial transactions or server logs. In the present disclosure, a method and an apparatus for empowering the robust, fast and real time detection of spurious signals in data with a novel method and a device using a graph network methodology and/or a novel neural network topology is described. Analytical description of data preprocessing before data are fed as input to the system is also described.

[0004] Disclosed are systems, methods, and other implementations to identify outlier data records from a set of records processed by a learning machine. Examples of such records may be transaction records (e.g., credit card records), with respect to which a learning system is configured to detect anomalous (outlying) activity or behavior. Such anomalous activity may be indicative of possible fraudulent activity.

[0005] In the present disclosure, methods are described for using a neural network, or other types of learning machines, with specific configurations structured to robustly detect outliers in data streams. The novel neural network architectures of the present disclosure can be combined with a unique data preprocessing methodology to reduce the dimensionality of the input data based on specific data filters that maximize the entropy of the input data.

[0006] In some embodiments, the implementations described herein use graph network topologies and processing to identify outliers or anomalous data. A method is thus provided to combine graph networks topology of the processed data with a neural network to automatically cluster data in a topological way that separates the spurious data patterns from normal data flow. The example implementations also include apparatus comprising the neural networks (or other types of learning machines), the topological graphs, and the neural network filters described herein. The example implementations additionally include non-transitory computer-readable medium having program code recorded thereon for filtering the input streaming data according to the preprocessing parameters and forwarding this data to the filtering neural network and the graph based topological calculator and neural network. The medium may include program code to, when executed by a processor, select at least one moment of an input of the data, along with the execution of the neural networks and graph topological transformers.

[0007] The methods and apparatus of the present disclosure include engineered features that are created/generated from the base streaming data. The engineered features measure many aspects of the data instance and may or may not be interesting or germane to human-based analysis. Inputting these features to the neural network modules might or might not supply them with data relationships humans intuitively find interesting. The methods and apparatus include a methodology, device and code to flag specific patterns in data for potential review from a human reviewers (for example, in the case of a financial transaction) if a feature such as comparing the distance between billing and shipping addresses for a transaction is above a certain threshold, the transaction will be automatically flagged for potential review.

[0008] In some variations, a method for robust detection and classification of data outliers is provided. The method includes converting a set of data values representative of a multi-dimensional item into a graph representation of the multi-dimensional item, with the graph representation comprising nodes and edges, applying a graph convolution process to the graph representation of the multi-dimensional item to generate a transformed graph representation for the multi-dimensional items comprising a resultant transformed configuration of the nodes and edges representing the multi-dimensional item, and determining, based on the transformed configuration of the nodes and edges representing the multi-dimensional item, a probability that the multi-dimensional item is anomalous.

[0009] Embodiments of the method may include at least some of the features described in the present disclosure, including one or more of the following features.

[0010] Determining the probability that the multi-dimensional item is anomalous may include processing the transformed configuration of the nodes and edges representing the multi-dimensional item with a global attention module to generate a resultant vector of values, and applying a softmax module to the resultant vector of values to derive the probability that the multi-dimensional item is anomalous.

[0011] Converting the set of data values representative of the multi-dimensional item may include transforming values comprising the multi-dimensional items into a plurality of respective multi-dimensional vectors by a plurality of trained multi-layer perceptron applied to the respective values.

[0012] The method may further include generating, for the plurality of respective multi-dimensional vectors, a graph representation of nodes with interconnecting edges connecting at least some of the nodes, with positions and orientations of the interconnected nodes in the graph representation relative to each other being indicative of potential anomalous relationships between the set of data values of the multi-dimensional item.

[0013] Applying the graph convolution process may include generating, for a particular edge of the edges of the graph representation, an edge composite value based on an edge value representing the particular edge, node values representative of a respective source node and destination node of the particular edge, and a global state value associated with the graph representation, and providing the edge composite value to an edge multi-layer perceptron unit to generate a resultant transformed edge corresponding to the particular edge.

[0014] Applying the graph convolution process may include generating, for a particular node of the nodes of the graph representation, a node composite value based on an average of intermediate values, computed using one or more node multi-layer perceptrons, based on a respective one of incoming edge values representing incomings edges directed to the particular node and a value of the particular node.

[0015] Applying the graph convolution process comprises may include averaging values of the nodes of the graph representation to generate an average node value generating a global composite value based on the average node value and a global state value associated with the graph representation, and providing the global composite value to a global multi-layer perceptron unit to generate a resultant transformed global state value corresponding to the global state value associated with the graph representation.

[0016] Applying the graph convolution process may include applying the graph convolution process using at least one graph neural network system.

[0017] The method may further include performing preprocessing on a received raw data record to produce the multi-dimensional item, including performing one or more of, for example, Gaussian normalization applied to the received raw data record, and/or removing one or more data elements of the received raw data record. Such removing may be based on at least one of, for example, entropy associated with the one or more data elements, sparseness associated with the one or more data elements, a p-value associated with the one or more data elements, and/or a low-effect size associated with the one or more data elements.

[0018] Removing one or more data elements may include identifying a particular data element as a rare element in response to determining, based on training data to train a learning engine implementation for performing the preprocessing, that the particular data element is present in fewer than an adjustable threshold number of data records comprising the training data, with the adjustable threshold number being adjusted based on likelihood of occurrence of anomalous values for the particular data element, and removing from runtime data records the particular data element identified as the rare element.

[0019] Applying the graph convolution process to the graph representation of the multi-dimensional item may include applying a learning-engine implementation of a graph-convolution process.

[0020] In some variations, a system is provided that includes an input stage to one or more input data records, and a controller, implementing one or more learning engines, in communication with a memory device to store programmable instructions. The controller is configured to convert a set of data values representative of a multi-dimensional item into a graph representation of the multi-dimensional item, with the graph representation comprising nodes and edges, apply a graph convolution process to the graph representation of the multi-dimensional item to generate a transformed graph representation for the multi-dimensional items comprising a resultant transformed configuration of the nodes and edges representing the multi-dimensional item, and determine, based on the resultant transformed configuration of the nodes and edges representing the multi-dimensional item, a probability that the multi-dimensional item is anomalous.

[0021] In some variations, a non-transitory computer readable media is provided, for storing a set of instructions, executable on at least one programmable device, to convert a set of data values representative of a multi-dimensional item into a graph representation of the multi-dimensional item, with the graph representation comprising nodes and edges, apply a graph convolution process to the graph representation of the multi-dimensional item to generate a transformed graph representation for the multi-dimensional items comprising a resultant transformed configuration of the nodes and edges representing the multi-dimensional item, and determine, based on the resultant transformed configuration of the nodes and edges representing the multi-dimensional item, a probability that the multi-dimensional item is anomalous.

[0022] Embodiments of the system and the non-transitory computer readable media may include at least some of the features described in the present disclosure, including any one or more of the features described above in relation to the method.

[0023] In some variations, another method is provided for detection and classification of data. The method includes receiving input data at a neural network circuit comprising a plurality of node layers, with each of the plurality of node layers comprising respective one or more nodes, and with the neural network circuit further comprising adjustable weighted connections connecting at least some nodes in different layers of the plurality of node layers. The method also includes removing one or more of the weighted connections at one or more time instances.

[0024] Embodiments of the other method may include at least some of the features described in the present disclosure, including one or more of the following features.

[0025] The neural network circuit may be a feed-forward neural network circuit.

[0026] Removing the one or more of the weighted connections may include selecting the one or more of the weighted connections randomly, and removing the randomly selected one or more of the weighted connections.

[0027] Removing the one or more of the weighted connections may include selecting a set of multiple connections from the weighted connections based, at least in part, on output of the neural network circuit, and selecting randomly the one or more of the weighted connections from the selected set of multiple connections.

[0028] Selecting the set of multiple connections may include selecting one or more pairs of node layers of the neural network circuit according to the output of the neural network circuit, and removing at least one weighted connection between node layers of the selected one or more pairs of node layers.

[0029] Selecting the set of multiple connections may include selecting the set of multiple connections according to output values produced by elements of an output node layer of the neural network circuit and a plurality of output ranges defined for possible values produced by the output node layer.

[0030] The method may further include configuring at least some of the weighted connections according to a biasing factor in response to output of the neural network resulting from an input data record, of the received input data, processed by the neural network.

[0031] The biasing factor may be a multiplication factor applied to the at least some of the weighted connections through a back-propagation operation in response to a determination that the neural network correctly identified the input data record as being anomalous.

[0032] The method may further include performing preprocessing on a received raw data record to produce an input data record provided to the neural network circuit, including performing one or more of, for example, Gaussian normalization applied to the raw data record, and/or removing one or more data elements of the raw data record. Such removing may be based on at least one of, for example, entropy associated with the one or more data elements, sparseness associated with the one or more data elements, a p-value associated with the one or more data elements, and/or a low-effect value associated with the one or more data elements.

[0033] Removing one or more data elements may include identifying a particular data element as a rare element in response to determining, based on training data to train a learning engine implementation for performing the preprocessing, that the particular data element is present in fewer than an adjustable threshold number of data records comprising the training data, the adjustable threshold number being adjusted based on likelihood of occurrence of anomalous values for the particular data element, and removing from runtime data records the particular data element identified as the rare element.

[0034] In some variations, another system is provided that includes an input stage to receive one or more input data records, and a controller, implementing one or more learning engines, in communication with a memory device to store programmable instructions, to receive input data at a neural network circuit comprising a plurality of node layers, with each of the plurality of node layers comprising respective one or more nodes, and with the neural network circuit further comprising adjustable weighted connections connecting at least some nodes in different layers of the plurality of node layers, and remove one or more of the weighted connections at one or more time instances.

[0035] In some variations, another non-transitory computer readable media is provided, for storing a set of instructions, executable on at least one programmable device, to receive input data at a neural network circuit comprising a plurality of node layers, with each of the plurality of node layers comprising respective one or more nodes, and with the neural network circuit further comprising adjustable weighted connections connecting at least some nodes in different layers of the plurality of node layers, and remove one or more of the weighted connections at one or more time instances.

[0036] Embodiments of the other system, and the other computer readable media may include at least some of the features described in the present disclosure, including at least some of the various features described above in relation to any of the different methods, systems, and media.

[0037] Other features and advantages of the invention are apparent from the following description, and from the claims.

BRIEF DESCRIPTION OF THE DRAWINGS

[0038] These and other aspects will now be described in detail with reference to the following drawings.

[0039] FIG. 1 is a flow diagram illustrating operations/stages to perform data pre-processing for numerical data.

[0040] FIG. 2 is a flow diagram showing preprocessing operations for categorical data.

[0041] FIG. 3 is a flow diagram illustrating an example data preprocessing procedure for input data (e.g., post-training data).

[0042] FIG. 4 is a flow diagram showing a procedure to identify anomalous data using graph neural networks.

[0043] FIG. 5 is a diagram of a topology of an example detector neural network.

[0044] FIG. 6 is a diagram of a features-to-nodes module to converts vector data into graph representation data.

[0045] FIG. 7 is a diagram illustrating transformation of an initial graph representation into a resultant transformed representation.

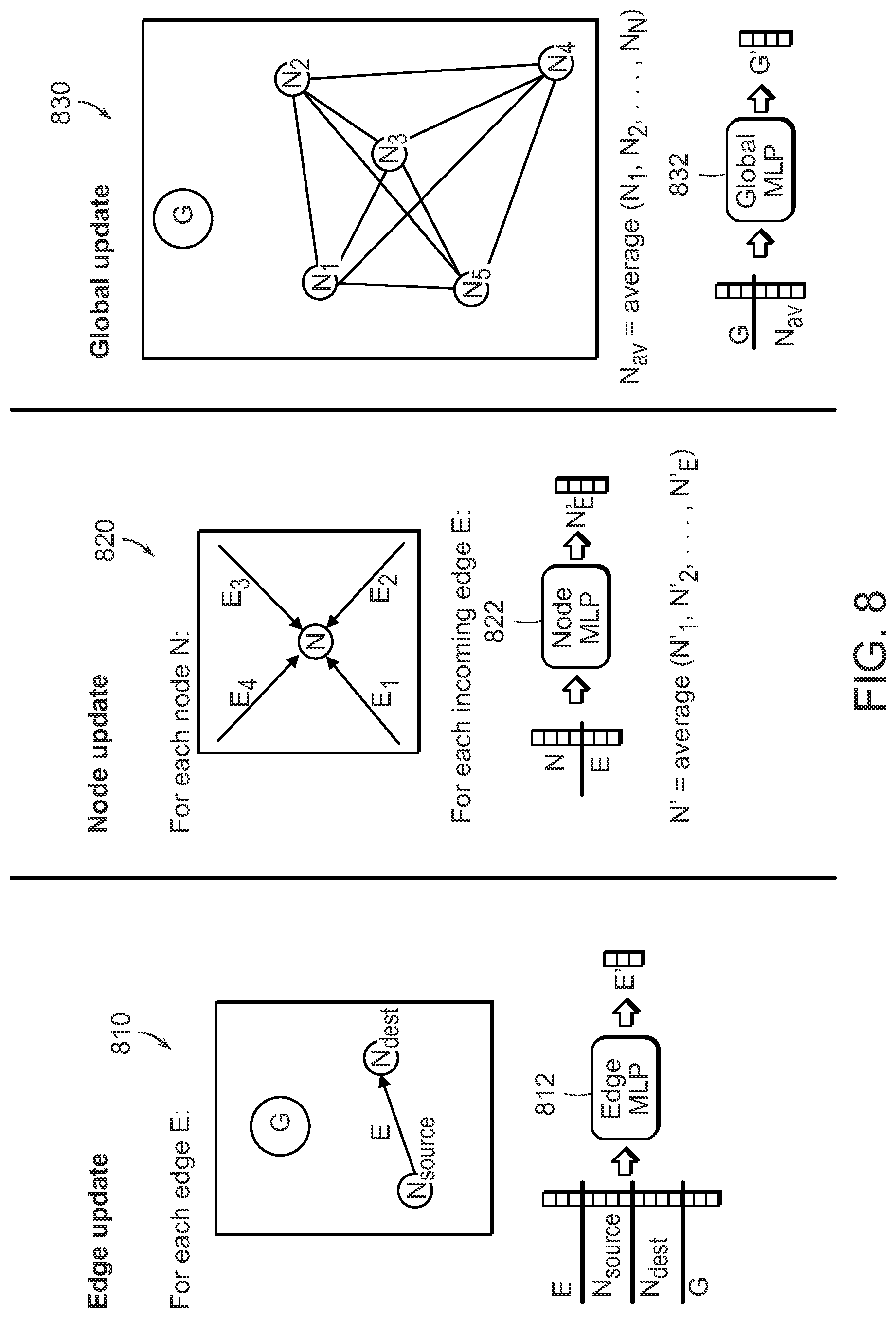

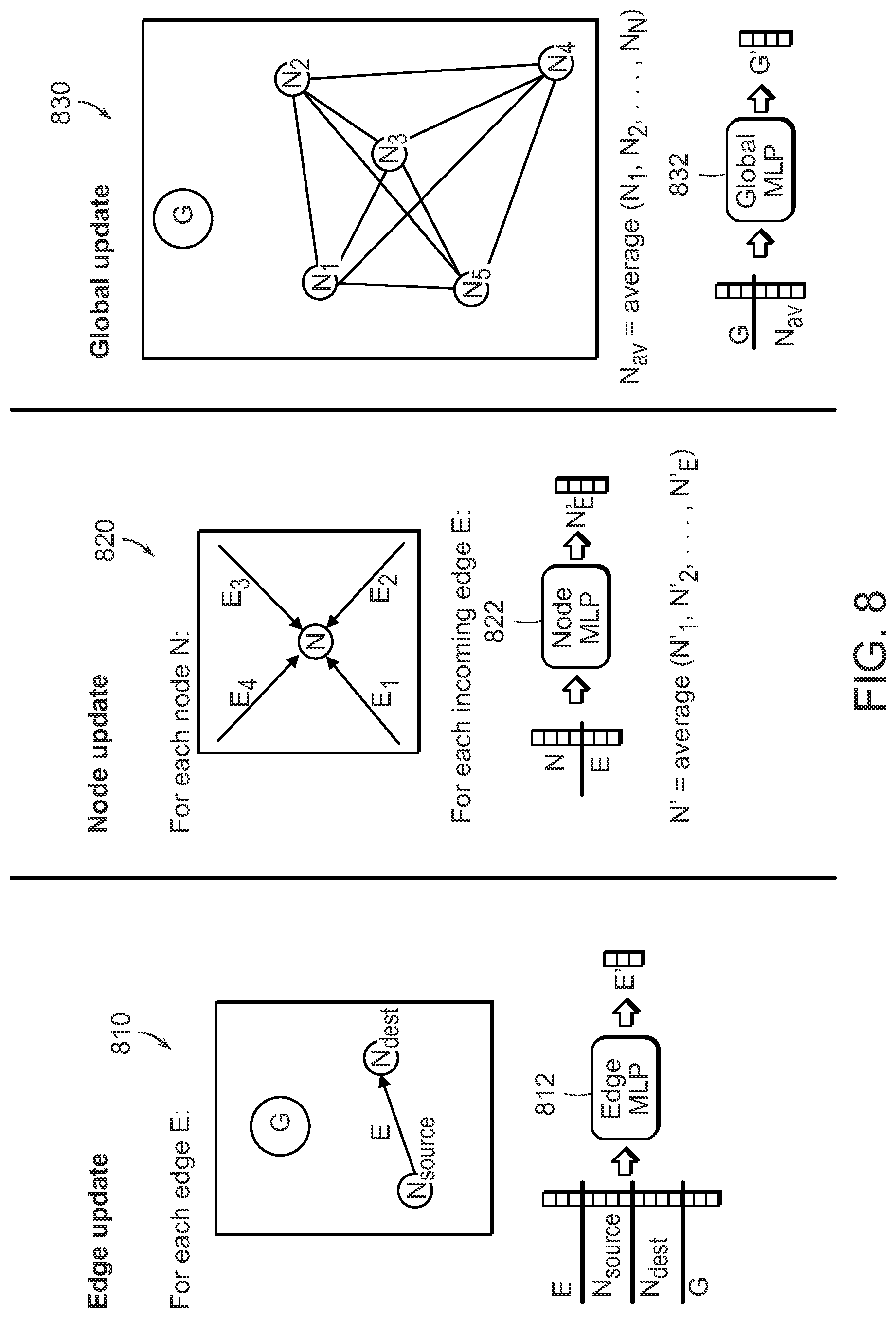

[0046] FIG. 8 include diagrams showing iterative updating of edges, nodes and a global state in a graph representation.

[0047] FIG. 9 is a flowchart of an example procedure to detect and classify.

[0048] FIG. 10 is a flowchart of an example procedure to detect and classify.

[0049] FIG. 11 is a schematic diagram of a computing system.

[0050] Like reference symbols in the various drawings indicate like elements.

DESCRIPTION

[0051] Described herein are systems, methods, devices, media, and other implementations, including implementations based on learning machines (such as neural networks) to detect anomalous data (e.g., outliers). Two examples implementations are described herein. A first implementation detects anomalous data based on graph representation in which data (after optionally being pre-processed to remove certain features, to normalize the data to be represented as a vector of a pre-determined dimensionality that can be input into a learning machine, etc.) is converted into a graph representation comprising a resultant configuration of nodes connected by weighted edges. A graph neural network (GNN), trained to generate a resultant transformed graph that organized the nodes and edges into a resultant representation (in which the nodes' clustering can be indicative of their relevance or anomality) is applied. The transformed graph representation is then processed by a post-transformation stage to generate an output vector, based on which an output determination (e.g., suspected transaction, or valid transaction) can be made. In a second example implementation, a feed-forward neural network, with an adjustable configuration controlled through a dropout operation (as will be discussed in greater detail below) is provided. In the second example implementation, the input data may be pre-processed (similarly to the pre-processing applied for the input data provided to the first example implementation, with such pre-processing including culling unimportant, redundant, or non-impactful features and values, normalizing the data, etc.) The pre-processed data is then provided to a multi-layer feed forward neural network, for which the various connections interconnecting the network's nodes (elements) can be controllably removed or adjusted (in some examples, based on the determined output of the feed-forward network).

[0052] With reference to FIG. 1, a flow diagram 100 illustrating operations/stages to perform data pre-processing for numerical data is shown. The use of preprocessing helps to reduce dimensionality of the data (thus reducing the computation effort required for operating the neural networks of the system, and making the data conform to what the receiving system can handle as input), and to make the neural networks more sensitive to anomalous data (e.g., outliers). As noted, before used as input data to an outlier detection system (e.g., implemented as a neural network), the input data may be optionally preprocessed to facilitate and/or optimize neural network performance. Neural network training data can be used to determine the preprocessing parameters. Numerical features (e.g., certain fields within transaction records) are gaussian normalized according to, for example, the distribution of training data, and dropped altogether if the entropy of that feature exceeds some threshold (as illustrated in FIG. 1). More particularly, and as depicted in FIG. 1, a training set 110 of numerical features is used to determine parameters for gaussian normalization, which are reused when inputting test data to the neural network. First, the entropy of each numerical column is determined, and columns with an entropy above or below a defined threshold are dropped (at block 120 of FIG. 1). Then, input data is gaussian normalized (at block 130) according to the mean and standard deviation of the data column (i.e., the mean and standard deviation generated for a particular feature or field in the records of the training data). The means and standard deviations of each column may be saved on a computer-readable medium, and are used when more data is input to the model (140).

[0053] To force a reduction in the dimensionality of the input data, categorical features are dropped if in the training set the feature is too sparse, the p-value is too high (the p-value is a measure of the probability that an observed difference could have occurred by random chance, with a low p-value being indicative of meaningful statistical significance of such an observed difference), or the effect size is too low. Training data can thus be processed to identify and drop sparse columns (e.g., corresponding to data fields that might not provide meaningful training input). Low frequency categories (fields in the records of the remaining data) may be classified as rare if their frequency is below a threshold frequency. Threshold frequency for rare classification may be lowered depending on the fraud likelihood of the category, or if the feature has a set number of categories or unrestricted number of categories. Subsequent to the rare encoding, columns with high p-values or low effect size can be removed/discarded. In some embodiments, when the processed training data is provided to train a feed-forward network, columns or fields of data records the corresponding to categories (e.g., descriptive data from a finite set of values or descriptions, such as a month field, purchase type field, etc.) are replaced with, for example, one-hot columns for each column category (in one-hot encoding, a vector representation may include, for example, one element that is `1` with other elements of the vector being `0`). When the resultant data at is to be used to train a graph neural network, category data represented as alpha-numerical strings may be replaced with integer indices.

[0054] FIG. 2 is a flow diagram 200 showing preprocessing operations for categorical data (e.g., descriptive data rather than numerical data). The training set of categorical features (210) is used to determine which features to use, which categories to use, and which categories to classify as "rare." First, columns below a certain sparsity level are dropped (at block 220). Next, categories for each column are classified as "rare" if the category occurs some number of times below a "rare" threshold (as determined at block 230). An exception to this rule is if the category is below the cutoff, but is still one of the top three (3), or some other number of categories, most frequent categories. Next, the p-value and Cramer's Corrected Statistic, or "effect size," are calculated for each categorical column. Columns with a p-value above a threshold, and columns below an effect size threshold, are dropped/discarded (at block 240). Next the categorical columns are encoded for input to the neural network modules. For the feed-forward neural network, categorical features are one-hot encoded, such that each categorical entry may be replaced by a number of columns equal to the number of categories for that categorical column (at blocks 250 and 260). For the graph neural network, the number of columns stays the same, but categorical strings in each column are replaced by an integer label (at blocks 270 and 280).

[0055] With reference to FIG. 3, a flow diagram 300 illustrating an example data preprocessing procedure for input data (e.g., post-training data) is shown. A data record 310 (depicted as a column with entries corresponding to fields or features) includes numerical features and categorical features (i.e., populated by descriptive categories from a finite dictionary or set of values). Input numerical features are gaussian-normalized (at block 320) according to the distribution determined by the training data. Thus, numerical values may by normalized (e.g., based on a Gaussian normalization process) according to mean and standard deviation values (.mu. and .sigma.) that may have been determined during training phase. In some examples, categorical features (e.g., descriptive features populated based on a finite dictionary of values/terms) of the input data (310) are translated (at block 330) to "rare" if either the category was "rare" during training, or if the category was not seen in the training data. Categorical features which saw no "rare" categories during training (e.g., all categories for this column in training data were present with high frequency), but are input with a category not present in the training set, will ignore the new category input and instead use no information for this column. Following the pre-processing performed on the input data record 310 (according to operations 320 and 330), a resultant transaction record 340 is generated.

[0056] As noted, one example learning-based processing applied to preprocessed data is based on graph neural networks. FIG. 4 is a flow diagram 400 showing a procedure to identify anomalous data using graph neural networks. A graph neural network module (as depicted in FIG. 4) of the present disclosure turns the input data 410 (which may correspond to the resultant transaction record 340 of FIG. 3) into a graph representation, then outputs (at the "Linear+Softmax" module 480) the probability that the record is anomalous (e.g., whether a transaction, represented by the record, is fraudulent or legitimate). Each of the individual data features of the transaction data is translated into a high-dimensional graph node representation using, for example, a features-to-nodes module 420.

[0057] FIG. 6 is a diagram showing an example implementation of a features-to-nodes module (such as the module 420) which turns/converts vector data (representative of a data record, such as a transaction record) into a graph representation for input into the graph neural network module. Each individual feature included in the input transaction data (depicted as record 610) can be mapped from 1-dimensional space to a high dimensional space (e.g., d>16) by a multi-layer perceptron (MLP) arrangement (depicted as the structure 620 in FIG. 6). In some embodiments, the MLP arrangement may be implemented as an artificial neural network (ANN), such a feedforward ANN, but other types of neural networks (as discussed herein), and/or other types of learning machines, may be used to implement the MLP arrangement of FIG. 6 or the other MLP arrangements discussed herein (e.g., with respect to FIG. 8, as more particularly detailed below). In some embodiments, a separate MLP is trained for each individual input feature. The output of an individual MLP is a resultant multi-dimensional vector (such as vector 630 in FIG. 6) that can be represented node within a graph representation of the input data record. In some examples, the resultant output vectors, representing nodes, provide not only data representative of the feature information (that was input into respective MLP's) but also their positional/orientational relationship, in the graph representation, to other resultant nodes in the graph representation. Such graphical representation of data can be used to determine if there are abnormal relationships between various nodes in a graph representation (e.g., if the orientation between, for example, a group of several (e.g., 3) particular nodes is such that the angles between straight lines passing between is unusually large). The nodes of the node-based graphical representation of the input data records are made into a fully connected graph, using a learned initial edge representation. The interconnected edge elements in the resultant graph representation of the node representations for the output of the MLP structures may share an initial weight vector, which is determined by the neural network training process.

[0058] With continued reference to FIG. 4, the graph representation to the GNN module (440), which may be implemented using a neural network or some other learning machine, transforms the initial graphical representation 430 of the input data record into a transformed graph representation resulting from the learned behavior/configuration of the GNN to identify anomalous data. For example, the transformed graph (represented as a graph 450 in FIG. 4) may have been transformed (by updating the edge and node representations of the graph) so that important nodes are clustered into a configuration that can be indicative of the existence or lack of anomalous behavior. The resultant graph representation 450 is input to a global attention layer 460, which outputs a vector representation 470 of the graph. The global node attention operation can thus generate a composite vector representation based on the individual nodes. For example, nodes of the graph representation 450 are input to the global attention module 460, providing a node weight for each node. The node representations are multiplied by their weights, and averaged. The global node attention operation can be represented according to

V output ( v 1 , v 2 , , v d ) = w a ( a 1 , a 2 , , a d ) + w b ( b 1 , b 2 , , b d ) + w n ( n 1 . n 2 , , n d ) n , ##EQU00001##

where V.sub.output is the output vector 470, each of a, b, n is one of the individual nodes of the transformed graph representation 450, and w.sub.a, . . . w.sub.n are the respective weights applied to the d-dimensional vector representation of the nodes. Other global node attention operations (to generate a composite vector from the graph representation) may be used.

[0059] The final weighted, averaged node representation may next be operated on by a module 480 that transforms a single linear layer to, for example, 2-dimensions, which is then input to a softmax layer to produce class probabilities, quantifying the probability of the data as being anomalous (e.g., the transaction is erroneous/fraudulent) or as being within normal data patterns (e.g., the transaction is not suspected to be abnormal/suspicious). Other filtering or processing operations may be applied to the composite vector representation 470.

[0060] Further details of the graph neural network module are provided in FIG. 7, comprising a diagram 700 illustrating the transformation of an initial graph representation (e.g., generated by the array of MLP structures 620 depicted in FIG. 6). The Graph Neural Network module 720 takes as input a graph 710 (where each node and edge may be represented by a vector), and outputs a graph 730 with updated values for each node and edge.

[0061] An example process for calculating output graph node and edge representations is detailed herein. The graph neural network module functions by iteratively updating representations of the edges, then nodes, then global state of the graph. This process is illustrated by FIG. 8 providing diagrams depicting the various operations performed by the graph neural network modules (such as the GNN module 720 depicted in FIG. 7). The process of updating graph state is referred to as "message-passing" or "graph convolution." In the implementations described herein, message-passing is implemented as follows. First edges are updated as shown in diagram 810. For each edge, the edge representation, source node representation, destination node representation, and global representation are consolidated (e.g., concatenated) into a single vector. This vector is used as input to an edge-MLP (such as edge MLP 812 depicted in FIG. 8), which outputs a new edge representation of the same length as the original edge representation. Second, node representations are updated as shown in diagram 820. For each node, a new representation is created for each of that node's incoming edges. The final node representation used is the average of each of these representations. The node representation for each incoming edge is created by, for example, concatenating the original node representation with the incoming edge representation, and using that as input to a node-MLP (such as node-MLP 822 depicted in FIG. 8), which outputs a new node representation. Finally, the graph global state is updated as shown in diagram 830. In an example embodiment, first, the node representations for all nodes are averaged. Then, the global state vector is concatenated with the average node vector, and used as input to a global-MLP (such as global-MLP 832 depicted in FIG. 8), which outputs a new global state representation.

[0062] With reference to FIG. 9, a flowchart of an example procedure 900 to detect and classify data (e.g., identifying data with anomalous behavior) is shown. The procedure 900 includes converting 910 a set of data values representative of a multi-dimensional item into a graph representation of the multi-dimensional item, with the graph representation comprising nodes and edges. In some examples, converting the set of data values representative of the multi-dimensional item may include transforming values comprising the multi-dimensional items into a plurality of respective multi-dimensional vectors by a plurality of trained multi-layer perceptron applied to the respective values. In such examples, the procedures may also include generating, for the plurality of respective multi-dimensional vectors, a graph representation of nodes with interconnecting edges connecting at least some of the nodes, with positions and orientations of the interconnected nodes in the graph representation relative to each other being indicative of potential anomalous relationships between the set of data values of the multi-dimensional item. For example, unusually skewed orientations can be indicative of abnormal (anomalous) relationships between different features of a multi-dimensional data item, which can indicate some oddity or inconsistency in the relationship between the features (which, in turn, can suggest an increased likelihood of unnatural or fraudulent behavior).

[0063] The procedure 900 further includes applying 920 a graph convolution process to the graph representation of the multi-dimensional item to generate a transformed graph representation for the multi-dimensional item comprising a resultant transformed configuration of the nodes and edges representing the multi-dimensional item. In some examples, applying the graph convolution process may include generating, for a particular edge of the edges of the graph representation, an edge composite value based on an edge value representing the particular edge, node values representative of a respective source node and destination node of the particular edge, and a global state value associated with the graph representation, and providing the edge composite value to an edge multi-layer perceptron unit to generate a resultant transformed edge corresponding to the particular edge. In another example, applying the graph convolution process may include generating, for a particular node of the nodes of the graph representation, a node composite value based on an average of intermediate values, computed using one or more node multi-layer perceptrons, based on a respective one of incoming edge values representing incomings edges directed to the particular node and a value of the particular node. In yet another example, applying the graph convolution process may include averaging values of the nodes of the graph representation to generate an average node value, generating a global composite value based on the average node value and a global state value associated with the graph representation, and providing the global composite value to an global multi-layer perceptron unit to generate a resultant transformed global state value corresponding to the global state value associated with the graph representation. In some embodiments, the various operations performed with respect to the edge transformation, the node transformation, and the global state value transformation may be performed together or independently of each operation. In some examples, applying the graph convolution process may include applying the graph convolution process using at least one graph neural network system.

[0064] With continued reference to FIG. 9, the procedure 900 additionally includes determining 930, based on the transformed configuration of the nodes and edges representing the multi-dimensional item, a probability that the multi-dimensional item is anomalous. Determining the probability that the multi-dimensional item is anomalous may include processing the transformed configuration of the nodes and edges representing the multi-dimensional item with a global attention module to generate a resultant vector of values, and applying a softmax module to the resultant vector of values to derive the probability that the multi-dimensional item is anomalous.

[0065] In some embodiments, the procedure 900 further includes performing preprocessing on a received raw data record to produce the multi-dimensional item, including performing one or more of, for example, Gaussian normalization applied to the received raw data record, and/or removing one or more data elements of the received raw data record based at least one of, for example, entropy associated with the one or more data elements, sparseness associated with the one or more data elements, a p-value associated with the one or more data elements, and/or a low-effect value associated with the one or more data elements. In some embodiments, removing the one or more data elements may include identifying a particular data element as a rare element in response to determining, based on training data to train a learning engine implementation for performing the preprocessing, that the particular data element is present in fewer than an adjustable threshold number of data records comprising the training data, with the adjustable threshold number based adjusted based on likelihood of occurrence of anomalous values for the particular data element. For example, for a data element that is determine to include, at a higher relative frequency, anomalous values, its associated threshold may be increased so that the data element is not removed from a data records, and may thus be captured by the anomalous data detection engine. The procedure may also include removing from runtime data records the particular data element identified as the rare element.

[0066] As noted, another example implementation for detecting anomalous behavior (e.g., the existence of outliers) is based on a feed-forward neural network. FIG. 5 is a diagram of an example implementation of a feed-forward neural network 500, configured to increase sensitivity of the neural network to the presence of outliers in the input data. A feed forward neural network module converts a set of transaction information into a numerical array and outputs the probability that the data input is normal or spurious. The input information can be both numerical data (for example in a financial transaction use case, the numerical data can include payment total or days since the last order) or categorical data (for example, payment method or country of origin). The model includes of a series of vectors (layers), where each node in a layer may be connected to some or all the nodes in the previous and subsequent layers. An input layer 510 is (or receives) the array created from the numerical and categorical variables. The values in the input layer are multiplied by the weight values in the connections to create the array for a first hidden layer 520 of one or more hidden layers (FIG. 5 shows multiple hidden layers). This process of multiplying each layer by the connection weights to the next layer is repeated until a last layer 530 (in the example of FIG. 5, the last layer 530 includes 2 nodes). The values in the nodes of the last layer represent the probability the model predicts for the specific transaction to be erroneous. As further depicted in FIG. 5, a dropout module 540 is connected to the neural network, and is configured to cut or remove one or more of the connections between nodes of different layers. In some embodiments, the dropout may randomly cut connections between one or more or the layers, and may do so either at random instances, or in response to a certain event (e.g., the determination, at the output stage layer of the network, that the generated probability of the existence of an anomalous events exceeds a threshold). Instead of a probability exceeding a threshold, the trigger event may be a determination, at the output, of the existence of an anomalous event (e.g., according to a yes/no determination with respect to existence of an outlier or some aspect of the data rendering the data anomalous). In some examples, the dropout module 540 may be configured to select the connection of the neural network at least partly based on deterministic criteria. For example, selection of the layers from which connections are to be (randomly) removed may be based, in part, on the output value produced by the network (e.g., selecting a connection between the first and second layer if the output is in some output range). The specific connection to be removed between the selected layer may then be picked randomly (or, at least partly, deterministically). The use of the dropout module 540 facilitates controlled structuring of the interconnections of the neural network in a way that increases sensitivity of the network to outlier data.

[0067] In some embodiments, increasing sensitivity of the neural network 500 to anomalous data (such as outlier data) may be achieved by applying a bias to weights of the neural network connections in response to, for example, a correct identification of a classification category (e.g., outlier/normal classification). Thus, for example, if during training the neural network produces a correct output in response to an input data record (e.g., correctly identifying, as defined in the ground truths for the training data, a particular record as corresponding to an outlier), a bias value (e.g., a multiplication factor to increase the strength of at least some of the connections' weight) is applied. The application of biasing can be performed through the use of a biasing factor to modify the weights of each output class through a weight (float) value, used for weighting the loss function during training based on a certain bias factor. This biasing scheme allows the sensitivity of the neural network to anomalous data (upon a correct identification of an input data record as being anomalous) to increase. It has been observed that use of a biasing procedure is more effective (i.e., to increase sensitivity of the network) when used in conjunction with the dropout module 540.

[0068] Thus, with reference to FIG. 10, a flowchart of an example procedure 1000 to detect and classify data (e.g., as anomalous or as being an outlier, etc.) is shown. The procedure 1000 may be used in conjunction with other anomalous data detection procedures (such as the procedure 900 depicted in FIG. 9). The procedure 1000 includes receiving 1010 input data at a neural network circuit comprising a plurality of node layers, with each of the plurality of node layers comprising respective one or more nodes, with the neural network circuit further comprising adjustable weighted connections connecting at least some nodes in different layers of the plurality of node layers. In some examples, the neural network circuit may be a feed-forward neural network circuit.

[0069] The procedure 1000 further includes removing 1020 one or more of the weighted connections at one or more time instances. In some examples, removing the one or more of the weighted connections may include selecting the one or more of the weighted connections randomly, and removing the randomly selected one or more of the weighted connections. In some embodiments, part of the connection-selection process may be deterministic. For example, the layers between which one of the connections is to be removed may be selected based on output of the neural network circuit. In such examples, removing the one or more of the weighted connections may include selecting a set of multiple connections from the weighted connections based, at least in part, on output of the neural network circuit, and selecting randomly the one or more of the weighted connections from the selected set of multiple connections. In some embodiments, selecting the set of multiple connections may include selecting one or more pairs of node layers of the neural network circuit according to the output of the neural network circuit, and removing at least one weighted connection between node layers of the selected one or more pairs of node layers. Selecting the set of multiple connections may include selecting the set of multiple connections according to output values produced by elements of an output node layer of the neural network circuit and a plurality of output ranges defined for possible values produced by the output node layer.

[0070] In some embodiments, the procedure 1000 may further include configuring at least some of the weighted connections according to a biasing factor in response to output of the neural network resulting from an input data record, of the received input data, processed by the neural network. In such embodiments, the biasing factor is a multiplication factor applied to the output of the feed forward neural network in response to a determination that the neural network correctly identified the input data record as being anomalous.

[0071] In some implementations, the procedure 1000 may further include performing preprocessing on a received raw data record to produce an input data record provided to the neural network circuit, including performing one or more of, for example, Gaussian normalization applied to the raw data record, and/or removing one or more data elements of the raw data record based on at least one of, for example, entropy associated with the one or more data elements, sparseness associated with the one or more data elements, a p-value associated with the one or more data elements, and/or a low-effect value associated with the one or more data elements. In some examples, removing one or more data elements may include identifying a particular data element as a rare element in response to determining, based on training data to train a learning engine implementation for performing the preprocessing, that the particular data element is present in fewer than an adjustable threshold number of data records comprising the training data, with the adjustable threshold number being adjusted based on likelihood of occurrence of anomalous values for the particular data element, and removing from runtime data records the particular data element identified as the rare element.

[0072] As noted, implementation of the anomalous behavior detection systems and methods described herein may be realized using one or more learning machines such as neural networks. Neural networks are in general composed of multiple layers of linear transformations (multiplications by a "weight" matrix), each followed by a nonlinear function (e.g., a rectified linear activation function, or ReLU, etc.) The linear transformations are learned during training by making small changes to the weight matrices that progressively make the transformations more helpful to the final classification task. A multilayer network is adapted to analyze data (such as transaction data for normal and suspicious transactions, or other types of data), taking into account the dimensionality or resolution of the data (e.g., a preprocessing stage may be applied to the data to normalize and/or cull some of the fields). The layered network may include convolutional processes which are followed by pooling processes along with intermediate connections between the layers to enhance the sharing of information between the layers. Several examples of learning engine approaches/architectures that may be used include generating an auto-encoder and using a dense layer of the network to correlate with probability for a future event through a support vector machine, or constructing a regression or classification neural network model that predicts a specific output from data records (based on training reflective of correlation between similar records and the output that is to predicted).

[0073] Examples of neural networks include convolutional neural network (CNN), feed-forward neural networks, recurrent neural networks (RNN, e.g., implemented, for example, using long short-term memory (LSTM) structures), etc. Feed-forward networks include one or more layers of perceptrons (the learning nodes/elements) with connections to one or more portions of the input data. In a feedforward network, the connectivity of the inputs and layers of perceptrons is such that input data and intermediate data propagate in a forward direction towards the network's output. There are typically no feedback loops or cycles in the configuration/structure of the feed-forward network. Convolutional layers allow a network to efficiently learn features by applying the same learned transformation to subsections of the data. In some embodiments, the various learning processes implemented through use of the learning machines may be realized using keras (an open-source neural network library) building blocks and/or NumPy (an open-source programming library useful for realizing modules to process arrays) building blocks.

[0074] In some embodiments, the various learning engine implementations may include a trained learning engine (e.g., a neural network) and a corresponding coupled learning engine controller/adapter configured to determine and/or adapt the parameters (e.g., neural network weights) of the learning engine that would produce output representative of determined anomalous data (e.g., corresponding to potential fraudulent transactions). In such implementations, training data includes sets of input records (similar to the types of transaction input data that would be provided as input during runtime operations of the learning engines constituting the anomalous data detection systems described herein) along with corresponding data defining the ground truth for the input training data. After initial training of the various learning engines comprising the systems described herein, subsequent training may be intermittently performed (at regular or irregular periods). For example, upon the acquisition of new data corresponding to different population groups or geographical regions that may be associated with different transaction behaviors or characteristics (e.g., for systems configured to detect anomalous transactions), the learning engine adapters/controllers may perform additional training cycles to configure the learning engines to generate appropriate output consistent with the old types of data that the learning engines had previously been adapted for, and also consistent with the new types of data (e.g., corresponding to the new population groups or geographical regions). Upon completion of a training cycles by the adapter/controller coupled to a particular learning engine, the adapter provides data representative of updates/changes (e.g., in the form of parameter values/weights to be assigned to links of a neural-network-based learning engine) to the particular learning engine to cause the learning engine to be updated in accordance with the training cycle(s) completed.

[0075] Performing the various operations described herein may be facilitated by a controller system (e.g., a processor-based controller system). Particularly, at least some of the various devices/systems described herein, including any neural network systems, may be implemented, at least in part, using one or more processor-based devices.

[0076] Thus, with reference to FIG. 11, a schematic diagram of a computing system 1100 is shown. The computing system 1100 includes a processor-based device (also referred to as a controller device) 1110 such as a personal computer, a server, a specialized computing device, and so forth, that typically includes a central processor unit 1112, or some other type of controller (or a plurality of such processor/controller units). In addition to the CPU 1112, the system includes main memory, cache memory and bus interface circuits (not shown in FIG. 11). The processor-based device 1110 may include a mass storage element 1114, such as a hard drive (realize as magnetic discs, solid state (semiconductor) memory devices), flash drive associated with the computer system, etc. The computing system 1100 may further include a keyboard 1116, or keypad, or some other user input interface, and a monitor 1120, e.g., an LCD (liquid crystal display) monitor, that may be placed where a user can access them. The computing system 1100 may also include one or more sensors 1130 (e.g., an image-capture device, inertial sensors, environmental sensors, etc.) to obtain data to be analyzed.

[0077] The processor-based device 1110 is configured to facilitate, for example, the implementation of detection of anomalous behavior in data (e.g., detection of fraudulent activity in financial transaction data), through implementation (using the computing system 1100) of trained learning machines, and according to the procedures and operations described herein. The storage device 1114 may thus include a computer program product that when executed on the processor-based device 1110 causes the processor-based device to perform operations to facilitate the implementation of procedures and operations described herein. The processor-based device may further include peripheral devices to enable input/output functionality. Such peripheral devices may include, for example, a CD-ROM drive and/or flash drive (e.g., a removable flash drive), or a network connection (e.g., implemented using a USB port and/or a wireless transceiver(s)), for downloading related content to the connected system. Such peripheral devices may also be used for downloading software containing computer instructions to enable general operation of the respective system/device. Alternatively or additionally, in some embodiments, the computing system 1100 may include one or more graphics processing units (GPU's, such as NVIDIA GPU's), and may also include special purpose logic circuitry, e.g., an FPGA (field programmable gate array), an ASIC (application-specific integrated circuit), a DSP processor, accelerated processing units (APU's), application processing units, etc., may be used in the implementation of the system 1100 in order to implement the learning engine including the neural networks. Other modules that may be included with the processor-based device 1110 are speakers, a sound card, a pointing device, e.g., a mouse or a trackball, by which the user can provide input to the computing system 1100. The processor-based device 1110 may include an operating system, e.g., Windows XP.RTM. Microsoft Corporation operating system, Ubuntu operating system, etc.

[0078] Computer programs (also known as programs, software, software applications or code) include machine instructions for a programmable processor, and may be implemented in a high-level procedural and/or object-oriented programming language, and/or in assembly/machine language. As used herein, the term "machine-readable medium" refers to any non-transitory computer program product, apparatus and/or device (e.g., magnetic discs, optical disks, memory, Programmable Logic Devices (PLDs)) used to provide machine instructions and/or data to a programmable processor, including a non-transitory machine-readable medium that receives machine instructions as a machine-readable signal.

[0079] In some embodiments, any suitable computer readable media can be used for storing instructions for performing the processes/operations/procedures described herein. For example, in some embodiments computer readable media can be transitory or non-transitory. For example, non-transitory computer readable media can include media such as magnetic media (such as hard disks, floppy disks, etc.), optical media (such as compact discs, digital video discs, Blu-ray discs, etc.), semiconductor media (such as flash memory), electrically programmable read only memory (EPROM), electrically erasable programmable read only Memory (EEPROM), etc.), any suitable media that is not fleeting or not devoid of any semblance of permanence during transmission, and/or any suitable tangible media. As another example, transitory computer readable media can include signals on networks, in wires, conductors, optical fibers, circuits, any suitable media that is fleeting and devoid of any semblance of permanence during transmission, and/or any suitable intangible media.

[0080] Unless defined otherwise, all technical and scientific terms used herein have the same meaning as commonly or conventionally understood. As used herein, the articles "a" and "an" refer to one or to more than one (i.e., to at least one) of the grammatical object of the article. By way of example, "an element" means one element or more than one element. "About" and/or "approximately" as used herein when referring to a measurable value such as an amount, a temporal duration, and the like, encompasses variations of .+-.20% or .+-.10%, .+-.5%, or +0.1% from the specified value, as such variations are appropriate in the context of the systems, devices, circuits, methods, and other implementations described herein. "Substantially" as used herein when referring to a measurable value such as an amount, a temporal duration, a physical attribute (such as frequency), and the like, also encompasses variations of .+-.20% or .+-.10%, .+-.5%, or +0.1% from the specified value, as such variations are appropriate in the context of the systems, devices, circuits, methods, and other implementations described herein.

[0081] As used herein, including in the claims, "or" as used in a list of items prefaced by "at least one of" or "one or more of" indicates a disjunctive list such that, for example, a list of "at least one of A, B, or C" means A or B or C or AB or AC or BC or ABC (i.e., A and B and C), or combinations with more than one feature (e.g., AA, AAB, ABBC, etc.). Also, as used herein, unless otherwise stated, a statement that a function or operation is "based on" an item or condition means that the function or operation is based on the stated item or condition and may be based on one or more items and/or conditions in addition to the stated item or condition.

[0082] Although particular embodiments have been disclosed herein in detail, this has been done by way of example for purposes of illustration only, and is not intended to be limiting with respect to the scope of the appended claims, which follow. Features of the disclosed embodiments can be combined, rearranged, etc., within the scope of the invention to produce more embodiments. Some other aspects, advantages, and modifications are considered to be within the scope of the claims provided below. The claims presented are representative of at least some of the embodiments and features disclosed herein. Other unclaimed embodiments and features are also contemplated.

* * * * *

D00000

D00001

D00002

D00003

D00004

D00005

D00006

D00007

D00008

D00009

D00010

D00011

XML

uspto.report is an independent third-party trademark research tool that is not affiliated, endorsed, or sponsored by the United States Patent and Trademark Office (USPTO) or any other governmental organization. The information provided by uspto.report is based on publicly available data at the time of writing and is intended for informational purposes only.

While we strive to provide accurate and up-to-date information, we do not guarantee the accuracy, completeness, reliability, or suitability of the information displayed on this site. The use of this site is at your own risk. Any reliance you place on such information is therefore strictly at your own risk.

All official trademark data, including owner information, should be verified by visiting the official USPTO website at www.uspto.gov. This site is not intended to replace professional legal advice and should not be used as a substitute for consulting with a legal professional who is knowledgeable about trademark law.