System, Apparatus And Method For Extracting Three-dimensional Information Of An Object From Received Electromagnetic Radiation

ROSEN; Joseph ; et al.

U.S. patent application number 17/114421 was filed with the patent office on 2021-04-22 for system, apparatus and method for extracting three-dimensional information of an object from received electromagnetic radiation. The applicant listed for this patent is CELLOPTIC, INC.. Invention is credited to Gary BROOKER, Joseph ROSEN.

| Application Number | 20210116865 17/114421 |

| Document ID | / |

| Family ID | 1000005305881 |

| Filed Date | 2021-04-22 |

View All Diagrams

| United States Patent Application | 20210116865 |

| Kind Code | A1 |

| ROSEN; Joseph ; et al. | April 22, 2021 |

SYSTEM, APPARATUS AND METHOD FOR EXTRACTING THREE-DIMENSIONAL INFORMATION OF AN OBJECT FROM RECEIVED ELECTROMAGNETIC RADIATION

Abstract

An apparatus and method to produce a hologram of an object includes an electromagnetic radiation assembly configured to receive a received electromagnetic radiation, such as light, from the object. The electromagnetic radiation assembly is further configured to diffract the received electromagnetic radiation and transmit a diffracted electromagnetic radiation. An image capture assembly is configured to capture an image of the diffracted electromagnetic radiation and produce the hologram of the object from the captured image.

| Inventors: | ROSEN; Joseph; (Omer, IL) ; BROOKER; Gary; (Rockville, MD) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Family ID: | 1000005305881 | ||||||||||

| Appl. No.: | 17/114421 | ||||||||||

| Filed: | December 7, 2020 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 16459888 | Jul 2, 2019 | 10859977 | ||

| 17114421 | ||||

| 15704890 | Sep 14, 2017 | 10379493 | ||

| 16459888 | ||||

| 15014742 | Feb 3, 2016 | 9804563 | ||

| 15704890 | ||||

| 14727342 | Jun 1, 2015 | |||

| 15014742 | ||||

| 13970103 | Aug 19, 2013 | |||

| 14727342 | ||||

| 12515343 | Feb 18, 2010 | 8542421 | ||

| PCT/US2007/085094 | Nov 19, 2007 | |||

| 13970103 | ||||

| 60869022 | Dec 7, 2006 | |||

| 60866358 | Nov 17, 2006 | |||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | G03H 2001/0436 20130101; G03H 1/0402 20130101; G03H 2240/23 20130101; G03H 1/0443 20130101; G03H 2240/24 20130101; G03H 2001/0224 20130101; G03H 2240/13 20130101; G03H 2240/21 20130101; G03H 1/0841 20130101; G03H 2225/33 20130101; G03B 35/02 20130101; G03H 2001/0452 20130101; G03H 1/06 20130101; G03H 2001/085 20130101; G03H 2001/0458 20130101; G03H 2223/23 20130101; G03H 1/041 20130101; H04N 1/00827 20130101; G03H 1/08 20130101 |

| International Class: | G03H 1/08 20060101 G03H001/08; G03H 1/04 20060101 G03H001/04; G03B 35/02 20060101 G03B035/02; H04N 1/00 20060101 H04N001/00; G03H 1/06 20060101 G03H001/06 |

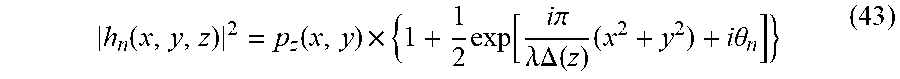

Claims

1. (canceled)

2. An apparatus configured to produce a hologram of an object, said apparatus comprising: an electromagnetic radiation assembly configured to receive a received electromagnetic radiation from the object and transmit a transmitted electromagnetic radiation based on the received electromagnetic radiation; capture an image of the transmitted electromagnetic radiation; and produce the hologram of the object from the captured image, wherein the electromagnetic radiation assembly is configured to simultaneously control more than one phase of the transmitted electromagnetic radiation.

Description

CROSS REFERENCE TO RELATED APPLICATIONS

[0001] This application is a continuation of U.S. patent application Ser. No. 14/727,342, filed Jun. 1, 2015 which is a continuation of U.S. patent application Ser. No. 13/970,103, filed Aug. 19, 2013 (now abandoned), which is a continuation of U.S. patent application Ser. No. 12/515,343, filed Feb. 18, 2010 (U.S. Pat. No. 8,542,421, issued Sep. 24, 2013), which is a national stage of PCT/US07/85094, filed Nov. 19, 2007, and claims the benefit of priority under 35 U.S.C. .sctn. 119(e) of U.S. Patent Provisional Application No. 60/869,022, filed Dec. 7, 2006, and U.S. Patent Provisional Application No. 60/866,358, filed Nov. 17, 2006. The contents of these applications are incorporated herein by reference in their entirety.

BACKGROUND OF THE INVENTION

Field of the Invention

[0002] This invention relates to an apparatus for capturing electromagnetic radiation, such as light or other forms of electromagnetic radiation, from an object and extracting object geometric information from the received radiation, in the field of three-dimensional imaging and holography. The invention also relates to a system and method of performing those functions.

Discussion of the Background

[0003] Conventional techniques for capturing three-dimensional information from physical objects include holography, range-finding, and tomography. However, conventional techniques may disadvantageously require an active illumination source, or place limitations on a light source (e.g., may require coherent light, a point light source or a bandwidth limited light), place limitations on movement of the object or the sensing apparatus (e.g., require that the object and sensing device be stationary, or require that they be moved in a predetermined fashion), may require complex electromagnetic radiation assemblies (e.g., complex arrangement of mirrors and lenses), and may produce poor quality three-dimensional images having low resolution or low fidelity.

SUMMARY OF THE INVENTION

[0004] Accordingly, one object of this invention is to provide an apparatus configured to produce a hologram of an object, said apparatus comprising: an electromagnetic radiation assembly configured to receive a received electromagnetic radiation from the object, diffract the received electromagnetic radiation, and transmit a diffracted electromagnetic radiation; and an image capture assembly configured to capture an image of the diffracted electromagnetic radiation, and produce the hologram of the object from the captured image.

[0005] Another object of this invention is to provide a novel apparatus, wherein the electromagnetic radiation includes light.

[0006] Another object of this invention is to provide a novel apparatus, wherein the electromagnetic radiation apparatus includes only one radiation propagation axis and is configured to propagate electromagnetic radiation only along the radiation propagation axis in only one direction.

[0007] Another object of this invention is to provide a novel apparatus, wherein the electromagnetic radiation assembly includes plural electromagnetic radiation elements each having an axis of symmetry arranged along a same straight line.

[0008] Another object of this invention is to provide a novel apparatus, wherein the electromagnetic radiation assembly includes plural electromagnetic radiation elements each having a geometric center arranged along a same straight line.

[0009] Another object of this invention is to provide a novel apparatus, wherein the electromagnetic radiation received from the object and the electromagnetic radiation diffracted by the electromagnetic radiation assembly have a same radiation propagation axis.

[0010] Another object of this invention is to provide a novel apparatus, wherein the electromagnetic radiation received from the object includes incoherent light.

[0011] Another object of this invention is to provide a novel apparatus, wherein the electromagnetic radiation received from the object is produced by the object.

[0012] Another object of this invention is to provide a novel apparatus, wherein the electromagnetic radiation received from the object does not interfere with an electromagnetic radiation that is not received from the object to produce the hologram of the object.

[0013] Another object of this invention is to provide a novel apparatus, wherein the object and the apparatus are configured to remain stationary during the capture of the image.

[0014] Another object of this invention is to provide a novel apparatus, wherein each portion of the electromagnetic apparatus is configured to remain stationary during the capture of the image.

[0015] Another object of this invention is to provide a novel apparatus, wherein at least one of the object or the apparatus is configured to be in motion during the capture of the image.

[0016] Another object of this invention is to provide a novel apparatus, wherein the hologram is produced from a single captured image.

[0017] Another object of this invention is to provide a novel apparatus, wherein the hologram is produced from plural captured images.

[0018] Another object of this invention is to provide a novel apparatus, wherein the hologram includes a Fresnel hologram.

[0019] Another object of this invention is to provide a novel apparatus, wherein the hologram includes an image hologram.

[0020] Another object of this invention is to provide a novel apparatus, wherein a phase and intensity of the diffracted electromagnetic radiation is described by a convolution of the received electromagnetic radiation and a Fresnel Zone Plate.

[0021] Another object of this invention is to provide a novel apparatus, wherein the hologram includes geometric information of the object, and the geometric information includes, for each electromagnetic radiation radiating surface of the object, (i) a range distance between the electromagnetic radiation radiating surface of the object and the electromagnetic radiation assembly, (ii) a horizontal offset distance of the electromagnetic radiation radiating surface of the object, and (iii) a vertical offset distance of the electromagnetic radiation radiating surface of the object.

[0022] Another object of this invention is to provide a novel apparatus, wherein the electromagnetic radiation assembly is configured to transmit the electromagnetic radiation including a convolution of the received electromagnetic radiation and a complex transmission function including a linear summation of a first transformed pattern, a second transformed pattern and a third transformed pattern, the first transformed pattern including a first shifted concentric ring pattern, the second transformed pattern including a second shifted concentric ring pattern, and the third transformed pattern including a third shifted concentric ring pattern.

[0023] Another object of this invention is to provide a novel apparatus, wherein each of the first, second and third shifted concentric ring patterns are shifted away from one another in a same plane of the electromagnetic radiation assembly.

[0024] Another object of this invention is to provide a novel apparatus, wherein each of the first, second and third shifted concentric ring patterns includes a Fresnel Zone Pattern or a portion of a Fresnel Zone Pattern.

[0025] Another object of this invention is to provide a novel apparatus, wherein the portion of the Fresnel Zone Pattern includes a Fresnel Zone Pattern having one or more rings removed, one or more extra rings added, one or more rings having a varied width, or one or more rings having a portion of the ring removed.

[0026] Another object of this invention is to provide a novel apparatus, wherein a phase of the Fresnel Zone Pattern or the portion of the Fresnel Zone Pattern in each of the first, second and third shifted concentric ring pattern is different.

[0027] Another object of this invention is to provide a novel apparatus, wherein a predetermined thickness and coefficients of absorption or reflectance of the electromagnetic radiation assembly is configured to control the phase and intensity of the diffracted light.

[0028] Another object of this invention is to provide a novel apparatus, wherein the electromagnetic radiation assembly is configured to control at least one of the phase or intensity of the transmitted electromagnetic radiation by varying a thickness of a material through which electromagnetic radiation passes.

[0029] Another object of this invention is to provide a novel apparatus, wherein the electromagnetic radiation assembly further comprises: a first electromagnetic radiation assembly configured to receive the received electromagnetic radiation from the object and transmit a first transformed electromagnetic radiation; a complex mask assembly configured to receive the first transformed electromagnetic radiation from the first electromagnetic radiation assembly, and transmit a complex masked electromagnetic radiation according to a complex transmission function; and a second electromagnetic radiation assembly configured to receive the complex masked electromagnetic radiation from the mask assembly, and transmit a second transformed electromagnetic radiation as the diffracted electromagnetic radiation.

[0030] Another object of this invention is to provide a novel apparatus, wherein the complex mask assembly further comprises: a mask controller configured to vary the complex transmission function of the electromagnetic radiation assembly over time, said mask controller configured to vary the complex transmission function to be based on a Fourier transform of a first Fresnel Zone Pattern at a first time, a Fourier transform of a second Fresnel Zone Pattern at a second time, and a Fourier transform of a third Fresnel Zone Pattern at a third time.

[0031] Another object of this invention is to provide a novel apparatus, wherein the image capture assembly further comprises: a timing controller configured to capture a first partial image at the first time, a second partial image at the second time, and a third partial image at the third time; and a summing unit configured to produce the hologram as a sum of the first partial image captured at the first time, the second partial image captured at the second time, and the third partial image captured at the third time.

[0032] Another object of this invention is to provide a novel apparatus, further comprising: an electromagnetic radiation separating assembly configured to separate the electromagnetic radiation received from the object into three object electromagnetic radiation portions each including a different frequency range; said first electromagnetic radiation assembly including three first electromagnetic radiation subassemblies each configured to receive one of the three object electromagnetic radiation portions, and respectively transmit first, second and third portions of the first transformed electromagnetic radiation; said mask assembly including first, second and third mask subassemblies respectively configured to receive the first, second and third portions of the first transformed electromagnetic radiation, and respectively transmit first, second and third complex mask transformed electromagnetic radiation; and said second electromagnetic radiation assembly including three second electromagnetic radiation subassemblies respectively configured to receive first, second and third complex mask transformed electromagnetic radiation, and respectively transmit first, second and third portions of transmitted electromagnetic radiation.

[0033] Another object of this invention is to provide a novel apparatus, wherein the first mask subassembly is configured to transmit the first complex mask transformed electromagnetic radiation based on a Fourier transform of a first Fresnel Zone Pattern, the second mask subassembly is configured to transmit the second complex mask transformed electromagnetic radiation based on a Fourier transform of a second Fresnel Zone Pattern, and the third mask subassembly is configured to transmit the third complex mask transformed electromagnetic radiation based on a Fourier transform of a third Fresnel Zone Pattern.

[0034] Another object of this invention is to provide a novel apparatus, wherein the image capture assembly includes at least one of a CCD, a CMOS light sensitive device, another electronic camera, a light sensitive emulsion, or another photosensitive device.

[0035] Another object of this invention is to provide a novel apparatus, wherein the electromagnetic radiation assembly consists of i) one diffractive electromagnetic radiation element and ii) one converging lens or mirror.

[0036] Another object of this invention is to provide a novel apparatus, wherein the electromagnetic radiation assembly consists of i) one diffractive electromagnetic radiation element and ii) two converging lenses or two mirrors.

[0037] Another object of this invention is to provide a novel apparatus, further comprising: an objective assembly arranged between the object and the electromagnetic radiation assembly and configured to collimate, focus, invert or modify the electromagnetic radiation from the object, prior to the received electromagnetic radiation being received at the electromagnetic radiation assembly.

[0038] Another object of this invention is to provide a novel apparatus, wherein the objective assembly includes at least one of an objective lens, a zoom lens, a macro lens, a microscope, a telescope, a prism, a filter, a monochromatic filter, a dichroic filter, a complex objective lens, a wide-angle lens, a camera, a pin-hole, a light slit, or a mirror.

[0039] Another object of this invention is to provide a novel apparatus, wherein the electromagnetic radiation apparatus includes a diffractive electromagnetic radiation element or two lenses configured to produce an off-axis Fresnel Zone pattern when the two lenses are illuminated by a coherent light.

[0040] Another object of this invention is to provide a novel apparatus, wherein the two lenses are arranged in a same plane perpendicular to an radiation propagation axis of the received electromagnetic radiation and the two lenses have different focal lengths.

[0041] Another object of this invention is to provide a novel apparatus, wherein the two lenses are arranged in different planes perpendicular to an radiation propagation axis of the received electromagnetic radiation.

[0042] Another object of this invention is to provide a novel apparatus, wherein the electromagnetic radiation includes at least one of an x-ray radiation, a microwave radiation, an infrared light, a radio frequency signal or an ultraviolet light.

[0043] Another object of this invention is to provide a novel apparatus, wherein the electromagnetic radiation assembly and the image capture assembly do not include any reflective electromagnetic radiation elements.

[0044] Another object of this invention is to provide a novel apparatus configured to produce a hologram of an object, said apparatus comprising: an electromagnetic radiation assembly configured to receive a received electromagnetic radiation from the object along a radiation axis in an electromagnetic radiation receiving direction, transmit a transmitted electromagnetic radiation along the radiation axis in the electromagnetic radiation receiving direction, and interfere a first portion of the transmitted electromagnetic radiation with a second portion of the transmitted electromagnetic radiation the transmitted electromagnetic radiation; and an image capture assembly configured to capture an image of the transmitted electromagnetic radiation transmitted along the optical axis in the electromagnetic radiation receiving direction, and produce the hologram of the object from the captured image, wherein the radiation axis is a straight line.

[0045] Another object of this invention is to provide a novel apparatus configured to produce a hologram of an object, said apparatus comprising: an electromagnetic radiation assembly configured to receive a received electromagnetic radiation from the object and transmit a transmitted electromagnetic radiation based on the received electromagnetic radiation, the transmitted electromagnetic radiation including the hologram of the object; and an image capture assembly configured to capture an image of the transmitted electromagnetic radiation and produce the hologram of the object from the captured image.

[0046] Another object of this invention is to provide a novel apparatus configured to produce a hologram of an object, said apparatus comprising: an electromagnetic radiation assembly configured to receive a received electromagnetic radiation from the object, transmit a transmitted electromagnetic radiation based on the received electromagnetic radiation, and interfere a first portion of the transmitted electromagnetic radiation with a second portion of the transmitted electromagnetic radiation; and an opaque image capture assembly configured to capture an image of the transmitted electromagnetic radiation produced by the interference of at least the first and second portions of the transmitted electromagnetic radiation, and produce the hologram of the object from the captured image, wherein a center of the electromagnetic radiation assembly and a center of the image capture assembly are arranged along a same straight line.

[0047] Another object of this invention is to provide a novel apparatus configured to produce a hologram of an object, said apparatus comprising: an electromagnetic radiation assembly consisting of one diffractive electromagnetic radiation element and configured to receive a received electromagnetic radiation from the object and transmit a transmitted electromagnetic radiation based on the received electromagnetic radiation; and an image capture assembly configured to capture an image of the transmitted electromagnetic radiation, and produce the hologram of the object from the captured image.

[0048] Another object of this invention is to provide a novel apparatus configured to produce a hologram of an object, said apparatus comprising: an electromagnetic radiation assembly configured to receive a received electromagnetic radiation from the object, perform a transformation of the received electromagnetic radiation, and transmit the transformed received electromagnetic radiation, the transformation including a convolution of a function representing an intensity distribution of the received electromagnetic radiation and a concentric ring function; and an image capture assembly configured to capture an image of the transmitted electromagnetic radiation, and produce the hologram of the object from the captured image.

[0049] Another object of this invention is to provide a novel apparatus configured to produce a hologram of an object, said apparatus comprising: an electromagnetic radiation assembly configured to receive a received electromagnetic radiation from the object, perform a transformation of the received electromagnetic radiation, and transmit the transformed received electromagnetic radiation, the transformation including a convolution of i) an intensity distribution of the received electromagnetic radiation and ii) a function having regions of positive slope and negative slope when evaluated between a center of the optical assembly and an outer edge of the optical assembly; and an image capture assembly configured to capture an image of the transmitted electromagnetic radiation, and produce the hologram of the object from the captured image.

[0050] Another object of this invention is to provide a novel apparatus configured to produce a hologram of an object, said apparatus comprising: an electromagnetic radiation assembly configured to convolve i) a received electromagnetic radiation received from the object and ii) a curve having plural inflection points between a center of the optical assembly and an edge of the optical assembly, and transmit the convolved electromagnetic radiation; and an image capture assembly configured to capture an image of the convolved electromagnetic radiation, and produce the hologram of the object from the captured image.

[0051] Another object of this invention is to provide a novel apparatus configured to produce a hologram of an object, said apparatus comprising: an electromagnetic radiation assembly configured to receive a received electromagnetic radiation from the object, perform a transformation of the received electromagnetic radiation, and transmit the transformed received electromagnetic radiation, the transformation including a convolution of i) an intensity distribution of the received electromagnetic radiation and ii) a transformation function that is a linear combination of three partial transformation functions, each including a concentric ring pattern; and an image capture assembly configured to capture an image of the transmitted electromagnetic radiation, and produce the hologram of the object from the captured image.

[0052] Another object of this invention is to provide a novel apparatus configured to produce a hologram of a chemiluminescent object, said apparatus comprising: an electromagnetic radiation assembly configured to receive a received chemiluminescent radiation from the object, and transmit a transmitted electromagnetic radiation including the hologram of the object; and an image capture assembly configured to capture an image of the transmitted electromagnetic radiation, and produce the hologram of the object from the captured image.

[0053] Another object of this invention is to provide a novel apparatus configured to produce a hologram of an object, said apparatus comprising: an electromagnetic radiation assembly configured to receive a scattered electromagnetic radiation scattered by the object, which scatters a source electromagnetic radiation, and transmit a transmitted electromagnetic radiation based on the received scattered electromagnetic radiation, the transmitted electromagnetic radiation being independent of any source electromagnetic radiation that is not scattered by the object; and an image capture assembly configured to capture an image of the transmitted electromagnetic radiation and produce the hologram of the object from the captured image.

[0054] Another object of this invention is to provide a novel apparatus configured to produce a hologram of an object, said apparatus comprising: an electromagnetic radiation assembly configured to diffract an electromagnetic radiation received from the object; and an image capture assembly configured to capture an image of the diffracted electromagnetic radiation and produce the hologram of the object from the captured image.

[0055] Another object of this invention is to provide a novel apparatus configured to produce a hologram of an object, said apparatus comprising: plural electromagnetic radiation sources configured to radiate the object with plural electromagnetic radiation signals; an electromagnetic radiation assembly configured to receive a received electromagnetic radiation from the object and transform the received electromagnetic radiation, the received electromagnetic radiation including portions of the plural source electromagnetic radiation signals scattered by the object; and a capture assembly configured to capture an image of the transformed electromagnetic radiation and produce the hologram of the object from the captured image.

[0056] Another object of this invention is to provide a novel apparatus configured to produce a hologram of a fluorescent object, said apparatus comprising: an electromagnetic radiation assembly configured to receive a received fluorescent radiation from the object and transmit a transmitted electromagnetic radiation based on the received fluorescent radiation; and an image capture assembly configured to capture an image of the transmitted electromagnetic radiation and produce the hologram of the object from the captured image.

[0057] Another object of this invention is to provide a novel apparatus configured to produce a hologram of a black body radiation radiating object, said apparatus comprising: an electromagnetic radiation assembly configured to receive a received black body electromagnetic radiation from the object, and transmit a transmitted electromagnetic radiation based on the received black body electromagnetic radiation from the object; and an image capture assembly configured to capture an image of the transmitted electromagnetic radiation and produce the hologram of the object from the captured image.

[0058] Another object of this invention is to provide a novel apparatus configured to produce a hologram of an object, said apparatus comprising: an electromagnetic radiation assembly configured to receive a received electromagnetic radiation from the object, transmit a transmitted electromagnetic radiation based only on the received electromagnetic radiation from the object, and interfere a first portion of the transmitted electromagnetic radiation with a second portion of the transmitted electromagnetic radiation; and an image capture assembly configured to capture a fringe pattern produced by the interference of at least the first and second portions of the transmitted electromagnetic radiation and produce the hologram of the object from the fringe pattern.

[0059] Another object of this invention is to provide a novel electromagnetic radiation apparatus configured to produce a hologram of an object, said apparatus configured to receive a received electromagnetic radiation from the object, diffract the received electromagnetic radiation, and transmit a diffracted electromagnetic radiation including the hologram of the object.

[0060] Another object of this invention is to provide a novel apparatus configured to produce a hologram of a scene, said apparatus comprising: an electromagnetic radiation assembly configured to receive a received electromagnetic radiation from the scene, diffract the received electromagnetic radiation, and transmit a diffracted electromagnetic radiation; and an image capture assembly configured to capture an image of the diffracted electromagnetic radiation, and produce the hologram of the scene from the captured image.

[0061] Another object of this invention is to provide a novel apparatus configured to produce a hologram of an object, said apparatus comprising: an electromagnetic radiation assembly configured to receive a received electromagnetic radiation from the object, transform the received electromagnetic radiation, and transmit the transformed electromagnetic radiation including a fringe pattern; and an image capture assembly configured to capture an image of the fringe pattern and produce the hologram of the scene from the captured fringe pattern.

[0062] Another object of this invention is to provide a novel apparatus configured to produce a hologram of an object, said apparatus comprising: an electromagnetic radiation assembly configured to receive a received electromagnetic radiation from the object and transform the received electromagnetic radiation; and an image capture assembly configured to capture the transformed electromagnetic radiation including the hologram of the object, said hologram includes fringe patterns produced by an interference of the received electromagnetic radiation with itself, and said hologram not including fringe patterns produced by an interference of the received electromagnetic radiation with any other electromagnetic radiation.

[0063] Another object of this invention is to provide a novel method for producing a hologram of an object, said method comprising steps of receiving a received electromagnetic radiation from the object; transmitting a diffracted electromagnetic radiation based on the received electromagnetic radiation; capturing an image of the diffracted electromagnetic radiation; and producing the hologram of the object from the captured image.

[0064] Another object of this invention is to provide a novel apparatus, wherein the received electromagnetic radiation does not include coherent light.

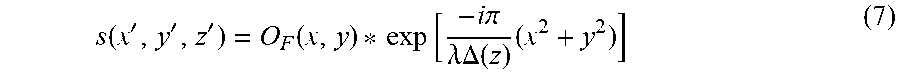

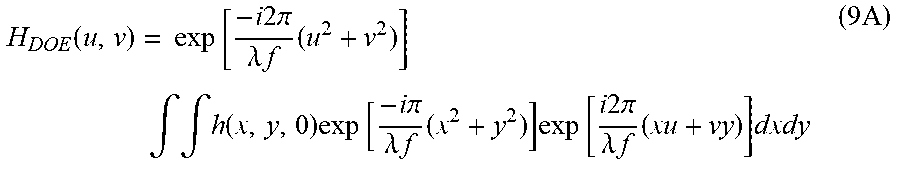

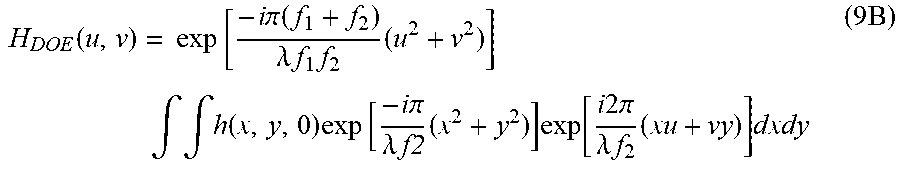

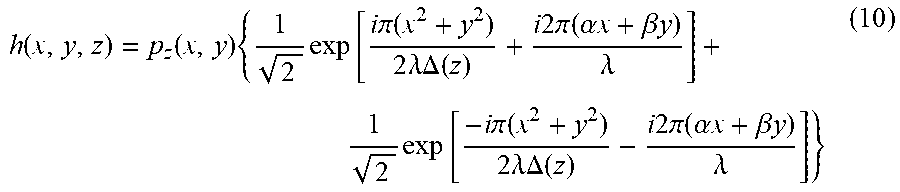

[0065] A new method of recording digital holograms under incoherent illumination reflects light from a three-dimensional (3-D) object, propagates through a diffractive optical element (DOE) and is recorded by a digital camera. Three holograms are recorded sequentially each for a different phase factor of the DOE. The three holograms are superposed in the computer such that the result is a complex valued Fresnel hologram. When this hologram is reconstructed in the computer, the 3-D properties of the object are revealed.

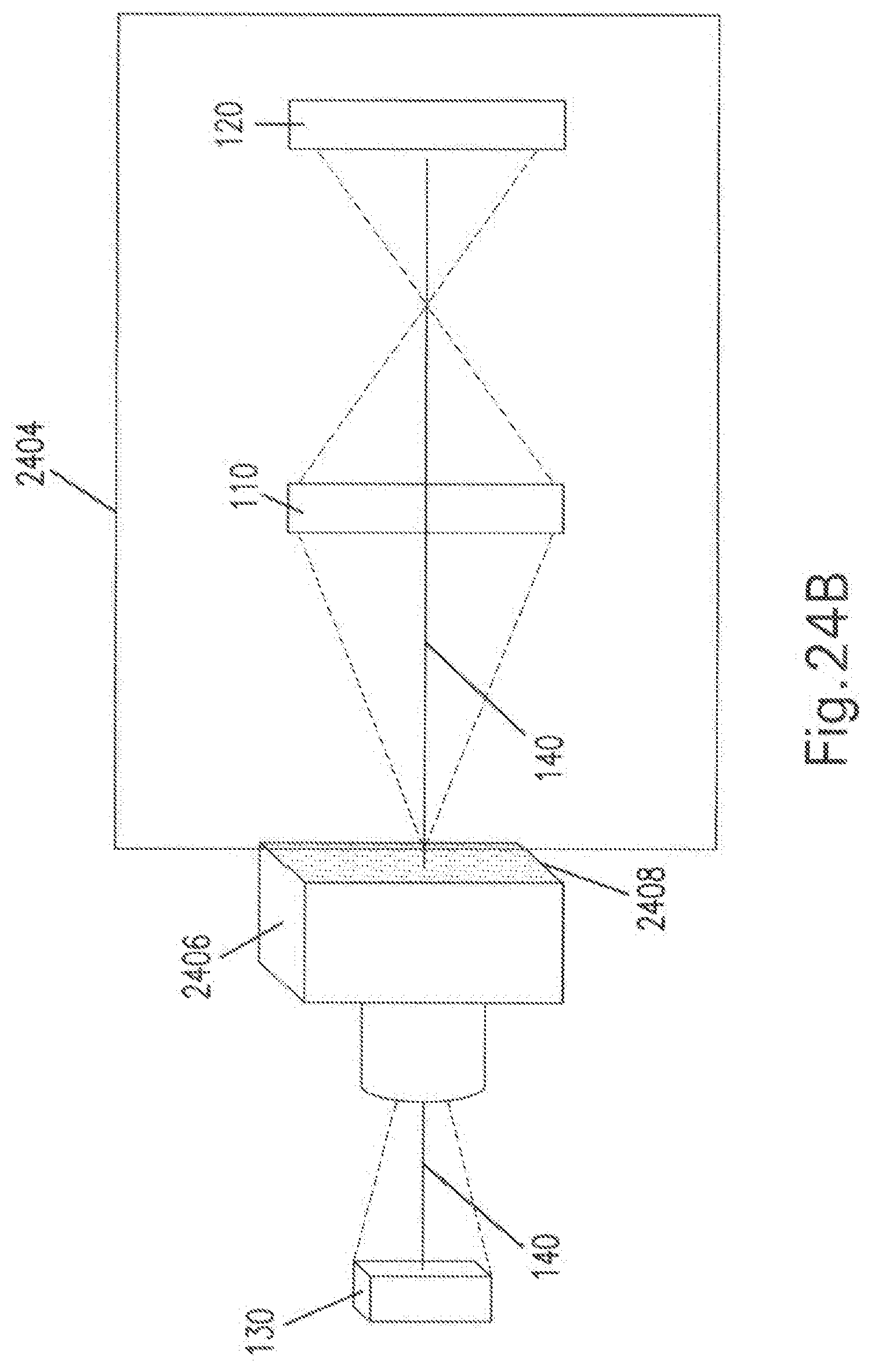

[0066] Another new imaging method records multicolor digital holograms from objects emitting fluorescent light. The fluorescent light specific to the emission wavelength of various fluorescent dyes after excitation of three dimensional (3-D) objects is recorded on a digital monochrome camera after reflection from a diffractive optical element (DOE). For each wavelength of fluorescent emission, the camera sequentially records three holograms reflected from the DOE, each with a different phase factor of the DOE's function. The three holograms are superposed in a computer to create a complex valued Fresnel hologram of each fluorescent emission. The holograms for each fluorescent color are further combined in a computer to produce a multicolored fluorescence hologram and 3-D color image.

BRIEF DESCRIPTION OF THE DRAWINGS

[0067] A more complete appreciation of the invention and many of the attendant advantages thereof will be readily obtained as the same becomes better understood by reference to the following detailed description when considered in connection with the accompanying drawings, wherein.

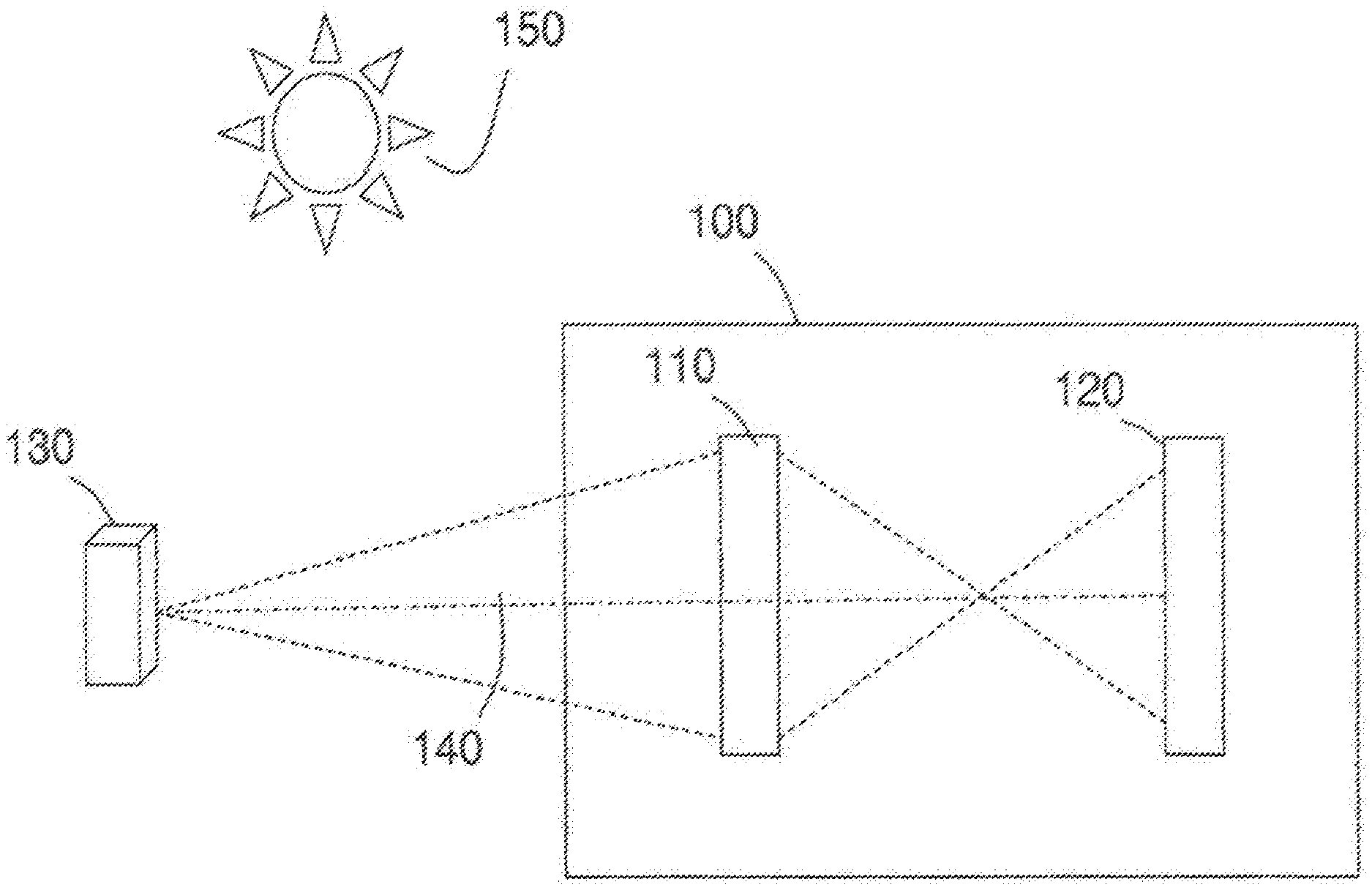

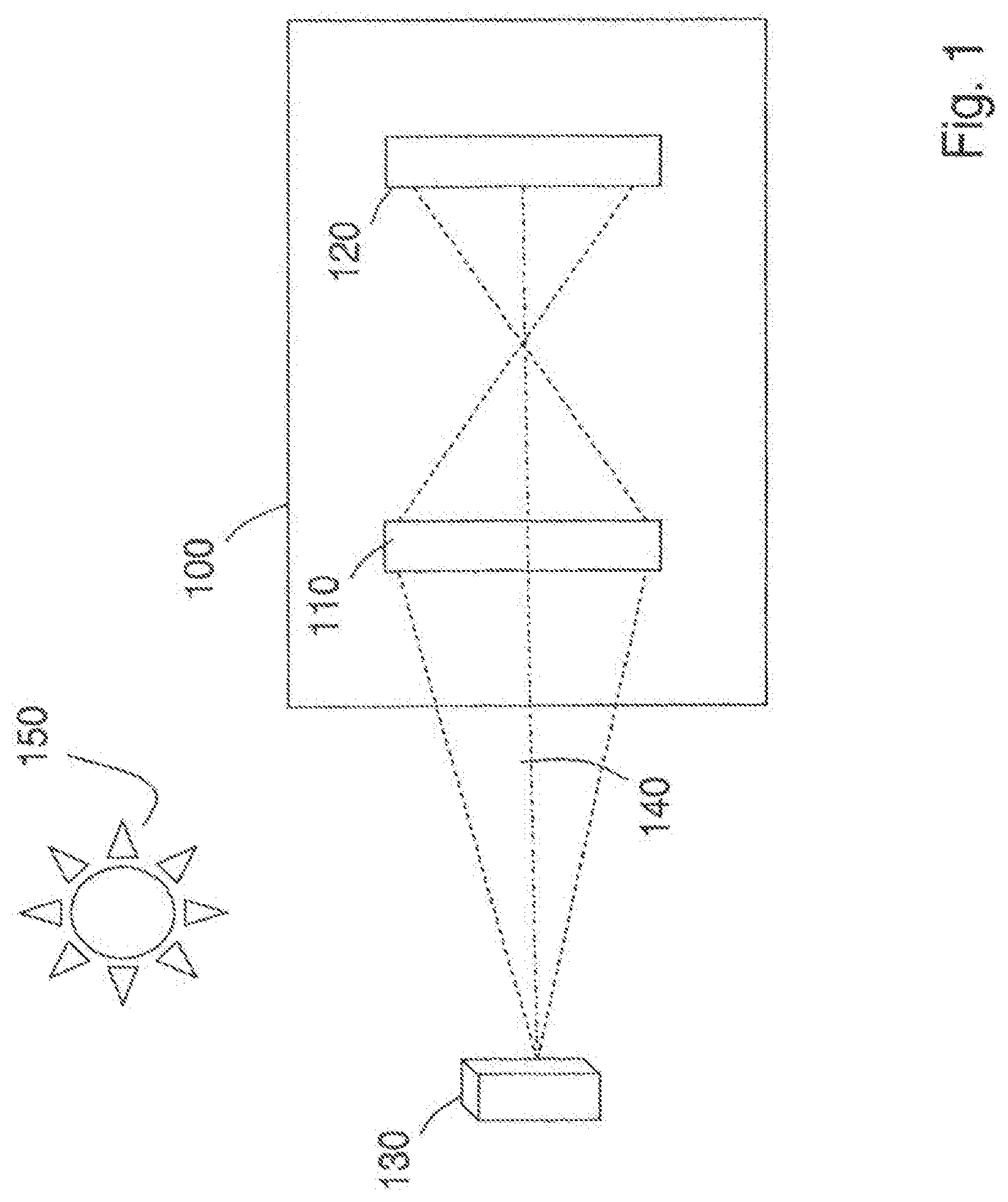

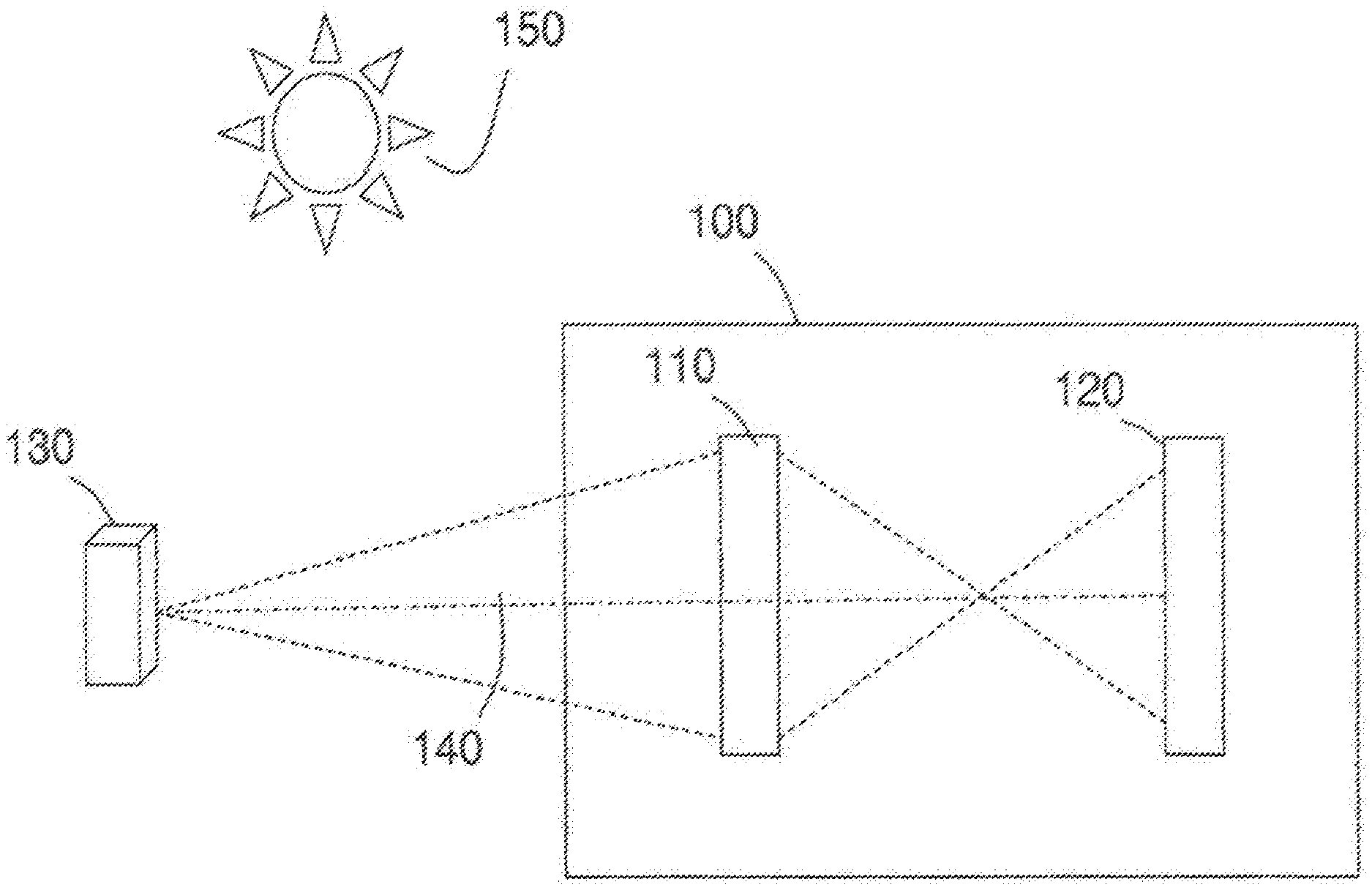

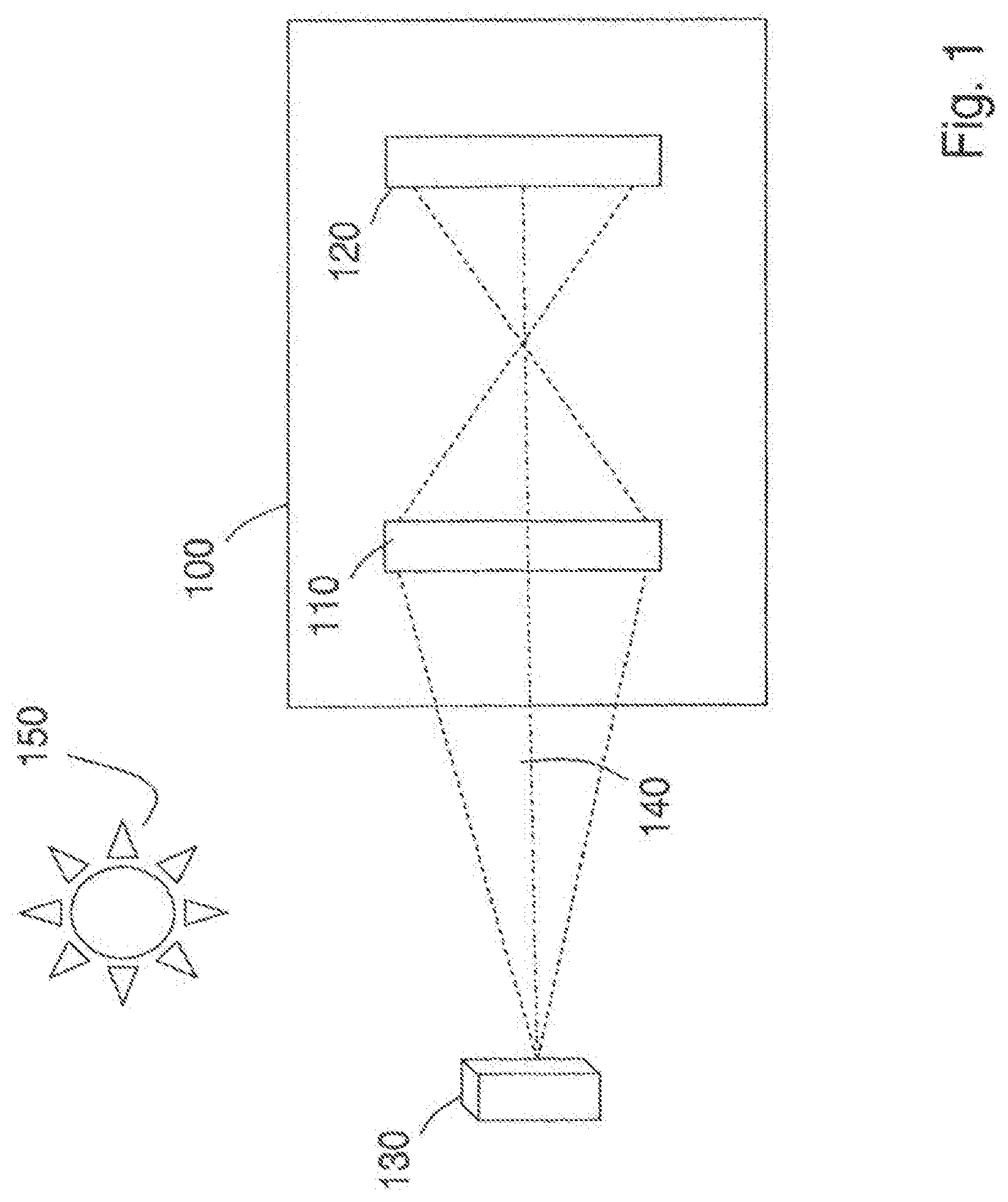

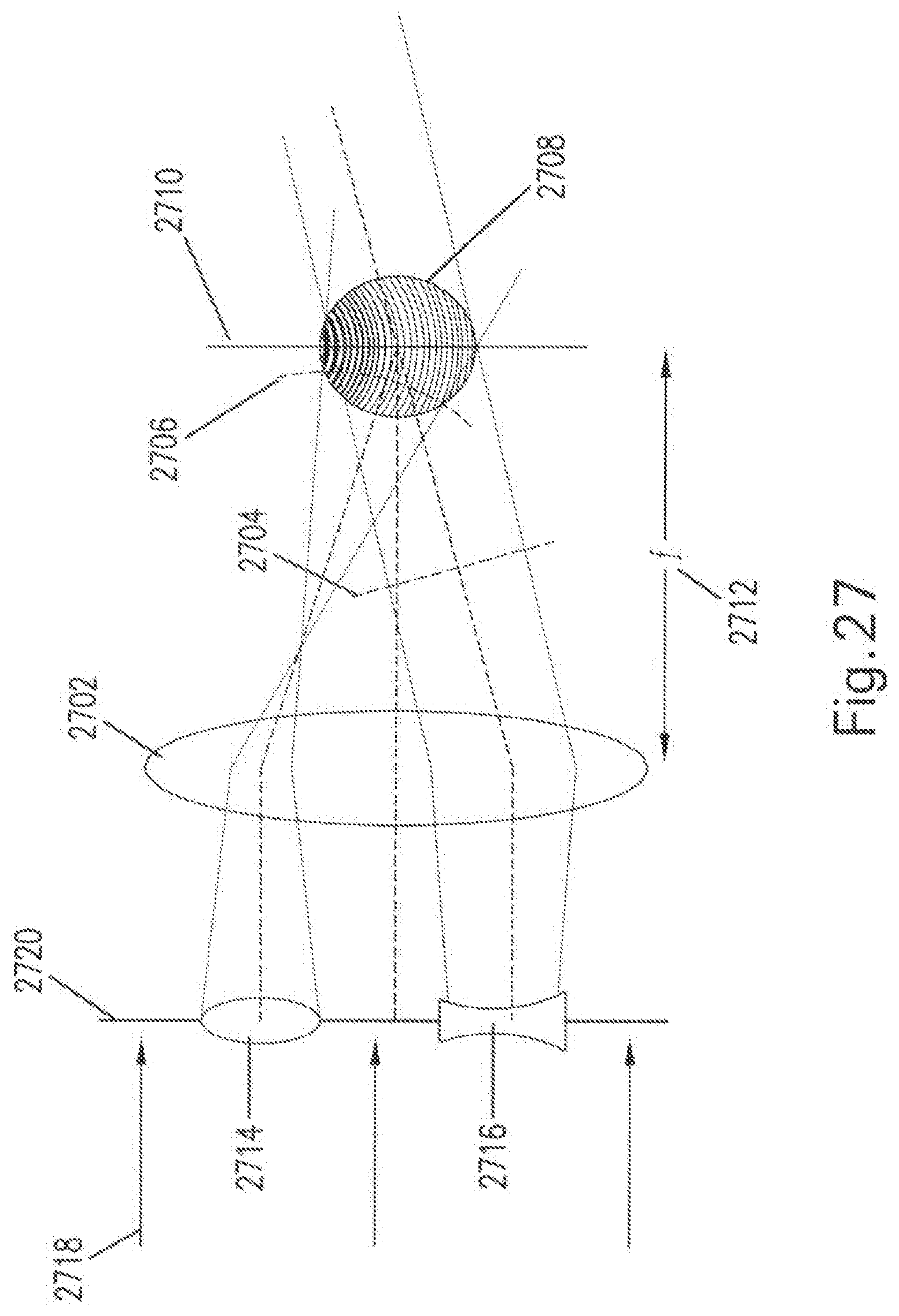

[0068] FIG. 1 is a block diagram of an optical apparatus according to an embodiment of the present invention;

[0069] FIG. 2 is a block diagram that illustrates an example of captured geometric information according to embodiments of the present invention;

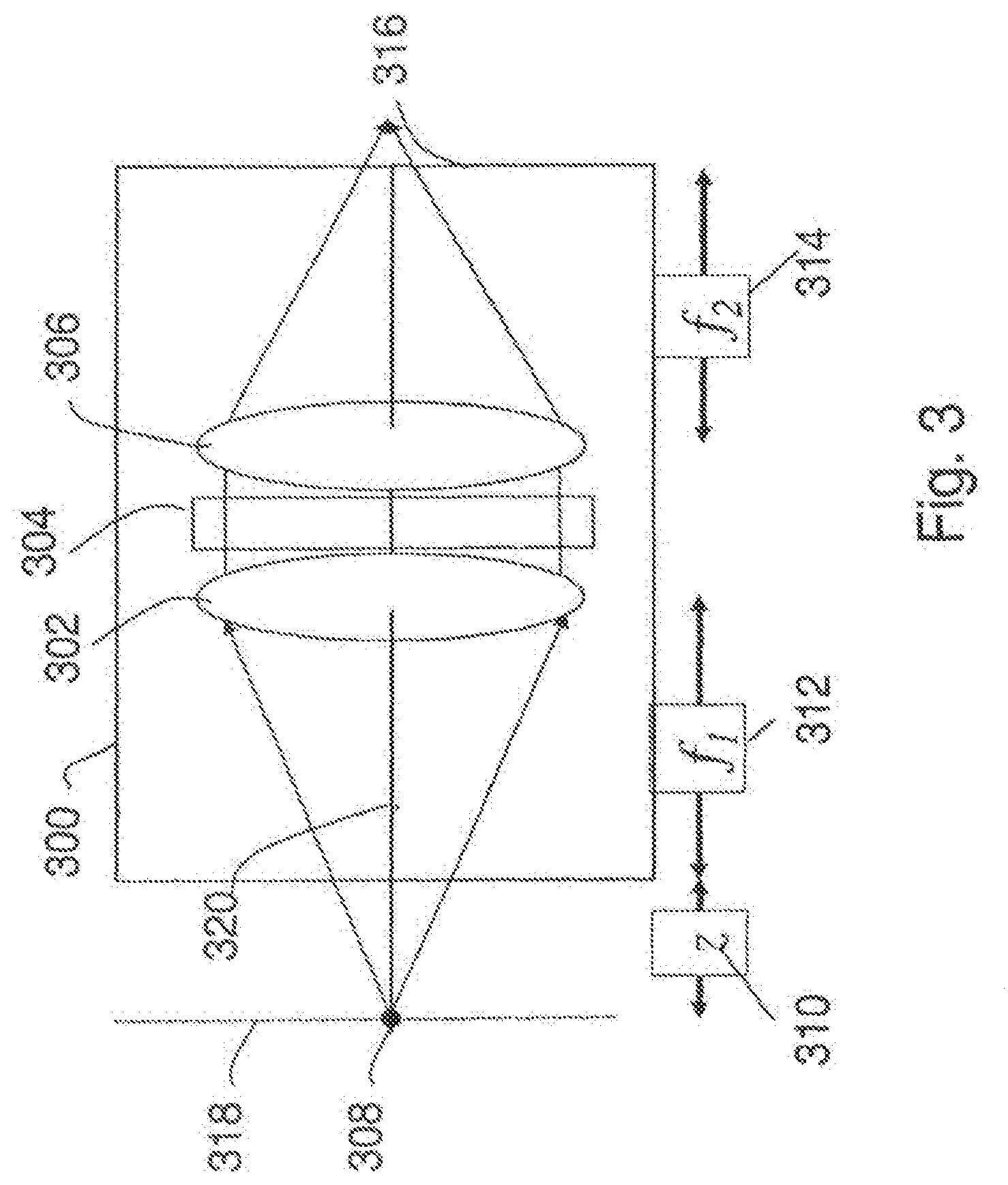

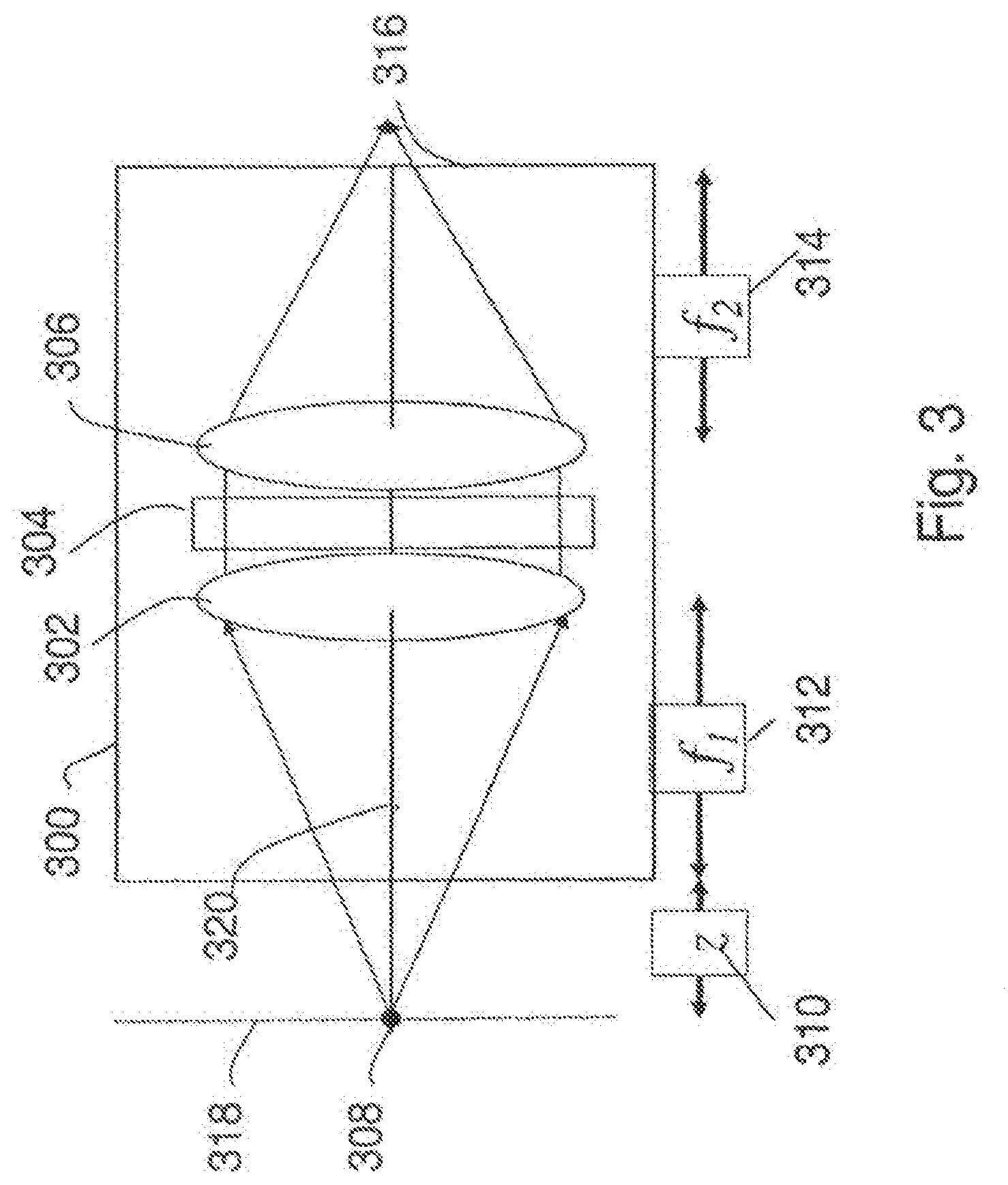

[0070] FIG. 3 is a block diagram of an incoherent correlator that may be used as the optical assembly in the optical apparatus of FIG. 1;

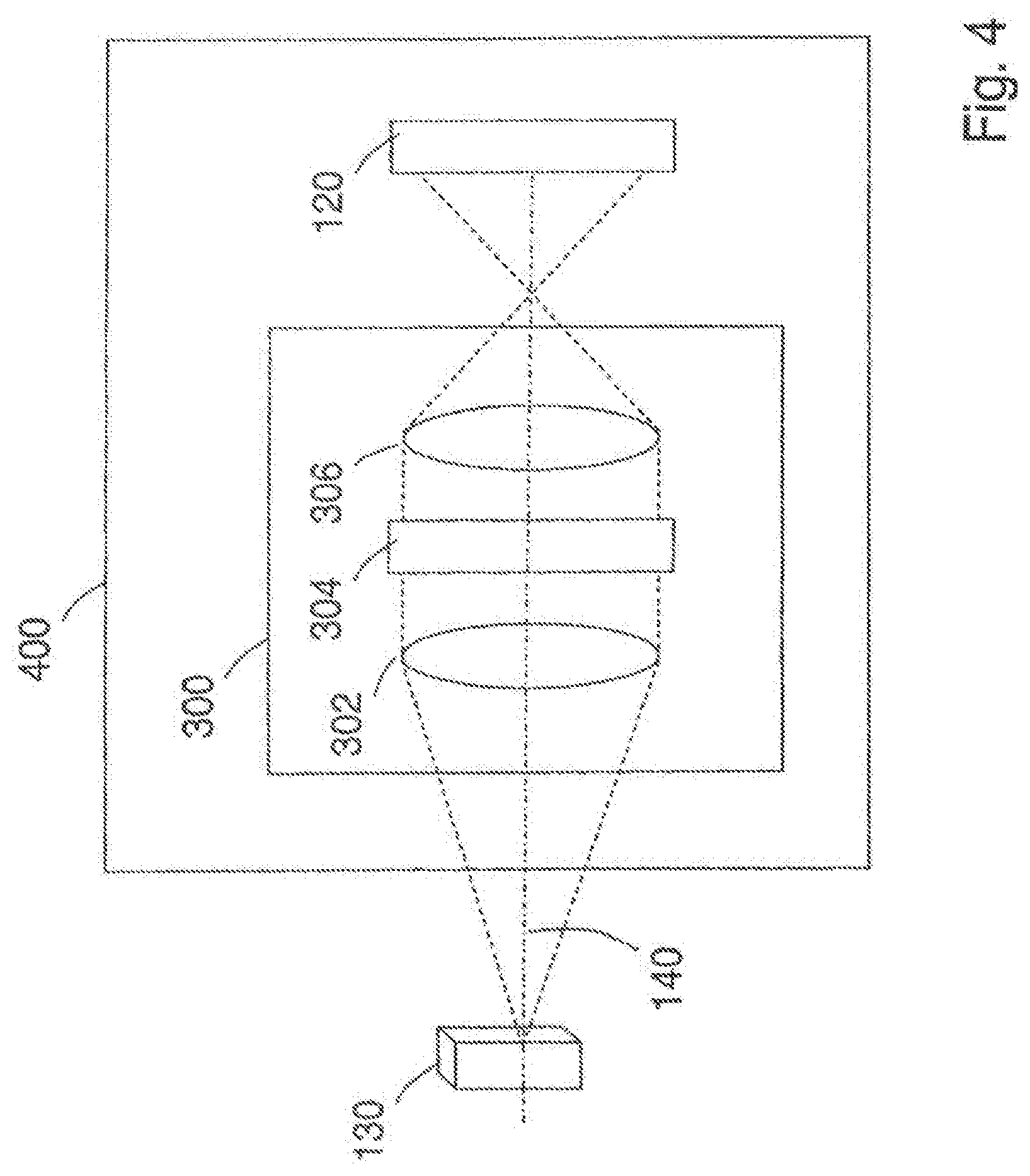

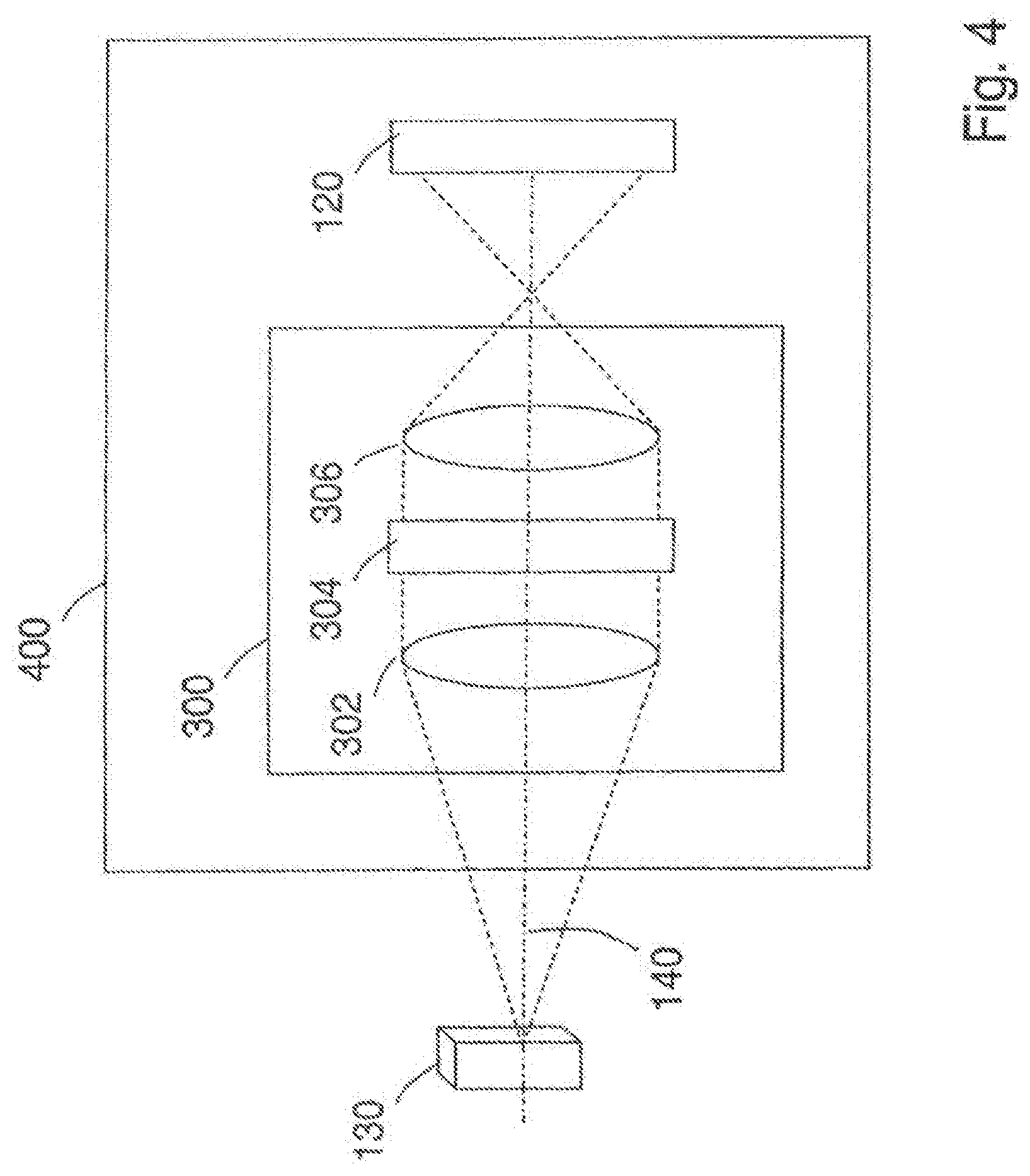

[0071] FIG. 4 is a block diagram of an embodiment of an optical apparatus that includes an optical assembly;

[0072] FIG. 5A is a detailed front view of an embodiment of a mask that includes a DOE having an array of plural transform regions;

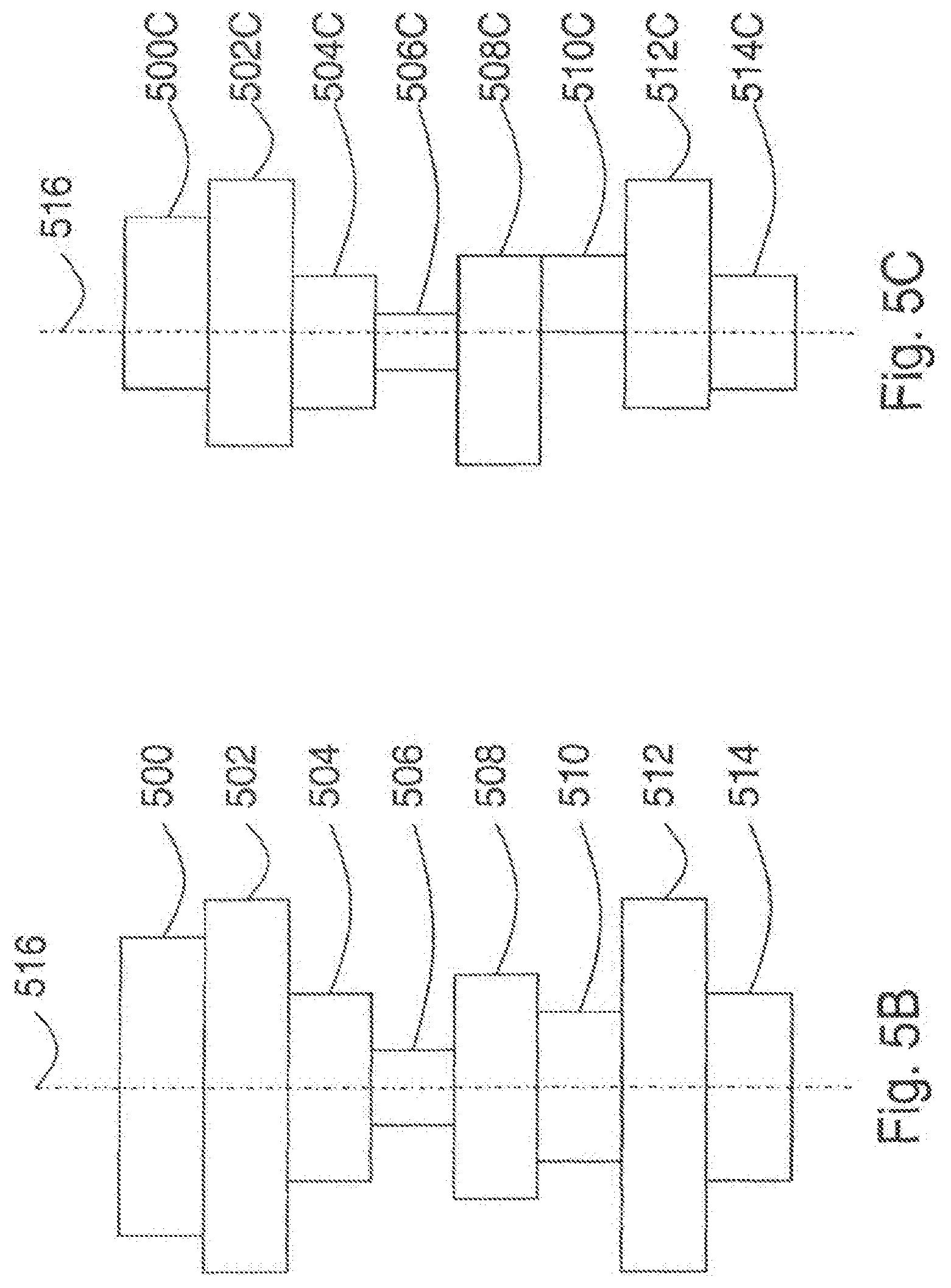

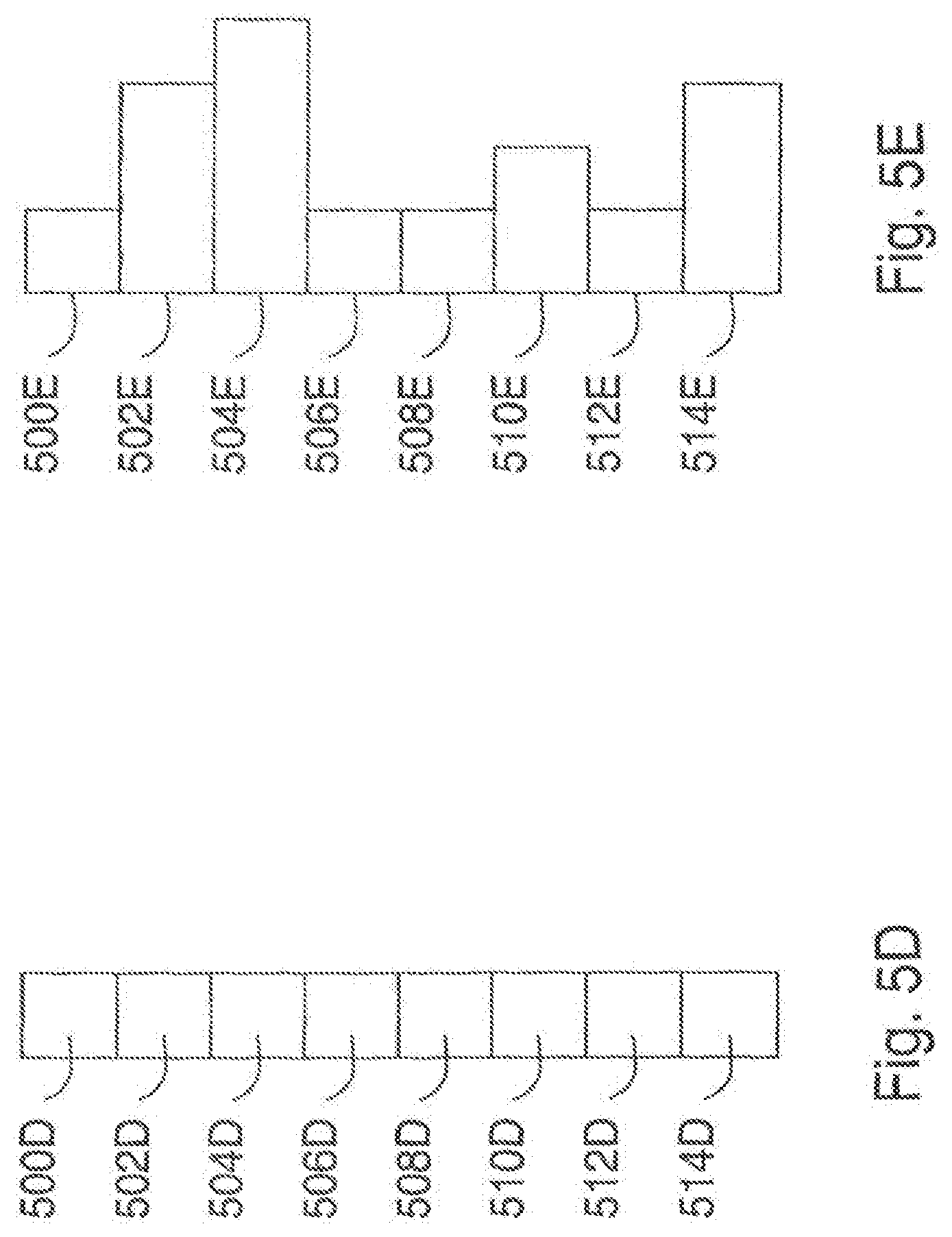

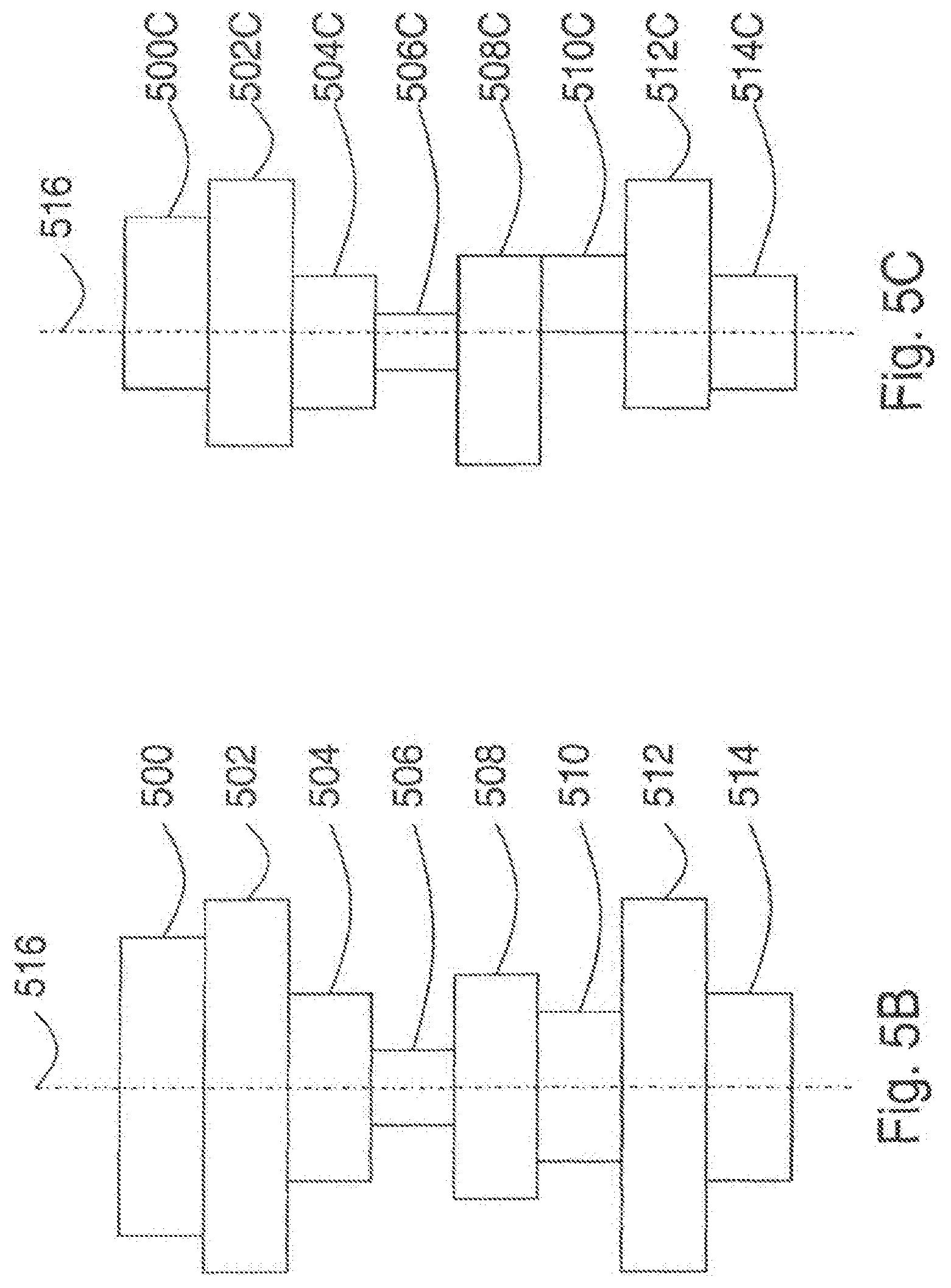

[0073] FIG. 5B is a cross-section view of a symmetrically arranged volume modulated diffractive optical element structure;

[0074] FIG. 5C is a cross-section view of a volume modulated diffractive optical element structure;

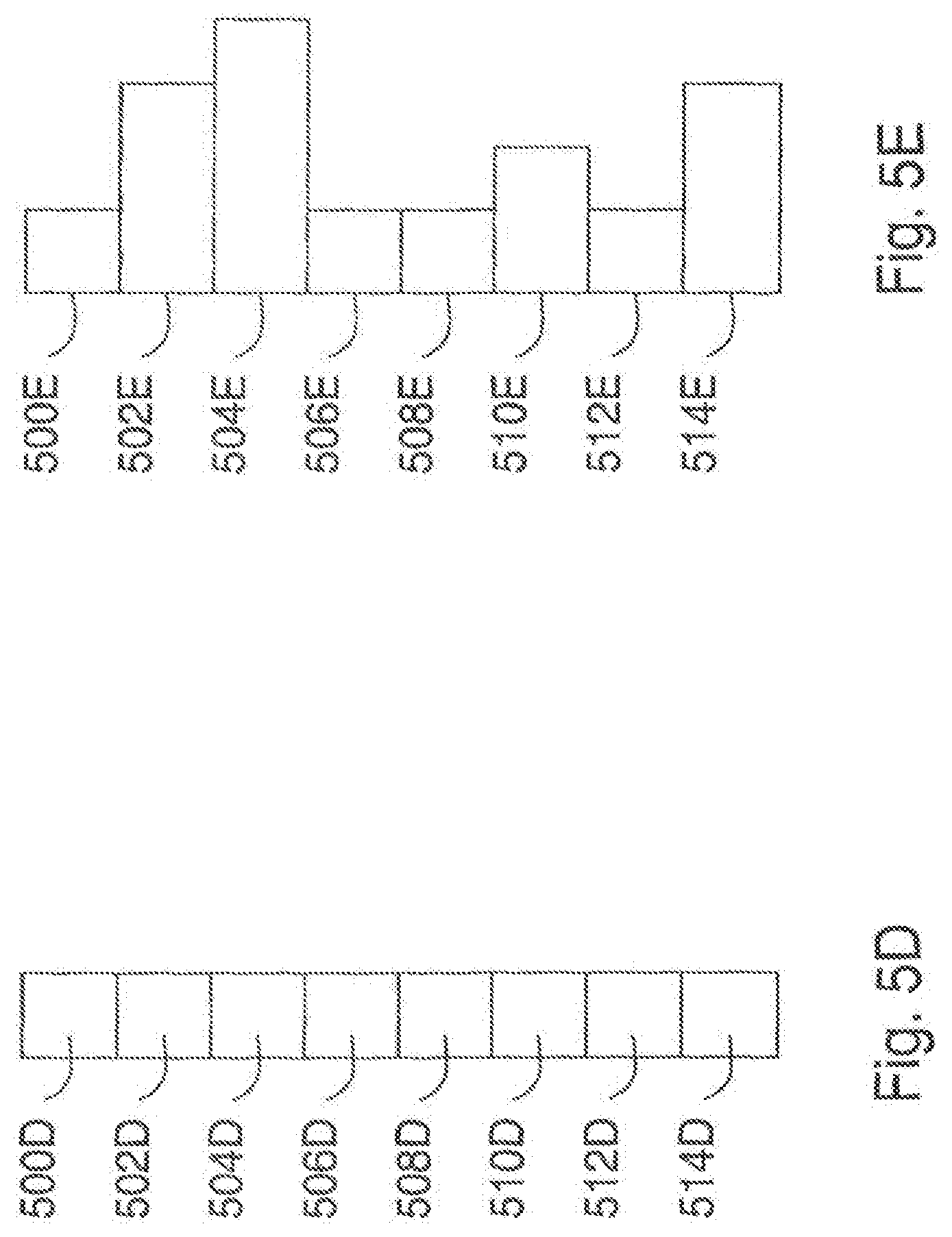

[0075] FIG. 5D is a cross-section view of an index modulated diffractive optical element structure;

[0076] FIG. 5E is a cross-section view of a mixed mode diffractive optical element structure;

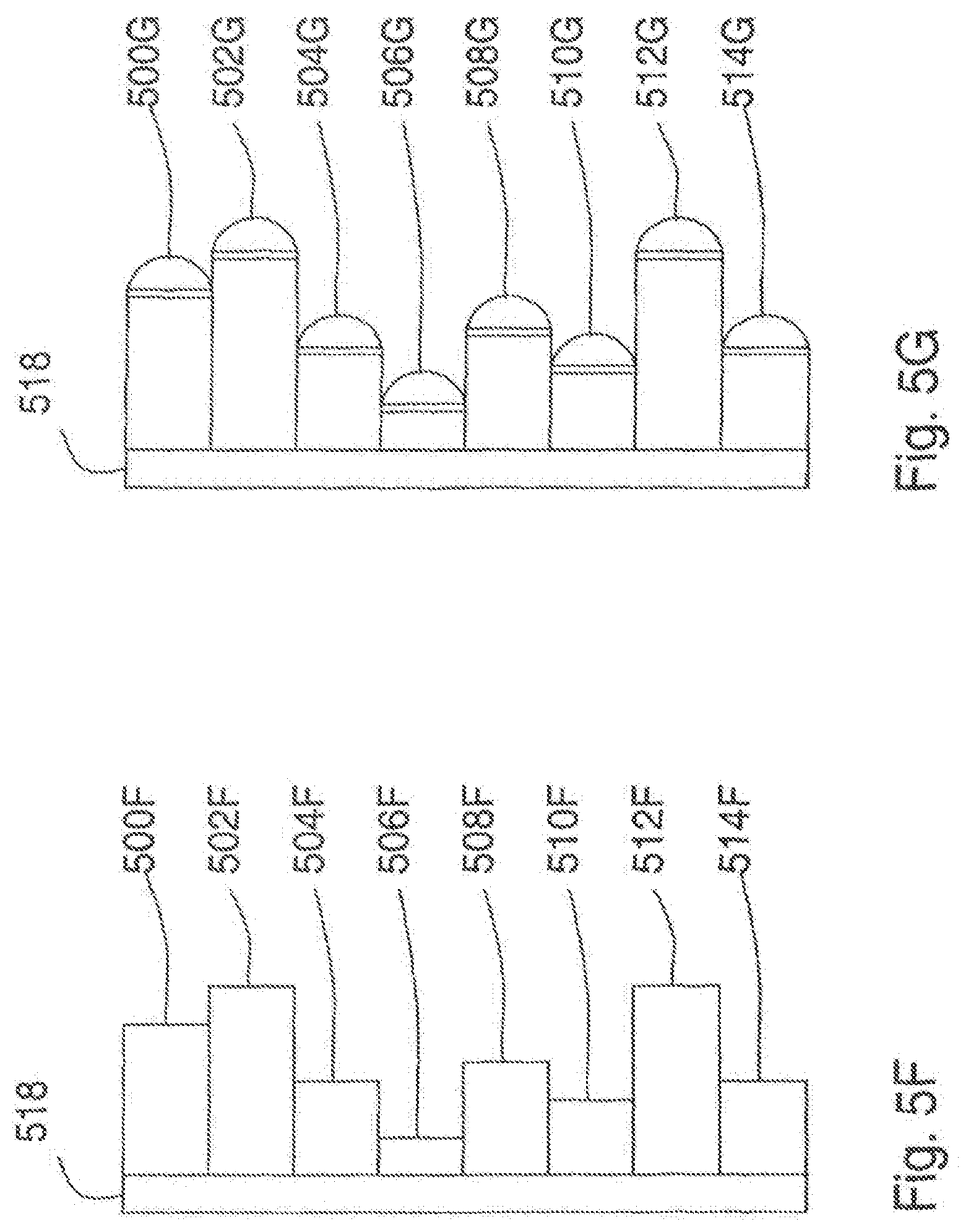

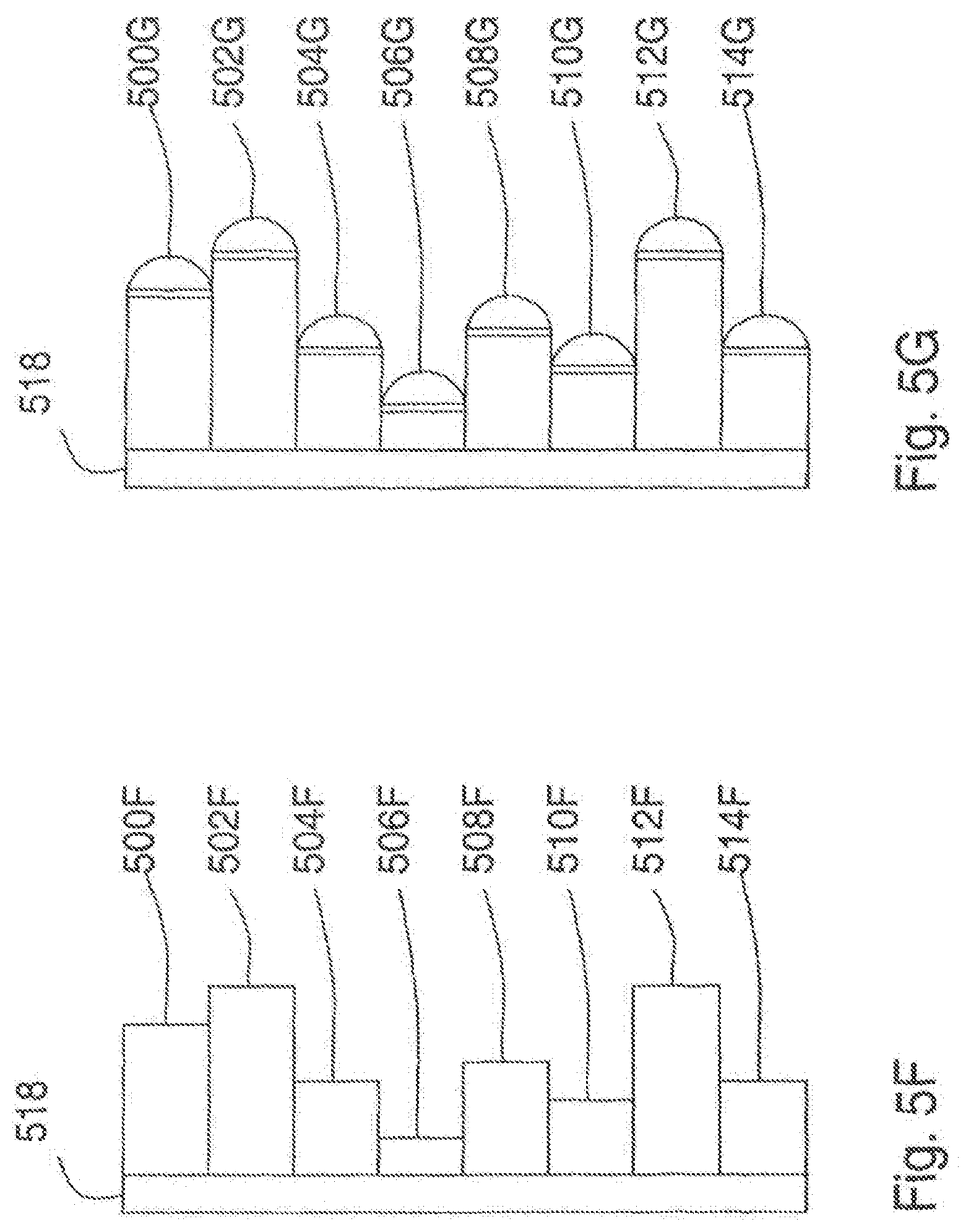

[0077] FIG. 5F is a cross-section view of a reflective volume modulated diffractive optical element; Figure SG is a cross-section view of a reflective volume modulated diffractive optical element;

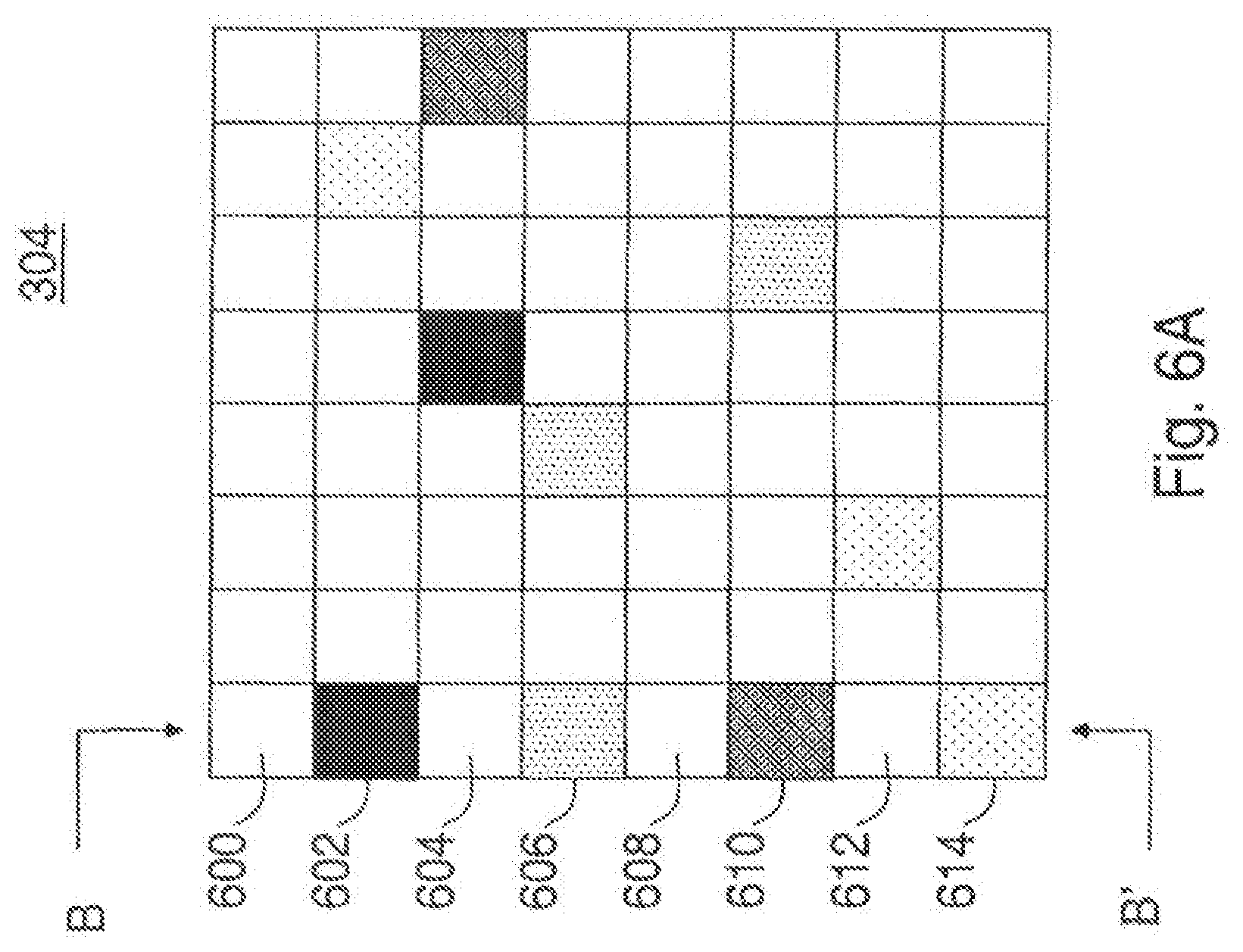

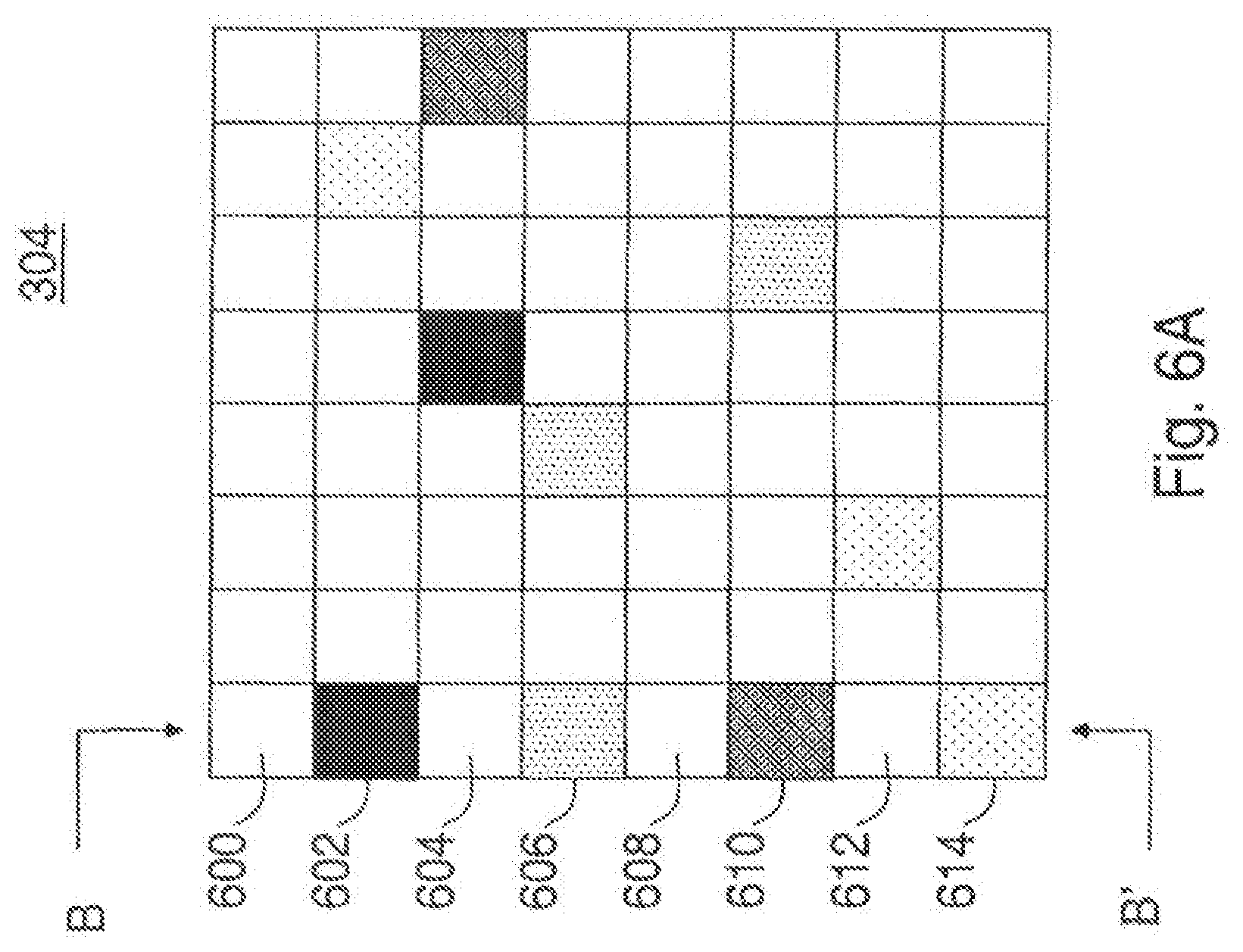

[0078] FIG. 6A is a detailed front view of another embodiment of a mask that includes a DOE having an array of plural transform regions;

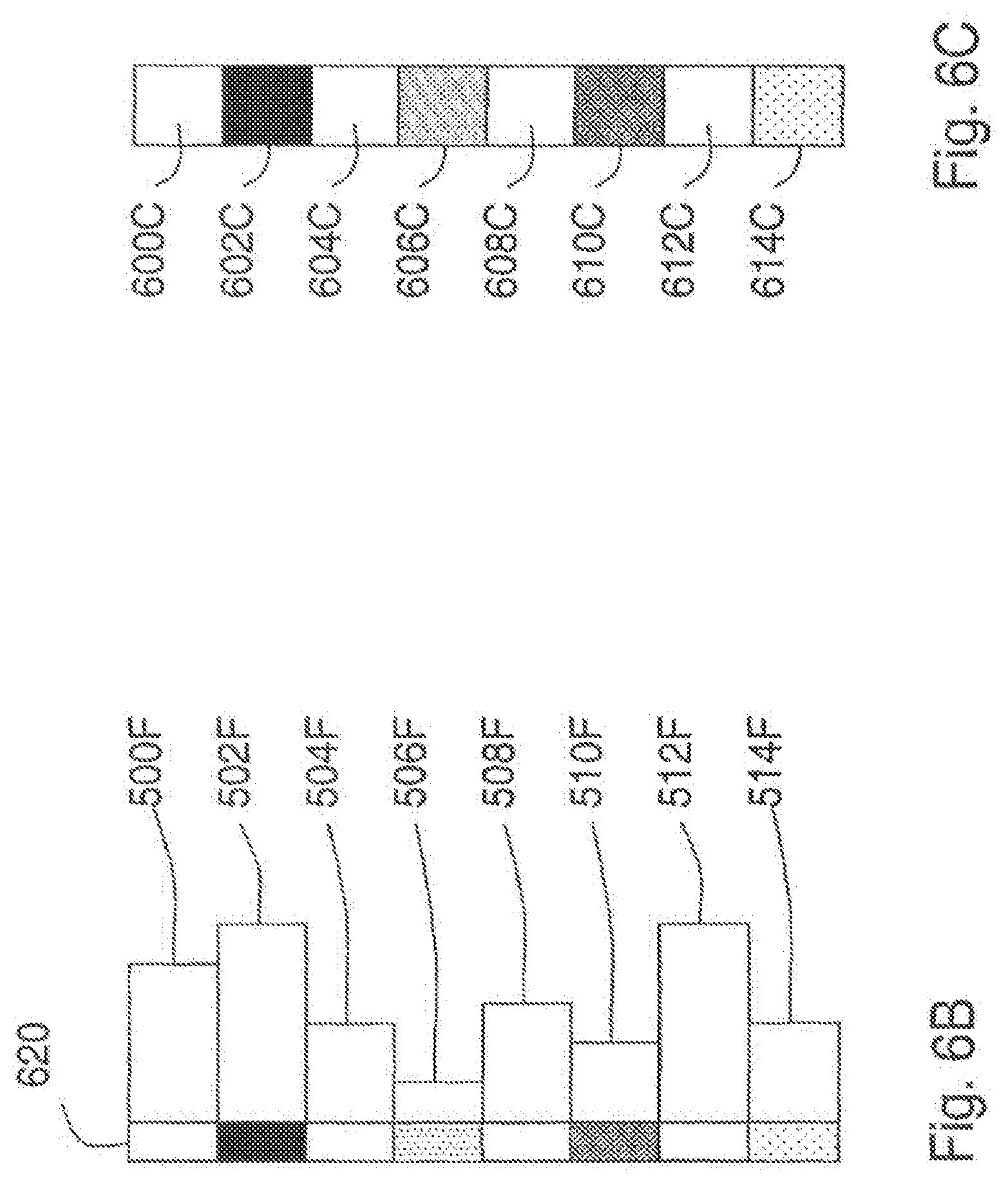

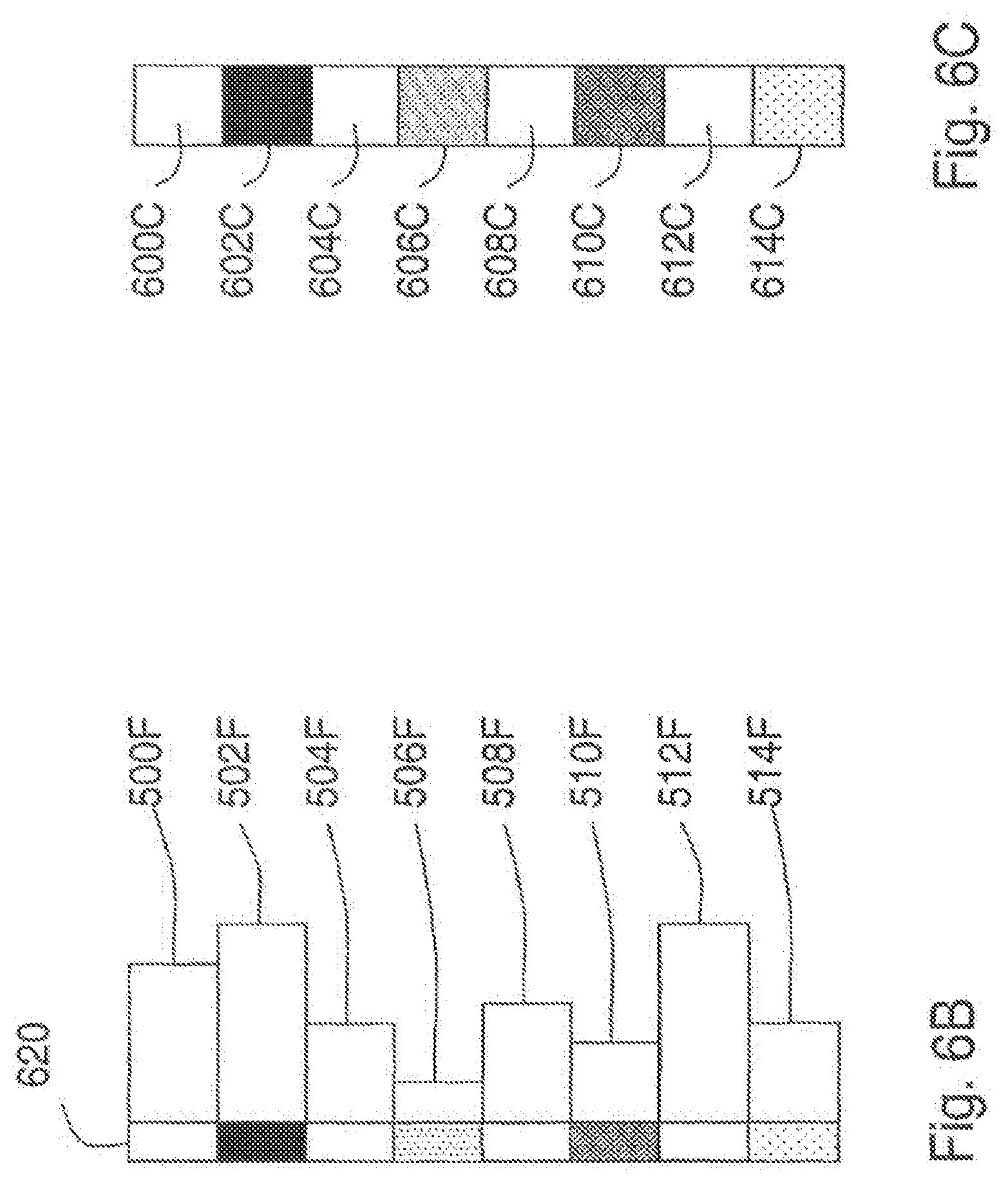

[0079] FIG. 6B is a cross-section view of a mask including a transmission layer having varied transmissivity for regions adjacent to corresponding transform regions;

[0080] FIG. 6C is a cross-section view of a mask having transform regions configured to vary an amplitude of the received light;

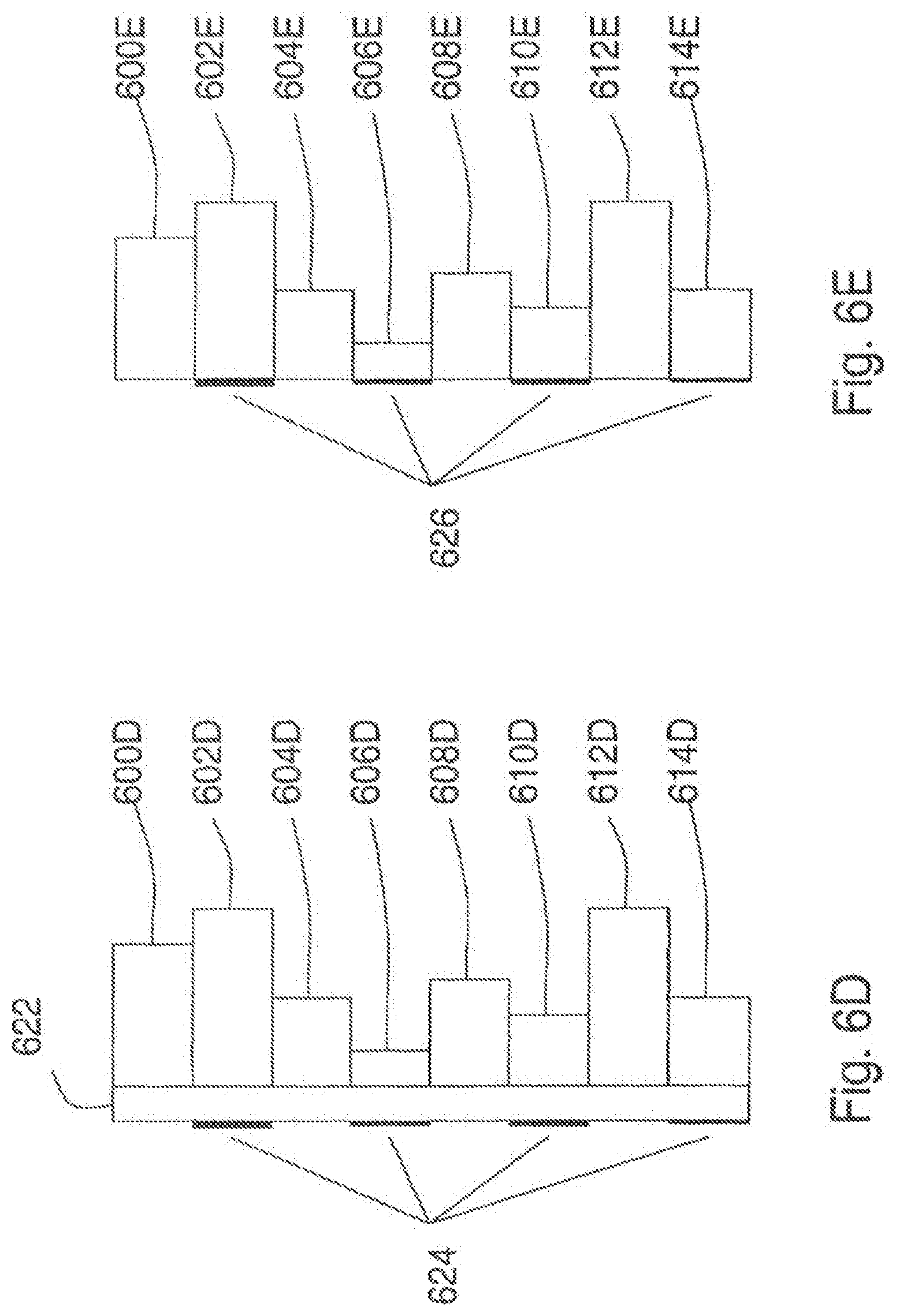

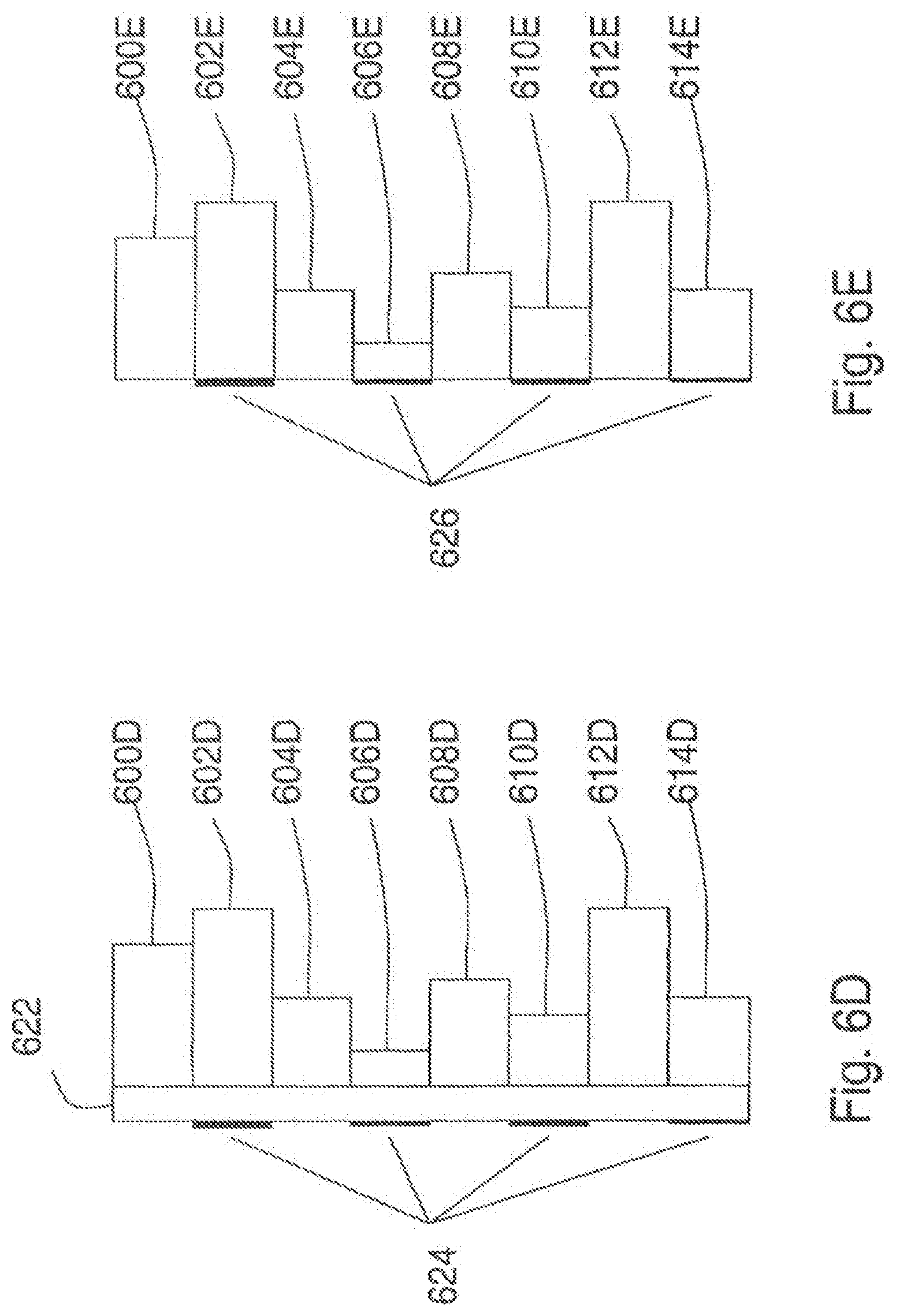

[0081] FIG. 6D is a cross-section view of a mask having a printed overlay;

[0082] FIG. 6E is another embodiment of a mask configured to vary an amplitude of the received light;

[0083] FIG. 6F is a block diagram of an embodiment of a mask including SLMs;

[0084] FIG. 6G is a block diagram of another embodiment of a mask including SLMs;

[0085] FIG. 7 is an example of a binary Fresnel Zone Pattern;

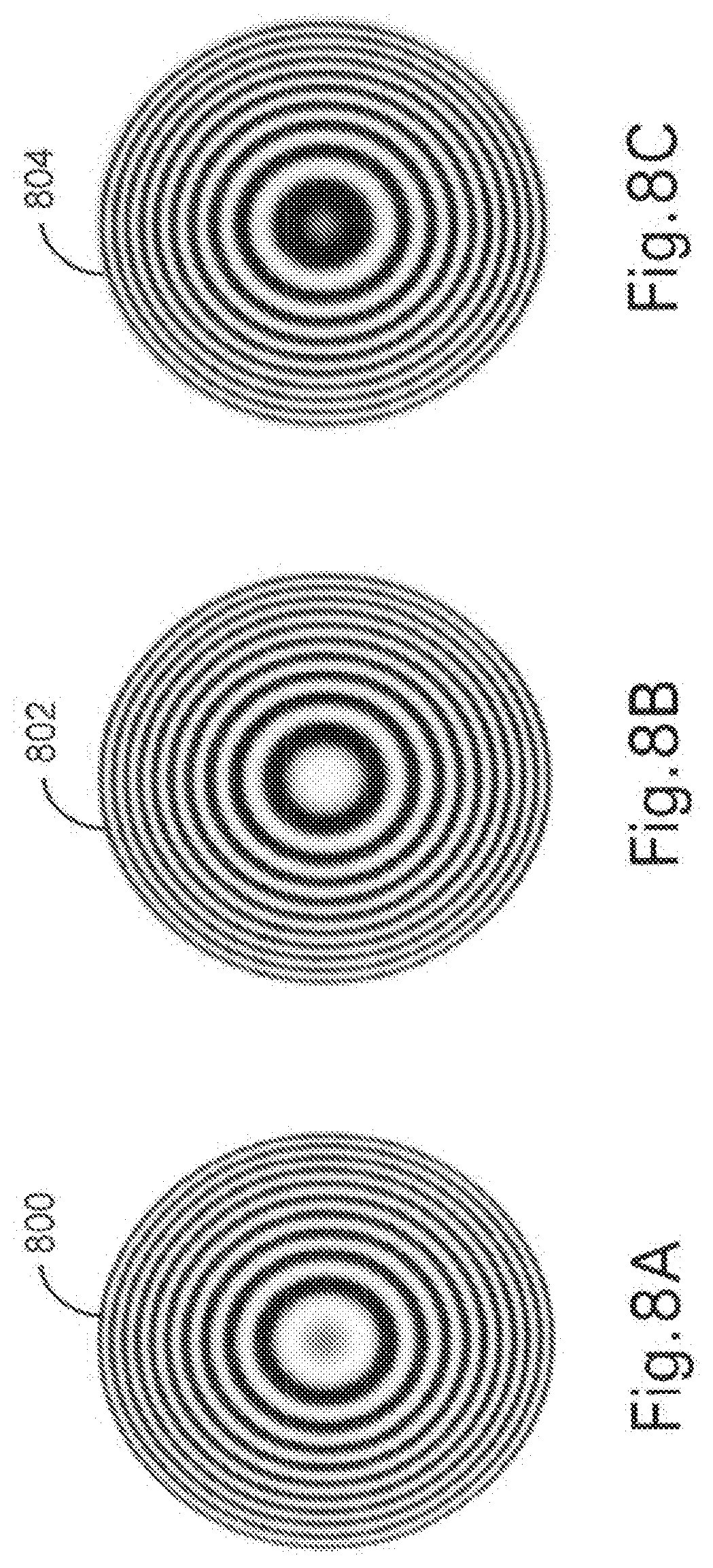

[0086] FIG. 8A is an example of a sinusoidal FZP;

[0087] FIG. 8B is an example of another sinusoidal FZP;

[0088] FIG. 8C is an example of another sinusoidal FZP;

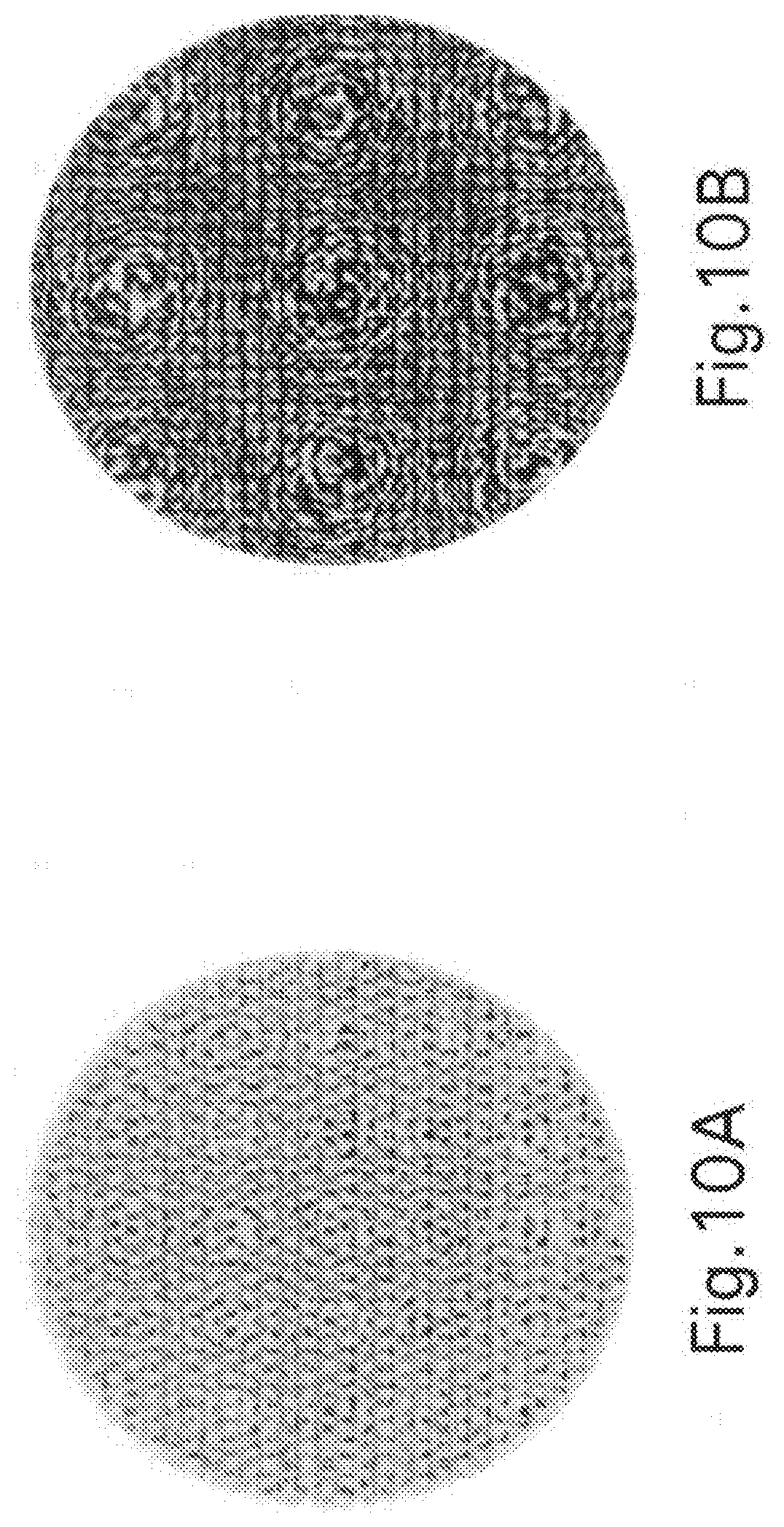

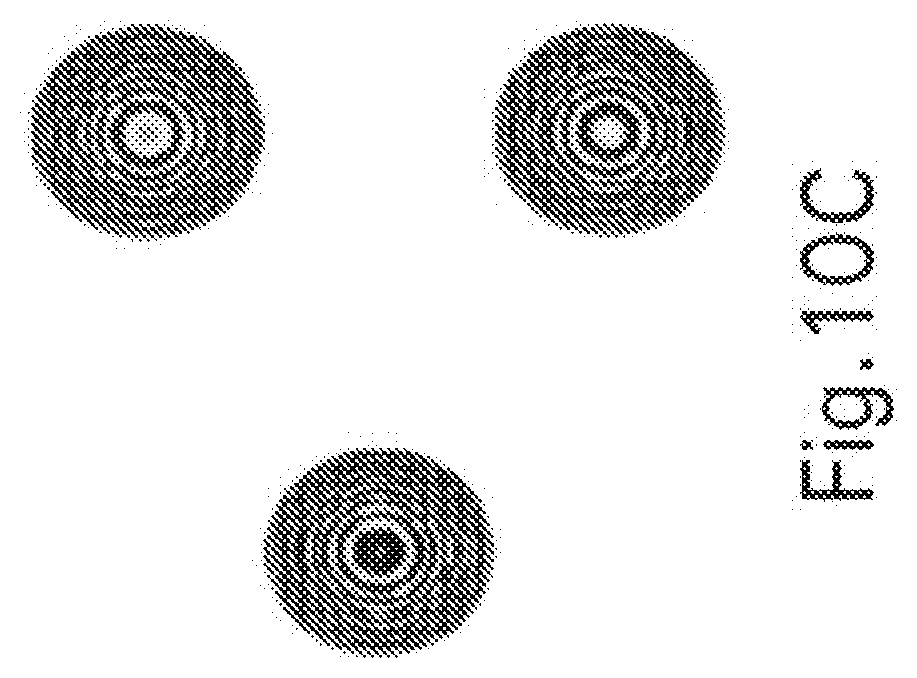

[0089] FIG. 9A is an example of a Fourier Transformed FZP pattern;

[0090] FIG. 9B is another example of a Fourier Transformed FZP pattern;

[0091] FIG. 9C is another example of a Fourier Transformed FZP pattern;

[0092] FIG. 10A is the amplitude portion of a complex transmission function that is a Fourier Transform of a linear combination of three mask functions;

[0093] FIG. 10B is the phase portion of a complex transmission function that is a Fourier Transform of a linear combination of mask functions,

[0094] FIG. 10C is an example of a pattern on a CCD when a point object is present at the input;

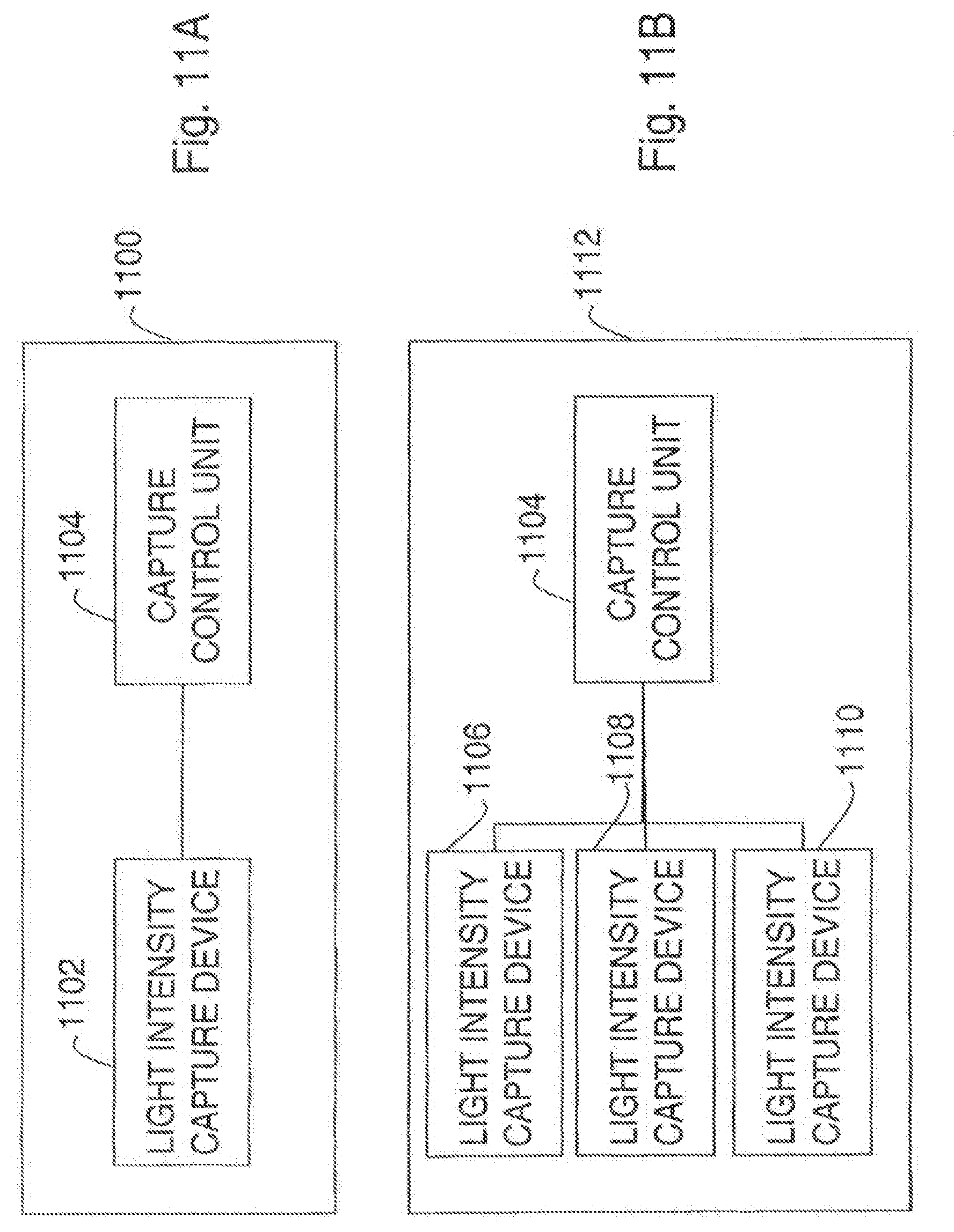

[0095] FIG. 11A is a block diagram of an embodiment of an image capture assembly;

[0096] FIG. 11B is a block diagram of another embodiment of an image capture assembly;

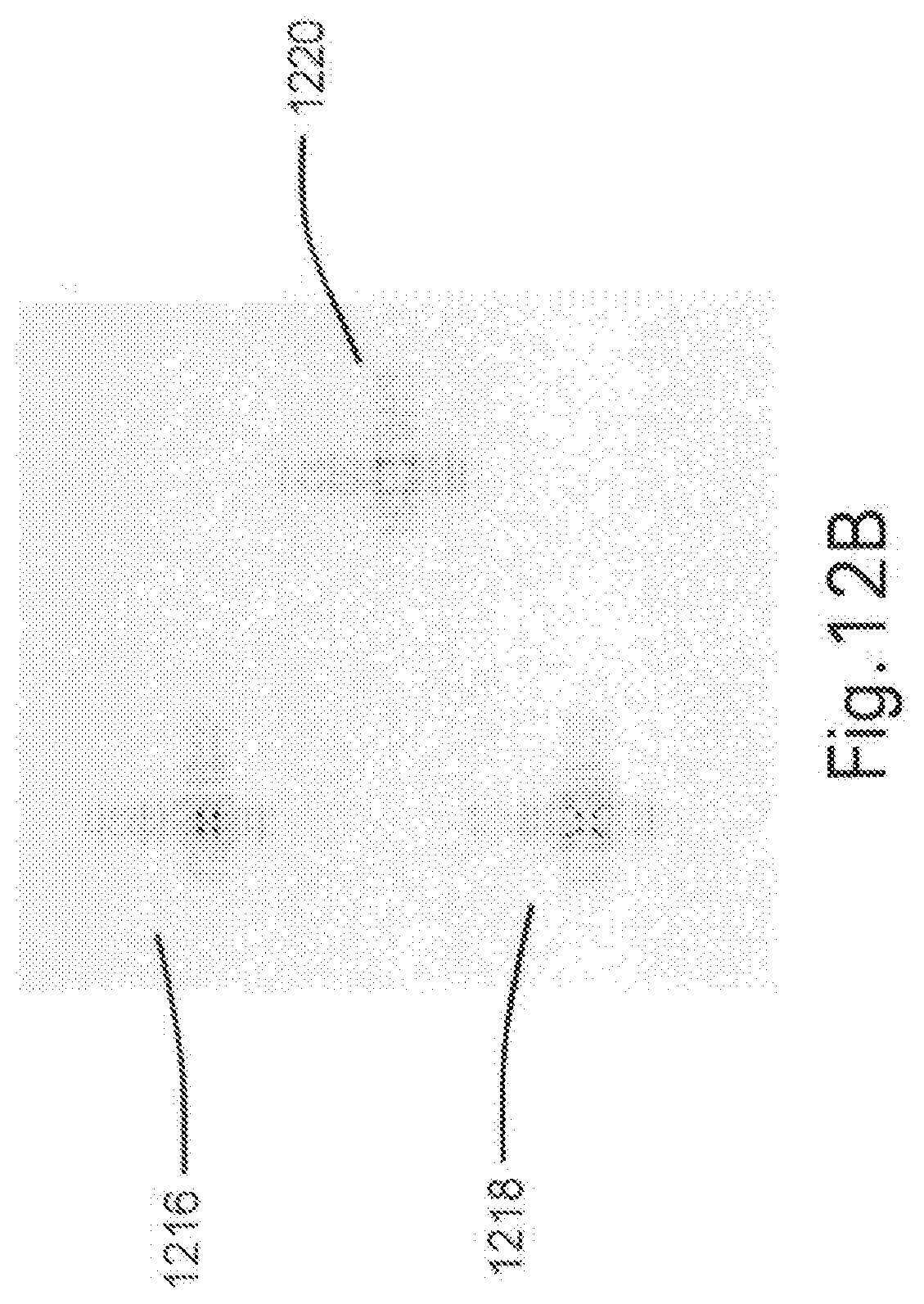

[0097] FIG. 12A is a view of an embodiment of a light intensity capture device that includes a charge coupled device having three distinct regions;

[0098] FIG. 12B is an example of a two-dimensional intensity image including three partial images;

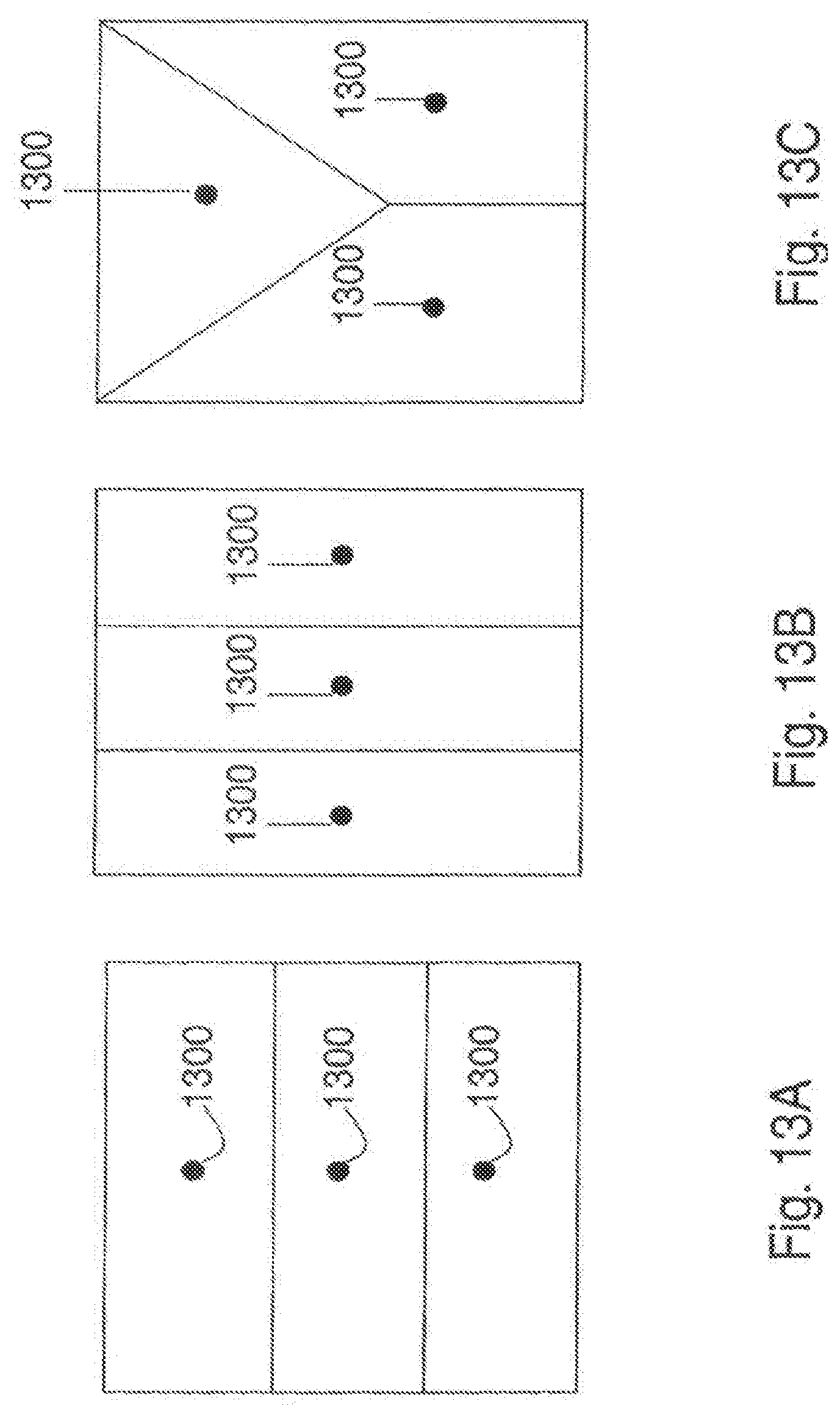

[0099] FIG. 13A is an example of an arrangement of distinct regions in an embodiment of a light capturing device;

[0100] FIG. 13B is another example of an arrangement of distinct regions in an embodiment of a light capturing device;

[0101] FIG. 13C is another example of an arrangement of distinct regions in an embodiment of a light capturing device;

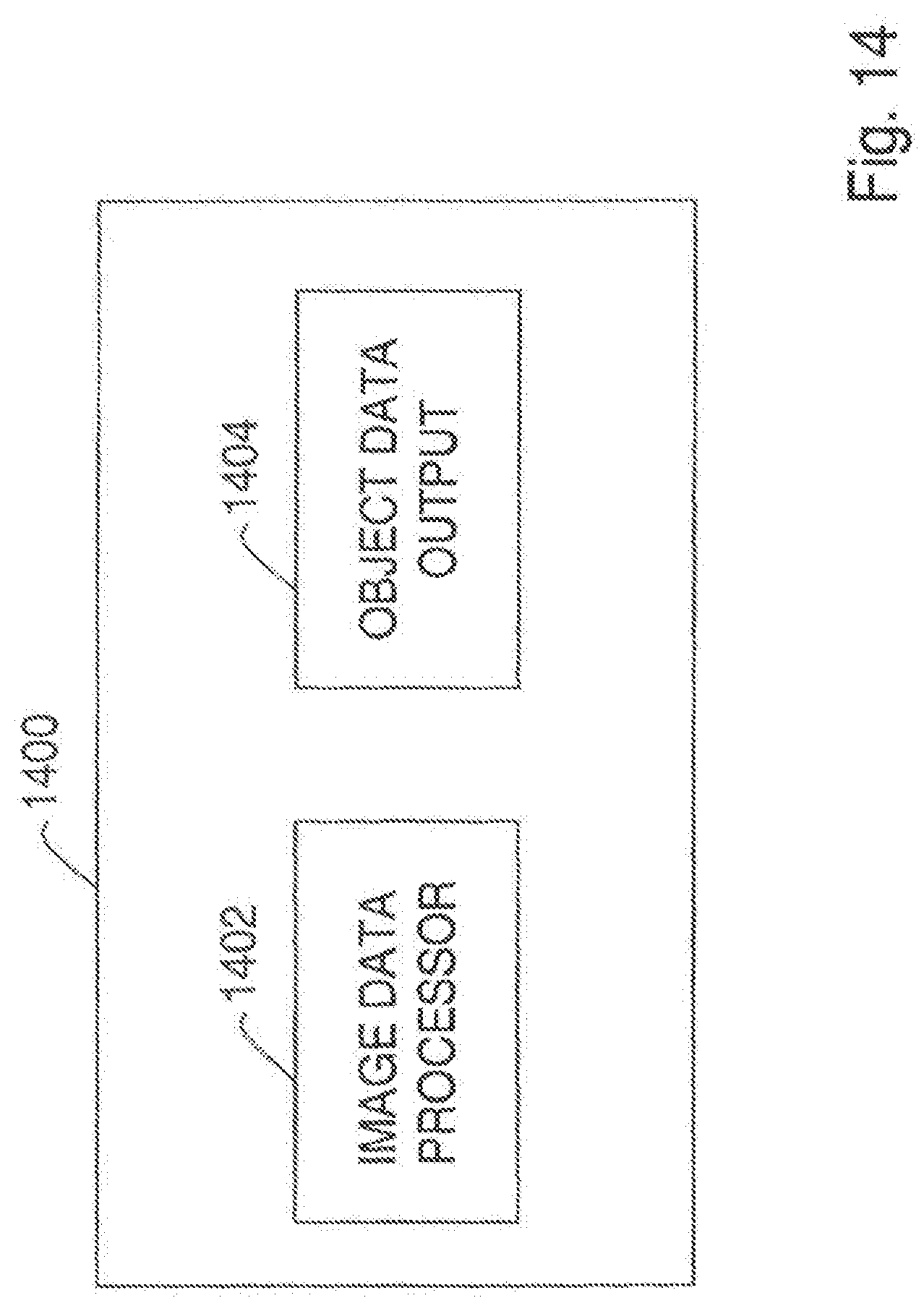

[0102] FIG. 14 is a block diagram of an embodiment of a capture control unit that includes an image data processor that combines the electronic image data;

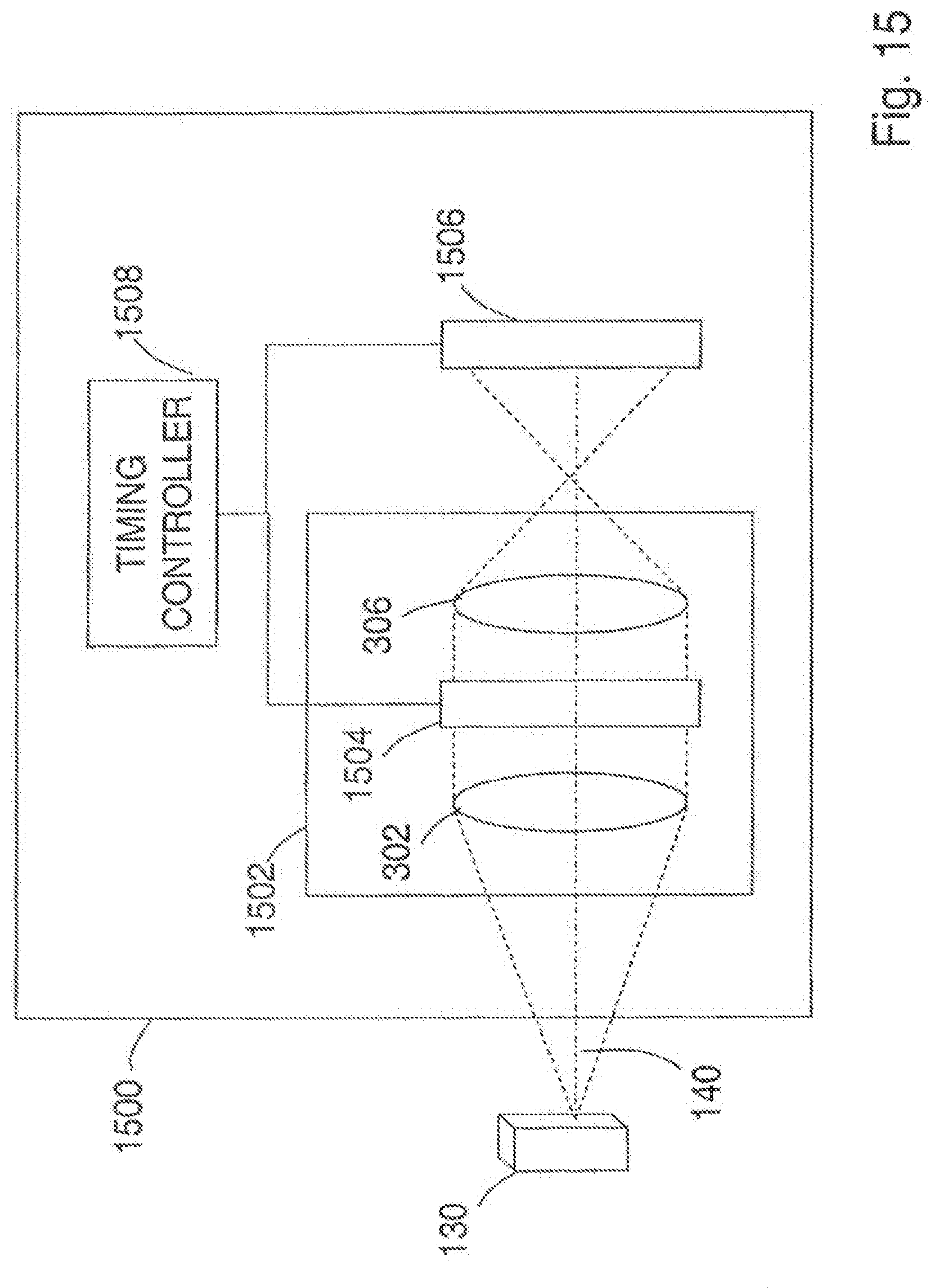

[0103] FIG. 15 is a block diagram of an embodiment of an optical apparatus that varies the mask over time;

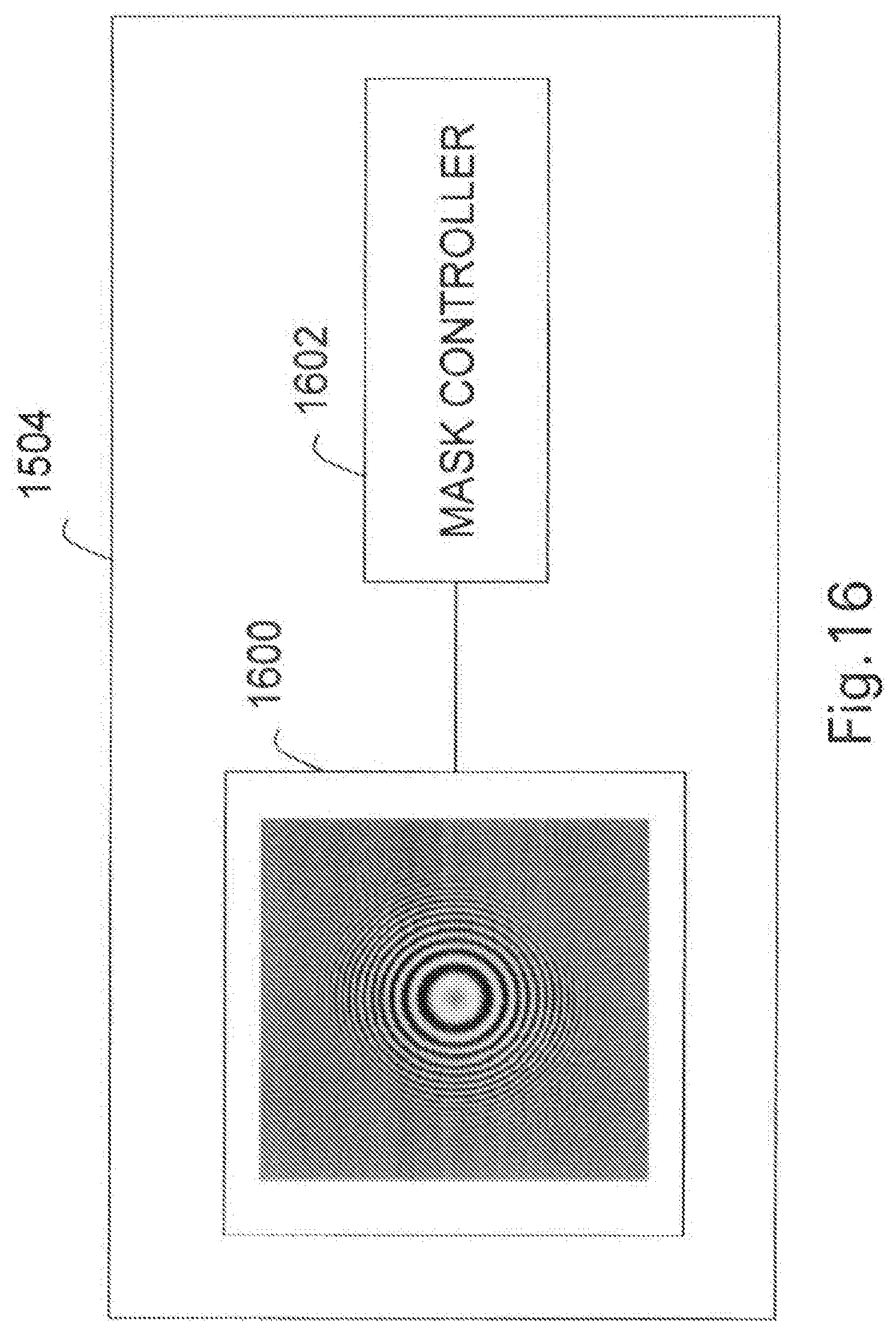

[0104] FIG. 16 is a block diagram of a controllable mask that includes a spatial light modulator under the control of a mask controller;

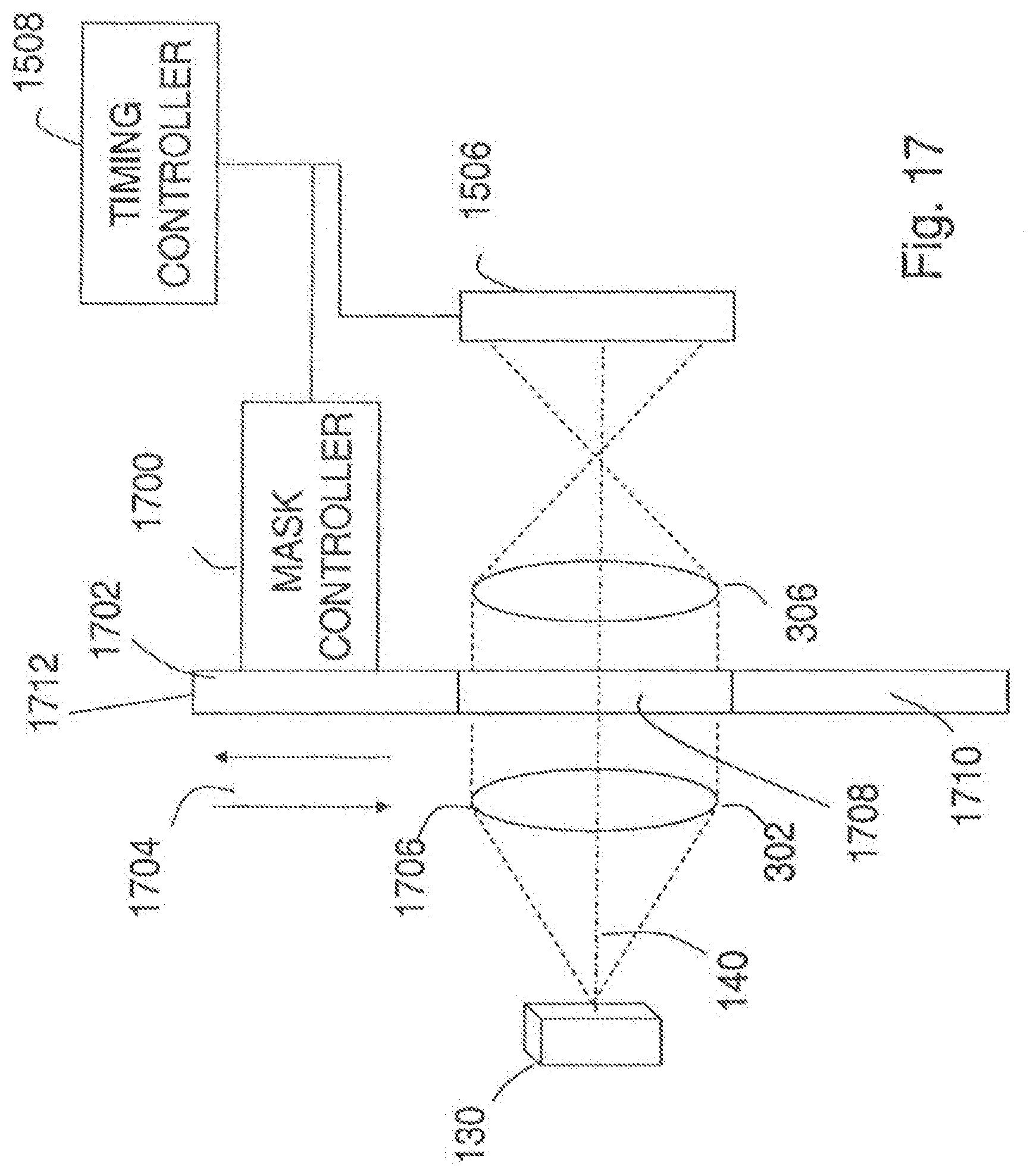

[0105] FIG. 17 is a block diagram of another embodiment of an optical apparatus in which the mask is varied over time,

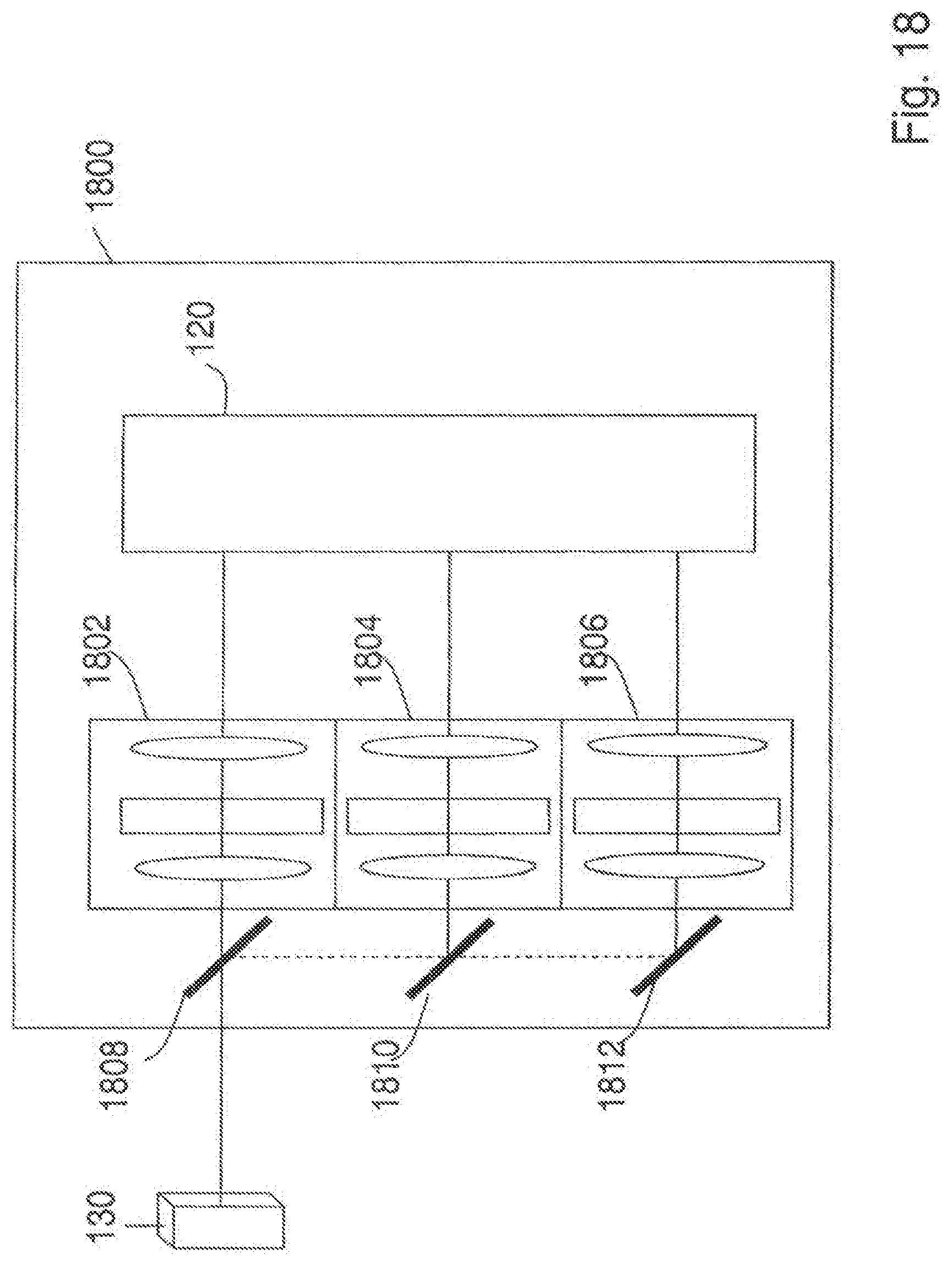

[0106] FIG. 18 is a block diagram of another embodiment of an optical apparatus;

[0107] FIG. 19 is a block diagram of another embodiment of an optical apparatus;

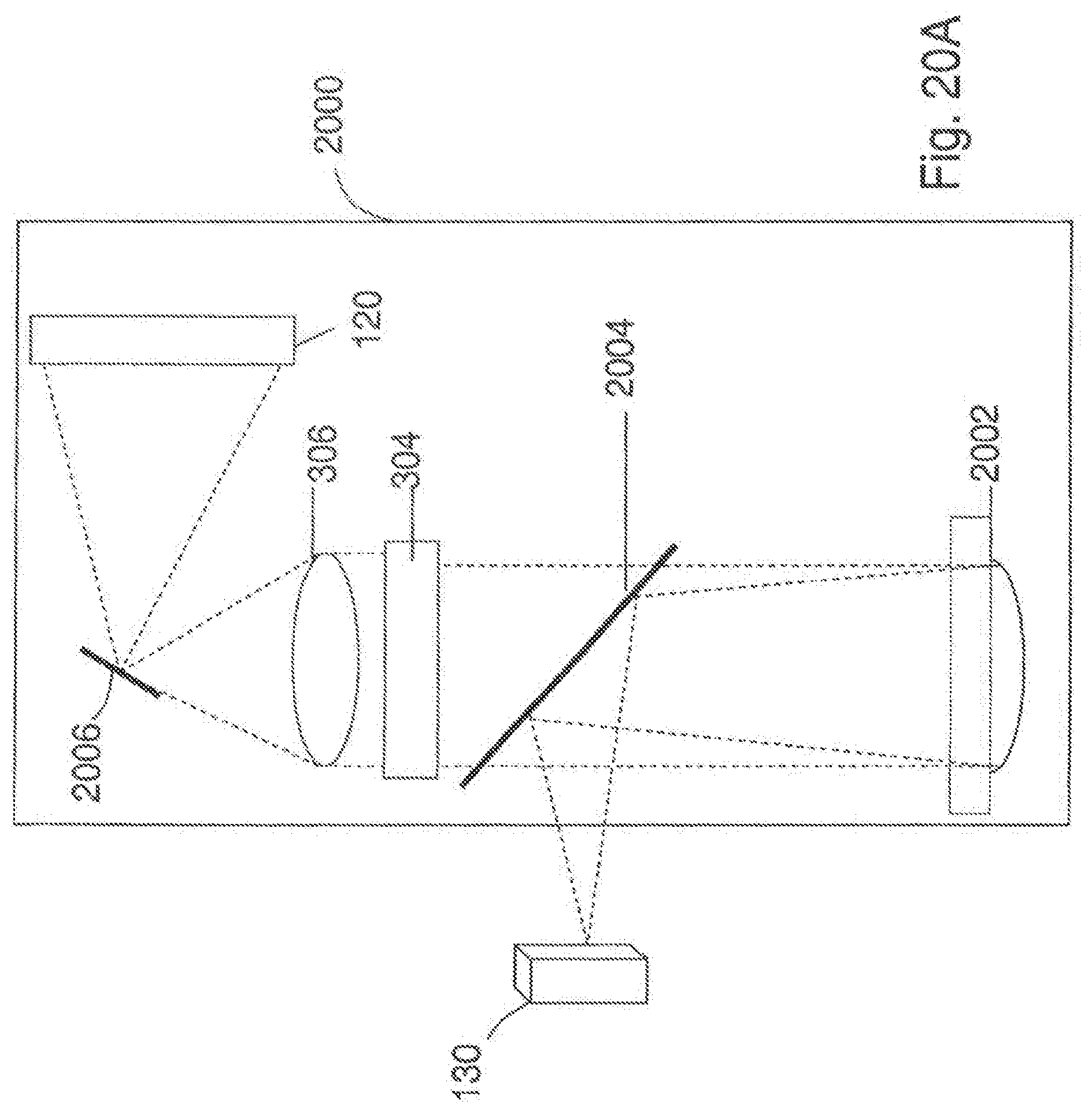

[0108] FIG. 20A is a block diagram of an embodiment of an optical apparatus having a first transforming optical assembly including a reflective optical assembly;

[0109] FIG. 20B is a block diagram of another embodiment of an optical apparatus having a first transforming optical assembly including a reflective optical assembly;

[0110] FIG. 21A is a block diagram of another embodiment of an optical apparatus;

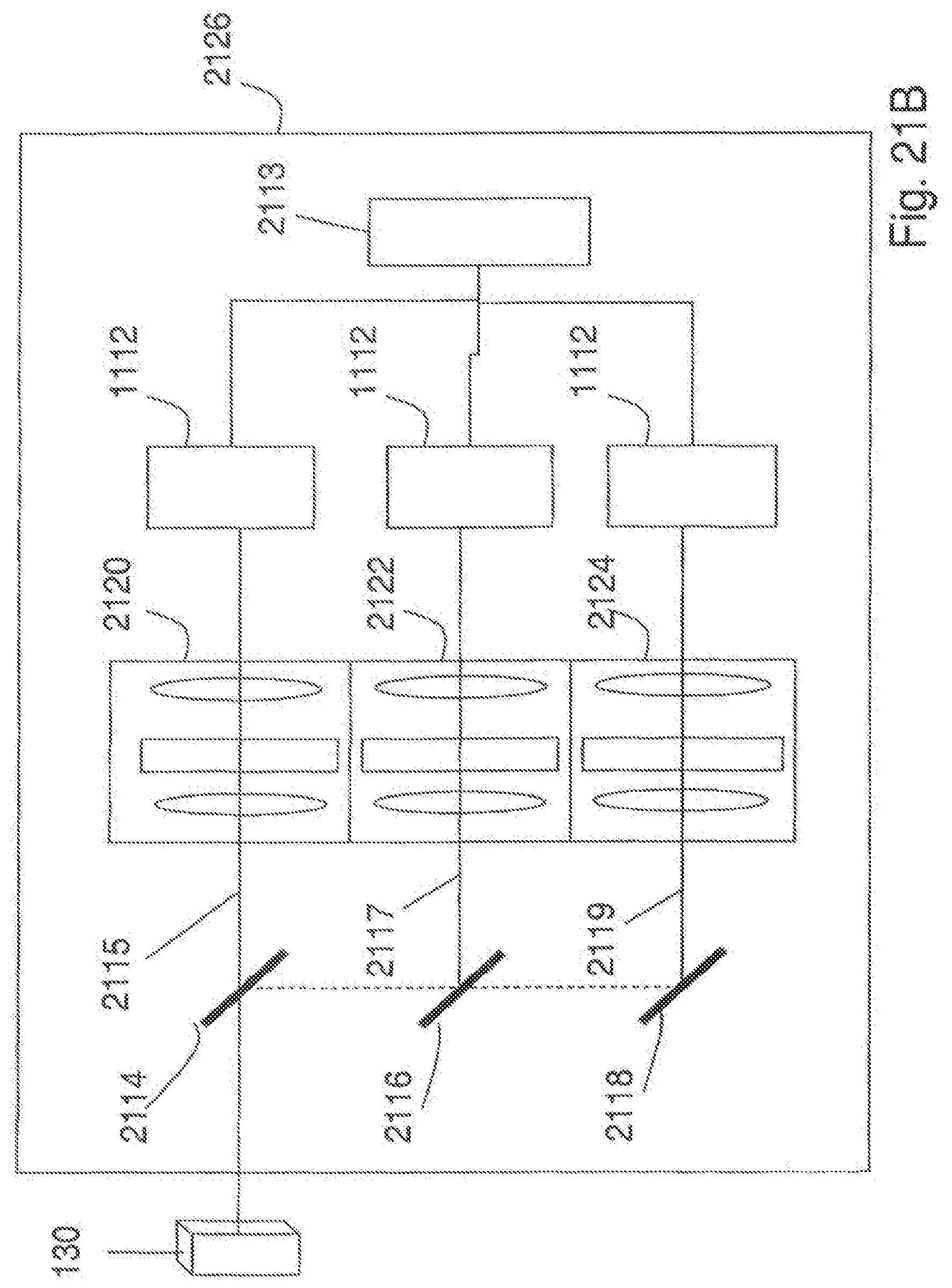

[0111] FIG. 21B is a block diagram of another embodiment of an optical apparatus;

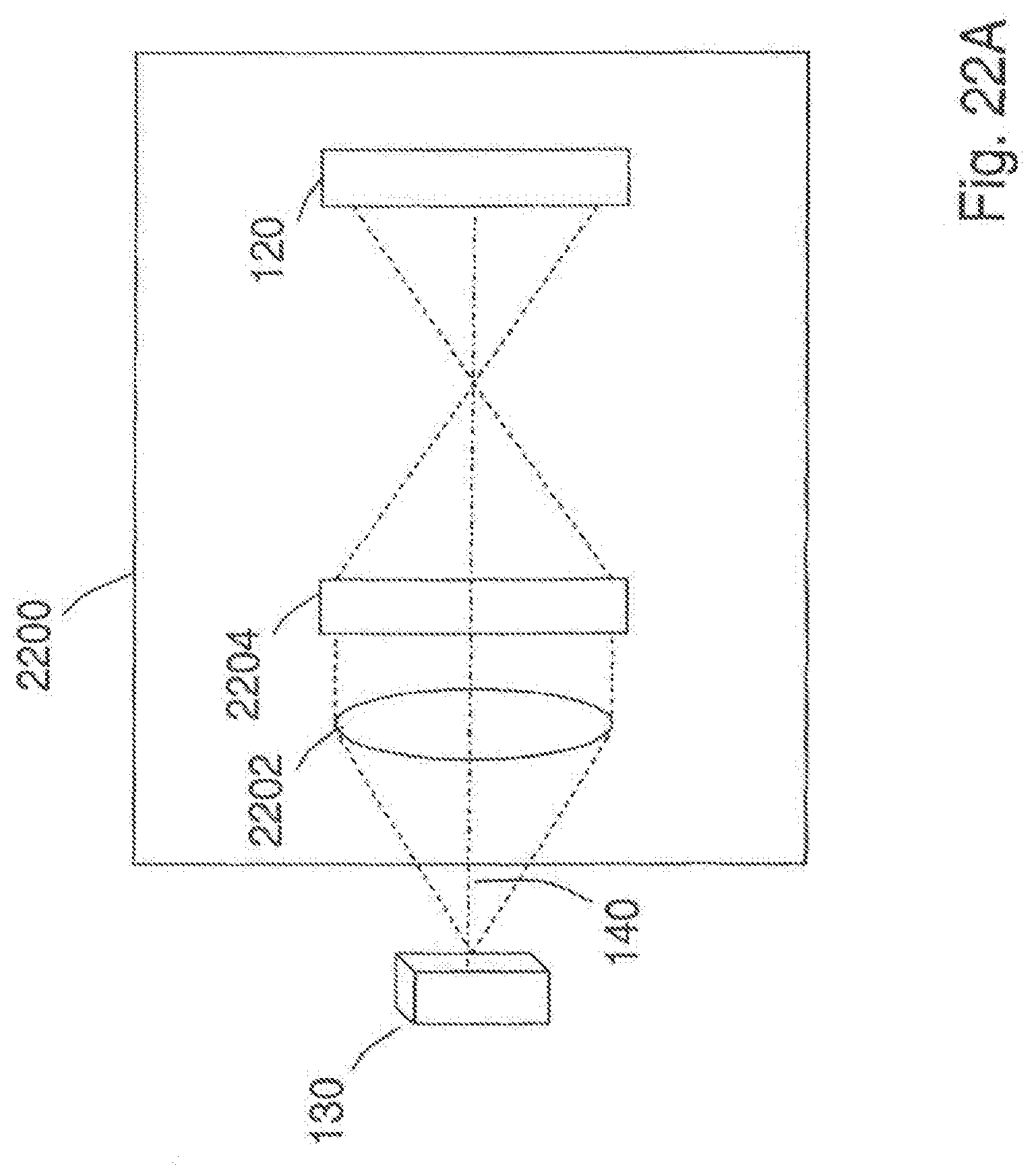

[0112] FIG. 22A is a block diagram of an example of an optical apparatus that does not require a second transforming optical element;

[0113] FIG. 22B is a block diagram of an example of an optical apparatus that does not require a first transforming optical element;

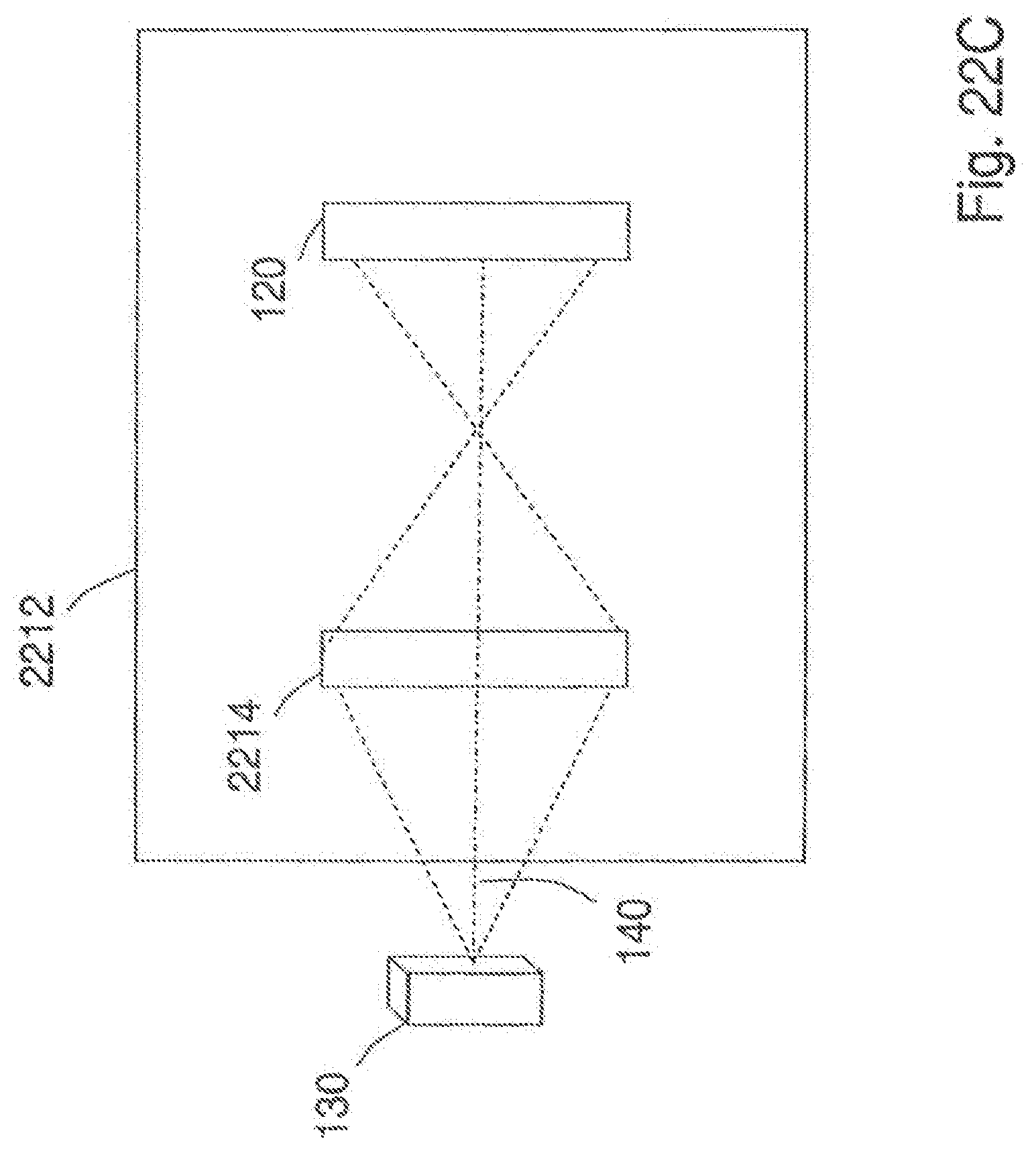

[0114] FIG. 22C is a block diagram of an example of an optical apparatus that does not require first and second transforming optical elements;

[0115] FIG. 23 is a block diagram of an embodiment of an optical apparatus including a reflective type diffractive optical element;

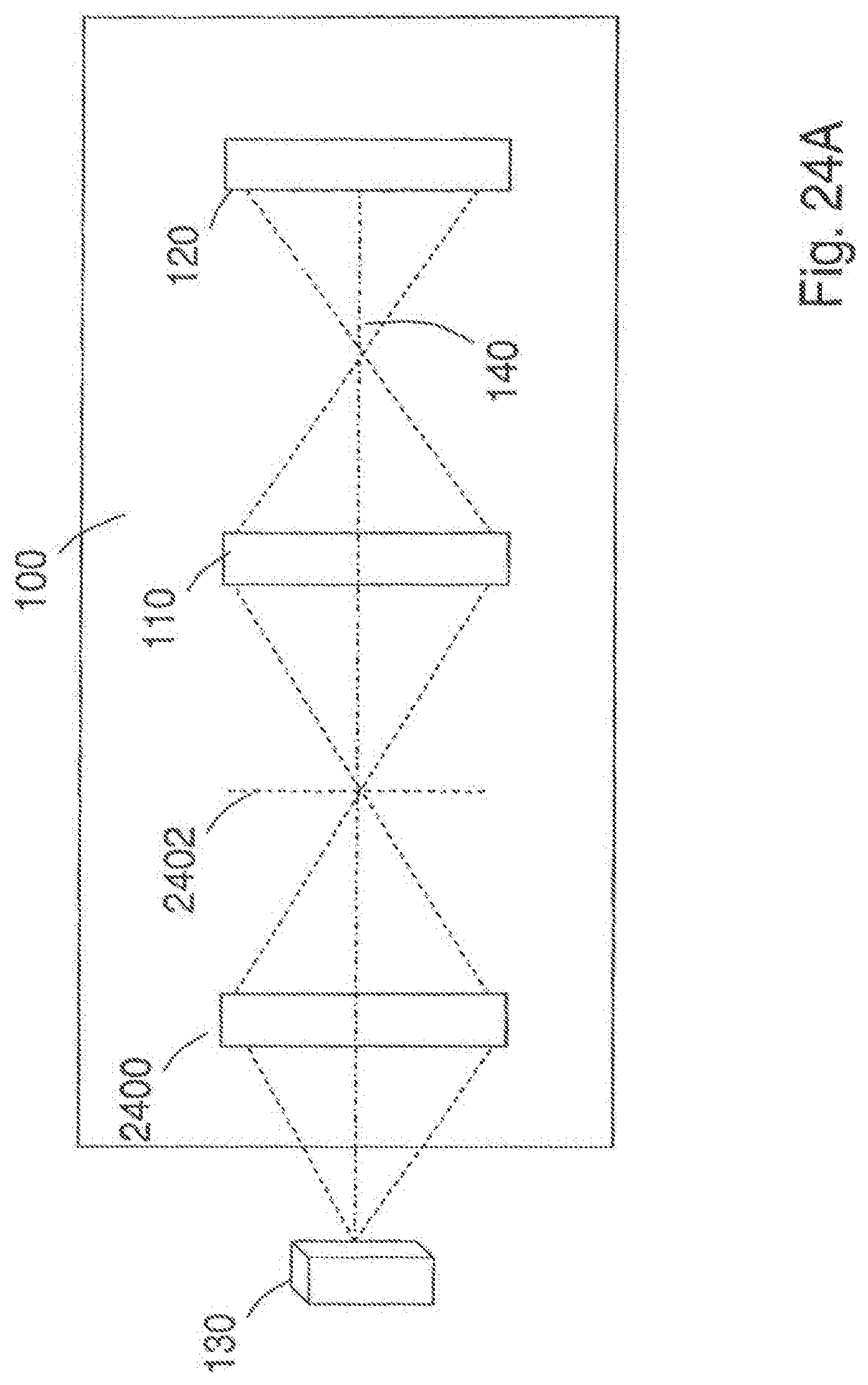

[0116] FIG. 24A is a block diagram of another embodiment of an optical apparatus;

[0117] FIG. 24B is a block diagram of another embodiment of an optical apparatus;

[0118] FIG. 25 is a block diagram of another embodiment of an optical apparatus;

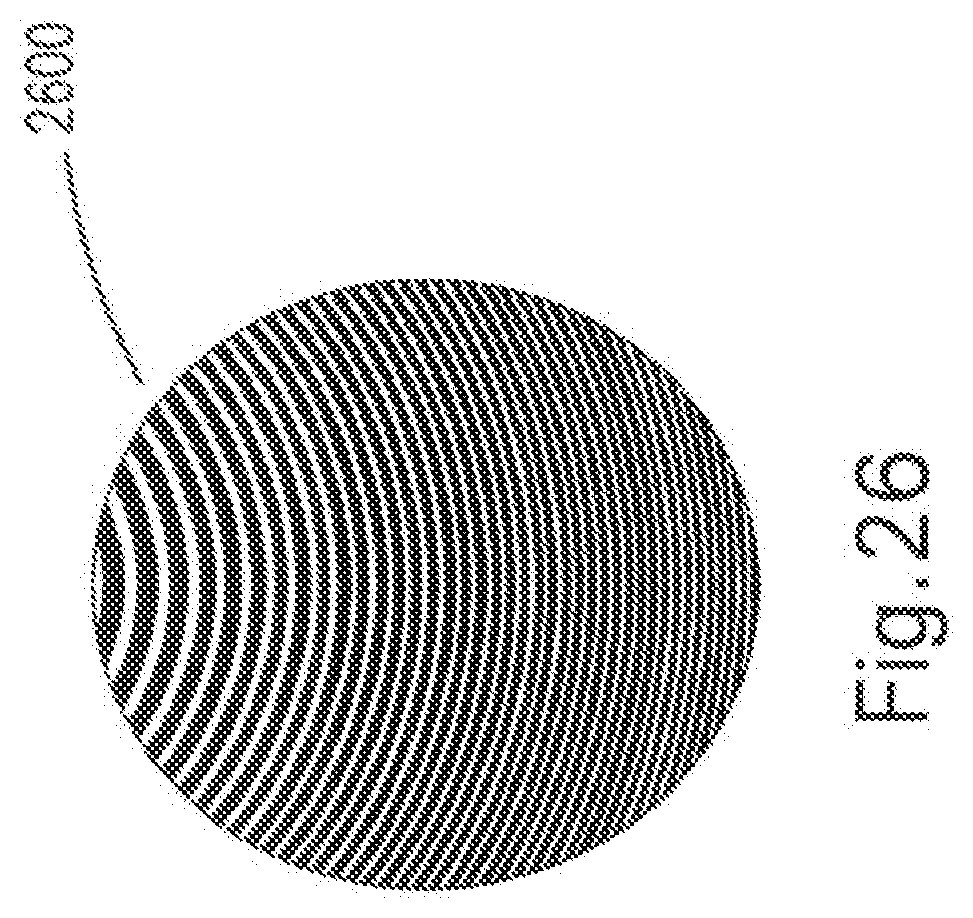

[0119] FIG. 26 is an example of an off-axis Fresnel Zone Pattern;

[0120] FIG. 27 is a block diagram of a portion of an optical apparatus including a composite mask having lenses;

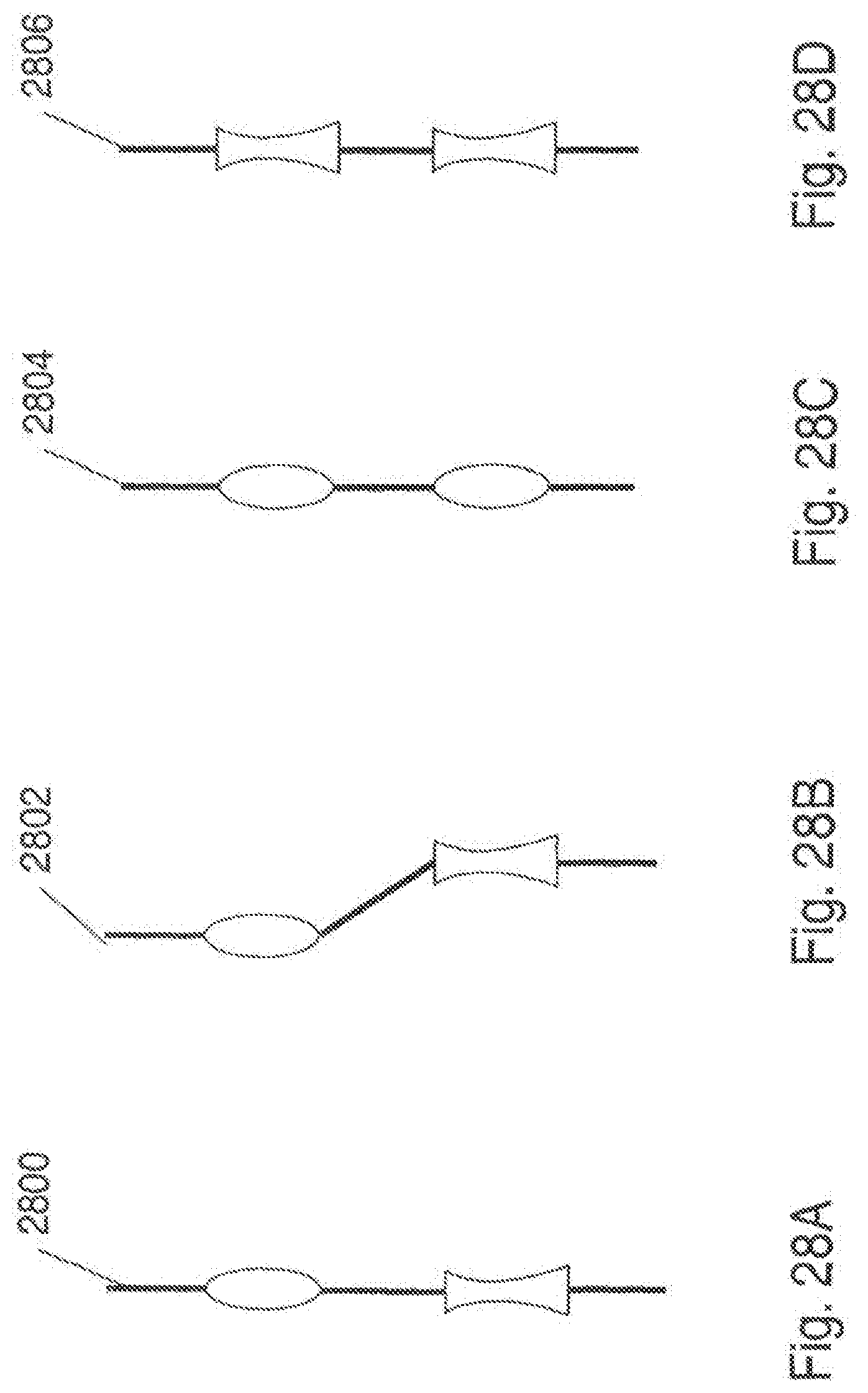

[0121] FIG. 28A is a side view of an embodiment of a composite mask;

[0122] FIG. 28B is a side view of another embodiment of a composite mask;

[0123] FIG. 28C is a side view of another embodiment of a composite mask;

[0124] FIG. 28D is a side view of another embodiment of a composite mask;

[0125] FIG. 29 is a block diagram of another embodiment of an optical apparatus;

[0126] FIG. 30 is a detailed view of an embodiment of a grating having low and high transmissivity regions;

[0127] FIG. 31A is a block diagram of an embodiment of an optical apparatus that may be used with a lined transparency or grating;

[0128] FIG. 31B is a block diagram of another embodiment of an optical apparatus;

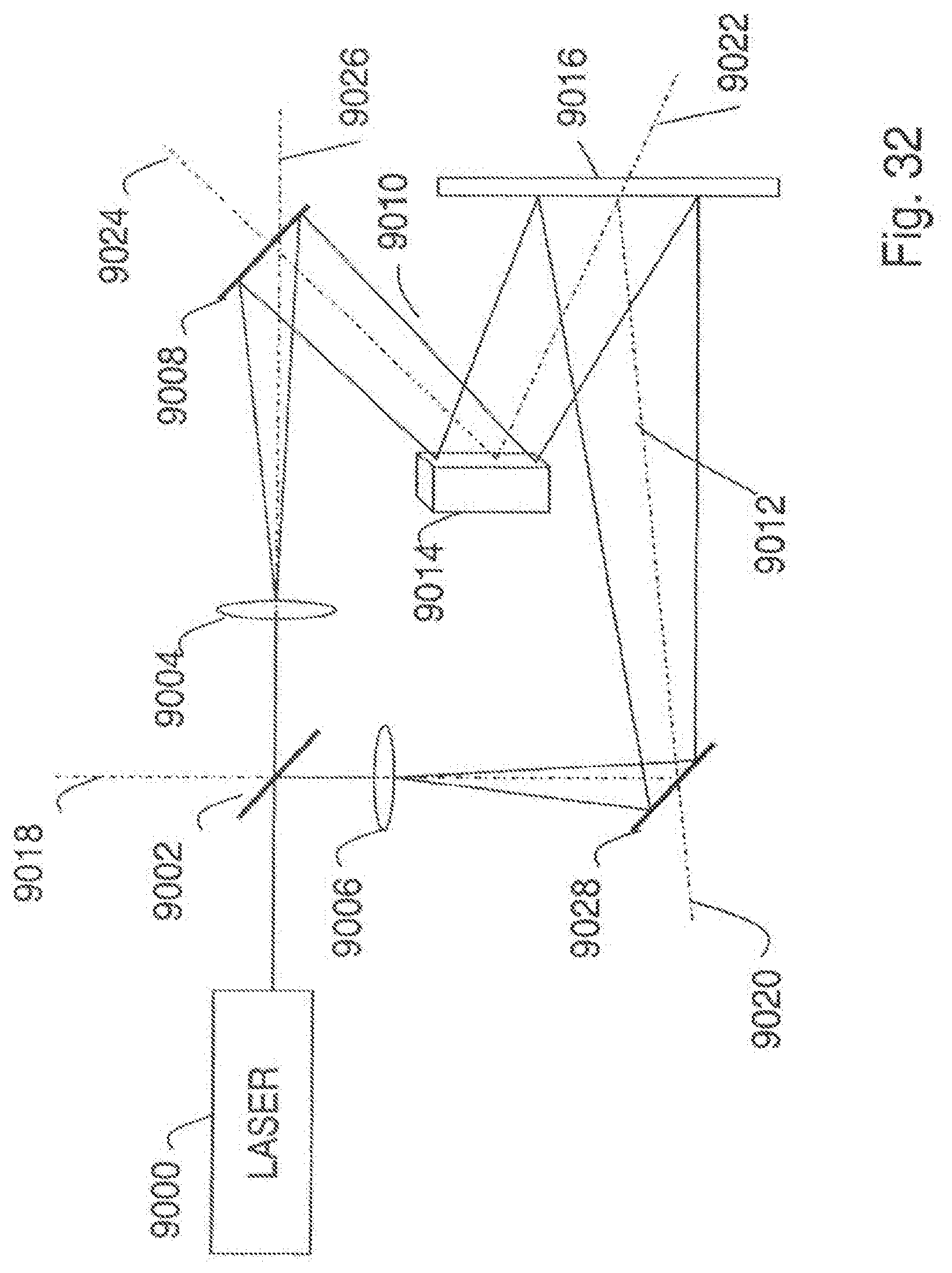

[0129] FIG. 32 is a block diagram of a conventional holographic system;

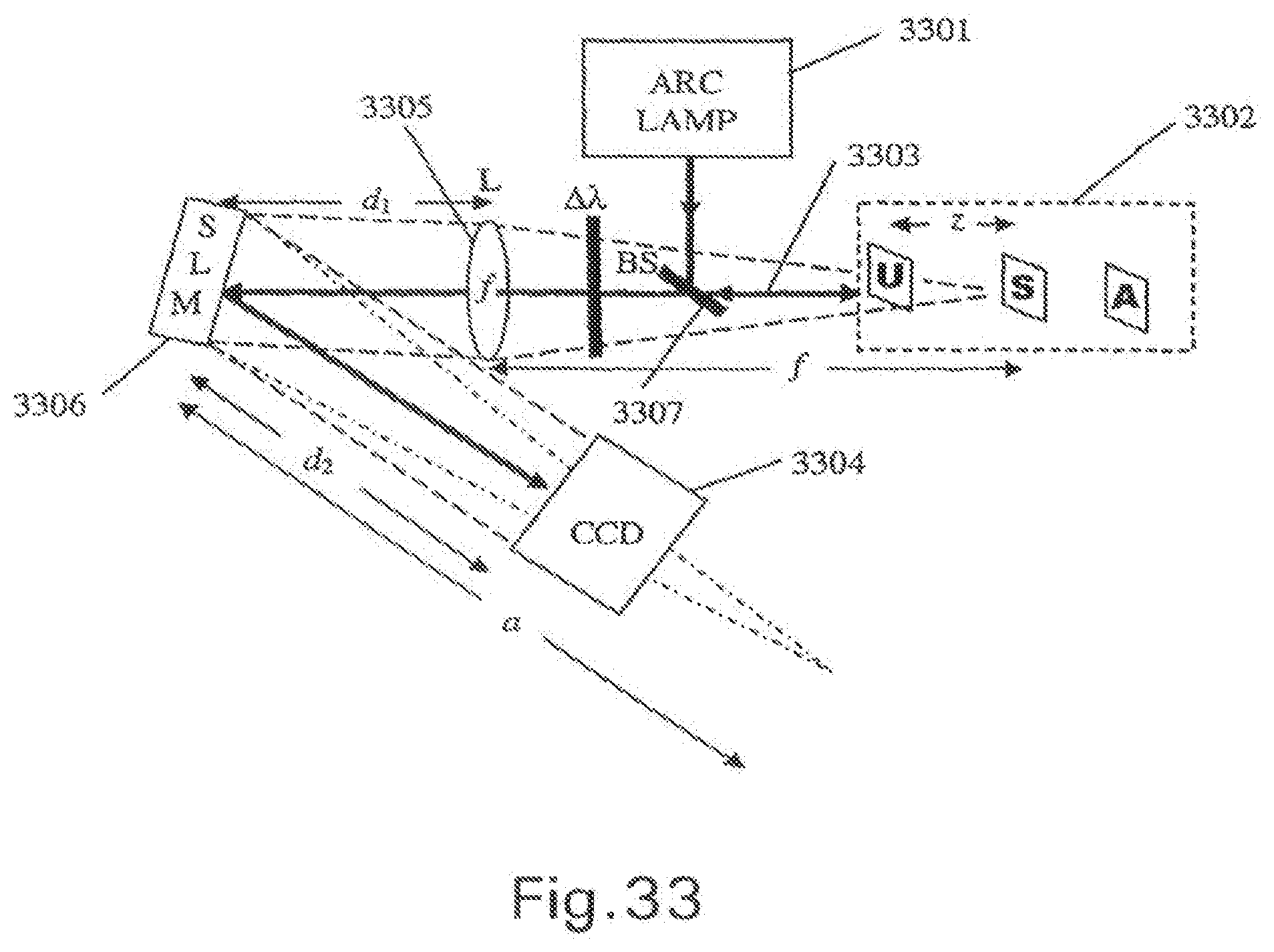

[0130] FIG. 33 is a block diagram of another embodiment of an optical apparatus;

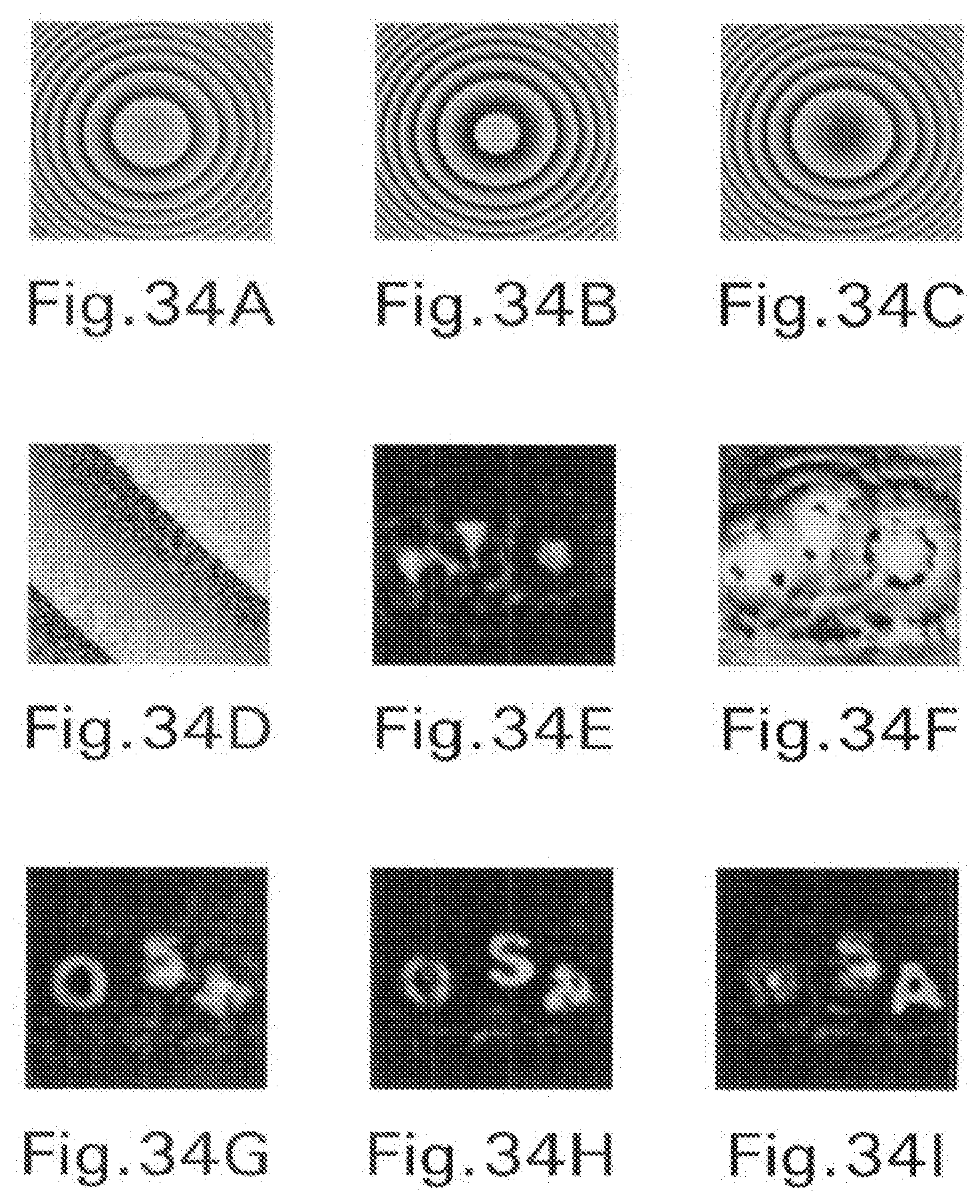

[0131] FIG. 34A shows a phase distribution of the reflection masks displayed on the SLM with .theta.=0.degree.;

[0132] FIG. 34B shows a phase distribution of the reflection masks displayed on the SLM with .theta.=120.degree.;

[0133] FIG. 34C shows a phase distribution of the reflection masks displayed on the SLM with .theta.=240.degree.;

[0134] FIG. 34D shows an enlarged portion of the reflection mask in FIG. 34A indicating that half of the SLM's pixels (randomly chosen) modulate light with constant phase;

[0135] FIG. 34E shows an enlarged portion of the reflection mask in FIG. 34A indicating that half of the SLM's pixels (randomly chosen) modulate light with constant magnitude;

[0136] FIG. 34F shows an enlarged portion of the reflection mask in FIG. 34A indicating that half of the SLM's pixels (randomly chosen) modulate light with phase of the final on-axis digital hologram;

[0137] FIG. 34G shows a reconstruction of the hologram of the three letters at the best focus distance of `O`;

[0138] FIG. 34H shows a reconstruction of the hologram of the three letters at the best focus distance of `S`;

[0139] FIG. 34I shows a reconstruction of the hologram of the three letters at the best focus distance of `A`;

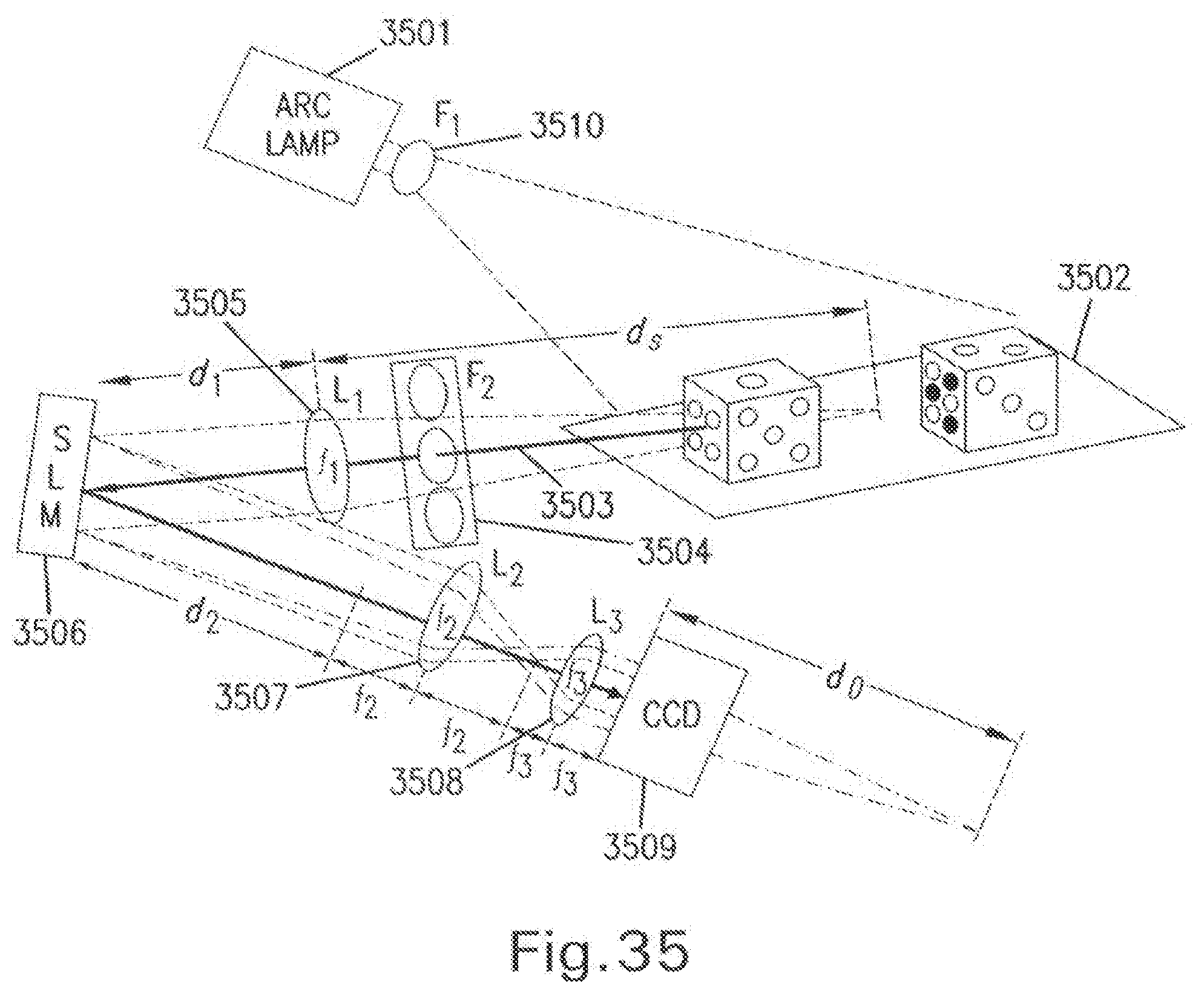

[0140] FIG. 35 is a block diagram of another embodiment of an optical apparatus;

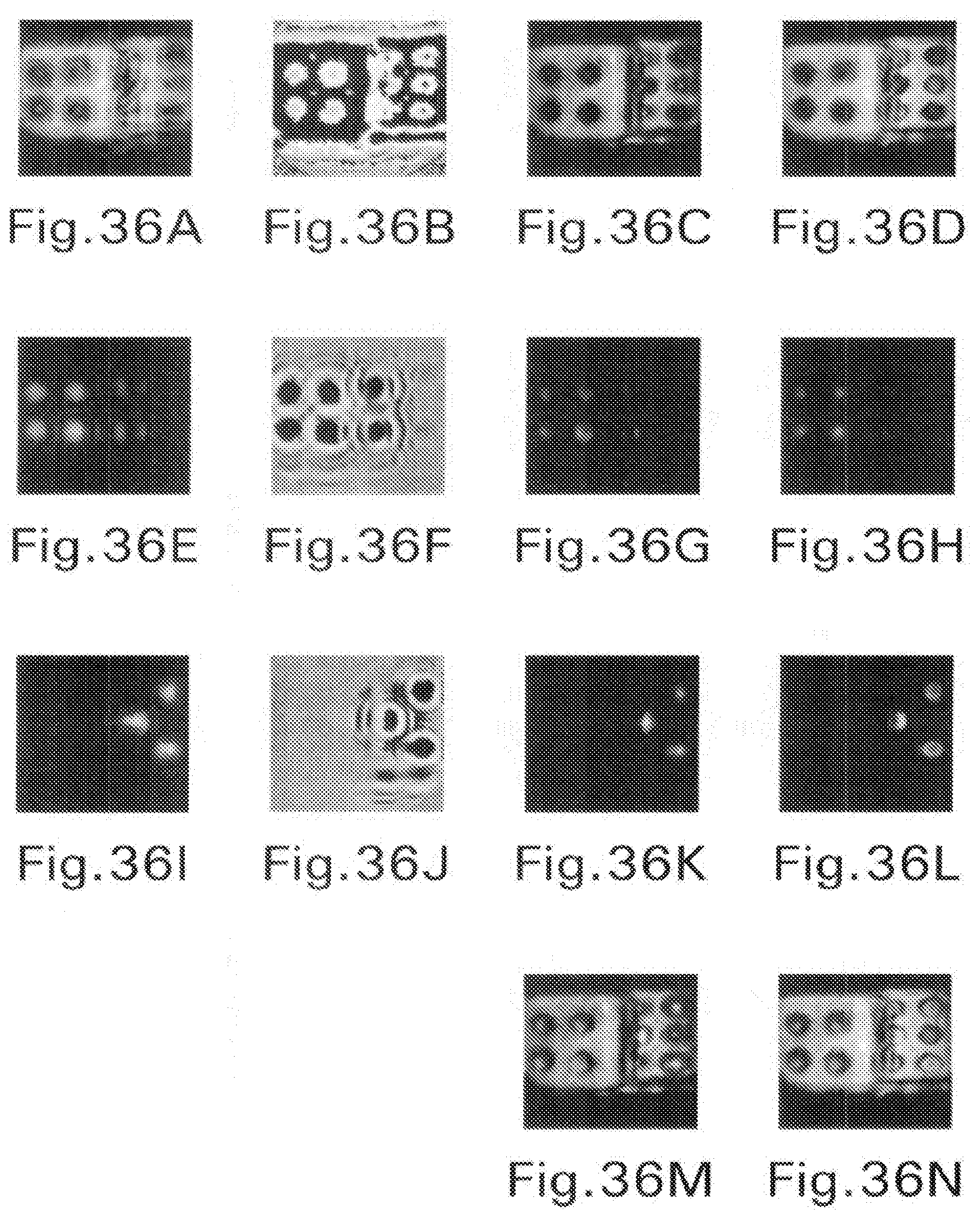

[0141] FIG. 36A shows magnitude of a complex Fresnel hologram of the dice,

[0142] FIG. 36B shows phase of a complex Fresnel hologram of the dice;

[0143] FIG. 36C shows a digital reconstruction of a non-fluorescence hologram at the face of the red-dots on the die;

[0144] FIG. 36D shows a digital reconstruction of a non-fluorescence hologram at the face of the green dots on the die;

[0145] FIG. 36E shows magnitude of a complex Fresnel hologram of the red dots;

[0146] FIG. 36F shows phase of a complex Fresnel hologram of the red dots;

[0147] FIG. 36G shows a digital reconstruction of the red fluorescence hologram at the face of the red-dots on the die;

[0148] FIG. 36H shows a digital reconstruction of the red fluorescence hologram at the face of the green dots on the die;

[0149] FIG. 36I shows magnitude of a complex Fresnel hologram of the green dots;

[0150] FIG. 36J shows phase of the complex Fresnel hologram of the green dots;

[0151] FIG. 36K shows a digital reconstruction of a green fluorescence hologram at the face of the red-dots on the die;

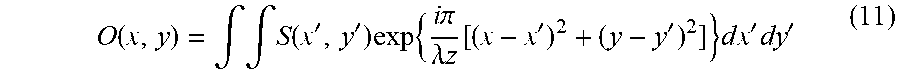

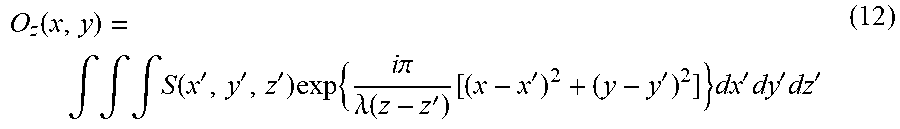

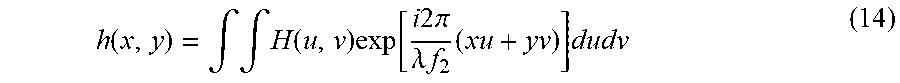

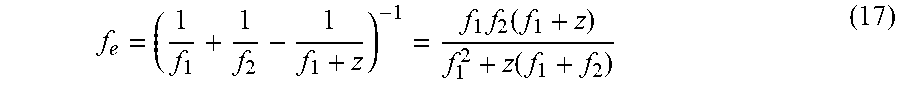

[0152] FIG. 36L shows a digital reconstruction of a green fluorescence hologram at the face of the green dots on the die;

[0153] FIG. 36M shows a composition of the digital reconstructions in FIGS. 36C, 36G, and 36K; and

[0154] FIG. 36N shows a composition of the digital reconstructions in FIGS. 36D, 36H, and 36L.

DETAILED DESCRIPTION OF THE PREFERRED EMBODIMENTS

[0155] Conventional holographic techniques may include methods of capturing a hologram of an object by capturing an interference pattern that results when a first portion of a coherent laser light beam (e.g., reference beam) interferes with a second portion of the laser light beam reflected off the object (e.g., object beam). A three-dimensional image of the object may be viewed by appropriately illuminating the recorded interference pattern with the reference beam.

[0156] FIG. 32 is a block diagram of a conventional holographic system including a laser 9000 that shines a coherent laser light beam along a first optical axis 9026 through a partially reflective and transmissive mirror, such as a beam splitter 9002. A first portion of the split laser beam is guided by lens 9004 and mirror 9008 to illuminate the object 9014 with the object beam 9010 along a second optical axis 9024. A second portion of the split laser beam is reflected by the beamsplitter 9002 along a third optical axis 9018 and guided by lens 9006 and mirror 9028 to direct a reference beam 9012 along a fourth optical axis 9020 to an image capture device 9016 such as a photographic plate, a charge coupled device (CCD) or a complementary metal oxide semiconductor sensor (CMOS). The reference beam 9012 and the object beam 9010 reflected from the object 9014 along a fifth optical axis 9022 interfere with each other, producing an interference pattern that may be recorded as a hologram on the image capture device 9016.

[0157] Conventional holography solutions that produce a hologram by interfering two parts of a light source along different optical paths or optical axes may be very sensitive to any change in alignment of the optical paths or axes, because even minor changes in the length or direction of the optical paths or axes will change the phase relationship of the portions of light that interfere. Such a change will result in a change to the resulting interference pattern and hologram, and yield a distorted resulting image.

[0158] For example, in the holography system of FIG. 32, a factor such as motion, vibration, component deterioration or distortion, or thermal expansion, may cause a slight change in the length or direction of one or more of the first, second, third, fourth or fifth optical axes 9026, 9024, 9018, 9020 and 9022, respectively. Even a slight change in one of the axes may cause a corresponding change in the phase relationship of the reference beam 9012 and the light reflected from the object 9014 along the fifth optical axis 9010, thereby causing a significant change in the resulting interference pattern and hologram captured at the image capture device 9016.

[0159] Such a sensitivity to axial variation in conventional holographic systems may result in reduced resolution in the resulting three-dimensional information.

[0160] Various conventional attempts to address such a sensitivity to axial variation have had limited success. For example, attempts have included using massive platforms and shock absorbers to dampen vibration, high tolerance mechanical optical stages to reduce positioning errors, and optical and structural materials having reduced coefficients of thermal expansion to reduce thermal expansion effects. However, these attempts generally increase the cost, size and mass of conventional holography systems, and reduce system portability and availability.

[0161] In addition, conventional holography systems may require an active light source to illuminate the object and produce the reference and object beams. Active solutions that require illumination of the object by a particular light source may limit the applicability and usefulness of the conventional holography systems. For example, an active light source would not be useful in stealth military targeting holographic systems where it would be undesirable for the targeting device to give away its position by producing light or other electromagnetic radiation. Alternatively, an active radiation source would not be applicable in holographic systems that observe objects that produce their own light, such as a holographic system observing chemiluminescent, black body, or infrared illuminating objects. Such a holographic technique may be useful in observing objects such as ships by virtue of their ability to block the chemiluminescence of background emissions in certain bodies of water, such as the chemiluminescent Red Sea.

[0162] In addition, conventional holographic systems that rely on a coherent light source, such as a monochromatic laser, may be unable to capture color information from the object unless multiline lasers or multiple lasers of different wavelengths are used. Systems such as those are likely to be very complex. Further, such systems may not be suitable for capturing three-dimensional information regarding objects that should not be illuminated with laser light (e.g., sensitive biological material).

[0163] Conventional holographic techniques using incoherent light to illuminate an object rely on a simplifying assumption that incoherent source objects may be considered to be composed of many individual light source points, each of which is self coherent, and each of which can therefore create an interference pattern with its mirrored image. For the purposes of this document, incoherent light is any temporally or spatially incoherent light for which any two electromagnetic fields emitted from a same location at two different times (in the case of temporal incoherence) or emitted from two different locations at the same time (in the case of spatial incoherence) do not create an interference grating or pattern when the two fields are summed together. Various methods of incoherent holography have been proposed using this principle, such as methods described in A. W. Lohmann, "Wavefront Reconstruction for Incoherent Objects," J. Opt. Soc. Am. 55, 1555-1556 (1964), G. Cochran, "New method of making Fresnel transforms," J. Opt. Soc. Am. 56, 1513-1517 (1966), P. J. Peters, "incoherent holography with mercury light source," Appl. Phys. Lett. 8, 209-210 (1966), H. R. Worthington, Jr., "Production of holograms with incoherent illumination," J. Opt Soc Am. 56, 1397-1398 (1966), A S. Marathay, "Noncoherent-object hologram its reconstruction and optical processing," J. Opt. Soc. Am. A 4, 1861-1868 (1987), and G. Sirat, D. Psaltis, "Conoscopic holography," Optics Letters, 10, 4-6 (1985), each of which is incorporated herein by reference.

[0164] However, the conventional incoherent holographic techniques may require impractically high levels of light intensity. Thus, conventional incoherent holographic systems require active illumination of objects, and therefore may exhibit the resulting problems and limitations described above.

[0165] In addition, conventional incoherent holographic systems may rely on illuminating the object with a bandwidth limited source to reduce sensitivity to length differences in the plural optical path differences. For example, in a conventional incoherent holographic system acceptable variations in the relative length of optical paths may be limited to the inverse of the bandwidth multiplied by the light velocity. Thus, a predetermined light source having a limited bandwidth may be required, and the elimination of extraneous illumination may be necessary in conventional incoherent holographic systems.

[0166] Further, conventional incoherent holographic systems may require optical arrangements having plural optical axes similar to the example shown in FIG. 32. Thus, conventional incoherent holographic systems may also be susceptible to variations in direction or length of the optical axes, and attendant problems, as described above.

[0167] In addition, conventional holographic techniques involve splitting light into two channels using mirrors, which may have a low transfer efficiency, and then recombining the split light. The efficiency may be particularly low in the recombination where more than 50% of the power gets lost.

[0168] Further, in a conventional Fourier hologram, each object point is transformed to a linear grating throughout the entire image plane (e.g., throughout an entire CCD plane). Thus, in conventional incoherent methods of producing Fourier holograms, light from each object point must disadvantageously be intense enough to establish a high contrast grating or pattern over the entire image plane.

[0169] Tomographic methods have been proposed to overcome the limitations of conventional holographic techniques described above. Such tomographic methods may involve capturing plural images of an object from different points of view, for example by moving the object, or the camera, or both, in between successive images, and extracting three-dimensional object information by processing the successive images. Conventional tomographic methods are described in Y. Li, D. Abookasis and J. Rosen, "Computer-generated holograms of three-dimensional realistic objects recorded without wave interference," Appl. Opt. 40, 2864-2870 (2001), and Y. Sando, M. Itoh, and T. Yatagai, "Holographic three-dimensional display synthesized from three-dimensional Fourier spectra of real existing objects," Opt. Lett 28, 2518-2520 (2003), each of which is incorporated herein by reference.

[0170] Tomographic methods may be slow, however, as they may require plural images to be captured before and after a relative perspective between the object and camera is changed and thus may not be able to capture objects which change during the time it takes to capture the multiple images. Alternatively, tomographic methods may require more expensive or physically large equipment that includes the ability to simultaneously capture images of the object from more than one perspective. Further, the methods may be impractical for distant objects or immovable objects for which it may be difficult to change a relative perspective from the camera. In addition, if the object is moving in an unpredictable way, it may be difficult to extract information from successive images without having another source of information regarding the shape or the movements of the object.

[0171] Range-finding methods involve measuring the distance between an apparatus and various points on a surface of an object, and constructing an image or model of the object based on the distances. Further, some range-finding methods may include a predetermined or controlled movement of the object or the apparatus to predictably change the view of the object from the apparatus over time. Thus, the conventional range-finding methods may also include the predictable change in the view of the object to determine an exterior three-dimensional shape of the object. Conventional range-finding methods include systems that illuminate points on an object with a laser, and measuring the amount of time required for the laser light to travel between the object and the laser source to determine a distance to the object based on the travel time. Related methods include "painting" an object with a laser stripe or grid and examining a deformation in the observed grid to determine geometric information of the object.

[0172] However, such range-finding methods require active illumination of the object by a coherent laser, and therefore are not suitable for incoherently illuminated objects or for fluorescent or luminescent emitting objects. When coherent light sources can be used, they have the attendant problems and limitations of active illumination and coherent light sources described above. Further, the methods may be difficult to perform if the object is moving in an unpredictable way, or if the object is very close to the laser source.

[0173] Other range-finding methods may include using a camera with a lens having a narrow depth of field and a calibrated automatic focusing system. The automatic focus system automatically adjusts the lens to focus on portions of the object, for example, by maximizing contrast in resulting images. Then, a range to the object is determined based on a mechanical position of the calibrated lens. However, such a calibrated focus technique may not be useful for objects having minimal optical contrast, or when objects or the apparatus is in motion. Further, the accuracy of such a system may be limited by the mechanical tolerances of the calibrated lens.

[0174] Another conventional method includes extracting an object distance from shadows in an image. For example, a conventional shadow method includes capturing an image of shadows produced when electromagnetic radiation (e.g., x-ray radiation or light) from an object is blocked by a mask with a concentric ring pattern such as a Fresnel Zone Pattern (FZP, a.k.a., Transmission Zone Plate, Zone Plate, Zone Pattern, Fresnel Zone Plate, etc. . . . ) placed between the object and an image plane.

[0175] For the purposes of this application, an FZP may be understood to be a two-dimensional pattern of alternating light and dark concentric rings in which a thickness (e.g., radial width) of successive rings is inversely proportional to the distance from the center of the rings. For example, the n.sup.th ring of an FZP may transition (i.e., from dark to light or light to dark) at a radius r described by the following equation (or by an approximation thereof):

r.sub.n= {square root over (nf.lamda.)}

where n is an integer, .lamda. is the wavelength of the applied light and f is the focal length of the FZP.

[0176] When used with scattered point light sources, such as stars, the relative positions of the centers of the shadows in the image may be extracted from the image, and distances to the corresponding point light sources may be calculated from the shadow center locations in the image. Such a method is described in L. Mertz and N. O. Young, "Fresnel transformations of images," in Proceedings of Conference on Optical Instruments and Techniques, K. J. Habell, ed. (Chapman and Hall, London 1961) p.305, incorporated herein by reference.

[0177] However, such conventional shadow ranging methods have a limited usefulness for when the captured electromagnetic radiation has a wavelength comparable to the distance between the rings of the FZP, such as visible light. For example, the visible light may be diffracted by the edges of rings in the FZP causing shadows in the image to have poorly defined or smeared edges, thereby making it difficult or impossible to isolate the centers of resulting shadow patterns (e.g., see Mertz and Young at FIG. 2).

[0178] Conventional scanning holographic methods involve scanning an object by illuminating a surface of the object with a moving a pattern of Fresnel Zone Plates (FZPs), serially sensing the intensity of reflected or transmitted light from the object (i.e., a one dimensional intensity signal) as the pattern moves across the object, and integrating and processing the serially sensed light intensities to generate three-dimensional information of the object. In particular, a convolution between the object and the moving Fresnel Zone Patterns is used to extract three-dimensional information regarding the object Conventional scanning holographic methods are described in Poon T.-C., "Three-dimensional image processing and optical scanning holography," ADVANCES IN IMAGING AND ELECTRON PHYSICS 126, 329-350 (2003), and G. Indebetouw, A. El Maghnouji, R. Foster, "Scanning holographic microscopy with transverse resolution exceeding the Rayleigh limit and extended depth of focus," J. Opt. Soc. Am. A 22, 892-898 (2005), each of which is incorporated herein by reference.

[0179] However, conventional scanning holographic methods require that a pattern be moved across the object while the location of the object is fixed, thereby limiting the usefulness of the method. Alternatively, the pattern may be fixed and the object moved across the pattern, resulting in similar limitations.

[0180] In addition, the scanning process may be a relatively slow process requiring mechanical movements. Thus, scanning is susceptible to problems produced by mechanical deterioration and inaccuracy, such as reduced resolution as described above.

[0181] Further, since scanning holographic methods serially capture a one-dimensional light intensity signal from the object and integrate the serial signal to extract three-dimensional information, such systems are highly susceptible to variations in the relative positions of the scanning apparatus and the object over the duration of the scan. For example, if such a system captures a first intensity during a first part of the scan, and a second intensity during a second part of the scan, any variation in the relative positions of the object and the scanning apparatus (or even minor changes in the internal arrangement of elements of the scanning apparatus) may adversely introduce variations into the captured second intensity, thereby reducing an accuracy, resolution and usefulness of the system.

[0182] In addition, scanning holographic systems are generally large and complex therefore they may not be suitable for applications requiring portability or low cost. Further, conventional scanning holographic systems may require an object to be illuminated by an interference pattern produced by interfering laser light, may include a very slow recording process that could take several minutes or more for each object capture, and the recording process may disadvantageously require significant mechanical movement of recording device components and/or the object during the recording process.

[0183] Holograms recorded by incoherent light open many new applications like outdoor and astronomical holography (J. B. Breckinridge, "Two-Dimensional White Light Coherence Interferometer," Appl. Opt. 13, 2760 (1974)) and fluorescence holographic microscopy (G. Indebetouw, A. El Maghnouji, R. Foster, "Scanning holographic microscopy with transverse resolution exceeding the Rayleigh limit and extended depth of focus," J. Opt. Soc. Am. A 22, 892-898 (2005)). The oldest methods of recording incoherent holograms have made use of the property that every incoherent object is composed of many source points each of which is self spatial coherent and therefore can create an interference pattern with light coming from the point's mirrored image. Under this general principle there are various types (J. B. Breckinridge, "Two-Dimensional White Light Coherence Interferometer," Appl. Opt. 13, 2760 (1974)) (A. W. Lohmann, "Wavefront Reconstruction for Incoherent Objects," J. Opt. Soc. Am. 55, 1555-1556 (1965)) (G. Sirat, D. Psaltis, "Conoscopic holography," Optics Letters, 10, 4-6 (1985)) of holograms including Fourier (J. B. Breckinridge, "Two-Dimensional White Light Coherence Interferometer," Appl. Opt. 13, 2760 (1974)) (G. W. Stroke and R. C. Restrick, "Holography with Spatially Incoherent Light," Appl. Phys. Lett. 7, 229 (1965)) and Fresnel holograms (G. Cochran, "New method of making Fresnel transforms," J. Opt. Soc. Am. 56, 1513-1517 (1966)) (P. J. Peters, "Incoherent holography with mercury light source," Appl. Phys. Lett. 8, 209-210 (1966)). The process of beam interfering demands high levels of light intensity, extreme stability of the optical setup and relatively narrow bandwidth light source. These limitations have prevented holograms from becoming widely used for many practical applications.

[0184] More recently two groups of researchers have proposed to compute holograms of 3-D incoherently illuminated objects from a set of images taken from different points of view. (Y. Li, D. Abookasis and J. Rosen, "Computer-generated holograms of three-dimensional realistic objects recorded without wave interference," Appl. Opt. 40, 2864-2870 (2001)) (Y. Sando, M. Itoh, and T. Yatagai, "Holographic three-dimensional display synthesized from three-dimensional Fourier spectra of real existing objects," Opt. Lett 28, 2518-2520 (2003)). This method, although it shows promising prospects, is relatively slow since it is based on capturing tens of images of the scene images from different view angles.

[0185] Another method is called scanning holography (G. Indebetouw, A. El Maghnouji, R. Foster, "Scanning holographic microscopy with transverse resolution exceeding the Rayleigh limit and extended depth of focus," J. Opt Soc. Am. A 22, 892-898 (2005)) (Poon T.-C., "Three-dimensional image processing and optical scanning holography," Adv. in Imag. & Elec. Phys. 126, 329-350 (2003)) in which a pattern of Fresnel Zone Plate (FZP) scans the object such that at each and every scanning position the light intensity is integrated by a point detector. The overall process yields a Fresnel hologram obtained as a convolution between the object and FZP patterns. However the scanning process is relatively slow and is done by mechanical movements. A similar convolution is actually done also in the present work; however, unlike the case of scanning holography, we propose here a convolution without movement.

[0186] Mertz and Young (L. Mertz and N. O. Young, "Fresnel transformations of images," in Proceedings of Conference on Optical Instruments and Techniques, K. J. Habell, ed. (Chapman and Hall, London 1961) p.305) already proposed holographic photography based on convolution without movement between object and FZPs. However, their process relies on geometrical optics, which cannot yield good imaging results in the optical regime. On the contrary, our suggested correlator for implementing the holographic recording is valid in the optical regime, since its operation principle is based on the diffraction theory (J. Goodman, Introduction to Fourier Optics, 2.sup.nd ed., McGraw-Hill, New York, 1996, pp. 63-95 (Chapter 4).

[0187] Referring now to the drawings, wherein like reference numerals designate identical or corresponding parts throughout the several views.

[0188] FIG. 1 is a block diagram of an optical apparatus 100 according to a first embodiment of the present invention. The optical apparatus 100 is configured to capture a three-dimensional information of an object 130, and the optical apparatus 100 includes an optical assembly 110 and an image capture assembly 120. In particular, the optical assembly 110 receives light from the object 130 along a receiving optical axis 140. For example, the optical assembly may receive light from the sun 150 that is reflected or scattered by reflecting surfaces on the object 130. The received light may be polychromatic and incoherent light, such as reflected sunlight, or may also include monochromatic light or coherent light. In addition, the light from the object may be fluorescent light or chemiluminescent light emitted by the object. The optical apparatus 100, in this embodiment, does not illuminate the object but passively receives light from the object.

[0189] The optical assembly 110 transforms the received light according to a transformation described below, and transmits the transformed light along the receiving optical axis 140. The image capture assembly 120 receives the transformed light from the optical assembly 110, and captures a two-dimensional intensity image of the transformed light. The captured two-dimensional intensity image includes three-dimensional or geometric information regarding the portions of the object 130 from which light is received at the optical assembly 110. The three-dimensional or geometric information is encoded in the captured two-dimensional intensity image as a Fresnel hologram. In other words, a hologram, as discussed in this specification, is a two-dimensional image that encodes three-dimensional information. In addition, the present invention also applies to capturing a volume hologram, which is a three-dimensional intensity image that encodes three-dimensional or geometric information of an object. The image capture assembly 120 may extract the three-dimensional information from the captured image. The image capture assembly may be an opaque light capturing device. An opaque device is understood to mean a device that is not transparent or translucent to electromagnetic radiation of relevant frequencies and intensities, and therefore such a device does not allow such electromagnetic radiation to pass through.

[0190] A Fresnel hologram is a real positive light intensity distribution that encodes a complex valued wave-front distribution, including three-dimensional information regarding the light scattering surface of the object. Further, in a Fresnel hologram, each point on the object is encoded into a portion of a sinusoidal Fresnel zone plate with an entire range of spatial frequency components present, as noted by Goodman, "Introduction to Fourier Optics," 3rd Ed., Roberts & Company Publishers, 2005, incorporated herein by reference. Thus, a three-dimensional image of the object may be recreated optically, by appropriately illuminating a transparency having the Fresnel hologram, or the three-dimensional image of the object may be recreated by a computer using an electronic image data of the Fresnel hologram. The recreated three-dimensional image of the object includes three-dimensional information regarding the shape and distance of an observable surface of the object.

[0191] The optical apparatus 100 may advantageously capture the three-dimensional object information without moving or being moved (i.e., the spatial relationship between the optical apparatus 100 and the object 130 may remain unchanged from a time before an image is captured to a time after the three-dimensional information is extracted from the captured image by the image capture assembly 120). In addition, the optical apparatus 100 may advantageously capture the three-dimensional object information while one or both of the object and the optical apparatus 100 are in motion.

[0192] Moreover, the optical apparatus 100 does not project any pattern on the object, such as is done in a conventional or scanning holographic method. Further, the optical apparatus 100 does not include any parts that are required to move while the light is being received from the object, such as a scanning aperture used in scanning holography. Thus, without parts that move during image capture, the optical apparatus 100 may be less expensive to produce and use, more reliable, quieter, and faster, for example, than an apparatus used for scanning holography. Further, with respect to conventional holographic systems that require active illumination (for example, illumination by a laser), the present invention advantageously has a simpler design that may be applied to more types of imaging.

[0193] In addition, the present invention does not require an interference between a light from the illumination (i.e., not scattered by the object) with a light scattered by the object. Instead, the current approach diffracts light scattered by the object, which may be understood as a mutual interference between portions of electromagnetic radiation wavefronts coming from object itself, and is not an interference between such scattered light and another light from the source. Thus, as the mutual interference may be performed by a few collinear electromagnetic elements (e.g., lenses and masks, or DOEs, as described below), or even a single electromagnetic element (e.g., a single DOE, as described below), the relative differences between optical paths of the interfering wavefronts are easily controlled (e.g., all the optical paths pass through the same electromagnetic elements) and therefore, variations between the lengths of the paths may be more easily controlled and minimized.

[0194] Further, the optical apparatus 100 may advantageously capture the three-dimensional object information in a single image (e.g., a single exposure).

[0195] Moreover, the optical apparatus 100 may advantageously be able to capture images with very low levels of light intensity. Conventional holographic systems may require beam splitters and/or mirrors that may cause some received light to be lost or wasted. On the other hand, the optical apparatus 100 does not require the use of beam splitters or mirrors, and therefore may be able to capture images with low levels of light intensity.

[0196] Further, conventional holographic systems may produce Fourier holograms in which each object point contributes to interference fringe patterns that are spread over the entire image plane. Such conventional systems may require greater light intensity than the optical apparatus 100, which translates each object point using a Fresnel Zone Pattern, which may produce fringe patterns for a particular object point in only a portion of the image plane, thereby advantageously allowing for lower light intensities.

[0197] In addition, the optical apparatus 100 advantageously receives and transmits light only along a single axis, thereby reducing susceptibility to axial variation and simplifying the design, manufacture and use of the optical apparatus 100. Further, the optical apparatus 100 is coaxial and self-interfering. In particular, in the present embodiment light from separate light paths is not interfered to produce an interference pattern or hologram. Instead, the hologram is produced by diffraction of light in the optical assembly 110. Although diffraction may be understood as being produced by interference between each portion of a light wavefront (i.e., each point along the wavefront being considered a point source of light according to Huygens wave theory of light), diffraction produced by a single coaxial assembly, as in the present embodiment, is much less sensitive to variations in optical paths between interfering light sources. In particular, light is self-interfered, according to the present embodiment, because the only interference is between light waves passing through various portions of a same optical element (e.g., the optical assembly 110, or the mask 304 in FIG. 3, described below), and it is much easier to minimize path length and angle variations for paths passing through a same optical element, as in the present embodiment, than it is to control path variations between separate optical paths, passing through separate optical elements, along separate optical axes, as in the conventional holography systems. In addition, the optical apparatus 100 may be used to advantageously capture polychromatic incoherent light received from the object. Therefore, a full color three-dimensional image may be recreated from the Fresnel hologram recorded by the apparatus.

[0198] Although embodiments within this document are described as transmitting and receiving light, capturing light images and including optical assemblies, the invention is also applicable to other types of electromagnetic radiation. Thus, the invention also includes an electromagnetic radiation apparatus that includes an electromagnetic radiation assembly that receives a received electromagnetic radiation from an object.

[0199] FIG. 2 is a block diagram that illustrates an example of captured geometric information according to embodiments of the present invention. According to the example of FIG. 2, an object 200 is illuminated by a light source or sources (e.g., the sun 150) causing light to be scattered or reflected by various light radiating portions of the object 200. Three example light radiating portions 206, 208 and 210 scatter light rays 216, 218 and 220, respectively. These example light rays travel towards the optical apparatus 100 (shown in FIG. 2 without the detail of FIG. 1). Light captured by the optical apparatus 100, according to the present invention, includes geometric information regarding the distance between the object and the optical apparatus as well as the shape of observable surfaces of the object 200 from which light is received at the optical apparatus 100. For example, the captured light includes information regarding a distance traveled by the ray of light 218, and in particular, includes the distance between the light radiating portion 208 and the optical apparatus 100. Further, the captured light also includes geometric information regarding a horizontal distance of the light radiating portion 208, for example, a horizontal distance 212 between an edge of the object 200 and the light radiating portion 208. In addition, the captured light also includes geometric information regarding a vertical distance of the light radiating portion 208, for example, a vertical distance 214 between an edge of the object 200 and the light radiating portion 208. In this example, horizontal distance 212 and vertical distance 214 are distances measured in a measurement plane 204 that passes through radiating portion 208.

[0200] Thus, an optical apparatus, according to the present embodiment, may be configured to capture a light including geometric information regarding each portion of each object from which the light is received at the optical apparatus. Further, from the geometric information, the size, shape and location of the visible portions of each object may be determined. For example, in FIG. 2, if light is scattered by each external surface of the object 200, and at least a portion of the scattered light is received at the optical apparatus 100, then the apparatus 100 may capture light including geometric information regarding the dimensions (e.g., height, width and depth) of each visible surface of the object 200, as well as information regarding the distance between the object 130 and the front surface of optical apparatus 100.

[0201] Although light is scattered by external surfaces in FIG. 2, one of skill in the art will understand that such an optical apparatus is also capable of capturing received light from an internal surface of the object 130 that radiates light to the optical assembly 100 through a translucent or transparent exterior portion of the object 130. In that case, the captured geometric information may include geometric information regarding an interior portion of the object.

[0202] The optical assembly 110 includes any optical assembly configured to control a complex amplitude of the transmitted light according to the complex transformation function described below. Thus, for example, optical assembly 110 may include one or more refractive lenses, one or more diffractive optical elements (DOEs), or one or more spatial light modulators (SLMs).

[0203] An incoherent correlator in the regime of diffraction theory may include every system that produces a pattern of a Fourier transform of the mask transparency on the system's aperture at an output plane around a point that is linearly related to an input point's location, when the incoherent correlator is illuminated by a single point from some position on the input plane. Thus, the incoherent correlator produces an output image including every point in the input plane.