System And Method For Transforming Authored Haptic Data To Fit Into Haptic Bandwidth

Weber; Maximilian ; et al.

U.S. patent application number 17/069644 was filed with the patent office on 2021-04-15 for system and method for transforming authored haptic data to fit into haptic bandwidth. This patent application is currently assigned to Lofelt GmbH. The applicant listed for this patent is Lofelt GmbH. Invention is credited to Gwydion ap Dafydd, Daniel Buttner, James Mazur, Maximilian Weber.

| Application Number | 20210110841 17/069644 |

| Document ID | / |

| Family ID | 1000005162095 |

| Filed Date | 2021-04-15 |

View All Diagrams

| United States Patent Application | 20210110841 |

| Kind Code | A1 |

| Weber; Maximilian ; et al. | April 15, 2021 |

SYSTEM AND METHOD FOR TRANSFORMING AUTHORED HAPTIC DATA TO FIT INTO HAPTIC BANDWIDTH

Abstract

A computer implemented method and system of transforming an audio signal into a haptic data to fit into a haptic perceptual bandwidth of an electronic device having at least one actuator is disclosed. The method and system receives the audio signal; filters the audio signal into one or more frequency bands with each frequency band having a center frequency and time-amplitude values; authors the one or more frequency bands by modifying the time-amplitude values by changing, appending or deleting one or more time amplitude values to create an authored audio descriptor data; calculates an available bandwidth and the haptic perceptual bandwidth of the electronic device having at least one embedded actuator and fits the authored audio descriptor data into the haptic perceptual bandwidth of the electronic device to create a haptic data file.

| Inventors: | Weber; Maximilian; (Berlin, DE) ; Mazur; James; (Berlin, DE) ; ap Dafydd; Gwydion; (Berlin, DE) ; Buttner; Daniel; (Berlin, DE) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Assignee: | Lofelt GmbH Berlin DE |

||||||||||

| Family ID: | 1000005162095 | ||||||||||

| Appl. No.: | 17/069644 | ||||||||||

| Filed: | October 13, 2020 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 62914876 | Oct 14, 2019 | |||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | G10L 21/06 20130101 |

| International Class: | G10L 21/06 20060101 G10L021/06 |

Claims

1. A computer implemented method of transforming an audio signal into haptic data to fit into a haptic perceptual bandwidth of an electronic device having at least one actuator, the method comprising: receiving the audio signal; filtering the audio signal into one or more frequency bands, wherein each of the one or more frequency bands has a center frequency; authoring the one or more frequency bands at the center frequency to modify one or more time amplitude values to create an authored audio descriptor data; calculating an available bandwidth and the haptic perceptual bandwidth of the electronic device having at least one actuator; and fitting the authored audio descriptor data into the haptic perceptual bandwidth of the electronic device having at least one actuator to create a haptic data file.

2. The computer implemented method of claim 1, wherein the available bandwidth of the electronic device having at least one actuator is determined based on device specific information and actuator specific information.

3. The computer implemented method of claim 2, wherein the device specific information includes device mass, device type, and device operating characteristics.

4. The computer implemented method of claim 2, wherein the actuator specific information includes mass of actuator, type of actuator, and operating characteristics of the actuator.

5. The computer implemented method of claim 1, wherein the haptic perceptual bandwidth is determined based upon the available bandwidth and the available bandwidth is combined bandwidth of the electronic device having at least one actuator.

6. The computer implemented method of claim 1, wherein fitting the authored audio descriptor data into the haptic perceptual bandwidth includes determining if the center frequency of each of the one or more frequency bands can be accommodated within the haptic perceptual bandwidth and if so, passing the authored audio descriptor data to create the haptic data file.

7. The computer implemented method of claim 1, wherein fitting the authored audio descriptor data into the haptic perceptual bandwidth further comprises receiving frequency band ranking data for each of the one or more frequency bands, and, if the frequency band ranking data has been provided by a user, then fitting each of the one or more frequency bands into the haptic perceptual bandwidth based on the frequency band ranking data.

8. An audio to haptic transformation system for transforming an audio signal into haptic data to fit into a haptic perceptual bandwidth of an electronic device comprising at least one actuator, the system comprising: an audio analysis module configured to receive and filter the audio signal into one or more frequency bands, wherein each of the one or more frequency bands has a center frequency; an authoring tool configured to author the one or more frequency bands at the center frequency to modulate one or more haptic data points to create authored audio descriptor data; a transformation module to calculate an available bandwidth and the haptic perceptual bandwidth of the electronic device comprising at least one actuator, wherein the transformation module transforms the authored audio descriptor data to fit into the haptic perceptual bandwidth to create a haptic data file; and a resynthesis module for parsing and executing the haptic data file in real time to provide an immersive haptic experience.

9. The audio to haptic transformation system of claim 8, wherein the transformation module calculates the available bandwidth of the electronic device comprising at least one actuator based on device specific information and actuator specific information.

10. The audio to haptic transformation system of claim 9, wherein the device specific information includes device mass, device type, and device operating characteristics.

11. The audio to haptic transformation system of claim 9, wherein the actuator specific information includes mass of actuator, type of actuator, and operating characteristics of the actuator.

12. The audio to haptic transformation system of claim 8, wherein the transformation module determines the haptic perceptual bandwidth based upon the available bandwidth and the available bandwidth is combined bandwidth of the electronic device having at least one actuator.

13. The audio to haptic transformation system of claim 8, wherein the transformation module further determines if all of the one or more frequency bands in the authored audio descriptor data can fit into the haptic perceptual bandwidth and if so, transforms the authored audio descriptor data to create the haptic data file.

14. The audio to haptic transformation system of claim 8, wherein the transformation module fits the authored audio descriptor data into the haptic perceptual bandwidth based on frequency band ranking data for each of the one or more frequency bands.

15. The audio to haptic transformation system of claim 13, wherein the transformation module further determines if all of the one or more frequency bands of the authored audio descriptor data cannot fit into the haptic perceptual bandwidth and if so, fits the one or more frequency bands of the authored audio descriptor data into the haptic perceptual bandwidth based on frequency band ranking.

16. The audio to haptic transformation system of claim 14, wherein the transformation module further determines if all of the one or more frequency bands of the authored audio descriptor data cannot be accommodated into the haptic perceptual bandwidth and if so, passes the center frequency of the one or more frequency bands according to frequency band ranking and discarding the one or more frequency bands of the authored audio descriptor data that do not fit into the haptic perceptual bandwidth.

17. A computer readable medium having encoded instructions that, when executed by a processor, perform the following steps for transforming an audio signal into a haptic data to fit into a haptic perceptual bandwidth of an electronic device having at least one actuator: receiving the audio signal; filtering the audio signal into one or more frequency bands, wherein the one or more frequency bands has a center frequency; authoring the one or more frequency bands at the center frequency to edit or append one or more haptic data points to create an authored audio descriptor data; calculating an available bandwidth and the haptic perceptual bandwidth of the electronic device having at least one actuator; transforming the authored audio descriptor data to fit into the haptic perceptual bandwidth to create a haptic data file; and executing the haptic data file to provide an immersive haptic experience.

18. The computer readable medium of claim 17, wherein calculating the available bandwidth of the electronic device having at least one actuator is determined based on device specific information and actuator specific information.

19. The computer readable medium of claim 18, wherein the device specific information includes device mass, device type, and device operating characteristics.

20. The computer readable medium of claim 18, wherein the actuator specific information includes mass of actuator, type of actuator, and operating characteristics of the actuator.

21. The computer readable medium of claim 17, wherein the haptic perceptual bandwidth is determined based upon the available bandwidth of the electronic device having at least one actuator.

22. The computer readable medium of claim 17, wherein the encoded instructions, when executed by the processor, further perform the step of determining if the center frequency of all the one or more frequency bands can be accommodated within the haptic perceptual bandwidth and if so, transforming the one or more frequency bands to create the haptic data file.

23. The computer readable medium of claim 17, wherein the encoded instructions, when executed by the processor, further perform the step of determining if the authored audio descriptor data cannot fit into the haptic perceptual bandwidth at the center frequency of the one or more frequency bands and if so, fitting the center frequency of the one or more frequency, bands into the haptic perceptual bandwidth based on frequency band ranking and discarding the center frequency of the one or more frequency bands that cannot fit into the haptic perceptual bandwidth.

Description

FIELD OF THE INVENTION

[0001] The present invention relates to a haptic processing system for generation of haptic data using an audio signal or an audio data. More specifically, the invention relates to transforming audio signal to haptic data to fit into a haptic perceptual bandwidth of an electronic device having an actuator.

BACKGROUND

[0002] Haptic refers to a sense of touch or perception provided to a user as a feedback force or vibration on a user interface of an electronic device or headphones. The feedback force can provide information to the user or create a sense of perception for enhanced user experience. With technological advancement, user interfaces are now integrated with haptic interfaces that provide haptic feedback based on different parameters. These parameters vary according to user applications and embedded haptic devices. A complex process of filtering, transformation and editing is required to efficiently convert audio signal into haptic data to provide a fulfilling user experience. To provide a fulfilling user experience, the audio signal is converted into haptic data which then can be authored and enhanced. The haptic experience is delivered using haptic actuators such as Linear Resonant Actuators (LRA), Wide Band or High Definition actuators, piezo-electric actuators etc. The delivery of the haptic experience is dependent on the audio to haptic conversion of the signal, the response characteristics of the haptic actuator, device specific data, among other factors. Therefore, a proper matching of the actuator type and its response characteristics is required to augment user experience.

[0003] An impressive haptic experience can be perceived by a user if the vibration can be felt over a wide range of frequencies. Furthermore, the user should be able to differentiate the perception over different frequencies ranges for immersive haptic experience. When the vibration can't be differentiated over a wide range of frequencies, the experience is passive and only vibrations are felt giving an unrealistic experience.

[0004] In order to create an immersive haptic experience, a method and system is described. The novel method allows the user to edit the audio signal for a range of frequencies. By editing, the different frequency ranges the user can edit different frequency ranges, append new haptic points, remove haptics points to tune any type of electronic device with an actuator according to its performance characteristics. Additionally, this novel method allows users to tune different electronic devices with an actuator for performance characteristics.

SUMMARY OF THE INVENTION

[0005] A computer implemented method and system of transforming an audio signal into a haptic data to fit into a haptic perceptual bandwidth of an electronic device having at least one actuator is disclosed. The method and system receives the audio signal; filters the audio signal into one or more frequency bands with each frequency band having a center frequency and time-amplitude values; authors/edits the one or more frequency bands by modifying the time-amplitude values by changing, appending or deleting one or more time amplitude values to create an authored audio descriptor data; calculates available bandwidth and the haptic perceptual bandwidth of the electronic device having at least one embedded actuator and fits the authored audio descriptor data into the haptic perceptual bandwidth of the electronic device having at least one embedded actuator to create a haptic data file.

[0006] In embodiments, the available bandwidth is the combined bandwidth of the electronic computing device and the embedded actuator. In embodiments, the haptic perceptual bandwidth is the difference between the highest frequency and the lowest frequency over which the haptic vibration can be experienced by a user and lies within the available bandwidth. In embodiments, the haptic perceptual bandwidth is determined based upon the available bandwidth.

[0007] In embodiments, the available bandwidth of the electronic device having at least one embedded actuator is determined based on a device specific information and an actuator specific information. The device specific information includes device mass, device type, and device operating characteristics. Further, the actuator specific information includes mass of actuator, type of actuator, operating characteristics of the actuator.

[0008] In embodiments, the computer implemented method and system of transformation involves fitting the center frequency of each of the frequency bands into the haptic perceptual bandwidth includes determining if the center frequency of each of the frequency bands can be accommodated within the haptic perceptual bandwidth and if so, passing the authored audio descriptor data to create the haptic data file.

[0009] In embodiments, the computer implemented method and system of fitting the authored audio descriptor data into the haptic perceptual bandwidth comprises receiving a frequency band ranking data for each of the frequency bands, and if the frequency band ranking has been provided by the user then fitting the center frequency of each of the frequency bands into the haptic perceptual bandwidth based on the frequency band ranking.

[0010] In one implementation, the transformation method and system implements a bandwidth fitting algorithm. The algorithm receives the authored audio descriptor data and other authored data comprising time-amplitude values and time amplitude envelope for one or more frequency bands. Each frequency band has a center frequency and time amplitude Values represented by a time amplitude envelope. In an alternative implementation, the audio analysis module can implement spectrogram analysis. The audio analysis module receives preprocessed audio signal from the audio preprocessor module. The audio analysis module calculates a Short Time Fourier Transform (STFT) for a fixed window size having a fixed number of frames. Alternatively, the window size can be variable having a variable number of frames, which may depend upon at least one of the previously analysed spectrograms. The Short Time Fourier Transform (STFT) provides a frequency magnitude spectrogram against time.

[0011] In some embodiments, after calculating frequency magnitude spectrogram, a median filtering is performed, once in horizontal direction of the spectrogram, and once in vertical direction of the spectrogram, to calculate a harmonic component and a percussive component. Each row of the magnitude spectrogram is analysed, frequency peaks are marked, to determine the harmonic component. Likewise, the magnitude spectrogram is analyzed for each frequency to determine the percussive component. After calculating the harmonic component and the percussive component, the harmonic component and the percussive component are converted into the time amplitude domain by performing the Inverse Short Time Fourier Transform (ISTFT) and the time amplitude envelope having time amplitude values of the harmonic component and the percussive component. The percussive components represent the transients in the received signal. The time-amplitude values related to transients are provided to the authoring tool. The authoring tool provides a user interface for modifying/editing/changing the transient values.

[0012] The harmonic component can be processed separately for each frequency comprising the time amplitude values and/or time-amplitude-frequency values.

[0013] In one embodiment, the peak time-amplitude values for each frequency for a specific frequency band are marked and saved. The peak-time amplitude values can then be modified/edited or changed using the user interface of the authoring tool.

BRIEF DESCRIPTION OF THE. DRAWINGS

[0014] FIG. 1 illustrates an overview of an operating environment of a haptic processing system in an embodiment of the present invention;

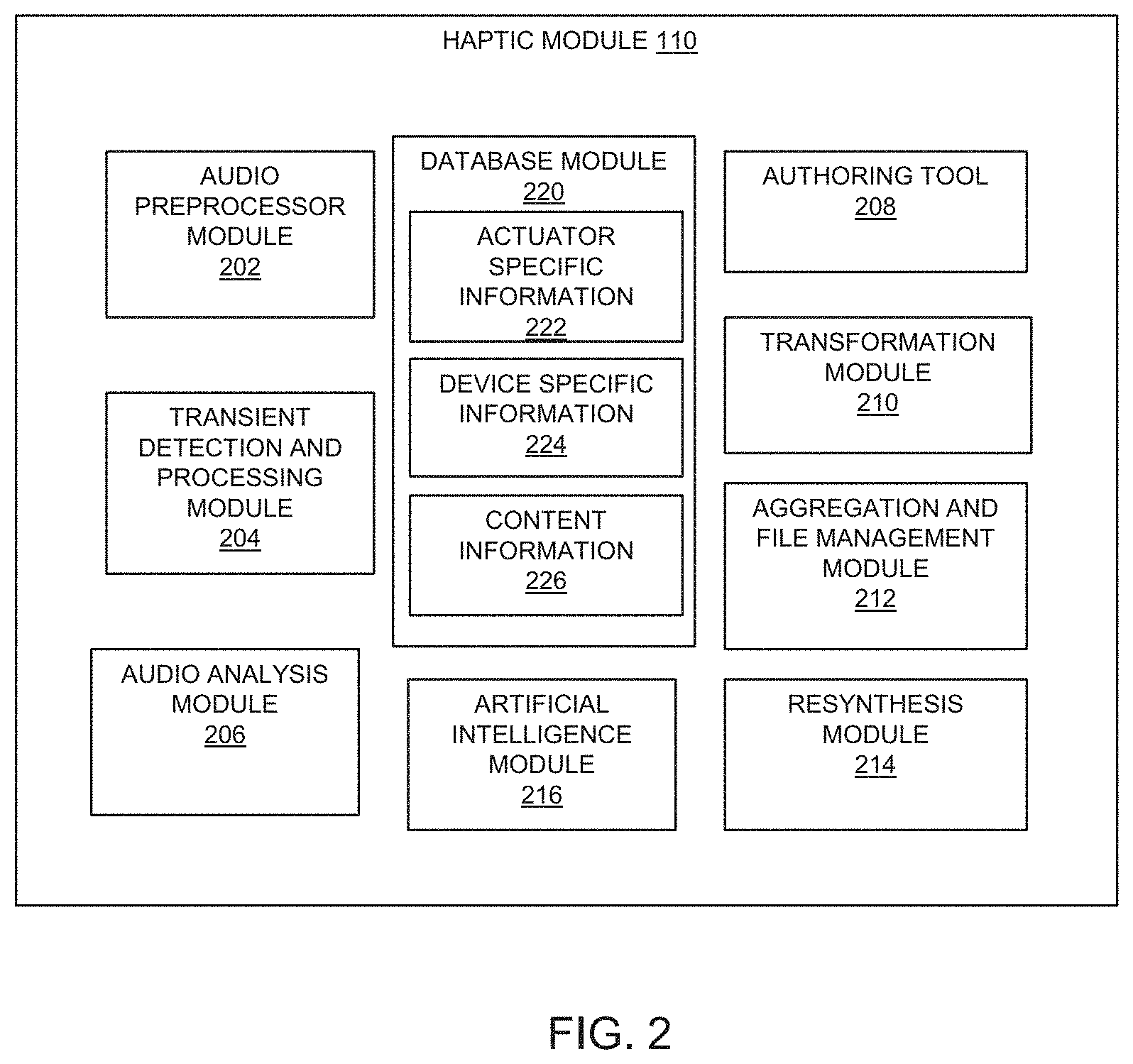

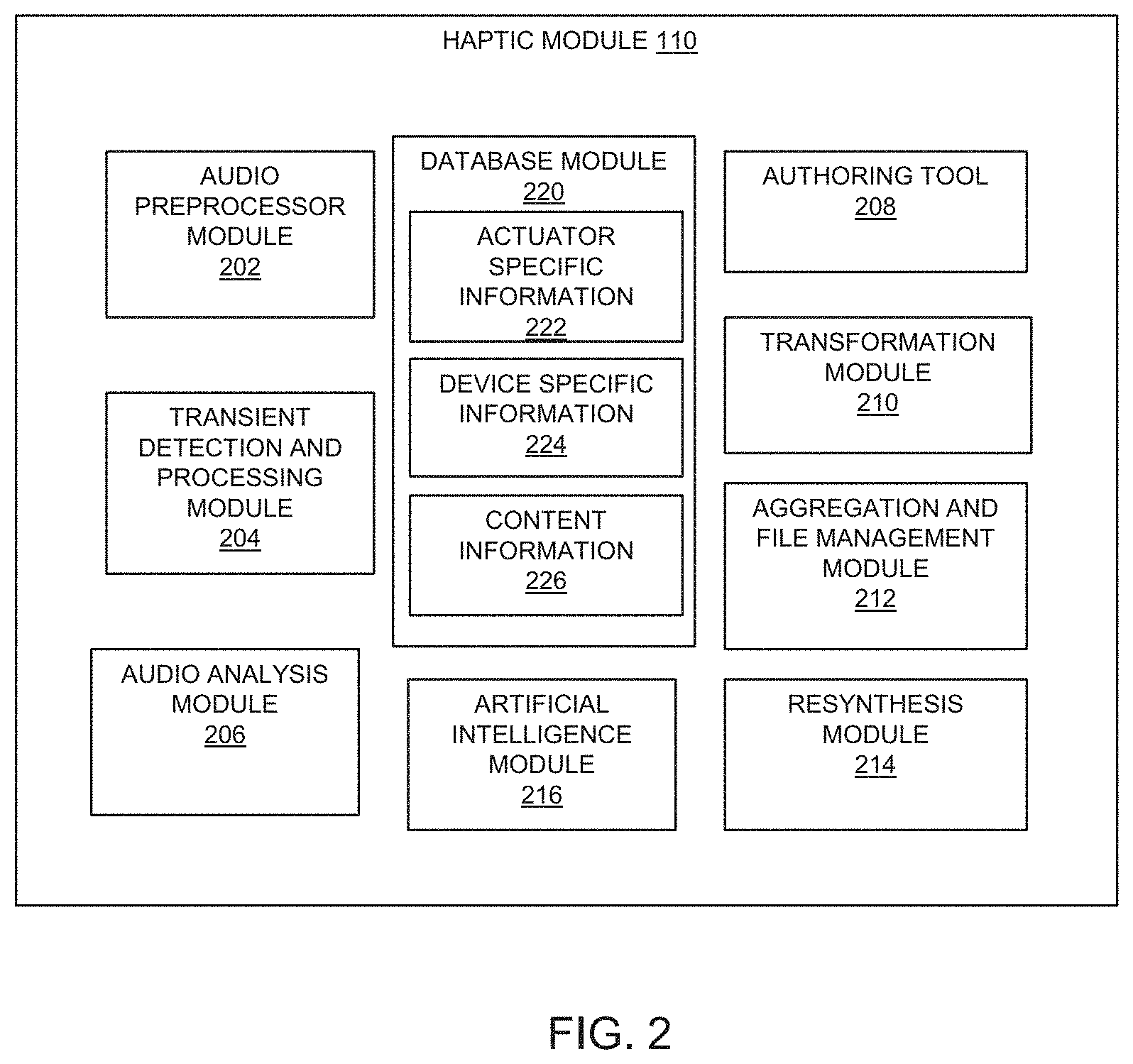

[0015] FIG. 2 illustrates different parts of the haptic module in an embodiment of the present invention;

[0016] FIG. 3 illustrates the haptic module operating in a distributed environment in an embodiment of the present invention;

[0017] FIG. 4 illustrates the different parts of haptic processing system in an embodiment of the present invention;

[0018] FIG. 4A illustrates the haptic processing system with multiple actuators configuration in an embodiment of the present invention;

[0019] FIG. 5 illustrates different parts of a preprocessing module in an embodiment of the present invention;

[0020] FIG. 6 illustrates different parts of the audio analysis module in an embodiment of the present invention;

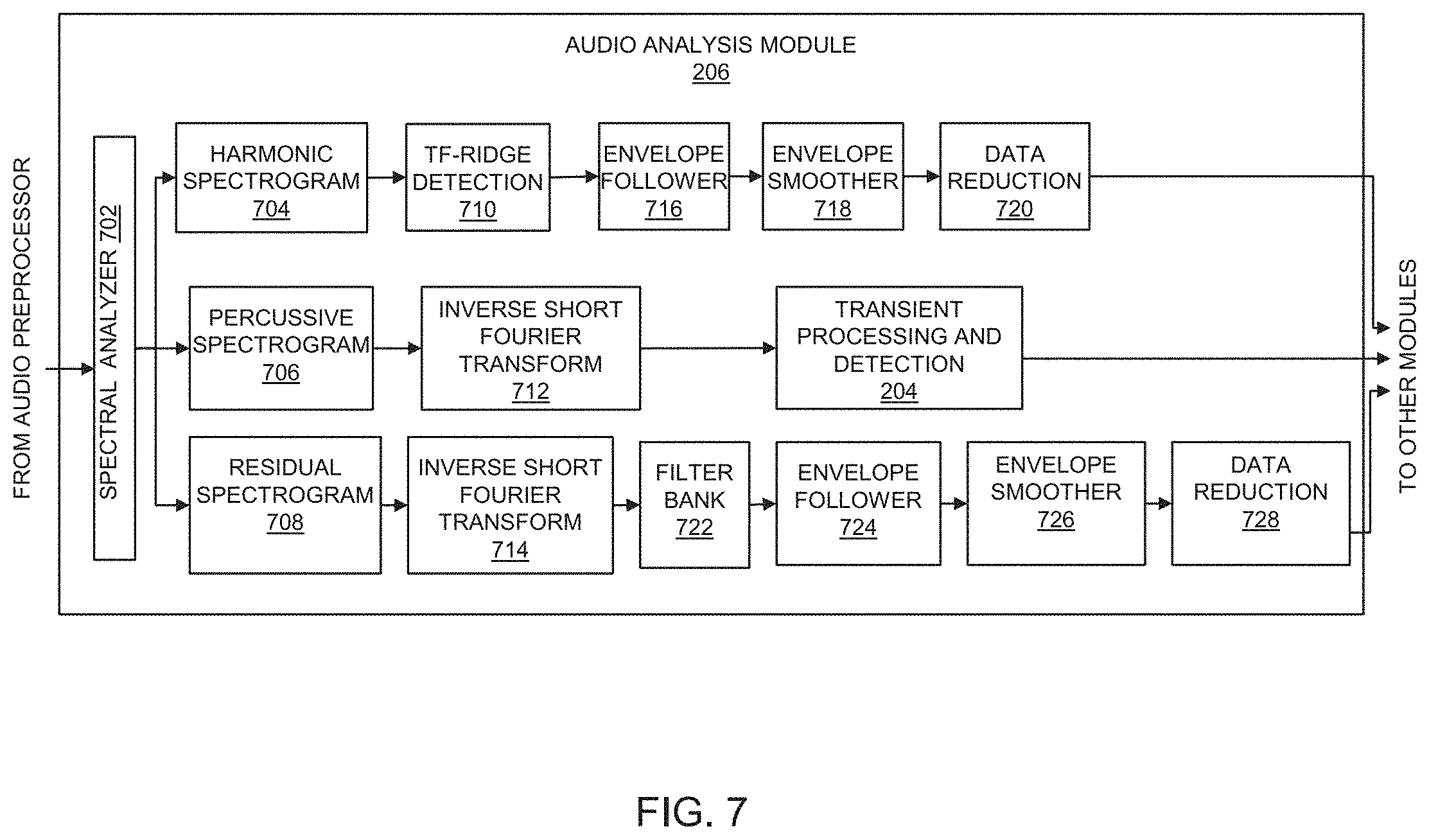

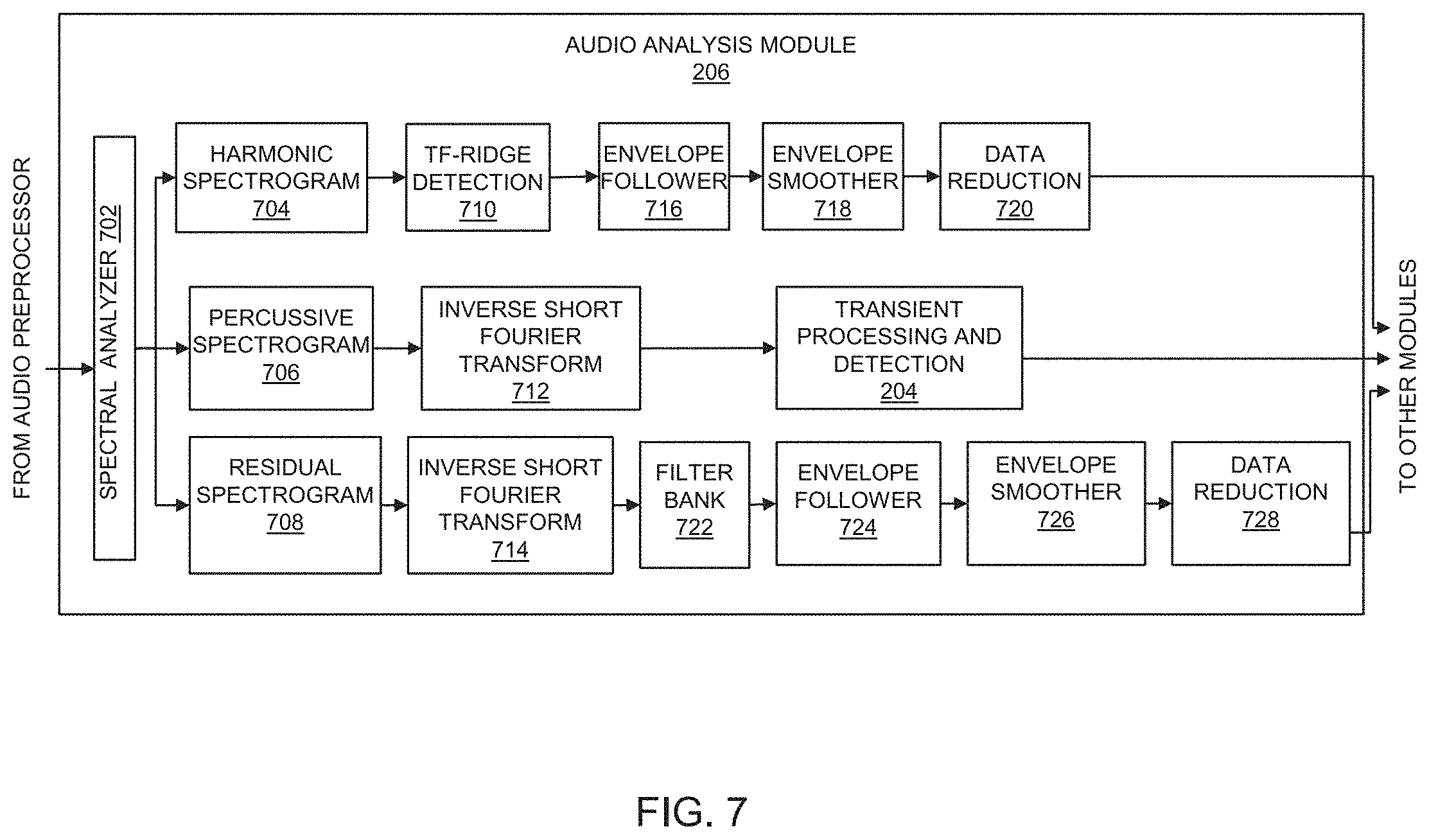

[0021] FIG. 7 illustrates different parts of the audio analysis module in an alternate embodiment of the present invention;

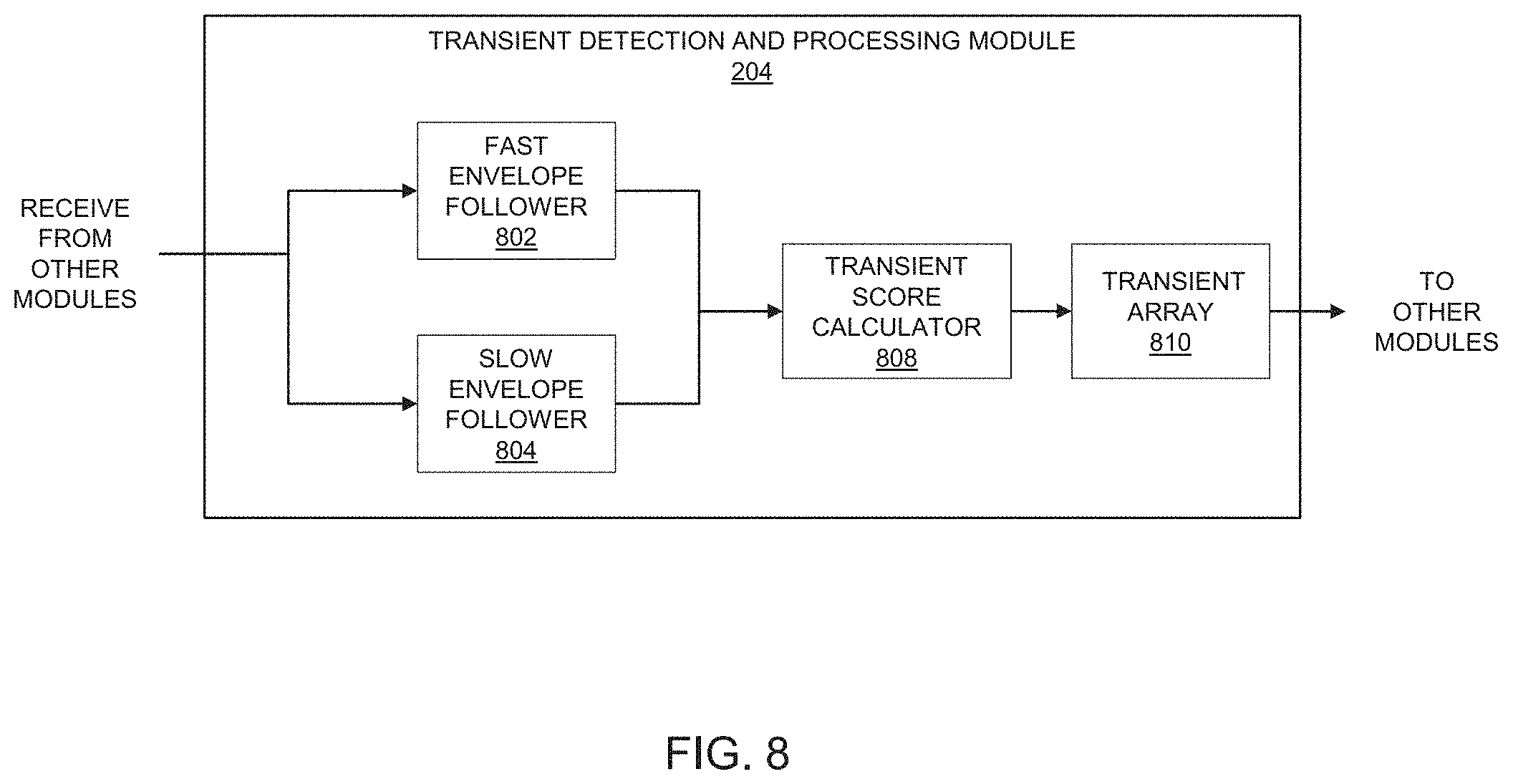

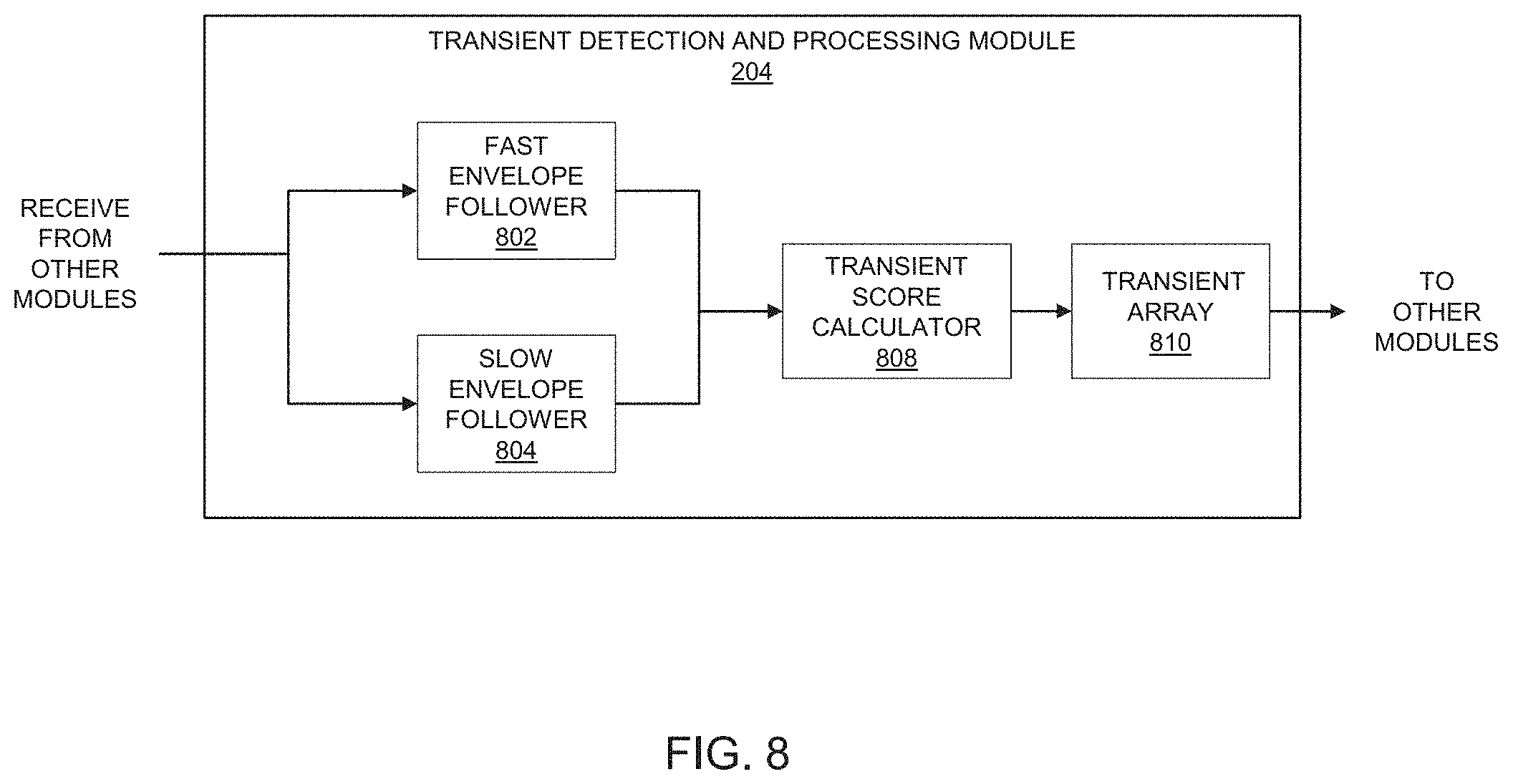

[0022] FIG. 8 illustrates different parts of a transient detection and processing module in an embodiment of the present invention;

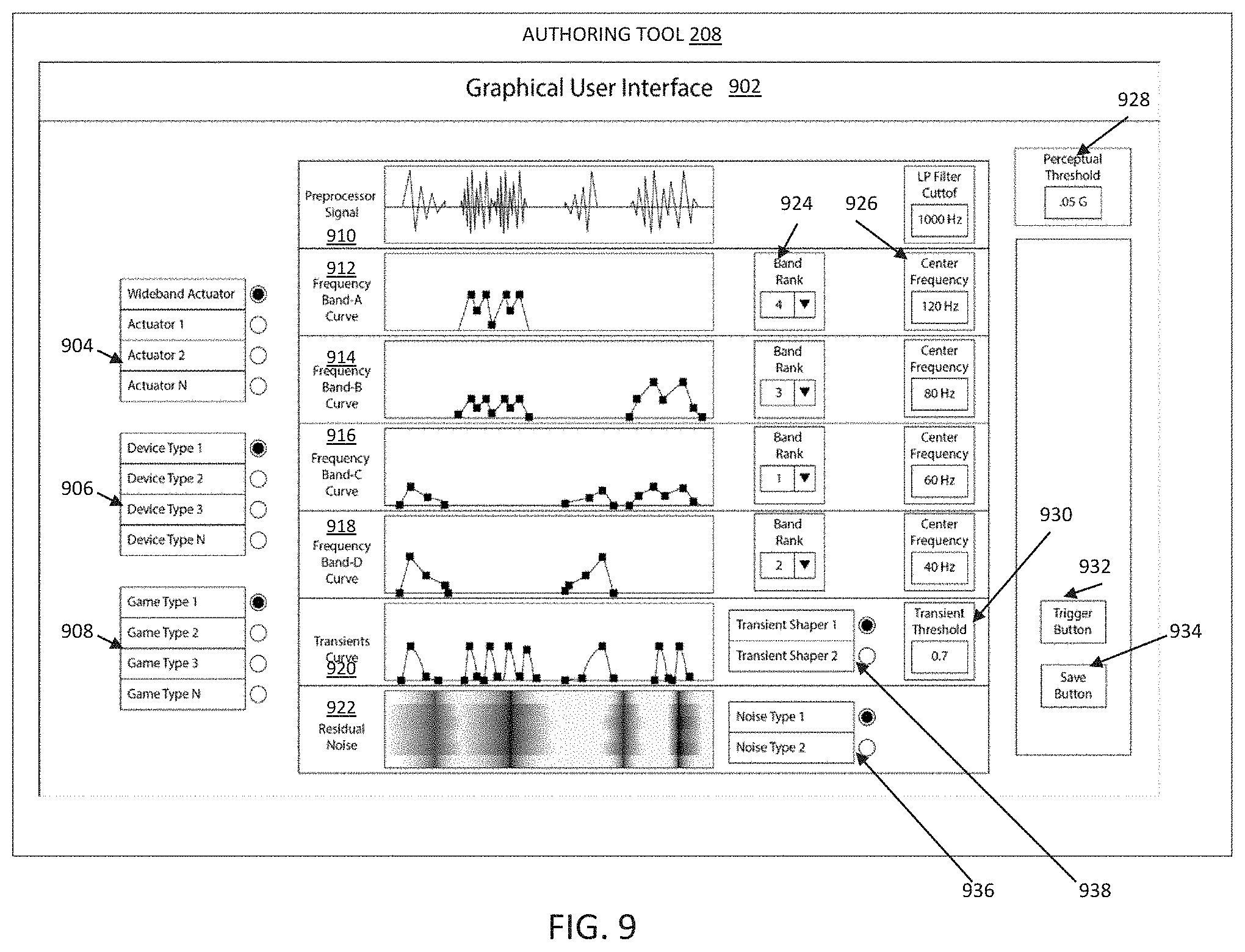

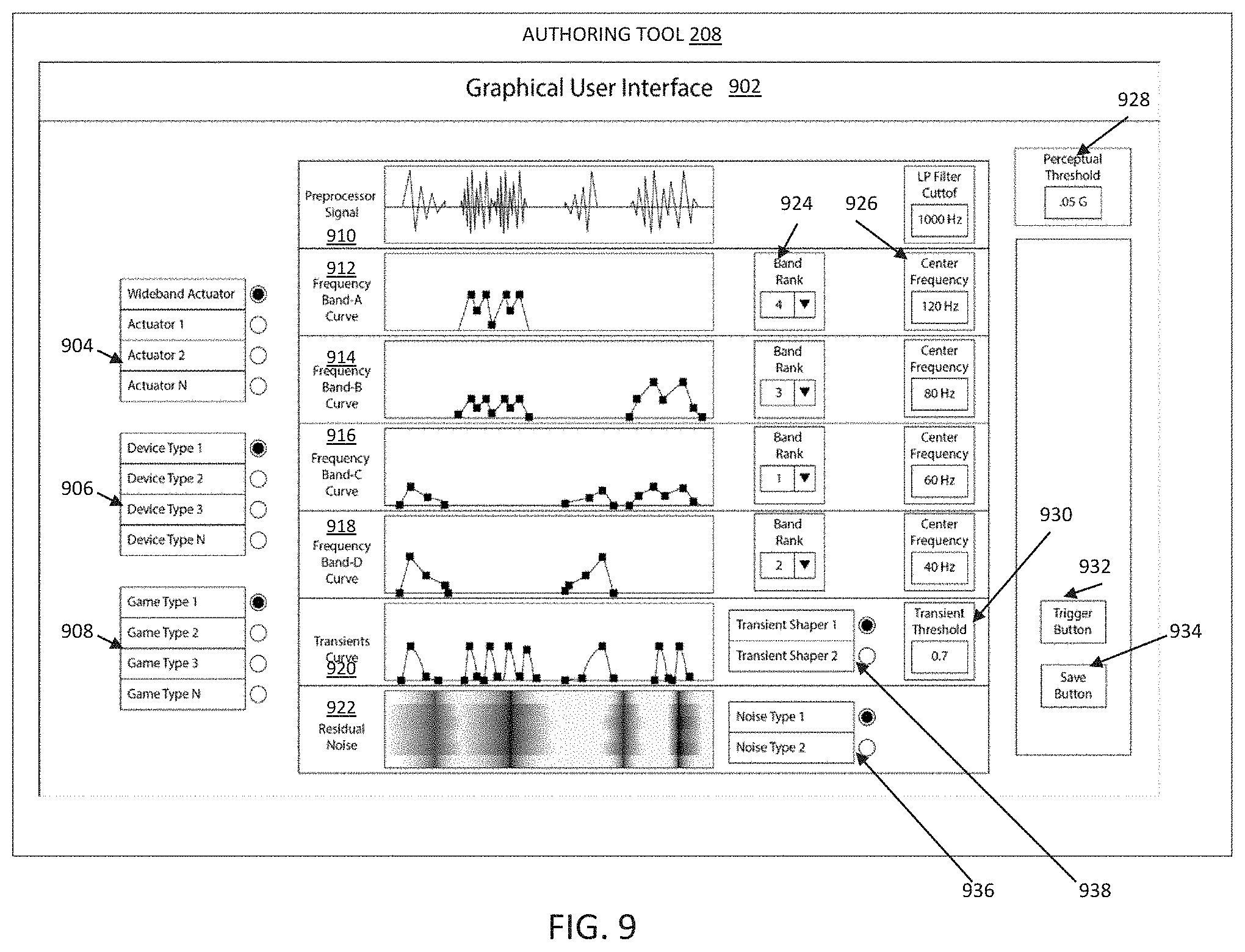

[0023] FIG. 9 illustrates a graphical user interface in the authoring tool in an embodiment of the present invention;

[0024] FIG. 10 illustrates different parts of a transformation module in an embodiment of the present invention;

[0025] FIG. 11 illustrates different parts of an aggregation and file management module in an embodiment of the present invention;

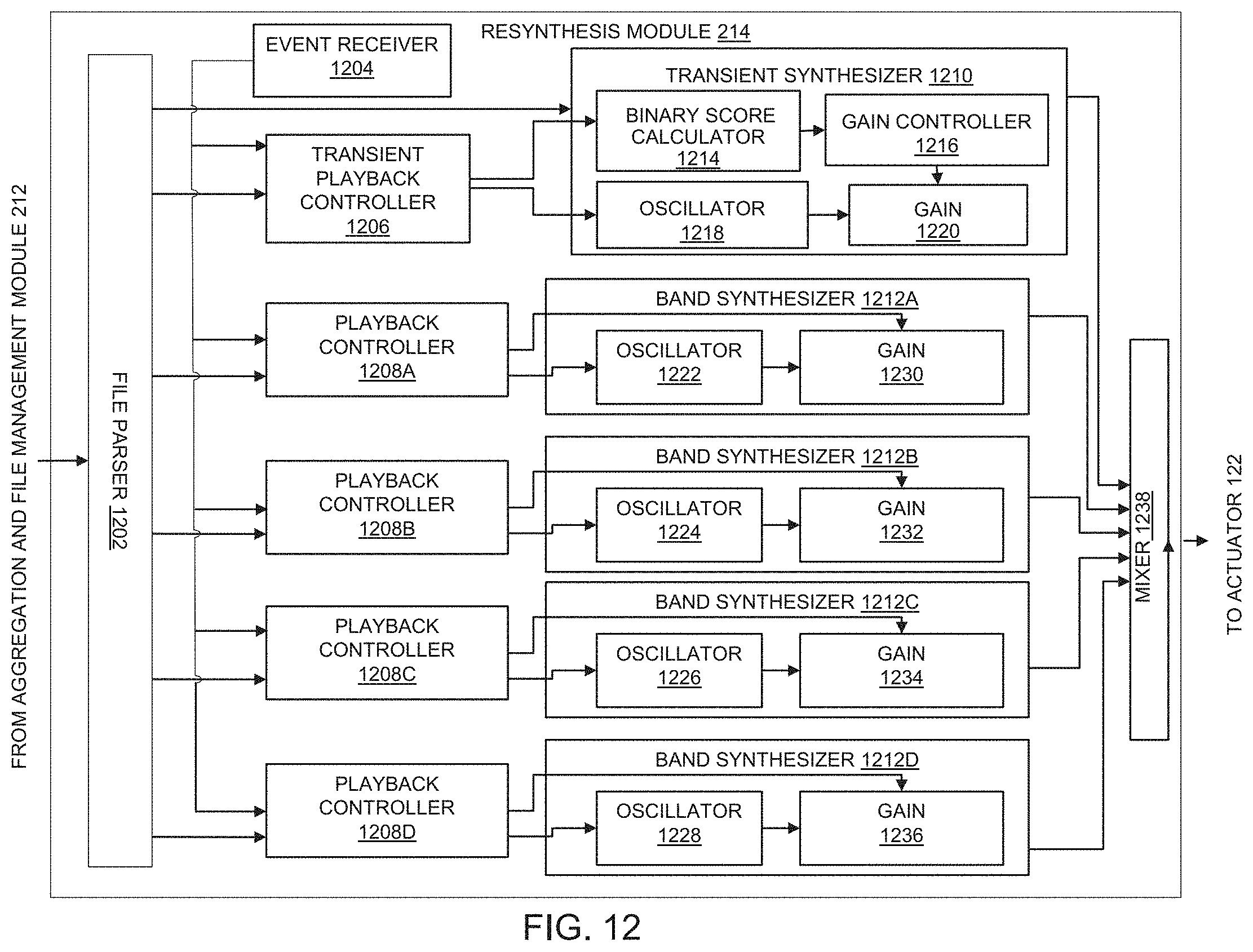

[0026] FIG. 12 illustrates different parts of a resynthesis module in an embodiment of the present invention;

[0027] FIG. 13 illustrates a process flow diagram for converting an audio signal into a haptic signal an embodiment of the present invention;

[0028] FIG. 14 illustrates a process flow diagram for converting the audio signal into analyzed audio descriptor data in the embodiment of the present invention;

[0029] FIG. 15 illustrates the process flow diagram for converting the audio signal into the analyzed audio descriptor data in an alternate embodiment of the present invention;

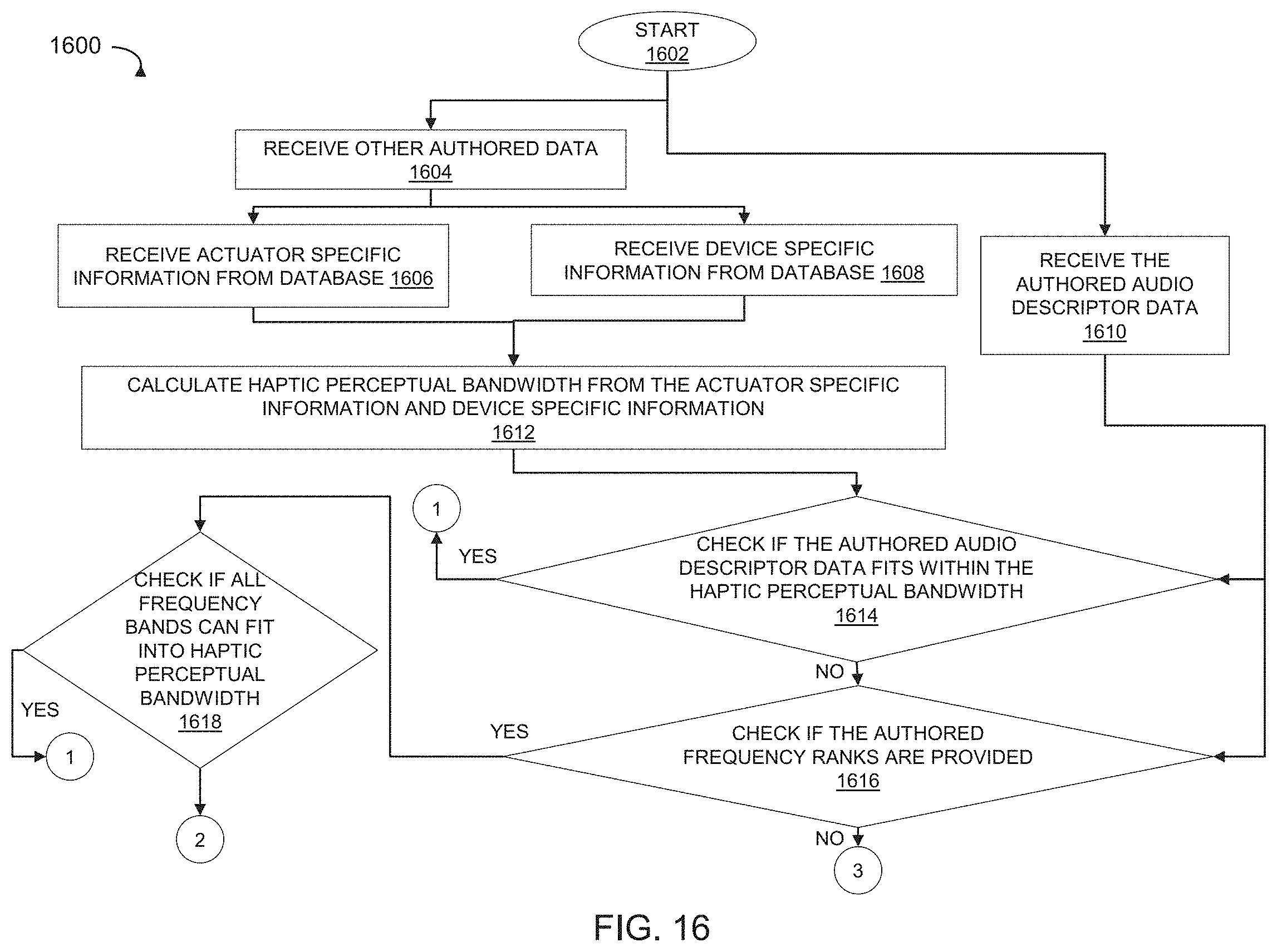

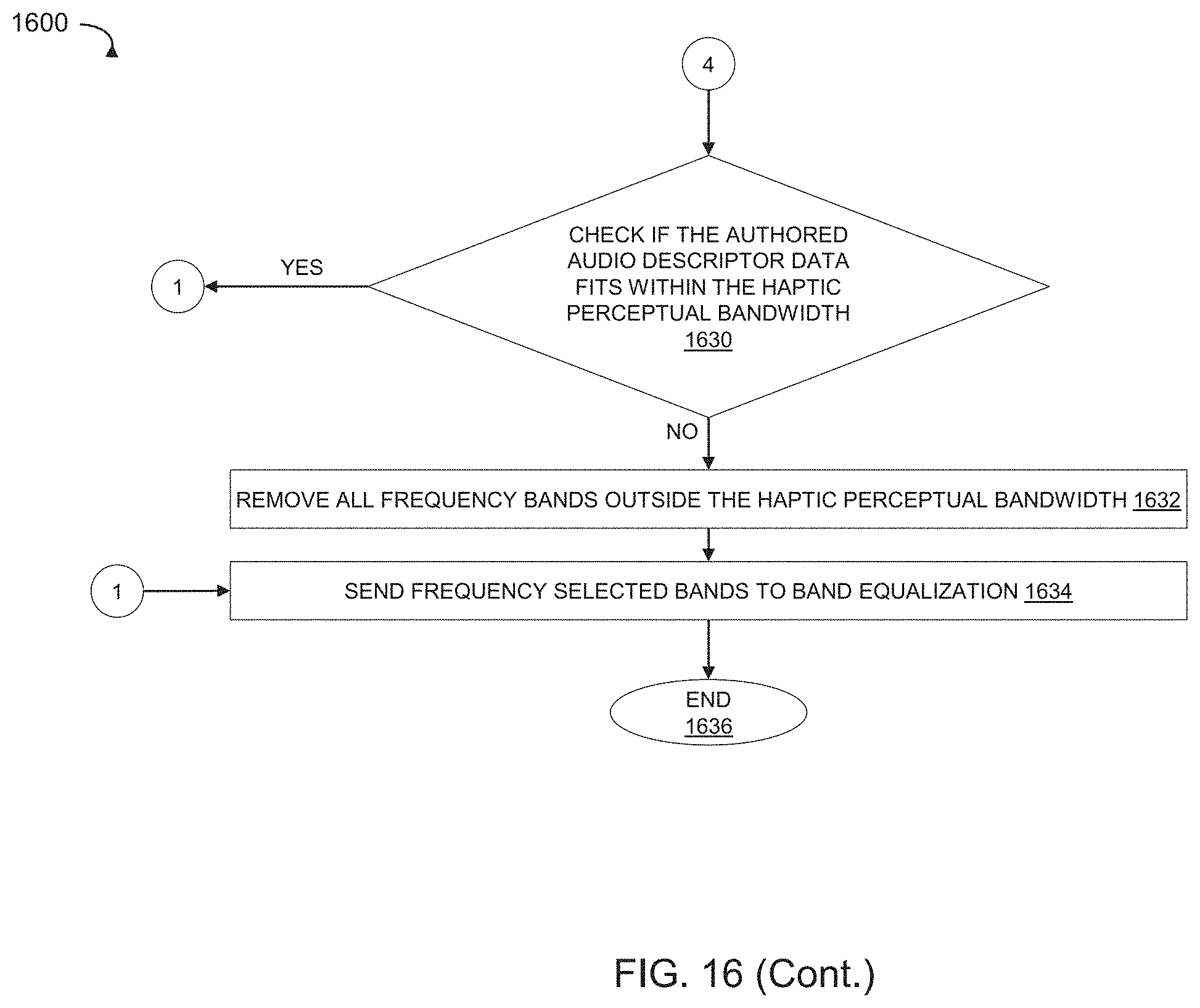

[0030] FIG. 16 illustrates the process of transforming an authored audio descriptor data to fit within a haptic perceptual bandwidth in an embodiment of the present invention;

DETAILED DESCRIPTION OF THE INVENTION

Audio Signal

[0031] As used herein, the term "audio signal" is intended to broadly encompass all types of audio signals including analog audio signals, digital audio signals, digital audio data, audio signals embedded in video or media streams.

Haptic Output

[0032] The term "haptic output" as used herein includes a haptic signal derived from audio signals by digital signal processing.

Analyzed Audio Descriptor Data

[0033] The term "analyzed audio descriptor data", as used herein includes one or more frequency band descriptor data and a transient descriptor data. The transient descriptor data as used herein includes the transient score envelope, which is a series or array of time-transient score data points.

Authored Audio Descriptor Data

[0034] The term "authored audio descriptor data" includes one or more authored frequency band descriptor data, which has been edited and/or modified and/or additional data points have been added. Each authored frequency band descriptor data includes a center frequency, bandwidth for that frequency band, a time-amplitude envelope comprising an array of time-amplitude data points, and a frequency band rank for a specific frequency band. Additionally, the authored audio descriptor data includes authored transient descriptor data. The authored transient descriptor data includes a transient threshold value; and the transient score envelope, which is a series or array of time-transient scores data points.

Other Authored Data

[0035] The term "other authored data" includes: (a) actuator specific data such as actuator type, actuator ID, actuator bandwidth and other actuator specific information; (h) device specific data such as a device type, a device ID; and (c) perceptual data such as a perceptual threshold value; perceptual bandwidth and other information, which has been edited or changed by the user through a user interface.

Haptic Perceptual Bandwidth

[0036] The combined bandwidth of the electronic computing device along with the embedded actuator(s) over which the vibrations are produced is referred as "available bandwidth". Not all of the vibrations in the available bandwidth can be experienced by the humans through sensory stimulus. The combined bandwidth of the electronic computing device along with the embedded actuator(s) over which the vibrations can be felt by humans is referred as "haptic perceptual bandwidth".

Transformed Audio Descriptor Data

[0037] The term "transformed audio descriptor data", as used herein includes the authored audio descriptor data comprising one or more frequency band descriptor data and a transient descriptor data that has been transformed to fit into the haptic perceptual bandwidth

[0038] The present invention and its advantages are best understood by referring to the illustrated embodiments depicted in the accompanying drawings, in which like numbers designate like parts. The present invention may, however, be embodied in numerous devices for haptic signal processing and should not be construed as being limited to the exemplary embodiments set forth herein. Exemplary embodiments are described below to illustrate the present invention by referring to the figures.

[0039] FIG. 1 illustrates an overview of a haptic processing system in an embodiment of the present invention. A haptic processing system 100 includes an electronic computing device 102 connected to a cloud 140, a server 160, and a distributed system 150.

[0040] The cloud 140 may be a cloud computing environment having computing resources and storage. The storage comprises one or more databases, for example, centralised database, distributed database, personal database, end-user database, commercial database, NoSQL database, operational database, relational database, cloud database, object-oriented database, graph database or some other type of database with at least one database having information about different actuators, devices in which actuators are embedded, haptic hardware, haptic game specific data, haptic preferences of users, and content information such as gaming information including game type.

[0041] The server 160 is multi-processor, multi-threaded, with a repository comprising databases, which holds at least one database having information about actuator specific information, device specific information, and content information. The distributed system 150 has distributed databases that hold information about actuator specific information, device specific information, and content information. Furthermore, the cloud 140, the server 160, the distributed system 150 allows several developers to use authoring tools concurrently, share information, share feedback, and communicate with each other.

[0042] The electronic computing device 102 includes a memory 104, a coprocessor 114, at least one processor 116, a communication system 118, an input/output controller 120, and one or more haptic actuators 126 apart from other software and/or hardware. For example, the electronic computing device 102 includes in the memory 104, one or more applications 108 for authoring different types of software games. An interface bus 112 provides power supply and also enables data communication between the memory 104, the processor 116, the coprocessor 114, the input/output controller or the I/O controller 120, the communication system 118 and one or more actuators 122. The I/O controller 120 interfaces with devices such as a display 130, at least one speaker 124, one or more haptic actuators 126, and at least one input device 128 such as a keyboard, a mouse, a gamepad, a joystick, a touch panel, or a microphone. The I/O controller 120 provides power supply, control information, and enables data communication between the display 130, the speaker 124, the one or more haptic actuators 126 and the input device 128. Alternatively, the display 130, the speaker 124, the one or more haptic actuators 126, and the input device 128 can receive power supply from an external source.

[0043] The memory 104 comprises an operating system 106, one or more applications 108, and a haptic module 110. In some embodiments, at least one application 108 for authoring the software games may reside in the haptic module 110. For example, the applications 108 include a game authoring application and/or a game editing application with a user interface that allows a user to edit time-amplitude values, time frequency values of the audio signal to derive and/or modulate haptic output. The haptic module 110, which may be a combination of hardware and software in some embodiments, include executable instructions to produce a haptic signal from an audio signal for providing a haptic experience.

[0044] In an alternate implementation, the haptic module 110 is implemented as software on the electronic computing device 102 having one or more embedded actuators 122. The haptic module 110 communicates with the cloud 140, the server 160, the distributed system 150 through the communication system 118. In another implementation, the haptic module 110 is a separate module with a dedicated processor and a memory. The haptic module 110 controls the haptic output of at least one actuator 126.

[0045] The memory 104 can be a Read-Only Memory (ROM), Random-Access Memory (RAM), digital storage, magnetic tape storage, flash storage, solid-state device storage or some other type of storage device. The memory 104 can store encrypted instructions, source code, binary code, object code, encrypted compiled code, encoded executable code, executable instructions, assembly language code or some other type of computer readable instructions.

[0046] The processor 116 and the coprocessor 114 are hyper-threading, multi-tasking, and multi-processing. Alternatively, the processor 116 can be a special purpose processor or some other type of microprocessor capable of processing analog or digitalized audio signals. The processor 116 and the coprocessor 114 can implement special hardware that is designed for digital signal processing, for example, MMX technology provided by Intel.RTM.. MMX technology provides an additional instruction set to manipulate audio, video, and multimedia. The processor 116 can any type of processor such as MMX, SSE, SSE2 (Streaming SIMD Extensions 2), SSE3 (Streaming SIMD Extensions 3), SSSE3 (Supplemental Streaming SIMD Extensions 3), SSE4 (Streaming SIMD Extensions 4) including the variants SSE 4.1 and SSE4.2 AVX (Advanced Vector Extensions), AVX2 (Haswell New Instructions), FMA (Fused multiply--add) including FMA3, SGX (Software Guard Extensions), MPX (Memory Protection Extensions), Enhanced Intel SpeedStep Technology (FIST), Intel.RTM. 64, XD bit (an NX bit implementation), Intel.RTM. VT-x, Intel.RTM. VT-d, Turbo Boost, Hyper-threading, AES-NI, Intel.RTM. TSX-NI, Intel.RTM. vPro, Intel.RTM. TXT, Smart Cache or some other type of implementation for a processor. The processor 116 or the coprocessor 118 can be a soft processor such as the Xilinx MicroBlaze.RTM. processor that can include at least one microcontroller, real-time processor, an application processor and the like.

[0047] The communication system 118 can interface with external devices/applications via wired or wireless communication. For example, the communication system 118 can connect to a server 160 via wired cable. The communication system 118 has an encoder, a decoder, and provides a standard interface for connecting to wired and/or wireless networks. Examples of interface include, but are not limited to, ethernet RJ-45 interface, thin coaxial cable BNC interface and thick coaxial AUI interface, FDDI interface, ATM interface and other network interface.

[0048] In some embodiments, the haptic module 110 for authoring software games may be implemented in the cloud 140, the distributed system 150 or the server 160. When the haptic module 110, which includes the authoring software is implemented in the cloud 140, the distributed system 150 or the server 160 a haptic output may be provided to the electronic computing device 102 having the embedded actuator 122 in form of a haptic file or a real time data stream or a stored data stream. The haptic file may be parsed by the haptic module 110 for providing immersive haptic experience.

[0049] FIG. 2 illustrates different components of the haptic module 110 in an embodiment of the present invention. The haptic module 110 residing in the memory 104 of the electronic computing device 102. The haptic module 110 includes an audio preprocessor module 202, a transient detection and processing module 204, an audio analysis module 206, a database module 220, an artificial intelligence module 216, an authoring tool 208, a transformation module 210, an aggregation and file management module 212, and a resynthesis module 214.

[0050] In one variation of this implementation, the audio preprocessor module 202 is embedded within the audio analysis module 206. In another variation of this implementation, the resynthesis module 214 may include the aggregation and file management module 212.

[0051] The database module 220 comprises an actuator specific information 222, a device specific information 224 and a content information 226. The actuator specific information 222 stores technical information related to different actuators. For example, the actuator specific information includes make, type of actuator (LRA, Piezo-electric actuator, wideband actuator, etc.), resonant frequency of the actuator, mass of the actuator, acceleration of the actuator and other technical information related to actuators.

[0052] The device specific information 224 information may include information such as but not limited to the type of electronic computing device (mobile phone, gamepad, tablet etc.), type of actuator embedded in the device electronic computing device, mass of the electronic computing device, the weight of the electronic computing device with embedded actuator and other parameters related to electronic computing device. In different embodiments, the electronic computing device 102 can be a desktop computer, a laptop, a gaming console, a mobile computing device such as a phone or a tablet, a gaming controller such as a joystick, gamepad, flight yoke, gaining mouse, gaming keyboard, keyboard wrist rest, mouse pad, headphones or some other type of electronic computing device.

[0053] The content information 226 includes information that will be analyzed for producing haptic effect. The content information 226 may include type of content, for example, game, multimedia file, song or some other type of content. In some embodiments, the type of content may be categorised according to the haptic experience, for example, content with high haptic experience, content with normal haptic experience, and content with low haptic experience. In addition, in some embodiments, the content may include user specific information, user characteristic, user experience with haptics and the like.

[0054] FIG. 3 illustrates a distributed haptic module implementation in an embodiment of the invention. In this implementation, some modules of the haptic module 110 may reside in the cloud 140, some modules of the haptic module 110 may reside on the server 160, and some modules of the haptic module 110 may reside in the distributed system 150 within a network 302. FIG. 3 shows one of the different implementations of the haptic module 110 distributed among different devices, however, in other implementations the haptic module 110 may include additional modules or fewer modules, which are distributed over the distributed system 150 in the network 302.

[0055] In an exemplary implementation, the audio preprocessor module 202, the transient detection and processing module 204, the audio analysis module 206, the artificial intelligence module 216, the transformation module 210, the aggregation and file management module 212, and the resynthesis module 214 all reside on the cloud 140. The database module 220 resides as a distributed database in the network 302. The database module 220 may include a processor 318 and a memory. The database module 220 may also be implemented over a distributed system 150 as a distributed database.

[0056] Each module of the haptic module 302 can have a dedicated processor and memory. For example, the audio preprocessor module 202 has a processor 304 and an associated memory, the transient detection and processing module 204 has a processor 306 and an associated memory, the audio analysis module 206 has a processor 308 and an associated memory, the artificial intelligence module 216 has a processor 310 and an associated memory, the transformation module 210 has a processor 312 and an associated memory, the aggregation and file management module 212 has a processor 314 and an associated memory, the resynthesis module 214 has a processor 316 and an associated memory.

[0057] The authoring tool 208, which resides in the electronic computing device 102 has a processor 116 and the memory 104.

[0058] In another exemplary variation of this implementation, the audio preprocessor module 202, the transient detection and processing module 204, the audio analysis module 206, the artificial intelligence module 216, the transformation module 210, the aggregation and file management module 212, the resynthesis module 214, and the authoring tool 208 can reside on the server 160. The database module 220 can be a distributed database, a standalone database, a cloud database or a network implemented database residing within the network 302 and may be associated with the server 160. Other variations and implementations are possible for deploying all the different modules such as the audio preprocessor module 202, the transient detection and processing module 204, the audio analysis module 206, the artificial intelligence module 216, the transformation module 210, the aggregation and file management module 212, the resynthesis module 214, the authoring tool 208, and the database module 220 over the cloud 140, the server 150, and the distributed system in the network 302.

[0059] FIG. 2 and FIG. 3 are exemplary illustrations and should not be construed as limiting for the implementation of the haptic module 110 in the network 302.

[0060] FIG. 4 illustrates the process of analysis of an audio signal in different modules in an embodiment of the present invention. The audio preprocessor module 202 receives an audio signal 402. The audio preprocessor module 202 down-samples and conditions the audio signal 402 to remove unwanted high frequency signal components and noise. The preprocessed audio signal is simultaneously passed to the transient detection and processing module 204, the audio analysis module 206, and the authoring tool 208. In some embodiments, the preprocessed audio module 202 may be combined in the audio analysis module 206.

[0061] The transient detection and processing module 204 detects the presence of transients in the preprocessed audio signal. If transients are detected, the transient detection and processing module 204 performs the analysis of the transients in the preprocessed audio signal. The analyzed transients are converted into transient descriptor data, which is passed simultaneously to the transformation module 210 and the authoring tool 208. The transient descriptor data includes a transient score. The transient score is calculated from the preprocessed audio signal and the time-transient scores are stored in the transient array.

[0062] In some embodiments, the transient detection and processing module 204 may directly receive the audio signal 402.

[0063] The audio analysis module 206 breaks up the received preprocessed audio signal into different frequency bands using one or more filter banks. Alternatively, in another embodiment, the audio analysis module 206 may implement spectrogram analysis for determining a harmonic component and a percussive component. In yet another embodiment, the spectrogram analysis of the received signal determines the harmonic component, the percussive component and a residual component.

[0064] When the audio analysis module 206 implements one or more filterbanks, the preprocessed audio signal received from the preprocessor module 202 is passed to one or more filter banks. The number of filter banks is decided based on the number of factors, such as, but not limited to audio signal characteristics, haptic experience, resonant frequency of the electronic computing device having an embedded actuator etc. Each filterbank can either be a digital filter or an analog filter or a combination of a digital filter and an analog filter, which is tuned to a specific frequency bandwidth. In one implementation, one or more filter banks can be utilised for separating the preprocessed audio signal into different frequency bands. For example, the frequency bands can be of fixed linear-scale bandwidth, that is. 30 Hz to 70 Hz, 70 Hz-110 Hz, 110 Hz-150 Hz, etc. Alternatively, the frequency bands can be of fixed logarithmic-scale bandwidth such as 30 Hz-51 Hz, 51 Hz-87 Hz, 87 Hz-150 Hz, etc. Other mathematical functions can be used to generate fixed bandwidth scales. Alternatively, the frequency bands can be unequal bandwidths such as 30 Hz-60 Hz, 60 Hz-115 Hz, 115 Hz 200 Hz, etc, and may be chosen to correspond with different haptic perception ranges. Each frequency band is analyzed to derive a time-amplitude envelope. The time-amplitude envelope of each frequency band has a center frequency, which is preferably the average of the upper frequency and the lower frequency of each of the frequency bands. In a variation of this implementation, the center frequency is calculated on a logarithmic scale. The time-amplitude envelope is filtered to reduce abrupt signal changes to create a smooth time-amplitude envelope. Additionally, the smooth time-amplitude envelope is reproduced using a minimum number of time amplitude values without losing signal information in a data reduction process. In one variation of this implementation, some time-amplitude values are discarded to reduce the number of time-amplitude data points, which results in a smooth time amplitude envelope. However, while reducing or discarding the time-amplitude points, the information loss of the original signal is minimized.

[0065] In another implementation, the audio analysis module may use a spectrogram analysis to calculate the harmonic component and the percussive component. The audio analysis module 206 receives the preprocessed audio signal, which is analyzed using a spectrogram. The spectrogram produces the time-frequency representation of signals by converting the audio signal into the frequency domain using Fourier transformation. The frequency domain signal is analyzed and processed in the frequency domain. Thereafter, an Inverse Fourier transformation is performed on the time-frequency signal to convert it back into the time domain. In some embodiments, the spectrogram analysis of the preprocessed audio signal can also be performed in the time domain by first converting the audio signal into the frequency domain and then converting back the plot of the intensity of the frequency content of the signal into the time domain for further analysis.

[0066] In yet another implementation, the audio analysis module may use a spectrogram analysis to calculate the harmonic component, the percussive component and the residual component. The audio analysis module 206 receives the preprocessed audio signal, which is analyzed using a spectrogram to derive the harmonic component and the percussive component. The sum of the harmonic component and the percussive component is subtracted from the spectrogram to produce the residual component. The spectrogram produces the time-frequency portraits of signals by converting the audio signal into the frequency domain using Fourier transformation. The frequency domain signal is analyzed and processed in the frequency domain. Thereafter, an Inverse Fourier transformation is performed on the time-frequency signal to convert it back into the time domain.

[0067] The audio analysis module 206 passes the analyzed audio signal to the authoring tool 208. The authoring tool 208 also receives the preprocessed audio signal from the audio preprocessor module 2002, the transient descriptor data from the transient detection and processing module 204. Additionally the database module 220 passes the actuator specific information 222, the device specific information 224 and the content information 226 to the authoring tool 208. A query processor executes queries received from the authoring tool 208. The actuator specific information 222 includes parameters related to the haptic actuator, such as, but not limited to, type of actuator (e.g. eccentric rotating mass, piezo, voice coil motor, linear resonant actuator), a universally unique identifier (UUID), model number, serial number, manufacturer details, mass, resonant frequency/frequencies of the actuator, acceleration over frequency responses curves by different attached masses, rise and fall times of the actuator with different masses, direction of vibration and other operating parameters such as impedance, sound pressure level, rub and buzz, and input power.

[0068] The device specific information 224 stored in the database module 220 includes parameters related to device(s) in which the actuator(s) can be embedded such as, but not limited to, mass of the device, a UUID of the device, the center of gravity of the device, geometric shape of the device, placement location of the actuator within the geometric shape of the device, attachment characteristic of the actuator in the device such as attachment stiffness and viscosity, and inherent resonances of the device.

[0069] In some embodiments, the device specific information 224 and actuator specific information 222 can be related to each other. The device specific information 224 can include the actuator specific information 222. For example, the resonant frequency of the computing device 102 can be measured with the actuator 122. Likewise, the resonant frequency can be measured by inserting a different actuator, that is, the actuator 126 in the computing device 102. The combined resonant frequency of the electronic computing device 102 with different actuators can be stored in the database module 220. For example, the combined resonant frequency of the electronic computing device 102 measured with different types of actuators such as but not limited to LRA, piezoelectric actuators, or wideband actuators may be determined and stored in the database module 220. The database module 220 also holds data related to resonant frequency and other haptic parameters for the computing device 102 with one or more different actuators, such as the actuator 120 and/or the actuator.

[0070] The contextual information related to games includes, but is not limited to, type of games (simulations, real time strategy, adventure, massively multiplayer online etc), gaining activities, gaming content, gaining log and previous gaming activities, a specific profile, a microphone and/or camera, face recognition technology, eye tracking data and user stress levels. In addition, the contextual information can also be related to user psychology such as user preferences with respect to haptic experience, for example, strong vibration versus weak vibration, frequency vibrational experience versus moderate vibrational experience, and other variables such as current user state, current game, current game level and the related psychological parameters. The contextual information can be employed to determine a user's current focus of attention to determine what types of haptic feedback will be most appropriate for the user.

[0071] In some embodiments, the contextual information related to the electronic computing device 102 can be utilised to create customized haptics in real time information based on the characteristics of the electronic computing device 102. For example, if the electronic computing device 102 is a mobile phone then customisation of vibrational feedback is provided based on its location, position, placement, and direction. In another example, the mobile phone may vibrate differently, when held in the hand as compared to when placed on a table.

[0072] The authoring tool 208 has a user interface for changing the different parameters of the processed audio signal received from the audio analysis module 206, the database module 220, and the transient detection and processing module 204. For example, the user interface allows changing and/or editing the time-amplitude values of the received audio signal for one or more frequency bands. In some embodiments, the editing/changing the time amplitude values of the received audio signal for one or more frequency bands may alter the time-amplitude envelope of one or more frequency bands. Likewise, the user interface allows changing and/or editing of the transient description data to provide an immersive haptic experience. In different embodiments, the received audio signal parameters can be time-amplitude values, time-frequency-amplitude values, center frequency values (center frequency for each frequency band) or other values representing audio signal characteristics. In addition, in other variations of this implementation, the center frequency of each frequency band can be changed or edited to a new value by the user for one or more frequency bands. In addition, optionally, the edited center frequency for one or more hands can also be ranked.

[0073] The user interface may allow editing/changing or adding time-amplitude values to the transient descriptor data, which is received from the transient detection and processing module 204.

[0074] In different embodiments, the process of editing/changing or appending the time-amplitude values or tune-frequency-amplitude values may involve: [0075] (a) editing the analyzed audio descriptor data [0076] (b) adding additional time-amplitude values in the analyzed audio descriptor data, [0077] (c) deleting one or more time-amplitude values in the analyzed audio descriptor data [0078] (d) tweaking one or more time-amplitude values to redraw the time-amplitude curve to create a desired haptic curve [0079] (e) shifting the center frequency of the one or more frequency bands, [0080] (f) changing one or more frequency bands rankings, and [0081] (g) editing or changing transients and/or noise shaping time-amplitude values to achieve a desired haptic curve.

[0082] In some embodiments, the time-amplitude values of the processed audio signal received from the audio analysis module 206 can be modified to create additional frequency bands. In some embodiments, the frequency bands may be merged. In some embodiments, the frequency bands may be deleted or changed to match the haptic perceptual bandwidth. The creation of additional frequency bands or merging of the frequency bands can either be performed by the haptic processing system 100 or can be manually performed by the user.

[0083] The authoring tool 208 passes a query to the database module 220 to extract the actuator specific information 222, the device specific information 224, and the content information 226. The information received from the database module 220 is utilized to adjust the analyzed audio descriptor data and the transient descriptor data for producing optimal haptic experience for different types of actuators 410-418. By way of example and not a limitation, the actuator 410 can be a VCM (Voice Coil Motor) wideband actuator, the actuator 412 can be a piezo-electric actuator and the actuator 414 can be an LRA (Linear Resonant Actuator).

[0084] The preprocessed audio signal received from the audio preprocessor module 202, the transient descriptor data received from the transient detection and processing module 204, the analyzed audio descriptor data received from the audio analysis module 206 is displayed in the user interface of the authoring tool 208. In addition, the user interface also displays the modified time-amplitude values and the center frequency for one or more frequency bands. In addition, the time-transient values, the noise entropy and/or the noise shaping curves are also displayed in the user interface. In addition, the user can modify the information received from various modules, for example, the user can select a specific frequency band as a primary frequency band from the one or more frequency bands during editing and processing of the analyzed audio descriptor data received from the audio analysis module 206. Furthermore, the user can rank the different frequency bands as per user preferences. For example, the user can rank the frequency bands by changing the ordering provided by the audio analysis module 206. Additionally, the user can edit the time-amplitude values of the time-amplitude envelope of each frequency band or edit the time-transient score values of the transient descriptor data before passing an authored audio descriptor data to the transformation module 210.

[0085] In some embodiments, the authoring tool 208 can utilize the artificial intelligence module 216 to automate the process of analysing and changing the signal and/or data from the audio preprocessor module 202, the transient descriptor data received from the transient detection and processing module 204, and the analyzed audio descriptor data from the audio analysis module 206, and the actuator specific information 222 and device specific information 224 from the database module 220 to manipulate the analyzed audio descriptor data into authored audio descriptor data.

[0086] In an exemplary implementation, the artificial intelligence modules learn from the modification made by the user through the authoring tool 208 and subsequently suggest modification for the next window of the analyzed audio signal. The analyzed audio signal may be passed using a fixed window size or in alternate embodiment using a variable window size. In some embodiments, the window size can be user defined. Each window size can include a fixed number of audio packets or in other embodiments a variable number of audio packets.

[0087] In some embodiments, the audio packets contain audio data corresponding to one or more frequency bands.

[0088] In other embodiments using spectrogram, the audio packets contain audio data corresponding to the harmonic component and the percussive components.

[0089] In some other embodiments using spectrogram, the audio packets contain audio data corresponding to the harmonic component, the percussive components and the residual component.

[0090] The transformation module 210 receives and analyzes the authored audio descriptor data from the authoring tool 208, the transient descriptor data from the transient detection and processing module 204, the analyzed audio descriptor data from the audio analysis module 206, and other authored data comprising the actuator specific information 222 and device specific information 224 from the database module 220 to transform the authored audio descriptor data to fit into the haptic perceptual bandwidth of the electronic computing device 102 having embedded actuator 122.

[0091] The process of transformation involves executing algorithms for fitting the authored audio descriptor data to derive the transformed audio descriptor data. The transformed audio descriptor data is the optimised transformed data that fits into haptic perceptual bandwidth of the electronic computing device 102 having embedded actuator 122 for producing immersive haptic experience. The transformed audio descriptor data is passed to the aggregation and file management module 212. In a variation of this implementation, the transformation module 210 can be implemented in a distributed environment as a standalone device, wherein the transformation module 210 includes a transformation processor and/or a memory and other modules such as the communication system 118, the I/O controller 120 and the display 130.

[0092] The aggregation and file management module 212 performs the data reduction, aggregation, and file management on the transformed audio descriptor data received from the transformation module 210 to generate a haptic data file in the form of a computer readable file. In one variation of this implementation, the aggregation and file management module 212 is embedded within the transformation module 210 and performs the function of the transformation module 210 and the aggregation and file management module 212. For example, when the transformation module 210 includes the aggregation and file management module 212, the transformation module 210 performs the function of executing algorithms for combining data to create the transformed audio descriptor data and further performs the function of the data reduction, aggregation, and file management. The haptic data file is a computer readable file, which may be a JSON, an XML, a CSV, a text file or some other type of computer readable file format.

[0093] The haptic data file is passed to the resynthesis module 214, which parses the computer readable file to extract the transformed audio descriptor data. The resynthesis module 214 includes one or more synthesizers for generating the haptic output from the computer readable file on the electronic computing device 102 having embedded actuator 122 or on one or more actuators such as the actuator 410, the actuator 412, the actuator 414, the actuator 416, and the actuator 418.

[0094] The resynthesis module 214, in some embodiments, include one or more band synthesizers for generating a haptic output on one or more actuators, such as, the actuator 410, the actuator 412, the actuator 414, the actuator 416, and the actuator 418. In this implementation, the different actuators may be embedded in different devices such as a headphone, a vest or a game controller.

[0095] FIG. 4A illustrates the synthesis process in an embodiment of the present invention. In this embodiment, the haptic data file is authored and transformed separately and the electronic computing device 102 has the resynthesis module 214 stored in the memory 104. The haptic data file, which has been authored and transformed according to the combined frequency bandwidth of the electronic computing device 102 and the actuator 122. In addition, the actuators 416-418 have been embedded in a haptic headphone 440, and a haptic vest 430 includes the actuator 410, the actuator 412, and the actuator 414. The haptic data file is synthesized by the synthesis module 214 and the haptic experience is produced by the electronic computing device 102 through actuator 122. In addition, the haptic headphone 440 and the haptic vest 430 also produce haptic experience through embedded actuators.

[0096] The haptic data file is authored and transformed for different devices associated with the haptic processing system 100, For example, the haptic processing system 100 may be connected to the electronic computing device 102 having embedded actuator 122, the haptic vest 430 and the haptic headphone 440. In this implementation, the haptic modules may provide different channels for producing haptic output from each of the connected devices as shown in FIG. 4A. In other embodiments, there may be additional devices attached to the haptic processing system 100. Furthermore, in this implementation, the resonant frequency of the connected devices is determined, mapped to the closest frequency band based on the resonant frequency of each device, and subsequently authored and transformed according to the frequency band to which the resonant frequency is associated.

[0097] In embodiments, the electronic computing device 102 may be a gamepad integrated with the haptic vest 430 and the haptic headphones 440. In one variation of this implementation, the haptic perceptual bandwidth of the haptic headphone 440 and the haptic perceptual bandwidth of haptic vest 430 may be separately calculated and provided to the authoring tool 208.

[0098] In some embodiments, the authoring tool 208 is implemented as a software. However, in other variations of this implementation, the authoring tool 208 can also be implemented as a combination of software and hardware. Furthermore, the authoring tool 208 can be implemented on the distributed system 150, or as a standalone software in the server 160 or the electronic computing device 102.

[0099] FIG. 5 illustrates the different parts of an audio preprocessor module in an embodiment of the present invention. The audio preprocessor module 202 comprises a signal conditioner 502, an audio down-sampler 504, and a low pass filter 506. The signal conditioner 502 reduces unwanted noise, distortion, and non-linearity, which creeps into the audio signal 402 due to electronic components, electromagnetic interference, and other reasons. If no signal conditioning is required, the signal conditioner 502 can be bypassed or removed from the audio preprocessor module 202. The audio down-sampler 504 receives and down-samples the audio signal 402 to a lower acceptable sampling rate, preferably below 22 kHz to reduce unwanted information in the audio signal 402 for haptic processing by other modules. For example, if the audio signal 402 is sampled at 44.1 kHz, the audio down-sampler 504 will down-sample the received audio signal 402 to a sampling rate of 22.05 kHz or less. If the audio signal 402 does not require down-sampling, the process of down-sampling is bypassed.

[0100] The audio preprocessor module 202 also includes the low pass filter 506 to filter out the high frequency components in the audio signal 402. The preprocessed audio signal is passed to the transient detection and processing module 204, and the audio analysis module 206, and the authoring tool 208.

[0101] In one variation of this implementation, the audio preprocessor module 202 is embedded within the audio analysis module 206.

[0102] FIG. 6 illustrates the different parts of an audio analysis module in an embodiment of the present invention. The audio analysis module 206 receives the preprocessed audio signal from the audio preprocessor module 202. Alternatively, the audio analysis module 206 can directly receive the audio signal 402, when the preprocessor module 202 is embedded within the audio analysis module 206.

[0103] The audio analysis module 206 can perform the analysis of the preprocessed audio signal by implementing (a) filter bank analysis method, (h) a harmonic-percussive method, and (c) a harmonic-percussive-residual method.

[0104] Referring to FIG. 6, the audio analysis module 206 comprises a filter bank 602, an envelope follower 604, an envelope smoother 606, and a data reduction 608. The preprocessed audio signal received from the audio preprocessor module 202 is separated into different frequency bands by the filter bank 602. The preprocessed audio signal is processed using a window, each window having a predefined number of audio packets. The audio analysis module 206 analyzes the audio packets in each window before processing and analysing the audio packets in the next window.

[0105] In one variation of this implementation, the window size can have a variable number of audio packets. Alternatively, in another variation of this implementation, the window size may comprise a variable number of audio packets. Each frequency band has a center frequency, an upper cut-off frequency and a lower cut-off frequency. The difference of the upper cut-off frequency and the lower cut-off frequency of the frequency band is the bandwidth of that frequency band. The center frequency is preferably the average value of the upper cut-off frequency and the lower cut-off frequency of the frequency band. In some embodiments, the center frequency can be a median value, a modal value, or a logarithmic means. A selection button in the graphical user interface may be used for changing the value of the center frequencies of the one or more frequency bands.

[0106] The filter bank 602 includes one or more band pass filters, such as a band filter 6022, a band filter 6024, a band filter 6026, and a band filter 6028. Although only four band filters are shown in the filter bank 602, in other variations the filter bank 602 can have a higher or lower number of band filters.

[0107] The center frequency of each band pass filter can be evenly spaced over a frequency range in a linear or a logarithmic scale. Alternatively, the user can set the center frequency of each band pass filter such as the band filter 6022, the band filter 6024, the band filter 6026, and the band filter 6028. The lower frequency and the upper frequency of band filters 6022-6028 can be predefined or can be defined by the user. For example, the first band pass 6022 filter has a lower frequency of 0 Hz and an upper frequency of 60 Hz and a bandwidth of 60 Hz. Likewise, the band filter 6024, the band filter 6026, and the band filter 6028 have a bandwidth of 60 Hz with the lower frequency and the upper frequency of 60-120 Hz, 120-180 Hz and 180-240 Hz, respectively.

[0108] In another variation of this implementation, each band filter 6022-6028 has a variable bandwidth. For example, the band filter 6022 has a lower frequency of 0 Hz and an upper frequency of 40 Hz with bandwidth of 40 Hz. Similarly, the band filter 6024, the band filter 6026, and the band filter 6028 have variable frequency bands such as 20-60 Hz, 60-120 Hz, 120-0.00 Hz, respectively.

[0109] The output of the filter bank 602 is the audio signal comprising audio signals filtered into different frequency bands by the filter bands 6022-6028, The band separated audio signals are passed to the envelope follower 604. The envelope follower 604 includes a band envelope approximation 6042, a band envelope approximation 6044, a band envelope approximation 6046, and a band envelope approximation 6048. In the current implementation, the band envelope approximation 6042 receives the audio signal filtered by the band filter 6022 at a specific frequency range. Likewise, the band envelope approximation 6044, the band envelope approximation 6046, and the band envelope approximation 6048 receive the signals filtered into specific frequency bands by the band filter 6024, the band filter 6026, and the band filter 6028.

[0110] The envelope follower 604 implements approximation of time-amplitude values of the time-amplitude envelope of each frequency band by using the band envelope approximation 6042-6048.

[0111] In one variation of this implementation, the band envelope approximation 6042-6048 includes at least one envelope follower, a memory bank, and an optional processor.

[0112] In another variation of this implementation, the envelope follower 604 is utilized for generating time amplitude values for each frequency band using Hilbert transformation in the band envelope approximation 6042-6048.

[0113] The band filtered audio signal is approximated in the band envelope approximation 6042-6048 to form a time-amplitude envelope for each of the frequency bands. The time-amplitude envelopes are an array of time-amplitude data values, which represent the amplitude values over the time for each frequency band. For example, the band envelope approximation 6042 approximates the time-amplitude envelope Output of the band Ater 6022. Likewise, the band envelope approximation 6044 approximates the time-amplitude envelope for the band filter 6024, the band envelope approximation 6046 approximates the time-amplitude envelope for the band filter 6026, and the band envelope approximation 6048 approximates the time-amplitude envelope for the band filter 6028. To summarize, the band envelope approximation 6042-6048 represents the approximate time-amplitude values that represent the changes in the received audio signal by a smooth envelope of the time-amplitude data values.

[0114] The output of the envelope follower 604 is passed to the envelope smoother 606. The envelope smoother 606 includes a band envelope smoother 6062, a band envelope smoother 6064, a band envelope smoother 6066, and band envelope smoother 6068. Each band envelope approximation 6042-6048 passes the approximated time-amplitude envelope to the corresponding band envelope smoother 6062-6068. In the current implementation, the band envelope smoother 6062 receives the time-amplitude envelope from the band envelope approximation 6042. Likewise, the band envelope smoother 6064, the band envelope smoother 6066, and the band envelope smoother 6068 receive the time-amplitude envelope from the band envelope approximation 6044, the band envelope approximation 6046, and the band envelope approximation 6048, respectively. The band smoother 606 smoothes the time amplitudes values by removing outliers. For example, removing extraneous time-amplitude data points, removing time-amplitude data points, which are outliers, or other points that don't contribute directly or indirectly for generation of haptic output and to generate the smooth time-amplitude envelope.

[0115] For example, the envelope smoother 606 smooths the time-amplitude envelope to reduce abrupt signal changes and generates a smoothed time-amplitude envelope at the center frequency for each of the frequency bands. Due to large variation in the amplitude values, there are abrupt signal changes; these abrupt signal changes are smoothed using the envelope smoother 606. The smoothing process eliminates outliers, clips of sharp peaks, and produces a smoothed time-amplitude envelope for each frequency band. The envelope smoother 606 has multiple band smoothers, one for each of the frequency bands, such as the band envelope smoother 6062-6068, having at least one digital filter, a memory bank, and an optional processor. The envelope smoother can be a filter such as a low-pass Butterworth filter with a cut-off frequency of 250 Hz. However, in other implementations, different types of filters can also be implemented and the filters can be set to different cut-off values ranging between 30 Hz to 1000 Hz. In a different implementation, the audio analysis module 206 has an audio analysis processor and memory to store and execute envelope smoothing algorithms, for example, numerical analysis, B-splines, AI algorithms and other known techniques of curve smoothening.

[0116] The output of the envelope smoother 606 is passed to the data reduction 608. The data reduction 608 includes a band data reduction 6082, a band data reduction 6084, a band data reduction 6086, and a band data reduction 6088. The band data reduction 6082 receives the smoothened time-amplitude envelope from the band envelope smoother 6062. Likewise, the band data reduction 6084, the band data reduction 6086, and the band data reduction 6088 receive the smoothened time-amplitude envelope from the band envelope smoother 6064, the band envelope smoother 6066, and the band envelope smoother 6068.

[0117] The data reduction 608 reduces the number of time-amplitude data points of the smoothed time-amplitude envelope and produces a reduced time-amplitude envelope. The reduced time-amplitude envelope is substantially similar to the smoothed time-amplitude envelope, but has a reduced number of time-amplitude data points. The reduced time-amplitude band envelope is created for each frequency band, which includes the center frequency value, a series or an array of reduced time-amplitude data points. The data reduction 608 includes the band data reduction 6082, the band data reduction 6084, the band data reduction 6086, and the band data reduction 6088; each band data reduction 6082-6088 implements data reduction algorithms.

[0118] In one variation of this implementation, the audio analysis module 206 has a memory and a processor. The data reduction 608 reduces the smoothed time-amplitude envelope into a minimum number of time-amplitude data points, by reducing the number of time-amplitude data for each frequency band, and removes the unwanted time-amplitude data points.

[0119] In one implementation, the data reduction 608 utilizes the Ramer-Douglas-Peucker data reduction algorithm in order to minimize the amount of time-amplitude data points to a manageable proportion. In different implementations, the data reduction algorithms can implement piecewise linear approximation methods such as, but not limited to, RLS (recursive least square). Visvalingam-Wyatt, differential evolution, Broyden-Fletcher-Goldfarb-Shanno (BEGS), gradient descent and other known techniques.

[0120] In some embodiments, the envelope smoother 606 and the data reduction 608 can be combined to form an envelope smoother and data reduction implementing algorithms that perform both smoothing and reduction of data simultaneously.

[0121] The audio analysis module 206 produces an analyzed audio descriptor data, which includes a frequency band descriptor data derived from each frequency band. Each frequency band descriptor data comprises the center frequency, the bandwidth, and the reduced time-amplitude envelope having time-amplitude values.

[0122] FIG. 7 illustrates the different parts of the audio analysis module implementing spectrogram analysis using a harmonic component and a percussive component. In this embodiment, the residual component is not used and the audio analysis module uses only the harmonic component and the percussive component to produce an output.

[0123] The preprocessed audio signal is provided to a spectral analyzer 702, which produces a frequency power spectrogram. The spectral analyzer 702 converts the received signal into the frequency domain by performing a Fourier transform, such as a Short Time Fourier Transform (STFT) to generate a power spectrogram. The power spectrogram created by the spectral analyzer 702 is then passed through a median filtering process for separating the filtered harmonic spectrogram and the filtered percussive spectrogram, which are utilized to compute the binary masks necessary to derive the harmonic spectrogram 704 and the percussive spectrogram 706. The spectrogram shows the frequencies along the vertical axis (y-axis) and the time along the horizontal axis (x-axis). In addition, the power of specific frequency is shown along the frequency-time graph along the x-y axis with the black color density showing the energy/power for that specific frequency.

[0124] In another variation of this implementation, the center frequency value is not calculated, instead the whole array of time-frequency values is stored and provided to the authoring tool 208 for signal processing through the user interface. For each frequency band, the array of time-amplitude values is converted into a time-amplitude envelope by an envelope follower 716. The time-amplitude envelope is converted into a smoothed time-amplitude envelope by an envelope smoother 718. The time-amplitude values of the smoothed time-amplitude envelope is reduced in size by a data reduction 720 without losing signal information. The data reduction 720 produces a reduced time-amplitude envelope. Finally, the data reduction 720, which comprises information related to multiple frequency bands produces a series of audio descriptor data comprising a frequency band descriptor data. The series of frequency band descriptor data includes frequency description data for each frequency band. Each frequency band descriptor data includes information about the center frequency, the reduced time-amplitude envelope, the time-amplitude values, and bandwidth.

[0125] In one variation of this implementation, the audio analysis module 206 has a memory and a processor.

[0126] In a variation of this implementation, the audio analysis module 206 calculates a Short Time Fourier Transform (STET) for a fixed window of audio packets to calculate the spectrogram. In another variation of this implementation, the audio analysis module 206 calculates a Short Time Fourier Transform (STFT) for a variable window of audio packets to calculate the spectrogram. The spectrogram is utilised to derive the harmonic component and the percussive component.

[0127] The harmonic spectrogram 704 and the percussive spectrogram 706 can be processed separately. Alternatively, in another implementation, the harmonic spectrogram 704, the percussive spectrogram 706 and the residual spectrogram can be separately processed and provided to the authoring tool 208 for further analysis.

[0128] The percussive spectrogram 706, which is derived from the median filtering of the power spectrogram is passed to the Inverse Short Fourier Transform (ISFT) to determine the transients. The transients are then passed to the transient processing and detection module 204, which analyzes the transients. The transients are provided to the user interface in the authoring tool 208. In some embodiments, the transient processing and detection module 204 may determine the transient binary score from the percussive spectrogram 706.

[0129] The analysis of each frequency band comprising time-amplitude values or time-frequency values or time-amplitude-frequency values can be performed either in the time domain or the frequency domain. In addition, the noise component can be converted into a time-amplitude envelope, in one variation can be displayed in the time authoring tool 208 as residual noise. In some embodiments, the residual noise can be shaped through the authoring tool 208.

[0130] Subsequently, the analyzed audio descriptor data from the audio analysis module 206 is provided to the authoring tool 208 and the transformation module 210.

[0131] In another embodiment, the audio analysis module may implement the harmonic component, the percussive component, and a residual component as shown FIG. 7. In this implementation, the audio analysis module 206 calculates the residual component by subtracting the harmonic component and the percussive component from the original spectrogram. When the Inverse Short Time Fourier Transform (ISTFT) of the residual component is performed, the residual component can be calculated. The residual component is the noise, which can be separately provided to the authoring tool 206. The authoring tool 206 can modify and re-shape the noise component.

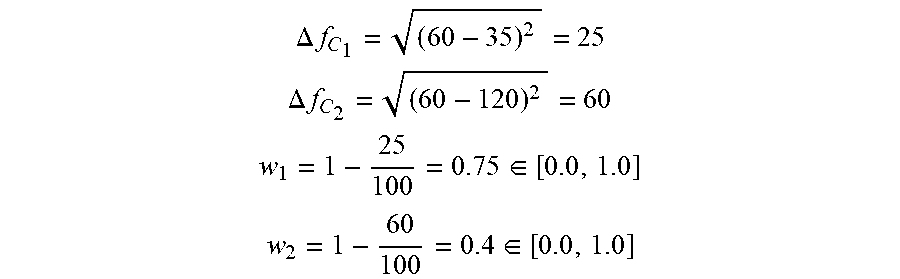

[0132] The residual path comprises the residual spectrogram 708 an inverse short fourier transform 714, an envelope follower 724, an envelope smoother 726, and a data reduction 728. The preprocessed audio signal is provided to a spectral analyzer 702. The spectral analyzer 702 converts the received signal into the frequency domain by performing a Fourier transform, such as a Short Time Fourier Transform (STFT) to generate a power spectrogram. The power spectrogram calculated by the spectral analyzer 702 is then passed through a median filtering process for separating a harmonic component and a percussive component to compute the binary masks needed in order to derive the harmonic spectrogram 704 and the percussive spectrogram 706. Alternatively, the harmonic spectrogram 704 can be derived from the median filtering of the power spectrogram, which is directly utilised for a time-frequency-ridge (TF-ridge) detection. The harmonic spectrogram 704 is passed through a TF-Ridge detection 710. The TF-Ridge detection 710 analyzes the harmonic spectrogram 704 to create an array of time-amplitude envelopes comprising time-amplitude values for one or more frequency bands. The bands of frequencies are predefined, such as 20-60 Hz, 60-120 Hz, 120-200 Hz, and 200 Hz-1 kHz. For each band, the TF-Ridge detection 710 analyzes all frequencies of that band within the harmonic spectrogram 704 and extracts a single maximum-energy in the time-frequency ridge, which is an array of time-frequency data points and time-amplitude values.