Summarization Of Group Chat Threads

Wang; Dakuo ; et al.

U.S. patent application number 16/595550 was filed with the patent office on 2021-04-08 for summarization of group chat threads. The applicant listed for this patent is International Business Machines Corporation. Invention is credited to Chuang Gan, Ming Tan, Dakuo Wang, Haoyu Wang.

| Application Number | 20210103636 16/595550 |

| Document ID | / |

| Family ID | 1000004423491 |

| Filed Date | 2021-04-08 |

| United States Patent Application | 20210103636 |

| Kind Code | A1 |

| Wang; Dakuo ; et al. | April 8, 2021 |

SUMMARIZATION OF GROUP CHAT THREADS

Abstract

Systems and methods provide for automated messaging summarization and ranking. The systems and methods may use an integrated machine learning model to perform thread detection, thread summarization, and summarization ranking. The messages may be received from a team chat application, organized, summarized and ranked by the machine learning model, and the results may be returned to the team chat application. In some cases, the ranking may be different for different users of the team chat application.

| Inventors: | Wang; Dakuo; (Cambrigde, MA) ; Tan; Ming; (Malden, MA) ; Gan; Chuang; (Cambrigde, MA) ; Wang; Haoyu; (Somerville, MA) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Family ID: | 1000004423491 | ||||||||||

| Appl. No.: | 16/595550 | ||||||||||

| Filed: | October 8, 2019 |

| Current U.S. Class: | 1/1 |

| Current CPC Class: | H04L 51/16 20130101; G06N 3/08 20130101; G06N 3/0445 20130101; G06F 40/30 20200101; H04L 65/403 20130101 |

| International Class: | G06F 17/27 20060101 G06F017/27; H04L 29/06 20060101 H04L029/06; H04L 12/58 20060101 H04L012/58; G06N 3/04 20060101 G06N003/04; G06N 3/08 20060101 G06N003/08 |

Claims

1. A method for communication technology, comprising: categorizing a set of messages into a plurality of message threads; generating a thread summary for each of the plurality of message threads; ordering the thread summaries; and transmitting the ordered thread summaries to one or more users.

2. The method of claim 1, further comprising: calculating a feature vector for a new message of the set of messages; calculating a candidate vector for each of a set of existing message threads and for an empty thread; comparing the feature value to each of the candidate vectors; and categorizing the new message into the one of the existing message threads or the empty thread based on the comparison.

3. The method of claim 2, further comprising: determining that the match value is closest to the candidate value for the empty thread, wherein the new message is categorized into the empty thread based on the determination; and generating a new empty thread based on the determination.

4. The method of claim 2, wherein: the candidate vectors are calculated based on a single direction long short-term memory (LS M) model.

5. The method of claim 2, wherein: the feature vector includes word count information, vocabulary information, message author information, message time information, or any combination thereof.

6. The method of claim 1, further comprising: encoding each of the plurality of message threads to produce an encoded thread vector; and decoding the encoded thread vector to produce the thread summary for each of the plurality of message threads.

7. The method of claim 6, wherein: the encoding and decoding are based on an LSTM model, a gated recurrent unit (GRU) model, a transformer model, or any combination thereof.

8. The method of claim 1, further comprising: identifying a set of rank orderings for the plurality of message threads; and generating a ranking value for each of the set of rank orderings, wherein the ordering is based on the ranking values.

9. The method of claim 8, further comprising: identifying user information for each of the one or more users; and selecting a plurality of rank orderings from the set of rank orderings based on the user information, wherein one of the plurality of rank orderings is selected for each of the one or more users.

10. The method of claim 1, further comprising: receiving the set of messages from a team chat application, wherein the ordered thread summaries are transmitted to the one or more users via the team chat application,

11. The method of claim 1, further comprising: training an integrated machine learning model using a set of training messages and a target set of ranked summaries, wherein categorizing the set of messages, generating the thread summaries, and ordering the thread summaries are all performed using the integrated machine learning model.

12. An apparatus for summarization of group chat threads, comprising: a processor and a memory storing instructions and in electronic communication with the processor, the processor being configured to execute the instructions to: receive a set of messages from a team chat application; categorize the set of messages into a plurality of message threads; generate a thread summary for each of the plurality of message threads; order the thread summaries; and transmit the ordered thread summaries to one or more users via the team chat application.

13. The apparatus of claim 12, the processor being further configured to execute the instructions to: calculate a feature vector for a new message of the set of messages; calculate a candidate vector for each of a set of existing message threads and for an empty thread; compare the feature value to each of the candidate vectors; and categorize the new message into the one of the existing message threads or the empty thread based on the comparison.

14. The apparatus of claim 12, the processor being further configured to execute the instructions to: encode each of the plurality of message threads to produce an encoded thread vector; and decode the encoded thread vector to produce the thread summary for each of the plurality of message threads.

15. The apparatus of claim 12, the processor being further configured to execute the instructions to: identify a set of rank orderings for the plurality of message threads; and generate a ranking value for each of the set of rank orderings, wherein the ordering is based on the ranking values.

16. The apparatus of claim 15, the processor being further configured to execute the instructions to: identify user information for each of the one or more users; and select a plurality of rank orderings from the set of rank orderings based on the user information, wherein one of the plurality of rank orderings is selected for each of the one or more users.

17. A non-transitory computer readable medium storing code for summarization of group chat threads, the code comprising instructions executable by a processor to: train an integrated machine learning model using a set of training messages and a target set of ranked summaries; categorize a set of messages into a plurality of message threads using the integrated machine learning model; generate a thread summary for each of the plurality of message threads using the integrated machine learning model; order the thread summaries using the integrated machine learning model; and transmit the ordered thread summaries to one or more users.

18. The non-transitory computer readable medium of claim 17, the code further comprising instructions executable by the processor to: calculate a feature vector for a new message of the set of messages; calculate a candidate vector for each of a set of existing message threads and for an empty thread; compare the feature value to each of the candidate vectors; and categorize the new message into the one of the existing message threads or the empty thread based on the comparison.

19. The non-transitory computer readable medium of claim 17, the code further comprising instructions executable by the processor to: encode each of the plurality of message threads to produce an encoded thread vector; and decode the encoded thread vector to produce the thread summary for each of the plurality of message threads.

20. The non-transitory computer readable medium of claim 17, the code further comprising instructions executable by the processor to: identify a set of rank orderings for the plurality of message threads; and generate a ranking value for each of the set of rank orderings, wherein the ordering is based on the ranking values.

Description

BACKGROUND

[0001] The following relates generally to communication technology, and more specifically to personalized summarization of group chat threads.

[0002] A group chat is a digital conference room where information is shared via text or instant message to multiple people at the same time. Users in a group chat connected to each other via an internet connection, or the like. When a user receives a chat update, a user's device may provide an alert regarding the new text.

[0003] The popularity of the group chat has led to the possibility that user may be simultaneously connected in multiple different chats with many other users. The amount of information that a user digests in each chat can be substantial, with a multitude of group chats available to a user.

[0004] Since group chat conversations may move quickly, the likelihood of a user missing important information is high. This missed information leads to problems such as scheduling conflicts, missed opportunities, or updates with other users. Therefore, a system is needed to automatically digest group chat information for a user and summarize the information for quick and easy comprehension.

SUMMARY

[0005] A method, apparatus, non-transitory computer readable medium, and system for personalized summarization of group chat threads are described. Embodiments of the method, apparatus, non-transitory computer readable medium, and system may categorize a set of messages into a plurality of message threads, generate a thread summary for each of the plurality of message threads, order the thread summaries, and transmit the ordered thread summaries to one or more users.

BRIEF DESCRIPTION OF THE DRAWINGS

[0006] FIG. 1 shows an example of a process for conversation thread detection according to aspects of the present disclosure.

[0007] FIG. 2 shows an example of a process for summarization of group chat threads according to aspects of the present disclosure.

[0008] FIG. 3 shows an example of a thread detection model according to aspects of the present disclosure.

[0009] FIG. 4 shows an example of a process for categorizing a set of messages into a plurality of message threads according to aspects of the present disclosure.

[0010] FIG. 5 shows an example of a summarization model according to aspects of the present disclosure.

[0011] FIG. 6 shows an example of a process for generating a thread summary for each of a plurality of message threads according to aspects of the present disclosure.

[0012] FIG. 7 shows an example of a ranking model according to aspects of the present disclosure.

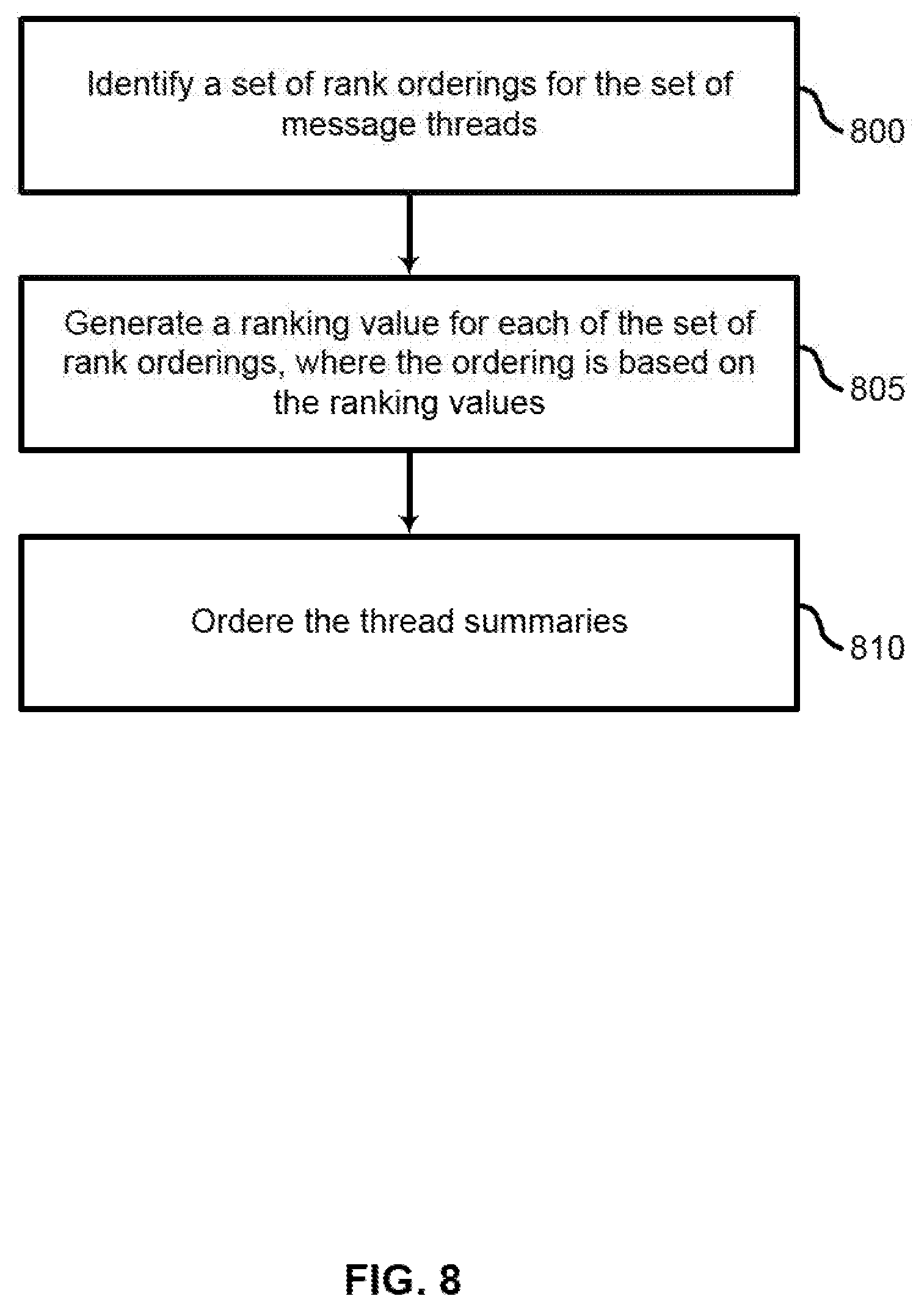

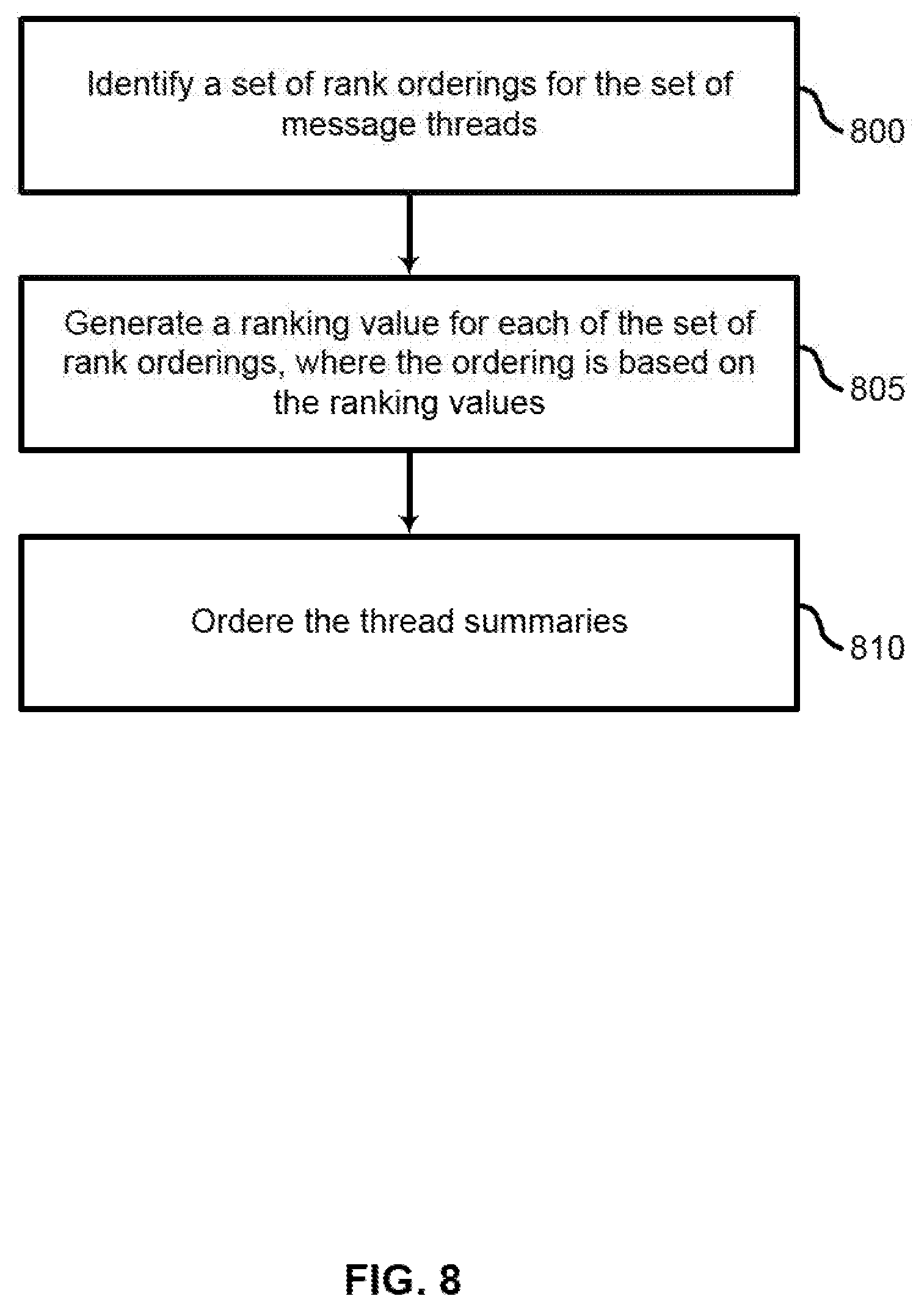

[0013] FIG. 8 shows an example of a process for ordering thread summaries according to aspects of the present disclosure.

[0014] FIG. 9 shows an example of a group chat summarization system according to aspects of the present disclosure.

[0015] FIG. 10 shows an example of a server according to aspects of the present disclosure.

DETAILED DESCRIPTION

[0016] Systems and methods provide for automated message summarization using a machine learning model. The machine learning model may include several integrated sub-models for performing thread detection, thread summarization, summarization ranking. The systems may receive messages from a team chat application, organized, summarized and ranked by the machine learning model, and the results may be returned to the team chat application. In some cases, the ranking may be different for different users of the team chat application.

[0017] A chat channel may include messages from multiple users, and may include a number of informal conversation threads. Users often separate these different conversations mentally, hut when the number of conversations grows large, this task may become difficult and important information may be missed.

[0018] Thus, according to embodiments of the present disclosure, messages are input into a thread detection model. The thread detection model assigns each message a thread ID. New messages are compared to existing messages, and either grouped with an existing conversational thread (represented by a unique thread ID) or used to create a new conversation.

[0019] Once the messages are grouped into threads, the threads are then output to a summarization model. The summarization model creates a human-readable summarization for each thread. The summaries are then output to a ranking model. The ranking model creates a ranked list of the summaries. The ranked lists of summaries are then output to a user interface for a chat application. Users may then read the ranked summarizations of the messages in the chat.

[0020] Training three separate models (i.e., thread detection, summarization, and ranking) may require different training data for each model. In some cases obtaining this training data for each model may be difficult or costly. Therefore, the present disclosure describes an integrated system in which all three sub-models may be trained together. Thus, the system may be trained using a single set of training data.

[0021] FIG. 1 shows an example of a process for conversation thread detection 105 according to aspects of the present disclosure. The example shown includes conversation channel 100, thread detection 105, summarization 110, ranking 115, team chat application 120, and users 125.

[0022] Conversation channel 100 may represent message from a team chat application 120 (either text or audio), and may include multiple conversations being carried out by a variety different people. Once the messages from the conversation channel 100 are received, thread detection 105 may be performed. Thread detection 105 may be performed on conversational data using a neural coherence model for automatically identifying the underlying thread structure of a forum discussion.

[0023] Once the threads are identified, summarization 110 may be performed to create a single, human-readable summary of the conversation thread. Lastly, ranking 115 organizes the summaries in order of importance to the users 125. The ranked summaries may be transmitted to users 125 via team chat application 120.

[0024] Team chat application 120 may be an example of, or include aspects of, the corresponding element or elements described with reference to FIG. 9. Thread detection 105, summarization 110, ranking 115 can be implemented as an artificial neural network (ANN) as described below with reference to FIG. 10.

[0025] Thus, messages are input to a thread detection model where they are assigned to threads, The threads are output to the summarization model. The summarization model then uses an encoder to embed the threads in a vector space. Then the summarization model outputs the embedded threads to a decoder where thread summarization occurs. Each summary is then packaged into ranked summarizations in a ranking model.

[0026] Manually generated ranked summarizations may be input to a learning module to train an integrated network including the thread detection model, the summarization model, and the ranking model.

[0027] FIG. 2 shows an example of a process for summarization of group chat threads according to aspects of the present disclosure. In some examples, these operations may be performed by a system including a processor executing a set of codes to control functional elements of an apparatus. Additionally or alternatively, the processes may be performed using special-purpose hardware. Generally, these operations may be performed according to the methods and processes described in accordance with aspects of the present disclosure. For example, the operations may be composed of various substeps, or may be performed in conjunction with other operations described herein.

[0028] At operation 200, the system categorizes a set of messages into a set of message threads. In some cases, the operations of this step may refer to, or be performed by, a thread detection component as described with reference to FIG. 10. An embodiment of operation 200 is described in further detail with reference to FIG. 3 and FIG. 4.

[0029] At operation 205, the system generates a thread summary for each of the set of message threads. In some cases, the operations of this step may refer to, or be performed by, a summarization component as described with reference to FIG. 10. An embodiment of operation 205 is described in further detail with reference to FIG. 5 and FIG. 6.

[0030] At operation. 210, the system orders the thread summaries, in some cases, the operations of this step may refer to, or be performed by, a ranking component as described with reference to FIG. 10. An embodiment of operation 210 is described in further detail with reference to FIG. 7 and FIG. 8.

[0031] At operation 215, the system transmits the ordered thread summaries to one or more users. In some cases, the operations of this step may refer to, or be performed by, a communication component as described with reference to FIG. 10. An embodiment of operation 215 is described in further detail with reference to FIG. 7 and FIG. 8.

[0032] FIG. 3 shows an example of a thread detection model according to aspects of the present disclosure. The example shown includes new message 300, feature vector 305, existing threads 310, candidate vectors 320, new thread 325, default candidate vector 330, and a combined set of conversation threads 335. Existing thread 310 may include existing messages 315.

[0033] When a new message is received, it may be converted into a feature vector 305 including features such as word count, verb use, author, time. In some cases each vector may have a large number of dimensions (e.g., 500 or more). In some examples, the conversion may be performed using a single directional LSTM encoder, or any other suitable text encoder.

[0034] Once the feature vector 305 is generated, it may be compared to candidate vectors 320 representing existing threads 310, as well as a default candidate vector 330 representing a new thread 325 (i.e., a "dummy" thread that does not yet have any messages associated with it). For example, the comparison may include determining the Euclidian distance between the vectors. Based on the comparison, an existing thread 310 will be selected (or the new thread 325) and the new message 300 will be associated with that thread (e.g., by assigning a thread ID). If the new thread 325 is selected, the new thread 325 may be replaced by a subsequent new thread so that there is always the possibility of creating a new thread.

[0035] Once a set of messages has been categorized, a set of conversation threads 335 may be created that includes all of the threads that were created during the categorization process.

[0036] FIG. 4 shows an example of a process for categorizing a set of messages into a plurality of message threads according to aspects of the present disclosure, in some examples, these operations may be performed by a system including a processor executing a set of codes to control functional elements of an apparatus. Additionally or alternatively, the processes may be performed using special-purpose hardware. Generally, these operations may be performed according to the methods and processes described in accordance with aspects of the present disclosure. For example, the operations may be composed of various substeps, or may be performed in conjunction with other operations described herein.

[0037] At operation 400, the system calculates a feature vector for a new message of the set of messages. In some cases, the operations of this step may refer to, or be performed by, a thread detection component as described with reference to FIG. 10.

[0038] At operation 405, the system calculates a candidate vector for each of a set of existing message threads and for an empty thread, in some cases, the operations of this step may refer to, or be performed by, a thread detection component as described with reference to FIG. 10.

[0039] At operation 410, the system compares the feature value to each of the candidate vectors. In some cases, the operations of this step may refer to, or be performed by, a thread detection component as described with reference to FIG. 10.

[0040] At operation 415, the system categorizes the new message into the one of the existing message threads or the empty thread based on the comparison. In some cases, the operations of this step may refer to, or be performed by, a thread detection component as described with reference to FIG. 10.

[0041] FIG. 5 shows an example of a summarization model according to aspects of the present disclosure. The example shown includes thread 500, encoding 510, encoded thread 515, decoding 520, and summary 525. The thread 500 may include a plurality of messages 505.

[0042] After a set of messages have been categorized, the resulting threads may be processed by the summarization model. The summarization model encodes a thread 500 (at encoding 510) to produce encoded thread 515. Then a decoding model (at decoding 520) processes the encoded thread to produce a summary 525. In various embodiments, the threads may be encoded and decoded using an LSTM model, a GRU model, a Transformer model, or any suitable other model or algorithm.

[0043] FIG. 6 shows an example of a process for generating a thread summary for each of a plurality of message threads according to aspects of the present disclosure. In some examples, these operations may be performed by a system including a processor executing a set of codes to control functional elements of an apparatus. Additionally or alternatively, the processes may be performed using special-purpose hardware. Generally, these operations may be performed according to the methods and processes described in accordance with aspects of the present disclosure. For example, the operations may be composed of various substeps, or may be performed in conjunction with other operations described herein.

[0044] At operation 600, the system encodes each of the set of message threads to produce an encoded thread vector. In some cases, the operations of this step may refer to, or be performed by, a summarization component as described with reference to FIG. 10.

[0045] At operation 605, the system decodes the encoded thread vector to produce the thread summary for each of the set of message threads. In some cases, the operations of this step may refer to, or be performed by, a summarization component as described with reference to FIG. 10.

[0046] FIG. 7 shows an example of a ranking model according to aspects of the present disclosure. The example shown includes candidate orderings 700, ranking model 705, and selected orderings 710.

[0047] The ranking model may generate a plurality of candidate orderings 700. For example, the candidate orderings 700 may correspond to each possible ordering of the threads identified by the thread detection model. The ranking model 705 takes one or more candidate orderings 700 as input and outputs a ranking value for each of the candidate orderings 700. In some cases, meta-data, such as information about a user is also used to order the candidate orderings 700.

[0048] A selected ordering 710 is the output of the ranking model 705. For example, the candidate ordering 700 that results in the highest output value may be selected as the selected ordering 710. In some cases, a different selected ordering 710 is provided for each user. Thus, each selected ordering 710 may include the summaries created by the summarization model in an order that is most likely to present relevant information to a particular user. In some cases, the selected ordering 710 is then passed to a team chat application to be shown to the various users.

[0049] A predictive ranking for the ranked summarizations is output. For each user, the ranking equation may be represented as:

logP(R|M)=log .SIGMA.P(R, S|M)=log.SIGMA..SIGMA.P(R S, T|M), (1)

where P is the probability, R represents the "correct" ordering, M represents an index of a candidate ordering 700, S represents the thread summary, and T represents a thread.

[0050] FIG. 8 shows an example of a process for ordering thread summaries according to aspects of the present disclosure. In some examples, these operations may be performed by a system including a processor executing a set of codes to control functional elements of an apparatus. Additionally or alternatively, the processes may be performed using special-purpose hardware. Generally, these operations may be perfhrmed according to the methods and processes described in accordance with aspects of the present disclosure. For example, the operations may he composed of various substeps, or may be performed in conjunction with other operations described herein.

[0051] At operation 800, the system identifies a set of rank orderings for the set of message threads. In some cases, the operations of this step may refer to, or be performed by, a ranking component as described with reference to FIG. 10.

[0052] At operation 805, the system generates a ranking value for each of the set of rank orderings, where the ordering is based on the ranking values. In some cases, the operations of this step may refer to, or be performed by, a ranking component as described with reference to FIG. 10.

[0053] At operation 810, the system orders the thread summaries. In some cases, the operations of this step may refer to, or be performed by, a ranking component as described with reference to FIG. 10.

[0054] FIG. 9 shows an example of a group chat summarization system according to aspects of the present disclosure. The example shown includes server 900, network 905, team chat application 910, and users 915. The users 915 may communicate with each other via the team chat application 910, and the team chat application 910 may then transmit message to server 900 via network 905.

[0055] Once the server 900 has received the messages, it may categorize them into different threads, generate a summary for each thread, and rank the threads. Then the server 900 may transmit the ranked threads (including the summaries) back to the team chat application 910. In some cases, different users 915 may be presented with different rankings or summarizations.

[0056] Server 900 may be an example of, or include aspects of, the corresponding element or elements described with reference to FIG. 10. Team chat application 910 may be an example of, or include aspects of, the corresponding element or elements described with reference to FIG. 1.

[0057] FIG. 10 shows an example of a server 1000 according to aspects of the present disclosure. Server 1000 may include thread detection component 1005, summarization component 1015, ranking component 1025, communication component 1035, training component 1045 processor unit 1050, and memory unit 1055.

[0058] A server 1000 may provide one or more functions to requesting users linked by way of one or more of the various networks. In some cases, the server 1000 may include a single microprocessor board, which may include a microprocessor responsible for controlling all aspects of the server 1000, in some cases, a server 1000 may use a microprocessor and protocols to exchange data with other devices/users on one or more of the networks via hypertext transfer protocol (HTTP) and simple mail transfer protocol (SMTP), although other protocols may be also used, such as file transfer protocol (FTP), and simple network management protocol (SNMP). A server 1000 may be a general-purpose computing device, a personal computer, a laptop computer, a mainframe computer, a super computer, or any other suitable processing apparatus. Server 1000 may be an example of, or include aspects of, the corresponding element or elements described. with reference to FIG. 9.

[0059] Thread detection component 1005 may categorize a set of messages into a set of message threads. Thread detection component 1005 may first calculate a feature vector for a new message of the set of messages. In some examples, the feature vector includes word count information, vocabulary information, message author information, message time information, or any combination thereof. Thread detection component 1005 may then calculate a candidate vector for each of a set of existing message threads and for an empty thread and compare the feature value to each of the candidate vectors.

[0060] Thread detection component 1005 may categorize the new message into the one of the existing message threads or the empty thread based on the comparison, in some eases, thread detection component 1005 may determine that a match value is closest to a candidate value for an existing thread or an empty thread, and the new message may be categorized into the corresponding thread based on the determination. Thread detection component 1005 may also generate a new empty thread if a new message is categorized to the empty (new) thread.

[0061] Thread detection component 1005 may include thread detection model 1010. In some examples, the thread detection model 1010 is based on a single direction long short-term memory (LSTM) model.

[0062] Summarization component 1015 may generate a thread summary for each of the set of message threads. Summarization component 1015 may also encode each of the set of message threads to produce an encoded thread vector. Summarization component 1015 may also decode the encoded thread vector to produce the thread summary for each of the set of message threads.

[0063] Summarization component 1015 may include summarization model 1020. In some examples, the summarization model 1020 is based on an LSTM model, a gated recurrent unit (GRU) model, a transformer model, or any combination thereof.

[0064] Ranking component 1025 may order the thread summaries. Ranking component 1025 may also identify a set of rank orderings for the set of message threads. Ranking component 1025 may also generate a ranking value for each of the set of rank orderings, where the ordering is based on the ranking values, Ranking component 1025 may also identify user information for each of the one or more users, Ranking component 1025 may also select a set of rank orderings from the set of rank orderings based on the user information, where one of the set of rank orderings is selected for each of the one or more users. Ranking component 1025 may include rankine model 1030.

[0065] Communication component 1035 may transmit the ordered thread summaries to one or more users. Communication component 1035 may also receive the set of messages from the team chat application, where the ordered thread summaries are transmitted to the one or more users via the team chat application. Communication component 1035 may include team chat application interface 1040.

[0066] Training component 1045 may train an integrated machine learning model using a set of training messages and a target set of ranked summaries, where categorizing the set of messages, generating the thread summaries, and ordering the thread summaries are all performed using the integrated machine learning model.

[0067] A processor unit 1050 may include an intelligent hardware device, (e.g., a general-purpose processing component, a digital signal processor (DSP), a central processing unit (CPU), a graphics processing unit (GPU), a microcontroller, an application specific integrated circuit (ASIC), a field programmable gate array (FPGA), a programmable logic device, a discrete gate or transistor logic component, a discrete hardware component, or any combination thereof), in some cases, the processor may be configured to operate a memory array using a memory controller. In other cases, a memory controller may be integrated into the processor. The processor may be configured to execute computer-readable instructions stored in a memory to perform. various functions. In some examples, a processor may include special purpose components for modem processing, baseband processing, digital signal processing, or transmission processing. In some examples, the processor may comprise a system-on-a-chip.

[0068] A memory unit 1055 may store information for various programs and applications on a computing device. For example, the storage may include data for running an operating system. The memory may include both volatile memory and non-volatile memory. Volatile memory may be random access memory (RAM), and non-volatile memory may include read-only memory (ROM), flash memory, electrically erasable programmable read-only memory (EEPROM), digital tape, a hard disk drive (HDD), and a solid-state drive (SSD). The memory may include any combination of readable and/or writable volatile memories and/or non-volatile memories, along with other possible storage devices.

[0069] In some examples, the thread detection component 1005, summarization component 1015, and ranking component 1025 may be implemented using an artificial neural network (ANN). An ANN is a hardware or a software component that includes a number of connected nodes (a.k.a., artificial neurons), which may loosely correspond to the neurons in a human brain. Each connection, or edge, may transmit a signal from one node to another (like the physical synapses in a brain). When a node receives a signal, it can process the signal and then transmit the processed signal to other connected nodes. In some cases, the signals between nodes comprise real numbers, and the output of each node may be computed by a function of the sum of its inputs. Each node and edge may be associated with one or more node weights that determine how the signal is processed and transmitted.

[0070] During the training, process, these weights may be adjusted to improve the accuracy of the result (i.e., by minimizing a loss function which corresponds in some way to the difference between the current result and the target result). The weight of an edge may increase or decrease the strength of the signal transmitted between nodes. In some cases, nodes may have a threshold below which a signal is not transmitted at all. The nodes may also be aggregated into layers, Different layers may perform different transformations on their inputs. The initial layer may be known as the input layer and the last layer may be known as the output layer. In some cases, signals may traverse certain layers multiple times.

[0071] Accordingly, the present disclosure includes the following embodiments.

[0072] A method for personalized summarization of group chat threads is described. Embodiments of the method may categorize a set of messages into a plurality of message threads, generating a thread summary for each of the plurality of message threads, ordering the thread summaries, and transmitting the ordered thread summaries to one or more users.

[0073] An apparatus for the summarization of group chat threads is described. The apparatus may include a processor, memory in electronic communication with the processor, and instructions stored in the memory. The instructions may be operable to cause the processor to categorize a set of messages into a plurality of message threads, generate a thread summary for each of the plurality of message threads, order the thread summaries, and transmit the ordered thread summaries to one or more users.

[0074] A non-transitory computer readable medium storing code for summarization of group chat threads is described. In some examples, the code comprises instructions executable by a processor to: categorize a set of messages into a plurality of message threads, generate a thread summary for each of the plurality of message threads, order the thread summaries, and transmit the ordered thread summaries to one or more users.

[0075] A system for personalized summarization of group chat threads is described. Embodiments of the system may provide for categorizing a set of messages into a plurality of message threads, means for generating a thread summary for each of the plurality of message threads, means for ordering the thread summaries, and means for transmitting the ordered thread summaries to one or more users.

[0076] Some examples of the method, apparatus, non-transitory computer readable medium, and system described above may further include calculating a feature vector for a new message of the set of messages. Some examples may further include calculating a candidate vector for each of a set of existing message threads and for an empty thread. Some examples may further include comparing the feature value to each of the candidate vectors. Some examples may further include categorizing the new message into the one of the existing message threads or the empty thread based on the comparison.

[0077] Some examples of the method, apparatus, non-transitory computer readable medium, and system described above may further include determining that the match value is closest to the candidate value for the empty thread, wherein the new message is categorized into the empty thread based on the determination. Some examples may further include generating a new empty thread based on the determination.

[0078] In some examples, the candidate vectors are calculated based on a single direction long short-term memory (LSTM) model. In some examples, the feature vector includes word count information, vocabulary information, message author information, message time information, or any combination thereof.

[0079] Some examples of the method, apparatus, non-transitory computer readable medium., and system described above may further include encoding each of the plurality of message threads to produce an encoded thread vector. Some examples may further include decoding the encoded thread vector to produce the thread summary for each of the plurality of message threads. In some examples, the encoding and decoding are based on an LSTM model, a gated recurrent unit (GRU) model, a transformer model, or any combination thereof.

[0080] Some examples of the method, apparatus, non-transitory computer readable medium, and system described above may further include identifying a set of rank orderings for the plurality of message threads. Some examples may further include generating a ranking value for each of the set of rank orderings, wherein the ordering is based on the ranking values.

[0081] Some examples of the method, apparatus, non-transitory computer readable medium, and system described above may further include identifying user information for each of the one or more users. Some examples may further include selecting a plurality of rank orderings from the set of rank orderings based on the user information, wherein one of the plurality of rank orderings is selected for each of the one or more users.

[0082] Some examples of the method, apparatus, non-transitory computer readable medium, and system described above may further include receiving the set of messages from a team chat application, wherein the ordered thread summaries are transmitted to the one or more users via the team chat application.

[0083] Some examples of the method, apparatus, non-transitory computer readable medium, and system described above may further include training an integrated machine learning model. using a set of training messages and a target set of ranked summaries, wherein categorizing the set of messages, generating the thread summaries, and ordering the thread summaries are all performed using the integrated machine learning model.

[0084] The description and drawings described herein represent example configurations and do not represent all the implementations within the scope of the claims. For example, the operations and steps may be rearranged, combined or otherwise modified. Also, structures and devices may be represented in the form of block diagrams to represent the relationship between components and avoid obscuring the described concepts. Similar components or features may have the same name but may have different reference numbers corresponding to different figures.

[0085] Some modifications to the disclosure may be readily apparent to those skilled in the art, and the principles defined herein may be applied to other variations without departing from the scope of the disclosure. Thus, the disclosure is not limited to the examples and designs described herein but is to be accorded the broadest scope consistent with the principles and novel features disclosed herein.

[0086] The described methods may be implemented or performed by devices that include a general-purpose processor, a digital signal processor (DSP), an application specific integrated circuit (ASIC), a field programmable gate array (FPGA) or other programmable logic device, discrete gate or transistor logic, discrete hardware components, or any combination thereof. A general-purpose processor may be a microprocessor, a conventional processor, controller, microcontroller, or state machine. A processor may also be implemented as a combination of computing devices (e.g., a combination of a DSP and a microprocessor, multiple microprocessors, one or more microprocessors in conjunction with a DSP core, or any other such configuration). Thus, the functions described herein may be implemented in hardware or software and may be executed by a processor, firmware, or any combination thereof. If implemented in software executed by a processor, the functions may be stored in the form of instructions or code on a computer-readable medium.

[0087] Computer-readable media includes both non-transitory computer storage media and communication media including any medium that facilitates the transfer of code or data. A non-transitory storage medium may be any available medium that can be accessed by a computer. For example, non-transitory computer-readable media can comprise random access memory (RAM), read-only memory (ROM), electrically erasable programmable read-only memory (EEPROM), compact disk (CD) or other optical disk storage, magnetic disk storage, or any other non-transitory medium for carrying or storing data or code.

[0088] Also, connecting components may be properly termed as computer-readable media. For example, if code or data is transmitted from a website, server, or other remote source using a coaxial cable, fiber optic cable, twisted pair, digital subscriber line (DSL), or wireless technology such as infrared, radio, or microwave signals, then the coaxial cable, fiber optic cable, twisted pair, DSL, or wireless technology are included in the definition of medium. Combinations of media are also included within the scope of computer-readable media.

[0089] In this disclosure and the following claims, the word "or" indicates an inclusive list such that, for example, the list of X, Y, or Z means X or Y or Z or XY or XZ or YZ or XYZ. Also the phrase "based on" is not used to represent a closed set of conditions. For example, a step that is described as "based on condition A" may be based on both condition A and condition B. In other words, the phrase "based on" shall be construed to mean "based at least in part on."

* * * * *

D00000

D00001

D00002

D00003

D00004

D00005

D00006

D00007

D00008

D00009

D00010

XML

uspto.report is an independent third-party trademark research tool that is not affiliated, endorsed, or sponsored by the United States Patent and Trademark Office (USPTO) or any other governmental organization. The information provided by uspto.report is based on publicly available data at the time of writing and is intended for informational purposes only.

While we strive to provide accurate and up-to-date information, we do not guarantee the accuracy, completeness, reliability, or suitability of the information displayed on this site. The use of this site is at your own risk. Any reliance you place on such information is therefore strictly at your own risk.

All official trademark data, including owner information, should be verified by visiting the official USPTO website at www.uspto.gov. This site is not intended to replace professional legal advice and should not be used as a substitute for consulting with a legal professional who is knowledgeable about trademark law.