Notifying Entities Of Relevant Events Removing Private Information

Guereca-Pinuelas; Armando ; et al.

U.S. patent application number 17/102418 was filed with the patent office on 2021-04-01 for notifying entities of relevant events removing private information. The applicant listed for this patent is safeXai, Inc.. Invention is credited to Christian Gratton, Armando Guereca-Pinuelas, Damien Patton.

| Application Number | 20210096935 17/102418 |

| Document ID | / |

| Family ID | 1000005266253 |

| Filed Date | 2021-04-01 |

View All Diagrams

| United States Patent Application | 20210096935 |

| Kind Code | A1 |

| Guereca-Pinuelas; Armando ; et al. | April 1, 2021 |

NOTIFYING ENTITIES OF RELEVANT EVENTS REMOVING PRIVATE INFORMATION

Abstract

The present invention extends to systems and methods for notifying entities of relevant events notifying entities of relevant events removing private information. A privacy infrastructure can apply data privacy operations to user information prior to, during, or after any of signal ingestion, event detection, or event notification. An entity defines a rule formula that is triggered when one or more detected events match the rule formula including defining one or more event types and one or more locations types. One or more events are detected. User information is detected in one of the events. The privacy infrastructure applies a data privacy operation on the user information. It is determined that the one or events satisfies the rule formula subsequent to applying the data privacy operation.

| Inventors: | Guereca-Pinuelas; Armando; (Cottonwood Heights, UT) ; Patton; Damien; (Park City, UT) ; Gratton; Christian; (Las Vegas, NV) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Family ID: | 1000005266253 | ||||||||||

| Appl. No.: | 17/102418 | ||||||||||

| Filed: | November 23, 2020 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 16850172 | Apr 16, 2020 | 10846151 | ||

| 17102418 | ||||

| 16751105 | Jan 23, 2020 | 10691512 | ||

| 16850172 | ||||

| 16536452 | Aug 9, 2019 | 10585724 | ||

| 16751105 | ||||

| 16353212 | Mar 14, 2019 | 10423688 | ||

| 16536452 | ||||

| 62859941 | Jun 11, 2019 | |||

| 62657695 | Apr 13, 2018 | |||

| 62657705 | Apr 13, 2018 | |||

| 62660934 | Apr 20, 2018 | |||

| 62660924 | Apr 20, 2018 | |||

| 62660929 | Apr 20, 2018 | |||

| 62664001 | Apr 27, 2018 | |||

| 62667616 | May 7, 2018 | |||

| 62669540 | May 10, 2018 | |||

| 62686791 | Jun 19, 2018 | |||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | G08B 21/0423 20130101; G06F 9/542 20130101; G08B 21/182 20130101 |

| International Class: | G06F 9/54 20060101 G06F009/54; G08B 21/18 20060101 G08B021/18; G08B 21/04 20060101 G08B021/04 |

Claims

1. A method comprising: receiving an indication of a location type, a boundary geometry, a user event truthfulness preference, a first event type, and a second event type; receiving an indication of an area including a first location of the location type and a second location of the location type; combining the location type, the boundary geometry, the area, the user event truthfulness preference, the first event type, and the second event type, into a rule formula; monitoring the area for events occurring within a first boundary surrounding the first location or occurring within a second boundary surrounding the second location, the first boundary and the second boundary defined in accordance with the boundary geometry; accessing first event characteristics including a first event type and a first event truthfulness corresponding to a first detected event; identifying user information contained within the first event characteristics; applying a data privacy operation to the user information; accessing second event characteristics including a second event type and a second event truthfulness corresponding to a second detected event; determining that a combination of the first characteristics and the second characteristics satisfy the rule formula subsequent to applying the data privacy operation, including determining that the first event type and the second event type occurred in combination within the first boundary and that the first event truthfulness and the second event truthfulness both satisfy the user event truthfulness preference; and automatically electronically notifying an entity in accordance with notification preferences that the rule formula was satisfied.

Description

CROSS-REFERENCE TO RELATED APPLICATIONS

[0001] This application is a continuation of U.S. patent application Ser. No. 16/850,172, entitled "NOTIFYING ENTITIES OF RELEVANT EVENTS REMOVING PRIVATE INFORMATION," filed Apr. 16, 2020, which is herein incorporated by reference in its entirety. That application is a continuation in part of U.S. patent application Ser. No. 16/751,105, entitled "NOTIFYING ENTITIES OF RELEVANT EVENTS," filed Jan. 23, 2020, which is herein incorporated by reference in its entirety. That Application is a continuation of U.S. patent application Ser. No. 16/536,452, now U.S. Pat. No. 10,585,724, entitled "NOTIFYING ENTITIES OF RELEVANT EVENTS," filed Aug. 9, 2019, which is incorporated herein in its entirety. That Application is a continuation in part of U.S. patent application Ser. No. 16/353,212, now U.S. Pat. No. 10,423,688, entitled "NOTIFYING ENTITIES OF RELEVANT EVENTS," filed Mar. 14, 2019, which is incorporated herein in its entirety.

[0002] U.S. patent application Ser. No. 16/353,212 claims the benefit of U.S. Provisional Patent Application Ser. No. 62/657,695, entitled "Event Identification And Notification Based On Entity Selected Event Notification Preferences," filed Apr. 13, 2018, which is incorporated herein in its entirety. U.S. patent application Ser. No. 16/353,212 claims the benefit of U.S. Provisional Patent Application Ser. No. 62/657,705, entitled "Pushing Event Notifications Based On Current or Predicted Entity Location," filed Apr. 13, 2018, which is incorporated herein in its entirety. U.S. patent application Ser. No. 16/353,212 claims the benefit of U.S. Provisional Patent Application Ser. No. 62/660,934, entitled "Event Identification And Notification Based On Entity Selected Event Notification Preferences," filed Apr. 20, 2018, which is incorporated herein in its entirety. U.S. patent application Ser. No. 16/353,212 claims the benefit of U.S. Provisional Patent Application Ser. No. 62/660,924, entitled "Pushing Event Notifications Based On Current or Predicted Entity Location," filed Apr. 20, 2018, which is incorporated herein in its entirety. U.S. patent application Ser. No. 16/353,212 claims the benefit of U.S. Provisional Patent Application Ser. No. 62/660,929, entitled "Determining Event Truthfulness From Multiple Input Signals," filed Apr. 20, 2018, which is incorporated herein in its entirety. U.S. patent application Ser. No. 16/353,212 claims the benefit of U.S. Provisional Patent Application Ser. No. 62/664,001, entitled "Normalizing Different Types Of Ingested Signals Into A Common Format," filed Apr. 27, 2018, which is incorporated herein in its entirety. U.S. patent application Ser. No. 16/353,212 claims the benefit of U.S. Provisional Patent Application Ser. No. 62/667,616, entitled "Normalizing Different Types Of Ingested Signals Into A Common Format," filed May 7, 2018, which is incorporated herein in its entirety. U.S. patent application Ser. No. 16/353,212 claims the benefit of U.S. Provisional Patent Application Ser. No. 62/669,540, entitled "Determining Event Severity From Multiple Input Signals," filed May 10, 2018, which is incorporated herein in its entirety. U.S. patent application Ser. No. 16/353,212 claims the benefit of U.S. Provisional Patent Application Ser. No. 62/686,791 entitled, "Normalizing Signals," filed Jun. 19, 2018, which is incorporated herein in its entirety

[0003] U.S. patent application Ser. No. 16/850,172 application claims the benefit of U.S. Provisional Patent Application Ser. No. 62/859,941 entitled "CUSTOMIZING EVENT NOTIFICATIONS," filed Jun. 11, 2019, which is incorporated herein in its entirety, each of which is incorporated herein in its entirety.

BACKGROUND

1. Background and Relevant Art

[0004] Entities (e.g., parents, guardians, friends, relatives, teachers, social workers, first responders, hospitals, delivery services, media outlets, government entities, etc.) may desire to be made aware of relevant events (e.g., fires, accidents, police presence, shootings, etc.) as close as possible to the events' occurrence. However, entities typically are not made aware of an event until after a person observes the event (or the event aftermath) and calls authorities.

[0005] Some techniques to automate event detection have been attempted. However, in general, automated event detection techniques are unreliable. Some techniques attempt to mine social media data to detect events and forecast when events might occur. However, events can occur without prior planning and/or may not be detectable using social media data. Further, these techniques are not capable of meaningfully processing available data nor are these techniques capable of differentiating false data (e.g., hoax social media posts)

[0006] Further, data provided to computer systems can come from any number of different sources, such as, for example, user input, files, databases, applications, sensors, social media systems, cameras, emergency communications, etc. In some environments, computer systems receive (potentially large volumes of) data from a variety of different domains and/or verticals in a variety of different formats. When data is received from different sources and/or in different formats, it can be difficult to efficiently and effectively derive intelligence from the data.

[0007] Extract, transform, and load (ETL) refers to a technique that extracts data from data sources, transforms the data to fit operational needs, and loads the data into an end target. ETL systems can be used to integrate data from multiple varied sources, such as, for example, from different vendors, hosted on different computer systems, etc.

[0008] ETL is essentially an extract and then store process. Prior to implementing an ETL solution, a user defines what (e.g., subset of) data is to be extracted from a data source and a schema of how the extracted data is to be stored. During the ETL process, the defined (e.g., subset of) data is extracted, transformed to the form of the schema (i.e., schema is used on write), and loaded into a data store. To access different data from the data source, the user has to redefine what data is to be extracted. To change how data is stored, the user has to define a new schema.

[0009] ETL is beneficial because it allows a user to access a desired portion of data in a desired format. However, ETL can be cumbersome as data needs evolve. Each change to the extracted data and/or the data storage results in the ETL process having to be restarted. As such, ETL is marginally practical, at best, for automated event detection. When using ETL, measures can be taken to reduce the possibility of introducing errors or inconsistencies into event detection and notification processes. However, inevitably errors and/or inconsistencies occur at least from time to time.

[0010] Unfortunately, many events are related to human suffering and possibly even human death, such as, for example, accidents, shootings, natural disasters, etc. Entities being notified of such events (e.g., drivers, first responders, disaster relief organizations, etc.) attempt to tailor their response based on circumstances of an event. Thus, entities can rely on event notification when allocating and expending resources. Errors or inconsistencies in event detection and notification may cause entities to respond inappropriately (insufficiently), waste resources, etc.

BRIEF SUMMARY

[0011] Examples extend to methods, systems, and computer program products for notifying entities of relevant events removing private information.

[0012] A privacy infrastructure spans other modules used for signal ingestion, event detection, and event notification. The privacy infrastructure can apply data privacy operations to user information in any of raw signals, normalized signals, events, or event notifications prior to, during, or after any of signal ingestion, event detection, or event notification.

[0013] An indication of a location type, a boundary geometry, a user event truthfulness preference, a first event type, and a second event type are received. An indication of an area including a first location of the location type and a second location of the location type are received. The location type, the boundary geometry, the area, the user event truthfulness preference, the first event type, and the second event type are combined into a rule formula.

[0014] The area is monitored for events occurring within a first boundary surrounding the first location or occurring within a second boundary surrounding the second location, the first boundary and the second boundary defined in accordance with the boundary geometry. First event characteristics including a first event type and a first event truthfulness corresponding to a first detected event are accessed. User information contained within the first event characteristics is identified. A data privacy operation is applied to the user information.

[0015] Second event characteristics including a second event type and a second event truthfulness corresponding to a second detected event are accessed. It is determined that a combination of the first characteristics and the second characteristics satisfy the rule formula subsequent to applying the data privacy operation. The determination includes determining that the first event type and the second event type occurred in combination within the first boundary and that the first event truthfulness and the second event truthfulness both satisfy the user event truthfulness preference. An entity is automatically electronically notified in accordance with notification preferences that the rule formula was satisfied.

[0016] In one aspect, other user information contained within the second event characteristics is identified. Another data privacy operation is applied to the other user information. It is determined that the combination of the first characteristics and the second characteristics satisfy the rule formula subsequent to applying the other data privacy operation.

[0017] In general, user information can include confidential information, patient information, personally identifiable information (PII), personal health information (PHI), sensitive personal information (SPI), Payment Card Industry information (PCI), or other private information. Data privacy operations can include removing user information (e.g., stripping, scrubbing, etc.), obscuring user information, anonymizing user information, encrypting user information encryption, segregating user information segregation, or applying access controls.

[0018] This summary is provided to introduce a selection of concepts in a simplified form that are further described below in the Detailed Description. This Summary is not intended to identify key features or essential features of the claimed subject matter, nor is it intended to be used as an aid in determining the scope of the claimed subject matter.

[0019] Additional features and advantages will be set forth in the description which follows, and in part will be obvious from the description, or may be learned by practice. The features and advantages may be realized and obtained by means of the instruments and combinations particularly pointed out in the appended claims. These and other features and advantages will become more fully apparent from the following description and appended claims, or may be learned by practice as set forth hereinafter.

BRIEF DESCRIPTION OF THE DRAWINGS

[0020] In order to describe the manner in which the above-recited and other advantages and features can be obtained, a more particular description will be rendered by reference to specific implementations thereof which are illustrated in the appended drawings. Understanding that these drawings depict only some implementations and are not therefore to be considered to be limiting of its scope, implementations will be described and explained with additional specificity and detail through the use of the accompanying drawings in which:

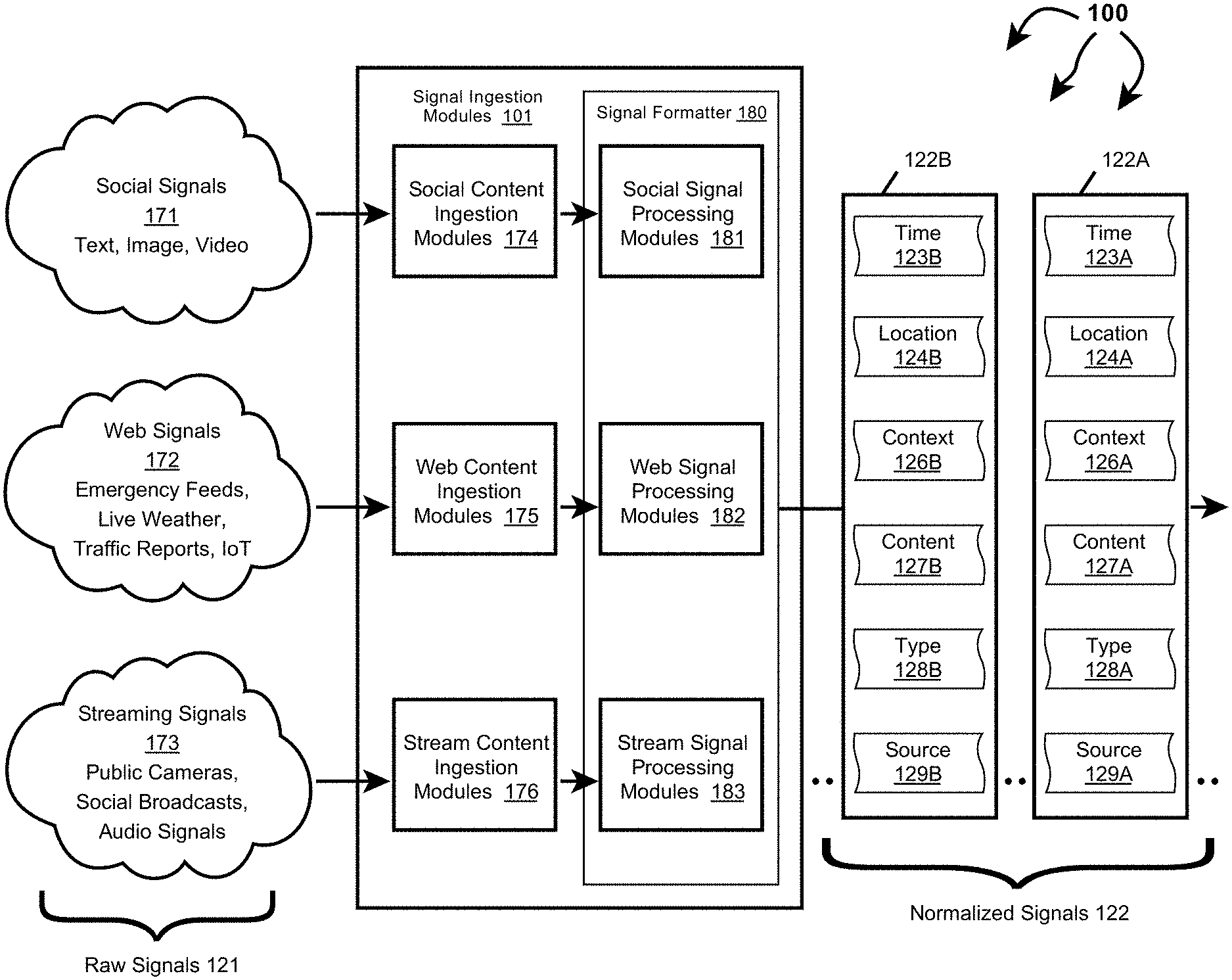

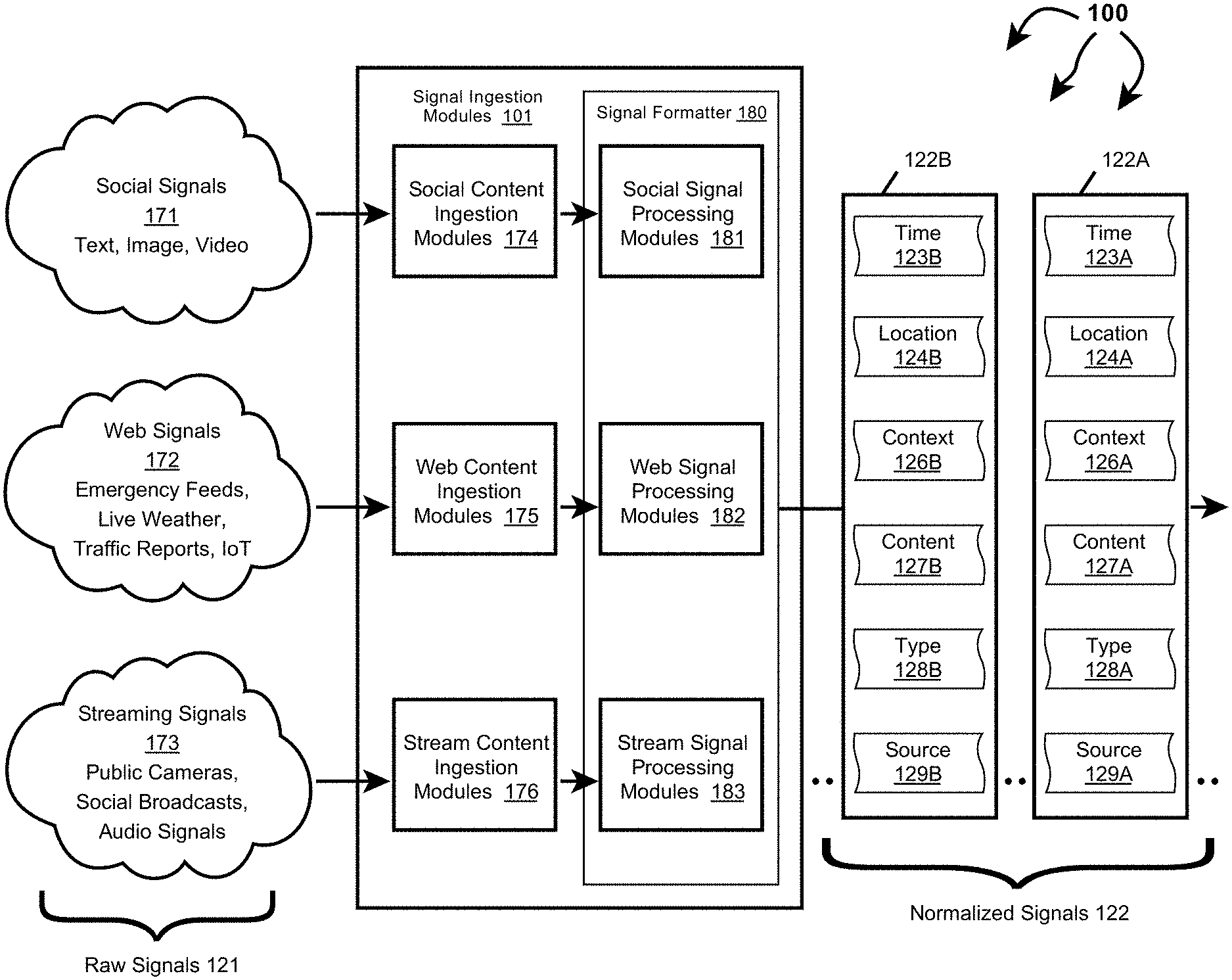

[0021] FIG. 1A illustrates an example computer architecture that facilitates normalizing ingesting signals.

[0022] FIG. 1B illustrates an example computer architecture that facilitates detecting events from normalized signals.

[0023] FIG. 1C illustrates the example computer architecture of FIG. 1B and includes a privacy infrastructure.

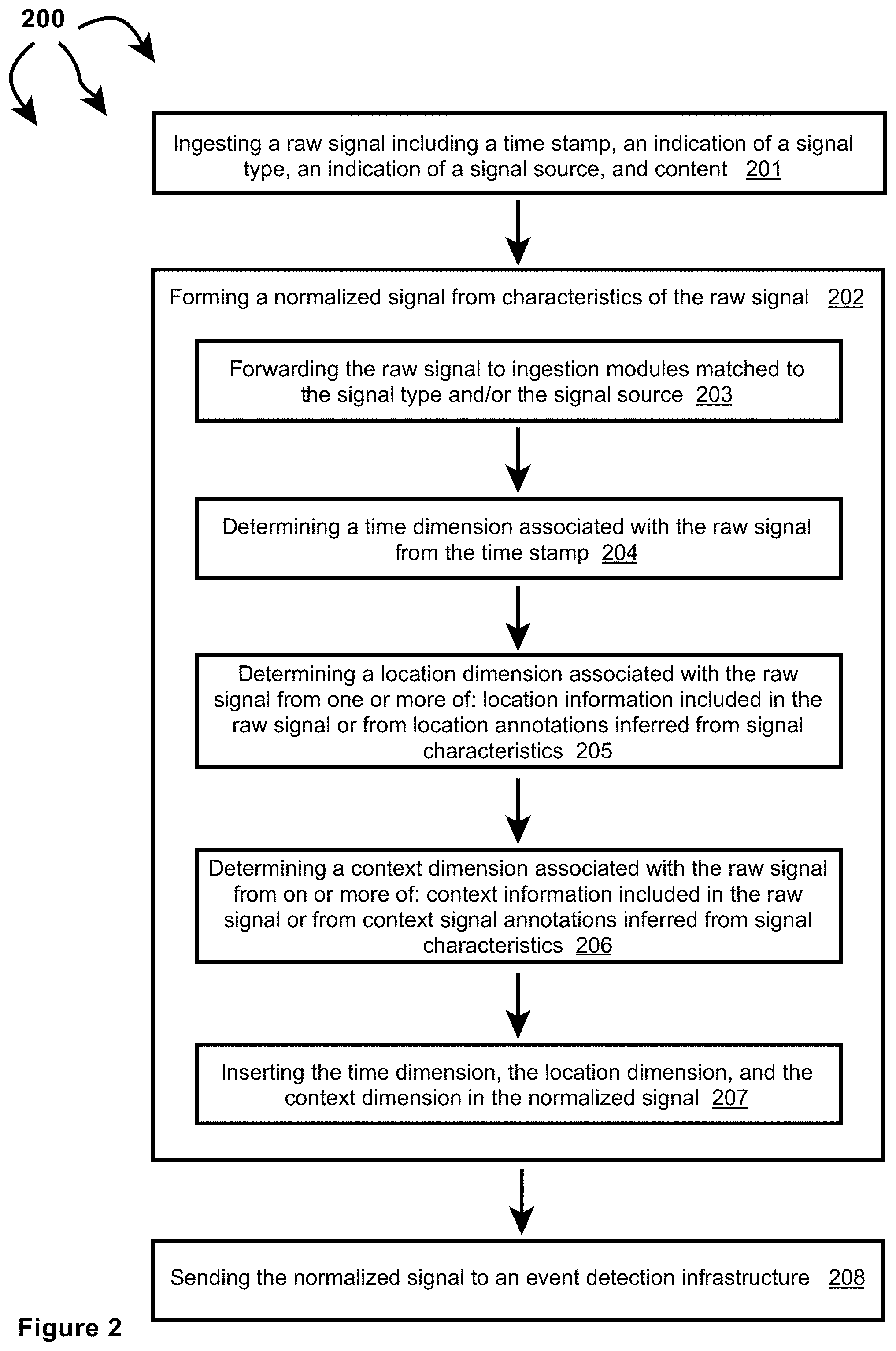

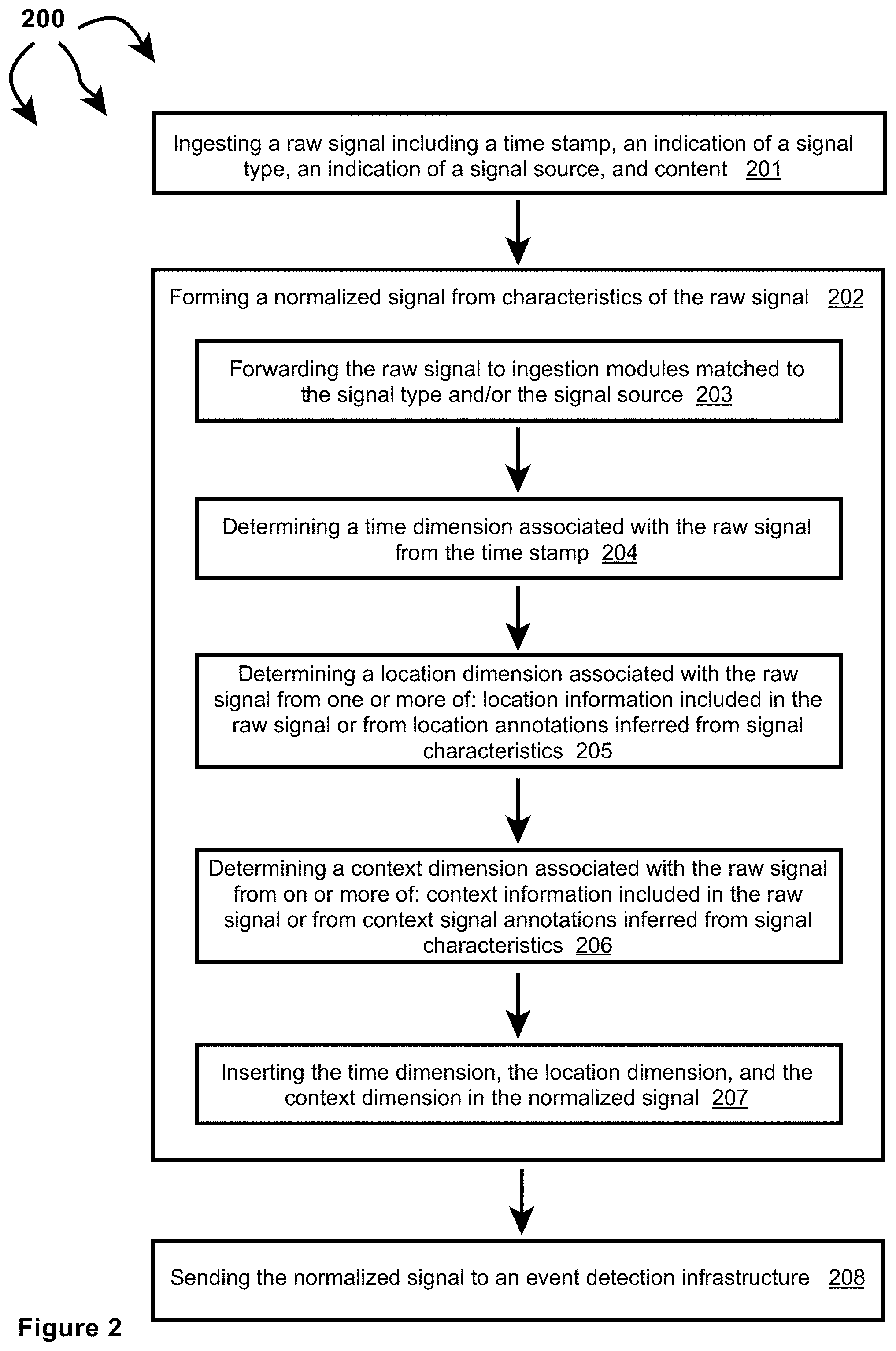

[0024] FIG. 2 illustrates a flow chart of an example method for normalizing ingested signals.

[0025] FIGS. 3A, 3B, and 3C illustrate other example components that can be included in signal ingestion modules.

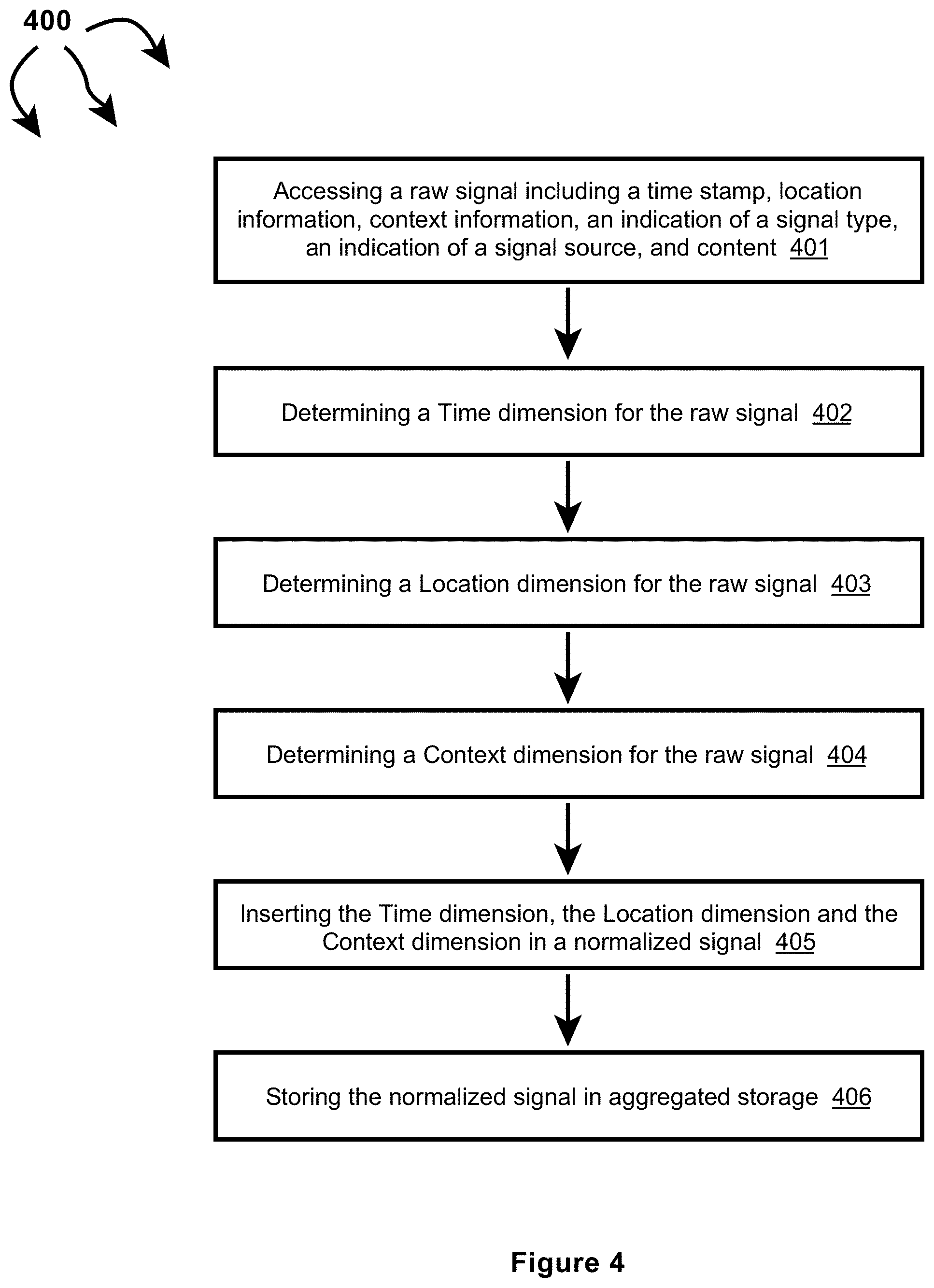

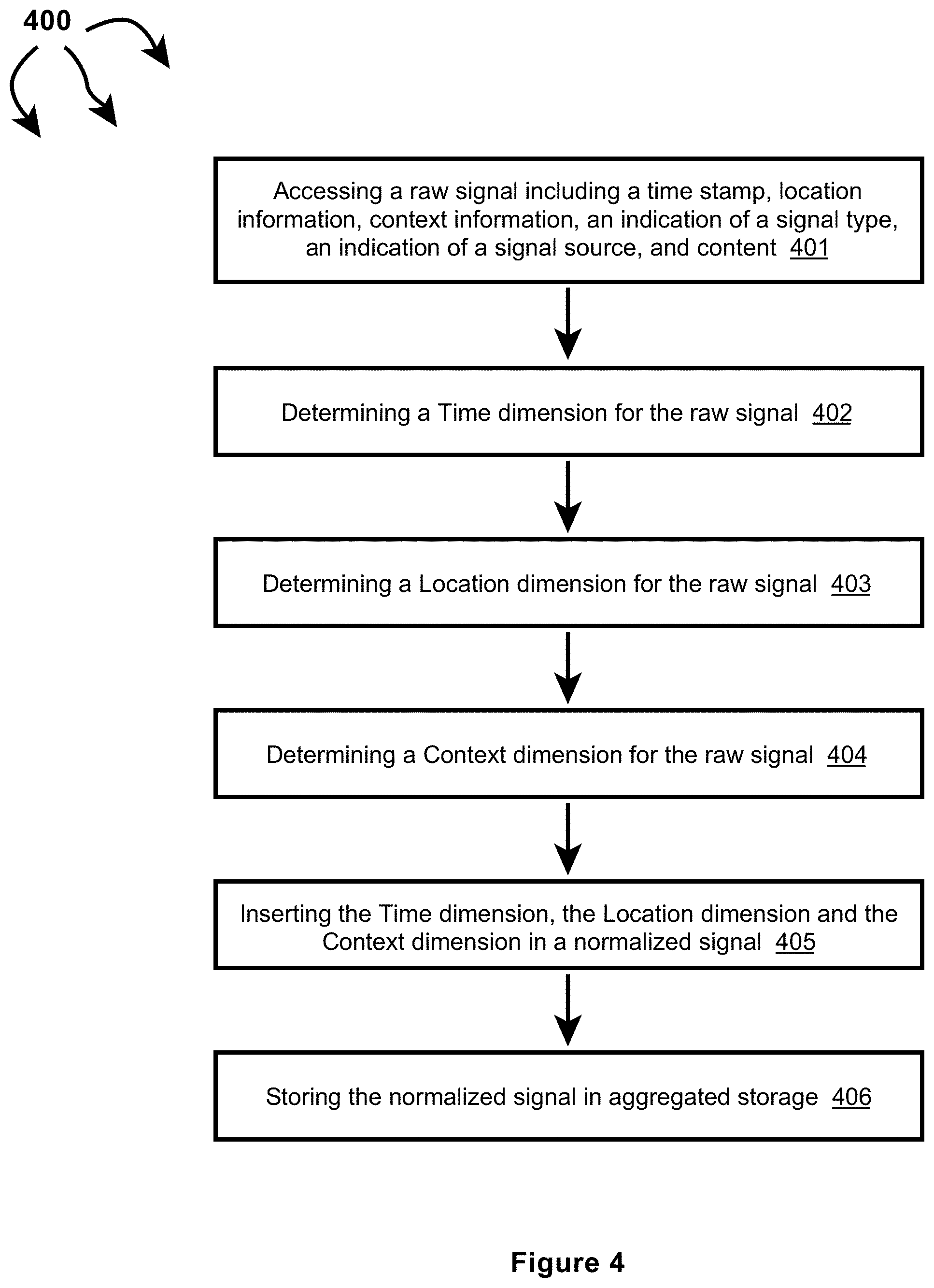

[0026] FIG. 4 illustrates a flow chart of an example method for normalizing an ingested signal including time information, location information, and context information.

[0027] FIG. 5 illustrates a flow chart of an example method for normalizing an ingested signal including time information and location information.

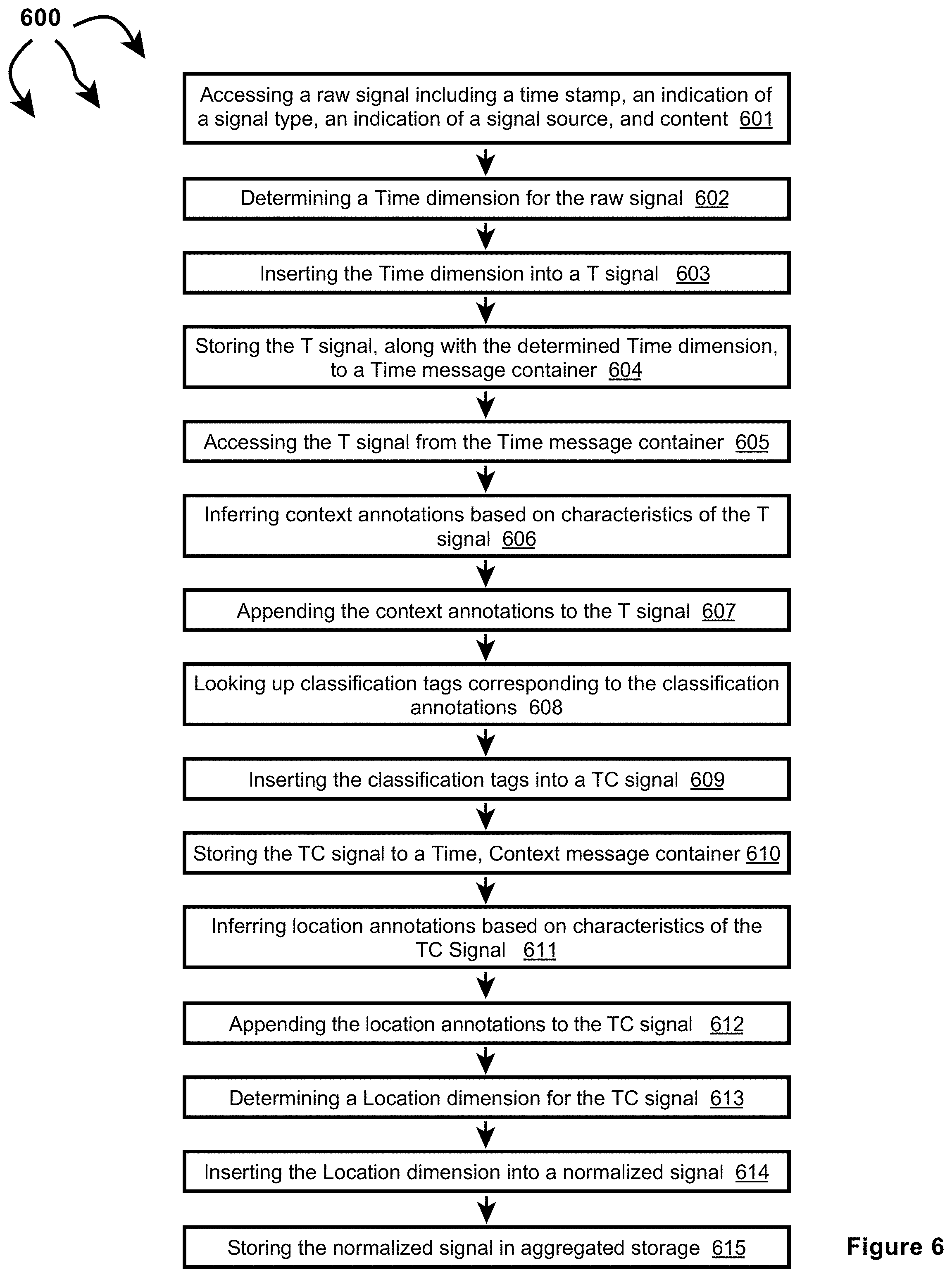

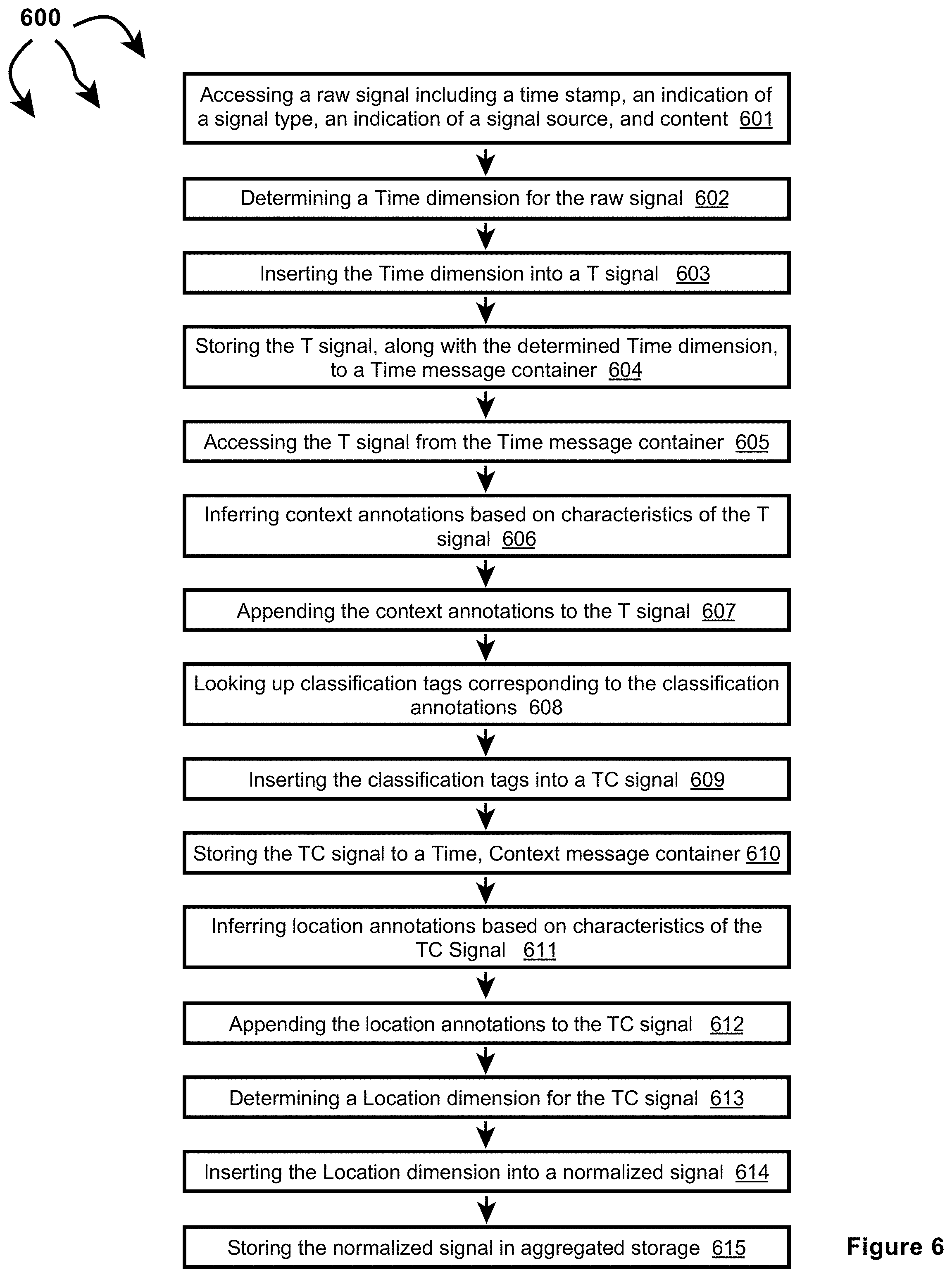

[0028] FIG. 6 illustrates a flow chart of an example method for normalizing an ingested signal including time information.

[0029] FIG. 7 illustrates a more detailed view of truthfulness determination module.

[0030] FIG. 8 illustrates a flow chart of an example method for determining event truthfulness.

[0031] FIG. 9 illustrates a more detailed view of severity determination module.

[0032] FIG. 10 illustrates a flow chart of an example method for determining event severity.

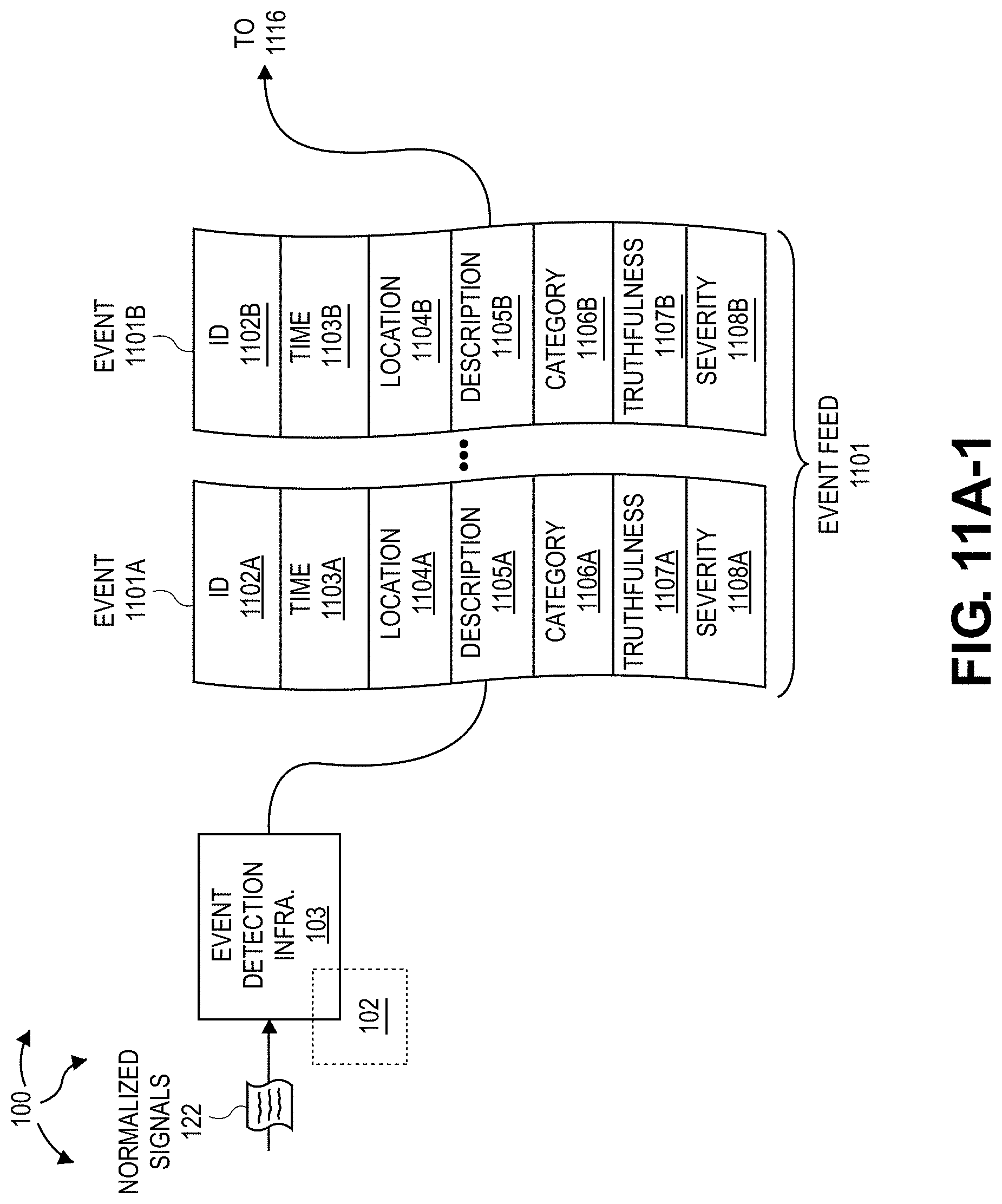

[0033] FIGS. 11A-1 and 11A-2 illustrate a computer architecture that facilitates identifying relevant events and notifying entities of relevant events.

[0034] FIG. 11B illustrates a computer architecture that facilitates identifying relevant events and notifying entities of relevant events.

[0035] FIG. 12A illustrates a flow chart of an example method for identifying relevant events and notifying entities of relevant events.

[0036] FIG. 12B illustrates a flow chart of an example method for identifying relevant events and notifying entities of relevant events.

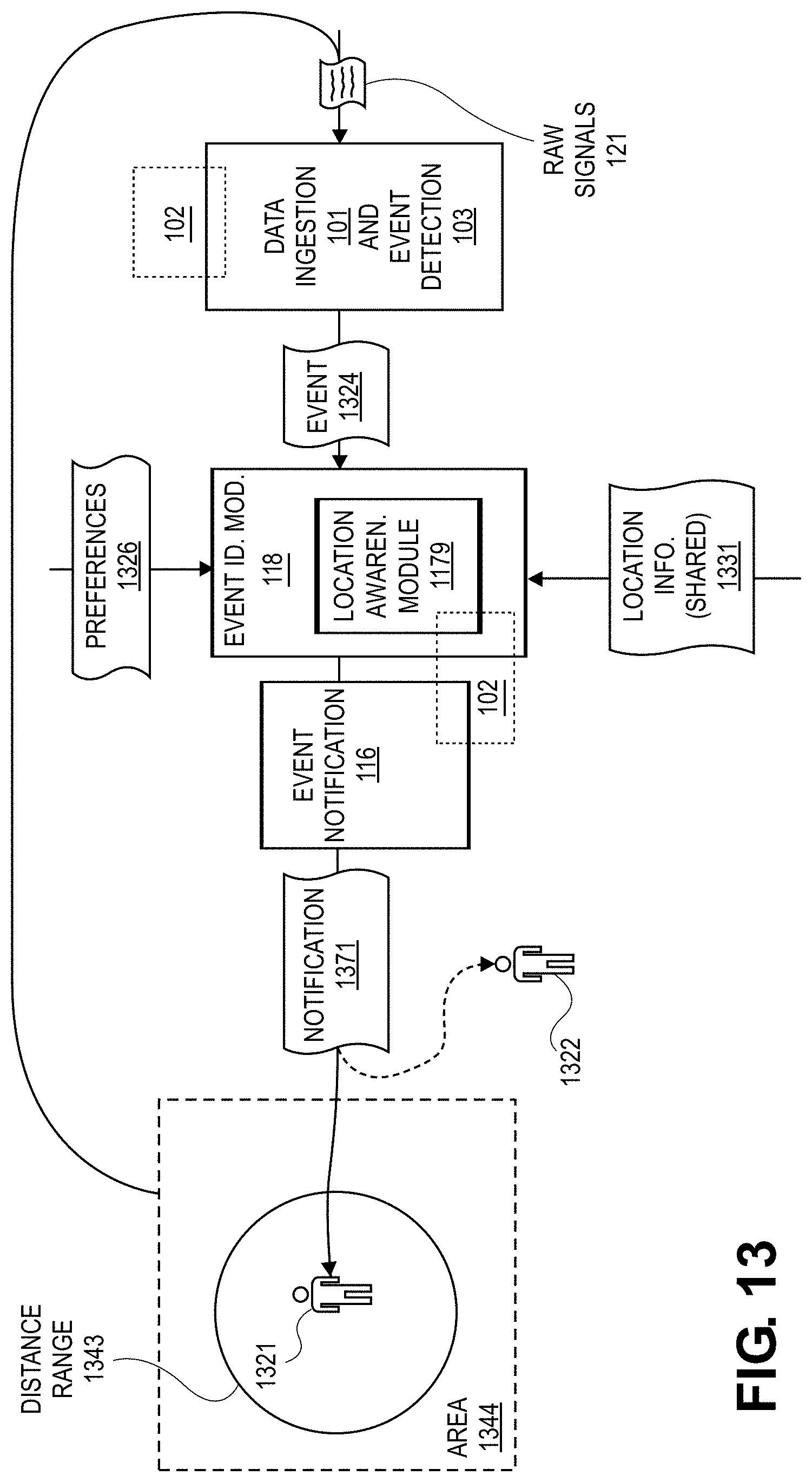

[0037] FIG. 13 illustrates a computer architecture that facilitates notifying of an event at or near the current location of an entity.

[0038] FIG. 14 illustrates a computer architecture that facilitates notifying of an event at or near a predicted future location of an entity.

[0039] FIG. 15 depicts an example user interface that facilitates selecting event notification preferences.

[0040] FIG. 16 illustrates a computer architecture that facilitates predicting event impact and notifying relevant entities.

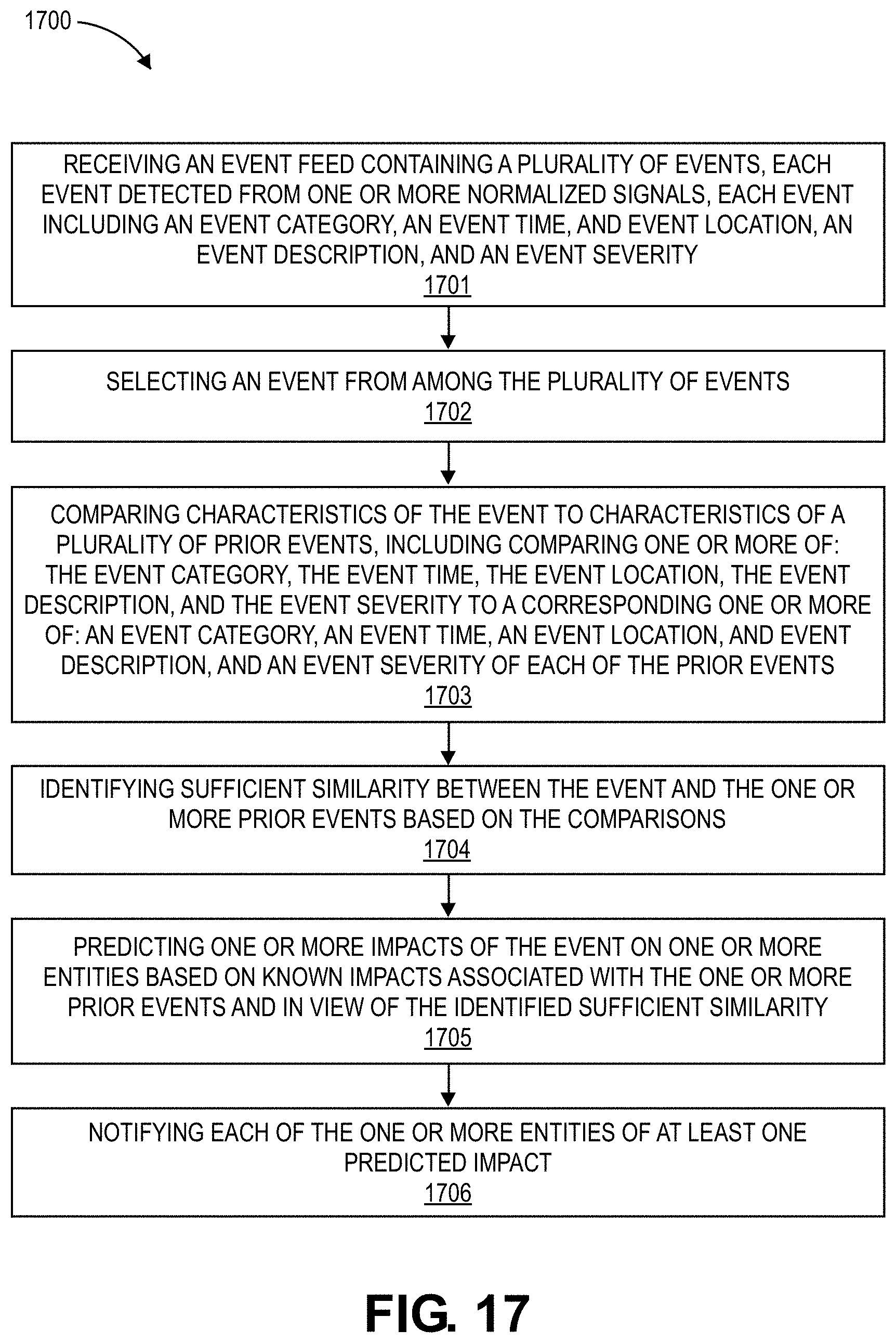

[0041] FIG. 17 illustrates a flow chart of an example method for predicting event impact and notifying relevant entities.

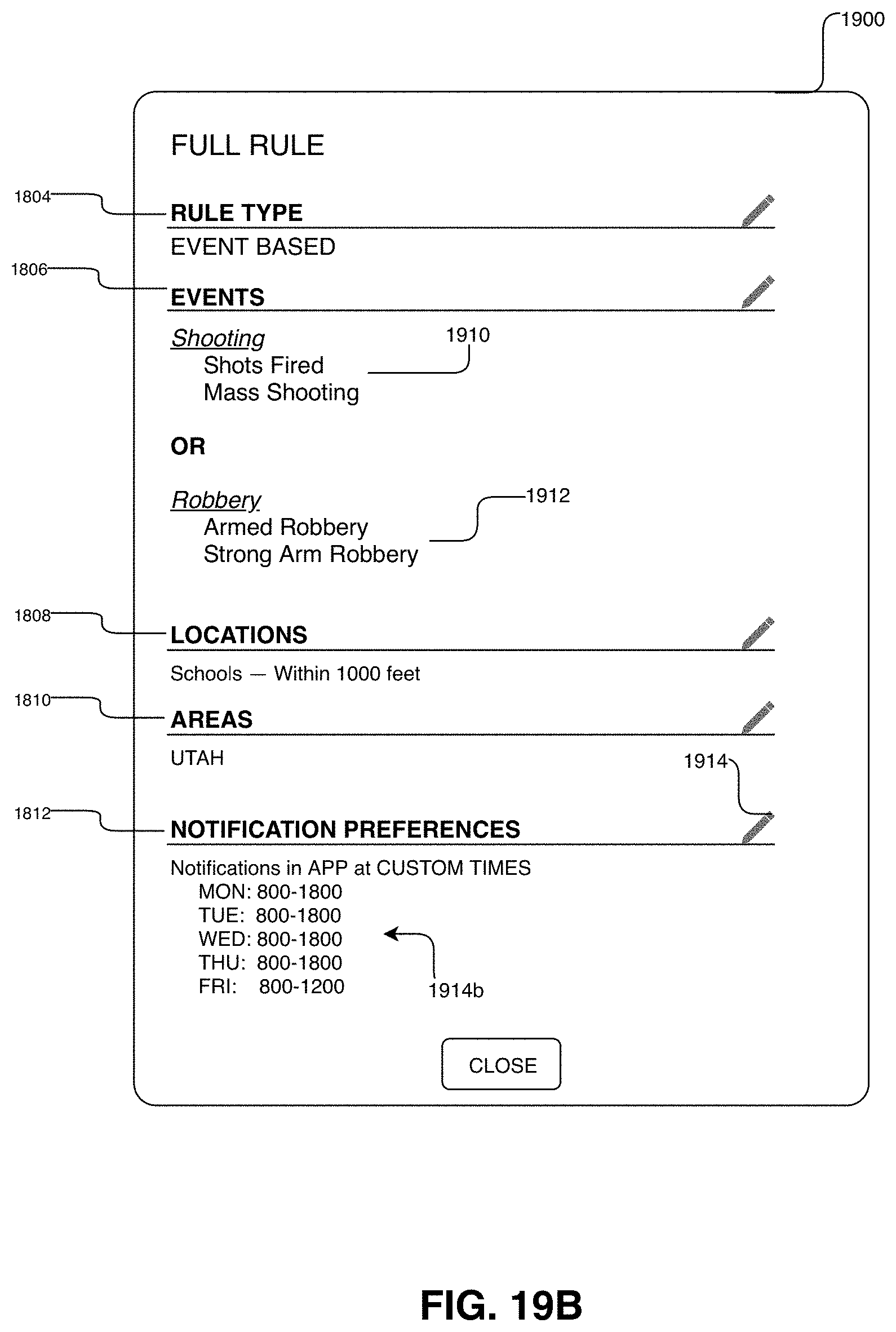

[0042] FIG. 18 illustrates a user interface element for viewing a user generated rule configuration.

[0043] FIGS. 19A and 19B illustrate user interfaces for modifying a user generated rule configuration.

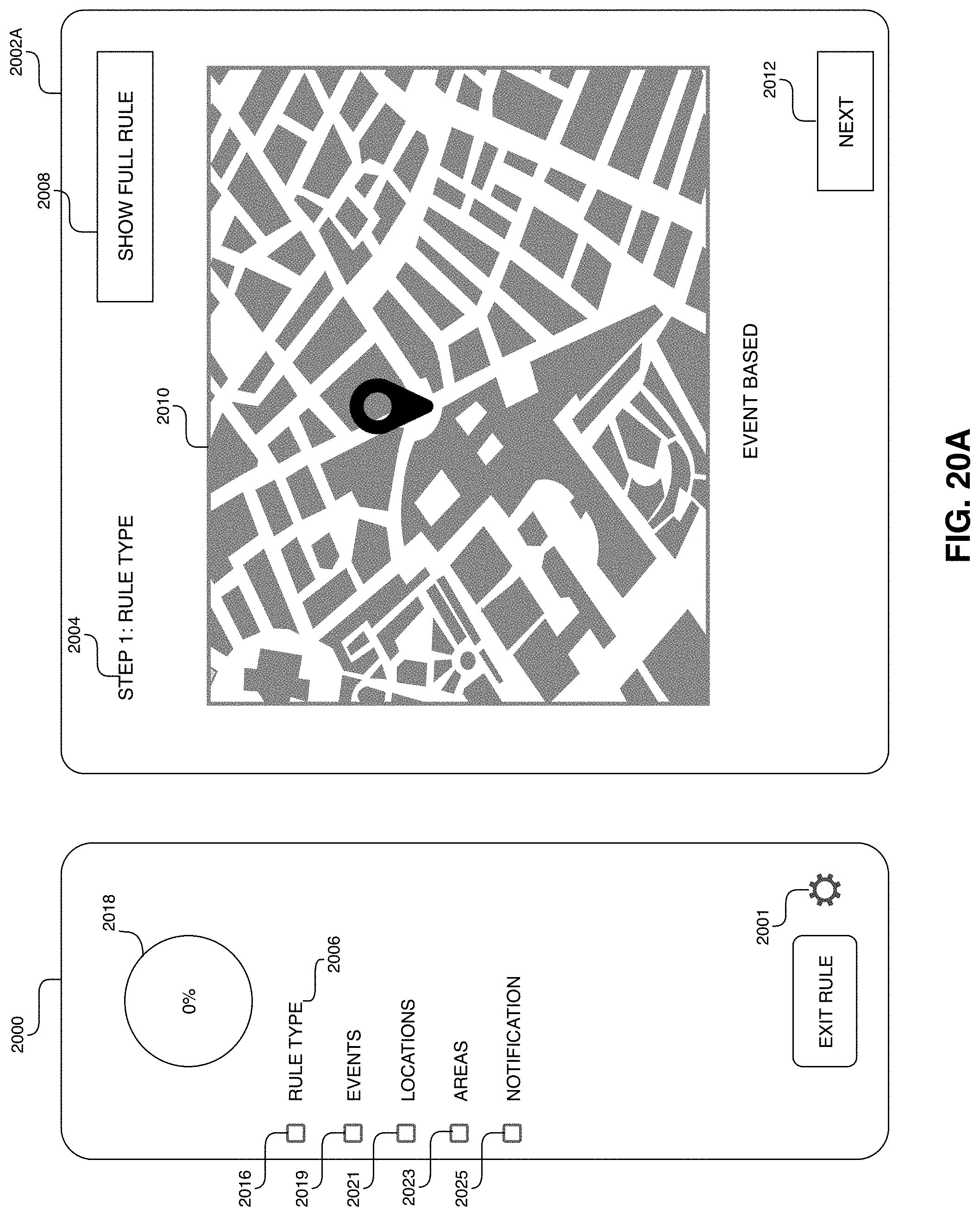

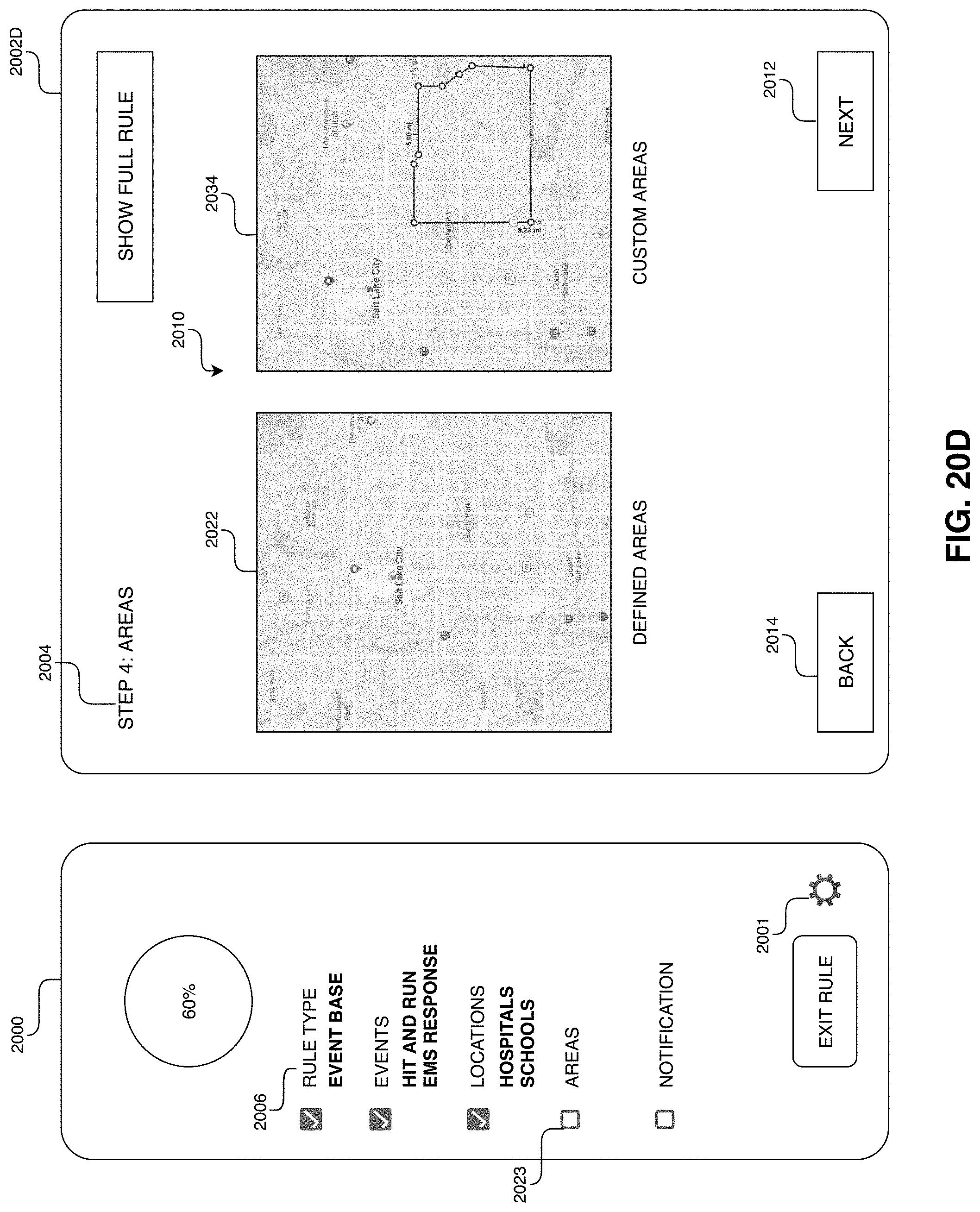

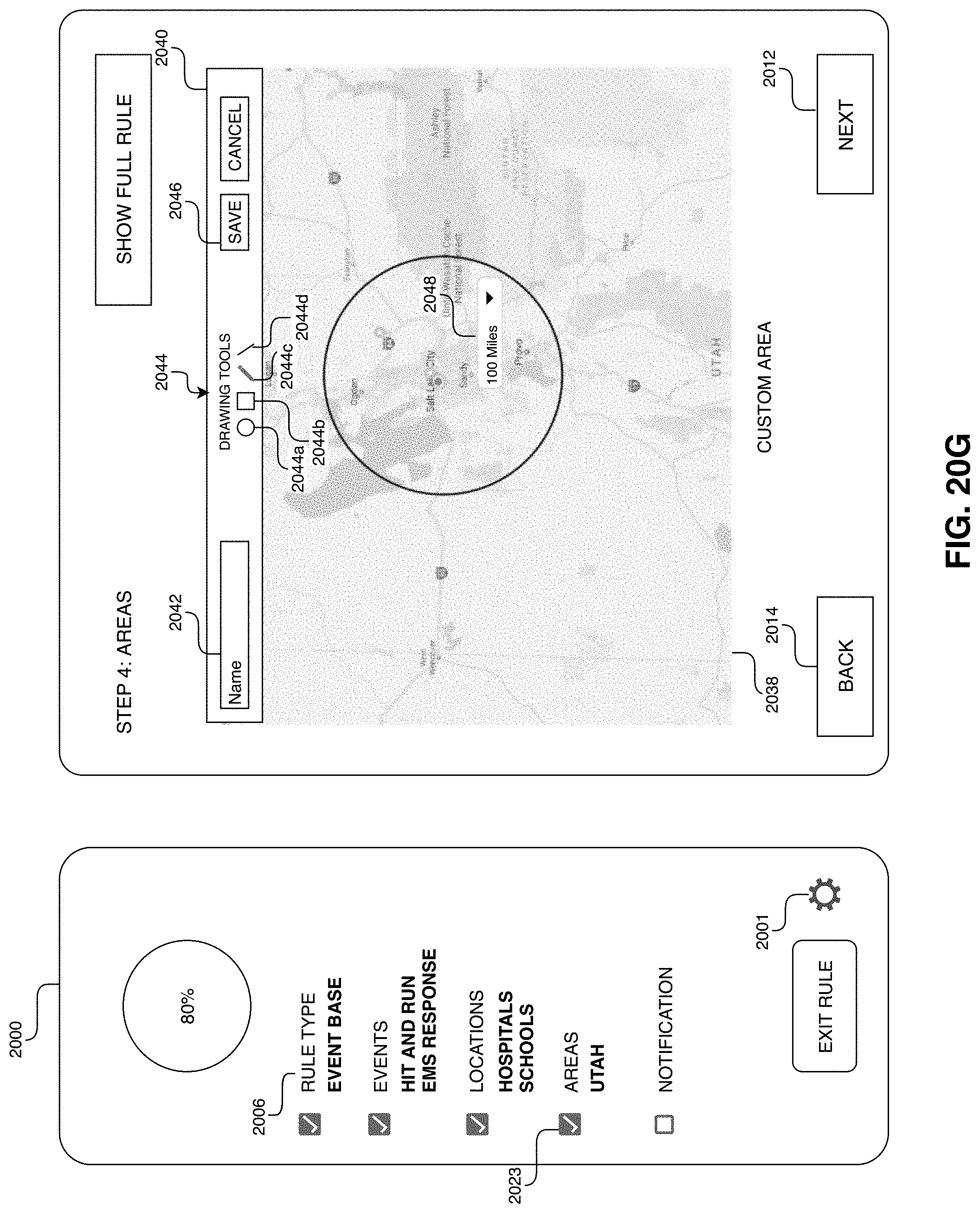

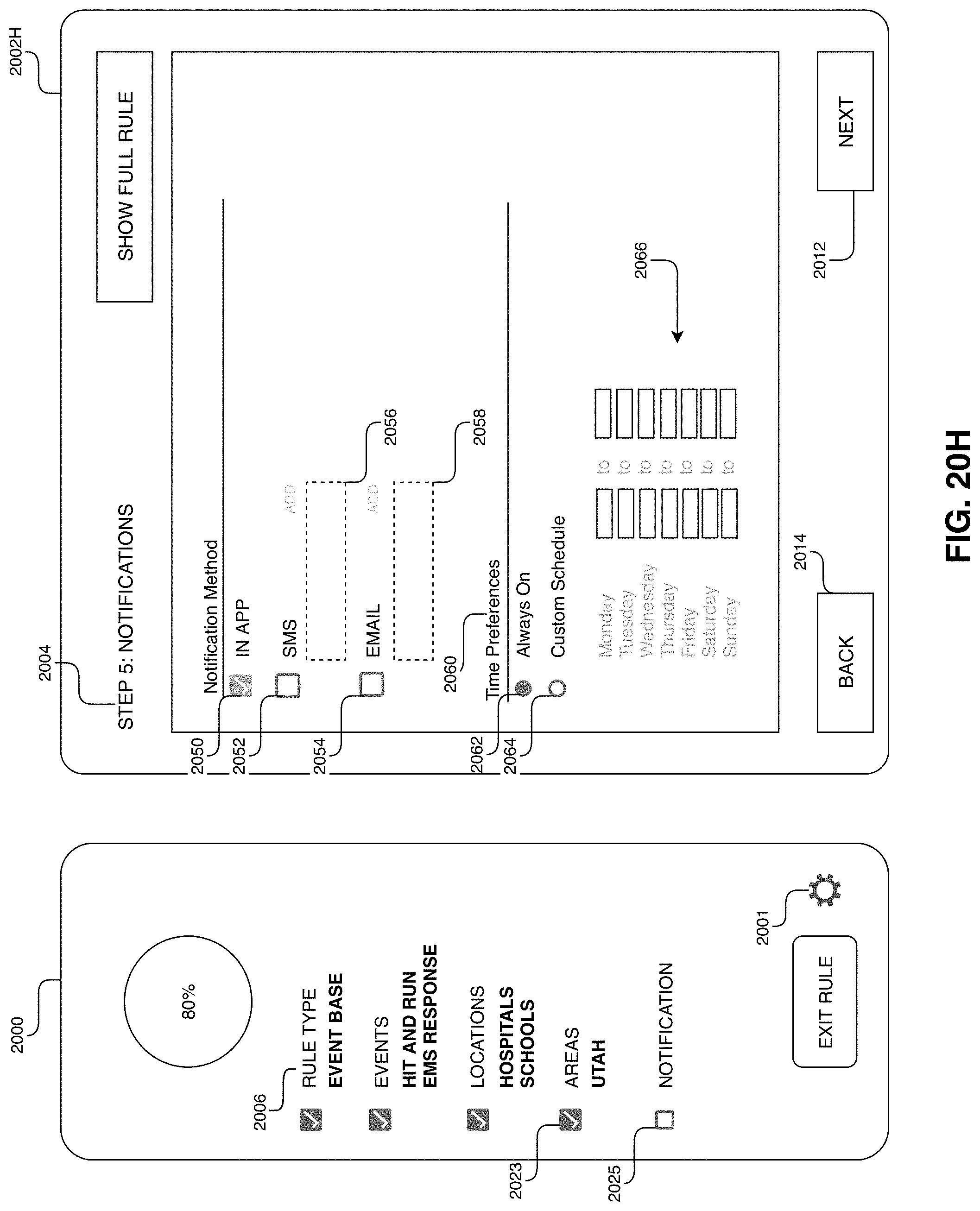

[0044] FIGS. 20A through 20I illustrate a wizard for user generated rule configuration.

[0045] FIG. 21 illustrates a user interface for viewing user generated rules.

[0046] FIG. 22 illustrates an embodiment for receiving event notifications as a mobile device.

[0047] FIG. 23 illustrates a user interface for generating whether related rule configurations.

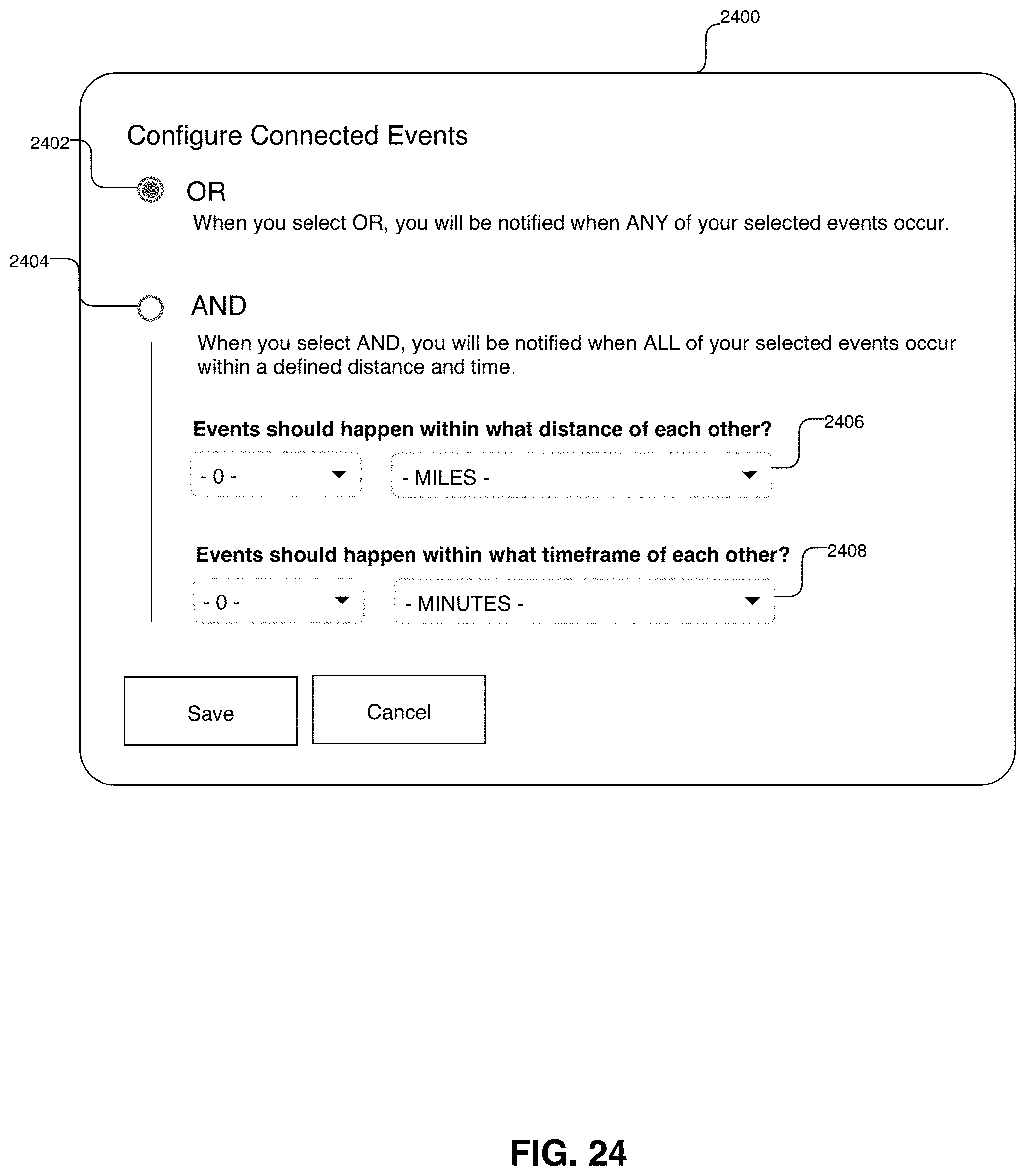

[0048] FIG. 24 illustrates a user interface for providing logic configurations for connecting event types in a user generated rule configuration system.

[0049] FIGS. 25 and 26 illustrate an app interface for user notification and exploration of triggered events.

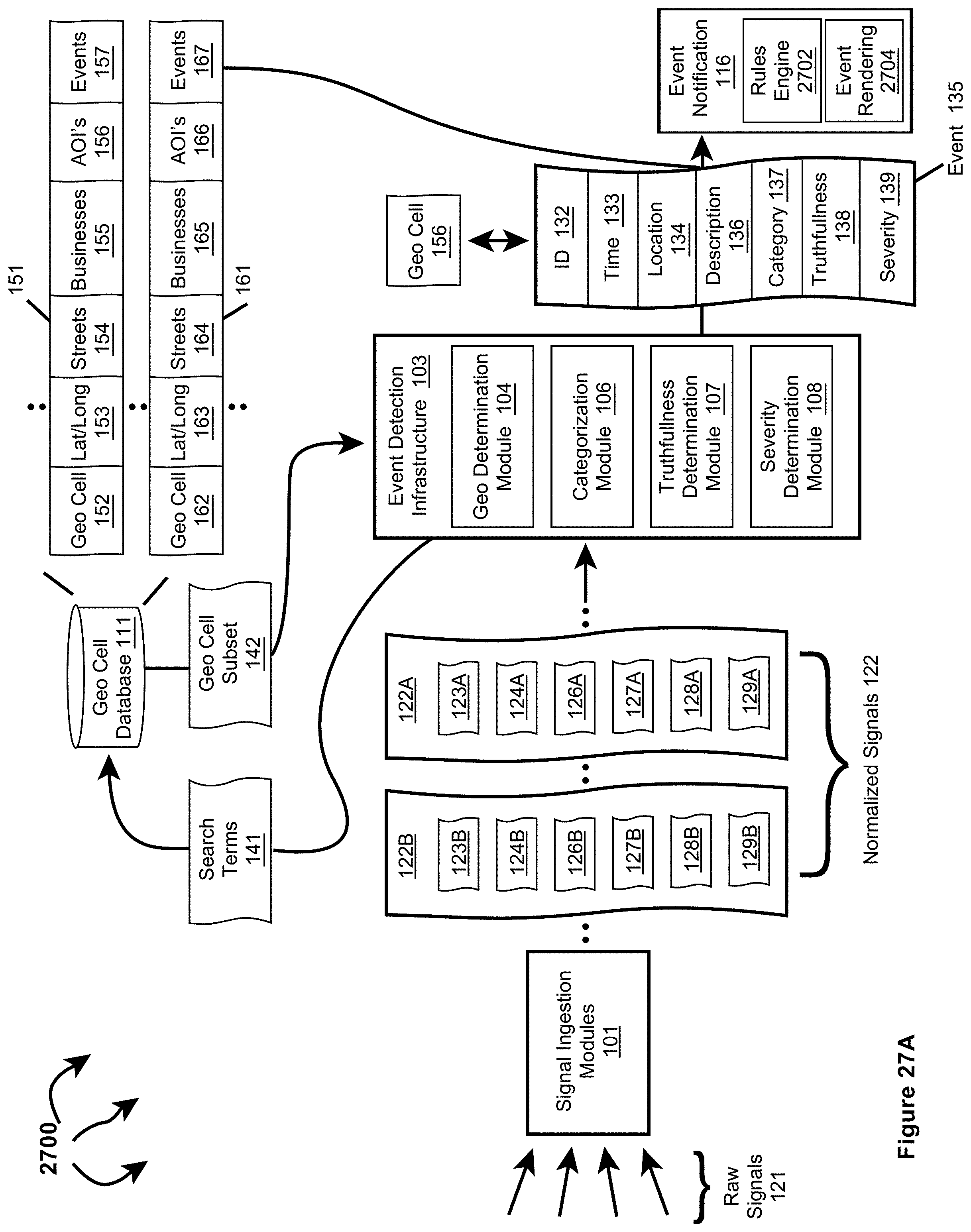

[0050] FIG. 27A illustrates an example computer architecture that facilitates normalizing ingesting signals and generating event notifications based on user generated rules.

[0051] FIG. 27B illustrates the example computer architecture of FIG. 27A and includes a privacy infrastructure.

[0052] FIG. 28 depicts an example computer architecture for analyzing normalized events against user generated rules.

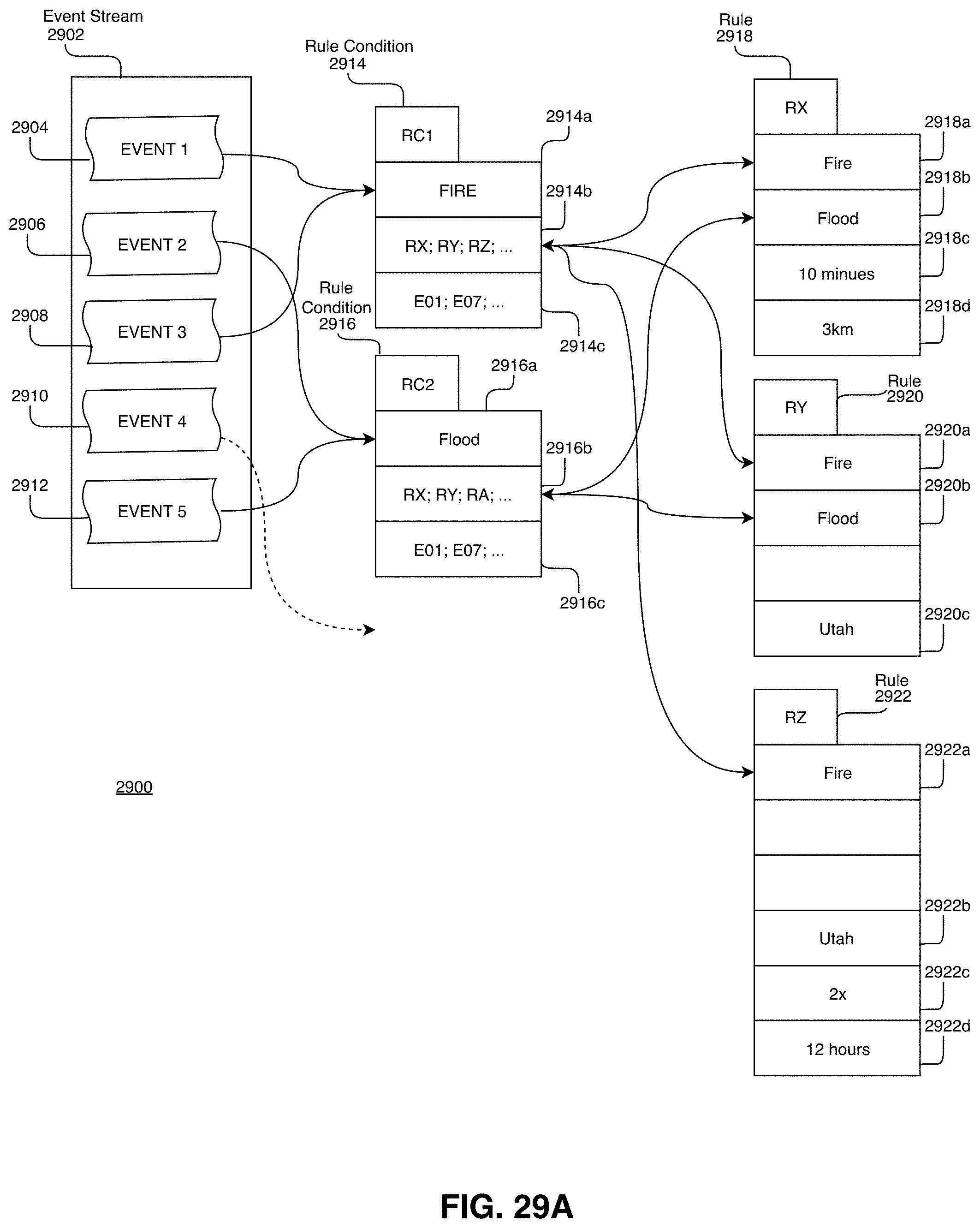

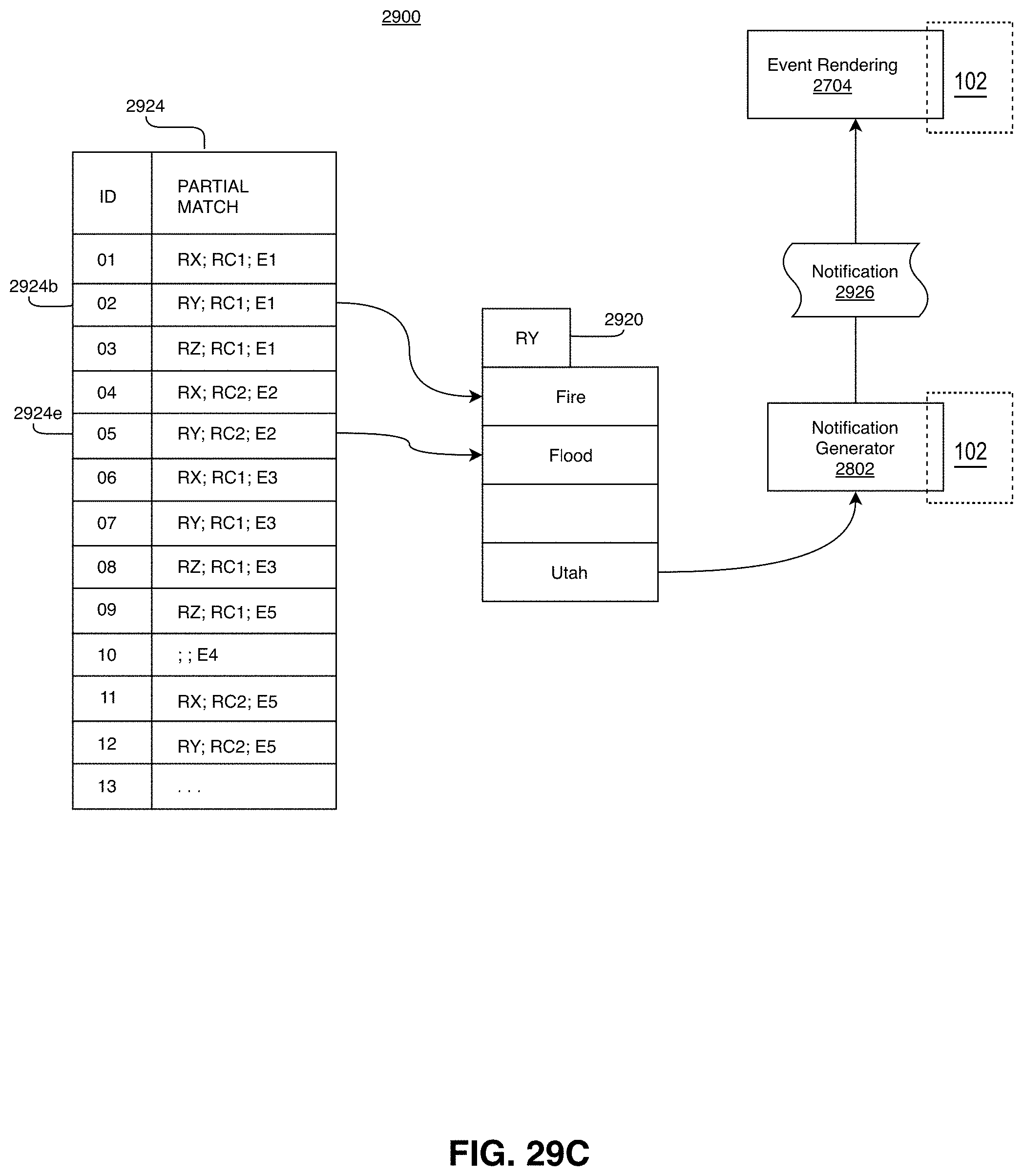

[0053] FIGS. 29A through 29E depict an embodiment for processing, tracking, and determining rule condition components from disparate events across a time window.

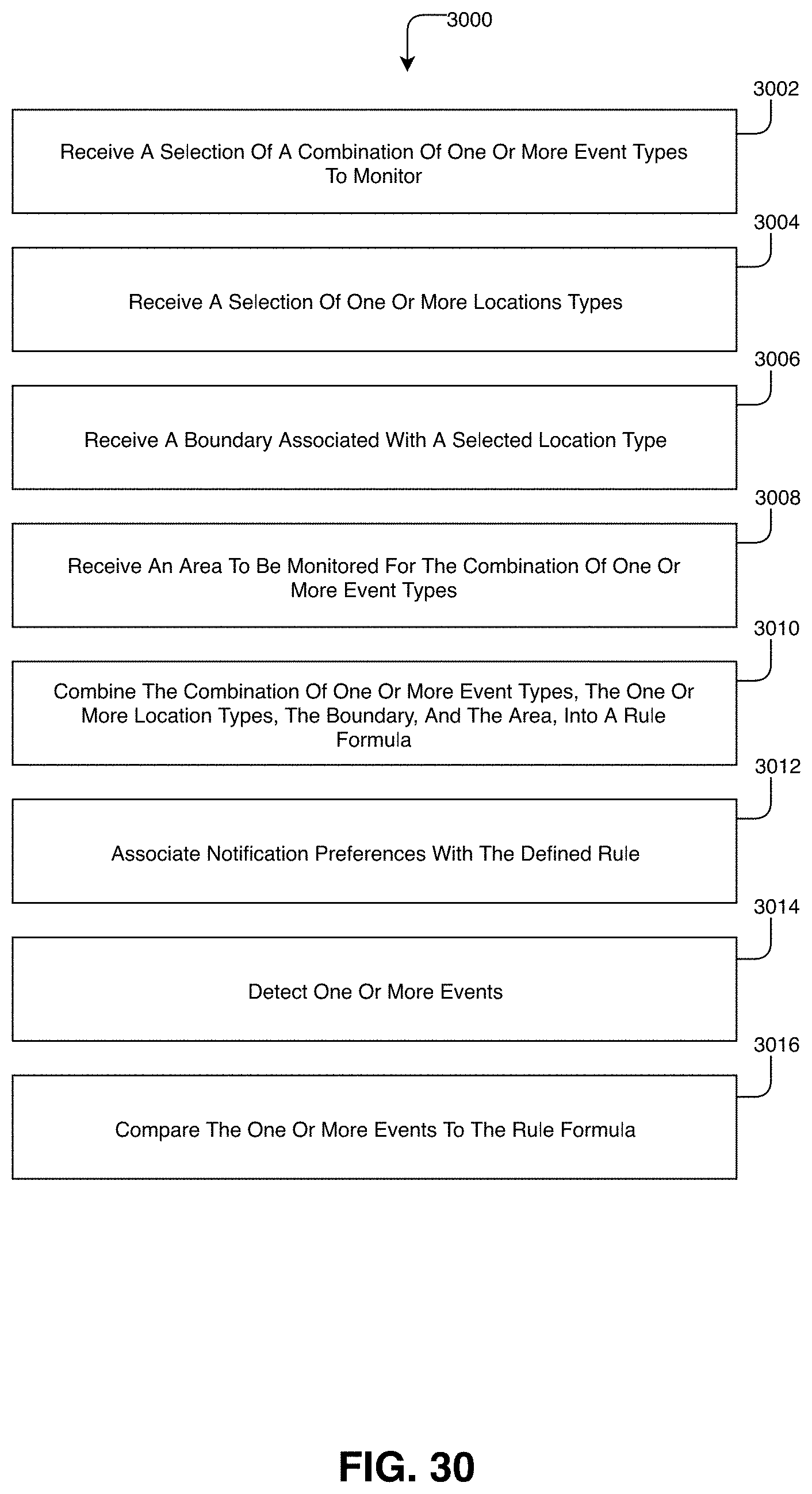

[0054] FIG. 30 depicts a method for generating notifications based on user generated rules according to an embodiment.

DETAILED DESCRIPTION

[0055] Examples extend to methods, systems, and computer program products for ????

[0056] Entities (e.g., parents, other family members, guardians, friends, teachers, social workers, first responders, hospitals, delivery services, media outlets, government entities, security personnel, etc.) may desire to be made aware of relevant events as close as possible to the events' occurrence (i.e., as close as possible to "moment zero"). Different types of ingested signals (e.g., social media signals, web signals, and streaming signals) can be used to detect events. Event relevancy can be determined from entity selectable notification preferences including but not limited to event categories, event location, a computed event truth, a computed event severity, event impact, etc. Entities can also select notification preferences indicating a minimal notification delay. The minimal notification delay defines a minimum time after a relevant event is detected that an entity desires notification of the relevant event.

[0057] In general, signal ingestion modules ingest different types of raw structured and/or raw unstructured signals on an ongoing basis. Different types of signals can include different data media types and different data formats. Data media types can include audio, video, image, and text. Different formats can include text in XML, text in JavaScript Object Notation (JSON), text in RSS feed, plain text, video stream in Dynamic Adaptive Streaming over HTTP (DASH), video stream in HTTP Live Streaming (HLS), video stream in Real-Time Messaging Protocol (RTMP), other Multipurpose Internet Mail Extensions (MIME) types, etc. Handling different types and formats of data introduces inefficiencies into subsequent event detection processes, including when determining if different signals relate to the same event.

[0058] Accordingly, the signal ingestion modules can normalize raw signals across multiple data dimensions to form normalized signals. Each dimension can be a scalar value or a vector of values. In one aspect, raw signals are normalized into normalized signals having a Time, Location, Context (or "TLC") dimensions.

[0059] A Time (T) dimension can include a time of origin or alternatively a "event time" of a signal. A Location (L) dimension can include a location anywhere across a geographic area, such as, a country (e.g., the United States), a State, a defined area, an impacted area, an area defined by a geo cell, an address, etc.

[0060] A Context (C) dimension indicates circumstances surrounding formation/origination of a raw signal in terms that facilitate understanding and assessment of the raw signal. The Context (C) dimension of a raw signal can be derived from express as well as inferred signal features of the raw signal.

[0061] Signal ingestion modules can include one or more single source classifiers. A single source classifier can compute a single source probability for a raw signal from features of the raw signal. A single source probability can reflect a mathematical probability or approximation of a mathematical probability (e.g., a percentage between 0%-100%) of an event actually occurring. A single source classifier can be configured to compute a single source probability for a single event type or to compute a single source probability for each of a plurality of different event types. A single source classifier can compute a single source probability using artificial intelligence, machine learning, neural networks, logic, heuristics, etc.

[0062] As such, single source probabilities and corresponding probability details can represent a Context (C) dimension. Probability details can indicate (e.g., can include a hash field indicating) a probabilistic model and (express and/or inferred) signal features considered in a signal source probability calculation.

[0063] Thus, per signal type, signal ingestion modules determine Time (T), a Location (L), and a Context (C) dimensions associated with a signal. Different ingestion modules can be utilized/tailored to determine T, L, and C dimensions associated with different signal types. Normalized (or "TLC") signals can be forwarded to an event detection infrastructure. When signals are normalized across common dimensions subsequent event detection is more efficient and more effective.

[0064] Normalization of ingestion signals can include dimensionality reduction. Generally, "transdimensionality" transformations can be structured and defined in a "TLC" dimensional model. Signal ingestion modules can apply the "transdimensionality" transformations to generic source data in raw signals to re-encode the source data into normalized data having lower dimensionality. Dimensionality reduction can include reducing dimensionality (e.g., hundreds, thousands, or even more signal features (dimensions)) of a raw signal into a normalized signal including a T vector, an L vector, and a C vector. At lower dimensionality, the complexity of measuring "distances" between dimensional vectors across different normalized signals is reduced.

[0065] Concurrently with signal ingestion, an event detection infrastructure considers features of different combinations of normalized signals to attempt to identify events. For example, the event detection infrastructure can determine that features of multiple different normalized signals collectively indicate an event. Alternately, the event detection infrastructure can determine that features of one or more normalized signals indicate a possible event. The event detection infrastructure then determines that features of one or more other normalized signals validate the possible event. Signal features can include: signal type, signal source, signal content, Time (T) dimension, Location (L) dimension, Context (C) dimension, other circumstances of signal creation, etc.

[0066] The event detection infrastructure can send detected events to an event relevancy module. The event relevancy module can compare event characteristics to entity selected notification preferences. Based on the comparisons, the event relevancy module can determine a detected event is relevant to one or more entities. Relevant events can be forwarded to an event notification module along with entity identifiers for the one or more entities. The event notification module can use the entity identifiers to notify the one or more entities of the relevant event.

[0067] In one aspect, an entity identifier includes information for communicating with an entity, such as, for example, an email address, mobile telephone number, social media name, etc. In another aspect, an entity identifier is specific to the event relevancy module. Upon receiving an entity identifier, the event notification module refers to a database, list, mapping table, etc. that matches entity identifiers to corresponding information for communicating with an entity.

[0068] The event notification module notifies the one or more entities that the relevant event occurred and/or is occurring in accordance with entity notification preferences. The event notification module can use one or more communication mechanisms, such as, for example, email, text, social media direct message, etc., to attempt to notify an entity of a relevant event. In one aspect, an entity is notified of a relevant event within a period of time less than a selected minimal notification delay.

[0069] In some aspects, raw signals (or portions thereof), normalized signals (or portions thereof), events (or portions thereof), or event notifications (or portions thereof) may include information (private information, user information, etc.) deemed inappropriate for further propagation. A privacy infrastructure can span other modules used for signal ingestion, signal normalization, event detection, and event notification. The privacy infrastructure can use various data privacy operations to prevent other modules from inappropriately propagating information. For example, the privacy infrastructure can remove or otherwise (temporarily or permanently) obscure information in any of: raw signals, normalized signals, events, or event notifications prior to, during, or after any of: signal ingestion, event detection, or event notification.

[0070] Thus, signals, including raw signals and/or normalized signals, may include information deemed inappropriate for propagation. Similarly, detected events and event notifications may include information deemed inappropriate for propagation. The privacy infrastructure can apply data privacy operations to prevent the information from being inappropriately propagated prior to, during, or after event detection. Information deemed inappropriate for propagation can include: confidential information, patient information, personally identifiable information (PII), personal health information (PHI), sensitive personal information (SPI), Payment Card Industry information (PCI), or other private information, etc. (collectively, "user information"). Preventing propagation of user information can include removing (e.g., scrubbing or stripping) the user information from ingested signals. Removal of user information prior to event detection allows events to be detected while significantly increasing the privacy of any entities (e.g., individuals, businesses, etc.) referenced within the user information.

[0071] More specifically, for example, user information can include one or more portions of data that when considered individually or in the aggregate relate to the identity of a natural person or can be used to identify a natural person. Alternately, user information can be any information that can be used on its own or with other information to identify, contact, or locate a single person, or to identify an individual in context, including but not limited to: name, first name, last name, home address (or portions thereof), email address, nation identification number, passport number, vehicle registration plate, driver's license, face, fingerprints, handwriting, credit card numbers, digital identity, date of birth, birthplace, login name, social media identifier, mobile telephone number, nickname, age, gender, employer, school name, criminal record, job position, etc.

[0072] Data scrubbing or stripping can include the removal or permanent destruction of certain information. As compared to data anonymization (another type of data privacy operation)--which may involve complex methods of obfuscation--data scrubbing eliminates information from the system. That is, scrubbed data is not merely aggregated in a manner that delinks it from other data, rather, scrubbed data is permanently eliminated.

[0073] A signal source may include user information in a raw signal when the raw signal is generated. During normalization, user information included in a raw signal may be retained in a corresponding normalized signal. During event detection, user information in one or more normalized signals can be retained in a detected event. During event notification, user information included in a detected event can be retained in an event notification.

[0074] As such, and when appropriate, the privacy infrastructure can be configured to actively attempt to identify user information in one or more of: ingested raw signals, normalized signals, detected events, or notifications. For example, the privacy infrastructure can parse one or more of: (attributes/characteristics of) an ingested raw signal (including signal content), (attributes/characteristics of) a normalized signal (including signal content), (attributes/characteristics of) a detected event, or (attributes/characteristics of) an event notifications, searching for user information, such as, names, birthdates, physical characteristics, etc. When appropriate, the privacy infrastructure can also can actively attempt to identify user information in any intermediate data structures utilized during signal ingestion, signal normalization, event detection, notification, etc. The privacy infrastructure can apply data privacy operations to/on (e.g. scrubbing or otherwise removing) any identified user information in raw signals, normalized signals, events, and event notification, as well as identified user information in intermediate data structures utilized during signal ingestion, signal normalization, event detection, or notification. For example, the privacy infrastructure can identify and scrub PII included in a Computer Aided Dispatch (CAD) signal prior to utilizing the CAD signal for event detection.

[0075] In one aspect, user information is included in metadata within an ingested raw signal. The privacy infrastructure can apply data privacy operations (e.g., scrubbing) to the metadata prior to event detection and/or storage of the raw signal. For example, the privacy infrastructure can remove associated account information from a social media post. The privacy infrastructure can also scrub (or otherwise remove) geocoded information included in an ingested raw signal metadata.

[0076] Certain types of data may be inherently personal but are also used for event detection. For example, in an emergency situation involving a suspected perpetrator, it may be appropriate (and even beneficial) to propagate identifying physical characteristics (or other user information) included in a signal to law enforcement. The physical characteristics (or other user information) may remain with the signal but the signal may be tagged to indicate the presence of the physical characteristics. The privacy infrastructure may apply various data privacy operations on signals tagged as including user information. Data privacy operations can include segregating the tagged signal from other signals, applying encryption (or higher encryption) to the tagged signal, applying access controls (e.g., user-based, entity-based, purpose-based, time-based, warrant-based, etc.) to the tagged signal, or otherwise implementing rules regarding activities that are authorized/appropriate for the tagged signal.

[0077] The privacy infrastructure can apply data privacy operations to remove (or otherwise obscure) user information in accordance with one or more of: time-domain, expiry, or relevance-based rules. In one aspect, some user information may be appropriate to retain for a (e.g., relatively short) period of time. However, after the period of time, retention of the user information is no longer appropriate. The privacy infrastructure can implement a time based rule to remove (or otherwise obscure) the user information when the time period expires. For example, in a healthcare setting, it may be appropriate to know the identity of a person who tests positive for a communicable disease during the time in which the disease is communicable to others. However, once the person is no longer contagious, the identity loses relevance, and the privacy infrastructure can scrub the identify while maintaining other, non-user-identifiable information about the case.

[0078] In another aspect, the privacy infrastructure can retain information on a rolling window of time, for example 24 hours. For example, an access log for a resource (e.g., a building, a file, a computer, etc.) may be retained for a set period of time. Once the period of time has expired for a specific record, user information may be scrubbed from the access record while maintaining non-identifiable information (e.g., an indication that the resource was accessed).

[0079] In further aspect, the privacy infrastructures can obscure user information at multiple layers to further protect a user's privacy even during a period of time in which their user information is retained. For example, a data provider may hide, modify, encrypt, hash, or otherwise obscure user information prior to transfer into a system. The event detection algorithms previously described may be employed to identify similarities among signal characteristics even with the data within the signals has been arbitrarily assigned. That is, event detection may still be possible based on a uniform obfuscation of data prior to ingestion within the system. In this way, user data within the event detection system may not be traceable back to a user without also having access to the entirely separate system operated by the entity providing the signal. This may improve user privacy.

[0080] To further improve user privacy, the privacy infrastructure can combine receiving pre-obscured data from a signal provider with a process of applying an additional local obfuscation. For example, a signal source may provide only a hashed version of a user identifier to the signal ingestion system. The hashed version of the user identified may be hashed according to a method unknown to the signal ingestion system (e.g., a private key, salt, or the like). Upon receipt, the privacy infrastructure may apply an additional obfuscation (e.g., a second private key, salt, or the like) to the received data using a method unknown to the signal provider. As described, the privacy infrastructure may then scrub, cancel, or delete any connection between the received data (already obfuscated), and the secondary local modification according to a time-window, expiry, relevance, etc., rules.

[0081] Implementations can comprise or utilize a special purpose or general-purpose computer including computer hardware, such as, for example, one or more computer and/or hardware processors (including any of Central Processing Units (CPUs), and/or Graphical Processing Units (GPUs), general-purpose GPUs (GPGPUs), Field Programmable Gate Arrays (FPGAs), application specific integrated circuits (ASICs), Tensor Processing Units (TPUs)) and system memory, as discussed in greater detail below. Implementations also include physical and other computer-readable media for carrying or storing computer-executable instructions and/or data structures. Such computer-readable media can be any available media that can be accessed by a general purpose or special purpose computer system. Computer-readable media that store computer-executable instructions are computer storage media (devices). Computer-readable media that carry computer-executable instructions are transmission media. Thus, by way of example, and not limitation, implementations can comprise at least two distinctly different kinds of computer-readable media: computer storage media (devices) and transmission media.

[0082] Computer storage media (devices) includes RAM, ROM, EEPROM, CD-ROM, Solid State Drives ("SSDs") (e.g., RAM-based or Flash-based), Shingled Magnetic Recording ("SMR") devices, Flash memory, phase-change memory ("PCM"), other types of memory, other optical disk storage, magnetic disk storage or other magnetic storage devices, or any other medium which can be used to store desired program code means in the form of computer-executable instructions or data structures and which can be accessed by a general purpose or special purpose computer.

[0083] In one aspect, one or more processors are configured to execute instructions (e.g., computer-readable instructions, computer-executable instructions, etc.) to perform any of a plurality of described operations. The one or more processors can access information from system memory and/or store information in system memory. The one or more processors can (e.g., automatically) transform information between different formats, such as, for example, between any of: raw signals, normalized signals, signal features, single source probabilities, times, time dimensions, locations, location dimensions, geo cells, geo cell entries, designated market areas (DMAs), contexts, location annotations, context annotations, classification tags, context dimensions, events, truth values, truth scores, truth factors, geo fences, time decay functions, severity values, severity scores, severity ranks, signal groups, signal bursts, entity input, event notification preferences, event notifications, entity location data, entity locations, predicted impacts, impact notifications, etc.

[0084] System memory can be coupled to the one or more processors and can store instructions (e.g., computer-readable instructions, computer-executable instructions, etc.) executed by the one or more processors. The system memory can also be configured to store any of a plurality of other types of data generated and/or transformed by the described components, such as, for example, raw signals, normalized signals, signal features, single source probabilities, times, time dimensions, locations, location dimensions, geo cells, geo cell entries, designated market areas (DMAs), contexts, location annotations, context annotations, classification tags, context dimensions, events, truth values, truth scores, truth factors, geo fences, time decay functions, severity values, severity scores, severity ranks, signal groups, signal bursts, entity input, event notification preferences, event notifications, entity location data, entity locations, predicted impacts, impact notifications, etc.

[0085] A "network" is defined as one or more data links that enable the transport of electronic data between computer systems and/or modules and/or other electronic devices. When information is transferred or provided over a network or another communications connection (either hardwired, wireless, or a combination of hardwired or wireless) to a computer, the computer properly views the connection as a transmission medium. Transmissions media can include a network and/or data links which can be used to carry desired program code means in the form of computer-executable instructions or data structures and which can be accessed by a general purpose or special purpose computer. Combinations of the above should also be included within the scope of computer-readable media.

[0086] Further, upon reaching various computer system components, program code means in the form of computer-executable instructions or data structures can be transferred automatically from transmission media to computer storage media (devices) (or vice versa). For example, computer-executable instructions or data structures received over a network or data link can be buffered in RAM within a network interface module (e.g., a "NIC"), and then eventually transferred to computer system RAM and/or to less volatile computer storage media (devices) at a computer system. Thus, it should be understood that computer storage media (devices) can be included in computer system components that also (or even primarily) utilize transmission media.

[0087] Computer-executable instructions comprise, for example, instructions and data which, in response to execution at a processor, cause a general purpose computer, special purpose computer, or special purpose processing device to perform a certain function or group of functions. The computer executable instructions may be, for example, binaries, intermediate format instructions such as assembly language, or even source code. Although the subject matter has been described in language specific to structural features and/or methodological acts, it is to be understood that the subject matter defined in the appended claims is not necessarily limited to the described features or acts described above. Rather, the described features and acts are disclosed as example forms of implementing the claims.

[0088] Those skilled in the art will appreciate that the described aspects may be practiced in network computing environments with many types of computer system configurations, including, personal computers, desktop computers, laptop computers, message processors, hand-held devices, wearable devices, multicore processor systems, multi-processor systems, microprocessor-based or programmable consumer electronics, network PCs, minicomputers, mainframe computers, mobile telephones, PDAs, tablets, routers, switches, and the like. The described aspects may also be practiced in distributed system environments where local and remote computer systems, which are linked (either by hardwired data links, wireless data links, or by a combination of hardwired and wireless data links) through a network, both perform tasks. In a distributed system environment, program modules may be located in both local and remote memory storage devices.

[0089] Further, where appropriate, functions described herein can be performed in one or more of: hardware, software, firmware, digital components, or analog components. For example, one or more Field Programmable Gate Arrays (FPGAs) and/or one or more application specific integrated circuits (ASICs) and/or one or more Tensor Processing Units (TPUs) can be programmed to carry out one or more of the systems and procedures described herein. Hardware, software, firmware, digital components, or analog components can be specifically tailor-designed for a higher speed detection or artificial intelligence that can enable signal processing. In another example, computer code is configured for execution in one or more processors, and may include hardware logic/electrical circuitry controlled by the computer code. These example devices are provided herein purposes of illustration, and are not intended to be limiting. Embodiments of the present disclosure may be implemented in further types of devices.

[0090] The described aspects can also be implemented in cloud computing environments. In this description and the following claims, "cloud computing" is defined as a model for enabling on-demand network access to a shared pool of configurable computing resources. For example, cloud computing can be employed in the marketplace to offer ubiquitous and convenient on-demand access to the shared pool of configurable computing resources (e.g., compute resources, networking resources, and storage resources). The shared pool of configurable computing resources can be provisioned via virtualization and released with low effort or service provider interaction, and then scaled accordingly.

[0091] A cloud computing model can be composed of various characteristics such as, for example, on-demand self-service, broad network access, resource pooling, rapid elasticity, measured service, and so forth. A cloud computing model can also expose various service models, such as, for example, Software as a Service ("SaaS"), Platform as a Service ("PaaS"), and Infrastructure as a Service ("IaaS"). A cloud computing model can also be deployed using different deployment models such as private cloud, community cloud, public cloud, hybrid cloud, and so forth. In this description and in the following claims, a "cloud computing environment" is an environment in which cloud computing is employed.

[0092] In this description and the following claims, a "geo cell" is defined as a piece of "cell" in a spatial grid in any form. In one aspect, geo cells are arranged in a hierarchical structure. Cells of different geometries can be used.

[0093] A "geohash" is an example of a "geo cell".

[0094] In this description and the following claims, "geohash" is defined as a geocoding system which encodes a geographic location into a short string of letters and digits. Geohash is a hierarchical spatial data structure which subdivides space into buckets of grid shape (e.g., a square). Geohashes offer properties like arbitrary precision and the possibility of gradually removing characters from the end of the code to reduce its size (and gradually lose precision). As a consequence of the gradual precision degradation, nearby places will often (but not always) present similar prefixes. The longer a shared prefix is, the closer the two places are. geo cells can be used as a unique identifier and to approximate point data (e.g., in databases).

[0095] In one aspect, a "geohash" is used to refer to a string encoding of an area or point on the Earth. The area or point on the Earth may be represented (among other possible coordinate systems) as a latitude/longitude or Easting/Northing--the choice of which is dependent on the coordinate system chosen to represent an area or point on the Earth. geo cell can refer to an encoding of this area or point, where the geo cell may be a binary string comprised of 0s and 1s corresponding to the area or point, or a string comprised of 0s, 1s, and a ternary character (such as X)--which is used to refer to a don't care character (0 or 1). A geo cell can also be represented as a string encoding of the area or point, for example, one possible encoding is base-32, where every 5 binary characters are encoded as an ASCII character.

[0096] Depending on latitude, the size of an area defined at a specified geo cell precision can vary. When geohash is used for spatial indexing, the areas defined at various geo cell precisions are approximately:

TABLE-US-00001 TABLE 1 Example Areas at Various Geohash Precisions Geohash Length/Precision width .times. height 1 5,009.4 km .times. 4,992.6 km 2 1,252.3 km .times. 624.1 km.sup. 3 156.5 km .times. 156 km.sup. 4 39.1 km .times. 19.5 km 5 4.9 km .times. 4.9 km 6 1.2 km .times. 609.4 m 7 152.9 m .times. 152.4 m 8 38.2 m .times. 19 m.sup. 9 4.8 m .times. 4.8 m 10 1.2 m .times. 59.5 cm 11 14.9 cm .times. 14.9 cm 12 3.7 cm .times. 1.9 cm

Other geo cell geometries, such as, hexagonal tiling, triangular tiling, etc. are also possible. For example, the H3 geospatial indexing system is a multi-precision hexagonal tiling of a sphere (such as the Earth) indexed with hierarchical linear indexes.

[0097] In another aspect, geo cells are a hierarchical decomposition of a sphere (such as the Earth) into representations of regions or points based a Hilbert curve (e.g., the S2 hierarchy or other hierarchies). Regions/points of the sphere can be projected into a cube and each face of the cube includes a quad-tree where the sphere point is projected into. After that, transformations can be applied and the space discretized. The geo cells are then enumerated on a Hilbert Curve (a space-filling curve that converts multiple dimensions into one dimension and preserves the approximate locality).

[0098] Due to the hierarchical nature of geo cells, any signal, event, entity, etc., associated with a geo cell of a specified precision is by default associated with any less precise geo cells that contain the geo cell. For example, if a signal is associated with a geo cell of precision 9, the signal is by default also associated with corresponding geo cells of precisions 1, 2, 3, 4, 5, 6, 7, and 8. Similar mechanisms are applicable to other tiling and geo cell arrangements. For example, S2 has a cell level hierarchy ranging from level zero (85,011,012 km.sup.2) to level 30 (between 0.48 cm.sup.2 to 0.96 cm.sup.2).

[0099] Signal Ingestion and Normalization

[0100] Signal ingestion modules ingest a variety of raw structured and/or raw unstructured signals on an on going basis and in essentially real-time. Raw signals can include social posts, live broadcasts, traffic camera feeds, other camera feeds (e.g., from other public cameras or from CCTV cameras), listening device feeds, 911 calls, weather data, planned events, IoT device data, crowd sourced traffic and road information, satellite data, air quality sensor data, smart city sensor data, public radio communication (e.g., among first responders and/or dispatchers, between air traffic controllers and pilots), subscription data services, etc. The content of raw signals can include images, video, audio, text, etc.

[0101] In general, signal normalization can prepare (or pre-process) raw signals into normalized signals to increase efficiency and effectiveness of subsequent computing activities, such as, event detection, event notification, etc., that utilize the normalized signals. For example, signal ingestion modules can normalize raw signals into normalized signals having a Time, Location, and Context (TLC) dimensions. An event detection infrastructure can use the Time, Location, and Content dimensions to more efficiently and effectively detect events.

[0102] Per signal type and signal content, different normalization modules can be used to extract, derive, infer, etc. Time, Location, and Context dimensions from/for a raw signal. For example, one set of normalization modules can be configured to extract/derive/infer Time, Location and Context dimensions from/for social signals. Another set of normalization modules can be configured to extract/derive/infer Time, Location and Context dimensions from/for Web signals. A further set of normalization modules can be configured to extract/derive/infer Time, Location and Context dimensions from/for streaming signals.

[0103] Normalization modules for extracting/deriving/inferring Time, Location, and Context dimensions can include text processing modules, NLP modules, image processing modules, video processing modules, etc. The modules can be used to extract/derive/infer data representative of Time, Location, and Context dimensions for a signal. Time, Location, and Context dimensions for a signal can be extracted/derived/inferred from metadata and/or content of the signal.

[0104] For example, NLP modules can analyze metadata and content of a sound clip to identify a time, location, and keywords (e.g., fire, shooter, etc.). An acoustic listener can also interpret the meaning of sounds in a sound clip (e.g., a gunshot, vehicle collision, etc.) and convert to relevant context. Live acoustic listeners can determine the distance and direction of a sound. Similarly, image processing modules can analyze metadata and pixels in an image to identify a time, location and keywords (e.g., fire, shooter, etc.). Image processing modules can also interpret the meaning of parts of an image (e.g., a person holding a gun, flames, a store logo, etc.) and convert to relevant context. Other modules can perform similar operations for other types of content including text and video.

[0105] Per signal type, each set of normalization modules can differ but may include at least some similar modules or may share some common modules. For example, similar (or the same) image analysis modules can be used to extract named entities from social signal images and public camera feeds. Likewise, similar (or the same) NLP modules can be used to extract named entities from social signal text and web text.

[0106] In some aspects, an ingested signal includes sufficient expressly defined time, location, and context information upon ingestion. The expressly defined time, location, and context information is used to determine Time, Location, and Context dimensions for the ingested signal. In other aspects, an ingested signal lacks expressly defined location information or expressly defined location information is insufficient (e.g., lacks precision) upon ingestion. In these other aspects, Location dimension or additional Location dimension can be inferred from features of an ingested signal and/or through references to other data sources. In further aspects, an ingested signal lacks expressly defined context information or expressly defined context information is insufficient (e.g., lacks precision) upon ingestion. In these further aspects, Context dimension or additional Context dimension can be inferred from features of an ingested signal and/or through reference to other data sources.

[0107] In further aspects, time information may not be included, or included time information may not be given with high enough precision and Time dimension is inferred. For example, a user may post an image to a social network which had been taken some indeterminate time earlier.

[0108] Normalization modules can use named entity recognition and reference to a geo cell database to infer Location dimension. Named entities can be recognized in text, images, video, audio, or sensor data. The recognized named entities can be compared to named entities in geo cell entries. Matches indicate possible signal origination in a geographic area defined by a geo cell.

[0109] As such, a normalized signal can include a Time dimension, a Location dimension, a Context dimension (e.g., single source probabilities and probability details), a signal type, a signal source, and content.

[0110] A single source probability can be calculated by single source classifiers (e.g., machine learning models, artificial intelligence, neural networks, statistical models, etc.) that consider hundreds, thousands, or even more signal features (dimensions) of a signal. Single source classifiers can be based on binary models and/or multi-class models.

[0111] FIG. 1A depicts part of computer architecture 100 that facilitates ingesting and normalizing signals. As depicted, computer architecture 100 includes signal ingestion modules 101, social signals 171, Web signals 172, and streaming signals 173. Signal ingestion modules 101, social signals 171, Web signals 172, and streaming signals 173 can be connected to (or be part of) a network, such as, for example, a system bus, a Local Area Network ("LAN"), a Wide Area Network ("WAN"), and even the Internet. Accordingly, signal ingestion modules 101, social signals 171, Web signals 172, and streaming signals 173 as well as any other connected computer systems and their components can create and exchange message related data (e.g., Internet Protocol ("IP") datagrams and other higher layer protocols that utilize IP datagrams, such as, Transmission Control Protocol ("TCP"), Hypertext Transfer Protocol ("HTTP"), Simple Mail Transfer Protocol ("SMTP"), Simple Object Access Protocol (SOAP), etc. or using other non-datagram protocols) over the network.

[0112] Signal ingestion module(s) 101 can ingest raw signals 121, including social signals 171, web signals 172, and streaming signals 173, on an on going basis and in essentially real-time. Raw signals 121 can include social posts, traffic camera feeds, other camera feeds, listening device feeds, 911 calls, weather data, planned events, IoT device data, crowd sourced traffic and road information, satellite data, air quality sensor data, smart city sensor data, public radio communication, subscription data service data, etc. As such, potentially thousands, millions or even billions of unique raw signals, each with unique characteristics, are can be ingested and used determine event characteristics, such as, event truthfulness, event severity, event category or categories, etc.

[0113] Signal ingestion module(s) 101 include social content ingestion modules 174, web content ingestion modules 176, stream content ingestion modules 176, and signal formatter 180. Signal formatter 180 further includes social signal processing module 181, web signal processing module 182, and stream signal processing modules 183.

[0114] For each type of signal, a corresponding ingestion module and signal processing module can interoperate to normalize the signal into a Time, Location, Context (TLC) dimensions. For example, social content ingestion modules 174 and social signal processing module 181 can interoperate to normalize social signals 171 into TLC dimensions. Similarly, web content ingestion modules 176 and web signal processing module 182 can interoperate to normalize web signals 172 into TLC dimensions. Likewise, stream content ingestion modules 176 and stream signal processing modules 183 can interoperate to normalize streaming signals 173 into TLC dimensions.

[0115] In one aspect, signal content exceeding specified size requirements (e.g., audio or video) is cached upon ingestion. Signal ingestion modules 101 include a URL or other identifier to the cached content within the context for the signal.

[0116] In one aspect, signal formatter 180 includes modules for determining a single source probability as a ratio of signals turning into events based on the following signal properties: (1) event class (e.g., fire, accident, weather, etc.), (2) media type (e.g., text, image, audio, etc.), (3) source (e.g., twitter, traffic camera, first responder radio traffic, etc.), and (4) geo type (e.g., geo cell, region, or non-geo). Probabilities can be stored in a lookup table for different combinations of the signal properties. Features of a signal can be derived and used to query the lookup table. For example, the lookup table can be queried with terms ("accident", "image", "twitter", "region"). The corresponding ratio (probability) can be returned from the table.

[0117] In another aspect, signal formatter 180 includes a plurality of single source classifiers (e.g., artificial intelligence, machine learning modules, neural networks, etc.). Each single source classifier can consider hundreds, thousands, or even more signal features (dimensions) of a signal. Signal features of a signal can be derived and submitted to a signal source classifier. The single source classifier can return a probability that a signal indicates a type of event. Single source classifiers can be binary classifiers or multi-source classifiers.

[0118] Raw classifier output can be adjusted to more accurately represent a probability that a signal is a "true positive". For example, 1,000 signals whose raw classifier output is 0.9 may include 80% as true positives. Thus, probability can be adjusted to 0.8 to reflect true probability of the signal being a true positive. "Calibration" can be done in such a way that for any "calibrated score" this score reflects the true probability of a true positive outcome.

[0119] Signal ingestion modules 101 can insert one or more single source probabilities and corresponding probability details into a normalized signal to represent a Context (C) dimension. Probability details can indicate a probabilistic model and features used to calculate the probability. In one aspect, a probabilistic model and signal features are contained in a hash field.

[0120] Signal ingestion modules 101 can access "transdimensionality" transformations structured and defined in a "TLC" dimensional model. Signal ingestion modules 101 can apply the "transdimensionality" transformations to generic source data in raw signals to re-encode the source data into normalized data having lower dimensionality. Dimensionality reduction can include reducing dimensionality (e.g., hundreds, thousands, or even more signal features (dimensions)) of a raw signal into a normalized signal including a T vector, an L vector, and a C vector. At lower dimensionality, the complexity of measuring "distances" between dimensional vectors across different normalized signals is reduced.

[0121] Thus, in general, any received raw signals can be normalized into normalized signals including a Time (T) dimension, a Location (L) dimension, a Context (C) dimension, signal source, signal type, and content. Signal ingestion modules 101 can send normalized signals 122 to event detection infrastructure 103.

[0122] For example, signal ingestion modules 101 can send normalized signal 122A, including time 123A, location 124A, context 126A, content 127A, type 128A, and source 129A to event detection infrastructure 103. Similarly, signal ingestion modules 101 can send normalized signal 122B, including time 123B, location 124B, context 126B, content 127B, type 128B, and source 129B to event detection infrastructure 103.

[0123] Event Detection

[0124] Turning back to FIG. 1B, computer architecture 100 also includes components that facilitate detecting events. As depicted, computer architecture 100 includes geo cell database 111 and event notification 116. Geo cell database 111 and event notification 116 can be connected to (or be part of) a network with signal ingestion modules 101 and event detection infrastructure 103. As such, geo cell database 111 and even notification 116 can create and exchange message related data over the network.

[0125] As described, in general, on an ongoing basis, concurrently with signal ingestion (and also essentially in real-time), event detection infrastructure 103 detects different categories of (planned and unplanned) events (e.g., fire, police response, mass shooting, traffic accident, natural disaster, storm, active shooter, concerts, protests, etc.) in different locations (e.g., anywhere across a geographic area, such as, the United States, a State, a defined area, an impacted area, an area defined by a geo cell, an address, etc.), at different times from Time, Location, and Context dimensions included in normalized signals. Since, normalized signals are normalized to include Time, Location, and Context dimensions, event detection infrastructure 103 can handle normalized signals in a more uniform manner increasing event detection efficiency and effectiveness.

[0126] Event detection infrastructure 103 can also determine an event truthfulness, event severity, and an associated geo cell. In one aspect, a Context dimension in a normalized signal increases the efficiency and effectiveness of determining truthfulness, severity, and an associated geo cell.

[0127] Generally, an event truthfulness indicates how likely a detected event is actually an event (vs. a hoax, fake, misinterpreted, etc.). Truthfulness can range from less likely to be true to more likely to be true. In one aspect, truthfulness is represented as a numerical value, such as, for example, from 1 (less truthful) to 10 (more truthful) or as percentage value in a percentage range, such as, for example, from 0% (less truthful) to 100% (more truthful). Other truthfulness representations are also possible. For example, truthfulness can be a dimension or represented by one or more vectors.

[0128] Generally, an event severity indicates how severe an event is (e.g., what degree of badness, what degree of damage, etc. is associated with the event). Severity can range from less severe (e.g., a single vehicle accident without injuries) to more severe (e.g., multi vehicle accident with multiple injuries and a possible fatality). As another example, a shooting event can also range from less severe (e.g., one victim without life threatening injuries) to more severe (e.g., multiple injuries and multiple fatalities). In one aspect, severity is represented as a numerical value, such as, for example, from 1 (less severe) to 5 (more severe). Other severity representations are also possible. For example, severity can be a dimension or represented by one or more vectors.

[0129] In general, event detection infrastructure 103 can include a geo determination module including modules for processing different kinds of content including location, time, context, text, images, audio, and video into search terms. The geo determination module can query a geo cell database with search terms formulated from normalized signal content. The geo cell database can return any geo cells having matching supplemental information. For example, if a search term includes a street name, a subset of one or more geo cells including the street name in supplemental information can be returned to the event detection infrastructure.

[0130] Event detection infrastructure 103 can use the subset of geo cells to determine a geo cell associated with an event location. Events associated with a geo cell can be stored back into an entry for the geo cell in the geo cell database. Thus, over time an historical progression of events within a geo cell can be accumulated.

[0131] As such, event detection infrastructure 103 can assign an event ID, an event time, an event location, an event category, an event description, an event truthfulness, and an event severity to each detected event. Detected events can be sent to relevant entities, including to mobile devices, to computer systems, to APIs, to data storage, etc.

[0132] Event detection infrastructure 103 detects events from information contained in normalized signals 122. Event detection infrastructure 103 can detect an event from a single normalized signal 122 or from multiple normalized signals 122. In one aspect, event detection infrastructure 103 detects an event based on information contained in one or more normalized signals 122. In another aspect, event detection infrastructure 103 detects a possible event based on information contained in one or more normalized signals 122. Event detection infrastructure 103 then validates the potential event as an event based on information contained in one or more other normalized signals 122.

[0133] As depicted, event detection infrastructure 103 includes geo determination module 104, categorization module 106, truthfulness determination module 107, and severity determination module 108.

[0134] Generally, geo determination module 104 can include NLP modules, image analysis modules, etc. for identifying location information from a normalized signal. Geo determination module 104 can formulate (e.g., location) search terms 141 by using NLP modules to process audio, using image analysis modules to process images, etc. Search terms can include street addresses, building names, landmark names, location names, school names, image fingerprints, etc. Event detection infrastructure 103 can use a URL or identifier to access cached content when appropriate.

[0135] Generally, categorization module 106 can categorize a detected event into one of a plurality of different categories (e.g., fire, police response, mass shooting, traffic accident, natural disaster, storm, active shooter, concerts, protests, etc.) based on the content of normalized signals used to detect and/or otherwise related to an event.

[0136] Generally, truthfulness determination module 107 can determine the truthfulness of a detected event based on one or more of: source, type, age, and content of normalized signals used to detect and/or otherwise related to the event. Some signal types may be inherently more reliable than other signal types. For example, video from a live traffic camera feed may be more reliable than text in a social media post. Some signal sources may be inherently more reliable than others. For example, a social media account of a government agency may be more reliable than a social media account of an individual. The reliability of a signal can decay over time.

[0137] Generally, severity determination module 108 can determine the severity of a detected event based on or more of: location, content (e.g., dispatch codes, keywords, etc.), and volume of normalized signals used to detect and/or otherwise related to an event. Events at some locations may be inherently more severe than events at other locations. For example, an event at a hospital is potentially more severe than the same event at an abandoned warehouse. Event category can also be considered when determining severity. For example, an event categorized as a "Shooting" may be inherently more severe than an event categorized as "Police Presence" since a shooting implies that someone has been injured.

[0138] Geo cell database 111 includes a plurality of geo cell entries. Each geo cell entry is included in a geo cell defining an area and corresponding supplemental information about things included in the defined area. The corresponding supplemental information can include latitude/longitude, street names in the area defined by and/or beyond the geo cell, businesses in the area defined by the geo cell, other Areas of Interest (AOIs) (e.g., event venues, such as, arenas, stadiums, theaters, concert halls, etc.) in the area defined by the geo cell, image fingerprints derived from images captured in the area defined by the geo cell, and prior events that have occurred in the area defined by the geo cell. For example, geo cell entry 151 includes geo cell 152, lat/lon 153, streets 154, businesses 155, AOIs 156, and prior events 157. Each event in prior events 157 can include a location (e.g., a street address), a time (event occurrence time), an event category, an event truthfulness, an event severity, and an event description. Similarly, geo cell entry 161 includes geo cell 162, lat/lon 163, streets 164, businesses 165, AOIs 166, and prior events 167. Each event in prior events 167 can include a location (e.g., a street address), a time (event occurrence time), an event category, an event truthfulness, an event severity, and an event description.

[0139] Other geo cell entries can include the same or different (more or less) supplemental information, for example, depending on infrastructure density in an area. For example, a geo cell entry for an urban area can contain more diverse supplemental information than a geo cell entry for an agricultural area (e.g., in an empty field).

[0140] Geo cell database 111 can store geo cell entries in a hierarchical arrangement based on geo cell precision. As such, geo cell information of more precise geo cells is included in the geo cell information for any less precise geo cells that include the more precise geo cell.

[0141] Geo determination module 104 can query geo cell database 111 with search terms 141. Geo cell database 111 can identify any geo cells having supplemental information that matches search terms 141. For example, if search terms 141 include a street address and a business name, geo cell database 111 can identify geo cells having the street name and business name in the area defined by the geo cell. Geo cell database 111 can return any identified geo cells to geo determination module 104 in geo cell subset 142.

[0142] Geo determination module can use geo cell subset 142 to determine the location of event 135 and/or a geo cell associated with event 135. As depicted, event 135 includes event ID 132, time 133, location 137, description 136, category 137, truthfulness 138, and severity 139.

[0143] Event detection infrastructure 103 can also determine that event 135 occurred in an area defined by geo cell 162 (e.g., a geohash having precision of level 7 or level 9). For example, event detection infrastructure 103 can determine that location 134 is in the area defined by geo cell 162. As such, event detection infrastructure 103 can store event 135 in events 167 (i.e., historical events that have occurred in the area defined by geo cell 162).

[0144] Event detection infrastructure 103 can also send event 135 to event notification module 116. Event notification module 116 can notify one or more entities about event 135.

[0145] Privacy Infrastructure

[0146] Referring now to FIG. 1C, privacy infrastructure 102 spans signal ingestion modules 101, event detection infrastructure 103, and event notification 116. Privacy infrastructure 102 can implement any described user information data privacy operations (e.g., removal, scrubbing, stripping, obfuscation, access rule application, etc.) within and/or through interoperation with one or more of ingestion modules 101, event detection infrastructure 103, and event notification 116. As such, privacy infrastructure 102 may be configured to apply data privacy operations, including data scrubbing, before, during, or after signal ingestion, event detection, and/or event notification.

[0147] In some aspects, one or more of raw signals 121 (or portions thereof) can include user information. Privacy infrastructure 102 can implement/apply data privacy operations through interaction and/or interoperation with signal ingestion modules 101 on the user information (e.g., prior to, during, or after signal ingestion and/or signal normalization). For example, while normalizing one of raw signals 121, privacy infrastructure 102 may apply one or more data privacy operations to alter an aspect of the raw signal 121 (e.g., user information) relating to the Time dimension. One way this may be done is to round a time-stamp to the nearest second, minute, hour, etc. By reducing precision associated with a timestamp, privacy can be increased (e.g., by making it impossible to directly link a signal aspect to the original aspect). However, the reduced time-stamp precision may cause little, if any, corresponding reduction in identifying an event based on the raw signal 121. Depending on signal type, the level of precision may be more or less important to event detection and may also be more or less helpful in eliminating user information. Thus, heuristics may be applied to different signal types to determine relevancy of precision and/or relevancy of reducing user information footprint.

[0148] Privacy infrastructure 102 can also apply one or more data privacy operations to modify location information (e.g., user information) associated with a signal in a manner that irreversibly increases privacy with little, if any, reduction in event detection capabilities. For example, privacy infrastructure 102 can reduce or eliminate GPS precision. Depending on the signal type, location information may not benefit event detection. In such cases, signal specific rules may be implemented to reduce or eliminate the unnecessary information prior to event detection processing.

[0149] Privacy infrastructure 102 can also apply one or more data privacy operations to modify different types of contextual information (e.g., user information) associated with a signal. For example, vehicle telematics information may include metadata identifying a make/model of a vehicle. However, if such telematic information is used to detect events, such as, car accidents, the exact make/model of the automobile may not be necessary and can be eliminated from the signal during normalization. In another example, content from a social media post may be scrubbed to eliminate extraneous information. This may be accomplished through natural language processing and configured to eliminate content such as names, locations, or other sensitive information.