Learning From Estimated High-dynamic Range All Weather Lighting Parameters

Zhang; Jinsong ; et al.

U.S. patent application number 16/564398 was filed with the patent office on 2021-03-11 for learning from estimated high-dynamic range all weather lighting parameters. The applicant listed for this patent is ADOBE INC.. Invention is credited to Jonathan Eisenmann, Sunil Hadap, Yannick Hold-Geoffroy, Jean-Francois Lalonde, Kalyan K. Sunkavalli, Jinsong Zhang.

| Application Number | 20210073955 16/564398 |

| Document ID | / |

| Family ID | 1000004334594 |

| Filed Date | 2021-03-11 |

View All Diagrams

| United States Patent Application | 20210073955 |

| Kind Code | A1 |

| Zhang; Jinsong ; et al. | March 11, 2021 |

LEARNING FROM ESTIMATED HIGH-DYNAMIC RANGE ALL WEATHER LIGHTING PARAMETERS

Abstract

Methods and systems are provided for determining high-dynamic range lighting parameters for input low-dynamic range images. A neural network system can be trained to estimate high-dynamic range lighting parameters for input low-dynamic range images. The high-dynamic range lighting parameters can be based on sky color, sky turbidity, sun color, sun shape, and sun position. Such input low-dynamic range images can be low-dynamic range panorama images or low-dynamic range standard images. Such a neural network system can apply the estimates high-dynamic range lighting parameters to objects added to the low-dynamic range images.

| Inventors: | Zhang; Jinsong; (Quebec, CA) ; Sunkavalli; Kalyan K.; (San Jose, CA) ; Hold-Geoffroy; Yannick; (San Jose, CA) ; Hadap; Sunil; (Dublin, CA) ; Eisenmann; Jonathan; (San Francisco, CA) ; Lalonde; Jean-Francois; (Quebec City, CA) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Family ID: | 1000004334594 | ||||||||||

| Appl. No.: | 16/564398 | ||||||||||

| Filed: | September 9, 2019 |

| Current U.S. Class: | 1/1 |

| Current CPC Class: | G06T 5/009 20130101; G06T 2207/20081 20130101; G06T 2207/20084 20130101; G06T 2207/20208 20130101 |

| International Class: | G06T 5/00 20060101 G06T005/00 |

Claims

1. A computer-implemented method for determining high-dynamic range ("HDR") lighting parameters for a low-dynamic range ("LDR") image, the method comprising: receiving, by a neural network system, a LDR panorama image; estimating HDR lighting parameters for the LDR panorama image, wherein the HDR lighting parameters are extrapolated from a latent representation generated from the LDR panorama image by a panoramic lighting parameter neural network of the neural network system, wherein the HDR lighting parameters are based on at least one of sky color, sky turbidity, sun color, sun shape, and sun position; and outputting, based on the estimated HDR lighting parameters from the panoramic lighting parameter neural network, substantially accurate HDR lighting parameters for the LDR panorama image.

2. The computer-implemented method of claim 1, further comprising: applying the substantially accurate HDR lighting parameters to an object added to the LDR panorama image.

3. The computer-implemented method of claim 1, further comprising: providing the substantially accurate HDR lighting parameters to a user device, wherein the substantially accurate HDR lighting parameters are provided as one of the substantially accurate HDR lighting parameters or as the substantially accurate HDR lighting parameters applied to an object added to the LDR panorama image.

4. The computer-implemented method of claim 1, further comprising: training the panoramic lighting parameter neural network, wherein the training is based on loss including at least one of panorama loss, sun elevation loss, panorama render loss, domain adaptation loss, sun parameter render loss, and sky parameter render loss.

5. The computer-implemented method of claim 1, further comprising: using the substantially accurate HDR lighting parameters as ground-truth lighting parameters to train a standard image lighting parameter neural network of the neural network system.

6. The computer-implemented method of claim 5, wherein the standard image lighting parameter neural network is trained using loss based on at least one of the sky color, the sky turbidity, the sun color, the sun shape, and the sun position, sun parameter render loss, and sky parameter render loss.

7. The computer-implemented method of claim 5, further comprising: receiving, by the neural network system, a LDR standard image; determining, using the neural network system, estimated HDR lighting parameters for the LDR standard image, wherein the estimated HDR lighting parameters are based on the ground-truth lighting parameters; and outputting the estimated HDR lighting parameters.

8. The computer-implemented method of claim 7, further comprising: applying the estimated HDR lighting parameters to an object added to the LDR standard image.

9. The computer-implemented method of claim 7, further comprising: providing the estimated HDR lighting parameters to a user device, wherein the estimated HDR lighting parameters are provided as one of the estimated HDR lighting parameters or as the estimated HDR lighting parameters applied to an object added to the LDR standard image.

10. One or more non-transitory computer-readable media having a plurality of executable instructions embodied thereon, which, when executed by one or more processors, cause the one or more processors to perform steps comprising: receiving, by a neural network system, a LDR image; generating a latent representation using the neural network system; estimating, by the neural network system, HDR lighting parameters for the LDR image, wherein the HDR lighting parameters are estimated from the latent representation generated by the neural network system, wherein the HDR lighting parameters are based on at least one of sky color, sky turbidity, sun color, sun shape, and sun position; and outputting, based on the estimated HDR lighting parameters, substantially accurate HDR lighting parameters for an LDR panorama image.

11. The media of claim 10, wherein the LDR image is one of a LDR panorama image or a LDR standard image.

12. The media of claim 10, the steps further comprising: applying the substantially accurate HDR lighting parameters to an object added to the LDR image.

13. The media of claim 10, the steps further comprising: providing the substantially accurate HDR lighting parameters to a user device, wherein the substantially accurate HDR lighting parameters are provided as one of the substantially accurate HDR lighting parameters or as the substantially accurate HDR lighting parameters applied to an object added to the LDR image.

14. The media of claim 10, the steps further comprising: training a panoramic lighting parameter neural network of the neural network system, wherein the training is based on loss including panorama loss, sun elevation loss, panorama render loss, domain adaptation loss, sun parameter render loss, and sky parameter render loss.

15. The media of claim 14, the steps further comprising: using the trained panoramic lighting parameter neural network to estimate the HDR lighting parameters; applying the HDR lighting parameters as ground-truth lighting parameters to train a standard image lighting parameter neural network of the neural network system.

16. The media of claim 15, wherein the standard image lighting parameter neural network is trained based on the loss.

17. A computing system comprising: means for estimating HDR lighting parameters for an LDR image, wherein the HDR lighting parameters are estimated based on a latent representation generated from the LDR image, wherein the HDR lighting parameters are based on sky color, sky turbidity, sun color, sun shape, and sun position; and means for outputting substantially accurate HDR lighting parameters for the LDR panorama image based on the estimated HDR lighting parameters.

18. The computing system of claim 17, wherein the LDR image is a LDR panorama image and the HDR lighting parameters are estimated using a panoramic lighting parameter neural network.

19. The computing system of claim 17, wherein the LDR image is a LDR standard image and the HDR lighting parameters are estimated using a standard image lighting parameter neural network.

20. The computing system of claim 17, further comprising: means for applying the substantially accurate HDR lighting parameters to an object added to the LDR image.

Description

BACKGROUND

[0001] When using graphics applications, users often desire to manipulate images by compositing objects into the images or performing scene reconstruction or modeling. Creating realistic results depends on determining accurate lighting related to the original image. In particular, when compositing objects into an image, understanding the lighting conditions in the image is important to ensure that the objects added to the image are illuminated appropriately so the composite looks realistic. Determining lighting, however, is complicated by scene geometry and material properties. Further, outdoor scenes often contain additional complicating factors that affect lighting such as clouds in the sky, exposure, and tone mapping. Conventional methods of determining lighting in outdoor scenes have had limited success in attempting to solve these problems. In particular, conventional methods do not accurately determine lighting for use in scenes when such complicating factors are present (e.g., generating accurate composite images where the sky has clouds).

SUMMARY

[0002] Embodiments of the present disclosure are directed towards a lighting estimation system trained to estimate high-dynamic range (HDR) lighting from a single low-dynamic range (LDR) image of an outdoor scene. Such a LDR image can be a panoramic image or a standard image. In accordance with embodiments of the present disclosure, such a system can be created using one or more neural networks. In this regard, the neural networks can be trained to assist in estimating HDR lighting parameters by leveraging the overall attributes of HDR lighting. In particular, the HDR parameters can be based on the Lalonde-Matthews (LM) model. Specifically, the HDR parameters can be LM lighting parameters that include sky color, turbidity, sun color, shape of the sun, and the sun position.

[0003] The lighting estimation system can estimate HDR lighting parameters from a single LDR image. In particular, the lighting estimation system can use a panoramic lighting parameter neural network to estimate HDR lighting parameters from a single LDR panoramic image. To run the panoramic lighting parameter neural network, a LDR panorama image can be input into the lighting estimation neural network system. Upon receiving the LDR panorama image, the panoramic lighting parameter neural network can estimate the LM lighting parameters for the LDR panorama image. The panoramic lighting parameter neural network can be used to generate a dataset for training a standard image lighting parameter neural network to estimate HDR lighting parameters from a single LDR standard image. A standard image can be a limited field-of-view image (e.g., when compared to a panorama). The panoramic lighting parameter neural network can be used to obtain estimated LM lighting parameters for a set of LDR panorama images. These estimated LM lighting parameters can be treated as ground-truth parameters for a set of LDR standard images (e.g., generated from the set of LDR panorama images) used to train the standard image lighting parameter neural network. The trained standard image lighting parameter neural network can be used to determine HDR lighting parameters from a single LDR standard image. To run the standard lighting parameter neural network, a LDR standard image can be input into the lighting estimation neural network system. Upon receiving the LDR panorama image, the standard image lighting parameter neural network can estimate the LM lighting parameters for the LDR standard image.

[0004] This summary is provided to introduce a selection of concepts in a simplified form that are further described below in the Detailed Description. This summary is not intended to identify key features or essential features of the claimed subject matter, nor is it intended to be used as an aid in determining the scope of the claimed subject matter.

BRIEF DESCRIPTION OF THE DRAWINGS

[0005] FIG. 1A depicts an example configuration of an operating environment in which some implementations of the present disclosure can be employed, in accordance with various embodiments.

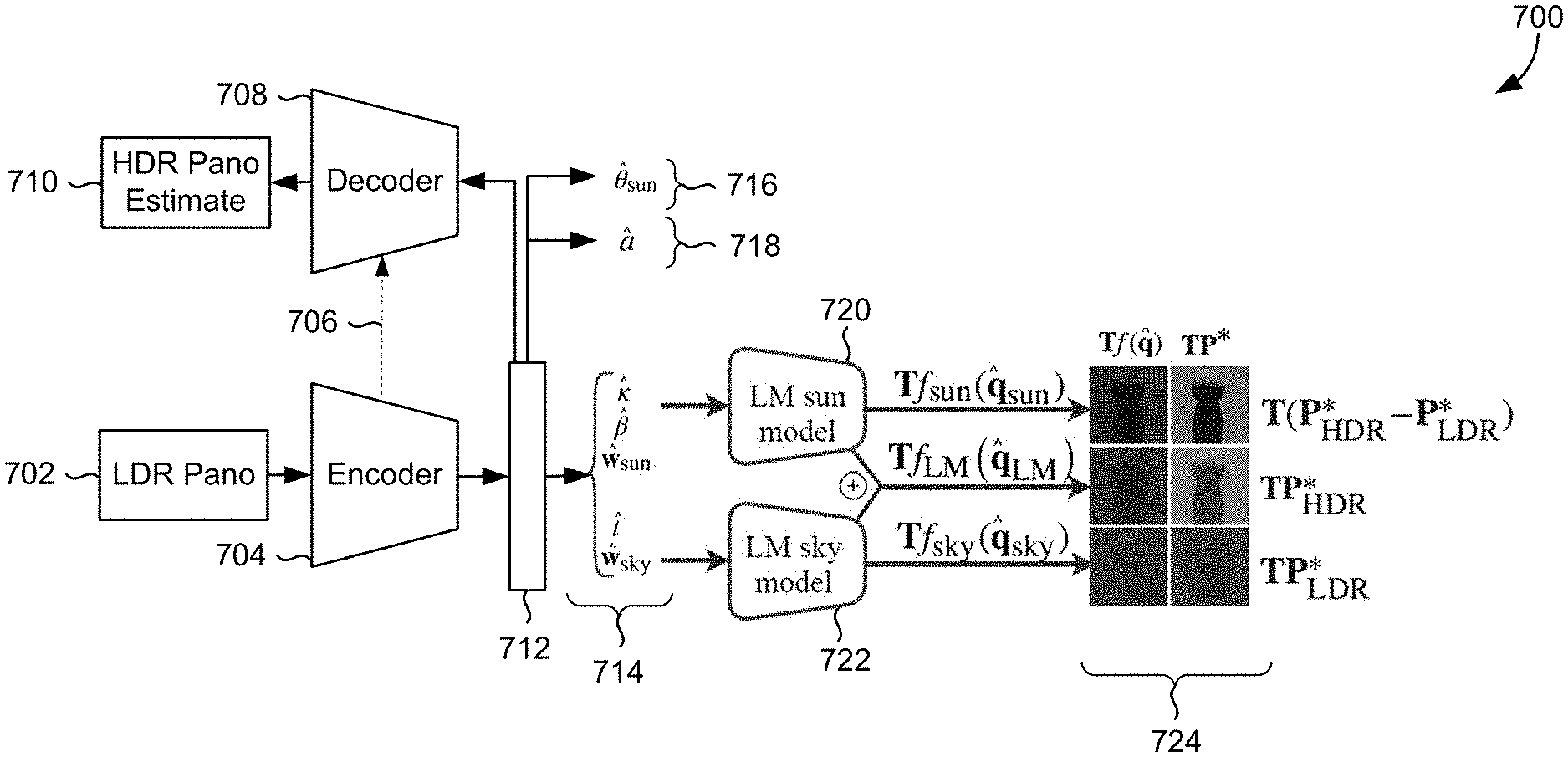

[0006] FIG. 1B depicts an example configuration of an operating environment in which some implementations of the present disclosure can be employed, in accordance with various embodiments.

[0007] FIG. 2 depicts an example configuration of an operating environment in which some implementations of the present disclosure can be employed, in accordance with various embodiments of the present disclosure.

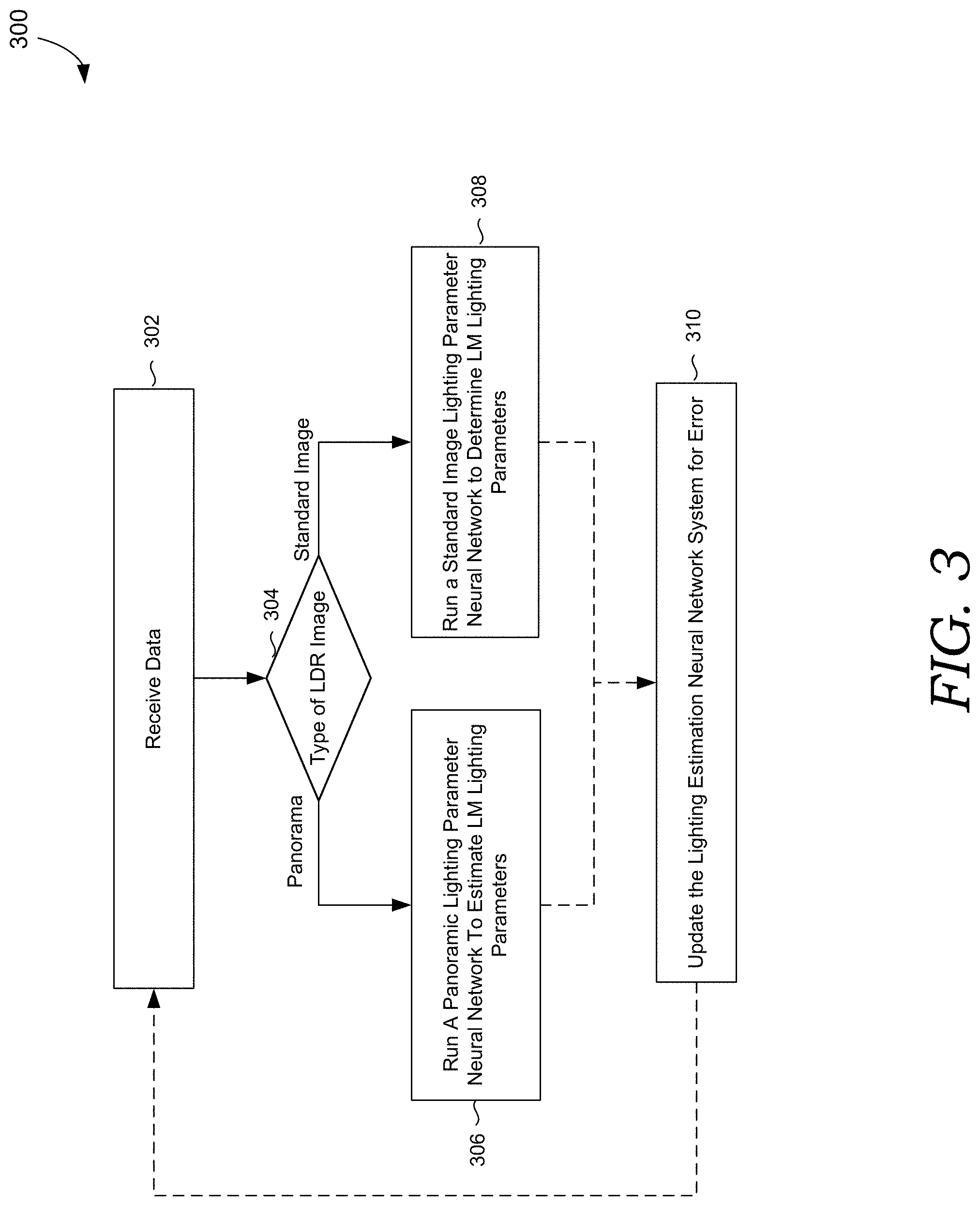

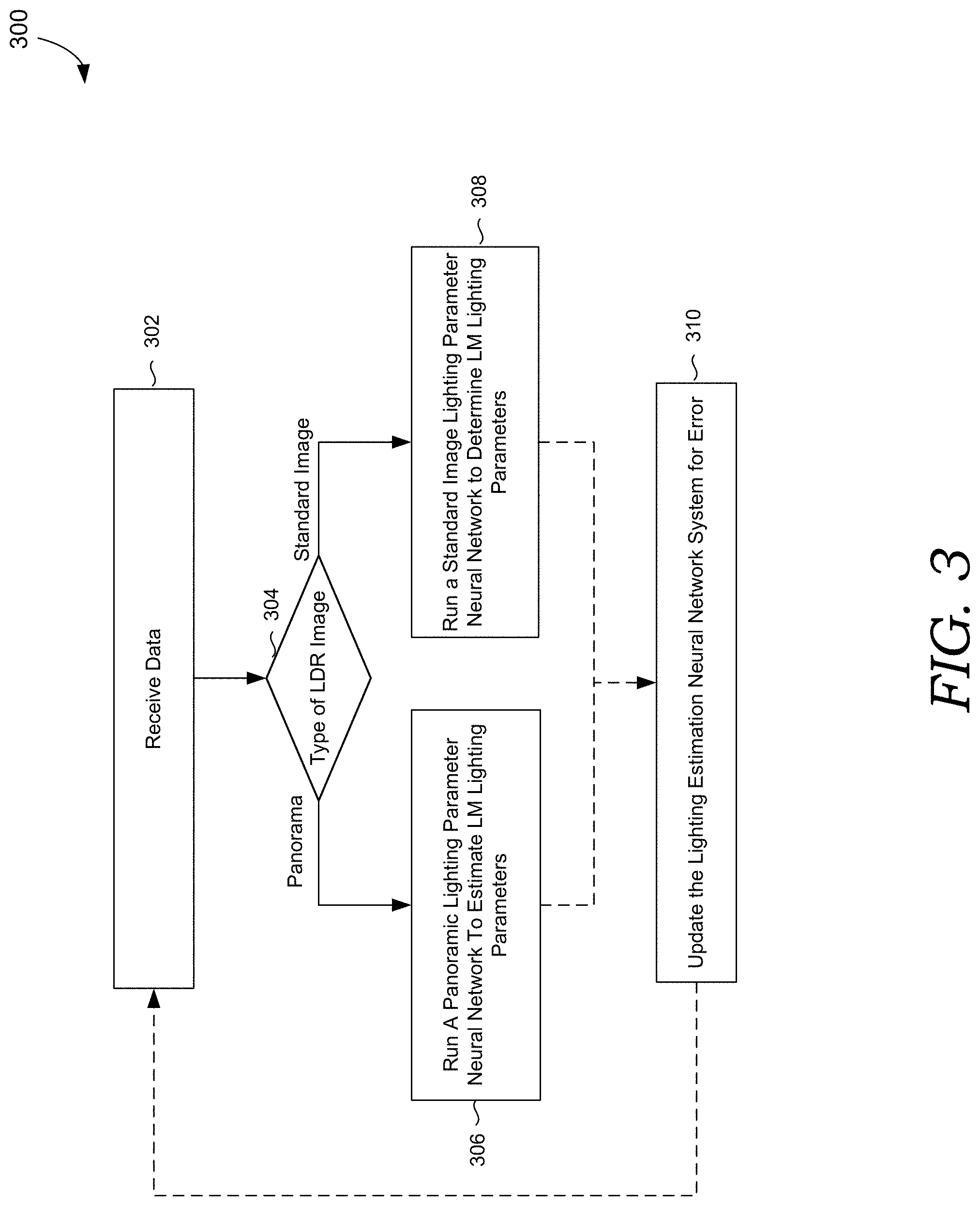

[0008] FIG. 3 depicts a process flow is provided showing an embodiment of training and/or running a lighting estimation system, in accordance with embodiments of the present disclosure.

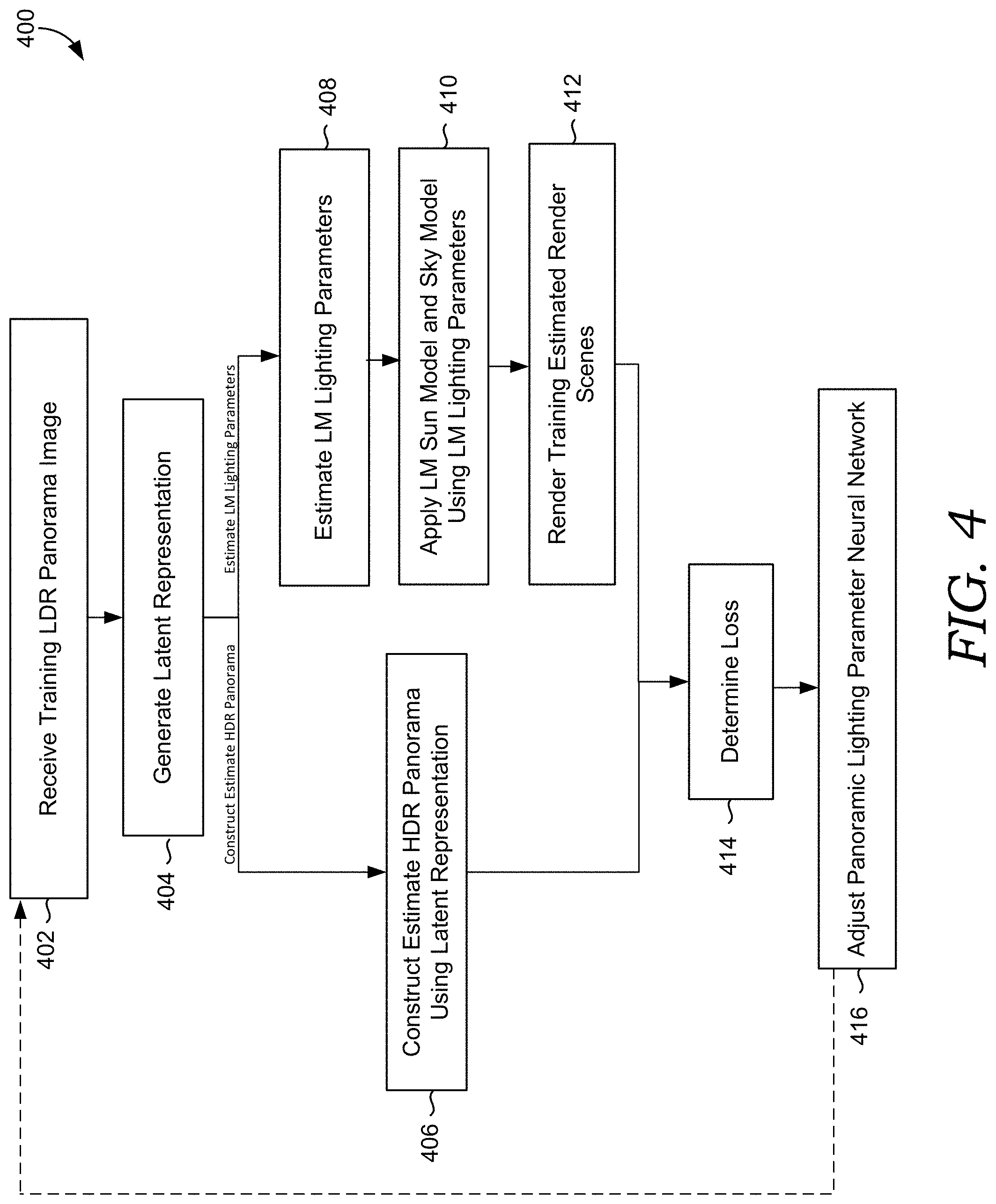

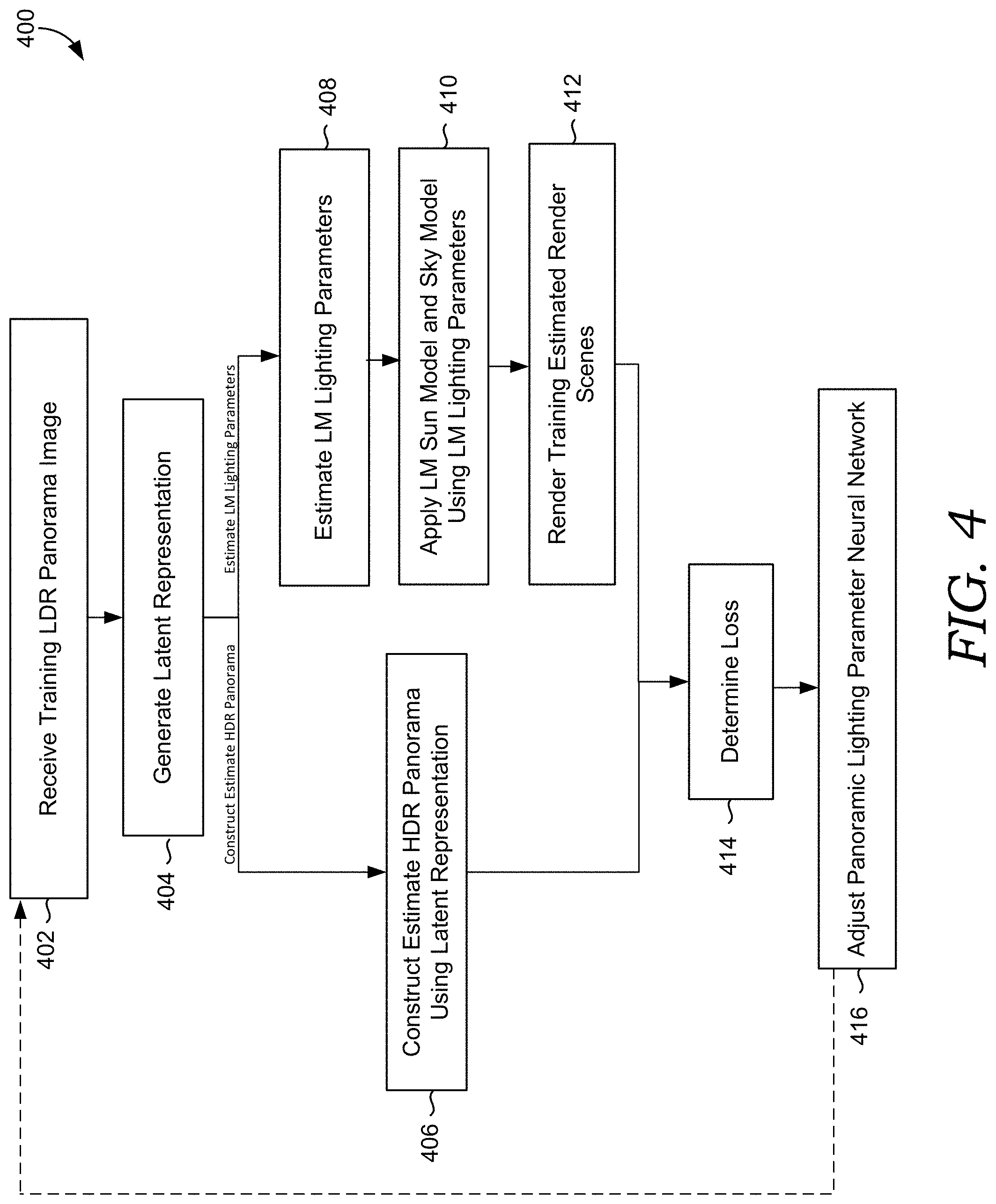

[0009] FIG. 4 depicts a process flow showing an embodiment for training a panorama lighting parameter neural network of a lighting estimation neural network system.

[0010] FIG. 5 depicts a process flow showing an embodiment for training a standard image lighting parameter neural network of a lighting estimation neural network system, from a LDR image, in accordance with embodiments of the present disclosure.

[0011] FIG. 6 depicts a process flow showing an embodiment for using a trained lighting estimation neural network system to estimate HDR lighting parameters from a LDR image, in accordance with embodiments of the present disclosure.

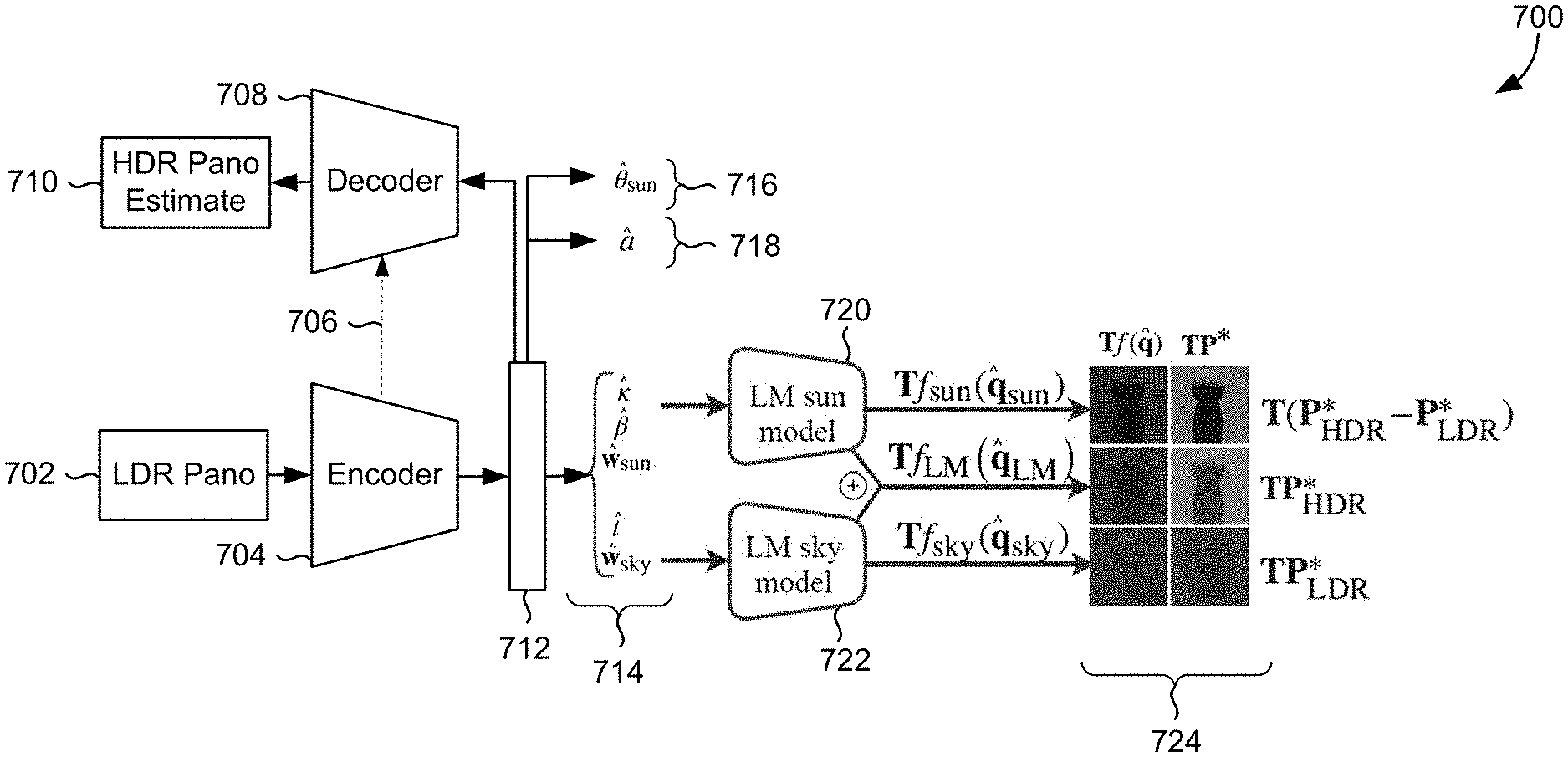

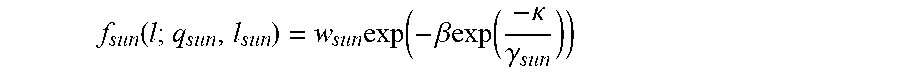

[0012] FIG. 7 depicts an example environment that can be used for a panorama lighting parameter neural network to estimate HDR parameters, in accordance with embodiments of the present disclosure.

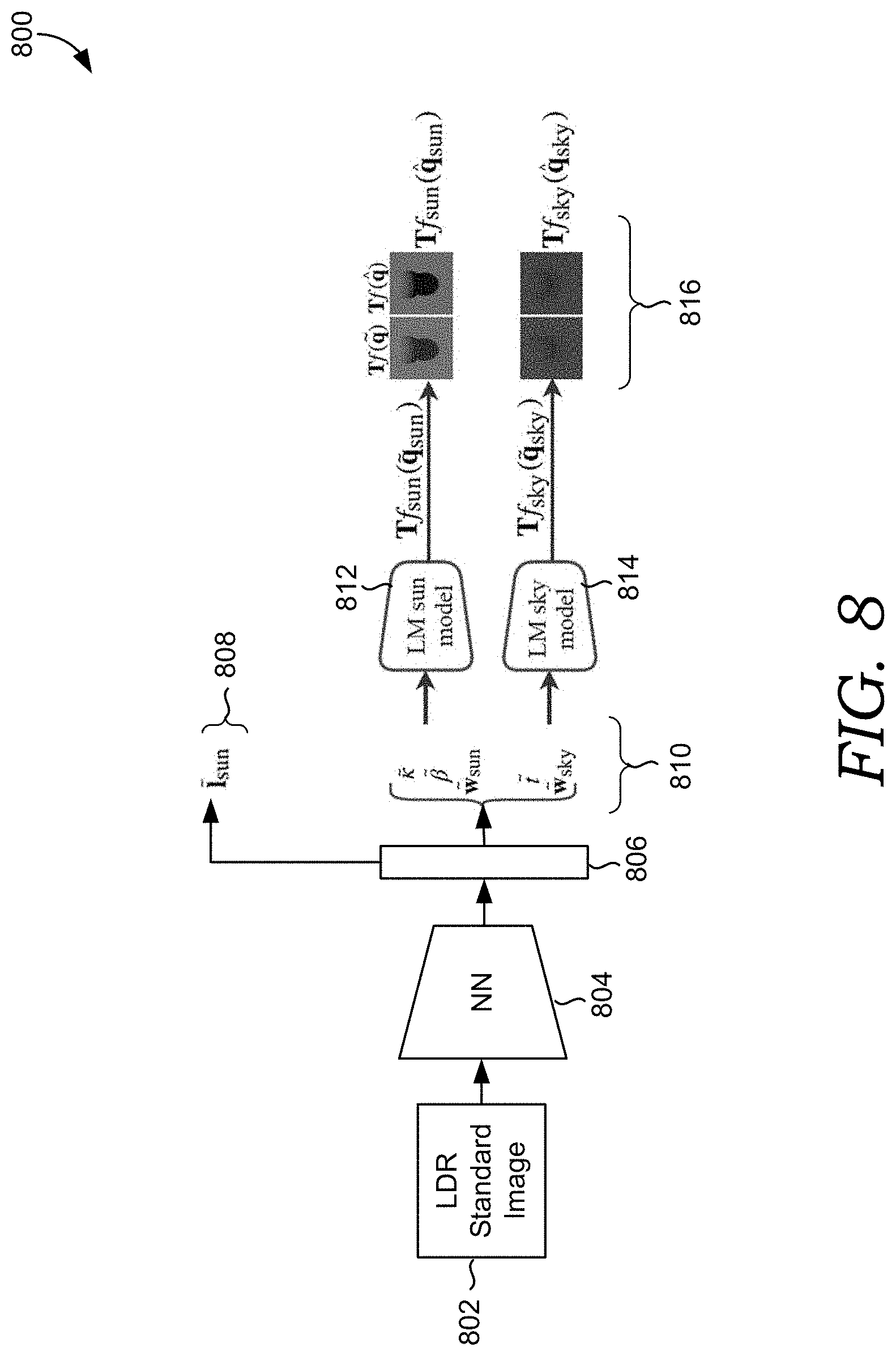

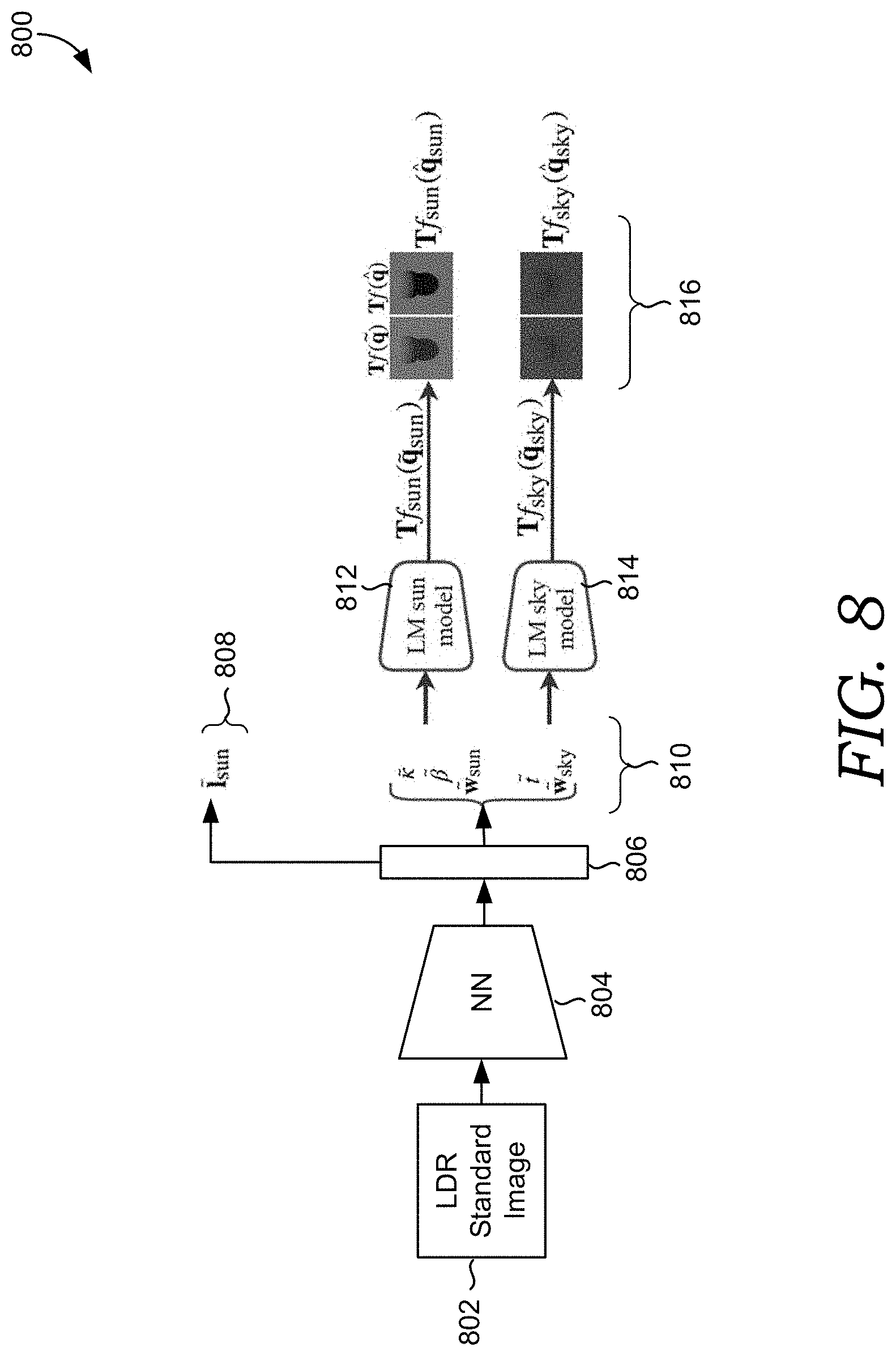

[0013] FIG. 8 illustrates an example environment that can be used for a standard lighting parameter neural network to estimate HDR parameters, in accordance with embodiments of the present disclosure.

[0014] FIG. 9 is a block diagram of an example computing device in which embodiments of the present disclosure may be employed.

DETAILED DESCRIPTION

[0015] The subject matter of the present disclosure is described with specificity herein to meet statutory requirements. However, the description itself is not intended to limit the scope of this patent. Rather, the inventors have contemplated that the claimed subject matter might also be embodied in other ways, to include different steps or combinations of steps similar to the ones described in this document, in conjunction with other present or future technologies. Moreover, although the terms "step" and/or "block" may be used herein to connote different elements of methods employed, the terms should not be interpreted as implying any particular order among or between various steps herein disclosed unless and except when the order of individual steps is explicitly described.

[0016] Oftentimes, users desire to manipulate images, for example, by performing manipulations in image composition, scene reconstruction, and/or three dimensional modeling. To achieve realistic results when compositing an object into an image, the object added to the image should be lighted similarly to the rest of the image (e.g., the shadow casted from the object should match the other shadows in the image). As such, it is important to accurately determine the lighting affecting objects depicted in the original image.

[0017] Some conventional systems rely on hand-crafted features extracted from an image to estimate lighting for the image. In particular, contrast and shadows can be determined for the features to try to estimate the lighting. However, this method is quite brittle because the lighting determined from hand-designed features tends to fail in many cases.

[0018] Other conventional methods have attempted to determine more detailed lighting information for images to increase lighting accuracy. Such detailed lighting information can be based on the dynamic range of an image. A dynamic range of an image can refer to the range between the brightest and darkest parts of a scene or an image. Images taken with conventional cameras are often low-dynamic range (LDR) images. However, LDR images have a limited range of pixel values. For example, a LDR image has any ratio equal to or less than 255:1. Often, this limited range of pixels in LDR images means that lighting determined from such images cannot be applied to objects added to the image in a manner that accurately reflects the lighting of the scene in the image. High-dynamic range (HDR) images, on the other hand, often contain a broad dynamic range of lighting information for an image. For example, a HDR image may have a pixel range of any ratio higher than 255:1, up to 100,000:1. Because a larger range of pixel values are available in HDR images, lighting determined from such images is much more accurate. Objects added to an image using lighting determined from HDR images can be rendered to accurately imitate the lighting of the overall image. As such, conventional methods have attempted to convert LDR images to HDR images to determine lighting parameters (e.g., HDR lighting parameters) that can be used to more accurately light objects added to the LDR images.

[0019] Unlike lighting determined from a LDR image, HDR lighting captures the entire dynamic range of lighting present in an image. For instance, methods based on HDR lighting fit a HDR parametric lighting model to a large-scale dataset of low-dynamic range 360 degree panoramas using pure optimization. This optimization can then be used to label LDR panoramas with HDR parameters. Such conventional methods that use optimization can often take into account sun position, haziness, and intensity. The problem with such conventional optimization methods is that while they are successful at locating the sun in a 360 degree panorama, in general, if the image has any clouds in the sky, the methods are not robust at determining accurate lighting. This leads to erroneous estimates of lighting in images with clouds in the sky. Further, LDR panoramas often have reduced sun intensity values due to the inherent pixel value constraints in a LDR image. Such pixel value constraints impact the ability of the optimization to find good HDR sunlight parameters, regardless of the presence of clouds.

[0020] Other methods have attempted to directly predict pixel values for HDR lighting for an entire panorama, for instance, by converting LDR panoramas to HDR panoramas and fitting a parametric lighting mode to the HDR panoramas. However, such methods directly predict pixel values of HDR lighting and focus on determining values for every pixel in the panorama. Predicting values for every pixel is computationally expensive and inefficient. Further, such methods are not able to generate robust estimates of intensity and cloudiness for images with cloudy skies. Such methods do not always determine realistic high-dynamic lighting properties and require significant computational resources.

[0021] Attempts have also been made to estimate HDR lighting from LDR panorama images. These approaches have relied on using the Ho{grave over (s)}ek-Wilkie model to represent the sky and sun in making HDR lighting estimations. In such a model, the sky and sun are correlated such that the sky parameters can be fit to a LDR panorama and a HDR sun model can be extrapolated (that can be used to apply HDR lighting parameters to objects added to the image). However, such a model does not always accurately determine lighting in scenes in all lighting conditions (e.g., where the sky has clouds).

[0022] Accordingly, embodiments of the present disclosure are directed to a lighting estimation system capable of facilitating more accurate HDR lighting estimation from LDR images. In particular, the lighting estimation system can robustly estimate HDR lighting parameters for a wide variety of outdoor lighting conditions. In addition, the lighting estimation system can estimate HDR lighting parameters from a single LDR panorama image or a single LDR standard image.

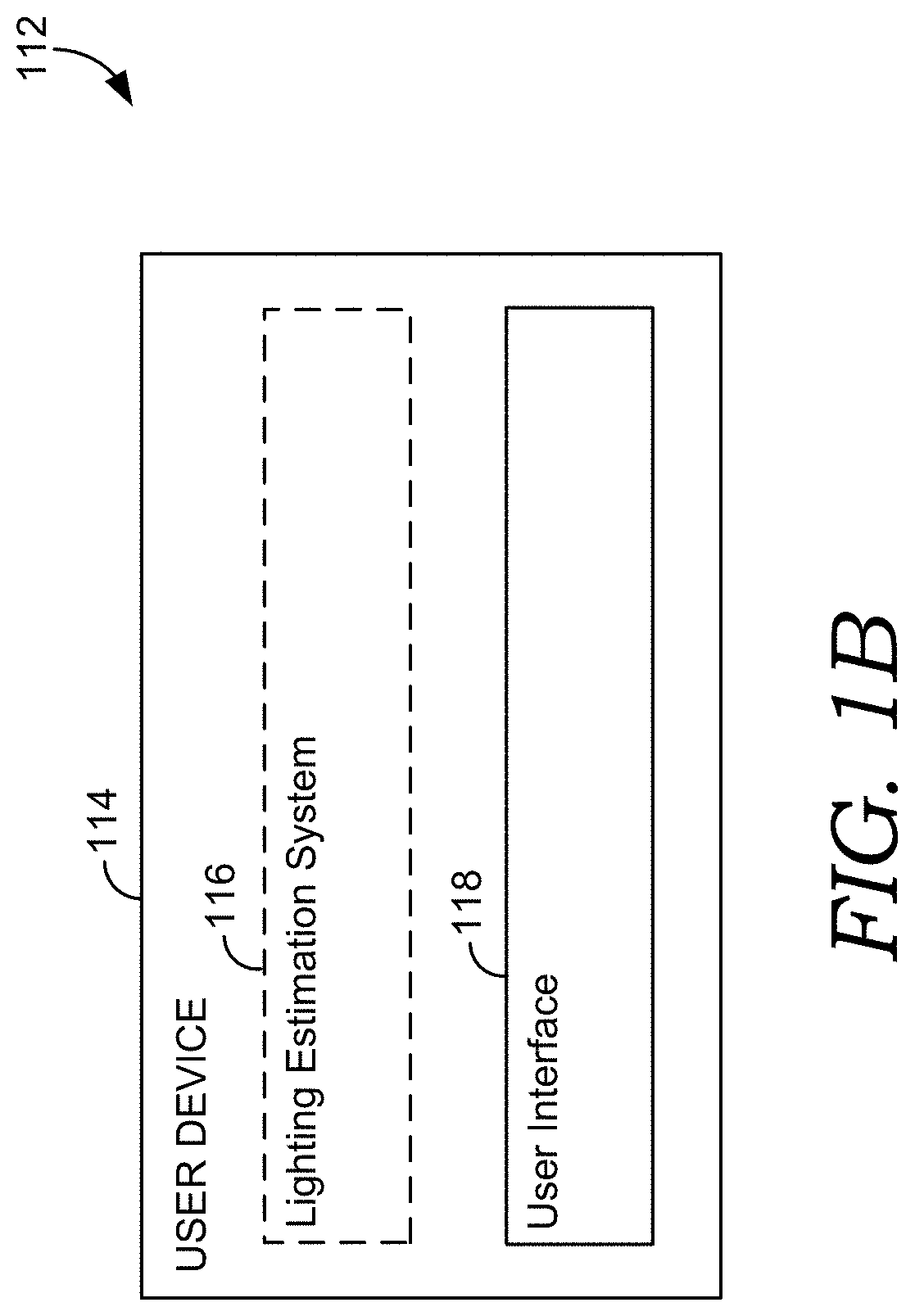

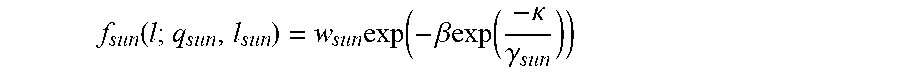

[0023] To more robustly estimate HDR lighting parameters, the lighting estimation system uses a model that provides more expressive HDR lighting parameters. These more expressive HDR lighting parameters allow for more accurately predicting lighting under a wider set of lighting conditions. Generally, a model can be used by the lighting estimation system to determine HDR lighting parameters. Such a model can represent the sky and sun in an image. In particular, the present disclosure leverages the Lalonde-Matthews sky model ("LM model") to represent the sky and sun in an image. The LM model can represent a wide range of lighting conditions for outdoor scenes (e.g., completely overcast to fully sunny). While the LM model is more expressive than other available models, the LM model is comprised of two uncorrelated components, a sky component (e.g., represented using a LM sky model) and a sun illumination component (e.g., represented using a LM sun model). The sky component can be based on a Preetham sky model multiplied with an average sky color that takes into account the angle between the sky and the sun position as well as sky turbidity. The sun component can take into account the shape of the sun (e.g., from the perspective of the earth) and the color of the sun. Such a model can also take into account atmospheric turbidity and sparse aerosols in the air, as well as simulating smaller and denser occluders like small clouds passing over the sun that affect the effective visible size of the sun.

[0024] At a high level, lighting estimations by the lighting estimation system can be determined using a neural network system (e.g., a lighting estimation neural network system). A neural network system can be comprised of one or more neural networks. A neural network is a computational approach loosely based on how the brain solves problems using large clusters of connected neurons. Neural networks are self-learning and trained to generate output reflecting a desired result. As described herein, the lighting estimation neural network system can be trained using at least one neural network (e.g., panoramic lighting parameter neural network, standard image lighting parameter neural network, etc.). In such a system, a first neural network (e.g., panoramic lighting parameter neural network) can be trained to estimate HDR lighting parameters (e.g., based on estimated LM lighting parameters) from an input LDR panorama image (e.g., a 360 degree panorama). Further, in such a system, a second neural network (e.g., standard image lighting parameter neural network) can be trained to determine HDR lighting parameters (e.g., based on determine LM lighting parameters) from an input LDR standard image. Although generally described as two separate neural networks, any number of neural networks can be trained in accordance with embodiments described herein.

[0025] By training and utilizing a lighting estimation neural network system in accordance with the systems and methods described herein, the lighting estimation system more accurately estimates HDR lighting for LDR images using the LM lighting parameters. Whereas conventional systems have difficulty predicting lighting for scenes with cloudy skies, the lighting estimation system can accurately estimate HDR lighting parameters that take into consideration how these lighting conditions affect a scene in an image. Determining more accurate estimations of lighting parameters further enables more accurate digital image alterations, better (e.g., more realistic) virtual object rendering, etc. Advantageously, the lighting estimation neural network system can be used to determine HDR lighting for LDR panoramic images and/or LDR standard images.

[0026] As mentioned, the lighting estimation neural network system can use a panoramic lighting parameter neural network to estimate HDR lighting parameters from a single LDR panoramic image. In particular, the panoramic lighting parameter neural network can estimate LM lighting parameters for an input LDR panorama image. For instance, a latent representation in the panoramic lighting parameter neural network can be used to estimate the LM lighting parameters. Such LM lighting parameters can include sky color, turbidity, sun color, shape of the sun, and the sun position. To run the panoramic lighting parameter neural network, a LDR panorama image can be input into the lighting estimation neural network system. The panoramic lighting parameter neural network can receive the input LDR panorama image. Upon receiving the LDR panorama image, the panoramic lighting parameter neural network can estimate the LM lighting parameters for the LDR panorama image (e.g., based on a latent representation).

[0027] In embodiments, the panoramic lighting parameter neural network can undergo training to learn to estimate the LM lighting parameters for LDR panorama images. During training, a set of LDR panoramic images can be used. For example, the network can be trained using a set of outdoor LDR panoramic images generated from a corresponding set of outdoor HDR panoramic images. The lighting estimation neural network system can use the set of outdoor HDR panoramic images to provide ground-truth information for each of the outdoor LDR panoramic images. For example, the ground-truth information can be used to determine the accuracy of the estimated LM parameters by the panoramic lighting parameter neural network. The ground-truth information can be based on a desired optimal output by the network. In particular, after LM lighting parameters are estimated for an input LDR panorama image, the estimated LM lighting parameters can be compared with a variety of corresponding ground-truth information to determine any error in the panoramic lighting parameter neural network. During training, the lighting estimation system can minimize loss based on the estimated LM lighting parameters as compared to the corresponding ground-truth information such that the network learns to estimate accurate LM lighting parameters.

[0028] In more detail, the accuracy of estimated LM lighting parameters can be analyzed to determine error in the panoramic lighting parameter estimate neural network. Error can be based on a variety of losses. Loss can be based on comparing an output from the panoramic lighting parameter neural network with a known ground-truth. In particular, a latent representation in the panoramic lighting parameter neural network can be used to generate an estimated HDR panorama image (having the estimated LM lighting parameters. This estimated HDR panorama image can be compared to a corresponding ground-truth HDR panorama image to calculate loss (e.g., panorama loss). In addition, the estimated sun elevation can be compared with a ground-truth sun elevation to calculate loss (e.g., sun elevation loss).

[0029] Further, the estimated LM lighting parameters can be compared with the ground-truth lighting parameters by applying the lighting parameters to a synthetic scene to calculate loss. Such a scene can be a basic three-dimensional scene to which lighting parameters can easily be applied (e.g., a scene of geometric primitives, such as spheres, with varying surface material properties). The scenes can then be compared to determine the accuracy of the estimated LM lighting parameters. This accuracy can indicate errors in the lighting parameter estimate neural network. It is advantageous to use rendered scenes to correct errors in the panoramic lighting parameter neural network because rendering a three-dimensional scene with HDR lighting (e.g., using the LM lighting parameters) is very computationally expensive. Loss can be calculated using rendered scenes based on estimated LM parameters compared to ground-truth rendered scenes. Such rendered scenes can include a rendered scene based on LM sky parameters (e.g., using a LM sky model), a rendered scene based on estimated LM sun parameters (e.g., using a LM sun model), and a rendered scene based on the combination of estimated LM sky parameters and estimated LM sun parameters (e.g., using the combined LM sky model and LM sun model). In addition, estimated LM parameters from the estimated HDR panorama image can be used to render a scene. Errors determined from comparing the scene(s) with estimated LM lighting parameters with the scene(s) with ground-truth lighting parameters can be used to update the panoramic lighting parameter estimate neural network.

[0030] In one embodiment, the trained panoramic lighting parameter neural network can be used to generate a dataset for training a standard image lighting parameter neural network to estimate HDR lighting parameters from a single LDR standard image. A standard image can be a limited field-of-view image (e.g., when compared to a panorama). In particular, the trained panoramic lighting parameter neural network can be used to obtain estimated LM lighting parameters for a set of LDR panorama images. These estimated LM lighting parameters can be treated as ground-truth parameters for a set of LDR standard images (e.g., generated from the set of LDR panorama images). The standard image lighting parameter neural network can be trained to estimate HDR lighting parameters from a single LDR standard image is discussed further below.

[0031] The lighting estimation neural network system can use a standard image lighting parameter neural network to determine HDR lighting parameters from a single LDR standard image. In particular, the standard image lighting parameter neural network can determine LM lighting parameters for an input LDR standard image. For instance, a latent representation in the standard image lighting parameter neural network can be used to determine the LM lighting parameters. To run the standard image lighting parameter neural network, a LDR standard image can be input into the lighting estimation neural network system. The standard image lighting parameter neural network can receive the input LDR standard image. Upon receiving the LDR standard image, the standard image lighting parameter neural network can determine LM lighting parameters for the LDR standard image (e.g., based on a latent representation). The LM lighting parameters determined by the standard image lighting parameter neural network can include sky color, sky turbidity, sun color, sun shape, and sun position (e.g., the same LM lighting parameters learned by the panoramic lighting parameter neural network).

[0032] More particularly, the standard image lighting parameter neural network can undergo training to learn to determine LM lighting parameters for a LDR standard image. During training, the lighting estimation neural network system can train the standard image lighting parameter neural network using a set of LDR standard images. The set of LDR standard images can be generated by cropping a set of outdoor LDR panoramic images (e.g., cropping a LDR panoramic image into seven limited field-of-view image). Such outdoor LDR panoramic images can be have estimated LM lighting parameters (e.g., estimated using the trained panoramic lighting parameter neural network) that can be used as ground-truth LM lighting parameters. The ground-truth LM lighting parameters can be used to determine the accuracy of the estimated LM parameters as determined by the standard image lighting parameter neural network. In particular, after LM lighting parameters are determined for an input LDR standard image, the determined LM lighting parameters can be compared with the ground-truth LM lighting parameters. During training, the lighting estimation system can minimize loss based on the determined LM lighting parameters and the ground-truth LM lighting parameters such that the system learns to determine accurate lighting parameters.

[0033] In more detail, the accuracy of determined LM lighting parameters can be analyzed to determine error in the standard image lighting parameter estimate neural network. Error can be based on a variety of losses. Loss can be based on comparing an output from the panoramic lighting parameter neural network with a known ground-truth. In particular, the determined LM average sky color parameter from the standard image lighting parameter neural network can compared to a corresponding ground-truth LM average sky color parameter to calculate loss (e.g., sky loss). In addition, the determined LM mean sun color parameter generated by the standard image lighting parameter neural network can compared to a corresponding ground-truth LM mean sun color parameter to calculate loss (e.g., sun loss). The determined LM global scattering lighting parameter (i.e., .beta.) generated by the standard image lighting parameter neural network can compared to a corresponding ground-truth LM scattering of the global scattering parameter to calculate loss (e.g., .beta. loss). The determined LM local scattering lighting parameter (i.e., .kappa.) generated by the standard image lighting parameter neural network can compared to a corresponding ground-truth LM local scattering lighting parameter to calculate loss (e.g., .kappa. loss). The determined LM turbidity lighting parameter (i.e., t) generated by the standard image lighting parameter neural network can compared to a corresponding ground-truth LM turbidity lighting parameter to calculate loss (e.g., t loss). In addition, determined LM sun position can compared to a corresponding ground-truth LM sun position to calculate loss (e.g., sun position loss).

[0034] Further, the determined LM lighting parameters can be compared with the ground-truth LM lighting parameters by applying the lighting parameters to a scene to calculate loss. Such a scene can be the same basic three-dimensional scene previously discussed (e.g., a scene of geometric primitives, such as spheres, with varying surface material properties). The scenes can then be compared to determine the accuracy of the estimated LM lighting parameters. This accuracy can indicate errors in the standard image lighting parameter estimate neural network. It is advantageous to use rendered scenes to correct errors in the standard image lighting parameter estimate neural network because rendering a three-dimensional scene with HDR lighting (e.g., using the LM lighting parameters) is very computationally expensive. Loss can be calculated using rendered scenes based on determined LM parameters compared to ground-truth rendered scenes. Such rendered scenes can include a rendered scene based on determined LM sky parameters (e.g., using a LM sky model) and a rendered scene based on determined LM sun parameters (e.g., using a LM sun model). Errors determined from comparing the scene(s) with estimated LM lighting parameters with the scene(s) with ground-truth lighting parameters can be used to update the lighting parameter estimate neural network.

[0035] FIG. 1A depicts an example configuration of an operating environment in which some implementations of the present disclosure can be employed, in accordance with various embodiments. It should be understood that this and other arrangements described herein are set forth only as examples. Other arrangements and elements (e.g., machines, interfaces, functions, orders, and groupings of functions, etc.) can be used in addition to or instead of those shown, and some elements may be omitted altogether for the sake of clarity. Further, many of the elements described herein are functional entities that may be implemented as discrete or distributed components or in conjunction with other components, and in any suitable combination and location. Various functions described herein as being performed by one or more entities may be carried out by hardware, firmware, and/or software. For instance, some functions may be carried out by a processor executing instructions stored in memory as further described with reference to FIG. 9.

[0036] It should be understood that operating environment 100 shown in FIG. 1A is an example of one suitable operating environment. Among other components not shown, operating environment 100 includes a number of user devices, such as user devices 102a and 102b through 102n, network 104, and server(s) 108. Each of the components shown in FIG. 1A may be implemented via any type of computing device, such as one or more of computing device 900 described in connection to FIG. 9, for example. These components may communicate with each other via network 104, which may be wired, wireless, or both. Network 104 can include multiple networks, or a network of networks, but is shown in simple form so as not to obscure aspects of the present disclosure. By way of example, network 104 can include one or more wide area networks (WANs), one or more local area networks (LANs), one or more public networks such as the Internet, and/or one or more private networks. Where network 104 includes a wireless telecommunications network, components such as a base station, a communications tower, or even access points (as well as other components) may provide wireless connectivity. Networking environments are commonplace in offices, enterprise-wide computer networks, intranets, and the Internet. Accordingly, network 104 is not described in significant detail.

[0037] It should be understood that any number of user devices, servers, and other components may be employed within operating environment 100 within the scope of the present disclosure. Each may comprise a single device or multiple devices cooperating in a distributed environment.

[0038] User devices 102a through 102n can be any type of computing device capable of being operated by a user. For example, in some implementations, user devices 102a through 102n are the type of computing device described in relation to FIG. 9. By way of example and not limitation, a user device may be embodied as a personal computer (PC), a laptop computer, a mobile device, a smartphone, a tablet computer, a smart watch, a wearable computer, a personal digital assistant (PDA), an MP3 player, a global positioning system (GPS) or device, a video player, a handheld communications device, a gaming device or system, an entertainment system, a vehicle computer system, an embedded system controller, a remote control, an appliance, a consumer electronic device, a workstation, any combination of these delineated devices, or any other suitable device.

[0039] The user devices can include one or more processors, and one or more computer-readable media. The computer-readable media may include computer-readable instructions executable by the one or more processors. The instructions may be embodied by one or more applications, such as application 110 shown in FIG. 1A. Application 110 is referred to as a single application for simplicity, but its functionality can be embodied by one or more applications in practice. As indicated above, the other user devices can include one or more applications similar to application 110.

[0040] The application(s) may generally be any application capable of facilitating the exchange of information between the user devices and the server(s) 108 in carrying out HDR lighting estimation for a LDR image (e.g., of an outdoor panoramic scene and/or an outdoor standard scene). In some implementations, the application(s) comprises a web application, which can run in a web browser, and could be hosted at least partially on the server-side of environment 100. In addition, or instead, the application(s) can comprise a dedicated application, such as an application having image processing functionality. In some cases, the application is integrated into the operating system (e.g., as a service). It is therefore contemplated herein that "application" be interpreted broadly.

[0041] In accordance with embodiments herein, the application 110 can facilitate HDR lighting estimation from a single LDR image. In some embodiments, the single LDR image can be a 360 degree panorama scene. In other embodiments, the single LDR image can be a limited field-of-view scene. A LDR image can be a still image or taken from a video. The LDR image can be selected or input in any manner. For example, a user may take a picture using a camera function on a device. As another example, a desired LDR image can be selected from a repository, for example, a repository stored in a data store accessible by a network or stored locally at the user device 102a. In other cases, an image may be automatically selected or detected. Based on the input LDR image, (e.g., provided via a user device or server), HDR lighting parameters can be estimated for the input LDR image. The HDR lighting parameters can be output to a user, for example, to the user via the user device 102a. For instance, in one embodiment, the HDR lighting parameters can be displayed via a display screen of the user device. In other embodiments, the HDR lighting parameters can be automatically applied to objects composited with the input LDR image. As an example, application 110 can be ADOBE DIMENSION (e.g., utilizing a Match Image Sunlight feature).

[0042] As described herein, server 108 can facilitate HDR lighting estimation from a LDR image via lighting estimation system 106. Server 108 includes one or more processors, and one or more computer-readable media. The computer-readable media includes computer-readable instructions executable by the one or more processors. The instructions may optionally implement one or more components of lighting estimation system 106, described in additional detail below.

[0043] Lighting estimation system 106 can train and operate a lighting estimation neural network system in order to estimate HDR lighting parameters from a single LDR image. Such a neural network system can be comprised of one or more neural networks trained to generate a designated output. Once trained, the neural network can estimate HDR lighting parameters for an input LDR scene. Such a LDR scene can a panoramic scene or a limited field-of-view scene.

[0044] For cloud-based implementations, the instructions on server 108 may implement one or more components of illumination estimation system 106, and application 110 may be utilized by a user to interface with the functionality implemented on server(s) 108. In some cases, application 110 comprises a web browser. In other cases, server 108 may not be required, as further discussed with reference to FIG. 1B. For example, the components of illumination estimation system 106 may be implemented completely on a user device, such as user device 102a. In this case, lighting estimation system 106 may be embodied at least partially by the instructions corresponding to application 110.

[0045] Referring to FIG. 1B, aspects of an illustrative lighting estimation system are shown, in accordance with various embodiments of the present disclosure. FIG. 1B depicts a user device 114, in accordance with an example embodiment, configured to allow for estimating HDR lighting from an LDR image using a lighting estimation system. The user device 114 may be the same or similar to the user device 102a-102n and may be configured to support the lighting estimation system 116 (as a standalone or networked device). For example, the user device 114 may store and execute software/instructions to facilitate interactions between a user and the lighting estimation system 116 via the user interface 118 of the user device.

[0046] A user device can be utilized by a user to perform lighting estimation. In particular, a user can select and/or input a LDR image to identify HDR lighting for the image utilizing user interface 118. An image can be selected or input in any manner. The user interface may facilitate the user accessing one or more stored images on the user device (e.g., in a photo library), and/or import images from remote devices and/or applications. As can be appreciated, images can be input without specific user selection. Images can include frames from a video. Based on the input and/or selected image, illumination estimation system 116 can be used to perform HDR lighting estimation of the image using various techniques, some of which are further discussed below. User device 114 can also be utilized for displaying the determined lighting parameters.

[0047] FIG. 2 depicts an example configuration of an operating environment in which some implementations of the present disclosure can be employed, in accordance with various embodiments of the present disclosure. It should be understood that this and other arrangements described herein are set forth only as examples. Other arrangements and elements (e.g., machines, interfaces, functions, orders, and groupings of functions, etc.) can be used in addition to or instead of those shown, and some elements may be omitted altogether for the sake of clarity. Further, many of the elements described herein are functional entities that may be implemented as discrete or distributed components or in conjunction with other components, and in any suitable combination and location. Various functions described herein as being performed by one or more entities may be carried out by hardware, firmware, and/or software. For instance, some functions may be carried out by a processor executing instructions stored in memory as further described with reference to FIG. 9. It should be understood that operating environment 200 shown in FIG. 2 is an example of one suitable operating environment. Among other components not shown, operating environment 200 includes a number of user devices, networks, and server(s).

[0048] Lighting estimation system 204 includes panoramic lighting parameter engine 206, and standard image lighting parameter engine 208. The foregoing engines of lighting estimation system 204 can be implemented, for example, in operating environment 100 of FIG. 1A and/or operating environment 112 of FIG. 1B. In particular, those engines may be integrated into any suitable combination of user devices 102a and 102b through 102n and server(s) 106 and/or user device 114. While the various engines are depicted as separate engines, it should be appreciated that a single engine can perform the functionality of all engines. Additionally, in implementations, the functionality of the engines can be performed using additional engines and/or components. Further, it should be appreciated that the functionality of the engines can be provided by a system separate from the lighting estimation system.

[0049] As shown, a lighting estimation system can operate in conjunction with data store 202. Data store 202 can store computer instructions (e.g., software program instructions, routines, or services), data, and/or models used in embodiments described herein. In some implementations, data store 202 can store information or data received via the various engines and/or components of lighting estimation system 204 and provide the engines and/or components with access to that information or data, as needed. Although depicted as a single component, data store 202 may be embodied as one or more data stores. Further, the information in data store 202 may be distributed in any suitable manner across one or more data stores for storage (which may be hosted externally). In embodiments, data stored in data store 202 can include images used for training the lighting estimation system. Such images can be input into data store 202 from a remote device, such as from a server or a user device.

[0050] The data stored in data store 202 can include training data. Training data generally refers to data used to train a neural network, or portion thereof. As such, the training data can include ground-truth HDR panorama images, training LDR images, ground-truth render images, estimated render images, or the like. In some cases, data store 202 receives data from user devices (e.g., an input image received by user device 202a or another device associated with a user, via, for example, application 210). In other cases, data is received from one or more data stores in the cloud.

[0051] Data store 202 can also be used to store a lighting estimation neural network system. Such a neural network system may be comprised of one or more neural networks, such as a neural network trained to estimate HDR lighting parameters for an input panoramic LDR image (e.g., panoramic lighting parameter neural network 218) and/or neural network trained to estimate HDR lighting parameters for an input standard LDR image (e.g., standard image lighting parameter neural network 220). One implementation can employ a convolutional neural network architecture for the one or more neural networks.

[0052] Panoramic lighting parameter engine 206 may be used to train and/or run the panoramic lighting parameter neural network to estimate HDR lighting parameters for a LDR panorama image. As depicted in FIG. 2, panoramic lighting parameter engine 206 includes panorama image component 210 and parameter estimate component 212. The foregoing components of panoramic lighting parameter engine 206 can be implemented, for example, in operating environment 100 of FIG. 1A and/or operating environment 112 of FIG. 1B. In particular, these components may be integrated into any suitable combination of user devices 102a and 102b through 102n and server(s) 106 and/or user device 114. While the various components are depicted as separate components, it should be appreciated that a single component can perform the functionality of all components. Additionally, in implementations, the functionality of the components can be performed using additional components and/or engines. Further, it should be appreciated that the functionality of the components can be provided by an engine separate from the panoramic lighting parameter engine. Although these components are illustrated separately, it can be appreciated that the functionality described in association therewith can be performed by any number of components.

[0053] Panorama image component 210 can generally be used to generate and/or modify any image utilized in relation to the panoramic lighting parameter neural network. Images generated by the panorama image component can include HDR panorama images, training LDR panorama images, estimated HDR panorama images, ground-truth render scenes, and estimated render scenes.

[0054] In implementations, the panorama image component 210 can generate LDR panorama images from HDR panorama images. Such a HDR panorama image has known LM illumination/lighting properties. These known properties can be used to provide information used as ground-truth during training of the panoramic lighting parameter neural network. The panorama image component 210 convert the HDR panorama images into LDR panorama images for use in training the panoramic lighting parameter neural network. To convert an HDR panorama image into a LDR panorama image, a random exposure factor can be applied, clipping the maximum value at one and quantizing the image to eight bits.

[0055] Parameter estimate component 212 can be used to run a panoramic lighting parameter neural network 218. The panoramic lighting parameter neural network can generally use a convolutional neural network architecture. In particular, the convolutional neural network can receive an input LDR panorama image. From the input LDR panorama image, the convolutional neural network can use an encoder (e.g., an auto-encoder) with skip-links to regress a HDR panorama from the input LDR panorama image. In this regression, an equirectangular format can be used with the assumption such that the panorama is rotated such that the sun is in the center.

[0056] The convolutional neural network can have a path from a latent vector (e.g., z) to two fully connected layers that can estimate the sun elevation for the input LDR panorama image. Advantageously, estimating the sun elevation can add robustness to the convolutional neural network in estimating LM lighting parameters. Another path from the latent vector (e.g., z) can connect to an unsupervised domain adaptation branch. Advantageously, having a domain adaptation branch in the convolutional neural network can help the network generalize to real data. A further path from the latent vector (e.g., z) can be added that predicts the LM parameters (e.g., based on the LM sun model and the LM sky model). In this way, the network can learn to estimate the sun and sky colors, the sun shape, and the sky turbidity (e.g., from the latent vector). This path can have a structure of two consecutive FC layers with a size of 512 and 25 neurons where the output layer has 9 neurons corresponding to the nine LM sky parameters.

[0057] In one embodiment, parameter estimate component 212 can be used to train the panoramic lighting parameter neural network. In particular, parameter estimate component 212 may select a training LDR panorama image for use in training the panoramic lighting parameter neural network. Such a training LDR panorama image can be a LDR panoramic image generated by the panorama image component 212. From a training LDR panorama image, the panoramic lighting parameter neural network can estimate LM lighting parameters.

[0058] Parameter estimate component 212 can construct an estimated HDR panorama image from the LDR panorama image. In particular, a latent vector generated by the panoramic lighting parameter neural network (e.g., z) can be used to generate an estimated HDR panorama image using a decoder network. In particular, the estimated LM lighting parameters generated by the panoramic lighting parameter neural network can be used to generate an estimated HDR panorama image using the estimated LM lighting parameters. During training of the panoramic lighting parameter neural network, the estimated HDR panorama image may be compared to the corresponding ground-truth HDR panorama image to determine errors. During training, the estimated HDR panorama image can also be multiplied with a pre-computed transport matrix of a synthetic scene and then compared with the corresponding ground-truth HDR panorama image multiplied with the pre-computed transport matrix of the synthetic scene to determine errors. In addition, the estimated sun elevation can be compared with a ground-truth sun elevation to determine errors. Based on such comparisons, parameter estimate component 212 may adjust or modify the panoramic lighting parameter neural network so that the network becomes more accurate and performs accurately on real panoramic LDR images. The process of training the panoramic lighting parameter neural network is discussed further with respect to FIGS. 3 and 4.

[0059] Parameter estimate component 212 can also generate rendered scenes using the LM lighting parameters. In particular, the estimated LM lighting parameters generated by the panoramic lighting parameter neural network can be used to generate an estimated render scene. For instance, the estimated LM lighting parameters can be used to generate separate HDR environment maps based on the LM sun model and LM sky model. These generated environment maps can then be used to render a scene that can be used to determine error in the panoramic lighting parameter neural network. The scene can be generated using a pre-computed transport matrix. For instance, the transport matrix can be used to render a scene with 64.times.64 resolution. This estimated render scene may be compared to the corresponding ground-truth render scene to determine errors. Based on such comparisons, parameter estimate component 212 may adjust or modify the panoramic lighting parameter neural network so that the network becomes more accurate and performs accurately on real panoramic LDR images.

[0060] Upon completion of training, the panoramic lighting parameter neural network of the lighting estimation neural network system can estimate HDR lighting for LDR panorama images input into the system. The HDR lighting can be based on LM lighting parameters. HDR lighting estimation may be performed using parameter estimate component 212. The method of estimating HDR lighting from input LDR panorama images may be similar to the process described for training the neural network system, however, in execution, the network is not evaluated and/or updated for error. Accordingly, the HDR lighting parameters for the input LDR panorama images may be unknown, but the trained neural network system may, nevertheless, estimate HDR lighting for the images.

[0061] In embodiments, parameter estimate component 212 may run a trained panoramic lighting parameter neural network of the lighting estimation neural network system to estimate HDR lighting parameters for an input LDR panorama image. The input LDR panorama image may be received from a user at a user device. The user may select or input an image in any available manner. For example, a user may take a picture using a camera on a device, for example, user device 102a-102n and/or user device 114 of FIGS. 1A-1B. As another example, a user may select a desired image from a repository stored in a data store accessible by a network or stored locally at the user device 102a-102n and/or user device 114 of FIG. 1A-1B. In other embodiments, a user can input the image by inputting a link or URL to an image. Based on the input LDR panorama image, HDR lighting parameters can be estimated by parameter estimate component 212.

[0062] The HDR lighting parameters may be provided directly to a user via a user device, for example, user device 102a-102n and/or user device 114. In other aspects, the HDR lighting parameters can be used to automatically adjust lighting of a selected image or object within an image to reflect the HDR lighting parameters estimated from the input LDR panorama image.

[0063] In one embodiment, parameter estimate component 212 can be used to estimate LM lighting parameters for a dataset of LDR images that can be used to train a standard image lighting parameter neural network. For instance, the dataset (e.g., SUN360 database) can be run through the trained panoramic lighting parameter neural network. For the LDR images in the dataset, the trained panoramic lighting parameter neural network can estimate sun and sky LM parameters (e.g., {circumflex over (q)}.sub.sun and {circumflex over (q)}.sub.sky), sun position (e.g., I.sub.sun). The sun LM parameter can be based on sun and sun shape (e.g., {w.sub.sun, .beta., .kappa.}. The sky LM parameter can be based on sky color and turbidity (e.g., {w.sub.sky, t}. Sun position can be estimated by finding the center of mass of the largest saturated region in the sky.

[0064] Standard image lighting parameter engine 208 may be used to train the standard image lighting parameter neural network to estimate HDR lighting parameters for a LDR standard image. As depicted in FIG. 2, standard image lighting parameter engine 208 includes standard image component 214 and parameter learning component 216. The foregoing components of standard image lighting parameter engine 208 can be implemented, for example, in operating environment 100 of FIG. 1A and/or operating environment 112 of FIG. 1B. In particular, these components may be integrated into any suitable combination of user devices 102a and 102b through 102n and server(s) 106 and/or user device 114. While the various components are depicted as separate components, it should be appreciated that a single component can perform the functionality of all components. Additionally, in implementations, the functionality of the components can be performed using additional components and/or engines. Further, it should be appreciated that the functionality of the components can be provided by an engine separate from the standard image lighting parameter engine. Although these components are illustrated separately, it can be appreciated that the functionality described in association therewith can be performed by any number of components.

[0065] Standard image component 214 can generally be used to generate and/or modify any image utilized in relation to the standard image lighting parameter neural network. Images generated by the standard image component can include HDR panorama images, LDR panorama images, LDR standard images, ground-truth render scenes, and estimated render scenes.

[0066] In implementations, the standard image component 214 can generate LDR standard images from LDR panorama images. For instance, standard image component 214 can take a LDR panorama image (e.g., from SUN360 database) and crop the LDR panorama image to generate LDR standard images (e.g., seven limited field-of-view images). Such a HDR panorama image has known HDR illumination/lighting properties. These known properties can be used to provide information used as ground-truth during training of the panoramic lighting parameter neural network. The panorama image component 210 convert the HDR panorama images into LDR panorama images for use in training the panoramic lighting parameter neural network. To convert an HDR panorama image into a LDR panorama image, a random exposure factor can be applied, clipping the maximum value at one and quantizing the image to eight bits.

[0067] Parameter learning component 216 can be used to run a standard image lighting parameter neural network 220. The standard image lighting parameter neural network can generally use a convolutional neural network architecture. In particular, a standard LDR image can be input into the convolutional neural network. The convolutional neural network can estimate the LM lighting parameters from the standard LDR image (e.g., I.sub.sun, {circumflex over (q)}.sub.sun, and {circumflex over (q)}.sub.sky). The architecture of the convolutional neural network can be comprised of five convolutional layers, followed by two consecutive FC layers. Each convolutional layer of the convolutional neural network can be followed by a sub-sampling step, batch normalization, and an ELU activation function on all the convolutional layers. A sun position branch of the convolutional neural network can output a probability distribution over a discretized sun position. For sun position, 64 bins can be used for azimuth and 16 bins for elevation.

[0068] Parameter learning component 216 can be used to train the standard image lighting parameter neural network. In particular, parameter learning component 216 can select a training LDR standard image for training the standard image lighting parameter neural network. Such a training LDR standard image can be a standard image generated by the standard image component 214. From a training LDR standard image, the standard image lighting parameter neural network may output LM lighting parameters. The LM lighting parameters can be based on sun and sky LM lighting parameter models.

[0069] Parameter learning component 216 can then generate a rendered scene using the LM lighting parameters. In particular, the estimated LM lighting parameters generated by the standard image lighting parameter neural network can be used to generate an estimated render scene. In some implementations, this estimated render scene may be compared to the corresponding ground-truth render scene to determine errors. In some other implementations, this estimated render scene may be evaluated for realism to determine errors. Based on such comparisons, parameter learning component 216 may adjust or modify the standard image lighting parameter neural network so that the network becomes more accurate and performs accurately on real LDR standard images. The process of training the standard image lighting parameter neural network is discussed further with respect to FIGS. 3 and 5.

[0070] The standard image lighting parameter neural network of the lighting estimation neural network system may be used to estimate HDR lighting for LDR standard images input into the system. The HDR lighting can be based on LM lighting parameters. HDR lighting estimation may be performed using parameter learning component 216. The method of estimating LM lighting parameters from input LDR standard image images may be similar to the process described for training the standard image lighting parameter neural network, however, in execution, the network is not evaluated and/or updated for error. Accordingly, the LM lighting parameters for the input LDR standard image images may be unknown, but the trained standard image lighting parameter neural network may, nevertheless, estimate LM lighting for the images.

[0071] In embodiments, parameter learning component 216 may run a trained standard image lighting parameter neural network of the lighting estimation neural network system to estimate LM lighting parameters for an input LDR standard image. The input LDR standard image may be received from a user at a user device. The user may select or input an image in any available manner. For example, a user may take a picture using a camera on a device, for example, user device 102a-102n and/or user device 114 of FIGS. 1A-1B. As another example, a user may select a desired image from a repository stored in a data store accessible by a network or stored locally at the user device 102a-102n and/or user device 114 of FIG. 1A-1B. In other embodiments, a user can input the image by inputting a link or URL to an image. Based on the input LDR standard image, LM lighting parameters can be estimated by parameter learning component 216.

[0072] The determined LM lighting parameters may be provided directly to a user via a user device, for example, user device 102a-102n and/or user device 114. In other aspects, the LM lighting parameters can be used to automatically adjust lighting of a selected image or object within an image to reflect the HDR lighting parameters estimated from the input LDR standard image.

[0073] With reference to FIG. 3, a process flow is provided showing an embodiment of method 300 for training and/or running a lighting estimation system, in accordance with embodiments of the present disclosure. Such a lighting estimation system can be implemented using, for example, a lighting estimation neural network system. The lighting estimation neural network system can be comprised of a panoramic lighting parameter neural network and a standard image lighting parameter neural network. Aspects of method 300 can be performed, for example, by panoramic lighting parameter engine 206 and/or standard image lighting parameter engine 208, as discussed with reference to FIG. 2.

[0074] At block 302, data can be received. In some embodiments, the data can be received from an online depository. In other embodiments, the data can be received from a local system. Such received data can be selected or input into the lighting estimation neural network system in any manner (e.g., by a user). For example, a user can access one or more stored images on a device (e.g., in a photo library) and select an image from remote devices and/or applications for import into the lighting estimation neural network system.

[0075] In some instances, received data can be data used to train the lighting estimation neural network system. Such data can include datasets for training the panoramic lighting parameter neural network. One dataset used for training the panoramic lighting parameter neural network can include over 44,000 synthetic HDR panoramas created by lighting a virtual three-dimensional city model (e.g., from Unity Store) with over 9,500 HDR sky panoramas (e.g., from the Lavel HDR sky database). Another dataset used for training the panoramic lighting parameter neural network can contain around 150 daytime outdoor panoramas from a database of HDR outdoor panoramas.

[0076] LDR datasets can also be used for training the panoramic lighting parameter neural network. For instance, a LDR dataset of over 19,500 LDR panorama images (e.g., from SUN360 dataset) can be used for training the panoramic lighting parameter neural network. Further, another LDR dataset of almost 5,000 images from a real-world map (e.g., Google Street View) can be used for training the panoramic lighting parameter neural network. Such LDR datasets can be used in training the used for training the panoramic lighting parameter neural network for domain loss adaptation. The data can also include datasets for training the standard image lighting parameter neural network. For instance, the LDR dataset of over 19,500 LDR panorama images (e.g., from SUN360 dataset) can be used for training the standard image lighting parameter neural network. In particular, the LDR panorama images can be cropped into LDR standard images (e.g., seven standard images for each panorama image). Data associated with such a LDR dataset can also be received (e.g., ground-truth LM lighting parameters). Associated data can include ground-truth LM lighting parameters for the LDR dataset. These ground-truth LM lighting parameters can be estimated using, for example, the trained panoramic lighting parameter neural network.

[0077] At block 304, a type of LDR image can be determined. The LDR image can be a panorama image or a standard image. When the LDR image is a panorama image, the method proceeds to block 306. When the LDR image is a standard image, the method proceeds to block 308. In embodiments related to training, the lighting estimation neural network system can first be trained using LDR panorama images (e.g., to train the panoramic lighting parameter neural network) and then the lighting estimation neural network system can be trained using LDR standard images (e.g., to train the standard image lighting parameter neural network).

[0078] At block 306, a panoramic lighting parameter neural network of the lighting estimation neural network system can be run using data. The data can be, for example, the data received at block 302. In an embodiment where the lighting estimation neural network system is undergoing training, the data can be data for training the system (e.g., images and ground-truth lighting information). In an embodiment where a trained lighting estimation neural network system is being implemented, the data can be LDR images (e.g., panorama images). For instance, the data can be an LDR panorama image input into the panoramic lighting parameter neural network to estimate LM lighting parameters. Such estimated lighting parameters can be LM lighting parameters including: sky color, turbidity, sun color, shape of the sun, and the sun position. For example, such estimated lighting parameters can be extrapolated from a latent representation of the panoramic lighting parameter neural network. Such a latent representation can be a latent vector (e.g., z).

[0079] In embodiments where the panoramic lighting parameter neural network is undergoing training, an image from training data can be input such that the panoramic lighting parameter neural network estimates the LM lighting parameters at block 306. In such embodiments, the method can then proceed to block 310. At block 310, the panoramic lighting parameter neural network of the lighting estimation neural network system can be updated using determined error. Errors based on the output (e.g., determined lighting parameters) can be fed back through the panoramic lighting parameter neural network to appropriately train the network.

[0080] Error can be based on a variety of losses. Loss can be based on comparing an output from the panoramic lighting parameter neural network with a known ground-truth. In particular, the estimated LM lighting parameters generated by the panoramic lighting parameter neural network can be used to generate an estimated HDR panorama image (e.g., having the estimated LM lighting parameters). This estimated HDR panorama image may be compared to a corresponding ground-truth HDR panorama image to calculate loss (e.g., panorama loss). In addition, the estimated sun elevation can be compared with a ground-truth sun elevation to calculate loss (e.g., sun elevation loss). The estimated HDR panorama image can also be multiplied with a pre-computed transport matrix of a synthetic scene and then compared with the corresponding ground-truth HDR panorama image multiplied with the pre-computed transport matrix of the synthetic scene to calculate loss (e.g., render loss).

[0081] Further, the estimated LM lighting parameters can be compared with the ground-truth lighting parameters by applying the lighting parameters to a scene to calculate loss. Scenes can then be compared to determine the accuracy of the estimated LM lighting parameters. Such rendered scenes can include a rendered scene based on LM sky parameters, a rendered scene based on estimated LM sun parameters, and a rendered scene based on the combination of estimated LM sky parameters and estimated LM sun parameters. Errors determined from comparing the scene(s) with estimated LM lighting parameters with the scene(s) with ground-truth lighting parameters can be used to update the lighting parameter estimate neural network.

[0082] In some embodiments, upon completion of training of the lighting estimation neural network system, the system can be utilized to output estimated LM lighting parameters for an image, at block 306 (for panoramic images). For instance, upon receiving an image, the trained lighting estimation neural network system can be run to determine lighting parameters for a LDR panorama image.

[0083] At block 308, a standard image lighting parameter neural network of a lighting estimation neural network system can be run using data. The data can be, for example, the data received at block 302. In an embodiment where the lighting estimation neural network system is undergoing training, the data can be data for training the system (e.g., images and ground-truth LM lighting information). In an embodiment where a trained lighting estimation neural network system is being implemented, the data can be LDR images (e.g., standard images). For instance, the data can be an LDR image input into the standard image lighting parameter neural network to determine LM lighting parameters. To run the lighting estimation neural network system during training, an image from training data can be input such that the standard image lighting parameter neural network learns to estimate lighting parameters at block 308. Such lighting parameters can be LM lighting parameters including: sky color, turbidity, sun color, shape of the sun, and the sun position. For example, such estimated lighting parameters can be extrapolated from a latent representation of the standard image lighting parameter neural network. Such a latent representation can be a latent vector (e.g., z).

[0084] In embodiments where the standard image lighting parameter neural network is undergoing training, the method can proceed to block 310. At block 310, the standard image lighting parameter neural network of the lighting estimation neural network system can be updated using determined error. Errors in the output (e.g., determined lighting parameters) can be fed back through the standard image lighting parameter neural network of the lighting estimation neural network system to appropriately train the network.

[0085] Error can be based on a variety of losses. Loss can be based on comparing an output from the panoramic lighting parameter neural network with a known ground-truth. In particular, the determined LM sky color lighting parameter generated by the standard image lighting parameter neural network can compared to a corresponding ground-truth LM sky color lighting parameter to calculate loss (e.g., sky loss). In addition, the determined LM global scattering parameter generated by the standard image lighting parameter neural network can compared to a corresponding ground-truth LM global scattering parameter to calculate loss (e.g., sun loss). The determined LM scattering of the global scattering parameter (i.e., .beta.) generated by the standard image lighting parameter neural network can compared to a corresponding ground-truth LM scattering of the global scattering parameter to calculate loss (e.g., .beta. loss). The determined LM local scattering lighting parameter (i.e., .kappa.) generated by the standard image lighting parameter neural network can compared to a corresponding ground-truth LM local scattering lighting parameter to calculate loss (e.g., .kappa. loss). The determined LM turbidity lighting parameter (i.e., t) generated by the standard image lighting parameter neural network can compared to a corresponding ground-truth LM turbidity lighting parameter to calculate loss (e.g., t loss).

[0086] Further, the determined LM lighting parameters can be compared with the ground-truth LM lighting parameters by applying the lighting parameters to a scene to calculate loss. Scenes can then be compared to determine the accuracy of the estimated LM lighting parameters. Such rendered scenes can include a rendered scene based on determined LM sky parameters and a rendered scene based on determined LM sun parameters. Errors determined from comparing the scene(s) with estimated LM lighting parameters with the scene(s) with ground-truth lighting parameters can be used to update the lighting parameter estimate neural network.

[0087] In some embodiments, upon completion of training of the lighting estimation neural network system, the system can be utilized to output a determined lighting parameters for an image, at block 308 (for standard images). For instance, upon receiving an image, the trained lighting estimation neural network system can be run to determine lighting parameters for a LDR standard image.

[0088] Turning now to FIG. 4, FIG. 4 provides a process flow showing an embodiment of method 400 for training a panorama lighting parameter neural network of a lighting estimation neural network system. In particular, the panorama lighting parameter neural network can be trained to estimate HDR lighting parameters from a LDR panorama image, in accordance with embodiments of the present disclosure. Method 400 can be performed, for example by lighting estimation system 204, as illustrated in FIG. 2.