Interactive Animated Character Head Systems And Methods

Vyas; Anisha ; et al.

U.S. patent application number 16/952899 was filed with the patent office on 2021-03-11 for interactive animated character head systems and methods. The applicant listed for this patent is Universal City Studios LLC. Invention is credited to Travis Jon Cossairt, Anisha Vyas, Wei Cheng Yeh.

| Application Number | 20210072888 16/952899 |

| Document ID | / |

| Family ID | 1000005227255 |

| Filed Date | 2021-03-11 |

| United States Patent Application | 20210072888 |

| Kind Code | A1 |

| Vyas; Anisha ; et al. | March 11, 2021 |

INTERACTIVE ANIMATED CHARACTER HEAD SYSTEMS AND METHODS

Abstract

An interactive system includes one or more processors that are configured to receive a first signal indicative of an activity of a user within an environment and to receive a second signal indicative of the user approaching an animated character head. The one or more processors are also configured to provide information related to the activity of the user to a base station control system associated with the animated character head in response to receipt of the second signal to facilitate a personalized interaction between the animated character head and the user.

| Inventors: | Vyas; Anisha; (Orlando, FL) ; Cossairt; Travis Jon; (Celebration, FL) ; Yeh; Wei Cheng; (Orlando, FL) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Family ID: | 1000005227255 | ||||||||||

| Appl. No.: | 16/952899 | ||||||||||

| Filed: | November 19, 2020 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 15939887 | Mar 29, 2018 | 10845975 | ||

| 16952899 | ||||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | A63J 19/006 20130101; G06F 3/04847 20130101; A63F 13/79 20140902; A63G 31/00 20130101; A63F 13/216 20140902; G06K 19/0723 20130101; A63F 13/24 20140902; G06K 7/10366 20130101; A63H 13/005 20130101; G06F 3/013 20130101; A63F 13/428 20140902; A63F 13/28 20140902; G08C 17/02 20130101; A63F 13/67 20140902; G08C 2201/34 20130101; A63F 13/95 20140902; H04W 4/023 20130101 |

| International Class: | G06F 3/0484 20060101 G06F003/0484; H04W 4/02 20060101 H04W004/02; G08C 17/02 20060101 G08C017/02; A63J 19/00 20060101 A63J019/00; A63F 13/79 20060101 A63F013/79; A63F 13/428 20060101 A63F013/428; A63H 13/00 20060101 A63H013/00; A63F 13/28 20060101 A63F013/28; A63F 13/95 20060101 A63F013/95; A63F 13/216 20060101 A63F013/216; A63F 13/24 20060101 A63F013/24; A63G 31/00 20060101 A63G031/00; A63F 13/67 20060101 A63F013/67 |

Claims

1. An interactive system, comprising one or more processors configured to: receive data related to prior activity of a user within an amusement park; generate an inventory of phrases based on the prior activity of the user and instruct display of the inventory of phrases at a base station control system associated with an animated character head to facilitate a personalized interaction between the animated character head and the user; and remove a phrase from the inventory of phrases to generate an updated inventory of phrases in response to the phrase being spoken by the animated character head during the personalized interaction with the user such that the phrase is not available to be spoken by the animated character head during a future personalized interaction with the user.

2. The interactive system of claim 1, wherein the prior activity of the user comprises an attraction visited by the user.

3. The interactive system of claim 1, where the one or more processors are configured to: receive a signal indicative of the user approaching the animated character head; and instruct display of the inventory of phrases at the base station control system in response to receiving the signal.

4. The interactive system of claim 1, wherein the data indicates that the user has exited an attraction of the amusement park, and the one or more processors are configured to: receive a signal that indicates that the user is approaching the animated character head after the user has exited the attraction of the amusement park; and instruct display of the inventory of phrases at the base station control system in response to receiving the signal, wherein at least one phrase in the inventory of phrases relates to the attraction.

5. The interactive system of claim 1, comprising an identification device positioned within an attraction of the amusement park, wherein the identification device is configured to generate the data in response to detection of an identifier supported by a wearable device of the user.

6. The interactive system of claim 5, wherein the identification device comprises a radio-frequency identification (RFID) reader, and the identifier comprises a RFID tag.

7. The interactive system of claim 1, wherein the one or more processors are configured to determine a recommended phrase in the inventory of phrases based on the prior activity, and to highlight the recommended phrase in the display of the inventory of phrases at the base station control system for visualization by a handler.

8. The interactive system of claim 1, comprising the base station control system and the animated character head, wherein the base station control system and the animated character head comprise respective audio communication devices that enable a handler operating the base station control system to verbally communicate with a performer wearing the animated character head.

9. The interactive system of claim 1, comprising the base station control system, wherein the base station control system is configured to receive, from a handler, a selection input to select the phrase and the base station control system is configured to communicate the selection input to the animated character head.

10. The interactive system of claim 9, wherein the selection input to select the phrase is configured to cause a speaker of the animated character head to output the phrase as audio.

11. An interactive system, comprising: one or more identification devices configured to detect an identifier supported by a wearable device of a user; one or more processors configured to: monitor activities of the user within an amusement park based on respective signals received from the one or more identification devices; access an inventory of phrases available to an animated character head in response to respective signals from at least one identification device of the one or more identification devices indicating that the user is approaching the animated character head; modify the inventory of phrases to generate a personalized inventory of phrases based on the monitored activities of the user; and instruct display of the personalized inventory of phrases on a display screen to facilitate a personalized interaction between the animated character head and the user.

12. The interactive system of claim 11, wherein the one or more processors are configured to: identify one or more previously spoken phrases that were previously spoken by the animated character head to the user during one or more previous interactions between the animated character head and the user; and modify the inventory of phrases to generate the personalized inventory of phrases by excluding the one or more previously spoken phrases.

13. The interactive system of claim 11, wherein the one or more processors are configured to: identify one or more previously spoken phrases that were previously spoken by the animated character head to the user during one or more previous interactions between the animated character head and the user; and instruct display of the personalized inventory of phrases with the one or more previously spoken phrases highlighted at the display screen.

14. The interactive system of claim 10, wherein the one or more identification devices comprise one or more radio-frequency identification (RFID) readers, and the identifier comprises a RFID tag.

15. The interactive system of claim 10, wherein the monitored activities comprise an achievement within an attraction of the amusement park.

16. The interactive system of claim 15, wherein the one or more processors are configured to instruct display of information that indicates the achievement within the attraction at the display screen.

17. A method, comprising: receiving, at one or more processors, a signal indicative of a user approaching an animated character head; accessing, using the one or more processors, data related to a prior activity of the user; identifying one or more previously spoken phrases that were previously spoken by the animated character head to the user during one or more previous interactions between the animated character head and the user; generating a list of phrases based on the data related to the prior activity of the user and that excludes the one or more previously spoken phrases; and instructing display of the list of phrases via a display screen to facilitate a personalized interaction between the animated character head and the user.

18. The method of claim 17, comprising receiving the signal indicative of the user approaching the animated character head from an identification device that is configured to detect an identifier supported by a wearable device of the user.

19. The method of claim 17, wherein the prior activity of the user comprises a ride attraction visited by the user.

20. The method of claim 17, comprising accessing, using the one or more processors, a preferred language of the user, wherein the list of phrases comprises one or more phrases in the preferred language of the user.

Description

CROSS-REFERENCE TO RELATED APPLICATION

[0001] This application is a continuation of U.S. Non-Provisional application Ser. No. 15/939,887, entitled "INTERACTIVE ANIMATED CHARACTER HEAD SYSTEMS AND METHODS," filed on Mar. 29, 2018, which is hereby incorporated by reference in its entirety for all purposes.

BACKGROUND

[0002] The present disclosure relates generally to amusement parks. More specifically, embodiments of the present disclosure relate to systems and methods utilized to provide amusement park experiences.

[0003] Amusement parks and other entertainment venues contain, among many other attractions, animated characters that interact with guests. For example, the animated characters may walk around the amusement park, provide entertainment, and speak to the guests. Certain animated characters may include a performer in a costume with an animated character head that covers the performer's face. With the increasing sophistication and complexity of attractions, and the corresponding increase in expectations among guests, more creative animated character head systems and methods are needed to provide an interactive and personalized experience for guests.

SUMMARY

[0004] Certain embodiments commensurate in scope with the originally claimed subject matter are summarized below. These embodiments are not intended to limit the scope of the disclosure, but rather these embodiments are intended only to provide a brief summary of certain disclosed embodiments. Indeed, the present disclosure may encompass a variety of forms that may be similar to or different from the embodiments set forth below.

[0005] In an embodiment, an interactive system includes one or more processors that are configured to receive a first signal indicative of an activity of a user within an environment and to receive a second signal indicative of the user approaching an animated character head. The one or more processors are also configured to provide information related to the activity of the user to a base station control system associated with the animated character head in response to receipt of the second signal to facilitate a personalized interaction between the animated character head and the user.

[0006] In an embodiment, an interactive system includes one or more identification devices configured to detect an identifier supported by a wearable device of a user. The interactive system also includes one or more processors configured to monitor activities of the user within an environment based on respective signals received from the one or more identification devices. The one or more processors are further configured to output a respective signal based on the activities of the user to an animated character head, thereby causing the animated character head to present an animation that is relevant to the activities of the user to facilitate a personalized interaction between the animated character head and the user.

[0007] In an embodiment, a method includes receiving, at one or more processors, a signal indicative of a user approaching an animated character head. The method also includes accessing, using the one or more processors, information related to a prior activity of the user. The method further includes providing, using the one or more processors, the information related to the prior activity of the user to a base station control system associated with the animated character head in response to receipt of the signal to facilitate a personalized interaction between the animated character head and the user.

BRIEF DESCRIPTION OF THE DRAWINGS

[0008] These and other features, aspects, and advantages of the present disclosure will become better understood when the following detailed description is read with reference to the accompanying drawings in which like characters represent like parts throughout the drawings, wherein:

[0009] FIG. 1 is a block diagram of an interactive system having an animated character head, in accordance with an embodiment;

[0010] FIG. 2 is a schematic view of an amusement park including the interactive system of FIG. 1, in accordance with an embodiment;

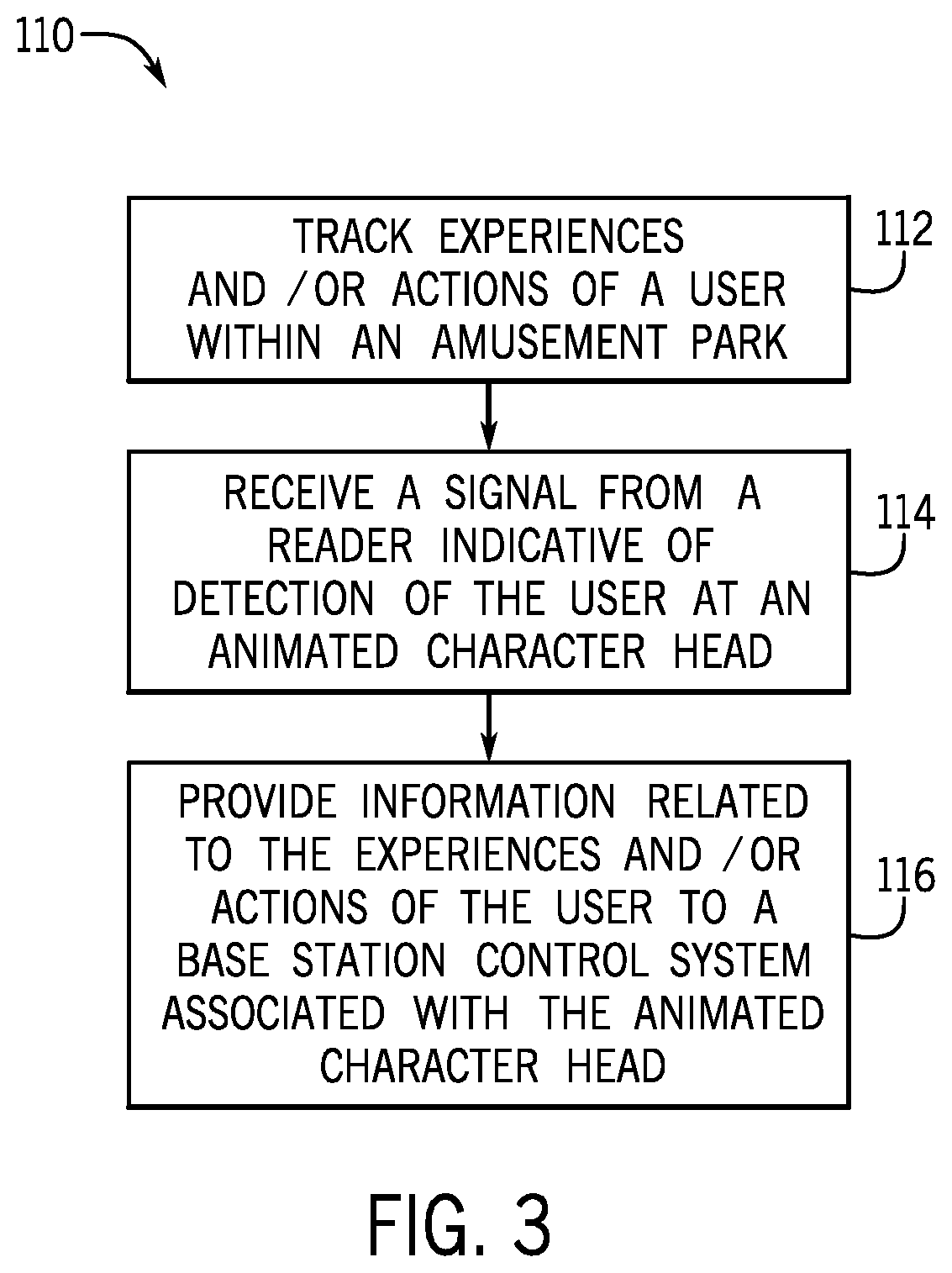

[0011] FIG. 3 is a flow diagram of a method of operating the interactive system of FIG. 1, in accordance with an embodiment; and

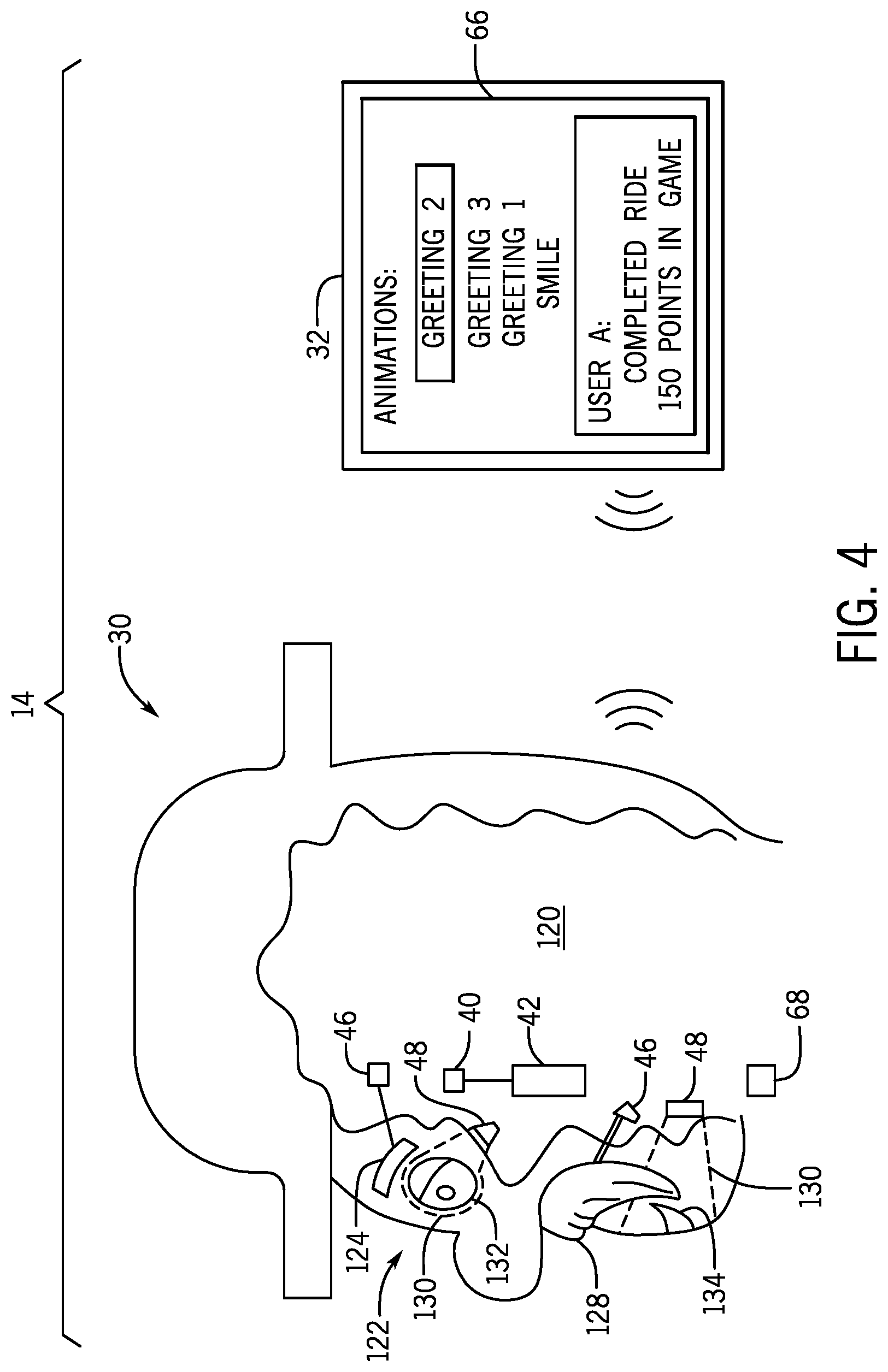

[0012] FIG. 4 is a cross-sectional side view of the animated character head and a front view of a base station control system that may be used in the interactive system of FIG. 1, in accordance with an embodiment.

DETAILED DESCRIPTION

[0013] One or more specific embodiments of the present disclosure will be described below. In an effort to provide a concise description of these embodiments, all features of an actual implementation may not be described in the specification. It should be appreciated that in the development of any such actual implementation, as in any engineering or design project, numerous implementation-specific decisions must be made to achieve the developers' specific goals, such as compliance with system-related and business-related constraints, which may vary from one implementation to another. Moreover, it should be appreciated that such a development effort might be complex and time consuming, but would nevertheless be a routine undertaking of design, fabrication, and manufacture for those of ordinary skill having the benefit of this disclosure.

[0014] Amusement parks feature a wide variety of entertainment, such as amusement park rides, games, performance shows, and animated characters. However, many of the forms of entertainment do not vary based upon a guest's previous activities (e.g., experiences and/or actions). For example, an animated character may greet each guest in a similar manner. Some guests may prefer a unique or customized interaction with the animated character that is different for each guest, different during each interaction, and/or that indicates recognition of the guest's previous activities. Accordingly, the present embodiments relate to an interactive system that monitors a guest's activities within an amusement park and provides an output to control or influence an animated character's interaction with the guest based at least in part on the guest's previous activities.

[0015] More particularly, the present disclosure relates to an interactive system that uses an identification system, such as a radio-frequency identification (RFID) system, to monitor a guest's activities within an amusement park. In an embodiment, the guest may wear or carry a device that supports an identifier, such as an RFID tag. When the guest brings the device within a range of a reader (e.g., RFID transceiver) positioned within the amusement park, the reader may detect the identifier and provide a signal to a computing system to enable the computing system to monitor and to record (e.g., in a database) the guest's activities within the amusement park. For example, the reader may be positioned at an exit of a ride (e.g., a roller coaster or other similar attraction), and the reader may detect the identifier in the device as the guest exits the ride. The reader may provide a signal indicating that the device was detected proximate to the exit of the ride to the computing system, the computing may then determine that the guest completed the ride based on the signal, and the computing system may then store the information (e.g., that the guest completed the ride) in the database.

[0016] Subsequently, the guest may visit an animated character, which may be located in another portion of the amusement park. In an embodiment, the animated character includes an animated character head worn by a performer. An additional reader (e.g., RFID transceiver) positioned proximate to the animated character (e.g., coupled to the animated character, carried by the performer, coupled to a base station control system, or coupled to a stationary structure or feature within the amusement park) may detect the identifier in the device as the guest approaches the animated character. The additional reader may provide a signal to the computing system indicating that the device was detected proximate to the animated character, the computing system may then determine that the guest is approaching the animated character based on the signal, the computing system may then access the information stored in the database, and the computing system may then provide an output to control or influence the animated character's interaction with the guest based on the information. For example, the computing system may be in communication with the base station control system, which may be a tablet or other computing device (e.g., mobile phone) operated by a handler who travels with and/or provides support to the performer wearing the animated character head. In some such cases, the computing system may provide an output to the base station control system that causes display of the information (e.g., the guest's score in a game, the rides completed by the guest) and/or a recommended interaction (e.g., congratulate the guest on winning a game) on a display screen of the base station control system. The handler may then select an appropriate phrase and/or gesture for the animated character, such as by providing an input at the base station control system (e.g., making a selection on a touch screen) that causes the animated character to speak the phrase and/or to perform the gesture. In an embodiment, the handler may suggest an appropriate phrase and/or gesture for the animated character, such as by speaking (e.g., via a two-way wireless communication system) to the performer wearing the animated character head.

[0017] Each guest may have had different, respective experiences and/or carried out different, respective actions in the amusement park. For example, one guest may experience a ride, earn virtual points by playing a game, and eat at a restaurant, while another guest may experience a different ride, earn a different number of virtual points by playing the game, and eat at a different restaurant. The disclosed interactive system may enable the animated character to carry out a unique, personalized interaction with each guest by speaking or gesturing based on each guest's particular activities. To facilitate discussion, a user of the interactive system is described as being a guest at an amusement park and the interactive system is described as being implemented in the amusement park; however, it should be appreciated that the interactive system may be implemented in other environments. Furthermore, the disclosed embodiments refer to an animated character head worn by a performer; however, it should be appreciated that the interactive system may additionally or alternatively include and affect operation of other components, such as objects (e.g., cape, hat, glasses, armour, sword, button) held, worn, or carried by the performer.

[0018] With the foregoing in mind, FIG. 1 is a block diagram of an interactive system 10 that may be utilized in an environment, such as an amusement park. As illustrated in FIG. 1, the interactive system 10 includes an identification system 12 (e.g., an RFID system) and an animated character system 14. In an embodiment, the identification system 12 includes a computing system 16 (e.g., a cloud-based computing system), one or more databases 18, and one or more readers 20 (e.g., RFID readers or transceivers) that are configured to read an identifier 22 (e.g., RFID tag) supported in a wearable device 24 (e.g., wearable or portable device, such as a bracelet, necklace, charm, pin, or toy), which may be worn or carried by a user 26. As discussed in more detail below, the identification system 12 may monitor activities of the user 26 as the user 26 travels through the amusement park, and the identification system 12 may provide an output indicative of previous activities of the user 26 to the animated character system 14, thereby facilitating a unique, personalized interactive experience for the user 26.

[0019] In one embodiment, the animated character system 14 includes an animated character head 30 that may be worn by a performer and that may be configured to emit sounds (e.g., speak phrases) and/or carry out various gestures (e.g., eye blinks, jaw motions, lip shapes). The animated character system 14 may also include a base station control system 32 (e.g., remote control system) that may be operated by a handler who travels with and/or provides support to the performer wearing the animated character head 30. In an embodiment, the animated character head 30 and the base station control system 32 are communicatively coupled, such that an input by the handler at the base station control system 32 causes the animated character head 30 to emit a certain sound or perform a certain gesture.

[0020] More particularly, in one embodiment, the animated character head 30 may include a controller 34 (e.g., electronic controller) with one or more processors 36 and one or more memory devices 38. In an embodiment, the memory 36 may be configured to store instructions, data, and/or information, such as a library of animations (e.g., database of available animations, including sounds and/or gestures, and corresponding control instructions for effecting the animations) for the animated character head 30. In an embodiment, the processor 36 may be configured to receive an input (e.g., signal from the base station control system 32), to identify an appropriate animation from the library of animations (e.g., a selected animation) based on the received input, and/or to provide one or more appropriate control signals to a display 42, a speaker 44, an actuator 46, and/or a light source 48 based on the received input and/or in accordance with the selected animation. In this way, the animated character head 30 may enable the handler to control the speech and/or gestures of the animated character head 30. It should be appreciated that the library of animations may include separate sounds or small sound clips (e.g., single word, beep, buzz), separate gestures (e.g., smile, frown, eye blink), and/or combinations of multiple sounds and gestures (e.g., a greeting that includes multiple words in combination with a motion profile that includes smile and eye movements). For example, the base station control system 32 may present the handler with a selection menu of available animations for the animated character head 30, and the handler may be able to provide an input at the base station control system 32 to select a smile and then select a particular greeting. Subsequently, the processor 36 of the animated character head 30 may receive a signal indicative of the handler's input from the base station control system 32, access the selected animations from the library, and control the actuators 46 to effect the smile and the particular greeting.

[0021] The animated character head 30 may include various features to facilitate the techniques disclosed herein. For example, the animated character head 30 may include one or more sensors 40 that are configured to monitor the performer and/or to receive inputs from the performer. The one or more sensors 40 may include eye tracking sensors that are configured to monitor eye movement of the performer, machine vision sensors that are configured to monitor movement of the performer's face, microphones or audio sensors that are configured to receive spoken inputs or other audible inputs from the performer, physical input sensors (e.g., switch, button, motion sensors, foot controls, or wearable input device, such as a myo input, ring input, or gesture gloves) that are configured to receive a physical or manual input from the performer, or any combination thereof. The inputs may be processed by the processor 36 to select an animation from the library of animations stored in the memory 38 and/or to otherwise affect the animations presented via the animated character head 30. For example, certain inputs via the one or more sensors 40 may veto or cancel a selection made by the handler and/or certain inputs may initiate a particular animation.

[0022] The actuators 46 may be any suitable actuators, such as electromechanical actuators (e.g., linear actuator, rotary actuator). The actuators 46 may be located inside the animated character head 30 and be configured to adjust certain features or portions of the animated character head 30 (e.g., the eyes, eyebrows, cheeks, mouth, lips, ears, light features). For example, a rotary actuator may be positioned inside the animated character head 30 along the outer cusps of the lips of the animated character head 30 to cause the face of the animated character head 30 to smile in response to a control signal (e.g., from the processor 36). As a further example, the animated character head 30 may contain an electric linear actuator that drives the position of the eyebrows (e.g., to frown) of the animated character head 30 in response to a control signal (e.g., from the processor 36).

[0023] As shown, the animated character head 30 may include the light source 48, and the duration, brightness, color, and/or polarity of the light emitted from the light source 48 may be controlled based on a control signal (e.g., from the processor 36). In an embodiment, the light source 48 may be configured to project light onto a screen or other surface of the animated character head 30, such as to display a still image, a moving image (e.g., a video), or other visible representation of facial features or gestures on the animated character head 30. In some embodiments, the actuators 46 and/or the light source 48 may enable the animated character head 30 to provide any of a variety of projected facial features or gestures, animatronic facial features or gestures, or combinations thereof.

[0024] In an embodiment, the processor 36 may instruct the display 42 to show an indication of available animations (e.g., a list of animations stored in the library in the memory 38), an indication of the selected animation (e.g., selected by the processor 36 from the library in the memory 38 based on an input from the base station control system 32), and/or other information (e.g., information about the user 26, such as prior activities; recommended animations) for visualization by the performer wearing the animated character head 30. For example, in operation, the display 42 may provide a list of available animations, and the one or more sensors 40 may obtain an input from the performer (e.g., an eye tracking sensor may enable the performer to provide the input with certain eye movements) to enable the performer to scroll through the list of available animations and/or to select an animation from the list of available animations. In an embodiment, a selected animation may be shown on the display 42, and the selected animation may be confirmed, changed, modified, switched, delayed, or deleted by the performer via various inputs to the one or more sensors 40 (e.g., by speaking into a microphone or actuating a physical input sensor), thereby enabling efficient updates by the performer during interactions with guests. It should be appreciated that the performer may not have control over the selections, and thus, may not be able to input a selection or change the selection made by the handler via the one or more sensors 40, for example.

[0025] The display 42 may be utilized to provide various other information. For example, in some embodiments, a camera 50 (e.g., coupled to or physically separate from the animated character head 30) may be provided to obtain images (e.g., still or moving images, such as video) of the user 26, the surrounding environment, and/or the currently playing animation (e.g., current movements or features of the animated character head 30), which may be relayed to the animated character head 30 (e.g., via wireless communication devices, such as transceivers) for display via the display 42 to provide information and/or feedback to the performer. In an embodiment, the display 42 may be part of augmented or virtual reality glasses worn by the performer.

[0026] In an embodiment, the animated character head 30 may include one or more status sensors 52 configured to monitor a component status and/or a system status (e.g., to determine whether a performed animation does not correspond to the selected animation), and an indication of the status may be provided to the performer via the display 42 and/or to the handler via the base station control system 32. For example, a status sensor 52 may be associated with each actuator 46 and may be configured to detect a position and/or movement of the respective actuator 46, which may be indicative of whether the actuator 46 is functioning properly (e.g., moving in an expected way based on the selected animation).

[0027] The processor 36 may execute instructions stored in the memory 38 to perform the operations disclosed herein. As such, in an embodiment, the processor 36 may include one or more general purpose microprocessors, one or more application specific processors (ASICs), one or more field programmable logic arrays (FPGAs), or any combination thereof. Additionally, the memory 38 may be a tangible, non-transitory, computer-readable medium that store instructions executable by and data to be processed by the processor 36. Thus, in some embodiments, the memory 38 may include random access memory (RAM), read only memory (ROM), rewritable non-volatile memory, flash memory, hard drives, optical discs, and the like.

[0028] The base station control system 32 may include various features to facilitate the techniques disclosed herein. In an embodiment, the handler may utilize an input device 60 (e.g., a touch screen) at the base station control system 32 to provide an input and/or to select animations. In such cases, the handler's selections and/or other data may be transmitted wirelessly or through a wired connection to the animated character head 30 via the communication devices 62, 64. In an embodiment, the handler receives system status information (e.g., an indication of component failure as detected by the status sensors 52, completed animations, images from the camera 50) from the animated character head 30. In an embodiment, if a particular actuator 46 is not functioning properly, animation selections that rely on the particular actuator 46 may be removed from the list of available animations and/or otherwise made inaccessible for selection by the handler.

[0029] In an embodiment, the animated character head 30 and the base station control system 32 may include audio communication devices 68, 70 (e.g., a headset or other devices having a microphone and/or a speaker) that enable the performer and the handler to communicate (e.g., verbally communicate via one-way or two-way communication). In such cases, the handler may be able to verbally inform the performer of the handler's current selection, the handler's next selection, information about the user 26, or the like. Additionally, the performer may be able to request a particular animation, indicate a preference to cancel a selected animation, or the like.

[0030] In the depicted embodiment, a controller 72 of the base station control system 32 contains a processor 74 that may execute instructions stored in the memory 76 to perform operations, such as receiving, accessing, and/or displaying a selection menu of available animations for the animated character head 30 on a display 66 (which may also operate as the input device 60), providing a signal indicative of a selected animation to the animated character head 30, or the like. As such, in an embodiment, the processor 74 may include one or more general purpose microprocessors, one or more application specific processors (ASICs), one or more field programmable logic arrays (FPGAs), or any combination thereof. Additionally, the memory 76 may be a tangible, non-transitory, computer-readable medium that stores instructions executable by and data to be processed by the processor 74. Thus, in some embodiments, the memory 76 may include random access memory (RAM), read only memory (ROM), rewritable non-volatile memory, flash memory, hard drives, optical discs, and the like.

[0031] Furthermore, the communication devices 62, 64 may enable the controllers 34, 72 to interface with one another and/or with various other electronic devices, such as the components in the identification system 12. For example, the communication devices 62, 64 may enable the controllers 34, 72 to communicatively couple to a network, such as a personal area network (PAN), a local area network (LAN), and/or a wide area network (WAN). As noted above, the base station control system 32 may also include the display 66 to enable display of information, such as the selection menu of animations, completed animations, the system status as detected by the status sensors 52, and/or an external images obtained by the camera 50, or the like.

[0032] In an embodiment, the animated character system 14 is configured to operate independently of or without the identification system 12. For example, at least at certain times, the handler and/or the performer may provide inputs to play various animations on the animated character head 30 to interact with the user 26 without any information regarding the user's 26 previous activities within the amusement park. In an embodiment, at least at certain other times, the handler and/or the performer may receive information about the user's 26 previous activities within the amusement park from the identification system 12 (e.g., information that the user 26 completed a ride, earned points in a game, or visited an attraction), and the information may be utilized to provide a unique, personalized interactive experience for the user 26. For example, the identification system 12 may provide the information for visualization on one or both of the displays 42, 66, or the identification system 12 may provide the information for visualization by the handler on the display 66 and the handler may be able to verbally communicate the information to the performer using the audio communication devices 68, 70. The information may enable the handler to select a more appropriate animation for the user 26, such as a greeting in which the animated character head 30 congratulates the user 26 on an achievement in a game or in which the animated character head 30 asks the user 26 if the user 26 enjoyed a recent ride.

[0033] Additionally or alternatively, the identification system 12 and/or another processing component of the interactive system 10 (e.g., the processor 74) may determine one or more relevant animations for the user 26 based on the information about the user's 26 previous activities within the amusement park. In some such cases, the identification system 12 and/or the another processing component may provide a recommendation to select or to play the one or more relevant animations. The recommendation may be provided by highlighting (e.g., with color, font size or style, position on the screen or in the menu) the one or more relevant animations on one or both of the displays 42, 66, thereby facilitating selection of the one or more relevant animations. Additionally or alternatively, the identification system 12 may cause the base station control system 32 to select and/or the animated character head 30 to play a particular animation (e.g., if the user 26 recently completed the ride, the signal from the identification system 12 received at the processor 74 may cause selection of a greeting related to the ride, and the selection may or may not be overridden or changed by the handler and/or the performer).

[0034] More particularly, the identification system 12 operates to monitor the user's 26 activities within an amusement park. In an embodiment, the user 26 may wear or carry the wearable device 24 that supports the identifier 22. When the user 26 brings the wearable device 24 within an area proximate to the reader 20 (e.g., within a reading range of the reader 20), the reader 20 may detect the identifier 22 and provide a signal to the computing system 16 to enable the computing system 16 to monitor and to record (e.g., in the one or more databases 18) the user's 26 activities within the amusement park. For example, one reader 20 may be positioned at an exit of a ride (e.g., a roller coaster or other similar attraction), and the reader may detect the identifier 22 in the wearable device 24 as the user 26 exits the ride. The reader 20 may provide a signal indicating that the wearable device 24 was detected proximate to the exit of the ride to the computing system 16, the computing system 16 may then determine that the user completed the ride based on the signal, and the computing system 16 may then store the information (e.g., that the user completed the ride) in the one or more databases 18. In this way, the identification system 12 may monitor the various activities of the user 26 as the user 26 travels through the amusement park.

[0035] Subsequently, the user 26 may visit the animated character head 30, which may be located in another portion of the amusement park. Another reader 20 positioned proximate to the animated character head 30 (e.g., coupled and/or inside to the animated character head 30, carried by the performer, coupled to the base station control system 32, or coupled to a stationary structure or feature within the amusement park) may detect the identifier 22 in the wearable device 24 as the user 26 approaches the animated character head 30. The another reader 20 may provide a signal indicating that the wearable device 24 was detected proximate to the animated character head 30 to the computing system 16 (e.g., via a wireless or wired connection), the computing system 16 may then determine that the user 26 is approaching the animated character head 30 based on the signal, the computing system 16 may then access the information stored in the one or more databases 18, and the computing system 16 may then provide an output to control or influence the animated character head's 30 interaction with the user 26 based on the information. For example, the computing system 16 may be in communication with the base station control system 32 (e.g., via communication devices 64, 80). In some such cases, the computing system 16 may provide an output to the base station control system 32 that causes display of the information and/or a recommended interaction on the display 66 of the base station control system 32. The handler may then select an appropriate animation for the animated character head 30, such as by providing an input at the base station control system 32 that causes the animated character head 30 to speak a particular phrase and/or to perform a particular gesture (e.g., relevant to the information about the user 26). In some such cases, the computing system 16 may provide an output to the animated character head 30 that causes display of the information and/or a recommended interaction on the display 42 of the animated character head 30. In an embodiment, the handler may convey the information and/or provide a recommendation based on the information to the performer, such as by speaking (e.g., via a two-way wireless communication system) to the performer.

[0036] In this manner, the interactive system 10 may provide a unique, personalized interactive experience between the user 26 and the animated character head 30. The interactive experience may be different for each user 26 and/or different each time the user 26 visits the animated character head 30. It should be appreciated that any of the features, functions, and/or techniques disclosed herein may be distributed between the identification system 12, the animated character head 30, and the base station control system 32 in any suitable manner. As noted above, the animated character system 14 may be able to operate independently of or without the identification system 12. Similarly, in an embodiment, the animated character head 30 may be able to operate independently of or without the base station control system 32. Thus, it should be appreciated that, in some such cases, the identification system 12 may provide outputs directly to the animated character head 30 (e.g., the processor 36 of the animated character head 30 may process signals received directly from the identification system 12 to select and play an animation from the library).

[0037] Certain examples disclosed herein relate to activities that involve interaction with attractions (e.g., rides, restaurants, characters) within the amusement park. In an embodiment, the interactive system 10 may receive and utilize information about the user 26 other than activities within the amusement park to provide the unique, personalized interaction. For example, the interactive system 10 may receive and utilize information about the user's 26 performance in a video game at a remote location (e.g., other than within the amusement park, such as at a home video console or computing system), the user's 26 name, age, or other information provided by the user 26 (e.g., during a registration process or ticket purchasing process), or the like. In an embodiment, the interactive system 10 may receive and utilize information related to the user's preferred language, any unique conditions of the user (e.g., limited mobility, limited hearing, sensitive to loud sounds), or the like. For example, the user 26 may complete a registration process or otherwise have the opportunity to input preferences or other information that is associated with the wearable device 14. When the user 26 approaches the animated character head 30, the preferences or other information may be presented to the handler and/or the performer, may be used to select an animation, and/or may be used to determine a recommended animation. In this way, the animated character head 30 may speak to the user 26 in a language that the user 26 understands and/or interact with the user 26 in a manner that is appropriate for the user 26. Additionally, it should be understood that the illustrated interactive system 10 is merely intended to be exemplary, and that certain features and components may be omitted and various other features and components may be added to facilitate performance, in accordance with the disclosed embodiments.

[0038] As shown, the computing system 16 may include a processor 82 configured to execute instructions stored in a memory 84 to perform the operations disclosed herein. As such, in an embodiment, the processor 82 may include one or more general purpose microprocessors, one or more application specific processors (ASICs), one or more field programmable logic arrays (FPGAs), or any combination thereof. Additionally, the memory 84 may be a tangible, non-transitory, computer-readable medium that store instructions executable by and data to be processed by the processor 82. Thus, in some embodiments, the memory 84 may include random access memory (RAM), read only memory (ROM), rewritable non-volatile memory, flash memory, hard drives, optical discs, and the like.

[0039] FIG. 2 is a schematic view of an amusement park 100 including the interactive system 10, in accordance with an embodiment. As shown, multiple users 26A, 26B travel through the amusement park 100 and wear respective wearable devices 24A, 24B that support respective identifiers 22A, 22B. The identification system 12 monitors the multiple users 26A, 26B in the manner discussed above with respect to FIG. 1. The animated character system 14 includes the animated character head 30 worn by the performer and the base station control system 32 operated by the handler, and the animated character system 14 may receive information from the identification system 12 to provide a unique, personalized experience for each user 26A, 26B.

[0040] With reference to FIG. 2, in operation, the identification system 12 may detect a first user 26A at a restaurant 102 via a first reader 20A and a second user 26B at a ride 104 via a second reader 20B. More particularly, each reader 20A, 20B may read a unique identification code or number from the identifier 22A, 22B supported in each wearable device 24A, 24B. The readers 20A, 20B may provide respective signals indicating detection of the users 24A, 24B to the computing system 16, which determines and records the activities in the one or more databases 18.

[0041] At a later time, each user 26A, 26B may approach the animated character head 30. When the first user 26A is within range of a third reader 20C positioned proximate to the animated character head 30, the third reader 20C may provide a signal indicating detection of the first user 24A to the computing system 16. In response, the computing system 16 may provide information regarding the first user's 26A previous activities within the amusement park 100 to the base station control system 32. For example, the computing system 16 may provide information indicating that the first user 26A recently visited the restaurant 102, and the base station control system 32 may provide the information on the display 66 for visualization by the handler. Thus, the handler may be led to select an animation related to the first user's 26A visit to the restaurant, such as to ask the first user 26A whether the first user 26A enjoyed the meal at the restaurant 102. As noted above, the information may be communicated and/or utilized in various other ways to provide the unique, customized interactive experience for the first user 26A. For example, in an embodiment, the computing system 16 may additionally or alternatively determine and provide a recommended animation to the base station control system 32, or the base station control system 32 may determine one or more relevant animations to facilitate selection by the handler.

[0042] Similarly, when the second user 26B is within range of the third reader 20C positioned proximate to the animated character head 30, the third reader 20C may provide a signal indicating detection of the second user 24B to the computing system 16. In response, the computing system 16 may provide information regarding the second user's 26B previous activities within the amusement park 100 to the base station control system 32. For example, the computing system 16 may provide information indicating that the second user 26B recently visited the ride 104, and the base station control system 32 may provide the information on the display 66 for visualization by the handler. Thus, the handler may be led to select an animation related to the second user's 26B visit to the ride, such as to ask the second user 26B whether the second user 26B enjoyed the ride 104. As noted above, the information may be communicated and/or utilized in various other ways to provide the unique, customized interactive experience for the second user 26B. For example, in an embodiment, the computing system 16 may additionally or alternatively determine and provide a recommended animation, or the base station control system 32 may determine one or more relevant animations to facilitate selection by the handler.

[0043] It should also be appreciated that the third reader 20C may provide respective signals indicating detection of the users 24A, 24B to the computing system 16, which determines and records the interaction with the animated character head 30 in the one or more databases 18. Accordingly, subsequent activities (e.g., at the restaurant 102, the ride 104, or at other attractions, including interactions with other animated character heads) may be varied based on the user's 24A, 24B interaction with the animated character head 30. For example, a game attraction may adjust game elements based on the user's 24A, 24B achievements, including the user's 24A, 24B interaction with the animated character head 30. In an embodiment, another handler may be led to select an animation for another animated character head based on the user's 24A, 24B previous interaction with the animated character head 30. Similarly, should the users 24A, 24B revisit the animated character head 30 (e.g., in the same day or at any later time, including one or more later years), the animated character system 14 may operate in a manner that avoids repeating the same phrase(s), builds off of a prior interaction, or indicates recognition of the user 26 (e.g., states "it is nice to see you again," or "I have not seen you since last year"). For example, some or all of the phrases that were previously spoken may be removed from inventory (e.g., the handler is not given the option to play the phrases), will not be presented to the handler on an initial screen that is viewable to the handler via the base station control system 32 as the user 26 approaches the animated character head 30, and/or will be marked or highlighted as being previously spoken.

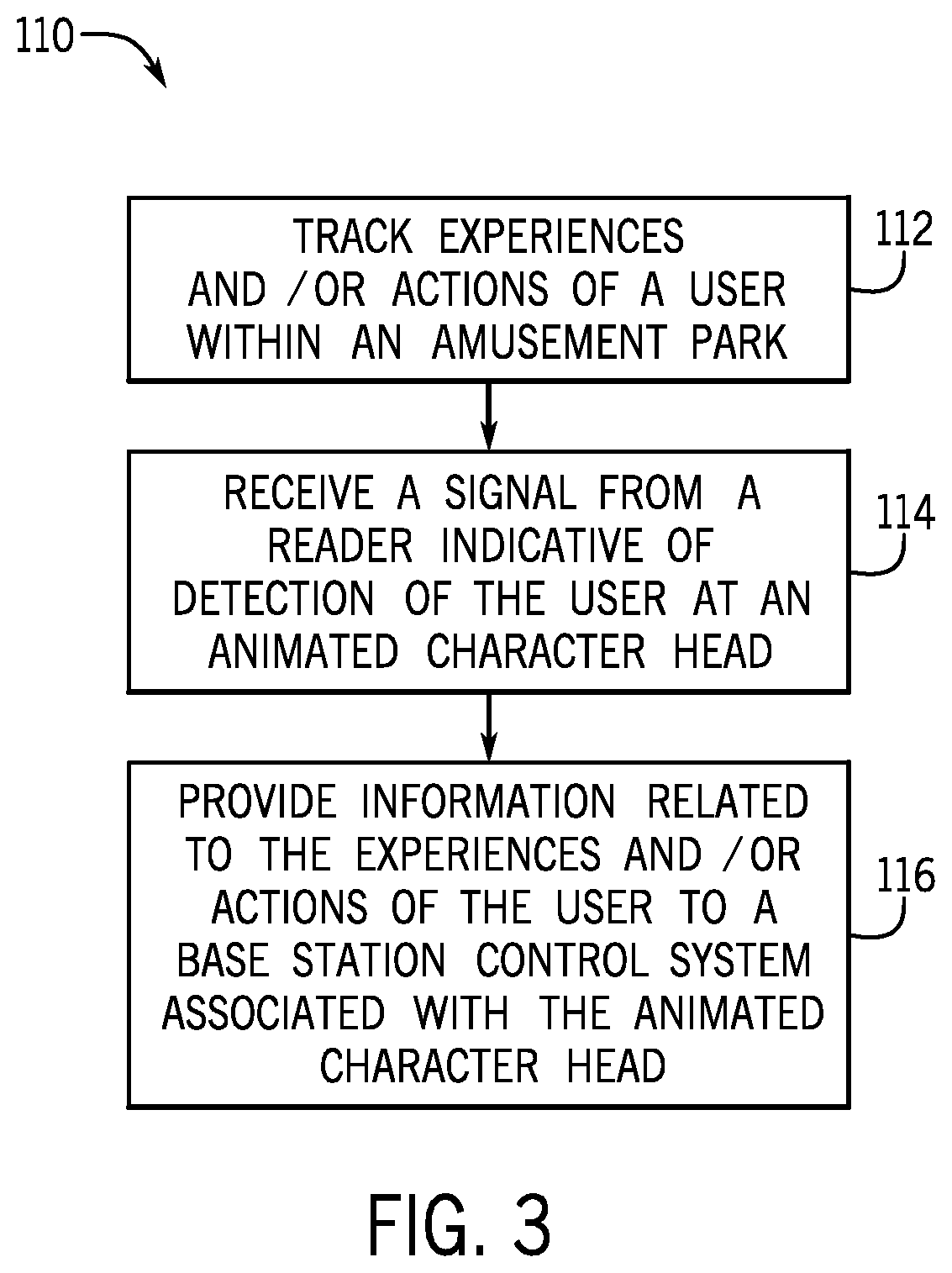

[0044] FIG. 3 is a flow diagram of a method 110 of operating the interactive system 10, in accordance with an embodiment. The method 110 includes various steps represented by blocks and references components shown in FIG. 1. Certain steps of the method 110 may be performed as an automated procedure by a system, such as the computing system 16 that may be used within the interactive system 10. Although the flow chart illustrates the steps in a certain sequence, it should be understood that the steps may be performed in any suitable order, certain steps may be carried out simultaneously, and/or certain steps may be omitted, and other steps may be added, where appropriate. Further, certain steps or portions of the method 110 may be performed by separate devices.

[0045] In step 112, the computing system 16 tracks (e.g., detects and records) the user's 26 activities within an amusement park. In an embodiment, the user 26 may wear or carry the wearable device 24 that supports the identifier 22. When the user 26 brings the wearable device 24 within range of the reader 20, the reader 20 may detect the identifier 22 and provide a signal to the computing system 16 to enable the computing system 16 to detect and to record (e.g., in the one or more databases 18) the user's 26 activities within the amusement park.

[0046] In step 114, the computing system 16 receives a signal indicative of detection of the identifier 22 in the wearable device 24 of the user 26 from one or more readers 20 proximate to the animated character head 30. The computing system 16 may determine that the user 26 is approaching the animated character head 30 based on the signal.

[0047] In step 116, in response to receipt of the signal at step 114, the computing system 16 may then access and provide information related to the activities of the user to the base station control system 32 to control or influence the animated character head's 30 interaction with the user 26. For example, the computing system 16 may provide an output to the base station control system 32 that causes display of the information and/or a recommended interaction on the display 66 of the base station control system 32. The handler may then select an appropriate animation for the animated character head 30, such as by providing an input at the base station control system 32 that causes the animated character head 30 to speak a particular phrase and/or to perform a particular gesture (e.g., relevant to the information about the user 26). In this manner, the interactive system 10 may provide a unique, personalized interactive experience between the user 26 and the animated character head 30.

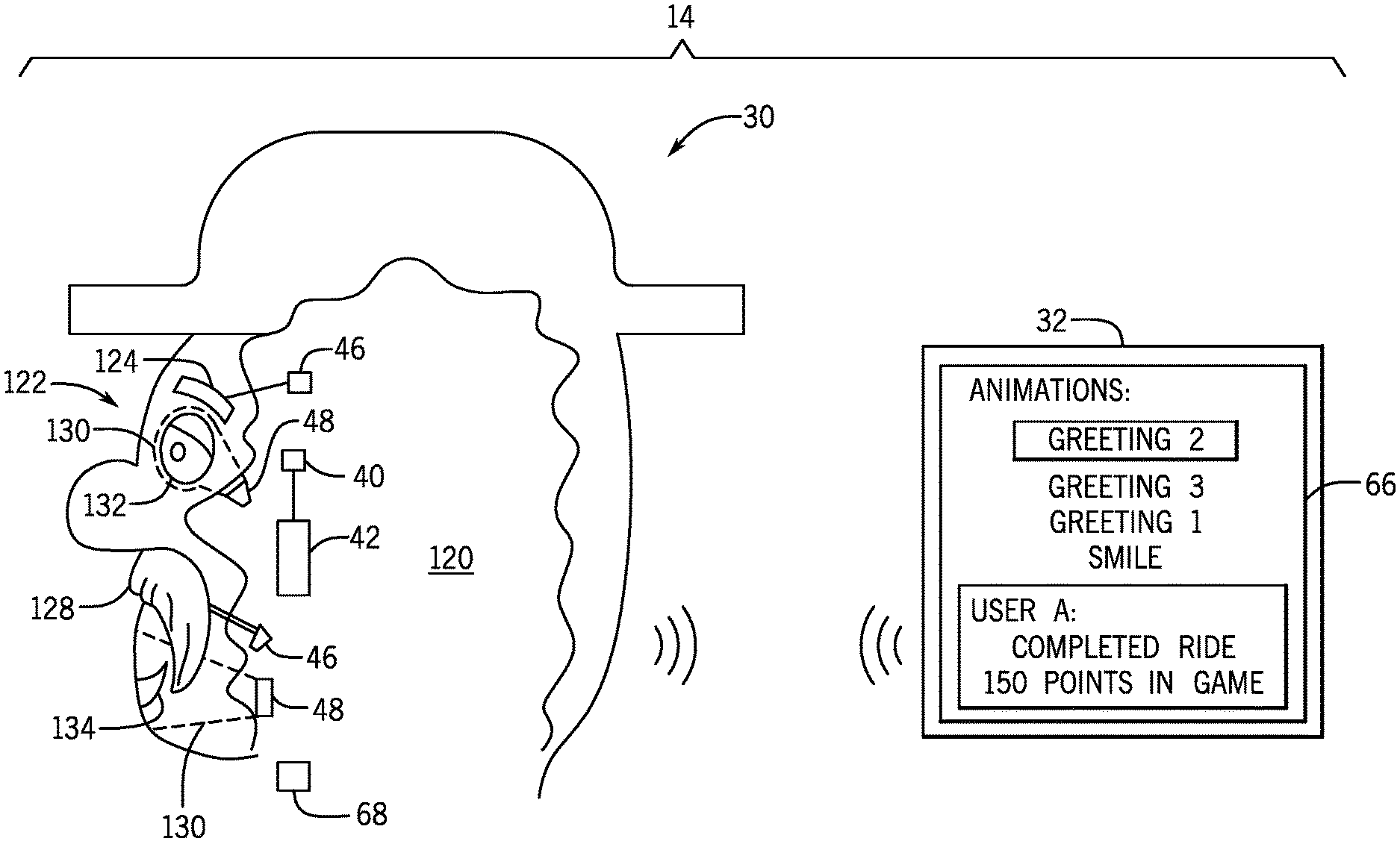

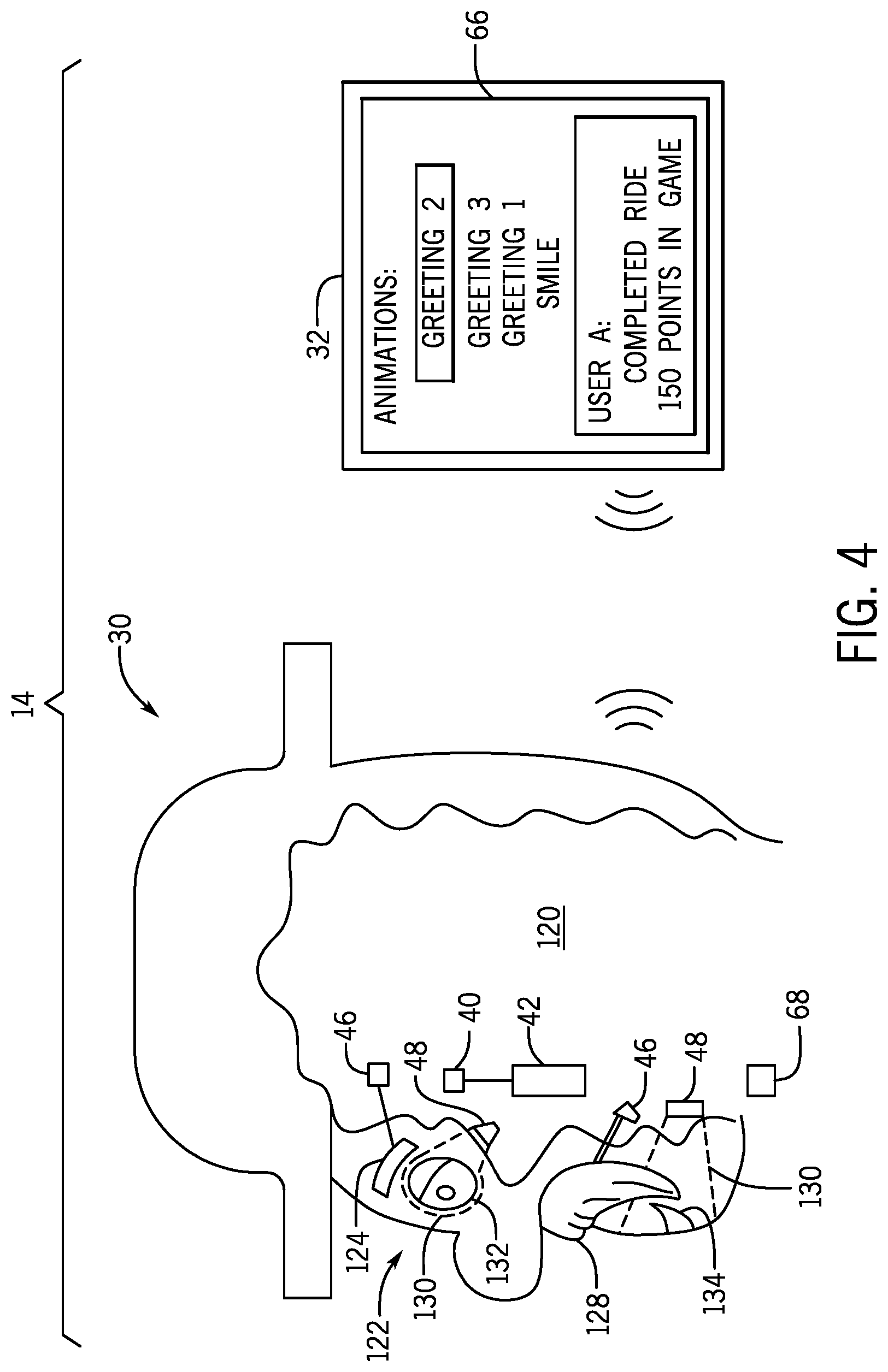

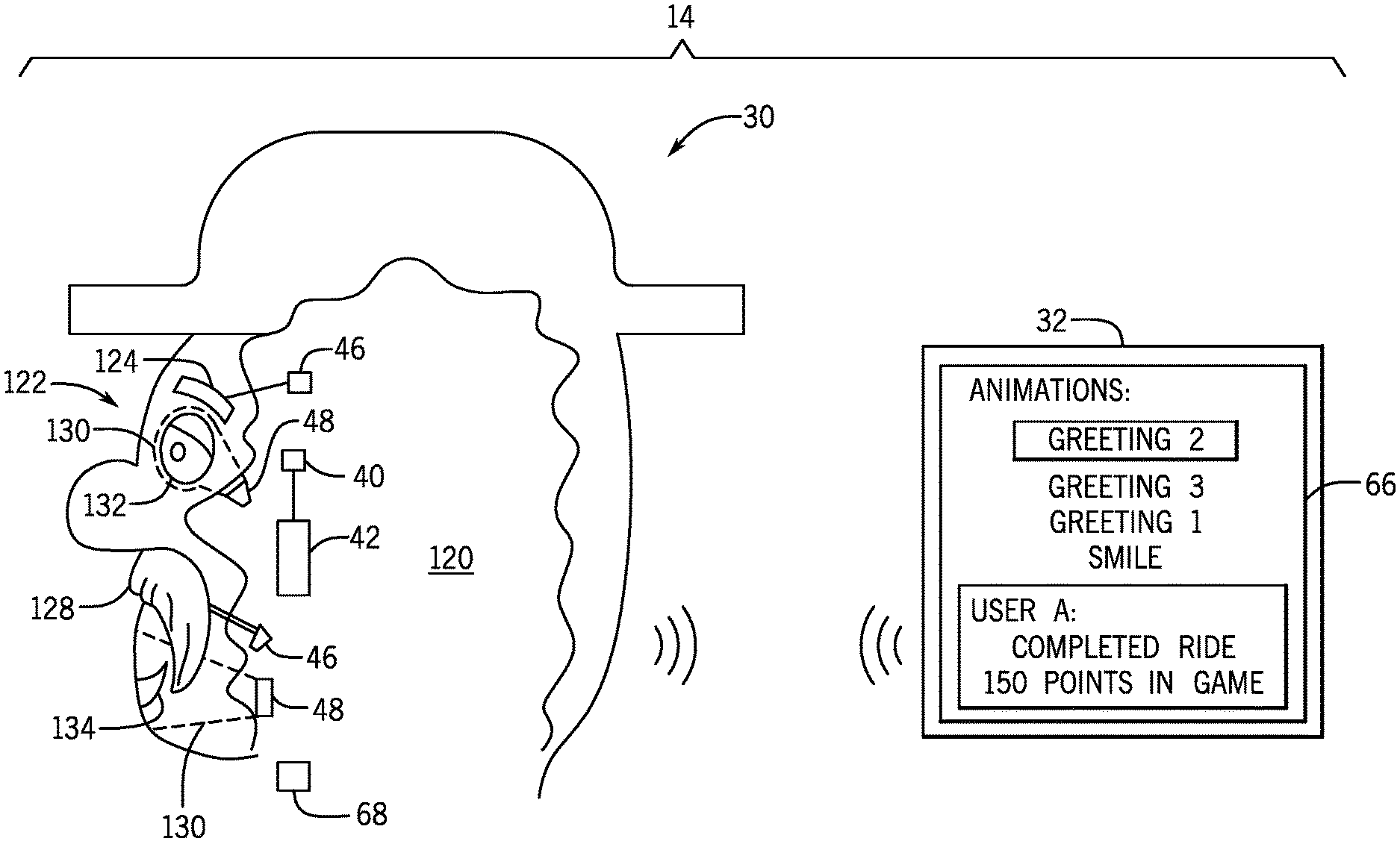

[0048] FIG. 4 is a cross-sectional side view of the animated character head 30 and a front view of the base station control system 32 that may be used in the interactive system 10 (FIG. 1), in accordance with an embodiment. As shown, the animated character head 30 includes an opening 120 configured to receive and/or surround a performer's head, and an outer surface 122 (e.g., face) that is visible to the user 26 (FIG. 1). The outer surface 122 may support various features, such as an eyebrow 124 and a mustache 128, which may be actuated via respective actuators 46 based on a control signal (e.g., received from the processor 36, FIG. 1). In an embodiment, screens 130 may be positioned about the animated character head 30 to enable display of certain gestures and/or features, such as an eye 132 and a mouth 134, via light projection onto the screens 130. As discussed above, light sources 48 may be provided to project light onto the screens 130 to display such gestures and/or features in response to receipt of a control signal (e.g., received from the processor 36, FIG. 1). As shown, the animated character head 30 may include the display 42, the one or more sensors 40, and the audio communication device 68, among other components.

[0049] The animated character head 30 may be used with the base station control system 32. As shown, the display 66 of the base station control system 32 shows a selection menu of available animations for the animated character head 30. In an embodiment, the animations may be arranged in order of relevance to the user 26 (FIG. 1) that is interacting with the animated character head 30 and/or certain relevant animations are highlighted to assist the handler in creating a unique, personalized interaction for the user 26 (FIG. 1). The display 66 may also present information related to the user 26 (FIG. 1), such as previous activities of the user 26 (FIG. 1). The handler may make a selection (e.g., by touching a corresponding region of the display 66), and the selection may be communicated to the animated character head 30 to effect play of a particular animation. It should be appreciated that the elements shown on the display 66 of the base station control system 32 in FIG. 4 may additionally or alternatively be shown on the display 42 of the animated character head 30.

[0050] While the identification system is disclosed as a radio-frequency identification (RFID) system to facilitate discussion, it should be appreciated that the identification system may be or include any of a variety of tracking or identification technologies, such as a Bluetooth system (e.g., Bluetooth low energy [BLE] system), that enable an identification device (e.g., transceiver, receiver, sensor, scanner) positioned within an environment (e.g., the amusement park) to detect the identifier in the device of the user. Additionally, while only certain features of the present disclosure have been illustrated and described herein, many modifications and changes will occur to those skilled in the art. Further, it should be understood that components of various embodiments disclosed herein may be combined or exchanged with one another. It is, therefore, to be understood that the appended claims are intended to cover all such modifications and changes as fall within the true spirit of the disclosure.

[0051] The techniques presented and claimed herein are referenced and applied to material objects and concrete examples of a practical nature that demonstrably improve the present technical field and, as such, are not abstract, intangible or purely theoretical. Further, if any claims appended to the end of this specification contain one or more elements designated as "means for [perform]ing [a function] . . . " or "step for [perform]ing [a function] . . . ", it is intended that such elements are to be interpreted under 35 U.S.C. 112(f). However, for any claims containing elements designated in any other manner, it is intended that such elements are not to be interpreted under 35 U.S.C. 112(f).

* * * * *

D00000

D00001

D00002

D00003

D00004

XML

uspto.report is an independent third-party trademark research tool that is not affiliated, endorsed, or sponsored by the United States Patent and Trademark Office (USPTO) or any other governmental organization. The information provided by uspto.report is based on publicly available data at the time of writing and is intended for informational purposes only.

While we strive to provide accurate and up-to-date information, we do not guarantee the accuracy, completeness, reliability, or suitability of the information displayed on this site. The use of this site is at your own risk. Any reliance you place on such information is therefore strictly at your own risk.

All official trademark data, including owner information, should be verified by visiting the official USPTO website at www.uspto.gov. This site is not intended to replace professional legal advice and should not be used as a substitute for consulting with a legal professional who is knowledgeable about trademark law.