Data Preparation Context Navigation

Levy; Amnon ; et al.

U.S. patent application number 16/883036 was filed with the patent office on 2021-02-18 for data preparation context navigation. The applicant listed for this patent is DR HoldCo 2, Inc.. Invention is credited to Nenshad Dinshaw Bardoliwalla, Dave Brewster, Jing Chen, Lilia Gutnik, Amnon Levy, Krupa Natarajan, Pakshi Rajan.

| Application Number | 20210049163 16/883036 |

| Document ID | / |

| Family ID | 1000005190521 |

| Filed Date | 2021-02-18 |

View All Diagrams

| United States Patent Application | 20210049163 |

| Kind Code | A1 |

| Levy; Amnon ; et al. | February 18, 2021 |

DATA PREPARATION CONTEXT NAVIGATION

Abstract

Navigating to a data preparation context is disclosed. A set of data preparation operations is performed on one or more data sets to generate a set of answer sets in a first application. A final answer set in the set of answer sets is provided to a second application. In response to a user specification of a source-related query, a reference associated with the source-related query is obtained. A corresponding subset of the set of answer sets associated with one or more corresponding or relevant data preparation operations is determined according to the obtained reference. The corresponding subset of the set of answer sets associated with the one or more data preparation operations are presented in the first application according to the obtained reference.

| Inventors: | Levy; Amnon; (Redwood City, CA) ; Brewster; Dave; (Redwood City, CA) ; Rajan; Pakshi; (Redwood City, CA) ; Bardoliwalla; Nenshad Dinshaw; (Castro Valley, CA) ; Chen; Jing; (Redwood City, CA) ; Gutnik; Lilia; (Redwood City, CA) ; Natarajan; Krupa; (Redwood City, CA) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Family ID: | 1000005190521 | ||||||||||

| Appl. No.: | 16/883036 | ||||||||||

| Filed: | May 26, 2020 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 15294605 | Oct 14, 2016 | 10698916 | ||

| 16883036 | ||||

| 62242820 | Oct 16, 2015 | |||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | G06F 16/245 20190101; G06T 1/20 20130101; G06F 16/248 20190101; G06T 1/60 20130101 |

| International Class: | G06F 16/248 20060101 G06F016/248; G06T 1/20 20060101 G06T001/20; G06T 1/60 20060101 G06T001/60; G06F 16/245 20060101 G06F016/245 |

Claims

1-20. (canceled)

21. A method, comprising: obtaining, using a visualization application, a final answer set from a set of answer sets generated by a data preparation application, wherein the answer sets were generated by performing a set of data preparation operations on one or more data sets in the data preparation application, and wherein each answer set comprises a result of transforming at least a portion of the one or more data sets using at least some of the set of data preparation operations; and receiving, using the visualization application, a user specification of a source related query, wherein, in response to the source related query, a reference associated with the source related query is obtained by the data preparation application, wherein the data preparation application uses the reference to determine a corresponding subset of the set of answer sets associated with one or more corresponding data preparation operations, and wherein the data preparation application presents the corresponding subset of the set of answer sets associated with the one or more data preparation operations according to the obtained reference.

22. The method of claim 21, wherein the visualization application is configured to render a visualization of data associated with the final answer set.

23. The method of claim 21, wherein the set of data preparation operations comprises a sequence of data preparation operations.

24. The method of claim 21, wherein obtaining the final answer set comprises obtaining the reference.

25. The method of claim 21, wherein receiving the user specification comprises composing the reference using the visualization application.

26. The method of claim 21, wherein the reference comprises a link for navigating a user from the visualization application to a data preparation context in the data preparation application, and wherein the data preparation context is associated with the data associated with the final answer set.

27. The method of claim 21, wherein the data preparation context comprises a histogram of filtered data associated with the final answer set.

28. The method of claim 21, wherein the data preparation context comprises a project step that affected at least some data associated with the final answer set.

29. The method of claim 21, wherein the data preparation context comprises a lineage of at least some data associated with the final answer set.

30. The method of claim 21, wherein the data preparation context comprises a data quality summary of at least some data associated with the final answer set.

31. A system, comprising: one or more computer processors programmed to perform operations comprising: obtaining, using a visualization application, a final answer set from a set of answer sets generated by a data preparation application, wherein the answer sets were generated by performing a set of data preparation operations on one or more data sets in the data preparation application, and wherein each answer set comprises a result of transforming at least a portion of the one or more data sets using at least some of the set of data preparation operations; and receiving, using the visualization application, a user specification of a source related query, wherein, in response to the source related query, a reference associated with the source related query is obtained by the data preparation application, wherein the data preparation application uses the reference to determine a corresponding subset of the set of answer sets associated with one or more corresponding data preparation operations, and wherein the data preparation application presents the corresponding subset of the set of answer sets associated with the one or more data preparation operations according to the obtained reference.

32. The system of claim 31, wherein the visualization application is configured to render a visualization of data associated with the final answer set.

33. The system of claim 31, wherein the set of data preparation operations comprises a sequence of data preparation operations.

34. The system of claim 31, wherein obtaining the final answer set comprises obtaining the reference.

35. The system of claim 31, wherein receiving the user specification comprises composing the reference using the visualization application.

36. The system of claim 31, wherein the reference comprises a link for navigating a user from the visualization application to a data preparation context in the data preparation application, and wherein the data preparation context is associated with the data associated with the final answer set.

37. The system of claim 31, wherein the data preparation context comprises a histogram of filtered data associated with the final answer set.

38. The system of claim 31, wherein the data preparation context comprises a project step that affected at least some data associated with the final answer set.

39. The system of claim 31, wherein the data preparation context comprises at least one of a lineage or a data quality summary of at least some data associated with the final answer set.

40. An article, comprising: a non-transitory computer-readable medium having instructions stored thereon that, when executed by one or more computer processors, cause the one or more computer processors to perform operations comprising: obtaining, using a visualization application, a final answer set from a set of answer sets generated by a data preparation application, wherein the answer sets were generated by performing a set of data preparation operations on one or more data sets in the data preparation application, and wherein each answer set comprises a result of transforming at least a portion of the one or more data sets using at least some of the set of data preparation operations; and receiving, using the visualization application, a user specification of a source related query, wherein, in response to the source related query, a reference associated with the source related query is obtained by the data preparation application, wherein the data preparation application uses the reference to determine a corresponding subset of the set of answer sets associated with one or more corresponding data preparation operations, and wherein the data preparation application presents the corresponding subset of the set of answer sets associated with the one or more data preparation operations according to the obtained reference.

Description

CROSS REFERENCE TO OTHER APPLICATIONS

[0001] This application is a continuation of U.S. patent application Ser. No. 15/294,605, filed Oct. 14, 2016, which claims the benefit of U.S. Provisional Patent Application No. 62/242,820, filed Oct. 16, 2015, the entire contents of each of which are incorporated by reference herein.

BACKGROUND OF THE INVENTION

[0002] Data processing tools such as data visualization applications can be used to provide answers facilitating data driven decisions. Typically, however, such tools only provide a final set of results and do not provide underlying data, and thus, it can be difficult for users of such tools to understand how those results were arrived at.

BRIEF DESCRIPTION OF THE DRAWINGS

[0003] Various embodiments of the invention are disclosed in the following detailed description and the accompanying drawings.

[0004] FIG. 1 is a functional diagram illustrating a programmed computer system for using a step editor for data preparation in accordance with some embodiments.

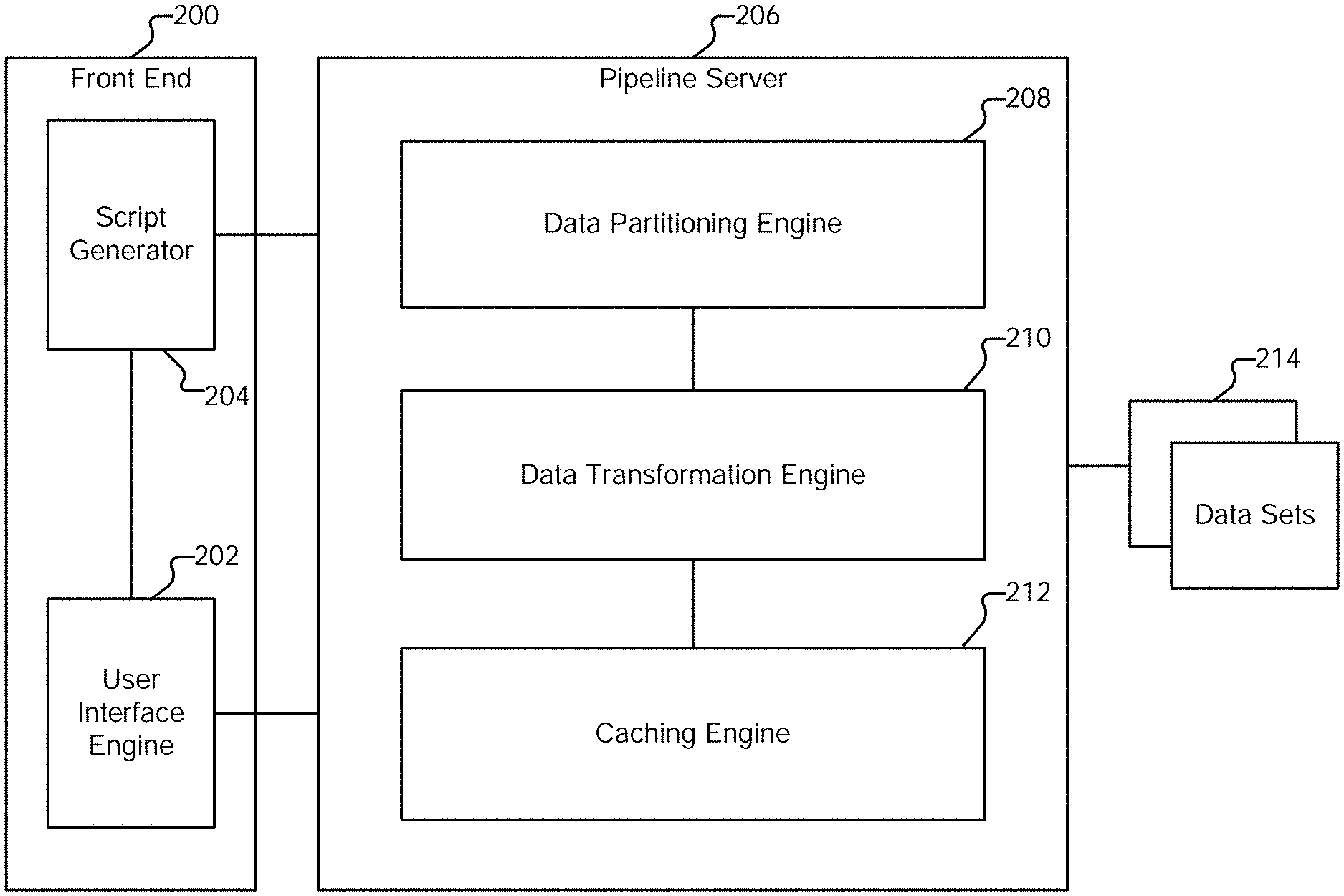

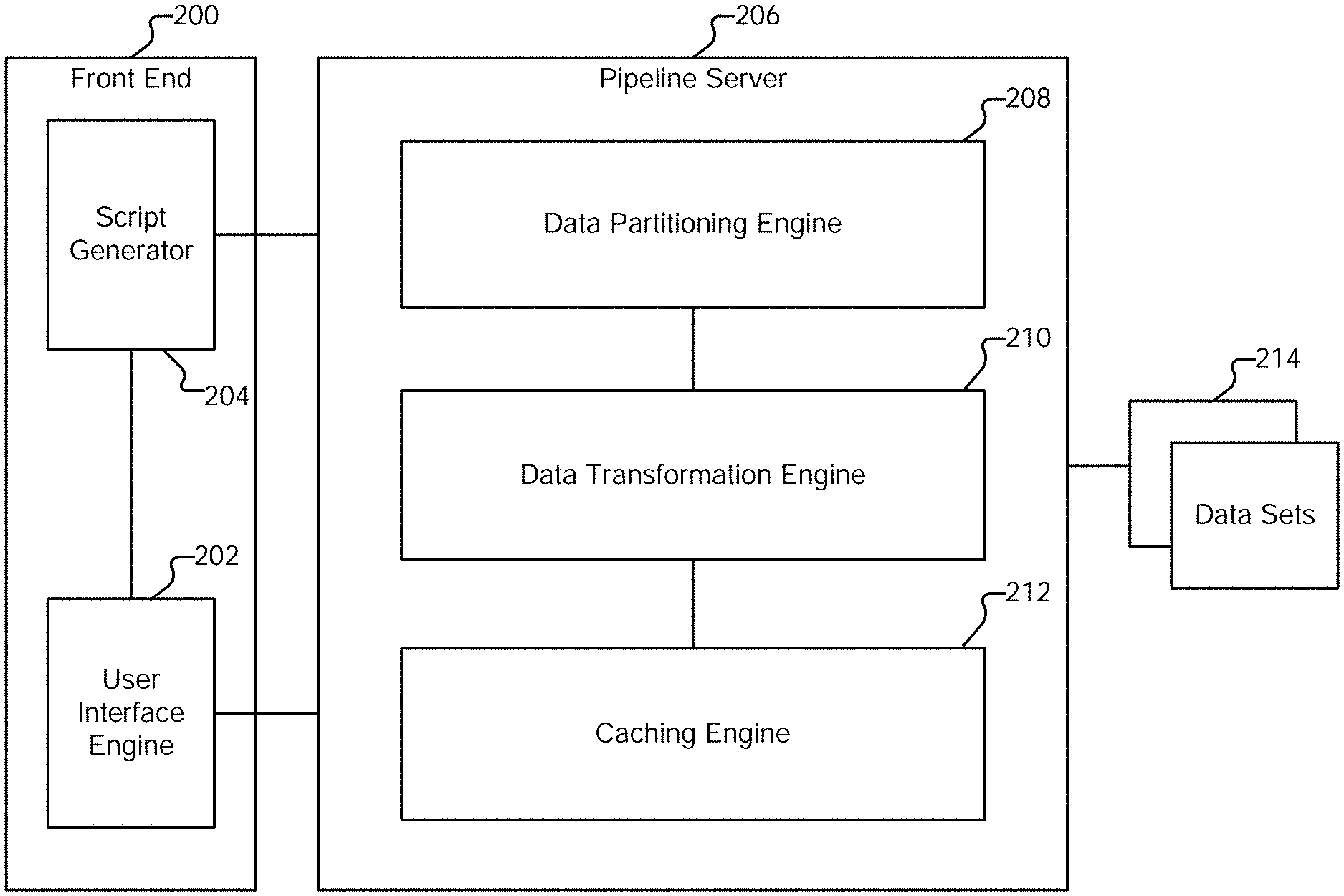

[0005] FIG. 2 is a system diagram illustrating an embodiment of a system for data preparation.

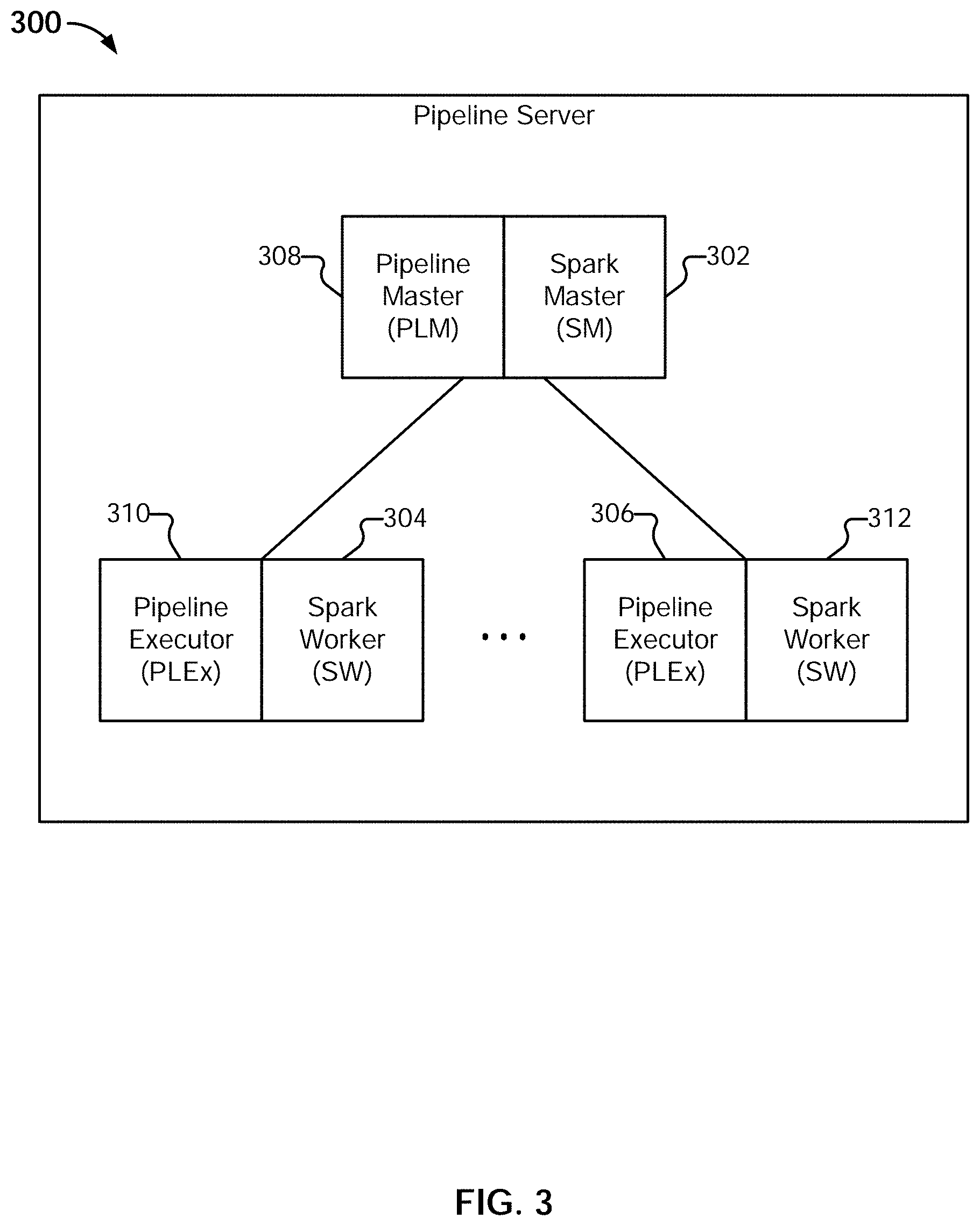

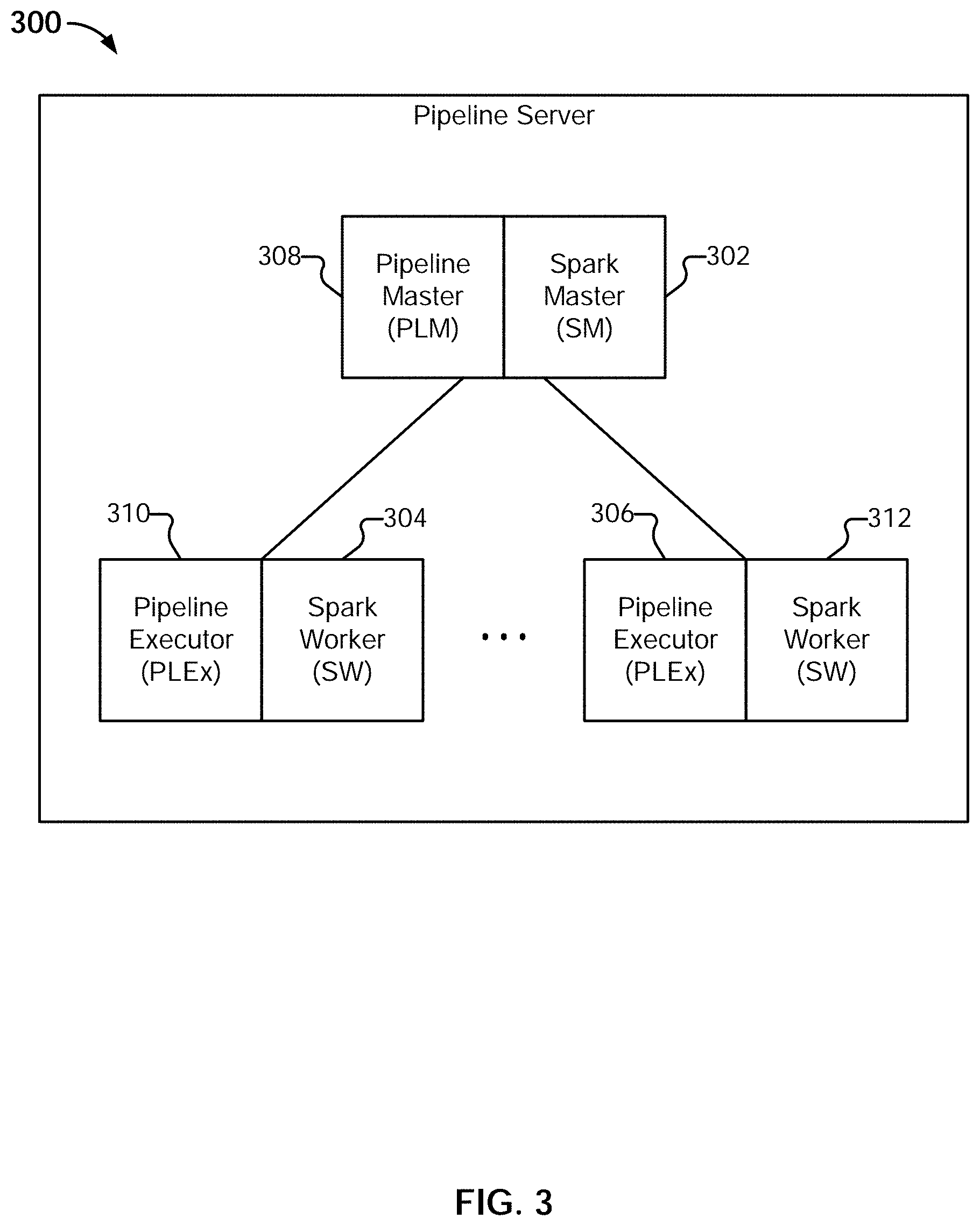

[0006] FIG. 3 is a system diagram illustrating an embodiment of a pipeline server.

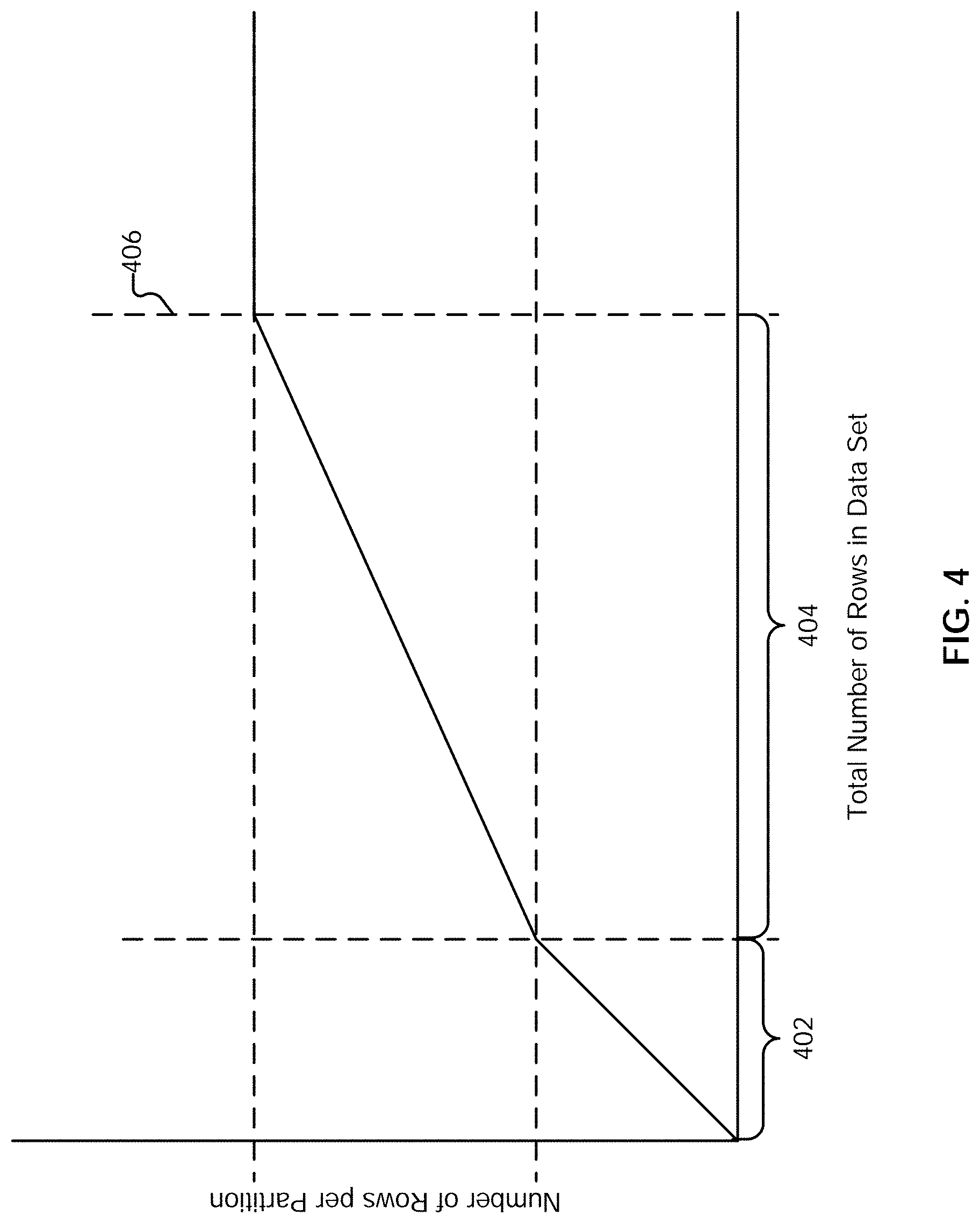

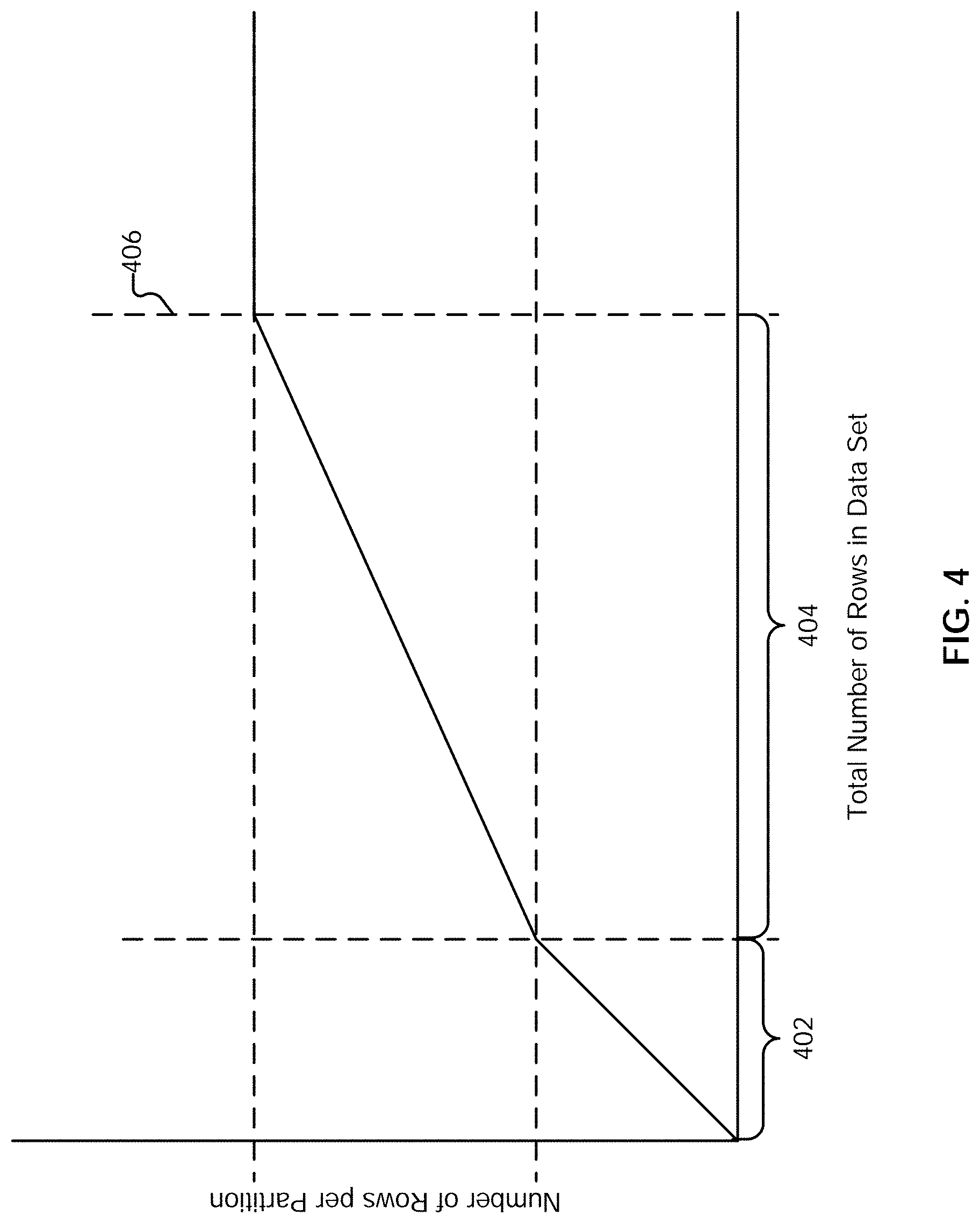

[0007] FIG. 4 illustrates an example embodiment of a three-part function.

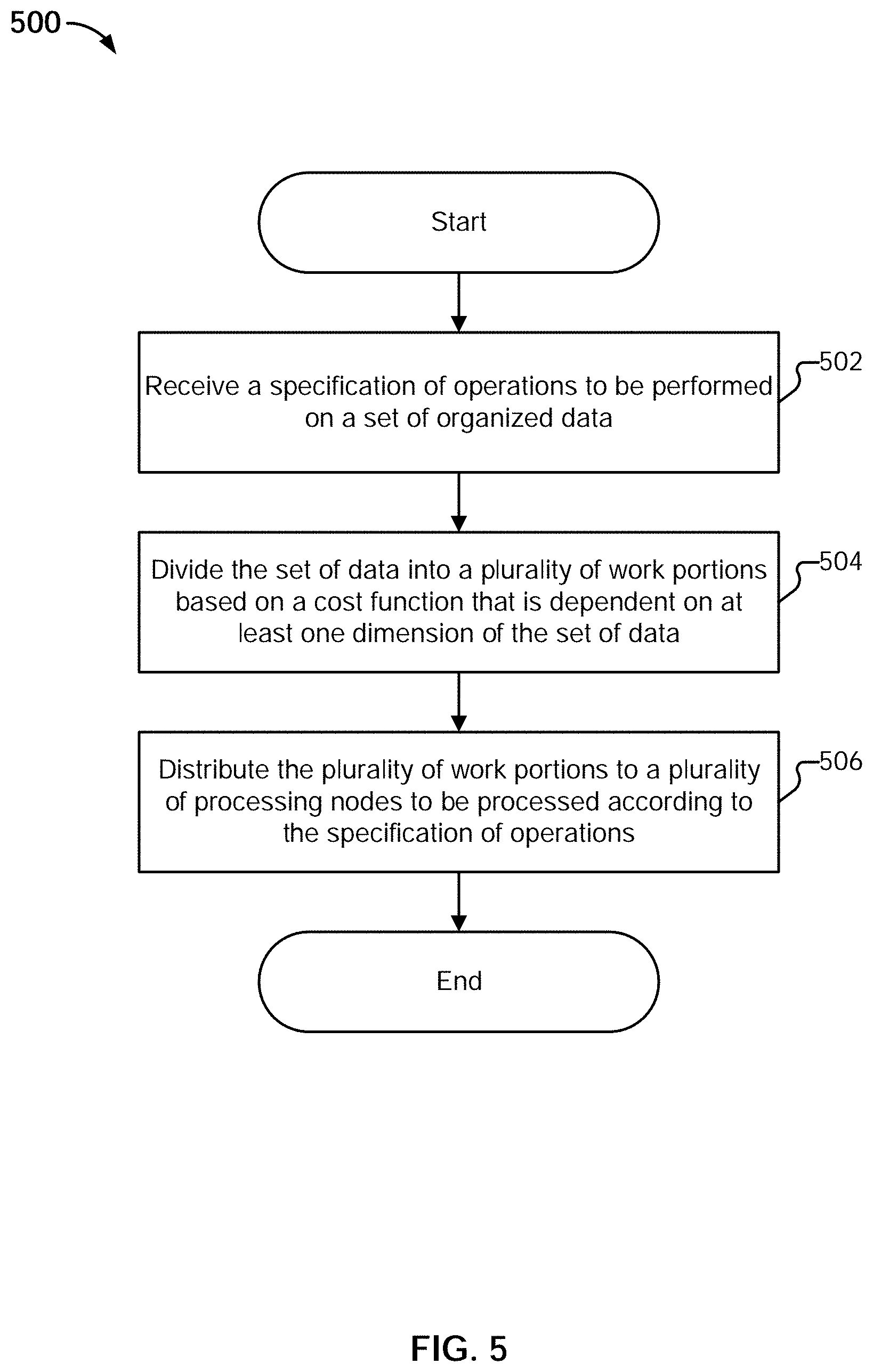

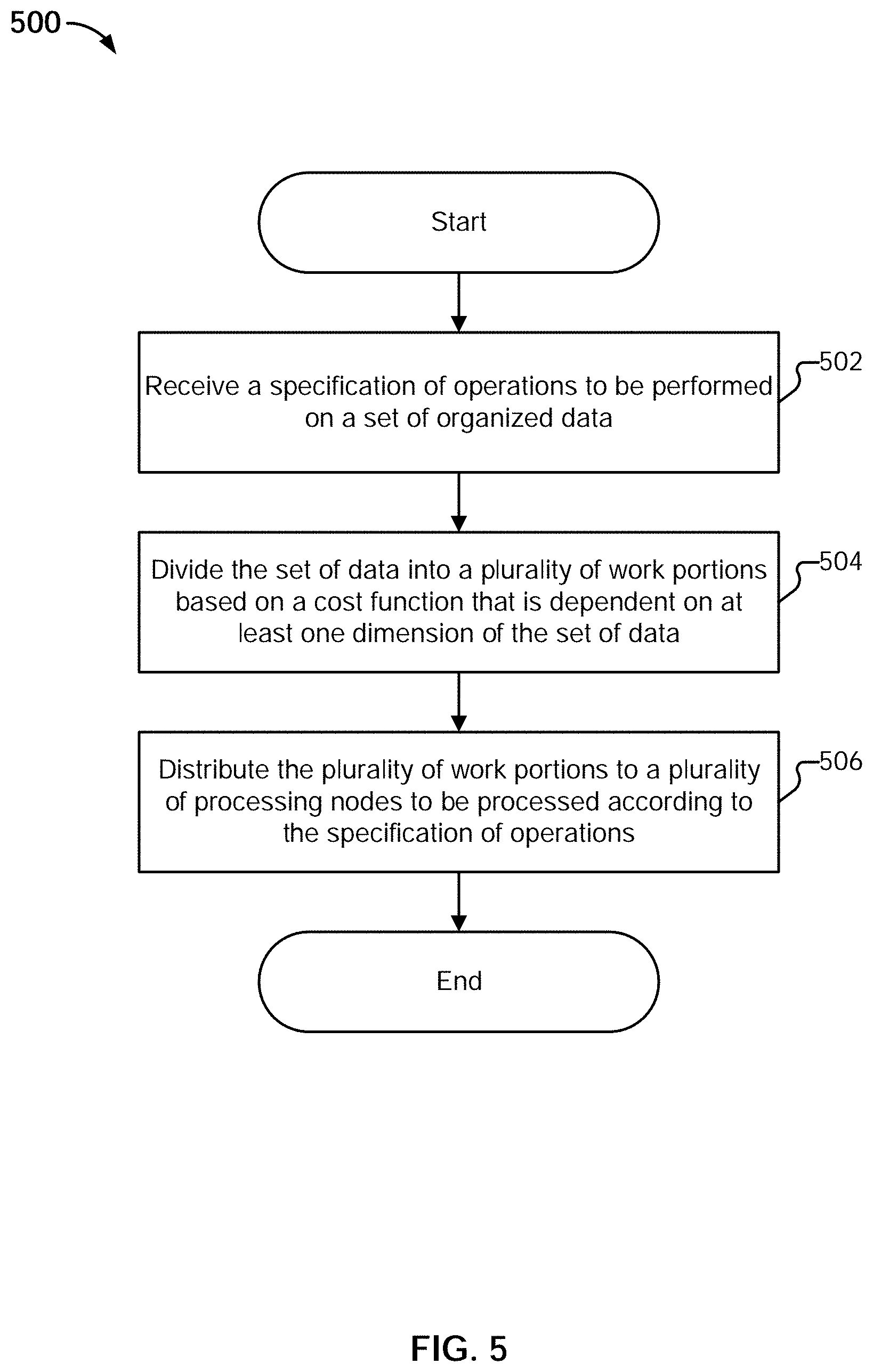

[0008] FIG. 5 is a flow diagram illustrating an example embodiment of a process for partitioning.

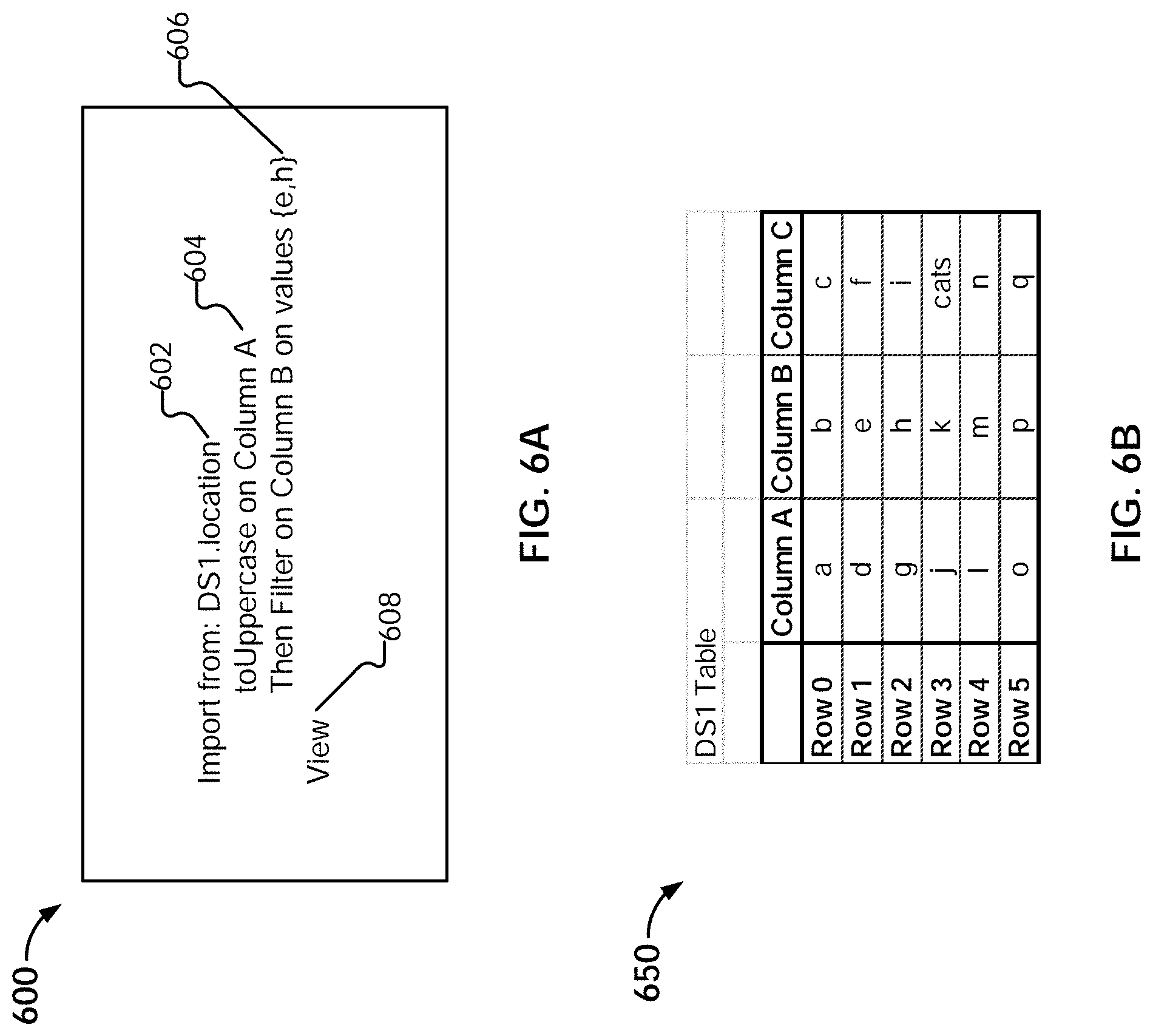

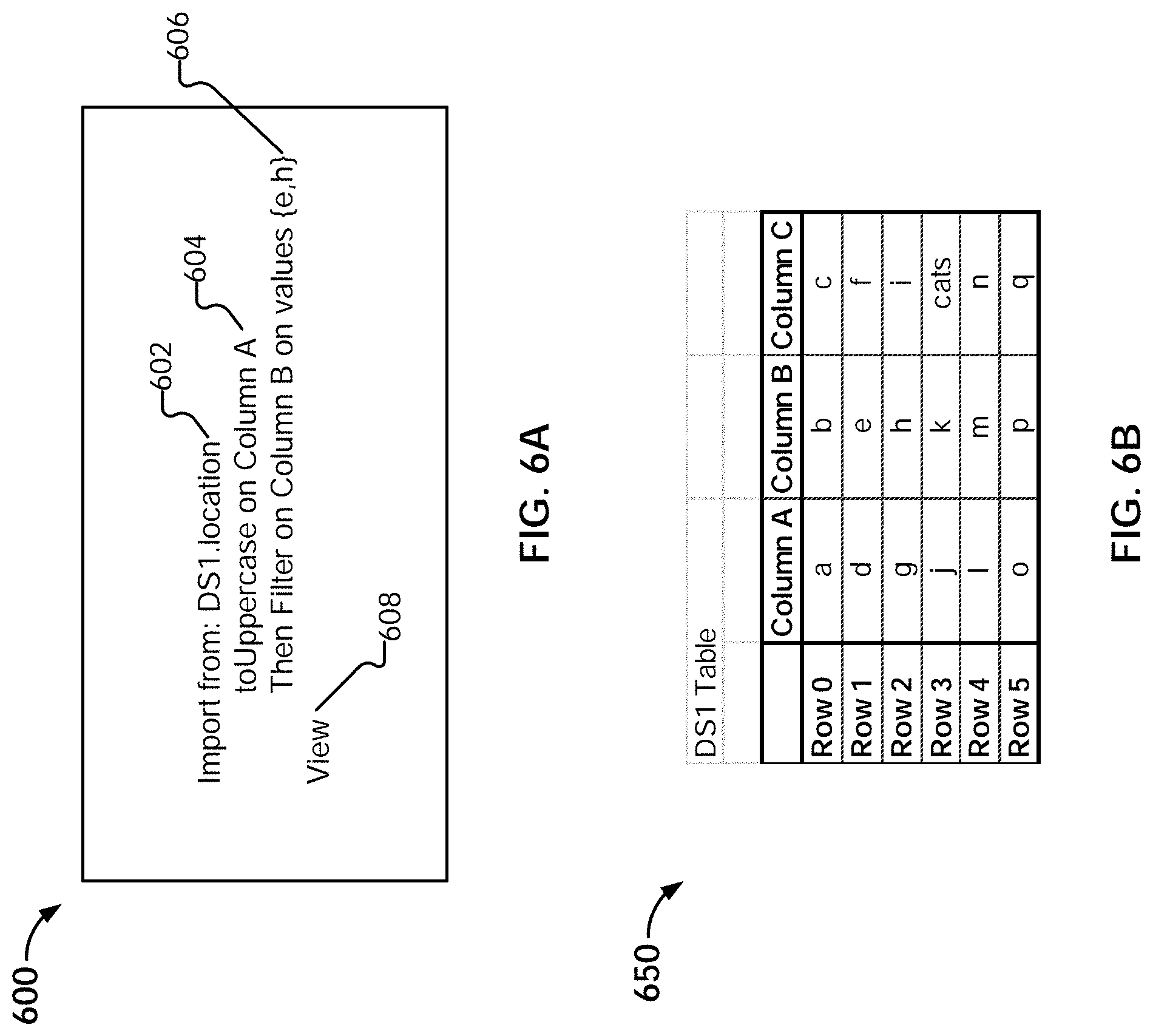

[0009] FIG. 6A illustrates an example embodiment of a script.

[0010] FIG. 6B illustrates an example embodiment of a data set to be processed.

[0011] FIG. 7A illustrates an example embodiment of data structures generated during an import operation.

[0012] FIG. 7B illustrates an example embodiment of executing a data traversal program.

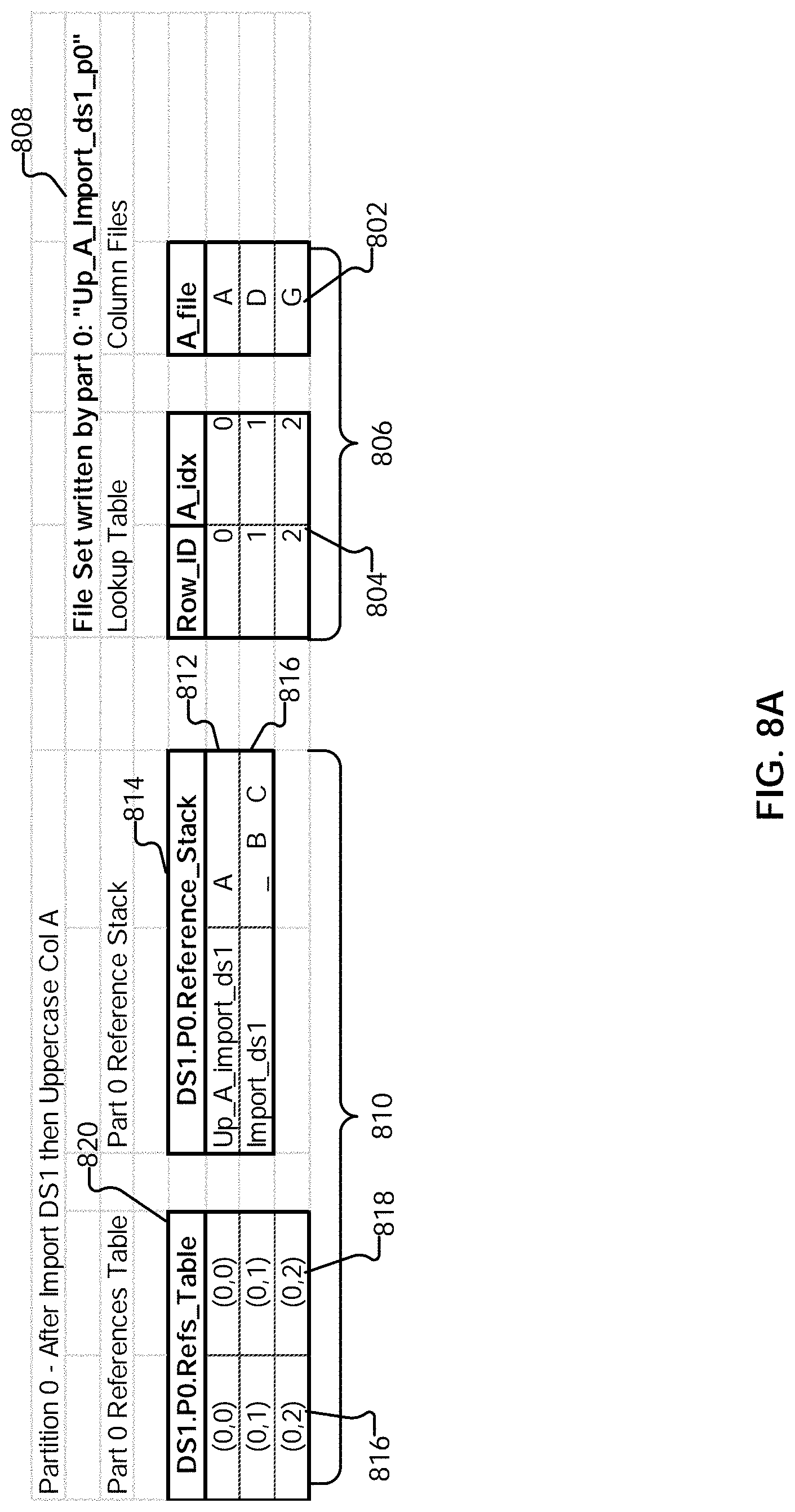

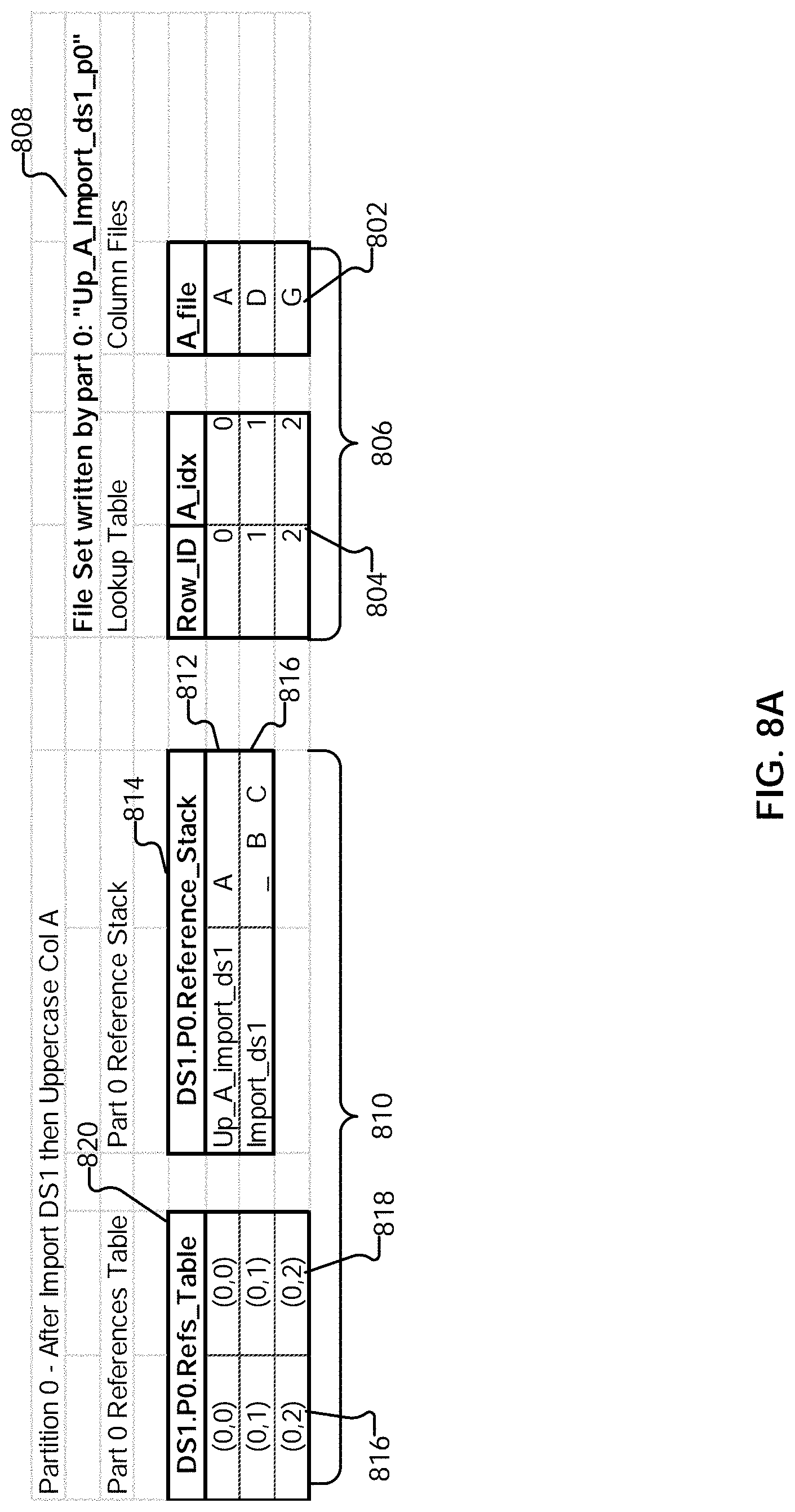

[0013] FIG. 8A illustrates an example embodiment of an updated data traversal program.

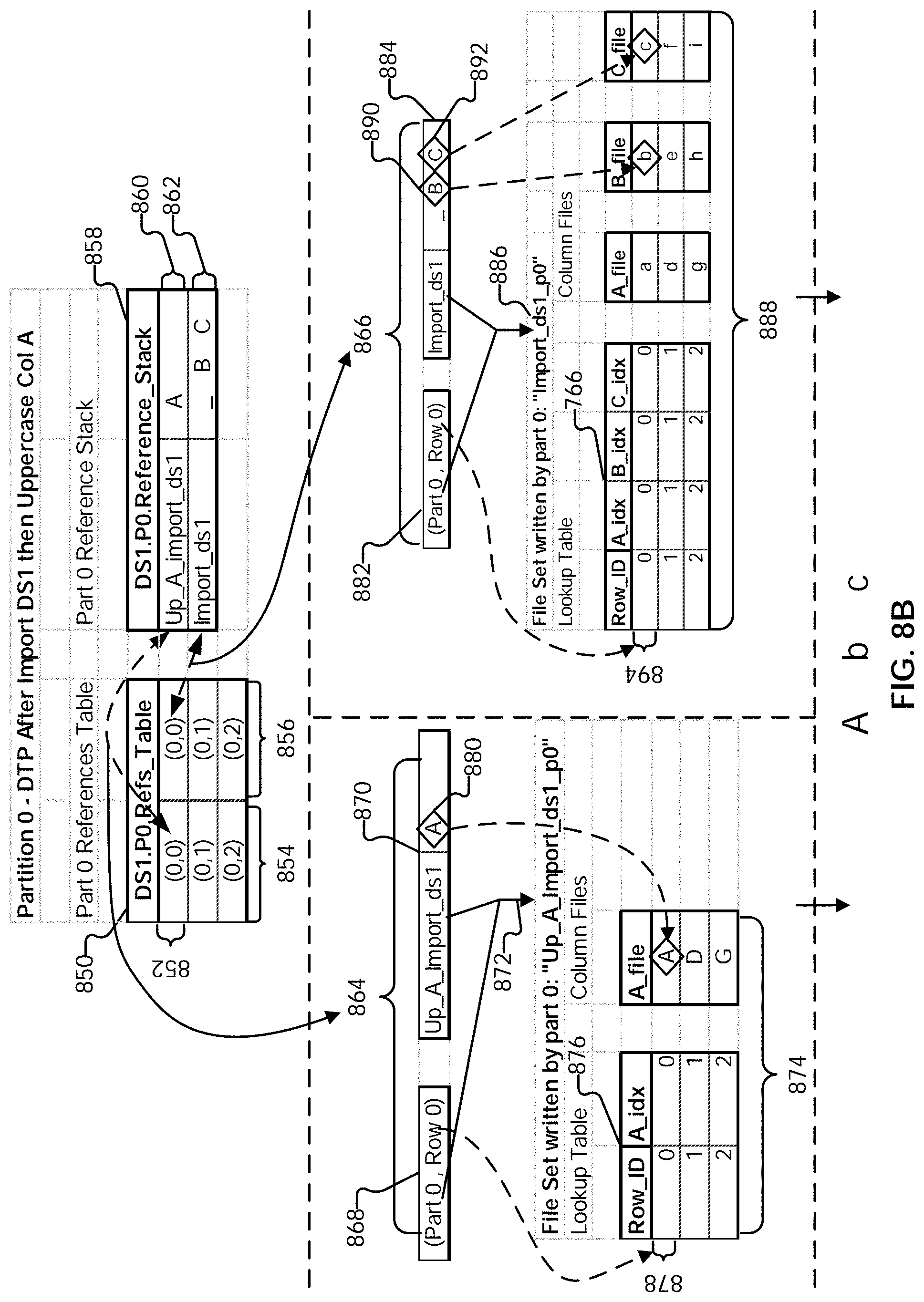

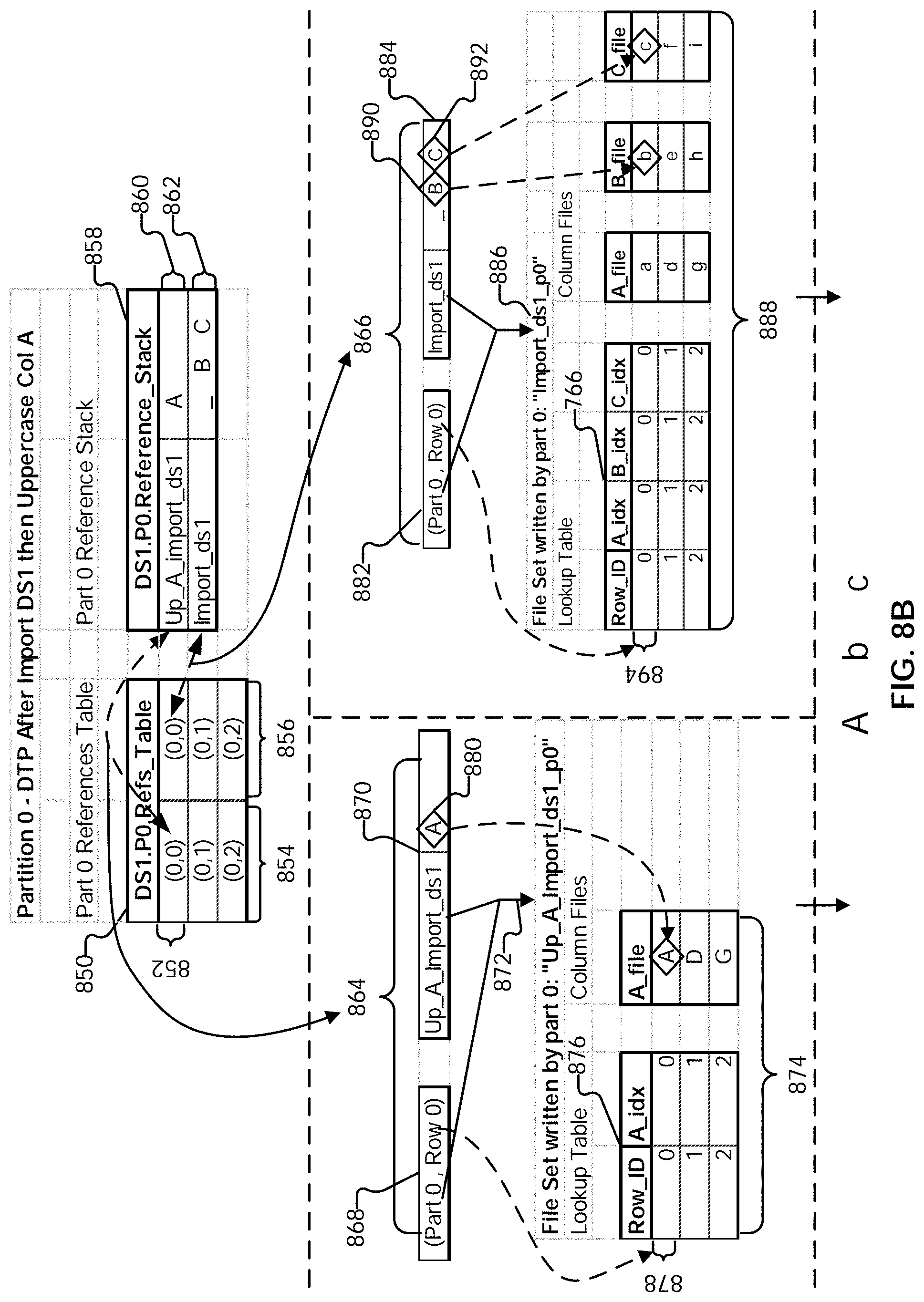

[0014] FIG. 8B illustrates an example embodiment of executing a data traversal program.

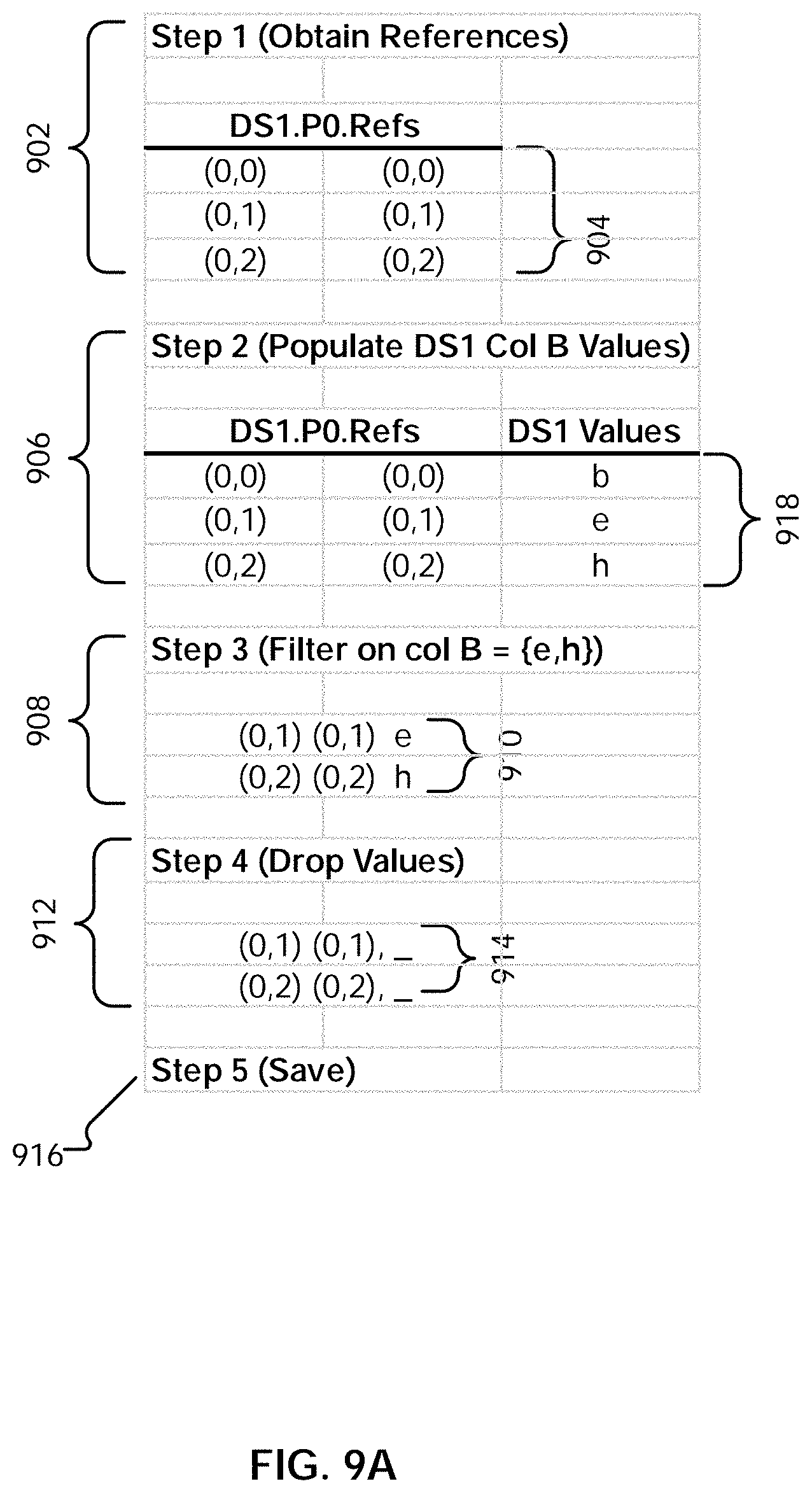

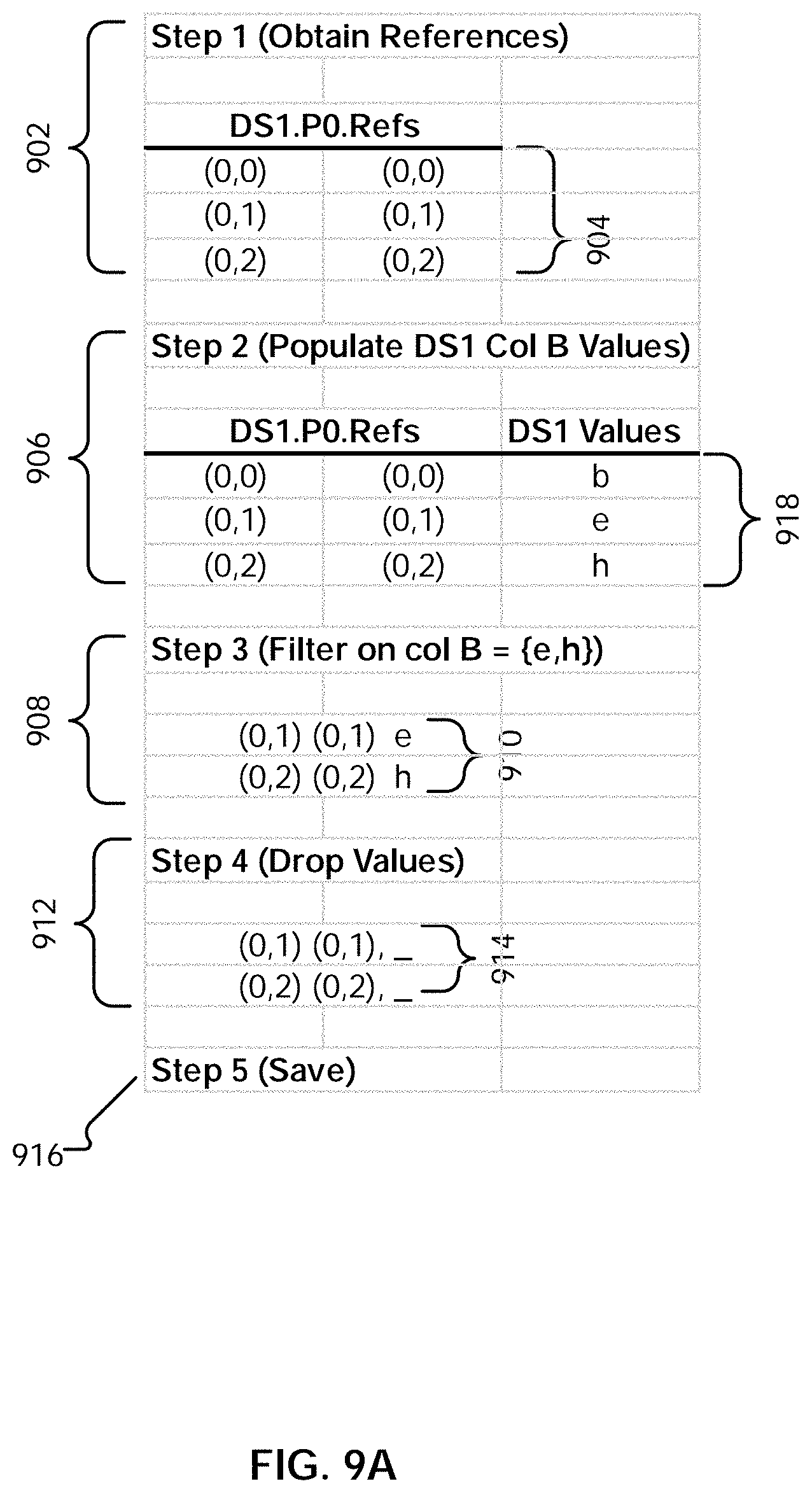

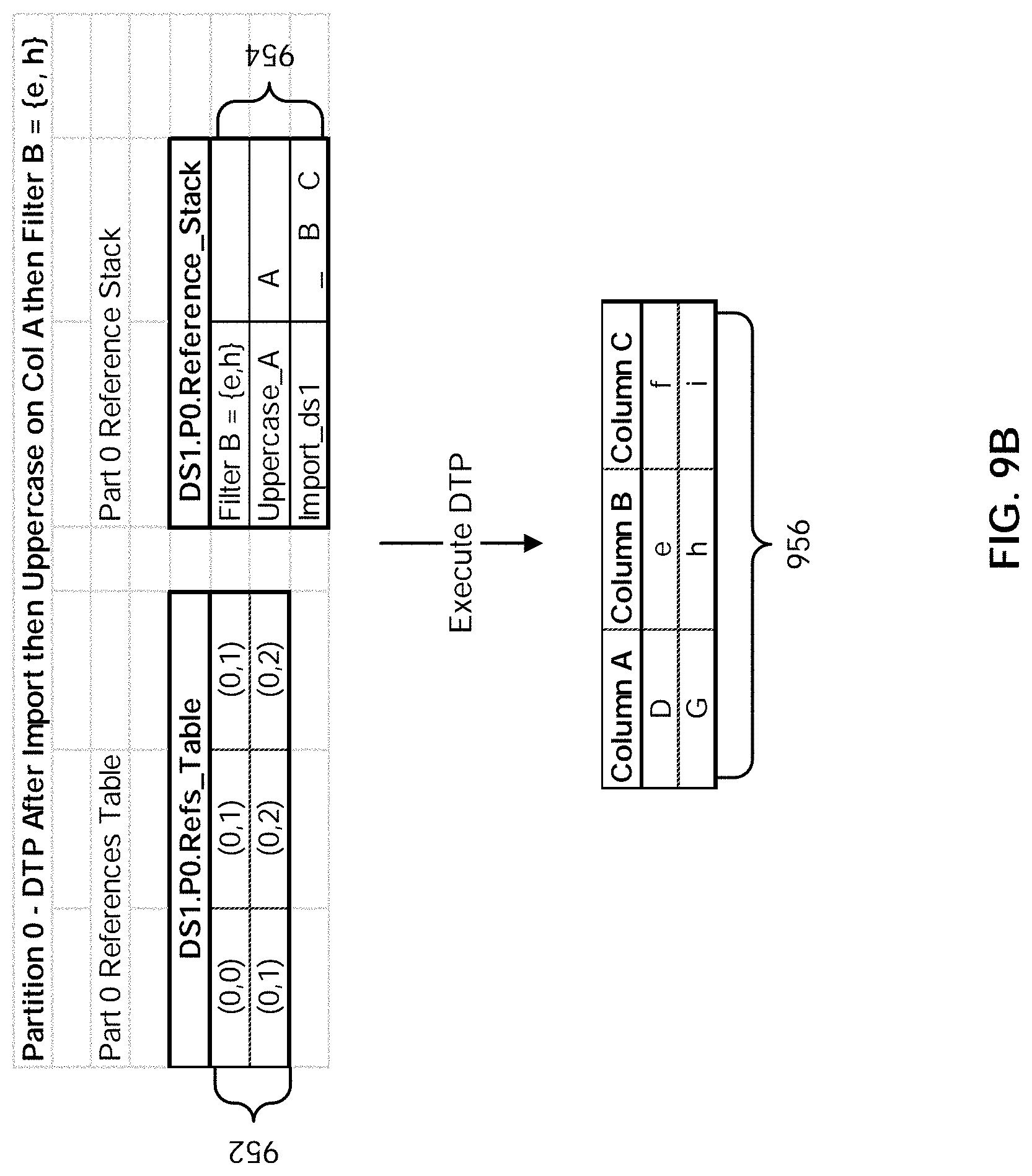

[0015] FIG. 9A illustrates an embodiment of a process for updating a data traversal program to reflect the results of a filter operation.

[0016] FIG. 9B illustrates an example embodiment of a data traversal program.

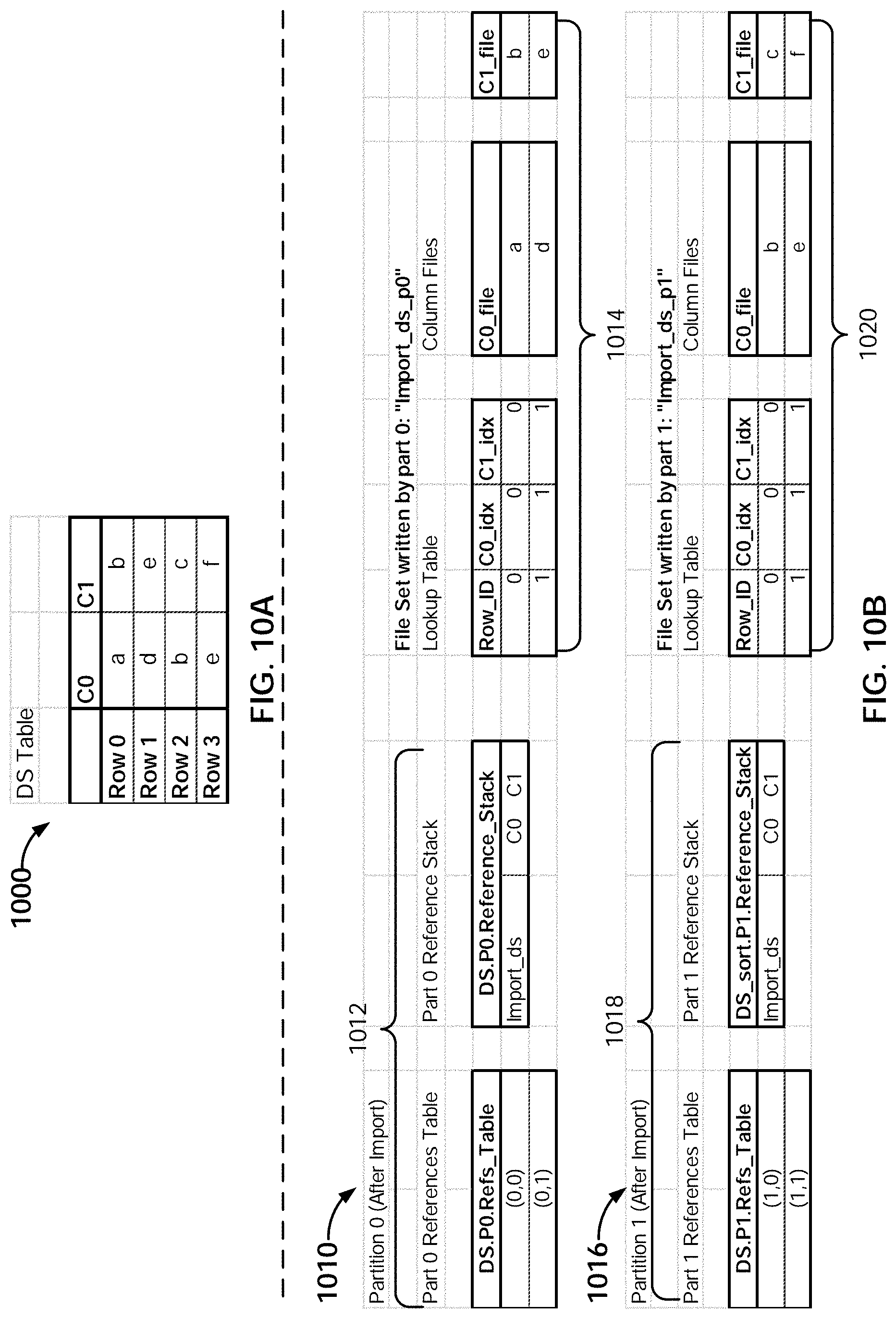

[0017] FIG. 10A is a diagram illustrating an embodiment of a data set to be sorted.

[0018] FIG. 10B is a diagram illustrating an embodiment of data traversal programs and file sets.

[0019] FIG. 10C illustrates an example of a sorted result.

[0020] FIG. 10D is a diagram illustrating an embodiment of a process for performing a sort operation.

[0021] FIG. 10E illustrates an example embodiment of data traversal programs.

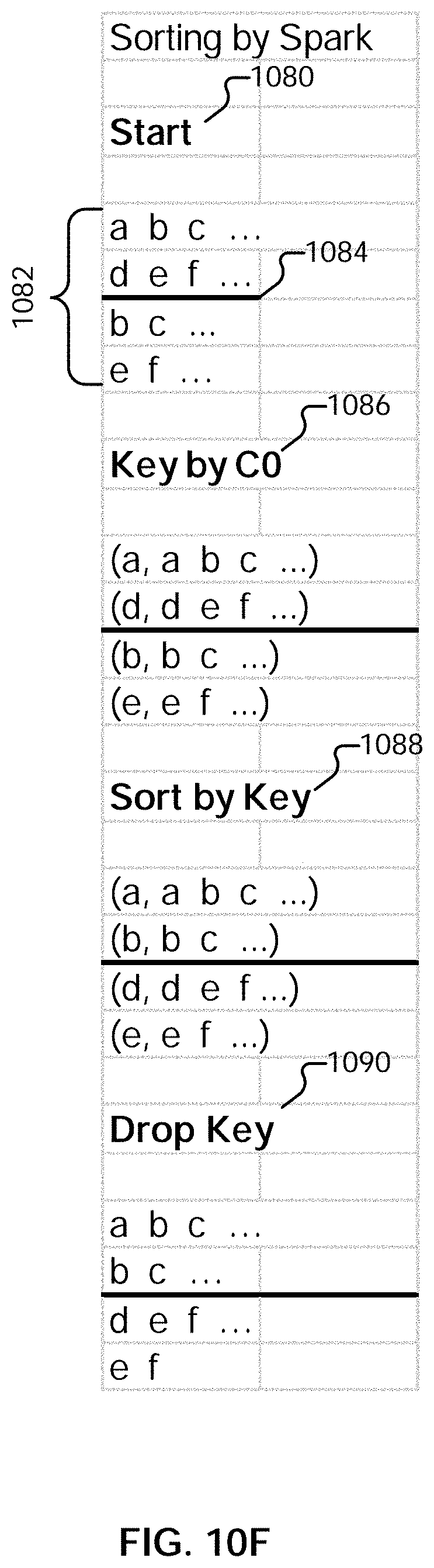

[0022] FIG. 10F illustrates an example embodiment of a native Spark sort.

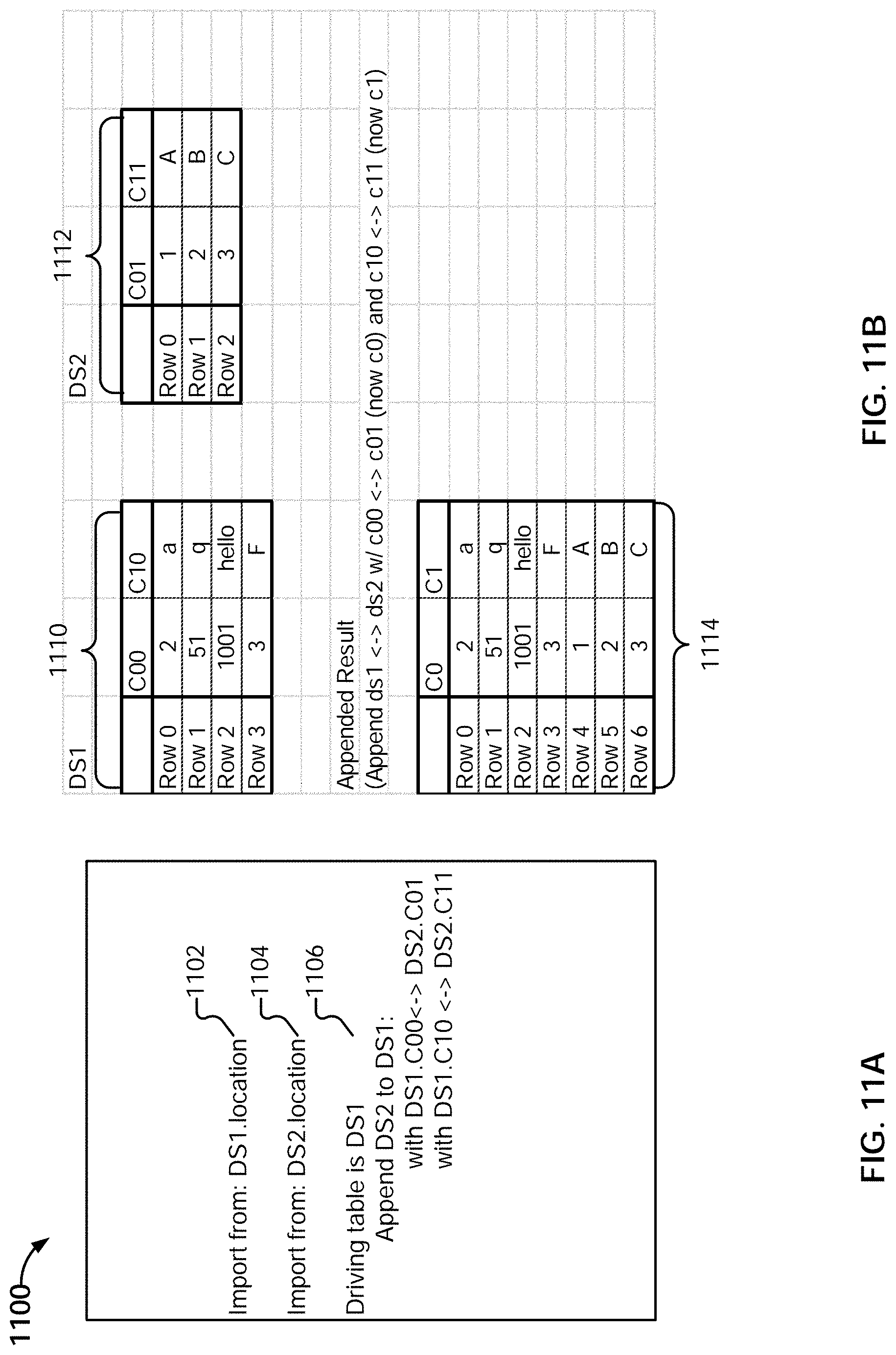

[0023] FIG. 11A illustrates an example embodiment of a script including an append operation.

[0024] FIG. 11B illustrates an example embodiment of data sets to be appended.

[0025] FIG. 11C illustrates an example embodiment of logical file/name spaces associated with pipelines for two different data sets.

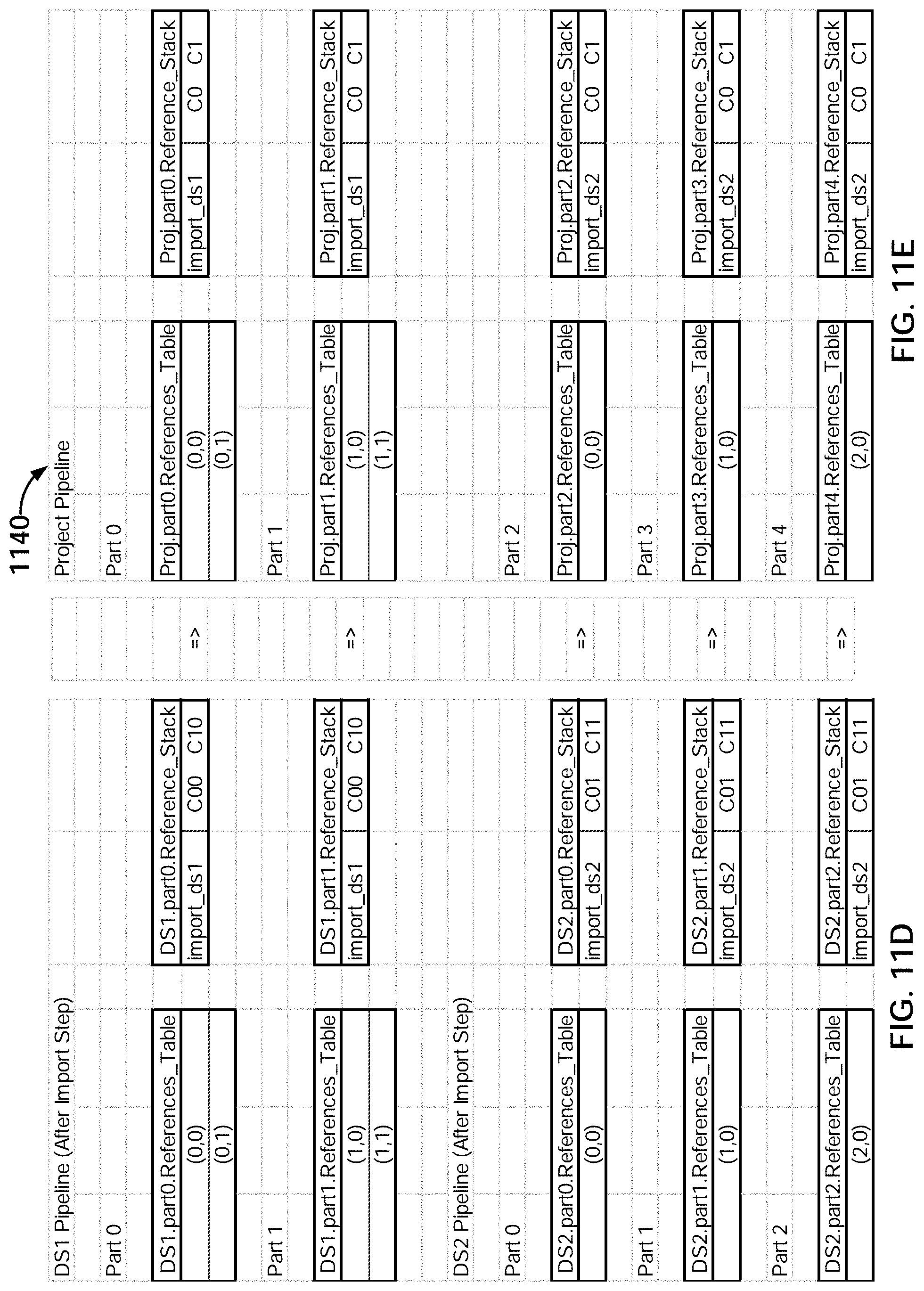

[0026] FIG. 11D illustrates an example embodiment of data traversal programs prior to an append.

[0027] FIG. 11E illustrates an example embodiment of data traversal programs subsequent to an append.

[0028] FIG. 11F illustrates an example embodiment of partitions and data traversal programs.

[0029] FIG. 11G illustrates an example embodiment of data traversal programs prior to an append.

[0030] FIG. 11H illustrates an example embodiment of data traversal programs subsequent to an append.

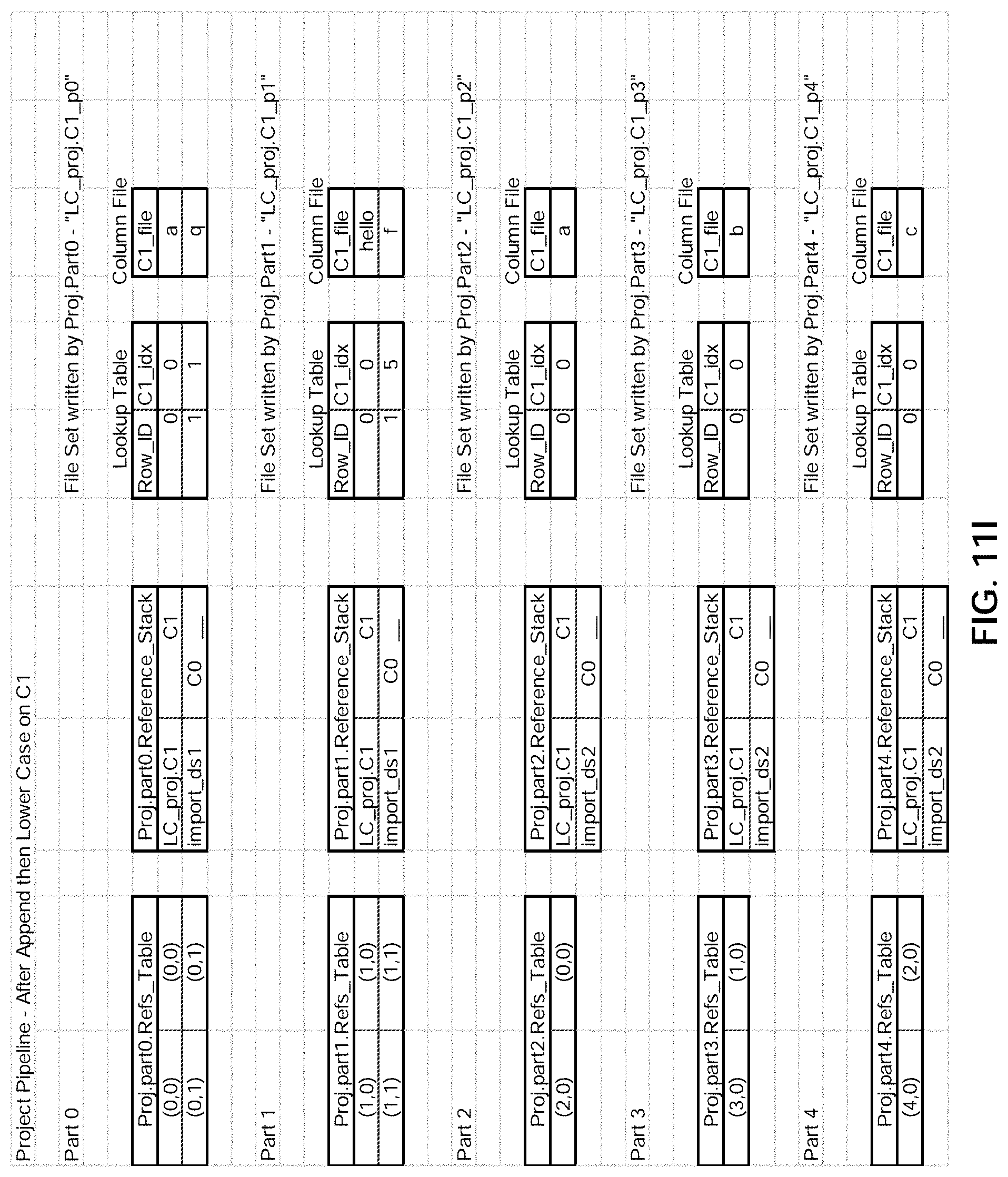

[0031] FIG. 11I illustrates an example embodiment of data traversal programs and file sets.

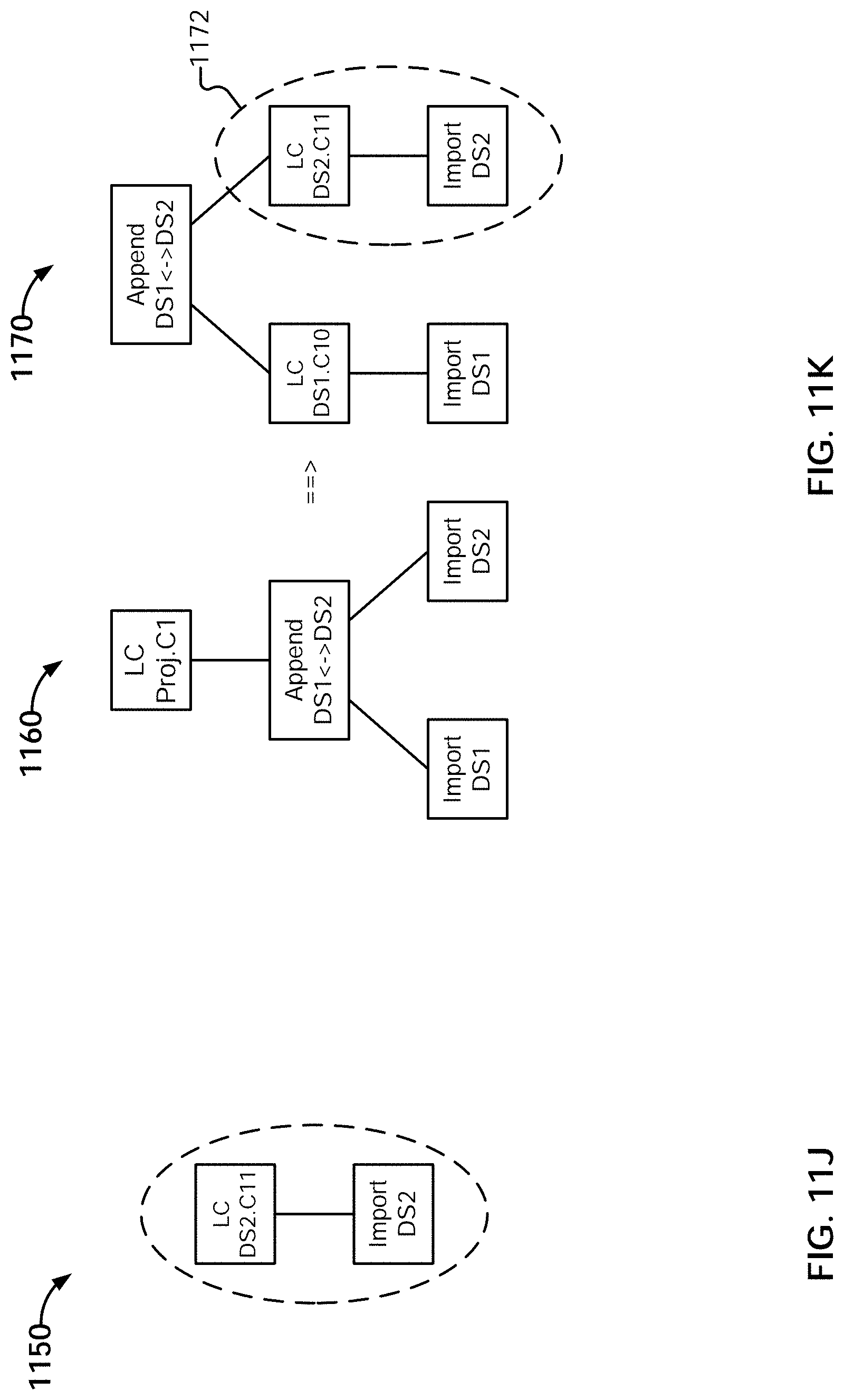

[0032] FIG. 11J illustrates an example embodiment of a tree representation of a set of sequenced operations.

[0033] FIG. 11K illustrates an example embodiment of a tree representation of a set of sequenced operations.

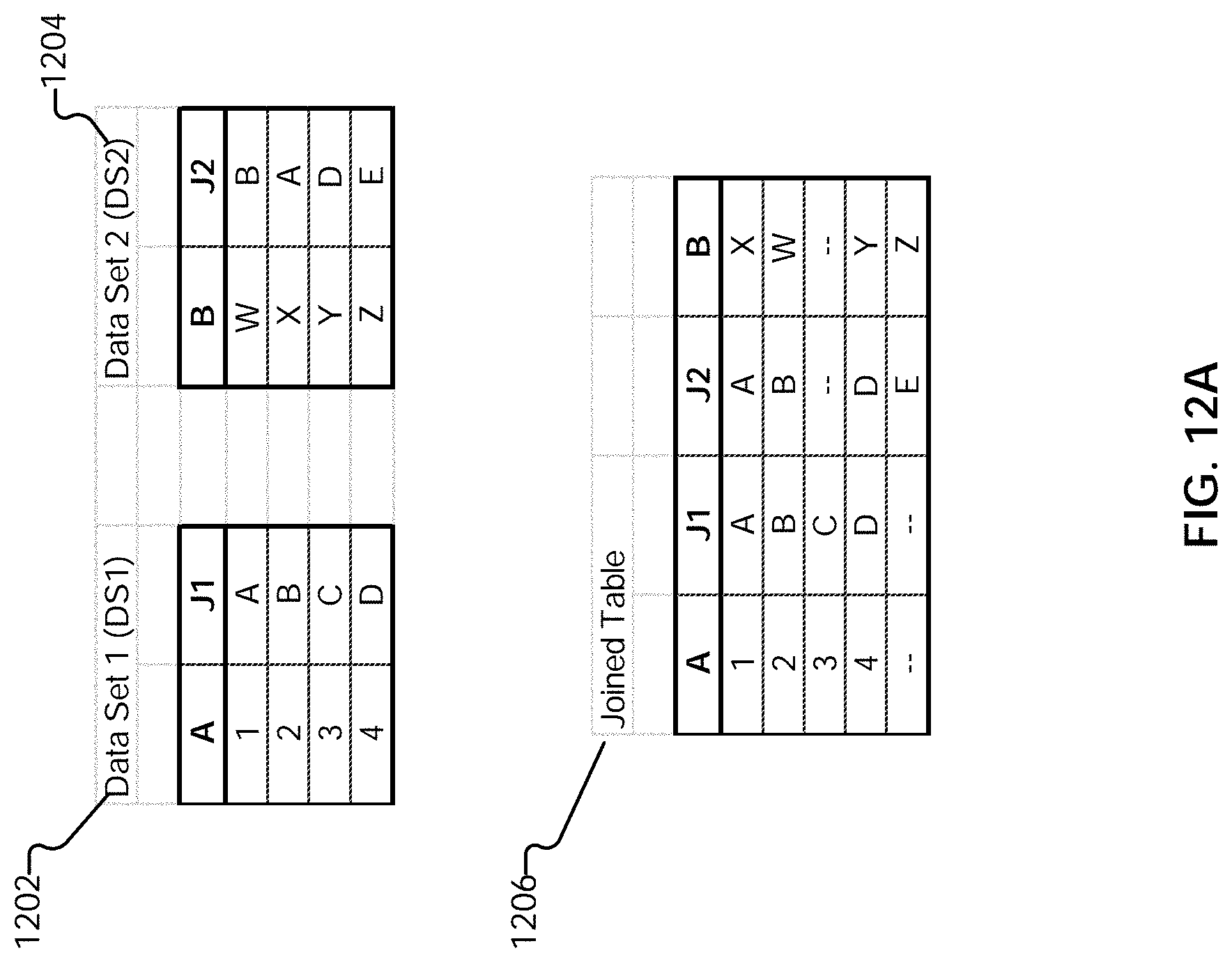

[0034] FIG. 12A illustrates an example of data sets to be joined.

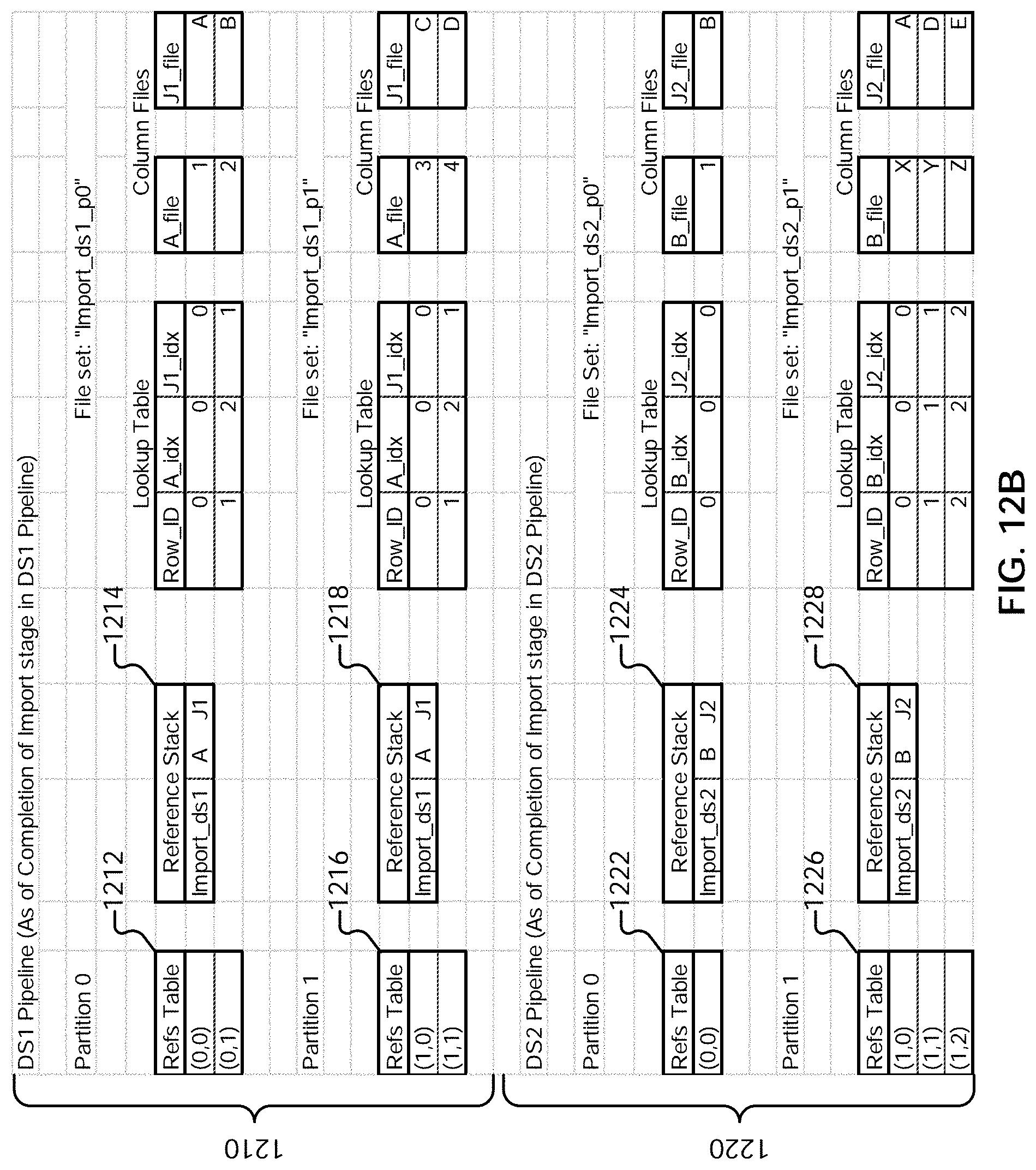

[0035] FIG. 12B illustrates an example of data traversal programs and file sets generated for imported data.

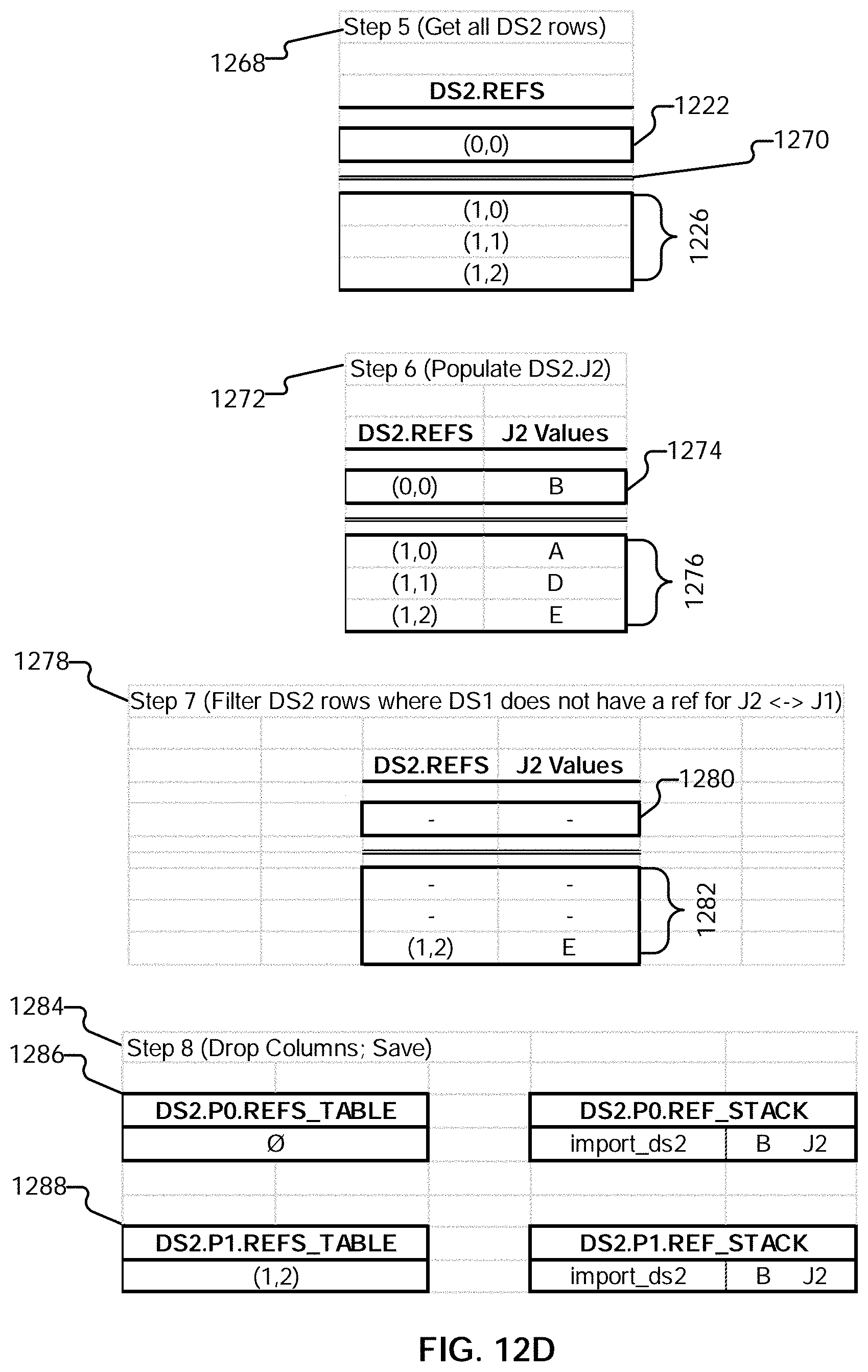

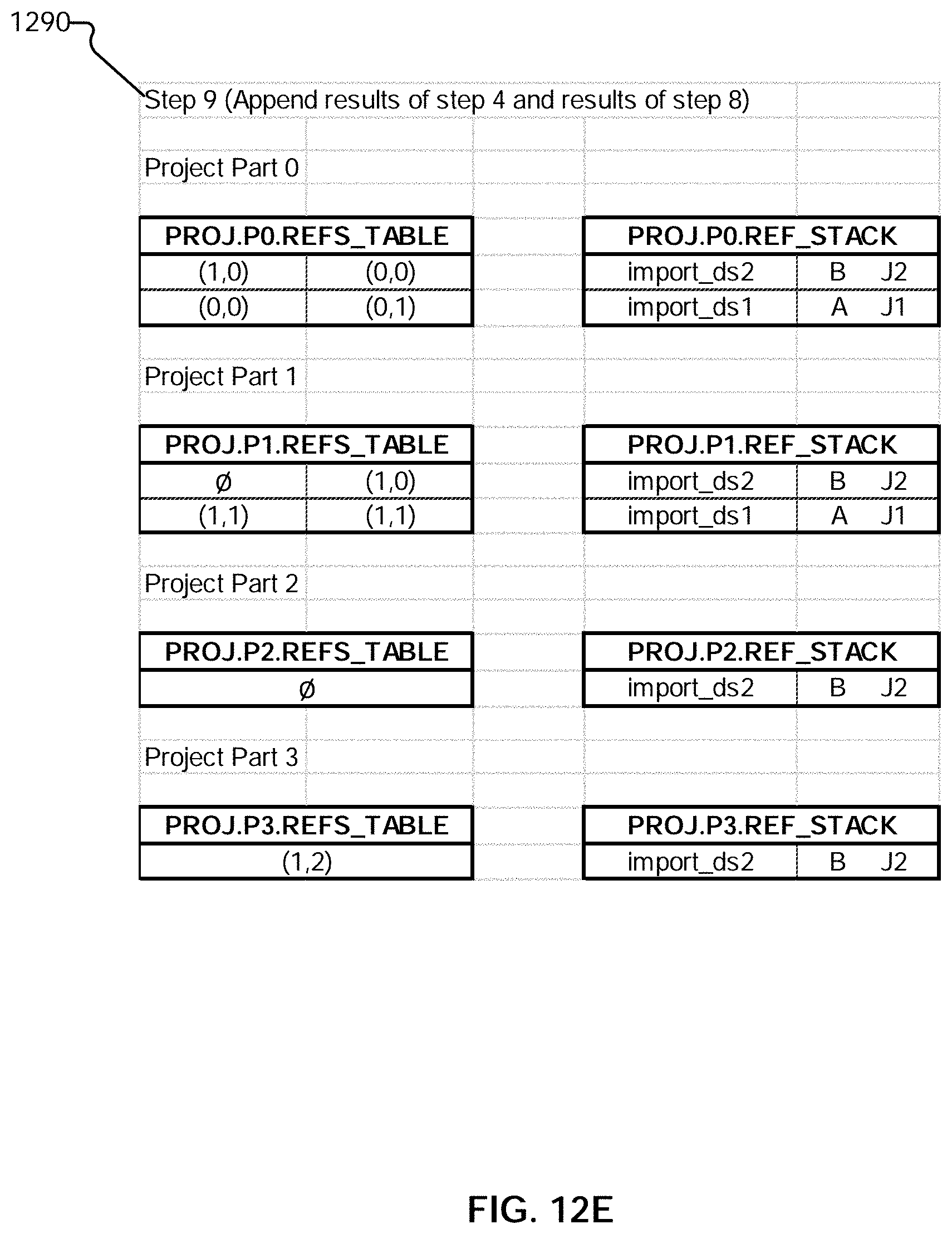

[0036] FIGS. 12C-E illustrate an example embodiment of a process for performing a join.

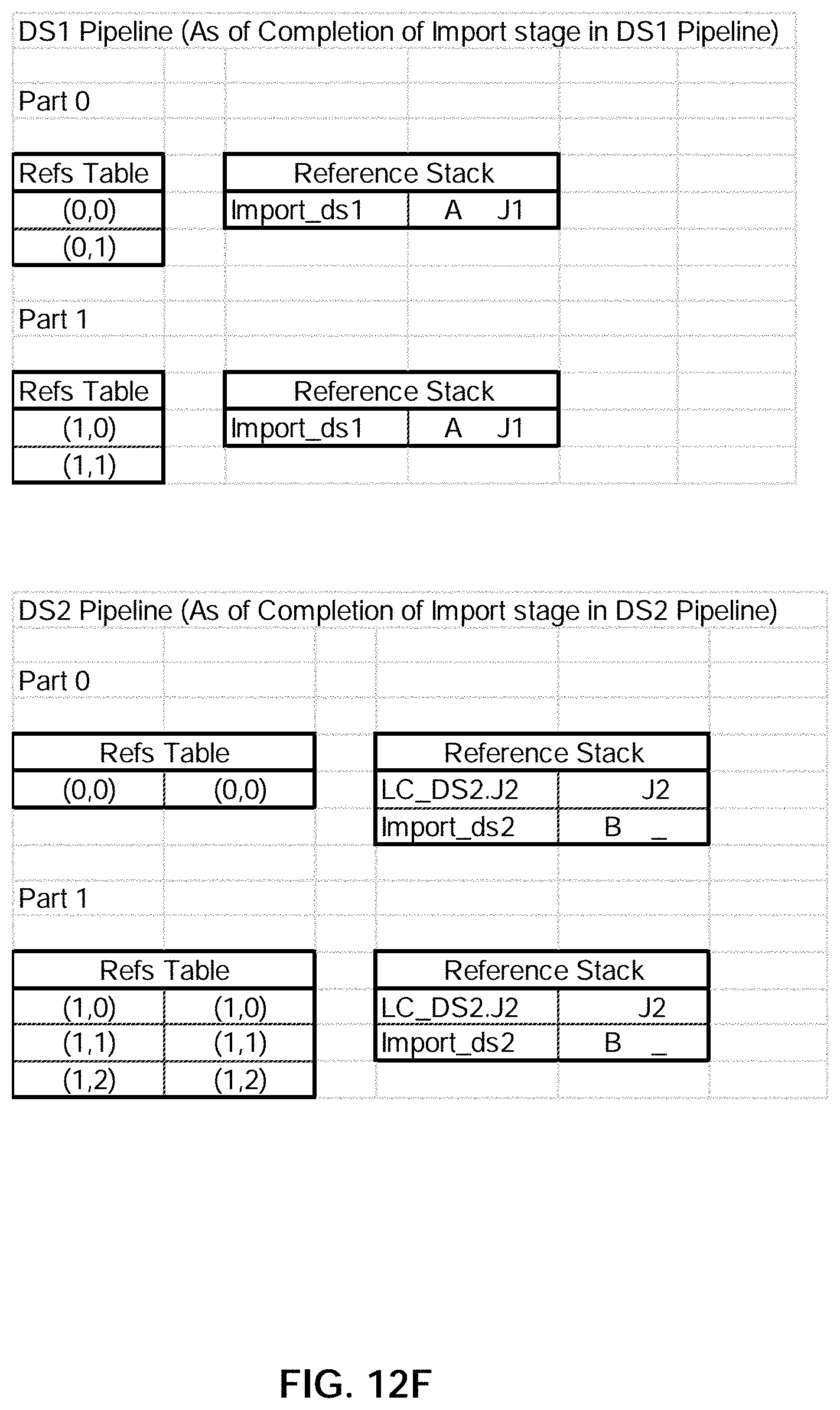

[0037] FIG. 12F illustrates an example embodiment of data traversal programs prior to a join.

[0038] FIG. 12G illustrates an example embodiment of data traversal programs subsequent to a join.

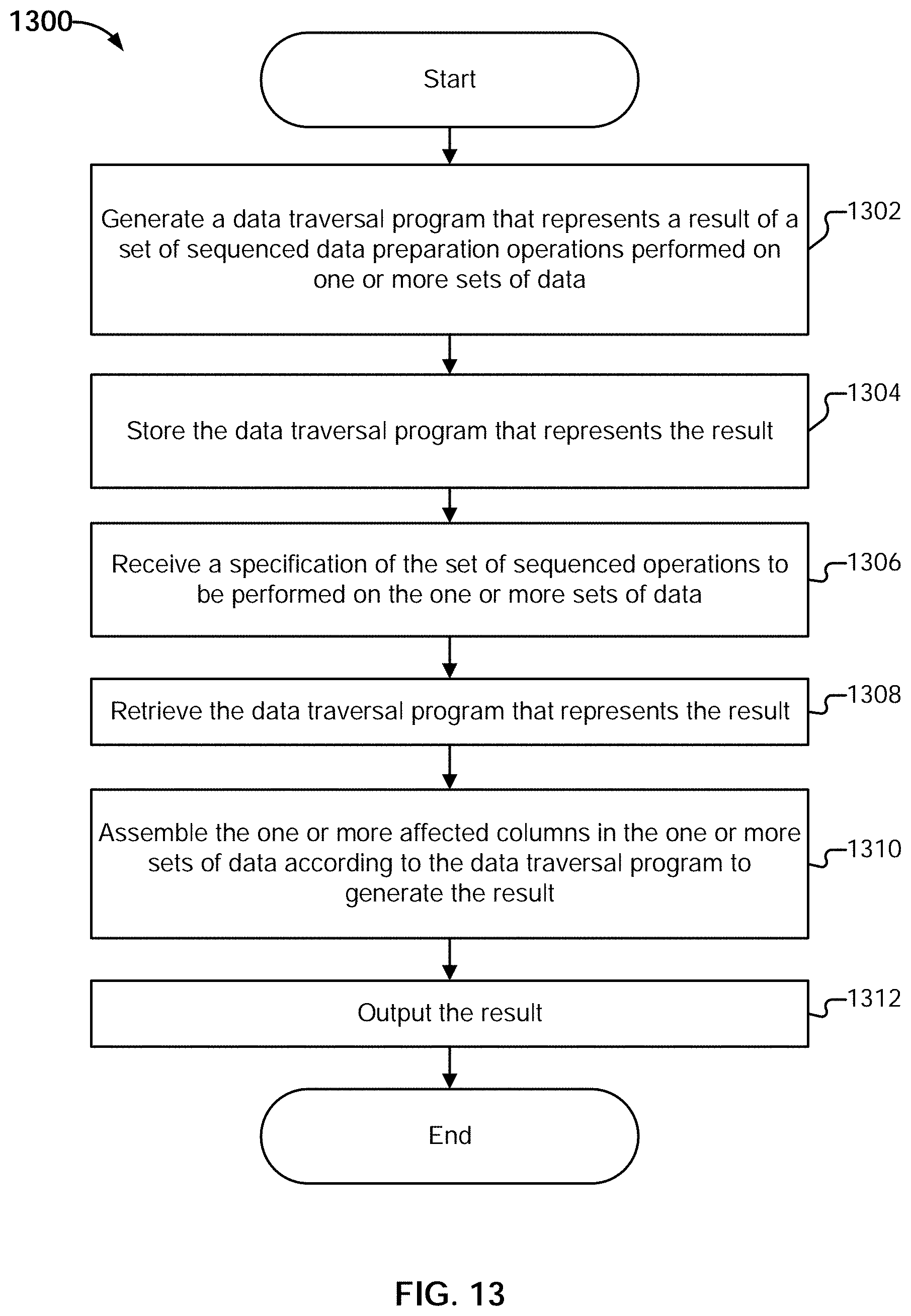

[0039] FIG. 13 is a flow diagram illustrating an embodiment of a process for caching transformation results.

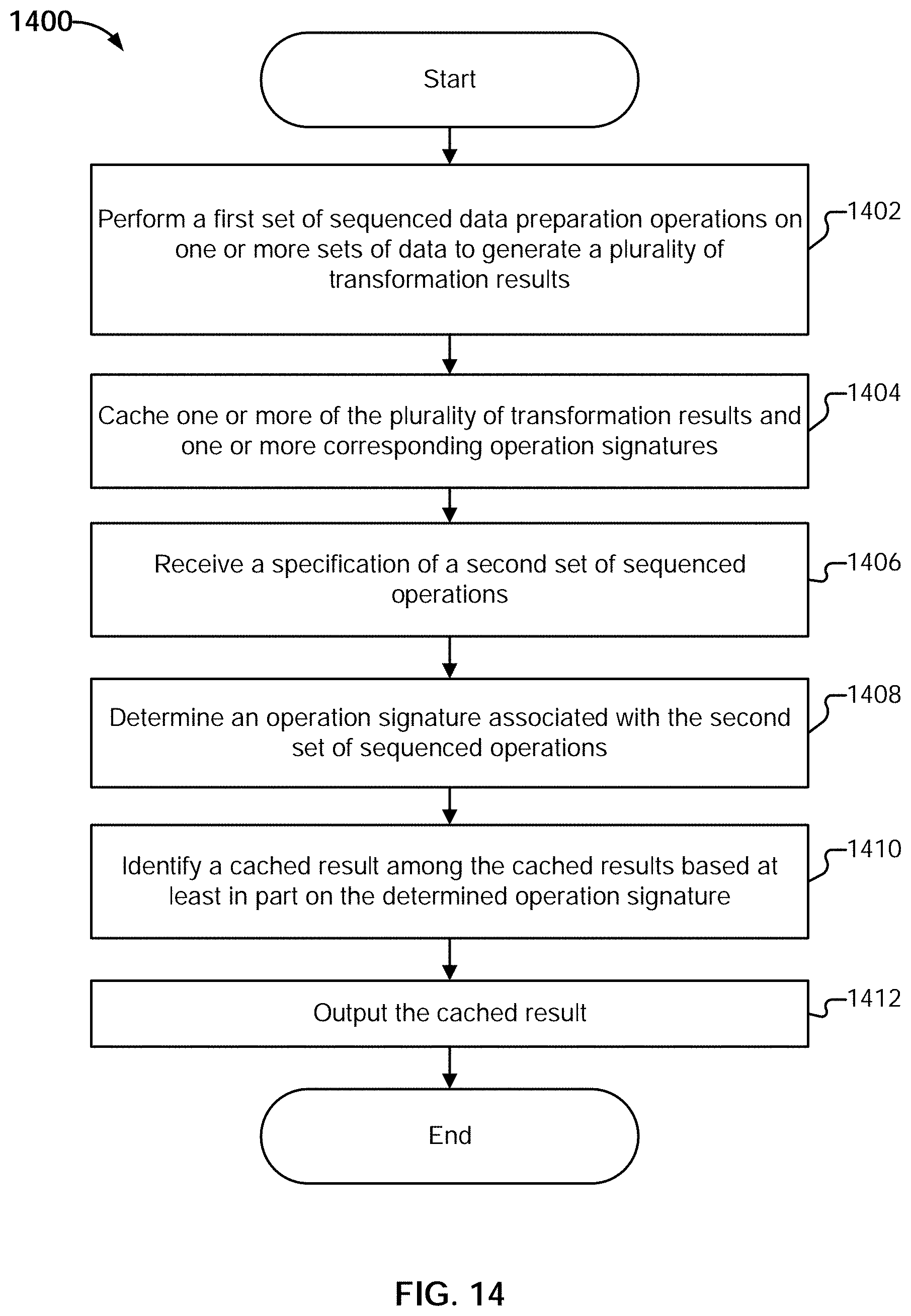

[0040] FIG. 14 is a flow diagram illustrating an embodiment of a process for cache reuse.

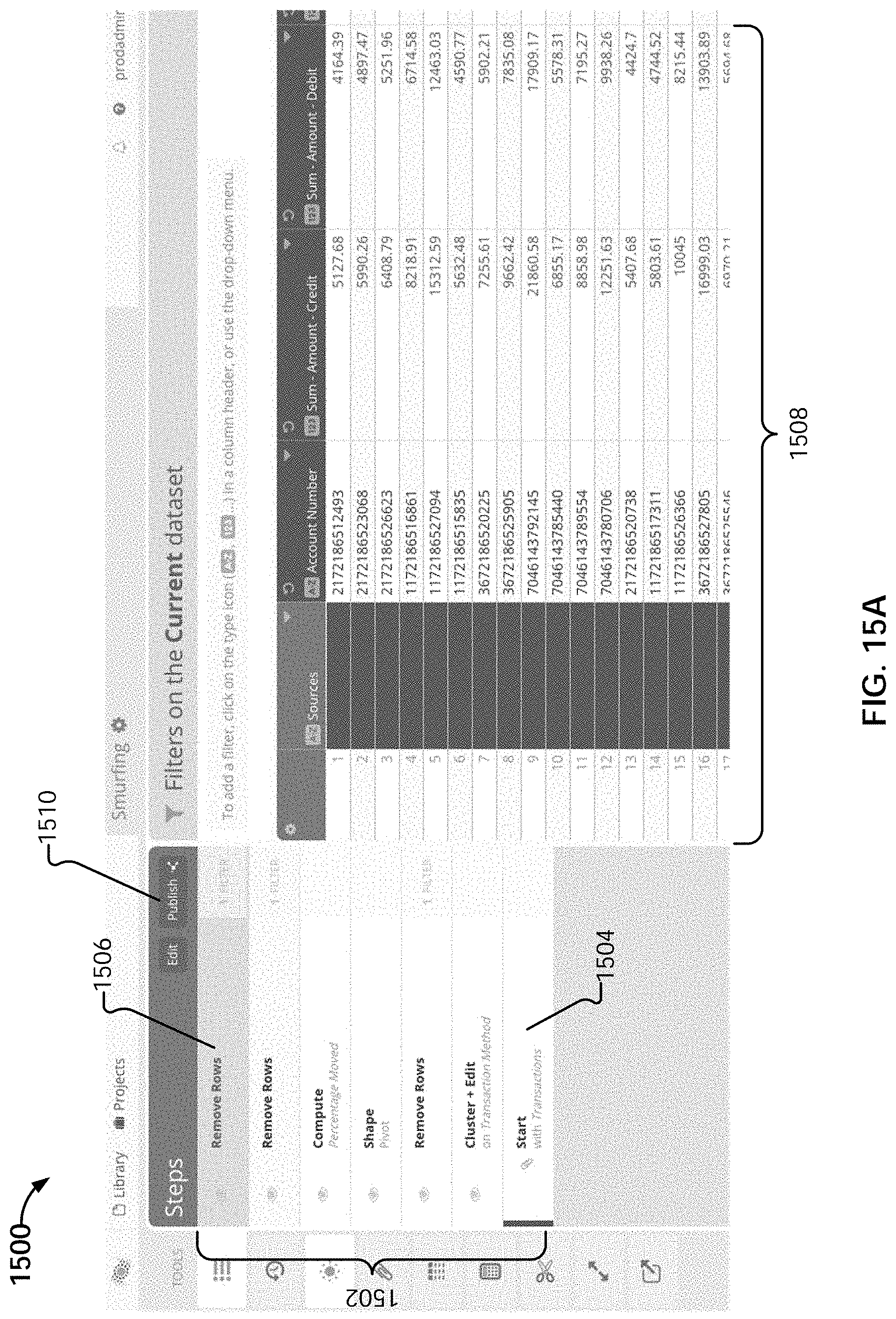

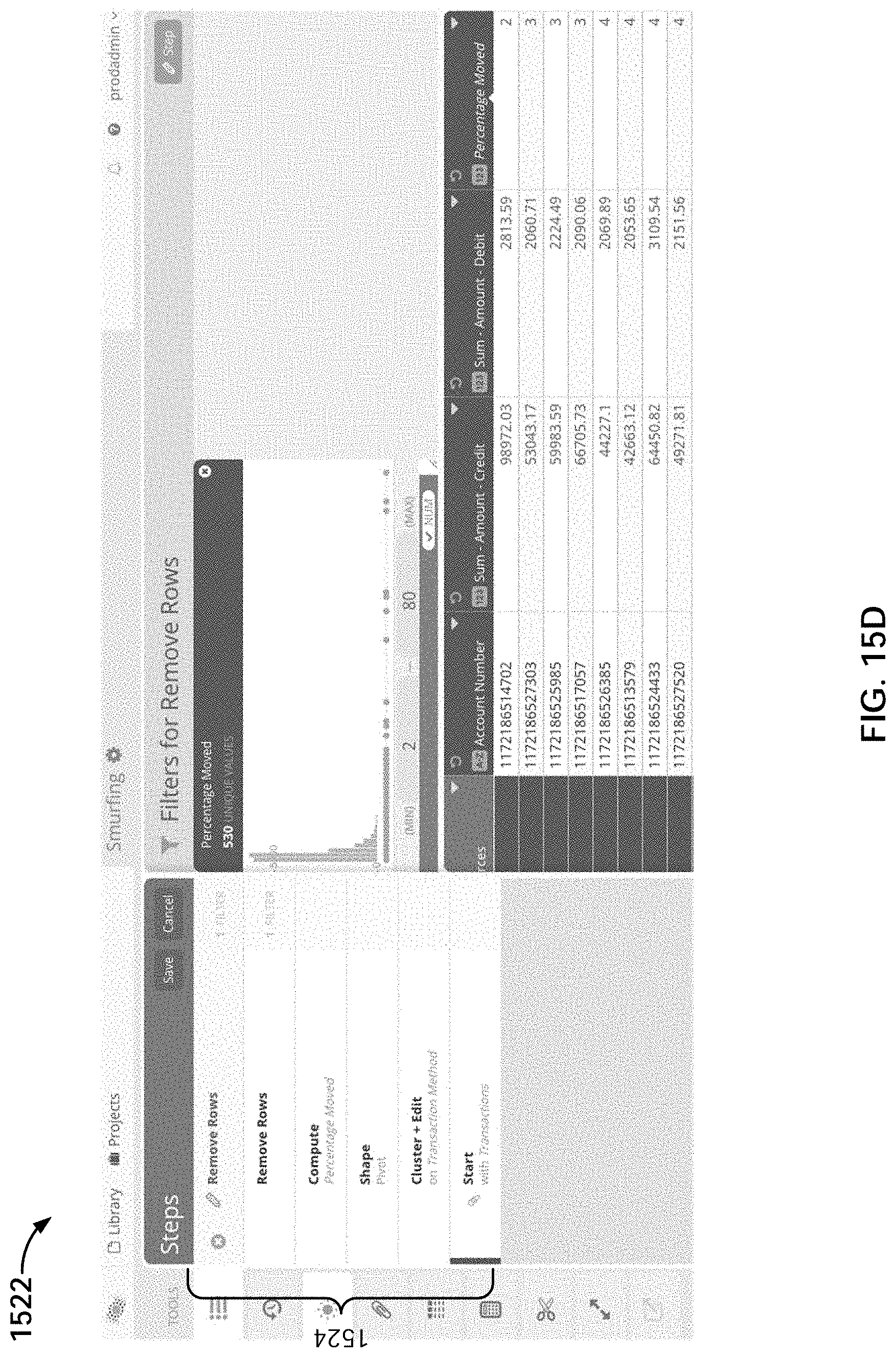

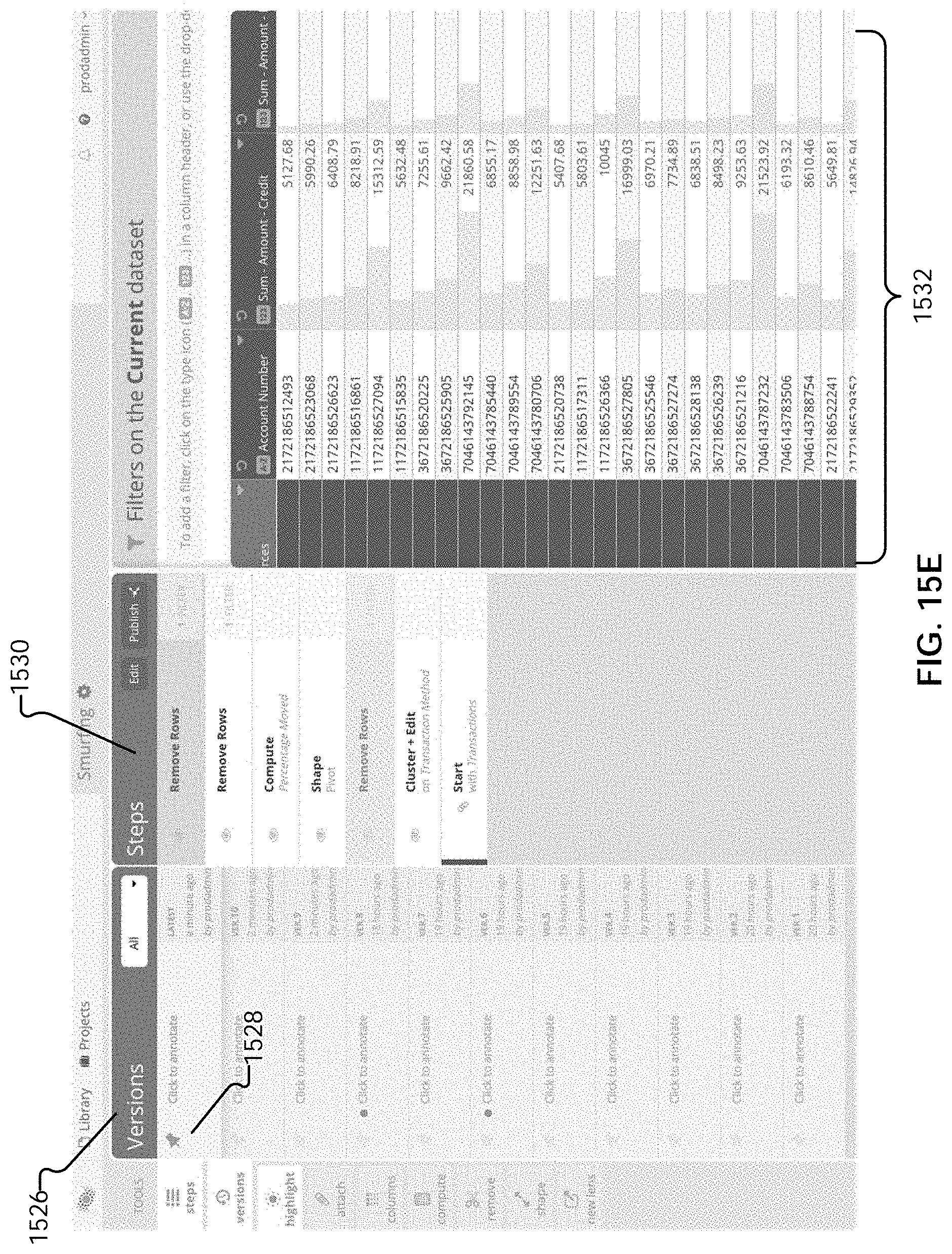

[0041] FIGS. 15A-E illustrate example embodiments of user interfaces of a step editor.

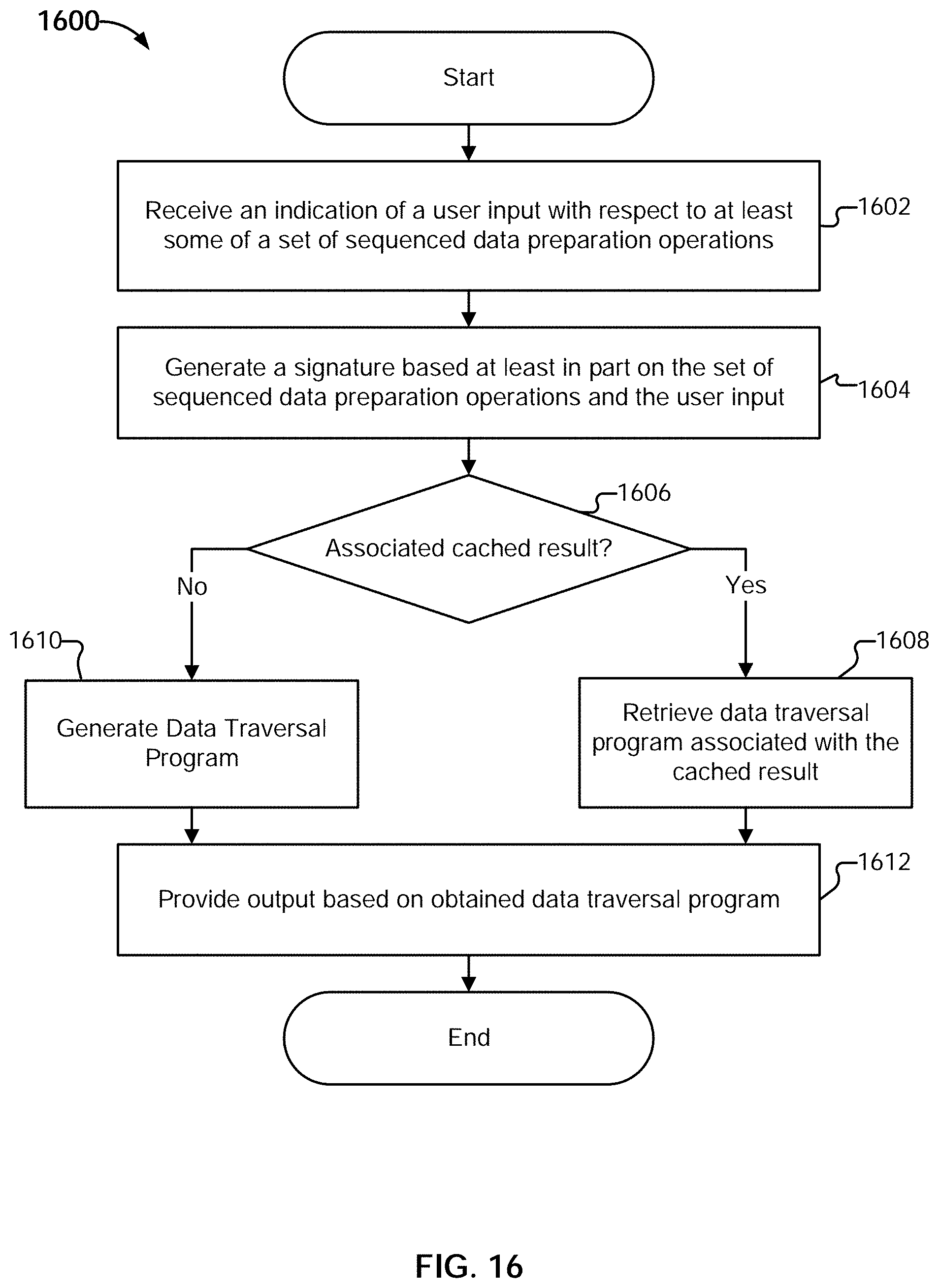

[0042] FIG. 16 is a flow diagram illustrating an embodiment of a process for using a step editor for data preparation.

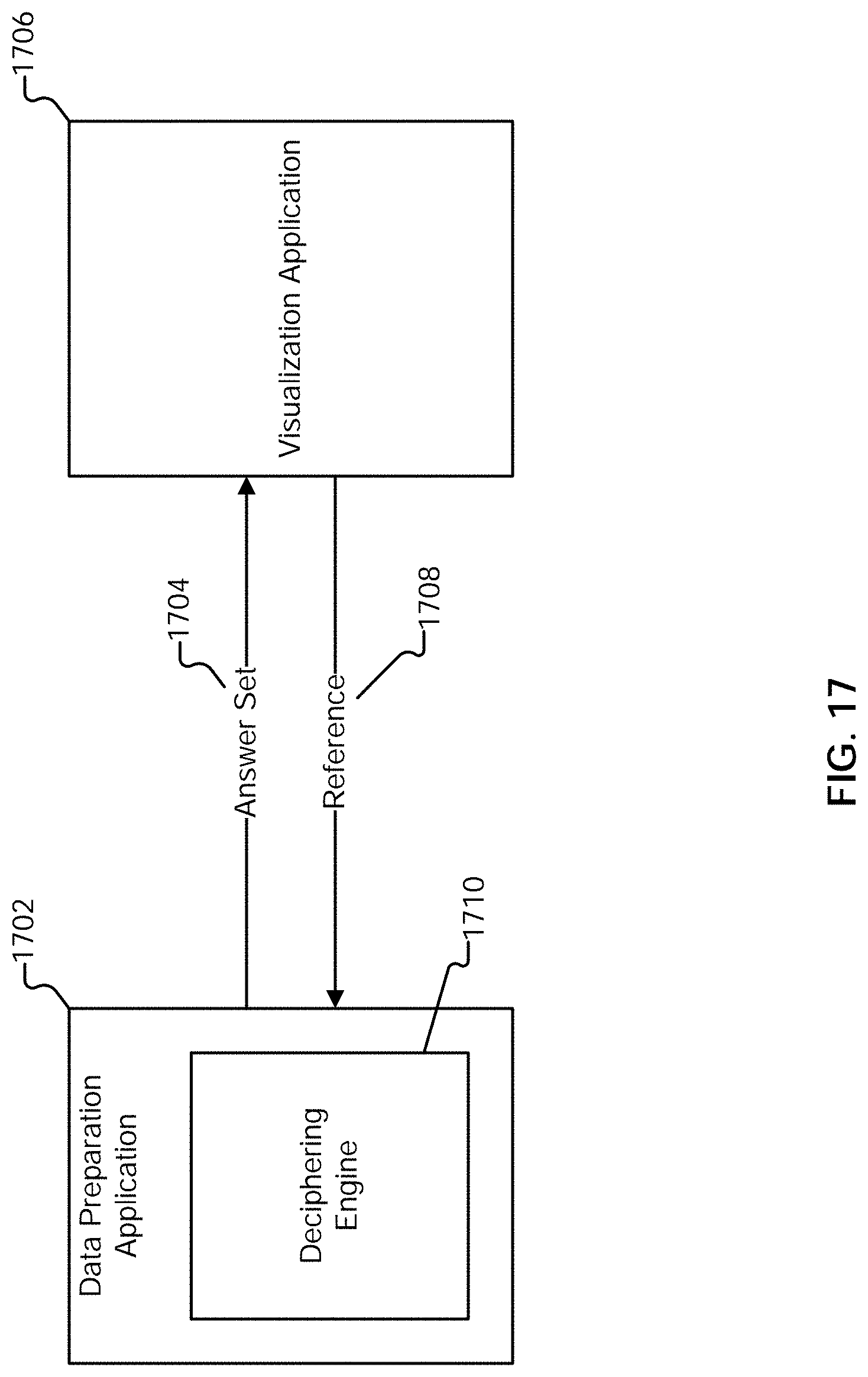

[0043] FIG. 17 illustrates an embodiment of an environment in which linking back or returning to a data preparation application is facilitated.

[0044] FIG. 18 illustrates an example embodiment of a bar chart.

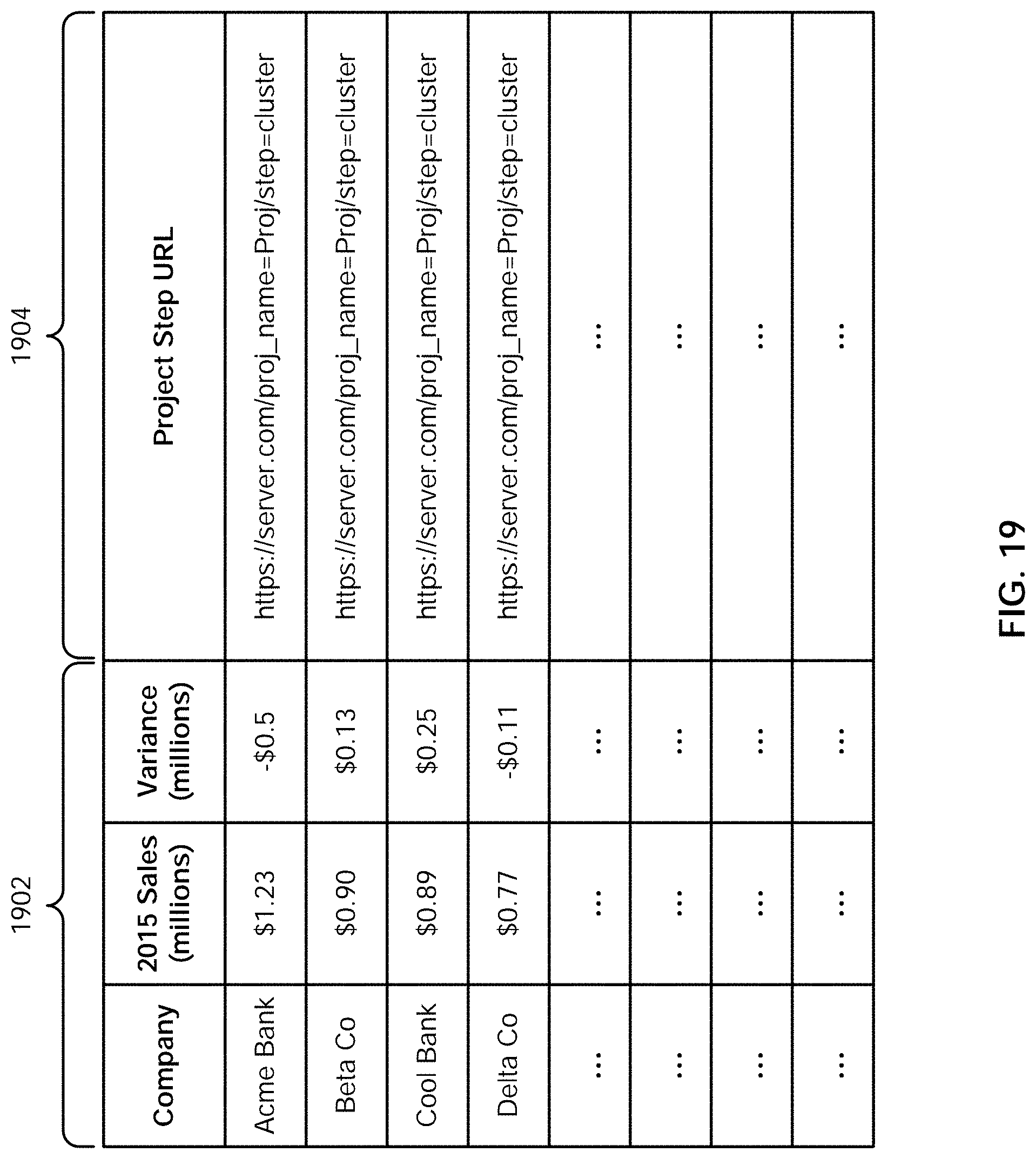

[0045] FIG. 19 is an example embodiment of a portion of an answer set including reference metadata.

[0046] FIG. 20 illustrates an example embodiment of a process for navigating to a data preparation context.

[0047] FIG. 21 illustrates an example embodiment of a filtergram.

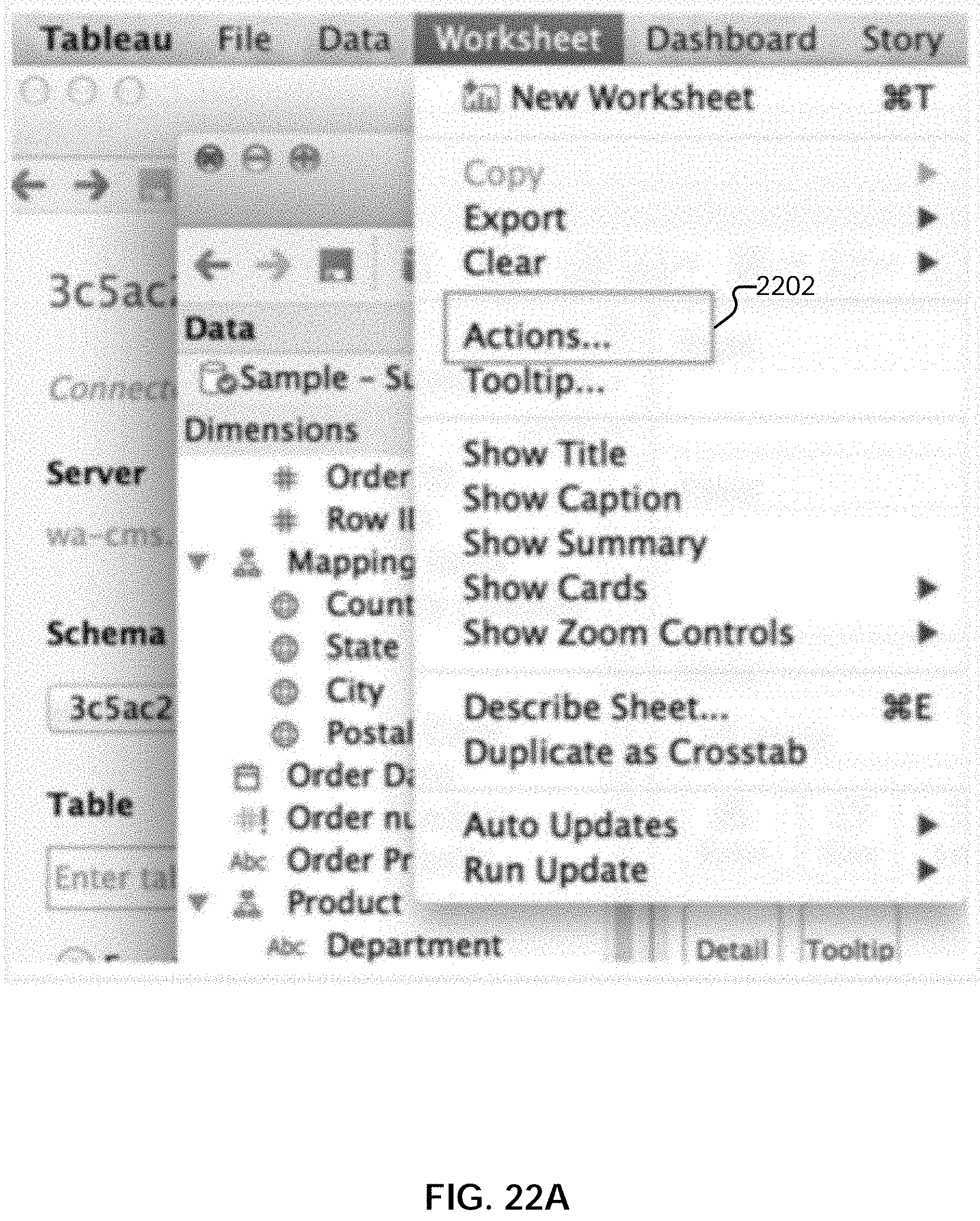

[0048] FIG. 22A illustrates an embodiment of an interface for creating a click-to-prep link for a project filtergram in a visualization tool.

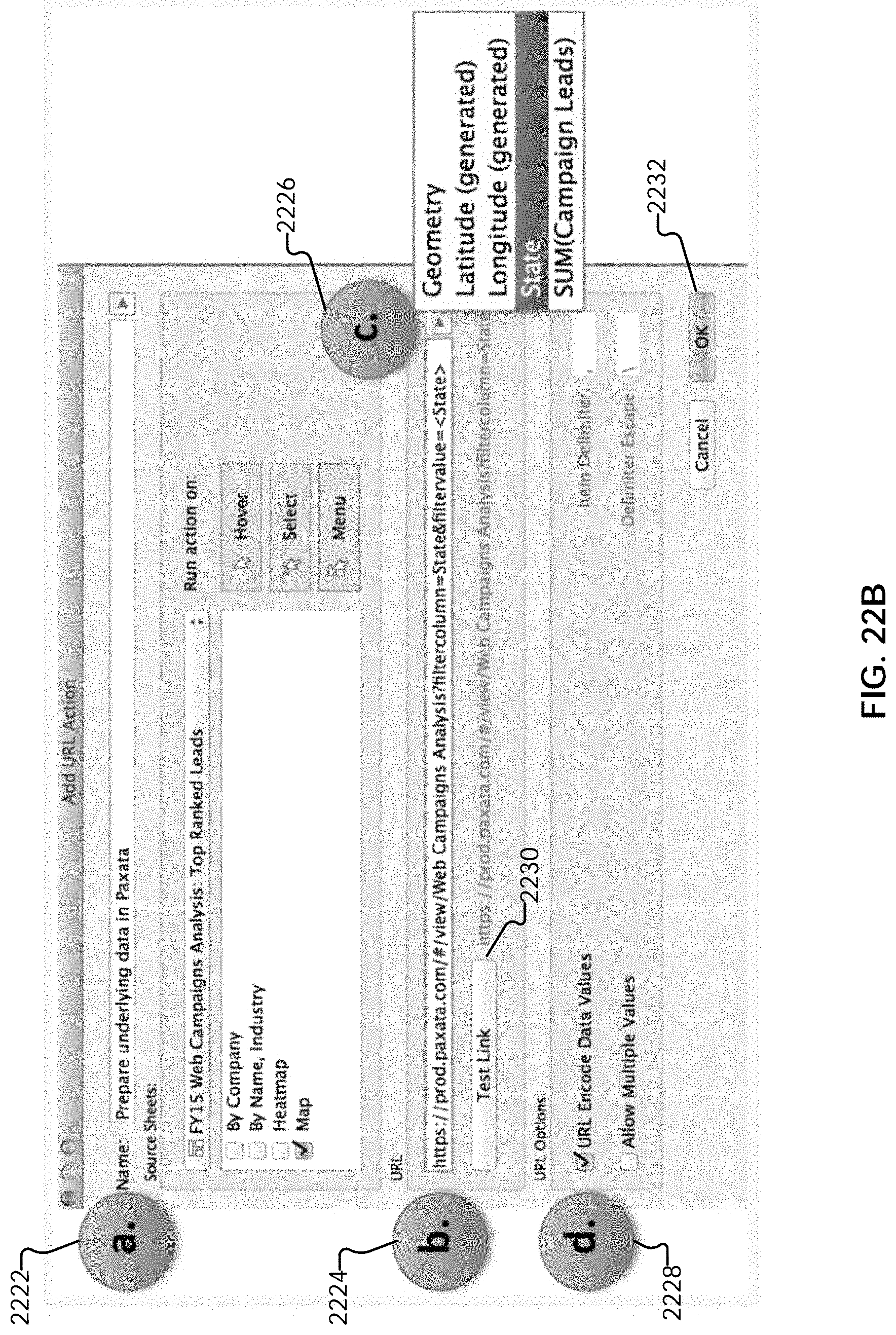

[0049] FIG. 22B illustrates an embodiment of an interface for creating a click-to-prep link for a project filtergram in a visualization tool.

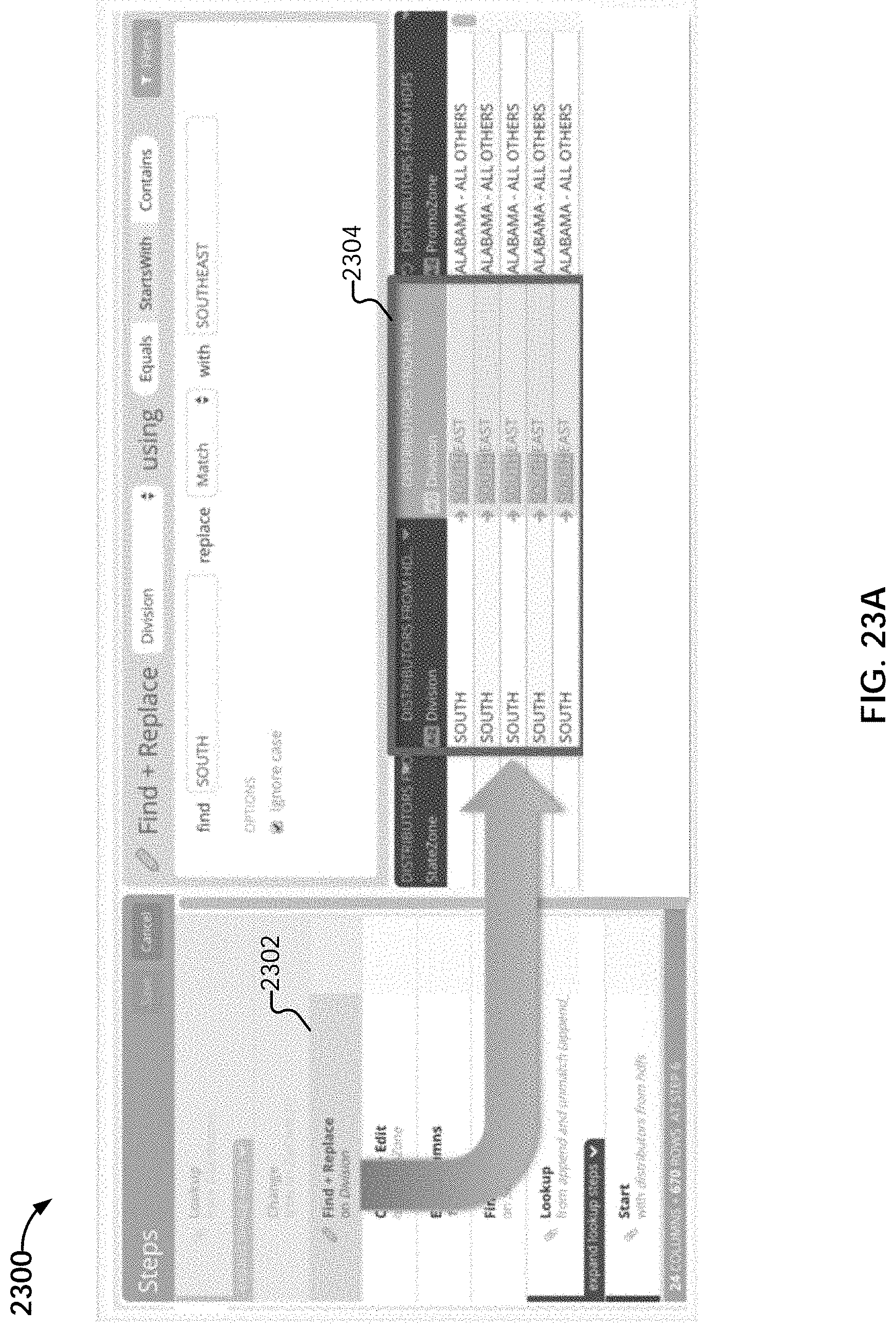

[0050] FIG. 23A illustrates an embodiment of navigating to a last step in a project that affected or modified data in a column.

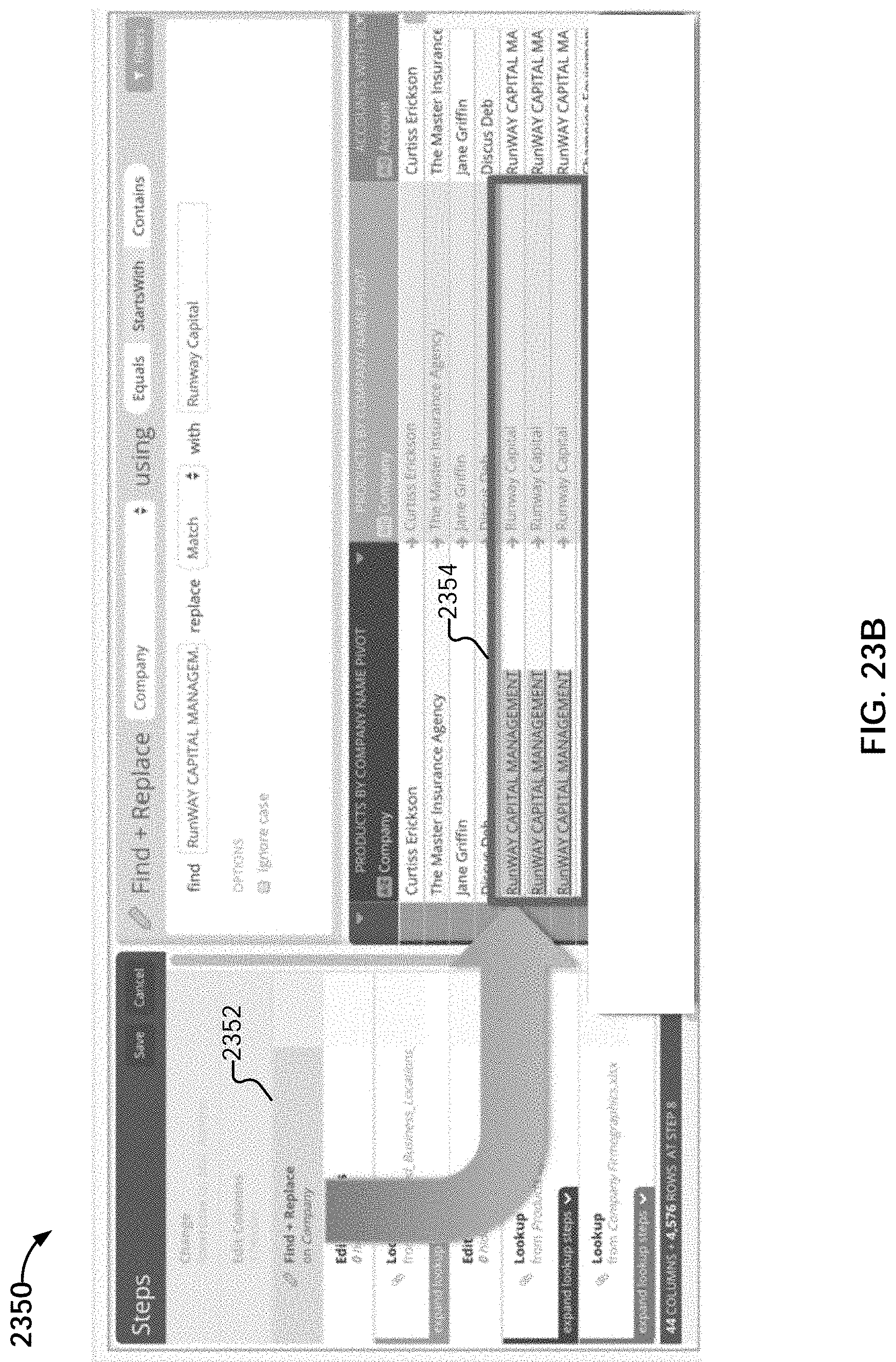

[0051] FIG. 23B illustrates an embodiment of navigating to a project where the last step of a particular type was made on a column.

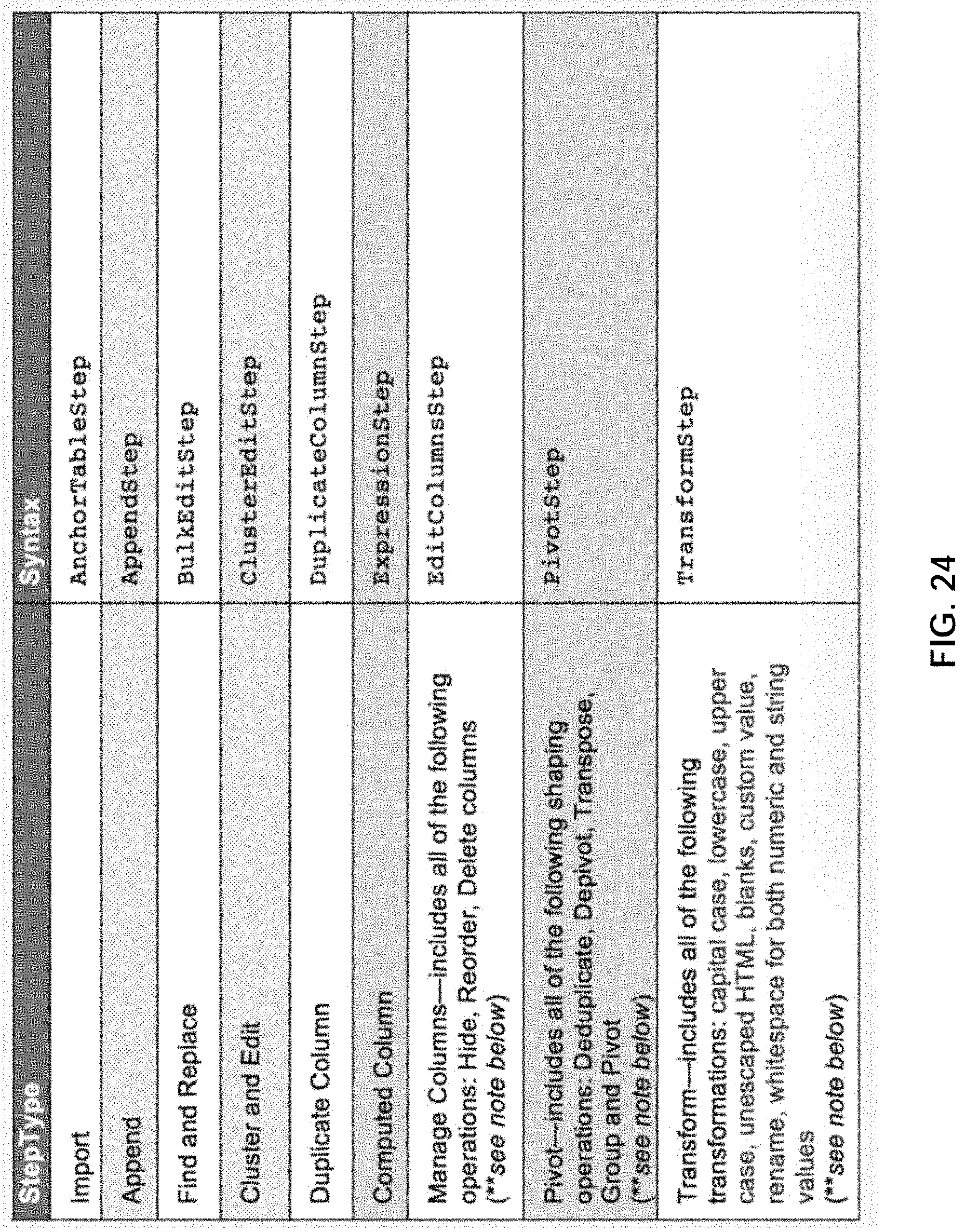

[0052] FIG. 24 illustrates example step types.

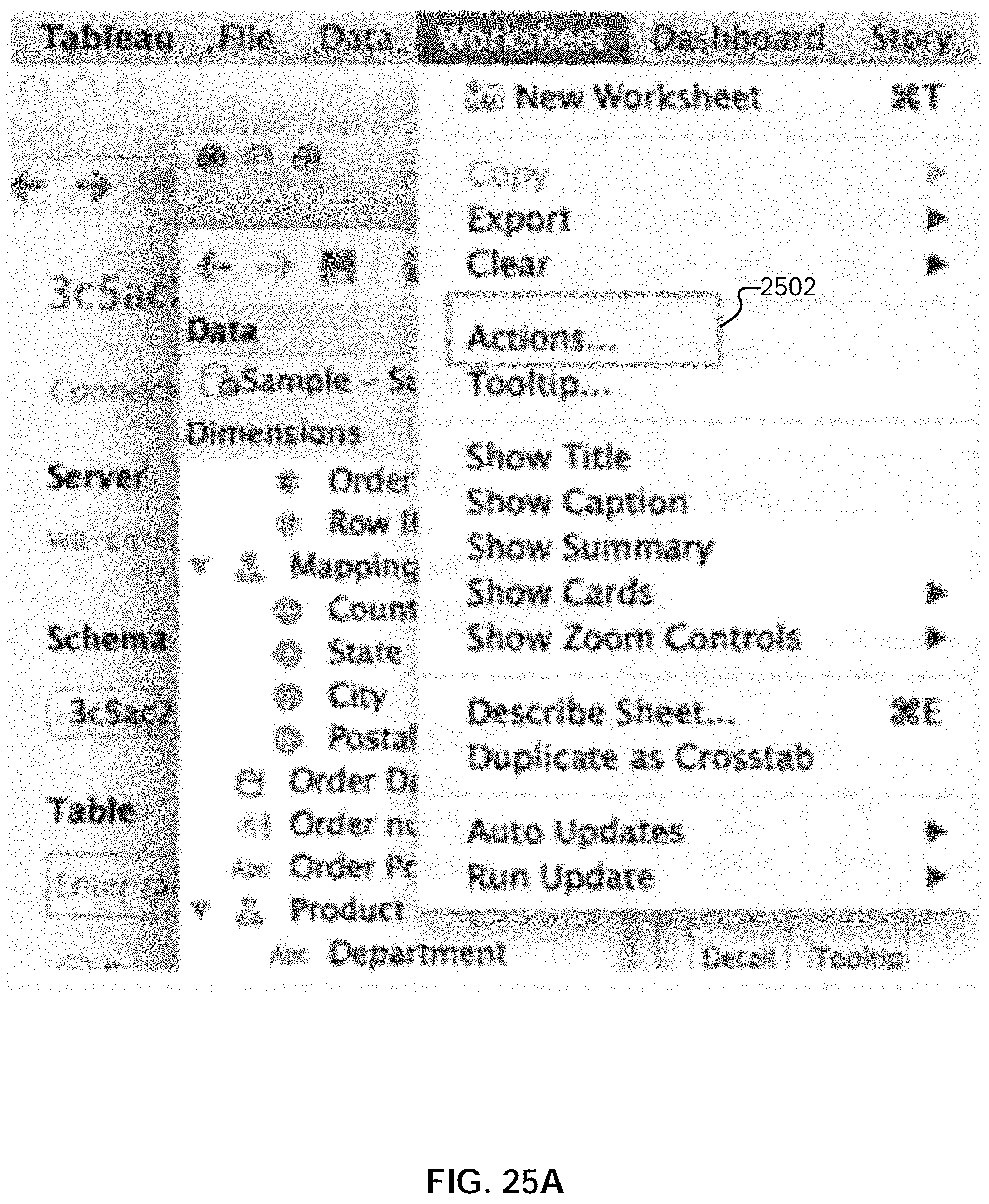

[0053] FIG. 25A illustrates an embodiment of an interface for creating a click-to-prep link for a project step in a visualization tool.

[0054] FIG. 25B illustrates an embodiment of an interface for creating a click-to-prep link for a project step in a visualization tool.

DETAILED DESCRIPTION

[0055] The invention can be implemented in numerous ways, including as a process; an apparatus; a system; a composition of matter; a computer program product embodied on a computer readable storage medium; and/or a processor, such as a processor configured to execute instructions stored on and/or provided by a memory coupled to the processor. In this specification, these implementations, or any other form that the invention may take, may be referred to as techniques. In general, the order of the steps of disclosed processes may be altered within the scope of the invention. Unless stated otherwise, a component such as a processor or a memory described as being configured to perform a task may be implemented as a general component that is temporarily configured to perform the task at a given time or a specific component that is manufactured to perform the task. As used herein, the term `processor` refers to one or more devices, circuits, and/or processing cores configured to process data, such as computer program instructions.

[0056] A detailed description of one or more embodiments of the invention is provided below along with accompanying figures that illustrate the principles of the invention. The invention is described in connection with such embodiments, but the invention is not limited to any embodiment. The scope of the invention is limited only by the claims and the invention encompasses numerous alternatives, modifications and equivalents. Numerous specific details are set forth in the following description in order to provide a thorough understanding of the invention. These details are provided for the purpose of example and the invention may be practiced according to the claims without some or all of these specific details. For the purpose of clarity, technical material that is known in the technical fields related to the invention has not been described in detail so that the invention is not unnecessarily obscured.

[0057] Using the techniques described herein, a distributed computing platform such as Apache Spark.TM. can be efficiently utilized to perform sequenced data preparation operations (i.e., a set of operations that are applied in sequential order) on data sets to generate transformation results. As used herein, a data preparation operation refers to an operation used to transform/mutate an input data. The input data is accessible dynamically upon execution of a set of sequenced operations, where the data is not necessarily stored, but may be computed on-the-fly, as needed. This is in contrast to operating against data stored at a fixed and known location, and is performed without the advantages of prior indexing and partitioning. The input data includes data that is organized (e.g., into rows and columns). Various examples of data preparation operations include clustering, joining, appending, sorting, uppercase, lowercase, filtering, deduplicating, grouping by, adding or removing columns, adding or removing rows, pivoting, depivoting, order dependent operations, etc. The representation of the transformation results is referred to herein as a "data traversal program," which indicates how to assemble one or more affected columns in the input data to derive a transformation result. The representation of the transformation results can be stored for reuse along with corresponding operation signatures, allowing cached results to be identified and obtained for reuse.

[0058] Navigation to a relevant data preparation context is disclosed. In some embodiments, a set of data preparation operations is performed on one or more data sets to generate a set of answer sets in a first application. A final answer set in the set of answer sets is provided to a second application. In response to a user specification of a source-related query, a reference associated with the source-related query is obtained. A corresponding subset of the set of answer sets associated with one or more corresponding data preparation operations is determined according to the obtained reference. The determined corresponding subset of the set of answer sets is presented in the first application.

[0059] FIG. 1 is a functional diagram illustrating a programmed computer system for navigating to a relevant data preparation context in accordance with some embodiments. As will be apparent, other computer system architectures and configurations can be used to perform automated join detection. Computer system 100, which includes various subsystems as described below, includes at least one microprocessor subsystem (also referred to as a processor or a central processing unit (CPU)) 102. For example, processor 102 can be implemented by a single-chip processor or by multiple processors. In some embodiments, processor 102 is a general purpose digital processor that controls the operation of the computer system 100. Using instructions retrieved from memory 110, the processor 102 controls the reception and manipulation of input data, and the output and display of data on output devices (e.g., display 118). In some embodiments, processor 102 includes and/or is used to provide front end 200 of FIG. 2, pipeline server 206 of FIG. 2, and data preparation application 1702 of FIG. 17, and/or executes/performs process 500, 1300, 1400, 1600, and/or 2000.

[0060] Processor 102 is coupled bi-directionally with memory 110, which can include a first primary storage, typically a random access memory (RAM), and a second primary storage area, typically a read-only memory (ROM). As is well known in the art, primary storage can be used as a general storage area and as scratch-pad memory, and can also be used to store input data and processed data. Primary storage can also store programming instructions and data, in the form of data objects and text objects, in addition to other data and instructions for processes operating on processor 102. Also as is well known in the art, primary storage typically includes basic operating instructions, program code, data, and objects used by the processor 102 to perform its functions (e.g., programmed instructions). For example, memory 110 can include any suitable computer-readable storage media, described below, depending on whether, for example, data access needs to be bi-directional or uni-directional. For example, processor 102 can also directly and very rapidly retrieve and store frequently needed data in a cache memory (not shown).

[0061] A removable mass storage device 112 provides additional data storage capacity for the computer system 100, and is coupled either bi-directionally (read/write) or uni-directionally (read only) to processor 102. For example, storage 112 can also include computer-readable media such as magnetic tape, flash memory, PC-CARDS, portable mass storage devices, holographic storage devices, and other storage devices. A fixed mass storage 120 can also, for example, provide additional data storage capacity. The most common example of mass storage 120 is a hard disk drive. Mass storages 112, 120 generally store additional programming instructions, data, and the like that typically are not in active use by the processor 102. It will be appreciated that the information retained within mass storages 112 and 120 can be incorporated, if needed, in standard fashion as part of memory 110 (e.g., RAM) as virtual memory.

[0062] In addition to providing processor 102 access to storage subsystems, bus 114 can also be used to provide access to other subsystems and devices. As shown, these can include a display monitor 118, a network interface 116, a keyboard 104, and a pointing device 106, as well as an auxiliary input/output device interface, a sound card, speakers, and other subsystems as needed. For example, the pointing device 106 can be a mouse, stylus, track ball, or tablet, and is useful for interacting with a graphical user interface.

[0063] The network interface 116 allows processor 102 to be coupled to another computer, computer network, or telecommunications network using a network connection as shown. For example, through the network interface 116, the processor 102 can receive information (e.g., data objects or program instructions) from another network or output information to another network in the course of performing method/process steps. Information, often represented as a sequence of instructions to be executed on a processor, can be received from and outputted to another network. An interface card or similar device and appropriate software implemented by (e.g., executed/performed on) processor 102 can be used to connect the computer system 100 to an external network and transfer data according to standard protocols. For example, various process embodiments disclosed herein can be executed on processor 102, or can be performed across a network such as the Internet, intranet networks, or local area networks, in conjunction with a remote processor that shares a portion of the processing. Additional mass storage devices (not shown) can also be connected to processor 102 through network interface 116.

[0064] An auxiliary I/O device interface (not shown) can be used in conjunction with computer system 100. The auxiliary I/O device interface can include general and customized interfaces that allow the processor 102 to send and, more typically, receive data from other devices such as microphones, touch-sensitive displays, transducer card readers, tape readers, voice or handwriting recognizers, biometrics readers, cameras, portable mass storage devices, and other computers.

[0065] In addition, various embodiments disclosed herein further relate to computer storage products with a computer readable medium that includes program code for performing various computer-implemented operations. The computer-readable medium is any data storage device that can store data which can thereafter be read by a computer system. Examples of computer-readable media include, but are not limited to, all the media mentioned above: magnetic media such as hard disks, floppy disks, and magnetic tape; optical media such as CD-ROM disks; magneto-optical media such as optical disks; and specially configured hardware devices such as application-specific integrated circuits (ASICs), programmable logic devices (PLDs), and ROM and RAM devices. Examples of program code include both machine code, as produced, for example, by a compiler, or files containing higher level code (e.g., script) that can be executed using an interpreter.

[0066] The computer system shown in FIG. 1 is but an example of a computer system suitable for use with the various embodiments disclosed herein. Other computer systems suitable for such use can include additional or fewer subsystems. In addition, bus 114 is illustrative of any interconnection scheme serving to link the subsystems. Other computer architectures having different configurations of subsystems can also be utilized.

[0067] FIG. 2 is a system diagram illustrating an embodiment of a system for data preparation. The system includes front end server (or application) 200 and pipeline server 206. Each server can be implemented using a system such as 100.

[0068] Front end 200 is configured to provide an interface for configuring data preparation. Front end 200 interacts with the pipeline server 206. In various embodiments, front end 200 can be implemented as a standalone application and/or a browser-based client application executing on a client device and communicating with the pipeline server, as a J2EE application server such as Tomcat or Jetty, or a combination thereof. Front end 200 includes user interface engine 202 and script generator 204.

[0069] User interface engine 202 is configured to interact with pipeline server 206 to present table data, configuration options, results of sequenced operations, and any other appropriate information to the user in user interface screens and receive user input from user interface components. For example, user interface engine 202 is configured to provide editor user interfaces by which users can specify a sequence of data preparation operations to be performed on one or more sets of data to generate one or more transformation results. The specified sequenced set of operations, which are to be applied in a specified order, forms a pipeline through which one or more sets of data are processed. The data sets include tables of data that include data records organized in rows and columns. Examples of user interfaces provided by user interface engine 202 are described in conjunction with FIGS. 15A-E.

[0070] Script generator 204 is configured to generate a script based on the data sets and sequence of operations specified by a user using the one more user interfaces provided by user interface engine 202. The script includes a formatted set of instructions that includes a specification of the one or more data sets to be operated on and the sequenced set of operations specified to be performed on the one or more data sets. In some embodiments, the pipeline specified in the script is referred to as an application. An example of a script generated using script generator 204 is described in conjunction with FIG. 6A.

[0071] Pipeline server 206 is configured to perform data preparation. In some embodiments, the pipeline server receives a script from script generator 204, and performs a sequenced set of data preparation operations (which form a pipeline) on one or more input data sets (e.g., data sets 214) according to the script. A data set can be stored in a memory (e.g., a random access memory), read or streamed from a storage (e.g., a local disk, a network storage, a distributed storage server, etc.), or obtained from any other appropriate sources. Pipeline server 206 can be implemented on one or more servers in a network-based/cloud-based environment, a client device (e.g., a computer, a smartphone, a wearable device, or other appropriate device with communication capabilities), or a combination. In some embodiments, the pipeline server is deployed as an application. The pipeline server can be implemented using a system such as 100. In some embodiments, the pipeline server is implemented using a distributed computing platform, such as Apache Spark.TM.. While example embodiments involving Apache Spark.TM. are described below, any other distributed computing platform/architecture can be used, with the techniques described herein adapted accordingly. Pipeline server 206 includes data partitioning engine 208, data transformation engine 210, and caching engine 212.

[0072] Data partitioning engine 208 is configured to partition input data sets (e.g., data sets 214) and distribute them to a cluster of processing nodes in a distributed computing environment. In some embodiments, the data partitioning engine is configured to pre-process the input data so that it can be translated into a form that can be provided to a distributed computing platform such as Apache Spark.TM.. Determining the distribution of the data in a data set includes determining how obtained data sets should be divided/partitioned into logical partitions/work portions, and includes determining how many partitions should be generated, as well as the load to assign each partition. In some embodiments, the partition determination is based on various cost functions. The operations of the data partitioning engine are described in greater detail below.

[0073] Data transformation engine 210 is configured to perform data preparation. Performing data preparation includes determining transformation results by performing a sequenced set of data preparation operations on one or more sets of data. In some embodiments, the data transformation engine is a columnar data transformation engine. In some embodiments, the data transformation engine is also configured to perform caching of results, as well as lookups of existing cached results for reuse.

[0074] As will be described below, the data transformation engine is configured to efficiently perform the sequenced data preparation operations by generating a compact representation (referred to herein as a "data traversal program") of the transformation results of a set of sequenced operations on one or more sets of data. The data traversal program includes references and reference stacks which, when used in conjunction with column files, indicate how to assemble one or more affected columns in the one or more sets of data that were operated on to derive a transformation result. The operations of the data transformation engine are described in greater detail below.

[0075] Caching engine 212 is configured to perform caching and cache identification. For example, the data traversal program/representation of the results determined using data transformation engine 210 can be cached at various points (e.g., after a particular subset of sequenced data preparation operations) for reuse. The data being cached can be stored in a cache layer, for example in memory (e.g., random access memory), stored on a local or networked storage device (e.g., a disk or a storage server), and/or any other appropriate devices. The results can be cached, for example, based on an explicit request from a user (e.g., via an interaction with a step editor user interface provided by user interface engine 202). The results can also be cached automatically, for example, based on factors such as the complexity of operations that were performed to arrive at the result. The cached representations can be identified based on corresponding signatures. For example, the caching engine can take as input a set of sequenced operations (e.g., received in a script generated from user input via step editor user interfaces provided by user interface engine 202), derive an operation signature, and compare it to the signatures associated with existing cached results. The operations of the caching engine are described in greater detail below.

[0076] The engines described above can be implemented as software components executing on one or more processors, as hardware components such as programmable logic devices (e.g., microprocessors, field-programmable gate arrays (FPGAs), digital signal processors (DSPs), etc.), Application Specific Integrated Circuits (ASICs) designed to perform certain functions, or a combination thereof. In some embodiments, the engines can be embodied by a form of software products which can be stored in a nonvolatile storage medium (such as optical disk, flash storage device, mobile hard disk, etc.), including a number of instructions for making a computer device (such as personal computers, servers, network equipment, etc.) implement the methods described in the embodiments of the present application. The engines may be implemented on a single device or distributed across multiple devices. The functions of the engines may be merged into one another or further split into multiple sub-engines.

[0077] FIG. 3 is a system diagram illustrating an embodiment of a pipeline server. In some embodiments, pipeline server 300 is an example of pipeline server 206 of FIG. 2. In this example, pipeline server 300 is implemented using a distributed computing platform. In some embodiments, the distributed computing platform of pipeline server 300 is used to implement data partitioning engine 208, data transformation engine 210, and caching engine 212 of FIG. 2.

[0078] Shown in pipeline server 300 is an example embodiment of a Spark cluster. The cluster includes a Spark master (302) and Spark workers (304 and 312). In some embodiments, the Spark cluster is implemented using a master-slave architecture. In some embodiments, the Spark master is configured to coordinate all work that is to be executed (in possibly a distributed manner). In some embodiments, the Spark workers are responsible for receiving and executing pieces of work that point at some data with instructions as to the operations to perform. The Spark master and workers can be implemented, for example, as Java applications.

[0079] In some embodiments, the Spark master is configured to receive requests (e.g., jobs) from external clients. The Spark master is configured to break down the job into smaller chunks (work portions) and distribute the work to the various Spark workers. When a Spark worker completes its portion of the work, it returns the results to the Spark master. Once all of the workers return their respective results, the Spark master compiles all of the worker results and returns the final result to the requesting client.

[0080] In some embodiments, when run in a standalone mode, the Spark master is configured to track the health/status of the workers manage work scheduling.

[0081] In some embodiments, both the Spark master and workers use a companion application (e.g., a purpose-built Spark application) to perform the actual work. In some embodiments, the companion application runs on all of the machines that run a Spark process (both Master and workers). The run-time instance of the companion application (also referred to herein as a "pipeline" application) that runs on the worker machine is referred to herein as a Spark "pipeline executor." A Spark worker is configured to perform its job through the executor application.

[0082] In this example, while two Spark workers are shown, any number of Spark workers may be established in the cluster. In some embodiments, an application (e.g., data preparation application initiated by a front end such as front end 200) provisions the cluster of nodes to perform a set of sequenced operations comprising a pipeline through which data sets are pushed. In some embodiments, each Spark master or worker is a node comprising either a physical or virtual computer, implemented in various embodiments as a device, a processor, a server, etc.

[0083] In this example, the Spark master is designated to communicate with a "pipeline master" (308), and the Spark workers are designated to communicate with pipeline executors (310 and 306). The pipeline masters/executors connect with Spark software residing on their corresponding nodes.

[0084] As described above, the pipeline server receives a script that specifies one or more input data sets and a set of sequenced data preparation operations that form a pipeline through which the input data sets are to be processed. The pipeline server, using the distributed computing platform, processes the input data according to the received script.

Data Partitioning

[0085] In this example, the pipeline master is configured to perform partitioning of the input data sets. In some embodiments, the pipeline master is used to implement data partitioning engine 208 of FIG. 2. Partitioning includes dividing a data set into smaller chunks (e.g., dividing a data set with one hundred rows into five partitions with twenty rows each). In some embodiments, the set of data is divided into work portions, or pieces of work that are to be performed. The pipeline master is also configured to distribute the partitions to the various established pipeline executors in the provisioned cluster for processing. In a Spark implementation, a division/partition (also referred to as a "portion of work" or "work portion") of the data set is represented as a Resilient Distributed Dataset (RDD). Other partition formats are possible for other distributed platform implementations.

[0086] When partitioning data, various tradeoffs exist when determining how many partitions to create and/or how many rows/how much to include in each partition. For example, while an increase in the number of slices of data can lead to an increase in parallelism and computation speed, the increased number of partitions also results in increased overhead and increased communication bandwidth requirement, due to data having to be communicated back and forth between an increasing number of nodes. This can result in inefficiencies. Using the techniques described herein, partitioning can be optimized. For example, an optimal number of partitions and/or an optimal size/number of rows per partition can be determined.

[0087] The master node is configured to devise or consume an intelligent strategy to partition a data set by taking into consideration various pieces of information. In various embodiments, the considered information includes information about the data being operated on, the data preparation operations to be performed, the topology/performance characteristics of the distributed computing environment, etc. By considering such information, a partitioning strategy can be devised that optimizes, for example, for reliable throughput throughout the nodes of a cluster so that the nodes can complete processing at approximately the same time. Thus, for example, straggling in the distributed computing environment can be reduced (e.g., where some workers are spending more time performing their portion of the work as compared to other workers, and must be waited upon).

[0088] The information about the data being operated on includes metadata information about the data. In one example embodiment, the Spark (pipeline) master queries an input data set (e.g., obtained from a source location described in a received script). The pipeline master probes the data set to determine metadata describing the data set. In various embodiments, the metadata includes the number of rows that are in the data set, the number of columns that are in the data set, etc. In some embodiments, the metadata that is determined/generated includes statistical information, such as histogram information about how data is distributed within the data set. For example, it may be determined that some rows in the data set are denser than others. The metadata determined as a result of the analysis (e.g., statistical analysis) is used in part by the pipeline master to devise an intelligent partitioning strategy.

[0089] Example embodiments of partitioning strategies are described below.

Example Strategy 1: Partitioning Based on Row Count

[0090] In this example strategy, a data set is divided based on row count, so that in this context-free approach (e.g., where metadata information about the rows or other information is not utilized), each Spark worker/pipeline executor is given a fixed (e.g., same) number of rows. In some embodiments, an assumption is made that each row will take the same amount of resources and time to process.

Example Strategy 2: Partitioning Based on a Size of Rows/Amount of Data

[0091] In this example strategy, a data set is divided in part based on the sizes of the rows in the data set. A statistical analysis is performed on the data to determine the density and/or amount of the data in the rows of the data set (e.g., the amount of data may vary from row to row). For example, metadata indicating the amount of space that a row takes is determined. The data set is divided in a manner such that each partition includes the same amount of data (but may include varying numbers of rows).

[0092] In some embodiments, the number of rows is utilized as a secondary criterion in addition to the size of the rows. For example, a number of rows that has a data size of a given amount is determined for a partition. If the number of rows exceeds a threshold number of rows (or is more than a threshold number of deviations away from a mean number of rows), then the number of rows in the partitions is trimmed, and capped at the threshold. For example, each partition is assigned 100 MB of data or 200,000 rows, whichever produces fewer rows.

[0093] The use of the number of rows as a secondary criterion is based in part on the columnar nature of the data transformation, where data is transformed based on data preparation operations performed with respect to a particular column or columns, and it is those columns which are affected by the data preparation operations which determine the amount of computational effort needed to perform an operation. However, a row includes data cells in every column of a data set, and the size of the row may be concentrated in data cells that are in columns that do not materially contribute to the cost of an operation. By using a number of rows as a secondary criterion, columns that have outlier distributions in terms of size can be eliminated (assuming that most common data preparations are operating on data that is fairly uniform in distribution). This provides a limiter for how much data will ultimately be processed in the distributed computing system.

[0094] In some embodiments, the limit/maximum number of rows per partition is determined as a function of the total number of rows for an entire data set. An example plot illustrating an embodiment of a three-part function is shown in FIG. 4. The slopes and transition points of the line segments shown in the figure are empirically determined and may vary in different embodiments. In this example, for data sets whose number of rows falls within range 402, partitions are loaded with a larger proportion of the total number of rows of the data set. For example, for very small data sets, a single partition is loaded with all of the data. By doing so, data will not need to be distributed across partitions (and potentially to different nodes), reducing resource overhead. Thus, in this first region 402, for smaller input data sets, it is more efficient to divide the data set into fewer partitions; in other words, the partitioning technique favors putting more rows into a single partition.

[0095] For data sets with a total number of rows in range 404, new partitions are steadily added as the total number of rows are increased, where the size of each partition grows steadily. In comparison to region 402, in region 404, the rate at which rows are added to partitions is slower. For example, in this range, the addition of new partitions is favored over adding rows to those partitions. While rows are still added to partitions steadily, which may sacrifice some partitions' performance on a node (as the node will have to process more row data), they are added at a rate such that the number of partitions to be processed does not expand too much.

[0096] For data sets whose total number of rows exceeds threshold 406, the number of rows that can be included in a partition is frozen and does not grow, where the addition of more partitions is favored. Thus, an upper bound on the number of rows that can be included in a single partition is established, allowing for the knowledge that each partition will be able to process a limited (upper-bounded) amount of data in a relatively fixed amount of time.

Example Strategy 3: Partitioning Based on a Size of Active Portions of Rows

[0097] In this strategy, as in strategy 2, an amount of data to include in a partition is considered. However, only the data in those columns that are involved (i.e., active) in (or affected by) an operation (or set of sequenced operations) is considered. For example, if, out of four total columns, only three of those columns are involved in a data preparation operation (e.g., a join operation that uses these three columns), then only the data in those three columns is determined. The data set is then partitioned according to the amount of data in the active columns (e.g., as described above in strategy 2). In some embodiments, a density of data in the active portions of rows is used as another factor to determine partitioning.

[0098] In some embodiments, strategies 2 and 3 are context aware, and take into account attributes and characteristics of the data set to be processed (e.g., metadata information determined about the rows of the data set). In some embodiments, the context aware strategies also take into account the physical characteristics of the cluster, such as the amount of memory that a partition will require and the amount of memory that a pipeline executor working on a partition can accommodate. For example, the amount (memory size) of data that can be in a partition can be set so that it does not exceed the memory that an executor is allocated to use. Other physical characteristics of the cluster that are taken into account include performance metrics such as an amount of processing power, network bandwidth metrics, etc., as will be described in further detail below.

[0099] The nodes in a cluster may be physical machines with varying performance characteristics. For example, suppose that a cluster includes two computing nodes. The first has 8 processor cores, with 10 GB of memory per core (i.e., a total of 80 GB of memory), while a second node has 16 processor cores, also with 10 GB of memory per core (i.e., a total of 160 GB of memory). Based on these memory/processing characteristics of the nodes, and using a heuristic in which a worker is allocated 10 GB per processor core, a number of workers that is a multiple of three should perform the work across the two nodes. This is because the first node has one-third of the total memory, while the second node has two-thirds of the total memory (i.e., the ratio of memory for the two nodes is 1:2), and having a number of workers that is a multiple of three will ensure that the total amount of memory in the cluster is fully utilized.

[0100] However, given that the nodes of the cluster may vary in performance characteristics, and that the cluster structure may change, in some embodiments, the creation of partitions is done without explicit knowledge of the actual processing capabilities of the cluster. Rather, each partition is allocated a pre-specified amount of computing resources, such as an amount of memory (e.g., 10 GB) per core. The data set is then divided according to the performance heuristic/characteristic (e.g., into chunks that are some multiple of 10 GB). Thus, for example, if a partition is allocated a maximum of 10 GB of memory per core, then the first node, with 80 GB of total memory across 8 cores can support 8 partitions/workers (where one partition corresponds to one worker). In this example, the property of an amount of RAM per core has been reduced down to a principle/heuristic that can be applied to tasks (and without explicit knowledge of the actual hardware of the cluster).

[0101] In some embodiments, a partition is processed by one worker, and the amount of resources that can be allocated to a partition/worker is embodied in an atomic computing unit, which defines the performance characteristics of a worker unit that can work on a partition. The atomic computing unit is associated with a set of performance metrics whose values indicate the amount of resources that a worker/pipeline executor has to process the partition. In addition to an amount of memory per core, as described above, other properties that can be reduced down into this higher level form include network bandwidth, latency, and core performance. By defining a higher level view of the amount of resources available to a single worker unit (working on a partition), the cost in resources for adding partitions (and more worker units) can be determined. For example, a cost function can be used to determine, given a set of performance characteristics/heuristics, a cost of computing a result. In some embodiments, a unit of cost is computed (e.g., for a worker to process some number of rows/amount of data). The data is then divided based on the computed unit of cost to determine a number of workers needed to process the data.

[0102] Thus, using the higher level view of the performance characteristics of an atomic worker unit, a number of workers needed to work on a data set can be determined (i.e., the number of pieces of work/partitions into which the data should be divided). Additionally, the number of partitions/pieces of work to create versus the number of rows to add to a partition can be evaluated based on computation costs.

[0103] In some embodiments, the determination of how to partition a data set is based on the characteristics of an operation to be performed. For example, different types of operations will have different computational costs. As one example, a function that takes a single input and provides an output solely based on that input, such as an uppercase operation, has a constant cost. Other types of operations, such as sort, which may require partitions to communicate with each other, may have larger costs (e.g., order of log n divided by the number of partitions for sort). A data set can then be partitioned based in part on the cost to perform the operations specified in a received script.

[0104] Any combination of the strategies and techniques described above can be used to determine a strategy for partitioning a data set according to a cost function. In some embodiments, the partitions are contiguous and non-overlapping. As one example, suppose that a data set of 200 rows, indexed from 0 to 199, is divided equally into four logical partitions (e.g., using strategy 1 described above). A first partition will have rows 0-49, a second partition will have rows 50-99, a third partition will include rows 100-149, and a fourth partition will include rows 150-199. In some embodiments, the partitions are ordered as well, such that the rows obtained/read from partition N+1 follow the rows obtained/read from partition N. Thus, a data set can be read in row order by reading each partition in sequential order. The partitions are then distributed to the pipeline executors/Spark workers in the distributed computing deployment architecture. For example, a Spark scheduler determines where (e.g., node) a partition/piece of work is to be assigned and processed.

[0105] FIG. 5 is a flow diagram illustrating an example embodiment of a process for partitioning. In some embodiments, process 500 is executed by data partitioning engine 208 of FIG. 2. The process begins at 502 when a specification of a set of sequenced operations to be performed on a set of organized data is received. In some embodiments, the sequenced operations include data preparation operations. As one example, the set of data can be organized into rows and columns, or any other appropriate dimensions. The specification of the set of sequenced operations to be performed on the set of organized data can be received in the form of a script (e.g., generated based on user input via a step editor user interface, imported from a file, etc.), as described above.

[0106] At 504, the set of data is divided into a plurality of work portions based on a cost function that is dependent on at least one dimension of the set of data. In some embodiments, the set of data is divided based on a cost function that takes into account a number of rows to include in a work portion. The cost function can take into account various factors, such as an amount of data to be processed, the computational cost of creating additional work portions/partitions, the cost to add rows to a partition/work portion, the computational cost of operations to be performed, etc. Examples of techniques and strategies for dividing a set of data into a plurality of work portions/partitions are described above. If multiple data sets are specified in the specification, the data sets can be divided into logical partitions in their own respective namespaces.

[0107] At 506, the plurality of work portions is distributed to a plurality of processing nodes to be processed according to the specification of operations. For example, a scheduler (e.g., Spark scheduler) distributes the determined work portions to processing nodes in a distributed computing cluster. In some embodiments, the determined work portions are sent to the processing nodes via a tree-structured description of dependent operations to be performed on some input data. An example of dependent operations is as follows: making a change to column A that depends on a change to column B that depends on a cache of columns A, B, and C.

[0108] The above described strategies and techniques for distributed pipeline optimization provide various benefits. For example, as described above, a data set can be distributed to workers in an intelligent manner that takes into consideration the characteristics of the data itself (e.g., the amount of data in a row, the active columns in the row, etc.). This allows workers, for example, to process similar amounts of data, reducing the amount of time needed to wait for stragglers (e.g., that are taking longer to compute their portion of work). As another example, by considering the physical characteristics of a cluster, work portions can be generated that efficiently utilize the resources of the cluster. As another example, using the strategies described above, an optimal number of work portions and/or number of rows/amount of data to include in a work portion can be determined to minimize additional overhead and maximize parallelism. Thus, distributed computing can be performed more efficiently and predictably.

Data Transformation and Cache Optimization

[0109] Once an input data set has been partitioned and distributed, a set of sequenced data preparation operations can be applied to the data set according to the specification of a received script. For example, the pipeline master 308, having divided one or more input data sets and distributed them to workers/nodes in a distributed computing cluster, is configured to cooperate with the pipeline executors to determine transformation results. In some embodiments, each pipeline executor working on a partition/work portion is configured to provide a subset of the overall results of performing a sequenced set of operations. The pipeline master has the responsibility of collating/combining the result subsets into the overall result. In some embodiments, the pipeline master of the cluster is used to implement data transformation engine 210 and caching engine 212 of FIG. 2.

[0110] In some cases, distributed computing platforms such as Spark include native functionality for performing various operations. However, the manner in which these platforms execute operations typically requires data to be replicated, which can be resource intensive and inefficient.

[0111] Using the techniques described herein, a set of sequenced operations can be performed without replicating data at each stage of the pipeline, thereby increasing the speed and efficiency with which the sequenced set of operations can be performed and data transformation results obtained. An example illustrating how a platform such as Spark replicates data when performing an operation, in contrast to the techniques described herein, will be shown with respect to the sort operation described below in conjunction with FIGS. 10A-10F.

[0112] As will be described in further detail below, data fragments including column files and data traversal programs can be generated and executed as data is processed through a pipeline. The data fragments are used to represent the cumulative results at various stages of the pipeline (e.g., the result of having performed a subset of the sequenced data preparation operations). The fragments representing the transformation results can be cached at various stages of the pipeline for reuse. For example, for a given piece of work that was operated on, the cumulative results (or representation of the results) of operations on the piece of work up to a particular stage in the pipeline can be saved to disk or stored to a cache layer. The cached representation can be later used to reconstruct the state of the data as of the particular stage in the sequence of operations. The data fragments/representation can be cached not only at the end of the pipeline, but in the middle as well. This allows for intermediary results at the various stages of a pipeline to be viewed. Further, edits to the sequenced set of data preparation operations defined in a script (e.g., using an editor interface provided by user interface engine 202 of FIG. 2) can reuse the same cached result without having to perform re-computation of the sequenced set of steps that led to the cached result. For example, in some embodiments, the cached representation is identified using a signature that is a function (e.g., hash function such as SHA hash function) of the (e.g., string) description of the sequenced set of operations that led to the results represented by the cached representation. When new data preparation scripts are received (e.g., as a user configures data preparation via an editor interface), signatures can be generated from the operations of the new script and used to determine whether there is an existing cached representation that can be leveraged.

[0113] In some embodiments, the cached representation described herein is optimized for columnar workloads. The columnar workloads include data preparation operations that are used to perform columnar data transformations. In some embodiments, the data formats and structures used to generate cached representations are also optimized for speed and efficiency, for example, to limit the flow of data throughout a pipeline server so that as little data as is necessary is worked on as quickly as possible.

[0114] (Re)use of the columnar workload-optimized cache, including the generation and reuse of data traversal programs, will be described below in conjunction with various example data preparation operations. While example details of several data preparation operations are provided for illustrative purposes, the list is not exhaustive, and the techniques described herein can be adapted accordingly for any other data preparation operations as appropriate.

Data Preparation Operation Examples

[0115] Suppose that a user has specified a data set and a set of sequenced data preparation operations to perform on the data set via a user interface (e.g., provided by user interface engine 202 of front end 200 of FIG. 2), resulting in the script shown in FIG. 6A being generated (e.g., using script generator 204 of FIG. 2). The script is received by a pipeline server (e.g., pipeline server 300 of FIG. 3 from front end 200 of FIG. 2), implemented using a distributed computing platform such as Apache Spark.

[0116] FIG. 6A illustrates an example embodiment of a script. As shown, script 600 includes a description of the data set (referred to as "DS1" in this example) to be worked on (and imported) at 602. The contents of the data set to be processed are shown in conjunction with FIG. 6B. The script also includes a set of sequenced operations to perform on the data set. In this example, the set of sequenced operations includes an uppercase operation on column A of the data set (604) and a filter operation on column B of the data set (606) on the values "e" and "h." The sequenced set of operations forms a pipeline through which the data set will be processed. In this example, the logical sequence of the operations is also the physical execution sequence, but need not be (e.g., the physical execution sequence may be different, for example, in the presence of a smart optimization compiler). For example, suppose that a sequence of data preparation operations includes two operations, "f" and "g," in successive positions, in that order. A smart compiler may determine that performing "g" before "f" would result in exactly the same result, and would be faster to compute. For instance, in the example operations specified in script 600, the final result could also be obtained by swapping the uppercase and filter steps. Doing so would result in the uppercase operation being performed on far fewer rows, increasing the speed (and efficiency) of the computation.

[0117] As shown in this example, the data preparation operations are columnar in nature, where an operation to be performed on a data set is defined with respect to a particular column. For example, the uppercase operation is performed on column "A" of the data set, and the filter operation is performed based on particular values found in a specific column (column "B"). For such data preparation operations, how an entire data set is transformed is based on how particular columns are affected by an operation, or based on the characteristics of the particular columns implicated in an operation. This will be leveraged to provide techniques for optimized and efficient performance of data preparation operations, as will be described in further detail below.

[0118] At 608, the script indicates how the results of the data preparation operations are to be outputted. In this example, the results are to be viewed (e.g., presented to a user in a user interface provided by user interface engine 202 of FIG. 2). Another example of an option for outputting results is to publish the results (e.g., export them to another file).

[0119] FIG. 6B illustrates an example embodiment of a data set to be processed. In this example, data set 650 corresponds to the data set specified at 602 of script 600 of FIG. 6A.

[0120] The processing performed at each stage of the pipeline formed by the set of sequenced operations defined in script 600 will be described in further detail below. For illustrative purposes, the files written as of each step in the sequenced operations are saved (cached), but need not be.

Import/Start

[0121] The first operation of script 600 is Import/Start. After the decision on how rows should be divided and distributed is made (e.g., by data partitioning engine 208 of FIG. 2), the data assigned to the various partitions is imported. In some embodiments, importing the data includes preparing the data such that it can be quickly accessed sequentially (e.g., read a column of data quickly from top to bottom).

[0122] FIG. 7A illustrates an example embodiment of data structures generated during an import operation. In some embodiments, the example of FIG. 7A continues from the example of FIG. 6B. In some embodiments, the data being imported in FIG. 7A is the data from data set 650 (DS1) of FIG. 6B.

[0123] Suppose in this example that DS1 has been split into two logical partitions, partition zero (702) and partition one (704). The partitions are each processed by one or more workers (e.g., Spark workers/pipeline executors, as described above). As described above, each partition includes a subset of the rows of DS1, and collectively the two partitions comprise the entire data set. The subsets of rows among the partitions are non-overlapping and are contiguous.

[0124] With the work (data) having been partitioned, each row of DS1 is uniquely identified by a set of coordinates. In some embodiments, the coordinates indicate the partition in which the row can be found, and an identifier of the row within the partition. In the examples described herein, the coordinates are organized as follows: (partition number, row identifier). An example of the unique row identifiers is shown in references tables 706 and 708, which correspond to partitions zero and one, respectively.

[0125] As shown, data set DS1 has been equally divided into two partitions, with the top three rows of the data set assigned to partition zero, and the bottom three rows assigned to partition one.

[0126] In this example, each partition stores the data into sets of files corresponding to the columns, as shown at 710 and 712. For example, at 710, separate column files corresponding to the columns "A," "B," and "C," respectively, of data set DS1 are written (e.g., the contents of the data set DS1 are obtained from their source (specified in a script) and re-written into the column files). Each separate column sequentially describes the cells for all of the rows of DS1 that are in the partition. In some embodiments, the column values that are written are read from the source of the input data set (as specified in a script), and the original source data set is not modified (e.g., the values of the source data set are copied into the column files).

[0127] Accompanying column files 710 and 712 are lookup tables 714 and 716, respectively. Each row of the lookup table includes a row identifier ("Row ID") and indices into the column files (indicating the location of the data values for an identified row). In this example, the indices shown in the index columns are byte indices into their respective column files.

[0128] The structure of the lookup table and the column files are optimized for sequential access such that, for example, all of the data can be read down a column quickly. The structures shown also allow for efficient non-sequential row probes (e.g., random access probing of a row). For example, to access a specific value in a row of a column, a lookup of the table can be performed by using a row identifier of the row of interest and the column of interest. The index value corresponding to that (row, column) coordinate is obtained from the lookup table and used to access the corresponding column file. The value at the index of the column file can then be retrieved directly, without requiring other data not of interest to be loaded and read.

[0129] In this example, the values in the column file are stored sequentially and are indexed by byte order. As the values can be of different types (e.g., char, int, etc.) and can be of different sizes (e.g., in bytes), the indices in the lookup table indicate the location of a cell in a column file by its starting byte location in the file. For purposes of illustration, throughout this and other examples described herein, assume that a character has a size of one byte. The numeric values shown in the examples described herein are, also for illustrative purposes, integers with a size of two bytes.

[0130] Take for example the column file (718) corresponding to column "C" written by partition one as part of the import operation. The column file includes the values `cats,` `n,` and `q.` The corresponding byte indices for the column file are shown at 720 of lookup table 716. The starting byte in the "C_file" for the value `cats` is 0, as it is the initial data value written in the column file. The starting byte in the "C_file" for the value `n` is 4. This is because the value `cats," which is a word including 4 characters, has a size of 4 bytes. Thus, the zeroth byte in column file 718 includes the value for the first row of the "C" column file (in partition one), the fourth byte starts the second row, and the fifth byte starts the third row of the column. Thus, data can be read from the column files by byte index.

[0131] By using byte (or any other appropriate data unit of size) indexes, the column values can be tightly packed into a column file, without spaces/gaps between values. This allows for space efficient-storage of column values as well as efficient lookup of those values. As the column files are stored separately and compactly, if an operation requires operating on an entire particular column, the corresponding column file can be read directly (e.g., without indexing) and without reading values from any other columns that are not of interest. Thus, the data structures/formats shown are space-efficient, columnar, and optimized for specific column operations. As described above, the data format shown is optimized for both random and sequential access.

[0132] In some embodiments, the set of column files and corresponding lookup table are included together into a file set. In this example, lookup table 714 and column files 710 are included in file set 722. Lookup table 716 and column files 712 are included in file set 724. Each file set is associated with a file name/cache identifier, which can be used to locate the file set including the actual column values. In this example, the file set name/identifier is generated based on the name of the step that resulted in the column files being written, and the partition that wrote the file. For example, the file set 722 written by partition zero is called "import_ds1_p0," indicating that the file set was written by partition zero ("p0") for the step of importing_ds1 ("import_ds1"). Similarly, the file set 724 written by partition one is called "import_ds1_p1," indicating that the file set was written by partition one ("p1") for the step of importing ds1 ("import_ds1"). When generating the file sets for an operation that is performed across all of the partitions, the handle/cache id that is generated is consistent across all of the partitions. In this example, for partitions zero and one participating in the import DS1 operation, the handle of the file sets ("import_ds1") written by the partitions is consistent across both partitions, with the difference being the partition number that is concatenated to the end of the file set name. In some embodiments, the file sets are written to a cache/storage and can be obtained using the identifiers described above. The use of such cache identifiers/file set names will be described in further detail below.

[0133] While a data set may have been divided across multiple partitions, as shown, the processing performed with respect to only one partition is shown for the remaining steps of script 600, as the specified set of sequenced operations do not require movement of information between partitions (i.e., rows will not move between partitions). Similar processing is performed in the other logical partition(s) into which the input data set has been divided. Examples of operations that result in transfer of rows between partitions will be described in further detail below.

[0134] In addition to the file sets that are written, each partition is associated with what is referred to herein as a "data traversal program" (DTP). The data traversal program includes a references table and a reference stack, which together provide information for how to read the state of a portion of the data as of a certain stage of a pipeline (e.g., how to read what is the cumulative result of having performed some portion of the sequenced set of operations on the input data set). A references table includes references of row transformations during a set of sequenced operations, and a reference stack includes a record of the sequenced operations and columns that are changed by the sequenced operations. In some embodiments, as each operation in a sequenced set of operations is performed, the references table and the reference stack of the data traversal program for the partition are updated to reflect the cumulative transformation result after having performed the sequenced set of operations up to a given operation. In some embodiments, the data traversal program is stored in a cache layer. This allows the data traversal program to be quickly accessed and updated as operations are performed, thereby allowing efficient access of the results of the operations (including intermediate results) without having to repeat the operations.

[0135] In some embodiments, a data traversal program of a partition, when executed, uses the references table and reference stack of the partition to obtain a sequenced set of rows that are a subset of the data set resulting from a sequenced set of operations having been performed on an input data set. The position of the sequenced subset of rows in the entire resulting data set is based on the position of the corresponding partition in the sequence of partitions. For example, the sequenced subset of rows obtained from the data traversal program for partition "N" is immediately followed by the sequenced subset of rows obtained from the data traversal program for partition "N+1." The sequenced subsets of rows from the various partitions are non-overlapping. The sequenced subsets of rows, when read in this order, collectively form the results of a sequenced set of data preparation operations performed on one or more input sets of data.

[0136] In some embodiments, the references table and the reference stack of the data traversal program are updated as each data preparation operation is performed to reflect the cumulative result of having performed the sequenced set of operations up to a given point in the pipeline. As the pipeline includes various stages and intermediary results, which, for example, a user may wish to revisit, in some embodiments, a copy of the data traversal program can be cached at a save point (e.g., before it is updated by the next step in the sequence of data preparation operations). The caching allows, for example, incremental saving of the data that is changing as the data progresses through various points of the pipeline/sequenced set of operations.

[0137] As shown in the example of FIG. 7A, partitions zero and one are each associated with their own data traversal programs, 726 and 728, respectively. Data traversal program 726 associated with partition zero includes the references table 706 and reference stack 730. Data traversal program 728 associated with partition one includes references table 708 and reference stack 732. In some embodiments, the data traversal programs (including corresponding references tables and reference stacks) are initialized (created) as a result of the import being performed. As will be described in further detail below, in some embodiments, the data traversal program represents a result of a set of sequenced data preparation operations and indicates how to assemble one or more affected columns to derive the result.

[0138] Reference stack 730 of partition zero is now described. In this example, the first row of reference stack 730 (which currently includes only one row after the import step) includes cache identifier ("cache id") 734. The cache identifier projects out the columns "A," "B," and "C," as indicated by the corresponding entry in the row at 736. Cache id 734, when combined with an indicator of the partition (partition 0), will result in a file name corresponding to file set 722 ("import_ds1_p0"). This indicates the location of the data that was written due to the import by part 0. The reference stack is used in conjunction with the corresponding references table to read a sequenced set of rows that is a subset of the overall data set resulting from the import operation having been performed.

[0139] An example of reading the result of importing DS1 is as follows. Suppose, for example, that a user would like to see the state of the data set DS1 after it has been operated (which should appear the same, as import does not make modifications to the data set). The files and data traversal programs shown in FIG. 7A can be used as follows to assemble DS1 (e.g., for viewing) as of the import step.

[0140] In order to read the imported data in its proper order, the data traversal programs of the partitions are executed in the order of the partitions to which they correspond. Thus, data traversal program 726 of partition zero is executed first (the data traversal programs of the partitions can also be executed in parallel, with the sub-results from each data traversal program placed in their correct order as they are obtained).

[0141] Data traversal program 726 is executed as follows. References table 706 includes three rows. This indicates that the data traversal program (which is associated with partition zero), when executed, will provide the first three rows of the imported data set. The first row of the imported data set is obtained as follows. The value of the first (and as yet, only) column in the first row (738) of references table 706, the coordinates (0,0), is obtained. This column of the references table corresponds to the first (and as yet, only) row in the reference stack. The row includes cache identifier 734 and identifies columns "A," "B," and "C" at 736.