Mobile Computing Device Application Software Interacting with an Umbrella

Gharabegian; Armen Sevada

U.S. patent application number 16/792890 was filed with the patent office on 2021-02-11 for mobile computing device application software interacting with an umbrella. This patent application is currently assigned to Shadecraft, Inc.. The applicant listed for this patent is Shadecraft, Inc.. Invention is credited to Armen Sevada Gharabegian.

| Application Number | 20210042802 16/792890 |

| Document ID | / |

| Family ID | 1000005180844 |

| Filed Date | 2021-02-11 |

View All Diagrams

| United States Patent Application | 20210042802 |

| Kind Code | A1 |

| Gharabegian; Armen Sevada | February 11, 2021 |

Mobile Computing Device Application Software Interacting with an Umbrella

Abstract

An intelligent umbrella includes a wireless communication transceiver to receive commands or messages from a mobile computing device and an integrated computing device, the integrated computing device including one or more memory devices, one or more processors and computer-readable instructions stored in the one or more memory devices. The computer-readable instructions are executable by the one or more processors to receive the commands or messages, via the wireless communication transceiver from the mobile computing device and to generate instructions, signals, commands and/or messages, based, at least in part on the received commands or messages from the mobile computing device. The computer-readable instructions are executable by the one or more processors to communicate the generated instructions, signals, commands or messages to one or more assemblies of the intelligent umbrella to cause the one or more assemblies of the intelligent umbrella to move in a specified manner.

| Inventors: | Gharabegian; Armen Sevada; (Glendale, CA) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Assignee: | Shadecraft, Inc. |

||||||||||

| Family ID: | 1000005180844 | ||||||||||

| Appl. No.: | 16/792890 | ||||||||||

| Filed: | February 18, 2020 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 16129244 | Sep 12, 2018 | 10565631 | ||

| 16792890 | ||||

| 15273669 | Sep 22, 2016 | 10078856 | ||

| 16129244 | ||||

| 15268199 | Sep 16, 2016 | |||

| 15273669 | ||||

| 15242970 | Aug 22, 2016 | 10455395 | ||

| 15268199 | ||||

| 15225838 | Aug 2, 2016 | |||

| 15242970 | ||||

| 15219292 | Jul 26, 2016 | 10250817 | ||

| 15225838 | ||||

| 15214471 | Jul 20, 2016 | |||

| 15219292 | ||||

| 15212173 | Jul 15, 2016 | 10159316 | ||

| 15214471 | ||||

| 15160856 | May 20, 2016 | 9949540 | ||

| 15212173 | ||||

| 15160822 | May 20, 2016 | 10813422 | ||

| 15160856 | ||||

| 62333822 | May 9, 2016 | |||

| 62333822 | May 9, 2016 | |||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | A45B 2200/1009 20130101; A45B 25/10 20130101; A45B 2200/1018 20130101; A45B 25/165 20130101; H02S 40/38 20141201; Y02B 20/40 20130101; G06F 3/165 20130101; A45B 2025/003 20130101; H02S 20/32 20141201; G06Q 30/0601 20130101; A45B 2017/005 20130101; A45B 25/143 20130101; G08B 13/19697 20130101; F24S 30/452 20180501; F24S 50/20 20180501; G06F 3/04847 20130101; G06K 9/00288 20130101; G08B 21/12 20130101; A45B 3/04 20130101; G06F 3/167 20130101; G08B 21/182 20130101; G08B 21/22 20130101; G06F 3/04883 20130101; H02S 40/32 20141201; A45B 23/00 20130101; F24S 25/12 20180501; H05B 47/00 20200101; G08B 27/00 20130101; H02S 20/10 20141201; A45B 17/00 20130101; H04N 7/181 20130101; A45B 2023/0012 20130101; A45B 2200/1027 20130101; A45B 25/18 20130101; H05B 47/175 20200101; A45B 3/02 20130101 |

| International Class: | G06Q 30/06 20060101 G06Q030/06; A45B 17/00 20060101 A45B017/00; H02S 40/38 20060101 H02S040/38; H02S 40/32 20060101 H02S040/32; H02S 20/10 20060101 H02S020/10; H02S 20/32 20060101 H02S020/32; A45B 25/16 20060101 A45B025/16; H05B 47/00 20060101 H05B047/00; H05B 47/175 20060101 H05B047/175; A45B 3/02 20060101 A45B003/02; A45B 3/04 20060101 A45B003/04; A45B 23/00 20060101 A45B023/00; A45B 25/10 20060101 A45B025/10; A45B 25/14 20060101 A45B025/14; A45B 25/18 20060101 A45B025/18; G06F 3/0484 20060101 G06F003/0484; G06F 3/0488 20060101 G06F003/0488; H04N 7/18 20060101 H04N007/18 |

Claims

1. A mobile computing device to communicate with one or more umbrellas, comprising: a graphical user interface configured to receive input selections and to display results of operations of an umbrella; a wireless communication transceiver configured to communicate commands and/or messages to one or more wireless communication transceivers of the one or more umbrellas; one or more processors coupled to the wireless communication transceiver; and a computer-readable storage medium containing computer-readable instructions in the form of application software, the computer-readable instructions executable by the one or more processors, to cause the one or more processors to perform actions including: receive an input selection corresponding to a selected tilting movement of an umbrella of the umbrella; communicate messages and/or commands, via the wireless communication transceiver, based on the selected tilted movement to the umbrella to cause an upper support assembly to tilt with respect to a lower support assembly about an elevation axis; and generate and communicate messages and/or commands to the graphical user interface to present, on a display of the mobile computing device, a representation of the selected elevation movement of the umbrella and the selected deployment movement of the umbrella.

2. The mobile computing device of claim 1, wherein the application software, when executable by the one or more processors, causes the one or more processors to further perform actions comprising: receive an input representing one or more operations to be performed on a lighting assembly of the umbrella; communicate messages and/or commands, via the wireless communication transceiver, to cause the one or more operations to be performed on the lighting assembly; and generate and communicate messages and/or commands to the graphical user interface to present, on a display of the mobile computing device, a representation of the performed one or more lighting assembly operations.

3. The mobile computing device of claim 1, wherein the application software, when executable by the one or more processors, causes the one or more processors to further perform actions comprising: receive an input representing activation or deactivation of one or more cameras of the umbrella; communicate messages and/or commands, via the wireless communication transceiver, based at least in part on the received input, to cause the one or more cameras of the umbrella to be activated and capture video or images or to be deactivated; receive, via the wireless communication transceiver, captured video or images from the one or more cameras of the umbrella; and generate and communicate messages and/or commands to the graphical user interface to present, on a display of the mobile computing device, the received videos or images from the one or more cameras of the umbrella.

4. The mobile computing device of claim 1, wherein the application software, when executable by the one or more processors, causes the one or more processors to further perform actions comprising: receive an input representing selecting activation or deactivation of one or more environmental sensors of the umbrella; communicate messages and/or commands, via the wireless communication transceiver, based at least in part on the selected input, to cause the one or more environmental sensors of the umbrella to be activated and capture measurements in an area around the umbrella or to be deactivated; receive, via the wireless communication transceiver, captured measurements from the one or more environmental sensors of the umbrella; and generate and communicate messages and/or commands to the graphical user interface to present, on a display of the mobile computing device, the received captured measurements from the one or more environmental sensors of the umbrella.

5. The mobile computing device of claim 1, wherein the application software, when executable by the one or more processors, causes the one or more processors to further perform actions comprising: receive an input representing selecting activation of an audio system of the umbrella; communicate messages and/or commands, via the wireless communication transceiver, based at least in part on the selected input, to cause the audio system of the umbrella to be activated; communicate one or more audio files, via the wireless communication transceiver, to the audio system for playback on one or more speakers; and generate and communicate messages and/or commands to the graphical user interface to present, on a display of the mobile computing device, a representation of the one or more audio files being played on the one or more speakers of the umbrella.

6. The mobile computing device of claim 1, wherein the wireless communication transceiver is a personal area network (PAN) communication transceiver and the instructions and/or messages are communicated directly to a PAN communication transceiver of the umbrella.

7. A mobile computing device to communicate with one or more umbrellas, comprising: a graphical user interface configured to receive input selections and to display results of operations of an umbrella; a wireless communication transceiver configured to communicate commands and/or messages to one or more wireless communication transceivers of the one or more umbrellas; one or more processors coupled to the wireless communication transceiver; and a computer-readable storage medium containing computer-readable instructions in the form of application software, that, when executable by the one or more processors, cause the one or more processors to perform actions including: receive an input selection corresponding to a selected azimuth movement of the umbrella; communicate messages and/or commands, via the wireless communication transceiver, based on the selected azimuth movement to the umbrella to cause one or more arm support assemblies to deploy to an open position or to retract to a closed position; and generate and communicate messages and/or commands to the graphical user interface to present, on a display of the mobile computing device, a representation of the selected azimuth movement of the umbrella.

8. The mobile computing device of claim 7, wherein the application software, when executable by the one or more processors, causes the one or more processors to further perform actions comprising: receive an input representing one or more operations to be performed on a lighting assembly of the umbrella; communicate messages and/or commands, via the wireless communication transceiver, to cause the one or more operations to be performed on the lighting assembly; and generate and communicate messages and/or commands to the graphical user interface to present, on a display of the mobile computing device, a representation of the performed one or more lighting assembly operations.

9. The mobile computing device of claim 7, wherein the application software, when executable by the one or more processors, causes the one or more processors to further perform actions comprising: receive an input representing activation of one or more cameras of the umbrella; communicate messages and/or commands, via the wireless communication transceiver, based at least in part on the received input, to cause the one or more cameras of the umbrella to be activated and to capture video or images in an area surrounding the umbrella; receive captured video or images from the one or more cameras of the umbrella via the wireless communication transceiver; and generate and communicate messages and/or commands to the graphical user interface to present, on a display of the mobile computing device, the received videos or images from the one or more cameras of the umbrella.

10. The mobile computing device of claim 7, wherein the application software, when executable by the one or more processors, causes the one or more processors to further perform actions comprising: receive an input representing selecting activation of one or more environmental sensors of the umbrella; communicate messages and/or commands, via the wireless communication transceiver, based at least in part on the selected input, to cause the one or more environmental sensors of the umbrella to be activated and capture measurements in an area around the umbrella; receive, via the wireless communication transceiver, captured measurements from the one or more environmental sensors of the umbrella; and generate and communicate messages and/or commands to the graphical user interface to present, on a display of the mobile computing device, the received captured measurements from the one or more environmental sensors of the umbrella.

11. The mobile computing device of claim 7, wherein the application software, when executed by the one or more processors, causes the one or more processors to further perform actions comprising: receive an input representing selecting activation of an audio system of the umbrella; communicate messages and/or commands, via the wireless communication transceiver, based at least in part on the selected input, to cause the audio system of the umbrella to be activated; communicate one or more audio files, via the wireless communication transceiver, to the audio system for playback on one or more speakers; and generate and communicate messages and/or commands to the graphical user interface to present, on a display of the mobile computing device, a representation of the one or more audio files being played on the one or more speakers of the umbrella.

12. The mobile computing device of claim 7, wherein the wireless communication transceiver is a personal area network (PAN) communication transceiver and the instructions and/or messages are communicated directly to a PAN communication transceiver of the umbrella.

13. A mobile computing device to communicate with one or more umbrellas, comprising: a graphical user interface configured to receive input selections and to display results of operations of an umbrella; a wireless communication transceiver configured to communicate commands and/or messages to one or more wireless communication transceivers of the one or more umbrellas; one or more processors coupled to the wireless communication transceiver; and a computer-readable storage medium containing computer-readable instructions in the form of application software, that, when executable by the one or more processors, causes the one or more processors to perform actions including: receive an input selection corresponding to activation of one or more cameras or deactivation of the one or more cameras in an area around the umbrella; communicate messages and/or commands, via the wireless communication transceiver, based on the selected input, to the umbrella to cause one or more cameras to be activated and to capture video or image(s) in the area around the umbrella; receive the captured video or image(s) from the wind sensors in the umbrella via the wireless communication transceiver; and generate and communicate messages and/or commands to the graphical user interface to present the captured video or image(s) from the one or more cameras of the umbrella on a display of the mobile computing device.

14. The mobile computing device of claim 13, wherein the wireless communication transceiver is a personal area network (PAN) transceiver and the instructions and/or messages are communicated directly to a PAN communication transceiver of the umbrella.

15. The mobile computing device of claim 13, wherein the application software, when executable by the one or more processors, causes the one or more processors to further perform actions comprising: receive an input selection corresponding to activation of one or more air quality sensors to capture air quality measurements in an area around the umbrella; communicate messages and/or commands, via the wireless communication transceiver, based on the selected input, to the umbrella to cause the one or more air quality sensors to be activated and to capture the air quality measurements in the area around the umbrella; receive, via the wireless communication transceiver, the captured air quality measurements from the air quality sensors in the umbrella; and generate and communicate messages and/or commands to the graphical user interface to present a representation of the captured air quality measurements from the air quality sensors of the umbrella on a display of the mobile computing device.

16. The mobile computing device of claim 13, wherein the application software, when executable by the one or more processors, causes the one or more processors to further perform actions comprising: receive input at a graphical user interface indicative of activation of initiation of a social media application software; initiate the social media application software; receive commands and messages generated by the social media application software corresponding to assemblies and/or components of the umbrella to be utilized; and communicate, via the wireless communication transceiver, the commands and messages received from the social media application to the umbrella to cause activation of the assemblies and/or components.

17. The mobile computing device of claim 13, wherein the application software, when executable by the one or more processors, causes the one or more processors to further perform actions comprising: receive input indicative of activation of initiation of an artificial intelligence (AI) software application; generate instructions initiating the AI software application; generate instructions initiating a speech recognition software process; receive voice commands or instructions; convert the received voice commands or instructions to commands and/or messages; execute the AI software application based, at least in part, on the converted commands and/or messages; receive commands and/or messages from the AI software application, generate audible stimuli from the received AI commands and/or messages; and communicate the audible stimuli to a speaker of the mobile computing device for playback or reproduction of the audible stimuli.

18. The mobile computing device of claim 13, wherein the application software, when executable by the one or more processors, causes the one or more processors to further perform actions comprising: receive input indicative of one or more alert thresholds and an alert notification method; store the alert thresholds and the alert notification method in one or memory devices of the mobile computing device; communicate the alert thresholds to the umbrella via the wireless communication transceiver; receive, via the wireless communication transceiver, alert notifications from the umbrella when umbrella assemblies experience out-of-tolerance conditions; and communicate alert notifications via the alert notification method to a display or a speaker.

19. The mobile computing device of claim 13, wherein the application software, when executable by the one or more processors, causes the one or more processors to further perform actions comprising: receive input indicative of activation of a motion detector, a proximity detector, or an infrared sensor of the umbrella; generate messages and/or instructions to activate one or more of the motion detector, the proximity detector, or the infrared sensor; communicate, via the wireless communication transceiver, the generated messages and/or instructions to the umbrella to cause activation of the motion detector, the proximity detector or the infrared sensor; receive, via the wireless communication transceiver, alerts that the motion detector, the proximity detector, or the infrared sensor has detected movement in an area around the umbrella; receive, via the wireless communication transceiver, captured video or images from one or more cameras of the umbrella that were activated by the motion detector, the proximity detector or the infrared sensor; and present the alerts and/or the captured video or images via the graphical user interface on a display of the mobile computing device.

Description

RELATED APPLICATIONS

[0001] This application claims priority to and is a continuation of U.S. non-provisional patent application Ser. No. 16/129,244, filed Sep. 12, 2018, which is a continuation of U.S. non-provisional application Ser. No. 15/273,669, filed Sep. 22, 2016 and entitled "Mobile Computing Device Control of Shading Object, Intelligent Umbrella and Intelligent Shading Charging System," which is a continuation-in-part of U.S. non-provisional application Ser. No. 15/268,199, filed Sep. 16, 2016, entitled "Automatic Operation of Shading Object, Intelligent Umbrella and Intelligent Shading Charging System," which is a continuation-in-part of U.S. non-provisional application Ser. No. 15/242,970, filed Aug. 22, 2016, entitled "Shading Object, Intelligent Umbrella and Intelligent Shading Charging Security System and Method of Operation," which is a continuation-in-part of U.S. non-provisional application Ser. No. 15/225,838, filed Aug. 2, 2016, entitled "Remote Control of Shading Object and/or Intelligent Umbrella," which is a continuation-in-part of U.S. non-provisional patent application Ser. No. 15/219,292, filed Jul. 26, 2016, entitled "Shading Object, Intelligent Umbrella and Intelligent Shading Object Integrated Camera and Method of Operation," which is a continuation-in-part of U.S. non-provisional patent application Ser. No. 15/214,471, filed Jul. 20, 2016, entitled "Computer-Readable Instructions Executable by a Processor to Operate a Shading Object, Intelligent Umbrella and/or Intelligent Shading Charging System," which is a continuation-in-part of U.S. non-provisional patent application Ser. No. 15/212,173, filed Jul. 15, 2016, entitled "Intelligent Charging Shading Systems," which is a continuation-in-part of application of U.S. non-provisional patent application Ser. No. 15/160,856, filed May 20, 2016, entitled "Automated Intelligent Shading Objects and Computer-Readable Instructions for Interfacing With, Communicating With and Controlling a Shading Object," and is also a continuation-in-part of application of U.S. non-provisional patent application Ser. No. 15/160,822, filed May 20, 2016, entitled "Intelligent Shading Objects with Integrated Computing Device," both of which claim the benefit of U.S. provisional Patent Application Ser. No. 62/333,822, entitled "Automated Intelligent Shading Objects and Computer-Readable Instructions for Interfacing With, Communicating With and Controlling a Shading Object," filed May 9, 2016, the disclosures of which are all hereby incorporated by reference.

BACKGROUND

1. Field

[0002] The subject matter disclosed herein relates to mobile computing device control of a shading object, an intelligent umbrella and/or a shading charging system.

2. Information/Background of the Invention

[0003] Conventional sun shading devices usually are comprised of a supporting frame and an awning or fabric mounted on the supporting frame to cover a predefined area. For example, a conventional sun shading device may be an outdoor umbrella or an outdoor awning.

[0004] However, current sun shading devices do not appear to be flexible, modifiable or able to adapt to changing environmental conditions, or user's desires. Many of the current sun shading devices appear to require manual operation in order to change inclination angle of the frame to more fully protect an individual from the environment. Further, the current sun shading devices appear to have one (or a single) awning or fabric piece that is mounted to an interconnected unitary frame. An interconnected unitary frame may not be able to be opened or deployed in many situations. Accordingly, alternative embodiments may be desired. Further, current sun shading devices may not have automated assemblies to allow a shading object to track movement of a sun and/or adjust to other environmental conditions. In addition, current sun shading devices do not communicate with external shading object related systems. Further, individuals utilizing current sun shading devices are limited in interactions with users. In addition, sun shading devices generally do not have software stored therein which controls and/or operates the sun-shading device. Further, current sun shading devices do not interact with the environment in which they are installed and require manual intervention in order to operate and/or perform functions.

BRIEF DESCRIPTION OF DRAWINGS

[0005] Non-limiting and non-exhaustive aspects are described with reference to the following figures, wherein like reference numerals refer to like parts throughout the various figures unless otherwise specified.

[0006] FIGS. 1A and 1B illustrates a shading object or shading object device according to embodiments;

[0007] FIGS. 1C and 1D illustrate intelligent shading charging systems according to embodiments;

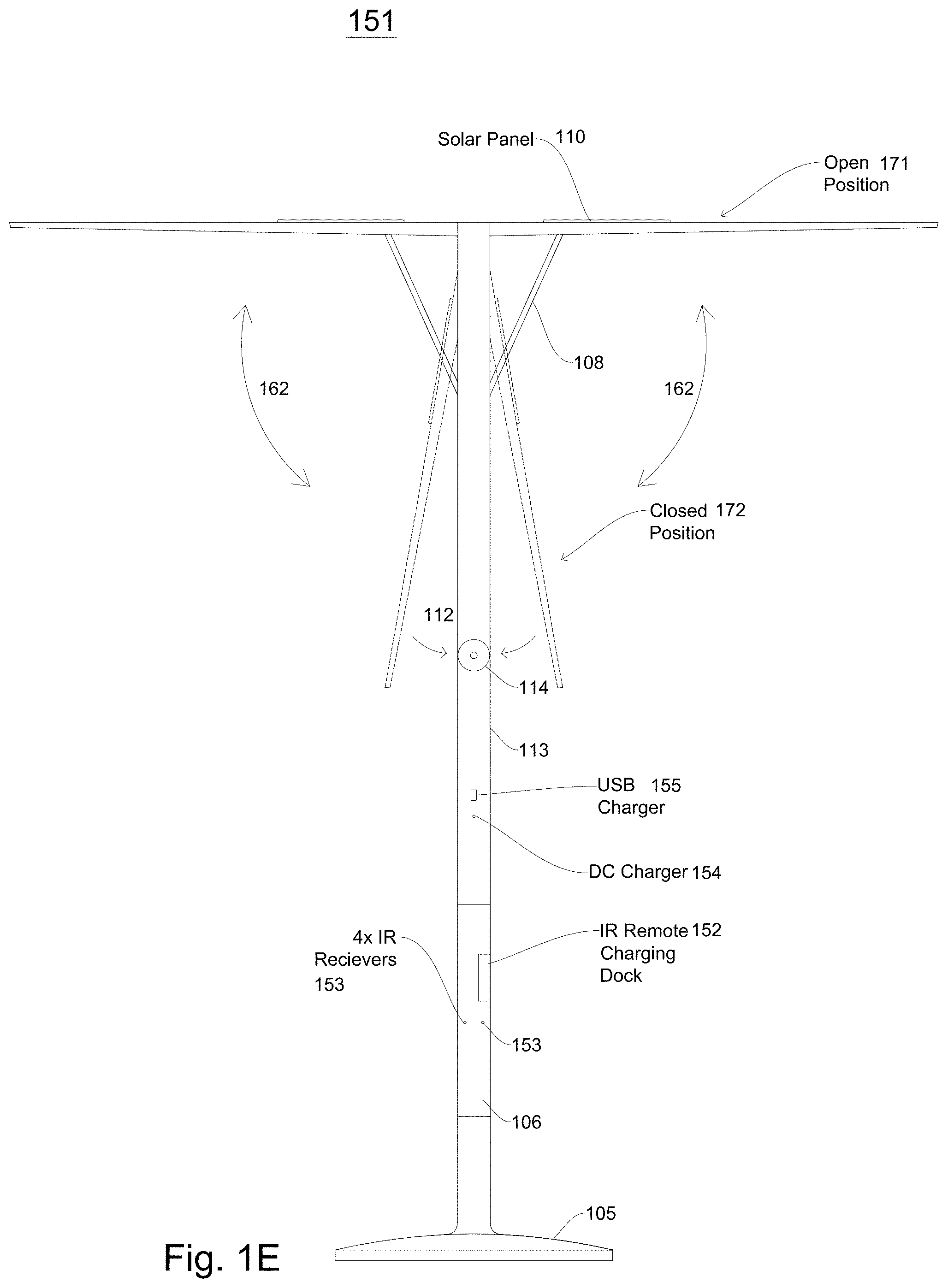

[0008] FIG. 1E illustrates a remote-controlled shading object or umbrella according to embodiments;

[0009] FIG. 1F illustrates a remote-controlled shading object or umbrella after an upper support assembly has moved according to embodiments;

[0010] FIG. 1G illustrates a block diagram of signal control in a remote-controlled shading object according to embodiments;

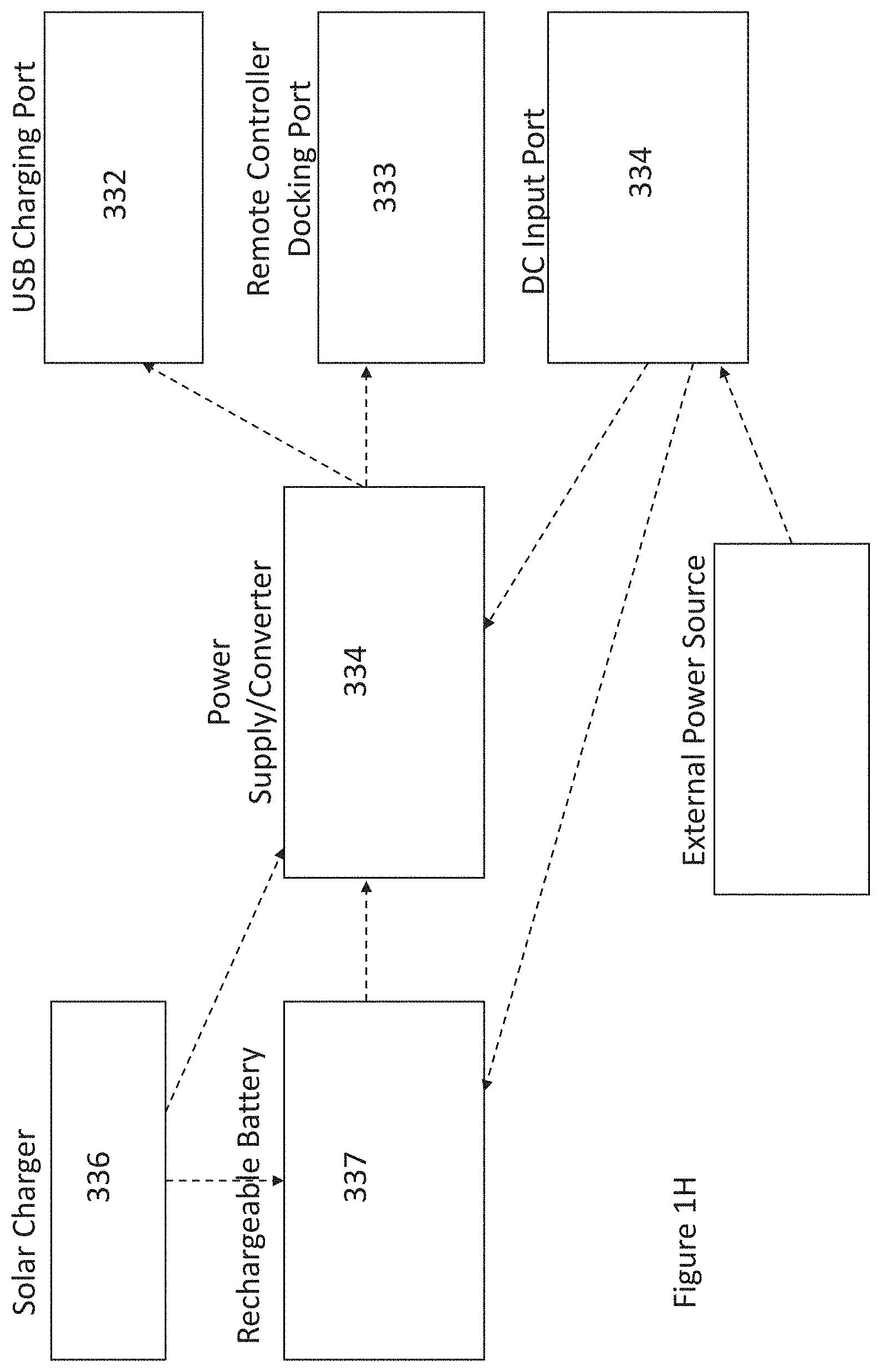

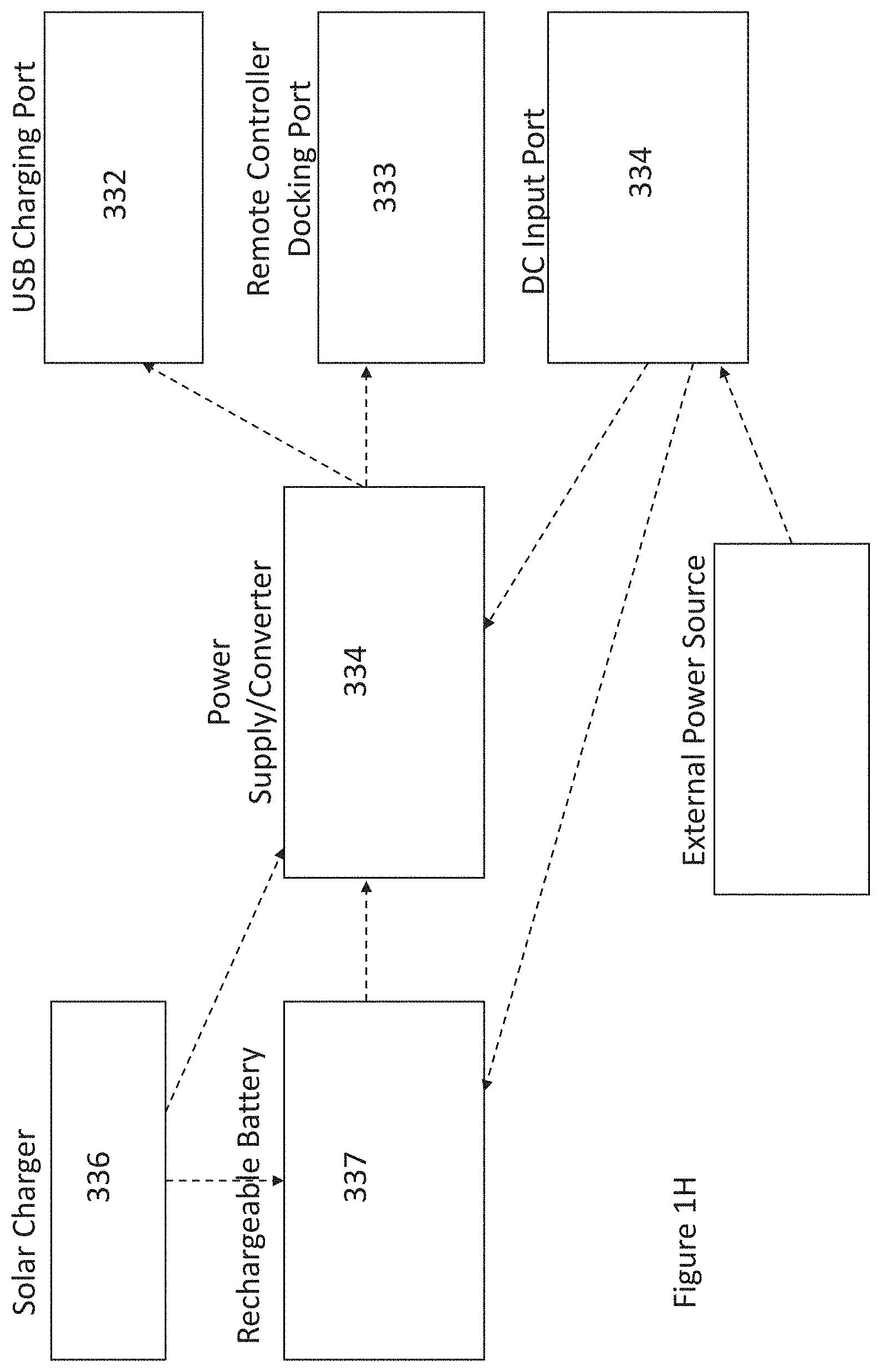

[0011] FIG. 1H illustrates a block diagram of power in a remote-controlled shading object according to embodiments;

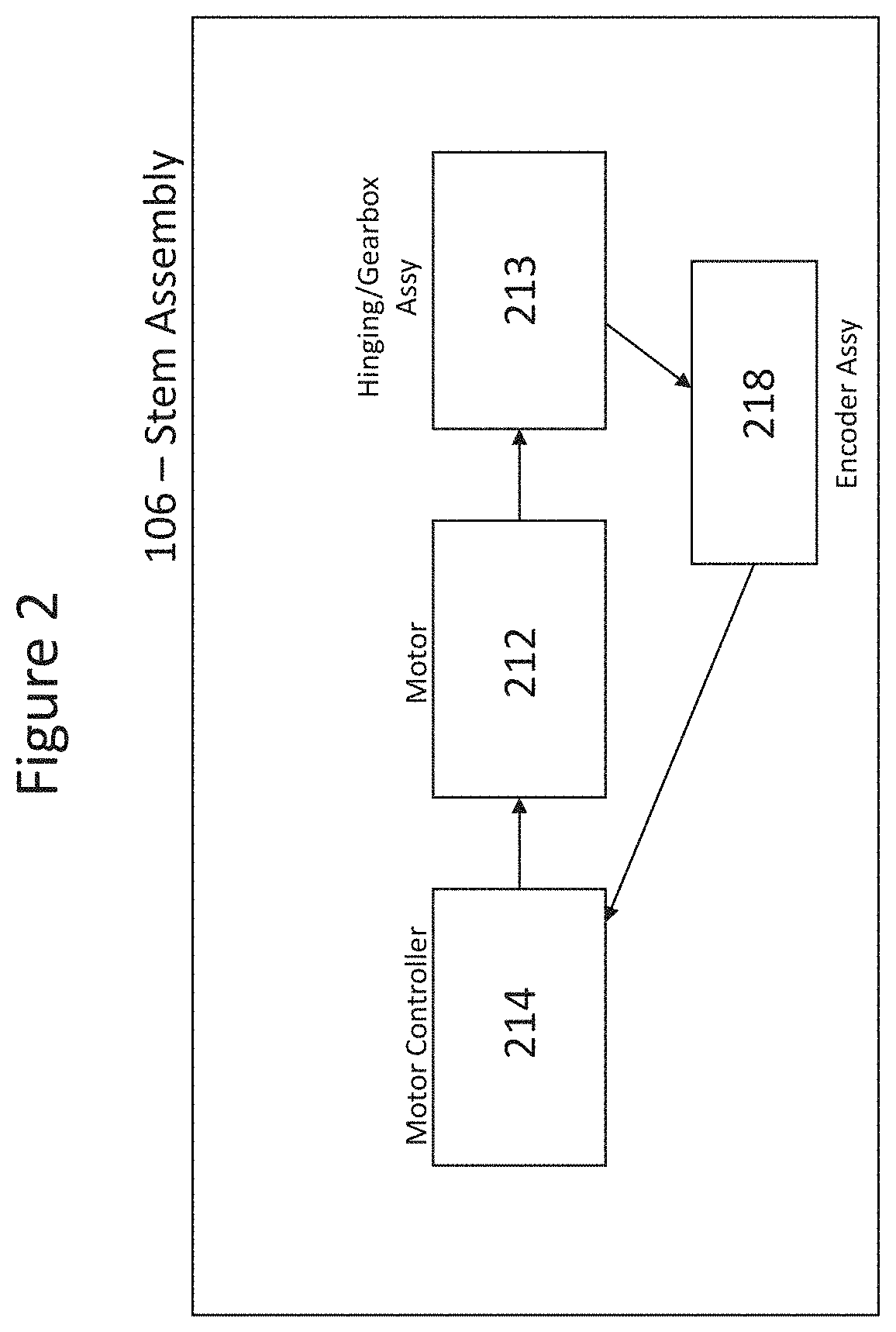

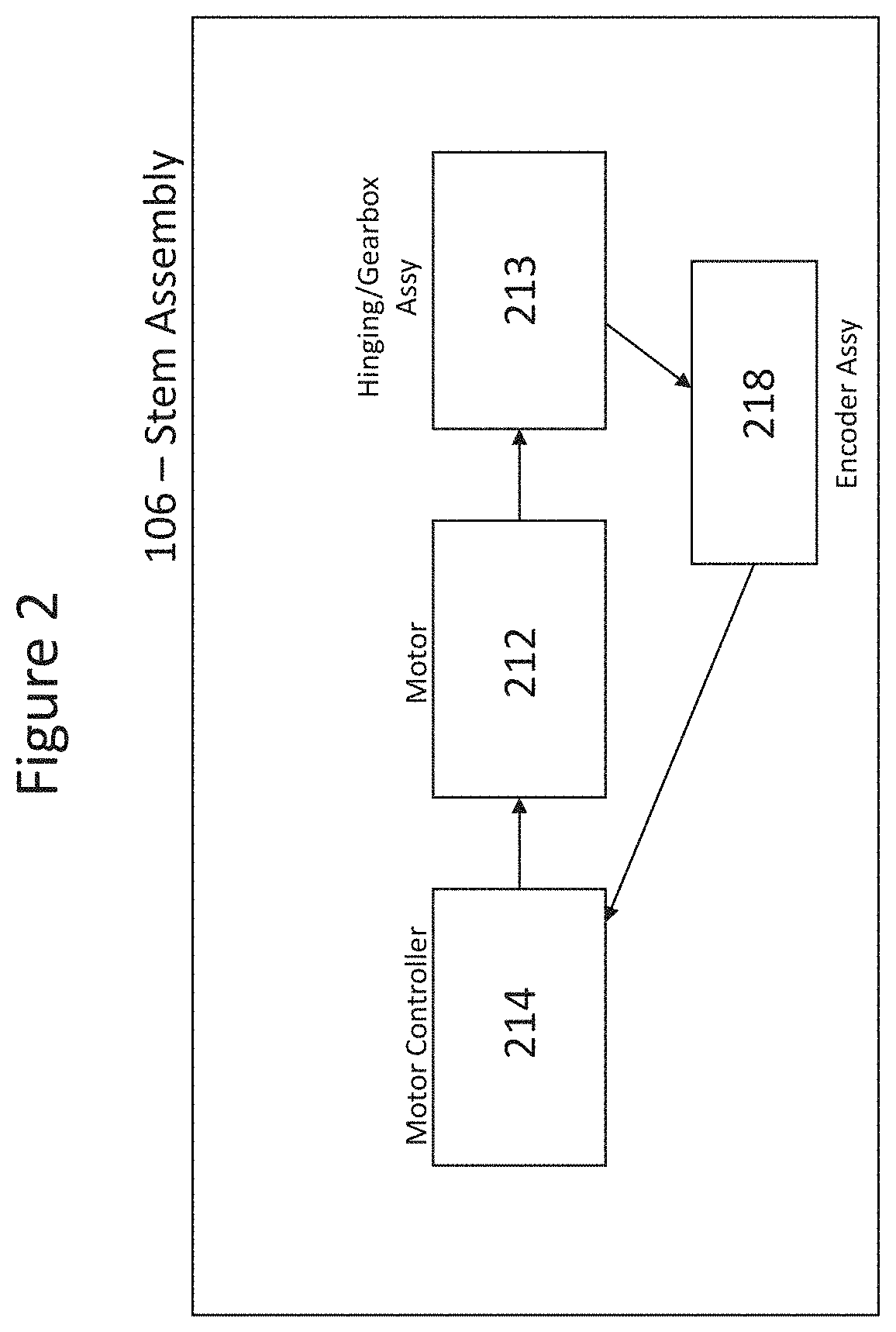

[0012] FIG. 2 illustrates a block diagram of a stem assembly according to embodiments;

[0013] FIG. 3A illustrates a base assembly according to embodiments;

[0014] FIG. 3B illustrates a housing and/or enclosure according to embodiments;

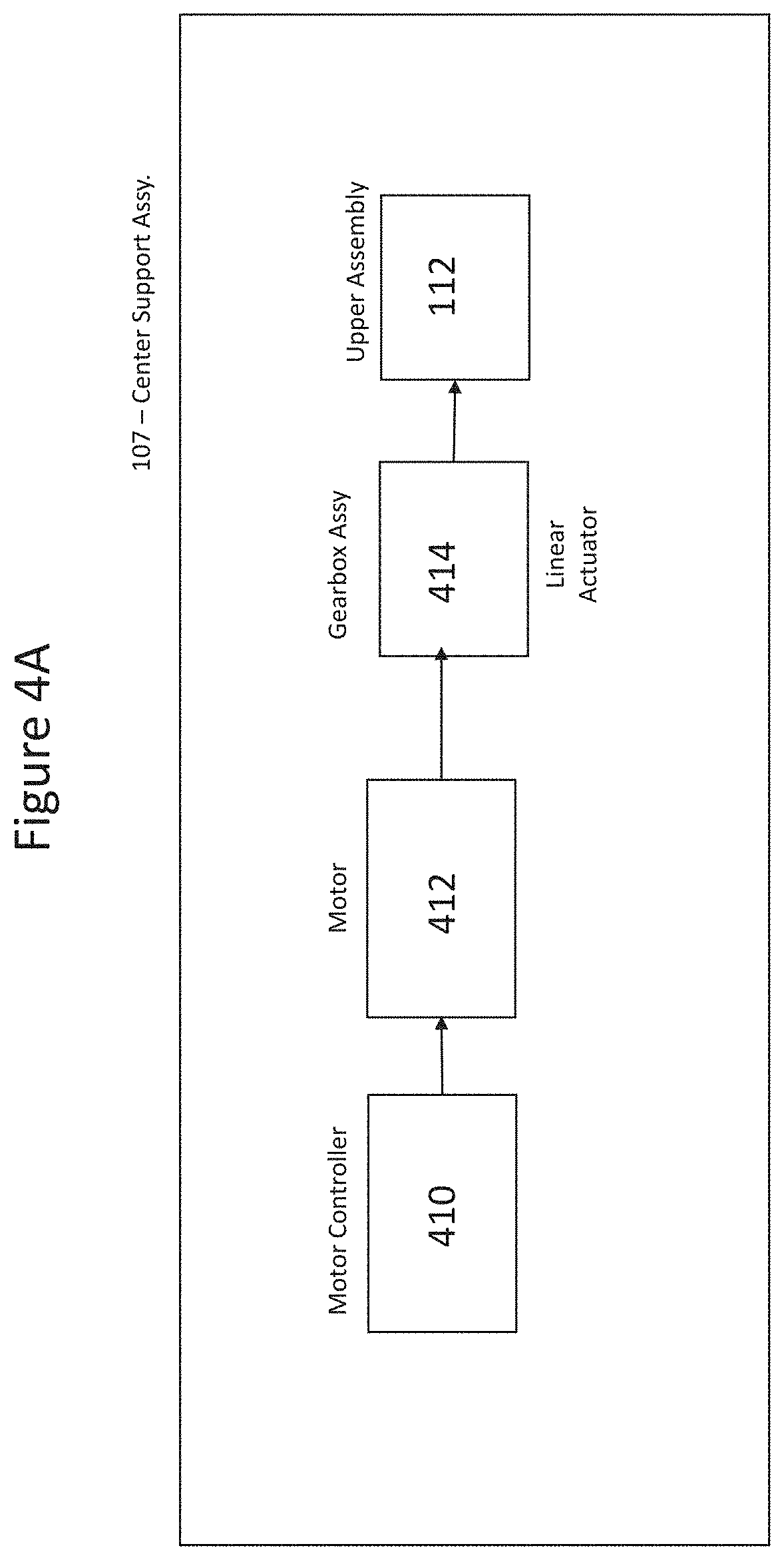

[0015] FIG. 4A illustrates a block diagram of a center support assembly motor control according to embodiments;

[0016] FIG. 4B illustrates a lower support motor assembly according to embodiments;

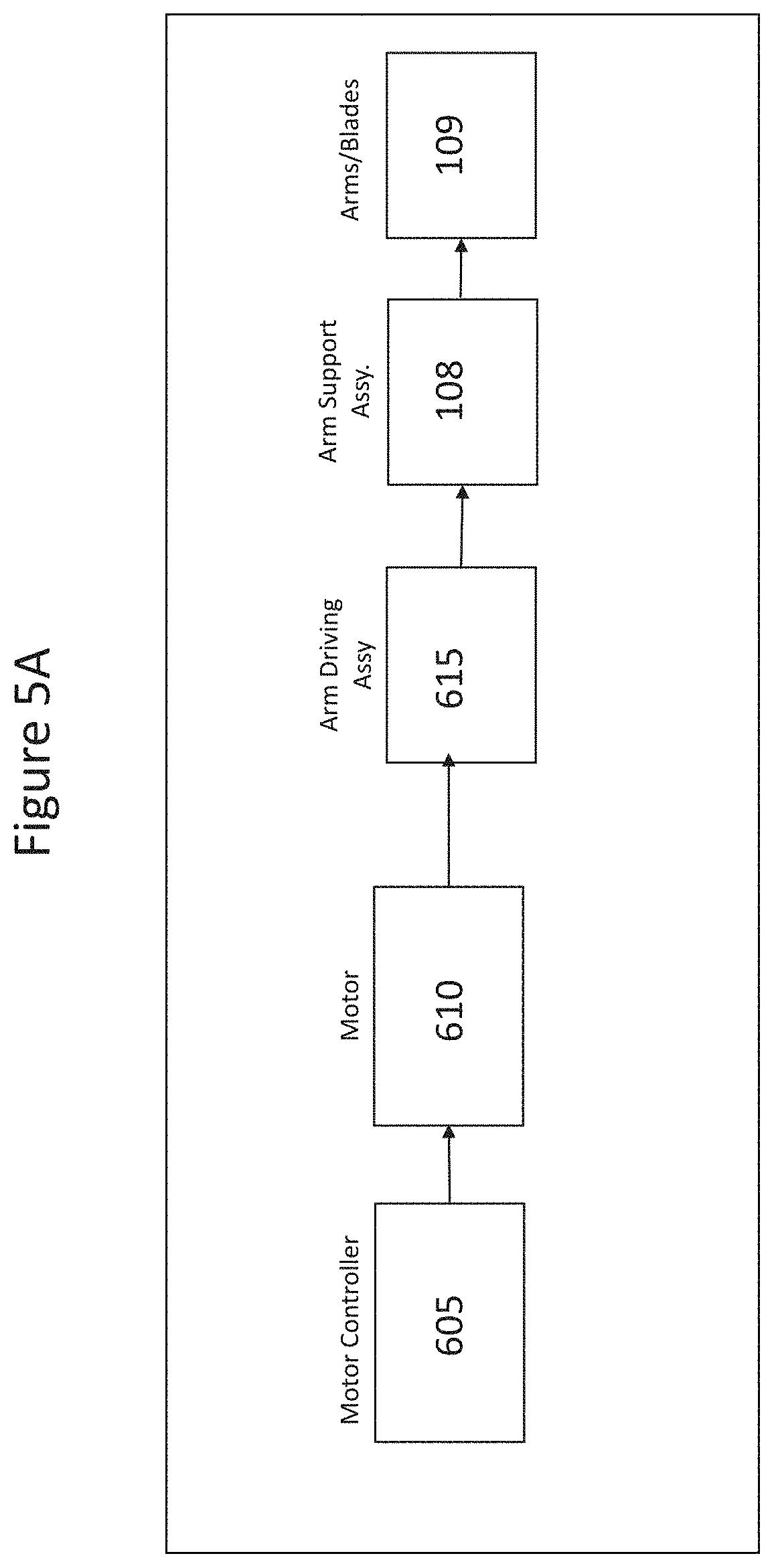

[0017] FIG. 5A illustrates a block diagram of an actuator or deployment motor in an intelligent umbrella or shading object according to embodiment;

[0018] FIG. 5B illustrates a block diagram of an actuator or deployment motor in an intelligent shading charging system according to embodiments;

[0019] FIG. 6A illustrates a shading object or intelligent umbrella with arm support assemblies and arms/blades in an open position and a closed positions;

[0020] FIG. 6B illustrates an intelligent shading charging system with arm support assemblies and arms/blades in an open position and a closed position;

[0021] FIG. 7 illustrates assemblies to deploy arms and/or blades according to embodiments;

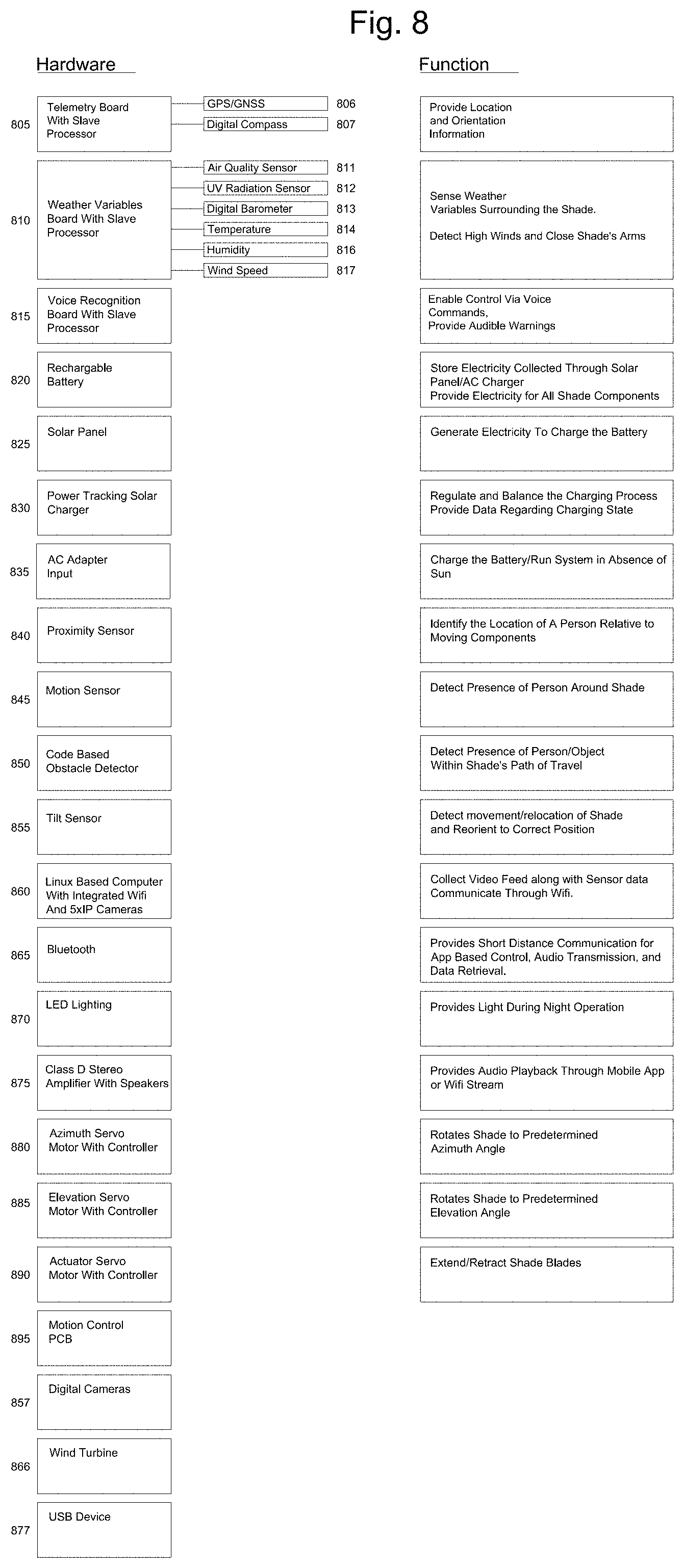

[0022] FIG. 8 illustrates a block diagram of a movement control PCB according to embodiments;

[0023] FIG. 9 illustrates a block diagram with data and command flow of a movement control PCB according to embodiments;

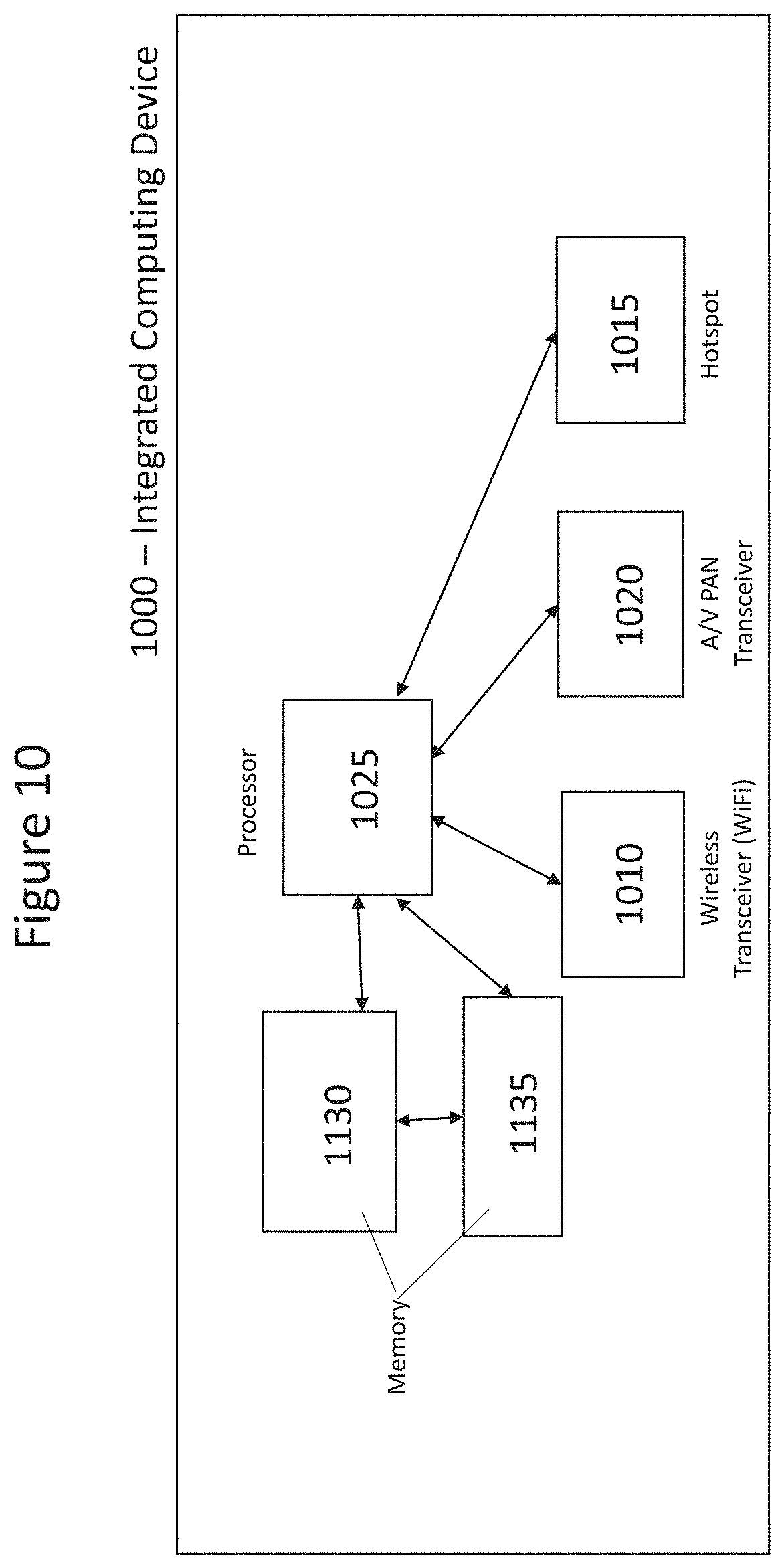

[0024] FIG. 10 illustrates a shading object or umbrella computing device according to embodiments;

[0025] FIG. 11 illustrates a lighting subsystem according to embodiments;

[0026] FIG. 12 illustrates a power subsystem according to embodiments;

[0027] FIG. 13 illustrates components and assemblies of a shading object umbrella according to embodiments;

[0028] FIGS. 13A and 13B illustrates placements of intelligent shading charging systems according to embodiments;

[0029] FIG. 14 is a block diagram of multiple assemblies and components or a shading object, intelligent umbrella, or intelligent shading charging system according to embodiments;

[0030] FIG. 15A illustrates an automated weather process according to embodiments;

[0031] FIG. 15B illustrates predicting weather conditions in a weather process according to embodiments;

[0032] FIG. 15C illustrates a weather data gathering process on a periodic basis according to embodiments;

[0033] FIG. 15D illustrates execution of a health process by a computing device in an intelligent umbrella or shading charging system according to embodiments;

[0034] FIG. 15E illustrates an energy process in a shading object, intelligent umbrella, and/or intelligent shading charging system implementing an energy process according to embodiments;

[0035] FIG. 15F illustrates energy generation and energy consumption process of an energy process in an intelligent umbrella and/or intelligent shading charging assembly according to embodiments;

[0036] FIG. 15G illustrates energy gathering for a plurality of devices according to embodiments

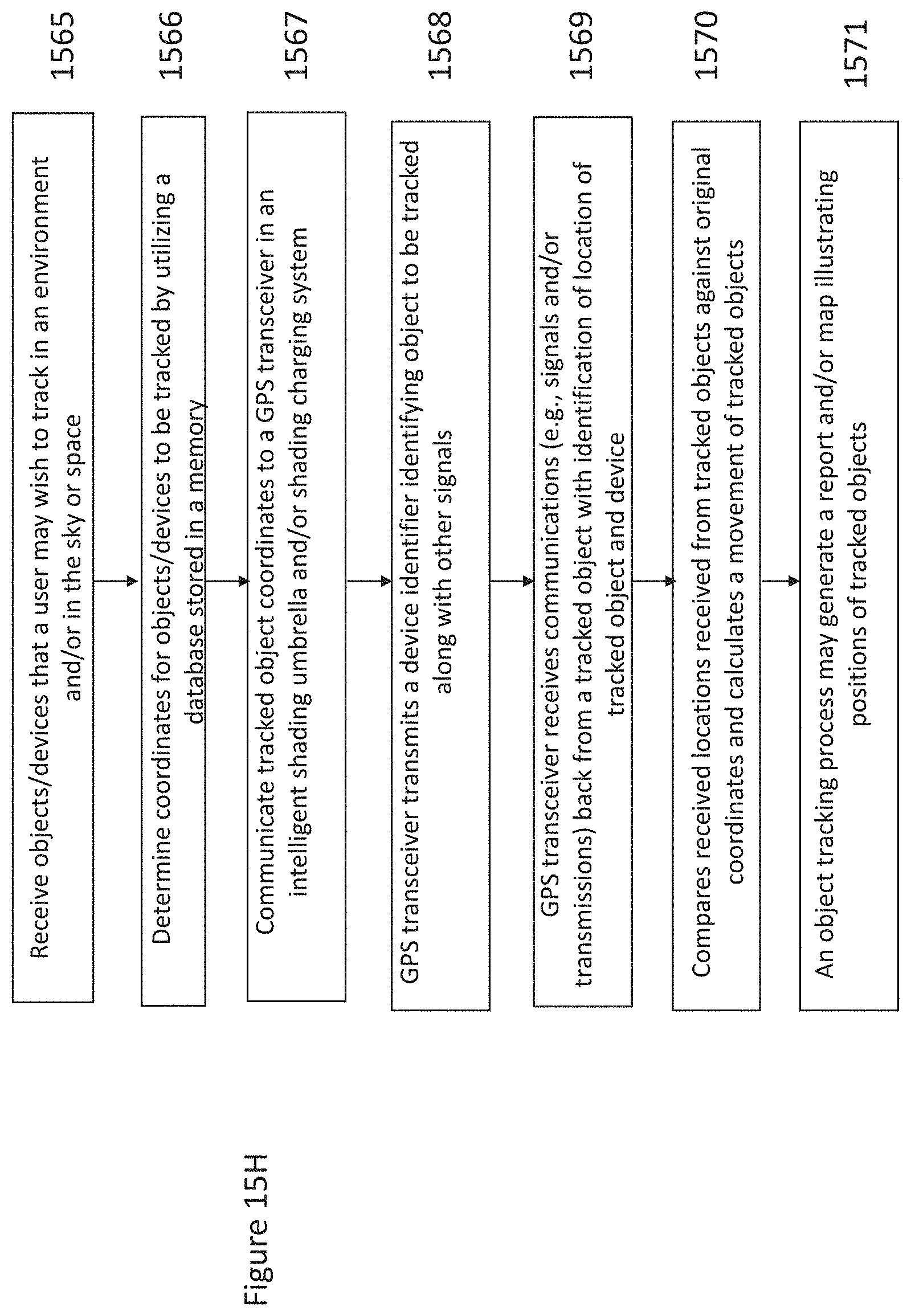

[0037] FIG. 15H illustrates object tracking in an energy process according to embodiments;

[0038] FIG. 15I illustrates a backup process for a shading object, an intelligent umbrella and/or shading charging system according to embodiments;

[0039] FIG. 16A is a flowchart of a facial recognition process according to an embodiment;

[0040] FIG. 16B illustrates an infrared detection process according to embodiments;

[0041] FIG. 16C illustrates a thermal detection process according to embodiments;

[0042] FIG. 16D illustrates a security process for an intelligent umbrella and/or intelligent shading charging systems according to embodiments;

[0043] FIG. 17A illustrates an intelligent umbrella comprising four cameras according to embodiments;

[0044] FIG. 17B illustrates an intelligent umbrella comprising two cameras according to embodiments;

[0045] FIG. 17C illustrates an intelligent umbrella comprising a camera at a first elevation and a camera at a second elevation;

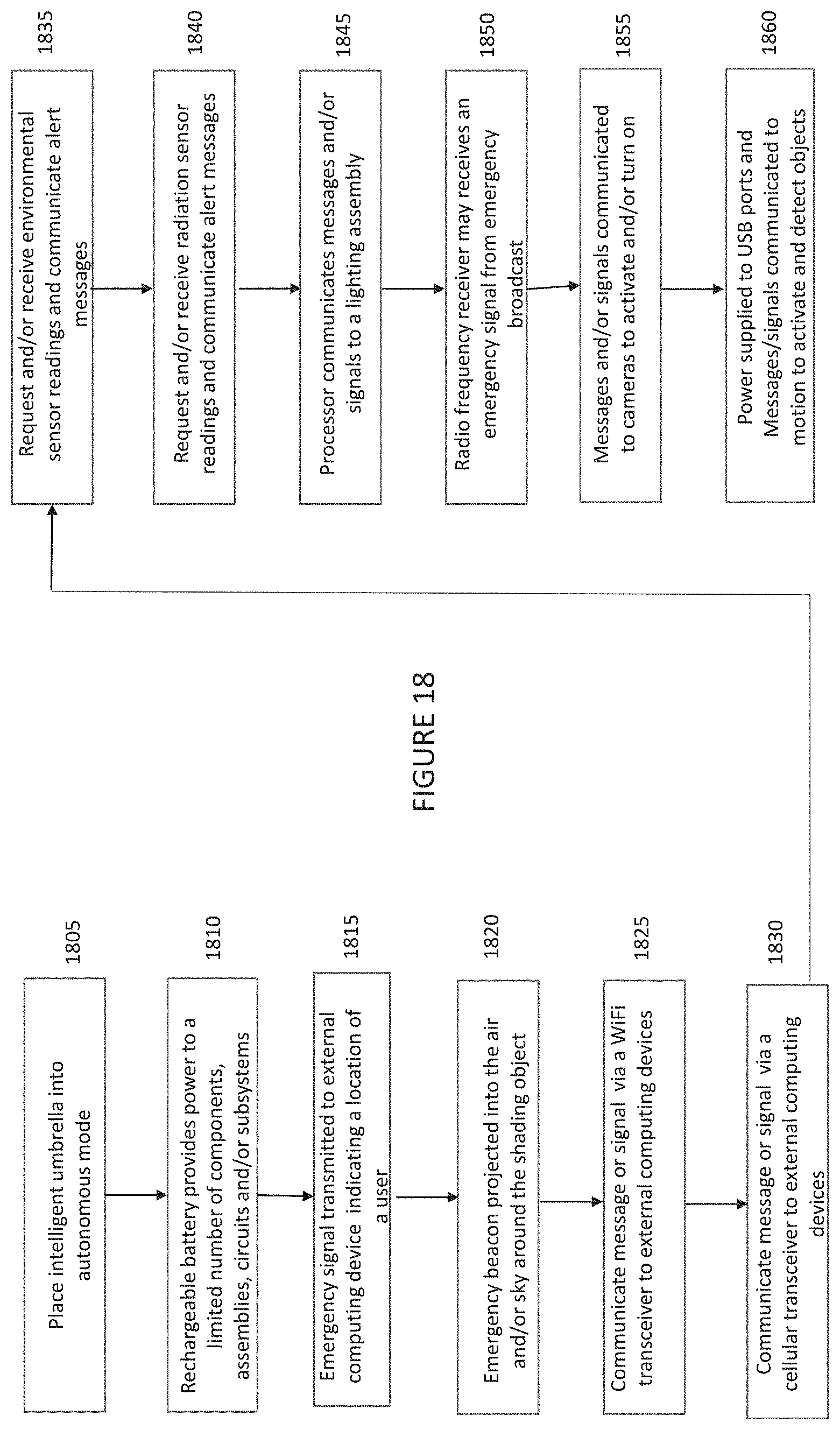

[0046] FIG. 18 illustrates operation of a shading object, intelligent umbrella and/or an intelligent shading charging system if no external power and/or solar power is available according to embodiments;

[0047] FIG. 18A illustrates a rechargeable battery and/a backup rechargeable battery providing power to selected assemblies and/or components according to embodiments;

[0048] FIG. 19 illustrates a touch screen recognition component according to embodiments; and

[0049] FIG. 20 illustrates placement of icons and/or buttons on a user interface screen of SMARTSHADE and/or SHADECRAFT installed or resident on a mobile communication device (e.g., smartphone) according to embodiments

DETAILED DESCRIPTION

[0050] In the following detailed description, numerous specific details are set forth to provide a thorough understanding of claimed subject matter. For purposes of explanation, specific numbers, systems and/or configurations are set forth, for example. However, it should be apparent to one skilled in the relevant art having benefit of this disclosure that claimed subject matter may be practiced without specific details. In other instances, well-known features may be omitted and/or simplified so as not to obscure claimed subject matter. While certain features have been illustrated and/or described herein, many modifications, substitutions, changes and/or equivalents may occur to those skilled in the art. It is, therefore, to be understood that claims are intended to cover any and all modifications and/or changes as fall within claimed subject matter.

[0051] References throughout this specification to one implementation, an implementation, implementations, examples, embodiments, one embodiment, an embodiment and/or the like means that a particular feature, structure, and/or characteristic described in connection with a particular implementation and/or embodiment is included in at least one implementation and/or embodiment of claimed subject matter. Thus, appearances of such phrases, for example, in various places throughout this specification are not necessarily intended to refer to the same implementation and/or to any one particular implementation described. Furthermore, it is to be understood that particular features, structures, functions, and/or characteristics described are capable of being combined in various ways in one or more implementations and, therefore, are within intended claim scope, for example. In general, of course, these and other issues vary with context. Therefore, particular context of description and/or usage provides helpful guidance regarding inferences to be drawn.

[0052] With advances in technology, it has become more typical to employ distributed computing approaches in which portions of a problem, such as signal processing of signal samples, for example, may be allocated among computing devices, including one or more clients or client devices, and/or one or more servers, via a computing and/or communications network, for example. A network may comprise two or more network devices and/or may couple network devices so that signal communications, such as in the form of signal packets and/or frames (e.g., comprising one or more signal samples), for example, may be exchanged, such as between a server and a client device and/or other types of devices, for example, including between wireless devices coupled and/or connected via a wireless network.

[0053] In this context, the term network device refers to any device capable of communicating via and/or as part of a network and may comprise a computing device. While network devices may be capable of sending and/or receiving signals (e.g., signal packets and/or frames), such as via a wired and/or wireless network, they may also be capable of performing arithmetic and/or logic operations, processing and/or storing signals (e.g., signal samples), such as in memory as physical memory states, and/or may, for example, operate as a server in various embodiments. Network devices capable of operating as a server, or otherwise, may include, as examples, rack-mounted servers, desktop computers, cloud-based servers, laptop computers, set top boxes, tablets, netbooks, smart phones, wearable devices, integrated devices combining two or more features of the foregoing devices, the like, or any combination thereof. It is noted that the terms, server, server device, server computing device, server computing platform and/or similar terms are used interchangeably. Similarly, the terms client, client device, client computing device, client computing platform and/or similar terms are also used interchangeably. While in some instances, for ease of description, these terms may be used in the singular, such as by referring to a "client device" or a "server device," the description is intended to encompass one or more client devices and/or one or more server devices, as appropriate. Along similar lines, references to a "database" are understood to mean, one or more databases, database servers, and/or portions thereof, as appropriate.

[0054] It should be understood that for ease of description a network device and/or networking device may be embodied and/or described in terms of a computing device. However, it should further be understood that this description should in no way be construed that claimed subject matter is limited to one embodiment, such as a computing device or a network device, and, instead, may be embodied as a variety of devices or combinations thereof.

[0055] Operations and/or processing, such as in association with networks, such as computing and/or communications networks, for example, may involve physical manipulations of physical quantities. Typically, although not necessarily, these quantities may take the form of electrical and/or magnetic signals capable of, for example, being stored, transferred, combined, processed, compared and/or otherwise manipulated. It has proven convenient, at times, principally for reasons of common usage, to refer to these signals as bits, data, values, elements, symbols, characters, terms, numbers, numerals and/or the like. It should be understood, however, that all of these and/or similar terms are to be associated with appropriate physical quantities and are intended to merely be convenient labels.

[0056] Likewise, in this context, the terms "coupled", "connected," and/or similar terms are used generically. It should be understood that these terms are not intended as synonyms. Rather, "connected" is used generically to indicate that two or more components, for example, are in direct physical, including electrical, contact; while, "coupled" is used generically to mean that two or more components are potentially in direct physical, including electrical, contact; however, "coupled" is also used generically to also mean that two or more components are not necessarily in direct contact, but nonetheless are able to co-operate and/or interact. The term "coupled" is also understood generically to mean indirectly connected, for example, in an appropriate context. In a context of this application, if signals, instructions, and/or commands are transmitted from one component (e.g., a controller or processor) to another component (or assembly), it is understood that signals, instructions, and/or commands may be transmitted directly to a component, or may pass through a number of other components on a way to a destination component. For example, a signal transmitted from a motor controller and/or processor to a motor (or other driving assembly) may pass through glue logic, an amplifier, and/or another component. Similarly, a signal communicated through solar cells and/or arrays may pass through a solar charging assembly and/or an amplifier or converter or other component on the way to a rechargeable battery, and a signal communicated from any one or a number of sensors to a controller and/or processor may pass through a sensor module, a conditioning module, an analog-to-digital controller, and/or a comparison module.

[0057] The terms, "and", "or", "and/or" and/or similar terms, as used herein, include a variety of meanings that also are expected to depend at least in part upon the particular context in which such terms are used. Typically, "or" if used to associate a list, such as A, B or C, is intended to mean A, B, and C, here used in the inclusive sense, as well as A, B or C, here used in the exclusive sense. In addition, the term "one or more" and/or similar terms is used to describe any feature, structure, and/or characteristic in the singular and/or is also used to describe a plurality and/or some other combination of features, structures and/or characteristics. Likewise, the term "based on" and/or similar terms are understood as not necessarily intending to convey an exclusive set of factors, but to allow for existence of additional factors not necessarily expressly described. Of course, for all of the foregoing, particular context of description and/or usage provides helpful guidance regarding inferences to be drawn. It should be noted that the following description merely provides one or more illustrative examples and claimed subject matter is not limited to these one or more illustrative examples; however, again, particular context of description and/or usage provides helpful guidance regarding inferences to be drawn.

[0058] A network may also include now known, and/or to be later developed arrangements and/or improvements, including, for example, past, present and/or future mass storage, such as network attached storage (NAS), cloud storage, a storage area network (SAN), and/or other forms of computing and/or device readable media, for example. A network may include a portion of the Internet, one or more local area networks (LANs), one or more wide area networks (WANs), wire-line type connections, one or more personal area networks (PANs), wireless type connections, other network connections, or any combination thereof. Thus, a network may be worldwide in scope and/or extent.

[0059] The Internet and/or a global communications network may refer to a decentralized global network of interoperable networks that comply with the Internet Protocol (IP). It is noted that there are several versions of the Internet Protocol. Here, the term Internet Protocol, IP, and/or similar terms, is intended to refer to any version, now known and/or later developed of the Internet Protocol. The Internet may include local area networks (LANs), wide area networks (WANs), wireless networks, and/or long haul public networks that, for example, may allow signal packets and/or frames to be communicated between LANs. The term World Wide Web (WWW or Web) and/or similar terms may also be used, although it refers to a part of the Internet that complies with the Hypertext Transfer Protocol (HTTP). For example, network devices and/or computing devices may engage in an HTTP session through an exchange of appropriately compatible and/or compliant signal packets and/or frames. Here, the term Hypertext Transfer Protocol, HTTP, and/or similar terms is intended to refer to any version, now known and/or later developed. It is likewise noted that in various places in this document substitution of the term Internet with the term World Wide Web (`Web`) may be made without a significant departure in meaning and may, therefore, not be inappropriate in that the statement would remain correct with such a substitution.

[0060] Although claimed subject matter is not in particular limited in scope to the Internet and/or to the Web; nonetheless, the Internet and/or the Web may without limitation provide a useful example of an embodiment at least for purposes of illustration. As indicated, the Internet and/or the Web may comprise a worldwide system of interoperable networks, including interoperable devices within those networks. A content delivery server and/or the Internet and/or the Web, therefore, in this context, may comprise an service that organizes stored content, such as, for example, text, images, video, etc., through the use of hypermedia, for example. A HyperText Markup Language ("HTML"), for example, may be utilized to specify content and/or to specify a format for hypermedia type content, such as in the form of a file and/or an "electronic document," such as a Web page, for example. An Extensible Markup Language ("XML") may also be utilized to specify content and/or format of hypermedia type content, such as in the form of a file or an "electronic document," such as a Web page, in an embodiment. HTML and/or XML are merely example languages provided as illustrations and intended to refer to any version, now known and/or developed at another time and claimed subject matter is not intended to be limited to examples provided as illustrations, of course.

[0061] Also as used herein, one or more parameters may be descriptive of a collection of signal samples, such as one or more electronic documents, and exist in the form of physical signals and/or physical states, such as memory states. For example, one or more parameters, such as referring to an electronic document comprising an image, may include parameters, such as time of day at which an image was captured, latitude and longitude of an image capture device, such as a camera, for example, etc. Claimed subject matter is intended to embrace meaningful, descriptive parameters in any format, so long as the one or more parameters comprise physical signals and/or states, which may include, as parameter examples, name of the collection of signals and/or states.

[0062] Some portions of the detailed description which follow are presented in terms of algorithms or symbolic representations of operations on binary digital signals stored within a memory of a specific apparatus or special purpose computing device or platform. In the context of this particular specification, the term specific apparatus or the like includes a general purpose computer once it is programmed to perform particular functions pursuant to instructions from program software. In embodiments, a shading object may comprise a shading object computing device installed and/or integrated within or as part of a shading object, intelligent umbrella and/or intelligent shading charging system. Algorithmic descriptions or symbolic representations are examples of techniques used by those of ordinary skill in the signal processing or related arts to convey the substance of their work to others skilled in the art. An algorithm is here, and generally, considered to be a self-consistent sequence of operations or similar signal processing leading to a desired result. In this context, operations or processing involve physical manipulation of physical quantities. Typically, although not necessarily, such quantities may take the form of electrical or magnetic signals capable of being stored, transferred, combined, compared or otherwise manipulated within a memory of a computing device within a shading object, umbrella and/or shading charging system.

[0063] It has proven convenient at times, principally for reasons of common usage, to refer to such signals as bits, data, values, elements, symbols, characters, terms, numbers, numerals or the like, and that these are conventional labels. Unless specifically stated otherwise, it is appreciated that throughout this specification discussions utilizing terms such as "processing," "computing," "calculating," "determining" or the like may refer to actions or processes of a specific apparatus, such as a special purpose computer or a similar special purpose electronic computing device (e.g., such as a shading object, umbrella and/or shading charging computing device). In the context of this specification, therefore, a special purpose computer or a similar special purpose electronic computing device (e.g., a shading object, umbrella and/or shading charging computing device) may be capable of manipulating or transforming signals (electronic and/or magnetic) in memories (or components thereof), other storage devices, transmission devices sound reproduction devices, and/or display devices.

[0064] In embodiments, a controller and/or a processor typically performs a series of instructions resulting in data manipulation. In embodiments, a microcontroller or microprocessor may be a compact microcomputer designed to govern the operation of embedded systems in electronic devices, e.g., an intelligent, automated shading object, umbrella, and/or shading charging systems, and various other electronic and mechanical devices coupled thereto or installed thereon. Microcontrollers may include processors, microprocessors, and other electronic components. Controller may be a commercially available processor such as an Intel Pentium, Motorola PowerPC, SGI MIPS, Sun UltraSPARC, or Hewlett-Packard PA-RISC processor, but may be any type of application-specific and/or specifically designed processor or controller. In embodiments, a processor and/or controller may be connected to other system elements, including one or more memory devices, by a bus. Usually, a processor or controller, may execute an operating system which may be, for example, a Windows-based operating system (Microsoft), a MAC OS System X operating system (Apple Computer), one of many Linux-based operating system distributions (e.g., an open source operating system) a Solaris operating system (Sun), a portable electronic device operating system (e.g., mobile phone operating systems), and/or a UNIX operating systems. Embodiments may not be limited to any particular implementation of a controller and/or processor, and/or operating system.

[0065] The specification may refer to a shading object as an apparatus that provides shade to a user from weather elements such as sun, wind, rain, hail, and/or other environmental conditions. In embodiments, a shading object may be referred to as an intelligent shading object, an intelligent umbrella, and/or intelligent shading charging system. In embodiments, a shading object may be referred to as automated shading object, automated umbrella, and/or automated shading charging system. The automated intelligent shading object may also be referred to as a parasol, intelligent umbrella, sun shade, outdoor shade furniture, sun screen, sun shelter, awning, sun cover, sun marquee, brolly and other similar names, which may all be utilized interchangeably in this application. Shading objects which also have electric vehicle charging capabilities may also be referred to as intelligent shading charging systems. These terms may be utilized interchangeably throughout the specification. In embodiments, many features, functions, and/or operations may occur automatically, without input from a user and/or operator. In embodiments, many features, functions, and/or operations may occur via voice control and/or via control via computer-readable instructions stored in a memory and executable by a processor in response to voice control. In embodiments, many features, functions and/or operations may occur via a remote and/or separate computing device having computer-readable instructions stored in a memory and executable by a processor that communicates with a shading object and/or shading charging system to control operations. The shading objects, intelligent umbrellas and shading charging systems described herein comprises many novel and non-obvious features, which are described in detail in U.S. non-provisional patent application Ser. No. 15/212,173, filed Jul. 15, 2016, entitled "Intelligent Charging Shading Systems," U.S. patent application Ser. No. 14/810,380, entitled "Intelligent Shading Objects", inventor Armen Sevada Gharabegian, filed Jul. 27, 2015, and U.S. Provisional Patent Application Ser. No. 62/165,869, filed May 22, 2015, the disclosures of which are hereby incorporated by reference.

[0066] FIG. 1A illustrates an intelligent shading object according to embodiments. In embodiments, an intelligent shading object and/or umbrella may comprise a base assembly 105, a stem assembly 106, a central support assembly 107 (including a lower assembly, a hinge assembly and/or gearbox, and/or an upper assembly), arm support assemblies 108, arms/blades 109, and/or a shading fabric 715. In embodiments, a stem assembly 106 (and a coupled central support assembly, arm support assemblies, and/or blades) may rotate within a base assembly around a vertical axis. In embodiments, an upper assembly of a center support assembly 107 may rotate up to a right angle with respect to a lower assembly of the center support assembly 107 via a gearbox or hinging mechanism, and a second motor. In embodiments, arm support assemblies 109 may deploy and/or extend from a center support assembly 107 to open a shading object. In embodiments, rotation of a stem assembly 106 may rotate automatically within a base assembly 105, an upper assembly may rotate automatically with respect to a lower assembly, and arm support assemblies 109 may automatically deploy and/or retract in response to commands initiated by a processor, controller and/or computing device. In embodiments, detachable arms/blades 109 may be attached or coupled to arm support assemblies 108. In embodiments, a detachable shading fabric 715 may be attached or coupled to arms/blades 109.

[0067] FIGS. 1A and 1B illustrates a shading object or shading object device according to embodiments. In embodiments, a shading object 100 may comprise a base assembly 105, a stem assembly 106, a center support assembly 107, one or more supporting arm assemblies 108, one or more arms/blades 109, solar panels and or a shading fabric (not shown). In embodiments, a stem assembly 106, a center support assembly 107, one or more supporting arm assemblies 108, and/or one or more arms/blades 109 may be referred to as an umbrella support assembly, a shading system body and/or shading subsystem. In embodiments, a central support assembly 107 may comprise an upper assembly 112, a lower assembly 113 and a hinging assembly and/or gearbox 114, where the hinging assembly and/or gearbox assembly 114 may connect and/or couple the upper assembly 112 to the lower assembly 113. In embodiments, a base assembly 105 may rest on a ground surface in an outdoor environment. A ground surface may be a floor, a patio, grass, sand, or other outdoor environments surfaces. In embodiments, a stem assembly 106 may be placed into a top portion of a base assembly 105.

[0068] FIG. 3A illustrates a base assembly according to embodiments. A base assembly as illustrated in FIG. 3A and FIGS. 1A and 1B is described in detailed in U.S. non-provisional patent application Ser. No. 15/160,856, filed May 20, 2016, entitled "Automated Intelligent Shading Objects and Computer-Readable Instructions for Interfacing With, Communicating With and Controlling a Shading Object," and U.S. non-provisional patent application Ser. No. 15/160,822, filed May 20, 2016, entitled "Intelligent Shading Objects with Integrated Computing Device," the disclosures of which are both hereby incorporated by reference.

[0069] In embodiments, a base assembly 105 may have an opening (e.g., a circular or oval opening) into which a stem assembly 106 may be placed. FIG. 2 illustrates a block diagram of a stem assembly according to embodiments. In embodiments, a stem assembly may be referred to as an automatic and/or motorized stem assembly. In embodiments, a stem assembly 106 may comprise a stem body 211 and a first motor assembly. In embodiments, a first motor assembly may comprise a first motor 212, a gear box assembly and/or hinging assembly 213, and/or a first motor controller 214. Although a gearbox assembly and/or hinging assembly is discussed, other connecting assemblies, gearing assemblies, actuators, etc., may be utilized. In embodiments, a first motor controller 214 may also be referred to as a motor driver and within this specification, terms "motor driver" and "motor controller" may be used interchangeably. In embodiments, a first motor controller 214 may receive commands, instructions and/or signals requesting movement of a shading system around an azimuth axis. In embodiments, a shading system body 211 may rotate (e.g., may rotate between 0 and 360 degrees about a vertical axis formed by a base assembly 105, a stem assembly 106, and/or a central support assembly 107). Reference number 140 (FIG. 1B) illustrates a rotation of a shading system body about a vertical axis according to embodiments. In embodiments, a shading object stem assembly 106 may rotate around a vertical axis, such as vertical axis 730 in FIG. 7. In embodiments, a shading object stem assembly may rotate 360 degrees about such a vertical axis. In embodiments, a shading object stem assembly 106 may rotate up to 270 degrees and/or 180 degrees about a vertical axis. In embodiments, a shading object stem assembly 106 may be limited by detents, stops and/or limiters in an opening of a base assembly 105. In embodiments, a stem assembly encoder 218 may provide location and/or position feedback to a first motor controller 214. In other words, an encoder 218 may verify that a certain distance and/or position has been moved by a base assembly 105 from an original position. In embodiments, encoders may be utilized in motor systems in order to feedback position and/or distance information to motor controllers and/or motors to verify a correct position has been turned. In embodiments, encoders (which may be utilized with motors or motor controllers) may have a number of positions and/or steps and may compare how much an output shaft and/or gearbox assembly has moved in order to feedback information to a motor controller. The embodiments described herein provide a benefit as compared to prior art umbrellas because the intelligent shading umbrella, due to its rotation (e.g., 360 degree rotation), may orient itself with respect to any position in a surrounding area. In embodiments, rotation may occur automatically in response to signals from a processor, controller and/or a component in a computing device (integrated within the umbrella and/or received from an external and/or separate computing device).

[0070] In embodiments, a first motor controller 214 may communicate commands and/or signals to a first motor 212 to cause movement of an umbrella support assembly or shading system body (e.g., a stem assembly 106, central support assembly 107, shading arm supports 108, and/or arms/blades 109) about an azimuth axis. In this illustrative embodiment, a base assembly 105 may remain stationary while the shading system boy rotates within the base assembly 105. In other words, a shading system body is placed in an opening of a base assembly 105 and rotates while the base assembly remains stationary. In embodiments, a first motor 212 may be coupled to a gearbox assembly 213. In embodiments, a gearbox assembly 213 may comprise a planetary gearbox assembly. A planetary gearbox assembly may be comprise a central sun gear, a planet carrier with one or more planet gears and an annulus (or outer ring). In embodiments, planet gears may mesh with a sun gear while outer rings teeth may mesh with planet gears. In embodiments, a planetary gearbox assembly may comprise a sun gear as an input, an annulus as an output and a planet carrier (one or more planet gears) remaining stationary. In embodiments, an input shaft may rotate a sun gear, planet gears may rotate on their own axes, and may simultaneously apply a torque to a rotating planet carrier that applies torque to an output shaft (which in this case is the annulus). In embodiments, a planetary gearbox assembly and a first motor 212 may be connected and/or adhered to a stem assembly 105. In embodiments, an output shaft from a gearbox assembly 213 may be connected to a base assembly 105 (e.g., an opening of a base assembly). In embodiments, because a base assembly 105 is stationary, torque on an output shaft of a gearbox assembly 213 may be initiated by a first motor 212 to cause a stem assembly 106 to rotate. In embodiments, other gearbox assemblies and/or hinging assemblies may also be utilized to utilize an output of a motor to cause a stem assembly 106 (and hence an umbrella support assembly) to rotate within a base assembly 105. In embodiments, a first motor 212 may comprise a pneumatic motor. In other embodiments, a first motor 212 may comprise a servo motor and/or a stepper motor.

[0071] In embodiments, a stem assembly 106 may be coupled and/or connected to a center support assembly 107. In embodiments, as mentioned above, a stem assembly 106 and a center support assembly 107 may both be part of an umbrella support assembly. In embodiments, a center support assembly 107 may comprise an upper assembly 112, a second gearbox assembly (or a linear actuator or hinging assembly) 114, a lower assembly 113, a second motor 121, and/or a second motor controller 122. In embodiments, a second motor assembly may comprise a second motor controller 122 and a second motor 121, and maybe a second gearbox assembly or linear actuator 114. In embodiments, a center support assembly 107 may also comprise a motor control PCB which may have a second motor controller 122 mounted and/or installed thereon. In embodiments, an upper assembly 112 may be coupled or connected to a lower assembly 113 of the center support assembly 107 via a second gearbox assembly 113. In embodiments, a second gearbox assembly 113 and a second motor 121 connected thereto, may be connected to a lower assembly 113. In embodiments, an output shaft of a second gearbox assembly 114 may be connected to an upper assembly 112. In embodiments, as a second motor 121 operates and/or rotates, a second gearbox assembly 114 rotates an output shaft which causes an upper assembly 112 to rotate (either upwards or downwards) at a right angle from, or with respect to, a lower assembly 113. In embodiments, rotation of an output shaft which causes an upper assembly 112 to rotate with respect to a lower assembly may occur automatically in response to signals from a processor, controller and/or a component in a computing device (integrated within the umbrella and/or received from an external and/or separate computing device).

[0072] In embodiments utilizing a linear actuator as a hinging assembly 114, a steel rod may be coupled to an upper assembly 112 and/or a lower assembly 113 which causes a free hinging between an upper assembly 112 and a lower assembly 113. In embodiments, a linear actuator 114 may be coupled, connected, and/or attached to an upper assembly 112 and/or a lower assembly 113. In embodiments, as a second motor 121 operates and/or rotates a steel rod, an upper assembly 112 moves in an upward or downward direction with respect to a hinged connection (or hinging assembly) 114. In embodiments, a direction of movement is illustrated by reference number 160 in FIG. 1B. In embodiments, a direction of movement may be limited to approximately a right angle (e.g., approximately 90 degrees). In embodiments, an upper assembly 112 may move from a position where it is an extension of a lower assembly 113 (e.g., forming a vertical center support assembly 107) to a position wherein an upper assembly 112 is at a right angle from a lower assembly 113 (and also approximately parallel to a ground surface). In embodiments, movement may be limited by a right angle gearbox or right angle gearbox assembly 114. In embodiments, an upper assembly 112 and a lower assembly 113 may be perpendicular to a ground surface in one position (as is shown in FIG. 1A), but may move (as is shown by reference number 160) to track a solar light source, e.g., sun, (depending on location and time of day) so that an upper assembly 112 moves from a perpendicular position with respect to a ground surface to an angular position with respect to a ground surface and/or an angular position with respect to a lower assembly 113. In embodiments, an upper assembly tracking sun movement between a vertical location (top of sky) and a horizontal location (horizon) may depend on time and location. Tracking of a solar light source provides a benefit, as compared to prior art umbrellas, of automatically orienting a shading object or umbrella to positions of a sun in the sky (e.g., directly overhead, on a horizon as during sunrise and/or sunset), which may occur automatically.

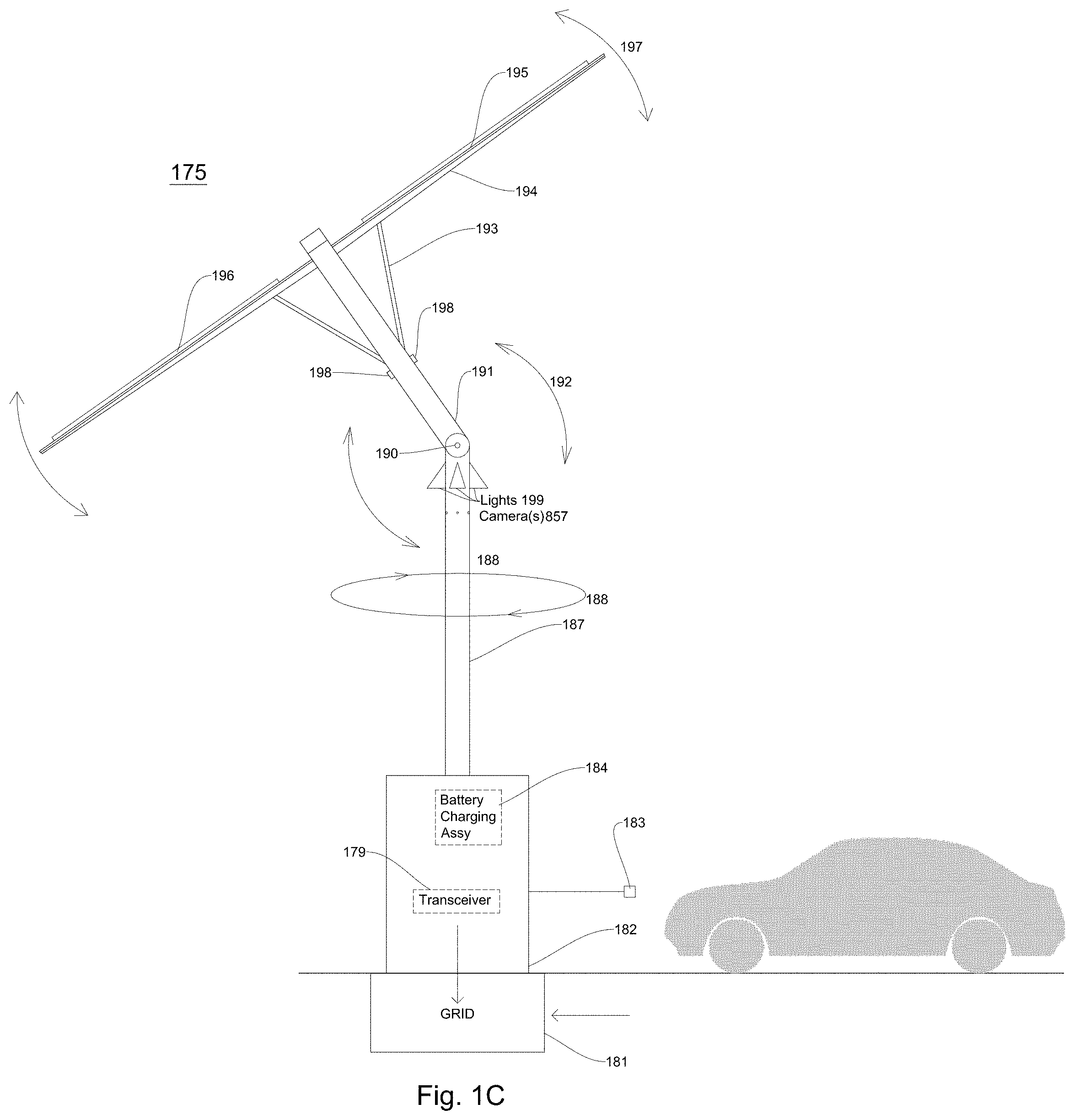

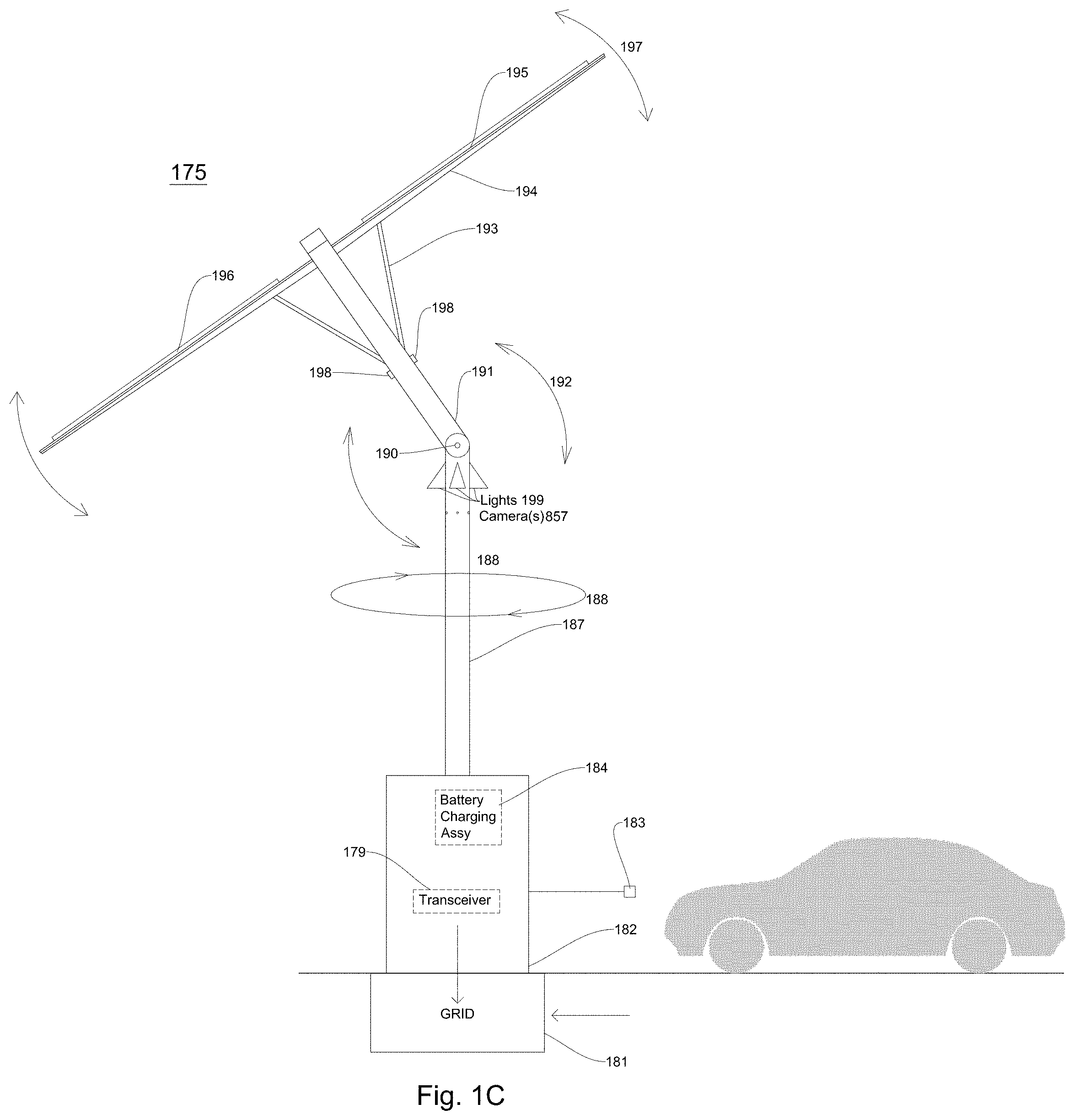

[0073] FIG. 1C illustrates an intelligent shading charging system according to embodiments. In embodiments, an intelligent shading charging system provides shade to a surrounding area, coverts solar energy to solar power, and charges a rechargeable battery, and/or provides power to a rechargeable power supply in an electric vehicle. In embodiments, an intelligent shading charging system 175 may comprise a rechargeable battery connection interface (not shown), a housing and/or enclosure 182 including a rechargeable battery 184 and/or a transceiver 179, a lower support assembly 187, cameras 857, which may be described in detail below, a hinging assembly or mechanism 190, and an upper support assembly 191. In embodiments, an intelligent shading charging system 175 further comprises a base assembly (not shown). In embodiments, an intelligent shading charging system 175 may comprise one or more arm support assemblies 193, one or more arms and/or blades 194 and a shading fabric 195. In embodiments, a shading fabric 195, arms 194, and/or arm support assemblies 193 may have one or more solar cells and/or arrays 196 attached thereto, integrated therein, and/or placed thereon. In embodiments, many movements of an intelligent shading charging system may be automated and/or occur automatically. In embodiments, an intelligent shading charging system 175 may be connected and/or coupled to a power delivery system (e.g., a power grid or a power mains) 181.

[0074] In embodiments, an automated intelligent shading charging assembly or system may comprise an interface assembly, a rechargeable apparatus (e.g., a rechargeable battery, a base assembly (not shown)) 184, a charging port and/or interface 183 for an electric vehicle, a lower support assembly 187, an upper support assembly 191, a hinging assembly and/or gearbox assembly 190, one or more arm support assemblies 193, one or more arms/blades 194, and/or a shading fabric 195. In embodiments, a lower support assembly 187 (and a coupled and/or connected hinging assembly 190, upper support assembly 193, one or more arm support assemblies 193, and/or arms/blades 194) may also rotate with respect to a housing and/or enclosure 182 around a vertical axis, as is illustrated by reference number 188 in FIG. 1C. In embodiments, an upper support assembly 191 may rotate up to a right angle (e.g., 90 degrees) with respect to a lower support assembly 187 of the center via a gearbox or hinging mechanism 190. In embodiments, one or more arm support assemblies 193 may deploy and/or extend from an upper support assembly 191 to open an intelligent shading charging system 175. In embodiments, one or more detachable arms/blades 194 may be attached or coupled to one or more arm support assemblies 193. In embodiments, a detachable shading fabric 195 may be attached or coupled to one or more arms/blades 194. In embodiments, a rotation of a lower support assembly 187 with respect to an enclosure 182 around a vertical axis, a rotation of an upper support assembly 191 with respect to a lower support assembly 187, and/or deployment/retraction of one or more arm support assemblies 193 may occur or be initiated in response to signals from a processor, controller and/or a component in a computing device (integrated within the umbrella and/or received from an external and/or separate computing device).

[0075] In embodiments, a housing/enclosure 182 may comprise rechargeable battery 184, an electric vehicle charging port 183, a transceiver 179, and/or a charging interface may rest or be inserted into a ground surface in an outdoor environment. In embodiments, a ground surface may be a floor, a patio, grass, sand, cement, an outdoor plaza, a parking garage surface, or other outdoor environment surfaces. In embodiments, a rechargeable battery interface may be integrated into a ground surface and a rechargeable battery 184 (or an enclosure or housing including a rechargeable battery) may rest on a ground surface.

[0076] In embodiments, a housing and/or enclosure 182 may comprise a rechargeable battery 183, a charging port 183, a wireless transceiver 179 and/or a base assembly. In embodiments, a rechargeable battery may be enclosed in a housing and/or enclosure 182. In embodiments, a base assembly may be enclosed in a housing and/or enclosure 182. In embodiments, a housing and/or enclosure 182 may be comprised of a cement, wood, metal, stainless steel, and/or hard plastic material.

[0077] In embodiments, a lower support assembly 187 may comprise one or more first lighting assemblies 199. In embodiments, one or more first light assemblies 199 may be integrated into a lower support assembly 187. In embodiments, one or more first light assemblies 199 may be connected, fastened, adhered, coupled, and/or attached to a lower support assembly 187. In embodiments, one or more light assemblies 199 may direct light downward towards a housing and/or enclosure 182 as well as an area surrounding an intelligent shading charging system 175. This feature allows an intelligent shading charging system to be utilized even at night or in a dark environment in a public environment and not utilize power from an electrical grid allowing electric vehicle users availability to recharge their batteries. In alternate embodiments, one or more first lighting assemblies 199 may be installed in an upper support assembly 191 and/or a shading fabric 196.

[0078] In embodiments, an intelligent shading charging system may comprise a second lighting subsystem 198. In embodiments, an intelligent shading charging system upper support assembly 191 may comprise a second lighting subsystem 198 integrated therein and/or installed and/or mounted thereon. In embodiments, a second lighting subsystem 198 may be connected, fastened, adhered, coupled, and/or attached to an upper support assembly 191. In embodiments, a second lighting subsystem 198 may comprise a plurality of LED lights. In embodiments, a second lighting subsystem 198 may be integrated into and/or attached to arm support assemblies 193. In embodiments, a second lighting subsystem 198 may direct light in a downward manner directly towards or at a certain angle to a ground surface, and/or where a charging electric vehicle is located. In embodiments, a second lighting subsystem 198 may direct light beams outward (e.g., in a horizontal direction) from an upper support assembly 191. In embodiments, for example, a second lighting subsystem 198 may direct light at a 90 degree angle from an upper support assembly 191 vertical axis. In embodiments, a second lighting subsystem 198 (e.g., one or more LED lights) may be installed in a swiveling assembly and the second lighting subsystem 198 may transmit and/or direct light (or light beams) at an angle of 5 to 185 degrees from an intelligent upper support vertical axis. In embodiments, one or more LED lights in a second lighting subsystem 198 may be directed to shine lines in an upward direction (e.g., more vertical direction) towards arms/blades 194 and/or a shading fabric 195 of an intelligent shading charging system. In embodiments, a bottom surface of a shading fabric 195, arms 194 and/or arm support assemblies 193, may reflect light beams from one or more LED lights of a second lighting subsystem 198 back to a surrounding area of an intelligent shading charging system. In an embodiment, a shading fabric 195, arms 194 and/or arm support assemblies 193 may have a reflective bottom surface to assist in reflecting light from the LED lights back to a shading area. In alternate embodiments, a second lighting subsystem 198 may be installed in or attached to a lower support assembly 187 and/or in a shading fabric 195. In embodiments, a first lighting subsystem 199 and a second lighting subsystem 198 may be controlled independently by a controller or processor in an intelligent shading object, umbrella and/or shading charging system. In embodiments, a controller and/or processor and/or a component in a computing device (integrated within the umbrella and/or received from an external and/or separate computing device) may automatically communicate a signal to a first lighting system 199 and/or a second lighting system and/or operation may be controlled automatically.

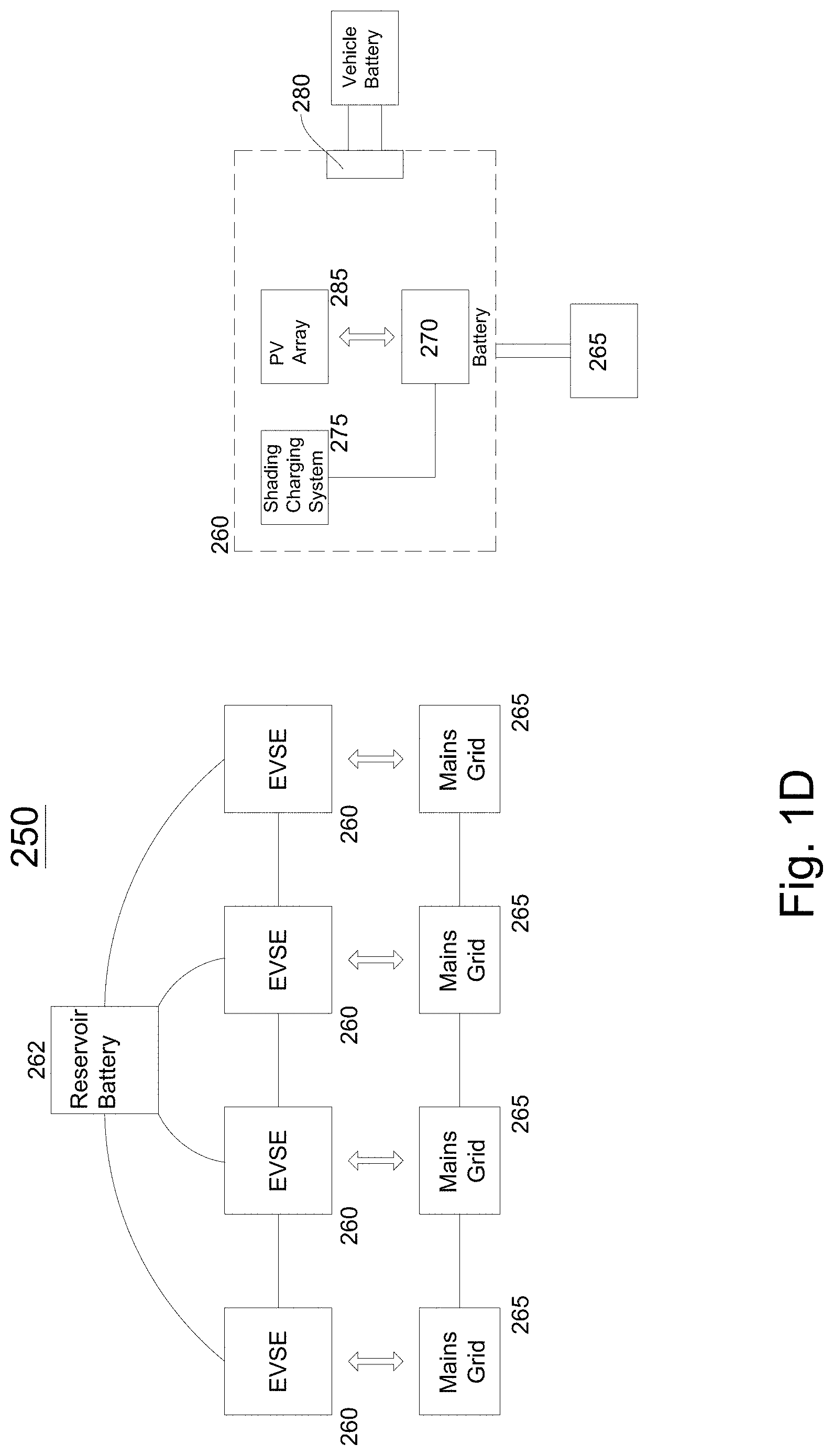

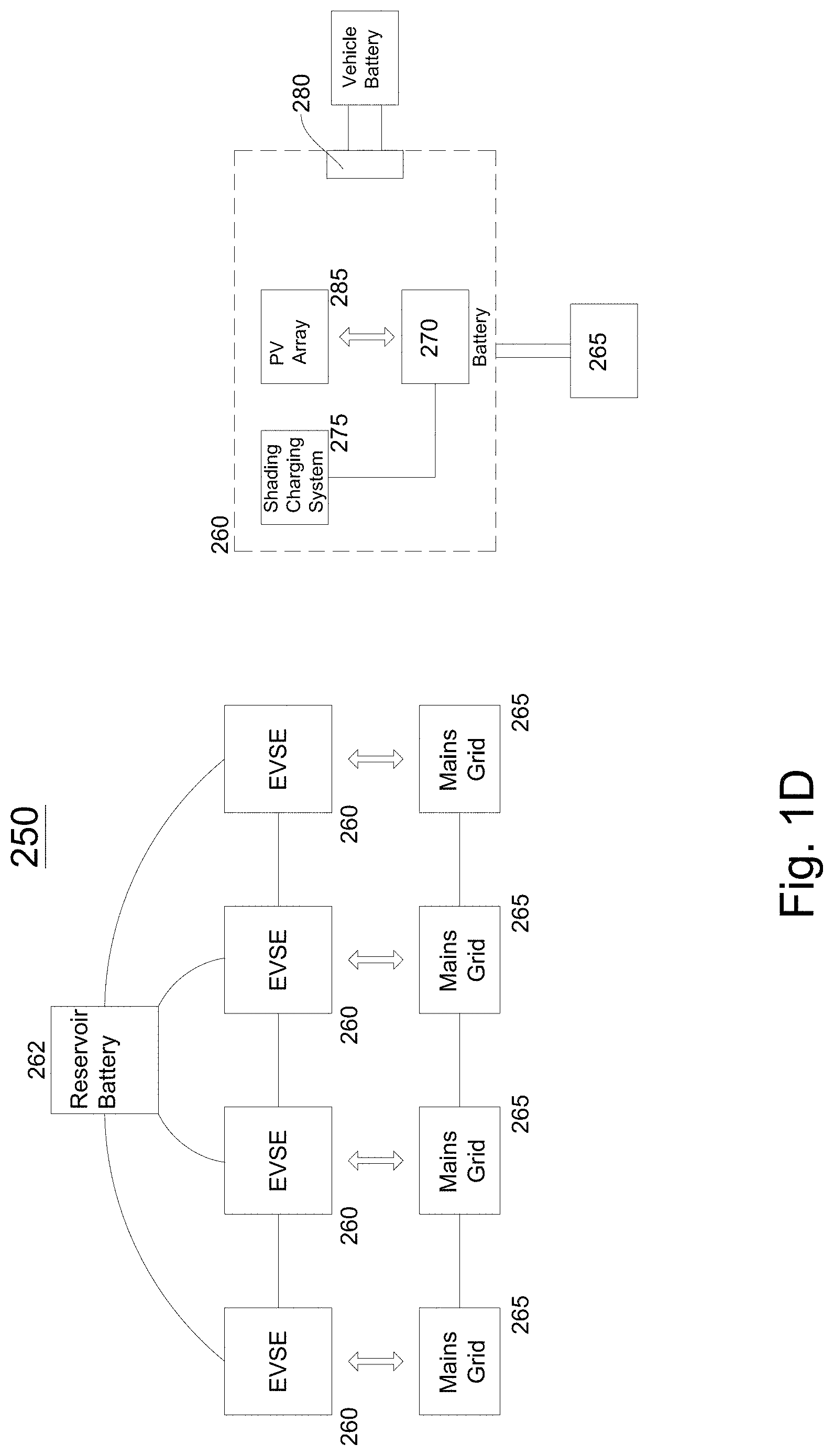

[0079] FIG. 1D illustrates a power charging station 250 comprising one or more automated intelligent shading charging systems installed in an outdoor or indoor environment according to embodiments. In embodiments, a power charging station 250 may comprise one or more intelligent shading charging systems 260 (or electric vehicle supply equipment (EVSE)) and one or more reservoir batteries 262 connected, attached and/or coupled to a power supply system 265 (e.g., a power mains grid). In embodiments, one or more intelligent shading charging systems 260 may comprise a rechargeable apparatus 270 (e.g., a rechargeable battery), an intelligent shading charging assembly or system 275 and a solar power system 285 (e.g., a photovoltaic (PV) array or a solar power array). In embodiments, an intelligent shading charging assembly or system 275 may be portable and/or detachable from an enclosure and/or housing 182 including a rechargeable apparatus 270 (e.g., rechargeable battery). In embodiments, an intelligent shading charging assembly or system 275 may be portable and/or detachable from a base assembly, which is coupled, connected, attached in a housing 182, which may also include a rechargeable apparatus 270 (battery).

[0080] As shown in FIG. 1D, an intelligent shading charging systems 260 may be coupled, connected and/or interfaced with a power supply system 265, such as an electricity mains grid 265. In embodiments, a power supply company may transfer, transmit or communicate power to an electricity mains grid 265. In embodiments, an intelligent shading charging system 260 may include a car charging interface 280. In embodiments, an electric vehicle charging interface 280 may be coupled and/or connected to vehicle battery (e.g., a rechargeable vehicle battery).

[0081] In embodiments, outdoor areas, such as a plaza, a parking garage, an open-air parking lot, an outdoor sports complex, a mall parking lot, a store parking lot, a school or university grounds and/or other large outdoor facilities may include one or more electric vehicle charging stations 250, where an electric vehicle charging station comprises a plurality of electric vehicle charging systems 260. FIG. 1D illustrates a station with four electrical vehicle charging systems connected to one another. In embodiments, an electric vehicle charging system may be referred to as an EVSE (electric vehicle supply equipment) and/or an intelligent shading charging system. In embodiments, a computing device or a plurality of computing devices may control operation of one or more intelligent shading charging systems at an electric vehicle charging station in an outdoor facility. In embodiments, the electric vehicle charging station (e.g., electric vehicle charging systems) may provide shade for electric vehicles and/or wireless communication capabilities (via wireless transceivers 179), which may be utilized to as interfaces to computing devices located in outdoor and/or indoor facilities having intelligent shading charging systems 260 and/or external computing devices.

[0082] In embodiments, for example, an operator of one or more intelligent shading charging systems 175 may charge users, electric vehicle users, or third parties for global communications network access (e.g., Internet usage access) as well as electric vehicle charging. In outdoor environments, e.g., as discussed above, this may provide an additional revenue source, (e.g., for a shopping mall). In addition, in embodiments, an intelligent shading charging system may comprise one or more cameras 857. In embodiments, cameras may provide images, videos and/or sounds of an outdoor area surrounding one or more intelligent shading charging systems. Therefore, an operator and/or user may also charge third parties for capturing and communicating images, videos, and/or sounds to third parties. Including such features on shading objects, intelligent umbrellas, and intelligent shading charging systems are a marked improvement for existing outdoor locations such as shopping parking lots, parking lots, outdoor sporting locations and event locations, which generally do not provide wireless communication capabilities, image/video/sound capture, and/or electric vehicle recharging capabilities alone and/or in combination.

[0083] In embodiments, an intelligent shading charging system 260, when offline (e.g., not providing power to an electric vehicle) may feed and/or transfer power to a power supply system, such as a mains power grid 265. In embodiments, an intelligent shading charging system may transfer up to 2, 4, 6 or 8 kilowatt hours of power to a mains power grid (e.g., becoming an energy source and/or provider). In embodiments, an electric vehicle charging station 250 may generate revenue by selling excess power back to the power company. In embodiments, current owners of outdoor facilities (e.g., parking lots, building plazas, athletic and/or event fields) having EVSEs have to pay a power company for power utilized to charge electric vehicle(s) (e.g., $100 a month/$1,200 a year or $200 a month or $2,400 a year). However, because an intelligent shading charging system 260 may obtain power from a solar energy source, like the sun, (e.g., converts solar energy into solar power), recharging an electric vehicle's battery may not cost an owner of an intelligent shading charging system 260 and/or station 250 anything or a minimal amount because the power is self-generating and there is little or no need to obtain power from a mains power grid 265. Thus, the intelligent shading charging system 260 (and/or power station 250) may multiply revenue opportunities if an electric vehicle charging station owner has a plurality of intelligent shading charging systems at a location (any of the outdoor locations listed above).

[0084] In embodiments, an intelligent shading charging system may charge an electric vehicle in two, four and/or eight hours if an electric vehicle arrives with little or no charge/power in its rechargeable battery. In embodiments, if one intelligent shading charging system does not have enough power in its rechargeable battery 184 to charge an electric vehicle connected to its charging port 183, a rechargeable battery in another intelligent shading charging system 260 at the electric vehicle charging station 250 (such as the one illustrated in FIG. 1D) may provide power to the rechargeable battery in the initial intelligent shading charging system. In embodiments, in an electric vehicle charging station, one or more intelligent shading charging systems 260 (and thus one or more rechargeable batteries) may be connected in series with a capability of providing backup power for other intelligent shading charging systems to power electric vehicles connected to the intelligent shading charging systems. In embodiments, a reservoir battery (and/or reservoir charging assembly) 262 may be charged by and/or provide power to connected and/or coupled shading charging systems 260. In embodiments, a reservoir battery may be a rechargeable battery, a capacitor or similar rechargeable assemblies.

[0085] In embodiments, an intelligent shading charging system 260 may comprise a power conversion subsystem or a power converter. In embodiments, a power conversion subsystem may receive power from a power supply system 265 and may output DC power to a rechargeable battery 270. In embodiments, a power conversion subsystem may comprise an AC-to-DC converter, a DC-to-DC converter and/or regulator and a digital control system. In embodiments, an AC-to-DC converter may convert AC power from an electrical grid to DC power. In embodiments, converted power from the AC-to-DC converter may be regulated by a DC-to-DC converter. The power output from the DC-to-DC converter may be transferred or transmitted to a rechargeable battery 270. In embodiments, a digital control system may controls operations of a DC-to-DC converter and an AC-to-DC converter.

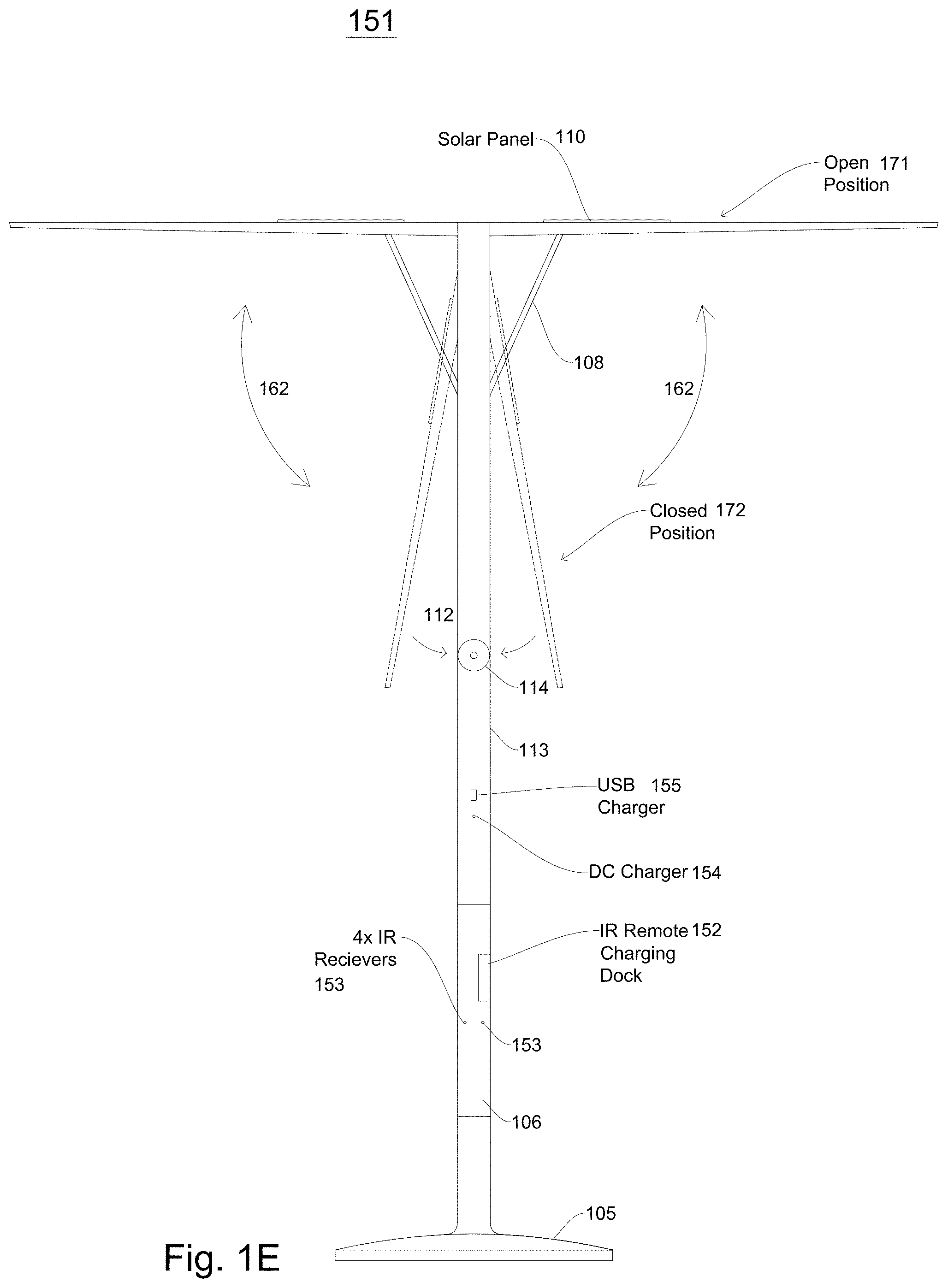

[0086] FIG. 1E illustrates a remote-controlled shading object or umbrella according to embodiments. In embodiments, a shading object or umbrella 151 comprises a base assembly 105, a stem assembly 106, a lower support assembly 113, an upper support assembly 112, a hinging assembly 114, one or more arm support assemblies 108, one or more arms 109, and/or one or more solar panels 110. In embodiments, shading object or umbrella 151 may comprise one or more infrared receivers 153, an infrared remote charging dock 152, a DC charger 155 and/or an universal serial bus (USB) charger 155.