Imaging Device And Method, Image Processing Device And Method, And Imaging Element

Mikami; Takuya ; et al.

U.S. patent application number 17/040956 was filed with the patent office on 2021-01-28 for imaging device and method, image processing device and method, and imaging element. The applicant listed for this patent is Sony Corporation. Invention is credited to Takuya Mikami, Yoshitaka Miyatani.

| Application Number | 20210029303 17/040956 |

| Document ID | / |

| Family ID | 1000005167602 |

| Filed Date | 2021-01-28 |

View All Diagrams

| United States Patent Application | 20210029303 |

| Kind Code | A1 |

| Mikami; Takuya ; et al. | January 28, 2021 |

IMAGING DEVICE AND METHOD, IMAGE PROCESSING DEVICE AND METHOD, AND IMAGING ELEMENT

Abstract

An imaging device and method with improved white balance control are disclosed. An image of an object is captured by an imaging element that includes a plurality of pixel output units that receives incident light that is incident without passing through either of an imaging lens and a pinhole. Each pixel output unit outputs one detection signal indicating an output pixel value modulated by an incident angle of the incident light, and on the basis of a detection signal acquired by the image capturing and acquired in the pixel output units, a white balance coefficient that is used for correcting a white balance of a restored image restored from the detection signal is set. The present disclosure can be applied to, for example, an imaging element, an imaging device, an image processing device, an electronic device, a system, or the like.

| Inventors: | Mikami; Takuya; (Tokyo, JP) ; Miyatani; Yoshitaka; (Tokyo, JP) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Family ID: | 1000005167602 | ||||||||||

| Appl. No.: | 17/040956 | ||||||||||

| Filed: | March 15, 2019 | ||||||||||

| PCT Filed: | March 15, 2019 | ||||||||||

| PCT NO: | PCT/JP2019/010765 | ||||||||||

| 371 Date: | September 23, 2020 |

| Current U.S. Class: | 1/1 |

| Current CPC Class: | H04N 5/243 20130101; H04N 5/23229 20130101 |

| International Class: | H04N 5/243 20060101 H04N005/243; H04N 5/232 20060101 H04N005/232 |

Foreign Application Data

| Date | Code | Application Number |

|---|---|---|

| Mar 30, 2018 | JP | 2018-068049 |

Claims

1. An imaging device comprising: an imaging element that includes a plurality of pixel output units that receives incident light that is incident without passing through either of an imaging lens and a pinhole and each outputs one detection signal indicating an output pixel value modulated by an incident angle of the incident light; and a coefficient setting unit that, on a basis of a detection signal acquired in the pixel output units of the imaging element, sets a white balance coefficient that is used for correcting a white balance of a restored image restored from the detection signal.

2. The imaging device according to claim 1, wherein the plurality of pixel output units has a configuration in which an incident angle directivity indicating a directivity of the output pixel value with respect to an incident angle of incident light from an object is independently settable in each of the pixel output units.

3. The imaging device according to claim 1, wherein the plurality of pixel output units has a configuration in which an incident angle directivity indicating a directivity with respect to an incident angle of incident light from an object is independently settable in each of the pixel output units.

4. The imaging device according to claim 1, wherein the coefficient setting unit sets the white balance coefficient on a basis of the detection signal acquired in a part of the pixel output units of the imaging element.

5. The imaging device according to claim 4, wherein the part of the pixel output units includes at least one pixel output unit of each color.

6. The imaging device according to claim 4, wherein the part of the pixel output units includes a single pixel output unit for each color.

7. The imaging device according to claim 4, wherein the part of the pixel output units includes a single pixel output unit or a plurality of pixel output units in which the detection signal is read out before other pixel output units of the plurality of pixel output units.

8. The imaging device according to claim 4, wherein the part of the pixel output units includes a plurality of pixel output units, and the coefficient setting unit sets the white balance coefficient on a basis of a value of the detection signal read from each of the part of the pixel output units.

9. The imaging device according to claim 4, wherein the part of the pixel output units is formed outside an effective pixel region where the detection signal is acquired.

10. The imaging device according to claim 9, wherein the part of the pixel output units is formed in a region around the effective pixel region.

11. The imaging device according to claim 4, wherein the part of the pixel output units includes a pixel output unit having a directivity in a predetermined direction.

12. The imaging device according to claim 11, wherein the part of the pixel output units includes a pixel output unit having a directivity in a forward direction in a center of the imaging element.

13. The imaging device according to claim 1, further comprising an association unit that associates the white balance coefficient set by the coefficient setting unit with the detection signal as metadata.

14. The imaging device according to claim 1, further comprising a restoration unit that restores the restored image with a corrected white balance from the detection signal using a restoration matrix.

15. The imaging device according to claim 14, wherein the restoration unit restores the restored image with a corrected white balance from the detection signal by using the restoration matrix with a coefficient corrected by the white balance coefficient.

16. The imaging device according to claim 14, wherein the restoration unit corrects using the white balance coefficient a pixel value of the restored image restored from the detection signal using the restoration matrix, to generate the restored image with a corrected white balance.

17. An imaging method comprising: capturing an image of an object by an imaging element that includes a plurality of pixel output units that receives incident light that is incident without passing through either of an imaging lens and a pinhole and each outputs one detection signal indicating an output pixel value modulated by an incident angle of the incident light; and on a basis of a detection signal acquired by the image capturing and acquired in the pixel output units of the imaging element, setting a white balance coefficient that is used for correcting a white balance of a restored image restored from the detection signal.

18. An image processing device comprising a coefficient setting unit that sets a white balance coefficient on a basis of a detection signal acquired by capturing an image of an object by an imaging element that includes a plurality of pixel output units that receives incident light that is incident without passing through either of an imaging lens and a pinhole and each outputs one detection signal indicating an output pixel value modulated by an incident angle of the incident light, in which the detection signal is acquired in the pixel output units, and the white balance coefficient is used for correcting a white balance of a restored image restored from the detection signal.

19. An image processing method comprising setting a white balance coefficient on a basis of a detection signal acquired by capturing an image of an object by an imaging element that includes a plurality of pixel output units that receives incident light that is incident without passing through either of an imaging lens and a pinhole and each outputs one detection signal indicating an output pixel value modulated by an incident angle of the incident light, in which the detection signal is acquired in the pixel output units, and the white balance coefficient is used for correcting a white balance of a restored image restored from the detection signal.

20. An imaging element comprising: a plurality of pixel output units that receives incident light that is incident without passing through either of an imaging lens and a pinhole and each outputs one detection signal indicating an output pixel value modulated by an incident angle of the incident light; and a coefficient setting unit that, on a basis of a detection signal acquired in the pixel output units, sets a white balance coefficient that is used for correcting a white balance of a restored image restored from the detection signal.

Description

TECHNICAL FIELD

[0001] The present technology relates to an imaging device and method, an image processing device and method, and an imaging element, and particularly to an imaging device and method, an image processing device and method, and an imaging element which enable white balance control to be performed more easily.

BACKGROUND ART

[0002] Conventionally, an imaging element is generally used in combination with an imaging lens that focuses light on the imaging element. The imaging lens guides light from an object plane to each pixel of the imaging element so as to reproduce a light intensity distribution of the object plane, so that the imaging element can acquire a detection signal of the level corresponding to the light intensity distribution in each pixel, and can acquire in its entirety a captured image of the object.

[0003] However, in this case, the physical size increases. Accordingly, an imaging element that does not use an imaging lens has been considered (see, for example, Patent Document 1, Patent Document 2, and Non-Patent Document 1). An imaging device to which such an imaging element is applied restores a restored image by performing, for example, a predetermined calculation on a detection image generated by the imaging element.

[0004] Incidentally, in the imaging device, in order to correct the color tone of a captured image, a process called white balance control is performed in some cases to correct a white reference in the captured image in consideration of influence of the color of light in the imaging environment. The white balance control is performed by using, for example, a ratio of integrated values acquired by integrating RGB pixel values for every color in a wide range to some extent.

CITATION LIST

Patent Document

[0005] Patent Document 1: WO 2016/123529 [0006] Patent Document 2: Japanese Translation of PCT International Application Publication No. 2016-510910

Non-Patent Document

[0006] [0007] Non-Patent Document 1: M. Salman Asif, four others, "Flatcam: Replacing lenses with masks and computation", "2015 IEEE International Conference on Computer Vision Workshop (ICCVW)", 2015, pp. 663 to 666.

SUMMARY OF THE INVENTION

Problems to be Solved by the Invention

[0008] However, in a case where the white balance control is performed by such a method in an imaging device to which an imaging element not using an imaging lens is applied as described in Patent Document 1, Patent Document 2, and Non-Patent Document 1, a restored image is restored from a detection image acquired with the imaging element, and the white balance is controlled by using a ratio of integrated values acquired by integrating RGB pixel values for every color in a wide range to some extent in the restored image, which requires complicated processing.

[0009] The present disclosure has been made in view of such circumstances, and is to make it possible to more easily perform white balance control.

Solutions to Problems

[0010] An imaging device according to one aspect of the present technology is an imaging device including an imaging element that includes a plurality of pixel output units that receives incident light that is incident without passing through either of an imaging lens and a pinhole and each outputs one detection signal indicating an output pixel value modulated by an incident angle of the incident light, and a coefficient setting unit that, on the basis of a detection signal acquired in the pixel output units of the imaging element, sets a white balance coefficient that is used for correcting a white balance of a restored image restored from the detection signal.

[0011] An imaging method according to one aspect of the present technology is an imaging method including capturing an image of an object by an imaging element that includes a plurality of pixel output units that receives incident light that is incident without passing through either of an imaging lens and a pinhole and each outputs one detection signal indicating an output pixel value modulated by an incident angle of the incident light, and on the basis of a detection signal acquired by the image capturing and acquired in the pixel output units of the imaging element, setting a white balance coefficient that is used for correcting a white balance of a restored image restored from the detection signal.

[0012] An image processing device according to another aspect of the present technology is an image processing device including a coefficient setting unit that sets a white balance coefficient on the basis of a detection signal acquired by capturing an image of an object by an imaging element that includes a plurality of pixel output units that receives incident light that is incident without passing through either of an imaging lens and a pinhole and each outputs one detection signal indicating an output pixel value modulated by an incident angle of the incident light, in which the detection signal is acquired in the pixel output units, and the white balance coefficient is used for correcting a white balance of a restored image restored from the detection signal.

[0013] An image processing method according to another aspect of the present technology is an image processing method including setting a white balance coefficient on the basis of a detection signal acquired by capturing an image of an object by an imaging element that includes a plurality of pixel output units that receives incident light that is incident without passing through either of an imaging lens and a pinhole and each outputs one detection signal indicating an output pixel value modulated by an incident angle of the incident light, in which the detection signal is acquired in the pixel output units, and the white balance coefficient is used for correcting a white balance of a restored image restored from the detection signal.

[0014] An imaging element according to still another aspect of the present technology is an imaging element including a plurality of pixel output units that receives incident light that is incident without passing through either of an imaging lens and a pinhole and each outputs one detection signal indicating an output pixel value modulated by an incident angle of the incident light, and a coefficient setting unit that, on the basis of a detection signal acquired in the pixel output units, sets a white balance coefficient that is used for correcting a white balance of a restored image restored from the detection signal.

[0015] In the imaging device and method according to one aspect of the present technology, an image of an object is captured by an imaging element that includes a plurality of pixel output units that receives incident light that is incident without passing through either of an imaging lens and a pinhole and each outputs one detection signal indicating an output pixel value modulated by an incident angle of the incident light, and on the basis of a detection signal acquired by the image capturing and acquired in the pixel output units of the imaging element, a white balance coefficient is set that is used for correcting a white balance of a restored image restored from the detection signal.

[0016] In the image processing device and method according to another aspect of the present technology, a white balance coefficient is set on the basis of a detection signal acquired by capturing an image of an object by an imaging element that includes a plurality of pixel output units that receives incident light that is incident without passing through either of an imaging lens and a pinhole and each outputs one detection signal indicating an output pixel value modulated by an incident angle of the incident light, in which the detection signal is acquired in the pixel output units, and the white balance coefficient is used for correcting a white balance of a restored image restored from the detection signal.

[0017] The imaging element according to still another aspect of the present technology includes a plurality of pixel output units that receives incident light that is incident without passing through either of an imaging lens and a pinhole and each outputs one detection signal indicating an output pixel value modulated by an incident angle of the incident light, and a coefficient setting unit that, on the basis of a detection signal acquired in the pixel output units, a white balance coefficient that is used for correcting a white balance of a restored image restored from the detection signal.

Effects of the Invention

[0018] According to the present technology, it is possible to capture an image of an object or process an image. Further, according to the present technology, white balance control can be performed more easily.

BRIEF DESCRIPTION OF DRAWINGS

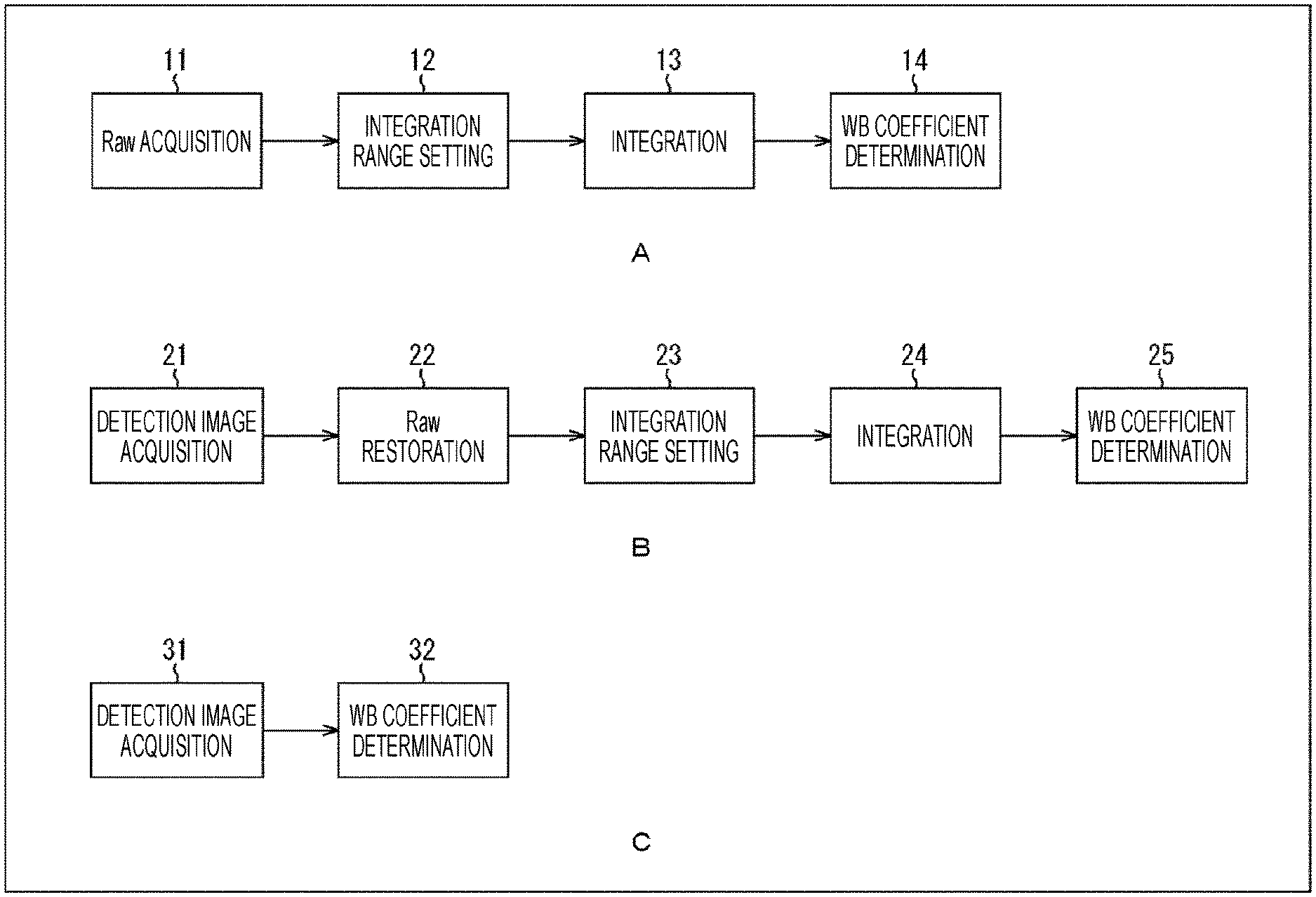

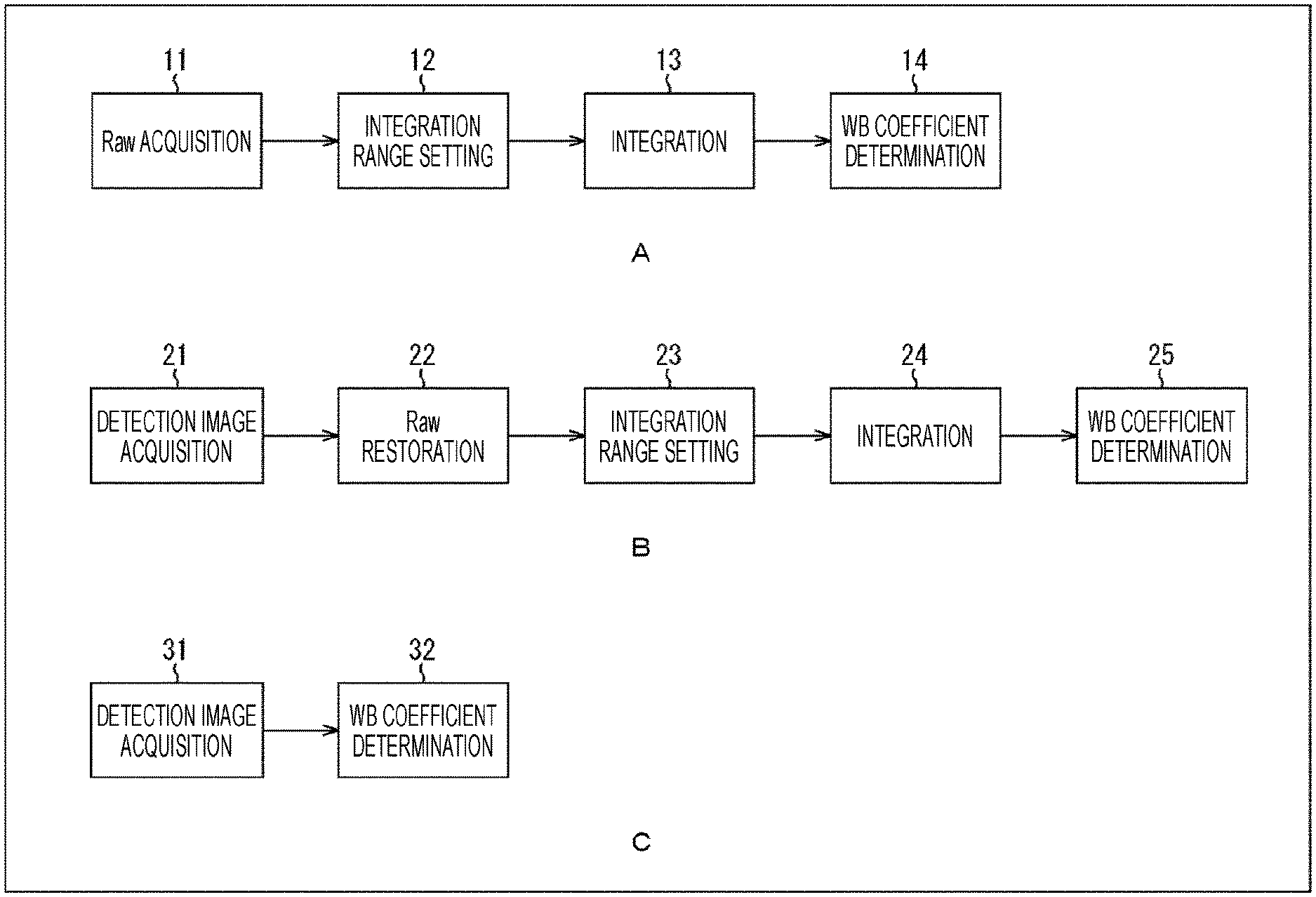

[0019] FIG. 1 is a diagram describing an example of a processing flow up to white balance control.

[0020] FIG. 2 is a block diagram illustrating a main configuration example of an imaging element to which a technique of the present disclosure is applied.

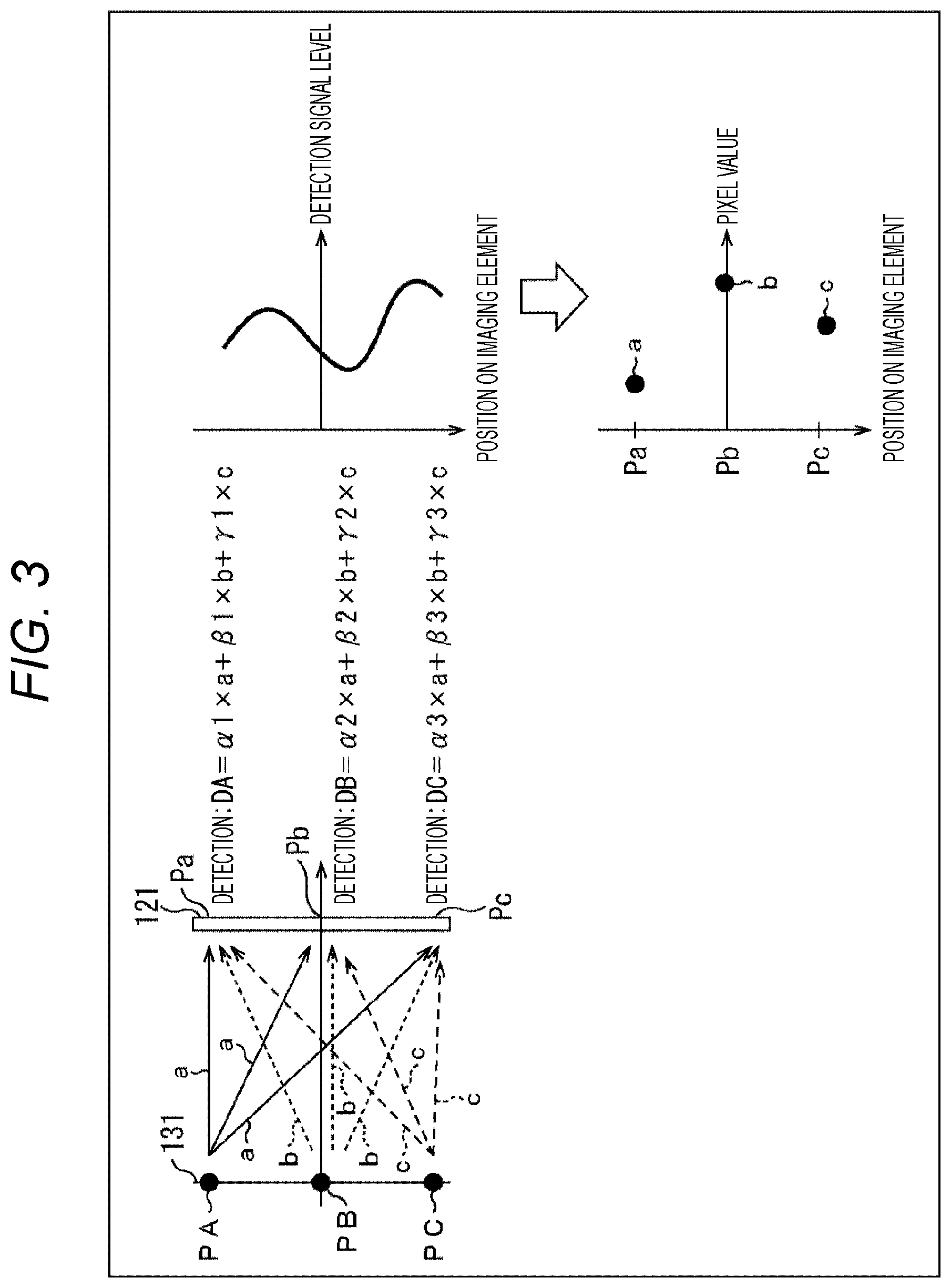

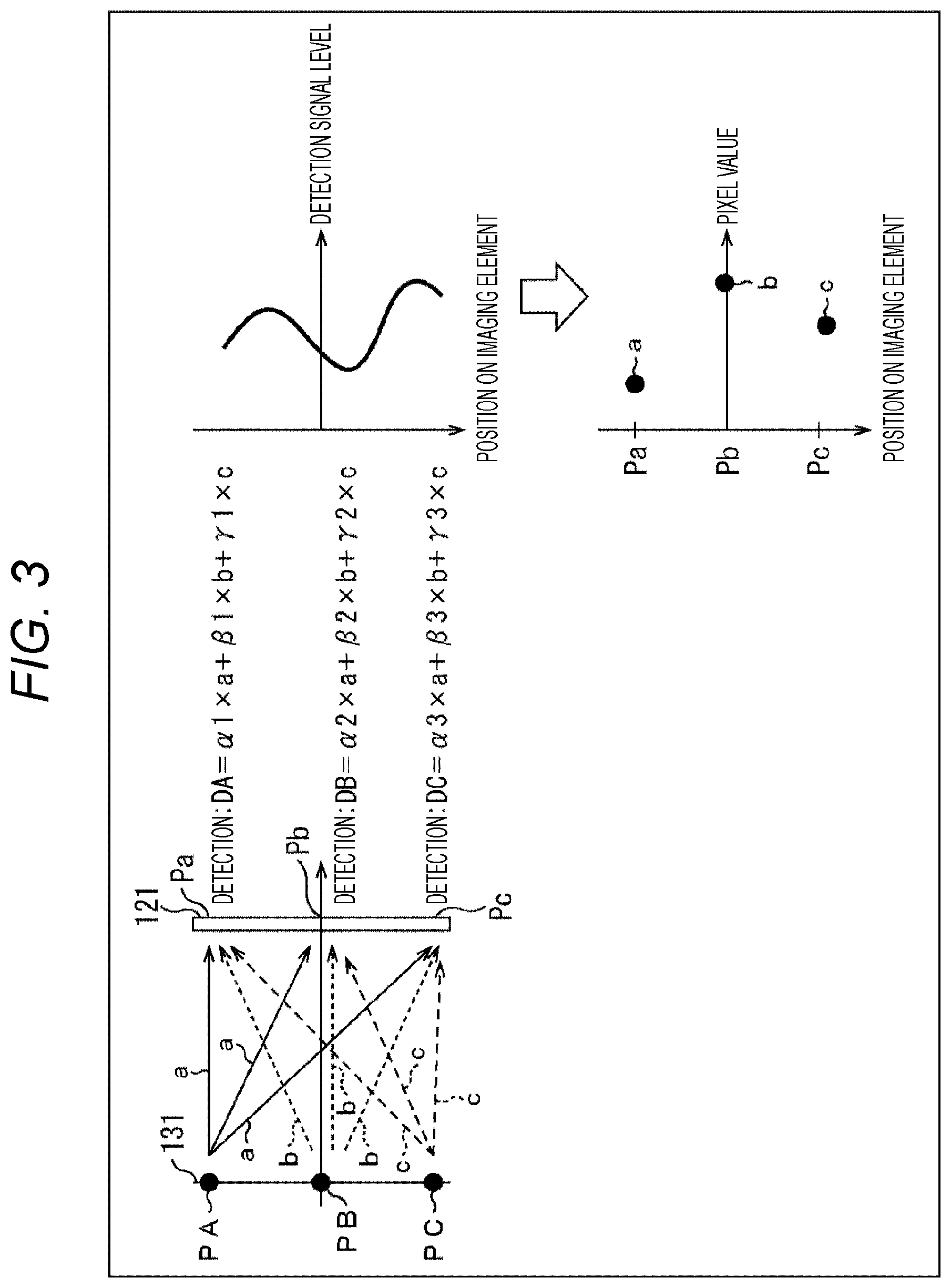

[0021] FIG. 3 is a diagram describing a principle of imaging by the imaging element.

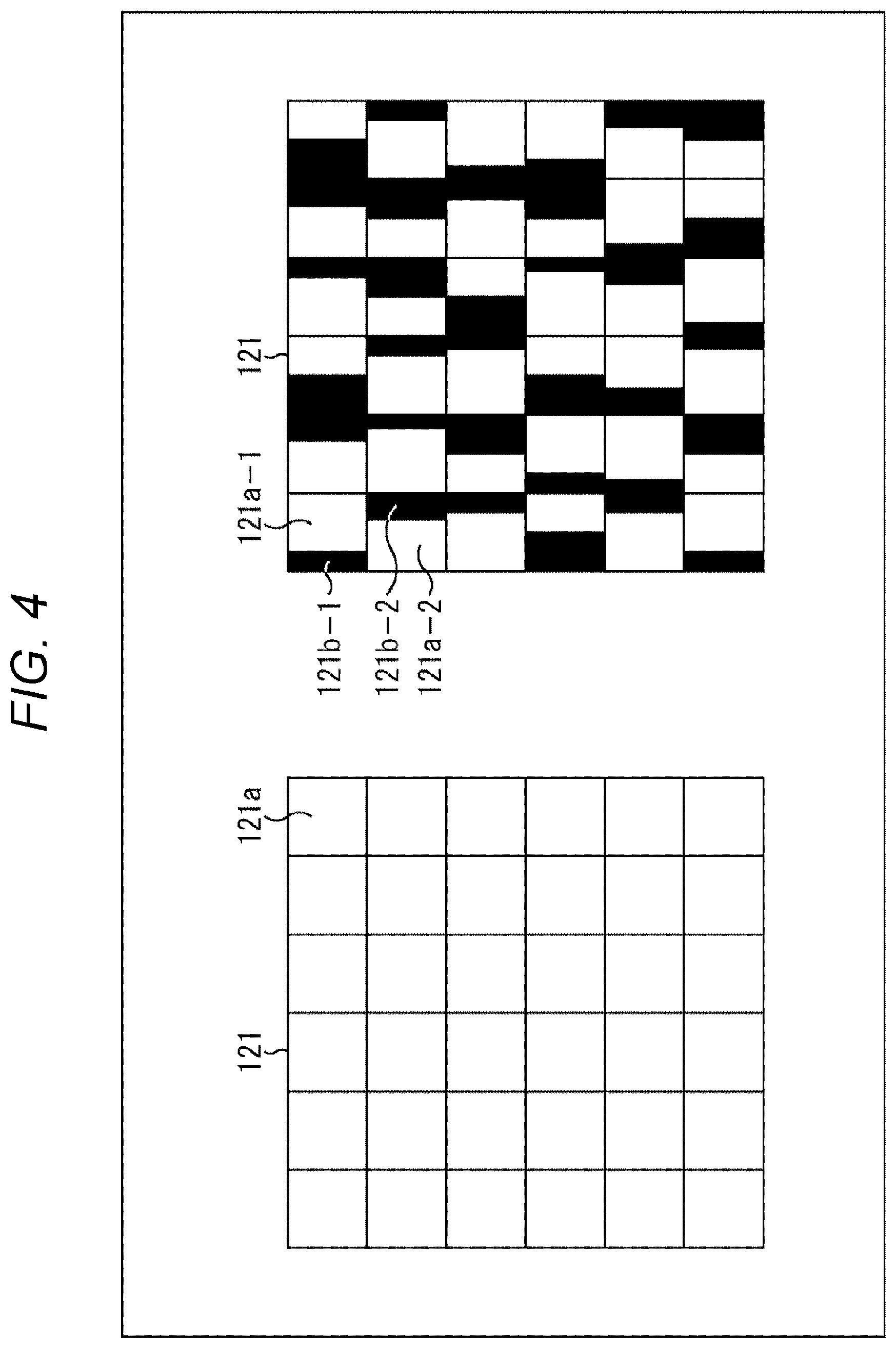

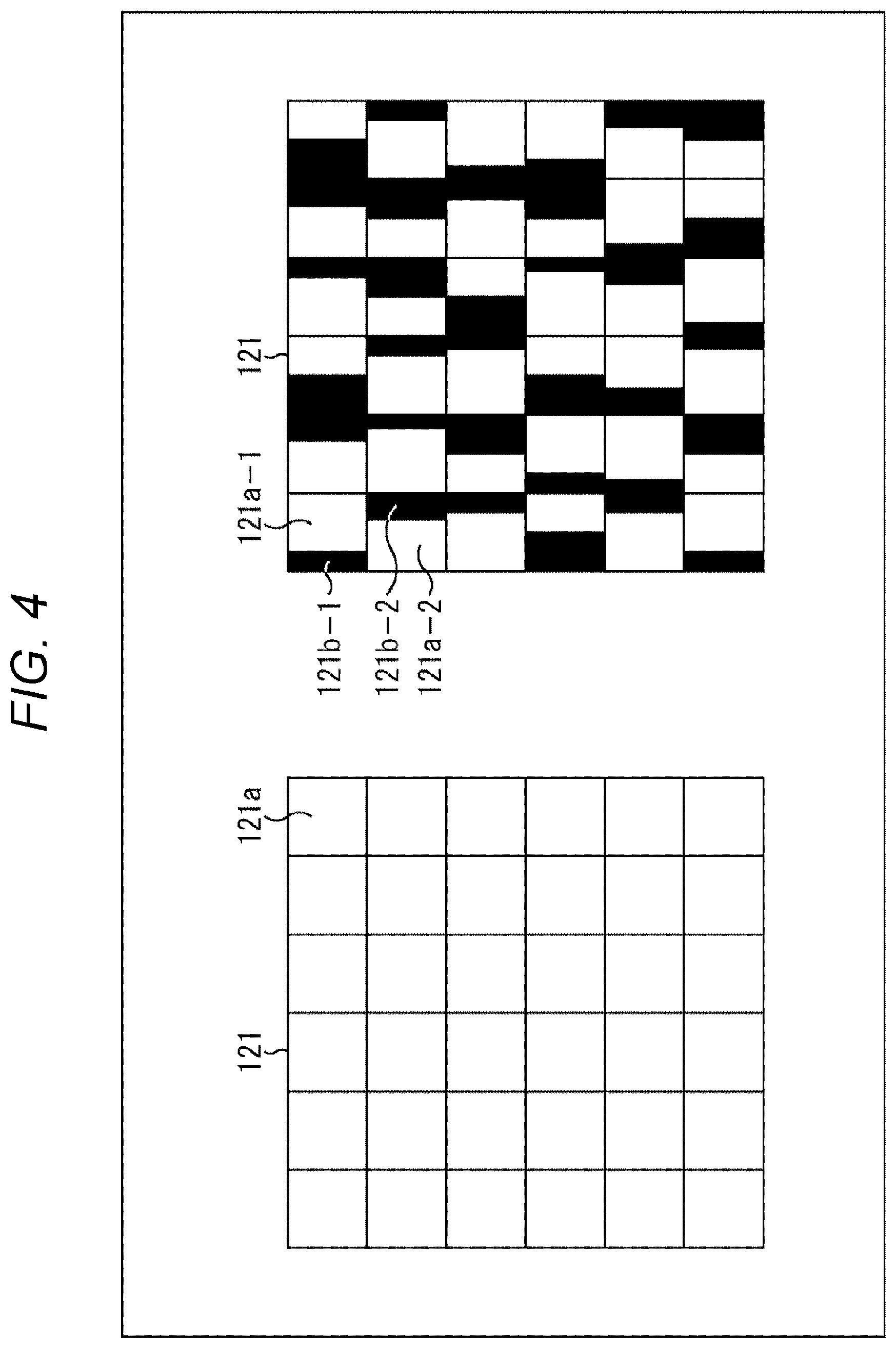

[0022] FIG. 4 is a diagram describing a difference in configuration between a conventional imaging element and the imaging element of the present disclosure.

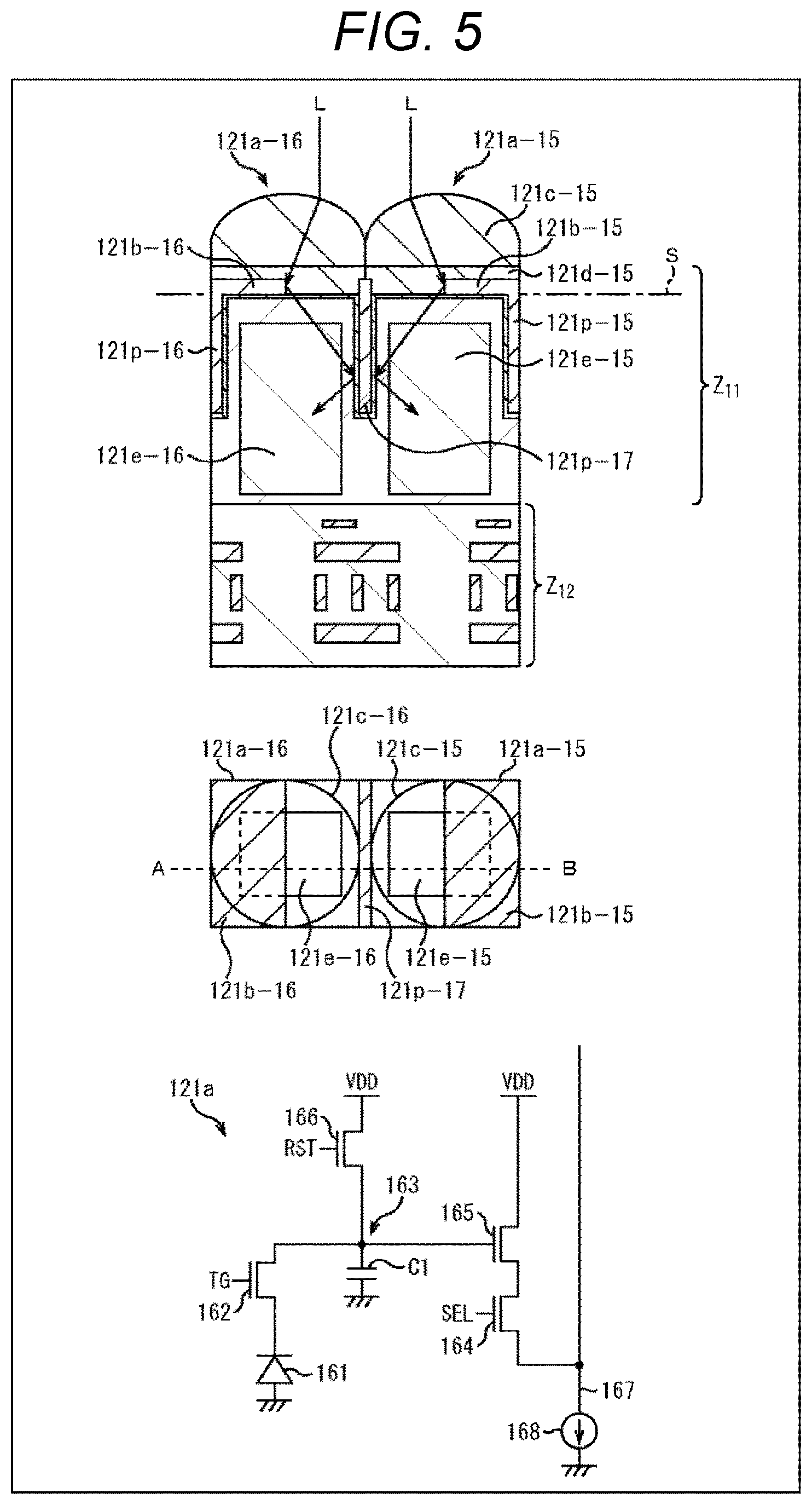

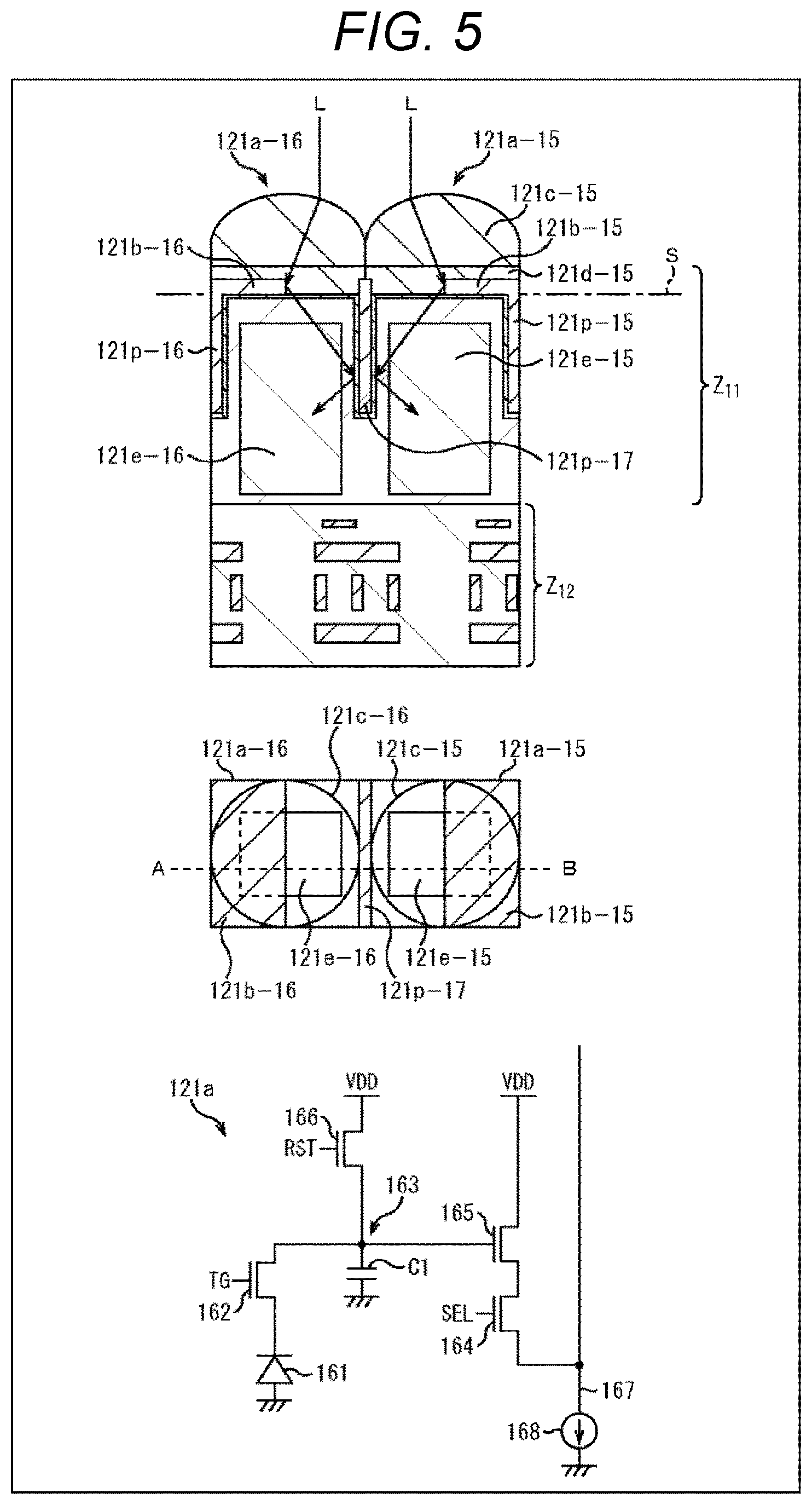

[0023] FIG. 5 is a diagram describing a first configuration example of the imaging element.

[0024] FIG. 6 is a diagram describing the first configuration example of the imaging element.

[0025] FIG. 7 is a diagram describing principles of generation of an incident angle directivity.

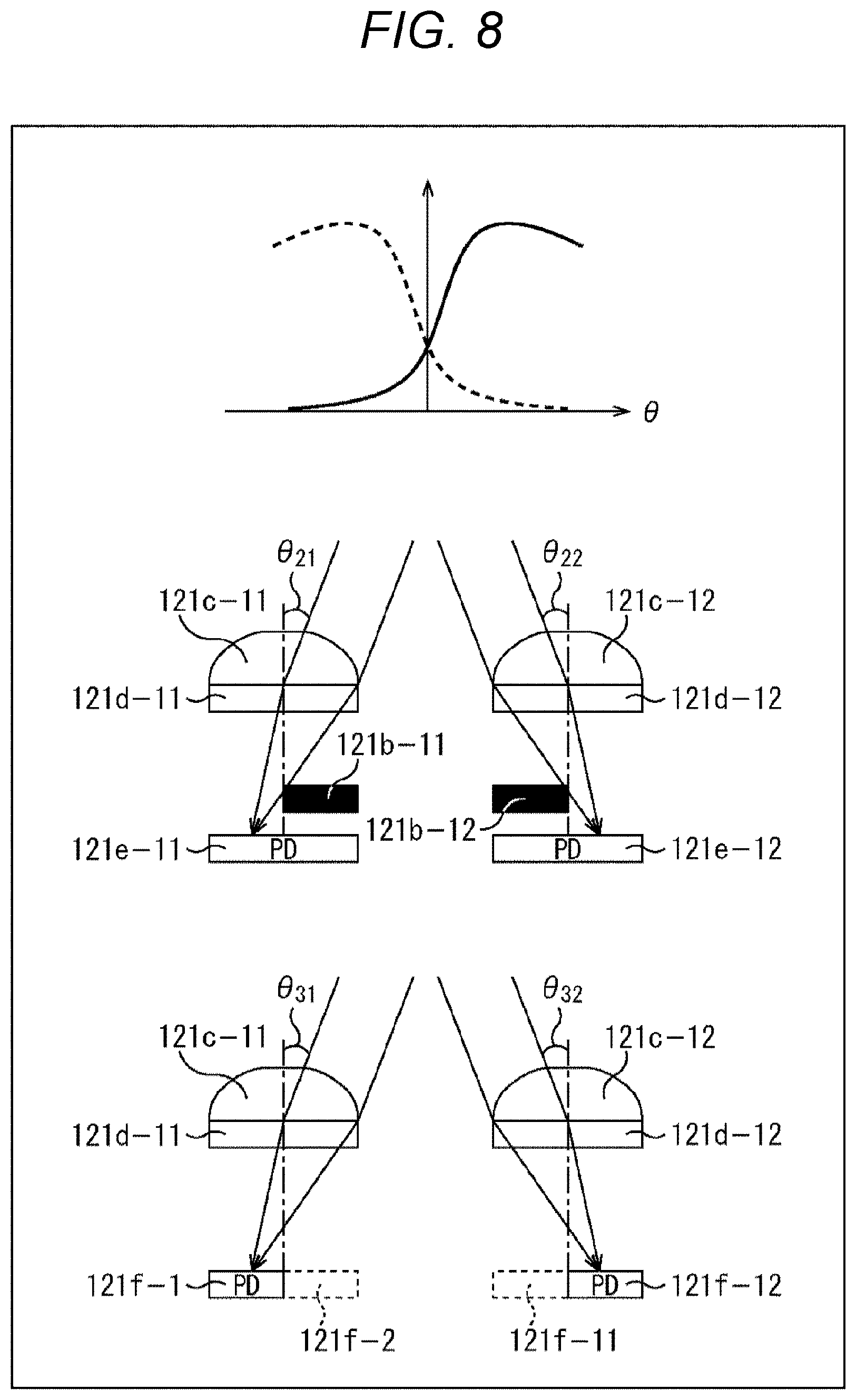

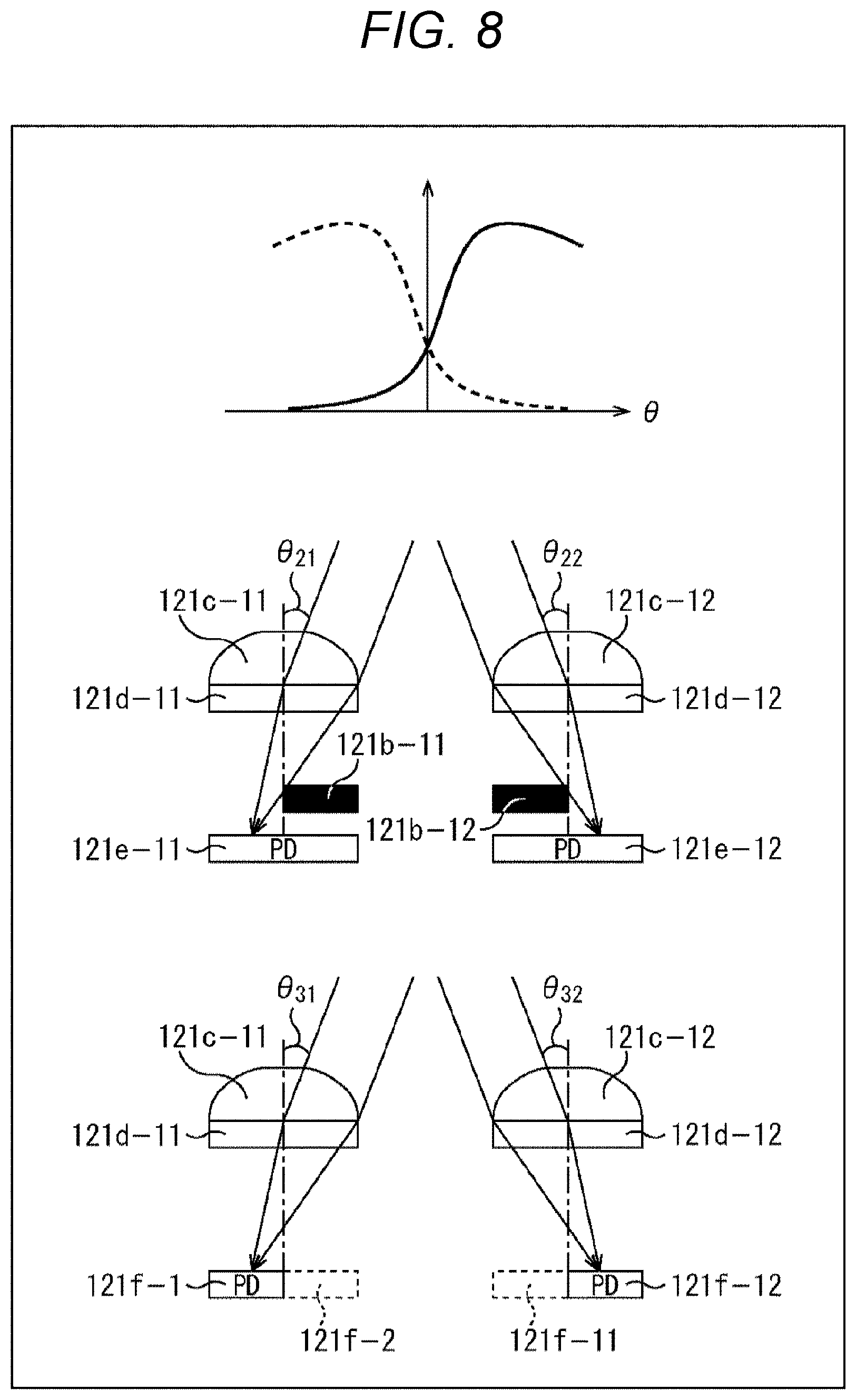

[0026] FIG. 8 is a diagram describing a change in the incident angle directivity using an on-chip lens.

[0027] FIG. 9 is a diagram describing a design of the incident angle directivity.

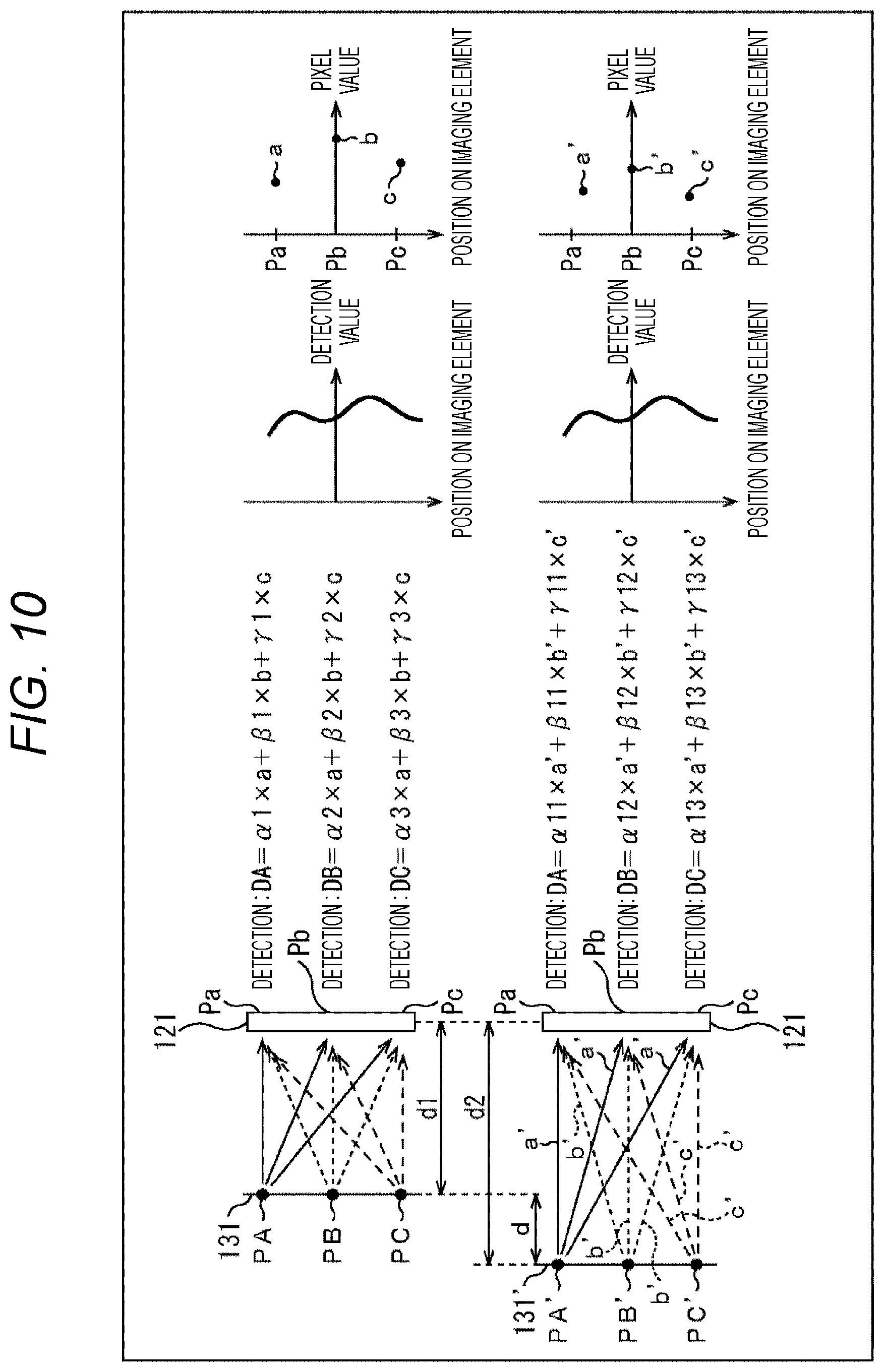

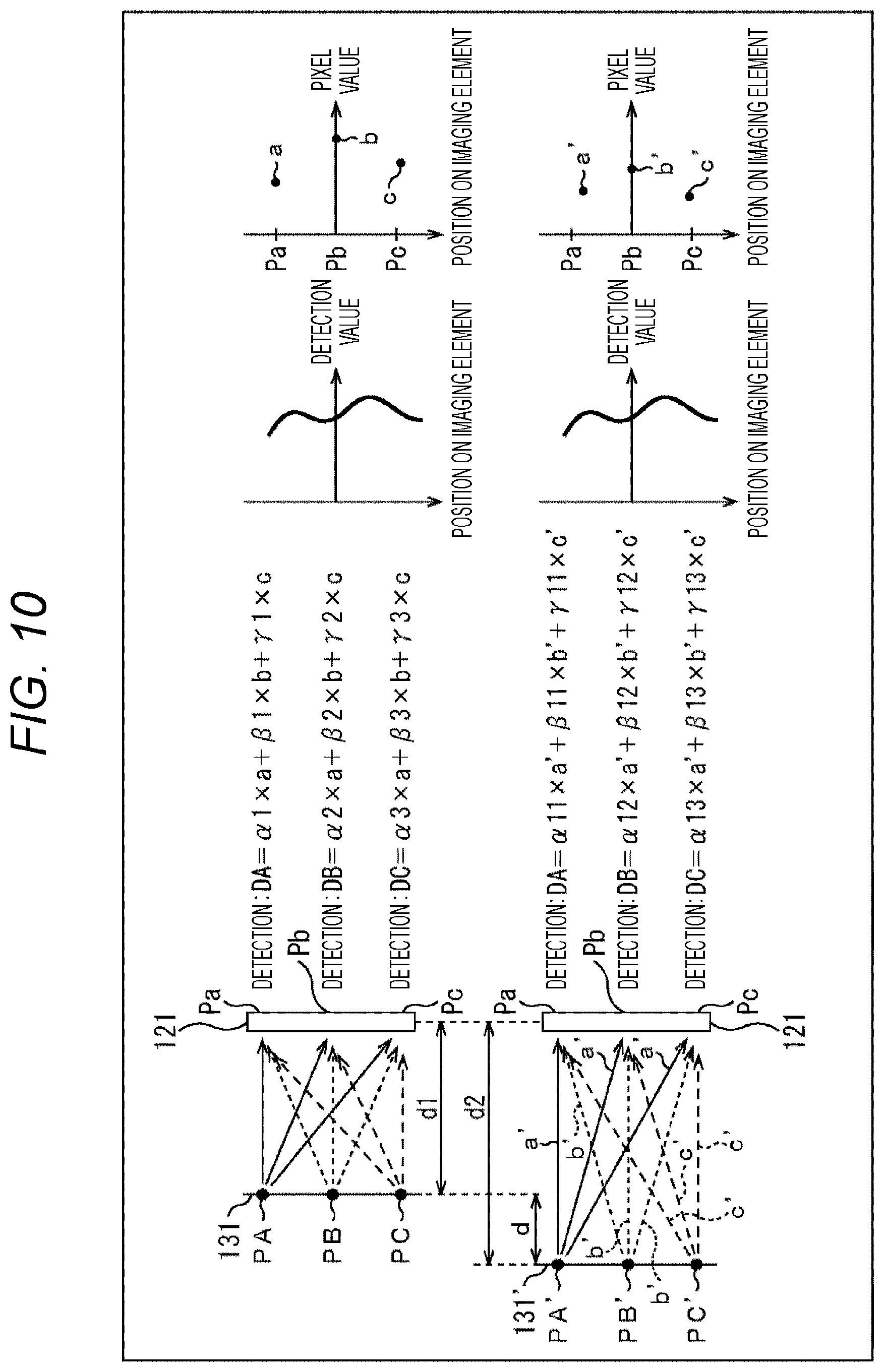

[0028] FIG. 10 is a diagram describing a relationship between an object distance and coefficients representing the incident angle directivity.

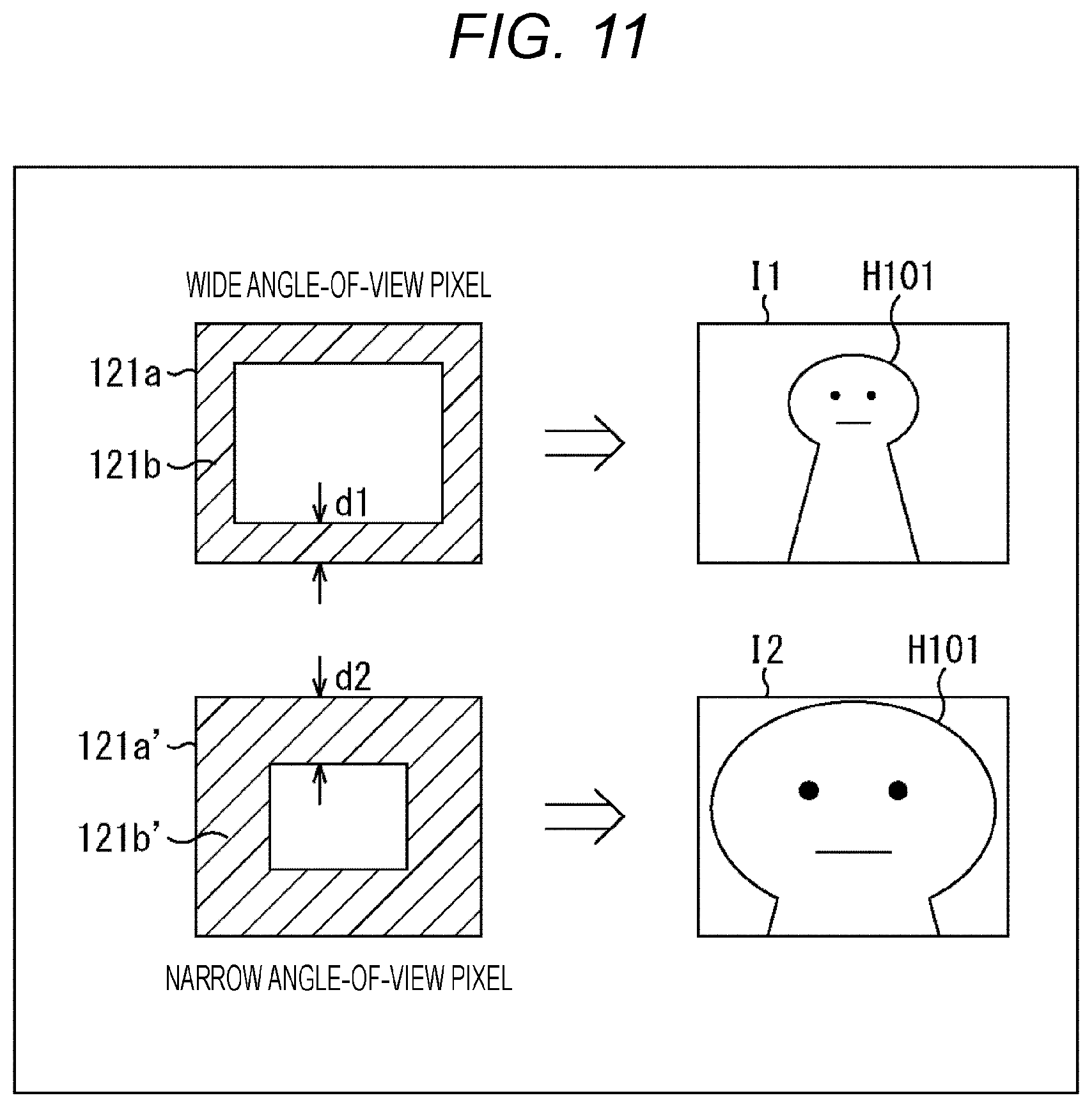

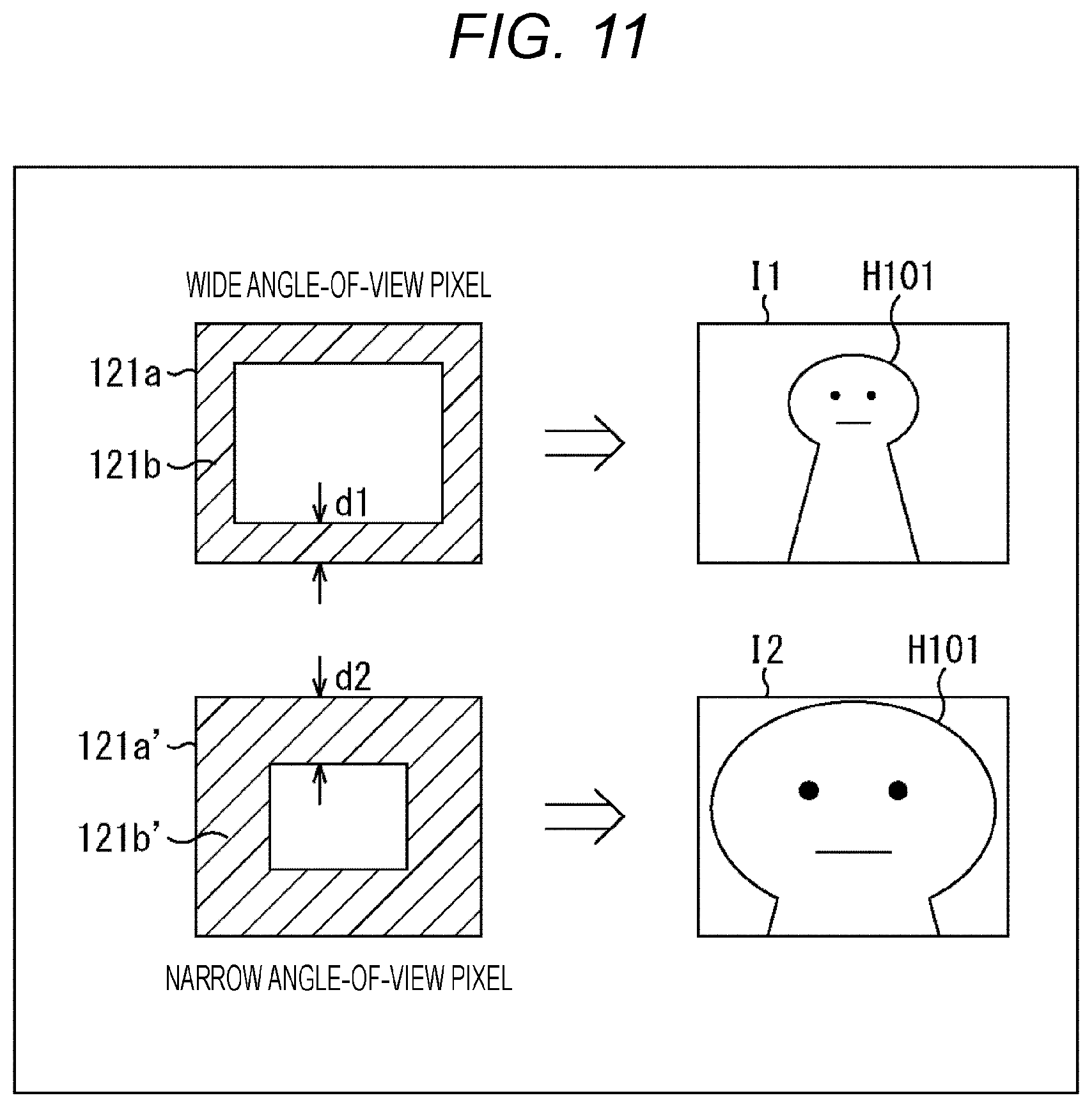

[0029] FIG. 11 is a diagram describing the relationship between narrow angle-of-view pixels and wide angle-of-view pixels.

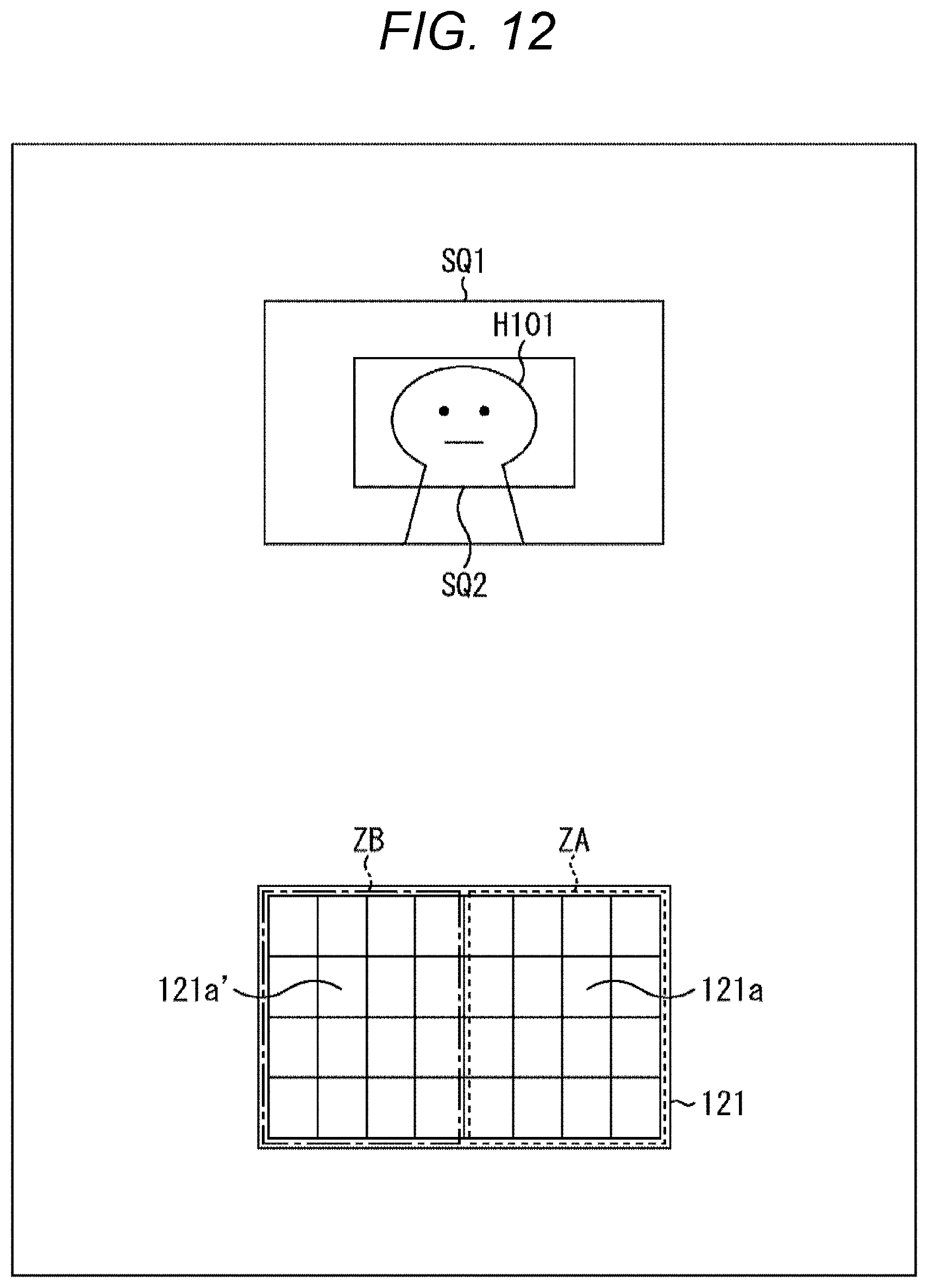

[0030] FIG. 12 is a diagram describing the relationship between the narrow angle-of-view pixels and the wide angle-of-view pixels.

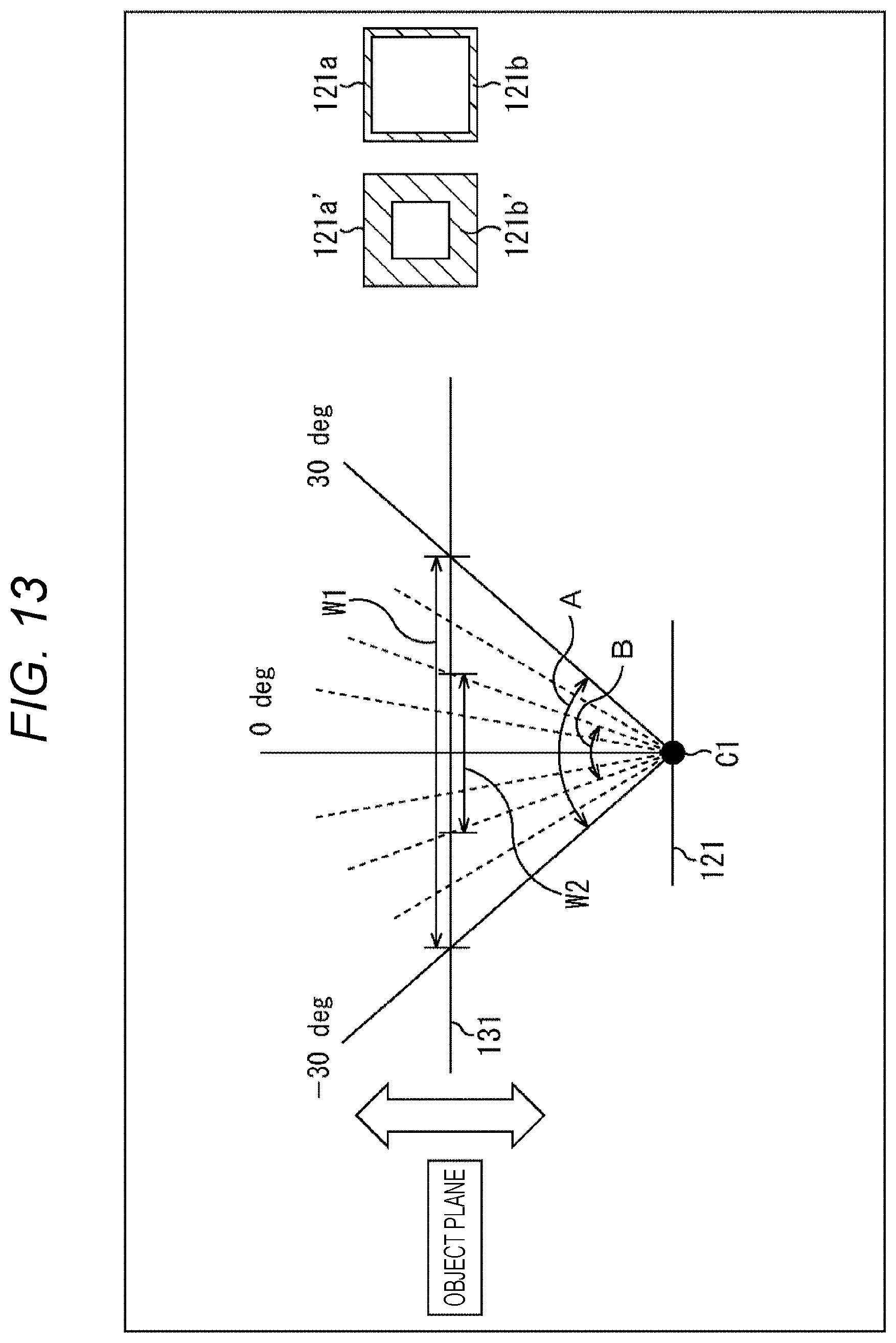

[0031] FIG. 13 is a diagram describing the relationship between the narrow angle-of-view pixels and the wide angle-of-view pixels.

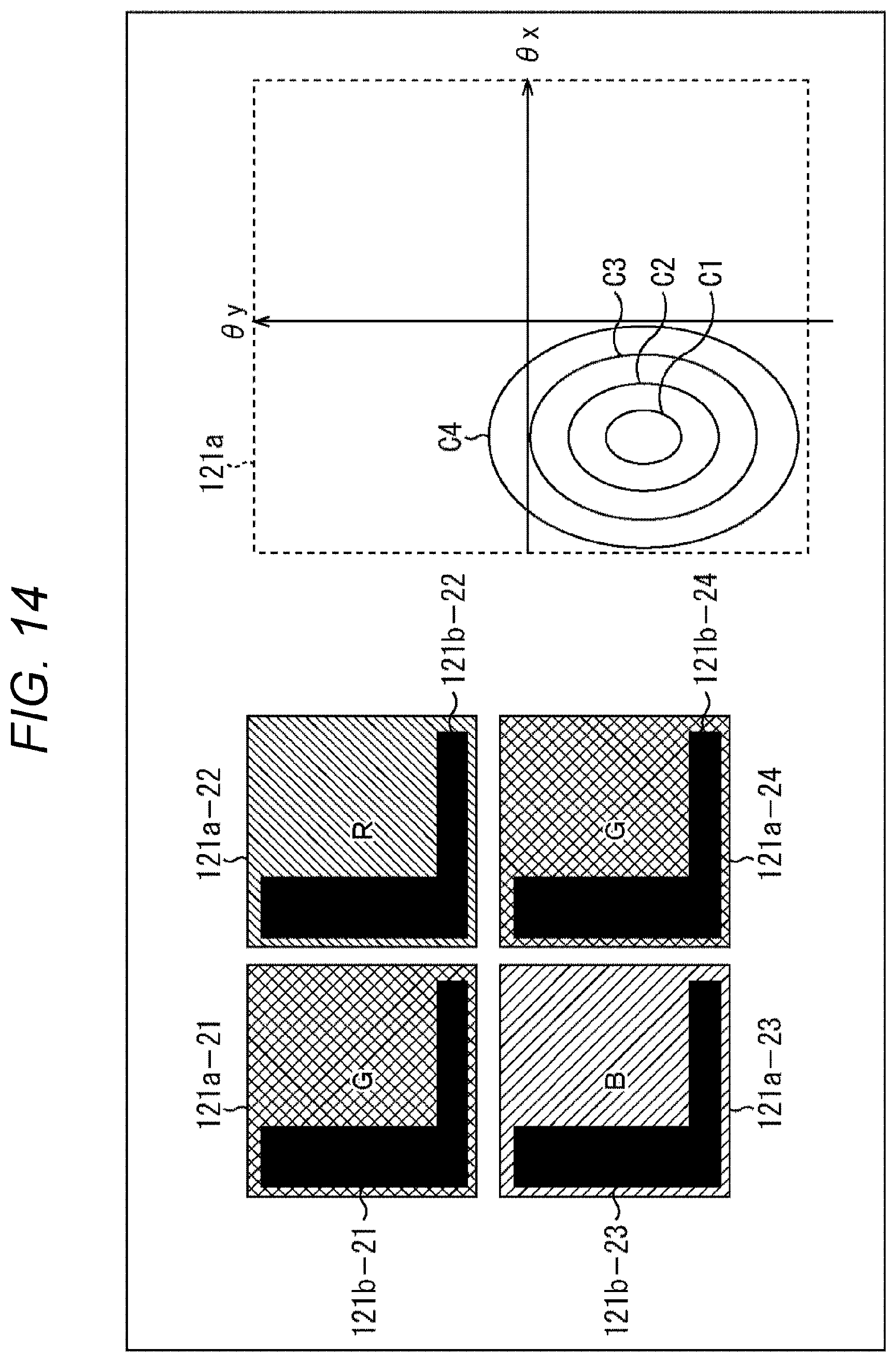

[0032] FIG. 14 is a diagram describing a modification example.

[0033] FIG. 15 is a diagram describing a modification example.

[0034] FIG. 16 is a diagram describing a modification example.

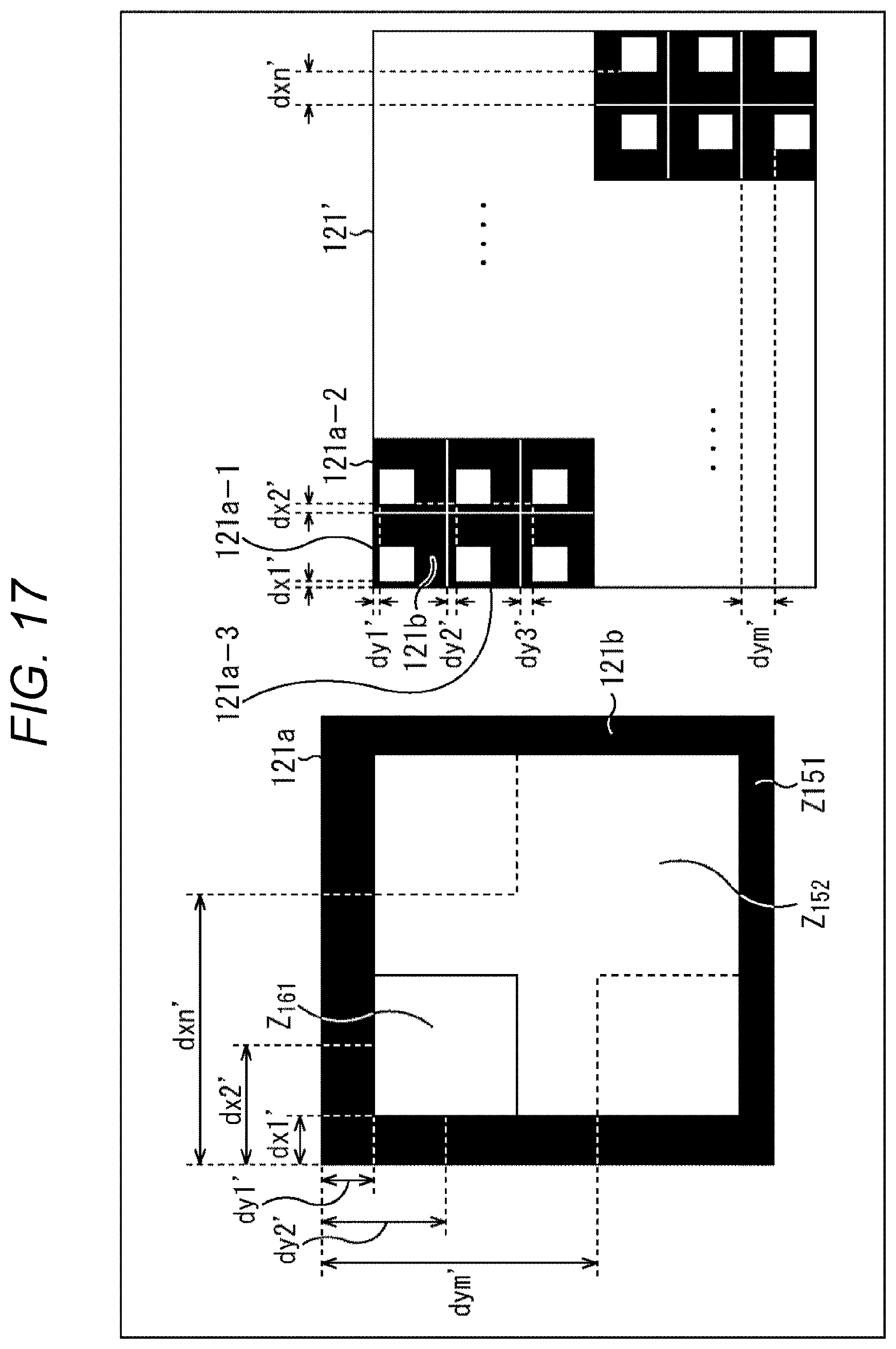

[0035] FIG. 17 is a diagram describing an example of changing an angle of view by applying a modification example.

[0036] FIG. 18 is a diagram describing an example of combining pixels of a plurality of angles of view when the angle of view is changed by applying the modification example.

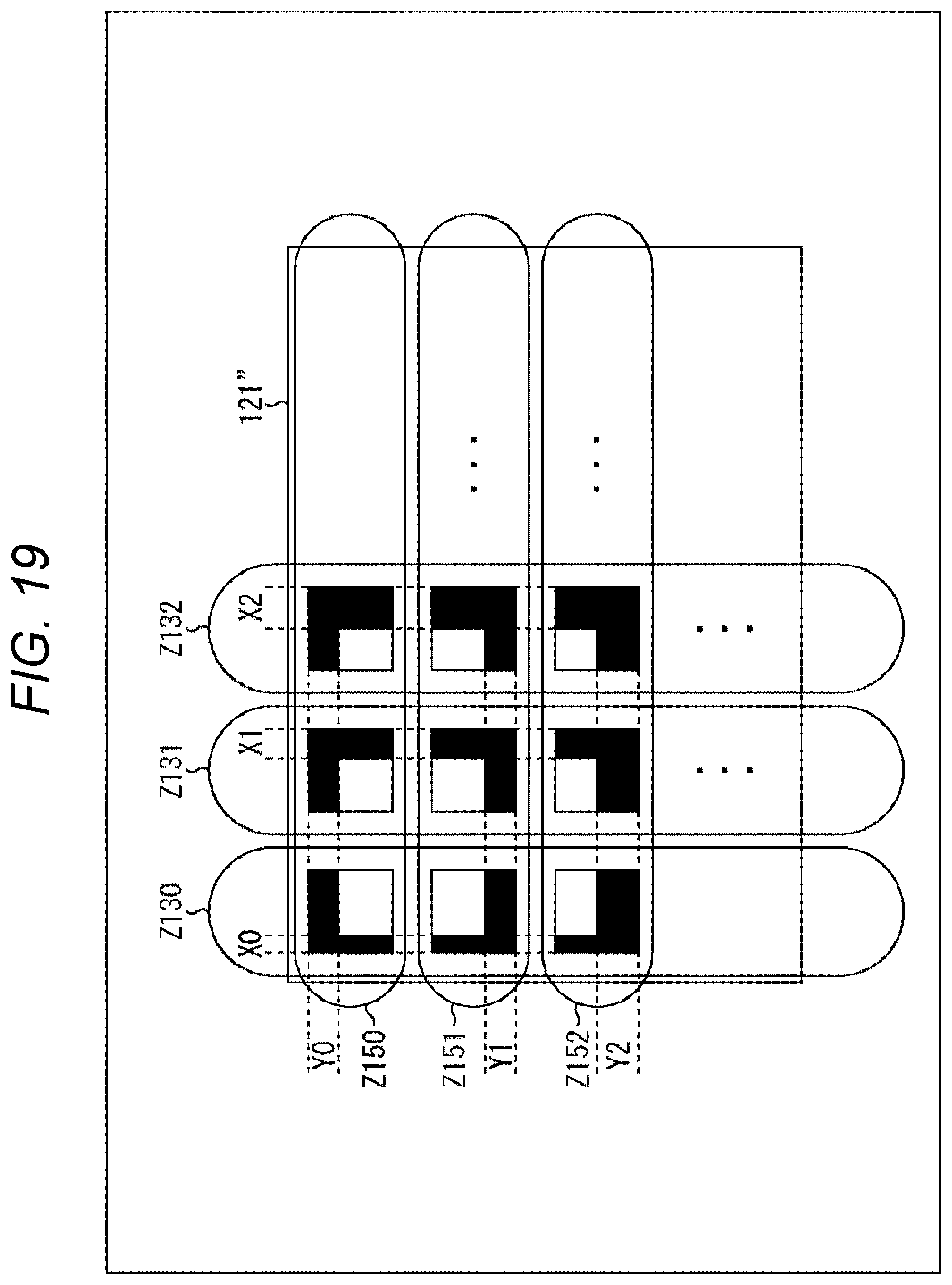

[0037] FIG. 19 is a diagram describing a modification example.

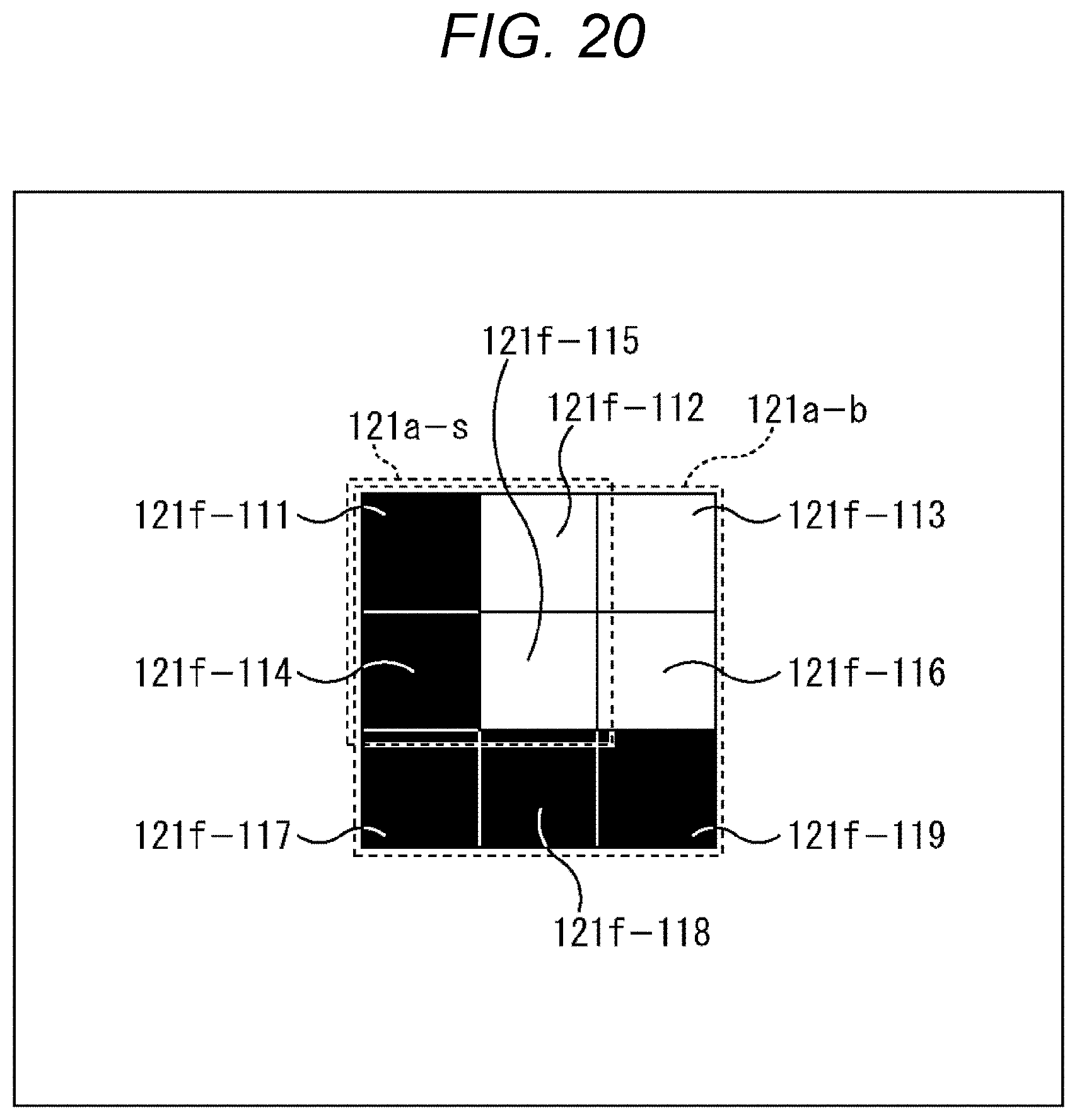

[0038] FIG. 20 is a diagram describing a modification example.

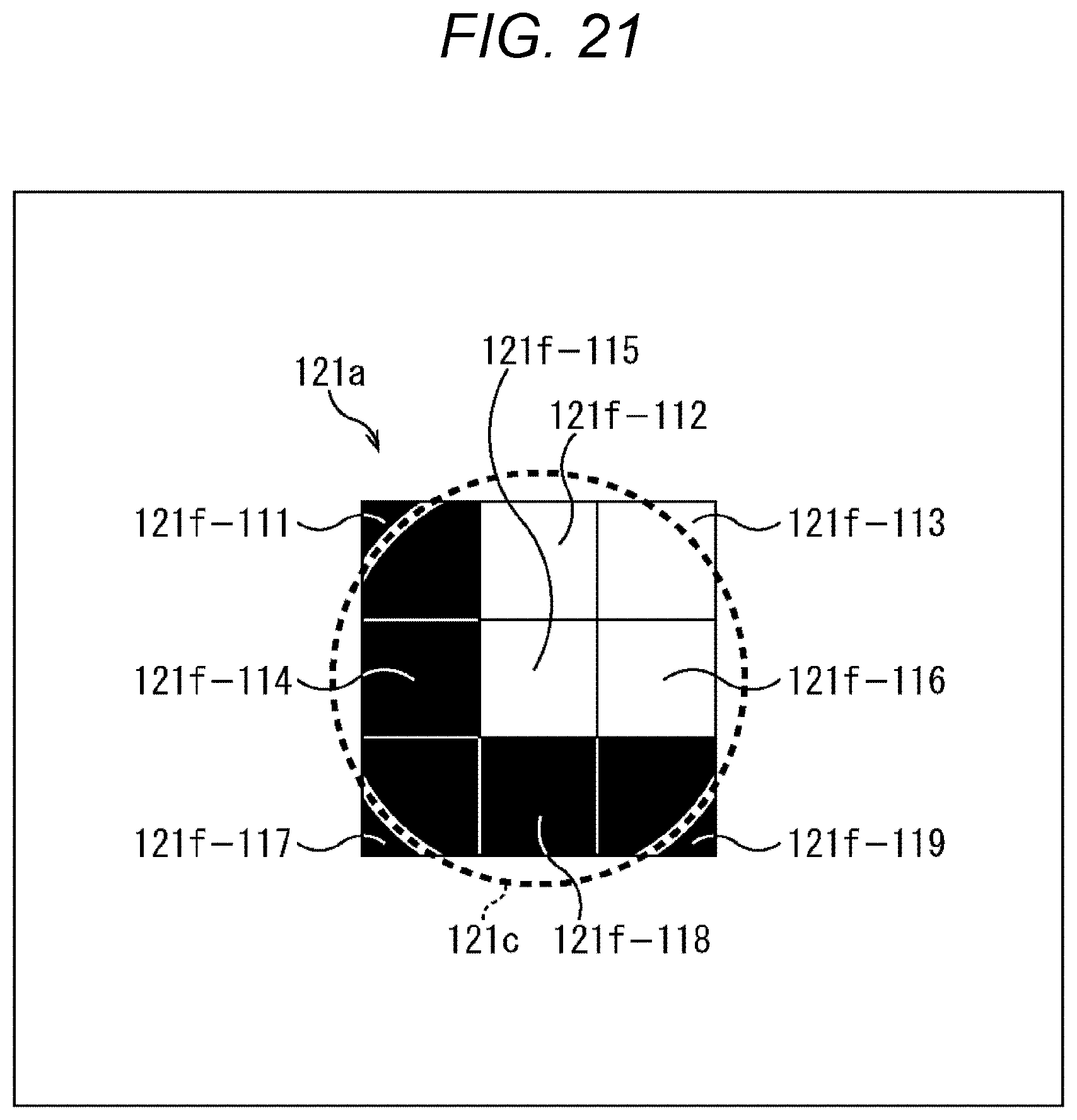

[0039] FIG. 21 is a diagram describing a modification example.

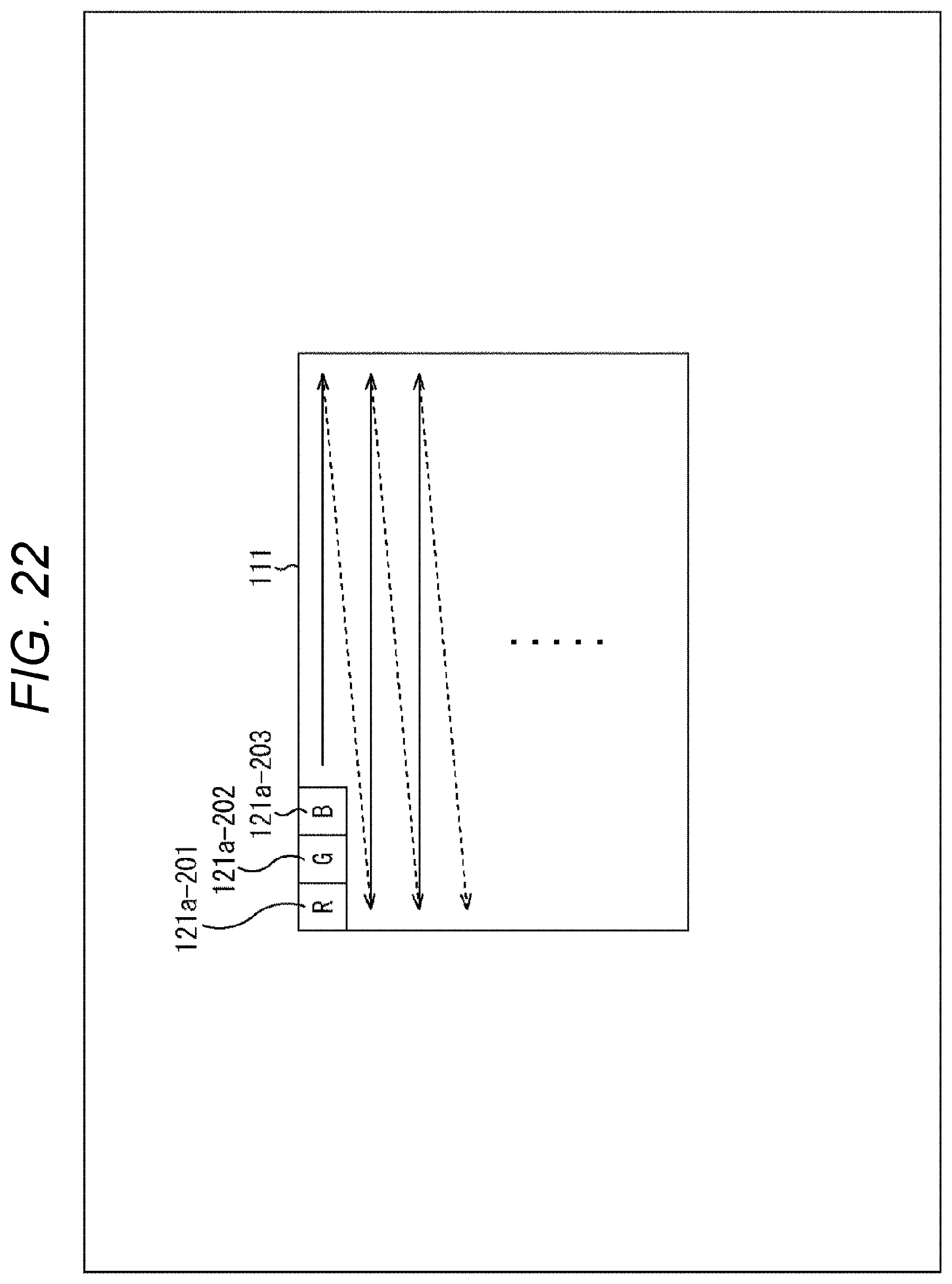

[0040] FIG. 22 is a diagram illustrating an arrangement example of pixel output units used for the white balance control.

[0041] FIG. 23 is a diagram illustrating an arrangement example of pixel output units used for the white balance control.

[0042] FIG. 24 is a diagram illustrating a main configuration example of output data.

[0043] FIG. 25 is a flowchart describing an example of a flow of an imaging process.

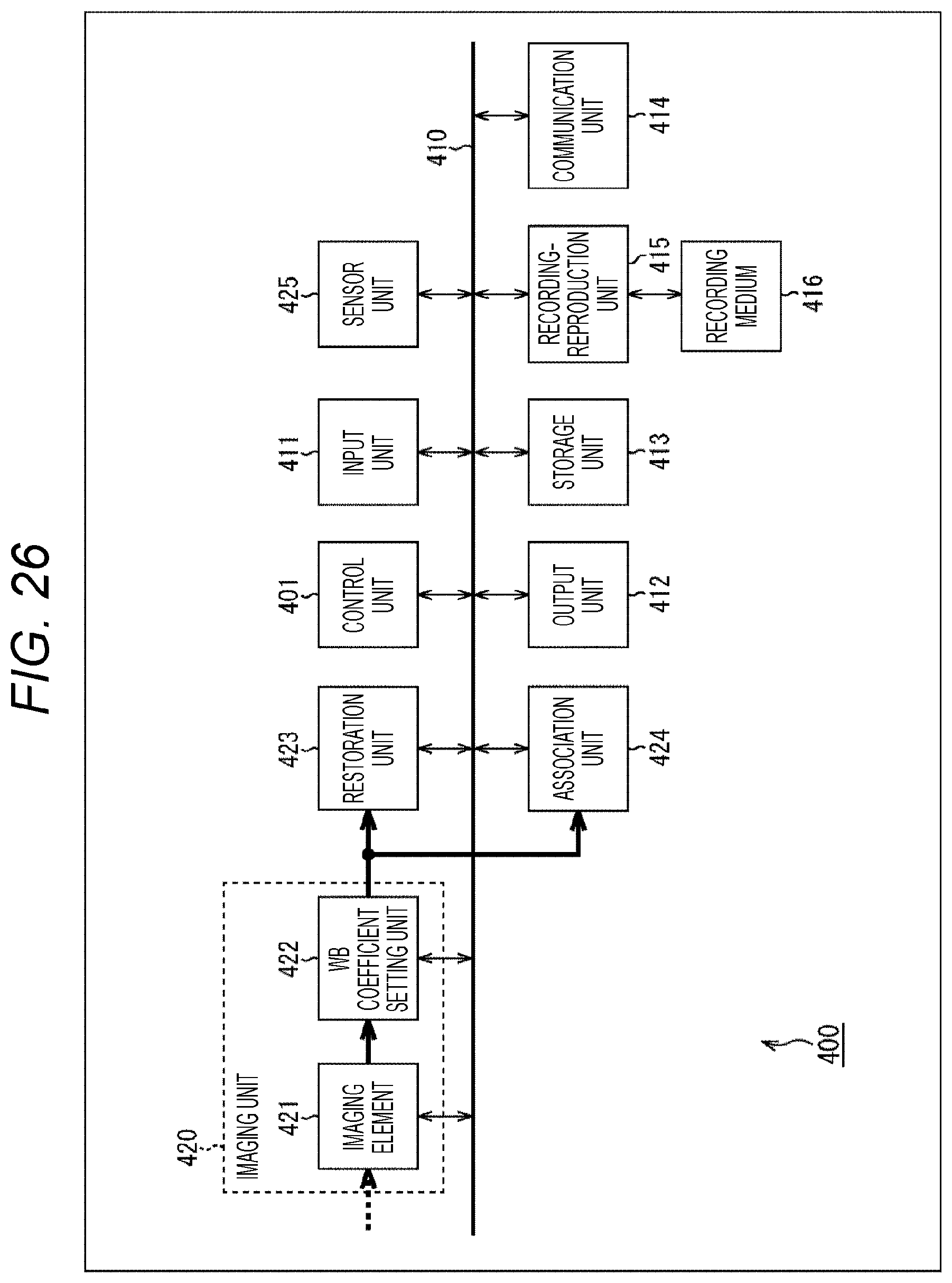

[0044] FIG. 26 is a block diagram illustrating a main configuration example of an imaging device.

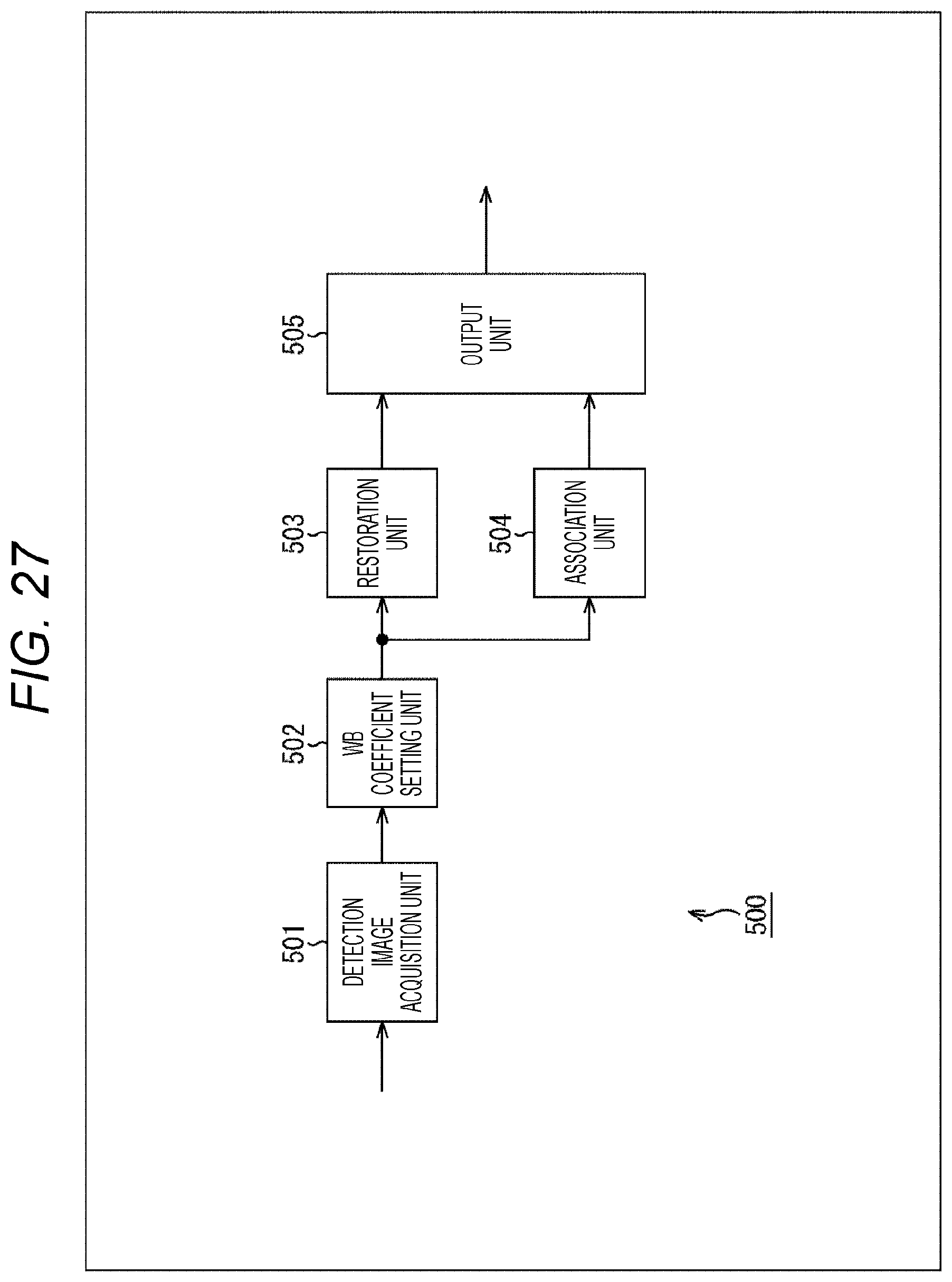

[0045] FIG. 27 is a block diagram illustrating a main configuration example of an image processing device.

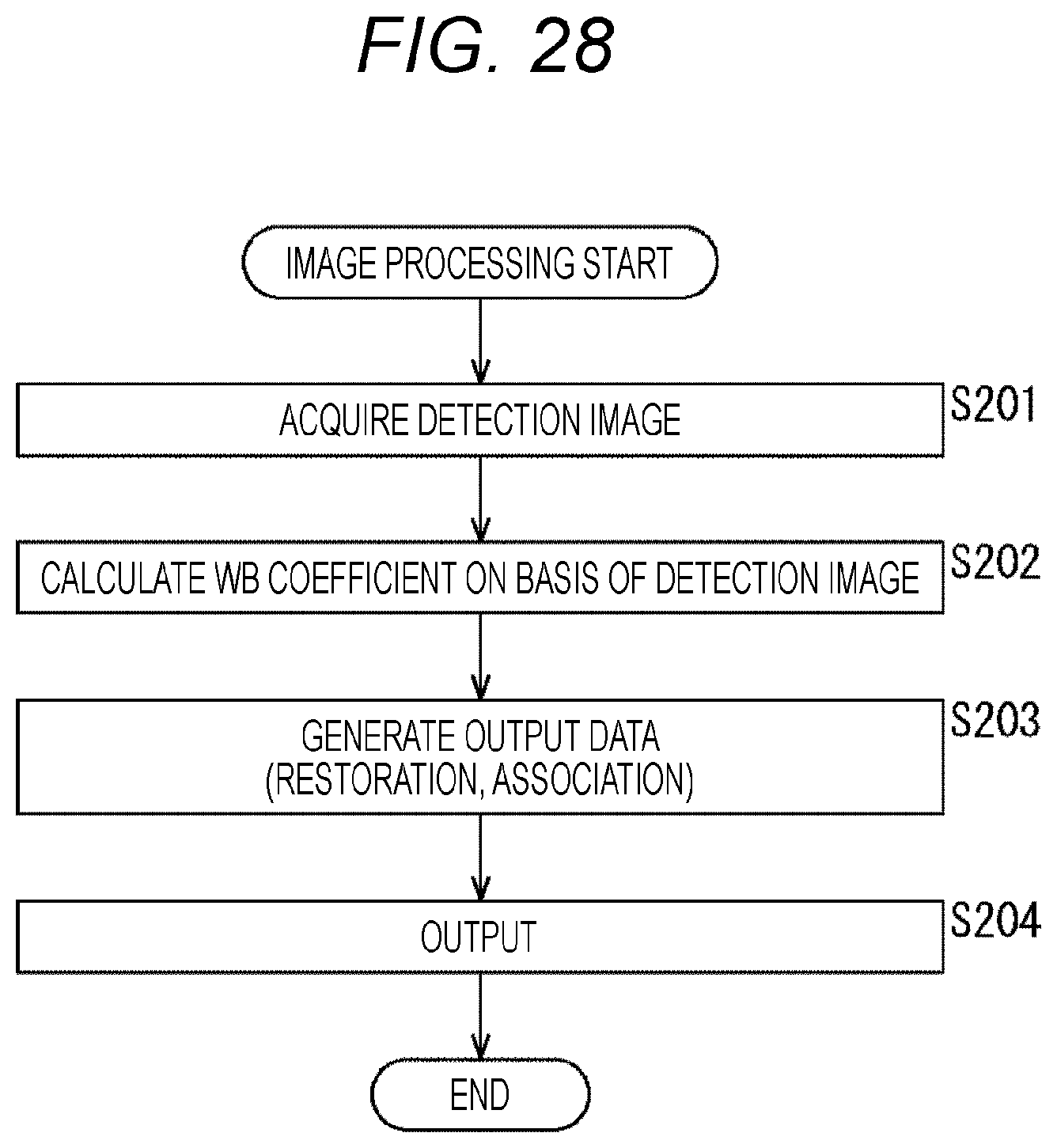

[0046] FIG. 28 is a flowchart describing an example of a flow of image processing.

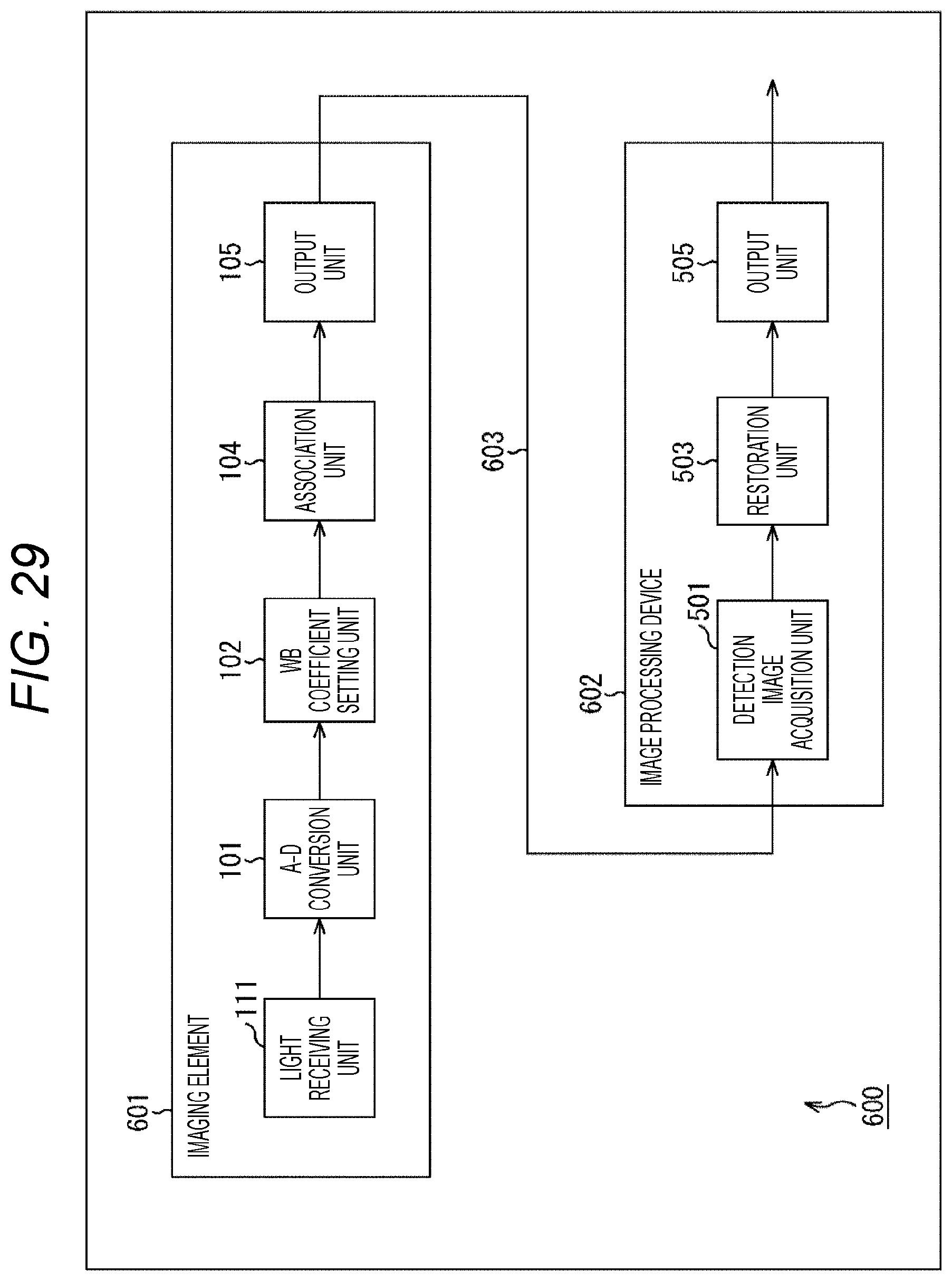

[0047] FIG. 29 is a block diagram illustrating a main configuration example of an image processing system.

[0048] FIG. 30 is a flowchart describing an example of a flow of image processing.

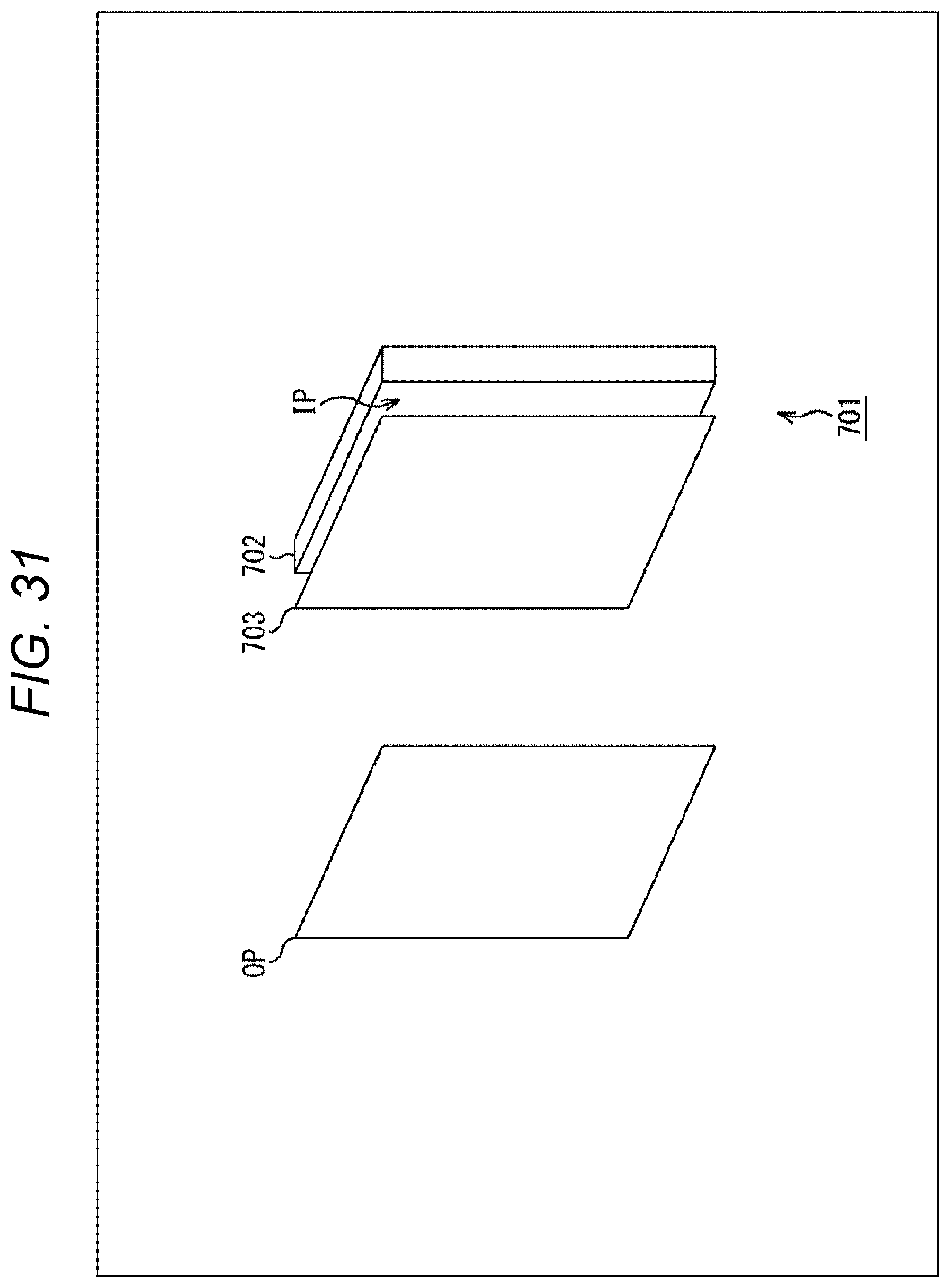

[0049] FIG. 31 is a diagram illustrating a main configuration example of an imaging element.

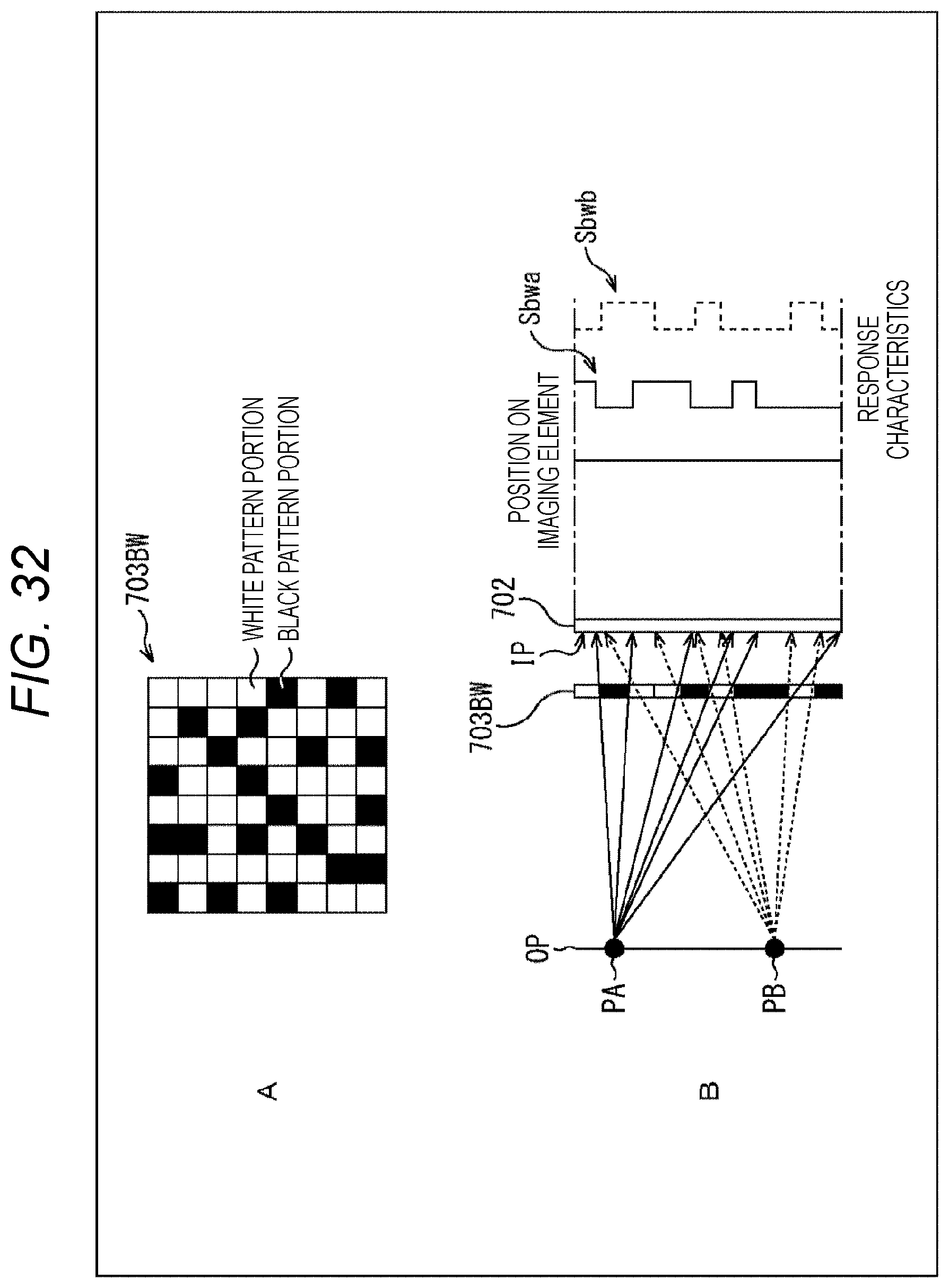

[0050] FIG. 32 is a diagram illustrating a case where a black-and-white pattern mask is used.

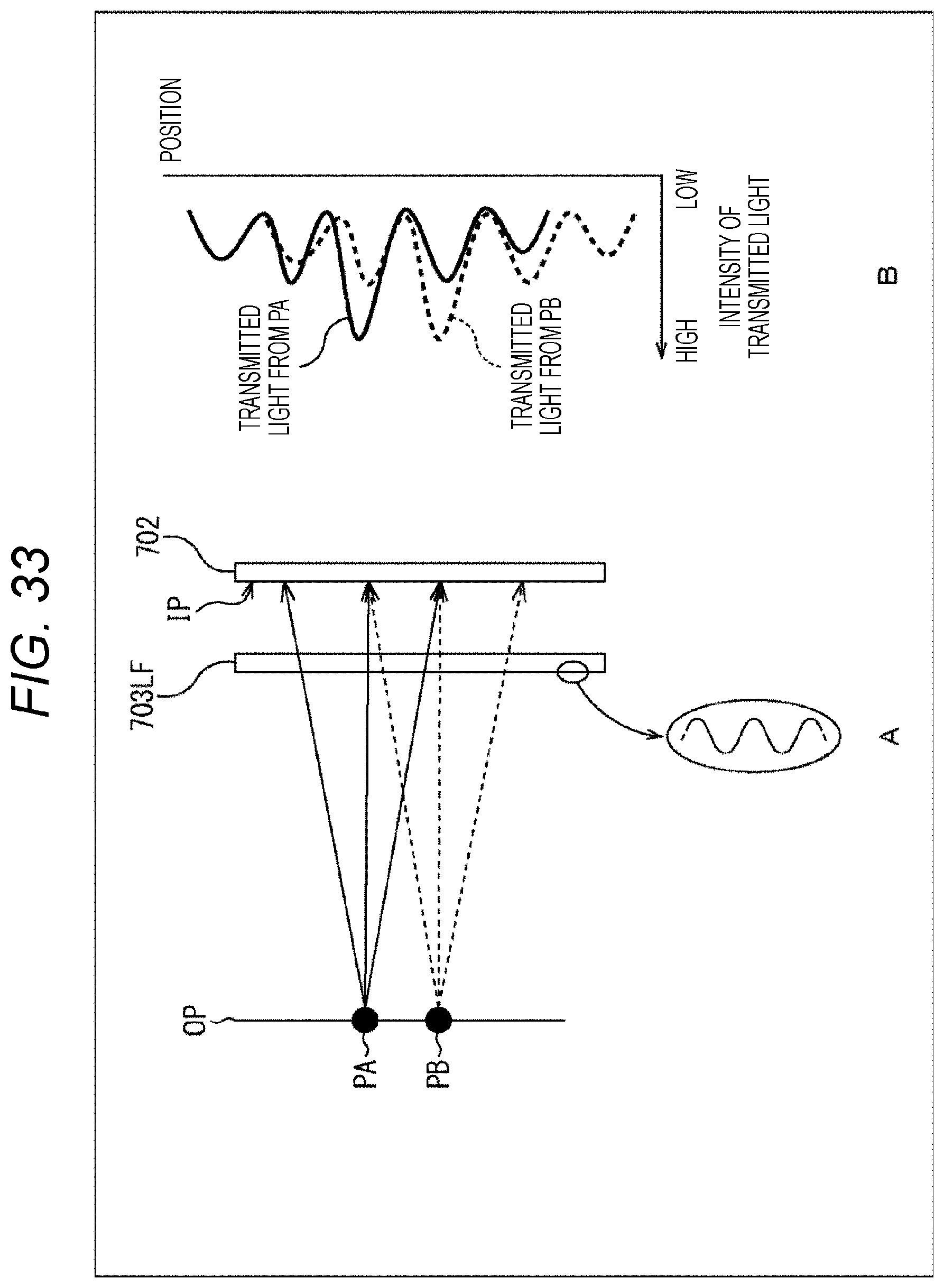

[0051] FIG. 33 is a diagram illustrating a case where a light interference mask is used.

[0052] FIG. 34 is a diagram illustrating a modification example of the imaging element.

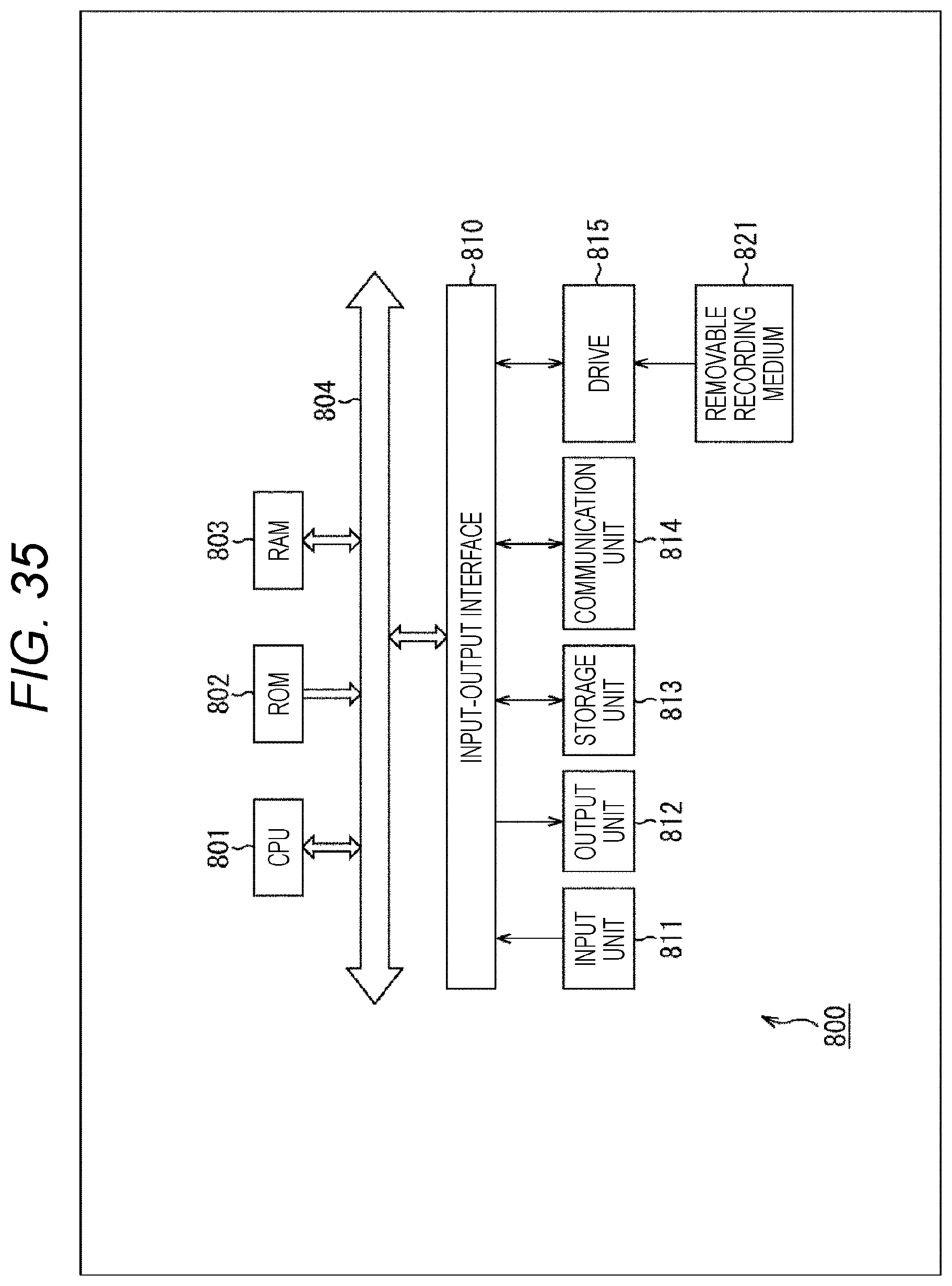

[0053] FIG. 35 is a block diagram illustrating a main configuration example of a computer.

MODE FOR CARRYING OUT THE INVENTION

[0054] Hereinafter, modes for carrying out the present disclosure (hereinafter referred to as embodiments) will be described. Note that the description will be made in the following order.

[0055] 1. White balance control

[0056] 2. First embodiment (imaging element)

[0057] 3. Second embodiment (imaging device)

[0058] 4. Third embodiment (image processing device)

[0059] 5. Fourth embodiment (image processing system)

[0060] 6. Fifth embodiment (another configuration example of imaging element or imaging device)

[0061] 7. Appendix

1. White Balance Control

[0062] <White Balance Control>

[0063] Conventionally, as described in Patent Document 1, Patent Document 2, and Non-Patent Document 1 for example, there have been imaging elements that do not use an imaging lens (also referred to as imaging lens-free imaging element). An imaging device to which such an imaging lens-free imaging element is applied restores a restored image by performing, for example, a predetermined calculation on a detection image generated by the imaging element.

[0064] Incidentally, in the imaging device, in order to correct the color tone of a captured image, a process called white balance control (adjustment) is performed in some cases to correct a white reference in the captured image in consideration of influence of the color of light in the imaging environment. The white balance control is performed by using, for example, a ratio of integrated values acquired by integrating RGB pixel values for every color in a wide range to some extent.

[0065] For example, in a case of an imaging element using a conventional imaging lens, as illustrated in A of FIG. 1, when a Raw image is acquired by the imaging element (Raw acquisition 11), on the Raw image, processes such as integration range setting 12 that is a process of setting a range for integrating pixel values, integration 13 that is a process of integrating pixel values for every color in a set range, and WB coefficient determination 14 that is a process of calculating a white balance coefficient (also referred to as WB coefficient) used for white balance control by using a ratio of acquired integrated values are performed on the Raw image. The white balance of the captured image (Raw image) is controlled using this WB coefficient.

[0066] On the other hand, in a case where the white balance control is performed by such a method in an imaging device to which the imaging lens-free imaging element is applied as described in Patent Document 1, Patent Document 2, and Non-Patent Document 1, a restored image is restored from a detection image acquired with the imaging element, and the white balance is controlled by using a ratio of integrated values acquired by integrating RGB pixel values for every color in a wide range to some extent in the restored image, which requires complicated processing.

[0067] For example, as illustrated in B of FIG. 1, when a detection image is acquired by the imaging element (detection image acquisition 21), a restored image (Raw image) is restored from the detection image (Raw restoration 22), and processes such as an integration range setting 23 that is a process similar to the integration range setting 12, integration 24 that is a process similar to the integration 13, and WB coefficient determination 25 that is a process similar to the WB coefficient determination 14 are performed on this Raw image. The white balance of the restored image (Raw image) is controlled using this WB coefficient. That is, in this case, it has been necessary to restore the restored image.

[0068] <White Balance Control in Imaging Element that does not Use Imaging Lens>

[0069] Accordingly, on the basis of a detection signal, the white balance coefficient used to correct the white balance of a restored image restored from a detection image formed by the detection signal is set.

[0070] For example, an imaging device includes an imaging element that includes a plurality of pixel output units that receives incident light that is incident without passing through either of an imaging lens and a pinhole and each outputs one detection signal indicating an output pixel value modulated by an incident angle of the incident light, and a coefficient setting unit that, on the basis of a detection signal acquired in the pixel output units of the imaging element, sets a white balance coefficient that is used for correcting a white balance of a restored image restored from a detection image formed by the detection signal.

[0071] In order to calculate the WB coefficient, information of an object in a sufficiently wide range within the angle of view is required. In a case of the imaging element using the conventional imaging lens, light from the object in a narrow range is detected in each pixel, and thus it is necessary to integrate pixel values in a sufficiently wide range.

[0072] For example, a pixel value R of a red (R) pixel (R pixel), a pixel value G of a green (G) pixel (G pixel), and a pixel value B of a blue (B) pixel (B pixel) are used to calculate the WB coefficient for the pixel value R and the WB coefficient for the pixel value B as in the following formulas (1) and (2).

WB coefficient for pixel value R=integrated value of all pixel values G/integrated value of all pixel values R (1)

WB coefficient for pixel value B=integrated value of all pixel values G/integrated value of all pixel values B (2)

[0073] Then, as in the following formulas (3) and (4), white balance control is performed by multiplying the captured image (pixel value R and pixel value B) by these WB coefficients.

Pixel value R after white balance control=pixel value R.times.WB coefficient for pixel value R (3)

Pixel value B after white balance control=pixel value B.times.WB coefficient for pixel value B (4)

[0074] On the other hand, in a case of an imaging lens-free imaging element, light from almost all objects within the angle of view is detected at each pixel. That is, each detection signal includes information that is substantially equivalent to the integrated value of all pixel values of the imaging element using an imaging lens. Therefore, in the case of this imaging element, the WB coefficient can be calculated from this detection signal (pixel value of the detection image) without restoring the restored image.

[0075] That is, it is not necessary to restore the restored image. Furthermore, since it is possible to acquire information that is substantially equivalent to the integrated value of all pixel values of the imaging element using an imaging lens from each detection signal, it is not necessary to integrate the detection signal (pixel value), and is it not necessary to set the integration range. In other words, the processing required for calculating the WB coefficient is only processing for detection image acquisition 31 and WB coefficient determination 32, as in C of FIG. 1. Therefore, the WB coefficient can be calculated more easily. That is, the white balance control can be performed more easily.

[0076] Therefore, it is possible to suppress an increase in processing load related to the white balance control. Thus, not only an increase in processing time and power consumption can be suppressed, but also a utilization rate of hardware resources can be reduced. Therefore, it is possible to suppress an increase in hardware manufacturing cost. Moreover, it can be expected to facilitate development and design of a device or the like to which the white balance control is applied. Therefore, it is possible to suppress an increase in development period and cost.

[0077] In addition, since it is unnecessary to restore the restored image, the WB coefficient can be calculated earlier and the white balance control can be performed earlier. For example, it is possible to respond (provide a control result) to a white balance control instruction from the user or the like at higher speed. Therefore, it is possible to suppress a decrease in user satisfaction due to a time lag from the input of an instruction to the response.

2. First Embodiment

[0078] <Imaging Element>

[0079] FIG. 2 is a block diagram illustrating a main configuration example of an imaging element to which the present technology as described above is applied. The imaging element 121 illustrated in FIG. 2 is configured to perform processing related to imaging of an object. For example, the imaging element 121 captures an image of an object and acquires data (electronic data) related to the captured image. At this time, the imaging element 121 can capture the image of the object without passing through an imaging lens, an optical filter or the like such as a diffraction grating, or a pinhole or the like, and can acquire data with respect to the captured image. For example, the imaging element 121 captures the image of the object, and acquires data (detection signal or the like) from which data of the captured image can be acquired by a predetermined calculation.

[0080] Note that in the present description, the captured image is an image that is formed by pixel values with which an image of the object is formed and that can be visually observed and recognized as an image by the user. On the other hand, an image (referred to as a detection image) formed by a detection signal that is a detection result of incident light in a pixel unit output of the imaging element 121 is an image that cannot be recognized as an image when visually observed by the user (that is, the object cannot be visually recognized) because the image of the object is not formed. That is, the detection image is an image different from the captured image. However, as described above, by performing a predetermined calculation on data of the detection image, it is possible to restore the captured image, that is, the image in which an image of the object is formed and which can be visually observed and recognized as an image (that is, the object can be visually recognized) by the user. This restored captured image is referred to as a restored image. That is, the detection image is different from the restored image.

[0081] Further, in the present description, an image that constitutes the restored image and is an image before synchronization processing, color separation processing, and the like (for example, demosaic processing or the like) is also referred to as a Raw image. This Raw image is also an image that can be visually observed and recognized as an image (that is, the object can be visually recognized) by the user, similarly to the captured image. In other words, the detection image is an image according to the color filter array, but is different from the raw image.

[0082] However, in a case where the imaging element 121 has sensitivity only to invisible light such as infrared light or ultraviolet light for example, the restored image (Raw image or captured image) is an image that cannot be visually observed and recognized as an image (the object cannot be visually recognized) by the user. However, since this is due to the wavelength range of the detected light, the restored image can be changed to an image in which the object can be visually recognized by converting the wavelength range to a visible light range. On the other hand, in the detection image, since the image of the object is not formed, it is not possible to be changed to an image in which the object is visually recognizable by simply changing the wavelength range. Therefore, even when the imaging element 121 has sensitivity only to invisible light, an image acquired by performing a predetermined operation on the detection image as described above is referred to as a restored image. Note that in the following, unless otherwise specified, the present technology will be described using an example of a case where basically the imaging element 121 receives visible light.

[0083] That is, the imaging element 121 can capture the image of an object and acquire data related to the detection image.

[0084] In such an imaging element 121, there is provided a plurality of pixel output units that receives incident light that is incident without passing through either of an imaging lens and a pinhole and each outputs one detection signal indicating an output pixel value modulated by an incident angle of the incident light, and there is provided a coefficient setting unit that, on the basis of the detection signals acquired in the pixel output units, sets a white balance coefficient that is used for correcting a white balance of a restored image restored from a detection image formed by the detection signal.

[0085] In this manner, as described above, the white balance control can be performed more easily.

[0086] As illustrated in FIG. 2, the imaging element 121 has a light receiving unit 111 and another processing unit 112. The light receiving unit 111 and the other processing unit 112 may be formed on the same semiconductor substrate or may be formed on different semiconductor substrates from each other. For example, a semiconductor substrate on which the light receiving unit 111 is formed and a semiconductor substrate on which the other processing unit 112 is formed may be stacked.

[0087] The light receiving unit 111 has a pixel matrix formed by a plurality of pixels (pixel output units) and, in each pixel, receives light from an object and photoelectrically converts the light, accumulates charges according to the incident light, and outputs the charges as a detection signal at a predetermined timing. The plurality of pixels includes three types of pixels, for example, R pixels, G pixels, and B pixels.

[0088] As illustrated in FIG. 2, the other processing unit 112 includes an A-D conversion unit 101, a WB coefficient setting unit 102, a restoration unit 103, an association unit 104, and an output unit 105.

[0089] The A-D conversion unit 101 performs A-D conversion of the detection signal of an analog signal output from the light receiving unit 111 and supplies it to the WB coefficient setting unit 102 as a detection signal of digital data. Note that a configuration of this A-D conversion unit 101 is arbitrary. For example, the A-D conversion unit 101 may be configured by one A-D conversion unit, and the detection signals of all pixels of the light receiving unit 111 may be A-D converted by this one A-D conversion unit. Further, for example, the A-D conversion unit 101 may have an A-D conversion unit for every column or every row of the pixel matrix of the light receiving unit 111, and may perform A-D conversion of detection signals read from pixels in each column or each row by using the A-D conversion unit of the column or row. Further, for example, the A-D conversion unit 101 may have an A-D conversion unit for every area of the pixel matrix of the light receiving unit 111, and may perform A-D conversion of detection signals read from pixels in each area by using the A-D conversion unit of this area. Furthermore, for example, the A-D conversion unit 101 may have an A-D conversion unit for every pixel of the light receiving unit 111, and may perform A-D conversion of a detection signal read from each pixel by using the A-D conversion unit of this pixel.

[0090] The WB coefficient setting unit 102 sets a WB coefficient on the basis of the supplied detection signal. For example, using a detection signal R of an R pixel, a detection signal G of a green G pixel, and a detection signal B of a B pixel, a WB coefficient WB_R for the pixel value R and the WB coefficient WB_B for the pixel value B are calculated as in the following formulas (5) and (6).

WB_R=detection signal G/detection signal R (5)

WB_B=detection signal G/detection signal B (6)

[0091] For example, when G=200, R=160, and B=100, WB_R=1.25 and WB_B=2. In this manner, the WB coefficient can be calculated from detection signals by a simple calculation.

[0092] Further, as indicated in formulas (5) and (6), in this case, integration of the detection signals is unnecessary. That is, the WB coefficient can be calculated from a (single) detection signal of each pixel of each color (each of RGB).

[0093] Of course, the detection signals of a plurality of pixels for every color (that is, a plurality of detection signals R, a plurality of detection signals G, and a plurality of detection signals B) may be used for calculating the WB coefficient. In that case, it is only required to apply integrated values of these detection signals to the detection signals of the above-described formulas (5) and (6).

[0094] That is, the WB coefficient setting unit 102 can set the white balance coefficient on the basis of the detection signal acquired in a part of the plurality of pixels (pixel output units) included in the imaging element 121 (light receiving unit 111). The pixels (pixel output units) of this part may include at least one pixel output unit for each color.

[0095] Further, for calculating this WB coefficient, detection signals of not only the RGB pixels but also pixels to which filters of other wavelengths such as cyan and yellow for example are applied may be used. Also in that case, similarly to the case of RGB described above, the WB coefficient for each color can be acquired using a ratio of detection signals for every color as in formula (5) and formula (6). That is, the WB coefficient can be calculated by a simple calculation. Note that in a case where the detection signals of a plurality of pixels for every color are used for calculating the WB coefficient, it is only required to calculate an integrated value of detection signals for every color, similarly to those of RGB, and apply the integrated value for every color to formula (5) and formula (6). That is, the WB coefficient can be calculated by a simple calculation using the ratio of the integrated value for every color. Further, in a case of using detection signals of pixels to which filters of a complementary color system such as cyan, magenta, and yellow are applied, the WB coefficients may be calculated using formula (5) or (6) after converting detection signals of respective colors of the complementary color system into detection signals of RGB.

[0096] The WB coefficient setting unit 102 sets a value calculated as described above as a WB coefficient, and supplies the set WB coefficient to the restoration unit 103 and the association unit 104 together with the detection signal (detection image).

[0097] The restoration unit 103 is configured to perform processing related to generation of the restored image. For example, the restoration unit 103 generates the restored image by performing a predetermined calculation on data (detection signal or the like) related to the supplied detection image, using a restoration matrix that is included in advance.

[0098] At that time, the restoration unit 103 uses the WB coefficient set by the WB coefficient setting unit 102 to perform white balance control of the restored image. For example, the restoration unit 103 may correct a coefficient of the restoration matrix using the WB coefficient set by the WB coefficient setting unit 102 (for example, multiply the coefficient of the restoration matrix by the WB coefficient), and may restore the restored image with a corrected white balance from the detection image by using the restoration matrix with the corrected coefficient.

[0099] Further, for example, the restoration unit 103 may restore the restored image from the detection image using the restoration matrix, and correct a pixel value of the restored image, which has been restored, using the WB coefficient set by the WB coefficient setting unit 102 (for example, multiply each pixel value of the restored image by the WB coefficient), to thereby perform the white balance control of the restored image.

[0100] The restoration unit 103 supplies the restored image on which the white balance control has been performed as output data to the output unit 105. Note that the restoration unit 103 may further perform, for example, arbitrary image processing such as gamma correction (.gamma. correction) on the restored image. Further, the restoration unit 103 may convert the format of data of the restored image or, for example, compress the restored image by a predetermined compression method such as Joint Photographic Experts Group (JPEG), Tagged Image File Format (TIFF), or Graphics Interchange Format (GIF).

[0101] The association unit 104 is configured to perform processing related to data association. For example, the association unit 104 associates the WB coefficient supplied from the WB coefficient setting unit 102 with data (detection signal or the like) related to the detection image supplied from the WB coefficient setting unit 102.

[0102] Here, the term "associate" means that, for example, when one piece of information (data, command, program, or the like) is processed, the other piece of information can be used (linked). That is, the pieces of information associated with each other may be combined as one file or the like, or may each be individual information. For example, information B associated with information A may be transmitted on a transmission path different from that for the information A. Further, for example, information B associated with information A may be recorded in a recording medium different from that for the information A (or another recording area of the same recording medium). Note that the "association" may be for a part of information instead of the entire information. For example, an image and information corresponding to the image may be associated with each other in an arbitrary unit such as a plurality of frames, one frame, or a part of the frame.

[0103] The association unit 104 supplies the associated data as output data to the output unit 105. Note that the association unit 104 may further associate data related to the detection image with information used in a predetermined calculation for generating the restored image, such as the restoration matrix for example.

[0104] The output unit 105 outputs the output data supplied from the restoration unit 103 or the output data supplied from the association unit 104 to the outside of the imaging element 121.

[0105] In this manner, the imaging element 121 can more easily acquire the WB coefficient, and can more easily perform the white balance control of the detection image.

[0106] Note that either one of the restoration unit 103 and the association unit 104 may be omitted. That is, only one of the restoration unit 103 and the association unit 104 may be formed in the imaging element 121.

[0107] <On Imaging Element>

[0108] Next, the imaging element 121 will be described with reference to FIGS. 3 to 21.

[0109] <Pixel and Pixel Output Unit>

[0110] In the present description, the present technology will be described using the term "pixel" (or "pixel output unit"). In the present description, a "pixel" (or a "pixel output unit") refers to a divided unit of a region (also referred to as a pixel region) in which a physical configuration for receiving incident light of the imaging element 121 is formed, the divided unit including at least one physical configuration capable of receiving light independent from other pixels. The physical configuration capable of receiving light is, for example, a photoelectric conversion element and is, for example, a photodiode (PD). The number of physical configurations (for example, photodiodes) formed in one pixel is arbitrary, and may be one or more. The type, size, shape, and the like thereof are also arbitrary.

[0111] Further, the physical configuration of this "pixel" unit includes not only the above-mentioned "physical configuration capable of receiving light" but also includes, for example, all physical configurations related to reception of incident light, such as an on-chip lens, a light shielding film, a color filter, a flattening film, an anti-reflection film, and the like. Moreover, a configuration such as a readout circuit may be included. That is, this physical configuration of the pixel unit may be any configuration.

[0112] Further, a detection signal read from the "pixel" (that is, the physical configuration of the pixel unit) may also be referred to as "detection signal of pixel unit (or pixel output unit)" or the like. Moreover, this detection signal of the pixel unit (or pixel output unit) will also be referred to as "pixel unit detection signal (or pixel output unit detection signal)". Further, this pixel unit detection signal will also be referred to as "pixel output". Furthermore, the value will also be referred to as "output pixel value".

[0113] The imaging element 121 includes a plurality of pixel output units that receives incident light that is incident without passing through either of an imaging lens and a pinhole, and each outputs one detection signal indicating an output pixel value modulated by an incident angle of the incident light. Therefore, the value of the detection signal of the pixel unit (output pixel value) of the imaging element 121 can have an incident angle directivity indicating a directivity with respect to an incident angle of incident light from an object independently of others. For example, each pixel unit (pixel output unit) of the imaging element 121 has a configuration in which the incident angle directivity indicating a directivity of the output pixel value with respect to an incident angle of incident light from an object is independently settable. In other words, in the imaging element 121, the incident angle directivities of the output pixel values of at least two pixel units can be different from each other.

[0114] Note that since the number of "physical configurations capable of receiving light" included in the "pixel (or pixel output unit)" is arbitrary as described above, the pixel unit detection signal may be a detection signal acquired by a single "physical configuration capable of receiving light" or may be detection signals acquired by a plurality of "physical configurations capable of receiving light".

[0115] Further, a plurality of the pixel unit detection signals (output pixel values) can be combined into one at an arbitrary stage. For example, the output pixel values of a plurality of pixels may be added in a state of an analog signal, or may be converted into a digital signal and then added.

[0116] Further, after this detection signal is read from the imaging element 121, that is, in the detection image, a plurality of detection signals can be combined into a single detection signal, or a single detection signal can be converted into a plurality of detection signals. That is, the resolution (the number of data) of the detection image is variable.

[0117] Incidentally, in the following description, for convenience of description, unless otherwise specified, the imaging element 121 will be described as having a pixel region (that is, a light receiving unit 111) in which a plurality of pixels is arranged in a matrix (a pixel array is formed). Note that the arrangement pattern of the pixels (or pixel output units) of the imaging element 121 is arbitrary, and is not limited to this example. For example, the pixels (or pixel output units) may be arranged in a honeycomb structure. Further, for example, the pixels (or pixel output units) may be arranged in one row (or one column). That is, the imaging element 121 may be a line sensor.

[0118] Note that the wavelength range to which (the pixels of) the imaging element 121 have sensitivity is arbitrary. For example, (the pixels of) the imaging element 121 may have sensitivity to visible light, or may have sensitivity to invisible light such as infrared light or ultraviolet light, or may have sensitivity to both visible light and invisible light. For example, in a case where the imaging element detects far-infrared light that is invisible light, a thermograph (an image representing a heat distribution) can be generated using a captured image acquired by the imaging element. However, in a case of an imaging element with an imaging lens, since it is difficult for glass to transmit far-infrared light, an expensive imaging lens including a special material is required, which may increase the manufacturing cost. Since the imaging element 121 can capture an image of an object without passing through an imaging lens or the like and can acquire data related to the captured image, by having the pixels capable of detecting far-infrared light, increase in the manufacturing cost can be suppressed. That is, far-infrared light imaging can be performed at lower cost (a thermograph can be acquired at lower cost). Note that in a case where (the pixels of) the imaging element 121 have sensitivity to invisible light, the restored image does not become an image in which the user can visually observe and recognize an object, but becomes an image in which the user cannot visually recognize the object. In other words, the restored image may be an image of visible light or an image of invisible light (for example, (far) infrared light, ultraviolet light, or the like).

[0119] <Incident Angle Directivity>

[0120] As described above, the imaging element 121 can acquire detection signals of a plurality of pixel output units (a plurality of pixel output unit detection signals). Then, the incident angle directivities of at least two pixel output unit detection signals may be different from each other.

[0121] Here, the "incident angle directivity" refers to light receiving sensitivity characteristics according to the incident angle of incident light, that is, detection sensitivity to the incident angle of incident light. For example, even if the incident light has the same light intensity, the detection sensitivity may change depending on the incident angle. Such a bias in the detection sensitivity (including cases where there is no bias) is referred to as "incident angle directivity".

[0122] For example, when incident lights having the same light intensity are incident on the physical configuration of the two pixel output units at the same incident angles as each other, the signal levels (detection signal levels) of the detection signals of respective pixel output units may be values different from each other depending on the respective incident angle directivities. The imaging element 121 (each pixel output unit thereof) has a physical configuration having such characteristics.

[0123] This incident angle directivity may be achieved by any method. For example, the incident angle directivity may be achieved by providing a general, for example, light shielding film on a front side (light incident side) of a photoelectric conversion element (photodiode or the like) of an imaging element having a basic structure similar to an imaging element such as a complementary metal oxide semiconductor (CMOS) imaging sensor, or the like.

[0124] When an image is captured only by a general imaging element including pixels having the same incident angle directivities, light having substantially the same light intensity is incident on all pixels of the imaging element, and a formed image of an object cannot be acquired. Therefore, in general, an imaging lens or a pinhole is provided on a front side (light incident side) of the imaging element. For example, by providing an imaging lens, light from the object plane can be formed on the imaging plane of the imaging element. Therefore, the imaging element can acquire a detection signal of a level corresponding to the formed image of the object at each pixel (that is, the imaging element can acquire a captured image of the object whose image is formed). However, in this case, the size becomes physically large, and it may be difficult to reduce the size of the device. Further, in a case where the pinhole is provided, the size can be reduced as compared with a case where the imaging lens is provided. However, since the amount of light incident on the imaging element is reduced, measures such as elongating the exposure time or increasing the gain are necessary. Therefore, it has been possible that blurring is likely to occur in high-speed imaging of an object, or color expression becomes unnatural.

[0125] On the other hand, the imaging element 121 includes a plurality of pixel output units that receives incident light that is incident without passing through either of an imaging lens and a pinhole, and each outputs one detection signal indicating an output pixel value modulated by an incident angle of the incident light. Note that the incident angle directivities of respective pixel output units may be different from each other (the light receiving sensitivity characteristic according to the incident angle of incident light is different for every pixel output unit), or ones having the same light receiving sensitivity characteristics may be included in part of pixels, or part of pixels may have different light receiving sensitivity characteristics.

[0126] For example, in FIG. 3, assuming that a light source forming an object plane 131 is a point light source, in the imaging element 121, light rays of the same light intensity emitted from the same point light source are incident on all the pixels, but they are incident at different incident angles in every pixel. Then, since the respective pixels of the imaging element 121 have different incident angle directivities, the light rays having the same light intensity are detected with different sensitivity. That is, a detection signal having a different signal level is detected for every pixel.

[0127] More specifically, the sensitivity characteristic according to the incident angle of incident light received at each pixel of the imaging element 121, that is, the incident angle directivity according to the incident angle at each pixel is represented by a coefficient representing the light receiving sensitivity according to the incident angle, and the signal level of the detection signal according to the incident light at each pixel (also referred to as a detection signal level) is acquired by multiplying by a coefficient set according to the light receiving sensitivity according to the incident angle of the incident light.

[0128] More specifically, as illustrated in the upper left part of FIG. 3, detection signal levels DA, DB, and DC at positions Pa, Pb, and Pc are represented by the following formulas (7) to (9), respectively.

[Mathematical Formula 1]

DA=.alpha.1.times.a+.beta.1.times.b+.gamma.1.times.c (7)

DB=.alpha.2.times.a+.beta.2.times.b+.gamma.2.times.c (8)

DC=.alpha.3.times.a+.beta.3.times.b+.gamma.3.times.c (9)

[0129] Here, .alpha.1 is a coefficient that is set according to the incident angle of a light ray from a point light source PA on the object plane 131 to be restored at the position Pa on the imaging element 121. Further, .beta.1 is a coefficient that is set according to the incident angle of a light ray from a point light source PB on the object plane 131 to be restored at the position Pa on the imaging element 121. Moreover, .gamma.1 is a coefficient that is set according to the incident angle of a light ray from a point light source PC on the object plane 131 to be restored at the position Pa on the imaging element 121.

[0130] As illustrated in formula (7), the detection signal level DA at the position Pa is represented by the sum (combined value) of the product of light intensity "a" of the light ray from the point light source PA at the position Pa and the coefficient .alpha.1, the product of light intensity "b" of the light ray from the point light source PB at the position Pa and the coefficient .beta.1, and the product of light intensity "c" of the light ray from the point light source PC at the position Pa and the coefficient .gamma.1. In the following, coefficients .alpha.x, .beta.x, and .gamma.x (x is a natural number) are collectively referred to as a coefficient set.

[0131] Similarly, a coefficient set .alpha.2, .beta.2, and .gamma.2 of formula (8) is a coefficient set that is set according to the incident angles of light rays from the point light sources PA, PB, and PC on the object plane 131 to be restored at the position Pb on the imaging element 121. That is, the detection signal level DB at the position Pb is, as in the above formula (8), represented by the sum (combined value) of the product of light intensity "a" of the light ray from the point light source PA at the position Pb and the coefficient .alpha.2, the product of light intensity "b" of the light ray from the point light source PB at the position Pb and the coefficient .beta.2, and the product of light intensity "c" of the light ray from the point light source PC at the position Pb and the coefficient .gamma.2. Further, coefficients .alpha.3, .beta.3, and .gamma.3 of formula (9) are a coefficient set that is set according to the incident angles of light rays from the point light sources PA, PB, and PC on the object plane 131 to be restored at the position Pc on the imaging element 121. That is, the detection signal level DC at the position Pc is, as in the above formula (9), represented by the sum (combined value) of the product of light intensity "a" of the light ray from the point light source PA at the position Pc and the coefficient .alpha.3, the product of light intensity "b" of the light ray from the point light source PB at the position Pc and the coefficient .beta.3, and the product of light intensity "c" of the light ray from the point light source PC at the position Pc and the coefficient .gamma.3.

[0132] As described above, these detection signal levels are different from those for an image in which an image of the object is formed because light intensities of the light rays emitted from the respective point light sources PA, PB, and PC are intermingled. That is, the detection signal level illustrated in an upper right part of FIG. 3 is not a detection signal level corresponding to an image (captured image) in which an image of the object is formed, and thus is different from that of a pixel value illustrated in a lower right part of FIG. 3 (generally the two do not match).

[0133] However, by forming a simultaneous equation using the coefficient set .alpha.1, .beta.1, and .gamma.1, the coefficient set .alpha.2, .beta.2, and .gamma.2, and the coefficient set .alpha.3, .beta.3, and .gamma.3 and the detection signal levels DA, DB, and DC, and solving a simultaneous equation of above-described formulas (7) to (9) using a, b, and c as variables, pixel values at the respective positions Pa, Pb, and Pc as illustrated in the lower right part of FIG. 3 can be acquired. Thus, the restored image (the image in which the image of the object is formed), which is a set of pixel values, is restored.

[0134] With such a configuration, the imaging element 121 can output one detection signal indicating an output pixel value modulated by an incident angle of the incident light in each pixel without requiring an imaging lens, an optical filter including a diffraction grating or the like, a pinhole, or the like. As a result, an imaging lens, an optical filter including a diffraction grating or the like, a pinhole, or the like is not a necessary component, and thus it is possible to lower the height of the imaging device, that is, reduce the thickness in the light incident direction in a configuration for achieving an imaging function.

[0135] <Formation of Incident Angle Directivity>

[0136] A left part of FIG. 4 illustrates a front view of a portion of a pixel array unit of a general imaging element, and a right part of FIG. 4 illustrates a front view of a portion of the pixel array part of the imaging element 121. Note that although FIG. 4 illustrates an example in which the pixel array unit has a configuration in which the number of pixels in each of the horizontal direction.times.vertical direction is 6 pixels.times.6 pixels, but the configuration of the number of pixels is not limited to this.

[0137] The incident angle directivity can be formed by, for example, a light shielding film. It is illustrated that in the general imaging element 121, pixels 121a having the same incident angle directivities are arranged in an array, as in the example on the left part of FIG. 4. On the other hand, the imaging element 121 in the example on the right side of FIG. 4 is provided with a light shielding film 121b, which is one of modulation elements, so as to cover a part of a light receiving region of a photodiode in every pixel 121a. The incident light that is incident on each pixel 121a is optically modulated according to an incident angle. Then, for example, by providing the light shielding film 121b in a different range in every pixel 121a, light receiving sensitivity with respect to an incident angle of incident light is different in every pixel 121a, and each pixel 121a has a different incident angle directivity.

[0138] For example, the pixel 121a-1 and the pixel 121a-2 have different light-shielding ranges (at least either light-shielding regions (positions) or light shielding areas are different) due to the light shielding films 121b-1 and 121b-2 provided. That is, in the pixel 121a-1, the light shielding film 121b-1 is provided so as to shield a part of a left side in the light receiving region of the photodiode by a predetermined width, and in the pixel 121a-2, the light shielding film 121b-2 is provided so as to shield a part of a right side in the light receiving region by a width wider in the horizontal direction than the light shielding film 121b-1. In the other pixels 121a, similarly, the light shielding films 121b are provided so as to shield different ranges in the light receiving region in every pixel, and are randomly arranged in the pixel array.

[0139] Note that the range of the light shielding film 121b desirably has an area to a degree that a desired amount of light can be ensured because as the ratio of covering the light receiving region of each pixel becomes larger, it becomes a state that the amount of light that can be received is smaller, and thus the area of the light shielding film 121b may be limited to, for example, about 3/4 at the maximum of an entire light receivable range. In this manner, it is possible to secure a light amount equal to or more than a desired amount. However, if each pixel is provided with an unshielded range having a width corresponding to the wavelength of light to be received, it is possible to receive a minimum amount of light. That is, for example, in a case of a B pixel (blue pixel), the wavelength is about 500 nm, but it is possible to receive a minimum amount of light unless the light is shielded by a width corresponding to this wavelength or more.

Configuration Example of Imaging Element

[0140] A configuration example of the imaging element 121 in this case will be described with reference to FIG. 5. An upper part of FIG. 5 is a side cross-sectional view of the imaging element 121, and a middle part of FIG. 5 is a top view of the imaging element 121. Further, the side cross-sectional view in the upper part of FIG. 5 is an AB cross section in the middle part of FIG. 5. Moreover, a lower part of FIG. 5 is a circuit configuration example of the imaging element 121.

[0141] The imaging element 121 having the configuration illustrated in FIG. 5 has a plurality of pixel output units that receives incident light that is incident without passing through either of an imaging lens and a pinhole, and each outputs one detection signal indicating an output pixel value modulated by an incident angle of the incident light. For example, the imaging element 121 has a configuration in which incident angle directivities indicating directivities of the output pixel values of at least two pixel output units of the plurality of pixel output units with respect to an incident angle of incident light from an object are different characteristics from each other. Furthermore, the imaging element 121 in this case has a configuration such that, in the plurality of pixel output units, an incident angle directivity indicating a directivity with respect to the incident angle of the incident light from the object can be set independently in every pixel output unit.

[0142] In the imaging element 121 in the upper part of FIG. 5, incident light is incident from an upper side to a lower side in the view. Adjacent pixels 121a-15 and 121a-16 are of what is called back-illuminated type in each of which a wiring layer Z12 is provided in a lowermost layer in the view and a photoelectric conversion layer Z11 is provided thereon.

[0143] Note that when it is not necessary to distinguish the pixels 121a-15 and 121a-16, they are simply referred to as a pixel 121a, and other configurations are also referred to in a similar manner. Further, although FIG. illustrates a side view and a top view of two pixels forming the pixel array of the imaging element 121, it goes without saying that a larger number of pixels 121a are arranged but are omitted in the views.

[0144] Moreover, the pixels 121a-15 and 121a-16 include photodiodes 121e-15 and 121e-16 in the photoelectric conversion layer Z11, respectively. Further, on the photodiodes 121e-15 and 121e-16, on-chip lenses 121c-15 and 121c-16 and color filters 121d-15 and 121d-16 are respectively formed from above.

[0145] The on-chip lenses 121c-15 and 121c-16 focus incident light on the photodiodes 121e-15 and 121e-16, respectively.

[0146] The color filters 121d-15, 121d-16 are, for example, optical filters that transmit light having specific wavelengths such as red, green, blue, infrared, and white. Note that in a case of white, the color filters 121d-15 and 121d-16 may be transparent filters or may be omitted.

[0147] Light shielding films 121p-15 to 121p-17 are formed on a boundary between the respective pixels in the photoelectric conversion layer Z11 of the pixels 121a-15 and 121a-16, and suppress crosstalk between adjacent pixels.

[0148] Further, light shielding films 121b-15 and 121b-16, which are one of modulation elements, shield a part of a light receiving surface S from light, as illustrated in the upper and middle parts of FIG. 5. Since a part of the light receiving surface S is shielded from light by the light shielding film 121b, incident light that is incident on the pixel 121a is optically modulated according to the incident angle. Since the pixel 121a detects the optically modulated incident light, the pixel 121a has an incident angle directivity. On the light receiving surface S of the photodiodes 121e-15 and 121e-16 in the pixels 121a-15 and 121a-16, different ranges are shielded from light by the light shielding films 121b-15 and 121b-16, thereby setting incident angle directivities different for every pixel. However, the range shielded from light is not limited to a case of being different in each of all the pixels 121a of the imaging element 121, and pixels 121a in which the same ranges are shielded from light may be partially included.

[0149] With the configuration as illustrated in the upper part of FIG. 5, a right end of the light shielding film 121p-15 and an upper end of the light shielding film 121b-15 are connected, and a left end of the light shielding film 121b-16 and an upper end of the light shielding film 121p-16 are connected, forming an L shape when viewed from the side.

[0150] Moreover, the light shielding films 121b-15 to 121b-17 and the light shielding films 121p-15 to 121p-17 include metal, for example, tungsten (W), aluminum (Al), or an alloy of Al and copper (Cu). Further, the light shielding films 121b-15 to 121b-17 and the light shielding films 121p-15 to 121p-17 may be formed simultaneously with the same metal as the wiring in the same process as a process of forming the wiring in the semiconductor process. Note that the light shielding films 121b-15 to 121b-17 and the light shielding films 121p-15 to 121p-17 do not need to have the same thicknesses depending on the position.

[0151] Further, as illustrated in the lower part of FIG. 5, the pixel 121a includes a photodiode 161 (which corresponds to the photodiode 121e), a transfer transistor 162, a floating diffusion (FD) unit 163, a selection transistor 164, an amplification transistor 165, and a reset transistor 166, and is connected to a current source 168 via a vertical signal line 167.

[0152] The photodiode 161 has a configuration in which an anode electrode is each grounded and a cathode electrode is connected to a gate electrode of the amplification transistor 165 via the transfer transistor 162.

[0153] The transfer transistor 162 is each driven according to a transfer signal TG. For example, when the transfer signal TG supplied to a gate electrode of the transfer transistor 162 becomes high level, the transfer transistor 162 turns on. Thus, charges accumulated in the photodiode 161 are transferred to the FD unit 163 via the transfer transistor 162.

[0154] The amplification transistor 165 serves as an input unit of a source follower that is a reading circuit that reads out a signal acquired by photoelectric conversion in the photodiode 161, and outputs a pixel signal at a level corresponding to charges accumulated in the FD unit 163 to the vertical signal line 167. That is, the amplification transistor 165 has a drain terminal connected to a power supply voltage VDD and a source terminal connected to the vertical signal line 167 via the selection transistor 164, thereby forming a source follower with the current source 168 connected to one end of the vertical signal line 167.

[0155] The FD (Floating Diffusion) unit 163 is a floating diffusion region having a charge capacitance C1 provided between the transfer transistor 162 and the amplification transistor 165, and temporarily accumulates charges transferred from the photodiode 161 via the transfer transistor 162. The FD unit 163 is a charge detection unit that converts charges into a voltage, and the charges accumulated in the FD unit 163 are converted into a voltage in the amplification transistor 165.

[0156] The selection transistor 164 is driven according to a selection signal SEL and is turned on when the selection signal SEL supplied to the gate electrode becomes high level, and connects the amplification transistor 165 and the vertical signal line 167.

[0157] The reset transistor 166 is driven according to a reset signal RST. For example, the reset transistor 166 turns on when the reset signal RST supplied to the gate electrode becomes high level, and discharges the charges accumulated in the FD unit 163 to the power supply voltage VDD to reset the FD unit 163.

[0158] With the circuit configuration as described above, the pixel circuit illustrated in the lower part of FIG. 5 operates as follows.

[0159] That is, as a first operation, the reset transistor 166 and the transfer transistor 162 are turned on, and the charges accumulated in the FD unit 163 are discharged to the power supply voltage VDD to reset the FD unit 163.

[0160] As a second operation, the reset transistor 166 and the transfer transistor 162 are turned off, exposure period starts, and the photodiode 161 accumulates charges according to the amount of incident light.

[0161] As a third operation, the reset transistor 166 is turned on, the FD unit 163 is reset, and then the reset transistor 166 is turned off. By this operation, the FD unit 163 is reset and set to a reference potential.

[0162] As a fourth operation, a potential of the FD unit 163 in the reset state is output from the amplification transistor 165 as the reference potential.

[0163] As a fifth operation, the transfer transistor 162 is turned on, and the charges accumulated in the photodiode 161 are transferred to the FD unit 163.

[0164] As a sixth operation, the potential of the FD unit 163 to which the charges of the photodiode are transferred is output from the amplification transistor 165 as a signal potential.

[0165] By the above processing, the reference potential is subtracted from the signal potential, and is output as a detection signal by correlated double sampling (CDS). The value of the detection signal (output pixel value) is modulated according to the incident angle of the incident light from the object, and has a different characteristic (directivity) depending on the incident angle (has an incident angle directivity).

[0166] As described above, in the pixel 121a in the case of FIG. 5, one photodiode 121e is provided for each pixel, a different range is shielded in every pixel 121a by the light shielding film 121b, and by optical modulation using the light shielding film 121b, the detection signal of one pixel of the detection image having the incident angle directivity can be expressed by one pixel 121a.

Another Configuration Example of Imaging Element

[0167] Further, the incident angle directivity can be formed by, for example, a position, a size, a shape, and the like of a light receiving element (for example, a photodiode) in a pixel. Pixels for which these parameters are different have different sensitivity to incident light having the same light intensity from the same direction. That is, by setting these parameters for every pixel, the incident angle directivity can be set to every pixel.

[0168] For example, a plurality of light receiving elements (for example, photodiodes) may be provided in a pixel, and these may be selectively used. In this manner, it becomes possible to set the incident angle directivity to every pixel by selecting the light receiving element.

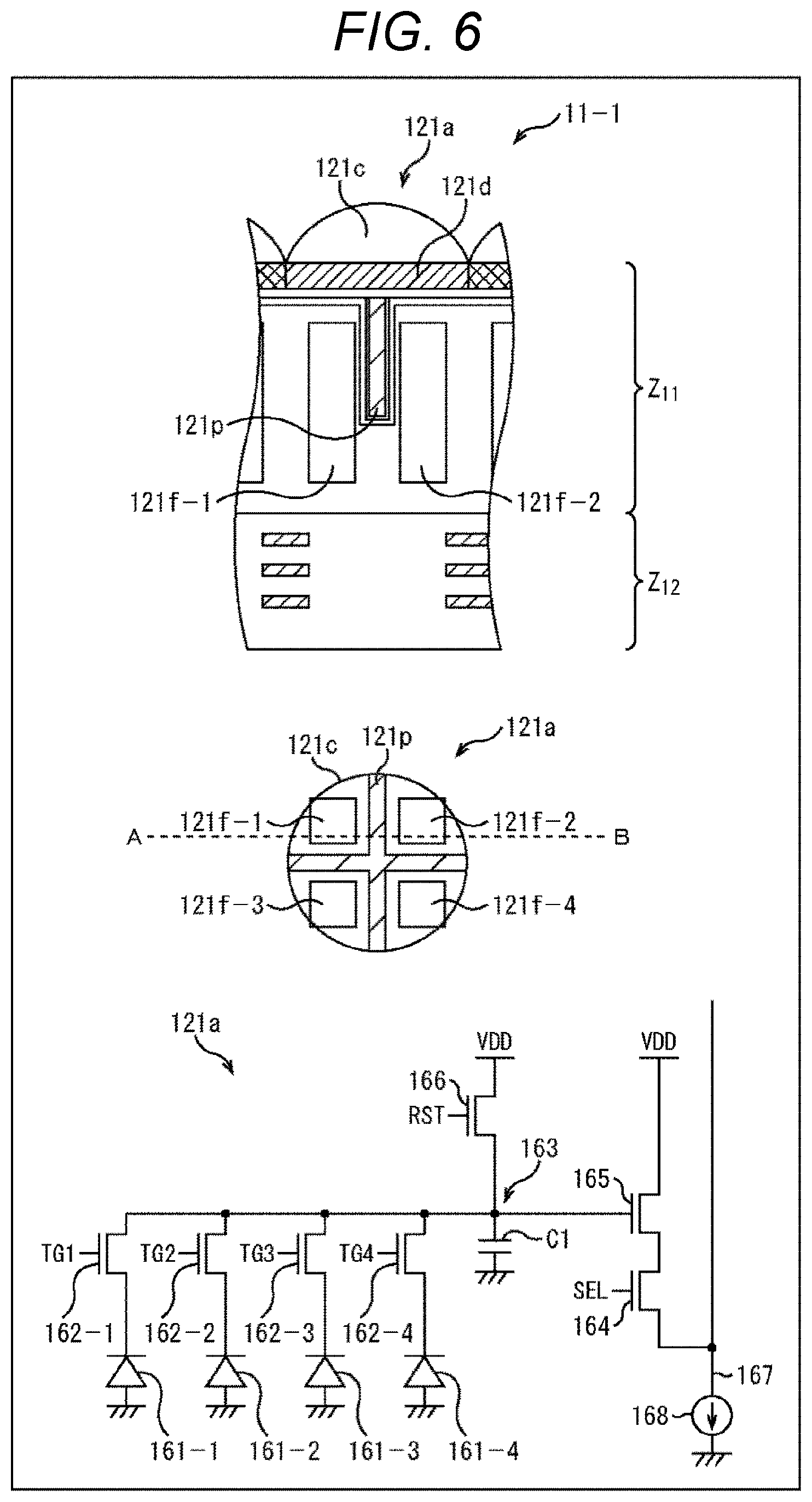

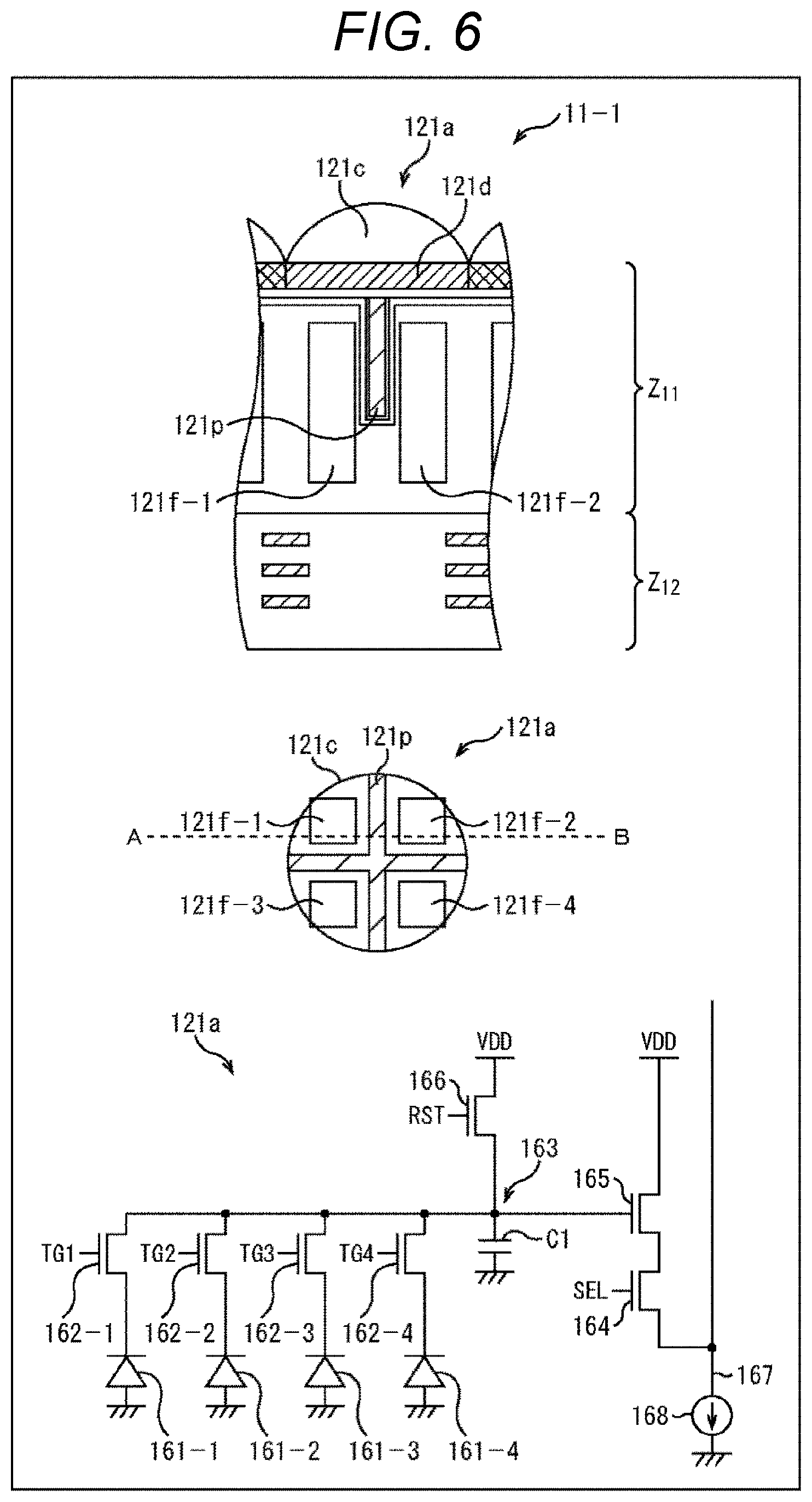

[0169] FIG. 6 is a diagram illustrating another configuration example of the imaging element 121. A side cross-sectional view of the pixel 121a of the imaging element 121 is illustrated in an upper part of FIG. 6, and a top view of the imaging element 121 is illustrated in a middle part of FIG. 6. Further, the side cross-sectional view of the upper part of FIG. 6 is an AB cross section in the middle part of FIG. 6. Moreover, a lower part of FIG. 6 is a circuit configuration example of the imaging element 121.

[0170] The imaging element 121 having the configuration illustrated in FIG. 6 has a plurality of pixel output units that receives incident light that is incident without passing through either of an imaging lens and a pinhole, and each outputs one detection signal indicating an output pixel value modulated by an incident angle of the incident light. For example, the imaging element 121 has a configuration in which incident angle directivities indicating directivities of the output pixel values of at least two pixel output units of the plurality of pixel output units with respect to an incident angle of incident light from an object are different characteristics from each other. Furthermore, in the imaging element 121 in this case, by making the plurality of pixel output units have different photodiodes (PD) from each other that contribute to output, an incident angle directivity indicating a directivity of the output pixel value with respect to an incident angle of incident light from an object is independently settable in each of the pixel output units.

[0171] As illustrated in FIG. 6, the imaging element 121 differs in configuration from the imaging element 121 in FIG. 6 in that four photodiodes 121f-1 to 121f-4 are formed in the pixel 121a, and the light shielding film 121p is formed in a region that separates the photodiodes 121f-1 to 121f-4 from each other. That is, in the imaging element 121 of FIG. 6, the light shielding film 121p is formed in a "+" shape when viewed from the top surface. Note that their common components are denoted by the same reference numerals, and detailed description thereof is omitted.

[0172] In the imaging element 121 configured as illustrated in FIG. 6, by separating the photodiodes 121f-1 to 121f-4 by the light shielding film 121p, electrical and optical crosstalk among the photodiodes 121f-1 to 121f-4 can be prevented. That is, the light shielding film 121p in FIG. 6 is for preventing crosstalk as in the light shielding film 121p of the imaging element 121 in FIG. 5, and is not for giving incident angle directivity.

[0173] Although details will be described later, the photodiodes 121f-1 to 121f-4 have different incident angles at which the light receiving sensitivity characteristics increase. That is, the output pixel value of the pixel 121a can be given a desired incident angle directivity depending on which of the photodiodes 121f-1 to 121f-4 the charges are read from. That is, it is possible to control the incident angle directivity of the output pixel value of the pixel 121a.

[0174] In the configuration example of the imaging element 121 of FIG. 6, one FD unit 163 is shared by the four photodiodes 121f-1 to 121f-4. The lower part of FIG. 6 illustrates a circuit configuration example in which the one FD unit 163 is shared by the four photodiodes 121f-1 to 121f-4. Note that in the lower part of FIG. 6, description of the same configurations as in the lower part of FIG. 5 is omitted.

[0175] The lower part of FIG. 6 differs from the lower part of the circuit configuration of FIG. 5 in that instead of the photodiode 161 and the transfer transistor 162, photodiodes 161-1 to 161-4 (corresponding to the photodiodes 121f-1 to 121f-4 in the upper part of FIG. 6) and transfer transistors 162-1 to 162-4 are provided, and the FD unit 163 is shared.