Computing Device

Solomon; Mark ; et al.

U.S. patent application number 16/869413 was filed with the patent office on 2021-01-07 for computing device. The applicant listed for this patent is Tangible Play, Inc.. Invention is credited to Jerome Scholler, Mark Solomon.

| Application Number | 20210006730 16/869413 |

| Document ID | / |

| Family ID | |

| Filed Date | 2021-01-07 |

View All Diagrams

| United States Patent Application | 20210006730 |

| Kind Code | A1 |

| Solomon; Mark ; et al. | January 7, 2021 |

COMPUTING DEVICE

Abstract

A computing device is described. In one embodiment, the computing device includes a display screen located on a front surface of a housing, a support located on a back surface of the housing, the support is configured to support the display screen in a first position relative to a surface when situated in a first orientation, and to support the display screen in a second position relative to the physical surface when situated in a second orientation, the computing device further includes a first camera located on a first peripheral side of the front surface, the first camera being configured to capture a first field of view, and a second camera located on a second peripheral side of the front surface, and the second camera is configured to capture a second field of view that is different from the first field of view.

| Inventors: | Solomon; Mark; (San Jose, CA) ; Scholler; Jerome; (San Francisco, CA) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Appl. No.: | 16/869413 | ||||||||||

| Filed: | May 7, 2020 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 62871195 | Jul 7, 2019 | |||

| Current U.S. Class: | 1/1 |

| International Class: | H04N 5/247 20060101 H04N005/247; H04N 5/225 20060101 H04N005/225 |

Claims

1. A computing device comprising: a display screen located on a front surface of a housing; a support located on a back surface of the housing, wherein: the support is configured to support the display screen in a first position relative to a physical surface when situated in a first orientation; and the support is configured to support the display screen in a second position relative to the physical surface when situated in a second orientation; a first camera located on a first peripheral side of the front surface, the first camera being configured to capture a first field of view; and a second camera located on a second peripheral side of the front surface, wherein: the first peripheral side is opposite the second peripheral side; and the second camera is configured to capture a second field of view that is different from the first field of view.

2. The computing device of claim 1, wherein: the second orientation and the first orientation are different; when the display screen is situated in the first orientation, the display screen is in a first viewing position and the first camera captures the first field of view that includes a portion of a surface in front of the housing and the second camera captures the second field of view that includes a portion of an area in front of the display screen; and when the display screen is situated in the second orientation, the display screen is in a second viewing position and the first camera captures the first field of view that includes a first portion of an area above the display screen and the second camera captures the second field of view that includes a second portion of an area above the display screen.

3. The computing device of claim 1, wherein: a tilt angle between the display screen and the physical surface when the display screen is positioned in the first orientation is greater than a tilt angle between the display screen and the physical surface when the display screen is positioned in the second orientation.

4. The computing device of claim 1, further comprising: an orientation sensor that detects when the display screen is situated in the first orientation and when the display screen is situated in the second orientation; and an activity application that executes a routine based on whether the orientation sensor detects the first orientation or the second orientation.

5. The computing device of claim 4, further comprising: when the orientation sensor detects that the display screen is positioned in the first orientation, the activity application identifies the first camera located on the first peripheral side as a top camera of the display screen and the second camera located on the second peripheral side as a bottom camera of the display screen; the first field of view of the top camera is directed downward towards the physical surface; and the second field of view of the bottom camera is directed upward towards a user facing the display screen.

6. The computing device of claim 5, wherein: the top camera is configured to capture a first video stream that includes an activity scene of the physical surface in the first field of view; and the bottom camera is configured to capture a second video stream that includes a face of the user in the second field of view.

7. The computing device of claim 4, wherein: when the orientation sensor detects that the display screen is positioned in the second orientation: an activity application identifies the first camera located on the first peripheral side as a bottom camera of the display screen and the second camera located on the second peripheral side as a top camera of the display screen; and the first field of view of the bottom camera and the second field of view of the top camera are directed upward.

8. The computing device of claim 7, wherein: the bottom camera is configured to capture a first video stream that includes a first portion of a face of a user in the first field of view; and the top camera is configured to capture a second video stream that includes a second portion of the face of the user in the second field of view.

9. The computing device of claim 7, wherein: the bottom camera is configured to capture a first video stream that includes a first user in the first field of view; and the top camera is configured to capture a second video stream that includes a second user in the second field of view.

10. The computing device of claim 1, wherein: the first peripheral side includes a protrusion that extends outwardly from the front surface of the housing; and the first camera is positioned on the protrusion towards the physical surface; and the first field of view of the first camera is configured to capture at least a portion of the physical surface.

11. The computing device of claim 1, wherein the support comprises: a supporting element that is integral with or coupled to the back surface of the housing; and the supporting element extends outwardly from the back surface of the housing and is situatable on a physical surface to support the display screen in the first orientation or the second orientation.

12. The computing device of claim 11, wherein: the computing device transitions from the first orientation to the second orientation by rotating the computing device.

13. A method comprising: determining that a computing device is positioned in a first orientation on a physical surface, wherein: the computing device includes a first camera configured to capture a first field of view and a second camera configured to capture a second field of view that is different from the first field of view; and the first field of view of the first camera is directed towards the physical surface and the second field of view of the second camera is directed towards a user facing the computing device when the computing device is situated in the first orientation; capturing, using the first camera of the computing device, a first video stream that includes an activity scene of the physical surface in the first field of view; capturing, using the second camera of the computing device, a second video stream that includes the user in the second field of view; determining, in an activity application of the computing device, an operation routine of the activity application based on the first video stream including the activity scene of the physical surface and the second video stream including the user; and executing the operation routine in the activity application.

14. The method of claim 13, wherein determining the operation routine of the activity application includes: detecting, in the first video stream, a tangible object in the activity scene of the physical surface; determining, in the second video stream, a user state of the user; and determining the operation routine of the activity application based on the tangible object in the activity scene and the user state of the user.

15. The method of claim 14, wherein: determining the user state of the user includes: determining a facial feature of the user in the second video stream; and determining the user state of the user based on the facial feature of the user; and the operation routine of the activity application includes adjusting one or more of a task complexity level and an instruction detail level associated with the user in the activity application based on the user state of the user.

16. The method of claim 13, wherein: the first camera is located on a first peripheral side of a front surface of a housing of a display screen; and the second camera is located on a second peripheral side of the front surface of the housing of the display screen, wherein the first peripheral side is opposite the second peripheral side.

17. The method of claim 13, further comprising: when the computing device is positioned in the first orientation on the physical surface: the first camera becomes a top camera of the computing device, the top camera being directed downward towards the physical surface; and the second camera becomes a bottom camera of the computing device, the bottom camera being directed upward towards the user facing the computing device.

18. The method of claim 13, further comprising: determining that the computing device is positioned in a second orientation on the physical surface, wherein the first field of view of the first camera is directed towards the user facing the computing device when the computing device is situated in the second orientation, and the second field of view of the second camera is directed towards the user facing the computing device when the computing device is situated in the second orientation; capturing, using the first camera of the computing device, a third video stream that includes a first portion of a face of the user in the first field of view; capturing, using the second camera of the computing device, a fourth video stream that includes a second portion of the face of the user in the second field of view; and adjusting the operation routine of the activity application based on one or more of the third video stream including the first portion of the face of the user and the fourth video stream including the second portion of the face of the user.

19. The method of claim 18, further comprising: when the computing device is positioned in the second orientation on the physical surface: the first camera becomes a bottom camera of the computing device, the bottom camera being directed upward towards the first portion of the face of the user in the first field of view; and the second camera becomes a top camera of the computing device, the top camera being directed upward towards the second portion of the face of the user in the second field of view.

20. The method of claim 13, wherein: the computing device in a second orientation has been rotated as compared to the computing device in the first orientation; and a tilt angle between the computing device and the physical surface when the computing device is positioned in the second orientation on the physical surface is smaller than a tilt angle between the computing device and the physical surface when the computing device is positioned in the first orientation on the physical surface.

21. A computing device comprising: a display screen located on a front surface of the computing device in a first orientation; a first camera located on a front surface of the computing device near a top edge of the computing device in the first orientation, the first camera being configured to capture a first field of view; and a second camera located on the front surface of the computing device near a bottom edge of the computing device in the first orientation, the second camera being configured to capture a second field of view different from the first field of view, and an orientation sensor configured to detect when the computing device is oriented into a second orientation, wherein in the second orientation the first camera is located near a bottom edge of the computing device in the second orientation and the second camera is now located near a top edge of the computing device in the second orientation.

Description

BACKGROUND

[0001] The present disclosure relates to a computing device.

[0002] An electronic device often has cameras for capturing videos or images. Common examples of cameras of the electronic device usually include a front camera to capture objects in front of the electronic device and a back camera to capture objects behind the electronic device. To capture an image or a video of an object, a user often needs to manually select to use either the front camera or the back camera based on a location of the object relative to the electronic device. The selected camera may then capture the object and the non-selected camera may not be utilized. In addition, the electronic device often includes various input/output elements and the position of the input/output elements relative to the user may change when the electronic device is situated in different positions. Therefore, the electronic device often requires the user to memorize the functionality associated with each input/output element and accurately localize the desired input/output element to interact with the electronic device. As a result, the electronic device is usually inconvenient for the user to use, especially for the young children and the elderly.

SUMMARY

[0003] According to one innovative aspect of the subject matter in this disclosure, a computing device is described. One general aspect includes a computing device may include: a display screen located on a front surface of a housing; a support located on a back surface of the housing, where: the support is configured to support the display screen in a first position relative to a physical surface when situated in a first orientation; and the support is configured to support the display screen in a second position relative to the physical surface when situated in a second orientation; a first camera located on a first peripheral side of the front surface, the first camera being configured to capture a first field of view; and a second camera located on a second peripheral side of the front surface, where: the first peripheral side is opposite the second peripheral side; and the second camera is configured to capture a second field of view that is different from the first field of view.

[0004] Implementations may include one or more of the following features. The computing device where: the second orientation and the first orientation are different; when the display screen is situated in the first orientation, the display screen is in a first viewing position and the first camera captures the first field of view that includes a portion of a surface in front of the housing and the second camera captures the second field of view that includes a portion of an area in front of the display screen; and when the display screen is situated in the second orientation, the display screen is in a second viewing position and the first camera captures the first field of view that includes a first portion of an area above the display screen and the second camera captures the second field of view that includes a second portion of an area above the display screen. The computing device where: a tilt angle between the display screen and the physical surface when the display screen is positioned in the first orientation is greater than a tilt angle between the display screen and the physical surface when the display screen is positioned in the second orientation. The computing device may include: an orientation sensor that detects when the display screen is situated in the first orientation and when the display screen is situated in the second orientation; and an activity application that executes a routine based on whether the orientation sensor detects the first orientation or the second orientation. The computing device of may include: when the orientation sensor detects that the display screen is positioned in the first orientation, the activity application identifies the first camera located on the first peripheral side as a top camera of the display screen and the second camera located on the second peripheral side as a bottom camera of the display screen; the first field of view of the top camera is directed downward towards the physical surface; and the second field of view of the bottom camera is directed upward towards a user facing the display screen. The computing device where: the top camera is configured to capture a first video stream that includes an activity scene of the physical surface in the first field of view; and the bottom camera is configured to capture a second video stream that includes a face of the user in the second field of view. The computing device where: when the orientation sensor detects that the display screen is positioned in the second orientation: an activity application identifies the first camera located on the first peripheral side as a bottom camera of the display screen and the second camera located on the second peripheral side as a top camera of the display screen; and the first field of view of the bottom camera and the second field of view of the top camera are directed upward. The computing device, where: the bottom camera is configured to capture a first video stream that includes a first portion of a face of a user in the first field of view; and the top camera is configured to capture a second video stream that includes a second portion of the face of the user in the second field of view. The computing device, where: the bottom camera is configured to capture a first video stream that includes a first user in the first field of view; and the top camera is configured to capture a second video stream that includes a second user in the second field of view. The computing device where: the first peripheral side includes a protrusion that extends outwardly from the front surface of the housing; and the first camera is positioned on the protrusion towards the physical surface; and the first field of view of the first camera is configured to capture at least a portion of the physical surface. The computing device where the support may include: a supporting element that is integral with or coupled to the back surface of the housing; and the supporting element extends outwardly from the back surface of the housing and is situatable on a physical surface to support the display screen in the first orientation or the second orientation. The computing device where: the computing device transitions from the first orientation to the second orientation by rotating the computing device.

[0005] One general aspect includes a method may include: determining that a computing device is positioned in a first orientation on a physical surface, where: the computing device includes a first camera configured to capture a first field of view and a second camera configured to capture a second field of view that is different from the first field of view; and the first field of view of the first camera is directed towards the physical surface and the second field of view of the second camera is directed towards a user facing the computing device when the computing device is situated in the first orientation; capturing, using the first camera of the computing device, a first video stream that includes an activity scene of the physical surface in the first field of view; capturing, using the second camera of the computing device, a second video stream that includes the user in the second field of view; determining, in an activity application of the computing device, an operation routine of the activity application based on the first video stream including the activity scene of the physical surface and the second video stream including the user; and executing the operation routine in the activity application.

[0006] Implementations may include one or more of the following features. The method where determining the operation routine of the activity application includes: detecting, in the first video stream, a tangible object in the activity scene of the physical surface; determining, in the second video stream, a user state of the user; and determining the operation routine of the activity application based on the tangible object in the activity scene and the user state of the user. The method where: determining the user state of the user includes: determining a facial feature of the user in the second video stream; and determining the user state of the user based on the facial feature of the user; and the operation routine of the activity application includes adjusting one or more of a task complexity level and an instruction detail level associated with the user in the activity application based on the user state of the user. The method where: the first camera is located on a first peripheral side of a front surface of a housing of a display screen; and the second camera is located on a second peripheral side of the front surface of the housing of the display screen, where the first peripheral side is opposite the second peripheral side. The method may include: when the computing device is positioned in the first orientation on the physical surface: the first camera becomes a top camera of the computing device, the top camera being directed downward towards the physical surface; and the second camera becomes a bottom camera of the computing device, the bottom camera being directed upward towards the user facing the computing device. The method may include: determining that the computing device is positioned in a second orientation on the physical surface, where the first field of view of the first camera is directed towards the user facing the computing device when the computing device is situated in the second orientation, and the second field of view of the second camera is directed towards the user facing the computing device when the computing device is situated in the second orientation; capturing, using the first camera of the computing device, a third video stream that includes a first portion of a face of the user in the first field of view; capturing, using the second camera of the computing device, a fourth video stream that includes a second portion of the face of the user in the second field of view; and adjusting the operation routine of the activity application based on one or more of the third video stream including the first portion of the face of the user and the fourth video stream including the second portion of the face of the user. The method may include: when the computing device is positioned in the second orientation on the physical surface: the first camera becomes a bottom camera of the computing device, the bottom camera being directed upward towards the first portion of the face of the user in the first field of view; and the second camera becomes a top camera of the computing device, the top camera being directed upward towards the second portion of the face of the user in the second field of view. The method where: the computing device in a second orientation has been rotated as compared to the computing device in the first orientation; and a tilt angle between the computing device and the physical surface when the computing device is positioned in the second orientation on the physical surface is smaller than a tilt angle between the computing device and the physical surface when the computing device is positioned in the first orientation on the physical surface.

[0007] One general aspect includes a computing device may include: a display screen located on a front surface of the computing device in a first orientation; a first camera located on a front surface of the computing device near a top edge of the computing device in the first orientation, the first camera being configured to capture a first field of view; and a second camera located on the front surface of the computing device near a bottom edge of the computing device in the first orientation, the second camera being configured to capture a second field of view different from the first field of view, and an orientation sensor configured to detect when the computing device is oriented into a second orientation, where in the second orientation the first camera is located near a bottom edge of the computing device in the second orientation and the second camera is now located near a top edge of the computing device in the second orientation.

[0008] Other implementations of one or more of these aspects and other aspects described in this document include corresponding systems, apparatus, and computer programs, configured to perform the actions of the methods, encoded on computer storage devices. The above and other implementations are advantageous in a number of respects as articulated through this document. Moreover, it should be understood that the language used in the present disclosure has been principally selected for readability and instructional purposes, and not to limit the scope of the subject matter disclosed herein.

BRIEF DESCRIPTION OF THE DRAWINGS

[0009] The disclosure is illustrated by way of example, and not by way of limitation in the figures of the accompanying drawings in which like reference numerals are used to refer to similar elements.

[0010] FIG. 1A is a front view of an example computing device.

[0011] FIGS. 1B and 1C respectively illustrate perspective views of an example computing device from a front perspective and a rear perspective.

[0012] FIG. 2A illustrates a side view of a computing device in a first orientation and depicts fields of view of a first camera and a second camera.

[0013] FIG. 2B illustrates a side view of a computing device in a second orientation and depicts fields of view of a first camera and a second camera.

[0014] FIG. 3 illustrates a field of view of a first camera and a field of view of a second camera of an example computing device when the example computing device is situated in a first orientation on an activity surface.

[0015] FIG. 4 is a block diagram illustrating an example computer system that includes one or more example computing devices.

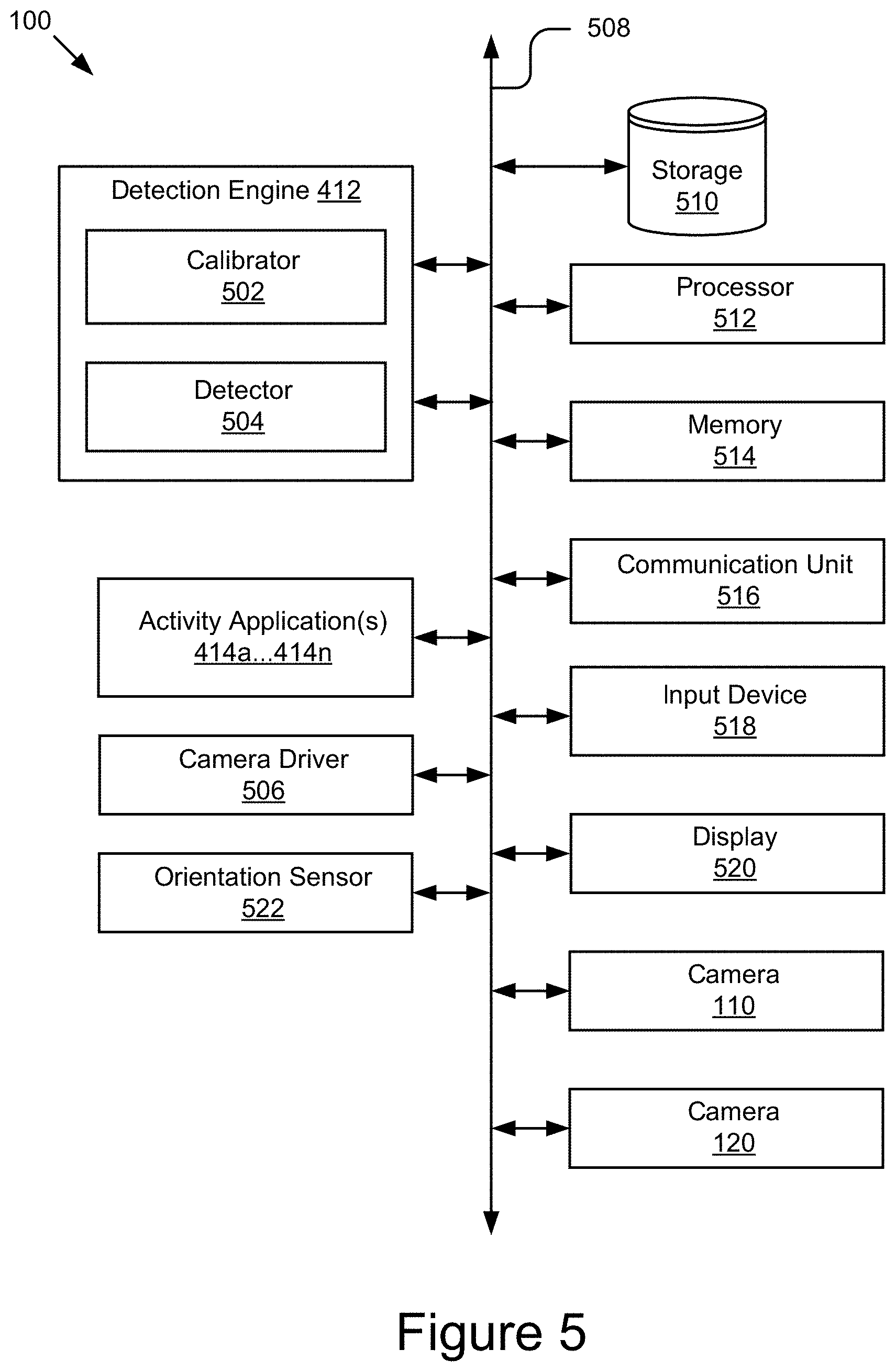

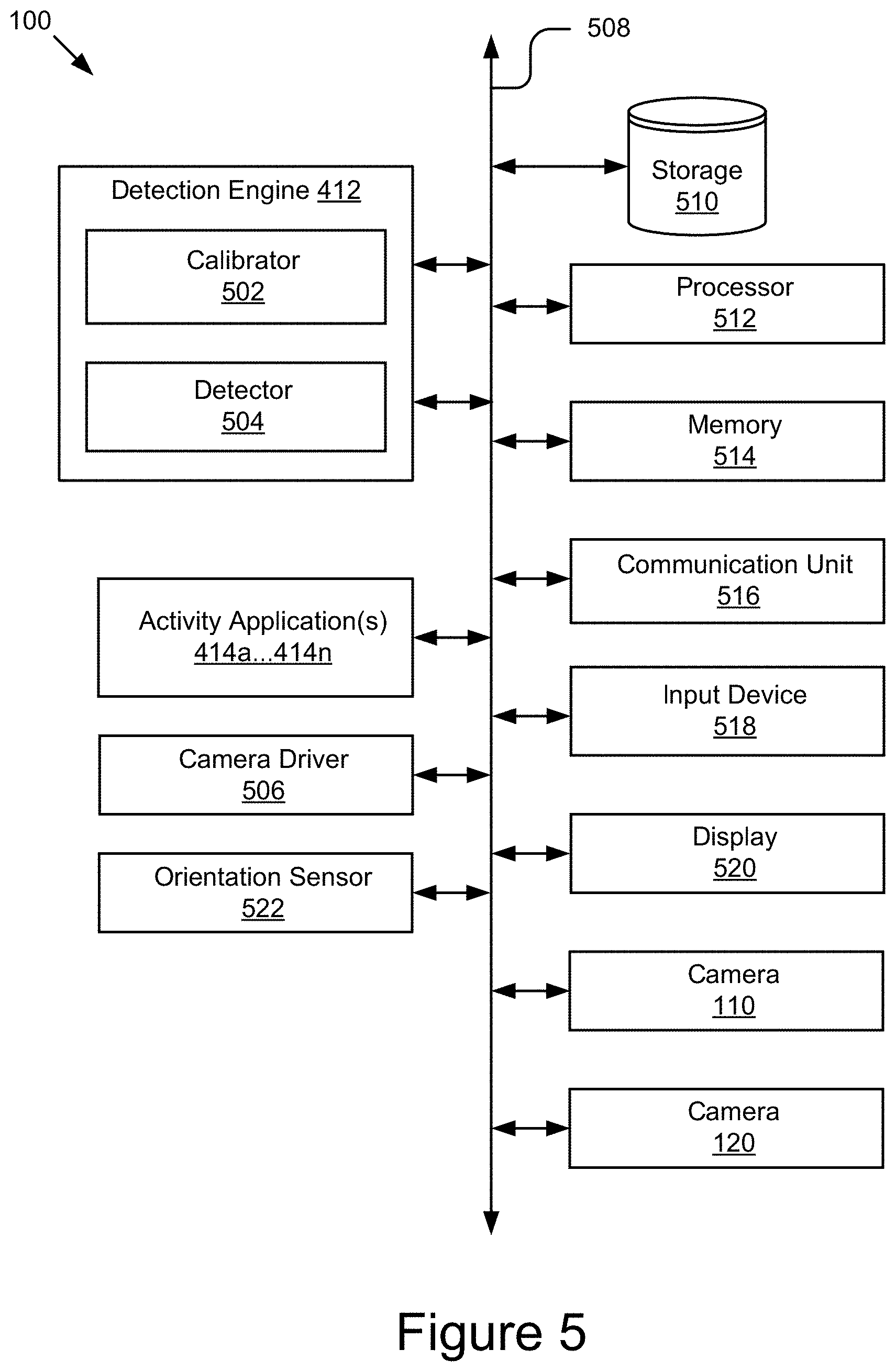

[0016] FIG. 5 is a block diagram of an example computing device.

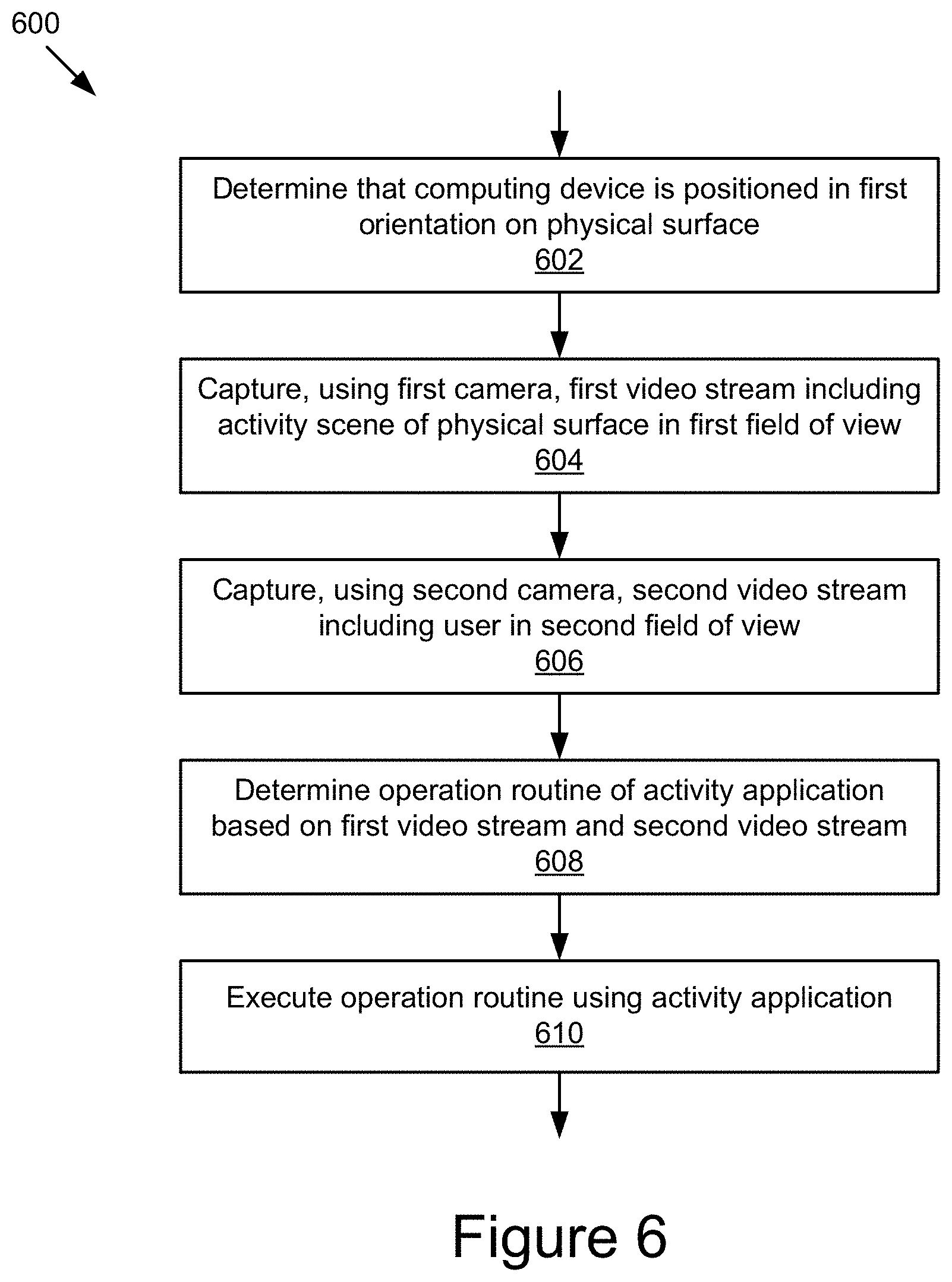

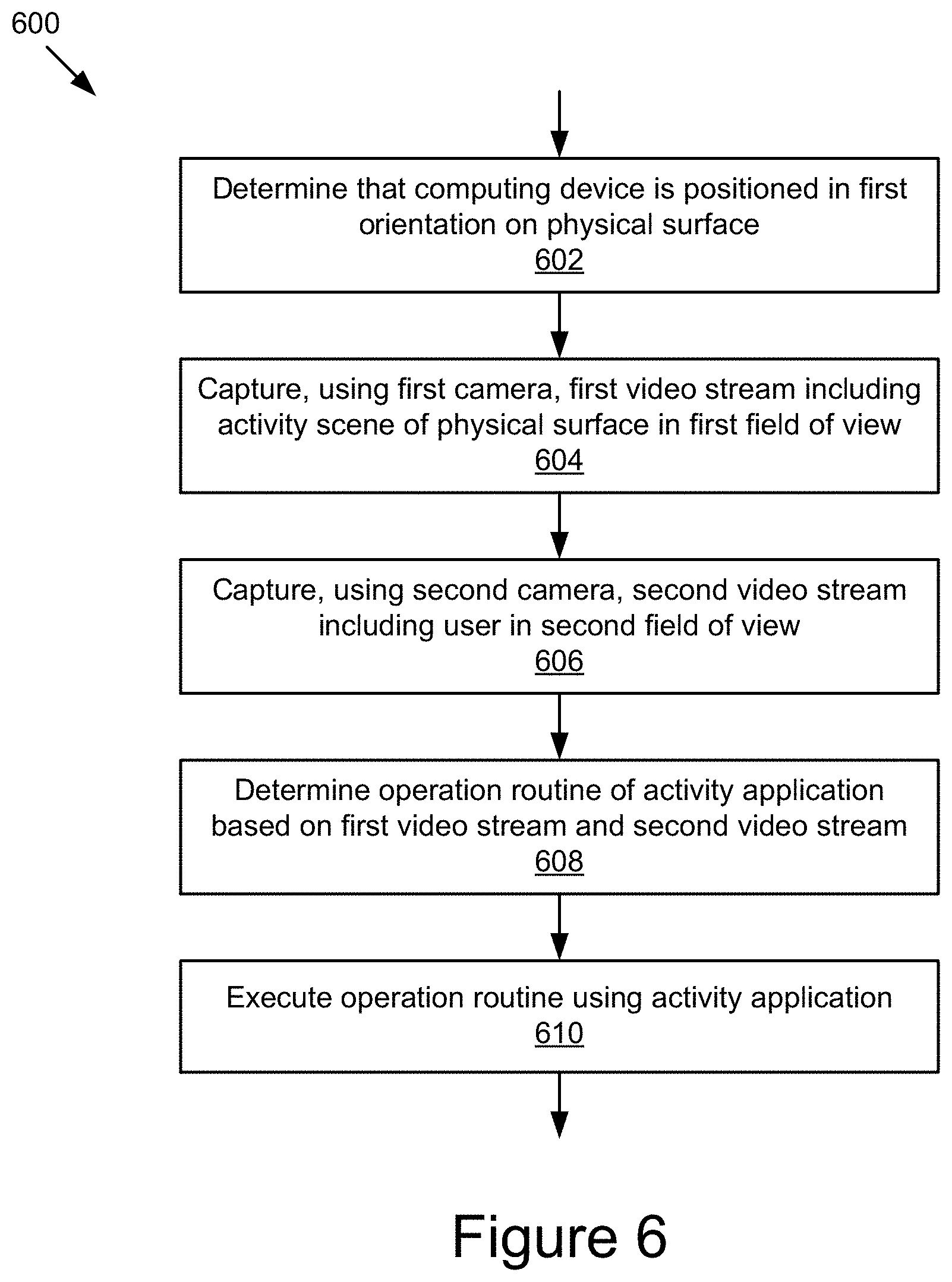

[0017] FIG. 6 is a flowchart of an example method for determining an orientation of a computing device.

[0018] FIG. 7 is a flowchart of an example method for capturing video streams in a second orientation.

[0019] FIGS. 8A and 8B illustrate different orientations of the computing device.

DETAILED DESCRIPTION

[0020] FIGS. 1A-1C illustrate an example computing device 100 that includes a first camera 110 and a second camera 120 that are capable of operating simultaneously to capture video streams of different fields of view. For example, a field of view of the first camera 110 may be pointing downward towards a physical surface in front of the computing device where a user may be placing one or more tangible objects and the field of view of the second camera may be directed outwards from a display screen 140 on the computing device 100 towards a user viewing the display screen 140. By using the first camera 110 and the second camera 120, the computing device 100 may be able to see both what objects a user places in front of the computing device 100 and what the user is doing at the same time (such as capturing facial expressions, speech, gestures, etc.).

[0021] In some implementations, the computing device 110 may be postionable in different orientations and the field of view of the first camera 110 and the second camera 120 may be different relative to the physical surface the computing device 110 is positioned on in the different orientations. In some implementations, the computing device 100 may transition from the first orientation 101 to the second orientation 103. For example, a first orientation 101, as shown in FIGS. 1A-1C may be a first viewing position of the displays screen 140 and the display screen 140 may be substantially upright position and the field of view of the first camera 110 may be downwards towards a physical surface in front of the computing device 100. In another example, a second orientation 103, as shown in FIG. 2B, may be a second viewing position of the display screen 140 and the display screen 140 may be substantially flat or horizontal in position (e.g., resting substantially flat on a surface) and the field of view of the first camera 110 may be outwards towards a top area above the computing device 100.

[0022] As depicted in FIG. 1A, in some implementations, the computing device 100 may include a housing 105 that includes a front surface 107 in which a display screen 140 is positioned. The front surface 107 may be substantially flat and a display screen 140 and one or more cameras (e.g., a first camera 110 and/or a second camera 120) may be integrated into the front surface 107. In some implementations, the front surface 107 of the housing 105 computing device 100 may also include one or more audio outputs 150. In further implementations, the audio output 150 may be positioned on another surface of the computing device 100, such as a back surface and/or side surface.

[0023] The display screen 140 may be positioned on the front surface 107 of the housing 105 of the computing device 100 to allow for the display screen 140 to be easily viewed by a user. In some implementations, the display screen 140 may be viewable by a user in different orientations, such as a first orientation 101 where the display screen 140 is substantially vertical or a second orientation 103 where the display screen 140 is substantially horizontal as shown in more detail in FIGS. 2A and 2B.

[0024] In some implementations, the display screen 140 may be adapted to orient the content of the display screen 140 relative to the orientation of the computing device 100 in order to allow content to be presented to the user as being upright, based on the orientation of the computing device 100. For example, when the computing device 100 is rotated from the first orientation 101 to the second orientation 103, the top of the display screen 140 in the first orientation 101 becomes the bottom of the display screen 140 in the second orientation 103. The activity application(s) 414 may receive the orientation information from the orientation sensor 522 and the activity application(s) 414 may cause the content of the display screen 140 to be rotated 180 degrees in order to account for the change in orientation as described in more detail elsewhere herein.

[0025] In some implementations, the displays screen 140 may occupy a portion of the front surface 107 of the computing device 100 and the edges of the front surface 107 around the display screen 140 may be referred to as peripheral sides. The peripheral sides (such as the first peripheral sides 102 and the second peripheral side 104) may occupy other portions of the front surface 107 of the housing 105 of the computing device 100 along the periphery of the side of the display screen 140 (such as the first side 142 of the display screen 140 and/or the second side 144 of the display screen 140). These other peripheral sides of the front surface 107 of the housing 105 may allow for other components of the computing device 100 to be positioned. For example, as depicted in FIG. 1A, the first camera 110 may be located on the first peripheral side 102 proximate to the first side 142 of the display screen 140. In FIG. 1A, the computing device 100 is positioned in the first orientation 101 and the first camera 110 may be identified as the top camera along the top peripheral side of the housing 105 of the computing device 100. As depicted in FIG. 1A, the second camera 120 may be located on the second peripheral side 104 proximate to the second side 144 of the display screen 140. In the first orientation 101, the second camera 120 may be identified as the bottom camera along the bottom peripheral side of the computing device 100.

[0026] As depicted in FIG. 1A, the first camera 110, the second camera 120, and the display screen 140 are all located on the front surface 107 of the computing device 100. It should be understood that while the first camera 110, second camera 120, and the display screen 140 are integrated into the front surface 107 of the housing 105 of the computing device 100 as shown, in other implementations, one or more of the first camera 110, the second camera 120, and/or the display screen 140 may be separate components that may be attachable to the front surface 107 or other portions of the housing 105 of the computing device 140 and may be positionable around the peripheral sides of the housing 105 of the computing device 100.

[0027] As depicted in FIG. 1A, the first camera 110 is positioned opposite the second camera 120 on the front surface 107 of the housing 105 of the computing device 100. As depicted in FIG. 1A, the first camera 110 is centered along the first peripheral side 102 and the second camera 120 is centered along the second peripheral side 104. In further implementations, additional cameras (not shown) may be positioned on other portions of the front surface 107 of the housing 105 of the computing device 100 and these additional cameras may be configured to capture additional fields of view separate from the fields of view of the first camera 110 and/or the second camera 120. In some implementations, the different fields of view may overlap and allow for stereo vision from the additional cameras, as describe elsewhere herein.

[0028] In some implementations, the activity application(s) 414 may select which cameras (from the first camera 110, second camera 120, and/or additional cameras) to use to capture a video stream and may limit the amount of cameras used to capture video streams to improve processing time. In further implementations, based on the orientation of the computing device 100, the activity application(s) 414 may select specific cameras (from the first camera 110, second camera 120, and/or additional cameras) based on the orientation of the computing device 100.

[0029] In some implementations, the first camera 110 and the second camera 120 may be positioned on the same peripheral side of the front surface 107 (such as the first peripheral side 102 or the second peripheral side 104) and the first camera 110 and the second camera 120 may be positioned to capture different fields of view despite being positioned on the same peripheral side. For example, the first camera 110 and the second camera 120 may both be positioned on the first peripheral side 102 (such as adjacent to each other, or within a distance of a couple of inches but positioned on the first peripheral side 102, etc.). In the example, the first camera 110 may have a first field of view (such as forward looking to capture things in front of the computing device, such as a user, etc.) and the second camera 120 may have a second field of view (such as downward looking to capture things (such as tangible objects, etc.) on a physical surface the computing device 100 is resting on).

[0030] FIGS. 1B and 1C illustrate perspective views of the computing device 100 from a front perspective and a rear perspective, respectively. As depicted in FIGS. 1B and 1C, the computing device 100 may include a support to position the computing device 100 in the different orientations. In some implementations, the support may include a supporting element 130 for supporting and positioning the housing 105 computing device 100 on a physical surface in different orientations. In some embodiments, the supporting element 130 may rest against the physical surface on different surfaces, such as a bottom surface 133 of the supporting element 130 or a back surface 135 of the supporting element 130 in order to prop the housing 105 of the computing device 100 up in the different orientations, such as the first orientation 101 shown in FIGS. 1B and 1C.

[0031] In some implementations, the supporting element 130 may extend out from a back surface 150 of the housing 105 of the computing device 100. In these implementations, the back surface 150 of the housing 105 of the computing device 100 may be substantially flat, and the supporting element 130 may extend out from a portion of the back surface 150 of the housing 105 of the computing device 100. As shown in FIG. 1C, the supporting element 130 may extend out of a lower portion 152 of the back surface 150 of the housing 105 of the computing device 100. In some implementations, the supporting element 130 may extend along at least a portion of the lower portion 152 of the back surface 150 of the housing 105 of the computing device 100. In some implementations, the supporting element 130 may be integral to the housing 150 of the computing device 100. In these implementations, the computing device 100 may be a single unit and all of the component may be housed within the housing 105 for easy portability and use. In further implementations, the supporting element 130 may be a rod or protrusion that may be capable of supporting the computing device 100 while being minimal in size. In some implementations, the supporting element 130 may be detachable and may be removed from the back surface 150 of the housing 105 of the computing device 100 in order to allow the computing device 100 to lay flat on a surface. In further implementations, the supporting element 130 may be foldable and may be able to fold flat against the back surface 150 of the housing 105 of the computing device 100 in order to allow the computing device 100 to lay flat on a surface.

[0032] In some implementations, the supporting element 150 may house one or more components of the computing device 100. For example, a battery and or other components of the computing device 100 may be positioned within the larger area of the supporting element 150 compared to the thinner area of the computing device 100 that includes the display screen 140. In some implementations, the supporting element may include one or more access ports 132, such as a power button 132a, a memory card slot 132b, an input/output port 132c (such as a 3.5 mm port, etc.), etc. By positioning the access ports 132 on the supporting element 130, a user can interact with the various ports 132 without obscuring the field of views of the first camera 110 and/or second camera 120, and/or the view of the display screen 140.

[0033] In some implementations, an additional supporting element 170 may be present on one or more surfaces of the housing 105 of the computing device, such as the back surface 150 of the housing 105 of the computing device 100. The additional supporting element 170 may be a protrusion on the back surface 150 of the housing 105 of the computing device 100 or a piece of plastic/rubber/metal/etc. that is positioned on the back surface 150. The additional supporting element 170 may be positioned on the back surface 150 of the housing 105 of the computing device 100, such that when the computing device 100 is placed in different orientations, such as the second orientation 103, the additional supporting element 170 comes into contact with a physical surface and assists in positioning and retaining the computing device 100 in the second orientation 103, as shown in FIG. 2B.

[0034] In some implementations, the computing device 100 may include additional hardware input/outputs, such as a volume control 180 and/or a screen lock button 182. These additional hardware input/outputs may be positioned on a side surface of the housing 105 of the computing device 100, as shown in FIGS. 1B and 1C where the additional hardware input/outputs may be easily accessible by a user without obscuring the display screen 140 and/or field of views of the cameras. In some implementations, these additional hardware input/outputs may be configurable to provide similar functions independent of the orientation of the computing device 100 as described elsewhere herein with respect to FIGS. 8A and 8B.

[0035] In some implementations, a camera protrusion 160 may be included on the housing 105 of the computing device 100. The camera protrusion 160 may be a molded portion of one of the peripheral sides (such as the first peripheral side 102 or the second peripheral side 104) of the housing 105. The camera protrusion 160 may extend out from the front surface 107 and have a camera (such as the first camera 110 and/or the second camera 120) positioned within the camera protrusion 160 in order to increase what is included in the field of view of the camera as compared to a camera mounted flat on the front surface 107 of the housing 105 of the computing device 100. For example, the camera protrusion 160 may angle the camera to look down towards a physical surface in front of the display screen 140 and the computing device 100, whereas a camera mounted flat on the front surface 107 would look out towards the area in front of the computing device 100 rather than down towards the physical surface the computing device 100 is resting on. In some implementations, the camera protrusion 160 may direct the field of view of the camera to include at least a portion of the housing 105 of the computing device 100 and/or the display screen 140 and the information captured related to the display screen 140 and or the housing 105 of the computing device 100 may be used by the activity application(s) 414 to change one or more routines being executed by the activity application(s) 414.

[0036] FIG. 2A illustrates the computing device 100 situated in the first orientation 101. As shown, in the first orientation 101, the computing device 100 is resting on a bottom side edge 190 of the housing 105 of the computing device 100 and the bottom surface 133 of the supporting element 130. In this position, the front of the computing device 100 and the display screen 140 are positioned in a substantially vertical position. In some implementations, a tilt angle between the display screen 140 or a back surface of the housing 105 and the physical surface on which the display screen 140 is positioned in the first orientation 101 is greater than a tilt angle between the display screen 140 or the back surface of the housing 105 and the physical surface when the display screen is positioned in the second orientation 103. In some implementations, the display screen 140 may be leaning slightly back in an angled vertical position, such that if a user sits in front of the computing device 100 while the computing device 100 is resting on a surface 112 in the angled vertical position, the user is looking forward and slightly down to view the display screen 140.

[0037] FIG. 2A shows a side view of the computing device 100. In the example, the first camera 110 may include a first field of view 230 and the second camera 120 may include a second field of view 240. As shown in this example, the first field of view 230 is angled downwards and includes the area immediately in front of the display screen 140 and the portion of the physical surface 112 proximate to the front of the computing device 100. As shown in this example, the second field of view 240 is angled outward and/or upward to capture the area in front of the computing device 100, which may include a user viewing the computing device 100 from approximately 2 feet away, etc. It should be understood that the field of view 230 and the field of view 240 are depicted as triangles with limited bounds, but that any shape of field of view can be depicted and the view is not limited to the shapes shown. Additionally, the field of view 230 and the field of view 240 may extend beyond the shapes shown and the distance of what can be captured is limited only by the constraints of what the camera can view. For example, special lenses, such as a fish-eye or telescopic lens may be used to adapt the field of view of the camera to capture specific portions of an area.

[0038] As shown in the example in FIG. 2A, the field of view 230 and the field of view 240 may overlap where both the first camera 110 and the second camera 120 may capture an object within the overlap but from a different perspective. This may allow the activity application(s) 414 to perform stereo vision, where an object, such as a user or physical object, may be captured in the two different video streams and the locations of the separate cameras (first camera 110 and second camera 120) may be known relative to the object being captured in the images. This may allow the activity application(s) 414 to track a position, an acceleration, a depth, or other information related to the object using the two different views.

[0039] In some implementations, this may allow the activity application(s) 414 to capture three-dimensional information using the two different two-dimensional captured video streams. It may allow the activity applications(s) 414 to estimate the distance of the object, a range of the object, a depth, an acceleration of the object, etc. The activity application(s) 414 may perform stereopsis on the captured video streams to provide a three-dimensional visualization based on the captured video streams.

[0040] In further implementations, the activity application(s) 414 may account for when an object in one of the fields of view (such as field of view 230 and/or field of view 240) is obscured (such as by a hand moving the object, or another object being placed in front of the object) and the activity application(s) 414 may use the other field of view to continue to track the object that is obscured. It should be understood that by adding additional cameras along with the first camera 110 and the second camera 120, the stereo vision capabilities may be enhanced by the capturing of additional video streams showing additional fields of view.

[0041] FIG. 2B illustrates the computing device 100 situated in the second orientation 103. As shown in FIG. 2B, in the second orientation 103, the computing device 100 may be positioned so that supporting element 130 is angling the computing device 100, such as in an angled flat position and the back surface 135 of the supporting element 130 is resting against the physical surface and the additional supporting element 170 is resting against the physical surface. The substantially flat position of the computing device 100 may be such that when a user is viewing the display screen in this position, the bottom edge of the display screen 140 is lower than the top edge of the displays screen 140 relative to the user and the surface on which the computing device 100 is resting. For example, in this position, a user 210 may be viewing the computing device 100 on their lap and the surface of their lap is where the computing device 100 is resting. The back surface of the housing 105 of the computing device 100 where the supporting element 130 is positioned, may be higher than the back of surface of the housing 105 of the computing device 100 with the additional supporting element 170, to tilt the screen slightly towards user 210.

[0042] In some implementations, the computing device 100 may be oriented into the second orientation 103 by rotating the computing device 100 180 degrees around itself relative to the first orientation 101 and then resting the computing device 100 on the physical surface in the second orientation 103. In further implementations, the supporting element 130 may be slidable or detachably removable and may be raised from the first portion of the back surface of the housing 105 of the computing device 100 to a second portion of the back surface of the housing 105 of the computing device 100 without rotating the computing device 100, to position the computing device in the second orientation 103.

[0043] As shown in FIG. 2B, in the second orientation 103, the first camera 110 may be identified as a bottom camera as it is located at the bottom edge of the of the display screen 140 and the second camera 120 may be identified as a top camera as it is located at the top edge of the display screen 140 in the second orientation 103. The field of view 240 of the first camera 110 may be directed upward and out along the top edge of the computing device 100 in the second orientation 103. The field of view 230 of the second camera 120 may be directed up towards the top area above the display screen 140 of the computing device 100. As shown in the example in FIG. 2B, the field of view 230 may extent up and outward to capture a view of a user 210 viewing the display screen 140 from the bottom of the computing device 100 in the second orientation 103. The field of view 230 may capture at least a portion of a face of the user 210 from an angle below the face of the user 210. The second camera 120 may be able to include the face of the user 210 in the captured video streams and may provide the face of the user 210 to the activity application(s) 214 for facial analysis to detect facial expressions, such as confusion, disinterest, sleepiness, etc.

[0044] In some implementations, the second camera 120 may also be directed upwards above the computing device 100 and may be able to capture a field of view that includes objects such as a ceiling, a lighting array, etc. Using this field of view 230, the video stream of the second camera 120 may be analyzed to identify specific rooms or locations that the computing device 100 is located in. For example, the activity application(s) 414 may have a database of room profiles, such as a user's classroom, home, etc. and the activity application(s) 414 may compare the field of view 230 to the database of room profiles and determine which ceiling matches with what is being captured in the video stream of the second camera 120. This may allow the activity application(s) 414 to run specific applications in specific locations. For example, the activity application(s) 414 may block the request to run a game when the activity application(s) 414 determines that the computing device 100 is located in the user's classroom at the moment.

[0045] In some implementations, the second camera 120 may be configured to capture a field of view 230 that includes the first user 210 and the first camera 110 may be configured to capture a field of view 240 of a second user 220. The first user 210 and the second user 220 may be positioned on opposite sides of the computing device 100 and they may both be able to view the display screen 140 from their respective positions. The activity application(s) 414 may run an application that both users can participate in and the activity application(s) 414 may analyze objects, expressions, gestures, etc. that are presented by the first user 210 and the second user 220 in the respective fields of view. In another example, the first user 210 may be a student and the second user 220 may be a teacher. The activity application(s) 414 may capture the interactions between the teacher and the student, including the facial expressions and/or gestures of the teacher or student and execute an application based on the captured information.

[0046] FIG. 3 illustrates an example computing device 100 in the first orientation 101. As shown in this example, the first camera 110 includes a field of view 230 that extends downward towards the surface on which an activity object 310 (e.g., a book, etc.) is present. The second camera 120 includes a field of view 240 that extends outward towards the area in front of the computing device as shown. The computing device 100 may capture a video stream of the activity object 310 using the first camera 110 and a video stream of a user (not shown) interacting with the activity object 310 using the second camera 120. The activity application(s) 414 may generate a visualization of the activity object 310 for display on the display screen 140 and the user (not shown) may be able to simultaneously interact with the activity object 310 and the visualization on the display screen 140, while the second camera captures at least a portion of the user, such as a face or hands, as the user interacts. This allows for the user to experience an enriched interaction with both physical and virtual objects while the activity application(s) 414 may capture an image of the user for additional routines. For example, the user may be doing homework and learning about a topic in a textbook. The activity application(s) 414 may identify the topic from the textbook and provide additional curated information related to the topic on the display screen 140. The activity application(s) 414 may also capture one or more facial expressions of the user and based on the captured facial expressions, may remove or supplement the virtual information presented on the display screen 140. For example, if the user appears confused when viewing the virtual information, the activity application(s) 414 may present a broader overview of the virtual information in order to guide the user as to what the virtual information is presenting.

[0047] FIG. 4 is a block diagram illustrating an example computer system 400 that is used with the computing device 100. As depicted, the system 400 may include computing devices 100a . . . 100n and servers 402a . . . 402n communicatively coupled via a network 406. In FIG. 4 and the remaining figures, a letter after a reference number, e.g., "100a", represents a reference to the element having that particular reference number. A reference number in the text without a following letter, e.g., "100", represents a general reference to instances of the element bearing that reference number. It should be understood that the system 400 depicted in FIG. 4 is provided by way of example and that the system 400 and/or further systems contemplated by this present disclosure may include additional and/or fewer components, may combine components and/or divide one or more of the components into additional components, etc. For example, the system 400 may include any number of servers 402, computing devices 100, and/or networks 406. As depicted in FIG. 4, the computing device 100 may be coupled to the network 406 via the signal line 408 and the server 402 may be coupled to the network 406 via the signal line 404. The computing device 100 may be accessed by user 210.

[0048] The network 406 may include any number of networks and/or network types. For example, the network 406 may include, but is not limited to, one or more local area networks (LANs), wide area networks (WANs) (e.g., the Internet), virtual private networks (VPNs), mobile (cellular) networks, wireless wide area network (WWANs), WiMAX.RTM. networks, Bluetooth.RTM. communication networks, peer-to-peer networks, other interconnected data paths across which multiple devices may communicate, various combinations thereof, etc.

[0049] The computing device 100 may be a computing device that has data processing and communication capabilities. In some embodiments, the computing device 100 may include a processor (e.g., virtual, physical, etc.), a memory, a power source, a network interface, and/or other software and/or hardware components, such as front and/or rear facing cameras, display screen, graphics processor, wireless transceivers, keyboard, firmware, operating systems, drivers, various physical connection interfaces (e.g., USB, HDMI, etc.). In some embodiments, the computing devices 100 may be coupled to and communicate with one another and with other entities of the system 400 via the network 406 using a wireless and/or wired connection. As discussed elsewhere herein, the system 400 may include any number of computing devices 100 and the computing devices 100 may be the same or different types of devices (e.g., tablets, mobile phones, desktop computers, laptop computers, etc.).

[0050] As depicted in FIG. 4, the computing device 100 may include the cameras 110 and 120, a detection engine 412, and one or more activity applications 414. The computing device 100 and/or the cameras 110 and 120. In some embodiments, the detection engine 412 may detect and/or recognize objects in a video stream, and cooperate with the activity application(s) 414 to provide the user 210 with a virtual experience that incorporates in substantially real-time the objects and the user manipulation of the objects in the physical environment. As an example, the detection engine 412 may process the video stream captured by the cameras 110 or 120 to detect and recognize an object created by the user. The activity application 414 may generate a visualization of the object created by the user, and display to the user a virtual scene on the display screen 140. The components and operations of the detection engine 412 and the activity application 414 are described in details throughout.

[0051] The server 402 may include one or more computing devices that have data processing, storing, and communication capabilities. In some embodiments, the server 402 may include one or more hardware servers, server arrays, storage devices and/or storage systems, etc. In some embodiments, the server 402 may be a centralized, distributed and/or a cloud-based server. In some embodiments, the server 402 may include one or more virtual servers that operate in a host server environment and access the physical hardware of the host server (e.g., processor, memory, storage, network interfaces, etc.) via an abstraction layer (e.g., a virtual machine manager).

[0052] The server 402 may include software applications operable by one or more processors of the server 402 to provide various computing functionalities, services, and/or resources, and to send and receive data to and from the computing devices 160. For example, the software applications may provide the functionalities of internet searching, social networking, web-based email, blogging, micro-blogging, photo management, video/music/multimedia hosting/sharing/distribution, business services, news and media distribution, user account management, or any combination thereof. It should be understood that the server 202 may also provide other network-accessible services.

[0053] In some embodiments, the server 402 may include a search engine capable of retrieving results that match one or more search criteria from a data store. As an example, the search criteria may include an image and the search engine may compare the image to product images in its data store (not shown) to identify a product that matches the image. In another example, the detection engine 412 and/or the storage 510 (e.g., see FIG. 5) may request the search engine to provide information that matches a physical drawing, an image, and/or a tangible object extracted from a video stream.

[0054] It should be understood that the system 400 illustrated in FIG. 4 is provided by way of example, and that a variety of different system environments and configurations are contemplated and are within the scope of the present disclosure. For example, various functionalities may be moved from a server to a client, or vice versa and some implementations may include additional or fewer computing devices, services, and/or networks, and may implement various client or server-side functionalities. In addition, various entities of the system 400 may be integrated into a single computing device or system or divided into additional computing devices or systems, etc.

[0055] FIG. 5 is a block diagram of an example computing device 100. As depicted, the computing device 100 may include a processor 512, a memory 514, a communication unit 516, an input device 518, a display 520, a storage 510, the camera 110, the camera 120, and the orientation sensor 522 communicatively coupled by a bus 308. It should be understood that the computing device 100 is not limited to such and other components are also possible and contemplated.

[0056] The processor 512 may execute software instructions by performing various input/output, logical, and/or mathematical operations. The processor 512 may have various computing architectures to process data signals including, for example, a complex instruction set computer (CISC) architecture, a reduced instruction set computer (RISC) architecture, and/or an architecture implementing a combination of instruction sets. The processor 512 may be physical and/or virtual, and may include a single core or plurality of processing units and/or cores.

[0057] The memory 514 may be a non-transitory computer-readable medium that is configured to store and provide access to data to other components of the computing device 100. In some embodiments, the memory 514 may store instructions and/or data that are executable by the processor 512. For example, the memory 514 may store the detection engine 412, the activity applications 414, and a camera driver 506. The memory 514 may also store other instructions and data, including, for example, an operating system, hardware drivers, other software applications, data, etc. The memory 514 may be coupled to the bus 508 for communication with the processor 512 and other components of the computing device 100.

[0058] The communication unit 516 may include one or more interface devices (I/F) for wired and/or wireless connectivity with the network 406 and/or other devices. In some embodiments, the communication unit 516 may include transceivers for sending and receiving wireless signals. For example, the communication unit 516 may include radio transceivers for communication with the network 406 and for communication with nearby devices using close-proximity connectivity (e.g., Bluetooth.RTM., NFC, etc.). In some embodiments, the communication unit 516 may include ports for wired connectivity with other devices. For example, the communication unit 516 may include a CAT-5 interface, Thunderbolt.TM. interface, FireWire.TM. interface, USB interface, etc.

[0059] The display 520 (also referred to as display screen 140) may display electronic images and data output by the computing device 100 for presentation to the user. The display 520 may include any display device, monitor or screen, including, for example, an organic light-emitting diode (OLED) display, a liquid crystal display (LCD), etc. In some embodiments, the display 520 may be a touch-screen display capable of receiving input from one or more fingers of the user. For example, the display 520 may be a capacitive touch-screen display capable of detecting and interpreting multiple points of contact with the display surface. In some embodiments, the computing device 100 may include a graphic adapter (not shown) for rendering and outputting the images and data for presentation on display 520. The graphic adapter may be a separate processing device including a separate processor and memory (not shown) or may be integrated with the processor 512 and memory 514.

[0060] The input device 518 may include any device for inputting information into the computing device 100. In some embodiments, the input device 518 may include one or more peripheral devices. For example, the input device 518 may include a keyboard (e.g., a QWERTY keyboard), a pointing device (e.g., a mouse or touchpad), a microphone, a camera, etc. In some implementations, the input device 518 may include a touch-screen display capable of receiving input from one or more fingers of the user. In some embodiments, the functionality of the input device 518 and the display 520 may be integrated, and the user may interact with the computing device 100 by touching a surface of the display 520. For example, the user may interact with an emulated keyboard (e.g., soft keyboard or virtual keyboard) displayed on the touch-screen display 520 by contacting the display 520 in the keyboard regions using his or her fingers.

[0061] The orientation sensor 522 may include one or more sensors for detecting an orientation of the computing device 100. In some implementations, the orientation sensor 522 may include on more orientation sensors 522 that can detect the orientation of the computing device 100. The orientation sensors may be configured to detect an angle or tilt of the computing device, such as by using an accelerometer or similar sensor relative to a known position and communication the angle or tilt to the activity application(s) 414. For example, the orientation sensor 522 can detect the differences in the tilt of the computing device 100 in order to determine when the computing device 100 is positioned in the first orientation 101 or the second orientation 103 and may provide that information to the activity application(s) 414.

[0062] The detection engine 412 may include a calibrator 502 and a detector 504. The components 412, 502, and 504 may be communicatively coupled to one another and/or to other components 414, 506, 510, 512, 514, 516, 518, 520, 110, 120, and/or 522 of the computing device 100 by the bus 508 and/or the processor 512. In some embodiments, the components 412, 502, and 504 may be sets of instructions executable by the processor 512 to provide their functionality. In some embodiments, the components 412, 502, and 504 may be stored in the memory 514 of the computing device 100 and may be accessible and executable by the processor 512 to provide their functionality. In any of the foregoing implementations, these components 412, 502, and 504 may be adapted for cooperation and communication with the processor 512 and other components of the computing device 100.

[0063] The calibrator 502 includes software and/or logic for performing image calibration on the video stream captured by the cameras 110 and/or 120. In some embodiments, to perform the image calibration, the calibrator 502 may calibrate the images in the video stream to adapt to the capture position of the cameras 110 and/or 120. The capture position of the cameras 110 and/or 120 may depend on the computing device 100 attributes and/or the orientation of the computing device 100. Capturing the video stream from a camera position in different orientations may cause distortion effects on the video stream. Therefore, the calibrator 502 may adjust one or more operation parameters of the cameras 110 and 120 to compensate for these distortion effects. Examples of the operation parameters being adjusted include, but are not limited to, focus, exposure, white balance, aperture, f-stop, image compression, ISO, depth of field, noise reduction, focal length, etc. Performing image calibration on the captured video streams is advantageous, because it can optimize the images of the video streams to accurately detect the objects depicted therein, and thus the operations of the activity applications 414 based on the objects detected in the video streams can be significantly improved.

[0064] In some embodiments, the calibrator 502 may also calibrate the images to compensate for the characteristics of the activity surface (e.g., size, angle, topography, etc.). For example, the calibrator 502 may perform the image calibration to account for the discontinuities and/or the non-uniformities of the activity surface, thereby enabling accurate detection of objects when the computing device 100 is set up on various activity surfaces (e.g., bumpy surface, beds, tables, whiteboards, etc.). In some embodiments, the calibrator 502 may calibrate the images to compensate for optical effect caused by the optical elements of the cameras 110 and/or 120. In some embodiments, the calibrator 502 may also calibrate the cameras 110 or 120 to split their field of view into multiple portions with the user being included in one portion of the field of view and the activity surface being included in another portion of the field of view of the cameras 110 and/or 120.

[0065] The detector 504 includes software and/or logic for processing the video stream captured by the cameras 110 or 120 to detect the objects present in the video stream. In some embodiments, to detect an object in the video streams, the detector 504 may analyze the images of the video streams to determine line segments, and determine the object that has the contour matching the line segments using the object data in the storage 510. In some embodiments, the detector 504 may provide the tangible objects detected in the video stream to the activity applications 414. In some embodiments, the detector 504 may store the objects detected in the video stream in the storage 510 for retrieval by these components. In some embodiments, the detector 504 may determine whether the line segments and/or the object associated with the line segments can be identified in the video stream, and instruct the calibrator 502 to calibrate the images of the video stream accordingly.

[0066] The activity application 414 includes software and/or logic executable on the computing device 100. In some embodiments, the activity application 414 may receive the objects detected in the video stream of the activity surface from the detector 504. In some embodiments, the activity application 414 may generate a virtual environment that incorporates, in real-time, the virtualization of the tangible objects and the user manipulation of the tangible objects on the activity surface, and display the virtual environment to the user on the computing device 100. Non-limiting examples of the activity application 414 include video games, learning applications, assistive applications, storyboard applications, collaborative applications, productivity applications, etc. Other types of activity application are also possible and contemplated.

[0067] The camera driver 506 includes software storable in the memory 514 and operable by the processor 512 to control/operate the cameras 110 and 120. For example, the camera driver 506 may be a software driver executable by the processor 512 for instructing the cameras 110 and 120 to capture and provide a video stream and/or a still image, etc. In some embodiments, the camera driver 506 may be capable of controlling various features of the cameras 110 and 120 (e.g., flash, aperture, exposure, focal length, etc.). In some embodiments, the camera driver 506 may be communicatively coupled to the cameras 110 and 120 and other components of the computing device 100 via the bus 508, and these components may interface with the camera driver 506 to capture video and/or still images using the cameras 110 and 120.

[0068] As discussed elsewhere herein, the cameras 110 and 120 are video capture devices (e.g., a camera) adapted to capture video streams and/or images in their field of view. In some embodiments, the cameras 110 and 120 may be coupled to the bus 508 for communication and interaction with the other components of the computing device 100. In some embodiments, the one or more of the cameras 110 and 120 may include a lens for gathering and focusing light, a photo sensor including pixel regions for capturing the focused light, and a processor for generating image data based on signals provided by the pixel regions. The photo sensor may be any type of photo sensor (e.g., a charge-coupled device (CCD), a complementary metal-oxide-semiconductor (CMOS) sensor, a hybrid CCD/CMOS device, etc.). In some embodiments, the cameras 110 and 120 may include a microphone for capturing sound. Alternatively, the cameras 110 and 120 may be coupled to a microphone that is coupled to the bus 508 or included in another component of the computing device 100. In some embodiments, the cameras 110 and 120 may also include a flash, a zoom lens, and/or other features. In some embodiments, the processor of the cameras 110 and 120 may store video and/or still image data being captured in the memory 514 and/or provide the video and/or still image data to other components of the computing device 100, such as the detection engine 412 and/or the activity applications 414.

[0069] The storage 510 is a non-transitory storage medium that stores and provides access to various types of data. Non-limiting examples of the data stored in the storage 510 include video stream and/or still images captured by the cameras 110 and 120, object data describing various tangible objects (e.g., object contour, color, shape and size, etc.), object detection result indicating the tangible objects, etc. In some embodiments, the data stored in the storage 510 may also include one or more orientation profiles. For example, the orientaiton profile may include the position information of the various cameras 110 and 120 as well as expected fields of view in different orientations.

[0070] In some embodiments, the storage 510 may be included in the memory 514 or another storage device coupled to the bus 508. In some embodiments, the storage 510 may be included in a distributed data store, such as a cloud-based computing and/or data storage system. In some embodiments, the storage 510 may include a database management system (DBMS). The DBMS may be a structured query language (SQL) DBMS. For example, the storage 510 may store data in an object-based data store or multi-dimensional tables including rows and columns, and may manipulate (i.e., insert, query, update, and/or delete) data entries stored in the storage 510 using programmatic operations (e.g., SQL queries and statements or a similar database manipulation library). Other implementations of the storage 510 with additional characteristics, structures, acts, and functionalities are also possible and contemplated.

[0071] FIG. 6 depicts an example method for determining what orientation a computing device 100 is positioned in. At block 602, the orientation sensor 522 may determine that the computing device 100 is positioned in a first orientation 101 on a physical surface. The orientation sensor 522 may determine that the computing device 100 is in the first orientation based on an angle detected by one or more orientation sensors 522 that can determine how the computing device 100 is orientated and angled. The computing device 100 may include a first camera 110 configured to capture a first field of view 230 and a second camera 120 configured to capture a second field of view 240 that is different from the first field of view. In some implementations the first field of view 230 and the second field of view 240 may originate from different camera locations on the computing device 100, in further implementations, the first field of view 230 and the second field of view 240 may overlap at least a portion of the field of view. In some implementations, the first field of view 230 of the first camera 110 may be directed towards the physical surface and the second field of view 240 of the second camera 120 may be directed towards a user facing the computing device 100 when the computing device 100 is situated in the first orientation 101.

[0072] At block 604, the first camera 110 may capture a first video stream including an activity scene of the physical activity surface in the first field of view 230. The activity scene may be a portion of a physical surface proximate to the computing device 100. In some implementations, one or more objects or other items may be positioned on the physical surface within the field of view 230 of the first camera 110. In further implementations, a user may draw or craft an image on a piece of paper or board situated on the physical surface and within the field of view 230 of the first camera 110. In another implementation, the objects may be passed through the field of view 230 of the first camera 110 without being placed on the physical surface, such as a gesture performed by the user in the area proximate to the computing device 100 and within the field of view 230 of the first camera 110.

[0073] At block 606, the second camera 120 may capture a second video stream including a user in the second field of view 240. The second video stream may include a profile of user positioned in front of the computing device 100 and within the second field of view 240. For example, the user can be positioned in front of the computing device 100 and viewing content on the display screen 140 while the computing device 100 rests on a table and the user sits in a chair. In some implementations, the video stream may capture at least a portion of a face of a user. In further implementations, the video stream may capture at least a portion of an appendage of the user, such as a hand, to capture a gesture. The video stream may include objects that are being held or manipulated by a user. The video stream may further include the environment around a user, such as posters in a classroom, etc. that may be detectable by the detector 504 and passed on to the activity application(s) 414. In some implementations, the video stream may include facial expression information from the user.

[0074] At block 608, the activity application(s) 414 may determine an operation routine based on the first video stream and the second video stream. For example, the first video stream may include a textbook for a student and the second video stream may identify which specific student is present in front of the computing device 100. The activity application(s) 414 may retrieve a personalized study schedule from the storage 510 related to the identity of the specific student and find which topic the student has been assigned in the specific book that has been identified from the first video stream. The activity application(s) 414 may then cause the specific topic and page number to be displayed on the display screen 140 for student to open the book to that page without having to receive any directions from the student. This is advantageous as it reduces the busy work and time needed for a student to begin learning assigned material and also reduces the opportunity for mistakes to arise from the student not knowing where to go in the book, etc.