Streaming Application Upgrading Method, Master Node, and Stream Computing System

Hong; Sibao ; et al.

U.S. patent application number 17/014388 was filed with the patent office on 2020-12-24 for streaming application upgrading method, master node, and stream computing system. The applicant listed for this patent is Huawei Technologies Co., Ltd.. Invention is credited to Sibao Hong, Mingzhen Xia, Songshan Zhang.

| Application Number | 20200404032 17/014388 |

| Document ID | / |

| Family ID | 1000005076897 |

| Filed Date | 2020-12-24 |

View All Diagrams

| United States Patent Application | 20200404032 |

| Kind Code | A1 |

| Hong; Sibao ; et al. | December 24, 2020 |

Streaming Application Upgrading Method, Master Node, and Stream Computing System

Abstract

A streaming application upgrading method includes obtaining an updated logical model of a streaming application, and determining a to-be-adjusted stream by comparing the updated logical model with an initial logical model; generating an upgrading instruction according to the to-be-adjusted stream; and delivering the generated upgrading instruction to a worker node, so that the worker node adjusts, according to an indication of the upgrading instruction, a stream between PEs distributed on the worker node.

| Inventors: | Hong; Sibao; (Hangzhou, CN) ; Xia; Mingzhen; (Hangzhou, CN) ; Zhang; Songshan; (Shenzhen, CN) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Family ID: | 1000005076897 | ||||||||||

| Appl. No.: | 17/014388 | ||||||||||

| Filed: | September 8, 2020 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 15492392 | Apr 20, 2017 | 10785272 | ||

| 17014388 | ||||

| PCT/CN2015/079944 | May 27, 2015 | |||

| 15492392 | ||||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | G06F 8/65 20130101; H04L 65/4069 20130101; G06F 16/00 20190101; H04L 67/10 20130101 |

| International Class: | H04L 29/06 20060101 H04L029/06; G06F 8/65 20060101 G06F008/65; G06F 16/00 20060101 G06F016/00 |

Foreign Application Data

| Date | Code | Application Number |

|---|---|---|

| Oct 22, 2014 | CN | 201410568236.5 |

Claims

1. A method for upgrading an initial logical model of a streaming application deployed on a stream computing system and implemented by a master node of the stream computing system, wherein the method comprises: obtaining an updated logical model of the streaming application when the streaming application is updated, wherein the stream computing system comprises the master node and at least one worker node, wherein a plurality of process elements (PEs) are distributed on one or more worker nodes of the at least one worker node and are each configured to process data of the streaming application, and wherein the initial logical model denotes the PEs processing the data and a direction of a data stream between the PEs; determining, by the master node, a to-be-adjusted data stream by comparing the initial logical model with the updated logical model; generating an upgrading instruction according to the to-be-adjusted data stream; and delivering the upgrading instruction to a first worker node of the at least one worker node, wherein a PE related to the to-be-adjusted data stream is located at the first worker node, and wherein the upgrading instruction instructs the first worker node to adjust a direction of a data stream between PEs distributed on the first worker node.

2. The method of claim 1, wherein the PEs denoted by the initial logical model are the same as PEs denoted by the updated logical model.

3. The method of claim 1, further comprising: comparing the initial logical model with the updated logical model to determine a to-be-adjusted PE, wherein the PEs denoted by the initial logical model are different from PEs denoted by the updated logical model; generating a first upgrading instruction according to the to-be-adjusted data stream; generating a second upgrading instruction according to the to-be-adjusted PE; delivering the first upgrading instruction to the first worker node, wherein the first upgrading instruction instructs the first worker node to adjust the direction of the data stream between the PEs distributed on the first worker node; and delivering the second upgrading instruction to a second worker node at which the to-be-adjusted PE is located, wherein the second upgrading instruction instructs the second worker node to adjust a quantity of PEs distributed on the second worker node.

4. The method of claim 1, further comprising: determining, according to a dependency relationship between an input stream and an output stream of the PE related to the to-be-adjusted data stream, a target PE to perform data recovery and a checkpoint for the target PE performing data recovery; delivering a data recovery instruction to a worker node at which the target PE is located, wherein the data recovery instruction instructs the target PE to recover data according to the checkpoint; and triggering the target PE to input the data that has been recovered to a downstream PE of the target PE for processing after determining that the first worker node has completed adjustment of the direction of the data stream between the PEs distributed on the first worker node.

5. The method of claim 4, wherein the to-be-adjusted data stream comprises a to-be-updated data stream and a to-be-deleted data stream, and wherein the method comprises: determining, according to status data of a PE related to the to-be-updated data stream and the to-be-deleted data stream, the checkpoint for performing data recovery; and determining, according to a dependency relationship between an input stream and an output stream of the PE related to the to-be-updated data stream and the to-be-deleted data stream, the target PE to perform data recovery, wherein status data of each PE is backed up by the PE when triggered by an output event and indicates a status in which the PE processes data.

6. The method of claim 3, wherein the to-be-adjusted PE comprises a to-be-added PE, wherein the method further comprises selecting the second worker node according to a load status of each worker node in the stream computing system, and wherein the second upgrading instruction instructs the second worker node to create the to-be-added PE.

7. The method of claim 3, wherein the to-be-adjusted PE comprises a to-be-deleted PE, wherein the to-be-deleted PE is located at the second worker node, and wherein the second upgrading instruction instructs the second worker node to delete the to-be-deleted PE.

8. The method of claim 1, further comprising: configuring the PEs according to the initial logical model of the streaming application; and processing, by the PEs, the data of the streaming application.

9. The method of claim 1, wherein the initial logical model is denoted using a directed acyclic graph (DAG).

10. A master node for upgrading an initial logical model of a streaming application deployed on a stream computing system, wherein the master node comprises: an input device; an output device; a processor; and a memory storing instructions which, when executed by the processor, cause the master node to: obtain an updated logical model of the streaming application using the input device when the streaming application is updated, wherein the stream computing system comprises the master node and at least one worker node, wherein a plurality of process elements (PEs) are distributed on one or more worker nodes of the at least one worker node and are each configured to process data of the streaming application, and wherein the initial logical model denotes the PEs processing the data and a direction of a data stream between the PEs, and determine a to-be-adjusted data stream by comparing the initial logical model of the streaming application with the updated logical model; generate an upgrading instruction according to the to-be-adjusted data stream; and deliver the upgrading instruction to a first worker node of the at least one worker node using the output device, wherein a PE related to the to-be-adjusted data stream is located at the first worker node, and wherein the upgrading instruction instructs the first worker node to adjust a direction of a data stream between PEs distributed on the first worker node.

11. The master node of claim 10, wherein the PEs denoted by the initial logical model of the streaming application are the same as PEs denoted by the updated logical model.

12. The master node of claim 10, wherein the instructions, when executed by the processor, further cause the master node to: compare the initial logical model with the updated logical model to determine a to-be-adjusted PE, wherein the PEs denoted by the initial logical model are different from PEs denoted by the updated logical model; generate a first upgrading instruction according to the to-be-adjusted data stream; generate a second upgrading instruction according to the to-be-adjusted PE; and deliver the first upgrading instruction to the first worker node using the output device, wherein the first upgrading instruction instructs the first worker node to adjust the direction of the data stream between the PEs distributed on the first worker node; and deliver the second upgrading instruction to a second worker node using the output device, wherein the second worker node comprises a worker node at which the to-be-adjusted PE is located, and wherein the second upgrading instruction instructs the second worker node to adjust a quantity of PEs distributed on the second worker node.

13. The master node of claim 10, wherein the instructions, when executed by the processor, further cause the master node to: determine, according to a dependency relationship between an input stream and an output stream of the PE related to the to-be-adjusted data stream, a target PE that needs to perform data recovery and a checkpoint for the target PE performing data recovery; deliver a data recovery instruction to a worker node at which the target PE is located using the output device, wherein the data recovery instruction instructs the target PE to recover data according to the checkpoint; and trigger the target PE to input the data that has been recovered to a downstream PE of the target PE for processing, wherein the first worker node completes adjustment of the direction of the data stream between the PEs distributed on the first worker node.

14. The master node of claim 13, wherein the to-be-adjusted data stream comprises a to-be-updated data stream and a to-be-deleted data stream, wherein the instructions, when executed by the processor, further cause the master node to: determine, according to status data of a PE related to the to-be-updated data stream and the to-be-deleted data stream, the checkpoint for performing data recovery; and determine, according to a dependency relationship between an input stream and an output stream of the PE related to the to-be-updated data stream and the to-be-deleted data stream, the target PE to perform data recovery, wherein status data of each PE is backed up by the PE when triggered by an output event, and indicates a status in which the PE processes data.

15. The master node of claim 12, wherein the to-be-adjusted PE comprises a to-be-deleted PE, wherein the to-be-deleted PE is located at the second worker node, and wherein the second upgrading instruction instructs the second worker node to delete the to-be-deleted PE.

16. The master node of claim 12, wherein the to-be-adjusted PE comprises a to-be-added PE, wherein the instructions, when executed by the processor, further cause the master node to select the second worker node according to a load status of each worker node in the stream computing system, and wherein the second upgrading instruction instructs the second worker node to create the to-be-added PE.

17. A stream computing system, comprising: at least one worker node comprising a plurality of process elements (PEs), wherein the PEs are distributed on one or more worker nodes of the at least one worker node, and are configured to process data of a streaming application deployed in the stream computing system, wherein an initial logical model of the streaming application denotes the PEs processing the data and a direction of a data stream between the PEs; and a master node coupled to the at least one worker node, wherein the master node is configured to: obtain an updated logical model of the streaming application when the streaming application is updated; determine a to-be-adjusted data stream by comparing the initial logical model of the streaming application with the updated logical model; generating an upgrading instruction according to the to-be-adjusted data stream; and delivering the upgrading instruction to a first worker node of the at least one worker node, wherein a PE related to the to-be-adjusted data stream is located at the first worker node, and wherein the upgrading instruction instructs the first worker node to adjust a direction of a data stream between PEs distributed on the first worker node, and wherein the first worker node is configured to: receive the upgrading instruction from the master node; and adjust, according to an indication of the upgrading instruction, the direction of the data stream between the PEs distributed on the first worker node.

18. The stream computing system of claim 17, wherein the master node is further configured to compare the initial logical model with the updated logical model to determine the to-be-adjusted data stream, wherein the PEs denoted by the initial logical model are the same as PEs denoted by the updated logical model.

19. The stream computing system of claim 17, wherein the master node is further configured to: compare the initial logical model with the updated logical model to determine a to-be-adjusted PE and the to-be-adjusted data stream, wherein the PEs denoted by the initial logical model are different from PEs denoted by the updated logical model; generate a first upgrading instruction according to the to-be-adjusted data stream; and generate a second upgrading instruction according to the to-be-adjusted PE; deliver the first upgrading instruction to the first worker node; and deliver the second upgrading instruction to a second worker node, wherein the to-be-adjusted PE is located at the second worker node, wherein the first worker node is further configured to: receive the first upgrading instruction from the master node; and adjust, according to an indication of the first upgrading instruction, the direction of the data stream between the PEs distributed on the first worker node, and wherein the second worker node is configured to: receive the second upgrading instruction from the master node; and adjust, according to an indication of the second upgrading instruction, a quantity of PEs distributed on the second worker node.

20. The stream computing system of claim 17, wherein the master node is further configured to: determine, according to a dependency relationship between an input stream and an output stream of the PE related to the to-be-adjusted data stream, a target PE to perform data recovery and a checkpoint for the target PE performing data recovery; deliver a data recovery instruction to a worker node at which the target PE is located, wherein the data recovery instruction instructs the target PE to recover data according to the checkpoint; and trigger the target PE to input the data that has been recovered to a downstream PE of the target PE for processing after determining that the first worker node has completed adjustment of the direction of the data stream between the PEs distributed on the first worker node.

Description

CROSS-REFERENCE TO RELATED APPLICATIONS

[0001] This application is a continuation of U.S. patent application Ser. No. 15/492,392, filed on Apr. 20, 2017, which is a continuation of International Patent Application No. PCT/CN2015/079944, filed on May 27, 2015, which claims priority to Chinese Patent Application No. 201410568236.5, filed on Oct. 22, 2014. All of the aforementioned patent applications are hereby incorporated by reference in their entireties.

TECHNICAL FIELD

[0002] The present disclosure relates to the field of computer technologies, and in particular, to a streaming application upgrading method, a master node, and a stream computing system.

BACKGROUND

[0003] With arrival of the big data era, market demands for performing real-time processing, analysis, and decision-making on mass data continuously expand, such as precise advertisement push in the field of telecommunications, dynamic real-time analysis on transaction in the field of finances, and real-time monitoring in the field of industries. Against this backdrop, a data-intensive application such as financial service, network monitoring, or telecommunications data management, is applied increasingly widely, and a stream computing system applicable to the data-intensive application also emerges. Data generated by the data-intensive application is characterized by a large amount of data, a high speed, and time variance, and after the data-intensive application is deployed in the stream computing system, the stream computing system may immediately process the data of the application upon receiving it, to ensure real-time performance. As shown in FIG. 1, a stream computing system generally includes a master node and multiple worker nodes, where the master node is mainly responsible for scheduling and managing each worker node, the worker node is a logical entity carrying an actual data processing operation, the worker node further processes data by invoking several process elements (PEs), and a PE is a physical process element of service logic.

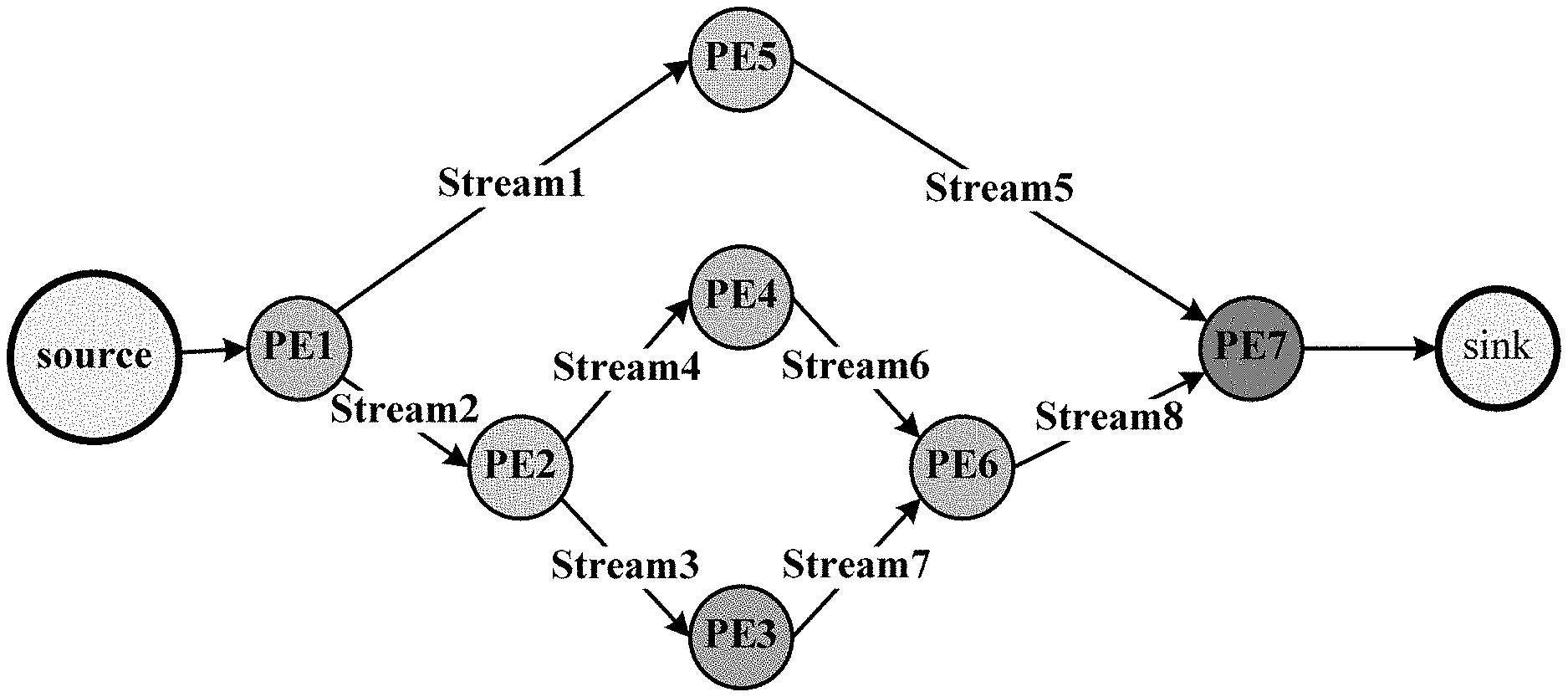

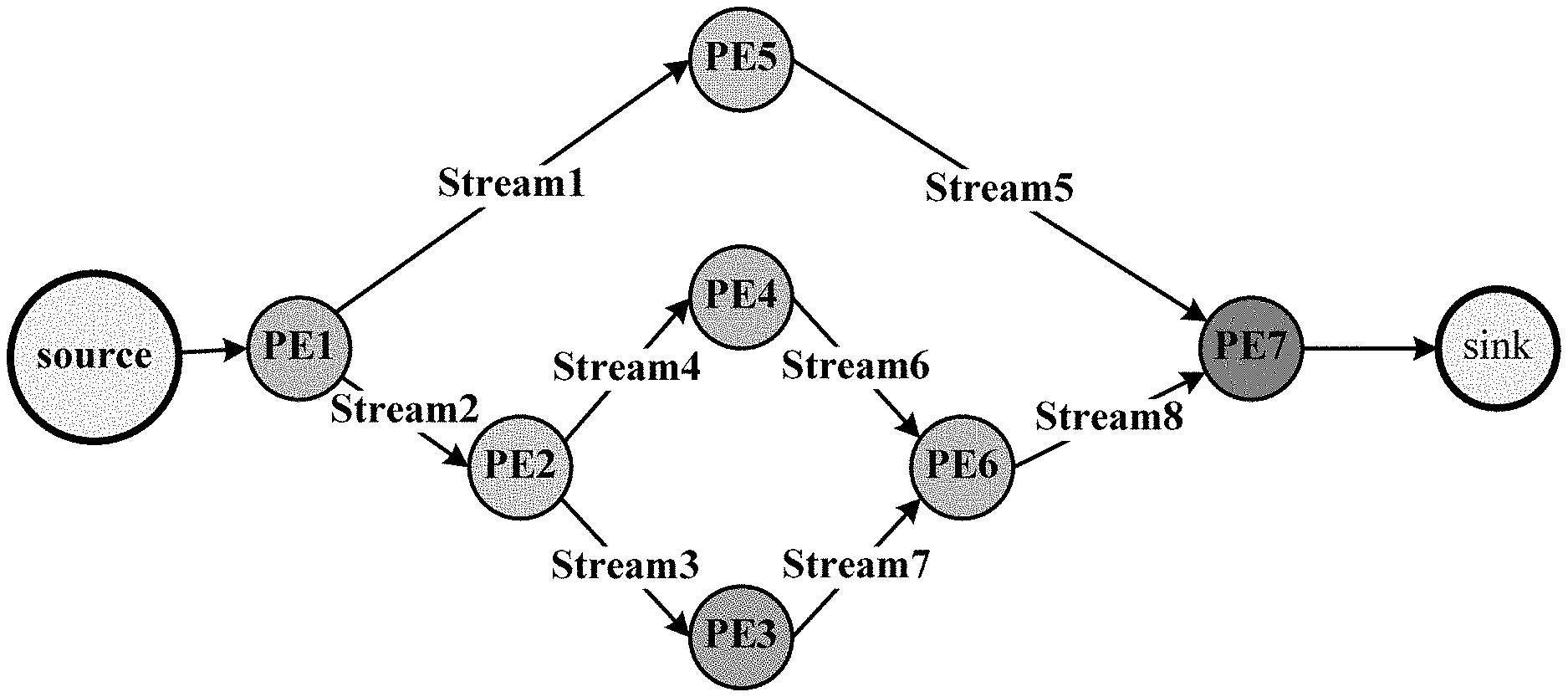

[0004] Generally, an application program or a service deployed in the stream computing system is referred to as a streaming application. In other approaches, when a streaming application is deployed in the stream computing system, a logical model of the streaming application needs to be defined in advance, and the logical model of the streaming application is generally denoted using a directed acyclic graph (DAG). As shown in FIG. 2, a PE is a physical carrier carrying an actual data processing operation, and is also a minimum unit that may be scheduled and executed by the stream computing system. A stream represents a data stream transmitted between PEs, and an arrow denotes a direction of a data stream. A PE may load and execute service logic dynamically, and process data of the streaming application in real time. As shown in FIG. 3, a stream computing system deploys PEs on different worker nodes for execution according to a logical model, and each PE performs computing according to logic of the PE, and forwards a computing result to a downstream PE. However, when a user demand or a service scenario changes, the streaming application needs to be updated or upgraded, and the initial logical model is no longer applicable. Therefore, first, updating of the streaming application needs to be completed offline, and a new logical model is defined. Then the old application is stopped, an updated streaming application is deployed in the stream computing system according to the new logical model, and finally the updated streaming application is started. It can be seen that, in other approaches, a service needs to be interrupted to update the streaming application. Therefore, the streaming application cannot be upgraded online, causing a service loss.

SUMMARY

[0005] Embodiments of the present disclosure provide a streaming application upgrading method, a master node, and a stream computing system, which are used to upgrade a streaming application in a stream computing system online without interrupting a service.

[0006] According to a first aspect, an embodiment of the present disclosure provides a streaming application upgrading method, where the method is applied to a master node in a stream computing system, and the stream computing system includes the master node and at least one worker node, where multiple PEs are distributed on one or more worker nodes of the at least one worker node, and are configured to process data of a streaming application deployed in the stream computing system, where an initial logical model of the streaming application denotes the multiple PEs processing the data of the streaming application and a direction of a data stream between the multiple PEs, and the method includes obtaining, by the master node, an updated logical model of the streaming application, and determining a to-be-adjusted data stream by comparing the initial logical model of the streaming application with the updated logical model when the streaming application is updated, generating an upgrading instruction according to the to-be-adjusted data stream, and delivering the upgrading instruction to a first worker node, where the first worker node is a worker node at which a PE related to the to-be-adjusted data stream is located, and the upgrading instruction instructs the first worker node to adjust a direction of a data stream between PEs distributed on the first worker node.

[0007] In a first possible implementation manner of the first aspect, determining a to-be-adjusted data stream by comparing the initial logical model of the streaming application with the updated logical model includes comparing the initial logical model of the streaming application with the updated logical model, to determine the to-be-adjusted data stream, where the PEs denoted by the initial logical model of the streaming application are the same as PEs denoted by the updated logical model.

[0008] In a second possible implementation manner of the first aspect, determining a to-be-adjusted data stream by comparing the initial logical model of the streaming application with the updated logical model includes comparing the initial logical model of the streaming application with the updated logical model, to determine a to-be-adjusted PE and the to-be-adjusted data stream, where the PEs denoted by the initial logical model of the streaming application are not completely the same as PEs denoted by the updated logical model. Generating an upgrading instruction according to the to-be-adjusted data stream includes generating a first upgrading instruction according to the to-be-adjusted data stream, and generating a second upgrading instruction according to the to-be-adjusted PE. Delivering the upgrading instruction to a first worker node includes delivering the first upgrading instruction to the first worker node, and delivering the second upgrading instruction to a second worker node, where the second worker node includes a worker node at which the to-be-adjusted PE is located, and the first upgrading instruction instructs the first worker node to adjust the direction of the data stream between the PEs distributed on the first worker node, and the second upgrading instruction instructs the second worker node to adjust a quantity of PEs distributed on the second worker node.

[0009] With reference to the first aspect, or either of the first and second possible implementation manners of the first aspect, in a third possible implementation manner, the method further includes determining, by the master node according to a dependency relationship between an input stream and an output stream of the PE related to the to-be-adjusted data stream, a target PE that needs to perform data recovery and a checkpoint for the target PE performing data recovery, delivering a data recovery instruction to a worker node at which the target PE is located, where the data recovery instruction instructs the target PE to recover data according to the checkpoint, and triggering, by the master node, the target PE to input the recovered data to a downstream PE of the target PE for processing after the first worker node completes adjustment, and the PEs distributed on the first worker node get ready.

[0010] With reference to the third possible implementation manner of the first aspect, in a fourth possible implementation manner, the to-be-adjusted data stream includes a to-be-updated data stream and a to-be-deleted data stream, and determining, by the master node according to a dependency relationship between an input stream and an output stream of the PE related to the to-be-adjusted data stream, a target PE that needs to perform data recovery and a checkpoint for the target PE performing data recovery includes determining, by the master node according to status data of a PE related to the to-be-updated data stream and the to-be-deleted data stream, a checkpoint for performing data recovery, and determining, according to a dependency relationship between an input stream and an output stream of the PE related to the to-be-updated data stream and the to-be-deleted data stream, a target PE that needs to perform data recovery, where status data of each PE is backed up by the PE when being triggered by an output event, and indicates a status in which the PE processes data.

[0011] With reference to any one of the second to fourth possible implementation manners of the first aspect, in a fifth possible implementation manner, the to-be-adjusted PE includes a to-be-added PE. The second worker node is a worker node selected by the master node according to a load status of each worker node in the stream computing system, and the second upgrading instruction instructs the second worker node to create the to-be-added PE.

[0012] With reference to any one of the second to fifth possible implementation manners of the first aspect, in a sixth possible implementation manner, the to-be-adjusted PE includes a to-be-deleted PE. The second worker node is a worker node at which the to-be-deleted PE is located, and the second upgrading instruction instructs the second worker node to delete the to-be-deleted PE.

[0013] With reference to the first aspect, or any one of the first to sixth possible implementation manners of the first aspect, in a seventh possible implementation manner, the method further includes configuring the multiple PEs according to the initial logical model of the streaming application such that the multiple PEs process the data of the streaming application.

[0014] With reference to the first aspect, or any one of the first to seventh possible implementation manners of the first aspect, in an eighth possible implementation manner, the initial logical model of the streaming application is denoted using a DAG

[0015] According to a second aspect, an embodiment of the present disclosure provides a master node in a stream computing system, where the stream computing system includes the master node and at least one worker node, where multiple PEs are distributed on one or more worker nodes of the at least one worker node, and are configured to process data of a streaming application deployed in the stream computing system, where an initial logical model of the streaming application is used to denote the multiple PEs processing the data of the streaming application and a direction of a data stream between the multiple PEs, and the master node includes an obtaining and determining module configured to obtain an updated logical model of the streaming application, and determine a to-be-adjusted data stream by comparing the initial logical model of the streaming application with the updated logical model when the streaming application is updated, an upgrading instruction generating module configured to generate an upgrading instruction according to the to-be-adjusted data stream, and a sending module configured to deliver the upgrading instruction to a first worker node, where the first worker node is a worker node at which a PE related to the to-be-adjusted data stream is located, and the upgrading instruction instructs the first worker node to adjust a direction of a data stream between PEs distributed on the first worker node.

[0016] In a first possible implementation manner of the second aspect, the obtaining and determining module is further configured to compare the initial logical model of the streaming application with the updated logical model, to determine the to-be-adjusted data stream, where the PEs denoted by the initial logical model of the streaming application are the same as PEs denoted by the updated logical model.

[0017] In a second possible implementation manner of the second aspect, the obtaining and determining module is further configured to compare the initial logic model of the streaming application with the updated logic model, to determine a to-be-adjusted PE and the to-be-adjusted data stream, and where the PEs denoted by the initial logical model of the streaming application are not completely the same as PEs denoted by the updated logical model. The upgrading instruction generating module is further configured to generate a first upgrading instruction according to the to-be-adjusted data stream, and generating a second upgrading instruction according to the to-be-adjusted PE, and the sending module is further configured to deliver the first upgrading instruction to the first worker node, and deliver the second upgrading instruction to a second worker node, where the second worker node includes a worker node at which the to-be-adjusted PE is located, and the first upgrading instruction instructs the first worker node to adjust the direction of the data stream between the PEs distributed on the first worker node, and the second upgrading instruction instructs the second worker node to adjust a quantity of PEs distributed on the second worker node.

[0018] With reference to the second aspect, or either of the first and second possible implementation manners of the second aspect, in a third possible implementation manner, the master node further includes a data recovery module configured to determine, according to a dependency relationship between an input stream and an output stream of the PE related to the to-be-adjusted data stream, a target PE that needs to perform data recovery and a checkpoint for the target PE performing data recovery, where the sending module is further configured to deliver a data recovery instruction to a worker node at which the target PE is located, where the data recovery instruction instructs the target PE to recover data according to the checkpoint, and the master node further includes an input triggering module configured to trigger the target PE to input the recovered data to a downstream PE of the target PE for processing after the first worker node completes adjustment, and the PEs distributed on the first worker node get ready.

[0019] With reference to the third possible implementation manner of the second aspect, in a fourth possible implementation manner, the to-be-adjusted data stream includes a to-be-updated data stream and a to-be-deleted data stream, and the data recovery module is further configured to determine, according to status data of a PE related to the to-be-updated data stream and the to-be-deleted data stream, a checkpoint for performing data recovery, and determine, according to a dependency relationship between an input stream and an output stream of the PE related to the to-be-updated data stream and the to-be-deleted data stream, a target PE that needs to perform data recovery, where status data of each PE is backed up by the PE when being triggered by an output event, and indicates a status in which the PE processes data.

[0020] With reference to any one of the second to fourth possible implementation manners of the second aspect, in a fifth possible implementation manner, the to-be-adjusted PE includes a to-be-deleted PE. The second worker node is a worker node at which the to-be-deleted PE is located, and the second upgrading instruction instructs the second worker node to delete the to-be-deleted PE.

[0021] With reference to any one of the second to fifth possible implementation manners of the second aspect, in a sixth possible implementation manner, the to-be-adjusted PE includes a to-be-added PE. The second worker node is a worker node selected by the master node according to a load status of each worker node in the stream computing system, and the second upgrading instruction instructs the second worker node to create the to-be-added PE.

[0022] With reference to the second aspect, or any one of the first to sixth possible implementation manners of the second aspect, in a seventh possible implementation manner, the master node further includes a configuration module configured to configure the multiple PEs according to the initial logical model of the streaming application such that the multiple PEs process the data of the streaming application.

[0023] According to a third aspect, an embodiment of the present disclosure provides a stream computing system, including a master node and at least one worker node, where multiple PEs are distributed on one or more worker nodes of the at least one worker node, and are configured to process data of a streaming application deployed in the stream computing system, where an initial logical model of the streaming application denotes the multiple PEs processing the data of the streaming application and a direction of a data stream between the multiple PEs, and the master node is configured to obtain an updated logical model of the streaming application, and determine a to-be-adjusted data stream by comparing the initial logical model of the streaming application with the updated logical model when the streaming application is updated, generate an upgrading instruction according to the to-be-adjusted data stream, and deliver the upgrading instruction to a first worker node, where the first worker node is a worker node at which a PE related to the to-be-adjusted data stream is located, and the upgrading instruction instructs the first worker node to adjust a direction of a data stream between PEs distributed on the first worker node, and the first worker node is configured to receive the upgrading instruction sent by the master node, and adjust, according to an indication of the upgrading instruction, the direction of the data stream between the PEs distributed on the first worker node.

[0024] In a first possible implementation manner of the third aspect, where the PEs denoted by the initial logical model of the streaming application are the same as PEs denoted by the updated logical model.

[0025] In a second possible implementation manner of the third aspect, where the PEs denoted by the initial logical model of the streaming application are not completely the same as PEs denoted by the updated logical model, generate a first upgrading instruction according to the to-be-adjusted data stream, and generate a second upgrading instruction according to the to-be-adjusted PE, and deliver the first upgrading instruction to the first worker node, and deliver the second upgrading instruction to a second worker node, where the second worker node includes a worker node at which the to-be-adjusted PE is located. The first worker node is further configured to receive the first upgrading instruction sent by the master node, and adjust, according to an indication of the first upgrading instruction, the direction of the data stream between the PEs distributed on the first worker node, and the second worker node is further configured to receive the second upgrading instruction sent by the master node, and adjust, according to an indication of the second upgrading instruction, a quantity of PEs distributed on the second worker node.

[0026] With reference to the third aspect, or either of the first and second possible implementation manners of the third aspect, in a third possible implementation manner, the master node is further configured to determine, according to a dependency relationship between an input stream and an output stream of the PE related to the to-be-adjusted data stream, a target PE that needs to perform data recovery and a checkpoint for the target PE performing data recovery, delivering a data recovery instruction to a worker node at which the target PE is located, where the data recovery instruction is used to instruct the target PE to recover data according to the checkpoint, and trigger the target PE to input the recovered data to a downstream PE of the target PE for processing after the first worker node completes adjustment, and the PEs distributed on the first worker node get ready.

[0027] It can be known from the foregoing technical solutions that, according to the streaming application upgrading method and the stream computing system provided in the embodiments of the present disclosure, a logical model of a streaming application is compared with an updated logical model of the streaming application, to dynamically determine a to-be-adjusted data stream, and a corresponding upgrading instruction is generated according to the to-be-adjusted data stream and delivered to a worker node, thereby upgrading the streaming application in the stream computing system online without interrupting a service.

BRIEF DESCRIPTION OF DRAWINGS

[0028] To describe the technical solutions in the embodiments of the present disclosure more clearly, the following briefly introduces the accompanying drawings required for describing the embodiments. The accompanying drawings in the following description show merely some embodiments of the present disclosure, and persons of ordinary skill in the art may still derive other drawings from these accompanying drawings without creative efforts.

[0029] FIG. 1 is a schematic diagram of an architecture of a stream computing system according to the present disclosure.

[0030] FIG. 2 is a schematic diagram of a logical model of a streaming application according to an embodiment of the present disclosure.

[0031] FIG. 3 is a schematic diagram of deployment of a streaming application according to an embodiment of the present disclosure.

[0032] FIG. 4 is a diagram of a working principle of a stream computing system according to an embodiment of the present disclosure.

[0033] FIG. 5 is a flowchart of a streaming application upgrading method according to an embodiment of the present disclosure.

[0034] FIG. 6 is a schematic diagram of a change to a logical model of a streaming application after the streaming application is updated according to an embodiment of the present disclosure.

[0035] FIG. 7 is a schematic diagram of a change to a logical model of a streaming application after the streaming application is updated according to an embodiment of the present disclosure.

[0036] FIG. 8 is a flowchart of a streaming application upgrading method according to an embodiment of the present disclosure.

[0037] FIG. 9 is a schematic diagram of a logical model of a streaming application according to an embodiment of the present disclosure.

[0038] FIG. 10 is a schematic diagram of an adjustment of a logical model of a streaming application according to an embodiment of the present disclosure.

[0039] FIG. 11 is a schematic diagram of PE deployment after a streaming application is upgraded according to an embodiment of the present disclosure.

[0040] FIG. 12 is a schematic diagram of a dependency relationship between an input stream and an output stream of a PE according to an embodiment of the present disclosure.

[0041] FIG. 13 is a schematic diagram of a dependency relationship between an input stream and an output stream of a PE according to an embodiment of the present disclosure.

[0042] FIG. 14 is a schematic diagram of backup of status data of a PE according to an embodiment of the present disclosure.

[0043] FIG. 15 is a schematic diagram of a master node according to an embodiment of the present disclosure.

[0044] FIG. 16 is a schematic diagram of a stream computing system according to an embodiment of the present disclosure.

[0045] FIG. 17 is a schematic diagram of a master node according to an embodiment of the present disclosure.

DESCRIPTION OF EMBODIMENTS

[0046] To make the objectives, technical solutions, and advantages of the present disclosure clearer, the following describes the technical solutions of the present disclosure with reference to the accompanying drawings in the embodiments of the present disclosure. The following described embodiments are some of the embodiments of the present disclosure. Based on the embodiments of the present disclosure, persons of ordinary skill in the art can obtain other embodiments that can resolve the technical problem of the present disclosure and implement the technical effect of the present disclosure by equivalently altering some or all the technical features even without creative efforts. The embodiments obtained by means of alteration do not depart from the scope disclosed in the present disclosure.

[0047] The technical solutions provided in the embodiments of the present disclosure may be typically applied to a stream computing system. FIG. 4 describes a basic structure of a stream computing system, and the stream computing system includes a master node and multiple worker nodes. During cluster deployment, there may be one or more master nodes and one or more worker nodes, and a master node may be a physical node separated from a worker node, and during standalone deployment, a master node and a worker node may be logical units deployed on a same physical node, where the physical node may be a computer or a server. The master node is responsible for scheduling a data stream to the worker node for processing. Generally, one physical node is one worker node. In some cases, one physical node may correspond to multiple worker nodes, a quantity of worker nodes corresponding to one physical node depends on physical hardware resources of the physical node. One worker node may be understood as one physical hardware resource. Worker nodes corresponding to a same physical node communicate with each other by means of process communication, and worker nodes corresponding to different physical nodes communicate with each other by means of network communication.

[0048] As shown in FIG. 4, a stream computing system includes a master node, a worker node 1, a worker node 2, and a worker node 3.

[0049] The master node deploys, according to a logical model of a streaming application, the streaming application in the three worker nodes, the worker node 1, the worker node 2, and the worker node 3 for processing. The logical model shown in FIG. 4 is a logical relationship diagram including nine PEs, PE1 to PE9, and directions of data streams between the nine PEs, and the directions of the data streams between the PEs also embodies dependency relationships between input streams and output streams of the PEs. It should be noted that, a data stream in the embodiments of the present disclosure is also briefly referred to as a stream.

[0050] The master node configures PE1, PE2, and PE3 on the worker node 1, PE4, PE7, and PE9 on the worker node 2, and PE5, PE6, and PE8 on the worker node 3 according to the logical model of the streaming application to process a data stream of the streaming application. It can be seen that, after the configuration, a direction of a data stream between the PEs on the worker nodes 1, 2, and 3 matches the logical model of the streaming application.

[0051] The logical model of the streaming application in the embodiments of the present disclosure may be a DAG, a tree graph, or a cyclic graph. The logical model of the streaming application may be understood by referring to FIG. 2. A diagram of a streaming computing application shown in FIG. 2 includes seven operators from PE1 to PE7, and eight data streams from S1 to S8. FIG. 2 explicitly marks directions of the data streams, for example, the data stream S1 is from PE1 to the PE5, which denotes that PE5 processes a stream output by PE1, that is, an output of PE5 depends on an input of PE1. PE5 is generally also referred to as a downstream PE of PE1, and PE1 is an upstream PE of PE5. It can be understood that, an upstream PE and a downstream PE are determined according to a direction of a data stream between the PEs, and only two PEs are related to one data stream, a source PE that outputs the data stream, and a destination PE to which the data stream is directed, that is, a PE receiving the data stream. Viewed from a direction of a data stream, a source PE is an upstream PE of a destination PE, and the destination PE is a downstream PE of the source PE. Further, after a data stream S2 is input to PE2, and subject to logical processing of PE2, two data streams S3 and S4 are generated, and enter PE3 and PE4 respectively for logical processing. Likewise, PE2 is also a downstream PE of PE1, and PE1 is an upstream PE of PE2. The data stream S6 output by PE4 and a data stream S7 output by PE3 are both used as inputs of PE6, that is, an output of PE6 depends on inputs of PE3 and PE4. It should be noted that, in the embodiments of the present disclosure, a PE whose output depends on an input of a single PE is defined as a stateless PE, such as PE5, PE3, or PE4, and a PE whose output depends on inputs of multiple PEs is defined as a stateful PE, such as PE6 or PE7. A data stream includes a single data segment referred to as a tuple, where the tuple may be structured or unstructured data. Generally, a tuple may denote a status of an object at a specific time point, a PE in the stream computing system processes a data stream generated by the streaming application using a tuple as unit, and it may be also considered that a tuple is a minimum granularity for division and denotation of data in the stream computing system.

[0052] It should be further noted that, the stream computing system is only a typical application scenario of the technical solutions of the present disclosure, and does not constitute any limitation on application scenarios of the present disclosure, and the technical solutions of the embodiments of the present disclosure are all applicable to other application scenarios involved in application deployment and upgrading of a distributed system or a cloud computing system.

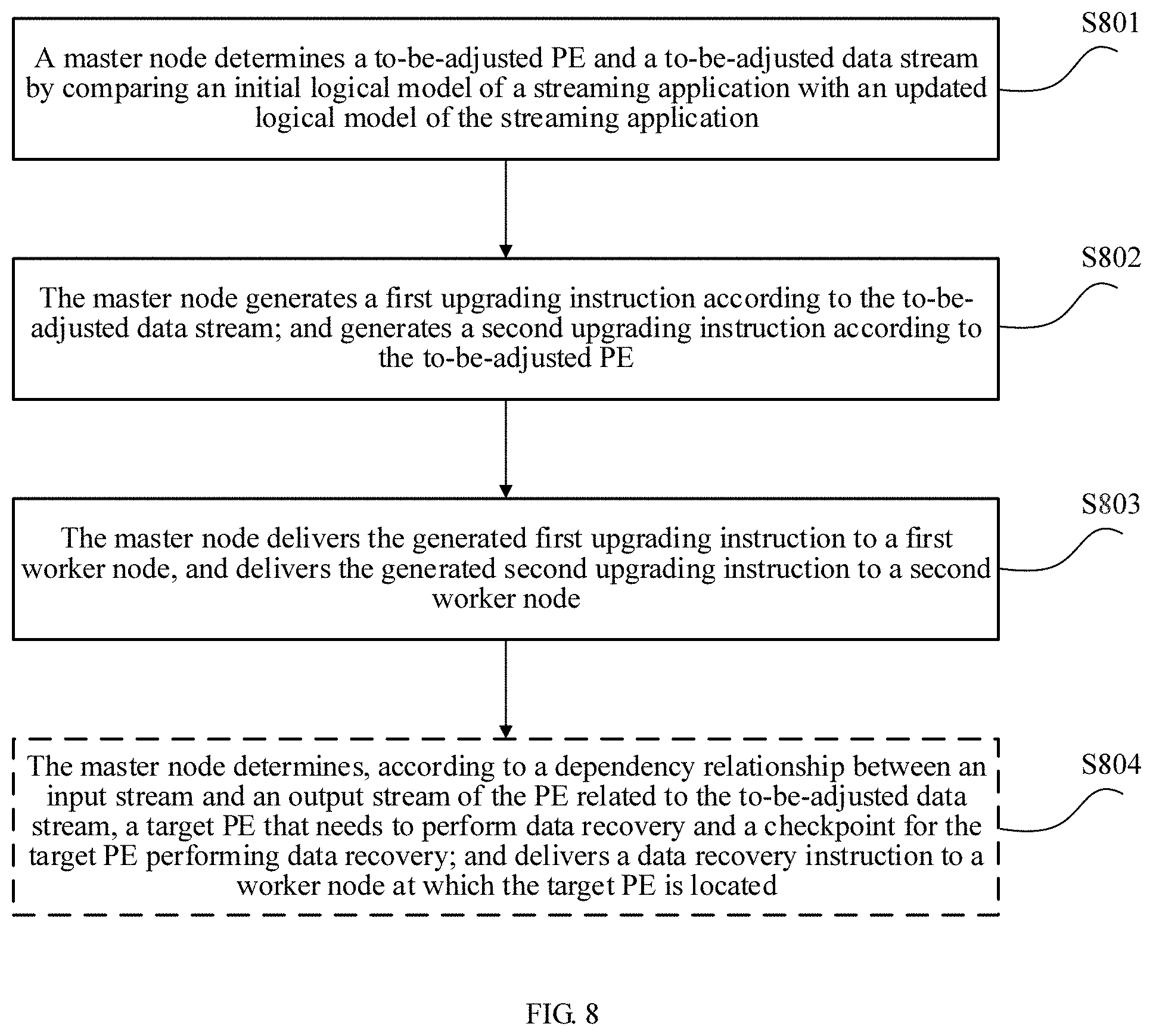

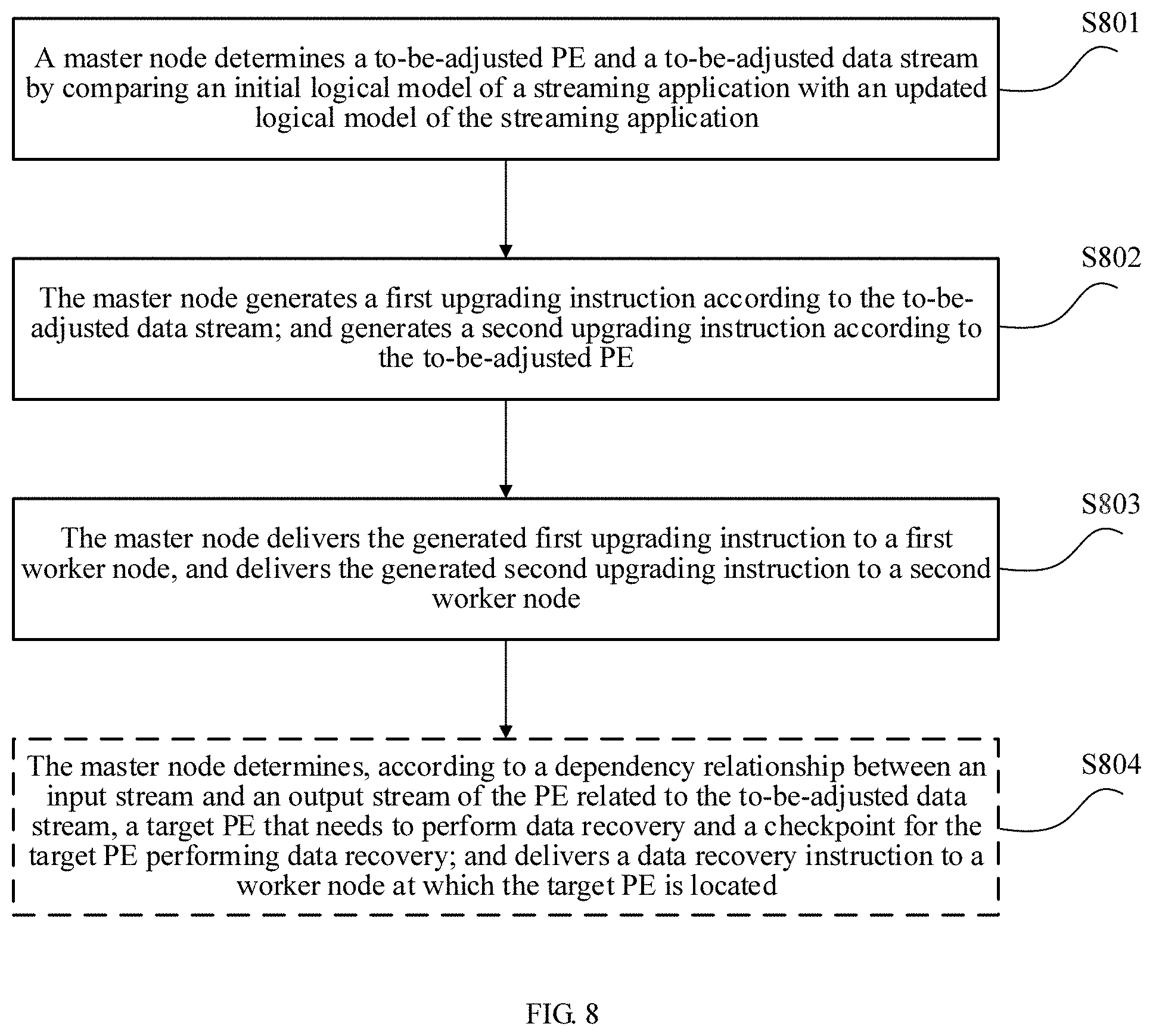

[0053] An embodiment of the present disclosure provides a streaming application upgrading method, where the method may be typically applied to the stream computing system shown in FIG. 1 and FIG. 4. Assuming that a streaming application is deployed in the stream computing system, the master node of the stream computing system deploys multiple PEs according to the initial logical model to process a data stream of the streaming application, where the multiple PEs are distributed on one or more worker nodes of the stream computing system. As shown in FIG. 6, after the streaming application is upgraded or updated, the logical model of the streaming application is correspondingly updated, updating of the logical model is generally completed by a developer, or by a developer with a development tool, which is not particularly limited in the present disclosure. As shown in FIG. 5, a main procedure of the streaming application upgrading method is described as follows.

[0054] Step S501: A master node of a stream computing system obtains an updated logical model of a streaming application when the streaming application is updated.

[0055] Step S502: The master node determines a to-be-adjusted data stream by comparing the updated logical model with the initial logical model.

[0056] Step S503: The master node generates an upgrading instruction according to the to-be-adjusted data stream.

[0057] Step S504: The master node delivers the generated upgrading instruction to a first worker node, where the first worker node is a worker node at which a PE related to the to-be-adjusted data stream is located, and the upgrading instruction instructs the first worker node to adjust a direction of a data stream between PEs distributed on the first worker node.

[0058] It should be noted that, there may be one or more to-be-adjusted data streams in this embodiment of the present disclosure, which depends on a specific situation. PEs related to each to-be-adjusted data stream refer to a source PE and a destination PE of the to-be-adjusted data stream, where the source PE of the to-be-adjusted data stream is a PE that outputs the to-be-adjusted data stream, the destination PE of the to-be-adjusted data stream is a PE receiving the to-be-adjusted data stream or a downstream PE of the source PE of the to-be-adjusted data stream.

[0059] According to the streaming application upgrading method and the stream computing system provided in this embodiment of the present disclosure, a logical model of a streaming application is compared with an updated logical model of the streaming application, to dynamically determine a to-be-adjusted data stream, and a corresponding upgrading instruction is generated according to the to-be-adjusted data stream and delivered to a worker node, thereby upgrading the streaming application in the stream computing system online without interrupting a service.

[0060] In this embodiment of the present disclosure, the logical model of the streaming application denotes multiple PEs processing data of the streaming application and a direction of a data stream between the multiple PEs. The logical model of the streaming application is correspondingly updated after the streaming application is upgraded or updated. Generally, a difference between an updated logical model and the initial logical model is mainly divided into two types:

[0061] (1) The PEs denoted by the initial logical model are completely the same as PEs denoted by the updated logical model, and only a direction of a data stream between PEs changes; and

[0062] (2) The PEs denoted by the initial logical model are not completely the same as the PEs denoted by the updated logical model, and a direction of a data stream between PEs also changes. For the foregoing two types of differences, corresponding processing procedures are described below.

[0063] In a specific embodiment, as shown in FIG. 6, PEs denoted by an initial logical model of a streaming application are completely the same as PEs denoted by an updated logical model of the streaming application, and a direction of a data stream between PEs changes. According to FIG. 6, both the PEs in the logical model of the streaming application before updating and the PEs in the logical model of the streaming application that is updated are PE1 to PE7, and are completely the same, but a direction of a data stream changes, that is, a data stream from PE4 to PE6 becomes a data stream S11 from PE4 to PE7, and a data stream S12 from PE2 to PE6 is added. In this case, a main procedure of the streaming application upgrading method is as follows.

[0064] Step 1: Determine a to-be-adjusted data stream by comparing an initial logical model of a streaming application with an updated logical model of the streaming application, where the to-be-adjusted data stream includes one or more data streams. Further, in an embodiment, the to-be-adjusted data stream may include at least one of a to-be-added data stream, a to-be-deleted data stream, and a to-be-updated data stream, where the to-be-updated data stream refers to a data stream whose destination node or source node changes after the logical model of the streaming application is updated. Further, as shown in FIG. 6, the to-be-adjusted data stream includes a to-be-added data stream S12, and a to-be-updated data stream S11.

[0065] Step 2: Generate an upgrading instruction according to the to-be-adjusted data stream, where the upgrading instruction may include one or more instructions, and the upgrading instruction is related to a type of the to-be-adjusted data stream. For example, the generated upgrading instruction includes an instruction used to add a data stream and an instruction used to update a data stream if the to-be-adjusted data stream includes a to-be-added data stream and a to-be-updated data stream, where different types of upgrading instructions may be separate instructions, or may be integrated into one instruction, which is not particularly limited in the present disclosure either. Further, as shown in FIG. 6, the generated upgrading instruction includes an instruction for adding the data stream S12 and an instruction for updating a data stream S6 to a data stream S11.

[0066] Step 3: Deliver the generated upgrading instruction to a first worker node, where the first worker node is a worker node at which a PE related to the to-be-adjusted data stream is located. It can be understood that, there may be one or more first worker nodes. After receiving the upgrading instruction, a first worker node performs operations indicated by the upgrading instruction, for example, adding the data stream S12, and updating the data stream S6 to the data stream S11 such that a direction of a data stream between PEs distributed on the first worker node is adjusted, and a direction of a data stream after the adjustment matches the updated logical model.

[0067] Further, when the first worker node adjusts a data stream between PEs distributed on the first worker node, data being processed may be lost, and therefore the data needs to be recovered. Further, in an embodiment, before the first worker node adjusts a data stream between PEs distributed on the first worker node, a master node determines, according to a dependency relationship between an input stream and an output stream of a PE related to the to-be-adjusted data stream, a target PE that needs to perform data recovery and a checkpoint for the target PE performing data recovery, and delivers a data recovery instruction to a worker node at which the target PE is located, where the data recovery instruction is used to instruct the target PE to recover data according to the checkpoint, and after the master node determines that the first worker node completes adjustment, and the PEs distributed on the first worker node get ready, the master node triggers the target PE to input the recovered data to a downstream PE of the target PE for processing.

[0068] It should be noted that, the master node may perceive a status of a PE on each worker node in the stream computing system by actively sending a query message, or a worker node may report a status of each PE distributed on the worker node to the master node, where a status of a PE includes a running state, a ready state and a stopped state. When a channel between a PE and an upstream or downstream PE is established successfully, the PE is in the ready state, and the PE may receive and process a data stream.

[0069] Optionally, before performing the steps of the foregoing streaming application upgrading method, the master node may further configure multiple PEs according to the initial logical model of the streaming application such that the multiple PEs process data of the streaming application.

[0070] According to the streaming application upgrading method provided in this embodiment of the present disclosure, a logical model of a streaming application is compared with an updated logical model of the streaming application, to dynamically determine a to-be-adjusted data stream, and a corresponding upgrading instruction is generated and delivered to a worker node, to complete online upgrading of the streaming application, thereby ensuring that a service does not need to be interrupted in an application upgrading process, and further, data is recovered in the upgrading process, to ensure that key data is not lost, and service running is not affected.

[0071] In another specific embodiment, as shown in FIG. 7, PEs denoted by an initial logical model of a streaming application are not completely the same as PEs denoted by an updated logical model of the streaming application, and a direction of a data stream between the PEs also changes. According to FIG. 7, a quantity of the PEs in the logical model of the streaming application before updating is different from a quantity of the PEs in the logical model of the streaming application that is updated (PE2, PE3, PE4 and PE6 are deleted, and PE9, PE10, PE11, PE12 and PE13 are added), a direction of a data stream also changes, that is, original data streams S4, S5, S6, and S7 are deleted, and data streams S11, S12, S13, S14, S15 and S16 are added, a destination PE of an original data stream S3 is updated, and a source PE of an original data stream S9 is updated. In this case, as shown in FIG. 8, a main procedure of the streaming application upgrading method is as follows.

[0072] Step S801: A master node determines a to-be-adjusted PE and a to-be-adjusted data stream by comparing an initial logical model of a streaming application with an updated logical model of the streaming application, where the to-be-adjusted PE includes one or more PEs, and the to-be-adjusted data stream includes one or more data streams. Further, in an embodiment, the to-be-adjusted PE includes at least one of a to-be-added PE and a to-be-deleted PE, and the to-be-adjusted data stream may include at least one of a to-be-added data stream, a to-be-deleted data stream, and a to-be-updated data stream.

[0073] Further, as shown in FIG. 9, the master node may determine, by comparing the logical model of the streaming application before updating with the logical model of the streaming application that is updated, that the initial logical model is the same as the updated logical model only after a logical submodel including PE2, PE3, PE4, and PE6 in the initial logical model is replaced with a logical submodel including PE9, PE10, PE11, PE12 and PE13. Therefore, it is determined that PE2, PE3, PE4, PE6, and PE9, PE10, PE11, PE12 and PE13 are to-be-adjusted PEs (where PE2, PE3, PE4 and PE6 are to-be-deleted PEs, and PE9, PE10, PE11, PE12 and PE13 are to-be-added PEs), and it is determined that data streams related to the to-be-adjusted PEs, that is, all input streams and output streams of the to-be-adjusted PEs are to-be-adjusted streams. As shown in FIG. 9, a stream indicated by a dashed line part is a to-be-deleted data stream, and a stream indicated by a black bold part is a to-be-added data stream, and a stream indicated by a light-colored bold part is a to-be-updated data stream.

[0074] Step S802: The master node generates a first upgrading instruction according to the to-be-adjusted data stream, and generates a second upgrading instruction according to the to-be-adjusted PE, where the first upgrading instruction and the second upgrading instruction may include one or more instructions each, the first upgrading instruction is related to a type of the to-be-adjusted data stream, and the second upgrading instruction is related to a type of the to-be-adjusted PE. For example, the generated first upgrading instruction includes an instruction used to add a data stream and an instruction used to update a data stream if the to-be-adjusted data stream includes a to-be-added data stream and a to-be-updated data stream, and the generated second upgrading instruction includes an instruction used to add a PE if the to-be-adjusted PE includes a to-be-added PE, where the first upgrading instruction and the second upgrading instruction may be separate instructions, or may be integrated into one instruction, which is not particularly limited in the present disclosure either. Further, as shown in FIG. 7, the generated first upgrading instruction includes an instruction for deleting a data stream, an instruction for adding a data stream, and an instruction for updating a data stream. Further, the second upgrading instruction includes an instruction for adding a PE, and an instruction for deleting a PE.

[0075] In a specific embodiment, as shown in FIG. 9, after determining the to-be-adjusted PE and the to-be-adjusted stream by comparing the logical model of the streaming application before updating with the logical model of the streaming application that is updated, the master node may further determine an adjustment policy, that is, how to adjust a PE and a stream such that PE deployment after the adjustment (including a quantity of PEs and a dependency relationship between data streams between the PEs) matches the updated logical model of the streaming application. The adjustment policy includes two pieces of content:

[0076] (1) A policy of adjusting a quantity of PEs, that is, which PEs need to be added and/or which PEs need to deleted; and

[0077] (2) A policy of adjusting a direction of a data stream between PEs, that is, directions of which data streams between PEs need to be updated, which data streams need to be added, and which data streams need to be deleted.

[0078] In an embodiment, the adjustment policy mainly includes at least one of the following:

[0079] (1) Update a stream: either a destination node or a source node of a data stream changes;

[0080] (2) Delete a stream: a data stream needs to be discarded after an application is updated;

[0081] (3) Add a stream: no data stream originally exists, and a stream is added after an application is updated;

[0082] (4) Delete a PE: a PE needs to be discarded after an application is updated; and

[0083] (5) Add a PE: a PE is added after an application is updated.

[0084] Further, in the logical models shown in FIG. 7 and FIG. 9, it can be seen with reference to FIG. 10 that, five PEs (PE9 to PE13) need to be added, data streams between PE9 to PE13 need to be added, PE2, PE3, PE4, and PE6 need to be deleted, and data streams between PE2, PE3, PE4, and PE6 need to be deleted. In addition, because a destination PE of an output stream of PE1 changes (from PE2 to PE9), and an input stream of PE7 also changes (from an output stream of PE6 to an output stream of PE13, that is, a source node of a stream changes), an output stream of PE1 and an input stream of PE7 need to be updated. Based on the foregoing analysis, it may be learned that the adjustment policy is as follows.

[0085] (1) Add PE9 to PE13;

[0086] (2) Add streams between PE9 to PE13, where directions of data streams between PE9 to PE13 are determined by the updated logical model;

[0087] (3) Delete PE2, PE3, PE4, and PE6;

[0088] (4) Delete streams between PE2, PE3, PE4, and PE6; and

[0089] (5) Change a destination PE of an output stream of PE1 from PE2 to PE9; and change a source PE of an input stream of PE7 from PE6 to PE13.

[0090] After the adjustment policy is determined, the master node may generate an upgrading instruction based on the determined adjustment policy, where the upgrading instruction is used to instruct a worker node (which is a worker node at which a to-be-adjusted PE is located and a worker node at which a PE related to a to-be-adjusted data stream is located) to implement the determined adjustment policy. Corresponding to the adjustment policy, the upgrading instruction includes at least one of an instruction for adding a PE, an instruction for deleting a PE, an instruction for updating a stream, an instruction for deleting a stream, and an instruction for adding a stream. Further, in the logical models shown in FIG. 7 and FIG. 9, the upgrading instruction includes the following.

[0091] (1) an instruction for adding PE9 to PE13;

[0092] (2) an instruction for adding streams between PE9 to PE13;

[0093] (3) an instruction for deleting PE2, PE3, PE4, and PE6;

[0094] (4) an instruction for deleting streams between PE2, PE3, PE4, and PE6;

[0095] (5) an instruction for changing a destination PE of an output stream of PE1 from PE2 to PE9; and

[0096] (6) an instruction for changing a source PE of an input stream of PE7 from PE6 to PE13.

[0097] Step S803 The master node delivers the generated first upgrading instruction to a first worker node, and delivers the generated second upgrading instruction to a second worker node, where the first worker node is a worker node at which a PE related to the to-be-adjusted data stream is located, and the second worker node includes a worker node at which the to-be-adjusted PE is located. It can be understood that, there may be one or more first worker nodes and one or more second worker nodes, and the first worker node and the second worker node may be overlapped, that is, a worker node may not only belong to the first worker node but also belong to the second worker node. The first upgrading instruction instructs the first worker node to adjust the direction of the data stream between the PEs distributed on the first worker node, and the second upgrading instruction instructs the second worker node to adjust a quantity of PEs distributed on the second worker node. After receiving the upgrading instruction, the first worker node and the second worker node perform an operation indicated by the upgrading instruction such that PEs distributed on the first worker node and the second worker node and a direction of a data stream between the PEs are adjusted. It can be understood that, adjusting, by the second worker node, a quantity of PEs distributed on the second worker node may be creating a PE and/or deleting a created PE.

[0098] Optionally, in a specific embodiment, if the to-be-adjusted PE includes a to-be-deleted PE, the second worker node includes a worker node at which the to-be-deleted PE is located, and the second upgrading instruction instructs the second worker node to delete the to-be-deleted PE.

[0099] Optionally, in another specific embodiment, if the to-be-adjusted PE includes a to-be-added PE, the second worker node may be a worker node selected by the master node according to a load status of each worker node in the stream computing system, or may be a worker node randomly selected by the master node, and the second upgrading instruction is used to instruct the second worker node to create the to-be-added PE.

[0100] Further, in the logical models shown in FIG. 7 and FIG. 9, as shown in FIG. 11, the master node (not shown) sends, to worker2, an instruction for adding PE9, sends, to worker3, an instruction for adding PE10, sends, to worker4, an instruction for adding PE11 and PE11, and sends, to worker6, an instruction for adding PE13, sends, to worker3, an instruction for deleting PE2 and PE3, and sends, to worker4, an instruction for deleting PE4 and PE6, and sends, to worker3, a worker node at which PE2 and PE3 are initially located, an instruction for deleting a stream between PE2 and PE3, and sends, to worker3, a worker node at which PE3 is located and worker4, a worker node at which PE6 is located, an instruction for deleting a data stream between PE3 and PE6. The other instructions can be deduced by analogy, and details are not described herein again. It should be noted that, each worker node maintains data stream configuration information of all PEs on the worker node, and data stream configuration information of each PE includes information such as a source address, a destination address, and a port number, and therefore deletion and updating of a data stream is essentially implemented by modifying data stream configuration information.

[0101] As shown in FIG. 11, according to the upgrading instruction delivered by the master node, PE9 is added to worker2, PE2 and PE3 are deleted from worker3, PE10 is added to worker3, PE6 and PE4 are deleted from worker4, PE11 and PE12 are added to worker4, and PE13 is added to worker6. In addition, worker1 to worker6 also adjust directions of data streams between PEs by performing operations such as an operation for deleting a stream, an operation for adding a stream, and an operation for updating a stream. Further, streams between PE9 to PE13 are added, streams between PE2, PE3, PE4, and PE6 are deleted, and a destination PE of an output stream of PE1 is changed from PE2 to PE9, and a source PE of an input stream of PE7 is changed from PE6 to PE13. It can be seen from FIG. 11 that, PE deployment after the adjustment (including a quantity of PEs and a dependency relationship between data streams between the PEs) matches the updated logical model of the streaming application.

[0102] Further, when the first worker node and the second worker node adjust PEs distributed on the first worker node and the second worker node and a data stream between the PEs, data being processed may be lost, and therefore the data needs to be recovered. Further, in an embodiment, the streaming application upgrading method further includes:

[0103] Step S804: The master node determines, according to a dependency relationship between an input stream and an output stream of the PE related to the to-be-adjusted data stream, a target PE that needs to perform data recovery and a checkpoint for the target PE performing data recovery, and delivers a data recovery instruction to a worker node at which the target PE is located, where the data recovery instruction instructs the target PE to recover data according to the checkpoint, and after the master node determines that the first worker node and the second worker node complete adjustment, and the PEs distributed on the first worker node and the second worker node get ready, the master node triggers the target PE to input the recovered data to a downstream PE of the target PE for processing. It should be noted that, the master node may perceive a status of a PE on each worker node in the stream computing system by actively sending a query message, or a worker node may report a status of each PE distributed on the worker node to the master node, where a status of a PE includes a running state, a ready state and a stopped state. When a channel between a PE and an upstream or downstream PE is established successfully, the PE is in the ready state, and the PE may receive and process a data stream.

[0104] In a process of updating or upgrading the streaming application, adjustment of PE deployment needs to be involved in adjustment of a data stream, and when the PE deployment is adjusted, some data may be being processed, and therefore, to ensure that data is not lost in the upgrading process, it is needed to determine, according to a dependency relationship between an original input stream and an original output stream of the PE related to the to-be-adjusted data stream, a target PE that needs to perform data recovery and a checkpoint for the target PE performing data recovery, to ensure that data that has not been completely processed by a PE before the application is upgraded can continue to be processed after the upgrading is completed, where the data that needs to be recovered herein generally refers to a tuple.

[0105] In a specific embodiment, as shown in FIG. 12, an input/output relationship of a logical submodel including {PE1, PE2, PE3, PE4, PE6, PE7} related to a to-be-adjusted data stream is as follows. After tuples i.sub.1, i.sub.2, i.sub.3 and i.sub.4 are input from PE1 to PE2, the tuples i.sub.1, i.sub.2, i.sub.3 and i.sub.4 are processed by PE2 to obtain tuples k.sub.1, k.sub.2, k.sub.3 and j.sub.1, and then the tuples k.sub.1, k.sub.2, and k.sub.3 are input to PE4 and processed to obtain m.sub.1, the tuple j.sub.1 is input to PE3 and processed to obtain l.sub.1, and PE6 processes m.sub.1 to obtain O.sub.2, and processes l.sub.1 to obtain O.sub.1. Based on the foregoing input/output relationship, a dependency relationship between an input stream and an output stream of a to-be-adjusted PE may be obtained by means of analysis, as shown in FIG. 13, O.sub.1 depends on an input l.sub.1 of PE6, l.sub.1 depends on j.sub.1, and j.sub.1 depends on i.sub.2. Therefore, for an entire logical submodel, the output O.sub.1 of PE6 depends on the input i.sub.2 of PE2, and O.sub.2 depends on the input m.sub.1 of PE6, m.sub.1 depends on inputs k.sub.1, k.sub.2 and k.sub.3 of PE4, and k.sub.1, k.sub.2 and k.sub.3 also depend on i.sub.1, i.sub.3 and i.sub.4. Therefore, for the entire logical submodel, the output O.sub.2 of PE6 depends on the inputs i.sub.1, i.sub.3 and i.sub.4 of PE2. It can be known using the foregoing dependency relationship obtained by means of analysis that, PE2, PE3, PE4, and PE6 all depend on an output of PE1, and therefore, when the first worker node and the second worker node adjust PEs distributed on the first worker node and the second worker node and a data stream between the PEs, data in PE2, PE3, PE4, and PE6 is not completely processed, and then PE1 needs to recover the data, that is, PE1 is a target PE.

[0106] Further, it may be determined, according to latest status data backed up by a PE related to a to-be-adjusted data stream when the first worker node and the second worker node adjust the PEs distributed on the first worker node and the second worker node and the data stream between the PEs, whether data input to the PE related to the to-be-adjusted data stream has been completely processed and is output to a downstream PE, and therefore a checkpoint for the target PE performing data recovery may be determined. It should be noted that, status data of a PE denotes a status in which a PE processes data, and content further included in the status data is well-known by persons skilled in the art. For example, the status data may include one or more types of cache data in a tuple receiving queue, cache data on a message channel, and data generated by a PE in a process of processing one or more common tuples in a receiving queue of the PE, such as a processing result of a common tuple currently processed and intermediate process data. It should be noted that, data recovery does not need to be performed on an added data stream, and therefore when a checkpoint for performing data recovery and a target PE that needs to perform data recovery are determined, neither status information of a PE related to a to-be-added data stream, nor a dependency relationship between an input stream and an output stream of the PE related to the to-be-added data stream needs to be used. For example, in an embodiment, if the to-be-adjusted data stream includes a to-be-updated data stream, a to-be-deleted data stream, and a to-be-added data stream, a checkpoint for performing data recovery may be determined according to only status data of a PE related to the to-be-updated data stream and the to-be-deleted data stream, and a target PE that needs to perform data recovery may be determined according to only a dependency relationship between an input stream and an output stream of the PE related to the to-be-updated data stream and the to-be-deleted data stream. Similarly, if the to-be-adjusted data stream includes a to-be-updated data stream and a to-be-added data stream, a checkpoint for performing data recovery and a target PE that needs to perform data recovery may be determined according to only status data of a PE related to the to-be-updated data stream, and a dependency relationship between an input stream and an output stream of the PE related to the to-be-updated data stream.

[0107] It should be noted that, in an embodiment of the present disclosure, status data of a PE is periodically backed up, that is, the stream computing system periodically triggers each PE to back up status data of the PE, and after receiving a checkpoint event, the PE backups current status data of the PE, records the checkpoint, and clears expired data. It can be understood by persons skilled in the art that, a checkpoint may be understood as a record point of data backup or an index of backup data, one checkpoint corresponds to one data backup operation, data backed up at different moments has different checkpoints, and data backed up at a checkpoint may be queried and obtained using the checkpoint. In another embodiment of the present disclosure, status data may be backed up using an output triggering mechanism, triggered by an output of a PE. As shown in FIG. 14, when a PE completes processing on input streams Input_Stream1 to Input_Stream5, and outputs a processing result Output_Stream1, a triggering module triggers a status data processing module, and the status data processing module then starts a new checkpoint to record latest status data of the PE into a memory or a magnetic disk. Such a triggering manner is precise and effective, has higher efficiency compared with a periodic triggering manner, and can avoid excessive resource consumption. The status data processing module may further clear historical data recorded at a previous checkpoint, thereby reducing intermediate data and effectively saving storage space.

[0108] Using the situation shown in FIG. 12 as an example, the following describes in detail a process of determining, according to a dependency relationship between an input stream and an output stream of a PE and status data, a target PE that needs to perform data recovery and a checkpoint for the target PE performing data recovery. If it is determined according to status data of {PE1, PE2, PE3, PE4, PE6, PE7} related to a to-be-adjusted data stream that the PE6 has not completed processing on a tuple m.sub.1, or O.sub.2 obtained after processing is performed on a tuple m.sub.1 has not been sent to PE7, a downstream PE of PE6, it may be determined according to the foregoing dependency relationship between an input stream and an output stream that i.sub.1, i.sub.3, and i.sub.4 on which O.sub.2 depends need to be recovered, and PE1 that outputs i.sub.1, i.sub.3, and i.sub.4 should complete data recovery, that is, a target PE that needs to recover data is PE1, and therefore a checkpoint at which i.sub.1, i.sub.3, and i.sub.4 may be recovered may be determined. In this way, before the first worker node and the second worker node adjust deployment of PEs on the first worker node and the second worker node, the target PE may recover the data i.sub.1, i.sub.3, and i.sub.4 according to the determined checkpoint, and after the first worker node and the second worker node complete adjustment, and the PEs distributed on the first worker node and the second worker node get ready, the target PE sends the recovered data i.sub.1, i.sub.3, and i.sub.4 to a downstream PE of the target PE for processing, thereby ensuring that data loss does not occur in the upgrading process, and achieving an objective of lossless upgrading.

[0109] Optionally, before performing the steps of the foregoing streaming application upgrading method, the master node may further configure multiple PEs according to the initial logical model of the streaming application such that the multiple PEs process data of the streaming application.