System and Method for Providing Patient Record Synchronization In a Healthcare Setting

BORMANN; DANIEL S. ; et al.

U.S. patent application number 16/884194 was filed with the patent office on 2020-12-17 for system and method for providing patient record synchronization in a healthcare setting. The applicant listed for this patent is Epic Systems Corporation. Invention is credited to DANIEL S. BORMANN, AARON T. CORNELIUS, TIMOTHY W. ESCHER, ANDREW M. GIESLER, SAMEER GROVER, JASON L. HANSEN, CLIFFORD L. MICHALSKI, VASSIL D. PEYTCHEV.

| Application Number | 20200394208 16/884194 |

| Document ID | / |

| Family ID | 1000005051567 |

| Filed Date | 2020-12-17 |

View All Diagrams

| United States Patent Application | 20200394208 |

| Kind Code | A1 |

| BORMANN; DANIEL S. ; et al. | December 17, 2020 |

System and Method for Providing Patient Record Synchronization In a Healthcare Setting

Abstract

A system provides an information sharing architecture that allows physically separate healthcare information systems, called "deployments," to share and exchange information. The collection of these participating deployments is referred to as the "Community," and systems within the Community sometimes store records for patients in common. The system allows participants in the Community to share information on data changes to these patients, and to reconcile concurrent and conflicting updates to the patient's record.

| Inventors: | BORMANN; DANIEL S.; (Waunakee, WI) ; CORNELIUS; AARON T.; (Mount Horeb, WI) ; ESCHER; TIMOTHY W.; (Chickaloon, AK) ; GROVER; SAMEER; (Schaumburg, IL) ; GIESLER; ANDREW M.; (Madison, WI) ; HANSEN; JASON L.; (Verona, WI) ; MICHALSKI; CLIFFORD L.; (Fitchburg, WI) ; PEYTCHEV; VASSIL D.; (Madison, WI) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Family ID: | 1000005051567 | ||||||||||

| Appl. No.: | 16/884194 | ||||||||||

| Filed: | May 27, 2020 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 15862837 | Jan 5, 2018 | 10698922 | ||

| 16884194 | ||||

| 14326935 | Jul 9, 2014 | |||

| 15862837 | ||||

| 10794933 | Mar 5, 2004 | 8825502 | ||

| 14326935 | ||||

| 60507419 | Sep 30, 2003 | |||

| 60519389 | Nov 12, 2003 | |||

| 60533316 | Dec 30, 2003 | |||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | G16H 10/60 20180101; G16H 40/00 20180101; A61B 5/0022 20130101; G06Q 50/22 20130101; G06F 16/273 20190101 |

| International Class: | G06F 16/27 20060101 G06F016/27; G06Q 50/22 20060101 G06Q050/22; G16H 10/60 20060101 G16H010/60; G16H 40/00 20060101 G16H040/00 |

Claims

1.-101. (canceled)

102. Within an enterprise healthcare information management system having at least one deployment, the deployment including data stored within a data structure, the data at least relating to a data entity of the enterprise healthcare system and the deployment being operable for autonomous management of the data stored within the data structure, a data record corresponding to the data entity comprising: a first portion of the data record identifying a generation of the data record; and a second portion of the date record identifying an update history of the data record.

103. The data record of claim 102, wherein the data entity comprises at least one of: a patient, a healthcare provider; a healthcare administrator; a benefits provider and a benefits administrator.

104. The data record of claim 102, wherein the data record comprises groups, and wherein each group has associated therewith a first portion identifying a generation of the group and a second portion identifying an update history of the group.

105. The data record of claim 102, wherein the first portion is associated with an current update level of the data record and wherein the second portion is associated with an update history by deployment for the data record.

106. The data record of claim 102, wherein the first portion and the second portion facilitate data conflict resolution.

107. The data record of claim 102, wherein the healthcare information management system comprises a plurality of communicatively coupled deployments, and wherein the update history table reflects each of the plurality of deployments contributing to the data record, and the generation represents a latest update attributable to a corresponding deployment.

108. The data record of claim 102, wherein the generation and update history table reflect sequential continuity of the data record.

109. A method of managing data within a healthcare information management system, the healthcare information management system comprising at least one deployment, the deployment including data stored within a data structure, the data at least relating to a data entity of the enterprise healthcare system and the deployment being operable for autonomous management of the data stored within the data structure, a data record corresponding to the data entity and having a first portion identifying a generation of the data record and a second portion of the data record identifying an update history of the data record; the method comprising the steps of: receiving at a deployment of the healthcare information system a data record for a data entity; updating at the deployment the data record; and correspondingly incrementing the generation to a current generation and the update history table to reflect the updating deployment and the current generation.

110. The method of claim 109, comprising the steps of: determining in the update history table a previous update attributable to the deployment; and discarding from the update history table the previous update.

111. The method of claim 109, wherein the data record comprises a plurality of groups and each group has associated therewith a first portion and a second portion identifying a generation and an update history of the group.

112. The method of claim 109, wherein prior to the step of updating at the deployment the data record the method comprising the step of: determining if the data record is sequentially continuous based upon the generation and the update history table.

113. The method of claim 112, wherein the step of determining if the data record is sequentially continuous based upon the generation and the update history table comprises: determining the data record is an older but sequentially continuous data record; and ignoring the older but sequentially continuous data record.

114. An enterprise healthcare information management system comprising: at least a first deployment and a second deployment; the first deployment having a first data structure with a first data structure configuration for storing data therein and the second deployment having a second data structure with a second data structure configuration, different from the first data structure configuration, for storing data therein, the data at least relating to a data entity of the enterprise healthcare system and each deployment operable for autonomous management of the data stored within its respective data structure; a communication network communicatively coupling the first and second deployments; and each deployment having a data mapping functionality, the data mapping functionality operable to reconcile data stored in one of the first deployment and the second deployment for use in the other of the first deployment and the second deployment upon communication of data from the other deployment to the one deployment.

115. The enterprise healthcare information management system of claim 114, wherein the data mapping functionality is operable to perform database record pointer resolution.

116. The enterprise healthcare information management system of claim 114, wherein the data mapping functionality is operable to perform deployment-to-deployment record pointer resolution.

117. The enterprise healthcare information management system of claim 116, wherein the deployment-to-deployment record pointers comprise selection list data values.

118. The enterprise healthcare information management system of claim 114, wherein data associated with a data entity comprises a community identification and a local deployment identification.

119. The enterprise healthcare information management system of claim 114, wherein data associated with a data entity comprises a direct match identification.

120. The enterprise healthcare information management system of claim 114, wherein the data mapping functionality is operable responsive to a data type and a deployment type.

121. The enterprise healthcare information management system of claim 114, the healthcare information management system comprising a master file index, the data mapping functionality operable responsive to the master file index.

Description

CROSS-REFERENCE TO RELATED APPLICATIONS

[0001] This application claims benefit of the following U.S. Provisional Applications: Ser. No. 60/507,419, entitled "System And Method For Providing Patient Record Synchronization In A Healthcare Setting" filed Sep. 30, 2003 (attorney docket no. 29794/39410), Ser. No. 60/519,389, entitled "System And Method Of Synchronizing Data Sets Across Distributed Systems" filed Nov. 12, 2003 (attorney docket no. 29794/39682), Ser. No. 60/533,316, entitled "System And Method Of Synchronizing Category Lists And Master Files Across Distributed Systems" filed Dec. 30, 2003 (attorney docket no. 29794/39682A), the disclosures of which are hereby expressly incorporated herein by reference.

TECHNICAL FIELD

[0002] This patent relates generally to health record management, and more particularly, this patent relates to a system and method for providing an information sharing architecture that allows physically separate health care information systems to share and exchange information.

BACKGROUND

[0003] Many healthcare professionals and most healthcare organizations are familiar with using information technology and accessing systems for their own medical specialty, practice, hospital department, or administration. While these systems servicing these entities have proven that they can be efficient and effective, they have largely been isolated systems that have managed electronic patient data in a closed environment. These systems collected, stored, and viewed the data in homogenous and compatible IT systems often provided by a single company. Minimal, if any, connections to the outside world or "community" were established which eased the protection of patient data immensely. Current interfaces commonly used to communicate between systems have inherent limitations.

[0004] Increased computerization throughout the healthcare industry has given rise to a proliferation of independent systems storing electronic patient data. However, at the point of delivery, more care is being moved into the community and shared among different professionals and organizations. These changes require that patients' records must be transferred and combined. Many of the existing systems are capable of accessing data from others in their own hospital, hospital group, healthcare district, or organization. However, these islands of information are typically not capable of linkage and sharing of information with other islands in the community. Furthermore, as more systems are interconnected, the linkages and sharing problems increase exponentially and become unmanageable.

BRIEF DESCRIPTION OF THE DRAWINGS

[0005] FIG. 1 is an embodiment of an exemplary system to provide an information sharing architecture that allows physically separate health care information systems to share and exchange information.

[0006] FIG. 2 is an exemplary schematic diagram of several system components located in a deployment.

[0007] FIG. 3 is an exemplary block diagram overview of several functional components in a deployment within the system.

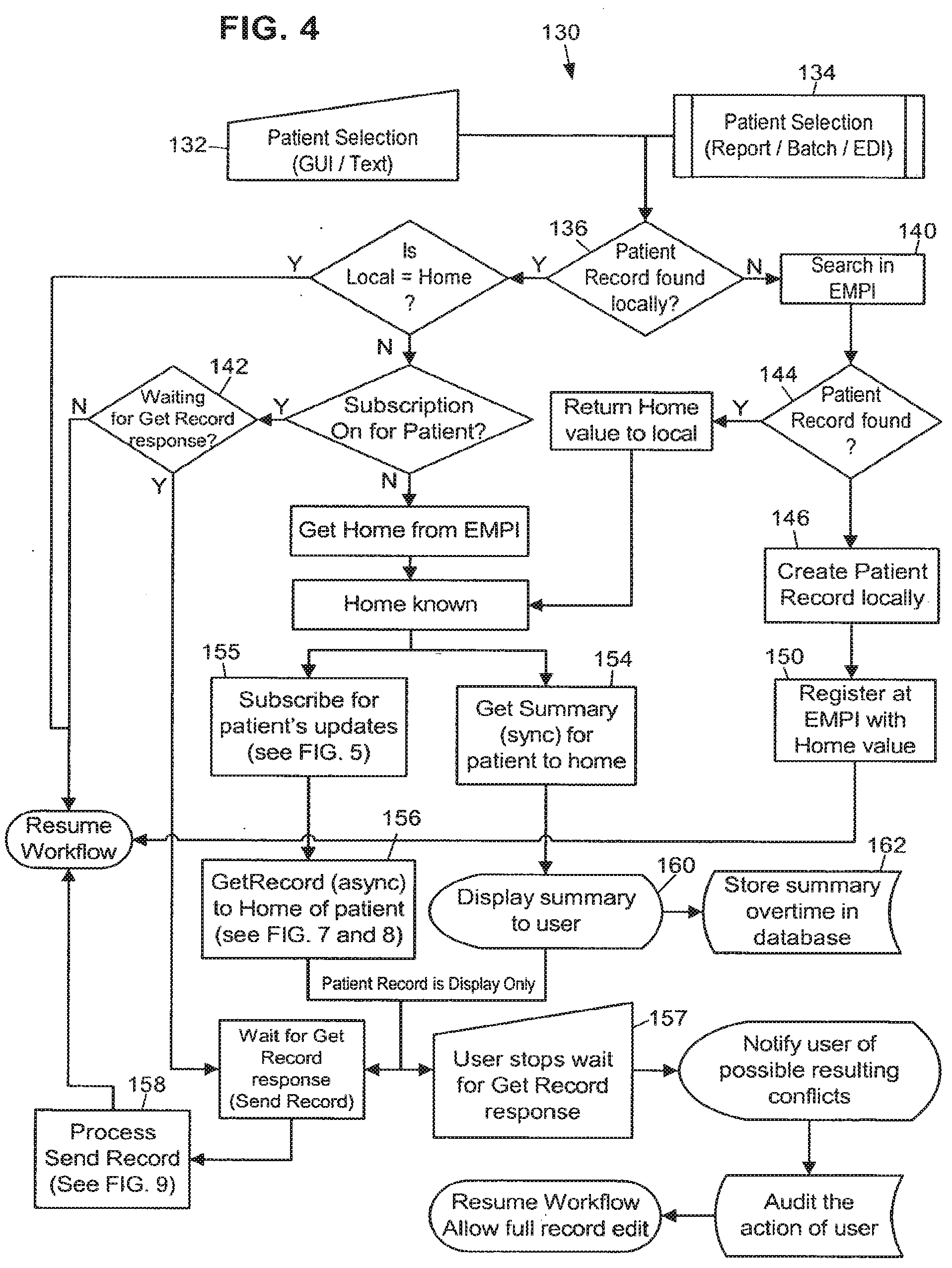

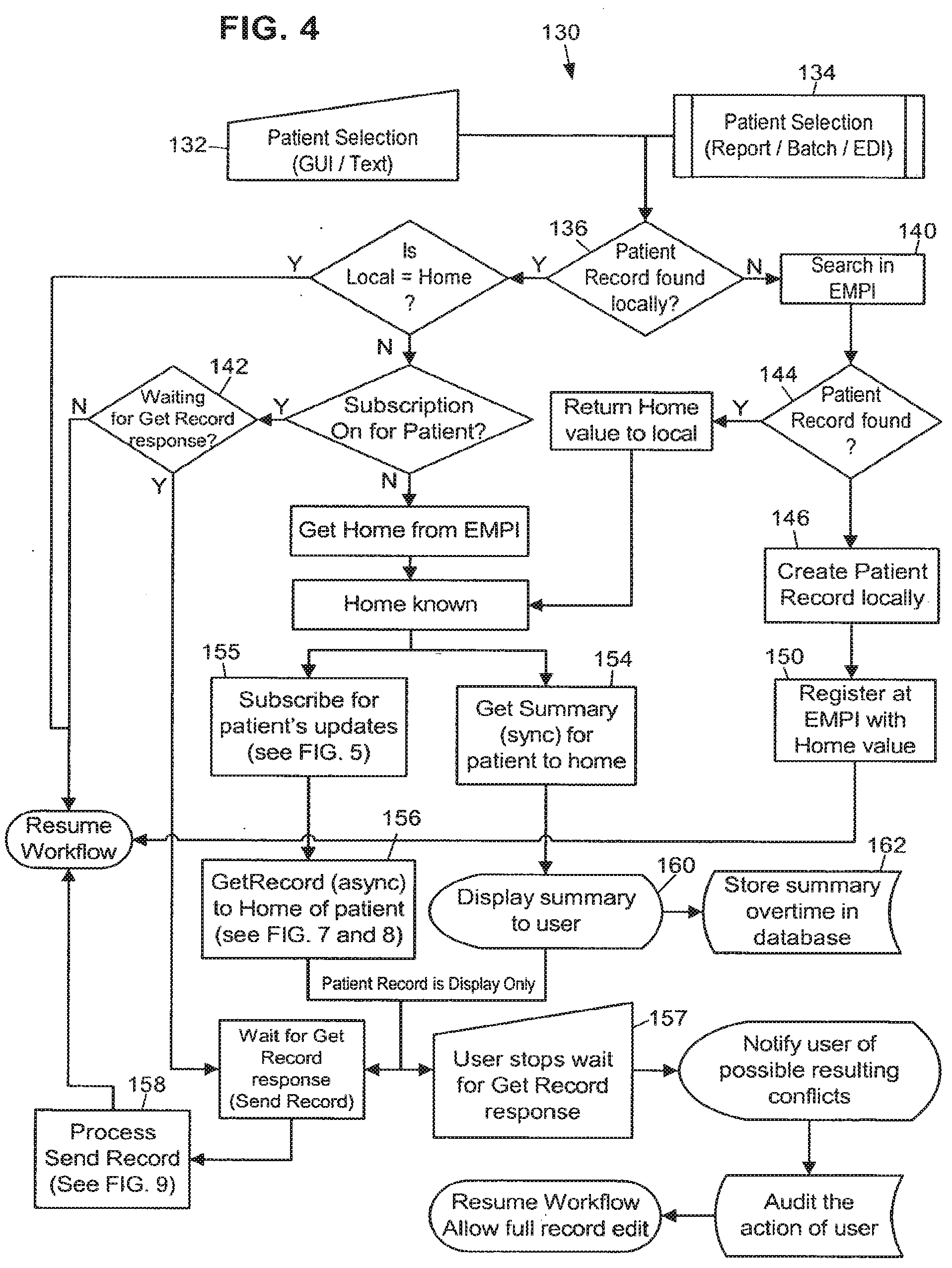

[0008] FIG. 4 is an exemplary flowchart representation of several steps that may be taken in identifying a patient.

[0009] FIG. 5 is an exemplary flowchart representation of several steps that may be involved during the patient subscription process at a deployment.

[0010] FIG. 6 is an exemplary flowchart representation of several steps that may be involved during the unsubscribe process for a patient record at a deployment.

[0011] FIG. 7 is an exemplary flowchart representation of several steps that may be used in pulling a patient's electronic medical record from the record's home deployment to a remote deployment.

[0012] FIG. 8 is a continuation of the exemplary flowchart illustrating more actions used by the home deployment when sending a response to a remote deployment's request for a patient record.

[0013] FIG. 9 is an exemplary flowchart representation of several steps that may be used when a remote deployment processes a patient record it received from the home deployment.

[0014] FIG. 10 is an exemplary block diagram illustrating the hierarchy within a patient record with a small set of clinical data groups and leaf groups.

[0015] FIG. 11 is an exemplary block diagram illustrating an update history table for a demographics group of a patient record.

[0016] FIG. 12 is an exemplary block diagram illustrating a patient record with pointers to external data.

[0017] FIG. 13 is an exemplary flowchart representation of the steps that may be used when publishing a record via the EMFI.

[0018] FIG. 14 is an exemplary block diagram illustrating a mapping technique involving a Community ID.

[0019] FIG. 15 is an exemplary flowchart representation of a routine that may be used in tracking patient level changes.

[0020] FIG. 16 is an exemplary flowchart representation of a routine used in a triggering queue.

[0021] FIG. 17 is an exemplary flowchart representation of a routine used in the processing and sending of updates to a record.

[0022] FIG. 18 is an exemplary flowchart representation of a routine used in receiving and filing data received from the community.

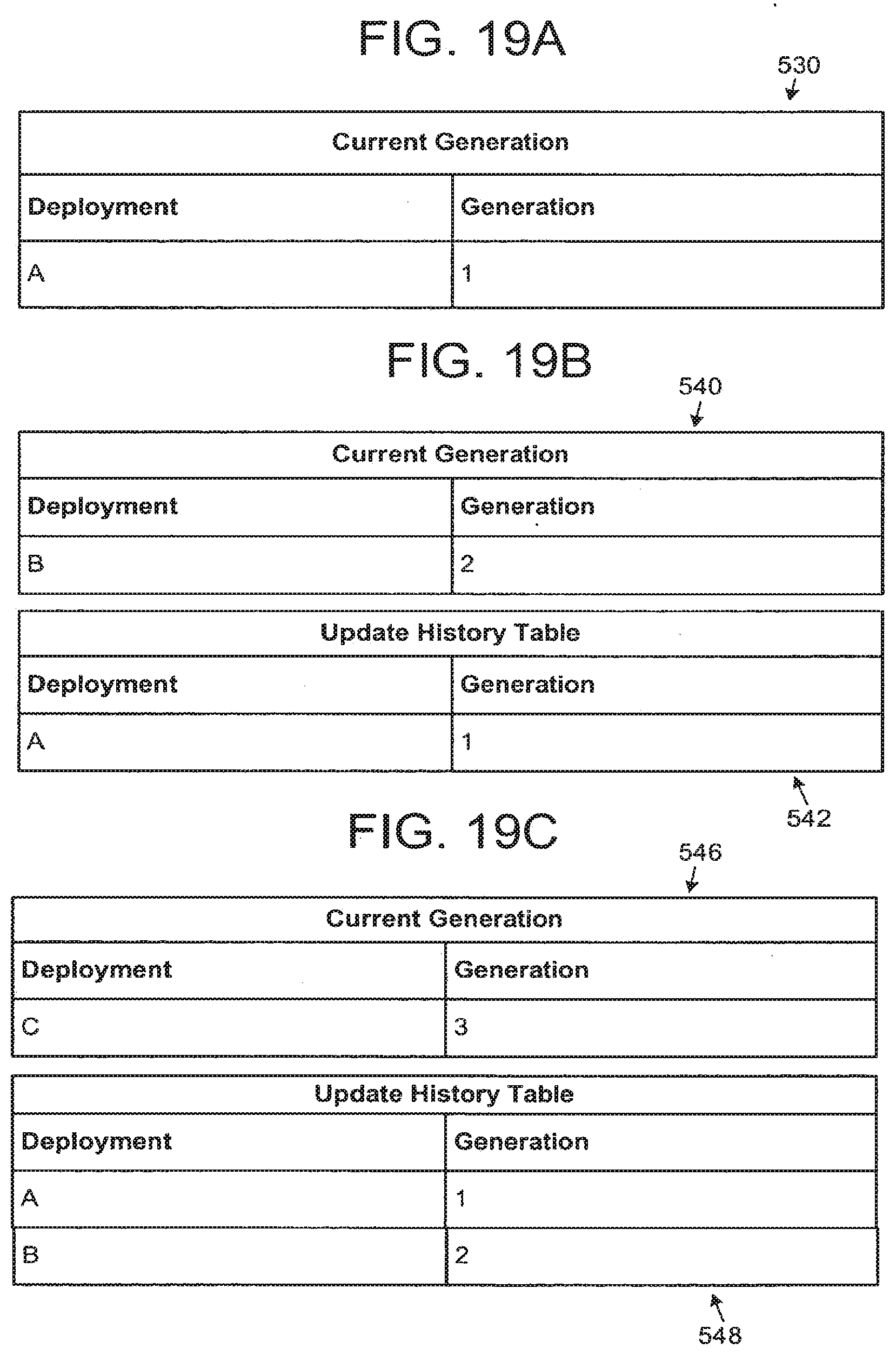

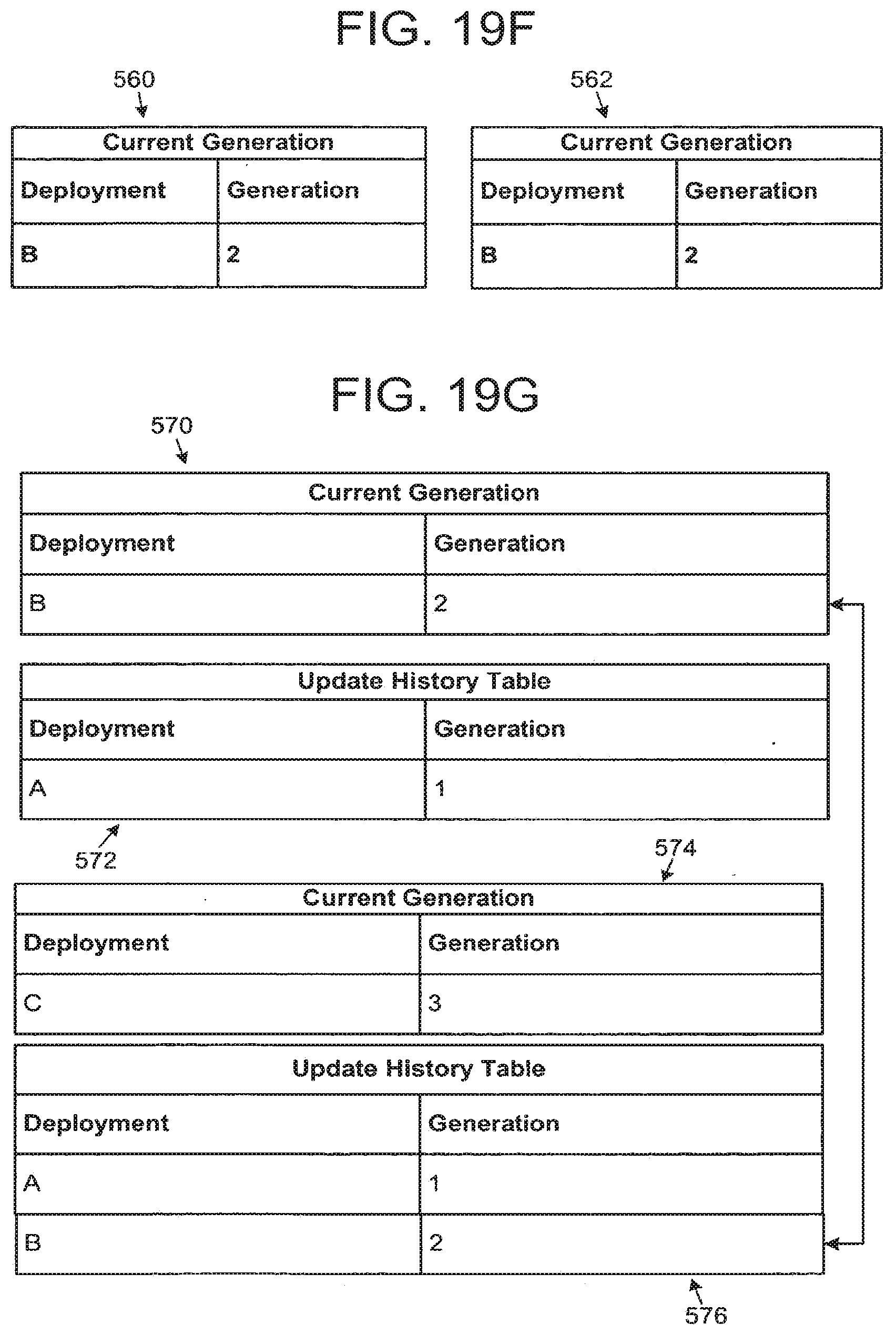

[0023] FIGS. 19 A-L illustrates exemplary current generations and update history tables for a number of deployments.

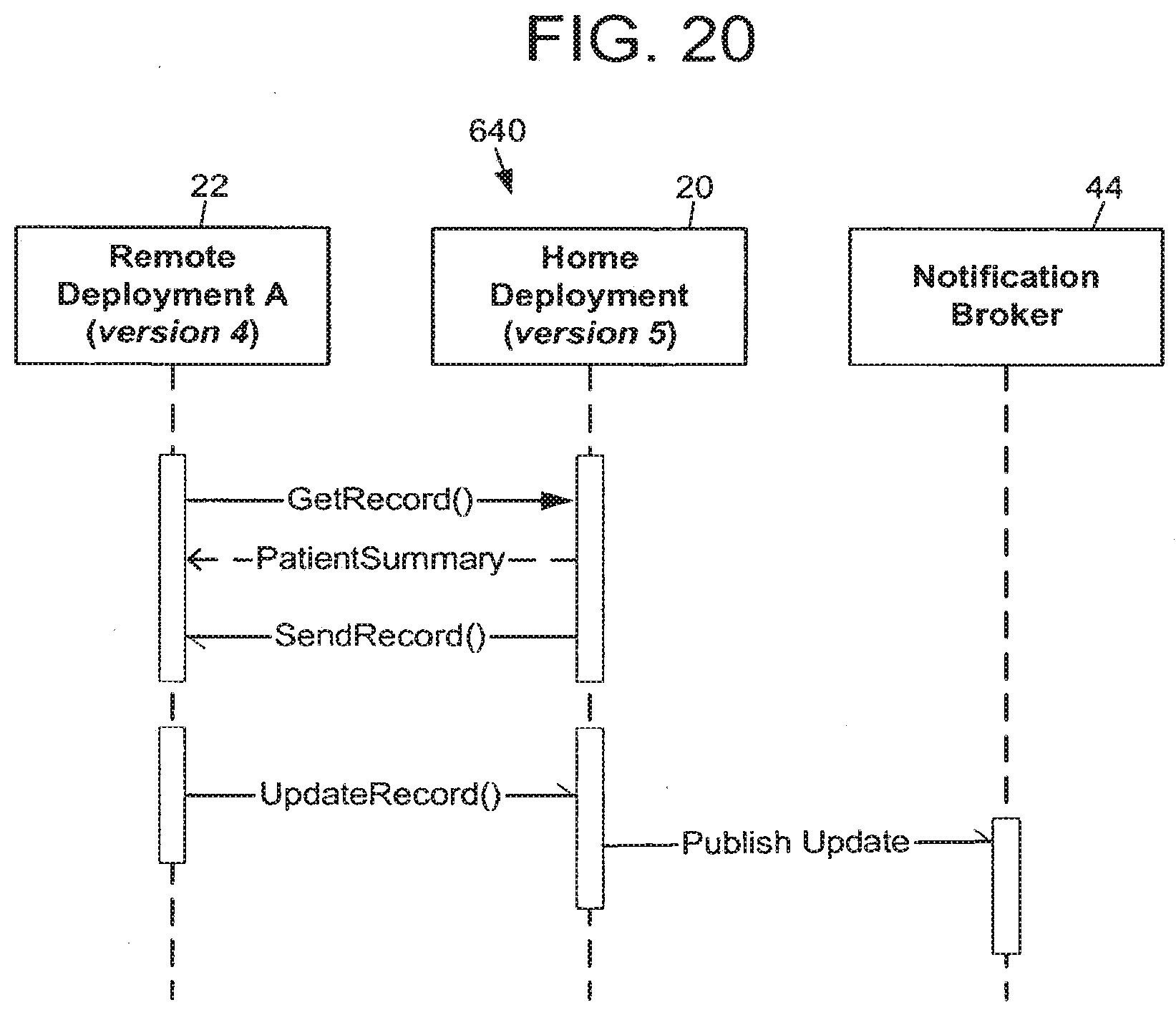

[0024] FIG. 20 is an exemplary block diagram illustrating a patient record pull when a home deployment is on a higher version.

[0025] FIG. 21 is an exemplary block diagram illustrating a patient record pull when a home deployment is on a lower version.

[0026] FIG. 22 is an exemplary block diagram illustrating conflict detection at a physical home and resolution at a logical home.

DETAILED DESCRIPTION

[0027] FIG. 1 illustrates an embodiment of an exemplary system 10 to provide an information sharing architecture that allows physically separate healthcare information systems, called "deployments," to share and exchange information. The collection of these participating deployments is referred to as the "Community," and systems within the Community sometimes store records for patients in common. The system 10 allows participants in the Community to share information on data changes to these patients, and to reconcile concurrent and conflicting updates to the patient's record.

[0028] The system 10 of FIG. 1 shows three deployments 20-24, labeled Home, A, and B. Home deployment 20 is operatively coupled to deployments A 22 and B 24 via the network 26. The deployments 20-24 may be located, by way of example rather than limitation, in separate geographic locations from each other, in different areas of the same city, or in different states. Although the system 10 is shown to include the deployment 20 and two deployments A 22 and B 24, it should be understood that large numbers of deployments may be utilized. For example, the system 10 may include a network 26 having a plurality of network computers and dozens of deployments 20-24, all of which may be interconnected via the network 26.

[0029] Each record that is exchanged throughout the system may be managed, or "owned," by a specific deployment. The deployment owning a record is referred to as the record's "home deployment." When a record is accessed for the first time from a deployment other than its home deployment, referred to as a "remote deployment," the home deployment may send a copy of the record to the requesting remote deployment. The remote deployment may send its updates to the home deployment. The home deployment may coordinate the updates it receives from remote deployments by checking for conflicting data, before publishing the consolidated updates back to the Community of deployments. While the home deployment may have greater responsibility for the records it stores and manages there, it has no greater role in the general system than do the other deployments.

[0030] By convention, examples throughout this patent involve records homed on the deployment 20 labeled Home. It is important to note that the use of Home as the basis for examples would seem to suggest an inherently greater role for the home deployment 20. In fact, all three deployments 20-24 are peers, and each act as home to a subset of the system 10's records. In other words, "home" is merely an arbitrary convention for discussion.

[0031] At any given time, the home deployment for a given patient record may need to be changed because the patient moved or for some other infrastructural reason. A utility may be provided to allow authorized users at the home deployment to search for a patient record homed there and initiate a re-home process for the patient record.

[0032] The network 26 may be provided using a wide variety of techniques well known to those skilled in the art for the transfer of electronic data. For example, the network 26 may comprise dedicated access lines, plain ordinary telephone lines, satellite links, local area networks, wide area networks, frame relay, cable broadband connections, synchronous optical networks, combinations of these, etc. Additionally, the network 26 may include a plurality of network computers or server computers (not shown), each of which may be operatively interconnected in a known manner. Where the network 26 comprises the Internet, data communication may take place over the network 26 via an Internet communication protocol.

[0033] The deployments 20-24 may include a production server 30, a shadow server 32, and a dedicated middleware adaptor 34. The production server 30 and shadow server 32 may be servers of the type commonly employed in data storage and networking solutions. The servers 30 and 32 may be used to accumulate, analyze, and download data relating to a healthcare facility's medical records. For example, the servers 30 and 32 may periodically receive data from each of the deployments 20-24 indicative of information pertaining to a patient.

[0034] The production servers 30 may be referred to as a production data repository, or as an instance of a data repository. Due to the flexibility in state-of-the-art hardware configurations, the instance may not necessarily correspond to a single piece of hardware (i.e., a single server machine), although that is typically the case. Regardless of the number and variety of user interface options (desktop client, Web, etc.) that are in use, the instance is defined by the data repository. Enterprise reporting may be provided by extracting data from the production server 30, and forwarding the data to reporting repositories. Accordingly, although often configured in a one-to-one correspondence with the production server 30, the reporting repository may be separate from the production server 30.

[0035] The shadow servers 32 are servers optionally dedicated as near-real time backup of the production servers 30, and are often used to provide a failover in the event that a production server 30 becomes unavailable. Shadow servers 32 are used to improve system performance for larger systems as they provide the ability to offload display-only activity from the production servers 30.

[0036] The deployments 20-24 may also include a middleware adapter machine 34 which provides transport, message routing, queuing and delivery/processing across a network for communication between the deployments 20-24. To allow for scaling, there may be several middleware adapters 34 that together serve a deployment. For purposes of this discussion, however, all machines that form a "pairing" (production server 30 and one or more middleware adapters) will be collectively referred to as a deployment. The presence of the middleware adapters 34 is not essential to this discussion and they are shown only as a reminder that messaging is necessary and present, and for uniformity with examples/diagrams.

[0037] As the patient is the center of each healthcare experience, the information to be exchanged revolves around the patient and grows into a number of areas that, while related (they apply to the patient), serve different and distinct purposes. This includes, for example, the exchange of clinical information. However, the system provides techniques and conventions for the exchange of non-clinical information as well, including information outside the healthcare domain altogether. As used herein, the term "record" generally refers to a collection of information that might extend beyond the clinical information some might typically expect to make up a medical chart, per se.

[0038] The two types of records that most require ID tracking/management are patient records (a single file for each patient), and master file records. In this document "master file" denotes a database (a collection of data records) which is relatively static in nature, and which is primarily used for reference purposes from other more dynamic databases. For example, a patient database is relatively dynamic, growing and changing on a minute-by-minute basis; dynamic databases are comprised of records that are created as part of the workflow of software applications, such as orders and medical claims. On the other hand, a reference list of all recognized medical procedure codes, or of all recognized medical diagnoses, is relatively more static and is used for lookup purposes, and so would be referred to as a master file.

[0039] The patient record IDs (identification numbers) and the related record IDs , may be coordinated across the Community by a dedicated central server that does not host healthcare application workflows, the Enterprise Master Patient Index (EMPI)/Enterprise Master File Index (EMFI) agent 40. The EMPI is the agent used to coordinate patient record IDs across the system 10, and the EMFI is the agent used to coordinate master file IDs across the system 10. This server may also function as the coordinator managing (minimizing) patient duplicate records across deployments. The EMPI/EMFI deployment 40 is aware of the home deployment for all the patients in the Community.

[0040] Each deployment 20, 22, 24, may maintain its own static and dynamic records. For example, the same specific medical order may have one local identification number (ID) at one deployment, and a different local ID at another deployment. Such deployments must agree to use a data mapping technique to resolve foreign keys contained within a patient record that arrives from another deployment. This data mapping process is described in more detail in the section titled Data Mapping.

[0041] The EMPI/EMFI server 40 is likely a separate machine running a scaled-down repository. As shown in FIG. 1, it is backed by a shadow server 32, and paired with middleware adapter 34.

[0042] A patient record is comprised of one or more "events."

[0043] An event is a data structure which stores all information relevant to a specific situation (typically though not necessarily clinical) that occurs at a specific time. Examples of events include a visit to a doctor's office, or an event that initially registers the patient with the healthcare organization. An event is composed of "data elements."

[0044] Data elements (sometimes referred to as "fields" or "columns") are a specific type of information related to the record. Examples of data elements include the patient's blood pressure, the cost of a procedure, or a doctor's phone number.

[0045] "Store-Once" data elements are shared across all events in a patient's record. Examples include the patient's birth date and eye color. These data elements either do not change over time, or if they do it is not necessary to associate the changes with specific events in the patient's record.

[0046] "Event Data" is associated with a specific event. Examples include the patient's blood pressure and symptoms for a specific visit. This information is not typically meaningful outside the event.

[0047] For effective patient synchronization, it is necessary that every deployment be aware of the other participating deployments in the Community. Numerous settings are provided in the community global settings to make a deployment "community aware."

[0048] Administrators are able to assign community-wide unique identifiers to each deployment. This is important to uniquely identify a deployment when processing incoming and outgoing messages for patient synchronization. These settings are used to notify all the deployments of the software version of each deployment in the Community. This helps to effectively step up or step down version-dependent data in the synchronization messages.

[0049] Any changes to a deployment's software version are published to the Community, so that each deployment is aware of the change. Administrators are able to activate and deactivate deployments in a Community. This way, a deployment can start or stop participating in the Community at any time.

[0050] Those persons of ordinary skill in the art will appreciate that every event in a patient record has information stored in it to easily determine the deployment that owns the event. This may be the deployment that created the event in the patient record.

[0051] The crossover server 42 allows deployments to operate at differing release versions of system software. The crossover server 42 provides storage/management for records that are extended beyond the data model available at their home deployments. The crossover server 42 allows a good deal of autonomy at the deployment level in that it provides the latitude for deployments to upgrade their version of system software on different timelines. This creates a situation where a record is homed on a deployment that, due to updates by another deployment, can no longer accommodate all of the data elements in the record. For example, if remote deployment R is at version 2007 and updates a patient record, and the patient's record is homed at deployment H which is on version 2006, deployment R may have collected new data elements that were introduced in version 2007, and so which cannot be stored in H's version 2006-based system. In this case, the model will request assistance from the crossover server--a deployment that meets or exceeds the version of any other deployment in the system 10. When the crossover server 42 is used, the record's logical home deployment will remain the same, but the physical home for the patient record will move to the crossover server 42. Like the EMPUEMFI agent 40, the crossover server 42 is a deployment, albeit a special deployment that does not own patient and other dynamic records, and does not host workflow. The crossover server 42 is backed by a shadow server 32, and paired with middleware adapter service 34. The crossover server 42 is described in more detail in the Version Skew section below.

[0052] Remote deployments may be "subscribed" to records when they request (pull) a copy of a record from the record's home deployment. Remote deployments may send all changes they make to the patient record to the home deployment via the middleware adapter. In this manner, the design of system 10 ensures that a patient's home deployment always has the latest information about the patient's record. The home deployment publishes the updates it receives from remote deployments back into the Community.

[0053] Through the subscription process, the remote deployment may automatically be signed up to receive updates to the patient record or portion of the patient record as they occur at other deployments. When the home deployment receives updates from a remote deployment and knows that other deployments are subscribed to the record, the updates may be published to a dedicated "notification broker" server 44. The notification broker 44 may then send the updates to all subscribed deployments, via the middleware adapter 34. The notification broker 44 is a publication/subscription manager that is responsible for, as requested by participants in the system 10, registering/unregistering subscribers and distributing messages (typically containing record updates) to active subscribers. The notification broker 44 is not a typical deployment in that its repository primarily includes a list of active subscribers, the topics they are subscribed to, and other technical details about messaging.

[0054] FIG. 2 is a schematic diagram 20 of one possible embodiment of several components located in deployment 20 labeled Home from FIG. 1. One or more of the deployments 20-24 from FIG. 1 may have the same components. Although the following description addresses the design of the healthcare facilities 20, it should be understood that the design of one or more of the deployments 20-24 may be different than the design of other deployments 20-24. Also, deployments 20-24 may have various different structures and methods of operation. It should also be understood that the embodiment shown in FIG. 2 illustrates some of the components and data connections present in a deployment, however it does not illustrate all of the data connections present in a typical deployment. For exemplary purposes, one design of a deployment is described below, but it should be understood that numerous other designs may be utilized.

[0055] One possible embodiment of one of the production servers 30 and one of the shadow servers 32 shown in FIG. 1 is included. The production server 30 may have a controller 50 that is operatively connected to the middleware adapter 34 via a link 52. The controller 50 may include a program memory 54, a microcontroller or a microprocessor (MP) 56, a random-access memory (RAM) 60, and an input/output (I/O) circuit 62, all of which may be interconnected via an address/data bus 64. It should be appreciated that although only one microprocessor 56 is shown, the controller 50 may include multiple microprocessors 56. Similarly, the memory of the controller 50 may include multiple RAMs 60 and multiple program memories 54. Although the I/O circuit 62 is shown as a single block, it should be appreciated that the I/O circuit 62 may include a number of different types of I/O circuits. The RAM(s) 60 and program memories 54 may be implemented as semiconductor memories, magnetically readable memories, and/or optically readable memories, for example. The controller 50 may also be operatively connected to the shadow server 32 via a link 66. The shadow server 50A, if present in the deployment 20, may have similar components, 50A, 54A, 56A, 60A, 62A, and 64A.

[0056] All of these memories or data repositories may be referred to as machine-accessible mediums. For the purpose of this description, a machine-accessible medium includes any mechanism that provides (i.e., stores and/or transmits) information in a form accessible by a machine (e.g., a computer, network device, personal digital assistant, manufacturing tool, any device with a set of one or more processors). For example, a machine-accessible medium includes recordable/non-recordable media (e.g., read only memory (ROM); random access memory (RAM); magnetic disk storage media; optical storage media; flash memory devices), as well as electrical, optical, acoustical or other form of propagated signals (e.g., carrier waves, infrared signals, digital signals); etc.

[0057] The deployments 20-24 may have a data repository 70 via a link 72, and a plurality of client device terminals 82 via a network 84. The links 52, 66, 72 and 84 may be part of a wide area network (WAN), a local area network (LAN), or any other type of network readily known to those persons skilled in the art.

[0058] The client device terminals 82 may include a display 96, a controller 97, a keyboard 98 as well as a variety of other input/output devices (not shown) such as a printer, mouse, touch screen, track pad, track ball, isopoint, voice recognition system, etc. Each client device terminal 82 may be signed onto and occupied by a healthcare employee to assist them in performing their duties.

[0059] Typically, the servers 30, 32 store a plurality of files, programs, and other data for use by the client device terminals 82 and other servers located in other deployments. One server 30, 32 may handle requests for data from a large number of client device terminals 82. Accordingly, each server 30, 32 may typically comprise a high end computer with a large storage capacity, one or more fast microprocessors, and one or more high speed network connections. Conversely, relative to a typical server 30, 32, each client device terminal 82 may typically include less storage capacity, a single microprocessor, and a single network connection.

Overall Operation of the System

[0060] One manner in which an exemplary system may operate is described below in connection with a block diagram overview and a number of flow charts which represent a number of routines of one or more computer programs.

[0061] As those of ordinary skill in the art will appreciate, the majority of the software utilized to implement the system 10 is stored in one or more of the memories in the controllers 50 and 50A, or any of the other machines in the system 10, and may be written at any high level language such as C, C++, C#, Java, or the like, or any low-level, assembly or machine language. By storing the computer program portions therein, various portions of the memories are physically and/or structurally configured in accordance with the computer program instructions. Parts of the software, however, may be stored and run locally on the workstations 82. As the precise location where the steps are executed can be varied without departing from the scope of the invention, the following figures do not address which machine is performing which functions.

[0062] FIG. 3 is an exemplary block diagram overview 100 of several functional components in a deployment within the system 10. The overview 100 includes one or more production servers 30 that are used to store and maintain an electronic patient . record, possibly employing a database management system as the data store. The production servers 30 may provide high-availability and data redundancy through fail-over and shadow servers in order to ensure uninterruptible access to the patient record.

[0063] The collection of components illustrated in overview 100 also includes a group of common infrastructure components 102, a group of incoming processing components 104 and a group of outgoing processing components 106. The group of common infrastructure components 102 includes the crossover server 42 and the EMFI/EMPI server 40. As previously mentioned, the EMFI/EMPI server 40 can provide a mechanism for coordinating master files across deployments as well as providing a way to coordinate person identities across deployments.

[0064] The group of incoming processing components 104 includes a communication agent 110 to provide reliable transport for synchronizing a patient record and a version skew agent 112 which is used to identify and handle the need to distribute record changes to multiple versions of system software. The group of incoming processing components 104 may also include a conflict detection agent 114, a conflict resolution agent 116, and a data mapping agent 120. The conflict detection agent 114 provides automated detection of cases where the changes in one deployment may be in conflict with changes made in another deployment. The conflict resolution agent 116 provides automated resolution of certain cases where conflict was detected, and for the cases which require user intervention, provides the necessary tools for the user actions. The data mapping agent 120 converts deployment specific data values from a normalized form when needed. EMPI and EMFI 40 communicate the normalized form to the data mapping agent 120 at the deployments.

[0065] The group of outgoing processing components 106 may include a triggering agent 122, a data mapping agent 120A, a version skew agent 112A and a communication agent 110A. The triggering agent 122 is the sub-system which detects changes and user actions, which need to be communicated to the Community.

[0066] FIG. 4 is an exemplary flowchart representation 130 of several steps that may be taken in identifying a patient and initiating the record pull process. In general terms, the steps illustrated in this flowchart provide a channel to share important information from a patient's medical record between various locations that are using separate information systems. The record pull process accomplishes this by allowing access to and use of patient record information at any location to help physicians and other personnel make sound clinical decisions about the patient's care. The record synchronization process ensures patient confidentiality and abides by the rules and regulations of the organizations exchanging data.

[0067] The steps in the patient record pull process are intended to run in a system such as that illustrated in FIG. 1, where there exists middleware adapter capability at every deployment 20-24 and 40-44 to process the outgoing and incoming messages. Messages are presented in an easily interpreted format, such as XML. This design assumes that the EMPI/EMFI deployment 40 houses the identifiable patient information needed to lookup patients across deployments. Messages to the EMPI/EMFI deployment 40 are sent through the middleware adapter 34 to query for patient record information.

[0068] A patient record pull may be requested when a deployment accesses a patient record not homed in that deployment. The EMPI provides the accessing deployment with the patient's home deployment so that it can request the patient record from the correct deployment. The requesting deployment is now a remote deployment with respect to that patient record. A summary of the patient record is first sent to the remote deployment while the full patient record is retrieved from the home deployment. At the time that the remote deployment requests the patient record from the home deployment, they are also subscribed to the patient record. This means that they may begin receiving all the updates, near real time, for the patient record as published by the home deployment.

[0069] Alternatively, a deployment may only subscribe to the data needed for a particular workflow. For example, a deployment may only subscribe for scheduling-related patient information, or registration-related patient information, thus increasing the data retrieval speed and lowering demand for system resources. A deployment may also choose to synchronize the entire patient record, but specify a period of delay for patient information that is not essential for a particular workflow.

[0070] Still referring to FIG. 4, the first step in patient record pull is to determine whether or not a patient has a different home than the current deployment. During a user workflow in an application, a patient record can be accessed via: (1) a patient lookup (block 132), (2) a report (block 134), and (3) a batch job that process patient records (block 134), for example. In the above cases, the deployment checks whether the patient's home deployment is the accessing deployment (block 136). The local Master Person Index (MPI) is searched for the patient. If the patient is not found at the local MPI then the EMPI is queried (block 140). The EMPI is also queried for the home deployment of the patient if the patient is found in the local MPI, but the current deployment isn't the home deployment for the record. The EMPI doesn't need to be queried for the home of the patient if the patient subscription is active. (If the patient subscription is active, it can be assumed that the local data for the patient record is current and up to date). Additional description of a patient record subscription is provided below.

[0071] If the EMPI doesn't find the patient at a block 144, then the user is able to create the patient locally (block 146) and register the record with the EMPI for use throughout the Community (block 150). Also, the user is able to assign a home deployment to the patient and update the EMPI with that information. If the EMPI does find the patient at block 144 and the patient record identification is successfully retrieved from the EMPI, then the Subscribe message is sent to the notification broker (block 155). Each deployment may have a global system for tracking all the patient records to which it is subscribed.

[0072] After the identity of a patient record is established and if the home deployment of the patient is not the current deployment, message requests are sent to the patient's home deployment to synchronously retrieve the patient record summary, "Get Summary", (block 154) and also asynchronously pull a copy of the full patient record to the remote deployment, "Get Record" (block 156). The home deployment for a patient record is stored as information in the patient record.

[0073] As mentioned above, after the identity of a non-local patient is established and if the record is not yet retrieved from the home deployment, the patient record summary will be requested from the home through a synchronous message (block 154). The patient record summary may be displayed to a user in display-only format (block 160).

[0074] This summary can be viewed by the user at the remote deployment to begin working with the patient while the full patient record is being retrieved through one or more asynchronous messages.

[0075] Furthermore, the summary may be time stamped and stored in the patient record for audit purposes. It may also be stored every time a patient summary is extracted from home (block 162), so that any clinical decisions made by a physician viewing the summary can be verified later.

[0076] While waiting for the patient record pull to complete, the user at the remote deployment may be allowed to perform certain actions on the patient record. For example, the user may be permitted to schedule an appointment or create a new event in the patient's record. For these actions to be performed, some of the patient data is present on the remote deployment in discrete format in the database and not as display-only. When a remote deployment sends a synchronous message to the home deployment, the response can include the additional discrete data elements. This section of the message response may be small in size, in order to ensure a quick response.

[0077] While the user is waiting for the response at the remote deployment, some functions on the patient's record may be unavailable. All the applications' functions can look at the server and see if the full patient record has been synchronized and whether to allow the users to start working on the record.

[0078] It should be noted that at the time of patient selection, if the full patient record is not synchronized the summary may appear. When the user opens such a patient record in any application, the user is allowed to perform relatively few actions against the patient record. Similarly, the user may be notified when the full patient record is synchronized and allowed to perform all the application activities.

[0079] If the user wishes to perform immediate actions instead of waiting for the full patient record synchronization, it is possible for the user to stop waiting for the response (block 157). In this scenario, the user actions may be fully audited and the data which is built by the user for the patient record is marked in conflict with the home deployment data. The system 10 may be restricted to allow only certain authorized users to perform this action. Also, the patient summary may be saved at the remote deployment and time stamped, so that it can be viewed later for audit purposes.

[0080] If the record pull is stopped 157, then the response messages of Send Record may be discarded and the update messages going to the home deployment for the new data may be marked as conflicting data at the home deployment. When a user stops the record pull for a patient record, the remote deployment is said to be in Local Activity Mode (LAM) for that patient. In this mode, the remote deployment discards any incoming update messages for that patient, but keeps sending the update messages of data collected at the remote deployment to the home deployment. When the same patient data is edited on different deployments, conflict between deployments can occur. Details of how the conflicts will be resolved and the various steps involved in this are discussed in the Conflict Resolution section below.

[0081] Still referring to FIG. 4, after it subscribes, the remote deployment sends the patient's home deployment a Get Record message to request the patient record, or portion of the patient record. Any existing data about the patient at the remote deployment, for example, earlier events at that deployment, is display-only at this point. The patient record is sent by the home deployment to the remote deployment asynchronously, in one or more logically affiliated Send Record messages. The remote deployment processes the Send Record message and allows the application to resume its normal workflow.

[0082] With regard to message affinity, the design of system 10 also ensures that multiple messages for record synchronization can be logically affiliated with each other to represent one action message. For example, the home deployment 20 can respond to an asynchronous Get Record message request by packing the patient record into one or more messages that comprise the Send Record message and process them together. Each message in this scenario includes a summary of the kinds of information in the other messages so that the receiving deployment knows what types of messages to expect. Although the messages are linked together for the goal of full record synchronization, these messages can be received and processed in any order. When sending the set of messages for the Send Record message, the home deployment can prioritize the type of data needed to be sent before any other data. A configuration setting allows a user to specify the order in which data groups are packed and sent. This means that high priority patient record information is sent immediately, so that users will have quick access to it.

[0083] FIG. 5 is an exemplary flowchart representation 300 of several steps used in the subscription process. If the remote deployment intends to edit the patient's record, then it subscribes to the patient's updates and the home deployment is updated with any changes it makes to the patient's record. When a patient is selected (block 302), the system checks to see if the patient is homed on the current deployment (block 304). If it is determined at the block 304 that the local deployment is the home for the patient, the software does not cause the remote deployment to subscribe to the record (block 306). If it is determined at the block 304 that the local deployment is not the home deployment for the patient, the system causes the remote deployment to send a Subscribe message, including the patient ID and home deployment ID, to the notification broker 44 (block 310). The notification broker in turn adds the remote deployment to the list of recipients for updates made to that patient's record. The notification broker also notifies the home deployment that a remote deployment is subscribed, and that it should keep the community updated with changes. This is used to optimize the publishing of updates from the home deployment. The updates are not sent if there are no remote deployments subscribed for that patient's updates. The home deployment for the patient record then publishes the patient record changes for the community.

[0084] The home deployment for the patient record may be directly notified by the remote deployment whenever it modifies the patient record (block 312). This is important for effective conflict resolution and stepping up and down between software versions. The home deployment may publish the record updates to the Community through the notification broker 44, and all the deployments that are subscribed for the patient's updates receive the published message. The notification broker 44 is responsible for notifying each deployment of all active subscriptions for the patient records homed at that deployment.

[0085] FIG. 6 is an exemplary flowchart representation 320 of several steps used when closing a subscription, the "unsubscribe" process, to a patient record. When a remote deployment no longer requires updates to a patient record, such as when the patient is no longer receiving care at the deployment, an Unsubscribe message is sent to the notification broker with the patient ID and home deployment ID.

[0086] While accessing the patient record in an application workflow, the user may perform actions that trigger the deployment to unsubscribe to the patient record, and stop receiving updates published by the home deployment. Examples of such actions are closing (finalizing) an outpatient encounter or discharging a patient. When a need to unsubscribe has been identified (block 322), an Unsubscribe message is sent to the notification broker 44 to remove the remote deployment from the recipients list for the patient's updates (block 324). If this is the last remaining remote deployment subscribed to the patient record, the notification broker 44 then notifies the home deployment that remote deployments are no longer subscribed to the patient record and that there is no more need to publish updates 326.

[0087] Now, if users at the remote deployment wish to modify the patient record through some functions in the application, then the remote deployment has to pull the record of the patient again from the home deployment and start a subscription.

[0088] A configuration setting at each deployment may determine the default number of days that subscriptions are kept open for a patient record or portion thereof after a user commits an action to cancel subscription for a patient's updates. When the user commits an action that causes a subscription to be canceled, the subscription may instead be kept active for that predefined period of time. This is helpful, for example, for encounters and admissions for which charges are entered nightly. For administrative purposes, there may be a tool to explicitly start and end subscriptions for a patient's updates. As mentioned above, if a Subscribe message is sent for a patient from a remote deployment, then an implicit Get Record message may also be sent to the patient's home deployment.

[0089] FIG. 7 and its continuation, FIG. 8, is an exemplary flowchart representation 170 of several steps used in synchronizing a patient's existing electronic medical record, whether it exists in out-dated form or not at all at the remote deployment, with the record's home deployment. As described above, the remote deployment requests the up-to-date patient record from the patient's home deployment by sending a Get Record message (block 172) to the home deployment.

[0090] When a Get Record message is sent by a remote deployment, a deployment-side setting is marked at the remote deployment with the patient ID, signifying that a response is expected for this patient's record pull request (block 173). This setting is used to filter any unsolicited messages and also to notify the user at the remote deployment that a patient's full record pull is in progress.

[0091] When the Get Record message is received, the home deployment first confirms that the patient is homed at that deployment. If the Get Record is sent to the wrong deployment, then the system may return a Wrong Home Error code (block 177) message back to the requesting deployment.

[0092] Once it confirms that the patient is homed at the deployment, the system then compares the records and sends any new information for that patient record at the home deployment to the remote deployment in a Send Record message.

[0093] Because the patient record can exist simultaneously at multiple deployments, the system 10 keeps track of the most current data for that patient record, including the updates and iterations of updates made to the record. For the purposes of record synchronization, the patient record and all the related data is divided into hierarchical groups of data. FIG. 10 is an exemplary block diagram 330 illustrating the hierarchy within a patient record with a small set of clinical data groups and leaf groups.

[0094] A reason for creating hierarchical groups of data is that data grouping enables the system to locate, package and send only the changed portions of records instead of the whole record which facilitates performance optimization. Other reasons are that data grouping makes it easier to search for changes in a patient record, and conflicts between multiple deployments updating the same patient record are minimized as a result of data grouping because in many cases the deployments will not edit the same portion of the record at the same time. Thus the home deployment can smoothly compare and merge the updates it receives. Auditing changes at the more granular data element level may provide another method of achieving the same need.

[0095] The exemplary patient record of FIG. 10 is made up of: Store-Once patient specific data groups 332, patient record events 334, and Event Data groups. Examples of Store-Once data groups are: demographics, allergies, problem list 342, and patient preferences. Examples of patient events are: encounters 344, admissions, and appointments. Examples of Event Data groups are: orders 346, vitals 348, admission data 349, and appointment resources.

[0096] Each of the Store-Once and Event Data groups may be further divided into smaller leaf groups which contain a number of data elements. Examples of leaf groups are: Demographics Name, Demographics Address, and Demographics Temporary Address. Some of the conventions for defining a leaf group are: defining a leaf group to have only one type of data, either Store-Once or Event Data elements; defining a leaf group to have multiple parents, and requiring a data element to belong to only one leaf group.

[0097] Data elements within a leaf group can point to dynamic database records. Dynamic database records are created as part of patient workflow in various applications and are synchronized between deployments with the patient synchronization messages. To transfer the dynamic database records along with the patient record, the dynamic databases are also divided into a hierarchical group structure. Generation levels may be assigned to dynamic database records too. Any changes to a dynamic database record's group may be propagated to the patient record and eventually increment the patient generation.

[0098] In order, to track changes to a patient record, search for changes within a patient record, and compare the same patient record across deployments, both the patient record itself and its individual groups and events are marked with generation levels when they are modified. Generations are assigned to events so that a particular encounter can be synchronized and compared easily. Each version, or generation, of a group is tracked. When a change is made to a piece of data in the group, the generation is incremented, thus flagging the group as requiring synchronization. Each level in the hierarchy in the patient record is assigned a generation and is incremented if any of the child groups or data elements are edited. In this manner, the system 10 avoids having to send the entire patient record every time a change is made to one part. This concept is discussed in more detail below. Every patient has a generation level 1 when it is published the first time into the Community and it is updated to the next generation level when the record is published.

[0099] Along with the generation for the patient and the groups, the history of the updates from various deployments may also be stored. The update history of the patient record or the group tells us the foundation on which the data group was built. This is important information when update messages are processed and during conflict detection and resolution, described in later sections.

[0100] Each group may contain several special fields with metadata. These fields include, for example: generation (the latest iteration of a group); changed flag (a Boolean flag indicating that a group has changed since its generation was last incremented); generation instant (a time and date indicating when the generation was last incremented); and update history (a table holding the ID's of the deployments that have contributed to a group and the last generation each deployment contributed).

[0101] In addition, the top-level group for a record may have an additional number of fields. These may include, for example: Last Generation Published (LGP) (indicates the last generation any deployment has published to the Community); and Update Processing Record (UPR) (flag indicating that an update for this record is currently being processed and sent out).

[0102] The design of system 10 provides a schema definition to represent data groups for a patient's record which includes generation information about each data group, as well as dependable and identifiable representation of data pointers for static master file records, dynamic database records, lookup table values and events. This schema is used to create messages to synchronize patient records across deployments.

[0103] The remote deployment which wishes to retrieve a patient's record from the home deployment for that patient, sends a Get Record message to the home deployment (block 172) along with the patient record's and individual groups' generation level information. The remote deployment may send the following identifying information in the Get Record message: the intended receiver (patient's home deployment), the patient ID, the patient record generation and update history (FIGS. 12 and 21 discuss update history in detail), the group hierarchy and each group's generation and update history, the remote deployment's system software version (used for stepping up or down the data in the message format), and the event present at the remote site and its generations and update history with its groups' generations and update history (This may not be true for patients synced for the first time to the remote deployment).

[0104] Because the Get Record message is requested asynchronously, it is added to a queue to be processed by the home deployment and when it is, the Send Record message is sent asynchronously to the remote (block 176 of FIG. 7 and block 190 of FIG. 8).

[0105] The home deployment first compares the generation levels of the patient record on its own server to the generation level sent inside the Get Record message (block 174). The Send Record message (block 191 of FIG. 8) contains information regarding each group and event in the patient record with its compared info. If the generation information on the remote deployment's patient record is lower (older) than that on the copy at the home deployment, a flag indicating that home has newer generation data than remote (block 186 of FIG. 8) is added to the Send Record message. The new data and its generation levels are packed and sent to the remote deployment (block 191 of FIG. 8).

[0106] If the generation information on the remote deployment's patient record is equal or newer to that found on the home deployment copy of the record and if the update history matches, a flag indicating that the remote has the same generation of data or higher as the home deployment (block 175 of FIG. 7) is added to the Send Record message.

[0107] If the generation information on the remote deployment's patient record is equal or newer than that found on the home deployment copy of the record, but the update history does not match, then the home deployment compares all group and event generations and if the group or event generation of the remote deployment's copy is greater (newer) than the home deployment, a flag indicating that the remote has newer generation data than the home deployment (block 189 of FIG. 8) is added to the Send Record message.

[0108] FIG. 9 is an exemplary flowchart representation 200 of several steps taken by a deployment when it receives and processes a Send Record message. The Send Record message may include, for example, the following information in the message: the intended receiver (remote requester), the patient ID, the patient record generation and update history with flag of the difference in generation for the record (as mentioned above), the group hierarchy and each event or group's generation and update history, with flag of the difference in generation for the group or event, the discrete data for each group with, for example: static master file pointers, dynamic master file pointers, event pointers, lookup table pointers.

[0109] If needed, the Send Record message can be broken into smaller messages to keep the size manageable. Each of the messages may include some important identifying information in the header. The header of the message (or each message, in case there are multiple messages for the response) may have the number of groups and events that exist in the patient record that would be part of the message(s). For example: the patient ID, the intended receiver (remote requester), the total number of events for the patient and total number of groups, which event and groups this message contains. This information can be used to help users keep track of the progress of the patient record synchronization process.

[0110] For optimization, the processing of messages may be shared between the production and the shadow server at any deployment. A Get Record message may be sent out by the production server of a remote deployment and received by the production server of the home deployment. The response message Send Record is created and sent by the shadow server of the home deployment. On the remote deployment the Send Record message is received and processed by the production server.

[0111] By looking at any of the messages, the remote deployment can tell how many of each group to expect for the patient record. As the remote deployment gets more messages, the deployment unpacks each group, marking the group or event "received." When all the messages for the set of Send Record messages have been received, the data in the groups is filed in the patient record. This way the remote deployment can at any given point tell how much of the patient's record has been received. The unpacking of messages and filing of the data is discussed in more detail below.

[0112] When the remote deployment gets the first message in the Send Record message set, it stores the total number of groups of Store-Once data elements, the total events, and the total number of Event Data groups in a deployment-wide setting.

[0113] As mentioned above, the remote deployment keeps track of the amount of data received' per patient in the record pull process through the number of groups and events, and once all the groups and events for the patient are received from the home deployment, the data is filed in the patient record and the patient record is marked as synchronized. At this moment, the applications are notified and patient functions become accessible to the user.

[0114] Still referring to FIG. 9, when the remote deployment receives the Send Record message from the home deployment, it reads the patient ID and checks to see if it was expecting a response for the patient (block 202). If it was not expecting a response for the patient, an error is logged locally and an exception is sent to the deployment that sent the Send Record message (block 203). If the remote deployment was expecting a response but an error was found in the message, an error is logged locally, the sending deployment is notified, and the user at the remote deployment is allowed to proceed in Local Activity Mode (LAM) (block 205). If the patient record information is the same as that sent from the home deployment (block 207), the patient is marked as synchronized (block 208) and the user at the remote deployment can proceed to work with the patient record fully.

[0115] If the patient record received from the home deployment in the Send Record message is not the same as that on the remote deployment (block 207), the remote deployment reads the Send Record message and counts the total number of expected groups and events (block 209). If the incoming message contains groups or events that are newer than that stored on the remote deployment, the new information is filed (block 212). If the incoming message contains information that is older than that stored on the remote deployment, it is not filed. Generations and the update history table are used to determine which information is newer. Each group and event is marked as received (block 214). As this is accomplished, the count of expected groups and events is decremented (block 215). When all groups and events are done, the system checks if all groups and events for the patient are synchronized; if they are not all synchronized, the system waits for more Send Record messages (block 218). If they are, the patient record is marked as synchronized (block 208), and the user is able to continue working with the patient record (block 209).

[0116] FIG. 11 illustrates an exemplary update history table for a Demographics Group of a patient record. As previously mentioned, records include a set of information referred to as the record's update history. Throughout this patent, examples used will illustrate the update history using a table that details, for a group, the deployment and generation that contributed to the current state of the group. In the table header 350, 115781 is the Community ID for the Patient database record being summarized; Demographics indicates the data group to which the table refers. The table lists five deployments in the Community: A, B, C, D and E. The data group Demographics has been updated five times by various deployments in the Community to arrive at generation 5 which was last updated by deployment B. The current generation of the Demographics group, which is the last update generation received or published for the group is generation 5.

[0117] The update history shown in FIG. 11 tells us that the patient was created at deployment A and the Demographics group was updated by deployment E to generation 2, by deployment D to generation 3, by deployment C to generation 4 and then finally by deployment B to generation 5. Generation 5 is the last updated generation of the group, thus it is stored in the current generation field for the Demographics group.

[0118] The current generation and update history information of the patient record, data groups, events and dynamic database records may be stored along with the patient record. The group information is stored as part of the database schema for the patient record.

[0119] When packing the data groups for a patient's record at the patient's home deployment 20 all the patient specific Store-Once groups are packed first followed by the Event Data groups. The Event Data groups are prioritized by a configuration setting which determines the number of days in past or future to search for events. These events are packed first, before the rest of the events that are part of the patient record. Examples of default behavior may be to process: events for 30 days in past, events for 30 days in future, all past events, and all events for future. As previously mentioned, each message header may include the correlated information about other messages which contain the rest of the event groups for the patient record.

[0120] For the purposes of this discussion, a tightly affiliated community is assumed. However, different levels of affiliation and data sharing amongst deployments are possible. The deployments participating as a Community can agree to share certain patient data during the record synchronization process, but due to some legal or contractual reasons, they might not agree to share other data. There may be a setting per deployment to filter incoming or outgoing messages depending on these contracts or agreements. Some of the requirements for these settings may include: (1) Deployment settings at each database level of which data groups can be sent out as part of a record synchronization message and (2) Deployment settings at each database level of which data groups can be accepted when receiving a record synchronization message.

[0121] In a loosely affiliated community, a few levels of synchronization are possible, including, for example: Central Home, Distributed Home, and Distributed Home with Focal Point Server. For a community using the Central Home synchronization level, the whole patient record resides on the patient's home deployment and is sent to the requestor from that deployment when a Get Record request is made. It works similarly to the tightly affiliated community.

[0122] For the Distributed Home synchronization level, only a part of the patient record is stored on the home deployment because of certain non-sharing rules between deployments: Various pieces of the patient's data are owned by various deployments. When a remote deployment requests a patient's record, it requests the home deployment for the patient and returns only the data owned by the home deployment. The data residing on the other remote deployments is not sent to the requesting deployment.

[0123] In a loosely affiliated community, if there is no EMPI present for patient records, then each deployment publishes each patient to the Community with identifiable information at the time of creation. MPI tables are created in each of the deployments to resolve the patient record. When a deployment publishes a new patient record, the receiver marks the sender deployment as the home deployment for the patient.

[0124] If there is no EMFI present for static master file records, then each deployment may need to publish changes to static master file records and selection lists nightly to the other deployments, using the Master File Index (MFI) and Master Category Index (MCI) techniques to resolve the pointers. Another technique is to use the Active and Passive snapshots describe below to synchronize the static master files and lookup table values.

Data Mapping

[0125] When a patient record is synchronized to another deployment, there are various data elements in the patient record--typically pointers to other records or selection list values--which simply cannot be resolved in the receiving deployment, or have pointer values that need to be translated for use at the receiving deployment. For example, FIG. 12 illustrates a block diagram 360 of an exemplary patient record 362 with pointers to external data. As shown in diagram 360, an orders section 364 in the patient record 362 includes pointers to external data in: a provider record 366, a procedure record 368, and a department record 370.

[0126] In addition to reconciling pointers between database records, there will be deployment-to-deployment variations in some selection lists, and these pointers need to be reconciled as well. A "selection list" data value is an entry which is restricted to a limited list of possible answers. For purposes of this discussion, each possible choice in the selection list is composed of a selection list ID (a unique identifier within the list, typically numeric), and a selection list value (the value that the end users will normally see, typically a text value). For example if a data value is restricted to choices of "Yes" or "No," that would normally be represented as a selection list with two possible choices on the list: 1--Yes and 2--No. In this case "1" is an example of a selection list ID, and "Yes" is an example of a selection list value.

[0127] Several techniques may be used to resolve the various data pointers. During patient synchronization, deployments should be able to determine which technique to use to resolve various data pointers within the patient record. These techniques can vary depending on the type of data and the deployment. For events, the technique used should be specified for the database to which the event belongs. For static master file records and dynamic database records, the techniques used for resolving each of the records should be specified for each database. For example, the technique used to resolve data pointers for provider records can differ from that used for order records. For selection lists, the technique used to resolve the data pointers should be specified for the selection list data element. Other data elements pointing to the same selection list use the same technique for resolving data pointers.

[0128] When synchronizing a patient record that includes pointers to static master file records, steps should be taken to ensure that those static master file records are either already present on the other deployment or created on the receiving deployment as a part of the synchronization operation. Some techniques assume that static master files records are always present on receiving deployments, and thus require that the system build process ensures that all the deployments get copies of records whenever they are created in the community. Remember, these are records which are usually very static in their life span and generally are created as part of the initial system and software setup through an administrative action. Examples of these are provider records, procedure records and employee (user) records.

[0129] FIG. 13 is an exemplary block diagram 390 of a community record publication process. In this technique, there may be a central hub to assign a Community ID (CID), unique across all deployments, to each static master file record. This central hub, also called the EMFI (Enterprise Master File Index) 392, keeps track of all the static master file records in the Community and the CIDs of each. When Deployment A (block 22) creates a new static master file record (block 394), such as a new provider record, it is sent to the EMFI which assigns a CID to the record before sending the new record (block 396) to Deployment B (block 24). Depending on the Community's choice of method to resolve pointers, Deployment B may the assign the new incoming record a separate, local ID in addition to the OD that the EMFI sent with the record.

[0130] In communities where static master file records are either present on the other deployments or copied to the other deployments when they are created, there are two ways to handle resolving a patient record's pointers to static master files: Direct ID Match and a mapping technique.

[0131] If the participants of a community choose to use the Direct ID Match technique, the system build may require that the static master file records in the Community have mutually identical ID generation mechanisms so that the same record ID is used for the same record across deployments. This technique is very efficient when pointers in the patient record are being resolved because no special mapping is required to translate the data, the use of CIDs unnecessary.

[0132] If the community participants agree to use a mapping technique, then individual deployments in the Community may have their own local IDs assigned to a record and the resolution at the time of patient record synchronization may map the static master file records by one of the following exemplary methods: Community ID, Combination of fields matching, and Embedded Master File Index (MFI) table.

[0133] FIG. 14 is an exemplary block diagram 380 of the Community ID technique of mapping static master file records. At the home deployment, a provider record was created with a local ID 231 (block 383). When the provider record was created, it was also assigned a unique Community ID (FIG. 13, block 392), which was communicated to both deployments. The patient (block 382) receives care from provider 231, and the patient record now contains a pointer to this provider record. The provider offers care at both deployments. At deployment B, however, the provider record has a local ID 1902 (block 385).

[0134] When the patient record is synchronized, the data pointer in the record 382 from the home deployment (block 20) points to the provider record 231 on the home deployment (block 383). This provider record may also contain a CID that the receiving deployment A (block 22) may use to "map" the record to local provider record 1902 (block 285) thus resolving the patient record pointer (block 386.)

[0135] In the technique involving combination of fields matching, the participating deployments may agree on matching certain static master files using a combination of fields within the record. For example, the provider record could be matched using the SSN, Tax ID, Name and Address. So, within the message containing the patient data, the additional information to identify the provider record is included.

[0136] In the technique involving the embedded MFI table, the static master file records are mapped across deployments using a table of cross deployment IDs for each record. For every record, each deployment keeps a table of IDs for that record in other deployments. That table is sent as a part of the synchronization message, and the pointer is resolved during the filing process.

[0137] In all the above techniques there may be a way of publishing the static master file records' changes to the Community. This may be done either through a central hub--the EMFI, or through periodic imports of records to individual deployments, which could include nightly imports.

[0138] Because each deployment receives published static master file records from all the other deployments, a deployment has the option to inactivate, or "hibernate," each record. Also, a deployment can keep local autonomy by modifying certain sections of the record to fulfill local needs.