Systems And Methods For Processing Images Of Slides To Automatically Prioritize The Processed Images Of Slides For Digital Pathology

GODRICH; Ran ; et al.

U.S. patent application number 16/887855 was filed with the patent office on 2020-12-03 for systems and methods for processing images of slides to automatically prioritize the processed images of slides for digital pathology. This patent application is currently assigned to PAIGE.AI, Inc.. The applicant listed for this patent is PAIGE.AI, Inc.. Invention is credited to Thomas FUCHS, Ran GODRICH, Leo GRADY, Jillian SUE.

| Application Number | 20200381122 16/887855 |

| Document ID | / |

| Family ID | 1000004898340 |

| Filed Date | 2020-12-03 |

View All Diagrams

| United States Patent Application | 20200381122 |

| Kind Code | A1 |

| GODRICH; Ran ; et al. | December 3, 2020 |

SYSTEMS AND METHODS FOR PROCESSING IMAGES OF SLIDES TO AUTOMATICALLY PRIORITIZE THE PROCESSED IMAGES OF SLIDES FOR DIGITAL PATHOLOGY

Abstract

Systems and methods are disclosed for processing an electronic image corresponding to a specimen and automatically prioritizing processing of the electronic image. One method includes receiving a target electronic image of a slide corresponding to a target specimen, the target specimen including a tissue sample of a patient; computing, using a machine learning system, a prioritization value of the target electronic image, the machine learning system having been generated by processing a plurality of training images, each training image comprising an image of human tissue and a label characterizing at least one of a slide morphology, a diagnostic value, a pathologist review outcome, and/or an analytic difficulty; and outputting a sequence of digitized pathology images, wherein a placement of the target electronic image in the sequence is based on the prioritization value of the target electronic image.

| Inventors: | GODRICH; Ran; (New York, NY) ; SUE; Jillian; (New York, NY) ; GRADY; Leo; (Darien, CT) ; FUCHS; Thomas; (New York, NY) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Assignee: | PAIGE.AI, Inc. |

||||||||||

| Family ID: | 1000004898340 | ||||||||||

| Appl. No.: | 16/887855 | ||||||||||

| Filed: | May 29, 2020 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 62855199 | May 31, 2019 | |||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | G06K 2209/05 20130101; G06K 2209/07 20130101; G16B 40/20 20190201; G16H 30/40 20180101; G16H 40/20 20180101; G16H 50/20 20180101; G16H 70/60 20180101; G06K 9/6256 20130101; G16H 10/40 20180101; G16H 70/20 20180101 |

| International Class: | G16H 50/20 20060101 G16H050/20; G06K 9/62 20060101 G06K009/62; G16H 30/40 20060101 G16H030/40; G16H 10/40 20060101 G16H010/40; G16H 70/60 20060101 G16H070/60; G16H 70/20 20060101 G16H070/20; G16H 40/20 20060101 G16H040/20; G16B 40/20 20060101 G16B040/20 |

Claims

1. A computer-implemented method of processing an electronic image corresponding to a specimen and automatically prioritizing processing of the electronic image, the method comprising: receiving a target electronic image of a slide corresponding to a target specimen, the target specimen comprising a tissue sample of a patient; computing, using a machine learning system, a prioritization value of the target electronic image, the machine learning system having been generated by processing a plurality of training images, each training image comprising an image of human tissue and a label characterizing at least one of a slide morphology, a diagnostic value, a pathologist review outcome, and/or an analytic difficulty; and outputting a sequence of digitized pathology images, wherein a placement of the target electronic image in the sequence is based on the prioritization value of the target electronic image.

2. The computer-implemented method of claim 1, wherein the label comprises a preparation value corresponding to a likelihood that further preparation is to be performed for the target electronic image.

3. The computer-implemented method of claim 1, wherein the label comprises a preparation value corresponding to a likelihood that further preparation is to be performed for the target electronic image, and wherein the further preparation is performed for the target electronic image based on at least one of a specimen recut, an immunohistochemical stain, additional diagnostic testing, additional consultation, and/or a special stain.

4. The computer-implemented method of claim 1, wherein the label comprises a diagnostic feature of the target electronic image, the diagnostic feature comprising at least one of cancer presence, cancer grade, treatment effects, precancerous lesions, biomarkers for treatment selection, and/or presence of infectious organisms.

5. The computer-implemented method of claim 1, wherein the prioritization value of the target electronic image comprises a first prioritization value of the target electronic image for a first user and a second prioritization value of the target electronic image for a second user.

6. The computer-implemented method of claim 1, wherein the prioritization value of the target electronic image comprises a first prioritization value of the target image for a first user and a second prioritization value of the target electronic image for a second user, and wherein the first prioritization value is determined based on the first user's preferences and the second prioritization value is determined based on the second user's preferences.

7. The computer-implemented method of claim 1, wherein the label comprises an artifact label corresponding to at least one of scanning lines, missing tissue, and/or blur.

8. A system for processing an electronic image corresponding to a specimen and automatically prioritizing processing of the electronic image, the system comprising: at least one memory storing instructions; and at least one processor configured to execute the instructions to perform operations comprising: receiving a target electronic image of a slide corresponding to a target specimen, the target specimen comprising a tissue sample of a patient; computing, using a machine learning system, a prioritization value of the target electronic image, the machine learning system having been generated by processing a plurality of training images, each training image comprising an image of human tissue and a label characterizing at least one of a slide morphology, a diagnostic value, a pathologist review outcome, and/or an analytic difficulty; and outputting a sequence of digitized pathology images, wherein a placement of the target electronic image in the sequence is based on the prioritization value of the target electronic image.

9. The system of claim 8, wherein the label comprises a preparation value corresponding to a likelihood that further preparation is to be performed for the target electronic image.

10. The system of claim 8, wherein the label comprises a preparation value corresponding to a likelihood that further preparation is to be performed for the target electronic image, and wherein the further preparation is performed for the target electronic image based on at least one of a specimen recut, an immunohistochemical stain, additional diagnostic testing, additional consultation, and/or a special stain.

11. The system of claim 8, wherein the label comprises a diagnostic feature of the target electronic image, the diagnostic feature comprising at least one of cancer presence, cancer grade, treatment effects, precancerous lesions, biomarkers for treatment selection, and/or presence of infectious organisms.

12. The system of claim 8, wherein the prioritization value of the target electronic image comprises a first prioritization value of the target electronic image for a first user and a second prioritization value of the target electronic image for a second user.

13. The system of claim 8, wherein the prioritization value of the target electronic image comprises a first prioritization value of the target image for a first user and a second prioritization value of the target electronic image for a second user, and wherein the first prioritization value is determined based on the first user's preferences and the second prioritization value is determined based on the second user's preferences.

14. The system of claim 8, wherein the label comprises an artifact label corresponding to at least one of scanning lines, missing tissue, and/or blur.

15. A non-transitory computer-readable medium storing instructions that, when executed by at least one processor, cause the at least one processor to perform a method for processing an electronic image corresponding to a specimen and automatically prioritizing processing of the image, the method comprising: receiving a target electronic image of a slide corresponding to a target specimen, the target specimen comprising a tissue sample of a patient; computing, using a machine learning system, a prioritization value of the target electronic image, the machine learning system having been generated by processing a plurality of training images, each training image comprising an image of human tissue and a label characterizing at least one of a slide morphology, a diagnostic value, a pathologist review outcome, and/or an analytic difficulty; and outputting a sequence of digitized pathology images, wherein a placement of the target electronic image in the sequence is based on the prioritization value of the target electronic image.

16. The non-transitory computer-readable medium of claim 15, wherein the label comprises a preparation value corresponding to a likelihood that further preparation is to be performed for the target electronic image.

17. The non-transitory computer-readable medium of claim 15, wherein the label comprises a preparation value corresponding to a likelihood that further preparation is to be performed for the target electronic image, and wherein the further preparation is performed for the target electronic image based on at least one of a specimen recut, an immunohistochemical stain, additional diagnostic testing, additional consultation, and/or a special stain.

18. The non-transitory computer-readable medium of claim 15, wherein the label comprises a diagnostic feature of the target electronic image, the diagnostic feature comprising at least one of cancer presence, cancer grade, treatment effects, precancerous lesions, biomarkers for treatment selection, and/or presence of infectious organisms.

19. The non-transitory computer-readable medium of claim 15, wherein the prioritization value of the target electronic image comprises a first prioritization value of the target electronic image for a first user and a second prioritization value of the target electronic image for a second user.

20. The non-transitory computer-readable medium of claim 15, wherein the prioritization value of the target electronic image comprises a first prioritization value of the target image for a first user and a second prioritization value of the target electronic image for a second user, and wherein the first prioritization value is determined based on the first user's preferences and the second prioritization value is determined based on the second user's preferences.

Description

RELATED APPLICATION(S)

[0001] This application claims priority to U.S. Provisional Application No. 62/855,199 filed May 31, 2019, the entire disclosure of which is hereby incorporated herein by reference in its entirety.

FIELD OF THE DISCLOSURE

[0002] Various embodiments of the present disclosure pertain generally to image-based slide prioritization, streamlining a digital pathology workflow, and related image processing methods. More specifically, particular embodiments of the present disclosure relate to systems and methods for providing an automatic prioritization process for preparing, processing, and reviewing images of slides of tissue specimens.

BACKGROUND

[0003] There is no standardized or efficient way to prioritize the review of images of tissue specimens for pathology patient cases. By extension, there is no standardized process for reviewing pathology slides. In some academic institutions, pathology trainees may perform a preliminary review of patient cases, triaging and prioritizing cases with significant findings and/or which require additional diagnostic workup (e.g., immunohistochemical stains, recuts, molecular studies, special stains, intradepartmental consultation). Meanwhile, patient diagnosis may involve using digitized pathology slides for a primary diagnosis. A desire exists for a way to expedite or streamline the slide preparation process. A desire further exists for a way to ensure that pathology slides have sufficient information to render a diagnosis, by the time the slides are reviewed by a pathologist.

SUMMARY

[0004] According to certain aspects of the present disclosure, systems and methods are disclosed for processing an image corresponding to a specimen and automatically prioritizing processing of the slide.

[0005] A computer-implemented method of processing an electronic image corresponding to a specimen and automatically prioritizing processing of the electronic image includes: receiving a target electronic image of a slide corresponding to a target specimen, the target specimen including a tissue sample of a patient; computing, using a machine learning system, a prioritization value of the target electronic image, the machine learning system having been generated by processing a plurality of training images, each training image including an image of human tissue and a label characterizing at least one of a slide morphology, a diagnostic value, a pathologist review outcome, and/or an analytic difficulty; and outputting a sequence of digitized pathology images, wherein a placement of the target electronic image in the sequence is based on the prioritization value of the target electronic image.

[0006] A system for processing an electronic image corresponding to a specimen and automatically prioritizing processing of the electronic image includes: at least one memory storing instructions; and at least one processor configured to execute the instructions to perform operations including: receiving a target electronic image of a slide corresponding to a target specimen, the target specimen including a tissue sample of a patient; computing, using a machine learning system, a prioritization value of the target electronic image, the machine learning system having been generated by processing a plurality of training images, each training image including an image of human tissue and a label characterizing at least one of a slide morphology, a diagnostic value, a pathologist review outcome, and/or an analytic difficulty; and outputting a sequence of digitized pathology images, wherein a placement of the target electronic image in the sequence is based on the prioritization value of the target electronic image.

[0007] A non-transitory computer-readable medium storing instructions that, when executed by at least one processor, cause the at least one processor to perform a method for processing an electronic image corresponding to a specimen and automatically prioritizing processing of the image, the method including: receiving a target electronic image of a slide corresponding to a target specimen, the target specimen including a tissue sample of a patient; computing, using a machine learning system, a prioritization value of the target electronic image, the machine learning system having been generated by processing a plurality of training images, each training image including an image of human tissue and a label characterizing at least one of a slide morphology, a diagnostic value, a pathologist review outcome, and/or an analytic difficulty; and outputting a sequence of digitized pathology images, wherein a placement of the target electronic image in the sequence is based on the prioritization value of the target electronic image.

[0008] It is to be understood that both the foregoing general description and the following detailed description are exemplary and explanatory only and are not restrictive of the disclosed embodiments, as claimed.

BRIEF DESCRIPTION OF THE DRAWINGS

[0009] The accompanying drawings, which are incorporated in and constitute a part of this specification, illustrate various exemplary embodiments and together with the description, serve to explain the principles of the disclosed embodiments.

[0010] FIG. 1A is an exemplary block diagram of a system and network for providing an automatic prioritization process for preparing, processing, and reviewing images of slides of tissue specimens, according to an exemplary embodiment of the present disclosure.

[0011] FIG. 1B is an exemplary block diagram of a disease detection platform 100, according to an exemplary embodiment of the present disclosure.

[0012] FIG. 1C is an exemplary block diagram of a slide prioritization tool 101, according to an exemplary embodiment of the present disclosure.

[0013] FIG. 1D is a diagram of an exemplary system for an automatic prioritization process for pathology slide preparation, processing, and review, according to an exemplary embodiment of the present disclosure.

[0014] FIG. 2 is a flowchart of an exemplary method for analyzing an image of a slide corresponding to a specimen and providing automatically prioritized processing of the slide, using machine learning, according to an exemplary embodiment of the present disclosure.

[0015] FIG. 3 is a flowchart of an exemplary embodiment for automatically prioritizing pathology slide preparation, processing, and review, according to an exemplary embodiment of the present disclosure.

[0016] FIG. 4 is a flowchart of an exemplary embodiment of generating and using a quality control-based pathology slide preparation prioritization tool, according to an exemplary embodiment of the present disclosure.

[0017] FIG. 5 is a flowchart of an exemplary embodiment of generating and using a pathology slide preparation prioritization tool, with respect to quality control, according to an exemplary embodiment of the present disclosure.

[0018] FIG. 6 is a flowchart of an exemplary embodiment of generating and using a diagnostic feature prioritization tool, according to an exemplary embodiment of the present disclosure.

[0019] FIG. 7 is a flowchart of an exemplary embodiment of generating and using a pathology slide processing prioritization tool, according to an exemplary embodiment of the present disclosure.

[0020] FIG. 8 is a flowchart of an exemplary embodiment of generating and using a pathology slide review and assignment prioritization tool, according to an exemplary embodiment of the present disclosure.

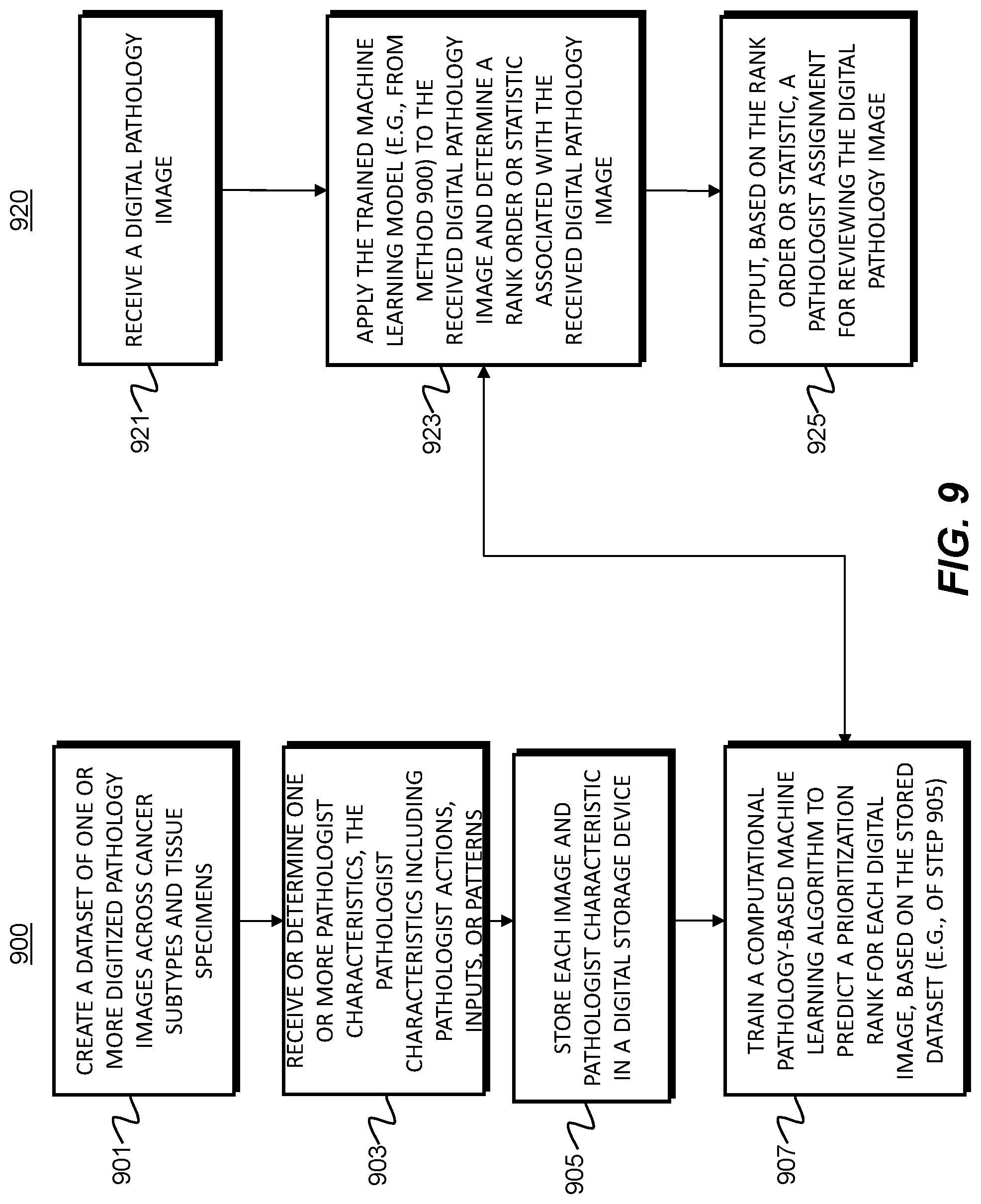

[0021] FIG. 9 is a flowchart of an exemplary embodiment of generating and using a personalized pathology slide prioritization tool, according to an exemplary embodiment of the present disclosure.

[0022] FIG. 10 is a flowchart of an exemplary embodiment of generating and using an educational pathology slide prioritization tool, according to an exemplary embodiment of the present disclosure.

[0023] FIG. 11 depicts an example system that may execute techniques presented herein.

DESCRIPTION OF THE EMBODIMENTS

[0024] Reference will now be made in detail to the exemplary embodiments of the present disclosure, examples of which are illustrated in the accompanying drawings. Wherever possible, the same reference numbers will be used throughout the drawings to refer to the same or like parts.

[0025] The systems, devices, and methods disclosed herein are described in detail by way of examples and with reference to the figures. The examples discussed herein are examples only and are provided to assist in the explanation of the apparatuses, devices, systems, and methods described herein. None of the features or components shown in the drawings or discussed below should be taken as mandatory for any specific implementation of any of these devices, systems, or methods unless specifically designated as mandatory.

[0026] Also, for any methods described, regardless of whether the method is described in conjunction with a flow diagram, it should be understood that, unless otherwise specified or required by context, any explicit or implicit ordering of steps performed in the execution of a method does not imply that those steps must be performed in the order presented but instead may be performed in a different order or in parallel.

[0027] As used herein, the term "exemplary" is used in the sense of "example," rather than "ideal." Moreover, the terms "a" and "an" herein do not denote a limitation of quantity, but rather denote the presence of one or more of the referenced items.

[0028] Pathology refers to the study of diseases. More specifically, pathology refers to performing tests and analysis that are used to diagnose diseases. For example, tissue samples may be placed onto slides to be viewed under a microscope by a pathologist (e.g., a physician that is an expert at analyzing tissue samples to determine whether any abnormalities exist). That is, pathology specimens may be cut into multiple sections, stained, and prepared as slides for a pathologist to examine and render a diagnosis. When uncertain of a diagnostic finding on a slide, a pathologist may order additional cut levels, stains, or other tests to gather more information from the tissue. Technician(s) may then create new slide(s) which may contain the additional information for the pathologist to use in making a diagnosis. This process of creating additional slides may be time-consuming, not only because it may involve retrieving the block of tissue, cutting it to make a new a slide, and then staining the slide, but also because it may be batched for multiple orders. This may significantly delay the final diagnosis that the pathologist renders. In addition, even after the delay, there may still be no assurance that the new slide(s) will have information sufficient to render a diagnosis.

[0029] Pathologists may evaluate cancer and other disease pathology slides in isolation. A consolidated workflow may improve diagnosis of cancer and other diseases. The workflow may integrate, for example, slide evaluation, tasks, image analysis and cancer detection artificial intelligence (AI), annotations, consultations, and recommendations in one workstation. In particular, exemplary user interfaces may be available in the workflow, as well as AI tools that may be integrated into the workflow to expedite and improve a pathologist's work.

[0030] For example, computers may be used to analyze an image of a tissue sample to quickly identify whether additional information may be needed about a particular tissue sample, and/or to highlight to a pathologist an area in which he or she should look more closely. Thus, the process of obtaining additional stained slides and tests may be done automatically before being reviewed by a pathologist. When paired with automatic slide segmenting and staining machines, this may provide a fully automated slide preparation pipeline.

[0031] The process of using computers to assist pathologists is known as computational pathology. Computing methods used for computational pathology may include, but are not limited to, statistical analysis, autonomous or machine learning, and AI. AI may include, but is not limited to, deep learning, neural networks, classifications, clustering, and regression algorithms. By using computational pathology, lives may be saved by helping pathologists improve their diagnostic accuracy, reliability, efficiency, and accessibility. For example, computational pathology may be used to assist with detecting slides suspicious for cancer, thereby allowing pathologists to check and confirm their initial assessments before rendering a final diagnosis.

[0032] Histopathology refers to the study of a specimen that has been placed onto a slide. For example, a digital pathology image may be comprised of a digitized image of a microscope slide containing the specimen (e.g., a smear). One method a pathologist may use to analyze an image on a slide is to identify nuclei and classify whether a nucleus is normal (e.g., benign) or abnormal (e.g., malignant). To assist pathologists in identifying and classifying nuclei, histological stains may be used to make cells visible. Many dye-based staining systems have been developed, including periodic acid-Schiff reaction, Masson's trichrome, nissl and methylene blue, and Haemotoxylin and Eosin (H&E). For medical diagnosis, H&E is a widely used dye-based method, with hematoxylin staining cell nuclei blue, eosin staining cytoplasm and extracellular matrix pink, and other tissue regions taking on variations of these colors. In many cases, however, H&E-stained histologic preparations do not provide sufficient information for a pathologist to visually identify biomarkers that can aid diagnosis or guide treatment. In this situation, techniques such as immunohistochemistry (IHC), immunofluorescence, in situ hybridization (ISH), or fluorescence in situ hybridization (FISH), may be used. IHC and immunofluorescence involve, for example, using antibodies that bind to specific antigens in tissues enabling the visual detection of cells expressing specific proteins of interest, which can reveal biomarkers that are not reliably identifiable to trained pathologists based on the analysis of H&E stained slides. ISH and FISH may be employed to assess the number of copies of genes or the abundance of specific RNA molecules, depending on the type of probes employed (e.g. DNA probes for gene copy number and RNA probes for the assessment of RNA expression). If these methods also fail to provide sufficient information to detect some biomarkers, genetic testing of the tissue may be used to confirm if a biomarker is present (e.g., overexpression of a specific protein or gene product in a tumor, amplification of a given gene in a cancer).

[0033] A digitized image may be prepared to show a stained microscope slide, which may allow a pathologist to manually view the image on a slide and estimate a number of stained abnormal cells in the image. However, this process may be time consuming and may lead to errors in identifying abnormalities because some abnormalities are difficult to detect. Computational processes and devices may be used to assist pathologists in detecting abnormalities that may otherwise be difficult to detect. For example, AI may be used to predict biomarkers (such as the over-expression of a protein and/or gene product, amplification, or mutations of specific genes) from salient regions within digital images of tissues stained using H&E and other dye-based methods. The images of the tissues could be whole slide images (WSI), images of tissue cores within microarrays or selected areas of interest within a tissue section. Using staining methods like H&E, these biomarkers may be difficult for humans to visually detect or quantify without the aid of additional testing. Using AI to infer these biomarkers from digital images of tissues has the potential to improve patient care, while also being faster and less expensive.

[0034] The detected biomarkers or the image alone could then be used to recommend specific cancer drugs or drug combination therapies to be used to treat a patient, and the AI could identify which drugs or drug combinations are unlikely to be successful by correlating the detected biomarkers with a database of treatment options. This can be used to facilitate the automatic recommendation of immunotherapy drugs to target a patient's specific cancer. Further, this could be used for enabling personalized cancer treatment for specific subsets of patients and/or rarer cancer types.

[0035] In the field of pathology, it may be difficult to provide systematic quality control ("QC"), with respect to pathology specimen preparation, and quality assurance ("QA") with respect to the quality of diagnoses, throughout the histopathology workflow. Systematic quality assurance is difficult because it is resource and time intensive as it may require duplicative efforts by two pathologists. Some methods for quality assurance include (1) second review of first-time diagnosis cancer cases; (2) periodic reviews of discordant or changed diagnoses by a quality assurance committee; and/or (3) random review of a subset of cases. These are non-exhaustive, mostly retrospective, and manual. With an automated and systematic QC and QA mechanism, quality can be ensured throughout the workflow for every case. Laboratory quality control and digital pathology quality control are critical to the successful intake, process, diagnosis, and archive of patient specimens. Manual and sampled approaches to QC and QA confer substantial benefits. Systematic QC and QA has the potential to provide efficiencies and improve diagnostic quality.

[0036] As described above, example embodiments described herein provide an integrated platform allowing a fully automated process including data ingestion, processing and viewing of digital pathology images via a web-browser or other user interface, while integrating with a laboratory information system (LIS). Further, clinical information may be aggregated using cloud-based data analysis of patient data. The data may come from hospitals, clinics, field researchers, etc., and may be analyzed by machine learning, computer vision, natural language processing, and/or statistical algorithms to do real-time monitoring and forecasting of health patterns at multiple geographic specificity levels.

[0037] Previously, there was no way of prioritizing the production or analysis of pathology slides. Accordingly, example embodiments described herein automatically prioritize slide preparation, processing, and review, in order to streamline and speed digitized pathology image-based diagnoses.

[0038] This automation has, at least, the benefits of (1) minimizing the amount of time wasted by a pathologist determining a slide to be insufficient to make a diagnosis, (2) minimizing the time (e.g., average total time) from specimen acquisition to diagnosis by avoiding the additional time between when additional tests are ordered and when they are produced, (3) allowing higher volumes of slides to be processed or reviewed by a pathologist in a shorter amount of time, (4) contributing to more informed/precise diagnoses by reducing the overhead of requesting additional testing for a pathologist, (5) identifying or verifying correct properties (e.g., pertaining to a specimen type) of a digital pathology image, and/or (6) training pathologists, etc. The present disclosure uses automated detection, prioritization and triage of all pathology cases to a clinical digital workflow involving digitized pathology slides, such that pathology slide analysis may be prioritized before diagnostic review by a pathologist. For example, the disclosed embodiments may provide case-level prioritization, and prioritize slides with significant findings within each case. These prioritization embodiments may make digital review of pathology slides more efficient in various settings (e.g., academic, commercial lab, hospital, etc.).

[0039] Exemplary global outputs of the disclosed embodiments may contain information or slide parameter(s) about an entire image or slide, e.g., the depicted specimen type, the overall quality of the cut of the specimen of the slide, the overall quality of the glass pathology slide itself, or tissue morphology characteristics. Exemplary local outputs may indicate information in specific regions of an image or slide, e.g., a particular slide region may be labeled as blurred or containing an irrelevant specimen. The present disclosure includes embodiments for both developing and using the disclosed automatic prioritization process for slide preparation, processing, and review, as described in further detail below.

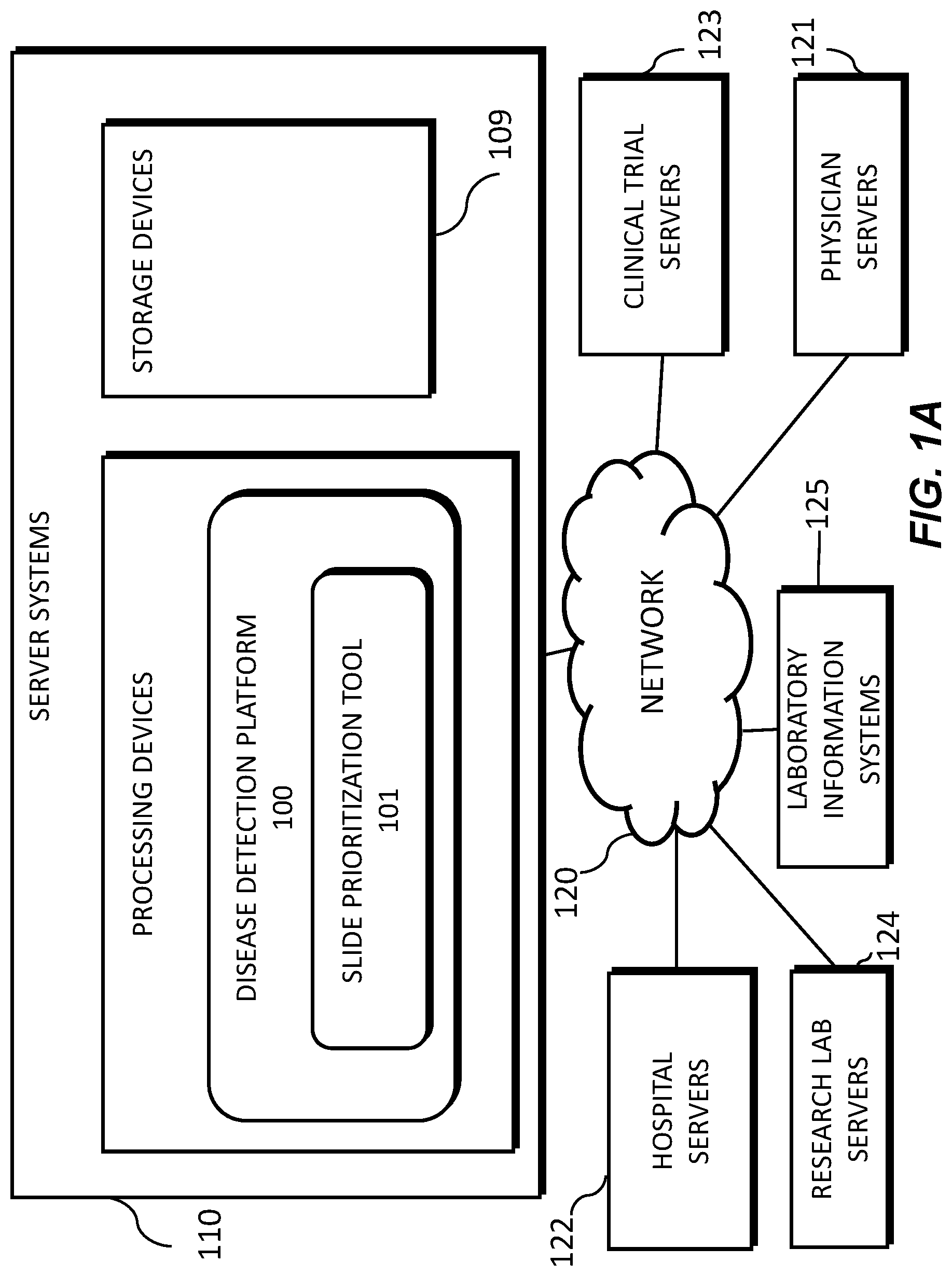

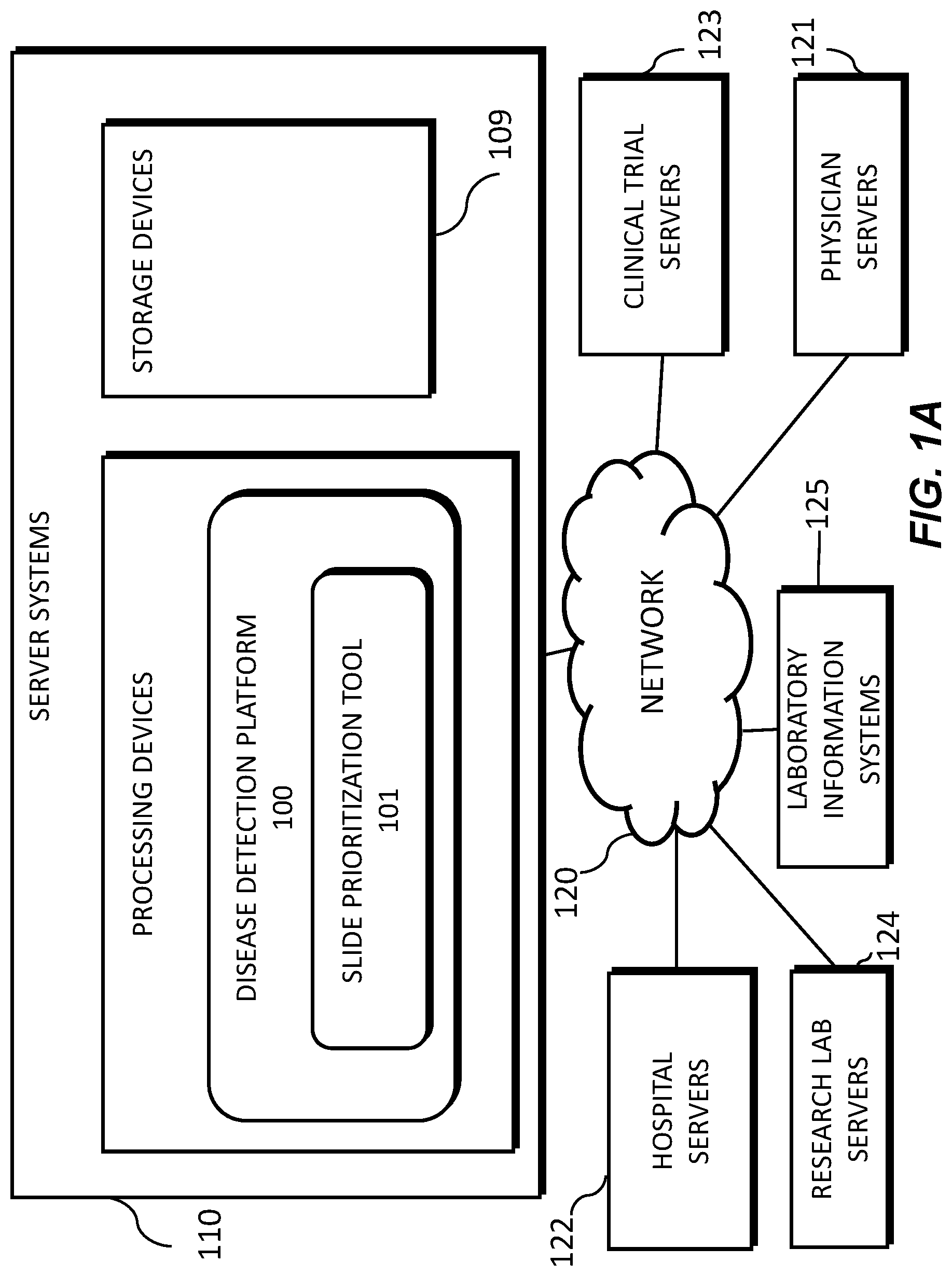

[0040] FIG. 1A illustrates a block diagram of a system and network for providing an automatic prioritization process for preparing, processing, and reviewing images of slides of tissue specimens, using machine learning, according to an exemplary embodiment of the present disclosure.

[0041] Specifically, FIG. 1A illustrates an electronic network 120 that may be connected to servers at hospitals, laboratories, and/or doctors' offices, etc. For example, physician servers 121, hospital servers 122, clinical trial servers 123, research lab servers 124, and/or laboratory information systems 125, etc., may each be connected to an electronic network 120, such as the Internet, through one or more computers, servers, and/or handheld mobile devices. According to an exemplary embodiment of the present application, the electronic network 120 may also be connected to server systems 110, which may include processing devices that are configured to implement a disease detection platform 100, which includes a slide prioritization tool 101 for providing an automatic prioritization process for preparing, processing, and reviewing images of slides of tissue specimens, according to an exemplary embodiment of the present disclosure.

[0042] The physician servers 121, hospital servers 122, clinical trial servers 123, research lab servers 124, and/or laboratory information systems 125 may create or otherwise obtain images of one or more patients' cytology specimen(s), histopathology specimen(s), slide(s) of the cytology specimen(s), digitized images of the slide(s) of the histopathology specimen(s), or any combination thereof. The physician servers 121, hospital servers 122, clinical trial servers 123, research lab servers 124, and/or laboratory information systems 125 may also obtain any combination of patient-specific information, such as age, medical history, cancer treatment history, family history, past biopsy or cytology information, etc. The physician servers 121, hospital servers 122, clinical trial servers 123, research lab servers 124, and/or laboratory information systems 125 may transmit digitized slide images and/or patient-specific information to server systems 110 over the electronic network 120. Server system(s) 110 may include one or more storage devices 109 for storing images and data received from at least one of the physician servers 121, hospital servers 122, clinical trial servers 123, research lab servers 124, and/or laboratory information systems (LIS) 125. Server systems 110 may also include processing devices for processing images and data stored in the storage devices 109. Server systems 110 may further include one or more machine learning tool(s) or capabilities. For example, the processing devices may include a machine learning tool for a disease detection platform 100, according to one embodiment. Alternatively or in addition, the present disclosure (or portions of the system and methods of the present disclosure) may be performed on a local processing device (e.g., a laptop).

[0043] The physician servers 121, hospital servers 122, clinical trial servers 123, research lab servers 124, and/or LIS 125 refer to systems used by pathologists for reviewing the images of the slides. In hospital settings, tissue type information may be stored in a LIS 125. According to an exemplary embodiment of the present disclosure, slides may be automatically prioritized without needing to access the LIS 125. For example, a third party may be given anonym ized access to the image content without the corresponding specimen type label stored in the LIS. Additionally, access to LIS content may be limited due to its sensitive content.

[0044] FIG. 1B illustrates an exemplary block diagram of a disease detection platform 100 for providing an automatic prioritization process for preparing, processing, and reviewing images of slides of tissue specimens, using machine learning.

[0045] Specifically, FIG. 1B depicts components of the disease detection platform 100, according to one embodiment. For example, the disease detection platform 100 may include a slide prioritization tool 101, a data ingestion tool 102, a slide intake tool 103, a slide scanner 104, a slide manager 105, a storage 106, and a viewing application tool 108.

[0046] The slide prioritization tool 101, as described below, refers to a process and system for providing an automatic prioritization process for preparing, processing, and reviewing images of slides of tissue specimens, according to an exemplary embodiment.

[0047] The data ingestion tool 102 refers to a process and system for facilitating a transfer of the digital pathology images to the various tools, modules, components, and devices that are used for classifying and processing the digital pathology images, according to an exemplary embodiment.

[0048] The slide intake tool 103 refers to a process and system for scanning pathology images and converting them into a digital form, according to an exemplary embodiment. The slides may be scanned with slide scanner 104, and the slide manager 105 may process the images on the slides into digitized pathology images and store the digitized images in storage 106.

[0049] The viewing application tool 108 refers to a process and system for providing a user (e.g., pathologist) with specimen property or image property information pertaining to digital pathology image(s), according to an exemplary embodiment. The information may be provided through various output interfaces (e.g., a screen, a monitor, a storage device, and/or a web browser, etc.).

[0050] The slide prioritization tool 101, and each of its components, may transmit and/or receive digitized slide images and/or patient information to server systems 110, physician servers 121, hospital servers 122, clinical trial servers 123, research lab servers 124, and/or laboratory information systems 125 over a network 120. Further, server systems 110 may include storage devices for storing images and data received from at least one of the slide prioritization tool 101, the data ingestion tool 102, the slide intake tool 103, the slide scanner 104, the slide manager 105, and viewing application tool 108. Server systems 110 may also include processing devices for processing images and data stored in the storage devices. Server systems 110 may further include one or more machine learning tool(s) or capabilities, e.g., due to the processing devices. Alternatively or in addition, the present disclosure (or portions of the system and methods of the present disclosure) may be performed on a local processing device (e.g., a laptop).

[0051] Any of the above devices, tools, and modules may be located on a device that may be connected to an electronic network 120, such as the Internet or a cloud service provider, through one or more computers, servers, and/or handheld mobile devices.

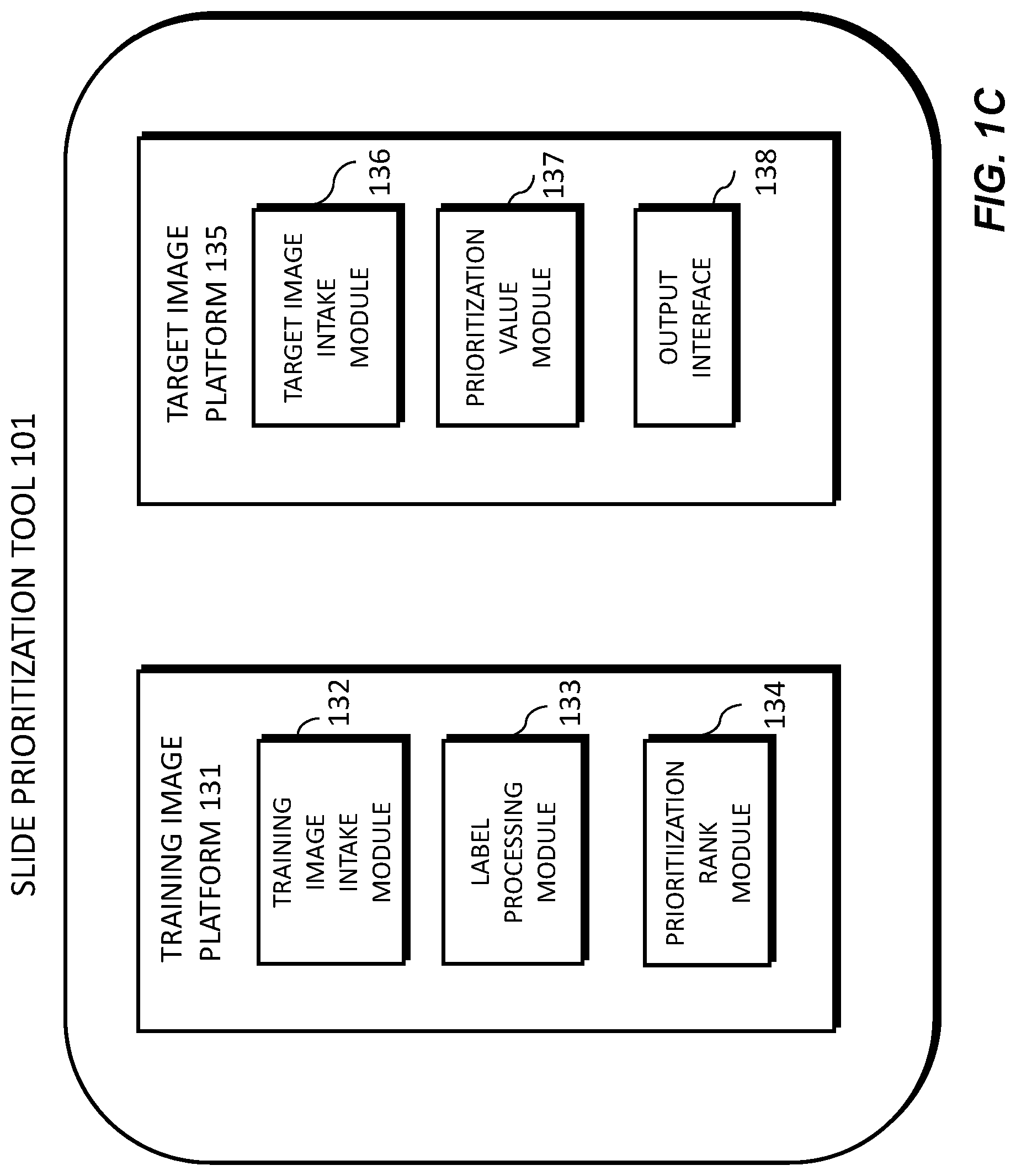

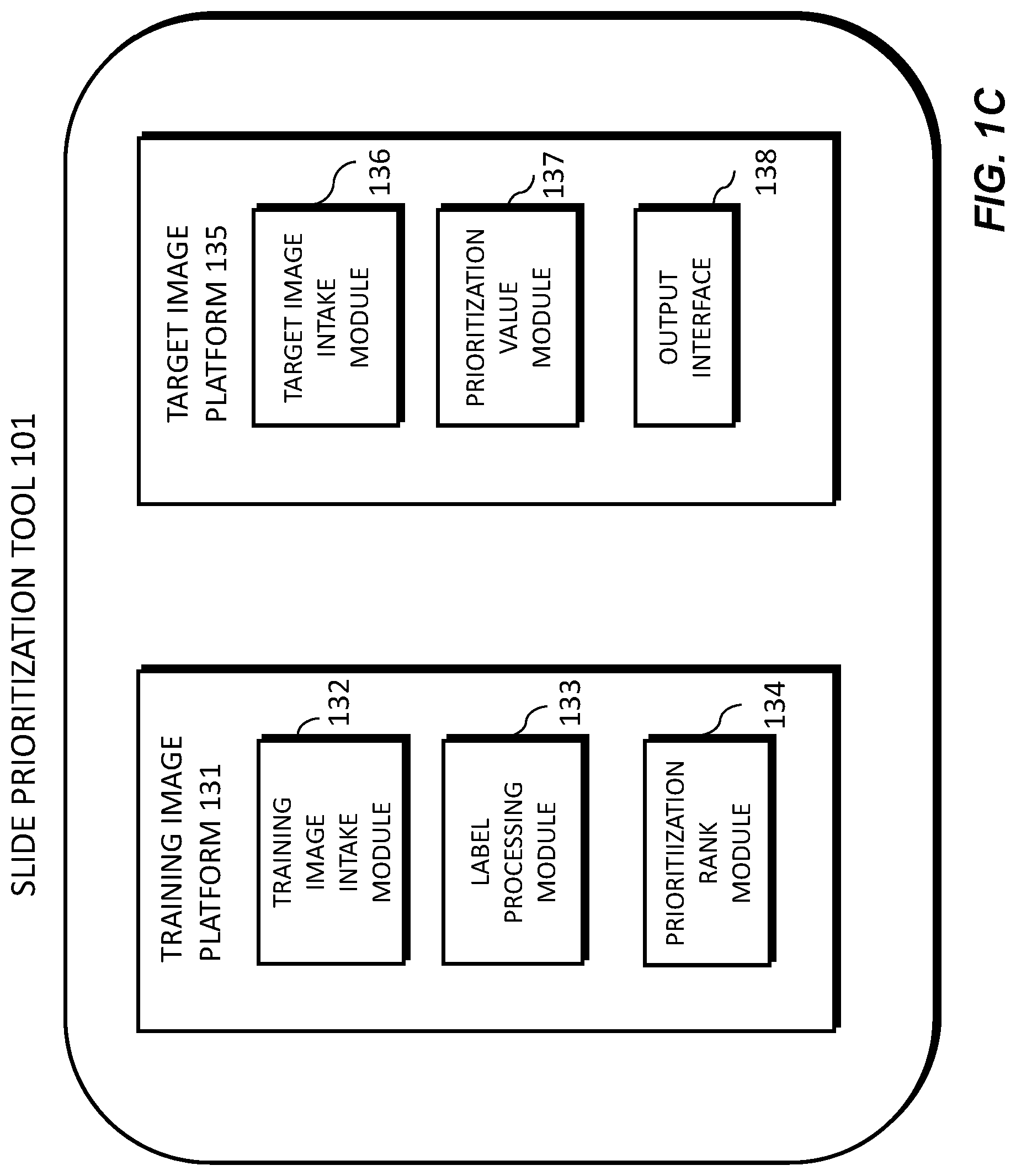

[0052] FIG. 1C illustrates an exemplary block diagram of a slide prioritization tool 101, according to an exemplary embodiment of the present disclosure. The slide prioritization tool 101 may include a training image platform 131 and/or a target image platform 135.

[0053] The training image platform 131 may include a training image intake module 132, a label processing module 133, and/or a prioritization rank module 134.

[0054] The training image platform 131 may create or receive training images that are used to train a machine learning model and/or system to effectively analyze and classify digital pathology images. For example, the training images may be received from any one or any combination of the server systems 110, physician servers 121, hospital servers 122, clinical trial servers 123, research lab servers 124, and/or laboratory information systems 125. Images used for training may come from real sources (e.g., humans, animals, etc.) or may come from synthetic sources (e.g., graphics rendering engines, 3D models, etc.). Examples of digital pathology images may include (a) digitized slides stained with a variety of stains, such as (but not limited to) H&E, Hematoxylin alone, IHC, molecular pathology, etc.; and/or (b) digitized tissue samples from a 3D imaging device, such as microCT.

[0055] The training image intake module 132 may create or receive a dataset comprising one or more training images corresponding to images of a human tissue and/or images that are graphically rendered. For example, the training images may be received from any one or any combination of the server systems 110, physician servers 121, hospital servers 122, clinical trial servers 123, research lab servers 124, and/or laboratory information systems 125. This dataset may be kept on a digital storage device. The label processing module 133 may, for each training image, determine a label characterizing at least one of a slide morphology, a diagnostic value, a pathologist review outcome, and/or an analytic difficulty. The prioritization rank module 134 may process images of tissues and determine a predicted prioritization rank for each training image.

[0056] According to one embodiment, the target image platform 135 may include a target image intake module 136, a prioritization value module 137, and an output interface 138. The target image platform 135 may receive a target image and apply the machine learning model to the target image to compute a prioritization value for the target image. For example, the target image may be received from any one or any combination of the server systems 110, physician servers 121, hospital servers 122, clinical trial servers 123, research lab servers 124, and/or laboratory information systems 125. The target image intake module 136 may receive a target image corresponding to a target specimen. The prioritization value module 137 may apply the machine learning model to the target image to compute a prioritization value for the target image.

[0057] The output interface 138 may be used to output information about the target image and the target specimen. (e.g., to a screen, monitor, storage device, web browser, etc.).

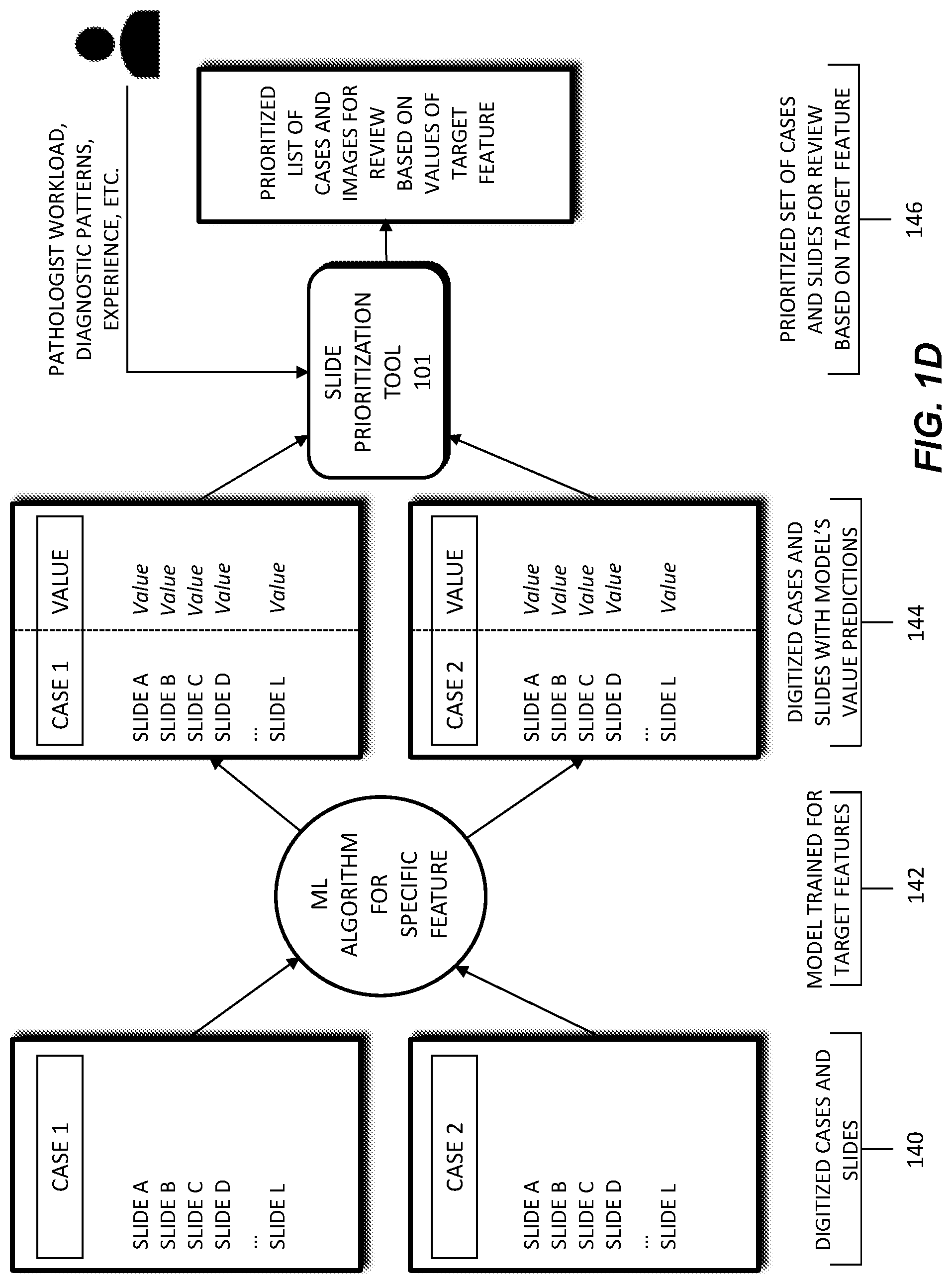

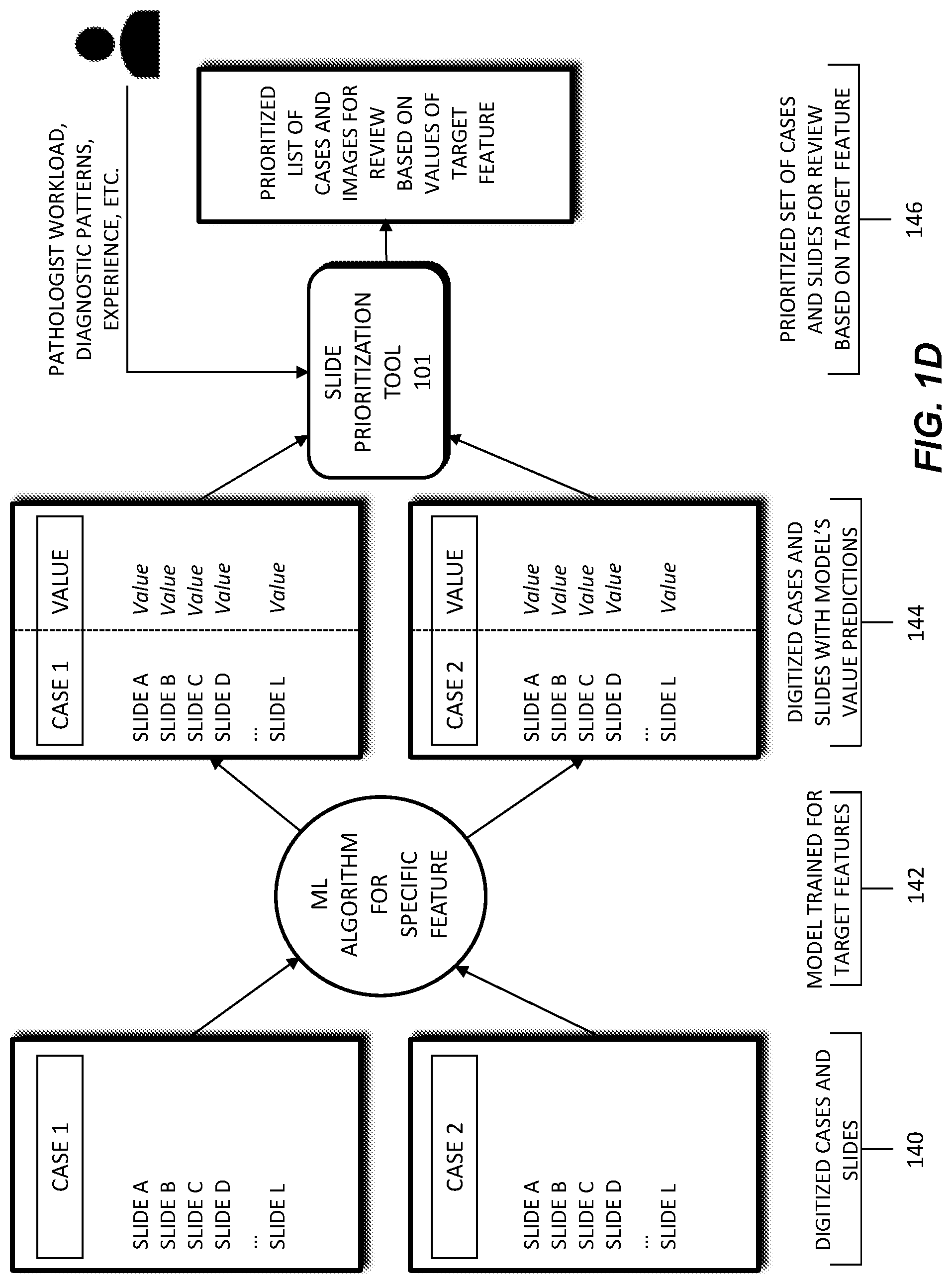

[0058] FIG. 1D depicts a schematic diagram of an exemplary system and workflow for prioritizing slides in a digital pathology workflow. In this workflow, a machine learning model 142 may receive digitized cases and slides 140 as input. The digitized cases and slides 140 may be comprised of images of a patient's pathology slides and/or electronic data regarding patient characteristics, treatment history, patient context, slide data, etc. Patient characteristics may include a patient's age, height, body weight, family medical history, allergies, etc. Treatment history may include tests performed on a patient, past procedures performed on a patient, radiation exposure of a patient, etc. Case context may refer to whether a case/slide is part of a clinical study, experimental treatment, follow-up report, etc. Slide data may include stain(s) performed, location of tissue slice, time/date at which a slide was made, lab making the slide, etc.

[0059] The machine learning model 142 may be trained using the digitized cases and slides 140. The trained machine learning model 142 may output one or more prioritization value predictions 144. For example, the trained machine learning model 142 may generate a prioritization value 144 for a selected digitized case/slide. The selected digitized case/slide may be a new or additional case/slide, not included in the input digitized cases and slides 140. Alternately, machine learning model 142 may also be used to output a prioritization value for a selected digitized case/slide that was part of the digitized cases and slides 140.

[0060] A prioritization order 146 may be generated based on the generated prioritization value 144. For example, a prioritization value 144 may be output by the machine learning model 142, for each case/slide in a set of cases/slides. The prioritization order 146 may then be comprised of a listing, or docket, of cases for a pathologist to review, where the cases are listed in an order based on each case's prioritization value 144. This prioritization of cases may allow a pathologist to triage their cases and review cases of higher urgency or priority first. In some cases, the prioritization order 146 may be adjusted, prior to a pathologist's review. For example, a prioritization value 144 of a case may increase if a case has been in queue past a certain amount of time, or if additional information is received on the case. The methods of FIG. 1D are described in further detail below.

[0061] FIG. 2 is a flowchart illustrating an exemplary method of a tool for processing an image of a slide corresponding to a specimen and automatically prioritizing processing of the slide, according to an exemplary embodiment of the present disclosure. For example, an exemplary method 200 (e.g., steps 202 to 206) may be performed by the slide prioritization tool 101 automatically or in response to a request from a user (e.g., physician, pathologist, etc.).

[0062] According to one embodiment, the exemplary method 200 for automatically prioritizing processing of the slide may include one or more of the following steps. In step 202, the method may include receiving a target image of a slide corresponding to a target specimen, the target specimen comprising a tissue sample of a patient. For example, the target image may be received from any one or any combination of the server systems 110, physician servers 121, hospital servers 122, clinical trial servers 123, research lab servers 124, and/or laboratory information systems 125.

[0063] In step 204, the method may include computing, using a machine learning model, a prioritization value of the target image, the machine learning model having been generated by processing a plurality of training images, each training image comprising an image of human tissue and a label characterizing at least one of a slide morphology, a diagnostic value, a pathologist review outcome, and/or an analytic difficulty. The label may include a preparation value corresponding to a likelihood that further preparation is to be performed for the target image. Further preparation may be performed for the target image based on at least one of a specimen recut, an immunohistochemical stain, additional diagnostic testing, additional consultation, and/or a special stain. The label may include a diagnostic feature of the target image, the diagnostic feature comprising at least one of cancer presence, cancer grade, treatment effects, precancerous lesions, and/or presence of infectious organisms. The prioritization value of the target image may include a first prioritization value of the target image for a first user and a second prioritization value of the target image for a second user, the first prioritization value may be determined based on the first user's preferences and the second prioritization value may be determined based on the second user's preferences. The label may include an artifact label corresponding to at least one of scanning lines, missing tissue, and/or blur.

[0064] The training images may be received from any one or any combination of the server systems 110, physician servers 121, hospital servers 122, clinical trial servers 123, research lab servers 124, and/or laboratory information systems 125. This dataset may be kept on a digital storage device. Images used for training may come from real sources (e.g., humans, animals, etc.) or may come from synthetic sources (e.g., graphics rendering engines, 3D models, etc.). Examples of digital pathology images may include (a) digitized slides stained with a variety of stains, such as (but not limited to) H&E, Hematoxylin alone, IHC, molecular pathology, etc.; and/or (b) digitized tissue samples from a 3D imaging device, such as microCT.

[0065] In step 206, the method may include outputting a sequence of digitized pathology images, and a placement of the target image in the sequence is based on the prioritization value of the target image.

[0066] Different methods for implementing machine learning algorithms and/or architectures may include but are not limited to (1) CNN (Convolutional Neural Network); (2) MIL (Multiple Instance Learning); (3) RNN (Recurrent Neural Network); (4) Feature aggregation via CNN; and/or (5) Feature extraction following by ensemble methods (e.g., random forest), linear/non-linear classifiers (e.g., SVMs (support vector machines), MLP (multiplayer perceptron), and/or dimensionality reduction techniques (e.g., PCA (principal component analysis), LDA (linear discriminant analysis), etc.). Example features may include vector embeddings from a CNN, single/multi-class output from a CNN, and/or multi-dimensional output from a CNN (e.g., a mask overlay of the original image). A CNN may learn feature representations for classification tasks directly from pixels, which may lead to better diagnostic performance. When detailed annotations for regions or pixel-wise labels are available, a CNN may be trained directly if there is a large amount of labeled data. However, when labels are only at the whole slide level or over a collection of slides in a group (which may be called a "part" in pathology), MIL may be used to train the CNN or another neural network classifier, where MIL learns the image regions that are diagnostic for the classification task leading to the ability to learn without exhaustive annotations. An RNN may be used on features extracted from multiple image regions (e.g., tiles) that it then processes to make a prediction. Other machine learning methods, e.g., random forest, SVM, and numerous others may be used with either features learned by a CNN, a CNN with MIL, or using hand-crafted image features (e.g., SIFT or SURF) to do the classification task, but they may perform poorly when trained directly from pixels. These methods may perform poorly compared to CNN-based systems when there is a large amount of annotated training data available. Dimensionality reduction techniques could be used as a pre-processing step before using any of the classifiers mentioned, which could be useful if there was little data available.

[0067] According to one or more embodiments, any of the above algorithms, architectures, methodologies, attributes, and/or features may be combined with any or all of the other algorithms, architectures, methodologies, attributes, and/or features. For example, any of the machine learning algorithms and/or architectures (e.g., neural network methods, convolutional neural networks (CNNs), recurrent neural networks (RNNs), etc.) may be trained with any of the training methodologies (e.g., Multiple Instance Learning, Reinforcement Learning, Active Learning, etc.)

[0068] The description of the terms below is merely exemplary and is not intended to limit the terms in any way.

[0069] A label may refer to information about an input to a machine learning algorithm that the algorithm is attempting to predict.

[0070] For a given image of size N.times.M, a segmentation may be another image of size N.times.M that, for each pixel in an original image, assigns a number that describes the class or type of that pixel. For example, in a WSI, elements in the mask may categorize each pixel in the input image as belonging to the classes of, e.g., background, tissue and/or unknown.

[0071] Slide level information may refer to information about a slide in general, but not necessarily a specific location of that information in the slide.

[0072] A heuristic may refer to a logic rule or function that deterministically produces an output, given inputs. For example: if a prediction that a slide should be prioritized over another slide is greater than or equal to 32%, then output one, if not, output 0.

[0073] Embedding may refer to a conceptual high-dimensional numerical representation of low-dimensional data. For example, if a WSI is passed through a CNN training to classify tissue type, the numbers on the last layer of the network may provide an array of numbers (e.g., in the order of thousands) that contain information about the slide (e.g., information about a type of tissue).

[0074] Slide level prediction may refer to a concrete prediction about a slide as a whole. For example, a slide level prediction may be that the slide should be prioritized over another slide. Further, slide level prediction may refer to individual probability predictions over a set of defined classes.

[0075] A classifier may refer to a model that is trained to take input data and associate it with a category.

[0076] According to one or more embodiments, the machine learning model may be trained in different ways. For example, the training of the machine learning model may be performed by any one or any combination of supervised training, semi-supervised training, unsupervised training classifier training, mixed training, and/or uncertainty estimation. The type of training used may depend on an amount of data, a type of data, and/or a quality of data. Table 1 below describes a non-limiting list of some types of training and the corresponding features.

TABLE-US-00001 TABLE 1 Index Input Label Model Output 1 WSI Segmentation CNN, RNN, Predicted Embedding MLP Segmentation Embedding 2 WSI Slide Level CNN, RNN, Embedding Embedding Information MLP Slide level prediction 3 WSI -- CNN, RNN, Embedding Embedding MLP 4 Embedding Slide Level SVM, MLP, Slide level Information RNN, Random prediction Forests 5 Slide level Measure of MLP, RNN, Predict a prediction how wrong Statistical likelihood that the prediction Model an original was prediction is wrong

[0077] Supervised training may be used with a small amount of data to provide a seed for a machine learning model. In supervised training, the machine learning model may look for a specific item (e.g., bubbles, tissue folds, etc.), flag the slide, and quantify how much of the specific item is present in the slide.

[0078] According to one embodiment, an example fully supervised training may take as an input a WSI and may include a label of segmentation. Pipelines for a fully supervised training may include (1) 1; (2) 1, Heuristic; (3) 1, 4, Heuristic; (4) 1, 4, 5, Heuristic; and/or (5) 1, 5, Heuristic. Advantages of a fully supervised training may be that (1) it may require fewer slides and/or (2) the output is explainable because (a) it may be known which areas of the image contributed to the diagnosis; and (b) it may be known why a slide is prioritized over another (e.g., a diagnostic value, an analytic difficulty, etc.). A disadvantage of using a fully supervised training may be that it may require large amounts of segmentation which may be difficult to acquire.

[0079] According to one embodiment, an example semi-supervised (e.g., weakly supervised) training may take as an input WSI and may include a label of slide level information. Pipelines for a semi-supervised training may include (1) 2; (2) 2, Heuristic; (3) 2, 4, Heuristic; (4) 2, 4, 5, Heuristic; and/or (5) 2, 5, Heuristic. Advantages of using a semi-supervised training may be that (1) the types of labels required may be present in many hospital records; and (2) output is explainable because (a) it may be known which areas of the image contributed most to the diagnosis; and (b) it may be known why a slide was prioritized over another (e.g., a diagnostic value, an analytic difficulty, etc.). A disadvantage of using a semi-supervised training is that it may be difficult to train. For example, the model may need to use a training scheme such as Multiple Instance Learning, Activate Learning, and/or distributed training to account for the fact that there is limited information about where in the slide the information is that should lead to a decision.

[0080] According to one embodiment, an example unsupervised training may take as an input a WSI and may require no label. The pipelines for an unsupervised training may include (1) 3, 4; and/or (2) 3, 4, Heuristic. An advantage of unsupervised training may be that it does not require any labels. Disadvantages of using an unsupervised training may be that (1) it may be difficult to train. For example, it may need to use a training scheme such as Multiple Instance Learning, Activate Learning, and/or distributed training to account for the fact that there is limited information about where in the slide the information is that should lead to a decision; (2) it may require additional slides; and/or (3) it may be less explainable because it might output a prediction and probability without explaining why that prediction was made.

[0081] According to one embodiment, an example mixed training may include training any of the example pipelines described above for fully supervised training, semi-supervised training, and/or unsupervised training, and then use the resulting model as an initial point for any of the training methods. Advantages of mixed training may be that (1) it may require less data; (2) it may have improved performance; and/or (3) it may allow a mixture of different levels of labels (e.g., segmentation, slide level information, no information). Disadvantages of mixed training may be that (1) it may be more complicated and/or expensive to train; and/or (2) it may require more code that may increase a number and complexity of potential bugs.

[0082] According to one embodiment, an example uncertainty estimation may include training any of the example pipelines described above for fully supervised training, semi-supervised training, and/or unsupervised training, for any task related to slide data using uncertainty estimation in the end of the pipeline. Further, a heuristic or classifier may be used to predict whether a slide should be prioritized over another based on an amount of uncertainty in the prediction of the test. An advantage of uncertainty estimation may be that it is robust to out-of-distribution data. For example, when unfamiliar data is presented, it may still correctly predict that it is uncertain. Disadvantages of uncertainty estimation may be that (1) it may need more data; (2) it may have poor overall performance; and/or (3) it may be less explainable because the model might not necessarily identify how a slide or slide embedding is abnormal.

[0083] According to one embodiment, an ensembles training may include simultaneously running models produced by any of the example pipelines described above, and combining the outputs by a heuristic or a classifier to produce robust and accurate results. Advantages of ensembles training may be that (1) it is robust to out-of-distribution data; and/or (2) it may combine advantages and disadvantages of other models, resulting in a minimization of disadvantages (e.g., a supervised training model combined with an uncertainty estimation model, and a heuristic that uses a supervised model when incoming data is in distribution and uses an uncertainty model when data is out of distribution, etc.). Disadvantages of ensembles training may be that (1) it may be more complex; and/or (2) it may be expensive to train and run.

[0084] Training techniques discussed herein may also proceed in stages, where images with greater annotations are initially used for training, which may allow for more effective later training using slides that have fewer annotations, are less supervised, etc.

[0085] Training may begin using the slides that are the most thoroughly annotated, relative to all the training slide images that may be used. For example, training may begin using supervised learning. A first set of slides images may be received or determined with associated annotations. Each slide may have marked and/or masked regions and may include information such as whether the slide should be prioritized over another. The first set of slides may be provided to a training algorithm, for example a CNN, which may determine correlations between the first set of slides and their associated annotations.

[0086] After training with the first set of images is completed, a second set of slide images may be received or determined having fewer annotations than the first set, for example with partial annotations. In one embodiment, the annotations might only indicate that the slide has a diagnosis or quality issue associated with it, but might not specify what or where disease may be found, etc. The second set of slide images may be trained using a different training algorithm than the first, for example Multiple Instance Learning. The first set of training data may be used to partially train the system, and may make the second training round more effective at producing an accurate algorithm.

[0087] In this way, training may proceed in any number of stages, using any number of algorithms, based on the quality and types of the training slide images. These techniques may be utilized in a situations where multiple training sets of images are received, which may be of varying quality, annotation levels, and/or annotation types.

[0088] FIG. 3 illustrates exemplary methods for determining an order in which to analyze a plurality of pathology slides. For example, exemplary methods 300 and 320 (e.g., steps 301-325) may be performed by the slide prioritization tool 101 automatically or in response to a request from a user (e.g., physician, pathologist, etc.).

[0089] According to one embodiment, the exemplary method 300 for determining an order in which to analyze a plurality of pathology slides may include one or more of the steps below. In step 301, the method may include creating a dataset of one or more digitized pathology images across cancer subtypes and tissue specimens (e.g., histology, cytology, hematology, microCT, etc.). In step 303, the method may include receiving or determining one or more labels (e.g., slide morphology, diagnostic, outcome, difficulty, etc.) for each pathology image of the dataset. In step 305, the method may include storing each image and its corresponding label(s) in a digital storage device (e.g., hard drive, network drive, cloud storage, RAM, etc.)

[0090] In step 307, the method may include training a computational pathology-based machine learning algorithm that takes, as input, one or more digital images of a pathology specimen, and predicting a prioritization rank for each digital image. Different methods for implementing the machine learning algorithm may include but are not limited to (1) CNN (Convolutional Neural Network); (2) MIL (Multiple Instance Learning); (3) RNN (Recurrent Neural Network); (4) Feature aggregation via CNN; and/or (5) Feature extraction following by ensemble methods (e.g., random forest), linear/non-linear classifiers (e.g., SVMs, MLP), and/or dimensionality reduction techniques (e.g., PCA, LDA). Example features may include vector embeddings from a CNN, single/multi-class output from a CNN, and/or multi-dimensional output from a CNN (e.g., a mask overlay of the original image). A CNN may learn feature representations for classification tasks directly from pixels, which may lead to better diagnostic performance. When detailed annotations for regions or pixel-wise labels are available, a CNN may be trained directly if there is a large amount of labeled data. However, when labels are only at the whole slide level or over a collection of slides in a group (which may be called a "part" in pathology), MIL may be used to train the CNN or another neural network classifier, where MIL learns the image regions that are diagnostic for the classification task leading to the ability to learn without exhaustive annotations. An RNN may be used on features extracted from multiple image regions (e.g., tiles) that it then processes to make a prediction. Other machine learning methods, e.g., random forest, SVM, and numerous others may be used with either features learned by a CNN, a CNN with MIL, or using hand-crafted image features (e.g., SIFT or SURF) to do the classification task, but they may perform poorly when trained directly from pixels. These methods tend to perform poorly compared to CNN-based systems when there is a large amount of annotated training data available. Dimensionality reduction techniques could be used as a pre-processing step before using any of the classifiers mentioned, which could be useful if there was little data available.

[0091] The above description of machine learning algorithms for FIG. 2 (e.g., Table 1 and corresponding description) may also apply to the machine learning algorithms of FIG. 3.

[0092] An exemplary method 320 for using the slide prioritization tool may include one or more of the steps below. In step 321, the method may include receiving a digital pathology image corresponding to a user. In step 323, the method may include determining a rank order or statistic for a slide and/or a case associated with the received digital pathology image. The rank order or statistic may be determined by applying the trained computational pathology-machine learning algorithm (e.g., of method 300) to the received image. The rank order or statistic may be used to prioritize review or additional slide preparation for the slide associated with the received image or the case associated with the received image.

[0093] In step 325, the method may include outputting the rank order or statistic. One output may include a determination and/or display of one or more variation(s) in order, based on preferences, heuristics, statistics, objectives of user (e.g., efficiency, difficulty, urgency, etc.). Alternately or in addition, an output may include a visual sorting of the received image at a case level, based on the generated order. For example, such visual sorting may include a display comprising a sorting of cases ordered based on maximum or minimum slide probability for a target feature, based on an average probability across all slides for a target feature, based on the raw number of slides showing a target feature, etc. Yet another output may include a visualization of a sorting at the slide level or tissue block level within each case, based on the generated order. The visual sorting may be performed by a user, and/or computationally.

[0094] The above-described slide prioritization tool may include particular applications or embodiments usable in research, and/or production/clinical/industrial settings. The embodiments may occur at various phases of development and use. A tool may employ one or more of the embodiments below.

[0095] According to one embodiment, a prioritization may be based on quality control. Quality control issues may impact a pathologist's ability to render a diagnosis. In other words, quality control issues may increase the turnaround time for a case. For example, a poorly prepared and scanned slide may be sent to a pathologist's queue, before a quality control issue is found. According to one embodiment, the turnaround time may be shortened by identifying a quality control issue before it reaches a pathologist's queue, therefore saving time in a pathology diagnosis workflow. For example, the present embodiment may identify and triage cases/slide(s) with quality control issues and signal the issue to lab and scanner technicians, before the slide(s) reach a pathologist. This quality control catch earlier in the workflow may improve efficiency.

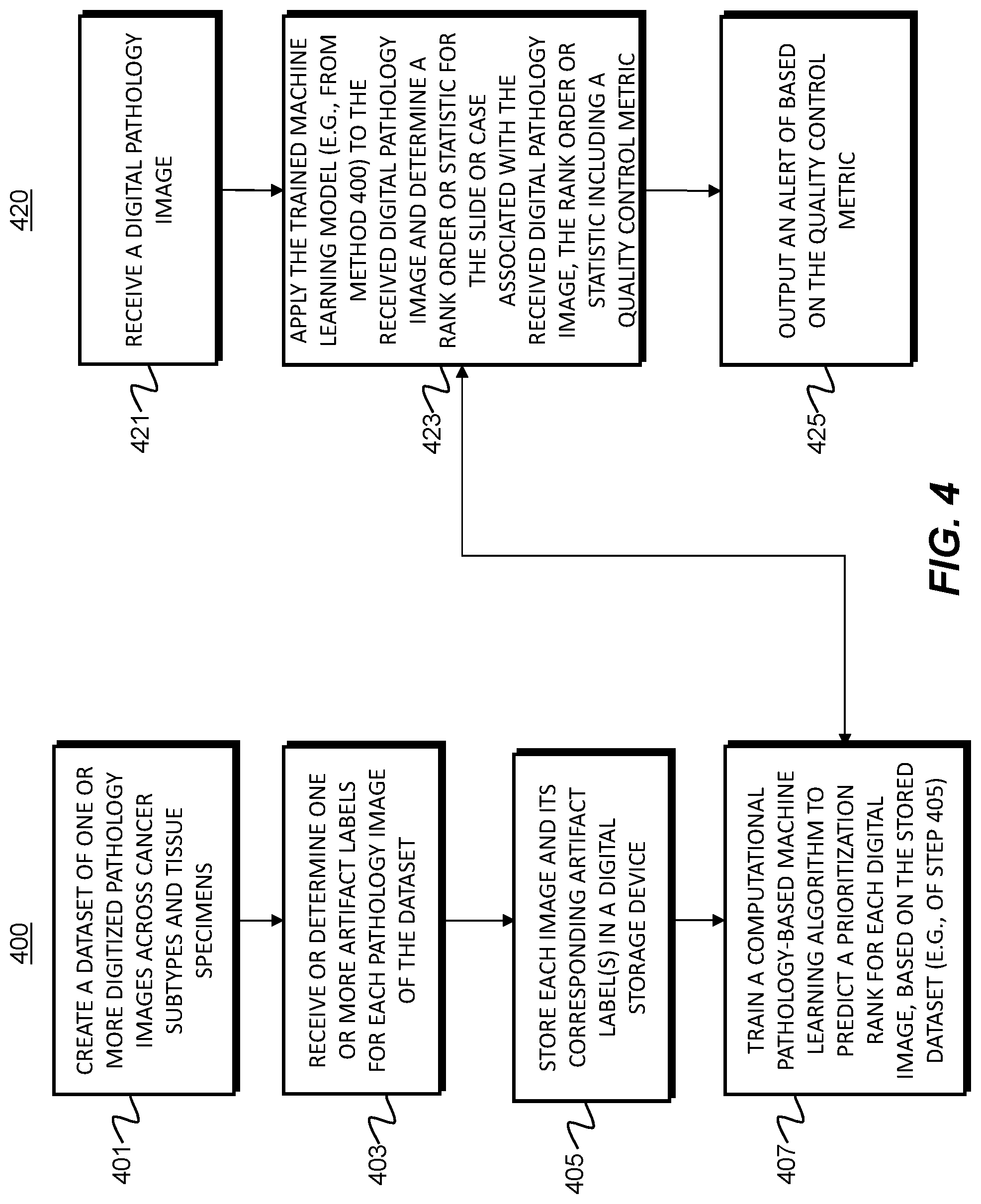

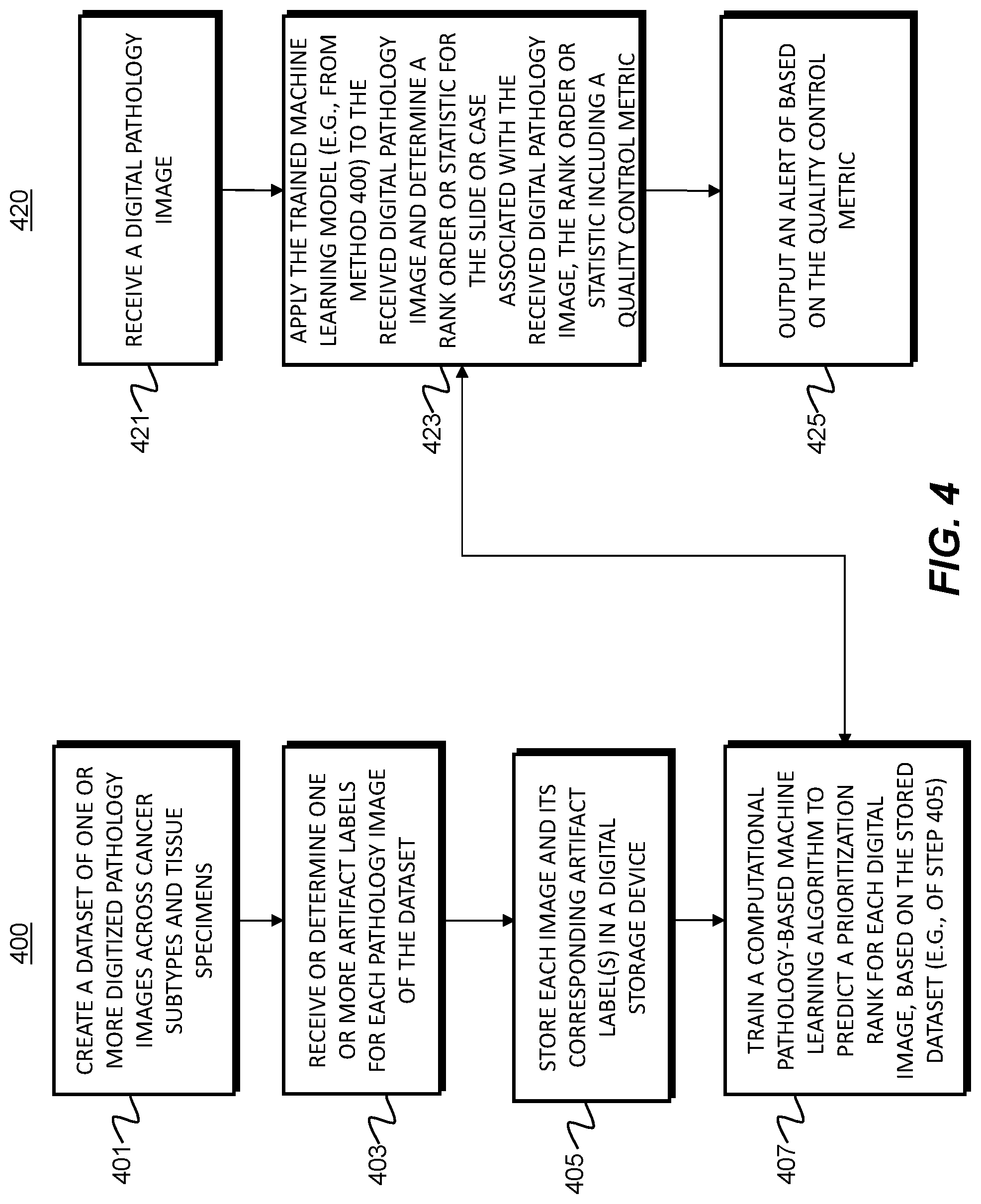

[0096] FIG. 4 illustrates exemplary methods for developing a quality control prioritization tool. For example, exemplary methods 400 and 420 (e.g., steps 401-425) may be performed by the slide prioritization tool 101 automatically or in response to a request from a user (e.g., physician, pathologist, etc.).

[0097] According to one embodiment, the exemplary method 400 for developing a quality control prioritization tool may include one or more of the steps below. In step 401, the method may include creating a dataset of digitized pathology images across cancer subtypes and tissue specimens (e.g., histology, cytology, hematology, microCT, etc.). In step 403, the method may include receiving or determining one or more labels (e.g., slide morphology, diagnostic, outcome, difficulty, etc.) for each pathology image of the dataset. Additional exemplary labels may include but are not limited to scanning artifacts (e.g., scanning lines, missing tissue, blur, etc.) and slide preparation artifacts (e.g., folded tissue, poor staining, damaged slide, marking, etc.). In step 405, the method may include storing each image and its corresponding label(s) in a digital storage device (e.g., hard drive, network drive, cloud storage, RAM, etc.).

[0098] In step 407, the method may include training a computational pathology-based machine learning algorithm that takes, as input, one or more digital images of a pathology specimen, and predicting a prioritization rank for each digital image. Different methods for implementing the machine learning algorithm may include but are not limited to (1) CNN (Convolutional Neural Network); (2) MIL (Multiple Instance Learning); (3) RNN (Recurrent Neural Network); (4) Feature aggregation via CNN; and/or (5) Feature extraction following by ensemble methods (e.g., random forest), linear/non-linear classifiers (e.g., SVMs, MLP), and/or dimensionality reduction techniques (e.g., PCA, LDA). Example features may include vector embeddings from a CNN, single/multi-class output from a CNN, and/or multi-dimensional output from a CNN (e.g., a mask overlay of the original image). A CNN may learn feature representations for classification tasks directly from pixels, which may lead to better diagnostic performance. When detailed annotations for regions or pixel-wise labels are available, a CNN may be trained directly if there is a large amount of labeled data. However, when labels are only at the whole slide level or over a collection of slides in a group (which may be called a "part" in pathology), MIL may be used to train the CNN or another neural network classifier, where MIL learns the image regions that are diagnostic for the classification task leading to the ability to learn without exhaustive annotations. An RNN may be used on features extracted from multiple image regions (e.g., tiles) that it then processes to make a prediction. Other machine learning methods, e.g., random forest, SVM, and numerous others may be used with either features learned by a CNN, a CNN with MIL, or using hand-crafted image features (e.g., SIFT or SURF) to do the classification task, but they may perform poorly when trained directly from pixels. These methods tend to perform poorly compared to CNN-based systems when there is a large amount of annotated training data available. Dimensionality reduction techniques could be used as a pre-processing step before using any of the classifiers mentioned, which could be useful if there was little data available.

[0099] The above description of machine learning algorithms for FIG. 2 (e.g., Table 1 and corresponding description) may also apply to the machine learning algorithms of FIG. 4.

[0100] An exemplary method 420 for using the quality control prioritization tool may include one or more of the steps below. In step 421, the method may include receiving a digital pathology image corresponding to a user. In step 423, the method may include determining a rank order or statistic for a slide and/or a case associated with the received digital pathology image. The rank order or statistic may be determined by applying the trained computational pathology-machine learning algorithm (e.g., of method 400) to the received image. The rank order or statistic may be used to prioritize review or additional slide preparation for the slide associated with the received image or the case associated with the received image.

[0101] In step 425, the method may include outputting the rank order or statistic. One output may include a determination and/or display of one or more variation(s) in order, based on preferences, heuristics, statistics, objectives of user (e.g., efficiency, difficulty, urgency, etc.). Alternately or in addition, an output may include a visual sorting of the received image at a case level, based on the generated order. For example, such visual sorting may include a display comprising a sorting of cases ordered based on maximum or minimum slide probability for a target feature, based on an average probability across all slides for a target feature, based on the raw number of slides showing a target feature, etc. Another output may include a visualization of a sorting at the slide level or tissue block level within each case, based on the generated order. The visual sorting may be performed by a user, and/or computationally. Yet another output may include an identification of a specific quality control issue and/or an alert to address the identified quality control issue. For example, a quality control metric may be computed for each slide. The quality control metric may signify the presence and/or severity of a quality control issue. The alert may be transmitted to a particular personnel. For example, this step may include identifying personnel associated with an identified quality control issue and generating the alert for the identified personnel. Another aspect of the alert may include a step of discerning if the quality control issue impacts rendering of a diagnosis. In some embodiments, the alert may be generated or prompted only if the identified quality control issue impacts rendering a diagnosis. For example, the alert may be generated only if the quality control metric associated with the quality control issue passes a predetermined quality control metric threshold value.

[0102] According to one embodiment, a prioritization may be designed to increase efficiency. Currently, most institutions and laboratories have standardized turnaround time expectations for each pathologist. The time may be measured from the point of accession of a pathology specimen, to sign-out by a primary pathologist. In practice, pathologists may order additional stains or recuts for more information for some cases before rendering a final diagnosis. The additional stain or recut orders may be more numerous in certain pathology subspecialties. The additional orders may increase turnaround time and thus impact the patient. The current embodiment may prioritize these types of subspecialty cases for review, e.g., so that additional stain(s) or recut(s) may be ordered prior to pathologist review, or so that pathologists may review such slide(s) sooner and order the additional stain(s) or recut(s) sooner. Such prioritization may lower turnaround time and raise efficiency of slide review.

[0103] FIG. 5 illustrates exemplary methods for developing an efficiency prioritization tool. For example, exemplary methods 500 and 520 (e.g., steps 501-525) may be performed by the slide prioritization tool 101 automatically or in response to a request from a user (e.g., physician, pathologist, etc.).

[0104] According to one embodiment, the exemplary method 500 for developing an efficiency prioritization tool may include one or more of the steps below. In step 501, the method may include creating a dataset of digitized pathology images across cancer subtypes and tissue specimens (e.g., histology, cytology, hematology, microCT, etc.). In step 503, the method may include receiving or determining one or more labels (e.g., slide morphology, diagnostic, outcome, difficulty, etc.) for each pathology image of the dataset. Additional exemplary labels may include but are not limited to the following slide preparation labels: (1) likely need for a specimen recut; (2) likely need for an immunohistochemical stain; (3) Likely need for additional diagnostic testing (e.g., genomic testing); (4) Likely need for a second opinion (consultation); and/or (5) Likely need for a special stain.

[0105] In step 505, the method may include storing each image and its corresponding label(s) in a digital storage device (e.g., hard drive, network drive, cloud storage, RAM, etc.). In step 507, the method may include training a computational pathology-based machine learning algorithm that takes, as input, one or more digital images of a pathology specimen, and then predicts a prioritization rank for each digital image. Different methods for implementing the machine learning algorithm may include but are not limited to (1) CNN (Convolutional Neural Network); (2) MIL (Multiple Instance Learning); (3) RNN (Recurrent Neural Network); (4) Feature aggregation via CNN; and/or (5) Feature extraction following by ensemble methods (e.g., random forest), linear/non-linear classifiers (e.g., SVMs, MLP), and/or dimensionality reduction techniques (e.g., PCA, LDA). Example features may include vector embeddings from a CNN, single/multi-class output from a CNN, and/or multi-dimensional output from a CNN (e.g., a mask overlay of the original image). A CNN may learn feature representations for classification tasks directly from pixels, which may lead to better diagnostic performance. When detailed annotations for regions or pixel-wise labels are available, a CNN may be trained directly if there is a large amount of labeled data. However, when labels are only at the whole slide level or over a collection of slides in a group (which may be called a "part" in pathology), MIL may be used to train the CNN or another neural network classifier, where MIL learns the image regions that are diagnostic for the classification task leading to the ability to learn without exhaustive annotations. An RNN may be used on features extracted from multiple image regions (e.g., tiles) that it then processes to make a prediction. Other machine learning methods, e.g., random forest, SVM, and numerous others may be used with either features learned by a CNN, a CNN with MIL, or using hand-crafted image features (e.g., SIFT or SURF) to do the classification task, but they may perform poorly when trained directly from pixels. These methods tend to perform poorly compared to CNN-based systems when there is a large amount of annotated training data available. Dimensionality reduction techniques could be used as a pre-processing step before using any of the classifiers mentioned, which could be useful if there was little data available.

[0106] The above description of machine learning algorithms for FIG. 2 (e.g., Table 1 and corresponding description) may also apply to the machine learning algorithms of FIG. 5.