Trajectory-Based Viewport Prediction for 360-Degree Videos

Petrangeli; Stefano ; et al.

U.S. patent application number 16/421276 was filed with the patent office on 2020-11-26 for trajectory-based viewport prediction for 360-degree videos. This patent application is currently assigned to Adobe Inc.. The applicant listed for this patent is Adobe Inc.. Invention is credited to Stefano Petrangeli, Gwendal Brieuc Christian Simon, Viswanathan Swaminathan.

| Application Number | 20200374506 16/421276 |

| Document ID | / |

| Family ID | 1000005207330 |

| Filed Date | 2020-11-26 |

View All Diagrams

| United States Patent Application | 20200374506 |

| Kind Code | A1 |

| Petrangeli; Stefano ; et al. | November 26, 2020 |

Trajectory-Based Viewport Prediction for 360-Degree Videos

Abstract

In implementations of trajectory-based viewport prediction for 360-degree videos, a video system obtains trajectories of angles of users who have previously viewed a 360-degree video. The angles are used to determine viewports of the 360-degree video, and may include trajectories for a yaw angle, a pitch angle, and a roll angle of a user recorded as the user views the 360-degree video. The video system clusters the trajectories of angles into trajectory clusters, and for each trajectory cluster determines a trend trajectory. When a new user views the 360-degree video, the video system compares trajectories of angles of the new user to the trend trajectories, and selects trend trajectories for a yaw angle, a pitch angle, and a roll angle for the user. Using the selected trend trajectories, the video system predicts viewports of the 360-degree video for the user for future times.

| Inventors: | Petrangeli; Stefano; (San Jose, CA) ; Swaminathan; Viswanathan; (Saratoga, CA) ; Simon; Gwendal Brieuc Christian; (San Carlos, CA) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Assignee: | Adobe Inc. San Jose CA |

||||||||||

| Family ID: | 1000005207330 | ||||||||||

| Appl. No.: | 16/421276 | ||||||||||

| Filed: | May 23, 2019 |

| Current U.S. Class: | 1/1 |

| Current CPC Class: | H04N 13/376 20180501; H04N 13/194 20180501 |

| International Class: | H04N 13/194 20060101 H04N013/194; H04N 13/376 20060101 H04N013/376 |

Claims

1. In a digital medium environment for viewport prediction of a 360-degree video, a method implemented by a computing device, the method comprising: receiving trajectories of angles that are sampled at time instances of the 360-degree video, the angles at the time instances corresponding to viewports of the 360-degree video at the time instances; clustering the trajectories into trajectory clusters based on mutual distances between pairs of the trajectories; determining score thresholds for the trajectory clusters from the mutual distances between the pairs of the trajectories belonging to the trajectory clusters; determining trend trajectories for the trajectory clusters, the trend trajectories representing the trajectories belonging to the trajectory clusters; and predicting a user viewport of the 360-degree video for a future time instance from the score thresholds, angle samples of at least one of the trend trajectories, and user angles that correspond to the user viewport, the angle samples and the user angles corresponding to the time instances occurring prior to the future time instance.

2. The method as described in claim 1, wherein the determining the score thresholds for the trajectory clusters includes: determining, for each trajectory cluster of the trajectory clusters, a maximum one of the mutual distances between the pairs of the trajectories belonging to said each trajectory cluster; and generating, for said each trajectory cluster, a score threshold of the score thresholds based on the maximum one of the mutual distances for said each trajectory cluster.

3. The method as described in claim 1, wherein the determining the trend trajectories for the trajectory clusters includes: determining time intervals of the 360-degree video; determining, for each time interval of the time intervals, polynomial coefficients for each trajectory cluster of the trajectory clusters; and forming, for said each trajectory cluster, a union over the time intervals of polynomial functions having the polynomial coefficients.

4. The method as described in claim 1, wherein the determining the trend trajectories for the trajectory clusters includes determining, for each trajectory cluster of the trajectory clusters, a centroid trajectory or a median trajectory.

5. The method as described in claim 1, wherein the angles include, at each time instance of the time instances, at least one of a yaw angle, a pitch angle, a roll angle, or an angle determined from one or more of the yaw angle, the pitch angle, or the roll angle.

6. The method as described in claim 1, wherein the trajectories include separate trajectories for different ones of the angles.

7. The method as described in claim 1, wherein the trajectories include a trajectory for a joint angle that simultaneously represents multiple ones of the angles.

8. The method as described in claim 1, wherein the user angles and the angles correspond to different user-viewings of the 360-degree video.

9. The method as described in claim 1, wherein the user angles and the angles correspond to a shared-viewing of the 360-degree video.

10-20. (canceled)

21. In a digital medium environment, a computing device comprising: a processing system; and a computer-readable storage medium having instructions stored thereon that, responsive to execution by the processing system, causes the processing system to perform operations comprising: receiving trajectories of angles that are sampled at time instances of the 360-degree video, the angles at the time instances corresponding to viewports of the 360-degree video at the time instances; clustering the trajectories into trajectory clusters based on mutual distances between pairs of the trajectories; determining score thresholds for the trajectory clusters from the mutual distances between the pairs of the trajectories belonging to the trajectory clusters; determining trend trajectories for the trajectory clusters, the trend trajectories representing the trajectories belonging to the trajectory clusters; and predicting a user viewport of the 360-degree video for a future time instance from the score thresholds, angle samples of at least one of the trend trajectories, and user angles that correspond to the user viewport, the angle samples and the user angles corresponding to the time instances occurring prior to the future time instance.

22. The computing device as described in claim 21, wherein the determining the score thresholds for the trajectory clusters includes: determining, for each trajectory cluster of the trajectory clusters, a maximum one of the mutual distances between the pairs of the trajectories belonging to said each trajectory cluster; and generating, for said each trajectory cluster, a score threshold of the score thresholds based on the maximum one of the mutual distances for said each trajectory cluster.

23. The computing device as described in claim 21, wherein the determining the trend trajectories for the trajectory clusters includes: determining time intervals of the 360-degree video; determining, for each time interval of the time intervals, polynomial coefficients for each trajectory cluster of the trajectory clusters; and forming, for said each trajectory cluster, a union over the time intervals of polynomial functions having the polynomial coefficients.

24. The computing device as described in claim 21, wherein the determining the trend trajectories for the trajectory clusters includes determining, for each trajectory cluster of the trajectory clusters, a centroid trajectory or a median trajectory.

25. The computing device as described in claim 21, wherein the angles include, at each time instance of the time instances, at least one of a yaw angle, a pitch angle, a roll angle, or an angle determined from one or more of the yaw angle, the pitch angle, or the roll angle.

26. The computing device as described in claim 21, wherein the trajectories include separate trajectories for different ones of the angles.

27. The computing device as described in claim 21, wherein the trajectories include a trajectory for a joint angle that simultaneously represents multiple ones of the angles.

28. The computing device as described in claim 21, wherein the user angles and the angles correspond to different user-viewings of the 360-degree video.

29. The computing device as described in claim 21, wherein the user angles and the angles correspond to a shared-viewing of the 360-degree video.

30. In a digital medium environment for viewport prediction of a 360-degree video, a system comprising: means for receiving trajectories of angles that are sampled at time instances of the 360-degree video, the angles at the time instances corresponding to viewports of the 360-degree video at the time instances; means for clustering the trajectories into trajectory clusters based on mutual distances between pairs of the trajectories; means for determining score thresholds for the trajectory clusters from the mutual distances between the pairs of the trajectories belonging to the trajectory clusters; means for determining trend trajectories for the trajectory clusters, the trend trajectories representing the trajectories belonging to the trajectory clusters; and means for predicting a user viewport of the 360-degree video for a future time instance from the score thresholds, angle samples of at least one of the trend trajectories, and user angles that correspond to the user viewport, the angle samples and the user angles corresponding to the time instances occurring prior to the future time instance.

31. The system as described in claim 30, wherein the means for determining the score thresholds for the trajectory clusters includes: means for determining, for each trajectory cluster of the trajectory clusters, a maximum one of the mutual distances between the pairs of the trajectories belonging to said each trajectory cluster; and means for generating, for said each trajectory cluster, a score threshold of the score thresholds based on the maximum one of the mutual distances for said each trajectory cluster.

Description

BACKGROUND

[0001] Videos in which views in multiple directions are simultaneously recorded (e.g., using an omnidirectional camera or multiple cameras) are referred to as 360-degree videos, immersive videos, or spherical videos, and are used in virtual reality, gaming, and playback situations where a viewer can control his or her viewing direction. The part of a 360-degree video being viewed by a viewer during playback of the 360-degree video is referred to as the viewport, and changes as the viewer changes his or her viewing direction. For instance, when playing a video game that allows a viewer to immerse themselves in the 360-degree video using virtual reality, the viewport corresponding to the viewer may change to display a different portion of the 360-degree video based on the viewer's movements within the video game.

[0002] When delivering a 360-degree video, such as when a server delivers the 360-degree video to a client device over a network, the portion of the 360-degree video corresponding to a current viewport is often delivered at a higher quality (e.g., a higher bit-rate of source encoding) than other portions of the 360-degree video to reduce the bandwidth requirements needed to deliver the 360-degree video. For instance, the 360-degree video can be encoded at different qualities and spatially divided into tiles. During the streaming session, the client device can request the tiles corresponding to the current viewport at the highest qualities. Consequently, when a user changes the viewport of the 360-degree video, such as by moving during playback of the 360-degree video, the user may experience a degradation in the video quality at the transitions of the viewport caused by different encoding qualities of the different portions of the 360-degree video. Hence, many video systems not only request a current viewport for a user at a higher quality, but also predict a future viewport for the user and request the predicted viewport (e.g., tiles of the 360-degree video corresponding to the predicted viewport) at a higher quality than other portions of the 360-degree video to minimize the transitions in quality experienced by the user as they change the viewport. This technique is sometimes referred to as viewport-based adaptive streaming.

[0003] Conventional systems that perform viewport-based adaptive streaming are limited to predicting user viewports for short-term time horizons, typically on the order of milliseconds, and almost always less than a couple seconds. However, most devices that process and display 360-degree videos are equipped with video buffers having much longer delays than the short-term time horizons of conventional systems that perform viewport-based adaptive streaming. For instance, it is not uncommon for a client device that displays 360-degree videos to include video buffers having 10-15 seconds worth of storage. Moreover, conventional systems that are limited to predicting user viewports for short-term time horizons do not scale to long-term horizons, since these conventional systems usually rely just on physical movements of a user, and often model these movements using second-order statistics which simply do not include the information needed for long-term time horizons corresponding to the delays of video buffers in client devices.

[0004] Hence, conventional systems that perform viewport-based adaptive streaming are not efficient because when a video buffer with a long-term time horizon (e.g., 10-15 seconds) is used, these conventional systems suffer from quality degradation as the user moves, due to the poor performance of short-term (e.g., a few seconds) based prediction algorithms. Conversely, when a video buffer with a short-term time horizon (e.g., 2-3 seconds) is used, the short-term based prediction algorithms may be effective at predicting a user viewport for the short-term time horizon, but delivery of the 360-degree video is more susceptible to bandwidth fluctuations that cause video freezes and other quality degradations. Accordingly, these conventional systems yield poor viewing experiences for users.

SUMMARY

[0005] Techniques and systems are described for trajectory-based viewport prediction for 360-degree videos. A video system obtains trajectories of angles as related to a user's viewing angle, and determines the user's viewport of the 360-degree video over time. For instance, the trajectories of the angles may include trajectories for a yaw angle, a pitch angle, and a roll angle of a user's head recorded as the user views the 360-degree video for users who have previously viewed the 360-degree video. The video system clusters the trajectories of angles into trajectory clusters based on a mutual distance between pairs of trajectories, and determines for each cluster a trend trajectory that represents the trajectories of the trajectory cluster (e.g., an average trajectory for the trajectory cluster) and a score threshold that represents the mutual distances for the pairs of trajectories of the trajectory cluster. When a new user views the 360-degree video, the video system compares trajectories of angles of the new user recorded during a time frame of the 360-degree video to the trend trajectories, and selects trend trajectories for a yaw angle, a pitch angle, and a roll angle for the user based on the comparison and the score thresholds.

[0006] Using the selected trend trajectories for yaw, pitch, and roll angles, the video system predicts viewports of the 360-degree video for the user for future times (e.g., later times than the time frame of the 360-degree video used for the comparison). Hence, the video system predicts a user's viewport of a 360-degree video based on patterns of past viewing behavior of the 360-degree video, e.g., how other users viewed the 360-degree video. Accordingly, the video system can accurately predict a user's viewport for long-term time horizons (e.g., 10-15 seconds) that correspond to a device's video buffer delay, so that the 360-degree video can be efficiently delivered to a device and viewed without undesirable transitions in display quality as the user changes his or her viewport of the 360-degree video.

[0007] This Summary introduces a selection of concepts in a simplified form that are further described below in the Detailed Description. As such, this Summary is not intended to identify essential features of the claimed subject matter, nor is it intended to be used as an aid in determining the scope of the claimed subject matter.

BRIEF DESCRIPTION OF THE DRAWINGS

[0008] The detailed description is described with reference to the accompanying figures. In the figures, the left-most digit(s) of a reference number identifies the figure in which the reference number first appears. The use of the same reference numbers in different instances in the description and the figures may indicate similar or identical items. Entities represented in the figures may be indicative of one or more entities and thus reference may be made interchangeably to single or plural forms of the entities in the discussion.

[0009] FIG. 1 illustrates a digital medium environment in an example implementation that is operable to employ techniques described herein.

[0010] FIG. 2 illustrates an example system usable for trajectory-based viewport prediction for 360-degree videos in accordance with one or more aspects of the disclosure.

[0011] FIG. 3 illustrates a flow diagram depicting an example procedure in accordance with one or more aspects of the disclosure.

[0012] FIG. 4 illustrates a flow diagram depicting an example procedure in accordance with one or more aspects of the disclosure.

[0013] FIG. 5 illustrates a flow diagram depicting an example procedure in accordance with one or more aspects of the disclosure.

[0014] FIG. 6 illustrates example performance measures in accordance with one or more aspects of the disclosure.

[0015] FIG. 7 illustrates an example system including various components of an example device that can be implemented as any type of computing device as described and/or utilized with reference to FIGS. 1-6 to implement aspects of the techniques described herein.

DETAILED DESCRIPTION

[0016] Overview

[0017] A 360-degree video (e.g., an immersive video or spherical video) includes multiple viewing directions for the 360-degree video, and can be used in virtual reality, gaming, and any playback situation where a viewer can control his or her viewing direction and viewport, which is the part of the 360-degree video viewed by a user at a given time. For instance, when viewing a 360-degree video that allows a user to immerse themselves in the 360-degree video using virtual reality, such as in a virtual reality environment of a video game, the viewport corresponding to the user changes over time as the user moves within the virtual reality environment and views different portions of the 360-degree video. Consequently, conventional systems may perform viewport-based adaptive streaming in which a future viewport for a user is predicted, and this future viewport and a current viewport for the user are delivered (e.g., to a client device over a network) at a higher quality than other portions of the 360-degree video that are delivered in a lower quality format to reduce bandwidth requirements.

[0018] However, these conventional systems predict future viewports over short-term time horizons, e.g., less than two seconds--far less than the delays of video buffers typically found on devices that process and display videos, e.g., 10-15 seconds for most client devices. Unfortunately, these conventional systems do not scale to long-term horizons corresponding to the delays of video buffers. For instance, these conventional systems may rely on physical movements of a user without considering how other users viewed the content of the 360-degree video, and often use second-order statistics which simply do not include the information needed for long-term time horizons. When a video buffer with a long-term time horizon is used, these conventional systems suffer from quality degradation as the user moves due to the poor performance of short-term based prediction algorithms. When a video buffer with a short-term time horizon is used, delivery of the 360-degree video is susceptible to bandwidth fluctuations that cause video freezes and quality degradations, even if the short-term based prediction algorithms are effective at predicting a user viewport for the short-term time horizon. Hence, conventional systems that perform viewport-based adaptive streaming are inefficient and result in poor viewing experiences for the user.

[0019] Accordingly, this disclosure describes systems, devices, and techniques for trajectory-based viewport prediction for 360-degree videos. A video system predicts a user viewport for a 360-degree video at a future time based on trajectories of angles that determine viewports for the 360-degree video at earlier times than the future time. Angles may include a yaw angle, a pitch angle, and a roll angle for a user, such as based on a user's head, eyes, virtual reality device (e.g., head-mounted virtual-reality goggles), and the like, that are used to determine a viewport for the user.

[0020] The video system obtains trajectories of angles, such as a trajectory of yaw angles, a trajectory of pitch angles, a trajectory of roll angles, a trajectory that jointly represents two or more angles, or combinations thereof, for a plurality of users for a 360-degree video. The angles are sampled at time instances of the 360-degree video and correspond to viewports of the 360-degree video at the time instances for a plurality of viewers of the 360-degree video, such as users who have previously viewed the 360-degree video. The video system exploits the observation that many users consume a given 360-degree video in similar ways. Hence, the video system clusters the angle trajectories into trajectory clusters, and determines trend trajectories (e.g., average trajectories) and score thresholds for each trajectory cluster. When a new user views the 360-degree video for a time period, the video system can match the new user's angles collected over the time period of the 360-degree video to one or more trajectory clusters based on the trend trajectories and score thresholds, and predict a viewport for the new user at a future time relative to the time period from the trajectory clusters that match the new user's angles.

[0021] The video system can cluster the trajectories of angles into trajectory clusters in any suitable way, including trajectory clusters for yaw angle, trajectory clusters for pitch angle, trajectory clusters for roll angle, and trajectory clusters for a joint angle that jointly represents two or more angles. Hence, the video system can process yaw, pitch, and roll angles independently and cluster the trajectories of angles into trajectory clusters separately for the yaw, pitch, and roll angles. Additionally or alternatively, the video system can process yaw, pitch, and roll angles jointly by processing a joint angle that represents two or more of the yaw, pitch, and roll angles, and cluster trajectories of joint angles into trajectory clusters. Trajectory clusters include trajectories of angles deemed by the video system to be similar. For instance, pairs of trajectories belonging to a trajectory cluster may have affinity scores above a threshold affinity score, and the affinity score for a pair of trajectories may be determined from a mutual distance between the pair of trajectories.

[0022] The video system determines a score threshold for each trajectory cluster identified by the video system. In one example, the video system determines a score threshold for a trajectory cluster based on the mutual distances of pairs of trajectories that belong to the trajectory cluster. For instance, the video system may determine a maximum mutual distance for pairs of trajectories that belong to the trajectory cluster (e.g., the mutual distance for the pair of trajectories that are farthest from each other among the pairs of trajectories belonging to the trajectory cluster). The video system may determine the score threshold for the trajectory cluster from an affinity score based on the maximum mutual distance for the trajectory cluster. The video system uses the score thresholds for the trajectory clusters to determine if a trajectory of a new user's angles belongs to a trajectory cluster. For instance, the video system may require that a user trajectory (e.g., a trajectory of user angles) and the trend trajectory for the trajectory cluster have an affinity score determined from the mutual distance between the user trajectory and the trend trajectory that is greater than the score threshold for the trajectory cluster.

[0023] The video system can determine a trend trajectory that represents the trajectories of a trajectory cluster in any suitable way. In one example, the video system breaks the 360-degree video into time intervals (e.g., equally-spaced time intervals), and determines, for each time interval, polynomial coefficients of a polynomial function that is fitted to the trajectories of the trajectory cluster over the time interval. The video system forms a union over the time intervals of the polynomial functions having the polynomial coefficients to determine the trend trajectory for each trajectory cluster. Hence, a trend trajectory can be represented as a piecewise polynomial. The video system can fit polynomial coefficients of a polynomial function to the trajectories of the trajectory cluster in any suitable way, such as by selecting the polynomial coefficients to minimize a difference function between the polynomial and a trajectory of angles over all trajectories of the trajectory cluster. In one example, the difference function includes a mean squared error between the polynomial and a trajectory of angles over all trajectories of the trajectory cluster. Additionally or alternatively, the difference function may be minimized subject to a boundary constraint on the polynomial functions at boundaries of the time intervals, to guarantee continuity across the time intervals for the trend trajectory.

[0024] The video system uses the trend trajectories and score thresholds for the trajectory clusters to predict a viewport for a user from user trajectories of angles for the user collected over a time period of the 360-degree video. The user trajectories can include yaw angles, pitch angles, roll angles, or combinations thereof, and determine user viewports of the 360-degree video during the time period. To predict a viewport for the user at a later time (e.g., a future time) relative to the time period, the video system determines affinity scores between the trend trajectories of the trajectory clusters and the user trajectories over the time period, and selects at least one trend trajectory based on comparing the affinity scores to the score thresholds. For instance, if the affinity score for a trend trajectory of a trajectory cluster and a user trajectory is greater than the score threshold for the trajectory cluster, then the video system may determine that the user trajectory belongs to the trajectory cluster. In one example, the video system selects a first trend trajectory for a yaw angle, a second trend trajectory for a pitch angle, and a third trend trajectory for a roll angle based on comparing the affinity scores for the trend trajectories and user trajectories to the score thresholds of the trajectory clusters. The video system predicts a user viewport of the 360-degree video for a later time than the time period based on the first trend trajectory, the second trend trajectory, and the third trend trajectory, such as by evaluating the polynomial functions for the first, second, and third trend trajectories at the later time to determine the user viewport for the later time.

[0025] In one example, the video system predicts a user viewport for a viewing (e.g., display or playback) of a 360-degree video from trend trajectories and score thresholds for trajectory clusters that correspond to different viewings of the 360-degree video than the viewing of the 360-degree video. For instance, the video system may be partially implemented by a server that clusters trajectories of angles for users who have previously viewed the 360-degree video (e.g., a history of viewings of the 360-degree video). At a later time when a new user views the 360-degree video on a client device, the server may deliver to the client device the trend trajectories and score thresholds for the trajectory clusters based on data from the history of viewings of the 360-degree video.

[0026] Additionally or alternatively, the video system can predict a user viewport for a display of a 360-degree video from trend trajectories and score thresholds for trajectory clusters that correspond to a same display (e.g., viewing or exposing) of the 360-degree video for which the user viewport is predicted. For instance, when a new user views the 360-degree video on a client device, such as during a live event with multiple simultaneous viewers of the live event, or as part of an interactive and immersive video game with multiple simultaneous users playing the video game, the video system implemented on the client device may obtain trajectories of angles for users who are currently watching the live event or playing the video game with the new user. The video system may cluster the trajectories of angles for the users who are currently watching the live event or playing the video game and determine trend trajectories and score thresholds for the trajectory clusters. Since users may consume portions of the live event or the video game in different orders and prior to the new user, the video system may predict a viewport for the new user from the trend trajectories and score thresholds for the trajectory clusters that include trajectories for the users who are currently viewing the live event or playing the video game with the new user. Hence, the video system may predict or update a prediction of a viewport of a 360-degree video for a user based on most-recently available angle trajectories, including angle trajectories for users who are consuming the 360-degree video simultaneously with the user, such as watching a live event or playing a video game concurrently with the user.

[0027] Accordingly, the video system predicts a user's viewport of a 360-degree video based on patterns of past viewing behavior of the 360-degree video, e.g., how other users viewed the 360-degree video, rather than relying on methods that do not adequately capture the information needed to predict viewports for long-term time horizons, such as second-order statistics or physical models of a user's movement without regard to how other users viewed the content of the 360-degree video. Hence, the video system can accurately predict a user's viewport for long-term time horizons (e.g., 10-15 seconds in the future) that correspond to a device's video buffer delay, so that the 360-degree video can be efficiently delivered and viewed without undesirable transitions in quality as the user changes the viewport of the 360-degree video.

[0028] In the following discussion an example digital medium environment is described that may employ the techniques described herein. Example implementation details and procedures are then described which may be performed in the example digital medium environment as well as other environments. Consequently, performance of the example procedures is not limited to the example environment and the example environment is not limited to performance of the example procedures.

[0029] Example Digital Medium Environment

[0030] FIG. 1 is an illustration of a digital medium environment 100 in an example implementation that is operable to employ techniques described herein. As used herein, the term "digital medium environment" refers to the various computing devices and resources that can be utilized to implement the techniques described herein. The illustrated digital medium environment 100 includes a user 102 having computing device 104 and computing device 106. Computing device 104 is depicted as a pair of goggles (e.g., virtual reality goggles), and computing device 106 is depicted as a smart phone. Computing devices 104 and 106 can include any suitable type of computing device, such as a mobile phone, tablet, laptop computer, desktop computer, gaming device, goggles, glasses, camera, digital assistant, echo device, image editor, non-linear editor, digital audio workstation, copier, scanner, client computing device, and the like. Hence, computing devices 104 and 106 may range from full resource devices with substantial memory and processor resources (e.g., personal computers, game consoles) to a low-resource device with limited memory or processing resources (e.g., mobile devices).

[0031] Computing devices 104 and 106 are illustrated as separate computing devices in FIG. 1 for clarity. In one example, computing devices 104 and 106 are included in a same computing device. Notably, computing devices 104 and 106 can include any suitable number of computing devices, such as one or more computing devices, (e.g., a smart phone connected to a tablet). Furthermore, discussion of one computing device of one of computing devices 104 and 106 is not limited to that one computing device, but generally applies to each of the computing devices 104 and 106.

[0032] In one example, computing devices 104 and 106 are representative of one or a plurality of different devices connected to a network that perform operations "over the cloud" as further described in relation to FIG. 7. Additionally or alternatively, computing device 104 can be communicatively coupled to computing device 106, such as with a low power wireless communication standard (e.g., a Bluetooth.RTM. protocol). Hence, an asset (e.g., digital image, video, text, drawing, artwork, document, file, and the like) generated, processed, edited, or stored on one device (e.g., a tablet of computing device 106) can be communicated to, and displayed on and processed by another device (e.g., virtual reality goggles of computing device 104).

[0033] Various types of input devices and input instrumentalities can be used to provide input to computing devices 104 and 106. For example, computing devices 104 and 106 can recognize input as being a mouse input, stylus input, touch input, input provided through a natural user interface, and the like. In one example, computing devices 104 and 106 may display a 360-degree video, such as in a virtual reality environment, and include inputs to interact with the virtual reality environment, such as to facilitate a user moving within the virtual reality environment to change the viewport of the 360-degree video (e.g., the user's viewing perspective of the 360-degree video).

[0034] In this example of FIG. 1, computing device 104 displays a 360-degree video 108, which includes viewport 110 that corresponds to a current viewport of the 360-degree video 108 for the user 102 (e.g., the portion of the 360-degree video 108 that is currently being viewed by the user 102). The 360-degree video 108 may be any suitable size in any suitable dimension. In one example, the 360-degree video 108 spans 360 degrees along at least one axis, such as a horizontal axis. Additionally or alternatively, the 360-degree video 108 may span 360 degrees in multiple axes, such as including a spherical display format in which a user can change his or her viewport 360 degrees in any direction. In one example, the 360-degree video 108 spans less than 360 degrees in at least one axis. For instance, the 360-degree video 108 may include a panoramic video spanning 180 degrees, in which only a portion of the 180 degree span is viewable at any one time.

[0035] While the user 102 views the 360-degree video 108, computing device 104 determines angles 112 for the user 102 that are used to determine a viewport of the 360-degree video 108, such as viewport 110. For instance, computing device 104 may include a gyroscope that measures a yaw angle 114, a pitch angle 116, and a roll angle 118 in any suitable coordinate system, such as a coordinate system for the user's head, a coordinate system for the user's eyes, a coordinate system for goggles or a head-mounted display of the computing device 104, and the like. The yaw angle 114, the pitch angle 116, and the roll angle 118 are used to determine a viewport of the 360-degree video 108 because they correspond to a viewing direction of the 360-degree video 108 for the user 102. Computing device 104 determines values of the yaw angle 114, the pitch angle 116, and the roll angle 118 over time for the user 102, and can store these values as trajectories for the angles, such as a first trajectory including values of the yaw angle 114, a second trajectory including values of the pitch angle 116, and a third trajectory including values of the roll angle 118. The values of the angles may be sampled at time instances of the 360-degree video 108 (e.g., based on a timeline of the 360-degree video 108). Accordingly, the trajectories of the angles 112 represent a trajectory of the viewport 110 for the user 102 as the user changes his or her viewing direction of the 360-degree video 108, such as when the user 102 is immersed in a virtual reality environment represented by the 360-degree video 108 and moves within the environment.

[0036] Computing device 104 includes video system 120 that predicts a future viewport of the 360-degree video 108 for user 102 based on the trajectories of the angles 112. For instance, the video system 120 obtains trend trajectories and score thresholds for trajectory clusters, such as clusters of angle trajectories for users who have previously viewed the 360-degree video 108. The video system 120 compares the trajectories of the angles 112 for the user 102 to the trend trajectories, and based on the comparisons and the score thresholds, selects a first trend trajectory for the yaw angle 114, a second trend trajectory for the pitch angle 116, and a third trend trajectory for the roll angle 118 to represent the movement of the user 102 while viewing the 360-degree video 108.

[0037] The video system 120 evaluates the selected first, second, and third trend trajectories at a future time (e.g., a later time than the time period of the trajectories of the angles 112 for the user 102) to predict future viewport 122 of the 360-degree video 108 for the user 102. Because the video system 120 selects the first, second, and third trend trajectories based on users' movements while viewing the 360-degree video 108 itself, and since most users tend to consume 360-degree videos in similar ways, the video system 120 is able to accurately predict the future viewport 122 for the user 102 for long-term time horizons that correspond to typical delays of video buffers, such as 10-15 seconds, which is a significant improvement over conventional systems that are typically limited to viewport prediction for short-term time horizons (e.g., less than two seconds). Accordingly, the video system 120 can deliver the 360-degree video 108 to the user 102 efficiently and without undesirable transitions in the quality of the 360-degree video 108 as the user 102 changes the viewport, such as from viewport 110 to future viewport 122.

[0038] Computing device 106 is also coupled to network 124, which communicatively couples computing device 106 with server 126. Network 124 may include a variety of networks, such as the Internet, an intranet, local area network (LAN), wide area network (WAN), personal area network (PAN), cellular networks, terrestrial networks, satellite networks, combinations of networks, and the like, and as such may be wired, wireless, or a combination thereof. For clarity, FIG. 1 does not depict computing device 104 as being coupled to network 124, though computing device 104 may also be coupled to network 124 and server 126.

[0039] Server 126 may include one or more servers or service providers that provide services, resources, assets, or combinations thereof to computing devices 104 and 106, such as 360-degree videos. Services, resources, or assets may be made available to video system 120, video support system 128, or combinations thereof, and stored at assets 130 of server 126. Hence, 360-degree video 108 can include any suitable 360-degree video stored at assets 130 of server 126 and delivered to a client device, such as the computing devices 104 and 106.

[0040] Server 126 includes video support system 128 configurable to receive signals from one or both of computing devices 104 and 106, process the received signals, and send the processed signals to one or both of computing devices 104 and 106 to support trajectory-based viewport prediction for 360-degree videos. For instance, computing device 106 may obtain user angle trajectories (e.g., a yaw angle trajectory, a pitch angle trajectory, and a roll angle trajectory) for user 102, and communicate them to server 126. Server 126, using video support system 128, may select trend trajectories for yaw angle, pitch angle, and roll angle based on comparing the user angle trajectories received from computing device 106 to trend trajectories for trajectory clusters corresponding to previous viewings of the 360-degree video 108. Server 126 may then send the selected trend trajectories for yaw angle, pitch angle, and roll angle back to computing device 106, which can predict a future viewport for the user 102 corresponding to a later time with the video system 120. Accordingly, the video support system 128 of server 126 can include a copy of the video system 120. In one example, computing device 106 sends a request for content of the 360-degree video 108 corresponding to the future viewport to server 126, which in response delivers the content of the 360-degree video 108 corresponding to the future viewport to computing device 106 at a higher quality (e.g., encoded at a higher bit rate) than other portions of the 360-degree video 108, to support viewport-based adaptive streaming.

[0041] Computing device 104 includes video system 120 for trajectory-based viewport prediction for 360-degree videos. The video system 120 includes a display 132, which can be used to display any suitable data used by or associated with video system 120. In one example, display 132 displays a viewport of a 360-degree video, such as viewport 110 of the 360-degree video 108. Portions of the 360-degree video 108 outside the current viewport may not be displayed in the display 132. As the current viewport of the 360-degree video 108 is changed over time in response to a user changing his or her viewing direction of the 360-degree video 108, the display 132 may change the portion of the 360-degree video 108 that is displayed. For instance, at a first time, the display 132 may display content of the 360-degree video 108 corresponding to viewport 110, and at a future time (e.g., ten seconds following the first time), the display 132 may display content of the 360-degree video 108 corresponding to viewport 122.

[0042] The video system 120 also includes processors 134. Processors 134 can include any suitable type of processor, such as a graphics processing unit, central processing unit, digital signal processor, processor core, combinations thereof, and the like. Hence, the video system 120 may be implemented at least partially by executing instructions stored in storage 136 on processors 134. For instance, processors 134 may execute portions of video application 152 (discussed below in more detail).

[0043] The video system 120 also includes storage 136, which can be any suitable type of storage accessible by or contained in the video system 120. Storage 136 stores data and provides access to and from memory included in storage 136 for any suitable type of data. For instance, storage 136 includes angle trajectory data 138 including data associated with trajectories of angles for viewers of a 360-degree video, such as representations of trajectory clusters (e.g., identification numbers of trajectory clusters, indications of types of angles of clusters, such as yaw, pitch, roll, or joint angles), angle trajectories belonging to trajectory clusters, identifiers of users corresponding to the angle trajectories, a date of a viewing of a 360-degree video used to determine angle trajectories, mutual distances for pairs of angle trajectories belonging to trajectory clusters, and affinity scores for pairs of angle trajectories belonging to trajectory clusters. Angle trajectory data 138 may also include trend trajectories for trajectory clusters (e.g., polynomial coefficients for piecewise polynomials making up a trend trajectory), a distance measure used to determine trend trajectories (e.g., minimum mean-squared error, absolute value, an indication of a boundary constraint, etc.), and a number of angle trajectories used to determine trend trajectories (e.g., a number of angle trajectories of a trajectory cluster used to determine polynomial coefficients of a trend trajectory for the trajectory cluster). Angle trajectory data 138 may also include score thresholds for trajectory clusters, a mutual distance used to determine a score threshold (e.g., a maximum mutual distance for pairs of angle trajectories belonging to a trajectory cluster), indications of a pair of angle trajectories used to determine a score threshold, statistics of score thresholds across trajectory clusters, such as mean, median, mode, variance, etc., combinations thereof, and the like.

[0044] Storage 136 also includes user trajectory data 140 including data related to a user viewing a 360-degree video, such as angle trajectories that determine user viewports of the 360-degree video, including a yaw angle trajectory, a pitch angle trajectory, a roll angle trajectory, and combinations thereof. User trajectory data 140 may also include an indication of a location of a device (e.g., a gyroscope) that measures the angle trajectories, such as a head of a user, an eye of a user, a pair of goggles, etc., and indicators of the 360-degree video corresponding to the angle trajectories, such as timestamps indicating time instances of the 360-degree video, scene identifiers, chapter identifiers, viewports of the 360-degree video, combinations thereof, and the like.

[0045] Storage 136 also includes affinity score data 142 including data related to affinity scores for trajectory clusters, such as mutual distances between user trajectories (e.g., trajectories of user angles) and trend trajectories, and affinity scores determined from the mutual distances. Affinity score data 142 may also include a time period of the 360-degree video corresponding to the user trajectories (e.g., time instances for which the angles of the user trajectories are sampled), and the like.

[0046] Storage 136 also includes selection data 144 including data related to determining trend trajectories that match user trajectories, such as affinity scores between user trajectories (e.g., trajectories of user angles) and trend trajectories, differences between the affinity scores and score thresholds for the trajectory clusters, and selected trend trajectories (e.g., a first trajectory including values of a yaw angle, a second trajectory including values of a pitch angle, and a third trajectory including values of roll angle) to assign to a user and predict a future viewport for the user. Selection data 144 may also include indications of whether or not the selected trend trajectories comply with a selection constraint, such as requiring the affinity score between a user trajectory and the trend trajectory to be greater than the score threshold for the trajectory cluster represented by the trend trajectory. For instance, when no trend trajectory satisfies the selection constraint, the video system 120 may select a trend trajectory that is closest to a user trajectory based on the affinity score for the trend trajectory and the user trajectory, despite the affinity score being less than the score threshold for the trend trajectory (e.g., for the trajectory cluster represented by the trend trajectory).

[0047] Storage 136 also includes viewport data 146 including data related to viewports of a 360-degree video, such as a predicted viewport (e.g., a viewport for a user predicted by the video system 120) and indications of the trend trajectories used to predict the viewport. Viewport data 146 may also include data related to a time for which the video system 120 predicts the viewport, such as a time horizon (e.g., 10 seconds) from a current time, a percentage of storage of a video buffer corresponding to the time horizon, combinations thereof, and the like. In one example, viewport data 146 includes data related to a viewport, such as content of the 360-degree video for the viewport, an indicator of quality for content of a viewport, such as encoder rate, and the like.

[0048] Furthermore, the video system 120 includes transceiver module 148. Transceiver module 148 is representative of functionality configured to transmit and receive data using any suitable type and number of communication protocols. For instance, data within video system 120 may be transmitted to server 126 with transceiver module 148. Furthermore, data can be received from server 126 with transceiver module 148. Transceiver module 148 can also transmit and receive data between computing devices, such as between computing device 104 and computing device 106. In one example, transceiver module 148 includes a low power wireless communication standard (e.g., a Bluetooth.RTM. protocol) for communicating data between computing devices.

[0049] The video system 120 also includes video gallery module 150, which is representative of functionality configured to obtain and manage videos, including 360-degree videos. Hence, video gallery module 150 may use transceiver module 148 to obtain any suitable data for a 360-degree video from any suitable source, including obtaining 360-degree videos from a server, such as server 126, computing device 106, or combinations thereof. Data regarding 360-degree videos obtained by video gallery module 150, such as content of 360-degree videos, viewports of 360-degree videos, encoder rates for content of 360-degree videos, and the like can be stored in storage 136 and made available to modules of the video system 120.

[0050] The video system 120 also includes video application 152. The video application 152 includes angle trajectory module 154, which includes cluster module 156, score threshold module 158, and trend trajectory module 160. The video application 152 also includes user trajectory module 162, affinity score module 164, trajectory selection module 166, and viewport prediction module 168. These modules work in conjunction with each other to facilitate trajectory-based viewport prediction for 360-degree videos.

[0051] Angle trajectory module 154 is representative of functionality configured to determine score thresholds and trend trajectories for trajectory clusters. For instance, cluster module 156 clusters angle trajectories into trajectory clusters, score threshold module 158 determines score thresholds for the trajectory clusters, and trend trajectory module 160 determines trend trajectories for the trajectory clusters that represent the trajectories belonging to the trajectory clusters. Angle trajectory module 154 can determine score thresholds and trend trajectories for trajectory clusters on any suitable device. In one example, angle trajectory module 154 is implemented by a server, such as server 126, and the server provides the score thresholds and trend trajectories for trajectory clusters to a client device (e.g., computing device 104 or computing device 106). Additionally or alternatively, the angle trajectory module 154 can be implemented by a client device, such as computing device 104 or computing device 106.

[0052] Angle trajectory module 154 can determine score thresholds and trend trajectories for trajectory clusters at any suitable time to be used by the video system 120. For instance, a server may provide the score thresholds and trend trajectories for trajectory clusters to computing device 106 periodically (e.g., updated every 24 hours or weekly), in response to the user 102 enabling the 360-degree video 108 (e.g., when the user 102 begins viewing the 360-degree video 108), during a viewing of the 360-degree video 108 (e.g., after the user 102 has begun consuming the 360-degree video 108), combinations thereof, and the like. Additionally or alternatively, a client device may generate the score thresholds and trend trajectories for trajectory clusters periodically, or in response to the user 102 enabling the 360-degree video 108.

[0053] In one example, angle trajectory module 154 generates the score thresholds and trend trajectories for trajectory clusters during a display of the 360-degree video 108 (e.g., after the user 102 has begun consuming the 360-degree video 108). In this case, the score thresholds and the trend trajectories may be based on angle trajectories of users who are consuming the 360-degree video 108 at the same time as the user 102 (e.g., a same viewing of the 360-degree video 108, such as in a multi-player video game). For instance, the 360-degree video 108 may be broken into chapters, and the chapters may be consumed by users in an interactive fashion based on user selections, such as a user's location within a virtual reality environment of a video game. Hence, the chapters may be consumed in different orders by different users of the 360-degree video 108. Accordingly, some users may view some chapters of the 360-degree video 108 prior to the user 102, but still during a same playing of the 360-degree video 108, so that the user movements and angle trajectories may be used by the video system 120 to determine score thresholds and trend trajectories to predict a viewport for the user 102 based on the chapter of the 360-degree video 108 being consumed by the user 102.

[0054] Cluster module 156 is representative of functionality configured to cluster trajectories of angles into trajectory clusters. In one example, cluster module 156 clusters trajectories separately for different types of angles, such as by clustering trajectories of yaw angles into trajectory clusters for yaw angles, clustering trajectories of pitch angles into trajectory clusters for pitch angles, and clustering trajectories of roll angles into trajectory clusters for roll angles.

[0055] Cluster module 156 can cluster trajectories of angles into trajectory clusters in any suitable way. In one example, cluster module 156 determines mutual distances between pairs of angle trajectories, affinity scores from the mutual distances, and angle trajectories belonging to the trajectory clusters from the affinity scores. Cluster module 156 can use any suitable distance measure to determine mutual distances between pairs of angle trajectories. Let P=[p.sub.1 p.sub.2 p.sub.3 . . . ] and Q=[q.sub.1 q.sub.2 q.sub.3 . . . ] represent two trajectories of a type of angle (e.g., a yaw angle), so that p.sub.i and q.sub.i each denote a yaw angle for a different user, and the subscript i denotes a sample value (e.g., a time instance of a 360-degree video). In one example, cluster module 156 determines a mutual distance D(P, Q) between the pair of trajectories P and Q according to

D ( P , Q ) = .alpha. ord p .di-elect cons. P d ) d ) = min q .di-elect cons. N ( C ( p , Q ) , Q ) d ( p , q ) ##EQU00001##

where C(p, Q) maps a point p E P to a corresponding point of Q at a same relative position (e.g., sample value or time instance of the 360-degree video), N(q, Q) maps the point q E Q to the set of neighboring points of Q in the time interval [t.sub.q-T.sub.l; t.sub.q+T.sub.l] for time instance t.sub.q of the point q and tunable parameter T.sub.l (e.g., two seconds), and d(p, q) denotes the distance between the points p and q (e.g., the absolute value of the difference between the angles represented by p and q). Cluster module 156 determines the quantity d for each point in P, and the mutual distance D(P, Q) is determined from the value of d larger than a percentage a of all values of d. For instance, for the example where d=[0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 1.0] and .alpha.=80%, the mutual distance is 0.8. By conditioning the mutual distance based on the percentage a, cluster module 156 discards outlier values of d. In one example, the percentage a is set to 95%. Generally, the mutual distance is not symmetric, so that D(P, Q).noteq.D(Q, P). Hence, a mutual distance between the pair of trajectories P and Q can include both measures D(P, Q) and D(Q, P).

[0056] Cluster module 156 determines the mutual distance, including both D(P, Q) and D(Q, P), between the pair of trajectories P and Q for all trajectories to be clustered together (e.g., for trajectories of yaw angles separately from trajectories of pitch angles and separately from trajectories of roll angles). Based on the mutual distances between pairs of trajectories P and Q, the cluster module 156 determines an affinity score K(P, Q) between the pair of trajectories P and Q for all trajectories to be clustered together. In one example, the cluster module 156 determines an affinity score K(P, Q) according to

K ( P , Q ) = e - D ( P , Q ) D ( Q , P ) 2 .sigma. 2 .A-inverted. P , .A-inverted. Q ##EQU00002##

where .sigma. is a tunable scaling parameter that scales the affinity score. In one example, .sigma. is set to a value of ten.

[0057] The cluster module 156 includes a spectral clustering algorithm, such as a spectral clustering algorithm as described for vehicle trajectories in "Clustering of Vehicle Trajectories" in IEEE Transactions on Intelligent Systems, 11(3):647-657, September 2010, the disclosure of which is incorporated herein by reference in its entirety. The cluster module 156 provides the affinity scores K(P, Q) for all P and Q as inputs to the spectral clustering algorithm, and the spectral clustering algorithm clusters trajectories that are similar based on the affinity scores into trajectory clusters. For instance, all pairs of a trajectory cluster identified by cluster module 156 may have an affinity score greater than a threshold affinity score.

[0058] Trajectory clusters determined by cluster module 156, along with any suitable information, such as mutual distances, affinity scores, numbers of trajectory clusters, numbers of angle trajectories belonging to trajectory clusters, identifiers of trajectories (e.g., trajectory identification numbers), identifiers of trajectory clusters that each trajectory belongs (e.g., cluster identification numbers), parameters used to cluster angle trajectories into trajectory clusters, such as percentage a, tunable parameter T.sub.1, and scaling parameter .sigma., combinations thereof, and the like, used by or calculated by cluster module 156 are stored in angle trajectory data 138 of storage 136 and made available to modules of video application 152. In one example, trajectory clusters determined by cluster module 156 are provided to trend trajectory module 160. Additionally or alternatively, cluster module 156 provides mutual distances of pairs of trajectories in trajectory clusters to score threshold module 158.

[0059] Score threshold module 158 is representative of functionality configured to determine score thresholds for trajectory clusters, such as the trajectory clusters identified by cluster module 156. A score threshold determined by score threshold module 158 can be used by video system 120 to determine if a trajectory (e.g., a user trajectory obtained during a viewing of a 360-degree video) belongs to a trajectory cluster, such as by comparing a distance measure between the user trajectory and a trend trajectory for the trajectory cluster to the score threshold for the trajectory cluster.

[0060] Score threshold module 158 can determine score thresholds for trajectory clusters in any suitable way. In one example, score threshold module 158 determines a score threshold for each trajectory cluster from the minimum affinity score for pairs of trajectories belonging to the trajectory cluster. For instance, score threshold module 158 may set the score threshold for a trajectory cluster based on the minimum affinity score among pairs of trajectories belonging to the trajectory cluster, such as equal to the minimum affinity score, a scaled version of the minimum affinity score (e.g., 110% of the minimum affinity score), and the like. Hence, the score threshold can represent a minimum affinity score that a pair of trajectories must have to belong to the trajectory cluster.

[0061] Additionally or alternatively, since cluster module 156 determines an affinity score for a pair of trajectories based on the mutual distance between the pair of trajectories, score threshold module 158 can determine a score threshold for each trajectory cluster from mutual distances between pairs of trajectories belonging to a trajectory cluster. For instance, score threshold module 158 may determine a score threshold for a trajectory cluster from a maximum mutual distance among the mutual distances of pairs of trajectories belonging to the trajectory cluster (e.g., the maximum mutual distance corresponds to a pair of trajectories that are farthest apart from one another among the pairs of trajectories belonging to the trajectory cluster). Score threshold module 158 may determine a maximum mutual distance in any suitable way, such as according to

max P , Q ( D ( P , Q ) + D ( Q , P ) ) ##EQU00003##

for pairs of trajectories P, Q belonging to the trajectory cluster. In one example, score threshold module 158 sets the score threshold for a trajectory cluster equal to the maximum mutual distance among the mutual distances of pairs of trajectories belonging to the trajectory cluster. Additionally or alternatively, score threshold module 158 can set the score threshold for a trajectory cluster to a scaled version of the maximum mutual distance (e.g., 90% of the maximum mutual distance). Hence, the score threshold can represent a maximum mutual distance that a pair of trajectories can have to belong to the trajectory cluster.

[0062] Score thresholds determined by score threshold module 158, along with any suitable information, such as mutual distances, affinity scores, scale factors used to determine the score thresholds, statistics of the score thresholds across the trajectory clusters (e.g., mean, median, mode, standard deviation, etc.), combinations thereof, and the like, used by or calculated by score threshold module 158 are stored in angle trajectory data 138 of storage 136 and made available to modules of video application 152. In one example, score thresholds determined by score threshold module 158 are provided to trajectory selection module 166.

[0063] Trend trajectory module 160 is representative of functionality configured to determine trend trajectories for trajectory clusters, such as the trajectory clusters identified by cluster module 156. The trend trajectories determined by trend trajectory module 160 represent the trajectories belonging to the trajectory clusters. For instance, the trend trajectories can be an average trajectory for a trajectory cluster, one trajectory of a trajectory cluster, a combination of trajectories of a trajectory cluster, or any suitable trajectory that represents the trajectories of a trajectory cluster.

[0064] Trend trajectory module 160 can determine trend trajectories for trajectory clusters in any suitable way. In one example, trend trajectory module 160 selects one trajectory from among trajectories of a trajectory cluster and approximates the one trajectory by a function, such as a polynomial, a spline of polynomials, piecewise connected polynomials, a sum of basis functions, combinations thereof, and the like. For instance, trend trajectory module 160 may break up the one trajectory into segments and fit a function to the one trajectory over each segment. To fit a function to the one trajectory, trend trajectory module 160 may minimize a cost function (e.g., mean-squared error) over parameters of the function (e.g., polynomial coefficients) subject to a boundary constraint at the segment boundaries.

[0065] By selecting one of the trajectories of a trajectory cluster to determine a trend trajectory, rather than multiple trajectories, the processing requirements needed to determine trend trajectories may be reduced. This reduction in processing requirements can be an advantage in some situations, such as when trend trajectory module 160 is needed to determine the trend trajectories quickly (e.g., responsive to a user-request to display a 360-degree video so that perceptible delay to the user is minimized), or during a display of a 360-degree video (e.g., when a user is playing a video game with a 360-degree video environment, the user's viewport can be predicted based on updated trend trajectories that reflect a most recent set of data, including data for users of the video game who are concurrently playing the video game).

[0066] In one example, trend trajectory module 160 determines trend trajectories for trajectory clusters based on multiple trajectories in the trajectory clusters, such as all the trajectories belonging to a trajectory cluster. For instance, trend trajectory module 160 can divide a 360-degree video into intervals (e.g., equally-spaced time intervals for the 360-degree video, for chapters of the 360-degree video, for scenes of the 360-degree video, combinations thereof, and the like). In one example, the intervals include equally-spaced time intervals of the 360-degree video (e.g., three second intervals). For each trajectory cluster determined by cluster module 156, trend trajectory module 160 can determine a function r.sub.i(t), where subscript i denotes a time interval of the 360-degree video. The trend trajectory R.sup.C for the trajectory cluster C is the union of the functions over the time intervals, or R.sup.C=.orgate..sub.ir.sub.i(t). Trend trajectory module 160 can determine trend trajectories for trajectory clusters by fitting the function r.sub.i(t) to multiple trajectories of the trajectory clusters, such as in a least-squares sense.

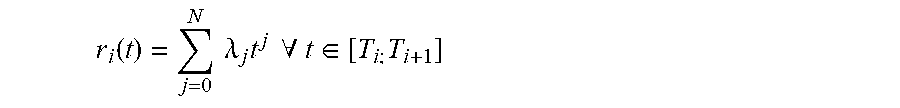

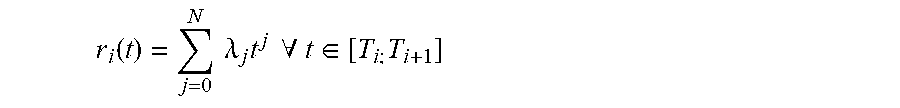

[0067] Trend trajectory module 160 can use any suitable function r.sub.i(t) to construct the trend trajectories, such as polynomials, exponentials, trigonometric functions, wavelets, etc. In one example, trend trajectory module 160 represents each function r.sub.i(t) as an N.sup.th order polynomial, or

r i ( t ) = j = 0 N .lamda. j t j .A-inverted. t .di-elect cons. [ T i ; T i + 1 ] ##EQU00004##

where .lamda..sub.j are polynomial coefficients. The order of the poynolmial can be set to any suitable order. In one example, the order is set to seven (e.g., N=7).

[0068] To determine the polynomial coefficients so that the trend trajectories represent the trajectories of a trajectory cluster, trend trajectory module 160 can fit the functions r.sub.i(t) to the trajectories of the trajectory cluster. In one example, trend trajectory module 160 fits the functions r.sub.i(t) to the trajectories based on a distance measure between the trend trajectory and the trajectories in the cluster, such as a minimum mean-squared error distance measure. For instance, trend trajectory module 160 can determine the polynomial coefficients to minimize a mean-squared error cost function subject to a boundary constraint, or

min .lamda. P .di-elect cons. p .di-elect cons. P [ T i , T i + 1 ] p - r i ( t p ) 2 ##EQU00005## s . t . r i ( T i ) = r i - 1 ( T i ) ##EQU00005.2##

where t.sub.p is the time instance of angle p, P[T.sub.i,T.sub.i+1] indicates all points of P in the interval [T.sub.i,T.sub.i+1], and P.di-elect cons.C denotes multiple trajectories of the trajectory cluster C (e.g., all trajectories of the trajectory cluster C). The constraint r.sub.i(T.sub.i)=r.sub.i-1(T.sub.i) enforces a boundary condition that guarantees continuity across time intervals for the trend trajectory R.sup.C. Trend trajectory module 160 can determine polynomial coefficients for each time interval to determine a trend trajectory for each trajectory cluster identified by cluster module 156.

[0069] Trend trajectories determined by trend trajectory module 160, along with any suitable information, such as polynomial coefficients, an indication of a number of trajectories of a cluster used to determine the trend trajectory for the cluster (e.g., one, all, or some but not all of the trajectories), values of a cost function (e.g., a minimum mean-squared error used to determine polynomial coefficients), a time duration between samples of angles making up a trajectory of a trajectory cluster, combinations thereof, and the like, used by or calculated by trend trajectory module 160 are stored in angle trajectory data 138 of storage 136 and made available to modules of video application 152. In one example, trend trajectories determined by trend trajectory module 160 are provided to affinity score module 164 and trajectory selection module 166.

[0070] User trajectory module 162 is representative of functionality configured to obtain, during a display of a 360-degree video, user trajectories of user angles for a time period of the 360-degree video being displayed. The user angles correspond to user viewports of the 360-degree video during the time period of the 360-degree video being displayed. For instance, user trajectory module 162 may obtain user trajectories of user angles, including a trajectory of yaw angles, a trajectory of roll angles, and a trajectory of pitch angles collected for a time period while a user is viewing the 360-degree video. The angles 112 in FIG. 1 are examples of user angles in user trajectories obtained by user trajectory module 162. For any given time instance of the time period, the user angles can be used to determine a user viewport during the time period. For instance, for any given time instance of the time period, the user angles correspond to a content portion of the 360-degree video consumed by the user at the given time instance, such as a portion of content in viewport 110.

[0071] User trajectory module 162 can obtain the user trajectories in any suitable way. For instance, user trajectory module 162 may automatically record the user trajectories responsive to the 360-degree video 108 being displayed, such as when a user 102 enables a viewing device (e.g., virtual-reality goggles) to display the 360-degree video 108 and a viewport of the 360-degree video 108 is displayed, such as a first viewport of the 360-degree video 108 displayed to the user 102. Additionally or alternatively, user trajectory module 162 may record the user trajectories responsive to a user selection to enable recording, such as a "record user angles now" button on a display device, e.g., a head-mounted display. In one example, a user may select to disregard previously-recorded user trajectories, such as by selecting a "record over" button on a head-mounted display that erases previously-recorded user trajectories and begins recording new user trajectories when selected. Hence, the user may disregard old or unreliable user trajectories and facilitate the video system 120 to better predict a future viewport for the user based on more reliable user trajectories that more accurately reflect the user's movements and viewports, thus improving the delivery and playback of the 360-degree video 108 (e.g., by reducing the transitions in quality as the user moves and changes the viewport).

[0072] User trajectories of user angles obtained by user trajectory module 162 can include timestamps for each user angle, such as a time value indicating a time on a timeline of a 360-degree video, a sample number, a time value indicating a time on a time line of a chapter or scene of the 360-degree video, a chapter number, a scene number, combinations thereof, and the like. Hence, a timestamp for a user angle can associate the user angle with a specific time of a 360-degree video, specific content of a 360-degree video, a playback sequence of a 360-degree video, combinations thereof, and the like.

[0073] User trajectory module 162 can obtain user trajectories that include angles sampled at any suitable rate. In one example, the sampling rate of the user angles in the user trajectories is based on the 360-degree video being displayed. For instance, the sampling rate may be determined from a rate of the 360-degree video, such as derived from a frame rate of the 360-degree video, or set so that a prescribed number of samples of the user angles are recorded for a chapter or scene of the 360-degree video. In one example, the sampling rate of the user angles in the user trajectories is user-selectable. For instance, a user may select a rate adjuster control via video system 120, such as via a user interface exposed by display 132.

[0074] User trajectories determined by user trajectory module 162, along with any suitable information, such as a sampling rate of the user angles in the user trajectories, indicators of a type of user angle of the user trajectories (e.g., indicators of yaw angles, pitch angles, and roll angles), timestamps of user angles, combinations thereof, and the like, used by or calculated by user trajectory module 162 are stored in user trajectory data 140 of storage 136 and made available to modules of video application 152. In one example, user trajectories obtained by user trajectory module 162 are provided to affinity score module 164.

[0075] Affinity score module 164 is representative of functionality configured to determine affinity scores for trajectory clusters based on user trajectories and trend trajectories for the trajectory clusters. Affinity score module 164 can determine affinity scores based on the trend trajectories evaluated for times within the time period used to collect the user trajectories. For instance, for each trajectory cluster for a type of angle, such as a yaw angle, affinity score module 164 determines an affinity score for the trajectory cluster by computing the affinity score between the trend trajectory for the trajectory cluster and a user trajectory for the type of angle. Since the user angles of the user trajectory are collected over a time period, affinity score module 164 evaluates the trend trajectory over this time period to compute the affinity score.

[0076] Let user trajectory U represent a user trajectory of any type of user angles, such as a trajectory of yaw angles or a trajectory of pitch angles, collected over the time period [0; T.sub.n]. For instance, the user trajectory may be recorded by user trajectory module 162 for the first T.sub.n seconds of a 360-degree video. In one example, the affinity score module 164 processes the user trajectories for the different types of angles separately, such as by first determining affinity scores for yaw angles, followed by determining affinity scores for pitch angles, followed by determining affinity scores for roll angles.

[0077] The affinity score module 164 determines an affinity score between the user trajectory U and each of the trend trajectories representing trajectory clusters for the type of angle (e.g., yaw angles). Hence, the affinity score module 164 determines an affinity score K(U, R.sup.C[0; T.sub.n]) based on the mutual distance between the user trajectory and the trend trajectory as described above. Here, R.sup.C[0; T.sub.n] denotes the trend trajectory of trajectory cluster C evaluated for time instances within the time period [0; T.sub.n].

[0078] Accordingly, for user trajectories obtained by user trajectory module 162, affinity score module 164 can determine a respective affinity score for each trajectory cluster clustered by cluster module 156 based on the trend trajectories for the trajectory clusters and the type of angles included in the trend trajectories. The video system 120 uses the affinity scores to match the user trajectories to the trajectory clusters (e.g., to determine which trajectory cluster, if any, a user trajectory may belong to).

[0079] Affinity scores determined by affinity score module 164, along with any suitable information, such as mutual distances between user trajectories and trend trajectories, statistics of affinity scores across trajectory clusters, such as mean, median, mode, variance, maximum, minimum, etc., a time period of a 360-degree video for which an affinity score is determined (e.g., a time period of user angles included in a user trajectory), combinations thereof, and the like, used by or calculated by affinity score module 164 are stored in affinity score data 142 of storage 136 and made available to modules of video application 152. In one example, affinity scores determined by affinity score module 164 are provided to trajectory selection module 166.

[0080] Trajectory selection module 166 is representative of functionality configured to select trend trajectories based on the affinity scores determined by affinity score module 164 and the score thresholds determined by the score threshold module 158. Trajectory selection module 166 selects trend trajectories for trajectory clusters that match user trajectories. For instance, when trajectory selection module 166 selects a trend trajectory, the trajectory selection module 166 determines that a user trajectory belongs to the trajectory cluster represented by the trend trajectory.

[0081] In one example, trajectory selection module 166 selects a first trend trajectory for a yaw angle, a second trend trajectory for a pitch angle, and a third trend trajectory for a roll angle from the trend trajectories based on the affinity scores and the score thresholds. For instance, among the trend trajectories representing trajectory clusters for yaw angles, trajectory selection module 166 selects the trend trajectory with the highest affinity score as the first trend trajectory. Among the trend trajectories representing trajectory clusters for pitch angles, trajectory selection module 166 selects the trend trajectory with the highest affinity score as the second trend trajectory. Among the trend trajectories representing trajectory clusters for roll angles, trajectory selection module 166 selects the trend trajectory with the highest affinity score as the third trend trajectory.