Image Sensor With Pixels Including Photodiodes Sharing Floating Diffusion Region

SHIM; EUNSUB ; et al.

U.S. patent application number 16/852679 was filed with the patent office on 2020-11-12 for image sensor with pixels including photodiodes sharing floating diffusion region. The applicant listed for this patent is SAMSUNG ELECTRONICS CO., LTD.. Invention is credited to KYUNGHO LEE, EUNSUB SHIM.

| Application Number | 20200358971 16/852679 |

| Document ID | / |

| Family ID | 1000004815623 |

| Filed Date | 2020-11-12 |

View All Diagrams

| United States Patent Application | 20200358971 |

| Kind Code | A1 |

| SHIM; EUNSUB ; et al. | November 12, 2020 |

IMAGE SENSOR WITH PIXELS INCLUDING PHOTODIODES SHARING FLOATING DIFFUSION REGION

Abstract

An image sensor operating in multiple resolution modes including a low resolution mode and a high resolution mode includes a pixel array including a plurality of pixels, wherein each pixel in the plurality of pixels comprises a micro-lens, a first subpixel including a first photodiode, a second subpixel including a second photodiode, and the first subpixel and the second subpixel are adjacently disposed and share a floating diffusion region. The image sensor also includes a row driver providing control signals to the pixel array to control performing of an auto focus (AF) function, such that performing the AF function includes performing the AF function according to pixel units in the high resolution mode and performing the AF function according to pixel group units in the low resolution mode. A resolution corresponding to the low resolution mode is equal to or less than 1/4 times a resolution corresponding to the high resolution mode.

| Inventors: | SHIM; EUNSUB; (HWASEONG-SI, KR) ; LEE; KYUNGHO; (SUWON-SI, KR) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Family ID: | 1000004815623 | ||||||||||

| Appl. No.: | 16/852679 | ||||||||||

| Filed: | April 20, 2020 |

| Current U.S. Class: | 1/1 |

| Current CPC Class: | H04N 9/0455 20180801; H04N 5/349 20130101; H04N 5/378 20130101; H04N 5/3698 20130101 |

| International Class: | H04N 5/349 20060101 H04N005/349; H04N 9/04 20060101 H04N009/04; H04N 5/369 20060101 H04N005/369 |

Foreign Application Data

| Date | Code | Application Number |

|---|---|---|

| May 7, 2019 | KR | 10-2019-0053242 |

| Aug 16, 2019 | KR | 10-2019-0100535 |

Claims

1. An image sensor selectively adapted for use in multiple resolution modes including a low resolution mode and a high resolution mode, the image sensor comprising: a pixel array comprising a plurality of pixels, wherein each pixel in the plurality of pixels comprises a micro-lens, a first subpixel including a first photodiode, and a second subpixel including a second photodiode, and the first subpixel and the second subpixel are adjacently disposed and share a floating diffusion region; and a row driver configured to provide control signals to the pixel array to control performing of an auto focus (AF) function, the AF function including performing the AF function according to pixel units in the high resolution mode and performing the AF function according to pixel group units in the low resolution mode, wherein a resolution corresponding to the low resolution mode is equal to or less than 1/4 times a resolution corresponding to the high resolution mode.

2. The image sensor of claim 1, wherein the plurality of pixels includes a first subpixel array and a second subpixel array, the first subpixel array includes horizontal pixels, and the second subpixel array includes vertical pixels.

3. The image sensor of claim 2, wherein the row driver is further configured to provide the control signals such that at least one of the first subpixel and the second subpixel of at least one of the horizontal pixels in the first subpixel array performs the AF function in the high resolution mode.

4. The image sensor of claim 3, wherein the row driver is further configured to provide the control signals such that; photoelectric charge generated by the first subpixels of the at least one of the horizontal pixels is accumulated in the floating diffusion region, then photoelectric charge in the floating diffusion region is reset, and then photoelectric charge generated by the second subpixels of the at least one of the horizontal pixels is accumulated in the floating diffusion region.

5. The image sensor of claim 3, wherein the at least one of the horizontal pixels in the first subpixel array includes not all of the horizontal pixels in the first subpixel array.

6. The image sensor of claim 2, wherein the first sub pixel array comprises a first horizontal pixel and a second horizontal pixel adjacently disposed, in response to a control signal provided from the row driver, a first floating diffusion region of the first horizontal pixel and a second floating diffusion region of the second horizontal pixel are electrically connected to each other, thereby enabling the first horizontal pixel and the second horizontal pixel to share the floating diffusion region.

7. The image sensor of claim 2, wherein the horizontal pixels include a first horizontal pixel and a second horizontal pixel sharing the floating diffusion region, and the row driver is configured to provide the control signals, such that the first subpixels of the first horizontal pixel and the second horizontal pixel simultaneously accumulate photoelectric charge in the floating diffusion region in the low resolution mode.

8. The image sensor of claim 2, wherein the horizontal pixels of the first subpixel array include a first pixel group and a second pixel group adjacently disposed to the first pixel group, the first pixel group and the second pixel group include horizontal pixels arranged in a first direction and a second direction, all of the horizontal pixels of the first pixel group are associated with a first color filter, and all of the horizontal pixels of the second pixel group are associated with a second color filter different from the first color filter.

9. The image sensor of claim 2, wherein the horizontal pixels of the first subpixel array include a first pixel group and a second pixel group adjacently disposed to the first pixel group, the first pixel group and the second pixel group include horizontal pixels arranged in a first direction and a second direction, at least one of the horizontal pixels of the first pixel group is associated with a first color filter, and at least one of the horizontal pixels of the second pixel group is associated with a second color filter different from the first color filter.

10. The image sensor of claim 9, wherein the first color filter and the second color filter is respectively selected from a group of color filters including; a red filter, a blue filter, a green filter, a white filter and a yellow filter.

11. The image sensor of claim 2, wherein the horizontal pixels of the first subpixel array include a first pixel group and a second pixel group adjacently disposed to the first pixel group, the first pixel group and the second pixel group include horizontal pixels arranged in a first direction and a second direction, at least one of the horizontal pixels of the first pixel group is associated with a first color filter and at least another one of the horizontal pixels of the first pixel group is associated with a second color filter, and at least one of the horizontal pixels of the second pixel group is associated with a third color filter and at least another one of the horizontal pixels of the second pixel group is associated with a fourth color filter.

12. The image sensor of claim 11, wherein the first color filter and the third color filter are different color filters.

13. The image sensor of claim 12, wherein the second color filter and the fourth color filter are the same color filter.

14. The image sensor of claim 2, wherein the horizontal pixels of the first subpixel array include a first pixel group and a second pixel group adjacently disposed to the first pixel group, the first pixel group and the second pixel group include horizontal pixels arranged in a first direction and a second direction, at least two of the horizontal pixels of the first pixel group are respectively associated with a first color filter and a second color filter different from the first color filter, and at least two of the horizontal pixels of the second pixel group are respectively associated with a third color filter and a fourth color filter different from the third color filter.

15. An image sensor selectively adapted for use in multiple resolution modes including a low resolution mode, a medium resolution mode, and a high resolution mode, the image sensor comprising: a pixel array comprising a plurality of pixels arranged in a row direction and a column direction, wherein each pixel in the plurality of pixels has a shared pixel structure, wherein the shared pixel structure comprises: a first subpixel including a first photoelectric conversion element selectively transmitting photoelectric charge to a floating diffusion region via a first transmission transistor in response to a first transmission signal, a second subpixel including a second photoelectric conversion element selectively transmitting photoelectric charge to the floating diffusion region via a second transmission transistor in response to a second transmission signal, a reset transistor configured to selectively reset photoelectric charge accumulated in the floating diffusion region in response to a reset signal, a driver transistor and a selection transistor selectively connecting the floating diffusion region to a pixel signal output in response to a selection signal, the floating diffusion region, the reset transistor, the driver transistor and the selection transistor are shared by the first subpixel and the second subpixel, and the first subpixel and the second subpixel are adjacently disposed; and a row driver configured to provide the first transmission signal, the second transmission signal, the reset signal and the selection signal, such that performing of an auto focus (AF) function includes performing the AF function according to units of the plurality of pixels in the high mode, performing the AF function according to units of pixels arranged in the same row in one pixel group in the medium resolution, and performing the AF function according to units of pixel groups in low resolution mode.

16. The image sensor of claim 15, wherein a plurality of pixels comprised in the same pixel group among the plurality of pixel groups are configured to share a floating diffusion region, and the row driver is configured to provide the first transmission signal, the second transmission signal, the reset signal and the selection signal so that photoelectric charges generated by the plurality of pixels comprised in the same pixel group are all accumulated into the floating diffusion region and then are reset.

17. The image sensor of claim 15, wherein at least two pixel groups disposed adjacent to each other among are configured to share a floating diffusion region.

18. The image sensor of claim 15, wherein the high resolution mode has a first resolution, the medium resolution mode has second resolution ranging from between about 1/4 the first resolution to about 1/2 the first resolution, and the low resolution mode has a third resolution less than or equal to about 1/4 the first resolution.

19. An image sensor selectively adapted for use in multiple resolution modes including a low resolution mode, a medium resolution mode, and a high resolution mode, the image sensor comprising: a controller configured to control the operation of a row driver and a signal read unit; a pixel array comprising a plurality of pixels arranged in a row direction and a column direction and configured to provide a pixel signal in response to received incident light, wherein each pixel in the plurality of pixels comprises a micro-lens, a first subpixel including a first photodiode, a second subpixel including a second photodiode, and the first subpixel and the second subpixel are adjacently disposed and share a floating diffusion region, wherein the row driver is configured to provide control signals to the pixel array to control performing of an auto focus (AF) function, such that performing of an auto focus (AF) function includes performing the AF function according to units of the plurality of pixels in the high mode, performing the AF function according to units of pixels arranged in the same row in one pixel group in the medium resolution, and performing the AF function according to units of pixel groups in low resolution mode.

20. The image sensor of claim 19, wherein the high resolution mode has a first resolution, the medium resolution mode has second resolution ranging from between about 1/4 the first resolution to about 1/2 the first resolution, and the low resolution mode has a third resolution less than or equal to about 1/4 the first resolution.

Description

CROSS-REFERENCE TO RELATED APPLICATIONS

[0001] This application claims the benefit of Korean Patent Application Nos. 10-2019-0053242, filed on May 7, 2019 and 10-2019-0100535, filed on Aug. 16, 2019, in the Korean Intellectual Property Office, the collective subject matter of which is hereby incorporated by reference.

BACKGROUND

[0002] The inventive concept relates to image sensors, and more particularly, to image sensors including a plurality of pixels, wherein each pixel includes a plurality of subpixels including a photodiode.

[0003] Image sensors include a pixel array. Each pixel among the plurality of pixels in the pixel array may include a photodiode. Certain image sensors may perform an auto-focus (AF) function to improve the imaging accuracy of an object.

SUMMARY

[0004] The inventive concept provides an image sensor capable of accurately performing an auto-focus function across a range of illumination environments.

[0005] According to one aspect of the inventive concept, there is provided an image sensor selectively adapted for use in multiple resolution modes including a low resolution mode and a high resolution mode. The image sensor includes; a pixel array comprising a plurality of pixels, wherein each pixel in the plurality of pixels comprises a micro-lens, a first subpixel including a first photodiode, a second subpixel including a second photodiode, and the first subpixel and the second subpixel are adjacently disposed and share a floating diffusion region. The image sensor also includes a row driver configured to provide control signals to the pixel array to control performing of an auto focus (AF) function, such that performing the AF function includes performing the AF function according to pixel units in the high resolution mode and performing the AF function according to pixel group units in the low resolution mode. a resolution corresponding to the low resolution mode is equal to or less than 1/4 times a resolution corresponding to the high resolution mode.

[0006] According to another aspect of the inventive concept, there is provided an image sensor selectively adapted for use in multiple resolution modes including a low resolution mode, a medium resolution mode and a high resolution mode. The image sensor includes a pixel array including a plurality of pixels arranged in a row direction and a column direction, wherein each pixel in the plurality of pixels has a shared pixel structure. The shared pixel structure includes; a first subpixel including a first photoelectric conversion element selectively transmitting photoelectric charge to a floating diffusion region via a first transmission transistor and in response to a first transmission signal, a second subpixel including a second photoelectric conversion element selectively transmitting photoelectric charge to the floating diffusion region via a second transmission transistor in response to a second transmission signal, a reset transistor configured to selectively reset photoelectric charge accumulated in the floating diffusion region in response to a reset signal, a driver transistor and a selection transistor selectively connecting the floating diffusion region to a pixel signal output in response to a selection signal, the floating diffusion region, reset transistor, driver transistor and selection transistor are shared by the first subpixel and the second subpixel and the first subpixel and the second subpixel are adjacently disposed. the image sensor also includes a row driver configured to provide the first transmission signal, the second transmission signal, the reset signal and the selection signal, such that performing of an auto focus (AF) function includes performing the AF function according to units of the plurality of pixels in the high resolution mode, performing the AF function according to units of pixels arranged in the same row in one pixel group in the medium resolution, and performing the AF function according to units of pixel groups in low resolution mode.

[0007] According to another aspect of the inventive concept, there is provided an image sensor selectively adapted for use in multiple resolution modes including a low resolution mode, a medium resolution mode and a high resolution mode. The image sensor includes; a controller configured to control the operation of a row driver and a signal read unit, and a pixel array comprising a plurality of pixels arranged in a row direction and a column direction and configured to provide a pixel signal in response to received incident light, wherein each pixel in the plurality of pixels comprises a micro-lens, a first subpixel including a first photodiode, a second subpixel including a second photodiode, and the first subpixel and the second subpixel are adjacently disposed and share a floating diffusion region, wherein the row driver is configured to provide control signals to the pixel array to control performing of an auto focus (AF) function, such that performing of an auto focus (AF) function includes performing the AF function according to units of the plurality of pixels in the high resolution mode, performing the AF function according to units of pixels arranged in the same row in one pixel group in the medium resolution, and performing the AF function according to units of pixel groups in low resolution mode.

BRIEF DESCRIPTION OF THE DRAWINGS

[0008] Embodiments of the inventive concept may be more clearly understood upon consideration of the following detailed description taken in conjunction with the accompanying drawings in which:

[0009] FIG. 1 is a block diagram illustrating a digital imaging device according to an embodiment of the inventive concept;

[0010] FIG. 2 is a block diagram further illustrating in one embodiment the image sensor 100 of FIG. 1;

[0011] FIGS. 3A, 3B, 4A, 4B and 4C are respective diagrams further illustrating certain aspects of the pixel array 110 of the image sensor 100 of FIG. 2;

[0012] FIG. 5 is a circuit diagram illustrating in one embodiment an arrangement of first and second subpixels sharing a floating diffusion region;

[0013] FIGS. 6A, 6B, 6C, 6D and 6E are respective diagrams further illustrating a plurality of subpixels sharing a floating diffusion region among the subpixels included in a first subpixel array of FIG. 3A;

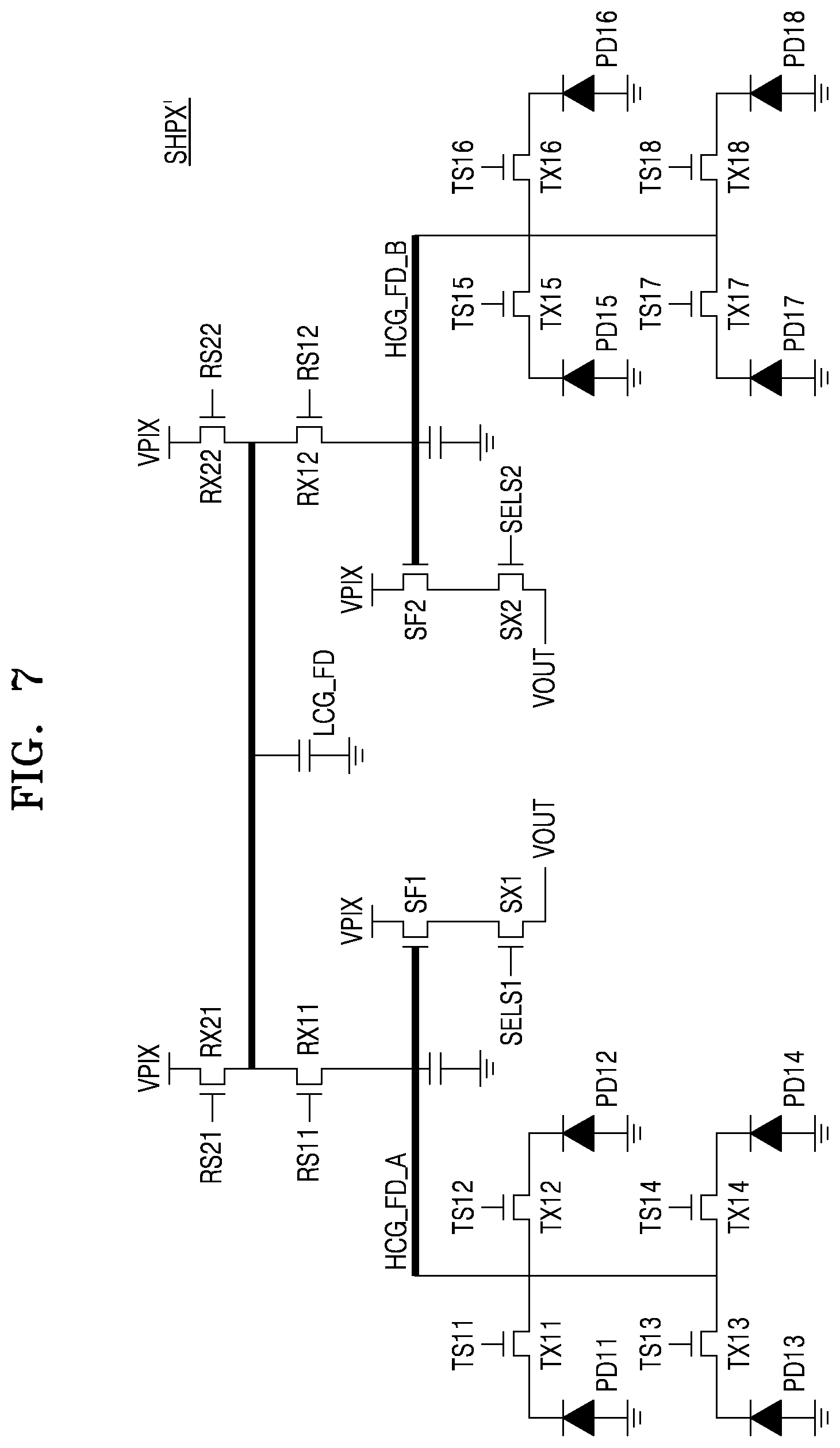

[0014] FIG. 7 is a circuit diagram illustrating in one embodiment an arrangement of subpixels sharing a floating diffusion region;

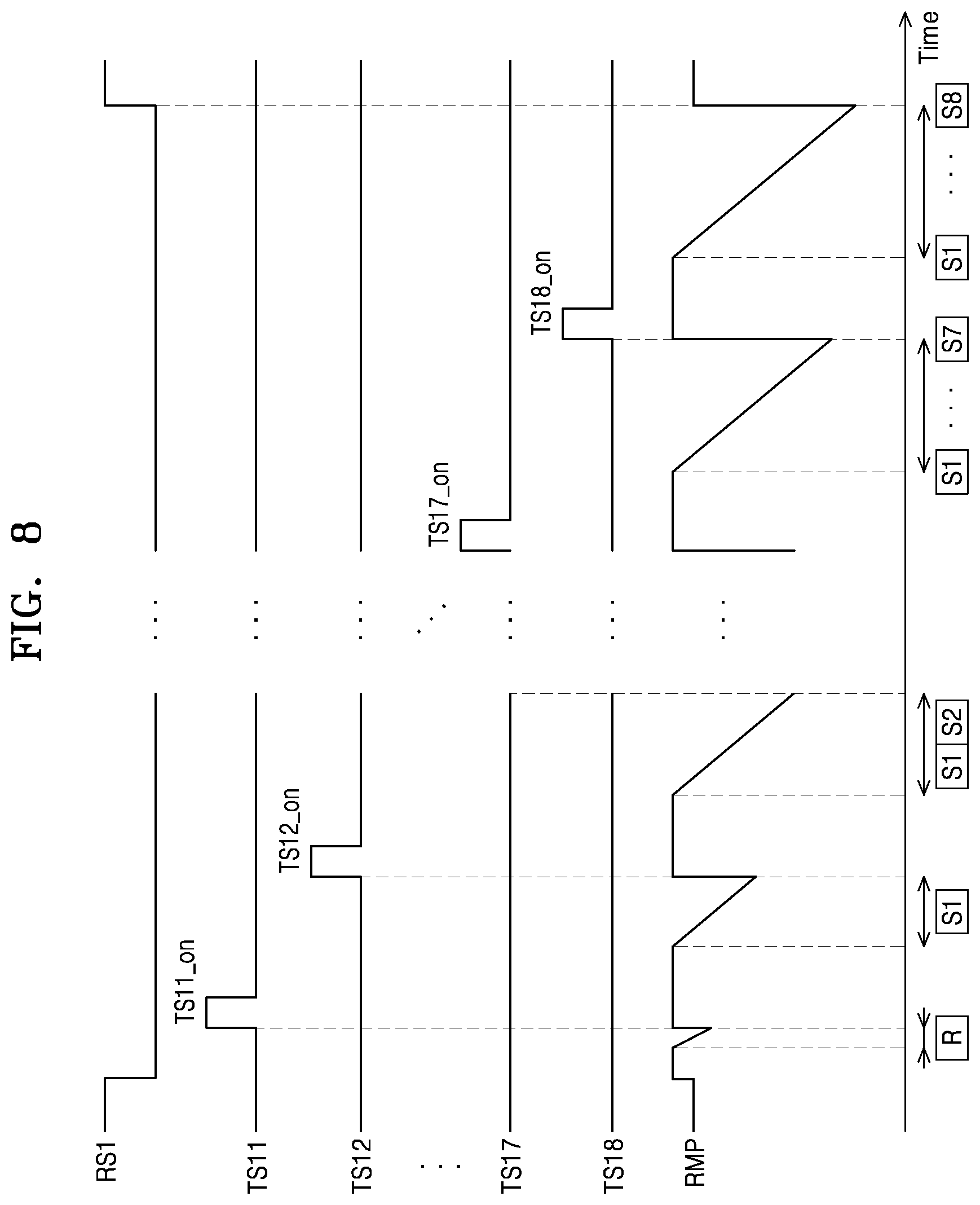

[0015] FIGS. 8, 9, 10 and 11 are respective timing diagrams further illustrating in several embodiments certain timing relationships between various control signals in the operation of the image sensor 100 of FIG. 2; and

[0016] FIG. 12 is a diagram further illustrating in still another embodiment the pixel array 110 of FIG. 2.

DETAILED DESCRIPTION

[0017] Figure (FIG. 1 is a block diagram illustrating a digital imaging device 1000 according to an embodiment of the inventive concept.

[0018] Referring to FIG. 1, the digital imaging device 1000 generally comprises an imaging unit 1100, an image sensor 100, and a processor 1200. Here, it is assumed that the digital imaging device 1000 is capable of performing a focus detection function.

[0019] The overall operation of the digital imaging device 1000 may be controlled by the processor 1200. In the illustrated embodiment of FIG. 1, it is assumed that the processor 1200 provides certain signals controlling various components of the digital imaging device 1000. For example, the processor 1200 may provide a lens driver signal applied to a lens driver 1120, an aperture driver signal applied to an aperture driver 1140, and a controller signal applied to a controller 120.

[0020] The imaging unit 1100 generally includes one or more element(s) configured to receiving incident light associated with an object 2000 being imaged by the digital imaging device. In this regard, the object 2000 may be a single object, a collection of objects, or a distributed field of object. Further in this regard, the term "incident light" should be broadly construed to mean any selected range of electro-magnetic energy across one or more bands of the electro-magnetic spectrum (e.g., wavelengths discernable to the human eye) capable of being imaged by the digital imaging device 1000.

[0021] In the illustrated embodiment of FIG. 1, the imaging unit 1100 includes the lens driver 1120 and aperture driver 1140, as well as a lens 1110 and an aperture 1130. Here, the lens 1110 may include one or more lenses arranged singularly or in combination to effectively capture the incident light associated with the object 2000.

[0022] In particular, the lens driver 1120 operates to control the operation of the lens 1110 to accurately capture the incident light associated with the object 2000. Accordingly, the lens driver 1120 is responsive to the focus detection function performed by the digital imaging device 1000, as may be transmitted to the lens driver 1120 by the processor 1200. In this manner, the focal position of the lens 1110 may be controlled by one or more control signals provided from the processor 1200.

[0023] In this regard, it should be noted that the term "control signal" is used hereafter to denote one or more signal(s), whether analog or digital in nature and having various formats, that are used to adjust or control the operation of a component within the digital imaging device 1000.

[0024] Thus, the lens driver 1120 may adjust the focal position of the lens 1110 with respect to the movement, orientation and/or distance of the object 2000 relative to the lens 1110 in order to correct focal mismatches between a given focal position of the lens 1110 and the object 2000.

[0025] Within the illustrated embodiment of FIG. 1, the image sensor 100 may be used to convert incident light received via the imaging unit 1100 into a corresponding image signal. The image sensor 100 may generally include a pixel array 110, the controller 120, and a signal processor 130. Here, incident light passing through the lens 1110 and the aperture 1130 reaches an incident light receiving surface of the pixel array 110.

[0026] The pixel array 110 may include a Complementary Metal Oxide Semiconductor (CMOS) image sensor (CIS) capable of converting the energy of the incident light into corresponding electrical signal(s). In this regard, the sensitivity of the pixel array 110 may be adjusted by the controller 120. The resulting collection of corresponding electrical signal(s) may be further processed by the signal processor 130 to provide an image signal.

[0027] In certain embodiments of the inventive concept, the pixel array 110 may include a plurality of pixels selectively capable of performing an auto focus (AF) function or a distance measurement function.

[0028] Thus, in certain embodiments of the inventive concept, the processor 1200 may receive a first image signal and a second image signal from the signal processor 130 and perform a phase difference determination using the first image signal and the second image signal. The processor 1200 may then determine an appropriate focal position, identify a focus direction, and/or a calculate a distance between the digital imaging device and the object 2000 on the basis of a result of the phase difference determination. In this manner, the processor 1200 may be used to provide the signal(s) applied to the lens driver 1120 in order to properly adjust the focal position of the lens 1110 based on the results of the phase difference determination operation.

[0029] FIG. 2 is a block diagram further illustrating in one embodiment the image sensor 100 of FIG. 1. Here, the image sensor 100 may be selectively adapted for use in various resolution modes in response to the illumination environment associated with the object 2000. That is, in certain embodiments of the inventive concept, the digital imaging device of FIG. 1 may make a determination regarding an appropriate resolution mode (e.g., low or high) and selectively adapted (or configure) the image sensor 100 accordingly.

[0030] Referring to FIG. 2, the image sensor 100 comprises a row driver 140 and a signal read unit 150 in addition to the pixel array 110, the controller 120 and the signal processor 130. Here, it is assumed that the signal read unit 150 includes a correlated-double sampling circuit (CDS) 151, an analog-to-digital converter (ADC) 153 and a buffer 155.

[0031] The pixel array 110 comprises a plurality of pixels. This plurality of pixels may be variously designated for operation (or functionally divided) into one or more subpixel arrays. For example, the pixel array 110 may include a first subpixel array 110_1 and a second subpixel array 110_2. In certain embodiments of the inventive concept, the first subpixel array 110_1 includes a plurality of horizontal pixels PX_X capable of performing an AF function in a first direction (e.g., a row direction), and the second subpixel array 110_2 includes a plurality of vertical pixels PX_Y capable of performing an AF function in a second direction (e.g., a column direction). In certain embodiments of the inventive concept each subpixel array may be further, functionally divided into two (2) or more pixel groups.

[0032] Those skilled in the art will recognize that the terms "horizontal" and "vertical"; "first direction" and "second direction", as well as "row direction" and "column direction" are relative in nature, and are used to describe various, relative orientation relationships between recited elements and components.

[0033] Each horizontal pixel PX_X of the first subpixel array 110_1 includes at least two (2) photodiodes adjacently disposed in the first (or row) direction of a matrix arrangement including at least one row. Each horizontal pixel PX_X of the first subpixel array 110_1 also includes a micro-lens ML disposed on the at least two (2) photodiodes.

[0034] Each vertical pixels PX_Y of the second subpixel array 110_2 includes at least two (2) photodiodes adjacently disposed in the second (or column) direction of a matrix including at least one column. Each vertical pixel PX_Y of the second subpixel array 110_2 also includes a micro-lens ML disposed on the at least two (2) photodiodes.

[0035] With this configuration, each of the horizontal pixel PX_X of the first subpixel array 110_1 may perform an AF function in the first direction, and each vertical pixel PX_Y of the second subpixel array 110_2 may perform an AF function in the second direction. Since each of the horizontal pixels PX_X and each of the vertical pixels PX_Y includes at least two photodiodes as well as a micro-lens, each one of the plurality of pixels (including both horizontal PX_X pixels and vertical PX_Y pixels) may generate a pixel signal capable of effectively performing an AF function. In this manner, an image sensor according to an embodiment of the inventive concept may readily provide an enhanced AF function.

[0036] In certain embodiments of the inventive concept, the width of each horizontal pixel PX_X of the first subpixel array 110_1 may range from between about 0.5 .mu.m and about 1.8 .mu.m. The width of each vertical pixel PX_Y of the second subpixel array 110_2 may also range from between about 0.5 .mu.m and about 1.8 .mu.m. Alternatively, the width of the plurality of horizontal and vertical pixels, PX_X and PX_Y, may range from between about 0.64 .mu.m and about 1.4 .mu.m.

[0037] In certain embodiments of the inventive concept, the horizontal pixels PX_X of the first subpixel array 110_1 may be grouped into one or more pixel groups, and the vertical pixels PX_Y of the second subpixel array 110_2 may be grouped into one or more pixel groups.

[0038] According to a given configuration and definition of a plurality of pixels and pixel groups, the image sensor 100 of FIG. 2 may selectively perform an AF function according using pixels units or according to pixel group units. For example, given the digital imaging device 1000 of FIG. 1 is adapted for use in a high resolution mode, the image sensor may perform an AF function according to pixel units (i.e., in response to various pixel signal(s) provided by individual pixels--e.g., horizontal and/or vertical pixels). Alternately, given the digital imaging device 1000 of FIG. 1 is adapted for use in a low resolution mode, the image sensor may perform an AF function according to pixel group units (i.e., in response to various pixel signal(s) provided by pixel groups).

[0039] Respective embodiments further illustrating possible configurations for the first and second subpixel arrays 110_1 and 110_2 will be described hereafter with reference to FIGS. 3A and 3B.

[0040] Returning to FIG. 2, each pixel in the pixel array 110 may respectively output a pixel signal (see e.g., the VOUT signal of FIG. 5) to the CDS 151 through one of the first through n-1.sup.th column output lines CLO_0 to CLO_n-1. Pixel signals output from the horizontal pixels PX_X of the first subpixel array 110_1 and the vertical pixels PX_Y of the second subpixel array 110_2 may be phase signals used to calculate a phase difference. The phase signals may include information associated with the positioning of object(s) imaged by the image sensor 100. Accordingly, a focus position for the lens 1110 (of FIG. 1) may be calculated in response to the calculated phase difference(s). For example, a focus position for the lens 1110 corresponding to a phase difference of `0` may be deemed an optimal focus position. In certain embodiments of the inventive concept, given operation in the high resolution mode, a selected plurality of pixels (e.g., including both horizontal and vertical pixels, PX_X and PX_Y) may from the pixel array 110 may be used to perform an AF function. Indeed, in certain embodiment all of the horizontal pixels in the first subpixel array 110_1 and all of the vertical pixels in the second subpixel array 110_2 may collectively be used to perform the AF function when the digital imaging device 100 is being operated in the high resolution mode.

[0041] Additionally or alternately, the horizontal pixels PX_X of the first subpixel array 110_1 and the vertical pixels PX_Y of the second subpixel array 110_2 may be used to measure a distance between the object 2000 and the digital imaging device 1000. In order to measure a distance between the digital imaging device 100 and the object 2000, some additional information may be necessary or convenient to use. Examples of additional information may include: phase difference between the object 2000 and the image sensor 100, lens size for the lens 1110, current focus position for the lens 1110, etc.

[0042] In the embodiment illustrated in FIG. 2, the controller 120 may be used to control the row driver 140, such that the pixel array 110 effectively captures incident light to effectively accumulate corresponding photoelectric charge (or temporarily store the accumulated photoelectric charge). Here, the term "photoelectric charge" is used to denote electrical charge generated by at least one subpixel in the pixel array in response to incident light. The pixel array may then output an electrical signal (i.e., a pixel signal) corresponding to the accumulated photoelectrical charge. Additionally, the controller 120 may control the operation of the signal read unit 150, such that the pixel array 110 may accurately measure a level of the pixel signal provided by the pixel array 110.

[0043] The row driver 140 may be used to generate various control signals. Here, examples of control signals include; reset control signals RS, transmission control signals TS, and selection signals SELS that may be variously provided to control the operation of the pixel array 110. Those skilled in the art will recognize that the choice, number and definition of various control signals is a matter of design choice.

[0044] In certain embodiments of the inventive concept, the row driver 140 may be used determine the activation timing and/or deactivation timing (hereafter, singularly or collectively in any pattern, "activation/deactivation") of the reset control signals RS, transmission control signals TS, and selection signals SELS variously provided to the horizontal pixels PX_X of the first subpixel array 110_1 and the vertical pixels PX_Y of the second subpixel array 110_2, in response to various factors, such as high/low resolution mode of operation, types of AF function being performed, distance measurement function, etc.

[0045] The CDS 151 may sample and hold a pixel signal provided from the pixel array 110. The CDS 151 may doubly sample a level of certain noise and a level based on the pixel signal to output a level corresponding to a difference therebetween. Moreover, the CDS 151 may receive a ramp signal generated by a ramp signal generator 157 and may compare the ramp signal with the pixel signal to output a comparison result. The ADC 153 may convert an analog signal, corresponding to the level received from the CDS 151, into a digital signal. The buffer 155 may latch the digital signal, and the latched digital signal may be sequentially output to the outside of the signal processor 130 or the image sensor 100.

[0046] The signal processor 130 may perform signal processing on the basis of the digital signal received from the buffer 155. For example, the signal processor 130 may perform noise decrease processing, gain adjustment, waveform standardization processing, interpolation processing, white balance processing, gamma processing, edge emphasis processing, etc. Moreover, the signal processor 130 may output information, obtained through signal processing performed in an AF operation, to the processor 1200 to allow the processor 1200 to perform a phase difference operation needed for the AF operation. In an embodiment, the signal processor 130 may be included in a processor (1200 of FIG. 1) provided outside the image sensor 100.

[0047] FIGS. 3A and 3B are respective diagrams further illustrating in certain embodiments the pixel array 110 of FIG. 2. FIG. 3A is a diagram further illustrating in one embodiment the first subpixel array 110_1 of the pixel array 110, and FIG. 3B is a diagram further illustrating in another embodiment the second subpixel array 110_2 of the pixel array 110.

[0048] Referring to FIG. 3A, the first subpixel array 110_1 includes a plurality of horizontal pixels PX_X arranged in a matrix defined according to a row direction (i.e., a first direction X) and a column direction (i.e., a second direction Y). Each of the horizontal pixels PX_X in the first subpixel array 110_1 may include a micro-lens ML.

[0049] In the illustrated example of FIG. 3A, the first subpixel array 110_1 includes first to fourth pixel groups PG1, PG2, PG3 and PG4, wherein the first pixel group PG1 and the second pixel group PG2 are adjacently disposed in the first direction X, while the third pixel group PG3 and the fourth pixel group PG4 are adjacently disposed in the first direction X. The first pixel group PG1 and the third pixel group PG3 are adjacently disposed in the second direction Y, while the second pixel group PG2 and the fourth pixel group PG4 are adjacently disposed in the second direction Y.

[0050] In the illustrated embodiment of FIG. 3A, each of the first, second, third and fourth pixel groups PG1 to PG4 includes four (4) horizontal pixels PX_X, but other embodiments of the inventive concept are not limited to this configuration. For example, each of the first, second, third and fourth pixel groups PG1 to PG4 may include eight (8) horizontal pixels PX_X arranged in two (2) rows and four (4) columns.

[0051] Here, however, the first pixel group PG1 includes first to eighth subpixels SPX11 to SPX18, where first subpixel SPX11 and second subpixel SPX12 are configured in one horizontal pixel PX_X, third subpixel SPX13 and fourth subpixel SPX14 are configured in another horizontal pixel PX_X, fifth subpixel SPX15 and sixth subpixel SPX16 are configured in another horizontal pixel PX_X, and seventh subpixel SPX17 and eighth subpixel SPX18 are configured in still another horizontal pixel PX_X.

[0052] With analogous configurations, the second pixel group PG2 includes first to eighth subpixels SPX21 to SPX28; the third pixel group PG3 includes first to eighth subpixels SPX31 to SPX38; and the fourth pixel group PG4 includes first to eighth subpixels SPX41 to SPX48.

[0053] Here, it should be noted that each horizontal pixel PX_X includes two (2) subpixels adjacently disposed to each other in the first direction X.

[0054] The first subpixel array 110_1 may further include one or more color filter(s), such that respective horizontal pixels, respective collection(s) of horizontal pixels and/or respective pixel groups may selectively sense various light wavelengths, such as those conventionally associated with different colors of the visible light spectrum. For example, in certain embodiments of the inventive concept, various color filter(s) associated with the first subpixel array 110_1 may include a red filter (R) for sensing red, a green filter (G) for sensing green, and a blue filter (B) for sensing blue. That is, various color filters (e.g., a first color filter, a second color filter, etc.) may be respectively selected from a group of color filters including; a red filter, a blue filter, a green filter, a white filter, a yellow filter, etc.

[0055] Here, each of the first, second, third and fourth pixel groups PG1 to PG4 may variously associated with one or more color filters.

[0056] In one embodiment of the inventive concept consistent with the configuration shown in FIG. 3A, the first, second, third and fourth pixel groups PG1 to PG4 may be disposed in the first subpixel array 110_1 according to a Bayer pattern. That is, the first pixel group PG1 and the fourth pixel group PG4 may associated with a green filter (G), the second pixel group PG2 may associated with a red filter (R), and the third pixel group PG3 may be associated with a blue filter (B).

[0057] However, the foregoing embodiment of the first subpixel array 110_1 is just one example of many different configurations wherein a color filter is variously associated with one or more pixels selected from one or more pixel groups. Additionally or alternatively, embodiments of the inventive concept may variously include; a white filter, a yellow filter, a cyan filter, and/or a magenta filter.

[0058] With reference to FIG. 3A, each of the subpixels SPX11 to SPX18, SPX21 to SPX28, SPX31 to SPX38, and SPX41 to SPX48 included in the first subpixel array 110_1 may include a corresponding photodiode. Thus, each of the horizontal pixels PX_X will include at least two (2) photodiodes adjacently disposed to each other in the first direction X. A micro-lens ML may be disposed on the at least two (2) photodiodes.

[0059] With this exemplary configuration in mind, the amount of photoelectric charge generated by the at least two (2) photodiodes included in each horizontal pixel PX_X will vary with the shape and/or refractive index of an associated micro-lens ML. Accordingly, an AF function performed in the first direction X may be based on a pixel signal corresponding to the amount of photoelectric charge generated by the constituent, at least two (2) photodiodes.

[0060] For example, an AF function may be performed by using a pixel signal output by the first subpixel SPX11 of the first pixel group PG1 and a pixel signal output by the second subpixel SPX12 of the first pixel group PG1. Accordingly, an image sensor according to an embodiment of the inventive concept may selectively perform an AF function according to pixel units in a first operating (e.g., a high resolution) mode. The performance of an AF function according to "pixel units" in a high resolution mode allows for the selective use of one, more than one, or all of the horizontal pixels PX_X in the first subpixel array 110_1 during the performance of an AF function.

[0061] By way of comparison, an image sensor according to an embodiment of the inventive concept may selectively perform an AF function according to pixel group units in a second operating (e.g., a low resolution) mode. The performance of an AF function according to "pixel group units" in a low resolution mode allows for the selective use of one, more than one, or all of the pixel groups (e.g., PG1, PG2, PG3 and PG4) in the first subpixel array 110_1 during the performance of an AF function. For example, an AF function may be performed by processing a first pixel signal corresponding to the amount of photoelectric charge generated by a photodiode of each of the first subpixel SPX11, the third subpixel SPX13, the fifth subpixel SPX15, and the seventh subpixel SPX17 of the first pixel group PG1 and a second pixel signal corresponding to the amount of photoelectric charge generated by a photodiode of each of the second subpixel SPX12, the fourth subpixel SPX14, the sixth subpixel SPX16, and the eighth subpixel SPX18 of the first pixel group PG1. Given this selective approach to performing an AF function by the image sensor 100, and even in environmental circumstances wherein a relatively low level incident light is captured by the pixel array 110 (e.g., a level of incident light conventional inadequate to accurately perform an AF function), an image sensor according to an embodiment of the inventive concept may nonetheless faithfully perform the AF function.

[0062] In this regard, those skilled in the art will recognize that the terms "high resolution" and "low resolution" are relative terms and may be arbitrarily defined according to design. However, in the context of certain embodiments of the inventive concept, a first level of image resolution associated with a low resolution mode may be understood as being less than or equal to 1/4 of a second level of image resolution associated with a high resolution mode.

[0063] With the foregoing in mind, other embodiments of the inventive concept may provide digital imaging devices capable of operating (or selectively adapted for use) in more than two resolution modes. For example, a digital imaging device according to certain embodiments of the inventive concept may be selectively adapted for use in a low resolution mode and a high resolution mode, as described above, and additionally in a medium resolution mode. Here, for example, an image sensor according to embodiments of the inventive concept may perform an AF function according to pixel units by selecting a set of horizontal pixels PX_X (or a set of vertical pixels PX_Y) included in a single pixel group (e.g., PG1) and arranged in a same row (or the same column).

[0064] Extending this example, an AF function may be performed by processing a first pixel signal corresponding to an amount of photoelectric charge generated by the photodiodes of each of the first subpixel SPX11 and the third subpixel SPX13 of the first pixel group PG1, and a second pixel signal corresponding to the amount of photoelectric charge generated by a photodiode of each of the second subpixel SPX12 and the fourth subpixel SPX14 of the first pixel group PG1. Alternatively, an AF function may be performed by processing a first pixel signal corresponding to an amount of photoelectric charge generated by a photodiode of each of the first subpixel SPX11 and the fifth subpixel SPX15 of the first pixel group PG1 and a second pixel signal corresponding to an amount of photoelectric charge generated by a photodiode of each of the second subpixel SPX12 and the sixth subpixel SPX16 of the first pixel group PG1.

[0065] Recognizing here again that the terms "high resolution", "medium resolution", and "low resolution" are relative terms, and may be arbitrarily defined according to design, in the context of certain embodiments of the inventive concept, a third level of image resolution associated with the medium resolution mode may be understood as being greater than 1/4 of a second level of image resolution associated with a high resolution mode, but less than 1/2 of the second level of the image resolution associated with the high resolution mode.

[0066] Referring to FIG. 3B, the second subpixel array 110_2 includes a plurality of vertical pixels PX_Y arranged in a matrix defined in relation to the first direction X and the second direction Y. In certain embodiments, each of the vertical pixels PX_Y may include a single micro-lens ML.

[0067] As shown in FIG. 3B, the second subpixel array 110_2 includes first, second, third and fourth pixel groups PG1Y to PG4Y. However, other embodiments of the inventive concept may include less or more pixel groups. Here, the first pixel group PG1Y includes first to eighth subpixels SPX11Y to SPX18Y. The first subpixel SPX11Y and the second subpixel SPX12Y are configured as one vertical pixel PX_Y, the third subpixel SPX13Y and the fourth subpixel SPX14Y are configured as another vertical pixel PX_Y, the fifth subpixel SPX15Y and the sixth subpixel SPX16Y are configured as another vertical pixel PX_Y, and the seventh subpixel SPX17Y and the eighth subpixel SPX18Y are configured as still another vertical pixel PX_Y. Moreover, for example, the second pixel group PG2Y may include first to eighth subpixels SPX21Y to SPX28Y, the third pixel group PG3Y may include first to eighth subpixels SPX31Y to SPX38Y, and the fourth pixel group PG4Y may include first to eighth subpixels SPX41Y to SPX48Y. That is, one pixel PX_Y may include two subpixels disposed adjacent to each other in the second direction Y.

[0068] The respective vertical pixels of the second subpixel array 110_2 or various collections of the respective vertical pixels of the second subpixel array 110_2 may be variously associated with one or more color filter(s), as described above in relation to FIG. 3A.

[0069] Thus, each of the subpixels SPX11Y to SPX18Y, SPX21Y to SPX28Y, SPX31Y to SPX38Y, and SPX41Y to SPX48Y included in the second subpixel array 110_2 may include one corresponding photodiode. Therefore, each of the vertical pixels PX_Y will include at least two (2) photodiodes adjacently disposed to each other in the second direction Y. The amount of photoelectric charge generated by the at least two (2) photodiodes included in a vertical pixel PX_Y may vary with the shape and/or the refractive index of the associated micro-lens ML. An AF function in the second direction Y may be performed based on a pixel signal corresponding to the amount of photoelectric charge generated by photodiodes included in one pixel PX_Y. For example, an AF function may be performed by using a first pixel signal output by the first subpixel SPX11Y of the first pixel group PG1Y and a second pixel signal output by the second subpixel SPX12Y of the first pixel group PG1Y. Therefore, the image sensor according to an embodiment may perform an AF function according to pixel units in the high resolution mode.

[0070] On the other hand, an image sensor according to embodiments of the inventive concept may perform an AF function according to pixel groups in the low resolution mode. For example, an AF function in the second direction Y may be performed by processing a first pixel signal corresponding to an amount of photoelectric charge generated by a photodiode of each of the first subpixel SPX11Y, the third subpixel SPX13Y, the fifth subpixel SPX15Y, and the seventh subpixel SPX17Y of the first pixel group PG1Y, and a second pixel signal corresponding to an amount of photoelectric charge generated by a photodiode of each of the second subpixel SPX12Y, the fourth subpixel SPX14Y, the sixth subpixel SPX16Y, and the eighth subpixel SPX18Y of the first pixel group PG1Y.

[0071] Analogously with the foregoing description of the embodiment of FIG. 3A, the embodiment of FIG. 3B may be altered to operate in a medium resolution mode, as well as the high resolution mode and the low resolution mode.

[0072] FIGS. 4A, 4B and 4C are respective diagrams further illustrating in various embodiments the pixel array 110 of the image sensor 100 of FIG. 2. Here, like reference numbers and labels are used among FIGS. 3A, 4A, 4B and 4C.

[0073] Referring to FIG. 4A, the horizontal pixels PX_X of a first subpixel array 110_1a are variously associated with (i.e., functionally configured together with) different color filters; a red filter (R), a green filter (G), a blue filter (B) and a white (or yellow) filter (W) or (Y). Here again, first, second third, and fourth pixel groups PG1a to PG4a are assumed for the first subpixel array 110_1a.

[0074] In FIG. 4A, both the first pixel group PG1a and the fourth pixel group PG4a have horizontal pixels PX_X variously associated with a green filter (G) and a white filter (W). That is, a seventh subpixel SPX17 and an eighth subpixel SPX18 of the first pixel group PG1a are associated with the white filter (W), and a first subpixel SPX41 and a second subpixel SPX42 of the fourth pixel group PG4a are associated with the white filter (W). Alternatively, the horizontal pixels PX_X of the first pixel group PG1a and the fourth pixel group PG4a might be associated with the green filter (G) and a yellow filter (Y).

[0075] Analogously, horizontal pixels PX_X of the second pixel group PG2a are variously associated with the red filter (R) and the white filter (W). That is, a fifth subpixel SPX25 and a sixth subpixel SPX26 of the second pixel group PG2a are associated with the white filter (W) or alternately the yellow filter (Y), and horizontal pixels PX_X of the third pixel group PG3a are variously associated with the blue filter (B) and the white filter (W). That is, a third subpixel SPX33 and a fourth subpixel SPX34 of the third pixel group PG3a are associated with the white filter (W) or alternately the yellow filter (Y).

[0076] Thus, as illustrated in FIG. 4A certain embodiments of the inventive concept may associate adjacent horizontal pixels PX_X selected from different pixel groups with a color filter, while non-selected horizontal pixels PX_X from each of the different pixel groups are variously associated with different color filters.

[0077] In contrast and as illustrated in FIG. 4B, respective pixel groups (e.g., first to fourth pixel groups PG1b to PG4b) may be respectively associated with one of a set of color filters. For example, the first pixel group PG1b is associated with the green filter (G), the second pixel group PG2b is associated with the red filter (R), the third pixel group PG3b is associated with the blue filter (B), and the fourth pixel group PG4b is associated with the white filter (W) or the yellow filter (Y).

[0078] In further contrast and as illustrated in FIG. 4C, each individual horizontal pixel PX_X may be respectively associated with a selected one of the set of color filters, without regard to inclusion of the individual horizontal pixel PX_X in a particular pixel group. Thus, each one of the first to fourth pixel groups PG1c to PG4c includes color filters having different colors.

[0079] As illustrated in FIG. 4C, the first pixel group PG1c and the fourth pixel group PG4c include a green filter (G) and a white filter (W) or a yellow filter (Y). The first, second, seventh, and eighth subpixels SPX11, SPX12, SPX17, and SPX18 of the first pixel group PG1c are associated with the white filter (W) or the yellow filter (Y), whereas the first, second, seventh, and eighth subpixels SPX41, SPX42, SPX47, and SPX48 of the fourth pixel group PG4c are associated with the white filter (W) or the yellow filter (Y). Moreover, the third, fourth, fifth, and sixth subpixels SPX13, SPX14, SPX15, and SPX16 of the first pixel group PG1c are associated with the green filter (G), whereas the third, fourth, fifth, and sixth subpixels SPX43, SPX44, SPX45, and SPX46 of the fourth pixel group PG4c are associated with the green filter (G).

[0080] The second pixel group PG2c includes horizontal pixels PX_X variously associated with the red filter (R) and the white filter (W) or the yellow filter (Y). Hence, the first, second, seventh, and eighth subpixels SPX21, SPX22, SPX27, and SPX28 of the second pixel group PG2c are each associated with the white filter (W) or the yellow filter (Y), whereas the third, fourth, fifth, and sixth subpixels SPX23, SPX24, SPX25, and SPX26 of the second pixel group PG2c are associated with the red filter (R).

[0081] The third pixel group PG3c includes horizontal pixels PX_X variously associated with the blue filter (B) and the white filter (W) or the yellow filter (Y). Hence, the first, second, seventh, and eighth subpixels SPX31, SPX32, SPX37, and SPX38 of the third pixel group PG3c are associated with the white filter (W) or the yellow filter (Y), whereas the third, fourth, fifth, and sixth subpixels SPX33, SPX34, SPX35, and SPX36 of the third pixel group PG3c are each associated with the blue filter (B).

[0082] FIG. 5 is a circuit diagram illustrating an arrangement of first and second subpixels sharing a floating diffusion region according to certain embodiments of the inventive concept. In FIG. 5, a first subpixel and a second subpixel of a pixel (e.g., a horizontal pixel or a vertical pixel) are configured within a shared pixel structure to share a floating diffusion region. However, other embodiments of the inventive concept may include other arrangements of various subpixels sharing a floating diffusion region.

[0083] In FIG. 5, the first subpixel includes a first photodiode PD11, a first transmission transistor TX11, a selection transistor SX1, a drive transistor SF1, and a reset transistor RX1. The second subpixel includes a second photodiode PD12, a second transmission transistor TX12, as well as the selection transistor SX1, the drive transistor SF1, and the reset transistor RX1. With this configuration (e.g., a shared pixel structure SHPX), the first subpixel and the second subpixel may effectively share a floating diffusion region FD1 as well as the selection transistor SX1, the drive transistor SF1, and the reset transistor RX1. Those skilled in the art will recognize that one or more of the selection transistor SX1, the drive transistor SF1, and the reset transistor RX1 may be omitted in other configurations.

[0084] Here, each of the first photodiode PD11 and the second photodiode PD12 may generate photoelectric charge as a function of received incident light. For example, each of the first photodiode PD11 and the second photodiode PD12 may be a P-N junction diode that generates photoelectric charge (i.e., an electron as a negative photoelectric charge and a hole as a positive photoelectric charge) in proportion to an amount of incident light. That is, each of the first photodiode PD11 and the second photodiode PD12 may include at least one photoelectric conversion element, such as a phototransistor, a photogate, a pinned photodiode (PPD), etc.

[0085] The first transmission transistor TX11 may be used to transmit photoelectric charge generated by the first photodiode PD11 to the floating diffusion region FD1 in response to a first transmission control signal TS11 applied to the first transmission transistor TX11. Thus, when the first transmission transistor TX11 is turned ON, photoelectric charge generated by the first photodiode PD11 is transmitted to the floating diffusion region FD1 wherein it is accumulated (or stored) in the floating diffusion region FD1. Likewise, when the second transmission transistor TX12 is turned ON in response to a second transmission control signal TS12, photoelectric charge generated by the second photodiode PD12 is transmitted to, and is accumulated by, the floating diffusion region FD1.

[0086] In this regard, the floating diffusion region FD1 operates as a photoelectric charge capacitor. Thus, as the number of photodiodes operationally connected to the floating diffusion region FD1 increases in certain embodiments of the inventive concept, capacitance storing capability of the floating diffusion region FD1 must also increase.

[0087] The reset transistor RX1 may be used to periodically reset the photoelectric charge accumulated in the floating diffusion region FD1. A source electrode of the reset transistor RX may be connected to the floating diffusion region FD1, and a drain electrode thereof may be connected to a source voltage VPIX. When the reset transistor RX is turned ON in response to a reset control signal RS1, the source voltage VPIX connected to the drain electrode of the reset transistor RX1 may be applied to the floating diffusion region FD1. When the reset transistor RX1 is turned ON, photoelectric charge accumulated in the floating diffusion region FD1 may be discharged, and thus, the floating diffusion region FD1 may be reset.

[0088] The drive transistor SF1 may be controlled based on the amount of photoelectric charge accumulated in the floating diffusion region FD1. The drive transistor SF1 may be a buffer amplifier and may buffer a signal in response to the photoelectric charge accumulated by the floating diffusion region FD1. The drive transistor SF1 may amplify a potential varying in the floating diffusion region FD1, and output the amplified potential as a pixel signal VOUT to a column output line (e.g., one of the first to n-1.sup.th column output lines CLO_0 to CLO_n-1 of FIG. 2).

[0089] A drain terminal of the selection transistor SX1 may be connected to a source terminal of the drive transistor SF1, and in response to a selection signal SELS1, the selection transistor SX1 may output the pixel signal VOUT to a CDS (e.g., the CDS 151 of FIG. 2) through a corresponding column output line.

[0090] One or more of the first transmission control signal TS11, the second transmission control signal TS12, the reset control signal RS1, and the selection signal SELS1, as illustrated in FIG. 5, may be control signals provided by a row driver (e.g., the row driver 140 of FIG. 2) operating in relation to a pixel array (e.g., pixel array 110 of FIG. 2) according to embodiments of the inventive concept.

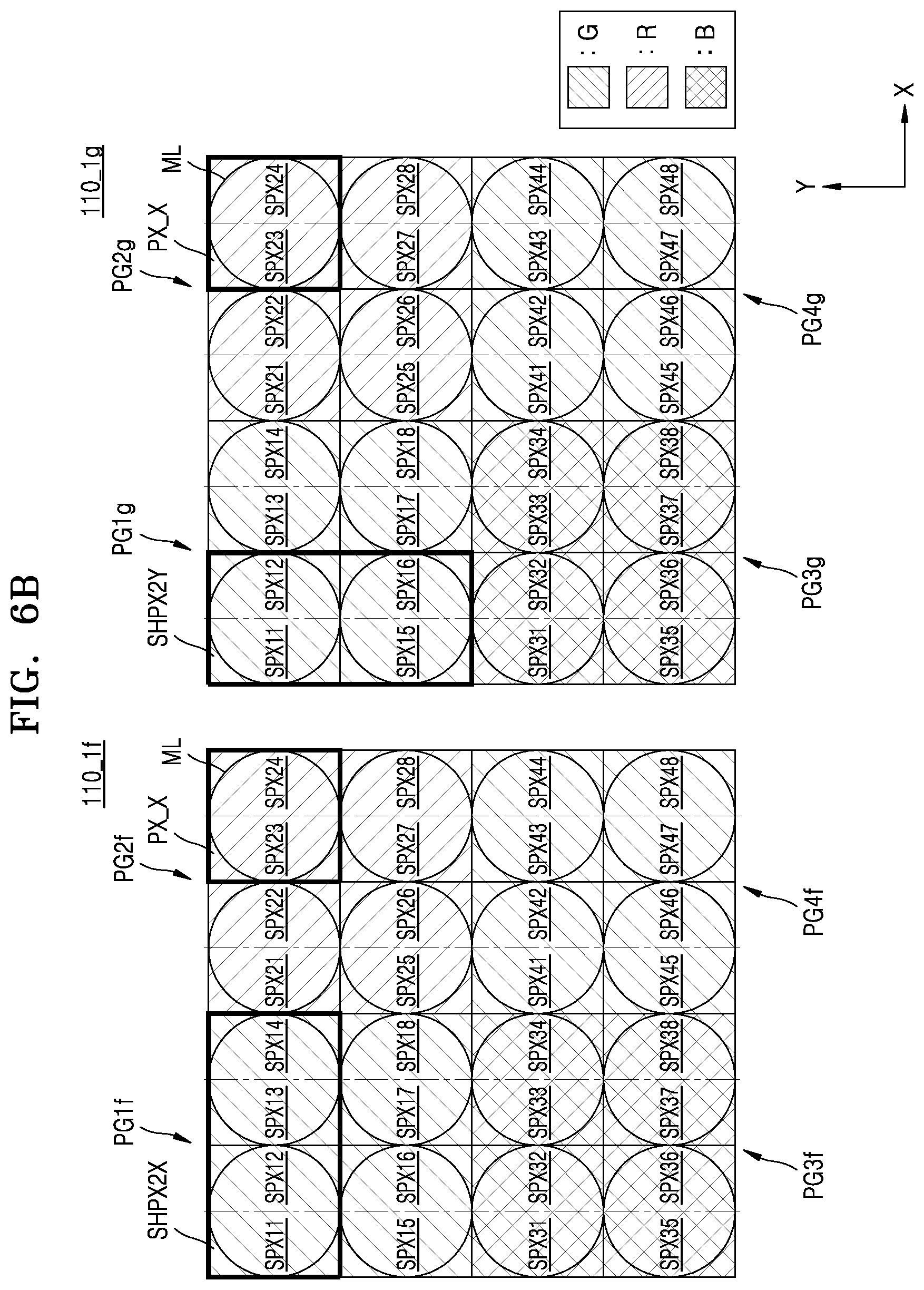

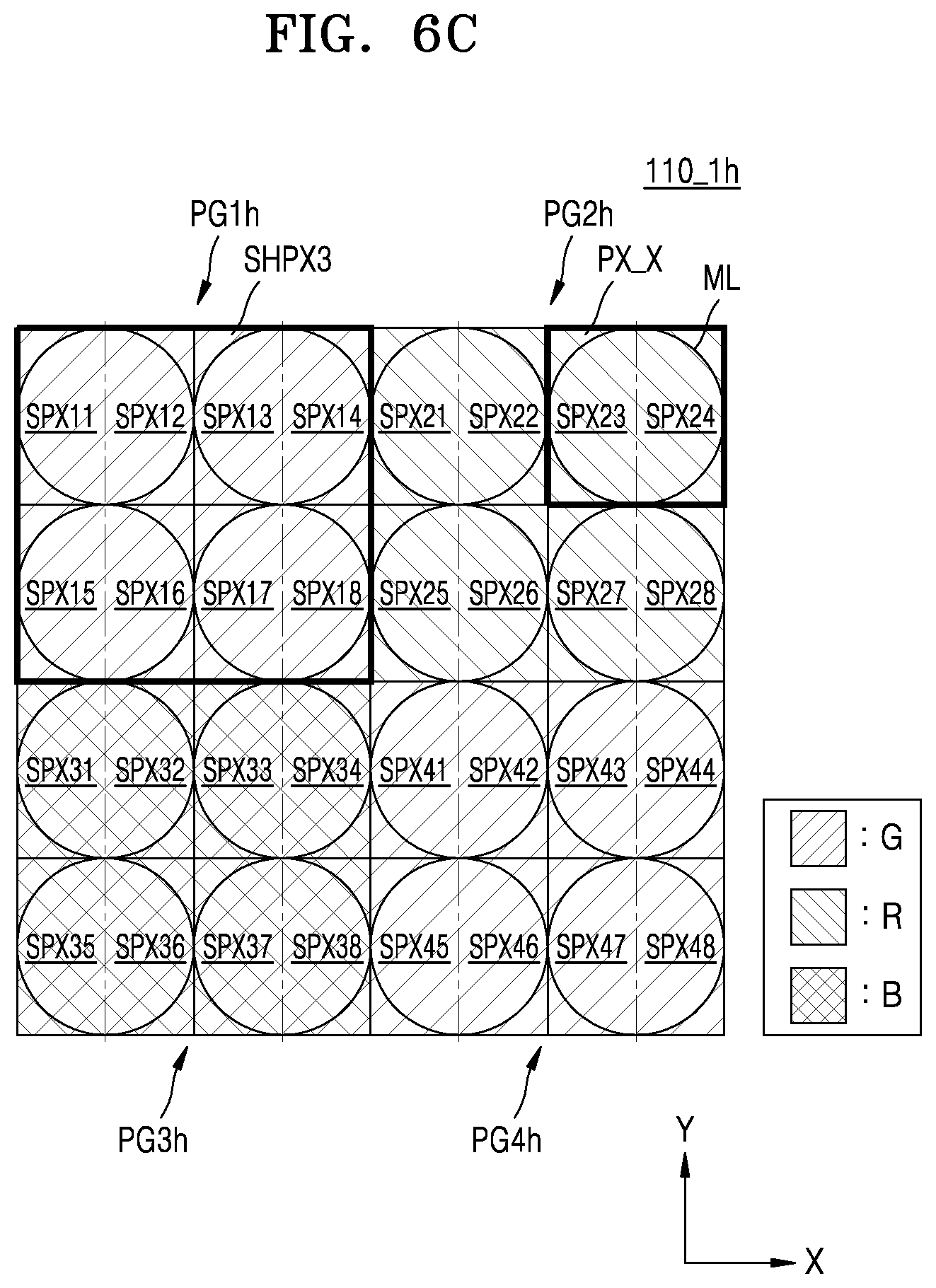

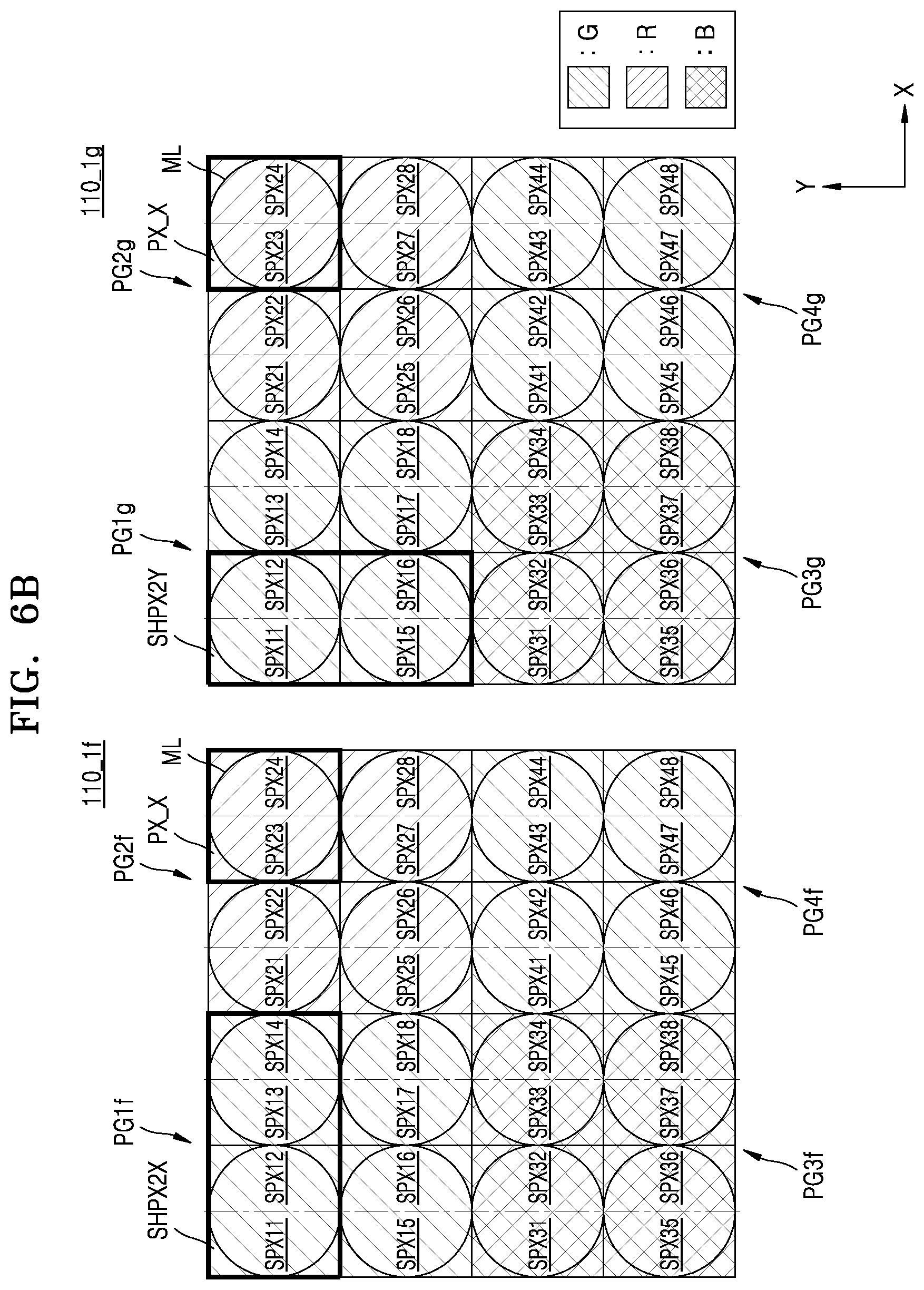

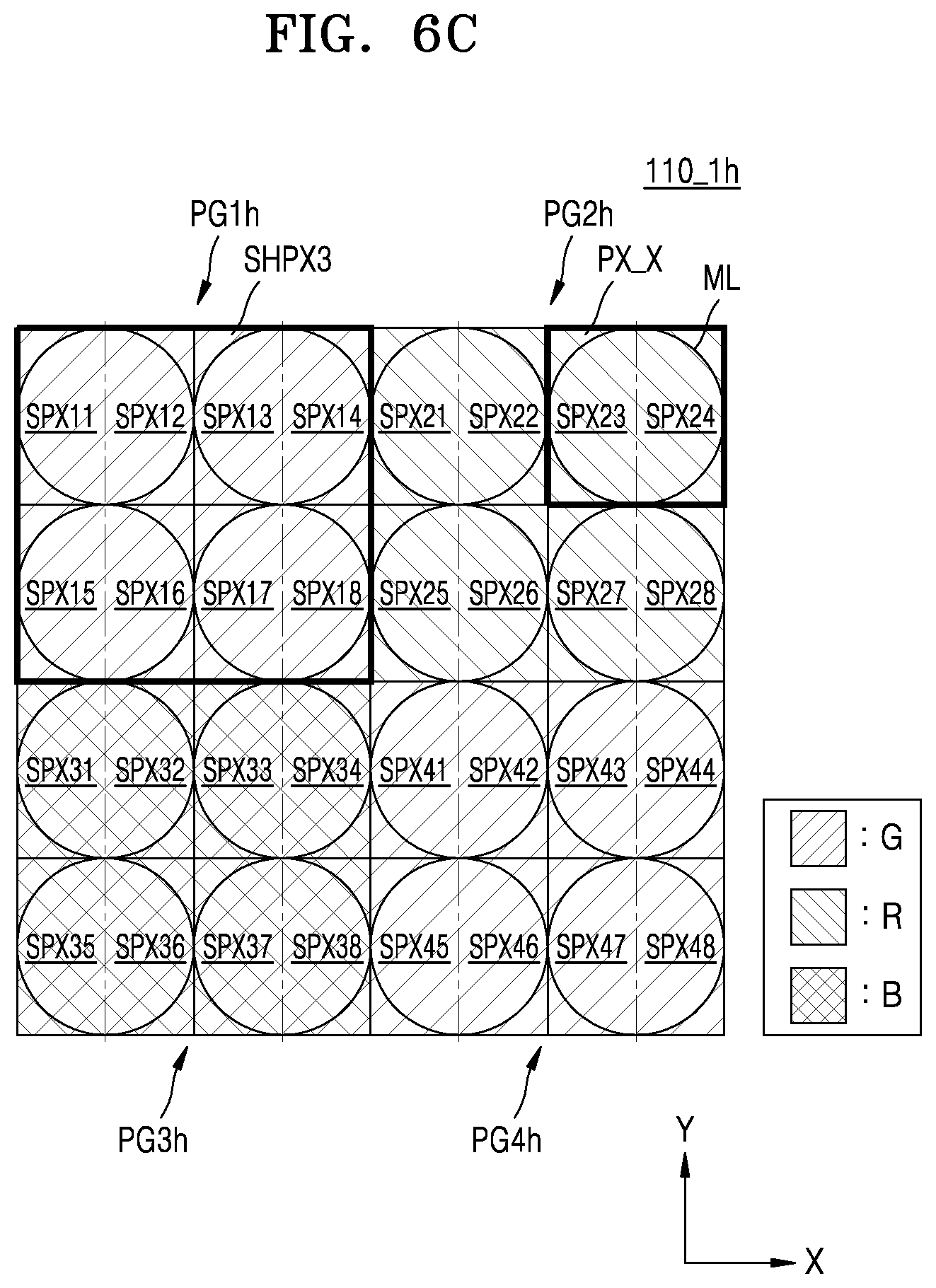

[0091] FIGS. 6A, 6B, 6C, 6D and 6E are respective diagrams variously illustrating subpixel arrangements that share a floating diffusion region. Such subpixel arrangements may be included in subpixel arrays of pixels arrays included in embodiments of the inventive concept (e.g., the first subpixel array 110_1 of FIG. 3A, the second subpixel array 110_2 of FIG. 3B and the subpixel array(s) of FIGS. 4A to 4C).

[0092] Referring to FIG. 6A, a first subpixel array 100_1e may include first to fourth pixel groups PG1e to PG4e, and each of the first to fourth pixel groups PG1e to PG4e may include a plurality of horizontal pixels PX_X. Subpixels included in each of the horizontal pixels PX_X may be configured in a shared pixel structure SHPX1 which shares different floating diffusion regions. That is, the shared pixel structure SHPX1 may be a 2-shared structure including two subpixels. Hence, two subpixels will share a single floating diffusion region.

[0093] For example, a first subpixel SPX11 and a second subpixel SPX12 of the first pixel group PG1e may be configured in a shared pixel structure SHPX1 which shares a first floating diffusion region, while a third subpixel SPX13 and a fourth subpixel SPX14 of the first pixel group PGle may be configured in a shared pixel structure SHPX1 which shares a different floating diffusion region. In this case, the first subpixel SPX11 and the third subpixel SPX13 are associated with different floating diffusion regions.

[0094] The foregoing description of the first pixel group PGle may be applied to the second to fourth pixel groups PG2e to PG4e.

[0095] In the high resolution mode, the first subpixel array 100_1e may accumulate all of the photoelectric charge generated by the least two (2) photodiodes of different subpixels in a floating diffusion region. For example, the first subpixel array 100_1e may output a reset voltage as a pixel signal (e.g., VOUT of FIG. 5), and then, may output a pixel signal VOUT based on the first subpixel SPX11, and then may output a pixel signal VOUT based on the first subpixel SPX11 and the second subpixel SPX12. That is, subpixels (e.g., the first subpixel SPX11 and the second subpixel SPX12) configuring together in a single horizontal pixel may share a floating diffusion region (e.g., the floating diffusion region FD1 of FIG. 5), and thus, may output a pixel signal VOUT based on a photoelectric charge generated by a first photodiode (e.g., the first photodiode PD11 of FIG. 5) of the first subpixel SPX11 and a photoelectric charge generated by a second photodiode (e.g., the second photodiode PD12 of FIG. 5) of the second subpixel SPX12. Such a readout method may be a reset-signal-signal-reset-signal-signal-reset-signal-signal-reset-signal-- signal (RSSRSSRSSRSS) readout method.

[0096] However, operation of an image sensor including the first subpixel array 100_1e in the high resolution mode according to an embodiment is not limited thereto. Although the first subpixel SPX11 and the second subpixel SPX12 share the floating diffusion region FD1, the first subpixel array 100_1e may output a pixel signal VOUT based on the first subpixel SPX11, subsequently reset the floating diffusion region FD1 to output a reset voltage as a pixel signal VOUT, and subsequently output a pixel signal VOUT based on the second subpixel SPX12. Such a readout method may be a reset-signal-reset-signal-reset-signal-reset-signal-reset-signal-reset-si- gnal-reset-signal-reset-signal (RSRSRSRSRSRSRSRS) readout method.

[0097] Thus, image sensors consistent with embodiments of the inventive concept may variously adjust the number of floating diffusion region resets, in obtaining a pixel signal VOUT output from each of subpixels included in one shared pixel structure SHPX1 sharing one floating diffusion region. As the number of floating diffusion region resets increases, the time taken in obtaining a pixel signal VOUT output from each of subpixels included in one shared pixel structure SHPX1 also increases, but a floating diffusion region having a relatively low capacitance may be formed and a conversion gain may increase. On the other hand, as the number of floating diffusion region resets decreases, a floating diffusion region having a high capacitance may be needed. However, the time taken in obtaining a pixel signal VOUT output from each of subpixels included in one shared pixel structure SHPX1 may decrease.

[0098] In certain embodiments of the inventive concept, the first to fourth pixel groups PGle to PG4e of the first subpixel array 100_1e may be respectively connected to different column output lines (e.g., respective corresponding column output lines of the first to n-1.sup.th column output lines CLO_0 to CLO_n-1). For example, a plurality of pixels PX_X of the first pixel group PGle may be connected to the first column output line CLO_0, a plurality of pixels PX_X of the second pixel group PG2e may be connected to the second column output line CLO_1, a plurality of pixels PX_X of the third pixel group PG3e may be connected to the third column output line CLO_2, and a plurality of pixels PX_X of the fourth pixel group PG4e may be connected to the fourth column output line CLO_3.

[0099] In this case, an image sensor including the first subpixel array 100_1e may perform an analog pixel binning operation in the low resolution mode. That is, in the low resolution mode, the first subpixel array 100_1e may output a reset voltage as a pixel signal VOUT through the first column output line CLO_0, subsequently output a pixel signal VOUT based on the first, third, fifth, and seventh subpixels SPX11, SPX13, SPX15, and SPX17 through the first column output line CLO_0, and subsequently output a pixel signal VOUT based on the first to eighth subpixels SPX11 to SPX18 through the first column output line CLO_0. Such a readout method may be a reset-signal-signal (RSS) readout method.

[0100] Alternatively, in the low resolution mode, the first subpixel array 100_1e may output the reset voltage as a pixel signal VOUT through the first column output line CLO_0, subsequently output the pixel signal VOUT based on the first, third, fifth, and seventh subpixels SPX11, SPX13, SPX15, and SPX17 through the first column output line CLO_0, subsequently output the reset voltage as a pixel signal VOUT through the first column output line CLO_0 again, and subsequently output a pixel signal VOUT based on the second, fourth, sixth, and eighth subpixels SPX12, SPX14, SPX16, and SPX18 through the first column output line CLO_0. Such a readout method may be a reset-signal-reset-signal (RS-RS) readout method.

[0101] However, image sensors according to embodiments of the inventive concept are not limited thereto, and the plurality of horizontal pixels PX_X included in the first subpixel array 100_1e may be respectively connected to different column output lines. In this case, an image sensor including the first subpixel array 100_1e may perform a digital pixel binning operation in the low resolution mode. For example, in the low resolution mode, the first subpixel array 100_1e may output different pixel signals VOUT based on the first, third, fifth, and seventh subpixels SPX11, SPX13, SPX15, and SPX17 through different column output lines, and each of the pixel signals VOUT may be converted into a digital signal by a CDS (for example, 151 of FIG. 2) and an ADC (for example, 153 of FIG. 2) and may be stored in a buffer (for example, 155 of FIG. 2). The buffer 155 may output data, corresponding to a pixel signal output from each of the first, third, fifth, and seventh subpixels SPX11, SPX13, SPX15, and SPX17, as one signal to a signal processor (for example, 130 of FIG. 2) or the outside of the image sensor. Subsequently, the buffer 155 may output data, corresponding to a pixel signal output from each of the second, fourth, sixth, and eighth subpixels SPX12, SPX14, SPX16, and SPX18, as one signal to the signal processor 130 or the outside of the image sensor.

[0102] From the foregoing, those skilled in the art will recognize that an image sensor according to embodiments of the inventive concept may include a subpixel array (e.g., the first subpixel array 100_1e of FIG. 6A) and may perform an AF function according to pixel units in the high resolution mode, or perform the AF function according to pixel group units in the low resolution mode.

[0103] In other embodiments of the inventive concept additionally capable of operating in medium resolution mode, image sensor including the first subpixel array 100_1e may perform the analog pixel binning operation or the digital pixel binning operation. Therefore, the image sensor including the first subpixel array 100_1e may perform an AF function according to pixel units in the high resolution mode, perform the AF function according to pixel group units in the low resolution mode. In this manner, image sensors according to embodiments of the inventive concept may effectively operate in the high resolution mode, the low resolution mode, and the medium resolution mode to properly meet the needs of the illumination environment.

[0104] Referring to FIG. 6B, a first subpixel array 100_1f includes first to fourth pixel groups PG1f to PG4f, wherein each of the first to fourth pixel groups PG1f to PG4f includes a plurality of horizontal pixels PX_X. Adjacently disposed (in the X direction) horizontal pixels PX_X among the plurality of horizontal pixels PX_X may configured in a shared pixel structure SHPX2X in order to share a single floating diffusion region. That is, the shared pixel structure SHPX2X of FIG. 6B is a 4-shared structure including four subpixels that share a single floating diffusion region.

[0105] An image sensor including the first subpixel array 100_1f may perform an AF function according to pixel units in the high resolution mode, and may perform the AF function according to pixel groups units in conjunction with the first to fourth pixel groups PG1f to PG4f in the low resolution mode. For example, in the high resolution mode, the first subpixel array 100_1f may output a pixel signal VOUT according to the above-described RSSRSSRSSRSS readout method. Alternatively, the first subpixel array 100_1f may output the pixel signal VOUT according to the above-described RSRSRSRSRSRSRSRS readout method.

[0106] Alternatively, in the high resolution mode, the first subpixel array 100_1f may accumulate all photoelectric charge, generated by four different photodiodes, into one floating diffusion region. For example, the first subpixel array 100_1f may output a reset voltage as a pixel signal VOUT, and then, may output a pixel signal VOUT based on a first subpixel SPX11, output a pixel signal VOUT based on the first subpixel SPX11 and a second subpixel SPX12, output a pixel signal VOUT based on the first to third subpixels SPX11 to SPX13, and output a pixel signal VOUT based on the first to fourth subpixels SPX11 to SPX14. Such a readout method may be a reset-signal-signal-signal-signal-reset-signal-signal-signal-signal (RSSSSRSSSS) readout method.

[0107] Alternatively, for example, in the high resolution mode, the first subpixel array 100_1f may output the reset voltage as the pixel signal VOUT, and then, may output the pixel signal VOUT based on the first subpixel SPX11, output the pixel signal VOUT based on the first subpixel SPX11 and the second subpixel SPX12, and output the pixel signal VOUT based on the first to fourth subpixels SPX11 to SPX14. That is, in the high resolution mode, the first subpixel array 100_1f may simultaneously accumulate photoelectric charge, generated by photodiodes of the third and fourth subpixels SPX13 and SPX14, into one floating diffusion region. Such a readout method may be a reset-signal-signal-signal-reset-signal-signal-signal (RSSSRSSS) readout method. In this case, the third and fourth subpixels SPX13 and SPX14 may not be used for the AF function, but a total readout speed of the first subpixel array 100_1f may increase.

[0108] On the other hand, an image sensor including the first subpixel array 100_1f may perform an analog pixel binning operation in the low resolution mode. For example, the first subpixel array 100_1f may output a pixel signal VOUT based on first, third, fifth, and seventh subpixels SPX11, SPX13, SPX15, and SPX17 to a first column output line CLO_0, and then, may output a pixel signal VOUT based on first to eighth subpixels SPX11 to SPX18 to the first column output line CLO_0. Alternatively, for example, the first subpixel array 100_1f may output the pixel signal VOUT based on the first, third, fifth, and seventh subpixels SPX11, SPX13, SPX15, and SPX17 to the first column output line CLO_0, subsequently output a reset voltage as a pixel signal VOUT to the first column output line CLO_0 again, and subsequently output a pixel signal VOUT based on the second, fourth, sixth, and eighth subpixels SPX12, SPX14, SPX16, and SPX18 to the first column output line CLO_0.

[0109] On the other hand, the first subpixel array 100_1f may perform a digital pixel binning operation in the low resolution mode. For example, the first subpixel array 100_1f may output a pixel signal VOUT based on the first and third subpixels SPX11 and SPX13 and a pixel signal VOUT based on the fifth and seventh subpixels SPX15 and SPX17 to different column output lines, and each of the pixel signals VOUT may be converted into a digital signal by a CDS (for example, 151 of FIG. 2) and an ADC (for example, 153 of FIG. 2) and may be stored in a buffer (for example, 155 of FIG. 2). The buffer 155 may output data, corresponding to a pixel signal output from each of the first, third, fifth, and seventh subpixels SPX11, SPX13, SPX15, and SPX17, as one signal to a signal processor (for example, 130 of FIG. 2) or the outside of the image sensor. Subsequently, the buffer 155 may output data, corresponding to a pixel signal output from each of the second, fourth, sixth, and eighth subpixels SPX12, SPX14, SPX16, and SPX18, as one signal to the signal processor 130 or the outside of the image sensor. Therefore, an image sensor including the first subpixel array 100_1f may perform an AF function according to pixel units in the high resolution mode, and may perform the AF function in pixel group units in the low resolution mode. A first subpixel array 100_1g may include first to fourth pixel groups PG1g to PG4g, and each of the first to fourth pixel groups PG1g to PG4g may include a plurality of horizontal pixels PX_X. Adjacently disposed (in the Y direction) horizontal pixels PX_X among the plurality of horizontal pixels PX_X may configured in a shared pixel structure SHPX2Y which shares a single floating diffusion region. That is, the shared pixel structure SHPX2Y may be a 4-shared structure including four subpixels, and four subpixels each time may configured in the shared pixel structure SHPX2Y, which shares a floating diffusion region. In each of the high resolution mode and the low resolution mode, the description of the operation of the first subpixel array 100_1f described above may be similarly applied to an operation of the first subpixel array 100_1g.

[0110] Referring to FIG. 6C, a first subpixel array 100_1h includes first to fourth pixel groups PG1h to PG4h, wherein each of the first to fourth pixel groups PG1h to PG4h includes a plurality of horizontal pixels PX_X, wherein the horizontal pixels PX_X included in a particular same group are configured in a shared pixel structure SHPX3 which shares a floating diffusion region. That is, the shared pixel structure SHPX3 may be an 8-shared structure including eight subpixels, and eight subpixels each time may configure the shared pixel structure SHPX3, which shares a floating diffusion region. Therefore, subpixels included in different pixel groups may not share a floating diffusion region. An operation of the first subpixel array 100_1h in a high resolution mode and an operation of the first subpixel array 100_1h in a low resolution mode will be described below with reference FIG. 9 to FIG. 11.

[0111] Referring to FIG. 6D, a first subpixel array 100_1i include first to fourth pixel groups PG1i to PG4i, wherein each of the first to fourth pixel groups PG1i to PG4i includes a plurality of horizontal pixels PX_X. Adjacently disposed (in the X direction) horizontal pixels PX_X may be configured in a shared pixel structure SHPX4X which shares a single floating diffusion region. That is, the shared pixel structure SHPX4X may be a 16-shared structure including sixteen subpixels, and sixteen subpixels each time may configure the shared pixel structure SHPX4X, which shares a floating diffusion region.

[0112] For example, first to eighth subpixels SPX11 to SPX18 of the first pixel group PG1i and first to eighth subpixels SPX21 to SPX28 of the second pixel group PG2i may configure one shared pixel structure SHPX4X, which shares a floating diffusion region, and first to eighth subpixels SPX31 to SPX38 of the third pixel group PG3i and first to eighth subpixels SPX41 to SPX48 of the fourth pixel group PG4i may configure one shared pixel structure SHPX4X, which shares a floating diffusion region. Therefore, subpixels included in different pixel groups may share a floating diffusion region.

[0113] A first subpixel array 100_1j includes first to fourth pixel groups PG1j to PG4j, wherein each of the first to fourth pixel groups PG_1j to PG4j includes a plurality of horizontal pixels PX_X. Adjacently disposed (in the Y direction) horizontal pixels PX_X may be configured in a shared pixel structure SHPX4Y which shares a single floating diffusion region. That is, the shared pixel structure SHPX4Y may be a 16-shared structure including sixteen subpixels, and sixteen subpixels each time may configure the shared pixel structure SHPX4Y, which shares a floating diffusion region.

[0114] For example, first to eighth subpixels SPX11 to SPX18 of the first pixel group PG1j and first to eighth subpixels SPX31 to SPX38 of the third pixel group PG3j may configure one shared pixel structure SHPX4Y, which shares a floating diffusion region, and first to eighth subpixels SPX21 to SPX28 of the second pixel group PG2j and first to eighth subpixels SPX41 to SPX48 of the fourth pixel group PG4j may configure one shared pixel structure SHPX4Y, which shares a floating diffusion region. Therefore, subpixels included in different pixel groups may share a floating diffusion region.

[0115] The description of the first subpixel array 100_1h of FIG. 6C in the high resolution mode may be similarly applied to an operation of each of the first subpixel arrays 100_1i and 100_1j in the high resolution mode, and the description of the first subpixel array 100_1h of FIG. 6C in the low resolution mode may be similarly applied to an operation of each of the first subpixel arrays 100_1i and 100_1j in the low resolution mode.