Detecting Malicious Code Received From Malicious Client Side Injection Vectors

Stoletny; Alexey ; et al.

U.S. patent application number 16/827476 was filed with the patent office on 2020-11-12 for detecting malicious code received from malicious client side injection vectors. The applicant listed for this patent is Clean.io, Inc.. Invention is credited to Seth Demsey, Ivan Soroka, Alexey Stoletny.

| Application Number | 20200358818 16/827476 |

| Document ID | / |

| Family ID | 1000004859722 |

| Filed Date | 2020-11-12 |

| United States Patent Application | 20200358818 |

| Kind Code | A1 |

| Stoletny; Alexey ; et al. | November 12, 2020 |

DETECTING MALICIOUS CODE RECEIVED FROM MALICIOUS CLIENT SIDE INJECTION VECTORS

Abstract

There are disclosed devices, system and methods for detecting malicious scripts received from malicious client side vectors. First, a script received from a client side injection vector and being displayed to a user in a published webpage is detected. The script may have malicious code configured to cause a browser unwanted action without user action. The script is wrapped in a java script (JS) closure and/or stripped of hyper-text markup language (HTML). The script is then executed in a browser sandbox that is capable of activating the unwanted action, displaying execution of the script, and stopping execution of the unwanted action if a security error resulting from the unwanted action is detected. When a security error results from this execution in the sandbox, executing the malicious code is discontinued, displaying the malicious code is discontinued, and execution of the unwanted action is stopped.

| Inventors: | Stoletny; Alexey; (New York, NY) ; Demsey; Seth; (New York, NY) ; Soroka; Ivan; (New York, NY) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Family ID: | 1000004859722 | ||||||||||

| Appl. No.: | 16/827476 | ||||||||||

| Filed: | March 23, 2020 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 16409514 | May 10, 2019 | 10599834 | ||

| 16827476 | ||||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | G06F 2221/033 20130101; G06Q 30/0277 20130101; H04L 63/1466 20130101; G06F 21/566 20130101; G06F 21/53 20130101; G06Q 30/0248 20130101 |

| International Class: | H04L 29/06 20060101 H04L029/06; G06F 21/53 20060101 G06F021/53; G06F 21/56 20060101 G06F021/56; G06Q 30/02 20060101 G06Q030/02 |

Claims

1. A method for detecting malicious code existing in computer code received from a client side injection vector, the method comprising: detecting receipt of computer code received from a client side injection vector and being displayed to a user in a published webpage, the computer code having malicious code configured to cause a browser unwanted action in the browser when executed; one of wrapping the computer code in a java script (JS) closure to detect the unwanted action caused by the malicious code, or stripping hyper-text markup language (HTML) content from the computer code; executing the computer code and the malicious code in a browser sandbox that activates the unwanted action of the malicious code, that displays execution of the computer code and the malicious code to the user, and that stops execution of the unwanted action if a security error resulting from the unwanted action is detected; wherein the unwanted action causes a browser unwanted action without user action and causes the security error when the unwanted action occurs; detecting whether the security error resulting from the unwanted action exists; based on a type of the security error, detecting a type of client side injection vector that the computer code was received from; and when the security error exists, discontinuing executing the malicious code in the browser sandbox, discontinuing displaying the malicious code on the display, and stopping execution of the unwanted action.

2. The method of claim 1, wherein detecting receipt of computer code received from a client side injection vector and being displayed to a user in a published webpage includes detecting receipt of computer code received in response to a request for an internet advertisement (ad) promoting goods and/or services requested from a third party advertiser by the published webpage being displayed to the user.

3. The method of claim 1, wherein detecting whether a security error resulting from the unwanted action exists includes one of: detecting that the client side injection vector is a network connection vector that monitors network traffic of the browser and hijacks ad placements on the webpage; detecting that the client side injection vector is a browser extension vector of the browser that monitors the browser and hijacks ad placements on the webpage; or detecting that the client side injection vector is a local virus that accesses the computer operating system to proxy web traffic and hijack ad placements or common scripts on the webpage.

4. The method of claim 1, wherein detecting whether a security error resulting from the unwanted action exists includes one of: detecting that the client side injection vector is a local vector between a network and the computer, or detecting that the client side injection vector is a non-ad vector that injects the malicious code independently of a call for an ad or when a call for an ad has not occurred.

5. The method of claim 1, wherein executing the script and the malicious code in a browser sandbox includes: protecting against deferred unwanted actions using browser attributes, and only allowing deferred redirects in response to actual user gesture detection at a user input device.

6. The method of claim 1, wherein executing the script and the malicious code in a browser sandbox that displays execution of the ad and the malicious code to the user includes: writing or rendering a malicious script having images, video and/or audio to the browser sandbox and a display; and continuing to monitor the browser sandbox for unwanted actions by the malicious code.

7. The method of claim 1, wherein the computer code received is an internet advertisement (ad) promoting goods and/or services requested from a third party advertiser; wherein the unwanted action is a first deferred type of unwanted action and the security error is also a deferred type of security error; wherein the first deferred type of unwanted action is a cross-origin script or a cross-origin iframe having malicious code including one of a settimeout function, a setinterval function or an adeventlistener function; wherein the computer code is configured to return a count impression for the third party advertiser when the computer code is executed wherein the HTML content would otherwise provide an extraneous count impression for the third party advertiser; and further comprising: returning a first count impression for the computer code for the third party advertiser; if the computer code is an SRC type document, wrapping the malicious code in a java script (JS) closure to detect the unwanted action requested by the malicious code; and stopping execution of the first deferred type of error event includes capturing that a script on a uniform resource locator (URL) attempted to cause the browser to perform an unwanted action.

8. The method of claim 1, further comprising: detecting receipt of one of a JS closure, a Javascript SRC or a Javascript SRC document; creating a JS wrapped version of the detected JS closure, Javascript SRC or Javascript SRC document; executing the JS wrapped version of the detected JS closure, Javascript SRC or Javascript SRC document in the browser sandbox; and re-throwing the detected security error so it is heard by the browser sandbox or browser by creating a sandbox frame, subscribing to that frame and listening to that frame to hear the error event that result from rethrowing the errors that the browser protected or stopped.

9. The method of claim 1, further comprising: prior to detecting receipt of malicious code: executing a user requested protected published webpage having a call to a protection code source for protection code; executing the call to the protection code source for and downloading the protection code; executing the protection code; and after detecting receipt of malicious code, reporting to the protection code source, detecting information that is based on the detecting, that identifies the client side injection vector and that identifies the executed unwanted acts.

10. A non-transitory machine readable medium storing a program having instructions which when executed by a processor will cause the processor to detect malicious code existing in computer code received from a client side injection vector, the instructions of the program for: detecting receipt of computer code received from a client side injection vector and being displayed to a user in a published webpage, the computer code having malicious code configured to cause a browser unwanted action in the browser when executed; one of wrapping the computer code in a java script (JS) closure to detect the unwanted action caused by the malicious code, or stripping hyper-text markup language (HTML) content from the computer code; executing the computer code and the malicious code in a browser sandbox that activates the unwanted action of the malicious code, that displays execution of the computer code and the malicious code to the user, and that stops execution of the unwanted action if a security error resulting from the unwanted action is detected; wherein the unwanted action causes a browser unwanted action without user action and causes the security error when the unwanted action occurs; detecting whether the security error resulting from the unwanted action exists; based on a type of the security error, detecting a type of client side injection vector that the computer code was received from; and when the security error exists, discontinuing executing the malicious code in the browser sandbox, discontinuing displaying the malicious code on the display, and stopping execution of the unwanted action.

11. The medium of claim 10, wherein detecting whether a security error resulting from the unwanted action exists includes one of: detecting that the client side injection vector is a network connection vector that monitors network traffic of the browser and hijacks ad placements on the webpage; detecting that the client side injection vector is a browser extension vector of the browser that monitors the browser and hijacks ad placements on the webpage; or detecting that the client side injection vector is a local virus that accesses the computer operating system to proxy web traffic and hijack ad placements or common scripts on the webpage.

12. The medium of claim 10, wherein detecting whether a security error resulting from the unwanted action exists includes one of: detecting that the client side injection vector is a local vector between a network and the computer, or detecting that the client side injection vector is a non-ad vector that injects the malicious code independently of a call for an ad or when a call for an ad has not occurred.

13. The medium of claim 10, wherein executing the script and the malicious code in a browser sandbox includes: protecting against deferred unwanted actions using browser attributes, and only allowing deferred redirects in response to actual user gesture detection at a user input device.

14. The medium of claim 10, wherein executing the script and the malicious code in a browser sandbox that displays execution of the ad and the malicious code to the user includes: writing or rendering a malicious script having images, video and/or audio to the browser sandbox and a display; and continuing to monitor the browser sandbox for unwanted actions by the malicious code.

15. The medium of claim 10, wherein the computer code received is an internet advertisement (ad) promoting goods and/or services requested from a third party advertiser; wherein the unwanted action is a first deferred type of unwanted action and the security error is also a deferred type of security error; wherein the first deferred type of unwanted action is a cross-origin script or a cross-origin iframe having malicious code including one of a settimeout function, a setinterval function or an adeventlistener function; wherein the computer code is configured to return a count impression for the third party advertiser when the computer code is executed wherein the HTML content would otherwise provide an extraneous count impression for the third party advertiser; and further comprising: returning a first count impression for the computer code for the third party advertiser; if the computer code is an SRC type document, wrapping the malicious code in a java script (JS) closure to detect the unwanted action requested by the malicious code; and stopping execution of the first deferred type of error event includes capturing that a script on a uniform resource locator (URL) attempted to cause the browser to perform an unwanted action.

16. The medium of claim 10, the instructions of the program further for: detecting receipt of one of a JS closure, a Javascript SRC or a Javascript SRC document; creating a JS wrapped version of the detected JS closure, Javascript SRC or Javascript SRC document; executing the JS wrapped version of the detected JS closure, Javascript SRC or Javascript SRC document in the browser sandbox; and re-throwing the detected security error so it is heard by the browser sandbox or browser by creating a sandbox frame, subscribing to that frame and listening to that frame to hear the error event that result from rethrowing the errors that the browser protected or stopped.

17. The medium of claim 10, the instructions of the program further for: prior to detecting receipt of malicious code: executing a user requested protected published webpage having a call to a protection code source for protection code; executing the call to the protection code source for and downloading the protection code; executing the protection code; and after detecting receipt of malicious code, reporting to the protection code source, detecting information that is based on the detecting, that identifies the client side injection vector and that identifies the executed unwanted acts.

18. The medium of claim 11, the user device further comprising: a user input device; a display device; a processor; and a memory; wherein the processor and the memory comprise circuits and software for performing the instructions on the storage medium.

19. A system for detecting malicious code existing in computer code received from a third party, the system comprising: a user device having protection code instructions to: detect receipt of computer code received from a client side injection vector and being displayed to a user in a published webpage, the computer code having malicious code configured to cause a browser unwanted action in the browser when executed; one of wrap the computer code in a java script (JS) closure to detect the unwanted action caused by the malicious code, or strip hyper-text markup language (HTML) content from the computer code; execute the computer code and the malicious code in a browser sandbox that activates the unwanted action of the malicious code, that displays execution of the computer code and the malicious code to the user, and that stops execution of the unwanted action if a security error resulting from the unwanted action is detected; wherein the unwanted action causes a browser unwanted action without user action and causes the security error when the unwanted action occurs; detect whether the security error resulting from the unwanted action exists; based on a type of the security error, detect a type of client side injection vector that the computer code was received from; and when the security error exists, discontinue executing the malicious code in the browser sandbox, discontinue displaying the malicious code on the display, and stopping execution of the unwanted action.

20. The system of claim 19, the user device further comprising: a user input device; a display device; a processor; and a memory; wherein the processor and the memory comprise circuits and software for performing the protection code instructions.

21. The system of claim 19, wherein detecting the type of client side injection vector that the computer code was received from includes one of: detecting that the type of client side injection vector is a network connection vector; detecting that the type of client side injection vector is a browser extension vector; or detecting that the type of client side injection vector is a local virus.

22. The system of claim 19, wherein detecting whether a security error resulting from the unwanted action exists includes one of: detecting that the client side injection vector is a local vector between a network and the computer, or detecting that the client side injection vector is a non-ad vector that injects the malicious code independently of a call for an ad or when a call for an ad has not occurred.

23. The method of claim 1, wherein detecting the type of client side injection vector that the computer code was received from includes one of: detecting that the type of client side injection vector is a network connection vector; detecting that the type of client side injection vector is a browser extension vector; or detecting that the type of client side injection vector is a local virus.

24. The medium of claim 10, wherein detecting the type of client side injection vector that the computer code was received from includes one of: detecting that the type of client side injection vector is a network connection vector; detecting that the type of client side injection vector is a browser extension vector; or detecting that the type of client side injection vector is a local virus.

Description

NOTICE OF COPYRIGHTS AND TRADE DRESS

[0001] A portion of the disclosure of this patent document contains material which is subject to copyright protection. This patent document may show and/or describe matter which is or may become trade dress of the owner. The copyright and trade dress owner has no objection to the facsimile reproduction by anyone of the patent disclosure as it appears in the Patent and Trademark Office patent files or records, but otherwise reserves all copyright and trade dress rights whatsoever.

RELATED APPLICATION INFORMATION

[0002] This patent is a continuation-in-part of and claims the priority benefit from co-pending patent application Ser. No. 16/409,514, filed May 10, 2019, titled DETECTING MALICIOUS CODE EXISTING IN INTERNET ADVERTISEMENTS, which is incorporated herein by reference, and which will issue on Mar. 24, 2020 as U.S. Pat. No. 10,599,834.

BACKGROUND

Field

[0003] This disclosure relates to detecting malicious code received from malicious client side vectors and executing unwanted acts in a browser.

Description of the Related Art

[0004] Consumers use various computing devices to shop for goods and services on websites they access through a browser. They access websites where they will shop through links in advertisements, emails, texts, coupons and the like which are provided by various sources or "vectors". These vectors may be server or remote vectors that send the links through a network (e.g., the Internet) such as servers, intermediaries, the cloud or content delivery networks. However, the vectors may also be malicious client side injection vectors that high jack the device or browser to send malicious computer scripts as the links, advertisements, emails, texts, coupons, etc. The malicious scripts incorporate malicious code that performs unwanted browser actions, such as non-user-initiated redirects of the user's browser.

[0005] For example, an Internet advertisement ("ad") is typically displayed in a certain area or space of a publisher's Internet webpage, such as a webpage of content for readers to see. The publisher may provide a certain ad space or call in their webpage content for a browser to download an internet ad from an advertiser or server. A publisher may designate a slot (e.g., space, location or placement on their published webpage) and know how and when the ad unit runs. Typically, the ads have pixels that are typically images that load from somewhere (e.g., a party such as an advertiser or intermediary) and thereby signal that party they loaded from about a certain action (like an impression). Then, the loaded ads will typically have a link to click on to go to another webpage. In some cases, the link is a combination of the hyper-text markup language (HTML) tag for a link with the HTML tag for an image or video so that when users click on the link or ad, they are redirected from the publisher's webpage to the advertiser's website to make a purchase. The click on the advertisement activates a browser call to download a page from the associated advertiser's website that the browser can render (e.g. display) on the computing device.

[0006] However, the downloaded ads access to the computing device may not be secure because the ad may not be sufficiently vetted or reviewed to ensure it does not include malware (e.g., malicious code). This can be a problem, at least for the users because the ads themselves are a piece of HTML+JavaScript+cascading style sheets (CSS), which runs in the trusted scope of the user browsing session (often times having access to a first party domain which the user is viewing the ad from). This means that many ads, coming from anywhere, actually have full access to what a user does, types or sees on the site because they have access to the first party domain, and malware in those ads can do a lot of damage, with redirects being one of the types of this kind of damage. Some ads will not have full access because they do not have access to the first party domain. Similar to an executable file from an untrusted party running on a user's computing device (trusted environment), same thing happens with ads where this trust boundary is implicitly violated. Users do not realize that the ads on a website may have access to their shopping cart or details they enter on the site. Site owners do not want to let the ads do anything their site can accomplish, and they want to limit what the ad can do to only certain types of activities (e.g. define a policy). However, there is little control of that in the browser, and while some things can be set using browser sandbox attributes, cryptographic service provider (CSP), etc., this does not stop sophisticated malicious actors or malware.

[0007] In some cases, a malicious client side injection vector may download or inject a malicious script to the computing device that has access to the computing device browser. This is also a problem, because the script can be a piece of HTML+JavaScript+cascading style sheets (CSS), which also runs in the trusted scope of the user browsing session and has full access to what a user does, types or sees on the site. The script may execute unwanted actions on the computing device or browser. Thus, malware in that script can do a lot of damage, such as with redirects. The malicious script can be an executable file from an untrusted party running on a user's computing device and the user does not realize that it has access to their computer or browser.

[0008] And, increasingly, electronic commerce (e-commerce) entities such as e-commerce networks and/or intermediaries have become targets of malware that, effectively, has open access to internet user computing devices. Consequently, there is a problem when a malicious vector sends malicious script that incorporates malicious code to perform unwanted browser actions (such as non-user-initiated redirects), and/or forcing redirects to legitimate sites (e.g., so that an advertiser effectively gets a "100% click-through rate" and can make money on this). When this malware is rendered by the browser it exposes the user's computing device to harmful unwanted actions such as unwanted data access, cryptocurrency mining, "trick" webpages that attempt to force users to do unwanted actions, or to the automatic or near-automatic downloading of unwanted applications, harmful content such as viruses, or unpaid for advertising images.

[0009] Thus, there is a need to detect this malicious code and/or unwanted action on the user's computing device to give users, website owners and e-commerce entities greater control over third party Java Script code executed on their sites, which otherwise was not available.

DESCRIPTION OF THE DRAWINGS

[0010] FIG. 1A is a block diagram of a system for detecting malicious code received from malicious client side injection vectors.

[0011] FIG. 1B is a block diagram of a user device executing a protected published webpage content that has the ability to detect malicious code received from malicious client side injection vectors.

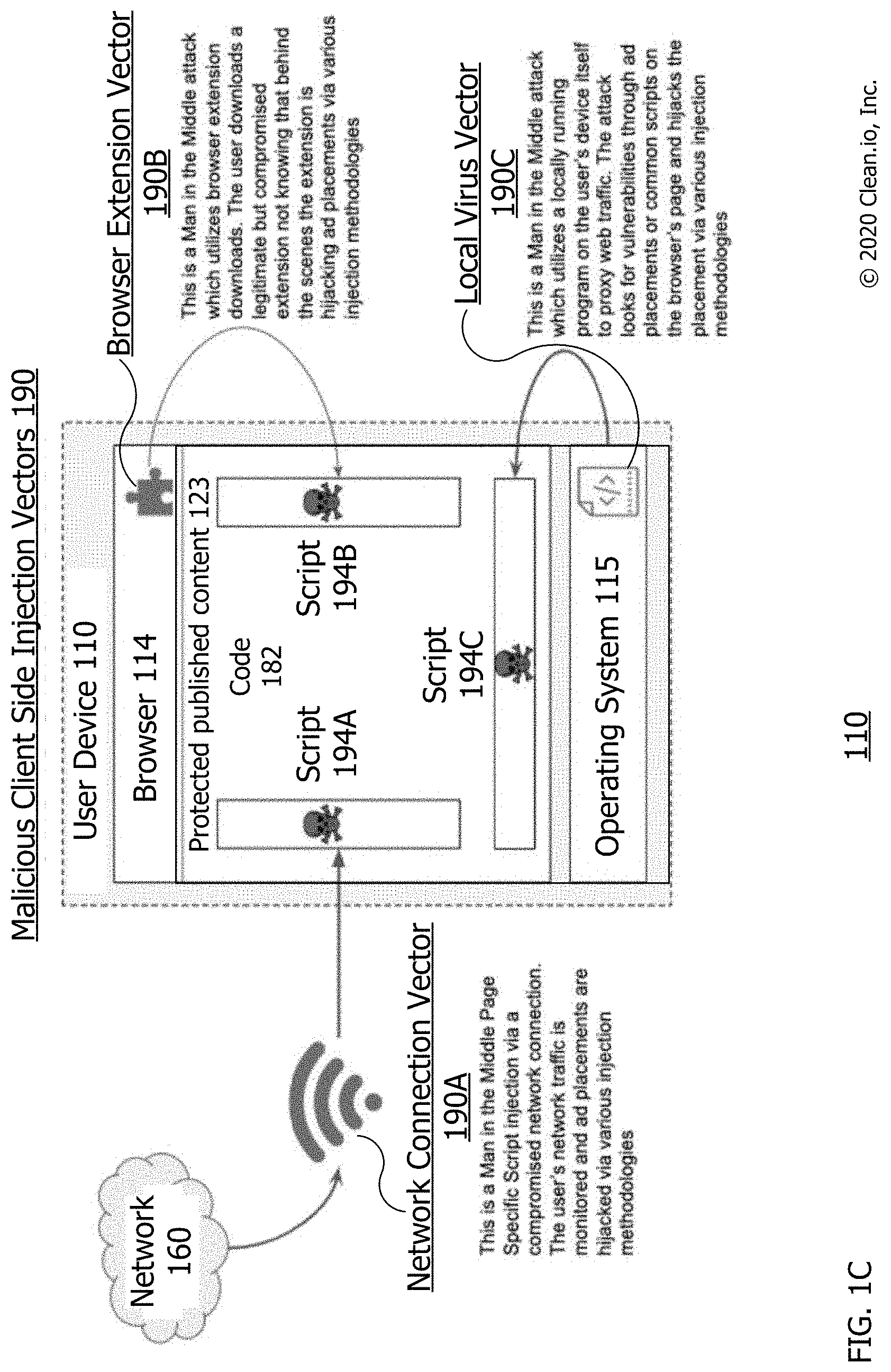

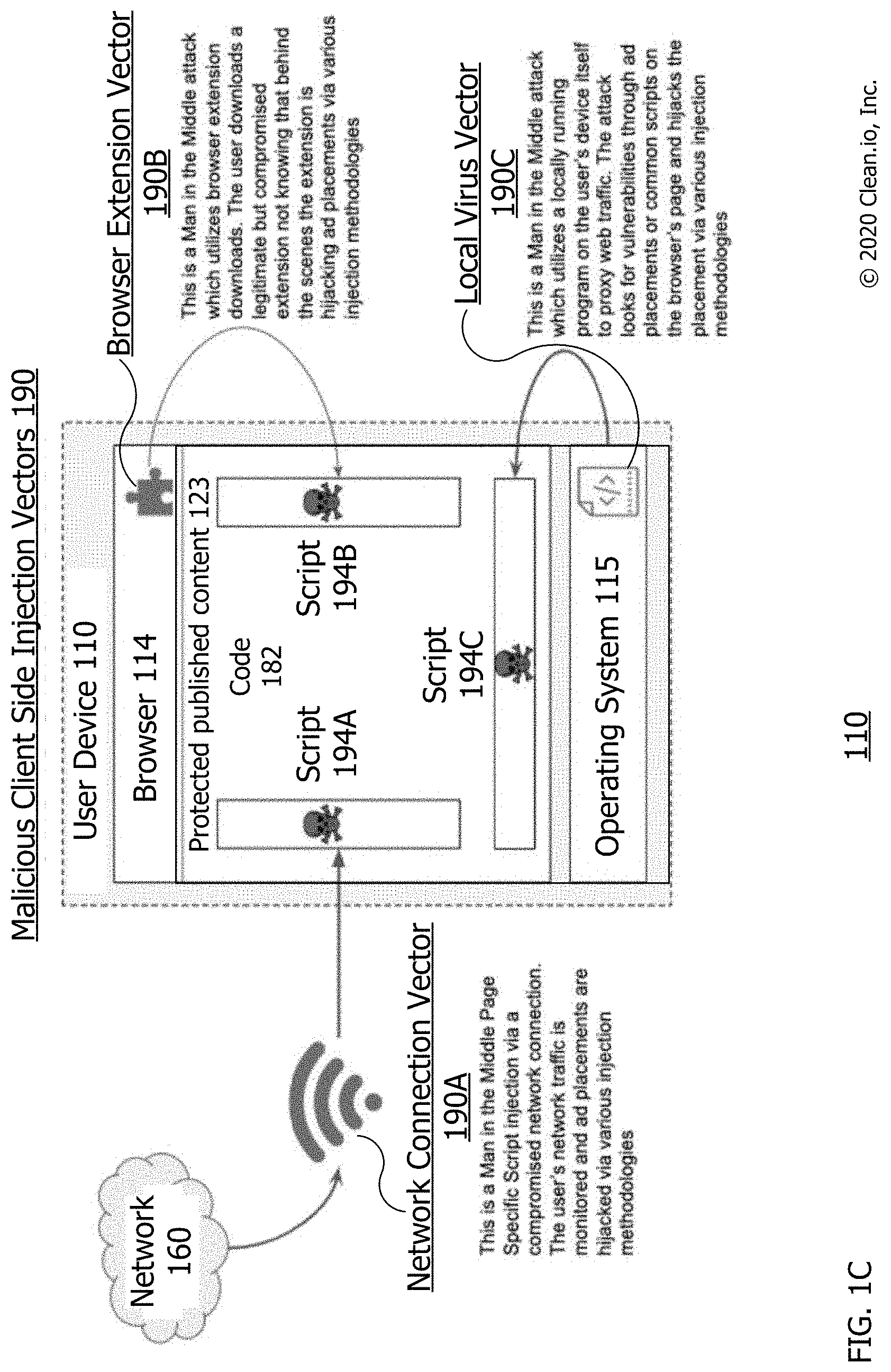

[0012] FIG. 1C is a block diagram of different malicious client side injection vectors.

[0013] FIG. 2 is a flow diagram of an operating environment/process for managing the detecting of malicious code received from malicious client side injection vectorsr.

[0014] FIG. 3 is a flow diagram of an operating environment/process for detecting malicious code received from malicious client side injection vectors.

[0015] FIG. 4 is a flow diagram of a process for detecting malicious code received from malicious client side injection vectors.

[0016] FIG. 5 is a block diagram of a computing device.

[0017] Throughout this description, elements appearing in figures are assigned three-digit reference designators, where the most significant digit is the figure number and the two least significant digits are specific to the element. An element that is not described in conjunction with a figure may be presumed to have the same characteristics and function as a previously-described element having a reference designator with the same least significant digits.

DETAILED DESCRIPTION

[0018] Technologies described herein include systems and methods for detecting malicious code received from malicious client side injection vectors. The malicious code may be received by a user computing device such as a client computer and may execute unwanted acts on a browser of the device. The system may include the user computing device executing a protection script in the browser to detect the unwanted acts and malicious code. It may receive a publisher's webpage having a call for the protection code. The publisher's webpage may or may not have ad space and a call for an ad. The malicious client side injection vector may be a local vector between a network and the computing device. The malicious vector may be a non-ad vector that injects the malicious code independently of a call for an ad or when a call for an ad has not occurred. The webpage may be a published webpage being displayed to a user that is protected by having protection computer instructions or code that detects, intercepts execution of, stops execution of and/or refuses to execute the unwanted browser actions on the computing device. In this document, the term detect (e.g., and/or detecting, detected etc.) may be used to describe monitoring for, detecting, intercepting execution of, stopping execution of, preventing execution of, modifying and/or refusing to execute unwanted browser actions on a computing device.

[0019] As noted herein and quickly referring to FIGS. 1A-B, for a typical or legitimate internet advertisement such as legitimate creative 144, legitimate code 145 may be activated by a user's click (e.g., click with a mouse, keyboard key or touchscreen tap) to cause an instruction or command to be provided to a web browser, sandbox or similar web-based software, that instructs that software open a destination landing page. This opening may access or request access to content (e.g., computer code to be rendered or downloaded) from a web location different from the one currently being viewed or accessed by that software. When activated, a legitimate code 145 may be or cause a browser legitimate action such as an intentional redirect, opening of a new window, opening of a browser tab, opening of an AppStore or opening of another application. In this legitimate case, the activated legitimate code 145 may be the hypertext markup language link that activates in response to user interaction with an advertisement so as to direct a web browser to legitimate code. In this legitimate case, the downloaded content (e.g., code) can be the content to which an advertisement redirects a browser when a user clicks or otherwise interacts with that advertisement within a web page.

[0020] However, for malicious creatives (e.g., illegitimate or malware ads), malicious script 194 and/or malicious client side injection vectors 190, malicious code 155 (e.g., malware) may automatically (e.g., without user interaction such as without being activated by a user's click) cause an activated malicious code 157 and/or unwanted action 158. The malicious code 155 may be in either a creative 154 or a script 194. Execution of code 155 by the browser 114 and/or code 182 may automatically cause an instruction or command to be provided to a web browser, sandbox or similar web-based software, that instructs that software to open a destination landing page. This automatically causing may occur without user interaction such as to open the page, without being activated by a user's click, and thus be activated malicious code 157 causing an unwanted action 158. It may be an unwanted and automatic redirect action to another webpage (or URL) to which the user does not intend to go to. Thus, when code 155 is executing, it may be activated code 157 that automatically causes (e.g., performs, takes, makes, requests, executes) a browser unwanted action 158 of (e.g., on or in the browser).

[0021] This opening may be or include an instruction or command that automatically activates (e.g., without user 111 interaction, activation or action) as activated malicious code 157 that when provided to a web browser 114 or similar web-based software, instructs that software to perform an unwanted action 158, such as to request access to harmful content 159 at a web location different from the webpage currently being viewed or accessed by that browser. That is, malicious code 155 may automatically activate as activated malicious code 157 during or after the malicious advertisement or script has been executed or rendered, which may be or cause a browser unwanted action 158 for harmful content 159. The browser unwanted action may be an unwanted redirect, unwanted opening of a new window, unwanted opening of a browser tab, unwanted opening of an AppStore and/or unwanted opening of another application. Activated malicious code 157, may be automatically activated from code 155 without user interaction with an internet advertisement, malicious creative, or malicious script 194; and direct a web browser 114 to perform (e.g., execute, make and/or take) an unwanted action 158 (e.g., to request, render or download harmful content 159). In some cases, malicious code 157, is a trigger such as a hypertext markup language link, or other executing code that causes the unwanted action in the browser, automatically without user interaction such as without a user verbal command, clicking a button of a mouse, or pressing a key of a keyboard. Activated malicious code 157, may be or automatically cause an unwanted action 158 which automatically injects or downloads harmful content 159 into browser 114 without a user click or otherwise interacting with the malicious creative or script 194 within web page content 123.

[0022] In some cases, the description of capabilities or actions above by malicious code 155 may be capabilities or actions by malicious script 194. That is, script 194 may cause an activated malicious code 157 and/or unwanted action 158 as noted for code 155 because script 194 includes code 155.

[0023] Description of Apparatus

[0024] Referring now to FIG. 1A, there is shown a system 100 for detecting malicious code received from malicious client side injection vectors 190. The system 100 includes the following system components: the user device 110, the webpage publisher 120, the protection code (e.g. computer instructions or software) source 130, the legitimate advertiser 140, the malware (e.g., ad with malicious code 155) vectors 190 for injecting malicious script 194, the network 160 and the content delivery network (CDN) 138 having protection code 180. Each of the components is or includes at least one computing device such as computing device 500 of FIG. 5. Each of these computing devices is connected to the network 160 through a data connection as shown by the lines between each computing device and the network 160. Each system component's computing device may communicate with and transfer data to any of the other system component's computing device through the network 160 and the data connections between those components. The system 100 may include additional components. For example, there may be numerous user devices 110, vectors 190, intermediary 161, publisher 120, advertiser 140 and advertiser 1500 connected to the network 160.

[0025] The device 110 (e.g., page 123 and/or script 182 executing on the device) may automatically detect malicious code received from malicious client side injection vectors. For example, when the user 111 requests the content 123 or receives script 194 from one of vectors 190, the device 110 may automatically detect the malicious code without further input or activation by the user. The device 110 may be used by user 111 to download, execute or render published content 123 and call 127 for a creative. It may automatically perform call 125 for protection code 180 during rendering of content 123. In some cases, call 125 exists in the header of the page having content 123. The user device 110 may be any of computing devices 500 (see FIG. 5) such as a personal computer or client computer located at a business, residence for accessing the Internet.

[0026] The user device 110 has display 113; and input/output (I/O) device 117 for data outputting to and data inputting from user 111. The user may be a person using device 110, such as to surf the Internet by using display 113 and device 117 to access browser 114. The device 110 has browser 114 for rendering protected published content 123, executing call 125 for protection code 180 and call 127 for a creative. Browser 114 may be any of various browsers such as Chrome.RTM., Internet Explorer.RTM., Edge.RTM., Firefox.RTM., Safari.RTM. or Opera.RTM..

[0027] The webpage publisher 120 may be a source of published webpages that are or include protected published content 123 having call 125 for protection code 180 and call 127 for internet creatives. The calls 125 and 127 or other calls herein may be HTTP, HTML IP or other calls know for browser 114 and/or network 160.

[0028] The protection code source 130 may be a developer of the protection code 180 such as a generator, administrator or author of computer instructions or software that is code 180. It has updater 135 for updating code 180 based on reporting information 189.

[0029] The legitimate advertiser 140 may be an advertiser providing internet advertisements or legitimate creatives 144 for goods and/or services having a legitimate code 145. The advertiser 140 may be a server or remote side injection vector of the legitimate script of creative 144 having code 145. The legitimate code 145 may be for legitimate redirecting or action by browser 114 to a website of the advertiser 140 or another legitimate advertiser when user 111 clicks on legitimate creative 144 or legitimate code 145. Activation (e.g., execution or rendering) of code 145 may cause an intended action by the browser 114 after or due to user 111 clicking on an area or location of legitimate creative 144 or code 145. In some cases, an intended action is an action that is intended by the user, desired by the user and/or caused by a user action. In some cases, an intended action is an action taken by a browser or sandbox after and/or due to user 111 clicking on an area or location of a creative or its code (e.g., an action caused when code 145 is activated by a browser or sandbox as noted herein). An intended action may be a pop-up, redirect, playing of video, video stuffing, playing of audio, interstitial, etc. cause by activation of code 145 and an intentional user action such as a click using a mouse pointer or keyboard entry.

[0030] The malware advertiser 150 may be an illegitimate or malware advertiser that creates malicious creative 154 with or as malicious code 155. The advertiser 150 may be a server or remote side injection vector of the malicious script of creative 154 having code 155. In some cases, advertiser 150 adds to or replaces code of internet advertisements for goods and/or services such as legitimate creative 144 with illegitimate or malicious creative 154 or code 155 such as to cause unwanted action by browser 114 to a website other than the intended website (e.g., other than to the advertiser 140 or another legitimate advertiser). The malware advertiser 150 may have a malicious code replacer or adder to put code 155 into legitimate creative 144, thus writing over existing code or adding to code of legitimate code 145 to create malicious creative 154. In other cases, a malware advertiser 150 may simply be an advertiser creates malware as or within the malicious creative 154. Activation (e.g., execution or rendering) of code 155 may cause unwanted action by the browser 114 prior to or without user 111 clicking on any area or location of malicious creative 154 or code 155. In some cases, an unwanted action is an action that is not intended by the user, not desired by the user and/or not caused by a user action. In some cases, an unwanted action is an action taken by a browser or sandbox prior to and/or without user 111 clicking on any area or location of a creative or its code (e.g., an action caused when code 155 is activated by a browser or sandbox as noted herein). An unwanted action may be an automatic or forced pop-up, redirect, playing of video, video stuffing, playing of audio, interstitial, etc. cause by activation of code 155 and without an intentional user action such as without a click using a mouse pointer or without a keyboard entry.

[0031] The advertisement intermediary 161 may be an intermediary located between webpage publisher 120 and advertisers 140 and/or 150; such as for providing (e.g., serving) advertisements such as creatives 144 and/or 154 to the page 123. The intermediary 161 may be a server or remote side injection vector of the script of creatives 144 and/or 154 to content 123 in response to call 127. The advertisement intermediary 161 may be a supply side platforms (SSP) or a demand side platform (DSP) that signs up advertisers 140 and/or 150 and provides the creatives 144 or 154 such as in response to the call 127 by the publisher 120. The advertisement intermediary 161 may unknowingly provide malicious creative 154 to the publisher 120. The intermediary 161 may represent multiple intermediaries between the advertisers 140 and/or 150 and publisher 120.

[0032] The content delivery network (CDN) 138 may be a source of protection code 180 such as provided by protection code source 130. It may receive updated versions of the code 180 from updater 135.

[0033] The vectors 190 may be a client or local (to device 110) side injection vectors of the malicious script 194 having malicious code 155. The malicious client side injection vector of vectors 190 may be a local vector because it is a source of the creative 154 or script 194 that is in communication with and between the network 160 and the computing device 110. It may be local because it had direct communication access to device 110 without communicating or sending messages to device 110 through network 160. It may be executing on or as part of device 110. As a network device, it may be in direct communication with device 110 without communicating with device 110 through network 160. It may be local to device 110 while devices that must communicate with device 110 through network 160 are remote to device 110.

[0034] A client side injection vector may be a local vector between a network 160 and the device 110 and/or may be a non-ad vector that injects the malicious code independently of a call 127 for an ad or when a call 127 for an ad has not occurred. As compared to a client side injection vector, a server or remote side injection vector may be located (e.g., be executing on a processor of a device that is) in or on another side of network 160 that is away from device 110, not on device 110 and/or may not be on a device between device 110 and network 160.

[0035] In some cases, a vector of vectors 190 provides or injects a creative 154 as script 194 using the vector.

[0036] The content 123, the creative 144, the code 145, the creative 154, the code 155, the script 194 and/or the code 180 may be computer instructions in one or more software language such as but not restricted to JavaScript (JS), hyper-text markup language (HTML), Cascading style sheets (CSS), and the like. A script 194 may consist of the initial payload, which will then call, include, or otherwise reference additional source files downloaded from external sources (such as additional JS, HTML, CSS, image or other files as defined above), each of which may further reference additional files. The additional files may be used to track visits, serve additional user interface elements, enable animation or cause legitimate or illegitimate redirects to other sites or locations, or other activity.

[0037] The network 160 may be a network that can be used to communicate as noted for the network attached to computing device 500 of FIG. 5, such as the internet. Each of the components of system 110 may have a network interface for communication through a data connection with the network 160 and with other components of the system 100. Each data connection may be or include network: connections, communication channels, routers, switches, nodes, hardware, software, wired connections, wireless connections and/or the like. Each data connection may be capable of being used to communicate information and data as described herein.

[0038] Referring now to FIG. 1B, there is shown the user device 110 having browser 114 executing a protected published webpage content 123 that has the ability to detect malicious code received from malicious client side injection vectors 190. The FIG. 1B may show user device 110 at a point in time after the webpage 123 is rendered; and protection code 180 has been downloaded and is executing as executing protection code 182. At this time, malicious script 194 having malicious code 155 has been downloaded or received from one of malicious client side vectors 190.

[0039] FIG. 1B shows code 182 having or executing behavioral sandbox 184 which is executing a wrapped script 185 which may be a Java script (JS) wrapped version of malicious script 194 with code 155 in browser 114. Other than a JS wrapped version, other appropriately wrapped versions of malicious script 194 are also considered. Wrapped script 185 may represent a version of script 194 that is wrapped and/or stripped of HTML as noted below. The sandbox 184 may be used by the code 182 to detect and/or intercept malicious code 155, such as immediate and/or deferred types of unwanted action requested by the malicious code 155. The behavioral sandbox 184 may take many forms, but it is defined by its capability to execute software code in an environment in which that code may not perform any important system-level functions or have any effect upon an ongoing browser session. In a preferred form, the behavioral sandbox 184 may operate in a protected portion of memory that is denied access to any other portions of memory.

[0040] FIG. 1B also shows code 182 having or executing browser sandbox 186 which may be a protected part, subpart (e.g. a plugin or extension) or version of a browser 114 executing malicious script 194 with code 155. The sandbox 186 may be used by the code 182 to detect and/or intercept malicious code 155, such as deferred types of unwanted action requested by the malicious code 155. The code 155 may have cross-origin malicious code 162 such as for causing cross-origin type unwanted actions and errors of code 155.

[0041] In some cases, browser sandbox 186 is also executing the wrapped script 185 which may be a Java script (JS) wrapped version of malicious script 194 with code 155 in browser 114. In some cases, if the script 194 is an SRC type document, the malicious code is wrapped in a java script (JS) closure to detect an unwanted action requested by the malicious code. Other than a JS wrapped version, other appropriately wrapped versions of malicious script 194 are also considered. The wrapped version executed by sandbox 186 may be an (iframe/script).src (e.g., see at 316) and/or an iframe.src doc if script 194 or code 155 includes a JS protocol.

[0042] The code 182 also has blacklist 164 for preventing cross-origin type unwanted actions of content 162. The code 182 has ongoing sandbox monitoring 166 which may be part of code 182 that performs continued monitoring of execution of script 194 in sandbox 184 and/or of script 194 rendering in sandbox 186 to detect code 155 or unwanted actions 158 of code 155.

[0043] The code 182 also has activated malicious code 157 which may be malicious code 155 activated by sandbox 186. The activated code 157 may cause a browser unwanted action 158 which can cause harmful content 159, such as the download of harmful content.

[0044] The code 182 has document.write interceptor 170, such as for detecting document.write type writes, unwanted actions and errors caused by code 155. The interceptor 170 may detect and/or intercept document.write types of unwanted actions of or requested by the code 155.

[0045] Next, the code 182 has element.innerHTML interceptor 172, such as for detecting element.innerHTML type writes, unwanted actions and errors caused by code 155. The interceptor 172 may detect and/or intercept element.innerHTML types of unwanted actions of or requested by the code 155.

[0046] In addition, the code 182 has (iframe|script).scr interceptor 174, such as for detecting (iframe|script).scr type writes, unwanted actions and errors caused by code 155. The interceptor 174 may detect and/or intercept (iframe|script).scr types of unwanted actions of or requested by the code 155.

[0047] The code 182 has appendChild, replaceChild and insertBefore interceptor 176, such as for detecting appendChild, replaceChild and insertBefore type writes, unwanted actions and errors caused by code 155. The interceptor 176 may detect and/or intercept appendChild, replaceChild and insertBefore types of unwanted actions of or requested by the code 155.

[0048] The code 182 has click interceptor 178 such as for detecting programmatically generated clicks, unwanted actions and error caused by code 155. The interceptor 178 may detect and/or intercept programmatically generated click types of unwanted actions of or requested by the code 155.

[0049] The code 182 has cross-origin interceptor 179, such as for detecting cross-origin source type writes, unwanted actions and errors caused by code 155. The interceptor 179 may detect and/or intercept cross-origin source types of unwanted actions of or requested by the code 155.

[0050] Finally, the code 182 has reporting information 189 such as information to report the detections of the code 155 by code 182, such as by reporting code 155, the unwanted actions requested by code 155, and the types of unwanted action requested by the malicious code 155.

[0051] Each of browser 114, published content 123, call 125, call 127, protection code 180, executing code 182, script 194 and/or any of items 154-179 shown within code 182 may each be computer data and/or at least one computer file.

[0052] Intercepting malicious code (e.g., code 155) by code 182 (e.g., such as for ongoing sandbox monitoring) may include code 182 monitoring for, detecting, stopping execution of, preventing execution of, preventing activation of, discontinuing rendering of, blocking calls from, stopping calls of, stopping any downloads caused by, modifying code 155, and/or refusing to load the malicious code. In some cases, intercepting malicious code includes stopping execution of, preventing execution of, preventing activation of, discontinuing rendering of, blocking calls or, stopping calls of and/or stopping loading the malicious code. Intercepting malicious code may be code 182 stopping or blocking browser 114 from taking any of the above noted intercepting actions.

[0053] In general, the code 182 is designed and operates in such a way that it automatically intercepts or detects actions that are more likely to be used for nefarious purposes or that can otherwise operate negatively or beyond the scope of what is typically necessary for an advertisement. In some cases, code 182 is Java script code that focuses on malicious ads and scripts such as script 194 and stops unwanted actions 158 (such as redirects, pop-ups, video stuffing, etc.) by malicious code 155 (e.g., calls, scripts, payload and the like) from happening by offering a more granular control over what JavaScript/ES (JavaScript/ECMAScript, a standard governing Javascript--a scripting-language specification standardized by Ecma International in ECMA-262 and ISO/IEC 16262), HTML, cascading style sheets (CSS) (whether executed as a part of an ad, script or otherwise delivered to the webpage in some way) can do on a webpage (e.g., the content 123). Code 182 may use numerous methods, such as native browser sandboxing, overriding numerous native JavaScript calls (such as document.write, document.appendChild, etc.) used to manipulate DOM (document object model) of a webpage rendered in the browser 114 from JavaScript.

[0054] In some cases, the code 182 focuses on working with any Java Script code that gets to a webpage and intends to execute (whether from scripts or not). In this case, the code 182 will not discern "malicious code" from other content or detect it, but rather implement a policy to stop the redirects or actions 158 from happening when called without user action. For example, a vector may deliver a script to the page that has numerous "pixels" or JS code that will fire events back to the vector or an advertiser to notify them of an impression count, when it occurred (regardless if the script is malicious or not). The malicious script 194 or advertiser 150, however, will typically include additional pixels or calls to notify and track their own servers or vectors 190 about how the malicious code is executed on the user devices and collect additional data. Specifically, before any script 194 or ad call is executed, code 182 may initialize various interceptors and override various JS methods to monitor (e.g., "watch") scripts and their code as it gets delivered to the webpage. The script 194 can get delivered using many different ways, vectors of vectors 190 and/or contexts (an inline script being "written" to the page using document.write, a script loaded from a 3rd party URL, as a part of a cross-origin frame, etc.), and each of these may contain one or many of different nested scripts and/or "triggers" that cause malicious activity or unwanted actions 158, such as redirects.

[0055] Referring now to FIG. 1C, there is shown a block diagram 110 of different types of malicious client side injection vectors 190. The diagram 110 shows user device 110 having executing browser 114 after receiving or downloading a malicious script 194 from client side injection vectors 190. As examples of script 194, the diagram shows each of script 194A, 194B and 190C. As examples of vectors 190, the diagram 110 shows browser 114 receiving script 194A from network connection vector 190A, receiving script 194B from browser extension vector 190B, and receiving script 194C from local virus vector 190C. The browser 114 has content 123 and executing code 182. Operating system 115 is the operating system of device 110 and is executing browser 114, extension vector 190B, content 123, code 182, virus vector 190C and the scripts 190.

[0056] The vector 190A is connected between network 160 and device 110 (e.g., browser 114). It is a local network device that is not part of device 110. As a local network device, it may be in direct communication with device 110 without communicating with device 110 through network 160. It is connected between network 160 and device 110. The vector 190B is part of or an extension of browser 114. The vector 190C is part of or executing on operating system 115. Vectors 190B and 190C are executing on or as part of device 110.

[0057] Vector 190A may be a network (e.g., internet) connection, such as a man in the middle Page Specific Script injection via a compromised network connection of network 160. It may be a computer router, a WIFI router, an Ethernet connection or other network connection between network 160 and device 110. In this case, the user's network traffic is monitored by the vector 190A source and ad placements are hijacked via various injection methodologies. For example, an ad or creative received by the vector 190A from network 160 may be replaced by that vector with script 194A having code 155. Thus, a call 127 for an ad by the browser may be responded to by receipt of script 194A. In some cases, this first vector monitoring, hijacking and/or injecting may occur using a system or process as described herein.

[0058] Vector 190B may be a browser extension, such as a man in the middle attack which utilizes browser extension downloads for browser 114. The user downloads a legitimate but compromised browser extension not knowing that behind the scenes the extension is hijacking ad placements via various injection methodologies. It may be downloaded from a malware website that is mimicking a legitimate website or that the user/browser has been maliciously redirected to. An ad or creative received by the browser 114 or by the vector 190B, such as from network 160 may be replaced by that vector with script 194B having code 155. Thus, a call 127 for an ad by the browser may be responded to by receipt of script 194B. In some cases, this second vector monitoring, hijacking and/or injecting may occur using a system or process as described herein.

[0059] Vector 190C may be a local virus, such as a man in the middle attack which utilizes a locally running program on the user's device itself 110 to proxy webtraffic. The attack looks for vulnerabilities through ad placements or common scripts on the browser's page and hijacks the placement via various injection methodologies. It may be downloaded from a malware website that is mimicking a legitimate website or that the user/browser has been maliciously redirected to. An ad or creative received by the operating system 115, browser 114 or by the vector 190C, such as from network 160 may be replaced by that vector with script 194C having code 155. Thus, a call 127 for an ad by the browser may be responded to by receipt of script 194B. In another example, vector 190C may send of inject script 194C having code 155 to the operating system 115, browser 114 or content 123 without a call 127 for an ad by the browser. For example, vector 190C may send script 194C to the browser upon it detecting execution of content 123, creative 154 or another script executing on content 123. In some cases, this third vector monitoring, hijacking and/or injecting may occur using a system or process as described herein.

[0060] Any of the 3 vectors 190 may be a man in the middle source of script 194 because it is between a legitimate source such as source 140, intermediary 161 or publisher 120 and the device 110 or browser 114. Any of the 3 vectors 190 may pose as a replacement for source 140, intermediary 161 or publisher 120 because they provide code to the browser 114 in response to or not in response to a call 127 for an ad.

[0061] Any of the 3 vectors 190 may be hijacking the page's existing ad call 127 or sending malicious code that is not a response to (e.g., without responding to) a call 127. In either event, the vector may send an ad, a coupons or other unwanted element having code 155 that it injects or adds to the page 123. The code 155 will then be activated as code 157 by browser 114 or code 182, causing action 158 which is detected, intercepted and reported by code 182.

[0062] If the script 194 is sent by one of the 3 vectors 190 in response to call 127 it may be received by and activated by browser 114 or code 182 as a response to the call 127.

[0063] If the script 194 is sent by one of the 3 vectors 190 not in response to call 127 (e.g., sent by vector 190C) it may be a self-initiated or independent sending of script 194 (e.g., script 194C), such as automatically in response to the vector 190 monitoring browser 114 and detecting opening of or activation of content 123. Then, the sent or injected script 194 may be received by and activated by browser 114 or code 182 not as a response to the call 127. For example, code 182 may detect, intercept and report malicious code 155 sent and detected independently of the ad call 127. In this case, script 194 is not tied to the page's ad code sent in response to call 127 or the page has no ad call 127.

[0064] The script 194 sent by any of the 3 vectors 190 may be received and activated visibly in content 123 so that it is seen by the user. In other cases, it is activated invisibly. In either case, code 182 will detect, intercept and report and the unwanted action 158 and code 155.

[0065] Description of Processes

[0066] Using the system 100 is possible to manage detection of malicious code 155. The management may include communicating between components of the system 100. For example, referring now to FIG. 2 is a flow diagram of an operating environment/process 200 for managing the detecting of malicious code received from malicious client side injection vectors 190r. The process 200 may be or describe an operating environment in which the system 100 can perform the managing. The process 200 may be performed by the system 100, such as shown in FIGS. 1A-C. The process 200 starts at 205 and can end at 240, but the process can also be cyclical and return to 205 after 240. For example, the process may return to 205 when a publisher's webpage is requested by a user of any of various user devices 110 connected to network 160.

[0067] The process 200 starts at 205 where a user requested protected publisher webpage is received and executed, such as by the device 110 or the browser 114. The webpage may be or include content 123 having the call 125 to CDN 138 for protection code 180. Content 123 also has call 127 for an internet advertisement or creative. In some cases, call 127 is a call for the creative 144. It may be a call for which is responded to by one of the vectors 190 by providing the browser with script 194; an ad for goods and/or services having malicious code 155. Call 127 may be for a third party advertisement source such as to advertiser 140 or intermediary 161. As noted, one of vectors 190 may provide script 194 to the operating system 115, browser 114 or content 123 without the script being responsive to call 127. Call 125 may exist in a header of the webpage or content 123 and thus be executed before execution of other content of the webpage such as before call 127 that is not in the header.

[0068] Executing the content 123 may include rendering some of the webpage content by the browser 114 and/or displaying that content on the display 113. Rendering a webpage, ad or malicious code (e.g., computer data, message, packet or a file) may include a browser or computing device requesting (e.g., making a call over a network to a source for), receiving (e.g., over a network from the source, or downloading), executing and displaying that webpage, ad or malicious code.

[0069] Next, at 210 the call 125 for protection code 180 is executed or sent; and the protection code 180 is received or downloaded. Calling and receiving at 210 may include device 110 or browser 114 making the call 125 to content delivery network (CDN) 138 (a source of code 180) or another source of code 180. In some cases, call 125 is to source 130 for protection code 180. Calling and receiving at 210 may include calling for protection code 180, receiving code 180 and executing code 180 as executed code 182.

[0070] Then, at 220 the script 194 having malicious code 155 is received by the operating system 115, browser 114 or content 123. The script 194 may be send by one of the vectors 190 in response to call 127 is made or sent to a third party internet advertiser for an internet advertisement having malicious code 155. In other cases, it may be sent independently of call 127. Receiving the script at 220 may be or receiving or downloading the script 194 from one of vectors 190s.

[0071] The vectors 190 or webpage publisher 120 may provide a certain placement, space or response to call 127 in the webpage content 123 at which the user's computing device browser 114 downloads the malicious script 194 from the vector. At this point the legitimate source of ads (e.g., other than vectors 190), user 111, device 110, operating system 115 and browser 114 may not know that the malicious script 194 has malicious code 155 (e.g., is malware).

[0072] Now, at 230 the malicious code 155 existing in the script 194 is detected by the executing protection code 182 executed at 210. Detecting at 230 may include code 182 intercepting and monitoring execution or rendering of malicious script 194 and/or code 155. Detecting at 230 may include code 182 detecting and intercepting execution or activation of code 155. For example, the malicious script 194 may have some legitimate code or content such as a legitimate image, coupon, or video promoting goods and/or services that is not code 155; and also has the malicious code 155 which is not legitimate but will cause a malware type of unwanted action during or after loading of malicious script 194 without user action or input using device 117 or 110. In other cases, malicious script 194 is the code 155 such as when rendering malicious script 194 is the same as rendering malicious code 155. Thus, code 182 can monitor and intercept execution of malicious script 194 when detecting execution of code 155.

[0073] The detecting at 230 may protect content 123 from executing or displaying to the user 111, the malicious code 155 and/or harmful content 159 downloaded in response to the activation of the malicious code 155. In some cases, detecting at 230 includes intercepting activation of malicious code 155 from making unwanted action 158, such as a request or redirect for downloading of harmful content 159 in response to the activation of the malicious code 155. Activating a webpage, script or malicious code may include a browser or computing device executing, rendering or displaying that webpage, script or malicious code.

[0074] For example, the publisher's protected webpage or content 123 has the call 125 to the CDN 138 to download and execute code 180 as code 182. Code 182 is not considered "rendered" or displayed because it has no visual part on the browser 114 or display 113. It may be presumed that content 123 has at least one ad or script space at which script 194 can be executed or rendered. The malicious script 194 has already been or will be identified, and that the script comes from a vector that is not webpage publisher 120, advertiser 140 or intermediary 161.

[0075] In some cases, executing code 182 will monitor all content of the publisher's page of content 123 without any specific check to see if it has an advertisement, because code 182 knows source 130 of code 180 has been engaged by the publisher 120 to protect their ad space and/or their webpage of content 123. In these cases, code 182 will detect any of the malware scripts 194 and/or unwanted acts 158 noted herein (e.g., see FIGS. 3-4 and such as noted for code 155), anywhere on the webpage or on published content 123. Here, detecting at 230 may include code 182 monitoring all of content 123 for all legitimate and unwanted actions of the webpage, browser or calls of the webpage.

[0076] In other cases, code 182 may separately detect whether there is a call 127 instead of presuming that call 127 exists. After detecting at least one call 127, the code 182 may perform detection of code 155 only for ads such as malicious script 194 instead of for all of the content 123.

[0077] Detecting at 230 will be discussed further below with respect to FIGS. 3-4.

[0078] Next, at 235 the detecting is reported by sending to source 130 reporting information 189 that is based on the detecting at 230. Reporting information 189 may report or include data identifying and/or derived from malicious code 155, unwanted action 158, and/or the harmful content 159. It may include the actual code 155. Reporting at 235 may include reporting to the protection code source, detecting information that that identifies the client side injection vector of vectors 190 and that identifies the executed unwanted action 158.

[0079] Now, optionally, at 240 the protection code source 130 or updater 135 updates the protection code 180 based on the reporting information 189 and sends the updated protection code 180 to network 138 for downloading by the device 110 or numerous other user devices like device 110. Updating at 240 may be optional. Updating at 240 may include source 130 pushing the updated code 180 to CDN 138 using network 160.

[0080] Using the device 110 it is possible to detect malicious code 155, such as noted at 230. The detecting may include communicating between the system 100 components. For example, referring now to FIG. 3 is a flow diagram of an operating environment/process for detecting malicious code 155 received from malicious client side injection vectors. The process 300 may be or describe an operating environment in which the system 100 can perform the detecting. The process 300 may be an example of executing at 230 (and optionally reporting at 235) performed by the device 110, operating system 115 and/or the browser 114 executing protection code 182 of FIG. 1B. The code 182 can perform process 300. The process 300 starts at 310 and can end at 346; but the process can also be cyclical and return to 310 or 320 after 346. For example, after 346 the process may return to 310 for each internet malicious script 194 that is received at a protected publisher's webpage content 123 is about to be rendered by the browser 114 of any of various ones of the user device 110. In some cases, determining "if" a condition, occurrence or event happened in process 300 may be determining "when" that a condition, occurrence or event happened.

[0081] The process 300 has 4 different stages 310, 320, 330 and 340 that break down the way protected published content 123 gets loaded by browser 114 and how the malicious script 194 that is received is monitored and how code 155 is detected by executing protection code 182 as the malicious script 194 is being executed and/or displayed on the webpage of content 123. As noted above, as content 123 loads, it calls, receives and executes protection code 180 as code 182 prior to receiving malicious script 194.

[0082] For example, prior to scripts rendering stage 310, user 110 may type in or click to an address of published page content 123 to go into that page in the browser 114. Then the browser 114 requests that content 123 and renders it inside the browser 114. As the browser renders the content 123 it makes call 125 for code 180; receives and executes code 180 as code 182. As the browser renders the content 123 it will then make call 127 to request the script 194 from somewhere, like an ad server; and the ad server returns the script 194 but vectors 190 may highjack or intercept that creative and replace it with script 194. In other cases, one of vectors 190 provides or injects script 194 into content 123 with the content calling for an ad. The scripts rendering stage 310 may begin when the browser 114 is about to render content of malicious script 194 on the webpage of content 123. That is, the content of malicious script 194 including code 155 has not yet been loaded onto the webpage when process 300 begins.

[0083] In cases where code 180 or 182 does not exist, when browser 114 loads or renders "malware" malicious code 155, that code or content may be malicious and in most cases 1) can get access to entire page content 123 and data on it; 2) has the ability to navigate the user without their permission to any web address and any scan page; and/or 3) has the ability to mine cryptocurrency in the background based upon the page of content 123.

[0084] Thus, in process 300, code 182 can monitor and protect against the undetected, un-intercepted and/or unmodified rendering of code 155 at the stages 310-340 of rendering malicious script 194. Before malicious script 194 is actually rendered on the page of content 123, code 182 can monitor for and intercept a number of ways in which the malicious script 194 can be rendered upon the page of content 123 by the browser 114 to detect and intercept code 155.

[0085] The process 300 starts at scripts rendering stage 310 which includes 4 example processes for receiving and rendering scripts. It is considered that scripts rendering at 310 may include various computer related script functions and/or script actions. For example, at scripts rendering stage 310 the malicious script 194 that was received from vectors 190 at a protected publisher's webpage content 123 has been received and is about to be rendered by the browser 114 of the user device 110. Scripts rendering stage 310 includes code 182 (in browser 114) receiving and rendering malicious script 194, which includes receiving and rendering code 155.

[0086] The 4 example processes for receiving and rendering scripts are click( ) 311, document.write 312, element.innerHTML 314, (iframe|script).scr 316; or appendChild or replaceChild or insertBefore 318. It can be appreciated other processes for receiving and rendering scripts are considered. In addition, there may be fewer or more than the 4 example processes mentioned here. Each of the processes 311-318 may be a method that browser 114 is exposed to Javascript which may be what malicious script 194 and code 155 are written in and what protection code 180 is written in. Each of the processes 311-318 may be a method by which browser 114 is exposed to Javascript to detect, intercept, inject and/or append code into the pages of content 123 that are being shown by the browser 114 to the user 111. Code 180 and 182 each include an interceptor 170-179 for monitoring and intercepting each of these processes 311-318. It can be appreciated that other than Javascript, various other languages or types of code may be used for or to write processes 311-318, malicious script 194, code 155 and/or code 180. In some cases, interceptors 170-179 represent a general "executing code interceptor" which may intercept processes 311-318 and other processes for exposing the browser 114 to Javascript, malicious script 194 and/or code 155. That is, interceptors 170-179 are several examples, there could be others.

[0087] For example, code 180 may be stored on CDN 138 (where it is updated by source 130) and be retrieved by the browser 114 from the CDN using call 125 which is place on the protect publisher page content 123, in the header of the page. So, protected page content 123 may download code 180 from a CDN when it is loading and installs code 180 which the browser 114 executes as executing code 182. Code 182 may execute/run automatically upon receipt of code 180 and invisibly to the user at the top of the webpage of protected content 123. The installed code or executing code 182 is installing interceptors 170-179 before the scripts rendering of malicious script 194 begins.

[0088] One processes for receiving and rendering scripts is click( ) 311. At 311, a first click interceptor 178 of code 182 (e.g., as noted at 342) will monitor and intercept execution of this click( ) method of malicious script 194 to detect and intercept execution of code 155 of the malicious script 194. After process 311, ongoing monitoring stage 340 begins at 342.

[0089] A second process for receiving and rendering scripts is document.write 312, where content of the malicious script 194 is received from an outside source such as one of vectors 190 (e.g., vector 190A) or an ad server and then is written into a webpage using this document.write method. Here, document.write interceptor 170 of executing protection code 182 will monitor for and intercept this process and collect the content of malicious script 194 at 312 before it is written to the page, to detect and intercept execution of code 155 of the malicious script 194. After process 312, behavior analysis stage 320 begins at 322.

[0090] A third process for receiving and rendering scripts is element.innerHTML 314, where someone can create in malicious script 194 and the malicious script 194 can have an element like an HTML element such as a frame or something that is shown on the page of content 123; and insert or inject inner HTML to the element in the same was as if they had obtained the code of malicious script 194 somewhere and injected it to the page. Here, element.innerHTML interceptor 172 of executing protection code 182 will monitor for and intercept this process and collect the content of malicious script 194 at 314 before it is written to the page, to detect and intercept execution of code 155 of the malicious script 194. After process 314, behavior analysis stage 320 begins at 322.

[0091] A fourth process for receiving and rendering scripts is (iframe/script).scr 316, where someone can create in malicious script 194 and the malicious script 194 can have an iframe that points to an external page which will have the content of malicious script 194 with a script (e.g., .SCR). Here, (iframe/script).scr interceptor 174 executing protection code 182 will monitor for and intercept this process and collect the content of malicious script 194 at 316 before it is written to the page, to detect and intercept execution of code 155 of the malicious script 194. After process 316, behavior analysis stage 320 begins at 322.

[0092] A fifth process for receiving and rendering scripts is appendChild or replaceChild or insertBefore 318, where someone can create in malicious script 194 and the malicious script 194 can have elements and modify them and append one to another like modifying a tree of nested elements using appendChild/replaceChild/insertBefore. Here, appendChild, replaceChild and insertBefore interceptor 176 executing protection code 182 will monitor for and intercept this process and collect the content of malicious script 194 at 318 before it is written to the page, to detect and intercept execution of code 155 of the malicious creative 154. After process 318, browser sandboxing stage 330 begins at 332.

[0093] Processes 311-318 may be considered native prototypes that are intercepted (e.g. detected by and overridden) by code 182 to ingest incoming scripts, such as of the malicious script 194. It is known that there are other processes that are allowed to put things on the page of malicious script 194 that code 182 also intercepts; but 312-318 are the most common processes.

[0094] The intercepting at stage 310 and detecting by process 300 reduces a problem for publishers such as publisher 120 (e.g., AOLTM, Huffington Post.TM., ESPN.TM.) who are having users getting taken to malicious sites or content due to malware vectors 190 because the code 155 can prohibit the browser 114 from executing a click on the browser back button (<), can divert or stop the publisher from making revenue from content 123 and can cause user complaints. So, the publishers can put code 180 or a call 125 for code 180 at the top of some or every page or content 123 so that code 182 intercepts (e.g., highjacks) all processes 311-318 by being executed, intercepting and/or modifying the publishers page before any script 194 can get on the page. So, the script 194 is not calling what it thinks it is (e.g., code 155 is not calling an unwanted action or access to a malware directed server because the call will be intercepted or modified by code 182) but after the calls are intercepted, script 194 is calling code 182 at stages 320-340.

[0095] For example, after processes 312-316, process 300 moves to behavior analysis stage 320 where code 182 running at the top of the webpage of protected content 123 begins a behavior analysis of these processes intercepted at stage 310. At stage 320, the content of malicious script 194 that is being written to the browser by processes 312-316 is run through behavior analysis of code 182 at 322-326.

[0096] At the stage 320, code 182 performs behavior analysis which allows code 182 to detect code 155 without having to know or focus on only those sets of predefined strings or unwanted actions that a protection code knows of. For example, code 182 does not have to capture every new payload or write to the page to really know that it is going to be an unwanted action. Instead, code 182 uses stage 320 that is aimed at allowing code 182 to understand that a certain piece of code of malicious script 194 that is about to be written to the webpage of content 123 is going to unwanted action somewhere such as by code 155. For example, stage 320 includes interceptors 172-176 executing and rendering processes 312-316 of malicious script 194, which may include executing and rendering code 155 at 322-326.

[0097] First, at 322, the code 182 "wraps" the HTML it receives from the interceptors 172-174 at 312-316 in a wrapper. Here, code 182 wraps the code or content of malicious script 194 that is about to be written to the page inside of a wrapper of code 182 that allows code 182 to retrieve errors from the wrapped content of the script by doing a special error handling, such as at 324, 326 and 342.