Cleaning Robot And Method For Controlling Cleaning Robot

HAN; Seong Joo

U.S. patent application number 16/947058 was filed with the patent office on 2020-11-05 for cleaning robot and method for controlling cleaning robot. The applicant listed for this patent is Samsung Electronics Co., Ltd.. Invention is credited to Seong Joo HAN.

| Application Number | 20200348666 16/947058 |

| Document ID | / |

| Family ID | 1000004958075 |

| Filed Date | 2020-11-05 |

View All Diagrams

| United States Patent Application | 20200348666 |

| Kind Code | A1 |

| HAN; Seong Joo | November 5, 2020 |

CLEANING ROBOT AND METHOD FOR CONTROLLING CLEANING ROBOT

Abstract

A cleaning robot includes a user interface to display a map image including one or more divided regions, and the user interface displays an icon corresponding to a state value of a main device on the map image.

| Inventors: | HAN; Seong Joo; (Yongin-si, KR) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Family ID: | 1000004958075 | ||||||||||

| Appl. No.: | 16/947058 | ||||||||||

| Filed: | July 16, 2020 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 16061262 | Jun 11, 2018 | |||

| PCT/KR2016/015379 | Dec 28, 2016 | |||

| 16947058 | ||||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | A47L 9/2842 20130101; A47L 9/2857 20130101; G05D 1/0044 20130101; G05D 2201/0215 20130101; A47L 2201/04 20130101; A47L 9/2894 20130101; A47L 9/2847 20130101; A47L 9/2889 20130101; A47L 9/009 20130101; A47L 11/4011 20130101; A47L 2201/06 20130101; A47L 9/2852 20130101; A47L 2201/00 20130101; A47L 9/2805 20130101; A47L 9/2826 20130101 |

| International Class: | G05D 1/00 20060101 G05D001/00; A47L 9/28 20060101 A47L009/28; A47L 9/00 20060101 A47L009/00; A47L 11/40 20060101 A47L011/40 |

Foreign Application Data

| Date | Code | Application Number |

|---|---|---|

| Dec 28, 2015 | KR | 10-2015-0187427 |

| Mar 4, 2016 | KR | 10-2016-0026295 |

Claims

1. A cleaning robot comprising: a body; a wheel assembly configured to move the body; a brush unit configured to perform cleaning on a bottom surface of a moving path; a communicator configured to communicate with a remote device; and a controller configured to: determine a virtual region based on a user input received through the remote device, and control the wheel assembly and the brush unit to clean a region excluding the virtual region among a cleanable region.

2. The cleaning robot of claim 1, wherein the controller is configured to determine a region among the cleanable region corresponding to a region selected by a user from a map image of the cleanable region as the virtual region.

3. The cleaning robot of claim 2, wherein the user input is received by drag and drop in the map image.

4. The cleaning robot of claim 2, wherein the controller is configured to control the wheel assembly to limit entry into the virtual region.

5. The cleaning robot of claim 2, wherein the controller is configured to control the wheel assembly to autonomously travel in the region other than the virtual region among the cleanable region.

6. The cleaning robot of claim 2, wherein the virtual region comprises a space in a virtual wall forming a closed loop.

7. The cleaning robot of claim 2, wherein the controller is further configured to: divide the cleanable region into at least one divided region based on a structure of the cleanable region, generate the map image comprising the at least one divided region, and control the communicator to transmit the map image to the remote device.

8. The cleaning robot of claim 2, wherein the controller is further configured to determine at least one virtual wall that restricts passage in the cleanable region based on a user input received through the remote device.

9. The cleaning robot of claim 8, wherein the controller is further configured to control the wheel assembly and the brush unit to clean a region of the cleanable region before passing through the at least one virtual wall.

10. The cleaning robot of claim 8, wherein the at least one virtual wall is formed in a straight line or a curve line in the cleanable region.

11. A remote device comprising: a communicator configured to communicate with a cleaning robot; a user interface configured to: display a map image of a cleanable region, and receive input from a user; and a controller configured to control the communicator to transmit information about a virtual region to the cleaning robot in response to receiving the input for the virtual region restricting cleaning of the cleaning robot in the map image.

12. The remote device of claim 11, wherein the controller is further configured to determine the virtual region based on drag and drop in the map image.

13. The remote device of claim 11, wherein the virtual region is formed as a closed loop in the cleanable region.

14. The remote device of claim 11, wherein the controller is further configured to transmit information about at least one virtual wall to the cleaning robot in response to receiving input for the at least one virtual wall that restricts passage of the cleaning robot in the map image.

15. The remote device of claim 14, wherein the at least one virtual wall is formed in a straight line or a curve line in the cleanable region.

16. The remote device of claim 11, wherein the controller is further configured to control the user interface to display the map image as at least one divided area in which the cleanable region is divided based on a structure of the cleanable region.

17. A cleaning robot control system comprising: a cleaning robot; and a remote device configured to receive input from a user and communicate with the cleaning robot, wherein the cleaning robot is configured to: determine a virtual region based on input received through the remote device, and clean a region excluding the virtual region among a cleanable region.

18. The cleaning robot control system of claim 17, wherein the remote device is configured to receive the input for the virtual region based on drag and drop in a map image for the cleanable region.

19. The cleaning robot control system of claim 17, wherein the cleaning robot is configured to restrict entry into the virtual region.

20. The cleaning robot control system of claim 17, wherein the cleaning robot is further configured to: determine at least one virtual wall that restricts passage in the cleanable region based on input received through the remote device, and control a wheel assembly and a brush unit to clean a region of the cleanable region before passing through the at least one virtual wall.

21. A cleaning robot comprising: a body; a wheel assembly configured to move the body; a brush unit configured to perform cleaning on a bottom surface of a moving path; a communicator configured to communicate with a remote device; and a controller configured to: generate a map corresponding to a cleanable area; transmit, via the communicator, the map to the remote device; receiving, from the remote device, a user input on the map corresponding to the cleanable area; based on the user input received via the remote device, identify a virtual region in the map to be excluded during cleaning; and after the virtual region in the map is identified, control the wheel assembly and the brush unit to perform cleaning with respect to a first portion of the cleanable area without cleaning with respect to a second portion of the cleanable area corresponding to the virtual region of the map.

22. The cleaning robot of claim 21, wherein the remote device is configured to provide a user interface for displaying the map corresponding to the cleanable area generated by the cleaning robot and for receiving a user input to drag and drop the virtual region over an area of the displayed map to be excluded during cleaning.

Description

CROSS-REFERENCE TO RELATED APPLICATIONS

[0001] This application is a continuation of application Ser. No. 16/061,262, which is the 371 National Stage of International Application No. PCT/KR2016/015379, filed Dec. 28, 2016, which claims priority to Korean Patent Application No. 10-2015-0187427, filed Dec. 28, 2015 and Korean Patent Application No. 10-2016-0026295, filed Mar. 4, 2016, the disclosures of which are herein incorporated by reference in their entirety.

BACKGROUND

1. Field

[0002] The present disclosure relates to a cleaning robot and a method of controlling the same.

2. Description of Related Art

[0003] A cleaning robot is an apparatus that automatically cleans a space to be cleaned by suctioning foreign substances such as dust accumulated on a floor while traveling the space to be cleaned without a user's manipulation. That is, the cleaning robot cleans the space to be cleaned while traveling the space to be cleaned.

[0004] Conventionally, a cleaning robot performs a traveling function and a cleaning function in a region to be cleaned in accordance with the user command. However, since the cleaning robot directly moves or performs cleaning from merely receiving the user command, it is not possible for the user to check a state of the cleaning robot.

[0005] Conventionally, in a case in which a user wishes a specific position within a space to be cleaned to be cleaned first, the user has to directly check a position of a cleaning robot and move the cleaning robot to the specific position using a remote controller.

SUMMARY

[0006] It is an aspect of the present disclosure to provide a cleaning robot capable of intuitively displaying a state of a cleaning robot and changes in the state thereof, and a method of controlling the cleaning robot.

[0007] It is another aspect of the present disclosure to provide a cleaning robot capable of providing a user interface (UI) corresponding to a user command, and a method of controlling the cleaning robot.

[0008] It is still another aspect of the present disclosure to provide a cleaning robot capable of, in a divided region of an actual space to be cleaned that corresponds to a divided region of a virtual space to be cleaned that is selected by a user, completely cleaning an empty space within the divided region even when variations occur in the arrangement of obstacles within the divided region, and a method of controlling the cleaning robot.

[0009] In accordance with one aspect of the present disclosure, a cleaning robot includes a user interface to display a map image including one or more divided regions, and the user interface displays an icon corresponding to a state value of a main device on the map image.

[0010] The state value may include any one of a first state value which indicates that the main device is performing cleaning, a second state value which indicates that the main device has completed cleaning, and a third state value which indicates that an error has occurred.

[0011] The cleaning robot may further include a controller to control the main device to travel or perform cleaning, and the user interface may receive a user command; and the controller may control the main device on the basis of the user command.

[0012] The user interface may receive a command to designate at least one divided region, and may change an outline display attribute of the at least one designated divided region.

[0013] In a case in which the user interface may receive the command to designate at least one divided region, the user interface may change an outline color or an outline thickness of the at least one designated divided region.

[0014] In a case in which the main device is traveling, the user interface displays a translucent layer over the map image.

[0015] In a case in which the main device is traveling, the user interface may display an animation which indicates that the main device is traveling.

[0016] The user interface may further display a message corresponding to the state value of the main device.

[0017] The user interface may receive a command to designate at least one divided region and may change a name display attribute of the at least one designated divided region.

[0018] The cleaning robot may further include a controller to set a target point within the divided region, set a virtual wall on the map image, and control the main device to perform cleaning from the target point.

[0019] The cleaning robot may further include a storage unit to store the map image, and a controller to set a target point within the divided region, set a virtual wall on the map image, and control the main device to perform cleaning from the target point.

[0020] The storage unit may include information on a region dividing point corresponding to each divided region, and the controller may set the virtual wall at the region dividing point.

[0021] The cleaning robot may further include a driving wheel driver to control driving of a wheel and a main brush driver to control driving of a main brush unit, and the controller may control the driving wheel driver to allow the main device to move to the target point, and control the main brush driver to perform cleaning from the target point.

[0022] The cleaning robot may further include a main device sensor unit, and the main device sensor unit may match a position of the main device with the map image based on position information generated by the main device sensor unit.

[0023] The user interface may receive a selection of the divided region from a user; and the controller may set the target point within the divided region selected by the user.

[0024] The controller may set at least one of a central point of a divided region, a point farthest from surrounding obstacles within the divided region, and another point that is present within the divided region selected by the user from the map image and the closest to the current position of the main device as the target point.

[0025] The controller may set a virtual region on the map image.

[0026] The user interface may receive a command to designate a virtual region form the user.

[0027] The controller may control the main device to perform cleaning within the virtual region.

[0028] The controller may control the main device to perform cleaning outside the virtual region.

[0029] The user interface may receive a selection about whether the main device perform cleaning within the virtual region or the outside the virtual region from the user.

[0030] The virtual area may include a space in a virtual wall forming a closed loop.

[0031] The controller may set a movement path from the current position of the main device to the target point, and move the main device along the movement path.

[0032] The controller may set the virtual wall when the main device is located at the target point.

[0033] The controller may control the main device to perform autonomous traveling from the target point, and restrict entry of the main device into the virtual wall.

[0034] The controller may set a cleaning order for at least one of the divided regions, and move, when the main device completes cleaning for one of the divided areas, the main device to a next divided area

[0035] When the main device completes cleaning for the one of the divided areas, the controller may remove the virtual wall and move the main device to the next divided area.

[0036] The controller may set the target point of the next divided area and move the main device to the target point of the next divided area.

[0037] In accordance with another aspect of the present disclosure, a method of controlling a cleaning robot includes displaying a map image including one or more divided regions; and displaying an icon corresponding to a state value of a main device on the map image.

[0038] The method may further include setting a target point within the divided region; setting a virtual wall on the map image; and performing cleaning from the target point.

[0039] According to the above-described cleaning robot and method of controlling the cleaning robot, since a user can intuitively recognize a state of the cleaning robot or changes in the state thereof from a map image, an error in the user's recognition of the state of the cleaning robot can be reduced.

[0040] Further, according to the above-described cleaning robot and method of controlling the cleaning robot, by a user recognizing a state of the cleaning robot from a map image, the user can control the cleaning robot in various ways through a user interface (UI) on the basis of state information of the cleaning robot.

[0041] Further, according to the above-described cleaning robot and method of controlling the cleaning robot, by a virtual wall being set around a divided region selected from a map image, a main device can be blocked from entering a space outside the virtual wall.

[0042] Further, according to the above-described cleaning robot and method of controlling the cleaning robot, by a target point of a main device being set within a divided region selected from a map image and cleaning being started after the main device is first moved to the set target point, a user can complete cleaning in an actual region intended by the user even in a case in which the divided region displayed on the map image does not exactly correspond to the actual area.

BRIEF DESCRIPTION OF THE DRAWINGS

[0043] FIG. 1 is an exterior view of a cleaning robot.

[0044] FIG. 2A is a bottom view of a main device according to one embodiment, and FIG. 2B is an interior view of the main device according to one embodiment.

[0045] FIG. 3 is a block diagram of a control configuration of the cleaning robot.

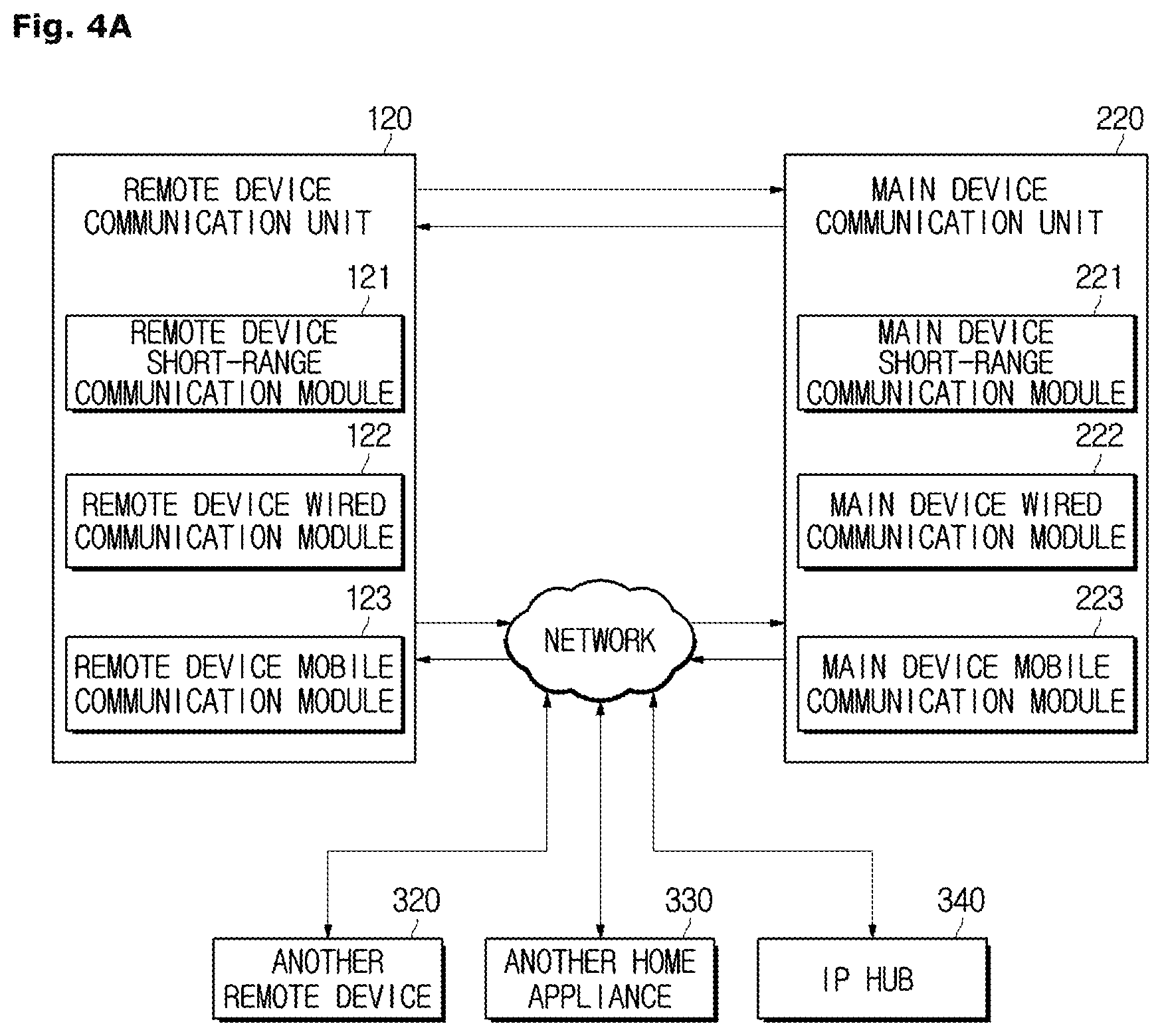

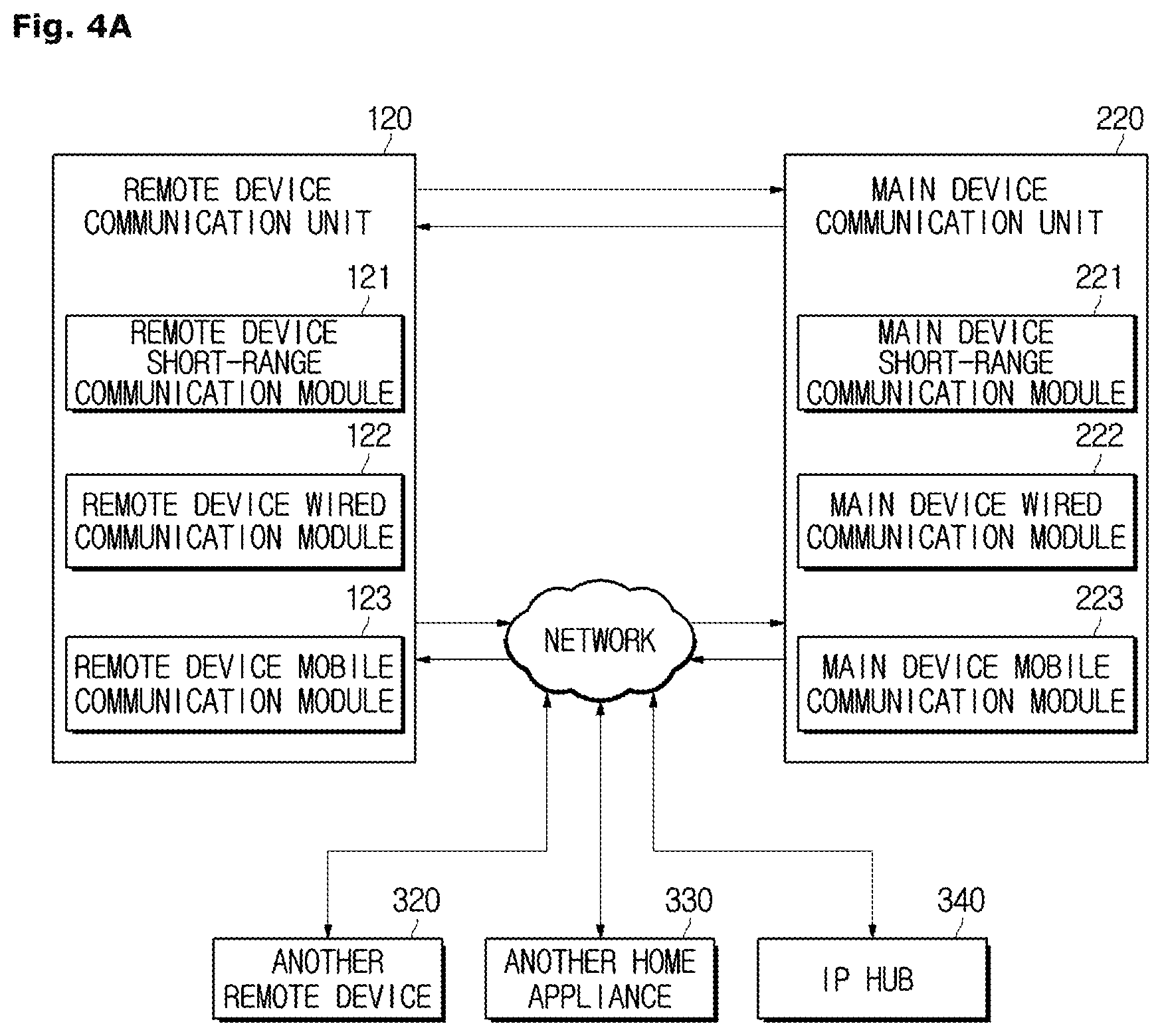

[0046] FIG. 4A is a control block diagram of a communication unit according to one embodiment.

[0047] FIG. 4B is a control block diagram of a communication unit according to another embodiment.

[0048] FIG. 5 is an exemplary view of a home screen of a remote device UI.

[0049] FIG. 6 is an exemplary view of a menu selection screen of the remote device UI.

[0050] FIG. 7 is an exemplary view of a map image displayed by the remote device UI of the cleaning robot according to one embodiment.

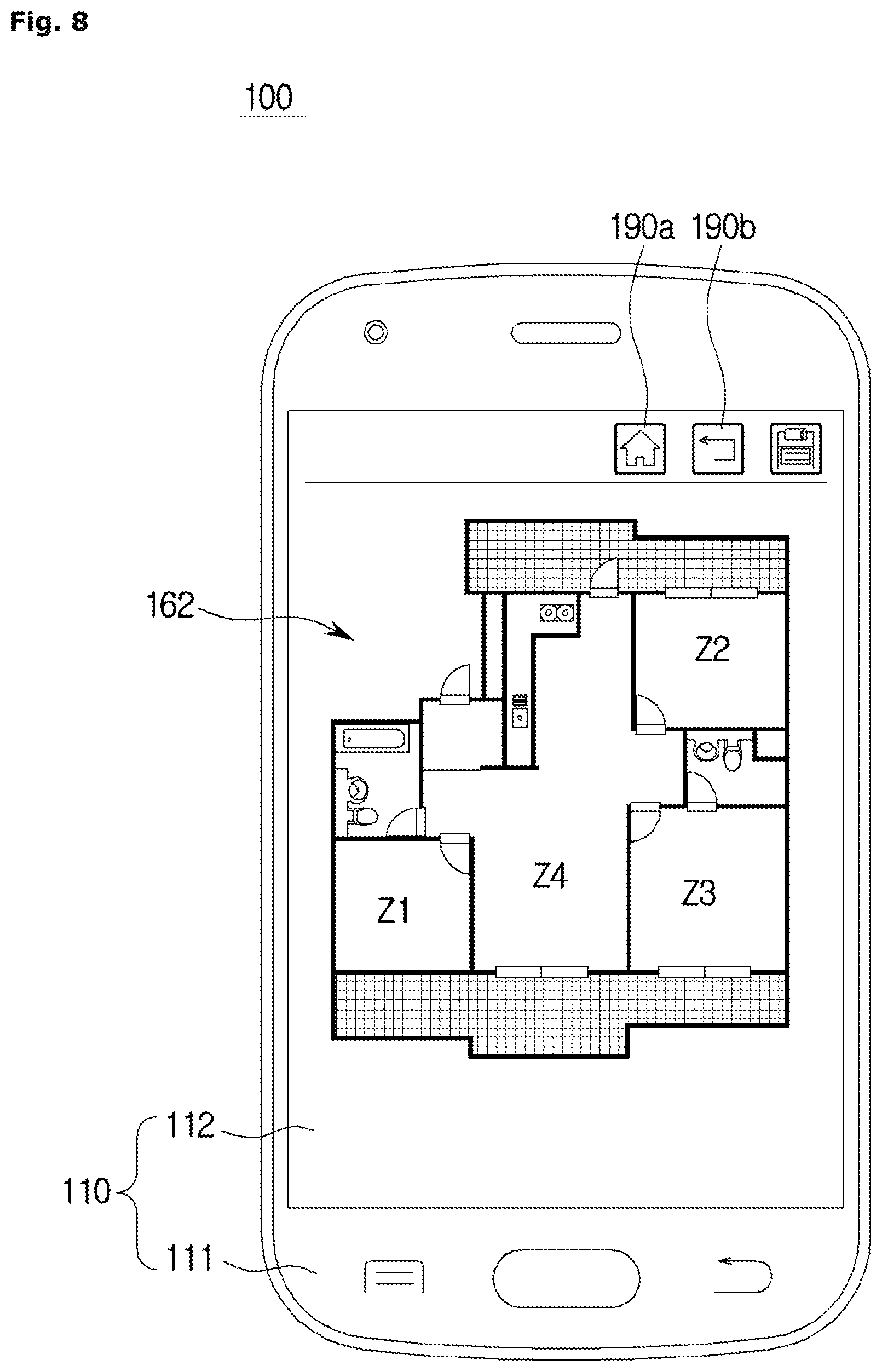

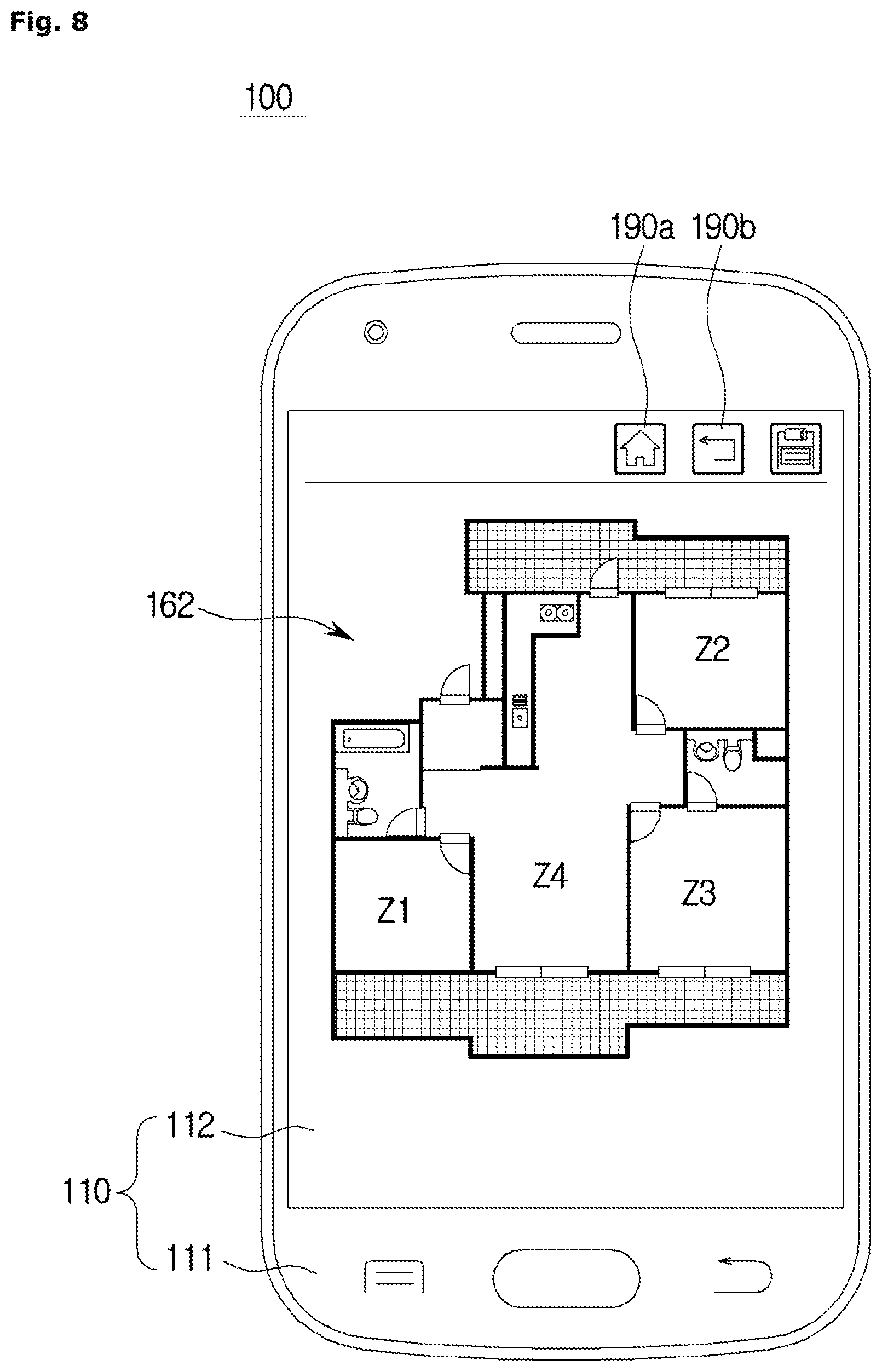

[0051] FIG. 8 is an exemplary view of a map image displayed by the remote device UI of the cleaning robot according to another embodiment.

[0052] FIGS. 9 to 16 are conceptual diagrams for describing processes in which the user commands cleaning operations of the cleaning robot on the basis of a map image displayed by the remote device UI of the cleaning robot and screens output by the remote device UI in accordance with user commands or states of the main device according to one embodiment.

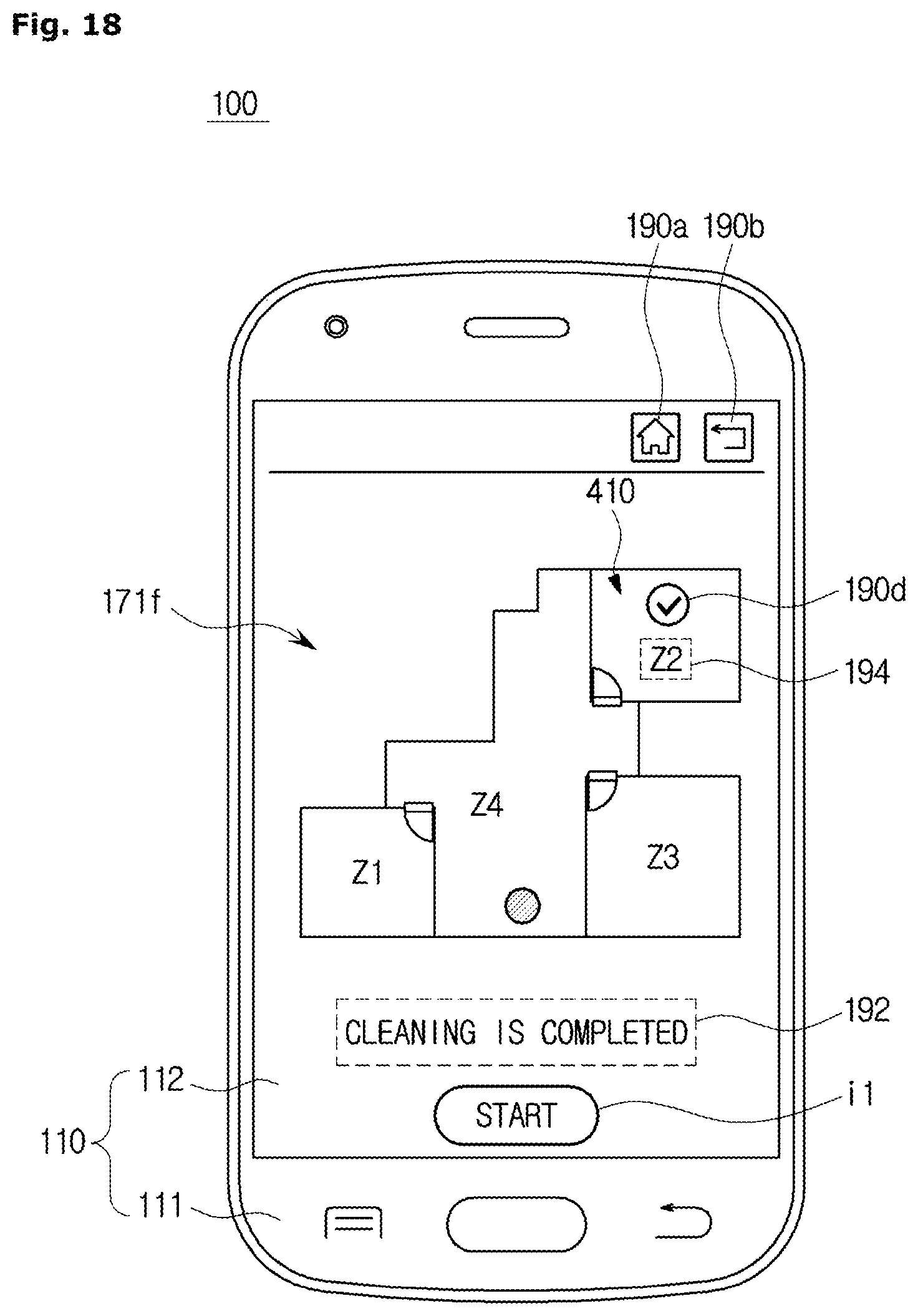

[0053] FIGS. 17 and 18 are conceptual views of screens of a UI according to another embodiment.

[0054] FIG. 19 is a conceptual diagram of a screen through which commands for specifying and designating a plurality of divided regions and a cleaning order of the plurality of divided regions are received.

[0055] FIG. 20 is a detailed control block diagram of the main device according to one embodiment.

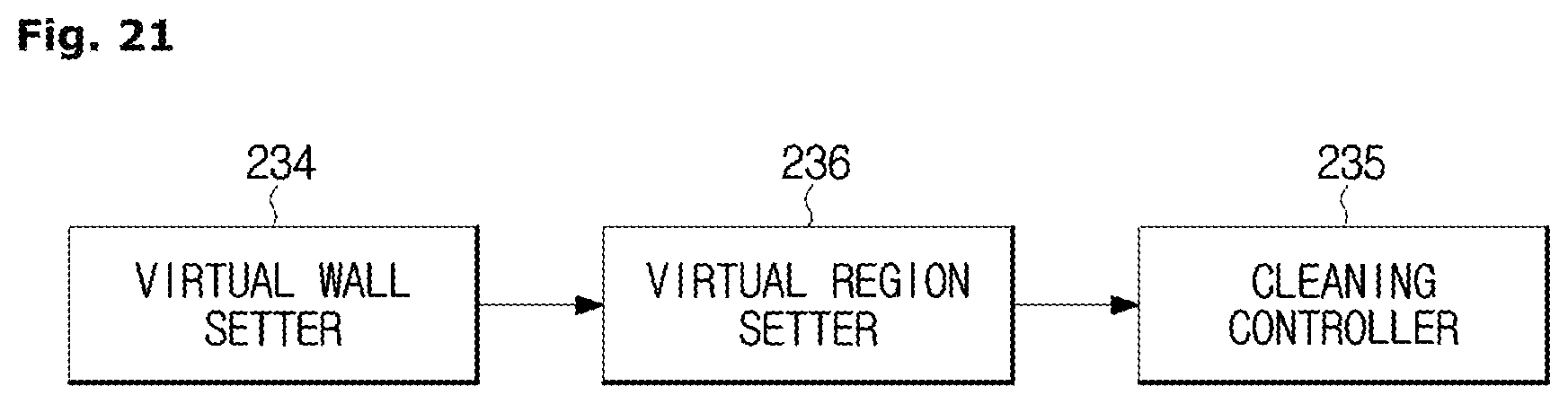

[0056] FIG. 21 is a control block diagram of a virtual wall setter, a virtual region setter.

[0057] FIGS. 22 and 23 are exemplary views for describing a method of setting a movement path of a main device set by a movement path generator and a target point of the main device.

[0058] FIG. 24 is an exemplary view of a virtual wall set by a virtual wall setter.

[0059] FIG. 25 is a view for describing a process in which a main device performs cleaning in a divided region of an actual space to be cleaned in a case in which the virtual wall is set.

[0060] FIGS. 26 and 27 are exemplary views of a virtual region set by a virtual region setter automatically or manually.

[0061] FIG. 28 is an exemplary view of a plurality of virtual walls set in accordance with a user command.

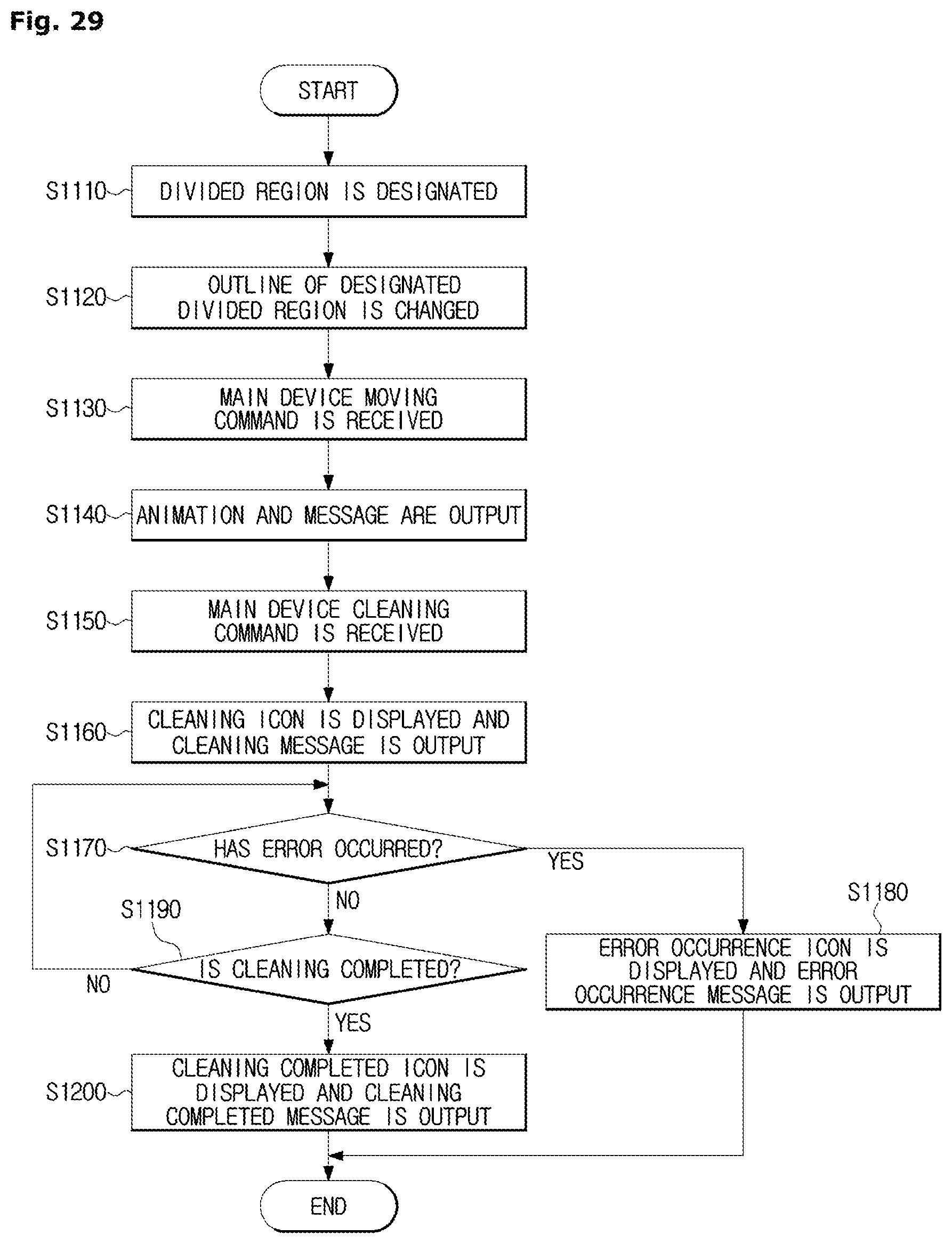

[0062] FIG. 29 is a flowchart of a method of controlling the cleaning robot according to one embodiment.

[0063] FIG. 30 is a flowchart of a method of controlling the cleaning robot according to another embodiment.

DETAILED DESCRIPTION

[0064] Hereinafter, the present disclosure will be described in detail using embodiments, which will be described with reference to the accompanying drawings, for those of ordinary skill in the art to easily understand and practice the disclosure. However, in describing the present disclosure, when it is judged that detailed descriptions on a known function or configuration related to the disclosure might unnecessarily blur the gist of the embodiments of the disclosure, the detailed descriptions thereof will be omitted.

[0065] The terms used below are terms selected in consideration of functions in the embodiments, and meanings of the terms may vary in accordance with an intention, practice, or the like of a user or an operator. Thus, in a case in which the terms used in the embodiments, which will be described below, are specifically defined below, the terms should be interpreted as having the specifically-defined meanings, and in a case in which the terms are not specifically defined below, the terms should be interpreted as having meanings generally understood by those of ordinary skill in the art.

[0066] Further, even when configurations of aspects or embodiments selectively described below are illustrated as a single integrated configuration in the drawings, it should be understood that the configurations may be freely combined with each other when it is not clear to those of ordinary skill in the art that such combinations are technically contradictory, unless described otherwise.

[0067] Hereinafter, embodiments of a cleaning robot and a method of controlling the cleaning robot will be described with reference to the accompanying drawings.

[0068] Hereinafter, a configuration of a cleaning robot according to one embodiment will be described with reference to FIG. 1.

[0069] FIG. 1 is an exterior view of a cleaning robot, FIG. 2A is a bottom view of a main device according to one embodiment, and FIG. 2B is an interior view of the main device according to one embodiment.

[0070] Referring to FIG. 1, a cleaning robot 1 performs cleaning or moves at least one time, generates a map including obstacle information of a space in which the cleaning robot 1 is currently present, generates a map image that is similar to the generated map, and displays the map image on a user interface (UI.) Here, "map" refers to spatial image information generated by the cleaning robot 1 on the basis of sensed values prior to structural analysis, and "map image" refers to spatial image information including structural information generated by the cleaning robot 1 as a result of the structural analysis. By the generation of the map image, a virtual space to be cleaned which matches an actual space to be cleaned in which the cleaning robot 1 is present may be provided to a user.

[0071] Specifically, the cleaning robot 1 may grasp the obstacle information of the space in which the cleaning robot 1 is currently present through a sensor unit by performing cleaning or moving at least one time in the space in which the cleaning robot 1 is currently present. The cleaning robot 1 may generate a map including the obstacle information of the space in which the cleaning robot 1 is currently present on the basis of the grasped obstacle information. The cleaning robot 1 may analyze a structure of the map including the obstacle information, and divide the space grasped through performing cleaning or moving at least one time into a plurality of divided regions on the basis of the analyzed structure.

[0072] The cleaning robot 1 may substitute the plurality of divided regions with preset figures and generate a map image in which the plurality of preset figures are combined to have different areas or positions.

[0073] The cleaning robot 1 may generate a map image that substitutes for the map on the basis of a plan view that corresponds to the analyzed structure among pieces of pre-stored plan view data. The cleaning robot 1 may display the generated map image on the UI for the user to easily grasp the structure of the space in which the cleaning robot 1 is currently present and the position of the cleaning robot 1.

[0074] The cleaning robot 1 may include a main device 200 configured to perform cleaning while moving on a space to be cleaned, and a remote device 100 configured to control the operation of the main device 200 from a remote distance and display a current situation or the like of the main device 200. Although a mobile phone may be employed as the remote device 100 as illustrated in FIG. 1, the remote device 100 is not limited thereto, and various hand-held devices other than a mobile phone such as a personal digital assistant (PDA), a laptop, a digital camera, an MP3 player, and a remote controller may also be employed as the remote device 100.

[0075] The remote device 100 may include a remote device UI 110 configured to provide the UI. The remote device UI 110 may include a remote device input unit 111 and a remote device display unit 112. The remote device UI 110 may receive a user command for control of the main device 200 or display various pieces of information of the main device 200.

[0076] The remote device input unit 111 may include hardware devices such as various buttons or switches, a pedal, a keyboard, a mouse, a track-ball, various levers, a handle, or a stick for a user input. The remote device input unit 111 may also include a graphical UI (GUI) such as a touch pad, i.e., a software device, for a user input. The touch pad may be implemented as a touchscreen panel (TSP) and form a layered structure with the remote device display unit 112.

[0077] A cathode ray tube (CRT), a digital light processing (DLP) panel, a plasma display panel, a liquid crystal display (LCD) panel, an electro-luminescence (EL) panel, an electrophoretic display (EPD) panel, an electrochromic display (ECD) panel, a light emitting diode (LED) panel, an organic LED (OLED) panel, or the like may be provided as the remote device display unit 112, but the remote device display unit 112 is not limited thereto.

[0078] In a case in which the remote device display unit 112 is configured as a TSP that forms a layered structure with the touch pad as described above, the remote device display unit 112 may also be used as an input unit in addition to being used as a display unit. Hereinafter, for convenience of descriptions, descriptions will be given by assuming that the remote device display unit 112 is configured as a TSP.

[0079] As illustrated in FIGS. 1 to 2B, the main device 200 may include a body 2 including a main body 2-1 and a sub-body 2-2, a driving wheel assembly 30, a main brush unit 20, a power supply unit 250, a dust collector, a main device communication unit 220, and a user interface unit 210. As illustrated in FIG. 1, the main body 2-1 may have a substantially semicircular shape, and the sub-body 2-2 may have a rectangular parallelepiped shape. Exteriors of the remote device 100 and the main device 200 are merely examples of an exterior of the cleaning robot 1, and the cleaning robot 1 may have various shapes.

[0080] The main device power supply unit 250 supplies driving power for driving the main device 200. The main device power supply unit 250 includes a battery that is electrically connected to driving devices for driving various components mounted on the body 2 and configured to supply the driving power. The battery may be provided as a rechargeable secondary battery and may be charged by receiving electric power from a docking station. Here, the docking station is a device at which the main device 200 is docked when the main device 200 has completed a cleaning process or when a residual amount of the battery is lower than a reference value. The docking station may supply electric power to the docked main device 200 using an external or internal power supply.

[0081] The main device power supply unit 250 may be mounted at the bottom of the body 2 as illustrated in FIGS. 2A and 2B, but embodiments are not limited thereto.

[0082] Although not illustrated, the main device communication unit 220 may be disposed inside the body 2 and allow the body 2 to communicate with the docking station, a virtual guard, the remote device 100, and the like. The main device communication unit 220 may transmit whether the main device 200 has completed cleaning, a residual amount of battery provided in the body 2, a position of the body 2, and the like to the docking station, and receive a position of the docking station and a docking signal that guides docking of the main device 200 from the docking station.

[0083] The main device communication unit 220 may transmit and receive an entry restriction signal to and from a virtual guard configured to form a virtual wall. The virtual guard is an external device configured to transmit an entry restriction signal to a connected path between any divided region and a specific divided region when the main device 200 is traveling. The virtual guard forms the virtual wall. For example, the virtual guard may sense entry of the main device 200 into the specific divided region using an infrared sensor, a magnetic sensor, or the like, and transmit an entry restriction signal to the main device communication unit 220 via a wireless communication network. Here, "region to be cleaned" refers to an entire region on which the main device 200 may travel that includes a plurality of divided regions.

[0084] In this case, the main device communication unit 220 may receive an entry restriction signal and block the main device 200 from entering a specific region.

[0085] The main device communication unit 220 may receive a command input by a user via the remote device 100. For example, the user may input a cleaning start/end command, a cleaning region map generation command, a main device 200 moving command, and the like via the remote device 100, and the main device communication unit 220 may receive a user command from the remote device 100 and allow the main device 200 to perform an operation corresponding to the received user command. The main device communication unit 220 will be described in further detail below.

[0086] A plurality of driving wheel assemblies 30 may be present. As illustrated in FIGS. 2A and 2B, two driving wheel assemblies 30 may be provided at left and right edges to be symmetrical to each other from the center of the bottom of the body 2. The driving wheel assemblies 30 respectively include driving wheels 33 and 35 that allow moving operations such as moving forward, moving backward, and rotating during a process of performing cleaning. The driving wheel assemblies 30 may be modularized and be detachably mounted on the bottom of the body 2. Therefore, in a case in which a failure occurs in the driving wheels 33 and 35 and repairing is required, only the driving wheel assemblies 30 may be separated from the bottom of the body 2 for repair without disassembling the entire body 2. The driving wheel assemblies 30 may be mounted on the bottom of the body 2 by methods such as hook coupling, screw coupling, and fitting.

[0087] A castor 31 is provided at a front edge from the center of the bottom of the body 2 to allow the body 2 to maintain a stable posture. The castor 31 may also constitute a single assembly like the driving wheel assemblies 30.

[0088] The main brush unit 20 is mounted at a side of a suction hole 23 formed at the bottom of the body 2. The main brush unit 20 includes a main brush 21 and a roller 22. The main brush 21 is disposed at an outer surface of the roller 22 and whirls dust accumulated on a floor surface in accordance with rotation of the roller 22 to guide the dust to the suction hole 23. In this case, the main brush 21 may be formed with various materials having an elastic force. The roller 22 may be formed of a rigid body, but embodiments are not limited thereto.

[0089] Although not illustrated in the drawings, a blower device configured to generate a suction force may be provided inside the suction hole 23, and the blower device may move the dust introduced into the suction hole 23 to the dust collector configured to collect and filter the dust.

[0090] Various sensors may be mounted on the body 2. The various sensors may include at least one of an obstacle sensor 261 and an image sensor 263.

[0091] The obstacle sensor 261 is a sensor configured to sense an obstacle present on a traveling path of the main device 200, e.g., an appliance, a piece of furniture, a wall surface, a wall corner, or the like inside a house. The obstacle sensor 261 may be provided in the form of an ultrasonic sensor capable of recognizing a distance, but embodiments are not limited thereto.

[0092] A plurality of obstacle sensors 261 may be provided at a front portion and a side surface of the body 2 and form a circumference of the body 2. A sensor window may be provided at front surfaces of the plurality of obstacle sensors 261 to protect and block the obstacle sensors 261 from the outside.

[0093] The image sensor 263 refers to a sensor configured to recognize the position of the main device 200 and form a map of a region to be cleaned by the main device 200. The image sensor 263 may be implemented with a device capable of acquiring image data such as a camera and be provided at the top portion of the body 2. In other words, in addition to extracting a feature point from image data of the top of the main device 200, allowing the position of the main device 200 to be recognized using the feature point, and allowing a map image of a region to be cleaned to be generated, the image sensor 263 may also allow the current position of the main device 200 to be grasped from the map image. The obstacle sensor 261 and the image sensor 263 which may be mounted on the body 2 will be described in further detail below.

[0094] A main device UI 280 may be disposed at the top portion of the body 2. The main device UI 280 may include a main device input unit 281 configured to receive a user command and a main device display unit 282 configured to display various states of the main device 200, and provide a UI. For example, a battery charging state, whether the dust collector is fully filled with dust, a cleaning mode, a dormant mode of the main device 200, or the like may be displayed on the main device display unit 282. Since the forms in which the main device input unit 281 and the main device display unit 282 are implemented are the same as the above-described forms of the remote device input unit 111 and the remote device display unit 112, descriptions thereof will be omitted.

[0095] The exterior of the cleaning robot according to one embodiment has been described above. Hereinafter, a configuration of the cleaning robot according to one embodiment will be described in detail with reference to FIG. 3.

[0096] FIG. 3 is a block diagram of a control configuration of the cleaning robot.

[0097] The cleaning robot 1 may include the remote device 100 and the main device 200 connected to each other by wired and wireless communications. The remote device 100 may include a remote device communication unit 120, a remote device controller 130, a remote device storage unit 140, and a remote device UI 110.

[0098] The remote device communication unit 120 transmits and receives various signals and data to and from the main device 200 or an external server via wired and wireless communications. For example, in accordance with a user command through the remote device UI 110, the remote device communication unit 120 may download an application for managing the main device 200 from an external server (for example, a web server, a mobile communication server or the like). The remote device communication unit 120 may also download plan view data of a region to be cleaned from the external server. Here, a plan view is a figure depicting a structure of a space in which the main device 200 is present, and plan view data is data in which a plurality of different plan views of a house are gathered.

[0099] The remote device communication unit 120 may transmit a "generate map" command of the user to the main device 200 and receive a generated map image from the main device 200. The remote device communication unit 120 may transmit a map image edited by the user to the main device 200.

[0100] The remote device communication unit 120 may also transmit commands for controlling the main device 200, such as a "start cleaning" command, an "end cleaning" command, and a "designate divided region" command input by the user, to the main device 200.

[0101] In a case in which the remote device controller 130 generates a movement path of the main device 200, the remote device communication unit 120 may also transmit information on the generated movement to the main device 200.

[0102] For this, the remote device communication unit 120 may include various communication modules such as a wireless Internet module, a short range communication module, and a mobile communication module.

[0103] The remote device communication unit 120 will be described in detail below with reference to FIG. 4A.

[0104] The remote device controller 130 controls the overall operation of the remote device 100. The remote device controller 130 may control each of the configurations of the remote device 100, i.e., the remote device communication unit 120, the remote device display unit 112, the remote device storage unit 140, and the like, on the basis of a user command input via the remote device UI 110.

[0105] The remote device controller 130 may generate a control signal for the remote device communication unit 120.

[0106] For example, in a case in which the user inputs the "generate map" command, the remote device controller 130 may generate a control signal so that the command to generate a map image including one or more divided regions is transmitted to the main device 200.

[0107] In a case in which the user inputs the "start cleaning" command, the remote device controller 130 may generate a control signal so that the start cleaning command, which moves the main device 200 to a designated divided region (hereinafter, a designated region) and makes the main device 200 perform cleaning in the designated region, is transmitted to the main device 200. Here, in a case in which the user designates a plurality of divided regions, the remote device controller 130 may generate a movement path of the main device 200 so that the main device 200 moves to any one divided region of the plurality of designated divided regions (for example, a divided region that is the closest to the main device 200), and the main device 200 moves along the plurality of designated divided regions in accordance with a set order (for example, an order starting from a divided region which is the closest to the main device 200). Even when the user does not input the "start cleaning" command, the remote device controller 130 may control the start cleaning command to be automatically transmitted to the main device 200 when a predetermined amount of time elapses after the user designates a divided region.

[0108] In a case in which the remote device controller 130 generates the movement path, the remote device controller 130 may extract region dividing points that respectively correspond to the plurality of divided regions. In a case in which the divided regions are configured as "rooms," the region dividing points may correspond to "doors" of the rooms. A method of generating the movement path and a method of extracting the region dividing points will be described in detail below.

[0109] In a case in which the user inputs the "end cleaning" command, the remote device controller 130 may generate the stop cleaning command for the main device 200 to stop cleaning that is being performed. The remote device controller 130 may generate a control signal to transmit the end cleaning command to the main device 200.

[0110] The remote device controller 130 may generate a control signal for the remote device display unit 112.

[0111] Specifically, the remote device controller 130 may generate a control signal so that a screen corresponding to a user command or a state of the main device 200 is output. The remote device controller 130 may generate a control signal so that a screen is switched in accordance with the user command or the state of the main device 200.

[0112] For example, in a case in which the user inputs the "generate map" command, the remote device controller 130 may generate a control signal so that a map image including one or more divided regions is displayed.

[0113] In a case in which the user inputs the "designate divided region" command, the remote device controller 130 may generate a control signal so that a designated region outline display attribute is changed or a designated region name display attribute is changed in accordance with the user command. As an example, the remote device controller 130 may allow color of an outline or name of a designated region to be changed or allow the outline or name of the designated region to be displayed in bold font.

[0114] In a case in which the user has input the "start cleaning" command and the main device 200 is moving to a designated region, the remote device controller 130 may generate a control signal so that the remote device display unit 112 outputs an animation, which indicates that the main device 200 is moving.

[0115] In a case in which the user has input the "start cleaning" command and the main device 200 is performing cleaning in a designated region, the remote device controller 130 may generate a control signal so that the remote device display unit 112 outputs an icon, which indicates that the main device 200 is performing cleaning.

[0116] In a case in which a movement path of the main device 200 is generated, the remote device controller 130 may generate a control signal so that the remote device display unit 112 outputs the generated movement path.

[0117] In a case in which the main device 200 has completed cleaning in the designated region, the remote device controller 130 may generate a control signal so that the remote device display unit 112 outputs an icon, which indicates that cleaning by the main device 200 is completed.

[0118] In a case in which an error occurs while the main device 200 is moving or performing cleaning, the remote device controller 130 may generate a control signal so that the remote device display unit 112 outputs an icon notifying of the occurrence of an error in the main device 200.

[0119] Screens respectively corresponding to user commands or states of the main device 200 will be described in detail below.

[0120] The remote device controller 130 may generate a control signal for the remote device storage unit 140. The remote device controller 130 may generate a control signal so that a map image is stored.

[0121] The remote device controller 130 may be various processors including at least one chip in which an integrated circuit is formed. Although the remote device controller 130 may be provided in a single processor, the remote device controller 130 may also be separately provided in a plurality of processors.

[0122] The remote device storage unit 140 temporarily or non-temporarily stores data and programs for the operation of the remote device 100. For example, the remote device storage unit 140 may store an application for managing the main device 200. The remote device storage unit 140 may also store the map image received from the main device 200 and store plan view data downloaded from an external server.

[0123] Such a remote device storage unit 140 may include at least one type of storage medium among a flash memory type, a hard disk type, a multimedia card micro type, a card type memory (for example, a secure digital (SD) or extreme digital (XD) memory, or the like), a random access memory (RAM), a static RAM (SRAM), a read-only memory (ROM), an electrically erasable programmable ROM (EEPROM), a PROM, a magnetic memory, a magnetic disk, and an optical disc. However, the remote device storage unit 140 is not limited thereto and may also be implemented in other arbitrary forms known in the same technical field. The remote device 100 may operate a web storage that performs a storage function on the Internet.

[0124] The remote device UI 110 may receive various commands for controlling the main device 200 from the user. For example, the remote device UI 110 may receive the "generate map" command for generating the map image including one or more divided regions, or receive a "manage map" command for modifying the generated map image, the "designate divided region" command for a divided region to be designated, a "manage cleaning" command for moving the main device 200 to a designated region and making the main device 200 perform cleaning, and the like.

[0125] The remote device UI 110 may also receive a "start/end" command or the like for starting or ending the cleaning by the main device 200.

[0126] The remote device UI 110 may display various pieces of information of the main device 200.

[0127] For example, the remote device UI 110 may display a map image corresponding to a region to be cleaned along which the main device 200 travels.

[0128] In a case in which the user inputs the "designate divided region" command, the remote device UI 110 may change a designated region outline display attribute or change a designated region name display attribute in accordance with a user command. As an example, the remote device controller 130 may allow color of an outline or name of a designated region to be changed or allow the outline or name of the designated region to be displayed in bold font.

[0129] In a case in which the user has input the "start cleaning" command and the main device 200 is moving to a designated region, the remote device UI 110 may output an animation, which indicates that the main device 200 is moving.

[0130] In a case in which the user has input the "start cleaning" command and the main device 200 is performing cleaning in a designated region, the remote device UI 110 may output an icon, which indicates that the main device 200 is performing cleaning.

[0131] In a case in which a movement path of the main device 200 is generated, the remote device UI 110 may display the generated movement path.

[0132] In a case in which the main device 200 has completed cleaning in the designated region, the remote device UI 110 may output an icon, which indicates that cleaning by the main device 200 is completed.

[0133] In a case in which an error occurs while the main device 200 is moving or performing cleaning, the remote device UI 110 may output an icon notifying of the occurrence of an error in the main device 200.

[0134] The main device 200 may include the main device power supply unit 250, a main device sensor unit 260, the main device communication unit 220, a main device controller 230, a main device driver 270, a main device UI 280, and a main device storage unit 240.

[0135] As described with reference to FIGS. 2A and 2B, the main device power supply unit 250 is provided as a battery and supplies driving power for driving the main device 200.

[0136] The main device communication unit 220 transmits and receives various signals and data to and from the remote device 100 or an external device via wired and wireless communications.

[0137] For example, the main device communication unit 220 may receive the "generate map" command of the user from the remote device 100 and transmit a generated map image to the remote device 100. The main device communication unit 220 may receive a map image stored in the remote device 100 and a cleaning schedule stored in the remote device 100. In this case, the stored map image may refer to a finally-stored map image, and the stored cleaning schedule may refer to a finally-stored cleaning schedule.

[0138] The main device communication unit 220 may also transmit a current state value of the main device 200 and cleaning history data to the remote device 100.

[0139] The main device communication unit 220 may receive the "start cleaning" command of the user and data related to a designated region from the remote device 100. When a situation in which a transmitted environment is inconsistent occurs while the main device 200 is performing cleaning, the main device communication unit 220 may transmit an error state value, which indicates that the environment is inconsistent, to the remote device 100. Likewise, in a case in which a region that is unable to be cleaned is generated, the main device communication unit 220 may transmit a state value, which indicates that cleaning is impossible, to the remote device 100.

[0140] In a case in which the remote device 100 generates a movement path, the main device communication unit 220 may also receive information on the generated movement path.

[0141] The main device communication unit 220 may also receive the "end cleaning" command of the user from the remote device 100.

[0142] The main device communication unit 220 will be described in detail below with reference to FIG. 4A.

[0143] The main device sensor unit 260 performs sensing of an obstacle and a state of the ground, which is required for traveling of the main device 200. The main device sensor unit 260 may include the obstacle sensor 261 and the image sensor 263.

[0144] A plurality of obstacle sensors 261 are provided at an outer circumferential surface of the body 2 and configured to sense an obstacle present in front of or beside the main device 200 and transmits a sensed result to the main device controller 230.

[0145] The obstacle sensors 261 may be provided as contact type sensors or non-contact type sensors in accordance with whether the obstacle sensors 261 come into contact with an obstacle, or may also be provided as a combination of a contact type sensor and a non-contact type sensor. A contact type sensor refers to a sensor that senses an obstacle by the body 2 actually colliding with an obstacle, and a non-contact type sensor refers to a sensor that senses an obstacle without the body 2 colliding with the obstacle or before the body 2 collides with the obstacle.

[0146] The non-contact type sensor may include an ultrasonic sensor, an optical sensor, a radiofrequency (RF) sensor, or the like. In a case in which the obstacle sensors 261 are implemented as ultrasonic sensors, the obstacle sensor 261 may transmit ultrasonic waves to a path on which the main device 200 travels, receive reflected ultrasonic waves, and sense an obstacle. In a case in which the obstacle sensors 261 are implemented as optical sensors, the obstacle sensors 261 may project light in an infrared region or visible light region, receive reflected light, and sense an obstacle. In a case in which the obstacle sensors 261 are implemented as RF sensors, the obstacle sensors 261 may transmit a radio wave having a specific frequency, for example, a microwave, using the Doppler effect, detect changes in a frequency of a reflected wave, and sense an obstacle.

[0147] The image sensor 263 may be provided as a device capable of acquiring image data such as a camera, and may be mounted on the top portion of the body 2 to recognize the position of the main device 200. The image sensor 263 extracts a feature point from image data of the top of the main device 200 and recognizes the position of the main device 200 using the feature point. Position information sensed by the image sensor 263 may be transmitted to the main device controller 230.

[0148] Sensor values of the main device sensor unit 260, i.e., sensor values of the obstacle sensors 261 and the image sensor 263, may be transmitted to the main device controller 230, and the main device controller 230 may generate a map of a region to be cleaned on the basis of the received sensor values. Since a method of generating a map on the basis of sensor values is a known technique, descriptions thereof will be omitted. FIG. 4A merely illustrates one example of the main device sensor unit 260. The main device sensor unit 260 may further include other types of sensors or some sensors may be omitted from the main device sensor unit 260 as long as a map of a region to be cleaned may be generated.

[0149] The main device driver 270 may include a driving wheel driver 271 configured to control driving of the driving wheel assemblies 30, and a main brush driver 272 configured to control driving of the main brush unit 20.

[0150] The driving wheel driver 271 is controlled by the main device controller 230 to control the driving wheels 33 and 35 mounted on the bottom of the body 2 and allow the main device 200 to move. In a case in which the "generate map" command, the "start cleaning" command, a "move region" command, or the like of the user is transmitted to the main device 200, the driving wheel driver 271 controls driving of the driving wheels 33 and 35, and the main device 200 travels in accordance with the control. The driving wheel driver 271 may also be included in the driving wheel assemblies 30 and be modularized together with the driving wheel assemblies 30.

[0151] The main brush driver 272 drives the roller 22 mounted at the side of the suction hole 23 of the body 2 in accordance with control by the main device controller 230. In accordance with rotation of the roller 22, the main brush 21 may be rotated and clean a floor surface. When the "start cleaning" command of the user is transmitted to the main device 200, the main brush driver 272 controls driving of the roller 22.

[0152] The main device controller 230 controls the overall operation of the main device 200. The main device controller 230 may control the configurations of the main device 200, i.e., the main device communication unit 220, the main device driver 270, the main device storage unit 240, and the like, and generate a map image.

[0153] Specifically, the main device controller 230 may generate a control signal for the main device driver 270.

[0154] For example, in a case in which the main device controller 230 receives the "generate map" command, the main device controller 230 may generate a control signal for the driving wheel driver 271 to drive the driving wheels 33 and 35. While the driving wheels 33 and 35 are driven, the main device controller 230 may receive sensor values from the main device sensor unit 260 and generate a map image of a region to be cleaned on the basis of the received sensor values.

[0155] In a case in which the main device controller 230 receives the "start cleaning" command and data related to a designated region, the main device controller 230 may generate a control signal for the driving wheel driver 271 for the main device 200 to be moved to the designated region by the user. In a case in which the main device 200 is moved to the designated region, the main device controller 230 may control the main brush driver 272 to drive the main brush unit 20 while generating a control signal related to the driving wheel driver 271 to drive the driving wheels 33 and 35.

[0156] The main device controller 230 may generate a map image.

[0157] Specifically, in a case in which the main device controller 230 receives the "generate map" command, the main device controller 230 may receive sensor values from the main device sensor unit 260, generate a map including obstacle information, analyze a structure of the generated map, and divide the map into a plurality of regions.

[0158] The main device controller 230 may substitute the plurality of divided regions with preset figures different from each other and generate a map image in which the plurality of preset figures are combined.

[0159] The main device controller 230 may find a plan view corresponding to the analyzed map structure among pieces of plan view data stored in the main device storage unit 240 and postprocess the corresponding plan view to generate a map image.

[0160] In a case in which the user designates a plurality of divided regions, although it has been described in the above-described embodiment that the remote device controller 130 generates a movement path of the main device 200, the main device controller 230 may also generate the movement path. In this case, the main device controller 230 may generate a control signal so that the main device communication unit 220 transmits information on the generated movement path to the remote device 100, and the main device driver 270 travels and performs cleaning along the generated movement path.

[0161] When the user inputs the "start cleaning" command or even when the user does not input the "start cleaning" command, the remote device controller 130 may generate a control signal so that the main device driver 270 travels and performs cleaning along the generated movement path when a predetermined amount of time elapses after the user designates a divided region.

[0162] Hereinafter, for convenience of description, a case in which the main device controller 230 generates the movement path will be described as an example.

[0163] The main device controller 230 may generate control signals for the main device communication unit 220 and the main device UI 280.

[0164] For example, the main device controller 230 may generate a control signal so that the main device communication unit 220 transmits a generated map image to the remote device 100, and may generate a control signal so that the main device UI 280 displays the generated map image.

[0165] In a case in which the main device controller 230 receives the "start cleaning" command and data related to a designated region, the main device controller 230 may generate a control signal so that the main device communication unit 220 transmits a state value of the main device 200 (for example, whether the main device 200 is moving or performing cleaning, has completed cleaning, or whether an error has occurred) to the remote device 100.

[0166] In a case in which the main device controller 230 generates the movement path of the main device 200, the main device controller 230 may also generate a control signal so that the main device communication unit 220 transmits information on the generated movement to the remote device 100.

[0167] The main device controller 230 may determine whether an environment is inconsistent while the main device 200 performs cleaning. In a case in which the environment is inconsistent, the main device controller 230 may control the main device communication unit 220 to transmit an error state value, which indicates that the environment is inconsistent, to the remote device 100. The user may check an error state and decide whether to update a map image. In a case in which the main device controller 230 receives an "update map" command, the main device controller 230 updates the map image on the basis of a user command. In a case in which the environment is inconsistent, the main device controller 230 may also automatically update the map image.

[0168] To prevent malfunctioning due to the environmental inconsistency, in a case in which the environment is inconsistent, the main device controller 230 may control the main device 100 to stop cleaning and return to be charged.

[0169] While the main device 200 performs cleaning, the main device controller 230 may determine whether a region that is unable to be cleaned is present. In a case in which a region unable to be cleaned is present, the main device controller 230 may control the main device communication unit 220 to transmit the error state value, which indicates that the region unable to be cleaned is present. The user may check that the region unable to be cleaned is present and determine whether to change a region to be cleaned. In a case in which the main device controller 230 receives a "move divided region" command, the main device controller 230 generates a control signal so that the main device moves to the next divided region. In a case in which a region unable to be cleaned is present, the main device controller 230 may automatically generate a control signal for the main device 200 to move to the next divided region along the generated movement path. For example, the order of divided regions in which the main device 200 moves may be set to be clockwise or counterclockwise from a divided region that is the closest to the main device 200 or from a divided region that is the farthest from the main device 200, may be set to be from the largest divided region to the smallest divided region, or may be set to be from the smallest divided region to the largest divided region. In this way, various methods may be employed in accordance with the user's setting. Even in this case, the main device controller 230 may control the main device 100 to stop cleaning and return to be charged.

[0170] The main device controller 230 may generate a control signal for the main device storage unit 240. The main device controller 230 may also generate a control signal so that a generated map image is stored. The main device controller 230 may generate a control signal so that a map image and a cleaning schedule received from the remote device 100 are stored.

[0171] The main device controller 230 may be various processors including at least one chip in which an integrated circuit is formed. Although the main device controller 230 may be provided in a single processor, the main device controller 230 may also be separately provided in a plurality of processors.

[0172] The main device UI 280 may display a current operation situation of the main device 200 and a map image of a section in which the main device 200 is currently present, and display the current position of the main device 200 on the displayed map image. The main device UI 280 may receive an operation command of the user and transmit the received operation command to a controller. The main device UI 280 may be the same as or different from the main device UI 280 which has been described with reference to FIGS. 1 to 2B.

[0173] The main device UI 280 may include the main device input unit 281 and the main device display unit 282. Since the forms in which the main device input unit 281 and the main device display unit 282 are implemented may be the same as the above-described forms of the remote device input unit 111 and the remote device display unit 112, descriptions thereof will be omitted.

[0174] Although it has been described in the above-described embodiment that the remote device input unit 111 of the remote device UI 110 receives the generate map command, the designate divided region command, the start cleaning command, and the end cleaning command, such user commands may also be directly received by the main device input unit 281 of the main device UI 280.

[0175] Although it has been described in the above-described embodiment that the remote device display unit 112 of the remote device UI 110 outputs a screen corresponding to a user command or a state of the main device 200, the screen corresponding to a user command or a state of the main device 200 may also be directly output by the main device display unit 282 of the main device UI 280.

[0176] The main device storage unit 240 temporarily or non-temporarily stores data and programs for the operation of the main device 200. For example, the main device storage unit 240 may temporarily or non-temporarily store a state value of the main device 200. The main device storage unit 240 may store cleaning history data, and the cleaning history data may be periodically or non-periodically updated. In a case in which the main device controller 230 generates a map image or updates the map image, the main device storage unit 240 may store the generated map image or the updated map image. The main device storage unit 240 may store a map image received from the remote device 100.

[0177] The main device storage unit 240 may store a program for generating the map image or updating the map image. The main device storage unit 240 may store a program for generating or updating the cleaning history data. The main device storage unit 240 may also store a program for determining whether an environment is consistent, a program for determining whether a certain region is a region unable to be cleaned, or the like.

[0178] Such a main device storage unit 240 may include at least one type of storage medium from among a flash memory type, a hard disk type, a multimedia card micro type, a card type memory (for example, a SD or XD memory, or the like), a RAM, a SRAM, a ROM, an EEPROM, a PROM, a magnetic memory, a magnetic disk, and an optical disc. However, the main device storage unit 240 is not limited thereto and may also be implemented in other arbitrary forms known in the same technical field.

[0179] FIG. 4A is a control block diagram of a communication unit according to one embodiment, and FIG. 4B is a control block diagram of a communication unit according to another embodiment.

[0180] Referring to FIG. 4A, a communication unit may include a remote device communication unit 120 included in a remote device 100 and a main device communication unit 220 included in a main device 200.

[0181] The remote device communication unit 120, the main device communication unit 220, and a network may be connected to each other and transmit and receive data to and from each other. For example, the main device communication unit 220 may transmit a map image generated by the main device controller 230, the current position of the main device 200, and a state value of the main device 200 to the remote device 100, and the remote device communication unit 120 may transmit a user command to the main device 200. The remote device communication unit 120 may be connected to the network, receive an operation state of another home appliance 330, and transmit a control command related thereto. The main device communication unit 220 may be connected to another remote device 320 and receive the control command therefrom.

[0182] Referring to FIG. 4B, the main device communication unit 220 may be connected to the network and download plan view data from a server 310.

[0183] The remote device communication unit 120 may include a remote device short range communication module 121, which is a short range communication module, a remote device wired communication module 122, which is a wired communication module, and a remote device mobile communication module 123, which is a mobile communication module. The main device communication unit 220 may include a main device short range communication module 221, which is a short range communication module, a main device wired communication module 222, which is a wired communication module, and a main device mobile communication module 223, which is a mobile communication module.

[0184] Here, the short range communication module may be a module for short range communication within a predetermined distance. A short range communication technique may include a wireless local area network (LAN), a wireless fidelity (Wi-Fi), Bluetooth, ZigBee, Wi-Fi Direct (WFD), ultra wideband (UWB), infrared data association (IrDA), Bluetooth low energy (BLE), near field communication (NFC), and the like, but is not limited thereto.

[0185] The wired communication module refers to a module for communication using an electrical signal or an optical signal. A wired communication technique may include a pair cable, a coaxial cable, an optical fiber cable, an Ethernet cable, and the like, but is not limited thereto.

[0186] The mobile communication module may transmit and receive a wireless signal to and from at least one of a base station, an external terminal, and the server 310 in a mobile communication network. The wireless signal may include a voice call signal, a video call signal, or various forms of data in accordance with transmission and reception of text/multimedia messages.

[0187] Hereinafter, a menu selection screen displayed on a remote device according to one embodiment will be described with reference to FIGS. 5 and 6.

[0188] FIG. 5 is an exemplary view of a home screen of a remote device UI.

[0189] The remote device UI 110 including the remote device input unit 111 and the remote device display unit 112 may be provided at a front surface of the remote device 100. The remote device input unit 111 may include a plurality of buttons. In this case, the buttons may be hardware buttons or software buttons. The remote device display unit 112 may be configured as a TSP and sense a user's input.

[0190] An application for managing the main device 200 may be installed in the remote device 100. In this case, the application for managing the main device 200 will be simply referred to as a "cleaning robot application."

[0191] Although a case in which the cleaning robot application is installed in the remote device 100 is employed in the embodiment below, embodiments are not necessarily limited thereto, and the cleaning robot application may also be directly installed in the main device 200.

[0192] The remote device display unit 112 may display the installed application on the home screen and provide convenience for the user to access the application. For example, the remote device display unit 112 may display the installed application with an icon 150 titled "cleaning robot."

[0193] The user may execute the cleaning robot application by touching the "cleaning robot" icon 150. When the cleaning robot application is executed, the remote device display unit 112 may switch the screen to the one illustrated in FIG. 6.

[0194] FIG. 6 is an exemplary view of a menu selection screen of the remote device UI.

[0195] A "home screen" icon 190a may be displayed at the top of a remote device display unit 112 for returning to the home screen. That is, when the "home screen" icon 190a is selected, the screen may be switched back to the one illustrated in FIG. 5. A "map management" icon 160 and a "cleaning management" icon 170 may be sequentially displayed in that order below the "home screen" icon 190a. Here, the "map management" icon 160 is an icon provided for generating or managing a map image of a region in which the main device 200 travels or cleans, i.e., a region to be cleaned. The "cleaning management" icon 170 is an icon provided for designating a specific divided region on the basis of a generated map image and making the main device 200 move or perform cleaning.

[0196] The user may select the "cleaning management" icon 170 and switch the screen of the remote device display unit 112 to a screen for making the main device 200 move or perform cleaning.

[0197] FIG. 7 is an exemplary view of a map image displayed by the remote device UI of the cleaning robot according to one embodiment, and FIG. 8 is an exemplary view of a map image displayed by the remote device UI of the cleaning robot according to another embodiment.

[0198] In accordance with a user's input on a "map generation" icon, a main device controller 230 may perform cleaning or move at least one time in a space in which a main device 200 is currently present, generate a map including obstacle information, and analyze a structure of the map on the basis of the generated map. The main device controller 230 may divide the map into a plurality of regions on the basis of the analyzed map structure, and generate a map image including the plurality of divided regions.

[0199] The map image generated by the main device controller 230 according to one embodiment may be transmitted to a remote device 100 via a main device communication unit 220 and a remote device communication unit 120, and the transmitted map image may be displayed on a remote device UI 110.

[0200] Specifically, in a case in which the main device controller 230 combines four divided regions (first to fourth regions Z1 to Z4) and generates a map image depicting one living room and three rooms, the map image may be generated so that a fourth region Z4, which is a living room, is disposed at the center, a first region Z1, which is a room, is disposed at the left from the fourth region Z4, and a second region 410 and a third region Z3 are disposed at the right from the fourth region Z4 as shown in FIG. 7. A map image 161 in which figures corresponding to sizes of the divided regions are placed as the first region Z1 to the third region Z3 may be generated, and the generated map image 161 may be displayed on the remote device UI 110. Here, the figures include free figures formed as closed loops.

[0201] The main device controller 230 according to another embodiment may search for a plan view 162 corresponding to a map image as shown in FIG. 8 from the main device storage unit 240 and allow the found plan view 162 to be displayed on the remote device UI 110.

[0202] Cleaning operations and screens of the cleaning robot 1 disclosed in FIGS. 9 to 19 may be performed and displayed in a case in which the user selects the "cleaning management" icon 170 illustrated in FIG. 6, or may be automatically performed and displayed after a map image is generated.

[0203] Hereinafter, for convenience of description, a process performed in a case in which a "manage cleaning" command is input to the cleaning robot will be described using the map image 161 according to one embodiment illustrated in FIG. 7 as an example. FIGS. 9 to 16 are conceptual diagrams for describing processes in which the user commands cleaning operations of the cleaning robot on the basis of a map image displayed by the remote device UI of the cleaning robot and screens output by the remote device UI in accordance with user commands or states of the main device according to one embodiment.

[0204] Referring to FIG. 9, a "home screen" icon 190a and a "previous screen" icon 190b for returning to the previous screen may be displayed at the top of the remote device UI 110 according to one embodiment. That is, when the "previous screen" icon 190b is selected, the screen may be switched back to the previous screen.

[0205] The remote device UI 110 may display (171a) a map image 171a showing that a main device 200 is currently at the center of the bottom of a fourth region Z4, which is a living room.

[0206] The current position of the main device 200 may be determined by a main device controller 230 on the basis of values sensed by a main device sensor unit 260, and the main device controller 230 may match the current position of the main device 200 to the map image 171a stored in a main device storage unit 240 to control the UI 110 to display the position of the main device 200 on the map image 171a.

[0207] As illustrated in FIG. 10, a user U may specify and designate a second region 410, in which the user U wishes to perform cleaning or to which the user U wishes to move a main device 200, from a map image 17 lb-1 using his or her finger. In a case in which the user U designates the second region 410, a remote device UI 110 may change an outline display attribute of the second region 410 by displaying an outline of the second region 410 in a different color or displaying (171b-1) the outline of the second region 410 in bold font so that the second region 410, which is a designated region 410, is differentiated from the other divided regions.

[0208] In a case in which at least one divided region is designated by the user U, the remote device UI 110 may display a "start cleaning" icon it at the bottom of the screen.

[0209] By selecting the "start cleaning" icon it at the bottom of the screen, the user U may input the start cleaning command so that the main device 200 moves to the designated region 410 along a generated movement path and performs cleaning. In addition, the user U may input the start cleaning command using various other methods such as a voice command. Various known techniques may be employed as a method of inputting the start cleaning command. The main device 200 may also automatically start cleaning along the movement path when a predetermined amount of time elapses after the designate divided region command is input.

[0210] In a case in which the start cleaning command is input, the remote device communication unit 120 transmits information on the input start cleaning command and the designated region 410 designated by the user U to the main device communication unit 220. Then, a main device controller 230 moves the main device 200 to the designated region 410 and, in a case in which the main device 200 is moved to the designated region 410, controls the main device 200 to perform cleaning. In this case, a main device communication unit 220 may transmit a current state value of the main device 200 (for example, whether the main device 200 is moving or performing cleaning, has completed cleaning, or whether an error occurred) and cleaning history data to the remote device communication unit 120.

[0211] In a case in which the main device 200 moves to the designated region 410, the main device 200 may move along a movement path generated by the main device controller 230 or the remote device controller 130. The generation of the movement path will be described below.

[0212] According to one embodiment, in a case in which the remote device communication unit 120 receives a "moving" state value as the current state value of the main device 200 from the main device communication unit 220, as illustrated in FIG. 11, the remote device UI 110 may display a translucent layer 191a over a map image 171c-1, and the main device 200 may display an animation 191b from which a user may intuitively recognize that the main device 200 is moving. In addition, the remote device UI 110 may display a message 192, which indicates that the main device 200 is moving.

[0213] In a case in which the start cleaning command is input, the "start cleaning" icon it at the bottom of the screen may be changed to an "end cleaning" icon i2.

[0214] By selecting the "end cleaning" icon i2 at the bottom of the screen, the user U may input the end cleaning command so that the main device 200 stops cleaning that was being performed. In addition, the user U may input the end cleaning command using various other methods such as a voice command. Various known techniques may be employed as a method of inputting the end cleaning command.