Automatic Display Of Previously-acquired Endoluminal Images

TOLKOWSKY; David ; et al.

U.S. patent application number 16/929327 was filed with the patent office on 2020-11-05 for automatic display of previously-acquired endoluminal images. The applicant listed for this patent is SYNC-RX, LTD. Invention is credited to Ran COHEN, Eldad KLAIMAN, David TOLKOWSKY.

| Application Number | 20200345321 16/929327 |

| Document ID | / |

| Family ID | 1000004957820 |

| Filed Date | 2020-11-05 |

View All Diagrams

| United States Patent Application | 20200345321 |

| Kind Code | A1 |

| TOLKOWSKY; David ; et al. | November 5, 2020 |

AUTOMATIC DISPLAY OF PREVIOUSLY-ACQUIRED ENDOLUMINAL IMAGES

Abstract

Apparatus and methods are provided for use with an endoluminal data-acquisition device that acquires a set of endoluminal data-points of a lumen of a subject's body at respective locations inside the lumen, a second endoluminal device, and a display configured to display images. At least one processor includes location-association functionality that associates a given data point acquired by the endoluminal data-acquisition device with a given location within the lumen. Location-determination functionality determines, by means of image processing, in an extraluminal image of the second endoluminal device, a current location of at least a portion of the second endoluminal device. Display-driving functionality drives the display to display an indication of the endoluminal data point associated with the location, in response to determining that the portion of the second device is currently at the location. Other applications are also described.

| Inventors: | TOLKOWSKY; David; (TEL AVIV, IL) ; COHEN; Ran; (PETAH TIKVA, IL) ; KLAIMAN; Eldad; (HAIFA, IL) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Family ID: | 1000004957820 | ||||||||||

| Appl. No.: | 16/929327 | ||||||||||

| Filed: | July 15, 2020 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 13228185 | Sep 8, 2011 | 10716528 | ||

| 16929327 | ||||

| 12666879 | Mar 29, 2012 | 8781193 | ||

| 13228185 | ||||

| PCT/IL2011/000612 | Jul 28, 2011 | |||

| 12666879 | ||||

| 12650605 | Dec 31, 2009 | 9855384 | ||

| PCT/IL2011/000612 | ||||

| 12666879 | Mar 29, 2012 | 8781193 | ||

| PCT/IL2009/001089 | Nov 18, 2009 | |||

| 12650605 | ||||

| 12075244 | Mar 10, 2008 | |||

| 12666879 | ||||

| 61344464 | Jul 29, 2010 | |||

| 61344875 | Nov 1, 2010 | |||

| 61457339 | Mar 3, 2011 | |||

| 61457455 | Apr 1, 2011 | |||

| 61457780 | Jun 2, 2011 | |||

| 61457951 | Jul 15, 2011 | |||

| 61193329 | Nov 18, 2008 | |||

| 61193915 | Jan 8, 2009 | |||

| 61202181 | Feb 4, 2009 | |||

| 61202451 | Mar 2, 2009 | |||

| 61213216 | May 18, 2009 | |||

| 61213534 | Jun 17, 2009 | |||

| 61272210 | Sep 1, 2009 | |||

| 61272356 | Sep 16, 2009 | |||

| 60906091 | Mar 8, 2007 | |||

| 60924609 | May 22, 2007 | |||

| 60929165 | Jun 15, 2007 | |||

| 60935914 | Sep 6, 2007 | |||

| 60996746 | Dec 4, 2007 | |||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | A61B 6/12 20130101; A61B 2090/062 20160201; G06T 19/20 20130101; A61F 2/82 20130101; A61B 2017/22044 20130101; G06T 7/00 20130101; G09G 5/363 20130101; A61B 8/0891 20130101; A61B 6/461 20130101; A61B 2017/00252 20130101; A61B 5/0066 20130101; A61B 2017/00694 20130101; A61B 2017/00703 20130101; A61F 2250/0096 20130101; A61B 6/5217 20130101; G06T 1/00 20130101; A61B 6/541 20130101; A61B 2017/22094 20130101; G06T 2219/2024 20130101; A61B 6/503 20130101; A61B 6/504 20130101; A61B 6/463 20130101; A61B 1/0005 20130101; G06F 3/14 20130101; A61F 2/958 20130101 |

| International Class: | A61B 6/00 20060101 A61B006/00; G06F 3/14 20060101 G06F003/14; G06T 1/00 20060101 G06T001/00; G09G 5/36 20060101 G09G005/36; G06T 7/00 20060101 G06T007/00; G06T 19/20 20060101 G06T019/20; A61B 1/00 20060101 A61B001/00; A61B 6/12 20060101 A61B006/12 |

Claims

1. A co-registration system, comprising: a processor configured for communication with an extraluminal imaging device, a first endoluminal data-acquisition device, a different second endoluminal data-acquisition device, and a display, wherein the processor is configured to: receive an extraluminal image of a lumen of a body of a subject; receive, from the first endoluminal data-acquisition device operating according to a first modality and positioned at a first location within the lumen, first endoluminal data associated with the lumen at the first location, wherein the first endoluminal data comprises data of the first modality; associate the first endoluminal data with a first corresponding location of the lumen in the extraluminal image; receive, from the second endoluminal data-acquisition device operating according to a different second modality and positioned at a second location within the lumen, second endoluminal data associated with the lumen at the second location, wherein the second endoluminal data comprises data of the second modality; associate the second endoluminal data with a second corresponding location of the lumen in the extraluminal image; generate, based on associating the first endoluminal data with the first corresponding location and associating the second endoluminal data with the second corresponding location, a screen display comprising: the extraluminal image; a first indication of the first endoluminal data at the first corresponding location in the extraluminal image; and a second indication of the second endoluminal data at the second corresponding location in the extraluminal image; and output the screen display to the display.

2. The co-registration system of claim 1, wherein the first location is different from the second location.

3. The co-registration system of claim 1, wherein the first endoluminal data comprises an endoluminal image of the lumen at the first location.

4. The co-registration system of claim 3, wherein the endoluminal image comprises an intravascular ultrasound image or an optical coherence tomography image.

5. The co-registration system of claim 3, wherein the second endoluminal data comprises at least one of pressure, flow, or temperature within the lumen at the second location.

6. The co-registration system of claim 3, wherein the screen display further comprises: an endoluminal image stack comprising the endoluminal image; and a marking associated with the first location in the endoluminal image stack.

7. The co-registration system of claim 1, wherein the first indication and the second indication are overlaid on the extraluminal image in the screen display.

8. The co-registration system of claim 1, wherein the screen display further comprises at least one of the first endoluminal data or the second endoluminal data overlaid on the extraluminal image.

9. The co-registration system of claim 1, wherein the processor is configured to merge the first endoluminal data and the second endoluminal data.

10. The co-registration system of claim 1, further comprising the display.

11. The co-registration system of claim 1, further comprising the extraluminal imaging device.

12. The co-registration system of claim 1, further comprising the first endoluminal data-acquisition device.

13. The co-registration system of claim 1, further comprising the second endoluminal data-acquisition device.

Description

CROSS REFERENCES TO RELATED APPLICATIONS

[0001] The present application is a continuation of U.S. Non-Provisional patent application Ser. No. 13/228,185, filed Sep. 8, 2011, now U.S. Pat. No. 10,716,528, which is a continuation of U.S. patent application Ser. No. 12/666,879, filed Mar. 29, 2012, now U.S. Pat. No. 8,781,193 and a continuation of International Application No. PCT/IL2011/000612, entitled "Co-use of endoluminal data and extraluminal imaging," filed 28 Jul. 2011, which:

[0002] (a) claims the benefit of:

[0003] U.S. Provisional Patent Application 61/344,464, entitled "Co-use of endoluminal data and extraluminal imaging," filed 29 Jul. 2010;

[0004] U.S. Provisional Patent Application 61/344,875, entitled "Co-use of endoluminal data and extraluminal imaging," filed 1 Nov. 2010;

[0005] U.S. Provisional Patent Application 61/457,339, entitled "Co-use of endoluminal data and extraluminal imaging," filed 3 Mar. 2011;

[0006] U.S. Provisional Patent Application 61/457,455, entitled "Co-use of endoluminal data and extraluminal imaging," filed 1 Apr. 2011;

[0007] U.S. Provisional Patent Application 61/457,780, entitled "Co-use of endoluminal data and extraluminal imaging," filed 2 Jun. 2011; and

[0008] U.S. Provisional Patent Application 61/457,951, entitled "Co-use of endoluminal data and extraluminal imaging," filed 15 Jul. 2011; and

[0009] (b) is a continuation-in-part of U.S. patent application Ser. No. 12/650,605 to Cohen (published as US 2010/0172556), filed Dec. 31, 2009, which:

[0010] (i) is a continuation of U.S. patent application Ser. No. 12/666,879 to Steinberg, filed Mar. 29, 2012, which is the US national phase of PCT Application No. PCT/IL2009/001089 to Cohen (published as WO 10/058398), filed Nov. 18, 2009, which claims priority from the following patent applications: [0011] U.S. Provisional Patent Application 61/193,329, entitled "Apparatuses and methods for the automatic generation of a road map from angiographic images of a cyclically-moving organ," to Steinberg, filed Nov. 18, 2008 [0012] U.S. Provisional Patent Application 61/193,915, entitled "Image processing and tool actuation for medical procedures," to Steinberg, filed Jan. 8, 2009 [0013] U.S. Provisional Patent Application 61/202,181, entitled "Image processing and tool actuation for medical procedures," to Steinberg, filed Feb. 4, 2009 [0014] U.S. Provisional Patent Application 61/202,451, entitled "Image processing and tool actuation for medical procedures," to Steinberg, filed Mar. 2, 2009 [0015] U.S. Provisional Patent Application 61/213,216, entitled "Image processing and tool actuation for medical procedures," to Steinberg, filed May 18, 2009 [0016] U.S. Provisional Patent Application 61/213,534, entitled "Image Processing and Tool Actuation for Medical Procedures," to Steinberg, filed Jun. 17, 2009 [0017] U.S. Provisional Patent Application 61/272,210, entitled "Image processing and tool actuation for medical procedures," to Steinberg, filed Sep. 1, 2009 and [0018] U.S. Provisional Patent Application 61/272,356, entitled "Image Processing and Tool Actuation for Medical Procedures" to Steinberg, filed Sep. 16, 2009; and

[0019] (ii) is a continuation-in-part of U.S. patent application Ser. No. 12/075,244 to Tolkowsky (published as US 2008/0221442), filed Mar. 10, 2008, entitled "Imaging for use with moving organs," which claims the benefit of U.S. Provisional Patent Application Nos.: [0020] 60/906,091 filed on Mar. 8, 2007, [0021] 60/924,609 filed on May 22, 2007, [0022] 60/929,165 filed on Jun. 15, 2007, [0023] 60/935,914 filed on Sep. 6, 2007, and [0024] 60/996,746 filed on Dec. 4, 2007, [0025] all entitled "Apparatuses and methods for performing medical procedures on cyclically-moving body organs."

[0026] The present application is related to the following patent applications: [0027] U.S. patent application Ser. No. 12/075,214 to Iddan (published as 2008/0221439), filed Mar. 10, 2008, entitled "Tools for use with moving organs." [0028] U.S. patent application Ser. No. 12/075,252 to Iddan (published as US 2008/0221440), filed Mar. 10, 2008, entitled "Imaging and tools for use with moving organs." [0029] U.S. patent application Ser. No. 12/781,260 to Blank (published as US 2010/0228076), filed May 17, 2010, entitled "Controlled actuation and deployment of a medical device." [0030] U.S. patent application Ser. No. 12/487,315 to Iddan (published as US 2009/0306547), filed Jun. 18, 2009, entitled "Stepwise advancement of a medical tool," which claims the benefit of U.S. Provisional Patent Application No. 61/129,331 to Iddan, filed on Jun. 19, 2008, entitled "Stepwise advancement of a medical tool."

[0031] All of the above-mentioned applications are incorporated herein by reference.

FIELD OF EMBODIMENTS OF THE INVENTION

[0032] Some applications of the present invention generally relate to medical imaging. Specifically, some applications of the present invention relate to the co-use of endoluminal data and extraluminal imaging.

BACKGROUND

[0033] Vascular catheterizations, such as coronary catheterizations, are frequently-performed medical interventions. Such interventions are typically performed in order to diagnose the blood vessels for potential disease, and/or to treat diseased blood vessels. Typically, in order to facilitate diagnosis of blood vessels, the catheterization is performed under extraluminal imaging. For some procedures, an endoluminal data-acquisition device is used to perform endoluminal imaging and/or measurements. If appropriate based on the diagnosis, a treatment is applied to the blood vessel. For some procedures, treatment of the blood vessel includes the application of a treatment to the blood vessel by a therapeutic device that is placed endoluminally. For example, a therapeutic device (e.g., a balloon) is placed in the blood vessel temporarily and retrieved subsequent to the treatment having been applied. Alternatively, a therapeutic device (e.g., a stent) may remain implanted inside the blood vessel in order to treat the blood vessel.

SUMMARY OF EMBODIMENTS

[0034] Some applications of the present invention are applied to medical procedures performed, in whole or in part, on or within luminal structures. For some applications, apparatus and methods are provided for facilitating the co-use of extraluminal imaging and endoluminal data (i.e., data that are acquired using an endoluminal data-acquisition device), in performing medical procedures. Endoluminal data may include imaging data (e.g., imaging data acquired using an endoluminal imaging probe), data derived from measurements (e.g., measurements performed using an endoluminal sensor or measuring device), other data, and any combination thereof.

[0035] In accordance with some applications of the present invention, during insertion and deployment of an endoluminal device, e.g., an endoluminal therapeutic device, into a lumen, real-time extraluminal images of the device inside the lumen are displayed together with endoluminal data that were acquired previously and that correspond to the current location of the endoluminal therapeutic device. The cumulative effect of showing the extraluminal images and the endoluminal data is as if the endoluminal therapeutic tool is being inserted and deployed under both extraluminal imaging and endoluminal data acquisition. For some applications, the aforementioned techniques are applied since it is difficult or impossible to acquire the endoluminal data during insertion and deployment of the therapeutic device, because the lumen is too narrow to accommodate both the endoluminal therapeutic device and the endoluminal data-acquisition device. Alternatively, although it may be possible for the lumen to accommodate both the endoluminal therapeutic device and the endoluminal data-acquisition device, the aforementioned techniques may be used to prevent the endoluminal data-acquisition device from interfering with the endoluminal therapeutic device, during insertion and/or deployment of the therapeutic device.

[0036] There is therefore provided, in accordance with some applications of the present invention, apparatus for use with an endoluminal data-acquisition device that is configured to acquire a set of endoluminal data-points with respect to a lumen of a body of a subject at respective locations inside the lumen, a second endoluminal device, and a display configured to display images of the lumen, the apparatus including:

[0037] at least one processor, including: [0038] location-association functionality configured to associate a given endoluminal data point acquired by the endoluminal data-acquisition device with a given location within the lumen; [0039] location-determination functionality configured, in an extraluminal image of the second endoluminal device, to determine by means of image processing, a current location of at least a portion of the second endoluminal device inside the lumen; [0040] display-driving functionality configured, in response to determining that the portion of the second endoluminal device is currently at the given location, to drive the display to display an indication of the endoluminal data point associated with the given location.

[0041] For some applications, the second endoluminal device includes a second endoluminal data-acquisition device configured to acquire a second set of endoluminal data-points with respect to the lumen at respective locations inside the lumen, and the display-driving functionality is configured, in response to determining that the portion of the second endoluminal data-acquisition device is currently at the given location, to drive the display to display:

[0042] an endoluminal image acquired by the first endoluminal data-acquisition device that corresponds to the given location, and

[0043] an endoluminal image acquired by the second endoluminal data-acquisition device that corresponds to the given location.

[0044] For some applications, the second endoluminal device includes a second endoluminal data-acquisition device configured to acquire a second set of endoluminal data-points with respect to the lumen at respective locations inside the lumen, and the display-driving functionality is configured, in response to determining that the portion of the second endoluminal data-acquisition device is currently at the given location, to drive the display to display:

[0045] an endoluminal image acquired by the second endoluminal data-acquisition device that corresponds to the given location, and

[0046] an indication of the given location with respect to an endoluminal image stack of the lumen generated using the endoluminal data points acquired by the first endoluminal data-acquisition device.

[0047] For some applications, the endoluminal data-acquisition device includes an endoluminal imaging probe configured to acquire endoluminal images of the lumen at respective locations inside the lumen, and the location-association functionality is configured to associate a given endoluminal image acquired by the endoluminal imaging probe with a given location within the lumen.

[0048] For some applications, the display-driving functionality is configured, in response to determining that the portion of the second endoluminal device is currently at the given location, to drive the display to display an endoluminal image that corresponds to the given location.

[0049] For some applications, the display-driving functionality is configured, in response to determining that the portion of the second endoluminal device is currently at the given location, to drive the display to display an indication of the given location with respect to an endoluminal image stack of the lumen.

[0050] For some applications, the display-driving functionality is configured, in response to determining that the portion of the second endoluminal device is currently at the given location, to drive the display to display an indication of the given location with respect to the extraluminal image of the lumen.

[0051] For some applications, the second endoluminal device includes a second endoluminal data-acquisition device configured to acquire a second set of endoluminal data-points with respect to the lumen at respective locations inside the lumen, and the display-driving functionality is further configured, in response to determining that the portion of the second endoluminal data-acquisition device is currently at the given location, to drive the display to display:

[0052] an endoluminal image acquired by the first endoluminal data-acquisition device that corresponds to the given location, and

[0053] an endoluminal image acquired by the second endoluminal data-acquisition device that corresponds to the given location.

[0054] For some applications, the second endoluminal device includes a second endoluminal data-acquisition device configured to acquire a second set of endoluminal data-points with respect to the lumen at respective locations inside the lumen, and the display-driving functionality is further configured, in response to determining that the portion of the second endoluminal data-acquisition device is currently at the given location, to drive the display to display:

[0055] an endoluminal image acquired by the second endoluminal data-acquisition device that corresponds to the given location, and

[0056] an indication of the given location with respect to an endoluminal image stack of the lumen generated using the endoluminal data points acquired by the first endoluminal data-acquisition device.

[0057] For some applications,

[0058] the endoluminal data-acquisition device includes a portion that is visible in extraluminal images of the data-acquisition device inside the lumen, and

[0059] the location-association functionality is configured to associate the endoluminal data point with the given location inside the lumen by determining, by means of image-processing, in an extraluminal image of the data-acquisition device inside the lumen, a location of at least the visible portion of the data-acquisition device inside the lumen, at the acquisition of the endoluminal data point.

[0060] For some applications,

[0061] the endoluminal data-acquisition device includes an image-acquiring portion, and

[0062] the location-association functionality is configured to associate the endoluminal data point with the given location inside the lumen by accounting for an offset between the portion of the endoluminal data-acquisition device that is visible in the extraluminal image, and the image-acquiring portion of the endoluminal data-acquisition device.

[0063] For some applications, the second endoluminal device includes an endoluminal therapeutic device configured to apply a therapy to the lumen, and the location-determination functionality is configured, in an extraluminal image of the endoluminal therapeutic device, to determine by means of image processing, a current location of at least a portion of the endoluminal therapeutic device inside the lumen.

[0064] For some applications, the endoluminal therapeutic device includes a guidewire configured to penetrate an occlusion of the lumen and the endoluminal data-acquisition device includes a forward-looking endoluminal imaging probe, and the location-association functionality configured to associate the given endoluminal data point with the given location by associating an endoluminal image of a portion of the lumen that is distal to the given location with the given location.

[0065] There is further provided, in accordance with some applications of the present invention, a method, including:

[0066] acquiring a set of endoluminal data point of a lumen of a subject's body;

[0067] determining that one of the endoluminal data points of the set corresponds to a given location inside the lumen; and

[0068] subsequently,

[0069] while a second endoluminal device is inside the lumen: [0070] acquiring an extraluminal image of the second endoluminal device inside the lumen; [0071] by means of image processing, determining, based upon the extraluminal image, a current location of at least a portion of the second endoluminal device inside the lumen; and [0072] in response to determining that the portion of the second endoluminal device is currently at the given location, displaying an indication of the endoluminal data point that corresponds to the given location.

[0073] For some applications, the second endoluminal device includes an endoluminal therapeutic device configured to apply a therapy to the lumen, and acquiring the extraluminal image of the second endoluminal device inside the lumen includes acquiring an extraluminal image of the endoluminal therapeutic device inside the lumen.

[0074] For some applications, the endoluminal therapeutic device includes a guidewire, and the method further includes penetrating an occlusion of the lumen with the guidewire.

[0075] For some applications, acquiring the at least one endoluminal data point includes, while a forward-looking endoluminal imaging probe is at the given location, acquiring an endoluminal image of a portion of the lumen that is distal to the given location.

[0076] There is additionally provided, in accordance with some applications of the present invention, a method for use with an endoluminal data-acquisition device configured to be moved through a lumen of a subject's body, the endoluminal data-acquisition device having a radiopaque marker coupled thereto, including:

[0077] while the endoluminal data-acquisition device is being moved through the lumen, acquiring a plurality of endoluminal data points of the lumen using the endoluminal data-acquisition device;

[0078] determining that a first endoluminal data point corresponds to a first location within the lumen, by: [0079] acquiring a first angiographic image of the lumen, at a time associated with an acquisition of the first endoluminal data point by the endoluminal data-acquisition device, and [0080] determining a location of the radiopaque marker within the first angiographic image of the lumen, by performing image processing on the first angiographic image, the location of the radiopaque marker within the first angiographic image of the lumen corresponding to the first endoluminal data point;

[0081] determining that a second endoluminal data point corresponds to a second given location within the lumen, by: [0082] acquiring a second angiographic image of the lumen, at a time associated with an acquisition of the second endoluminal data point by the endoluminal data-acquisition device, and [0083] determining a location of the radiopaque marker within the second angiographic image of the lumen by performing image processing on the second angiographic image, the location of the radiopaque marker within the second angiographic image of the lumen corresponding to the second endoluminal data point;

[0084] generating a combined angiographic image of the lumen that includes representations of the first and second marker locations thereon, by co-registering the first and second angiographic images; and

[0085] determining that at least one location on the combined angiographic image that is intermediate to the first and second locations of the radiopaque marker corresponds to an endoluminal data point acquired between the acquisitions of the first and second data points, by interpolating between the first and second locations of the radiopaque marker on the combined angiographic image; and

[0086] generating an output in response thereto.

[0087] For some applications, acquiring the plurality of endoluminal data points of the lumen using the endoluminal data-acquisition device while the endoluminal data-acquisition device is being moved through the lumen includes acquiring the plurality of endoluminal data points of the lumen using the endoluminal data-acquisition device while the endoluminal data-acquisition device is being pulled-back through the lumen.

[0088] There is further provided, in accordance with some applications of the present invention, apparatus for use with:

[0089] an endoluminal data-acquisition device configured to acquire a plurality of endoluminal data points of a lumen of a body of a subject at respective locations inside the lumen, while the endoluminal data-acquisition device is moved through the lumen, the endoluminal data-acquisition device having a radiopaque marker coupled thereto,

[0090] an angiographic imaging device configured to (a) acquire a first angiographic image of the lumen, at a time associated with an acquisition of a first endoluminal data point by the endoluminal data-acquisition device, and (b) acquire a second angiographic image of the lumen, at a time associated with an acquisition of a second endoluminal data point by the endoluminal data-acquisition device, and

[0091] a display,

[0092] the apparatus including:

[0093] at least one processor, including: [0094] location-determination functionality configured to: [0095] determine that the first endoluminal data point corresponds to a first location within the lumen, by determining a location of the radiopaque marker within the first angiographic image of the lumen, by performing image processing on the first angiographic image, the location of the radiopaque marker within the first angiographic image of the lumen corresponding to the first endoluminal data point, and [0096] determine that a second endoluminal data point corresponds to a second given location within the lumen by determining a location of the radiopaque marker within the second angiographic image of the lumen by performing image processing on the second angiographic image, the location of the radiopaque marker within the second angiographic image of the lumen corresponding to the second endoluminal data point; [0097] image-co-registration functionality configured to generate a combined angiographic image of the lumen that includes representations of the first and second marker locations thereon, by co-registering the first and second angiographic images; [0098] location-association functionality configured to determine that at least one location on the combined angiographic image that is intermediate to the first and second locations of the radiopaque marker corresponds to an endoluminal data point acquired between the acquisitions of the first and second data points, by interpolating between the first and second locations of the radiopaque marker on the combined angiographic image; [0099] display-driving functionality configured to drive the display to display an output, in response to determining that the intermediate location corresponds to the endoluminal data point acquired between the acquisitions of the first and second data points.

[0100] For some applications, the location-determination functionality is configured to:

[0101] determine that the first endoluminal data point corresponds to the first location within the lumen by determining that the first endoluminal data point corresponds to a location in a vicinity of a first end of a luminal segment of interest, and

[0102] determine that the second endoluminal data point corresponds to the second location within the lumen by determining that the second endoluminal data point corresponds to a location in a vicinity of a second end of the luminal segment of interest.

[0103] For some applications,

[0104] the location-determination functionality is configured to: [0105] determine that the first endoluminal data point corresponds to the first location within the lumen by determining that the first endoluminal data point corresponds to a location in a vicinity of a first end of a luminal segment of interest, and [0106] determine that the second endoluminal data point corresponds to the second location within the lumen by determining that the second endoluminal data point corresponds to a location between the first end and a second end of the luminal segment of interest, and

[0107] the angiographic imaging device includes an angiographic imaging device that is further configured to acquire a third angiographic image of the lumen, at a time associated with an acquisition of a third endoluminal data point by the endoluminal data-acquisition device,

[0108] the location-determination functionality is further configured to determine that third endoluminal data point corresponds to a location in a vicinity of the second end of the luminal segment of interest, by determining a location of the radiopaque marker within the third angiographic image of the lumen by performing image processing on the third angiographic image, the location of the radiopaque marker within the third angiographic image of the lumen corresponding to the third endoluminal data point;

[0109] the image-co-registration functionality is further configured to generate a representation of the third marker location on the combined angiographic image, by co-registering the first, second, and third angiographic images; and

[0110] the location-association functionality is further configured to determine that at least one location on the combined angiographic image that is intermediate to the second and third locations of the radiopaque marker corresponds to an endoluminal data point acquired between the acquisitions of the second and third data points, by interpolating between the second and third locations of the radiopaque marker on the combined angiographic image; and

[0111] the display-driving functionality is further configured to drive the display to display an output, in response to determining that the intermediate location corresponds to the endoluminal data point acquired between the acquisitions of the second and third data points.

[0112] For some applications, the location-association functionality is configured to interpolate between the first and second locations of the radiopaque marker on the combined angiographic image by assuming that, between acquiring respective successive pairs of endoluminal data points between the acquisitions of the first and second data points, the endoluminal data acquisition device traveled equal distances.

[0113] For some applications, the location-association functionality is configured to interpolate between the first and second locations of the radiopaque marker on the combined angiographic image by assuming that a rate of the movement of the endoluminal data acquisition device was linear between the acquisitions of the first and second data points.

[0114] There is additionally provided, in accordance with some applications of the present invention, a method for use with an endoluminal data-acquisition device configured to be moved through a lumen of a subject's body, the endoluminal data-acquisition device having a radiopaque marker coupled thereto, including:

[0115] while the endoluminal data-acquisition device is being moved through the lumen: [0116] acquiring a plurality of endoluminal data points of the lumen using the endoluminal data-acquisition device; [0117] continuously injecting contrast agent into the lumen; and [0118] acquiring a plurality of angiographic images of the data-acquisition device;

[0119] determining that endoluminal data points correspond to respective locations within the lumen, by determining locations of the radiopaque marker within the angiographic images of the lumen, by performing image processing on the angiographic images, the locations of the radiopaque marker within the angiographic images of the lumen corresponding to respective endoluminal data points; and

[0120] generating an output in response thereto.

[0121] For some applications, continuously injecting the contrast agent into the lumen includes continuously injecting the contrast agent into the lumen for a period of at least two seconds.

[0122] For some applications, acquiring the plurality of endoluminal data points of the lumen using the endoluminal data-acquisition device includes acquiring the plurality of endoluminal data points of the lumen using the endoluminal data-acquisition device while the data-acquisition device is being pulled back through the lumen.

[0123] For some applications, continuously injecting the contrast agent into the lumen includes continuously injecting the contrast agent over at least 50% of a duration of a period over which the endoluminal data-acquisition device acquires the endoluminal data points.

[0124] For some applications, continuously injecting the contrast agent into the lumen includes continuously injecting the contrast agent over at least 80% of a duration of a period over which the endoluminal data-acquisition device acquires the endoluminal data points.

[0125] There is further provided, in accordance with some applications of the present invention, apparatus for use with:

[0126] an endoluminal data-acquisition device configured to acquire a plurality of endoluminal data points of a lumen of a body of a subject at respective locations inside the lumen, while the endoluminal data-acquisition device is being moved through the lumen, the endoluminal data-acquisition device having a radiopaque marker coupled thereto,

[0127] contrast agent configured to be continuously injected into the lumen, during the movement of the endoluminal data-acquisition device,

[0128] an angiographic imaging device configured to acquire a plurality of angiographic images of the endoluminal data-acquisition device inside the lumen, during the movement of the endoluminal data-acquisition device, and

[0129] a display configured to display images of the lumen,

[0130] the apparatus including:

[0131] at least one processor, including: [0132] location-association functionality configured to determine that endoluminal data points correspond to respective locations within the lumen, by determining locations of the radiopaque marker within the angiographic images of the lumen, by performing image processing on the angiographic images, the locations of the radiopaque marker within the angiographic images of the lumen corresponding to respective endoluminal data points; [0133] display-driving functionality configured to drive the display to display an output, in response to determining that the endoluminal data points correspond to respective locations within the lumen.

[0134] For some applications, the endoluminal data-acquisition device includes an endoluminal imaging probe configured to acquire a plurality of endoluminal images at a first frame rate, the angiographic imaging device includes an angiographic imaging device that is configured to acquire the plurality of angiographic images at a second frame rate that is different from the first frame rate, and the location-association functionality is configured to determine that endoluminal data points correspond to respective locations within the lumen by indexing the endoluminal images with respect to the angiographic images.

[0135] There is additionally provided, in accordance with some applications of the present invention, a method for use with an endoluminal data-acquisition device configured to be moved through a lumen of a subject's body, the endoluminal data-acquisition device having a radiopaque marker coupled thereto, including:

[0136] while the endoluminal data-acquisition device is being moved through the lumen, acquiring a plurality of endoluminal data points of the lumen using the endoluminal data-acquisition device;

[0137] determining that respective endoluminal data points correspond to respective locations within the lumen, by acquiring at least first and second angiographic images of the lumen, and determining first and second locations of the marker respectively within the first and second angiographic images;

[0138] generating a combined angiographic image of the lumen that includes representations thereon of the first and second marker locations within the lumen, by co-registering the first and second angiographic images to one another, by: [0139] designating one of the angiographic images as a baseline image, a shape of the lumen in the baseline image being designated as a baseline shape of the lumen; [0140] determining whether a shape of the lumen in the angiographic image that is not the baseline image is the same as the baseline shape of the lumen; and [0141] in response to determining that the shape of the lumen in the angiographic image that is not the baseline image is not the same as the baseline shape of the lumen: [0142] designating the image that is not the baseline image as a non-baseline image, and [0143] deforming the shape of the lumen in the non-baseline image, such that the shape of the lumen becomes more similar to the baseline shape of the portion than when the lumen in the non-baseline image is not deformed; [0144] based upon the deformation of the non-baseline image, determining a location upon the baseline image at which the marker from within the non-baseline image should be located; and [0145] generating an indication of the marker from within the non-baseline image at the determined location on the baseline image.

[0146] For some applications, acquiring the plurality of endoluminal data points of the lumen using the endoluminal data-acquisition device while the endoluminal data-acquisition device is being moved through the lumen includes acquiring the plurality of endoluminal data points of the lumen using the endoluminal data-acquisition device while the endoluminal data-acquisition device is being pulled-back through the lumen.

[0147] There is additionally provided, in accordance with some applications of the present invention, apparatus for use with:

[0148] an endoluminal data-acquisition device configured to acquire a plurality of endoluminal data points of a lumen of a body of a subject at respective locations inside the lumen, while the endoluminal data-acquisition device is moved through the lumen, the endoluminal data-acquisition device having a radiopaque marker coupled thereto,

[0149] an angiographic imaging device configured to acquire respective angiographic image of the lumen, at times associated with acquisitions of respective endoluminal data point by the endoluminal data-acquisition device, and

[0150] a display,

[0151] the apparatus including:

[0152] at least one processor, including: [0153] location-determination functionality configured to determine first and second locations of the radiopaque marker respectively within first and second angiographic images of the lumen; [0154] image-co-registration functionality configured to generate a combined angiographic image of the lumen that includes representations of the first and second marker locations thereon, by co-registering the first and second angiographic images to one another, by: [0155] designating one of the angiographic images as a baseline image, a shape of the lumen in the baseline image being designated as a baseline shape of the lumen; [0156] determining whether a shape of the lumen in the angiographic image that is not the baseline image is the same as the baseline shape of the lumen; and [0157] in response to determining that the shape of the lumen in the angiographic image that is not the baseline image is not the same as the baseline shape of the lumen: [0158] designating the image that is not the baseline image as a non-baseline image, and [0159] deforming the shape of the lumen in the non-baseline image, such that the shape of the lumen becomes more similar to the baseline shape of the portion than when the lumen in the non-baseline image is not deformed; [0160] based upon the deformation of the non-baseline image, determining a location upon the baseline image at which the marker from within the non-baseline image should be located; and [0161] generating an indication of the marker from within the non-baseline image at the determined location on the baseline image; and [0162] display-driving functionality configured to drive the display to display an output, in response to generating the combined angiographic image of the lumen.

[0163] For some applications, the location-determination functionality is configured to:

[0164] determine that a first endoluminal data point corresponds to a first location within the lumen, by determining a location of the radiopaque marker within the first angiographic image of the lumen, by performing image processing on the angiographic image, the location of the first radiopaque marker within the first angiographic image of the lumen corresponding to the first endoluminal data point, and

[0165] determine that a second endoluminal data point corresponds to a second given location within the lumen by determining a location of the radiopaque marker within the second angiographic image of the lumen by performing image processing on the second angiographic image, the location of the radiopaque marker within the second angiographic image of the lumen corresponding to the second endoluminal data point.

[0166] For some applications, the location-determination functionality is configured to:

[0167] determine that the first endoluminal data point corresponds to the first location within the lumen by determining that the first endoluminal data point corresponds to a location in a vicinity of a first end of a luminal segment of interest, and

[0168] determine that the second endoluminal data point corresponds to the second location within the lumen by determining that the second endoluminal data point corresponds to a location in a vicinity of a second end of the luminal segment of interest.

[0169] For some applications, the at least one processor further includes location-association functionality configured to determine that at least one location on the combined angiographic image that is intermediate to the first and second locations of the radiopaque marker corresponds to an endoluminal data point acquired between the acquisitions of the first and second data points, by interpolating between the first and second locations of the radiopaque marker on the combined angiographic image.

[0170] There is further provided, in accordance with some applications of the present invention, a method for imaging a tool inside a portion of a body of a subject that undergoes motion, the tool having contours, the method including:

[0171] acquiring a plurality of image frames of the portion of the subject's body; and

[0172] generating at least one image frame in which the tool is enhanced, by: [0173] identifying radiopaque markers in the image frames; [0174] identifying edge lines in a vicinity of the markers within the image frames, the edge lines corresponding to contours of the tool; [0175] in response to the identifying of the edge lines, selecting a subset of the image frames that are based upon the acquired image frames, based upon a level of similarity between the edge lines in the selected image frames to one another; [0176] aligning the contours in a plurality of the selected image frames, and [0177] averaging the plurality of aligned frames to generate an averaged image frame; and

[0178] displaying the averaged image frame.

[0179] There is further provided, in accordance with some applications of the present invention, apparatus for use with a tool configured to be placed inside a portion of a body of a subject that undergoes motion, the tool having contours, an image-acquisition device configured to acquire a plurality of image frames of the portion of the subject's body, and a display, the apparatus including:

[0180] at least one processor configured to generate at least one image frame in which the tool is enhanced, the processor including: [0181] image-receiving functionality configured to receive the plurality of image frames into the processor, [0182] marker-identifying functionality configured to automatically identify radiopaque markers in the image frames, [0183] edge-line-identifying functionality configured to automatically identify edge lines in a vicinity of the radiopaque markers in the image frames, [0184] image-selection functionality configured, in response to the identifying of the edge lines, to select a subset of the image frames that are based upon the acquired image frames, based upon a level of similarity between the edge lines in the selected image frames to one another, [0185] image-alignment functionality configured to align the edge lines in a plurality of the selected image frames, and [0186] image-averaging functionality configured to generate an averaged image frame by averaging the plurality of aligned image frames; and [0187] display-driving functionality configured to drive the display to display the averaged image frame.

[0188] For some applications, the image-selection functionality is configured to select the subset of image frames based upon a level of similarity between shapes of the edge lines in the image frames.

[0189] For some applications, the image-selection functionality is configured to select the subset of image frames based upon a level of alignment between the edge lines and the radiopaque markers in the image frames.

[0190] For some applications, the image-selection functionality is configured to select the subset of image frames by rejecting from being included in the subset, at least one image frame in which edge lines corresponding to the contours of the tool appear.

[0191] For some applications, the image-alignment functionality is configured to align the edge lines in the selected image frames by translating at least one image frame with respect to at least one other image frame of the selected image frames.

[0192] For some applications, the processor is configured to generate a plurality of image frames in which the tool is enhanced, and the display-driving functionality is configured to drive the display to display, as an image stream, the plurality of image frames in which the tool is enhanced.

[0193] For some applications, the tool includes a stent that is inserted into the lumen while disposed on a device, and the marker-identifying functionality is configured to identify the radiopaque markers by identifying radiopaque markers that are coupled to the device, and the edge-line-identifying functionality is configured to identify the edge lines by identifying curved edge lines, corresponding to contours of the stent.

[0194] For some applications, the image-selection functionality is configured to select the subset of image frames based upon a level of similarity between shapes of the curved edge lines in the image frames.

[0195] For some applications, the marker-identifying functionality is configured to identify first and second radiopaque markers that are coupled to the device, and the image-selection functionality is configured to select the subset of image frames based upon a level of alignment between the edge lines and an imaginary line running from the first marker to the second marker in the image frames.

[0196] There is further provided, in accordance with some applications of the present invention, a method for use with an endoluminal data-acquisition device configured to acquire endoluminal data points while moving through a lumen of a subject's body generally in a first direction with respect to the lumen, including:

[0197] while the endoluminal data-acquisition device is being moved through the lumen, acquiring a plurality of endoluminal data points of the lumen using the endoluminal data-acquisition device;

[0198] determining that, at at least one location, two or more endoluminal data points were acquired;

[0199] generating an output using a portion of the plurality of endoluminal data points of the lumen acquired using the endoluminal data-acquisition device, by using only a single endoluminal data point corresponding to the location.

[0200] There is further provided, in accordance with some applications of the present invention, apparatus for use with an endoluminal data-acquisition device that acquires a plurality of endoluminal data points of a lumen of a body of a subject while being moved through the lumen generally in a first direction with respect to the lumen, and a display, the apparatus including:

[0201] at least one processor including: [0202] duplicate-data-point-identification functionality configured to determine that, at at least one location, two or more endoluminal data points were acquired by the endoluminal data-acquisition device; [0203] data-point-selection functionality configured to generate an output using a portion of the plurality of endoluminal data points of the lumen acquired using the endoluminal data-acquisition device, by using only a single data point corresponding to the location; and [0204] display-driving functionality configured to drive the display to display the output.

[0205] For some applications, the data-point-selection functionality is configured to use only the single data point corresponding to the location by using a single one of the two or more endoluminal data points that were acquired at the at least one location, and rejecting another one of the two or more endoluminal data points from being used in the output

[0206] For some applications, the data-point-selection functionality is configured to generate the output selecting for use as the single data point a data point that was acquired at the given location at an earliest time with respect to the data points that were acquired at the given location.

[0207] For some applications, the data-point-selection functionality is configured to generate the output by rejecting from being used in the output an endoluminal data point that was acquired while the device was moving in a second direction with respect to the lumen that is opposite to the first direction.

[0208] For some applications, the data-point-selection functionality is configured:

[0209] to generate the output by generating an indication that one of the two or more endoluminal data points is associated with the location by co-registering the portion of the plurality of endoluminal data points of the lumen with the extraluminal image, and

[0210] to reject the other one of the two or more endoluminal data points from being used in the output by rejecting one of the two or more endoluminal images from being indicated to be associated with the location.

[0211] For some applications,

[0212] the endoluminal data-acquisition device includes an endoluminal imaging probe configured to acquire a plurality of endoluminal image frames of the lumen, and

[0213] the data-point-selection functionality is configured: [0214] to generate the output by generating an endoluminal image stack using some of the plurality of endoluminal image frames of the lumen, and [0215] to reject the other one of the two or more endoluminal data points from being used in the output by rejecting the other one of the two or more endoluminal image frames from being displayed in the image stack.

[0216] For some applications, the duplicate-data-point-identification functionality is configured to determine that, at at least one location, two or more endoluminal data points were acquired by determining that the endoluminal data-acquisition device moved past the location in a second direction with respect to the lumen that is opposite to the first direction, and the data-point-selection functionality is configured to generate the output by placing image frames in order within the image stack based on determining that the endoluminal data-acquisition device moved past the location, in the second direction with respect to the lumen.

[0217] For some applications, the duplicate-data-point-identification functionality is configured to determine that, at at least one location, two or more endoluminal data points were acquired by:

[0218] sensing a signal that is indicative of the subject's cardiac cycle, while the endoluminal data-acquisition device acquires the plurality of data points,

[0219] determining that a given data point was acquired at a given phase of the subject's cardiac cycle, and

[0220] in response thereto, identifying the given data point as having been acquired at a location at which another data point was acquired.

[0221] For some applications, the duplicate-data-point-identification functionality configured to determine that the given data point was acquired at the given phase of the subject's cardiac cycle by determining that the given data point was acquired during at least a portion of systole.

[0222] For some applications, the duplicate-data-point-identification functionality is configured to determine that, at at least one location, two or more endoluminal data points were acquired by determining that the endoluminal data-acquisition device moved past the location in a second direction with respect to the lumen that is opposite to the first direction.

[0223] For some applications, the duplicate-data-point-identification functionality configured to determine that the endoluminal data-acquisition device moved past the location in the second direction with respect to the lumen by performing image processing on extraluminal images of the device moving through the lumen generally in the first direction.

[0224] For some applications, the apparatus further includes a sensor configured to detect movement of a portion of the endoluminal data-acquisition device, and the duplicate-data-point-identification functionality is configured to determine that the endoluminal data-acquisition device moved past the location in the second direction with respect to the lumen, in response to a signal from the sensor.

[0225] There is further provided, in accordance with some applications of the present invention, a method for use with an endoluminal data-acquisition device configured to acquire endoluminal data points while moving through a lumen of a subject's body generally in a first direction with respect to the lumen, including:

[0226] while the endoluminal data-acquisition device is being moved through the lumen, acquiring a plurality of endoluminal data points of the lumen using the endoluminal data-acquisition device;

[0227] determining that, while acquiring at least one of the endoluminal data points, the endoluminal data-acquisition device was moving in a second direction that is opposite to the first direction; and

[0228] in response to the determining, generating an output using at least some of the plurality of endoluminal data points of the lumen acquired using the endoluminal data-acquisition device.

[0229] There is additionally provided, in accordance with some applications of the present invention, apparatus for use with an endoluminal data-acquisition device that acquires a plurality of endoluminal data points of a lumen of a body of a subject while being moved through the lumen generally in a first direction with respect to the lumen and a display, the apparatus including:

[0230] at least one processor including: [0231] direction-determination functionality configured to determine that, while acquiring at least one of the endoluminal data points, the endoluminal data-acquisition device was moving in a second direction that is opposite to the first direction; [0232] output-generation functionality configured, in response to the determining, to generate an output using at least some of the plurality of endoluminal data points of the lumen acquired using the endoluminal data-acquisition device; and [0233] display-driving functionality configured to drive the display to display the output.

[0234] For some applications, the direction-determination functionality is configured to determine that, while acquiring at least one of the endoluminal data points, the endoluminal data-acquisition device was moving in a second direction that is opposite to the first direction by performing image processing on extraluminal images of the device moving through the lumen generally in the first direction.

[0235] For some applications, the apparatus further includes a sensor configured to detect movement of a portion of the endoluminal data-acquisition device, and the direction-determination functionality configured to determine that, while acquiring at least one of the endoluminal data points, the endoluminal data-acquisition device was moving in a second direction that is opposite to the first direction, in response to a signal from the sensor.

[0236] For some applications,

[0237] the endoluminal data-acquisition device includes an endoluminal imaging probe configured to acquire a plurality of endoluminal image frames of the lumen, and

[0238] the output-generation functionality is configured to generate the output by generating an endoluminal image stack using at least some of the plurality of endoluminal image frames of the lumen.

[0239] For some applications, the output-generation functionality is configured to generate the output by placing image frames in order within the image stack based on determining that, while acquiring at least one of the endoluminal data points, the endoluminal data-acquisition device was moving in the second direction.

[0240] For some applications, the output-generation functionality is configured to generate the output by generating an indication on the endoluminal image stack of at least a portion of the endoluminal image stack that was acquired by the data-acquisition device while the data-acquisition device was moving in the second direction.

[0241] For some applications, the direction-determination functionality is configured to determine that, while acquiring at least one of the endoluminal data points, the endoluminal data-acquisition device was moving in a second direction that is opposite to the first direction by:

[0242] sensing a signal that is indicative of the subject's cardiac cycle, while the endoluminal data-acquisition device acquires the plurality of data points,

[0243] determining that a given data point was acquired at a given phase of the subject's cardiac cycle, and

[0244] in response thereto, identifying the given data point as having been acquired while the endoluminal data-acquisition device was moving in the second direction.

[0245] For some applications, the direction-determination functionality is configured to determine that the given data point was acquired at the given phase of the subject's cardiac cycle by determining that the given data point was acquired during at least a portion of systole.

[0246] The present invention will be more fully understood from the following detailed description of embodiments thereof, taken together with the drawings, in which:

BRIEF DESCRIPTION OF THE DRAWINGS

[0247] FIG. 1 is a flow chart, at least some of the steps of which are used in procedures that utilize co-use of endoluminal data and extraluminal imaging, in accordance with some applications of the present invention;

[0248] FIGS. 2A-B are schematic illustrations of an endoluminal device being inserted into a lumen, and (in FIG. 2B) a sensor for sensing the distance traveled through the lumen by the endoluminal device relative to a known starting location, in accordance with some applications of the present invention;

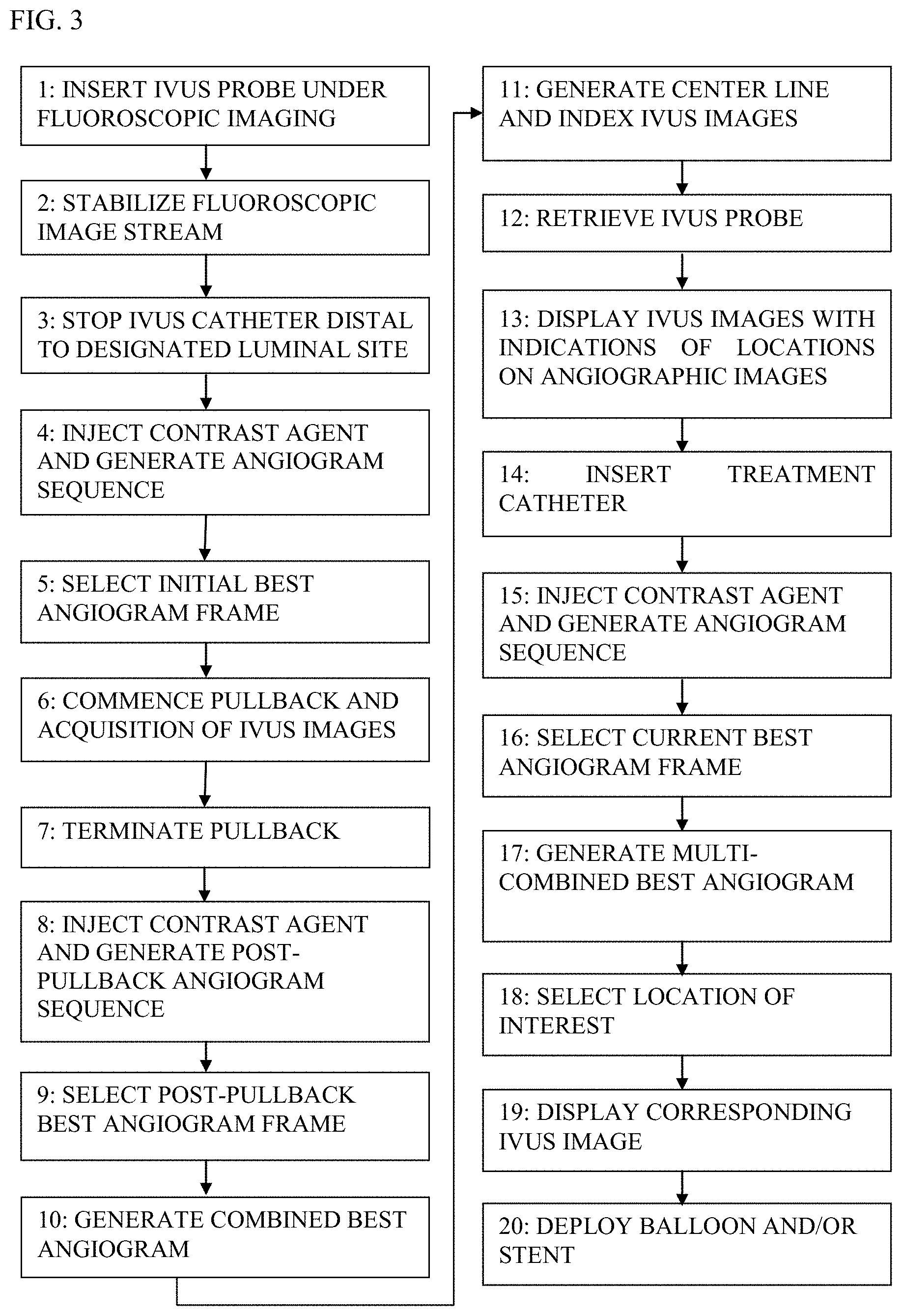

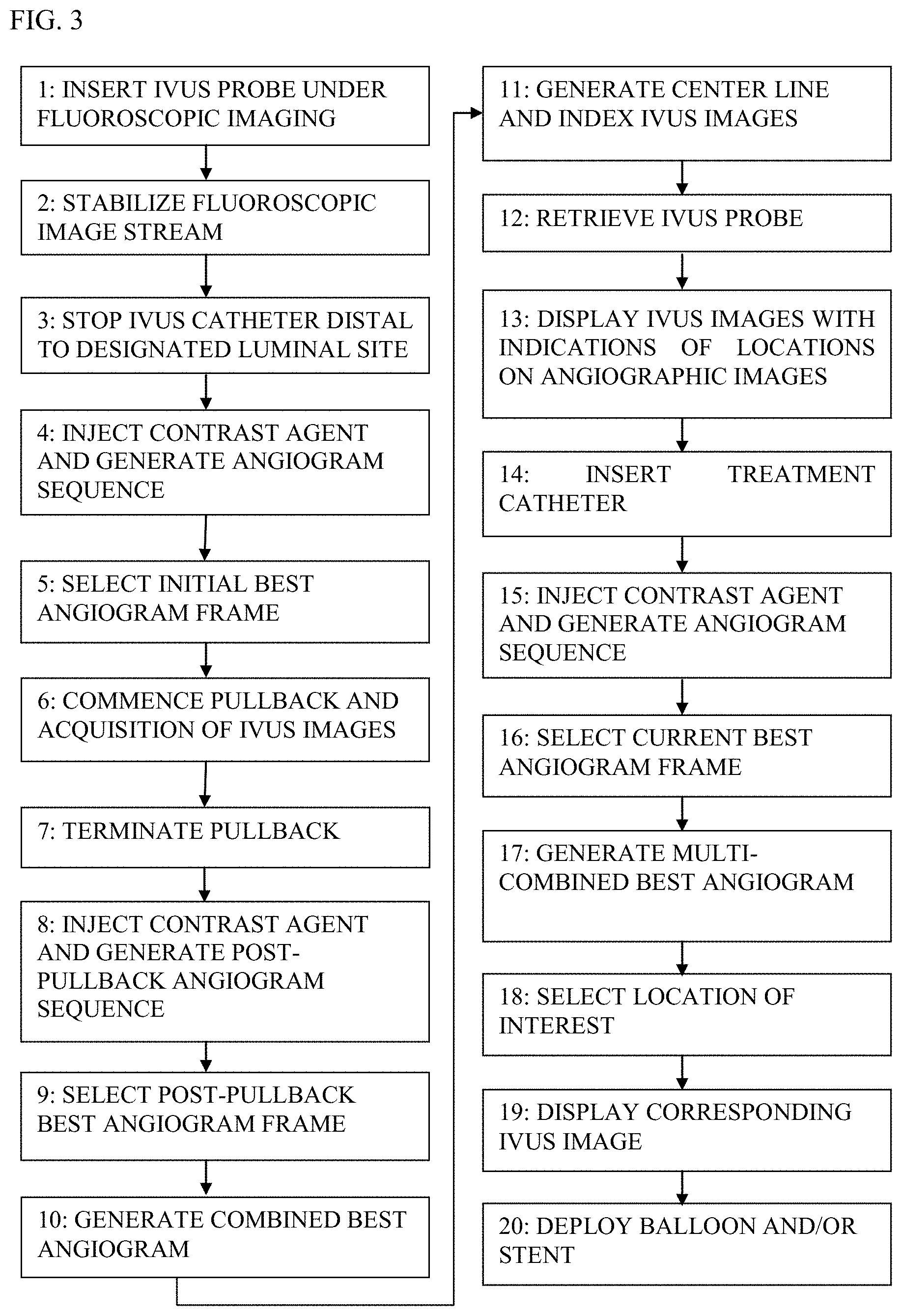

[0249] FIG. 3 is a flow chart, at least some of the steps of which are used in procedures that utilize co-use of endoluminal data and extraluminal imaging, in accordance with some applications of the present invention;

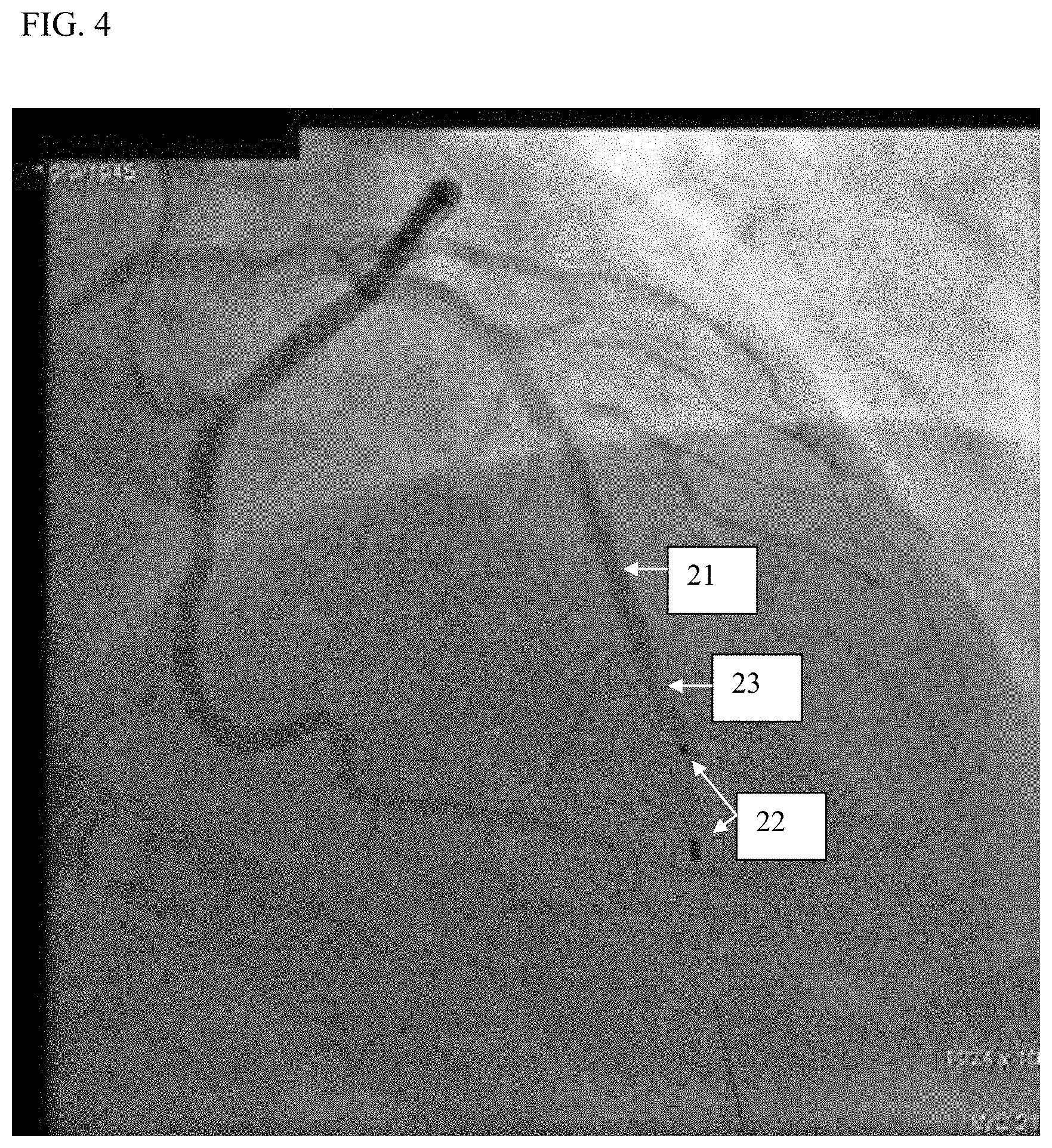

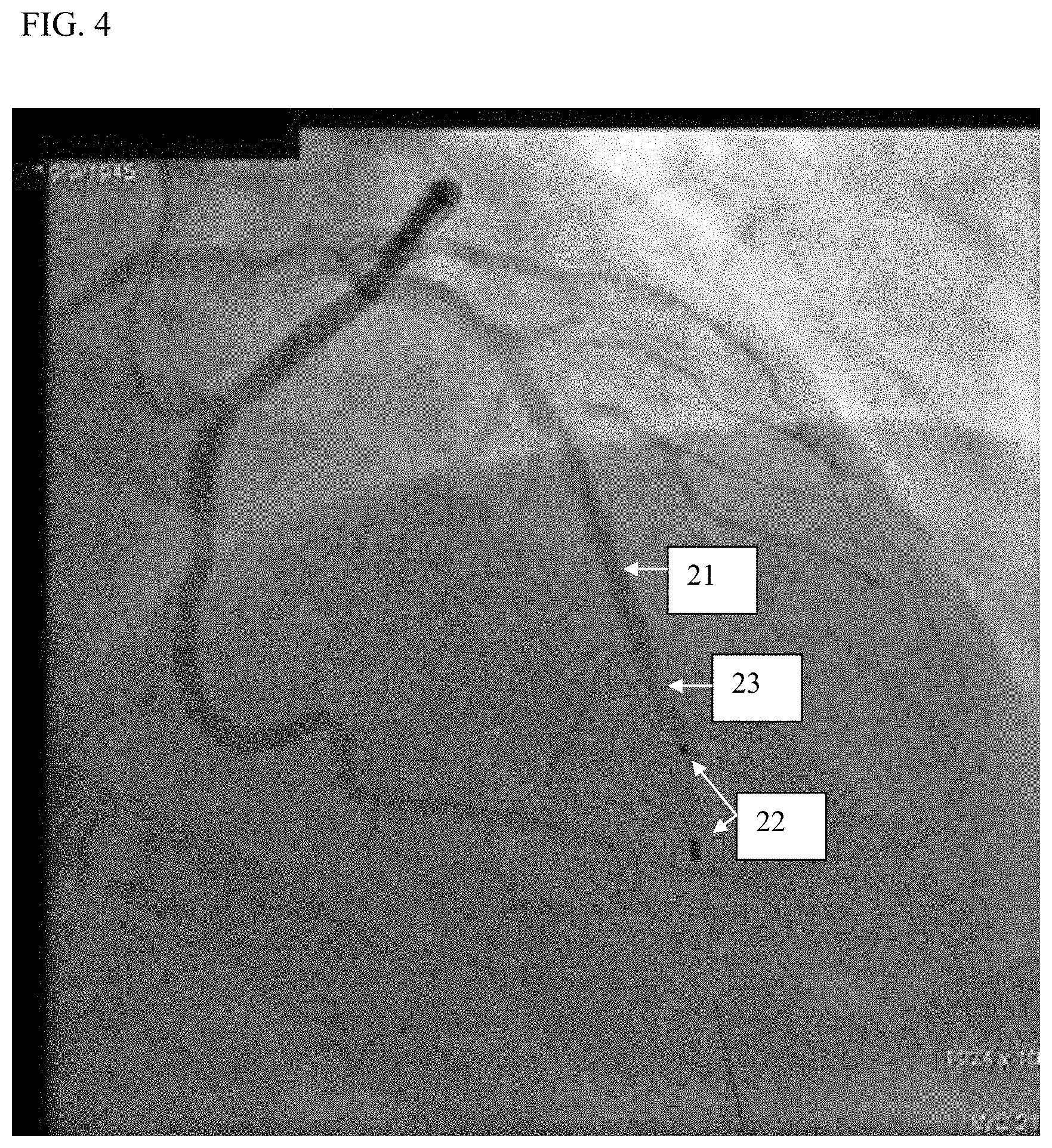

[0250] FIG. 4 shows an initial best angiogram of a luminal segment, the initial best angiogram being generated prior to the commencement of the pullback of an endoluminal imaging probe, in accordance with some applications of the present invention;

[0251] FIG. 5 shows a post-pullback best angiogram of a luminal segment, the post-pullback best angiogram being generated subsequent to the termination of the pullback of an endoluminal imaging probe, in accordance with some applications of the present invention;

[0252] FIG. 6 shows a combined best angiogram of a luminal segment, the combined best angiogram being generated by co-registering the initial best angiogram and the post-pullback best angiogram, in accordance with some applications of the present invention;

[0253] FIG. 7 shows the co-use of previously-acquired endoluminal images and an extraluminal fluoroscopic image, in accordance with some applications of the present invention;

[0254] FIG. 8 shows a location on an extraluminal image of a lumen that has been selected, the index of the corresponding endoluminal image frame being derived in response thereto, in accordance with some applications of the present invention;

[0255] FIG. 9 shows co-display of previously-acquired endoluminal images and an extraluminal fluoroscopic image, in accordance with some applications of the present invention;

[0256] FIG. 10 shows the co-use of previously-acquired endoluminal images and a current extraluminal fluoroscopic image stream, in accordance with some applications of the present invention;

[0257] FIG. 11 shows the co-use of a stack of previously-acquired IVUS images and a current, extraluminal fluoroscopic image stream, in accordance with some applications of the present invention;

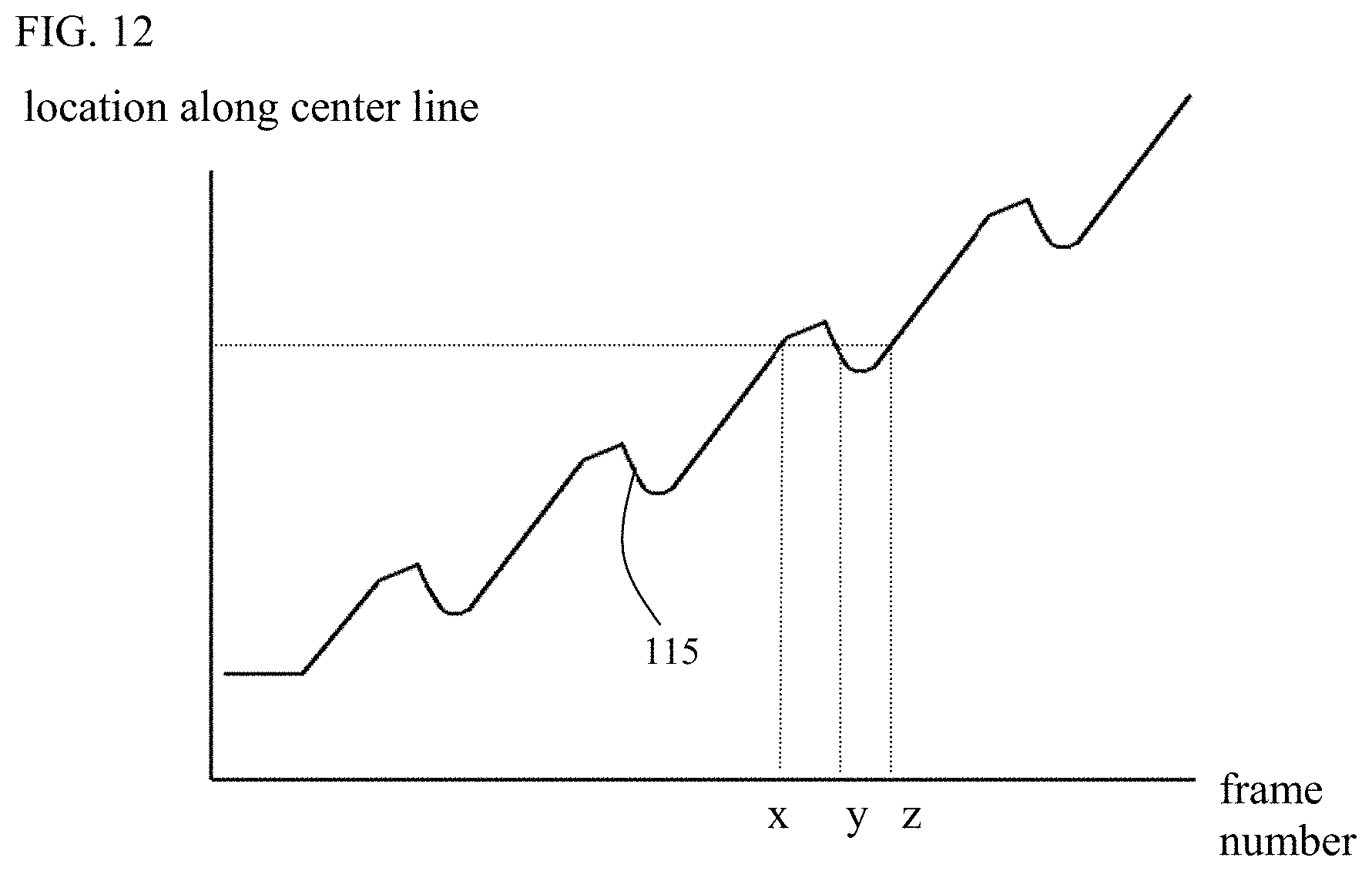

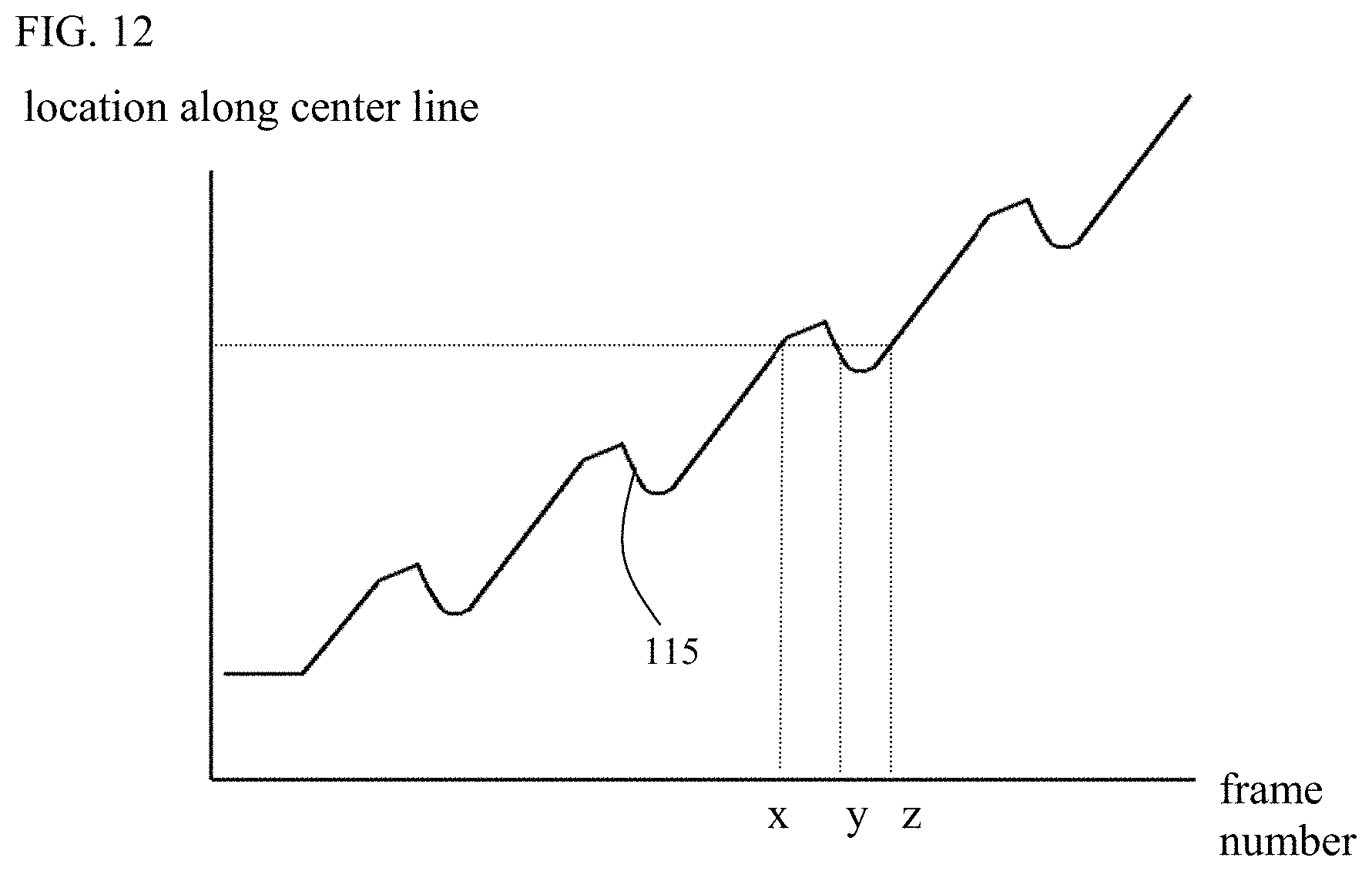

[0258] FIG. 12 is a graph indicating typical movement of an endoluminal imaging probe during pullback of the probe; and

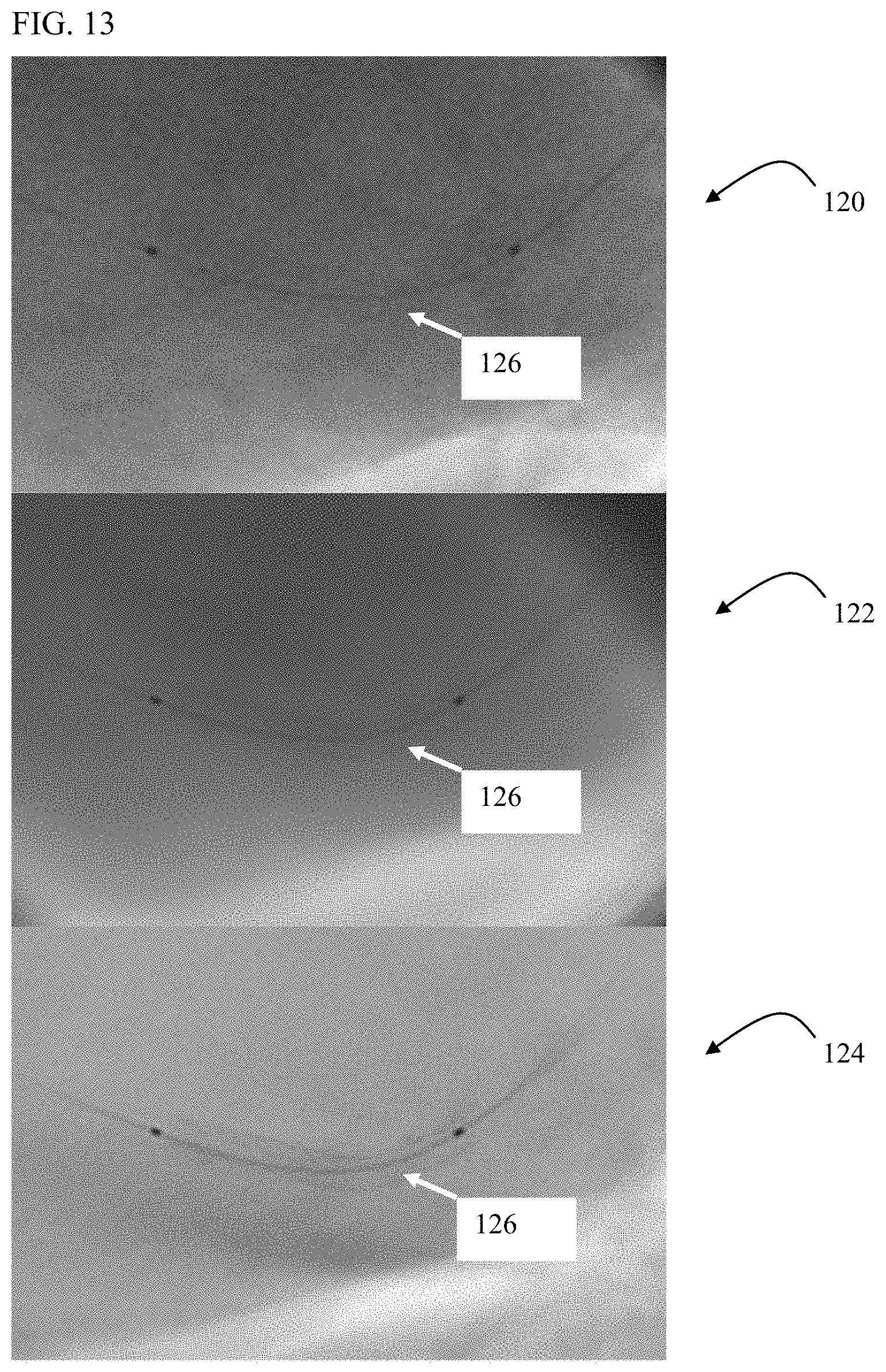

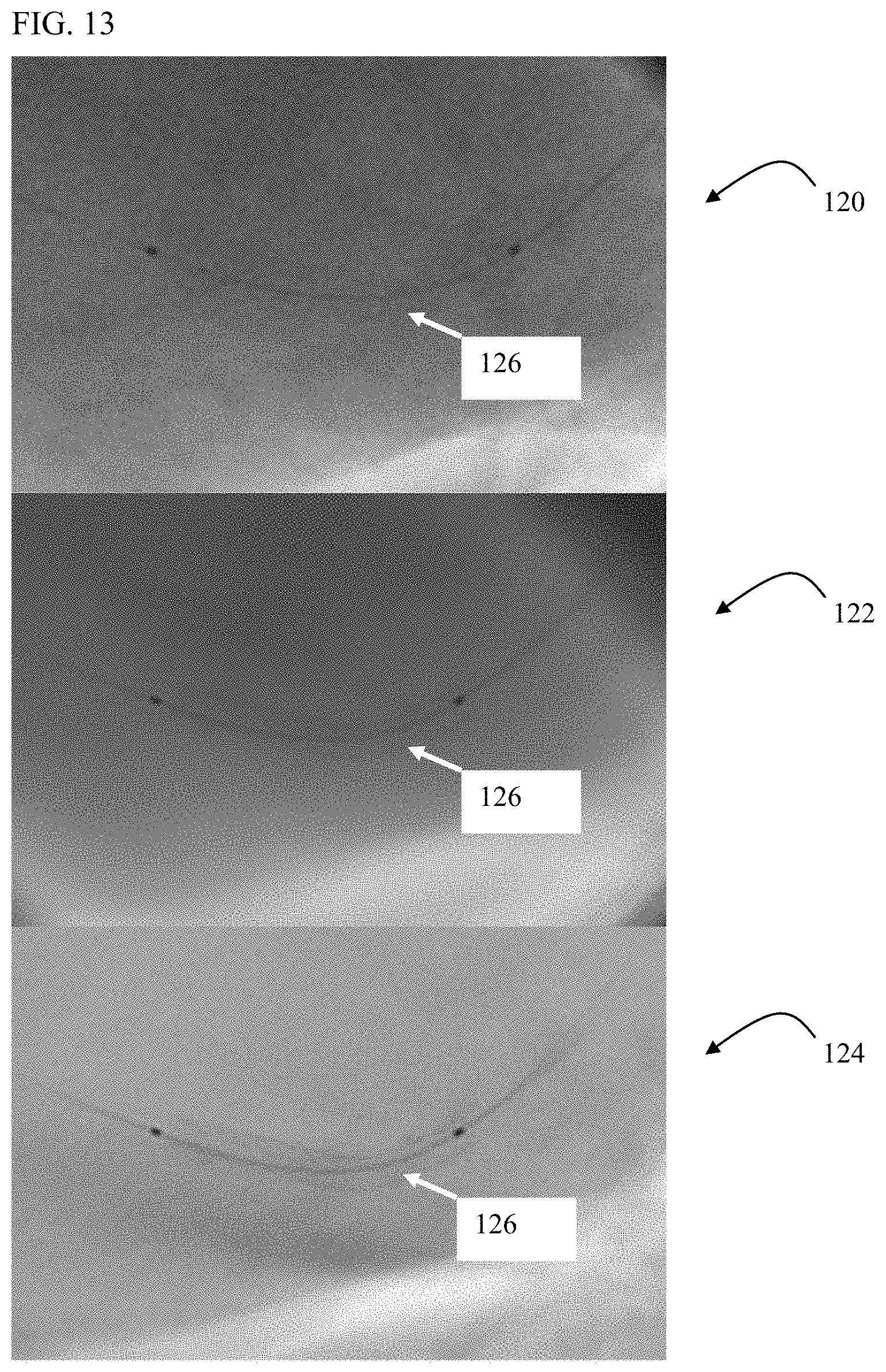

[0259] FIG. 13 shows an extraluminal image of a stent inside a blood vessel that has been enhanced, in accordance with some applications of the present invention.

DETAILED DESCRIPTION OF EMBODIMENTS

[0260] The terms "medical tool," "tool", "device," and "probe" refer to any type of a diagnostic or therapeutic or other functional tool including, but not limited to, a cardiovascular catheter, a stent delivery and/or placement and/or retrieval tool, a balloon delivery and/or placement and/or retrieval tool, a valve delivery and/or repair and/or placement and/or retrieval tool, a graft delivery and/or placement and/or retrieval tool, a tool for the delivery and/or placement and/or retrieval of an implantable device or of parts of such device, an implantable device or parts thereof, a tool for closing a gap, a tool for closing a septal defect, a guide wire, a marker wire, a suturing tool, a clipping tool (such as a valve-leaflet-clipping tool), a biopsy tool, an aspiration tool, a navigational tool, a localization tool, a probe comprising one or more location sensors, a tissue characterization probe, a probe for the analysis of fluid, a measurement probe, an electrophysiological probe, a stimulation probe, an ablation tool, a tool for penetrating or opening partial or total occlusions in blood vessels, a drug or substance delivery tool, a chemotherapy tool, a photodynamic therapy tool, a brachytherapy tool, a local irradiation tool, a laser device, a tool for delivering energy, a tool for delivering markers or biomarkers, a tool for delivering biological glue, an irrigation device, a suction device, a ventilation device, a device for delivering and/or placing and/or retrieving a lead of an electrophysiological device, a lead of an electrophysiological device, a pacing device, a coronary sinus device, an imaging device, a sensing probe, a probe comprising an optical fiber, a robotic tool, a tool that is controlled remotely, an excision tool, a plaque excision tool (such as a plaque excision catheter) or any combination thereof [0261] The terms "image" and "imaging" refer to any type of medical imaging, typically presented as a sequence of images and including, but not limited to, imaging using ionizing radiation, imaging using non-ionizing radiation, video, fluoroscopy, angiography, ultrasound, CT, MR, PET, PET-CT, CT angiography, SPECT, Gamma camera imaging, Optical Coherence Tomography (OCT), Near-Infra-Red Spectroscopy (NIRS), Vibration [0262] Response Imaging (VRI), Optical Imaging, infrared imaging, electrical mapping imaging, other forms of Functional Imaging, or any combination or fusion thereof. Examples of ultrasound imaging include Endo-Bronchial Ultrasound (EBUS), Trans-Thoracic Echo (TTE), Trans-Esophageal Echo (TEE), Intra-Vascular Ultrasound (IVUS), Intra-Cardiac Ultrasound (ICE), or any combination thereof [0263] The term "contrast agent," when used in reference to its application in conjunction with imaging, refers to any substance that is used to highlight, and/or enhance in another manner, the anatomical structure, functioning, and/or composition of a bodily organ while the organ is being imaged. [0264] The term "stabilized," when used in the context of displayed images, means a display of a series of images in a manner such that periodic, cyclical, and/or other motion of the body organ(s) being imaged, and/or of a medical tool being observed, is partially or fully reduced, with respect to the entire image frame, or at least a portion thereof [0265] The term "automatic," when used for describing the generation and utilization of the road map, means "without necessitating user intervention or interaction." (Such interaction or intervention may still however be optional in some cases.) [0266] The term "real time" means without a noticeable delay. [0267] The term "near real time" means with a short noticeable delay (such as approximately one or two motion cycles of the applicable organ, and, in the case of procedures relating to organs or vessels the motion of which are primarily as a result of the cardiac cycle, less than two seconds). [0268] The term "on-line," when used in reference to image processing, or to measurements being made on images, means that the image processing is performed, and/or the measurements are made, intra-procedurally, in real time or near real time.

[0269] Applications of the present invention are typically used during medical procedures that are performed, in whole or in part, on or within luminal structures. For some applications, apparatus and methods provided herein facilitate the co-use of extraluminal imaging and endoluminal data in performing such medical procedures. Endoluminal data may include imaging data, data derived from measurements, other data, or any combination thereof.

[0270] For some applications, the co-use of the endoluminal data and the extraluminal images is performed in the following manner. Endoluminal data are acquired by positioning an endoluminal data-acquisition device along a luminal segment of interest that includes a designated luminal site. Subsequently, while observing extraluminal images of the luminal segment, one or more locations along that segment are indicated by a user input device. In response to the indication of the one or more locations by the user input device, the corresponding, previously-acquired endoluminal images are displayed.

[0271] Typically, the designated luminal site includes a site being diagnosed, and at which, subject to the outcome of the diagnosis, a therapeutic device will be positioned and deployed, e.g., the site of an anatomical feature, the implantation site of a previously-implanted device, and/or a site at a defined location with respect to the implantation site. For example, the designated luminal site may include a portion of the lumen that is narrow with respect to surrounding portions of the lumen, and/or the site of a lesion.

[0272] For some applications, the co-use of the endoluminal data and the extraluminal images is performed in the following manner. Endoluminal data are acquired by positioning an endoluminal data-acquisition device at a designated luminal site. Subsequently, an endoluminal therapeutic device is positioned and deployed at the designated luminal site under extraluminal imaging, while concurrently viewing on-line the endoluminal data that were previously acquired by the endoluminal data-acquisition device at the current location of the therapeutic device. Typically, endoluminal data are acquired at respective endoluminal sites in the vicinity of the designated endoluminal site. Subsequently, when the endoluminal therapeutic device is placed inside the lumen, previously-acquired endoluminal data are displayed and updated, typically automatically and typically on-line, to correspond to the current location of the therapeutic device (or of a portion thereof), the location of the therapeutic device typically changing during the positioning of the therapeutic device.

[0273] For some applications, extraluminal imaging and the previously-acquired endoluminal data are co-used such that it is as if the therapeutic device is being positioned and deployed under both real-time extraluminal imaging and real-time endoluminal data acquisition. This is because (a) the extraluminal imaging is performed in real-time, and (b), although the endoluminal data are not acquired in real-time, endoluminal data are displayed that correspond to the current location of the therapeutic device.

[0274] In accordance with some applications of the present invention, when the therapeutic device is disposed inside the lumen, the location of the device within the lumen is determined by performing image processing on the extraluminal image of the device inside the lumen.

[0275] For some applications, the image processing includes tracking of one or more visible portions of a moving therapy-applying portion of the device in the extraluminal images. Typically, the tracking is performed in real time, and, typically, in accordance with techniques described in US 2010/0228076 to Blank, which is incorporated herein by reference.

[0276] For some applications, the image processing includes stabilization of an image stream produced by the extraluminal imaging. Typically, the stabilization is performed in real time, and typically in accordance with techniques described in US 2008/0221442 to Tolkowsky, or US 2010/0228076 to Blank, both of which applications are incorporated herein by reference. Typically, the stabilization facilitates the co-use of the endoluminal data with the extraluminal images (particularly in cases of intense organ motion). This is because it is typically easier to determine the luminal location of the therapeutic device based upon a stabilized image stream than to determine the luminal location of the therapeutic device on a native, non-stabilized image stream.

[0277] For some applications, the stabilized image stream is also enhanced, typically in real time, typically in accordance with techniques described in US 2010/0228076 to Blank.

[0278] For some applications, during the acquisition of the endoluminal data by the endoluminal data-acquisition device, the location of the endoluminal data-acquisition device is determined by advancing the endoluminal data-acquisition device under extraluminal imaging and image processing the extraluminal images to determine the location of a moving data-acquiring portion of the endoluminal data-acquisition device. For some applications, during this stage, the extraluminal image stream is stabilized and/or enhanced, as described hereinabove, to facilitate the determination of the location of the endoluminal data-acquisition device, based upon the extraluminal images. Alternatively, other techniques are used for determining the location of the endoluminal data-acquisition device, as described hereinbelow.

[0279] For some applications, the luminal structure to which the apparatus and methods described herein are applied includes a lumen in the vascular system, the respiratory tract, the digestive tract, the urinary tract, or any other luminal structure within a patient's body.

[0280] For some applications, the endoluminal data-acquisition device is an imaging probe. For some applications, the imaging probe is an IVUS probe, an EBUS probe, another ultrasound probe, an OCT probe, an NIRS probe, an MR probe, or any combination thereof.

[0281] For some applications, the endoluminal data-acquisition device performs additional functions. For example, the endoluminal data-acquisition device may comprise a probe, such as the VIBE.TM. RX Vascular Imaging Balloon Catheter, marketed by Volcano Corporation (San Diego, USA), that includes both IVUS and coronary balloon functionalities.

[0282] For some applications, the endoluminal data-acquisition device acquires data in a form other than images. For example, the data may include data related to pressure, flow, temperature, electrical activity, or any combination thereof. For some applications, and typically when data are acquired with respect to a coronary vessel, the endoluminal data-acquisition device is a Fractional Flow Reserve (FFR) probe.

[0283] For some applications, the extraluminal imaging is fluoroscopy, CT, MR, PET, SPECT, ultrasound, or any combination thereof.

[0284] For some applications, the apparatus and methods described herein are used with a therapeutic device that is positioned and/or deployed at an anatomical feature that requires or potentially requires treatment, such as a partial or total occlusion, a native valve, an aneurism, a dissection, a malformation, a septal defect, a mass suspected of being malignant, a mass suspected of being inflammatory, etc. The endoluminal data are typically determined at, and/or in the vicinity of, the anatomical feature.

[0285] For some applications, apparatus and methods described herein are used with a therapeutic device that is positioned and/or deployed at an implantation site of a previously-implanted device such as a stent, a graft or a replacement valve. The endoluminal data are determined at, and/or in the vicinity of, the implantation site. For example, the techniques described herein may be used during the placement of a new prosthetic aortic valve at the site of (e.g., inside) a previously implanted prosthetic aortic valve that is no longer functioning.

[0286] For some applications, apparatus and methods described herein are used with a therapeutic device that is positioned and/or deployed at a defined location relative to a previously-implanted device such as a stent, a graft or a replacement valve. The endoluminal data are determined at and in the vicinity of the defined location. For example, the techniques described herein may be used during the placement of a coronary stent such that the new stent overlaps with or is adjacent to a previously-implanted stent, in order to treat a long lesion and/or a lesion that has diffused along a coronary artery.

[0287] Reference is now made to FIG. 1, which is a flow chart, at least some of the steps of which are used in the course of co-use of endoluminal data and extraluminal imaging, in accordance with some applications of the current invention. It is noted that, for some applications, some of the steps shown in FIG. 1 may be practiced, without all of the steps shown in FIG. 1 necessarily being practiced in combination.