Processing Communications Signals Using A Machine-learning Network

O`Shea; Timothy James ; et al.

U.S. patent application number 16/856760 was filed with the patent office on 2020-10-29 for processing communications signals using a machine-learning network. The applicant listed for this patent is DeepSig Inc.. Invention is credited to Johnathan Corgan, Timothy James O`Shea, Nathan West.

| Application Number | 20200343985 16/856760 |

| Document ID | / |

| Family ID | 1000004795451 |

| Filed Date | 2020-10-29 |

| United States Patent Application | 20200343985 |

| Kind Code | A1 |

| O`Shea; Timothy James ; et al. | October 29, 2020 |

PROCESSING COMMUNICATIONS SIGNALS USING A MACHINE-LEARNING NETWORK

Abstract

Methods, systems, and apparatus, including computer programs encoded on computer-storage media, for processing communications signals using a machine-learning network are disclosed. In some implementations, pilot and data information are generated for a data signal. The data signal is generated using a modulator for orthogonal frequency-division multiplexing (OFDM) systems. The data signal is transmitted through a communications channel to obtain modified pilot and data information. The modified pilot and data information are processed using a machine-learning network. A prediction corresponding to the data signal transmitted through the communications channel is obtained from the machine-learning network. The prediction is compared to a set of ground truths and updates, based on a corresponding error term, are applied to the machine-learning network.

| Inventors: | O`Shea; Timothy James; (Arlington, VA) ; West; Nathan; (Washington, DC) ; Corgan; Johnathan; (San Jose, CA) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Family ID: | 1000004795451 | ||||||||||

| Appl. No.: | 16/856760 | ||||||||||

| Filed: | April 23, 2020 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 62837631 | Apr 23, 2019 | |||

| 63005599 | Apr 6, 2020 | |||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | G06N 3/0472 20130101; H04L 27/0008 20130101; H04B 17/3912 20150115; H04B 17/3911 20150115; G06N 20/00 20190101; G06N 3/08 20130101 |

| International Class: | H04B 17/391 20060101 H04B017/391; G06N 20/00 20060101 G06N020/00; G06N 3/04 20060101 G06N003/04; G06N 3/08 20060101 G06N003/08; H04L 27/00 20060101 H04L027/00 |

Claims

1. A method performed by at least one processor to train at least one machine-learning network to process a received communication signal, the method comprising: generating one or more of pilot and data information for a data signal, wherein one or more elements of the pilot and data information each correspond to a particular time and a particular frequency in a time-frequency spectrum; generating the data signal by modulating the pilot and data information using a modulator for an orthogonal frequency-division multiplexing (OFDM) system; transmitting the data signal through a communications channel to obtain modified pilot and data information; processing the modified pilot and data information using a machine-learning network; in response to the processing using the machine-learning network, obtaining, from the machine-learning network, a prediction corresponding to the data signal transmitted through the communications channel; computing an error term by comparing the prediction to a set of ground truths; and updating the machine-learning network based on the error term.

2. The method of claim 1, wherein the machine-learning network performs operations corresponding to pilot estimation, interpolation, and equalization.

3. The method of claim 1, wherein the communications channel is a simulated channel that includes one or more of an Additive White Gaussian Noise (AWGN) or Rayleigh fading channel model, International Telecommunication Union (ITU) or 3.sup.rd Generation Partnership Project (3GPP) fading channel models, emulated radio emissions, propagation models, ray tracing within simulated geometry or an environment to produce channel effects, or a machine-learning network trained to approximate measurements over a real channel.

4. The method of claim 1, wherein the communications channel is a real communications channel between a first device and a second device, and wherein transmitting the data signal through the communications channel comprises transmitting the data signal from the first device to the second device and obtaining the modified pilot and data information comprising a version of the data signal received by the second device.

5. The method of claim 1, wherein the prediction obtained from the machine-learning network is one of a channel response of the communications channel, an inverse channel response of the communication channel, or values of the pilot and data information prior to transmitting the data signal through the communications channel.

6. The method of claim 1, wherein the set of ground truths are values of equalized data symbols or channel estimates determined from one or more of a process of generating the pilot and data information, a decision feedback process, pilot subcarriers, or an out-of-band communication.

7. The method of claim 1, wherein updating the machine-learning network based on the error term comprises: determining, based on a loss function, a rate of change of one or more weight values within the machine-learning network; and performing an optimization process using the rate of change to update the one or more weight values within the machine-learning network.

8. The method of claim 7, wherein the optimization process comprises one or more of gradient descent, stochastic gradient descent (SGD), Adam, RAdam, AdamW, or Lookahead neural network optimization.

9. The method of claim 7, wherein the optimization process involves minimizing a loss value between predicted and actual values of subcarriers or channel responses.

10. The method of claim 1, wherein the machine-learning network is a fully convolutional neural network or a partially convolutional neural network.

11. The method of claim 1, wherein the OFDM system includes one or more elements of cyclic-prefix orthogonal frequency division multiplexing (CP-OFDM), single carrier frequency division multiplexing (SCFDM), filter bank multicarrier (FBMC), or elements of other variants of OFDM.

12. A non-transitory computer-readable medium storing one or more instructions executable by a computer system to perform operations comprising: generating one or more of pilot and data information for a data signal, wherein one or more elements of the pilot and data information each correspond to a particular time and a particular frequency in a time-frequency spectrum; generating the data signal by modulating the pilot and data information using a modulator for an orthogonal frequency-division multiplexing (OFDM) system; transmitting the data signal through a communications channel to obtain modified pilot and data information; processing the modified pilot and data information using a machine-learning network; in response to the processing using the machine-learning network, obtaining, from the machine-learning network, a prediction corresponding to the data signal transmitted through the communications channel; computing an error term by comparing the prediction to a set of ground truths; and updating the machine-learning network based on the error term.

13. The non-transitory computer-readable medium of claim 12, wherein the machine-learning network performs operations corresponding to pilot estimation, interpolation, and equalization.

14. The non-transitory computer-readable medium of claim 12, wherein the communications channel is a simulated channel that includes one or more of an Additive White Gaussian Noise (AWGN) or Rayleigh fading channel model, International Telecommunication Union (ITU) or 3rd Generation Partnership Project (3GPP) fading channel models, emulated radio emissions, propagation models, ray tracing within simulated geometry or an environment to produce channel effects, or a machine-learning network trained to approximate measurements over a real channel.

15. The non-transitory computer-readable medium of claim 12, wherein the communications channel is a real communications channel between a first device and a second device, and wherein transmitting the data signal through the communications channel comprises transmitting the data signal from the first device to the second device and obtaining the modified pilot and data information comprising a version of the data signal received by the second device.

16. The non-transitory computer-readable medium of claim 12, wherein the prediction obtained from the machine-learning network is one of a channel response of the communications channel, an inverse channel response of the communication channel, or values of the pilot and data information prior to transmitting the data signal through the communications channel.

17. The non-transitory computer-readable medium of claim 12, wherein the set of ground truths are values of equalized data symbols or channel estimates determined from one or more of a process of generating the pilot and data information, a decision feedback process, pilot subcarriers, or an out-of-band communication.

18. The non-transitory computer-readable medium of claim 12, wherein updating the machine-learning network based on the error term comprises: determining, based on a loss function, a rate of change of one or more weight values within the machine-learning network; and performing an optimization process using the rate of change to update the one or more weight values within the machine-learning network.

19. The non-transitory computer-readable medium of claim 18, wherein the optimization process comprises one or more of gradient descent, stochastic gradient descent (SGD), Adam, RAdam, AdamW, or Lookahead neural network optimization.

20. The non-transitory computer-readable medium of claim 12, wherein the machine-learning network is a fully convolutional neural network or a partially convolutional neural network.

21. The non-transitory computer-readable medium of claim 12, wherein the OFDM system includes one or more elements of cyclic-prefix orthogonal frequency division multiplexing (CP-OFDM), single carrier frequency division multiplexing (SCFDM), filter bank multicarrier (FBMC), or elements of other variants of OFDM.

22. A system, comprising: one or more processors; and machine-readable media interoperably coupled with the one or more processors and storing one or more instructions that, when executed by the one or more processors, perform operations comprising: generating one or more of pilot and data information for a data signal, wherein one or more elements of the pilot and data information each correspond to a particular time and a particular frequency in a time-frequency spectrum; generating the data signal by modulating the pilot and data information using a modulator for an orthogonal frequency-division multiplexing (OFDM) system; transmitting the data signal through a communications channel to obtain modified pilot and data information; processing the modified pilot and data information using a machine-learning network; in response to the processing using the machine-learning network, obtaining, from the machine-learning network, a prediction corresponding to the data signal transmitted through the communications channel; computing an error term by comparing the prediction to a set of ground truths; and updating the machine-learning network based on the error term.

23. The system of claim 22, wherein the machine-learning network performs operations corresponding to pilot estimation, interpolation, and equalization.

24. The system of claim 22, wherein the communications channel is a simulated channel that includes one or more of an Additive White Gaussian Noise (AWGN) or Rayleigh fading channel model, International Telecommunication Union (ITU) or 3rd Generation Partnership Project (3GPP) fading channel models, emulated radio emissions, propagation models, ray tracing within simulated geometry or an environment to produce channel effects, or a machine-learning network trained to approximate measurements over a real channel.

25. The system of claim 22, wherein the communications channel is a real communications channel between a first device and a second device, and wherein transmitting the data signal through the communications channel comprises transmitting the data signal from the first device to the second device and obtaining the modified pilot and data information comprising a version of the data signal received by the second device.

26. The system of claim 22, wherein the prediction obtained from the machine-learning network is one of a channel response of the communications channel, an inverse channel response of the communication channel, or values of the pilot and data information prior to transmitting the data signal through the communications channel.

27. The system of claim 22, wherein the set of ground truths are values of equalized data symbols or channel estimates determined from one or more of a process of generating the pilot and data information, a decision feedback process, pilot subcarriers, or an out-of-band communication.

28. The system of claim 22, wherein updating the machine-learning network based on the error term comprises: determining, based on a loss function, a rate of change of one or more weight values within the machine-learning network; and performing an optimization process using the rate of change to update the one or more weight values within the machine-learning network.

29. The system of claim 28, wherein the optimization process comprises one or more of gradient descent, stochastic gradient descent (SGD), Adam, RAdam, AdamW, or Lookahead neural network optimization.

30. The system of claim 22, wherein the machine-learning network is a fully convolutional neural network or a partially convolutional neural network and wherein the OFDM system includes one or more elements of cyclic-prefix orthogonal frequency division multiplexing (CP-OFDM), single carrier frequency division multiplexing (SCFDM), filter bank multicarrier (FBMC), or elements of other variants of OFDM.

Description

CROSS REFERENCE TO RELATED APPLICATIONS

[0001] This application claims the benefit of U.S. Provisional Application No. 62/837,631, filed on Apr. 23, 2019, and U.S. Provisional Application No. 63/005,599, filed on Apr. 6, 2020, both of which are incorporated herein by reference.

TECHNICAL FIELD

[0002] This specification generally relates to communications systems that use machine learning and includes processing of communications signals using a machine-learning network.

BACKGROUND

[0003] Communications systems involve transmitting and receiving various types of communication media, e.g., over the air, through fiber optic cables or metallic cables, under water, or through outer space. In some cases, communications channels use radio frequency (RF) waveforms to transmit information, in which the information is modulated onto one or more carrier waveforms operating at RF frequencies. In other cases, RF waveforms are themselves information, such as outputs of sensors or probes. Information that is carried in RF waveforms, or other communication channels, is typically processed, stored, and/or transported through other forms of communication, such as through an internal system bus in a computer or through local or wide-area networks.

SUMMARY

[0004] In general, the subject matter described in this disclosure can be embodied in methods, apparatuses, and systems for training and deploying machine-learning networks to replace elements of processing within a system for communications signals. In some implementations, the communications signals include digital communications signals. By consolidating multiple functions within the transmitter or receiver units into approximate networks trained through an optimization approach with different free parameters, lower bit error rate performance, improved error vector magnitude, frame error rate, enhance bitrates, among other improvements, can be attained over a given communications channel as compared to existing baseline methods conventionally used for digital communications.

[0005] In one implementation, a system and method include replacing the tasks of pilot estimation, interpolation, and equalization with a machine-learning network. By consolidating and accomplishing the tasks jointly within an appropriate machine-learning network architecture, lower error rates, lower complexity, or improved user density, among other performance improvements, can be obtained in processing a given data signal compared to today's commonly used approaches such as linear minimum-mean-squared error or minimum-mean-squared error (LMMSE or MMSE).

[0006] In other implementations, a machine-learning network approach to processing digital communications can be extended to include several additional signal processing stages that are used in 3.sup.rd Generation Partnership Project (3GPP), 4.sup.th generation (4G), 5.sup.th generation (5G), and other orthogonal frequency-division multiplexing (OFDM) systems, including spatial combining, multiple-input and multiple-output (MIMO) processing as well as beam forming (BF), non-linearity compensation, symbol detection, or pre-coding weight generation.

[0007] In one aspect, a method is performed by at least one processor to train at least one machine-learning network to perform one or more tasks related to the processing of digital information in a communications system. In some cases, the communications channel can be a form of radio frequency (RF) communications channel. The method includes: generating one or more of pilot and data information for a data signal, where one or more elements of the pilot and data information each correspond to a particular time and a particular frequency in a time-frequency spectrum; generating the data signal by modulating the pilot and data information using a modulator for an orthogonal frequency-division multiplexing (OFDM) system; transmitting the data signal through a communications channel to obtain modified pilot and data information; processing the modified pilot and data information using a machine-learning network; in response to the processing using the machine-learning network, obtaining, from the machine-learning network, a prediction corresponding to the data signal transmitted through the communications channel; computing an error term by comparing the prediction to a set of ground truths; and updating the machine-learning network based on the error term.

[0008] Implementations may include one or more of the following features. In some implementations, a machine-learning network performs operations corresponding to pilot estimation, interpolation, and equalization. The communications channel may be a simulated channel that includes one or more of an Additive White Gaussian Noise (AWGN) or Rayleigh fading channel model, International Telecommunication Union (ITU) or 3.sup.rd Generation Partnership Project (3GPP) fading channel models, emulated radio emissions, propagation models, ray tracing within simulated geometry or an environment to produce channel effects, or a machine-learning network trained to approximate measurements over a real channel.

[0009] In some implementations, the communications channel includes a real communications channel between a first device and a second device, and where transmitting the data signal through the communications channel includes transmitting the data signal from the first device to the second device and obtaining the modified pilot and data information including a version of the data signal received by the second device.

[0010] In some implementations, the pilot and data information includes one or more of pilot subcarriers, data subcarriers, pilot resource elements, or data resource elements.

[0011] In some implementations, the prediction obtained from the machine-learning network includes one of a channel response of the communications channel, an inverse channel response of the communication channel, or values of the pilot and data information prior to transmitting the data signal through the communications channel.

[0012] In some implementations, the set of ground truths are values of equalized data symbols or channel estimates determined from one or more of a process of generating the pilot and data information, a decision feedback process, pilot subcarriers, or an out-of-band communication.

[0013] In some implementations, updating the machine-learning network based on the error term includes determining, based on a loss function, a rate of change of one or more weight values within the machine-learning network; and performing an optimization process using the rate of change to update the one or more weight values within the machine-learning network.

[0014] In some implementations, the optimization process includes one or more of gradient descent, stochastic gradient descent (SGD), Adam, RAdam, AdamW, or Lookahead neural network optimization.

[0015] In some implementations, the optimization process involves minimizing a loss value between predicted and actual values of subcarriers or channel responses.

[0016] In some implementations, the machine-learning network is a fully convolutional neural network or a partially convolutional neural network.

[0017] In some implementations, the pilot and data information represents one or more signals transmitted over a communications system corresponding to one or more radio frequencies or one or more distinct radios.

[0018] In some implementations, the orthogonal frequency-division multiplexing (OFDM) system includes one or more elements of cyclic-prefix orthogonal frequency division multiplexing (CP-OFDM), single carrier frequency division multiplexing (SCFDM), filter bank multicarrier (FBMC), or elements of other variants of orthogonal frequency-division multiplexing (OFDM).

[0019] Implementations of the above techniques include methods, systems, apparatuses and computer program products. One such system includes one or more processors, and memory storing instructions that, when executed, cause the one or more processors to perform some or all of the above-described operations. Particular implementations of the system one or more user equipment (UE) or base stations, or both, that are configured to perform some or all of the above-described operations. One such computer program product is suitably embodied in one or more non-transitory machine-readable media that stores instructions executable by one or more processors. The instructions are configured to cause the one or more processors to perform some or all of the above-described operations.

[0020] Advantageous implementations can include using a machine-learning network approach to scale a system from a small number of transmit or receive antennas to massive multiple input, multiple output (MIMO) systems with a large number of antenna elements, e.g., 32, 64, 128, 256, 512, 1024, or more. The machine-learning network approach can scale close to linearly while alternative, conventional approaches, including linear minimum-mean-squared error (LMMSE) or linear zero-forcing (ZF) approaches to estimation, equalization, and pre-coding matrix calculation, often involve algorithms with higher order such as O(N.sup.3) (where N is an integer >0) or exponential complexity as the number of users (e.g., mobile user equipment terminals) or the number of antennas increases. The machine-learning network approach discussed in this specification reduces the complexity of systems, for example systems with relatively large numbers of elements, compared to conventional approaches, while offering improved performance to enhance current day wireless standards and to be used in future wireless standards, such as 3GPP 6.sup.th Generation (6G) cellular networks or future Wi-Fi standards, among others.

[0021] The details of one or more embodiments of the invention are set forth in the accompanying drawings and the description below. Other features and advantages of the invention will become apparent from the description, the drawings, and the claims.

BRIEF DESCRIPTION OF THE DRAWINGS

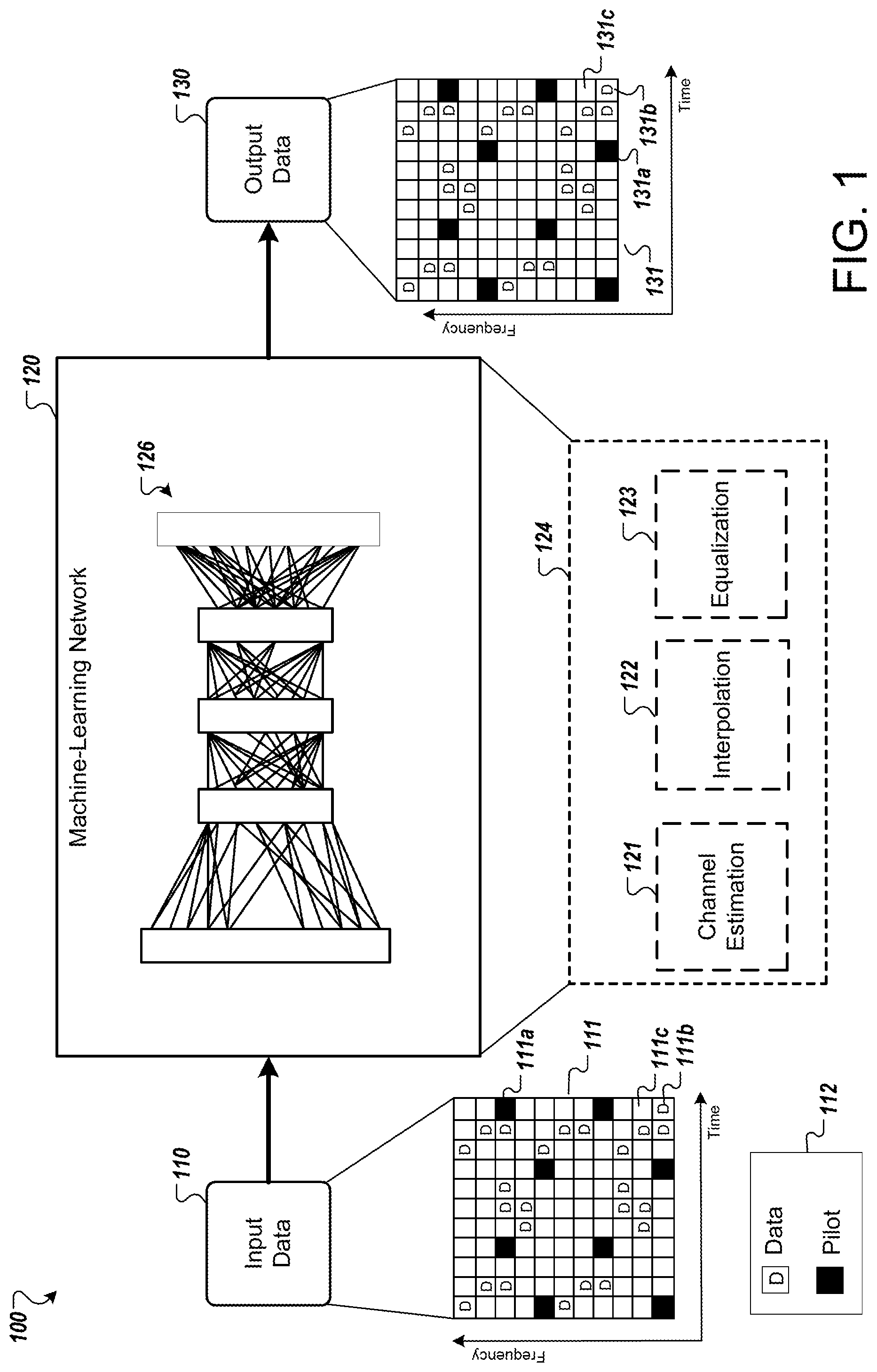

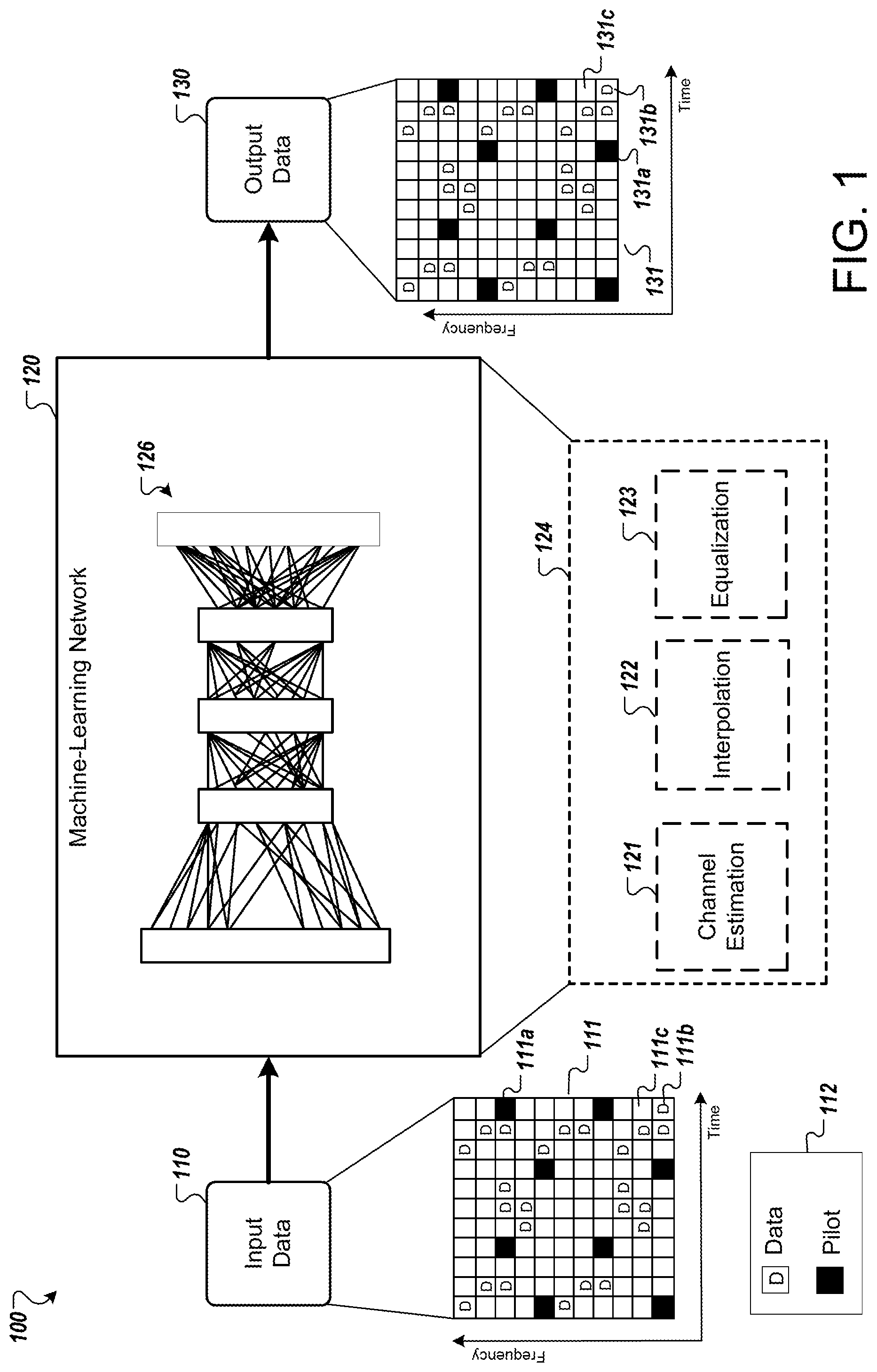

[0022] FIG. 1 is a diagram showing an example of a system for processing digital communications using a machine-learning network.

[0023] FIG. 2A is a diagram showing an example of a system for training a machine-learning network for processing digital communications.

[0024] FIG. 2B is a diagram showing an example of improved error vector magnitude (EVM) upon using a machine-learning network for processing digital communications.

[0025] FIG. 2C is a diagram showing an example of improved bit error rate (BER) over signal-to-noise ratio (SNR) upon using a machine-learning network for processing digital communications.

[0026] FIG. 3 is a flow diagram illustrating an example of a method for training a machine-learning network for processing digital communications.

[0027] FIGS. 4A and 4B are block diagrams showing a system for processing digital communications without and with using a machine-learning network, respectively.

[0028] FIG. 5 is a diagram showing processing stages within a communications system.

[0029] FIG. 6 is a diagram showing a front-haul scenario of a communications system.

[0030] FIG. 7 is a diagram illustrating an example of a computing system used for processing digital communications using a machine-learning network.

[0031] Like reference numbers and designations in the various drawings indicate like elements.

DETAILED DESCRIPTION

[0032] The disclosed implementations present techniques for processing communications signals using a machine-learning network. Using the disclosed techniques, multiple processing stages involved in digital communications can be adapted and consolidated into approximate networks within a machine-learning network trained through an optimization approach with different free parameters. The machine-learning network approach to processing digital communications enable a lower bit error rate performance over the same communications channel as compared to existing baseline methods. For example, in conventional radio receivers, estimation, interpolation, and equalization may be performed using existing baseline methods such as minimum-mean-squared error (MMSE), linear interpolation, or various others. In this scenario, as well as others, a machine-learning network can be used to perform the same tasks as the existing baseline methods with improved performance metrics such as bit error rate (BER) and error vector magnitude (EVM) among others. In addition, the machine-learning networks or approximate networks can often be run on more concurrent and more energy efficient hardware, such as systolic array or similar classes of processors grids. The networks can also run at lower precision, such as float16, int8, int4, or others, rather than float32 precision of conventional systems. The networks can be further enhanced, in terms of efficiency or performance, through the inclusion of additional techniques such as radio transformer networks and neural architecture search, or networking pruning, among several other techniques, which reduce the computational complexity of a specific task or approximate signal processing function through modifications to the processing network or graph.

[0033] In this context, digital communications includes OFDM radio signals, OFDM variant signals (e.g., cyclic-prefix OFDM (CP-OFDM)), 4.sup.th generation (4G) Long Term Evolution (LTE) communication signals, 5G communication signals including 5G new radio (5G-NR) physical (PHY) channel signals and other similar 3GPP radio access network (RAN)-like signals (e.g., beyond-5G and 6G candidates). Digital communications can also include RF signals in Wi-Fi communications networks, e.g., wireless networks using IEEE 802.11 protocols, or a plurality of additional internet of things (IoT) or other wireless standards.

[0034] In some implementations, the machine-learning network approach is applied in non-OFDM systems. For example, the machine-learning network approach can be used within the context of radar systems. Radar systems with multi-antenna processing for channel estimation, target estimation, or spatial estimation are currently challenged by the high complexity and model deficit features of conventional methods such as LMMSE, among others, for estimation used today in many systems. Within such non-OFDM systems, a machine-learning network approach offers improvements in efficiency as well as improvements in performance over conventionally used methods.

[0035] In some implementations, a system for processing digital communications as described in this specification uses a machine-learning network to perform one or more tasks for transmitting or receiving, or both, RF signals. In some cases, these tasks include pilot estimation, interpolation, and equalization. Some implementations include additional tasks performed using a machine-learning network, such as spatial combining, beam-forming (BF), non-linearity compensation, symbol detection, pre-coding weight generation, or other signal processing stages used in 5G and other orthogonal frequency-division multiplexing (OFDM) systems.

[0036] In some implementations, a machine-learning network encompasses other aspects. For example, a machine-learning network can encompass aspects such as error correction decoding, source and/or channel coding or decoding, or synchronization or other signal compensation or transformation functions. The machine-learning network can be trained before deployment, after deployment using one or more communications channels, or a combination of the two (e.g., trained before deployment and updated after deployment). In some implementations, training includes training one or more aspects of a machine-learning network before deployment and further training the one or more aspects, or other aspects, after deployment. For example, a model and architecture of a machine-learning network can be trained and optimized offline before deployment to create a starting condition. After deployment, the machine-learning network can be further optimized from the starting condition to improve based on one or more operating conditions. In some cases, the one or more operating conditions can include effects of a communications channel.

[0037] In conventional communications systems, processing for digital communications can take place in stages. For example, a number of systems use multi-carrier signal modulation schemes, such as OFDM, to transmit information. Some of the time-frequency subcarriers within an OFDM grid can be allocated as reference tones or pilot signals. Pilot signals can be resource elements with known values; these can be referred to as pilot resource elements. Other resource elements within the OFDM grid can carry data; these can be referred to as data resource elements. In some cases, other multiple access schemes may be used including variations that employ similar allocations of resources compared to OFDM. Variations, such as SCFDMA, CP-OFDM, WPM, or other basis functions can also be used. The data resource elements, or data subcarriers, can be used to carry modulated information bits. By properly allocating subcarrier width and length such that flat fading may be assumed for a single slot, that is, coherence time and frequency over one subcarrier, equalization may be performed through complex multiplication of a channel inverse tap with each subcarrier value.

[0038] A sparse set of channel response values for each subcarrier between the known pilot signals can be estimated by applying a method of equalization (e.g., zero-forcing (ZF) or minimum-mean squared error (MMSE), among other methods) and then applying a method of interpolation (e.g., a linear interpolation, spline interpolation, Fast Fourier Transform (FFT) interpolation, sinc interpolation, or Wiener filtering, among other interpolation methods). In some cases, the sparse set of channel response values can be estimated by using data from a given signal or other signals received in a data-aided way. The channel inverse taps may then be applied in an equalization step that multiplies the inverse channel response with the full set of subcarriers to estimate the transmitted values prior to modification. In some cases, estimation of the channel can occur without relying on the transmitted data. For example, instead of known values, properties of the modulation, such as constant modulus algorithm equalizers (CMA), among others, can be used to estimate the channel. In some cases, modification of transmitted values can be obtained from output of a fading channel. The equalization, interpolation or estimation above, among other tasks, are performed in conventional communications systems in different stages using separate models, for example, using separate hardware or software routines, or both. In some cases, specific algorithms are developed and implemented within this process. For example, algorithms for interferer cancellation or nulling explicitly within the estimated or received signals can be developed and implemented.

[0039] The disclosed implementations for processing digital communications using a machine-learning network can replace the separate stages used on conventional systems for applications such as OFDM signal transmission and processing. In some implementations, the machine-learning network does not use separate models for estimation, interpolation, or equalization, but instead jointly learns for these tasks using real data. By adopting an end-to-end learning approach to replace or supplement processing steps conventionally performed in stages, the disclosed implementations enable improved performance while reducing complexity and lowering cost of operation, for example, lower power consumption.

[0040] In some implementations, the machine-learning network enhances the reception of radio signals, e.g., OFDM signals or other communication signals, by leveraging learned relationships within the machine-learning network used for processing. For example, learned solutions can exploit features of both data-aided and non-data aided equalizers in the learned solutions, exploiting both known pilot values and distributions of certain unknown-data modulations in solutions. Similar solutions would be comparatively challenging to accomplish in a closed form statistical approach. In addition, the systems can perform learning enhanced schemes which take into account common phenomena, such as interferers, distortion or other effects. These phenomena would, in a conventional approach, require special algorithms or logic within estimation stages, such as cancelling narrowband tones or bursts, among others, which could negatively impact (e.g., destroy) pilots and interpolation steps using the conventional statistical approach. In contrast, the machine-learning approach is able to use both known pilot values and distribution of certain unknown-data modulations to determine solutions.

[0041] Using the disclosed techniques, systems such as OFDM, 4G, 5G-NR, 5G+, CP-OFDM, Single-carrier frequency division multiple access (SC-FDMA), filter-bank multicarrier (FBMC), Discrete Fourier Transform-spread-OFDM (DFT-s-OFDM), among others, can be enhanced. The disclosed techniques also enable such systems to be incrementally expanded, in which a greater share of the signal processing functions are replaced by jointly learned machine learning approximations, which perform better and reduce complexity compared to presently deployed systems. The incremental expansion includes expanding more of the reception and transmission processes as improvements are demonstrated in each process, justifying the changes. In some implementations, the process is driven by existing deficiencies in a given approach. For example, statistical models for a channel typically can have a degree of inaccuracy in a real system. In some cases, the degree of inaccuracy in a given system can be used to expand the use of a machine-learning network approach to portions of the given system involved in the inaccuracy. In existing systems, the estimation and equalization as well as spatial processing stages can often be core aspects, in which the disclosed techniques for data-driven learning can enhance performance over naive assumptions often made in conventional approaches involving linear processing and system modeling.

[0042] The process of incremental expansion (e.g., of the processing stages incorporated in the machine-learning network or approximation network) can follow evolutions in the parameters or signal processing functions (e.g., changes to modulation, coding, error correction, among others) for RF signal waveforms for 3GPP communications signals, such that incremental changes in the system can occur to improve performance. These incremental changes, such as transmitter adaptation of shaping, modulation, or pre-coding, may occur as feedback mechanisms within a system including a machine-learning network (e.g., where the channel estimation can be exploited or transformed to improve or implement these functions). The feedback mechanisms can involve channel state information feedback or compressed forms of channel state information being processed by the machine-learning network of a communications system, or elements of another communications system communicably connected to the machine-learning network. In some instances, feedback mechanisms may implement protocols such as channel state information reference signal (CSI-RS) in 5G-NR systems, which transmit signal quality or other CSI data over the RAN protocol in a wireless system. Feedback mechanisms may also include weight or parameter modifications to improve the performance of one or more machine-learning networks within one or more of the communications systems, or the conveyance of error statistics such as error vectors, error magnitudes, bit error or frame error information, among others.

[0043] The tasks performed by a machine-learning network as disclosed in this specification can increase over time. For example, feedback of other processing data related to a communications channel or communications sent over a communications channel, can be used by a machine-learning network within a given communications system to increase the capability of the machine-learning network to perform one or more additional tasks, or increase the amount of communications processed by the machine-learning network. For example, certain cell deployments, geometries, features, patterns of life, or information traffic may lend themselves over time to specific sets of network weights or estimation stages or other signal processing stage approximations. Performance of the machine-learning network approach can be enhanced by leveraging this information in the form of past feedback data.

[0044] In some implementations, data from communications processed by one or more machine-learning networks is used to inform the modifying of weights or parameters of at least one of the one or more machine-learning networks. For example, one or more machine-learning networks within a shared system or otherwise communicably connected can transmit data on one or more previously processed signals. The data from the one or more previously processed signals can be used by a member of the one or more machine-learning networks, or a device controlling the member of the one or more machine-learning network, to modify weights or parameters within the member to take advantage of the data captured by the one or more machine-learning networks. In some cases, the data on one or more previously processed signals can be information related to a fade of a signal across a communications channel. In some cases, the data from one or more communication systems may be used to build models or approximations of behaviors across one or more cells. For instance, radios experiencing similar forms of interference, fading, or other channel effects may be aggregated to build approximate signal processing functions which perform well across that class of effect, or to build approximate models in order to simulate the channel effects experienced by those radios.

[0045] In the following sections, the disclosed techniques are described primarily with respect to cellular communications networks, e.g., 3GPP 5G-NR cellular systems. However, the disclosed techniques are also applicable to other systems as noted above, including, for example, 4G LTE, 6G, or Wi-Fi networks. These techniques are further applicable to other domains. For example, the disclosed techniques can be applied to optical signal processing where high rate fiber optic or other systems may seek to perform signal processing functions at high bit rates and with low error rates while maximizing performance. The disclosed techniques using the machine-learning network approach can be used to achieve improved performance while being able to handle hard to model impairments.

[0046] FIG. 1 is a diagram showing an example of a system 100 for processing digital communications using a machine-learning network. The system 100 includes input data 110 fed into a machine-learning network 120 that produces output data 130. The machine-learning network 120 can perform tasks such as channel estimation, interpolation, and equalization, replacing separate components for performing these tasks that are used in deployed present day systems, for example, components for channel estimation 121, interpolation 122 and equalization 123 that would be used in a present day processing stage group 124. As discussed above, other tasks performed in transmitting or processing a signal can also be a part of the machine-learning network 120. The example system 100, and the tasks in which the machine-learning network 120 performs, is not meant to limit the scope of the present disclosure. Implementations of systems with additional tasks replaced by a machine-learning network are discussed later in this disclosure, for example in reference to FIG. 5 and corresponding description.

[0047] In some implementations, the input data 110 is an OFDM or CP-OFDM signal (e.g., in the 3GPP 5G-NR uplink (UL) or downlink (DL) PHY), as shown graphically as an input plot 111 that is a time-frequency spectrum grid of pilot and data subcarriers and time slots within an OFDM signal block. Each grid element in the input plot 111 is referred to as a tile, e.g., tiles 111a and 111b. The tiles 111a and 111b represent a pilot subcarrier and data subcarrier, respectively. A legend 112 describes the visual symbols that denote a pilot subcarrier and a data subcarrier used in the example of FIG. 1. The visual symbols as shown in the legend 112 are for illustration purposes only. Although the tile 111a is a pilot subcarrier and the tile 111b is a data subcarrier, both the tiles 111a and 111b are resource elements or subcarriers that carry information over a communications channel. The input plot 111 can also be referred to as an unequalized resource grid that includes tiles. The subcarriers carry pilot (reference) signals or tones in pilot tiles, and data signals or tones in data tiles. The pilot tiles include filled-in tiles with the letter "P", e.g., tile 111a. The data tiles are shown as non-filled-in tiles with the letter "D", e.g., tile 111b. Unoccupied tiles not denoting pilot or data subcarriers are shown as non-filled-in tiles without any letter, e.g., tile 111c. In some cases, all tiles within the input plot 111 are occupied, e.g., with pilot or data subcarriers, or both.

[0048] In some implementations, pilot and data information, such as pilot and data subcarriers, or other resource elements, are transmitted. In other implementations, only pilot information or only data information are transmitted and a non-data-aided learned approach can be used. In some cases, subcarriers change between pilot and data information elements several times over a given slot. In this context, pilot and data information refers to pilot subcarriers, data subcarriers, pilot resource elements, or data resource elements, or any combination of these.

[0049] In some implementations, inputs into the machine-learning network 120 include other items. For example, masks can be sent as input into the machine-learning network 120. The masks can indicate to the machine-learning network 120 which resource elements are pilots, which are data, and in some cases, which elements are from different users or allocations, or what is any of the known values or modulation types of each element. In other implementations, inputs into the machine-learning network 120 may not take the form of such a single or multi-layer OFDM grid but may take the form of raw time-series sample data which may or may not be synchronized depending on which approximation stages and transforms are learned.

[0050] In 3GPP cellular networks, the pilot tiles are populated using primary and secondary synchronization signals or other protocol information such as uplink directives to UEs as well as other signals that may be computed or predicted from cell information, such as cell identification (ID), physical cell ID, or other transmitter state information. Data tiles typically carry symbol values corresponding to the information transmitted. In some cases, the symbol values of one or more data tiles may be unknown. The symbol values may be unknown, however, the modulation and set of possible discrete constellation points and layout in resource elements may be known (e.g., MCS or FRC assigned to the burst).

[0051] During a typical OFDM reception process, pilot tiles are used to estimate the communications channel response (e.g., by using a MMSE or zero forcing (ZF) algorithm to perform estimation) for each time-frequency tile. The estimates for pilot tiles may then be interpolated across data tiles to obtain estimates for the data tiles as well. Finally, estimated channel values may be divided from the received symbol values (or typically multiplied with the channel inverse), to receive estimates for the transmitted tiles (which include both pilot tiles and data tiles). However, in general, the task is to obtain an accurate estimate of the correct transmitted symbol values, given the received pilot tiles and data tiles that may be sparse or irregularly spaced over the received time-frequency grid, and where the positions of the transmitted pilot tiles within the OFDM signal block are known and the positions of the transmitted data tiles are partially unknown. For example, the system 100 may know what constellation was transmitted, or some probability distribution over the possible values, but the system does not know, with certainty, the values of data tiles transmitted. If the exact value transmitted is known, that transmitted value would be a reference tone.

[0052] In contrast to the conventional approach of breaking the reception process into separate channel estimation 121, interpolation 122, and equalization 123 stages, the system 100 uses machine-learning network 120 to leverage end-to-end learning. The machine-learning network 120 learns a compact joint estimator that approximates the transmitted values directly from the sparse grid of received values, such as the input data 110, to produce estimates of the channel or transmitted symbols, such as the output data 130.

[0053] The machine-learning network 120 learns to accomplish these tasks jointly and, in doing so, learns to compensate for channel effects and to interpolate the channel response estimate properly across a sparse grid, in some cases leveraging both data aided (e.g., reference) and non-data aided (e.g., non-reference) resource elements. Channel estimation, interpolation, and equalization now performed collectively by the machine-learning network 120, which can enable a more accurate match of underlying propagation phenomena received in one or more signals such as the output data 130.

[0054] In some implementations, the machine-learning network 120 uses structured information within the channel response (e.g., deterministic, known, high probability behaviors, structure or geometry leading to stabilities or simplifications in the estimation and interpolation tasks, among others). Using the structured information within the channel response enables the machine-learning network 120 to improve on conventional approaches of performing channel estimation 121, interpolation 122, and equalization 123 with separate stages or models. The machine-learning network 120 is able to provide significant performance improvements over the conventional approach. Some of the performance improvements are described in greater detail below with respect to FIGS. 2B and 2C.

[0055] In some implementations, tiles for multiple layers may be received. For example, time-frequency tiles for multiple layers may be received from different antennas, antenna combines or spatial modes. An estimation task, such as the channel estimation, may then consume multiple input values such as a three-dimensional (3D) array over time, frequency and space. From at least the multiple input values, estimated transmit symbols or channel estimates for an arbitrary number of information channels such as one or more information input streams to a multiple input, multiple output (MIMO) system or code can be produced and transmitted across one or more communications channels. While this generally considers "digital combining", in some implementations, analog-digital combining schemes such as millimeter wave (mmWave) networks are also addressed by the disclosed techniques. For example, the machine-learning network approach may be applied by adapting and pushing weights down to analog combining components on an antenna combining network or array calibration network or set of weights.

[0056] In some implementations, the machine-learning network 120 is implemented as a plurality of fully connected layers as shown graphically in item 126. The illustrated layers of the graphical representation 126 are meant to convey two or more layers of the machine-learning network 120 and not all layers or aspects of the machine-learning network 120.

[0057] In some implementations, the machine-learning network 120 is a convolutional neural network, or another form of neural network. Different alternative implementations are discussed later in this specification.

[0058] The machine-learning network 120 processes the input data 110, to produce the output data 130. Where the input data 110, in the example of FIG. 1, is a collection of unequalized resource elements or subcarriers, the output data 130 is a collection of equalized resource elements or subcarriers, which is shown as the output plot 131. In some cases, for the equalized resource elements, the complex value of each grid element in the output data 130 closely resembles the values transmitted prior to transmission over the channel, having removed random phase and amplitude changes, or the addition of other interference or channel effects on these elements, which can be present in the unequalized grid. The output data 130 represents an estimation of a received signal depicted as the input data 111. As discussed later in this specification, the equalized collection of resource elements or subcarriers can be used in further processes involved in transmission or reception of communications signals. In some cases, the output data 130 of the machine-learning network 120 may be a grid of channel inverse taps,--which is multiplied with the input data 110 to attain a good estimate of the originally transmitted OFDM symbol grid. In some cases, the information in the output data 130 may represent soft-log-likelihoods of bits, hard bits, or decoded codewords in the originally transmitted information. In some cases, the output data 130 may correspond to specific grid regions or allocations, but may not take the form of a specific OFDM grid in all instances.

[0059] In some cases, the machine-leaning network 120 can run alongside other similar calculations within a system. For example, in the system 100, conventional stages for channel estimation 121, interpolation 122, and equalization 123 can be performed together with processes corresponding to the machine-learning network 120. In some cases, calculations by the conventional stages can be used to determine one or more comparison values between the conventional approach and the machine-learning network techniques. In some cases, calculations by conventional stages can be used to help train the machine-learning network 120. In some cases, a system variable (e.g., a received signal strength indicator (RSSI) or metrics of CSI stability), or other parameter or notification, can enable the use of the machine-learning network approach over the conventional approach, or vice versa. For example, in a small communications network that does not experience much data traffic or does not have a currently functioning machine-learning network code base or hardware to perform the machine-learning network approach, the conventional approach can be used. In some cases, a threshold number of communications or signals received or a performance metric related to the performance of the conventional or the learned approximation network can trigger the use of one approach over the other. For example, when larger antenna arrays, such as a 64-element antenna array, is in use for transmission or reception within a communications system (e.g., in massive MIMO cellular networks) or the EVM or FER of a learned estimation network outperforms the EVM or FER of a conventional approach, this can be a trigger to use the machine-learning network approach.

[0060] The machine-learning network 120 is trained to receive input data, such as the input data 110, and produce output data, such as the output data 130. FIG. 2A is a diagram showing an example of a system 200 for training a machine-learning network 212 for processing digital communications between devices 201 and 208. In some implementations, the machine-learning network 212 is similar to the machine-learning network 120 of FIG. 1. However, the machine-learning network 212 can also be different than the machine-learning network 120 in other implementations.

[0061] The machine-learning network 212 is trained or deployed, or both, over one or more communications channels 207, or approximations of a communications channel, which can be, for example, a 5G-NR wireless communications channel for transmitting or receiving data in a cellular network. In some cases, the system 200 is used after deployment of the machine-learning network 212. The illustration of FIG. 2A shows device 201 transmitting signals to device 208, and system 200. The machine-learning network 212 is employed in the receiving device 208 to detect or estimate properties of a transmitted signal. However, in other cases, the device 201 may receive signals from the device 208; in such cases, a similar machine-learning network could be used in the device 201. In some implementations, the device 201 or the device 208 is a mobile device, such as a cellular phone, a tablet or a notebook, while the other device is a network base station.

[0062] The operations of the system 200 are shown in stages A to D in one example process of training the machine-learning network 212. Stage A shows pilot and data insertion 202 for a given data signal that is to be transmitted from the transmission device 201 to the receiving device 208. The pilot and data information is then modulated using a modulation process 204. In some implementations, the pilot and data information is modulated using a multi-carrier transmission scheme such as OFDM. In some cases, a 5G-NR test signal modulator is alternatively used for modulating the pilot and data information. The modulated information is then converted to analog form for transmission using a converter 206 (e.g., a digital to analog converter (DAC)). The analog information is then transmitted as an RF signal over the communications channel 207 to the receiving device 208. The analog information may pass through various RF components such as amplifiers, filters, attenuators, or other components which effect the signal.

[0063] Stage B shows the analog RF signal sent over the communications channel 207 received by the receiving device 208, which may pass through a number of analog RF components and then converts the received signal to a digital signal using a converter 209 (e.g., an analog to digital converter (ADC)). In the example of FIG. 2A, existing methods of transmitting and receiving analog signals from a transmitted device to a reception device can be used. The converted digital information is then synchronized and subcarriers are extracted using a synchronization and extraction process 210. In some cases, synchronization stages or additional linearity compensation stages may also be performed by machine learning networks. The subcarriers in this case are a form of unequalized resource grid within a frequency-time spectrum over one or more layers or antennas. The subcarriers are processed by the machine-learning network 212. In this example, the subcarriers are also sent to a machine-learning network update component 214, to be used for training the machine-learning network 212, as described below with respect to stage C of FIG. 2A.

[0064] In some implementations, the machine-learning network 212 is a neural network that performs a set of parametric algebraic operations on an input vector to produce an output. The machine-learning network 212 includes several fully connected layers (FC), with a layer performing matrix multiplications of an input vector with a weight vector followed by summation to produce an output vector. In some implementations, the machine-learning network 212 includes non-linearity, such as a rectified linear unit (ReLU), sigmoid, parametric rectified linear unit (PReLU), MISH neural activation function, SWISH activation function, or other non-linearity. In some cases, the machine-learning network 212 leverages convolutional layers, skip connections, transformer layers, recurrent layers, residual layers, upsampling or downsampling layers, or a number of other techniques that serve to improve the performance of the machine-learning network 212, for example by achieving an improved performance architecture. In some implementations, the machine-learning network 212 is a convolutional neural network. In some cases this takes the form of a backbone network, u-Net, or other similar network that incorporates appropriate transformers, invariances, or efficient layers, which improve performance while reducing computational complexity.

[0065] In some instances, complex valued multiplication in layers of the machine-learning network 212, including FC layers or convolutional layers is used to aid in training the system 200 by training the machine-learning network 212. In some implementations, the machine-learning network 212 includes multiplying pairs of complex weights with pairs of complex inputs to mirror complex valued multiplication tasks that are performed in complex analytic form (e.g., outputs (y0,y1)=(x0*w0-x1*w1), (x0*w1+x1*w0)). In this way, complex layers can sometimes reduce the parametric complexity and overfitting of the network, resulting in faster training and lower computational, training, and data complexity of the result.

[0066] In some implementations, by conducting a forward pass through the set of operations performed by the machine-learning network 212, a prediction is made of the output values, which may be a prediction of the channel response of the communications channel 207, the inverse channel response of the channel 207, the tile values of the RF signal prior to transmission, or related values (e.g., transmitted codewords or bits, among others). The related values can be used to calculate the channel response of the channel 207, the inverse channel response of the channel 207, or the tile values of the RF signal prior to transmission. In some cases, the channel response or the inverse channel response of the channel 207 is predicted per-tile from within a resource grid of signals within a frequency-time spectrum, such as the resource grid shown by input plot 111 of FIG. 1.

[0067] The device 208 uses the output prediction from the machine-learning network 212 for detecting symbols, using symbol detection component 216, where the detected symbols are estimates of the symbols transmitted from the device 201, using the prediction output by the machine-learning network 212. The detected symbols are used in performance analysis 218.

[0068] Stage C in FIG. 2A shows data from the synchronization and extraction 210 and data from the symbol detection 216 are used to obtain machine-learning network updates 214 for the machine-learning network 212. The machine-learning network updates 214 computes a loss function, which measures a distance (e.g., a difference) between the known reference or data subcarriers 220 and the estimates of the transmitted symbols obtained from symbol detection 216. In some cases, this loss or difference may also consist of a maximum of an L1 loss or scaled L1 loss, and an L2 loss or scaled L2 loss, combining multiple distance metrics to exploit the best properties of both L1 and L2 loss convergence in their differing performance regions. This process may be referred to as the changeover value in denominator loss, or the constellation value inverse decay loss. In some cases, a rate of change of the loss function is used to update one or more weights or parameters within the machine-learning network 212. Actual transmitted symbol values used for computing the loss function are determined from known transmissions, in which the known reference and data subcarriers 220 can be pre-determined. For example, in some cases known or repeated data is transmitted over the air which enables predicting data values. In some cases test sequences, such as pseudo random bit sequences (PRBS), may be transmitted such that both the transmitter and receiver are able to compute the same bits or symbol values at either end of the link error free. In some implementations, such sounding or known-data training operations are realized in simulation or link sounding scenarios or within excess cell capacity.

[0069] In some implementations, the known reference and data subcarriers 220 come from a different source. For example, demodulation or decoding, either through conventional statistical methods such as MMSE or believe propagation, can estimate the most likely bits, symbols, or values seen based on values in the received signal, with some degree of error correction or fault tolerance. Furthermore, estimation of the symbols or bits can occur within the machine learning network as well. Whether by the conventional or MMSE approach or through the machine learning network, bits, codewords, or other information, can be estimated. This estimation is performed, in some cases, with a given error correction capability, such as within the Polar or low-density parity-check (LDPC) block code decoders used within the 5G-NR standard, and either cyclic redundancy checks (CRC) or simply forward error correction (FEC) codeword check bits such as in LDPC, providing a rapid indication about the reception of the information, for example whether all of the bits in a codeword have been received correctly. Upon knowledge of a correct frame (e.g., the checksum passes, LDPC check bits are correct), bits can be re-modulated to provide ground truth symbol values, correct bits, or log-likelihood ratios can be computed from the received and ground truth symbol locations or channel estimates. These ground truth symbol values, correct bits, or log-likelihood ratios, among others, can be used within the distance metric in order to update the machine learning model and its weights.

[0070] As another example, known reference or data subcarriers can come from out-of-band coordination from other user equipment (UE), next generation nodeB (gNB) or other base stations, network elements, or prior knowledge of content. In some cases, application data or probabilistic information on one or more of these items can be used to infer transmitted symbols. The known reference or data subcarriers 220 obtained by the system 200 can be stored in a form of digital data storage communicably connected to an element for obtaining the machine-learning network updates 214 for the machine-learning network 212.

[0071] Model updates calculated in the system 200 by elements such as the machine-learning network updates 214 allow model predictions to improve over time and iteratively provide improved estimates of the transmitted symbol values upon training in representative channel conditions. In some implementations, baseline models are used to provide estimation. In other implementations, the machine-learning network 212 is used to provide estimations with a form of error feedback to enable iterative training.

[0072] Training the machine-learning network 212 can take place using one or more received input signals as input data for the machine-learning network 212. In some implementations, given input data is used for two or more iterations and the machine-learning network 212 learns to model particular parameters or weights based on the given input data. In other implementations, new data is used for each iteration of training. In some cases, data used for training the machine-learning network can be chosen based on aspects of the data. For example, in a scenario where data sent through a communications channel suffers a particular type of fade or other distortion, the machine-learning network 212 can learn the particular distortion and translate corresponding input data to output data with less bit rate error and with less complexity and power usage compared to conventional systems. In some cases, data or models for users of different fading or mobility or spatial locality may be employed or aggregated further to train specific models for sets of users or user scenarios within various sectors or cells.

[0073] Stage D shows the output signal from the symbol detection 216 is sent for further processing 222, which can represent any other process after symbol detection 216. For example, further processing 222 can include subsequent modem stages such as error correction decoding, cyclic redundancy checks, LDPC check bits, de-framing, decryption, integrity checks, source decoding, or other processes.

[0074] In some implementations, the performance analysis 218 is used to compute values associated with one or more communications handled by the machine-learning network 212. For example, the performance analysis 218 can use symbol values or bits to compute quantitative quality metrics such as error vector magnitude (EVM) or bit error rate (BER) or frame error rate (FER) or code-block error rate (BLER). Such quantitative quality metrics can help determine comparative measurements between one or more communications processing systems or between one or more different set of machine learning models, architectures or sets of weights.

[0075] In some implementations, output from the performance analysis 218 is used by the machine-learning updates 214 to help improve the machine-learning network 212. For example, quantitative quality metrics or other data calculated or obtained by the performance analysis 218 can be used to help improve the machine-learning network 212. In some cases, the performance analysis 218 can detect trends or other data related to one or more calculations performed by the machine-learning network 212. This data can be used to inform specific weight or parameter modifications within the machine-learning network 212. For example, common objectives and distances include minimizing EVM or BER over the link by updating the weights on the same input data measured. In some cases, augmentations may be applied to the input data in order to magnify the effective number of input values that are being optimized, for example the phase, amplitude, fading or other effects applied to the input value may be altered upon input to the machine learning network update process to accelerate training on a smaller quantity of data.

[0076] As shown by FIG. 2A, in some implementations, elements including machine-learning network updates 214, the performance analysis 218, and the known reference and data subcarriers 220 as well as related operations in stage C are performed by the receiving device 208. In other implementations, operations related to stage C are performed by other elements. For example, an external element communicably connected to the receiving device 208 can be used to obtain the known reference and data subcarriers 220, as well as perform the corresponding performance analysis 218 from the symbol detection 216 as means of obtaining the machine-learning network updates 214.

[0077] Similarly, sub-elements shown in the example of FIG. 2A within elements such as the transmission device 201 and the receiving device 208 are, in some implementations, not within either the transmission device 201 or the receiving device 208. For example, the machine-learning network 212 can be stored within a separate device that is communicably connected to the receiving device 208. As another example, the reception and digital conversion 209 can perform the main operations of the receiving device 208 and a separate device communicably connected to the receiving device 208 can perform other operations, including, for example, synchronizing and extracting subcarriers and, in general, perform operations discussed in reference to the synchronization and extraction 210. The device communicably connected to the receiving device 208 can store and execute the various layers of machine-learning network 212 and, in general, perform operations discussed in reference to the machine-learning network 212. The device communicably connected to the receiving device 208 can also detect symbols based on the output of the machine-learning network 212 and, in general, perform operations discussed in reference to the symbol detection 216A.

[0078] In some implementations, training the machine-learning network 212 is performed over the air with, by sending RF signals over, an actual physical communications channel 207 between the transmitting device 201 and the receiving device 208. Over the air training or online training may be performed prior to system deployment or the machine-learning network 212 and the system 200 can continue to perform updates either continuously or periodically while carrying communications traffic.

[0079] In some implementations, a set of profiles, such as urban, rural, indoor, macro, micro, femto, or other profiles related to channel behavior correlated to or predicted by deployment scenario, is used to determine an initial model of the machine-learning network 212 that is deployed, and used to configure processes for determining augmentation or other training parameters for the machine-learning network 212. In some instances, data or models may be shared in cloud environments or network sharing configurations between specific gNB cells to improve initial machine-learning network models, or to jointly improve models within multiple environments with shared phenomena. For example, cells within a grid of cells that share similar interference, cells with similar delay spreads, or cells with other similar behaviors, can be used to improve the effectiveness, speed, or performance of the machine-learning network 212.

[0080] In some implementations, a simulated communications channel 207 is used for training the machine-learning network 212. For example, channel models, such as 5G-NR standardized time-delay line (TDL) models, Rayleigh or Rician channel or standard algorithms with standardized channels such as international telecommunications union (ITU) or 3GPP fading channel models including fixed taps, delay spreads, Doppler rates, or other parameters within a well-defined random process, can be used to simulate the communications channel 207.

[0081] In some implementations, the machine-learning network 212 is pre-trained. Pre-training can be based on simulation. Pre-training, depending on implementation, can use simplified statistical models (e.g., Rayleigh or Rician, among others), a COST 2100 model, tapped delay line (TDL-A, TDL-B, TDL-x, among others) model, or standard LTE or NR channel model, among other models. Pre-training can also use Ray tracing or geometric model of sector for deployment or channel generative adversarial network (GAN) machine learning networks trained to reproduce the channel response of one or more cells based on prior measurement or simulation.

[0082] In some cases, the training may use known values in order to compute the channel response at each step. For instance, preamble values, pilot values, known references, known data values can be used in training. In some cases, a decision feedback approach can be used. For instance, a decision feedback approach can include demodulating or decoding data to obtain the estimated symbols or bits for the allocation. The data can be one or more of a resource element, a packet, burst, frame, resource unit (RU) allocation, a codeword (e.g., LDPC or Polar code block), physical downlink shared channel (PDSCH) allocation, or physical uplink shared channel (PUSCH) allocation, among other forms of data. In some cases, the data can then be verified, for example by checking CRC fields, encryption or HMAC fields, or parity information (e.g., LDPC parity check bits), and then by using these values in order to provide target information for updating the machine learning model.

[0083] In some cases, training may include using other estimation or equalization approaches. For example, linear MMSE, max likelihood, successive interference cancellation (SIC), or other suitable approaches can be used to produce estimates of the channel response in certain instances (e.g., when the machine-learning network model is not well trained) or the training may use the existing learned estimation or equalization model to produce the estimates and use information such as decision or FEC feedback to improve machine-learning network models prior to training. In the latter case, transition from a general statistical model to a learned model may occur when the signal to interference plus noise ratio (SINR) exceeds a threshold value, or at another point (e.g., based on channel characteristics or output performance measures) where the performance of the learned model outperforms the general statistical model.

[0084] In some cases, augmentation is used to improve or accelerate the training of the machine-learning network 212. In such cases, multiple copies of data specific to training may be used with different augmentations when training the machine-learning network 212. For example, different channel effects such as noise, phase rotation, angle of arrival, or fading channel response, among others, can be applied to one or more transmit or receive antennas of the devices 201 or 208, or both. The copies of data can be used to increase the amount of effective usable training data available from a finite or smaller set of measurement data into a near infinite set of augmentation measurement or simulated data. This can assist in faster model training of the machine-learning network 212, training more resilient, more generalizable, or less-overfit models used for the machine-learning network 212, over much less data and training time, among others.

[0085] Some of the performance improvements, as discussed in reference to the implementation shown in the example of FIG. 1, are shown in FIGS. 2B and 2C. FIGS. 2B and 2C represent two examples of performance improvements corresponding to the machine-learning network approach of the implementation of FIG. 1. The performance improvements shown in FIGS. 2B and 2C, however, do not represent all possible improvements from all possible implementations of the machine-learning network approach applied to other tasks or processes related to processing digital communications. Other improvements are also possible upon using the machine-learning network for processing digital communications, as described in this specification.

[0086] FIG. 2B is a diagram showing an example of improved error vector magnitude (EVM) upon using a machine-learning network for processing digital communications. The figure presents a performance comparison between a conventional approach to estimation and equalizing involving MMSE algorithms (plot 230) and a machine-learning network approach to the same task (plot 240). Plot 230 illustrates the recovered data symbol tiles produced by using MMSE, which involves multiplication of the estimated channel inverses from the network with the received symbol value tiles. Plot 240 illustrates recovered data symbol tiles produced by a machine-learning network approach as discussed with respect to FIGS. 1 and 2A.

[0087] Both the conventional approach involving an MMSE algorithm, and the machine-learning network approach obtain a Quadrature Phase Shift Keying (QPSK) received symbol set correctly. However, plot 240 shows that the machine-learning approach produces more concentrated clusters of point estimates surrounding the possible symbol values. A visual comparison of the estimations of the conventional approach and the machine-learning network approach can be made by comparing item 235, representing estimation produced by MMSE equalization, and item 245, representing estimation produced by the machine-learning network equalization, which shows that the cluster for item 245 is more concentrated compared to the cluster for item 235, indicating a lower EVM when receive signals are processed using the machine-learning network. Processing receive signals using the machine-learning produces a lower cluster variance and lower EVM, compared to using MMSE. Lower cluster variance and lower EVM correlate to better signal reception and better receiver performance within a communications processing system.