Advanced Image Recognition for Threat Disposition Scoring

Givental; Gary I. ; et al.

U.S. patent application number 16/391485 was filed with the patent office on 2020-10-29 for advanced image recognition for threat disposition scoring. The applicant listed for this patent is International Business Machines Corporation. Invention is credited to Aankur Bhatia, Gary I. Givental, Wesley A. Khademi, Srinivas B. Tummalapenta.

| Application Number | 20200342252 16/391485 |

| Document ID | / |

| Family ID | 1000004071280 |

| Filed Date | 2020-10-29 |

View All Diagrams

| United States Patent Application | 20200342252 |

| Kind Code | A1 |

| Givental; Gary I. ; et al. | October 29, 2020 |

Advanced Image Recognition for Threat Disposition Scoring

Abstract

Mechanisms are provided to implement an image based event classification engine having an event image encoder and a first neural network computer model. The event image encoder receives an event data structure comprising a plurality of event attributes, where the event data structure represents an event occurring in association with a computing resource. The event image encoder executes, for each event attribute, a corresponding event attribute encoder that encodes the event attribute as a pixel pattern in a predetermined grid of pixels, corresponding to the event attribute, of an event image. The event image is into to a neural network computer model which applies one or more image feature extraction operations and image feature analysis algorithms to the event image to generate a classification prediction classifying the event into one of a plurality of predefined classifications and outputs the classification prediction.

| Inventors: | Givental; Gary I.; (Bloomfield Hills, MI) ; Khademi; Wesley A.; (Costa Mesa, CA) ; Bhatia; Aankur; (Bethpage, NY) ; Tummalapenta; Srinivas B.; (Broomfield, CO) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Family ID: | 1000004071280 | ||||||||||

| Appl. No.: | 16/391485 | ||||||||||

| Filed: | April 23, 2019 |

| Current U.S. Class: | 1/1 |

| Current CPC Class: | G06K 9/00771 20130101; G06N 3/088 20130101; G08B 13/194 20130101; G06K 9/6267 20130101; G06K 9/6202 20130101 |

| International Class: | G06K 9/62 20060101 G06K009/62; G06K 9/00 20060101 G06K009/00; G08B 13/194 20060101 G08B013/194; G06N 3/08 20060101 G06N003/08 |

Claims

1. A method, in a data processing system comprising at least one processor and at least one memory, wherein the at least one memory comprises instructions which are executed by the at least one processor and specifically configure the at least one processor to implement an image based event classification engine comprising an event image encoder and a first neural network computer model, wherein the method comprises: receiving, by the event image encoder, an event data structure comprising a plurality of event attributes, wherein the event data structure represents an event occurring in association with at least one computing resource in a monitored computing environment; executing, by the event image encoder, for each event attribute in the plurality of event attributes, a corresponding event attribute encoder that encodes the event attribute as a pixel pattern in a predetermined grid of pixels, corresponding to the event attribute, of an event image representation data structure; inputting, into the first neural network computer model, the event image representation data structure; processing, by the first neural network computer model, the event image representation data structure by applying one or more image feature extraction operations and image feature analysis algorithms to the event image representation data structure to generate a classification prediction output classifying the event into one of a plurality of predefined classifications; and outputting, by the first neural network computer model, a classification output indicating a prediction of one of the predefined classifications that applies to the event.

2. The method of claim 1, wherein the computing environment monitoring system is a security intelligence event monitoring system and wherein the event is an alert notification generated by the security intelligence event monitoring system.

3. The method of claim 1, wherein the event attribute encoder comprises one or more trained second neural network computer models trained to encode one or more of the event attributes in the plurality of event attributes into a corresponding pixel pattern in a corresponding predetermined grid of pixels.

4. The method of claim 1, wherein the first neural network computer model is trained via an unsupervised machine learning process to detect anomalies within the event image representation data structure.

5. The method of claim 1, wherein the event image encoder is deployed in an end user computing device of the monitored computing environment and the first neural network computer model is deployed in a server computing device outside the monitored computing environment, and wherein inputting the event image representation comprises transmitting the event image representation outside the monitored computing environment to the server computing device without outputting the event data structure outside the monitored computing environment.

6. The method of claim 1, wherein the event attributes comprise at least one of a customer identifier, a source address, a destination address, an event name, a triggering rule identification, or a duration, and wherein different event attribute encoders are executed on different types of event attributes.

7. The method of claim 1, wherein the plurality of predefined classifications comprise a true-threat classification indicating the event to represent an attack on the at least one computing resource, and a false-positive classification indicating that the event represents a falsely identified attack on the at least one computing resource.

8. The method of claim 1, wherein executing, for each event attribute in the plurality of event attributes, a corresponding event attribute encoder further comprises, for the event attribute: selecting a set of bits of the event attribute; converting the set of bits to a corresponding set of color properties for pixels in a corresponding pixel pattern; and applying a bitmask to bits of the event attribute to determine a location within a corresponding predetermined grid of pixels to place the pixels to generate a corresponding pixel pattern.

9. The method of claim 1, wherein the event image representation comprises a visual pattern discernable by the human eye but whose underlying meaning is not readily apparent to a human being without the processing, by the first neural network computing model, of the event image representation data structure to classify the event into one of the plurality of predefined classifications.

10. The method of claim 1, wherein the first neural network computing model comprises a pre-processing layer to perform image transformation operations to generate a pre-processed image, a feature detection layer that applies the one or more image feature extraction operations to detect and extract a set of features from the pre-processed image, a pooling layer which pools outputs of the feature detection layer into a smaller set of one or more feature arrays, a flattening layer that converts the one or more feature arrays generated by the pooling layer into a linear vector, one or more fully connected hidden layers which have trained non-linear functions of combinations of the features represented by the linear vector, and an output layer which outputs the classification output based on the outputs of the one or more fully connected hidden layers.

11. A computer program product comprising a computer readable storage medium having a computer readable program stored therein, wherein the computer readable program, when executed on a computing device, causes the computing device to implement an image based event classification engine comprising an event image encoder and a first neural network computer model, that operates to: receive, by the event image encoder, an event data structure comprising a plurality of event attributes, wherein the event data structure represents an event occurring in association with at least one computing resource in a monitored computing environment; execute, by the event image encoder, for each event attribute in the plurality of event attributes, a corresponding event attribute encoder that encodes the event attribute as a pixel pattern in a predetermined grid of pixels, corresponding to the event attribute, of an event image representation data structure; input, into the first neural network computer model, the event image representation data structure; process, by the first neural network computer model, the event image representation data structure by applying one or more image feature extraction operations and image feature analysis algorithms to the event image representation data structure to generate a classification prediction output classifying the event into one of a plurality of predefined classifications; and output, by the first neural network computer model, a classification output indicating a prediction of one of the predefined classifications that applies to the event.

12. The computer program product of claim 11, wherein the computing environment monitoring system is a security intelligence event monitoring system and wherein the event is an alert notification generated by the security intelligence event monitoring system.

13. The computer program product of claim 11, wherein the event attribute encoder comprises one or more trained second neural network computer models trained to encode one or more of the event attributes in the plurality of event attributes into a corresponding pixel pattern in a corresponding predetermined grid of pixels.

14. The computer program product of claim 11, wherein the first neural network computer model is trained via an unsupervised machine learning process to detect anomalies within the event image representation data structure.

15. The computer program product of claim 11, wherein the event image encoder is deployed in an end user computing device of the monitored computing environment and the first neural network computer model is deployed in a server computing device outside the monitored computing environment, and wherein inputting the event image representation comprises transmitting the event image representation outside the monitored computing environment to the server computing device without outputting the event data structure outside the monitored computing environment.

16. The computer program product of claim 11, wherein the event attributes comprise at least one of a customer identifier, a source address, a destination address, an event name, a triggering rule identification, or a duration, and wherein different event attribute encoders are executed on different types of event attributes.

17. The computer program product of claim 11, wherein the plurality of predefined classifications comprise a true-threat classification indicating the event to represent an attack on the at least one computing resource, and a false-positive classification indicating that the event represents a falsely identified attack on the at least one computing resource.

18. The computer program product of claim 11, wherein the computer readable program further causes the computing device to execute, for each event attribute in the plurality of event attributes, a corresponding event attribute encoder at least by, for the event attribute: selecting a set of bits of the event attribute; converting the set of bits to a corresponding set of color properties for pixels in a corresponding pixel pattern; and applying a bitmask to bits of the event attribute to determine a location within a corresponding predetermined grid of pixels to place the pixels to generate a corresponding pixel pattern.

19. The computer program product of claim 11, wherein the event image representation comprises a visual pattern discernable by the human eye but whose underlying meaning is not readily apparent to a human being without the processing, by the first neural network computing model, of the event image representation data structure to classify the event into one of the plurality of predefined classifications.

20. An apparatus comprising: a processor; and a memory coupled to the processor, wherein the memory comprises instructions which, when executed by the processor, cause the processor to implement an image based event classification engine comprising an event image encoder and a first neural network computer model, that operates to: receive, by the event image encoder, an event data structure comprising a plurality of event attributes, wherein the event data structure represents an event occurring in association with at least one computing resource in a monitored computing environment; execute, by the event image encoder, for each event attribute in the plurality of event attributes, a corresponding event attribute encoder that encodes the event attribute as a pixel pattern in a predetermined grid of pixels, corresponding to the event attribute, of an event image representation data structure; input, into the first neural network computer model, the event image representation data structure; process, by the first neural network computer model, the event image representation data structure by applying one or more image feature extraction operations and image feature analysis algorithms to the event image representation data structure to generate a classification prediction output classifying the event into one of a plurality of predefined classifications; and output, by the first neural network computer model, a classification output indicating a prediction of one of the predefined classifications that applies to the event.

Description

BACKGROUND

[0001] The present application relates generally to an improved data processing apparatus and method and more specifically to mechanisms for performing advanced image recognition to identify and score threats to computing system resources.

[0002] Security intelligence event monitoring (SIEM) is an approach to security management that combines security information management with security event monitoring functions into a single security management system. A SIEM system aggregates data from various data sources in order to identify deviations in the operation of the computing devices associated with these data sources from a normal operational state and then take appropriate responsive actions to the identified deviations. SIEM systems may utilize multiple collection agents that gather security related events from computing devices, network equipment, firewalls, intrusion prevention systems, antivirus systems, and the like. The collection agents may then send this information, or a subset of this information that has been pre-processed to identify only certain events for forwarding, to a centralized management console where security analysts examine the collected event data and prioritize events as to their security threats for appropriate responsive actions. The responsive actions may take many different forms, such as generating alert notifications, inhibiting operation of particular computer components, or the like.

[0003] IBM.RTM. QRadar.RTM. Security Intelligence Platform, available from International Business Machines (IBM) Corporation of Armonk, N.Y., is an example of one SIEM system which is designed to detect well-orchestrated, stealthy attacks as they are occurring and immediately set off the alarms before any data is lost. By correlating current and historical security information, the IBM.RTM. QRadar.RTM. Security Intelligence Platform solution is able to identify indicators of advanced threats that would otherwise go unnoticed until it is too late. Events related to the same incident are automatically chained together, providing security teams with a single view into the broader threat. With QRadar.RTM., security analysts can discover advanced attacks earlier in the attack cycle, easily view all relevant events in one place, and quickly and accurately formulate a response plan to block advanced attackers before damage is done.

[0004] In many SIEM systems, the SIEM operations are implemented using SIEM rules that perform tests on computing system events, data flows, or offenses, which are then correlated at a central management console system. If all the conditions of a rule test are met, the rule generates a response. This response typically results in an offense or incident being declared and investigated.

[0005] Currently, SIEM rules are created, tested, and applied to a system manually and sourced from out of the box rules (base set of rules that come with a SIEM system), use case library rules ("template" rules provided by provider that are organized by category, e.g., NIST, Industry, etc.), custom rules (rules that are manually developed based on individual requirements), and emerging thread rules (manually generated rules derived from a "knee jerk" reaction to an emerging thread or an attack). All of these rules must be manually created, testing and constantly reviewed as part of a rule life-cycle. The life-cycle determines if the rule is still valid, still works, and still applies. Furthermore, the work involved in rule management does not scale across different customer SIEM systems due to differences in customer industries, customer systems, log sources, and network topology.

[0006] SIEM rules require constant tuning and upkeep as new systems come online, new software releases are deployed, and new vulnerabilities are discovered. Moreover, security personnel can only create SIEM rules to detect threats that they already know about. SIEM rules are not a good defense against "Zero Day" threats and other threats unknown to the security community at large.

SUMMARY

[0007] This Summary is provided to introduce a selection of concepts in a simplified form that are further described herein in the Detailed Description. This Summary is not intended to identify key factors or essential features of the claimed subject matter, nor is it intended to be used to limit the scope of the claimed subject matter.

[0008] In one illustrative embodiment, a method is provided, in a data processing system comprising at least one processor and at least one memory, wherein the at least one memory comprises instructions which are executed by the at least one processor and specifically configure the at least one processor to implement an image based event classification engine comprising an event image encoder and a first neural network computer model. The method comprises receiving, by the event image encoder, an event data structure comprising a plurality of event attributes. The event data structure represents an event occurring in association with at least one computing resource in a monitored computing environment. The method further comprises executing, by the event image encoder, for each event attribute in the plurality of event attributes, a corresponding event attribute encoder that encodes the event attribute as a pixel pattern in a predetermined grid of pixels, corresponding to the event attribute, of an event image representation data structure. In addition, the method comprises inputting, into the first neural network computer model, the event image representation data structure, and processing, by the first neural network computer model, the event image representation data structure by applying one or more image feature extraction operations and image feature analysis algorithms to the event image representation data structure to generate a classification prediction output classifying the event into one of a plurality of predefined classifications. Moreover, the method comprises outputting, by the first neural network computer model, a classification output indicating a prediction of one of the predefined classifications that applies to the event.

[0009] In other illustrative embodiments, a computer program product comprising a computer useable or readable medium having a computer readable program is provided. The computer readable program, when executed on a computing device, causes the computing device to perform various ones of, and combinations of, the operations outlined above with regard to the method illustrative embodiment.

[0010] In yet another illustrative embodiment, a system/apparatus is provided. The system/apparatus may comprise one or more processors and a memory coupled to the one or more processors. The memory may comprise instructions which, when executed by the one or more processors, cause the one or more processors to perform various ones of, and combinations of, the operations outlined above with regard to the method illustrative embodiment.

[0011] These and other features and advantages of the present invention will be described in, or will become apparent to those of ordinary skill in the art in view of, the following detailed description of the example embodiments of the present invention.

BRIEF DESCRIPTION OF THE DRAWINGS

[0012] The invention, as well as a preferred mode of use and further objectives and advantages thereof, will best be understood by reference to the following detailed description of illustrative embodiments when read in conjunction with the accompanying drawings, wherein:

[0013] FIG. 1A is an example diagram illustrating the primary operational elements for a training phase of an advanced image recognition for threat disposition scoring (AIRTDS) computing system, and a workflow of these primary operational elements, in accordance with one illustrative embodiment;

[0014] FIG. 1B is an example diagram illustrating the primary operational elements for runtime operation of an AIRTDS computing system, after training of the AIRTDS computing system, and a workflow of these primary operational elements, in accordance with one illustrative embodiment;

[0015] FIG. 2A is an example diagram illustrating a data layout of extracted security alert attributes for a security alert that may be generated by the IBM.RTM. QRadar.RTM. Security Intelligence Platform in accordance with one illustrative embodiment;

[0016] FIG. 2B is an example diagram illustrating the image encoding of the industry identifier/event vendor in accordance with one illustrative embodiment;

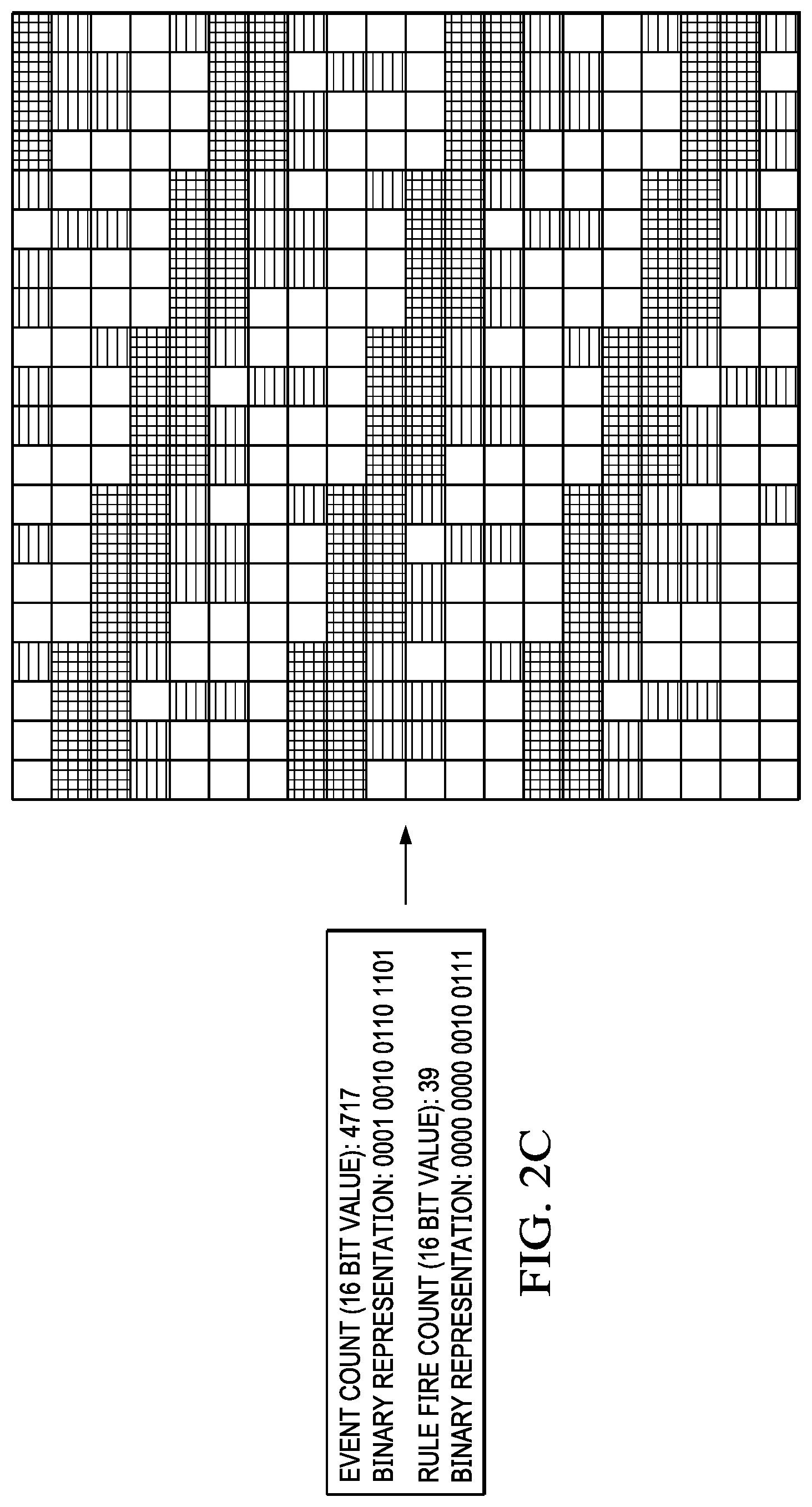

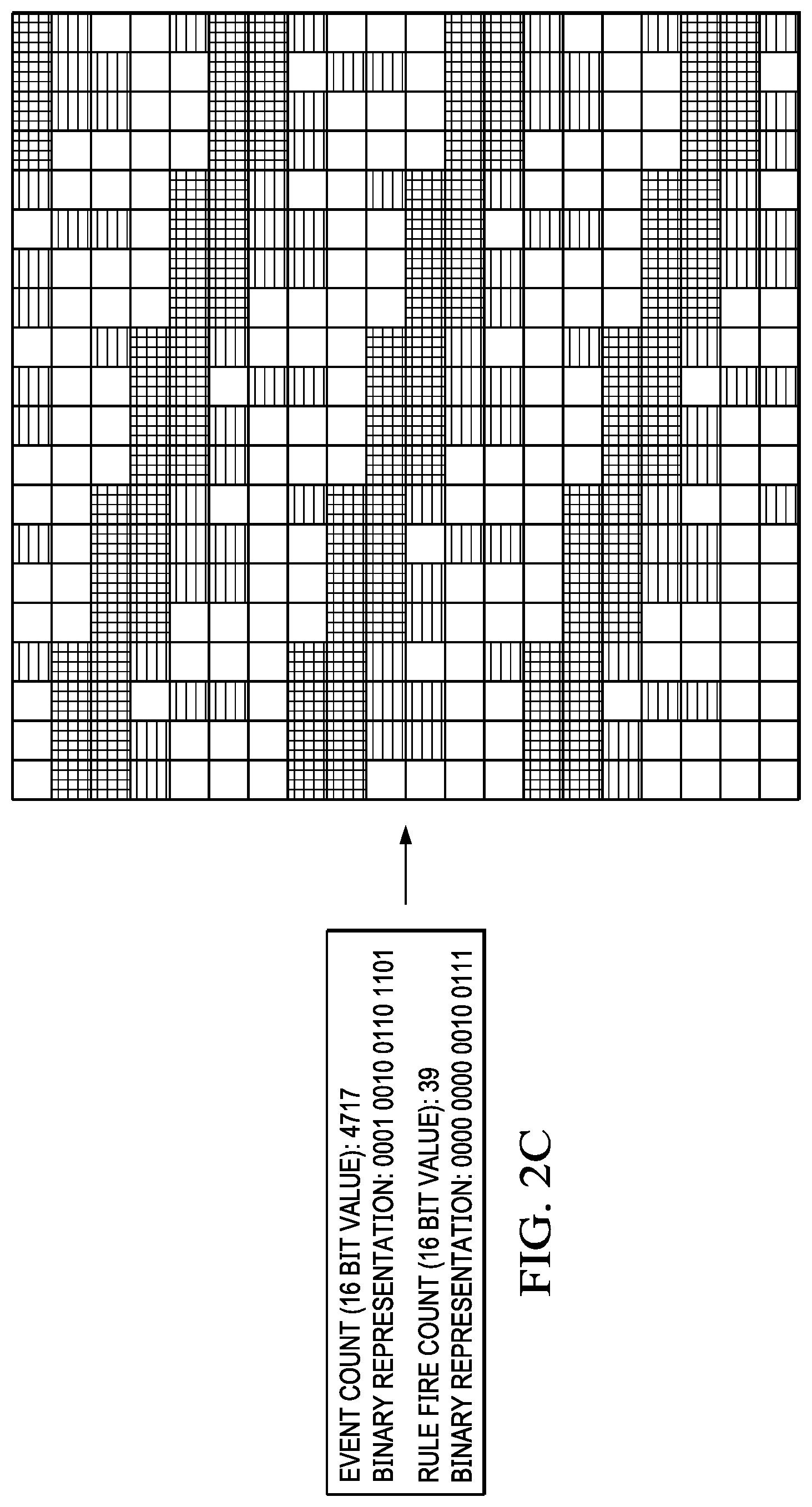

[0017] FIG. 2C is an example diagram illustrating one example of this event and rule fire count attribute image encoding in accordance with one illustrative embodiment;

[0018] FIG. 3A is a block diagram illustrating the operation of the alert attribute image encoding operation according to one illustrative embodiment;

[0019] FIG. 3B is an example diagram illustrating an encoding of an alert generated by the IBM.RTM. QRadar.RTM. Security Intelligence Platform as an alert image representation in accordance with one illustrative embodiment;

[0020] FIG. 4 is an example diagram of a convolutional neural network model that may be implemented as the predictive model of the AIRTDS system in accordance with one illustrative embodiment;

[0021] FIG. 5A is a flowchart outlining an example operation of an AIRTDS system during a training of the predictive model in accordance with one illustrative embodiment;

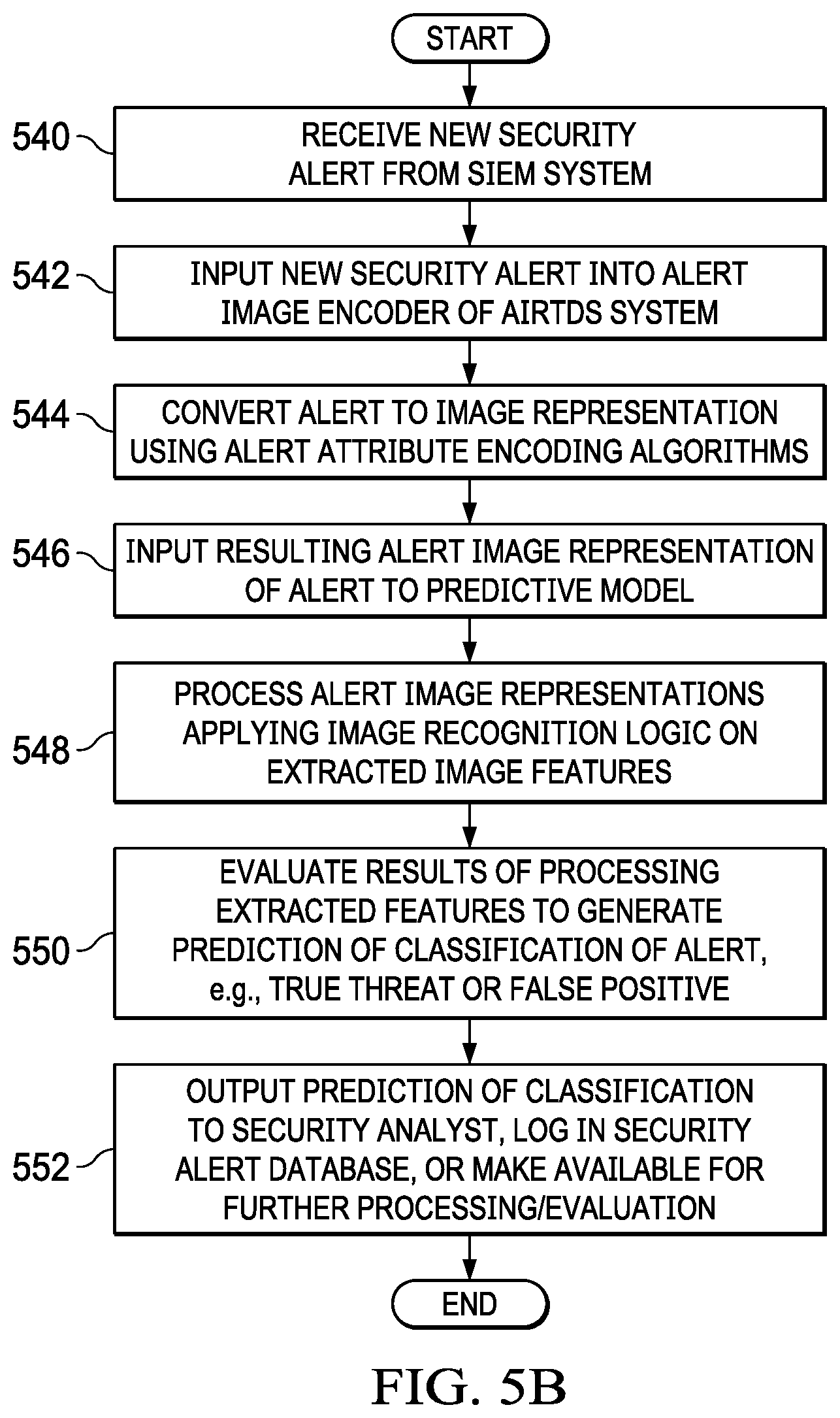

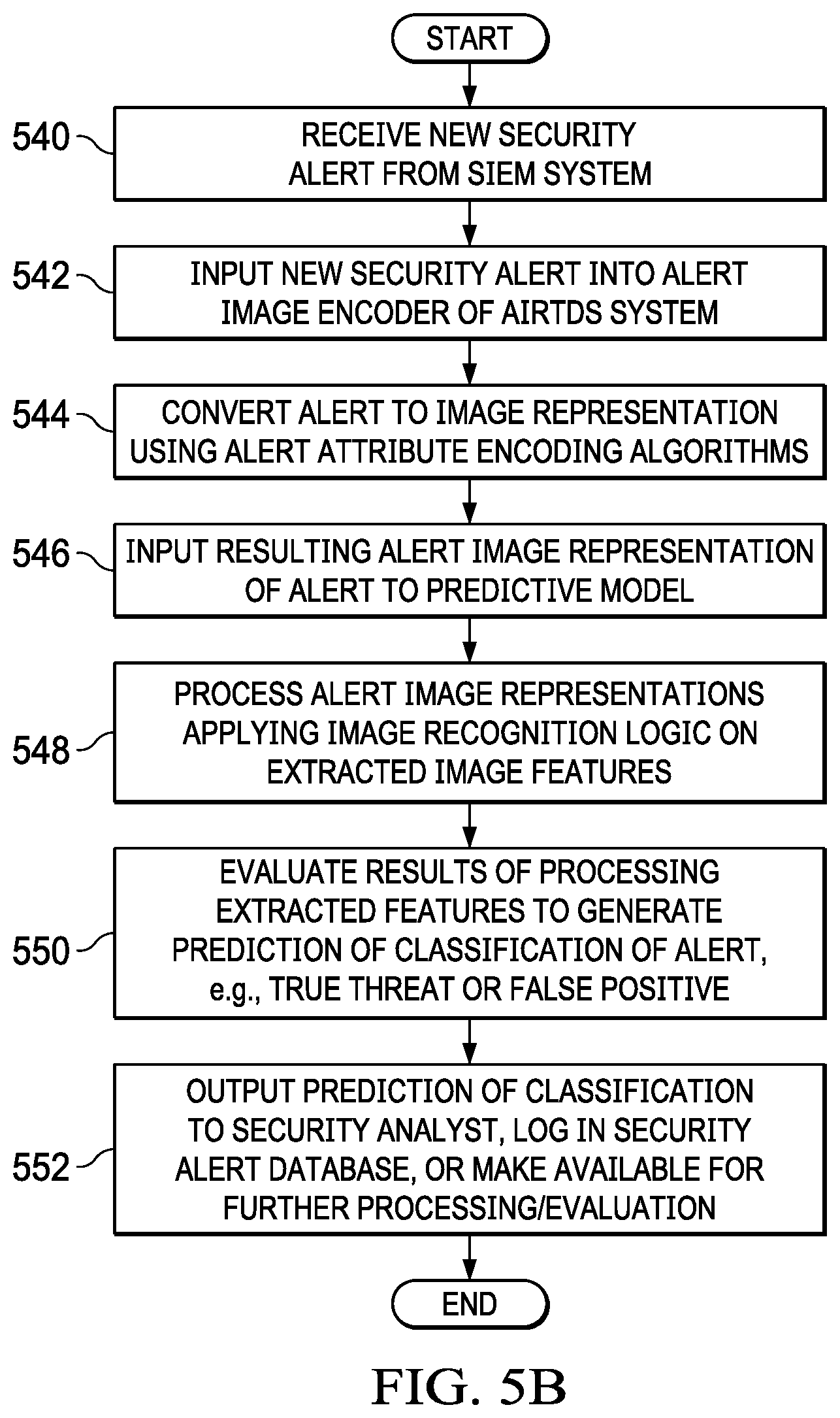

[0022] FIG. 5B is a flowchart outlining an example operation of a AIRTDS system during runtime operation in accordance with one illustrative embodiment;

[0023] FIG. 6 is an example diagram of a distributed data processing system in which aspects of the illustrative embodiments may be implemented; and

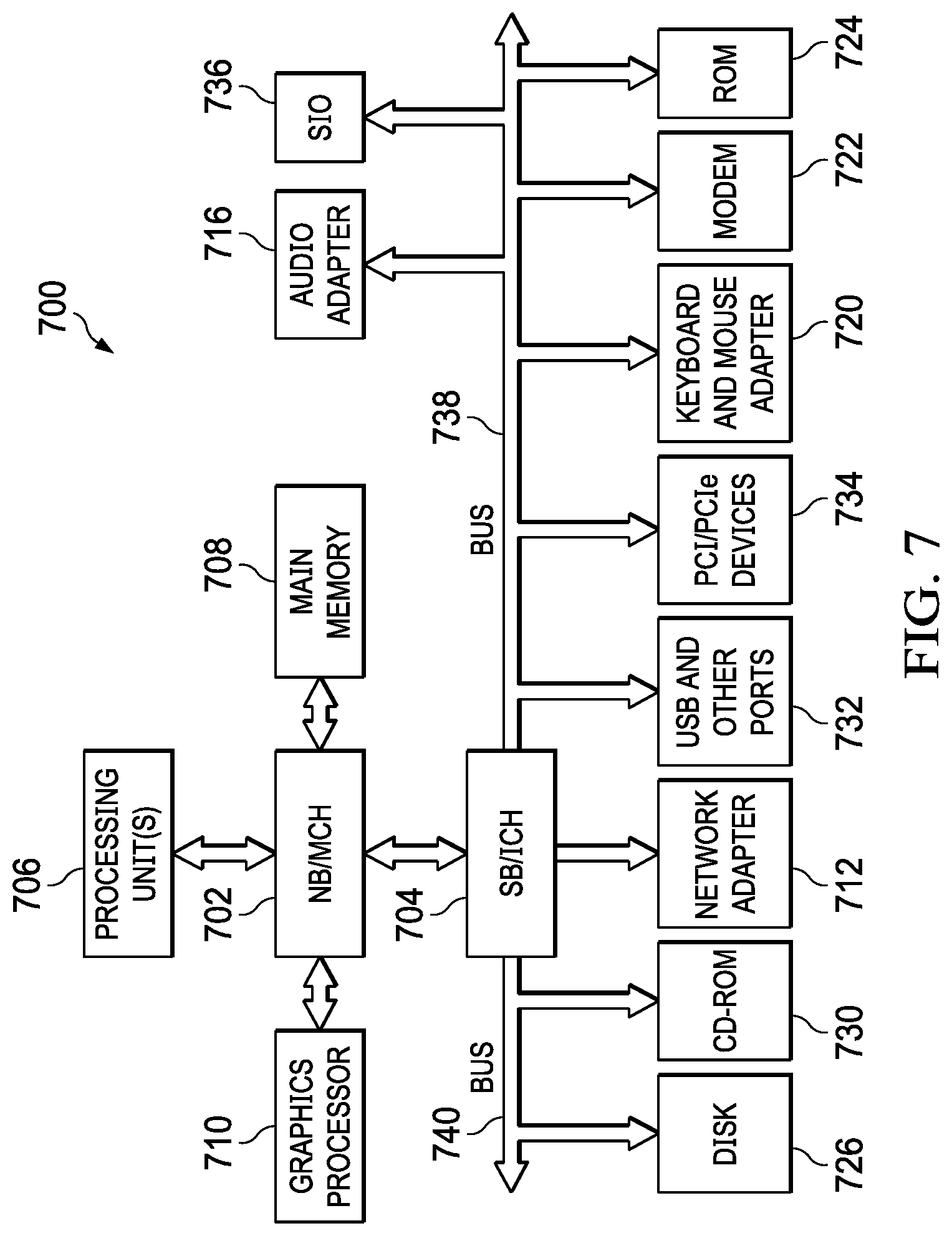

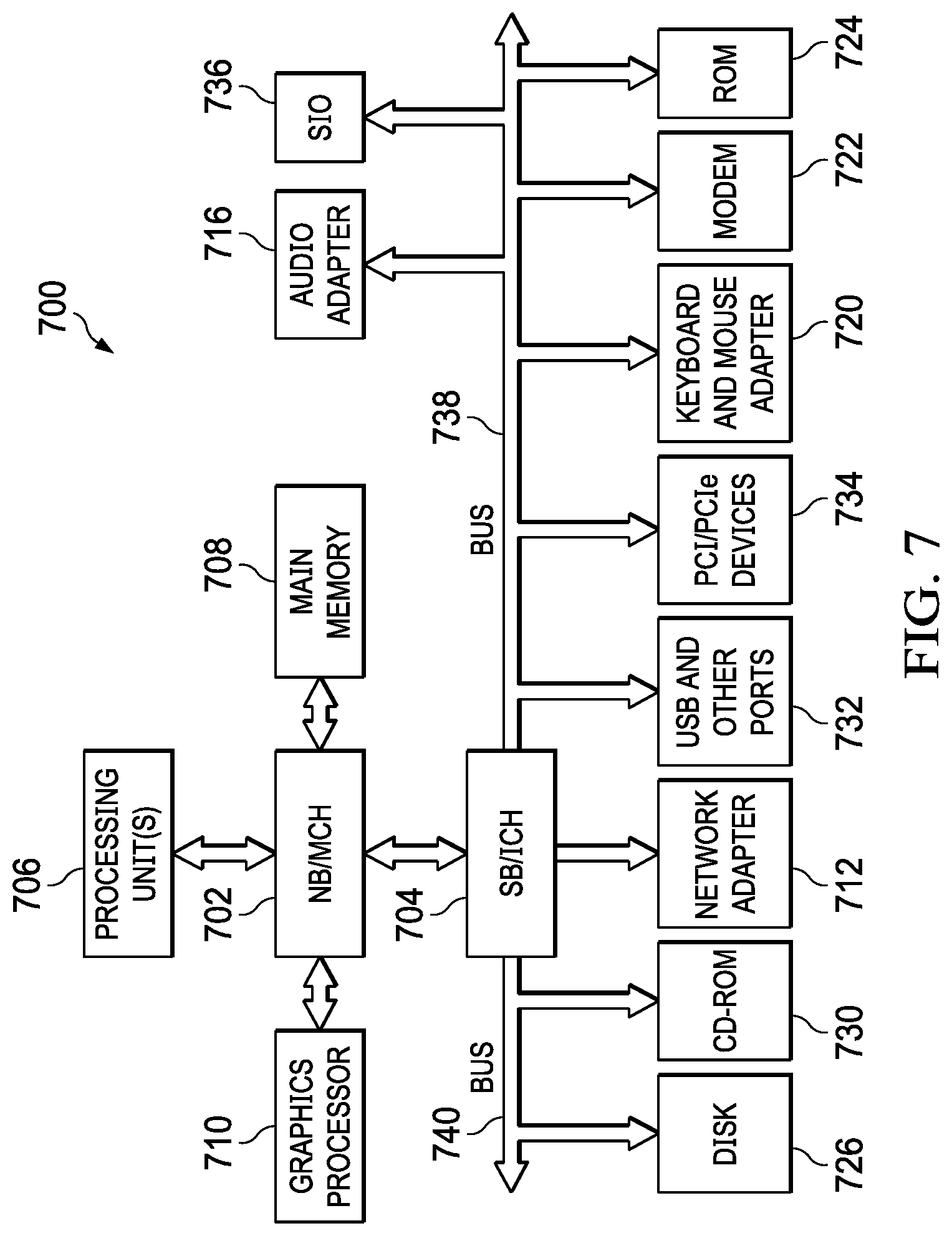

[0024] FIG. 7 is an example block diagram of a computing device in which aspects of the illustrative embodiments may be implemented.

DETAILED DESCRIPTION

[0025] Mechanisms are provided that process alerts (or offenses), such as those that may be generated by a security intelligence event monitoring (SIEM) system and/or stored in constituent endpoint logs, and encode them into an image that is then processed by a trained image recognition system to classify the alerts (or offenses) as to whether or not they are true threats or false positives. In performing the transformation of alerts into an image, the mechanisms of the illustrative embodiments utilize one or more transformation algorithms to transform or encode one or more of the alert attributes into corresponding portions of an image at a specified location and with image features, that correspond to the content of the alert attribute. Different alert attributes may be transformed or encoded into different sections of the image, with the transformation or encoding specifically designed to generate a human visible pattern which is recognizable by the human eye as a visual pattern, but may not necessarily be human understandable as to what the pattern itself represents. In other words, the image recognition system is able to understand the meaning behind the encoded image corresponding to the alert, while the human user may recognize the visual pattern as being a pattern, but not know the meaning of the pattern in the way that the image recognition system is able to understand the meaning.

[0026] It should be appreciated that while the primary illustrative embodiments described herein will make reference specifically to SIEM systems and SIEM alerts or offenses, the illustrative embodiments are not limited to such. Rather, the mechanisms of the illustrative embodiments may be implemented with any computer data analytics systems which generate events or log occurrences of situations for which classification of the events or logged entries may be performed to evaluate the veracity of the event or logged entry. For ease of explanation of the illustrative embodiments, and to illustrate the applicability of the present invention to security data analytics, the description of the illustrative embodiments set forth herein will assume a SIEM system based implementation, but again should not be construed as being limited to such.

[0027] As noted previously, data analytics systems, such as a security intelligence event monitoring (SIEM) computing system operates based on a set of SIEM rules which tend to be static in nature. That is, the SIEM rules employed by a SIEM computing system to identify potential threats to computing resources and then generate alerts/log entries are often generated manually and in response to known threats, rather than automatically and in a proactive manner. As a result, such data analytics systems struggle to keep up with the ever-changing security landscape. That is, as these systems are primarily rule driven, security experts constantly have to modify and manage the SIEM rules in order to keep up with new threats.

[0028] Often the SIEM computing systems suffer from a vast number of false-positive alerts they generate, causing a large amount of manual effort for security analysts and experts to investigate these false-positive alerts to determine whether they are actual threats requiring the creation or modification of SIEM rules. Thus, it would be beneficial to reduce the false-positives and identify true positives, i.e. true threats to computing resources, for further investigation. In so doing, the amount of manual effort needed to investigate alerts and generate appropriate responses to actual threats, e.g., new or modified SIEM rules, may be reduced.

[0029] The illustrative embodiments provide an improved computing tool to differentiate false-positive alerts from true threat alerts for further investigation by subject matter experts, e.g., security analysts and experts, and remediation of such threats. The improved computing tool of the illustrative embodiments includes a trained neural network model that translates attributes of alerts into images and then uses a trained image classification neural network model to classify the alert image into a corresponding class, e.g., false-positive alert image, true threat alert image, or indeterminate threat image. The mechanisms of the illustrative embodiments provide an analytics machine learning computing system that auto-disposes alerts based on learning prior security analyst/expert decisions and applying them to the alert images generated by the mechanisms of the illustrative embodiments. The mechanisms of the illustrative embodiments utilize unsupervised machine learning with similarly encoded images to detect anomalies within alert images, further augmenting computing system capabilities to automatically disposition true threats.

[0030] In addition, the mechanisms of the illustrative embodiments may be used directly in an end user's computing environment in order to obfuscate sensitive data and securely send it over the network directly into the alert image classification computing tool of the illustrative embodiments for auto-dispositioning. That is, at the end user's computing environment, alerts and logged entries may be translated into an image representation of the alert or logged entry, referred to herein as an alert image. The alert image may then be transmitted to a remotely located alert image classification computing tool of the illustrative embodiments, thereby ensuring that only the alert image data is exposed outside the end user's computing environment. Hence, an unauthorized interloper will at most be able to access the encoded alert image data, but without knowing the manner by which the encoded alert image data is encoded from the original alert or logged entries, will be unable to ascertain the private or sensitive data from which the encoded alert image data was generated. Additional security mechanisms, such as private-public key mechanisms, random seed values, and the like, may be used to increase the security of the alert image generation process and decrease the likelihood that an interloper will be able to extract the sensitive underlying alert/log data that is the basis of the alert image data transmitted to the alert image classification computing tool of the illustrative embodiments.

[0031] In one illustrative embodiment, the advanced image recognition for threat disposition scoring (AIRTDS) computing tool comprises two primary cognitive computing system elements which may comprise artificial intelligence based mechanisms for processing threat alerts/logged data to identify and differentiate true threat based alerts/log entries from false-positive threat alerts/log entries. A first primary cognitive computing system element comprises an alert/log entry image encoding mechanism (referred to hereafter as an alert image encoder). The alert image encoder comprises one or more algorithms for encoding alert/log entry attributes into corresponding pixels of a digital image representation of the alert/log entry having properties, e.g., pixel location within the digital image representation, color of pixels, etc., that are set to correspond to the encoded alert/log entry attribute. The alert/log attributes that are encoded may comprise various types of alert/log attributes extracted from the alert/log entry, such as remedy customer ID, source Internet Protocol (IP) address, destination IP, event name, SIEM rule triggering alert/log entry, attack duration, and the like. Different encoding algorithms may be applied to each type of alert/log entry attribute and each encoding algorithm may target a predefined section of the image representation where the corresponding alert/log entry attribute is encoded.

[0032] While each encoding algorithm may encode the corresponding alert/log entry attribute in a different section of the image representation, the encoding algorithm is configured such that the combination of sections of the image representation are encoded in manner where a visual pattern that is present across the sections is generated. Thus, the algorithms are specifically configured to work together to generate a visual pattern, i.e. a repeatable set of pixel properties and locations within a section, that spans across the plurality of sections of the image representation. The visual pattern is visually discernable by the human eye even if the underlying meaning of the visual pattern is not human recognizable from the visual nature, e.g., the human viewing the image representation of the alert can see that there is a pattern, but cannot ascertain that the particular pattern, to a cognitive computing system of the illustrative embodiments, represents a true threat to a computing resource.

[0033] A second primary cognitive computing system element comprises an alert/log entry classification machine learning mechanism that classifies alerts/log entries as to whether they represent true threats, false-positives, or indeterminate alerts/log entries. The alert/log entry classification machine learning mechanism may comprise a neural network model, e.g., a convolutional neural network (CNN), that is trained to classify images into one of a plurality of pre-defined classes of images based on image analysis algorithms applied by the nodes of the neural network model to extract and process features of the input image based on learned functions of the extracted features. In one illustrative embodiment, the pre-defined classes of images comprises classifying an encoded image representation of an alert/log entry into either a true-threat classification or a false-positive classification (not a true threat). In this implementation, the neural network model only processes alerts/log entries that correspond to potential threats to computing resources identified by a monitoring tool, such as SIEM system. In some implementations, additional classifications may also be utilized, e.g., indeterminate threat classification where it cannot be determined whether the alert/log entry corresponds to one of the other predetermined classes, non-threat classification in embodiments where all log entries over a predetermined period of time are processed to generate image representations which are then evaluated as to whether they represent a threat or a non-threat, etc. For purposes of illustration, it will be assumed that an embodiment is implemented where the predetermined classifications consist of a true-threat classification and a false-positive classification.

[0034] Assuming a SIEM computing system based implementation of the illustrative embodiments, during a training phase of operation, the mechanisms of the AIRTDS computing tool process a set of alerts (offenses) generated by the SIEM computing system which are then dispositioned by a human security analyst/expert with a label of "true threat" or "false positive." That is, a human security analyst/expert is employed to generate a set of training data comprising alerts that are labeled by the human security analyst/expert as to whether they are true threats or false positives. Each alert is encoded as an image representation by extracting the alert attributes from the alert that are to be encoded, and then applying corresponding encoding algorithms to encode those extracted alert attributes into sections of the image representation. For example, in one illustrative embodiment, the image representation of an alert/log entry is a 90.times.90 pixel image made up of smaller 90.times.5 pixel grids, each smaller grid representing an area for encoding individual alert attributes, or encoding of combinations of a plurality, or subset, of alert attributes.

[0035] For example, using an example of an IBM.RTM. QRadar.RTM. Security Intelligence Platform as a SIEM system implementation, the mechanisms of the illustrative embodiments may extract a remedy customer ID (e.g., remedy customer ID: JPID001059) from an alert generated by the SIEM system and encode the remedy customer ID by converting a predetermined set of bits of the remedy customer ID, e.g., the last 6 digits of the remedy customer ID (e.g., 001059, treating the digits as a 24 bit value), to corresponding red, green, blue (RGB) color properties of a set of one or more pixels in a section of the image representation of the alert. The whole customer ID may be used to set pixel locations for the corresponding colored pixels by applying a bit mask to each character's ASCII value to determine whether or not to place a colored pixel, having the RGB color corresponding to the predetermined set of bits of the remedy customer ID, at a corresponding location.

[0036] Other encoding of other alert attributes may be performed to generate, for each section of the image representation, a corresponding sub-image pattern which together with the other sub-image patterns of the various sections of the image representation, results in a visual image pattern present across the various sections. Examples of various types of encoding algorithms that may be employed to encode alert attributes as portions of an image representation of the alert/log entry will be provided in more detail hereafter. A combination of a plurality of these different encoding algorithms for encoding alert attributes may be utilized, and even other encoding algorithms that may become apparent to those of ordinary skill in the art in view of the present description may be employed, depending on the desired implementation. The result is an encoded image representation of the original alert generated by the SIEM computing system which can then be processed using image recognition processing mechanisms of a cognitive computing system, such as an alert/log entry classification machine learning computing system.

[0037] That is, in accordance with the illustrative embodiments, the encoded image representation is then input to the alert/log entry classification machine learning mechanism which processes the image to classify the image as to whether the alert/log entry classification machine learning mechanism determines the image represents a true threat or is a false positive. In some illustrative embodiments, the alert/log entry classification machine learning mechanism comprises a convolutional neural network (CNN) that comprises an image pre-processing layer to perform image transformation operations, e.g., resizing, rescaling, shearing, zoom, flipping pixels, etc., a feature detection layer that detects and extracts a set of features (e.g., the layer may comprise 32 nodes that act as feature detectors to detect 32 different features) from the pre-processed image, and a pooling layer which pools the outputs of the previous layer into a smaller set of features, e.g., a max-pooling layer that uses the maximum value from each of a cluster of neurons or nodes at the prior layer (feature extraction layer in this case). The CNN may further comprise a flattening layer that converts the resultant arrays generated by the pooling layer into a single long continuous linear vector, fully connected dense hidden layers which learn non-linear functions of combinations of the extracted features (now flattened into a vector representation by the flattening layer), and an output layer which, in some illustrative embodiments, may be a single neuron or node layer that outputs a binary output indicating whether or not the input image representation is representative of a true threat or a false positive.

[0038] In some illustrative embodiments, the neurons or nodes may utilize a rectified linear unit (Relu) activation function, while in other illustrative embodiments, other types of activation functions may be utilized, e.g., softmax, radial bias functions, various sigmoid activation functions, and the like. Moreover, while a CNN implementation having a particular configuration as outlined above is used in the illustrative embodiments described herein, other configurations of machine learning or deep learning neural networks may also be used without departing from the spirit and scope of the illustrative embodiments.

[0039] Through a machine learning process, such as an unsupervised machine learning process, the alert/log entry classification machine learning mechanism is trained based on the human security analyst/expert provided labels for the corresponding alerts, to recognize true threats and false positives. For example, the machine learning process modifies operational parameters, e.g., weights and the like, of the nodes of the neural network model employed by the alert/log entry classification machine learning mechanism so as to minimize a loss function associated with the neural network model, e.g., a binary cross-entropy loss function, or the like. Once trained based on the training data set comprising the alert image representations and corresponding human analyst/expert label annotations as to whether the alert is a "true threat" (anomaly detected) or "false positive" (anomaly not detected), the trained alert/log entry classification machine learning mechanism may be applied to new alert image representations to classify the new alert image representations as to whether they represent true threats or false positives.

[0040] As mentioned previously, in accordance with some illustrative embodiments, one significant benefit obtained by the mechanisms of the present invention is the ability to maintain the security of an end user's private or sensitive data, e.g., SIEM event data, within the end user's own computing environment. That is, in some illustrative embodiments, the alert image encoder may be deployed within the end user's own computing environment so that the encoding of alerts as alert image representations may be performed within the end user's own computing environment with only the alert image representation being exported outside the end user's computing environment for processing by the alert/log entry classification machine learning mechanisms of the illustrative embodiments. Thus, the end user's sensitive or private data does not leave the end user's secure computing environment and third parties are not able to discern the sensitive or private data without having an intimate knowledge of the encoding algorithms specifically used. It should further be appreciated that other security mechanisms, such as encryption, random or pseudo-random seed values used in the encrypting algorithms, and the like, may be used to add additional variability and security to the encryption algorithms and to the generated alert image representation to make it less likely that a third party will be able to reverse engineer the alert image representation to generate the sensitive or private data.

[0041] Thus, the illustrative embodiments provide mechanisms for converting alerts/log entries into an image representation of the alerts/log entries for processing by cognitive image recognition mechanisms that use machine learning to recognize alert/log entry image representations that represent true threats or false positives. By converting the alerts/log entries into image representations and using machine learning to classify the alerts/log image representations, the evaluation of the alerts/log entries are not dependent on strict alphanumeric text comparisons which may be apt to either miss actual threats or be over-inclusive of alerts/log entries that do not represent actual threats. Moreover, because the mechanism of the illustrative embodiments utilize image recognition based on image patterns, the mechanisms of the illustrative embodiments are adaptable to new alerts/log entries attribute combinations that result is such patterns, without having to wait for human intervention to generate new rules to recognize new threats.

[0042] Before discussing the various aspects of the illustrative embodiments further, it should first be appreciated that throughout this description the term "mechanism" will be used to refer to elements of the present invention that perform various operations, functions, and the like. A "mechanism," as the term is used herein, may be an implementation of the functions or aspects of the illustrative embodiments in the form of an apparatus, a procedure, or a computer program product. In the case of a procedure, the procedure is implemented by one or more devices, apparatus, computers, data processing systems, or the like. In the case of a computer program product, the logic represented by computer code or instructions embodied in or on the computer program product is executed by one or more hardware devices in order to implement the functionality or perform the operations associated with the specific "mechanism." Thus, the mechanisms described herein may be implemented as specialized hardware, software executing on general purpose hardware, software instructions stored on a medium such that the instructions are readily executable by specialized or general purpose hardware, a procedure or method for executing the functions, or a combination of any of the above.

[0043] The present description and claims may make use of the terms "a", "at least one of", and "one or more of" with regard to particular features and elements of the illustrative embodiments. It should be appreciated that these terms and phrases are intended to state that there is at least one of the particular feature or element present in the particular illustrative embodiment, but that more than one can also be present. That is, these terms/phrases are not intended to limit the description or claims to a single feature/element being present or require that a plurality of such features/elements be present. To the contrary, these terms/phrases only require at least a single feature/element with the possibility of a plurality of such features/elements being within the scope of the description and claims.

[0044] Moreover, it should be appreciated that the use of the term "engine," if used herein with regard to describing embodiments and features of the invention, is not intended to be limiting of any particular implementation for accomplishing and/or performing the actions, steps, processes, etc., attributable to and/or performed by the engine. An engine may be, but is not limited to, software, hardware and/or firmware or any combination thereof that performs the specified functions including, but not limited to, any use of a general and/or specialized processor in combination with appropriate software loaded or stored in a machine readable memory and executed by the processor. Further, any name associated with a particular engine is, unless otherwise specified, for purposes of convenience of reference and not intended to be limiting to a specific implementation. Additionally, any functionality attributed to an engine may be equally performed by multiple engines, incorporated into and/or combined with the functionality of another engine of the same or different type, or distributed across one or more engines of various configurations.

[0045] In addition, it should be appreciated that the following description uses a plurality of various examples for various elements of the illustrative embodiments to further illustrate example implementations of the illustrative embodiments and to aid in the understanding of the mechanisms of the illustrative embodiments. These examples intended to be non-limiting and are not exhaustive of the various possibilities for implementing the mechanisms of the illustrative embodiments. It will be apparent to those of ordinary skill in the art in view of the present description that there are many other alternative implementations for these various elements that may be utilized in addition to, or in replacement of, the examples provided herein without departing from the spirit and scope of the present invention.

[0046] The present invention may be a system, a method, and/or a computer program product. The computer program product may include a computer readable storage medium (or media) having computer readable program instructions thereon for causing a processor to carry out aspects of the present invention.

[0047] The computer readable storage medium can be a tangible device that can retain and store instructions for use by an instruction execution device. The computer readable storage medium may be, for example, but is not limited to, an electronic storage device, a magnetic storage device, an optical storage device, an electromagnetic storage device, a semiconductor storage device, or any suitable combination of the foregoing. A non-exhaustive list of more specific examples of the computer readable storage medium includes the following: a portable computer diskette, a hard disk, a random access memory (RAM), a read-only memory (ROM), an erasable programmable read-only memory (EPROM or Flash memory), a static random access memory (SRAM), a portable compact disc read-only memory (CD-ROM), a digital versatile disk (DVD), a memory stick, a floppy disk, a mechanically encoded device such as punch-cards or raised structures in a groove having instructions recorded thereon, and any suitable combination of the foregoing. A computer readable storage medium, as used herein, is not to be construed as being transitory signals per se, such as radio waves or other freely propagating electromagnetic waves, electromagnetic waves propagating through a waveguide or other transmission media (e.g., light pulses passing through a fiber-optic cable), or electrical signals transmitted through a wire.

[0048] Computer readable program instructions described herein can be downloaded to respective computing/processing devices from a computer readable storage medium or to an external computer or external storage device via a network, for example, the Internet, a local area network, a wide area network and/or a wireless network. The network may comprise copper transmission cables, optical transmission fibers, wireless transmission, routers, firewalls, switches, gateway computers and/or edge servers. A network adapter card or network interface in each computing/processing device receives computer readable program instructions from the network and forwards the computer readable program instructions for storage in a computer readable storage medium within the respective computing/processing device.

[0049] Computer readable program instructions for carrying out operations of the present invention may be assembler instructions, instruction-set-architecture (ISA) instructions, machine instructions, machine dependent instructions, microcode, firmware instructions, state-setting data, or either source code or object code written in any combination of one or more programming languages, including an object oriented programming language such as Java, Smalltalk, C++ or the like, and conventional procedural programming languages, such as the "C" programming language or similar programming languages. The computer readable program instructions may execute entirely on the user's computer, partly on the user's computer, as a stand-alone software package, partly on the user's computer and partly on a remote computer or entirely on the remote computer or server. In the latter scenario, the remote computer may be connected to the user's computer through any type of network, including a local area network (LAN) or a wide area network (WAN), or the connection may be made to an external computer (for example, through the Internet using an Internet Service Provider). In some embodiments, electronic circuitry including, for example, programmable logic circuitry, field-programmable gate arrays (FPGA), or programmable logic arrays (PLA) may execute the computer readable program instructions by utilizing state information of the computer readable program instructions to personalize the electronic circuitry, in order to perform aspects of the present invention.

[0050] Aspects of the present invention are described herein with reference to flowchart illustrations and/or block diagrams of methods, apparatus (systems), and computer program products according to embodiments of the invention. It will be understood that each block of the flowchart illustrations and/or block diagrams, and combinations of blocks in the flowchart illustrations and/or block diagrams, can be implemented by computer readable program instructions.

[0051] These computer readable program instructions may be provided to a processor of a general purpose computer, special purpose computer, or other programmable data processing apparatus to produce a machine, such that the instructions, which execute via the processor of the computer or other programmable data processing apparatus, create means for implementing the functions/acts specified in the flowchart and/or block diagram block or blocks. These computer readable program instructions may also be stored in a computer readable storage medium that can direct a computer, a programmable data processing apparatus, and/or other devices to function in a particular manner, such that the computer readable storage medium having instructions stored therein comprises an article of manufacture including instructions which implement aspects of the function/act specified in the flowchart and/or block diagram block or blocks.

[0052] The computer readable program instructions may also be loaded onto a computer, other programmable data processing apparatus, or other device to cause a series of operational steps to be performed on the computer, other programmable apparatus or other device to produce a computer implemented process, such that the instructions which execute on the computer, other programmable apparatus, or other device implement the functions/acts specified in the flowchart and/or block diagram block or blocks.

[0053] The flowchart and block diagrams in the Figures illustrate the architecture, functionality, and operation of possible implementations of systems, methods, and computer program products according to various embodiments of the present invention. In this regard, each block in the flowchart or block diagrams may represent a module, segment, or portion of instructions, which comprises one or more executable instructions for implementing the specified logical function(s). In some alternative implementations, the functions noted in the block may occur out of the order noted in the figures. For example, two blocks shown in succession may, in fact, be executed substantially concurrently, or the blocks may sometimes be executed in the reverse order, depending upon the functionality involved. It will also be noted that each block of the block diagrams and/or flowchart illustration, and combinations of blocks in the block diagrams and/or flowchart illustration, can be implemented by special purpose hardware-based systems that perform the specified functions or acts or carry out combinations of special purpose hardware and computer instructions.

[0054] FIG. 1A is an example diagram illustrating the primary operational elements for a training phase of an advanced image recognition for threat disposition scoring (AIRTDS) computing system, and a workflow of these primary operational elements, in accordance with one illustrative embodiment. FIG. 1B is an example diagram illustrating the primary operational elements for runtime operation of an AIRTDS computing system, after training of the AIRTDS computing system, and a workflow of these primary operational elements, in accordance with one illustrative embodiment. Similar reference numbers in FIGS. 1A and 1B represent similar elements and workflow operations. FIGS. 1A and 1B assume an implementation that is directed to a security intelligence event monitoring (SIEM) based embodiment in which a SIEM system provides security alerts for classification as to whether they represent true threats or false positives (it can be appreciated that a SIEM system will not generate an alert for events that do not represent potential threats and thus, security alerts only represent either true threats or false positives). However, as noted above, it should be that the illustrative embodiments are not limited to operation with SIEM systems or security alerts and may be used with any mechanism in which event notifications/logged entries need to be classified into one of a plurality of classes, and which can be converted into an image representation in accordance with the mechanisms of the illustrative embodiments.

[0055] As shown in FIG. 1A, a SIEM computing system 110, which may be positioned in an end user computing environment 105, obtains security log information from managed computing resources 101 in the end user computing environment 105, e.g., servers, client computing devices, computing network devices, firewalls, database systems, software applications executing on computing devices, and the like. Security monitoring engines 103 may be provided in association with these managed computing resources, such as agents deployed and executing on endpoint computing devices, which collect security events and provide the security event data to the SIEM computing system 110 where it is logged in a security log data structure 112. In some illustrative embodiments, the security monitoring engine(s) 103 themselves may be considered an extension of the SIEM computing system 110 and may apply SIEM rules to perform analysis of the security events to identify event data indicative of suspicious activity that may be indicative of a security attack or vulnerability, triggering a security alert 120 or security log entry to be generated. Moreover, the security monitoring engine(s) may provide such security event log information to the SIEM system 110 for further evaluation. In other illustrative embodiments, the security event data gathered by the security monitoring engines may be provided to the SIEM system 110 for logging in a security log 112 and for generation of security alerts 120.

[0056] The security event data may specify various events associated with the particular managed computing resources 101 that represent events of interest to security evaluations, e.g., failed login attempts, password changes, network traffic patterns, system configuration changes, etc. SIEM rules are applied by the agents 103 and/or SIEM computing system 110, e.g., IBM.RTM. QRadar.RTM. Security Intelligence Platform, to the security log information to identify potential threats to computing resources and generate corresponding security alerts 120. The security alerts 120 may be logged in one or more security alert log entries or may be immediately output to a threat monitoring interface 130 as they occur.

[0057] The threat monitoring interface 130 is a user interface that may be utilized by a security analyst to view security alerts 120 and determine the veracity of the security alerts 120, i.e. determine whether the security alerts 120 represent an actual security threat or a false-positive generated by the SIEM rules applied by the SIEM computing system 110. The threat monitoring interface 130 receives security alerts 120 and displays the security alert attributes to a security analyst 131 via a graphical user interface so that that security analyst 131 is able to manually view the security alert attributes, investigate the basis of the security alert 132, and then label the security alert 120 as being representative of an actual threat 134 to computing resources 101 of the end user computing environment 105, or a false positive alert 136. The labeled security alert data is then stored in an entry in the security alert database 138 and thereby generate a training dataset comprising security alert entries and corresponding labels as to the correct classification of the alert as to whether it represents a true threat or a false positive.

[0058] The training dataset, comprising the security alerts and their corresponding correct classifications, are exported 142 into an alert image encoder 144 of the AIRTDS system 140. The alert image encoder 144 extracts alert attributes from the security alert entries in the training dataset exported from the security alert database 138 and applies image encoding algorithms 143 to the corresponding extracted alert attributes to encode portions of an image representation of the security alert. Thus, for each security alert, and for each alert attribute of each security alert, a corresponding encoded section of an alert image representation is generated. Each alert attribute may have a different encoding performed by a different type of alert attribute image encoding algorithm that is specific to the particular alert attribute type. Examples of the alert attribute encodings for a variety of different alert attributes will be described hereafter with regard to example illustrative embodiments.

[0059] The resulting encoded alert image representations 146 are input to a cognitive predictive computer model 148 which is then trained based on the encoded alert image representations 146 and the corresponding correct labels, i.e. "true threat" or "false positive", associated with the corresponding security alert entry exported 142 to the alert image encoder 144. The cognitive predictive computer model 148 may be the alert/log entry classification machine learning mechanism previously described above which may employ a neural network model, such as a convolutional neural network (CNN) model, that is trained through a machine learning process using the training dataset comprising the alert image representations 146 and the corresponding ground truth of the correct labels generated by the security analyst 131 and stored in the security alert database 138.

[0060] As mentioned previously, this machine learning may be a supervised or unsupervised machine learning operation, such as by executing the CNN model on the alert image representation for security alerts to thereby perform image recognition operations on image features extracted from the alert image representation, generating a classification of the alert as to whether it represents a true threat or a false positive, and then comparing the result generated by the CNN model to the correct classification for the security alert. Based on a loss function associated with the CNN model, a loss, or error, is calculated and operational parameters of nodes or neurons of the CNN model are modified in an attempt to reduce the loss or error calculated by the loss function. This process may be repeated iteratively with the same or different alert image representations and security alert correct classifications. Once the loss or error is equal to or less than a predetermined threshold loss or error, the training of the CNN model is determined to have converged and the training process may be discontinued. The trained CNN model, i.e. the trained alert/log entry classification machine learning mechanism 148 may then be deployed for runtime execution on new security alerts to classify their corresponding alert image representations as to whether they represent true threats to computing resources or are false positives generated by the SIEM system 110.

[0061] FIG. 1B illustrates an operation of the trained alert/log entry classification machine learning mechanism 148 during runtime operation on new security alerts 150 generated by the SIEM system 110. It should be noted that in the runtime operation shown in FIG. 1B, the threat monitoring interface 130 is not necessary to the runtime operation as the threat monitoring interface 130 was utilized to allow a security analyst 131 to provide a correct label for the security alerts 120 for purposes of training. Since the training has been completed prior to the runtime operation of FIG. 1B, the new security alerts 150 may be input directly into the AIRTDS system 140. That is similar to the operation of the SIEM system 110 during the training operation, the SIEM system 110 generates new security alerts 150 which, during runtime operation, are sent directly to the AIRTDS system 140 for encoding by the alert image encoder 144 as alert image representations 160. The alert image representations, as with the alert image representations generated during training, are data structures specifying image pixel characteristics for each pixel of a predefined size alert image and which encode alert attributes in predefined sections of the alert image representation. As with the training operation, the alert image encoder 144 applies the encoding algorithms 143 to the extracted alert attributes from the security alert 150 and generates the alert image representation 160 that is input to the trained predictive model 148.

[0062] The trained predictive model 148 operates on the alert image representation 160 to apply the trained image recognition operations of the nodes/neurons of the CNN to the input alert image representation data and generates a classification of the alert image representation 160 as to whether it represents a true threat to computing resources detected by the SIEM system 110, or is a false positive, i.e. an alert generated by the SIEM system 110, but which is not in fact representative of a true threat to computing resources. The output classification generated by the predictive model 148 may be provided to a security analyst, such as via the threat monitoring interface 130 in FIG. 1A, for further evaluation, logged in the security alert database 138, or otherwise made available for further use in determining the correct/incorrect operation of the SIEM system 110 and performing responsive actions to improve the operation of the SIEM system 110 and/or protect the computing resources of the end user computing environment 105.

[0063] It should be appreciated that there are many modifications that may be made to the example illustrative embodiments shown in FIGS. 1A and 1B without departing from the spirit and scope of the illustrative embodiments. For example, while FIGS. 1A and 1B illustrate the alert image encoder 144 as being part of the AIRTDS system 140 in a separate computing environment from the end user computing environment 105, the illustrative embodiments are not limited to such. Rather, in some illustrative embodiments, the alert image encoder 144 may be deployed in the end user computing environment 105 and may operate on security alerts 120, 150 generated by the SIEM system 110, within the end user computing environment 105, as represented by the dashed line alternative shown in FIGS. 1A and 1B. In such embodiments, rather than exporting the security alerts 120, 150 to the threat monitoring interface 130 or AIRTDS system 140, instead the encoded alert image representations may be exported. During training, the security analyst 131 may view the security alerts 120, 150 using a user interface that is accessible within the end user computing environment 105 and the exported alert image representations 120 may be output along with their corresponding labels as to whether they are true threats or false positives. The outputting of such alert image representations and corresponding correct labels may be performed as a training dataset that is exported directly to the AIRTDS system 140 for processing by the predictive model 148.

[0064] In addition, as another modification example, rather training the predictive model 148 on both true threat and false positive alert image representations, the predictive model 148 may be trained using an unsupervised training operation on only false positive alert image representations so that the predictive model 148 learns how to recognize alert image representations that corresponding to false positives. In this way, the predictive model 148 regards false positives as a "normal" output and any anomalous inputs, i.e. alert image representations that are not false positives, as true threats. Hence a single node output layer may be provided that indicates 1 (normal--false positive) or 0 (abnormal--true threat), for example.

[0065] Other modifications may also be made to the mechanisms and training/runtime operation of the AIRTDS system 140 without departing from the spirit and scope of the illustrative embodiments. For example, various activation functions, image encoding algorithms, loss functions, and the like may be employed depending on the desired implementation.

[0066] As noted above, the alert image encoder 144 applies a variety of different image encoding algorithms to the alert attributes extracted from the security alerts 120, 150 generated by the SIEM system 110. To illustrate examples of such image encoding algorithms a description of an example SIEM system security alert 120, 150 generated by an implementation of the SIEM system 110 as the IBM.RTM. QRadar.RTM. Security Intelligence Platform will now be described. FIG. 2A is an example diagram illustrating a data layout of extracted security alert attributes for a security alert that may be generated by the IBM.RTM. QRadar.RTM. Security Intelligence Platform in accordance with one illustrative embodiment. As shown in FIG. 2A, the alert attributes include a remedy customer ID, an industry identifier, a QRadar rule name/Qradar rule name count/isQRalert attribute, an alert score QSeverity/Magnitude attribute, Event Count/Rule Fire Count attribute, a Source (Src) Geo/Src Geo count attribute, a Destination (Dst) geo/Dst Geo Count attribute, Event Names/Event Names count attribute, Event Vendor attribute, Event Groups/Event Groups count attribute, various Source and Destination IP count attributes and traffic count attributes, an attack duration attribute, and an alert creation time attribute. These are only examples, other SIEM systems may generate other types of attributes that may be the basis of the operation of the illustrative embodiments with regard to alert image representation generation and image analysis to identify the veracity of an alert in accordance with one or more illustrative embodiments.

[0067] The alert image encoding algorithms 143 applied by the alert image encoder 144 are selected and configured taking into account a desirability to visually represent the alert attributes so that they are visually easier to identify each block of alert attribute data represented in an image form. That is, the alert image encoding algorithms 143 are specifically selected and configured such that they generate a visual pattern identifiable by the human eye as a pattern, even though the underlying meaning of the pattern is not necessarily human understandable. Moreover, these algorithms 143 are selected and configured such that the patterns may be recognized across multiple sections of the resulting alert image representation, which comprises sections, each section corresponding to a different alert attribute, or subset of attributes, encoded using a different alert image encoding algorithm 143 or set of algorithms. Moreover, the alert image encoding algorithms 143 are selected and encoded to ensure scalability by modifying the sections (or grids) of the alert image representation attributed to the corresponding alert attribute(s), i.e. if there are more alert attributes to consider for a particular implementation, decrease the dimensions of the section or grid and add an additional grid for the additional alert attributes.

[0068] Furthermore, in some illustrative embodiments, each alert attribute is afforded a fixed size section or grid in the alert image representation which avoids certain portions of the alert attributes having priority over others. However, in some illustrative embodiments, the sizes of the sections or grids may be dynamically modified based on a determined relative priority of the corresponding alert attributes to the overall identification of a true threat from a false positive. For example, it is determined that a particular attribute, e.g., source IP address, is more consistently representative of an true threat/false positive than other attributes, then the section (grid) of the alert image representation corresponding to the source IP address may have its dimensions modified to give them greater priority in the results generated by the image recognition processing performed by the trained predictive model 148.

[0069] With the alert image encoding performed by the alert image encoder 144, the alert image representation is configured to a predefined image size having sections, or grids, of a predetermined size. Each section or grid corresponds to a single alert attribute encoded as an image pattern, or a subset of alert attributes that are encoded as an image pattern present within that section or grid. In one illustrative embodiment, the alert image representation is a 90.times.90 pixel image having sections that are 90.times.5 pixel grids.

[0070] As an example of one type of alert attribute image encoding that may be performed by a selected and configured alert image encoding algorithm, 143, the remedy customer ID may have certain bits of the customer ID selected and used to set color characteristics of pixels in a corresponding section of the alert image representation with the location of these pixels being dependent upon the application of a bit mask to each character of the customer ID. For example, assume a remedy customer ID of "JPID001059." In one illustrative embodiment, the last 6 digits of the remedy customer ID, i.e. "001059," may be extracted and treated as a 24 bit value to set each of red, green, and blue (RGB) pixel color values. In this case, the value "001059" is treated as the 24 bit value "000000000000010000100011" and each of the R, G, and B values for the pixels are set to this 24 bit value. A bit mask is applied to each characters ASCII value to decide whether or not to place the colored pixel at a corresponding location in the corresponding section, or grid, of the alert image representation.

[0071] As a further example, for the industry identifier and/or event vendor identifiers, a predetermined set of characters of the string may be used to set an initial pixel value, with the remaining characters being used to provide a binary representation that identifies the location of where the colored pixels are to be placed within the section or grid, using again a bit mask mechanism. For example, the first 3 characters of a character string specifying the industry identifier or the event vendor may be used to identify the red, green, and blue pixel color characteristics, e.g., Red: Char1, Green: Char2, and Blue: Char3. The 4.sup.th character and thereafter may be used to determine the location of colored pixels having these R, G, and B color characteristics. Once a character has been mapped to the image within the corresponding section or grid, that character may replace any older RGB value, e.g., any of R, G, or B values, and then a next character of the character string, e.g., the 5.sup.th character and so on, may be processed.

[0072] FIG. 2B is an example diagram illustrating the image encoding of the industry identifier/event vendor in accordance with one illustrative embodiment. The example shown in FIG. 2B assumes an industry identifier/event vendor string of "a4vxb". In this example, the characters "a", "4", and "v" are mapped to the RGB channels of the pixel and the binary representation of character "x" (e.g., 0111100001) is mapped to the image. Once "x" has been mapped to the image, "x" is mapped to the R (Red) channel, i.e. the red pixel characteristic is reset to a new value based on the "x" character, and the map now proceeds to encoding the character "b". This process may be repeated for each subsequent character until the entirety of the industry identifier and/or event vendor string is encoded as an image pattern for the section of the alert image representation.

[0073] As another encoding example, consider the QRadar Rule alert attribute information, which actually includes 3 attributes: QRadar Rule Name, QRadar Rule Name Count, and IsQRalert. In one illustrative embodiment of an image encoding algorithm operating on the QRadar Rule alert attribute information, the IsQRalert attribute is used to determine how much of the corresponding section or grid to fill with colored pixels. For example, if the IsQRalert attribute is set to a value of "true", then the full pixel grid or section is utilized. If the IsQRalert attribute is set to a value of "false," then only a sub-portion of the section or grid is utilized, e.g., a first half of the pixel grid is utilized.

[0074] The QRadar Rule Name Count attribute may be used to determine the pixel color of the pixels in the corresponding portion of the section or grid. For example, the QRadar Rule Name Count may be normalized and then values may be scaled up to be in the range 0-255, for example. The pixel color characteristics, i.e. the RGB values, are set based on the scaled count value, i.e. the value in the range from 0-255. For example, if the scaled count is 255, the RGB value may be set to (230, 0, 0), i.e. "red," otherwise the RGB characteristics are set to (count, count, count) where count is the scaled count value.

[0075] The QRadar Rule Name is used to identify the pixel location, within the portion of the section or grid (determined based on the value of the IsQRalert), where the pixel, having the pixel color characteristics determined based on the QRadar Rule Name Count attribute, is to be placed. In one illustrative embodiment a special character summation is utilized where each character added to the sum is multiplied by its index in the string. A bit mask is then applied to the summation to determine whether or not to place the colored pixel at the corresponding location.

[0076] In another example, for source/destination geographical identifier and/or source/destination geographical count, the count may be used again as a basis for setting the pixel color by normalizing the source/destination geographical count and then scaling the values up to be in the 0-255 range. The resulting scaled count values may be used to set the RGB color characteristics for the pixel, again with a count of 255 being set to (230, 0, 0) while other counts are have the RGB values set to (count, count, count). The source/destination geographical identifier may be used to determine the location of the pixels within the corresponding section or grid by applying a bit mask to each character in the source/destination geographical identifier string to determine whether or not to place the colored pixel at the corresponding location.