Efficient Architectures For Deep Learning Algorithms

Chilappagari; Shashi Kiran ; et al.

U.S. patent application number 16/397401 was filed with the patent office on 2020-10-29 for efficient architectures for deep learning algorithms. The applicant listed for this patent is DeGirum Corporation. Invention is credited to Shashi Kiran Chilappagari, Winston Lee.

| Application Number | 20200341772 16/397401 |

| Document ID | / |

| Family ID | 1000004082167 |

| Filed Date | 2020-10-29 |

View All Diagrams

| United States Patent Application | 20200341772 |

| Kind Code | A1 |

| Chilappagari; Shashi Kiran ; et al. | October 29, 2020 |

Efficient Architectures For Deep Learning Algorithms

Abstract

A computer system including a plurality of SIMD engines and a corresponding plurality of output register sets. Operand A register file stores one or more Operand A values, each including a plurality of operand words. Operand B register file stores one or more Operand B values, each including a plurality of operand words. Operand A distribution circuit receives an Operand A value from the Operand A register file, and selectively routes one or more of the operand words of the received Operand A value to create a plurality of input Operand A values, which are selectively routed to the SIMD engines. Operand B distribution circuit receives one or more Operand B values from the Operand B register file, and selectively routes one or more of the operand words of the Operand B value(s) to create a plurality of input Operand B values, which are selectively routed to the SIMD engines.

| Inventors: | Chilappagari; Shashi Kiran; (San Jose, CA) ; Lee; Winston; (Palo Alto, CA) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Family ID: | 1000004082167 | ||||||||||

| Appl. No.: | 16/397401 | ||||||||||

| Filed: | April 29, 2019 |

| Current U.S. Class: | 1/1 |

| Current CPC Class: | G06F 9/3824 20130101; G06F 9/30109 20130101; G06F 9/30141 20130101; G06F 9/3887 20130101 |

| International Class: | G06F 9/38 20060101 G06F009/38; G06F 17/16 20060101 G06F017/16; G06F 9/30 20060101 G06F009/30; G06N 20/00 20060101 G06N020/00 |

Claims

1. A computer system comprising: a plurality of single instruction, multiple data (SIMD) engines; a plurality of output register sets, each coupled to a corresponding one of the plurality of SIMD engines; a first operand register file that stores a first plurality of operand values, wherein each of the first plurality of operand values includes a plurality of operand words; a second operand register file that stores a second plurality of operand values, wherein each of the second plurality of operand values includes a plurality of operand words; an first input distribution circuit coupled to receive a first operand value from the first operand register file, wherein the first input distribution circuit selectively routes one or more of the operand words of the first operand value to create a plurality of first input operand values, wherein each of the first input operand values is routed to a corresponding one of the plurality of SIMD engines; and a second input distribution circuit coupled to receive one or more second operand values from the second operand register file, wherein the second input distribution circuit selectively routes one or more of the operand words of the one or more second operand values to create a plurality of second input operand values, wherein each of the second input operand values is routed to a corresponding one of the plurality of SIMD engines.

2. The computer system of claim 1, wherein the first input distribution circuit selectively routes the first operand value as the first input operand value to each of the plurality of SIMD engines.

3. The computer system of claim 1, wherein the first input distribution circuit selectively routes a single one of the operand words of the first operand value to create all of the first input operand values.

4. The computer system of claim 1, wherein the first input distribution circuit selectively routes the operand words of the first operand value such that each of the first input operand values comprises a repeated operand word of the first operand value.

5. The computer system of claim 1, wherein the second input distribution circuit selectively routes a different second operand value to each of the plurality of SIMD engines.

6. The computer system of claim 1, wherein the second input distribution circuit selectively routes a different second operand value to at least two of the plurality of SIMD engines.

7. The computer system of claim 1, wherein the second input distribution circuit selectively routes the same second operand value to each of the plurality of SIMD engines.

8. The computer system of claim 1, wherein the second input distribution circuit includes a first plurality of second operand buffers, each of the first plurality of second operand buffers configured to store one of the second operand values from the second operand register file.

9. The computer system of claim 8, wherein the second operand register file comprises a plurality of register files, each coupled to a corresponding one of the second operand buffers.

10. The computer system of claim 8, wherein the second input distribution circuit comprises means for coupling the second operand buffers to the plurality of SIMD engines in parallel.

11. The computer system of claim 8, wherein the second input distribution circuit further includes a second plurality of second operand buffers, each of the second plurality of second operand buffers configured to store one of the second operand values from the second operand register file.

12. The computer system of claim 1, wherein the second operand register file comprises a plurality of register files, wherein the plurality of register files are coupled to the second input distribution circuit in parallel.

13. The computer system of claim 12, wherein the second input distribution circuit comprises a shift logic circuit coupled to receive a plurality of the second operand values from the plurality of register files of the second operand register file, wherein the shift logic circuit is configured to shift the operand words of the plurality of the second operand values to create a plurality of input second operand values.

14. The computer system of claim 13, further comprising a plurality of second operand buffers, coupled to receive the plurality of input second operand values from the shift logic circuit.

15. The computer system of claim 14, further comprising switching circuitry coupling the plurality of second operand buffers to the plurality of SIMD engines. (FIG. 19)

16. The computer system of claim 1, further comprising: an output circuit including a plurality of output register sets, wherein each of the output register sets is coupled to a corresponding one of the SIMD engines.

17. The computer system of claim 16, wherein each of the output register sets is configured to provide an accumulation value to the corresponding SIMD engine and store an accumulation value provided by the corresponding SIMD engine.

18. The computer system of claim 16, wherein each of the output register sets is independently addressed.

19. The computer system of claim 1, wherein each of the SIMD engines is configured to multiply the operand words of the first input operand value received from the first input distribution circuit with the operand words of the second input operand value received from the second input distribution circuit, whereby each of the SIMD engines generates a corresponding plurality of product values.

20. The computer system of claim 19, further comprising an output circuit including a plurality of output register sets, wherein each of the output register sets is coupled to a corresponding one of the SIMD engines.

21. The computer system of claim 20, wherein each of the output register sets is configured is configured to provide a corresponding plurality of accumulation values to the corresponding one of the SIMD engines, wherein each of the SIMD engines is configured to add the received accumulation values to the corresponding generated product values, whereby each of the SIMD engines generates a corresponding plurality of updated accumulation values.

22. The computer system of claim 21, wherein each of the output register sets is configured to receive and store the corresponding plurality of updated accumulation values from the corresponding one of the SIMD engines.

23. A method of performing matrix multiplication of a first matrix and a second matrix using a computer system including a plurality of single instruction multiple data (SIMD) engines and a plurality of corresponding output registers, the method comprising: identifying a plurality of non-zero entries included in the first matrix, wherein each of the non-zero entries has a corresponding column address and a corresponding row address within the first matrix; for each non-zero entry of the identified non-zero entries, identifying one of the SIMD engines and a corresponding one of the output registers to process the non-zero entry in response to the corresponding row address of the non-zero entry; sorting the non-zero entries based on the identified SIMD engines and corresponding output registers, thereby creating a plurality of first operand values, wherein each of the first operand values includes a plurality of the non-zero entries, each having a different identified SIMD engine and corresponding output register; and routing the first operand values to the SIMD engines to perform multiply operations, wherein the routing causes each of the non-zero entries included in the first operand values to be provided to the identified SIMD engines.

24. The method of claim 23, further comprising: for each non-zero entry of the identified non-zero entries, identifying a row of entries within the second matrix in response to the corresponding column address of the non-zero entry; and routing the identified rows of entries to the SIMD engines to perform multiply operations, wherein the each of the SIMD engines multiples a non-zero entry with its identified row of entries.

25. The method of claim 24, further comprising: for each non-zero entry of the identified non-zero entries, identifying a row within the corresponding one of the output registers in response to the corresponding row address of the non-zero entry; and performing accumulate operations by accessing the identified rows of the output registers.

26. The method of claim 23, wherein each row of the first matrix represents a weight vector in a machine learning system, and each column of the second matrix represents an activation vector in the machine learning system.

Description

FIELD OF THE INVENTION

[0001] Multiple energy efficient architectures are provided that use single instruction multiple data (SIMD) engines to perform computations such as matrix multiplication and convolution. These operations are at the heart of various machine learning algorithms that are currently being implemented in various applications such as computer vision, machine translation and automatic speech recognition.

BACKGROUND

[0002] Conventional dot products and matrix multiplication are described in detail below.

[0003] The dot product of two vectors can be defined as follows. Let p=[p1 p2 . . . pn] and q=[q1 q2 . . . qn] be two vectors of length n. The dot product of p and q, denoted by Pq is defined as:

p q = ? ? pi * qi ? indicates text missing or illegible when filed ( 1 ) ##EQU00001##

Computing the dot product of two vectors of length n requires n multiplications and (n-1) additions.

[0004] The multiplication of two vectors can be defined as follows. Let A be an (m.times.n) matrix (i.e., A is a matrix with m rows (horizontal) and n columns (vertical)). Let a.sub.i,j denote an element in the i.sup.th row and j.sup.th column of matrix A. Let B be an (n.times.k) matrix. Matrices A and B are represented below.

A = [ a 1 , 1 a 1 , 2 a 1 , 3 a 1 , n a 2 , 1 a 2 , 2 a 2 , 3 a 2 , n a m , 1 a m , 2 a m , 3 a m , n ] B = [ b 1 , 1 b 1 , 2 b 1 , 3 b 1 , k b 2 , 1 b 2 , 2 b 2 , 3 b 2 , k b n , 1 b n , 2 b n , 3 b n , k ] ( 2 ) ##EQU00002##

[0005] The matrices A and B can be multiplied only if their dimensions are compatible (i.e., if the number of columns in A is equal to the number of rows in B). The product C of matrices A and B is defined below.

c i , j = r = 1 ? ? .A-inverted. i .di-elect cons. { 1 , 2 , , m } and .A-inverted. j .di-elect cons. { 1 , 2 , , k } ? indicates text missing or illegible when filed ( 3 ) ##EQU00003##

[0006] From equation (3), it can be seen that the matrix C has m rows and k columns. It should also be noted that it is possible that A.times.B exists, but B.times.A does not exist.

[0007] One way to think about matrix multiplication is as follows. The element in the i.sup.th row and j.sup.th column in matrix C is the dot product of the i.sup.th row in matrix A and the j.sup.th column in matrix B. If the i.sup.th row in matrix A is denoted by a.sub.i,: and the j.sup.th column in matrix B is denoted by b.sub.:,j, then

c.sub.i,j=a.sub.i,:b.sub.:,j (4)

[0008] Hence, the multiplication of two matrices of dimensions (m.times.n) and (n.times.k) consists of computing (m.times.k) dot products of n length vectors.

[0009] As noted above, computing the dot product of two vectors of length n requires n multiplications and (n-1) additions. The dot product computation is generally implemented as a series of multiply-accumulate operations. The multiply-accumulate operation computes the product of two numbers and adds that product to an accumulator. This can be represented as:

c.rarw.c+a*b (5)

[0010] where a and b are the two numbers that are multiplied and c is the accumulator. The hardware unit that performs the multiply-accumulate operation is known as a multiplier-accumulator (MAC) unit. In this document, we also refer to the multiply-accumulate operation as MAC. FIG. 1 is a block diagram of a simple MAC unit 100 that includes input operand registers 101 and 102, which store operands a and b, respectively, multiply circuit 103, addition circuit 104 and accumulator register 105, which stores the accumulator value c.

[0011] As described above, matrix multiplication involves computing multiple dot products. Hence, matrix multiplication can be parallelized by employing multiple MACs. FIG. 2 is a block diagram of a system 200 that includes multiple parallel MACs 201-204 for performing multiple dot products in parallel. MACs 201, 202, 203, and 204 include operand registers 211-212, 213-214, 215-216 and 217-218, respectively, multiplier circuits 221, 222, 223 and 224, respectively, addition circuits 231, 232, 233 and 234, respectively, and accumulators 241, 242, 243 and 244, respectively.

[0012] System 200 includes four parallel MACs in which MAC 201 computes the dot product of a.sub.1,: with b.sub.:,1, MAC 202 computes the dot product of a.sub.2,: with b.sub.:,1, MAC 203 computes the dot product of a.sub.3,: with b.sub.:,1 and MAC 204 computes the dot product of a.sub.4,: with b.sub.:,1. It should be noted that while the four MACs 201-204 use different rows of matrix A, they all use the same column of matrix B. Supplying the MACs 201-204 with the input data is a challenge that needs to be solved. It would therefore be desirable to have efficient ways to supply computation units such as MACs 201-204 with the required data.

[0013] It is noted that all the MAC units 201-204 of FIG. 2 are performing the same operations, but with different inputs. Hence, instead of providing separate instructions to each of the MACs 201-204, it is possible to group all the MACs 201-204 together to form a single instruction multiple data (SIMD) engine that operates in response to a common instruction.

[0014] FIG. 3 is a block diagram of a SIMD engine 300 that groups operand registers 211, 213, 215 and 217 of MACs 201-204 to form a first operand register 301, and groups operand registers 212, 214, 216 and 218 of MACs 201-204 to form a second operand register 302. The multiplier circuits 221-224 of MACs 201-204 are combined to form multiplier 321, and the addition circuit 231-234 of MACs 201-204 are combined to form addition circuit 331. The accumulators 241-244 of MACs 201-204 are combined to form an accumulator 341. In this manner, the various elements of parallel MACs 201-204 are combined to form SIMD engine 300. It is important to note that the scalar inputs for the different MACs 201-204 are be combined to form vector inputs in the SIMD engine 300. In addition, the output of the accumulator 341 of the SIMD engine 300 will also be a vector.

[0015] It is noted that SIMD engines can accomplish much more than a simple multiply-accumulate operation using vector inputs. Generally, a SIMD engine is designed as a block with an instruction set that allows it to carry out different operations. A few of the operations that a SIMD engine can be built to handle include (but not limited to): vector addition, vector multiplication, dot product of two vectors and vector comparison (min and max).

[0016] Another important feature of a SIMD engine is the ability to handle different levels of precision. For example, a SIMD engine with input vector width of 128 bits can interpret the input vector as: 1 scalar of 128 bits, 2 scalars each of 64 bits, 4 scalars each of 32 bits, 8 scalars each of 16 bits, or 16 scalars each of 8 bits. Thus, the SIMD engine can operate in different `modes` such as 8-bit mode, 16-bit mode or 32-bit mode.

[0017] While SIMD engines are efficient in processing vectors and are capable of executing a variety of instructions, they require significant control logic and local memory. It can be seen that in order to get the maximum number of operations per unit silicon area, the number of SIMD engines needs to be maximized. One way to achieve this is to have a design in which multiple SIMD engines can share control logic and memory resources. However, this imposes restrictions on the type of operations that can be performed by the SIMD engines and may require additional logic to drive the SIMD engines. It would therefore be desirable to have improved computer architectures that include SIMD engines.

SUMMARY

[0018] Accordingly, the present invention provides an improved computer architecture that includes a plurality of single instruction, multiple data (SIMD) engines that operate in parallel. An Operand A register file stores a first set of one or more operand values (Operand A values), wherein each of the Operand A values includes a plurality of operand words. An Operand B register file stores a second plurality of one or more operand values (Operand B values), wherein each of the Operand B values includes a plurality of operand words. In a particular embodiment, each of the Operand A and Operand B values includes four 32-bit operand words. In another embodiment, each of the Operand A and Operand B values includes eight 16-bit operand words.

[0019] An input distribution block that includes an Operand A distribution circuit and an Operand B distribution circuit.

[0020] The Operand A distribution circuit is coupled to receive an Operand A value from the Operand A register file. The Operand A distribution circuit selectively routes one or more of the operand words of the received Operand A value to create a plurality of input Operand A values, wherein each of the input Operand A values is routed to a corresponding one of the plurality of SIMD engines.

[0021] In one mode, the Operand A distribution circuit is controlled to route the received Operand A value to each of the SIMD engines in parallel. For example, if the received Operand A value includes four operand words [w, x, y, z], and there are four parallel SIMD engines, then each of the SIMD engines would receive the four operand words [w, x, y, z].

[0022] In another mode, the Operand A distribution circuit is controlled to route a single one of the operand words of the received Operand A value to each of the SIMD engines in parallel. In this mode, the selected single one of the operand words is repeated multiple to create each of the input Operand A values. For example, if the received Operand A value includes four operand words [w, x, y, z], operand word [w] is selected, and there are four parallel SIMD engines, then each of the SIMD engines would receive an input Operand A value that includes four operand words [w, w, w, w].

[0023] In yet another mode, the Operand A distribution circuit is controlled to route a different operand word of the received Operand A value to each of the SIMD engines in parallel. In this mode, each different operand word is repeated multiple times to create each of the input Operand A values. For example, if the received Operand A value includes four operand words [w, x, y, z] and there are four parallel SIMD engines, then one of the four SIMD engines would receive input Operand A value [w, w, w, w], one of the four SIMD engines would receive input Operand A value [x, x, x, x], one of the four SIMD engines would receive input Operand A value [y, y, y, y], and one of the four SIMD engines would receive input Operand A value [z, z, z, z].

[0024] The Operand B distribution circuit is coupled to receive one or more Operand B values from the Operand B register file. The Operand B distribution circuit selectively routes one or more of the operand words from one or more of the received Operand B values to create a plurality of input Operand B values, wherein each of the input Operand B values is routed to a corresponding one of the plurality of SIMD engines.

[0025] In one mode, the Operand B distribution circuit is controlled to route a received Operand B value to each of the SIMD engines in parallel. For example, if the received Operand B value includes four operand words [a, b, c, d], and there are four parallel SIMD engines, then each of the SIMD engines would receive the four operand words [a, b, c, d].

[0026] In another mode, the Operand B distribution circuit includes a plurality of buffers to store a plurality of Operand B values. Operand B select logic is used to select which of the SIMD engines receive which of the buffered Operand B values. For example, if the buffered Operand B values include [a, b, c, d], [e, f, g, h], [i, j, k, l] and [m, n, o, p], and there are four parallel SIMD engines, then then one of the four SIMD engines could receive input Operand B value [a, b, c, d], one of the four SIMD engines could receive input Operand B value [e, f, g, h], one of the four SIMD engines could receive input Operand B value [i, j, k, l], and one of the four SIMD engines could receive input Operand B value and [m, n, o, p]. Alternately, two of the four SIMD engines could receive input Operand B value [a, b, c, d], one of the four SIMD engines could receive input Operand B value [e, f, g, h], and one of the four SIMD engines could receive input Operand B value [i, j, k, l].

[0027] In various embodiments, the Operand B register file can include a single register file (such that the plurality of Operand B values are loaded into the Operand B buffers in a serial manner), or a plurality of register files (such that the plurality of Operand B values are loaded into the Operand B buffers in parallel). If Operand B register file is implemented using a plurality of register files, then the Operand B buffers can be implemented using a double buffer configuration, wherein Operand B values are transferred from the Operand B register file to the Operand B distribution circuit at the same time that Operand B value are transferred from the Operand B distribution circuit to the SIMD engines.

[0028] In yet another mode, the Operand B distribution circuit receives a plurality of Operand B values in parallel from the Operand B register file. These received Operand B values are provided to a shift logic circuit within the Operand B distribution circuit. Control logic specifies an amount of shift (in operand words) that the shift logic circuit introduces to the received Operand B values. The shifted Operand B values are buffered within the Operand B distribution circuit, and are then routed to the SIMD engines in parallel.

[0029] The improved computer architecture also includes a plurality of output register sets, each coupled to a corresponding one of the plurality of SIMD engines. Data (e.g., dot product values) are selectively transferred between the output register sets and the corresponding SIMD engines, enabling operations such as multiply-accumulate operations. In one embodiment, each of the plurality of output register sets is independently addressed, providing flexibility to the operations performed.

[0030] In particular embodiments, the computer architecture of the present invention enables efficient sparse matrix multiplication.

[0031] The present invention will be more fully understood in view of the following description and drawings.

BRIEF DESCRIPTION OF THE DRAWINGS

[0032] FIG. 1 is a block diagram of a conventional multiplier-accumulator (MAC) unit.

[0033] FIG. 2 is a block diagram of a conventional system that includes multiple parallel MAC units for calculating multiple dot products in parallel.

[0034] FIG. 3 is a block diagram of a conventional single instruction multiple data (SIMD) engine that is created by grouping various elements of the multiple parallel MAC units of FIG. 2.

[0035] FIG. 4 is a block diagram of a computer system in accordance with one embodiment of the present invention.

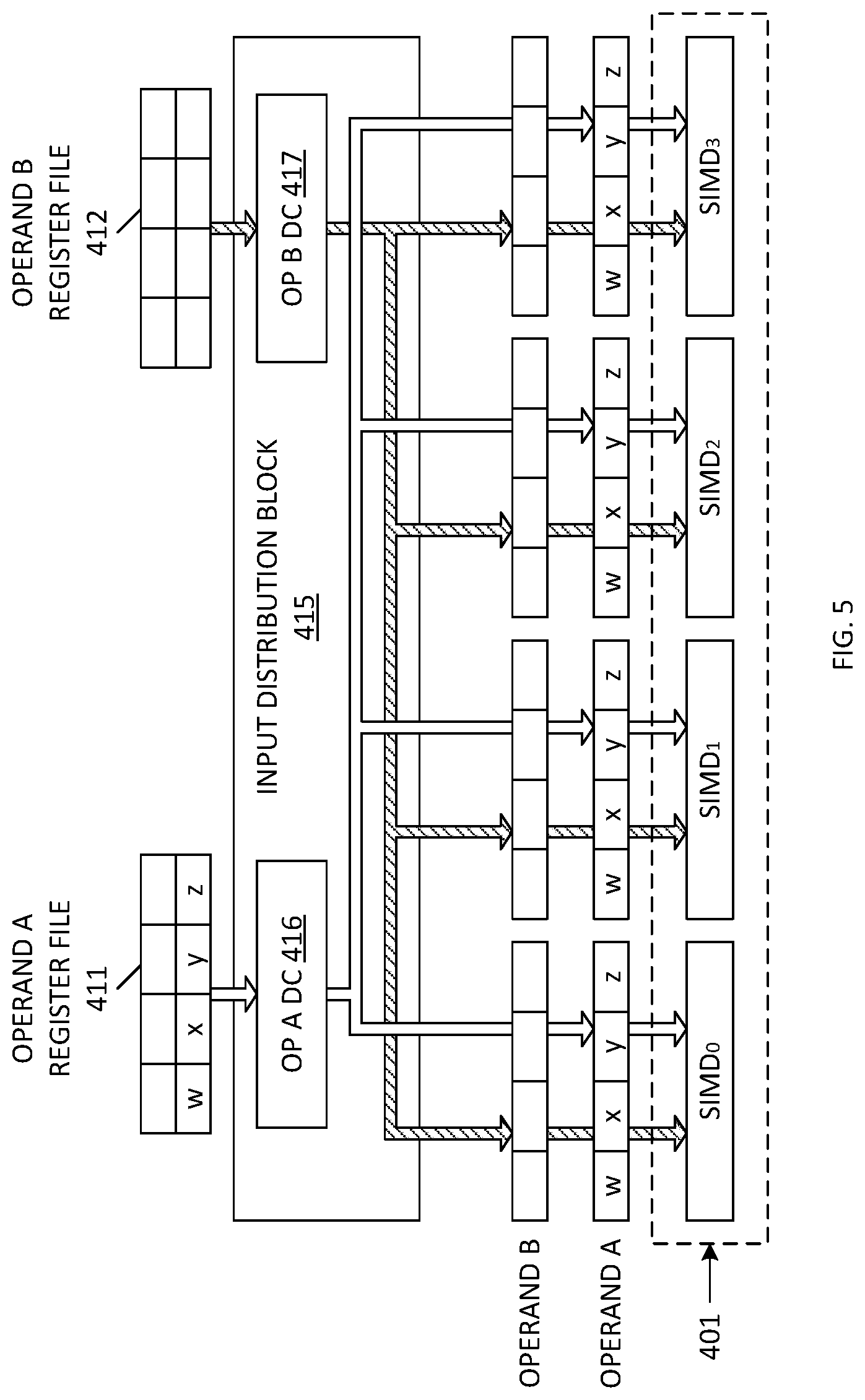

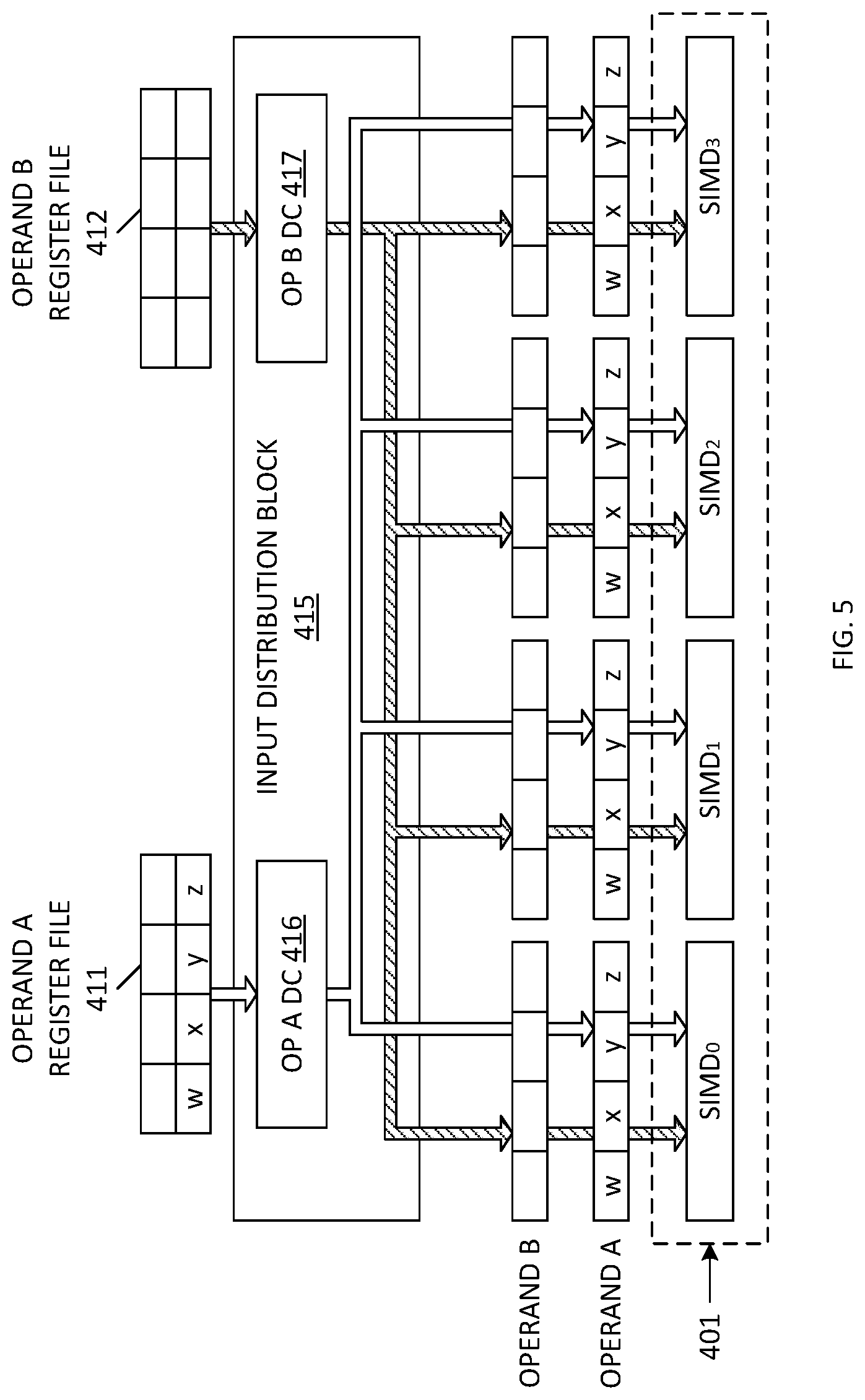

[0036] FIG. 5 is a block diagram illustrating an architecture (Architecture 1A) for routing a first operand value (Operand A) having four 32-bit operand words from an Operand A register file to a plurality of SIMD engines in accordance with one embodiment of the present invention.

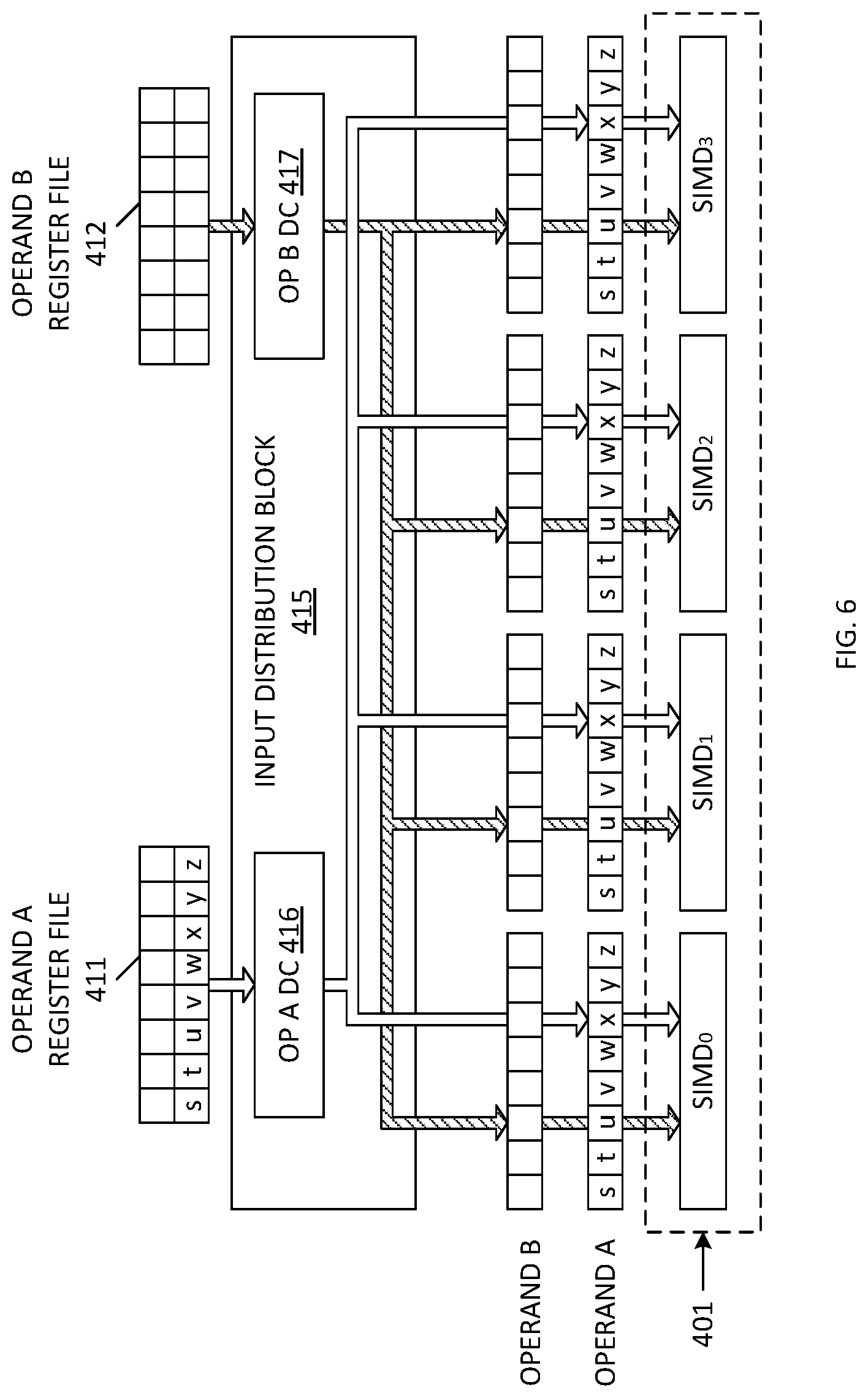

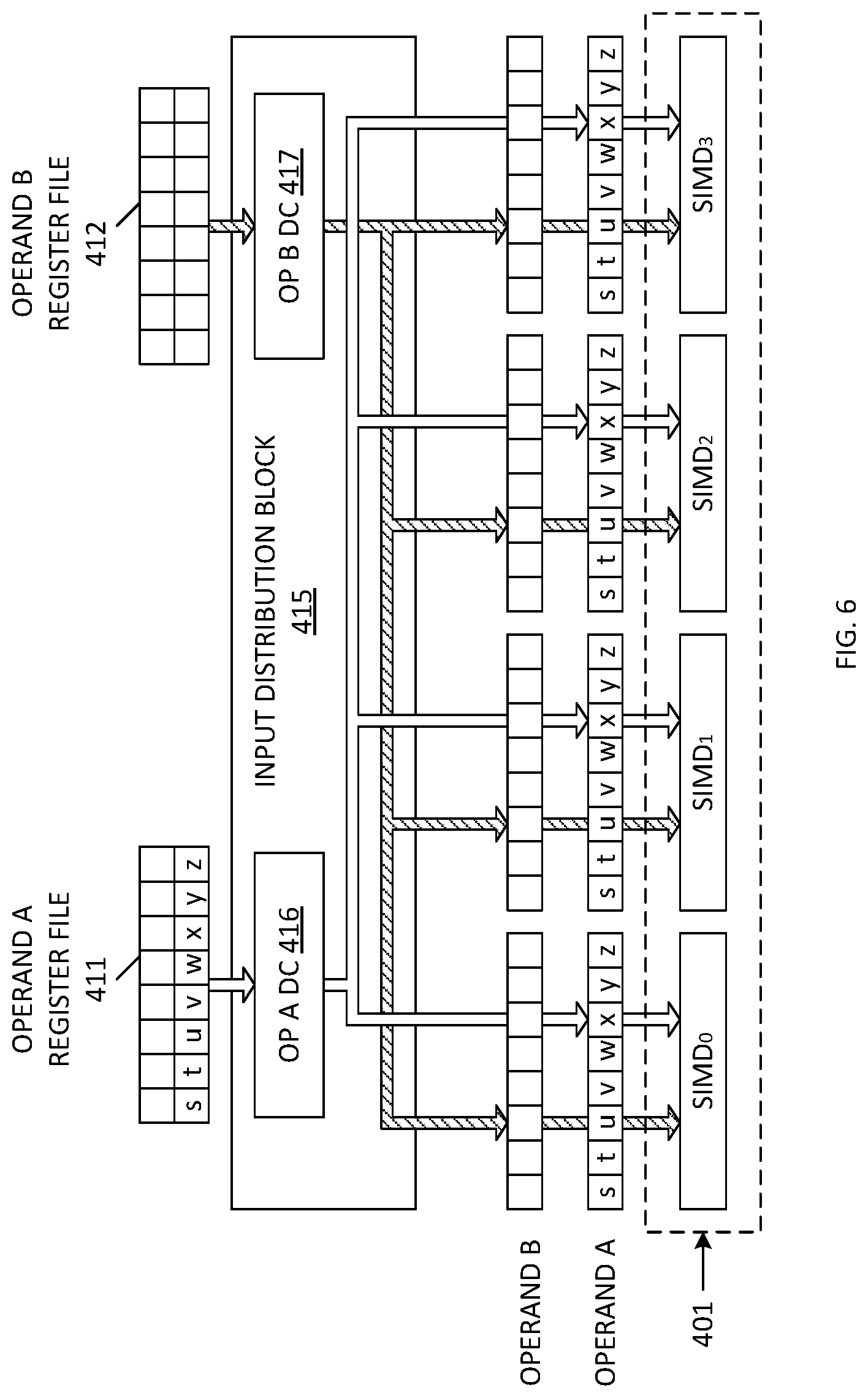

[0037] FIG. 6 is a block diagram illustrating an architecture (Architecture 1A) for routing a first operand value (Operand A) having eight 16-bit operand words from an Operand A register file to a plurality of SIMD engines in accordance with one embodiment of the present invention.

[0038] FIG. 7 and FIG. 8 are block diagrams illustrating an architecture (Architecture 2A), for routing a first operand value (Operand A) having four 32-bit operand words from an Operand A register file to a plurality of SIMD engines in accordance with one embodiment of the present invention.

[0039] FIG. 9 and FIG. 10 are block diagrams illustrating an architecture (Architecture 2A), for routing a first operand value (Operand A) having eight 16-bit operand words from an Operand A register file to a plurality of SIMD engines in accordance with one embodiment of the present invention.

[0040] FIG. 11 is a block diagram illustrating an architecture (Architecture 3A) for routing a first operand value (Operand A) having four 32-bit operand words from an Operand A register file to a plurality of SIMD engines in accordance with one embodiment of the present invention.

[0041] FIG. 12 and FIG. 13 are block diagrams illustrating an architecture (Architecture 3A) for routing a first operand value (Operand A) having eight 16-bit operand words from an Operand A register file to a plurality of SIMD engines in accordance with one embodiment of the present invention.

[0042] FIG. 14 is a block diagram illustrating an architecture (Architecture 1B) for routing a second operand value (Operand B) having four 32-bit operand words from an Operand B register file to a plurality of SIMD engines in accordance with one embodiment of the present invention.

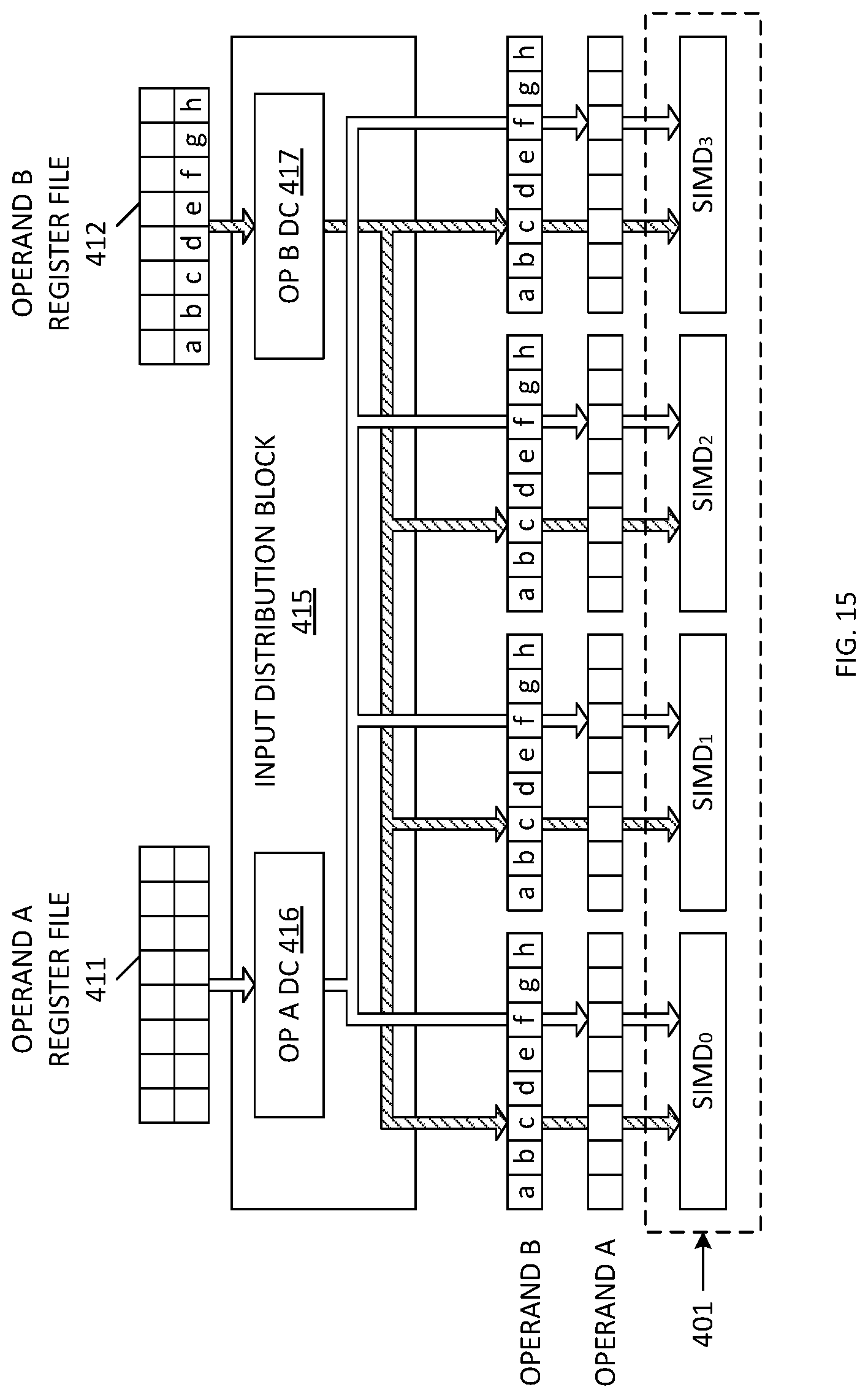

[0043] FIG. 15 is a block diagram illustrating an architecture (Architecture 1B) for routing a second operand value (Operand B) having eight 16-bit operand words from an Operand B register file to a plurality of SIMD engines in accordance with one embodiment of the present invention.

[0044] FIG. 16 is a block diagram illustrating an architecture (Architecture 2B) for routing a second operand value (Operand B) having four 32-bit operand words from an Operand B register file and a plurality of Operand B buffers to a plurality of SIMD engines in accordance with one embodiment of the present invention.

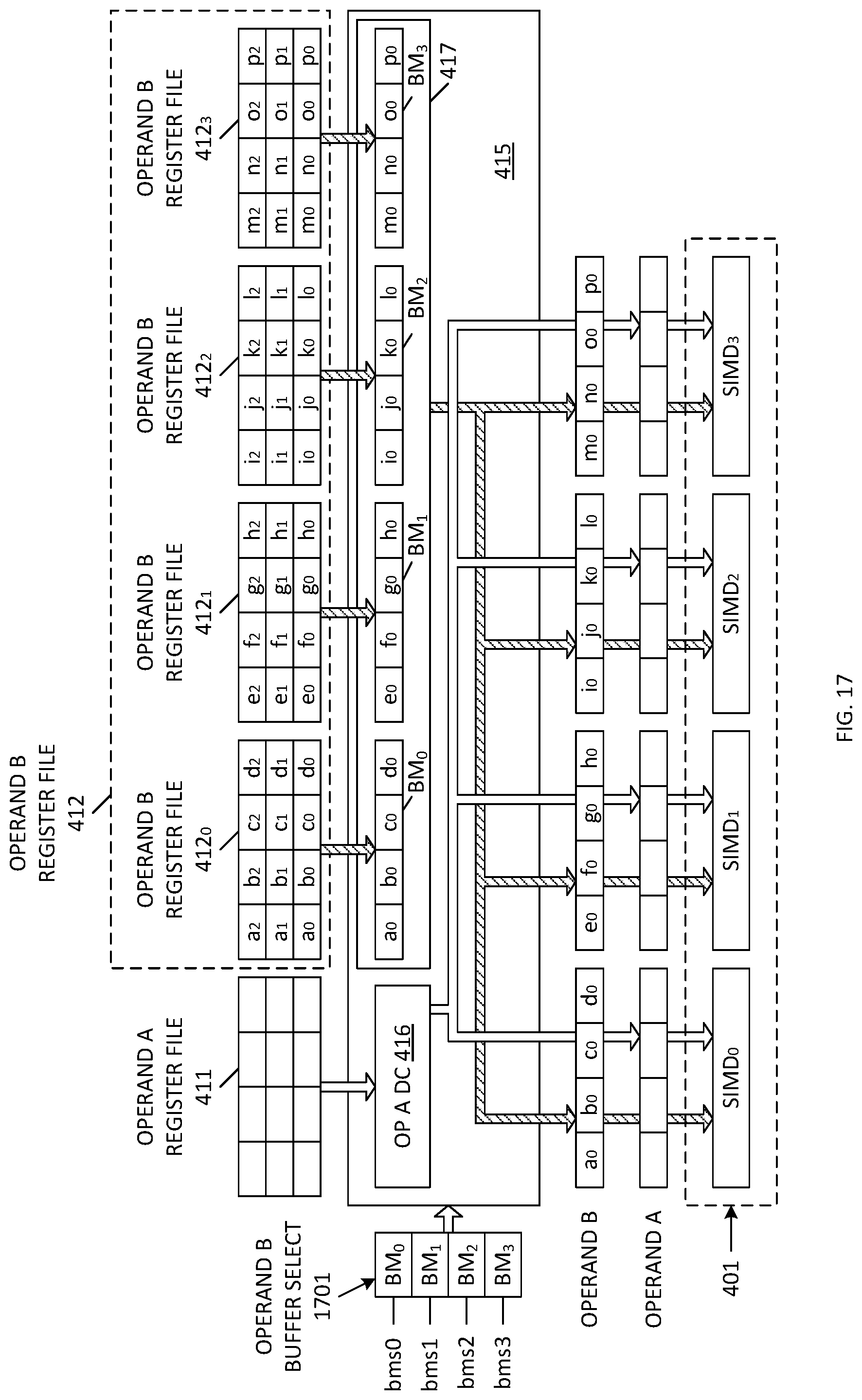

[0045] FIG. 17 is a block diagram illustrating an architecture (Architecture 3B) for routing a second operand value (Operand B) having four 32-bit operand words from a plurality of parallel Operand B register files and a plurality of Operand B buffers to a plurality of SIMD engines in accordance with one embodiment of the present invention.

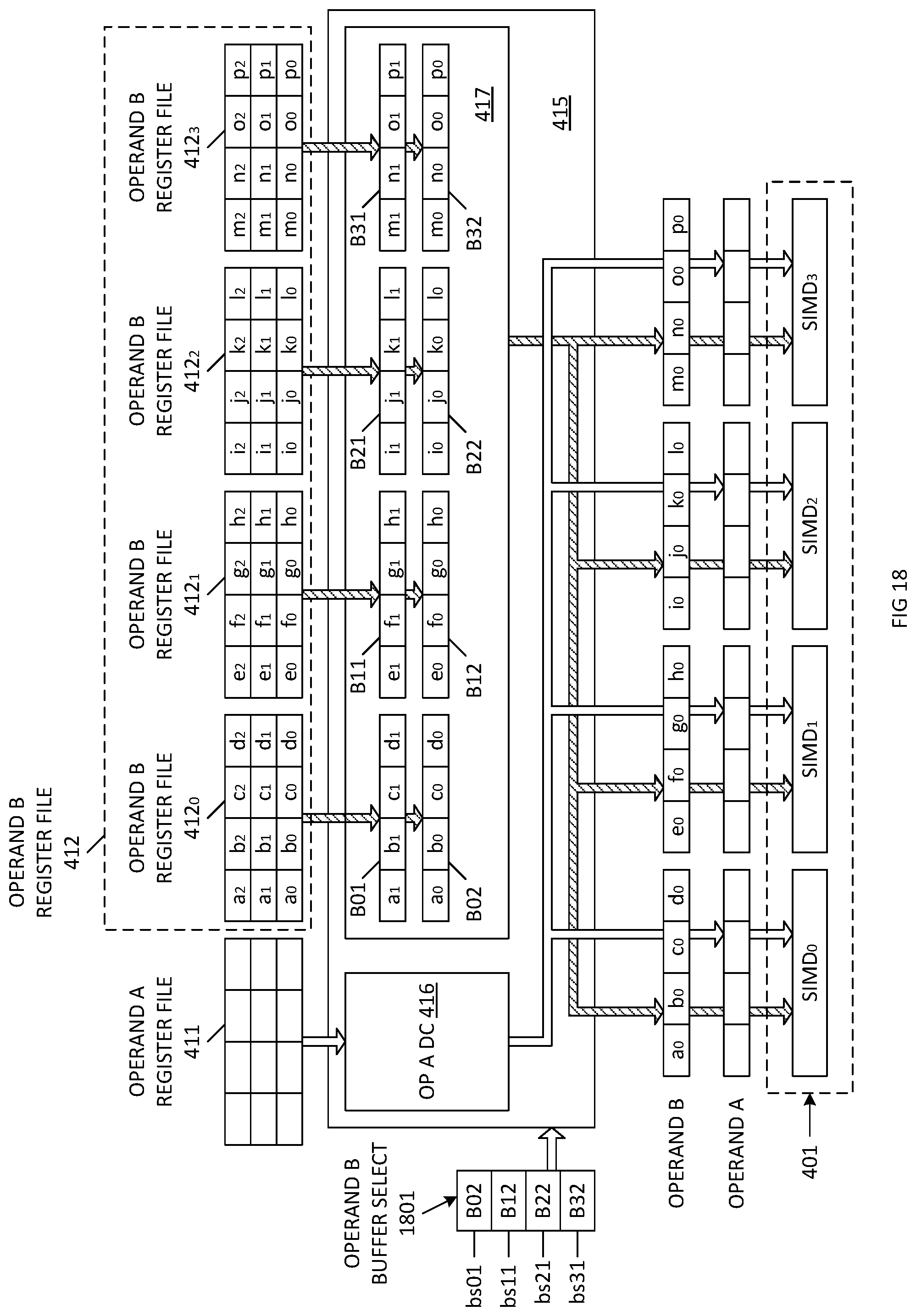

[0046] FIG. 18 is a block diagram illustrating an architecture (Architecture 3B) for routing a second operand value (Operand B) having four 32-bit operand words from a plurality of parallel Operand B register files and a plurality of Operand B double buffers to a plurality of SIMD engines in accordance with one embodiment of the present invention.

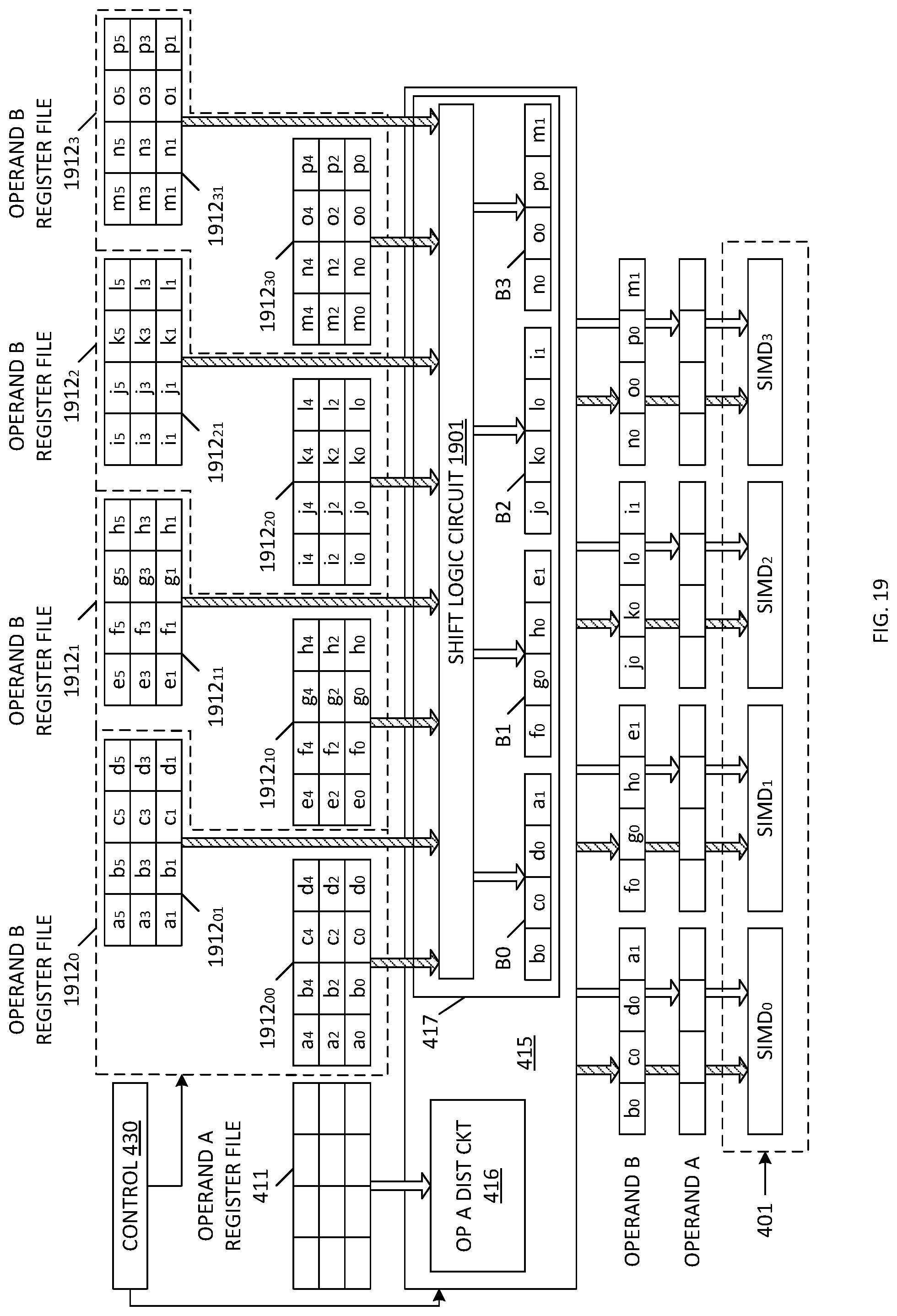

[0047] FIG. 19 is a block diagram illustrating an architecture (Architecture 4B) for routing a second operand value (Operand B) having four 32-bit operand words from a plurality of parallel Operand B register files, a shift logic circuit and a plurality of Operand B buffers to a plurality of SIMD engines in accordance with one embodiment of the present invention.

[0048] FIG. 20 is a block diagram of a computer system that includes a SIMD block having four parallel SIMD engines and an output circuit having four parallel output register sets in accordance with one embodiment of the present invention.

[0049] FIG. 21 is a block diagram of the computer system of FIG. 20, which illustrates the addressing of the four parallel output register sets in accordance with one embodiment of the present invention.

[0050] FIG. 22 is a block diagram of a hardware system that unifies the various architectures and features for different operands and outputs specified by FIGS. 4-21 in accordance with one embodiment of the present invention.

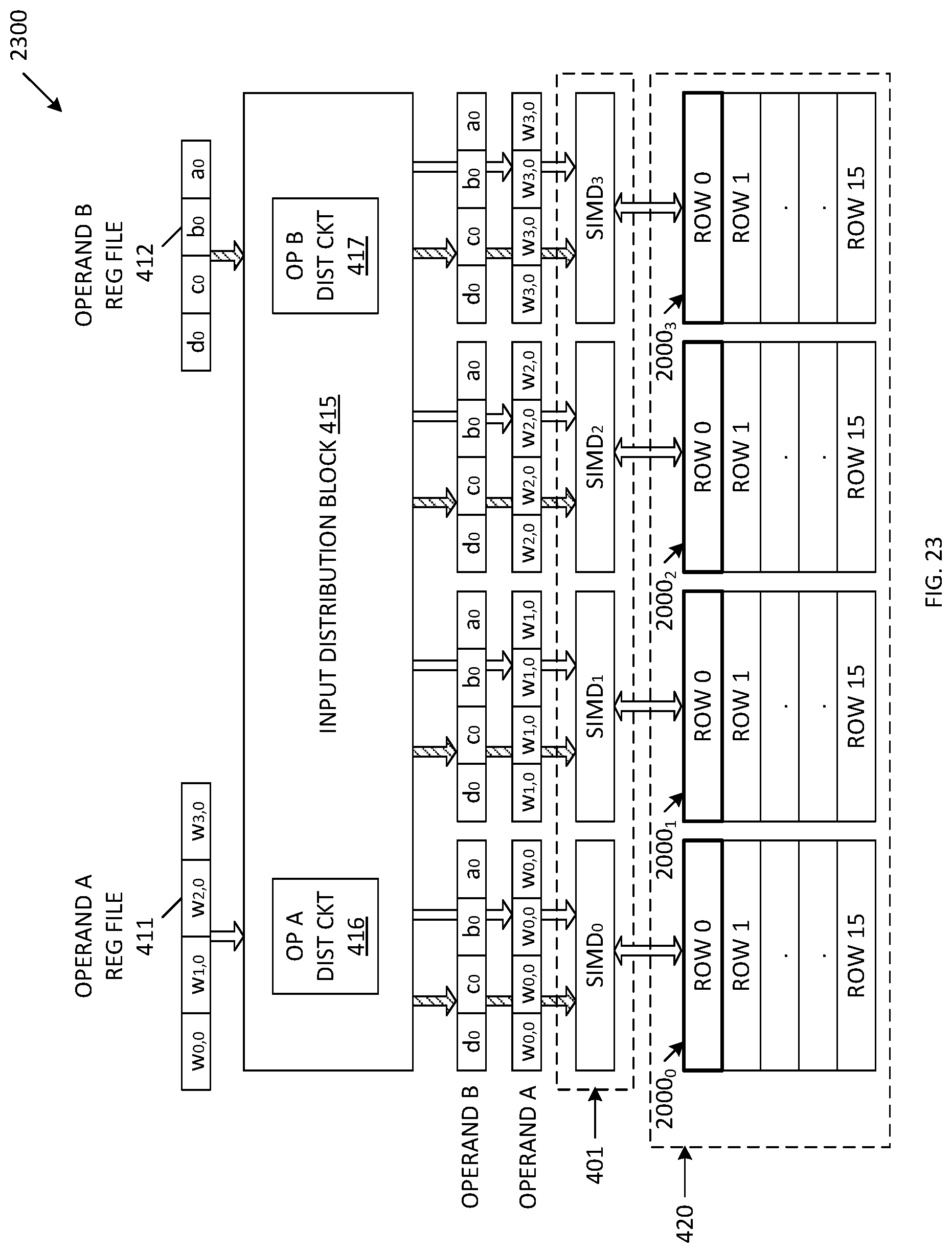

[0051] FIG. 23 is a block diagram of a computer architecture used to perform matrix multiplication in accordance with one embodiment of the present invention.

[0052] FIG. 24 is a diagram illustrating two matrices I and J to be multiplied by the computer architecture of FIG. 23 in accordance with one embodiment of the present invention.

[0053] FIG. 25 is a block diagram illustrating the manner in which the contents of Matrix I and Matrix J of FIG. 24 are logically stored within system memory in accordance with one embodiment of the present invention.

[0054] FIG. 26 is a block diagram illustrating the manner in which results of the multiplication of Matrix I and Matrix J of FIG. 24 are stored within the output register sets of the computer architecture of FIG. 23 in accordance with one embodiment of the present invention.

[0055] FIG. 27 and FIG. 28 are block diagrams of a computer architecture during various stages of a sparse matrix multiplication in accordance with one embodiment of the present invention.

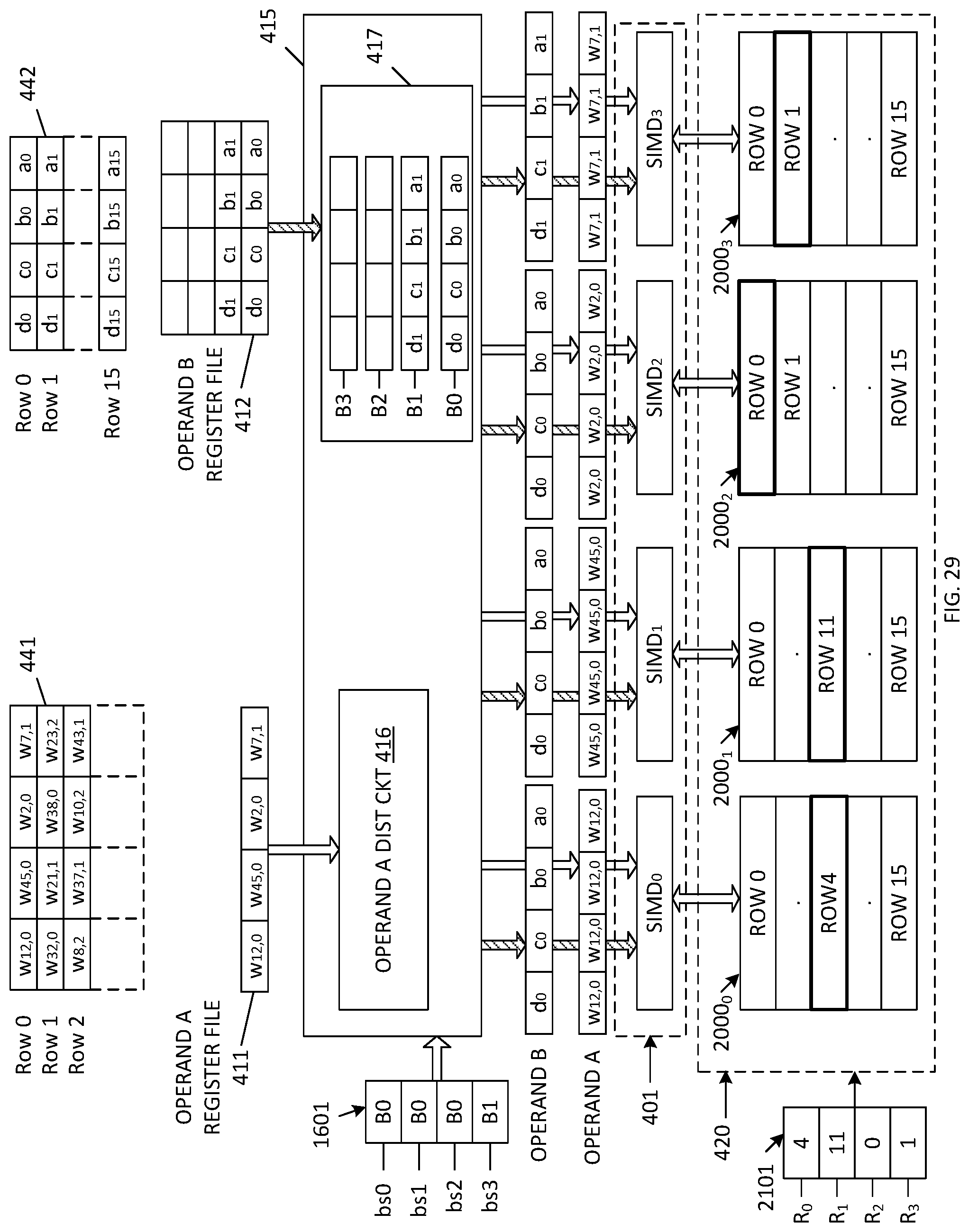

[0056] FIG. 29, FIG. 30 and FIG. 31 are block diagrams of a computer architecture during various stages of a sparse matrix multiplication in accordance with an alternate embodiment of the present invention.

DETAILED DESCRIPTION

[0057] The following subsections describe various efficient SIMD engine architectures. Specifically, ways to operate multiple SIMD engines in parallel are proposed, and manners for supplying the SIMD engines with inputs are described. While the following description uses examples that implement 128-bit wide input operands and 4 SIMD engines, it is noted that the described examples can be extended to smaller or larger input operand widths and/or fewer or more SIMD engines.

[0058] FIG. 4 is a block diagram of a computer system 400 that includes various hardware resources needed for operating a SIMD block 401 in accordance with one embodiment. These resources include an operand buffer 410 (which includes Operand A register file 411 and Operand B register file 412), input distribution block 415 (which includes operand A distribution circuit 416 and operand B distribution circuit 417), SIMD block 401, output circuit 420, control logic 430 (which includes state machine and scheduler 431, control registers 432 and operand packaging circuit 433), and system memory 440. The important parameters for the SIMD operation are the operands, the type of operation and the addresses for the output circuit. These parameters are described in more detail in the subsequent sections.

[0059] In general, control logic 430 controls writing operand values (e.g., matrix entries) into Operand A register file 411 and Operand B register file 412. More specifically, state machine and scheduler 431 causes operand packaging circuit 433 to retrieve matrix entries that are stored in system memory 440. Operand packaging circuit 433 packages these matrix entries to form operand values in accordance with the operation to be performed. In one embodiment, the various operations are defined by entries in the control registers 432. As described in more detail below, some operations (e.g., sparse matrix multiplication) require that matrix entries having zero values are omitted from the operand values provided to the operand buffer 410. State machine and scheduler 431 controls the writing of operand values provided by the operand packaging circuit 433 to the Operand A register file 411 and the Operand B register file 412. State machine and scheduler 431 also controls the reading of operand values from Operand A register file 411 and Operand B register file 412, wherein these read values are provided to Operand A distribution circuit 416 and Operand B distribution circuit 417 within input distribution block 415.

[0060] In general, state machine and scheduler 431 provides addresses to input distribution block 415, wherein these addresses control the manner in which the Operand A distribution circuit 416 routes the Operand A values received from Operand A register file 411 to SIMD block 401, and also control the manner in which the Operand B distribution circuit 417 routes the Operand B values received from Operand B register file 412 are routed to SIMD block 401. As described in more detail below, Operand B distribution circuit 417 may include buffers to store multiple Operand B values, as well as shift logic that controls an amount of shift to be applied to the Operand B values received from Operand B register file 412.

[0061] State machine and scheduler 431 also provides addresses used to access memory banks included within the output circuit 420. These addresses include read addresses, which enable accumulation values stored in the memory banks to be routed to the SIMD block 401 for multiply-accumulate operations, as well as write addresses, which enable updated accumulation values provided by SIMD block 401 to be written back to the memory banks within output circuit 420.

[0062] Control registers 432 store values that control the manner in which the state machine and scheduler 431 generates the various addresses for different modes of operation (which are described in more detail below). The operation of the various elements of computer system 400 is described in more detail below for various modes (i.e., architectures).

Architectures for Operand A

[0063] Three architectures, which are described in more detail below, are proposed for input Operand A. In these following examples, the SIMD block 401 includes four SIMD engines operating in parallel, wherein each of the four SIMD engines receives a corresponding input Operand A having a width of 128 bits. A single entry from the Operand A register file 411 (which is included in operand buffer 410) is 128 bits. This entry is hereinafter referred to as a register file word. In the described embodiments, each of the four SIMD engines within SIMD block 401 is identical to the SIMD engine 300 of FIG. 3.

Architecture 1A

[0064] In a first architecture for providing Operand A to the SIMD block 401 (Architecture 1A), each of the four SIMD engines (SIMD.sub.0, SIMD.sub.1, SIMD.sub.2, SIMD.sub.3) included in the SIMD block 401 receives a full register file word (which includes four 32-bit word values w, x, y and z) as the input Operand A.

[0065] FIG. 5 is a block diagram illustrating one embodiment of the first Operand A architecture (Architecture 1A), wherein four 32-bit input words [w, x, y, z] stored in Operand A register file 411 are routed to Operand A distribution circuit 416. In one embodiment, Operand A distribution circuit 416 includes a buffer that stores the received input words [w, x, y, z]. Operand A distribution circuit 416 also includes circuitry for performing a switching/demultiplexing function, wherein the buffered input words [w, x, y, z] are routed in parallel to each of the four SIMD engines (SIMD.sub.0, SIMD.sub.2, SIMD.sub.2, SIMD.sub.3) included in the SIMD block 401. Thus, in Architecture 1A, each of the SIMD engines (SIMD.sub.0, SIMD.sub.1, SIMD.sub.2, SIMD.sub.3) receives the full register file word [w, x, y, z] as input Operand A.

[0066] FIG. 6 is a block diagram illustrating another embodiment of the first Operand A architecture (Architecture 1A), wherein eight 16-bit input words [s, t, u, v, w, x, y, z] stored in Operand A register file 411 are routed to Operand A distribution circuit 416. These input words [s, t, u, v, w, x, y, z] are buffered within Operand A distribution circuit 416, and are then routed in parallel to each of the four SIMD engines (SIMD.sub.0, SIMD.sub.1, SIMD.sub.2, SIMD.sub.3) included in the SIMD block 401. Again, in Architecture 1A, each of the SIMD engines (SIMD.sub.0, SIMD.sub.1, SIMD.sub.2, SIMD.sub.3) receives the full register file word [s, t, u, v, w, x, y z] as input Operand A.

Architecture 2A

[0067] In a second architecture for providing Operand A to the SIMD block 401 (Architecture 2A), each of the four SIMD engines (SIMD.sub.0, SIMD.sub.1, SIMD.sub.2, SIMD.sub.3) included in the SIMD block 401 receives a single input word from the operand A register file 411, wherein this single input word is repeated a number of times to match the input width of Operand A. Input distribution block 415 selects the single input word by specifying the index of the single input word to be broadcast within the operand A register file 411.

[0068] FIG. 7 is a block diagram illustrating one embodiment of the second Operand A architecture (Architecture 2A), wherein four 32-bit input words [w, x, y, z] stored in Operand A register file 411 are routed to Operand A distribution circuit 416. As described above, Operand A distribution circuit 416 includes a buffer that stores the received input words [w, x, y, z]. State machine and scheduler 431 provides an index value to Operand A distribution circuit 416, wherein this index value specifies the input word [w]. In response, Operand A distribution circuit 416 performs a switching/demultiplexing operation, wherein the input word [w] is routed in parallel to each of the four SIMD engines (SIMD.sub.0, SIMD.sub.2, SIMD.sub.2, SIMD.sub.3) included in the SIMD block 401. That is, the 32-bit input word [w] is effectively repeated four times to provide a 128-bit input Operand A, which consists of the 32-bit word [w] repeated four times. This 128-bit input Operand A [w, w, w, w] is provided to each of the SIMD engines (SIMD.sub.0, SIMD.sub.2, SIMD.sub.2, SIMD.sub.3) in parallel.

[0069] FIG. 8 is a block diagram illustrating another example of the second Operand A architecture (Architecture 2A), wherein the state machine and scheduler 431 provides an index value that specifies the input word y to Operand A distribution circuit 416. In response, Operand A distribution circuit 416 performs a switching/demultiplexing operation, wherein the input word [y] is routed in parallel to each of the four SIMD engines (SIMD.sub.0, SIMD.sub.2, SIMD.sub.2, SIMD.sub.3) included in the SIMD block 401. That is, the 32-bit input word [y] is effectively repeated four times to provide a 128-bit input Operand A [y, y, y, y], which is provided to each of the SIMD engines (SIMD.sub.0, SIMD.sub.1, SIMD.sub.2, SIMD.sub.3) in parallel.

[0070] FIG. 9 is a block diagram illustrating another embodiment of the second Operand A architecture (Architecture 2A), wherein eight 16-bit input words [s, t, u, v, w, x, y, z] from Operand A register file 411 are buffered in Operand A distribution circuit 416. State machine and scheduler 431 provides an index value to Operand A distribution circuit 416, wherein this index value specifies the input word [u]. In response, Operand A distribution circuit 416 performs a switching/demultiplexing operation, wherein the input word [u] is routed in parallel to each of the four SIMD engines (SIMD.sub.0, SIMD.sub.1, SIMD.sub.2, SIMD.sub.3) included in the SIMD block 401. That is, the 16-bit input word [u] is effectively repeated eight times to provide a 128-bit input Operand A [u, u, u, u, u, u, u, u], which is provided to each of the SIMD engines (SIMD.sub.0, SIMD.sub.1, SIMD.sub.2, SIMD.sub.3) in parallel.

[0071] FIG. 10 is a block diagram illustrating another example of the second Operand A architecture (Architecture 2A), wherein eight 16-bit input words [s, t, u, v, w, x, y, z] from Operand A register file 411 are buffered in Operand A distribution circuit 416. State machine and scheduler 431 provides an index value to Operand A distribution circuit 416, wherein this index value specifies the input word [z]. In response, Operand A distribution circuit 416 performs a switching/demultiplexing operation, wherein the input word [z] is routed in parallel to each of the four SIMD engines (SIMD.sub.0, SIMD.sub.1, SIMD.sub.2, SIMD.sub.3) included in the SIMD block 401. That is, the 16-bit input word [z] is effectively repeated eight times to provide a 128-bit input Operand A [z, z, z, z, z, z, z, z], which is provided to each of the SIMD engines (SIMD.sub.0, SIMD.sub.1, SIMD.sub.2, SIMD.sub.3) in parallel.

Architecture 3A

[0072] In a third architecture for providing the input Operand A to the SIMD block 401 (Architecture 3A), each of the four SIMD engines (SIMD.sub.0, SIMD.sub.1, SIMD.sub.2, SIMD.sub.3) included in the SIMD block 401 receives a single input word from the operand A register file 411, wherein this single input word is repeated a number of times to match the input width of Operand A. However, different SIMD engines are provided with different input words. In one embodiment, the input words are assigned to the SIMD engines in a round-robin manner. Input distribution block 415 selects the single input word for each SIMD by specifying the index of each input word to be provided to each SIMD.

[0073] FIG. 11 is a block diagram illustrating one embodiment of the third Operand A architecture (Architecture 3A), wherein four 32-bit input words [w, x, y, z] stored in Operand A register file 411 are received by (and buffered in) Operand A distribution circuit 416. State machine and scheduler 431 provides index values to Operand A distribution circuit 416, wherein these index values specify which of the input words [w, x, y, z] are to be provided to the SIMD engines. In the present example, the index values specify that the input words w, x, y and z are to be provided to SIMD.sub.0, SIMD.sub.1, SIMD.sub.2 and SIMD.sub.3, respectively. In response to the index values, Operand A distribution circuit 416 performs switching/demultiplexing operations, wherein: the 32-bit input word [w] is repeated four times to create a 128-bit Operand A value of [w, w, w, w], which is routed to SIMD.sub.0; the 32-bit input word [x] is repeated four times to create a 128-bit Operand A value of [x, x, x, x], which is routed to SIMD.sub.1; the 32-bit input word [y] is repeated four times to create a 128-bit Operand A value of [y, y, y, y], which is routed to SIMD.sub.2; and the 32-bit input word [z] is repeated four times to create a 128-bit Operand A value of [z, z, z, z], which is routed to SIMD.sub.3. In the foregoing manner, the four 32-bit entries [w, x, y, z] of the 128-bit register file word are distributed to the four SIMD engines in a round-robin manner (i.e., SIMD.sub.0 is assigned w, SIMD.sub.1 is assigned x, SIMD.sub.2 is assigned y and SIMD.sub.3 is assigned z).

[0074] FIG. 12 is a block diagram illustrating another embodiment of the third Operand A architecture (Architecture 3A), wherein eight 16-bit input words [s, t, u, v, w, x, y, z] stored in Operand A register file 411 are received by (and buffered in) Operand A distribution circuit 416. State machine and scheduler 431 provides index values to Operand A distribution circuit 416, wherein these index values specify which of the input words [s, t, u, v, w, x, y, z] are to be provided to the SIMD engines. In the present example, the index values specify that the input words s, t, u and v are to be provided to SIMD.sub.0, SIMD.sub.1, SIMD.sub.2 and SIMD.sub.3, respectively. In response to the index values, Operand A distribution circuit 416 performs switching/demultiplexing operations, wherein: the 16-bit input word [s] is repeated eight times to create a 128-bit Operand A value of [s, s, s, s, s, s, s, s], which is routed to SIMD.sub.0; the 16-bit input word [t] is repeated eight times to create a 128-bit Operand A value of [t, t, t, t, t, t, t, t], which is routed to SIMD.sub.1; the 16-bit input word [u] is repeated eight times to create a 128-bit Operand A value of [u, u, u, u, u, u, u, u], which is routed to SIMD.sub.2; and the 16-bit input word [v] is repeated eight times to create a 128-bit Operand A value of [v, v, v, v, v, v, v, v], which is routed to SIMD.sub.3. In the foregoing manner, the four 16-bit entries [s, t, u, v] of the 128-bit register file word are distributed to the four SIMD engines in a round-robin manner.

[0075] Note that in the 16-bit input mode represented by FIG. 12, there are more entries (8) in the register file word than the number of SIMD engines (4). So, the same register file word can be used two times: once to distribute the first four entries to the four SIMD engines (shown in FIG. 12) and another time to distribute the last four entries to the four SIMD engines (shown in FIG. 13). In one embodiment, index values provided by state machine and scheduler 431 specify which four entries of the register file word are distributed to provide the input operand A.

[0076] FIG. 13 is a block diagram illustrating the continuation of the distribution started by FIG. 12, wherein the 16-bit input word [w] is repeated eight times to create a 128-bit Operand A value of [w, w, w, w, w, w, w, w], which is routed to SIMD.sub.0; the 16-bit input word [x] is repeated eight times to create a 128-bit Operand A value of [x, x, x, x, x, x, x, x], which is routed to SIMD.sub.2; the 16-bit input word [y] is repeated eight times to create a 128-bit Operand A value of [y, y, y, y, y, y, y, y], which is routed to SIMD.sub.2; and the 16-bit input word [z] is repeated eight times to create a 128-bit Operand A value of [z, z, z, z, z, z, z, z], which is routed to SIMD.sub.3.

[0077] More details regarding the routing of input operand A in accordance with Architectures 1A, 2A and 3A are provided below in connection with FIG. 22.

[0078] Note that the preceding descriptions of Architectures 1A, 2A and 3A implement 32-bit input and 16-bit input modes. However, these embodiments are provided for illustration purpose only. The ideas are general and can be extended in a straightforward manner to other input modes (e.g., 8-bit input mode). Moreover, although the Architectures 1A, 2A and 3A have been described in connection with embodiments that include 4 SIMD engines and a 128-bit register file word, other numbers of SIMD engines and register file word widths can be used in other embodiments in a straightforward manner.

[0079] In actual hardware implementation, multiple architectures can be implemented together by sharing hardware resources. The hardware can be programmed to operate different architectures as modes that can be chosen by some register settings. For example, control registers 432 (FIG. 4) can store values that cause state machine and scheduler 431 implement Architecture 1A, Architecture 2A or Architecture 3A for Operand A in the manners described above. That is, the switching and demultiplexing logic implemented in the Operand A distribution circuit 416 can be driven by settings stored by control registers 432.

[0080] For example, in Architecture 2A, the index of the single value to be broadcast needs to be provided to the Operand A distribution circuit 416. The index can have different interpretations depending on whether the data is 8-bit, 16-bit or 32-bit wide (which could be specified by a control register setting).

[0081] Similarly, in Architecture 3A, the index of the value to be broadcast to SIMD.sub.0 needs to be provided to the Operand A distribution circuit 416. From this index, the indices for the values to be broadcast to the other SIMD engines (SIMD.sub.1, SIMD.sub.2 and SIMD.sub.3) can be inferred by the hardware by incrementing. Or all the four indices can be provided to the Operand A distribution circuit 416.

[0082] The data stored in the buffers of the Operand A distribution circuit 416 can be reused over multiple cycles so that the register file words do not need to be read every cycle from the Operand A register file 411. Separate control logic can supply a flag specifying which cycles need to load the data from the Operand A register file 411. Additionally, the Operand A distribution circuit 416 can contain multiple buffers to hold the register file word data with control logic specifying the buffer indices to use for writing and reading. In one embodiment, the Operand A distribution circuit 416 contains two buffers: one for writing and one for reading, which are used in a ping-pong manner. The state machine and scheduler 431 automatically manages the read and write indices. This scheme is generally known as double buffering. In such cases, no additional control logic is needed to specify buffer indices for read and write.

Architectures for Operand B

[0083] In accordance other embodiments, multiple architectures are used to provide the input Operand B to the SIMD block 401. As described below, Operand B distribution circuit 417 can be configured in four different architectures (Architecture 1B, Architecture 2B, Architecture 3B and Architecture 4B) to provide the input Operand B to SIMD block 401.

Architecture 1B

[0084] In a first architecture for providing Operand B to the SIMD block 401 (Architecture 1B), each of the four SIMD engines (SIMD.sub.0, SIMD.sub.1, SIMD.sub.2, SIMD.sub.3) included in the SIMD block 401 receives a full register file word (which includes four 32-bit word values a, b, c and d) as the input Operand B. Note that Architecture 1B for providing Operand B to the SIMD block 401 is similar to Architecture 1A for providing Operand A to the SIMD block 401.

[0085] FIG. 14 is a block diagram illustrating one embodiment of the first Operand B architecture (Architecture 1B), wherein four 32-bit input words [a, b, c, d] stored in Operand B register file 412 are routed to Operand B distribution circuit 417. In one embodiment, Operand B distribution circuit 417 includes a buffer that stores the received input words [a, b, c, d]. Operand B distribution circuit 417 also includes circuitry for performing a switching/demultiplexing function, wherein the buffered input words [a, b, c, d] are routed in parallel to each of the four SIMD engines (SIMD.sub.0, SIMD.sub.2, SIMD.sub.2, SIMD.sub.3) included in the SIMD block 401. Thus, in Architecture 1B, each of the SIMD engines (SIMD.sub.0, SIMD.sub.1, SIMD.sub.2, SIMD.sub.3) receives the full register file word [a, b, c, d] as input Operand B.

[0086] FIG. 15 is a block diagram illustrating another embodiment of the first Operand B architecture (Architecture 1B), wherein eight 16-bit input words [a, b, c, d, e, f, g, h] stored in Operand B register file 412 are routed to Operand B distribution circuit 417. These input words [a, b, c, d, e, f, g, h] are buffered within Operand B distribution circuit 417, and are then routed in parallel to each of the four SIMD engines (SIMD.sub.0, SIMD.sub.1, SIMD.sub.2, SIMD.sub.3) included in the SIMD block 401. Again, in Architecture 1B, each of the SIMD engines (SIMD.sub.0, SIMD.sub.1, SIMD.sub.2, SIMD.sub.3) receives the full register file word [a, b, c, d, e, f, g, h] as input Operand B.

Architecture 2B

[0087] In the architectures considered so far (for Operand A as well as for Operand B), all the SIMD engines use data from a single register file word at a given time. This can make the architectures rigid in terms of the type of operations they can support. In accordance with one embodiment, different SIMD engines are provided with different register file words from the operand register files.

[0088] One method to achieve this would be to allow multiple reads to the register file simultaneously. While this is possible, the hardware complexity can be prohibitive.

[0089] In one embodiment, multiple entries (not necessarily distinct) can be read simultaneously from Operand B register file 412. The most general way to implement this is to use a multi-read-port memory to implement this register file 412. A memory with four read ports can be used to simultaneously read four entries from the Operand B register file 412. However, such a memory configuration has a high hardware complexity (occupies a relatively large area and consumes a relatively high power). Thus, preferred embodiments of the present invention include low complexity methods and structures for supplying the different SIMD engines with (possibly) different input Operand B values. While these preferred embodiments may not provide as much generality as the broad (multiple read port) method, they are efficient for the purposes of the algorithms to be implemented.

[0090] In accordance with one embodiment, a second architecture (Architecture 2B) for providing input Operand B to the SIMD engines is provided, wherein a small number of entries from the Operand B register file 412 are buffered in the Operand B distribution circuit 417 and then distributed to the SIMD engines of SIMD block 401. Intuitively, this can be thought of as an approach that gives some flexibility for each SIMD by allowing them to address any entry from a small number of entries. This keeps hardware complexity small.

[0091] The main characteristics of the second architecture (Architecture 2B) for providing the input Operand B to the SIMD block 401 can be defined as follows. The Operand B distribution circuit 417 includes a plurality of Operand B buffers to hold values read from the Operand B register file 412. Each of these Operand B buffers can hold one full register file word. Each SIMD can receive the register file word stored in any one of the Operand B buffers. A buffer select mechanism is used to specify which of the Operand B buffers is coupled to each of the SIMD engines. The Operand B buffers are filled one at a time from the Operand B register file 412. When a new register file word needs to be loaded into the Operand B buffers from Operand B register file 412, one of the previous Operand B buffers is overwritten. There can be multiple schemes to determine which Operand B buffer needs to be overwritten. One simple scheme is that the Operand B buffer with oldest data is overwritten (i.e., the Operand B buffers are used in a round-robin fashion). In another scheme, control logic 430 can specify which Operand B buffer needs to be overwritten.

[0092] It is not necessary to load the data from the Operand B register file 412 into the operand B buffers during every cycle. Separate control logic 430 can specify a flag for every cycle to indicate if new data needs to be read from the Operand B register file 412 into the Operand B buffers of Operand B distribution circuit 417. In the actual hardware implementation, each Operand B buffer may use a double buffering scheme so that read and write operations to an Operand B buffer do not occur in the same cycle.

[0093] FIG. 16 is a block diagram of the second architecture (Architecture 2B) for providing input Operand B to the SIMD engines in accordance with one embodiment. In the illustrated example, Operand B distribution logic 417 includes four Operand B buffers B0-B3, each of which is capable of storing a full register word from Operand B register file 412. Although four Operand B buffers are shown, it is understood that the number of operand buffers can be any number greater than one. In the illustrated example, Operand B buffers B0-B3 store register file words received from operand B register file 412. More specifically, Operand B buffers B0, B1, B2 and B3 store values [a, b, c, d], [e, f, g, h], [i, j, k, l] and [m, n, o, p], respectively (wherein each of the values a-p is a 32-bit word).

[0094] Operand B buffer select logic 1601 (which may be included in the state machine and scheduler 431 of control logic 430) is used to determine the manner in which the contents of Operand B buffers B0, B1, B2 and B3 are provided to SIMD.sub.0, SIMD.sub.2, SIMD.sub.2 and SIMD.sub.3. In one embodiment, Operand B buffer select logic 1601 includes four buffer select entries bs0, bs1, bs2 and bs3, which store values that specify which of the Operand B buffers B0-B3 provide their contents to SIMD.sub.0, SIMD.sub.1, SIMD.sub.2 and SIMD.sub.3, respectively. In the illustrated example, the four entries bs0, bs1, bs2 and bs3 specify operand B buffers B0, B0, B1 and B2, respectively, indicating that the contents of operand B buffer B0 (i.e., [a, b, c, d]) are provided to SIMD.sub.0 and SIMD.sub.1, the contents of operand B buffer B1 (i.e., [e, f, g, h]) are provided to SIMD.sub.2, and the contents of operand B buffer B2 (i.e., [i, j, k, l]) are provided to SIMD.sub.3. In subsequent cycles, the buffer selection may change by changing the buffer select entries bs0, bs1, bs2 and bs3. It is noted that if the number of operand buffers is reduced to 1, then Architecture 2B would be equivalent to Architecture 1B.

Architecture 3B

[0095] Another approach to effectively allow for multiple reads from the operand B register file 412 is to implement the operand B register file 412 using a plurality of register files, each of which allows a single read operation to be performed at a time. As noted before, having one large memory with 4 read ports can be more expensive than four smaller memories with one read port each. However, it is worth noting that the larger memory with 4 read ports offers more flexibility in terms of the data that can be read. When four smaller memories with single read port are used, four entries can be read at a given time, but each of the entries has to belong to a different memory. This is not the case with a 4 read-port memory that allows any 4 entries to be read simultaneously.

[0096] The main characteristics of the third architecture (Architecture 3B) for providing the input Operand B to the SIMD block 401 can be defined as follows. There is more than one Register File for Operand B. In one specific case, the number of Register Files for Operand B is equal to the number of SIMD engines included in SIMD block 401. Thus, if there are four SIMD engines, then there will be four corresponding Operand B register files. However, other cases are possible and it is easy to extend the architecture to those cases.

[0097] The multiple Operand B Register Files can be read simultaneously. In a simple case, each SIMD receives its input Operand B directly from one of the Operand B register files. If the number of SIMD engines is equal to the number of operand B register files, then each of the SIMD engines can receive an input Operand B from a corresponding one of the Operand B register files.

[0098] In the general case, the Operand B distribution circuit 417 can contain operand buffers (similar to Architecture 2B) to hold the data read from the Operand B register files. This can allow multiple cycles to use same data. Also, the Operand B register files need not be read every cycle due to reuse of the buffered data. A load flag can specify the cycles in which data needs to be read from the Operand B register files to the Operand B distribution circuit 417. A separate block can also specify the address of the buffer to load for every SIMD, as described above in connection with Architecture 2B.

[0099] FIG. 17 is a block diagram of the third architecture (Architecture 3B) for providing the input Operand B to the SIMD block 401 in accordance with one embodiment. In the illustrated example, four Operand B register files 412.sub.0, 412.sub.1, 412.sub.2 and 413.sub.3 provide four corresponding Operand B register words (e.g., [a0, b0, c0, d0] [e0, f0, g0, h0] [i0, j0, k0, l0] and [m0, n0, o0, p0]) to Operand B distribution circuit 417. In the illustrated example, the Operand B distribution circuit 417 routes the register file words provided by operand B register files 412.sub.0, 412.sub.1, 412.sub.2 and 413.sub.3 to buffer memories BM.sub.0, BM.sub.1, BM.sub.2 and BM.sub.3, respectively, within Operand B distribution circuit 417.

[0100] Operand B buffer select logic 1701 (which may be included in the state machine and scheduler 431 of control logic 430) is used to determine the manner in which the contents of Operand B buffers BM.sub.0, BM.sub.1, BM.sub.2 and BM.sub.3 are provided to SIMD.sub.0, SIMD.sub.1, SIMD.sub.2 and SIMD.sub.3. In one embodiment, Operand B buffer select logic 1701 includes four buffer select entries bms0, bms1, bms2 and bms3, which store values that specify which of the Operand B buffers BM.sub.0-BM.sub.3 provide their contents to SIMD.sub.0, SIMD.sub.1, SIMD.sub.2 and SIMD.sub.3, respectively. In the illustrated example, the four entries bms0, bms1, bms2 and bms3 specify operand B buffers BM.sub.0, BM.sub.1, BM.sub.2 and BM.sub.3, respectively, indicating that the contents of operand B buffer BM.sub.0 (i.e., [a0, b0, c0, d0]) are provided to SIMD.sub.0, the contents of operand B buffer BM.sub.1 (i.e., [e0, f0, g0, h0]) are provided to SIMD.sub.1, the contents of operand B buffer BM.sub.2 (i.e., [i0, j0, k0, l0]) are provided to SIMD.sub.2, and the contents of operand B buffer BM.sub.3 (i.e., [m0, n0, o0, p0]) are provided to SIMD.sub.3. In subsequent cycles, the buffer selection may change by changing the buffer memory select entries bms0, bms1, bms2 and bms3.

[0101] FIG. 18 is a block diagram of the third architecture (Architecture 3B) for providing input Operand B to the SIMD block 401 in accordance with an alternate embodiment. In the embodiment of FIG. 18, Operand B distribution circuit 417 includes double Operand B buffers B01-B02, B11-B12, B21-B22 and B31-B32, which store data provided by the Operand B register files 412.sub.0, 412.sub.1, 412.sub.2 and 413.sub.3, respectively. In the illustrated example, Operand B register file words [a0, b0, c0, d0] and [a1, b1, c1, d1] from operand B register file 412.sub.0 are stored in Operand B buffers B02 and B01, respectively. Operand B register file words [e0, f0, g0, h0] and [e1, f1, g1, h1] from operand B register file 412.sub.1 are stored in Operand B buffers B12 and B11, respectively. Operand B register file words [i0, j0, k0, l0] and [i1, j1, k1, 11] from operand B register file 412.sub.2 are stored in Operand B buffers B22 and B21, respectively. Operand B register file words [m0, n0, o0, p0] and [m1, n1, o1, p1] from operand B register file 412.sub.3 are stored in Operand B buffers B32 and B31, respectively.

[0102] Operand B buffer select logic 1801 (which may be included in the state machine and scheduler 431 of control logic 430) is used to determine the manner in which the contents of Operand B buffers B01-B02, B11-B12, B21-B22 and B31-B32 are provided to SIMD.sub.0, SIMD.sub.1, SIMD.sub.2 and SIMD.sub.3. In one embodiment, Operand B buffer select logic 1801 includes four buffer select entries bs01, bs11, bs21 and bs31, which store values that specify which of the Operand B buffers B01-B02, B11-B12, B21-B22 and B31-B32 provide their contents to SIMD.sub.0, SIMD.sub.1, SIMD.sub.2 and SIMD.sub.3, respectively. In the illustrated example, the four entries bs01, bs11, bs21 and bs31 specify operand B buffers B02, B12, B22 and B32, respectively, indicating that the contents of Operand B buffer B02 (i.e., [a0, b0, c0, d0]) are provided to SIMD.sub.0, the contents of Operand B buffer B12 (i.e., [e0, f0, g0, h0]) are provided to SIMD.sub.1, the contents of Operand B buffer B22 (i.e., [i0, j0, k0, l0]) are provided to SIMD.sub.2, and the contents of Operand B buffer B32 (i.e., [m0, n0, o0, p0) are provided to SIMD.sub.3. In one embodiment, Operand B distribution circuit 417 includes switching/demultiplexing circuitry that performs the above-described routing in response to the buffer select entries bs01, bs11, bs21 and bs31. Note that Operand B buffer select logic 1801 can select any of the operand buffers B01-B02, B11-B12, B21-B22 and B31-B32 to provide input Operand B to any of the SIMD engines. For example, buffer select entry bs01 may store a value (B31) that causes the contents of Operand B buffer B31 (i.e., [m1, n1, o1, p1] to be routed to SIMD.sub.0. In subsequent cycles, the buffer selection may change by changing the buffer select entries bs01, bs11, bs21 and bs31. Note that in other embodiments, different numbers of Operand B buffers can be included in Operand B distribution circuit 417.

Architecture 4B

[0103] In a fourth architecture for providing Operand B to the SIMD block 401 (Architecture 4B), an architecture similar to Architecture 3B is provided, with the added feature that each Operand B register file allows reading two entries at a time and choosing one register file word worth of data by applying some shifting operations. Control logic 340 specifies the addresses of two rows to be read from each Operand B register file, as well as the amount of shift to be applied to the entries read from these two rows. This functionality is typically realized in hardware by implementing each Operand B register file memory as two banks of memory. This allows reading two entries at the same time. The two register file words are then fed into a shifting logic module that receives an amount of shift as an input parameter and outputs one register file word worth of data. The addresses for the two banks and the amount of shift are supplied by state machine and scheduler 431.

[0104] FIG. 19 is a block diagram of the fourth architecture (Architecture 4B) for providing input Operand B to the SIMD block 401 in accordance with one embodiment. In the illustrated embodiment, there are four Operand B register files 1912.sub.0-1912.sub.3, wherein each of these Operand B register files includes two memory banks. Thus, Operand B register files 1912.sub.0, 1912.sub.1 1912.sub.2, and 1912.sub.3 include memory banks 1912.sub.00-1912.sub.01, 1912.sub.10-1912.sub.11, 1912.sub.20-1912.sub.21 and 1912.sub.30-1912.sub.31, respectively. Each of the memory bank pairs 1912.sub.00-1912.sub.01, 1912.sub.10-1912.sub.11, 1912.sub.20-1912.sub.21 and 1912.sub.30-1912.sub.31 store different register file words. In the illustrated example, memory bank 1912.sub.00 stores register file words [a0, b0, c0, d0], [a2, b2, c2, d2] and [a4, b4, c4, d4] and memory bank 1912.sub.01 stores register file words [a1, b1, c1, d1], [a3, b3, c3, d3] and [a5, b5, c5, d5].

[0105] Memory bank 1912.sub.10 stores register file words [e0, f0, g0, h0], [e2, f2, g2, h2] and [e4, f4, g4, h4] and memory bank 1912.sub.11 stores register file words [e1, f1, g1, h1], [e3, f3, g3, h3] and [e5, f5, g5, h5].

[0106] Memory bank 1912.sub.20, stores register file words [i0, j0, k0, l0], [i2, j2, k2, l2] and [i4, j4, k4, l4] and memory bank 1912.sub.21 stores register file words [i1, j1, k1, l1], [i3, j3, k3, l3] and [i5, j5, k5, l5].

[0107] Memory bank 1912.sub.30, stores register file words [m0, n0, o0, p0], [m2, n2, o2, p2] and [m4, n4, o4, p4] and memory bank 1912.sub.31 stores register file words [m1, n1, o1, p1], [m3, n3, o3, p3] and [m5, n5, o5, p5].

[0108] Register file words read from the memory bank pairs 1912.sub.00-1912.sub.01, 1912.sub.10-1912.sub.11, 1912.sub.20-1912.sub.21 and 1912.sub.30-1912.sub.31 are provided to shift logic circuit 1901 in Operand B distribution circuit 417. Outputs of shift logic circuit 1901 are provided to Operand B buffers B0, B1, B2 and B3 in Operand B distribution circuit 417.

[0109] Control logic 430 (and more specifically, state machine and scheduler 431) controls the register file words read from memory banks 1912.sub.00-1912.sub.01, 1912.sub.10-1912.sub.11, 1912.sub.20-1912.sub.21 and 1912.sub.30-1912.sub.31. In general, control logic 430 causes register file words to be simultaneously read from the memory banks 1912.sub.00-1912.sub.01, 1912.sub.10-1912.sub.11, 1912.sub.20-1912.sub.21 and 1912.sub.30-1912.sub.31. The addresses provided to each of the memory bank pairs may selected such that two different consecutive register file words are read from each of the memory banks, thereby providing the register file words necessary to perform a shifting operation. For example, register file words [a0, b0, c0, d0] and [a1, b1, c1, d1] may be simultaneously read from memory banks 1912.sub.00 and 1912.sub.01, respectively; register file words [e0, f0, g0, h0] and [e1, f1, g1, h1] may be simultaneously read from memory banks 1912.sub.10 and 1912.sub.11, respectively; register file words [i0, j0, k0, l0] and [i1, j1, k1, 11] may be simultaneously read from memory banks 1912.sub.20 and 1912.sub.21, respectively; and register file words [m0, n0, o0, p0] and [m1, n1, o1, p1] may be simultaneously read from memory banks 1912.sub.30 and 1912.sub.31, respectively. The shift logic circuit 1901 receives the eight register file words provided by Operand B register files 1912.sub.0-1912.sub.3.

[0110] Control logic 340 also controls the amount of shift introduced by shift logic circuit 1901. In general, Table 1 below defines the values provided by shift logic circuit 1901 to operand buffers B0-B3 in the present example, for various shift values. Note that each shift value introduces an additional 32-bit shift to the received pairs of register file words. FIG. 19 illustrates the results for a shift value of 1.

TABLE-US-00001 TABLE 1 Shift B0 B1 B2 B3 0 [a0 b0 c0 d0] [e0 f0 g0 h0] [i0 j0 k0 l0] [m0 n0 o0 p0] 1 [b0 c0 d0 a1] [f0 g0 h0 e1] [j0 k0 l0 i1] [n0 o0 p0 m1] 2 [c0 d0 a1 b1] [g0 h0 e1 f1] [k0 l0 i1 j1] [o0 p0 m1 n1] 3 [d0 a1 b1 c1] [h0 e1 f1 g1] [l0 i1 j1 k1] [p0 m1 n1 o1] 4 [a1 b1 c1 d1] [e1 f1 g1 h1] [i1 j1 k1 l1] [m1 n1 o1 p1]

[0111] The contents of operand B buffers B0, B1, B2 and B3 are routed to SIMD.sub.0, SIMD.sub.1, SIMD.sub.2 and SIMD.sub.3, respectively, as input operand B. In the foregoing manner, shifting may be efficiently performed within the register file words stored by Operand B register files 1912.sub.0-1912.sub.3.

[0112] Again, in actual hardware implementation, multiple architectures can be implemented together by sharing hardware resources. The hardware can be programmed to operate different architectures as modes that can be chosen by a register.

[0113] More details regarding the routing of input Operand B in accordance with Architectures 1B, 2B, 3B and 4B are provided below in connection with FIG. 22.

[0114] Although the preceding descriptions of Architectures 1B, 2B, 3B and 4B implement 32-bit input and 16-bit input modes, it is understood that these architectures can easily be modified to implement input modes of other widths (e.g., 8-bit input mode). Moreover, although the Architectures 1B, 2B, 3B and 4B have been described in connection with embodiments that include 4 SIMD engines and a 128-bit register file word, other numbers of SIMD engines and register file word widths can be used in other embodiments in a straightforward manner.

[0115] In actual hardware implementation, multiple architectures can be implemented together by sharing hardware resources. The hardware can be programmed to operate different architectures as modes that can be chosen by some register settings. For example, control registers 432 (FIG. 4) can store values that configure Operand B distribution circuit 415 to implement Architecture 1B, 2B, 3B or 4B for Operand B in the manners described above.

Output Circuitry

[0116] Output circuit 420 (FIG. 4) is used for storing (and specifying addresses for) the outputs of the SIMD engines (SIMD.sub.0, SIMD.sub.2, SIMD.sub.2 and SIMD.sub.3). Each SIMD can write the output of an operation performed within the SIMD to certain number of output registers within output circuit 420.

[0117] FIG. 20 is a block diagram that shows each of the SIMD engines (SIMD.sub.0, SIMD.sub.2, SIMD.sub.2 and SIMD.sub.3) coupled to corresponding memory banks 2000.sub.0-2000.sub.3, wherein each memory bank includes k rows, with each row forming an output register. In the case of accumulation operations, the control logic 340 specifies a row address within each of the memory banks 2000.sub.0-2000.sub.3, such that previously stored accumulation values are read from the addressed output registers of the memory banks 2000.sub.0-2000.sub.3, and are provided to the corresponding SIMD engines, SIMD.sub.0-SIMD.sub.3. In response, the SIMD engines (SIMD.sub.0-SIMD.sub.3) perform multiply-accumulate operations to generate updated accumulation values, which are then written back to the addressed output registers within the corresponding memory banks 2000.sub.0-2000.sub.3.

[0118] As described in more detail below, the row addresses of the output registers associated with each SIMD can be thought of as input signals to the SIMD engines. The row address is the index of the row within the SIMD (referred to as relative index within the SIMD).

[0119] FIG. 21 is a block diagram that shows register select logic 2101 used to determine the manner in which the contents of output register sets 2000.sub.0, 2000.sub.1, 2000.sub.2 and 2000.sub.3 are provided to SIMD engines SIMD.sub.0, SIMD.sub.1, SIMD.sub.2 and SIMD.sub.3, respectively. Register select logic 2101 is implemented within state machine and scheduler 431 of control logic 430. In one embodiment, register select logic 2101 includes four register select entries R0, R1, R2 and R3, which store row address values that specify which of the output registers within output register sets 2000.sub.0, 2000.sub.1, 2000.sub.2 and 2000.sub.3 provide their contents to SIMD.sub.0, SIMD.sub.1, SIMD.sub.2 and SIMD.sub.3, respectively (or store values received from SIMD.sub.0, SIMD.sub.1, SIMD.sub.2 and SIMD.sub.3, respectively). In the illustrated example, the four register select entries R0, R1, R2 and R3 specify the output registers in Row 1, Row(K-1), Row 0 and Row 2 of output register sets 2000.sub.0, 2000.sub.1, 2000.sub.2 and 2000.sub.3, respectively (indicating that the contents of these output registers are provided to SIMD.sub.0, SIMD.sub.1, SIMD.sub.2 and SIMD.sub.3, respectively). Note that the selected output registers are highlighted in FIG. 21. In subsequent cycles, the register selection may change by changing the register select entries R0, R1, R2 and R3.

A Unified Architecture

[0120] FIG. 22 is a block diagram of a hardware system 2200 that unifies the various architectures and features proposed above for the different operands and outputs. The system 2200 includes (1) an operand block 2210 that includes one or more register files for storing each of the operands (Operand A and Operand B), (2) an input distribution block (IDB) 2220 that includes one or more buffers for each of the operands and a logic block for each of the operands, (3) a SIMD block 2230 that includes one or more SIMD engines, and (4) an output block 2240 that includes one or more output register files for each of the SIMD engines. In the illustrated system 2200, operand block 2210 includes operand A register file(s) 2211 and operand B register file(s) 2212, which may be used to implement the various embodiments of Operand A register file 411 and Operand B register file 412 described above. Input distribution block (IDB) 2220 includes Operand A IDB buffers 2221 and Operand A IDB logic 2223, which may be used to implement the various embodiments of Operand A distribution circuit 416 described above. Input distribution block 2220 also includes Operand B IDB buffers 2222 and Operand B IDB shift logic 2224, which may be used to implement the various embodiments of Operand B distribution circuit 417 described above. SIMD block 2240, which may be used to implement the various embodiments of SIMD block 401 described above, includes SIMD.sub.0, SIMD.sub.1, SIMD.sub.2 and SIMD.sub.3. Output block 2240, which may be used to implement the various embodiments of output circuit 420 described above, includes output register files 2241, 2242, 2243 and 2244, which are coupled to SIMD.sub.0, SIMD.sub.1, SIMD.sub.2 and SIMD.sub.3, respectively.

[0121] The operand block 2210 is responsible for holding the operand data. The data from operand register files 2211 and 2212 is transferred to the input distribution block 2220 based on control signals that are explained below. In one embodiment of the unified architecture of system 2200, Operand A register file 2211 includes one register file, and Operand B register files 2212 include four register files. Further, the four register files for Operand B allow two simultaneous reads (i.e., the memory is split into two banks). While the general system can contain arbitrary number of register files for each of the operands, the optimal hardware should be designed such that it uses the least number of register files but supports all required operations.

[0122] In the illustrated, three control signals, OP_A_RF_SRC_ADDR_SEL, OP_A_RF_DEST_ADDR_SEL and OP_A_RF_LOAD_FLAG, are used to control the operation of Operand A register files 2211. Similarly, three control signals, OP_B_RF_SRC_ADDR_SEL, OP_B_RF_DEST_ADDR_SEL and OP_B_RF_LOAD_FLAG, are used to control the operation of Operand B register files 2212.