Systems, Environment And Methods For Identification And Analysis Of Recurring Transitory Physiological States And Events Using A Portable Data Collection Device

SAHIN; Nedim T

U.S. patent application number 16/843712 was filed with the patent office on 2020-10-29 for systems, environment and methods for identification and analysis of recurring transitory physiological states and events using a portable data collection device. The applicant listed for this patent is Nedim T SAHIN. Invention is credited to Nedim T SAHIN.

| Application Number | 20200337631 16/843712 |

| Document ID | / |

| Family ID | 1000004944570 |

| Filed Date | 2020-10-29 |

View All Diagrams

| United States Patent Application | 20200337631 |

| Kind Code | A1 |

| SAHIN; Nedim T | October 29, 2020 |

SYSTEMS, ENVIRONMENT AND METHODS FOR IDENTIFICATION AND ANALYSIS OF RECURRING TRANSITORY PHYSIOLOGICAL STATES AND EVENTS USING A PORTABLE DATA COLLECTION DEVICE

Abstract

In one aspect, the systems, environment, and methods described herein support anticipation and identification of adverse health events and/or atypical behavioral episodes such as Autistic behaviors, epileptic seizures, heart attack, stroke, and/or narcoleptic "sleep attacks" using a portable data collection device. In another aspect, the systems, environment, and methods described herein support measurement of motions and vibrations associated with recurring transitory physiological states and events using a portable data collection device. For example, motion and vibration measurements may be analyzed to identify pronounced head motion patterns indicative of specific heart defects, neurodegenerative conditions, inner ear or other balance problems, or types of cardiac disease. In another example, motion and vibration measurements may be analyzed to identify slow-wave changes indicative of temporary conditions such as intoxication, fatigue, distress, aggression, attention deficit, anger, and/or narcotic ingestion as well as temporary or periodic normal events, such as ovulation, pregnancy, and sexual arousal.

| Inventors: | SAHIN; Nedim T; (Boston, MA) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Family ID: | 1000004944570 | ||||||||||

| Appl. No.: | 16/843712 | ||||||||||

| Filed: | April 8, 2020 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 15897891 | Feb 15, 2018 | |||

| 16843712 | ||||

| 14693641 | Apr 22, 2015 | 9936916 | ||

| 15897891 | ||||

| 14511039 | Oct 9, 2014 | 10405786 | ||

| 14693641 | ||||

| 61943727 | Feb 24, 2014 | |||

| 61888531 | Oct 9, 2013 | |||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | A61B 5/163 20170801; A61B 5/6803 20130101; A61B 5/486 20130101; A61B 5/743 20130101; A61B 5/0022 20130101; A61B 5/1112 20130101; A61B 5/1123 20130101; G16H 40/67 20180101; A61B 2560/0242 20130101 |

| International Class: | A61B 5/00 20060101 A61B005/00; A61B 5/11 20060101 A61B005/11; G16H 40/67 20060101 G16H040/67 |

Claims

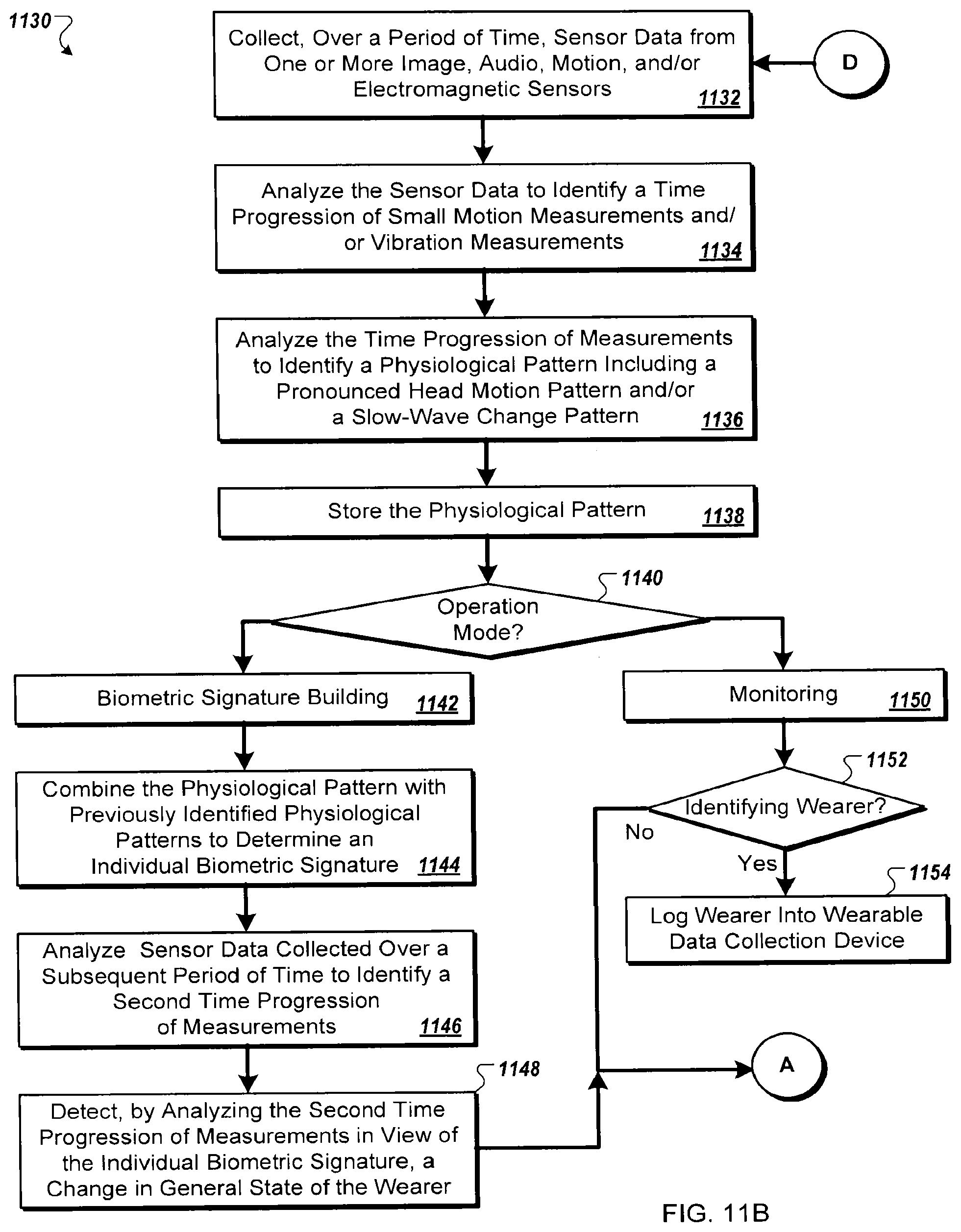

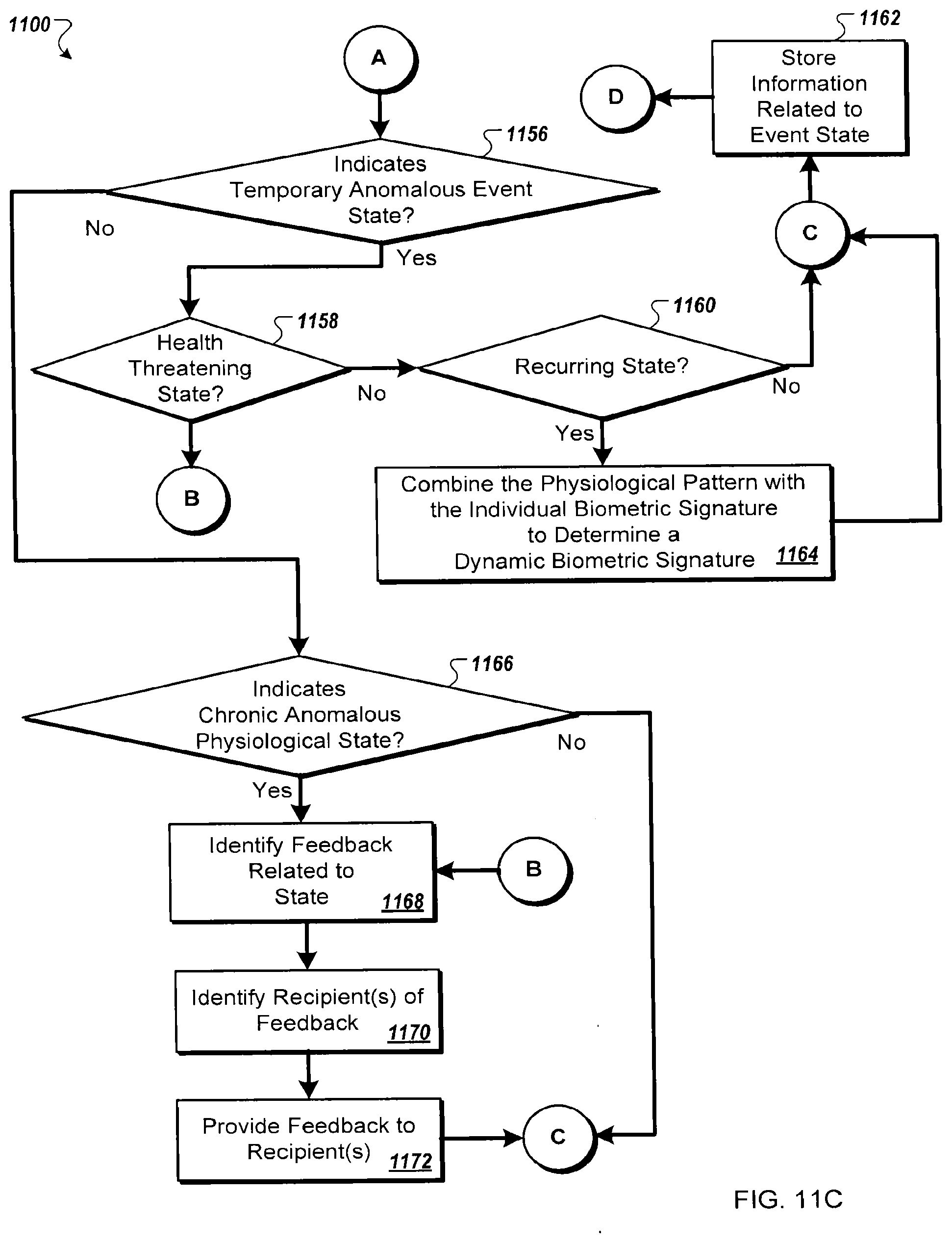

1. A system comprising: a wearable data collection device designed to be worn upon a head of a wearer, the wearable data collection device comprising processing circuitry, and a non-transitory computer readable medium having instructions stored thereon, and one or more input capture elements connected to and/or in communication with the wearable data collection device, wherein the one or more input capture elements are positioned upon or proximate to the head of the wearer; wherein the instructions, when executed by the processing circuitry, cause the processing circuitry to: collect, over a period of time via at least one of the one or more input capture elements, sensor data, wherein the sensor data includes at least one of image data, audio data, electromagnetic data, and motion data, analyze the sensor data to identify a time progression of measurements including at least one of a) a plurality of small motion measurements, and b) a plurality of vibration measurements, analyze the time progression of measurements to identify a physiological pattern, wherein the physiological pattern comprises at least one of a pronounced head motion pattern and a slow-wave change pattern, store, upon a non-transitory computer readable storage device, the physiological pattern, and provide, to at least one of a wearer of the wearable data collection device and a third party computing device, feedback corresponding to the physiological pattern, wherein providing feedback to the wearer comprises providing, via at least one output feature of one or more output features of the wearable data collection device responsive to a physiological state indicated by the physiological pattern, at least one of visual, audible, haptic, and neural stimulation feedback to the wearer, and providing feedback to the third party computing device comprises transmitting, via a wired or wireless transmission link, a data transmission to the third party computing device identifying at least one of the physiological pattern and the identification of the physiological state.

Description

CROSS-REFERENCE TO RELATED APPLICATIONS

[0001] This application is a continuation of U.S. patent application Ser. No. 15/897,891 entitled "Systems, Environment and Methods for Identification and Analysis of Recurring Transitory Physiological States and Events Using a Portable Data Collection Device" filed Feb. 15, 2018, which is a continuation of U.S. patent application Ser. No. 14/693,641 entitled "Systems, Environment and Methods for Identification and Analysis of Recurring Transitory Physiological States and Events Using a Portable Data Collection Device" filed Apr. 22, 2015 (now U.S. Pat. No. 9,936,916), which is a continuation-in-part of U.S. patent application Ser. No. 14/511,039 entitled "Systems, Environment and Methods for Evaluation and Management of Autism Spectrum Disorder using a Wearable Data Collection Device" filed Oct. 9, 2014 (now U.S. Pat. No. 10,405,786), which claims the benefit of priority of U.S. Provisional Application No. 61/888,531 entitled "A Method and Device to Provide Information Regarding Autism Spectrum Disorders" filed Oct. 9, 2013, and U.S. Provisional Application No. 61/943,727 entitled "Method, System, and Wearable Data Collection Device for Evaluation and Management of Autism Spectrum Disorder" filed Feb. 24, 2014. All above identified applications are hereby incorporated by reference in their entireties.

BACKGROUND

[0002] Autism probably begins in utero, and can be diagnosed at 4-6 months. However, right now in America, Autism is most often diagnosed at 4-6 years. The median diagnosis age in children with only 7 of the 12 classic Autism Spectrum Disorder symptoms is over 8. In these missed years, the child falls much further behind his or her peers than necessary. This tragedy is widespread, given that 1 in 42 boys is estimated to have Autism (1 in 68 children overall) (based upon U.S. Centers for Disease Control and Prevention, surveillance year 2010). Additionally there are few methods of managing or treating Autism, and almost no disease-modifying medical treatments. Why do these diagnosis and treatment gaps exist?

[0003] There is no blood test for autism. Nor is there a genetic, neural or physiological test. Astonishingly, the only way parents can know if their child has autism is to secure an appointment with multiple doctors (pediatrician, speech pathologist, perhaps neurologist) who observe the child playing and interacting with others, especially with the caregiver. This is time-consuming, must be done during doctors' hours, is challenging and contains subjective components, varies by clinician, does not usually generate numerical data or closely quantified symptoms or behaviors; and demands resources, knowledge and access to the health system--all contributing to delayed diagnosis.

[0004] There are also social factors. A parent's suspicion that his/her child has autism generally takes time to grow, especially with the first child or in parents with little child experience (no frame of reference). Furthermore, the decision to seek help may be clouded by fear, doubt, denial, guilt, stigma, embarrassment, lack of knowledge, distrust of the medical system, and confusion. Once the decision is made, it can be a protracted, uphill battle to find the right care center and secure the screening appointment and a correct diagnosis. All these factors are amplified for at-risk families with low SES, low education level, language and cultural barriers, familial ASD; and in single-parent or dual job families. Time that passes before diagnosis reduces the child's social and emotional development, learning of language, and eventual level of function in society.

[0005] Even if the family surmounts various hurdles and comes in for an official diagnosis, hospital admission and the test environment can be daunting and unnatural, especially for those with language, cultural or SES barriers.

[0006] In this context, a shy child may seem autistic and an ASD child may completely shut down, especially since ASD children are particularly averse to changes in familiar settings and routines. Thus, the child may be diagnosed as further along the Autism spectrum than is the reality, and false diagnoses such as retardation may be attached. This has profound consequences in terms of what schooling options are available to the child, how the parents and community treats the child, and the relationship that gets set up between the parents and the healthcare system. Even in a friendly testing lab, clinicians cannot see the child play and interact exactly as he/she does in the familiar home environment, and can never see the child through the caregiver's eyes, nor see the world through the child's eyes. Importantly, there are no widely adopted systems for objectively quantifying behavioral markers or neural signals associated with ASD, especially at home.

[0007] Even when and if a diagnosis is achieved, there are few options available to the family (or to the school or health care giver) that quantify the degree of severity of the child's symptoms. Autism is a spectrum of course, and people with autism spectrum disorders have a range of characteristic symptoms and features, each to varying degrees of severity if at all. Measuring these and characterizing the overall disorder fingerprint for each person is an important advance for the initial characterization, as per above, but importantly this fingerprint is dynamic over time, especially in the context of attempted treatments and schooling options, so measuring the changing severity and nature of each feature is important. This is the tracking or progress-assessment framework. Additionally, perhaps one of the greatest unmet needs within ASD comes in terms of the treatment or training framework. That is to say, mechanisms for providing intervention of one kind or another that can have a disease-modifying or symptom-modifying impact. There are few options available to the families affected, and again, there are few options for rigorously quantifying the results.

SUMMARY

[0008] Various systems and methods described herein support anticipation and identification of adverse health events and/or atypical behavioral episodes such as Autistic behaviors, epileptic seizures, heart attack, stroke, and/or narcoleptic "sleep attacks" using a wearable data collection device. In another aspect, the systems, environment, and methods described herein support measurement of motions and vibrations associated with recurring transitory physiological states and events using a wearable data collection device.

[0009] In one aspect, the present disclosure relates to systems and methods developed to better track, quantify, and educate an individual with an unwellness condition or neurological development challenge. In some embodiments, certain systems and methods described herein monitor and analyze an individual's behaviors and/or physiology. The analysis, for example, may identify recurring transient physiological states or events. For example, motion and vibration measurements may be analyzed to identify pronounced head motion patterns indicative of specific heart defects, neurodegenerative conditions, inner ear or other balance problems, or types of cardiac disease. During the pulse cycle, for example, blockages of the atrium may cause a particular style of motion, while blockages of the ventricle may cause a different particular style of motion (e.g., back and forth vs. side-to-side, etc.). Vestibular inner ear issues, for example as a result of a percussive injury such as a blast injury disrupting inner ear physiology, can lead to poor balance and balance perception, resulting in measurable tilt and head motion. In another example, motion and vibration measurements may be analyzed to identify slow-wave changes indicative of temporary anomalous states such as intoxication, fatigue, and/or narcotic ingestion as well as temporary or periodic normal events, such as ovulation, pregnancy, and sexual arousal.

[0010] A slow-wave change can be measurable over a lengthier period of time such as a day, series of days, week(s), month(s), or even year. Mean activity, for example, may be affected by time of the day and/or time of the year. The motions, for example, may include small eye motions, heart rate, mean heart variability, respiration, etc. Any of these systemic motions may become disregulated and demonstrate anomalies. Certain systems and methods described herein, in some embodiments, provide assistance to the individual based upon analysis of data obtained through monitoring.

[0011] In one aspect, motion signatures may be derived from a baseline activity signature particular to an individual or group of individuals, such as a common gait, customary movements during driving, or customary movements while maintaining a relaxed standing position. In relation to a group of individuals, for example, the group may contemplate similar physiological disabilities, genetic backgrounds (e., family members), sex, age, race, size, sensory sensitivity profiles (e.g., auditory vs. visual vs. haptic, etc.), responsiveness to pharmaceuticals, behavioral therapies, and/or other interventions, and/or types of digestive problems.

[0012] In one aspect, the present disclosure relates to systems and methods for inexpensive, non-invasive measuring and monitoring of breathing, heart rate, and/or cardiovascular dynamics using a portable or wearable data collection device. Breathing, heart rate, and/or cardiovascular dynamics, in one aspect, may be derived through analysis of a variety of motion sensor data and/or small noise data. It is advantageous to be able to measure heart rate and cardiovascular dynamics as non-invasively as possible. For instance, the ability to avoid electrodes, especially electrodes that must be adhered or otherwise attached to the skin, is in most situations preferable, particularly for children who do not like extraneous sensory stimulus on their skin. It is also advantageous to be able to derive, from a non-invasive signal, additional cardiovascular dynamics beyond simply heart rate, such as dynamics that may indicate unwellness and which may usually require multi-lead ECG setups and complex analysis.

[0013] In some embodiments, a wearable data collection device including one or more motion sensors and/or electromagnetic sensors capable of discerning small motions of the body and/or one or more microphones capable of discerning small noises of the body is placed comfortably and removably on an individual without need for gels or adhesives. In a further example, the wearable data collection device may include one or more imaging sensors for capturing a time series of images or video imagery. The time progression of image data may be analyzed to identify small motions attributable to the wearer. The wearable data collection device may be a device specifically designed to measure and monitor cardiovascular dynamics of the body or a more general purpose personal wearable computing device capable of executing a software application for analyzing small motion data (e.g., motion sensor data, audio data, electromagnetic data, and/or small noise data) to obtain physiological characteristics such as cardiovascular dynamics data or a biometric signature pattern.

[0014] In some implementations, the system goes beyond the evaluation stage to track an individual's ongoing progress. The system, for example, could provide high-frequency (e.g., up to daily) assessments, each with perhaps hundreds or thousands or more data points or samples such as, in some examples, assessments of chronic anomalous physiological states and events (e.g., balance problems, Autistic behaviors, slow-wave changes indicative of unwellness conditions, and small head motion patterns indicative of unwellness conditions), assessments of chronic and normal physiological events (e.g., heart rate, breathing, etc.), and assessments of temporary anomalous events (e.g., heart attack, stroke, seizure, falls, etc.). Assessments can be incorporated into the individual's everyday home life to measure the individual's ongoing progress (e.g., symptom management, condition progress, etc.).

[0015] To enable such ongoing assessment as well as to support the training and education of an individual with a neurological development disorder or unwellness condition, in some implementations, applications for use with a portable computing device or wearable data collection device may be made available for download to or streaming on the wearable data collection device via a network-accessible content store such as iTunes.RTM. by Apple, Inc. of Cupertino, Calif. or Google Play.TM. store by Google Inc. of Menlo Park, Calif., or YouTube.TM. by Google Inc. or other content repositories, or other content collections. Content providers, in some examples, can include educators, clinicians, physicians, and/or parents supplied with development abilities to build new modules for execution on the wearable data collection device evaluation and progress tracking system. Content can range in nature from simple text, images, or video content or the like, to fully elaborated software applications ("apps") or app suites. Content can be stand-alone, can be playable on a wearable data-collection device based on its existing capabilities to play content (such as in-built ability to display text, images, videos, apps, etc., and to collect data), or can be played or deployed within a content-enabling framework or platform application that is designed to incorporate content from content providers. Content consumers, furthermore, can include individuals diagnosed with a particular unwellness condition or their families as well as clinicians, physicians, and/or educators who wish to incorporate system modules into their professional practices.

[0016] In some implementations, in addition to assessment, one or more modules of the system provide training mechanisms for supporting the individual's coping and development with an unwellness condition and its characteristics. In the aspect of a balance problem such as inner ear damage, a balance coaching training mechanism may be used to accurately compensate for the effects of the vestibular system damage through correction and feedback. In the aspect of ASD, training mechanisms may include, in some examples, training mechanisms to assist in recognition of emotional states of others, social eye contact, language learning, language use and motivation for instance in social contexts, identifying socially relevant events and acting on them appropriately, regulating vocalizations, regulating overt inappropriate behaviors and acting-out, regulating temper and mood, regulating stimming and similar behaviors, coping with sensory input and aversive sensory feelings such as overload, and among several other things, the learning of abstract categories.

BRIEF DESCRIPTION OF THE FIGURES

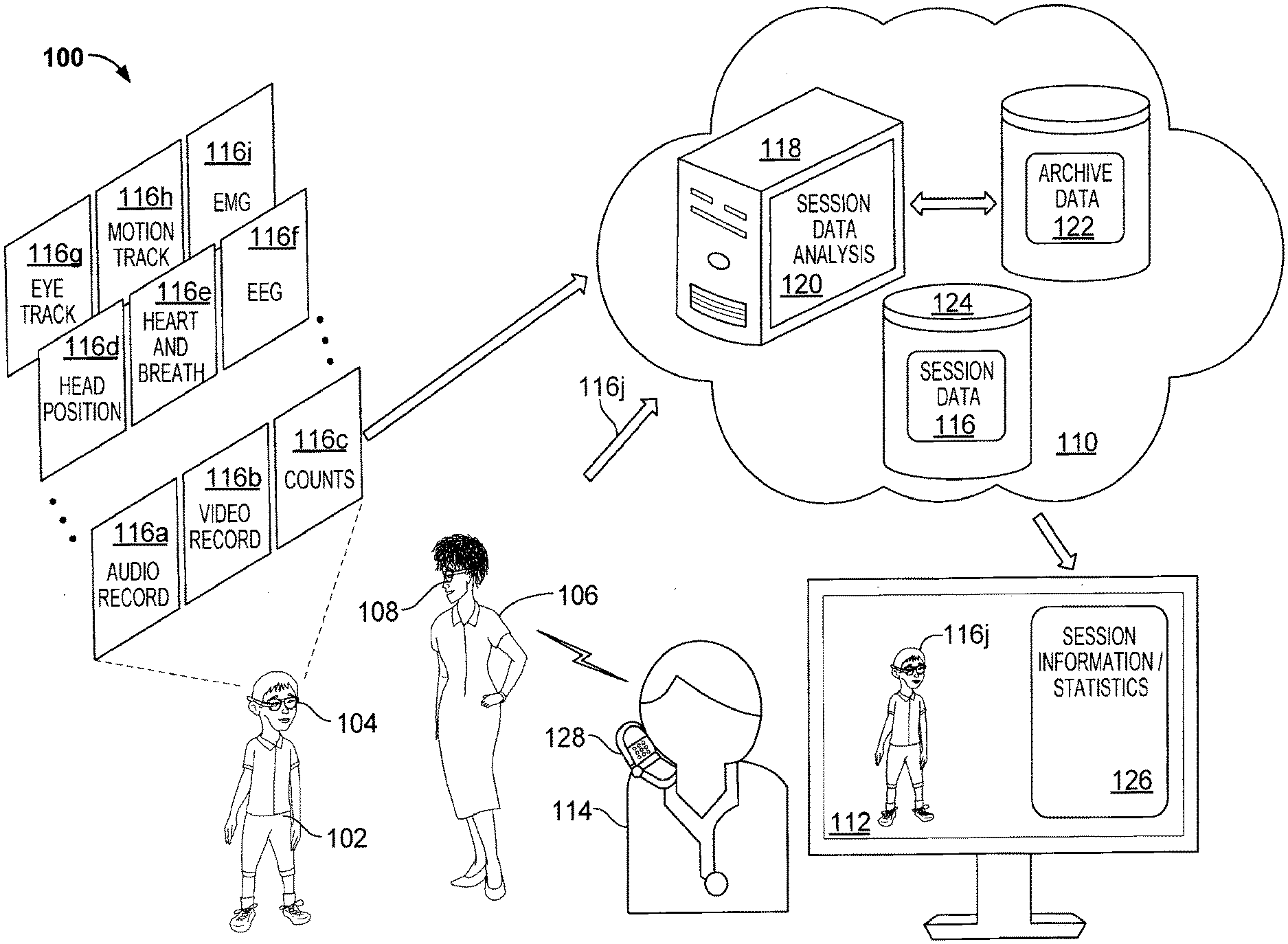

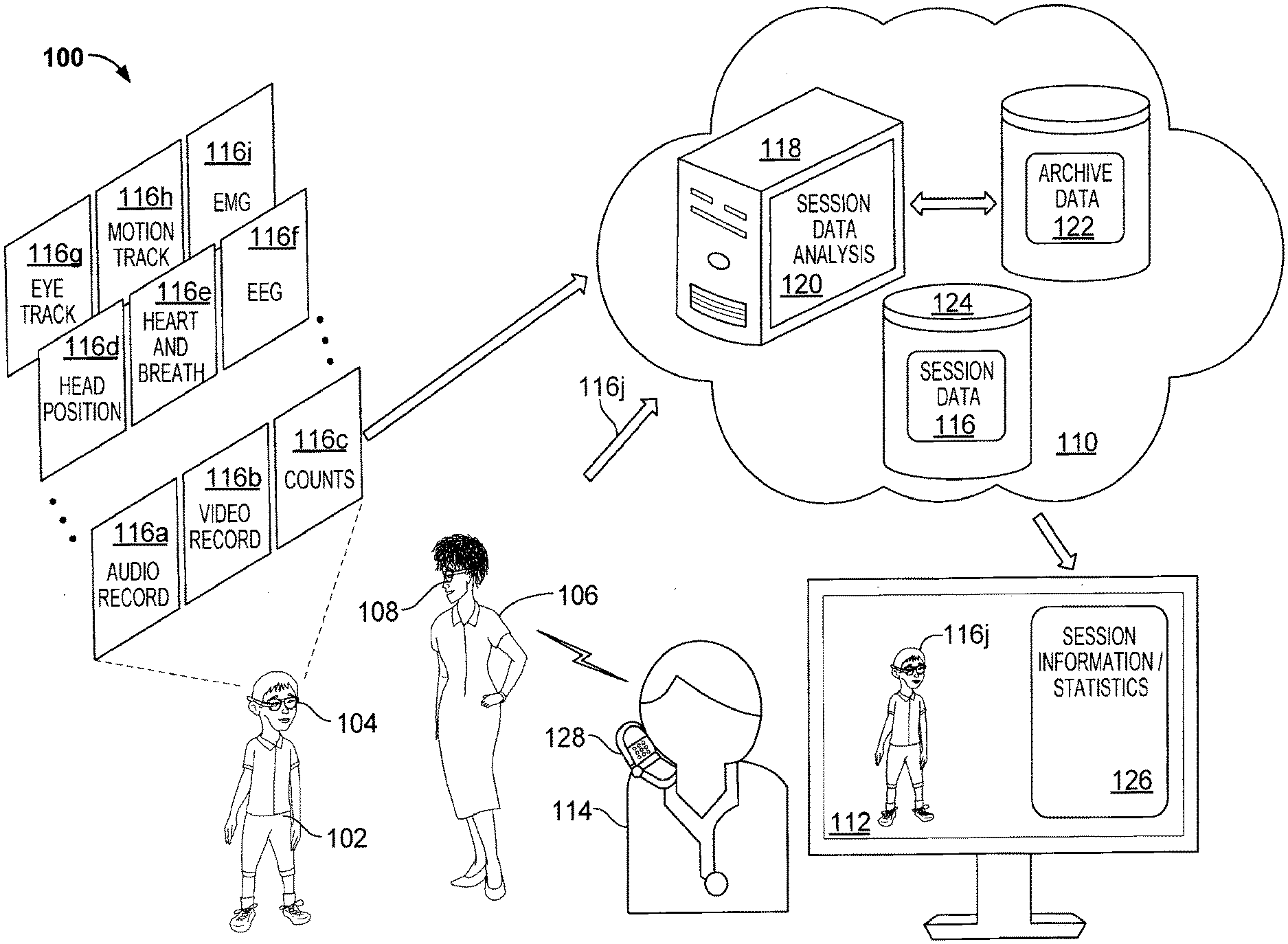

[0017] FIG. 1A is a block diagram of an example environment for evaluating an individual for Autism Spectrum Disorder using a wearable data collection device;

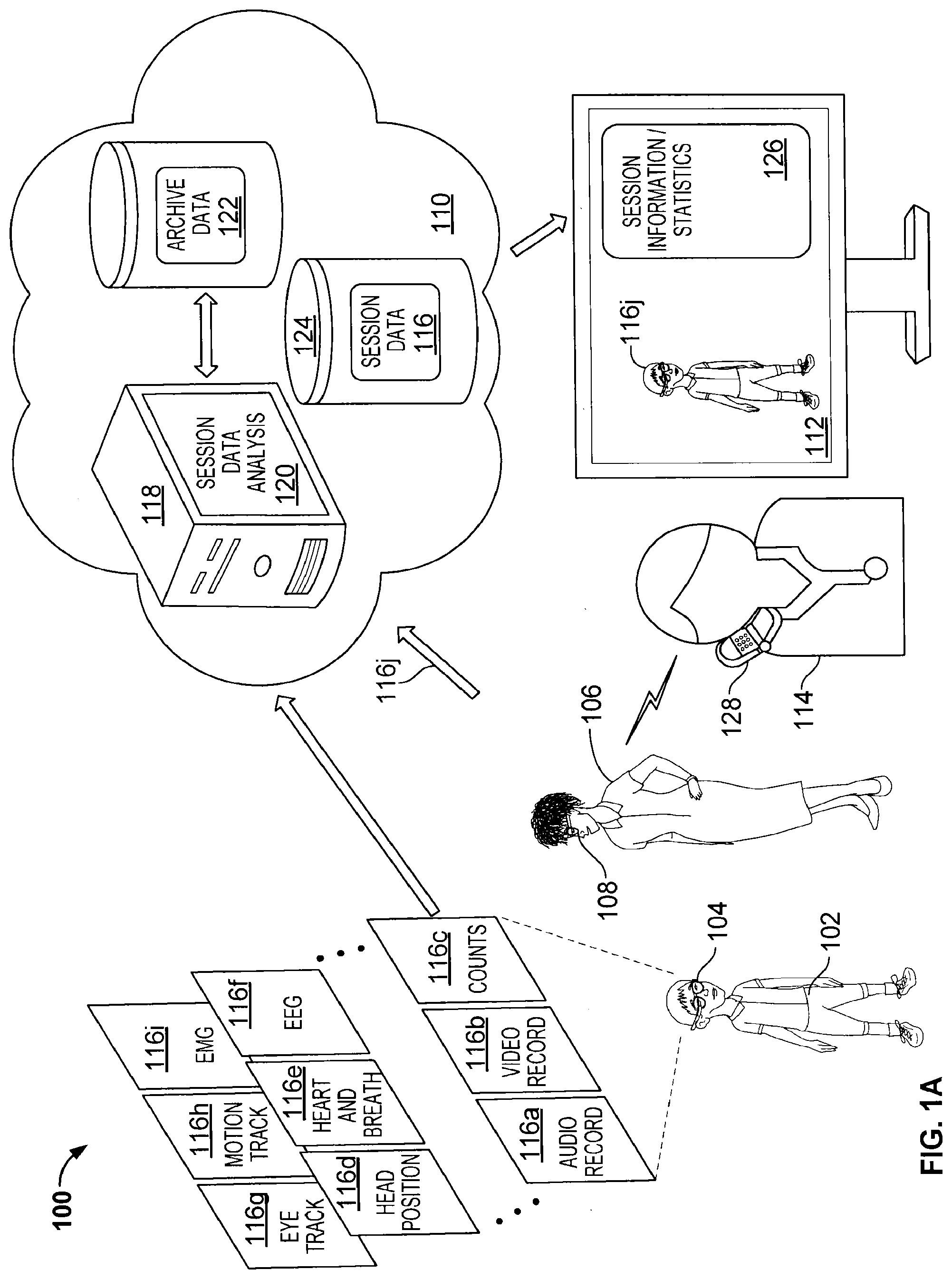

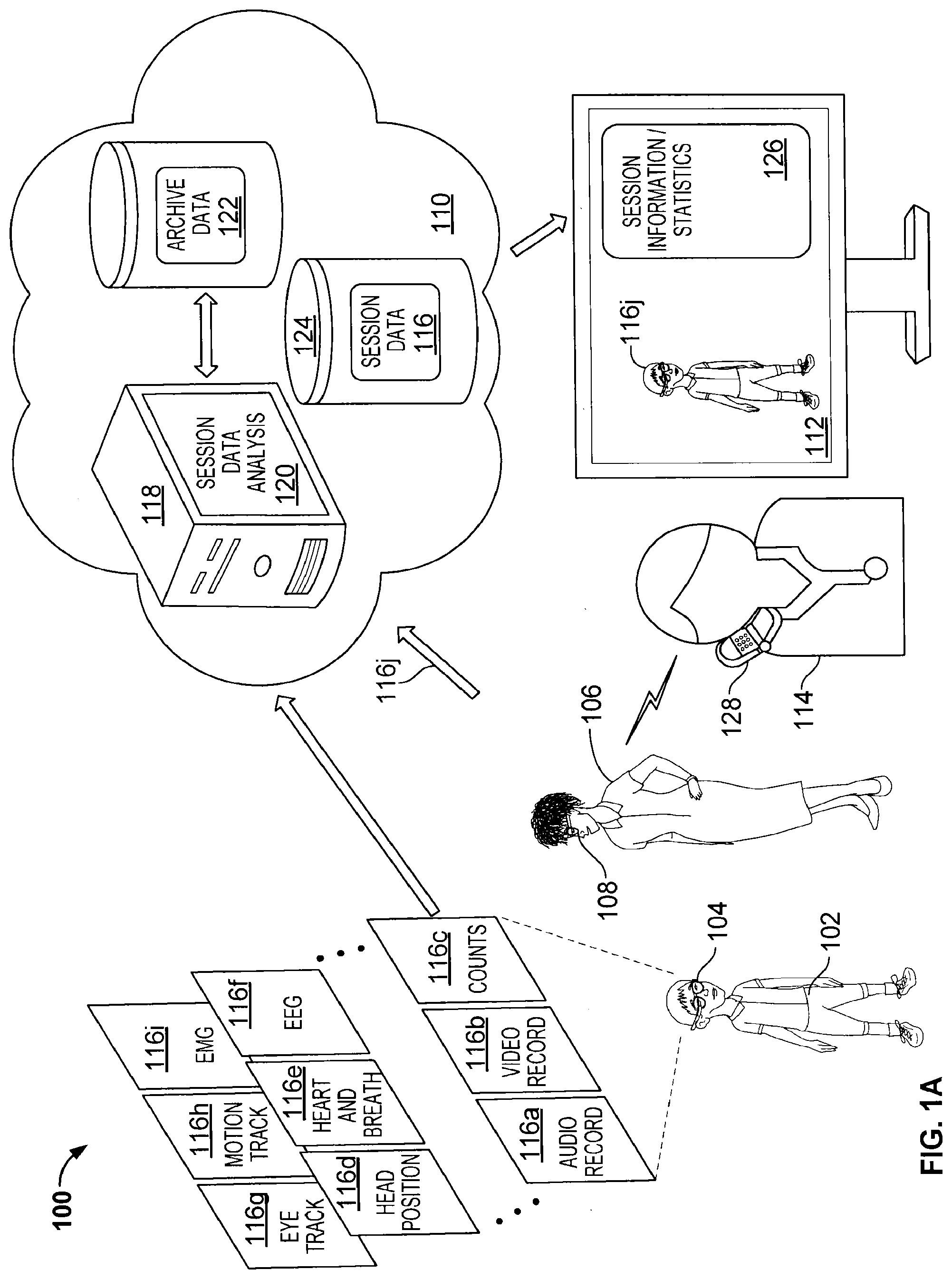

[0018] FIG. 1B is a block diagram of an example system for evaluation and training of an individual using a wearable data collection device;

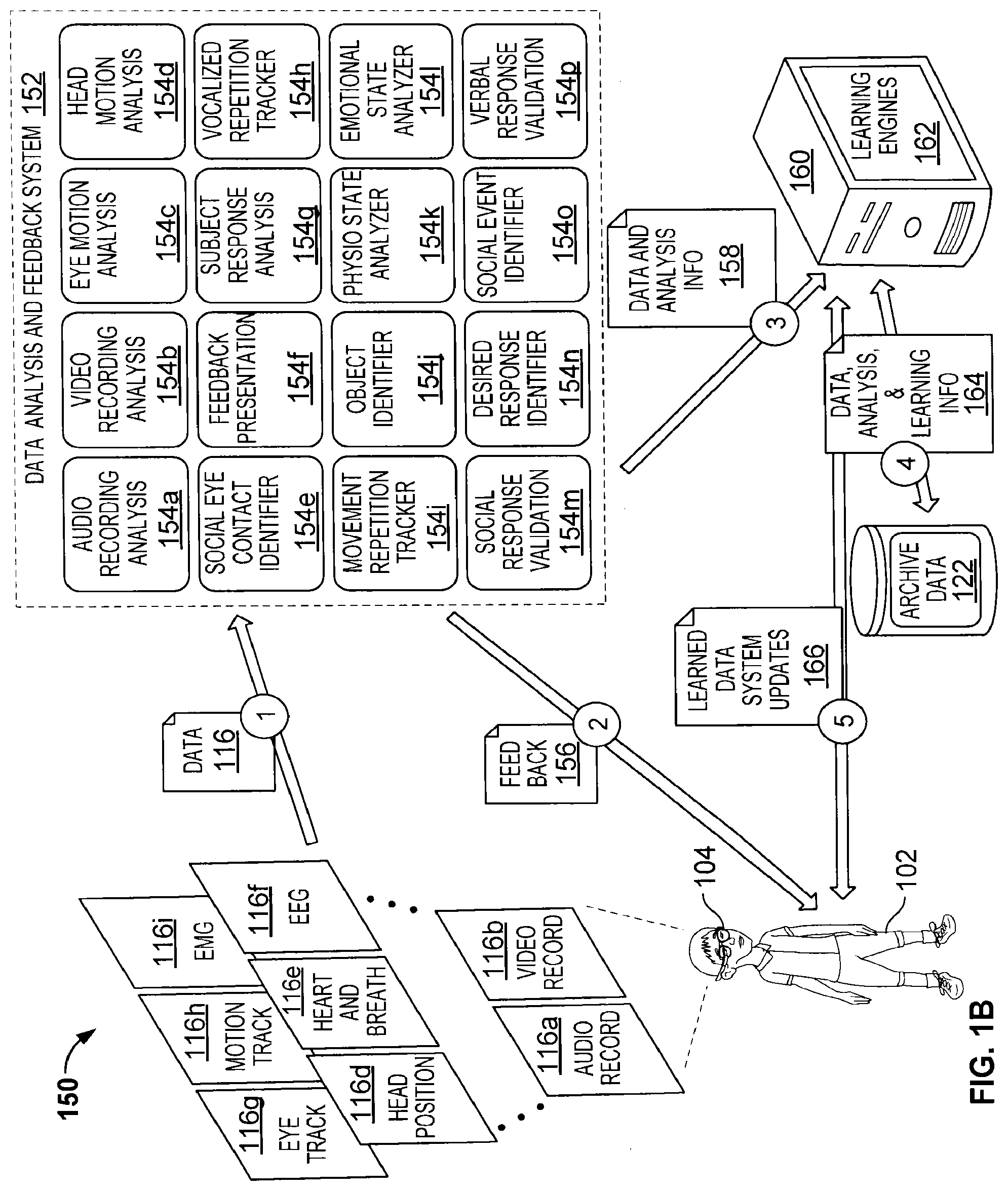

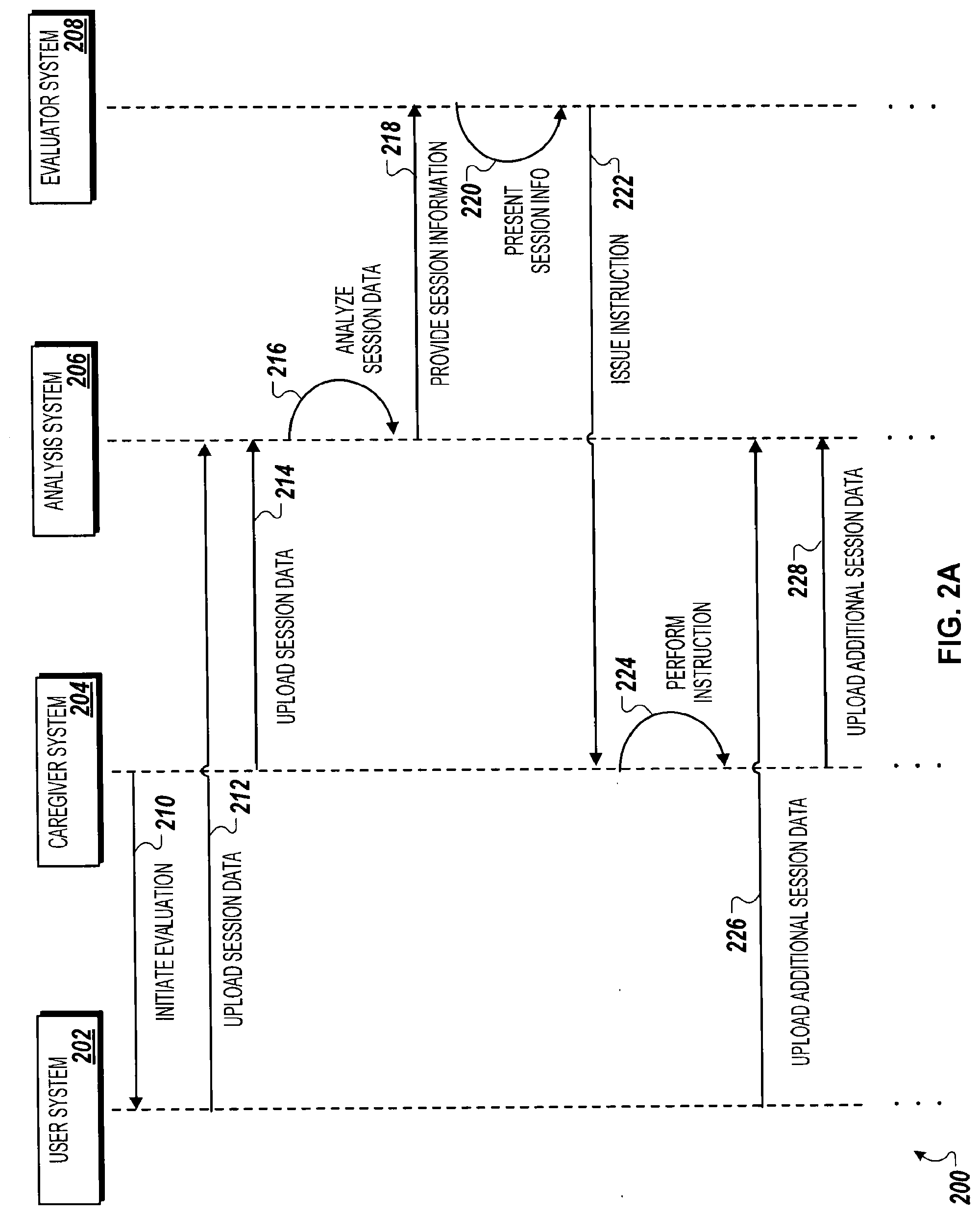

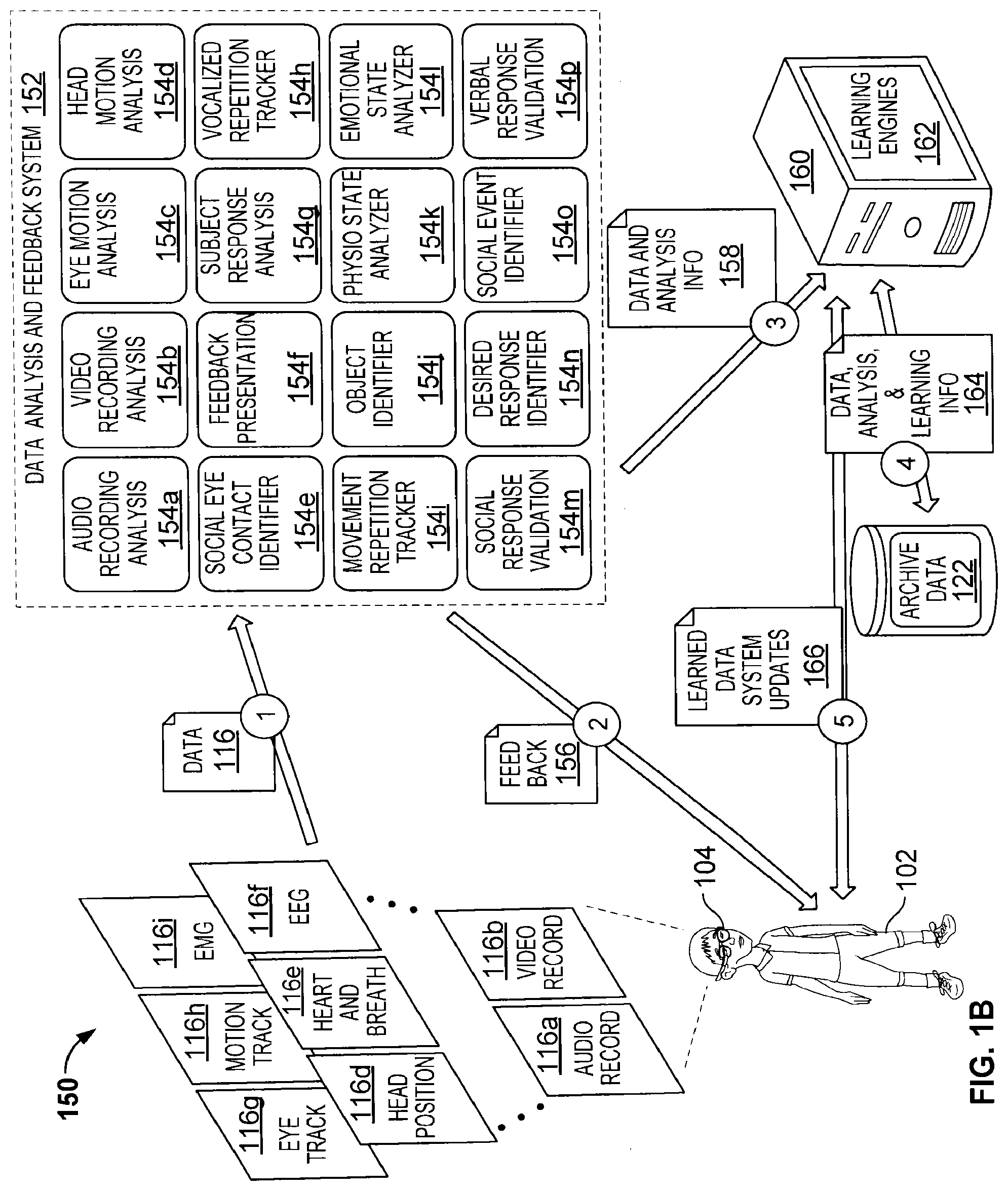

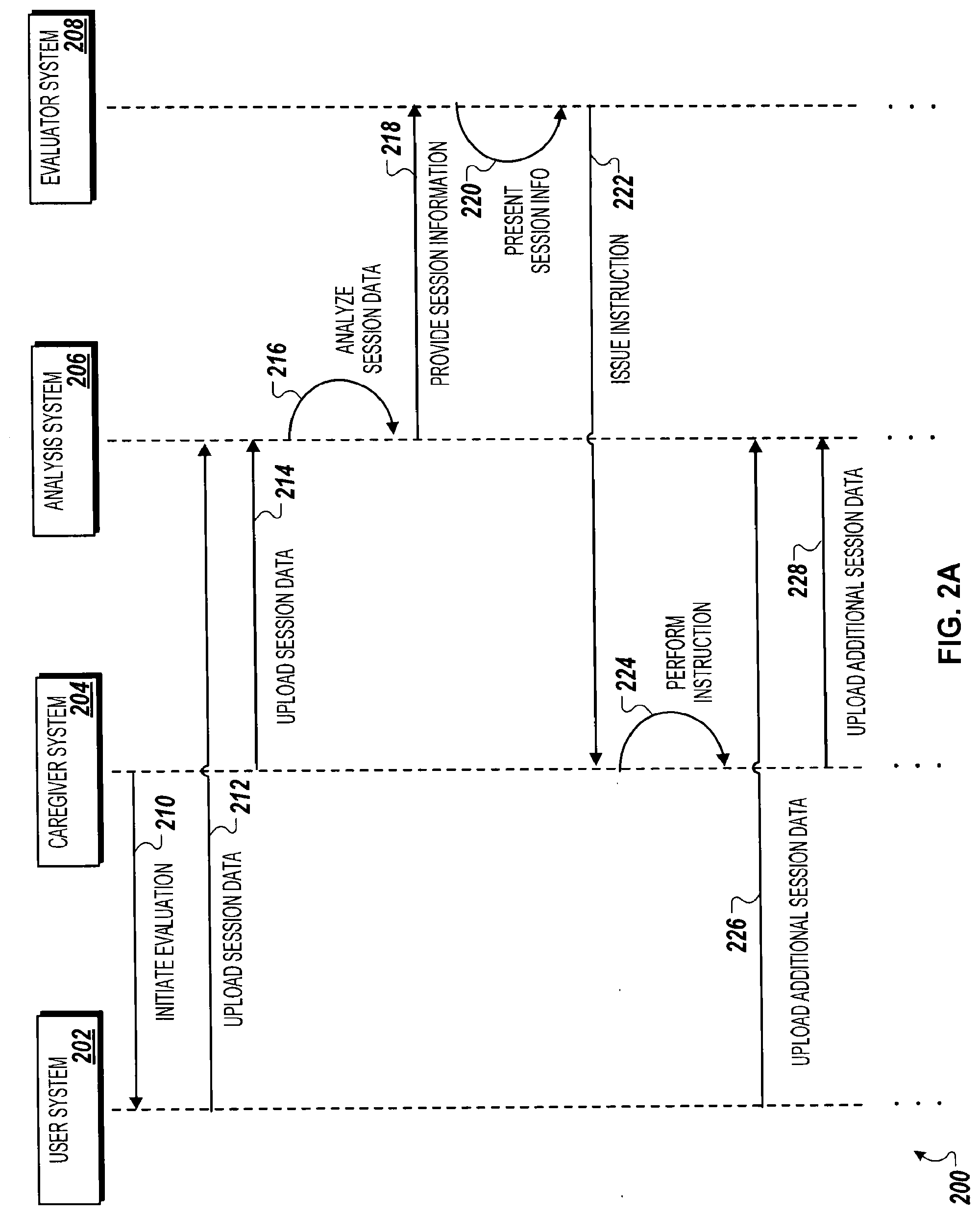

[0019] FIGS. 2A and 2B are a swim lane diagram of an example method for performing a remote evaluation of an individual using a wearable data collection device;

[0020] FIG. 3A is a block diagram of an example computing system for training and feedback software modules incorporating data derived by a wearable data collection device;

[0021] FIG. 3B is a block diagram of an example computing system for analyzing and statistically learning from data collected through wearable data collection devices;

[0022] FIG. 4 is a flow chart of an example method for conducting an evaluation session using a wearable data collection device donned by a caregiver of an individual being evaluated for Autism Spectrum Disorder;

[0023] FIG. 5A is a block diagram of an example environment for augmented reality learning using a wearable data collection device;

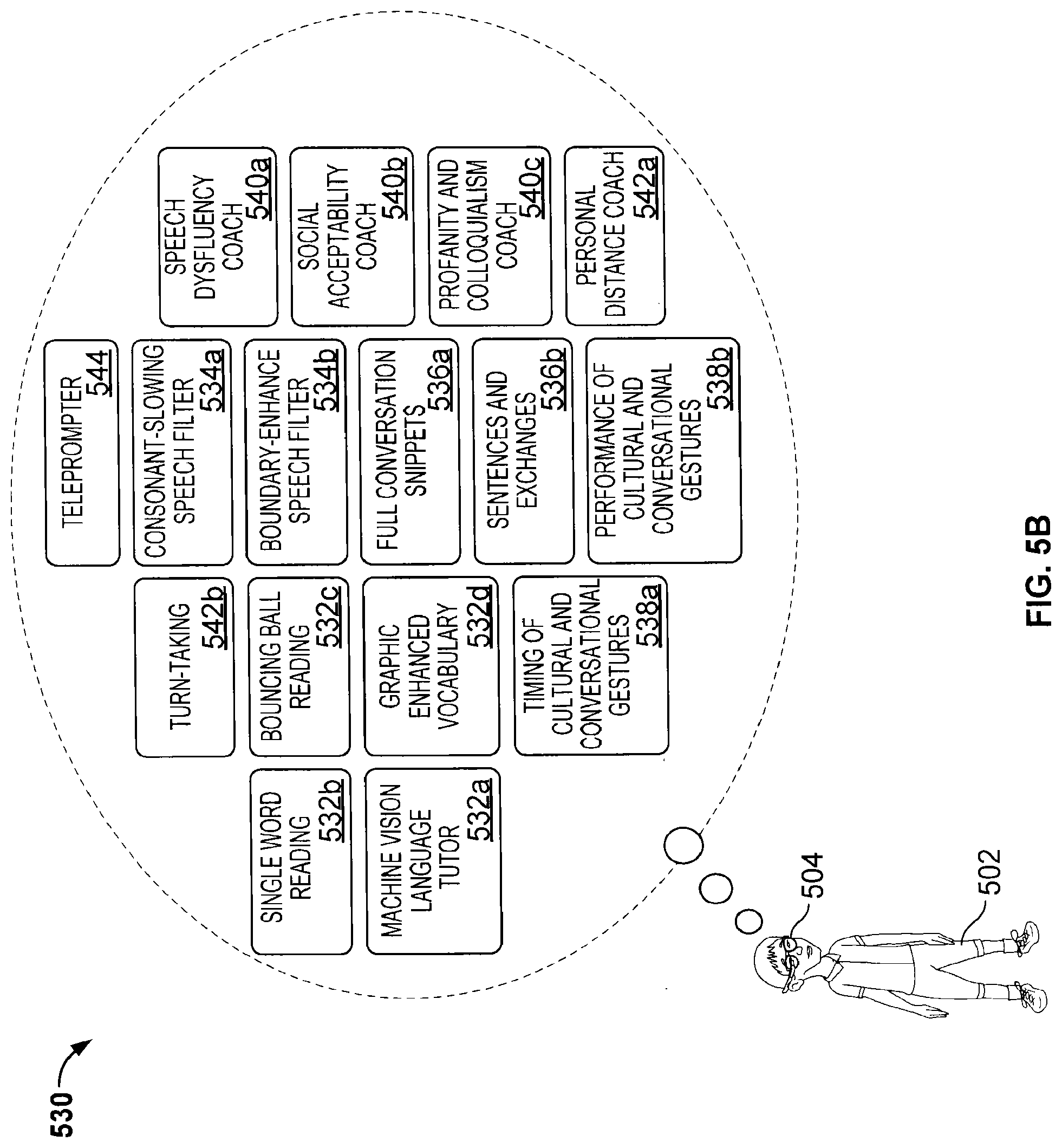

[0024] FIG. 5B is a block diagram of an example collection of software algorithms or modules for implementing language and communication skill training, assessment, and coaching using a wearable data collection device;

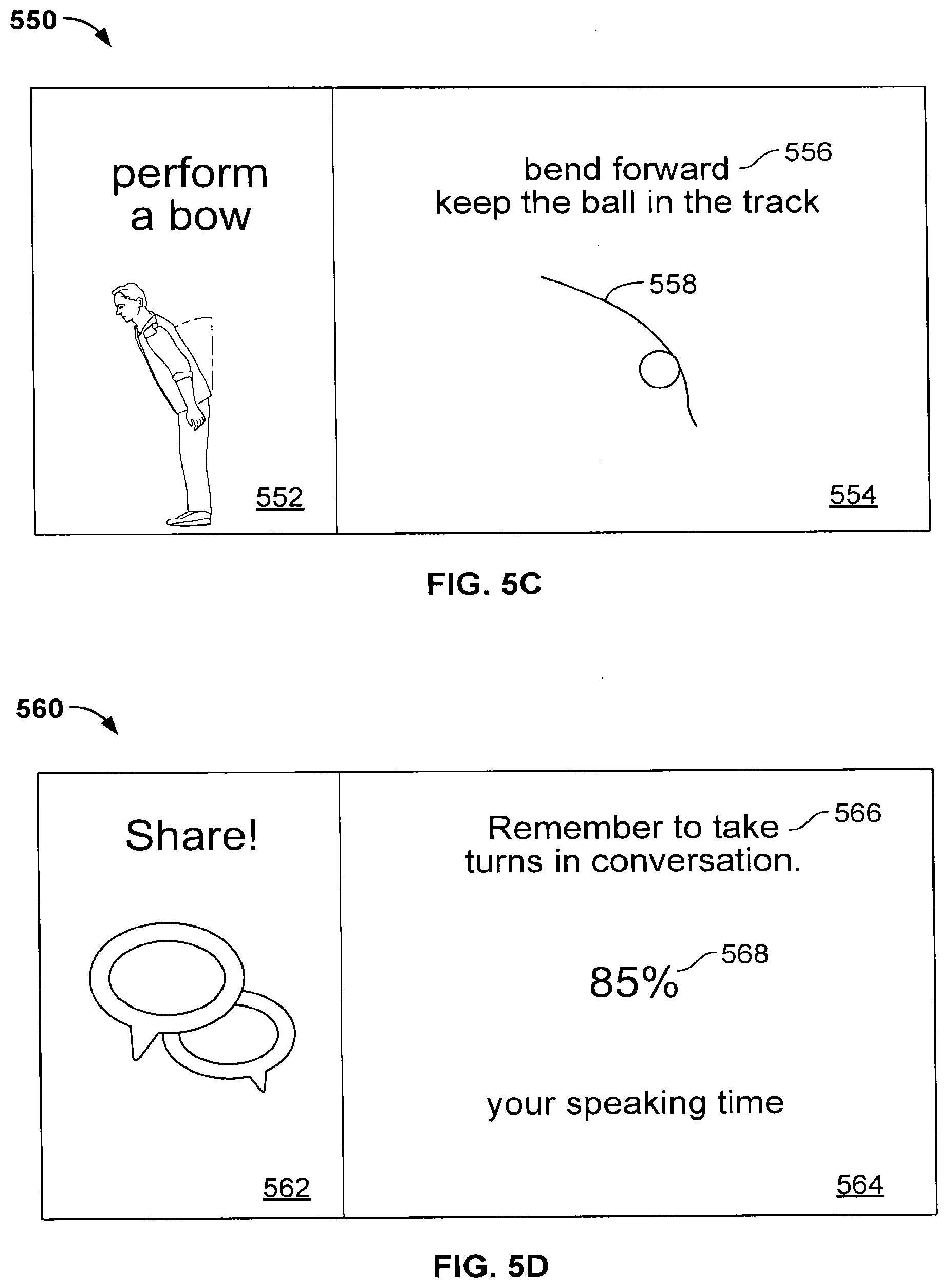

[0025] FIG. 5C is a screen shot of an example display for coaching a user in performing a bow;

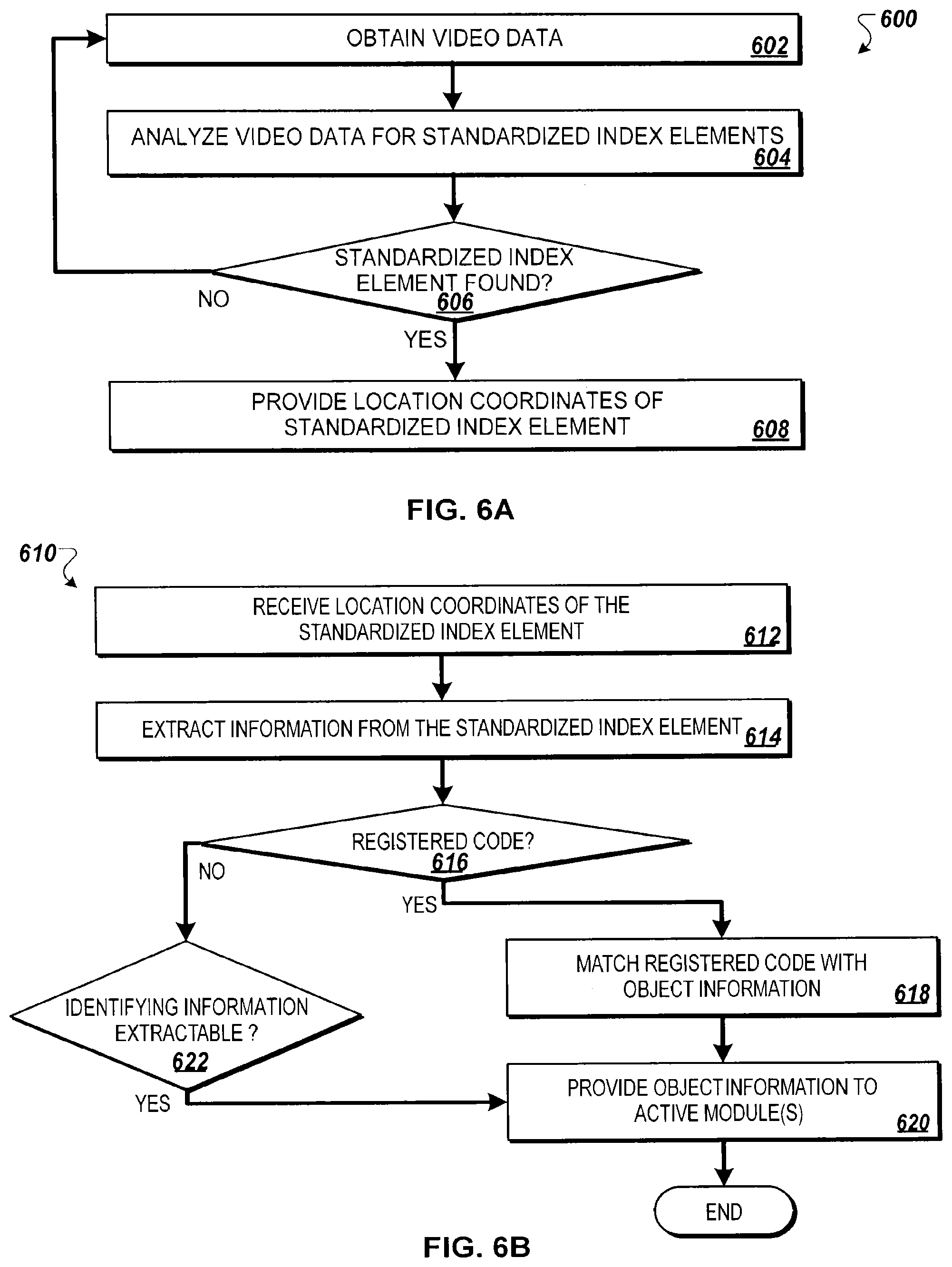

[0026] FIG. 5D is a screen shot of an example display for providing conversation skill feedback to a user;

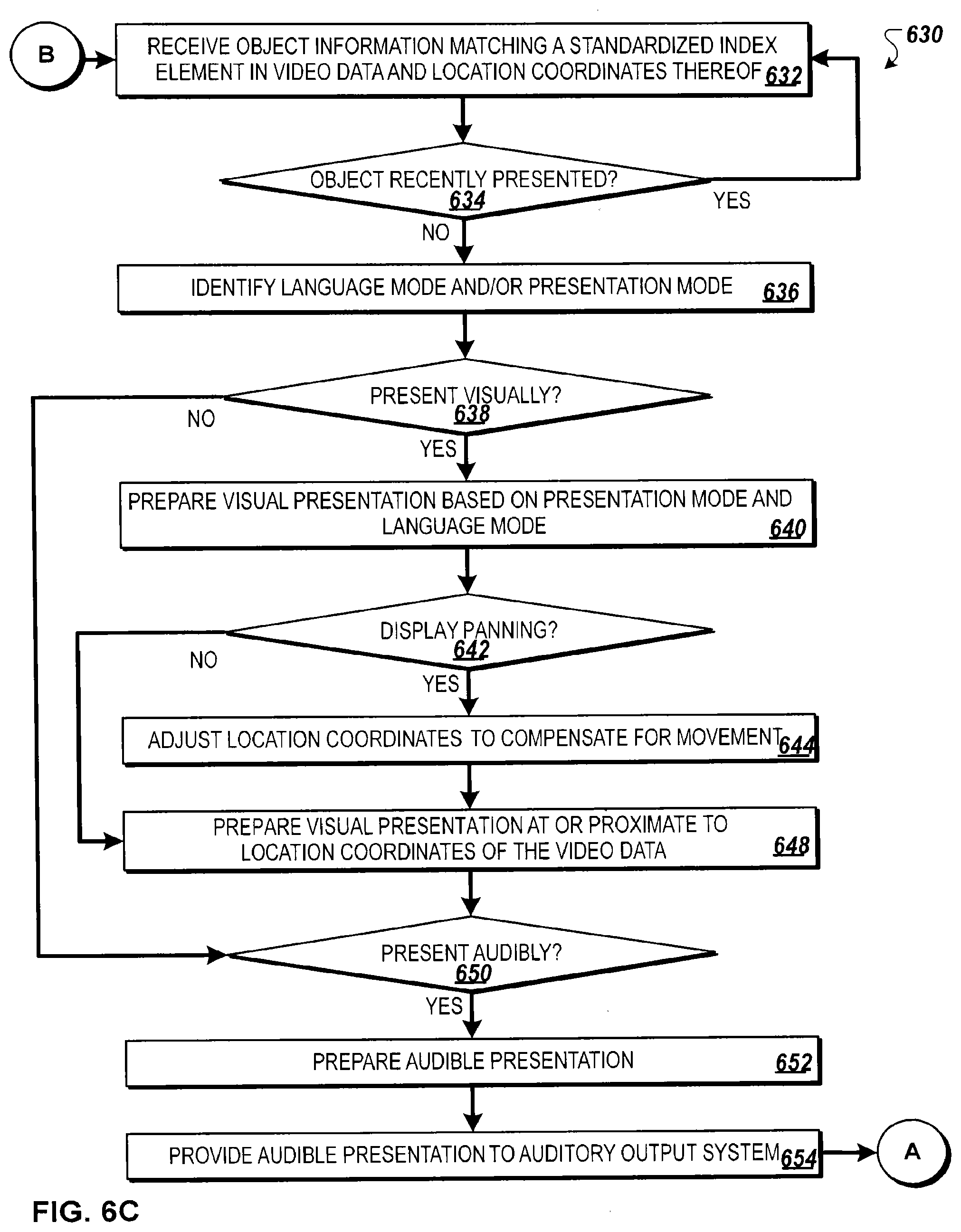

[0027] FIG. 6A through 6D illustrate a flow chart of an example method for augmented reality learning using a wearable data collection device;

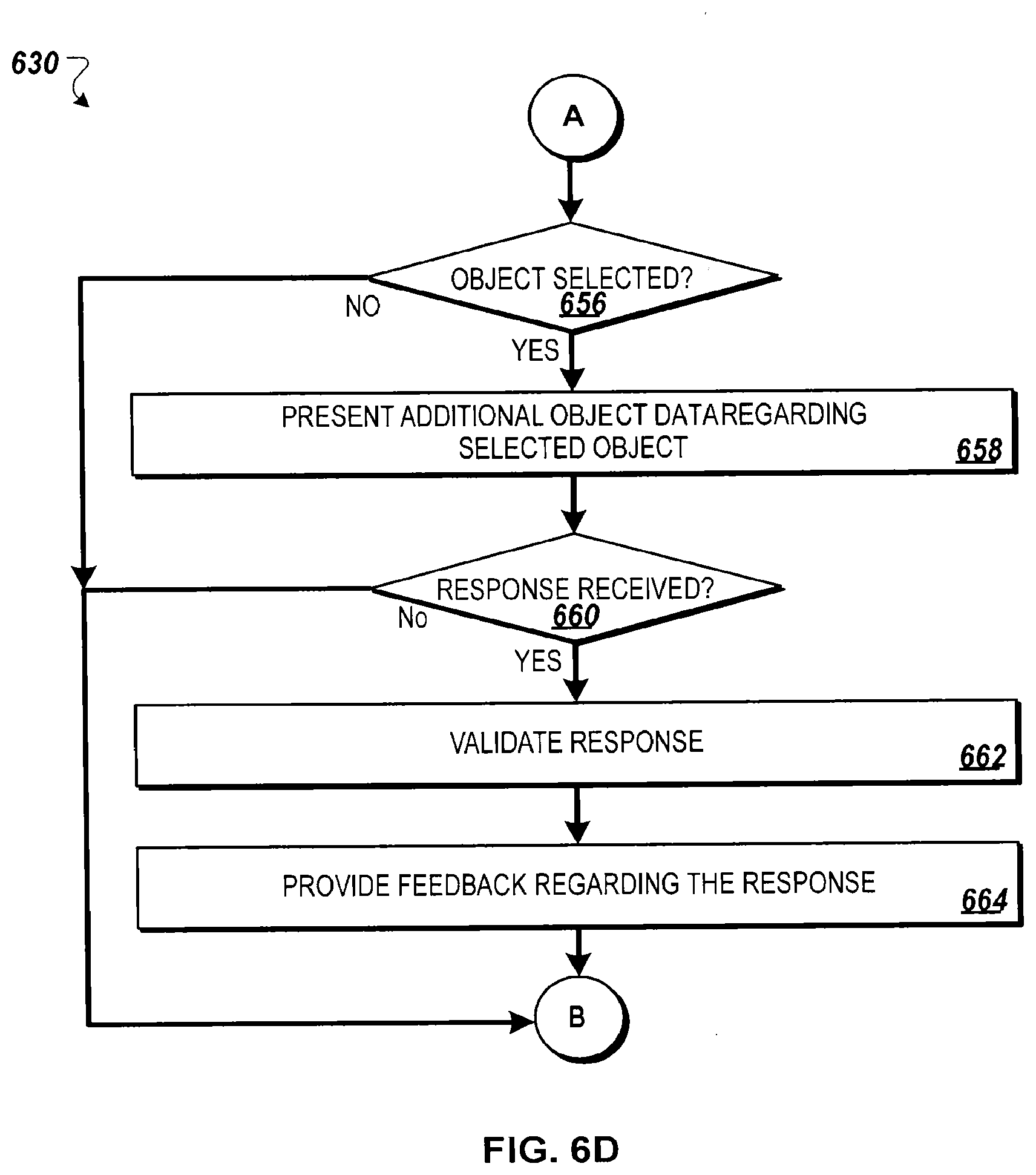

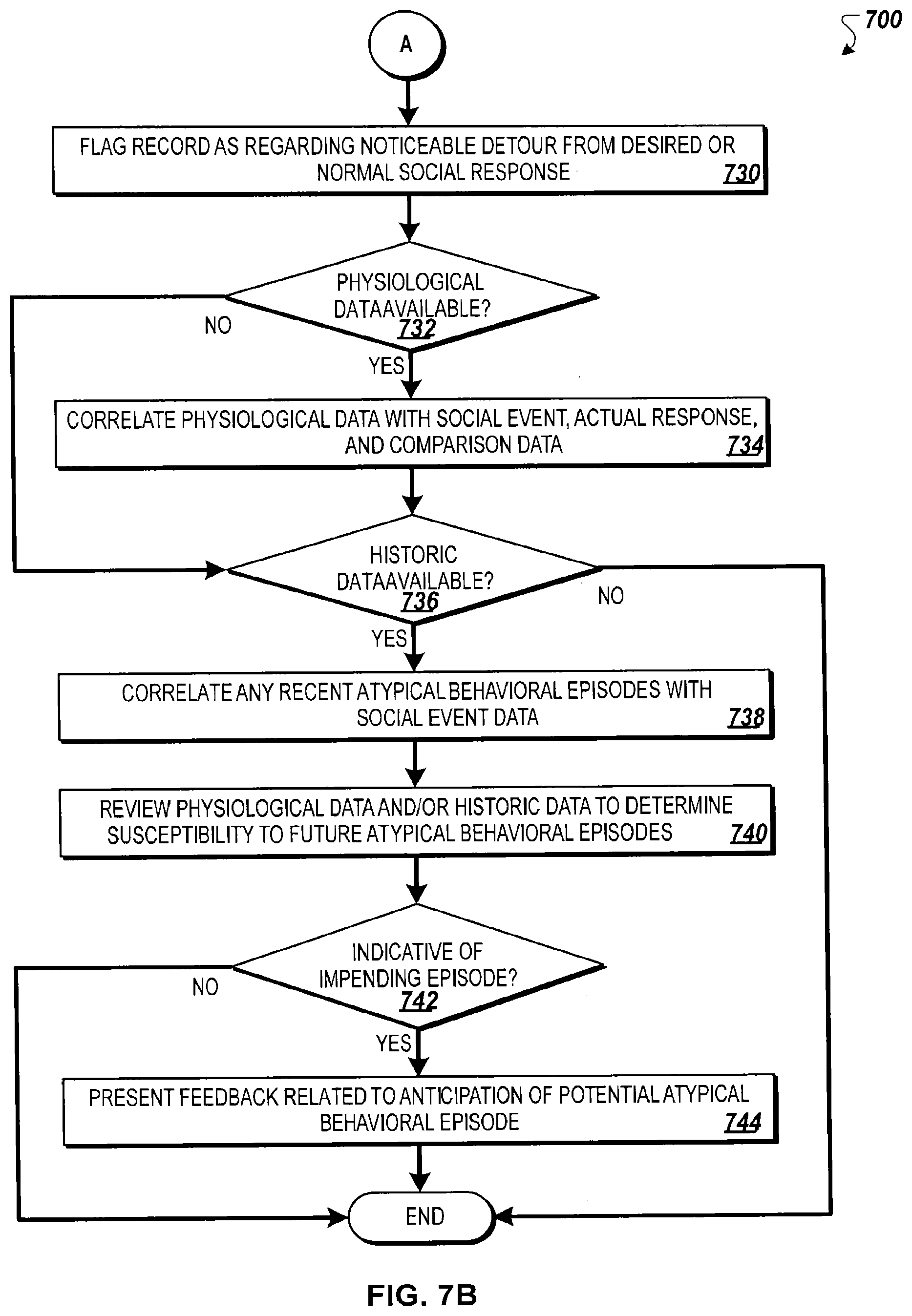

[0028] FIGS. 7A through 7C illustrate a flow chart of an example method for identifying socially relevant events and collecting information regarding the response of an individual to socially relevant events;

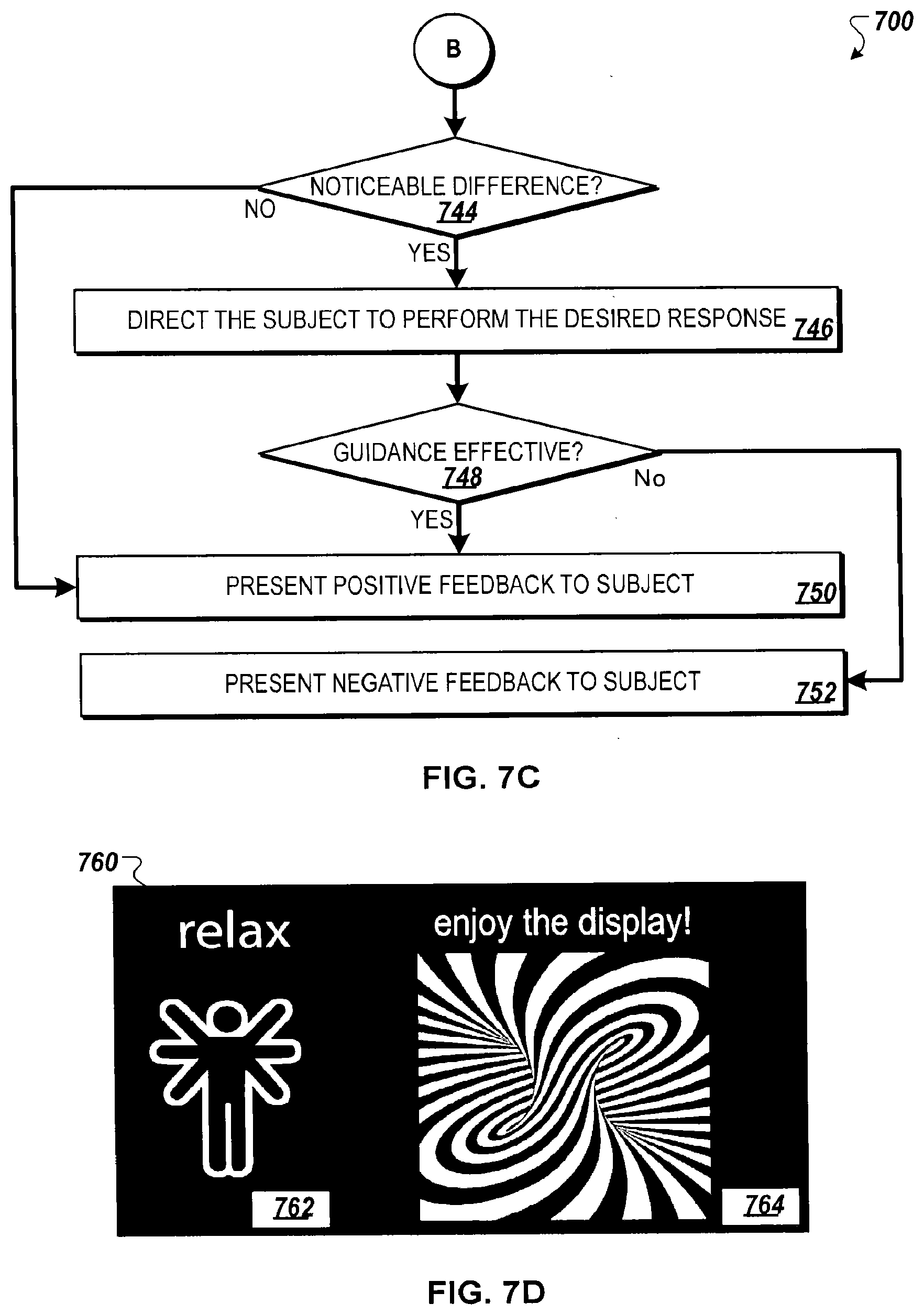

[0029] FIG. 7D illustrates a screen shot of an example feedback display for suggesting an intervention to a user;

[0030] FIG. 8 is a flow chart of an example method for conditioning social eye contact response through augmented reality using a wearable data collection device;

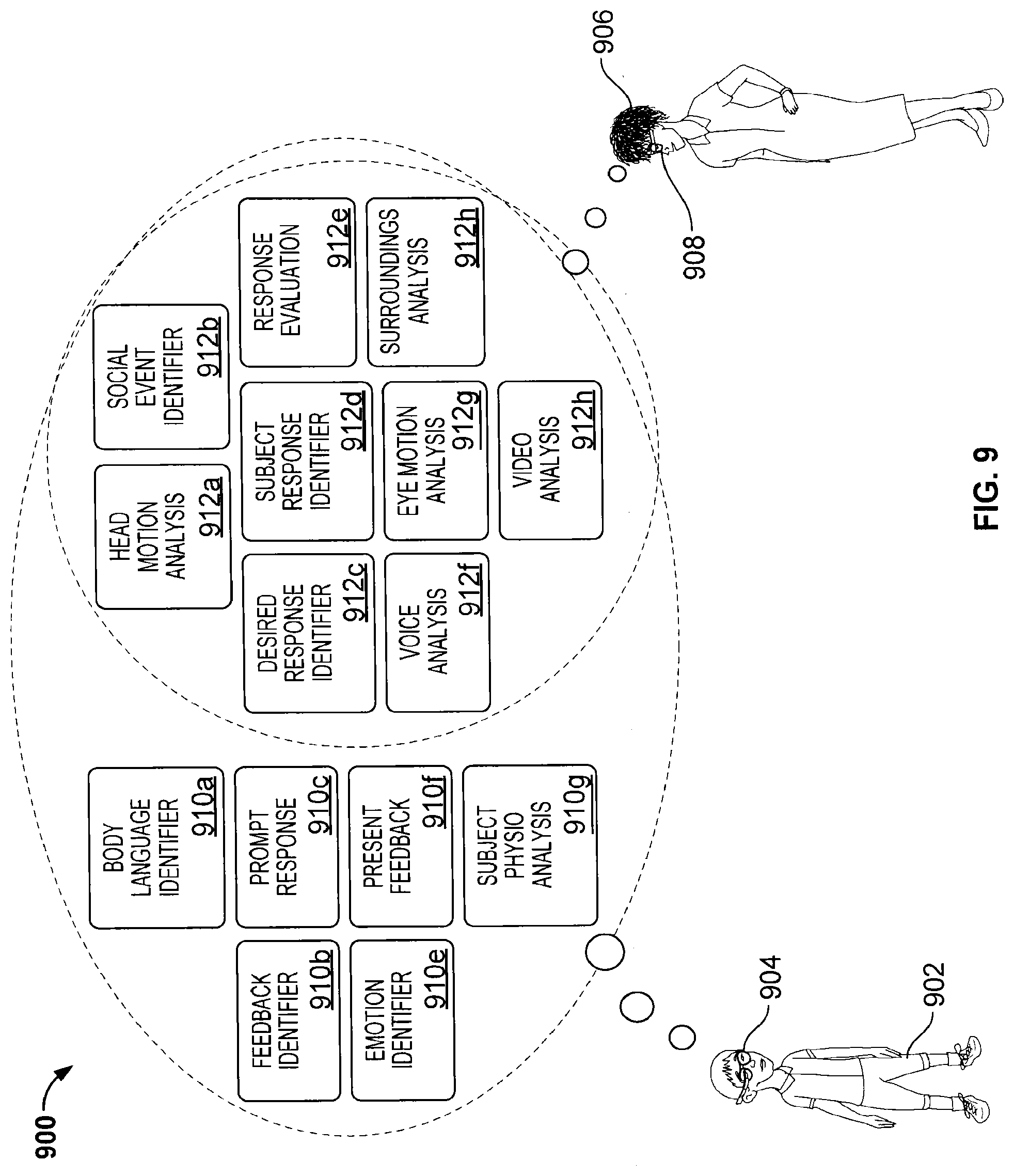

[0031] FIG. 9 is a block diagram of an example collection of software algorithms for implementing identification of and gauging reaction to socially relevant events;

[0032] FIG. 10A is a flow chart of an example method for identifying and presenting information regarding emotional states of individuals near an individual;

[0033] FIGS. 10B and 10C are screen shots of example user interfaces for identifying and presenting information regarding emotional states of an individual based upon facial expression;

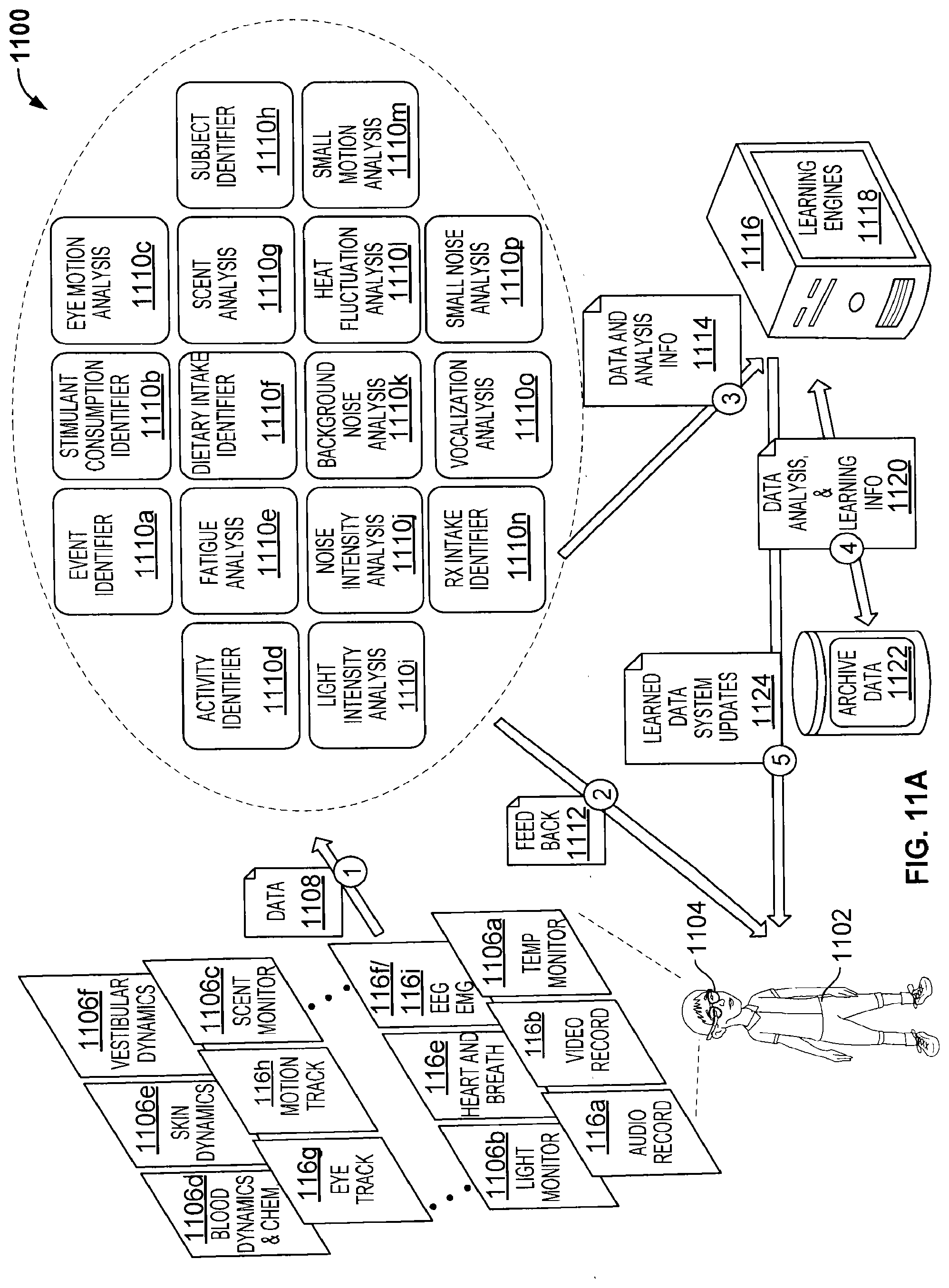

[0034] FIG. 11A is a block diagram of an example system for identifying and analyzing circumstances surrounding adverse health events and/or atypical behavioral episodes and for learning potential triggers thereof;

[0035] FIGS. 11B and 11C illustrate a flow chart of an example method for identifying and analyzing circumstances surrounding adverse health events and/or atypical behavioral episodes;

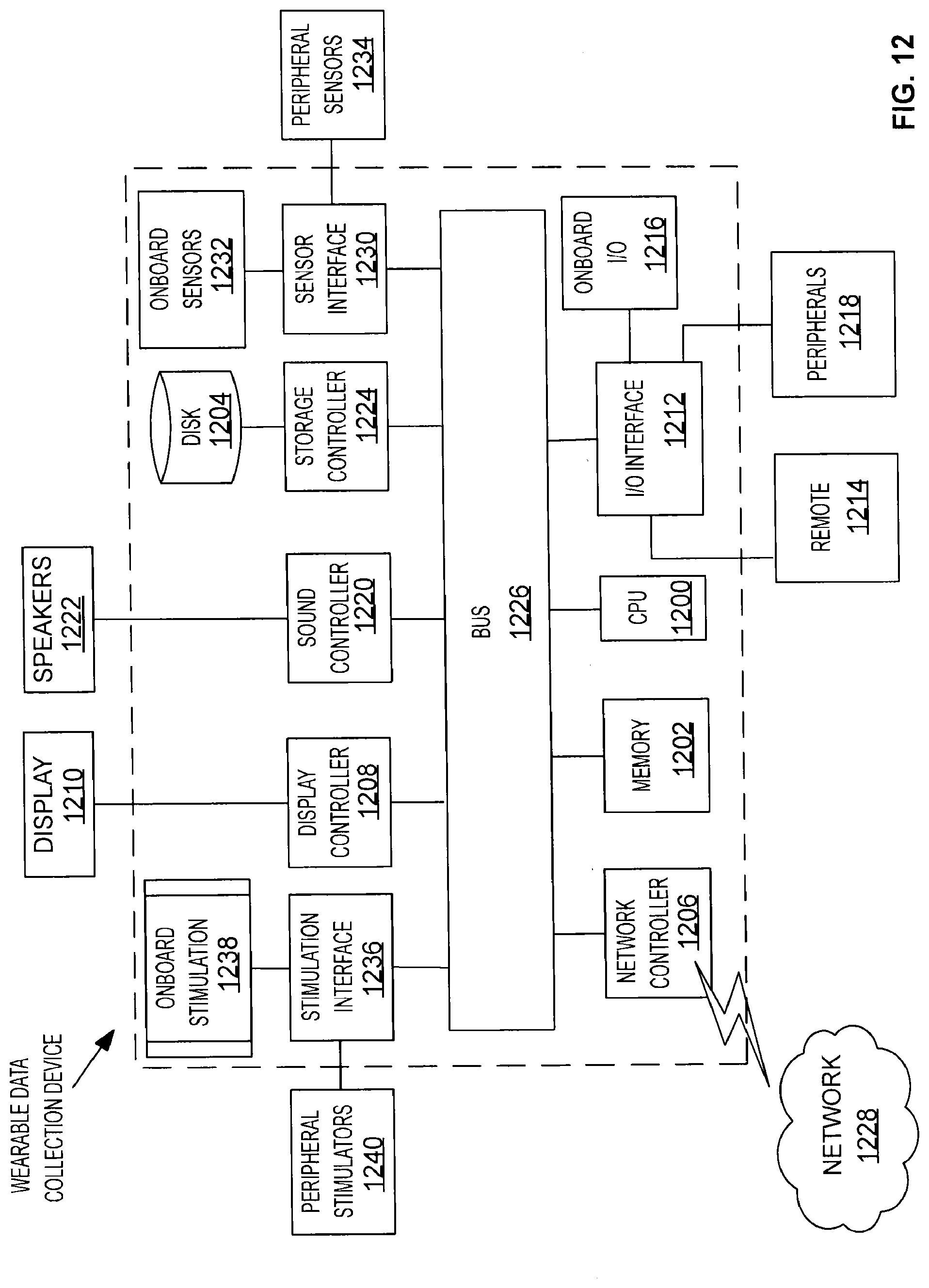

[0036] FIG. 12 is a block diagram of an example wearable computing device;

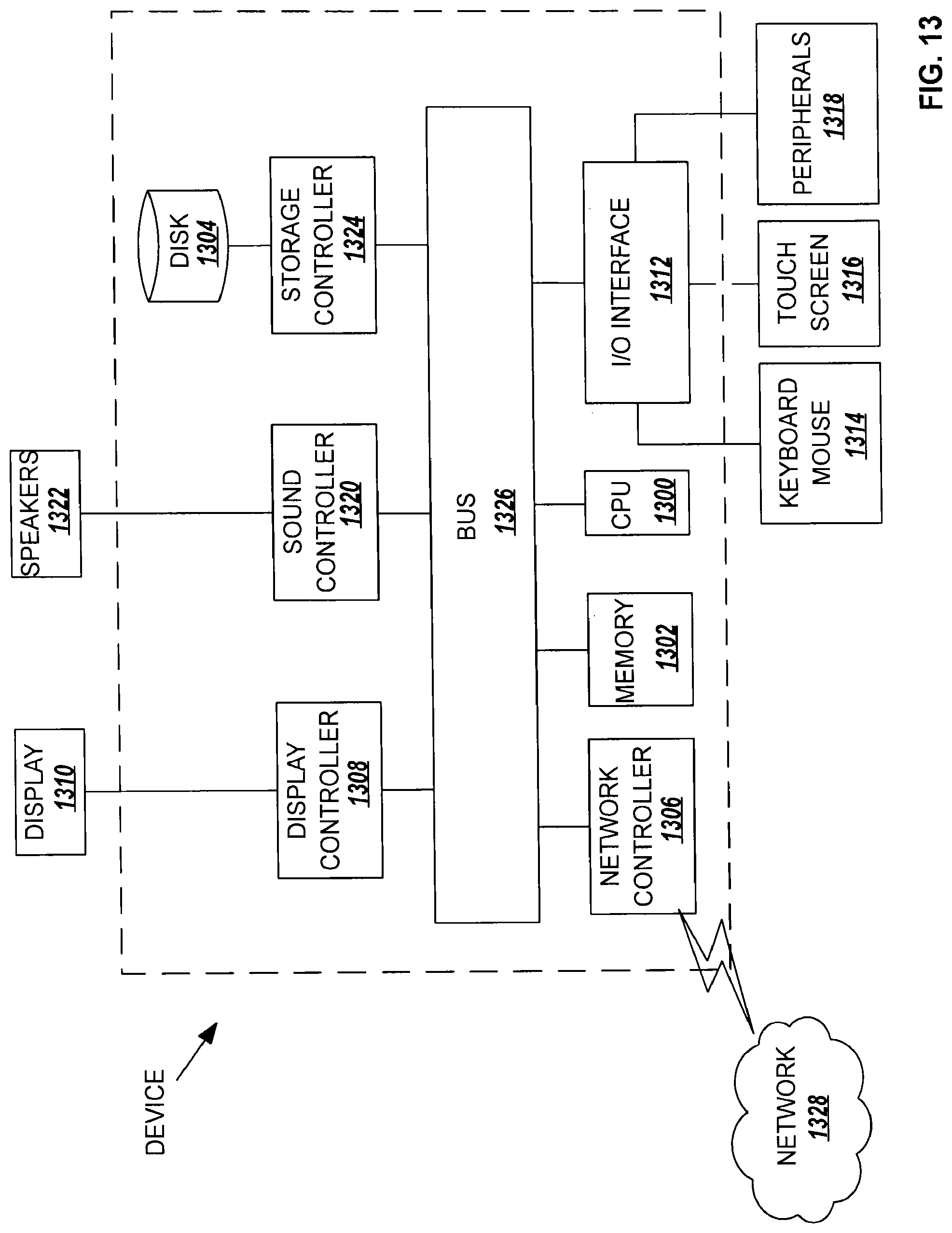

[0037] FIG. 13 is a block diagram of an example computing system;

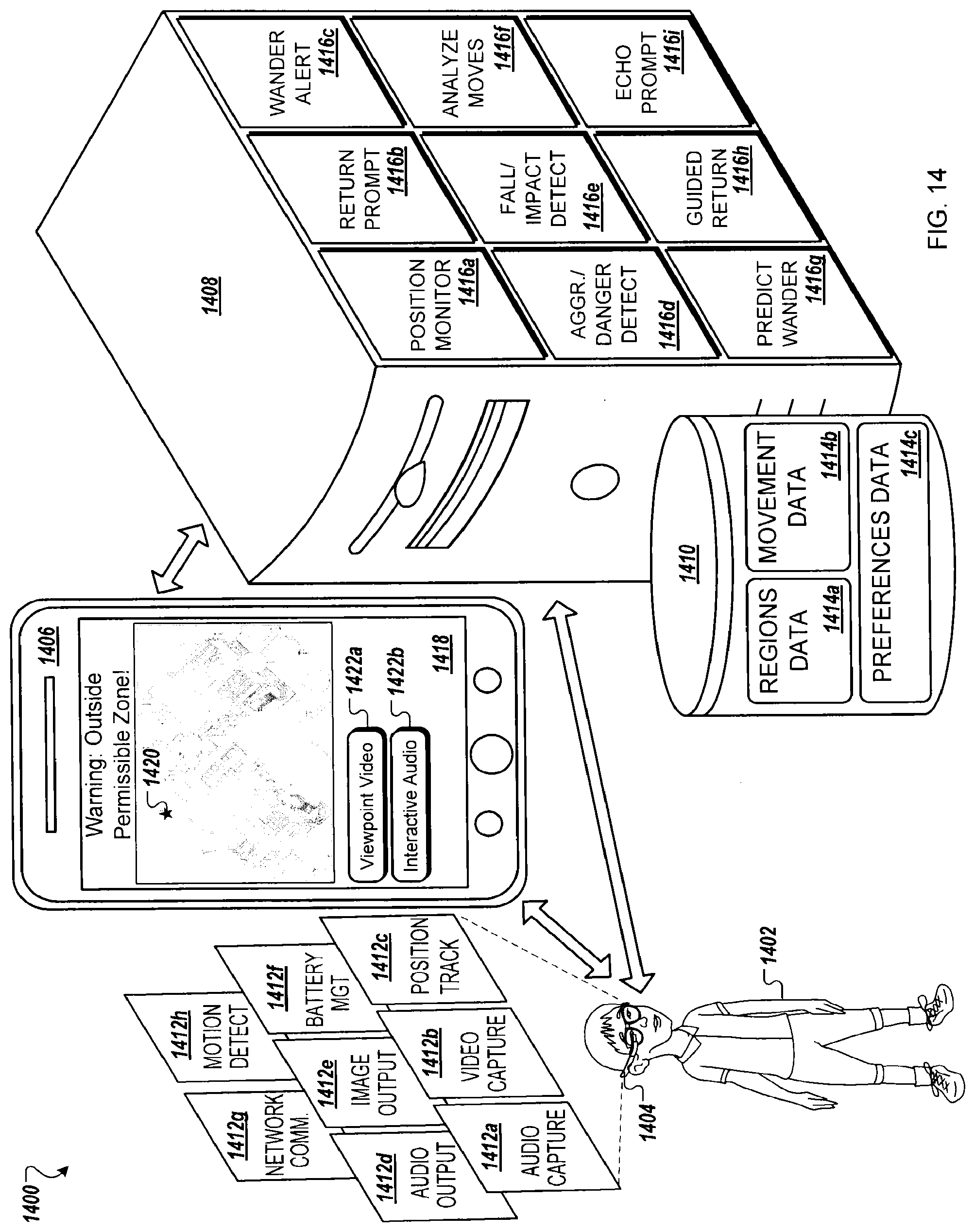

[0038] FIG. 14 is a block diagram of an example system for tracking location of an individual via a portable computing device; and

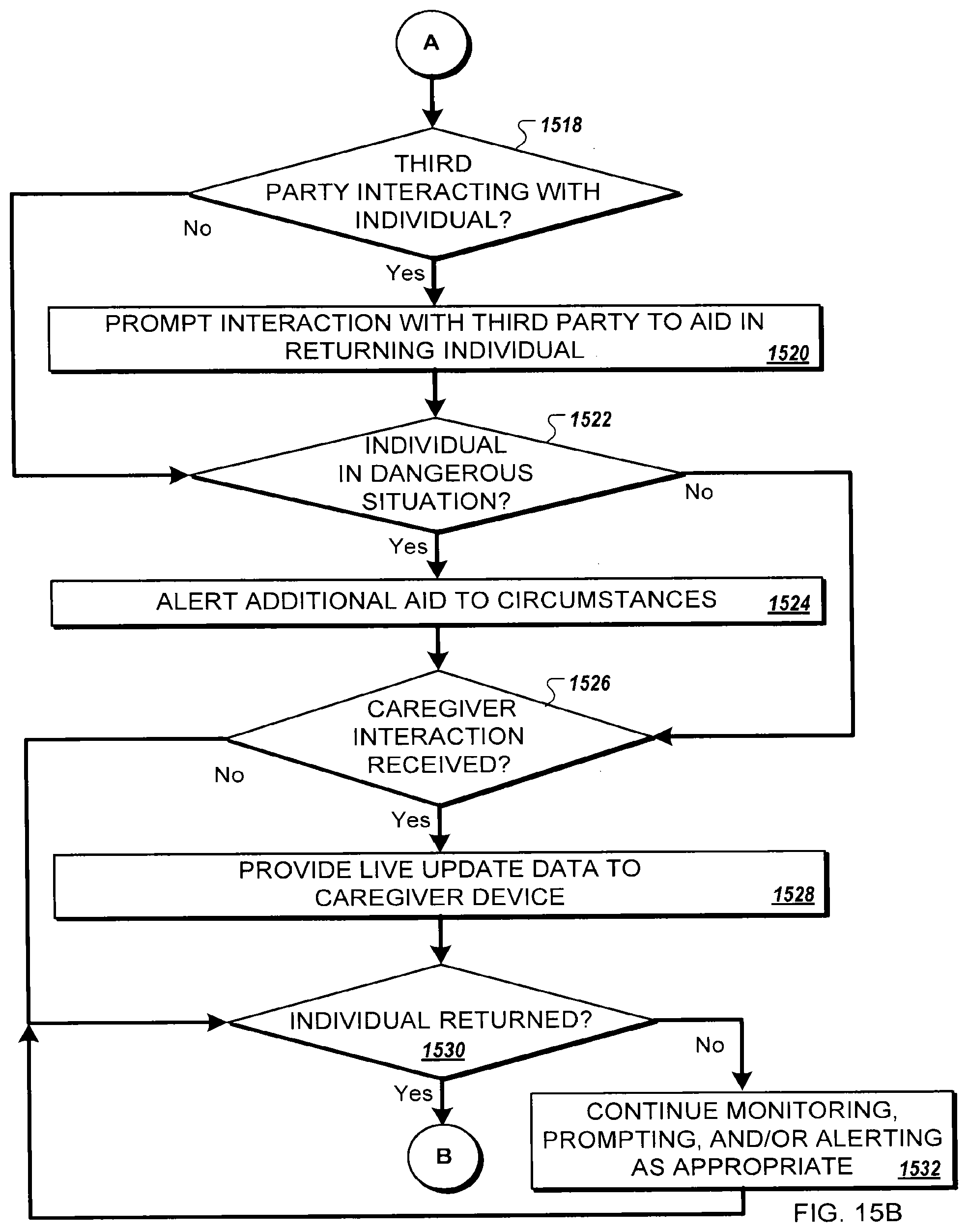

[0039] FIGS. 15A and 15B illustrate a flow chart of an example method for tracking location of an individual via a portable computing device.

DETAILED DESCRIPTION

[0040] As illustrated in FIG. 1A, an environment 100 for evaluating an individual 102 for autism spectrum disorder includes a wearable data collection device 104 worn by the individual 102 and/or a wearable data collection device 108 worn by a caregiver 106, such that data 116 related to the interactions between the individual 102 and the caregiver 108 are recorded by at least one wearable data collection device 104, 108 and uploaded to a network 110 for analysis, archival, and/or real-time sharing with a remotely located evaluator 114. In this manner, evaluation activities, to be evaluated in real time or after the fact by the evaluator 114, may be conducted in the individual's accustomed surroundings without the stress and intimidation of the evaluator 114 being present. For example, evaluation activities may be conducted in a family's home environment at a time convenient for the family members.

[0041] Evaluation activities, in some implementations, include a set of play session phases incorporating, for example, various objects for encouraging interaction between the caregiver 106 and the individual 102. For example, the caregiver 106 may be supplied with an evaluation kit including one or both of the individual's data collection device 104, the caregiver data collection device 108, a set of interactive objects, and instructions on how to conduct the session. The set of interactive objects, in one example, may include items similar to those included within the Screening Tool for Autism in Toddlers (STAT.TM.) test kit developed by the Vanderbilt University Center for Technology Transfer & Commercialization of Nashville, Tenn. The instructions, in one example, may be provided textually, either online or in a booklet supplied in the evaluation kit. In another example, the instructions are presented in video form, either online or in a video recording (e.g., DVD) included in the kit.

[0042] In some implementations, the instructions are supplied via the caregiver wearable data collection device 108. For example, the wearable data collection device 108 may include an optical head-mounted display (OHMD) such that the caregiver may review written and/or video instructions after donning the wearable data collection device 108. The caregiver may perform a play session or test session based on the instructions, or by mirroring or responding to step-by-step directions supplied by a remote evaluator 114, who can be a trained clinician or autism specialist, such that the remote evaluator 114 can walk the caregiver 106 through the process step by step, and the remote evaluator 114 can observe and evaluate the process and the behaviors of the individual 102 and other data in real time and directly through the eyes of the caregiver 106 (via a camera feed from the data collection device 104).

[0043] The wearable data collection device 104 or 108, in some implementations, is a head-mounted wearable computer. For example, the wearable data collection device 104 or 108 may be a standard or modified form of Google Glass.TM. by Google Inc. of Mountain View, Calif. In other examples, the wearable data collection device 104 or 108 is mounted in a hat, headband, tiara, or other accessory worn on the head. The caregiver 108 may use a different style of data collection device 108 than the individual 102. For example, a caregiver may use a glasses style wearable data collection device 108, while the subject uses a head-mounted visor style of data collection device 104.

[0044] In some implementations, the data collection device 104 for the individual 102 and/or the data collection device 108 for the caregiver 106 is be composed of multiple portions 105 of body-mountable elements configured to mount on different areas of the body. In general, the wearable data collection device 104 or 108 may be configured as a single, physically-contiguous device, or as a collection of two or more units that can be physically independent or semi-independent of each other but function as a whole as a wearable data collection device 104 or 108. For example, the data collection device 104 or 108 may have a first portion including an optical head-mounted display (OHMD) and which therefore is mounted on or about the head such as in a modified version of eyeglasses or on a visor, hat, headband, tiara or other accessory worn on the head. Further, the data collection device 104 or 108 may have a second portion separate from the first portion configured for mounting elsewhere on the head or elsewhere on the body. The second portion can contain, in some examples, sensors, power sources, computational components, data and power transmission apparatuses, and other components. For instance, in an illustrative example, the first portion of data collection device 104 or 108 may be used to display information to the user and/or perform various tasks of user interface, whereas the second portion of data collection device 104 or 108 may be configured to perform sensing operations that are best suited to specific parts of the body, and/or may be configured to perform computation and in so doing may consume power all of which may require a size and bulk that is better suited to be elsewhere on the body than a head-mounted device. Further to the example, the second portion of data collection device 104 or 108 may be configured to mount on the wrist or forearm of the wearer. In a particular configuration, the second portion may have a design similar to a watch band, where the second portion can be interchanged with that of a standard-sized wrist watch and thereby convert an off-the-shelf wrist watch into a part of a smart ecosystem and furthermore hide the presence of the second portion of the data collection device 104 or 108. Although described as having two portions, in other implementations, the wearable data collection device 104 or 108 may include three or more portions physically independent of each other with each portion capable of inter-communicating with at least one of the other portions. Many other configurations are also anticipated.

[0045] The wearable data collection device 104 for the subject may be customized for use by an individual, for instance by making it fit the head better of someone of the age and size of a given individual 102, or by modifying the dynamics of the display such that it is minimally distracting for the individual 102. Another possible customization of the wearable data collection device 104 includes regulating the amount of time that the wearable data collection device 104 can be used so as to cause minimal change to the individual 102, such as to the developing visual system of the individual 102. The wearable data collection device 104, in a further example, may be customized for the individual 102 to make the wearable data collection device 104 palatable or desirable to be worn by the individual 102 for instance by cosmetic or sensory modifications of the wearable data collection device 104.

[0046] The wearable data collection device 104 or 108, in some implementations, can be modified for the type of usage discussed herein, for instance by equipping it with an extended-life power source or by equipping it with an extended capacity for data acquisition such as video data acquisition with features such as extended memory storage or data streaming capabilities, or the like.

[0047] Rather than performing the described functionality entirely via a wearable data collection device 104 or 108, in some implementations, the data collection device 104 or 108 includes a bionic contact lens. For example, the OHMD may be replaced with a bionic contact lens capable of providing augmented reality functionality. In another example, an implantable device, such as a visual prosthesis (e.g., bionic eye) may provide augmented reality functionality.

[0048] The wearable data collection device 104 or 108 can be arranged on the body, near the body, or embedded within the body, in part or entirely. When one or more components of the wearable data collection device 104 or 108 is embedded within the body, the one or more components can be embedded beneath the skin; within the brain; in contact with input or output structures of the body such as peripheral nerves, cranial nerves, ganglia, or the spinal cord; within deep tissue such as muscles or organs; within body cavities; between organs; in the blood; in other fluid or circulatory systems; inside cells; between cells (such as in the interstitial space); or in any other manner arranged in a way that is embedded within the body, permanently or temporarily. When one or more components of the wearable data collection device 104 or 108 is embedded within the body, the one or more components may be inserted into the body surgically, by ingestion, by absorption, via a living vector, by injection, or other means. When one or more components of the wearable data collection device 104 or 108 is embedded within the body, the one or more components may include data collection sensors placed in direct contact with tissues or systems that generate discernible signals within the body, or stimulator units that can directly stimulate tissue or organs or systems that can be modulated by stimulation. Data collection sensors and stimulator units are described in greater detail in relation to FIG. 12.

[0049] The wearable data collection device 104 or 108 can be configured to collect a variety of data 116. For example, a microphone device built into the data collection device 104 or 108 may collect voice recording data 116a, while a video camera device built into the data collection device 104 or 108 may collect video recording data 116b. The voice recording data 116a and video recording data 116b, for example, may be streamed via the network 110 to an evaluator computing device (illustrated as a display 112) so that the evaluator 114 reviews interactions between the individual 102 and the caregiver 108 in real-time. For example, as illustrated on the display 112, the evaluator is reviewing video recording data 116j recorded by the caregiver wearable data collection device 108. Additionally, the evaluator may be listening to voice recording data 116a.

[0050] Furthermore, in some implementations, the wearable data collection device 104 is configured to collect a variety of data regarding the movements and behaviors of the individual 102 during the evaluation session. For example, the wearable data collection device 104 may include motion detecting devices, such as one or more gyroscopes, accelerometers, global positioning system, and/or magnetometers used to collect motion tracking data 116h regarding motions of the individual 102 and/or head position data 116d regarding motion particular to the individual's head. The motion tracking data 116h, for example, may track the individual's movements throughout the room during the evaluation session, while the head position data 116d may track head orientation. In another example, the motion tracking data 116h may collect data to identify repetitive motions, such as jerking, jumping, flinching, fist clenching, hand flapping, or other repetitive self-stimulating ("stimming") behaviors.

[0051] In some implementations, the wearable data collection device 104 is configured to collect eye tracking data 116g. For example, the wearable data collection device 104 may include an eye tracking module configured to identify when the individual 102 is looking straight ahead (for example, through the glasses style wearable data collection device 104) and when the individual 102 is peering up, down, or off to one side. Techniques for identifying eye gaze direction, for example, are described in U.S. Patent Application No. 20130106674 entitled "Eye Gaze Detection to Determine Speed of Image Movement" and filed Nov. 2, 2011, the contents of which are hereby incorporated by reference in its entirety. In another example, the individual's data collection device 104 is configured to communicate with the caregiver data collection device 108, such that the wearable data collection devices 104, 108 can identify when the individual 102 and the caregiver 106 have convergent head orientation. In some examples, a straight line wireless signal, such as a Bluetooth signal, infrared signal, or RF signal, is passed between the individual's wearable data collection device 104 and the caregiver wearable data collection device 108, such that a wireless receiver acknowledges when the two wearable data collection devices 104, 108 are positioned in a substantially convergent trajectory.

[0052] The wearable data collection device 104, in some implementations, is configured to monitor physiological functions of the individual 102. In some examples, the wearable data collection device 104 may collect heart and/or breathing rate data 116e (or, optionally, electrocardiogram (EKG) data), electroencephalogram (EEG) data 116f, and/or Electromyography (EMG) data 116i). The wearable data collection device 104 may interface with one or more peripheral devices, in some embodiments, to collect the physiological data. For example, the wearable data collection device 104 may have a wired or wireless connection with a separate heart rate monitor, EEG unit, or EMG unit. In other embodiments, at least a portion of the physiological data is collected via built-in monitoring systems. Unique methods for non-invasive physiological monitoring are described in greater detail in relation to FIGS. 11A through 11C. Optional onboard and peripheral sensor devices for use in monitoring physiological data are described in relation to FIG. 12.

[0053] In some implementations, during an evaluation session, the individual's wearable data collection device 104 gathers counts data 116c related to patterns identified within other data 116. For example, the individual's data collection device 104 may count verbal (word and/or other vocalization) repetitions identified within the voice recording data 116a and movement repetitions identified in the head position data 116d and/or the motion tracking data 116h. The baseline analysis for identifying repetitions (e.g., time span between repeated activity, threshold number of repetitions, etc.), in some embodiments, may be tuned by educators and/or clinicians based upon baseline behavior analysis of "normal" individuals or typical behaviors indicative of individuals with a particular clinical diagnosis such as ASD. For example, verbal repetition counts 116c may be tuned to identify repetitive vocalizations separate from excited stuttering or other repetitive behaviors typical of children of an age or age range of the individual. In another example, movement repetition counts 116c may distinguish from dancing and playful repetitive behaviors of a young child. Autism assessment, progress monitoring, and coaching all are currently done with little or no support via structured, quantitative data which is one reason that rigorous counts 116c are so very important. Counts 116c can include other types of behavior such as rocking, self-hugging, self-injurious behaviors, eye movements and blink dynamics, unusually low-movement periods, unusually high-movement periods, irregular breathing and gasping, behavioral or physiological signs of seizures, irregular eating behaviors, and other repetitive or irregular behaviors.

[0054] In other implementations, rather than collecting the counts data 116c, a remote analysis and data management system 118 (e.g., networked server, cloud-based processing system, etc.) analyzes a portion of the session data 116 to identify at least a portion of the counts data 116c (e.g., verbal repetition counts and/or movement repetition counts). For example, a session data analysis engine 120 of the remote analysis and data management system 118 may analyze the voice recording data 116a, motion tracking data 116h, and/or head position data 116d to identify the verbal repetition counts and/or movement repetition counts.

[0055] In some implementations, the analysis is done at a later time. For example, the analysis and data management system 118 may archive the session data 116 in an archive data store 122 for later analysis. In other implementations, the session data and analysis engine 120 analyzes at least a portion of the session data 116 in real-time (e.g., through buffering the session data 116 in a buffer data store 124). For example, a real-time analysis of a portion of the session data 116 may be supplied to the evaluator 114 during the evaluation session. The real-time data analysis, for example, may be presented on the display 112 as session information and statistics information 126. In some examples, statistics information 126 includes presentation of raw data values, such as a graphical representation of heart rate or a graphical presentation of present EEG data. In other examples, statistics information 126 includes data analysis output, such as a color-coded presentation of relative excitability or stimulation of the subject (e.g., based upon analysis of a number of physiological factors) or graphic indications of identified behaviors (e.g., an icon displayed each time social eye contact is registered).

[0056] Session information and statistics information 126 can be used to perform behavioral decoding. Behavioral decoding is like language translation except that it decodes the behaviors of an individual 102 rather than verbal language utterances. For instance, a result of the session data analysis 120 might be that a pattern emerges whereby repetitive vocalizations of a particular type as well as repeated touching the cheek are correlated, in the individual 102, with ambient temperature readings below a certain temperature level, and the behaviors cease when the temperature rises. Once this pattern has been reliably measured by the system 100, upon future episodes of those behaviors, the system 100 could present to the caregiver 108 or evaluator 114 some information such as that the subject is likely too cold. The system 100 can also interface directly with control systems in the environment, for instance in this case the system 100 may turn up a thermostat to increase the ambient temperature. This example is illustrative of many possibilities for behavioral decoding. The system 100 increases in ability to do behavioral decoding the longer it interacts with the individual 102 to learn the behavioral language of the individual 102. Furthermore, the greater the total number of individuals interacting with the system 100, the greater the capacity of the system 100 to learn from normative data to identify stereotypical communication strategies of individuals within subgroups of various conditions, such as subgroups of the autism spectrum.

[0057] During an evaluation session, in an illustrative example, the caregiver 106 is tasked with performing interactive tasks with the individual 102. Video recording data 116j collected by the caregiver wearable data collection device 108 is supplied to a computing system of the evaluator 114 in real-time via the analysis and data management system 118 such that the evaluator 114 is able to see the individual 102 more or less "through the eyes of" the caregiver 108 during the evaluation session. The evaluator 114 may also receive voice recording data 116a from either the caregiver wearable data collection device 108 or the subject wearable data collection device 104.

[0058] Should the evaluator 114 wish to intercede during the evaluation session, in some implementations, the evaluator 114 can call the caregiver 106 using a telephone 128. For example, the caregiver 106 may have a cell phone or other personal phone for receiving telephone communications from the evaluator 114. In another example, the caregiver wearable computing device 108 may include a cellular communications system such that a telephone call placed by the evaluator 114 is connected to the caregiver wearable computing device 108. In this manner, for example, the caregiver 108 may receive communications from the evaluator 114 without disrupting the evaluation session.

[0059] In other implementations, a computer-aided (e.g., voice over IP, etc.) communication session is established between the evaluator 114 computing system and the caregiver wearable data collection device 108. For example, the analysis and data management system 118 may establish and coordinate a communication session between the evaluator system and the caregiver wearable data collection device 108 for the duration of the evaluation system. Example techniques for establishing communication between a wearable data collection device and a remote computing system are described in U.S. Patent Application No. 20140368980 entitled "Technical Support and Remote Functionality for a Wearable Computing System" and filed Feb. 7, 2012, the contents of which are hereby incorporated by reference in its entirety. Further, the analysis and data management system 118, in some embodiments, may collect and store voice recording data of commentary supplied by the evaluator 114.

[0060] In some examples, the evaluator 114 may communicate with the caregiver 106 to instruct the caregiver 106 to perform certain interactions with the individual 102 or to repeat certain interactions with the individual 102. Prior to or at the end of an evaluation session, furthermore, the evaluator 114 may discuss the evaluation with the caregiver 106. In this manner, the caregiver 106 may receive immediate feedback and support of the evaluator 114 from the comfort of her own home.

[0061] FIG. 1B is a block diagram of an example system 150 for evaluation and training of the individual 102 using the wearable data collection device 104. Data 116 collected by the wearable data collection device 104 (and, optionally or alternatively, data collected by the caregiver data collection device 108 described in relation to FIG. 1A) is used by a number of algorithms 154 developed to analyze the data 116 and determine feedback 156 to provide to the individual 102 (e.g., via the wearable data collection device 104 or another computing device). Furthermore, additional algorithms 532, 534, 536, 538, 540, 542, and 544 described in relation to FIG. 5B and/or algorithms 910 and 912 described in relation to FIG. 9 may take advantage of components of the system 150 in execution. The algorithms 154 may further generate analysis information 158 to supply, along with at least a portion of the data 116, to learning engines 162. The analysis information 158 and data 116, along with learning information 164 generated by the learning engines 162, may be archived as archive data 122 for future use, such as for pooled statistical learning. The learning engines 162, furthermore, may provide learned data 166 and, potentially, other system updates for use by the wearable data collection device 104. The learned data 166, for example, may be used by one or more of the algorithms 154 residing upon the wearable data collection device 104. A portion or all of the data analysis and feedback system 152, for example, may execute upon the wearable data collection device 104. Conversely, in some implementations, a portion or all of the data analysis and feedback system 152 is external to the wearable data collection device 104. For example, certain algorithms 154 may reside upon a computing device in communication with the wearable data collection device 104, such as a smart phone, smart watch, tablet computer, or other personal computing device in the vicinity of the individual 102 (e.g., belonging to a caregiver, owned by the individual 102, etc.). Certain algorithms 154, in another example, may reside upon a computing system accessible to the wearable data collection device 104 via a network connection, such as a cloud-based processing system.

[0062] The algorithms 154 represent a sampling of potential algorithms available to the wearable data collection device 104 (and/or the caregiver wearable data collection device 108 as described in relation to FIG. 1A). The algorithms 154 include an audio recording analysis algorithm 154a, a video recording analysis algorithm 154b, an eye motion analysis algorithm 154c, a head motion analysis algorithm 154d, a social eye contact identifying algorithm 154e, a feedback presentation algorithm 154f, a subject response analysis algorithm 154g, a vocalized repetition tracking algorithm 154h (e.g., to generate a portion of the counts data 116c illustrated in FIG. 1A), a movement repetition tracking algorithm 154i (e.g., to generate a portion of the counts data 116c illustrated in FIG. 1A), an object identification algorithm 154j, a physiological state analysis algorithm 154k, an emotional state analysis algorithm 154l, a social response validation algorithm 154m, a desired response identification algorithm 154n, a social event identification algorithm 154o, and a verbal response validation engine 154p. Versions of one or more of the algorithms 154 may vary based upon whether they are executed upon the individual's wearable data collection device 104 or the caregiver wearable data collection device 108. For example, the social eye contact identification algorithm 154e may differ when interpreting video recording data 116b supplied from the viewpoint of the individual 102 as compared to video recording data 116b supplied from the viewpoint of the caregiver 106 (illustrated in FIG. 1A).

[0063] The algorithms 154 represent various algorithms used in performing various methods described herein. For example, method 600 regarding identifying objects labeled with standardized index elements (described in relation to FIG. 6A) and/or method 610 regarding extracting information from objects with standardized index elements (described in relation to FIG. 6B), may be performed by the object identification algorithm 154j. Step 662 of method 630 (described in relation to FIG. 6D) regarding validating the subject's response may be performed by the verbal response validation algorithm 154p. Step 664 of method 630 (described in relation to FIG. 6D) regarding providing feedback regarding the subject's response may be performed by the feedback presentation algorithm 154f Step 704 of method 700 regarding detection of a socially relevant event, described in relation to FIG. 7A, may be performed by the social event identification algorithm 154o. Step 716 of method 700 regarding determination of a desired response to a socially relevant event may be performed by the desired response identification algorithm 154n. Step 718 of method 700 regarding comparison of the subject's actual response may be performed by the social response validation algorithm 154m. Step 740 of method 700 regarding reviewing physiological data, described in relation to FIG. 7B, may be performed by the physiological state analysis algorithm 154k. Step 802 of method 800 regarding identification of faces in video data, described in relation to FIG. 8, may be performed by the video recording analysis algorithm 154b. Step 810 of method 800 regarding identification of social eye contact may be performed by the social eye contact identification algorithm 154e. The social eye contact identification algorithm 154e, in turn, may utilize the eye motion analysis engine 154c and/or the head motion analysis engine 154d in identifying instances of social eye contact between the individual 102 and another individual. Step 816 of method 800 regarding ascertaining an individual's reaction to feedback may be performed by the subject response analysis algorithm 154g. Step 1006 of method 1000 regarding identifying an emotional state of an individual, described in relation to FIG. 10A, may be performed by the emotional state analysis algorithm 154l. Step 1010 of method 1000 regarding analyzing audio data for emotional cues may be performed by the audio recording analysis algorithm 154a.

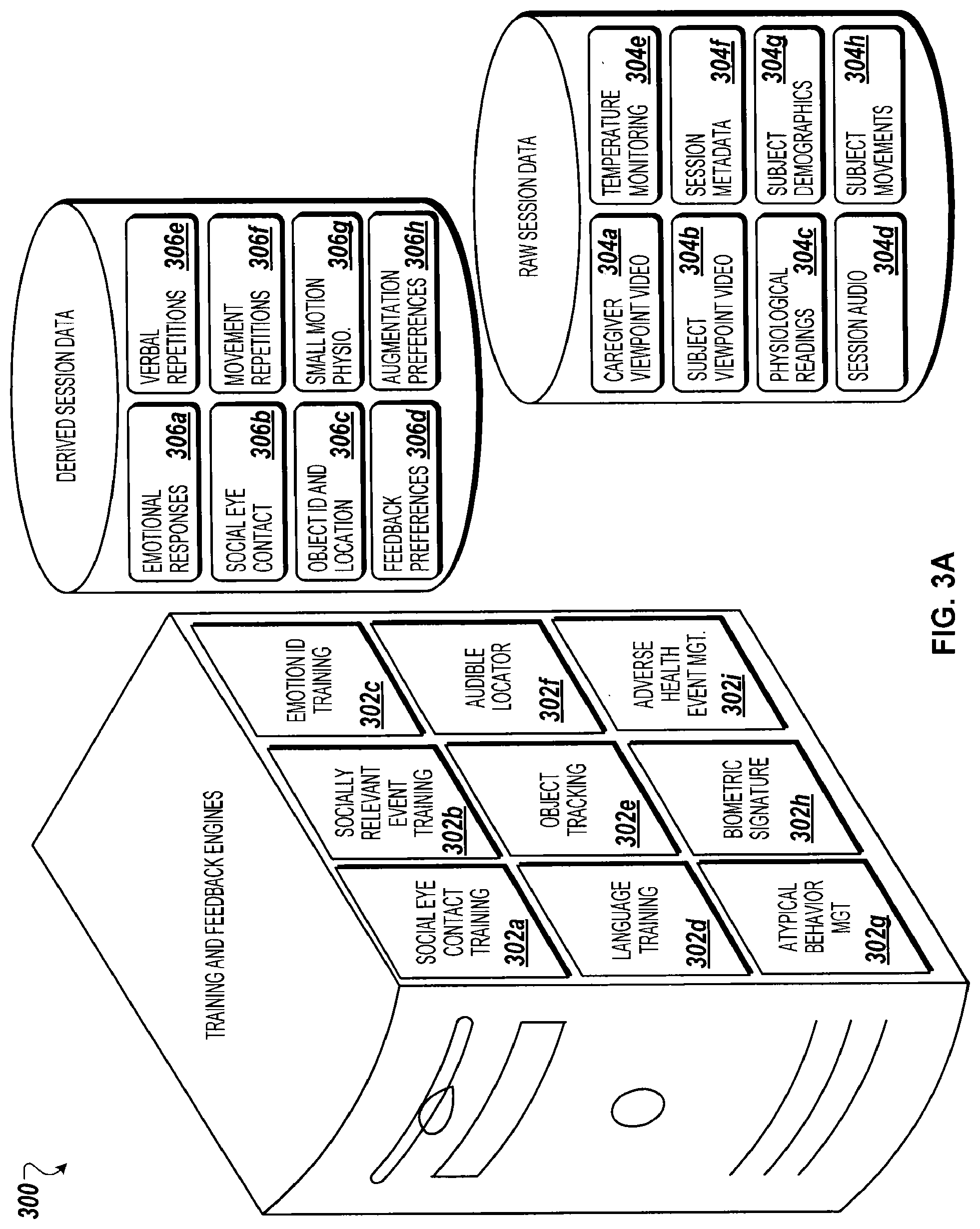

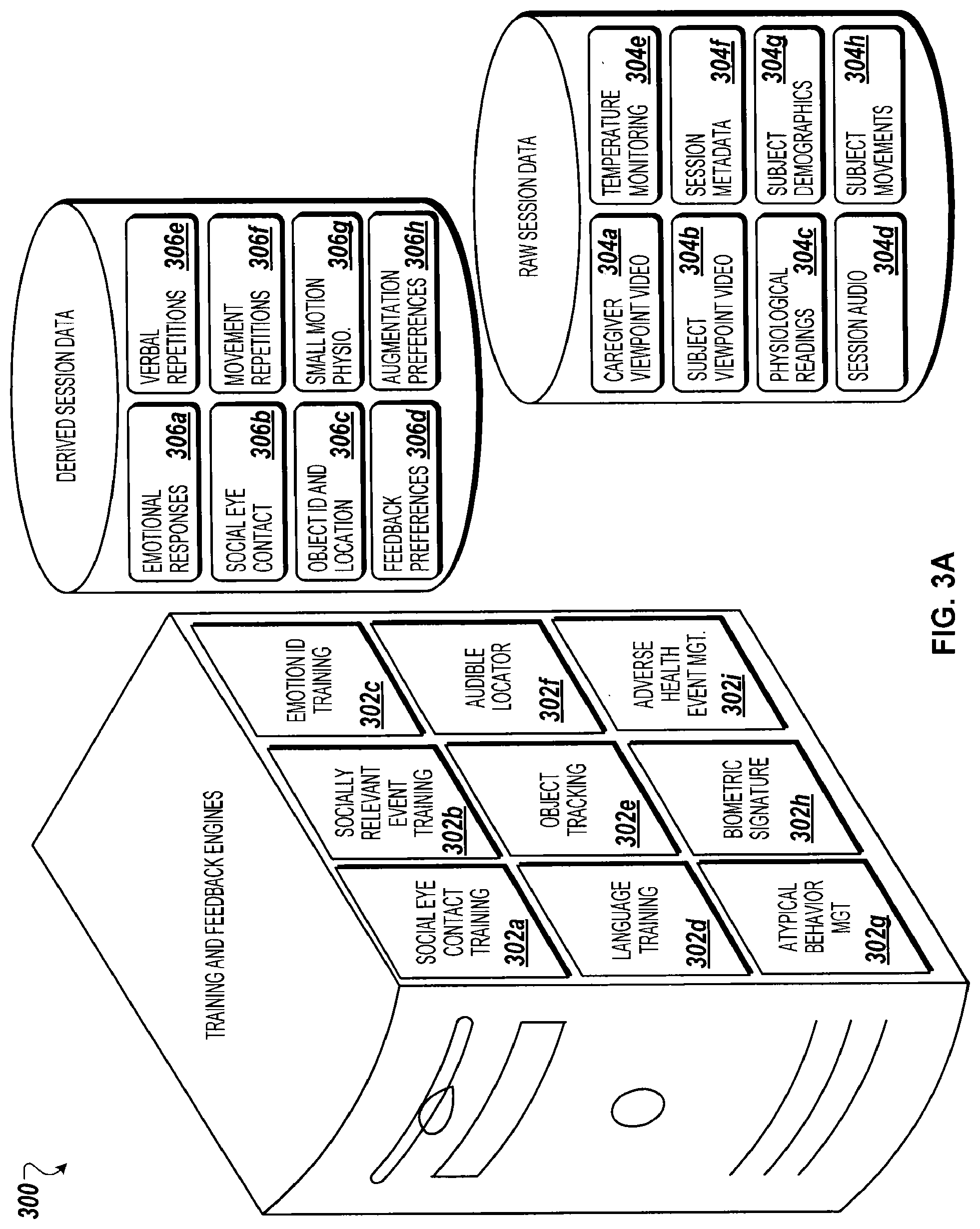

[0064] The algorithms 154, in some implementations, are utilized by various software modules 302 described in relation to FIG. 3A. For example, a social eye contact training module 302a may utilize the social eye contact identification algorithm 154e. A socially relevant event training module 302b, in another example, may utilize the social response validation algorithm 154m, the desired response identification algorithm 154n, and/or the social event identification algorithm 154o.

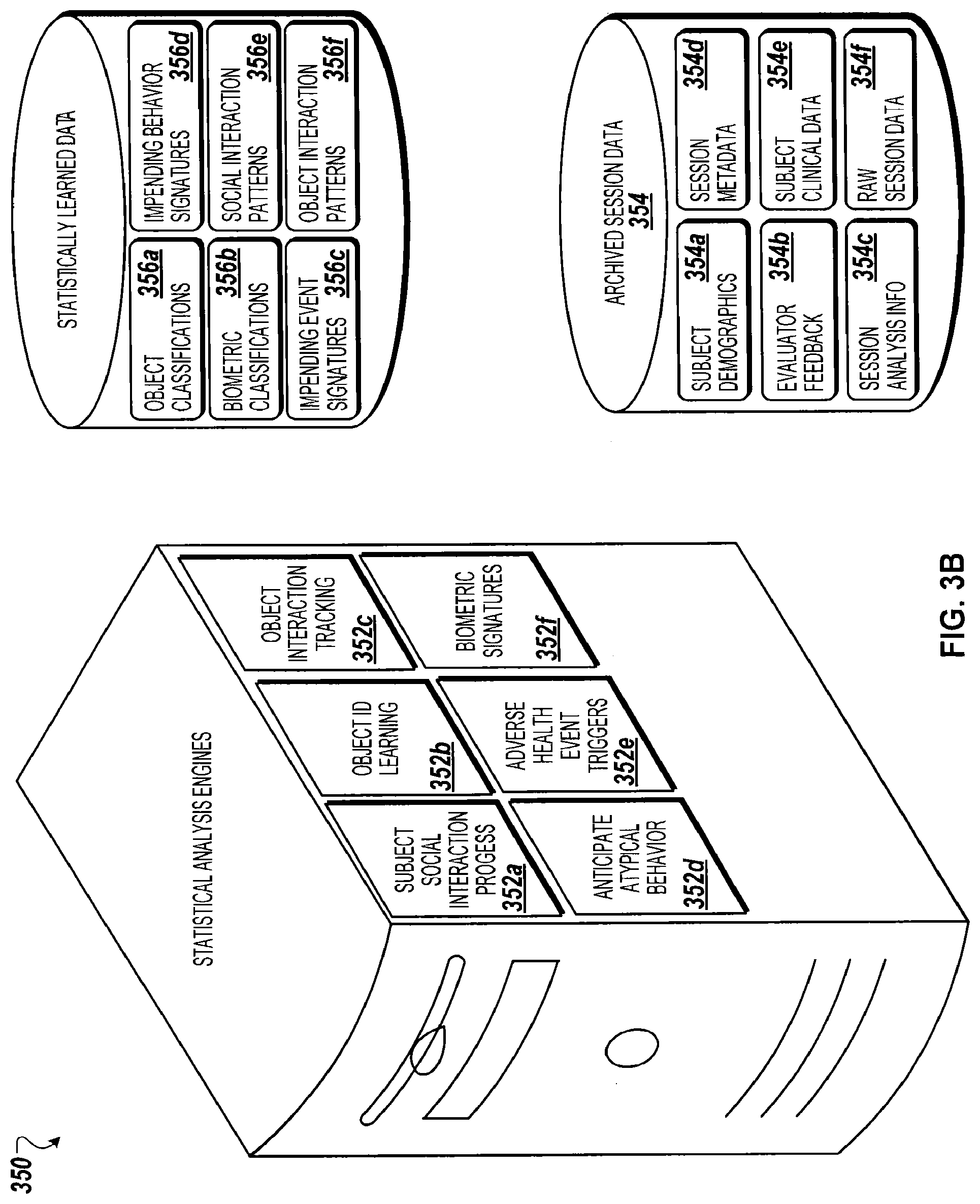

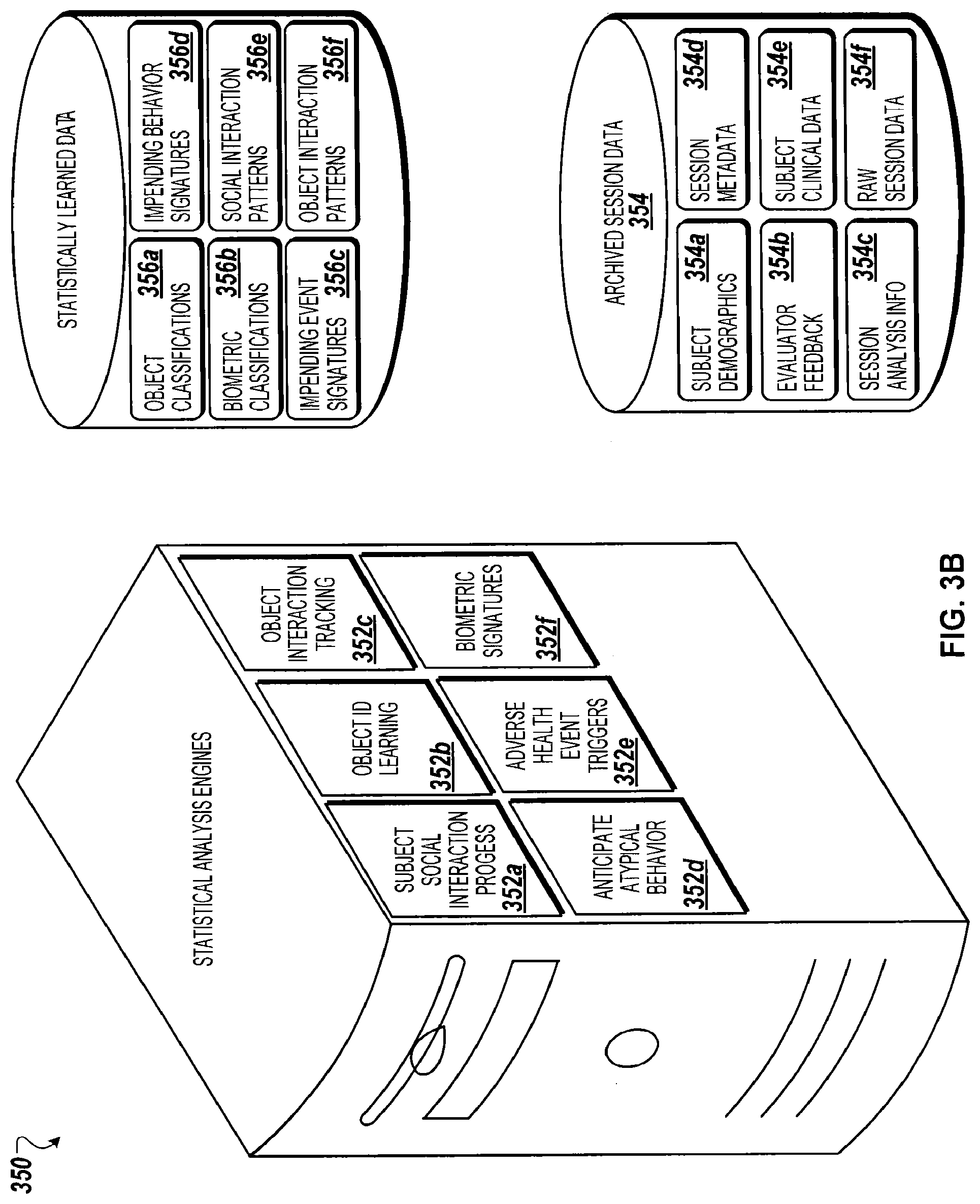

[0065] The algorithms 154, in some implementations generate analysis information 158 such as, for example, the derived session data 306 illustrated in FIG. 3A. The analysis information 158 may be provided in real time and/or in batch mode to a learning and statistical analysis system 160 including the learning engines 162. The learning engines 162, for example, may include the statistical analysis software modules 352 illustrated in FIG. 3B. A portion of the statistical analysis system 160 may execute upon the wearable data collection device 104. Conversely, in some implementations, a portion or all of the statistical analysis system 160 is external to the wearable data collection device 104. For example, certain learning engines 162 may reside upon a computing device in communication with the wearable data collection device 104, such as a smart phone, smart watch, tablet computer, or other personal computing device in the vicinity of the individual 102 (e.g., belonging to a caregiver, owned by the individual 102, etc.). The statistical analysis system 160, in another example, may reside upon a computing system accessible to the wearable data collection device 104 via a network connection, such as a cloud-based processing system.

[0066] The learning engines 162, in some implementations, generate learning information 164. For example, as illustrated in FIG. 3B, statistically learned data 356 may include social interaction patterns 356e. The learning engines 162 may execute a subject social interaction progress software module 352a to track progress of interactions of the individual 102 with the caregiver 106. Further, statistically learned data 356, in some implementations, may lead to system updates 166 presented to improve and refine the performance of the wearable data collection device 104. Statistically learned data 356, in some implementations, can be used to predict acting out or episodes in people with ASD. In some implementations, statistically learned data 356 can be used to predict, based on current conditions and environmental features as well as physiological or behavioral signals from the subject, unwellness or health episodes such as seizures or migraine onset or heart attacks or other cardiovascular episodes, or other outcomes such as are related to ASD. Statistically learned data 356 can be used to provide behavioral decoding. For instance, statistically learned data 356 may indicate that one type of self-hitting behavior plus a specific vocalization occurs in an individual 102 most frequently before meal times, and these behaviors are most pronounced if a meal is delayed relative to a regular meal time, and that they are extinguished as soon as a meal is provided and prevented if snacks are given before a regular meal. In this context, these behaviors may be statistically associated with hunger. The prior example is simplistic in nature--a benefit of computer-based statistical learning is that the statistical learning data 356 can allow the system to recognize patterns that are less obvious than this illustrative example. In the present example, at future times, statistical learning data 356 that resulted in recognition of a pattern such as mentioned can provide for behavioral decoding such as recognizing the behaviors as an indicator that the individual 102 is likely hungry.

[0067] Behavioral decoding can be used for feedback and/or for intervention. For instance, in terms of feedback, the system, in some implementations, provides visual, textual, auditory or other feedback to the individual 102, caregiver 106, and/or evaluator 114 (e.g., feedback identifying that the individual 102 is likely hungry). Behavioral decoding can also be used for intervention. For instance, in this case, when the aforementioned behaviors start emerging, a control signal can be sent from the system 100 to trigger in intervention that will reduce hunger, such as in this case ordering of food or instruction to the caregiver to provide food.

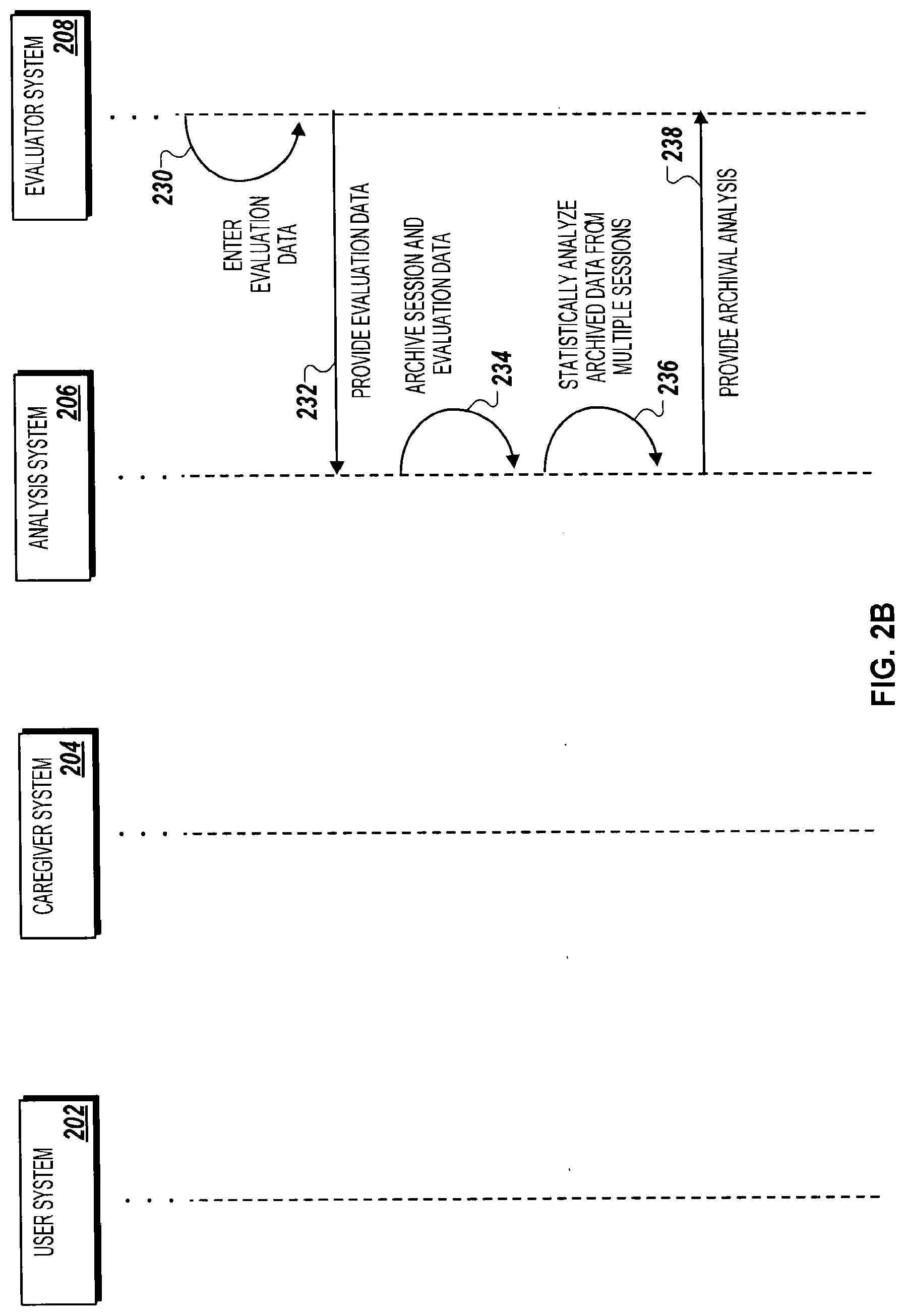

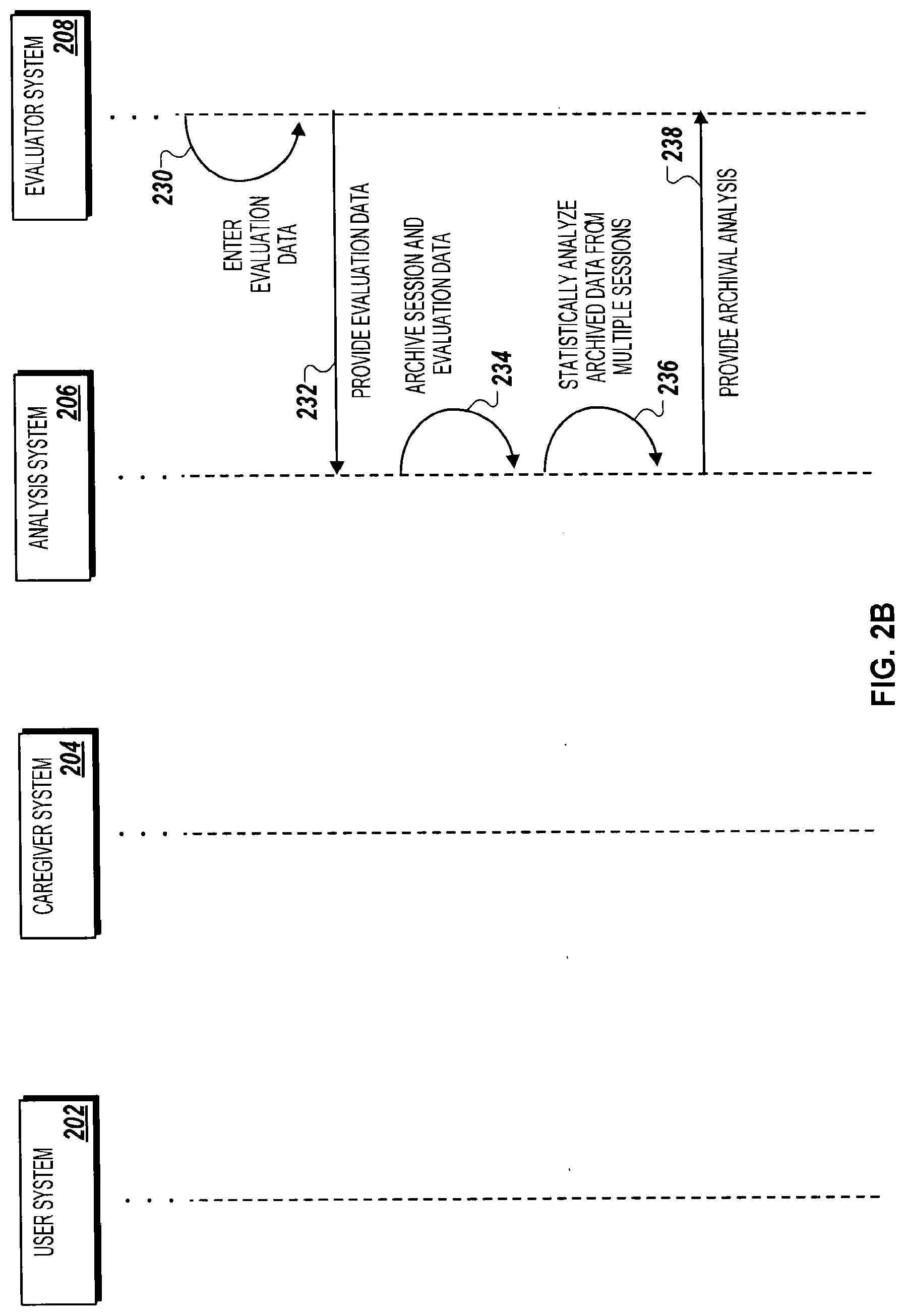

[0068] Turning to FIGS. 2A and 2B, a swim lane diagram illustrates a method 200 for conducting an evaluation session through a caregiver system 204 and a user system 202 monitored by an evaluator system 208. Information passed between the evaluator system 208 and either the caregiver system 204 or the user system 202 is managed by an analysis system 206. The caregiver system 204 and/or the user system 202 include a wearable data collection device, such as the wearable data collection devices 104 and 108 described in relation to FIG. 1A. The evaluation system 208 includes a computing system and display for presentation of information collected by the wearable data collection device(s) to an evaluator, such as the evaluator 114 described in relation to FIG. 1A. The analysis system 206 includes a data archival system such as the data buffer 128 and/or the data archive 122 described in relation to FIG. 1A, as well as an analysis module, such as the session data analysis engine 120 described in relation to FIG. 1A.

[0069] In some implementations, the method 200 begins with initiating an evaluation session (210) between the caregiver system 204 and the user system 202. An evaluator may have defined parameters regarding the evaluation session, such as a length of time, activities to include within the evaluation session, and props or objects to engage with during the evaluation session. In initiating the evaluation session, a software application functioning on the caregiver system 204 may communicate with a software application on the user system 202 to coordinate timing and initialize any data sharing parameters for the evaluation session. For example, information may be shared between the caregiver system 204 and the user system 202 using techniques described in U.S. Pat. No. 8,184,983 entitled "Wireless Directional Identification and Subsequent Communication Between Wearable Electronic Devices" and filed Jun. 9, 2011, the contents of which are hereby incorporated by reference in its entirety. In a particular example, the caregiver system 204 may issue a remote control "trigger" to the user system 202 (e.g., wearable data collection device) to initiate data collection by the user system 202. Meanwhile, the caregiver system 204 may initiate data collection locally (e.g., audio and/or video recording).

[0070] In some implementations, initiating the evaluation session further includes opening a real-time communication channel with the evaluator system 208. For example, the real-time evaluation session may be open between the caregiver system 204 and the evaluator system 208 and/or the user system 202 and the evaluator system 208. In some implementations, the caregiver system 204 initiates the evaluation session based upon an initiation trigger supplied by the evaluator system 208.

[0071] In some implementations, session data is uploaded (212) from the user system 202 to the analysis system 206. For example, data collected by one or more modules functioning upon the user system 202, such as a video collection module and an audio collection module, may be passed from the subject system 202 to the analysis system 206. The data, in some embodiments, is streamed in real-time. In other embodiments, the data is supplied at set intervals, such as, in some examples, after a threshold quantity of data has been collected, after a particular phase of the session has been completed, or upon pausing an ongoing evaluation session. The data, in further examples, can include eye tracking data, motion tracking data, EMG data, EEG data, heart rate data, breathing rate data, and data regarding subject repetitions (e.g., repetitive motions and/or vocalizations).

[0072] Furthermore, in some implementations, session data is uploaded (214) from the caregiver system 204 to the analysis system 206. For example, audio data and/or video data collected by a wearable data collection device worn by the caregiver may be uploaded to the analysis system 206. Similar to the upload from the subject system 202 and the analysis system 206, data upload from the caregiver system 204 to the analysis system 206 may be done in real time, periodically, or based upon one or more triggering events.

[0073] In some implementations, the analysis system 206 analyzes (216) the session data. Data analysis can include, in some examples, identifying instances of social eye contact between the individual and the caregiver, identifying emotional words, and identifying vocalization of the subject's name. The analysis system 206, in some embodiments, determines counts of movement repetitions and/or verbal repetitions during recording of the individual's behavior. Further, in some embodiments, data analysis includes deriving emotional state of the individual from one or more behavioral and/or physiological cues (e.g., verbal, body language, EEG, EMG, heart rate, breathing rate, etc.). For example, the analysis system 206 may analyze the reaction and/or emotional state of the individual to the vocalization of her name. The analysis system 206, in some embodiments, further analyzes caregiver reactions to identified behaviors of the individual such as, in some examples, social eye contact, repetitive behaviors, and vocalizations. For example, the analysis system 206 may analyze body language, emotional words, and/or vocalization tone derived from audio and/or video data to determine caregiver response.

[0074] In some implementations, analyzing the session data (216) includes formatting session data into presentation data for the evaluator system 208. For example, the analysis system 206 may process heart rate data received from the user system 202 to identify and color code instances of elevated heart rate, as well as preparing presentation of the heart rate data in graphic format for presentation to the evaluator. If prepare in real time, the session data supplied by the user system 202 and/or the caregiver system 204 may be time delayed such that raw session information (e.g., video feed) may be presented to the evaluator simultaneously with processed data feed (e.g., heart rate graph).

[0075] The analysis system 206, in some implementations, archives at least a portion of the session data. For example, the session data may be archived for review by an evaluator at a later time. In another example, archived system data may be analyzed in relation to session data derived from a number of additional subjects to derived learned statistical data (described in greater detail in relation to FIG. 3B).

[0076] In some implementations, the analysis system 206 provides (218) session information, including raw session data and/or processed session data, to the evaluator system 208. At least a portion of the session data collected from the user system 202 and/or the caregiver system 204, in one example, is supplied in real time or near-real time to the evaluator system 208. As described above, the session information may include enhanced processed session data prepared for graphical presentation to the evaluator. In another example, the evaluator system 208 may request the session information from the analysis system 206 at a later time. For example, the evaluator may review the session after the individual and caregiver have completed and authorized upload of the session to the analysis system. In this manner, the evaluator may review session data at leisure without needing to coordinate scheduling with the caregiver.

[0077] In some implementations, if the evaluator is reviewing the session information in near-real-time, the evaluator system 208 issues (222) an instruction to the caregiver system 204. The evaluator, for example, may provide verbal instructions via a telephone call to the caregiver system 204 or an audio communication session between the evaluator system 208 and the caregiver system 204. For example, a voice data session may be established between the evaluator system 208 and the caregiver's wearable data collection device. In another example, the evaluator system 208 may supply written instructions or a graphic cue to the caregiver system 204. In a particular example, a graphic cue may be presented upon a heads-up display of the caregiver's wearable data collection device (such as the heads up display described in U.S. Pat. No. 8,203,502 entitled "Wearable Heads-Up Display with Integrated Finger-Tracking Input Sensor" and filed May 25, 2011, the contents of which are hereby incorporated by reference in its entirety) to prompt the caregiver to interact with the individual using a particular object.

[0078] Rather than issuing an instruction, in some implementations the evaluator system 208 takes partial control of either the caregiver system 204 or the user system 202. In some examples, the evaluator system 208 may assert control to speak through the user system 202 to the individual or to adjust present settings of the wearable data collection device of the caregiver. In taking partial control of the caregiver system 204 or the user system 202, the evaluator system 208 may communicate directly with either the caregiver system 204 or the user system 202 rather than via the relay of the analysis system 206.

[0079] Similarly, although the instruction, as illustrated, bypasses the analysis system 206, the communication session between the evaluator system 208 and the caregiver system 204, in some implementations, is established by the analysis system 206. The analysis system 206, in some embodiments, may collect and archive a copy of any communications supplied to the caregiver system 204 by the evaluator system 208.

[0080] In some implementations, the caregiver system 204 performs (224) the instruction. For example, the instruction may initiate collection of additional data and/or real-time supply of additional data from one of the caregiver system 204 and the subject system 202 to the evaluator system 208 (e.g., via the analysis system 206). The evaluator system 208, in another example, may cue a next phase on the evaluation session by presenting instructional information to the caregiver via the caregiver system 204. For example, upon cue by the evaluator system 208, the caregiver system 204 may access and present instructions for performing the next phase of the evaluation session by presenting graphical and/or audio information to the caregiver via the wearable data collection device.

[0081] In some implementations, the user system 202 uploads (226) additional session data and the caregiver system 204 uploads (228) additional session data. The data upload process may continue throughout the evaluation session, as described, for example, in relation to steps 212 and steps 214.

[0082] Turning to FIG. 2B, in some implementations, the evaluator enters (230) evaluation data via the evaluator system 208. For example, the evaluator may include comments, characterizations, caregiver feedback, and/or recommendations regarding the session information reviewed by the evaluator via the evaluator system 208.

[0083] In some implementations, the evaluator system 208 provides (232) the evaluation data to the analysis system 206. The evaluation data, for example, may be archived along with the session data. At least a portion of the evaluation data, furthermore, may be supplied from the analysis system 206 to the caregiver system 204, for example as immediate feedback to the caregiver. In some embodiments, a portion of the evaluation data includes standardized criteria, such that the session data may be compared to session data of other individuals characterized in a same or similar manner during evaluation.

[0084] In some implementations, the analysis system 206 archives (234) the session and evaluation data. For example, the session and evaluation data may be uploaded to long term storage in a server farm or cloud storage area. Archival of the session data and evaluation data, for example, allows data availability for further review and/or analysis. The session data and evaluation data may be anonymized, secured, or otherwise protected from misuse prior to archival.

[0085] In some implementations, the analysis system 206 statistically analyzes (236) the archived data from multiple sessions. In one example, archived session data may be compared to subsequent session data to reinforce characterizations or to track progress of the individual. In another example, as described above, the session data may be evaluated in relation to session data obtained from further individuals to derive learning statistics regarding similarly characterized individuals. The evaluation data supplied by the evaluator in step 230, in one example, may include an indication of desired analysis of the session data. For example, the session data may be compared to session data collected during evaluation of a sibling of the subject on a prior occasion.

[0086] In some implementations, the analysis system 206 provides (238) analysis information derived from the archived session data to the evaluator system 208. For example, upon analyzing the session data in view of prior session data with the same individual, progress data may be supplied to the evaluator system 208 for review by the evaluator.

[0087] FIG. 3A is a block diagram of a computing system 300 for training and feedback software modules 302 for execution in relation to a wearable data collection device. The training and feedback software modules 302 incorporate various raw session data 304 obtained by a wearable data collection device, and generate various derived session data 306. The training and feedback software modules 302, for example, may include software modules capable of executing on any one of the subject wearable data collection device 104, the caregiver wearable data collection device 108, and the analysis and data management system 118 of FIG. 1A. Further, at least a portion of the training and feedback software modules 302 may be employed in a system 500 of FIG. 5A, for example in a wearable data collection device 504 and/or a learning data analysis system 520, or in a system 1100 of the FIG. 11A, for example in a wearable data collection device 1104 and/or a learning data analysis system 1118. The raw session data 304, for example, may represent the type of session data shared between the subject system 202 or the caregiver system 204 and the analysis system 206, as described in relation to FIG. 2A.

[0088] FIG. 3B is a block diagram of a computing system 350 for analyzing and statistically learning from data collected through wearable data collection devices. The archived session data 354 may include data stored as archive data 122 as described in FIG. 1A and/or data stored as archive data 1122 as described in FIG. 11A. For example, the analysis system 206 of FIG. 2B, when statistically analyzing the archived data in step 236, may perform one or more of the statistical analysis software modules 352 upon a portion of the archived session data 354.

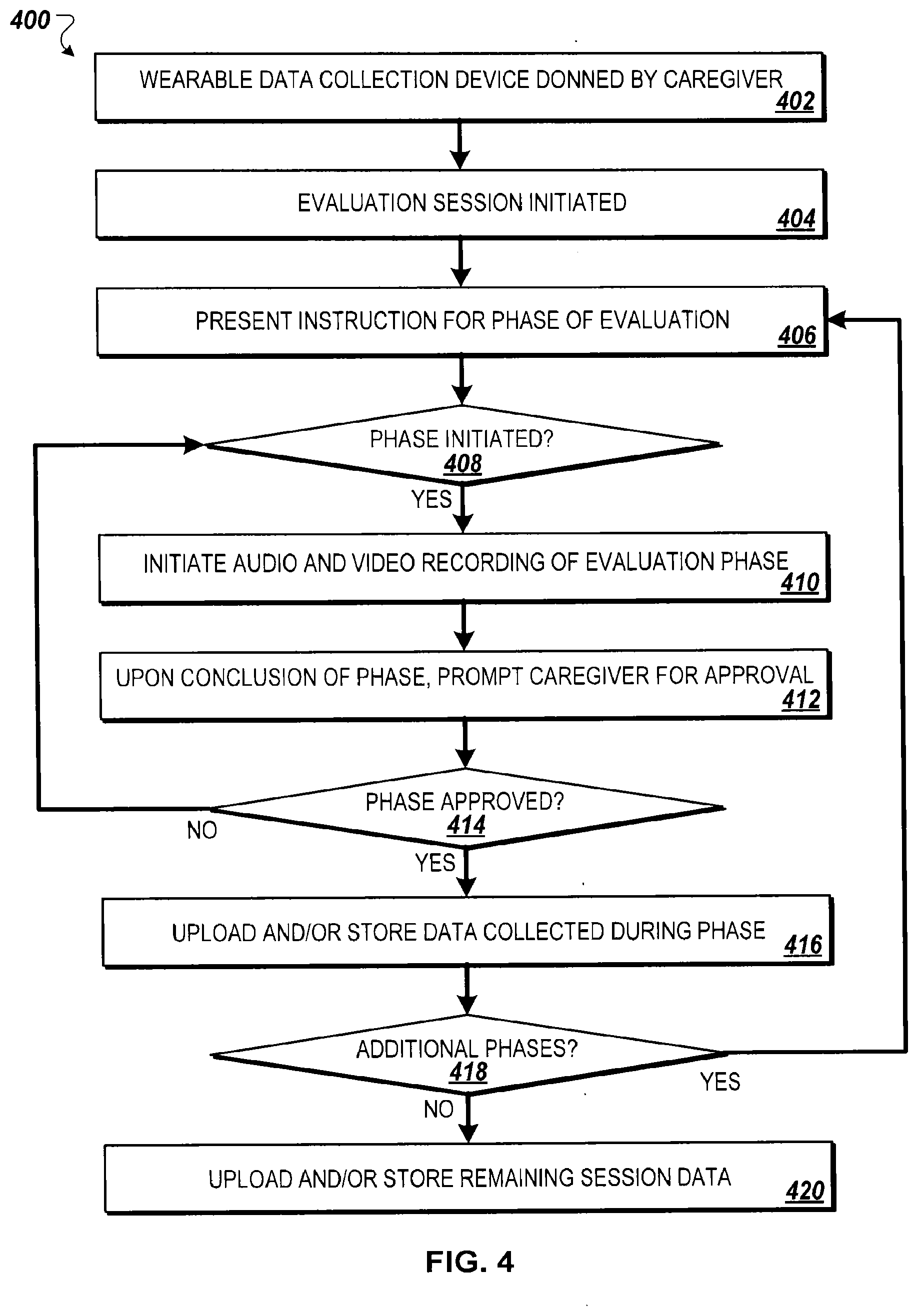

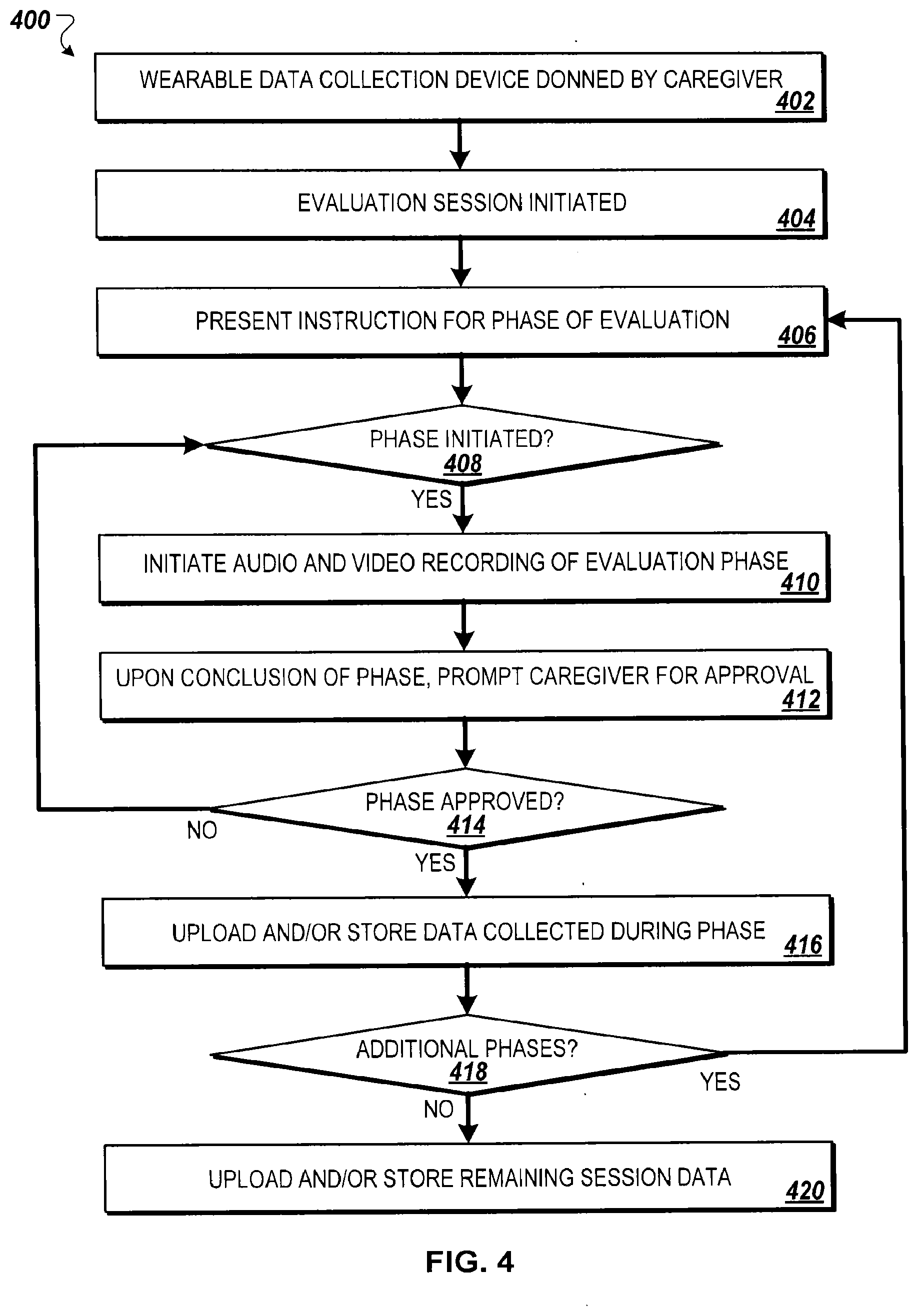

[0089] FIG. 4 is a flow chart of an example method 400 for conducting an evaluation session using a wearable data collection device donned by a caregiver of an individual being evaluated for Autism Spectrum Disorder. The method 400, for example, may be performed independent of an evaluator in the comfort of the caregiver's home. The caregiver may be supplied with a kit including a wearable data collection device and instructions for performing an evaluation session. The kit may optionally include a wearable data collection device for the individual.

[0090] In some implementations, the method 400 begins with the caregiver donning the wearable data collection device (402). Examples of a wearable data collection device are described in relation to FIG. 1A. The wearable data collection device, for example, may include a head-mounted lens for a video recording system, a microphone for audio recording, and a head-mounted display. Further, the wearable data collection device may include a storage medium for storing data collected during the evaluation session.

[0091] In some implementations, the evaluation session is initiated (404). Upon powering and donning the wearable data collection device, or launching an evaluation session application, the evaluation session may be initiated. Initiation of the evaluation session may include, in some embodiments, establishment of a communication channel between the wearable data communication device and a remote computing system.

[0092] In some implementations, instructions are presented for a first phase of evaluation (406). The instructions may be in textual, video, and/or audio format. Instructions, for example, may be presented upon a heads-up display of the wearable data collection device. If a communication channel was established with the remote computing system, the instructions may be relayed to the wearable data communication device from the remote computing system. In other embodiments, the instructions may be programmed into the wearable data communication device. The evaluation kit, for example, may be preprogrammed to direct the caregiver through an evaluation session tailored for a particular individual (e.g., first evaluation of a 3-year-old male lacking verbal communication skills versus follow-on evaluation of a 8-year-old female performing academically at grade level). In another example, the caregiver may be prompted for information related to the individual, and a session style may be selected based upon demographic and developmental information provided. In other implementations, rather than presenting instructions, the caregiver may be prompted to review a booklet or separate video to familiarize himself with the instructions.

[0093] The evaluation session, in some implementations, is performed as a series of stages. Each stage for example, may include one or more activities geared towards encouraging interaction between the caregiver and the individual. After reviewing the instructions, the caregiver may be prompted to initiate the first phase of evaluation. If the phase is initiated, in some implementations, audio and video recording of the evaluation phase is initiated (410). The wearable data collection device, for example, may proceed to collect data related to the identified session.

[0094] In some implementations, upon conclusion of the phase, the caregiver is prompted for approval (412). The caregiver may be provided the opportunity to approve the phase of evaluation, for example, based upon whether the phase was successfully completed. A phase may have failed to complete successfully, in some examples, due to unpredicted interruption (e.g., visitor arriving at the home, child running from the room and refusing to participate, etc.).

[0095] In some implementations, if the phase has not been approved (414), the phase may be repeated by re-initiating the current phase (408) and repeating collection of audio and video recording (410). In this manner, if the evaluation session phase is interrupted or otherwise failed to run to completion, the caregiver may re-try a particular evaluation phase.

[0096] Upon approval by the caregiver of the present phase (414), in some implementations, session data associated with the particular phase is stored and/or uploaded (416). The data, for example, may be maintained in a local storage medium by the wearable data collection device or uploaded to the remote computing system. Metadata, such as a session identifier, phase identifier, subject identifier, and timestamp, may be associated with the collected data. In some implementations, for storage or transfer, the wearable data collection device secures the data using one or more security algorithms to protect the data from unauthorized review.

[0097] In some implementations, if additional phases of the session exist (418), instructions for a next phase of the evaluation are presented (406). As described above in relation to step 406, for example, the wearable data collection device may present instructions for caregiver review or prompt the caregiver to review separate instructions related to the next phase.

[0098] In some implementations, at the end of each phase, the caregiver may be provided the opportunity to suspend a session, for example to allow the individual to take a break or to tend to some other activity prior to continuing the evaluation session. In other implementations, the caregiver is encouraged to proceed with the evaluation session, for example to allow an evaluator later to review the individual's responses as phase activities are compounded.

[0099] If no additional phases exist in the evaluation session (418), in some implementations, remaining session data is uploaded or stored (420) as described in step 416. If the phase data was previously stored locally on the wearable data collection device, at this point, the entire session data may be uploaded to the remote computing system. In other embodiments, the session data remains stored on the wearable data collection device, and the wearable data collection device may be returned for evaluation and reuse purposes. In addition to the session data, the caregiver may be prompted to provide additional data regarding the session, such as a session feedback survey or comments regarding the individual's participation in the evaluation session compared to the individual's typical at-home behaviors. This information may be uploaded or stored along with the data collected for each evaluation phase.

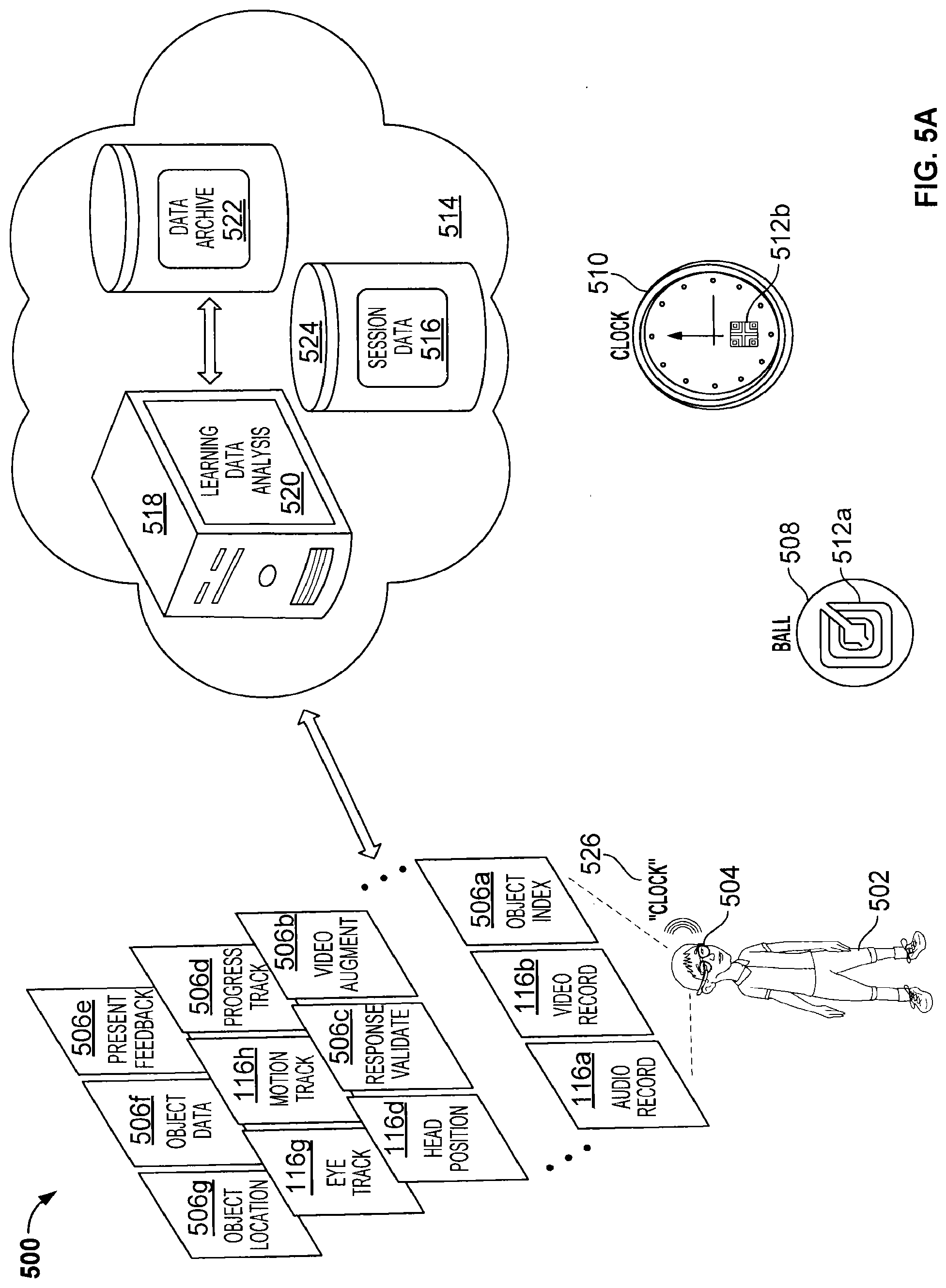

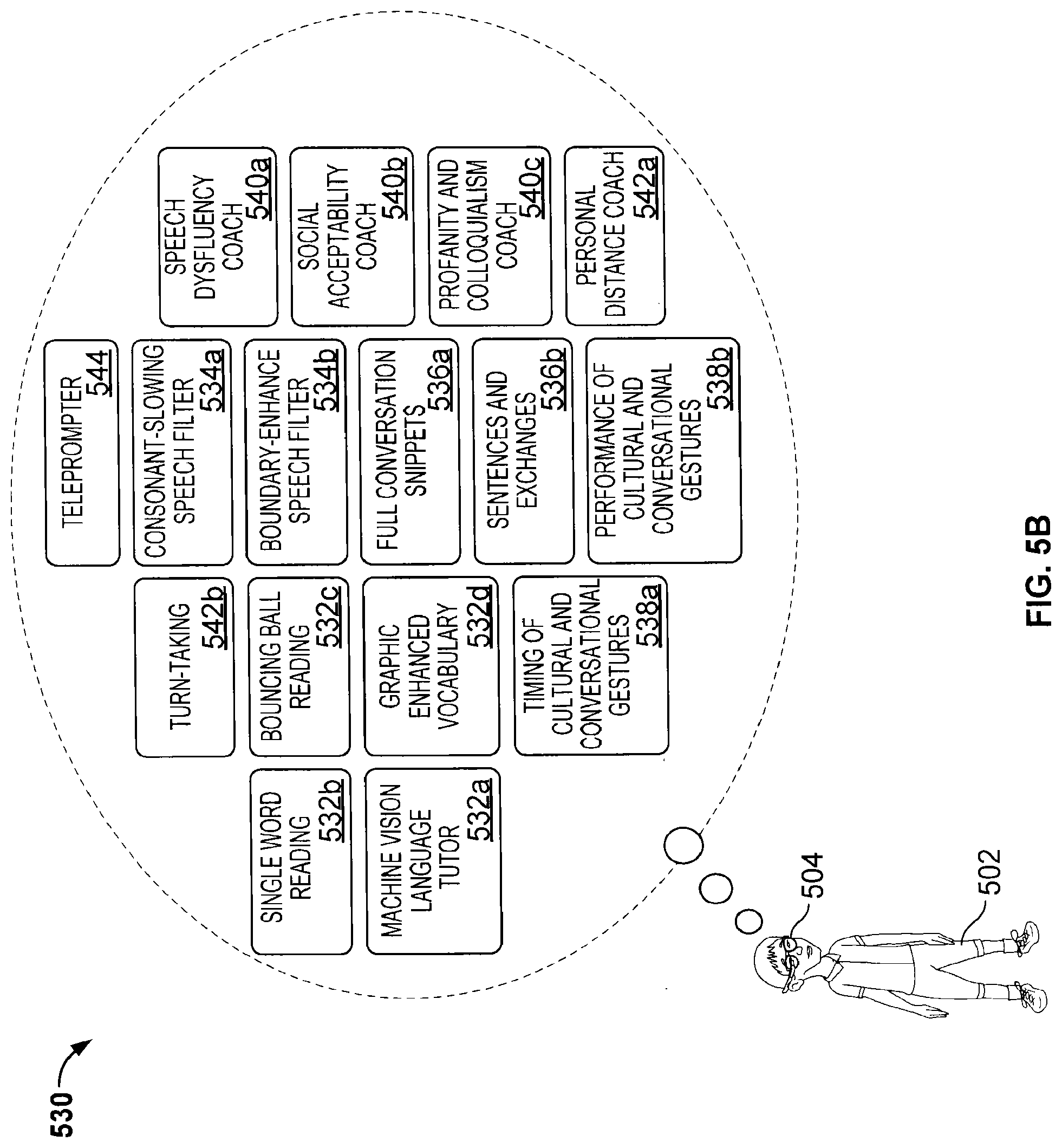

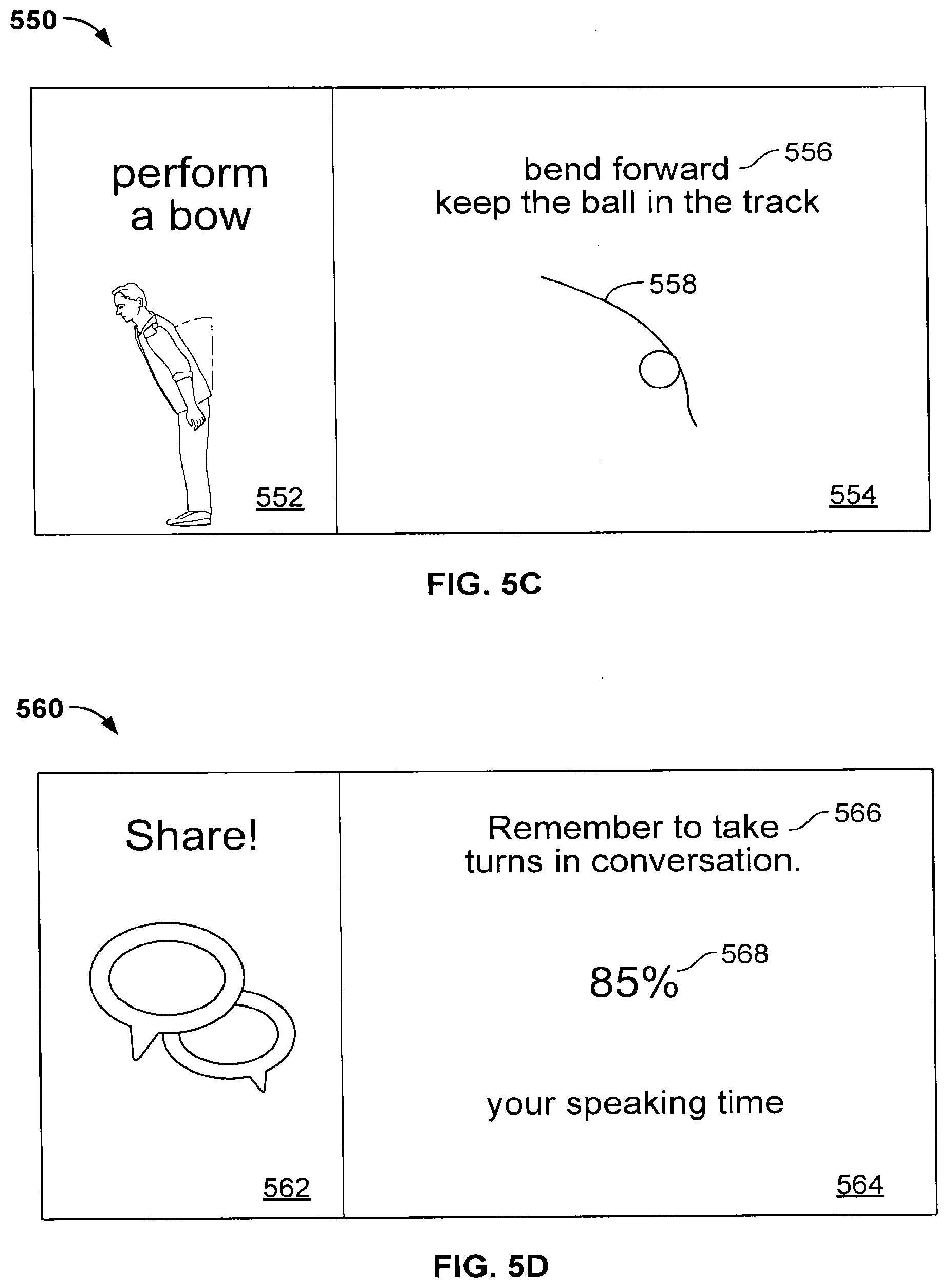

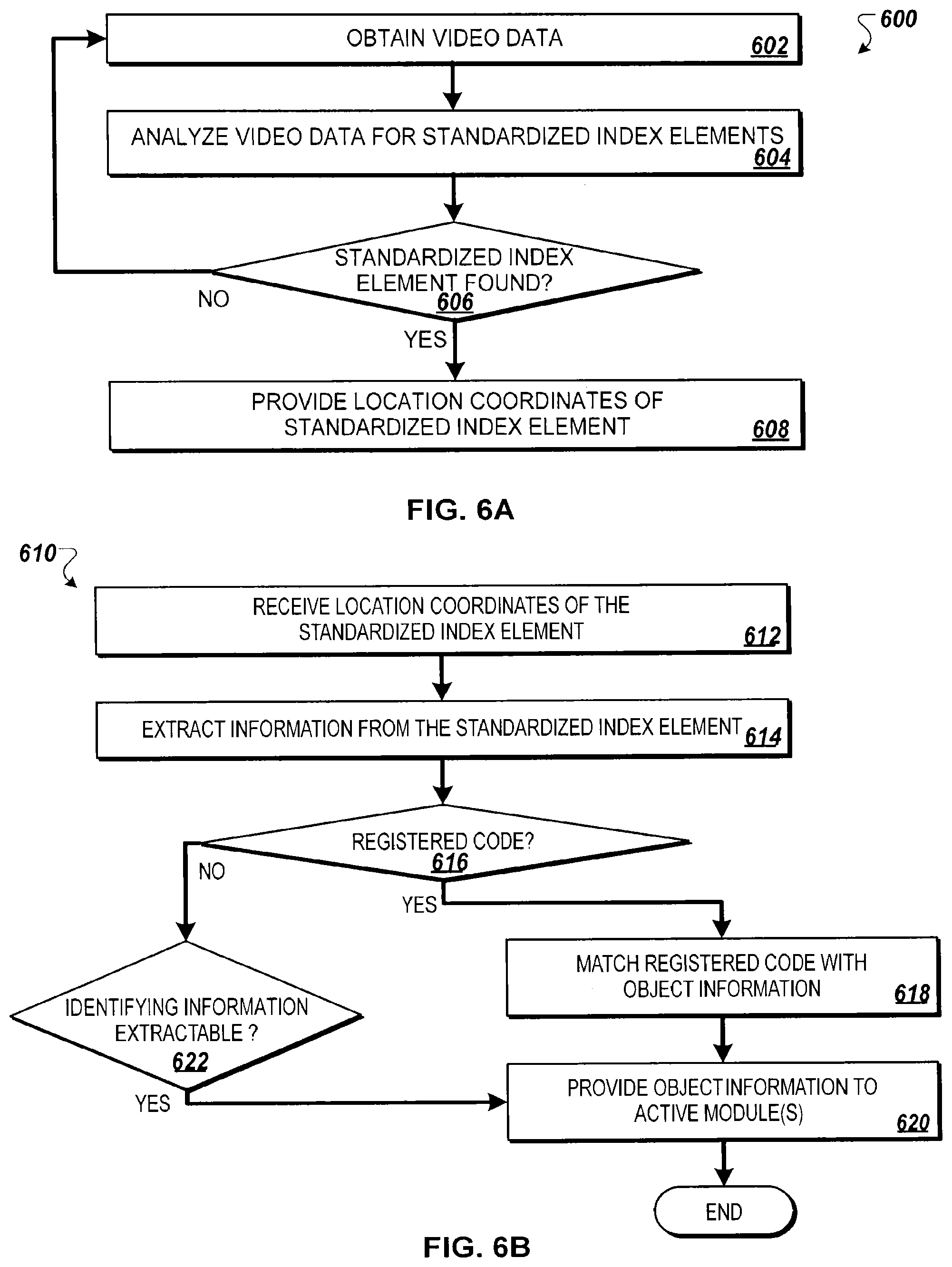

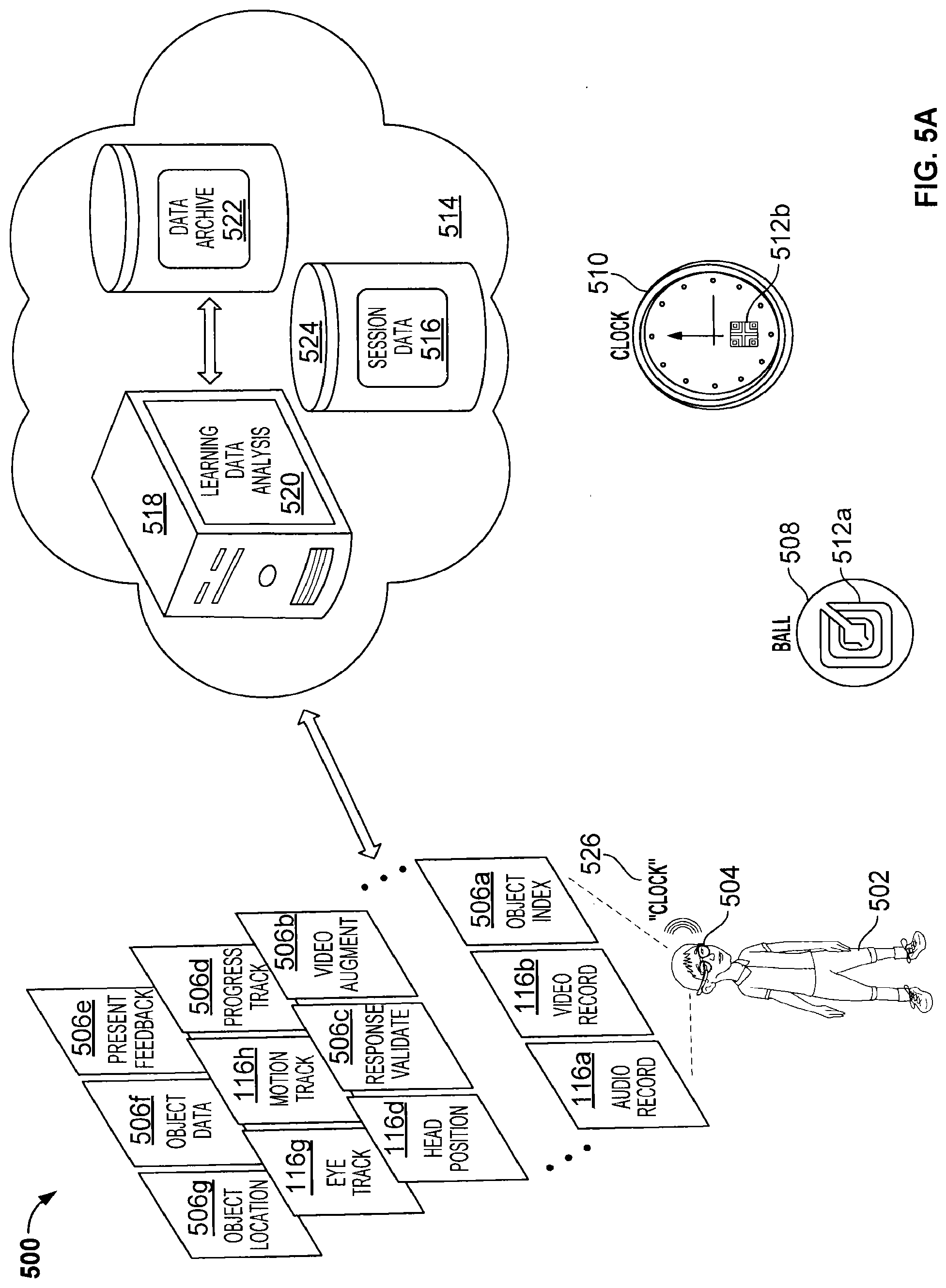

[0100] FIG. 5A is a block diagram of an example environment 500 for augmented reality learning, coaching, and assessment using a wearable data collection device 504. As illustrated, the wearable data collection device 504 shares many of the same data collection features 116 as the wearable data collection devices 104 and 108 described in relation to FIG. 1A. Additionally, the wearable data collection device includes data collection and interpretation features 506 configured generally for identifying objects and individuals within a vicinity of an individual 502 and for prompting, coaching, or assessing interactions between the individual 502 and those objects and individuals within the vicinity.

[0101] In some implementations, the example environment includes a remote analysis system 514 for analyzing the data 116 and/or 506 using one or more learning data analysis modules 520 executing upon a processing system 518 (e.g., one or more computing devices or other processing circuitry). The learning data analysis module(s) 520 may store raw and/or analyzed data 116, 506 as session data 516 in a data store 524. Further, the remote analysis system 514 may archive collected data 116 and/or 506 in a data archive 522 for later analysis or for crowd-sourced sharing to support learning engines to enhance performance of the learning data analysis modules 520.