Reception Apparatus, Reception Method, Transmission Apparatus, And Transmission Method

KITAZATO; Naohisa ; et al.

U.S. patent application number 16/922671 was filed with the patent office on 2020-10-22 for reception apparatus, reception method, transmission apparatus, and transmission method. This patent application is currently assigned to SONY CORPORATION. The applicant listed for this patent is SONY CORPORATION. Invention is credited to Jun KITAHARA, Naohisa KITAZATO, Yasuaki YAMAGISHI.

| Application Number | 20200336797 16/922671 |

| Document ID | / |

| Family ID | 1000004942717 |

| Filed Date | 2020-10-22 |

View All Diagrams

| United States Patent Application | 20200336797 |

| Kind Code | A1 |

| KITAZATO; Naohisa ; et al. | October 22, 2020 |

RECEPTION APPARATUS, RECEPTION METHOD, TRANSMISSION APPARATUS, AND TRANSMISSION METHOD

Abstract

The present technology relates to a reception apparatus, a reception method, a transmission apparatus, and a transmission method, by which an increase in data size of signaling information transmitted by broadcasting can be suppressed. Provided is a reception apparatus including: a reception unit that receives a broadcast wave of digital broadcasting using an IP (Internet Protocol) transmission system; and a control unit that controls, on the basis of information for managing only a broadcast component transmitted by a broadcast wave of the digital broadcasting, which is first signaling information transmitted by a broadcast wave of the digital broadcasting, or information for managing at least one component of the broadcast component and a communication component transmitted by communication, which is second signaling information transmitted by communication, operations of respective units for acquiring the at least one component of the broadcast component and the communication component. The present technology is applicable to a television receiver, for example.

| Inventors: | KITAZATO; Naohisa; (Tokyo, JP) ; YAMAGISHI; Yasuaki; (Kanagawa, JP) ; KITAHARA; Jun; (Shizuoka, JP) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Assignee: | SONY CORPORATION Tokyo JP |

||||||||||

| Family ID: | 1000004942717 | ||||||||||

| Appl. No.: | 16/922671 | ||||||||||

| Filed: | July 7, 2020 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 15300564 | Sep 29, 2016 | 10743082 | ||

| PCT/JP2015/059587 | Mar 27, 2015 | |||

| 16922671 | ||||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | H04L 67/06 20130101; H04N 21/64322 20130101; H04L 67/02 20130101; H04N 21/2362 20130101; H04N 21/4345 20130101; H04H 2201/16 20130101; H04N 21/8586 20130101; H04L 65/608 20130101; H04L 65/4076 20130101; H04H 20/93 20130101; H04N 21/65 20130101 |

| International Class: | H04N 21/643 20060101 H04N021/643; H04H 20/93 20060101 H04H020/93; H04L 29/06 20060101 H04L029/06; H04L 29/08 20060101 H04L029/08; H04N 21/2362 20060101 H04N021/2362; H04N 21/434 20060101 H04N021/434; H04N 21/65 20060101 H04N021/65; H04N 21/858 20060101 H04N021/858 |

Foreign Application Data

| Date | Code | Application Number |

|---|---|---|

| Apr 11, 2014 | JP | 2014-081536 |

Claims

1. (canceled)

2. A reception apparatus, comprising: a tuner configured to receive a digital broadcast signal based on an Internet Protocol (IP) transmission system; and one or more processors configured to: acquire service configuration information transmitted via the digital broadcast signal, the service configuration information including at least one of bootstrap information or communication delivery information corresponding to signaling information, the bootstrap information including IP address information and port information about the signaling information transmitted via the digital broadcast signal, the communication delivery information including information which indicates communication delivery of the signaling information, receive a user selection for a service corresponding to the service configuration information, the user selection being performed using a remote controller, acquire, based on the service configuration information, the signaling information corresponding to the service selected by the user selection, acquire, based on the signaling information, at least one of a broadcast component transmitted via the digital broadcast signal or a communication component transmitted via a communication other than the digital broadcast signal, and output the at least one of the broadcast component or the communication component.

3. The reception apparatus according to claim 2, wherein the information in the communication delivery information indicating the communication delivery of the signaling information includes location information including a piece of a uniform resource locator (URL) corresponding to a signaling server.

4. The reception apparatus according to claim 2, wherein the one or more processors are configured to determine acquisition of the signaling information transmitted via the communication other than the digital broadcast signal based on the service configuration information and capability of the reception apparatus.

5. The reception apparatus according to claim 2, wherein the one or more processors are configured to acquire the broadcast component based on the signaling information transmitted via the digital broadcast signal.

6. The reception apparatus according to claim 2, wherein the one or more processors are configured to acquire the communication component based on the signaling information transmitted via the communication other than the digital broadcast signal.

7. The reception apparatus according to claim 2, wherein the one or more processors are configured to receive input from a microphone.

8. A reception method performed in a reception apparatus, the reception method comprising: receiving, by a tuner, a digital broadcast signal based on an Internet Protocol (IP) transmission system, acquiring, by one or more processors, service configuration information transmitted via the digital broadcast signal, the service configuration information including at least one of bootstrap information or communication delivery information corresponding to signaling information, the bootstrap information including IP address information and port information about the signaling information transmitted via the digital broadcast signal, the communication delivery information including information which indicates communication delivery of the signaling information; receiving, by the one or more processors, a user selection for a service corresponding to the service configuration information, the user selection being performed using a remote controller; acquiring, by the one or more processors, based on the service configuration information, the signaling information corresponding to the service selected by the user selection; acquiring, by the one or more processors, based on the signaling information, at least one of a broadcast component transmitted via the digital broadcast signal or a communication component transmitted via a communication other than the digital broadcast signal; and output, by the one or more processors, the at least one of the broadcast component or the communication component.

9. The reception method according to claim 8, wherein the information in the communication delivery information indicating that the communication delivery of the signaling information includes location information including a piece of a uniform resource locator (URL) corresponding to a signaling server.

10. The reception method according to claim 8, comprising: determining, by the one or more processors, to acquire the signaling information transmitted via the communication other than the digital broadcast signal based on the service configuration information and capability of the reception apparatus.

11. The reception method according to claim 8, wherein the acquiring, by the one or more processors, the broadcast component is based on the signaling information transmitted via the digital broadcast signal.

12. The reception method according to claim 8, further comprising: acquiring, by the one or more processors, the communication component based on the signaling information transmitted via the communication other than the digital broadcast signal.

13. The reception method according to claim 8, further comprising: receiving, by the one or more processors, input from a microphone.

Description

CROSS REFERENCE TO RELATED APPLICATIONS

[0001] This application is a continuation of U.S. patent application Ser. No. 15/300,564, filed on Sep. 29, 2016, which is a U.S. National Phase of International Patent Application No. PCT/JP2015/059587, filed on Mar. 27, 2015, which claims priority benefit of Japanese Patent Application No. JP 2014-081536, filed in the Japan Patent Office on Apr. 11, 2014. The benefit of priority is claimed to each of the foregoing, and the entire contents of each of the foregoing are incorporated herein by reference.

TECHNICAL FIELD

[0002] The present technology relates to a reception apparatus, a reception method, a transmission apparatus, and a transmission method and more particularly to a reception apparatus, a reception method, a transmission apparatus, and a transmission method, by which an increase in data size of signaling information transmitted by broadcasting can be suppressed.

BACKGROUND ART

[0003] In recent years, in the fields of digital broadcasting, in addition to services utilizing broadcasting, hybrid services cooperating with communication have been introduced (e.g., see Patent Document 1). In such hybrid services, components such as video, audio, and closed captions for providing those services are transmitted by broadcasting or communication as streams. [0004] Patent Document 1: Japanese Patent Application Laid-open No. 2011-66556

SUMMARY OF INVENTION

Problem to be Solved by the Invention

[0005] By the way, if the hybrid services are introduced, it is necessary to describe information relating to broadcasting and communication in signaling information. Thus, an increase in data size of the signaling information transmitted by broadcasting is assumed. Therefore, the data size of the signaling information transmitted by broadcasting is required to be reduced.

[0006] The present technology has been made in view of the above-mentioned circumstances to be capable of suppressing an increase in data size of signaling information transmitted by broadcasting.

Means for Solving the Problem

[0007] A reception apparatus according to a first aspect of the present technology is a reception apparatus including: a reception unit that receives a broadcast wave of digital broadcasting using an IP (Internet Protocol) transmission system; and a control unit that controls, on the basis of information for managing only a broadcast component transmitted by a broadcast wave of the digital broadcasting, which is first signaling information transmitted by a broadcast wave of the digital broadcasting, or information for managing at least one component of the broadcast component and a communication component transmitted by communication, which is second signaling information transmitted by communication, operations of respective units for acquiring the at least one component of the broadcast component and the communication component.

[0008] The first signaling information may be information in units of services and may include a plurality of management information items for acquiring the broadcast component transmitted through a FLUTE (File Delivery over Unidirectional Transport) session.

[0009] The first signaling information may be information in units of services and may include one management information item obtained by integrating a plurality of management information items for acquiring the broadcast component transmitted through a FLUTE session as a parameter defined at a component level.

[0010] The first signaling information and the second signaling information may include management information defining information relating to the second signaling information as a parameter at a service level.

[0011] The management information may include information indicating a range of signaling information, a version information, and a URL (Uniform Resource Locator) indicating an acquisition source, as the information relating to the second signaling information.

[0012] The management information may further include information indicating an update interval of the second signaling information, as the information relating to the second signaling information.

[0013] The management information may further include information indicating a timing to terminate acquisition of the second signaling information, as the information relating to the second signaling information.

[0014] The second signaling information may be information in units of services and may include a plurality of management information items for acquiring the broadcast component and an MPD (Media Presentation Description) complying with a standard of MPEG-DASH (Moving Picture Expert Group-Dynamic Adaptive Streaming over HTTP) as management information for acquiring the communication component.

[0015] The first signaling information may be transmitted in an upper layer than an IP layer in a hierarchy of a protocol in the IP transmission system, and a common IP address may be assigned to the broadcast component configuring a particular service and the first signaling information.

[0016] The reception apparatus may be an independent apparatus or may be an internal block configuring a single apparatus.

[0017] A reception method according to the first aspect of the present technology is a reception method corresponding to the reception apparatus according to the first aspect of the present technology.

[0018] In the reception apparatus according to the first aspect of the present technology, and a reception method, a broadcast wave of digital broadcasting using an IP transmission system is received, and, on the basis of information for managing only a broadcast component transmitted by a broadcast wave of the digital broadcasting, which is first signaling information transmitted by a broadcast wave of the digital broadcasting, or information for managing at least one component of the broadcast component and a communication component transmitted by communication, which is second signaling information transmitted by communication, operations of respective units for acquiring the at least one component of the broadcast component and the communication component are controlled.

[0019] A transmission apparatus according to a second aspect of the present technology is a transmission apparatus including: a first acquisition unit that acquires first signaling information for managing only a broadcast component transmitted by a broadcast wave of digital broadcasting using an IP transmission system; a second acquisition unit that acquires one or more broadcast components configuring a service; and a transmission unit that transmits the first signaling information together with the broadcast component by a broadcast wave of the digital broadcasting using the IP transmission system.

[0020] The first signaling information may be information in units of services and may include a plurality of management information items for acquiring the broadcast component transmitted through a FLUTE session.

[0021] The first signaling information may be information in units of services and may includes one management information item obtained by integrating a plurality of management information items for acquiring the broadcast component transmitted through a FLUTE session as a parameter defined at a component level.

[0022] A receiver that receives a broadcast wave of the digital broadcasting using the IP transmission system may be capable of acquiring information for managing the at least one component of the broadcast component and a communication component transmitted by communication, which is second signaling information transmitted by communication, and the first signaling information and the second signaling information may include management information defining information relating to the second signaling information as a parameter at a service level.

[0023] The management information may include information indicating a range of signaling information, version information, and a URL of an acquisition source, as the information relating to the second signaling information.

[0024] The management information may further include information indicating an update interval of the second signaling information, as the information relating to the second signaling information.

[0025] The management information may further include information indicating a timing to terminate acquisition of the second signaling information, as the information relating to the second signaling information.

[0026] The second signaling information may be information in units of services and may include an MPD complying with a standard of MPEG-DASH as the plurality of management information items for acquiring the broadcast component and the management information for acquiring the communication component.

[0027] The first signaling information may be transmitted in an upper layer than an IP layer in a hierarchy of a protocol in the IP transmission system, and a common IP address may be assigned to the broadcast component configuring a particular service and the first signaling information.

[0028] The transmission apparatus may be an independent apparatus or may be an internal block configuring a single apparatus.

[0029] A transmission method according to the second aspect of the present technology is a transmission method corresponding to the transmission apparatus according to the second aspect of the present technology.

[0030] In the transmission apparatus according to the second aspect of the present technology and a transmission method, first signaling information for managing only a broadcast component transmitted by a broadcast wave of digital broadcasting using an IP transmission system is acquired, one or more broadcast components configuring the service is acquired, and the first signaling information is transmitted together with the broadcast component by a broadcast wave of the digital broadcasting using the IP transmission system.

Effects of the Invention

[0031] In accordance with the first aspect and the second aspect of the present technology, it is possible to suppress an increase in data size of signaling information transmitted by broadcasting.

[0032] It should be noted that the effect described here is not necessarily limitative and may be any effect described in the present disclosure.

BRIEF DESCRIPTION OF DRAWINGS

[0033] FIG. 1 A diagram showing a protocol stack of digital broadcasting in an IP transmission system.

[0034] FIG. 2 A diagram showing a configuration of a broadcast wave of the digital broadcasting in the IP transmission system.

[0035] FIG. 3 A diagram showing a structure of signaling information according to Solution 1.

[0036] FIG. 4 A diagram explaining a channel selection scenario of a basic service according to Solution 1.

[0037] FIG. 5 A diagram explaining a channel selection scenario of a hybrid service according to Solution 1.

[0038] FIG. 6 A diagram showing a structure of signaling information according to Solution 2.

[0039] FIG. 7 A diagram explaining a channel selection scenario of a basic service according to Solution 2.

[0040] FIG. 8 A diagram explaining a channel selection scenario of a hybrid service according to Solution 2.

[0041] FIG. 9 A diagram for explaining a scenario in the case where a transition from the basic service to the hybrid service is made.

[0042] FIG. 10 A diagram for explaining a scenario in the case where an acquisition source of streams is changed in the hybrid service.

[0043] FIG. 11 A diagram for explaining another scenario in the case where the acquisition source of the streams is changed in the hybrid service.

[0044] FIG. 12 A diagram for explaining a scenario in the case where a transition from the hybrid service to the basic service is made.

[0045] FIG. 13 A diagram for explaining another scenario in the case where a transition from the hybrid service to the basic service is made.

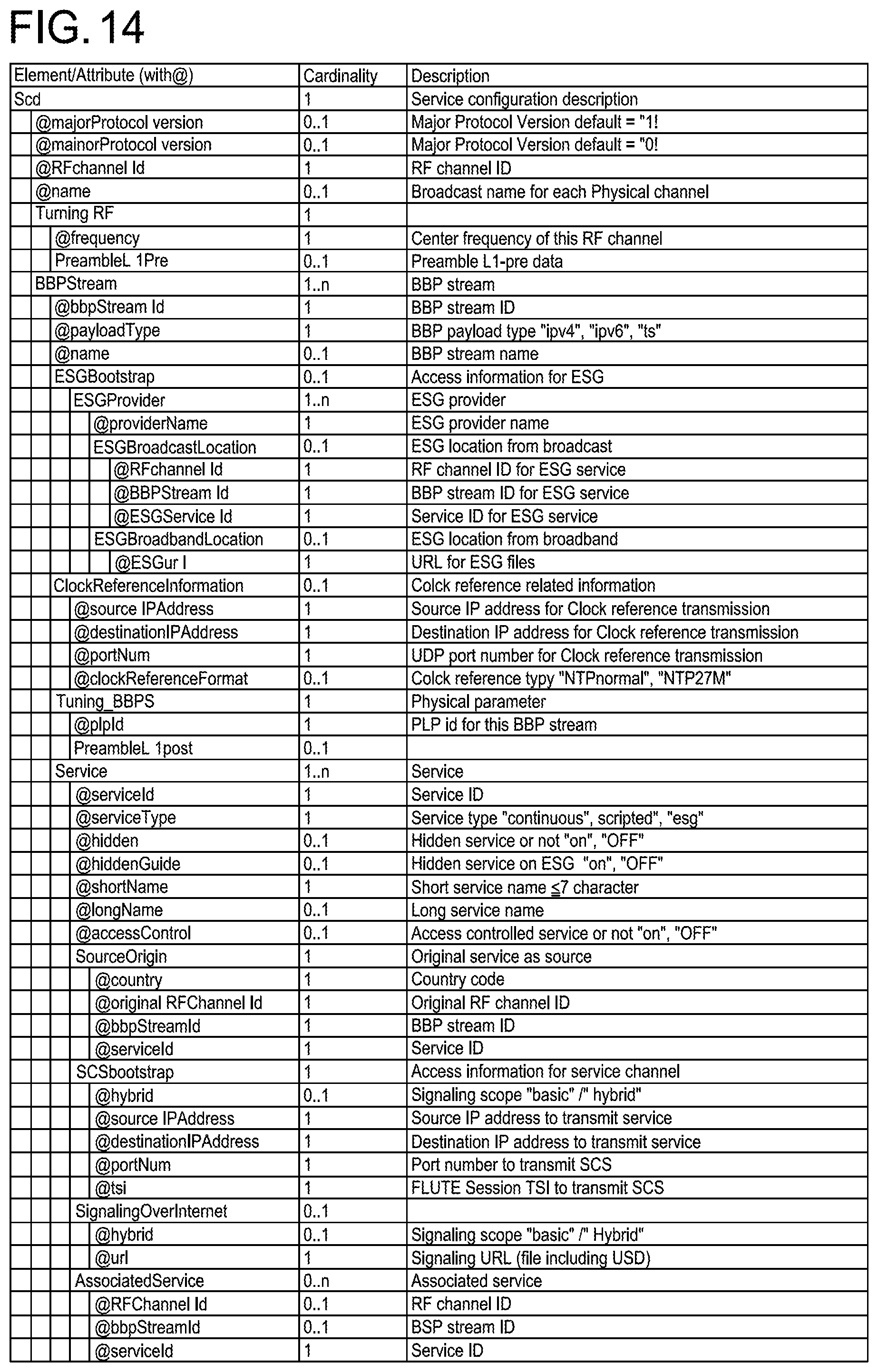

[0046] FIG. 14 A diagram showing a syntax of an SCD common to Solutions 1 and 2.

[0047] FIG. 15 A diagram showing an SPD according to Solution 1.

[0048] FIG. 16 A diagram showing an SPD according to Solution 2.

[0049] FIG. 17 A diagram showing details of a ComponentLocation element.

[0050] FIG. 18 A diagram showing an SPD common to Solutions 1 and 2.

[0051] FIG. 19 A diagram showing a syntax of a Protocol Version Descriptor.

[0052] FIG. 20 A diagram showing a syntax of an NRT Service Descriptor.

[0053] FIG. 21 A diagram showing a syntax of a Capability Descriptor.

[0054] FIG. 22 A diagram showing a syntax of an Icon Descriptor.

[0055] FIG. 23 A diagram showing a syntax of an ISO-639 Language Descriptor.

[0056] FIG. 24 A diagram showing a syntax of a Receiver Targeting Descriptor.

[0057] FIG. 25 A diagram showing a syntax of an Associated Service Descriptor.

[0058] FIG. 26 A diagram showing a syntax of a Content Advisory Descriptor.

[0059] FIG. 27 A diagram showing a syntax of an AVC Video Descriptor.

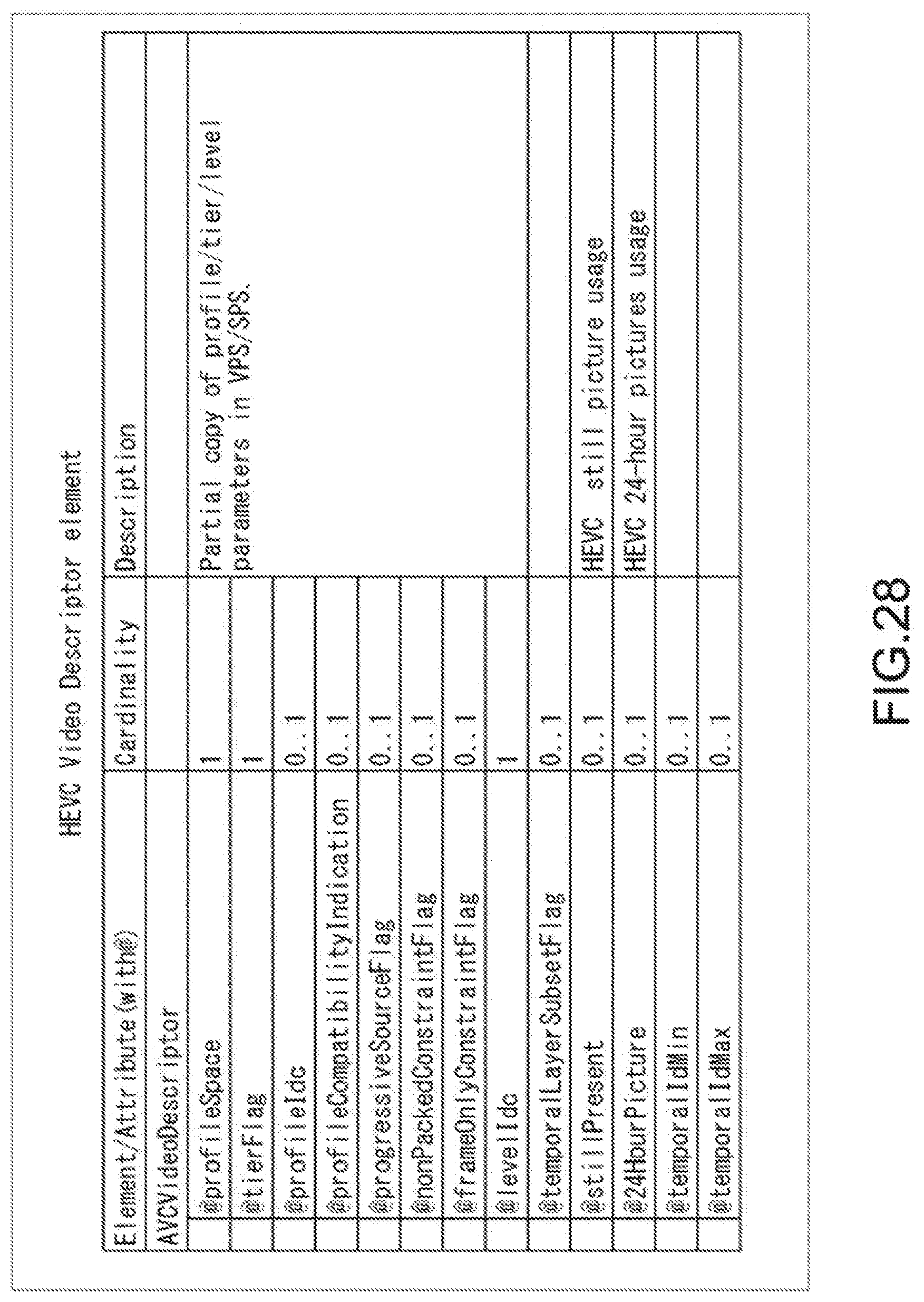

[0060] FIG. 28 A diagram showing a syntax of an HEVC Video Descriptor.

[0061] FIG. 29 A diagram showing a syntax of an MPEG4 AAC Audio Descriptor.

[0062] FIG. 30 A diagram showing a syntax of an AC3 Audio Descriptor.

[0063] FIG. 31 A diagram showing a syntax of Caption Parameters.

[0064] FIG. 32 A diagram showing a configuration example of a broadcast communication system.

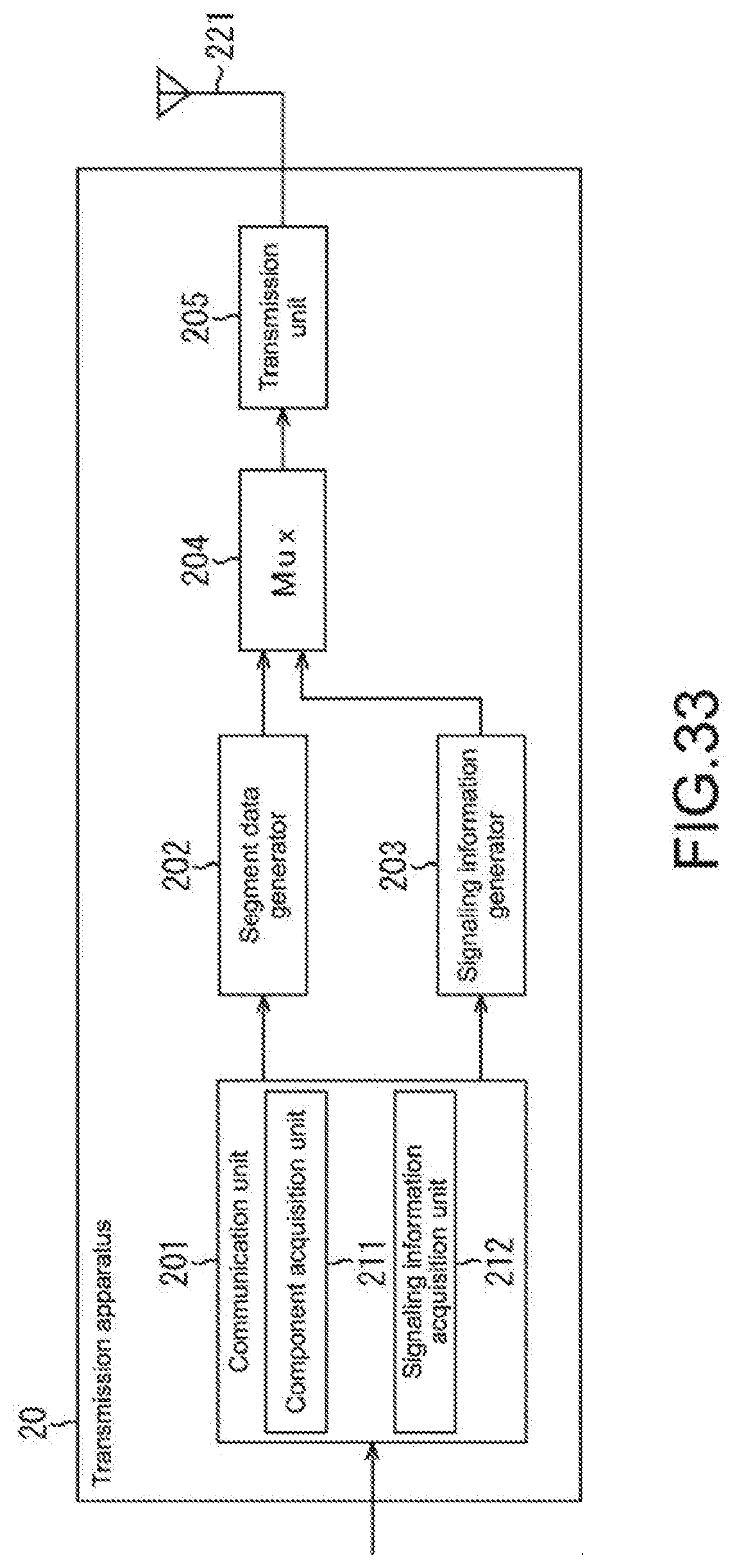

[0065] FIG. 33 A diagram showing a configuration example of a transmission apparatus.

[0066] FIG. 34 A diagram showing a configuration example of an Internet server.

[0067] FIG. 35 A diagram showing a configuration example of a reception apparatus.

[0068] FIG. 36 A flowchart explaining transmission processing.

[0069] FIG. 37 A flowchart explaining streaming delivery processing.

[0070] FIG. 38 A flowchart explaining signaling information-providing processing.

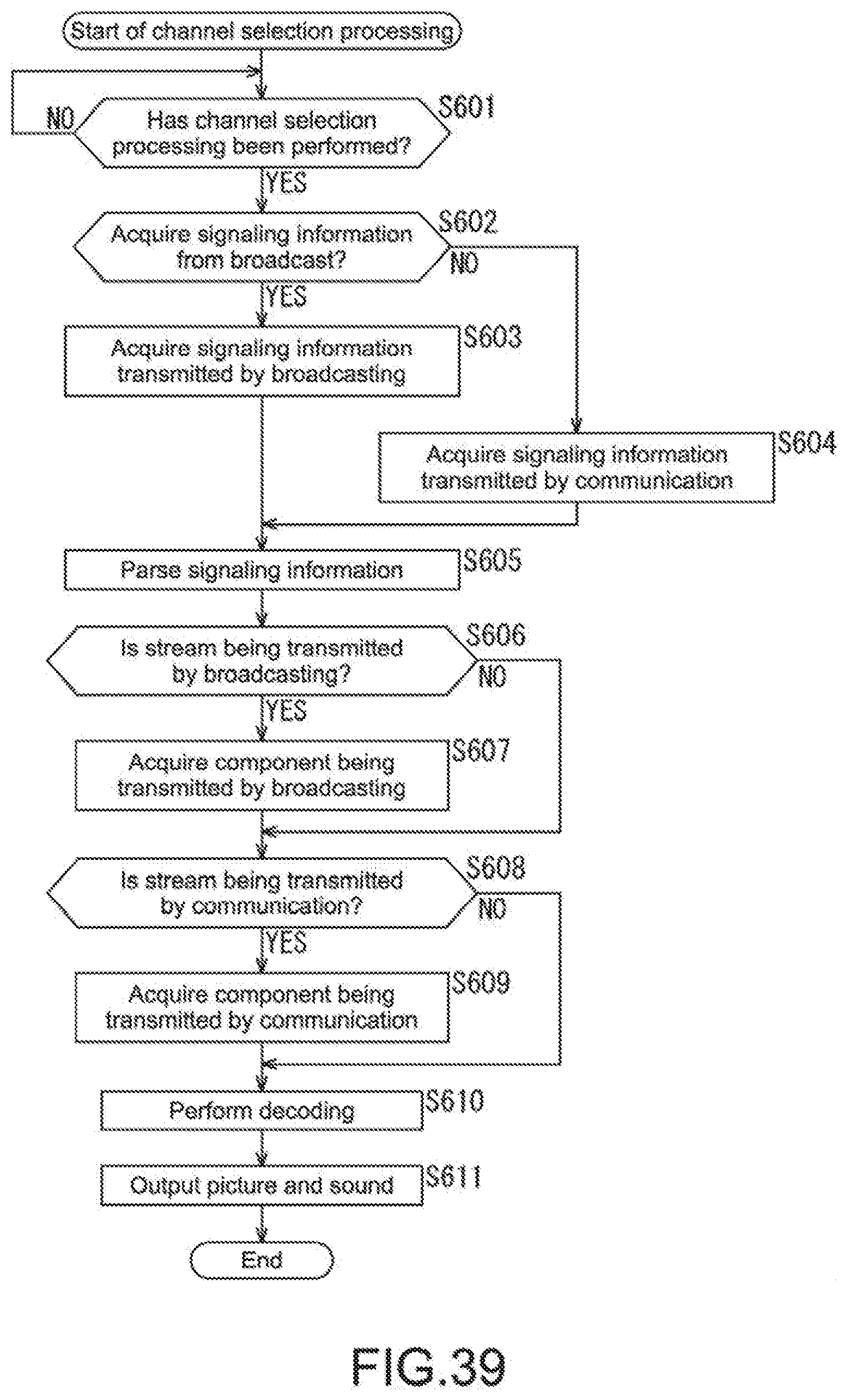

[0071] FIG. 39 A flowchart explaining channel selection processing.

[0072] FIG. 40 A diagram showing a configuration example of a computer.

MODE(S) FOR CARRYING OUT THE INVENTION

[0073] Hereinafter, embodiments of the present technology will be described with reference to the drawings. Note that descriptions will be made in the following order.

[0074] 1. Outline of Digital Broadcasting in IP Transmission System

[0075] 2. Solution 1

[0076] (1) Structure of Signaling Information

[0077] (2) Specific Application Example

[0078] 3. Solution 2

[0079] (1) Structure of Signaling Information

[0080] (2) Specific Application Example

[0081] 4. Common to Solutions 1 and 2

[0082] (1) Structure of Signaling Information

[0083] (2) Specific Application Example

[0084] 5. Syntax

[0085] (1) Syntax of SCD

[0086] (2) Syntax of SPD

[0087] 6. System Configuration

[0088] 7. Flows of Processing Executed by Apparatuses

[0089] 8. Configuration of Computer

[0090] <1. Outline of Digital Broadcasting in IP Transmission System>

[0091] (Protocol Stack)

[0092] FIG. 1 is a diagram showing a protocol stack of digital broadcasting in an IP transmission system.

[0093] As shown in FIG. 1, a lowest layer is a physical layer. The frequency band of broadcast waves assigned for a service (channel) corresponds to this. An upper layer that is adjacent to the physical layer is an IP layer sandwiching a BBP stream (Base Band Packet Stream) therebetween. The BBP stream is a stream including packets storing various types of data of the IP transmission system.

[0094] The IP layer corresponds to an IP (Internet Protocol) in the TCP/IP protocol stack. IP packets are identified by IP addresses. An upper layer adjacent to the IP layer is a UDP layer. In a further upper layer, RTP and FLUTE/ALS are shown. Thus, in the digital broadcasting in the IP transmission system, packets in which a port number of a UDP (User Datagram Protocol) is specified are transmitted and an RTP (Real time Transport Protocol) session or a FLUTE (File Delivery over Unidirectional Transport) session is established, for example.

[0095] In an upper layer adjacent to the FLUTE/ALS, fMP4 (Fragmented MP4) is shown. In addition, in an upper layer adjacent to the RTP and the fMP4, video data (Video), audio data (Audio), and closed caption data (Closed Caption) are shown, for example. Thus, the RTP session is used when the video data and the audio data are transmitted as streams, and the FLUTE session is used when the video data and the audio data are transmitted as files.

[0096] Further, in an upper layer of the FLUTE/ALS, NRT content, ESG, and SCS are shown. The NRT content, ESG, and SCS are transmitted through the FLUTE session. The NRT content is content transmitted by NRT (Non-Real Time) broadcasting, stored in storage of a receiver, and then reproduced. Note that the NRT content is an example of the content and other content files may be transmitted through the FLUTE session. The ESG is an electronic service guide.

[0097] The SCS (Service Channel Signaling) is signaling information in units of services and transmitted through the FLUTE session. For example, USD (User Service Description), MPD (Media Presentation Description), SDP (Session Description Protocol), FDD (File Delivery Description), SPD (Service Parameter Description), and IS (Initialization Segment) are transmitted as the SCS.

[0098] LLS (Low Layer Signaling) is low-layer signaling information and transmitted in the BBP stream. For example, service configuration information items such as SCD (Service Configuration Description), EAD (Emergency Alerting Description), and RRD (Region Rating Description) are transmitted as the LLS.

[0099] (Configuration of Broadcast Wave in IP Transmission System)

[0100] FIG. 2 is a diagram showing a configuration of a broadcast wave of the digital broadcasting in the IP transmission system.

[0101] As shown in FIG. 2, a plurality of BBP streams are transmitted in a broadcast wave (RF Channel) having a predetermined frequency band. Further, each of the BBP streams includes an NTP (Network Time Protocol), a plurality of service channels, an electronic service guide (ESG Service), and an LLS. Note that the NTP, the service channels, and the electronic service guide are transmitted according to a UDP/IP protocol while the LLS is transmitted in the BBP stream. Further, the NTP is time information and can be common to the plurality of service channels.

[0102] The service channels (hereinafter, referred to as "services") include components such as video, audio, and closed captions, which are information items configuring a program, and an SCS such as USD and SPD. A common IP address is added to the services. Using this IP address, the components, the SCS, and the like can be packaged for one or more services.

[0103] An RF channel ID (RF channel id) is assigned to a broadcast wave (RF Channel) having a predetermined frequency band. Further, a BBP stream ID (BBP stream id) is assigned to one or more BBP streams transmitted by each broadcast wave. In addition, a service ID (service id) is assigned to one or more services transmitted by each of the BBP streams.

[0104] Such a configuration corresponding to a combination of network ID (network id), transport stream ID (transport stream id), and service ID (service id) used in the MPEG2-TS (Moving Picture Expert Group 2-Transport Stream) system (hereinafter, referred to as "triplet") is employed as the ID system of the IP transmission system. This triplet indicates a BBP stream configuration and a service configuration in a broadcasting network.

[0105] The use of this ID system can achieve compatibility with the currently widely spread MPEG2-TS system, and hence it is possible to easily perform simulcast during transition from the MPEG2-TS system to the IP transmission system, for example. It should be noted that the RF channel ID and the BBP stream ID in the ID system of the IP transmission system correspond to the network ID and the transport stream ID in the MPEG2-TS system, respectively.

[0106] <2. Solution 1>

[0107] By the way, if a hybrid service is introduced into the digital broadcasting in the IP transmission system, it is necessary to describe information relating to broadcasting and communication in signaling information. Thus, an increase in data size of the signaling information transmitted by broadcasting is assumed. Therefore, the data size is required to be reduced. In view of this, as a method for addressing this requirement, two solutions, Solution 1 and Solution 2 will be proposed in the present technology. Solution 1 will be first described.

(1) Structure of Signaling Information

[0108] FIG. 3 is a diagram showing a structure of signaling information according to Solution 1. In FIG. 3, different acquisition sources for signaling information and components are in a left-hand region and a right-hand region with respect to a boundary line shown as the bold line extending in a vertical direction of the figure. The left-hand region shows "broadcasting acquisition" in which the acquisition source is broadcasting and the right-hand region shows "communication acquisition" in which the acquisition source is communication.

[0109] As the signaling information in the broadcasting acquisition in the left-hand region of the figure, LLS (Low Layer Signaling) and SCS (Service Channel Signaling) are provided. The LLS is acquired when the receiver performs initial scanning, for example. SCD, EAD, and RRD are acquired as the LLS. The SCD (Service Configuration Description) employs the triplet used in the MPEG2-TS system. This triplet indicates the BBP stream configuration and the service configuration in the broadcasting network. The SCD further includes information on an IP address and the like serving as attribute/setting information in units of services, bootstrap information for acquiring the SCS and ESG, and the like. The SCD further includes, if the signaling information is transmitted by communication, information relating to the signaling information transmitted by communication.

[0110] The EAD (Emergency Alerting Description) includes information relating to emergency notice. The RRD (Region Rating Description) includes rating information. Note that the SCD, EAD, and RRD are described with a markup language, for example, an XML (Extensible Markup Language).

[0111] The SCS is transmitted through the FLUTE session, and hence acquired according to IP address, port number, and TSI described in SCS bootstrap information of the SCD. USD, MPD, SDP, FDD, SPD, and IS are acquired as the SCS. The USD (User Service Description) includes link information for referring to the MPD, SDP, and FDD. Note that the USD is in some cases called USBD (User Service Bundle Description). The MPD (Media Presentation Description) includes information on URLs (Uniform Resource Locators) and the like for streams (components) transmitted in units of services. Note that the MPD complies with the MPEG-DASH (Moving Picture Expert Group-Dynamic Adaptive Streaming over HTTP) standard.

[0112] The SDP (Session Description Protocol) includes a service attribute in units of services, component configuration information, a component attribute, component filter information, component location information, and the like. The FDD (File Delivery Description) includes, as index information for each TSI (Transport Session Identifier), location information (e.g., URL) and information on TOI (Transport Object Identifier) and the like. Note that the FDD may be included as an element in the USD.

[0113] The SPD (Service Parameter Description) includes various parameters defined at a service level and a component level. The SPD further includes, if the signaling information is transmitted by communication, information relating to the signaling information transmitted by communication. The IS (Initialization Segment) is control information that is, if (files of) the components are segmented and transmitted as segments complying with the ISO Base Media File Format standard, transmitted together with media segments storing segment data. Note that the IS transmitted in units of components such as video and audio.

[0114] If the components such as the video and audio are transmitted as streams in units of segments by broadcasting through the FLUTE, a segment transmitted through the FLUTE session is determined by using the signaling information such as the SDP, FDD, and IS and a broadcast component is acquired.

[0115] On the other hand, the USD, MPD, SDP, FDD, SPD, and IS are acquired as the signaling information in the communication acquisition on the right-hand side of the figure. That is, the signaling information transmitted by communication has a signaling system identical to that of the SCS in the broadcasting acquisition. This signaling information is acquired according to a URL of a signaling server that is described in the SCD transmitted by broadcasting. If the signaling information is transmitted by communication, the URL of the signaling server that provides the signaling information is described in the SPD acquired by broadcasting, and hence the signaling information may be acquired according to that URL. That is, the SCD is acquired during initial scanning or the like, and hence the URL described in the SCD is a stationary URL (Fixed URL). On the other hand, the SPD is acquired during channel selection or the like, and hence it can be said that the URL described in the SPD is a dynamic URL.

[0116] Then, if the components such as the video and audio are transmitted as streams by broadcasting through the FLUTE in units of segments, a segment is determined by using the signaling information such as the SDP, FDD, and IS, and a broadcast component (Component) is acquired. If the components are, as the streams, transmitted by communication in units of segments, a segment is determined by using the signaling information such as the MPD and a communication component (Component) is acquired. Note that the files of the USD, MPD, SDP, FDD, SPD, IS, and the like acquired by communication can be handled as a single file in the ZIP file format, for example.

[0117] As described above, in the signaling information acquired by broadcasting, only the information relating to the broadcast component (stream) transmitted by broadcasting is described and the information relating to the communication component (stream) transmitted by communication is not described. Therefore, the increase in data size of signaling information transmitted by broadcasting can be suppressed. In contrast, in the signaling information acquired by communication, the information relating to the broadcast component (stream) transmitted by broadcasting and the information relating to the communication component (stream) transmitted by communication are both described. The signaling information transmitted by communication is acquired via the Internet, and hence there is less need to worry about the data size in comparison with the signaling information transmitted by broadcasting. Thus, this is not an issue.

(2) Specific Application Example

[0118] Next, a specific application example according to Solution 1 will be described. Here, a service formed of only streams transmitted by broadcasting (hereinafter, referred to as "basic service") and a service formed of streams transmitted by broadcasting and communication (hereinafter, referred to as "hybrid service") will be described in the stated order.

[0119] (2-1) Basic Service

[0120] FIG. 4 is a diagram for explaining a channel selection scenario of the basic service according to Solution 1.

[0121] In FIG. 4, a transmitter of a broadcasting station (broadcaster) transmits, through a broadcast wave (RF Channel) of the digital broadcasting using the IP transmission system, components and signaling information configuring each service in a BBP stream. It should be noted that the above-mentioned ID system is employed in this digital broadcasting. Further, files of the components and signaling information are transmitted through the FLUTE session. In the basic service, only streams transmitted by broadcasting are acquired and streams and signaling information transmitted by communication from an Internet server such as a streaming server and a signaling server are not acquired.

[0122] As shown in FIG. 4, a receiver placed in each house or the like acquires an SCD transmitted in LLS by initial scanning and records it on an NVRAM (Procedure 1). This SCD includes SCS Bootstrap information in which IP address, port number, and TSI for acquiring the SCS are described. When the user selects a particular service (basic service) (Procedure 2), the receiver reads out the SCD from the NVRAM (Procedure 3), and is connected to the SCS transmitted by a broadcast wave according to the SCS Bootstrap information and acquire signaling information (Procedures 4 and 5).

[0123] The file of the SCS is being transmitted through the FLUTE session, and hence the signaling information such as the USD, MPD, SDP, and FDD is acquired by parsing data stored in LCT packets (Procedure 6). Although a deliveryMethod element is considered as a child element of the USD, for the sake of description, it is shown separately from the USD in the figure. Further, link information is described in the USD and this link information is used for acquiring the MPD, SDP, and FDD. Those signaling information items are all included in the SCS, and hence all of them can also be acquired from it.

[0124] In AdaptationSet elements of the MPD, Representation elements are provided and components transmitted as streams by broadcasting or communication are enumerated. In the Representation elements, segment URLs indicating acquisition sources of the components are enumerated other than representation IDs. In the example of the MPD of FIG. 4, video and audio components are enumerated in the Representation elements within the AdaptationSet elements. Further, in the deliveryMethod element of the USD, information for identifying a delivery mode for the components is specified.

[0125] In the channel selection scenario of FIG. 4, the basic service, that is, the component is transmitted only by broadcasting, and hence a broadcastAppService element is provided in the deliveryMethod element and the URL of the component transmitted by broadcasting is specified in a basepattern element. By matching the segment URLs described in the MPD with the URL described in the deliveryMethod element, it is determined that the video and audio components enumerated in the MPD are being transmitted by broadcasting (Procedure 7).

[0126] Further, tsi attribute, contentLocation attribute, and toi attribute are described in the FDD. In the tsi attribute, a TSI (Transport Session Identifier) that is identification information of each FLUTE session are specified. Further, in the toi attribute, an TOI (Transport Object Identifier) that is identification information of a plurality of objects transmitted in each FLUTE session is specified. In the contentLocation attribute, the URL of the file is specified. By matching the segment URLs described in the MPD with the URLs described in the FDD, the TSI and TOI for acquiring the components enumerated in the MPD are determined (Procedure 8). In addition, by referring to the SPD, IP addresses and port numbers for acquiring those video and audio components are determined (Procedure 8).

[0127] In this manner, the IP address, port number, TSI, and TOI for acquiring the video component and the IP address, port number, TSI, and TOI for acquiring the audio component are acquired. Using the IP addresses, port numbers, TSIs, and TOIs for the video and audio components, the receiver is connected to the video and audio streams being transmitted through the FLUTE session, and acquires the LCT packets (Procedures 9 and 10). Then, the receiver extracts segment data (media segment) stored in the LCT packets and temporarily stores it in a buffer for buffering (Procedures 11 and 12), and performs rendering (Procedure 13). With this, at the receiver, a picture of a program corresponding to the particular service (basic service) selected by the user is displayed and at the same time sound synchronized with that picture is output.

[0128] As described above, in the basic service, the video and audio components (streams) are delivered only by broadcasting, and hence the information relating to those components is described in the signaling information (SCS) transmitted by broadcasting. Therefore, the receiver acquires the video and audio components (streams) on the basis of the signaling information transmitted by broadcasting. At this time, only the information relating to the components being transmitted by broadcasting is described in this signaling information, and hence the increase in data size can be suppressed. Note that the receiver not adapted for the hybrid service basically cannot be connected to the Internet, and hence cannot acquire the signaling information transmitted by communication. Even such a receiver can acquire the signaling information transmitted by broadcasting, and hence it is possible to view the program of the basic service.

[0129] (2-2) Hybrid Service

[0130] FIG. 5 is a diagram for explaining a channel selection scenario of a hybrid service according to Solution 1.

[0131] In FIG. 5, as in FIG. 4, the transmitter of the broadcasting station is transmitting, through a broadcast wave of the digital broadcasting using the IP transmission system, components and signaling information configuring each service in a BBP stream. Further, in the hybrid service, at the receiver, streams transmitted by communication are also acquired, and hence a stream of audio (A2: Audio2) is transmitted from the streaming server. Further, signaling information (e.g., ZIP file of USD) is transmitted from the signaling server.

[0132] As shown in FIG. 5, a receiver placed in each house or the like acquires an SCD transmitted in LLS by initial scanning and records it on an NVRAM (Procedure 1). In this SCD, a SignalingOverinternet element, and a hybrid attribute and an url attribute as descendants thereof are described. In the hybrid attribute, "basic" is specified in the case of the basic service and "hybrid" is specified in the case of the hybrid service. Therefore, "hybrid" is specified here. In the url attribute, a URL of a signaling server, for example, is specified as an acquisition source of the signaling information.

[0133] Note that the initial scanning is performed at the start of the use of the receiver, for example. Thus, it is not frequently performed. Therefore, even if, at the receiver adapted for the hybrid service, "hybrid" is specified as the hybrid attribute in the SignalingOverinternet element of the SCD acquired in the initial scanning, it is assumed that the streams of the basic service are received. That is, when "hybrid" is specified in this hybrid attribute, it can be said that it indicates that streams of the hybrid service may be received.

[0134] When the user selects a particular service (hybrid service) (Procedure 2), the receiver reads out the SCD from the NVRAM (Procedure 3), and accesses the signaling server via the Internet according to the URL specified as the url attribute of the SignalingOverinternet element, such that the signaling information such as the USD, MPD, SDP, and FDD is acquired (Procedures 4, 5, and 6).

[0135] In the example of the MPD of FIG. 5, video and audio components are enumerated in the Representation elements within the AdaptationSet elements. Further, in the channel selection scenario of FIG. 5, the hybrid service, that is, the component is transmitted by broadcasting and communication, and hence a broadcastAppService element and a unicastAppService element are provided in a deliveryMethod element of the USD. In a basepattern element of the broadcastAppService element, a URL of a component transmitted by broadcasting is specified. In a basepattern element of the unicastAppService element, a URL of a component transmitted by communication is specified.

[0136] By matching the segment URLs described in the MPD with the URLs described in the deliveryMethod element of the USD, it is determined that, out of the components enumerated in the MPD, the video component is being transmitted by broadcasting and the audio component is being transmitted by communication (Procedure 8). Further, by matching the segment URLs described in the MPD with the URLs described in the FDD, the TSI and TOI for acquiring the video component transmitted by broadcasting are determined (Procedure 9). In addition, by referring to the SPD, the IP address and port number for acquiring the video component are determined (Procedure 9). Note that the audio component is transmitted by communication, and hence the segment URL of the MPD corresponding to this audio component is the URL of the streaming server delivering the component (stream) of the audio (A2: Audio2).

[0137] In this manner, the IP address, port number, TSI, and TOI for acquiring the video component and the URL of the streaming server for acquiring the audio component are acquired. Using the IP address, port number, TSI, and TOI of the video component, the receiver is connected to the video stream being transmitted through the FLUTE session, and acquires LCT packets (Procedure 10-1). Then, the receiver extracts segment data (media segment) stored in the LCT packets (Procedure 12). Further, the receiver accesses the streaming server via the Internet according to the segment URL of the MPD, and is connected to the audio stream (Procedure 10-2).

[0138] As a result, the video and audio streams are acquired (Procedure 11), and hence the receiver temporarily stores data of them in a buffer for buffering (Procedure 13), and performs rendering (Procedure 14). With this, at the receiver, a picture of a program corresponding to the particular service (hybrid service) selected by the user is displayed and at the same time sound synchronized with that picture is output.

[0139] As described above, in the hybrid service, the video and audio components (streams) are delivered by broadcasting and communication, and hence the information relating to those components is described in the signaling information transmitted by communication. Therefore, the receiver acquires the video and audio components (streams) on the basis of the signaling information transmitted by communication. At this time, in this signaling information, the information items relating to the components transmitted by broadcasting and communication are both described. However, in the case of the signaling information transmitted by communication, there is less need to worry about the data size in comparison with the signaling information transmitted by broadcasting. Thus, this is not an issue. Note that the receiver adapted for the hybrid service can be connected to the Internet as a premise, and hence even if the signaling information is transmitted by communication, this is not an issue.

[0140] <3. Solution 2>

[0141] Next, Solution 2 will be described. In Solution 2, the signaling information acquired by broadcasting is simplified in comparison with Solution 1 described above.

(1) Structure of Signaling Information

[0142] FIG. 6 is a diagram showing a structure of signaling information according to Solution 2. In FIG. 6, as in FIG. 3, the left-hand region shows the "broadcasting acquisition" and the right-hand region shows the "communication acquisition." Further, out of the signaling information in broadcasting acquisition in the left-hand region of the figure, the LLS is the same as that in FIG. 3 and the SCS is simplified in comparison with FIG. 3. That is, in FIG. 6, the SPD and the IS are acquired as the SCS.

[0143] Here, the SPD includes various parameters defined at the service level and the component level. As this parameter at the component level, information (e.g., port number, TSI, and TOI) for acquiring the component transmitted by broadcasting is described. Thus, a segment transmitted through the FLUTE session is determined and a broadcast component (Component) is acquired.

[0144] On the other hand, as the signaling information in the communication acquisition on the right-hand side of the figure, the USD, MPD, SDP, FDD, SPD, and IS are acquired as in FIG. 3. Then, by using the signaling information such as the SDP, FDD, and IS, a segment transmitted through the FLUTE session is determined and a broadcast component (Component) is acquired. Further, by using the signaling information such as the MPD, a segment is determined and a communication component (Component) is acquired.

[0145] As described above, information items for acquiring the broadcast components (streams) transmitted by broadcasting are collected in the SPD, and hence the signaling information can be simplified without using the USD, MPD, SDP, and FDD. Further, in the structure of the signaling information according to Solution 2, as in Solution 1, in the signaling information acquired by the broadcasting, only the information relating to the broadcast component (stream) transmitted by broadcasting is described and the information relating to the communication component transmitted by communication (stream) is not described, and hence it is possible to suppress the increase in data size of signaling information transmitted by broadcasting.

(2) Specific Application Example

[0146] Next, a specific application example according to Solution 2 will be described, and, as in Solution 1 described above, channel selection scenarios in the basic service and the hybrid service will be described.

[0147] (2-1) Basic Service

[0148] FIG. 7 is a diagram for explaining a channel selection scenario of a basic service according to Solution 2.

[0149] In FIG. 7, as in FIG. 4, the transmitter of the broadcasting station is transmitting, through a broadcast wave of the digital broadcasting using the IP transmission system, components and signaling information configuring each service in a BBP stream. Further, in the basic service, streams transmitted by broadcasting are only acquired and streams and signaling information transmitted by communication from the Internet server are not acquired.

[0150] As shown in FIG. 7, a receiver placed in each house or the like acquires an SCD transmitted in LLS by initial scanning and records it on an NVRAM (Procedure 1). This SCD includes SCS Bootstrap information in which IP address, port number, and TSI for acquiring the SCS are described. When the user selects a particular service (basic service) (Procedure 2), the receiver reads out the SCD from the NVRAM (Procedure 3), and is connected to the SCS transmitted by a broadcast wave according to the SCS Bootstrap information and acquire the signaling information (Procedures 4 and 5).

[0151] The file of the SCS is being transmitted through the FLUTE session, and hence the signaling information such as the SPD is acquired by parsing data stored in LCT packets (Procedure 6). Here, in the SPD, componentId attribute, componentType attribute, and ComponentLocation element are described as the parameters at the level of the components such as the video and audio. In the componentId attribute, a component ID is specified. In the componentType attribute, type information of the component is specified.

[0152] Further, in the ComponentLocation element, portNum attribute, tsi attribute, startToi attribute, and endToi attribute are described as location information for each component. That is, port number, TSI, and TOI are specified as information for acquiring the components (streams) transmitted by broadcasting. Note that, in the startToi attribute, a start value of the TOI if the TOI changes in time series is specified. Further, in the endToi attribute, an end value of the TOI if the TOI changes in time series is specified. That is, by specifying the startToi attribute and the endToi attribute, that value is sequentially incremented from the start value to the end value of the TOI.

[0153] In this manner, the IP address, port number, TSI, and TOI for acquiring the video component and the IP address, port number, TSI, and TOI for acquiring the audio component are acquired (Procedure 7). Using the IP addresses, port numbers, TSIs, and TOIs for the video and audio components, the receiver is connected to the stream being transmitted through the FLUTE session, and acquires the LCT packets (Procedures 8 and 9). Then, the receiver extracts segment data (media segment) stored in the LCT packets and temporarily stores it in a buffer for buffering (Procedures 10 and 11), and performs rendering (Procedure 12). With this, at the receiver, a picture of a program corresponding to the particular service (basic service) selected by the user is displayed and at the same time sound synchronized with that picture is output.

[0154] As described above, in the basic service, the video and audio components (streams) are delivered only by broadcasting, and hence the information relating to those components is described in the signaling information (SCS) transmitted by broadcasting. Therefore, the receiver acquires the video and audio components (streams) on the basis of the signaling information transmitted by broadcasting. At this time, only the information relating to the components being transmitted by broadcasting is described in this signaling information, and hence the increase in data size can be suppressed.

[0155] (2-2) Hybrid Service

[0156] FIG. 8 is a diagram for explaining a channel selection scenario of a hybrid service according to Solution 2.

[0157] In FIG. 8, as in FIG. 4, the transmitter of the broadcasting station is transmitting, through a broadcast wave of the digital broadcasting using the IP transmission system, components and signaling information configuring each service in a BBP stream. Further, in the hybrid service, at the receiver, streams transmitted by communication are also acquired, and hence a stream of audio (A2: Audio2) is transmitted from the streaming server. Further, signaling information (e.g., ZIP file including USD and the like) is being transmitted from the signaling server.

[0158] As shown in FIG. 8, a receiver placed in each house or the like acquires an SCD transmitted in LLS by initial scanning, and records it on an NVRAM (Procedure 1). In this SCD, a SignalingOverinternet element, and a hybrid attribute and an url attribute as descendants thereof are described. In the hybrid attribute, "hybrid" is specified. Further, the URL of the signaling server, for example, is specified in the url attribute. Note that, as described above, even when "hybrid" is specified as the hybrid attribute in the SignalingOverinternet element, it is assumed that the streams of the basic service are received. Therefore, when "hybrid" is specified in this hybrid attribute, it can be said that it indicates that streams of the hybrid service may be received.

[0159] When the user selects a particular service (hybrid service) (Procedure 2), the receiver reads out the SCD from the NVRAM (Procedure 3), and accesses the signaling server via the Internet according to the URL specified as the url attribute of the SignalingOverinternet element, and accesses the signaling server via the Internet, such that the signaling information such as the USD, MPD, SDP, and FDD is acquired (Procedures 4, 5, and 6).

[0160] In the example of the MPD of FIG. 8, video and audio components are enumerated in the Representation elements within the AdaptationSet elements. Further, in the channel selection scenario of FIG. 8, the hybrid service, that is, the component is transmitted by broadcasting and communication, and hence the broadcastAppService element and the unicastAppService element are provided in the deliveryMethod element of the USD.

[0161] Then, by matching the segment URLs described in the MPD with the URLs described in the deliveryMethod element of the USD, it is determined that, out of the components enumerated in the MPD, the video component is being transmitted by broadcasting and the audio component is being transmitted by communication (Procedure 8). Further, by matching the segment URLs described in the MPD with the URLs described in the FDD, the TSI and TOI for acquiring the video component transmitted by broadcasting are determined (Procedure 9).

[0162] In addition, by referring to the SPD, the IP address and port number for acquiring the video component are determined (Procedure 9). Note that the audio component is transmitted by communication, and hence the segment URL of the MPD corresponding to this audio component is the URL of the streaming server delivering the component (stream) of the audio (A2: Audio2).

[0163] In this manner, the IP address, port number, TSI, and TOI for acquiring the video component and the URL of the streaming server for acquiring the audio component are acquired. Using the IP address, port number, TSI, and TOI of the video component, the receiver is connected to the video stream being transmitted through the FLUTE session, and acquires LCT packets (Procedure 10-1). Then, the receiver extracts segment data (media segment) stored in the LCT packets (Procedure 12). Further, the receiver accesses the streaming server via the Internet according to the segment URL of the MPD, and is connected to the audio stream (Procedure 10-2).

[0164] As a result, the video and audio streams are acquired (Procedure 11), and hence the receiver temporarily stores data of them in a buffer for buffering (Procedure 13), and performs rendering (Procedure 14). With this, in the receiver, a picture of a program corresponding to the particular service (hybrid service) selected by the user is displayed and at the same time sound synchronized with that picture is output.

[0165] As described above, in the hybrid service, the video and audio components (streams) are delivered by broadcasting and communication, and hence the information relating to those components is described in the signaling information transmitted by communication. Therefore, the receiver acquires the video and audio components (streams) on the basis of the signaling information transmitted by communication. At this time, in this signaling information, the information items relating to the components transmitted by broadcasting and communication are both described. In the case of the signaling information transmitted by communication, there is less need to worry about the data size in comparison with the signaling information transmitted by broadcasting. Thus, this is not an issue.

[0166] <4. Solutions 1 and 2>

[0167] By the way, at the receiver, the selected particular service is continued in the hybrid service. However, when the program is changed, for example, a case where a transition from the basic service to the hybrid service is made, a case where a transition from the hybrid service to the basic service is made, and the like are conceivable other than a case where the acquisition source of streams is changed. Hereinafter, those cases will be described. It should be noted that the following descriptions are common to Solution 1 and Solution 2, and hence descriptions of the both solutions will be made together, not separately.

(1) Structure of Signaling Information

[0168] As the structure of the signaling information, either one structure of the structure of the signaling information according to Solution 1 (FIG. 3) and the structure of the signaling information according to Solution 2 (FIG. 6) is used.

(2) Specific Application Example

[0169] (2-1) Transition from Basic Service to Hybrid Service

[0170] FIG. 9 is a diagram for explaining a scenario in the case where a transition from the basic service to the hybrid service is made.

[0171] In FIG. 9, as in FIG. 4, the transmitter of the broadcasting station is transmitting, through a broadcast wave of the digital broadcasting using the IP transmission system, components and signaling information configuring each service in a BBP stream. Further, in the hybrid service, at the receiver, streams transmitted by communication are also acquired, and hence a stream of audio (A2: Audio2) is being transmitted from the streaming server. Further, the signaling information is being transmitted from the signaling server.

[0172] As shown in FIG. 9, at the receiver placed in each house or the like, the particular service selected by the user is a basic service, and hence video and audio streams transmitted by broadcasting are acquired by using signaling information transmitted by broadcasting. With this, at the receiver, a picture of a program corresponding to the basic service is displayed and at the same time sound synchronized with that picture is output (Procedure 1). Note that this Procedure 1 corresponds to, in the case of Solution 1, the procedures in the channel selection scenario of FIG. 4 and corresponds to, in the case of Solution 2, the procedures in the channel selection scenario of FIG. 7.

[0173] At the receiver, the signaling information (SCS) transmitted by broadcasting is acquired according to the SCS Bootstrap information of the SCD, and the contents of the SignalingOverinternet element described in the SPD are constantly monitored (Procedure 2). Here, in the SignalingOverinternet element, the hybrid attribute and url attribute are described as the descendants thereof. In the hybrid attribute, "basic" is specified in the case of the basic service and "hybrid" is specified in the case of the hybrid service. In the url attribute, the URL of the signaling server, for example, is specified as the acquisition source of the signaling information.

[0174] That is, if the service selected by the user is a basic service, "basic" is specified in the hybrid attribute. However, if this service is transitioned from the basic service to the hybrid service, the value of the hybrid attribute of the SignalingOverinternet element is changed from "basic" to "hybrid." In this case, the receiver accesses the signaling server via the Internet according to the URL specified as the url attribute of the SignalingOverinternet element and acquires the signaling information (Procedures 3 and 4).

[0175] Then, at the receiver, the video component transmitted by broadcasting and the audio component transmitted by communication are acquired by using the signaling information transmitted by communication. With this, at the receiver, a picture of a program corresponding to the hybrid service is displayed and at the same time sound synchronized with that picture is output (Procedures 5 and 6). Note that Procedures 4 to 6 corresponds to, in the case of Solution 1, the procedures in the channel selection scenario of FIG. 5 and corresponds to, in the case of Solution 2, the procedures in the channel selection scenario of FIG. 8.

[0176] As described above, in the case where a transition from the basic service to the hybrid service is made, in the basic service after transition, the video and audio components are delivered only by broadcasting, and hence the information relating to those components is being described in the signaling information (SCS) transmitted by broadcasting. Therefore, the receiver acquires the video and audio components (streams) on the basis of the signaling information transmitted by broadcasting. At this time, only the information relating to the components being transmitted by broadcasting is described in this signaling information, and hence the increase in data size can be suppressed.

[0177] On the other hand, in the hybrid service after transition, the video and audio components are being delivered by broadcasting and communication, and hence the information relating to those streams is described in the signaling information transmitted by communication. Therefore, the receiver acquires the video and audio components (streams) on the basis of the signaling information transmitted by communication. At this time, in this signaling information, the information items relating to the components transmitted by broadcasting and communication are both described. In the case of the signaling information transmitted by communication, there is less need to worry about the data size in comparison with the signaling information transmitted by broadcasting. Thus, this is not an issue. By the way, even in the case where the transition is made to the hybrid service, if the user wishes to view the basic service, the receiver corresponds only to the basic service, or the receiver is not connected to the Internet, the reception of the basic service is still continued without referring to the SignalingOverinternet element.

[0178] (2-2) Change of Stream Acquisition Source in Hybrid Service

[0179] FIG. 10 is a diagram for explaining a scenario in the case where the acquisition source of the streams is changed in the hybrid service.

[0180] In FIG. 10, as in FIG. 4, the transmitter of the broadcasting station is transmitting, through a broadcast wave of the digital broadcasting using the IP transmission system, components and signaling information configuring each service in a BBP stream. Further, in the hybrid service, at the receiver, streams transmitted by communication are also acquired, and hence an audio stream is being transmitted from the streaming server. It should be noted that, in this hybrid service, different types of audio of programs are provided, and hence there are provided a streaming server (Streaming Server1) that provides audio 2 (A2) and a streaming server (Streaming Server2) that provides audio 3 (A3). Further, signaling information (e.g., ZIP file including USD and the like) is being transmitted from the signaling server.

[0181] As shown in FIG. 10, at the receiver placed in each house or the like, using the signaling information transmitted by communication, a video component transmitted by broadcasting and an audio-2 component transmitted by communication from the streaming server (Streaming Server1) are acquired. With this, at the receiver, a picture of a program corresponding to the hybrid service is displayed and at the same time sound corresponding to the audio 2 synchronized with that picture is output (Procedure 1). Note that this Procedure 1 corresponds to, in the case of Solution 1, the procedures in the channel selection scenario of FIG. 5 and corresponds to, in the case of Solution 2, the procedures in the channel selection scenario of FIG. 8.

[0182] Further, in the SignalingOverinternet element of the SPD in the signaling information transmitted by communication, version attribute and minUpdatePeriod attribute are described other than the hybrid attribute and url attribute. In the version attribute, version information of the signaling information is specified. In the minUpdatePeriod attribute, an update interval of the signaling information is specified. Therefore, the receiver accesses the signaling server at the update interval of the signaling information according to the URL of the signaling server, and acquires the signaling information (Procedure 2). Then, the receiver checks the attribute values of the hybrid attribute and version attribute of the SPD, to thereby check whether or not the contents of the signaling information have been updated (Procedure 3).

[0183] In the scenario of FIG. 10, at a timing when the program of the hybrid service is changed, the contents of the signaling information are updated and the audio is changed from the audio 2 to the audio 3. Therefore, at the receiver, using the updated signaling information, a video component transmitted by broadcasting and a component of the audio 3 transmitted by communication from the streaming server (Streaming Server2) are acquired (Procedures 4 and 5). With this, at the receiver, a picture of a program corresponding to the hybrid service is displayed and at the same time sound corresponding to the audio 3 synchronized with that picture is output. Note that those Procedures 4 and 5 correspond to, in the case of Solution 1, the procedures in the channel selection scenario of FIG. 5 and correspond to, in the case of Solution 2, the procedures in the channel selection scenario of FIG. 8.

[0184] FIG. 11 is a diagram for explaining another scenario in the case where the acquisition source of the streams is changed in the hybrid service.

[0185] FIG. 11 shows, as in FIG. 10, a scenario in the case where the acquisition source of the streams is changed in the hybrid service. They are different from each other in that the update of the signaling information is checked using the signaling information transmitted by communication in FIG. 10 while the update of the signaling information is checked using the signaling information transmitted by broadcasting in FIG. 11.

[0186] Specifically, as shown in FIG. 11, at the receiver placed in each house or the like, using the signaling information transmitted by communication, a video component transmitted by broadcasting and an audio-2 component transmitted by communication from the streaming server (Streaming Server1) are acquired. With this, at the receiver, a picture of a program corresponding to the hybrid service is displayed and at the same time sound corresponding to the audio 2 synchronized with that picture is output (Procedure 1). Note that this Procedure 1 corresponds to, in the case of Solution 1, the procedures in the channel selection scenario of FIG. 5 and corresponds to, in the case of Solution 2, the procedures in the channel selection scenario of FIG. 8.

[0187] The receiver is capable of acquiring the signaling information (SCS) transmitted by broadcasting according to the SCS Bootstrap information of the SCD, and the contents of the SignalingOverinternet element described in the SPD are constantly monitored (Procedure 2). Then, the receiver checks the attribute values of the hybrid attribute and version attribute of the SPD, to thereby check whether or not the contents of the signaling information transmitted by communication have been updated (Procedure 3). If the signaling information transmitted by communication has been updated, the receiver accesses the signaling server according to the URL of the signaling server, and acquires the updated signaling information (Procedure 4).

[0188] In the scenario of FIG. 11, as in the scenario of FIG. 10, at a timing when the program of the hybrid service is changed, the contents of the signaling information are updated and the audio is changed from the audio 2 to the audio 3, and hence, at the receiver, a video component transmitted by broadcasting and a component of the audio 3 transmitted by communication from the streaming server (Streaming Server2) are acquired by using the updated signaling information (Procedures 5 and 6). With this, at the receiver, a picture of a program corresponding to the hybrid service is displayed and at the same time sound corresponding to the audio 3 synchronized with that picture is output. Note that those Procedures 5 and 6 correspond to, in the case of Solution 1, the procedures in the channel selection scenario of FIG. 5 and correspond to, in the case of Solution 2, the procedures in the channel selection scenario of FIG. 8.

[0189] As described above, in the case where the acquisition source of the streams is changed in the hybrid service, the video and audio components (streams) are delivered by broadcasting and communication, and hence the information relating to those components is described in the signaling information transmitted by communication. Therefore, the receiver acquires the video and audio components (streams) on the basis of the signaling information transmitted by communication. At this time, in this signaling information, the information items relating to the components transmitted by broadcasting and communication are both described. In the case of the signaling information transmitted by communication, there is less need to worry about the data size in comparison with the signaling information transmitted by broadcasting. Thus, this is not an issue.

[0190] (2-3) Transition from Hybrid Service to Basic Service

[0191] FIG. 12 is a diagram for explaining a scenario in the case where a transition from the hybrid service to the basic service is made.

[0192] In FIG. 12, as in FIG. 4, the transmitter of the broadcasting station is transmitting, through a broadcast wave of the digital broadcasting using the IP transmission system, components and signaling information configuring each service in a BBP stream. Further, in the hybrid service, at the receiver, streams transmitted by communication are also acquired, and hence an audio stream is being transmitted from the streaming server. It should be noted that, in this hybrid service, audio (A2) and audio 3 (A3) are provided, and hence a plurality of streaming servers (Streaming Servers 1 and 2) are provided. Further, signaling information (e.g., ZIP file including USD and the like) is being transmitted from the signaling server.

[0193] As shown in FIG. 12, at the receiver placed in each house or the like, the particular service selected by the user is a hybrid service, and hence a video component transmitted by broadcasting and a component of the audio 3 transmitted by communication from the streaming server (Streaming Server2) is acquired by using the signaling information transmitted by communication. With this, at the receiver, a picture of a program corresponding to the hybrid service is displayed and at the same time sound corresponding to the audio 3 synchronized with that picture is output (Procedure 1). Note that this Procedure 1 corresponds to, in the case of Solution 1, the procedures in the channel selection scenario of FIG. 5 and corresponds to, in the case of Solution 2, the procedures in the channel selection scenario of FIG. 8.

[0194] Further, in the signaling information transmitted by communication, other than the hybrid attribute, the version attribute, and the url attribute, the minUpdatePeriod attribute and the endtime attribute are described in the SignalingOverinternet element of the SPD. In the minUpdatePeriod attribute, an update interval of the signaling information is specified. In the endtime attribute, the point of time of the end of the communication acquisition of the signaling information is specified. Therefore, the receiver accesses the signaling server at the update interval of the signaling information according to the URL of the signaling server, and acquires the signaling information (Procedure 2). Then, the receiver checks the attribute value of the endtime attribute of the SPD, to thereby check whether or not to terminate the communication acquisition of the signaling information (Procedure 3).

[0195] When the communication acquisition of the signaling information is terminated, at the receiver, the signaling information (SCS) transmitted by broadcasting is acquired according to the SCS Bootstrap information of the SCD (Procedure 4). That is, the signaling information is changed from the communication acquisition to the broadcasting acquisition and a transition from the hybrid service to the basic service is made.

[0196] Then, at the receiver, using signaling information (SCS) transmitted by broadcasting, the video and audio components transmitted by broadcasting are acquired (Procedures 5 and 6). With this, at the receiver, a picture of a program corresponding to the basic service is displayed and at the same time sound synchronized with that picture is output. Note that those Procedures 5 and 6 correspond to, in the case of Solution 1, the procedures in the channel selection scenario of FIG. 4 and correspond to, in the case of Solution 2, the procedures in the channel selection scenario of FIG. 7.

[0197] FIG. 13 is a diagram for explaining another scenario in the case where a transition from the hybrid service to the basic service is made.

[0198] FIG. 13 shows, as in FIG. 12, the scenario in the case where a transition from the hybrid service to the basic service is made. They are different in that the end of the communication acquisition of the signaling information is checked using the signaling information transmitted by communication in FIG. 12 while the end of the communication acquisition of the signaling information is checked using the signaling information transmitted by broadcasting in FIG. 13.

[0199] Specifically, at the receiver placed in each house or the like, the particular service selected by the user is a hybrid service, and hence a video component transmitted by broadcasting and a component of the audio 3 transmitted by communication from the streaming server (Streaming Server2) are acquired by using the signaling information transmitted by communication. With this, at the receiver, a picture of a program corresponding to the hybrid service is displayed and at the same time sound corresponding to the audio 3 synchronized with that picture is output (Procedure 1). Note that this Procedure 1 corresponds to, in the case of Solution 1, the procedures in the channel selection scenario of FIG. 5 and corresponds to, in the case of Solution 2, the procedures in the channel selection scenario of FIG. 8.