Merge Tree Garbage Metrics

Boles; David ; et al.

U.S. patent application number 16/921371 was filed with the patent office on 2020-10-22 for merge tree garbage metrics. The applicant listed for this patent is Micron Technology, Inc.. Invention is credited to David Boles, John M. Groves, Steven Moyer, Alexander Tomlinson.

| Application Number | 20200334295 16/921371 |

| Document ID | / |

| Family ID | 1000004939630 |

| Filed Date | 2020-10-22 |

View All Diagrams

| United States Patent Application | 20200334295 |

| Kind Code | A1 |

| Boles; David ; et al. | October 22, 2020 |

MERGE TREE GARBAGE METRICS

Abstract

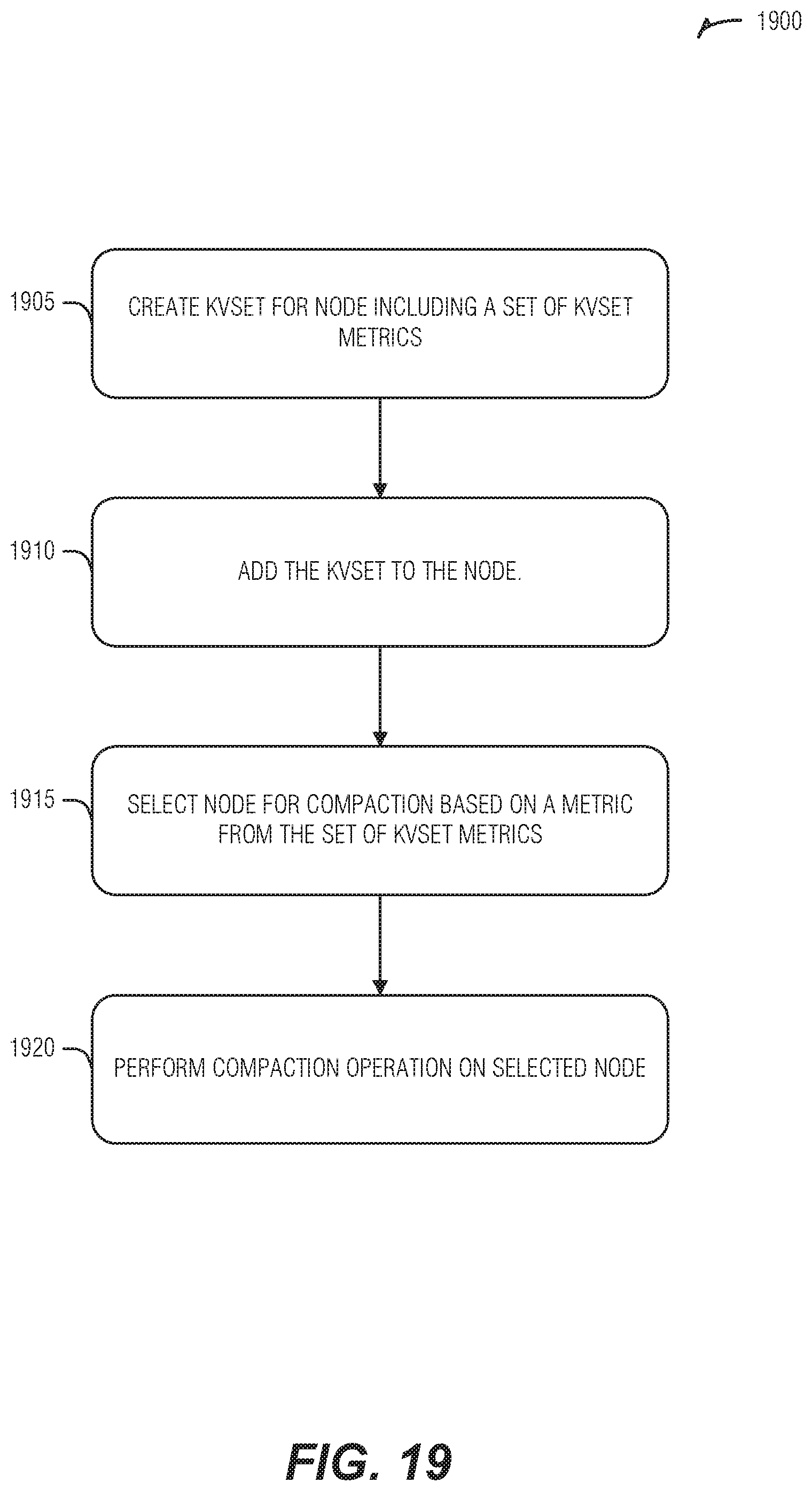

Systems and techniques for collecting and using merge tree garbage metrics are described herein. A kvset is created for a node in a KVS tree. Here, a set of kvset metrics for the kvset are computed as part of the node creation. The kvset is added to the node. The node is selected for a compaction operation based on a metric in the set of kvset metrics. The compaction operation is performed on the node.

| Inventors: | Boles; David; (Austin, TX) ; Groves; John M.; (Austin, TX) ; Moyer; Steven; (Round Rock, TX) ; Tomlinson; Alexander; (Austin, TX) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Family ID: | 1000004939630 | ||||||||||

| Appl. No.: | 16/921371 | ||||||||||

| Filed: | July 6, 2020 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 15428912 | Feb 9, 2017 | 10706105 | ||

| 16921371 | ||||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | G06F 16/9027 20190101 |

| International Class: | G06F 16/901 20060101 G06F016/901 |

Claims

1. A system comprising processing circuitry configured to perform operations comprising: generating a key-value set (kvset) for a node in a key-value set tree, the generation of the kvset comprising computation of a set of kvset metrics for the kvset, the node comprising a temporally ordered sequence of kvsets, and the temporally ordered sequence comprising an oldest kvset at one end of the temporally ordered sequence and a newest kvset at another end of the temporally ordered sequence; adding the kvset to the temporally ordered sequence of kvsets of the node; selecting the node for a compaction operation based on a metric in the set of kvset metrics; and performing the compaction operation on the node.

2. The system of claim 1, wherein the generating the kvset is performed in response to execution of a compaction operation, the compaction operation comprising at least one of a key compaction, a key-value compaction, a spill compaction, or a hoist compaction.

3. The system of claim 1, wherein the generating the kvset is performed in response to execution of a compaction operation, the compaction operation comprising a key compaction, and the set of kvset metrics comprising metrics of unreferenced values in the kvset as a result of the key compaction.

4. The system of claim 1, wherein the set of kvset metrics comprises an estimate of obsolete key-value pairs in the kvset, the estimate of obsolete key-value pairs being calculated by summing a number of key entries from pre-compaction kvsets that were not included in the kvset.

5. The system of claim 1, wherein the set of kvset metrics comprises an estimated storage size of obsolete key-value pairs in the kvset, the estimated storage size of obsolete key-value pairs being calculated by summing storage sizes of key entries and corresponding values from pre-compaction kvsets that were not included in the kvset.

6. The system of claim 1, wherein the set of kvset metrics comprises an estimated storage size of valid key-value pairs in the kyset, the estimated storage size of valid key-value pairs being calculated by summing storage sizes of key entries and corresponding values from pre-compaction kvsets that were included in the kvset.

7. The system of claim 1, wherein the operations further comprise modifying node metrics in response to adding the kvset to the node.

8. The system of claim 7, wherein the node metrics comprise a value of a fraction of estimated obsolete key-value pairs in kvsets subject to prior compactions performed on a node group comprising the node.

9. The system of claim 8, wherein the node metrics comprise a summation of like metrics in the set of kvset metrics resulting from a compaction operation and previous kvset metrics from compaction operations performed on the node.

10. The system of claim 8, wherein the value is a mean of the fraction of estimated obsolete key-value pairs in kvsets subject to a set number of most recent prior compactions for the node.

11. The system of claim 8, wherein the node metrics comprise an estimated number of keys that are the same in the kvset and a different kvset of the node.

12. The system of claim 11, wherein the operations further comprise: calculating the estimated number of keys by: obtaining a first key bloom filter from the kvset; obtaining a second key bloom filter from the different kyset; and intersecting the first key bloom filter and the second key bloom filter to produce a node bloom filter estimated cardinality (NBEC).

13. The system of claim 1, wherein the selecting the node for the compaction operation based on the metric in the set of kvset metrics comprises: collecting sets of kvset metrics for a multiple of nodes comprising the node; sorting the multiple of nodes based on the sets of kvset metrics; and selecting a subset of the multiple of nodes based on a sort order from the sorting, the performing the compaction operation on the node comprising performing the compaction operation on each node in the subset of the multiple of nodes, and the subset of the multiple of nodes comprising the node.

14. The system of claim 13, wherein a cardinality of the subset of the multiple of nodes is set by a performance value.

15. At least one non-transitory machine readable medium comprising instructions that, when executed by a machine, cause the machine to perform operations comprising: generating a key-value set (kvset) for a node in a key-value set tree, the generation of the kvset comprising computation of a set of kvset metrics for the kvset, the node comprising a temporally ordered sequence of kvsets, and the temporally ordered sequence comprising an oldest kvset at one end of the temporally ordered sequence and a newest kvset at another end of the temporally ordered sequence; adding the kvset to the temporally ordered sequence of kvsets of the node; selecting the node for a compaction operation based on a metric in the set of kvset metrics; and performing the compaction operation on the node.

16. The at least one non-transitory machine readable medium of claim 15, wherein the generating the kvset is performed in response to execution of a compaction operation, the compaction operation comprising at least one of a key compaction, a key-value compaction, a spill compaction, or a hoist compaction.

17. The at least one non-transitory machine readable medium of claim 15, wherein the generating the kvset is performed in response to execution of a compaction operation, the compaction operation comprising a key compaction, and the set of kvset metrics comprising metrics of unreferenced values in the kvset as a result of the key compaction.

18. The at least one non-transitory machine readable medium of claim 15, wherein the operations further comprise modifying node metrics in response to adding the kvset to the node.

19. The at least one non-transitory machine readable medium of claim 18, wherein the node metrics comprise a value of a fraction of estimated obsolete key-value pairs in kvsets subject to prior compactions performed on a node group comprising the node.

20. A method comprising: generating, by processing circuitry, a key-value set (kvset) for a node in a key-value set tree, the generation of the kvset comprising computation of a set of kvset metrics for the kvset, the node comprising a temporally ordered sequence of kvsets, and the temporally ordered sequence comprising an oldest kvset at one end of the temporally ordered sequence and a newest kvset at another end of the temporally ordered sequence; adding, by the processing circuitry, the kvset to the temporally ordered sequence of kvsets of the node; selecting, by the processing circuitry, the node for a compaction operation based on a metric in the set of kvset metrics; and performing, by the processing circuitry, the compaction operation on the node.

Description

PRIORITY APPLICATION

[0001] This application is a continuation of U.S. application Ser. No. 15/428,912, filed Feb. 9, 2017, which is incorporated herein by reference in its entirety.

TECHNICAL FIELD

[0002] Embodiments described herein generally relate to a key-value data store and more specifically to implementing merge tree garbage metrics and use.

BACKGROUND

[0003] Data structures are organizations of data that permit a variety of ways to interact with the data stored therein. Data structures may be designed to permit efficient searches of the data, such as in a binary search tree, to permit efficient storage of sparse data, such as with a linked list, or to permit efficient storage of searchable data such as with a B-tree, among others.

[0004] Key-value data structures accept a key-value pair and are configured to respond to queries for the key. Key-value data structures may include such structures as dictionaries (e.g., maps, hash maps, etc.) in which the key is stored in a list that links (or contains) the respective value. While these structures are useful in-memory (e.g., in main or system state memory as opposed to storage), storage representations of these structures in persistent storage (e.g., on-disk) may be inefficient. Accordingly, a class of log-based storage structures have been introduced. An example is the log structured merge tree (LSM tree).

[0005] There have been a variety of LSM tree implementations, but many conform to a design in which key-value pairs are accepted into a key-sorted in-memory structure. As that in-memory structure fills, the data is distributed amongst child nodes. The distribution is such that keys in child nodes are ordered within the child nodes themselves as well as between the child nodes. For example, at a first tree-level with three child nodes, the largest key within a left-most child node is smaller than a smallest key from the middle child node and the largest key in the middle child node is smaller than the smallest key from the right-most child node. This structure permits an efficient search for both keys, but also ranges of keys in the data structure.

BRIEF DESCRIPTION OF THE DRAWINGS

[0006] In the drawings, which are not necessarily drawn to scale, like numerals may describe similar components in different views. Like numerals having different letter suffixes may represent different instances of similar components. The drawings illustrate generally, by way of example, but not by way of limitation, various embodiments discussed in the present document.

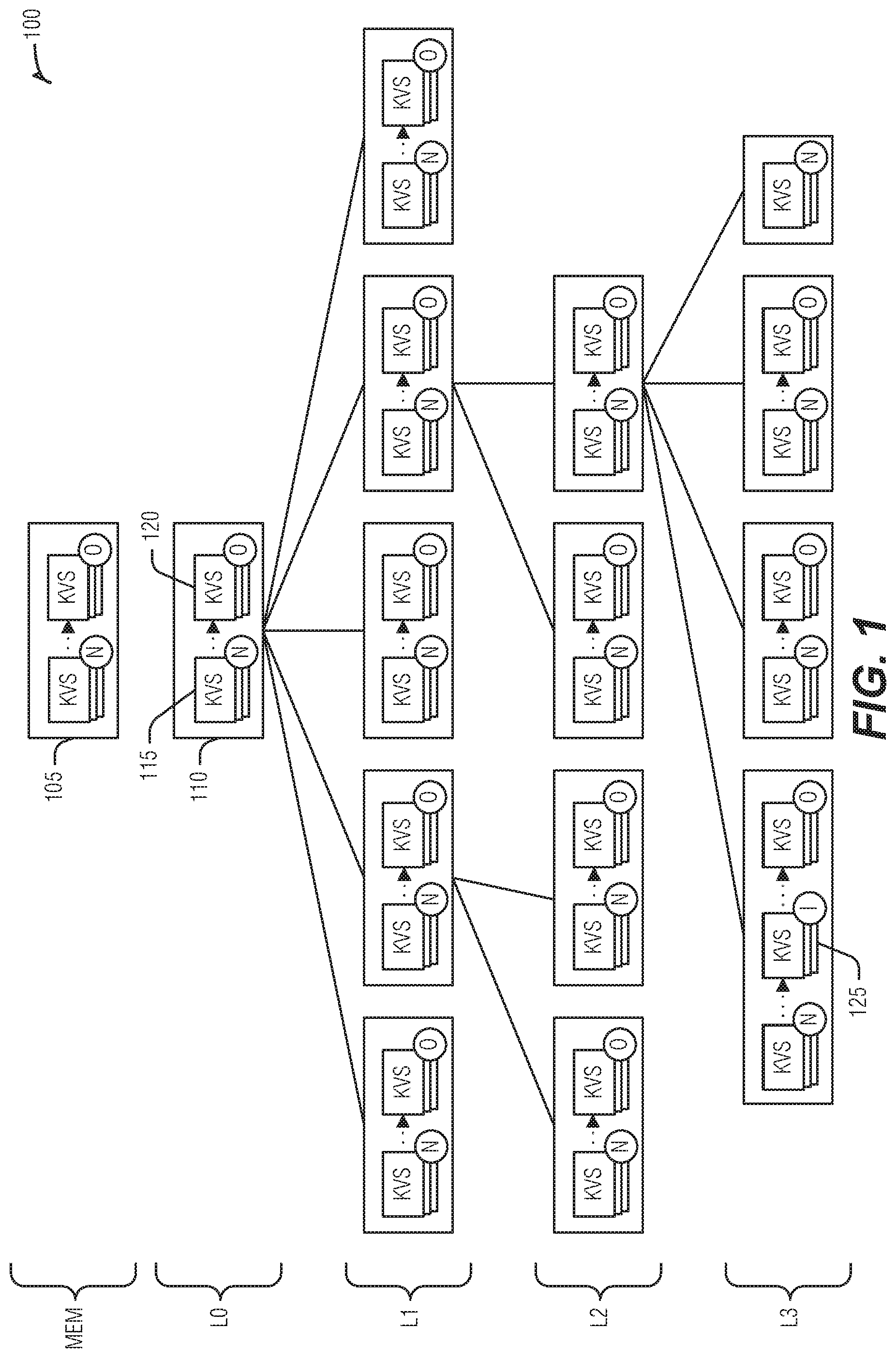

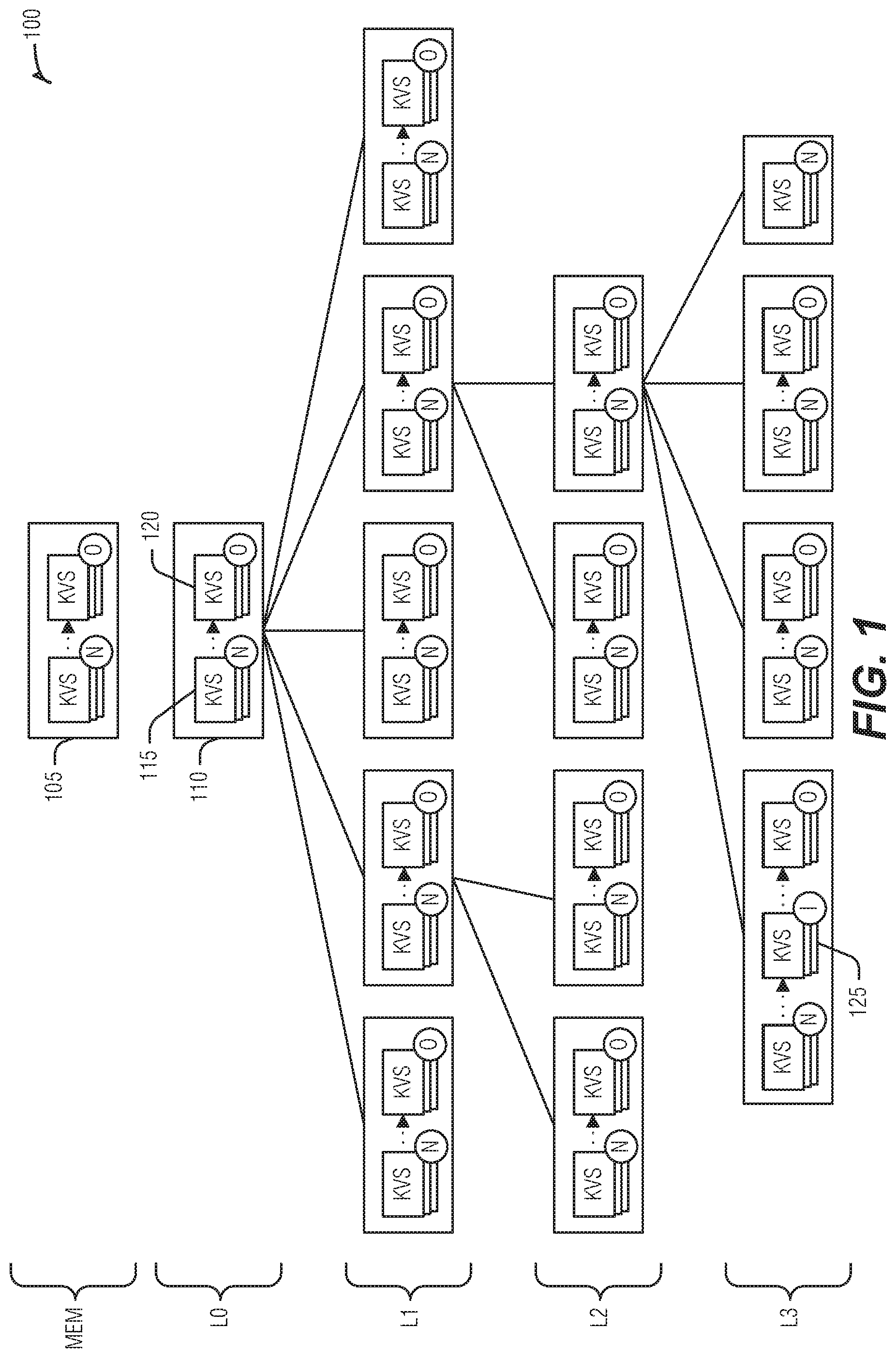

[0007] FIG. 1 illustrates an example of a KVS tree, according to an embodiment.

[0008] FIG. 2 is a block diagram illustrating an example of a write to a multi-stream storage device, according to an embodiment.

[0009] FIG. 3 illustrates an example of a method to facilitate writing to a multi-stream storage device, according to an embodiment.

[0010] FIG. 4 is a block diagram illustrating an example of a storage organization for keys and values, according to an embodiment.

[0011] FIG. 5 is a block diagram illustrating an example of a configuration for key-blocks and value-blocks, according to an embodiment.

[0012] FIG. 6 illustrates an example of a KB tree, according to an embodiment.

[0013] FIG. 7 is a block diagram illustrating KVS tree ingestion, according to an embodiment.

[0014] FIG. 8 illustrates an example of a method for KVS tree ingestion, according to an embodiment.

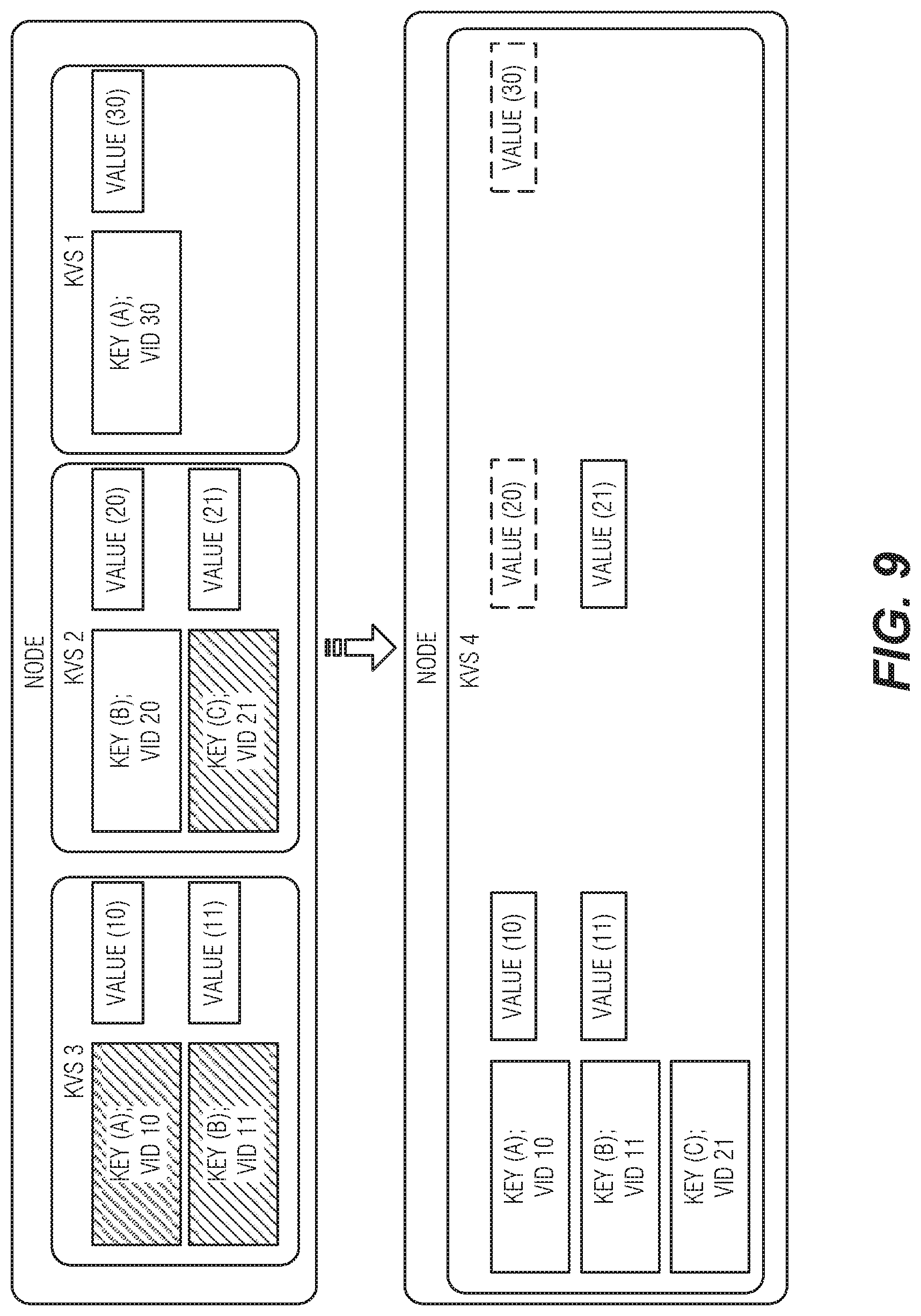

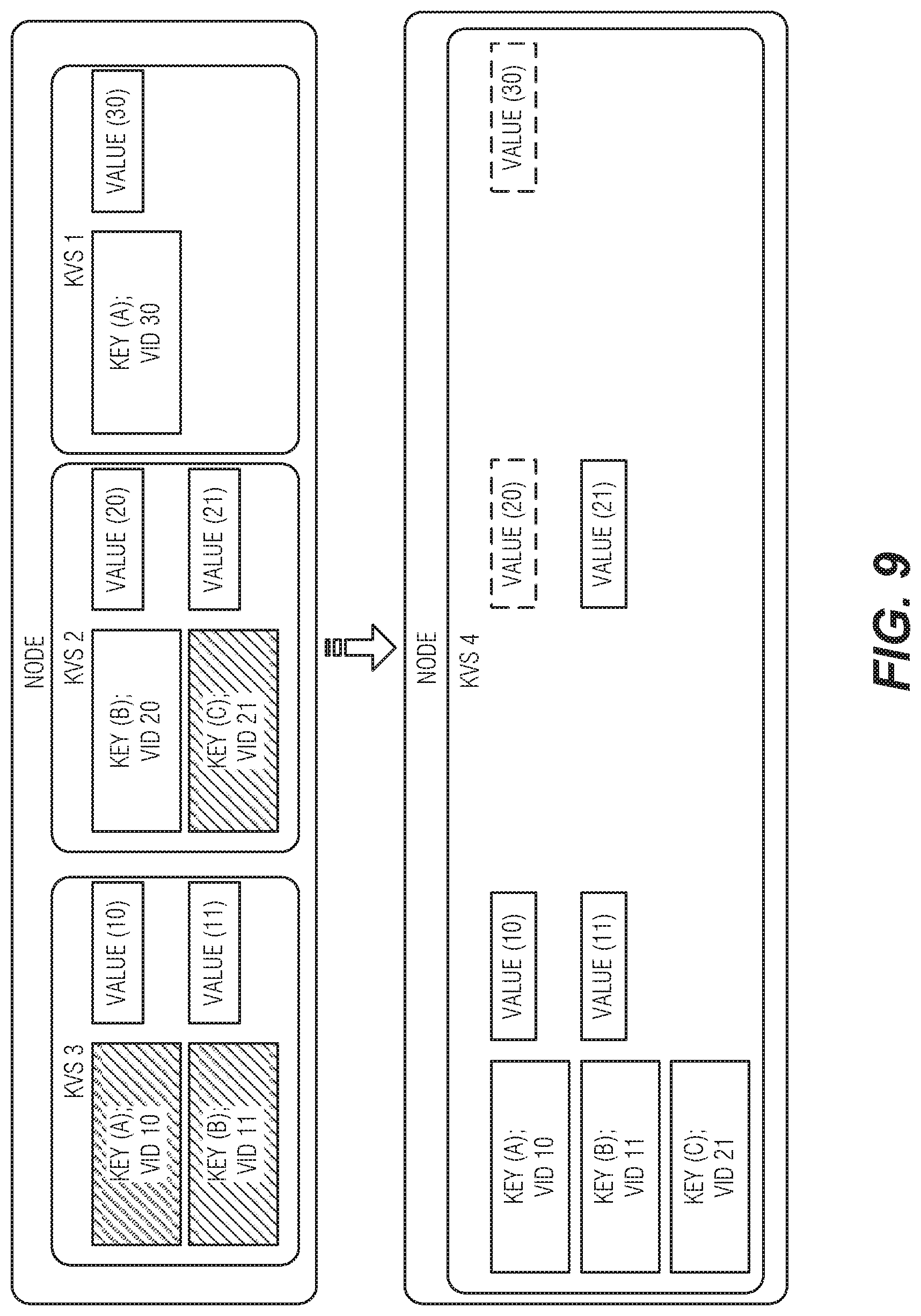

[0015] FIG. 9 is a block diagram illustrating key compaction, according to an embodiment.

[0016] FIG. 10 illustrates an example of a method for key compaction, according to an embodiment.

[0017] FIG. 11 is a block diagram illustrating key-value compaction, according to an embodiment.

[0018] FIG. 12 illustrates an example of a method for key-value compaction, according to an embodiment.

[0019] FIG. 13 illustrates an example of a spill value and its relation to a tree, according to an embodiment.

[0020] FIG. 14 illustrates an example of a method for a spill value function, according to an embodiment.

[0021] FIG. 15 is a block diagram illustrating spill compaction, according to an embodiment.

[0022] FIG. 16 illustrates an example of a method for spill compaction, according to an embodiment.

[0023] FIG. 17 is a block diagram illustrating hoist compaction, according to an embodiment.

[0024] FIG. 18 illustrates an example of a method for hoist compaction, according to an embodiment.

[0025] FIG. 19 illustrates an example of a method for performing maintenance on a KVS tree, according to an embodiment.

[0026] FIG. 20 illustrates an example of a method for modifying KVS tree operation, according to an embodiment.

[0027] FIG. 21 is a block diagram illustrating a key search, according to an embodiment.

[0028] FIG. 22 illustrates an example of a method for performing a key search, according to an embodiment.

[0029] FIG. 23 is a block diagram illustrating a key scan, according to an embodiment.

[0030] FIG. 24 is a block diagram illustrating a key scan, according to an embodiment.

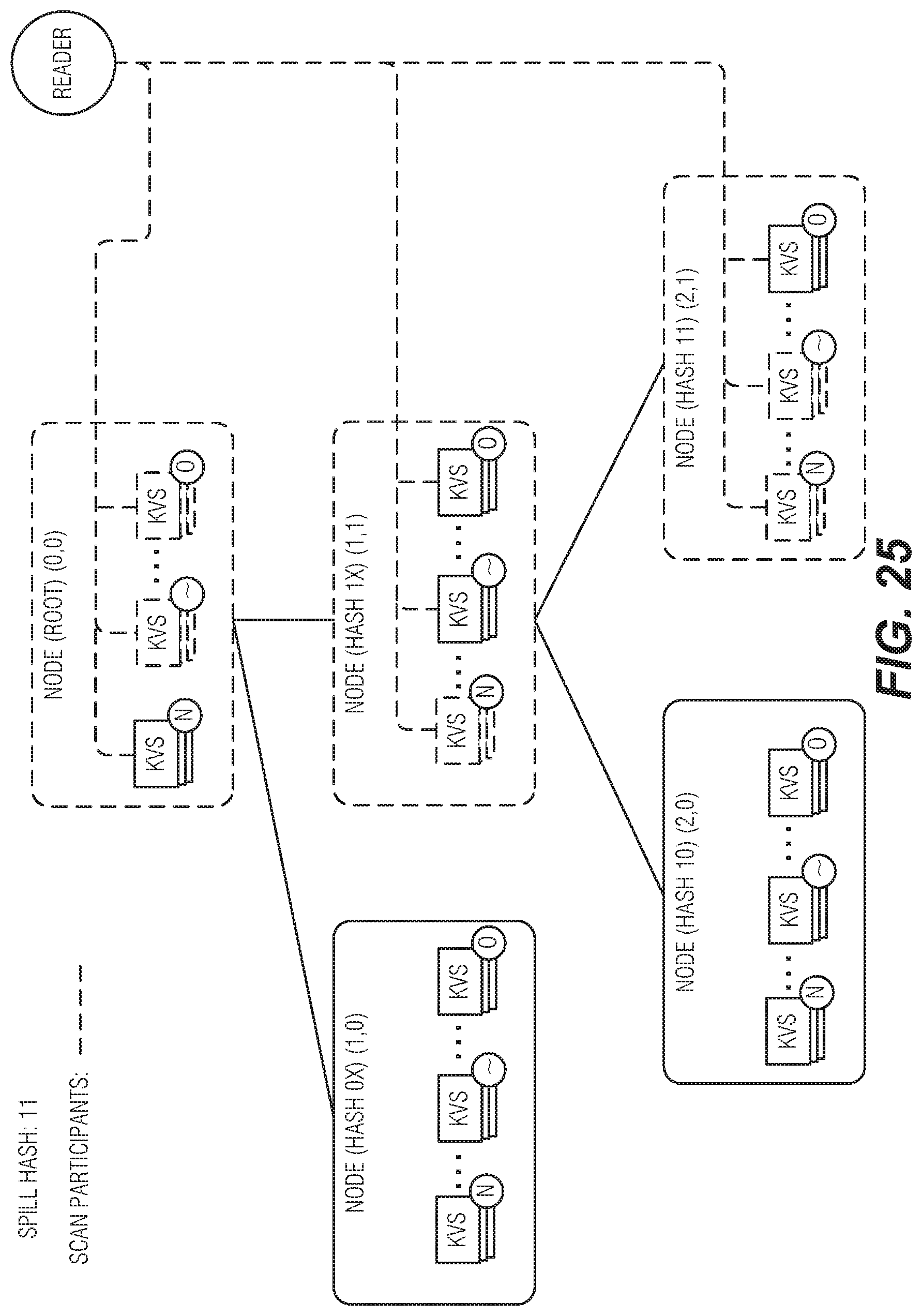

[0031] FIG. 25 is a block diagram illustrating a prefix scan, according to an embodiment.

[0032] FIG. 26 is a block diagram illustrating an example of a machine upon which one or more embodiments may be implemented.

DETAILED DESCRIPTION

[0033] LSM trees have become a popular storage structure for data in which high volume writes are expected and also for which efficient access to the data is expected. To support these features, portions of the LSM are tuned for the media upon which they are kept and a background process generally addresses moving data between the different portions (e.g., from the in-memory portion to the on-disk portion). Herein, in-memory refers to a random access and byte-addressable device (e.g., static random access memory (SRAM) or dynamic random access memory (DRAM)) and on-disk refers to a block addressable device (e.g., hard disk drive, compact disc, digital versatile disc, or solid-state drive (SSD) such as a flash memory based device), which also be referred to as a media device or a storage device. LSM trees leverage the ready access provided by the in-memory device to sort incoming data, by key, to provide ready access to the corresponding values. As the data is merged onto the on-disk portion, the resident on-disk data is merged with the new data and written in blocks back to disk.

[0034] While LSM trees have become a popular structure underlying a number of data base and volume storage (e.g., cloud storage) designs, they do have some drawbacks. First, the constant merging of new data with old to keep the internal structures sorted by key results in significant write amplification. Write amplification is an increase in the minimum number of writes for data that is imposed by a given storage technique. For example, to store data, it is written at least once to disk. This may be accomplished, for example, by simply appending the latest piece of data onto the end of already written data. This structure, however, is slow to search (e.g., it grows linearly with the amount of data), and may result in inefficiencies as data is changed or deleted. LSM trees increase write amplification as they read data from disk to be merged with new data and then re-write that data back to disk. The write amplification problem may be exacerbated when storage device activities are included, such as defragmenting hard disk drives or garbage collection of SSDs. Write amplification on SSDs may be particularly pernicious as these devices may "wear out" as a function of a number of writes. That is, SSDs have a limited lifetime measured in writes. Thus, write amplification with SSDs works to shorten the usable life of the underlying hardware.

[0035] A second issue with LSM trees includes the large amount of space that may be consumed while performing the merges. LSM trees ensure that on-disk portions are sorted by key. If the amount of data resident on-disk is large, a large amount of temporary, or scratch, space may be consumed to perform the merge. This may be somewhat mitigated by dividing the on-disk portions into non-overlapping structures to permit merges on data subsets, but a balance between structure overhead and performance may be difficult to achieve.

[0036] A third issue with LSM trees includes possibly limited write throughput. This issue stems from the essentially always sorted nature of the entirety of the LSM data. Thus, large volume writes that overwhelm the in-memory portion must wait until the in-memory portion is cleared with a possibly time-consuming merge operation. To address this issue, a write buffer (WB) tree has been proposed in which smaller data inserts are manipulated to avoid the merge issues in this scenario. Specifically, a WB tree hashes incoming keys to spread data, and stores the key-hash and value combinations in smaller intake sets. These sets may be merged at various times or written to child nodes based on the key-hash value. This avoids the expensive merge operation of LSM trees while being performant in looking up a particular key. However, WB trees, being sorted by key-hash, result in expensive whole tree scans to locate values that are not directly referenced by a key-hash, such as happens when searching for a range of keys.

[0037] To address the issues noted above, a KVS tree and corresponding operations are described herein. KVS trees are a tree data structure including nodes with connections between parent and child based on a predetermined derivation of a key rather than the content of the tree. The nodes include temporally ordered sequences of key-value sets (kvsets). The kvsets contain key-value pairs in a key-sorted structure. Kvsets are also immutable once written. The KVS tree achieves the write-throughput of WB trees while improving upon WB tree searching by maintaining kvsets in nodes, the kvsets including sorted keys as well as, in an example, key metrics (such as bloom filters, minimum and maximum keys, etc.), to provide efficient search of the kvsets. In many examples, KVS trees may improve upon the temporary storage issues of LSM trees by separating keys from values and merging smaller kvset collections. Additionally, the described KVS trees may reduce write amplification through a variety of maintenance operations on kvsets. Further, as the kvsets in nodes are immutable, issues such as write wear on SSDs may be managed by the data structure, reducing garbage collection activities of the device itself. This has the added benefit of freeing up internal device resources (e.g., bus bandwidth, processing cycles, etc.) that result in better external drive performance (e.g., read or write speed). Additional details and example implementations of KVS trees and operations thereon are described below.

[0038] FIG. 1 illustrates an example of a KVS tree 100, according to an embodiment. The KVS tree 100 is a key-value data structure that is organized as a tree. As a key-value data structure, values are stored in the tree 100 with corresponding keys that reference the values. Specifically, key-entries are used to contain both the key and additional information, such as a reference to the value, however, unless otherwise specified, the key-entries are simply referred to as keys for simplicity. Keys themselves have a total ordering within the tree 100. Thus, keys may be sorted amongst each other. Keys may also be divided into sub-keys. Generally, sub-keys are non-overlapping portions of a key. In an example, the total ordering of keys is based on comparing like sub-keys between multiple keys (e.g., a first sub-key of a key is compared to the first sub-key of another key). In an example, a key prefix is a beginning portion of a key. The key prefix may be composed of one or more sub-keys when they are used.

[0039] The tree 100 includes one or more nodes, such as node 110. The node 110 includes a temporally ordered sequence of immutable key-value sets (kvsets). As illustrated, kvset 115 includes an `N` badge to indicate that it is the newest of the sequence while kvset 120 includes an `O` badge to indicate that it is the oldest of the sequence. Kvset 125 includes an `I` badge to indicate that it is intermediate in the sequence. These badges are used throughout to label kvsets, however, another badge (such as an `X`) denotes a specific kvset rather than its position in a sequence (e.g., new, intermediate, old, etc.), unless it is a tilde `.about.` in which case it is simply an anonymous kvset. As is explained in greater detail below, older key-value entries occur lower in the tree 100. Thus, bringing values up a tree-level, such as from L2 to L1 results in a new kvset in the oldest position in the recipient node.

[0040] The node 110 also includes a determinative mapping for a key-value pair in a kvset of the node to any one child node of the node 110. As used herein, the determinative mapping means that, given a key-value pair, an external entity could trace a path through the tree 100 of possible child nodes without knowing the contents of the tree 100. This, for example, is quite different than a B-tree, for example, where the contents of the tree will determine where a given key's value will fall in order to maintain the search-optimized structure of the tree. Instead, here, the determinative mapping provides a rule such that, for example, given a key-value pair, one may calculate the child at L3 this pair would map even if the maximum tree-level (e.g., tree depth) is only at L. In an example, the determinative mapping includes a portion of a hash of a portion of the key. Thus, a sub-key may be hashed to arrive at a mapping set. A portion of this set may be used for any given level of the tree. In an example, the portion of the key is the entire key. There is no reason that the entire key may not be used.

[0041] In an example, the hash includes a multiple of non-overlapping portions including the portion of the hash. In an example, each of the multiple of non-overlapping portions corresponds to a level of the tree. In an example, the portion of the hash is determined from the multiple of non-overlapping portions by a level of the node. In an example, a maximum number of child nodes for the node is defined by a size of the portion of the hash. In an example, the size of the portion of the hash is a number of bits. These examples may be illustrated by taking a hash of a key that results in 8 bits. These eight bits may be divided into three sets of the first two bits, bits three through six (resulting in four bits), and bits seven and eight. Child nodes may be index based on a set of bits, such that children at the first level (e.g., L1) have two bit names, children on the second level (e.g., L2) have four-bit names, and children on the third level (e.g., L3) have two bit names. An expanded discussion is included below with regard to FIGS. 13 and 14.

[0042] Kvsets are the key and value store organized in the nodes of the tree 100. The immutability of the kvsets means that the kvset, once placed in a node, does not change. A kvset may, however, be deleted, some or all of its contents may be added to a new kysets, etc. In an example, the immutability of the kvset also extends to any control or meta-data contained within the kvset. This is generally possible because the contents to which the meta-data applies are unchanging and thus, often the meta-data will also be static at that point.

[0043] Also of note, the KVS tree 100 does not require uniqueness among keys throughout the tree 100, but a kvset does have only one of a key. That is, every key in a given kvset is different than the other keys of the kvset. This last statement is true for a particular kvset, and thus may not apply when, for example, a kvset is versioned. Kvset versioning may be helpful for creating a snapshot of the data. With a versioned kvset, the uniqueness of a key in the kvset is determined by a combination of the kvset identification (ID) and the version. However, two different kvsets (e.g., kvset 115 and kvset 120) may each include the same key.

[0044] In an example, the kvset includes a key-tree to store key entries of key-value pairs of the kvset. A variety of data structures may be used to efficiently store and retrieve unique keys in the key-tree (it may not even be a tree), such as binary search trees, B-trees, etc. In an example, the keys are stored in leaf nodes of the key-tree. In an example, a maximum key in any subtree of the key-tree is in a rightmost entry of a rightmost child. In an example, a rightmost edge of a first node of the key-tree is linked to a sub-node of the key-tree. In an example, all keys in a subtree rooted at the sub-node of the key-tree are greater than all keys in the first node of the key tree. These last few examples illustrate features of a KB tree, as discussed below with regard to FIG. 6.

[0045] In an example, key entries of the kvset are stored in a set of key-blocks including a primary key-block and zero or more extension key-blocks. In an example, members of the set of key-blocks correspond to media blocks for a storage medium, such as an SSD, hard disk drive, etc. In an example, each key-block includes a header to identify it as a key-block. In an example, the primary key-block includes a list of media block identifications for the one or more extension key-blocks of the kvset.

[0046] In an example, the primary key-block includes a header to a key-tree of the kvset. The header may include a number of values to make interacting with the keys, or kvset generally, easier. In an example, the primary key-block, or header, includes a copy of a lowest key in a key-tree of the kvset. Here, the lowest key is determined by a pre-set sort-order of the tree (e.g., the total ordering of keys in the tree 100). In an example, the primary key-block includes a copy of a highest key in a key-tree of the kvset, the highest key determined by a pre-set sort-order of the tree. In an example, the primary key-block includes a list of media block identifications for a key-tree of the kvset. In an example, the primary key-block includes a bloom filter header for a bloom filter of the kvset. In an example, the primary key-block includes a list of media block identifications for a bloom filter of the kvset.

[0047] In an example, values of the kvset are stored in a set of value-blocks. Here, members of the set of value-blocks correspond to media blocks for the storage medium. In an example, each value-block includes a header to identify it as a value-block. In an example, a value block includes storage section to one or more values without separation between. Thus, the bits of a first value run into bits of a second value on the storage medium without a guard, container, or other delimiter between them. In an example, the primary key-block includes a list of media block identifications for value-blocks in the set of value blocks. Thus, the primary key-block manages storage references to value-blocks.

[0048] In an example, the primary key-block includes a set of metrics for the kvset. In an example, the set of metrics include a total number of keys stored in the kvset. In an example, the set of metrics include a number of keys with tombstone values stored in the kvset. As used herein, a tombstone is a data marker indicating that the value corresponding to the key has been deleted. Generally, a tombstone will reside in the key entry and no value-block space will be consumed for this key-value pair. The purpose of the tombstone is to mark the deletion of the value while avoiding the possibly expensive operation of purging the value from the tree 100. Thus, when one encounters the tombstone using a temporally ordered search, one knows that the corresponding value is deleted even if an expired version of the key-value pair resides at an older location within the tree 100.

[0049] In an example, the set of metrics stored in the primary key-block include a sum of all key lengths for keys stored in the kvset. In an example, the set of metrics include a sum of all value lengths for keys stored in the kyset. These last two metrics give an approximate (or exact) amount of storage consumed by the kvset. In an example, the set of metrics include an amount of unreferenced data in value-blocks (e.g., unreferenced values) of the kvset. This last metric gives an estimate of the space that may be reclaimed in a maintenance operation. Additional details of key-blocks and value-blocks are discussed below with respect to FIGS. 4 and 5.

[0050] In an example, the tree 100 includes a first root 105 in a first computer readable medium of the at least one machine readable medium, and a second root 110 in a second computer readable medium of the at least one computer readable medium. In an example, the second root is the only child to the first root. In an example, the first computer readable medium is byte addressable and wherein the second computer readable is block addressable. This is illustrated in FIG. 1 with node 105 being in the MEM tree-level to signify its in-memory location while node 110 is at L0 to signify it being in the root on-disk element of the tree 100.

[0051] The discussion above demonstrates a variety of the organization attributes of a KVS tree 100. Operations to interact with the tree 100, such as tree maintenance (e.g., optimization, garbage collection, etc.), searching, etc. are discussed below with respect to FIGS. 7-25. Before proceeding to these subjects, FIGS. 2 and 3 illustrate a technique to leverage the structure of the KVS tree 100 to implement an effective use of multi-stream storage devices.

[0052] Storage devices comprising flash memory, or SSDs, may operate more efficiently and have greater endurance (e.g., will not "wear out") if data with a similar lifetime is grouped in flash erase blocks. Storage devices comprising other non-volatile media may also benefit from grouping data with a similar lifetime, such as shingled magnetic recording (SMR) hard-disk drives (HDDs). In this context, data has a similar lifetime if it is deleted at the same time, or within a relatively small time interval. The method for deleting data on a storage device may include explicitly deallocating, logically overwriting, or physically overwriting the data on the storage device.

[0053] As a storage device may be generally unaware of the lifetime of the various data to be stored within it, the storage device may provide an interface for data access commands (e.g., reading or writing) that identify a logical lifetime group with which the data is associated. For example, the industry standard SCSI and proposed NVMe storage device interfaces specify write commands comprising data to be written to a storage device and a numeric stream identifier (stream ID) for a lifetime group called a stream, to which the data corresponds. A storage device supporting a plurality of streams is a multi-stream storage device.

[0054] Temperature is a stability value to classify data, whereby the value corresponds to a relative probability that the data will be deleted in any given time interval. For example, HOT data may be expected to be deleted (or changed) within a minute while COLD data may be expected to last an hour. In an example, a finite set of stability values may be used to specify such a classification. In an example, the set of stability values may be {Hot, Warm, Cold} where, in a given time interval, data classified as Hot has a higher probability of being deleted than data classified as Warm, which in turn has a higher probability of being deleted than data classified as Cold.

[0055] FIGS. 2 and 3 address assigning different stream IDs to different writes based on a given stability value as well as one or more attributes of the data with respect to one or more KVS trees. Thus, continuing the prior example, for a given storage device a first set of stream identifiers may be used with write commands for data classified as Hot, a second set of stream identifiers may be used with write commands for data classified as Warm, and a third set of stream identifiers may be used with write commands for data classified as Cold, where a stream identifier is in at most one of these three sets.

[0056] The following terms are provided for convenience in discussing the multi-stream storage device systems and techniques of FIGS. 2 and 3: [0057] DID is a unique device identifier for a storage device. [0058] SID is a stream identifier for a stream on a given storage device. [0059] TEMPSET is a finite set of temperature values. [0060] TEMP is an element of TEMPSET. [0061] FID is a unique forest identifier for a collection of KVS trees. [0062] TID is a unique tree identifier for a KVS tree. The KVS tree 100 has a TID. [0063] LNUM is a level number in a given KVS tree, where, for convenience, the root node of the KVS tree is considered to be at tree-level 0, the child nodes of the root node (if any) are considered to be at tree-level 1, and so on. Thus, as illustrated, KVS tree 100 includes tree-levels L0 (including node 110) through L3. [0064] NNUM is a number for a given node at a given level in a given KVS tree, where, for convenience, NNUM may be a number in the range zero through (NodeCount(LNUM)-1), where NodeCount(LNUM) is the total number of nodes at a tree-level LNUM, such that every node in the KVS tree 100 is uniquely identified by the tuple (LNUM, NNUM). As illustrated in FIG. 1, the complete listing of node tuples, starting at node 110 and progressing top-to-bottom, left-to-right, would be: [0065] L0 (root): (0.0.0) [0066] L1: (1,0), (1,1), (1,2), (1,3), (1,4) [0067] L2: (2,0), (2,1), (2,2), (2,3) [0068] L3: (3,0), (3,1), (3,2), (3,3) [0069] KVSETID is a unique kvset identifier. [0070] WTYPE is the value KBLOCK or VBLOCK as discussed below. [0071] WLAST is a Boolean value (TRUE or FALSE) as discussed below.

[0072] FIG. 2 is a block diagram illustrating an example of a write to a multi-stream storage device (e.g., device 260 or 265), according to an embodiment. FIG. 2 illustrates multiple KVS trees. KVS tree 205 and KVS tree 210. As illustrated, each tree is respectively performing a write operation 215 and 220. These write operations are handled by a storage subsystem 225. The storage subsystem may be a device driver, such as for device 260, may be a storage product to manage multiple devices (e.g., device 260 and device 265) such as those found in operating systems, network attached storage devices, etc. In time the storage subsystem 225 will complete the writes to the storage devices in operations 250 and 255 respectively. The stream-mapping circuits 230 provide a stream ID to a given write 215 to be used in the device write 250.

[0073] In the KVS tree 205, the immutability of kvsets results in entire kvsets being written or deleted at a time. Thus, the data comprising a kvset has a similar lifetime. Data comprising a new kvset may be written to a single storage device or to several storage devices (e.g., device 260 and device 265) using techniques such as erasure coding or RAID. Further, as the size of kvsets may be larger than any given device write 250, writing the kvset may involve directing multiple write commands to a given storage device 260. To facilitate operation of the stream-mapping circuits 230, one or more of the following may be provided for selecting a stream ID for each such write command 250: [0074] A) KVSETID of the kvset being written; [0075] B) DID for the storage device; [0076] C) FID for the forest to which the KVS tree belongs; [0077] D) TID for the KVS tree; [0078] E) LNUM of the node in the KVS tree containing the kvset; [0079] F) NNUM of the node in the KVS tree containing the kvset; [0080] G) WTYPE is KBLOCK if the write command is for a key-block for KVSETID on DID, or is VBLOCK if the write command is for a value-block for KVSETID on DID [0081] H) WLAST is TRUE if the write command is the last for a KVSETID on DID, and is FALSE otherwise In an example, for each such write command, the tuple (DID, FID, TID, LNUM, NNUM, KVSETID, WTYPE, WLAST)-referred to as a stream-mapping tuple--may be sent to the stream-mapping circuits 230. The stream-mapping circuits 230 may then respond with the stream ID for the storage subsystem 225 to use with the write command 250.

[0082] The stream-mapping circuits 230 may include an electronic hardware implemented controller 235, accessible stream ID (A-SID) table 240 and a selected stream ID (S-SID) table 245. The controller 235 is arranged to accept as input a stream-mapping tuple and respond with the stream ID. In an example, the controller 235 is configured to a plurality of storage devices 260 and 265 storing a plurality of KVS trees 205 and 210. The controller 235 is arranged to obtain (e.g., by configuration, querying, etc.) a configuration for accessible devices. The controller 235 is also arranged to configure the set of stability values TEMPSET, and for each value TEMP in TEMPSET configure a fraction, number, or other determiner of the number of streams on a given storage device to use for data classified by that value.

[0083] In an example, the controller 235 is arranged to obtain (e.g., receive via configuration, message, etc., retrieve from configuration device, firmware, etc.) a temperature assignment method. The temperature assignment method will be used to assign stability values to the write request 215 in this example. In an example, a stream-mapping tuple may include any one or more of DID, FID, TID, LNUM, NNUM, KVSETID, WTYPE or WLAST and be used as input to the temperature assignment method executed by the controller 235 to select a stability value TEMP from the TEMPSET. In an example, a KVS tree scope is a collection of parameters for a write specific to the KVS tree component (e.g., kvset) being written. In an example, the KVS tree scope includes one or more of FID, TID, LNUM, NNUM, or KVSETID. Thus, in this example, the stream-mapping tuple may include components of the KVS tree scope as well as device specific or write specific components, such as DID. WLAST, or WTYPE. In an example, a stability, or temperature, scope tuple TSCOPE is derived from the stream-mapping tuple. The following are example constituent KVS tree scope components that may be used to create TSCOPE: [0084] A) TSCOPE computed as (FID, TID, LNUM); [0085] B) TSCOPE computed as (LNUM); [0086] C) TSCOPE computed as (TID); [0087] D) TSCOPE computed as (TID. LNUM); or [0088] E) TSCOPE computed as (TID, LNUM, NNUM).

[0089] In an example, the controller 235 may implement a static temperature assignment method. The static temperature assignment method may read the selected TEMP, for example, from a configuration file, database, KVS tree meta data, or meta data in the KVS tree 105 TID or other database, including metadata stored in the KVS tree TID. In this example, these data sources include mappings from the TSCOPE to a stability value. In an example, the mapping may be cached (e.g., upon controller 235's activation or dynamically during later operation) to speed the assignment of stability values as write requests arrive.

[0090] In an example, the controller 235 may implement a dynamic temperature assignment method. The dynamic temperature assignment method may compute the selected TEMP based on a frequency with which kvsets are written to TSCOPE. For example, the frequency with which the controller 235 executes the temperature assignment method for a given TSCOPE may be measured and clustered around TEMPS in TEMPSET. Thus, such a computation may, for example, define a set of frequency ranges and a mapping from each frequency range to a stability value so that the value of TEMP is determined by the frequency range containing the frequency with which kvsets are written to TSCOPE.

[0091] The controller 235 is arranged to obtain (e.g., receive via configuration, message, etc., retrieve from configuration device, firmware, etc.) a stream assignment method. The stream assignment method will consume the KVS tree 205 aspects of the write 215 as well as the stability value (e.g., from the temperature assignment) to produce the stream ID. In an example, controller 235 may use the stream-mapping tuple (e.g., including KVS tree scope) in the stream assignment method to select the stream ID. In an example, any one or more of DID, FID, TID, LNUM. NNUM, KVSETID. WTYPE or WLAST along with the stability value may be used in the stream assignment method executed by the controller 235 to select the stream ID. In an example, a stream-scope tuple SSCOPE is derived from the stream-mapping tuple. The following are example constituent KVS tree scope components that may be used to create SSCOPE: [0092] A) SSCOPE computed as (FID. TID. LNUM. NNUM) [0093] B) SSCOPE computed as (KVSETID) [0094] C) SSCOPE computed as (TID) [0095] D) SSCOPE computed as (TID, LNUM) [0096] E) SSCOPE computed as (TID. LNUM, NNUM) [0097] F) SSCOPE computed as (LNUM)

[0098] The controller 235 may be arranged to, prior to accepting inputs, initialize the A-SID table 240 and the S-SID table 245. A-SID table 240 is a data structure (table, dictionary, etc.) that may store entries for tuples (DID, TEMP, SID) and may retrieve such entries with specified values for DID and TEMP. The notation A-SID(DID, TEMP) refers to all entries in A-SID table 240, if any, with the specified values for DID and TEMP. In an example, the A-SID table 240 may be initialized for each configured storage device 260 and 265 and temperature value in TEMPSET. The A-SID table 240 initialization may proceed as follows: For each configured storage device DID, the controller 235 may be arranged to:

A) Obtain the number of streams available on DID, referred to as SCOUNT; B) Obtain a unique SID for each of the SCOUNT streams on DID; and C) For each value TEMP in TEMPSET: a) Compute how many of the SCOUNT streams to use for data classified by TEMP in accordance with the configured determiner for TEMP, referred to as TCOUNT: and b) Select TCOUNT SIDs for DID not yet entered in the A-SID table 240 and, for each selected TCOUNT SID for DID, create one entry (e.g., row) in A-SID table 240 for (DID, TEMP. SID).

[0099] Thus, once initialized, the A-SID table 240 includes an entry for each configured storage device DID and value TEMP in TEMPSET assigned a unique SID. The technique for obtaining the number of streams available for a configured storage device 260 and a usable SID for each differs by storage device interface, however, these are readily accessible via the interfaces of multi-stream storage devices

[0100] The S-SID table 245 maintains a record of streams already in use (e.g., already a part of a given write). S-SID table 245 is a data structure (table, dictionary, etc.) that may store entries for tuples (DID, TEMP, SSCOPE. SID, Timestamp) and may retrieve or delete such entries with specified values for DID. TEMP, and optionally SSCOPE. The notation S-SID(DID. TEMP) refers to all entries in S-SID table 245, if any, with the specified values for DID and TEMP. Like the A-SID table 240, the S-SID table 245 may be initialized by the controller 235. In an example, the controller 235 is arranged to initialize the S-SID table 245 for each configured storage device 260 and 265 and temperature value in TEMPSET.

[0101] As noted above, the entries in S-SID table 245 represent currently, or already, assigned streams for write operations. Thus, generally, the S-SID table 245 is empty after initiation, entries being created by the controller 235 as stream IDs are assigned.

[0102] In an example, the controller 235 may implement a static stream assignment method. The static stream assignment method selects the same stream ID for a given DID, TEMP, and SSCOPE. In an example, the static stream assignment method may determine whether S-SID(DID. TEMP) has an entry for SSCOPE. If there is no conforming entry, the static stream assignment method selects a stream ID SID from A-SID(DID, TEMP) and creates an entry in S-SID table 245 for (DID, TEMP, SSCOPE. SID, timestamp), where timestamp is the current time after the selection. In an example, the selection from A-SID(DID, TEMP) is random, or the result of a round-robin process. Once the entry from S-SID table 245 is either found or created, the stream ID SID is returned to the storage subsystem 225. In an example, if WLAST is true, the entry in S-SID table 245 for (DID, TEMP, SSCOPE) is deleted. This last example demonstrates the usefulness of having WLAST to signal the completion of a write 215 for a kvset or the like that would be known to the tree 205 but not to the storage subsystem 225.

[0103] In an example, the controller 235 may implement a least recently used (LRU) stream assignment method. The LRU stream assignment method selects the same stream ID for a given DID, TEMP, and SSCOPE within a relatively small time interval. In an example, the LRU assignment method determines whether S-SID(DID, TEMP) has an entry for SSCOPE. If the entry exists, the LRU assignment method thens select the stream ID in this entry and sets the timestamp in this entry in S-SID table 245 to the current time.

[0104] If the SSCOPE entry is not in S-SID(DID, TEMP), the LRU stream assignment method determines whether the number of entries S-SID(DID, TEMP) equals the number of entries A-SID(DID, TEMP). If this is true, then the LRU assignment method selects the stream ID SID from the entry in S-SID(DID. TEMP) with the oldest timestamp. Here, the entry in S-SID table 245 is replaced with the new entry (DID, TEMP, SSCOPE, SID, timestamp) where timestamp is the current time after the selection.

[0105] If there are fewer S-SSID(DID, TEMP) entries than A-SID(DID, TEMP) entries, the method selects a stream ID SID from A-SID(DID. TEMP) such that there is no entry in S-SID(DID, TEMP) with the selected stream ID and creates an entry in S-SID table 245 for (DID, TEMP, SSCOPE, SID, timestamp) where timestamp is the current time after the selection.

[0106] Once the entry from S-SID table 245 is either found or created, the stream ID SID is returned to the storage subsystem 225. In an example, if WLAST is true, the entry in S-SID table 245 for (DID, TEMP, SSCOPE) is deleted.

[0107] In operation the controller 235 is configured to assign a stability value for a given stream-mapping tuple received as par of the write request 215. Once the stability value is determined, the controller 235 is arranged to assign the SID. The temperature assignment and stream assignment methods may each reference and update the A-SID table 240 and the S-SID table 245. In an example, the controller 235 is also arranged to provide the SID to a requester, such as the storage subsystem 225.

[0108] Using the stream ID based on the KVS tree scope permits like data to be colocated in erase blocks 270 on multi-stream storage device 260. This reduces garbage collection on the device and thus may increase device performance and longevity. This benefit may be extended to multiple KVS trees. KVS trees may be used in a forest, or grove, whereby several KVS trees are used to implement a single structure, such as a file system. For example, one KVS tree may use block number as the key and bits in the block as a value while a second KVS tree may use file path as the key and a list of block numbers as the value. In this example, it is likely that kvsets for a given file referenced by path and the kvsets holding the block numbers have similar lifetimes. Thus the inclusion of FID above.

[0109] The structure and techniques described above provide a number of advantages in systems implementing KVS trees and storage devices such as flash storage devices. In an example, a computing system implementing several KVS trees stored on one or more storage devices may use knowledge of the KVS tree to more efficiently select streams in multi-stream storage devices. For example, the system may be configured so that the number of concurrent write operations (e.g., ingest or compaction) executed for the KVS trees is restricted based on the number of streams on any given storage device that are reserved for the temperature classifications assigned to kvset data written by these write operations. This is possible because, within a kvset, the life expectancy of that data is the same as kvsets are written and deleted in their entirety. As noted elsewhere, keys and values may be separated. Thus, key write will have the same life-time which is likely shorter than value life-times when key compaction, discussed below, is performed. Additionally, tree-level experimentally appears to be a strong indication of data life-time, the older data, and thus greater (e.g., deeper) tree-level, having a longer life-time than younger data at higher tree-levels.

[0110] The following scenario may further elucidate the operation of the stream-mapping circuits 230 to restrict writes, consider [0111] A) Temperature values {Hot. Cold}, with H streams on a given storage device used for data classified as Hot, and C streams on a given storage device used for data classified as Cold. [0112] B) A temperature assignment method configured with TSCOPE computed as (LNUM) whereby data written to L0 in any KVS tree is assigned a temperature value of Hot, and data written to L1 or greater in any KVS tree is assigned a temperature value of Cold. [0113] C) An LRU stream assignment method configured with SSCOPE computed as (TID, LNUM). In this case, the total number of concurrent ingest and compaction operations-operations producing a write--for all KVS trees follows these conditions: concurrent ingest operations for all KVS trees is at most H-because the data for all ingest operations is written to level 0 in a KVS tree and hence will be classified as Hot--and concurrent compaction operations for all KVS trees is at most C-because the data for all spill compactions, and the majority of other compaction operations, is written to level 1 or greater and hence will be classified as Cold.

[0114] Other such restrictions are possible and may be advantageous depending on certain implementation details of the KVS tree and controller 235. For example, given controller 235 configured as above, it may be advantageous for the number of ingest operations to be a fraction of H (e.g., one-half) and the number of compaction operations to be a fraction of C (e.g., three-fourths) because LRU stream assignment with SSCOPE computed as (TID, LNUM) may not take advantage of WLAST in a stream-mapping tuple to remove unneeded S-SID table 245 entries upon receiving the last write for a given KVSET in TID, resulting in a suboptimal SID selection.

[0115] Although the operation of the stream-mapping circuits 230 are described above in the context of KVS trees, other structures, such as LSM tree implementations, may equally benefit from the concepts presented herein. Many LSM Tree variants store collections of key-value pairs and tombstones whereby a given collection may be created by an ingest operation or garbage collection operation (often referred to as a compaction or merge operation), and then later deleted in whole as the result of a subsequent ingest operation or garbage collection operation. Hence the data comprising such a collection has a similar lifetime, like the data comprising a kvset in a KVS tree. Thus, a tuple similar to the stream-mapping tuple above, may be defined for most other LSM Tree variants, where the KVSETID may be replaced by a unique identifier for the collection of key-value pairs or tombstones created by an ingest operation or garbage collection operation in a given LSM Tree variant. The stream-mapping circuits 230 may then be used as described to select stream identifiers for the plurality of write commands used to store the data comprising such a collection of key-value pairs and tombstones.

[0116] FIG. 3 illustrates an example of a method 300 to facilitate writing to a multi-stream storage device, according to an embodiment. The operations of the method 300 are implemented with electronic hardware, such as that described throughout at this application, including below with respect to FIG. 26 (e.g., circuits). The method 300 provides a number of examples to implement the discussion above with respect to FIG. 2.

[0117] At operation 305, notification of a KVS tree write request for a multi-stream storage device is received. In an example, the notification includes a KVS tree scope corresponding to data in the write request. In an example, the KVS tree scope includes at least one of: a kvset ID corresponding to a kvset of the data; a node ID corresponding to a node of the KVS tree corresponding to the data; a level ID corresponding to a tree-level corresponding to the data; a tree ID for the KVS tree; a forest ID corresponding to the forest to which the KVS tree belongs; or a type corresponding to the data. In an example, the type is either a key-block type or a value-block type.

[0118] In an example, the notification includes a device ID for the multi-stream device. In an example, the notification includes a WLAST flag corresponding to a last write request in a sequence of write requests to write a kvset, identified by the kvset ID, to the multi-stream storage device.

[0119] At operation 310, a stream identifier (ID) is assigned to the write request based on the KVS tree scope and a stability value of the write request. In an example, assigning the stability value includes: maintaining a set of frequencies of stability value assignments for a level ID corresponding to a tree-level, each member of the set of frequencies corresponding to a unique level ID: retrieving a frequency from the set of frequencies that corresponds to a level ID in the KVS tree scope; and selecting a stability value from a mapping of stability values to frequency ranges based on the frequency.

[0120] In an example, assigning the stream ID to the write request based on the KVS tree scope and the stability value of the write request includes creating a stream-scope value from the KVS tree scope. In an example, the stream-scope value includes a level ID for the data. In an example, the stream-scope value includes a tree ID for the data. In an example, the stream-scope value includes a level ID for the data. In an example, the stream-scope value includes a node ID for the data. In an example, the stream-scope value includes a kvset ID for the data.

[0121] In an example, assigning the stream ID to the write request based on the KVS tree scope and the stability value of the write request also includes performing a lookup in a selected-stream data structure using the stream-scope value. In an example, performing the lookup in the selected-stream data structure includes: failing to find the stream-scope value in the selected-stream data structure; performing a lookup on an available-stream data structure using the stability value; receiving a result of the lookup that includes a stream ID; and adding an entry to the selected-stream data structure that includes the stream ID, the stream-scope value, and a timestamp of a time when the entry is added. In an example, multiple entries of the available-stream data structure correspond to the stability value, and wherein the result of the lookup is at least one of a round-robin or random selection of an entry from the multiple entries. In an example, the available-stream data structure may be initialized by: obtaining a number of streams available from the multi-stream storage device; obtain a stream ID for all streams available from the multi-stream storage device, each stream ID being unique; add stream IDs to stability value groups; and creating a record in the available-stream data structure for each stream ID, the record including the stream ID, a device ID for the multi-stream storage device, and a stability value corresponding to a stability value group of the stream ID.

[0122] In an example, performing the lookup in the selected-stream data structure includes: failing to find the stream-scope value in the selected-stream data structure; locating a stream ID from either the selected-stream data structure or an available-stream data structure based on the contents of the selected stream data structure; and creating an entry to the selected-stream data structure that includes the stream ID, the stream-scope value, and a timestamp of a time when the entry is added. In an example, locating the stream ID from either the selected-stream data structure or an available-stream data structure based on the contents of the selected stream data structure includes: comparing a first number of entries from the selected-stream data structure to a second number of entries from the available-stream data structure to determine that the first number of entries and the second number of entries are equal; locating a group of entries from the selected-stream data structure that correspond to the stability value; and returning a stream ID of an entry in the group of entries that has the oldest timestamp. In an example, locating the stream ID from either the selected-stream data structure or an available-stream data structure based on the contents of the selected stream data structure includes: comparing a first number of entries from the selected-stream data structure to a second number of entries from the available-stream data structure to determine that the first number of entries and the second number of entries are not equal; performing a lookup on the available-stream data structure using the stability value and stream IDs in entries of the selected-stream data structure; receiving a result of the lookup that includes a stream ID that is not in the entries of the selected-stream data structure; and adding an entry to the selected-stream data structure that includes the stream ID, the stream-scope value, and a timestamp of a time when the entry is added.

[0123] In an example, assigning the stream ID to the write request based on the KVS tree scope and the stability value of the write request also includes returning a stream ID corresponding to the stream-scope from the selected-stream data structure. In an example, returning the stream ID corresponding to the stream-scope from the selected-stream data structure includes updating a timestamp for an entry in the selected-stream data structure corresponding to the stream ID. In an example, the write request includes a WLAST flag, and wherein returning the stream ID corresponding to the stream-scope from the selected-stream data structure includes removing an entry from the selected-stream data structure corresponding to the stream ID.

[0124] In an example, the method 300 may be extended to include removing entries from the selected-stream data structure with a timestamp beyond a threshold.

[0125] At operation 315, the stream ID is returned to govern stream assignment to the write request, with the stream assignment modifying a write operation of the multi-stream storage device.

[0126] In an example, the method 300 may be optionally extended to include assigning the stability value based on the KVS tree scope. In an example, the stability value is one of a predefined set of stability values. In an example, the predefined set of stability values includes HOT, WARM, and COLD, wherein HOT indicates a lowest expected lifetime of the data on the multi-stream storage device and COLD indicates a highest expected lifetime of the data on the multi-stream storage device.

[0127] In an example, assigning the stability value includes locating the stability value from a data structure using a portion of the KVS tree scope. In an example, the portion of the KVS tree scope includes a level ID for the data. In an example, the portion of the KVS tree scope includes a type for the data.

[0128] In an example, the portion of the KVS tree scope includes a tree ID for the data. In an example, the portion of the KVS tree scope includes a level ID for the data. In an example, the portion of the KVS tree scope includes a node ID for the data.

[0129] FIG. 4 is a block diagram illustrating an example of a storage organization for keys and values, according to an embodiment. A kvset may be stored using key-blocks to hold keys (along with tombstones as needed) and value-blocks to hold values. For a given kvset, the key-blocks may also contain indexes and other information (such as bloom filters) for efficiently locating a single key, locating a range of keys, or generating the total ordering of all keys in the kvset, including key tombstones, and for obtaining the values associated with those keys, if any.

[0130] A single kvset is represented in FIG. 4. The key-blocks include a primary key block 410 that includes header 405 and an extension key-block 415 that includes an extension header 417. The value blocks include headers 420 and 440 respectively as well as values 425, 430, 435, and 445. The second value block also includes free space 450.

[0131] A tree representation for the kvset is illustrated to span the key-blocks 410 and 415. In this illustration, the leaf nodes contain value references (VID) to the values 425, 430, 435, and 445, and two keys with tombstones. This illustrates that, in an example, the tombstone does not have a corresponding value in a value block, even though it may be referred to as a type of key-value pair.

[0132] The illustration of the value blocks demonstrates that each may have a header and values that run next to each other without delineation. The reference to particular bits in the value block for a value, such as value 425, are generally stored in the corresponding key entry, for example, in an offset and extent format.

[0133] FIG. 5 is a block diagram illustrating an example of a configuration for key-blocks and value-blocks, according to an embodiment. The key-block and value block organization of FIG. 5 illustrates the generally simple nature of the extension key-block and the value-blocks. Specifically, each are generally a simple storage container with a header to identify its type (e.g., key-block or value-block) and perhaps a size, location on storage, or other meta data. In an example, the value-block includes a header 540 with a magic number indicating that it is a value-block and storage 545 to store bits of values. The key-extension block includes a header 525 indicating that it is an extension block and stores a portion of the key structure 530, such as a KB tree, B-tree, or the like.

[0134] The primary key-block provides a location for many kvset meta data in addition to simply storing the key structure. The primary key-block includes a root of the key structure 520. The primary key block may also include a header 505, bloom filters 510, or a portion of the key structure 515.

[0135] Reference to the components of the primary key-block are included in the header 505, such as the blocks of the bloom filter 510, or the root node 520. Metrics, such as kvset size, value-block addresses, compaction performance, or use may also be contained in the header 505.

[0136] The bloom filters 510 are computed when the kvset is created and provide a ready mechanism to ascertain whether a key is not in the kvset without performing a search on the key structure. This advance permits greater efficiency in scanning operations as noted below.

[0137] FIG. 6 illustrates an example of a KB tree 600, according to an embodiment. An example key structure to use in a kvset's key-blocks is the KB tree. The KB tree 600 has structural similarities to B+ trees. In an example, the KB tree 600 has 4096-byte nodes (e.g., node 605, 610, and 615). All keys of the KB tree reside in leaf nodes (e.g., node 615). Internal nodes (e.g., node 610) have copies of selected leaf-node keys to navigate the tree 600. The result of a key lookup is a value reference, which may be, in an example, to a value-block ID, an offset and a length.

[0138] The KB tree 600 has the following properties: [0139] A) All keys in the subtree rooted at an edge key K's child node are less than or equal to K. [0140] B) The maximum key in any tree or subtree is the right-most entry in the right-most leaf node. [0141] C) Given a node N with a right-most edge that points to child R, all keys in the subtree rooted at node R are greater than all keys in node N.

[0142] The KB tree 600 may be searched via a binary search among the keys in the root node 605 to find the appropriate "edge" key. The link to the edge key's child may be followed. This procedure is then repeated until a match is found in a leaf node 615 or no match is found.

[0143] Because kvsets are created once and not changed, creating the KB tree 600 may be different than other tree structures that mutate over time. The KB tree 600 may be created in a bottom-up fashion. In an example, the leaf nodes 615 are created first, followed by their parents 610, and so on until there is one node left--the root node 605. In an example, creation starts with a single empty leaf node, the current node. Each new key is added to the current node. When the current node becomes full, a new leaf node is created and it becomes the current node. When the last key is added, all leaf nodes are complete. At this point, nodes at the next level up (i.e., the parents of the leaf nodes) are created in a similar fashion, using the maximum key from each leaf node as the input stream. When those keys are exhausted, that level is complete. This process repeats until the most recently created level consists of a single node, the root node 605.

[0144] If, during creation, the current key-block becomes full, new nodes may be written to an extension key-block. In an example, an edge that crosses from a first key-block to a second key-block includes a reference to the second key-block.

[0145] FIG. 7 is a block diagram illustrating KVS tree ingestion, according to an embodiment. In a KVS tree, the process of writing a new kvset to the root node 730 is referred to as an ingest. Key-value pairs 705 (including tombstones) are accumulated in-memory 710 of the KVS tree, and are organized into kvsets ordered from newest 715 to oldest 720. In an example, the kvset 715 may be mutable to accept key-value pairs synchronously. This is the only mutable kvset variation in the KVS tree.

[0146] The ingest 725 writes the key-value pairs and tombstones in the oldest kvset 720 in main memory 710 to a new (and the newest) kvset 735 in the root node 730 of the KVS tree, and then deletes that kvset 720 from main memory 710.

[0147] FIG. 8 illustrates an example of a method 800 for KVS tree ingestion, according to an embodiment. The operations of the method 800 are implemented with electronic hardware, such as that described throughout at this application, including below with respect to FIG. 26 (e.g., circuits).

[0148] At operation 805, a key-value set (kvset) is received to store in a key-value data structure. Here, the key-value data structure is organized as a tree and the kvset includes a mapping of unique keys to values. The keys and the values of the kvset are immutable and nodes of the tree have a temporally ordered sequence of kvsets.

[0149] In an example, when a kvset is written to the at least one storage medium, the kvset is immutable. In an example, wherein key entries of the kvset are stored in a set of key-blocks including a primary key-block and zero or more extension key-blocks. Here, members of the set of key-blocks correspond to media blocks for the at least one storage medium with each key-block including a header to identify it as a key-block.

[0150] In an example, the primary key-block includes a list of media block identifications for the one or more extension key-blocks of the kvset. In an example, the primary key-block includes a list of media block identifications for value-blocks in the set of value blocks. In an example, the primary key-block includes a copy of a lowest key in a key-tree of the kvset, the lowest key determined by a pre-set sort-order of the tree. In an example, the primary key-block includes a copy of a highest key in a key-tree of the kvset, the highest key determined by a pre-set sort-order of the tree. In an example, the primary key-block includes a header to a key-tree of the kvset. In an example, the primary key-block includes a list of media block identifications for a key-tree of the kvset. In an example, the primary key-block includes a bloom filter header for a bloom filter of the kvset. In an example, the primary key-block includes a list of media block identifications for a bloom filter of the kvset.

[0151] In an example, values are stored in a set of value-blocks operation 805. Here, members of the set of value-blocks corresponding to media blocks for the at least one storage medium with each value-block including a header to identify it as a value-block. In an example, a value block includes storage section to one or more values without separation between values.

[0152] In an example, the primary key-block includes a set of metrics for the kvset. In an example, the set of metrics include a total number of keys stored in the kvset. In an example, the set of metrics include a number of keys with tombstone values stored in the kvset. In an example, the set of metrics include a sum of all key lengths for keys stored in the kvset. In an example, the set of metrics include a sum of all value lengths for keys stored in the kvset. In an example, the set of metrics include an amount of unreferenced data in value-blocks of the kvset.

[0153] At operation 810, the kvset is written to a sequence of kvsets of a root-node of the tree.

[0154] The method 800 may be extended to include operations 815-825.

[0155] At operation 815, a key and a corresponding value to store in the key-value data structure are received.

[0156] At operation 820, the key and the value are placed in a preliminary kvset, the preliminary kvset being mutable. In an example, a rate of writing to the preliminary root node is beyond a threshold. In this example, the method 800 may be extended to throttle write requests to the key-value data structure.

[0157] At operation 825, the kvset is written to the key-value data structure when a metric is reached. In an example, the metric is a size of a preliminary root node. In an example, the metric is an elapsed time.

[0158] Once ingestion has occurred, a variety of maintenance operations may be employed to maintain the KVS tree. For example, if a key is written at one time with a first value and at a later time with a second value, removing the first key-value pair will free up space or reduce search times. To address some of these issues, KVS trees may use compaction. Details of several compaction operations are discussed below with respect to FIGS. 9-18. The illustrated compaction operations are forms of garbage collection because they may remove obsolete data, such as keys or key-value pairs during the merge.

[0159] Compaction occurs under a variety of triggering conditions, such as when the kvsets in a node meet specified or computed criteria. Examples of such compaction criteria include the total size of the kvsets or the amount of garbage in the kvsets. One example of garbage in kvsets is key-value pairs or tombstones in one kvset rendered obsolete, for example, by a key-value pair or tombstone in a newer kvset, or a key-value pair that has violated a time-to-live constraint, among others. Another example of garbage in kvsets is unreferenced data in value-blocks (unreferenced values) resulting from key compactions.

[0160] Generally, the inputs to a compaction operation are some or all of the kvsets in a node at the time the compaction criteria are met. These kvsets are called a merge set and comprise a temporally consecutive sequence of two or more kvsets.

[0161] As compaction is generally triggered when new data is ingested, the method 800 may be extended to support compaction, however, the following operations may also be triggered when, for example, there are free processing resources, or other convenient scenarios to perform the maintenance.

[0162] Thus, the KVS tree may be compacted. In an example, the compacting is performed in response to a trigger. In an example, the trigger is an expiration of a time period.

[0163] In an example, the trigger is a metric of the node. In an example, the metric is a total size of kvsets of the node. In an example, the metric is a number of kvsets of the node. In an example, the metric is a total size of unreferenced values of the node. In an example, the metric is a number of unreferenced values.

[0164] FIG. 9 is a block diagram illustrating key compaction, according to an embodiment. Key compaction reads the keys and tombstones, but not values, from the merge set, removes all obsolete keys or tombstones, writes the resulting keys and tombstones into one or more new kvsets (e.g., by writing into new key-blocks), deletes the key-stores, but not the values, from the node. The new kvsets atomically replace, and are logically equivalent to, the merge set both in content and in placement within the logical ordering of kvsets from newest to oldest in the node.

[0165] As illustrated, the kvsets KVS3 (the newest), KVS2, and KVS1 (the oldest) undergo key compaction for the node. As the key-stores for these kvsets are merged, collisions on keys A and B occur. As the new kvset. KVS4 (illustrated below), may only contain one of each merged key, the collisions are resolved in favor of the most recent (the leftmost as illustrated) keys, referring to value ID 10 and value ID 11 for keys A and B respectively. Key C has no collision and so will be included in the new kvset. Thus, the key entries that will be part of the new kvset. KVS4, are shaded in the top node.

[0166] For illustrative purposes. KVS4 is drawn to span KVS1. KVS2, and KVS3 in the node and the value entries are drawn in a similar location in the node. The purpose of these positions demonstrates that the values are not changed in a key compaction, but rather only the keys are changed. As explained below, this provides a more efficient search by reducing the number of kvsets searched in any given node and may also provide valuable insights to direct maintenance operations. Also note that the values 20 and 30 are illustrated with dashed lines, denoting that they persist in the node but are no longer referenced by a key entry as their respective key entries were removed in the compaction.

[0167] Key compaction is non-blocking as a new kvset (e.g., KVS5) may be placed in the newest position (e.g., to the left) of KVS3 or KVS4 during the compaction because, by definition, the added kvset will be logically newer than the kvset resulting from the key compaction (e.g., KVS4).

[0168] FIG. 10 illustrates an example of a method 1000 for key compaction, according to an embodiment. The operations of the method 1000 are implemented with electronic hardware, such as that described throughout at this application, including below with respect to FIG. 26 (e.g., circuits).

[0169] At operation 1005, a subset of kvsets from a sequence of kvsets for the node is selected. In an example, the subset of kvsets are contiguous kvsets and include an oldest kvset.

[0170] At operation 1010, a set of collision keys is located. Members of the set of collision keys including key entries in at least two kvsets in the sequence of kvsets for the node.

[0171] At operation 1015, a most recent key entry for each member of the set of collision keys is added to a new kvset. In an example, where the node has no children, and where the subset of kvsets includes the oldest kvset, writing the most recent key entry for each member of the set of collision keys to the new kvset and writing entries for each key in members of the subset of kvsets that are not in the set of collision keys to the new kvset includes omitting any key entries that include a tombstone. In an example, where the node has no children, and where the subset of kvsets includes the oldest kvset, writing the most recent key entry for each member of the set of collision keys to the new kvset and writing entries for each key in members of the subset of kvsets that are not in the set of collision keys to the new kvset includes omitting any key entries that are expired.

[0172] At operation 1020, entries for each key in members of the subset of kvsets that are not in the set of collision keys are added to the new kvset. In an example, operation 1020 and 1015 may operate concurrently to add entries to the new kvset.

[0173] At operation 1025, the subset of kvsets is replaced with the new kvset by writing the new kvset and removing (e.g., deleting, marking for deletion, etc.) the subset of kvsets.

[0174] FIG. 11 is a block diagram illustrating key-value compaction, according to an embodiment. Key value compaction differs from key compaction in its treatment of values. Key-value compaction reads the key-value pairs and tombstones from the merge set, removes obsolete key-value pairs or tombstones, writes the resulting key-value pairs and tombstones to one or more new kvsets in the same node, and deletes the kvsets comprising the merge set from the node. The new kvsets atomically replace, and are logically equivalent to, the merge set both in content and in placement within the logical ordering of kvsets from newest to oldest in the node.

[0175] As illustrated, kvsets KVS3, KVS2, and KVS1 comprise the merge set. The shaded key entries and values will be kept in the merge and placed in the new KVS4, written to the node to replace KVS3, KVS2, and KVS1. Again, as illustrated above with respect to key compaction, the key collisions for keys A and B are resolved in favor of the most recent entries. What is different in key-value compaction from key compaction is the removal of the unreferenced values. Thus, here, KVS4 is illustrated to consume only the space required to hold its current keys and values.