Systems and Methods for Integration of Application Performance Monitoring with Logs and Infrastructure Using a Common Schema

Martin; Mathieu ; et al.

U.S. patent application number 16/456377 was filed with the patent office on 2020-10-15 for systems and methods for integration of application performance monitoring with logs and infrastructure using a common schema. The applicant listed for this patent is Elasticsearch B.V.. Invention is credited to Ron Cohen, Rasmus Makwarth, Mathieu Martin, Michael D. Paquette.

| Application Number | 20200326819 16/456377 |

| Document ID | / |

| Family ID | 1000005117860 |

| Filed Date | 2020-10-15 |

View All Diagrams

| United States Patent Application | 20200326819 |

| Kind Code | A1 |

| Martin; Mathieu ; et al. | October 15, 2020 |

Systems and Methods for Integration of Application Performance Monitoring with Logs and Infrastructure Using a Common Schema

Abstract

Methods and corresponding systems for integration of different data for a distributed search, visualization and analysis platform, the method comprising automatically correlating data sources associated with execution of an application, the data from the data sources adhering to a common schema. The method further comprises providing a separate UI for each of the data sources for visualization and analysis; for each UI, providing a menu for selecting other correlated data sources; and in response to a menu selection, causing display of the other correlated data source and its corresponding UI. The data sources may include application performance monitoring (APM) data, log data, and infrastructure data. The common schema provides a core set of fields to be used in event messages stored for APM data, log data, and infrastructure data, and is configured to enable adding new data sources while enabling reuse of existing analysis content for the new data sources.

| Inventors: | Martin; Mathieu; (Laval, CA) ; Paquette; Michael D.; (Ludlow, MA) ; Makwarth; Rasmus; (Copenhagen, DK) ; Cohen; Ron; (Copenhagen, DK) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Family ID: | 1000005117860 | ||||||||||

| Appl. No.: | 16/456377 | ||||||||||

| Filed: | June 28, 2019 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 16382005 | Apr 11, 2019 | 10756959 | ||

| 16456377 | ||||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | H04L 43/06 20130101; G06F 3/0482 20130101; H04L 41/22 20130101 |

| International Class: | G06F 3/0482 20060101 G06F003/0482; H04L 12/26 20060101 H04L012/26; H04L 12/24 20060101 H04L012/24 |

Claims

1. A method for providing integration of different data for a distributed search, data visualization and analysis platform, the method comprising: automatically correlating a plurality of data sources associated with execution of an application, at least some of the data, from each of at least two of the plurality of data sources, adhering to a common schema; providing a separate user interface (UI) for each of the plurality of data sources for data visualization and analysis; for each of the separate UIs, providing a menu for selecting one of the other correlated data sources associated with the other UIs; and in response to a menu selection by a user, causing display of the other correlated data source and corresponding UI to the user; wherein the common schema further provides a set of rules for naming fields for consistency in the common schema; wherein a platform enforces the set of rules for the common schema; and wherein the common schema includes a superset of predefined fields and corresponding data types, the platform enforcing that the customization not conflict with a superset of predefined fields.

2. The method of claim 1, wherein all of the data from each of the plurality of data sources adheres to the common schema.

3. The method of claim 1, wherein the plurality of data sources includes application performance monitoring data, log data, and infrastructure data.

4. The method of claim 3, wherein the log data is from one or more services invoked for the execution, infrastructure data is associated with the execution, and the application performance monitoring (APM) data comprises trace information indicating in real time at least execution time information for the one or more services.

5. The method of claim 1, wherein the common schema provides for core, common fields for certain data appearing in different ones of the plurality of data sources.

6. The method of claim 1, wherein the common schema includes a list of names of fields, corresponding field types, and corresponding field definitions.

7. The method of claim 1, wherein the common schema defines a common set of document fields to be used in event messages stored for application performance monitoring data, log data, and infrastructure.

8. The method of claim 1, further comprising configuring the common schema so as to enable the addition of a new data source and also enabling reuse of existing analysis content for the new data source.

9. The method of claim 8, wherein the existing analysis content includes searches, visualizations, dashboards, alerts, reports, and machine learning jobs.

10. The method of claim 5, wherein the common schema provides for customization by a user a new field based on at least one of the core, common fields for ones of the plurality of data sources.

11. The method of claim 1, further comprising receiving inputs from a user for customizing a field for a particular one of the plurality of data sources.

12. The method of claim 11, wherein the inputs specify particular key value pairs associated with one or more unique fields, corresponding data types, and functionality enabled thereby.

13-15. (canceled)

16. The method of claim 11, wherein a rename processor for renaming a unique field to a matching core, common field when a name for a customized field conflicts with a predefined object of the common schema.

17. The method of claim 1, wherein the menu for each of the separate UIs includes a plurality of selectable actions, the method further comprising, in response to user selection of one of the plurality of selectable actions, causing display of data, associated with the selectable action, via the separate UI for the selected other data source.

18. The method of claim 17, wherein the plurality of selectable actions includes show container logs, show host logs, show container metrics, and show host metrics.

19. A system comprising: a processor; and a memory communicatively coupled to the processor, the memory storing instructions executable by the processor to perform a method for integration of application performance monitoring with logs and infrastructure data, the method comprising: automatically correlating a plurality of data sources associated with execution of an application, at least some of the data, from each of at least two of the plurality of data sources, adhering to a common schema; providing a separate user interface (UI) for each of the plurality of data sources for data visualization and analysis; for each of the separate UIs, providing a menu for selecting one of the other correlated data sources associated with the other UIs; and in response to a menu selection by a user, causing display of the other correlated data source and corresponding UI to the user; wherein the common schema further provides a set of rules for naming fields for consistency in the common schema; wherein a platform enforces the set of rules for the common schema; and wherein the common schema includes a superset of predefined fields and corresponding data types, the platform enforcing that the customization not conflict with a superset of predefined fields.

20. A computer-implemented method for providing integration of log data, infrastructure data, and application performance monitoring data in a microservices architecture, the method comprising: automatically correlating a plurality of data sources associated with execution of an application, the data adhering to a common schema, the plurality of data sources including application performance monitoring (APM) data, log data, and infrastructure data; providing a separate user interface (UI) for each of the plurality of data sources for data visualization and data analysis; for each of the separate UIs, providing a menu for selecting one of the other correlated data sources associated with the other UIs; and in response to a menu selection by a user, causing display of the other correlated data source and corresponding UI to the user; wherein the common schema further provides a set of rules for naming fields for consistency in the common schema; wherein a platform enforces the set of rules for the common schema; and wherein the common schema includes a superset of predefined fields and corresponding data types, the platform enforcing that the customization not conflict with a superset of predefined fields.

Description

CROSS REFERENCE TO RELATED APPLICATIONS

[0001] This application is a continuation-in part of U.S. application Ser. No. 16/382,005, filed Apr. 11, 2019, which application is hereby incorporated by reference herein in their entirety.

FIELD

[0002] The present technology pertains in general to application performance monitoring and more specifically, to providing integration of application performance monitoring with logs and infrastructure, using a common schema, for data visualization and analysis.

SUMMARY

[0003] This summary is provided to introduce a selection of concepts in a simplified form that are further described in the Detailed Description below. This summary is not intended to identify key or essential features of the claimed subject matter, nor is it intended to be used as an aid in determining the scope of the claimed subject matter.

[0004] The present disclosure provides various embodiments of methods and systems for integration of different data for a distributed search, visualization and analysis platform. In some embodiments, the method comprises automatically correlating a plurality of data sources associated with execution of an application, at least some of the data, from each of at least two of the plurality of data sources, adhering to a common schema; providing a separate user interface (UI) for each of the plurality of data sources for data visualization and analysis; for each of the separate UIs, providing a menu for selecting one of the other correlated data sources associated with the other UIs; and in response to a menu selection by a user, causing display of the other correlated data source and corresponding UI to the user.

[0005] The data sources may include application performance monitoring (APM) data, log data, and infrastructure data. The common schema provides a core set of fields to be used in event messages stored for APM data, log data, and infrastructure data, and is configured to enable adding new data sources while enabling reuse of existing analysis content for the new data sources. The common schema provides for customization by a user a new field based on at least one of the core, common fields for ones of the plurality of data sources. A corresponding system is disclosed that includes a processor and a memory communicatively coupled to the processor, the memory storing instructions executable by the processor to perform the method.

[0006] In other embodiments, a computer-implemented method is provided for integration of log data, infrastructure data, and application performance monitoring data in a microservices architecture, the method comprising automatically correlating data from a plurality of data sources associated with execution of an application, the data adhering to a common schema, the plurality of data sources including application performance monitoring data, log data, and infrastructure data; providing a separate user interface (UI) for each of the correlated data sources for visualization and data analysis; and in response to a selection by a user at one of the separate UIs, causing display of one of the other correlated data sources at another of the separate UIs.

BRIEF DESCRIPTION OF THE DRAWINGS

[0007] Embodiments are illustrated by way of example, and not by limitation, in the figures of the accompanying drawings, in which like references indicate similar elements and in which:

[0008] FIG. 1 is a simplified block diagram of a system having a distributed application structure, according to some embodiments.

[0009] FIG. 2 is an example overall diagram illustrating various aspects and process flow, according to example embodiments.

[0010] FIG. 3 is an example overall diagram showing various application performance monitoring (APM) aspects within the environment in the example in FIG. 2, according to some embodiments.

[0011] FIG. 4 is a diagram illustrating part of a screenshot for example log files UI, according to some embodiments.

[0012] FIG. 5 is a diagram illustrating an example inventory snapshot of the infrastructure, e.g., containers and hosts in this example embodiment.

[0013] FIG. 6 shows an expanded view of a portion of the diagram in the example in FIG. 5 for easier reference.

[0014] FIG. 7 is a diagram illustrating an screenshot (partial) showing example UI presenting distributed trace information, for example, for a container in FIG. 5-6, according to an example embodiment.

[0015] FIG. 8 is a diagram illustrating an screenshot (partial) 800 showing an example UI including graphs for various container metrics, according to an example embodiment.

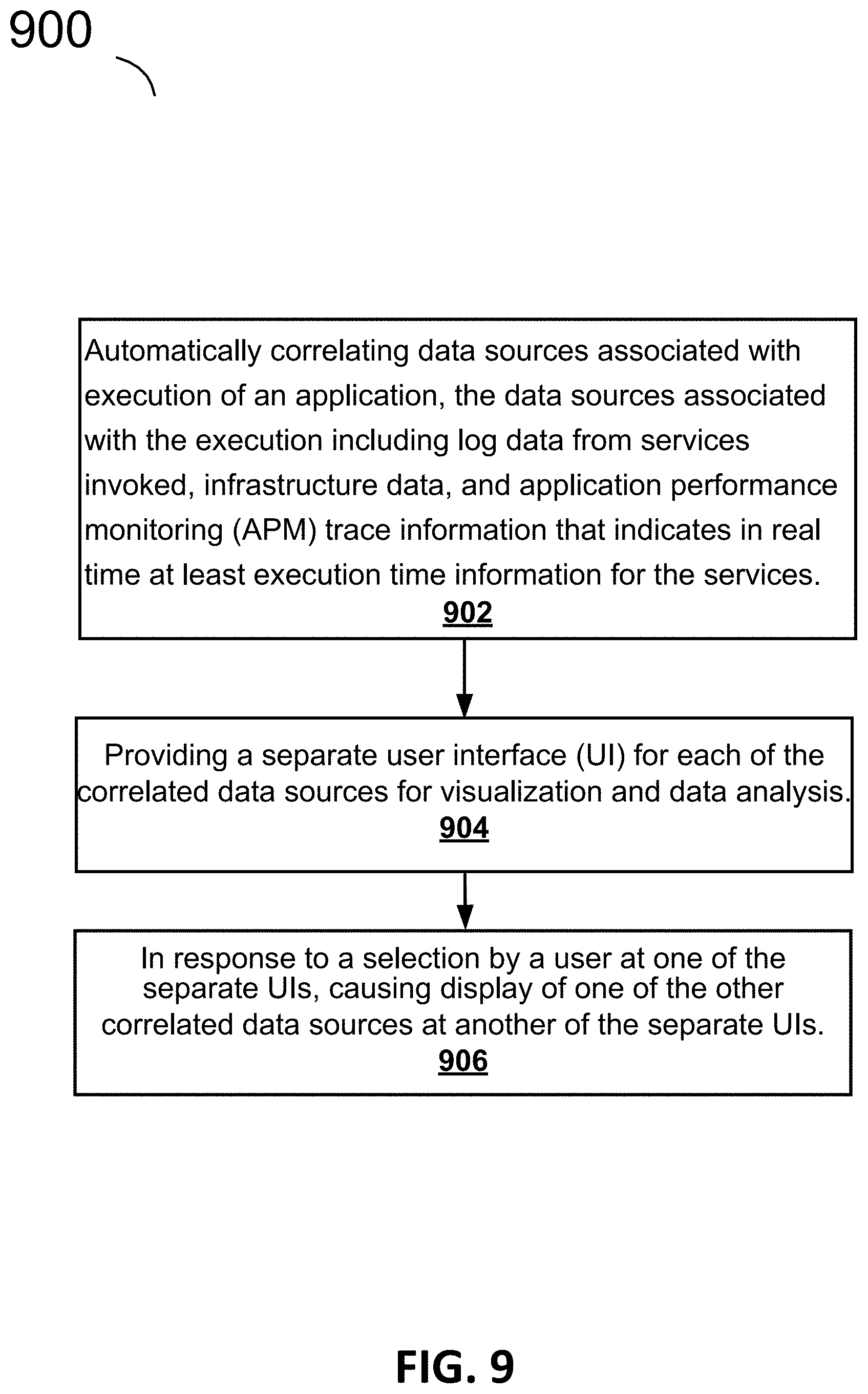

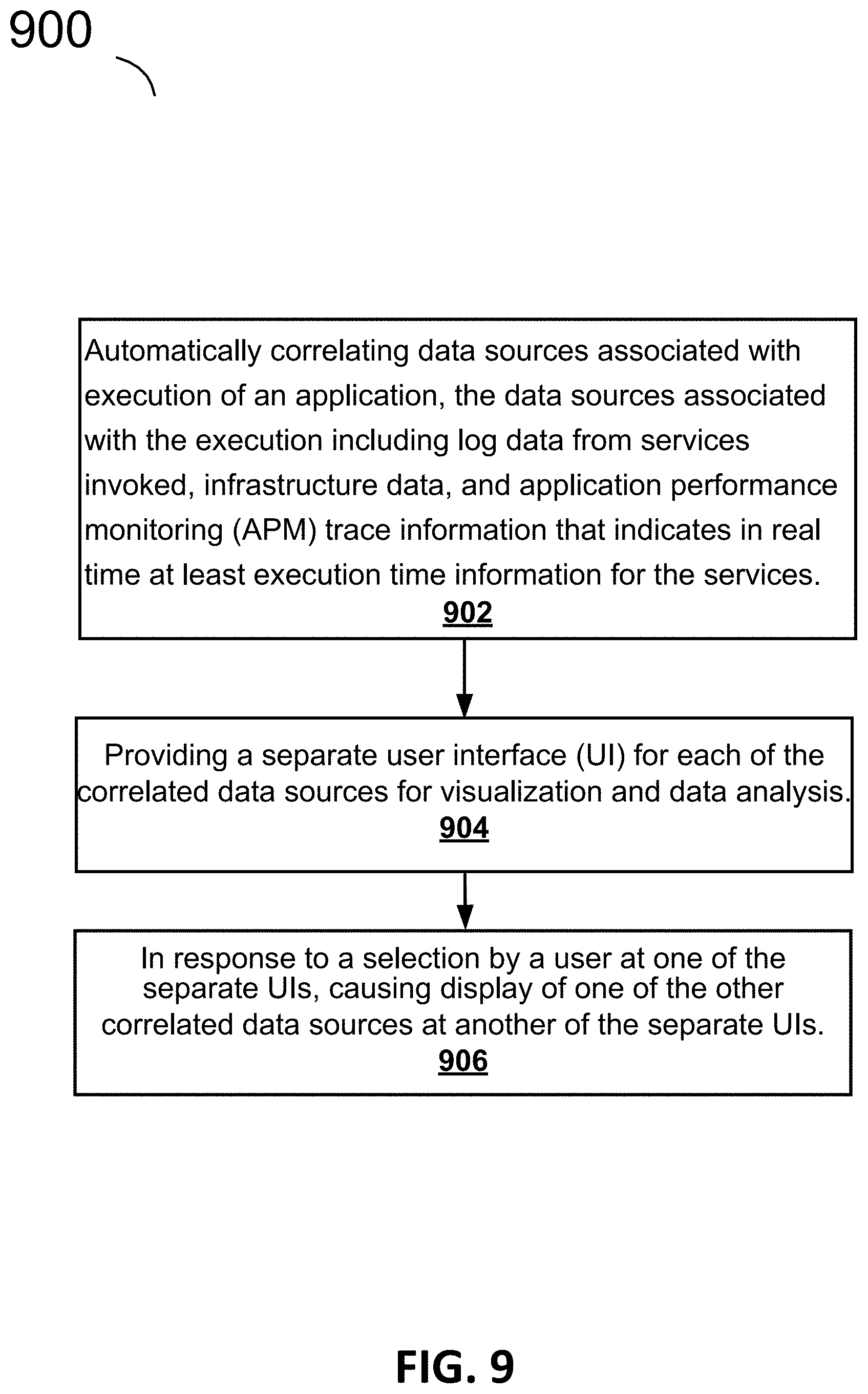

[0016] FIG. 9 is a flow diagram of a method, according to an example embodiment.

[0017] FIG. 10 shows a table of example core (common) fields and some corresponding extended fields for an example fields set, according to an example embodiment.

[0018] FIG. 11 illustrates another example of field sets and their description for core fields, according to another example embodiment.

[0019] FIG. 12 illustrates an example of a combination of fields that may be used for logging along with some corresponding descriptions, levels, types, and examples, according to an example embodiment.

[0020] FIG. 13 illustrates an example of a combination of fields that may be used for APM, along with some corresponding descriptions, levels, types, and examples, according to an example embodiment.

[0021] FIG. 14 illustrates example fields for infrastructure, more specifically, container field, according to an example embodiment.

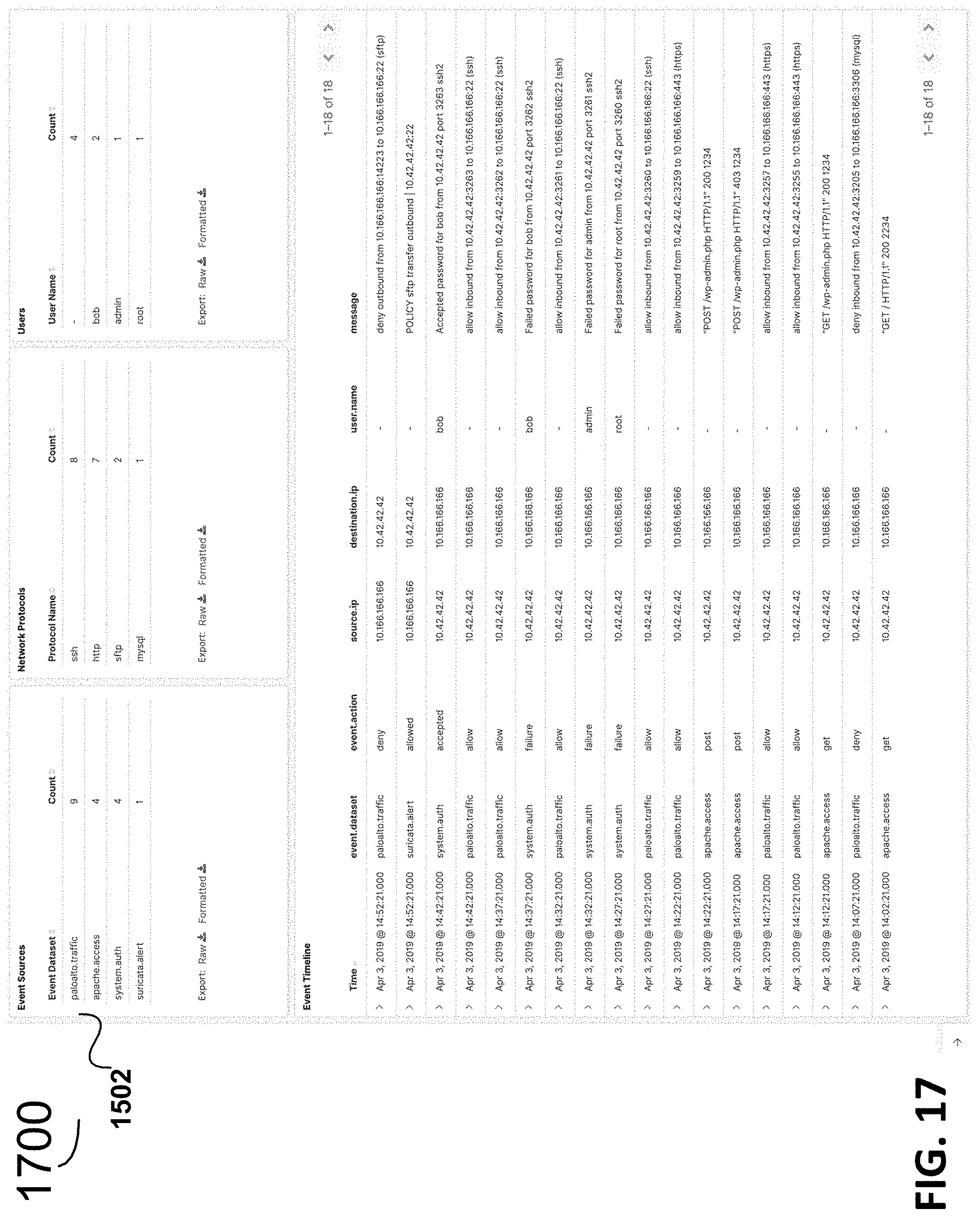

[0022] FIG. 15, FIG. 16, and FIG. 17 illustrate a top third, middle third, and bottom third, respectively, of an example dashboard that applies the common schema for visualizing multiple sources of network data.

[0023] FIG. 18 is a simplified block diagram of a computing system, according to some embodiments.

DETAILED DESCRIPTION

[0024] While this technology is susceptible of embodiment in many different forms, there is shown in the drawings and will herein be described in detail several specific embodiments with the understanding that the present disclosure is to be considered as an exemplification of the principles of the technology and is not intended to limit the technology to the embodiments illustrated. The terminology used herein is for the purpose of describing particular embodiments only and is not intended to be limiting of the technology. As used herein, the singular forms "a," "an," and "the" are intended to include the plural forms as well, unless the context clearly indicates otherwise. It will be further understood that the terms "comprises," "comprising," "includes," and/or "including," when used in this specification, specify the presence of stated features, integers, steps, operations, elements, and/or components, but do not preclude the presence or addition of one or more other features, integers, steps, operations, elements, components, and/or groups thereof. It will be understood that like or analogous elements and/or components, referred to herein, may be identified throughout the drawings with like reference characters. It will be further understood that several of the figures are merely schematic representations of the present technology. As such, some of the components may have been distorted from their actual scale for pictorial clarity.

[0025] The present disclosure is related to various embodiments of systems and methods for providing integration of application performance monitoring with logs and infrastructure for data visualization and analysis. In some embodiments, this functionality is configured to be provided in a distributed, multitenant-capable full-text analytics and search engine environment.

[0026] FIGS. 1-3 provide an overview of an example overall system and some aspects and components that may be used for some embodiments.

[0027] FIG. 1 is a simplified diagram illustrating a system 100 to illustrate certain concepts of the distributed nature and distributed application structure, according to some embodiments. System 100 includes client application 110A, one or more nodes 1201-120X, and connections 140. Collectively, one or more nodes 1201-120X form cluster 130A. When only one node (e.g., node 1201) is running, then cluster 130A is just one node. In various embodiments, a cluster (e.g., cluster 130A) is a collection of one or more nodes (servers) (e.g., one or more nodes 1201-120X) that together store data and provides federated indexing and search capabilities across all nodes. A cluster can be identified by a unique name, such that a node can be part of a cluster when the node is set up to join the cluster by its name. A cluster may have only one node in it. In some embodiments, a node (e.g., one or more nodes 1201-120X) is a single server that is part of a cluster (e.g., cluster 130A), stores data, and participates in the cluster's indexing and search capabilities. A node can be identified by a name which by default is a random Universally Unique IDentifier (UUID) that is assigned to the node at startup. Any number of nodes can be in a single cluster. In some embodiments, nodes (e.g., one or more nodes 1201-120X) can communicate using an application protocol (e.g., Hypertext Transfer Protocol (HTTP), transport layer protocol (e.g., Transmission Control Protocol (TCP)), and the like. Nodes can know about all the other nodes in the cluster (e.g., cluster 130A) and can forward client (e.g., client 110A) requests to the appropriate node. Each node can serve one or more purposes, master node and data node.

[0028] Each of client application 110A and one or more nodes 1201-120X can be a container, physical computing system, virtual machine, and the like. Generally, client application 110A can run on the same or different physical computing system, virtual machine, container, and the like as each of one or more nodes 1201-120X. Each of one or more nodes 1201-120X can run on the same or different physical computing system, virtual machine, container, and the like as the others of one or more nodes 1201-120X. A physical computing system is described further in relation to the exemplary computer system 1500 of FIG. 15. Virtual machines may provide a substitute for a physical computing system and the functionality needed to execute entire operating systems.

[0029] When client application 110A runs on a different physical server from a node (e.g., of one or more nodes 1201-120X), connections 140 can be a data communications network (e.g., various combinations and permutations of wired and wireless networks such as the Internet, local area networks (LAN), metropolitan area networks (MAN), wide area networks (WAN), and the like using Ethernet, Wi-Fi, cellular networks, and the like). When a node (of one or more nodes 1201-120X) runs on a different physical computing system from another node (of one or more nodes 1201-120X), connections 140 can be a data communications network. Further details regarding the distributed application structure can be found in commonly assigned U.S. patent application Ser. No. 16/047,959, filed Jul. 27, 2018 and incorporated by reference herein.

[0030] Having provided the above details of certain concepts of the distributed application structure described above, the description now turns to further detailing aspects of the present technology according to various embodiments.

[0031] Although various example embodiments are described herein with respect to KIBANA and other elements of an integration solution called ELASTIC STACK, the present technology is not so limited.

[0032] KIBANA provides for data visualization and exploration, for example, for log and time-series data analytics, application monitoring, and other use cases regarding a user's data on its servers, cloud-based services used, etc.

[0033] FIG. 2 is an example diagram of a system 200 illustrating KIBANA connections and flow with respect to other aspects of an integrated solution referred to as ELASTIC STACK. BEATS 202 can capture various items including but not limited to audit data (AUDITBEAT), log files (FILEBEAT), availability (HEARTBEAT), metrics (METRICBEAT), network traffic (PACKETBEAT), and windows event logs (WINLOGBEAT). Although each of those is shown in FIG. 2, BEATS need not include all of those elements in this example. BEATS can send data directly into ELASTICSEARCH 204 or via LOGSTASH 206 (a data-collection and log-parsing engine) where it can be further processed and enhanced before visualizing, analyzing and exploring it using KIBANA 208). Although FIG. 2 includes KIBANA 208 and other particular aspects and components, the present technology is not limited to utilizing some or all of the components and aspects.

[0034] KIBANA 208 can provide a powerful and easy-to-use visual interface with features such as histograms, line graphs, pie charts, sunbursts and the can enable a user to design their own visualization, e.g., leveraging the full aggregation capabilities of the ELASTICSEARCH 204 (a distributed, multitenant-capable full-text analytics and search engine). In that regard, KIBANA 208 can provide tight integration with ELASTICSEARCH 204 for visualizing data stored in ELASTICSEARCH 204. KIBANA 208 may also leverage the Elastic Maps Service to visualize geospatial data, or get creative and visualize custom location data on a schematic of the user's choosing. Regarding time series data, KIBANA 208 can also perform advanced time series analysis on a company or other user's ELASTICSEARCH 204 data with provide curated time series user interfaces (UI)s. Queries, transformations, and visualizations can be described with powerful, easy-to-learn expressions. Relationships can be analyzed with graph exploration.

[0035] With KIBANA 208, a user may take the relevance capabilities of a search engine, combine them with graph exploration, and uncover the uncommonly common relationships in the user's ELASTICSEARCH 204 data. In addition, KIBANA 208 can enable a user to detect the anomalies hiding in a user's ELASTICSEARCH 204 data and explore the properties that significantly influence them with unsupervised machine learning features. A user could also, e.g., using CANVAS, infuse their style and creativity into presenting the story of their data, including live data, with the logos, colors, and design elements that make their brand unique. This covers just an exemplary subset of the capabilities of KIBANA 208.

[0036] It can be provided for the user to share visualizations and dashboards (e.g., KIBANA 208 or other visualizations and dashboards) within a space or spaces (e.g., using KIBANA SPACES), with others, e.g., a user's team members, the user's boss, their boss, a user's customers, compliance managers, contractors, while having access controlled.

[0037] FIG. 3 is an example overall diagram 300 showing various application performance monitoring (APM) aspects within the environment in the example in FIG. 2, according to some embodiments. In the example in FIG, 3, a plurality of APM agents 302 are included. In various embodiments, the APM agents are open source libraries written in the same language as a user's service. A user may install APM agents 302 into their service as the user would install any other library. The APM agents 302 can instrument a user's code and collect performance data and errors at runtime. In various embodiments, the collected performance data and errors (also referred to collectively as collected data or just data) is buffered for a short period and sent on to APM Server 304 304. In some embodiments, the APM Server 304 is an open source application which typically runs on dedicated servers. The APM Server 304 may receive the collected data from the APM agents 302 through an application programming interface (API). In some embodiments, the APM Server 304 creates documents from the collected data from the APM agents 302 and store the documents in the full-text search and analytics engine, e.g., ELASTICSEARCH 204 in this example. ELASTICSEARCH 204 can allow the user to store, search, and analyze big volumes of data quickly and in near real time. The documents can include APM performance metrics. As further described herein, KIBANA 208 is an open source analytics and visualization platform designed to work with ELASTICSEARCH 204. KIBANA 208 may be used to search, view, and interact with data stored in ELASTICSEARCH 204. KIBANA 208 may also be used to visualize APM data by utilizing the APM UI.

[0038] In various embodiments, the APM agents 302 capture different types of information from within their instrumented applications, known as events. The events may be errors, spans, or transactions. These events may be then streamed to the APM Server 304 which validates and processes the events.

[0039] Historically, software applications have been monolithic in that the code was contained within a single application. Modern architectures often include multiple services and multiple applications that talk to each other. This is also referred to as a microservice architecture. Microservices are also referred to herein shortened to services for conciseness. Applications and services may also be referred to herein as just services for conciseness.

[0040] As part of serving a web request on a particular website there might be, for example, several of services invoked. There could be two or dozens of services invoked for one request. A web request is also referred to herein as a request or an HTTP request. Part of serving a particular request could involve queries made to back-end services such as to www.google.com, and while waiting for response in a browser, the GOOGLE back-end is getting requests through several services, which could also delay getting a response to the particular request. In various embodiments, distributed tracing allows a user (such as a developer and the like) to follow a request as it comes in and how it automatically propagates through the services. In various embodiments, user can see a visualization of how long the entire request took to be served (e.g., from the incoming request to the time the response was ultimately served). A user may also see, on a per service level, the location of the biggest bottlenecks as part of serving that particular request. The bottlenecks can be related to time and resources used, to name a few. Without distributed tracing with a distributed, multitenant-capable full-text analytics and search engine environment, developers and other users would have to manually determine a first service involved, look into that first service to determine manually what other services are involved and manually call up various metrics, etc. to try to identify a bottleneck; a very time consuming process and sometimes impossible to do for servicing complex requests, for instance. A complex request may invoke hundreds of services, making the aforementioned manual process impossible to perform in the timely manner.

[0041] In assessing a bottleneck in processing a request, it is key to identify which services are involved, trace each one, and narrow down which service(s) are the problem, and then delve even more granularly within the service. In various embodiments, instrumentation is also provided on a service level which provides a granular view of exactly what a particular service was spending its time on.

[0042] All of the time consuming tasks that a certain service is doing when performing a response to a request may be traced. So within the scope of that one service, methods and systems according to certain aspects provide a very granular view of where time was spent. In addition to this granular breakdown, a user can also be provided with the duration that each service was occupying as part of the entire trace. Thus, both the granular level within a service, and the duration of each service, are some of the aspects provided in various embodiments. For example, the user can be enabled in certain embodiments to visualize which part (e.g., service) of a trace was slowest in terms of the dynamics of the services and why that part (e.g., service) was so slow. The distributed tracing can be structured to function in particular environments and enables a user to analyze performance throughout their microservices architecture all in one view, with that environment. Transactions and spans can form the trace. Traces can group together events that have a common root.

[0043] In a debugging production environment, three different sources of information can be invaluable. Log data, infrastructure metrics, and APM data are the holy trinity of sources when it comes to debugging production environments. Historically, developers (and other IT professionals) have these three data sources in different verticals; e.g., having one system to handle all log files, another system to handle all of the infrastructure metrics, and a third system that handles all of the APM data. (Developers and other IT professionals are collectively referred to as just `developers" for conciseness herein.) This can mean the developers historically would have to buy three different vendor licenses and have increased storage requirements. In addition to the increases license(s) cost and increased storage requirements, a developer historically would have use a manual process; manually going to each of these vertical and determine at each one if there is any interesting debugging information in any of them for a given point in time; which is a tedious process. Moreover, sometimes using these three sources for debugging is impossible because a developer does not have access to one or more of them, or it is just too time consuming to manually stitch together information from the three separate verticals.

[0044] In various embodiments, a user can have ready access to all three of these data sources--log data, infrastructure metrics, and APM data--which are very efficiently stored, e.g., in ELASTICSEARCH for one example.

[0045] Various embodiments provide methods and systems that make it very easy for users to jump between the three most important data sources of log data, infrastructure metrics, and APM data.

[0046] FIG. 4 is a diagram illustrating part 400 of a screenshot from a browser for example log files UI. In various embodiments, these log files can be consumed within a visualization platform. In some embodiments, the platform is KIBANA that provides visualization to the data in ELASTICSEARCH. Although KIBANA and ELASTICSEARCH are included for illustration purposes, the present technology is not limited to use with KIBANA and ELASTICSEARCH. A search field 402 may be provided to enable the user to specify (e.g., input or select) which logs to display, to narrow the logs down to more relevant logs.

[0047] In various embodiments, another view that is provided is for the infrastructure metrics and can be readily viewed by the user, e.g., in another tab of the same browser (the logs being viewable in a different tab). FIG. 5 is a diagram illustrating at the other browser tab is an example inventory snapshot 500 of the infrastructure, e.g., containers and hosts in an example embodiment. For clarity, the snapshot 500 in FIG. 5 is just a portion of the entire browser tab displayed in this example.

[0048] Key indicators can be shown for the large number of devices; ten devices are shown in FIG. 5, however, a smaller or larger number of devices may be shown on the UI. In the example in FIG. 5, the key indicator is a metric selected, i.e., "Metric: CPU Usage" identified at 504. That metric is shown in FIG. 5 for each of the ten containers in the portion of the tab shown at 502. As can be seen some container are performing worse than others in regard to CPU usage. FIG. 6 shows an expanded view of portion 502 for easier reference. One of the containers at 506 in FIGS. 5-6, has the highest CPU usage at 38.6% in this example.

[0049] In response to user selection of the container 506 in this example, the full identification can be shown as "localtesting_7.0.0-rd1_elasticsearch" with the 38.6% CPU usage, The full identification at 508 can also be shown in response to the pointer hovering over the container 506 icon. Also in response to the selection, a menu 510 can be displayed. A search field 502 may be provided to enable the user to specify (e.g., input or select) which containers to display, to displayed desired containers. Although DOCKER containers are noted in FIG. 5, other types of device including, for example, KUBERNETES pods may be used.

[0050] FIG. 6 shows the details enlarged for the menu 510 which has items 512 (View logs), 514 (View metrics) and 516 (View container APM traces). If a user such as developer wishes to view the container APM traces for this container 506, the selection can be made of menu item 516 in this UI. In response to the selection on the UI, various embodiments present the container traces in a UI at which the user can view details and drill down to further details to attempt to pinpoint the problem, e.g. excess CPU usage in example in FIG. 5-6.

[0051] FIG. 7 is a diagram 700 illustrating an screenshot (partial) showing example UI presenting distributed trace information, for example, shown in response to "View container APM traces selected from the menu in FIG. 6 for the selected container in FIG. 5-6, according to an example embodiment. For clarity, FIG. 7 shows just part of a web browser tab. Diagram 700 shows on the UI (also referred to herein as the APM UI) the type of distributed trace information for trace 714 which could be shown for the container in FIGS. 5-6 exhibiting excess CPU usage, to greatly aid a user in debugging. It should be appreciated that the APM UI could be the viewed first and then the container logs or container metrics, etc. could be selected for viewing using the menu 702 as described further below.

[0052] The trace information provides insights to the user in a waterfall breakdown showing execution times for various associated executed services. Further details regarding distributed traces and associated data is found in U.S. patent application Ser. No. 16/381,997, filed Apr. 11, 2019, entitled "Distributed Tracing for Application Performance Monitoring" and incorporated by reference in its entirety herein.

[0053] For example, the user can look on the UI 700 to see how at a particular time the machines were performing to determine the cause of a slowdown/bottleneck. In various embodiments, the UI and information presented enables the user to select a trace that appears slow based on execution time to help debug the code, e.g., determine if there are problems with the database queries, the code itself, or outside factors. A trace might be slow due to issues with the container it was served from, not the code itself. Such an outside factor could be that the machine on which the transaction is being executed is overwhelmed with traffic, causing the slowdown. If it is not apparent from the information in the APM traces and other information on the UI 700, various embodiments enable the user to select various action.

[0054] In various embodiments, an APM UI provides integration between the APM, Logging and Infrastructure functionality. When looking at a trace in the APM UI, a user can now quickly jump to the host or container metrics and logs by clicking an Actions menu (e.g., Actions menu 702 in FIG. 7)

[0055] For example, various embodiments enable the user to jump to viewing the container metrics for the time at issue, by selecting actions at 706 for the trace. The actions may be predefined. In response to the selection, the Actions menu 702 can be shown, on which an action "Show container metrics" at 704 can be chosen to view metrics for the container at the relevant point in time. Other example actions that may be provided on menu 702 include selectable action including show container logs at 708, show host logs at 712, show container metrics at 704, show host metrics at 710, according to this example embodiment.

[0056] For one example, the "Show container metrics" selection at 704 can show container metrics (see FIG. 8 described further below) that this particular trace (and particular span selected within the trace) was recorded on and enable the user to jump to the particular time of interest.

[0057] In various embodiments, an APM UI provides integration between the APM, Logging and Infrastructure functionality. When looking at a trace in the APM UI, a user can now quickly jump to the host or container metrics and logs by clicking an Actions menu.

[0058] FIG. 8 is a diagram illustrating an screenshot (partial) showing an example UI 800 including graphs for various container metrics for a particular container, according to an example embodiment. In the example in FIG. 8, the container metrics are shown for a window of time and include graphs for CPU usage, memory usage, network traffic, and disk I/O over time within the relevant time window. A user might then determine the cause of the problem based on viewing the metrics shown. However, the user may also need to view the container logs (and/or host logs) that were generated during this time in order to pinpoint the problem.

[0059] Referring back to FIG. 7, in various embodiments, at the APM trace UI 700, show container logs and show host logs can be selected at 708 and 712 respectively, in this example. In response, a log viewer can show the corresponding log. In various embodiments, the log viewer is as shown in the example in FIG. 4, but the log viewer may also be filtered down in some embodiments by the relevant time and the selected container ID for which the particular APM trace was served. In response to the request to filter, the relevant log messages are shown to the user for the selected container ID and the time relevant for the selection, according to some embodiments.

[0060] Certain fields in the log UI, e.g., UI 400, may be linkable to aid in debugging by enabling a user to quickly jump between different UIs/views. For example, the trace.id field may be linked to the distributed tracing UI view (e.g., UI 700 in FIG. 7). Similarly, the container.id field may be linked to the relevant container metrics in the infrastructure UI (e.g., UI 800 in FIG. 8). In addition, the host.hostname field may be linked to the relevant host metrics in an infrastructure UI (alternatively, the host metrics are selectable via 710 in FIG. 7). These are merely nonlimiting example, other fields may also be linkable and thus selectable by a user, in response to, an automatic jump is made to an associated UI/view. Having a trace ID attached to log messages, for example, can enable a user to select (e.g., click on or otherwise actuate) the trace ID in the log messages (e.g., log UI 400) and in response, the UI/view automatically jumps straight to the corresponding actual trace. The method in some embodiments works similarly for container IDs or the host name to jump to a corresponding infrastructure view.

[0061] Methods and systems in various embodiments thus can show APM data, relevant infrastructure metrics, and relevant log message, all in response to just a few selections by a user on a UI (or multiple UIs) presented via a web browser. Having logs, metrics, and APM traces stored and indexed, according to various embodiments, provides several advantages. UIs are also configured such that method and systems according to various embodiments enable a user to view corresponding information for data sources like infrastructure metrics, logs and traces enabling the user to debug the root cause much faster. This provides substantial advantages over the user having to log into three different systems (one for APM traces, another for infrastructure metrics, and another for log messages) and to have to do the correlations manually. In addition to various embodiments being configured to provide automatic correlating in response to user inputs, various embodiments eliminate the increased license(s) cost and increased storage requirements that are historically required for the manual process of going to each of these vertical for debugging. Having all of the logs, metrics, APM, and distributed tracing all together enables a user to use all of the analysis and visualization tools across all of their data.

[0062] In various embodiments, a common schema provides a common data model that aids in automatically correlating data despite the presence of disparate data types (e.g., logs, metrics, APM, flows, contextual data); heterogeneous environments with diverse vendor standards; and similar but different data sources.

[0063] The common schema can facilitate, among other things, the automatic correlations of data from sources such as logs, metrics, and various analytics using the common data model. For this common schema, data may be structured as a key and a value. For example, a particular container ID includes a particular key and value and if the system is configured to align on all the keys and values across all the elements a user wants to investigate, it becomes easier to link around to the different elements. This enables a user to take advantage of the common schema across certain data sources and create their own custom actions, in some embodiments.

[0064] For example, under the common schema, metrics, logs, and APM data (and, in some embodiments, any data that has shifted in ELASTICSEARCH through official components) all refer to a container in the same way, all refer to an IP address in the same way. In embodiments with the common schema implemented, if a first user wants to look at data sources that were generated by a second user on a certain IP address, the first user will be viewing the same fields across all the data, e.g., for fields that are part of the common schema.

[0065] FIG. 9 is a simplified flow diagram of a method 900, according to an example embodiment. Operation 902 includes automatically correlating data sources associated with execution of an application, the data sources associated with the execution including log data from services invoked, infrastructure data, and application performance monitoring (APM) trace information that indicates in real time at least execution time information for the services, as described further herein. In some embodiments, this operation includes automatically correlating three data sources associated with execution of an application, the three data sources including log data from one or more services invoked for the execution, infrastructure associated with the execution, along with application performance monitoring (APM) trace information indicating in real time at least execution time information for the one or more services, as described further herein.

[0066] Operation 904 includes further providing a separate user interface (UI) for each of the correlated data sources for visualization and data analysis, as described further herein. In some embodiments, this operation includes providing a separate user interface (UI) for at least the logs, infrastructure, and APM trace information data sources for data visualization and analysis; and for the separate UIs for at least the infrastructure and APM trace information correlated data sources, providing a menu for selecting one of the other correlated data sources associated with the other UIs, as described further herein.

[0067] In operation 906, in response to a selection by a user at one of the separate UIs, causing display of one of the other correlated data sources at another of the separate UIs, as described further herein. In some embodiments, this operation includes, in response to a menu selection by a user, causing display of the other correlated data source and corresponding UI to the user, as described further herein.

[0068] Further regarding the common schema and, for example, operation 902 in the example in FIG. 9, the common schema can substantially enable the automatic correlating of the data sources associated with execution of an application (including e.g., log data from services invoked, infrastructure data, and application performance monitoring (APM) trace information that indicates in real time at least execution time information for the services). Utilizing the common schema can enable a user to have access to automatic correlations across data sources of particular interest to the user (e.g., those of interest for debugging a particular problem), much more easily than if the user had to do the correlations manually. Various embodiments enable automatically correlating data from diverse data sources. The data sources may be for instance from diverse vendors and technologies, e.g., APACHE web logs, CISCO NetFlow, TANIUM endpoint events, to name just a few examples. The data sources may be similar but different sources of endpoint data, e.g., Audibeat, Cylance, and Tanium, to name just a few examples,

[0069] Various embodiments enable a user to create custom action for the common schema. The custom actions that a user can create can be in addition to or in place of the predefined actions, e.g., predefined actions at actions menu 702 in FIG. 7, and can for just one example, provide a more meaningful correlation for particular debugging. Without the common schema according to various embodiments, actions such as searching for a specific user within data originating from multiple sources would be time consuming and inefficient. To search for this one field, would likely require accounting for multiple field names, such as user, username, nginx.access.user_name, and login. Drilling into and pivoting around that data would present an even greater challenge. Even further, in developing analytics content, such as a visualization, alert, or machine learning job, each new data source would add either complexity or duplication if the common schema was not implemented.

[0070] In various embodiments, the common schema provides both the predictability of a purpose-built taxonomy and the versatility of an inclusive specification that adapts for custom use cases. The common schemas' taxonomy can distribute data elements across fields that are organized into three levels, in various embodiments. One level provided can be, for example, core fields that can provide a fully defined set of field names that exists under a defined set of top-level objects. These core fields are common across most use cases, according to various embodiments, and are also referred to as common fields or compatible fields. In various embodiments, another level provided is extended fields which can be a partially defined set of field names that exists under the same set of top-level objects. The extended fields may apply to narrower use cases or be more open to interpretation depending on the use case. In various embodiments, an example third level may be custom fields which can be defined and named by the user for their custom use cases. Custom fields can be added where there are no corresponding core or extended field in the common schema, however, the custom fields may not conflict with the core and extended fields.

[0071] In just one example, FIG. 10 shows a table of example core (common) field sets 1002 and underlying fields and extended field sets 1004 and their underlying fields, for an example fields set. A field set as used herein is a grouping of fields, e.g., host is a field set whereas host.id is a field in the host field set. In addition to host, the fields sets 1002 for the core fields in the example in FIG. 10 include event, device, agent, network, source, destination, service, and resource with the corresponding example core fields list under each. It should be appreciated that this is just one example of core (common) fields for a common schema, other sets of core fields (see e.g., FIG. 11 can be used). FIG. 10 also shown some mapping between example extended fields 1004 with the example core fields 1002. The fields sets for the extended fields in the example in FIG. 10 include error, network protocols, threat, user, geolp, and various services. It should be appreciated that the fields and field sets in the example in FIG. 10 are merely examples; other suitable fields and field sets could be used in other embodiments to practice the common schema.

[0072] FIG. 11 illustrates another example 1100 of field sets and their description for various core and extended fields, according to an example embodiment. In the example 1100, there are a number of fields in each field set. Other fields may be used in various embodiments of the common schema, e.g., see example in FIG. 10.

[0073] FIG. 12 illustrates an example of a combination of fields that may be used for logging (logging fields 1200) along with some corresponding descriptions, levels, types, and examples, according to an example embodiment.

[0074] FIG. 13 illustrates an example of a combination of fields that may be used for APM (APM fields 1300), along with descriptions, levels, types, and examples.

[0075] FIG. 14 illustrates example fields 1400 for infrastructure, more specifically, container field 1400 in this example. The container fields in this example can be used for meta information about a specific container information is coming from. These core fields can help to correlate data based on containers. Other suitable core fields may be provided for other infrastructure.

[0076] In one example, the common schema defines a common set of document fields (and their respective field names) to be used in event messages stored in a distributed, multitenant-capable full-text analytics and search engine environment such as ELASTICSEARCH as part of any logging or metrics use case of the ELASTIC STACK, including IT operations analytics and security analytics. It should be appreciated that core (common) fields are provided in various embodiments for other aspect besides logging, APM, and infrastructure examples provided herein.

[0077] FIG. 15, FIG. 16, and FIG. 17 illustrate a top third 1500, middle third 1600, and bottom third 1700, respectively, of an example dashboard that applies the common schema for visualizing multiple sources of network data. The sources are identified at 1502 in the example shown in three parts in the examples in FIG. 15, FIG, 16, and FIG. 17.

[0078] Non-limiting example use cases include monitoring an entity's web stack for threats with several sources of network data: a Palo Alto Next-Gen Firewall on the perimeter, and the Suricata IDS generating events and alerts. There is a challenge, for example, in how to extract the source.ip and network.direction fields from each message in a way that enables centralized visualization and vendor-agnostic drill-down and pivoting. The common schema according to various embodiments, can help readily meet that challenge, allowing performance of centralized monitoring more easily than was previously possible.

[0079] In various embodiments, the common schema provides a set of rules for naming field for consistency in the common schema. In example embodiments, the rules could include, but are not limited to, having field names in lower case, avoiding abbreviations, avoiding special characters except underscore, net fields with a field set with dots, and using a prefix for all fields except the base (for example, one rule could be that all host fields are prefixed with host, where in this example, host is a field set). Other rules can also apply in some embodiments.

[0080] The common schema can include a list of field names and corresponding definitions. The common schema can further include several data types. Various embodiments include configuring the common schema so as to enable the addition of a new data source and also enabling reuse of existing analysis content for the new data source. The analysis content may variously include searches, visualizations, dashboards, alerts, reports, and machine learning jobs.

[0081] In various embodiments, a platform enforces the common schema. The common schema can include a superset of predefined fields and corresponding data types, the platform enforcing that the customization not conflict with a superset of predefined fields.

[0082] The common schema can provide for user customization of a new custom field based on at least one of the common fields for ones of the plurality of data sources, the customization received from the user specifying particular key value pairs associated with one or more unique fields, corresponding data types, and functionality enabled thereby. Various embodiments of the method include receiving inputs from a user for customizing a field for a particular one of the plurality of data sources. As part of enforcements, a rename processor functionality can be provided for renaming a unique field to a matching common field when a name for a customized field conflicts with a predefined object of the common schema. This and other aspects can enable users, e.g., user of a distributed search engine platform, to normalize their event data, so the users can better analyze, visualize, and correlate the data represented in their events.

[0083] FIG. 18 illustrates an exemplary computer system 1800 that may be used to implement some embodiments of the present invention. The computer system 1800 in FIG. 18 may be implemented in the contexts of the likes of computing systems, networks, servers, or combinations thereof. The computer system 1800 in FIG. 18 includes one or more processor unit(s) 1810 and main memory 1820. Main memory 1820 stores, in part, instructions and data for execution by processor unit(s) 1810. Main memory 1820 stores the executable code when in operation, in this example. The computer system 1800 in FIG. 18 further includes a mass data storage 1830, portable storage device 1840, output devices 1850, user input devices 1860, a graphics display system 1870, and peripheral device(s) 1880.

[0084] The components shown in FIG. 18 are depicted as being connected via a single bus 1890. The components may be connected through one or more data transport means. Processor unit(s) 1810 and main memory 1820 are connected via a local microprocessor bus, and the mass data storage 1830, peripheral device(s) 1880, portable storage device 1840, and graphics display system 1870 are connected via one or more input/output (I/O) buses.

[0085] Mass data storage 1830, which can be implemented with a magnetic disk drive, solid state drive, or an optical disk drive, is a non-volatile storage device for storing data and instructions for use by processor unit(s) 1810. Mass data storage 1830 stores the system software for implementing embodiments of the present disclosure for purposes of loading that software into main memory 1820.

[0086] Portable storage device 1840 operates in conjunction with a portable non-volatile storage medium, such as a flash drive, floppy disk, compact disk, digital video disc, or Universal Serial Bus (USB) storage device, to input and output data and code to and from the computer system 1800 in FIG. 18. The system software for implementing embodiments of the present disclosure is stored on such a portable medium and input to the computer system 1800 via the portable storage device 1840.

[0087] User input devices 1860 can provide a portion of a user interface. User input devices 1860 may include one or more microphones, an alphanumeric keypad, such as a keyboard, for inputting alphanumeric and other information, or a pointing device, such as a mouse, a trackball, stylus, or cursor direction keys. User input devices 1860 can also include a touchscreen. Additionally, the computer system 1800 as shown in FIG. 18 includes output devices 1850. Suitable output devices 1850 include speakers, printers, network interfaces, and monitors.

[0088] Graphics display system 1870 include a liquid crystal display (LCD) or other suitable display device. Graphics display system 1870 is configurable to receive textual and graphical information and processes the information for output to the display device. Peripheral device(s) 1880 may include any type of computer support device to add additional functionality to the computer system.

[0089] Some of the components provided in the computer system 1800 in FIG. 18 can be those typically found in computer systems that may be suitable for use with embodiments of the present disclosure and are intended to represent a broad category of such computer components. Thus, the computer system 1800 in FIG. 18 can be a personal computer (PC), hand held computer system, telephone, mobile computer system, workstation, tablet, phablet, mobile phone, server, minicomputer, mainframe computer, wearable, or any other computer system. The computer may also include different bus configurations, networked platforms, multi-processor platforms, and the like. Various operating systems may be used including MAC OS, UNIX, LINUX, WINDOWS, PALM OS, QNX, ANDROID, IOS, CHROME, TIZEN, and other suitable operating systems.

[0090] Some of the above-described functions may be composed of instructions that are stored on storage media (e.g., computer-readable medium). The instructions may be retrieved and executed by the processor. Some examples of storage media are memory devices, tapes, disks, and the like. The instructions are operational when executed by the processor to direct the processor to operate in accord with the technology. Those skilled in the art are familiar with instructions, processor(s), and storage media.

[0091] In some embodiments, the computing system 1800 may be implemented as a cloud-based computing environment, such as a virtual machine operating within a computing cloud. In other embodiments, the computing system 1800 may itself include a cloud-based computing environment, where the functionalities of the computing system 1800 are executed in a distributed fashion. Thus, the computing system 1800, when configured as a computing cloud, may include pluralities of computing devices in various forms, as will be described in greater detail below.

[0092] In general, a cloud-based computing environment is a resource that typically combines the computational power of a large grouping of processors (such as within web servers) and/or that combines the storage capacity of a large grouping of computer memories or storage devices. Systems that provide cloud-based resources may be utilized exclusively by their owners or such systems may be accessible to outside users who deploy applications within the computing infrastructure to obtain the benefit of large computational or storage resources.

[0093] The cloud is formed, for example, by a network of web servers that comprise a plurality of computing devices, such as the computing system 1800, with each server (or at least a plurality thereof) providing processor and/or storage resources. These servers manage workloads provided by multiple users (e.g., cloud resource customers or other users). Typically, each user places workload demands upon the cloud that vary in real-time, sometimes dramatically. The nature and extent of these variations typically depends on the type of business associated with the user.

[0094] It is noteworthy that any hardware platform suitable for performing the processing described herein is suitable for use with the technology. The terms "computer-readable storage medium" and "computer-readable storage media" as used herein refer to any medium or media that participate in providing instructions to a CPU for execution. Such media can take many forms, including, but not limited to, non-volatile media, volatile media and transmission media. Non-volatile media include, e.g., optical, magnetic, and solid-state disks, such as a fixed disk. Volatile media include dynamic memory, such as system random-access memory (RAM). Transmission media include coaxial cables, copper wire and fiber optics, among others, including the wires that comprise one embodiment of a bus. Transmission media can also take the form of acoustic or light waves, such as those generated during radio frequency (RF) and infrared (IR) data communications. Common forms of computer-readable media include, e.g., a floppy disk, a flexible disk, a hard disk, magnetic tape, any other magnetic medium, a CD-ROM disk, digital video disk (DVD), any other optical medium, any other physical medium with patterns of marks or holes, a RAM, a programmable read-only memory (PROM), an erasable programmable read-only memory (EPROM), an electrically erasable programmable read-only memory (EEPROM), a Flash memory, any other memory chip or data exchange adapter, a carrier wave, or any other medium from which a computer can read.

[0095] Various forms of computer-readable media may be involved in carrying one or more sequences of one or more instructions to a CPU for execution. A bus carries the data to system RAM, from which a CPU retrieves and executes the instructions. The instructions received by system RAM can optionally be stored on a fixed disk either before or after execution by a CPU.

[0096] Computer program code for carrying out operations for aspects of the present technology may be written in any combination of one or more programming languages, including an object oriented programming language such as PYTHON, RUBY, JAVASCRIPT, JAVA, SMALLTALK, C++ or the like and conventional procedural programming languages, such as the "C" programming language or similar programming languages. The program code may execute entirely on the user's computer, partly on the user's computer, as a stand-alone software package, partly on the user's computer and partly on a remote computer or entirely on the remote computer or server. In the latter scenario, the remote computer may be connected to the user's computer through any type of network, including a local area network (LAN) or a wide area network (WAN), or the connection may be made to an external computer (e.g., through the Internet using an Internet Service Provider).

[0097] The corresponding structures, materials, acts, and equivalents of all means or step plus function elements in the claims below are intended to include any structure, material, or act for performing the function in combination with other claimed elements as specifically claimed. The description of the present technology has been presented for purposes of illustration and description, but is not intended to be exhaustive or limited to the invention in the form disclosed. Many modifications and variations will be apparent to those of ordinary skill in the art without departing from the scope and spirit of the invention. Exemplary embodiments were chosen and described in order to best explain the principles of the present technology and its practical application, and to enable others of ordinary skill in the art to understand the invention for various embodiments with various modifications as are suited to the particular use contemplated.

[0098] Aspects of the present technology are described above with reference to flowchart illustrations and/or block diagrams of methods, apparatus (systems) and computer program products according to embodiments of the invention. It will be understood that each block of the flowchart illustrations and/or block diagrams, and combinations of blocks in the flowchart illustrations and/or block diagrams, can be implemented by computer program instructions. These computer program instructions may be provided to a processor of a general purpose computer, special purpose computer, or other programmable data processing apparatus to produce a machine, such that the instructions, which execute via the processor of the computer or other programmable data processing apparatus, create means for implementing the functions/acts specified in the flowchart and/or block diagram block or blocks.

[0099] These computer program instructions may also be stored in a computer readable medium that can direct a computer, other programmable data processing apparatus, or other devices to function in a particular manner, such that the instructions stored in the computer readable medium produce an article of manufacture including instructions which implement the function/act specified in the flowchart and/or block diagram block or blocks.

[0100] The computer program instructions may also be loaded onto a computer, other programmable data processing apparatus, or other devices to cause a series of operational steps to be performed on the computer, other programmable apparatus or other devices to produce a computer implemented process such that the instructions which execute on the computer or other programmable apparatus provide processes for implementing the functions/acts specified in the flowchart and/or block diagram block or blocks.

[0101] The flowchart and block diagrams in the Figures illustrate the architecture, functionality, and operation of possible implementations of systems, methods and computer program products according to various embodiments of the present technology. In this regard, each block in the flowchart or block diagrams may represent a module, segment, or portion of code, which comprises one or more executable instructions for implementing the specified logical function(s). It should also be noted that, in some alternative implementations, the functions noted in the block may occur out of the order noted in the figures. For example, two blocks shown in succession may, in fact, be executed substantially concurrently, or the blocks may sometimes be executed in the reverse order, depending upon the functionality involved. It will also be noted that each block of the block diagrams and/or flowchart illustration, and combinations of blocks in the block diagrams and/or flowchart illustration, can be implemented by special purpose hardware-based systems that perform the specified functions or acts, or combinations of special purpose hardware and computer instructions.

[0102] The description of the present technology has been presented for purposes of illustration and description, but is not intended to be exhaustive or limited to the invention in the form disclosed. Many modifications and variations will be apparent to those of ordinary skill in the art without departing from the scope and spirit of the invention. Exemplary embodiments were chosen and described in order to best explain the principles of the present technology and its practical application, and to enable others of ordinary skill in the art to understand the invention for various embodiments with various modifications as are suited to the particular use contemplated.

* * * * *

References

D00000

D00001

D00002

D00003

D00004

D00005

D00006

D00007

D00008

D00009

D00010

D00011

D00012

D00013

D00014

D00015

D00016

D00017

D00018

XML

uspto.report is an independent third-party trademark research tool that is not affiliated, endorsed, or sponsored by the United States Patent and Trademark Office (USPTO) or any other governmental organization. The information provided by uspto.report is based on publicly available data at the time of writing and is intended for informational purposes only.

While we strive to provide accurate and up-to-date information, we do not guarantee the accuracy, completeness, reliability, or suitability of the information displayed on this site. The use of this site is at your own risk. Any reliance you place on such information is therefore strictly at your own risk.

All official trademark data, including owner information, should be verified by visiting the official USPTO website at www.uspto.gov. This site is not intended to replace professional legal advice and should not be used as a substitute for consulting with a legal professional who is knowledgeable about trademark law.