Methods And Apparatus For Processing Audio Signals

ABOLFATHI; Amir A.

U.S. patent application number 16/908415 was filed with the patent office on 2020-10-08 for methods and apparatus for processing audio signals. This patent application is currently assigned to SoundMed, LLC. The applicant listed for this patent is SoundMed, LLC. Invention is credited to Amir A. ABOLFATHI.

| Application Number | 20200322741 16/908415 |

| Document ID | / |

| Family ID | 1000004906205 |

| Filed Date | 2020-10-08 |

View All Diagrams

| United States Patent Application | 20200322741 |

| Kind Code | A1 |

| ABOLFATHI; Amir A. | October 8, 2020 |

METHODS AND APPARATUS FOR PROCESSING AUDIO SIGNALS

Abstract

Various methods and apparatus for processing audio signals are disclosed herein. The assembly may be attached, adhered, or otherwise embedded into or upon a removable oral appliance to form a hearing aid assembly. Such an oral appliance may be a custom-made device which can enhance and/or optimize received audio signals for vibrational conduction to the user. Received audio signals may be processed to cancel acoustic echo such that undesired sounds received by one or more intra-buccal and/or extra-buccal microphones are eliminated or mitigated. Additionally, a multiband actuation system may be used where two or more transducers each deliver sounds within certain frequencies. Also, the assembly may also utilize the sensation of directionality via the conducted vibrations to emulate directional perception of audio signals received by the user. Another feature may include the ability to vibrationally conduct ancillary audio signals to the user along with primary audio signals.

| Inventors: | ABOLFATHI; Amir A.; (Petaluma, CA) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Assignee: | SoundMed, LLC Mountain View CA |

||||||||||

| Family ID: | 1000004906205 | ||||||||||

| Appl. No.: | 16/908415 | ||||||||||

| Filed: | June 22, 2020 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 15676807 | Aug 14, 2017 | 10735874 | ||

| 16908415 | ||||

| 14936548 | Nov 9, 2015 | 9781526 | ||

| 15676807 | ||||

| 13526923 | Jun 19, 2012 | 9185485 | ||

| 14936548 | ||||

| 12862933 | Aug 25, 2010 | 8233654 | ||

| 13526923 | ||||

| 11672271 | Feb 7, 2007 | 7801319 | ||

| 12862933 | ||||

| 60809244 | May 30, 2006 | |||

| 60820223 | Jul 24, 2006 | |||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | B33Y 80/00 20141201; H04R 1/46 20130101; A61C 8/0098 20130101; H04R 25/554 20130101; H04R 2225/31 20130101; A61C 8/0093 20130101; A61C 5/00 20130101; H04R 25/602 20130101; H04R 2460/13 20130101; H04R 25/604 20130101; B33Y 70/00 20141201; H04R 25/606 20130101; H04R 2420/07 20130101; H04R 2460/01 20130101; H04R 3/04 20130101; H04R 2225/67 20130101 |

| International Class: | H04R 25/00 20060101 H04R025/00; B33Y 80/00 20060101 B33Y080/00; A61C 8/00 20060101 A61C008/00; H04R 1/46 20060101 H04R001/46; H04R 3/04 20060101 H04R003/04; A61C 5/00 20060101 A61C005/00 |

Claims

1. Any apparatus disclosed herein.

2. Any method disclosed herein.

Description

CROSS-REFERENCE TO RELATED APPLICATIONS

[0001] This application is a continuation of U.S. patent application Ser. No. 15/676,807 filed Aug. 14, 2017, which is a continuation of U.S. patent application Ser. No. 14/936,548 filed Nov. 9, 2015 (now U.S. Pat. No. 9,781,526 issued Oct. 3, 2017), which is a continuation of U.S. patent application Ser. No. 13/526,923 filed Jun. 19, 2012 (now U.S. Pat. No. 9,185,485 issued Nov. 10, 2015), which is a continuation of U.S. patent application Ser. No. 12/862,933 filed Aug. 25, 2010 (now U.S. Pat. No. 8,233,654 issued Jul. 31, 2012), which is a continuation of U.S. patent application Ser. No. 11/672,271 filed Feb. 7, 2007 (now U.S. Pat. No. 7,801,319 issued Sep. 21, 2010), which claims the benefit of U.S. Provisional Patent Application Nos. 60/809,244 filed May 30, 2006 and 60/820,223 filed Jul. 24, 2006, each of which is incorporated herein by reference in its entirety herein for all purposes.

FIELD OF THE INVENTION

[0002] The present invention relates to methods and apparatus for processing and/or enhancing audio signals for transmitting these signals as vibrations through teeth or bone structures in and/or around a mouth. More particularly, the present invention relates to methods and apparatus for receiving audio signals and processing them to enhance its quality and/or to emulate various auditory features for transmitting these signals via sound conduction through teeth or bone structures in and/or around the mouth such that the transmitted signals correlate to auditory signals received by a user.

BACKGROUND OF THE INVENTION

[0003] Hearing loss affects over 31 million people in the United States (about 13% of the population). As a chronic condition, the incidence of hearing impairment rivals that of heart disease and, like heart disease, the incidence of hearing impairment increases sharply with age.

[0004] While the vast majority of those with hearing loss can be helped by a well-fitted, high quality hearing device, only 22% of the total hearing impaired population own hearing devices. Current products and distribution methods are not able to satisfy or reach over 20 million persons with hearing impairment in the U.S. alone.

[0005] Hearing loss adversely affects a person's quality of life and psychological well-being. Individuals with hearing impairment often withdraw from social interactions to avoid frustrations resulting from inability to understand conversations. Recent studies have shown that hearing impairment causes increased stress levels, reduced self-confidence, reduced sociability and reduced effectiveness in the workplace.

[0006] The human ear generally comprises three regions: the outer ear, the middle ear, and the inner ear. The outer ear generally comprises the external auricle and the ear canal, which is a tubular pathway through which sound reaches the middle ear. The outer ear is separated from the middle ear by the tympanic membrane (eardrum). The middle ear generally comprises three small bones, known as the ossicles, which form a mechanical conductor from the tympanic membrane to the inner ear. Finally, the inner ear includes the cochlea, which is a fluid-filled structure that contains a large number of delicate sensory hair cells that are connected to the auditory nerve.

[0007] Hearing loss can also be classified in terms of being conductive, sensorineural, or a combination of both. Conductive hearing impairment typically results from diseases or disorders that limit the transmission of sound through the middle ear. Most conductive impairments can be treated medically or surgically. Purely conductive hearing loss represents a relatively small portion of the total hearing impaired population (estimated at less than 5% of the total hearing impaired population).

[0008] Sensorineural hearing losses occur mostly in the inner ear and account for the vast majority of hearing impairment (estimated at 90-95% of the total hearing impaired population). Sensorineural hearing impairment (sometimes called "nerve loss") is largely caused by damage to the sensory hair cells inside the cochlea. Sensorineural hearing impairment occurs naturally as a result of aging or prolonged exposure to loud music and noise. This type of hearing loss cannot be reversed nor can it be medically or surgically treated; however, the use of properly fitted hearing devices can improve the individual's quality of life.

[0009] Conventional hearing devices are the most common devices used to treat mild to severe sensorineural hearing impairment. These are acoustic devices that amplify sound to the tympanic membrane. These devices are individually customizable to the patient's physical and acoustical characteristics over four to six separate visits to an audiologist or hearing instrument specialist. Such devices generally comprise a microphone, amplifier, battery, and speaker. Recently, hearing device manufacturers have increased the sophistication of sound processing, often using digital technology, to provide features such as programmability and multi-band compression. Although these devices have been miniaturized and are less obtrusive, they are still visible and have major acoustic limitation.

[0010] Industry research has shown that the primary obstacles for not purchasing a hearing device generally include: a) the stigma associated with wearing a hearing device; b) dissenting attitudes on the part of the medical profession, particularly ENT physicians; c) product value issues related to perceived performance problems; d) general lack of information and education at the consumer and physician level; and e) negative word-of-mouth from dissatisfied users.

[0011] Other devices such as cochlear implants have been developed for people who have severe to profound hearing loss and are essentially deaf (approximately 2% of the total hearing impaired population). The electrode of a cochlear implant is inserted into the inner ear in an invasive and non-reversible surgery. The electrode electrically stimulates the auditory nerve through an electrode array that provides audible cues to the user, which are not usually interpreted by the brain as normal sound. Users generally require intensive and extended counseling and training following surgery to achieve the expected benefit.

[0012] Other devices such as electronic middle ear implants generally are surgically placed within the middle ear of the hearing impaired. They are surgically implanted devices with an externally worn component.

[0013] The manufacture, fitting and dispensing of hearing devices remain an arcane and inefficient process. Most hearing devices are custom manufactured, fabricated by the manufacturer to fit the ear of each prospective purchaser. An impression of the ear canal is taken by the dispenser (either an audiologist or licensed hearing instrument specialist) and mailed to the manufacturer for interpretation and fabrication of the custom molded rigid plastic casing. Hand-wired electronics and transducers (microphone and speaker) are then placed inside the casing, and the final product is shipped back to the dispensing professional after some period of time, typically one to two weeks.

[0014] The time cycle for dispensing a hearing device, from the first diagnostic session to the final fine-tuning session, typically spans a period over several weeks, such as six to eight weeks, and involves multiple with the dispenser.

[0015] Moreover, typical hearing aid devices fail to eliminate background noises or fail to distinguish between background noise and desired sounds. Accordingly, there exists a need for methods and apparatus for receiving audio signals and processing them to enhance its quality and/or to emulate various auditory features for transmitting these signals via sound conduction through teeth or bone structures in and/or around the mouth for facilitating the treatment of hearing loss in patients.

SUMMARY OF THE INVENTION

[0016] An electronic and transducer device may be attached, adhered, or otherwise embedded into or upon a removable dental or oral appliance to form a hearing aid assembly. Such a removable oral appliance may be a custom-made device fabricated from a thermal forming process utilizing a replicate model of a dental structure obtained by conventional dental impression methods. The electronic and transducer assembly may receive incoming sounds either directly or through a receiver to process and amplify the signals and transmit the processed sounds via a vibrating transducer element coupled to a tooth or other bone structure, such as the maxillary, mandibular, or palatine bone structure.

[0017] The assembly for transmitting vibrations via at least one tooth may generally comprise a housing having a shape which is conformable to at least a portion of the at least one tooth, and an actuatable transducer disposed within or upon the housing and in vibratory communication with a surface of the at least one tooth. Moreover, the transducer itself may be a separate assembly from the electronics and may be positioned along another surface of the tooth, such as the occlusal surface, or even attached to an implanted post or screw embedded into the underlying bone.

[0018] In receiving and processing the various audio signals typically received by a user, various configurations of the oral appliance and processing of the received audio signals may be utilized to enhance and/or optimize the conducted vibrations which are transmitted to the user. For instance, in configurations where one or more microphones are positioned within the user's mouth, filtering features such as Acoustic Echo Cancellation (AEC) may be optionally utilized to eliminate or mitigate undesired sounds received by the microphones. In such a configuration, at least two intra-buccal microphones may be utilized to separate out desired sounds (e.g., sounds received from outside the body such as speech, music, etc.) from undesirable sounds (e.g., sounds resulting from chewing, swallowing, breathing, self-speech, teeth grinding, etc.).

[0019] If these undesirable sounds are not filtered or cancelled, they may be amplified along with the desired audio signals making for potentially unintelligible audio quality for the user. Additionally, desired audio sounds may be generally received at relatively lower sound pressure levels because such signals are more likely to be generated at a distance from the user and may have to pass through the cheek of the user while the undesired sounds are more likely to be generated locally within the oral cavity of the user. Samples of the undesired sounds may be compared against desired sounds to eliminate or mitigate the undesired sounds prior to actuating the one or more transducers to vibrate only the resulting desired sounds to the user.

[0020] Independent from or in combination with acoustic echo cancellation, another processing feature for the oral appliance may include use of a multiband actuation system to facilitate the efficiency with which audio signals may be conducted to the user. Rather than utilizing a single transducer to cover the entire range of the frequency spectrum (e.g., 200 Hz to 10,000 Hz), one variation may utilize two or more transducers where each transducer is utilized to deliver sounds within certain frequencies. For instance, a first transducer may be utilized to deliver sounds in the 200 Hz to 2000 Hz frequency range and a second transducer may be used to deliver sounds in the 2000 Hz to 10,000 Hz frequency range. Alternatively, these frequency ranges may be discrete or overlapping. As individual transducers may be configured to handle only a subset of the frequency spectrum, the transducers may be more efficient in their design.

[0021] Yet another process which may utilize the multiple transducers may include the utilization of directionality via the conducted vibrations to emulate the directional perception of audio signals received by the user. In one example for providing the perception of directionality with an oral appliance, two or more transducers may be positioned apart from one another along respective retaining portions. One transducer may be actuated corresponding to an audio signal while the other transducer may be actuated corresponding to the same audio signal but with a phase and/or amplitude and/or delay difference intentionally induced corresponding to a direction emulated for the user. Generally, upon receiving a directional audio signal and depending upon the direction to be emulated and the separation between the respective transducers, a particular phase and/or gain and/or delay change to the audio signal may be applied to the respective transducer while leaving the other transducer to receive the audio signal unchanged.

[0022] Another feature which may utilize the oral appliance and processing capabilities may include the ability to vibrationally conduct ancillary audio signals to the user, e.g., the oral appliance may be configured to wirelessly receive and conduct signals from secondary audio sources to the user. Examples may include the transmission of an alarm signal which only the user may hear or music conducted to the user in public locations, etc. The user may thus enjoy privacy in receiving these ancillary signals while also being able to listen and/or converse in an environment where a primary audio signal is desired.

BRIEF DESCRIPTION OF THE DRAWINGS

[0023] FIG. 1 illustrates the dentition of a patient's teeth and one variation of a hearing aid device which is removably placed upon or against the patient's tooth or teeth as a removable oral appliance.

[0024] FIG. 2A illustrates a perspective view of the lower teeth showing one exemplary location for placement of the removable oral appliance hearing aid device.

[0025] FIG. 2B illustrates another variation of the removable oral appliance in the form of an appliance which is placed over an entire row of teeth in the manner of a mouthguard.

[0026] FIG. 2C illustrates another variation of the removable oral appliance which is supported by an arch.

[0027] FIG. 2D illustrates another variation of an oral appliance configured as a mouthguard.

[0028] FIG. 3 illustrates a detail perspective view of the oral appliance positioned upon the patient's teeth utilizable in combination with a transmitting assembly external to the mouth and wearable by the patient in another variation of the device.

[0029] FIG. 4 shows an illustrative configuration of one variation of the individual components of the oral appliance device having an external transmitting assembly with a receiving and transducer assembly within the mouth.

[0030] FIG. 5 shows an illustrative configuration of another variation of the device in which the entire assembly is contained by the oral appliance within the user's mouth.

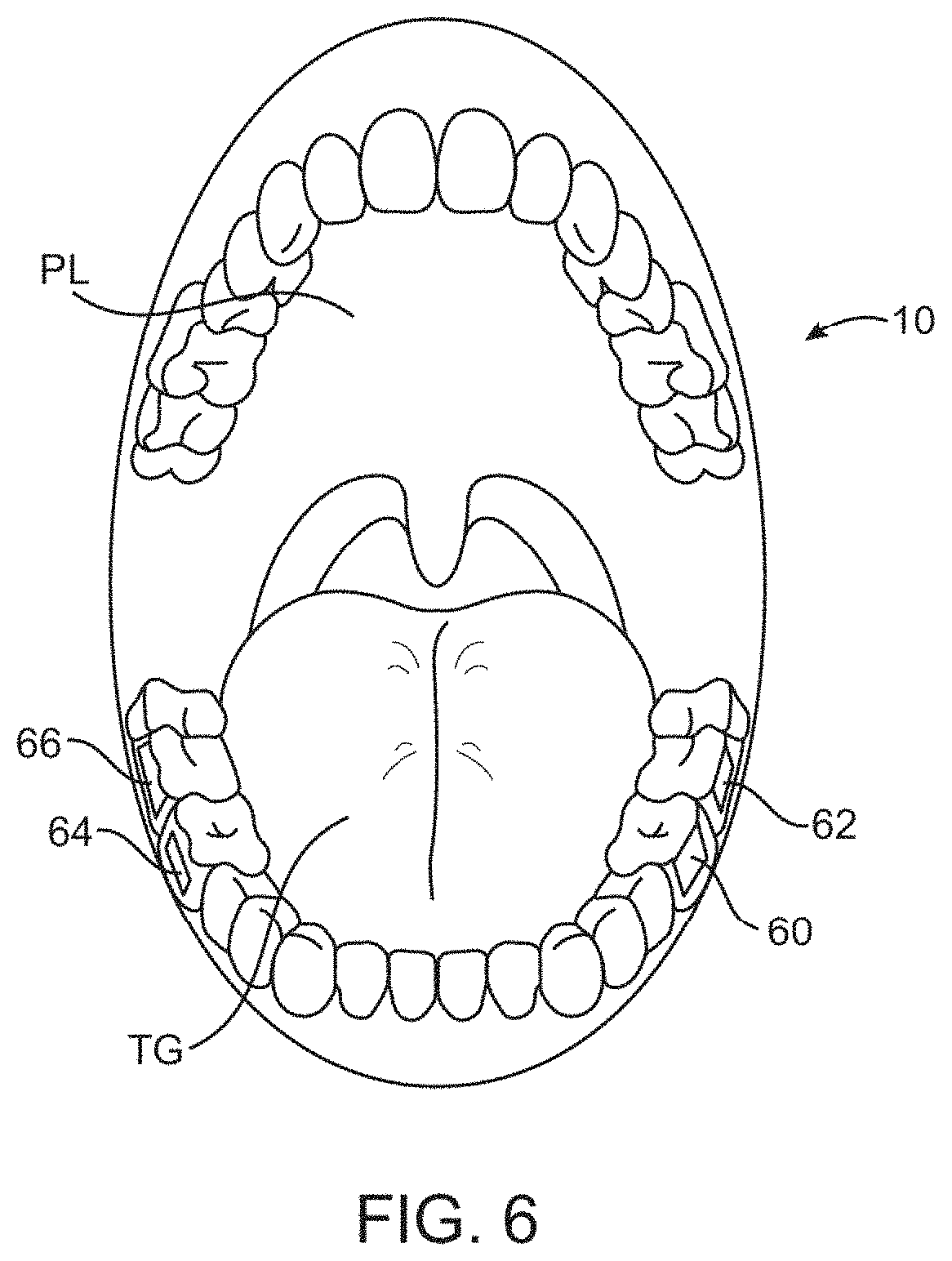

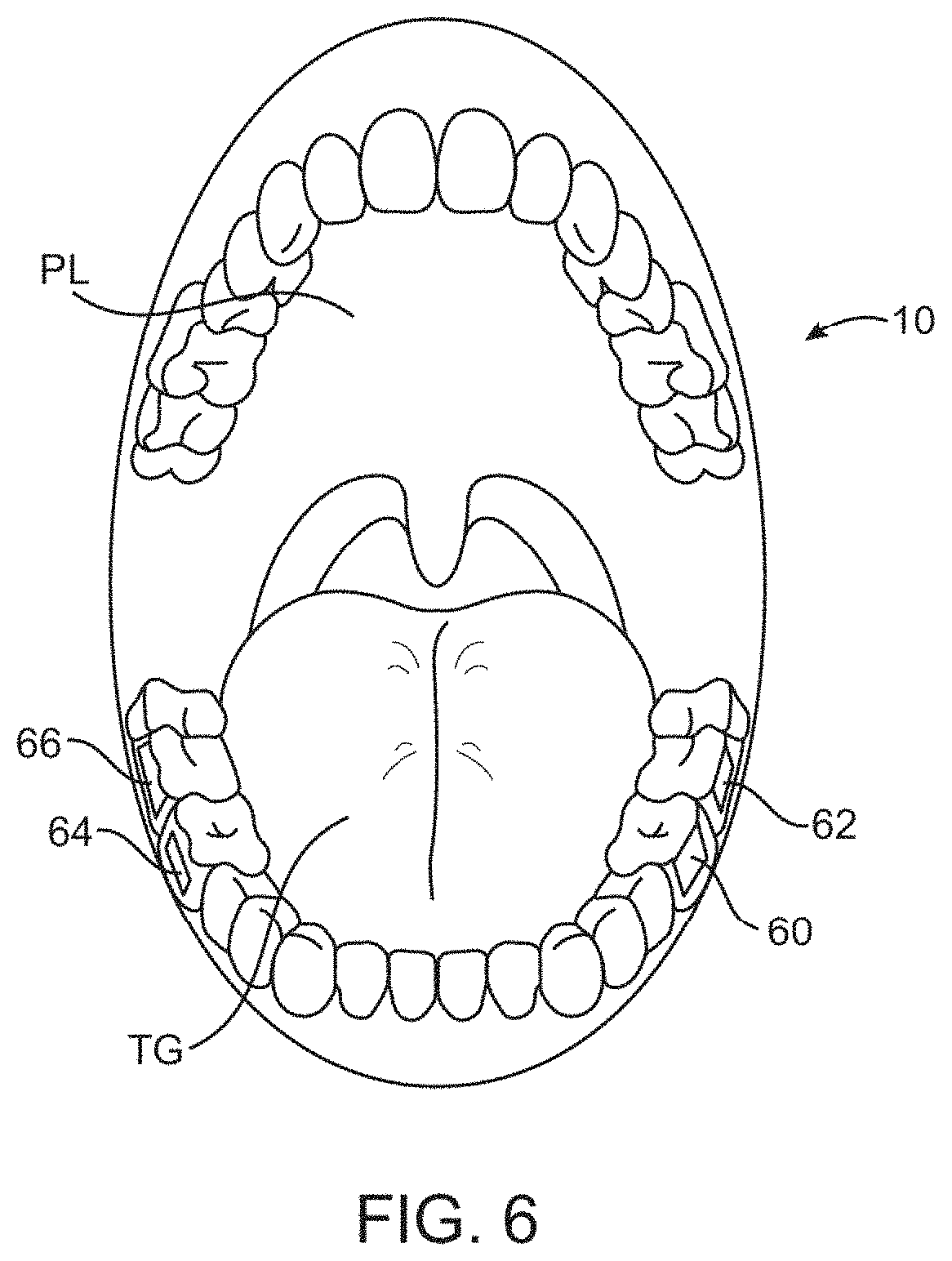

[0031] FIG. 6 illustrates an example of how multiple oral appliance hearing aid assemblies or transducers may be placed on multiple teeth throughout the patient's mouth.

[0032] FIG. 7 illustrates another variation of a removable oral appliance supported by an arch and having a microphone unit integrated within the arch.

[0033] FIG. 8A illustrates another variation of the removable oral appliance supported by a connecting member which may be positioned along the lingual or buccal surfaces of a patient's row of teeth.

[0034] FIGS. 8B to 8E show examples of various cross-sections of the connecting support member of the appliance of FIG. 8A.

[0035] FIG. 9 shows yet another variation illustrating at least one microphone and optionally additional microphone units positioned around the user's mouth and in wireless communication with the electronics and/or transducer assembly.

[0036] FIG. 10 illustrates yet another example of a configuration for positioning multiple transducers and/or processing units along a patient's dentition.

[0037] FIG. 11A illustrates another variation on the configuration for positioning multiple transducers and/or processors supported via an arched connector.

[0038] FIG. 11B illustrates another variation on the configuration utilizing a connecting member positioned along the lingual surfaces of a patient's dentition.

[0039] FIG. 12A shows a configuration for positioning one or more transducers with multiple microphones.

[0040] FIG. 12B schematically illustrates an example for integrating an acoustic echo cancellation system with the oral appliance.

[0041] FIG. 13A shows a configuration for positioning and utilizing multiple band transducers with the oral appliance.

[0042] FIG. 13B schematically illustrates another example for integrating multiple band transducers with the oral appliance.

[0043] FIG. 14A shows a configuration for positioning multiple transducers for emulating directionality of audio signals perceived by a user.

[0044] FIG. 14B schematically illustrates an example for emulating the directionality of detected audio signals via multiple transducers.

[0045] FIG. 15 schematically illustrates an example for activating one or more transducers to emulate directionality utilizing phase and/or amplitude modified signals.

[0046] FIG. 16 schematically illustrates an example for optionally compensating for the relative positioning of the microphones with respect to the user for emulating directionality of perceived audio signals.

[0047] FIG. 17A shows a configuration for positioning multiple transducers which may be configured to provide for one or more ancillary auditory/conductance channels.

[0048] FIG. 17B schematically illustrates an example for providing one or more ancillary channels for secondary audio signals to be provided to a user.

[0049] FIG. 17C illustrates another variation where secondary device may directly transmit audio signals wirelessly via the oral appliance.

[0050] FIG. 18 schematically illustrates an example for optionally adjusting features of the device such as the manner in which ancillary auditory signals are transmitted to the user.

[0051] FIG. 19A illustrates one method for delivering ancillary audio signals as vibrations transmitted in parallel.

[0052] FIG. 19B illustrates another method for delivering ancillary audio signals as vibrations transmitted in series.

[0053] FIG. 19C illustrates yet another method for delivering ancillary audio signals as vibrations transmitted in a hybrid form utilizing signals transmitted in both parallel and series.

DETAILED DESCRIPTION OF THE INVENTION

[0054] An electronic and transducer device may be attached, adhered, or otherwise embedded into or upon a removable oral appliance or other oral device to form a hearing aid assembly. Such an oral appliance may be a custom-made device fabricated from a thermal forming process utilizing a replicate model of a dental structure obtained by conventional dental impression methods. The electronic and transducer assembly may receive incoming sounds either directly or through a receiver to process and amplify the signals and transmit the processed sounds via a vibrating transducer element coupled to a tooth or other bone structure, such as the maxillary, mandibular, or palatine bone structure.

[0055] As shown in FIG. 1, a patient's mouth and dentition 10 is illustrated showing one possible location for removably attaching hearing aid assembly 14 upon or against at least one tooth, such as a molar 12. The patient's tongue TG and palate PL are also illustrated for reference. An electronics and/or transducer assembly 16 may be attached, adhered, or otherwise embedded into or upon the assembly 14, as described below in further detail.

[0056] FIG. 2A shows a perspective view of the patient's lower dentition illustrating the hearing aid assembly 14 comprising a removable oral appliance 18 and the electronics and/or transducer assembly 16 positioned along a side surface of the assembly 14. In this variation, oral appliance 18 may be fitted upon two molars 12 within tooth engaging channel 20 defined by oral appliance 18 for stability upon the patient's teeth, although in other variations, a single molar or tooth may be utilized. Alternatively, more than two molars may be utilized for the oral appliance 18 to be attached upon or over. Moreover, electronics and/or transducer assembly 16 is shown positioned upon a side surface of oral appliance 18 such that the assembly 16 is aligned along a buccal surface of the tooth 12; however, other surfaces such as the lingual surface of the tooth 12 and other positions may also be utilized. The figures are illustrative of variations and are not intended to be limiting; accordingly, other configurations and shapes for oral appliance 18 are intended to be included herein.

[0057] FIG. 2B shows another variation of a removable oral appliance in the form of an appliance 15 which is placed over an entire row of teeth in the manner of a mouthguard. In this variation, appliance 15 may be configured to cover an entire bottom row of teeth or alternatively an entire upper row of teeth. In additional variations, rather than covering the entire rows of teeth, a majority of the row of teeth may be instead be covered by appliance 15. Assembly 16 may be positioned along one or more portions of the oral appliance 15.

[0058] FIG. 2C shows yet another variation of an oral appliance 17 having an arched configuration. In this appliance, one or more tooth retaining portions 21, 23, which in this variation may be placed along the upper row of teeth, may be supported by an arch 19 which may lie adjacent or along the palate of the user. As shown, electronics and/or transducer assembly 16 may be positioned along one or more portions of the tooth retaining portions 21, 23. Moreover, although the variation shown illustrates an arch 19 which may cover only a portion of the palate of the user, other variations may be configured to have an arch which covers the entire palate of the user.

[0059] FIG. 2D illustrates yet another variation of an oral appliance in the form of a mouthguard or retainer 25 which may be inserted and removed easily from the user's mouth. Such a mouthguard or retainer 25 may be used in sports where conventional mouthguards are worn; however, mouthguard or retainer 25 having assembly 16 integrated therein may be utilized by persons, hearing impaired or otherwise, who may simply hold the mouthguard or retainer 25 via grooves or channels 26 between their teeth for receiving instructions remotely and communicating over a distance.

[0060] Generally, the volume of electronics and/or transducer assembly 16 may be minimized so as to be unobtrusive and as comfortable to the user when placed in the mouth. Although the size may be varied, a volume of assembly 16 may be less than 800 cubic millimeters. This volume is, of course, illustrative and not limiting as size and volume of assembly 16 and may be varied accordingly between different users.

[0061] Moreover, removable oral appliance 18 may be fabricated from various polymeric or a combination of polymeric and metallic materials using any number of methods, such as computer-aided machining processes using computer numerical control (CNC) systems or three-dimensional printing processes, e.g., stereolithography apparatus (SLA), selective laser sintering (SLS), and/or other similar processes utilizing three-dimensional geometry of the patient's dentition, which may be obtained via any number of techniques. Such techniques may include use of scanned dentition using intra-oral scanners such as laser, white light, ultrasound, mechanical three-dimensional touch scanners, magnetic resonance imaging (MRI), computed tomography (CT), other optical methods, etc.

[0062] In forming the removable oral appliance 18, the appliance 18 may be optionally formed such that it is molded to fit over the dentition and at least a portion of the adjacent gingival tissue to inhibit the entry of food, fluids, and other debris into the oral appliance 18 and between the transducer assembly and tooth surface. Moreover, the greater surface area of the oral appliance 18 may facilitate the placement and configuration of the assembly 16 onto the appliance 18.

[0063] Additionally, the removable oral appliance 18 may be optionally fabricated to have a shrinkage factor such that when placed onto the dentition, oral appliance 18 may be configured to securely grab onto the tooth or teeth as the appliance 18 may have a resulting size slightly smaller than the scanned tooth or teeth upon which the appliance 18 was formed. The fitting may result in a secure interference fit between the appliance 18 and underlying dentition.

[0064] In one variation, with assembly 14 positioned upon the teeth, as shown in FIG. 3, an extra-buccal transmitter assembly 22 located outside the patient's mouth may be utilized to receive auditory signals for processing and transmission via a wireless signal 24 to the electronics and/or transducer assembly 16 positioned within the patient's mouth, which may then process and transmit the processed auditory signals via vibratory conductance to the underlying tooth and consequently to the patient's inner ear.

[0065] The transmitter assembly 22, as described in further detail below, may contain a microphone assembly as well as a transmitter assembly and may be configured in any number of shapes and forms worn by the user, such as a watch, necklace, lapel, phone, belt-mounted device, etc.

[0066] FIG. 4 illustrates a schematic representation of one variation of hearing aid assembly 14 utilizing an extra-buccal transmitter assembly 22, which may generally comprise microphone or microphone array 30 (referred to "microphone 30" for simplicity) for receiving sounds and which is electrically connected to processor 32 for processing the auditory signals. Processor 32 may be connected electrically to transmitter 34 for transmitting the processed signals to the electronics and/or transducer assembly 16 disposed upon or adjacent to the user's teeth. The microphone 30 and processor 32 may be configured to detect and process auditory signals in any practicable range, but may be configured in one variation to detect auditory signals ranging from, e.g., 250 Hertz to 20,000 Hertz.

[0067] With respect to microphone 30, a variety of various microphone systems may be utilized. For instance, microphone 30 may be a digital, analog, and/or directional type microphone. Such various types of microphones may be interchangeably configured to be utilized with the assembly, if so desired. Moreover, various configurations and methods for utilizing multiple microphones within the user's mouth may also be utilized, as further described below.

[0068] Power supply 36 may be connected to each of the components in transmitter assembly 22 to provide power thereto. The transmitter signals 24 may be in any wireless form utilizing, e.g., radio frequency, ultrasound, microwave, Blue Tooth.RTM. (BLUETOOTH SIG, INC., Bellevue, Wash.), etc. for transmission to assembly 16. Assembly 22 may also optionally include one or more input controls 28 that a user may manipulate to adjust various acoustic parameters of the electronics and/or transducer assembly 16, such as acoustic focusing, volume control, filtration, muting, frequency optimization, sound adjustments, and tone adjustments, etc.

[0069] The signals transmitted 24 by transmitter 34 may be received by electronics and/or transducer assembly 16 via receiver 38, which may be connected to an internal processor for additional processing of the received signals. The received signals may be communicated to transducer 40, which may vibrate correspondingly against a surface of the tooth to conduct the vibratory signals through the tooth and bone and subsequently to the middle ear to facilitate hearing of the user. Transducer 40 may be configured as any number of different vibratory mechanisms. For instance, in one variation, transducer 40 may be an electromagnetically actuated transducer. In other variations, transducer 40 may be in the form of a piezoelectric crystal having a range of vibratory frequencies, e.g., between 250 to 4000 kHz.

[0070] Power supply 42 may also be included with assembly 16 to provide power to the receiver, transducer, and/or processor, if also included. Although power supply 42 may be a simple battery, replaceable or permanent, other variations may include a power supply 42 which is charged by inductance via an external charger. Additionally, power supply 42 may alternatively be charged via direct coupling to an alternating current (AC) or direct current (DC) source. Other variations may include a power supply 42 which is charged via a mechanical mechanism, such as an internal pendulum or slidable electrical inductance charger as known in the art, which is actuated via, e.g., motions of the jaw and/or movement for translating the mechanical motion into stored electrical energy for charging power supply 42.

[0071] In another variation of assembly 16, rather than utilizing an extra-buccal transmitter, hearing aid assembly 50 may be configured as an independent assembly contained entirely within the user's mouth, as shown in FIG. 5. Accordingly, assembly 50 may include at least one internal microphone 52 in communication with an on-board processor 54. Internal microphone 52 may comprise any number of different types of microphones, as described below in further detail. At least one processor 54 may be used to process any received auditory signals for filtering and/or amplifying the signals and transmitting them to transducer 56, which is in vibratory contact against the tooth surface. Power supply 58, as described above, may also be included within assembly 50 for providing power to each of the components of assembly 50 as necessary.

[0072] In order to transmit the vibrations corresponding to the received auditory signals efficiently and with minimal loss to the tooth or teeth, secure mechanical contact between the transducer and the tooth is ideally maintained to ensure efficient vibratory communication. Accordingly, any number of mechanisms may be utilized to maintain this vibratory communication.

[0073] For any of the variations described above, they may be utilized as a single device or in combination with any other variation herein, as practicable, to achieve the desired hearing level in the user. Moreover, more than one oral appliance device and electronics and/or transducer assemblies may be utilized at any one time. For example, FIG. 6 illustrates one example where multiple transducer assemblies 60, 62, 64, 66 may be placed on multiple teeth. Although shown on the lower row of teeth, multiple assemblies may alternatively be positioned and located along the upper row of teeth or both rows as well. Moreover, each of the assemblies may be configured to transmit vibrations within a uniform frequency range. Alternatively in other variations, different assemblies may be configured to vibrate within overlapping or non-overlapping frequency ranges between each assembly. As mentioned above, each transducer 60, 62, 64, 66 can be programmed or preset for a different frequency response such that each transducer may be optimized for a different frequency response and/or transmission to deliver a relatively high-fidelity sound to the user.

[0074] Moreover, each of the different transducers 60, 62, 64, 66 can also be programmed to vibrate in a manner which indicates the directionality of sound received by the microphone worn by the user. For example, different transducers positioned at different locations within the user's mouth can vibrate in a specified manner by providing sound or vibrational queues to inform the user which direction a sound was detected relative to an orientation of the user, as described in further detail below. For instance, a first transducer located, e.g., on a user's left tooth, can be programmed to vibrate for sound detected originating from the user's left side. Similarly, a second transducer located, e.g., on a user's right tooth, can be programmed to vibrate for sound detected originating from the user's right side. Other variations and queues may be utilized as these examples are intended to be illustrative of potential variations.

[0075] FIG. 7 illustrates another variation 70 which utilizes an arch 19 connecting one or more tooth retaining portions 21, 23, as described above. However, in this variation, the microphone unit 74 may be integrated within or upon the arch 19 separated from the transducer assembly 72. One or more wires 76 routed through arch 19 may electrically connect the microphone unit 74 to the assembly 72. Alternatively, rather than utilizing a wire 76, microphone unit 74 and assembly 72 may be wirelessly coupled to one another, as described above.

[0076] FIG. 8A shows another variation 80 which utilizes a connecting member 82 which may be positioned along the lingual or buccal surfaces of a patient's row of teeth to connect one or more tooth retaining portions 21, 23. Connecting member 82 may be fabricated from any number of non-toxic materials, such stainless steel, Nickel, Platinum, etc. and affixed or secured 84, 86 to each respective retaining portions 21, 23. Moreover, connecting member 82 may be shaped to be as non-obtrusive to the user as possible. Accordingly, connecting member 82 may be configured to have a relatively low-profile for placement directly against the lingual or buccal teeth surfaces. The cross-sectional area of connecting member 82 may be configured in any number of shapes so long as the resulting geometry is non-obtrusive to the user. FIG. 8B illustrates one variation of the cross-sectional area which may be configured as a square or rectangle 90. FIG. 8C illustrates another connecting member geometry configured as a semi-circle 92 where the flat portion may be placed against the teeth surfaces. FIGS. 8D and 8E illustrate other alternative shapes such as an elliptical shape 94 and circular shape 96. These variations are intended to be illustrative and not limiting as other shapes and geometries, as practicable, are intended to be included within this disclosure.

[0077] In yet another variation for separating the microphone from the transducer assembly, FIG. 9 illustrates another variation where at least one microphone 102 (or optionally any number of additional microphones 104, 106) may be positioned within the mouth of the user while physically separated from the electronics and/or transducer assembly 100. In this manner, the one or optionally more microphones 102, 104, 106 may be wirelessly or by wire coupled to the electronics and/or transducer assembly 100 in a manner which attenuates or eliminates feedback from the transducer, also described in further detail below.

[0078] In utilizing multiple transducers and/or processing units, several features may be incorporated with the oral appliance(s) to effect any number of enhancements to the quality of the conducted vibratory signals and/or to emulate various perceptual features to the user to correlate auditory signals received by a user for transmitting these signals via sound conduction through teeth or bone structures in and/or around the mouth.

[0079] As illustrated in FIG. 10, another variation for positioning one or more transducers and/or processors is shown. In this instance generally, at least two microphones may be positioned respectively along tooth retaining portions 21, 23, e.g., outer microphone 110 positioned along a buccal surface of retaining portion 23 and inner microphone 112 positioned along a lingual surface of retaining portion 21. The one or more microphones 110, 112 may receive the auditory signals which are processed and ultimately transmitted through sound conductance via one or more transducers 114, 116, 118, one or more of which may be tuned to actuate only along certain discrete frequencies, as described in further detail below.

[0080] Moreover, the one or more transducers 114, 116, 118 may be positioned along respective retaining portions 21, 23 and configured to emulate directionality of audio signals received by the user to provide a sense of direction with respect to conducted audio signals. Additionally, one or more processors 120, 124 may also be provided along one or both retaining portions 21, 23 to process received audio signals, e.g., to translate the audio signals into vibrations suitable for conduction to the user, as well as other providing for other functional features. Furthermore, an optional processor 122 may also be provided along one or both retaining portions 21, 23 for interfacing and/or receiving wireless signals from other external devices such as an input control, as described above, or other wireless devices.

[0081] FIG. 11A illustrates another configuration utilizing an arch 130 similar to the configuration shown in FIG. 7 for connecting the multiple transducers and processors positioned along tooth retaining portions 21, 23. FIG. 11B illustrates yet another configuration utilizing a connecting member 132 positioned against the lingual surfaces of the user's teeth, similar to the configuration shown in FIG. 8A, also for connecting the multiple transducers and processors positioned along tooth retaining portions 21, 23.

[0082] In configurations particularly where the one or more microphones are positioned within the user's mouth, filtering features such as Acoustic Echo Cancellation (AEC) may be optionally utilized to eliminate or mitigate undesired sounds received by the microphones. AEC algorithms are well utilized and are typically used to anticipate the signal which may re-enter the transmission path from the microphone and cancel it out by digitally sampling an initial received signal to form a reference signal. Generally, the received signal is produced by the transducer and any reverberant signal which may be picked up again by the microphone is again digitally sampled to form an echo signal. The reference and echo signals may be compared such that the two signals are summed ideally at 180.degree. out of phase to result in a null signal, thereby cancelling the echo.

[0083] In the variation shown in FIG. 12A, at least two intra-buccal microphones 110, 112 may be utilized to separate out desired sounds (e.g., sounds received from outside the body such as speech, music, etc.) from undesirable sounds (e.g., sounds resulting from chewing, swallowing, breathing, self-speech, teeth grinding, etc.). If these undesirable sounds are not filtered or cancelled, they may be amplified along with the desired audio signals making for potentially unintelligible audio quality for the user. Additionally, desired audio sounds may be generally received at relatively lower sound pressure levels because such signals are more likely to be generated at a distance from the user and may have to pass through the cheek of the user while the undesired sounds are more likely to be generated locally within the oral cavity of the user.

[0084] Samples of the undesired sounds may be compared against desired sounds to eliminate or mitigate the undesired sounds prior to actuating the one or more transducers to vibrate only the resulting desired sounds to the user. In this example, first microphone 110 may be positioned along a buccal surface of the retaining portion 23 to receive desired sounds while second microphone 112 may be positioned along a lingual surface of retaining portion 21 to receive the undesirable sound signals. Processor 120 may be positioned along either retaining portion 21 or 23, in this case along a lingual surface of retaining portion 21, and may be in wired or wireless communication with the microphones 110, 112.

[0085] Although audio signals may be attenuated by passing through the cheek of the user, especially when the mouth is closed, first microphone 110 may still receive the desired audio signals for processing by processor 120, which may also amplify the received audio signals. As illustrated schematically in FIG. 12B, audio signals for desired sounds, represented by far end speech 140, are shown as being received by first microphone 110. Audio signals for the undesired sounds 152, represented by near end speech 150, are shown as being received by second microphone 112. Although it may be desirable to position the microphones 110, 112 in their respective positions to optimize detection of their respective desirable and undesirable sounds, they may of course be positioned at other locations within the oral cavity as so desired or practicable. Moreover, while it may also be desirable for first and second microphone 110, 112 to detect only their respective audio signals, this is not required. However, having the microphones 110, 112 detect different versions of the combination of desired and undesired sounds 140, 150, respectively, may be desirable so as to effectively process these signals via AEC processor 120.

[0086] The desired audio signals may be transmitted via wired or wireless communication along a receive path 142 where the signal 144 may be sampled and received by AEC processor 120. A portion of the far end speech 140 may be transmitted to one or more transducers 114 where it may initially conduct the desired audio signals via vibration 146 through the user's bones. Any resulting echo or reverberations 148 from the transmitted vibration 146 may be detected by second microphone 112 along with any other undesirable noises or audio signals 150, as mentioned above. The undesired signals 148, 150 detected by second microphone 112 or the sampled signal 144 received by AEC processor 120 may be processed and shifted out of phase, e.g., ideally 180.degree. out of phase, such that the summation 154 of the two signals results in a cancellation of any echo 148 and/or other undesired sounds 150.

[0087] The resulting summed audio signal may be redirected through an adaptive filter 156 and re-summed 154 to further clarify the audio signal until the desired audio signals is passed along to the one or more transducers 114 where the filtered signal 162, free or relatively free from the undesired sounds, may be conducted 160 to the user. Although two microphones 110, 112 are described in this example, an array of additional microphones may be utilized throughout the oral cavity of the user. Alternatively, as mentioned above, one or more microphones may also be positioned or worn by the user outside the mouth, such as in a bracelet, necklace, etc. and used alone or in combination with the one or more intra-buccal microphones. Furthermore, although three transducers 114, 116, 118 are illustrated, other variations may utilize a single transducer or more than three transducers positioned throughout the user's oral cavity, if so desired.

[0088] Independent from or in combination with acoustic echo cancellation, another processing feature for the oral appliance may include use of a multiband actuation system to facilitate the efficiency with which audio signals may be conducted to the user. Rather than utilizing a single transducer to cover the entire range of the frequency spectrum (e.g., 200 Hz to 10,000 Hz), one variation may utilize two or more transducers where each transducer is utilized to deliver sounds within certain frequencies. For instance, a first transducer may be utilized to deliver sounds in the 200 Hz to 2000 Hz frequency range and a second transducer may be used to deliver sounds in the 2000 Hz to 10,000 Hz frequency range. Alternatively, these frequency ranges may be discrete or overlapping. As individual transducers may be configured to handle only a subset of the frequency spectrum, the transducers may be more efficient in their design.

[0089] Additionally, for certain applications where high fidelity signals are not necessary to be transmitted to the user, individual higher frequency transducers may be shut off to conserve power. In yet another alternative, certain transducers may be omitted, particularly transducers configured for lower frequency vibrations.

[0090] As illustrated in FIG. 13A, a configuration for utilizing multiple transducers is shown where individual transducers may be attuned to transmit only within certain frequency ranges. For instance, transducer 116 may be configured to transmit audio signals within the frequency range from, e.g., 200 Hz to 2000 Hz, while transducers 114 and/or 118 may be configured to transmit audio signals within the frequency range from, e.g., 2000 Hz to 10,000 Hz. Although the three transducers are shown, this is intended to be illustrative and fewer than three or more than three transducers may be utilized in other variations. Moreover, the audible frequency ranges are described for illustrative purposes and the frequency range may be sub-divided in any number of sub-ranges correlating to any number of transducers, as practicable. The choice of the number of sub-ranges and the lower and upper limits of each sub-range may also be varied depending upon a number of factors, e.g., the desired fidelity levels, power consumption of the transducers, etc.

[0091] One or both processors 120 and/or 124, which are in communication with the one or more transducers (in this example transducers 114, 116, 118), may be programmed to treat the audio signals for each particular frequency range similarly or differently. For instance, processors 120 and/or 124 may apply a higher gain level to the signals from one band with respect to another band. Additionally, one or more of the transducers 114, 116, 118 may be configured differently to optimally transmit vibrations within their respective frequency ranges. In one variation, one or more of the transducers 114, 116, 118 may be varied in size or in shape to effectuate an optimal configuration for transmission within their respective frequencies.

[0092] As mentioned above, the one or more of transducers 114, 116, 118 may also be powered on or off by the processor to save on power consumption in certain listening applications. As an example, higher frequency transducers 114, 118 may be shut off when higher frequency signals are not utilized such as when the user is driving. In other examples, the user may activate all transducers 114, 116, 118 such as when the user is listening to music. In yet another variation, higher frequency transducers 114, 118 may also be configured to deliver high volume audio signals, such as for alarms, compared to lower frequency transducers 116. Thus, the perception of a louder sound may be achieved just by actuation of the higher frequency transducers 114, 118 without having to actuate any lower frequency transducers 116.

[0093] An example of how audio signals received by a user may be split into sub-frequency ranges for actuation by corresponding lower or higher frequency transducers is schematically illustrated in FIG. 13B. In this example, an audio signal 170 received by the user via microphones 110 and/or 112 may be transmitted 172 to one or more processors 120 and/or 124. Once the audio signals have been received by the respective processor, the signal may be filtered by two or more respective filters to transmit frequencies within specified bands. For instance, first filter 174 may receive the audio signal 172 and filter out the frequency spectrum such that only the frequency range between, e.g., 200 Hz to 2000 Hz, is transmitted 178. Second filter 176 may also receive the audio signal 172 and filter out the frequency spectrum such that only the frequency range between, e.g., 2000 Hz to 10,000 Hz, is transmitted 180.

[0094] Each respective filtered signal 178, 180 may be passed on to a respective processor 182, 184 to further process each band's signal according to an algorithm to achieve any desired output per transducer. Thus, processor 182 may process the signal 178 to create the output signal 194 to vibrate the lower frequency transducer 116 accordingly while the processor 184 may process the signal 180 to create the output signal 196 to vibrate the higher frequency transducers 114 and/or 118 accordingly. An optional controller 186 may receive control data 188 from user input controls, as described above, for optionally sending signals 190, 192 to respective processors 182, 184 to shut on/off each respective processor and/or to append ancillary data and/or control information to the subsequent transducers.

[0095] In addition to or independent from either acoustic echo cancellation and/or multiband actuation of transducers, yet another process which may utilize the multiple transducers may include the utilization of directionality via the conducted vibrations to emulate the directional perception of audio signals received by the user. Generally, human hearing is able to distinguish the direction of a sound wave by perceiving differences in sound pressure levels between the two cochlea. In one example for providing the perception of directionality with an oral appliance, two or more transducers, such as transducers 114, 118, may be positioned apart from one another along respective retaining portions 21, 23, as shown in FIG. 14A.

[0096] One transducer may be actuated corresponding to an audio signal while the other transducer is actuated corresponding to the same audio signal but with a phase and/or amplitude and/or delay difference intentionally induced corresponding to a direction emulated for the user. Generally, upon receiving a directional audio signal and depending upon the direction to be emulated and the separation between the respective transducers, a particular phase and/or gain and/or delay change to the audio signal may be applied to the respective transducer while leaving the other transducer to receive the audio signal unchanged.

[0097] As illustrated in the schematic illustration of FIG. 14B, audio signals received by the one or more microphones 110, 112, which may include an array of intra-buccal and/or extra-buccal microphones as described above, may be transmitted wirelessly or via wire to the one or more processors 120, 124, as above. The detected audio signals may be processed to estimate the direction of arrival of the detected sound 200 by applying any number of algorithms as known in the art. The processor may also simply reproduce a signal that carries the information of the received sound 202 detected from the microphones 110, 112. This may entail a transfer of the information from one of the microphones, a sum of the signals received from the microphones, or a weighted sum of the signals received from the microphones, etc., as known in the art. Alternatively, the reproduced sound 202 may simply pass the information in the audio signals from any combination of the microphones or from any single one of the microphones, e.g., a first microphone, a last microphone, a random microphone, a microphone with the strongest detected audio signals, etc.

[0098] With the estimated direction of arrival of the detected sound 200 determined, the data may be modified for phase and/or amplitude and/or delay adjustments 204 as well as for orientation compensation 208, if necessary, based on additional information received the microphones 110, 112 and relative orientation of the transducers 114, 116, 118, as described in further detail below. The process of adjusting for phase and/or amplitude and/or delay 204 may involve calculating one phase adjustment for one of the transducers. This may simply involve an algorithm where given a desired direction to be emulated, a table of values may correlate a set of given phase and/or amplitude and/or delay values for adjusting one or more of the transducers. Because the adjustment values may depend on several different factors, e.g., speed of sound conductance through a user's skull, distance between transducers, etc., each particular user may have a specific table of values. Alternatively, standard set values may be determined for groups of users having similar anatomical features, such as jaw size among other variations, and requirements. In other variations, rather than utilizing a table of values in adjusting for phase and/or amplitude and/or delay 204, set formulas or algorithms may be programmed in processor 120 and/or 124 to determine phase and/or amplitude and/or delay adjustment values. Use of an algorithm could simply utilize continuous calculations in determining any adjustment which may be needed or desired whereas the use of a table of values may simply utilize storage in memory.

[0099] Once any adjustments in phase and/or amplitude and/or delay 204 are determined and with the reproduced signals 202 processed from the microphones 110, 112, these signals may then be processed to calculate any final phase and/or amplitude and/or delay adjustments 206 and these final signals may be applied to the transducers 114, 116, 118, as illustrated, to emulate the directionality of received audio signals to the user. A detailed schematic illustration of the final phase and/or amplitude and/or delay adjustments 206 is illustrated in FIG. 15 where the signals received from 202 may be split into two or more identical signals 214, 216 which may correlate to the number of transducers utilized to emulate the directionality. The phase and/or amplitude and/or delay adjustments 204 may be applied to one or more of the received signals 214, 216 by applying either a phase adjustment (F) 210, e.g., any phase adjustment from 0.degree. to 360.degree., and/or amplitude adjustment (a) 212, e.g., any amplitude adjustment from 1.0 to 0.7, and/or delay (.tau.) 213, e.g., any time delay of 0 to 125 .mu.sec, to result in at least one signal 218 which has been adjusted for transmission via the one or more transducers. These values are presented for illustrative purposes and are not intended to be limiting. Although the adjustments may be applied to both signals 214, 216, they may also be applied to a single signal 214 while one of the received signals 216 may be unmodified and passed directly to one of the transducers.

[0100] As mentioned above, compensating 208 for an orientation of the transducers relative to one another as well as relative to an orientation of the user may be taken into account in calculating any adjustments to phase and/or amplitude and/or delay of the signals applied to the transducers. For example, the direction 230 perpendicular to a line 224 connecting the microphones 226, 228 (intra-buccal and/or extra-buccal) may define a zero degree direction of the microphones. A zero degree direction of the user's head may be indicated by the direction 222, which may be illustrated as in FIG. 16 as the direction the user's nose points towards. The difference between the zero degree direction of the microphones and the zero degree direction of the user's head may define an angle, .THETA., which may be taken into account as a correction factor when determining the phase and/or amplitude adjustments. Accordingly, if the positioning of microphones 226, 228 are such that their zero degree direction is aligned with the zero degree direction of the user's head, then little or no correction may be necessary. If the positioning of the microphones 226, 228 is altered relative to the user's body and an angle is formed relative to the zero degree direction of the user's head, then the audio signals received by the user and the resulting vibrations conducted by the transducers to the user may be adjusted for phase and/or amplitude taking into account the angle, .THETA., when emulating directionality with the vibrating transducers.

[0101] In addition to or independent from any of the processes described above, another feature which may utilize the oral appliance and processing capabilities may include the ability to vibrationally conduct ancillary audio signals to the user, e.g., the oral appliance may be configured to wirelessly receive and conduct signals from secondary audio sources to the user. Examples may include the transmission of an alarm signal which only the user may hear or music conducted to the user in public locations, etc. The user may thus enjoy privacy in receiving these ancillary signals while also being able to listen and/or converse in an environment where a primary audio signal is desired.

[0102] FIG. 17A shows an example of placing one or more microphones 110, 112 as well as an optional wireless receiver 122 along one or both retaining portions 21, 23, as above. In a schematic illustration shown in FIG. 17B, one variation for receiving and processing multiple audio signals is shown where various audio sources 234, 238 (e.g., alarms, music players, cell phones, PDA's, etc.) may transmit their respective audio signals 236, 240 to an audio receiver processor 230, which may receive the sounds via the one or more microphones and process them for receipt by an audio application processor 232, which may apply the combined signal received from audio receiver processor 230 and apply them to one or more transducers to the user 242. FIG. 17C illustrates another variation where a wireless receiver and/or processor 122 located along one or more of the retaining portions 21 may be configured to wirelessly receive audio signals from multiple electronic audio sources 244. This feature may be utilized with any of the variations described herein alone or in combination.

[0103] The audio receiver processor 230 may communicate wirelessly or via wire with the audio application processor 232. During one example of use, a primary audio signal 240 (e.g., conversational speech) along with one or more ancillary audio signals 236 (e.g., alarms, music players, cell phones, PDA's, etc.) may be received by the one or more microphones of a receiver unit 250 of audio receiver processor 230. The primary signal 250 and ancillary signals 254 may be transmitted electrically to a multiplexer 256 which may combine the various signals 252, 254 in view of optional methods, controls and/or priority data 262 received from a user control 264, as described above. Parameters such as prioritization of the signals as well as volume, timers, etc., may be set by the user control 264. The multiplexed signal 258 having the combined audio signals may then be transmitted to processor 260, which may transmit the multiplexed signal 266 to the audio application processor 232, as illustrated in FIG. 18.

[0104] As described above, the various audio signals 236, 240 may be combined and multiplexed in various forms 258 for transmission to the user 242. For example, one variation for multiplexing the audio signals via multiplexer 256 may entail combining the audio signals such that the primary 240 and ancillary 236 signals are transmitted by the transducers in parallel where all audio signals are conducted concurrently to the user, as illustrated in FIG. 19A, which graphically illustrates transmission of a primary signal 270 in parallel with the one or more ancillary signals 272, 272 over time, T. The transmitted primary signal 270 may be transmitted at a higher volume, i.e., a higher dB level, than the other ancillary signals 272, 274, although this may be varied depending upon the user preferences.

[0105] Alternatively, the multiplexed signal 258 may be transmitted such that the primary 240 and ancillary 236 signals are transmitted in series, as graphically illustrated in FIG. 19B. In this variation, the transmitted primary 270 and ancillary 272, 274 signals may be conducted to the user in a pre-assigned, random, preemptive, or non-preemptive manner where each signal is conducted serially.

[0106] In yet another example, the transmitted signals may be conducted to the user in a hybrid form combining the parallel and serial methods described above and as graphically illustrated in FIG. 19C. Depending on user settings or preferences, certain audio signals 274, e.g., emergency alarms, may preempt primary 270 and/or ancillary 272 signals from other sources at preset times 276 or intermittently or in any other manner such that the preemptive signal 274 is played such that it is the only signal played back to the user.

[0107] The applications of the devices and methods discussed above are not limited to the treatment of hearing loss but may include any number of further treatment applications. Moreover, such devices and methods may be applied to other treatment sites within the body. Modification of the above-described assemblies and methods for carrying out the invention, combinations between different variations as practicable, and variations of aspects of the invention that are obvious to those of skill in the art are intended to be within the scope of the claims.

* * * * *

D00000

D00001

D00002

D00003

D00004

D00005

D00006

D00007

D00008

D00009

D00010

D00011

D00012

D00013

D00014

D00015

D00016

D00017

D00018

D00019

XML

uspto.report is an independent third-party trademark research tool that is not affiliated, endorsed, or sponsored by the United States Patent and Trademark Office (USPTO) or any other governmental organization. The information provided by uspto.report is based on publicly available data at the time of writing and is intended for informational purposes only.

While we strive to provide accurate and up-to-date information, we do not guarantee the accuracy, completeness, reliability, or suitability of the information displayed on this site. The use of this site is at your own risk. Any reliance you place on such information is therefore strictly at your own risk.

All official trademark data, including owner information, should be verified by visiting the official USPTO website at www.uspto.gov. This site is not intended to replace professional legal advice and should not be used as a substitute for consulting with a legal professional who is knowledgeable about trademark law.