Method, Apparatus, Medium, And Device For Processing Multi-angle Free-perspective Video Data

Sheng; Xiaojie

U.S. patent application number 16/810681 was filed with the patent office on 2020-09-10 for method, apparatus, medium, and device for processing multi-angle free-perspective video data. The applicant listed for this patent is Alibaba Group Holding Limited. Invention is credited to Xiaojie Sheng.

| Application Number | 20200288112 16/810681 |

| Document ID | / |

| Family ID | 1000004701058 |

| Filed Date | 2020-09-10 |

View All Diagrams

| United States Patent Application | 20200288112 |

| Kind Code | A1 |

| Sheng; Xiaojie | September 10, 2020 |

METHOD, APPARATUS, MEDIUM, AND DEVICE FOR PROCESSING MULTI-ANGLE FREE-PERSPECTIVE VIDEO DATA

Abstract

A method, an apparatus, a medium, and a device for processing multi-angle free-perspective video data are disclosed. The method includes: parsing acquired video data to obtain multiple data combinations of different frame moments, the data combinations including pixel data and depth data of multiple synchronized images, and the multiple synchronized images having different perspectives on a to-be-viewed area; and reconstructing an image associated with a respective virtual viewpoint based on the respective data combination for each frame moment, the respective virtual viewpoint being selected from a multi-angle free-perspective range, and the multi-angle free-perspective range being a range that supports viewing and switching of viewpoints on the to-be-viewed area. The technical solutions in the embodiments of the present disclosure can support range being that supports viewing and switching of viewpoints within a multi-angle free-perspective range.

| Inventors: | Sheng; Xiaojie; (Shanghai, CN) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Family ID: | 1000004701058 | ||||||||||

| Appl. No.: | 16/810681 | ||||||||||

| Filed: | March 5, 2020 |

| Current U.S. Class: | 1/1 |

| Current CPC Class: | H04N 13/282 20180501; G06T 3/4038 20130101; H04N 13/279 20180501; H04N 13/268 20180501; H04N 13/293 20180501 |

| International Class: | H04N 13/282 20060101 H04N013/282; H04N 13/268 20060101 H04N013/268; H04N 13/279 20060101 H04N013/279; H04N 13/293 20060101 H04N013/293; G06T 3/40 20060101 G06T003/40 |

Foreign Application Data

| Date | Code | Application Number |

|---|---|---|

| Mar 7, 2019 | CN | 201910172717.7 |

| Mar 7, 2019 | CN | 201910172720.9 |

| Mar 7, 2019 | CN | 201910172727.0 |

| Mar 7, 2019 | CN | 201910172729.X |

| Mar 7, 2019 | CN | 201910172742.5 |

| Mar 7, 2019 | CN | 201910172743.X |

| Mar 7, 2019 | CN | 201910172761.8 |

| Mar 7, 2019 | CN | 201910173413.2 |

| Mar 7, 2019 | CN | 201910173414.7 |

| Mar 7, 2019 | CN | 201910173415.1 |

| Mar 7, 2019 | CN | 201910177941.5 |

Claims

1. A method implemented by one or more computing devices, the method comprising: parsing acquired video data to obtain multiple data combinations of different frame moments, the data combinations including pixel data and depth data of multiple synchronized images, and the multiple synchronized images having different perspectives on a to-be-viewed area; and reconstructing an image associated with a respective virtual viewpoint based on the respective data combination for each frame moment, the respective virtual viewpoint being selected from a multi-angle free-perspective range, and the multi-angle free-perspective range being a range that supports viewing and switching of viewpoints on the to-be-viewed area.

2. The method according to claim 1, wherein the depth data of each image of the synchronized images is a set of depth values that are in one-to-one correspondence with pixels of the respective image.

3. The method according to claim 1, wherein the depth data of each image of the synchronized images is data obtained after up-sampling a depth map, the depth map is an image formed by a set of depth values that are in one-to-one correspondence with pixels of the respective image and arranged according to pixel points of the respective image.

4. The method according to claim 3, wherein reconstructing the image associated with the respective virtual viewpoint based on the respective data combination comprises: up-sampling the depth data to obtain the set of depth values that are in one-to-one correspondence with the pixels of the image; and reconstructing the image associated with the virtual viewpoint according to the pixel data of the synchronized multiple images and the set of depth values.

5. The method according to claim 1, wherein reconstructing the image associated with the respective virtual viewpoint based on the respective data combination comprises: determining parameter data of each of the multiple synchronized images, the parameter data including data of a shooting position and a shooting angle of the respective image; determining parameter data of the virtual viewpoint, the parameter data of the virtual viewpoint including a virtual viewing position and a virtual viewing angle; determining a plurality of target images among the multiple synchronized images; mapping, for each target image, depth data to the virtual viewpoint according to a relationship between the parameter data of the virtual viewpoint and parameter data of the image; and generating a reconstructed image according to the depth data mapped to the virtual viewpoint and pixel data of the target image.

6. The method according to claim 5, wherein determining the plurality of target images among the multiple synchronized images comprises: selecting a target image from among the multiple images according to the relationship between the parameter data of the virtual viewpoint and parameter data of the image.

7. The method according to claim 6, wherein all of the multiple synchronized images are treated as the target images.

8. The method according to claim 6, wherein parameters of the image further comprises internal parameter data, the internal parameter data including property data of a shooting device of the image.

9. The method according to claim 1, wherein: prior to reconstructing the image associated with the virtual viewpoint, the method further comprises: receiving parameter data of the virtual viewpoint.

10. The method according to claim 9, wherein after reconstructing the image associated with the virtual viewpoint, the method further comprises: sending the reconstructed image to an image display device.

11. One or more computer readable media storing executable instructions that, when executed by one or more processors, cause the one or more processors to perform acts comprising: parsing acquired video data to obtain multiple data combinations of different frame moments, the data combinations including pixel data and depth data of multiple synchronized images, and the multiple synchronized images having different perspectives on a to-be-viewed area; and reconstructing an image associated with a respective virtual viewpoint based on the respective data combination for each frame moment, the respective virtual viewpoint being selected from a multi-angle free-perspective range, and the multi-angle free-perspective range being a range that supports viewing and switching of viewpoints on the to-be-viewed area.

12. The one or more computer readable media according to claim 11, wherein the depth data of each image of the synchronized images is a set of depth values that are in one-to-one correspondence with pixels of the respective image.

13. The one or more computer readable media according to claim 11, wherein the depth data of each image of the synchronized images is data obtained after up-sampling a depth map, the depth map is an image formed by a set of depth values that are in one-to-one correspondence with pixels of the respective image and arranged according to pixel points of the respective image.

14. The one or more computer readable media according to claim 13, wherein reconstructing the image associated with the respective virtual viewpoint based on the respective data combination comprises: up-sampling the depth data to obtain the set of depth values that are in one-to-one correspondence with the pixels of the image; and reconstructing the image associated with the virtual viewpoint according to the pixel data of the synchronized multiple images and the set of depth values.

15. The one or more computer readable media according to claim 11, wherein reconstructing the image associated with the respective virtual viewpoint based on the respective data combination comprises: determining parameter data of each of the multiple synchronized images, the parameter data including data of a shooting position and a shooting angle of the respective image; determining parameter data of the virtual viewpoint, the parameter data of the virtual viewpoint including a virtual viewing position and a virtual viewing angle; determining a plurality of target images among the multiple synchronized images; mapping, for each target image, depth data to the virtual viewpoint according to a relationship between the parameter data of the virtual viewpoint and parameter data of the image; and generating a reconstructed image according to the depth data mapped to the virtual viewpoint and pixel data of the target image.

16. The one or more computer readable media according to claim 15, wherein determining the plurality of target images among the multiple synchronized images comprises: selecting a target image from among the multiple images according to the relationship between the parameter data of the virtual viewpoint and parameter data of the image.

17. The one or more computer readable media according to claim 16, wherein parameters of the image further comprises internal parameter data, the internal parameter data including property data of a shooting device of the image.

18. The one or more computer readable media according to claim 11, wherein: prior to reconstructing the image associated with the virtual viewpoint, the acts further comprise: receiving parameter data of the virtual viewpoint.

19. The one or more computer readable media according to claim 18, wherein after reconstructing the image associated with the virtual viewpoint, the acts further comprise: sending the reconstructed image to an image display device.

20. An apparatus comprising: one or more processors; memory; a parsing unit stored in the memory and executable by the one or more processors to parse acquired video data to obtain multiple data combinations of different frame moments, the data combinations including pixel data and depth data of multiple synchronized images, and the multiple synchronized images having different perspectives on a to-be-viewed area; and a virtual viewpoint image reconstruction unit stored in the memory and executable by the one or more processors to reconstruct an image associated with a respective virtual viewpoint based on the respective data combination for each frame moment, the respective virtual viewpoint being selected from a multi-angle free-perspective range, and the multi-angle free-perspective range being a range that supports viewing and switching of viewpoints on the to-be-viewed area.

Description

CROSS REFERENCE TO RELATED PATENT APPLICATIONS

[0001] This application claims priority to the following Chinese Patent Applications: (1) CN201910177941.5, filed on 7 Mar. 2019, entitled "Method, Apparatus, Terminal, Capturing System, and Device for Setting Capturing Devices", (2) CN201910172743.X, filed on 7 Mar. 2019, entitled "Method, Apparatus, Medium, and Device for Generating Multi-Angle Free-Perspective Image Data", (3) CN201910172727.0, filed on 7 Mar. 2019, entitled "Method, Apparatus, Medium, and Server for Generating Multi-angle Free-perspective Video Data", (4) CN201910172742.5, filed on 7 Mar. 2019, entitled "Method, Apparatus, Medium, Terminal, and Device for Processing Multi-Angle Free-Perspective Data", (5) CN201910172729.X, filed on 7 Mar. 2019, entitled "Method, Apparatus, Medium, Terminal, and Device for Multi-Angle Free-Perspective Interaction", (6) CN201910173415.1, filed on 7 Mar. 2019, entitled "Method, Apparatus, Medium, Terminal, and Device for Multi-Angle Free-Perspective Interaction", (7) CN201910173413.2, filed on 7 Mar. 2019, entitled "Method, Apparatus, Medium, and Device for Processing Multi-Angle Free-Perspective Image Data", (8) CN201910173414.7, filed on 7 Mar. 2019, entitled "Method, Apparatus, Medium, and Device for Processing Multi-Angle Free-Perspective Video Data", (9) CN201910172761.8, filed on 7 Mar. 2019, entitled "Video Generating Method, Apparatus, Medium, and Terminal", (10) CN201910172717.7, filed on 7 Mar. 2019, entitled "Video Reconstruction Method, System, Device, and Computer Readable Storage Medium", (11) CN201910172720.9, filed on 7 Mar. 2019, entitled "Image Reconstruction Method, System, Device, and Computer-Readable Storage Medium", which are hereby incorporated by reference in their entirety.

TECHNICAL FIELD

[0002] The present disclosure relates to the field of data processing, and in particular, to methods, apparatuses, media, and devices for processing multi-angle free-perspective video data.

BACKGROUND

[0003] In the field of data processing, video data can be received, and a video can be played to a user based on the video data. This type of video playback is usually based on a fixed perspective, and the user experience needs to be improved.

SUMMARY

[0004] This Summary is provided to introduce a selection of implementations in a simplified form that are further described below in Detailed Description. This Summary is not intended to identify all features of the claimed subject matter, nor is it intended to be used alone as an aid in determining the scope of the claimed subject matter. The term "techniques," for instance, may refer to device(s), system(s), method(s) and/or processor-readable/computer-readable instructions as permitted by the context above and throughout the present disclosure.

[0005] A technical problem to be solved by example embodiments of the present disclosure is to provide a method for processing multi-angle free-perspective video data, to support pilot switching playback within a multi-angle free-perspective range.

[0006] In order to solve the above technical problems, the embodiments of the present disclosure provide a method for processing multi-angle free-perspective video data, which includes:

[0007] parsing acquired video data to obtain multiple data combinations of different frame moments, the data combinations including pixel data and depth data of multiple synchronized images, and the multiple synchronized images having different perspectives on a to-be-viewed area; and reconstructing an image associated with a respective virtual viewpoint based on the respective data combination for each frame moment, the respective virtual viewpoint being selected from a multi-angle free-perspective range, and the multi-angle free-perspective range being a range that supports viewing and switching of viewpoints on the to-be-viewed area.

[0008] In an example embodiment, for each image of the synchronized images, the depth data is a set of depth values that are in one-to-one correspondence with pixels of the respective image.

[0009] In an example embodiment, for each image of the synchronized images, the depth data is data obtained after up-sampling a depth map, the depth map is an image formed by a set of depth values that are in one-to-one correspondence with pixels of the respective image and arranged according to pixel points of the respective image.

[0010] In an example embodiment, reconstructing the image associated with the respective virtual viewpoint based on the respective data combination includes: up-sampling the depth data to obtain the set of depth values that are in one-to-one correspondence with the pixels of the image; and reconstructing the image associated with the virtual viewpoint according to the pixel data of the synchronized multiple images and the set of depth values.

[0011] In an example embodiment, reconstructing the image associated with the respective virtual viewpoint based on the respective data combination includes: determining parameter data of each of the multiple synchronized images, the parameter data including data of a shooting position and a shooting angle of the respective image; determining parameter data of the virtual viewpoint, the parameter data of the virtual viewpoint including a virtual viewing position and a virtual viewing angle; determining a plurality of target images among the multiple synchronized images; mapping, for each target image, depth data to the virtual viewpoint according to a relationship between the parameter data of the virtual viewpoint and parameter data of the image; and generating a reconstructed image according to the depth data mapped to the virtual viewpoint and pixel data of the target image.

[0012] In an example embodiment, determining the plurality of target images among the multiple synchronized images includes: selecting a target image from among the multiple images according to a relationship between the parameter data of the virtual viewpoint and parameter data of the image.

[0013] In an example embodiment, all of the multiple synchronized images are treated as the target images.

[0014] In an example embodiment, parameters of the image further include internal parameter data, the internal parameter data including property data of a shooting device of the image.

[0015] In an example embodiment, prior to reconstructing the image associated with the virtual viewpoint, the method further includes: receiving parameter data of the virtual viewpoint.

[0016] In an example embodiment, after reconstructing the image associated with the virtual viewpoint, the method further includes: sending the reconstructed image to an image display device.

[0017] The embodiments of the present disclosure further provide a method for processing multi-angle free-perspective video data, which includes: performing image reconstruction of a virtual viewpoint using the method for processing multi-angle free-perspective video data as described above; and performing a video playback based on reconstructed images of different frame moments.

[0018] In an example embodiment, prior to performing the image reconstruction of the virtual viewpoint, the method further includes: receiving an instruction from a user, and determining the virtual viewpoint according to the user instruction.

[0019] The embodiments of the present disclosure further provide a method for processing multi-angle free-perspective video data, which includes: receiving an image after performing a reconstruction of a virtual viewpoint, the image reconstruction of the virtual viewpoint being performed using the method for processing multi-angle free-perspective video data as described above; and performing a video playback based on reconstructed images of different frame moments.

[0020] In an example embodiment, the reconstructed images are received from an edge computing node.

[0021] In an example embodiment, the method further includes: sending parameter data of the virtual viewpoint to an edge computing node.

[0022] The embodiments of the present disclosure further provide an apparatus for processing multi-angle free-perspective video data including:

[0023] a parsing unit, adapted to parse acquired video data to obtain multiple data combinations of different frame moments, the data combinations including pixel data and depth data of multiple synchronized images, and the multiple synchronized images having different perspectives on a to-be-viewed area; and a virtual viewpoint image reconstruction unit, adapted to reconstruct an image associated with a respective virtual viewpoint based on the respective data combination for each frame moment, the respective virtual viewpoint being selected from a multi-angle free-perspective range, and the multi-angle free-perspective range being a range that supports viewing and switching of viewpoints on the to-be-viewed area.

[0024] The embodiments of the present disclosure further provide an apparatus for processing multi-angle free-perspective video data including: a reconstruction unit, adapted to perform image reconstruction of a virtual viewpoint using the apparatus for processing multi-angle free-perspective video data as described above; and a playing unit, adapted to perform a video playback based on reconstructed images of different frame moments.

[0025] The embodiments of the present disclosure further provide an apparatus for processing multi-angle free-perspective video data including: a receiving unit, adapted to receive an image after performing a reconstruction of a virtual viewpoint, the image reconstruction of the virtual viewpoint being performed using the apparatus for processing multi-angle free-perspective video data as described above; and a playing unit, adapted to perform a video playback based on reconstructed images of different frame moments.

[0026] The embodiments of the present disclosure further provide a computer-readable storage medium having computer instructions stored thereon. When the computer instructions are executed, the steps of the method for processing multi-angle free-perspective video data are performed.

[0027] The embodiments of the present disclosure further provide an edge computing node including a memory and a processor, where the memory stores computer instructions capable of running on the processor, and when the computer instructions are executed by the processor, the steps of the method for processing multi-angle free-perspective video data are performed.

[0028] The embodiments of the present disclosure further provide a terminal including a memory and a processor, where the memory stores computer instructions capable of running on the processor, and when the computer instructions are executed by the processor, the steps of the method for processing multi-angle free-perspective video data are performed.

[0029] The embodiments of the present disclosure further provide a mobile device including a communication component, a processor, and a display component, the communication component being configured to receive multi-angle free-perspective video data, the multi-angle free-perspective video data including a data combination; the processor being configured to render based on the multi-angle free-perspective video data to generate video data corresponding to different virtual viewpoints; and the display component being configured to display the video data corresponding to the different virtual viewpoints.

[0030] Compared with existing technologies, the technical solutions of the embodiments of the present disclosure have the following beneficial effects.

[0031] In the embodiments of the present disclosure, parsing is performed on acquired video data to obtain multiple data combinations of different frame moments. The data combinations include pixel data and depth data of multiple synchronized images, and the multiple synchronized images have different perspectives on a to-be-viewed area. For each frame moment, an image reconstruction of a respective virtual viewpoint is performed based on the respective data combination. A video playback is performed based on reconstructed images of different frame moments. Therefore, the method for processing multi-angle free-perspective video data in the embodiments of the present disclosure can support range being that supports viewing and switching of viewpoints within a multi-angle free-perspective range.

BRIEF DESCRIPTION OF THE DRAWINGS

[0032] In order to illustrate the example embodiments of the present disclosure more clearly, the drawings used in the description of the example embodiments will be briefly introduced below. Apparently, the drawings in the following description represent some of the example embodiments of the present disclosure, and other drawings may be obtained from these drawings by those skilled in the art without any creative efforts.

[0033] FIG. 1 is a schematic diagram of a to-be-viewed area in an example embodiment of the present disclosure;

[0034] FIG. 2 is a schematic diagram of a setting method of capturing devices in an example embodiment of the present disclosure;

[0035] FIG. 3 is a schematic diagram of a multi-angle free-perspective display system in an example embodiment of the present disclosure;

[0036] FIG. 4 is a schematic diagram of a device display in an example embodiment of the present disclosure;

[0037] FIG. 5 is a schematic diagram of a control performed on a device in an example embodiment of the present disclosure;

[0038] FIG. 6 is a schematic diagram of another control performed on a device in an example embodiment of the present disclosure;

[0039] FIG. 7 is a schematic diagram of another setting method of capturing devices in an example embodiment of the present disclosure;

[0040] FIG. 8 is a schematic diagram of another control performed on a device in an example embodiment of the present disclosure;

[0041] FIG. 9 is a schematic diagram of another device display in an example embodiment of the present disclosure;

[0042] FIG. 10 is a flowchart of a setting method of capturing devices in an example embodiment of the present disclosure;

[0043] FIG. 11 is a schematic diagram of a multi-angle free-perspective range in an example embodiment of the present disclosure;

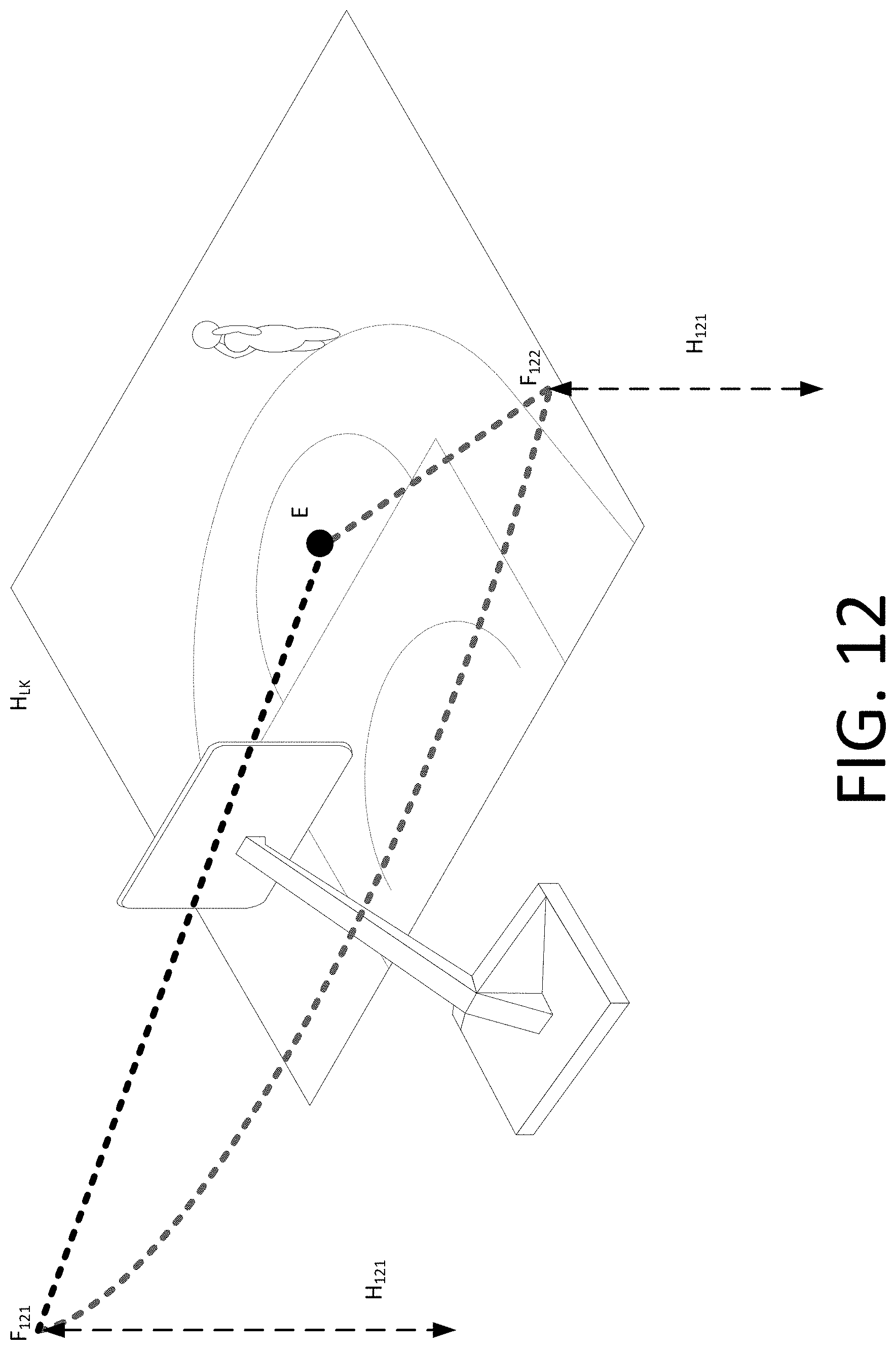

[0044] FIG. 12 is a schematic diagram of another multi-angle free-perspective range in an example embodiment of the present disclosure;

[0045] FIG. 13 is a schematic diagram of another multi-angle free-perspective range in an example embodiment of the present disclosure;

[0046] FIG. 14 is a schematic diagram of another multi-angle free-perspective range in an example embodiment of the present disclosure;

[0047] FIG. 15 is a schematic diagram of another multi-angle free-perspective range in an example embodiment of the present disclosure;

[0048] FIG. 16 is a schematic diagram of another setting method of capturing devices in an example embodiment of the present disclosure;

[0049] FIG. 17 is a schematic diagram of another setting method of capturing devices in an example embodiment of the present disclosure;

[0050] FIG. 18 is a schematic diagram of another setting method of capturing devices in an example embodiment of the present disclosure;

[0051] FIG. 19 is a flowchart of a method for generating multi-angle free-perspective data in an example embodiment of the present disclosure;

[0052] FIG. 20 is a schematic diagram of distribution positions of the pixel data and the depth data of a single image in an example embodiment of the present disclosure;

[0053] FIG. 21 is a schematic diagram of distribution positions of the pixel data and the depth data of another single image in an example embodiment of the present disclosure;

[0054] FIG. 22 is a schematic diagram of distribution positions of the pixel data and the depth data of another image in an example embodiment of the present disclosure;

[0055] FIG. 23 is a schematic diagram of distribution positions of the pixel data and the depth data of another image in another example embodiment of the present disclosure;

[0056] FIG. 24 is a schematic diagram of distribution positions of the pixel data and the depth data of another image in an example embodiment of the present disclosure;

[0057] FIG. 25 is a schematic diagram of distribution positions of the pixel data and the depth data of another image in another example embodiment of the present disclosure;

[0058] FIG. 26 is a schematic diagram of image area stitching in an example embodiment of the present disclosure;

[0059] FIG. 27 is a schematic diagram of a structure of a stitched image in an example embodiment of the present disclosure;

[0060] FIG. 28 is a schematic diagram of another structure of a stitched image in an example embodiment of the present disclosure;

[0061] FIG. 29 is a schematic diagram of another structure of a stitched image in an example embodiment of the present disclosure;

[0062] FIG. 30 is a schematic diagram of another structure of a stitched image in an example embodiment of the present disclosure;

[0063] FIG. 31 is a schematic diagram of another structure of a stitched image in an example embodiment of the present disclosure;

[0064] FIG. 32 is a schematic diagram of another structure of a stitched image in an example embodiment of the present disclosure;

[0065] FIG. 33 is a schematic diagram of the pixel data distribution of an image in an example embodiment of the present disclosure;

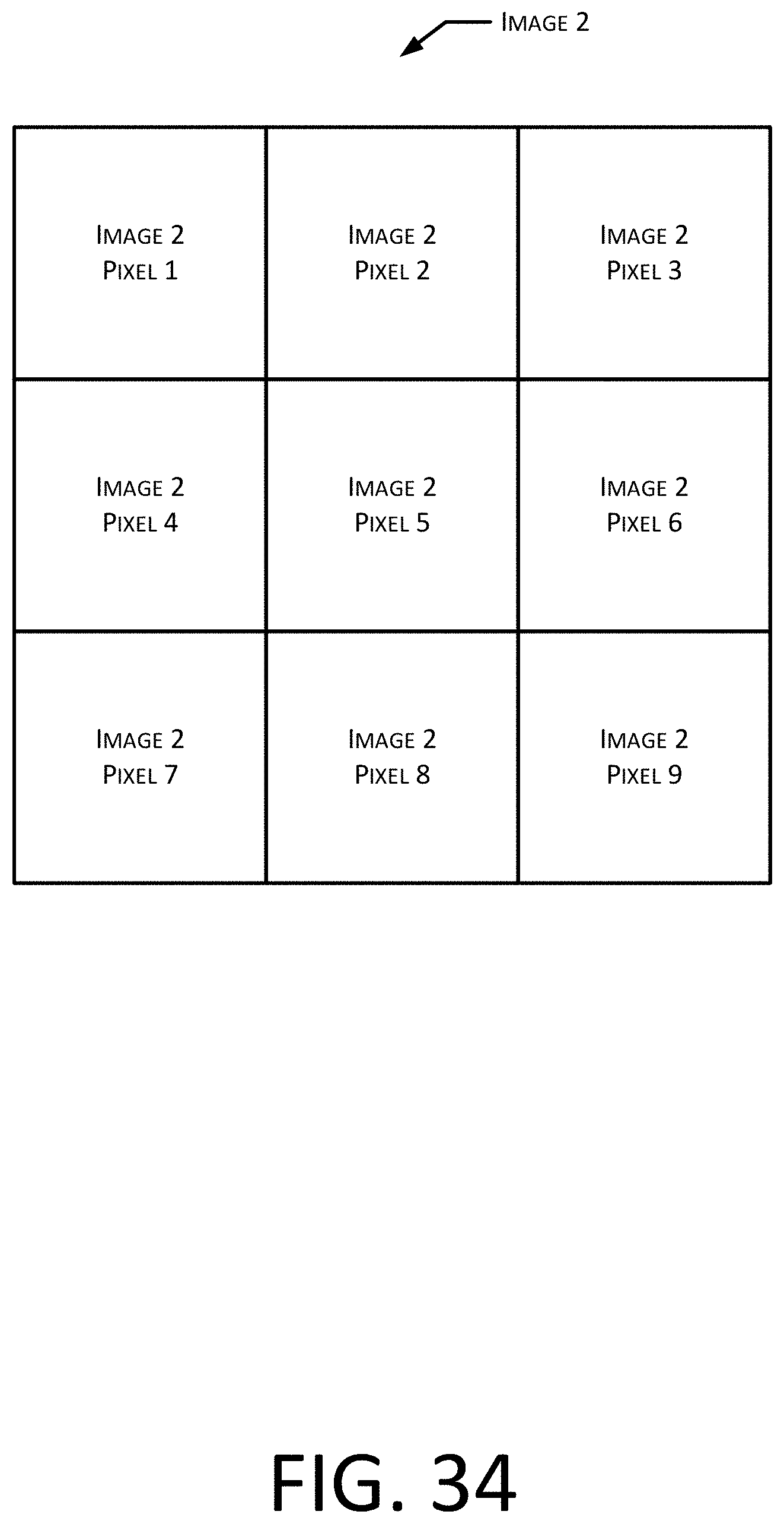

[0066] FIG. 34 is a schematic diagram of another pixel data distribution of an image in an example embodiment of the present disclosure;

[0067] FIG. 35 is a schematic diagram of data storage in a stitched image in an example embodiment of the present disclosure;

[0068] FIG. 36 is a schematic diagram of another data storage in a stitched image in an example embodiment of the present disclosure;

[0069] FIG. 37 is a flowchart of a method for generating multi-angle free-perspective video data in an example embodiment of the present disclosure;

[0070] FIG. 38 is a flowchart of a method for processing multi-angle free-perspective data in an example embodiment of the present disclosure;

[0071] FIG. 39 is a flowchart of a method for reconstructing an image for a virtual viewpoint in an example embodiment of the present disclosure;

[0072] FIG. 40 is a flowchart of a multi-angle free-perspective image data processing method in an example embodiment of the present disclosure;

[0073] FIG. 41 is a flowchart of a method for processing multi-angle free-perspective video data in an example embodiment of the present disclosure;

[0074] FIG. 42 is a flowchart of a multi-angle free-perspective interaction method in an example embodiment of the present disclosure;

[0075] FIG. 43 is a schematic diagram of another control performed on a device in an example embodiment of the present disclosure;

[0076] FIG. 44 is a schematic diagram of another device display in an example embodiment of the present disclosure;

[0077] FIG. 45 is a schematic diagram of another control performed on a device in an example embodiment of the present disclosure;

[0078] FIG. 46 is a schematic diagram of another device display in an example embodiment of the present disclosure;

[0079] FIG. 47 is a structural schematic diagram of an apparatus for processing multi-angle free-perspective video data in an example embodiment of the present disclosure;

[0080] FIG. 48 is a structural schematic diagram of a virtual viewpoint image reconstruction in an example embodiment of the present disclosure;

[0081] FIG. 49 is a structural schematic diagram of another virtual viewpoint image reconstruction unit in an example embodiment of the present disclosure;

[0082] FIG. 50 is a structural schematic diagram of an apparatus for processing multi-angle free-perspective video data in an example embodiment of the present disclosure;

[0083] FIG. 51 is a structural schematic diagram of an apparatus for processing multi-angle free-perspective video data in an example embodiment of the present disclosure;

[0084] FIG. 52 is a schematic diagram of a process for generating multi-angle free-perspective data in an example embodiment of the present disclosure;

[0085] FIG. 53 is a schematic diagram of a multi-camera 6DoF capturing system in an example embodiment of the present disclosure;

[0086] FIG. 54 is a schematic diagram of generating and processing of 6DoF video data in an example embodiment of the present disclosure;

[0087] FIG. 55 is a structural schematic diagram of the data header file in an example embodiment of the present disclosure;

[0088] FIG. 56 is a schematic diagram of 6DoF video data processing on the user side in an example embodiment of the present disclosure;

[0089] FIG. 57 is a schematic diagram of input and output of a reference software in an example embodiment of the present disclosure; and

[0090] FIG. 58 is a schematic diagram of an algorithm architecture of a reference software in an example embodiment of the present disclosure.

DETAILED DESCRIPTION

[0091] As mentioned in the background section, in the field of data processing, video data can be received, and a video can be played to a user based on the video data. This type of video playback is usually based on a fixed perspective, and the user experience needs to be improved.

[0092] In the embodiments of the present disclosure, parsing is performed on acquired video data to obtain multiple data combinations of different frame moments. A data combination includes pixel data and depth data of multiple synchronized images, and the multiple synchronized images have different perspectives on a to-be-viewed area. For each frame moment, an image associated with a respective virtual viewpoint is reconstructed based on the respective data combination, and thereby video can be played based on respective reconstructed images at different frame moments. Reconstructing an image associated with a virtual viewpoint enables an acquisition of an image of the to-be-viewed area that is viewed based on the virtual viewpoint. Therefore, the data processing method for processing multi-angle free-perspective video data in the embodiments of the present disclosure can support a pilot switching video playback in a multi-angle free-perspective range.

[0093] In a data processing method that may support a user's perspective switching, image data is stored in a manner of a point cloud, and the three-dimensional positions and pixel information of all points in the to-be-viewed area are specifically expressed and stored, which requires a larger amount of storage resources. Correspondingly, a relatively large amount of computing resources is needed for processing the data that is stored in such manner. If data corresponding to different moments are stored in this manner, the amount of data is large. Accordingly, it is difficult to meet the requirement for the smooth playing of real video if the video is played based on the data stored in this manner.

[0094] In the embodiments of the present disclosure, switching a viewpoint of a video that is played for playing the video is based on images that are reconstructed at different frame moments. The reconstruction of the images is implemented according to data combinations. The amount of data that needs to be processed according to the embodiments of the present disclosure is much less as compared with point cloud data.

[0095] In order to make the above objectives, features, and beneficial effects of the present disclosure more comprehensible, example embodiments of the present disclosure will be described in detail hereinafter with reference to the accompanying drawings.

[0096] As an example embodiment of the present disclosure, the applicant describes the following steps. The first step is capturing and depth map calculation, including three main steps, which respectively are multi-camera video capturing, camera internal and external parameter calculation (camera parameter estimation), and depth map calculation. For multi-camera capturing, the videos captured by respective cameras are required to be aligned at the frame level. Referring to FIG. 52, through the multi-camera video capturing at 5202, a texture image may be obtained at 5204, i.e., the multiple synchronized images as described hereinafter. Through the calculation of camera internal and external parameters at 5206, camera parameters may be obtained at 5208, including internal parameter data and external parameter data as described hereinafter. Through the depth map calculation at 5210, a depth map may be obtained at 5212.

[0097] In this solution, no special camera, such as a light field camera, is required to capture the video. Similarly, no complicated camera calibration is required before capturing. Positions of multiple cameras may be laid out and arranged to better capture the objects or scenarios that need to be captured. Referring to FIG. 53, multiple capturing devices, such as camera 1 to camera N, may be set in the to-be-viewed area.

[0098] After the above three steps are processed, the texture image captured from multiple cameras, all camera parameters, and the depth map of each camera are obtained. These three pieces of data may be referred to as data files in multi-angle free-perspective video data, and may also be referred to as 6 degrees of freedom video data (6DoF video data) 5214. Because of these pieces of data, the user terminal may generate a virtual viewpoint based on the virtual 6 degrees of freedom (DoF) position, thereby providing a 6DoF video experience.

[0099] Referring to FIG. 54, 6DoF video data and indicative data (metadata) at 5402 may be compressed and transmitted to the user side at 5404. The user side may obtain the user-side 6DoF expression at 5406 according to the received data, i.e., the above 6DoF video data and metadata, where the indicative data may also be referred to as metadata.

[0100] Referring to FIG. 55, metadata may be used to describe the data pattern of 6DoF video data, which may include stitching pattern metadata 5502, which is used to indicate storage rules of the pixel data and the depth data of multiple images in the stitched image; padding pattern metadata 5504, which may be used to indicate the padding pattern in the stitched image; and other metadata 5506. The metadata may be stored in the data header file, and the storage order may be as shown in FIG. 51, or may be other orders.

[0101] Referring to FIG. 56, the user terminal obtains 6DoF video data, which includes 6DoF position 5602, camera parameters 5604, the texture image and the depth map 5606, and descriptive metadata (metadata) 5608, in addition, interaction behavior data of the user terminal 5610. With these pieces of data, the user may use 6DoF rendering based on depth map-based rendering (DIBR) 5612 to generate the virtual viewpoint image at the 6DoF position generated according to the user behavior, that is, to determine the virtual viewpoint of the 6DoF position corresponding to the instruction according to the user instruction.

[0102] In an example embodiment implemented during a test, each test example includes 20 seconds of video data. The video data is 30 frames/second with a resolution of 1920*1080. For any one of the 30 cameras, there are 600 frames of data in total. The main folder includes the texture image folder and the depth map folder. Under the texture image folder, the secondary directories from 0 to 599 may be found. These secondary directories respectively represent 600 frames of content corresponding to the 20-second video. Each secondary directory includes texture images captured by 30 cameras, named from 0.yuv to 29.yuv in the format of yuv420. Accordingly, in the depth map folder, each secondary directory includes 30 depth maps calculated by the depth estimation algorithm. Each depth map corresponds to the texture image with the same name. The texture images and corresponding depth maps of multiple cameras belong to a certain frame moment in the 20-second video.

[0103] All depth maps in the test example are generated by a preset depth estimation algorithm. In the test, these depth maps may provide good virtual viewpoint reconstruction quality at the virtual 6DoF position. In one case, a reconstructed image of the virtual viewpoint may be generated directly from the given depth maps. Alternatively, the depth map may also be generated or improved by the depth calculation algorithm based on the original texture image.

[0104] In addition to the depth map and the texture image, the test example also includes a .sfm file, which is used to describe the parameters of all 30 cameras. The data of the .sfm file is written in binary format. The data format is described hereinafter. Considering the adaptability to different cameras, a fisheye camera model with distortion parameters was used in the test. How to read and use camera parameter data from the file may be understood with reference to DIBR reference software provided by us. The camera parameter data includes the following fields:

(1) krt_R is the rotation matrix of the camera; (2) krt_cc is the optical center position of the camera; (3) krt_WorldPosition is the three-dimensional space coordinate of the camera; (4) krt_kc is the distortion coefficient of the camera; (5) src_width is the width of the calibration image; (6) src_height is the height of the calibration image; and (7) fisheye_radius and lens_fov are parameters of the fisheye camera.

[0105] In the technical solutions implemented by the present disclosure, the user may find the detailed code of how to read the corresponding parameters in the .sfm file from the preset parameter reading function (set_sfm_parameters function).

[0106] In the DIBR reference software, camera parameters, the texture image, the depth map, and the 6DoF position of the virtual camera are received as inputs, and the generated texture image and depth map at the virtual 6DoF position are output at the same time. The 6DoF position of the virtual camera is the above 6DoF position determined according to user behavior. The DIBR reference software may be the software that implements image reconstruction based on the virtual viewpoint in the example embodiments of the present disclosure.

[0107] Referring to FIG. 57, in the reference software, camera parameters 5702, the texture image 5704, the depth map 5706, and the 6DoF position of the virtual camera 5708 are received as inputs, and generated texture image 5710 and generated depth map 5712 at the virtual 6DoF position are output at the same time.

[0108] Referring to FIG. 58, the software may include the following processing steps: camera selection 5802, forward projection of the depth map 5804 and 5806, postprocessing of the depth map 5808 and 5810, backward projection of the texture image 5812 and 5814, fusion of multi-camera projected texture image 5816, and inpainting of the image 5818.

[0109] In the reference software, two cameras closest to the virtual 6DoF position may be selected by default to generate the virtual viewpoint.

[0110] In the postprocessing step of the depth map, the quality of the depth map may be improved by various methods, such as foreground padding, pixel-level filtering, and the like.

[0111] For the output generated image, a method for fusing texture images from two cameras is used. The fusion weight is a global weight and is determined by the distance of the position of the virtual viewpoint from the position of the reference camera. When the pixel of the output virtual viewpoint image is projected to only one camera, the projected pixel may be directly used as the value of the output pixel.

[0112] After the fusion step, if there are still hollow pixels that have not been projected to, an inpainting method may be used to fill the hollow pixels.

[0113] For the output depth map, for the convenience of errors and analysis, a depth map obtained by projecting from one of the cameras to the position of the virtual viewpoint may be used as the output.

[0114] Additionally, 6DoF position of the virtual camera 5520 and camera parameters 5522 may be used as the input for the camera selection step 5520.

[0115] Those skilled in the art may understand that the above example embodiments are merely examples and are not limitations on the implementation manners. The technical solutions in the present disclosure will be further described hereinafter.

[0116] Referring to FIG. 1, the to-be-viewed area may be a basketball court, and multiple capturing devices may be provided to perform data capturing on the to-be-viewed area.

[0117] For example, referring to FIG. 2, several capturing devices may be set along a certain path at a height H.sub.LK higher than the hoop. For example, six capturing devices may be set along the arc, i.e., the capturing devices CJ.sub.1 to CJ.sub.6. Those skilled in the art may understand that the setting position, number, and supporting manners of the capturing devices may be various, and there is no limitation herein.

[0118] The capturing device may be a camera or a video camera capable of synchronous shooting, for example, a camera or a video camera capable of synchronous shooting through a hardware synchronization line. With multiple capturing devices capturing data in the to-be-viewed area, multiple images or video streams in synchronization may be obtained. According to the video streams captured by multiple capturing devices, multiple synchronized frame images may also be obtained as multiple synchronized images. Those skilled in the art may understand that, ideally, the term synchronization refers to corresponding to the same moment, but the existence of errors and deviations may also be tolerated.

[0119] Referring to FIG. 3, in The embodiments of the present disclosure, data may be captured in the to-be-viewed area through the capturing system 31 including multiple capturing devices. The acquired multiple synchronized images may be processed by the capturing system 31 or the server 32 to generate multi-angle free-perspective data which are capable of supporting the device 33 that performs displaying to perform virtual viewpoint switching. The device 33 that performs displaying may display the reconstructed image generated based on the multi-angle free-perspective data. The reconstructed image corresponds to the virtual viewpoint. According to the user instruction, reconstructed images corresponding to different virtual viewpoints may be displayed, and the viewing position and viewing angle may be switched.

[0120] In implementations, the process of performing image reconstruction to obtain a reconstructed image may be implemented by the device 33 that performs displaying, or may be implemented by a device located on a Content Delivery Network (CDN) in an edge computing manner. Those skilled in the art may understand that FIG. 3 is merely an example, and is not a limitation on the capturing system, the server, the device that performs displaying, and the implementation manner. The process of image reconstruction based on multi-angle free-perspective data will be described in detail hereinafter with reference to FIG. 38 to FIG. 41 and will not be repeated herein.

[0121] Referring to FIG. 4, following the previous example, the user may watch the to-be-viewed area through the device that performs displaying. In this example embodiment, the to-be-viewed area is a basketball court. As described above, the viewing position and viewing angle may be switched.

[0122] For example, the user may slide the screen to switch the virtual viewpoint. In an example embodiment of the present disclosure, referring to FIG. 5, when the user slides the screen with his/her finger to the right, the virtual viewpoint for viewing may be switched. Still referring to FIG. 2, the position of the virtual viewpoint before sliding may be VP.sub.1. The position of the virtual viewpoint may be VP.sub.2 after the virtual viewpoint is switched by sliding the screen. Referring to FIG. 6, after sliding the screen, the reconstructed image displayed on the screen may be as shown in FIG. 6. The reconstructed image may be obtained by performing image reconstruction based on multi-angle free-perspective data generated from data captured by multiple capturing devices in an actual capturing scenario.

[0123] Those skilled in the art may understand that the image viewed before switching may also be a reconstructed image. The reconstructed image may be a frame image in a video stream. In addition, there are various manners to switch the virtual viewpoint according to the user instruction, which is not limited herein.

[0124] In implementations, the virtual viewpoint may be represented by 6 degrees of freedom (DoF) coordinates, where the spatial position of the virtual viewpoint may be represented as (x, y, z), and the perspective may be represented as three directions of rotation (.theta., .phi., .gamma.).

[0125] The virtual viewpoint is a three-dimensional concept. Three-dimensional information is required to generate the reconstructed image. In an implementation manner, the multi-angle free-perspective data may include the depth data for providing third-dimensional information outside the plane image. Compared with other implementation manners, such as providing three-dimensional information through point cloud data, the data amount of the depth data is smaller. Implementations of generating multi-angle free-perspective data will be described in detail hereinafter with reference to FIG. 19 to FIG. 37 and will not be repeated herein.

[0126] In The embodiments of the present disclosure, the switching of the virtual viewpoint may be performed within a certain range, which is the multi-angle free-perspective range. That is, within the multi-angle free-perspective range, the position of the virtual viewpoint and the perspective may be arbitrarily switched.

[0127] The multi-angle free-perspective range is related to the arrangement of the capturing devices. The broader the shooting coverage of the capturing devices is, the larger the multi-angle free-perspective range is. The quality of the picture displayed by the device that performs displaying is related to the number of capturing devices. Generally, the more the number of capturing devices is set, the fewer the number of the hollow areas in the displayed picture is.

[0128] Referring to FIG. 7, if two rows (an upper row and a lower row) of capturing devices are set in the basketball court, i.e., the upper row of capturing devices CJ.sub.1 to CJ.sub.6 and the lower row of capturing devices CJ.sub.11 to CJ.sub.16, respectively, compared with setting only one row of capturing devices, the multi-angle free-perspective range thereof is greater.

[0129] Referring to FIG. 8, the user's finger may slide upward to switch the virtual viewpoint for viewing. Referring to FIG. 9, after sliding the screen, the image displayed on the screen may be as shown in FIG. 9.

[0130] In implementations, if only one row of capturing devices is set, a certain degree of freedom in the vertical direction may also be obtained in the process of image reconstruction to obtain the reconstructed image, but the multi-angle free-perspective range thereof is smaller than that of the scenario where two rows of capturing devices are set in the vertical direction.

[0131] those skilled in the art may understand that the above respective example embodiments and corresponding drawings are merely for illustrative purposes and are not intended to limit the association relationship between the setting of the capturing devices and the multi-angle free-perspective range, nor are they limitations of operation manners or obtained display effects of the device that performs displaying. According to the user instruction, implementations of the virtual viewpoint switching viewing of the to-be-viewed area will be described in detail hereinafter with reference to FIG. 43 to FIG. 47 and will not be repeated herein.

[0132] Hereinafter, a setting method of capturing devices is further described specifically.

[0133] FIG. 10 is a flowchart of a setting method 1000 of capturing devices in an example embodiment of the present disclosure, which may include the following steps:

[0134] Step S1002, determining a multi-angle free-perspective range, where virtual viewpoint switching viewing in the to-be-viewed area is supported within the multi-angle free-perspective range;

[0135] Step S1004, determining setting positions of the capturing devices according to at least the multi-angle free-perspective range, where the setting positions are suitable for setting the capturing devices to perform data capturing in the to-be-viewed area.

[0136] Those skilled in the art may understand that a completely free perspective may refer to a perspective with 6 degrees of freedom. That is, the user may freely switch the spatial position and perspective of the virtual viewpoint on the device that performs displaying, where the spatial position of the virtual viewpoint may be expressed as (x, y, z), and the perspective may be expressed as three directions of rotation (.theta., .phi., .gamma.). There are 6 degrees of freedom in total, and thus the perspective is referred to as a perspective with 6 degrees of freedom.

[0137] As described above, in the embodiments of the present disclosure, the switching of the virtual viewpoint may be performed within a certain range, which is the multi-angle free-perspective range. That is, within the multi-angle free-perspective range, the position of the virtual viewpoint and the perspective may be arbitrarily switched.

[0138] The multi-angle free-perspective range may be determined according to the needs of the application scenario. For example, in some scenarios, the to-be-viewed area may have a core focus, such as the center of the stage, or the center of the basketball court, or the hoop of the basketball court. In such scenarios, the multi-angle free-perspective range may include a planar or three-dimensional area including the core focus. Those skilled in the art may understand that the to-be-viewed area may be a point, a plane, or a three-dimensional area, which is not limited herein.

[0139] As described above, the multi-angle free-perspective range may be various areas, and further examples are described hereinafter with reference to FIG. 11 to FIG. 15.

[0140] Referring to FIG. 11, point O represents the core focus. The multi-angle free-perspective range may be a sector area with the core focus as the center and located in the same plane as the core focus, such as the sector area A.sub.1OA.sub.2, or the sector area B.sub.1OB.sub.2. The multi-angle free-perspective range may also be a circular plane centered at point O.

[0141] Taking the multi-angle free-perspective range as the sector area A.sub.1OA.sub.2 as an example, the position of the virtual viewpoint may be continuously switched in this area. For example, the position of the virtual viewpoint may be continuously switched from A.sub.1 along the arc segment A.sub.1A.sub.2 to A.sub.2. Alternatively, the position of the virtual viewpoint may also be continuously switched along the arc segment L.sub.1L.sub.2. Alternatively, the position is switched in the multi-angle free-perspective range in other manners. Accordingly, the perspective of the virtual viewpoint may also be changed in this area.

[0142] Further referring to FIG. 12, the core focus may be the center point E of the basketball court. The multi-angle free-perspective range may be a sector area with the center point E as the center and located in the same plane as the center point E, such as the sector area F.sub.121EF.sub.122. The center point E of the basketball court may be located on the ground of the court. Alternatively, the center point E of the basketball court may be at a certain height from the ground. The height of the arc endpoint F.sub.121 and the height of the arc endpoint F.sub.122 of the sector area may be the same, for example, the height H121 in the figure.

[0143] Referring to FIG. 13, the core focus is represented by point O. The multi-angle free-perspective range may be a part of a sphere centered on the core focus. For example, the area C.sub.1C.sub.2C.sub.3C.sub.4 is used to illustrate a partial area of the spherical surface, and the multi-angle free-perspective range may be a three-dimensional range formed by the area C.sub.1C.sub.2C.sub.3C.sub.4 and the point O. Any point within this range may be used as the position of the virtual viewpoint.

[0144] Further referring to FIG. 14, the core focus may be the center point E of the basketball court. The multi-angle perspective range may be a part of the sphere centered on the center point E. For example, the area F.sub.131F.sub.132F.sub.133F.sub.134 illustrates a partial area of the spherical surface. The multi-angle free-perspective range may be a three-dimensional range formed by the area F.sub.131F.sub.132F.sub.133F.sub.134 and the center point E.

[0145] In the scenario with the core focus, the position of the core focus may be various, and the multi-angle free-perspective range may also be various, which are not listed herein one by one. Those skilled in the art may understand that the above respective example embodiments are merely examples and are not limitations on the multi-angle free-perspective range. Moreover, the shapes shown therein are not limitations on actual scenarios and applications.

[0146] In implementations, the core focus may be determined according to the scenario. In a shooting scenario, there may also be multiple core focuses, and the multi-angle free-perspective range may be a superposition of multiple sub-ranges.

[0147] In other application scenarios, the multi-angle free-perspective range may also be without the core focus. For example, in some application scenarios, it is necessary to provide multi-angle free-perspective viewing of historic buildings, or to provide multi-angle free-perspective viewing of art exhibitions. Accordingly, the multi-angle free-perspective range may be determined according to the requirements of these scenarios.

[0148] Those skilled in the art may understand that the shape of the degree of freedom perspective range may be arbitrary. Any point within the multi-angle free-perspective range may be used as the position.

[0149] Referring to FIG. 15, the multi-angle free-perspective range may be the cube D.sub.1D.sub.2D.sub.3D.sub.4D.sub.5D.sub.6D.sub.7D.sub.8, and the to-be-viewed area is the surface D.sub.1D.sub.2D.sub.3D.sub.4. Then, any point in the cube D.sub.1D.sub.2D.sub.3D.sub.4D.sub.5D.sub.6D.sub.7D.sub.8 may be used as the position of the virtual viewpoint. The perspective of the virtual viewpoint, i.e., the viewing angle, may be various. For example, the position E.sub.6 on the surface D.sub.5D.sub.6D.sub.7D.sub.8 may be selected to view with the perspective of E.sub.6D.sub.1 or to view along the angle of E.sub.6D.sub.9, where the point D.sub.9 is selected from the to-be-viewed area.

[0150] In implementations, after the multi-angle free-perspective range is determined, the positions of the capturing devices may be determined according to the multi-angle free-perspective range.

[0151] In an example embodiment, the setting positions of the capturing devices may be selected within the multi-angle free-perspective range. For example, the setting positions of the capturing devices may be determined at boundary points of the multi-angle free-perspective range.

[0152] Referring to FIG. 16, the core focus may be the center point E of the basketball court, and the multi-angle free-perspective range may be the sector area with the center point E as the center and located in the same plane as the center point E, such as the sector area F.sub.61EF.sub.62. The capturing devices may be set inside the multi-angle perspective range, for example, along the arc F.sub.65F.sub.66. Areas that are not covered by the capturing devices may be reconstructed using algorithms. In implementations, the capturing devices may also be set along the arc F.sub.61F.sub.62, and the capturing devices may be set at the ends of the arc to improve the quality of the reconstructed image. Each capturing device may be set towards the center point E of the basketball court. The position of the capturing device may be represented by spatial position coordinates, and the orientation of the capturing device may be represented by three rotation directions.

[0153] In implementations, two or more setting positions may be set, and correspondingly, two or more capturing devices may be set. The number of capturing devices may be determined according to the requirements of the quality of the reconstructed image or video. In a scenario with a higher requirement on the picture quality of the reconstructed image or video, the number of capturing devices may be greater. In a scenario with a lower requirement on the picture quality of the reconstructed image or video, the number of capturing devices may be smaller.

[0154] Still referring to FIG. 16, those skilled in the art may understand that if the higher picture quality of reconstructed image or video and a reduction in the number of holes in the reconstructed image are pursued, a larger number of capturing devices may be set along the arc F.sub.61F.sub.62. For example, 40 cameras may be set.

[0155] Referring to FIG. 17, the core focus may be the center point E of the basketball court, and the multi-angle perspective range may be a part of the sphere centered on the center point E. For example, the area F.sub.61F.sub.62F.sub.63F.sub.64 illustrates a partial area of the spherical surface, and the multi-angle free-perspective range may be a three-dimensional range formed by the area F.sub.61F.sub.62F.sub.63F.sub.64 and the center point E. The capturing devices may be set inside the multi-angle perspective range, for example, along the arc F.sub.65F.sub.66 and the arc F.sub.67F.sub.68. Similar to the previous example, areas that are not covered by the capturing devices may be reconstructed using algorithms. In implementations, the capturing devices may also be set along the arc F.sub.61F.sub.62 and the arc F.sub.63F.sub.64, and the capturing devices may be set at the ends of the arc to improve the quality of the reconstructed image.

[0156] Each capturing device may be set to face the center point E of the basketball court. Those skilled in the art may understand that, although not being shown in the figure, the number of capturing devices along the arc F.sub.61F.sub.62 may be more than the number of capturing devices along the arc F.sub.63F.sub.64.

[0157] As described above, in some application scenarios, the to-be-viewed area may include the core focus. Accordingly, the multi-angle free-perspective range includes the area where the perspective is directed to the core focus. In such an application scenario, the setting positions of the capturing devices may be selected from an arc-shaped area whose concave direction (radius direction) points to the core focus.

[0158] When the to-be-viewed area includes the core focus, the setting positions are selected in the arc-shaped area pointing to the core focus in the concave direction, so that the capturing devices are arranged with an arc shape. Because the to-be-viewed area includes the core focus, the perspective points to the core focus. In such a scenario, the capturing devices are arranged with the arc shape, such that fewer capturing devices may be used to cover a larger multi-angle free-perspective range.

[0159] In implementations, the setting positions of the capturing devices may be determined with reference to the perspective range and the boundary shape of the to-be-viewed area. For example, the setting positions of the capturing devices may be determined at a preset interval along the boundary of the to-be-viewed area within the perspective range.

[0160] Referring to FIG. 18, the multi-angle perspective range may be without the core focus. For example, the position of the virtual viewpoint may be selected from the hexahedron F.sub.81F.sub.82F.sub.83F.sub.84F.sub.85F.sub.86F.sub.87F.sub.88, and the virtual viewpoint position is used for viewing the to-be-viewed area. The boundary of the to-be-viewed area may be the ground boundary of the court. The capturing devices may be set along the intersecting line B.sub.89B.sub.94 of the ground boundary line with the to-be-viewed area. For example, six capturing devices may be set at positions B.sub.89 to B.sub.94. The degree of freedom in the up and down direction may be realized by an algorithm. Alternatively, another row of capturing devices may be set at the positions where the horizontal projection positions thereof are in the intersection line B.sub.89 to B.sub.94.

[0161] In implementations, the multi-angle free-perspective range may also support viewing from the upper side of the to-be-viewed area, and the upper side is in a direction away from the horizontal plane.

[0162] Accordingly, the capturing device may be mounted on the drone to set the capturing device on the upper side of the to-be-viewed area, or on the top of the building where the to-be-viewed area is located. The top of the building is the structure in the direction away from the horizontal plane.

[0163] For example, the capturing device may be set on the top of the basketball stadium, or may hover on the upper side of the basketball court through the drone carrying the capturing device. The capturing device may be set on the top of the stadium where the stage is located, or may be carried by the drone.

[0164] By setting the capturing device on the upper side of the to-be-viewed area, the multi-angle free-perspective range may include the perspective above the to-be-viewed area.

[0165] In implementations, the capturing device may be a camera or a video camera, and the captured data may be pictures or video data.

[0166] Those skilled in the art may understand that the manner in which the capturing device is set at the setting position may be various. For example, the capturing device may be supported by the support frame at the setting position, or in other setting manners.

[0167] In addition, those skilled in the art may understand that the above respective example embodiments are merely examples for illustration, and are not limitations on the setting manner of capturing devices. In various application scenarios, the implementations of determining the setting positions of the capturing devices and setting the capturing devices for capturing according to the multi-angle free-perspective range are all within the protection scope of the present disclosure.

[0168] Hereinafter, the method for generating multi-angle free-perspective data is further described.

[0169] As described above, still referring to FIG. 3, the acquired multiple synchronized images may be processed by the capturing system 31 or the server 32 to generate multi-angle free-perspective data that is capable of supporting the device 33 that performs displaying to switch the virtual viewpoint. The multi-angle free-perspective data may indicate the third-dimension information outside the two-dimensional image through the depth data.

[0170] In an example embodiment, referring to FIG. 19, a method 1900 for generating the multi-angle free-perspective data may include the following steps:

[0171] Step S1902, acquiring multiple synchronized images, where the shooting angles of the multiple images are different;

[0172] Step S1904, determining the depth data of each image based on the multiple images;

[0173] Step S1906, for each of the images, storing the pixel data of each image in a first field, and storing the depth data in at least a second field associated with the first field.

[0174] The multiple synchronized images may be images captured by the camera or frame images in video data captured by the video camera. In the process of generating the multi-angle free-perspective data, the depth data of each image may be determined based on the multiple images.

[0175] The depth data may include a depth value corresponding to a pixel of the image. The distance from the capturing device to each point in the to-be-viewed area may be used as the above depth value, and the depth value may directly reflect the geometry of the visible surface in the to-be-viewed area. The depth value may be the distance from respective points in the to-be-viewed area along the optical axis of the camera to the optical center, and the origin of the camera coordinate system may be used as the optical center. Those skilled in the art may understand that the distance may be a relative value, and multiple images may be based on the same reference.

[0176] Further, the depth data may include depth values corresponding to the pixels of the image on a one-to-one basis. Alternatively, the depth data may be some values selected from a set of depth values corresponding to the pixels of the image on a one-to-one basis.

[0177] Those skilled in the art may understand that the set of depth values may be stored in the form of a depth map. In implementations, the depth data may be data obtained by down-sampling the original depth map. The image form where the set of depth values corresponding to the pixels of the image on a one-to-one basis is stored according to the arrangement of pixel points of the image is the original depth map.

[0178] In implementations, the pixel data of the image stored in the first field may be original image data, such as data obtained from the capturing device, or may be data with a reduced resolution of the original image data. Further, the pixel data of the image may be original the pixel data of the image, or the pixel data with reduced resolution. The pixel data of the image may be any one of YUV data and RGB data, or may be other data capable of expressing the image.

[0179] In implementations, the amount of the depth data stored in the second field may be the same as or different from the amount of pixel points corresponding to the pixel data of the image stored in the first field. The amount may be determined according to the bandwidth limitation of data transmission of the device terminal that processes the multi-angle free-perspective image data. If the bandwidth is small, the amount of data may be reduced in the above manners such as down-sampling or resolution reduction, and the like.

[0180] In implementations, for each of the images, the pixel data of the image may be sequentially stored in multiple fields in a preset order, and these fields may be consecutive or may be distributed in an interleaving manner with the second field. The fields storing the pixel data of the image may be used as the first fields. Hereinafter, examples are provided for explanation.

[0181] Referring to FIG. 20, the pixel data of an image that is represented by pixel 1 to pixel 6 and other pixels not shown in the figure, may be stored in multiple consecutive fields in a preset order. These consecutive fields may be used as the first fields. The depth data corresponding to the image that is represented by depth value 1 to depth value 6 and other depth values not shown in the figure, may be stored in multiple consecutive fields in a preset order. These consecutive fields may be used as the second fields. The preset order may be a storing performed line by line sequentially according to the distribution positions of the image pixels, or may be other orders.

[0182] Referring to FIG. 21, the pixel data and corresponding depth values of an image may also be stored in multiple fields alternately. Multiple fields storing the pixel data may be used as the first fields, and multiple fields storing the depth values may be used as the second fields.

[0183] In implementations, the depth data may be stored in the same order as the pixel data of the image, so that a respective field in the first fields may be associated with a respective field in the second fields, thereby reflecting the depth value corresponding to each pixel.

[0184] In implementations, the pixel data and the depth data of multiple images may be stored in various ways. Hereinafter, examples are provided for further explanation.

[0185] Referring to FIG. 22, respective pixels of image 1 are represented by image 1 pixel 1, image 1 pixel 2, and other pixels not shown in the figure, and may be stored in consecutive fields, which may be used as the first fields. The depth data of image 1 is represented by image 1 depth value 1, image 1 depth value 2, and the other depth data not shown in the figure, and may be stored in the fields adjacent to the first fields. These fields may be used as the second fields. Similarly, the pixel data of image 2 may be stored in the first fields, and the depth data of image 2 may be stored in the adjacent second fields.

[0186] Those skilled in the art may understand that respective images in the image stream or respective frame images in the video stream that are continuously captured by one capturing device of multiple synchronized capturing devices may be used as the above image 1 respectively. Similarly, among the multiple synchronized capturing devices, the image captured in synchronization with image 1 may be used as image 2. The capturing device may be the capturing device shown in FIG. 2, or capturing devices in other scenarios.

[0187] Referring to FIG. 23, the pixel data of image 1 and the pixel data of image 2 may be stored in multiple adjacent first fields, and the depth data of image 1 and the depth data of image 2 may be stored in multiple adjacent second fields.

[0188] Referring to FIG. 24, the pixel data of each image in the multiple images may be stored in multiple fields respectively, and these fields may be used as the first fields. Fields storing the pixel data may be interleaved with fields storing the depth values.

[0189] Referring to FIG. 25, the pixel data and the depth values of different images may also be arranged in the interleaving manner. For example, image 1 pixel 1, image 1 depth value 1, image 2 pixels 1, image 2 depth value 1, . . . may be sequentially stored until the completion of storing the pixel data and the depth data corresponding to the first pixel of each image of the multiple images. The adjacent fields thereof store image 1 pixel 2, image 1 depth value 2, image 2 pixel 2, image 2 depth value 2, . . . until the completion of storing of the pixel data and the depth data of each image.

[0190] In summary, the fields storing the pixel data of each image may be used as the first fields, and the fields storing the depth data of the image may be used as the second fields. For each image, the first fields and the second fields associated with the first fields may be stored respectively.

[0191] Those skilled in the art may understand that the above respective example embodiments are merely examples, and are not limitations on the type, size, and arrangement of the fields.

[0192] Referring to FIG. 3, the multi-angle free-perspective data including the first fields and the second fields may be stored in a server 32 in the cloud, transmitted to the CDN or to the device 33 that performs displaying, for reconstructing the image.

[0193] In implementations, both the first fields and the second fields may be pixel fields in the stitched image. The stitched image is used to store the pixel data and the depth data of the multiple images. By using image format for data storage, the amount of data may be reduced, the time length of data transmission may be reduced, and the resource occupation may be reduced.

[0194] The stitched image may be an image in various formats such as BMP format, JPEG format, PNG format, and the like. These image formats may be the compressed format or the uncompressed format. Those skilled in the art may understand that the image in various formats may include fields corresponding to respective pixels, which are referred to as pixel fields. The size of the stitched image, i.e., parameters like the number of pixels and the aspect ratio of the stitched image, may be determined according to needs, for example, may be determined based on the number of the multiple synchronized images, the amount of data to be stored in each image, the amount of the depth data to be stored in each image, and other factors.

[0195] In implementations, among the multiple synchronized images, the depth data corresponding to the pixels of each image and the number of bits of the pixel data may be associated with the format of the stitched image.

[0196] For example, when the format of the stitched image is the BMP format, the range of the depth value may be 0-255, which is 8-bit data, and the data may be stored as the gray value in the stitched image. Alternatively, the depth value may also be 16-bit data, which may be stored as the gray value at two pixel positions in the stitched image, or stored in two channels at one pixel position in the stitched image.

[0197] When the format of the stitched image is the PNG format, the depth value may also be 8-bit or 16-bit data. In the PNG format, the depth value of 16-bit may be stored as the gray value of one pixel position in the stitched image.

[0198] Those skilled in the art may understand that the above example embodiments are not limitations on the storage manner or the number of data bits, and other data storage manners that may be implemented by those skilled in the art fall within the protection scope of the present disclosure.

[0199] In implementations, the stitched image may be split into an image area and a depth map area. The pixel fields of the image area store the pixel data of the multiple images, and the pixel fields of the depth map area store the depth data of the multiple images. The pixel fields storing the pixel data of each image in the image area are used as the first fields, and the pixel fields storing the depth data of each image in the depth map area are used as the second fields.

[0200] In implementations, the image area may be a continuous area, and the depth map area may also be a continuous area.

[0201] Further, in implementations, the stitched image may be equally split, and the two split parts are used as the image area and the depth map area respectively. Alternatively, the stitched image may also be split in an unequal manner according to the amount of the pixel data and the amount of the depth data of the image to be stored.

[0202] For example, referring to FIG. 26, one pixel is represented by each minimum square, then the image area may be area 1 within the dashed frame, i.e., the upper half area after the stitched image is split equally up and down. The lower half area of the stitched image may be used as the depth map area.

[0203] Those skilled in the art may understand that FIG. 26 is merely for illustration, and the number of the minimum squares therein is not a limitation on the number of pixels of the stitched image. In addition, the method of equal splitting may be equally splitting the stitched image left and right.