Virtual Dispersive Networking Systems And Methods

Twitchell, JR.; Robert W.

U.S. patent application number 16/812676 was filed with the patent office on 2020-09-03 for virtual dispersive networking systems and methods. The applicant listed for this patent is DISPERSIVE NETWORKS, INC.. Invention is credited to Robert W. Twitchell, JR..

| Application Number | 20200280510 16/812676 |

| Document ID | / |

| Family ID | 1000004838270 |

| Filed Date | 2020-09-03 |

View All Diagrams

| United States Patent Application | 20200280510 |

| Kind Code | A1 |

| Twitchell, JR.; Robert W. | September 3, 2020 |

VIRTUAL DISPERSIVE NETWORKING SYSTEMS AND METHODS

Abstract

A method of communicating data using virtualization includes splitting, at endpoint software running on a first device, first data for communication to a destination device into a first plurality of data streams; selecting, at the first device by the endpoint software, a first plurality of deflects for use in communicating the first plurality of data streams; communicating each of the first plurality of data streams over a different one of the selected first plurality of deflects; splitting, at the first deflect, a particular data stream of the first plurality of data streams into a second plurality of data streams; selecting, at the first deflect, a second plurality of deflects for use in communicating the second plurality of data streams; and communicating each of the second plurality of data streams over a different one of the selected second plurality of deflects.

| Inventors: | Twitchell, JR.; Robert W.; (Alpharetta, GA) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Family ID: | 1000004838270 | ||||||||||

| Appl. No.: | 16/812676 | ||||||||||

| Filed: | March 9, 2020 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 15965459 | Apr 27, 2018 | 10693764 | ||

| 16812676 | ||||

| PCT/US16/60196 | Nov 2, 2016 | |||

| 15965459 | ||||

| 62249619 | Nov 2, 2015 | |||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | H04L 45/124 20130101; H04L 63/1408 20130101; G06F 9/45558 20130101; H04L 67/38 20130101; H04L 63/0442 20130101; H04L 45/24 20130101; G06F 2009/4557 20130101; G06F 2009/45595 20130101; H04L 69/14 20130101; H04L 45/06 20130101; H04L 63/18 20130101 |

| International Class: | H04L 12/721 20060101 H04L012/721; H04L 12/707 20060101 H04L012/707; H04L 29/06 20060101 H04L029/06; G06F 9/455 20060101 G06F009/455 |

Claims

1. A method of communicating data using virtualization, the method comprising: (a) spawning, at a first device, a first plurality of virtual machines that each virtualizes network capabilities of the first device such that a first plurality of virtual network connections are provided; (b) splitting, at endpoint software running on the first device, first data for communication to a destination device into a first plurality of data streams; (c) selecting, at the first device by the endpoint software, a first plurality of deflects for use in communicating the first plurality of data streams; (d) communicating each of the first plurality of data streams using a different one of the first plurality of virtual network connections over a different one of the selected first plurality of deflects; (e) spawning, at a first deflect of the selected first plurality of deflects, a second plurality of virtual machines that each virtualizes network capabilities of the first deflect such that a second plurality of virtual network connections are provided; (f) receiving, at the first deflect, a first data stream of the first plurality of data streams; (g) splitting, at the first deflect, the first data stream into a second plurality of data streams; (h) selecting, at the first deflect, a second plurality of deflects for use in communicating the second plurality of data streams; (i) communicating each of the second plurality of data streams using a different one of the second plurality of virtual network connections over a different one of the selected second plurality of deflects; (j) receiving, at a second deflect from a first set of deflects, the second plurality of data streams; (k) reassembling, at the second deflect, the second plurality of data streams into the first data stream, and communicating the first data stream onward to another device; (l) receiving, at a second device from a second set of deflects, the first plurality of data streams including the first data stream; (m) reassembling, at the second device, the first plurality of data streams into the first data.

2. The method of claim 1, wherein spawning, at the first deflect, the second plurality of virtual machines occurs before receiving, at the first deflect, the first data stream of the first plurality of data streams.

3. The method of claim 1, wherein receiving, at the first deflect, the first data stream of the first plurality of data streams occurs before spawning, at the first deflect, the second plurality of virtual machines.

4. The method of claim 1, wherein spawning, at the first deflect, the second plurality of virtual machines occurs in response to receiving, at the first deflect, the first data stream of the first plurality of data streams.

5. The method of claim 1, wherein the method further comprises spawning, at the second deflect, a third plurality of virtual machines that each virtualizes network capabilities of the second deflect such that a third plurality of virtual network connections are provided.

6. The method of claim 1, wherein the method further comprises spawning, at the second deflect, a third plurality of virtual machines that each virtualizes network capabilities of the second deflect such that a third plurality of virtual network connections are provided, and wherein receiving, at the second deflect, the second plurality of data streams comprises receiving, at the second deflect via the third plurality of virtual network connections, the second plurality of data streams.

7. The method of claim 1, wherein the method further comprises spawning, at the second device, a fourth plurality of virtual machines that each virtualizes network capabilities of the second device such that a fourth plurality of virtual network connections are provided.

8. The method of claim 1, wherein the method further comprises spawning, at the second device, a fourth plurality of virtual machines that each virtualizes network capabilities of the second device such that a fourth plurality of virtual network connections are provided, and wherein receiving, at the second device, the first plurality of data streams comprises receiving, at the second device via the fourth plurality of virtual network connections, the first plurality of data streams.

9. The method of claim 1, wherein the first set of deflects comprises one or more of the second plurality of deflects.

10. The method of claim 1, wherein the first set of deflects comprises none of the second plurality of deflects.

11. The method of claim 1, wherein the first set of deflects is the second plurality of deflects.

12. The method of claim 1, wherein the second set of deflects comprises one or more of the first plurality of deflects.

13. The method of claim 1, wherein the second set of deflects comprises none of the first plurality of deflects.

14. The method of claim 1, wherein the second set of deflects comprises the second deflect.

15. The method of claim 1, wherein the second set of deflects does not comprise the second deflect.

16. The method of claim 1, wherein each deflect represents deflect software loaded on a distinct physical computing device.

17. The method of claim 1, wherein two or more deflects represent deflect software loaded on the same physical computing device.

18. The method of claim 1, wherein the first device or the second device has both deflect software and endpoint software loaded thereon.

19. The method of claim 1, wherein one or more deflects represent deflect software loaded on a guest operating system running in a virtual machine.

20. The method of claim 1, wherein the method involves communication of a data stream between two virtual machines running on the same physical computing device.

21. The method of claim 1, wherein the method involves utilizing encryption for communications between nodes.

22. The method of claim 1, wherein the method involves utilizing node-to-node encryption for communications between nodes in combination with end-to-end encryption for the entire communication from the first device to the second device.

23. The method of claim 1, wherein application-level encryption is utilized.

24. The method of claim 1, wherein public-key encryption is utilized and a key is communicated utilizing a methodology involving splits inside of splits.

25. The method of claim 1, wherein interleaving is utilized inside of one or more splits.

26. The method of claim 1, wherein the insertion of red herring data is utilized within one or more splits.

27. The method of claim 1, wherein selecting, at the first device by the endpoint software, the first plurality of deflects for use in communicating the first plurality of data streams comprises selecting the first plurality of deflects based at least in part on networking information.

28. The method of claim 1, wherein endpoint software is loaded on a desktop computer.

29. The method of claim 1, wherein endpoint software is loaded on a laptop computer.

30. The method of claim 1, wherein endpoint software is loaded on a tablet.

31. The method of claim 1, wherein endpoint software is loaded on a smartphone.

32. The method of claim 1, wherein endpoint software is loaded on a smart watch.

33. The method of claim 1, wherein endpoint software is loaded on a wearable computing device.

34. The method of claim 1, wherein endpoint software is loaded on a smart appliance.

35. The method of claim 1, wherein deflect software is loaded on a desktop computer.

36. The method of claim 1, wherein deflect software is loaded on a laptop computer.

37. The method of claim 1, wherein deflect software is loaded on a tablet.

38. The method of claim 1, wherein deflect software is loaded on a smartphone.

39. The method of claim 1, wherein deflect software is loaded on a smart watch.

40. The method of claim 1, wherein deflect software is loaded on a wearable computing device.

41. The method of claim 1, wherein deflect software is loaded on a smart appliance.

42. A method of communicating data using virtualization, the method comprising: (a) spawning, at a first device, a first plurality of virtual machines that each virtualizes network capabilities of the first device such that a first plurality of virtual network connections are provided; (b) splitting, at endpoint software running on the first device, first data for communication to a destination device into a first plurality of data streams; (c) selecting, at the first device by the endpoint software, a first plurality of deflects for use in communicating the first plurality of data streams; (d) communicating each of the first plurality of data streams using a different one of the first plurality of virtual network connections over a different one of the selected first plurality of deflects; (e) spawning, at a first deflect of the selected first plurality of deflects, a second plurality of virtual machines that each virtualizes network capabilities of the first deflect such that a second plurality of virtual network connections are provided; (f) splitting, at the first deflect, a particular data stream of the first plurality of data streams into a second plurality of data streams; (g) selecting, at the first deflect, a second plurality of deflects for use in communicating the second plurality of data streams; (h) communicating each of the second plurality of data streams using a different one of the second plurality of virtual network connections over a different one of the selected second plurality of deflects.

43. A method of communicating data using virtualization, the method comprising: (a) splitting, at endpoint software running on a first device, first data for communication to a destination device into a first plurality of data streams; (b) selecting, at the first device by the endpoint software, a first plurality of deflects for use in communicating the first plurality of data streams; (c) communicating each of the first plurality of data streams over a different one of the selected first plurality of deflects; (d) splitting, at the first deflect, a particular data stream of the first plurality of data streams into a second plurality of data streams; (e) selecting, at the first deflect, a second plurality of deflects for use in communicating the second plurality of data streams, (f) communicating each of the second plurality of data streams over a different one of the selected second plurality of deflects.

44. One or more computer readable media containing computer executable instructions for performing a disclosed method.

45. A system for performing a disclosed method.

46. Software for performing a disclosed method.

47. A system as disclosed.

48. A method as disclosed.

49. Software as disclosed.

Description

CROSS-REFERENCE TO RELATED APPLICATION

[0001] The present application hereby incorporates by reference the disclosure of each of U.S. provisional patent application 62/249,619 and WO/2017/079359, both from which priority is claimed.

COPYRIGHT STATEMENT

[0002] All of the material in this patent document is subject to copyright protection under the copyright laws of the United States and other countries. The copyright owner has no objection to the facsimile reproduction by anyone of the patent document or the patent disclosure, as it appears in official governmental records but, otherwise, all other copyright rights whatsoever are reserved.

BACKGROUND OF THE INVENTION

[0003] The present invention generally relates to network routing and network communications.

[0004] Conventional networks, such as the Internet, rely heavily on centralized routers to perform routing tasks in accomplishing network communications. The vulnerability and fragility of these conventional networks make entities feel insecure about using them.

[0005] Further, network attacks are a common occurrence in today's cyber environment. Computers that are not protected by firewalls are under constant attack. Servers are especially vulnerable due to the fact that they must keep a port open on their firewall to enable a connection to a client. Hackers use this vulnerability to attack the server or computer with a Denial of Service (DoS) attack to deny use of the server.

[0006] Cyber security is under siege from hackers. Since cyber security solutions can be bought, studied, and reverse engineered, hackers often have had a free hand attacking and stealing information from individuals, companies, and nations. One major issue is that, traditionally, the security industry creates "a single lock" for all of their customers and assumes that no one can break the lock. However, this paradigm is not working, as companies and nations have had information stolen. Recently, for example, Sony and the United States Office of Program Management have been the victim of hacks. Once a hacker figures out a technique for how to break a cyber defense tool such as a firewall, deep packet inspection, virus protection, intrusion detection system. IPS or others, the technique generally becomes part of their play book on how to hack.

[0007] Further, when vendors develop red team tools that are used to test a company's defenses, these tools can simply be pointed at other companies and used by hackers to break encryption and hack firewalls and intrusion detection systems.

[0008] Needs exist for improvement in cyber security. For example, needs exist for security mechanisms that are not algorithmic in nature and which can be modified in unique ways so that security can be achieved with simple software modifications. Needs exist for system configuration to be leveraged to change operation functionality as well. One or more of these needs is addressed by one or more aspects of the present invention.

SUMMARY OF THE INVENTION

[0009] The present invention includes many aspects and features. Moreover, while many aspects and features relate to, and are described in, the context of network routing and network communications associated with the Internet, the present invention is not limited to use only in conjunction with the Internet and is applicable in other networked systems not associated with the Internet, as will become apparent from the following summaries and detailed descriptions of aspects, features, and one or more embodiments of the present invention.

[0010] A first aspect relates to a method of communicating data using virtualization which includes splitting, at endpoint software running on a first device, first data for communication to a destination device into a first plurality of data streams; selecting, at the first device by the endpoint software, a first plurality of deflects for use in communicating the first plurality of data streams; communicating each of the first plurality of data streams over a different one of the selected first plurality of deflects; splitting, at the first deflect, a particular data stream of the first plurality of data streams into a second plurality of data streams; selecting, at the first deflect, a second plurality of deflects for use in communicating the second plurality of data streams; and communicating each of the second plurality of data streams over a different one of the selected second plurality of deflects.

[0011] Another aspect relates to a method of communicating data using virtualization that includes spawning, at a first device, a first plurality of virtual machines that each virtualizes network capabilities of the first device such that a first plurality of virtual network connections are provided; splitting, at endpoint software running on the first device, first data for communication to a destination device into a first plurality of data streams; selecting, at the first device by the endpoint software, a first plurality of deflects for use in communicating the first plurality of data streams; communicating each of the first plurality of data streams using a different one of the first plurality of virtual network connections over a different one of the selected first plurality of deflects; spawning, at a first deflect of the selected first plurality of deflects, a second plurality of virtual machines that each virtualizes network capabilities of the first deflect such that a second plurality of virtual network connections are provided; splitting, at the first deflect, a particular data stream of the first plurality of data streams into a second plurality of data streams; selecting, at the first deflect, a second plurality of deflects for use in communicating the second plurality of data streams; and communicating each of the second plurality of data streams using a different one of the second plurality of virtual network connections over a different one of the selected second plurality of deflects.

[0012] Another aspect relates to a method of communicating data using virtualization. The method includes spawning, at a first device, a first plurality of virtual machines that each virtualizes network capabilities of the first device such that a first plurality of virtual network connections are provided; splitting, at endpoint software running on the first device, first data for communication to a destination device into a first plurality of data streams; selecting, at the first device by the endpoint software, a first plurality of deflects for use in communicating the first plurality of data streams; communicating each of the first plurality of data streams using a different one of the first plurality of virtual network connections over a different one of the selected first plurality of deflects; spawning, at a first deflect of the selected first plurality of deflects, a second plurality of virtual machines that each virtualizes network capabilities of the first deflect such that a second plurality of virtual network connections are provided; receiving, at the first deflect, a first data stream of the first plurality of data streams; splitting, at the first deflect, the first data stream into a second plurality of data streams; selecting, at the first deflect, a second plurality of deflects for use in communicating the second plurality of data streams; communicating each of the second plurality of data streams using a different one of the second plurality of virtual network connections over a different one of the selected second plurality of deflects; receiving, at a second deflect from a first set of deflects, the second plurality of data streams; reassembling, at the second deflect, the second plurality of data streams into the first data stream, and communicating the first data stream onward to another device; receiving, at a second device from a second set of deflects, the first plurality of data streams including the first data stream; and reassembling, at the second device, the first plurality of data streams into the first data.

[0013] In a feature of this aspect, spawning, at the first deflect, the second plurality of virtual machines occurs before receiving, at the first deflect, the first data stream of the first plurality of data streams.

[0014] In a feature of this aspect, receiving, at the first deflect, the first data stream of the first plurality of data streams occurs before spawning, at the first deflect, the second plurality of virtual machines.

[0015] In a feature of this aspect, spawning, at the first deflect, the second plurality of virtual machines occurs in response to receiving, at the first deflect, the first data stream of the first plurality of data streams.

[0016] In a feature of this aspect, the method further comprises spawning, at the second deflect, a third plurality of virtual machines that each virtualizes network capabilities of the second deflect such that a third plurality of virtual network connections are provided.

[0017] In a feature of this aspect, the method further comprises spawning, at the second deflect, a third plurality of virtual machines that each virtualizes network capabilities of the second deflect such that a third plurality of virtual network connections are provided, and wherein receiving, at the second deflect, the second plurality of data streams comprises receiving, at the second deflect via the third plurality of virtual network connections, the second plurality of data streams.

[0018] In a feature of this aspect, the method further comprises spawning, at the second device, a fourth plurality of virtual machines that each virtualizes network capabilities of the second device such that a fourth plurality of virtual network connections are provided.

[0019] In a feature of this aspect, the method further comprises spawning, at the second device, a fourth plurality of virtual machines that each virtualizes network capabilities of the second device such that a fourth plurality of virtual network connections are provided, and wherein receiving, at the second device, the first plurality of data streams comprises receiving, at the second device via the fourth plurality of virtual network connections, the first plurality of data streams.

[0020] In a feature of this aspect, the first set of deflects comprises one or more of the second plurality of deflects.

[0021] In a feature of this aspect, the first set of deflects comprises none of the second plurality of deflects.

[0022] In a feature of this aspect, the first set of deflects is the second plurality of deflects.

[0023] In a feature of this aspect, the second set of deflects comprises one or more of the first plurality of deflects.

[0024] In a feature of this aspect, the second set of deflects comprises none of the first plurality of deflects.

[0025] In a feature of this aspect, the second set of deflects comprises the second deflect.

[0026] In a feature of this aspect, the second set of deflects does not comprise the second deflect.

[0027] In a feature of this aspect, each deflect represents deflect software loaded on a distinct physical computing device.

[0028] In a feature of this aspect, two or more deflects represent deflect software loaded on the same physical computing device.

[0029] In a feature of this aspect, the first device or the second device has both deflect software and endpoint software loaded thereon.

[0030] In a feature of this aspect, one or more deflects represent deflect software loaded on a guest operating system running in a virtual machine.

[0031] In a feature of this aspect, the method involves communication of a data stream between two virtual machines running on the same physical computing device.

[0032] In a feature of this aspect, the method involves utilizing encryption for communications between nodes.

[0033] In a feature of this aspect, the method involves utilizing node-to-node encryption for communications between nodes in combination with end-to-end encryption for the entire communication from the first device to the second device.

[0034] In a feature of this aspect, application-level encryption is utilized.

[0035] In a feature of this aspect, public-key encryption is utilized and a key is communicated utilizing a methodology involving splits inside of splits.

[0036] In a feature of this aspect, interleaving is utilized inside of one or more splits.

[0037] In a feature of this aspect, the insertion of red herring data is utilized within one or more splits.

[0038] In a feature of this aspect, selecting, at the first device by the endpoint software, the first plurality of deflects for use in communicating the first plurality of data streams comprises selecting the first plurality of deflects based at least in part on networking information.

[0039] In a feature of this aspect, endpoint software is loaded on a desktop computer.

[0040] In a feature of this aspect, endpoint software is loaded on a laptop computer.

[0041] In a feature of this aspect, endpoint software is loaded on a tablet.

[0042] In a feature of this aspect, endpoint software is loaded on a smartphone.

[0043] In a feature of this aspect, endpoint software is loaded on a smart watch.

[0044] In a feature of this aspect, endpoint software is loaded on a wearable computing device.

[0045] In a feature of this aspect, endpoint software is loaded on a smart appliance.

[0046] In a feature of this aspect, deflect software is loaded on a desktop computer.

[0047] In a feature of this aspect, deflect software is loaded on a laptop computer.

[0048] In a feature of this aspect, deflect software is loaded on a tablet.

[0049] In a feature of this aspect, deflect software is loaded on a smartphone.

[0050] In a feature of this aspect, deflect software is loaded on a smart watch.

[0051] In a feature of this aspect, deflect software is loaded on a wearable computing device.

[0052] In a feature of this aspect, deflect software is loaded on a smart appliance.

[0053] Another aspect relates to one or more computer readable media containing computer executable instructions for performing a disclosed method.

[0054] Another aspect relates to a system for performing a disclosed method.

[0055] Another aspect relates to software for performing a disclosed method.

[0056] In addition to the disclosed aspects and features of the present invention, it should be noted that the present invention further encompasses the various possible combinations and subcombinations of such aspects and features. Thus, for example, any aspect may be combined with an aforementioned feature in accordance with the present invention without requiring any other aspect or feature.

BRIEF DESCRIPTION OF THE DRAWINGS

[0057] One or more preferred embodiments of the present invention now will be described in detail with reference to the accompanying drawings.

[0058] FIG. 1 illustrates components of a VDR software client loaded onto a client device in accordance with an embodiment of the present invention.

[0059] FIG. 2 illustrates how a VDR client gathers LAN routing information and queries an external network for backbone information and application-specific routing information in accordance with an embodiment of the present invention.

[0060] FIG. 3 illustrates how data is added to the payload of a packet on each of a plurality of hops in accordance with an embodiment of the present invention.

[0061] FIGS. 4A-C provide a simplified example of a VDR software response to a network attack in accordance with an embodiment of the present invention.

[0062] FIGS. SA-C illustrate an exemplary VDR implementation in accordance with a preferred embodiment of the present invention.

[0063] FIG. 6 includes Table 1, which table details data stored by a node in the payload of a packet.

[0064] FIG. 7 illustrates a direct connection between two clients in accordance with one or more preferred implementations.

[0065] FIG. 8 illustrates an exemplary process for direct transfer of a file from a first client to a second client in accordance with one or more preferred implementations.

[0066] FIG. 9B illustrates an exemplary user interface for a Sharzing file transfer application in accordance with one or more preferred implementations.

[0067] FIG. 10 presents table 9, which illustrates potential resource reduction in accordance with one or more preferred implementations.

[0068] FIG. 11 illustrates client and server architectures in accordance with one or more preferred implementations.

[0069] FIGS. 12 and 13 illustrate exemplary processes for downloading of a file in accordance with one or more preferred implementations.

[0070] FIG. 14 illustrates the use of a dispersive virtual machine implemented as part of a software application that can be easily downloaded to a device such as a PC, smart phone, tablet, or server.

[0071] FIG. 15 illustrates a methodology in which data to be sent from a first device to another device is split up into multiple parts which are sent separately over different routes and then reassembled at the other device.

[0072] FIG. 16 illustrates how multiple packets can be sent over different deflects in a direct spreading of packets methodology.

[0073] FIG. 17 illustrates how multiple packets can be sent to different IP addresses and/or ports in a hopping IP addresses and ports methodology.

[0074] FIG. 18 illustrates an exemplary system architecture configured to allow clients in a task network to access the Internet through an interface server using virtual dispersive networking (VDN) spread spectrum protocols.

[0075] FIG. 19 illustrates an exemplary system architecture configured to enable a workstation with four independent connections to the internet to send traffic using virtual dispersive networking spread spectrum protocols to an interface server from each independent internet connection.

[0076] FIGS. 20-23 illustrate an exemplary scenario utilizing point of entry gateways.

[0077] FIGS. 24-27 illustrate a similar scenario as that illustrated in FIGS. 20-23, only one or more deflects utilized for some communications or connections.

[0078] FIG. 28 illustrates the overlapping of data by sending it to a mobile device via two different wireless networks.

[0079] FIG. 29 illustrates VDN routing from a first virtual thin client to another virtual thin client using two deflects that are configured simply to pass data through.

[0080] FIG. 30 illustrates a system in which deflects are configured to reformat data to another protocol.

[0081] FIG. 31A illustrates a first packet which includes data A, as well as a second packet which includes data B.

[0082] FIG. 31B illustrates a superframe which has been constructed that includes both data A and data B.

[0083] FIGS. 32-33 illustrate a process in which a superframe is sent from a first virtual thin client to a deflect.

[0084] FIG. 34 illustrates a superframe which itself includes a superframe (which includes two packets) as well as a packet.

[0085] FIG. 35 illustrates how communications are intercepted at the session layer and parsed out to a plurality of links.

[0086] FIG. 36 illustrates a plurality of data paths from a first end device to a second end device.

[0087] FIG. 37 illustrates several data paths that pass through multiple deflects.

[0088] FIG. 38 illustrates how a connection from a first VTC to a second VTC through a second deflect is still possible even though it is not possible to connect through a first or third deflect.

[0089] FIG. 39 illustrates a system including two end clients that are communicating over a network that includes three deflects.

[0090] FIGS. 40-47 illustrate exemplary systems utilizing splitting of communications.

DETAILED DESCRIPTION

[0091] As a preliminary matter, it will readily be understood by one having ordinary skill in the relevant art ("Ordinary Artisan") that the present invention has broad utility and application. Furthermore, any embodiment discussed and identified as being "preferred" is considered to be part of a best mode contemplated for carrying out the present invention. Other embodiments also may be discussed for additional illustrative purposes in providing a full and enabling disclosure of the present invention. Moreover, many embodiments, such as adaptations, variations, modifications, and equivalent arrangements, will be implicitly disclosed by the embodiments described herein and fall within the scope of the present invention.

[0092] Accordingly, while the present invention is described herein in detail in relation to one or more embodiments, it is to be understood that this disclosure is illustrative and exemplary of the present invention, and is made merely for the purposes of providing a full and enabling disclosure of the present invention. The detailed disclosure herein of one or more embodiments is not intended, nor is to be construed, to limit the scope of patent protection afforded the present invention, which scope is to be defined by the claims and the equivalents thereof. It is not intended that the scope of patent protection afforded the present invention be defined by reading into any claim a limitation found herein that does not explicitly appear in the claim itself.

[0093] Thus, for example, any sequence(s) and/or temporal order of steps of various processes or methods that are described herein are illustrative and not restrictive. Accordingly, it should be understood that, although steps of various processes or methods may be shown and described as being in a sequence or temporal order, the steps of any such processes or methods are not limited to being carried out in any particular sequence or order, absent an indication otherwise. Indeed, the steps in such processes or methods generally may be carried out in various different sequences and orders while still falling within the scope of the present invention. Accordingly, it is intended that the scope of patent protection afforded the present invention is to be defined by the appended claims rather than the description set forth herein.

[0094] Additionally, it is important to note that each term used herein refers to that which the Ordinary Artisan would understand such term to mean based on the contextual use of such term herein. To the extent that the meaning of a term used herein--as understood by the Ordinary Artisan based on the contextual use of such term--differs in any way from any particular dictionary definition of such term, it is intended that the meaning of the term as understood by the Ordinary Artisan should prevail.

[0095] Furthermore, it is important to note that, as used herein, "a" and "an" each generally denotes "at least one." but does not exclude a plurality unless the contextual use dictates otherwise. Thus, reference to "a picnic basket having an apple" describes "a picnic basket having at least one apple" as well as "a picnic basket having apples." In contrast, reference to "a picnic basket having a single apple" describes "a picnic basket having only one apple."

[0096] When used herein to join a list of items. "or" denotes "at least one of the items." but does not exclude a plurality of items of the list. Thus, reference to "a picnic basket having cheese or crackers" describes "a picnic basket having cheese without crackers". "a picnic basket having crackers without cheese", and "a picnic basket having both cheese and crackers." Finally, when used herein to join a list of items, "and" denotes "all of the items of the list." Thus, reference to "a picnic basket having cheese and crackers" describes "a picnic basket having cheese, wherein the picnic basket further has crackers," as well as describes "a picnic basket having crackers, wherein the picnic basket further has cheese."

[0097] Further, as used herein, the term server may be utilized to refer to both a single server, or a plurality of servers working together.

[0098] Referring now to the drawings, one or more preferred embodiments of the present invention are next described. The following description of one or more preferred embodiments is merely exemplary in nature and is in no way intended to limit the invention, its implementations, or uses.

VDR

[0099] Virtual dispersive routing (hereinafter, "VDR") relates generally to providing routing capabilities at a plurality of client devices using virtualization. Whereas traditional routing calls for most, if not all, routing functionality to be carried out by centrally located specialized routing devices, VDR enables dispersed client devices to assist with, or even takeover, routing functionality, and thus is properly characterized as dispersive. Advantageously, because routing is performed locally at a client device, a routing protocol is selected by the client based upon connection requirements of the local application initiating the connection. A protocol can be selected for multiple such connections and multiple routing protocols can even be utilized simultaneously. The fragile nature of the routing protocols will be appreciated, and thus virtualization is utilized together with the localization of routing to provide a much more robust system. Consequently, such dispersive routing is properly characterized as virtual.

[0100] More specifically, preferred VDR implementations require that a VDR software client be loaded on each client device to help control and optimize network communications and performance. Preferably, VDR is implemented exclusively as software and does not include any hardware components. Preferably, the basic components of a VDR software client include a routing platform (hereinafter. "RP"); a virtual machine monitor (hereinafter. "VMM"); a dispersive controller (hereinafter, "DC"); and an application interface (hereinafter, "AI"). FIG. 1 illustrates each of these components loaded onto a client device. Each of these components is now discussed in turn.

The Routing Platform (RP) and Multiple Routing Protocols

[0101] Despite eschewing the traditional routing model utilizing central points of control, VDR is designed to function with existing routing protocols. Supported routing protocols, together with software necessary for their use, are included in the routing platform component of the VDR software, which can be seen in FIG. 1. For example, the RP includes software to implement and support the Interior Gateway Routing Protocol ("IGRP"), the Enhanced Interior Gateway Routing Protocol ("EIGRP"), the Border Gateway Protocol ("BGP"), the Open Shortest Path First ("OSPF") protocol, and the Constrained Shortest Path First ("CSPF") protocol. It will be appreciated that in at least some embodiments, a port will be needed to allow conventional routing software to run on a chip core (for example, a core of an Intel chip) at a client device. Preferably, multi-core components are used to allow routing protocols to be run on multiple cores to improve overall performance.

[0102] Moreover, it will be appreciated that the ability to support multiple routing protocols allows VDR to meet the needs of applications having varying mobility requirements. Applications can be supported by ad hoc algorithms such as pro-active (table driven) routing, reactive (on-demand) routing, flow oriented routing, adaptive (situation aware) routing, hybrid (pro-active/reactive) routing, hierarchical routing, geographical routing, and power aware routing. Further, the use of multiple protocols supports broadcasting, multi-casting, and simul-casting. It will be appreciated that the use of multiple protocols provides support for multi-threaded networking as well.

The Virtual Machine Monitor (VMM) and Virtualization

[0103] It will be appreciated that virtualization is known in some computing contexts, such as virtualization of memory and processing. Virtualization enables the abstraction of computer resources and can make a single physical resource appear, and function, as multiple logical resources. Traditionally, this capability enables developers to abstract development of an application so that it runs homogenously across many hardware platforms. More generally, virtualization is geared to hiding technical detail through encapsulation. This encapsulation provides the mechanism to support complex networking and improved security that is required to enable routing at client devices.

[0104] More specifically, a virtual machine (hereinafter, "VM") is a software copy of a real machine interface. The purpose of running a VM is to provide an environment that enables a computer to isolate and control access to its services. The virtual machine monitor (VMM) component is used to run a plurality of VMs on a real machine and interface directly with that real machine. As an example, consider a VMM on a real machine that creates and runs a plurality of VMs. A different operating system is then loaded onto each VM. Each VM provides a virtual interface that would appear to each operating system to be a real machine. The VMM runs the plurality of VMs and interfaces with the real machine.

[0105] In a VDR implementation, a VMM is utilized to create a VM for each distinct connection. It is helpful to explain at this juncture that what comprises a connection can vary, but in general includes a transfer of data in the form of packets from a first end device to a second end device along a path (or route). It will be appreciated that a single application can require multiple connections, for example, an application may require multiple connections because of bandwidth application requirements and performance requirements, in this event each connection preferably interfaces with its own VM and each connection can utilize (sometimes referred to as being tied to) the same routing protocol or different routing protocols, even though the connections are themselves necessitated by the same application. Similarly, although two connections may at times travel along an identical path, the connections themselves are nevertheless distinct, and each will preferably still continue to interface with its own VM.

The Dispersive Controller (DC) and Optimizing Performance

[0106] When the client is in need of a new connection, a dispersive controller located between an operating system and a driver that controls network hardware (such as a NIC card) intercepts the request for a new connection and tells the VMM to spawn a new VM associated with the desired connection. The DC then queries the application interface and utilizes any information obtained to select a routing protocol from among those supported by the RP. This selected routing protocol, however, is currently believed to be generally useless without knowledge of the surrounding network. To this end, the DC allows each client to find other clients, interrogate network devices, and utilize system resources. Thus, each VDR client is "network aware", in that routing information is gathered and maintained at each client by the DC.

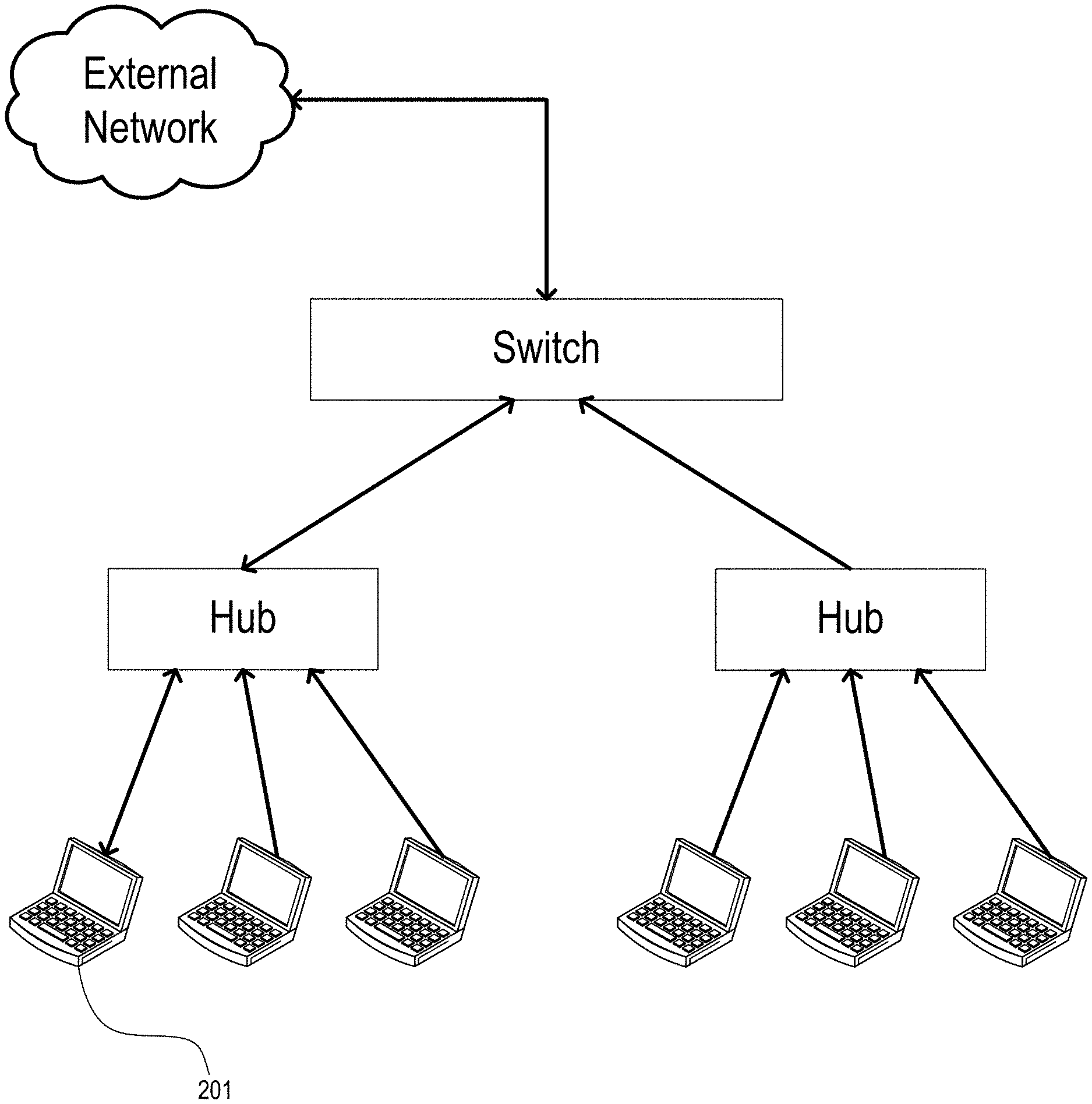

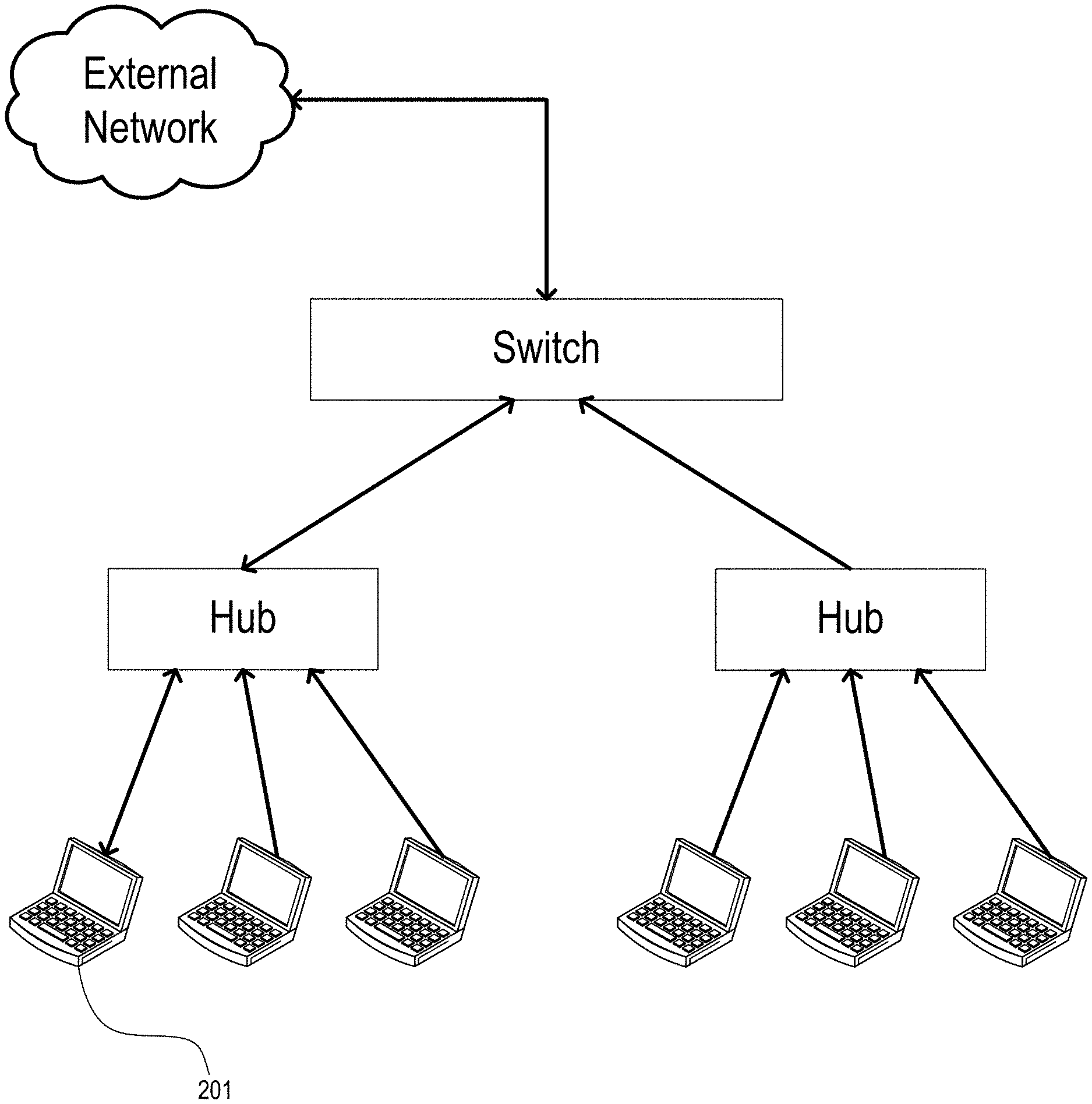

[0107] FIG. 2 illustrates how a VDR client 201 gathers LAN routing information and queries an external network for backbone information and application-specific routing information. In response to these queries, routing information is returned. This returned routing information is cached, processed, data mined, compared to historical data, and used to calculate performance metrics to gauge and determine the overall effectiveness of the network. This is possible because the resources available at a VDR client will typically be greater than those available at a conventional router.

[0108] In at least some embodiments, a VDR network functions in some ways similarly to a conventional network. In a conventional network, data, in the form of packets, is sent to a router to be routed according to a routing table maintained at the router. Similarly, in a VDR network, after utilizing gathered network information to generate a routing table, a client device utilizes this generated routing table to select a route and transmit a packet accordingly, which packet is then received by another client device and routed according to that client's routing table, and so on, until the packet reaches its destination.

[0109] However, rather than simply passing on received packets from client to client, in a manner akin to a traditional router, VDR, via the DC, instead takes advantage of the storage and processing resources available at each client, while still remaining compatible with existing network architecture, by attaching lower level protocol data to the payload of transmitted packets for subsequent client analysis.

[0110] More specifically, when a packet is received at a VDR client, a virtual machine intercepts the packet passed from the networking hardware (for example, a NIC card) and places it in memory. The VDR client then processes the packet data. When the data is subsequently passed on, this processed data is appended to the payload of the packet together with information relating to the VDR client for analysis at the destination. As can be seen in FIG. 3, the result of this process is that each hop causes additional information to be added to the payload of a packet, and thus results in a direct increase in payload size proportionate to the number of hops taken by the packet. Specifically, each hop is believed to result in an increase of 35 bytes for an IPv4 implementation, and 59 bytes for an IPv6 implementation. Table 1 of FIG. 6 details the information stored from each layer, along with the number of bytes allotted for each field. It will be appreciated that different or additional information could be stored in alternative embodiments.

[0111] Currently, 128-bit addressing provides support for IPv4 and IPv6 addressing, but support for additional addressing schemes is contemplated. It will be appreciated that for a typical communication over the Internet, i.e., one consisting of around 20 hops, the overhead appended to the payload will be around 700 bytes utilizing IPv4 and around 1180 bytes utilizing IPv6. It is believed that, in a worst case scenario, an extra IP datagram could be required for every datagram sent. Although some of this data may seem redundant at first blush, some repetition is tolerable and even necessary because network address translation ("NAT") can change source or destination fields. That being said, it is contemplated that some implementations use caching to lower this overhead. Additionally, in at least some implementations, the VDR client utilizes application specific knowledge to tailor the information that is appended to the needs of a specific application.

[0112] Conventionally, when a packet is received at a router, routing information is typically stripped off each packet by the router and disregarded. This is because each router has limited memory and handles an enormous number of packets. When a packet is received at a destination VDR client, however, the destination client has sufficient resources to store and process the information delivered to it. Additionally, to the extent that client resources may be taxed, the VDR client need not always store this information in every packet received, as in at least some embodiments application knowledge provides the client with an understanding of which packets are important to applications running on the client. Regardless of whether some or all of this information delivered in the payload of each data packet is processed, the information that is processed is analyzed to create a "network fingerprint" of the nodes involved in the communication link. Thus. VDR software loaded on nodes along a path enables the nodes to append information regarding a path of a packet, which in turn enables the generation of a network fingerprint at the destination device, which network fingerprint represents a historical record that is stored and maintained for later forensic analysis. In addition to forensic analysis by the client, the maintenance of network information on the client enables forensic analysis by a server as well.

The Application Interface (AI) & Application Knowledge

[0113] One of the benefits of providing routing functionality at a client device is that the client is able to utilize its knowledge of the application initiating a connection to enhance routing performance for that application. This knowledge is provided to the DC via an application interface, as can be seen in FIG. 1. Utilizing application knowledge to enhance routing performance could be useful to a variety of applications, such, as for example, computer games including massively multiplayer online role playing games.

[0114] The virtualization of routing functionality at a client device, as described hereinabove, allows multiple routing protocols and algorithms to be run simultaneously on a client device. Thus, the DC utilizes the application interface to obtain required criteria for an application connection and then chooses from among the protocols and algorithms available via the RP.

[0115] For example, Application "A" may need to communicate very large amounts of data, and thus require a routing protocol that optimizes bandwidth, while Application "B" may only need to communicate very small amounts of data at very fast speeds, and thus require a routing protocol that minimizes latency irrespective of bandwidth. A traditional router cannot tell the difference between packets originating from Application "A" and those originating from Application "B", and thus will utilize the same routing protocol for packets from each application. A VDR client, however, is aware of applications running locally, and thus can be aware, through the AI, of various connection criteria for each application. These connection criteria can then be utilized by the VDR client in selecting a routing protocol or algorithm. Furthermore, as described hereinabove, both the selected routing protocol and the originating application associated with a packet can be communicated to other client nodes via data appended to the payload of the packet. Thus, the protocol selected at a source client can be utilized to route the packet throughout its path to a destination client. Further, because virtualization allows multiple routing protocols to be run on a single client, each application can utilize its own routing protocol.

[0116] Moreover, a VDR client can utilize knowledge of the path of a specific connection to further optimize performance. Because a network fingerprint can be gathered detailing the nodes in a communication path, a VDR client running on a client device can analyze each network fingerprint to determine whether the associated connection satisfies the connection criteria of the application desiring to utilize the connection. If the connection does not satisfy the connection criteria, then the client can attempt to find a connection that does satisfy the criteria by switching to a different protocol and/or switching to a different first node in its routing table. Combinations utilizing various protocols and selecting a variety of first nodes can be attempted, and the resultant paths evaluated until a path is found that does satisfy connection criteria. Additionally, combinations utilizing various protocols and selecting a variety of first nodes can be utilized to create route redundancy. Such route redundancy can provide to an application both higher bandwidth and controllable quality of service.

[0117] Although connection criteria for source and destination clients will often be identical, there are many situations where this will not be the case. For example, if one client is downloading streaming video from another client, then the connection requirements for each client will likely not be identical. In this and other situations, connections between two clients may be asymmetrical, i.e., client "A" transmits packets to client "B" over path 1, but client "B" transmits packets to client "A" over path 2. In each case, because path information gleaned from the payload of packets is stored and processed at the destination client, the evaluation of whether the path meets the required connection criteria is made at the destination client. In the example above, client "B" would determine whether path 1 satisfies its application's connection criteria, while client "A" would determine whether path 2 satisfies its application's connection criteria.

[0118] Perhaps the epitome of a connection that does not satisfy connection criteria is a broken, or failed, connection. In the event of a connection break, VDR enjoys a significant advantage over more traditional routing. Conventionally, recognition of a connection break would require a timeout at an upper level application, with either the path being re-routed subsequent to the timeout or a connection failure message being presented to a user. A VDR client, however, is aware of generally how long it should take to receive a response to a transmitted communication, and can utilize this awareness to speed up route convergence for additional network connections to insure application robustness and performance requirements, performance requirements being defined as criteria that must be met to allow the application to run properly, i.e., video conferencing can't wait too long for packets to show up or else the audio "crackles" and the image "freezes." For example, a VDR client may be aware that it should receive a response to a communication in 500 ms. If a response has not been received after 500 ms, the VDR client can initiate a new connection utilizing a different routing protocol and/or first node as outlined above with respect to finding a satisfactory connection path.

[0119] In addition to performance optimization, application knowledge can also be utilized to enhance network security. For example, an application may have certain security requirements. A VDR client aware of these requirements can create a "trusted network" connection that can be used to transfer information securely over this connection in accordance with the requirements of the application. A more traditional routing scheme could not ensure such a trusted connection, as it could not differentiate between packets needing this secure connection and other packets to be routed in a conventional manner.

[0120] But before elaborating on security measures that may be built in to a VDR implementation, it is worth noting that a VDR client is able to work in concert with an existing client firewall to protect software and hardware resources. It will be appreciated that conventional firewalls protect the flow of data into and out of a client and defend against hacking and data corruption. Preferably, VDR software interfaces with any existing client firewall for ease of integration with existing systems, but it is contemplated that in some implementations VDR software can include its own firewall. In either implementation, the VDR software can interface with the firewall to open and close ports as necessary, thereby controlling the flow of data in and out.

[0121] In addition to this firewall security, by utilizing application knowledge the VDR software can filter and control packets relative to applications running on the client. Thus, packets are checked not only to ensure a correct destination address, but further are checked to ensure that they belong to a valid client application.

[0122] One way VDR software can accomplish this is by utilizing "spiders" to thread together different layers of the protocol stack to enable data communication, thereby reducing delays and taking advantage of network topologies. Each spider represents software that is used to analyze data from different layers of the software stack and make decisions. These threaded connections can be used to speed data transfer in static configurations and modify data transfer in dynamic circumstances. As an example, consider a client device running a secure email application which includes a security identification code. Packets for this application include a checksum that when run will come up with this identification code. A spider would allow this upper level application security identification code to be connected to the lower layer. Thus, the lower layer could run a checksum on incoming packets and discard those that do not produce the identification code. It will be appreciated that a more complex MD5 hash algorithm could be utilized as well.

[0123] Moreover, because the VDR software is knowledgeable of the application requiring a particular connection, the software can adaptively learn and identify atypical behavior from an outside network and react by quarantining an incoming data stream until it can be verified. This ability to match incoming data against application needs and isolate any potential security issues significantly undermines the ability of a hacker to gain access to client resources.

[0124] Additionally, when such a security issue is identified, a VDR client can take appropriate steps to ensure that it does not compromise the network. Because a VDR client is network aware and keeps track of other clients that it has been communicating with, when a security issue is identified, the VDR client can not only isolate the suspect connection, the VDR client can further initiate a new connection utilizing a different routing protocol and/or first node as outlined above with respect to finding a satisfactory connection path. Alternatively, or additionally, the VDR client could simply choose to switch protocols on the fly and communicate this switch to each client with which it is in communication.

[0125] FIGS. 4A-C provide a simplified example of such action for illustrative effect. In FIG. 4A, VDR client 403 is communicating with VDR client 405 over connection 440. In FIG. 4B, external computer 411 tries to alter packet 491 transmitted from client 403 to client 405. Client 405 runs a hashing algorithm on the received packet 491 and identifies that it has been corrupted. Client 405 then quarantines packets received via connection 440 and, as can be seen in FIG. 4C, establishes a new connection 450 with client 403.

[0126] Upon discovery of an "attack" on a network or specific network connection, a VDR client can monitor the attack, defend against the attack, and/or attack the "hacker". Almost certainly, a new, secure connection will be established as described above. However, after establishing a new connection, the VDR client can then choose to simply kill the old connection, or, alternatively, leave the old connection up so that the attacker will continue to think the attack has some chance of success. Because each connection is virtualized, as described hereinabove, a successful attack on any single connection will not spill over and compromise the client as a whole, as crashing the VM associated with a single connection would not affect other VMs or the client device itself. It is contemplated that a VDR client will attempt to trace back the attack and attack the original attacker, or alternatively, and preferably, communicate its situation to another VDR client configured to do so.

An Exemplary Implementation

[0127] Traditionally, wired and wireless networks have tended to be separate and distinct. Recently, however, these types of networks have begun to merge, with the result being that the routing of data around networks has become much more complex. Further, users utilizing such a merged network desire a high level of performance from the network regardless of whether they are connected wirelessly or are connected via a fixed line. As discussed hereinabove. VDR enables a client to monitor routing information and choose an appropriate routing protocol to achieve the desired performance while still remaining compatible with existing network architecture. VDR can be implemented with wired networks, wireless networks (including, for example, Wi-Fi), and networks having both wired and wireless portions.

[0128] FIG. 5A illustrates an exemplary local area network 510 (hereinafter. "LAN") utilizing VDR. The LAN 510 includes three internal nodes 511,513,515, each having VDR software loaded onto a client of the respective node. The internal nodes 511,513,515 can communicate with one another, and further can communicate with edge nodes 512,514,516,518, each also having VDR software loaded onto a client of the respective node. The coverage area 519 of the LAN 510 is represented by a dotted circle. It will be appreciated that the edge nodes 512,514,516,518 are located at the periphery of the coverage area 519. The primary distinction between the internal nodes 511,513,515 and the edge nodes 512,514,516,518 is that the internal nodes 511,513,515 are adapted only to communicate over the LAN 510, while the edge nodes 512,514,516,518 are adapted to communicate both with the internal nodes 511,513,515 and with edge nodes of other LANs through one or more wide area networks (hereinafter, "WANs"). As one of the nodes 511,513,515 moves within the LAN 510 (or, if properly adapted, moves to another LAN or WAN), VDR allows it to shift to ad hoc, interior, and exterior protocols. This ability to shift protocols allows the node to select a protocol which will provide the best performance for a specific application.

[0129] FIG. 5B illustrates an exemplary path between node 513 in LAN 510 and node 533 in LAN 530. It will be appreciated that an "interior" protocol is utilized for communications inside each LAN, and an "exterior" protocol is utilized for communications between edge nodes of different LANs. Thus, it will likewise be appreciated that each edge node must utilize multiple protocols, an interior protocol to communicate with interior nodes, and an exterior protocol to communicate with other edge nodes of different LANs. Further, at any time an adhoc protocol could be set up which is neither a standard interior nor exterior protocol.

[0130] In FIG. 5B, LAN 510 and LAN 530 are both using CSPF as an interior protocol, while LAN 520 and LAN 540 are utilizing EIGRP as an interior protocol. All edge nodes of each of the LANs 510,520,530 are connected to a WAN utilizing BGP to communicate between edge nodes.

[0131] The exemplary path between node 513 and node 533 includes node 515, edge node 518, edge node 522, node 521, node 523, node 525, edge node 528, edge node 534, and node 531. Further, because a particular protocol was not selected and propagated by the transmitting node, this connection utilizes CSPF for internal communications within LAN 510 and LAN 530. EIGRP for internal communications within LAN 520, and BGP for external communications between edge nodes. At one or both end nodes, the VDR software can analyze this information and determine whether the combination of protocols along this path is satisfactory for the communicating application. It will be appreciated that the VDR software can further analyze the information gathered and determine whether the path meets application requirements for throughput, timing, security, and other important criteria.

[0132] In a static environment, this path may represent a connection that meets application requirements and thus no further adjustment would be needed. However, if a network outage were to occur, a network or a node were to move, or another dynamic event was to occur, the path could need to be altered.

[0133] For example, if LAN 520 were to move out of range, node 533 might analyze the path information appended to a packet received after the movement and determine that increased latency resulting from this movement rendered this path unsuitable per application requirements. Node 533 would then attempt to establish a new connection utilizing a different route that would satisfy application requirements. FIG. 5C illustrates such a new connection, which remains between node 513 and node 533, but rather than being routed through LAN 520 as with the path illustrated in FIG. 5B, the path is instead routed through LAN 540.

[0134] It will be appreciated that the ability to influence path selection based on client application needs significantly enhances the performance, flexibility, and security of the network.

[0135] It will further be appreciated from the above description that one or more aspects of the present invention are contemplated for use with end, client, or end-client devices. A personal or laptop computer are examples of such a device, but a mobile communications device, such as a mobile phone, or a video game console are also examples of such a device. Still further, it will be appreciated that one or more aspects of the present invention are contemplated for use with financial transactions, as the increased security that can be provided by VDR is advantageous to these transactions.

Network Data Transfer

[0136] It will be appreciated that the transmission of data over the Internet, or one or more similar networks, often utilizes precious server processing, memory, and bandwidth, as the data is often delivered from, or processed at, a server. In implementations in accordance with one or more preferred embodiments of the present invention, some of this server load is mitigated by use of a direct connection between two end-user devices, such as, for example two end-user devices having virtualized routing capabilities as described hereinabove. Preferably, packets are then routed between the two end-user devices without passing through a conventional server.

[0137] Notably, however, although transferred data packets do not pass through a server, a server may still be utilized to establish, monitor, and control a connection, as illustrated in FIG. 7. Specifically. FIG. 7 illustrates two clients and an IP server which determines that the clients are authorized to communicate with one another, and which passes connection information to the clients that is utilized to establish a direct connection between the clients. Importantly, the IP server is not involved in this direct connection, i.e. data transferred via this direct connection is not routed through or processed by the IP server, which would require the use of additional resources of the IP server.

[0138] It will be appreciated that, in some networks, a firewall may be setup to prevent an end-user device from accepting connections from incoming requests. There are three basic scenarios that can occur. In a first case, there is no firewall obstruction. In the first case, either client can initiate the connection for the direct connect. In a second case, a single client has a firewall obstructing the connection. In this case, the client that is obstructed from accepting the connection is instructed by the IP Server to initiate the connection to the client that is unobstructed by the firewall. In a third case, both clients have firewalls obstructing the connection. In this case, a software router, or software switch, is used to accept the connection of the two clients and pass the packets through to the clients directly. Notably, this software router truly acts as a switch, and does not modify the payload as it passes the packet through. In a preferred implementation, a software router is implemented utilizing field programmable gate arrays (FPGAs) or other specific hardware designed to implement such cross-connect functionality.

[0139] A preferred system for such a described direct connection includes one or more end-user devices having client software loaded thereon, an IP server, or control server, having server software loaded thereon, and one or more networks (such as, for example Internet, Intranet or Extranet supported by Ethernet, Mobile Phone data networks. e.g. CDMA. WiMAX, GSM. WCDMA and others, wireless networks, e.g. Bluetooth, WiFi, and other wireless data networks) for communication.

[0140] In a preferred implementation, client software installed at an end-user device is configured to communicate with an IP server, which associates, for example in a database, the IP address of the end-user device with a unique identification of a user, such as an email address, telephone number, or other unique identification. The client then periodically "checks in" with the IP server and conveys its IP address to the server, for example by providing its IP address together with the unique identification of the user. This checking in occurs when the client is "turned on", e.g. when the end-user device is turned on or when the client software is loaded, as well as when the IP address has changed, or upon the occurrence of any other network event that would change the path between the client and server, or in accordance with other configured or determined events, times, or timelines, which may be user-configurable.

[0141] By collecting, and updating, the current IP address of a user, other users may communicate with that user as the user moves from place to place. The IP server thus acts as a registry providing updated IP addresses associated with users. This capability also enables multiple device delivery of content to multiple end-user devices a user designates or owns.

[0142] Preferably, such functionality is utilized in combination with virtualized routing capability as described hereinabove. Specifically, it will be appreciated that, currently. Internet communications utilize sessions, and that upon being dropped, e.g. due to a lost connection, a new session must be initialized.

[0143] In a preferred implementation, however, rather than having to re-initiate a new session, for example upon obtaining a new IP address, a new session is created and data is transferred from the old session to the new session while maintaining the state of the old session. In this way, a near-seamless transition is presented to a user between an old session and a new session. For example, a user might be connected via their mobile device to a Wi-Fi connection while they are on the move. They might move out of range of the Wi-Fi connection, but still be in range of a cellular connection. Rather than simply dropping their session, a new session is preferably created, and data from the old session copied over, together with the state of the old session. In this way, although the end-user device is now connected via a cellular connection, rather than via a Wi-Fi connection, the user's experience was not interrupted.

One Client to One Client--File Transfer Implementation

[0144] In a preferred implementation, direct connections between end-user devices having virtualized routing capabilities are utilized in a file transfer context, such as, for example, with a file sharing application.

[0145] FIG. 8 illustrates an exemplary file transfer use scenario between two clients. As described above, each client is in communication with an IP server, for example to communicate its IP address to the IP server. Such communications are exemplified by steps 1010 and 1020.

[0146] In use, a first client communicates to an IP server a request to connect to a particular client, user, or end-user device at step 1030. The IP server, or control server, determines whether or not the other client, user, or end-user device is available, e.g. online, and, if so, looks up the current IP address or addresses associated with the specified client, user, or end-user device. If the client, user, or end-user device is either not online or has left the network, a connection failure message is sent. If the client, user, or end-user device is online, the IP server will take action based upon a pre-selected preference setting. Preferably, each user may choose to accept connection requests automatically, require a confirmation of acceptance, or require some other authentication information, such as an authentication certificate, in order to accept a connection request. If the connection request is accepted, either automatically or manually, the IP server enables the transfer, e.g. by communicating to a second client that the first client has a file for transfer, as exemplified by step 1040.

[0147] Preferably, the IP server notifies each client involved in the transfer of required security levels and protocols, such as, for example, hashing algorithms used to confirm that received packets have not been altered. The IP server also insures that the client software at each end-user device has not been tampered, altered, or hacked.

[0148] The clients complete a messaging "handshake", and then begin transfer of a file. More specifically, the second client requests a connection with the first client at step 1050, the first client notifies the IP server of its status, e.g. that it is beginning a transfer, at step 1060, the first client grants the second client's request at step 1070, and the second client notifies the IP server of its status. e.g. that its connection request was accepted, at step 1080. The file transfer begins at step 1090.

[0149] Periodically, both clients will update the server on the status of the download, as illustrated by exemplary steps 1100 and 1110. The server will keep track of the file transfer and compare the information received from both clients for completeness and security. Once the file transfer is completed, at step 1120, a status is sent of each client is sent to the IP server at steps 1130 and 1140, and the connection is terminated at step 1150. The clients continue to update their availability with the IP server for future downloads, as illustrated by exemplary steps 1160 and 1170.

[0150] It will be appreciated that because one of the problems with the TCP/IP protocol is that significant timing delays can occur between communications, using a virtual machine advantageously allows messages to be sent at the lowest levels of the stack between virtual machines of different clients, thus helping insure that communications are not delayed. Further, the inclusion of local routing capabilities enables each client to setup another communication link, if needed, for example to continue a stalled download. Further still, as preferably both clients include such routing capability, either client can reinitiate a separate communication to the other client, thus helping insure that TCP/IP packet delay timeouts do not draw out the communication.

[0151] Additionally, to facilitate more robust transfers, one of the clients can instruct the other to open other TCP/IP connections independent of the server link. For example, a first client may receive an IP address for a second client via the IP server, and the second client could then communicate additional IP addresses to the first client and communicate duplicate packets via connections established with these additional IP addresses, thus increasing the reliability of the link. Additionally, the client could send multiple packets over separate IP addresses to insure a different starting point for transmission, and thus insure unique paths. It will be appreciated that this might advantageously allow for the continuing transfer of packets even if one of the connection paths fails. Notably, each path is closed upon completion of the transmission.

[0152] FIG. 9 illustrates a user interface for an exemplary file sharing application in accordance with a preferred implementation. To initiate a transfer, a user clicks on an application icon to open the user's Friend's List and a "Sharzing" window. Bold texted names identify on-line contacts, while grey texted names indicate off-line contacts. When the blades of the graphical connection representation on the right side of the window, i.e. the Sharzing, are shut, the Sharzing is inactive. Clicking on an on-line contact opens the blades and establishes a Sharzing connection. The user may then "drag and drop" a file onto the open Sharzing.

[0153] Once a Sharzing connection is established, multiple files can be transferred in either direction. Further, multiple Sharzings can be opened simultaneously to different users. Preferably, when a Sharzing is connected, wallpaper of the opposite PC that is being connected to is displayed. As a file is "dragged and dropped" on the Sharzing, the Sharzing displays the progress of the file transfer. Using a Sharzing skin, a Sharzing depiction can take on identities such as, for example, a futuristic StarGate motif. In the case where such a StarGate motif is used, flash wormhole turbulence may begin when a file is placed in the Sharzing, and, subsequently, an opening at the end of the wormhole may emerge to display an image of the file and/or the recipient's desktop wallpaper. Preferably, when the transferred file is visible on the destination desktop, the transfer is complete.

Many Clients to Many Clients--Video and Audio Conferencing Implementation

[0154] In another preferred implementation, direct connections between end-user devices having virtualized routing capabilities are utilized in a telecommunications context, such as, for example, in an audio and video conferencing application.