Coordinated Garbage Collection In Distributed Systems

A1

U.S. patent application number 16/864042 was filed with the patent office on 2020-08-13 for coordinated garbage collection in distributed systems. The applicant listed for this patent is Oracle International Corporation. Invention is credited to Timothy L. Harris, Martin C. Maas.

| Application Number | 20200257573 16/864042 |

| Document ID | 20200257573 / US20200257573 |

| Family ID | 1000004810760 |

| Filed Date | 2020-08-13 |

| Patent Application | download [pdf] |

View All Diagrams

| United States Patent Application | 20200257573 |

| Kind Code | A1 |

| Harris; Timothy L. ; et al. | August 13, 2020 |

COORDINATED GARBAGE COLLECTION IN DISTRIBUTED SYSTEMS

Abstract

Fast modern interconnects may be exploited to control when garbage collection is performed on the nodes (e.g., virtual machines, such as JVMs) of a distributed system in which the individual processes communicate with each other and in which the heap memory is not shared. A garbage collection coordination mechanism (a coordinator implemented by a dedicated process on a single node or distributed across the nodes) may obtain or receive state information from each of the nodes and apply one of multiple supported garbage collection coordination policies to reduce the impact of garbage collection pauses, dependent on that information. For example, if the information indicates that a node is about to collect, the coordinator may trigger a collection on all of the other nodes (e.g., synchronizing collection pauses for batch-mode applications where throughput is important) or may steer requests to other nodes (e.g., for interactive applications where request latencies are important).

| Inventors: | Harris; Timothy L.; (Cambridge, GB) ; Maas; Martin C.; (Berkeley, CA) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Family ID: | 1000004810760 | ||||||||||

| Appl. No.: | 16/864042 | ||||||||||

| Filed: | April 30, 2020 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 14723425 | May 27, 2015 | 10642663 | ||

| 16864042 | ||||

| 62048752 | Sep 10, 2014 | |||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | G06F 11/301 20130101; G06F 12/0253 20130101; G06F 2009/45583 20130101; G06F 9/45558 20130101; G06F 11/3409 20130101; G06F 12/0276 20130101; G06F 11/34 20130101; G06F 2212/1024 20130101; G06F 2212/152 20130101; G06F 2212/154 20130101; G06F 9/522 20130101 |

| International Class: | G06F 9/52 20060101 G06F009/52; G06F 12/02 20060101 G06F012/02; G06F 9/455 20060101 G06F009/455; G06F 11/30 20060101 G06F011/30; G06F 11/34 20060101 G06F011/34 |

Claims

1.-20. (canceled)

21. A system, comprising: a plurality of computing nodes interconnected via a network, each comprising at least one processor and one or more heap memories and hosting one or more virtual machine instances, wherein each of the virtual machine instances executes a respective process of a distributed application that communicates over the network with one or more other processes of the distributed application executing on respective other virtual machine instances, and wherein a node of the plurality of computing nodes is configured to: request, from a garbage collection coordinator, to perform a garbage collection on the respective one or more heap memories, and responsive to receiving a reply, from the garbage collection coordinator, granting the performing of the garbage collection: stop execution of the respective one or more virtual machine instances hosted by the node; and perform the garbage collection on the respective one or more heap memories; and the garbage collection coordinator, configured to: receive the request from the node to perform the garbage collection on the respective one or more heap memories; determine, responsive to receiving the request, that a number of granted garbage collections is below a number of garbage collections allowed to be performed at a same time, and responsive to the determining: send a reply to the node granting the performing of the garbage collection; and cause work directed to the node to be steered to one or more other nodes of the plurality of computing nodes.

22. The system of claim 21, wherein the garbage collection coordinator comprises a pool of zero or more tokens for granting garbage collections; wherein to determine that the number of granted garbage collections is below a number of garbage collections allowed to be performed at the same time, the garbage collection coordinator is configured to determine that at least one token for granting garbage collections exists in the pool; and wherein to send the reply to the node granting the performing of the garbage collection, the garbage collection coordinator is configured to allocate a token from the pool and send the allocated token to the node.

23. The system of claim 22, wherein the garbage collection coordinator enforces an upper bound on the number of computing nodes allowed to perform garbage collections at the same time.

24. The system of claim 22, wherein the node of the plurality of computing nodes is further configured to return the token to the garbage collection coordinator responsive to completion of the garbage collection on the respective one or more heap memories, and wherein the garbage collection coordinator is further configured to return the token to the pool responsive to receiving the token from the node.

25. The system of claim 24, wherein to determine that at least one token for granting garbage collections exists in the pool, the garbage collection coordinator is configured to: wait for a token to be returned to the pool responsive to determining that no tokens for granting garbage collections exist in the pool.

26. The system of claim 21, wherein the garbage collection coordinator comprises a single garbage collection coordinator component on one of the plurality of computing nodes.

27. The system of claim 21, wherein the distributed application is an application that was written in a garbage collected programming language; and wherein the request to perform a garbage collection is based, at least in part, on determining that a garbage collection should be performed on the node and that execution of the distributed application on the node should be paused or stopped while the garbage collection is performed.

28. A method, comprising: sending a request, by a computing node to a garbage collection coordinator, to perform a garbage collection on one or more heap memories, wherein the computing node is one of a plurality of computing nodes, each comprising at least one processor and one or more heap memories and hosting one or more virtual machine instances, wherein each of the virtual machine instances executes a respective process of a distributed application that communicates over a network with one or more other processes of the distributed application executing on respective other virtual machine instances; determining, by the garbage collection coordinator responsive to receiving the request, that a number of granted garbage collections is below a number allowed to be performed at a same time, and responsive to the determining: sending a reply to the computing node granting the performing of the garbage collection; and causing work directed to the computing node to be steered to one or more other computing nodes of the plurality of computing nodes; and responsive to receiving a reply granting the performing of the garbage collection: stopping execution of the respective one or more virtual machine instances hosted by the computing node; and performing the garbage collection on the one or more heap memories by the computing node.

29. The method of claim 28, wherein the garbage collection coordinator comprises a pool of zero or more tokens for granting garbage collections; wherein determining that the number of granted garbage collections is below the number allowed to perform garbage collections at the same time comprises determining that at least one token for granting garbage collections exists in the pool; and wherein sending the reply to the computing node granting the performing of the garbage collection comprises allocating a token from the pool and send the allocated token to the computing node.

30. The method of claim 29, wherein the garbage collection coordinator enforces an upper bound on the number of computing nodes allowed to perform garbage collections at the same time.

31. The method of claim 28, further comprising: returning, by the computing node, the token to the garbage collection coordinator responsive to completion of the garbage collection on the one or more heap memories; and returning, by the garbage collection coordinator, the token to the pool responsive to receiving the token from the computing node.

32. The method of claim 28, wherein the determining that at least one token for granting garbage collections exists in the pool comprises waiting for a token to be returned to the pool responsive to determining that no tokens for granting garbage collections exist in the pool.

33. The method of claim 28, wherein the garbage collection coordinator comprises a single garbage collection coordinator component on one of the plurality of computing nodes.

34. The method of claim 28, wherein the distributed application is an application that was written in a garbage collected programming language; and wherein the request to perform a garbage collection is based, at least in part, on determining that a garbage collection should be performed on the computing node and that execution of the distributed application on the computing node should be paused or stopped while the garbage collection is performed.

35. One or more non-transitory computer-readable storage media storing program instructions that when executed on or across one or more computing nodes cause the one or more computing nodes to implement a garbage collection coordinator to perform: receiving a request, from a computing node, to grant a garbage collection on one or more heap memories, wherein the computing node is one of a plurality of computing nodes, each comprising at least one processor and one or more heap memories and hosting one or more virtual machine instances, wherein each of the virtual machine instances executes a respective process of a distributed application that communicates over a network with one or more other processes of the distributed application executing on respective other virtual machine instances; determining, responsive to receiving the request, that a number of granted garbage collections is below a number allowed to be performed at a same time, and responsive to the determining: sending a reply to the computing node granting the performing of the garbage collection; and causing work directed to the computing node to be steered to one or more other computing nodes of the plurality of computing nodes.

36. The one or more non-transitory computer-readable storage media of claim 35, wherein the garbage collection coordinator comprises a pool of zero or more tokens for granting garbage collections; wherein determining that the number of granted garbage collections is below the number allowed to perform garbage collections at the same time comprises determining that at least one token for granting garbage collections exists in the pool; and wherein sending the reply to the computing node granting the performing of the garbage collection comprises allocating a token from the pool and send the allocated token to the computing node.

37. The one or more non-transitory computer-readable storage media of claim 35, wherein the garbage collection coordinator enforces an upper bound on the number of computing nodes allowed to perform garbage collections at the same time.

38. The one or more non-transitory computer-readable storage media of claim 35, wherein the garbage collection coordinator further performs: receiving, from the computing node, the token to the garbage collection coordinator responsive to completion of the garbage collection on the one or more heap memories; and returning the token to the pool responsive to receiving the token from the computing node.

39. The one or more non-transitory computer-readable storage media of claim 35, wherein the determining that at least one token for granting garbage collections exists in the pool comprises waiting for a token to be returned to the pool responsive to determining that no tokens for granting garbage collections exist in the pool.

40. The one or more non-transitory computer-readable storage media of claim 35, wherein the distributed application is an application that was written in a garbage collected programming language; and wherein the request to perform a garbage collection is based, at least in part, on determining that a garbage collection should be performed on the computing node and that execution of the distributed application on the computing node should be paused or stopped while the garbage collection is performed.

Description

[0001] This application is a continuation of U.S. patent application Ser. No. 14/723,425, filed May 27, 2015, which claims benefit of priority of U.S. Provisional Application Ser. No. 62/048,752, filed Sep. 10, 2014, the content of which are hereby incorporated by reference herein in their entirety.

BACKGROUND

[0002] Large software systems often include multiple virtual machine instances (e.g., virtual machines that adhere to the Java.RTM. Virtual Machine Specification published by Sun Microsystems, Inc. or, later, Oracle America, Inc., which are sometimes referred to herein as Java.RTM. Virtual Machines or JVMs) running on separate host machines in a cluster and communicating with one another as part of a distributed system. The performance of modern garbage collectors is typically good on individual machines, but may contribute to poor performance in distributed systems.

[0003] In some existing systems, both minor garbage collections (e.g., garbage collections that target young generation portions of heap memory) and major garbage collections (e.g., garbage collections that target old generation portions of heap memory) are "stop the world" events. In other words, regardless of the type of collection being performed, all threads of any executing applications are stopped until the garbage collection operation is completed. Major garbage collection events can be much slower than minor garbage collection events because they involve all live objects in the heap.

[0004] Some workloads involve "barrier" operations which require synchronization across all of the machines. That is, if any one machine is delayed (e.g., performing garbage collection) then every other machine may have to wait for it. The impact of this problem may grow as the size of the cluster grows, harming scalability. Other workloads, such as key-value stores, may involve low-latency request-response operations, perhaps with an average-case delay of 1 millisecond (exploiting the fact that a modern interconnect, such as one that adheres to the InfiniBand.TM. interconnect architecture developed by the InfiniBand.RTM. Trade Association, may provide network communication of the order of 1-2 .mu.s). A single user-facing operation (e.g., producing information for a web page) may involve issuing queries to dozens of key-value stores, and so may be held up by the latency of the longest "straggler" query taking 10 or 100 times longer than the average case. Young-generation garbage collection may also be a source of pauses which cause stragglers, even when using an optimized parallel collector.

SUMMARY

[0005] Many software systems comprise multiple processes running in separate Java Virtual Machines (JVMs) on different host machines in a cluster. For example, many applications written in the Java.TM. programming language (which may be referred to herein as Java applications) run over multiple JVMs, letting them scale to use resources across multiple physical machines, and allowing decomposition of software into multiple interacting services. Examples include popular frameworks such as the Apache.RTM. Hadoop framework and the Apache.RTM. Spark framework. The performance of garbage collection (GC) within individual virtual machine instances (VMs) may have a significant impact on a distributed application as a whole: garbage collection behavior may decrease throughput for batch-style analytics applications, and may cause high tail-latencies for interactive requests.

[0006] In some embodiments of the systems described herein, coordination between VMs, enabled by the low communication latency possible on modern interconnects, may mitigate the impact of garbage collection. For example, in some embodiments, fast modern interconnects may be exploited to control when garbage collection is performed on particular ones of the nodes (e.g., VMs) of a distributed system in which separate, individual processes communicate with each other and in which the heap memory is not shared between the nodes. These interconnects may be exploited to control when each of the VMs perform their garbage collection cycles, which may reduce the delay that pauses to perform garbage collection introduce into the overall performance of the software or into the latency of particular individual operations (e.g., query requests).

[0007] In various embodiments, a garbage collection coordination mechanism (e.g., a garbage collection coordinator process) may obtain (e.g., through monitoring) and/or receive state information from each of the nodes and apply a garbage collection coordination policy to reduce the impact of garbage collection pauses, dependent on that information. For example, if, while executing a batch-mode application in which the overall throughput of the application is a primary objective, the information indicates that a node is about to collect, the coordinator may trigger a collection on all of the other nodes, synchronizing collection pauses for all of the nodes. In another example, if, while executing an interactive application that is sensitive to individual request latencies, the information indicates that a node is about to collect, the coordinator may steer requests to other nodes, steering them away from nodes that are performing, or are about to perform, a collection.

[0008] In some embodiments, the garbage collection coordinator process may be implemented as a dedicated process executing on a single node in the distributed system. In other embodiments, portions of the garbage collection coordinator process may be distributed across the nodes in the distributed system to collectively provide the functionality of a garbage collection coordinator. In some embodiments, multiple garbage collection coordination policies may be supported in the distributed system, including, but not limited to, one or more policies that apply a "stop the world everywhere" approach, and one or more policies that apply a staggered approach to garbage collection (some of which make use of a limited number of tokens to control how many nodes can perform garbage collection at the same time).

[0009] In various embodiments, a GC-aware communication library and/or GC-related APIs may be used to implement (and/or configure) a variety of mechanisms for performing coordinated garbage collection, each of which may reduce the impact of garbage collection pauses during execution of applications having different workload characteristics and/or performance goals.

BRIEF DESCRIPTION OF THE DRAWINGS

[0010] FIG. 1 is a block diagram illustrating a distributed system, according to one embodiment.

[0011] FIG. 2 is a graph illustrating the duration of each superstep of a benchmark distributed application and the number of garbage collection operations on any node occurring during each superstep.

[0012] FIG. 3 is a block diagram illustrating an example database system including a four node cluster, according to one embodiment.

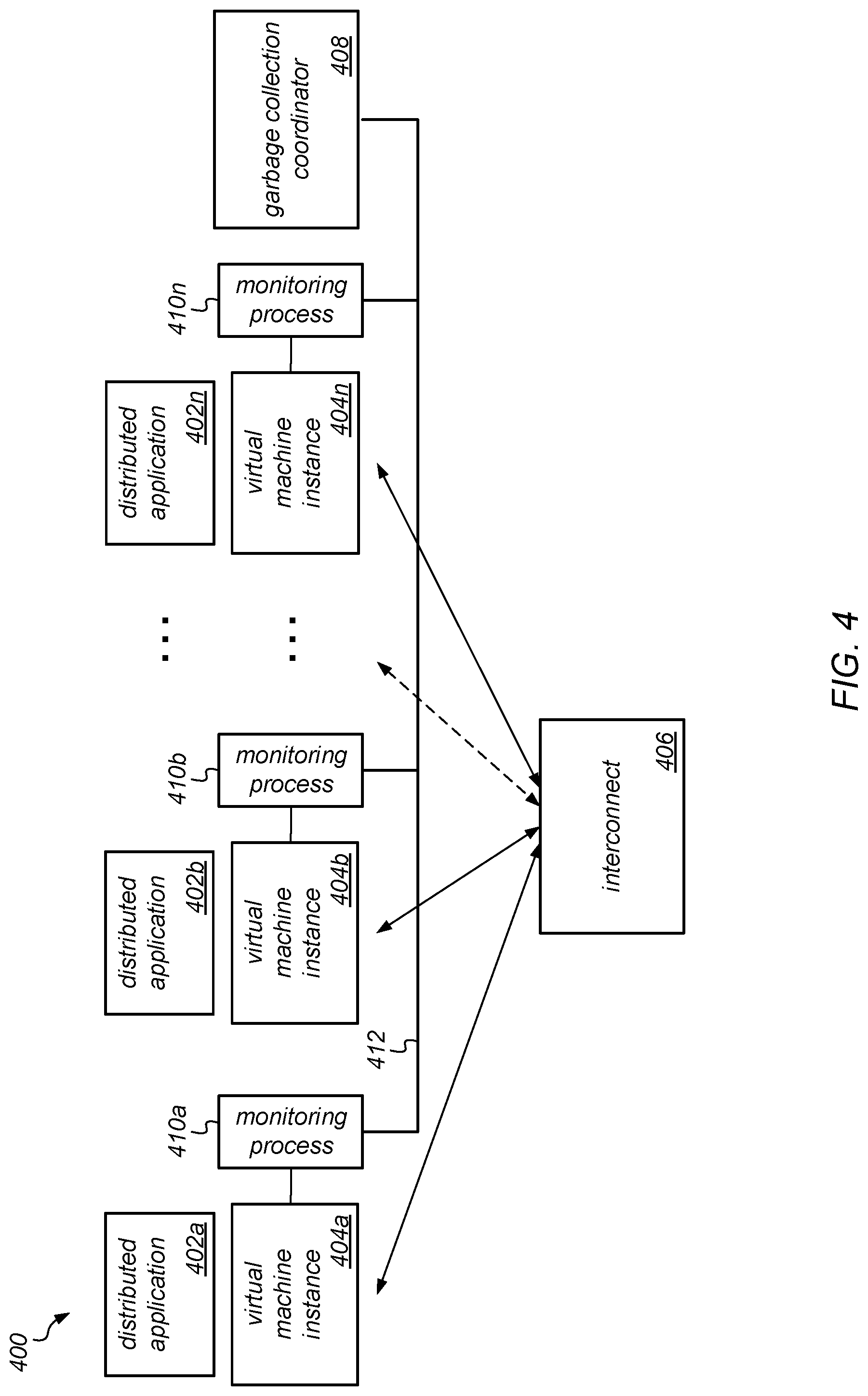

[0013] FIG. 4 is a block diagram illustrating one embodiment of system configured for implementing coordinated garbage collection.

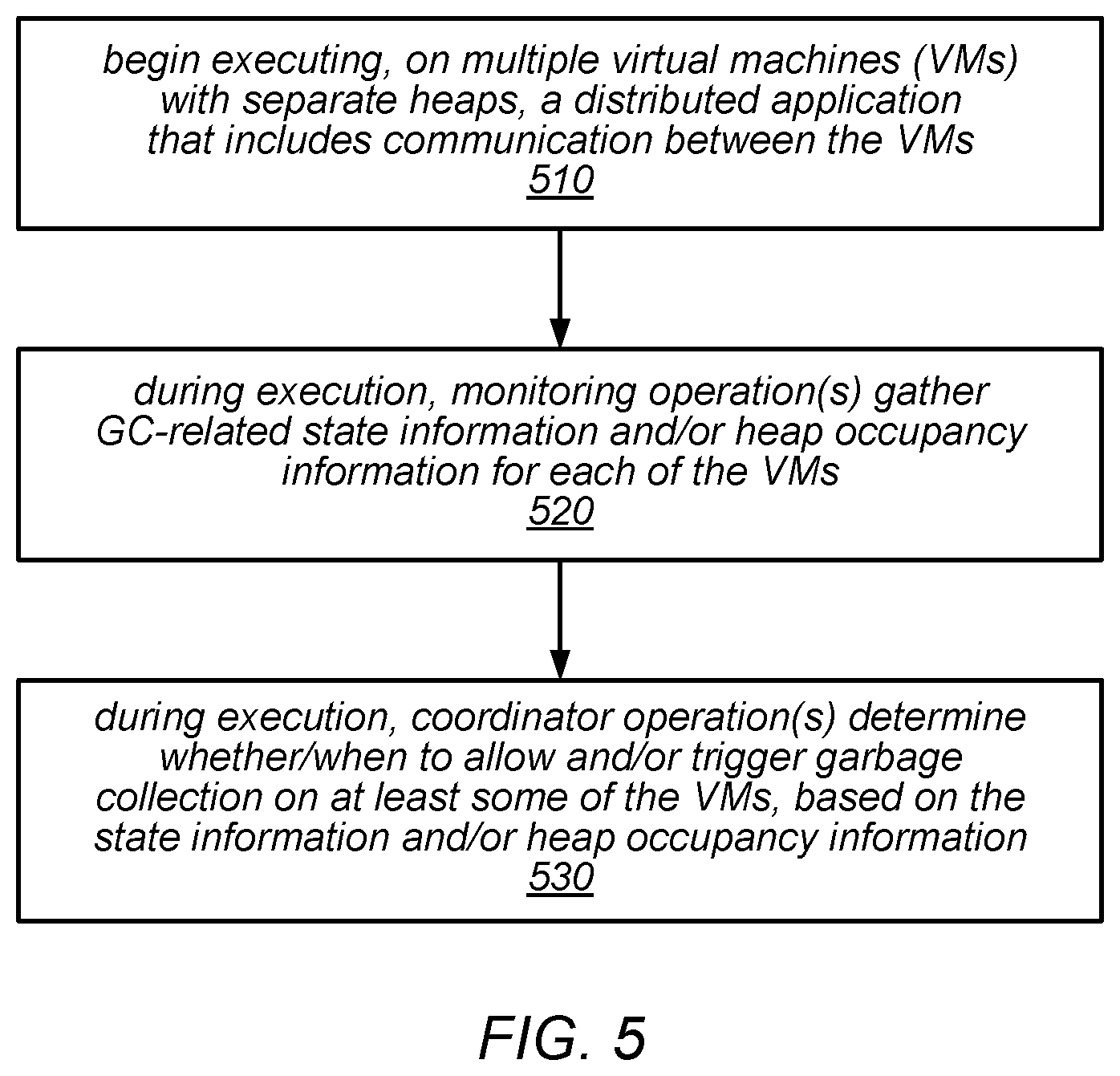

[0014] FIG. 5 is a flow diagram illustrating one embodiment of a method for coordinating garbage collection for a distributed application executing on multiple virtual machine instances.

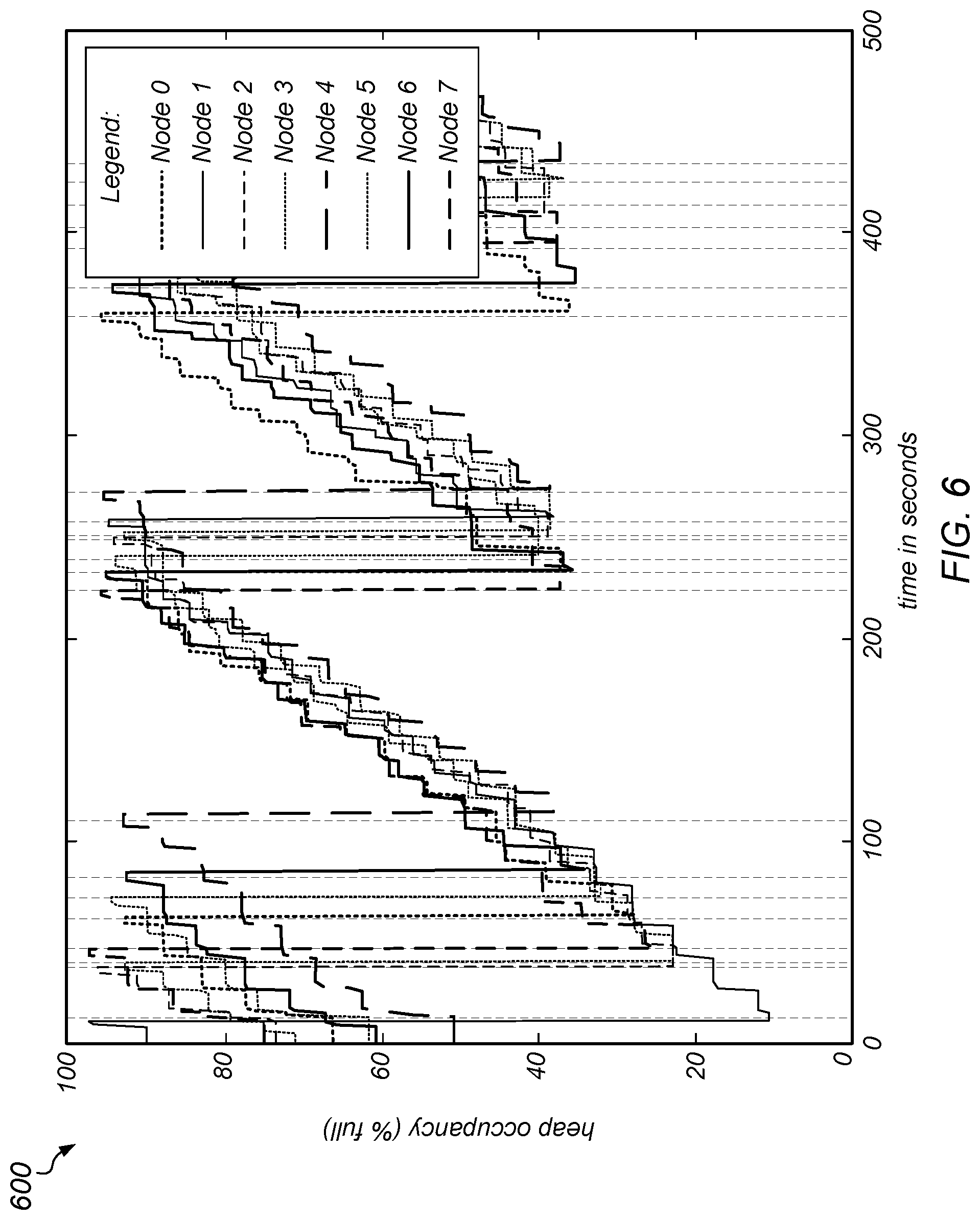

[0015] FIG. 6 is a graph illustrating the old generation size on the different nodes of a PageRank computation over time without coordination, as in one embodiment.

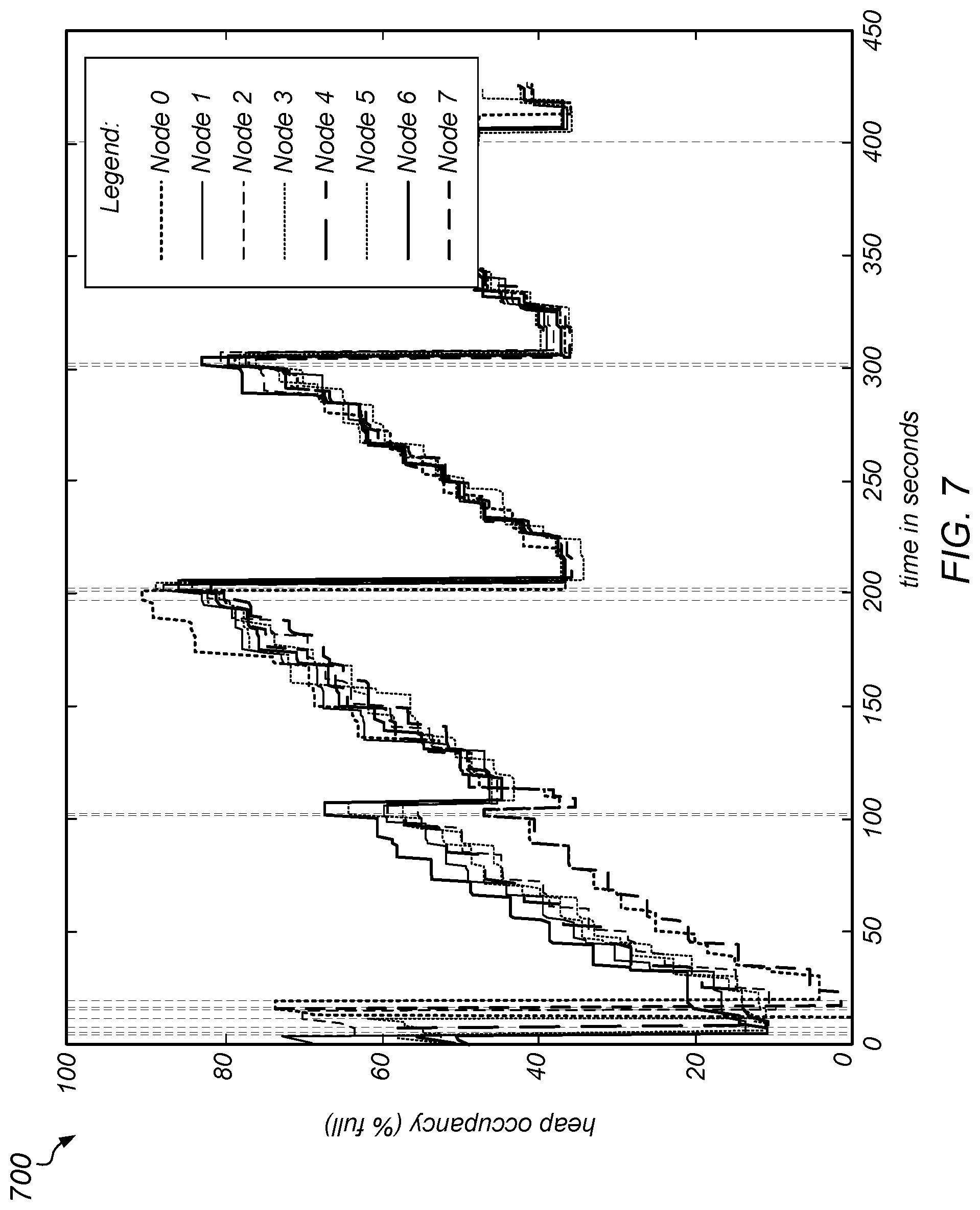

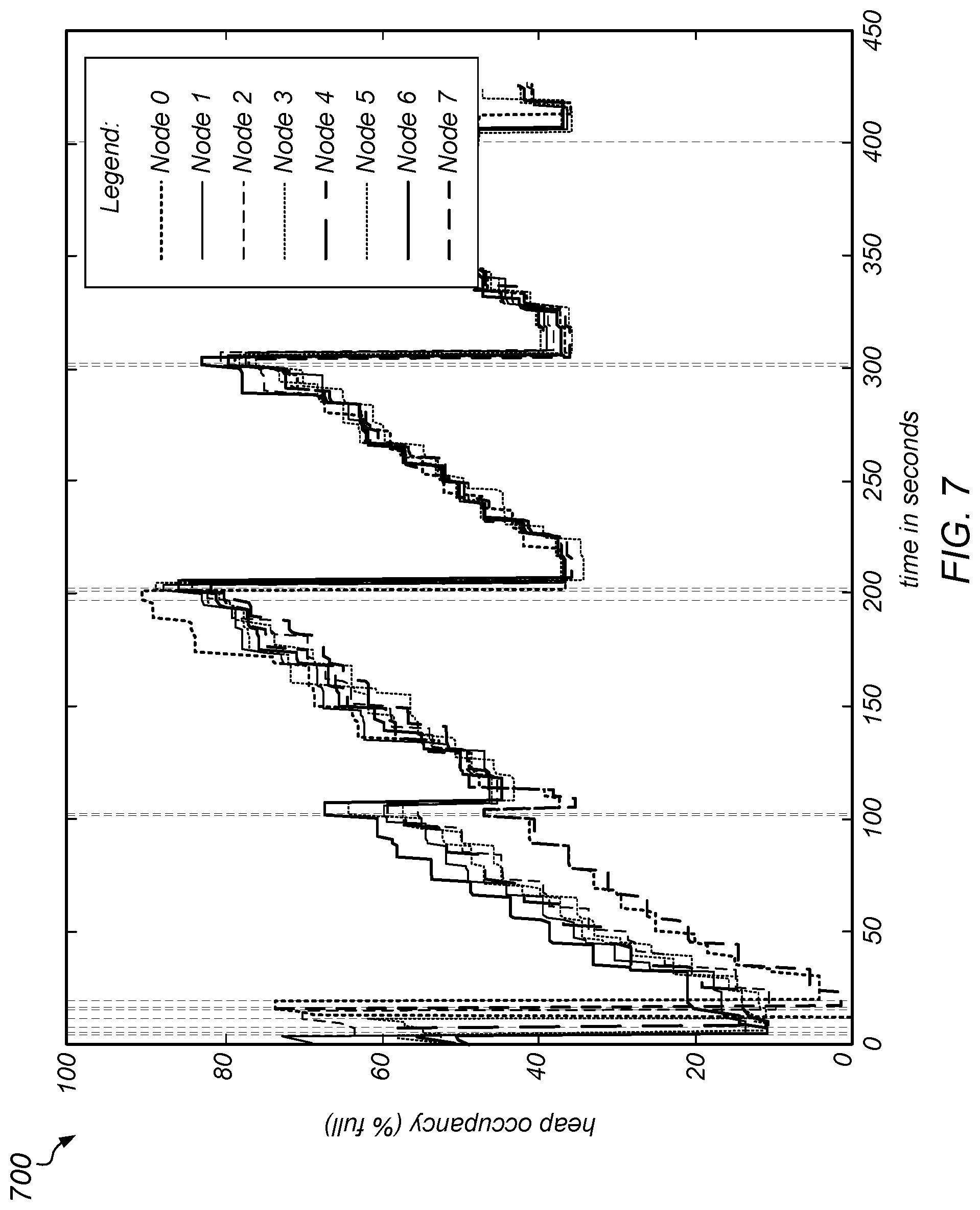

[0016] FIG. 7 is a graph illustrating the triggering of a collection on a fixed interval, according to one embodiment.

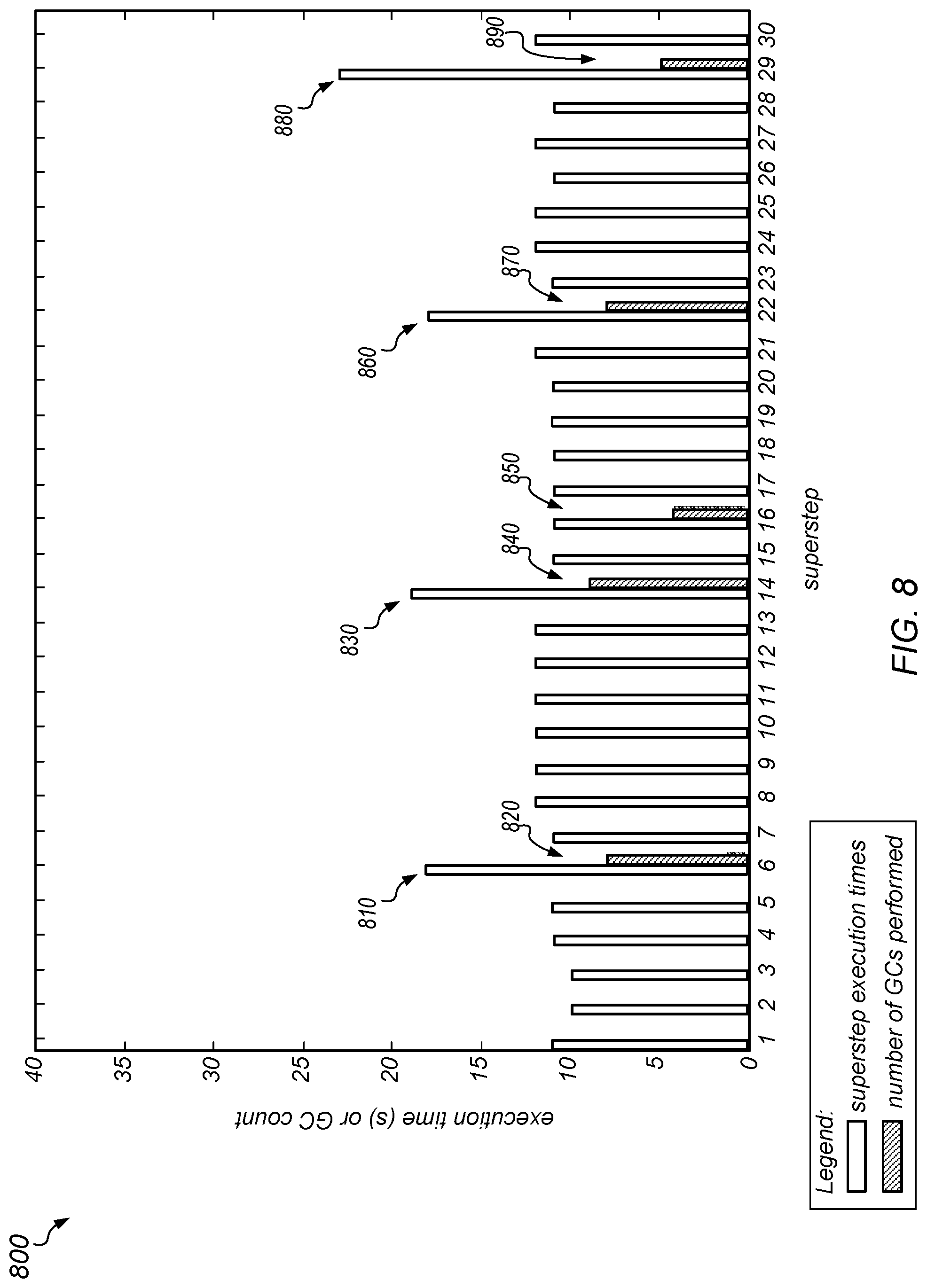

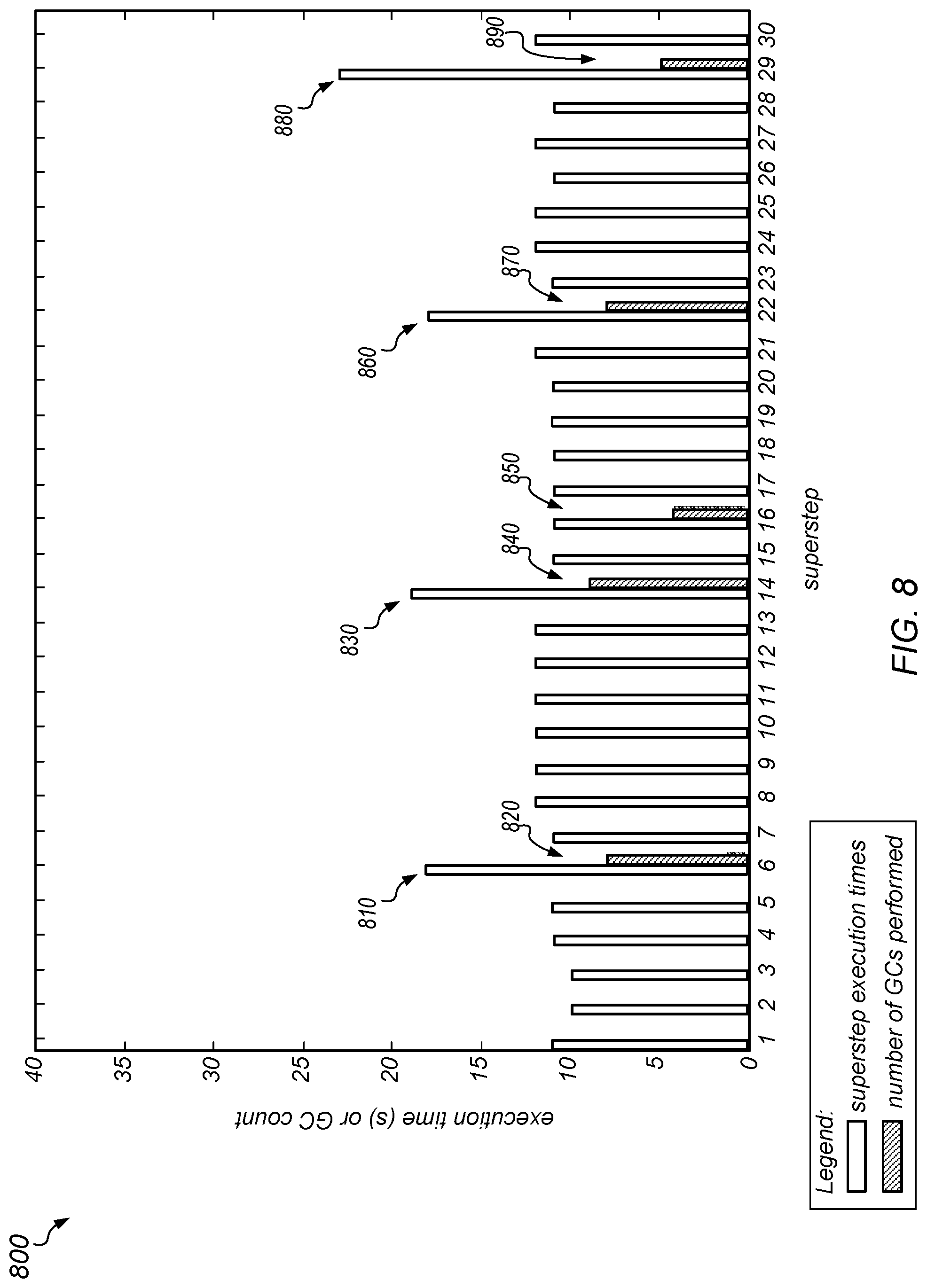

[0017] FIG. 8 is a graph illustrating the duration of each superstep of the PageRank computation when a coordinated collection is triggered on all nodes at a fixed interval, as in one embodiment.

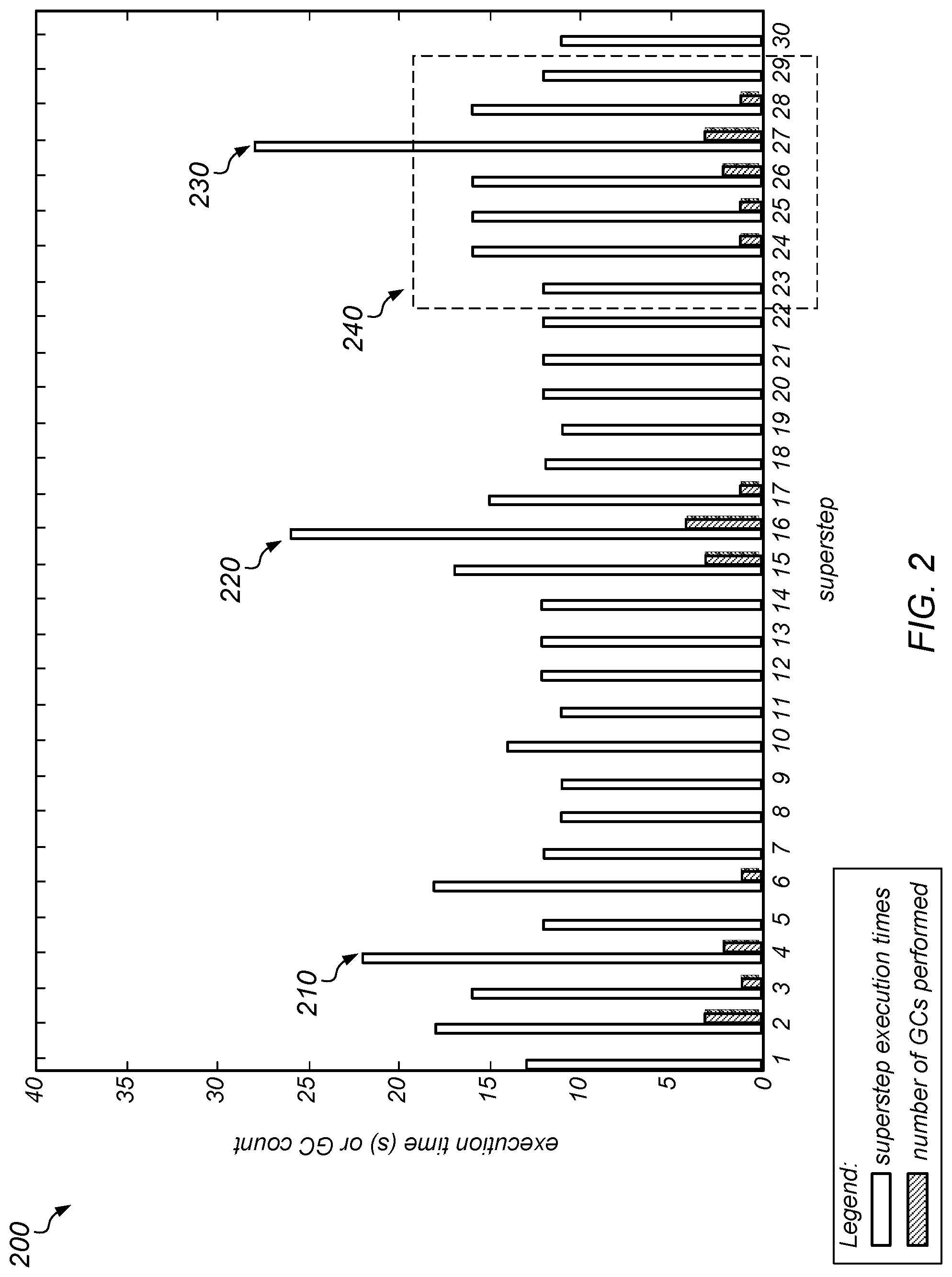

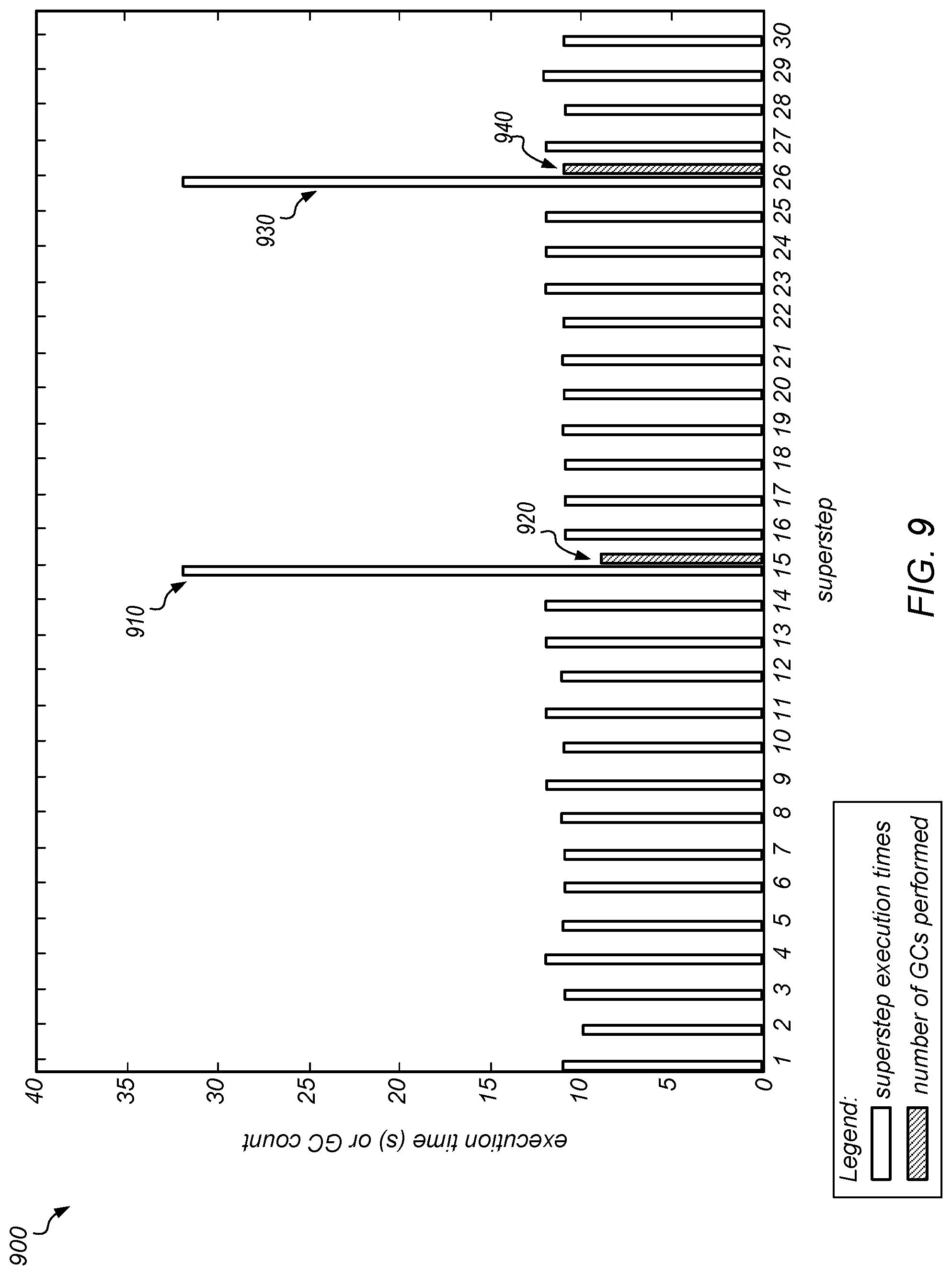

[0018] FIG. 9 is a graph illustrating the duration of each superstep of the PageRank computation when a coordinated collection is triggered on all nodes when one of them reaches a maximum heap occupancy threshold, as in one embodiment.

[0019] FIG. 10 is a graph illustrating a comparison of different garbage collection coordination policies based on execution time, according to at least some embodiments.

[0020] FIGS. 11 and 12 are graphs illustrating heap occupancies and corresponding read query latencies without garbage collection coordination and with garbage collection coordination, respectively, according to one embodiment.

[0021] FIGS. 13 and 14 are graphs illustrating response time distributions for read queries and update queries, respectively, without GC-aware query steering and with GC-aware query steering, according to one embodiment.

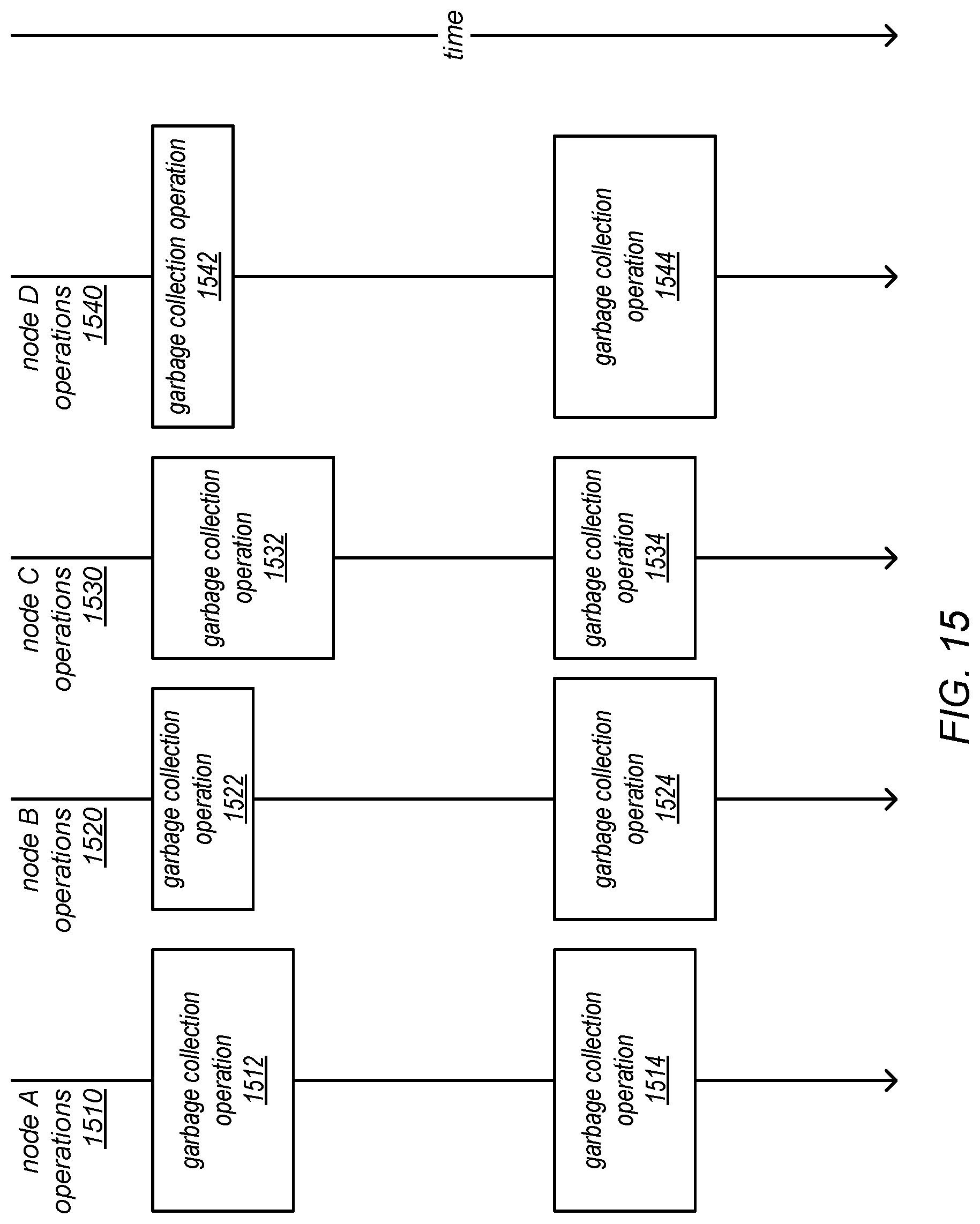

[0022] FIG. 15 is a block diagram illustrating a "stop the world everywhere" approach for implementing coordinated garbage collection, according to one embodiment.

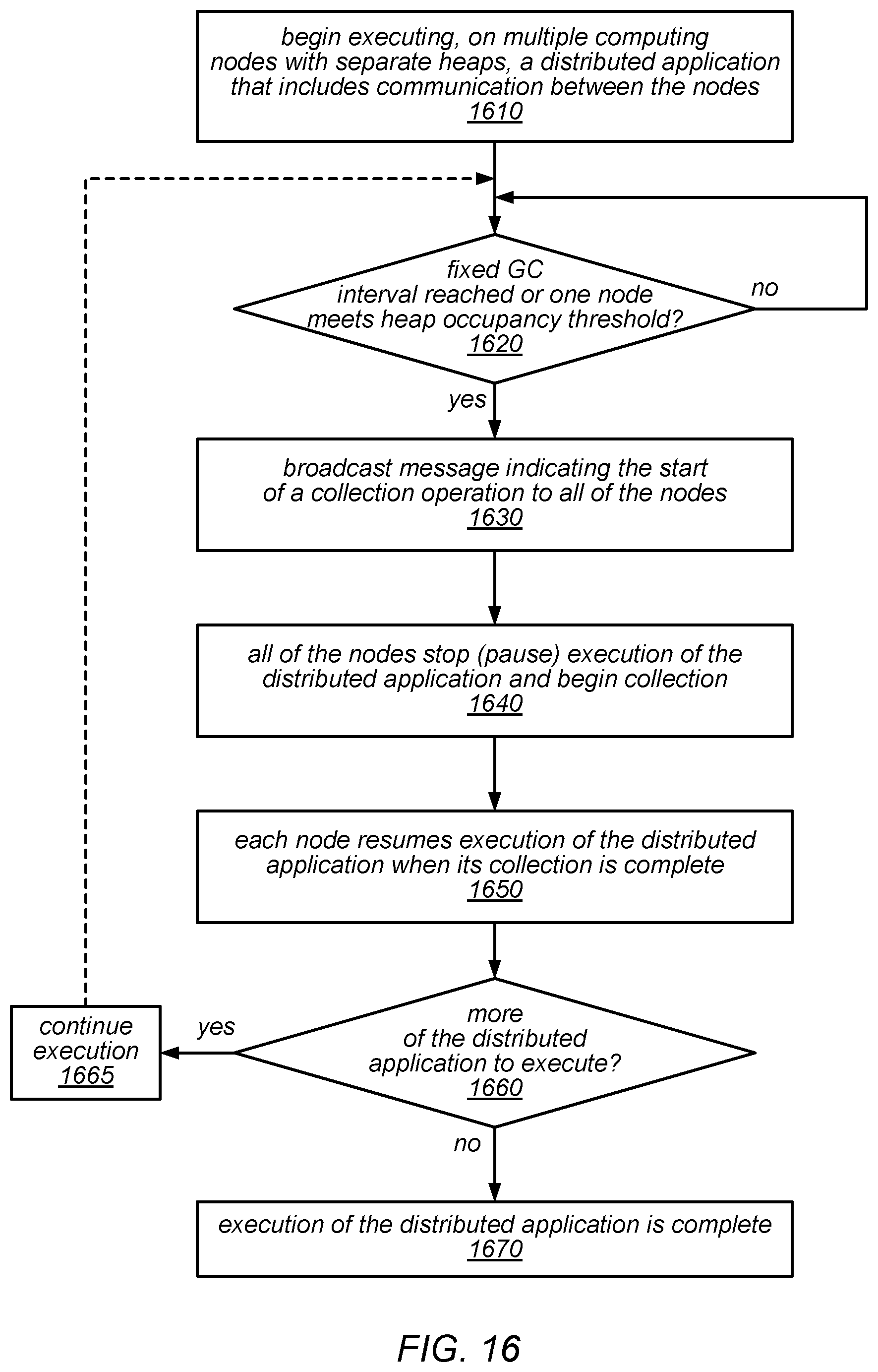

[0023] FIG. 16 is a flow diagram illustrating one embodiment of a method for synchronizing the start of collection across all nodes in a system under a "stop the world everywhere" approach for implementing coordinated garbage collection.

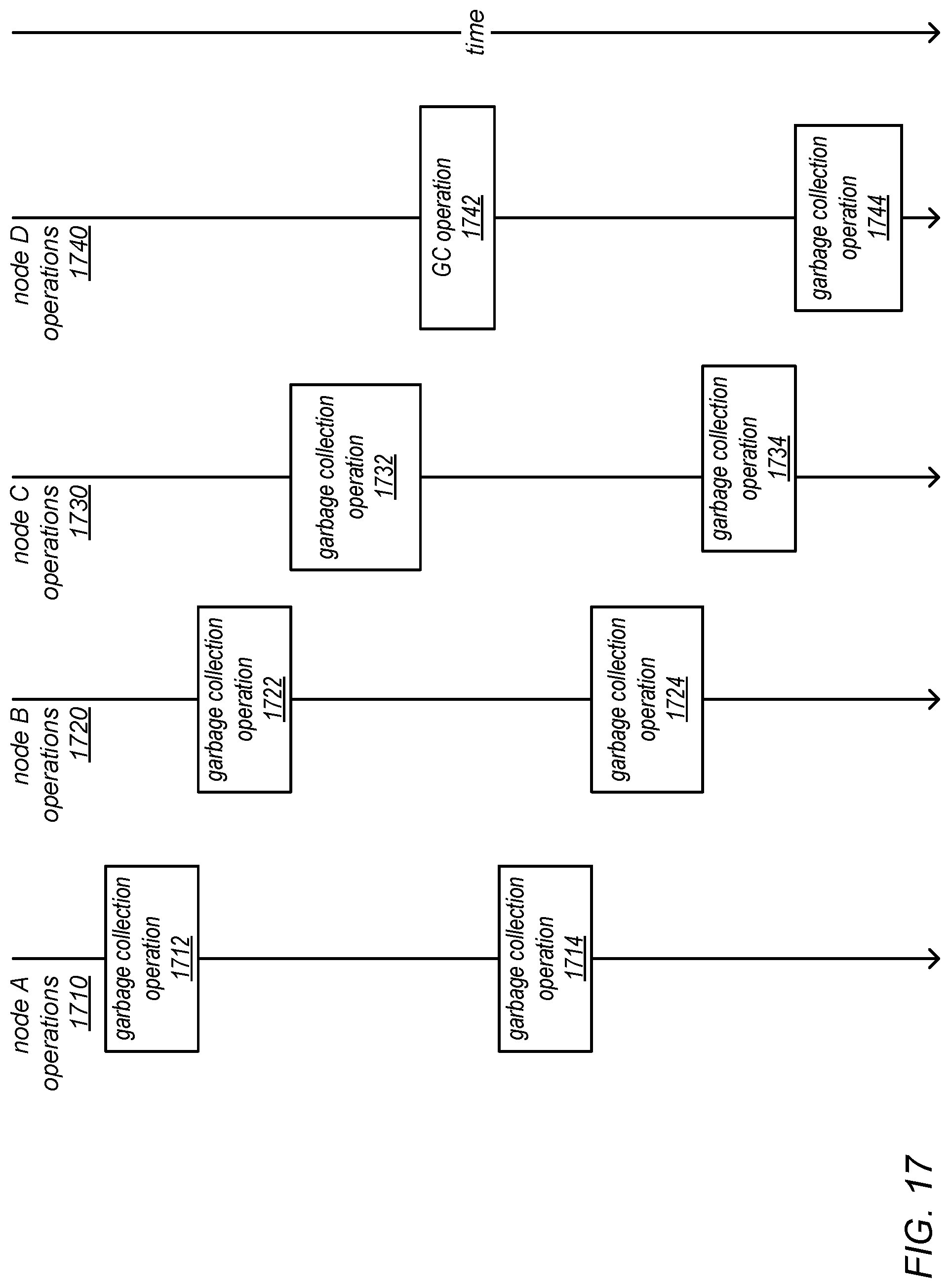

[0024] FIG. 17 is a block diagram illustrating a "staggered garbage collections" approach for implementing coordinated garbage collection, according to one embodiment.

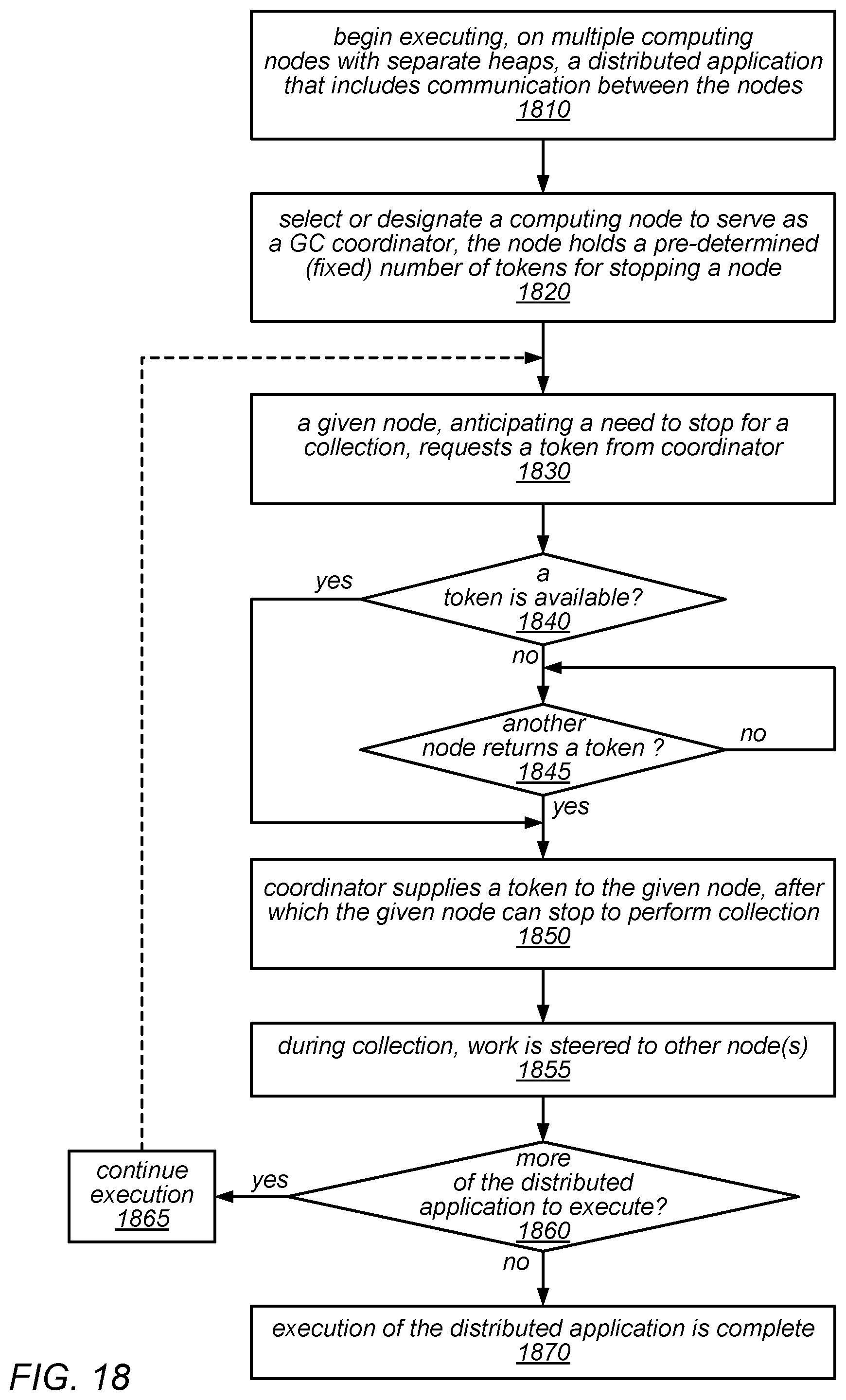

[0025] FIG. 18 is a flow diagram illustrating one embodiment of a method for implementing GC-aware work distribution that includes deliberately staggering collections across nodes.

[0026] FIG. 19 is a flow diagram illustrating one embodiment of a method for selecting a garbage collection coordination policy from among multiple garbage collection coordination policies that are supported in a single system.

[0027] FIG. 20 is a flow diagram illustrating one embodiment of a method for coordinating the execution of particular operations that are performed when executing a distributed application on multiple computing nodes.

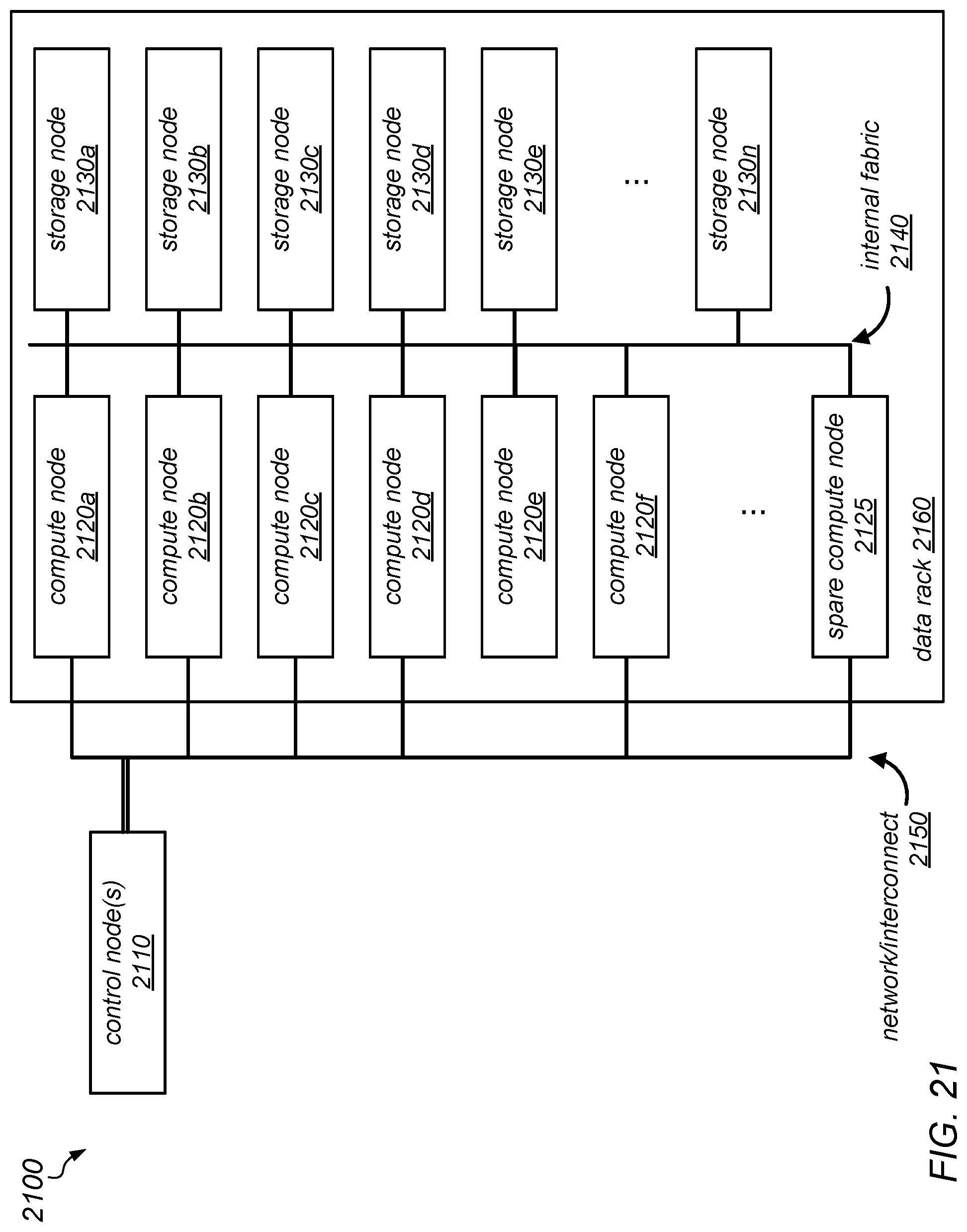

[0028] FIG. 21 is a block diagram illustrating one embodiment of a rack-scale system configured to implement coordinated garbage collection, according to one embodiment.

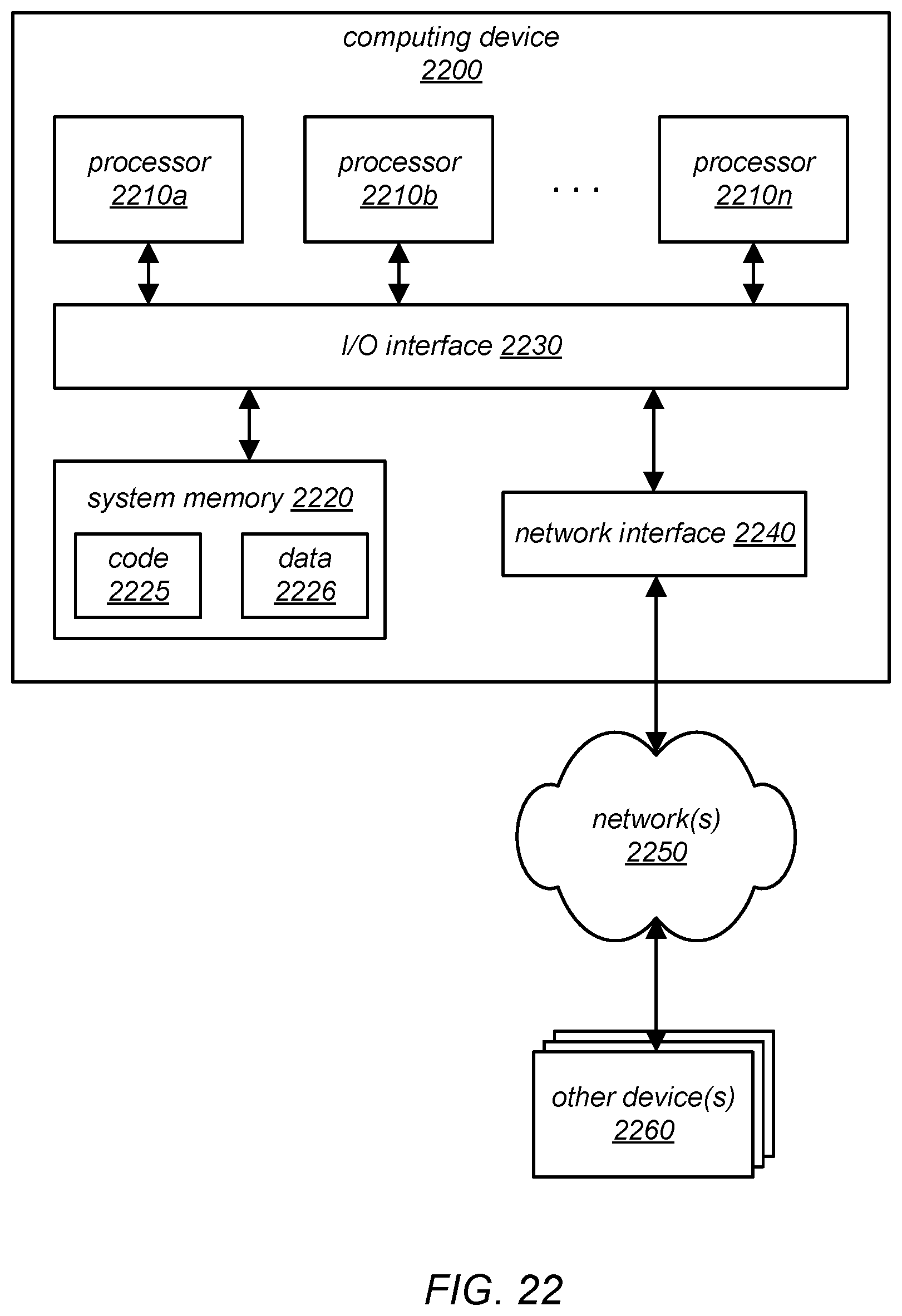

[0029] FIG. 22 is a block diagram illustrating a computing system configured to implement coordinated garbage collection, according to various embodiments.

[0030] While various embodiments are described herein by way of example for several embodiments and illustrative drawings, those skilled in the art will recognize that embodiments are not limited to the embodiments or drawings described. It should be understood that the drawings and detailed description thereto are not intended to limit the embodiments to the particular form disclosed, but on the contrary, the intention is to cover all modifications, equivalents and alternatives falling within the spirit and scope of the disclosure. Any headings used herein are for organizational purposes only and are not meant to be used to limit the scope of the description. As used throughout this application, the word "may" is used in a permissive sense (i.e., meaning having the potential to), rather than the mandatory sense (i.e., meaning must). Similarly, the words "include", "including", and "includes" mean including, but not limited to.

DETAILED DESCRIPTION OF EMBODIMENTS

[0031] As noted above, many software systems comprise multiple processes running in separate Java Virtual Machines (JVMs) on different machines in a cluster. For example, many Java applications run over multiple JVMs, letting them scale to use resources across multiple physical machines, and allowing decomposition of software into multiple interacting services. Examples include popular frameworks such as the Hadoop framework and the Spark framework.

[0032] As described in more detail herein, the performance of garbage collection (GC) within individual virtual machine instances (VMs) can have a significant impact on a distributed application as a whole. For example, garbage collection behavior can decrease throughput for batch-style analytics applications, and can cause high tail-latencies for interactive requests.

[0033] In some embodiments of the systems described herein, fast modern interconnects (such as those in Oracle.RTM. Engineered Systems) may be exploited to control when the separate JVMs perform their garbage collection cycles, which may reduce the delay that these introduce to the software's overall performance. For example, they may be exploited to synchronize collection pauses so that they occur at the same time, or to steer requests away from JVMs that are currently paused for collection, in different embodiments. In other words, coordination between VMs, enabled by the low communication latency possible on modern interconnects, may (in at least some embodiments) mitigate problems that can occur when performing uncoordinated garbage collection operations. For example, the use of coordinated garbage collection, as described herein, may reduce the impact of garbage collection pauses in a batch workload, and may reduce the extent to which garbage collection causes "stragglers" in interactive settings.

[0034] While the features, techniques and methods of coordinated garbage collection are described herein mainly in terms of systems based on the Java programming language, they may also be applicable in other distributed systems that employ garbage collection (such as those built over the Microsoft.RTM. .NET.TM. framework) and to distributed systems in which nodes may need to pause temporarily for other activities (e.g., not necessarily garbage collection), according to various embodiments. For example, "housekeeping" activities within an application itself (such as resizing a hash table, or restructuring a software-managed cache) or system activities (such as virtual machine live migration) may also be coordinated using the techniques described herein.

[0035] Some existing methods for preventing long latencies related to garbage collections may include: [0036] Using C/C++ instead of Java for latency-sensitive applications (e.g., application having interactive workloads). For example, the Apache.RTM. Cassandra.TM. key-value store from the Apache Software Foundation (originally developed at Facebook, Inc.) may utilize this technique. [0037] Writing code in contorted ways to avoid allocating memory (and hence the need for garbage collection), e.g., representing data within a single large array of bytes, rather than as separate objects. [0038] Setting heap sizes to prevent garbage collection from ever occurring, and restarting an application periodically to avoid performing garbage collection (e.g., restarting a trading application at the beginning of each day). Note, however, that this approach may involve inefficient use of resources. Note also that this approach may fail if the heap size is reached on a day on which the application experiences an unexpectedly large workload. [0039] In latency-sensitive workloads, duplicating requests to multiple servers, and picking the first response (e.g., hoping that there will be at most one straggler amongst these requests). Note that duplicating requests may also involve inefficient use of resources.

[0040] As shown above, some attempts to mitigate straggler problems may involve replicating work (at the cost of poor resource utilization), or changing code to avoid performing allocation at all (at the cost of poor software engineering, a reduction in the adoption of Java, or fragile performance, e.g., working in some cases, but failing unexpectedly).

[0041] Modern cluster interconnects may allow processes to communicate at a much lower latency than the start/end of activities such as garbage collection (even young-generation collection). In some embodiments, coordinated garbage collection in distributed systems may take advantage of modern cluster interconnect communication to reduce the impact that activities such as garbage collection have on the overall performance of the application. For example, in some embodiments, requests may be steered away from a process that is about to perform garbage collection.

[0042] In general, the systems and techniques described herein for performing coordinated garbage collection may improve the performance of distributed Java applications, in at least some embodiments. Additionally, when developing software for systems that implement coordinated garbage collection, programmers who might otherwise have chosen to write their software in C/C++ may instead choose to write it in Java.

INTRODUCTION

[0043] As described herein, it has been demonstrated that systems software and language runtime systems may be able to evolve in a manner that better supports "rack scale" machines in which tightly-coupled sets of machines are deployed and used together as a single system. In some cases, rack-scale systems (including some that have been developed as university research projects) may include specialized processors, storage devices, and/or interconnects. These systems may blur the boundaries between "distributed systems" and "single machines." For example, in various embodiments, they may exhibit one or more of the following features: (i) hardware may be designed and provisioned together, (ii) components such as power supplies and storage arrays may be shared across machines, (iii) IO devices may be accessed across an internal fabric rather than being attached directly to processor motherboards, and (iv) message passing within the system may be more reliable (e.g., as compared to traditional networking), and interconnect latencies may be low (e.g., sub-.mu.s on research systems, and few-.mu.s on commodity hardware).

[0044] As described in more detail herein, the performance of distributed Java applications running on multiple nodes in a rack-scale cluster (e.g., with and without support for coordinated garbage collection) has been investigated. For example, some of the coordinated garbage collection techniques described herein have been applied in investigations into whether low latency interconnects may enable useful coordination between language runtime systems at the rack-level (e.g., distributed Java applications running on multiple nodes in a rack-scale cluster), as has been hypothesized. As described herein, it has been demonstrated that low latency interconnect communication may be exploited to reduce the impact of the garbage collection on an application's performance. For example, in some embodiments, low latency interconnect communication may improve performance of Java-based systems that involve "big data" analytics workloads (e.g., without having to rewrite them in C/++ in order to avoid garbage collection latencies).

[0045] Some preliminary results of the application of coordinated garbage collection techniques are described herein in reference to two particular issues that were examined: (i) pauses in batch computations caused by software on one machine trying to synchronize with software on another machine that is currently stopped for garbage collection, and (ii) latency spikes in interactive workloads caused by one or more garbage collections coinciding with the handling of a request. More specifically, various features, methods, and/or techniques for implementing coordinated garbage collection are described herein in reference to two example systems: a batch-mode system (e.g., one that implements a Spark framework) on which the PageRank graph computation is executed, and a distributed NoSQL data management system (e.g., an Apache Cassandra database system). As demonstrated using these example systems, the introduction of these features, methods, and/or techniques to provide coordination between JVMs may mitigate at least some of the problems related to garbage collection latencies.

[0046] In a batch workload, any application-wide synchronization may have to wait if any of the processes involved has stopped for garbage collection. In other words, a garbage collection on a single machine may stall the entire distributed application. In some embodiments of the systems described herein, the use of a "stop the world everywhere" policy, in which the garbage collections across all of the processes may be forced to occur at the same time, may address (or mitigate) this issue.

[0047] In an interactive workload, an individual request's latency may be negatively impacted if a garbage collection occurs while it is being serviced. In some embodiments of the systems described herein, the use of heap-aware work distribution, in which requests may not be sent to machines if (and when) they are about to pause for collection, may address (or mitigate) this issue. In some embodiments, the replication of data in the system (which may already be utilized for robustness) may be exploited to redirect requests to another machine that is not paused (and that is not about to pause) to perform garbage collection.

[0048] In some ways, the two techniques described above (e.g., the "stop the world everywhere" technique and heap-aware work distribution) may be considered instances of a single, more general, technique for coordinating garbage collection in various target systems.

[0049] Some existing garbage collection algorithms perform well for single-machine (e.g., non-distributed) workloads. Other previous work on distributed garbage collection has been focused on systems and scenarios in which one large application is running across multiple JVMs, the data for the application is spread across the individual machines, and there is a single shared heap for all of the machines. In these systems and scenarios, there may be pointers from the objects on one machine to objects on another machine, with the primary challenges for the distributed garbage collector being determining which objects can be de-allocated and determining how to handle cycles of references between objects on two machines. For example, various distributed garbage collection techniques for heaps that span multiple machines are described in "Garbage Collection", by R. Jones and R. Lins, published by John Wiley & Sons Ltd., New York, 1996.

[0050] In contrast to these earlier approaches, the systems and methods for implementing coordinated garbage collection described herein may be applied to distributed systems in which the application itself is distributed, e.g., in systems in which each computing node in the distributed system runs in its own separate virtual machine instance (e.g., in its own separate JVM) and has its own heap memory (as opposed to the computing nodes or virtual machine instances sharing a single, distributed heap). More specifically, coordinated garbage collection, as described herein, may be well suited for application in systems in which a distributed application is executing on multiple virtual machine instances (e.g., multiple virtual machine instances, each of which is hosted on a respective physical computing node) and in which the distributed application includes frequent communication between the nodes. In some embodiments, the physical computing nodes on which the virtual machine instances are hosted may be components of a rack-scale machine, and may be connected to each other over a low latency interconnect such as an InfiniBand interconnect or a fast Ethernet network (e.g., one with a latency of a few microseconds).

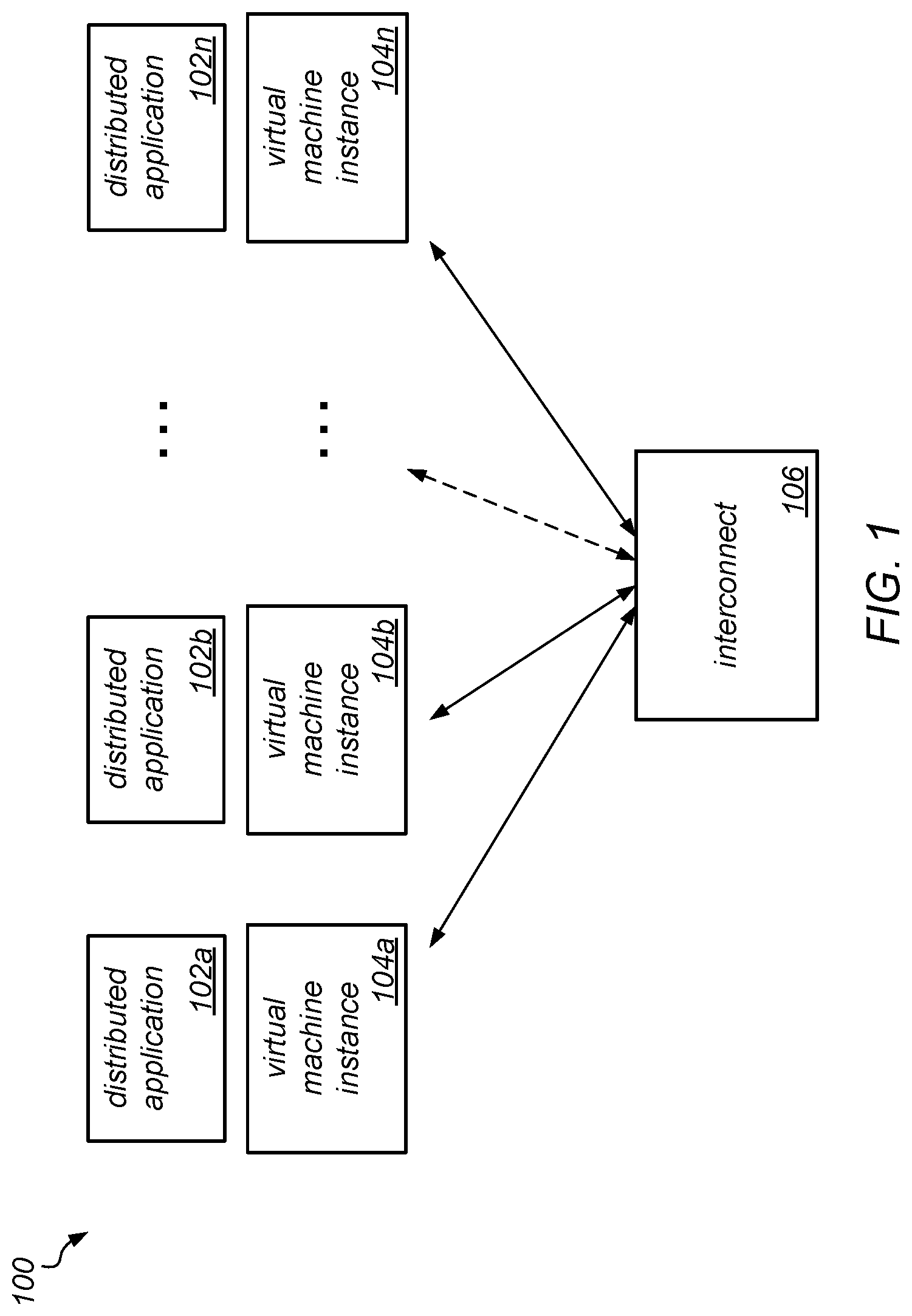

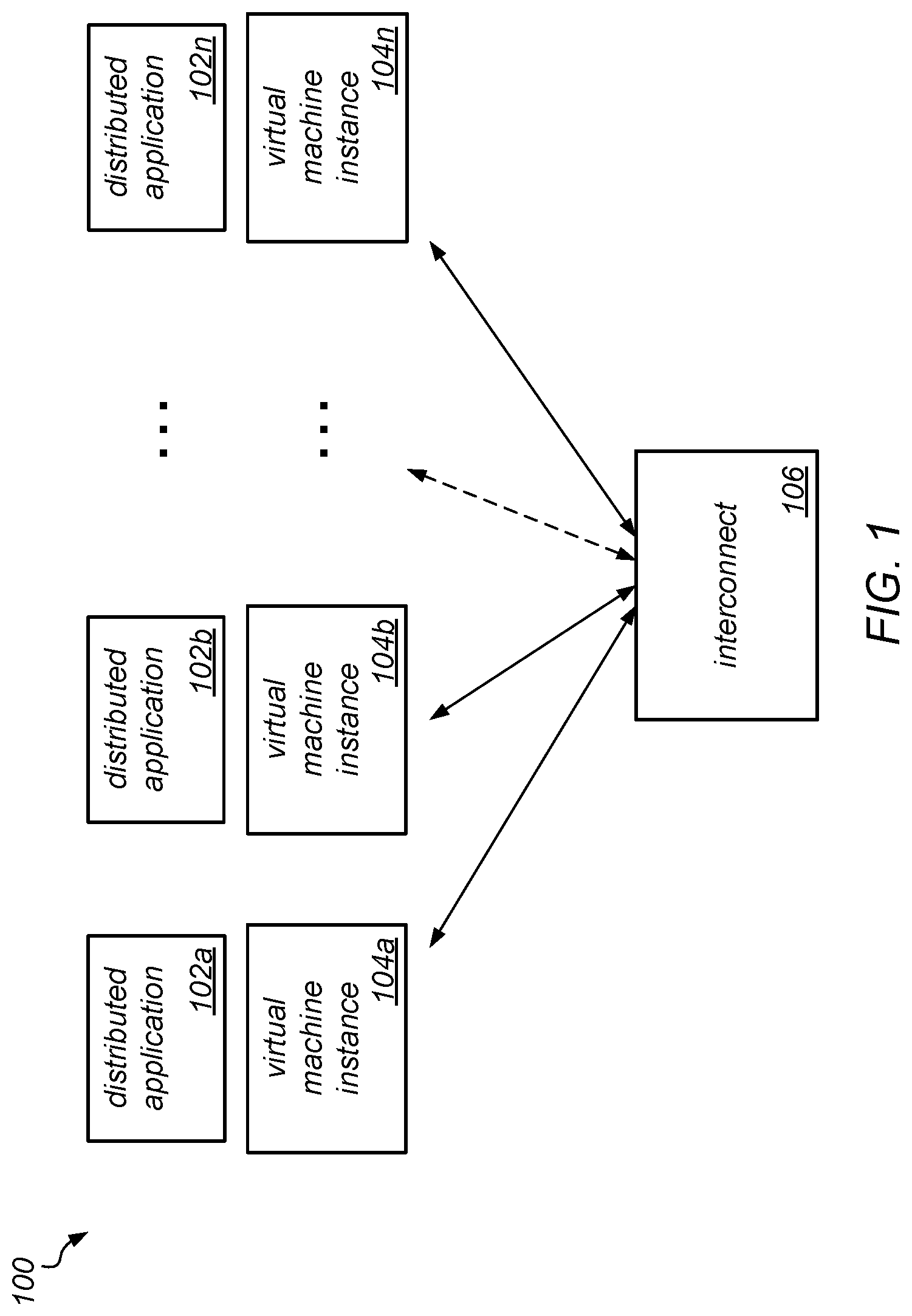

[0051] One example of a distributed system to which coordinated garbage collection may be applied is illustrated in FIG. 1, according to at least some embodiments. In this example, a distributed system 100 includes multiple virtual machine instances, shown as virtual machines instances 104a-104n, that communicate with each other over interconnect 106. Note that, in some embodiments, each virtual machine instance may be hosted on a different physical computing node, while in other embodiments, two or more of such virtual machine instances may be hosted on the same one of multiple physical computing nodes. In this example, different portions of a distributed application (shown as 102a-102n) are executing on each of the virtual machine instances 104a-104n.

[0052] In this type of distributed system, the virtual machine instances (e.g., JVMs) may be completely separate machines running separate, individual processes. Here, the heap may not be shared across the machines. Instead, in some embodiments of the systems described herein, the timing of the garbage collections performed on the individual machines may be controlled, and the timing of the garbage collections may be coordinated in a manner that mitigates the impact of those garbage collections on the performance of the complete distributed application as a whole. In other words, the techniques described herein may be directed to improvements in "garbage collection for distributed systems" rather than in "distributed garbage collection" (as in previous work). As described in more detail below using two example case studies, these techniques may be applied to applications that have very different workloads. The performance improvements achieved in these two (very different) case studies demonstrate that performance improvements due to the application of these techniques may be expected in the general case, in other embodiments.

[0053] In the type of distributed system to which the coordinated garbage collection techniques described herein are targeted (e.g., those in which the virtual machine instances are separate machines running separate, individual processes that communicate with each other and in which the heap memory is not shared across the machines), it may be common for one of the following two recurring problems to occur, depending on the workload: decreased throughput of applications or spikes in tail-latencies.

[0054] Decreased throughput of applications. Distributed applications often must perform operations to implement synchronization between the different nodes. For example, they may be synchronized through the use of distributed barriers or locks, or software running on one node may need to wait for a response to a message it has sent to another node. In such application, if a garbage collection pause occurs on a node that is holding a lock or that has not yet reached a barrier, all other nodes may be stalled waiting for the garbage collection to finish. This problem may become more significant (in terms of its effect on the overall performance of the application) as the number of nodes in a system increases. For example, in some embodiments, even if the percentage of time that a given node is garbage collecting remains fixed, as the number of nodes in a system increases, the percentage of time that at least one such node is garbage collecting may increase.

[0055] Spikes in tail-latencies. Interactive workloads may be expected to serve requests within time frames that are shorter than typical garbage collection pauses (such as <0.5 ms). Some examples of software applications that exhibit these workloads include web servers, key value stores, web caches, and so on. In many cases, these workloads have a large fan-out, meaning that a request to one server may cause a cascade of requests to additional servers whose responses are combined to form a result. Consequently, the overall performance of a request may be dependent on the slowest server that is contacted. Once again, the problem may become more significant as the number of VMs grows.

[0056] Typically, to avoid the issues of decreased throughput and tail-latency spikes, programmers may either (i) avoid Java for these interactive workloads, (ii) use unusual programming idioms to avoid garbage collection (e.g., keeping data in large scalar arrays), (iii) perform most computations in C/C++ code accessed through a native interface, such Java Native Interface (JNI), or (iv) over-provision machines (e.g., with enough physical memory to avoid the need to garbage collect at all) and/or utilize other ad hoc practices (e.g., restarting a trading application every day) to avoid collection while an application is active.

[0057] While the impact of garbage collection pauses may, in some circumstances, be mitigated by using concurrent or incremental collectors, such as concurrent mark sweep (CMS) or garbage-first (G1) collectors, in practice, developers often opt for "stop the world" collectors due to their high throughput (e.g., at least for major collections). For instance, the throughput achieved by concurrent collectors may be insufficient to keep up with allocation for very large heaps. Furthermore, the performance overhead of memory barriers required by concurrent collectors may decrease the effective performance of mutator threads.

[0058] In some embodiments of the systems described herein, rather than trying to avoid "stop the world" pauses, garbage collection pauses may be coordinated across multiple nodes in order to avoid them affecting an application's overall performance. For example, for batch workloads, computations may span multiple garbage collection cycles. When utilizing coordinated garbage collection, the impact of garbage collection on the application as a whole may be no worse than the impact on an individual machine (e.g., rather than incurring decreased performance with additional machines, even when the rest of the application scales well). In some embodiments, when utilizing coordinated garbage collections for interactive workloads, where computations may be much smaller than inter-GC periods, response times may not be affected by garbage collection at all.

[0059] As noted above, the features, techniques and/or methods of coordinated garbage collection described herein may be utilized with any of various types of systems. For instance, one example system on which some of the experiments described herein were executed was a cluster comprising 2-socket machines with processors running at 2.6 GHz. Each socket had 8 cores, each of which implements 2 hardware contexts, for a total of 32 hardware contexts per machine. Each machine included 128 GB RAM, and all of the machines were connected via a high throughput network communications link. In this example embodiment, the experiments used between 8 and 16 machines and using JVM with default settings, running on the Linux.TM. operating system.

[0060] For these experiments, in order to demonstrate the features of coordinated garbage collection, a set of scripts (in this case, written in the Python programming language) were developed and utilized to initialize workloads on the cluster. In this example embodiment, the scripts were designed to take a general description of a workload and run it across a set of machines, enabling the pinning of each workload to specific nodes of the cluster, and to specific cores within each machine.

[0061] In some embodiments, including in the example system used in the experiments, additional features may be added. For example, these additional features may be useful for bringing up composite workloads (e.g., waiting for certain services to come up), determining the servers associated with a particular service, and/or shutting down all services cleanly at the end of a run. In various embodiments, including in the example system used in the experiments, such scripts may be used to bring up the components of a specific setup (e.g., Hadoop, Hive, Spark, or Shark frameworks, and applications running on top of them) one after another, automatically populate them with the correct data, and run benchmarks against them. As described herein, such scripts may also be used to run a garbage collection coordinator on all the nodes, connect it to the JVMs spawned by the different workloads and collect the data in a central location (e.g., for collecting and reporting of GC-related latency data).

[0062] Various problems associated with traditional garbage collection techniques in distributed workloads may be demonstrated using a computation to rank websites within search engine results (e.g., a PageRank computation) using a data analytics cluster computing framework (e.g., the Spark computation framework, which was originally developed at UC Berkeley and is now part of the Apache computation ecosystem).

[0063] Workload overview. The Spark framework is based around distributed data structures called "Resilient Distributed Datasets", which support general MapReduce-style operations on distributed data (such as filter or map operations) but enable iterative computation without having to materialize data between steps. On the Spark Framework, this may be achieved through keeping track of transformations that were performed on the data. In the case of a node failure, the data on the node may be reconstructed from the last materialized version by performing the same transformations again.

[0064] One type of problem associated with traditional garbage collection in distributed workloads has been demonstrated using in-memory computations on a big data set. More specifically, distributed 8-node PageRank computations were performed on a 56 GB web page dump. In experiments performed as part of this demonstration, each PageRank step consisted of three phases that the nodes performed independently, with all-to-all communication taking place at the end of each phase. The end of each phase effectively acted as a cluster-wide barrier, with no node being able to continue execution until all nodes finished that phase. With these types of workloads, load balancing may be important. Thus, a partitioning mechanism that spreads different parts of the graph evenly across nodes (one that was provided by the execution framework) was utilized, in these experiments.

[0065] In contrast to domain-specific language (DSL) frameworks for graph data analysis (such as Green-Marl), a data analytics cluster computing framework, such as Spark, may not be specialized for performing graph computations, but may be used for many different workloads, including Machine Learning workloads or workloads that involve serving SQL Queries. While the graph performance of a data analytics cluster computing framework may not be competitive with specialized graph data analysis frameworks, the underlying patterns of cluster-wide synchronization may apply to other frameworks.

[0066] In a first example, a long-running computation having a lot of communication between the processes on separate machines was run over a Spark framework. In this example, at regular intervals during the communication, all of the machines needed to synchronize with one another, and as each of the machines reaches the synchronization point, they are stalled (i.e., none of them can proceed) until all of the machines reach the synchronization point. In other words, all of the machines are held up if any one of them is delayed in reaching the synchronization point. By observing the interaction of the Spark framework with the garbage collector, it was determined that if garbage collection executes on one of the machines in between two of these synchronization points that garbage collection will delay the threads running on that machine, which in turn delays the threads running on the other machines (because they are being held up waiting for the synchronization to occur). In this example, even if the programmer who has written the application has designed it to scale well as machines are added to the system, as extra machines are added, the likelihood that any one of the machines stops to do garbage collection at any given time goes up. For example, if there are 1000 machines, it becomes very likely that, at any given instance, at least a handful of them are going to be stopped to perform garbage collection, and even one garbage collection operation being executed on one machine can hold up all of the other machines. In this manner, the garbage collection may harm the scalability of the application as a whole.

[0067] More specifically, this first example illustrates the impact of garbage collection utilizing the PageRank benchmark with default garbage collection settings (e.g., parallel GC settings). The benchmark was set to run a large number of supersteps (iterations). Note that the default number of supersteps may be on the order of 10, which may or may not be sufficiently long-running to reach steady-state JVM performance. Therefore, in this example, the Spark framework was configured to keep all data in memory as intended (although in other embodiments, the Spark framework may use its own algorithm to write temporary data out to disk, in which case the disk performance may dwarf other factors). In this example, a heap size of 64 GB was used for the master and driver, and a heap size of 32 GB for spawned Spark executors (which may be considered the components performing the most work).

[0068] In this example, the PageRank computation ran for 30 supersteps and recorded the time that each of the supersteps took to execute. A profile analysis of the PageRank supersteps that required a synchronization barrier across the distributed system and the points at which a full garbage collection was performed on a particular node being profiled illustrated the effects of performing a garbage collection run in the middle of a superstep, with one runnable task locally. The analysis showed that, after a long loading phase that stresses the file system, there may be significant variation in the duration of each superstep. For example, while many steps completed in about 11 s, others took up to 28 s. In some cases, it appeared that the variation in the duration of each superstep may be due to work "stalling" across the system when any one (or more) of the nodes is performing a full collection. Using an analysis that examined the amount of work on each of two different nodes (e.g., the number of tasks to complete), along with the start/finish times of the collections, it was demonstrated that a full garbage collection pause on either of these machines tended to coincide with a lack of work on the other machine. For example, the first node was without work while the second node was in its first full garbage collection pause, and vice versa.

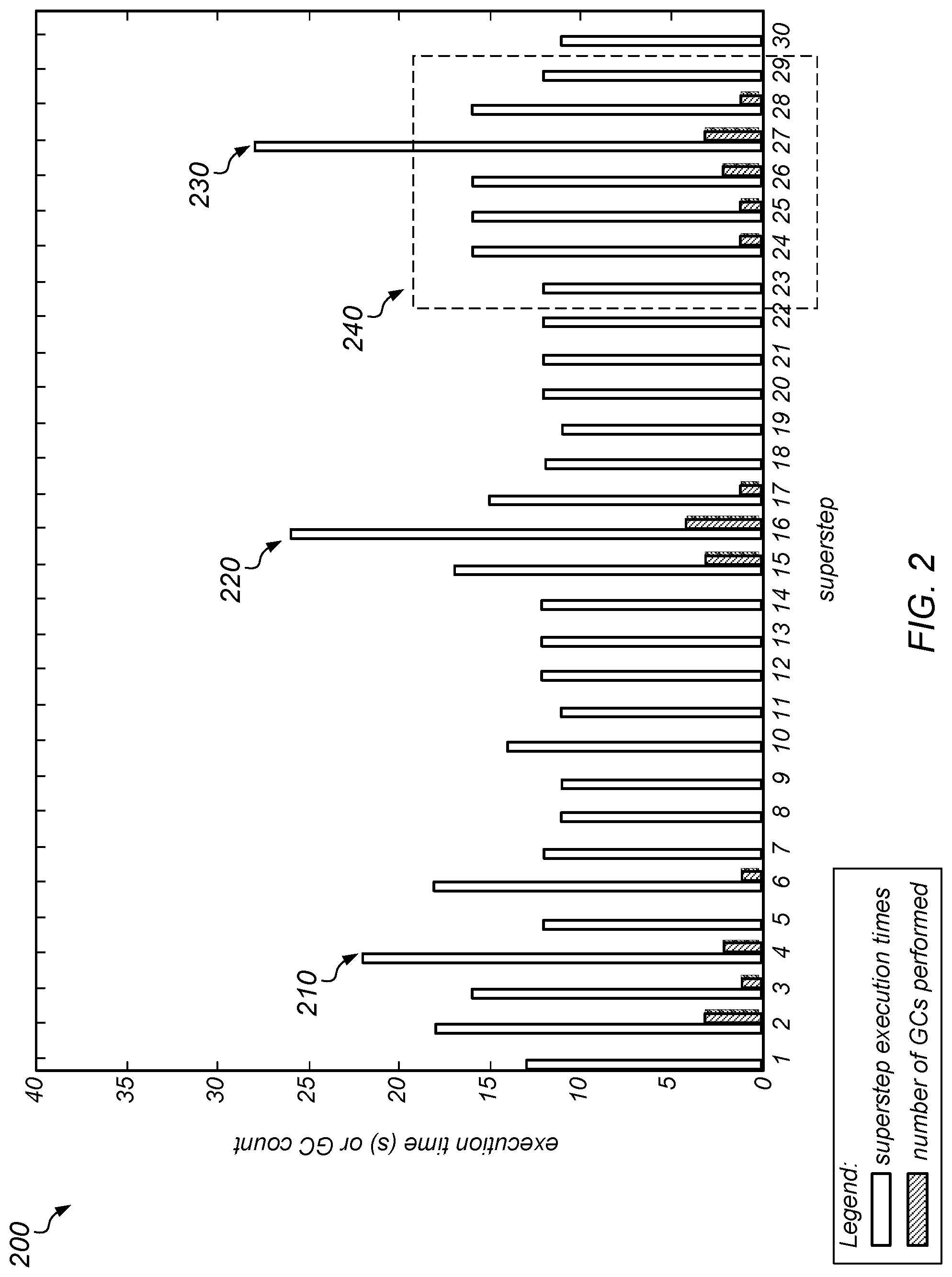

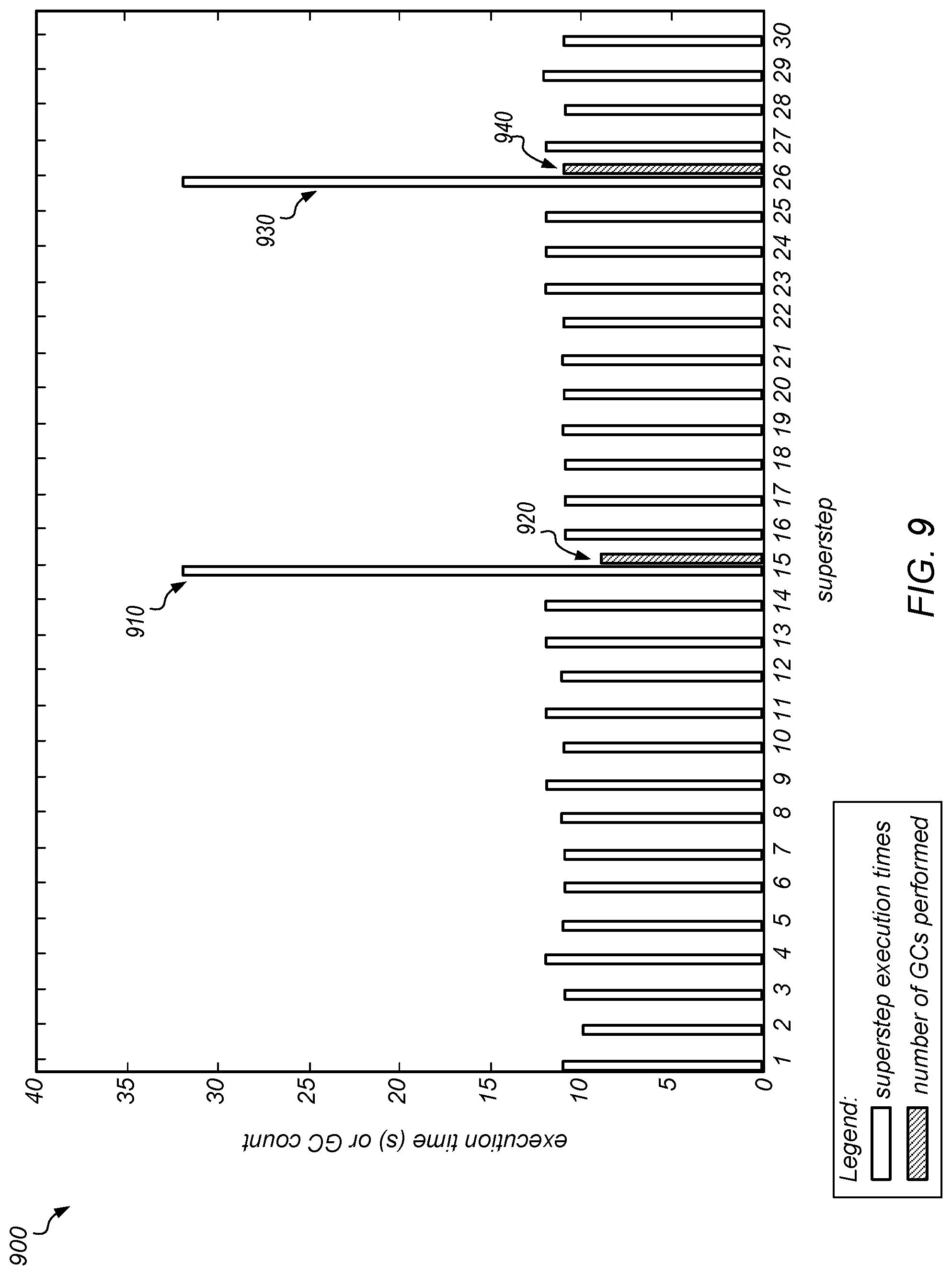

[0069] FIG. 2 is a graph illustrating the duration of each superstep of a benchmark distributed application and the number of garbage collection operations that are performed on any node during each superstep in a system that does not implement garbage collection coordination. More specifically, the unfilled bars in graph 200 illustrate the execution times (in seconds) of each superstep of a PageRank algorithm (e.g., they illustrate the lengths of the intervals between pairs of synchronization points during execution of the benchmark), while the hashed bars indicate the number of nodes, if any, that performed a full garbage collection during each superstep.

[0070] As shown in FIG. 2, supersteps that do not include garbage collection operations may have relatively low runtimes (e.g., all supersteps that do not include any garbage collection operations take roughly the same amount of time, in this example). However, if and when a garbage collection operation occurs on any node, this may significantly increase the runtime of a superstep. Here, the delays are largely due to garbage collection operations causing some machines to stall (i.e., while waiting for the collection operations to complete). For example, while most supersteps took approximately 12 seconds to complete, bars 210, 220, and 230 indicate that supersteps 4, 16, and 27, respectively, took much longer than this (e.g., approximately 22 seconds, 26 seconds, and 28 seconds, respectively). In other words, the highest peaks occurred when garbage collection operations were happening on a large number of nodes. As shown by the supersteps within dashed area 240, clumps of garbage collection activities may be spread out over time, impacting multiple supersteps. Note that when garbage collection did occur, in this example, the delays were generally proportional to the number of collections that took place in the superstep. Note also that any individual collection operation (anywhere in the system) can stall the entire application and harm performance, even though it occurs only on a single machine.

[0071] As described herein, there may be different garbage collection issues associated with different types of workloads. For example, some of the problems associated with garbage collection in interactive applications may be demonstrated using a distributed database management system, such as an Apache Cassandra database system. As used herein, the term "interactive" may refer to an application that makes low-latency responses to network requests (e.g., as opposed to a desktop application).

[0072] A NoSQL database, such as an Apache Cassandra database, may be optimized for low query latencies and scalability to large numbers of nodes. As such, it may serve as an example of a distributed, latency-sensitive workload. More specifically, this database uses consistent hashing to map each data row to a set of nodes in a cluster which store replicas of that data. A client can send a request to any node within a cluster (e.g., the node to which a request is sent is not necessarily one that is holding the requested data). That node may then act as the coordinator for the request, and may forward it to the nodes holding replicas of the data. The coordinator may respond to the client once replies are received from a quorum of replicas. For example, some of the experiments described herein use a replication factor of three nodes (meaning that the system holds three replicas for each data element, one on each of three different nodes), and a quorum size of two (meaning that two of the three nodes holding a replica of a given data element must return the same information in order to return a valid response to the requestor).

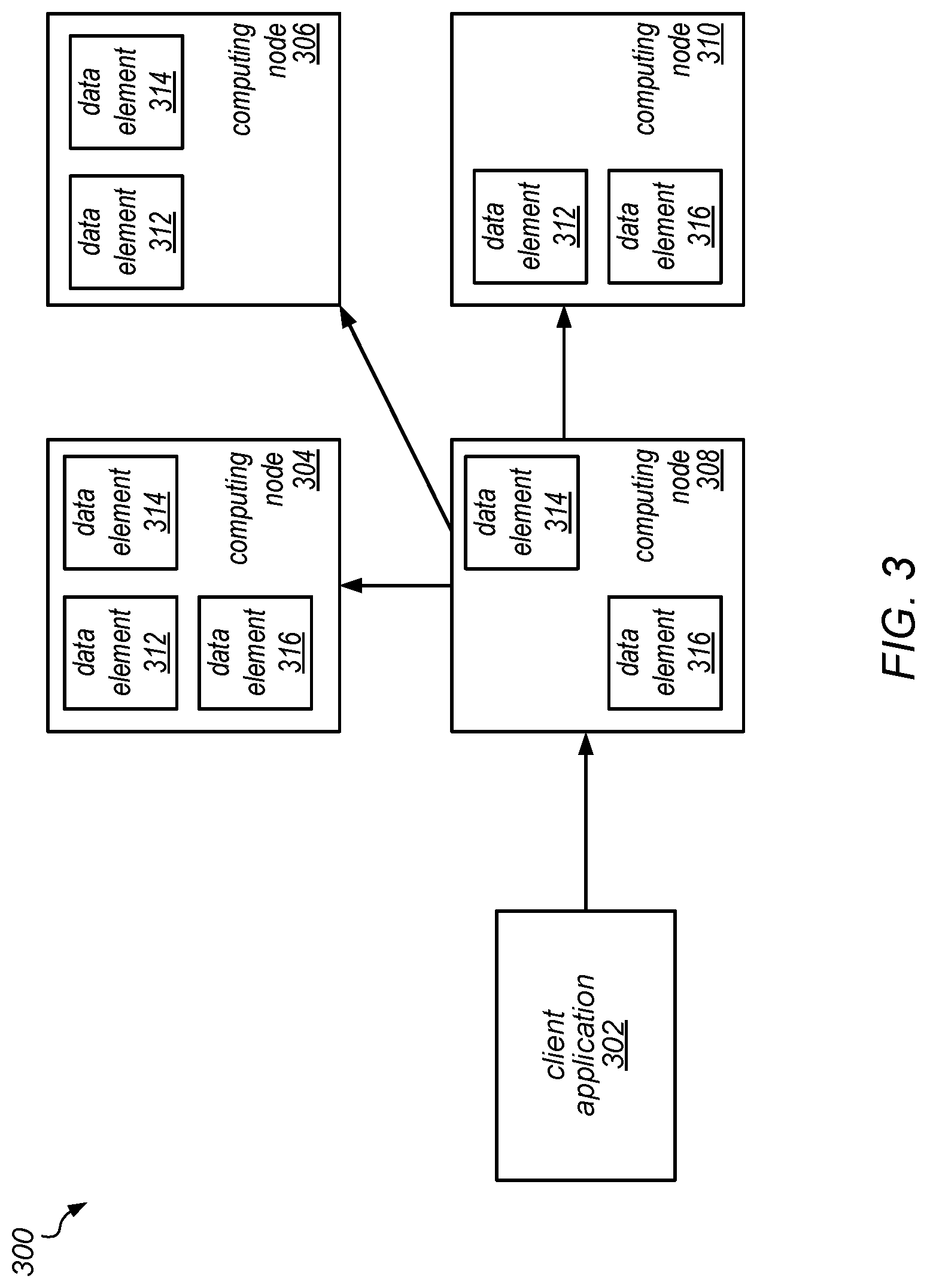

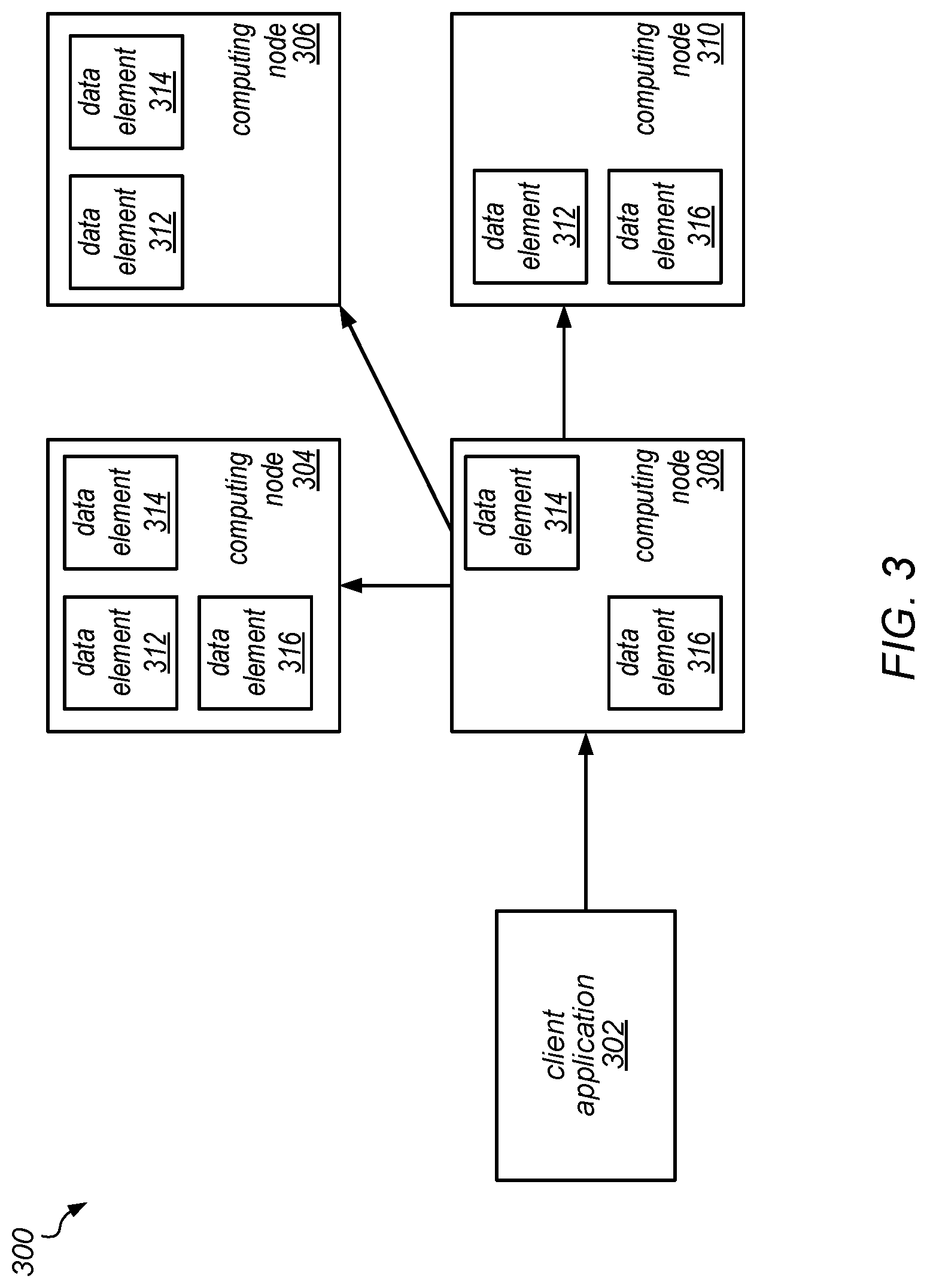

[0073] One example database system (e.g., a NoSQL database system) that includes a four node cluster is illustrated by the block diagram in FIG. 3, according to at least some embodiments. In this example, the data maintained in database system 300 is three-way replicated. Here, a client may contact any node in the cluster (i.e., a node that may or may not hold the requested data), and the contacted node contacts one or more of the nodes that hold replicas of the requested data. In this example, computing node 304 holds data items 312, 314, and 316; computing node 306 holds data items 312 and 314; computing node 308 holds data items 316 and 314; and computing node 310 holds data items 312 and 316. In this example, a client application 302 (e.g., a benchmark application described below) may contact computing node 308 to communicate read and/or update requests, and computing node 308 may pass at least some of those requests to other ones of the computing nodes that hold the requested data.

[0074] This example database system (which may implement a key-value store) may experience a workload in which the latency of individual requests is more important (e.g., to the client) than the overall throughput of the server. This workload may be representative of many different server workloads in which the server is receiving requests and making responses to clients, and in which the server must be able to supply responses quickly and within a predictable amount of time (e.g., with 99% of responses being provided within 1 millisecond, according to an applicable service level agreement). Note that this workload (and the expectations on the server performance for this workload) are quite different from those of the previous example. For example, in the previous case, a goal was to coordinate the old generation garbage collections. These are typically the longest types of pauses that are introduced due to garbage collection. For example, in some settings, these may last 5-10 seconds or longer (which may be multiple orders of magnitude longer than the young generation garbage collection pauses that are experienced in this second example (which may take on the order of 1 millisecond or 10 milliseconds). Note that young generation garbage collection pauses may be short enough that they do not significantly impact the overall performance of an interactive application executing on a desktop machine, but they may be significant in a server that is bound by an agreement to respond to requests within a period of time that is much shorter than the time it takes to perform garbage collection.

[0075] In some embodiments, the systems described herein may exploit the fact that, in this type of distributed system, the client application (such as client application 302 in FIG. 3) is able to contact any one of the server machines (shown as computing nodes 304, 306, 308, and 310) and the data that the client is accessing is replicated across multiple ones of these server machines. Here, if the client wants to access a particular data element, it could send a request to any of the four server machines, and that server machine (if it does not hold the requested data element) would forward the request on to one that holds the requested data element. For example, if the client wants to access data element 312, it could send a request to computing node 308 (even though it does not hold data element 312), and computing node 308 would forward the request on to one of the machines that holds data element 312 (e.g., computing node 304, 306, or 310). Note that, in some cases, the client may choose to send the request to a particular one of the servers that holds the requested data item, if it is possible to identify them. In some embodiments of the systems described herein, the latency of individual requests may be improved by avoiding contacting a server that is performing a garbage collection or that is about to pause to perform garbage collection. In such embodiments, when there is no garbage collection happening on a particular server that holds the requested data, the server may be able to reply reliably within a small time interval (e.g., within a 1 millisecond interval for the vast majority of requests). In such embodiments, as long as the client is able to avoid the servers that might be paused (e.g., for 10 milliseconds) to perform a garbage collection, then the client may not observe the effects of that garbage collection.

[0076] It is hypothesized that, for workloads such as these, a request may be delayed if (i) the coordinator pauses for garbage collection while handling the request, or (ii) so many nodes pause for garbage collection while handling the request that the coordinator does not receive timely responses from a quorum. Experiments testing this hypothesis are described below.

[0077] A workload generator for NoSQL databases, such as one conforming to the Yahoo! Cloud Serving Benchmark (YCSB) open-source specification and/or developed using (or in accordance with) the YCSB program suite (framework), can use multiple threads and multiple servers to evaluate a range of NoSQL databases. In one example, the impact of garbage collection has been demonstrated by running such a benchmark on one server with ten client threads. In this example, a YCSB workload having 50% reads and 50% writes was run to perform 10M operations on a Cassandra database with 1M entries on 8 nodes, and heap occupancy was observed on the different nodes over time. In this example, the old generation heap size of the Cassandra database grew steadily over time, which may imply that a full collection becomes necessary only once in a relatively great while (e.g., once every 1-2 hours). These results may indicate that, at the timescale of individual requests, the focus for improvements in performance may be on the behavior of minor garbage collections. In some embodiments of the systems described herein, coordinated garbage collection may be utilized to alleviate the impact of minor garbage collections.

[0078] More specifically, the benchmark was run without coordinated garbage collection, and then with coordinated garbage collection. In this example, the average latency per query (for read queries and for update queries) was measured over a 10 millisecond interval after an initial warm-up phase on a multi-node system running a YCSB workload with 10 threads on a Cassandra database with 1M entries. Without coordinated garbage collection, the mean latency for responding to requests was centered at approximately 0.5 milliseconds for much of the time, but included occasional (or periodic) spikes going up to tens, or even hundreds, of milliseconds (e.g., up to 200 milliseconds or more for read queries). In other words, most requests were handled very quickly (well below 1 ms). However, the occasional high-latency spikes can have a significant impact on the overall performance of an application built over NoSQL database, such as Cassandra. Many such applications (or applications with similar workloads) may issue multiple queries (e.g., to fetch different pieces of information needed for a web page), and the application's final result may only be produced once all of the queries have completed.

[0079] By comparing the times at which the high-latency spikes occurred and the times at which a minor garbage collection was performed on any of the nodes in the test system, it was observed that the times at which a server had an unexpectedly long response time corresponded to times during which there was garbage collection going on somewhere in the system (e.g., on at least one node in the system). This may suggest that garbage collection pauses may be the main contributor to these spikes and that alleviating the impact of garbage collection, such as by utilizing coordinated garbage collection, may avoid many of them. Based on these observations, garbage collection coordination efforts targeting these types of applications may be focused on controlling where garbage collection takes place (e.g., on which of the individual machines it takes place at different times) and where the client sends its requests (e.g., directing them away from machines that are performing, or are about to perform, a collection), in some embodiments. Note that in these experiments, no major garbage collections were observed during execution.

[0080] As observed during these experiments, many garbage collection pauses may be quite short, with the occasional pause being orders of magnitude higher. Thus, coordinating garbage collection over small timescales (e.g., over milliseconds rather than seconds) may alleviate the effect of garbage collection pauses, in at least some embodiments, and these timescales may be within the communication latency possible on modern clusters.

Coordinating Garbage Collection

[0081] As described herein, a prototype system has been built to assess the potential benefits of coordinating garbage collection across nodes in a rack-scale machine, according to different embodiments. In some embodiments, coordinated garbage collection may be implemented without requiring any changes to the JVM. Instead, a machine statistics monitoring tools, such as a j stat tool, may be used to periodically query a running JVM for occupancy of the different parts of its heap through any of various suitable interfaces, such as the JMX interface, according to various embodiments. In some embodiments, garbage collections may be externally triggered via a command, such as via the jcmd command line utility, for example. While in some embodiments, coordinated garbage collection may be implemented using a command line tool to trigger garbage collection, in other embodiments different methods for monitoring and for triggering garbage collection may be used.

[0082] In some embodiments, a central server may be executed on one node and all other nodes may spawn a monitoring client process that connects back to the server. In order to monitor heap occupancy, each client may launch an instance of the j stat tool in the background that samples the local JVM's heap occupancy periodically (e.g., every 200 ms, according to one embodiment).

[0083] The monitoring clients may check in with the server periodically (e.g., every 10 ms) to send the updated heap occupancy and to receive any commands related to coordinated garbage collection. For example, monitoring clients may receive commands related to triggering a full collection, to monitoring heap occupancy, or to querying the JVM for its uptime (e.g., for use in synchronizing timing numbers from different nodes).

[0084] The server may continuously collect the updated information from the monitoring clients and may (e.g., periodically) make decisions about whether or not to trigger a garbage collection. In some embodiments, the server may base coordinated garbage collection decisions based on a pre-selected policy. If a garbage collection is triggered, the server may send the corresponding command the next time that client checks in (e.g., within 10 ms), according to some embodiments. In other embodiments, the server may be configured to push a coordinated garbage collection command to one or more client nodes without waiting for the client to check in. In some embodiments, the server may be, or may include, a coordinated garbage collection coordinator (e.g., a GC Coordinator).

[0085] In other embodiments, however, coordinated garbage collection may be implemented without a central server. For instance, the various nodes may communicate and coordinate among themselves to implement a garbage collection policy allowing coordination of garbage collection. For example, in one embodiment, each node may monitor its own heap occupancy (e.g., as a percentage) and whenever one of the nodes determines that it should perform garbage collection, that node may send a message to the other nodes. In response, the other nodes may also perform garbage collection, thereby coordinating garbage collection among the nodes.

[0086] In yet other embodiments, the nodes may communicate among themselves to nominate and/or elect a leader to act as a GC Coordinator which may then coordinate the garbage collection activities of the nodes, as will be described in more detail below.

[0087] The techniques described herein for performing coordinated garbage collection may be implemented in a variety of systems, in different embodiments. However, the use of these techniques may be further illustrated by way of specific example systems. For example, in one embodiment, each of multiple machines (e.g., JVMs) in a distributed system may be extended to include a monitoring process that records the occupancy of the garbage collected heap. In this example, the system may exploit the fact that the interconnect between the machines has very low latency. For example, the system may provide a 1 millisecond query response time (on average), but may include an InfiniBand interconnect that allows messages to be passed between nodes (e.g., JVMs) within a few microseconds. In this example, each of the JVMs may record its heap occupancy (e.g., locally) and may periodically send that information to a garbage collection coordinator process. The coordinator process may be running on a machine that hosts one of the JVMs or on a separate machine (e.g., a machine other than those hosting the JVMs), in different embodiments. In one example embodiment, the monitoring processes may be attached to the JVMs through a debugging interface provided by the JVM, rather than through a modification of the JVMs themselves. In other embodiments, the JVMs may be modified to achieve tighter coupling between the monitoring components and the JVMs, potentially reducing the time it takes (within a machine) between gathering and/or recording heap information and sending it to the coordinator process. In general, logically speaking, the monitoring components may reside in a separate module or within the JVM itself.

[0088] In some embodiments, the coordinator process may be responsible for receiving the heap information, and for deciding when to trigger garbage collection on each of the machines, and what kind of garbage collection to trigger (e.g., whether to trigger a minor garbage collection, which removes objects from young generation heap space, or a major garbage collection, which removes objects from old generation heap space). In some embodiments, the coordinator process may also implement and/or apply distributed system-wide policies that specify when to expand or contract the heaps of the different JVMs. In this example (and in other embodiments), the garbage collection coordinator process may take advantage of the fact that (due improvements in interconnect technologies) the latency time for communication between nodes is now much faster than the garbage collection time itself). Therefore, even when a young generation collection can take 1 millisecond or 10 milliseconds, there may easily be enough time for several messages to make round trips between the JVM monitor and the coordinator process, thus allowing the coordinator process to enforce the kinds of garbage collection policies described herein (e.g., the "stop the world everywhere" policy).

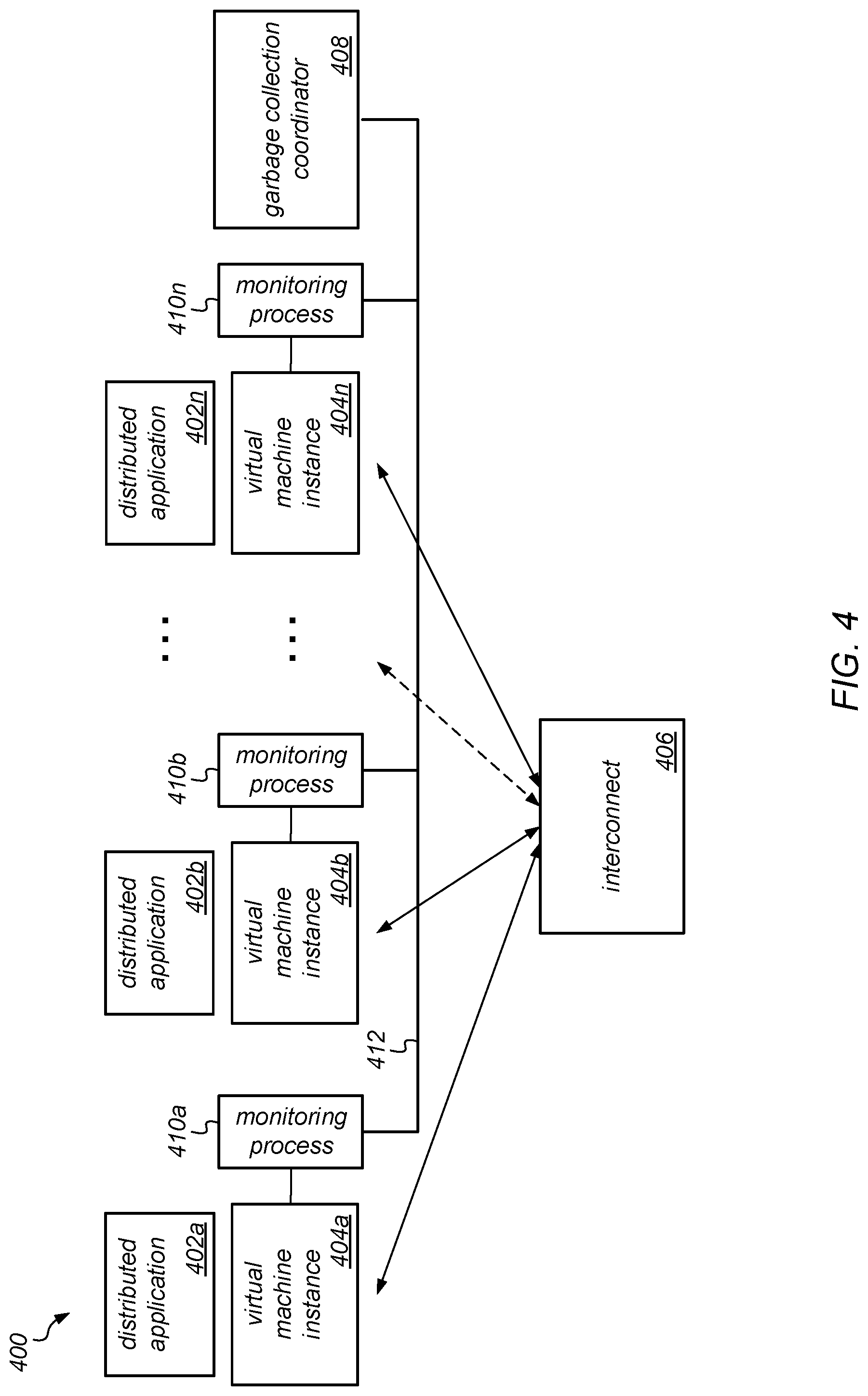

[0089] FIG. 4 is a block diagram illustrating one embodiment of system configured for implementing coordinated garbage collection as described herein. In the example illustrated in FIG. 4, system 400 may include one or more physical computing nodes, each of which hosts one or more virtual machine instances, each virtual machine instance having a monitoring process or being associated with a corresponding respective monitoring process that is also executing on the computing node. For example, system 400 includes virtual machine instance 404a that is associated with monitoring process 410a; virtual machine process 404b that is associated with monitoring process 410b; virtual machine instance 404n that is associated with monitoring process 410n; and so on. In some embodiments, each monitoring process 410 may be co-located with a corresponding virtual machine instance 404. In various embodiments, each of these monitoring processes 410a-410n may gather information from the corresponding virtual machine instances 404a-404n that can be used to coordinate garbage collection on the virtual machine instances (or underlying physical computing nodes). For example, the monitoring processes 410a-410n may collect heap occupancy information from virtual machine instances 404a-404n (e.g., using the jstat tool, or similar), and may trigger garbage collection (e.g., using the jcmd command line utility, or similar) on one or more virtual machines instances (or computing nodes), as appropriate, according to an applicable garbage collection coordination policy. In another example, the monitoring processes 410a-410n may collect (or determine) the readiness state of the virtual machine instances 404a-410n (e.g., the readiness of each node to receive communication from other ones of the node, dependent on whether it is performing, or is about to perform, a collection).

[0090] As illustrated in FIG. 4, each virtual machine instance 404 may also be configured to execute one or more applications (or portions thereof). These are illustrated in FIG. 4 as distributed applications 402a-402n. In the example illustrated in FIG. 4, coordinated garbage collection may be implemented using a garbage collection coordinator 408. The garbage collection coordinator 408 may be configured to implement coordinated garbage collection to address the problems related to garbage collection described above. In various embodiments, the monitoring processes 410a-410n may exchange information with each other and/or with garbage collection coordinator 408 on a periodic basis (e.g., once every 10 ms) or on an as-needed basis (e.g., when a trigger condition is met for performing a collection on one of the virtual machine instances). For example, the garbage collection coordinator 408 may receive heap usage information from across system 400 and may select when and where, e.g., when and on what node(s), to trigger major or minor garbage collection operations. In various embodiments, a garbage collection coordinator process may execute as a separate server or as an elected leader from among the cooperating computing nodes (e.g. those that host virtual machine instances 404a-404n). Note that, in some embodiments, the monitoring processes 410a-410n may exchange information with each other and/or with garbage collection coordinator 408 over interconnect 406, while in other embodiments, they may exchange information with each other and/or with garbage collection coordinator 408 over a separate interconnect (shown as interconnect 412, in this example).

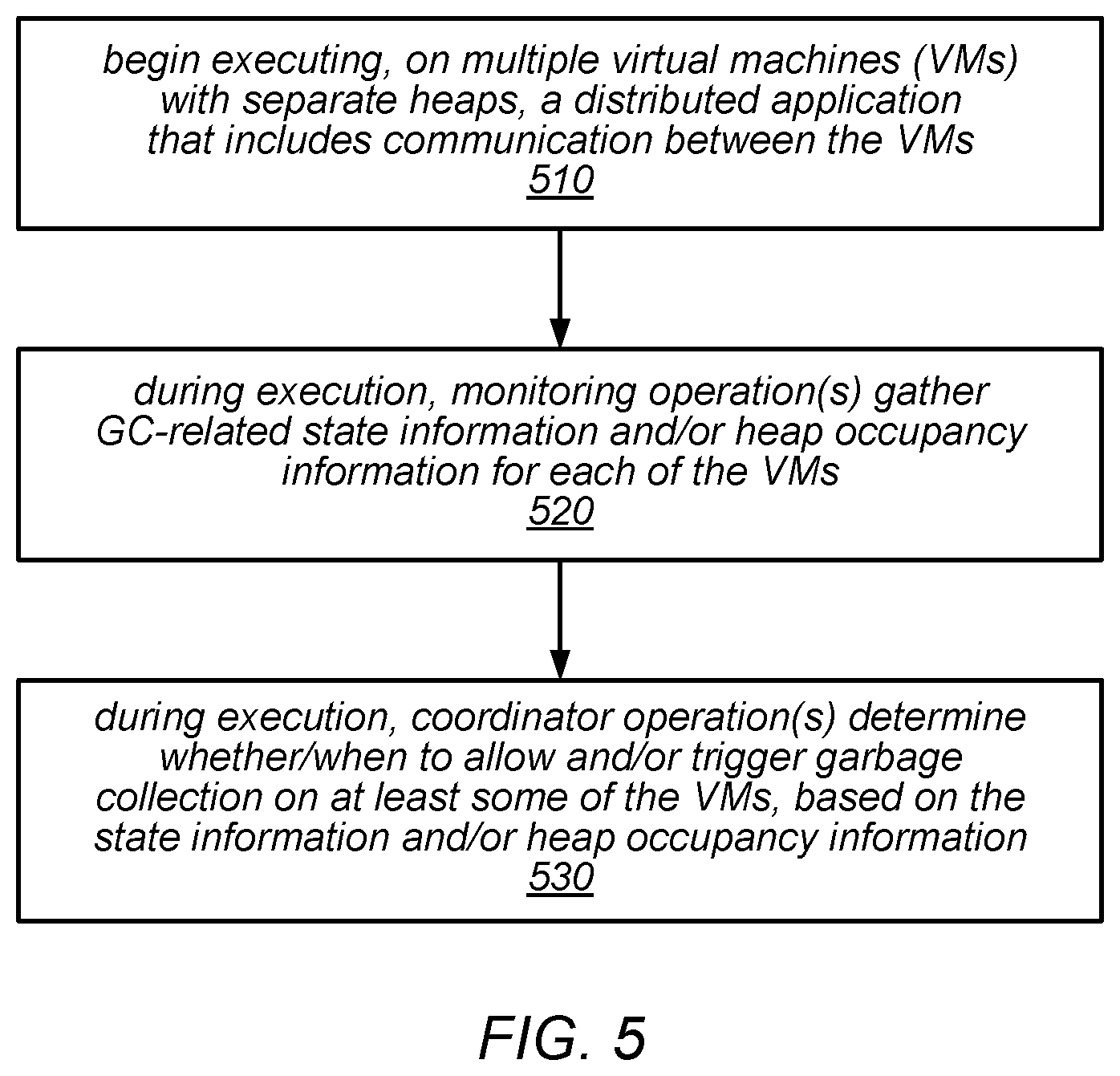

[0091] One embodiment of a method for coordinating garbage collection for a distributed application executing on multiple virtual machine instances is illustrated by the flow diagram in FIG. 5. As illustrated at 510, in this example, the method may include beginning execution, on multiple virtual machine instances (VMs) each having its own separate heap, of a distributed application that includes communication between the virtual machine instances.

[0092] The method may include one or more monitoring operations (e.g., a centralized monitoring operation or multiple monitoring operations that are distributed across some or all of the virtual machine instances) gathering GC-related state information and/or heap occupancy information for each of the virtual machine instances during execution of the distributed application, as in 520. The method may also include one or more coordinator operations (e.g., a centralized coordinator operation or multiple coordinator operations that are distributed across some or all of the virtual machine instances) determining whether and/or when to allow (and/or trigger) major or minor garbage collection on at least some of the virtual machine instances, based on the state information and/or heap occupancy information that is gathered during execution, 530. For example, such a determination may be based on whether collections are (or are about to) take place on other ones of the virtual machine instances.

[0093] In some embodiments, the systems described herein may implement application program interfaces (APIs) for performing operations that support the coordinated garbage collection techniques described herein. For example, they may include an API that is usable to send heap information from each node (e.g., each JVM) to a coordinator process, and one or more other APIs that are usable by the coordinator to trigger minor or major garbage collection activity on particular nodes. In some embodiments, they may also include APIs for expanding or contracting the heap on a particular node (e.g., on a particular JVM). For example, in some cases, there may be a reason that particular node(s) need to collect more frequently than the others, rather than having the heaps of all of the nodes being of equal size and/or adhering to the same policies for when to trigger a collection. In such cases, the collector process may be configured to take that into account and invoke an operation to expand the heap(s) on those particular node(s).

[0094] As discussed above, problems related to garbage collection may stem from different nodes performing garbage collection at different times. As a result, nodes may not be able to perform useful work while other nodes perform garbage collection. In some embodiments, the use of load balancing within a data analytics cluster computing framework (e.g., the Spark framework) may allow the heap growth rates to be set to be similar across all of the nodes. In such embodiments, an application as a whole may tend to need to collect on each node at approximately the same time, and a coordinated garbage collection policy may change the timing of collections on each node but may not substantially increase their frequency.

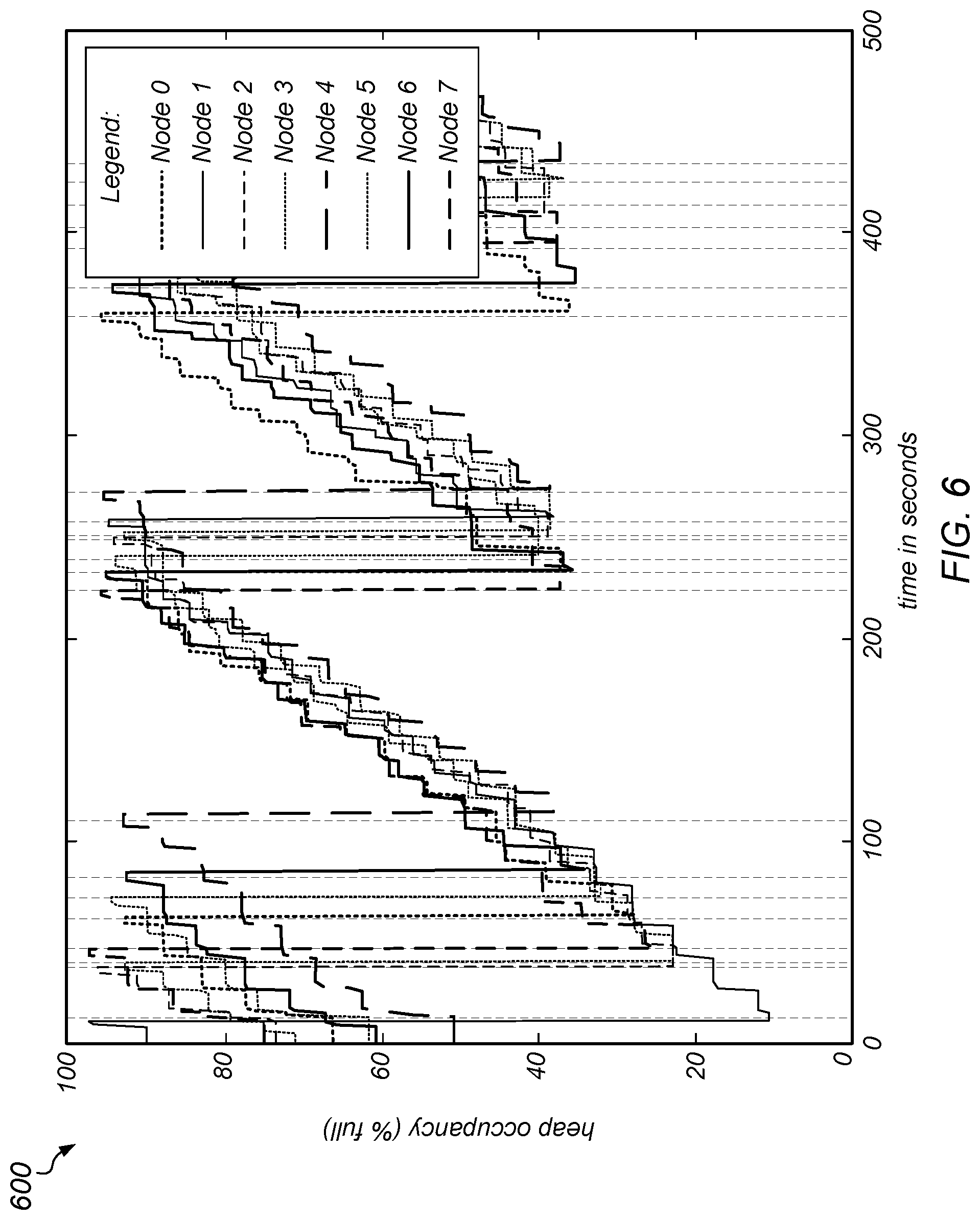

[0095] To test this hypothesis, the old generation size on different nodes was measured over time. More specifically, old generation size was measured on the different nodes of the PageRank computation over time without garbage collection coordination, and the results are illustrated in FIG. 6. In this example, the vertical lines in graph 600 indicate points at which garbage collection pauses were taken on any of the nodes, and each of the patterned lines indicates the old generation size of a respective number of nodes (according to the Legend). It was observed that the old generation fills up at a similar rate on the different nodes, but garbage collection is triggered at different times, causing garbage collection pauses to not overlap. In this example, as the heap on each node grew (as the computation proceeded), the time between synchronization intervals on that node also grew. The synchronization interval then dropped back down after garbage collection was performed. In this example, which does not include garbage collection coordination, each machine performed garbage collection whenever it determined that it needed to (without regard to what any other nodes were doing), which delayed the other nodes. Note that the amount of work represented in the graph in FIG. 6 took approximately 500 seconds to perform without garbage collection coordination.